Validating Homology in Biomedical Research: From Computational Criteria to Clinical Applications

This comprehensive guide addresses the critical process of homology validation for researchers and drug development professionals.

Validating Homology in Biomedical Research: From Computational Criteria to Clinical Applications

Abstract

This comprehensive guide addresses the critical process of homology validation for researchers and drug development professionals. It explores foundational principles of homology inference from sequence and structural data, detailing established methodological pipelines for model building and refinement. The article provides practical troubleshooting strategies for low-sequence-identity scenarios and systematic validation frameworks to assess model quality. By synthesizing traditional bioinformatics approaches with recent advances in multi-template modeling and machine learning, this resource offers actionable insights for employing reliable homology models in structure-based drug design and functional annotation.

Defining Molecular Homology: Principles, Inference, and Evolutionary Foundations

In comparative biology, particularly in the context of process homology validation research, the precise distinction between homology and similarity forms the foundational bedrock for accurate scientific inference. Although these terms are sometimes used interchangeably in informal contexts, they represent fundamentally distinct biological concepts with different implications for evolutionary analysis and functional prediction. Homology describes a qualitative evolutionary relationship based on common ancestry, whereas similarity constitutes a quantitative measure of observable resemblance [1]. This distinction carries profound implications for interpreting biological data, from gene function annotation to protein structure prediction and drug discovery.

The historical development of these concepts reveals their conceptual independence. The notion of phenotypic homology originated with Richard Owen's work in the 19th century, describing homologous structures across different species. Charles Darwin's evolutionary theory later recontextualized these homologous structures as evidence of derivation from a common ancestral structure [1]. With the advent of modern sequencing technologies, these concepts expanded beyond morphology to encompass molecular sequences, giving rise to the fields of sequence homology and gene homology analysis.

In contemporary research, particularly in drug development and functional genomics, maintaining this distinction remains crucial yet challenging. As computational biology increasingly relies on automated annotation pipelines and large-scale comparative analyses, understanding the relationship—and lack thereof—between sequence similarity and functional similarity becomes essential for avoiding erroneous conclusions and misguided experimental designs.

Conceptual Foundations: Qualitative Relationships Versus Quantitative Measures

The Fundamental Dichotomy

The core distinction between homology and similarity can be summarized succinctly: homology is a qualitative inference about evolutionary history, while similarity is a quantitative measurement of observable characteristics [1]. This dichotomy mirrors the difference between a binary state and a continuous spectrum. Sequences are either homologous or non-homologous—they share a common evolutionary origin or they do not. There is no intermediate state or "partial homology" in rigorous scientific terms. An apt analogy illustrates this distinction: "a person can either be pregnant or not pregnant; they can't be 55% pregnant" [1].

In contrast, similarity represents a measurable spectrum. Researchers can legitimately state that two sequences "share 55% similarity" or "have 80% identity" at the nucleotide or amino acid level [1]. This quantitative nature makes similarity an empirical observation rather than an evolutionary inference. The confusion often arises because significant sequence similarity frequently serves as evidence for inferring homology, but the concepts themselves remain logically distinct.

Inference Pathways in Comparative Biology

The relationship between observable similarity and inferred homology follows a specific logical pathway in biological research. Statistically significant similarity between sequences provides evidence supporting the inference of homology, with the excess similarity beyond what would be expected by chance representing the simplest explanation for common ancestry [2]. Modern sequence analysis tools like BLAST, FASTA, and HMMER are designed to detect this statistically significant similarity, providing accurate estimates that minimize false positives (non-homologs with significant scores) while being more conservative about false negatives (homologs with non-significant scores) [2].

This inference framework, however, contains important caveats. Homologous sequences do not always share statistically significant similarity at the sequence level, particularly when considering deeply divergent relationships. In such cases, intermediate sequences or structural conservation may provide evidence for homology even when direct sequence comparison fails [2]. This complexity underscores why homology represents an inference drawn from multiple lines of evidence rather than a direct observation from any single similarity measure.

Quantitative Perspectives: Measuring Similarity and Inferring Homology

Sequence Similarity Metrics and Statistical Evaluation

Multiple quantitative approaches exist for assessing sequence similarity, each with distinct advantages and limitations. The most straightforward measure is percentage identity, which calculates the proportion of identical residues at aligned positions. More sophisticated statistical measures include Expectation values (E-values), which estimate the number of times a particular alignment score would occur by chance in a database of a given size [2] [3]. E-values depend on database size, meaning the same alignment score will be less significant when searching larger databases [2].

The statistical framework for assessing sequence similarity follows the extreme value distribution for local sequence alignments, with modern search tools reporting E-values that account for multiple testing across entire databases [2]. For protein sequences, empirical studies indicate that alignments with expectation values < 0.001 can reliably be used to infer homology, whereas DNA-DNA alignments require much more stringent thresholds (often E-values < 10-10) due to less accurate alignment statistics and shorter evolutionary look-back time [2].

Table 1: Statistical Guidelines for Inferring Homology from Sequence Similarity

| Sequence Type | Significance Threshold | Evolutionary Look-Back Time | Primary Applications |

|---|---|---|---|

| Protein-Protein | E-value < 0.001 | >2.5 billion years | Functional annotation, structural prediction |

| DNA-DNA | E-value < 10-10 | 200-400 million years | Gene finding, regulatory element identification |

| Translated DNA-Protein | E-value < 0.001 | >2.5 billion years | Metagenomic analysis, gene characterization |

The Relationship Between Sequence Similarity and Functional Similarity

Understanding the correlation between sequence similarity and functional similarity represents a critical application in genomics and drug discovery. Quantitative studies across model organisms reveal that the probability of functional conservation increases with increasing sequence conservation [3]. For proteins with sequence identity exceeding 70%, there is approximately 90% probability that they share the same biological process across various Gene Ontology index levels [3].

However, this relationship varies significantly across different functional categories and sequence similarity ranges. Molecular function annotations tend to be more conserved at lower sequence identities compared to biological process annotations [3]. The "twilight zone" of sequence similarity (typically below 30% identity) presents particular challenges, as alignments in this range may not reliably indicate homology or functional conservation [3].

Table 2: Probability of Functional Conservation Based on Sequence Identity

| Sequence Identity Range | Biological Process Conservation | Molecular Function Conservation | Typical Inference |

|---|---|---|---|

| >70% | ~90% probability | ~95% probability | Strong evidence for homology and functional conservation |

| 40-70% | 70-90% probability | 75-95% probability | Likely homology with probable functional similarity |

| 20-40% | 40-70% probability | 45-75% probability | Possible homology with cautious functional inference |

| <20% | <40% probability | <45% probability | Uncertain homology with limited functional inference |

Methodological Approaches: Experimental Protocols and Workflows

Sequence Homology Analysis Workflow

The standard workflow for gene homology analysis involves multiple sequential steps that transform raw sequence data into evolutionary inferences [1]. For automated analysis pipelines like those implemented in Lasergen 17.6, the process begins with sequence acquisition and annotation, followed by homology-defining criteria application, multiple sequence alignment, and phylogenetic tree construction [1]. This workflow supports phylogenetic analysis of distantly related species by comparing gene sets at the amino acid level rather than nucleotide sequences [1].

A critical methodological consideration involves the appropriate use of annotated versus unannotated sequences. For unannotated sequences, researchers must first employ annotation pipelines such as NCBI's Prokaryotic Genome Annotation Pipeline (PGAP) before proceeding with homology analysis [1]. For raw unassembled data, initial assembly using tools like SeqMan NGen must precede annotation. The homology analysis itself uses annotated genome sequences to extract and compare gene sets, with protein sequences of homologous genes present across all genomes being concatenated for multiple sequence alignment using algorithms such as MAFFT [1].

Advanced Structural Similarity Assessment

Beyond sequence-based methods, structural similarity approaches provide powerful complementary techniques for homology inference, particularly for distantly related proteins. Three-dimensional shape similarity methods have gained prominence in drug discovery for applications including virtual screening, molecular target prediction, and drug repurposing [4]. These methods can be broadly classified as alignment-free (non-superposition) methods and alignment-based (superposition) methods [4].

Alignment-free methods, such as Ultrafast Shape Recognition (USR), describe molecular shape using the relative positions of atoms and calculate similarity through distribution comparisons without requiring molecular alignment [4]. These approaches offer significant computational advantages, with USR-VS capable of screening 55 million 3D conformers per second [4]. Alignment-based methods, while computationally intensive, provide superior visualization capabilities and enable direct comparison of surface properties such as hydrophobicity and polarity [4].

Applications in Research and Drug Discovery

Practical Implications for Genomic Analysis and Drug Development

The distinction between homology and similarity carries significant practical consequences across multiple research domains. In drug discovery, homology modeling techniques applied to genome-wide prediction of drug target protein structures represent a valuable approach for enhancing the effectiveness of the drug discovery process in the pharmaceutical industry [5]. The utility of these models depends critically on the sequence similarity between the target protein and available templates, with models based on >50% sequence identity being sufficient for detailed protein-ligand interaction studies, while those below 30% identity are primarily useful for general fold assignment [5].

In functional genomics, the relationship between sequence similarity and function similarity provides a benchmark for estimating confidence in function assignment. Studies have demonstrated that functional annotations based on computational techniques alone show different conservation patterns compared to those validated experimentally [3]. Specifically, electronically inferred annotations tend to show consistently high probabilities of function conservation regardless of sequence similarity, whereas experimentally validated annotations show the expected correlation with sequence identity [3]. This highlights the potential for error propagation when relying exclusively on computational annotations without considering the strength of sequence evidence.

Research Reagent Solutions for Homology and Similarity Analysis

Table 3: Essential Research Tools for Homology and Similarity Analysis

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Sequence Search Tools | BLAST, PSI-BLAST, FASTA, HMMER | Identify similar sequences in databases | Initial homology inference, functional annotation |

| Multiple Sequence Alignment | MAFFT, MUSCLE, Clustal Omega | Align multiple related sequences | Phylogenetic analysis, conserved domain identification |

| Protein Structure Prediction | AlphaFold, RoseTTAFold, ESMFold | Predict 3D protein structures from sequences | Remote homology detection, functional inference |

| Structural Comparison | USR, ROCS, TM-align | Compare molecular shapes and structures | Scaffold hopping, binding site analysis |

| Genomic Analysis Platforms | CoGe SynMap, Lasergen MegAlign Pro | Comparative genomics and synteny analysis | Whole genome duplication detection, orthology assignment |

| Homology Modeling | MODELLER, SWISS-MODEL, I-TASSER | Build 3D models from related structures | Drug target characterization, functional site prediction |

Current Advancements and Future Directions

Emerging Methodologies in Protein Complex Prediction

Recent advances in protein structure prediction have begun to address the challenging domain of protein complex modeling, with implications for process homology validation. DeepSCFold represents a novel pipeline that improves protein complex structure modeling by using sequence-based deep learning to predict protein-protein structural similarity and interaction probability [6]. This approach demonstrates the growing sophistication beyond traditional sequence-level co-evolutionary signals, instead leveraging sequence-derived structure-aware information to capture intrinsic and conserved protein-protein interaction patterns [6].

Benchmark results highlight significant improvements, with DeepSCFold achieving 11.6% and 10.3% improvement in TM-score compared to AlphaFold-Multimer and AlphaFold3 respectively for multimer targets from CASP15 [6]. For antibody-antigen complexes, the method enhances prediction success rates for binding interfaces by 24.7% and 12.4% over the same benchmarks [6]. These advances illustrate how the conceptual framework of structural complementarity extends beyond simple sequence similarity to enable more accurate biological inferences.

Integration of Protein Language Models

The emerging integration of protein language models (PLMs) represents another significant advancement in homology detection and structure prediction. DeepFold-PLM demonstrates how PLMs can accelerate multiple sequence alignment generation while maintaining prediction accuracy comparable to AlphaFold [7]. By utilizing high-dimensional embeddings and contrastive learning, this approach achieves 47-times faster MSA generation than standard JackHMMER-based methods while enhancing sequence diversity [7].

These developments highlight a paradigm shift from traditional sequence similarity measures toward embedded evolutionary information captured through self-supervised learning on massive protein sequence databases. The PLM-based remote homology detection extends modeling capabilities to multimeric protein complexes and offers particular benefits for orphan proteins with sparse evolutionary context [7].

The distinction between homology as a qualitative evolutionary statement and similarity as a quantitative measure remains fundamental to rigorous biological research. This conceptual clarity, framed within process homology validation criteria, enables researchers to properly interpret computational predictions and experimental observations across diverse applications from functional genomics to drug discovery. As methodological advances continue to enhance our ability to detect increasingly subtle biological relationships, maintaining precise terminology becomes ever more critical for valid scientific inference and effective translation of basic research into practical applications.

The continuing evolution of bioinformatic tools—from sequence-based homology detection to structural similarity assessment and protein language model applications—provides an expanding toolkit for exploring biological relationships. However, these technical capabilities must be grounded in conceptual precision, with researchers maintaining clear distinctions between observable similarities and inferred homologous relationships across the hierarchy of biological organization, from sequence and structure to process and function.

In biological research, homology is defined as the similarity between structures, genes, or sequences in different organisms due to descent from a common ancestor, representing divergent evolution rather than independent adaptation [8]. This foundational concept provides the basis for inferring evolutionary relationships across species, from anatomical features like the forelimbs of vertebrates to molecular sequences such as genes and proteins [9] [8]. The accurate identification of homologous relationships enables researchers to transfer functional annotations from well-characterized model organisms to less-studied species, a practice particularly vital in biomedical and drug development research where model organisms serve as proxies for human biology [10].

The statistical inference of homology represents a critical methodological framework for distinguishing true evolutionary relationships from random similarities. While early homology assessments relied primarily on morphological comparisons, modern bioinformatics approaches leverage statistical significance testing to evaluate sequence relationships, with E-values serving as a primary metric for quantifying the reliability of putative homologies [11] [2]. This guide examines the experimental protocols, significance thresholds, and analytical frameworks essential for validating homology in computational biology, providing researchers with evidence-based criteria for distinguishing homologous from analogous relationships in genomic data.

Statistical Foundations: E-values and Homology Inference

E-value Calculation and Interpretation

The E-value (Expectation value) is a fundamental statistical parameter in sequence similarity searching that indicates the number of alignments with a score ≥ S that one would expect to find by chance in a database of a given size [11]. This parameter is mathematically derived from the extreme value distribution of alignment scores and is calculated using the formula p(b) ≤ 1 - exp(-m n 2^(-b)), where b is the bit score, and m and n represent the lengths of the compared sequences [2]. The E-value depends critically on both the database size and query length, with lower E-values indicating more statistically significant alignments that are unlikely to have occurred by random chance [11] [12].

For practical interpretation, an E-value of 1 assigned to an alignment means that in a database of the same size, one would expect to see one match with a similar score purely by chance [12]. As E-values approach zero, they indicate increasing statistical significance, with values below established thresholds providing evidence for homologous relationships. The relationship between E-values and probability is particularly straightforward at lower values; for E < 1e-2 (0.01), the probability (P) is approximately equal to the E-value [11]. This statistical framework allows researchers to distinguish biologically meaningful sequence similarities from random background noise in database searches.

Homology Inference from Statistical Significance

The inference of homology from sequence similarity follows a fundamental principle: when two sequences share statistically significant similarity beyond what would be expected by chance, the simplest explanation is common evolutionary ancestry [2]. This relationship between statistical significance and homology enables powerful computational approaches for identifying evolutionary relationships across diverse organisms. As Pearson (2013) notes, "Sequence similarity searching to identify homologous sequences is one of the first, and most informative, steps in any analysis of newly determined sequences" [2].

It is crucial to recognize that while statistically significant similarity reliably indicates homology, the converse is not necessarily true. Homologous sequences, particularly those separated by vast evolutionary distances, may not always share statistically significant sequence similarity due to extensive divergence over time [2]. This distinction is critical for proper interpretation of negative results; the absence of significant similarity does not definitively demonstrate non-homology, as more sensitive methods or intermediate sequences might reveal the relationship.

Table 1: E-value Interpretation Guidelines for Homology Inference

| E-value Range | Statistical Interpretation | Biological Significance | Recommended Action |

|---|---|---|---|

| E ≤ 1e-10 | Highly significant | Strong evidence for homology | Accept as homologous; proceed with functional transfer |

| 1e-10 < E ≤ 1e-5 | Significant | Good evidence for homology | Likely homologous; verify with additional analysis |

| 1e-5 < E ≤ 0.01 | Marginally significant | Possible homology | Investigate further with domain architecture or structural data |

| E > 0.01 | Not significant | Little evidence for homology | Treat as potential random match; unlikely to be homologous |

Significance Thresholds and Best Practices

Established E-value Thresholds for Homology

Experimental and computational studies have established field-standard E-value thresholds for inferring homology with confidence. For protein-based searches, the widely accepted threshold is E ≤ 1e-5, with lower values indicating stronger evidence for homology [11]. In practice, extremely low E-values (e.g., 1e-25) provide unambiguous evidence of common ancestry, while values between 1e-5 and 1e-10 offer strong support for homologous relationships [11] [2]. For nucleic acid searches, more stringent thresholds are typically required (E ≤ 1e-6 to 1e-10) due to the reduced complexity of DNA sequence space and less accurate alignment statistics compared to protein sequences [11] [2].

These thresholds are not absolute but rather represent guidelines that must be considered alongside other factors such as sequence length, database size, and biological context. Short sequences may produce moderately significant E-values despite representing true homologies, while long sequences might achieve highly significant E-values through cumulative marginal similarities [11] [12]. As noted in the search results, "The E-value is influenced by the query length. A moderately good alignment involving two very long sequences will produce a higher E-value than an extremely good alignment involving two smaller sequences" [11].

Comparative Performance of Search Methods

The sensitivity of homology detection varies considerably across search methods and sequence types. Protein-based searches offer significantly greater evolutionary range than DNA-based searches, with protein-protein alignments routinely detecting homology in sequences that diverged over 2.5 billion years ago, while DNA-DNA alignments rarely detect homology beyond 200-400 million years of divergence [2]. This performance difference stems from the greater conservation patterns in protein sequences compared to the more rapid divergence of DNA sequences due to codon degeneracy.

Table 2: Performance Comparison of Homology Detection Methods

| Method | Optimal E-value Threshold | Evolutionary Look-back Time | Primary Applications | Limitations |

|---|---|---|---|---|

| Protein BLAST (blastp) | E ≤ 1e-5 | 2.5+ billion years | Functional annotation, phylogenetic analysis | May miss highly divergent homologs |

| Nucleotide BLAST (blastn) | E ≤ 1e-6 to 1e-10 | 200-400 million years | cis-regulatory elements, non-coding RNAs | Reduced sensitivity for ancient relationships |

| Translated BLAST (blastx) | E ≤ 1e-5 | 2+ billion years | Unknown coding sequences, metagenomics | Requires frame determination |

| Profile Methods (HMMER) | E ≤ 0.01 | 2.5+ billion years | Protein family membership, distant homologs | Requires multiple sequence alignment |

The selection of database size significantly impacts E-value calculations and consequently homology detection sensitivity. Since E-values scale with database size (E(b) ≤ p(b)D, where D is the number of sequences in the database), the same alignment score may be significant in a smaller database but non-significant in a comprehensive database [2]. This database-size effect means that researchers working with specialized organism-specific databases may detect homologs that would be obscured by background noise in comprehensive databases like NR, highlighting the importance of database selection in homology searches.

Experimental Protocols for Homology Validation

Standard BLAST Workflow for Homology Detection

The Basic Local Alignment Search Tool (BLAST) represents the most widely used protocol for initial homology inference. The standard protein BLAST (blastp) workflow begins with query sequence preparation, ensuring the sequence is in FASTA format and free of contaminants or vector sequences. The next critical step is database selection, where researchers choose an appropriate protein database based on their research question—non-redundant (nr) for comprehensive searches, RefSeq for curated sequences, or organism-specific databases for targeted analyses [12].

Parameter optimization follows, with key settings including E-value threshold adjustment (default typically 10, but should be lowered to 0.05-0.001 for significant results), low-complexity filtering to avoid artifactual matches, and scoring matrix selection based on evolutionary distance (BLOSUM62 for most applications, BLOSUM45 for distant relationships) [11] [12]. For short sequences or specific applications like primer testing, the program automatically adjusts parameters, though manual intervention may be necessary [11]. The interpretation of results focuses not only on E-values but also on bit scores, sequence identities, and alignment coverage to distinguish true homologs from chance matches.

Orthology and Paralogy Distinction

Beyond establishing general homology, rigorous evolutionary analyses require distinguishing between orthologs (homologs separated by speciation events) and paralogs (homologs separated by gene duplication events) [8]. This distinction is critical in functional genomics and drug target identification, as orthologs typically maintain equivalent biological functions across species, while paralogs often evolve new functions [10]. Experimental protocols for this distinction typically involve reciprocal best hits analysis, where two sequences from different species are considered orthologs if each is the other's best match in reciprocal searches [2].

More advanced methods incorporate phylogenetic tree reconciliation, constructing gene trees and comparing them to established species trees to identify duplication and speciation events [10]. The growth of comparative genomics resources has enabled sophisticated orthology prediction databases such as OrthoDB and EggNOG, which provide pre-computed orthologous groups across multiple species. However, for novel sequences or non-model organisms, manual verification through domain architecture analysis and conserved synteny examination provides additional evidence for orthology-paralogy distinctions.

Advanced Methodologies and Emerging Approaches

Long-Read Sequencing for Complex Genomic Regions

Recent advances in long-read sequencing technologies from Oxford Nanopore and PacBio have enabled more accurate homology assessment in genetically challenging regions that were previously intractable with short-read technologies [13]. These platforms generate reads spanning tens to hundreds of kilobases, allowing for resolution of repetitive elements, structural variants, and genes with highly homologous pseudogenes that confound traditional short-read approaches [13]. The experimental protocol involves library preparation from high-molecular-weight DNA, sequencing on platforms such as PromethION or Sequel II, and specialized bioinformatic processing for variant calling.

In validation studies, long-read sequencing platforms have demonstrated exceptional performance in comprehensive genetic diagnosis, detecting diverse variant types including single nucleotide variants (98.87% sensitivity), small insertions/deletions, complex structural variants, and repetitive expansions with overall concordance of 99.4% for clinically relevant variants [13]. This approach is particularly valuable for resolving homology in complex gene families like the major histocompatibility complex (MHC), cytochrome P450 genes important for drug metabolism, and highly duplicated gene families where precise phylogenetic relationships inform functional predictions.

Structural and Network-Based Homology Inference

When sequence similarity becomes too weak to detect statistically significant relationships, structural homology approaches provide an alternative method for inferring evolutionary relationships. The fundamental principle states that protein structure is more conserved than sequence over evolutionary time, allowing detection of homologous relationships even when sequences have diverged beyond recognition [2]. Experimental protocols for structural homology begin with protein structure prediction through X-ray crystallography, NMR, or computational modeling, followed by structural alignment using algorithms like DALI or CE, and statistical assessment using P-values or E-values specific to structural comparison.

Emerging approaches in network-based homology extend beyond pairwise comparisons to examine similarity within biological networks. Persistent homology, a technique from computational topology, has been applied to analyze functional brain networks in neurological disorders, offering higher-order topological features that differentiate between disease states with up to 85.7% accuracy in classifying mild cognitive impairment subtypes [14]. These network-based methods utilize filtration techniques (Vietoris-Rips or graph filtration) to capture persistent topological features across multiple scales, quantified using distance metrics like Wasserstein distance for subsequent statistical analysis [14].

Table 3: Essential Research Reagents and Computational Resources for Homology Research

| Tool/Resource | Category | Primary Function | Application Context |

|---|---|---|---|

| BLAST Suite | Software | Sequence similarity search | Initial homology screening, functional annotation |

| HMMER | Software | Profile hidden Markov models | Protein family analysis, distant homology detection |

| ClusteredNR | Database | Non-redundant protein clusters | Efficient searching with taxonomic context |

| OrthoDB | Database | Curated ortholog groups | Orthology inference across species |

| PDBe Fold | Software | Structural alignment | Structural homology assessment |

| DOT | Visualization | Graph visualization | Network homology representation |

| Oxford Nanopore | Platform | Long-read sequencing | Complex genomic region analysis |

| DUST / SEG | Algorithm | Low-complexity filtering | Prevention of spurious alignments |

Statistical inference of homology represents a multifaceted process that integrates evidence from sequence similarity, structural conservation, evolutionary models, and increasingly, network-based relationships. While E-values provide a crucial statistical foundation for assessing sequence homology, robust conclusions require consideration of multiple lines of evidence, including conserved domain architecture, gene order and synteny, and when available, structural data. The established thresholds of E ≤ 1e-5 for protein sequences and E ≤ 1e-6 to 1e-10 for DNA sequences serve as valuable guidelines, but biological context and experimental validation remain essential components of rigorous homology assessment.

Emerging technologies in long-read sequencing and topological data analysis are expanding the horizons of homology inference, enabling researchers to tackle previously intractable questions in genome evolution and comparative genomics. For drug development professionals and research scientists, these advanced methods offer new opportunities for identifying therapeutic targets based on evolutionary relationships and understanding the genetic basis of disease through improved homology detection across diverse species. The integration of traditional statistical approaches with these innovative methodologies promises to further refine our understanding of evolutionary relationships and their functional consequences in biological systems.

Sequence identity serves as a fundamental metric in computational biology, providing a quantitative measure of evolutionary and functional relationships between proteins. The spectrum of sequence identity—ranging from near-identical sequences to those with barely detectable similarity—correlates with different levels of biological organization, from high-resolution structural modeling to the detection of distant evolutionary relationships. In the broader context of validating process homology criteria, understanding this spectrum is essential. Process homology, which refers to the conservation of developmental or biochemical processes rather than merely structural components, can persist even when sequence identity becomes minimal [15]. This guide systematically compares the performance of modern bioinformatics tools across this identity spectrum, providing researchers with objective data to select appropriate methodologies for their specific experimental needs, particularly in drug development contexts where accurate functional annotation is critical.

The concept of homology extends beyond simple sequence matching; it represents common evolutionary ancestry. As articulated in research on process homology, biological processes "can be homologous without homology of the underlying genes or gene networks, since the latter can diverge over evolutionary time, while the dynamics of the process remain the same" [15]. This dissociation between different levels of biological organization explains why the sequence identity spectrum must be carefully interpreted—conserved functions can persist in proteins with remarkably low sequence identity, necessitating sophisticated tools capable of detecting these remote relationships.

Theoretical Framework: Process Homology and Sequence Divergence

The Conceptual Basis of Process Homology

Process homology represents a distinct level of biological organization that can persist despite significant genetic divergence. According to recent research, ontogenetic processes "can be homologous without homology of the underlying genes or gene networks, since the latter can diverge over evolutionary time, while the dynamics of the process remain the same" [15]. This conceptual framework is crucial for understanding why distant homology detection matters—conserved biological processes often maintain similar dynamic properties even when their underlying sequences have diverged beyond the recognition threshold of simple alignment tools.

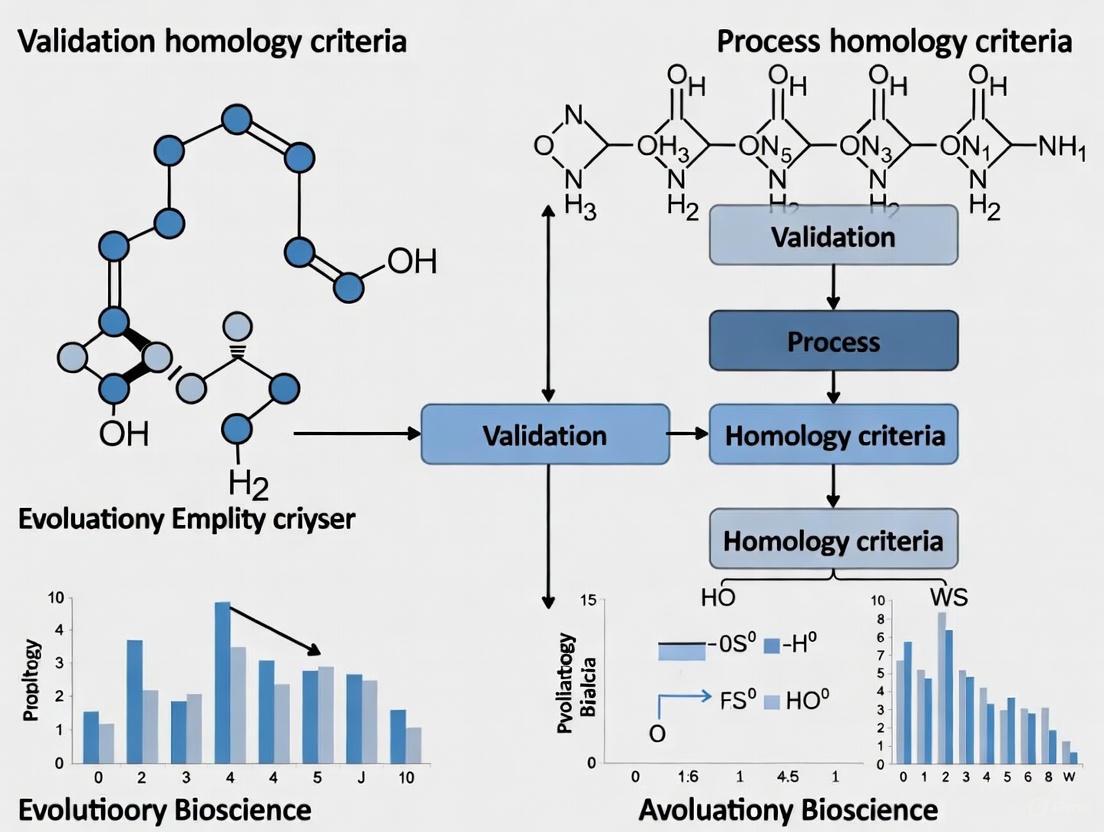

The validation of process homology requires specific criteria that combine traditional indicators (sameness of parts, morphological outcome, and topological position) with novel approaches derived from dynamical systems modeling (sameness of dynamical properties, dynamical complexity, and evidence for transitional forms) [15]. This multi-faceted approach to homology mirrors the methodological progression in sequence analysis, where researchers must employ increasingly sophisticated tools as sequence identity decreases, moving from simple pairwise comparisons to structural and deep learning-based methods.

The Relationship Between Sequence Identity and Structural Conservation

Protein structure is significantly more conserved throughout evolution than primary sequence, enabling the detection of deep evolutionary relationships that escape sequence-based methods [16]. This fundamental principle explains why structural alignment can reveal homologous relationships between proteins with sequence identities below 20%, where traditional sequence-based methods fail. The conservation hierarchy—function, structure, then sequence—means that biological processes can remain conserved even when sequences have diverged considerably, as functional constraints preserve structural motifs essential for mechanism while allowing sequence variation in non-essential regions.

Tool Comparison: Performance Across the Identity Spectrum

Sequence-Based Alignment Tools

Traditional sequence alignment tools form the foundation of homology detection and remain most effective at moderate to high sequence identities.

Table 1: Sequence-Based Alignment Tools for Homology Detection

| Tool | Primary Function | Optimal Sequence Identity Range | Strengths | Limitations |

|---|---|---|---|---|

| BLAST [17] [2] | Local sequence alignment | >25-30% | Fast, reliable statistical estimates, widely integrated | Limited sensitivity below 25% identity |

| PSI-BLAST [2] | Position-specific iterated search | 15-30% | Improved detection of distant relationships through profiles | Limited by query sequence, iterative errors can propagate |

| MMseqs2 [18] | Sequence similarity search | >25-30% | Better performance than BLAST at comparable sensitivity | Similar limitations to BLAST for very distant relationships |

| HMMER3 [2] | Profile hidden Markov models | 15-30% | Sensitive for protein domain detection | Requires multiple sequences, complex model building |

BLAST and similar tools become unreliable below 25% sequence identity because "DNA:DNA alignments rarely detect homology after more than 200–400 million years of divergence; protein:protein alignments routinely detect homology in sequences that last shared a common ancestor more than 2.5 billion years ago" [2]. The statistical estimates provided by these programs are highly reliable for inferring homology when expectation values (E-values) are significant, with E-values < 0.001 generally indicating homologous relationships for protein searches [2].

Structure-Based Alignment Tools

When sequence identity falls below 25%, structure-based alignment methods become essential for detecting remote homologous relationships.

Table 2: Structure-Based Alignment Tools for Remote Homology Detection

| Tool | Methodology | Effective Identity Range | Accuracy | Speed |

|---|---|---|---|---|

| TM-align [19] | Structural superposition using TM-score | <25% | High (94.1%) [20] | Moderate |

| DALI [16] [19] | 3D structure comparison using distance matrix | <25% | High | Slow for large databases |

| Foldseek [16] [20] | 3Di structural string alignment | <25% | High (95.9%) [20] | Very fast |

| SARST2 [20] | Integrated primary/secondary/tertiary features | <25% | Very high (96.3%) [20] | Fastest |

Structure-based methods exploit the principle that "protein structure is much more conserved along evolution than primary sequences, allowing to reveal relationships across evolutionary remote organisms" [16]. These tools measure structural similarity using metrics like TM-score, where values >0.5 generally indicate the same fold in SCOP/CATH classifications, and values >0.8 indicate highly similar structures [19].

Deep Learning and Integrated Approaches

Recent advances in deep learning have created hybrid approaches that bridge sequence and structure analysis.

Table 3: Deep Learning Tools for Remote Homology Detection

| Tool | Approach | Innovation | Performance |

|---|---|---|---|

| TM-Vec [19] | Twin neural networks predicting TM-scores | Structure-aware search from sequence alone | r=0.97 correlation with TM-align [19] |

| DeepBLAST [19] | Differentiable Needleman-Wunsch with protein language models | Structural alignments from sequence information | Outperforms sequence methods at low identity |

| HiPHD [21] | Hierarchical classification with sequential/structural integration | Combines language models and graph neural networks | State-of-the-art on SCOPe and CATH benchmarks |

TM-Vec represents a significant advancement as it "can resolve structural differences (and detect significant structural similarity) between sequence pairs with percentage sequence identity less than 0.1" [19], effectively extending homology detection into extremely divergent regions of the sequence identity spectrum where traditional methods fail completely.

Experimental Protocols and Workflows

Standard Protocol for High-Identity Sequence Analysis

For sequences with expected identity >30%, traditional sequence alignment methods provide the most efficient workflow. The recommended protocol begins with a BLAST search against a comprehensive database like UniProt or GenBank, using an E-value cutoff of 0.001 for initial hits [2] [18]. Significant matches should then be validated through reciprocal BLAST searches, where the top hits are used as queries against the database to confirm consistent relationships. For functional annotation, conserved domains should be identified using tools like InterPro or Pfam, and multiple sequence alignments should be constructed with tools like Clustal Omega or MAFFT to resolve ambiguous regions [17]. Finally, phylogenetic analysis can establish evolutionary relationships among confirmed homologs.

High-Identity Homology Detection Workflow

Advanced Protocol for Remote Homology Detection

For sequences with low identity (<25%) where standard BLAST searches fail, an integrated approach combining sequence profiles and structural information is necessary. Begin with iterative sequence profile methods like PSI-BLAST (3 iterations, E-value threshold 0.001) to detect weakly conserved patterns [2]. If structures are available or can be predicted (using AlphaFold2 or ESMFold), proceed with structural alignment using Foldseek or SARST2 against structural databases, prioritizing hits with TM-scores >0.5 [20] [19]. For sequences without structures, employ deep learning methods like TM-Vec to identify structurally similar proteins directly from sequence, validating top hits with DeepBLAST for alignment accuracy [19]. Finally, integrate evidence using hierarchical frameworks like HiPHD, which combines sequential and structural information for confident remote homology assignment [21].

Remote Homology Detection Workflow

Benchmarking and Validation Methodology

Rigorous benchmarking of homology detection tools requires standardized datasets and evaluation metrics. The recommended protocol uses curated databases like SCOP and CATH that provide hierarchical structural classifications [20] [19]. For performance assessment, researchers should employ information retrieval metrics including recall (sensitivity) and precision, calculating these measures at different sequence identity thresholds [20]. Statistical significance should be evaluated through pairwise comparison tests with multiple hypothesis correction, and runtime performance should be measured against standardized database sizes to ensure practical utility [20]. For method development, cross-validation should include held-out folds to assess generalization to novel protein families not present in training data [19].

Table 4: Key Research Reagents and Computational Resources

| Resource | Type | Function | Access |

|---|---|---|---|

| UniProt Knowledgebase [2] | Protein Sequence Database | Comprehensive sequence and functional annotation | https://www.uniprot.org/ |

| Protein Data Bank (PDB) [18] | Structural Database | Experimentally determined protein structures | https://www.rcsb.org/ |

| AlphaFold Database [20] | Predicted Structure Database | 214 million predicted structures | https://alphafold.ebi.ac.uk/ |

| SCOPe/CATH [19] | Structural Classification | Hierarchical protein structure classification | Public databases |

| PSSM Profiles [20] | Position-Specific Scoring Matrix | Evolutionary information for sensitive search | Generated by PSI-BLAST |

| 3Di Alphabets [16] | Structural Alphabet | Compact representation of protein 3D structure | Used in Foldseek |

These resources provide the essential data infrastructure for homology detection across the sequence identity spectrum. The AlphaFold Database in particular has revolutionized the field by providing "214 million predicted structures" [20], making structural information available for virtually any protein sequence and enabling structure-based methods even for proteins without experimentally determined structures.

The sequence identity spectrum demands a strategic approach to tool selection, with different methods exhibiting optimal performance in specific ranges. For high-identity sequences (>30%), traditional tools like BLAST and MMseqs2 provide rapid, reliable results. In the twilight zone (20-30%), profile methods like PSI-BLAST and HMMER extend detection sensitivity. For remote homology (<20%), structure-based methods and deep learning approaches like SARST2, Foldseek, and TM-Vec become essential.

This progression mirrors the biological reality that "protein structure is much more conserved along evolution than primary sequences" [16], explaining why structural methods can detect relationships invisible to sequence-based approaches. For researchers validating process homology criteria, this toolkit enables the detection of conserved biological processes even when sequences have diverged beyond recognition by conventional means, supporting critical applications in functional annotation, drug target identification, and evolutionary studies.

The future of homology detection lies in integrated systems that seamlessly combine sequence, structure, and deep learning approaches, with tools like HiPHD already demonstrating the power of hierarchical classification [21]. As these methods mature, they will further illuminate the deep evolutionary connections hidden within the sequence identity spectrum.

For decades, sequence-based analysis has served as the primary tool for inferring evolutionary relationships. However, growing evidence reveals that protein structure often provides a more accurate and conserved record of evolutionary history, particularly where sequence signals have faded. This guide objectively compares sequence-based and structure-based assessment methods, providing a framework for researchers to evaluate their applications in evolutionary biology and drug discovery. We present quantitative data demonstrating structural conservation's superiority in identifying distant homologies and its critical implications for validating process homology criteria.

Traditional evolutionary biology has relied heavily on sequence information to infer relationships between genes and proteins. The underlying assumption has been that sequence similarity implies functional and evolutionary relatedness. However, this approach encounters significant limitations in the "twilight zone" of sequence alignment, where identities fall below 20-25%, making evolutionary relationships difficult or impossible to detect through sequence alone [22].

Protein structures offer a solution to this fundamental limitation. Tertiary structures are known to be more conserved than amino acid sequences, with structural similarity often persisting long after sequence signals become statistically marginal or undetectable [22]. For example, the globin family exhibits nearly identical tertiary structures despite sequence identities as low as 16% [22]. This conservation occurs because structures are more directly linked to biological function than sequences.

The recent revolution in structural biology, powered by machine learning tools like AlphaFold2, has enabled proteome-wide structural analyses that were previously impossible due to limited experimental data [22]. This technological advancement now allows researchers to move beyond sequence-based assessments and adopt structural conservation as the gold standard for evolutionary analysis, particularly for deep evolutionary comparisons and process homology validation.

Comparative Analysis: Sequence-Based vs. Structure-Based Approaches

Fundamental Principles and Methodologies

Sequence-Based Methods primarily rely on alignment algorithms (e.g., BLAST, CLUSTAL) that quantify similarity through position-by-position comparison of amino acid or nucleotide sequences. These methods use substitution matrices (e.g., BLOSUM, PAM) to score matches and mismatches, generating identity percentages and similarity scores that supposedly reflect evolutionary relationships [23].

Structure-Based Methods compare three-dimensional protein architectures through structural alignment algorithms (e.g., DALI, CE, TM-align). These methods quantify similarity using metrics like Root-Mean-Square Deviation (RMSD), Template Modeling Score (TM-score), and structural overlap, which measure spatial agreement between protein folds regardless of sequence similarity [22].

Performance Comparison and Quantitative Assessment

The table below summarizes the key performance characteristics of both approaches based on comparative genomic analyses:

Table 1: Quantitative Comparison of Assessment Methods

| Performance Metric | Sequence-Based Methods | Structure-Based Methods |

|---|---|---|

| Twilight Zone Detection | Fails below 20-25% sequence identity | Successfully identifies ~8-32% of structurally similar homologous pairs despite low sequence identity [22] |

| Evolutionary Timescale | Effective for recent to moderate divergence | Reveals relationships across billions of years of evolution |

| Conservation Pattern | Rapidly diverges due to genetic code degeneracy | Remains stable despite significant sequence drift |

| Functional Prediction | Indirect through sequence motifs | Direct through active site geometry and binding surfaces |

| Multi-domain Protein Analysis | Challenging due to recombination events | Enables domain-by-domain evolutionary tracing |

Proteome-wide structural analyses reveal that structurally similar homologous protein pairs in the twilight zone account for approximately 0.004%-0.021% of all possible protein pair combinations, which translates to 8%-32% of protein-coding genes, depending on the species under comparison [22]. This represents a substantial portion of evolutionary relationships that would remain undetected through sequence analysis alone.

Experimental Validation and Case Studies

The Rossmann Fold Example: The Rossmann fold, a nucleotide-binding motif, presents a quintessential case where strictly conserved structures show no detectable sequence identities between distantly related proteins [22]. This structural motif appears across vast evolutionary distances in proteins with nucleotide-binding functions, demonstrating how structural conservation reveals functional relationships invisible to sequence analysis.

Cross-Domain Structural Comparisons: Comparative analysis of human proteins with bacterial (E. coli) and archaeal (M. jannaschii) homologs reveals distinct conservation patterns. Human proteins involved in energy supply show greater structural similarity to bacterial homologs, while proteins relating to the central dogma are more similar to archaeal homologs [22]. This structural evidence supports the chimera-like origin of eukaryotic genomes from both bacterial and archaeal ancestors.

Structural Conservation in Process Homology Validation

The Challenge of Process Homology

Process homology presents particular challenges for evolutionary biologists. Homologous morphological traits are often generated by processes involving non-homologous genes (developmental system drift), while homologous genes are frequently co-opted in generating non-homologous traits (deep homology) [15]. This dissociation between evolutionary levels means that neither gene sequences nor morphological outcomes alone can reliably establish process homology.

The concept of "homology of process" requires establishing that ontogenetic processes themselves are homologous, regardless of genetic underpinnings or morphological outcomes [15]. This is particularly relevant for complex, dynamic processes like insect segmentation and vertebrate somitogenesis, which can be homologous without homology of the underlying genes or gene networks [15].

Structural Conservation as a Criterion for Process Homology

Protein structural conservation offers a powerful criterion for establishing process homology through several mechanisms:

Conserved Structural Components: Even when sequences diverge, the preservation of key structural domains in proteins involved in developmental processes indicates conserved functional mechanisms. For example, DNA-binding domains maintaining similar folds despite sequence divergence suggests conservation of gene regulatory processes.

Structural Evidence for Dynamic Conservation: The conservation of allosteric regions and conformational dynamics in enzymes and signaling proteins indicates maintained regulatory processes, even when primary sequences have diversified.

Multi-protein Complex Architecture: The preservation of overall architecture in protein complexes, such as the nuclear pore or transcription pre-initiation complex, provides evidence for conserved cellular processes across evolutionary distances where sequence-based analyses fail.

Table 2: Criteria for Process Homology Validation

| Criterion | Traditional Approach | Structure-Enhanced Approach |

|---|---|---|

| Same Parts | Gene orthology based on sequence | Structural domains and folds |

| Morphological Outcome | Adult structure comparison | Developmental trajectory dynamics |

| Topological Position | Anatomical position in embryo | Structural interfaces and spatial relationships |

| Dynamical Properties | Inferred from genetic networks | Directly from protein dynamics and conformational ensembles |

| Dynamical Complexity | Gene expression patterns | Structural flexibility and allosteric regulation |

| Transitional Forms | Fossil morphological series | Intermediate structural states |

Experimental Protocols for Structural Conservation Analysis

Proteome-Wide Structural Comparison Methodology

The following protocol, adapted from cutting-edge research in comparative structural genomics, enables systematic identification of structurally conserved homologs beyond the sequence twilight zone [22]:

Sample Preparation and Data Collection:

- Obtain experimental structures from the Protein Data Bank (PDB) and complement with AlphaFold2-predicted structures for comprehensive proteome coverage

- For human proteome analysis: utilize 36,764 structures from PDB and 29,527 modeled structures from AlphaFold2 database

- Implement graph-based community clustering using Leiden algorithm to separate multidomain proteins into individual domains based on Predicted Aligned Error (PAE) matrix

- Trim unstructured regions and define domain boundaries using PAE matrix connectivity

Structural Comparison and Analysis:

- Employ sequence-independent protein structural comparison algorithms for all-against-all pairwise comparisons

- Calculate structural similarity metrics (TM-score, RMSD) for each protein pair

- Establish significance thresholds for structural homology (typically TM-score >0.5 indicates same fold)

- Correlate structural similarity measures with sequence identity to identify twilight zone pairs

- Perform functional annotation of structurally conserved homologs to identify process-related conservation

Figure 1: Experimental workflow for structural conservation analysis

Structural Conservation Scoring Methods

Multiple scoring systems have been developed to quantify structural conservation. Based on comparative studies of conservation and variation scores applied to catalytic sites, these methods fall into two primary categories [23]:

Substitution Matrix-Based Scores (Cluster A):

- Karlin96, Sander91sp, Pei01sp(w): Use scoring matrices to evaluate amino acid replacements

- Valdar01: Applies different sequence weighting and scoring matrices

- Thompson97: Measures deviation from consensus residue using scoring matrices

Frequency-Based Scores (Cluster B):

- Phylogeny-based scores (Mihalek04, Zhang08): Utilize phylogenetic trees in conservation calculations

- Non-phylogeny scores (Shannon entropy, relative entropy methods): Rely on amino acid frequencies and background distributions

Empirical analysis reveals that frequency-based scores considering background distributions generally perform best in predicting functionally critical sites like catalytic residues [23].

Table 3: Essential Resources for Structural Conservation Research

| Resource Category | Specific Tools/Methods | Primary Function | Application Context |

|---|---|---|---|

| Structural Databases | Protein Data Bank (PDB), AlphaFold2 Database | Source experimental and predicted structures | Primary data for structural comparisons |

| Structural Alignment | DALI, CE, TM-align | Quantify structural similarity between proteins | Identify structural homologs beyond sequence twilight zone |

| Domain Parsing | Predicted Alignment Error (PAE) analysis, Graph-based clustering | Define structural domain boundaries in multidomain proteins | Preprocessing for accurate domain-level comparisons |

| Conservation Scoring | Frequency-based scores (Shannon entropy), Relative entropy methods | Identify evolutionarily constrained residues | Functional site prediction and process homology assessment |

| Process Modeling | Dynamical systems modeling, Regulatory network analysis | Characterize developmental process dynamics | Establish homology of process beyond structural components |

Implications for Drug Discovery and Design

The adoption of structural conservation as a gold standard has profound implications for structure-guided drug discovery. While traditional approaches have relied heavily on static, cryo-cooled structures, the recognition of conserved structural dynamics enables more effective drug design [24].

Identifying Novel Drug Targets: Structural conservation analysis can reveal functionally important but previously unrecognized drug targets by identifying structurally conserved regions across protein families. This approach is particularly valuable for identifying allosteric sites that may be conserved despite sequence variation.

Understanding Binding Site Evolution: Comparing structurally conserved binding sites across homologs provides insights into how drug binding evolves and helps predict potential resistance mechanisms. This enables the design of more robust therapeutic compounds that target evolutionarily constrained regions.

Temperature-Sensitive Structural Analysis: Recent studies highlight the importance of performing structural studies at physiologically relevant temperatures to "unfreeze" structural ensembles, revealing conformational states that may be missed in traditional cryo-cooled samples [24]. These dynamic details are often conserved across homologs and provide critical information for drug design.

The cumulative evidence from proteome-wide structural analyses demonstrates that structural conservation provides a more reliable and evolutionarily deep record of homology than sequence-based assessments alone. The quantitative data presented in this comparison guide reveals that structural approaches can identify 8-32% of homologous relationships that would remain undetected in the sequence twilight zone [22].

For researchers validating process homology criteria, structural conservation offers a crucial intermediate level of evidence that bridges the gap between genetic sequences and morphological outcomes. By establishing homology at the structural level, scientists can more reliably trace the evolutionary history of developmental processes and their underlying mechanisms.

As structural biology continues to advance with tools like AlphaFold2 making structural data increasingly accessible, the research community stands at the threshold of a new era in evolutionary analysis. Embracing structural conservation as the gold standard will enable more accurate reconstruction of evolutionary history, more reliable identification of therapeutic targets, and deeper understanding of the fundamental processes that shape biological diversity.

In evolutionary and comparative biology, homology refers to the presence of the same biological structures in different species due to shared ancestry, regardless of potential differences in form and function [8] [25]. This concept stands in contrast to analogy, where similar structures arise independently in different lineages due to convergent evolution or similar functional pressures [8] [25]. The central challenge in homology research lies in establishing reliable criteria for determining "sameness" of biological traits across species [26]. For researchers in drug development and biomedical science, accurately identifying homologous structures, particularly at the molecular level, provides critical insights for extrapolating findings from model organisms to humans and for identifying potential therapeutic targets across related proteins [27] [28].

Historically, three principal criteria have emerged for establishing homologies: structural (including positional and compositional criteria), developmental (embryological origin and genetic programs), and functional correlates [8] [25]. These criteria do not always align perfectly; structures may be homologous despite functional divergence, or similar developmental genes may underlie non-homologous structures [26] [28]. This guide objectively compares the performance of these criteria based on experimental data, providing a framework for validating homology in basic research and drug discovery applications.

Comparative Analysis of Homology Criteria

The following table summarizes the core principles, strengths, and limitations of the three primary homology criteria, providing a quick reference for researchers selecting appropriate methodologies.

Table 1: Comparative Performance of Primary Homology Criteria

| Criterion | Core Principle | Key Strengths | Principal Limitations | Representative Experimental Approaches |

|---|---|---|---|---|

| Structural | Sameness in relative position, connections, and composition [8] [26] | High objectivity; applicable to fossil specimens; allows anatomical comparisons across diverse taxa [8] [25] | May miss homologies with radical structural modification; position can shift evolutionarily [26] | Comparative anatomy, 3D structural alignment [8], protein homology modeling [27] |

| Developmental | Derivation from same embryonic precursor or developmental pathway [25] | Can reveal deep homologies unrecognizable in adult forms [25]; provides mechanistic insight | Homologous structures can develop from different precursors ("de Beer's paradox") [26] [25] | Fate mapping, gene expression analysis (e.g., in situ hybridization), CRISPR/Cas9 gene editing |

| Functional | Performance of similar biological roles | Direct relevance to physiological and biochemical research; functional conservation often indicates selective pressure | High risk of misidentifying analogies as homologies; function evolves rapidly [8] | Physiological recording, enzymatic assays, behavioral studies, pharmacological tests |

Structural Criteria: From Gross Anatomy to Molecular Modeling

Foundational Principles and Classic Examples

The structural criterion, particularly the principle of connections established by Geoffroy Saint-Hilaire, posits that homologous structures maintain their relative topological positions and connections to other structures, even when their form and function diverge [26] [25]. A canonical example is the vertebrate forelimb, where the wings of bats, flippers of whales, and arms of humans all contain the same fundamental skeletal elements (humerus, radius, ulna) in consistent topological arrangements despite their divergent functions [8].

In modern research, structural homology extends to the molecular level, where protein homology modeling exploits structural similarities to predict the three-dimensional configuration of proteins based on known homologous structures [27]. For instance, researchers constructed a reliable homology model of the human voltage-gated proton channel (hHV1) by leveraging its structural homology to the voltage-sensing domains (VSDs) of potassium and sodium channels, despite sequence identity being below the typically reliable 30% threshold [27].

Experimental Protocol: Protein Homology Modeling and Validation

Table 2: Key Research Reagents for Structural Homology Modeling

| Research Reagent | Specific Function in Homology Assessment |

|---|---|

| Template Structures (e.g., from PDB) | Provide high-resolution 3D structural templates for model building [27] |

| Multiple Sequence Alignment Tools | Identify conserved residues and inform alignment between target and template [27] |

| Molecular Dynamics (MD) Simulation Software | Assess structural stability and refine models in membrane mimetic or solvent environments [27] |

| AlphaFoldDB Database | Source of predicted protein structures for comparative analysis when experimental structures are unavailable [29] [30] |

| Structural Comparison Algorithms (Dali, MATRAS, Foldseek) | Quantify structural similarity between models and templates to validate homology [29] |

Protocol: Homology Model Construction and Validation for a Membrane Protein (e.g., hHV1) [27]

- Template Identification and Alignment: Identify suitable structural templates (e.g., VSDs from Kv1.2/2.1 paddle-chimera channel) through sequence database searching. Perform multiple sequence alignment guided by phylogenetic analysis and conserved functional residues.

- Model Building: Use homology modeling software to construct initial 3D models of the target protein. The software copies coordinates from aligned regions of the template and models loops for unaligned regions.

- Structure-Stability Testing via Molecular Dynamics: Conduct multiple repeated MD simulations (e.g., 125 repeats of 100-ns simulations) in an explicit membrane mimetic to allow relaxation from initial conformation.

- Consensus Model Selection: Analyze structural deviations across simulations to identify features consistently retained. Select a consensus model demonstrating stable salt-bridge networks and structural integrity compatible with known function.

- Experimental Validation: Design accessibility experiments (e.g., His-scanning mutagenesis followed by Zn²⁺ probing under voltage clamp) to test structural predictions regarding residue exposure, confirming the model's accuracy.

Performance Assessment of Structural Criteria

Structural criteria provide a highly objective and empirically testable framework for homology assessment. The advent of AI-based structure prediction tools like AlphaFold2 has significantly enhanced this approach, enabling reliable homology detection even for proteins with low sequence similarity [29] [30]. Studies demonstrate that structural comparisons of AlphaFold2-predicted models can detect remote homologies that escape detection by sequence-based methods alone [29]. However, structural criteria face challenges with highly divergent homologs where structural conservation is minimal, and may be less effective for establishing homology in non-proteinaceous biological structures.

Developmental and Genetic Criteria: Tracing Embryological Origins

Conceptual Foundations and Modern Applications

The developmental criterion asserts that structures are homologous if they develop from the same embryonic precursors [25]. This approach, enriched by von Baer's laws stating that related animals begin development as similar embryos and then diverge, allows researchers to identify deep homologies obscured in adult morphology [8] [25]. A powerful extension of this criterion is the concept of "deep homology," where distantly related organisms share ancient genetic regulatory apparatus used in building morphologically disparate structures [8] [26]. For example, the pax6 genes control eye development in both vertebrates and arthropods, despite their eyes being anatomically dissimilar and evolving independently [8].

However, a significant limitation is that homologous structures can develop from different embryonic precursors or through different genetic pathways—a phenomenon thoroughly documented by de Beer [26]. Conversely, the same developmental genes (e.g., fringe) can be involved in creating non-homologous structures, such as arthropod and vertebrate limbs, which have completely different developmental mechanisms and evolutionary origins [28].

Diagram 1: Developmental Path to Homology

Experimental Protocol: Assessing Genetic Homology

Protocol: Gene Expression and Functional Analysis for Homology Assessment [28]

Comparative Gene Expression Analysis:

- Select candidate developmental regulator genes (e.g., transcription factors, signaling molecules) based on phylogenetic conservation.

- Perform whole-mount in situ hybridization on embryonic tissue from multiple species at comparable developmental stages.

- Precisely document expression domains relative to anatomical landmarks and developing structures of interest.

Functional Validation through Gene Perturbation:

- Design CRISPR/Cas9 constructs or RNAi reagents to target candidate genes in model organisms.

- Introduce loss-of-function or gain-of-function mutations and assess phenotypic consequences in developing embryos.

- Compare phenotypic outcomes across species: similar defects in putatively homologous structures provide evidence for conserved developmental requirements.

Integration with Phylogenetic Data:

- Map expression patterns and functional requirements onto established phylogenetic trees.

- Distinguish conserved developmental programs (potential homology) from convergent co-option of genetic networks.

This approach successfully identified the deep homology between the dorsal side of vertebrates and ventral side of arthropods based on conserved but inverted expression of dorsoventral patterning genes, confirming a hypothesis first proposed by Geoffroy Saint-Hilaire in the 19th century [26].

Integration and Validation: A Combined Evidence Approach

Hierarchical Nature of Biological Homology

Contemporary evolutionary biology recognizes that homology exists at multiple hierarchical levels (gene, protein, cell type, tissue, organ), and homologies at different levels may not always align [25] [28]. A gene can be homologous across species while participating in the development of non-homologous structures, and homologous structures can be built using non-homologous genes or developmental pathways [26] [25]. This non-reductive, hierarchical view suggests that no single criterion is absolute, and the most robust homology assessments integrate multiple lines of evidence.

Diagram 2: Multi-level Evidence Integration

Decision Framework for Homology Assessment

For research and drug development applications, the following workflow provides a systematic approach to homology validation:

- Initial Structural Comparison: Use 3D structural alignment tools (Dali, Foldseek) with experimental or AlphaFold2-predicted models to identify potential structural homologs [29] [30].

- Developmental/Genetic Analysis: Investigate expression patterns and functional requirements of developmental genes in putatively homologous structures.

- Phylogenetic Mapping: Map all characterized traits onto phylogenetic trees to test for consistency with common ancestry.

- Functional Correlates: Assess functional conservation while remaining cautious of analogous similarities.

Table 3: Decision Matrix for Conflicting Homology Evidence

| Evidence Pattern | Structural | Developmental | Functional | Likely Interpretation |

|---|---|---|---|---|

| Pattern A | + | + | + | Strong evidence for homology |

| Pattern B | + | + | - | Likely homology with functional divergence |

| Pattern C | - | + | + | Possible deep homology with structural divergence |

| Pattern D | + | - | + | Caution required; may represent analogy |

The validation of homology criteria remains fundamental to evolutionary developmental biology and has practical implications for drug discovery research. Based on comparative analysis:

- Structural criteria provide the most objective starting point, especially with advances in protein structure prediction enabling reliable homology detection even with low sequence similarity [29].

- Developmental and genetic criteria offer powerful insights, particularly for deep homologies, but cannot be used in isolation due to the evolutionary dissociation between genetic programs and morphological outcomes [26].

- Functional criteria should be applied most cautiously, as functional similarities frequently arise convergently rather than through common descent [8].

For researchers in drug development, structural homology modeling remains an indispensable tool for target identification and characterization, particularly for membrane proteins and other targets difficult to crystallize [27]. The most robust conclusions emerge from integrating multiple criteria while acknowledging the hierarchical nature of biological organization, where homology at one level does not necessarily predict homology at others. This integrated approach ensures accurate cross-species extrapolation in preclinical research and provides evolutionary context for target selection in therapeutic development.

Homology Modeling Workflows: From Template Selection to Refined Structures

Template identification and fold assignment are fundamental steps in computational biology, enabling researchers to infer protein structure, function, and evolutionary relationships. Detecting homology—the evidence of shared evolutionary ancestry—is crucial for transferring functional and structural annotations from characterized proteins to novel sequences. For researchers and drug development professionals, selecting the appropriate computational method can significantly impact the accuracy of downstream analyses, from functional annotation to structure-based drug design. This guide objectively compares three cornerstone methodologies: BLAST, PSI-BLAST, and profile Hidden Markov Models (HMMs), framing their performance within ongoing research to validate process homology criteria.

The core challenge these methods address is the detection of increasingly distant evolutionary relationships. As sequences diverge over evolutionary time, their pairwise sequence similarity drops into the "twilight zone" where conventional sequence comparison methods fail, necessitating more sensitive, evolutionarily-aware approaches [31]. This evaluation summarizes the experimental data on their sensitivity, speed, and accuracy to inform method selection for biological discovery.

Core Algorithmic Principles

The three methods represent a progression in evolutionary inference, from simple pairwise comparisons to complex models incorporating evolutionary information from multiple sequences.

BLAST (Basic Local Alignment Search Tool): The foundational method, BLAST, identifies homology by performing pairwise local alignments between a query sequence and sequences in a database. It rapidly identifies regions of local similarity without gaps (BLASTp) and uses a heuristic approach to evaluate statistical significance (E-value). Its speed comes at the cost of sensitivity for remote homologs, as it fails to account for position-specific conservation across a protein family [32].

PSI-BLAST (Position-Specific Iterated BLAST): PSI-BLAST enhances BLAST's sensitivity through an iterative, profile-based strategy. Its core innovation is using results from each search to refine the "probe" for the next search.

- Initial Search: A standard BLASTp search is performed.

- Build PSSM: Significant hits are extracted and used, with the query, to build a Multiple Sequence Alignment (MSA). A Position-Specific Scoring Matrix (PSSM) is calculated from this MSA, capturing evolutionary conservation at each position [33].

- Iterative Search: The PSSM is used as the new "query" to search the database again, identifying more distant homologs.

- Convergence: The process repeats until no new significant sequences are found [33]. This iterative refinement allows PSI-BLAST to detect remote homology missed by BLAST.