The Ornstein-Uhlenbeck Model: A Mean-Reverting Framework for Evolutionary Biology and Precision Medicine

This article provides a comprehensive exploration of the Ornstein-Uhlenbeck (OU) process, a cornerstone stochastic model renowned for its mean-reverting property.

The Ornstein-Uhlenbeck Model: A Mean-Reverting Framework for Evolutionary Biology and Precision Medicine

Abstract

This article provides a comprehensive exploration of the Ornstein-Uhlenbeck (OU) process, a cornerstone stochastic model renowned for its mean-reverting property. Tailored for researchers and drug development professionals, we dissect the model's mathematical foundations, from its formulation as a stochastic differential equation to its explicit solution and stationary distribution. The scope extends to practical methodological applications, including parameter estimation and the modeling of complex, multi-trait systems. We address common challenges in model fitting and selection, offering optimization strategies and troubleshooting guidance. Finally, the article presents a rigorous comparative analysis, contrasting the OU process with alternative models like Brownian motion and evaluating its predictive power through advanced validation techniques, including supervised learning and multi-model fusion. This synthesis of theory and application highlights the OU model's transformative potential in evolutionary biology and the development of personalized therapeutic regimens.

Demystifying the Ornstein-Uhlenbeck Process: Core Principles and Mathematical Formulation

The Ornstein-Uhlenbeck (OU) process is a stochastic process that serves as the fundamental mathematical framework for modeling mean reversion across diverse scientific fields. In the context of trait evolution research, it provides a powerful tool for modeling how biological traits evolve under the influence of stabilizing selection, tending towards an optimal value over time [1]. The process is named after Leonard Ornstein and George Eugene Uhlenbeck, who first applied it in physics to model the velocity of a massive Brownian particle under friction [2]. The OU process represents a continuous-time, mean-reverting stochastic process that is both Gaussian and Markovian, making it uniquely positioned as the only nontrivial process satisfying these three conditions up to linear transformations [2].

The core conceptual foundation of the OU process lies in its mean-reversion property – the tendency of a stochastic variable to gravitate toward a long-term mean level over time. This contrasts sharply with random walk models, where deviations persist indefinitely rather than reverting to historical averages [3]. In evolutionary biology, this mathematical framework translates to modeling how traits evolve toward specific optimal values (optima) dictated by environmental pressures and evolutionary constraints, with the rate of reversion reflecting the strength of stabilizing selection [1].

Mathematical Formulation

Stochastic Differential Equation Representation

The OU process is formally defined by the stochastic differential equation:

[ dXt = \theta(\mu - Xt)dt + \sigma dW_t ]

Where:

- ( X_t ) is the value of the process at time ( t )

- ( \mu ) represents the long-term mean or equilibrium level

- ( \theta > 0 ) is the rate of mean reversion, determining the speed at which the process reverts to the mean

- ( \sigma > 0 ) represents the volatility or diffusion coefficient

- ( dW_t ) is the increment of a Wiener process (Brownian motion)

The term ( \theta(\mu - Xt)dt ) constitutes the drift component that exerts a pull toward the mean proportional to the deviation from that mean, while ( \sigma dWt ) represents the stochastic component that introduces random fluctuations [2] [4].

Discrete-Time Formulation

For practical applications with empirical data, the continuous-time OU process is commonly discretized. Using the Euler-Maruyama discretization scheme with time step ( \Delta t ), we obtain:

[ X{t+1} = \kappa\theta\Delta t + (1 - \kappa\Delta t)Xt + \sigma\sqrt{\Delta t}\epsilon_t ]

Where ( \epsilon_t \sim \mathcal{N}(0,1) ) is standard Gaussian noise [5]. This discrete-time representation forms the basis for most parameter estimation methods and reveals the AR(1) character of the process.

Table 1: Key Parameters of the Ornstein-Uhlenbeck Process

| Parameter | Symbol | Biological Interpretation | Mathematical Role |

|---|---|---|---|

| Long-term mean | (\mu) | Optimal trait value | Equilibrium level |

| Mean reversion rate | (\theta) | Strength of stabilizing selection | Speed of reversion to mean |

| Volatility | (\sigma) | Rate of random trait evolution | Diffusion coefficient |

| Half-life | ( \ln(2)/\theta ) | Time for deviation to halve | Natural timescale |

Analytical Properties and Solution

The OU process admits an analytical solution, providing a closed-form expression for the process value at any time ( t ):

[ Xt = X0 e^{-\theta t} + \mu(1 - e^{-\theta t}) + \sigma\int0^t e^{-\theta(t-s)}dWs ]

This solution illuminates several fundamental properties. The conditional expectation evolves as:

[ \mathbb{E}[Xt | X0] = X_0 e^{-\theta t} + \mu(1 - e^{-\theta t}) ]

exponentially transitioning from the initial value ( X_0 ) to the long-term mean ( \mu ) at a rate determined by ( \theta ). The conditional variance is given by:

[ \text{Var}(Xt | X0) = \frac{\sigma^2}{2\theta}(1 - e^{-2\theta t}) ]

which converges to ( \frac{\sigma^2}{2\theta} ) as ( t \to \infty ), in contrast to the unbounded variance of Brownian motion [2].

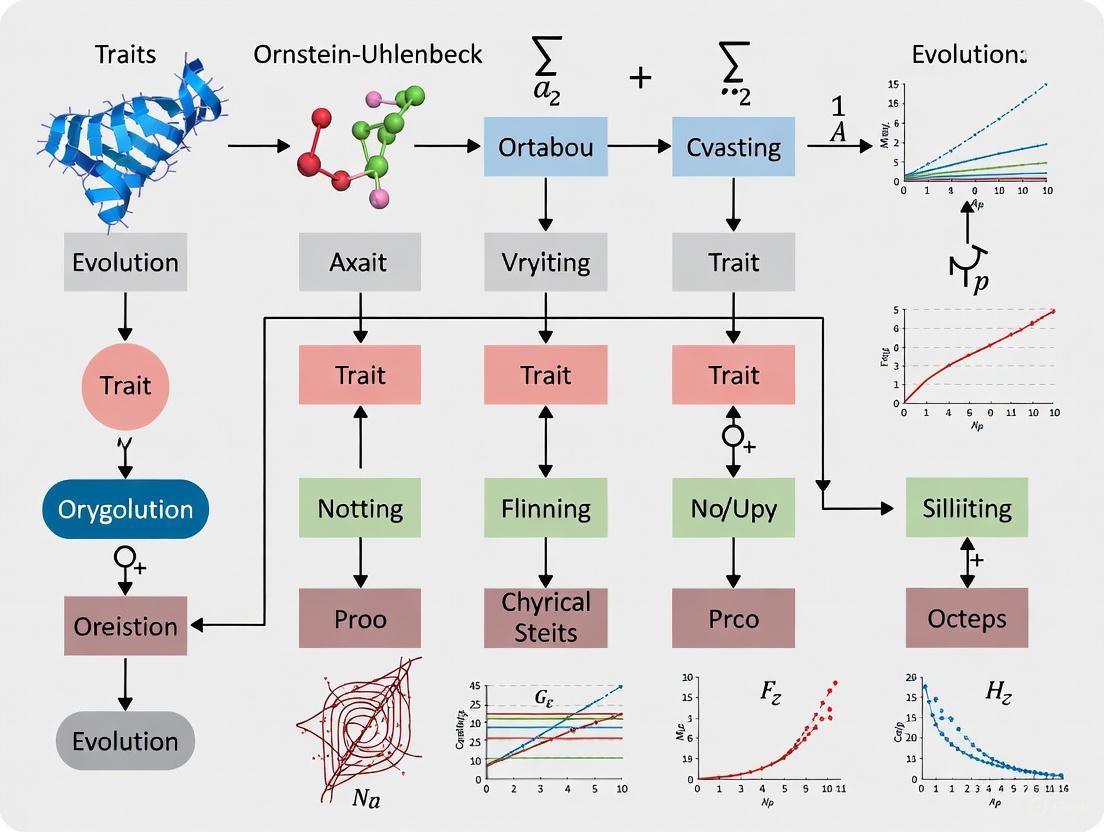

The following diagram illustrates the fundamental structure and relationships within the OU process framework:

Parameter Estimation Methods

Maximum Likelihood Estimation

For a discrete-time sample ( {X0, X1, ..., X_N} ) observed at intervals ( \Delta t ), the conditional distribution is normal:

[ X{t+1} | Xt \sim \mathcal{N}\left(\mu(1 - e^{-\theta\Delta t}) + X_te^{-\theta\Delta t}, \frac{\sigma^2}{2\theta}(1 - e^{-2\theta\Delta t})\right) ]

The log-likelihood function is given by [4]:

[ \ell(\theta, \mu, \sigma) = -\frac{N}{2}\ln(2\pi) - \frac{N}{2}\ln\left(\frac{\sigma^2}{2\theta}(1 - e^{-2\theta\Delta t})\right) - \sum{t=1}^N \frac{\left(Xt - \mu(1 - e^{-\theta\Delta t}) - X_{t-1}e^{-\theta\Delta t}\right)^2}{\frac{\sigma^2}{\theta}(1 - e^{-2\theta\Delta t})} ]

Maximizing this likelihood function provides asymptotically efficient estimators, though this typically requires numerical optimization.

Regression-Based Approaches

The discretized OU process can be represented as an AR(1) process:

[ X{t+1} = a + bXt + \varepsilon_t ]

where ( \varepsilont \sim \mathcal{N}(0, \sigma\varepsilon^2) ). The ordinary least squares (OLS) estimates relate to the OU parameters as follows [5] [4]:

[ \hat{b} = e^{-\hat{\theta}\Delta t}, \quad \hat{a} = \hat{\mu}(1 - \hat{b}), \quad \hat{\sigma}^2 = \frac{2\hat{\theta}\hat{\sigma}_\varepsilon^2}{1 - \hat{b}^2} ]

This regression framework provides a computationally efficient estimation method, though it may exhibit bias in small samples.

Method of Moments

For processes observed at low frequency, moment-based estimators can be employed. Using the unconditional mean and variance, and autocovariance at lag 1 [4]:

[ \hat{\mu} = \frac{1}{N}\sum{t=1}^N Xt, \quad \hat{\gamma}(0) = \frac{1}{N}\sum{t=1}^N (Xt - \hat{\mu})^2, \quad \hat{\gamma}(1) = \frac{1}{N-1}\sum{t=1}^{N-1} (Xt - \hat{\mu})(X_{t+1} - \hat{\mu}) ]

The parameter estimates are then:

[ \hat{\theta} = -\frac{1}{\Delta t}\ln\left(\frac{\hat{\gamma}(1)}{\hat{\gamma}(0)}\right), \quad \hat{\sigma}^2 = \frac{2\hat{\theta}\hat{\gamma}(0)}{1 - e^{-2\hat{\theta}\Delta t}} ]

Table 2: Comparison of Parameter Estimation Methods for OU Processes

| Method | Principles | Advantages | Limitations |

|---|---|---|---|

| Maximum Likelihood | Probability maximization | Statistical efficiency; confidence intervals | Computational complexity; sensitivity to initialization |

| Regression (AR(1)) | Linear least squares | Computational simplicity; intuitive | Small-sample bias; sensitive to measurement noise |

| Method of Moments | Moment matching | No distributional assumptions; robustness | Statistical inefficiency; requires large samples |

| Modified Least Squares [6] | Bias-corrected regression | Reduced small-sample bias; improved inference with low-frequency data | More complex implementation |

| Bayesian Methods [7] | Posterior distribution | Uncertainty quantification; incorporation of priors | Computational intensity; subjective priors |

Experimental Protocols for Trait Evolution Research

Model Selection Framework

In phylogenetic comparative studies, a critical initial step involves selecting the appropriate evolutionary model that best explains trait variation across species. The standard protocol involves [1]:

Specifying Candidate Models: Standard candidates include Brownian motion (BM), Ornstein-Uhlenbeck (OU), and Early-Burst (EB) models.

Model Fitting: Estimating parameters for each candidate model using maximum likelihood or Bayesian methods.

Model Comparison: Employing information criteria (AIC, AICc, BIC) to compare fitted models, balancing goodness-of-fit against complexity.

The OU process in comparative biology typically incorporates a phylogenetic tree structure, where the trait value for species ( i ) is modeled as:

[ Xi = \mu + \int0^{Ti} e^{-\theta(Ti - t)}\sigma dW_t ]

where ( T_i ) represents the phylogenetic distance from the root to species ( i ).

Handling Measurement Error

Biological trait measurements often contain substantial error, which must be accounted for in evolutionary models. The measurement model extends the basic OU process [7] [1]:

[ Yt = Xt + \eta_t ]

where ( Yt ) is the observed trait value, ( Xt ) is the true (unobserved) trait value following an OU process, and ( \etat \sim \mathcal{N}(0, \sigma\eta^2) ) represents measurement error. Failure to account for measurement error can substantially bias parameter estimates, particularly the mean reversion rate ( \theta ).

The following workflow diagram outlines the key decision points in designing a robust analysis of trait evolution using OU processes:

Table 3: Essential Analytical Tools for OU Process Research in Trait Evolution

| Tool Category | Specific Resources | Application Context | Key Functions |

|---|---|---|---|

| Programming Environments | R, Python | General statistical analysis | Data manipulation, visualization, model implementation |

| Phylogenetic Software | GEIGER, OUwie, phytools | Comparative methods | Phylogenetic tree handling, comparative analyses |

| Optimization Libraries | optim (R), scipy.optimize (Python) | Parameter estimation | Numerical maximization of likelihood functions |

| Stochastic Simulation | Custom OU simulators | Method validation | Synthetic data generation, power analysis |

| Bayesian Platforms | Stan, MrBayes | Bayesian inference | MCMC sampling, posterior distribution estimation |

| Specialized Methods | Evolutionary Discriminant Analysis (EvoDA) [1] | Model selection | Supervised learning for evolutionary model prediction |

Advanced Considerations and Research Frontiers

Multi-Optima OU Models

A significant extension of the basic OU model in evolutionary biology allows different optimal values (( \mu )) across distinct lineages or adaptive zones. The multi-optima OU model accommodates this scenario:

[ dXt = \theta(\mui - Xt)dt + \sigma dWt ]

where ( \mu_i ) represents the optimal value for the specific selective regime ( i ) that the lineage occupies [1]. Such models enable researchers to test hypotheses about adaptive radiation and divergent selection across clades.

Statistical Power and Estimation Challenges

A well-documented challenge in OU process estimation is the difficulty in precisely estimating the mean reversion rate ( \theta ), particularly with limited time series or phylogenetic data [4]. The sampling properties of ( \hat{\theta} ) depend critically on:

- The length of the observation period relative to the mean reversion timescale

- The frequency of observations within that period

- The signal-to-noise ratio (( \sigma/\theta ))

For finite samples, the maximum likelihood estimator of ( \theta ) exhibits positive bias, potentially leading to overestimation of the strength of mean reversion [4]. This has direct implications for biological interpretation, as overestimation of ( \theta ) would exaggerate the inferred strength of stabilizing selection.

Emerging Methodological Approaches

Recent methodological innovations aim to address longstanding challenges in OU process inference:

Evolutionary Discriminant Analysis (EvoDA): A supervised learning approach that trains discriminant functions to predict evolutionary models from trait data, potentially offering improved performance with noisy measurements [1].

Modified Least Squares Estimators: Specifically designed for low-frequency observational data, these estimators reduce bias in drift parameter estimation [6].

Measurement Error Modeling: Explicit incorporation of measurement error processes to mitigate bias in parameter estimates [7].

These advanced methods represent the cutting edge of statistical methodology for inferring evolutionary processes from comparative trait data, enabling more robust biological conclusions about the mode and tempo of trait evolution.

Stochastic Differential Equations (SDEs) provide a powerful mathematical framework for modeling systems that evolve randomly over time. In evolutionary biology, the Ornstein-Uhlenbeck (OU) process has emerged as a fundamental model for understanding trait evolution under selective constraints. Unlike the simple Brownian motion model, which describes random, unconstrained evolution, the OU model incorporates a stabilizing force that pulls traits toward an optimal value, providing a more biologically realistic representation of evolution under stabilizing selection [8]. This model is particularly valuable for researchers investigating phylogenetic patterns, as it helps quantify the relative roles of random processes and selective pressures in shaping trait distributions across species. The OU process extends the Brownian motion model by introducing a mean-reverting component, which can be interpreted as the effect of stabilizing selection drawing traits toward a physiological or ecological optimum [9]. This tutorial provides an in-depth technical examination of the two fundamental components of SDEs in general, and the OU model in particular: the drift coefficient, which determines the deterministic pull toward an optimum, and the diffusion coefficient, which governs the stochastic, random-walk component of evolution.

Mathematical Foundations: Drift and Diffusion in SDEs

General Form of Stochastic Differential Equations

Solutions to SDEs are most commonly expressed in differential form: $$dX(t) = \beta(X,t)dt + \alpha(X,t)dW(t)$$ or equivalently in integral form: $$X(t) = X(0) + \int{0}^{t} \beta(X,s)ds + \int{0}^{t} \alpha(X,s)dW(s)$$ where $X(t)$ represents the state of the process at time $t$ [10]. In this formulation, the function $\beta(X,t)$ is the drift coefficient, which captures the deterministic dynamics of the system, while $\alpha(X,t)$ is the diffusion coefficient, which characterizes the system's response to random perturbations represented by the Brownian motion $W(t)$ [10]. The interplay between these two components allows SDEs to model complex systems subject to both deterministic forces and random noise.

For the Ornstein-Uhlenbeck process, these components take specific forms that implement the mean-reverting behavior central to the model's utility in evolutionary biology. The drift coefficient becomes a linear function that pulls the trait value toward an optimum, while the diffusion coefficient is typically constant, representing background stochasticity in the evolutionary process [9] [8].

Biological Interpretation in Trait Evolution

In the context of trait evolution, the OU model conceptualizes species traits as evolving toward an adaptive optimum $\theta$, with the rate of adaptation controlled by the parameter $\alpha$ [9]. Larger values of $\alpha$ indicate a stronger pull toward the optimum, much like a "rubber band" effect [9]. The stochastic component, controlled by $\sigma^2$, represents the intensity of random fluctuations in trait values, which may arise from genetic drift, random environmental changes, or other unmeasured stochastic influences [9] [8].

Table 1: Core Parameters of the Ornstein-Uhlenbeck Model in Trait Evolution

| Parameter | Mathematical Symbol | Biological Interpretation | Effect on Trait Evolution |

|---|---|---|---|

| Drift Coefficient | $\alpha$ | Strength of stabilizing selection | Pulls trait toward optimum $\theta$; larger $\alpha$ = stronger pull |

| Diffusion Coefficient | $\sigma^2$ | Rate of stochastic evolution | Controls random fluctuations in trait values |

| Optimal Trait Value | $\theta$ | Adaptive optimum | Trait value that selection favors |

| Phylogenetic Half-life | $t_{1/2} = \ln(2)/\alpha$ | Time to move halfway to optimum | Measures rate of adaptation; smaller $t_{1/2}$ = faster adaptation |

The Ornstein-Uhlenbeck Model: A Special Case of SDE

Formal Model Specification

The Ornstein-Uhlenbeck process represents a special case of SDEs with specific forms for the drift and diffusion terms. For a continuous trait $X(t)$, the OU process is defined by the following SDE: $$dX(t) = -\alpha(X(t) - \theta)dt + \sigma dW(t)$$ where $\alpha > 0$ is the rate of adaptation, $\theta$ is the optimal trait value, $\sigma$ is the diffusion parameter, and $W(t)$ is a standard Brownian motion process [9] [8]. The drift term $-\alpha(X(t) - \theta)$ explicitly creates the mean-reverting behavior, as it always acts in the direction opposite to the deviation from the optimum $\theta$. When the trait value $X(t)$ is above the optimum, the drift term becomes negative, pulling the trait downward; when $X(t)$ is below the optimum, the drift becomes positive, pulling the trait upward.

The strength of this pull is proportional to both the parameter $\alpha$ and the distance from the optimum. The diffusion term $\sigma dW(t)$ adds stochastic noise to the system, representing the random component of evolution. When $\alpha = 0$, the OU model reduces to a simple Brownian motion model: $$dX(t) = \sigma dW(t)$$ where traits evolve purely randomly without any systematic pull toward an optimum [9] [8].

Extended OU Models for Complex Evolutionary Scenarios

Recent extensions of the basic OU model have expanded its applicability to more complex evolutionary scenarios. The coupled OU system allows modeling of correlated evolution between multiple traits or across different fields: $$dX(t) = -\lambda1 X(t)dt + \sigma{11}dZ1(\lambda1t) + \sigma{12}dZ2(\lambda2t)$$ $$dY(t) = -\lambda2 Y(t)dt + \sigma{21}dZ1(\lambda1t) + \sigma{22}dZ2(\lambda2t)$$ where $X(t)$ and $Y(t)$ represent two potentially correlated processes, $\lambda1$ and $\lambda2$ are intensity parameters, and the $\sigma$ parameters determine both volatility and correlation between the processes [11]. The terms $\sigma{12}$ and $\sigma{21}$ specifically describe the correlation structure between the datasets [11].

Another important extension is the Integrated Ornstein-Uhlenbeck (IOU) process, which incorporates both serial correlation and derivative tracking into the model [12]. The IOU process is particularly useful for modeling longitudinal data where subjects' biomarker trajectories may maintain consistency over short time periods but vary over longer intervals [12]. In the linear mixed IOU model, the $\alpha$ parameter represents the degree of derivative tracking, with smaller values indicating stronger derivative tracking (i.e., measurements more closely follow the same trajectory over long periods) [12].

Figure 1: Dynamics of the Ornstein-Uhlenbeck Process in Trait Evolution

Parameter Estimation and Statistical Implementation

Likelihood-Based Estimation Methods

Estimating OU model parameters from empirical data typically involves maximum likelihood (ML) or restricted maximum likelihood (REML) methods [12]. For the basic OU model with a constant optimum, the parameters $\alpha$, $\theta$, and $\sigma^2$ can be estimated using Markov Chain Monte Carlo (MCMC) algorithms in a Bayesian framework [9]. The implementation involves setting prior distributions for parameters—commonly a loguniform prior for $\sigma^2$, an exponential prior for $\alpha$, and a uniform prior for $\theta$—then using MCMC to sample from the posterior distribution [9].

The REML approach is particularly valuable for addressing the small-sample bias inherent in ML estimation, as it accounts for degrees of freedom lost in estimating fixed effects [12]. Various optimization algorithms can be employed for REML estimation, including:

- Newton-Raphson (NR) algorithm: Fast convergence but sensitive to poor starting values

- Fisher Scoring (FS) algorithm: More robust to poor starting values than NR

- Average Information (AI) algorithm: Computational efficiency for large datasets

- Expectation-Maximization (EM) algorithm: Numerically stable but slow convergence [12]

For challenging estimation problems with correlated parameters, specialized MCMC proposals such as the multivariate normal proposal with learned covariance (mvAVMVN) can significantly improve convergence [9].

Derived Evolutionary Metrics

Beyond the core parameters, the OU model enables calculation of several biologically informative derived metrics:

Phylogenetic half-life ($t_{1/2} = \ln(2)/\alpha$): Represents the expected time for a trait to evolve halfway from its initial state to the optimum [9]. This metric provides an intuitive measure of the rate of adaptation in real time units.

Stationary variance ($\sigma^2/(2\alpha)$): The expected trait variance under the stationary distribution of the OU process, representing the balance between stochastic perturbations and stabilizing selection [8].

Percent decrease in variance due to selection ($p_{th} = 1 - (1 - \exp(-2\alpha t))/(2\alpha t)$): Quantifies how much selection has reduced trait variance compared to pure drift (Brownian motion) over the study period [9].

Table 2: Experimental Protocols for OU Model Parameter Estimation

| Protocol Step | Methodological Details | Software Implementation |

|---|---|---|

| Data Preparation | Exclude non-focal traits; include only trait of interest | RevBayes: data.excludeAll(), data.includeCharacter() [9] |

| Prior Specification | $\sigma^2 \sim \text{Loguniform}(1e-3, 1)$; $\alpha \sim \text{Exponential}(\text{abs}(root_age/2/\ln(2)))$; $\theta \sim \text{Uniform}(-10, 10)$ | RevBayes [9] |

| MCMC Proposal Mechanisms | mvScale for $\sigma^2$ and $\alpha$; mvSlide for $\theta$; mvAVMVN for multivariate proposals | RevBayes: moves.append() [9] |

| Model Monitoring | mnModel for full parameter output; mnScreen for real-time monitoring | RevBayes: monitors.append() [9] |

| Convergence Assessment | Trace plots; joint posterior distribution examination | R packages: RevGadgets, gridExtra [9] |

Interpretation Challenges and Biological Meaning

Relating Parameters to Evolutionary Processes

Interpreting OU model parameters in terms of biological processes requires considerable caution. The $\alpha$ parameter is frequently described as representing the "strength of stabilizing selection," but this interpretation can be misleading [8]. In population genetics, stabilizing selection specifically refers to selection within a population toward a fitness optimum on an adaptive landscape [8]. However, in comparative phylogenetic applications, the OU model operates at a different scale, modeling trait evolution among species where the "selection" is more analogous to a trait tracking movement of the adaptive optimum itself [8].

The stationary distribution of the OU process provides important biological insights. Under the OU process, traits evolve toward a stationary normal distribution with mean $\theta$ and variance $\sigma^2/(2\alpha)$ [9] [8]. This stationary distribution represents the balance between the pull toward the optimum (drift) and random perturbations (diffusion). The ratio $\sigma^2/(2\alpha)$ thus quantifies the expected trait variation under this equilibrium, providing a measure of how much phenotypic diversity is maintained despite stabilizing selection.

Statistical Caution and Best Practices

Several statistical challenges complicate the application and interpretation of OU models:

Parameter correlation: When evolutionary rates are high or branches in the phylogeny are relatively long, it can be difficult to estimate $\sigma^2$ and $\alpha$ separately, as both contribute to the long-term variance of the process [9]. This identifiability problem can lead to high uncertainty in parameter estimates.

Small sample bias: With small phylogenies, likelihood ratio tests frequently incorrectly favor the more complex OU model over simpler Brownian motion models [8]. This problem is exacerbated by measurement error, which can profoundly affect parameter estimates [8].

Model misspecification: The single-optimum OU model may be incorrectly favored when the true evolutionary process involves shifting optima or other complexities not captured by the model [8].

Best practices for OU model application include:

- Using simulations to validate fitted models and verify that parameter estimates accurately recover known simulation parameters [8]

- Exercising caution when interpreting the $\alpha$ parameter, particularly with small datasets [8]

- Examining joint posterior distributions of parameters to identify correlation issues [9]

- Considering more complex multi-optima models when there is evidence for shifts in selective regimes [8]

Figure 2: Research Workflow for OU Model Analysis in Trait Evolution

Advanced Applications and Extensions

Coupled OU Systems for Interdependent Processes

The coupled OU system framework enables modeling of interdependent evolutionary processes, with applications ranging from finance to geophysics to epidemiology [11]. This approach is particularly valuable when analyzing traits or systems that potentially influence each other's evolution. For example, researchers might apply coupled OU systems to model:

- Coordinated evolution of morphological and behavioral traits

- Cross-system influences between environmental factors and species traits

- Phylogenetic comparative methods for multivariate trait evolution

The general form for a coupled system with two processes $X(t)$ and $Y(t)$ is: $$dX(t) = -\lambda1 X(t)dt + \sigma{11}dZ1(\lambda1t) + \sigma{12}dZ2(\lambda2t)$$ $$dY(t) = -\lambda2 Y(t)dt + \sigma{21}dZ1(\lambda1t) + \sigma{22}dZ2(\lambda2t)$$ where the parameters $\sigma{12}$ and $\sigma{21}$ explicitly capture the coupling between the two processes [11]. When these cross-diffusion parameters are zero, the system reduces to two independent OU processes.

Non-Gaussian Extensions with Lévy Processes

Recent extensions of OU models have incorporated Lévy processes as the background driving Levy process (BDLP) instead of standard Brownian motion [11]. These extensions are particularly useful for modeling evolutionary processes with jump components or heavy-tailed distributions. Two commonly used Lévy processes in this context are:

Gamma process ($\Gamma(a,b)$): A stochastic process $X = {Xt, t \geq 0}$ with independent increments where $Xt - X_s$ has a gamma distribution $\Gamma(a(t-s), b)$ for $s < t$ [11].

Inverse Gaussian process ($IG(a,b)$): Similar to the gamma process but with inverse Gaussian distributed increments [11].

These non-Gaussian OU models can better capture empirical patterns in real-world data that display non-Brownian characteristics, such as heavy-tailed distributions or jump dynamics [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for OU Model Implementation

| Tool/Resource | Function in Analysis | Implementation Considerations |

|---|---|---|

| RevBayes [9] | Bayesian phylogenetic analysis with MCMC | Primary platform for OU model specification; enables custom prior specification and MCMC sampling |

| R Statistical Environment [9] | Data visualization, trace plot examination, posterior analysis | Essential for diagnostics; key packages: RevGadgets, gridExtra, ggplot2 |

| Stata IOU Implementation [12] | Linear mixed IOU model for longitudinal data | Handles unbalanced designs with missing data; uses REML estimation |

| MATLAB SDEDDO Class [13] | General SDE simulation with drift/diffusion objects | Creates SDE objects from drift/diffusion components; simulates sample paths |

| StochVol R Package [11] | Stochastic volatility estimation | Used in coupled OU systems for volatility parameter estimation |

The drift and diffusion coefficients in Stochastic Differential Equations provide the fundamental mathematical structure for modeling complex evolutionary processes with both deterministic and stochastic components. In the Ornstein-Uhlenbeck model, these terms take specific forms that enable researchers to quantify the strength of stabilizing selection ($\alpha$), the adaptive optimum ($\theta$), and the rate of stochastic evolution ($\sigma^2$). The careful decomposition of these components offers powerful insights into evolutionary dynamics, but requires appropriate statistical safeguards and thoughtful biological interpretation. As model formulations become increasingly sophisticated—incorporating coupling between systems, non-Gaussian processes, and more complex phylogenetic structures—the core distinction between drift as deterministic pull and diffusion as stochastic perturbation remains essential to understanding both the mathematical foundations and biological applications of Stochastic Differential Equations in evolutionary research.

Closed-Form Solution and Conditional Distributions

The Ornstein-Uhlenbeck (OU) process is a stochastic model that describes the evolution of a trait, ( Xt ), over time, ( t ), incorporating both deterministic drift and random fluctuations [2]. Within phylogenetic comparative methods, it serves as a foundational model for testing hypotheses about adaptive evolution and stabilizing selection in continuous traits across species [8] [14]. The process is defined by the stochastic differential equation: [ dXt = \alpha (\theta - Xt) dt + \sigma dWt ] where:

- ( X_t ) is the trait value at time ( t ),

- ( \theta ) is the long-term optimum trait value,

- ( \alpha > 0 ) is the selection strength or rate of adaptation towards ( \theta ),

- ( \sigma > 0 ) is the diffusion coefficient controlling the intensity of random perturbations,

- ( (W_t) ) is the standard Wiener process (Brownian motion) [2] [14].

The deterministic component, ( \alpha (\theta - Xt) dt ), represents a "rubber band" effect, continuously pulling the trait towards the optimum ( \theta ). The stochastic term, ( \sigma dWt ), introduces random changes due to genetic drift or environmental fluctuations [9] [2]. When ( \alpha = 0 ), the OU process simplifies to a Brownian motion model, representing neutral evolution without stabilizing selection [9] [8].

Closed-Form Solution and Conditional Distributions

The OU process is mathematically tractable, with known transition probabilities between time points. Given an initial trait value ( X0 = x0 ) at time ( 0 ), the conditional distribution of the trait value ( Xt ) at time ( t ) is Gaussian [15]: [ Xt \mid X0 = x0 \ \sim \ \mathcal{N}\left( \mu(t), V(t) \right) ] where the conditional mean ( \mu(t) ) and variance ( V(t) ) are: [ \mu(t) = \mathbb{E}[Xt \mid X0 = x0] = x0 e^{-\alpha t} + \theta \left(1 - e^{-\alpha t}\right) ] [ V(t) = \operatorname{Var}(Xt \mid X0 = x_0) = \frac{\sigma^2}{2\alpha} \left(1 - e^{-2\alpha t}\right) ]

This analytical solution is derived using stochastic calculus techniques, such as Itô's lemma [2] [15]. The conditional mean represents the expected trait value at time ( t ), which is a weighted average between the initial value ( x0 ) and the optimum ( \theta ), with the weight on ( x0 ) decaying exponentially at rate ( \alpha ). The conditional variance describes the uncertainty around this expected value, which increases with time until reaching a stationary state [15].

Table 1: Parameters of the Ornstein-Uhlenbeck Process and Their Biological Interpretations

| Parameter | Mathematical Symbol | Biological Interpretation | Effect on Trait Evolution |

|---|---|---|---|

| Selection Strength | ( \alpha ) | Rate of adaptation toward the optimum | Higher ( \alpha ) causes faster return to ( \theta ) after perturbation |

| Long-Term Optimum | ( \theta ) | Adaptive peak or optimal trait value | Trait values evolve toward this value over long time periods |

| Diffusion Coefficient | ( \sigma ) | Rate of random trait change | Higher ( \sigma ) increases trait variance around the optimum |

For a phylogenetic tree with branching times, these closed-form expressions allow calculation of the likelihood of observing specific trait values at the tips, given the model parameters and phylogenetic relationships [9]. As ( t \to \infty ), the process reaches a stationary distribution [2]: [ X_\infty \sim \mathcal{N}\left( \theta, \frac{\sigma^2}{2\alpha} \right) ] This stationary distribution implies that even under stabilizing selection, traits exhibit standing variation due to ongoing random perturbations.

Key Metrics for Interpretation

Two derived metrics are particularly useful for interpreting OU models in evolutionary contexts [9]:

Phylogenetic Half-Life: ( t_{1/2} = \ln(2) / \alpha )

- This represents the time required for the expected trait value to move halfway from its initial state ( x_0 ) to the optimum ( \theta ).

- Shorter half-lives indicate faster adaptation.

Percent Decrease in Variance: ( p_{th} = 1 - \frac{1 - \exp(-2\alpha T)}{2\alpha T} )

- This quantifies how much selection has reduced trait variance over the study period ( T ) compared to neutral Brownian motion.

- For example, ( p_{th} = 0.25 ) means selection has reduced trait variance by 25%.

Table 2: Closed-Form Solutions for the Ornstein-Uhlenbeck Process

| Quantity | Mathematical Expression | Interpretation |

|---|---|---|

| Conditional Mean | ( \mu(t) = x_0 e^{-\alpha t} + \theta (1 - e^{-\alpha t}) ) | Expected trait value at time ( t ) |

| Conditional Variance | ( V(t) = \frac{\sigma^2}{2\alpha} (1 - e^{-2\alpha t}) ) | Uncertainty in trait value at time ( t ) |

| Stationary Variance | ( V_{\infty} = \frac{\sigma^2}{2\alpha} ) | Long-term equilibrium variance |

| Phylogenetic Half-Life | ( t_{1/2} = \frac{\ln(2)}{\alpha} ) | Time for expected trait to move halfway to optimum |

Experimental Protocols for Phylogenetic OU Modeling

Data Preparation and Model Specification

Protocol 1: Data Input and Tree Handling

- Input: A time-calibrated phylogenetic tree (e.g., in Newick format) and a dataset of continuous trait measurements for species at the tree tips [9].

- Tree Processing: Import the tree and compute its height (root age). This provides the temporal scale ( T ) for parameter interpretations like ( p_{th} ) [9].

- Trait Data Handling: Format trait data into a numerical vector, ensuring correct matching between species names in the tree and trait data [9].

Protocol 2: Parameter Identification and Model Specification

- Parameter Definition:

- Define the selection strength parameter ( \alpha ) with an exponential prior: ( \alpha \sim \text{Exponential}( \frac{\text{root_age}}{2 \ln(2)} ) ). This centers the prior on a phylogenetic half-life of half the tree height [9].

- Define the optimum ( \theta ) with a vague uniform prior (e.g., ( \theta \sim \text{Uniform}(-10, 10) )), adjusting bounds based on expected trait ranges [9].

- Define the diffusion coefficient ( \sigma ) with a loguniform prior (e.g., ( \sigma \sim \text{Loguniform}(10^{-3}, 1) )) to express ignorance about its order of magnitude [9].

- Model Specification: Construct the OU model using a function such as

dnPhyloOrnsteinUhlenbeckREML(), which computes the phylogenetic likelihood efficiently using the REML algorithm [9].

Parameter Estimation and Model Assessment

Protocol 3: Markov Chain Monte Carlo (MCMC) Estimation

- MCMC Setup: Implement MCMC with at least 50,000 generations to sample from the joint posterior distribution of parameters ( (\alpha, \theta, \sigma) ) [9].

- Proposal Mechanisms:

- Use scaling moves (

mvScale) for individual parameters. - Incorporate a multivariate proposal (

mvAVMVN) that learns the covariance structure among parameters during the MCMC to improve efficiency, as OU parameters are often correlated [9].

- Use scaling moves (

- Monitors: Track parameters and derived metrics (e.g., ( t{1/2} ), ( p{th} )) throughout the MCMC chain [9].

Protocol 4: Model Diagnostics and Interpretation

- Convergence Checking: Ensure MCMC chains have reached stationarity and sufficient effective sample sizes (ESS > 200) for all parameters [9].

- Posterior Analysis: Calculate posterior means and credible intervals for ( \alpha ), ( \theta ), and ( \sigma ).

- Biological Interpretation:

- Compute the posterior distribution of the phylogenetic half-life ( t{1/2} ) to understand the time scale of adaptation.

- Calculate ( p{th} ) to quantify the influence of selection in reducing trait variation [9].

- Caveat: Be aware that parameter estimates, particularly of ( \alpha ) and ( \sigma ), can be highly correlated, as both influence the long-term variance ( \sigma^2/(2\alpha) ) [9] [8].

Figure 1: Experimental workflow for phylogenetic OU model fitting, showing the sequence from data input through to biological interpretation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for OU Model Analysis

| Tool Category | Specific Software/Package | Primary Function | Application Context |

|---|---|---|---|

| Bayesian MCMC Framework | RevBayes [9] | Implements phylogenetic OU models with Bayesian inference using MCMC | Full probabilistic model fitting with flexible priors |

| R Packages | ouch [8], geiger [8], POUMM [15] |

Fit OU models to comparative data | Maximum likelihood or Bayesian estimation |

| Visualization & Analysis | RevGadgets [9] | Plotting MCMC output and parameter distributions | Posterior analysis and diagnostics |

| Programming Languages | R [9], Python | Data manipulation, custom analysis scripts | Pre-processing data and post-analysis interpretation |

Advanced Extensions and Practical Cautions

Multi-Optima OU Models

The basic OU model can be extended to scenarios where species evolve toward different optimal trait values ( \theta_i ) in different selective regimes (e.g., different ecological niches) [8]. These multi-regime OU models allow testing hypotheses about adaptive radiation and convergent evolution.

Modeling Interactions and Migration

Recent extensions incorporate interactions between species or gene flow between populations [14]: [ dXt = \alpha (\theta - Xt) dt + \gamma (Yt - Xt) dt + \sigma dWt ] where ( \gamma ) models the effect of migration or ecological interaction with a species having trait value ( Yt ). Ignoring such interactions can lead to misinterpreting migration-driven similarity as very strong convergent evolution [14].

Important Limitations and Cautions

- Parameter Identifiability: With limited phylogenetic data (small trees), it can be difficult to reliably estimate ( \alpha ) and ( \sigma ) separately, as both influence the stationary variance [8].

- Measurement Error: Even small amounts of intraspecific variation or measurement error can profoundly bias parameter estimates, particularly inflating estimates of ( \alpha ) [8].

- Biological Interpretation: While often described as "stabilizing selection," the OU model in comparative analyses does not directly estimate selection within populations but rather a macroevolutionary pull toward an optimum [8].

Figure 2: Logical relationships between the basic Ornstein-Uhlenbeck process and its advanced extensions for modeling complex evolutionary scenarios.

The Ornstein-Uhlenbeck (OU) process describes the velocity of a massive Brownian particle under friction and serves as a foundational model in evolutionary biology for modeling continuous trait evolution under stabilizing selection [16] [17]. Unlike Brownian motion, which exhibits unbounded variance over time, the OU process incorporates a mean-reverting property that pulls the trait value toward an optimal state, making it particularly suitable for modeling evolutionary processes where stabilizing selection maintains traits within functional ranges [18]. This paper examines the stationary properties of the OU process within the context of trait evolution research, focusing specifically on the long-term behavior that emerges when the process reaches statistical equilibrium.

The OU process's significance in evolutionary biology stems from its ability to model both random drift and selective pressures simultaneously [19]. When applied to trait evolution, the parameters of the OU process gain biological interpretations: the mean-reversion strength corresponds to the strength of stabilizing selection, while the process variance reflects the intensity of random fluctuations [20]. Understanding the stationary state of this process provides researchers with critical insights into the long-term evolutionary equilibrium of phenotypic traits, gene expression levels, and other biological characteristics subject to evolutionary constraints [19] [18].

Mathematical Foundation of the Ornstein-Uhlenbeck Process

Stochastic Differential Equation Formulation

The Ornstein-Uhlenbeck process is defined by the following stochastic differential equation [2] [16]:

[ dXt = \theta(\mu - Xt)dt + \sigma dW_t ]

Where:

- (X_t) represents the state of the process (trait value) at time (t)

- (\mu) is the long-term mean or optimal trait value

- (\theta > 0) is the mean-reversion rate (strength of selection)

- (\sigma > 0) is the volatility parameter (magnitude of random fluctuations)

- (W_t) is a standard Wiener process (Brownian motion)

The drift term, (\theta(\mu - Xt)dt), acts as a restoring force that pulls the process toward its long-term mean (\mu), with the strength of this pull proportional to the distance from the mean [16]. The diffusion term, (\sigma dWt), introduces random fluctuations that disperse the trait value [16].

Explicit Solution and Time-Dependent Moments

The OU process admits an explicit solution, allowing direct calculation of its moments [2] [17]:

[ Xt = X0 e^{-\theta t} + \mu(1 - e^{-\theta t}) + \sigma \int0^t e^{-\theta(t-s)} dWs ]

From this solution, the time-dependent mean and variance can be derived [16] [17]:

[ \mathbb{E}[Xt] = X0 e^{-\theta t} + \mu(1 - e^{-\theta t}) ]

[ \text{Var}[X_t] = \frac{\sigma^2}{2\theta}(1 - e^{-2\theta t}) ]

Table 1: Time-Dependent Moments of the OU Process

| Moment | Expression | Biological Interpretation | ||

|---|---|---|---|---|

| Mean | (\mathbb{E}[Xt] = X0 e^{-\theta t} + \mu(1 - e^{-\theta t})) | Expected trait value at time (t) | ||

| Variance | (\text{Var}[X_t] = \frac{\sigma^2}{2\theta}(1 - e^{-2\theta t})) | Variability around expected trait value | ||

| Covariance | (\text{Cov}[Xs, Xt] = \frac{\sigma^2}{2\theta}(e^{-\theta | t-s | } - e^{-\theta(t+s)})) | Trait correlation across evolutionary time |

The parameter (\theta^{-1}) represents the relaxation time or decorrelation time of the process, characterizing the time scale over which the process "forgets" its initial conditions [17].

The Stationary State of the OU Process

Convergence to Stationarity

As time approaches infinity, the OU process converges to a stationary state where its statistical properties become time-invariant [2] [17]. This convergence occurs regardless of the initial state (X_0), provided (\theta > 0). The characteristic time scale for this convergence is governed by the mean reversion rate (\theta), with the process reaching equilibrium more quickly for larger values of (\theta) [16].

The stationary distribution represents the long-term equilibrium of the evolutionary process, where the forces of random drift and stabilizing selection balance each other [21] [19]. In trait evolution, this corresponds to a phenotypic equilibrium where traits fluctuate around an optimal value with stable variance [18].

Stationary Mean and Variance

In the stationary limit ((t \to \infty)), the time-dependent moments simplify considerably [16] [17]:

[ \lim{t \to \infty} \mathbb{E}[Xt] = \mu ]

[ \lim{t \to \infty} \text{Var}[Xt] = \frac{\sigma^2}{2\theta} ]

Table 2: Stationary Moments of the OU Process

| Parameter | Symbol | Expression | Biological Interpretation |

|---|---|---|---|

| Long-term Mean | (\mu) | (\mu) | Optimal trait value under stabilizing selection |

| Stationary Variance | (\sigma^2_s) | (\frac{\sigma^2}{2\theta}) | Equilibrium trait diversity around optimum |

| Decorrelation Time | (\tau) | (\theta^{-1}) | Time scale for trait autonomy |

The stationary variance (\frac{\sigma^2}{2\theta}) reveals a fundamental trade-off between stochastic forces ((\sigma^2)) and stabilizing selection ((\theta)) [21]. Stronger selection (larger (\theta)) reduces equilibrium diversity, while greater random fluctuations (larger (\sigma^2)) increase it [19].

Figure 1: Parameter Relationships in OU Stationary State. The stationary variance emerges from the balance between drift (σ²) and selection (θ).

Gaussian Stationary Distribution

The stationary distribution of the OU process is Gaussian [16] [17]:

[ X_\infty \sim \mathcal{N}\left(\mu, \frac{\sigma^2}{2\theta}\right) ]

With probability density function:

[ f(x) = \sqrt{\frac{\theta}{\pi\sigma^2}} \exp\left(-\frac{\theta}{\sigma^2}(x-\mu)^2\right) ]

This Gaussian stationary distribution is a consequence of the linear drift term and the Gaussian nature of the diffusion term in the OU stochastic differential equation [16]. The distribution is fully characterized by its mean (\mu) and variance (\frac{\sigma^2}{2\theta}), with higher moments being zero [22].

The Gaussian distribution represents the most probable distribution of trait values under the constraints of random mutations and stabilizing selection [21] [22]. In evolutionary terms, traits are most likely to be found near the optimum (\mu), with probability decreasing exponentially with squared distance from the optimum [19].

Biological Interpretation in Trait Evolution

Evolutionary Quantitative Genetics Perspective

In evolutionary quantitative genetics, the OU process models trait evolution under stabilizing selection [19] [18]. The parameters gain specific biological interpretations:

- Long-term mean ((\mu)): The fitness optimum for the trait in a given selective regime

- Mean-reversion rate ((\theta)): The strength of stabilizing selection pulling traits toward the optimum

- Volatility parameter ((\sigma)): The rate of input of genetic variance through mutation and drift

The stationary variance (\frac{\sigma^2}{2\theta}) represents the standing genetic variance maintained at mutation-selection-drift balance [21]. This balance occurs because new mutations introduce variation while selection eliminates it [19].

Multi-Optima OU Models and Evolutionary Shifts

In more complex evolutionary scenarios, species may experience shifts in selective regimes due to environmental changes or niche transitions [20] [18]. These can be modeled using multi-optima OU models where the optimal value (\mu) changes at specific points in the phylogeny:

[ dY(t) = \alpha[\theta(t) - Y(t)]dt + \sigma dB(t) ]

Where (\theta(t)) is a piecewise constant function representing different selective optima on different branches of the phylogeny [18]. The detection of these shifts forms an important research area in phylogenetic comparative methods [20].

Figure 2: Evolutionary Shifts in Multi-Optima OU Models. Environmental changes trigger shifts in selective optima, leading to different stationary distributions.

Applications to Gene Expression Evolution

The OU process has been successfully applied to model the evolution of gene expression levels [19]. In this context:

- The trait value (X_t) represents the expression level of a gene

- The optimum (\mu) represents the expression level that maximizes fitness

- The stationary variance reflects the standing variation in gene expression maintained in populations

Comparative studies of gene expression across species have revealed that different genes experience different strengths of stabilizing selection, with essential genes typically showing lower stationary variance (stronger constraint) than non-essential genes [19].

Experimental Protocols and Methodologies

Parameter Estimation from Comparative Data

Estimating OU parameters from phylogenetic comparative data involves maximum likelihood or Bayesian methods [20] [18]. The key steps include:

- Data Collection: Measure trait values for multiple species with known phylogenetic relationships

- Model Specification: Define the OU process on the phylogenetic tree

- Likelihood Calculation: Compute the probability of observed data under the model

- Parameter Optimization: Find parameter values that maximize the likelihood

The likelihood function for a univariate OU process on a phylogeny follows a multivariate normal distribution with structured covariance [18].

Detecting Shifts in Selective Regimes

Recent methodological advances allow detection of shifts in optimal values ((\mu)) and variance parameters ((\sigma^2)) using penalized likelihood approaches [20]:

- Variable Selection Framework: Formulate shift detection as a variable selection problem

- LASSO Regularization: Apply (\ell_1) penalty to induce sparsity in shift locations

- Model Selection: Use information criteria (BIC, pBIC) to select optimal shift configuration

- Ensemble Methods: Combine results from multiple selection criteria for robust inference

These methods are implemented in software packages such as ℓ1ou, PhylogeneticEM, and ShiVa [20] [18].

Accounting for Within-Species Variation

Extended OU models incorporate within-species variation to distinguish evolutionary changes from non-evolutionary sources of variation [19]:

[ Y{ij} = Xi + \epsilon_{ij} ]

Where (Y{ij}) is the trait value for individual (j) in species (i), (Xi) is the species mean evolving under an OU process, and (\epsilon_{ij}) represents within-species variation due to genetic, environmental, or technical factors [19].

Table 3: Research Reagent Solutions for OU Process Analysis

| Tool/Software | Function | Application Context |

|---|---|---|

ℓ1ou R package |

Detect shifts in optimal values | Phylogenetic comparative analysis |

PhylogeneticEM |

EM algorithm for shift detection | Large phylogenies with unknown shift locations |

ShiVa R package |

Detect shifts in both optima and variance | Models with changing evolutionary rates |

OUwie |

Fit multi-optima OU models | Predefined selective regimes |

PCMFit |

Bayesian fitting of OU models | Complex models with parameter uncertainty |

Quantitative Relationships in the Stationary State

Moment Analysis in Stationary Distribution

Moment analysis provides a mathematical framework for characterizing the stationary distribution [22]. For a Gaussian distribution, the first two moments fully characterize the distribution:

- Zeroth Moment: Total probability (normalized to 1)

- First Moment: Mean ((\mu))

- Second Moment: Variance ((\sigma^2/2\theta))

- Third Moment: Skewness (zero for Gaussian)

- Fourth Moment: Kurtosis (3(\sigma^4) for Gaussian)

In practical applications, sample moments are calculated from observed data and used to estimate process parameters [22].

Scaling Relationships with Population Parameters

The parameters of the OU process connect to underlying population-genetic parameters [21]. For example:

- The volatility parameter (\sigma^2) scales with the mutational variance and effective population size

- The mean-reversion rate (\theta) reflects the strength of stabilizing selection

- The product (N_e\sigma^2) determines the relative power of selection versus drift

These scaling relationships help interpret OU parameters in biological terms and connect microevolutionary processes to macroevolutionary patterns [21].

Covariance Structure in Phylogenetic Data

Under the OU process, the covariance between species traits depends on their shared evolutionary history [18]:

[ \text{Cov}[Yi, Yj] = \frac{\sigma^2}{2\theta} e^{-\theta d_{ij}} ]

Where (d_{ij}) is the phylogenetic distance between species (i) and (j). This covariance structure decays exponentially with phylogenetic distance, unlike the linear decay under Brownian motion [18].

The stationary state of the Ornstein-Uhlenbeck process provides a powerful framework for modeling trait evolution under stabilizing selection. The Gaussian equilibrium distribution with mean (\mu) and variance (\frac{\sigma^2}{2\theta}) represents the balance between random drift and selective forces that characterizes long-term evolutionary stasis. The mathematical properties of this stationary state enable researchers to estimate selection strengths, identify shifts in selective regimes, and understand the maintenance of trait variation in natural populations.

Methodological advances in detecting evolutionary shifts continue to enhance our ability to extract biological insights from comparative data. The integration of within-species variation, multi-optima models, and sophisticated statistical regularization techniques represents the current frontier in OU-based phylogenetic comparative methods. As comparative datasets grow in size and complexity, the OU process and its extensions will remain essential tools for unraveling the evolutionary processes that shape biological diversity.

This technical guide provides an in-depth examination of the Ornstein-Uhlenbeck (OU) process, focusing on its three defining properties—autocorrelation, continuous-time Markov behavior, and mean-reverting drift—within the context of trait evolution research. The OU process serves as a fundamental stochastic model for analyzing phenotypic adaptation across phylogenies, offering a mathematically tractable framework for testing evolutionary hypotheses. We synthesize the theoretical foundations, quantitative properties, and practical implementation protocols essential for researchers investigating evolutionary patterns in biological traits. By integrating rigorous mathematical formulations with empirical applications, this whitepaper aims to equip scientists with the analytical tools necessary to leverage OU models in evolutionary biology, comparative phylogenetics, and related disciplines.

The Ornstein-Uhlenbeck process represents a cornerstone model in evolutionary biology for analyzing trait dynamics across phylogenetic trees. Originally developed in physics to model the velocity of a particle under friction [2] [16], the process was adapted to evolutionary biology by Hansen (1997) as a model for stabilizing selection [8] [9]. Unlike Brownian motion, which describes unbounded trait divergence, the OU process incorporates a restorative force that pulls traits toward an optimal value, thereby modeling the constraint imposed by stabilizing selection [8] [23]. This mean-reverting property makes it particularly valuable for testing hypotheses about adaptive evolution, phylogenetic niche conservatism, and the strength of selection across different selective regimes [8] [24].

In phylogenetic comparative methods, the OU process helps researchers infer evolutionary processes from contemporary species data by modeling trait evolution as a continuous-time stochastic process along phylogenetic branches [9] [24]. The model's mathematical properties—specifically its autocorrelation structure, Markov behavior, and mean-reverting drift—provide the mechanistic foundation for interpreting patterns of trait variation across species in terms of underlying evolutionary forces. Despite its widespread application, researchers must exercise caution in interpreting OU parameters, as statistical limitations can lead to misinterpretation without proper validation [8].

Mathematical Foundations

Stochastic Differential Equation Representation

The Ornstein-Uhlenbeck process is formally defined by the stochastic differential equation (SDE):

dXₜ = θ(μ - Xₜ)dt + σdWₜ

Where:

Xₜrepresents the trait value at timetθis the strength of selection (mean reversion rate)μis the optimal trait value (long-term mean)σis the volatility parameter controlling stochastic effectsdWₜis the increment of a Wiener process (Brownian motion) [2] [25] [23]

The SDE consists of two distinct components: a deterministic drift term θ(μ - Xₜ)dt that pulls the trait toward its optimum, and a stochastic diffusion term σdWₜ that introduces random fluctuations [16] [23]. This formulation captures the essential tension in evolutionary trait dynamics between selective constraints (deterministic component) and evolutionary innovation or drift (stochastic component).

Explicit Solution and Transition Densities

The OU process admits an explicit solution through application of Itô's lemma:

Xₜ = X₀e^{-θt} + μ(1 - e^{-θt}) + σ∫₀ᵗ e^{-θ(t-s)} dW_s [2] [23]

This solution reveals how the trait value depends on its initial state (X₀), the optimal value (μ), and accumulated stochastic effects. The transition probability density between states follows a normal distribution:

P(x,t|x',t') = √[θ/(2πD(1-e^{-2θ(t-t')})] × exp[-(θ(x - x'e^{-θ(t-t')} - μ(1-e^{-θ(t-t')}))²)/(2D(1-e^{-2θ(t-t')}))]

where D = σ²/2 [2]. This transition density forms the basis for likelihood calculations in phylogenetic comparative methods.

Table 1: Mathematical Components of the OU Process Solution

| Component | Mathematical Expression | Biological Interpretation |

|---|---|---|

| Initial Value Influence | X₀e^{-θt} |

Decaying dependence on ancestral trait value |

| Optimal Value Attraction | μ(1 - e^{-θt}) |

Progressive attraction toward evolutionary optimum |

| Stochastic Accumulation | σ∫₀ᵗ e^{-θ(t-s)} dW_s |

Integrated random evolutionary innovations |

The Mean-Reverting Drift

Mechanism and Biological Interpretation

The mean-reverting drift component θ(μ - Xₜ)dt represents the central adaptive mechanism of the OU process, modeling how natural selection pulls trait values toward an optimal state μ at a rate proportional to the displacement from that optimum [16] [9]. The parameter θ quantifies the strength of stabilizing selection, with larger values indicating stronger selective constraints and faster return to the optimum after perturbation [25] [9]. Biologically, this drift term can be interpreted as the effect of an adaptive landscape, with μ representing the fitness peak and θ characterizing the steepness of the landscape around that peak [8] [9].

In evolutionary applications, the optimal value μ may remain constant across a phylogeny or shift at specific nodes corresponding to hypothesized adaptive regimes [9] [24]. These selective regime shifts model adaptations to new environmental conditions or ecological niches, allowing researchers to test hypotheses about the relationship between trait evolution and historical events such as colonization of new habitats or evolution of key innovations [24].

Characteristic Time Scales and Half-Life

The mean-reversion process operates on a characteristic time scale of 1/θ, known as the relaxation or decorrelation time [17]. A more intuitive metric is the phylogenetic half-life:

t₁/₂ = ln(2)/θ

which represents the expected time for a trait to cover half the distance between its initial value and the optimum [9]. The half-life determines the phylogenetic scale at which adaptation occurs: when t₁/₂ is short compared to branch lengths, traits adapt rapidly to new optima; when long, traits retain evidence of deeper ancestral influences.

The percent decrease in trait variance due to selection over time period t is given by:

p_th = 1 - (1 - exp(-2θt))/(2θt) [9]

This metric quantifies how much stabilizing selection reduces trait variation relative to the neutral expectation under Brownian motion.

Continuous-Time Markov Behavior

Markov Property and Evolutionary Implications

The OU process exhibits the continuous-time Markov property, meaning that the future evolution of a trait depends only on its current state and not on its historical path [2] [16]. Formally, for any sequence of times t₁ < t₂ < ... < tₙ < t, the conditional distribution of Xₜ given X_{t₁}, X_{t₂}, ..., X_{tₙ} depends only on X_{tₙ}. This memoryless property makes the OU process mathematically tractable while providing a biologically plausible approximation for many evolutionary processes [2].

The Markov property enables efficient computation of likelihoods for phylogenetic data through recursive algorithms that traverse the tree from tips to root [24]. This computational efficiency facilitates fitting complex OU models with multiple selective regimes to large phylogenetic trees, enabling researchers to test sophisticated evolutionary hypotheses [9] [24].

Fokker-Planck Equation and Probability Flux

The temporal evolution of the trait probability distribution under the OU process is governed by the Fokker-Planck (Kolmogorov forward) equation:

∂P(x,t)/∂t = θ∂/∂x[xP(x,t)] + D∂²P(x,t)/∂x²

where D = σ²/2 [2] [17]. This partial differential equation describes how the probability density of trait values changes over time, with the first term representing the deterministic drift toward the optimum and the second term accounting for the diffusive spread of probability due to stochastic effects.

The stationary solution to this equation, representing the long-term trait distribution, is a Gaussian with mean μ and variance σ²/(2θ):

P_s(x) = √[θ/(πσ²)] × exp[-θ(x-μ)²/σ²] [2] [16] [17]

This stationary distribution reflects the equilibrium between selective forces pulling traits toward the optimum and stochastic forces dispersing trait values.

Table 2: Time-Dependent Properties of the OU Process

| Property | Time-Dependent Expression | Stationary Limit (t→∞) | ||||

|---|---|---|---|---|---|---|

| Mean | E[Xₜ] = X₀e^{-θt} + μ(1 - e^{-θt}) |

μ |

||||

| Variance | Var[Xₜ] = σ²/(2θ)(1 - e^{-2θt}) |

σ²/(2θ) |

||||

| Covariance | `Cov[Xₛ,Xₜ] = σ²/(2θ)(e^{-θ | t-s | } - e^{-θ(t+s)})` | `σ²/(2θ)e^{-θ | t-s | }` |

Autocorrelation Structure

Temporal Covariance and Phylogenetic Signal

The autocorrelation structure of the OU process quantifies how trait values remain correlated through evolutionary time, providing a mechanistic model for phylogenetic signal. For times s < t, the covariance between Xₛ and Xₜ is:

Cov[Xₛ, Xₜ] = σ²/(2θ)(e^{-θ(t-s)} - e^{-θ(t+s)}) [2]

For a process that has reached stationarity (s, t → ∞ with t-s fixed), this simplifies to:

Covₛ[Xₛ, Xₜ] = σ²/(2θ)e^{-θ|t-s|} [17]

This exponentially decaying autocorrelation distinguishes the OU process from Brownian motion, where covariance decreases linearly with shared evolutionary time. The autocorrelation function reveals that traits evolving under OU processes maintain stronger correlations over shorter phylogenetic distances, with the strength of correlation decaying exponentially with evolutionary time separation.

Comparative Analysis with Other Models

The autocorrelation structure of the OU process bridges the gap between Brownian motion (strong, linear-distance phylogenetic signal) and white noise (no phylogenetic signal). When θ → 0, the OU process reduces to Brownian motion with linearly decaying covariance, while large θ values produce rapidly decaying autocorrelation approaching white noise [8] [23]. This continuum allows researchers to quantify where a particular trait falls on the spectrum from strong phylogenetic conservatism to rapid adaptation.

Experimental Protocols and Implementation

Parameter Estimation via Maximum Likelihood

Estimating OU parameters from trait data requires maximizing the likelihood function derived from the multivariate normal distribution of tip species values. For a phylogeny with n species, the trait vector X follows a multivariate normal distribution with mean vector E[X] = μM and covariance matrix V with elements:

Vᵢⱼ = σ²/(2θ)e^{-θdᵢⱼ}(1 - e^{-2θtᵢⱼ})

where dᵢⱼ is the phylogenetic distance between species i and j, and tᵢⱼ is the time from their most recent common ancestor to the present [2] [24]. The log-likelihood function is:

ℓ(θ, μ, σ²|X) = -½[(X - μM)ᵀV⁻¹(X - μM) + log|V| + nlog(2π)]

Numerical optimization techniques such as the Nelder-Mead algorithm or subplex are employed to find parameter values that maximize this likelihood [24].

Bayesian Implementation with MCMC

For complex models with multiple selective regimes, Bayesian approaches using Markov chain Monte Carlo (MCMC) provide an alternative estimation framework [9]. The RevBayes software implements such an approach with the following protocol:

Specify Priors:

σ² ~ Loguniform(10⁻³, 1)for the diffusion parameterα ~ Exponential(root_age / (2ln(2)))for the selection strengthθ ~ Uniform(-10, 10)for the optimum [9]

Define Model: Trait evolution follows

X ~ dnPhyloOrnsteinUhlenbeckREML(tree, α, θ, σ²⁰·⁵)MCMC Sampling: Run chains with sufficient generations (typically 50,000+) to ensure convergence [9]

Posterior Analysis: Compute posterior distributions for biologically meaningful transformations such as phylogenetic half-life (

t₁/₂ = ln(2)/α) and percent variance reduction due to selection (p_th = 1 - (1 - exp(-2αt))/(2αt)) [9]

Simulation-Based Model Checking

Given the documented challenges with OU model selection and parameter estimation [8], simulation-based validation is essential:

Simulate trait data under the fitted OU model using the formal solution:

Xₜ = X₀e^{-θt} + μ(1 - e^{-θt}) + σ√[(1 - e^{-2θt})/(2θ)]ZwhereZ ~ N(0,1)Compare empirical statistics (mean, variance, autocorrelation) between observed and simulated data

Conduct parametric bootstrap to assess confidence intervals for parameter estimates [8]

Table 3: Research Reagent Solutions for OU Modeling

| Research Tool | Specification/Function | Implementation Examples |

|---|---|---|

| Phylogenetic Tree | Time-calibrated phylogeny with branch lengths | ouchtree object in R [24] |

| Likelihood Calculator | Computes multivariate normal probability | hansen() function in ouch package [24] |

| Optimization Algorithm | Maximizes likelihood function | Nelder-Mead, subplex, or BFGS methods [24] |

| Bayesian MCMC | Samples from parameter posterior distributions | RevBayes mcmc() function [9] |

| Parameter Diagnostic | Assesses convergence and effective sample size | Trace plots, Geweke statistic [9] |

Research Applications and Considerations

Biological Interpretation of Parameters

In trait evolution research, OU parameters translate directly to evolutionary hypotheses:

- Selection strength (θ): Intensity of stabilizing selection around an optimum

- Optimal value (μ): Phenotypic value favored by selection in a given selective regime

- Stochasticity (σ): Rate of unpredictable trait change due to genetic drift or unmeasured influences [9]

The phylogenetic half-life (t₁/₂ = ln(2)/θ) indicates the time scale over which adaptation occurs, helping distinguish between rapid recent adaptation versus deep phylogenetic constraints [9].

Statistical Considerations and Limitations

Researchers must be aware of several statistical challenges when applying OU models:

- Parameter identifiability: Strong correlation between

θandσestimates, especially with small datasets [8] [9] - Model selection bias: Likelihood ratio tests often incorrectly favor OU over simpler Brownian motion models [8]

- Measurement error: Even small intraspecific variation can substantially bias parameter estimates [8]

- Regime specification: A priori assignment of selective regimes may not reflect true evolutionary history [24]

To address these issues, researchers should:

- Use simulation-based model checking [8]

- Employ information-theoretic criteria (AICc) rather than nested hypothesis tests [8]

- Incorporate measurement error explicitly in models [8]

- Consider multivariate extensions to improve parameter identifiability [24]

The Ornstein-Uhlenbeck process provides a powerful mathematical framework for modeling trait evolution, with its key properties—autocorrelation, continuous-time Markov behavior, and mean-reverting drift—offering mechanistic insights into evolutionary processes. The autocorrelation structure captures how phylogenetic signal decays with evolutionary time, the Markov property enables computational tractability for complex phylogenies, and the mean-reverting drift models the fundamental action of stabilizing selection. When implemented with appropriate statistical care and biological interpretation, OU models allow researchers to test sophisticated hypotheses about adaptation, constraint, and the tempo and mode of trait evolution across the tree of life. As phylogenetic comparative methods continue to develop, the OU process remains a foundational model for connecting microevolutionary processes to macroevolutionary patterns.

From Theory to Practice: Implementing the OU Model in Biological and Clinical Research

The Ornstein-Uhlenbeck (OU) process has emerged as a fundamental stochastic model for studying the evolution of continuous traits along phylogenetic trees. Originally developed in physics to model the velocity of a massive Brownian particle under friction, the OU process was introduced to evolutionary biology to model phenotypic traits subject to both random perturbations and stabilizing selection [2]. Unlike simple Brownian motion, which describes purely random trait evolution with variance increasing linearly over time, the OU process incorporates a centralizing tendency that pulls traits toward an optimal value, making it particularly suitable for modeling adaptive evolution under constraints [14] [8]. This mean-reverting property allows researchers to test hypotheses about evolutionary forces such as genetic drift, stabilizing selection, and adaptation to different selective regimes across phylogenetic lineages.

The application of OU processes in phylogenetic comparative methods (PCMs) has grown substantially, with over 2,500 ecology, evolution, and paleontology papers referencing Ornstein-Uhlenbeck models between 2012 and 2014 alone [8]. This popularity stems from the model's ability to capture both the phylogenetic structure of trait data (similarity due to shared ancestry) and adaptive evolution toward optimal trait values, while providing a statistically tractable framework for hypothesis testing. The OU process serves as the continuous-time analogue of the discrete-time autoregressive process AR(1), bridging statistical time series analysis with evolutionary biology [2].

Mathematical Foundation of the OU Process

Core Stochastic Differential Equation

The OU process is defined by the stochastic differential equation:

dX(t) = -α(X(t) - θ)dt + σdW(t) [2]

Where:

- X(t) represents the trait value at time t

- α ≥ 0 is the strength of selection parameter, measuring the rate at which the trait is pulled toward the optimum

- θ is the optimal trait value (stationary mean)

- σ > 0 is the diffusion coefficient, controlling the rate of stochastic motion

- dW(t) represents the differential of the Wiener process (Brownian motion)

An alternative parameterization includes a mean parameter explicitly:

dX(t) = θ(μ - X(t))dt + σdW(t) [2]

In this formulation, μ represents the long-term mean of the process. The process can also be expressed in terms of its dynamics, where η(t) represents white noise [2].

Statistical Properties and Interpretation

The OU process exhibits several important statistical properties that make it valuable for evolutionary modeling. Conditioned on an initial value X(0) = x₀, the expected trait value at time t is:

E[X(t) | X(0) = x₀] = x₀e^(-θt) + μ(1 - e^(-θt)) [2]

This expectation shows that the process exponentially forgets its initial condition while converging toward the long-term mean μ. The covariance between time points s and t is:

Cov(X(s), X(t)) = σ²/(2θ) (e^(-θ|t-s|) - e^(-θ(t+s))) [2]

For the stationary process (when t → ∞), the covariance simplifies to σ²/(2θ)e^(-θ|t-s|), and the variance becomes σ²/(2θ). This bounded variance contrasts with the Brownian motion model, where variance increases without bound over time, making the OU process more biologically realistic for modeling traits under stabilizing selection [2] [8].

Table 1: Key Parameters of the Ornstein-Uhlenbeck Process in Evolutionary Biology

| Parameter | Biological Interpretation | Mathematical Meaning | Expected Range |

|---|---|---|---|

| α | Strength of stabilizing selection | Rate of reversion to optimum | 0 (neutral) to high (strong constraint) |

| θ | Optimal trait value | Stationary mean | Variable depending on trait |

| σ | Rate of stochastic evolution | Diffusion coefficient | > 0 |

| μ | Long-term trait mean | Asymptotic mean | Variable depending on trait |

Implementation Framework for Phylogenetic Comparative Studies

Model Extensions for Complex Evolutionary Scenarios

Recent extensions of the basic OU process have enhanced its applicability to realistic biological scenarios. The multi-optima OU model allows different selective regimes across a phylogeny, with each regime having its own optimal trait value [8]. This approach enables researchers to test hypotheses about adaptation to different environments or ecological niches. For example, one can specify different θ values for different clades or for species occupying different habitats.

Another significant extension incorporates species interactions and migration. The standard OU model assumes species evolve independently, which may be violated when gene flow or ecological interactions affect trait evolution. The interacting species OU model includes migration effects between populations or closely related species, preventing misinterpretation of similarity due to migration as convergent evolution [14]. This model can be formulated as:

dXi(t) = -α(Xi(t) - θi)dt + Σj mij(Xj(t) - Xi(t))dt + σdWi(t)

where m_ij represents migration rates between species i and j [14].

For gene expression evolution, an extended OU model incorporates within-species variation:

dXi(t) = -α(Xi(t) - θi)dt + σdWi(t) + ε_i

where ε_i represents non-evolutionary variation due to environmental, technical, or individual genetic factors [19]. This extension is crucial because failing to account for within-species variation can lead to misinterpreting non-evolutionary variation as strong stabilizing selection [19].

Parameter Estimation and Model Selection Protocols

Implementing OU processes in phylogenetic comparative studies involves several methodological steps:

Data Preparation Protocol:

- Phylogenetic Tree: Obtain a time-calibrated phylogenetic tree with branch lengths proportional to time

- Trait Data: Collect species-level trait measurements, ideally with multiple observations per species to estimate within-species variance

- Selective Regimes: Define hypothesized selective regimes based on a priori biological knowledge (e.g., habitat, diet, morphology)

Parameter Estimation Procedure:

- Likelihood Calculation: Compute the multivariate normal likelihood for the observed trait data given the phylogenetic tree and OU parameters

- Numerical Optimization: Use optimization algorithms (e.g., Nelder-Mead, BFGS) to find parameter values that maximize the likelihood

- Uncertainty Quantification: Calculate confidence intervals using profile likelihood or bootstrap methods

Model Selection Workflow:

- Define Candidate Models: Specify biologically plausible models (Brownian motion, single-optimum OU, multi-optima OU)

- Likelihood Comparison: Calculate likelihoods for all candidate models

- Statistical Testing: Use likelihood ratio tests for nested models or information criteria (AIC, AICc, BIC) for non-nested models

- Model Adequacy Check: Simulate data from fitted models and compare empirical patterns with simulated data [8]

Table 2: Software Packages for OU Model Implementation

| Software/Package | Key Features | OU Model Variants | Reference |

|---|---|---|---|

| geiger | Comparative analysis of evolutionary radiations | Basic OU, BM | [8] |

| ouch | OU models with possibly complex, regime-based selection | Multi-optima OU | [8] |

| OUwie | OU models incorporating multiple selective regimes | OUM, OUMV, OUMA | [8] |

| phyloseq | Microbiome data analysis | Extended OU with ecological data | [26] |

| ggtree | Phylogenetic tree visualization | Integration with OU analysis | [26] |

Experimental Design and Workflow

The following diagram illustrates the complete workflow for phylogenetic comparative analysis using OU processes: