Testing Assumptions of Phylogenetic Independent Contrasts: A Essential Guide for Robust Evolutionary Analysis

This article provides a comprehensive guide for researchers and scientists on the critical practice of testing assumptions for Phylogenetic Independent Contrasts (PIC).

Testing Assumptions of Phylogenetic Independent Contrasts: A Essential Guide for Robust Evolutionary Analysis

Abstract

This article provides a comprehensive guide for researchers and scientists on the critical practice of testing assumptions for Phylogenetic Independent Contrasts (PIC). PIC is a foundational method for accounting for phylogenetic non-independence in comparative biology, but its valid application hinges on verifying key assumptions about phylogenetic accuracy and evolutionary models. We cover the foundational logic of why phylogenetic non-independence invalidates standard statistical tests, detail the methodological steps for calculating contrasts and diagnosing assumptions, troubleshoot common pitfalls and optimization strategies, and validate findings through comparison with alternative methods like PGLS. This guide emphasizes that rigorous assumption testing is not optional but essential for producing reliable, interpretable, and reproducible results in evolutionary biology and biomedical research.

Why Independence Matters: The Phylogenetic Non-Independence Problem in Comparative Biology

The Problem of Phylogenetic Non-Independence and Inflated Type I Error

Frequently Asked Questions

1. What is phylogenetic non-independence, and why is it a problem for statistical analysis?

Phylogenetic non-independence refers to the phenomenon where species or populations sharing a recent common ancestor are more similar to each other than they are to more distantly related taxa due to their shared evolutionary history [1]. This is a problem because most standard statistical tests, like ordinary least squares regression, assume that all data points are independent. When this assumption is violated—as it is with phylogenetic data—it can lead to pseudo-replication, misleading error rates, and an inflated chance of finding a significant relationship where none exists (an inflated Type I error rate) [1] [2].

2. How does phylogenetic non-independence lead to an inflated Type I error rate?

Type I error is the incorrect rejection of a true null hypothesis (a false positive). Closely related species often have similar trait values, not because of a direct evolutionary relationship between the traits, but simply because they have inherited them from a common ancestor [3]. A statistical test that treats these species as independent data points will effectively count the same evolutionary signal multiple times. This reduces the effective sample size of the analysis and can create a spurious, statistically significant correlation between traits [3] [4]. One simulation demonstrated that phylogeny can easily induce a highly significant (p < 2.2e-16)—but entirely spurious—relationship between two uncorrelated traits [3].

3. What is the difference between a significant correlation in raw data and a non-significant correlation in Phylogenetically Independent Contrasts (PICs)?

If you observe a significant correlation using raw species data but find no correlation between the PICs, the most likely interpretation is that the correlation in the raw data is primarily an effect of phylogeny, not a true evolutionary relationship [4]. The PIC method has successfully removed the phylogenetic autocorrelation, revealing that there is no underlying relationship between the two traits once shared ancestry is accounted for.

4. Are predictive equations from regression models sufficient for predicting trait values in a phylogenetic context?

No. Using predictive equations derived from ordinary least squares (OLS) or phylogenetic generalized least squares (PGLS) is common but suboptimal. A 2025 study demonstrated that phylogenetically informed predictions, which directly incorporate the phylogenetic relationship of the target species, outperform predictive equations [2]. In simulations, phylogenetically informed predictions showed a four- to five-fold improvement in performance (measured by the variance of prediction errors) compared to OLS or PGLS predictive equations. Performance was so much better that predictions from weakly correlated traits using phylogenetically informed methods were more accurate than predictions from strongly correlated traits using standard equations [2].

5. What methods, besides PICs, can control for phylogenetic non-independence?

Several methods have been developed to address this issue, each with its own assumptions and applications [1]:

- Generalized Least Squares (GLS): Uses a phylogenetic variance-covariance matrix to weight the data in the regression model.

- Phylogenetic Mixed Models: A framework that incorporates phylogeny as a random effect, similar to the "animal model" in quantitative genetics.

- Phylogenetic Autoregression: Focuses on removing phylogenetic effects to examine patterns in the residual trait variation.

- "Mixed Models": These are particularly powerful as they can incorporate the effects of both shared common ancestry and gene flow between populations [1].

Troubleshooting Guides

Guide 1: Diagnosing and Correcting for Phylogenetic Non-Independence

Problem: My analysis of trait correlations across species shows a significant result, but I am concerned it might be a false positive driven by phylogenetic relationships.

Investigation & Solution:

- Perform a Preliminary Check: Begin by visualizing the distribution of your traits on the phylogeny. A

phylomorphospaceplot can quickly show if closely related species cluster together in trait space, which is a visual indicator of phylogenetic signal [3]. - Calculate Phylogenetically Independent Contrasts (PICs): This method transforms the raw trait data at the tips of the tree into independent comparisons (contrasts) at each node in the phylogeny [1] [3]. The following workflow outlines the core steps for implementing and interpreting PICs.

- Choose an Appropriate Model: If PICs are not suitable for your question (e.g., if you need to include fixed effects or account for gene flow), consider alternative methods. Phylogenetic Generalized Least Squares (PGLS) and Phylogenetic Mixed Models are powerful, general-purpose alternatives that are widely used [1] [2].

Guide 2: Implementing Phylogenetically Informed Predictions

Problem: I need to impute missing trait values for my dataset or predict traits for extinct species, and I want to use the most accurate method available.

Solution: Move beyond simple predictive equations and use a full phylogenetically informed prediction framework.

Experimental Protocol for Phylogenetically Informed Prediction

This protocol is based on findings that this method drastically outperforms predictive equations from OLS and PGLS [2].

- Data and Model Setup: Begin with a phylogenetic tree and a dataset of species with known values for the trait you want to predict (the dependent variable) and your predictor traits (independent variables). Fit a phylogenetic regression model (e.g., PGLS or a mixed model).

- Incorporate Phylogeny for Prediction: To predict a value for a new species (with or without known predictor traits), its phylogenetic position is explicitly incorporated into the model. The model uses the phylogenetic covariance to inform the prediction based on the traits and phylogenetic proximity of known species.

- Generate Prediction Intervals: A key advantage of this method is that it generates prediction intervals that logically increase with increasing phylogenetic distance from species with known data. This accurately reflects the greater uncertainty in predicting traits for more distantly related or isolated taxa [2].

Performance Comparison of Prediction Methods (Simulation Results)

The table below summarizes the quantitative performance of different prediction methods from a large simulation study on ultrametric trees, measured by the variance of prediction errors (lower is better) [2].

| Prediction Method | Weak Correlation (r = 0.25) | Moderate Correlation (r = 0.50) | Strong Correlation (r = 0.75) |

|---|---|---|---|

| Phylogenetically Informed Prediction | 0.007 | 0.004 | 0.002 |

| OLS Predictive Equation | 0.030 | 0.017 | 0.014 |

| PGLS Predictive Equation | 0.033 | 0.018 | 0.015 |

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| Ultrametric Phylogenetic Tree | The fundamental input for most comparative methods. It represents the evolutionary relationships and relative divergence times of the species in your study [2]. |

| Phylogenetic Variance-Covariance Matrix | A matrix derived from the phylogeny that quantifies the expected shared evolutionary history between all pairs of species. It is used to weight analyses in GLS and PGLS [1]. |

| Comparative Method Software (R packages: ape, phytools, nlme) | Software environments that provide functions for calculating PICs, fitting PGLS models, running phylogenetic mixed models, and simulating trait evolution [3]. |

| Bivariate Brownian Motion Model | A common null model for simulating the evolution of continuous traits along a phylogeny. It is used for power analysis, model testing, and method validation [2]. |

# Frequently Asked Questions (FAQs)

Q1: What is the core statistical problem caused by evolutionary history in comparative studies? Evolutionary history creates statistical dependence among species data because species share common ancestors. Closely related species are more similar in their traits than distantly related species due to their shared phylogenetic heritage. This non-independence of data points violates the fundamental assumption of independence in standard statistical tests, such as ordinary least squares (OLS) regression, leading to pseudo-replication, misleading error rates, and spurious results [2].

Q2: How do Phylogenetic Independent Contrasts (PIC) resolve this issue? PIC resolves the issue of non-independence by transforming the original trait data into a set of independent comparisons, or "contrasts." Each contrast is calculated at a node in the phylogenetic tree and represents the standardized difference in trait values between two lineages that diverged from a common ancestor. These contrasts are statistically independent and can be used in standard parametric statistical tests that require data independence [2].

Q3: My PIC analysis is yielding unexpected results. What are the key assumptions I should test? The key assumptions of a PIC analysis are:

- Brownian Motion Evolution: The model often assumes traits evolve according to a Brownian motion model, where the expected change in a trait is zero and the variance of change is proportional to time.

- Accurate and Fully Resolved Phylogeny: The analysis assumes the provided phylogenetic tree (including branch lengths) is correct. Polytomies (unresolved nodes with more than two descendants) can be problematic.

- Adequate Model Fit: The chosen evolutionary model should provide a good fit to the actual trait data. You can test this by checking whether the standardized contrasts are independent of their standard deviations and whether they show a normal distribution [2].

Q4: What is the practical performance difference between phylogenetically informed prediction and standard predictive equations? Simulation studies show that phylogenetically informed predictions significantly outperform predictive equations from OLS and Phylogenetic Generalized Least Squares (PGLS). The performance improvement can be substantial, with the variance in prediction errors for phylogenetically informed predictions being about 4 to 4.7 times smaller than for predictions from OLS or PGLS equations. This means phylogenetically informed predictions are consistently more accurate. In fact, predictions using weakly correlated traits (r = 0.25) via phylogenetic methods can be twice as accurate as predictions from strongly correlated traits (r = 0.75) using standard predictive equations [2].

Table 1: Comparison of Prediction Method Performance on Ultrametric Trees

| Prediction Method | Correlation Strength (r) | Variance of Prediction Errors (σ²) | Relative Performance vs. PIP |

|---|---|---|---|

| Phylogenetically Informed Prediction (PIP) | 0.25 | 0.007 | Baseline |

| OLS Predictive Equations | 0.25 | 0.030 | 4.3x worse |

| PGLS Predictive Equations | 0.25 | 0.033 | 4.7x worse |

| Phylogenetically Informed Prediction (PIP) | 0.75 | 0.002 | Baseline |

| OLS Predictive Equations | 0.75 | 0.014 | 7x worse |

| PGLS Predictive Equations | 0.75 | 0.015 | 7.5x worse |

# Troubleshooting Guides

## Issue 1: Diagnosing and Handling Violations of the Brownian Motion Assumption

Problem: The standardized contrasts from your PIC analysis are correlated with their standard deviations or the data does not fit the Brownian motion model.

Solution - A Step-by-Step Diagnostic Protocol:

- Calculate Standardized Independent Contrasts: Use your preferred software (e.g., R packages like

ape,geiger, orphytools) to compute the contrasts. - Create a Diagnostic Plot: Plot the absolute values of the standardized contrasts against their expected standard deviations (which are the square roots of the sums of the branch lengths leading to the two species being compared).

- Interpret the Plot:

- No Pattern: A scatter plot with no discernible trend indicates the Brownian motion model is adequate.

- Positive Correlation: A positive relationship suggests the data may be overdispersed relative to the Brownian motion model. Consider alternative models like the Ornstein-Uhlenbeck (OU) model, which can account for stabilizing selection.

- Model Comparison: Fit your data using alternative evolutionary models (e.g., Brownian motion, OU, Early-Burst). Use model selection criteria like Akaike Information Criterion (AIC) to identify the best-fitting model for your data.

- Re-run Analysis: Perform your PIC analysis using the best-fitting model identified in step 4.

## Issue 2: Implementing Phylogenetically Informed Prediction vs. Predictive Equations

Problem: Uncertainty about the correct method to predict unknown trait values, leading to inaccurate inferences.

Solution - Methodology for Phylogenetically Informed Prediction:

This method explicitly uses the phylogenetic relationship between species to predict missing values, unlike predictive equations which only use regression coefficients [2].

- Data and Tree Preparation: Assemble your dataset of known trait values and a fully resolved phylogenetic tree with branch lengths for all taxa, including those with missing data.

- Model Specification: Use a phylogenetic comparative method such as Phylogenetic Generalized Least Squares (PGLS) to model the relationship between traits. The phylogenetic variance-covariance matrix is an integral part of the model.

- Prediction Execution: Use specialized software functions (e.g.,

predict()in R'snlmeorcaperpackages) that are designed for phylogenetic models. These functions will use the known trait data and the phylogenetic relationships to impute the missing values for the target taxa. - Validation: Where possible, use a cross-validation approach to assess the prediction accuracy of your model by intentionally removing known data points and predicting them.

Table 2: Essential Research Reagent Solutions for Phylogenetic Contrast Analysis

| Item | Function | Example/Tool |

|---|---|---|

| Phylogenetic Tree | Represents the evolutionary relationships and branch lengths between taxa, serving as the backbone for calculating contrasts. | Time-calibrated molecular phylogeny from a database (e.g., TimeTree). |

| Trait Dataset | Contains the phenotypic or ecological measurements for the species in the phylogeny. May include missing values to be imputed. | Species-specific data on morphology, physiology, or behavior. |

| Statistical Software | Provides the computational environment and specialized packages for performing phylogenetic comparative analyses. | R environment with packages ape, nlme, caper, phytools. |

| Evolutionary Model | A mathematical description of the trait evolution process along the phylogeny, used to compute the contrasts. | Brownian Motion, Ornstein-Uhlenbeck, Early-Burst. |

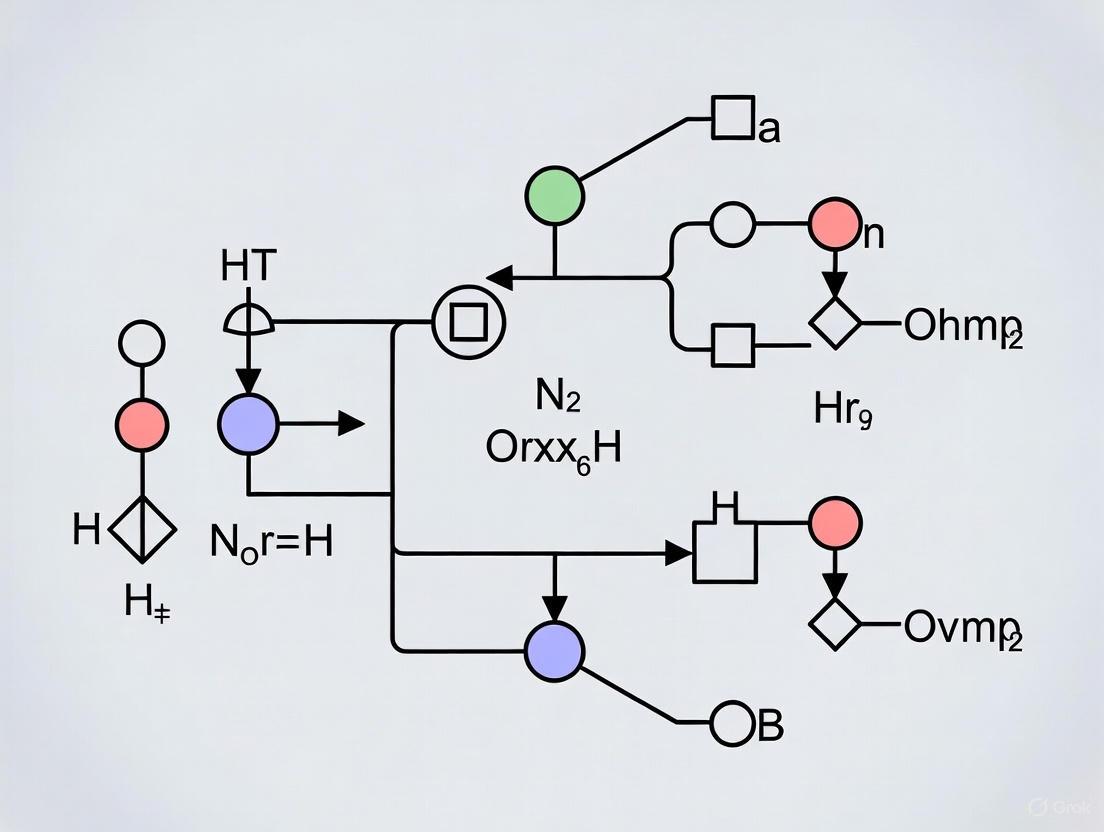

# Mandatory Visualizations

### Phylogenetic Independent Contrasts Workflow

### Prediction Methods Comparison

FAQs: Core Concepts of Independent Contrasts

Q1: What fundamental statistical problem do Phylogenetic Independent Contrasts (PICs) solve? PICs address the problem of phylogenetic non-independence. Standard statistical tests like ANOVA and regression assume that each data point is an independent sample [5] [6]. However, species are related through a branching phylogenetic tree; two closely related species (e.g., mice and rats) are likely to have similar traits because they inherited them from a recent common ancestor, not because of independent evolution [5] [6] [7]. Treating them as independent creates pseudoreplication and inflates the risk of Type I errors (false positives) [5] [6] [7]. PICs correct for this by transforming raw species trait data into a set of independent comparisons [8] [9].

Q2: What is the core logical idea behind the PIC algorithm? The core logic is to use a "pruning algorithm" to calculate evolutionary differences across each node in the phylogeny [8]. The algorithm starts at the tips of the tree and works inward toward the root, iteratively doing the following [8]:

- It finds two tips that are sister species and have a common ancestor.

- It calculates a raw contrast—the difference in their trait values [8].

- This raw contrast is then standardized by dividing it by its expected standard deviation under a Brownian motion model of evolution, which accounts for the branch lengths leading to the two species [8]. These standardized contrasts are statistically independent and identically distributed, making them suitable for standard statistical analyses like correlation or regression [8].

Q3: What are the key assumptions of the PIC method, and why is testing them critical? The PIC method relies on three major assumptions, and failing to test them is a primary source of error in comparative studies [7].

- Accurate Phylogeny: The provided phylogenetic tree's topology (branching order) is correct [7].

- Correct Branch Lengths: The branch lengths in the tree are proportional to time or the expected amount of evolutionary change [7].

- Brownian Motion Evolution: The trait(s) under study have evolved according to a Brownian motion model, where variance accumulates in direct proportion to time [8] [7].

Troubleshooting Guides: Common Issues & Solutions

Problem: Significant correlation between the absolute value of standardized contrasts and their standard deviations.

- Diagnosis: This suggests a problem with the branch lengths or the evolutionary model [7]. The Brownian motion assumption may be violated.

- Solution:

- Check the diagnostic plots provided in software packages like

caperorape[7]. - Consider transforming the branch lengths (e.g., using a logarithmic transformation) or the trait data.

- Explore alternative models of evolution, such as the Ornstein-Uhlenbeck model, which can account for stabilizing selection [7].

- Check the diagnostic plots provided in software packages like

Problem: The results of a PIC analysis are non-significant when non-phylogenetic methods find a strong signal.

- Diagnosis: This is an expected and correct outcome when a correlation is driven entirely by phylogenetic pseudoreplication. The strong signal in the raw data comes from a few major evolutionary splits and is not evidence of a consistent evolutionary relationship across the tree [9].

- Solution: Trust the PIC result. A classic example is avoiding Simpson's paradox, where a relationship within different clades is negative, but the overall, non-phylogenetic analysis shows a positive trend [9].

Problem: How do I handle a phylogeny that is not fully resolved (contains polytomies)?

- Diagnosis: A polytomy (a node with more than two descendant branches) represents uncertainty about the exact branching order. The original PIC algorithm requires a fully bifurcating tree [10].

- Solution: Use methods and software that can handle polytomies by calculating a set of possible independent contrasts or by using an algorithm to assign arbitrary branch lengths to resolve the polytomy [10].

Diagnostic Methods for Testing PIC Assumptions

The table below summarizes key diagnostic methods for testing the major assumptions of PICs.

| Assumption | Diagnostic Test | Interpretation | Protocol/Workflow |

|---|---|---|---|

| Accurate Topology & Branch Lengths | Examine residual plots for heteroscedasticity [7]. | A fan-shaped pattern in residuals indicates a problem with branch lengths or the evolutionary model. | 1. Calculate PICs. 2. Run a regression through the origin. 3. Plot standardized residuals against fitted values. |

| Brownian Motion Evolution | Plot standardized contrasts against their node heights [7]. | A significant relationship suggests the Brownian motion model is inadequate. | 1. Calculate PICs and associated node heights. 2. Plot contrasts vs. node heights. 3. Test for a significant correlation. |

| Proper Standardization | Plot the absolute value of standardized contrasts against their standard deviations [7]. | No significant relationship should exist. A significant correlation indicates improper standardization. | 1. Calculate PICs. 2. Plot absolute values of contrasts vs. their standard deviations. 3. Test for a significant correlation. |

The Scientist's Toolkit: Essential Research Reagents

The following table lists key software solutions essential for conducting a Phylogenetic Independent Contrasts analysis.

| Research Reagent | Function & Explanation |

|---|---|

| R Statistical Environment | The primary platform for implementing phylogenetic comparative methods, including PICs [5] [6]. |

ape (R package) |

A core package for phylogenetic analysis in R. It provides the function pic() to calculate Phylogenetic Independent Contrasts [5] [6] [9]. |

caper (R package) |

An R package that implements PICs and, crucially, provides comprehensive diagnostic functions to test the assumptions of the method, such as plotting contrasts against node heights [7]. |

phytools (R package) |

An extensive R package for phylogenetic comparative biology that offers a wide array of tools for fitting evolutionary models and visualizing phylogenetic data [5] [6]. |

Workflow Diagram: The PIC Algorithm & Diagnostics

The diagram below visualizes the step-by-step workflow for calculating PICs and the subsequent diagnostic checks.

PIC Calculation & Analysis Workflow

Diagnostic Framework for PIC Assumptions

This diagram outlines the logical process for testing the critical assumptions of a PIC analysis.

PIC Diagnostic Checks

Core Principle and Workflow

Phylogenetic Independent Contrasts (PICs) is a statistical technique developed by Felsenstein (1985) to account for the non-independence of species data due to shared evolutionary history. The core principle involves transforming raw trait values from related species into independent data points (contrasts) that can be used in standard statistical analyses, thus preventing inflated Type I errors.

The following diagram illustrates the logical workflow and calculations involved in the PIC method.

Experimental Protocol: Calculating PICs

The standard algorithm for calculating PICs follows these methodological steps [8]:

Input Requirements: A phylogenetic tree with branch lengths and trait measurements for all tip species.

Step-by-Step Protocol:

Identify Tips and Ancestor: Find two adjacent tips on the phylogeny (nodes i and j) that share a common ancestor (node k).

Compute Raw Contrast: Calculate the difference in their trait values.

c_ij = x_i - x_jUnder a Brownian motion model of evolution, this raw contrast has an expectation of zero and a variance proportional tov_i + v_j(their branch lengths from the common ancestor).Standardize the Contrast: Divide the raw contrast by its expected standard deviation.

s_ij = c_ij / (v_i + v_j) = (x_i - x_j) / (v_i + v_j)These standardized contrasts are independent and identically distributed under the Brownian motion model.Iterate Through the Tree: This process is repeated using the calculated values at internal nodes, moving towards the root of the tree. The algorithm is a "pruning algorithm" that trims pairs of sister taxa to create a smaller tree, eventually covering all nodes [8].

Statistical Analysis: The resulting set of standardized contrasts can be used in standard statistical tests (e.g., correlation, regression) that require independent data points.

Troubleshooting Common Issues

Researchers often encounter specific issues when applying PICs in their analyses. The following table summarizes common problems and their solutions.

| Problem / Symptom | Likely Cause | Solution / Diagnostic Step |

|---|---|---|

| Significant correlation disappears after PIC application [11]. | The initial correlation was a byproduct of shared ancestry (phylogenetic non-independence), not a true functional relationship. | Interpret the loss of significance as evidence that phylogeny explains the apparent relationship. The PIC result is the correct one. |

| Correlation strength changes significantly with PICs. | Phylogenetic inertia is confounding the trait relationship. | Trust the PIC analysis. The initial correlation was biased, and the PICs give a better estimate of the true evolutionary relationship. |

| Software errors during contrast calculation. | Incorrect tree format, missing branch lengths, or missing trait data for some species. | Ensure the tree is ultrametric (if required), all branch lengths are present and positive, and trait data exists for all tips in the tree. |

| Contrasts are not independent of their standard deviations. | Violation of the Brownian motion (BM) evolutionary model. | Consider alternative evolutionary models (e.g., Ornstein-Uhlenbeck, Early-Burst) that may be more appropriate for your data. |

Frequently Asked Questions (FAQs)

Q1: What does it mean if a significant correlation between two traits disappears after applying PICs?

This is a classic outcome indicating that the original, significant correlation was likely a statistical artifact caused by phylogenetic non-independence [11]. Closely related species tend to have similar trait values, which can create an apparent correlation between traits. PICs remove this phylogenetic effect, and the disappearance of the correlation suggests there is no evidence for a functional relationship between the traits independent of evolutionary history.

Q2: How do I know if the Brownian motion model is appropriate for my data?

A key diagnostic check is to test whether the absolute values of your standardized contrasts are independent of their standard deviations (or the square root of the sum of their branch lengths). If a relationship is found, it indicates a violation of the Brownian motion assumption. Visually inspecting a plot of contrasts against their expected standard deviations can reveal this. In such cases, you may need to use different branch length transformations or consider different evolutionary models.

Q3: My data includes a continuous and a categorical trait. Can I use PICs to test for a correlation?

PICs are designed for continuous traits. To investigate the relationship between a continuous trait and a categorical one (e.g., diet type), you can use the continuous trait to calculate PICs and then use these contrasts in an analysis of variance (ANOVA), testing whether the contrasts differ significantly among the categories of the other trait. Note that the categorical trait must also be mapped onto the tree.

Essential Research Reagents and Tools

The following table lists key software solutions and their primary functions for conducting PIC analysis.

| Tool / Reagent | Primary Function | Key Utility in PIC Analysis |

|---|---|---|

R with ape package |

Statistical computing and phylogenetics. | Provides the core pic() function for calculating phylogenetic independent contrasts. It is the most common and versatile environment for this analysis. |

phytools R package |

Phylogenetic tools and visualization. | Offers a wide range of functions for fitting evolutionary models, visualizing trait data on trees, and conducting comparative analyses that complement PICs [12]. |

| *ggtree R package* | Visualization of phylogenetic trees. | Specializes in annotating and plotting phylogenetic trees with associated data, which is crucial for visualizing the input and output of PIC analyses [13] [14]. |

| *iTOL (Interactive Tree Of Life)* | Online tree visualization and annotation. | A web-based tool for rapidly visualizing and annotating phylogenetic trees, helpful for exploring tree structure and data before formal analysis [15]. |

| Mesquite | Modular system for evolutionary biology. | Provides a graphical user interface for managing phylogenetic data and performing some comparative analyses, which can be useful for preparing data for PIC analysis. |

Executing PIC and Diagnosing Its Core Assumptions: A Step-by-Step Methodology

Phylogenetic Independent Contrasts (PIC) is a foundational statistical technique in evolutionary biology that enables researchers to test hypotheses about correlated trait evolution while accounting for shared evolutionary history among species. The validity of any PIC analysis rests upon three core assumptions derived from its underlying model of evolution. This guide provides experimental protocols and troubleshooting advice to help researchers validate these critical assumptions before drawing biological conclusions from their analyses.

The Three Pillars: Core Assumptions of PIC

The PIC method is built upon a Brownian motion model of trait evolution, which requires these three essential conditions to be met [2]:

- The tree must be known and correctly specified. The phylogenetic hypothesis used must accurately reflect the evolutionary relationships of the taxa in your study.

- Traits must evolve according to a Brownian motion model. Character evolution should resemble a random walk along the branches of the tree.

- The variance of evolutionary change must be proportional to branch length. Longer branches in units of time should accumulate more evolutionary change.

Frequently Asked Questions (FAQs) & Troubleshooting

How do I test if my data fits the Brownian motion model?

Answer: A key diagnostic is to check for a relationship between the absolute value of standardized contrasts and their standard deviations (which are functions of branch length).

Protocol: Diagnostic Regression

- Calculate standardized independent contrasts for your trait(s).

- Plot the absolute values of the standardized contrasts against their standard deviations.

- Perform a linear regression through the origin on this plot.

- A significant positive relationship indicates that the assumption of Brownian motion may be violated, as it suggests evolutionary change is not independent of branch length.

Troubleshooting: If you find a significant relationship, consider:

- Transforming your data: Log-transformation of trait values can often stabilize variance and improve model fit.

- Using a different evolutionary model: Explore models like Ornstein-Uhlenbeck, which can model stabilizing selection, or Early Burst, which models decreasing rates of evolution over time.

What should I do if my contrasts are not independent of branch length?

Answer: This is a common violation that suggests an incorrect evolutionary model or inaccurate branch lengths.

Protocol: Investigating Branch Lengths

- Re-check the source and estimation of your phylogenetic tree's branch lengths. Ensure they are in meaningful units (e.g., time, molecular substitutions).

- Use a diagnostic test, as described in the previous FAQ, to quantify the problem.

- Apply branch length transformations. Common methods include using

Pagel's λorGrafen's ρ, which are scaling parameters that can be optimized to improve the fit of the tree to your data.

Troubleshooting:

- Low support for internal nodes: If the phylogeny has regions with low bootstrap support or posterior probability, consider running analyses on a distribution of trees to incorporate phylogenetic uncertainty.

- Systematic bias in contrasts: If contrasts are consistently too large or small at specific nodes, investigate the biology of those clades for unusual evolutionary rates (e.g., adaptive radiation).

How does an incorrect tree topology affect my PIC results?

Answer: The consequences can be severe. Assuming an incorrect phylogenetic tree is a form of model misspecification that can lead to inflated Type I error rates (false positives) [16]. One study found that as the number of traits and species in an analysis increases, the false positive rate can soar to nearly 100% when the wrong tree is used [16].

Protocol: Robustness Testing

- Perform your PIC analysis on a set of alternative phylogenies (e.g., from a Bayesian posterior distribution of trees).

- Compare the results, such as the correlation coefficient between contrasts, across all trees.

- Report the range of statistical outcomes and whether your central conclusion is consistent.

Troubleshooting:

- Consider robust regression methods: Recent research shows that using robust sandwich estimators in phylogenetic regression can "rescue" analyses and maintain acceptable false positive rates even when the tree is misspecified [16].

- Justify your tree choice: Clearly state the source of your phylogeny and any potential limitations in your manuscript's methods section.

What are the best practices for testing the three pillars?

Answer: A comprehensive validation involves both diagnostic plots and statistical tests. The following workflow provides a step-by-step guide for testing the core assumptions.

How can I visualize and interpret the diagnostic plots for PIC?

Answer: Diagnostic plots are essential for a quick, visual assessment of model fit. The table below summarizes the key plots and how to interpret them.

| Diagnostic Plot | Purpose | What a "Good" Result Looks Like | What a "Bad" Result Looks Like |

|---|---|---|---|

| Absolute Contrasts vs. Standard Deviations | Tests if evolutionary change is independent of branch length. | No clear pattern; points are randomly scattered. | A significant positive or negative trend in the data points. |

| Q-Q Plot of Standardized Contrasts | Tests the normality of the contrasts. | Points fall approximately along a straight line. | Points deviate substantially from the reference line, especially at the tails. |

| Plot of Ancestral State Estimates | Checks for biological plausibility of reconstructed values. | Estimated values are within a realistic range for the trait. | Estimates are biologically impossible (e.g., negative body size). |

Research Reagent Solutions

Essential computational tools and resources for conducting robust PIC analysis.

| Tool / Resource | Function | Implementation Notes |

|---|---|---|

| ape package (R) | Core functions for reading trees, calculating PICs, and basic diagnostics. | The pic function calculates contrasts. ace can reconstruct ancestral states. |

| nlme package (R) | Fits phylogenetic regression models using GLS, correlating species based on a tree. | Allows for the incorporation of Pagel's λ and other evolutionary models. |

| phytools package (R) | Comprehensive toolkit for phylogenetic comparative methods, including visualization. | Useful for advanced diagnostics and fitting alternative evolutionary models. |

| Robust Phylogenetic Regression | A statistical method to reduce sensitivity to incorrect tree choice [16]. | Can be implemented using robust variance-covariance estimators (e.g., "sandwich" estimators). |

| Bayesian Posterior Tree Sample | A set of trees from a Bayesian analysis (e.g., from MrBayes, BEAST2) that represents phylogenetic uncertainty. | Use to test the robustness of your PIC results by running the analysis across hundreds of trees. |

Why is an accurate phylogenetic topology critical for Phylogenetically Independent Contrasts (PIC)?

An accurate phylogenetic topology is the foundational assumption of the PIC method because the algorithm uses the evolutionary relationships and branch lengths specified in the tree to calculate independent contrasts [3]. The PIC method, developed by Felsenstein (1985), fundamentally solves the statistical problem of non-independence in species data resulting from their shared evolutionary history [3] [17]. If the tree topology is incorrect, the calculated contrasts will be biased, as they will be based on erroneous relationships. This can lead to inflated Type I error rates (false positives) and invalid conclusions about evolutionary correlations [3].

What are the consequences of using an incorrect topology?

Using an incorrect or poorly supported phylogenetic topology can severely compromise your PIC analysis:

- Spurious Correlations: Closely related species often share similar traits due to common ancestry. An incorrect topology fails to correctly account for this non-independence, making it seem like traits are correlated when they are not. Simulations show that this can easily produce statistically significant but entirely spurious evolutionary relationships [3] [17].

- Loss of Statistical Power: Conversely, an incorrect topology can sometimes obscure a true relationship. The goal of PIC is not to "weaken" the analysis, but to provide an unbiased test by transforming species data into independent contrast points [3].

- Misleading Visualizations: Projecting a phylogeny into morphospace using tools like

phylomorphospacewill visually misrepresent the evolutionary trajectories of traits if the underlying topology is wrong [3] [18].

How can I test the robustness of my PIC results to topological uncertainty?

You should not assume your initial tree is correct. Instead, test the sensitivity of your PIC results using these methodological approaches:

- Bootstrapping: Perform a phylogenetic bootstrap analysis (e.g., 1000 replicates) to assess the support for the key nodes in your topology that might influence the contrasts.

- Alternative Topologies: Run your PIC analysis on multiple, equally plausible topologies (e.g., from different tree inference methods or models). If your results are consistent across these different trees, you can be more confident in your findings.

- Tree Distances: Compare your primary topology to alternative hypotheses using topological distance metrics (e.g., Robinson-Foulds distance) to quantify the differences.

The table below summarizes a hypothetical experimental protocol for testing topology robustness.

Table 1: Experimental Protocol for Testing Topology Robustness in PIC

| Step | Action | Objective |

|---|---|---|

| 1 | Generate a primary phylogenetic tree using your preferred method and dataset. | To establish a best-estimate hypothesis of evolutionary relationships. |

| 2 | Conduct PIC analysis on the primary tree. | To obtain an initial estimate of the evolutionary correlation. |

| 3 | Generate a set of alternative topologies (e.g., via bootstrapping, different genes, or alternative inference models). | To create a distribution of plausible evolutionary histories. |

| 4 | Run the same PIC analysis on all alternative topologies. | To assess the stability of the correlation coefficient across different tree hypotheses. |

| 5 | Compare the distribution of correlation coefficients and their p-values from all analyses. | To determine if the initial conclusion is robust to topological uncertainty. A consistent result strengthens the inference. |

What are the essential tools and reagents for testing phylogenetic topology?

A successful analysis requires a combination of bioinformatics software, phylogenetic data, and programming tools.

Table 2: Research Reagent Solutions for Topology Testing

| Item Name | Function in Analysis |

|---|---|

| Sequence Alignment Software (e.g., MAFFT, MUSCLE) | Aligns nucleotide or amino acid sequences to establish positional homology for phylogenetic inference. |

| Phylogenetic Inference Software (e.g., RAxML, MrBayes, BEAST2) | Constructs phylogenetic trees from aligned sequence data using various models of evolution. |

| R Statistical Environment | The primary platform for conducting statistical analyses and running PIC. |

R packages: ape, phytools |

Provide core functions for reading, writing, and manipulating phylogenetic trees (ape) and for performing PIC and related comparative methods (phytools) [17] [18]. |

| Molecular Dataset (e.g., multi-gene alignment, genome-wide SNPs) | The character data used to infer the phylogenetic topology. The choice of markers can impact the resulting tree. |

A Technical Workflow for Testing the Topology Assumption

The following diagram illustrates the logical workflow for testing if your PIC results are dependent on your specific phylogenetic topology. This process helps you validate the robustness of your conclusions.

A Practical R Code Example for Sensitivity Analysis

Below is a conceptual R code snippet demonstrating how you might structure a sensitivity analysis for PIC using different topologies. This example assumes you have multiple tree files and a dataset of traits.

## Frequently Asked Questions

Q1: Why is verifying branch length correctness critical for Phylogenetic Independent Contrasts (PIC)? Branch lengths are fundamental to the PIC algorithm because they quantify the expected variance of character evolution under a Brownian motion model. Incorrect branch lengths can invalidate the core assumption of the method—that contrasts are independent and identically distributed—leading to increased Type I or Type II errors in your hypothesis tests [19]. Using PIC with incorrect branch lengths is akin to using an incorrect scale in a physical measurement; all subsequent results become unreliable.

Q2: What are the most common sources of error in branch length estimation? Common sources include:

- Topological Uncertainty: Incorrect tree topology directly misinforms branch length estimates.

- Substitution Model Misspecification: Using an overly simple model (e.g., Jukes-Cantor) for complex DNA evolution can bias branch length estimates.

- Incomplete Taxon Sampling: Missing taxa can distort the inferred evolutionary paths and lengths.

- Methodological Limitations: Branch lengths from molecular data often reflect the number of substitutions per site, which may not perfectly correlate with the amount of divergence in phenotypic traits.

Q3: My branch lengths are based on molecular data. Are these suitable for phenotypic trait analysis? Molecular branch lengths are a common and often the only available proxy. However, they are not a perfect substitute for the true evolutionary divergence in phenotypic traits. The key is to test whether your specific phenotypic trait data exhibit a significant phylogenetic signal consistent with those branch lengths. A low or non-significant phylogenetic signal indicates that the trait may not be evolving according to the Brownian motion model assumed by PIC on the given tree, suggesting the branch lengths may be unsuitable for your analysis [20].

Q4: What diagnostic checks can I perform after calculating contrasts? A primary diagnostic is to check for a relationship between the absolute value of standardized contrasts and their standard deviations (which are a function of branch length). A strong positive correlation suggests that the assumed branch lengths may be incorrect, as the contrasts have not been adequately standardized [19].

## Troubleshooting Guides

### Problem: Low or Non-Significant Phylogenetic Signal

Symptoms:

- Blomberg's K or Pagel's λ is close to 0 or not statistically significant [20].

- PIC diagnostics show no correlation between the absolute values of contrasts and their standard deviations.

Solutions:

- Re-evaluate Your Tree:

- Action: Re-estimate branch lengths using a more appropriate molecular clock model or substitution model for your genetic data.

- Rationale: More realistic models can provide branch lengths that better reflect the evolutionary process of your traits.

- Apply a Branch Length Transformation:

- Action: Use a branch length transformation, such as Pagel's λ [19], to optimize the fit of your tree to the trait data. This statistically "stretches" or "compresses" the tree to find the best-fitting model.

- Protocol:

- Fit a phylogenetic generalized least squares (PGLS) model with a Pagel's λ transformation for your trait of interest.

- The optimized λ value (between 0 and 1) indicates the best-fitting transformation of the branch lengths for that trait.

- Multiply all branch lengths in your tree by this λ value to create a transformed tree for PIC analysis.

- Consider Alternative Evolutionary Models:

- Action: If phylogenetic signal remains low, your trait may not evolve via Brownian motion. Explore other models (e.g., Ornstein-Uhlenbeck) using PGLS, which may be more appropriate [19].

### Problem: Significant Correlation Between Contrasts and Their Standard Deviations

Symptoms: A significant positive correlation is found when the absolute values of standardized contrasts are regressed against their expected standard deviations (or the square root of the sum of their branch lengths) [19].

Solutions:

- Log-Transform Branch Lengths:

- Action: Apply a natural log transformation to all branch lengths in your phylogeny and re-run the PIC analysis.

- Rationale: This simple transformation can often linearize the relationship and correct for the non-independence of contrasts.

- Use Branch Length Transformations:

- Action: As in the previous guide, employ Pagel's λ or other transformations (e.g., δ, κ) to find an optimal scaling of the tree that removes this correlation.

## Experimental Protocols

### Protocol 1: Diagnosing Branch Length Adequacy using PIC Residuals

This protocol tests the key assumption that standardized contrasts are independent of their branch lengths.

Methodology:

- Calculate standardized independent contrasts for your trait data using your phylogeny.

- For each contrast, calculate its standard deviation. This is typically derived from the branch lengths leading to the two taxa being contrasted (often calculated as

sqrt(br1 + br2)wherebr1andbr2are the lengths of the two branches from a node). - Perform a linear regression of the absolute values of the standardized contrasts against their standard deviations.

- Interpretation: The regression should have a slope that is not significantly different from zero. A significant positive slope indicates that contrasts with larger expected variance are larger than they should be, suggesting the branch lengths are too short. A significant negative slope suggests branch lengths are too long.

### Protocol 2: Quantifying Phylogenetic Signal with Blomberg'sK

This protocol assesses whether your trait data conform to the Brownian motion expectation on your given tree.

Methodology:

- Calculate the Mean Squared Error (MSE₀): Compute the MSE of the trait values around the phylogenetic mean (the root state estimated under Brownian motion).

- Calculate the MSE under Brownian Motion (MSE): This is the mean squared error of the tip data, weighted by their phylogenetic relationships and branch lengths. Technically, it is derived from the variance-covariance matrix of the trait data given the tree.

- Compute the Expected MSE under Brownian Motion: This value is based on the topology and branch lengths of the tree alone.

- Calculate Blomberg's K [20]:

K = (MSE₀ / MSE) / (MSE₀ / MSE_expected)- Simplified,

Kis the ratio of the observed MSE to the MSE expected under Brownian motion.

- Significance Testing:

- Perform a permutation test by randomly shuffling trait values across the tips of the tree and re-calculating K for each shuffle.

- The p-value is the proportion of permuted K values that are greater than or equal to the observed K.

- Interpretation:

- K ≈ 1: Trait evolution is consistent with a Brownian motion model on the given tree.

- K > 1: More phylogenetic signal than expected under Brownian motion (traits are more similar among close relatives).

- K < 1: Less phylogenetic signal than expected (traits are more similar among distant relatives).

### Protocol 3: A Unified Test for Phylogenetic Signal Using the M Statistic

This protocol uses a newer, versatile method to detect phylogenetic signals for continuous, discrete, and multiple trait combinations [20].

Methodology:

- Calculate Phylogenetic Distance Matrix: Compute a pairwise distance matrix for all taxa based on the provided phylogeny (e.g., cophenetic distances).

- Calculate Trait Distance Matrix: Compute a pairwise distance matrix for all taxa based on the trait data. For continuous traits, use Euclidean distance. For mixed-type traits, use Gower's distance.

- Compute the M Statistic [20]:

- The M statistic is calculated by comparing the ranks of distances in the phylogenetic matrix to the ranks in the trait matrix. It strictly adheres to the definition of phylogenetic signal as the tendency for related species to resemble each other more than random.

- Significance Testing:

- A null distribution is generated by randomly permuting the rows and columns of the trait distance matrix and re-calculating M each time.

- The p-value is the proportion of permuted M statistics that are greater than or equal to the observed M statistic.

- Interpretation: A significant result indicates a strong phylogenetic signal in your trait data, validating the use of the given tree and branch lengths for phylogenetic comparative methods like PIC.

Table 1: Performance Comparison of Phylogenetic Prediction Methods on Simulated Ultrametric Trees This table summarizes key findings from a large-scale simulation study comparing prediction methods, highlighting the importance of using phylogenetically informed approaches over simple predictive equations. Performance was measured by the variance (σ²) of prediction errors across 1000 simulated trees; lower variance indicates better and more consistent performance [2].

| Method | Trait Correlation Strength (r) | Prediction Error Variance (σ²) | Relative Performance vs. PIP |

|---|---|---|---|

| Phylogenetically Informed Prediction (PIP) | 0.25 | 0.007 | Baseline |

| PGLS Predictive Equations | 0.25 | 0.033 | 4.7x worse |

| OLS Predictive Equations | 0.25 | 0.030 | 4.3x worse |

| Phylogenetically Informed Prediction (PIP) | 0.75 | Not specified | Baseline |

| PGLS Predictive Equations | 0.75 | 0.015 | ~2x worse |

| OLS Predictive Equations | 0.75 | 0.014 | ~2x worse |

Table 2: Interpretation of Key Phylogenetic Signal Indices

| Index | Value Interpretation | Implication for PIC/Branch Lengths |

|---|---|---|

| Blomberg's K | K ≈ 1 | Strong signal; branch lengths and model are adequate. |

| K < 1 | Weak signal; branch lengths may be poor or trait evolution is non-Brownian. | |

| K > 1 | Stronger-than-Brownian signal; branch lengths may be adequate. | |

| Pagel's λ | λ ≈ 1 | Strong signal; branch lengths are adequate. |

| λ ≈ 0 | No signal; star phylogeny; PIC is invalid. | |

| 0 < λ < 1 | Intermediate signal; a λ-transformation of branch lengths is recommended. | |

| PIC Correlation (vs. SD) | Slope ≈ 0 (n.s.) | Assumption met; contrasts are independent of branch lengths. |

| Slope > 0 (s.) | Assumption violated; branch lengths may be incorrect. |

## Research Reagent Solutions

Table 3: Essential Software and Statistical Tools for Branch Length Verification

| Tool Name | Function | Application in Verification |

|---|---|---|

| APE (R pkg) | Analysis of Phylogenetics and Evolution | Core functions for reading, manipulating trees, and calculating PICs and diagnostic plots [20]. |

| PHYTOOLS (R pkg) | Phylogenetic Tools for Evolutionary Biology | Contains functions for estimating Pagel's λ, Blomberg's K, and other evolutionary models [20]. |

| PHYLOSIGNALDB (R pkg) | Phylogenetic Signal Detection | Implements the unified M statistic for detecting phylogenetic signal in continuous, discrete, and multiple traits [20]. |

| GEIGER (R pkg) | Analysis of Evolutionary Diversification | Offers tools for fitting macroevolutionary models and transforming branch lengths. |

| PAUP*/BEAST/MrBayes | Phylogenetic Inference Software | Used for the initial estimation of phylogenetic trees and branch lengths under various molecular clock and substitution models. |

## Workflow Visualization

Branch Length Verification Workflow

M Statistic Signal Testing

Diagnostic Tests for Model Adequacy

Goodness-of-Fit Tests and Diagnostic Procedures

| Diagnostic Method | Purpose | Interpretation Guide | Implementation Tools |

|---|---|---|---|

| Phylogenetic Residual Diagnostics | Check for heavier-tailed residuals than expected under multivariate normality [21]. | Patterns in residuals suggest violation of BM assumptions; heavier tails indicate multivariate-t distribution may be better [21]. | Novel residual diagnostic plots for multivariate-t models [21]. |

| Analysis of Model Fit Statistics | Compare fit of BM model against more complex models [21]. | Improved fit (e.g., lower AIC) of fBM or multivariate-t models indicates BM inadequacy [21]. | Akaike's Information Criterion (AIC) [21]. |

| Simulation-Based Assessments | Evaluate biases in parameter estimates from BM models under censoring [21]. | Substantial bias in estimates (e.g., mean slope of decline) suggests BM model inadequacy [21]. | Cohort simulation from fitted models [21]. |

Frequently Asked Questions (FAQs)

General Model Questions

Q: What is the core assumption of the Brownian Motion model in phylogenetics? A: The BM model assumes that trait evolution follows a random walk with changes that are independent, normally distributed, and with a constant rate over time [22]. This implies that closely related species are expected to have more similar trait values due to shared evolutionary history.

Q: Why is it critical to test the adequacy of the BM model? A: Applying an overly simplistic model like BM to complex biological data can lead to substantial biases in parameter estimates, particularly when data are unbalanced or censored [21]. This can result in incorrect biological inferences and flawed predictions.

Q: My residuals suggest a multivariate-t distribution. What does this mean? A: This indicates that your trait data have heavier tails than expected under a normal distribution. This is biologically plausible and can be addressed by generalizing your model to follow a multivariate-t distribution, which has been shown to substantially improve model fit in some applications [21].

Troubleshooting Guide

Q: What should I do if diagnostic plots show my BM model is inadequate? A: Consider these alternative models:

- Fractional Brownian Motion (fBM): Allows for more erratic variation over time and incorporates long-range dependence [21] [22].

- Multivariate-t Models: Accommodates heavier-tailed residuals than the normal distribution [21].

- Ornstein-Uhlenbeck Process: A mean-reverting process suitable when traits are under stabilizing selection [22].

Q: How does censoring of data affect my model choice? A: Censoring, such as treatment initiation in longitudinal studies based on observed biomarker levels, can strongly bias parameter estimates from standard random slopes (BM) models. More flexible models like those incorporating fBM have been shown to be less susceptible to this bias [21].

Experimental Protocols for Model Assessment

Protocol 1: Residual Analysis for Multivariate-t Distribution

Objective: To assess whether the residuals from a BM model exhibit heavier tails than expected under multivariate normality.

- Model Fitting: Fit a standard BM model to your phylogenetic trait data.

- Residual Calculation: Extract the residuals from the fitted model.

- Diagnostic Plotting: Create novel residual diagnostic plots as proposed by [21].

- Interpretation: Visually inspect the tails of the residual distribution. If they are heavier than a normal distribution, consider a multivariate-t model.

- Model Refitting: Refit the model using a multivariate-t distribution and compare the AIC to the original model [21].

Protocol 2: Comparative Model Fit using Fractional Brownian Motion

Objective: To evaluate if a more flexible model provides a significantly better fit to the data.

- Baseline Model: Fit a standard BM model and record its AIC value.

- Alternative Model: Fit a fractional Brownian motion (fBM) model. This model generalizes BM by incorporating a Hurst parameter (H) to account for long-range dependence [21] [22].

- Model Comparison: Compare the AIC values of the BM and fBM models. A substantial improvement in AIC indicates the fBM model is more appropriate [21].

- Validation: Use the superior model for parameter estimation and inference to avoid biases.

Workflow Visualization

Model Adequacy Assessment Workflow

The Scientist's Toolkit

Key Research Reagent Solutions

| Reagent / Tool | Function / Purpose | Example / Notes |

|---|---|---|

| R package 'ape' | Environment for modern phylogenetics and evolutionary analyses in R [5] [6]. | Used for reading trees, basic comparative analyses, and calculating PICs. |

| R package 'phytools' | R package for phylogenetic comparative biology [5] [17]. | Provides tools for fitting and simulating evolutionary models, including BM. |

| Phylogenetic Independent Contrasts (PIC) | Algorithm to correct for phylogenetic non-independence in comparative data [5] [3] [6]. | The foundational method for which BM is a common underlying model. |

| Fractional Brownian Motion (fBM) Model | A flexible generalization of BM for modeling erratic trajectories and long-range dependence [21] [22]. | Implemented when standard BM provides poor fit. |

| Multivariate-t Model | A model extension for handling heavier-tailed residuals than the normal distribution [21]. | Used when residual diagnostics indicate non-normality. |

Diagnostic Workflow for Phylogenetic Independent Contrasts

Q: What is the complete diagnostic workflow after calculating Phylogenetically Independent Contrasts (PIC) to validate model assumptions?

After calculating phylogenetic independent contrasts, you must validate three critical assumptions before interpreting results. The following workflow provides a comprehensive diagnostic approach:

Table 1: Key Diagnostic Tests for PIC Assumptions Validation

| Assumption | Diagnostic Test | Expected Result | Implementation in R |

|---|---|---|---|

| Accurate Phylogeny Topology | Contrasts ~ Node Heights | No significant correlation | plot(pic_model) in caper [23] [7] |

| Correct Branch Lengths | Absolute Contrasts vs Standard Deviations | No relationship | caic.diagnostics() in caper [23] |

| Brownian Motion Evolution | Residual Heteroscedasticity | Homogeneous variance | plot(pic_model) residual checks [23] |

The diagnostic workflow specifically tests Felsenstein's three major assumptions: (1) accurate phylogenetic topology, (2) correct branch lengths, and (3) Brownian motion trait evolution [7]. Research indicates that the majority of studies using phylogenetic independent contrasts do not adequately test these assumptions, potentially compromising their conclusions [7].

Troubleshooting Common PIC Errors

Q: What are the most common errors when implementing PIC in R and how can they be resolved?

Data-Tree Mismatch Resolution

The most frequent error occurs when species names in your data frame don't match tip labels in your phylogeny. The comparative.data() function in caper automatically handles this mapping:

Table 2: Common PIC Implementation Errors and Solutions

| Error Message | Root Cause | Solution | Code Example |

|---|---|---|---|

"Tips do not match" |

Data-tree name mismatch | Use comparative.data() as intermediary |

comp_data <- comparative.data(tree, data, names.col="binomial") [23] |

"Contrasts did not converge" |

Incorrect branch lengths | Check and transform branch lengths | pic(x, phy, scaled=TRUE) [24] |

"NA/NaN/Inf in foreign function call" |

Missing data in traits | Use na.omit = FALSE or impute missing values |

comparative.data(..., na.omit=FALSE) [23] |

| Significant correlation between contrasts and node heights | Violation of Brownian motion assumption | Consider alternative evolutionary models | Check caic.diagnostics() plots [23] [7] |

Branch Length Transformation

When diagnostics indicate branch length issues, apply transformations within the crunch() function:

Research Reagent Solutions: Essential R Tools for PIC Analysis

Table 3: Essential R Packages and Functions for PIC Research

| Package/Function | Purpose | Key Features | Thesis Application |

|---|---|---|---|

ape::pic() [24] |

Calculate independent contrasts | Core PIC algorithm, returns contrasts with variances | Foundation for all PIC analyses |

caper::crunch() [23] |

PIC linear models | Automated diagnostics, model fitting | Testing evolutionary hypotheses |

caper::comparative.data() [23] |

Data-phylogeny integration | Handles name matching, data sorting | Data preparation step |

caper::caic.diagnostics() [23] |

Model assumption validation | Comprehensive diagnostic plots | Method validation section |

phytools [17] |

Phylogenetic analysis | Alternative methods, visualization | Supplementary analyses |

Advanced PIC Diagnostic Protocols

Q: What advanced diagnostic protocols should be included in a rigorous thesis methodology?

Beyond basic assumption checking, these advanced diagnostics ensure robust conclusions:

Protocol 1: Standardized Contrasts Validation

Protocol 2: Evolutionary Model Comparison

When PIC assumptions are violated, compare against alternative models:

PIC Workflow Integration in Experimental Research

This integrated workflow emphasizes that PIC should not be blindly applied to all comparative analyses [6] [5]. Specific cases like unreplicated evolutionary events may require different approaches [7].

Quantitative Data Presentation Standards

Table 4: Essential PIC Output Reporting Requirements for Thesis Research

| Output Component | Reporting Standard | Statistical Notation | R Function for Extraction |

|---|---|---|---|

| Contrast Values | Report raw and standardized | C~i~, Var(C~i~) | pic(x, phy, var.contrasts=TRUE) [24] |

| Regression Results | Slope through origin | Y = βX + ε | summary(crunch_model) [23] |

| Diagnostic Metrics | Correlation coefficients | r, p-value | cor.test(pic.x, pic.y) [17] |

| Model Fit | R-squared, F-statistic | R², F, df | anova(caic_model) [23] |

| Effect Size | Standardized coefficients | β, SE(β) | coef(pic_model) [23] |

This practical guide provides the essential troubleshooting framework and diagnostic protocols needed to robustly implement phylogenetic independent contrasts in evolutionary and comparative research, with specific application to thesis-level investigations.

Frequently Asked Questions

What is the purpose of creating diagnostic plots for Phylogenetic Independent Contrasts (PICs)? Diagnostic plots, specifically plots of contrasts versus their standard deviations or node heights, are essential for validating the Brownian motion (BM) evolutionary model assumption. They help you identify if your data meets the model's expectations or if there might be model violations, such as unusual evolutionary rates or the need for data transformation, which could invalidate your comparative analyses [8].

I see a pattern in my 'Contrasts vs. Standard Deviations' plot. What does it mean? A fan-shaped pattern or a significant positive correlation in this plot often indicates that the assumption of equal evolutionary rates across the tree is violated. This heteroscedasticity suggests that a log-transformation of your data might be necessary before calculating contrasts to stabilize the variance [8].

My 'Contrasts vs. Node Heights' plot shows a trend. Is this a problem? Yes, a trend in this plot can be problematic. The contrasts should be independent of their node heights. A systematic relationship may suggest that the Brownian motion model is not a good fit for your data, and you may need to consider alternative evolutionary models for your analysis [8].

What should I do if my diagnostic plots indicate a problem? If your diagnostic plots suggest a model violation, consider the following steps:

- Transform your data: For morphological or other positive-valued data, a log-transformation can often stabilize variance.

- Re-check your tree and branch lengths: Ensure your phylogenetic tree and its branch lengths are correct, as errors here directly impact contrast calculation.

- Explore other models: Consider evolutionary models that allow for variation in evolutionary rates, such as the Ornstein-Uhlenbeck model.

How reliable are independent contrasts if my data slightly deviates from the model? Independent contrasts are relatively robust to minor deviations. However, significant violations, especially those showing strong patterns in diagnostic plots, can lead to inflated Type I error rates (false positives). It is crucial to diagnose and address these issues to ensure the validity of your statistical conclusions [8].

Troubleshooting Guides

Issue 1: Significant Correlation in Contrasts vs. Standard Deviations

- Problem: A positive correlation between the absolute value of standardized contrasts and their standard deviations is observed.

- Diagnosis: This pattern suggests that the evolutionary rate (the Brownian motion parameter, σ²) is not constant across the tree. The variance of contrasts is proportional to the sum of branch lengths, and a fan shape indicates that this relationship is not being properly standardized [8].

- Solution:

- Apply a log-transformation to your raw trait data and recalculate the PICs.

- Replot the contrasts against their standard deviations.

- If the pattern persists, re-examine your phylogenetic tree's branch lengths for potential errors.

Issue 2: Significant Correlation in Contrasts vs. Node Heights

- Problem: The standardized contrasts show a significant correlation with their node heights (the age of the node from the present).

- Diagnosis: Under a pure Brownian motion model, contrasts should be independent of their node heights. A correlation indicates that the model does not adequately describe the evolutionary process. This could signal directional selection or trends in the data [8].

- Solution:

- This is a more serious violation of model assumptions.

- Consider using alternative comparative methods that do not rely solely on the Brownian motion assumption, such as phylogenetic generalized least squares (PGLS) with more complex correlation structures.

Issue 3: Outliers in Diagnostic Plots

- Problem: One or a few data points lie far away from the majority of contrasts in the diagnostic plot.

- Diagnosis: Outliers can be caused by errors in the original trait data, incorrect species assignment on the tree, or a genuine, exceptionally high or low evolutionary rate at a specific node.

- Solution:

- Verify your data: Double-check the trait values and phylogenetic placement for the species corresponding to the outlier contrast.

- Re-run analyses: Perform your analysis with and without the outlier to determine its influence on your conclusions.

- Biological interpretation: If the data are correct, investigate if there is a biological reason for the exceptional evolutionary change.

Diagnostic Patterns and Interpretations

Table 1: Common Patterns in 'Contrasts vs. Standard Deviations' Plots

| Pattern Observed | Potential Interpretation | Recommended Action |

|---|---|---|

| No pattern; random scatter | Consistent with Brownian motion assumption. | Proceed with analysis. |

| Positive correlation (Fan-shaped) | Evolutionary rate not constant; variance depends on branch length. | Log-transform trait data and re-plot. |

| Outlier points | Possible data error or genuine exceptional evolution. | Verify data and taxonomy for affected nodes. |

Table 2: Common Patterns in 'Contrasts vs. Node Heights' Plots

| Pattern Observed | Potential Interpretation | Recommended Action |

|---|---|---|

| No pattern; random scatter | Consistent with Brownian motion assumption. | Proceed with analysis. |

| Positive or Negative trend | Model violation; possible directional trend. | Consider alternative evolutionary models (e.g., OU). |

| Outlier points | Possible data error or localized extreme evolution. | Investigate specific node and its descendant species. |

Experimental Protocols

Protocol 1: Calculating and Diagnosing Phylogenetic Independent Contrasts

This protocol outlines the core method for calculating PICs and generating the essential diagnostic plots, based on the algorithm presented by Felsenstein (1985) [8].

- Input Data: You will need:

- A rooted phylogenetic tree with branch lengths.

- A continuous trait value for each tip (species) in the tree.

- Calculate Raw Contrasts: Starting from the tips, work iteratively towards the root. For each pair of sister nodes (i, j) with a common ancestor (k), compute the raw contrast:

c_ij = x_i - x_j[8]. - Standardize Contrasts: Divide each raw contrast by its expected standard deviation under BM:

s_ij = (x_i - x_j) / (v_i + v_j), wherev_iandv_jare the branch lengths leading to nodes i and j [8]. - Calculate Node Height: For each internal node

k, calculate its height as the distance from the node to the present time. - Generate Diagnostic Plots:

- Create a scatter plot of the absolute values of standardized contrasts against their standard deviations (which are

sqrt(v_i + v_j)). - Create a scatter plot of standardized contrasts against their node heights.

- Create a scatter plot of the absolute values of standardized contrasts against their standard deviations (which are

- Interpretation: Analyze the plots using Table 1 and Table 2 above to assess the fit of the Brownian motion model.

Protocol 2: Data Transformation for Variance Stabilization

This protocol is used when a fan-shaped pattern is observed in the contrasts vs. standard deviations plot.

- Apply Transformation: Transform the original trait data (

y) using the natural logarithm:y_transformed = log(y). Ensure all data are positive before transformation. - Re-calculate PICs: Repeat the PIC calculation (Protocol 1, steps 2-3) using the transformed data.

- Re-generate Plots: Create new diagnostic plots with the new set of standardized contrasts.

- Validation: Check the new "Contrasts vs. Standard Deviations" plot to see if the fan shape has been eliminated, indicating stabilized variance.

Workflow for Diagnostic Analysis

The following diagram illustrates the logical workflow for creating and interpreting diagnostic plots for Phylogenetic Independent Contrasts.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Software | Function in PIC Analysis | Key Feature / Note |

|---|---|---|

| Phylogenetic Tree | The evolutionary hypothesis used to calculate contrasts and node heights. | Must be rooted and have branch lengths proportional to time or evolutionary change. |

| Trait Data | The continuous phenotypic or ecological measurements for each species. | Data should be checked for normality and may require log-transformation. |

| R Statistical Environment | A primary platform for implementing PIC and diagnostic plot calculations. | Packages like ape, phytools, and geiger provide essential functions [25] [26]. |

| Phylo-rs Library | A high-performance library for phylogenetic analysis, including distance metrics [25]. | Useful for large-scale analyses; written in Rust for speed and memory safety [25]. |

| Independent Contrasts Algorithm | The core method to compute evolutionarily independent data points from tip data [8]. | Standardizes differences based on branch lengths under a Brownian motion model [8]. |

| iTOL (Interactive Tree Of Life) | Web-based tool for visualizing and annotating phylogenetic trees [27]. | Helpful for exploring tree structure and confirming branch lengths before analysis. |

Navigating the Dark Side: Troubleshooting Common PIC Pitfalls and Biases

Identifying and Correcting Heteroscedasticity in Standardized Contrasts

Frequently Asked Questions

What is heteroscedasticity in the context of standardized contrasts? Heteroscedasticity refers to the non-constant variance of the residuals or contrasts. In Phylogenetic Independent Contrasts (PIC), the calculated contrasts are supposed to be independent and identically distributed. Heteroscedasticity occurs when the variance of these contrasts is not constant across the range of expected values or node heights, violating a key assumption of the method and leading to biased statistical tests [7].

Why is heteroscedasticity a problem for my analysis? If heteroscedasticity is present and not corrected, the standard errors for regression parameters become biased and inconsistent [28]. This undermines the validity of hypothesis tests (e.g., for trait correlations), potentially leading to false positives or false negatives. It indicates that the model is not adequately accounting for the evolutionary process or the structure of the data [7] [3].

What are the main causes of heteroscedasticity in PICs? Common causes include:

- Incorrect Branch Lengths: The assumed branch lengths in the phylogeny do not reflect the true evolutionary time or rate of change [7].

- Violation of Evolutionary Model: The trait evolution did not follow a Brownian Motion model, or the model is too simplistic for the data [7].

- Measurement Error: The error in measuring the trait value is itself heteroskedastic, for example, if measurement error increases with the body size of the species [28].

Troubleshooting Guide: Diagnosing and Correcting Heteroscedasticity

This guide provides a step-by-step workflow for identifying and addressing heteroscedasticity in your PIC analysis.

Diagnosis: How to Detect Heteroscedasticity

The primary method for diagnosing heteroscedasticity in PICs is through diagnostic plots.

Protocol: Creating and Interpreting Diagnostic Plots

- Calculate Contrasts: Compute the phylogenetic independent contrasts for your trait(s) of interest using software like the

picfunction in R [3]. - Create Diagnostic Plots: Generate the following plots, which are standard in packages like

caper[7]: - Interpretation: The points in these plots should show no obvious pattern. A significant trend (increasing or decreasing spread) is evidence of heteroscedasticity.

The diagram below outlines the diagnostic and correction workflow.

Correction: How to Remedy Heteroscedasticity

If heteroscedasticity is detected, here are several strategies to correct it.

1. Data Transformation

- Method: Apply a transformation to your trait data, most commonly a logarithmic (log) transformation, before calculating contrasts [29].

- Rationale: Biological data often exhibit geometric normality, where variance scales with the mean. Log-transforming the data can stabilize the variance, making it constant across the range of measurements and reducing heteroscedasticity [29].

- Protocol:

- Transform your original trait data: e.g.,

log_trait <- log(original_trait). - Recalculate phylogenetic independent contrasts using the transformed data.

- Re-run the diagnostic plots to check if the heteroscedastic pattern has disappeared.

- Transform your original trait data: e.g.,

2. Check and Correct Branch Lengths

- Method: Re-evaluate the branch lengths of your phylogeny. PIC assumes that branch lengths are proportional to time or the expected amount of evolution [7].

- Rationale: Heteroscedasticity can be a direct result of incorrect branch length information [7]. Using arbitrary branch lengths (e.g., all set to 1) is a common cause.

- Protocol:

- Ensure your phylogeny has meaningful branch lengths (e.g., time-calibrated).

- If branch lengths are unknown, consider using methods to estimate them or try different transformations (e.g., Grafen's branch lengths) to see if it resolves the issue.

- Recalculate PICs and check diagnostic plots again.

3. Use an Alternative Comparative Method

- Method: Switch to a more flexible modeling framework, such as Phylogenetic Generalized Least Squares (PGLS) [7] [16].