Strategic Balance: Optimizing Network Performance and Resource Costs in Biomedical Research

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to navigate the critical trade-offs between computational network performance and associated resource costs.

Strategic Balance: Optimizing Network Performance and Resource Costs in Biomedical Research

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to navigate the critical trade-offs between computational network performance and associated resource costs. It explores the foundational principles of cost-performance balance, details methodological applications in areas like federated learning and AI-driven drug discovery, offers best practices for troubleshooting and optimization, and establishes validation techniques for comparing different strategic approaches. The insights are tailored to help biomedical organizations build efficient, cost-effective, and high-performing computational infrastructures that accelerate innovation without compromising financial sustainability.

The Fundamental Trade-Off: Understanding Performance-Cost Dynamics in Research Networks

Defining Network Performance and Resource Costs in a Biomedical Context

Frequently Asked Questions (FAQs)

Network Performance Fundamentals

What is network performance in a biomedical research context? Network performance refers to the efficiency and reliability of data transfer and communication systems that support research activities. In a biomedical setting, this encompasses everything from local lab networks handling large genomic datasets to the digital infrastructure that enables collaboration between institutions. Key components include bandwidth management (regulating data flow), latency reduction (minimizing delays), and traffic shaping (controlling network traffic to reduce congestion) [1] [2]. High performance is crucial for handling genome-scale experiments which can identify hundreds to thousands of previously unsuspected entities related to biological phenomena [3].

How do I identify if my network is underperforming? Common signs of network bottlenecks include: prolonged start-up times for research projects or clinical trials, slow data transfer speeds for large genomic files, sluggish access to shared computational resources or cloud-based analysis tools, and inconsistent performance during peak usage times [4] [1]. These bottlenecks often stem from hardware limitations, outdated software configurations, or insufficient bandwidth for the data demands of modern multi-omics research [1].

Resource Management and Cost Control

What are the main resource costs in biomedical research networks? Research costs are typically divided into two categories. Direct costs include researcher salaries, specific materials, and project-specific lab equipment. Indirect costs (Facilities and Administration or F&A) support shared research infrastructure including research facilities, shared lab supplies, data resources, research safety, utilities, and regulatory compliance functions [5]. The current indirect cost recovery (ICR) system helps institutions cover these infrastructure expenses, though effective rates have remained around 40% despite negotiated rates often being higher [5].

How can I optimize network performance cost-effectively? Implement Quality of Service (QoS) policies to prioritize critical research applications, utilize open-source monitoring tools, perform regular firmware updates, and leverage network segmentation to isolate research data traffic [1] [2] [6]. These strategies often yield significant performance improvements without substantial financial investment. Consolidating IT assets and actively managing hardware resources through regular audits can also minimize redundant infrastructure spending [1].

Troubleshooting Common Scenarios

Our collaborative research team is experiencing slow file transfers between institutions. What should we check? First, establish a performance baseline to understand current conditions during different usage scenarios [1]. Monitor bandwidth usage patterns, check for packet loss, and analyze which applications are consuming the most resources. Implement traffic shaping techniques to control non-essential data flows during critical research operations. For geographically dispersed teams, consider Content Delivery Networks (CDNs) or caching strategies to reduce latency [2] [6].

Data analysis from our genome-scale experiments is taking longer than expected. Could this be network-related? Yes, the interpretation of results from genome-scale experiments is computationally intensive and often requires creating integrated data-knowledge networks that combine experimental results with existing knowledge from biomedical databases and literature [3]. Ensure your network has sufficient bandwidth for these large-scale data operations and consider implementing load balancing to distribute computational workloads across available resources [2]. Network segmentation can also help by creating dedicated pathways for data analysis traffic separate from general network usage [6].

Troubleshooting Guides

Guide 1: Diagnosing Network Bottlenecks in High-Throughput Data Environments

Symptoms: Slow data processing, delayed analysis completion, inability to handle multi-omics datasets efficiently.

Step-by-Step Diagnosis:

- Establish Baseline Performance: Document average and peak bandwidth usage, typical latency metrics, and regular user counts during different research phases [1].

- Identify Bottleneck Sources:

- Hardware Limitations: Check routers, switches, and servers for capacity limitations

- Software Issues: Verify QoS settings and firmware versions

- Bandwidth Constraints: Monitor usage during peak research activities [1]

- Implement Monitoring Tools: Use network analyzers for real-time traffic visualization and bandwidth monitors to track usage patterns [1] [6]

- Prioritize Resolution: Focus first on bottlenecks affecting the most users or critical research functions [1]

Solution Implementation:

- Apply QoS settings to prioritize research applications

- Update network equipment firmware

- Implement traffic shaping for non-essential applications [1] [6]

Guide 2: Optimizing Resource Allocation for Multi-Institutional Collaborations

Symptoms: Inconsistent performance across sites, difficulty sharing large biomedical datasets, variable access to computational resources.

Assessment Protocol:

- Map Collaborative Workflows: Identify all data hand-off points between institutions and researchers [7]

- Analyze Data Flow Patterns: Track how multi-omics data moves between basic science, translational research, and clinical applications [7]

- Evaluate Current Infrastructure: Catalog existing network infrastructure and its utilization rates [4]

- Identify Integration Gaps: Look for disparities in data interoperability standards and security protocols [7]

Optimization Strategies:

- Implement SD-WAN solutions to simplify wide area network policies across locations [2]

- Establish clear data collaboration protocols throughout the discovery process [7]

- Create network segmentation by research function or department to improve performance [6]

- Leverage caching and content delivery optimization for frequently accessed datasets [2] [6]

Table 1: Network Performance Metrics for Biomedical Research Environments

| Metric Category | Specific Metric | Optimal Range | Biomedical Research Impact |

|---|---|---|---|

| Bandwidth Management | Bandwidth utilization | <70% capacity during peak operations | Ensures critical genomic data transfers complete without delay [2] |

| Latency Requirements | Network latency | 30-40 milliseconds | Maintains real-time collaboration and computational processing [2] |

| Traffic Prioritization | QoS for research applications | Highest priority for data analysis tools | Prevents interruption of time-sensitive experimental processes [1] [6] |

| Infrastructure Metrics | Support staff to researcher ratio | Tracked for efficiency assessment | Affects research productivity and operational costs [4] |

| Collaboration Metrics | Number of active research collaborations | Monitor for network impact | Increased collaborations strain shared resources [4] |

Table 2: Research Resource Cost Structures and Performance Trade-offs

| Cost Category | Typical Allocation | Performance Implications | Optimization Strategies |

|---|---|---|---|

| Direct Costs (Project-specific) | Researcher salaries, specialized reagents, project-specific equipment [5] | Directly enables research progress; insufficient funding delays timelines | Strategic allocation to critical path activities; shared equipment protocols |

| Indirect Costs (Infrastructure) | Research facilities, shared data resources, compliance functions [5] | Maintains research environment; underfunding creates bottlenecks | Effective ICR rates average 40% despite negotiated rates of 55-70% [5] |

| Network Optimization | Monitoring tools, QoS implementation, traffic management | 14.6% greater throughput and 13.7% better resource use when optimized [8] | Open-source tools; phased implementation; AI-driven optimization [1] [2] |

| Data Management | Storage, transfer, and analysis of multi-omics data | Handling diverse, high-dimensional data requires robust infrastructure [7] | Compression techniques; caching; standardized data formats [2] [7] |

Experimental Protocols

Protocol 1: Assessing Network Impact on Biomedical Data Analysis Workflows

Purpose: To quantitatively measure how network performance affects the analysis of genome-scale experimental data.

Background: The interpretation of results from genome-scale experiments is computationally intensive and requires efficient networks to handle large, complex datasets [3].

Materials:

- Research-grade network monitoring tool (e.g., PRTG Network Monitor, Nagios Core) [6]

- Standardized multi-omics test dataset (e.g., sample from TCGA, GTEx, or UK-Biobank) [9]

- Computational analysis pipeline for biomedical data (e.g., Cytoscape with RenoDoI framework) [3]

Methodology:

- Baseline Establishment:

Controlled Testing:

- Execute standardized analysis pipeline on test dataset during peak and off-peak hours

- Measure time to complete integrated data-knowledge network creation [3]

- Record instances of network-related delays or failures

Intervention Phase:

Data Collection:

- Document completion times for each analysis phase

- Record resource utilization metrics

- Calculate cost-benefit ratios of network optimizations

Analysis: Compare processing times, success rates, and resource utilization between baseline and optimized configurations. Evaluate return on investment for network improvements based on researcher time savings and increased throughput.

Protocol 2: Cost-Benefit Analysis of Network Infrastructure Investments

Purpose: To evaluate the economic and performance impact of network optimization strategies in biomedical research settings.

Background: Indirect cost recovery mechanisms help support research infrastructure, but institutions must make strategic decisions about network investments [5].

Materials:

- Financial records of direct and indirect research costs [5]

- Network performance monitoring data [6]

- Research productivity metrics (publications, grants, collaborations) [4]

Methodology:

- Cost Documentation:

Performance Benchmarking:

- Measure current network performance against biomedical research needs

- Quantify time losses due to network limitations across research teams

- Estimate opportunity costs of delayed research outcomes

Intervention Scenarios:

- Model performance improvements from specific optimization techniques

- Calculate implementation costs for each optimization strategy

- Project researcher time savings and throughput increases

Return on Investment Calculation:

- Compare projected research productivity gains against implementation costs

- Calculate payback period for network investments

- Assess impact on competitive grant positioning and institutional reputation

Analysis: Identify optimization strategies with the best cost-benefit ratio for biomedical research environments. Develop tiered implementation plan prioritizing high-impact, cost-effective interventions.

Research Reagent Solutions

Table 3: Essential Tools for Network Performance and Resource Management

| Tool Name | Primary Function | Application in Biomedical Research |

|---|---|---|

| Network Monitoring Software (e.g., PRTG, Nagios Core, Zabbix) | Real-time traffic visualization and bandwidth analysis [6] | Identifies performance bottlenecks during large-scale data analysis; ensures QoS for critical research applications [1] |

| Cytoscape with RenoDoI Framework | Visualization and analysis of biological networks using degree-of-interest functions [3] | Filters complex integrated data-knowledge networks to identify plausible mechanistic explanations for observed biological phenomena [3] |

| Quality of Service (QoS) Configuration | Network traffic prioritization based on business/research needs [2] [6] | Ensures computational analysis tools receive necessary bandwidth while limiting non-essential traffic during critical research phases |

| Content Delivery Networks (CDNs) | Distributed servers providing content from locations closest to users [2] | Accelerates access to shared biomedical databases and computational resources for geographically dispersed research teams |

| Load Balancers | Distributing network traffic across multiple servers or paths [2] [6] | Prevents computational overload during peak analysis periods; provides redundancy for critical research applications |

| Deep Reinforcement Learning Systems | AI-driven resource allocation in dense networks [8] | Optimizes network resources for healthcare applications prioritizing medical needs in research hospital environments |

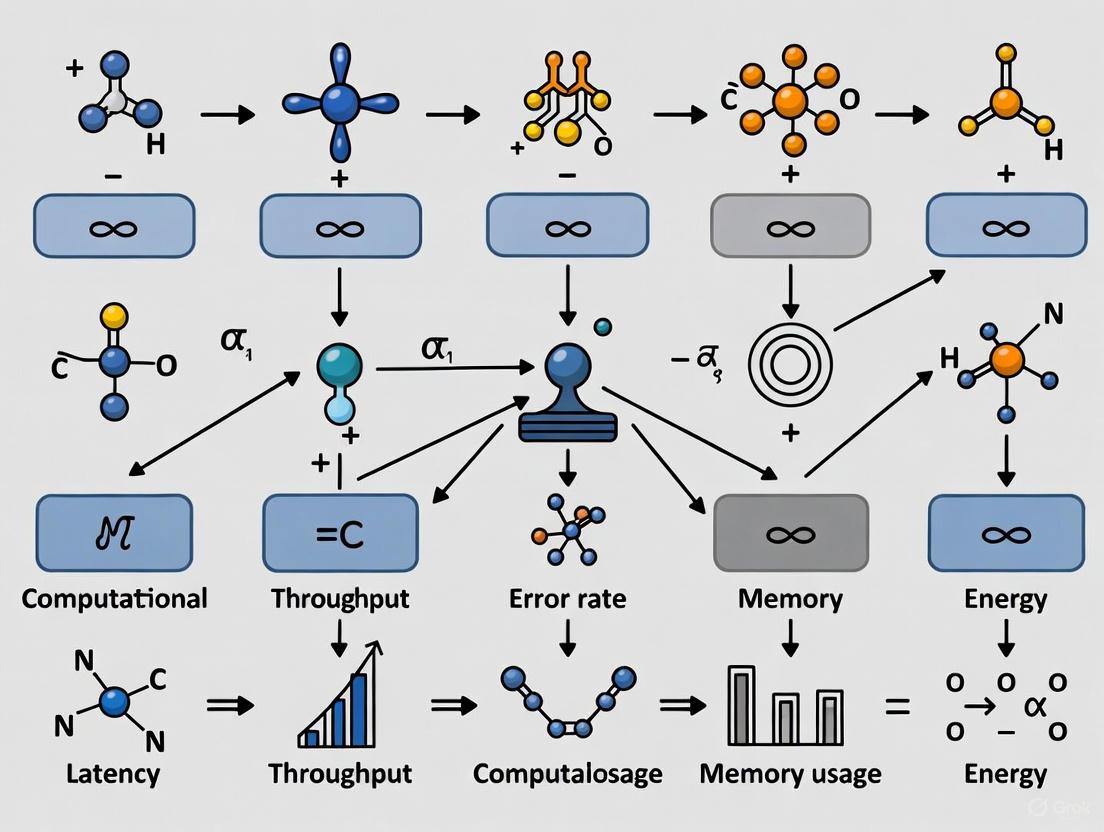

System Visualization

Network Performance Research Framework

Biomedical Data Network Optimization

Resource Cost Allocation Model

Fundamental Cost Concepts: Fixed vs. Variable

What is the core difference between a fixed cost and a variable cost?

Fixed costs are business expenses that remain constant and stable over time, regardless of the level of goods or services your business produces and sells. They do not fluctuate with activity levels [10]. Examples include rent, lease payments, salaried employee wages, insurance premiums, and loan repayments [10] [11].

Variable costs are business expenses that change in direct proportion to the level of business activity. When your business produces more, variable costs increase, and vice versa. They remain consistent on a per-unit basis but fluctuate in total based on business volume [11]. Examples include raw materials, production supplies, direct labor, sales commissions, and shipping costs [10] [12].

How do semi-variable or mixed costs fit into this framework?

Semi-variable or mixed costs contain elements of both fixed and variable costs. They combine a fixed component that exists regardless of activity level with a variable component that changes with business volume [11].

The total cost equation for mixed expenses is: Total Mixed Cost = Fixed Component + (Variable Component × Activity Level) [11].

Common examples include:

- Utilities: A base connection fee (fixed) plus usage-based charges (variable) [11].

- Sales Compensation: A base salary (fixed) plus performance-based commissions (variable) [11].

- Equipment Maintenance: Essential scheduled maintenance (fixed) plus additional repairs from increased utilization (variable).

What is the fundamental equation for Total Cost?

The total cost equation that combines fixed and variable components is [11]:

Total Cost = Total Fixed Cost + Total Variable Cost

Example Calculation: If a research operation has $150,000 in monthly fixed costs and variable costs of $75 per experimental run with 2,000 runs performed, the total cost equals: Total Cost = $150,000 + ($75 × 2,000) = $150,000 + $150,000 = $300,000

Cost Analysis & Troubleshooting

How do I calculate the break-even point for a research project or service?

Break-even analysis determines the sales or output volume required to cover all costs. The basic break-even formula is [11]:

Break-Even Quantity = Total Fixed Costs ÷ (Price per Unit - Variable Cost per Unit)

Example: If a project has $200,000 in fixed costs, a grant funding of $500 per unit of output, and variable costs of $300 per unit: Break-Even Quantity = $200,000 ÷ ($500 - $300) = $200,000 ÷ $200 = 1,000 units

The project must deliver 1,000 units to cover all costs. This is crucial for grant applications and project feasibility studies [11].

Our computational resource costs are too high. What is a systematic way to troubleshoot this?

A general troubleshooting methodology can be applied to cost-related issues [13]:

- Identify the Problem: Clearly define the issue without assuming the cause (e.g., "AWS bills exceeded Q3 budget by 40%").

- List All Possible Explanations: Brainstorm potential drivers (e.g., increased data storage, inefficient code leading to longer compute times, new team members provisioning oversized instances, changes in cloud provider pricing).

- Collect the Data: Gather information on the easiest explanations first. Analyze usage logs, cost allocation tags, and compare against historical data.

- Eliminate Explanations: Based on the data, rule out causes that are not supported.

- Check with Experimentation: Implement controlled changes (e.g., implementing auto-scaling policies, switching to spot instances for non-critical workloads) and monitor the impact on cost.

- Identify the Cause: After testing, pinpoint the primary cost driver and implement a permanent fix (e.g., establishing resource provisioning guidelines) [13].

What strategies can we use to optimize high fixed costs?

Reducing fixed costs often requires strategic, structural changes [10] [11]:

- Outsourcing Non-Core Functions: Convert internal fixed overhead for functions like IT or specialized data analysis into variable service expenses.

- Technology Leverage: Replace fixed labor costs with scalable automation and software solutions for data processing.

- Space Optimization: Rightsize laboratory and office facilities, negotiate favorable lease terms, or embrace hybrid work models to reduce real estate footprints.

- Consolidate Services: Look for opportunities to combine software subscriptions or service contracts to take advantage of bulk discounts [10].

What strategies can we use to manage and reduce variable costs?

Managing variable costs demands continuous operational attention [10] [12]:

- Negotiate with Suppliers: Secure better prices for reagents and materials by agreeing to longer contracts or buying in bulk, aligned with sales forecasts.

- Improve Process Efficiency: Standardize experimental protocols to reduce reagent waste and optimize material handling to prevent spoilage or damage.

- Implement Tiered Pricing Models: For services like sequencing or core facility use, establish tiered rates based on volume to balance access and cost.

- Review Utility Contracts: Conduct energy audits and shift high-consumption computational tasks to off-peak hours to reduce utility rates [12].

Cost Scenarios & Strategic Decisions

How should the cost structure influence our "Build vs. Buy" decisions for research tools?

The fixed/variable distinction is critical when deciding whether to develop tools in-house or purchase them [11].

| Factor | Build In-House | Buy/Subscribe |

|---|---|---|

| Cost Nature | Higher fixed costs (hiring developers, infrastructure) and potentially lower variable costs. | Lower initial fixed costs, but ongoing variable subscription/licensing fees. |

| Control & Customization | High control and ability to customize for specific research needs. | Limited by the vendor's feature set and roadmap. |

| Best Suited For | Tools that provide a long-term strategic advantage and will be used consistently at high volume. | Specialized, non-core tools or those requiring frequent updates and vendor support. |

Internal production (Build) typically converts variable supplier costs into a combination of fixed equipment/overhead costs plus lower variable costs. This makes financial sense when volumes are consistently high enough to offset the additional fixed cost burden [11].

When scaling operations, is it better to add a new shift (variable cost) or invest in a new facility (fixed cost)?

The optimal decision depends on projected volume, stability, and risk tolerance [11].

| Consideration | Add Shift (Higher Variable Cost) | New Facility (Higher Fixed Cost) |

|---|---|---|

| Financial Risk | Lower risk. Costs decrease automatically if output needs to scale down. | Higher risk. Fixed obligations remain even if output or funding decreases. |

| Profit Potential | Limited operational leverage. Profits grow linearly with output. | High operational leverage. Once break-even is passed, profits accelerate rapidly. |

| Best For | Uncertain or volatile project pipelines, shorter-term projects. | Predictable, sustained long-term growth and high, stable demand. |

Higher fixed costs create greater operational leverage—magnifying both profits in good times and losses during downturns [11].

Cost Management Toolkit

Key Financial Formulas and Metrics

The following table summarizes essential formulas for cost analysis [11].

| Metric | Formula | Purpose |

|---|---|---|

| Total Cost | Total Fixed Cost + (Variable Cost per Unit × Quantity) |

Calculate the total cost at a given production level. |

| Break-Even Point (Units) | Total Fixed Costs ÷ (Price per Unit - Variable Cost per Unit) |

Determine the number of units that must be sold to cover all costs. |

| Contribution Margin per Unit | Price per Unit - Variable Cost per Unit |

Understand how much each unit contributes to covering fixed costs. |

| Total Mixed Cost | Fixed Component + (Variable Component × Activity Level) |

Model costs that have both fixed and variable elements. |

Experimental Protocol: Cost Behavior Analysis for Mixed Costs

Objective: To separate the fixed and variable components of a semi-variable cost (e.g., a lab's total electricity bill).

Methodology: High-Low Method [11]

- Data Collection: Gather data on the cost (electricity bill) and the associated activity level (e.g., machine hours, number of experiments) for several periods.

- Identify Extremes: Select the periods with the highest and lowest activity levels.

- Calculate Variable Rate:

- Variable Cost per Unit = (Cost at High Activity - Cost at Low Activity) ÷ (High Activity Level - Low Activity Level)

- Determine Fixed Cost:

- Total Fixed Cost = Total Cost at High Activity - (Variable Cost per Unit × High Activity Level)

- (This can also be calculated using the low activity data).

- Create Cost Formula: Use the results to create a formula:

Total Electricity Cost = Total Fixed Cost + (Variable Cost per Unit × Activity Level).

Decision Framework for Cost-Related Issues

This workflow visualizes the troubleshooting and decision-making process for managing cost drivers, integrating both the systematic troubleshooting method [13] and strategic cost considerations [10] [11].

Frequently Asked Questions (FAQs)

Is direct labor always a variable cost?

Not always. In many research and manufacturing contexts, direct labor for hourly workers involved in production or experiments is treated as a variable cost because it fluctuates with output levels [12]. However, the salaries of principal investigators, lab managers, and core technical staff are typically considered fixed costs, as they are paid consistently regardless of short-term fluctuations in experimental throughput [10] [11].

How can we forecast variable costs more accurately for grant proposals?

The simplest way is to analyze historical data to understand past cost behavior [12]. For greater accuracy, use regression analysis, a statistical technique that examines the relationship between a specific variable expense (e.g., cost of reagents) and an activity driver (e.g., number of assays run). This helps establish a numerical relationship for forecasting. Additionally, employ scenario analysis to model how changes in market demand or supply chain disruptions could impact costs, moving beyond what historical data alone can show [12].

What is the most common mistake in classifying a fixed cost versus a variable cost?

A common error is misclassifying semi-variable costs. For example, a software subscription might have a fixed monthly fee for a base tier plus a variable, usage-based fee for exceeding certain limits. It's crucial to break down these mixed costs into their fixed and variable components for accurate modeling and decision-making [11]. Another mistake is assuming a cost is fixed without considering the relevant range; rent may be fixed until you need to expand to a larger facility, at which point it becomes a step-fixed cost.

For researchers, scientists, and drug development professionals, optimizing computational workflows is crucial for accelerating discovery while managing resources. This guide provides practical methodologies for diagnosing and resolving common performance issues, framed within the critical context of balancing latency, throughput, and accuracy.

Foundational Concepts and Trade-offs

Latency is the time taken to complete a single task or produce a single result, often measured in milliseconds or seconds. Throughput is the number of such tasks completed within a given time period, measured in operations per second. Computational Accuracy refers to the correctness and precision of the results generated by a system or algorithm [14].

These three metrics exist in a state of tension. Optimizing for one often means making compromises in the others. Understanding these trade-offs is essential for configuring systems to meet specific research goals [15] [14].

- The Latency-Accuracy Trade-off: Lower latency often requires simplifying processes, which can reduce accuracy. For instance, a machine learning model might achieve high accuracy by using complex algorithms or larger datasets, but this complexity slows down prediction times. Conversely, using a faster, simpler model might slightly reduce accuracy for a significant speed gain [14].

- The Latency-Throughput Trade-off: A system with constantly full request queues maximizes throughput but forces new requests to wait, resulting in high latency. Conversely, a system designed for low latency must keep request queues nearly empty to process tasks immediately, which can sacrifice maximum possible throughput [16]. You cannot optimize a single system for both the lowest possible latency and the highest possible throughput simultaneously [16].

The table below summarizes common strategies and their impacts on these core metrics.

Table: Common Optimization Strategies and Their Impact on Performance Metrics

| Strategy | Mechanism | Impact on Latency | Impact on Throughput | Impact on Accuracy |

|---|---|---|---|---|

| Replication/Redundancy [15] | Issuing multiple concurrent requests and using the fastest response. | Reduces mean and tail latency, especially under low load. | Increases system utilization, can reduce net throughput under high load. | Typically no direct impact. |

| Caching [15] | Storing frequently accessed data closer to the computation. | Reduces data access latency. | Can increase overall system throughput. | No direct impact; ensures accuracy by serving correct cached data. |

| Model Quantization [14] | Reducing numerical precision of calculations in ML models. | Speeds up inference time. | Allows more inferences per second. | May slightly reduce output quality. |

| Hybrid/Tiered Systems [14] | Using a fast, low-accuracy model first, then a slower, high-accuracy one. | Provides quick initial results. | Maximizes resource utilization for different query types. | Maintains or improves overall result quality. |

| Dynamic Adaptation [15] | Profiling workloads and adjusting precision or resources in real-time. | Can lower latency for latency-sensitive tasks. | Improves throughput for batch tasks. | Minimizes accuracy loss by applying it selectively. |

Frequently Asked Questions (FAQs)

1. How can I reduce my model's inference latency without changing the hardware? Consider implementing model quantization, which reduces the numerical precision of the model's weights and activations (e.g., from 32-bit floating-point to 8-bit integers). This decreases the computational complexity and memory bandwidth needed, significantly speeding up inference at a potential slight cost to accuracy [14]. For a layered approach, frameworks like FPX can adaptively reduce precision for "compression-tolerant" layers, delivering speedups with minimal loss in output quality [15].

2. My high-throughput data processing job is causing unacceptable latency for my interactive users. What should I do? This is a classic trade-off. The most effective solution is to split your computational resources. Dedicate a "low-latency" cluster for interactive users where request queues are kept short, and a separate "high-throughput" cluster for batch processing jobs where queues can be kept full. This prevents the two types of workloads from interfering with each other [16].

3. My database queries are accurate but slow. What are my options? For analytical tasks where perfect precision is not always critical, consider using approximate queries. Techniques like sampling return faster results with less precise outcomes. This trades a known, small margin of error for a significant reduction in latency [14].

4. How do I know if my system's performance is a hardware or a software issue? Begin by isolating the issue. Profile your application to identify if the bottleneck is in CPU, memory, disk I/O, or network. Use monitoring tools to establish a performance baseline. Often, bottlenecks are caused by software configuration, inefficient code, or resource contention rather than pure hardware limitations [1]. A systematic troubleshooting process is outlined in the next section.

Troubleshooting Guide: A Structured Methodology

Effective troubleshooting follows a logical progression from understanding the problem to implementing a permanent fix. The workflow below provides a high-level overview of this structured methodology.

Phase 1: Understand the Problem

Before diving in, gather crucial information to define the problem scope.

- Ask Targeted Questions: Go beyond "it's slow." Ask:

- Gather Information and Context: Collect relevant system logs, resource utilization metrics (CPU, memory, network), and profiling data. If possible, use tracking software or jump on a screen share to observe the issue in real-time [17].

- Reproduce the Issue: Attempt to recreate the problem in your own environment. This confirms the bug, helps you understand the user's experience, and is essential for verifying a fix later on [17].

Phase 2: Isolate the Root Cause

Narrow down the problem to a specific component or configuration.

- Remove Complexity: Simplify the system to a known functioning state. This could involve:

- Testing with a minimal dataset.

- Disabling non-essential services or background processes.

- Running the task in a clean, standardized environment (e.g., a new container instance) [17].

- Change One Variable at a Time: A core principle of scientific troubleshooting. If you alter multiple settings or conditions simultaneously and the problem resolves, you won't know which change was effective. Methodically test hypotheses one by one [17].

- Compare to a Working Baseline: Compare the broken setup against a similar one that functions correctly. Differences in software versions, library dependencies, hardware, or configuration files can illuminate the root cause [17].

Phase 3: Find a Fix or Workaround

Develop and validate a solution.

- Propose a Solution: Based on the isolated cause, determine the best path forward. This could be a configuration change, a software update, a data correction, or a temporary workaround that allows the user to continue their work [17].

- Test Thoroughly: Do not make the customer your guinea pig. Validate the fix in your own reproduction of the issue first. Check for any unintended side-effects [17].

- Communicate Clearly: Explain the solution to the user in a clear, step-by-step manner. Use numbered lists in written communication for easy follow-through. Empathize with their frustration and position yourself as an advocate working with them to resolve the issue [17] [18].

Phase 4: Fix for the Future

Prevent the problem from recurring.

- Document the Solution: Add the issue and its resolution to your team's internal knowledge base or troubleshooting guide [17].

- Update FAQs: If the issue is likely to affect other users, create or update a public-facing FAQ entry to deflect future tickets and empower users to self-serve [18].

- Report Upstream: If you've identified a genuine bug in the software or system, pass a detailed bug report to the engineering or development team for a permanent code-level fix [17].

The Scientist's Toolkit: Key Research Reagents

This table details essential "reagents" for performance optimization experiments.

Table: Essential Tools and Materials for Performance Analysis

| Tool/Resource | Category | Primary Function |

|---|---|---|

| Profiling Tools (e.g., CPU Profiler) | Software | Identifies specific functions or lines of code that consume the most CPU time, pinpointing computational bottlenecks. |

| System Monitoring (e.g., Nagios, Zabbix) | Software | Tracks real-time and historical resource utilization (CPU, memory, disk I/O, network) to establish baselines and detect anomalies [1]. |

| Traffic Analyzers (e.g., Wireshark) | Software | Captains and analyzes network traffic to diagnose latency, packet loss, or protocol issues that impact distributed systems [1]. |

| Model Quantization Framework (e.g., FPX, TensorFlow Lite) | Software Library | Reduces the precision of neural network models to decrease latency and increase throughput with a controlled trade-off in accuracy [15] [14]. |

| A/B Testing Platform | Methodology | Allows for the comparative testing of two different system configurations (e.g., different cache sizes) on live traffic to objectively measure performance impact. |

| Clinical Trial Management System (CTMS) | Platform | In life sciences contexts, platforms like Veeva Vault Analytics provide built-in dashboards and KPI tracking for trial performance metrics [19]. |

Experimental Protocol: Quantifying the Latency-Accuracy Trade-off

This protocol provides a reproducible method for measuring the impact of optimization techniques on model performance.

Objective: To quantitatively assess the effect of model quantization on inference latency and prediction accuracy.

Hypothesis: Applying post-training quantization to a machine learning model will significantly reduce inference latency and model size while causing a statistically quantifiable, minor reduction in prediction accuracy.

Materials:

- A pre-trained machine learning model (e.g., an image classification model like ResNet-50).

- A calibrated evaluation dataset appropriate for the model's task.

- A machine with standard CPU (and optional GPU) capabilities.

- Profiling software (e.g., TensorFlow Profiler, PyTorch Profiler).

- A model quantization library (e.g., TensorFlow Lite, PyTorch Quantization).

Methodology:

- Baseline Measurement:

- Load the pre-trained, full-precision (FP32) model.

- Run 1000 inferences on the evaluation dataset, recording the latency for each individual inference.

- Calculate the mean latency, P95 latency (tail latency), and throughput (inferences/second).

- Calculate the model's baseline accuracy (e.g., top-1 and top-5 accuracy for classification) on the dataset.

Intervention:

- Apply dynamic-range or full-integer quantization to the model using the chosen library, converting it to an INT8 format.

- Serialize and save the quantized model, noting the reduction in file size.

Post-Intervention Measurement:

- Load the quantized (INT8) model.

- Repeat the latency and throughput measurement process from Step 1 using the identical dataset and hardware.

- Calculate the accuracy of the quantized model on the same evaluation dataset.

Data Analysis:

- Create comparative tables for latency, throughput, model size, and accuracy.

- Calculate the percentage change for each metric.

- Use statistical tests (e.g., a paired t-test) to determine if the observed change in accuracy is statistically significant.

Visualization: The results are best summarized in a series of comparative bar charts. The diagram below outlines the core workflow of this experiment.

At the core of modern computational drug development is a critical balancing act: achieving high-performance outcomes while managing significant resource costs. This technical support center provides targeted troubleshooting guides and FAQs to help researchers navigate this trade-off, particularly when implementing advanced, resource-sensitive methodologies like Federated Learning (FL) for in-situ drug testing [20].

Frequently Asked Questions for Research Systems

Q: Our federated learning process is consuming excessive bandwidth and causing project delays. What are the primary strategies to reduce communication costs?

A: High communication costs are a recognized challenge in FL. Your primary strategy should be to reduce the total number of parameters shared during the FL process [20]. Instead of transmitting the entire model in every communication round, explore Learning Strategies (LS) that share only a critical subpart of the model, such as the dense layers. One study demonstrated that this approach can reduce communication overheads by 6% to 95.64% while maintaining model accuracy between 89.25% and 96.6% [20]. Begin by profiling your model to measure the parameter size and contribution of each layer to identify the best candidates for selective sharing.

Q: We are establishing a new fiber optic dissolution system (FODS). What are the key steps for its validation and common pitfalls?

A: Validating an in-situ Fiber Optic Dissolution System (FODS) should be treated with the same rigor as validating an HPLC method [21]. The protocol must be systematically assessed for:

- Linearity: Test across a range of concentrations (e.g., 25-125% of the expected concentration).

- Accuracy: Validate at multiple levels (e.g., 80%, 100%, and 120%).

- Precision: Conduct multiple runs (e.g., six) at the 100% level.

- Robustness: Evaluate the impact of small, deliberate changes in operating conditions like probe depth, orientation, analytical wavelength, and paddle speed [21].

Common troubleshooting areas for FODS include issues with media preparation, probe sensitivity, and interference from formulation excipients [21]. Ensure your team documents all method parameters meticulously to facilitate rapid diagnosis.

Q: How can we effectively present the trade-offs between performance and resource consumption in our research reports?

A: Clearly structured quantitative data is essential. Summarize your experimental results in a comparative table that lists different configurations (e.g., various Learning Strategies) alongside their resulting performance metrics (e.g., accuracy) and resource costs (e.g., data transmitted, training time). This provides a clear, at-a-glance view of the trade-offs. Furthermore, using a standardized workflow diagram (see below) to visualize your experimental process ensures clarity and reproducibility for your team and reviewers.

Troubleshooting Guides

Guide 1: Troubleshooting High Communication Costs in Federated Learning

This guide addresses the common FL problem of excessive network usage, which directly impacts the balance between research progress and operational cost [20].

Problem Definition: The federated learning process is moving large volumes of data (gigabytes per round), leading to high communication costs and training bottlenecks.

Isolation Steps:

- Profile Parameter Size: Determine the size (in MB) of each layer in your deep learning model. Identify the largest layers contributing most to the data transfer.

- Analyze Layer Criticality: Evaluate the contribution of each layer to the model's final accuracy. This can be done by selectively freezing layers during initial experiments and observing the performance impact.

- Monitor Convergence: Track the global model's accuracy over communication rounds. Note if the cost-saving strategy leads to unstable training or failure to converge.

Solution & Workaround: Implement a custom Learning Strategy (LS) that shares only a subset of model parameters. Empirical results show that sharing only the dense layers can often achieve performance comparable to sharing the full model while drastically reducing costs [20]. If a full model update is unavoidable, increase the number of local training epochs between communication rounds to reduce the total number of rounds required.

Preventive Measures:

- Architect your models with communication efficiency in mind from the start.

- Establish a monitoring dashboard that tracks data transfer per client and overall training time.

- Document the performance-cost trade-off of different LSs for future reference.

Guide 2: Troubleshooting a New Fiber Optic Dissolution Method

This guide helps ensure the reliability of your dissolution testing, a critical quality control tool in drug development [22] [21].

Problem Definition: A newly developed dissolution method using FODS is yielding high variability, inaccurate results, or is failing validation parameters.

Isolation Steps:

- Reproduce the Issue: Confirm the problem is consistent across multiple runs and with different tablet samples from the same batch.

- Verify Linearity & Accuracy: Ensure your validation results for linearity and accuracy meet pre-defined criteria (e.g., R² > 0.99). Failure here often points to issues with calibration or the chemical stability of the analyte in the media.

- Test Robustness Parameters: Systematically vary one parameter at a time (e.g., paddle speed by ±5 rpm, probe sampling depth, wavelength by ±1 nm) to identify if the method is overly sensitive to a specific condition [21].

Solution & Workaround:

- For media/excipient interference: Change the dissolution media composition or add surfactants to mitigate excipient effects on the fiber optic probe readings [21].

- For high variability: Optimize tablet coating formulations or ensure consistent de-aeration of the dissolution media. Also, verify the alignment and stability of the fiber optic probes.

- As a last resort, if FODS proves too problematic for your specific formulation, consider reverting to the traditional off-line sampling method with UV spectrophotometric analysis as a benchmark while you continue to troubleshoot the FODS [21].

Preventive Measures:

- Follow a rigorous, documented method development and validation process analogous to ICH Q2(R1) and ICH Q14 guidelines [22].

- During development, proactively test for robustness to identify optimal and stable operating ranges for all critical parameters.

Experimental Protocols & Data

Federated Learning Performance-Cost Trade-off Analysis

Objective: To evaluate the trade-off between classification performance and communication costs in a federated learning environment by testing different parameter-sharing strategies [20].

Methodology:

- Federated Environment Setup: Implement an environment with one aggregation server and five client nodes. Each node stores a local portion of the dataset (e.g., the SHARE dataset for patient risk identification).

- Model Definition: Select a hybrid deep learning model appropriate for the data (e.g., a model with Convolutional and Dense layers for time-series analysis).

- Define Learning Strategies (LS): Establish seven different LSs that dictate which parts of the local model (e.g., all layers, only convolutional layers, only dense layers) are sent to the server for aggregation.

- Training & Evaluation: Run the FL process for a fixed number of communication rounds. For each LS, record the final model accuracy and the total volume of data transmitted.

Quantitative Results Summary:

| Learning Strategy (LS) | Model Accuracy (%) | Data Transmitted (MB) | Communication Cost Reduction vs. FedAvg |

|---|---|---|---|

| FedAvg (Baseline - All Layers) | 90.5 | 100.0 | 0% |

| LS 1 (Dense Layers Only) | 96.6 | 4.36 | 95.64% |

| LS 2 | 92.1 | 15.80 | 84.20% |

| LS 3 | 89.25 | 94.00 | 6.00% |

Table 1: Example results from a federated learning trade-off study. Data shows that sharing only dense layers (LS1) can maximize performance while minimizing costs [20].

Protocol for Validation of a Fiber Optic Dissolution System

Objective: To develop and validate a robust, discriminatory dissolution method for an immediate-release (IR) tablet using an in-situ Fiber Optic Dissolution System (FODS) [21].

Methodology:

- Apparatus & Conditions: Use USP Apparatus II (paddles). Set temperature to 37 ± 0.2°C and rotation speed to 50 rpm. The dissolution volume is 500 mL of 0.01 N hydrochloric acid.

- Linearity: Prepare standard solutions at five concentration levels spanning 25% to 125% of the expected drug concentration. Measure the absorbance and plot a calibration curve.

- Accuracy: Perform recovery studies by spiking a placebo with the drug at 80%, 100%, and 120% of the target concentration. Calculate the percentage recovery.

- Precision: Assess repeatability by conducting six independent dissolution runs of the same drug product batch.

- Robustness: Deliberately vary parameters like probe sampling depth, probe orientation, analytical wavelength, and paddle speed within a small, predefined range to evaluate the method's resilience.

Visualizations

Federated Learning with Selective Aggregation

Diagram 1: A Federated Learning workflow demonstrating a communication-efficient strategy where clients train locally and send only critical sub-models (e.g., dense layers) for aggregation, reducing data transfer [20].

Fiber Optic Dissolution System Validation

Diagram 2: A protocol for developing and validating a Fiber Optic Dissolution System (FODS), highlighting key validation steps and potential troubleshooting loops [21].

The Scientist's Toolkit: Research Reagent & System Solutions

| Item | Function in Research |

|---|---|

| Federated Learning Framework | A software platform that enables the training of machine learning models across decentralized edge devices (like research labs) without exchanging the raw data, thus addressing privacy and data governance concerns [20]. |

| USP Dissolution Apparatus | Standardized equipment (e.g., Apparatus I [baskets] or II [paddles]) used to assess the drug release characteristics of solid oral dosage forms under controlled conditions, ensuring product quality and consistency [22]. |

| Fiber Optic Dissolution System | An in-situ analytical system that uses fiber optic probes to measure drug concentration in the dissolution vessel in real-time, eliminating the need for manual sampling and enabling faster, more efficient data collection [21]. |

| Biopharmaceutics Classification System | A scientific framework for classifying drug substances based on their aqueous solubility and intestinal permeability. It is used to determine when a biowaiver (an exemption from conducting in-vivo bioequivalence studies) can be granted [22]. |

| ICH Q2(R1) / ICH Q14 Guidelines | International regulatory guidelines that provide a framework for the validation and lifecycle management of analytical procedures, ensuring that methods like dissolution testing are reliable, reproducible, and fit for their intended purpose [22]. |

The Impact of Service Level Expectations on Network Design and Budget

In the context of modern computational research, particularly in data-intensive fields like drug development, the "network" can be understood as two interdependent layers: the logistical supply chain that delivers physical materials and the digital data pipeline that enables analysis. The design of both layers is critically shaped by service level expectations, which are formalized targets for performance, reliability, and responsiveness [23] [24].

This creates a fundamental tension: higher service levels (e.g., faster data processing, shorter lead times for lab supplies) typically require a more robust and complex network design, which invariably increases costs [25] [26]. Conversely, a singular focus on minimizing budget can lead to network designs that are fragile, slow, and ultimately hinder research progress. This article, framed within broader thesis research on balancing these trade-offs, provides a technical support framework to help scientists and researchers navigate these critical design decisions.

Frequently Asked Questions (FAQs)

Q1: What are the most common metrics for defining "service level" in a research supply chain? Service levels are quantified using Key Performance Indicators (KPIs) that directly impact research timelines. Common metrics include [23] [26] [24]:

- Order Cycle Time: The total time from placing an order to receipt.

- On-Time Delivery Rate: The percentage of orders delivered by the promised date.

- Fill Rate: The percentage of ordered items that are successfully fulfilled immediately from available stock.

- Data Throughput/ Latency: For digital workflows, the speed and volume at which data is processed and transferred.

Q2: How does increasing service level expectations directly impact network design? Elevated service level targets often necessitate a structural redesign of the network [25] [26] [24]:

- Increased Nodes: To reduce delivery times, you may need more distribution centers or regional cloud instances strategically located closer to research hubs.

- Higher Inventory Buffers: Achieving high fill rates requires holding more safety stock of critical reagents and materials, increasing warehousing costs.

- Enhanced Transportation Modes: Faster delivery often means shifting from ground to air freight, significantly increasing transportation costs.

- IT Infrastructure Upgrades: Lower data latency and higher throughput may require investments in higher-bandwidth connections, more powerful servers, and advanced load-balancing systems [2] [6].

Q3: What are the primary cost drivers that escalate with higher service levels? The main cost drivers can be categorized as follows [24]:

Table: Key Network Cost Drivers

| Cost Category | Description | Impact of Higher Service Level |

|---|---|---|

| Transportation Costs | Costs for moving goods/data (freight, fuel, data transfer fees). | Increases with faster, more premium shipping and data transfer modes. |

| Inventory Costs | Costs of holding stock (holding costs, capital tied up, storage). | Increases to maintain higher safety stock levels for better fill rates. |

| Warehousing Costs | Facility costs (rent, labor, utilities). | Increases with more or larger facilities to decentralize inventory. |

| Infrastructure Costs | IT hardware, software, and network infrastructure. | Increases with investments in higher-performance computing and networking gear [2]. |

Q4: What analytical methods can we use to find the optimal balance? Researchers and planners can leverage several quantitative approaches [23] [25] [24]:

- Cost-Service Curve Analysis: Plotting cost against service level to visually identify the "sweet spot" where cost increases begin to yield diminishing returns in service improvement.

- Optimization Modeling: Using mathematical models (e.g., linear programming, multi-objective optimization) to find a network design that minimizes cost for a given service level constraint, or maximizes service level for a given budget.

- Simulation & Digital Twins: Creating a virtual replica ("digital twin") of the supply chain or data pipeline. This allows for risk-free testing of how different designs perform under various "what-if" scenarios, such as demand spikes or supplier disruptions [25] [24].

- Multi-Criteria Decision Analysis (MCDA): A structured method to evaluate different network designs based on multiple, often conflicting, criteria such as cost, service level, risk, and sustainability [23].

Troubleshooting Common Experimental Scenarios

Scenario 1: Inconsistent Reagent Delivery Delays Critical Experiments

Problem: Your cell culture assays are consistently delayed because essential growth media and reagents are not arriving within the expected 2-day lead time, causing planned experiments to be pushed back.

Investigation & Diagnosis:

- Verify Internal Processes: Confirm that your internal ordering and approval workflows are not introducing delays.

- Analyze Supplier Performance: Review the supplier's on-time delivery history and track the shipping methods used. Is the delay occurring at the supplier, in transit, or at your receiving dock?

- Evaluate Network Design: Assess the physical location of the supplier's warehouse relative to your lab. A supplier located across the country may be inherently unable to consistently meet a 2-day lead time via ground transport.

Resolution Steps:

- Short-Term Mitigation: Work with procurement to identify a local or regional backup supplier for critical items to mitigate single-source risk [25].

- Supplier Collaboration: Present performance data to the primary supplier to collaboratively solve the logistics issue. They may need to switch carriers or adjust their fulfillment process.

- Long-Term Network Redesign: If the problem is fundamental to the network design, model the cost of switching to a supplier with a closer distribution center or paying for a premium shipping contract from the current supplier. Use digital twin simulation to validate this change before implementation [24].

Scenario 2: Computational Analysis Bottlenecks Slow Down Data-Processing Workflows

Problem: Your automated image analysis pipeline, which processes high-throughput screening data, is taking longer than expected, creating a bottleneck that delays subsequent analysis stages.

Investigation & Diagnosis:

- Identify the Bottleneck: Use system monitoring tools to check CPU, memory, disk I/O, and network utilization on your analysis server or cloud instance. The bottleneck is likely the resource running at or near 100% capacity [1] [6].

- Profile the Workflow: Time the different stages of your analysis script. The inefficiency may be in the code itself (e.g., an unoptimized algorithm) rather than the hardware.

- Check for Resource Competition: Determine if other processes or users are consuming shared resources on the same system.

Resolution Steps:

- Immediate Fixes: If the code is the issue, optimize the algorithm or enable parallel processing. For hardware, if the budget allows, vertically scale (upgrade) the server or choose a more powerful cloud instance type [27].

- Architectural Optimization: Implement load balancing to distribute analysis jobs across multiple machines [2] [6]. For cloud deployments, use auto-scaling to automatically add resources during peak demand and remove them during lulls, optimizing cost and performance [27].

- Cost-Effective Resource Selection: For non-time-sensitive parts of the workflow, consider using lower-cost cloud compute options like spot instances to reduce overall computational expense [27].

Diagram: A logical workflow for troubleshooting performance bottlenecks, illustrating the relationship between problem diagnosis and resolution strategies.

The Scientist's Toolkit: Essential Research Reagents & Solutions

In the context of designing a resilient research network, the following "reagents" are essential for planning, analysis, and execution.

Table: Essential Research Reagents for Network Design & Analysis

| Item / Solution | Function / Explanation |

|---|---|

| Digital Twin Software | A virtual replica of your physical supply chain or data pipeline. Its function is to simulate, visualize, and analyze real-world operations in a risk-free environment, allowing you to test "what-if" scenarios before implementation [25] [24]. |

| Supply Chain Network Design Platform | Specialized software that uses advanced analytics and optimization algorithms to model different network configurations (facility locations, transportation routes) and evaluate their cost and service level performance [24]. |

| AI/ML-Driven Optimization Tools | Tools that leverage artificial intelligence and machine learning to predict network bottlenecks, optimize inventory placement, and enhance demand forecasting accuracy, leading to more informed trade-off decisions [25] [28]. |

| Multi-Criteria Decision Analysis (MCDA) Framework | A structured methodology for evaluating different network design options against multiple, conflicting criteria (e.g., cost, service, risk, sustainability), helping researchers make balanced, objective decisions [23]. |

| Network Performance Monitoring | Tools that provide real-time visibility into the performance of your computational and data networks, enabling proactive identification of bottlenecks as outlined in Scenario 2 [2] [6]. |

Quantitative Data for Experimental Modeling

To effectively model the trade-offs in your research, the following table summarizes key quantitative relationships derived from industry analysis. These figures can serve as initial benchmarks or parameters for your own simulation models.

Table: Service Level Impact on Key Network Metrics

| Service Level Metric | Baseline Scenario (Lower Cost) | Enhanced Scenario (Higher Cost) | Quantitative Impact on Network |

|---|---|---|---|

| Target Delivery Lead Time | 5 days | 2 days | Transportation costs may increase by 50-100%+ (shift from ground to air freight) [25] [24]. |

| Inventory Target (Fill Rate) | 90% | 98% | Required safety stock inventory can increase by 20-50%+, raising holding costs [23] [26]. |

| Compute Resource Availability | On-demand instances | Reserved Instances | Commitment to reserved instances can reduce cloud compute costs by up to 75% compared to on-demand pricing [27]. |

| Data Processing Speed | Standard Computing | High-Performance Computing (HPC) | HPC cluster costs can be 3-5x higher than standard cloud instances, but reduce processing time by 80-90% [2]. |

Applied Strategies: Methodologies for Cost-Effective High-Performance Computing

Leveraging AI and Machine Learning for Predictive Resource Allocation

Technical Support Center

This support center provides practical guidance for researchers implementing AI-driven predictive resource allocation in computational drug discovery. The following troubleshooting guides and FAQs address common technical challenges, framed within the critical research context of balancing network performance and computational resource costs.

Troubleshooting Guides

Issue 1: High Computational Resource Costs During Model Training

- Problem: Training complex models like deep neural networks on large datasets (e.g., high-throughput screening results, genomic sequences) consumes excessive GPU/CPU time and memory, leading to unsustainable costs.

- Diagnosis: This often occurs when using inappropriately large models for the task or when data pipelines are inefficient. Monitor your system's resource utilization (GPU memory, CPU usage) during training. A consistent >90% utilization indicates a fundamental resource bottleneck.

- Solution:

- Model Simplification: Begin with simpler, more efficient models like gradient boosting machines (Scikit-learn) or small language models (SLMs) for initial experimentation [29].

- Adopt a Staged Workflow: Implement a progressive filtering strategy. Use a lightweight model (e.g., for initial virtual screening) to narrow down candidates before employing more resource-intensive models for detailed analysis [30].

- Leverage Cloud & MLOps: Utilize cloud platforms (AWS, Azure, GCP) with auto-scaling capabilities and implement MLOps practices to automate and optimize resource allocation during training, potentially improving resource utilization by up to 30% [31] [32].

Issue 2: Poor Model Generalization to New Experimental Data

- Problem: A model trained on one dataset (e.g., from a specific cell line) fails to accurately predict outcomes for new, slightly different data (e.g., a related cell line), rendering it useless for real-world decision-making.

- Diagnosis: This is typically a data quality and bias issue. The training data may not be representative, or data leakage may have occurred during preprocessing.

- Solution:

- Data Curation: Invest in robust data preprocessing and feature engineering to ensure data quality and representativeness [33].

- Causal ML Techniques: Move beyond purely predictive models. Integrate Causal Machine Learning (CML) methods, such as advanced propensity score modeling or doubly robust estimation, to better identify true cause-effect relationships from observational data, improving the validity of predictions [34].

- Continuous Validation: Implement a robust model monitoring system to detect data drift and concept drift in real-time, triggering model retraining as needed [29].

Issue 3: Inefficient Resource Allocation in Clinical Trial Simulations

- Problem: Simulations of clinical trials using digital twins or other AI models are slow and cannot efficiently explore different allocation strategies (e.g., patient cohort selection, site resource distribution).

- Diagnosis: The simulation model may not be optimized for performance, or the allocation logic is not integrated with the predictive model.

- Solution:

- Implement AI Agents: Deploy autonomous AI agents capable of goal-oriented planning. These agents can break down the complex task of trial simulation into executable steps, dynamically allocating computational resources to explore the most promising strategies [29] [35].

- Performance Tuning: Apply AI-driven performance optimization to your simulation code. This can lead to a 30% reduction in execution time and a 25% decrease in server load, allowing for faster iteration [31].

- Workload-Specific Tuning: Tailor your computing infrastructure to the simulation workload. For compute-intensive tasks, ensure access to high-performance GPUs and leverage auto-scaling policies [31].

Frequently Asked Questions (FAQs)

Q1: What are the most resource-efficient AI models for initial drug discovery phases? Small Language Models (SLMs) and traditional machine learning models offer a compelling balance of performance and efficiency. They are ideal for tasks like literature mining, initial compound property prediction, and prioritizing experiments, significantly reducing computational costs compared to large foundation models [29].

Q2: How can we balance the trade-off between model accuracy and the cost of the compute infrastructure needed to run it? This is a core research trade-off. The key is to adopt a "right-sizing" strategy:

- Use simpler models for high-throughput initial screening.

- Reserve complex, expensive models (like large multimodal models) only for final, critical decision points.

- Implement MLOps for continuous monitoring and cost control. Studies show that a strategic, tiered approach can improve operational efficiency by up to 40% while maintaining research velocity [31] [29].

Q3: Our AI models for predicting compound activity work well in validation but fail in production. What is the likely cause? This "production drift" is often due to differences between the clean, controlled data used for training and the noisy, real-world data encountered in production. Solutions include:

- Continuous Monitoring: Deploy tools to monitor model performance and data distributions in real-time.

- Retraining Pipeline: Establish an automated pipeline to retrain models periodically on newly generated experimental data.

- Causal ML: Use Causal ML techniques to ensure the model learns robust, causal relationships rather than spurious correlations from the training set [34].

Q4: What is the role of AI agents in resource allocation, and how do they differ from traditional models? Traditional models make predictions, but AI agents take actions. In resource allocation, an AI agent can autonomously execute tasks based on predictions. For example, instead of just predicting a high server load, an agent can proactively auto-scale cloud resources. They operate with goal-oriented planning and can coordinate with other agents, transforming predictive insights into automated, efficient resource management [29] [35].

Research Reagent Solutions: The AI Toolbox

The following table details key computational tools and their functions for building an AI-driven resource allocation system.

| Research Reagent (Tool/Framework) | Function in Predictive Resource Allocation |

|---|---|

| PyTorch / TensorFlow | Core machine learning frameworks used for building and training custom predictive models, such as those for forecasting computational needs or compound efficacy [32]. |

| Scikit-learn | A library for classical machine learning algorithms (e.g., regression, clustering), ideal for building efficient, less resource-intensive models for initial data analysis [32]. |

| MLflow | An MLOps platform for tracking experiments, packaging code, and managing model lifecycles. Essential for reproducibility and managing the cost of failed experiments [32]. |

| Docker & Kubernetes | Containerization and orchestration tools that ensure consistent environments from a researcher's laptop to high-performance computing clusters, optimizing deployment resources [32]. |

| Hugging Face Transformers | A library providing access to thousands of pre-trained models, including many Small Language Models (SLMs), which can be fine-tuned for domain-specific tasks without the cost of training from scratch [29] [32]. |

| Causal ML Libraries (e.g., EconML, CausalML) | Specialized libraries implementing Causal Machine Learning techniques (e.g., meta-learners, doubly robust methods) to move from correlation to causation in predictive modeling [34]. |

Experimental Protocol: Implementing a Cost-Aware Predictive Pipeline

This protocol details a methodology for building a predictive model that explicitly balances prediction accuracy with computational resource costs, directly addressing the core research trade-off.

1. Objective: To develop a two-stage predictive pipeline for virtual screening that maximizes predictive performance while minimizing total computational expenditure.

2. Materials & Software:

- Dataset: A curated library of chemical compounds with associated assay results (e.g., from ChEMBL).

- Software: Python, Scikit-learn, PyTorch, a hyperparameter optimization library (e.g., Optuna), and a resource monitoring tool (e.g.,

psutilor cloud monitoring APIs).

3. Step-by-Step Procedure:

- Step 1: Data Preparation & Feature Engineering

- Split the compound dataset into training, validation, and test sets (e.g., 70/15/15).

- Generate molecular descriptors or fingerprints for each compound. This represents the initial, fixed resource cost.

Step 2: Model Selection & Tiered Architecture

- Stage 1 Model (High-Throughput, Low-Cost): Train a lightweight model, such as a Random Forest or Gradient Boosting Classifier from Scikit-learn, on the entire training set. This model's goal is to filter out clearly inactive compounds with high confidence.

- Stage 2 Model (High-Accuracy, High-Cost): Train a more complex, resource-intensive model, such as a Graph Neural Network (GNN), on a subset of the training data that passes the Stage 1 filter. This model performs detailed analysis on the most promising candidates.

Step 3: Multi-Objective Hyperparameter Optimization

- Define the optimization goal: Maximize the Area Under the Curve (AUC) of the overall pipeline on the validation set, while penalizing the total CPU/GPU time consumed.

- Objective Function for Optuna:

- Run the optimization to find the Pareto-optimal frontier between AUC and cost.

Step 4: Validation & Analysis

- Evaluate the final chosen pipeline on the held-out test set.

- Report both the final predictive performance (AUC, Precision, Recall) and the total computational cost.

- Compare the cost-performance trade-off against a single-model baseline.

Workflow Visualization

The diagram below illustrates the logical flow and decision points of the tiered, cost-aware experimental protocol.

Implementing Federated Learning to Reduce Data Transfer Costs and Enhance Privacy

Troubleshooting Guide: Common Federated Learning Issues

This guide addresses specific technical issues you might encounter while implementing Federated Learning (FL) systems, framed within the research context of balancing network performance and resource costs.

FAQ: Slow Global Model Convergence

Q: The global model in our FL setup is taking many more rounds to converge than traditional centralized training. What strategies can improve convergence speed?

A: Slow convergence is a common challenge in FL due to statistical and system heterogeneity [36]. You can implement the following strategies:

- Increase Local Epochs: Allow clients to perform more training epochs on their local data before sending updates [37].

- Adaptive Learning Rates: Use learning rate schedules that decrease over time to stabilize training in later rounds [37].

- Client Sampling: Prioritize and select clients with higher-quality or more relevant data for participation in training rounds [37] [36].

- Advanced Aggregation: Employ algorithms like Federated Averaging (FedAvg) with momentum to smooth the update process and accelerate convergence [37] [36].

FAQ: Managing Unreliable Client Connections

Q: In our cross-device FL experiment, client nodes frequently disconnect, causing significant delays in aggregation. How can we make the system more robust to node dropout?

A: Node dropout is expected in large-scale, real-world deployments. Mitigation strategies focus on asynchronous operations and fault tolerance [37] [36]:

- Asynchronous Aggregation: Modify the aggregation protocol so the server does not need to wait for all selected clients in each round. This prevents stragglers from halting the entire process [37] [36].

- Checkpointing: Implement a system where clients can save their progress. The training process can resume from the last checkpoint after a disconnection and reconnection [37].

- Reliability Scoring: Develop a heuristic to track client reliability and weight their participation or their updates accordingly in future rounds [37].

FAQ: High Communication Overhead

Q: The communication cost of exchanging model updates is becoming prohibitive in our network. What techniques can reduce this bottleneck?

A: Communication efficiency is a primary research focus in FL [36] [38]. Effective techniques include:

- Update Compression: Apply methods like quantization (reducing the numerical precision of weights) and sparsification (only sending the largest weight updates) to dramatically shrink the size of transmitted messages [36].

- Reduced Communication Frequency: Let clients perform more substantial local computation (multiple epochs) between communication rounds, reducing the total number of sync rounds needed [36].

- Knowledge Distillation: Explore advanced techniques where a smaller, compressed "student" model is trained to mimic the larger "teacher" model, significantly reducing the data that needs to be transmitted [39].

FAQ: Addressing Data Heterogeneity and Bias

Q: The data across our client nodes is non-IID (not Independent and Identically Distributed), leading to a biased global model that performs poorly on some clients. How can we address this?

A: Statistical heterogeneity (non-IID data) is a fundamental FL challenge [36]. Solutions include:

- Data Quality Validation: Implement pre-training validation gates using tools that flag nodes with extreme class imbalance, anomalous data distributions, or insufficient sample sizes [37].

- Personalized FL: Instead of a single global model, develop strategies that create slightly personalized models for different client clusters or data distributions [36].

- Robust Aggregation: Use aggregation algorithms that are less sensitive to updates from clients with divergent data distributions, such as those that detect and filter statistical outliers [37] [36].

FAQ: Ensuring Privacy Against Inference Attacks

Q: While raw data never leaves the device, we are concerned that model updates could be reverse-engineered to reveal sensitive information. How can we enhance privacy guarantees?

A: This is a valid concern, as model updates can potentially leak information [40] [36]. A layered privacy approach is recommended:

- Differential Privacy (DP): Add a carefully calibrated amount of random noise to the model updates before they are sent from the client. This provides a mathematical guarantee that the output of the computation does not depend significantly on any single data point, making it difficult to infer individual contributions [36].

- Secure Multi-Party Computation (SMPC): Use cryptographic protocols that allow the server to aggregate the model updates without ever being able to inspect any single client's update in isolation [36] [39].

- Homomorphic Encryption (HE): Encrypt the model updates on the client side in such a way that the server can perform mathematical operations on the ciphertext. The server aggregates the encrypted updates and only the final, aggregated result is decrypted [39].

Quantitative Data on FL Optimization Techniques

The table below summarizes the performance impact of various optimization techniques, providing a basis for cost-benefit analysis in your research on network-resource tradeoffs.

Table 1: Impact of Federated Learning Optimization Techniques

| Technique | Primary Benefit | Typical Performance Impact | Key Consideration |

|---|---|---|---|

| Dynamic Tiered Scheduling (DTS) [39] | Computational Efficiency | Reduced total training time by 48.1% compared to traditional FL [39] | Requires mechanism to dynamically profile client resource capabilities. |

| Knowledge Distillation [39] | Communication Efficiency & Accuracy | Reduced communication epochs by 11.4% under high data heterogeneity; improved accuracy by ~12% [39] | Introduces additional complexity of managing teacher-student models. |

| Network Propagation Dynamics (NET-D-DFL) [38] | Communication Efficiency | Enhanced communication efficiency and reduced communication time, albeit with a potential slight accuracy trade-off in some scenarios [38] | Performance is influenced by the underlying network topology (e.g., ER, WS). |

| Differential Privacy [36] | Privacy Enhancement | Provides mathematical privacy guarantees but typically leads to a reduction in final model accuracy [36] | The level of privacy (epsilon) must be balanced against model utility loss. |

| Model Compression [36] | Communication Efficiency | Can reduce update size by 10x or more, directly lowering bandwidth use per round [37] | Excessive compression can slow down overall convergence, requiring more rounds. |

Experimental Protocol: Implementing a Robust FL System

This protocol provides a detailed methodology for setting up a federated learning experiment that is robust to common issues like data heterogeneity and communication bottlenecks, aligning with research into efficient resource utilization.

The following diagram illustrates the core iterative workflow of a centralized Federated Learning system, which forms the basis for the experimental protocol.

Detailed Methodology

Initialization:

- Central Server Setup: Deploy a central aggregation server using an open-source framework like TensorFlow Federated or FATE (Federated AI Technology Enabler) [37] [39].

- Global Model Definition: Define and initialize the shared global model architecture (e.g., a deep neural network for image classification or a support vector machine for stress detection [41]).

Client Configuration:

- Data Partitioning: Distribute the training data across multiple simulated or physical clients in a non-IID fashion to mimic real-world conditions. For example, sort data by label and distribute disproportionately among clients [36].

- Client Manager: Implement a

NodeManagerclass [37] to handle client check-ins, track metrics (last update time, data size), and manage the participation lifecycle.

Federated Training Loop:

- Client Selection: In each communication round, the server selects a subset of available clients. You may implement random selection or more advanced, resource-aware strategies [36].

- Local Training: