Strategic Approaches for Handling Infeasible Solutions in Constrained Evolutionary Optimization

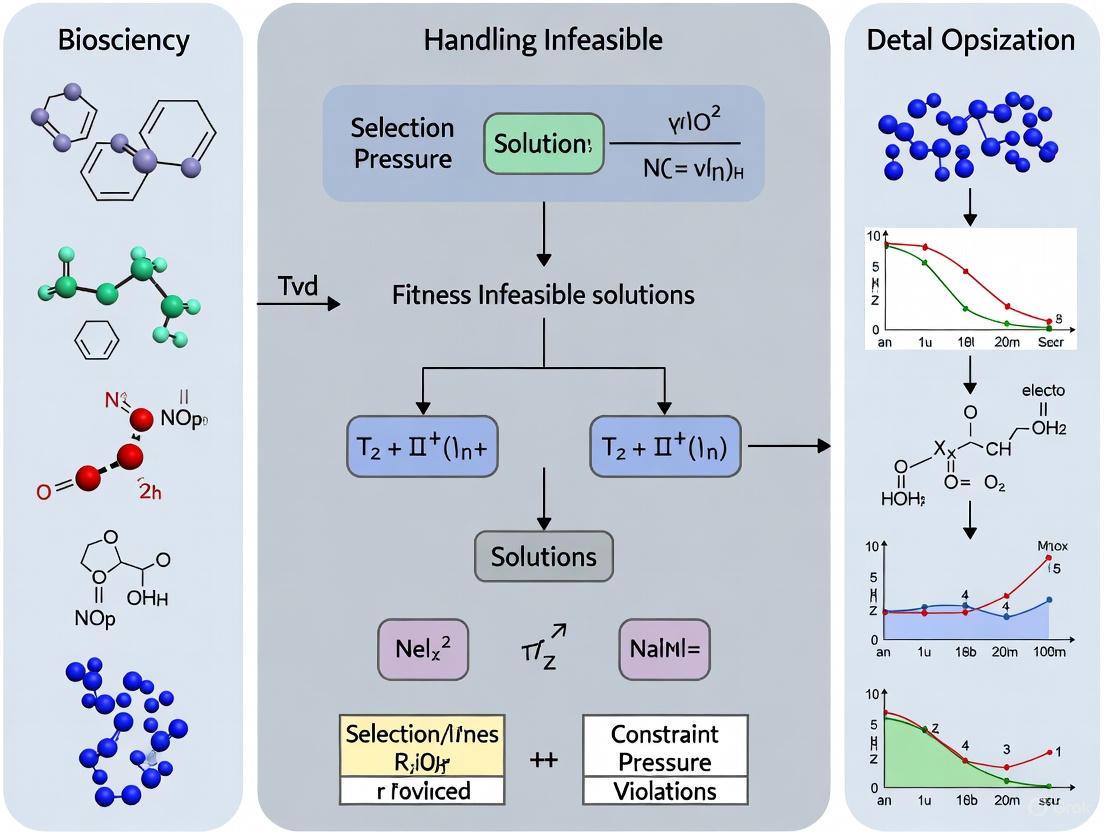

This article provides a comprehensive analysis of advanced methodologies for strategically leveraging infeasible solutions in constrained evolutionary optimization.

Strategic Approaches for Handling Infeasible Solutions in Constrained Evolutionary Optimization

Abstract

This article provides a comprehensive analysis of advanced methodologies for strategically leveraging infeasible solutions in constrained evolutionary optimization. Targeting researchers and drug development professionals, we explore the paradigm shift from discarding to strategically utilizing infeasible solutions through four key dimensions: fundamental principles of constraint violation metrics and feasibility rules; methodological implementations including multi-stage frameworks and adaptive penalty functions; optimization strategies for premature convergence and complex feasible regions; and rigorous validation using benchmark problems and industrial case studies. The synthesis of these approaches demonstrates significant potential for enhancing global search capability and solution quality in complex optimization scenarios prevalent in biomedical research and drug development.

Understanding Infeasible Solutions: Concepts and Significance in Constrained Optimization

Defining Constrained Optimization Problems and Constraint Violation Metrics

Frequently Asked Questions (FAQs)

1. What does it mean when my constrained optimization model is infeasible? An infeasible model is one where no solution exists that can satisfy all constraints simultaneously. This means the search space defined by the constraints has no overlapping region where all conditions are met. This can occur due to errors in the model formulation or because the real-world scenario described by the input data genuinely has no solution that meets all the requirements [1] [2].

2. What is a constraint violation metric? A constraint violation metric quantifies how severely an solution fails to meet the problem's constraints. A common formulation for the degree of constraint violation (G(x)) is the sum of violations for all constraints [3]: G(x) = Σ max( gᵢ(x), 0 ) + Σ max( |hⱼ(x)| - δ, 0 ) Here, gᵢ(x) are inequality constraints, hⱼ(x) are equality constraints, and δ is a small tolerance for converting equalities to inequalities [3]. This metric is crucial for algorithms that handle infeasible solutions.

3. What is an Irreducible Inconsistent Subsystem (IIS)? An IIS is a minimal set of constraints and variable bounds that is itself infeasible. If any single constraint or bound is removed from this set, the subsystem becomes feasible [1] [4]. Identifying an IIS helps you pinpoint the core conflicting rules in your model. Solvers like Gurobi, XPRESS, and CPLEX offer features to compute IISs [1] [5].

4. How can I resolve an infeasible model? Two primary approaches are analyzing the model and relaxing constraints.

- Diagnosis: Use tools like the IIS to identify the root cause of the conflict [1] [5].

- Relaxation: Transform "hard" constraints into "soft" constraints by introducing slack variables that are penalized in the objective function. This allows the solver to find a solution that minimally violates the constraints [1] [3]. This can be done manually or by using the built-in feasibility relaxation utilities in solvers like Gurobi and FICO Xpress [4] [5].

5. What is the difference between relaxable and unrelaxable constraints? This classification is vital for handling infeasibility [6]:

- Relaxable Constraints: These do not need to be strictly satisfied to obtain a meaningful output from the simulation or model. Slight violations are acceptable. An example could be a desired target output power.

- Unrelaxable Constraints: These must be satisfied for the solution to be physically or logically meaningful. An example is a constraint ensuring a positive pinch-point temperature difference in a heat exchanger, without which the process is thermodynamically impossible [6]. These are often handled with an extreme barrier approach, assigning an extremely high cost to any violation [6].

Troubleshooting Guide: My Model is Infeasible

Follow this logical workflow to diagnose and remedy an infeasible constrained optimization problem.

Step 1: Verify Input Data and Model Formulation

Before complex diagnosis, perform basic checks.

- Data Accuracy: Perform thorough sanity checks on your input data. Overpromising beyond capacity (e.g., production or resource limits) is a common cause of infeasibility [1].

- Model Accuracy: Check for simple coding errors: incorrect variable types, indexing mistakes, wrong bounds, or misusing equality instead of inequality [1].

- Best Practice: Always start with an algebraic formulation of your model before coding. This simplifies verification and sharing with colleagues [1].

Step 2: Compute an IIS

Use your solver's functionality to find an Irreducible Inconsistent Subsystem (IIS). This tool will return a minimal set of conflicting constraints [1] [5].

- In Python with Gurobi: Use the

Model.computeIIS()method [5]. - In Mosel with FICO Xpress: Use the

conflict_refinerfunctionality [4]. - Note: For large Mixed-Integer Programming (MIP) models, computing an IIS can be computationally expensive. For such cases, a feasibility relaxation (next step) may be a faster alternative [5].

Step 3: Analyze the IIS Output

Examine the constraints and bounds in the IIS. This is the core of your problem. Ask yourself:

- Are the constraints in the IIS logically contradictory (e.g., x ≥ y+1 and y ≥ x+1) [2]?

- Do they reflect a real-world impossibility given the current data (e.g., total demand exceeding total capacity) [2]?

Step 4: Choose a Resolution Strategy

Based on your analysis, choose a path forward.

Path A: Correct the Model or Data: If the IIS reveals a formulation error or incorrect data, correct it directly. This is the preferred solution during model development [1].

Path B: Relax Constraints: If the infeasibility is genuine (e.g., in production data), you need to relax constraints. This can be done manually or automatically.

- Using Slack Variables: Add slack variables to constraints and penalize their use in the objective function. This transforms hard constraints into soft ones [1].

- Using Built-in Relaxation: Solvers like Gurobi offer

Model.feasRelaxS()to automatically find the minimal relaxation needed for feasibility [5].

Step 5: Implement and Re-solve

Apply your chosen correction and solve the model again. If using constraint relaxation, the solution will indicate which constraints were violated and by how much, providing valuable business or research insights [2].

The Scientist's Toolkit: Key Reagents for Constrained Optimization

The table below outlines essential "research reagents"—methods and solver functions—for diagnosing and handling infeasibility.

Table 1: Essential Reagents for Handling Infeasibility

| Research Reagent | Function | Application Context |

|---|---|---|

| IIS Finder | Identifies a minimal set of conflicting constraints and bounds [1] [5]. | Primary diagnosis for linear and mixed-integer models to understand the root cause of infeasibility. |

| Slack Variables | Auxiliary variables added to constraints to absorb violations, transforming hard constraints into soft ones [1]. | Manual implementation of constraint relaxation; penalized in the objective function to find minimally violating solutions. |

| Feasibility Relaxation | A solver utility that automatically relaxes constraints and bounds to find a solution with minimal violation according to a specified metric [4] [5]. | An alternative to IIS for large models (especially MIP) or for directly obtaining a relaxed solution. |

| Constraint Violation Metric (G(x)) | A scalar measure quantifying the total infeasibility of a solution [3]. | Used in evolutionary algorithms to compare and steer infeasible solutions toward feasibility, e.g., in feasibility rules or ɛ-constraint methods [3]. |

| Extreme Barrier Function | Assigns an extremely poor (or infinite) objective value to any infeasible solution [6]. | Handling unrelaxable constraints that must be satisfied for a solution to be physically meaningful [6]. |

Experimental Protocols

Protocol 1: Implementing an IIS Analysis in Gurobi (Python)

This protocol details the steps to identify the cause of infeasibility using Gurobi's IIS functionality.

Methodology:

- Model Definition: Formulate and build the optimization model as usual.

- Solve and Check Status: After calling

model.optimize(), check if the model status isGRB.INFEASIBLE. - Compute IIS: Call

model.computeIIS()to instruct the solver to find the irreducible inconsistent subsystem. - Output Results: Write the IIS to a file for analysis using

model.write("model.ilp"). The.ilpfile will contain the minimal set of infeasible constraints.

Protocol 2: Implementing a Simple Feasibility Relaxation

This protocol describes how to use slack variables to handle infeasibility, a method applicable across various solvers and research codes.

Methodology:

- Define Slack Variables: For each constraint that can be relaxed, create a non-negative slack variable.

- Modify Constraints: Incorporate the slack variable to relax the constraint.

- For a constraint

f(x) ≤ b, it becomesf(x) - s ≤ b, withs ≥ 0. - For a constraint

f(x) ≥ b, it becomesf(x) + s ≥ b, withs ≥ 0.

- For a constraint

- Penalize in Objective: Add a penalty term to the objective function to discourage the use of slack. The new objective becomes

Minimize: Original_Cost + P * Σ s, wherePis a sufficiently large penalty weight.

Table 2: Comparison of Infeasibility Resolution Methods

| Method | Key Advantage | Key Disadvantage | Best Used When |

|---|---|---|---|

| IIS Analysis | Pinpoints the exact source of conflict for precise debugging [5]. | Can be computationally expensive for large MIP models [5]. | The root cause is unknown and the model is an LP or a manageable MIP. |

| Built-in Relaxation (e.g., feasRelaxS) | Fast, automated, and minimizes the relaxation according to a defined metric [5]. | Provides a "black-box" solution with less insight into the core conflict. | Speed is critical or the goal is simply to find a "close-to-feasible" solution. |

| Manual Slack Variables | Offers full control over which constraints are relaxed and the penalty structure [1]. | Requires manual setup and careful tuning of penalty weights to avoid numerical issues [1]. | Specific constraints are known to be soft, or full integration into a custom algorithm is needed. |

FAQs: Leveraging Infeasible Solutions in Constrained Optimization

Q1: Why should I keep infeasible solutions in my population? Won't they slow down convergence to a feasible optimum?

A1: Traditionally, infeasible solutions were often discarded. However, modern research shows that strategically maintaining infeasible solutions can be highly beneficial. They provide valuable information about the problem's landscape, help navigate around infeasible regions, and maintain population diversity. Crucially, infeasible solutions close to the feasible region boundary can be genetically closer to optimal feasible solutions than distant feasible ones, providing better genetic material for evolutionary operators [7]. The key is not to discard them, but to manage them intelligently.

Q2: What are the primary strategies for handling and utilizing infeasible solutions?

A2: The main strategies identified in current literature include:

- Feasibility Rules and Stochastic Ranking: Prefer feasible over infeasible solutions, but allow some high-quality infeasible solutions to remain based on a probability [8].

- Multi-Objective Transformation: Treat constraint satisfaction as separate objectives to be optimized alongside your primary objective. This allows you to work with a population that has varying degrees of feasibility and objective quality [9] [8].

- ε-Constrained Methods: Relax the constraints to allow solutions with a small, acceptable violation (ε) to be treated as feasible. This helps the population cross narrow infeasible regions [8].

- Dual-Population Approaches: Use one population to explore the feasible region and another to explore promising infeasible regions, allowing for information exchange between them [9].

- Preference-Based Optimization: Use a framework that prioritizes feasible solutions but also prefers infeasible solutions with lower constraint violations over those with higher violations, eliminating the need for careful penalty parameter tuning [10].

Q3: My algorithm gets stuck in a local feasible region. How can infeasible solutions help me escape it?

A3: This is a classic problem in constrained optimization, especially when feasible regions are disconnected. Allowing the population to traverse through promising infeasible regions can bridge isolated feasible zones. Techniques like the Feasible Search Boundary (CHT-FSB) dynamically define a boundary around feasible solutions, allowing infeasible solutions within this boundary to be considered potential candidates. This enables the algorithm to "cross" an infeasible valley to reach a separate, and potentially better, feasible region [9].

Q4: In a multi-objective setting, how can feasible solutions guide the search?

A4: In Constrained Multi-Objective Optimization Problems (CMOPs), feasible non-dominated solutions can be used to identify potential regions of the Constrained Pareto Front (CPF). A technique like the Feasible non-dominated reference set based Dominance Principle (FDP) uses these solutions to guide the search towards areas where the CPF is likely to exist, promoting a uniform and thorough exploration of the objective space [9].

Q5: Are there specific evolutionary algorithms where the handling of infeasible solutions is particularly critical?

A5: Yes. The handling method is a critical component that significantly impacts performance and reproducibility. For instance, in Differential Evolution (DE), the specific strategy for dealing with solutions generated outside the domain (even for simple box constraints) induces notably different behaviors in performance, disruptiveness, and population diversity. This effect grows with problem dimensionality. It is recommended to formally specify this strategy in any algorithmic description to ensure reproducibility [11].

Troubleshooting Guides

Problem 1: Population Stagnation in a Local Feasible Region

Symptoms: Convergence to a suboptimal feasible solution; rapid loss of population diversity; inability to find better solutions across disconnected feasible regions.

Solution Protocol:

- Diagnosis: Confirm that the problem has a complex constraint landscape (e.g., disconnected or narrow feasible regions). Check the population's feasibility history—a rapid, early jump to 100% feasibility often indicates over-preference for feasibility at the cost of exploration.

- Implementation: Integrate a dual-population mechanism.

- Implement an exploration-guided population (

P1) tasked with searching across both feasible and infeasible regions. Equip it with a constraint-handling technique like CHT-FSB that dynamically adjusts a search boundary to preserve promising infeasible solutions [9]. - Maintain an exploitation-guided population (

P2) that focuses on converging within the feasible regions identified. - Enable periodic information exchange between

P1andP2to allow the entire search process to benefit from both exploration and exploitation.

- Implement an exploration-guided population (

- Verification: Monitor the evolution of both populations. You should observe

P1gradually discovering new feasible regions, whileP2's solutions should show improvement in quality as it receives new genetic material.

Problem 2: Poor Balance Between Feasibility and Optimality

Symptoms: The algorithm finds feasible solutions easily, but they are of low quality; or, it finds high-quality solutions that are, however, infeasible.

Solution Protocol:

- Diagnosis: Evaluate your constraint-handling technique. Classic penalty function methods with fixed parameters are a common culprit.

- Implementation: Adopt a hybrid or advanced constraint-handling technique.

- Option A (Multi-Objective): Reformulate your single-objective constrained problem as a bi-objective problem where you simultaneously optimize the original objective

f(x)and the total constraint violationG(x). This allows you to find a trade-off front between performance and feasibility [8]. - Option B (Preference-Based): Implement a preference-based optimization framework like UCPO. This method uses a universal constrained preference loss function that inherently prioritizes feasibility and lower constraint violations without needing to tune sensitive penalty parameters [10].

- Option A (Multi-Objective): Reformulate your single-objective constrained problem as a bi-objective problem where you simultaneously optimize the original objective

- Verification: The output should be a set of solutions that represent a better trade-off. In the multi-objective case, you will get a Pareto front showing the relationship between objective value and constraint violation.

Problem 3: Algorithm is Overly Sensitive to Hyperparameters

Symptoms: Small changes in parameters (especially penalty factors) lead to drastically different results; requires extensive and problem-specific tuning.

Solution Protocol:

- Diagnosis: This is a known weakness of Lagrangian-based methods and penalty functions, where the Lagrange multipliers or penalty factors are notoriously sensitive to calibration [10].

- Implementation: Shift to a method that reduces or eliminates the need for such parameters.

- Verification: The algorithm's performance should become more robust and consistent across different runs and problem instances, with a significant reduction in time spent on parameter tuning.

Experimental Protocols & Data

Protocol 1: Implementing a Dual-Population Approach (UICMO)

Methodology: This protocol is based on the UICMO framework for constrained multi-objective optimization [9].

- Initialization: Randomly generate an initial population and split it into two sub-populations:

P1(exploration-guided) andP2(exploitation-guided). - Constraint Handling Assignment:

- For

P1, implement the Constraint Handling Technique based on Feasible Search Boundary (CHT-FSB). Calculate a dynamic boundary factorβ. An infeasible solution is considered "promising" and retained if a feasible solution exists within a Euclidean distanceβfrom it. - For

P2, implement the Feasible non-dominated reference set based Dominance Principle (FDP). Maintain an archive of feasible non-dominated solutions. Use this set to guide the selection pressure towards unexplored regions of the potential constrained Pareto front.

- For

- Evolution and Collaboration: Evolve

P1andP2for a defined number of generations using your chosen evolutionary algorithm (e.g., DE, GA). After everyKgenerations, allow a percentage of individuals to migrate betweenP1andP2to share information. - Termination: Combine the final

P1andP2and output the non-dominated feasible solutions as your approximation of the Pareto front.

Logical Workflow:

Protocol 2: Preference Optimization for Hard Constraints (UCPO)

Methodology: This protocol uses the UCPO framework to fine-tune a pre-trained neural solver for combinatorial problems with hard constraints [10].

- Warm-Start: Obtain a pre-trained model checkpoint for your target combinatorial problem (e.g., a model trained on TSP). This serves as a high-quality initializer.

- Fine-Tuning with Preference Loss:

- For a set of problem instances, sample solution pairs

(x, x'). - Compute the UCPO loss, which comprises:

- Feasibility Margin Loss: Ensures a margin between the scores of feasible and infeasible solutions.

- Primal Refinement Loss: Encourages improvement in the objective value.

- Dual Exploration Loss: Promotes exploration by increasing the score of infeasible solutions with low constraint violation.

- Perform gradient-based updates on the model parameters using this aggregated loss.

- For a set of problem instances, sample solution pairs

- Inference: Use the fine-tuned model to generate solutions for new, constrained problem instances (e.g., TSP with Time Windows).

Quantitative Comparison of Multi-Objective Optimization Algorithms: Table: Performance of MOO Algorithms on a Pharmaceutical Formulation Problem [12]

| Algorithm | Hypervolume | Generational Distance | Inverted Generational Distance | Spacing | Weighted Sum Method Score |

|---|---|---|---|---|---|

| NSGA-III | Highest | Smallest | Smallest | Most Uniform | 82.08 |

| MOEAD | High | Small | Small | Uniform | 80.89 |

| RVEA | Competitive | Competitive | Competitive | Competitive | Slightly Lower |

| C-TAEA | Competitive | Competitive | Competitive | Competitive | Slightly Lower |

| AGE-MOEA | Competitive | Competitive | Competitive | Competitive | Slightly Lower |

The Scientist's Toolkit: Key Research Reagents

Table: Essential Computational "Reagents" for Constrained Evolutionary Optimization

| Research Reagent | Function / Role in the Experiment |

|---|---|

| Differential Evolution (DE) | A versatile evolutionary algorithm framework that operates on real-valued vectors. Its mutation and crossover operators are highly effective for continuous optimization problems [13] [8]. |

| Reference Point Set (e.g., for NSGA-III) | A set of predefined points in objective space that guide the population towards a well-distributed set of Pareto-optimal solutions, crucial for many-objective problems [12]. |

Constraint Violation Metric G(x) |

A single, aggregate measure of the total violation of all constraints by a solution x. This is the fundamental quantity for any constraint-handling technique [9] [8]. |

| Feasible Non-Dominated Archive | A dynamic memory that stores the best feasible, non-dominated solutions found so far during the search. Used in techniques like FDP to guide the exploitation population [9]. |

| Preference Optimization Loss Function | A composite loss function (e.g., from UCPO) that allows fine-tuning neural solvers to handle hard constraints without manual penalty tuning or complex masking logic [10]. |

Taxonomy of Constraint Handling Techniques in Evolutionary Algorithms

Frequently Asked Questions (FAQs)

Q1: What is the core challenge when handling constraints in Evolutionary Algorithms (EAs)? The primary challenge is effectively balancing the search for optimal solutions with the need to satisfy all problem constraints. This involves making decisions about how to guide the population towards the feasible region while not prematurely converging or losing valuable genetic information from infeasible solutions that may be close to the global optimum, which often lies on constraint boundaries [14].

Q2: My algorithm is converging to a suboptimal feasible region. How can I improve its exploration capability? This is a common issue when the algorithm over-penalizes infeasible solutions too early. Consider implementing a two-stage approach. In the first stage, relax the constraints or use an exploratory population that ignores constraints to explore the entire search space and approximate the unconstrained Pareto Front. In the second stage, strictly enforce constraints or use a feasibility-driven population to exploit the feasible regions and refine the solutions [15] [16]. This balances exploration and exploitation.

Q3: How should I handle equality constraints?

Equality constraints are typically converted into inequality constraints using a tolerance value δ. The constraint violation for an equality constraint h_j(x) is calculated as max(0, |h_j(x)| - δ), where δ is a small positive tolerance (e.g., 10^-6) [8] [16]. This transformation allows the algorithm to treat near-feasible solutions as feasible, making the search process more manageable.

Q4: What should I do when my population contains very few or no feasible solutions? When feasible solutions are rare, pure feasibility-based rules can fail. Instead, use techniques that leverage information from infeasible solutions. Methods like the Infeasibility Driven Evolutionary Algorithm (IDEA) explicitly maintain a small percentage of "good" infeasible solutions close to the constraint boundaries [14]. Alternatively, ranking-based methods like E-BRM create separate ranking lists for feasible and infeasible solutions, then merge them, giving higher priority to feasible solutions but also valuing infeasible ones with low constraint violation [17].

Q5: Are there strategies to automatically select the best constraint handling technique during a run? Yes, ensemble and adaptive strategies address this. For instance, one can use a Multi-Armed Bandit (MAB)-based decision-making strategy. This approach runs multiple populations, each with a different Constraint Handling Technique (CHT), and adaptively selects the most suitable parent population for offspring generation based on real-time performance feedback [15]. This is particularly useful without prior knowledge of the problem's constraint characteristics.

Troubleshooting Guides

Problem 1: Premature Convergence to a Local Feasible Optimum

Symptoms: The population becomes feasible quickly but the objective function value stagnates at a non-optimal value. Diversity is lost early in the run.

Possible Causes and Solutions:

- Cause: Overly greedy feasibility preference.

- Solution: Integrate an infeasibility-driven mechanism. Modify your selection process to rank some marginally infeasible solutions higher than feasible ones if they have better objective values, forcing the population to explore near constraint boundaries [14].

- Cause: Single-population approach with a fixed CHT.

- Cause: Ineffective balance between exploration and exploitation.

- Solution: Implement a dynamic two-stage strategy. Let the first stage focus primarily on exploration (e.g., by ignoring constraints or using a multi-objective technique to find the unconstrained Pareto Front). Then, switch to a second stage that focuses on exploiting the feasible regions identified [15].

Problem 2: Inability to Find Any Feasible Solutions

Symptoms: The algorithm completes its run without discovering a single feasible solution, or finds feasible solutions only very late in the process.

Possible Causes and Solutions:

- Cause: The feasible region is extremely small or disconnected.

- Solution: Use a constraint relaxation mechanism. For example, in a two-stage archive-based algorithm (CMOEA-TA), constraints can be relaxed in the first stage based on the proportion of feasible solutions and the degree of constraint violation, encouraging the population to approach the feasible region gradually [16].

- Cause: Penalty coefficients are too high or too low.

- Cause: Population is not directed towards feasibility.

- Solution: For large-scale problems, hybridize the EA with a local constraint-handling method. One approach is to use the Constraint Consensus (DBmax) method, which can be applied to infeasible solutions within a cooperative coevolution framework to actively improve their feasibility [19].

Problem 3: Poor Performance on Large-Scale Constrained Problems

Symptoms: The algorithm's performance degrades significantly as the number of decision variables and constraints increases. Computation time becomes prohibitive.

Possible Causes and Solutions:

- Cause: The "curse of dimensionality" exacerbates the search complexity.

- Solution: Implement a cooperative coevolution (CC) framework. Decompose the large-scale problem into smaller, more manageable subproblems using techniques like Recursive Differential Grouping. Then, evolve these subproblems separately [19].

- Cause: Inefficient handling of many constraints.

- Solution: Use a classification-collaboration constraint handling technique. Randomly classify the constraints into

Kgroups, decompose the original problem intoKsubproblems, and assign each to a subpopulation. These subpopulations can then interact through learning strategies to generate better solutions for the original problem [8].

- Solution: Use a classification-collaboration constraint handling technique. Randomly classify the constraints into

Experimental Protocols & Methodologies

Protocol 1: Implementing a Two-Stage Ensemble Algorithm (CMOEA-TENS)

This protocol is effective for constrained multi-objective problems where the balance between convergence and diversity in the feasible region is critical [15].

- Initialization: Initialize an ensemble of multiple populations (e.g., four). Each population is assigned a different CHT, focusing on aspects like feasibility, diversity, or convergence.

- Stage 1 - Exploration:

- Designate one population as the "exploratory" population, which ignores all constraints.

- The goal of this stage is for the exploratory population to drive the evolution and converge towards the Unconstrained Pareto Front (UPF).

- The other populations in the ensemble co-evolve, leveraging the solutions found by the exploratory population.

- Stage 2 - Exploitation:

- Switch the driving force of the evolution to the ensemble of CHT-based populations.

- The goal is to refine the search and converge to the Constrained Pareto Front (CPF).

- Offspring Generation & Selection:

- Implement a Multi-Armed Bandit (MAB) strategy. The MAB dynamically selects the most suitable parent population from the ensemble for generating offspring in each generation, based on real-time performance feedback.

- Select parents from the chosen population and generate offspring using evolutionary operators (e.g., crossover, mutation).

- Environmental Selection: Combine parents and offspring, and select the next generation based on the specific CHT assigned to each population and Pareto dominance principles.

Protocol 2: Implementing the Infeasibility Driven Evolutionary Algorithm (IDEA)

This protocol is designed to improve performance on problems where the optimal solution lies on a constraint boundary by explicitly maintaining useful infeasible solutions [14].

- Initialization: Generate a random initial population.

- Evaluation and Classification: Evaluate each individual for objective function value and constraint violation. Classify the population into feasible and infeasible solutions.

- Ranking:

- Rank the infeasible solutions based on a combination of their objective function value and constraint violation. The key is to rank some "good" infeasible solutions (those with low objective value and low violation) higher than feasible solutions.

- Rank the feasible solutions based primarily on their objective function value.

- Selection and Reproduction: Use a selection operator (e.g., tournament selection) that considers this special ranking, ensuring that the best infeasible solutions are preserved and can participate in creating offspring.

- Replacement: Form the new population by selecting top-ranked individuals from the combined list of feasible and infeasible solutions, guaranteeing that a small percentage of the best infeasible solutions are retained.

Research Reagent Solutions

Table 1: Essential Computational Tools and Techniques for Constrained Evolutionary Optimization.

| Research Reagent | Function & Purpose |

|---|---|

| Feasibility Rules (e.g., CDP) | A simple, popular method that strictly prefers any feasible solution over any infeasible one. Serves as a baseline and is effective when feasible regions are easy to find [15]. |

| Stochastic Ranking (SR) | Balances the influence of objective and constraint functions by using a probability P to compare individuals based on objective function, even when infeasible. Helps prevent domination by either the objective or constraints [8]. |

| ε-Constraint Method | Relaxes constraints by allowing solutions with violation below a threshold ε to be treated as feasible. The parameter ε can be adaptively decreased during the run, providing a smooth transition from exploration to exploitation [8] [15]. |

| Penalty Function | Degrades the fitness of infeasible solutions by adding a penalty term proportional to their constraint violation. Adaptive versions self-tune the penalty coefficient, reducing parameter sensitivity [8] [17] [18]. |

| Multi-Objective CHT | Transforms the constrained problem into an unconstrained multi-objective one by treating constraint violations as additional objectives to minimize. Allows the use of well-established MOEAs [8]. |

| Test Suites (CEC2006, CEC2010, CEC2017) | Standard sets of benchmark constrained optimization problems. Used for rigorous validation, performance comparison, and ablation studies of new algorithms [8] [17]. |

| Performance Indicators (IGD, HV) | Quantitative metrics like Inverted Generational Distance (IGD) and Hypervolume (HV) used to assess the convergence and diversity of the obtained solution set against the true Pareto front [16]. |

Visualization of Key Concepts

Diagram 1: Two-Stage Evolutionary Workflow for Constrained Optimization

Diagram 2: High-Level Taxonomy of Constraint Handling Techniques

Feasibility Rules and Stochastic Ranking Approaches

Troubleshooting Guide: Common Issues with Constrained Evolutionary Optimization

Problem 1: Algorithm Converges on Infeasible Solutions

- Description: The evolutionary algorithm consistently produces solutions that violate problem constraints, failing to find the feasible region.

- Possible Causes & Solutions:

- Cause: Poor balance between objective function and constraint minimization. The search is overly biased toward better objective values, even in infeasible space [20].

- Solution: Implement a dynamic knowledge transfer mechanism. Classify your problem's objective as "simple" (clear minimizing direction) or "complex" (highly nonlinear). For simple objectives, use an objective-oriented constraint handling technique (CHT) that transfers knowledge from the objective to help satisfy constraints. For complex objectives, use a constraint-oriented CHT that first drives the population toward feasibility before considering the objective [20].

- Cause: Inadequate diversity maintenance near feasible region boundaries.

- Solution: Deliberately maintain and utilize "useful infeasible solutions" close to the feasible region. These solutions can, through genetic operators, help generate new solutions inside the feasible region and enable better sampling near its boundaries [7].

Problem 2: Algorithm is Trapped in Local Feasible Regions

- Description: The algorithm finds a small, suboptimal feasible region but cannot escape to discover better, potentially larger feasible areas.

- Possible Causes & Solutions:

- Cause: Infeasible barriers between disjoint feasible regions are too difficult for the algorithm to cross.

- Solution: Temporarily relax constraints using an

ɛ-constraint method or a stochastic ranking technique. This allows the population to traverse infeasible regions to reach other, more promising feasible areas [20]. - Cause: Loss of diversity in the population after discovering a feasible solution.

- Solution: Introduce a diversity mechanism into your evolution strategy. This can involve specific mutation operators or a niching technique to maintain a diverse population and continue exploring the search space [7].

Problem 3: Infeasibility in Complex Mathematical Optimization Models

- Description: When solving complex models (e.g., MIPs), the solver reports the model as infeasible.

- Possible Causes & Solutions:

- Cause: A small subset of conflicting constraints is making the entire model infeasible.

- Solution: Use an Irrreducible Infeasible Set (IIS) finder. Many modern solvers (like CPLEX) can identify an IIS—a minimal set of constraints and variable bounds that are mutually contradictory—which helps you pinpoint the exact source of infeasibility [21].

- Cause: Model formulation errors or incorrect data inputs.

- Solution: Systematically test your model. Build the model one constraint at a time, testing feasibility frequently. Fix variables to a known feasible solution (if one exists) to verify each new constraint's correctness [21].

Problem 4: Slow Convergence and High Computational Cost

- Description: The algorithm finds feasible solutions but is computationally expensive and takes too long to converge to a high-quality result.

- Possible Causes & Solutions:

- Cause: Evaluating the fitness of a large population over many generations is inherently computationally intensive [22].

- Solution: Analyze the problem landscape. If the objective function and constraints are correlated, use methods that leverage this relationship (like the KTR indicator) to guide the search more efficiently. For problems with complex objectives, avoid premature use of objective information that can lead the population to local optima [20].

- Cause: Inefficient handling of constraints for every individual in every generation.

- Solution: Consider using penalty functions that add a penalty for constraint violations to the objective function. To avoid numerical issues, use a reasonable penalty weight instead of an extremely large one. Alternatively, use a feasibility rule that prioritizes feasible solutions over infeasible ones, and between infeasible solutions, prioritizes those with a lower total constraint violation [20] [21].

Frequently Asked Questions (FAQs)

Q1: What are the main categories of Constraint Handling Techniques (CHTs)? The popular CHTs can be briefly divided into six categories [20]:

- Penalty Functions: Add a penalty for constraint violation to the objective value.

- Feasibility Rules: Prioritize solutions based on feasibility and degree of constraint violation.

- Stochastic Ranking: Rank solutions with a probability of switching between objective and constraint violation as the ranking criteria.

ɛ-Constraint: Allow a controllable level of constraint violation.- Multiobjective Optimization: Treat constraints as separate objectives to be minimized.

- Hybrid Methods: Combine two or more of the above techniques.

Q2: How can "useful infeasible solutions" improve my optimization? Maintaining infeasible solutions that are very close to the feasible region, especially those located in promising areas, can be highly beneficial. Using genetic operators (crossover and mutation), these solutions can help generate new offspring inside the feasible region. They also ensure the feasible region boundaries are well-sampled, which can lead to discovering better feasible solutions than if only feasible solutions were maintained [7].

Q3: My model is infeasible. How can I quickly diagnose the cause? Beyond using an IIS, you can [21]:

- Add slack variables: Convert hard constraints into soft ones by adding slack variables with a high penalty in the objective function. Solving this penalized model will show you which constraints are being violated (and by how much), helping to locate the source of infeasibility.

- Build and test incrementally: Add constraints to your model one by one, solving the model after each new addition. The point at which the model becomes infeasible immediately identifies the problematic constraint or the interaction causing the issue.

Q4: What is the core process of an Evolutionary Algorithm (EA)? EAs generally follow an iterative process with these key steps [22]:

- Initialization: Generate an initial population of random potential solutions.

- Fitness Evaluation: Assess each solution using a fitness function that measures how well it solves the problem.

- Selection: Select the fittest solutions to be parents for reproduction.

- Reproduction (Crossover/Mutation): Create new offspring by combining parts of parent solutions (crossover) and introducing small random changes (mutation).

- Replacement: Form a new population from the offspring and, optionally, some parents.

- Termination: Repeat from step 2 until a stopping condition (e.g., max iterations, fitness threshold) is met.

Experimental Protocol: Benchmarking CHT Performance

To rigorously compare the performance of different Constraint Handling Techniques within an Evolutionary Algorithm framework, follow this structured experimental methodology, adapted from recent literature [23] [20].

1. Benchmark Problems: Utilize established test suites to ensure a comprehensive evaluation.

- Recommended Suites: IEEE CEC2006, CEC2010, and CEC2017 for constrained optimization [20].

- Rationale: These suites provide a variety of problem landscapes with different challenges, such as disjoint feasible regions and nonlinear constraints.

2. Performance Measures: Use multiple metrics for a fair comparison. The expected run-time (fixed-target perspective) is a robust metric [23].

- Primary Metric: Function Evaluations (FE): Record the number of times the objective function is evaluated until the algorithm finds a solution with an objective value at or below a predefined target (e.g., the known global optimum or a best-known value). This is preferred over CPU time, which can be influenced by the computing environment [23].

- Secondary Metric: Best Achievable Solution Quality (Fixed-Budget): For a fixed budget of FEs, record the best solution quality achieved. This measures how close the solution is to the global optimum given limited resources [23].

3. Statistical Comparison Protocol: Due to the stochastic nature of EAs, perform multiple independent runs and use robust statistical analysis.

- Runs: Execute each algorithm-CHT combination on each problem for a minimum of 20-30 independent runs [23].

- Analysis: Use a bootstrapping-based hypothesis testing procedure that incorporates the principles of severity. This approach goes beyond simple

p-values by considering the magnitude of performance differences and their practical relevance [23]. - Ranking: A novel ranking scheme analogous to a football league can be employed. Algorithms get points for wins/draws against others on each problem, and the magnitude of performance difference ("goal difference") serves as a tie-breaker and quantitative performance measure [23].

| Technique | Core Principle | Key Advantages | Potential Drawbacks |

|---|---|---|---|

| Penalty Functions | Adds a penalty for constraint violation to the objective function. | Simple to implement; widely applicable. | Performance highly sensitive to the choice of penalty weights; can cause numerical issues [21]. |

| Feasibility Rules (FR) | Gives strict precedence to feasible solutions; compares infeasibles by their violation. | No parameters to tune; strong push towards feasibility. | May reject promising infeasible solutions that are close to the global optimum. |

| Stochastic Ranking | Ranks solutions with a probability of using objective or violation. | Balances objective and constraints without hard rules. | Performance depends on the chosen ranking probability. |

ɛ-Constraint |

Allows a dynamically controlled level of constraint violation. | Can bridge infeasible regions between feasible areas. | Requires a strategy for adaptively managing ɛ. |

| Multiobjective | Treats constraints as separate objectives. | Leverages powerful multi-objective algorithms. | Increases problem complexity; can be computationally expensive. |

| Knowledge Transfer (CLBKR) | Classifies objective as simple/complex and applies tailored CHT [20]. | Dynamically adapts to problem characteristics. | Requires a problem classification step. |

The Scientist's Toolkit: Research Reagents & Materials

This table lists key algorithmic components and their functions for implementing and experimenting with feasibility rules and stochastic ranking.

| Item | Function in the Experiment |

|---|---|

| Evolutionary Algorithm Engine | The core optimizer (e.g., Differential Evolution, Evolution Strategy) that handles population management, selection, and genetic operators [20]. |

| Constraint Violation Calculator | A function that calculates the degree of violation for all constraints for a given solution, often summed into a single scalar value G(x) [20]. |

| Feasibility Rule (FR) Comparator | A procedure for comparing two solutions that prioritizes: 1) feasible over infeasible, 2) if both infeasible, the one with lower total constraint violation [20]. |

| Stochastic Ranking Procedure | A ranking algorithm that probabilistically switches between comparing solutions based on their objective value and their constraint violation [20]. |

| IIS (Irrreducible Infeasible Set) Finder | A solver tool (e.g., in CPLEX) that identifies the minimal set of conflicting constraints in an infeasible model, crucial for debugging [21]. |

| Benchmark Test Suites (CEC2006, etc.) | Standardized sets of constrained optimization problems used to ensure fair and comprehensive performance testing of new algorithms [20]. |

| Performance Analysis Toolkit | Software for statistical comparison of results (e.g., using severity-based testing) and visualization (e.g., IOHanalyzer) [23]. |

Workflow for Selecting a Constraint Handling Strategy

This diagram illustrates a logical decision pathway for selecting an appropriate constraint handling approach, based on problem characteristics.

The Role of Infeasible Solutions in an EA Cycle

This diagram integrates the strategic use of infeasible solutions into the standard evolutionary algorithm workflow.

The Role of Infeasible Solutions in Maintaining Population Diversity

Frequently Asked Questions

1. What is an infeasible solution, and why is it important in constrained optimization? An infeasible solution is a candidate answer generated during the evolutionary process that violates one or more constraints of the problem. Unlike in traditional approaches where they are immediately discarded, modern research shows that selectively retaining certain infeasible solutions is crucial. They help maintain population diversity, enable the algorithm to cross infeasible "valleys" to reach separate feasible regions, and prevent the population from getting trapped in local optima, especially in problems with complex, narrow, or disjoint feasible spaces [11] [24] [25].

2. My algorithm is converging prematurely. Could my handling of infeasible solutions be the cause? Yes, this is a common issue. Overly strict constraint-handling, which eliminates all infeasible solutions, can drastically reduce population diversity and lead to premature convergence. This is particularly problematic when the feasible region is small or complex. To mitigate this, consider implementing strategies that preserve some well-distributed infeasible solutions. Algorithms like EGDCMO and DP-NSGA-III explicitly maintain an archive of infeasible solutions with good objective values or distribution to guide the population and improve exploration [24] [25] [26].

3. How do I choose which infeasible solutions to keep? Not all infeasible solutions are equally valuable. The key is to prioritize those that contribute to population diversity or have promising objective values. Effective strategies include:

- Global Diversity: Using weight vectors to partition the objective space and selecting infeasible solutions that are well-distributed across these subregions [24].

- Balanced Fitness: Evaluating infeasible solutions with a fitness function that balances their constraint violation degree with their objective function quality [24] [26].

- ε-Constraint Method: Allowing solutions with a constraint violation below a dynamically decreasing threshold (ε) to participate in the evolution, effectively treating them as "quasi-feasible" [25].

4. Are there strategies for leveraging infeasible solutions in drug discovery and molecule optimization? Absolutely. In drug discovery, the chemical space is vast and complex. Methods like the Swarm Intelligence-Based Method for Single-Objective Molecular Optimization (SIB-SOMO) use operations like "Random Jump" on particles (molecules) that are not improving. This introduces random changes, effectively creating novel infeasible structures that can help the swarm escape local optima and explore a wider area of the molecular space to find better, feasible candidates [27].

Troubleshooting Guides

Problem: Population Lacks Diversity in Problems with Disjoint Feasible Regions

- Symptoms: The population converges to a single, small feasible area, missing other potentially better regions. The algorithm performs poorly on problems where the feasible Pareto front is composed of multiple disconnected parts.

- Solution: Implement a Dual-Population or Multi-Archive Approach.

- Description: Maintain two co-evolving populations. The main population focuses on solving the constrained problem. An auxiliary population ignores constraints to explore the unconstrained Pareto front, providing genetic material to help the main population cross infeasible barriers.

- Protocol: The DP-NSGA-III algorithm is a prime example [25].

- Initialize two populations: Pop1 (main) and Pop2 (auxiliary).

- Evolve Pop1 using a constrained handling method (e.g., ε-constraint).

- Evolve Pop2 without considering any constraints, focusing solely on objective optimization.

- Exchange Offspring between the two populations every generation.

- Output the feasible solutions from Pop1 as the final result.

- Expected Outcome: The main population receives high-quality genetic information from the unconstrained search, enabling it to discover all disjoint segments of the feasible Pareto front and significantly improving convergence and diversity [25].

Problem: Algorithm Struggles with Balancing Objectives and Constraints

- Symptoms: The search is either dominated by constraint satisfaction, leading to poorly optimized objectives, or by objective optimization, leading to a high proportion of infeasible solutions.

- Solution: Adopt an Adaptive Penalty or Fitness Function.

- Description: Instead of treating all constraints equally, assign them different weights based on their violation severity or significance to guide the search more intelligently.

- Protocol: As implemented in the CdEA-SCPD algorithm [26]:

- Investigate Stage: During evolution, automatically calculate the significance (

Sig_j) for each constraintjbased on the population's current violation severity. - Adaptive Penalty: Calculate the total constraint violation for a solution

xasTotal_CV(x) = Σ (Sig_j * CV_j(x)), whereCV_j(x)is the violation of the j-th constraint. - Fitness Evaluation: Combine the objective value

f(x)andTotal_CV(x)into a single fitness function to rank solutions.

- Investigate Stage: During evolution, automatically calculate the significance (

- Expected Outcome: The algorithm gains "interpretability" by understanding which constraints are most critical, leading to faster and more stable convergence toward the true constrained optimum [26].

Problem: Premature Convergence in Molecular Optimization

- Symptoms: In molecule generation tasks, the algorithm repeatedly produces similar molecular structures and fails to discover novel candidates with improved properties.

- Solution: Integrate Exploration-Enhancing Operations.

- Description: Incorporate specific operations that actively disrupt convergence to push the search into new areas of the chemical space.

- Protocol: Following the SIB-SOMO framework for molecule optimization [27]:

- MIX Operation: Combine a current molecule (particle) with its local best and the global best molecule to create new candidates.

- MOVE Operation: Select the best candidate from the original and mixed molecules.

- Random Jump/Vary Operation: If the original particle remains the best after MIX, apply a "Random Jump" that randomly alters a portion of its structure (e.g., atoms or bonds). This introduces infeasible or novel intermediates that help escape local optima.

- Expected Outcome: Enhanced exploration of the vast molecular space, leading to the discovery of more diverse and novel molecular structures with desired properties in a shorter time [27].

Experimental Protocols for Key Studies

Protocol 1: Utilizing a Global Diversity Strategy for CMOPs (EGDCMO) This protocol is based on the EGDCMO algorithm designed for constrained multi-objective problems with small feasible regions [24].

- Initialization: Generate an initial population

Pand a set of weight vectors to partition the objective space into subregions. - Loop for a maximum number of generations:

- Reproduction: Create offspring

QfromPusing genetic operators. - Combination: Let

R = P ∪ Q. - Feasible Selection: Select all feasible solutions from

Rto formF. - Infeasible Selection (Global Diversity): From the remaining infeasible solutions, select a set

Ithat is well-distributed across the subregions defined by the weight vectors. Use a new fitness function that balances constraint violation and objective value for selection. - New Population: Form the next generation

Pby combiningFandI.

- Reproduction: Create offspring

- Output: The final feasible, non-dominated solutions.

Protocol 2: A Dual-Population Approach for Many-Objective Problems (DP-NSGA-III) This protocol is for challenging constrained many-objective problems (CMaOPs) and is derived from the DP-NSGA-III algorithm [25].

- Initialization: Create two populations: Pop1 (main) and Pop2 (auxiliary). Initialize an ε value using the provided formula based on initial constraint violation.

- Loop for a maximum number of generations:

- Evolve Pop1: Use NSGA-III with an ε-constraint method. Solutions with violation < ε are treated as feasible during environmental selection.

- Evolve Pop2: Use NSGA-III but completely ignore all constraints during environmental selection.

- Offspring Sharing: Combine the offspring from both populations. Each population uses the combined offspring pool for its next evolution step.

- Update ε: Decrease the ε value according to the monotonically decreasing function.

- Output: The final Pop1 population.

Research Reagent Solutions

The table below lists key algorithmic components and their functions in research on infeasible solutions.

| Component/Strategy | Primary Function in Research |

|---|---|

| ε-Constraint Method [25] | Dynamically relaxes constraints during early evolution, allowing beneficial infeasible solutions to participate and guide the search. |

| Dual-Population Coevolution [25] | Enables one population to explore the unconstrained objective space, providing genetic information to help a second population satisfy complex constraints. |

| Global Diversity Archive [24] | Actively maintains a diverse set of infeasible solutions across the objective space to prevent premature convergence and aid in exploring disjoint regions. |

| Adaptive Penalty Function [26] | Automatically assigns different weights to constraints based on their violation severity, improving interpretability and convergence. |

| Random Jump Operation [27] | In molecular optimization, it randomly modifies a solution to escape local optima and explore new areas of the chemical space. |

Optimization Strategy Workflow

The following diagram illustrates the logical relationships and workflow of a dual-population approach that leverages infeasible solutions, synthesizing concepts from the cited research.

Frequently Asked Questions: KKT Conditions and Constraints

What do the KKT conditions represent in a constrained optimization problem? The Karush-Kuhn-Tucker (KKT) conditions are first-order necessary conditions for a solution in nonlinear programming to be optimal, provided that some regularity conditions are satisfied. They generalize the method of Lagrange multipliers to allow for inequality constraints. A key interpretation is that at the optimum, the gradient of the objective function can be expressed as a linear combination of the gradients of the active constraints, effectively balancing the "forces" that keep the solution within the feasible region [28].

What does the *Stationarity condition mean?* The stationarity condition requires that at the optimal point ( x^* ), the gradient of the Lagrangian function ( L ) with respect to ( x ) is zero. For a minimization problem, this is expressed as ( \partial f(x^) + \sum_{j=1}^{\ell} \lambda_j \partial h_j(x^) + \sum{i=1}^{m} \mui \partial g_i(x^*) \ni \mathbf{0} ). This means the objective function's gradient is balanced by the gradients of the constraints [28].

Why is *Dual Feasibility ( \mu_i \geq 0 ) required for inequality constraints?* The dual feasibility condition ensures that the Lagrange multipliers ( \mu_i ) for inequality constraints are non-negative. This is crucial because it guarantees that the influence of an active inequality constraint opposes the decrease of the objective function, ensuring optimality. A negative multiplier would imply that the objective could be improved by moving further into the infeasible region, which is illogical [28] [29].

What is the practical interpretation of *Complementary Slackness?* Complementary Slackness ( \mui gi(x^) = 0 ) means that for each inequality constraint, either the constraint is active ( g_i(x^) = 0 ), or its corresponding Lagrange multiplier is zero ( \mui = 0 ), or both. If a constraint is not active ( gi(x^*) < 0 ), it has no direct influence on the solution (its multiplier is zero). Conversely, a non-zero multiplier ( \mu_i > 0 ) indicates that the constraint is active at the optimum [28] [29].

A solution satisfies the KKT conditions but is clearly not the global minimum. What might be wrong? The KKT conditions are necessary for optimality under certain regularity conditions (like constraint qualifications). If a solution meets the KKT conditions but is not the global minimum, the problem might be non-convex. For convex problems (convex objective and feasible region), the KKT conditions are sufficient, and any point satisfying them is a global minimizer. For non-convex problems, a KKT point could be a local minimum or a saddle point [28] [30].

How do I handle a case where my optimization algorithm converges to an infeasible solution? Convergence to an infeasible solution often indicates issues with the constraint handling method. In evolutionary algorithms, one advanced approach is to use adaptive penalty functions that assign different weights to constraints based on their violation severity. This helps guide the search back towards the feasible region by treating more severely violated constraints as more significant. Additionally, maintaining an archive of infeasible solutions with good objective values can provide directional information to help cross narrow feasible regions [26].

What does it mean if most of my Lagrange multipliers are zero at the solution? This is a common occurrence explained by complementary slackness. It means that the corresponding inequality constraints are not active at the solution ( g_i(x^*) < 0 ) and thus do not directly influence the optimal point. In practical terms, you could potentially remove these constraints from your model without changing the optimal solution, simplifying the problem [28] [29].

In the context of evolutionary constrained optimization, why is it ineffective to treat all constraints equally? In real-world problems, constraints have varying levels of "significance." Treating them equally fails to exploit their individual characteristics. Research shows that assigning different weights to constraints based on their violation severity enhances an algorithm's interpretability and helps it converge more rapidly toward the global optimum. The significance of each constraint can even be investigated spontaneously during the evolution process [26].

Troubleshooting Common KKT Issues

| Problem Symptom | Potential Cause | Diagnostic Steps | Solution & Recommendations |

|---|---|---|---|

| Infeasible KKT System: The KKT equations and complementarity conditions yield no solution. | Incorrect assumption about which constraints are active. | 1. Verify primal feasibility of the candidate point.2. Check all combinations of active/inactive constraints (e.g., for 3 constraints, check all 8 cases) [30]. | Re-solve the problem by systematically enumerating all active-set combinations. For convex problems, a graphical analysis can identify the active constraints [30]. |

| Violated Regularity: KKT conditions do not hold at a point that appears optimal. | Failure of constraint qualifications (e.g., the LICQ). | Check if the gradients of the active constraints at the point are linearly independent. | Reformulate the constraints to ensure linear independence or use numerical methods less sensitive to CQ failures, such as primal-dual interior point methods [28] [31]. |

| Numerical Instability: Difficulty solving the stationarity conditions due to ill-conditioning. | The problem may be poorly scaled, or the solution may lie very close to the constraint boundary. | Evaluate the condition number of the Hessian of the Lagrangian. | Implement a primal-dual interior point method that follows the "central path," keeping iterates in the interior and improving numerical stability [31]. |

| Trivial Multipliers: All Lagrange multipliers (μ) for inequality constraints are zero at the suspected solution. | No inequality constraints are active, meaning the solution is an unconstrained minimum inside the feasible region. | Check the values of ( g_i(x^*) ). If all are strictly negative, constraints are inactive. | Verify the unconstrained optimum. If it is feasible, the constraints are redundant and can be disregarded for determining the solution [28] [29]. |

Experimental Protocol: Identifying Active Constraints via KKT Analysis

This protocol provides a systematic methodology for empirically verifying the Karush-Kuhn-Tucker conditions in a numerical optimization experiment, a common task in evolutionary constrained optimization research.

1. Objective To verify whether a candidate solution ( x^* ) obtained from a constrained optimization algorithm satisfies the KKT conditions and to correctly identify the set of active constraints.

2. Materials and Computational Environment

- Software: A numerical computing environment (e.g., MATLAB, Python with NumPy/SciPy).

- Algorithm: A constrained optimization solver (e.g., an Evolutionary Algorithm with constraint-handling technique, fmincon in MATLAB, or scipy.optimize.minimize).

- Problem Definition: The objective function ( f(x) ) and constraint functions ( gi(x) ) and ( hj(x) ) must be explicitly defined and differentiable.

3. Procedure 1. Obtain a Candidate Solution: Run your chosen optimization algorithm on the problem to obtain a proposed solution ( x^* ). 2. Verify Primal Feasibility: * Calculate the values of all constraints at ( x^* ). * For inequality constraints: Confirm ( gi(x^*) \leq 0 ) for all ( i ). * For equality constraints: Confirm ( hj(x^) = 0 ) for all ( j ). * *If primal feasibility is violated, the point is not a candidate optimum. 3. Identify Active Inequality Constraints: * The set of active inequality constraints ( A ) is defined as ( A = { i \mid gi(x^*) = 0 } ). * All equality constraints are considered active by definition. 4. Check Linear Independence of Active Constraints: * Compute the gradients ( \nabla gi(x^) ) for all ( i \in A ) and ( \nabla h_j(x^) ) for all ( j ). * Verify that this set of gradient vectors is linearly independent. This is a common Constraint Qualification (CQ). If this fails, the KKT conditions may not be necessary at ( x^* ). 5. Form and Solve the KKT System: * Construct the Lagrangian: ( L(x, \mu, \lambda) = f(x) + \sumi \mui gi(x) + \sumj \lambdaj hj(x) ). * Write the stationarity condition: ( \nablax L(x^*, \mu, \lambda) = \nabla f(x^*) + \sumi \mui \nabla gi(x^) + \sum_j \lambda_j \nabla h_j(x^) = 0 ). This is a system of linear equations with variables ( \mu ) and ( \lambda ). * Solve this system for the Lagrange multipliers. 6. Verify Dual Feasibility and Complementary Slackness: * Dual Feasibility: Check that ( \mui \geq 0 ) for all inequality constraints. * Complementary Slackness: Confirm that ( \mui g_i(x^*) = 0 ) holds for all inequality constraints. This is automatically satisfied if multipliers for inactive constraints are zero.

4. Expected Results If all steps are completed successfully—primal and dual feasibility are satisfied, the stationarity condition holds, and complementary slackness is met—then the candidate solution ( x^* ) is a KKT point and a strong candidate for a local (or global, for convex problems) optimum.

5. Visualization of KKT Verification Logic The workflow for the experimental protocol described above can be logically represented by the following decision tree:

Research Reagent Solutions: Key Components for KKT Experiments

| Item Name | Function / Role in Experiment | Technical Specifications |

|---|---|---|

| Analytical Gradient Function | Provides exact first-derivative information for the objective and constraints, essential for forming the stationarity condition. | Must output ( \nabla f(x) ), ( \nabla gi(x) ), and ( \nabla hj(x) ) as vectors/matrices. Symbolic differentiation tools are ideal. |

| Lagrangian Formulation | The core function that combines the objective and constraints into a single unconstrained-looking function, incorporating the Lagrange multipliers. | ( L(x, \mu, \lambda) = f(x) + \sumi \mui gi(x) + \sumj \lambdaj hj(x) ) [28]. |

| Linear System Solver | A numerical routine to solve the system of equations arising from the stationarity condition ( \nabla_x L = 0 ) for the multiplier values. | Should be robust to ill-conditioning (e.g., LU decomposition, QR factorization). |

| Constraint Qualification Check | A procedure to verify that the gradients of active constraints are linearly independent, ensuring the necessity of the KKT conditions. | Algorithm to compute the rank of the matrix formed by ( \nabla gi(x^*) ) and ( \nabla hj(x^*) ). |

| Primal-Dual Interior Point Solver | An optimization algorithm that naturally generates sequences of primal variables and Lagrange multipliers, useful for benchmarking and validation. | Configured to follow the central path, providing both the solution and valid multipliers [31]. |

Advanced Methodologies for Leveraging Infeasible Solutions in Evolutionary Algorithms

Multi-Stage and Multi-Population Evolutionary Frameworks

Frequently Asked Questions (FAQs)

1. What does "infeasible solution" mean in the context of evolutionary optimization? An infeasible solution is a candidate solution proposed by the evolutionary algorithm that violates one or more of the problem's constraints. In a constrained optimization problem, the goal is to find a solution that not only optimizes an objective function (e.g., minimizes cost or maximizes efficacy) but also satisfies all given limitations, such as budgetary caps, resource capacity, or physical laws. When an algorithm produces an infeasible solution, it is not a valid answer to the problem as posed [1] [2].

2. What are the first steps I should take when my model is consistently infeasible? The first steps involve verifying the correctness of your model and data [1].

- Check Input Data: Perform thorough sanity checks on your input data. Inaccuracies, such as overpromising client commitments beyond your actual production capacity, are a common source of infeasibility [1].

- Review Model Formulation: Check for simple coding errors, including incorrect variable types, indexing mistakes, erroneous bounds, or using an equality constraint where an inequality would be more appropriate. It is highly recommended to start with an algebraic formulation of your model before coding to simplify verification [1].

3. What is an IIS and how can it help me? An IIS (Irreducible Inconsistent Subsystem or Irreducible Infeasible Set) is a powerful tool provided by solvers like Gurobi, CPLEX, and XPRESS. An IIS is a minimal subset of your model's constraints and variable bounds that is itself infeasible. If any single constraint or bound is removed from this subset, the subsystem becomes feasible. Analyzing the IIS helps you pinpoint the specific set of conflicting rules causing the infeasibility, saving considerable time in debugging large models [1] [2].

4. What are slack variables and the penalty method? This is a widely used strategy to manage infeasibility by softening hard constraints [1] [2].

- Slack Variables: A slack variable is added to a constraint, effectively allowing it to be "violated" by a certain amount.

- Penalty Method: The violation of the slack variable is then penalized in the objective function. The optimizer must then balance the original goal (e.g., minimizing cost) with the new goal of minimizing constraint violations. This approach often reflects real-world scenarios where some constraints can be bent at a cost (e.g., hiring temporary workers to overcome a labor shortage) [1].

5. How does the Boundary Update (BU) method work? The Boundary Update method is an implicit constraint handling technique that dynamically adjusts the lower and upper bounds of decision variables during the optimization process. It uses the problem's constraints to iteratively cut away portions of the infeasible search space, thereby guiding the algorithm toward the feasible region more quickly. However, this twisting of the search space can make the optimization problem more challenging. To counter this, switching mechanisms can be implemented to revert to the original problem landscape once the feasible region is found [32].

6. What is a Random Key Genetic Algorithm and how does it handle constraints? A Random Key Genetic Algorithm (RKGA) is a variant that ensures feasibility through a specialized decoding function. In an RKGA, chromosomes are encoded as vectors of real numbers (random keys). A decoding function is then designed to map any given chromosome to a feasible solution to the original problem. Because the decoder guarantees feasibility, the evolutionary algorithm operates without ever evaluating an infeasible solution. Designing this decoder is problem-specific but is often superior to penalty-based methods when achievable [33].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Infeasibility in Optimization Models

This guide outlines a systematic workflow for handling infeasible models. The process begins when a solver returns an infeasible status.

Diagram 1: A systematic workflow for diagnosing and resolving model infeasibility.

Step 1: Initial Verification Before deep diving, confirm the basics. Manually check your input data for accuracy and review your model's code for simple errors. A known feasible solution can be input into the model to see if it is correctly recognized as feasible [1].

Step 2: Identify the Core Conflict Use your solver's built-in tool to compute the Irreducible Inconsistent Subsystem (IIS). This report is the most direct way to identify the minimal set of constraints that are mutually exclusive [1] [2].

Step 3: Apply a Resolution Strategy Based on the IIS analysis, choose a resolution path:

- Model Reformulation: If the IIS reveals a modeling error (e.g., an incorrect sign or an overly restrictive bound), correct the formulation directly [1] [2].

- Slack Variables and Penalization: If the constraints are correct but too rigid, introduce slack variables. This transforms hard constraints into soft constraints, making the model feasible by construction and guiding the solver toward a solution that minimizes both the original objective and the constraint violations [1] [2].

- Constraint Prioritization: For complex models, assign priority levels to different constraints. During the resolution process, the solver can then relax lower-priority constraints first. This can be implemented by setting different penalty weights for the slack variables of different constraints [2].

Guide 2: Implementing a Hybrid Boundary Update Framework

This guide provides a methodology for implementing a multi-stage framework that uses the Boundary Update method to quickly find feasibility, then switches to a standard optimization process.

Experimental Protocol

- Objective: To enhance the convergence speed of evolutionary algorithms on constrained problems by rapidly locating the feasible region.

- Algorithms: This framework can be coupled with any evolutionary algorithm (e.g., Genetic Algorithm, Particle Swarm Optimization) for both single and multi-objective problems [32].

- Core Mechanism: The BU method is an implicit constraint handling technique that iteratively updates the bounds of decision variables to cut off infeasible regions of the search space [32].

- Key Innovation (Switching Mechanism): Since continuous BU can distort the search space, two switching thresholds are proposed to transition the optimization process back to the original problem landscape [32].

Diagram 2: A two-stage optimization framework using the Boundary Update method.

Detailed Methodology:

- Initialization: Start with a population of randomly generated individuals within the original variable bounds [34] [35].

- Stage 1 (BU Phase):

- Evaluation: Calculate the fitness and constraint violation for each individual [32].

- Boundary Update: Use the constraints to calculate new, tighter bounds for the decision variables. This reduces the available search space, cutting away infeasible regions [32].

- Evolution: Apply standard evolutionary operators (selection, crossover, mutation) within the updated bounds to create a new generation [36] [35].

- Switching Condition Check: After each generation, evaluate one of two conditions [32]:

- Hybrid-cvtol: Switch when the total constraint violation across the entire population reaches zero.

- Hybrid-ftol: Switch when the improvement in the objective function value stalls for a predefined number of generations.

- Stage 2 (Standard EA Phase): Once the switching condition is met, disable the BU method. Continue the evolutionary optimization using the original, untransformed search space and a standard constraint handling technique (e.g., feasibility rules) until the termination condition is met [32].

Research Reagent Solutions

The following table details key algorithmic components and their functions in implementing advanced constrained evolutionary frameworks.

| Research Reagent | Function in the Experimental Framework |

|---|---|

| Boundary Update (BU) Method | An implicit constraint handling technique that dynamically tightens variable bounds to steer the population toward the feasible region [32]. |

| Switching Mechanism (Hybrid-cvtol/ftol) | A critical control that transitions the algorithm from the distorted BU landscape back to the original problem space to facilitate better convergence [32]. |

| Slack Variables with Penalty Weights | A explicit method to soften hard constraints, allowing controlled violations that are penalized in the objective function, thus ensuring feasibility [1] [2]. |

| IIS (Irreducible Inconsistent Subsystem) Analyzer | A diagnostic tool within mathematical solvers that identifies the minimal set of conflicting constraints, invaluable for model debugging [1] [2]. |

| Feasibility Rules | A explicit constraint handling method often used in tandem with BU; it prioritizes selection of feasible solutions over infeasible ones [32]. |

The following table summarizes performance comparisons of constraint handling methods as reported in the literature.

| Method / Algorithm | Key Performance Findings | Comparative Basis |

|---|---|---|

| Improved PSO with Sparse Penalty [37] | Average value increased by at least 15x on single-peak test functions; always found global optimum on multi-peak functions. | Compared to 3 other PSO algorithms on 6 test functions. |

| Hybrid BU-Switching Method [32] | Significantly boosted convergence speed and found better solutions for constrained problems. | Benchmarked against EA with and without BU over the entire search process. |

| Improved PSO for Image Enhancement [37] | Performance indicators saw at least a 5% increase; algorithm running time increased by a minimum of 15%. | Compared to other evolutionary algorithms for contrast enhancement on multiple datasets. |

Adaptive Penalty Functions with Constraint-Specific Weighting

This technical support resource provides troubleshooting guides and detailed methodologies for researchers implementing adaptive penalty functions in constrained evolutionary optimization.

Troubleshooting Guide: Frequently Asked Questions

FAQ 1: My optimization converges to an infeasible solution even with an adaptive penalty. What could be wrong? This often occurs when the penalty method fails to effectively balance the objective function and constraints. The adaptive penalty method (APM) is designed to behave like a primal-dual active set method as the solution residual decreases, ensuring exact imposition of constraints at the limit [38]. Check if your penalty parameter is adapting correctly using the auxiliary problem at each iteration. Also, verify that your method transitions properly from exploring infeasible regions to exactly enforcing constraints as the solution converges.

FAQ 2: How do I determine appropriate initial weights for constraint-specific weighting?