Solving Knowledge Transfer Failure in EMTO: A Troubleshooting Guide for Biomedical Researchers

This article provides a comprehensive framework for diagnosing and resolving knowledge transfer failures in Evolutionary Multi-task Optimization (EMTO), with a special focus on applications in drug development and clinical research.

Solving Knowledge Transfer Failure in EMTO: A Troubleshooting Guide for Biomedical Researchers

Abstract

This article provides a comprehensive framework for diagnosing and resolving knowledge transfer failures in Evolutionary Multi-task Optimization (EMTO), with a special focus on applications in drug development and clinical research. It explores the foundational principles of EMTO, details advanced methodological approaches for facilitating positive transfer, offers a systematic troubleshooting guide for common failure modes, and presents validation strategies for comparative algorithm analysis. The content is tailored to help researchers and scientists enhance optimization efficiency, avoid negative transfer, and accelerate complex biomedical research processes such as multi-target drug discovery and clinical trial optimization.

Understanding EMTO and Knowledge Transfer: Core Concepts and Common Pitfalls

Evolutionary Multi-task Optimization (EMTO) and Its Relevance to Biomedical Research

Defining Evolutionary Multi-task Optimization (EMTO)

What is Evolutionary Multi-task Optimization (EMTO)?

Evolutionary Multi-task Optimization (EMTO) is a paradigm in evolutionary computation that aims to solve multiple optimization problems (tasks) simultaneously within a single evolutionary algorithm [1]. Unlike traditional evolutionary algorithms that handle one problem at a time, EMTO capitalizes on the implicit parallelism of population-based search and the existence of underlying commonalities between tasks. It facilitates bidirectional knowledge transfer between tasks, allowing the problem-solving experience gained for one task to assist in, and benefit from, solving other related tasks [1] [2].

How does EMTO differ from traditional optimization methods?

- Traditional Evolutionary Algorithms: Optimize a single task independently. Solving multiple tasks requires separate, independent optimization runs [1].

- EMTO: Creates a multi-task environment, typically evolving a single population of individuals that are evaluated across multiple tasks. It introduces mechanisms for knowledge transfer (KT) across tasks during the evolutionary process, promoting mutual enhancement [1].

The Relevance of EMTO to Biomedical Research

EMTO holds significant promise for biomedical research, where complex, correlated optimization problems are common. The following table summarizes its potential applications and associated data types.

Table 1: Potential EMTO Applications in Biomedical Research

| Application Area | Description of Multi-Task Scenario | Data/Model Types |

|---|---|---|

| Drug Discovery | Concurrently optimizing multiple molecular properties (e.g., efficacy, solubility, metabolic stability) for a single compound or a series of related compounds [3]. | Molecular structures, Quantitative Structure-Activity Relationship (QSAR) models. |

| Medical Image Analysis | Simultaneously performing multiple analysis tasks on medical images (e.g., segmentation, feature extraction, and classification for different disease markers) [3]. | MRI, CT, or X-ray images; annotated image datasets. |

| Clinical Decision Support | Optimizing multiple treatment outcome predictions or diagnostic rules simultaneously, leveraging commonalities between patient subgroups or related conditions [3]. | Electronic Health Records (EHRs), patient demographic and clinical data. |

The core principle is that by exploiting the synergies between related biomedical optimization tasks, EMTO can achieve performance gains, such as faster convergence to high-quality solutions or the discovery of more robust and generalizable solutions, compared to tackling each task in isolation [1] [3].

EMTO Technical Support Center

FAQ: Core Concepts

1. What is "Knowledge Transfer" in EMTO? Knowledge Transfer (KT) is the fundamental mechanism in EMTO where information or "knowledge" gleaned from the evolutionary search of one task is used to influence and potentially improve the search for another task [1]. This knowledge is often embedded in the genetic material of the population. Effective KT is critical for EMTO's success, as it allows tasks to help each other, leading to performance improvements over single-task optimization.

2. What is "Negative Transfer" and why is it a problem? Negative transfer occurs when knowledge from one task, upon being transferred to another, hinders the optimization performance of the recipient task [1]. This typically happens when the tasks are unrelated or have low correlation, and the transferred knowledge is misleading in the context of the target task. Negative transfer is a central challenge in EMTO research, as it can deteriorate performance compared to independent optimization [1].

3. What are the main algorithmic approaches to EMTO? A key distinction lies in how knowledge transfer is facilitated:

- Implicit Knowledge Transfer: This is seamlessly integrated into genetic operations. A prominent example is the Multifactorial Evolutionary Algorithm (MFEA) [4] [1]. In MFEA, crossover can occur between individuals from different tasks with a certain probability (rmp), allowing for a blending of genetic material without an explicit mapping.

- Explicit Knowledge Transfer: This involves directly constructing a mapping between the search spaces of different tasks [1]. For instance, if the relationship between tasks is known or can be modeled (e.g., one task is a noisy version of another), a mapping function can be designed to transform solutions from one task's space to another's before transfer.

Troubleshooting Guide: Knowledge Transfer Failures

This guide addresses common issues related to ineffective or detrimental knowledge transfer in EMTO experiments.

Problem: Performance Degradation (Suspected Negative Transfer)

- Symptoms: The algorithm's performance on one or more tasks is worse than if the tasks were optimized independently. Convergence is slower, or the final solution quality is poorer.

- Potential Causes and Solutions:

| Cause | Diagnostic Checks | Resolution Strategies |

|---|---|---|

| Tasks are unrelated | Measure and analyze the similarity between tasks before or during evolution. | Implement adaptive task selection: Dynamically adjust inter-task transfer probabilities based on measured similarity or the success rate of past transfers [1] [2]. |

| Fixed/Excessive Transfer Probability | The Random Mating Probability (rmp) or similar parameter is set too high, forcing excessive transfer between unrelated tasks. | Use adaptive rmp: Instead of a fixed rmp, implement a self-regulated mechanism that automatically adapts the intensity of cross-task knowledge transfer based on the observed degree of relatedness as the search proceeds [2]. |

| Inappropriate Evolutionary Search Operator (ESO) | A single ESO (e.g., only GA or only DE) is used for all tasks, but it may be unsuitable for some [4]. | Adopt a multi-operator strategy: Use multiple ESOs (e.g., both GA and DE) and adaptively control the selection probability of each based on its recent performance on different tasks [4]. |

Problem: Ineffective or Unstable Knowledge Transfer

- Symptoms: The algorithm does not show significant improvement from knowledge transfer. Performance gains are inconsistent across different runs.

- Potential Causes and Solutions:

| Cause | Diagnostic Checks | Resolution Strategies |

|---|---|---|

| Poor Quality of Transferred Solutions | The solutions chosen for transfer are not high-quality or representative of useful building blocks. | Implement quality-based selection: Favor individuals with high fitness or those identified as "elites" for knowledge transfer [5]. Use reasoning methods that consider both search space distribution and objective space evolution information [5]. |

| Lack of Balance in Multi-Objective EMTO | In multi-objective multitask problems, knowledge transfer disrupts the balance between convergence and diversity. | Use collaborative knowledge transfer: Design a mechanism that adaptively performs different knowledge transfer patterns based on the evolutionary stage, using metrics like information entropy to balance convergence and diversity [5]. |

| Naive Transfer in Dissimilar Search Spaces | Transferring solutions directly between tasks with vastly different search space characteristics. | Develop explicit mapping functions: For tasks with known relationships, use techniques like denoising autoencoders or subspace alignment to learn a mapping function between task spaces before transfer [4] [1]. |

Experimental Protocols and Methodologies

Standardized Benchmarking for EMTO

To validate any EMTO algorithm and troubleshoot its performance, standardized benchmarks are crucial. The CEC17 and CEC22 Multitasking Benchmark suites are widely used for this purpose [4]. These benchmarks contain predefined sets of optimization tasks with varying degrees of similarity (e.g., CIHS: Complete-Intersection, High-Similarity; CILS: Complete-Intersection, Low-Similarity), allowing researchers to systematically test an algorithm's ability to handle both positive and negative transfer scenarios.

Table 2: Exemplar Benchmark Problems from CEC17

| Problem Type | Similarity Level | Key Challenge |

|---|---|---|

| CIHS | High | Tests the algorithm's ability to leverage strong commonalities between tasks. |

| CIMS | Medium | Presents an intermediate challenge for knowledge transfer. |

| CILS | Low | Tests the algorithm's robustness against negative transfer. |

Protocol: Evaluating Knowledge Transfer Effectiveness

- Baseline Establishment: Run a traditional single-task evolutionary algorithm (e.g., DE, GA) independently on each task in the benchmark. Record the convergence speed and final best fitness.

- EMTO Execution: Run the proposed EMTO algorithm on the entire set of tasks simultaneously.

- Performance Comparison: For each task, compare the convergence curve and final solution obtained by the EMTO against the single-task baseline.

- Success Metric: A successful EMTO will show faster convergence and/or a better final solution on at least some tasks, without significant degradation on others, demonstrating positive knowledge transfer.

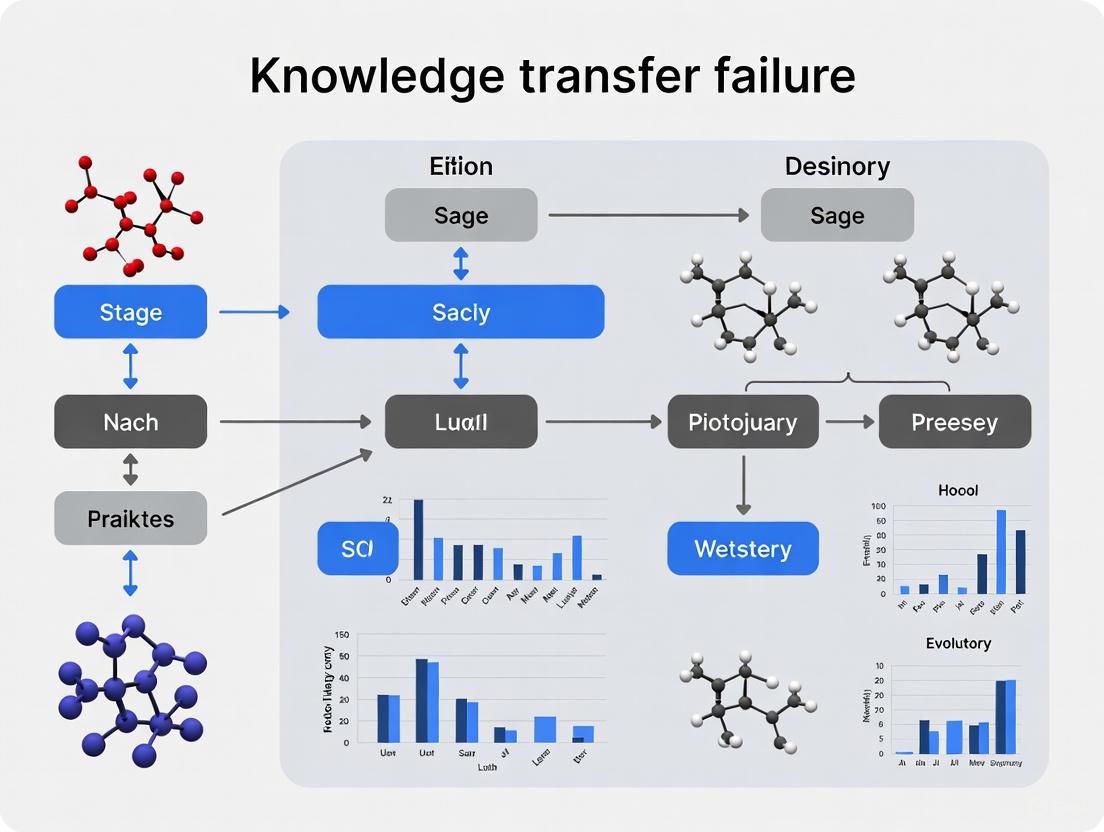

Visualization of a Generic EMTO Framework

The following diagram illustrates the core workflow and logical structure of a typical Evolutionary Multi-task Optimization algorithm, highlighting the central role of knowledge transfer.

Generic EMTO Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key "Research Reagent Solutions" for EMTO Experimentation

| Item / Concept | Function / Purpose in EMTO Research |

|---|---|

| Multifactorial Evolutionary Algorithm (MFEA) | A foundational and representative EMTO algorithm inspired by biocultural models. It provides a baseline framework for implementing implicit knowledge transfer using skill factors and assortative mating [4] [1]. |

| Random Mating Probability (rmp) | A critical parameter in algorithms like MFEA that controls the frequency of crossover between individuals from different tasks. It directly governs the intensity of knowledge transfer [4] [1]. |

| CEC17/CEC22 Benchmark Suites | Standardized sets of multitask optimization problems used to rigorously test, compare, and validate the performance of new EMTO algorithms against established benchmarks [4]. |

| Differential Evolution (DE) Operators | A family of evolutionary search operators (e.g., DE/rand/1) known for their strong exploration capabilities. Often used in combination with other operators like GA in adaptive strategies [4]. |

| Simulated Binary Crossover (SBX) | A crossover operator commonly used in Genetic Algorithms (GAs) and EMTO variants like MFEA. It creates offspring near parents, promoting a focused search [4]. |

| Explicit Mapping Functions | Tools (e.g., autoencoders, subspace alignment) used in explicit knowledge transfer to transform solutions from one task's search space to another, mitigating transfer issues between dissimilar spaces [1] [5]. |

| Adaptive Parameter Control | A strategy where key algorithm parameters (e.g., rmp, operator choice) are not fixed but are dynamically adjusted during the run based on feedback from the search process, which is crucial for mitigating negative transfer [4] [2]. |

The Critical Role of Knowledge Transfer in Accelerating Concurrent Optimization

Frequently Asked Questions (FAQs)

1. What are the most common causes of knowledge transfer failure in Evolutionary Multitask Optimization (EMTO)? The most common causes are negative transfer and transfer bias, which occur when knowledge from one task disrupts the optimization of another. This often happens due to:

- Incorrect transfer source selection: Choosing a source task that is not sufficiently similar to the target task [6].

- Improper transfer timing and intensity: Using fixed, non-adaptive knowledge transfer probabilities that do not align with the evolutionary stage of the tasks [7] [6].

- Over-reliance on a single knowledge space: Focusing knowledge transfer solely on the search space while ignoring valuable evolutionary information in the objective space, which can lead to degraded performance [5].

2. How can I adaptively control knowledge transfer to prevent negative transfer? You can implement strategies that dynamically adjust knowledge transfer based on real-time feedback:

- Reinforcement Learning: Use a Deep Q-Network (DQN) to learn the relationship between evolutionary scenarios (states) and the most effective scenario-specific strategies (actions) [7].

- Information Entropy: Divide the population evolution into stages and use information entropy to adaptively switch between different knowledge transfer patterns, balancing convergence and diversity [5].

- Anomaly Detection: Integrate anomaly detection to identify and filter out potentially detrimental individuals before they are transferred from a source task [6].

3. What metrics can I use to select the most similar tasks for knowledge transfer? To improve transfer source selection, use metrics that assess multiple facets of similarity:

- Population Distribution Similarity: Calculate the Maximum Mean Discrepancy (MMD) to quantify the similarity between the probability distributions of two task populations [6].

- Evolutionary Trend Similarity: Apply Grey Relational Analysis (GRA) to measure the similarity in the evolutionary trajectories of tasks [6].

- Multi-Feature Ensemble: Develop an ensemble method that characterizes the evolutionary scenario from multiple views, including both intra-task and inter-task features [7].

4. My EMTO algorithm suffers from slow convergence. How can knowledge transfer accelerate it? Effective knowledge transfer directly addresses slow convergence by leveraging learned information across tasks:

- Bi-Space Knowledge Reasoning: Systematically exploit not only population distribution in the search space but also particle evolutionary information in the objective space. This provides a more comprehensive knowledge base to guide the search [5].

- Domain Adaptation: Employ techniques like Progressive Auto-Encoding (PAE) to continuously align the search spaces of different tasks throughout the optimization process, facilitating more effective and efficient knowledge transfer [8].

Troubleshooting Guides

Problem: Negative Knowledge Transfer

Symptoms: The convergence curve of a task plateaus or regresses; the population diversity collapses prematurely; the algorithm performs worse than if tasks were solved independently.

| Diagnosis Step | Action | Reference |

|---|---|---|

| Check Source Similarity | Quantify task similarity using MMD (for population distribution) and GRA (for evolutionary trends). Select transfer sources only when similarity exceeds a threshold. | [6] |

| Inspect Transfer Content | Implement an anomaly detection filter to prevent the transfer of "outlier" individuals that do not fit the local distribution of the target task. | [6] |

| Verify Transfer Mapping | For cross-domain tasks, use a domain adaptation method like auto-encoding to learn a non-linear mapping between search spaces, rather than transferring raw solutions. | [8] |

Problem: Stagnation in Multi-Objective Multitask Optimization (MOMTO)

Symptoms: The Pareto Front (PF) fails to improve or spread; the algorithm struggles to balance convergence and diversity across multiple tasks and objectives.

| Diagnosis Step | Action | Reference |

|---|---|---|

| Analyze Knowledge Spaces | Implement a bi-space knowledge reasoning method to acquire and transfer knowledge from both the search space and the objective space, providing a more complete guidance. | [5] |

| Adjust Transfer Pattern | Use an Information Entropy-based Collaborative Knowledge Transfer (IECKT) mechanism. This automatically switches knowledge transfer patterns based on the current evolutionary stage (e.g., exploration vs. exploitation). | [5] |

| Evaluate Task Relationships | Re-assess the potential relationships between tasks in the objective space, which may have been overlooked in favor of search-space relationships. | [5] |

Problem: Poor Performance on Many-Task Problems

Symptoms: Performance degrades significantly as the number of concurrent tasks increases; increased computational overhead from managing transfers.

| Diagnosis Step | Action | Reference |

|---|---|---|

| Audit Transfer Probability | Replace fixed random mating probability (rmp) with an enhanced adaptive strategy. Dynamically control the knowledge transfer probability for each task based on its current knowledge needs. | [6] |

| Simplify Transfer Strategy | Consider a grouping-based method (e.g., K-means clustering) to partition tasks into groups with similar characteristics, restricting knowledge transfer within groups to reduce complexity and risk. | [6] |

| Adopt a Scalable Framework | Utilize a multi-population framework instead of a unified multifactorial one. This provides more explicit control over inter-task interactions and is better suited for a large number of dissimilar tasks. | [8] |

Experimental Protocols

Protocol 1: Implementing an Adaptive Knowledge Transfer Probability Strategy

This methodology dynamically balances task self-evolution and knowledge transfer based on accumulated experience [6].

1. Objective: To enhance EMTO performance by dynamically adjusting the knowledge transfer probability for each task, preventing both insufficient and excessive transfer.

2. Materials/Reagents:

- Algorithm Base: A multi-population Evolutionary Algorithm (EA) framework.

- Similarity Metrics: Code for calculating Maximum Mean Discrepancy (MMD) and Grey Relational Analysis (GRA).

- Probability Model: A symmetric matrix (e.g., RMP matrix) to store inter-task transfer probabilities.

3. Procedure: Step 1: Initialize the knowledge transfer probability matrix, typically with uniform values. Step 2: At each generation, for every task, calculate its similarity to other tasks using MMD (population distribution) and GRA (evolutionary trend). Step 3: Rank potential source tasks based on a composite similarity score. Step 4: Adjust the transfer probability for each task pair based on the similarity score and the historical success of past transfers between them. Feedback from generated offspring can be used to measure success. Step 5: Perform knowledge transfer operations (e.g., crossover) using the updated probabilities. Step 6: Repeat Steps 2-5 until termination criteria are met.

Protocol 2: Integrating Bi-Space Knowledge Reasoning for MOMTO

This method improves solution quality in multi-objective problems by leveraging knowledge from both search and objective spaces [5].

1. Objective: To acquire comprehensive knowledge (search space and objective space) and use it to prevent transfer bias and improve the balance between convergence and diversity.

2. Materials/Reagents:

- Algorithm Base: A multi-objective multitask Particle Swarm Optimization (PSO) or EA.

- Knowledge Extraction Modules: Components to analyze population distribution (search space) and particle evolutionary paths (objective space).

- Transfer Mechanism: An entropy-based selector for different transfer patterns.

3. Procedure: Step 1 - Knowledge Acquisition:

- Search Space Knowledge: Analyze the distribution information of similar populations across tasks.

- Objective Space Knowledge: Extract the evolutionary information of particles, such as progression towards Pareto fronts. Step 2 - Knowledge Reasoning: Use the bi-space knowledge to reason about the most promising directions for offspring generation and transfer. Step 3 - Collaborative Transfer:

- Use information entropy to identify the current evolutionary stage (e.g., early-exploration, mid-convergence, late-refinement).

- Adaptively activate one of three knowledge transfer patterns:

- Pattern A: Favor search space knowledge for diversity.

- Pattern B: Favor objective space knowledge for convergence.

- Pattern C: Balanced use of both knowledge types. Step 4: Integrate the new offspring into the population and repeat.

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Function in EMTO Experiment | Key Reference |

|---|---|---|

| Multi-factorial Evolutionary Algorithm (MFEA) | A foundational framework that uses a unified population and implicit genetic transfer via a fixed random mating probability (rmp). | [5] |

| Progressive Auto-Encoder (PAE) | A domain adaptation technique that continuously aligns the search spaces of different tasks throughout the evolutionary process, enabling more robust knowledge transfer. | [8] |

| Deep Q-Network (DQN) Model | A reinforcement learning model used to autonomously learn the optimal mapping between an observed evolutionary scenario and the most effective knowledge transfer strategy. | [7] |

| Anomaly Detection Filter | A filter applied during knowledge transfer to identify and exclude outlier individuals from the source task, reducing the risk of negative transfer. | [6] |

| Information Entropy Module | A metric used to divide the evolutionary process into distinct stages, allowing for the adaptive activation of different knowledge transfer patterns. | [5] |

| Maximum Mean Discrepancy (MMD) | A statistical metric used to quantify the similarity between the probability distributions of two task populations, aiding in source task selection. | [6] |

Frequently Asked Questions (FAQs)

Q1: What is negative transfer in the context of Evolutionary Multi-Task Optimization (EMTO)? In EMTO, negative transfer refers to the phenomenon where the transfer of knowledge (e.g., genetic material or solutions) from one optimization task to another interferes with the evolutionary search process, thereby degrading performance compared to solving the tasks independently [1] [9]. It occurs when tasks are not sufficiently related or when the knowledge transfer mechanism is poorly designed, leading to the introduction of unhelpful or misleading information into a task's population [10].

Q2: What are the common symptoms that my EMTO experiment is suffering from negative transfer? The primary symptom is a degradation in optimization performance for one or more tasks within the multi-task environment. Specifically, you may observe [1] [9]:

- Slower Convergence Rate: The algorithm takes significantly longer to find satisfactory solutions for a task compared to a single-task evolutionary algorithm.

- Premature Convergence: The population for a task gets trapped in a local optimum from which it cannot escape.

- Reduced Solution Quality: The best-found solutions for a task are consistently inferior to those found by independent optimization.

Q3: Which factors most commonly contribute to negative transfer? The main contributing factors align with the core challenges of knowledge transfer design [1] [9]:

- Low Inter-Task Relatedness: Transferring knowledge between tasks that have different global optima or landscape characteristics.

- Inappropriate Transfer Timing: Initiating knowledge transfer at a stage in the evolutionary process where the population is not receptive.

- Ineffective Knowledge Selection: Transferring solutions that are not useful or are harmful to the target task's search process.

- Fixed/Static Parameters: Using a fixed random mating probability (rmp) that does not adapt to the evolving relationships between tasks [9].

Q4: Are there quantitative metrics to detect and measure the severity of negative transfer? Yes, researchers employ several metrics to quantify negative transfer. The table below summarizes key performance indicators that can be monitored during experiments.

Table 1: Quantitative Metrics for Detecting Negative Transfer

| Metric Name | Description | How it Indicates Negative Transfer |

|---|---|---|

| Success Rate [9] | The ratio of successful runs where the algorithm finds a satisfactory solution. | A lower success rate in the EMTO setting compared to single-task baselines. |

| Inter-task vs. Intra-task Evolution Rate [9] | The relative improvement contributed by cross-task offspring versus within-task offspring. | A high proportion of inter-task offspring that do not survive selection suggests negative transfer. |

| Performance Loss Margin | The degree to which multi-task performance is worse than single-task performance. | A larger negative margin indicates more severe negative transfer [11]. |

Q5: What are the primary strategy categories for mitigating negative transfer? Mitigation strategies generally focus on making the knowledge transfer process adaptive and selective [1] [9]:

- Adaptive Transfer Control: Dynamically adjusting the probability of knowledge transfer based on its observed benefits.

- Similarity-based Task Selection: Measuring inter-task similarity (e.g., using Maximum Mean Discrepancy - MMD) and only permitting transfer between highly related tasks [9].

- Loss Balancing: In adjacent fields like Multi-Task Learning, scaling individual task losses based on their magnitudes to prevent one task from dominating, which is a related form of negative transfer [11].

Troubleshooting Guides

Guide 1: Diagnosing Negative Transfer in Your EMTO Workflow

Follow this experimental protocol to confirm and diagnose a negative transfer problem.

Objective: To determine if and to what extent negative transfer is impacting the performance of your EMTO algorithm.

Required Materials:

- Your EMTO algorithm implementation.

- The set of benchmark or real-world tasks you are optimizing.

- Baseline single-task evolutionary algorithm (EA) implementations.

Experimental Protocol:

- Establish Baselines: For each task

T_i, run a single-task EA. Record the performance (e.g., best fitness, convergence generation) over multiple independent runs. Calculate average performance. - Run EMTO: Execute your EMTO algorithm on the entire set of tasks, simultaneously. Ensure all other conditions (population size, function evaluations, etc.) are kept identical to the baseline runs.

- Comparative Analysis: For each task, compare the final performance and convergence trajectory of the EMTO run against the single-task baseline.

- Result Interpretation:

- Positive Transfer Suspected: If performance for a task is superior in the EMTO run.

- No Significant Transfer: If performance is statistically similar.

- Negative Transfer Confirmed: If performance for a task is significantly worse in the EMTO run [1].

The following workflow diagram illustrates this diagnostic process:

Guide 2: Implementing an Adaptive Knowledge Transfer Strategy

This guide provides a methodology for implementing a density-based clustering strategy to mitigate negative transfer, as proposed in recent literature [9].

Objective: To dynamically control knowledge transfer by selecting related tasks and regulating interaction intensity.

Principle: The strategy adapts based on the relative success of inter-task versus intra-task evolution and uses clustering to group individuals from different tasks, allowing for more controlled knowledge exchange within clusters [9].

Experimental Workflow:

The following diagram outlines the key stages of this adaptive strategy within a single generation of an EMTO algorithm.

Detailed Methodology:

Adaptive Mating Selection Mechanism:

- For each task, track the number of offspring created through intra-task crossover that survive to the next generation (intra-task evolution rate).

- Simultaneously, track the number of offspring created through inter-task knowledge transfer that survive (inter-task evolution rate).

- The probability of engaging in knowledge transfer for a task is dynamically adjusted by comparing the relative strength of these two rates. If inter-task evolution is consistently less successful, its probability is reduced [9].

Correlation Task Evaluation and Selection:

- For a target task, calculate the similarity to every other source task.

- Use the Maximum Mean Discrepancy (MMD) metric with a Gaussian kernel to quantify the difference in population distributions between the target task and each source task.

- Select the top

ksource tasks with the smallest MMD values as the most related tasks for potential knowledge transfer [9].

Density-Based Knowledge Interaction:

- Merge the subpopulations of the target task and the selected related source tasks.

- Apply a density-based clustering algorithm (e.g., DBSCAN) to this merged population. This forms groups of individuals that are close in the solution space, regardless of their task of origin.

- During mating selection, restrict parent selection to individuals within the same cluster. Favor the selection of parents from different tasks within the same cluster to promote useful knowledge exchange while maintaining diversity [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Advanced EMTO Research

| Item / Concept | Function / Relevance in EMTO |

|---|---|

| Multifactorial Evolutionary Algorithm (MFEA) | The foundational EMTO framework that uses a unified population and implicit genetic transfer via a fixed random mating probability (rmp) [1] [9]. |

| Maximum Mean Discrepancy (MMD) | A kernel-based statistical test used to measure the similarity between the probability distributions of two populations. It is employed to select the most related tasks for knowledge transfer, thereby reducing negative transfer [9]. |

| Density-Based Clustering (e.g., DBSCAN) | An unsupervised learning method used to group individuals from different tasks based on their proximity in the search space. This creates niches where productive knowledge transfer can occur [9]. |

| Exponential Moving Average (EMA) Loss Weighting | A technique adapted from Multi-Task Learning to balance the contribution of different task losses during gradient-based optimization. It helps mitigate a form of negative transfer where one task dominates the update process [11]. |

| Random Mating Probability (rmp) | A key parameter in many EMTO algorithms that controls the likelihood of crossover between individuals from different tasks. Modern approaches make this parameter adaptive or replace it with more sophisticated mechanisms [1] [9]. |

In scientific research and drug development, the effective transfer of knowledge is fundamental to maintaining project continuity, ensuring reproducibility, and building upon existing discoveries. However, this process is fraught with potential failure points that can compromise research integrity, delay timelines, and waste valuable resources. Knowledge transfer failures represent a critical vulnerability in experimental research, particularly in complex, multidisciplinary fields like EMTO (Experimental Methods and Technical Operations) where specialized expertise is distributed across teams and institutions. This article provides a comprehensive taxonomy of knowledge transfer failure modes and offers practical troubleshooting guidance to help researchers, scientists, and drug development professionals identify, prevent, and mitigate these failures in their experimental workflows.

The consequences of knowledge transfer failures in scientific settings can be severe, ranging from minor inefficiencies that slow project progress to complete corruption of experimental data that invalidates months or years of research. When critical methodological details, procedural nuances, or contextual insights fail to transfer effectively between researchers or across teams, the result is often experimental irreproducibility, flawed conclusions, and substantial financial losses. By understanding the specific failure modes and implementing targeted solutions, research organizations can significantly enhance the reliability and efficiency of their knowledge-intensive operations.

Taxonomy of Knowledge Transfer Failures

Knowledge transfer failures can be systematically categorized into distinct types based on their underlying mechanisms and manifestations. The following taxonomy identifies seven primary failure modes that commonly occur in research and development environments, particularly in pharmaceutical and life sciences settings where complex experimental knowledge must be accurately preserved and transferred.

Table 1: Knowledge Transfer Failure Taxonomy

| Failure Mode | Primary Manifestation | Common Causes in Research Settings |

|---|---|---|

| Slow Transfer | Knowledge arrives too late to inform critical experimental decisions | Bureaucratic approval processes; inefficient documentation systems; information siloing between departments |

| Inadequate Articulation | Recipients cannot understand or apply transferred knowledge | Expert blindness to novice needs; overuse of jargon; missing contextual details; poorly documented methods |

| Inadvertent Omission | Critical methodological details are accidentally excluded | Human error; over-reliance on memory; assumption of shared basic knowledge; time pressures |

| Deliberate Omission | Knowledge is intentionally withheld or filtered | Political considerations; competition for resources; intellectual property concerns; publication biases |

| Knowledge Hoarding | Information is not shared at all | Lack of incentive structures; cultural barriers; fear of losing competitive advantage; organizational silos |

| Failed Reuse | Transferred knowledge is not applied in new contexts | Not applicable to local conditions; poor findability; lack of trust in source; insufficient implementation guidance |

| Lack of Co-creation | Knowledge is transferred one-way without collaborative refinement | Power dynamics; lack of feedback mechanisms; time constraints; cultural resistance to collaborative development |

Failure Mode 1: Slow Knowledge Transfer

Slow knowledge transfer occurs when critical information moves through organizational systems too slowly to impact experimental decisions or procedures effectively. In research environments, this failure mode manifests when methodological insights, procedural updates, or technical notifications arrive after key experimental milestones have passed. This temporal misalignment can result in researchers utilizing outdated protocols, repeating previously-established failures, or missing opportunities to incorporate important technical improvements.

Root Causes:

- Overly complex approval processes for methodological changes

- Inefficient documentation distribution systems

- Organizational silos that impede cross-functional information flow

- Absence of clear protocols for communicating procedural updates

Failure Mode 2: Inadequate Knowledge Articulation

The "curse of knowledge" frequently affects senior researchers and technical experts, who may underestimate the difficulty less-experienced colleagues face when attempting to understand and apply specialized methodologies. This failure mode occurs when knowledge is expressed in forms that are incomplete, poorly contextualized, or overly reliant on implicit understanding. The result is often misinterpretation of experimental protocols, incorrect application of techniques, and ultimately, compromised research outcomes.

Root Causes:

- Assumption of shared baseline understanding

- Failure to articulate tacit knowledge and procedural nuances

- Use of undefined specialized terminology or laboratory-specific shorthand

- Incomplete methodological descriptions in protocols and SOPs

Failure Mode 3: Inadvertent Omission

Similar to a recipe that accidentally excludes a critical ingredient, inadvertent omission in knowledge transfer occurs when essential elements of experimental knowledge are unintentionally left out of documentation or verbal instructions. This failure mode is particularly problematic in complex multi-step procedures where certain steps have become automatic for experienced researchers but are absolutely critical for protocol success. The consequences include experimental failures, irreproducible results, and significant resource waste.

Root Causes:

- Reliance on human memory for complex procedures

- Assumption that certain steps are "obvious" or universally known

- Time pressures that lead to shortcuts in documentation

- Lack of systematic verification processes for methodological completeness

Failure Mode 4: Deliberate Omission

When knowledge is intentionally filtered, modified, or withheld for strategic, political, or competitive reasons, deliberate omission occurs. In research environments, this might manifest as downplaying methodological challenges, obscuring technical difficulties, or selectively reporting conditions to make results appear more robust. This failure mode represents a severe form of knowledge corruption that can lead to widespread replication failures and misdirected research efforts across entire scientific fields.

Root Causes:

- Competition for funding, publications, or recognition

- Intellectual property concerns

- Organizational power dynamics

- Publication biases favoring "clean" results over methodologically complex ones

Failure Mode 5: Knowledge Hoarding

The failure to share knowledge at all represents a complete breakdown in knowledge transfer systems. Knowledge hoarding occurs when researchers or technical experts retain critical information rather than disseminating it to colleagues who could benefit from it. This failure mode may stem from cultural factors, perceived threats to expertise-based authority, or inadequate organizational incentives for knowledge sharing.

Root Causes:

- Cultural norms that reward individual expertise over collective capability

- Fear that knowledge sharing diminishes personal value or job security

- Absence of technical systems that facilitate easy knowledge sharing

- Lack of recognition or reward for sharing practices

Failure Mode 6: Failed Reuse

Even when knowledge is successfully transferred, it may fail to be applied in new contexts due to various barriers. This failure mode occurs when researchers understand the transferred knowledge but cannot or will not implement it in their specific experimental context. The knowledge remains theoretically available but practically unused, representing a significant waste of knowledge acquisition and transfer resources.

Root Causes:

- Perceived misalignment with local conditions or constraints

- Difficulty adapting generalized knowledge to specific applications

- Low confidence in the reliability or applicability of the knowledge

- Organizational cultures that privilege original work over application of existing knowledge

Failure Mode 7: Lack of Co-creation

The traditional unidirectional model of knowledge transfer (from expert to novice) often fails to account for the collaborative nature of knowledge development and refinement. This failure mode occurs when knowledge is treated as a fixed commodity to be delivered rather than a dynamic resource to be developed jointly through interaction and adaptation. The result is often knowledge that fails to address the specific needs and contexts of recipients.

Root Causes:

- Hierarchical organizational structures that privilege certain voices

- Lack of mechanisms for feedback and iterative refinement

- Time constraints that discourage collaborative development

- Cultural assumptions about the directionality of expertise flows

Troubleshooting Guides and FAQs

Diagnostic Framework for Knowledge Transfer Failures

This diagnostic flowchart provides researchers with a systematic approach to identifying specific knowledge transfer failure modes in their experimental workflows. By following the decision points, research teams can quickly pinpoint the nature of their knowledge transfer challenges and implement targeted solutions.

Frequently Asked Questions

Q1: How can we distinguish between inadvertent and deliberate knowledge omission in our research team's documentation?

A1: Inadvertent omission typically shows patterns of inconsistency across different documents prepared by the same individual, affects seemingly "obvious" steps that experts perform automatically, and correlates with time pressure situations. Deliberate omission often affects the same types of sensitive information consistently across multiple documents, aligns with organizational incentives or political considerations, and may be accompanied by defensive justification when questioned. Conducting periodic knowledge capture interviews with multiple team members independently can help identify systematic gaps that suggest deliberate omission.

Q2: What specific strategies can help overcome the "curse of knowledge" when senior researchers train new lab members?

A2: Implement structured "knowledge articulation" protocols that require experts to: (1) demonstrate procedures while verbalizing each step, (2) identify and explain three most common mistakes and how to avoid them, (3) provide historical context for why specific methodological choices were made, and (4) observe novices performing the procedure and provide corrective feedback. This approach helps surface tacit knowledge that experts may not realize they possess [12].

Q3: How can we measure knowledge transfer effectiveness in experimental research settings?

A3: Implement a multi-dimensional assessment approach tracking both process and outcome metrics: protocol reproduction success rates, time from training to independent competency, error frequency in technique application, and cross-researcher consistency in results generation. Additionally, track system-level metrics including time spent searching for information and employee estimates of time spent on inefficient workarounds [13].

Q4: What organizational structures best support knowledge co-creation in pharmaceutical R&D?

A4: Matrix structures that facilitate cross-functional collaboration combined with formal knowledge broker roles have proven effective. Additionally, establishing communities of practice around key methodological areas, implementing structured peer mentoring programs, and creating "lessons learned" repositories with mandatory contribution requirements foster co-creation environments. These approaches help transition from unidirectional knowledge transfer to collaborative knowledge development [14] [15].

Experimental Protocols for Assessing Knowledge Transfer Effectiveness

Knowledge Loss Risk Assessment Protocol

Purpose: To systematically identify and prioritize knowledge vulnerabilities within research teams, particularly focusing on specialized technical expertise that resides with few individuals.

Materials:

- Knowledge risk assessment template

- Interview guides for subject matter experts

- Risk matrix scoring framework

- Team organizational charts and responsibility assignments

Procedure:

- Identify critical knowledge domains essential for research continuity

- Map current knowledge distribution across team members

- Conduct structured interviews using knowledge capture forms [12]

- Assess position risk based on uniqueness of knowledge and difficulty of replacement

- Evaluate attrition risk based on expected departure timelines

- Calculate total knowledge loss risk using assessment matrix

- Develop mitigation strategies for high-risk knowledge areas

- Document assessment results and review quarterly

Validation Metrics:

- Percentage of critical knowledge domains with documented backups

- Reduction in single-point knowledge dependencies

- Improved onboarding time for new researchers in technical roles

Knowledge Transfer Fidelity Measurement Protocol

Purpose: To quantitatively assess the completeness and accuracy of knowledge transfer between researchers, particularly for complex experimental techniques.

Materials:

- Standardized experimental procedure for assessment

- Evaluation checklist of critical procedural elements

- Video recording equipment (optional)

- Inter-rater reliability assessment tools

Procedure:

- Select a standardized experimental procedure with well-documented steps

- Have Subject Matter Expert (SME) demonstrate the procedure while being recorded

- SME trains novice researcher using normal knowledge transfer processes

- Novice researcher performs the procedure while evaluators assess fidelity

- Score performance against checklist of critical elements

- Identify specific points of knowledge degradation or omission

- Analyze patterns across multiple transfer events to identify systemic gaps

- Implement corrective measures for consistently problematic transfer points

Validation Metrics:

- Percentage of critical procedural steps correctly replicated

- Time to achieve competency benchmark

- Error rate in initial independent performances

- Inter-rater reliability in assessments

Table 2: Knowledge Transfer Assessment Metrics

| Assessment Dimension | Primary Metric | Benchmark Target | Measurement Frequency |

|---|---|---|---|

| Transfer Speed | Time from knowledge availability to application | <48 hours for critical updates | Weekly |

| Articulation Quality | Recipient comprehension scores | >90% correct on assessment | Per transfer event |

| Completeness | Percentage of critical elements retained | 100% for safety-critical steps | Per procedure |

| Utilization Rate | Percentage of transferred knowledge applied | >80% for high-value knowledge | Quarterly |

| Co-creation Index | Number of collaborative improvements | >2 improvements per procedure | Semi-annually |

Research Reagent Solutions for Knowledge Transfer Studies

Table 3: Essential Research Reagents for Knowledge Transfer Studies

| Reagent/Resource | Primary Function | Application in KT Research |

|---|---|---|

| Knowledge Capture Interview Forms | Structured data collection from experts | Systematic extraction of tacit knowledge from subject matter experts [12] |

| Knowledge Loss Risk Assessment Matrix | Risk visualization and prioritization | Identifying and ranking knowledge vulnerabilities based on position and attrition risks [12] |

| Digital Knowledge Repositories | Centralized knowledge storage and retrieval | Creating accessible organizational memory systems with version control [13] [16] |

| Structured Mentoring Program Frameworks | Facilitated knowledge exchange | Creating formal channels for tacit knowledge transfer between experienced and novice researchers [14] [15] |

| Knowledge Audit Protocols | Comprehensive knowledge mapping | Assessing knowledge assets, identifying gaps, and evaluating utilization patterns [16] |

| Cross-training Implementation Kits | Redundant capability development | Building backup expertise for critical technical procedures across multiple researchers [16] |

Knowledge Transfer Process Visualization

This visualization illustrates the four-stage knowledge transfer process (identification, capture, sharing, application) and maps the seven failure modes to the specific stages where they most commonly occur. The feedback loop from application back to identification represents the dynamic, cyclical nature of effective knowledge transfer systems that continuously improve through application and refinement.

The taxonomy presented in this article provides a comprehensive framework for understanding, diagnosing, and addressing knowledge transfer failures in research environments. By recognizing these distinct failure modes and implementing the targeted troubleshooting strategies, research organizations can significantly enhance the reliability and efficiency of their knowledge-intensive operations. The experimental protocols and assessment methods offer practical tools for proactively managing knowledge transfer risks, particularly in complex, technically specialized fields like pharmaceutical research and development where the costs of knowledge failure are exceptionally high.

A systematic approach to knowledge transfer troubleshooting represents not merely an operational improvement but a fundamental requirement for research excellence and reproducibility. As research methodologies grow increasingly complex and interdisciplinary collaboration becomes more essential, the ability to transfer knowledge effectively between researchers, teams, and institutions will increasingly determine scientific productivity and innovation capacity.

FAQs: Core Concepts and Problem Identification

Q1: What is the fundamental principle behind using Evolutionary Multi-task Optimization (EMTO) in multi-target drug discovery?

EMTO is an optimization paradigm designed to solve multiple tasks (e.g., optimizing for different target proteins) simultaneously [1]. It operates on the principle that related optimization tasks often possess implicit common knowledge or skills [1]. In multi-target drug discovery, this means that the knowledge gained while searching for compounds active against one target can be transferred to accelerate the discovery process for other, related targets, thereby improving overall optimization performance and efficiency [1] [17].

Q2: What is "negative transfer" and why is it a critical challenge in this field?

Negative transfer occurs when knowledge shared between tasks is not beneficial and instead deteriorates optimization performance compared to solving each task independently [1]. This is a common and serious challenge in EMTO research. Experiments have shown that performing knowledge transfer between tasks with low correlation can lead to worse outcomes [1]. In the context of drug discovery, this could mean that sharing information between two unrelated protein targets might lead the search process towards compounds that are ineffective for both.

Q3: How can we determine which tasks are suitable for knowledge transfer to avoid negative effects?

Determining task suitability primarily involves measuring the similarity or relatedness between tasks [1]. For drug-target interaction (DTI) prediction, a ligand-based similarity approach, such as the Similarity Ensemble Approach (SEA), can be used [18]. SEA computes the similarity between targets based on the structural similarity of their known active ligands [18]. Targets with high similarity scores can then be grouped into clusters, and multi-task learning can be applied within these clusters to promote positive knowledge transfer [18].

Q4: Beyond task selection, what advanced strategies can improve knowledge transfer?

- Self-Adaptive Transfer: Some EMTO algorithms incorporate a knowledge transfer adaptation strategy. They dynamically learn the probability of positively transferring knowledge from one task to another based on past success and failure records, adjusting transfer rates accordingly during the optimization process [17].

- Knowledge Distillation: This involves using a pre-trained "teacher" model (e.g., a single-task model) to guide the training of a "student" multi-task model. Techniques like "teacher annealing" can help the multi-task model achieve higher average performance while minimizing performance degradation on individual tasks [18].

Q5: How can Large Language Models (LLMs) assist in overcoming knowledge transfer challenges?

Recent research explores using LLMs to autonomously design and generate effective knowledge transfer models for EMTO [19]. This approach aims to reduce the heavy reliance on domain-specific expertise required to hand-craft these models. An LLM-based framework can search for and produce high-performing knowledge transfer models by optimizing for both transfer effectiveness and computational efficiency [19].

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Negative Transfer

Symptoms: The multi-task optimization model performs significantly worse on one or more tasks than a single-task model would. The search process appears to converge prematurely or is misdirected.

| Diagnosis Step | Action | Reference |

|---|---|---|

| Check Task Relatedness | Quantify the similarity between the targets in your multi-task problem. Use a method like the Similarity Ensemble Approach (SEA) to compute ligand-set-based similarity. | [18] |

| Analyze Transfer History | If using an adaptive algorithm, examine the "success memory" and "failure memory" for each task. A high failure rate for a specific knowledge source indicates a likely negative transfer relationship. | [17] |

| Compare to Baseline | Always run single-task optimization baselines. Performance degradation against these baselines is a clear indicator of negative transfer. | [18] |

Solutions:

- Re-cluster Tasks: If tasks are not sufficiently similar, re-group them into more coherent clusters. One study found that multi-task learning on dissimilar targets worsened performance, while learning on similar targets improved it [18].

- Adjust Transfer Parameters: In self-adaptive algorithms, ensure the learning period (LP) and base probability (bp) parameters are set appropriately to allow the algorithm to accurately learn inter-task relationships [17].

- Implement Focus Search: Activate a focus search strategy for tasks that consistently fail to benefit from transfer. This strategy temporarily restricts the task to only use its own knowledge, preventing interference from other tasks [17].

Guide 2: Addressing Data Sparsity and Model Generalizability

Symptoms: The model performs well on training data but poorly on validation/test data or new, unseen targets. Predictions for tasks with limited data are highly inaccurate.

Solutions:

- Leverage Multi-task Data Amplification: Use the combined data from all related tasks in the shared layers of the model to create a more robust feature representation, effectively amplifying the learning signal for data-sparse tasks [18].

- Utilize Pre-trained Representations: Represent drug targets using pre-trained protein language models (e.g., ESM, ProtBERT). These embeddings capture deep biological information and can improve generalizability [20].

- Apply Knowledge Distillation: Train a multi-task "student" model using the predictions of well-trained single-task "teacher" models. This guides the multi-task model to retain task-specific performance while benefiting from shared learning [18].

Experimental Protocols & Data

Protocol 1: Evaluating Knowledge Transfer with a Self-Adaptive EMTO Algorithm

This protocol outlines how to test the effectiveness of knowledge transfer using an algorithm like Self-adaptive Multi-task Differential Evolution (SaMTDE) [17].

1. Objective: To validate that knowledge transfer between two related drug optimization tasks improves performance and to measure the algorithm's ability to avoid negative transfer. 2. Materials:

- Algorithm: Self-adaptive Multi-task Differential Evolution (SaMTDE) code.

- Tasks: At least two formulated optimization problems representing different drug-target interactions. The relatedness should be known or estimated.

- Benchmark: Standard single-task optimization algorithms (e.g., classic DE) for baseline comparison. 3. Methodology:

- Initialization: For each task, initialize the knowledge source pool with all tasks. Set the initial choosing probability for all knowledge sources to be equal:

p_t,k = 1/K[17]. - Optimization Loop:

- For each individual in each task's subpopulation, select a knowledge source via roulette wheel selection based on the current probabilities

p_t,k. - Generate new candidate solutions (offspring) using the mutation and crossover operators, incorporating knowledge from the selected source.

- Evaluate the offspring.

- Update the success memory (

n_s_t,k^g) and failure memory (n_f_t,k^g) for each task based on whether offspring generated via each knowledge source entered the next generation [17]. - Every LP generations, update the choosing probabilities

p_t,kusing the formula:p_t,k = SR_t,k / (∑ SR_t,k), whereSR_t,k = (∑ n_s_t,k^j) / (∑ n_s_t,k^j + ∑ n_f_t,k^j + ε) + bp[17]. 4. Measurements:

- For each individual in each task's subpopulation, select a knowledge source via roulette wheel selection based on the current probabilities

- Record the convergence speed (number of generations to reach a fitness threshold).

- Record the final best fitness achieved for each task.

- Monitor the evolution of

p_t,kvalues to observe which knowledge sources the algorithm deems most useful.

Quantitative Data on Multi-task Learning Performance

The table below summarizes experimental results from a study on drug-target interaction prediction, comparing single-task learning (STL) with two multi-task learning (MTL) approaches [18].

| Learning Model | Tasks | Mean Target-AUROC | Standard Deviation | Robustness (Tasks with improved AUROC) |

|---|---|---|---|---|

| Single-Task Learning (STL) | 268 targets | 0.709 | 0.183 | (Baseline) |

| Classic MTL (All Tasks) | 268 targets | 0.690 | Not Specified | 37.7% |

| MTL on Similar Targets | Clustered targets | 0.719 | 0.172 | Not Specified |

- Key Insight: Classic MTL on all 268 targets simultaneously led to an average performance drop and performance degradation in over 60% of tasks. However, when MTL was applied only to clusters of similar targets, the average performance surpassed that of STL, demonstrating the importance of task selection [18].

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Function in Multi-target Drug Discovery | Key Details / Examples |

|---|---|---|

| Drug-Target Interaction Databases | Provide structured, experimental data on known drug-target interactions for model training and validation. | ChEMBL: Bioactivity data for drug-like small molecules [20]. DrugBank: Comprehensive drug and target data with mechanistic information [20]. BindingDB: Binding affinity data for protein targets [20]. |

| Protein Language Models | Generate informative numerical representations (embeddings) of protein targets from their amino acid sequences. | ESM & ProtBERT: Pre-trained models that capture structural and functional information about proteins, useful as input features for ML models [20]. |

| Similarity Ensemble Approach (SEA) | A computational method to estimate the similarity between targets based on the chemical similarity of their known ligands. Used for clustering tasks before MTL [18]. | Helps prevent negative transfer by grouping related targets. A raw score threshold (e.g., 0.74) can be used to define similarity [18]. |

| Graph Neural Networks (GNNs) | A deep learning architecture ideal for learning from molecular structures represented as graphs (atoms as nodes, bonds as edges). | Excels at capturing the topological structure of molecules, which is crucial for predicting their interaction with multiple biological targets [20]. |

| Knowledge Distillation Framework | A training methodology where a compact "student" model is trained to mimic the behavior of a larger or ensemble "teacher" model. | Application: A multi-task student model is guided by predictions from single-task teacher models, helping to avoid performance degradation in MTL [18]. |

Visualizations of Workflows and Relationships

Knowledge Transfer in Evolutionary Multi-task Optimization

Multi-task Learning with Knowledge Distillation

Task Clustering to Prevent Negative Transfer

Advanced EMTO Methodologies: Implementing Effective Knowledge Transfer Systems

This technical support center provides targeted troubleshooting guides and frequently asked questions (FAQs) for researchers encountering knowledge transfer failures in Electromagnetism-inspired Topology Optimization (EMTO) experiments. The content is structured to help scientists, particularly those in drug development and related fields, diagnose and resolve specific issues when working with single-population and multi-population transfer models. Knowledge transfer, the process of translating knowledge into action, is framed here as a complex, multidirectional process involving problem identification, knowledge selection, context analysis, transfer activities, and utilization [21].

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between single-population and multi-population transfer models in EMTO research?

Single-population transfer models typically involve transferring knowledge from one source domain to one target domain (e.g., reusing a pre-trained model's feature layers for a new classification task) [22]. The process is often more linear. In contrast, multi-population models involve knowledge integration from multiple, potentially diverse, source domains or populations. This introduces greater complexity, as seen when attempting to merge models from different deep-learning frameworks like TensorFlow and PyTorch, requiring careful handling of differing APIs, internal graph representations, and tensor operations [23].

2. Why does my multi-population model fail to converge, even when the constituent single-population models perform well?

This is a common symptom of knowledge transfer failure. Key troubleshooting areas include:

- Contextual Misalignment: The analytical context of your source populations may be too dissimilar, leading to conflicting gradient signals during fine-tuning [21].

- Batch Normalization Inconsistencies: If your model uses layers like

BatchNormalization, incorrect settings (e.g., wrongepsilonvalues or improperly setmoving_meanandmoving_varduring weight transfer) can prevent learning. A known solution is to ensure these layers are in inference mode (training=False) during transfer learning to prevent the destruction of pre-trained weights [24] [25]. - Framework Discrepancies: In multi-population setups that combine models from different frameworks, subtle differences in default hyperparameters (e.g., optimizer behavior, learning rate schedules, or data normalization) can cause one population to dominate or destabilize the training [23] [26].

3. How can I quantitatively decide between a single-population and multi-population approach for my specific dataset?

The decision should be guided by a structured analysis of your data and the available knowledge sources. The following table summarizes key quantitative and qualitative factors to consider:

Table 1: Framework Selection Guide: Single-Population vs. Multi-Population Models

| Factor | Single-Population Model | Multi-Population Model |

|---|---|---|

| Data Availability in Target Domain | Limited (the primary use case) | Limited, but multiple relevant source domains are available. |

| Similarity Between Source & Target | High similarity is required. | Can leverage multiple, partially similar sources. |

| Computational Cost | Generally lower, faster training cycles [22]. | Higher, due to increased model complexity and data integration. |

| Representation Power | Limited to knowledge from one source. | Higher potential for robust and generalizable representations. |

| Risk of Negative Transfer | Lower (if source is well-chosen). | Higher; requires mechanisms to weight or filter source contributions. |

| Implementation Complexity | Lower, well-supported by standard libraries (e.g., Keras). | High, may require custom integration layers and loss functions. |

4. What are the best practices for converting a model from one framework to another in a multi-population setup?

Automated conversion (e.g., via ONNX or Keras 3) is a viable strategy, but it is not foolproof [23]. Manual conversion, while labor-intensive, often yields the most reliable results. Key pitfalls to avoid during manual conversion include [25]:

- Inconsistent Padding: Kernel size and stride differences between frameworks (e.g., PyTorch vs. Keras) can lead to misaligned feature maps.

- Channel Ordering: Differences in default tensor formats (

channels_firstvs.channels_last) will break model layers if not correctly handled. - Parameter Mismatches: Epsilon values in normalization layers and the assignment of non-trainable parameters (like batch statistics) must be meticulously checked.

Troubleshooting Guides

Guide 1: Diagnosing Knowledge Transfer Failures

The following diagram outlines a high-level workflow for diagnosing common knowledge transfer failures, applicable to both single- and multi-population scenarios.

Guide 2: Resolving Single-Population Transfer Issues

A frequent issue in single-population transfer is a model that fails to learn, characterized by high loss and stagnant validation accuracy.

Symptoms:

- Training and validation loss do not decrease, or do so very slowly [27].

- Validation accuracy remains near random chance.

Methodology:

- Freeze Feature Layers Correctly: Ensure the pre-trained base model's layers are set to non-trainable. However, pay special attention to

BatchNormalizationlayers. - Set BN layers to inference mode: When building your model, pass

training=Falsewhen calling the base model to ensureBatchNormalizationlayers use their stored moving statistics instead of batch statistics [24]. - Validate Data Preprocessing: Confirm that input data preprocessing (e.g., rescaling, normalization) exactly matches the protocol used by the original pre-trained model.

- Adjust Learning Rate: Use a small learning rate for the newly added, unfrozen classification layers (e.g., 0.001 or lower) to avoid distorting the pre-trained features initially.

Guide 3: Resolving Multi-Population Integration Failures

Multi-population models fail when knowledge from different sources conflicts or is integrated poorly.

Symptoms:

- Training is unstable, with large swings in loss.

- The model performs worse than any single-source model.

- Convergence is significantly slower than expected [26].

Methodology:

- Standardize Inputs and Representations: Ensure all input populations are preprocessed and normalized consistently. For neural networks, this may involve ensuring all inputs use the same tensor format (e.g.,

channels_last). - Employ Weighted or Adaptive Fusion: Instead of simple concatenation or averaging, use learned mechanisms to combine features from different populations. An attention-based gating mechanism can allow the model to dynamically weight the importance of each source population.

- Staged Training (Curriculum Learning):

- Stage 1: Train the model on the most similar or easiest source population first to establish a good baseline.

- Stage 2: Progressively introduce data from other, more diverse populations, potentially fine-tuning the entire model or just the fusion layers with a reduced learning rate.

- Framework Alignment Protocol: When integrating models from different frameworks (e.g., TensorFlow and PyTorch):

- Option A: Use ONNX. Convert all models to the ONNX format as an intermediary, then load them into a unified framework for integration [23].

- Option B: Manual Weight Porting. Manually extract weights from the source model and assign them layer-by-layer to the corresponding model in the target framework. Rigorously test each layer's output [25].

The following diagram illustrates a robust multi-population integration architecture that mitigates common failures.

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and their functions for building robust transfer models in EMTO research.

Table 2: Essential Research Reagents for Knowledge Transfer Experiments

| Reagent / Tool | Function / Purpose |

|---|---|

| Pre-trained Model Weights | Provides the foundational knowledge (features) from a source population, drastically reducing the need for large target datasets [22]. |

| Batch Normalization Layer | Stabilizes and accelerates deep network training; requires careful configuration during transfer (e.g., training=False) to preserve knowledge [24]. |

| GlobalAveragePooling2D | Redides spatial dimensions, converting feature maps into a fixed-size vector for the classifier, often preferred over Flatten() in transfer learning [24]. |

| ONNX (Open Neural Network Exchange) | An intermediary format for model conversion, facilitating multi-population integration by translating models between different frameworks [23]. |

| Feature Fusion Layer (e.g., Attention Gate) | A critical component for multi-population models; dynamically learns the importance of features from different source populations for a given task. |

| Learning Rate Scheduler | Systematically adjusts the learning rate during training, which is crucial for fine-tuning pre-trained models without overwriting valuable pre-trained knowledge. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of knowledge transfer failure in Evolutionary Multitasking Optimization (EMTO) for genetic data? Knowledge transfer failures in EMTO primarily occur due to three reasons [28]:

- Chaotic Task Matching: Blind or random selection of auxiliary tasks for knowledge sharing, leading to negative transfer between unrelated genetic tasks [28].

- Fixed Transfer Intensity: Using a pre-defined, static intensity for knowledge transfer across all task pairs, which fails to adapt to the varying relatedness between different genetic concepts [28].

- Domain Mismatch: Significant discrepancies in the search spaces or data distributions (e.g., between different genomic or phenotypic datasets) that are not properly aligned before transfer [29] [28].

FAQ 2: How can unified representation schemes improve the integration of genomic and clinical data? Unified representation schemes address interoperability challenges by using language models to encode biomedical concepts based on their natural language descriptions, bypassing inconsistencies in clinical coding systems (like SNOMED CT or EFO) [29]. These frameworks construct a common embedding space where both biomedical concepts (e.g., diseases, medications) and genomic features (e.g., SNPs) can be aligned, enabling a more holistic biological understanding and facilitating data integration from heterogeneous sources like biobanks and GWAS catalogs [29].

FAQ 3: What practical steps can I take to mitigate negative transfer when working with multiple optimization tasks? You can implement an adaptive EMTO solver with online inter-task learning. Key steps include [28]:

- Adaptive Task Selection: Use a mechanism like maximum mean discrepancy to reasonably select source tasks for each constitutive task.

- Dynamic Intensity Control: Employ a multi-armed bandit model to adaptively control the intensity of knowledge transfer for different task pairs based on online feedback.

- Domain Adaptation: Utilize models like a Restricted Boltzmann Machine to extract latent features and reduce the discrepancy between the search spaces of different tasks.

Troubleshooting Guides

Problem: Poor Performance Due to Negative Knowledge Transfer

Symptoms

- The optimization algorithm converges slower when multiple tasks are solved together compared to solving them in isolation.

- The quality of solutions deteriorates after knowledge exchange between tasks.

Resolution Steps

- Diagnose Task Relatedness: Calculate the pairwise similarity or divergence (e.g., using maximum mean discrepancy) between the data distributions or search spaces of your tasks [28]. This helps identify which tasks are sufficiently related for beneficial knowledge exchange.

- Implement Adaptive Control: Integrate an online learning mechanism, such as a multi-armed bandit model, to dynamically adjust the intensity of knowledge transfer (

rmpparameter) between task pairs based on their historical success of interaction [28]. - Verify with Ablation: Run an ablation study by disabling knowledge transfer for specific task pairs. If performance improves for a task when isolated, it confirms negative transfer, and you should re-evaluate your task selection criteria [29].

Symptoms

- Inability to map or align concepts from different coding systems (e.g., EFO from GWAS Catalog to SNOMED CT from a clinical biobank).

- The model fails to capture the distinct biological mechanisms behind clinically similar concepts.

Resolution Steps

- Adopt a Description-Based Encoding: Move away from relying solely on code mappings. Instead, use a language model to generate embeddings for biomedical concepts directly from their textual descriptions (e.g., "type 1 diabetes," "rs12345 SNP") [29].

- Employ Multi-Task Contrastive Learning: Fine-tune the language model using a multi-task learning paradigm. Train it on objectives that align biomedical concepts and genomic variants using data from diverse sources like GWAS summaries, eQTL data, and biomedical knowledge graphs [29].

- Enrich with Biological Context: Ensure your training data includes sources that provide genetic context (e.g., odds ratios from GWAS, correlation scores from eQTL) to infuse the embeddings with functional biological knowledge, helping to distinguish concepts with different underlying mechanisms [29].

Experimental Protocols & Data

Protocol 1: Implementing an Adaptive EMTO Solver

This protocol outlines the methodology for creating a solver that mitigates negative transfer [28].

Objective: To solve many-task optimization problems competitively by adaptively selecting auxiliary tasks, controlling transfer intensity, and reducing inter-task discrepancy.

Methodology:

- Task Selection: For each constitutive task, select potential auxiliary source tasks by measuring the divergence of their task-specific subspaces using a metric like maximum mean discrepancy [28].

- Intensity Control: Model the intensity of knowledge transfer for each task pair as a multi-armed bandit problem. The bandit algorithm learns to allocate appropriate transfer resources based on rewarding successful knowledge exchanges [28].

- Domain Adaptation: Use a Restricted Boltzmann Machine (RBM) to extract latent features from the populations of different tasks. This non-linear transformation helps narrow the discrepancy between tasks, making knowledge transfer more robust [28].

- Validation: Conduct experiments on numerical benchmarks and compare the performance against existing EMTO counterparts using standard performance metrics [28].

Protocol 2: Constructing a Unified Embedding Space for Genomic and Biomedical Concepts

This protocol details the procedure for training a framework like GENEREL [29].

Objective: To generate a unified representation (embedding) of single-nucleotide polymorphisms (SNPs) and biomedical concepts that captures their complex relationships.

Methodology:

- Data Collection: Gather data from multiple sources:

- Patient-level data from biobanks (e.g., UK Biobank).

- Summary-level genomic data from GWAS Catalogs and eQTL repositories.

- Biomedical knowledge graphs from sources like PrimeKG and UMLS [29].

- Model Architecture: Use a pre-trained language model (e.g., PubMedBERT, BioBERT) as the base encoder for biomedical concept descriptions [29].

- Multi-Task Training: Fine-tune the model using a weighted multi-task contrastive learning paradigm with three key tasks:

- Task 1 (Relatedness): Learn from biomedical knowledge graphs (PrimeKG) to understand concept relatedness.

- Task 2 (Alignment): Align embeddings of biomedical concepts and SNPs using data from GWAS, UK Biobank, and eQTL. Contrastive losses are adjusted based on effect sizes (e.g., odds ratios) or correlation scores.

- Task 3 (Synonyms): Identify synonymous concepts from the UMLS knowledge base [29].

- Evaluation:

- Evaluate biomedical concept embeddings on benchmarks derived from independent databases like DisGeNET and DrugBank.

- Evaluate SNP embeddings using independent GWAS results from cohorts like the Million Veteran Program (MVP) [29].

Table 1: Common Causes and Solutions for Knowledge Transfer Failure in EMTO

| Cause of Failure | Symptoms | Recommended Solution | Key Reference |

|---|---|---|---|

| Chaotic Task Matching | Slow convergence, solution deterioration | Adaptive task selection via maximum mean discrepancy | [28] |