Small-World and Scale-Free Architectures in Biological Networks: From Foundational Principles to Therapeutic Applications

This article explores the prevalence, significance, and application of small-world and scale-free properties within biological networks.

Small-World and Scale-Free Architectures in Biological Networks: From Foundational Principles to Therapeutic Applications

Abstract

This article explores the prevalence, significance, and application of small-world and scale-free properties within biological networks. Tailored for researchers, scientists, and drug development professionals, it synthesizes foundational graph theory with cutting-edge methodological advances. We examine how high clustering and short path lengths (small-world) and hub-dominated, power-law degree distributions (scale-free) shape the robustness and dynamics of systems from gene regulation to protein-protein interactions. The content critically addresses ongoing debates, such as the empirical rarity of strongly scale-free networks, and presents state-of-the-art computational tools for network inference and analysis. Furthermore, it highlights practical applications in identifying essential genes, understanding disease mechanisms, and pioneering network-based drug repurposing strategies, ultimately providing a comprehensive resource for leveraging network science in biomedical research.

Unraveling the Blueprint: Core Principles of Small-World and Scale-Free Networks in Biology

The study of complex networks has provided a powerful framework for understanding the structure and function of diverse biological systems. From the intricate wiring of neuronal networks to the sophisticated interactions between proteins and genes, network science offers mathematical tools to decode biological complexity. Two architectural paradigms have proven particularly influential in this domain: small-world networks, characterized by high local clustering and short global path lengths, and scale-free networks, defined by a power-law degree distribution that gives rise to highly connected hubs. These topological patterns are not merely abstract mathematical concepts; they have profound implications for the robustness, dynamics, and functional capabilities of biological systems [1] [2] [3].

The significance of these network architectures extends directly to pharmaceutical research and drug development. Understanding whether a biological network exhibits small-world or scale-free properties can inform therapeutic strategies, particularly in identifying potential drug targets. For instance, in scale-free networks, hub nodes often represent critical control points whose disruption could significantly impact the entire system, whereas small-world organization supports both specialized processing in clustered regions and efficient information transfer across the network [4] [5]. This technical guide provides researchers with a comprehensive framework for distinguishing these architectural pillars, complete with methodological protocols for empirical analysis and theoretical foundations for interpreting results in biological contexts.

Small-World Networks: Definition and Properties

Core Architectural Principles

Small-world networks represent a unique topological class that combines elements of both regular lattices and random graphs. Formally, a small-world network exhibits two defining characteristics: a high clustering coefficient and a short average path length [1] [6]. The clustering coefficient (C) quantifies the degree to which nodes in a network tend to cluster together, calculated as the probability that two neighbors of a common node are also connected to each other. Mathematically, for a node with degree ki, its local clustering coefficient is given by Ci = (2ei)/(ki(ki-1)), where ei represents the number of edges between the ki neighbors of node i [7]. The network's overall clustering coefficient is the average of all local Ci values.

The second defining property, short average path length (L), measures the typical separation between any two nodes in the network. It is calculated as the mean of the shortest geodesic distances between all possible node pairs: L = (1/(N(N-1)))∑dij, where dij is the shortest distance between nodes i and j, and N is the total number of nodes [7]. This combination of high clustering and short path length creates a network architecture that supports both specialized local processing and efficient global integration—properties highly desirable for biological systems ranging from neural circuits to metabolic networks [8] [3].

Quantitative Metrics and Detection

Accurately identifying small-world properties requires rigorous quantification. The most prevalent metric has been the small-world coefficient (σ), introduced by Humphries and colleagues, which compares a network's clustering (C) and path length (L) to those of an equivalent random network (with measures Crand and Lrand): σ = (C/Crand)/(L/Lrand) [1] [7]. A network is typically classified as small-world if σ > 1, indicating C ≫ Crand and L ≈ Lrand. However, this approach has limitations, as comparing clustering to a random network doesn't fully capture the lattice-like local structure of true small-world networks [7].

To address this limitation, a revised metric ω has been proposed that compares clustering to an equivalent lattice network (Clatt) while maintaining the comparison of path length to a random network: ω = (Lrand/L) - (C/C_latt) [7]. This metric ranges between -1 and 1, with values near zero indicating small-world structure, negative values signaling more random characteristics, and positive values suggesting more regular lattice-like properties. This more nuanced quantification better aligns with the original conceptualization of small-world networks as existing in an intermediate regime between regular and random topologies [7].

Table 1: Key Metrics for Characterizing Small-World Networks

| Metric | Formula | Interpretation | Threshold for Small-Worldness |

|---|---|---|---|

| Clustering Coefficient (C) | C = (1/N)∑Ci where Ci = (2ei)/(ki(k_i-1)) | Measures local connectivity density | Significantly higher than random network |

| Average Path Length (L) | L = (1/(N(N-1)))∑d_ij | Measures global integration efficiency | Similar to random network |

| Small-World Coefficient (σ) | σ = (C/Crand)/(L/Lrand) | Ratio of clustering to path length relative to random | σ > 1 |

| Omega (ω) | ω = (Lrand/L) - (C/Clatt) | Compares clustering to lattice, path to random | ω ≈ 0 |

Scale-Free Networks: Definition and Properties

Core Architectural Principles

Scale-free networks constitute another fundamental architectural class distinguished by a particular pattern of connectivity. The defining feature of a scale-free network is a degree distribution that follows a power law for large degrees: P(k) ~ k^(-γ), where P(k) represents the probability that a randomly selected node has degree k, and γ is the power-law exponent [2] [9]. This mathematical relationship means that while most nodes in the network have relatively few connections, a few nodes (called "hubs") have an exceptionally large number of connections. The term "scale-free" originates from the fact that power laws are the only functional form that remains unchanged (up to a multiplicative factor) under rescaling of the independent variable, satisfying P(ak) = a^(-γ)P(k) [9].

The topological implications of this degree distribution are profound. In contrast to random networks where the maximum degree scales logarithmically with network size (kmax ~ log N), in scale-free networks the maximum degree scales polynomially (kmax ~ N^(1/(γ-1))) [2]. This results in extreme degree heterogeneity, with a measure κ = 〈k²〉/〈k〉 that increases with network size for 2 < γ < 3, unlike random networks where κ is largely independent of size. This structural organization has significant consequences for network robustness and vulnerability—scale-free networks are typically resilient to random failures (deletion of random nodes) but highly vulnerable to targeted attacks on hubs [1] [5].

Generative Mechanisms and Biological Relevance

The most widely recognized mechanism for generating scale-free networks is the preferential attachment model introduced by Barabási and Albert [2] [5]. This model incorporates two key processes: growth (the network expands over time by adding new nodes) and preferential attachment (new nodes tend to connect to existing nodes with probability proportional to their current degree). The "rich-get-richer" dynamics that emerge from this process naturally produce power-law degree distributions with an exponent γ = 3 [5]. In biological contexts, variations of preferential attachment may operate through mechanisms like gene duplication and divergence, where duplicated genes initially share interaction partners but gradually diverge to establish new connections [5].

Despite the theoretical appeal of scale-free networks, their empirical prevalence in biological systems requires careful statistical validation. A comprehensive study analyzing nearly 1,000 real-world networks found that strongly scale-free structure is actually rare, with most networks being better fit by log-normal distributions than power laws [10]. The same study revealed that while social networks are at best weakly scale-free, a handful of biological and technological networks do appear strongly scale-free. These findings highlight the importance of rigorous statistical testing rather than presuming scale-free architecture in biological networks [10].

Table 2: Key Metrics for Characterizing Scale-Free Networks

| Metric | Formula | Interpretation | Biological Significance |

|---|---|---|---|

| Power-Law Exponent (γ) | P(k) ∝ k^(-γ) | Determines hub dominance | 2<γ<3: Infinite variance; governs robustness |

| Degree Heterogeneity (κ) | κ = 〈k²〉/〈k〉 | Measures inequality in connections | Increases with network size in scale-free networks |

| Maximum Degree Scaling | k_max ~ N^(1/(γ-1)) | How the largest hub grows with system size | Polynomial growth enables persistent hubs |

| Hub Dominance | Proportion of edges connected to top 5% of nodes | Measures centralization around hubs | High values indicate functional specialization |

Comparative Analysis: Architectural and Functional Implications

Structural and Dynamic Differences

The architectural differences between small-world and scale-free networks translate into distinct functional capabilities and dynamic behaviors. Small-world topology, with its combination of high clustering and short path lengths, facilitates both local specialization and global integration [7]. This organization is particularly beneficial for systems that require modular processing of information while maintaining efficient communication between modules. In contrast, scale-free architecture, with its hub-dominated connectivity, enables efficient broadcasting from central nodes but creates potential vulnerabilities and bottlenecks at these critical hubs [1] [2].

These structural differences have profound implications for system dynamics. In small-world networks, the high clustering supports the formation of functional modules and stable local dynamics, while the short path lengths facilitate rapid synchronization and information propagation across the entire system [6]. Scale-free networks exhibit distinct dynamic behaviors shaped by their hub-centric organization—processes like information spread, contagion, and synchronization are predominantly governed by the highly connected hubs [2] [5]. The table below summarizes key comparative properties of these two network architectures.

Table 3: Comparative Properties of Small-World vs. Scale-Free Networks

| Property | Small-World Networks | Scale-Free Networks |

|---|---|---|

| Defining Feature | High clustering, short path length | Power-law degree distribution |

| Hub Presence | Moderate, degree homogeneity | Extreme, high-degree hubs |

| Robustness to Random Failure | Moderate | High |

| Robustness to Targeted Attacks | Moderate | Low (vulnerable to hub removal) |

| Clustering Distribution | Uniformly high | Decreases with node degree |

| Typical Generative Mechanism | Watts-Strogatz rewiring | Preferential attachment |

| Biological Examples | Neural connectivity, protein conformations | Protein-protein interactions, metabolic networks |

Biological Manifestations and Research Applications

In biological contexts, both architectural patterns appear across different scales of organization. Small-world properties have been identified in chemical library networks used for drug discovery, where the topological structure influences compound diversity and screening efficiency [4]. Similarly, brain networks consistently exhibit small-world architecture, balancing functional specialization (supported by high clustering) with integrated processing (enabled by short path lengths) [7] [3]. Scale-free organization has been reported in protein-protein interaction networks and metabolic networks, where hub molecules play disproportionately important roles in cellular functions [8] [3].

The distinction between these architectures has direct implications for pharmaceutical research and therapeutic development. In target identification, recognizing whether a disease-related network follows small-world or scale-free principles informs intervention strategies. For scale-free networks, targeting hub proteins may offer potent effects but risks systemic toxicity, while targeting peripheral nodes in small-world modules might enable more precise therapeutic effects with fewer off-target consequences [4] [5]. Understanding these architectural principles provides a conceptual framework for network pharmacology and polypharmacology, where multi-target interventions are designed based on the topological organization of biological systems.

Experimental Protocols for Network Analysis

Protocol for Identifying Small-World Properties

Objective: To quantitatively determine whether a biological network exhibits small-world architecture.

Materials and Software: Network data (adjacency matrix or edge list), programming environment (Python/R), graph analysis libraries (NetworkX, igraph), statistical computing packages.

Procedure:

- Network Construction: Represent biological entities as nodes and their interactions as edges. For weighted networks, preserve weight information.

- Compute Basic Metrics: Calculate the network's clustering coefficient (C) and average shortest path length (L).

- Generate Equivalent Random Networks: Create an ensemble of Erdős-Rényi random networks with the same number of nodes and edges as the biological network. Calculate Crand and Lrand as mean values across this ensemble.

- Generate Equivalent Lattice Networks: Create regular lattice networks with equivalent connectivity constraints for comparison.

- Calculate Small-World Metrics: Compute both σ = (C/Crand)/(L/Lrand) and ω = (Lrand/L) - (C/Clatt).

- Statistical Assessment: For σ > 1, the network has small-world properties. For ω, values near zero (typically |ω| < 0.1) indicate small-world structure.

Interpretation Guidelines: A genuine small-world network should demonstrate both significantly higher clustering than random networks (C/Crand ≫ 1) and similar path length (L/Lrand ≈ 1). The ω metric provides more reliable discrimination, with values between -0.1 and 0.1 strongly suggesting small-world organization [7].

Protocol for Identifying Scale-Free Properties

Objective: To rigorously test whether a biological network exhibits scale-free architecture through statistical analysis of its degree distribution.

Materials and Software: Network data, maximum-likelihood estimation tools, power-law fitting packages (powerlaw in Python), statistical comparison frameworks.

Procedure:

- Degree Distribution Extraction: Calculate the degree k for each node and construct the probability distribution P(k).

- Visual Inspection: Plot P(k) versus k on log-log scales as an initial assessment. A straight line suggests potential power-law behavior.

- Parameter Estimation: Using maximum-likelihood methods, estimate the power-law exponent γ and the lower bound k_min where the power-law behavior begins.

- Goodness-of-Fit Test: Calculate the p-value using the Kolmogorov-Smirnov statistic to determine whether the power-law distribution is a plausible fit to the data. A p-value > 0.1 suggests the power law is a plausible hypothesis.

- Alternative Distribution Comparison: Compare the power-law fit to alternative distributions (exponential, log-normal, stretched exponential) using likelihood ratio tests. Compute normalized log-likelihood ratios to determine the best-fitting model.

- Hub Identification: Identify hubs as nodes with degree significantly higher than the network average (typically k > 2σ above mean degree).

Interpretation Guidelines: A network can be considered scale-free if: (1) the power-law distribution is statistically plausible (p > 0.1), (2) it fits better than alternative distributions, and (3) the estimated exponent γ typically falls between 2 and 3 for real-world networks [10]. Recent research emphasizes the importance of comparing multiple distributions, as log-normal distributions often fit degree distributions as well or better than power laws [10].

Visualization and Analytical Workflows

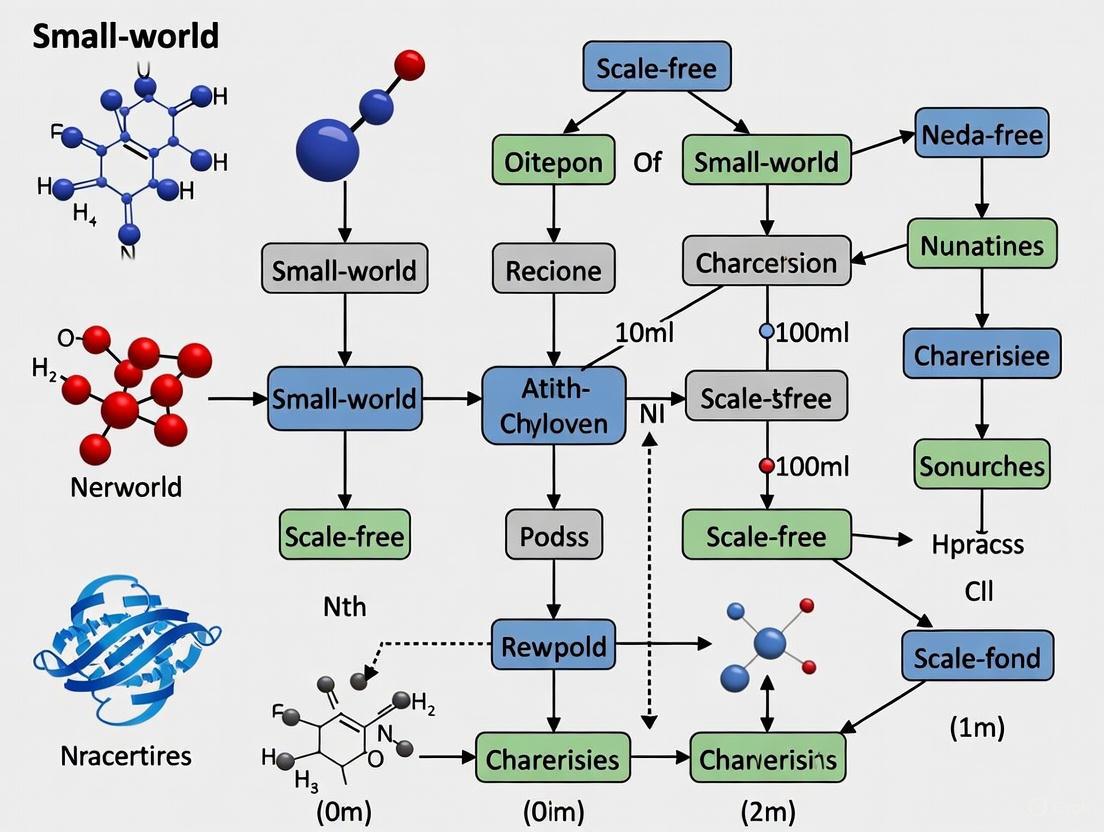

To support rigorous analysis of network architectures, standardized visualization and analytical workflows are essential. The following diagram illustrates the key decision points and analytical steps for classifying biological networks based on their topological properties:

Network Architecture Classification Workflow

The Researcher's Toolkit: Essential Methodologies

Table 4: Research Reagent Solutions for Network Analysis

| Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|

| Adjacency Matrix | Mathematical representation of network connectivity | Fundamental data structure for all network analyses |

| Maximum-Likelihood Estimation (MLE) | Statistical method for parameter estimation | Accurate fitting of power-law exponents to degree distributions |

| Erdős-Rényi Random Network Model | Null model with random connectivity | Baseline comparison for small-world and scale-free properties |

| Watts-Strogatz Model | Generative model with tunable randomness | Producing small-world networks for controlled experiments |

| Barabási-Albert Model | Generative model with preferential attachment | Producing scale-free networks for controlled experiments |

| Spectral Graph Analysis | Study of network eigenvalues | Complementary method for network classification [3] |

| Likelihood Ratio Tests | Statistical comparison of distribution fits | Determining whether power-law fits better than alternatives [10] |

| Kolmogorov-Smirnov Test | Goodness-of-fit measurement | Assessing plausibility of power-law distribution [10] |

The architectural distinction between small-world and scale-free networks provides fundamental insights into the organization of biological systems. While small-world architecture emphasizes a balance between local clustering and global efficiency, scale-free organization highlights the functional significance of highly connected hubs. Rather than existing as mutually exclusive categories, these architectural principles represent complementary perspectives for understanding biological complexity, with many real-world networks exhibiting features of both or falling along a continuum between these idealized types [8].

For researchers in biological networks and drug development, recognizing these architectural patterns has practical implications. Small-world properties suggest systems optimized for both specialized processing and integrated function, while scale-free properties indicate systems whose robustness and vulnerability are heavily dependent on hub elements. As statistical methodologies continue to advance, particularly with more rigorous testing of power-law hypotheses and improved small-world metrics [10] [7], our understanding of these architectural principles will further refine their application in biological contexts. The ongoing challenge lies not in forcing biological networks into rigid architectural categories, but in developing nuanced understandings of how their specific topological features support biological function and how these might be therapeutically modulated.

The small-world network is a fundamental concept in network science, describing systems that are highly clustered locally yet have short global path lengths, meaning that any two nodes can be connected via a surprisingly small number of steps [1]. This phenomenon, famously known as "six degrees of separation" in social networks, is also a prevalent architectural feature in biological systems. In the context of gene regulation, small-world properties are increasingly recognized as a crucial structural determinant of the robustness, dynamics, and functional capabilities of Transcriptional Networks.

This architectural principle helps reconcile seemingly contradictory views of gene regulation. On one hand, experiments like cellular reprogramming show that cell fate can be switched by overexpressing a few "master regulator" transcription factors, suggesting a relatively simple, hierarchical control structure. On the other hand, Genome-Wide Association Studies (GWAS) reveal that complex phenotypic traits are often influenced by hundreds of genetic loci, each with a small effect, indicating a highly distributed and complex regulatory system [11]. The small-world model provides a framework to unify these perspectives, suggesting that local actions can have system-wide consequences due to the network's short characteristic path lengths.

Structural Evidence for Small-World Topology in Transcriptional Networks

A small-world network is formally characterized by two key metrics when compared to an equivalent random graph: a significantly higher clustering coefficient and a comparably short average path length [1]. Evidence from multiple studies confirms that gene regulatory networks (GRNs) exhibit these features.

- High Clustering: Genes within GRNs tend to form tightly interconnected groups or modules. This high clustering arises from the cooperative action of transcription factors on multiple target genes and the presence of recurring network motifs, such as feed-forward loops (FFLs) and bi-fans (BFs) [12]. These motifs act as functional building blocks and contribute directly to the local density of connections.

- Short Path Lengths: Despite this local clustering, the average number of steps (or interactions) between any two randomly chosen genes in a GRN is typically low. This is facilitated by highly connected "hub" genes that act as bridges between different regulatory modules, ensuring efficient communication across the network.

A key driver of small-world structure in GRNs is the three-dimensional (3D) organization of the genome. Simulations using polymer models demonstrate that spatial proximity and clustering of transcription factors and their target sites, driven by a "bridging-induced attraction," naturally lead to a small-world topology where the transcriptional activity of each genomic region can subtly affect almost all others [11]. This results in a pan-genomic regulatory network that is inherently complex and interconnected.

Table 1: Key Properties of Small-World Transcriptional Networks

| Property | Description | Functional Implication in GRNs |

|---|---|---|

| High Clustering Coefficient | Measures the degree to which nodes tend to cluster together; the probability that two neighbors of a node are connected themselves. | Enables coordinated regulation of gene modules and functional redundancy. |

| Short Characteristic Path Length | The average shortest distance between any two nodes in the network is small. | Allows for rapid propagation of regulatory signals and systemic responses to perturbations. |

| Emergence of Hubs | Presence of nodes with a very high number of connections. | Hubs integrate and distribute regulatory information; their perturbation can have large effects. |

| Modularity | The presence of groups of highly interconnected nodes. | Supports specialized cellular functions and modular organization of genetic programs. |

A Quantitative Framework: Metrics and Experimental Validation

Quantifying the small-world nature of a network requires precise metrics. The small-world coefficient (( \sigma )) and the small-world measure (( \omega )) are two common quantitative tools used for this purpose [1].

The small-world coefficient is defined as: ( \sigma = \frac{C/Cr}{L/Lr} ), where a value of ( \sigma > 1 ) indicates small-world structure. Here, ( C ) and ( L ) are the observed clustering coefficient and characteristic path length of the network, while ( Cr ) and ( Lr ) are the same metrics for an equivalent random network.

Experimental validation of small-world topology often leverages high-throughput data. For instance, in protein-protein interaction networks, the Mutual Clustering Coefficient (Cvw) has been used to assess the reliability of individual interactions based on how well they fit the small-world pattern of neighborhood cohesiveness [13]. This principle can be extended to transcriptional networks by analyzing interaction data from techniques like ChIP-seq and Perturb-seq.

Table 2: Key Experimental and Computational Methods for Studying Small-World GRNs

| Method/Reagent | Function in Network Analysis |

|---|---|

| Chromatin Conformation Capture (3C) | Maps the 3D spatial organization of chromatin, providing data on physical interactions between genomic regions. |

| Perturb-seq (CRISPR-screening) | Enables high-throughput measurement of transcriptional consequences of single-gene perturbations, revealing causal regulatory relationships. |

| Polymer Modeling & Brownian Dynamics | In silico simulation of chromosome folding and transcription factor binding to study emergent network properties. |

| Mutual Clustering Coefficient (Cvw) | A topological metric to assess the local cohesiveness around an edge, indicating its confidence in a small-world context. |

Experimental Protocols and Workflows

Protocol 1: Inferring Small-World Properties from 3D Genome Data

This protocol outlines how to derive evidence for small-world regulatory networks from chromatin conformation data and polymer models, based on the methodology described by [11].

- System Representation: Model a chromatin fragment or a whole chromosome as a polymer chain. Each bead in the chain represents a segment of DNA (e.g., 3 kbp).

- Define Transcription Units (TUs): Randomly or annotate a set of beads as TUs, which contain binding sites for transcription factors (TFs).

- Simulate TF Binding and 3D Dynamics: Perform 3D Brownian dynamics simulations. TFs are represented as spheres that bind reversibly and multivalently to TU beads with strong affinity and to non-TU beads with weak affinity.

- Define Transcriptional Activity: A TU is considered "transcribed" when a TF is bound to it. The transcriptional activity of a TU is calculated as the fraction of simulation time it is bound.

- Analyze Emergent Clustering: Observe the spontaneous formation of TF/TU clusters due to "bridging-induced attraction," a hallmark of high local clustering.

- Construct and Analyze the Network: Create a network where nodes are TUs. Connect two nodes if their co-transcription or spatial co-localization exceeds a random expectation. Calculate the network's clustering coefficient (( C )) and average shortest path length (( L )).

- Compare to Null Models: Generate equivalent lattice (( C{\ell}, L{\ell} )) and random (( Cr, Lr )) networks. Compute the small-world measure ( \omega = \frac{Lr}{L} - \frac{C}{C{\ell}} ). A positive ( \omega ) indicates small-world structure.

Workflow for 3D Polymer Modeling of GRNs

Protocol 2: Generating Realistic GRN Structures with Small-World Properties

This protocol describes a computational algorithm for generating synthetic GRNs with properties like those observed biologically, incorporating insights from small-world and scale-free theory [14] [12].

- Initialize Network: Begin with a small, connected directed network (e.g., a simple motif like a downlink).

- Define Growth Unit: Instead of adding single nodes, use a small transcriptional motif (e.g., a downlink or feed-forward loop) as the fundamental unit for network growth.

- Preferential Attachment: The probability that a new motif attaches to an existing node in the substrate network is proportional to the node's current out-degree and/or in-degree (a linear or non-linear attachment kernel).

- Node Integration: Attach the new motif to the existing network. Nodes from the incoming motif can be new or can be merged with existing nodes in the substrate network, based on the attachment probabilities.

- Iterate: Repeat steps 2-4 until the network reaches the desired size.

- Validate Topology: Analyze the final network for key properties: sparsity, power-law-like degree distribution, high clustering (small-worldness), and enrichment for specific transcriptional motifs.

Workflow for Motif-Based GRN Generation

Functional and Dynamical Consequences

The small-world architecture of GRNs has profound implications for their function and dynamic behavior.

- Robustness and Fragility: Small-world networks are generally robust to random perturbations—the deletion of a random, typically low-connected node has little effect on the network's overall connectivity and path length. This property buffers the system against random mutations [1]. However, this robustness comes with a vulnerability: these networks are fragile to targeted attacks on hubs. The perturbation of a highly connected regulator can lead to catastrophic failure of the network, which may explain the pathogenicity of mutations in certain key transcription factors.

- Perturbation Propagation: The short average path length means that the effect of a perturbation, such as a gene knockout, can propagate widely and rapidly through the network. However, high clustering and modularity tend to dampen the effects of these perturbations, confining them to some extent and preventing total system failure [14]. This results in a distribution of perturbation effects where most genes have limited impact, while a few hub perturbations have large, system-wide consequences.

- Emergence of Complex Dynamics: Small-world structure, combined with biochemical realities like time delays in transcription and translation, can give rise to rich dynamical behaviors. Recent research has shown that even simple two-node GRN models with delays can exhibit extreme events, such as occasional, large-amplitude bursts in protein concentration, via routes like interior crisis-induced intermittency [15]. These dynamics are theorized to have potential links to abnormal physiological processes and disease states.

Discussion: Reconciling Scale-Free and Small-World Views

The discourse on network topology in biology has often intertwined the concepts of small-world and scale-free networks. A scale-free network is characterized by a degree distribution that follows a power law, leading to a few highly connected hubs and many poorly connected nodes. While often discussed together, it is crucial to recognize that these are distinct properties.

However, the universality of strict scale-free structure in real-world networks is controversial. A large-scale, rigorous statistical analysis of nearly 1000 networks found that strongly scale-free structure is empirically rare, with many networks being better fit by log-normal distributions [10]. Social networks, which share some organizational principles with biological networks, were found to be at best weakly scale-free.

This finding reframes our understanding of GRN architecture. The small-world property may be a more fundamental and universal feature of transcriptional networks than a strict power-law degree distribution. The small-world model—with its emphasis on high clustering, short path lengths, and the presence of some hub genes—accommodates a range of degree distributions and provides a robust explanation for the observed dynamics and functional capabilities of GRNs without relying on a strict scale-free hypothesis.

The small-world phenomenon provides a powerful and empirically supported model for understanding the architecture and function of gene regulatory networks. Evidence from 3D genome organization, network analysis of perturbation data, and computational modeling consistently points to a system architecture characterized by localized clustering and global efficiency. This topology facilitates coordinated gene expression, confers robustness against random failures, and allows for the rapid, widespread propagation of regulatory signals. It also provides a framework for reconciling the localized action of master transcription factors with the distributed complexity revealed by GWAS. As a fundamental organizational principle, the small-world structure deeply informs our understanding of cellular function, the phenotypic impact of genetic variation, and the dynamic underpinnings of disease.

Protein-protein interaction (PPI) networks model the intricate physical contacts between proteins, thereby underpinning the functional organization of cells. These networks are essential for understanding a vast array of cellular processes, including signal transduction, metabolic regulation, and the molecular mechanisms underlying disease states [16]. The physical interaction of proteins, which leads to their compilation into large, densely connected networks, is a fundamental subject of investigation in systems biology [17]. The study of these networks facilitates the understanding of pathogenic mechanisms that trigger the onset and progression of complex diseases. Consequently, this knowledge is being translated into the development of effective diagnostic and therapeutic strategies [17]. Within the broader context of biological networks research, PPI networks exhibit distinctive architectural properties. Two of the most significant are the small-world property, characterized by shorter than expected path lengths and high clustering coefficients, and the scale-free property, which is defined by a specific pattern of connectivity [17]. This whitepaper will delve into the prevalence and profound implications of scale-free topology in PPI networks, providing a technical guide for researchers, scientists, and drug development professionals.

Defining Scale-Free Topology and Its Prevalence in PPI Networks

Fundamental Principles of Scale-Free Networks

Scale-free networks are a class of complex networks whose topology is not random but follows a precise mathematical pattern. They were first formally introduced by Albert and Barabási [17]. The defining feature of a scale-free network is that the degree distribution—the probability P(k) that a randomly selected node has exactly k connections—follows a power-law distribution. This is expressed as ( P(k) \sim k^{-\gamma} ), where γ is a constant parameter typically ranging between 2 and 3 for real-world networks [17]. This mathematical principle leads to a network structure that is highly heterogeneous. Unlike random graphs where most nodes have a comparable number of links, a power-law distribution implies that the vast majority of nodes have a very low degree, while a smaller-than-expected number of nodes, known as hubs, possess a very high number of connections [17]. It was subsequently suggested that PPI networks obey this power-law distribution, a finding that has been confirmed in PPIs from multiple species [17].

Quantitative Evidence in Biological Networks

The scale-free nature of PPI networks is not merely a theoretical construct but is supported by empirical data from numerous studies. Research has discovered that regardless of species, known protein networks are scale-free, meaning that a few hub proteins account for a huge proportion of the interactions while most proteins possess only a small fraction [17]. The power-law nature of these networks has significant consequences for their robustness, vulnerability, and functional organization. Recent machine learning studies continue to account for this "scale-free property of biological networks," noting that in such networks, a few nodes have many connections while most have very few [18]. The following table summarizes key topological characteristics of PPI networks, including those indicative of scale-free structure.

Table 1: Key Topological Indices and Distributions for Characterizing PPI Networks

| Term | Definition | Implication for Scale-Free Networks |

|---|---|---|

| Node (Vertex) | Each protein in the network [17]. | The fundamental unit of the network. |

| Edge (Link) | A physical or functional interaction between proteins [17]. | Represents a binary relationship. |

| Degree (k) | The number of connections a node has [17]. | The central measure for power-law distribution. |

| Hub | A "high-degree" node with a disproportionate number of links [17]. | A defining feature of scale-free networks. |

| Power Law | ( P(k) \sim k^{-\gamma} ), the probability distribution of node degrees [17]. | The mathematical signature of scale-free topology. |

| Betweenness Centrality | Measures how often a node occurs on the shortest paths between other nodes [17]. | Hubs often have high betweenness. |

| Heterogeneity | The coefficient of variation of the degree distribution [17]. | High in scale-free networks due to hub presence. |

Methodologies for Mapping and Analyzing PPI Networks

Experimental Workflows for Interactome Mapping

The systematic analysis of PPI networks relies on diverse experimental methods to identify interactions. These can be broadly categorized into biophysical methods, which provide detailed structural information, and high-throughput methods, which enable large-scale mapping [17]. Selecting the appropriate method depends on the research goal, the nature of the PPI (e.g., stable vs. transient), and practical constraints like time and cost [19].

Table 2: Key Experimental Methods for Identifying Protein-Protein Interactions

| Method | Principle | Key Strengths | Key Limitations |

|---|---|---|---|

| Yeast Two-Hybrid (Y2H) | A transcription factor is split into BD and AD domains, fused to candidate proteins. Interaction reconstitutes the factor, activating a reporter gene [17] [19]. | Simple, established, low-cost, scalable, effective for binary interactions in an in vivo environment [19]. | High false-positive rate; requires nuclear localization; proteins may lack necessary PTMs in yeast; over-expression can cause non-specificity [19]. |

| Affinity Purification Mass Spectrometry (AP-MS) | A bait protein is purified using a tag or antibody, and co-purifying proteins are identified via mass spectrometry [20]. | Identifies stable protein complexes; can detect interactions for low-abundance proteins when optimized [20]. | Less suitable for transient interactions; scaling up to hundreds of targets is challenging [20]. |

| Membrane Yeast Two-Hybrid (MYTH) | A split-ubiquitin system where interaction between bait (membrane protein) and prey releases a transcription factor [19]. | Designed specifically for the analysis of membrane protein interactions. | Shares some limitations with Y2H regarding the yeast cellular environment. |

| Biophysical Methods (X-ray, NMR) | Direct structural analysis of protein complexes [17]. | Provide atomic-level detail about binding interfaces and mechanisms. | Expensive, laborious, and low-throughput [17]. |

The following diagram illustrates a generic workflow for AP-MS, a cornerstone method for mapping stable complexes.

AP-MS Workflow for PPI Mapping

Computational and Emerging Analytical Methods

Computational methods are crucial for predicting PPIs and analyzing network topology. With the growth of available interaction data, the focus has shifted to understanding the networks underlying human disease [17]. Machine learning (ML) techniques are extensively employed, but their evaluation must carefully account for scale-free topology, as standard random negative sampling can introduce severe biases. Models may learn to predict interactions based on node degree rather than biological features, leading to over-optimistic performance estimates [18]. To mitigate this, strategies like the Degree Distribution Balanced (DDB) sampling have been proposed [18].

Network embedding is another powerful approach that transforms networks into a low-dimensional space while preserving key topological properties. Recent advances include integrating overlapping clustering algorithms, such as Hierarchical Link Clustering (HLC), before embedding to better represent the overlapping community structure of biological systems [21]. On the frontier of computational research, quantum computing algorithms are being explored for analyzing biological networks. For instance, quantum interior-point methods have been demonstrated on metabolic modeling problems, suggesting a future potential for tackling the computational burden of massive biological networks as hardware matures [22].

Implications of Scale-Free Topology for Network Function and Dysfunction

Robustness, Vulnerability, and Disease Pathogenesis

The scale-free architecture of PPI networks has profound functional consequences. A key property is robustness against random attacks. Because the vast majority of nodes have few links, the random failure of a node is unlikely to severely disrupt the network. However, this comes with a critical vulnerability: sensitivity to targeted attacks on hubs [17]. The removal of a major hub can fragment the network, leading to catastrophic failure. This topological principle translates directly to human disease. Diseases are often caused by mutations that affect binding interfaces or lead to biochemically dysfunctional changes in proteins [17]. Given their central position, hubs are critical for cellular function, and mutations in hub proteins are frequently associated with severe pathologies, including cancer, autoimmune disorders, and neurodegenerative diseases [17] [20]. The dynamics of gene expression integrated with the static PPI network reveal a "just-in-time" model for dynamic complex assembly, where the expression of a single key hub protein can activate an entire complex at a specific time [17].

Applications in Drug Discovery and Therapeutics

The understanding of scale-free topology directly informs modern drug discovery. The traditional paradigm of targeting single proteins is shifting towards a network-based approach, where the PPI network itself becomes the therapeutic target for complex multi-genic diseases [17]. Hubs represent attractive but challenging drug targets. Disrupting a central hub could be highly efficacious but may also lead to toxicity due to its pleiotropic roles. An alternative strategy is to target less central nodes that are critical within specific disease modules [16]. Furthermore, network pharmacology utilizes PPI networks to identify multiple targets for complex diseases and to understand the mechanism of multi-component drugs [23]. Advanced computational frameworks, such as TCoCPIn, now integrate graph neural networks with topological metrics to predict chemical-protein interactions, thereby identifying novel therapeutic opportunities by analyzing the topology of interaction networks [23].

Table 3: Key Research Reagent Solutions for PPI Network Studies

| Reagent / Resource | Function and Application | Relevant Methods |

|---|---|---|

| Tandem Affinity Purification (TAP) Tag | Allows two-step purification of protein complexes under native conditions, reducing non-specific bindings [20]. | AP-MS |

| Sequential Peptide Affinity (SPA) Tag | Similar to TAP, uses a different set of tags for high-efficiency purification of complexes for MS [20]. | AP-MS |

| Gateway ORFeome Libraries | Comprehensive collections of open reading frames (ORFs) cloned into a universal system, enabling rapid transfer into various expression vectors for Y2H or AP-MS [19]. | Y2H, AP-MS |

| Stable Isotope Labeling | (e.g., SILAC) Allows for accurate quantitative comparison of protein abundance between samples using mass spectrometry [20]. | Quantitative AP-MS |

| STRING Database | A database of known and predicted PPIs, including direct and indirect associations, crucial for network analysis and validation [21]. | Bioinformatics Analysis |

| BioGRID Database | An open-access repository of curated physical and genetic interactions from major model organisms and humans [16]. | Bioinformatics Analysis |

The evidence overwhelmingly confirms the prevalence of scale-free topology in protein-protein interaction networks across species. This architectural principle is not a mere curiosity but a fundamental determinant of cellular organization, with deep implications for understanding biological function, disease mechanisms, and therapeutic development. The inherent robustness and vulnerability of this topology explain why certain proteins are critical and why their dysfunction leads to disease. Moving forward, the field is embracing more dynamic and context-specific models of the interactome, integrating other data types such as gene expression and structural information [16]. While challenges remain—such as the inherent bias in machine learning models trained on scale-free networks and the incomplete coverage of current interactome maps—the network perspective is firmly established [18]. The continued development of experimental techniques, sophisticated computational tools, and a deeper topological understanding promises to accelerate the translation of PPI network biology into tangible clinical benefits.

Biological networks, ranging from molecular interactions within a cell to species relationships within an ecosystem, exhibit distinct architectural patterns that underpin their functionality. Among the most studied of these patterns are scale-free and small-world topologies, which are argued to contribute significantly to key biological advantages: robustness, efficient information transfer, and specialization. This whitepaper synthesizes current research on these network properties, examining the evidence for their prevalence and their mechanistic roles in generating system-level behaviors. We present a critical analysis of the claim that scale-free structures are universal, discuss quantitative frameworks for measuring specialization, and detail experimental and computational methodologies for probing network robustness. The content is framed for researchers, scientists, and drug development professionals, with a focus on providing a technical foundation for understanding how network architecture influences biological function and resilience.

The representation of biological systems as networks—where nodes represent entities like proteins, genes, or species, and edges represent interactions, regulations, or trophic relationships—has revolutionized systems biology. This framework allows for the application of graph theory and statistical physics to decipher the organizational principles of life. Two conceptual paradigms have been particularly influential: the scale-free network and the small-world network.

A network is considered scale-free if the probability that a node has degree k (i.e., connections to k other nodes) follows a power-law distribution, Pr(k) ∝ k^(-α), where α is the scaling exponent [10]. This structure implies that the network lacks a characteristic scale for node connectivity, resulting in a few highly connected hubs and a majority of sparsely connected nodes. This topology is often associated with mechanisms like preferential attachment, where new nodes are more likely to link to already well-connected nodes. The small-world property, on the other hand, is characterized by short average path lengths between any two nodes (facilitating rapid propagation of signals or effects) and high clustering (nodes tend to form tightly knit groups). These properties are not mutually exclusive; a network can be both scale-free and small-world.

The core thesis of this whitepaper is that these architectural features are not merely topological curiosities but are fundamental to understanding the evolutionary advantages embedded in biological systems. Robustness—the ability to maintain function despite perturbations—is often linked to the presence of hubs and redundant pathways. Efficient information transfer is a direct consequence of short path lengths and is critical in signaling networks and neural circuits. Specialization, the division of biological labor, is enabled by a heterogeneous network structure where nodes can adopt distinct functional roles. The following sections will dissect the evidence for these relationships, providing a quantitative and methodological guide for researchers.

The Scale-Free Hypothesis: A Critical Examination

The claim that scale-free networks are ubiquitous in biology has been a central tenet of network science. The canonical definition requires that the degree distribution of the network follows a power law, a pattern with profound implications for network dynamics and resilience [10]. For instance, the theoretical synchronizability of oscillators on a network and the spread of information can be critically dependent on the power-law exponent α [10].

Current Evidence and Prevalence

Recent large-scale analyses, however, have challenged the universality of strongly scale-free structures. A seminal study testing nearly 1000 real-world networks—spanning social, biological, technological, transportation, and information domains—found that robust, strongly scale-free structure is empirically rare [10]. The study employed state-of-the-art statistical tools to fit power-law models and compare them to alternative distributions like the log-normal.

Table 1: Prevalence of Scale-Free Structure Across Network Domains [10]

| Network Domain | Prevalence of Strongly Scale-Free Structure | Commonly Observed Alternative Distribution |

|---|---|---|

| Social Networks | Weakly scale-free or non-scale-free | Log-normal |

| Biological Networks | A handful of strongly scale-free examples; most are not | Log-normal |

| Technological Networks | A handful of strongly scale-free examples | Log-normal |

| Information Networks | Mixed evidence | Log-normal |

| Transportation Networks | Rarely scale-free | Log-normal |

This analysis revealed that for most networks, log-normal distributions fit the degree data as well as, or better than, power laws [10]. This finding highlights the structural diversity of real-world networks and suggests that the scale-free hypothesis, in its strongest form, may not be as universal as once thought. This does not negate the value of the concept but rather emphasizes the need for careful statistical evaluation and for new theoretical explanations of these non-scale-free patterns.

Methodological Protocol for Identifying Scale-Free Topology

Accurately determining if an empirical network exhibits scale-free properties requires a rigorous statistical approach. The following protocol, based on the methods of Broido & Clauset (2019), should be followed [10].

- Data Preparation: Transform the raw network data (e.g., directed, weighted) into a simple, undirected graph. This step may generate multiple simple graphs from a single complex dataset, all of which should be tested.

- Power-Law Fitting: For the degree distribution of the simple graph, use maximum-likelihood methods to estimate the scaling parameter α and the lower bound k_min above which the power-law tail is hypothesized to hold.

- Goodness-of-Fit Test: Perform a hypothesis test (using a method like the Kolmogorov-Smirnov test) to evaluate the statistical plausibility of the power-law model. A high p-value (e.g., > 0.1) indicates the model is a plausible fit for the data.

- Model Comparison: Compare the power-law model to alternative heavy-tailed distributions, such as the log-normal, exponential, and stretched exponential, using a normalized likelihood-ratio test [10]. This step determines if the power law is the best among competing models.

This protocol formalizes the varying definitions of "scale-free" and provides a severe test of its empirical evidence, moving beyond visual inspection of log-log plots, which is notoriously unreliable.

Robustness in Biological Systems

Biological robustness is defined as the ability of a system to maintain specific functions or traits when exposed to a set of perturbations [24]. This property is observed at all organizational levels, from protein folding and gene expression to metabolic flux, physiological homeostasis, and ecosystem resilience.

Paradigms and Mechanisms

Robustness is often stabilized by specific system architectures and mechanisms. Perturbations can be mutational (e.g., gene knockouts) or environmental (e.g., temperature fluctuations), and research indicates that similar mechanisms often stabilize the system against different perturbation types [24]. System sensitivities to perturbations frequently display a long-tailed distribution, meaning that while the system is robust to most perturbations, it is highly sensitive to a few critical ones [24].

Key system properties associated with robustness include:

- Modularity: Decomposable subsystems that can fail independently.

- Bow-tie Architectures: A structure with diverse inputs, a core central process, and diverse outputs, promoting stability and efficient resource use.

- Degeneracy: The ability of structurally distinct elements to perform the same function, providing functional redundancy.

- Redundancy: The presence of duplicate elements that can substitute for one another.

These topological features often contribute to robustness through two primary underlying mechanisms: functional redundancy (multiple components can perform the same task) and response diversity (components respond differently to perturbations, regulated by competitive exclusion and cooperative facilitation) [24].

Experimental Analysis of Robustness

Experimental techniques for evaluating robustness are diverse, ranging from in silico simulations to in vivo genetic perturbations.

Table 2: Research Reagent Solutions for Probing Biological Robustness

| Reagent / Material | Function in Robustness Research |

|---|---|

| Gene Knockout Libraries (e.g., in E. coli, yeast) | Systematically tests mutational robustness by removing individual genes and assessing the impact on cell fitness and function. |

| Modified Regulatory Networks (e.g., promoter-swap constructs) | Evaluates robustness of cellular fitness to changes in genetic regulation, as demonstrated in E. coli [24]. |

| Chemical Perturbagens (e.g., kinase inhibitors) | Probes environmental robustness by disrupting specific signaling pathways and observing functional outputs. |

| Computational Network Models | In silico platforms to simulate thousands of perturbations (e.g., parameter variations, node deletions) that are infeasible to test experimentally. |

A notable experimental study by Isalan et al. (2008) constructed 598 modified regulatory networks in E. coli by recombining promoters with different transcription factor genes [24]. They found that 95% of these networks were tolerated by the bacteria, demonstrating a high degree of inherent robustness, and that some variants even provided a selective advantage in new environments. This highlights the link between robustness and evolvability.

Quantifying Specialization in Interaction Networks

Specialization describes the degree to which a species or molecule interacts with a specific, limited set of partners. In network terms, it represents the breadth of a node's interaction niche.

From Qualitative to Quantitative Indices

Traditional measures of specialization, such as the number of links (degree) or network-level connectance (the proportion of possible interactions that are realized), are qualitative as they ignore interaction frequencies [25]. These measures are also strongly dependent on network size, making cross-comparisons difficult. To overcome these limitations, information-theoretic indices that incorporate interaction strengths have been developed.

Table 3: Metrics for Quantifying Specialization in Networks

| Metric | Level | Formula / Principle | Interpretation |

|---|---|---|---|

| Number of Links (L) | Species | L = count of partners | A simple, qualitative measure of niche breadth. Ignores interaction strength. |

| Connectance (C) | Network | C = I / (r * c) [I=links, r=rows, c=columns] | The fraction of all possible interactions that occur. A qualitative, network-wide measure. |

| Specialization Index (d') | Species | Derived from Shannon entropy; compares an observed interaction distribution to a null model that assumes proportional interaction by availability [25]. | Ranges from 0 (generalist) to 1 (perfect specialist). Accounts for interaction frequencies and partner availability. |

| Network Specialization (H₂') | Network | Also derived from Shannon entropy; characterizes the degree of interaction partitioning between two parties across the entire network [25]. | Ranges from 0 (no specialization) to 1 (perfect specialization). Useful for comparisons across networks of different sizes. |

The species-level index d' is calculated by comparing the observed distribution of a species' interactions across its partners to a null model where interactions are distributed in proportion to the general availability of each partner [25]. This controls for the fact that a species may appear to be a generalist simply because it uses common partners in proportion to their abundance, whereas a true generalist actively seeks out rare partners. The network-level index H₂' is mathematically related to the species-level d' and provides a robust, size-independent measure for comparing different ecological or molecular interaction webs [25].

Visualization of Network Properties and Analysis Workflows

Visual representations are crucial for understanding the relationships and workflows in network biology. The following diagrams, generated using Graphviz with the specified color palette, illustrate key concepts.

Preferential Attachment Mechanism

This diagram illustrates the "rich-get-richer" process often used to explain the emergence of scale-free networks.

Diagram 1: The Preferential Attachment Mechanism in Scale-Free Networks. A new node (blue) is more likely to connect to an existing hub (red) than to a less-connected node (gray), reinforcing the hub's centrality.

Small-World Network Connectivity

This diagram contrasts a highly clustered, small-world architecture with a more regular lattice.

Diagram 2: Small-World Network Topology. Characterized by high local clustering (blue and green modules) and a few long-range shortcuts (yellow and red) that drastically reduce the average path length between any two nodes.

Workflow for Network Robustness Analysis

This flowchart outlines a standard methodology for computationally assessing the robustness of a biological network.

Diagram 3: Computational Workflow for Network Robustness Analysis. This protocol involves building a network model, defining a functional output, and systematically testing its resilience to perturbations to identify key vulnerabilities.

Small-world networks represent a fundamental topological structure that strikes a balance between regular lattices and random graphs, characterized by high local clustering and short global path lengths [1] [26]. This organization enables both specialized processing in densely interconnected regions and efficient information transfer across the entire system—properties exceptionally well-suited to biological networks. The concept, originally inspired by Stanley Milgram's "six degrees of separation" social experiments, was formalized mathematically by Watts and Strogatz in 1998 [26]. In their model, a regular lattice is transformed by randomly rewiring a small fraction of its connections, introducing "shortcuts" that dramatically reduce the network's diameter while preserving local clustering [1].

In biological systems, from neural circuits to gene regulatory networks, this architectural principle facilitates efficient information transfer, functional specialization, and robustness to random failure [1]. Mounting evidence suggests that communication is optimized in networks with small-world topology, with recent studies demonstrating that information processing capacity in 2D neuronal networks peaks at a specific small-world coefficient (SW = 4.8 ± 1) [27]. The accurate quantification of small-world properties is therefore not merely a theoretical exercise but a practical necessity for understanding the structure-function relationships that underlie complex biological phenomena, from brain connectivity to protein-protein interactions and the dynamics of disease propagation.

Quantitative Metrics for Small-World Networks

The Small-World Coefficient (σ)

The small-world coefficient (σ), introduced by Humphries and colleagues, provides a quantitative measure of small-worldness by comparing a network's clustering and path length to those of an equivalent random network [7]. It is defined as:

σ = (C / Crand) / (L / Lrand) [1] [7] [27]

where C is the observed clustering coefficient of the network, L is its characteristic path length, Crand is the average clustering coefficient of an ensemble of random networks with the same number of nodes and edges, and Lrand is their average characteristic path length [7]. The condition for a network to be classified as small-world is typically σ > 1, indicating that the network has a clustering coefficient significantly greater than that of a random network (C ≫ Crand) while maintaining a similar path length (L ≈ Lrand) [1] [7].

However, this metric has notable limitations. The value of σ can be disproportionately influenced by very low values of C_rand commonly found in random networks, potentially overestimating small-worldness in networks with absolute low clustering [7]. Additionally, σ values are dependent on network size, with larger networks exhibiting higher σ values than smaller networks with identical topological properties [7].

The Omega (ω) Metric

To address the limitations of σ, a alternative metric, omega (ω), was proposed that more closely aligns with the original Watts and Strogatz conception of small-world networks [7]. The ω metric compares a network's clustering to that of an equivalent lattice network and its path length to an equivalent random network:

ω = (Lrand / L) - (C / Clatt) [7]

where C_latt is the clustering coefficient of an equivalent lattice network [7]. The ω metric ranges between -1 and 1, with values close to zero (typically |ω| < 0.05) indicating a small-world network [7]. Values of ω significantly greater than zero suggest more random-like characteristics, while values significantly less than zero indicate more lattice-like properties [7].

This metric offers several advantages: it is less sensitive to network size, provides information about where a network falls on the continuum between lattice and random topologies, and more accurately identifies networks with simultaneously high absolute clustering and short path lengths [7].

Comparative Analysis of σ and ω

Table 1: Comparative Analysis of Small-World Network Metrics

| Feature | Small-World Coefficient (σ) | Omega (ω) Metric | ||

|---|---|---|---|---|

| Theoretical Basis | Comparison to random networks only [7] | Comparison to both random and lattice networks [7] | ||

| Range of Values | 0 to ∞ [7] | -1 to 1 [7] | ||

| Small-World Threshold | σ > 1 [1] | ω | < 0.05 (approaches zero) [7] | |

| Size Dependency | Dependent on network size [7] | Independent of network size [7] | ||

| Interpretive Value | Indicates deviation from randomness | Places network on lattice-random continuum [7] | ||

| Biological Application | Commonly used but may overestimate small-worldness | More accurate for identifying true small-world topology [7] |

Methodological Protocols for Small-World Analysis

Network Construction and Data Preparation

The initial step in small-world analysis involves constructing networks from raw biological data. The specific approach varies by domain:

- Neuronal Networks: Use microelectrode arrays or calcium imaging data to create connectivity matrices where nodes represent neurons and edges represent functional connections based on cross-correlation or transfer entropy between firing patterns [27].

- Gene Regulatory Networks: Employ RNA-seq or ChIP-seq data to construct networks where nodes represent genes and edges represent regulatory interactions (transcription factor binding or expression correlation).

- Protein-Protein Interaction Networks: Utilize mass spectrometry data from co-immunoprecipitation experiments to identify physical interactions between proteins.

For all network types, ensure proper thresholding to eliminate weak connections while preserving true biological interactions. The resulting adjacency matrix should be validated against known biological interactions before proceeding with topological analysis.

Computational Implementation of σ and ω

Table 2: Computational Requirements for Small-World Analysis

| Component | Specification | Purpose |

|---|---|---|

| Programming Environment | Python (NetworkX, NumPy) or MATLAB | Network construction and metric calculation |

| Random Network Models | Erdős-Rényi or degree-preserving randomizations | Generation of equivalent random networks for comparison |

| Lattice Reference | Regular ring lattice with same average degree | Reference for clustering coefficient comparison |

| Statistical Testing | Goodness-of-fit tests (Kolmogorov-Smirnov) | Validation of distribution fits |

| Visualization Tools | Graphviz, Gephi, Cytoscape | Network visualization and exploration |

To calculate σ and ω for a biological network:

- Compute fundamental metrics: Calculate the clustering coefficient (C) and characteristic path length (L) of your empirical network.

- Generate reference networks: Create an ensemble of at least 20 random networks with identical number of nodes and degree distribution using appropriate randomizations.

- Calculate σ: Compute the mean Crand and Lrand from the random network ensemble, then calculate γ = C/Crand and λ = L/Lrand, yielding σ = γ/λ [1] [27].

- Calculate ω: Generate an equivalent lattice network with the same number of nodes and average degree, compute its clustering coefficient (Clatt), then calculate ω = (Lrand/L) - (C/C_latt) [7].

- Statistical validation: Perform goodness-of-fit testing to ensure metric reliability, typically using bootstrapping methods to establish confidence intervals.

Experimental Validation in Biological Systems

For neuronal networks, experimental protocols may involve:

- Culturing dissociated cortical neurons on microelectrode arrays (MEA)

- Recording spontaneous activity across multiple days in vitro

- Constructing functional connectivity networks from cross-correlation spike trains

- Applying σ and ω metrics to quantify developing small-world properties

- Correlating topological metrics with functional measures of synchronization or information transfer [27]

For gene co-expression networks in disease states:

- Collecting transcriptomic data from diseased and control tissues

- Constructing co-expression networks using weighted correlation coefficients

- Calculating small-world metrics for each condition

- Statistically comparing network topology between groups

- Relating changes in σ or ω to clinical outcomes or pathological markers

Figure 1: Computational Workflow for Small-World Network Analysis

Small-World and Scale-Free Properties in Biological Networks

The Relationship Between Small-World and Scale-Free Topologies

Small-world and scale-free properties represent distinct but overlapping topological features of complex networks. While small-world networks emphasize high clustering and short path lengths, scale-free networks are characterized by a power-law degree distribution (P(k) ~ k^(-α)), where a few hubs possess many connections while most nodes have few links [8]. These topological classes are not mutually exclusive; a network can exhibit both small-world and scale-free properties simultaneously.

In scale-free networks, the presence of hubs naturally creates short paths between nodes (fulfilling one requirement for small-worldness), but this doesn't necessarily guarantee high clustering [8]. True small-world networks combine the efficient navigation of scale-free topologies with the specialized processing capabilities of modular, clustered organizations. The three classes of small-world networks identified in empirical studies include: (a) scale-free networks with power-law degree distributions, (b) broad-scale networks with power-law regimes followed by sharp cutoffs, and (c) single-scale networks with fast-decaying tails [8].

Prevalence of Scale-Free Networks in Biological Systems

Despite early enthusiasm suggesting universality of scale-free networks across biological systems, recent rigorous statistical analyses of nearly 1000 networks reveal that strongly scale-free structure is empirically rare [10]. When analyzing networks across social, biological, technological, transportation, and information domains, researchers found robust evidence that most real-world networks are better fit by log-normal distributions than power laws [10]. Specifically in biological contexts, while a handful of technological and biological networks appear strongly scale-free, most exhibit different architectural principles.

This has significant implications for biological network research. The supposed universality of scale-free topology has influenced models of network growth, robustness, and function, but these findings highlight the structural diversity of real-world biological networks [10]. Factors such as aging of components (e.g., proteins with limited functional lifetimes) and physical constraints (e.g., spatial limitations in cellular environments) may limit the formation of scale-free architectures in many biological contexts [8].

Applications in Biological Networks Research

Case Studies in Neural Systems

Small-world topology has been extensively documented in neural systems across multiple species and scales. In the nematode C. elegans, the synaptic connectivity network exhibits small-world properties with σ > 1, enabling both functional segregation and integration [8]. Macaque cortical connectivity and human brain networks derived from diffusion tensor imaging also demonstrate characteristic small-world architecture [26].

Crucially, small-world topology is not merely a structural feature but has functional consequences for information processing. Recent research on 2D neuronal networks has identified an optimal small-world coefficient of SW = 4.8 ± 1 that maximizes information transmission [27]. In these simulations, information processing capacity steadily increased with SW until this threshold, beyond which performance degraded, establishing an inverted U-shaped relationship between small-worldness and computational capability [27].

Figure 2: Optimal Small-World Coefficient for Information Processing

Implications for Disease and Drug Development

The disruption of optimal small-world architecture represents a promising frontier for understanding neurological and psychiatric disorders. Alzheimer's disease research has revealed aberrant small-world properties in functional brain networks, including elevated path length and reduced clustering compared to healthy controls. Similar disruptions have been documented in schizophrenia, epilepsy, and autism spectrum disorders.

From a therapeutic perspective, small-world metrics offer:

- Biomarkers for early detection of network-level pathologies before overt symptoms emerge

- Quantitative endpoints for evaluating treatment efficacy in restoring normal network dynamics

- Guiding principles for neuromodulation therapies (e.g., DBS, TMS) targeting critical network nodes

- Framework for understanding how pharmacological interventions alter information flow in neural circuits

In drug development, in vitro neuronal networks on microelectrode arrays provide a platform for screening compound effects on network topology. Compounds can be evaluated for their ability to restore optimal small-world characteristics in disease models, potentially identifying novel mechanisms of therapeutic action beyond single-target approaches.

Table 3: Essential Resources for Small-World Network Research

| Resource Category | Specific Examples | Application in Research |

|---|---|---|

| Data Acquisition Systems | Microelectrode arrays (MEA), Calcium imaging setups, RNA-seq platforms | Recording neural activity, gene expression, or protein interactions for network construction |

| Network Analysis Software | MATLAB with Brain Connectivity Toolbox, Python with NetworkX/igraph, Cytoscape | Network construction, visualization, and calculation of σ and ω metrics |

| Reference Databases | Connectome databases (WormWiring, Allen Brain Atlas), Protein-protein interaction databases | Validation of biologically-relevant network topologies and comparison with established circuits |

| *In Vitro Model Systems | Primary neuronal cultures, IPSC-derived neurons, Organoid models | Controlled experimental manipulation of network development and function |

| Statistical Frameworks | Bootstrapping algorithms, Null model implementations, Graph statistical packages | Robust statistical comparison of network metrics against appropriate null hypotheses |

The accurate quantification of small-world properties through metrics like σ and ω provides crucial insights into the organizational principles of biological networks. While σ offers a established method for identifying small-world topology through comparison with random networks, the ω metric provides a more nuanced classification that places networks along the continuum between lattice and random topologies. The identification of an optimal small-world coefficient for information processing in neuronal networks underscores the functional significance of these architectural principles.

As research progresses, integrating these topological metrics with spatial constraints, temporal dynamics, and multi-scale analyses will further enhance our understanding of biological complexity. For researchers and drug development professionals, these network-based approaches offer promising frameworks for identifying pathological states and developing targeted interventions that restore optimal network function rather than merely modulating individual components.

From Theory to Therapy: Computational Methods and Biomedical Applications

Inference of directed biological networks is a fundamental challenge in computational biology, with profound implications for understanding complex traits and identifying therapeutic targets [28]. The recent proliferation of large-scale CRISPR perturbation data, particularly from technologies like Perturb-seq, has created an ideal setting for tackling this problem by leveraging transcriptional responses to genetic perturbations [28]. However, existing causal discovery methods often assume strong intervention models, return unweighted graphs, prove computationally intractable for large graphs, or generally assume that the underlying graph is acyclic and unconfounded [28]. The INSPRE (inverse sparse regression) algorithm represents a significant methodological advancement that addresses these limitations while explicitly accommodating the small-world and scale-free properties believed to characterize biological networks [28].

The "small-world" property, characterized by high transitivity (clustering) combined with low average path length, has been widely observed in networks across biological disciplines [29]. Meanwhile, the "scale-free" hypothesis proposes that biological networks follow a power-law degree distribution (P(k) ~ k^(-α)), though recent rigorous statistical analyses have challenged the universality of this pattern, finding strong scale-free structure to be empirically rare across most real-world networks [10]. Understanding these topological properties is crucial as they have broad implications for network dynamics, robustness, and control strategies [10] [29].

The INSPRE Algorithm: Core Methodological Framework

Theoretical Foundation and Mathematical Formulation

INSPRE employs a two-stage procedure for causal discovery from interventional data. The approach treats guide RNAs as instrumental variables and leverages standard procedures for estimating the marginal average causal effect (ACE) of every feature on every other, represented as a matrix  [28]. The key theoretical insight is that the causal graph G can be obtained from the ACE matrix R through the relationship G = I - R^(-1)D[1/R^(-1)], where / indicates element-wise division and the operator D[A] sets off-diagonal entries of the matrix to 0 [28].

Since only a noisy estimate  is available in practice, which may not be well-conditioned or invertible, INSPRE's primary contribution is a procedure for estimating a sparse approximate inverse of the ACE matrix through solving the constrained optimization problem:

This approximate inverse is then used to estimate G via Ĝ = I - VD[1/V] [28]. Here, U approximates  while its left inverse V has sparsity controlled via the L1 optimization parameter λ. The weight matrix W allows the algorithm to place less emphasis on entries of  with high standard error [28].

Workflow and Implementation