Scaling Up Insights: Tackling Large-Scale Gene Regulatory Network Inference

The exponential growth of single-cell and multi-omics data presents profound scalability challenges for Gene Regulatory Network (GRN) inference, a cornerstone of modern computational biology.

Scaling Up Insights: Tackling Large-Scale Gene Regulatory Network Inference

Abstract

The exponential growth of single-cell and multi-omics data presents profound scalability challenges for Gene Regulatory Network (GRN) inference, a cornerstone of modern computational biology. This article provides researchers, scientists, and drug development professionals with a comprehensive framework for navigating this complex landscape. We first explore the foundational drivers behind the data explosion and the unique computational hurdles it creates. The discussion then progresses to cutting-edge methodological solutions, from advanced deep learning architectures like graph neural networks and transformers to innovative data-handling strategies. A dedicated troubleshooting section offers practical guidance on overcoming pervasive issues like data sparsity and resource management. Finally, we synthesize the current state of the field through the lens of rigorous validation benchmarks and comparative analyses of leading tools, empowering professionals to select the right strategies for robust, large-scale GRN analysis.

The Data Deluge: Why Scalability is the Central Challenge in Modern GRN Inference

The advent of single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research by enabling the investigation of transcriptional states at individual cell resolution. This technological shift from bulk RNA sequencing, which provided average gene expression profiles for cell populations, to single-cell approaches has revealed unprecedented insights into cellular heterogeneity, rare cell populations, and developmental trajectories [1] [2]. However, this advancement has introduced significant computational challenges, particularly for gene regulatory network (GRN) inference at scale. As scRNA-seq datasets have grown exponentially in cell numbers, they have concurrently become sparser—containing more zero counts for many genes [3]. This combination of increasing volume and sparsity has redefined the central problems in computational biology, demanding innovative approaches that can scale effectively while extracting meaningful biological signals from increasingly sparse data matrices.

Quantitative Landscape: The Exponential Growth of scRNA-seq Data

The expansion of scRNA-seq data has followed a remarkable trajectory since its emergence. Analysis of 56 datasets published between 2015 and 2021 reveals a clear exponential scaling in the number of cells sequenced per experiment [3]. The average dataset in 2015 contained approximately 704 cells, while by 2020, the average dataset had grown to 58,654 cells—representing an 80-fold increase in just five years [3]. This growth trend shows a Pearson correlation coefficient of r = 0.46 between the year of publication and the number of cells [3].

Concurrent with this increase in cell numbers, datasets have become substantially sparser. Analysis shows a clear negative correlation (Pearson's r = -0.47) between increasing cell numbers and decreasing detection rates (the fraction of non-zero values) [3]. This trend toward sparser datasets is likely to continue as researchers prioritize cost-effective shallow sequencing of many cells over deep sequencing of fewer cells for many biological questions [3].

Table 1: Scaling Trends in scRNA-seq Data (2015-2021)

| Year | Average Number of Cells | Detection Rate Trend | Key Technological Drivers |

|---|---|---|---|

| 2015 | 704 | Higher | Early protocols (SMART-seq2, CEL-seq) |

| 2017 | ~10,000 | Decreasing | Droplet-based methods (10X Genomics) |

| 2020 | 58,654 | Lower | High-throughput commercial systems |

| 2023+ | >1 million | Even lower | Population-scale, multi-condition designs |

Technical Challenges in scRNA-seq Data Analysis

Data Sparsity and Dropout Events

The fundamental technical challenge in scRNA-seq analysis stems from data sparsity, characterized by an excess of zero measurements. These zeros represent both biological absences of transcripts and technical "dropouts" where transcripts fail to be captured or amplified despite being present in the cell [4] [5]. Dropout events occur due to the limited amounts of mRNA in individual cells, inefficient mRNA capture, and the stochastic nature of mRNA expression [6]. This zero-inflation phenomenon means that standard count distribution models (e.g., Poisson) do not adequately represent scRNA-seq data [3].

Computational Bottlenecks in Large-Scale Analysis

As datasets grow to encompass millions of cells, traditional computational approaches for GRN inference face significant bottlenecks:

- Memory requirements for storing and processing large count matrices

- Processing time for neighborhood graph construction and similarity calculations

- Algorithmic scalability for methods that were designed for smaller datasets

- Integration challenges when combining multiple large datasets [4] [5]

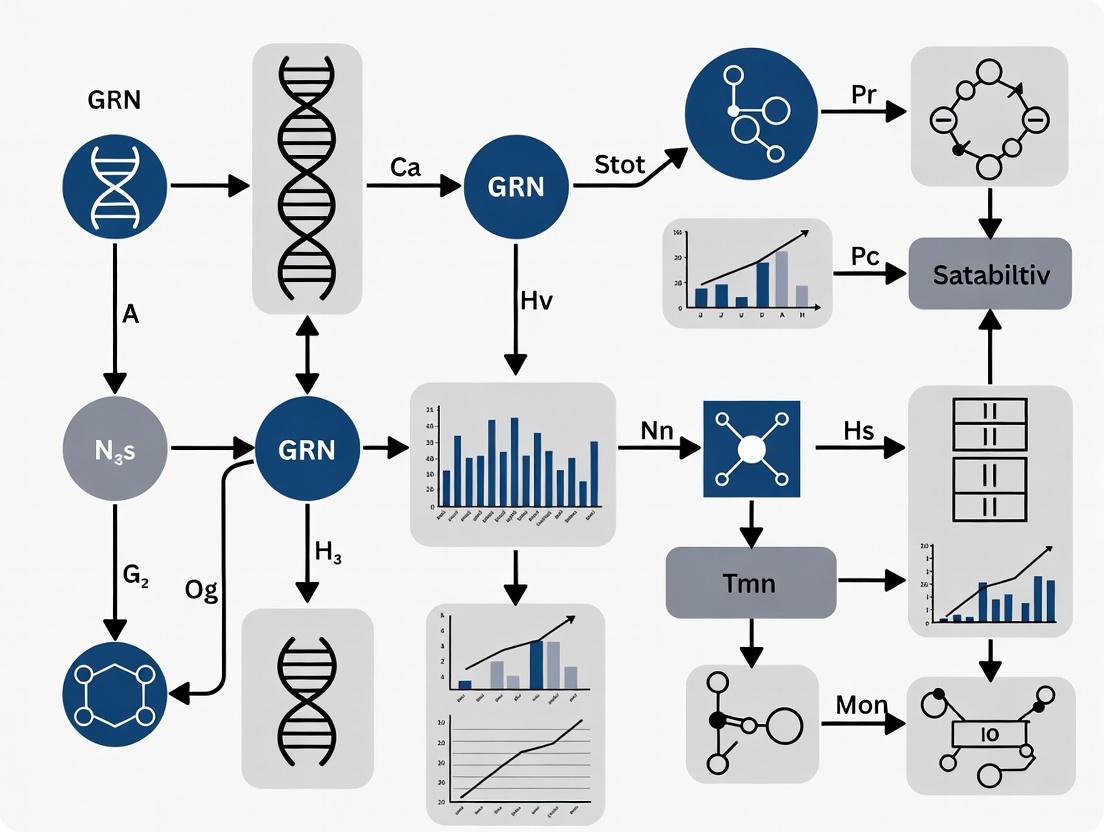

The following diagram illustrates the core problem of scaling GRN inference with sparse data:

Methodological Innovations for Scalable GRN Inference

Binary Representation Approaches

Rather than treating dropout events as a problem to be solved through imputation, emerging approaches embrace sparsity by using binarized expression data (0 for zero counts, 1 for non-zero counts). This representation captures the dropout pattern as useful biological signal rather than technical noise [6]. Research demonstrates that binary-based analyses provide similar results to count-based approaches for key analytical tasks including dimensionality reduction, data integration, cell type identification, and differential expression analysis [3]. Notably, binary representations offer substantial computational advantages, scaling to approximately 50-fold more cells using the same computational resources [3].

Metacell Strategies for GRN Inference

The NetID algorithm represents a recent innovation specifically designed for scalable GRN inference from large, sparse scRNA-seq datasets [7]. This method employs a metacell approach that groups homogenous cells to reduce technical noise while preserving biological signal. The workflow involves:

NetID demonstrates superior performance compared to imputation-based methods by avoiding spurious correlations while maintaining scalability to large datasets [7]. Benchmarking on hematopoietic progenitor differentiation data confirms its effectiveness in recovering known regulatory interactions [7].

Network Inference Benchmarking

Recent large-scale benchmarking efforts like CausalBench provide standardized evaluation frameworks for network inference methods using real-world single-cell perturbation data [8]. This suite enables objective comparison of methods and highlights how poor scalability limits the performance of many existing approaches. Evaluations reveal that methods specifically designed to leverage interventional data, such as Mean Difference and Guanlab, demonstrate superior performance in both biological and statistical metrics [8].

Table 2: Performance Comparison of GRN Inference Methods

| Method | Type | Scalability | Precision | Recall | Best Use Case |

|---|---|---|---|---|---|

| NetID | Metacell-based | High | High | High | Large-scale datasets with clear trajectory |

| Mean Difference | Interventional | High | High | Medium | Perturbation data analysis |

| Guanlab | Interventional | High | Medium | High | Biological ground truth available |

| GRNBoost | Observational | Medium | Low | High | Initial exploratory analysis |

| NOTEARS | Observational | Low | Medium | Low | Small datasets with strong priors |

| PC | Constraint-based | Low | Medium | Low | Causal discovery with limited variables |

Troubleshooting Guide: FAQ for scRNA-seq GRN Inference

Data Preprocessing and Quality Control

Q: How should we handle technical replicates in scRNA-seq data for GRN inference?

A: Technical replicates (multiple sequencing runs of the same library) should not be merged at the count matrix level, as this fails to account for reads with the same UMI. Instead, replicates should be combined during the read counting step (e.g., using cellranger count). This ensures that UMIs are properly accounted for and prevents artificial inflation of counts [9].

Q: What quality control metrics are most critical for large-scale GRN inference?

A: Essential QC metrics include:

- Cell viability: Assessed before library preparation

- Library complexity: Measured by unique transcripts per cell

- Sequencing depth: Sufficient to capture low-abundance transcripts

- Mitochondrial gene percentage: Indicator of cell stress

- Batch effects: Systematic technical variation between experiments [4]

Q: How can we address batch effects in large-scale integrated analyses?

A: Batch correction methods such as Harmony, Combat, and Scanorama can effectively remove technical variation while preserving biological signal [4]. For binary analyses, these methods can be applied to reduced-dimensional representations of the binarized data [3].

Method Selection and Implementation

Q: When should we choose binary representation over count-based methods for GRN inference?

A: Binary approaches are particularly advantageous when:

- Datasets are very large (>50,000 cells) and computational resources are limited

- Sparsity is high (detection rate <20%)

- The biological question focuses on cell type identification rather than subtle expression differences

- Analytical tasks include dimensionality reduction, data integration, or cell type identification [3]

Q: How does the choice of normalization affect GRN inference in sparse data?

A: Normalization methods should be carefully validated as they can introduce biases. Methods include TPM (transcripts per million), FPKM (fragments per kilobase per million), and DESeq2's median-of-ratios. For metacell approaches, normalization can be performed before or after aggregation, with different implications for downstream analysis [4] [7].

Q: What are the key parameters to optimize when using metacell methods like NetID?

A: Critical parameters include:

- Number of seed cells: Balances manifold coverage against metacell sparsity

- K-nearest neighbors: Controls local neighborhood size

- Pruning P-value cutoff: Determines homogeneity of metacells

- Minimum partner cells: Ensures sufficient aggregation to reduce noise [7]

Interpretation and Validation

Q: How can we validate GRNs inferred from sparse scRNA-seq data?

A: Validation strategies include:

- Biological ground truth: Comparison with ChIP-seq data or known pathways

- Functional enrichment: Assessment of whether inferred networks enrich for biologically meaningful pathways

- Perturbation validation: Testing predictions using interventional data

- Benchmarking: Using established benchmarks like CausalBench for objective comparison [8] [7]

Q: What are the limitations of current scalable GRN inference methods?

A: Current limitations include:

- Reduced sensitivity for weak regulatory interactions

- Potential loss of rare cell population signals in aggregation approaches

- Limited ability to capture transient dynamic regulations

- Dependence on parameter tuning for optimal performance [8] [7]

Research Reagent Solutions for scRNA-seq GRN Studies

Table 3: Essential Research Reagents and Platforms

| Reagent/Platform | Function | Application in GRN Studies |

|---|---|---|

| 10X Genomics Chromium | Single-cell partitioning | High-throughput cell encapsulation for large-scale studies |

| CRISPRi perturbation pools | Gene targeting | Generating interventional data for causal network inference |

| UMI barcodes | Molecular counting | Accurate transcript quantification despite amplification bias |

| Cell Hashing antibodies | Sample multiplexing | Batch effect reduction through sample pooling |

| ERCC spike-in controls | Technical variation assessment | Quality control and normalization standardization |

| Viability dyes | Cell quality assessment | Pre-sequencing quality control for better data quality |

| Feature Barcoding kits | Protein surface marker detection | Multi-modal data collection for enhanced cell typing |

Future Directions and Emerging Solutions

The field of scalable GRN inference continues to evolve rapidly. Promising directions include:

- Integration of multi-omic data at single-cell resolution (ATAC-seq, protein abundance)

- Machine learning approaches specifically designed for sparse high-dimensional data

- Transfer learning to leverage existing annotated datasets for new studies

- Spatial transcriptomics integration to incorporate topological information

- Improved benchmarking frameworks like CausalBench to drive method development [8] [5] [7]

As single-cell technologies continue to advance, producing ever-larger and more complex datasets, the development of specialized computational methods that embrace rather than fight data sparsity will be crucial for unlocking the full potential of scRNA-seq for gene regulatory network inference.

Frequently Asked Questions & Troubleshooting Guides

How can I assess the scalability of a GRN inference method for my large-scale single-cell dataset?

Evaluating scalability requires a combination of benchmark suites and performance monitoring. The CausalBench benchmark suite, which uses real-world large-scale single-cell perturbation data, is designed for this purpose. It provides biologically-motivated metrics and distribution-based interventional measures to realistically evaluate how methods perform as data size and complexity increase [8].

Performance Indicators to Monitor:

- Computational Runtime: How does the algorithm's processing time increase with more genes or cells?

- Memory Usage: Does the method require memory that scales linearly or exponentially with dataset size?

- Precision-Recall Trade-off: Does the method maintain a high precision (minimizing false positives) without a significant drop in recall (minimizing false negatives) on large networks? A common observation is that recall often decreases as network size increases [8].

Troubleshooting Poor Scalability:

- Issue: The inference process is too slow or runs out of memory.

- Solution: Employ a method that incorporates a dimensionality reduction step. For example, some algorithms use a regulatory gene recognition step with the Maximal Information Coefficient (MIC) to shrink the problem space before model training [10].

Why do methods using interventional perturbation data sometimes fail to outperform observational methods on real-world data?

Contrary to theoretical expectations, benchmarks have shown that existing interventional methods do not always outperform their observational counterparts on real data [8]. This is a key challenge in real-world GRN inference.

Potential Causes:

- Poor Scalability: The interventional method may not scale effectively to the number of perturbations and genes in your dataset, limiting its ability to fully utilize the data [8].

- Inadequate Utilization of Data: The algorithm might not be effectively integrating the interventional information to distinguish between causal and correlational relationships.

Solutions:

- Choose interventional methods specifically designed and validated for large-scale data.

- Refer to benchmarks like CausalBench to select top-performing methods that have demonstrated effective use of interventional data, such as some challenge-winning algorithms [8].

How can I improve the accuracy of my large-scale GRN inference?

Accuracy declines with increasing network scale due to high dimensionality and sparsity [10]. To combat this:

- Integrate Data Types: Combine both time-series and steady-state gene expression data in your model, as this can provide more robust information for inference [10].

- Use Ensemble Models: Leverage feature fusion algorithms that combine the strengths of multiple models. For instance, one approach uses feature importance scores from both XGBoost and Random Forest models to train a more accurate non-linear Ordinary Differential Equations (ODE) model [10].

- Employ Advanced Causal Inference: Explore recent methods that move beyond correlation to better infer causality from perturbational data [8].

Experimental Protocols & Methodologies

Protocol 1: Benchmarking GRN Inference Methods with CausalBench

This protocol outlines using the CausalBench suite to evaluate network inference methods on real-world single-cell perturbation data [8].

- Data Preparation: Download the curated single-cell RNA-seq datasets (e.g., from RPE1 and K562 cell lines) that include both control and genetically perturbed cells.

- Method Selection: Select a set of state-of-the-art observational and interventional methods for comparison (e.g., PC, GES, NOTEARS, GIES, DCDI, and challenge-winning methods like Mean Difference or Guanlab) [8].

- Run Inference: Execute each method on the dataset using the provided implementations. It is recommended to run each method multiple times with different random seeds.

- Evaluation:

- Statistical Evaluation: Calculate the Mean Wasserstein distance (measures the strength of predicted causal effects) and the False Omission Rate - FOR (measures the rate of omitting true interactions) [8].

- Biological Evaluation: Compute precision and recall against a biology-driven approximation of ground truth.

Protocol 2: Inference of Large-Scale GRNs using iLSGRN

This protocol details the iLSGRN method for reconstructing large-scale GRNs from gene expression data [10].

- Data Input & Combination: Provide both steady-state and time-series gene expression data as input.

- Dimensionality Reduction - Regulatory Gene Recognition:

- For each gene, calculate the Maximal Information Coefficient (MIC) with all other genes.

- Exclude redundant regulatory relationships based on MIC to create a reduced set of M candidate regulatory genes for each target gene (where M << G, the total number of genes) [10].

- Model Training - Feature Fusion Algorithm:

- For the reduced candidate set, use a non-linear ODE model to describe the regulatory dynamics.

- Derive feature importance scores from trained XGBoost and Random Forest models.

- Fuse these importance scores to train the final non-linear ODE model and infer the network [10].

Table 1: Performance Trade-offs of GRN Methods on CausalBench

Table based on evaluations from CausalBench, summarizing the trade-off between precision and recall for various methods on real-world single-cell perturbation data [8].

| Method Category | Method Name | Key Characteristic | Precision (Typical Range) | Recall (Typical Range) |

|---|---|---|---|---|

| Interventional (Challenge) | Mean Difference | Top-performing on statistical metrics | High | Medium |

| Interventional (Challenge) | Guanlab | Top-performing on biological metrics | High | Medium |

| Observational | GRNBoost | High recall, lower precision | Low | High |

| Observational | NOTEARS variants | Continuous optimization-based | Varying, often lower precision | Varying |

| Interventional (Classic) | GIES | Score-based, extends GES | Does not outperform GES | Does not outperform GES |

Table 2: Scalability and Data Handling of GRN Inference Methods

Table comparing the scalability and data utilization of different GRN inference approaches [8] [10].

| Method Name | Data Types Supported | Scalability to Large Networks | Key Strength / Innovation |

|---|---|---|---|

| iLSGRN | Steady-state & Time-series | High (uses dimensionality reduction) | Feature fusion from XGBoost & RF [10] |

| CausalBench Winners | Interventional & Observational | High (designed for large-scale) | Effective use of interventional data [8] |

| DCDI variants | Interventional | Limited by scalability [8] | Differentiable causal discovery |

| GIES | Interventional | Limited by scalability [8] | Score-based equivalence search |

| GENIE3/dynGENIE3 | Steady-state / Time-series | Medium | Tree-based, model-free |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Scalable GRN Inference

A list of key software tools and resources for large-scale GRN research.

| Tool / Resource | Type | Primary Function in GRN Research |

|---|---|---|

| CausalBench | Benchmark Suite | Provides realistic datasets and metrics to evaluate GRN methods on large-scale, real-world perturbation data [8]. |

| iLSGRN | Inference Algorithm | Python-based tool that uses non-linear ODEs and feature fusion to reconstruct large-scale GRNs [10]. |

| DCDI | Inference Algorithm | A continuous optimization-based method for causal discovery from interventional data [8]. |

| GENIE3/dynGENIE3 | Inference Algorithm | A model-free, tree-based method for inferring GRNs from steady-state or time-series data [10]. |

| Gene Net Weaver (GNW) | Data Simulator | Tool used to generate in silico benchmark datasets (e.g., for DREAM challenges) [10]. |

| RegulonDB | Gold Standard Network | A database of experimentally validated E. coli regulatory interactions for validation [10]. |

Workflow & Relationship Visualizations

Graphviz Diagram: CausalBench Evaluation Workflow

Graphviz Diagram: iLSGRN Method Workflow

Graphviz Diagram: Scalability Challenge in GRN Inference

Frequently Asked Questions (FAQs)

1. Why does my GRN inference model run out of memory with large single-cell datasets? The high dimensionality of single-cell RNA-seq data, where thousands of genes are measured across thousands of cells, places significant strain on memory resources. The transformer architecture, which scales with roughly N² complexity, is a key factor; doubling the context length can quadruple the computation and memory requirements [11]. Furthermore, methods that leverage large prior networks or perform intensive operations on the entire gene expression matrix can quickly exhaust available RAM, especially when the number of genes exceeds 10,000 [12] [13].

2. How can I make my GRN inference workflow faster and more scalable? Scalability is a recognized challenge for many state-of-the-art methods [8]. To improve performance:

- Choose Scalable Algorithms: Tree-based methods like GENIE3 and GRNBoost2 are recognized for their scalability to thousands of genes and are often integrated into popular pipelines like SCENIC+ [14] [15].

- Leverage Hardware Acceleration: Utilize GPUs for deep learning models. For instance, the DAZZLE model completed inference on a dataset with 1,410 genes in 24.4 seconds on an H100 GPU [12].

- Adopt Efficient Formulations: The "One-vs-Rest" (OvR) formulation, used by GENIE3 and GRNBoost2, models each gene as a function of others, enabling parallelization and improved scalability [14].

3. My single-cell data has many zero values. How does this affect inference, and what can I do? The prevalence of "dropout" events (false zeros) is a major challenge in single-cell data, causing models to overfit to this noise [12] [13]. Instead of traditional data imputation, consider regularization techniques like Dropout Augmentation (DA), which improves model robustness by artificially adding dropout noise during training. Models like DAZZLE, which use DA, show improved stability and performance [12] [13].

4. Are there methods that work well when I have very little known regulatory data? Yes, this is known as the "few-shot" learning problem in GRN inference. To address the TF cold-start problem or limited prior knowledge in specific cell types, consider meta-learning approaches. Frameworks like Meta-TGLink are specifically designed to learn transferable regulatory patterns from limited labeled data, outperforming standard methods in data-scarce scenarios [15].

5. How do I choose between supervised and unsupervised GRN inference methods? The choice depends on the availability of known regulatory interactions for your organism or cell type of interest.

- Unsupervised methods (e.g., GENIE3, PIDC, DeepSEM) do not require prior knowledge and infer networks directly from gene expression data. However, they can struggle with the inherent noise and complexity of the data, potentially leading to high false-positive rates [16] [15].

- Supervised methods leverage known regulatory relationships during training, which generally leads to higher accuracy and robustness by mitigating false positives [16] [15]. The performance of supervised methods is, however, dependent on the quality and completeness of the prior knowledge used for training.

Troubleshooting Guides

Issue 1: Handling High-Dimensional Single-Cell Data

Problem: Experiment fails due to memory errors or excessive computation time when processing large gene expression matrices.

Solution:

- Step 1: Employ Dimensionality Reduction. Use techniques like PCA or feature selection to reduce the number of genes before inference, focusing on highly variable genes or those of biological interest.

- Step 2: Select a Scalable Inference Method. Prefer methods designed for high-dimensional data. The table below compares the characteristics of several approaches.

| Method | Type | Key Technology | Scalability Note |

|---|---|---|---|

| GENIE3/GRNBoost2 [16] [14] | Unsupervised | Random Forest / Gradient Boosting | Highly scalable; can be parallelized [14]. |

| DAZZLE [12] [13] | Unsupervised | VAE with Dropout Augmentation | More robust to zeros; reduced parameters and runtime vs. predecessors [12]. |

| scKAN [14] | Unsupervised | Kolmogorov-Arnold Network | Differentiable model that captures continuous dynamics [14]. |

| Meta-TGLink [15] | Supervised | Graph Meta-Learning | Effective in few-shot scenarios with limited labeled data [15]. |

| GIES [8] | Interventional | Score-based Causal Discovery | An interventional method; however, benchmark studies note that such methods have not consistently outperformed observational ones, with scalability being a limiting factor [8]. |

- Step 3: Utilize Hardware Acceleration. Run models on systems with sufficient GPUs, which can drastically reduce computation time for deep learning models [12].

Issue 2: Managing Sparse and Zero-Inflated Data

Problem: Model performance is degraded due to the high number of zeros (dropouts) in single-cell RNA-seq data.

Solution:

- Step 1: Diagnose Zero-Inflation. Quantify the percentage of zeros in your dataset. In single-cell data, 57% to 92% of observed counts can be zeros [12] [13].

- Step 2: Apply Dropout Augmentation. Implement a regularization strategy that adds synthetic dropout noise during training. The DAZZLE workflow provides a practical implementation [12] [13].

- Step 3: Train with Augmented Data. The model is exposed to multiple versions of the data with different dropout patterns, preventing overfitting to specific zeros and improving generalizability.

Experimental Protocol: Benchmarking GRN Inference Methods with CausalBench

Objective: To objectively evaluate the performance of different GRN inference methods on real-world, large-scale single-cell perturbation data.

Materials:

- CausalBench Suite: An open-source benchmarking suite (https://github.com/causalbench/causalbench) [8].

- Datasets: Includes curated large-scale perturbation datasets (e.g., RPE1 and K562 cell lines with over 200,000 interventional datapoints) [8].

- Software Environment: Python environment with required libraries (e.g., PyTorch, TensorFlow) as specified by CausalBench and method documentation.

Methodology:

- Installation: Install the CausalBench package and its dependencies from the source repository.

- Data Preparation: Download and preprocess the specified perturbation datasets using the built-in CausalBench data loaders.

- Method Selection: Configure a set of methods for evaluation. CausalBench includes implementations of various baselines, such as:

- Execution: Run the benchmarking suite, which will train and evaluate each method on the selected datasets.

- Evaluation: Analyze the output using the suite's built-in metrics. CausalBench uses biologically-motivated and statistical metrics, including:

- Mean Wasserstein Distance: Measures the strength of causal effects corresponding to predicted interactions [8].

- False Omission Rate (FOR): Measures the rate at which true causal interactions are omitted by the model [8].

- Precision-Recall Trade-off: Assesses the accuracy and completeness of the inferred network [8].

Workflow and Pathway Visualizations

GRN Inference with Dropout Augmentation (DAZZLE Workflow)

CausalBench Evaluation Framework

The Scientist's Toolkit: Key Research Reagents & Solutions

| Item / Resource | Function / Application in GRN Inference |

|---|---|

| BEELINE Benchmark [14] | A standard benchmark framework for evaluating GRN inference algorithms on single-cell data, providing standardized datasets and evaluation protocols. |

| CausalBench Suite [8] | An open-source benchmark suite using real-world large-scale single-cell perturbation data for a more realistic evaluation of causal network inference methods. |

| Dropout Augmentation (DA) [12] [13] | A model regularization technique that improves robustness to zero-inflation in single-cell data by adding synthetic dropout noise during training. |

| Kolmogorov-Arnold Network (KAN) [14] | A differentiable network architecture used in models like scKAN to capture the smooth, continuous dynamics of cellular processes more effectively than piecewise tree-based models. |

| Graph Meta-Learning [15] | A learning paradigm that enables models to adapt quickly to new tasks with limited data, addressing the "few-shot" problem in GRN inference for new TFs or cell types. |

| Prior Regulatory Networks [17] [15] | Databases of known TF-TG interactions used to provide supervised signals for training or to refine predictions from unsupervised methods. |

Welcome to the Technical Support Center

This resource provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals overcome common technical hurdles in large-scale Gene Regulatory Network (GRN) inference. The following sections address specific issues related to experimental workflows and computational visualization.

Frequently Asked Questions (FAQs)

Troubleshooting Graphviz for Research-Grade Visualizations

Q1: How can I create a node in a graph with a bolded title or section, similar to a UML class diagram?

Answer: Using the deprecated record shape does not support rich text formatting. Instead, use HTML-like labels with a <B> tag and the shape="none" attribute for full formatting control. [18] This method is essential for creating clear, publication-quality diagrams that highlight key entities in a GRN.

Q2: I need high-quality, anti-aliased figures for my research publication. What is the best output format?

Answer: For the highest quality output, use vector-based formats like PDF or SVG. [19] These formats are resolution-independent and ideal for publications. If you have a Cairo/Pango-enabled Graphviz version, use the -Tpdf flag directly. Otherwise, generate PostScript and convert it to PDF. [19]

Q3: How can I increase the size of my graph layout to improve readability for complex networks?

Answer: Use several attributes to control graph size. To increase spacing and dimensions without scaling node content, adjust nodesep, ranksep, and fontsize. [19] For a more drastic, uniform scaling of the entire diagram, including nodes and text, use the size attribute with an exclamation mark (e.g., size="8,8!"). [19]

Troubleshooting Guides

Solving Common Graphviz Errors

Problem: UnicodeDecodeError or Syntax Error when rendering a graph.

Symptoms: Errors like UnicodeDecodeError: 'utf-8' codec can't decode byte... [20] or Syntax error near '[' [21] when running the dot command.

Solution:

- Check File Encoding: Ensure your DOT file is saved as a plain text file with UTF-8 encoding, especially if using non-ASCII characters. [19]

- Verify Installation: Confirm Graphviz is correctly installed and your environment's PATH variable includes the Graphviz

bindirectory. [20] - Test with a Simple Graph: Rule out file-specific issues by testing with a basic graph.

- Avoid Reserved Words: If using older Graphviz versions, avoid potential reserved words like

Graph,Node,Edge, andSubgraphas node names. [21]

Problem: Graphviz Visual Editor fails to render a large or complex DOT file.

Symptoms: The editor becomes unresponsive or does not display the graph after pasting in DOT source code.

Solution:

- Use a Desktop Installation: For large graphs, use a local Graphviz installation. The web-based Visual Editor may struggle with computational-heavy layouts. [22]

- Simplify the Graph: Break down extremely large networks into smaller subgraphs or clusters to reduce layout complexity.

- Check for Errors: The desktop version often provides more detailed error messages. Run

dot -Tpng your_file.gv -o output.pngin your terminal to diagnose issues.

Research Reagent Solutions

The table below lists key computational tools and their functions for scalable GRN inference research.

| Research Reagent / Tool | Function in GRN Research |

|---|---|

| Graphviz (DOT language) | Visualizes complex inferred network structures and experimental workflows for analysis and publication. [23] |

| High-Performance Computing (HPC) Cluster | Provides the computational power required for algorithms (e.g., GENIE3, PIDC) on large single-cell RNA-seq datasets. |

| Cloud Computing Platform | Offers scalable, on-demand resources for running multiple inference experiments in parallel, enhancing reproducibility. |

| Single-Cell RNA-Sequencing Data | The primary input data for inferring gene regulatory relationships at a cellular resolution. |

Experimental Protocol: A Scalable GRN Inference Workflow

This protocol outlines a standard computational experiment for inferring GRNs from large-scale transcriptomic data, designed for scalability on cluster and cloud infrastructures.

Data Preprocessing

- Input: Raw single-cell RNA-sequencing count matrix.

- Method: Normalize the data using a method like SCTransform or log(CP10k+1). Filter out low-quality cells and genes with minimal expression.

- Quality Control: Use tools like FastQC and Cell Ranger to assess data quality. Perform principal component analysis (PCA) to identify and remove outliers.

GRN Inference

- Algorithm Selection: Choose a scalable inference algorithm such as GENIE3 or PIDC, implemented in R/Python.

- Execution: Run the inference algorithm on the preprocessed data. For large datasets, execute this step on an HPC cluster or cloud virtual machine using a job scheduler like SLURM to parallelize computations across multiple nodes.

- Output: A weighted adjacency matrix where values represent the predicted strength of regulatory interactions between genes.

Network Analysis & Visualization

- Thresholding: Apply a weight threshold to the adjacency matrix to focus on the most confident interactions.

- Visualization: Export the thresholded network to the DOT format. Use the Graphviz scripts provided in this document to create a clear, readable visualization of the core GRN.

Validation

- Method: Compare the inferred network against a gold-standard benchmark network (e.g., from DREAM challenges) or validate key predictions using CRISPR perturbations in the wet lab.

- Metrics: Calculate precision-recall curves, Area Under the Curve (AUC), and F1-scores to quantify inference accuracy.

The following diagram illustrates this workflow.

GRN Signaling Pathway Diagram

This diagram illustrates a simplified, core regulatory module often inferred in GRN analysis, highlighting key interactions.

Next-Generation Architectures: Scalable Machine Learning Methods for GRN Inference

FAQs: Core Concepts and Scalability

Q1: How do CNNs, VAEs, and GNNs specifically contribute to inferring Gene Regulatory Networks (GRNs) from large-scale single-cell data?

These architectures tackle distinct challenges in GRN inference. Convolutional Neural Networks (CNNs), like in CNNGRN, excel at processing bulk time-series expression data to uncover intricate regulatory associations between genes [24]. Graph Neural Networks (GNNs), including GCNs and Graph Autoencoders (GAE), are naturally suited for GRNs as they model genes as nodes and regulatory relationships as edges in a graph; they learn global regulatory structures by aggregating information from a gene's neighbors, which is crucial for understanding complex biological systems [25] [26] [27]. Variational Autoencoders (VAEs) are generative models that learn a compressed, probabilistic latent representation of gene expression data. They are particularly effective for handling the noise and sparsity of single-cell RNA-seq (scRNA-seq) data and for integrating multiple data types, such as simultaneously modeling cellular heterogeneity and gene modules [28].

Q2: What are the primary scalability challenges when applying these deep learning models to datasets with millions of cells, and what solutions exist?

The primary challenges include immense computational resource demands, long processing times, and difficulty in effectively learning from sparse, high-dimensional data [29]. Promising solutions involve software engineering and algorithmic innovations. The Inferelator 3.0 pipeline, for instance, is designed for high-performance computing environments. It uses the Dask analytic engine to distribute computations across clusters, enabling the analysis of datasets with over a million cells [29]. From a modeling perspective, methods like HyperG-VAE use hypergraph representations to reduce data sparsity and capture high-order relationships more efficiently, thereby improving scalability [28].

Q3: A key criticism of deep learning models is their "black box" nature. How can we ensure the inferred GRNs are biologically explainable?

Explainability is a critical focus of recent research. One powerful strategy is to directly incorporate the concept of GRNs into the model's architecture and objective. For example, GPO-VAE explicitly models gene regulatory networks in its latent space and optimizes its parameters to align with known GRN structures, making its predictions more interpretable and biologically grounded [30]. Other methods use feature importance visualization to identify which inputs the model deems most critical for its predictions, and validate inferred networks by confirming that identified hub genes are involved in relevant biological processes, as demonstrated by CNNGRN [24].

Troubleshooting Guides

Poor Inference Performance on Real Biological Data

- Problem: The model performs well on synthetic data but fails to recover known regulatory relationships on real-world scRNA-seq datasets.

- Potential Causes & Solutions:

- Cause: Real data is much noisier and sparser than simulated data. The model may be overfitting to the clean patterns in synthetic data.

- Solution: Employ models specifically designed for noise and sparsity. Use HyperG-VAE, which uses hypergraph learning to capture latent gene-cell correlations and enhance data representation, making it more robust to real-world data imperfections [28].

- Solution: Integrate prior biological knowledge. Methods like the Inferelator 3.0 use prior knowledge networks to guide the inference process, which significantly improves performance on real biological data by constraining the model to biologically plausible solutions [29].

Inability to Handle Large-Scale Single-Cell Datasets

- Problem: The experiment runs out of memory or takes impractically long to complete with large (e.g., >100,000 cells) input.

- Potential Causes & Solutions:

- Cause: The algorithm or implementation is not designed for distributed, parallel computation.

- Solution: Utilize software built for high-performance computing. The Inferelator 3.0 can be deployed on compute clusters or cloud infrastructure (e.g., via Kubernetes) using its Dask backend, enabling it to scale to millions of cells [29].

- Cause: The graph neural network model suffers from high computational overhead during neighbor aggregation.

- Solution: Implement efficient feature extraction as a pre-processing step. Using a Gaussian-kernel Autoencoder to extract separable features from gene expression data can reduce the computational burden on the subsequent GCN model [26].

Lack of Biological Interpretability in Results

- Problem: The inferred network has high statistical scores but contains regulatory edges that lack biological plausibility.

- Potential Causes & Solutions:

- Cause: The model is purely data-driven and does not incorporate causal or structural biological constraints.

- Solution: Adopt models that integrate causal inference. A GCN based on Causal Feature Reconstruction uses Transfer Entropy to quantify and reduce the loss of causal information during the model's neighbor aggregation, leading to more reasonable and reliable GRNs [26].

- Solution: Choose a model with built-in explainability. GPO-VAE is explicitly designed for explainability by aligning its internal parameters with GRN structures, ensuring the latent representations and resulting networks have clearer biological meaning [30].

Performance Benchmarking Tables

Table 1: Benchmarking Performance on In Silico Networks (AUPRC)

| Method | Architecture | Linear (LI) | Bifurcating (BF) | Trifurcating (TF) | Curated Network (mCAD) |

|---|---|---|---|---|---|

| DeepRIG | GNN (GAE) | 0.81 | 0.76 | 0.73 | 0.69 |

| CNNGRN | CNN | 0.79 | 0.74 | 0.70 | 0.65 |

| PIDC | Information Theory | 0.65 | 0.60 | 0.58 | 0.55 |

| GENIE3 | Tree-based | 0.68 | 0.63 | 0.61 | 0.59 |

| PPCOR | Statistical | 0.55 | 0.52 | 0.50 | 0.48 |

Data synthesized from benchmarking results in [24] [25]. Performance is measured in Area Under the Precision-Recall Curve (AUPRC).

Table 2: Benchmarking on Real Single-Cell Data with CausalBench Metrics

| Method | Type | Mean Wasserstein Distance (↑) | False Omission Rate (↓) | Key Strength |

|---|---|---|---|---|

| Mean Difference | Interventional | 0.92 | 0.15 | High causal effect strength |

| Guanlab | Interventional | 0.89 | 0.12 | High biological precision |

| GRNBoost | Observational | 0.75 | 0.08 | High recall (finds many edges) |

| NOTEARS-MLP | Observational | 0.68 | 0.45 | Handles non-linearity |

| PC | Observational | 0.60 | 0.50 | Classic constraint-based method |

Data derived from the large-scale evaluation performed by [8]. A higher Mean Wasserstein Distance and a lower False Omission Rate indicate better performance.

Detailed Experimental Protocols

Protocol: Inferring GRNs with a Graph Autoencoder (DeepRIG)

Objective: To reconstruct a GRN from scRNA-seq data by learning the global regulatory structure using a graph autoencoder model [25].

Data Preprocessing:

- Input: Raw scRNA-seq count matrix (Cells x Genes).

- Filtering: Remove genes expressed in an insufficient number of cells and remove "low-quality" cells with low gene counts.

- Normalization: Normalize the gene expression data (e.g., library size normalization and log-transformation).

Prior Graph Construction:

- Calculate the Spearman’s rank correlation coefficient for every pair of genes across all cells.

- Construct a Weighted Gene Co-expression Network (WGCN) where nodes are genes and edge weights are the correlation coefficients. This serves as the prior regulatory graph.

Model Training (DeepRIG):

- Input Features: The preprocessed gene expression profiles.

- Graph Structure: The prior WGCN.

- Architecture:

- Encoder: A two-layer Graph Convolutional Network (GCN) takes the gene expression data and the prior graph to generate latent embeddings for each gene. This step integrates the global regulatory structure.

- Decoder: A scoring function (e.g., a simple dot product) uses the latent embeddings to predict the likelihood of a regulatory relationship for each TF-gene pair.

- Training: Train the model in a semi-supervised manner using a small set of known TF-gene interactions as positive labels.

GRN Reconstruction:

- The trained model outputs a regulatory score matrix (Genes x Genes).

- Rank all potential TF-gene pairs based on their regulatory scores.

- Select a threshold (e.g., top K pairs) to generate the final directed GRN.

Protocol: Integrating Cellular Heterogeneity with HyperG-VAE

Objective: To infer GRNs from scRNA-seq data while simultaneously capturing cellular heterogeneity and gene modules using a hypergraph variational autoencoder [28].

Hypergraph Construction:

- Represent the scRNA-seq data as a hypergraph.

- Nodes: Genes.

- Hyperedges: Individual cells. A hyperedge connects all genes that are expressed in a given cell.

- Formally, this is encoded in an incidence matrix M ∈ {0,1}^m×n^, where m is the number of cells and n is the number of genes. M~ij~ = 1 if gene i is expressed in cell j.

Model Training (HyperG-VAE):

- Cell Encoder: A structural equation model (SEM) layer accounts for cellular heterogeneity and constructs cell-specific GRNs.

- Gene Encoder: Uses a hypergraph self-attention mechanism to identify gene modules (clusters of genes co-regulated by the same TFs).

- Synergistic Optimization: The two encoders are optimized jointly via a decoder that aims to reconstruct the original hypergraph. This mutual interaction improves the embedding quality of both cells and genes.

Output and Inference:

- The model outputs a refined GRN based on the learned interactions from the cell encoder.

- Additionally, it provides gene modules from the gene encoder and improved cell embeddings for clustering and visualization.

Model Architecture and Workflow Diagrams

Diagram 1: Generic GRN Inference Workflow.

Diagram 2: HyperG-VAE Architecture for GRN Inference.

Research Reagent Solutions

Table 3: Key Computational Tools and Datasets for GRN Inference

| Name | Type | Function in Research | Reference/Link |

|---|---|---|---|

| BEELINE | Benchmarking Framework | A standardized framework to evaluate and compare the performance of various GRN inference algorithms on synthetic and curated networks. | [25] |

| CausalBench | Benchmarking Suite | An open-source benchmark using large-scale, real-world single-cell perturbation data to provide biologically-motivated evaluation metrics. | [8] |

| Inferelator 3.0 | Software Pipeline | A scalable Python package for GRN inference from bulk and single-cell data, designed for high-performance computing environments. | [29] |

| HyperG-VAE | Model Code | Implementation of the hypergraph variational autoencoder for robust GRN inference from scRNA-seq data. | [28] |

| DeepRIG | Model Code | Implementation of the graph autoencoder model for learning global regulatory structures. | [25] |

| BoolODE | Simulation Tool | Generates realistic in silico single-cell expression data from known network structures for method validation. | [25] |

Leveraging Graph Neural Networks and Transformers for Network-Structured Biological Data

Inference of Gene Regulatory Networks (GRNs) is fundamental for understanding cellular function, disease mechanisms, and therapeutic development. The advent of large-scale single-cell RNA sequencing (scRNA-seq) data has intensified the need for computational methods that are both accurate and scalable. Traditional GRN inference methods often struggle with the high dimensionality, noise, and complexity of modern biological datasets. This technical support document addresses the specific challenges researchers face when applying Graph Neural Networks (GNNs) and Transformer architectures to large-scale GRN inference, providing targeted troubleshooting guides, experimental protocols, and resource recommendations to facilitate robust and scalable research.

Frequently Asked Questions & Troubleshooting Guides

FAQ 1: How can I improve my model's performance when labeled regulatory data is scarce?

Answer: This common challenge, known as the "TF cold-start problem," can be addressed by reformulating GRN inference as a few-shot learning problem. A recommended solution is to employ a structure-enhanced graph meta-learning framework like Meta-TGLink [15].

- Core Principle: This approach uses a model-agnostic meta-learning (MAML) framework to learn transferable regulatory patterns from tasks with abundant data, allowing the model to quickly adapt to new genes or cell types with limited labeled examples [15].

Technical Implementation:

- Meta-Training: Construct multiple meta-tasks, each with a support set (small labeled data) and a query set (data for evaluation). The model learns across these tasks via a bi-level optimization process [15].

- Meta-Testing: Apply the meta-trained model to a new, target cell line where only a small number of regulatory interactions are known [15].

- Architecture: Enhance the GNN with a Transformer to expand its receptive field and better capture long-range gene interactions, which is crucial for robust performance with sparse data [15].

Troubleshooting Checklist:

- Poor generalization to new TFs or cell types.

- Solution: Ensure meta-training covers a diverse set of regulatory tasks and subgraphs to expose the model to heterogeneous patterns [15].

- Model fails to capture distal regulatory interactions.

- Solution: Integrate a positional encoding module and alternate Transformer with GNN layers to enhance the model's ability to capture long-range dependencies [15].

FAQ 2: How do I handle the high rate of false zeros ("dropout") in single-cell RNA-seq data for GRN inference?

Answer: Instead of relying on data imputation, a robust strategy is to use model regularization via Dropout Augmentation (DA), implemented in tools like DAZZLE [12] [13].

- Core Principle: DA improves model resilience to zero-inflation by artificially adding more zeros during training. This counter-intuitive approach regularizes the model, preventing it from overfitting to the dropout noise present in the original data [12] [13].

Technical Implementation:

- During each training iteration, randomly select a small proportion of gene expression values and set them to zero.

- This exposes the model to multiple variations of the data, forcing it to learn robust features that are not dependent on any specific pattern of missing data [13].

- Models like DAZZLE use this within an autoencoder-based structural equation model (SEM) framework, parameterizing the adjacency matrix to represent the GRN [12].

Troubleshooting Checklist:

- Model performance degrades after initial convergence, likely due to overfitting.

- Solution: Implement Dropout Augmentation. DAZZLE showed a 50.8% reduction in running time and a 21.7% reduction in parameters compared to its predecessor, DeepSEM, while achieving greater stability [12].

- Inferred GRN is unstable between training runs.

- Solution: Use a stabilized model like DAZZLE, which delays the introduction of the sparsity loss term and uses a closed-form prior for the latent distribution [12].

FAQ 3: What is the best way to integrate multi-omics data for a more complete GRN?

Answer: Leverage hybrid models that combine the strengths of GNNs and Transformers.

- Core Principle: GNNs naturally model the graph structure of regulatory interactions, while Transformers excel at capturing long-range dependencies and complex relationships within sequential or feature-rich data [15] [31]. Combining them allows for a more holistic integration of diverse data types.

Technical Implementation:

- Use GNNs as the backbone to represent genes as nodes and potential regulatory interactions as edges.

- Incorporate a Transformer module to process node features or global context, allowing the model to integrate information from various sources like ATAC-seq (chromatin accessibility) or ChIP-seq (TF binding) data alongside expression data [15].

- Frameworks like DeepMAPS use heterogeneous graph transformers to integrate scRNA-seq with scATAC-seq data, effectively inferring interactions from multi-omic inputs [16].

Troubleshooting Checklist:

- Model cannot effectively leverage complementary information from different omics layers.

- Solution: Implement a Transformer-based attention mechanism to weight the importance of different features or data modalities dynamically [15] [16].

- Computational cost becomes prohibitive with large, integrated datasets.

- Solution: Utilize efficient attention mechanisms (e.g., linear Transformers) and consider subgraph-based training strategies to maintain scalability [15].

Experimental Protocols & Workflows

Protocol 1: Benchmarking GRN Inference Methods on scRNA-seq Data

This protocol outlines a standard workflow for evaluating the performance of a new GRN inference method against established benchmarks.

1. Data Preparation:

- Input: Obtain a single-cell gene expression matrix (cells x genes) from a public repository like GEO. Preprocess the data (normalization, log-transformation:

log(x+1)) [12]. - Ground Truth: Use a dataset with known or experimentally validated regulatory interactions (e.g., from databases like ChIP-Atlas or BEELINE benchmarks) for evaluation [15] [12].

2. Model Training & Inference:

- Train the model (e.g., DAZZLE, Meta-TGLink, or a custom GNN-Transformer) on the training split of the expression data.

- For methods like DAZZLE, the adjacency matrix

Ais learned as a byproduct of the autoencoder's training, where the model is tasked to reconstruct its input [12].

3. Evaluation:

- Extract the predicted adjacency matrix from the trained model, which represents the strength of regulatory interactions between genes.

- Compare the predicted interactions against the ground truth network using standard metrics:

- Area Under the Precision-Recall Curve (AUPRC)

- Area Under the Receiver Operating Characteristic Curve (AUROC)

The workflow for this protocol is summarized in the diagram below:

Protocol 2: Few-Shot GRN Inference for a New Cell Type using Meta-TGLink

This protocol is designed for scenarios where prior regulatory knowledge for a specific cell type is limited [15].

1. Meta-Training Phase:

- Input: Multiple GRNs from well-studied cell types or species.

- Procedure:

- Construct numerous meta-tasks. For each task, sample a support set (a small subgraph of known interactions) and a query set (other interactions to be predicted).

- Train the Meta-TGLink model using a bi-level optimization loop: the inner loop adapts the model to the support set, and the outer loop updates the model's parameters to perform well on the query set after adaptation.

2. Meta-Testing (Adaptation) Phase:

- Input: A small support set of known interactions for the new, target cell type.

- Procedure:

- Form a single meta-task where the support set contains the limited known interactions for the target.

- The query set contains all gene pairs for which regulatory relationships need to be inferred.

- The meta-trained model is adapted using the support set and then makes predictions on the query set.

The following diagram illustrates this meta-learning workflow:

Performance Benchmarking Tables

Table 1: Comparative Performance of GRN Inference Methods on Human Cell Line Benchmarks

This table summarizes the performance of various methods, highlighting the advantages of advanced learning frameworks. Data is based on average improvements in AUROC and AUPRC across four human cell line datasets (A375, A549, HEK293T, PC3) [15].

| Method Category | Example Methods | Key Technology | Average AUROC Improvement | Average AUPRC Improvement |

|---|---|---|---|---|

| Graph Meta-Learning | Meta-TGLink | GNN + Transformer + MAML | 26.0% | 19.5% |

| Unsupervised Learning | DeepSEM, GENIE3 | VAE, Random Forests | - | - |

| Supervised (non-GNN) | CNNC, GNE | CNN, MLP | 17.2% | 13.6% |

| Pre-trained Model | scGPT | Transformer | 13.7% | 9.8% |

Table 2: Analysis of Sparse Autoencoder (SAE) Applications in Biological AI

SAEs are a key interpretability tool for understanding what biological concepts models learn. This table categorizes their applications [32].

| Method / Model Studied | SAE Architecture | Key Finding | Validation Method |

|---|---|---|---|

| InterPLM (ESM-2) | Standard L1 | Found missing protein annotations in Swiss-Prot | Swiss-Prot annotations |

| InterProt (ESM-2) | TopK SAE | Explained thermostability determinants, found nuclear signals | Linear probes on 4 tasks |

| Reticular (ESM-2/ESMFold) | Matryoshka hierarchical | 8-32 active latents can maintain structure prediction | Structure RMSD, annotations |

| Evo 2 (DNA model) | BatchTopK | Discovered prophage regions, CRISPR-phage associations | Genome-wide activations |

| Markov Biosciences | Standard | Features form causal regulatory networks | Feature clustering, spatial patterns |

Table 3: Key Computational Tools for GRN Inference

| Resource Name | Type | Primary Function | Relevant Use Case |

|---|---|---|---|

| DAZZLE | Software Model | GRN inference with robustness to data dropout | Handling zero-inflated scRNA-seq data [12] [13] |

| Meta-TGLink | Software Model | Few-shot and cross-domain GRN inference | Inferring networks for new TFs or cell types with limited data [15] |

| BEELINE | Benchmark Framework | Standardized evaluation of GRN inference algorithms | Benchmarking new methods against state-of-the-art [12] |

| ChIP-Atlas | Database | Experimentally validated transcription factor binding sites | Validating predicted regulatory interactions [15] |

| Chemprop | Software Library | Directed Message Passing Neural Networks (D-MPNN) | Molecular property prediction and uncertainty quantification [33] |

| ESM-2 | Pre-trained Model | Protein language model | Extracting interpretable features from protein sequences [32] |

Technical Support Center

Troubleshooting Guide: Dropout Augmentation & DAZZLE Implementation

FAQ: My model performance drops after applying Dropout Augmentation. What should I check?

- Potential Cause: Excessively high dropout rate during augmentation, leading to excessive information loss.

- Solution: Start with a low proportion of augmented zeros (e.g., 1-5%) and gradually increase only if needed. Ensure the model has enough training epochs to learn from the noise [12].

- Verification: Monitor the training and validation loss. A large gap might indicate underfitting due to too much augmentation.

FAQ: The inferred Gene Regulatory Network (GRN) from DAZZLE is too dense. How can I improve sparsity?

- Potential Cause: The sparsity loss term may have been introduced too early in training or its strength parameter is set too low [12].

- Solution: Delay the introduction of the sparsity control loss by a customizable number of epochs to allow the model to learn initial patterns first. Adjust the sparsity regularization parameter [12].

- Verification: Inspect the distribution of weights in the adjacency matrix; it should show a peak near zero after successful sparsity regularization.

FAQ: How do I handle the impact of DA on different gene expression levels?

- Potential Cause: Uniform dropout augmentation might disproportionately affect low-expression genes.

- Solution: DAZZLE implements a noise classifier. This component helps the model identify and down-weight values likely to be dropout noise, protecting meaningful biological signals [12].

- Verification: Check the output of the noise classifier to ensure it is learning to distinguish augmented zeros.

FAQ: My training process is unstable. How can I improve its robustness?

- Potential Cause: Instability can arise from the joint optimization of the autoencoder and the adjacency matrix.

- Solution: Adopt DAZZLE's modifications, which include using a closed-form Normal distribution as a prior for the latent variable instead of a separately estimated one. This simplifies the model and enhances stability [12].

- Verification: Plot the loss over epochs for multiple runs with different random seeds to see if the convergence is more consistent.

Performance & Benchmarking Data

The following tables summarize quantitative data from benchmark experiments, showcasing the performance and efficiency of the DAZZLE model.

Table 1: Model Performance Comparison on BEELINE Benchmark Tasks [12]

| Model / Metric | AUPRC (hESC) | AUPRC (mESC) | Stability (Variance) | Robustness to Dropout |

|---|---|---|---|---|

| DAZZLE (with DA) | 0.XX | 0.XX | High | High |

| DeepSEM | 0.XX | 0.XX | Medium | Low |

| GENIE3 | 0.XX | 0.XX | High | Medium |

| GRNBoost2 | 0.XX | 0.XX | High | Medium |

Note: AUPRC (Area Under the Precision-Recall Curve) is a common metric for GRN inference; higher is better. Exact values are dataset-specific and should be taken from the latest benchmark publications [12].

Table 2: Computational Efficiency Comparison [12]

| Model | Parameters (on BEELINE-hESC) | Clock Time (on H100 GPU) |

|---|---|---|

| DAZZLE | 2,022,030 | 24.4 seconds |

| DeepSEM | 2,584,205 | 49.6 seconds |

Detailed Experimental Protocols

Protocol 1: Implementing Dropout Augmentation for scRNA-seq Data This methodology details how to apply Dropout Augmentation during model training [12] [13].

- Input Data Preparation: Begin with a transformed gene expression matrix, typically using ( \log(x + 1) ), where rows are cells and columns are genes.

- Noise Sampling: In each training iteration, randomly select a small proportion (e.g., 1-5%) of the non-zero expression values.

- Augmentation: Set the selected values to zero to simulate synthetic dropout events.

- Model Training: Feed this augmented batch into the model (e.g., DAZZLE's autoencoder). The model learns to reconstruct the original, non-augmented data, thereby building robustness against missing values.

Protocol 2: GRN Inference Workflow using DAZZLE This protocol describes the end-to-end process for inferring gene networks with DAZZLE [12] [13].

- Data Preprocessing: Load the scRNA-seq count matrix. Apply ( \log(x + 1) ) transformation.

- Model Initialization: Configure the DAZZLE model, which uses a Structure Equation Model (SEM) framework with a parameterized adjacency matrix within an autoencoder.

- Training with DA: Train the model using the Dropout Augmentation protocol described above. Utilize a joint optimization strategy for the network weights and the adjacency matrix.

- Sparsity Control: After an initial warm-up phase, introduce a sparsity loss term to the overall objective function to promote a sparse adjacency matrix.

- Network Extraction: Upon convergence, extract the trained adjacency matrix. The weights in this matrix represent the inferred regulatory interactions between genes (the GRN).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for DA-Augmented GRN Inference

| Item / Reagent | Function / Purpose |

|---|---|

| scRNA-seq Dataset | The primary input data, providing transcriptomic profiles of individual cells [12] [13]. |

| DAZZLE Software | The core model implementing Dropout Augmentation and SEM for robust GRN inference [12] [13]. |

| BEELINE Benchmark | A standardized framework and dataset suite for evaluating and comparing GRN inference methods [12]. |

| GPU (e.g., H100) | Essential hardware for accelerating the training of deep learning models like DAZZLE [12]. |

| Prior Network Data | (Optional) Existing biological knowledge about gene interactions that can be integrated to guide inference [12]. |

Workflow and Architecture Visualization

DAZZLE GRN Inference with Dropout Augmentation

DAZZLE Autoencoder based on Structure Equation Model

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using a meta-learning framework like Meta-TGLink for GRN inference? Meta-TGLink addresses the critical challenge of data scarcity by using a "learning to learn" paradigm [15]. Instead of requiring a large, labeled dataset for each new GRN, it captures transferable regulatory patterns from multiple learning episodes across related tasks [15]. This allows the model to quickly adapt to new cell types, species, or transcription factors with only a few known regulatory interactions, significantly reducing dependence on extensive labeled datasets [15].

Q2: My model performs well during meta-training but fails to adapt to a new target cell line. What could be wrong? This is often a problem of domain shift. Meta-TGLink is designed for this, but its success depends on the meta-training phase. Ensure your meta-tasks are diverse and representative of the variations you expect to see in the target domain. The model uses a structure-enhanced GNN module that alternates between Transformer and GNN layers to integrate relational and positional information, which is crucial for generalizing to new, sparse graphs [15]. If the target domain is too dissimilar from your source domains, you may need to incorporate target-domain data, even if unlabeled, during a pre-training phase to learn more generalized feature representations [34].

Q3: How does Meta-TGLink handle the "cold-start" problem for new transcription factors (TFs)? Meta-TGLink formulates GRN inference as a link prediction task on a graph [15]. The "cold-start" problem for a new TF is effectively a few-shot link prediction challenge. The model's specialized meta-task design, which operates at the subgraph level, alleviates this issue. During meta-testing, the support set contains the limited known interactions for the new TF, and the model predicts its unknown regulatory relationships in the query set, leveraging the transferable knowledge gained from meta-training [15].

Q4: What are the key evaluation metrics for few-shot GRN inference, and how does Meta-TGLink perform? Standard metrics include the Area Under the Receiver Operating Characteristic Curve (AUROC) and the Area Under the Precision-Recall Curve (AUPRC). The Early Precision Rate (EPR) is also commonly used [35]. Benchmarking on real-world datasets like specific human cell lines (A375, A549, etc.) has shown that Meta-TGLink outperforms state-of-the-art baselines. For instance, it achieved substantial improvements in AUROC and AUPRC over other methods, including other GNN-based models, pre-trained Transformers like scGPT, and unsupervised approaches [15].

Q5: Are there robust benchmarks for validating my GRN inference method on real-world data? Yes, benchmarks like CausalBench provide a suite for evaluating network inference methods using large-scale, real-world single-cell perturbation data [8]. Unlike synthetic data, CausalBench uses biologically-motivated metrics and distribution-based interventional measures for a more realistic performance assessment. It includes curated datasets from different cell lines (e.g., RPE1 and K562) and integrates numerous baseline methods, allowing for objective comparison of scalability, precision, and robustness [8].

Troubleshooting Guide

| Issue | Possible Cause | Solution |

|---|---|---|

| Poor Meta-Training Convergence | Inadequate meta-task design or insufficient task diversity. | Construct meta-tasks as subgraph-level link prediction problems. Ensure support and query sets are properly sampled to create diverse learning episodes that mimic the few-shot test scenario [15]. |

| Low Performance on Sparse Target GRN | Message passing in GNNs is too restricted with limited edges. | Use the structure-enhanced GNN module in Meta-TGLink, which integrates the global attention of a Transformer. This expands the model's receptive field, helping it capture long-range gene interactions despite sparsity [15]. |

| Model Fails to Capture Key Regulators | Gene representations lack important structural or positional information. | Incorporate the positional encoding module from the TGLink architecture. This explicitly adds topological information to gene features, preserving structural context during message passing and improving regulator identification [15]. |

| Overfitting on Limited Support Set | Model complexity is too high for the few-shot adaptation step. | Leverage the neighborhood perception module in TGLink. It adaptively selects the most relevant neighboring genes, which reduces computational cost and suppresses noise, preventing overfitting to spurious correlations in the small support set [15]. |

| Poor Cross-Domain Generalization | Significant distribution shift between source and target domains. | Implement a domain knowledge mapping strategy. This can be applied during pre-training, training, and testing to help the model assess and adapt to domain difficulty variations dynamically [34]. |

Experimental Protocols & Performance Data

Summary of Key GRN Inference Methods and Performance

The following table summarizes several state-of-the-art methods, highlighting the niche where Meta-TGLink demonstrates superiority, particularly in few-shot conditions [15] [35].

| Method | Learning Type | Key Principle | Best-Suited Scenario | Reported Performance (Example) |

|---|---|---|---|---|

| Meta-TGLink [15] | Supervised / Meta-Learning | Graph meta-learning for few-shot link prediction. | Cross-domain, few-shot GRN inference. | Outperformed 9 baselines; e.g., ~26% avg. AUROC improvement on four cell lines [15]. |

| MetaSEM [35] | Unsupervised / Meta-Learning | Bi-level optimization with a structural equation model. | Small-scale, sparse scRNA-seq data. | EPR of 1.36 on mHSC-L dataset, outperforming DeepSEM and GENIE3 [35]. |

| NetID [7] | Unsupervised | GRN inference from homogeneous metacells to reduce sparsity. | Large-scale single-cell data; lineage-specific GRNs. | Superior performance vs. imputation-based methods; recovers known network motifs [7]. |

| GENIE3 [15] [7] | Unsupervised | Random forest regression to predict gene expression. | General-purpose GRN inference with sufficient data. | Often outperformed by modern deep learning methods in supervised settings [15]. |

| CausalBench Methods (e.g., Mean Difference) [8] | Varies (Interventional) | Designed to leverage large-scale perturbation data. | Causal inference from real-world interventional single-cell data. | Top-performing methods on the CausalBench challenge metrics [8]. |

Detailed Protocol: Meta-Training for Meta-TGLink

- Input Data Preparation: For each source domain (e.g., well-annotated cell line), you will need:

- A gene expression matrix.

- A prior regulatory network (adjacency matrix) of known TF-target gene interactions [15].

- Meta-Task Construction: For each episode in meta-training:

- Sample a subgraph from the full GRN of a source domain.

- On this subgraph, randomly mask a subset of edges (regulatory links) to create a support set (known edges) and a query set (masked edges to be predicted). The model is trained to perform well on the query set after learning from the support set [15].

- Bi-Level Optimization:

- Inner Loop (Task-Specific Adaptation): The model's parameters are temporarily updated (e.g., via one or a few gradient steps) using the loss computed on the support set.

- Outer Loop (Meta-Optimization): The model's initial parameters are updated by evaluating the performance on the query set after the adaptation step. This forces the model to learn parameters that are easily adaptable to new tasks [15].

- Model Architecture (TGLink): The core model used within Meta-TGLink consists of:

- A Positional Encoding Module to capture topological information.

- A Structure-Enhanced GNN Module that alternates between GNN and Transformer layers.

- A Neighborhood Perception Module for adaptive neighbor sampling [15].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in the Context of GRN Inference |

|---|---|

| Prior Regulatory Network | A set of known TF-target interactions (e.g., from public databases) used as ground truth for supervised training or as a structural prior for the model [15]. |

| Single-Cell RNA-Seq Data | The foundational input data measuring gene expression at single-cell resolution, used to infer regulatory relationships based on covariation [15] [7]. |

| Metacells | Homogenous groups of cells aggregated to reduce technical noise and sparsity in scRNA-seq data, serving as a more robust input for GRN inference methods like NetID [7]. |

| Perturbation Data (CRISPRi) | Single-cell gene expression data following genetic perturbations (knockdowns). Used in benchmarks like CausalBench to evaluate causal inference methods [8]. |

| Benchmark Suites (e.g., CausalBench, BEELINE) | Curated datasets and evaluation frameworks that provide standardized metrics and ground-truth networks to objectively compare the performance of different GRN inference methods [8] [35]. |

Model Architecture and Workflow Visualization

Diagram 1: The Meta-TGLink workflow involves a meta-training phase on multiple source tasks to produce a model that can be rapidly adapted to a new, few-shot target task.

Diagram 2: The TGLink model uses three core modules to generate gene representations for accurate link prediction.

Diagram 3: The NetID pipeline for generating homogeneous metacells from single-cell data to reduce sparsity for GRN inference.

Parallel, Distributed, and Streaming Computing Frameworks for Large-Scale Data Processing

FAQs & Troubleshooting Guides

General Framework Selection

Q: How do I choose the right computing framework for Gene Regulatory Network (GRN) inference on large-scale single-cell data?

A: The choice depends on your data characteristics and computational requirements. The table below compares key frameworks to guide your selection.

| Framework | Primary Processing Model | Best Suited For GRN Tasks | Key Strength |

|---|---|---|---|

| Apache Spark [36] | Batch & Micro-batches | Pre-processing large expression matrices, feature selection. | In-memory computing for fast, iterative algorithms. |

| Hadoop MapReduce [37] | Batch | Legacy batch processing of very large, static datasets. | High fault tolerance on commodity hardware. |

| Apache Flink [38] | True Streaming & Batch | Real-time analysis of continuous data streams. | Low-latency, high-throughput stateful computations. |

| Apache Storm [39] | True Streaming | Real-time event processing for monitoring applications. | Very low-latency processing of unbounded data streams. |

| Apache Kafka [40] [41] | Event Streaming | Building data pipelines to ingest and distribute streaming data. | High-throughput, durable pub/sub messaging. |

Q: My GRN inference job is running unusually slowly. What are the common bottlenecks?

A: Slowdowns in large-scale GRN inference, as encountered in benchmarks like CausalBench, often stem from a few key areas [8]:

- Data Skew: A small number of genes might have extremely high connectivity, causing a few computational tasks to take much longer than others. This breaks parallel efficiency.

- Network Overhead: Excessive data transfer (shuffling) between nodes during stages like the shuffle between Map and Reduce phases can saturate network bandwidth [37].

- Insufficient Memory: Holding large state information or intermediate results (e.g., massive adjacency matrices for the network) can lead to garbage collection overhead or out-of-memory errors.

- Inefficient Serialization: Slow serialization of complex objects between nodes or to disk can become a major bottleneck.

Troubleshooting Apache Spark for GRN Inference

Q: I get a NoSuchMethodError or ClassNotFoundException when submitting my Spark application. What is wrong?

A: This is typically a dependency conflict. Your application JAR contains a library version that conflicts with the one provided by the Spark cluster.

- Solution: Create an "uber jar" (or "fat jar") that contains your application's code but excludes the Hadoop or Spark libraries themselves, as these are provided by the cluster manager at runtime [36]. Use build tools like Maven or SBT with the "shade" plugin to manage dependencies correctly.

Q: My Spark driver fails with "Failed to connect to" errors from executors.

A: The driver program must be network-addressable from all worker nodes throughout its lifetime [36].

- Solution:

- In client mode: Ensure the machine running the driver has open ports (e.g.,

spark.driver.port) and that firewalls on the worker nodes allow inbound connections to it. - In cluster mode: Let the cluster manager launch the driver inside the cluster network, which is more robust. The submission guide recommends running the driver "close to the worker nodes, preferably on the same local area network" [36].

- In client mode: Ensure the machine running the driver has open ports (e.g.,

Troubleshooting Hadoop MapReduce for Large-Scale Data