Robust Multi-Objective Evolutionary Optimization: Foundations and Advances for Computational Drug Discovery

This article provides a comprehensive examination of robust multi-objective evolutionary optimization (RMOEO), a critical computational approach for solving complex problems with conflicting objectives under uncertainty.

Robust Multi-Objective Evolutionary Optimization: Foundations and Advances for Computational Drug Discovery

Abstract

This article provides a comprehensive examination of robust multi-objective evolutionary optimization (RMOEO), a critical computational approach for solving complex problems with conflicting objectives under uncertainty. Tailored for researchers and drug development professionals, we explore the foundational principles of multi-objective optimization and robustness measures, detail cutting-edge algorithmic frameworks including survival rate-based approaches and constrained optimization methods, address key implementation challenges in noisy environments, and present rigorous validation methodologies. With a special focus on molecular optimization applications, this review synthesizes recent advances to equip practitioners with both theoretical understanding and practical strategies for deploying RMOEO in biomedical research and therapeutic development.

The Principles of Robust Multi-Objective Optimization: Balancing Competing Objectives Under Uncertainty

Multi-objective optimization (MOO) represents a fundamental class of problems in operational research, engineering, and drug development where decision-makers must simultaneously optimize several conflicting objectives [1]. Traditional single-objective optimization methods, which yield a single optimal solution, are inadequate for these scenarios as they cannot capture the inherent trade-offs between competing goals [2]. In MOO, the concept of an optimal solution is redefined through the principle of Pareto optimality, named after the Italian economist Vilfredo Pareto, which formalizes the idea of an outcome that cannot be improved in any objective without degrading another [3]. The set of all such optimal solutions constitutes the Pareto front, which reveals the complete spectrum of trade-offs available to the decision-maker [4]. Within the broader thesis on robust multi-objective evolutionary optimization, understanding these core concepts is paramount, as they form the mathematical foundation upon which advanced algorithms and decision-support tools are built for navigating complex, high-dimensional search spaces prevalent in scientific domains such as pharmaceutical development.

Mathematical Foundations of Pareto Optimality

The mathematical framework for Pareto optimality provides the formal language for defining and identifying optimal solutions in multi-objective problems. A multi-objective optimization problem with ( m ) objectives is formally stated as minimizing a vector of objective functions [1]: [ \text{minimize} \quad (f1(\mathbf{x}), f2(\mathbf{x}), \dots, fm(\mathbf{x})), \quad \mathbf{x} \in X ] where ( \mathbf{x} ) is a decision vector from the feasible decision space ( X ), and ( fi ) are the objective functions.

The core relational concept for comparing solutions in this context is Pareto dominance. For two decision vectors ( \mathbf{x}^{(1)} ) and ( \mathbf{x}^{(2)} ) [3] [1]:

- ( \mathbf{x}^{(1)} ) is said to dominate ( \mathbf{x}^{(2)} ) (denoted ( \mathbf{x}^{(1)} \prec \mathbf{x}^{(2)} )) if and only if:

- ( fi(\mathbf{x}^{(1)}) \leq fi(\mathbf{x}^{(2)}) ) for all ( i \in {1, 2, \dots, m} ), and

- ( fj(\mathbf{x}^{(1)}) < fj(\mathbf{x}^{(2)}) ) for at least one ( j \in {1, 2, \dots, m} ).

A solution ( \mathbf{x}^* \in X ) is Pareto optimal (or efficient) if no other feasible solution dominates it [3]. The set of all Pareto optimal solutions in the decision space ( X ) constitutes the Pareto set. When these solutions are mapped into the objective space ( \mathbb{R}^m ), the resulting set of objective vectors ( {\mathbf{f}(\mathbf{x}^) | \mathbf{x}^ \text{ is Pareto optimal}} ) forms the Pareto front (also called the Pareto frontier or Pareto curve) [4]. The Pareto front provides a complete representation of the trade-offs between conflicting objectives, where improvement in one objective necessarily requires deterioration in at least one other [2].

Table 1: Key Variants of Pareto Efficiency

| Efficiency Type | Formal Definition | Key Characteristics |

|---|---|---|

| Strong Pareto Efficiency | No alternative exists where all agents are at least as well-off and at least one is strictly better-off [3]. | Standard definition; difficult to achieve in practice with discrete allocations. |

| Weak Pareto Efficiency | No alternative exists where all agents are strictly better-off [3]. | Less strict criterion; a solution can be weakly efficient even if some agents can be made better-off without harming others. |

| Fractional Pareto Efficiency (fPE/fPO) | An allocation of indivisible items is not Pareto-dominated even by allocations where items are split between agents [3]. | Relevant for fair item allocation problems; stronger than standard Pareto efficiency. |

| Constrained Pareto Efficiency | A planner cannot improve upon a decentralized outcome due to the same informational or institutional constraints faced by individual agents [3]. | Accounts for real-world limitations in information and implementation. |

The Pareto Front in Multi-Objective Optimization

Conceptual and Visual Representation

The Pareto front serves as the fundamental "map of trade-offs" in multi-objective optimization. In a typical two-objective minimization problem, the Pareto front can be visualized as a curve in the two-dimensional objective space, where each point on the curve represents a non-dominated solution [2]. Solutions lying on the front are considered equally optimal from a Pareto perspective; the choice among them depends on the decision-maker's specific preferences regarding the trade-off between objectives [5]. All solutions not on the Pareto front are dominated, meaning there exists at least one solution that is better in at least one objective without being worse in any other [1]. The visual representation makes immediately apparent which solutions are candidates for selection and which are unequivocally suboptimal.

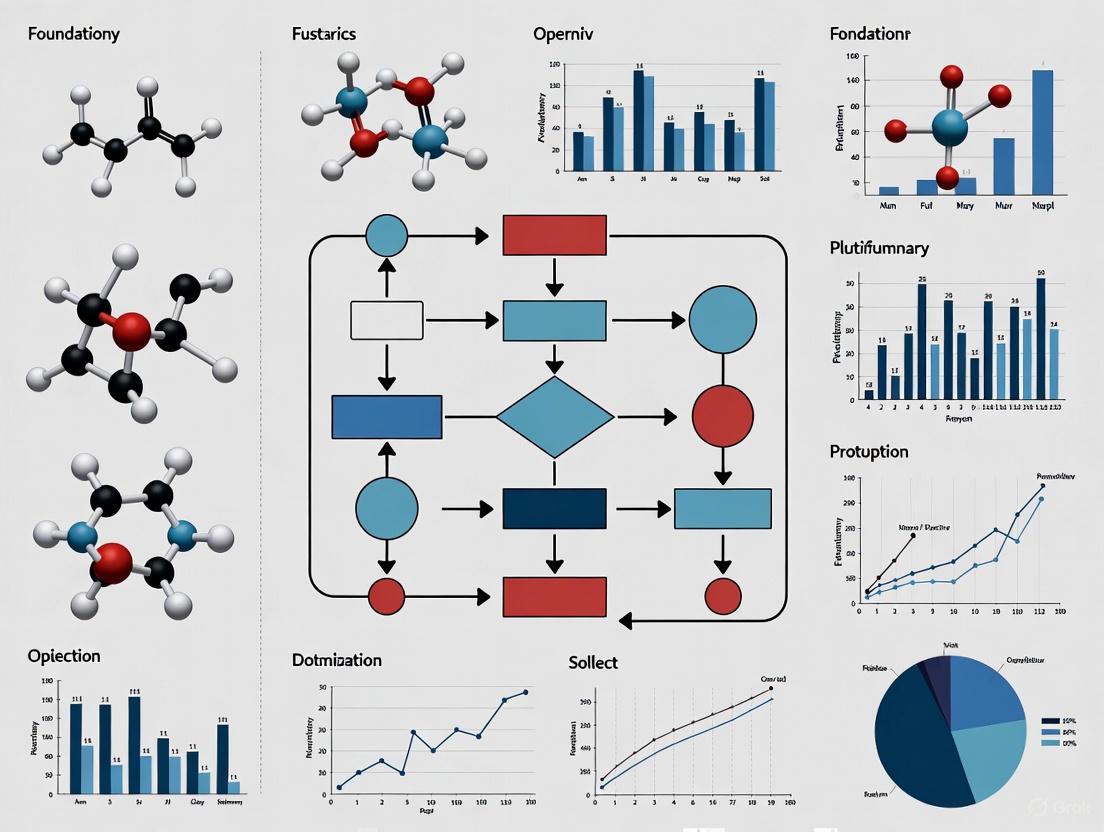

Diagram 1: Pareto front visualization

Marginal Rate of Substitution and Economic Interpretation

A crucial economic insight regarding the Pareto front is that at any Pareto-efficient allocation, the marginal rate of substitution (MRS) between any two goods must be identical for all consumers [4]. This principle extends to multi-objective optimization more broadly. For a system with multiple consumers and goods, where each consumer ( i ) has a utility function ( zi = f^i(x^i) ) defined over their consumption bundle ( x^i = (x1^i, x2^i, \ldots, xn^i) ), and subject to resource constraints ( \sum{i=1}^m xj^i = bj ), Pareto optimality requires that for any two goods ( j ) and ( s ), and any two consumers ( i ) and ( k ) [4]: [ \frac{f{xj^i}^i}{f{xs^i}^i} = \frac{\muj}{\mus} = \frac{f{xj^k}^k}{f{xs^k}^k} ] where ( f{xj^i} ) denotes the partial derivative of ( f ) with respect to ( xj^i ). This equality of MRS across all consumers indicates that Pareto-efficient allocations represent points where no further mutually beneficial trade can occur, reflecting an efficient distribution of resources given individual preferences.

Computational Methodologies for Pareto Front Approximation

Algorithmic Approaches and Classification

Computing the exact Pareto front is often computationally challenging, particularly for problems with complex, high-dimensional, or non-convex objective spaces. Consequently, researchers have developed numerous algorithmic strategies to approximate the Pareto front. These approaches can be broadly classified into mathematical programming-based methods and population-based metaheuristics [6].

Table 2: Computational Methods for Pareto Front Approximation

| Algorithm Class | Representative Methods | Key Characteristics | Application Context |

|---|---|---|---|

| Mathematical Programming | Weighted Sum Method, (\epsilon)-Constraint Method [4] [6] | Deterministic; converts MOO to single-objective problems via scalarization; well-suited for convex problems. | Continuous optimization problems with smooth, well-defined objective functions and constraints. |

| Multi-Objective Evolutionary Algorithms (MOEAs) | NSGA-II, SPEA2, MOEA/D, SMS-EMOA [1] [6] | Population-based; handles non-convex and discontinuous fronts; provides multiple diverse solutions in single run. | Complex, black-box, or non-differentiable problems; approximation of entire Pareto fronts. |

| Hybrid Methods | Mathematical programming combined with evolutionary approaches [6] | Leverages strengths of both approaches; uses mathematical programming for refinement and evolutionary for exploration. | Problems where both global exploration and local refinement are critical. |

Detailed Experimental Protocol: MOEA/D for Drug Design Optimization

The Multi-Objective Evolutionary Algorithm Based on Decomposition (MOEA/D) provides a powerful framework for solving complex multi-objective problems in drug development. Below is a detailed experimental protocol suitable for implementation in research settings.

1. Problem Formulation:

- Objective 1 (Potency): Maximize ( f1(\mathbf{x}) = -\log(IC{50}) ), where ( IC_{50} ) represents the half-maximal inhibitory concentration of a candidate compound.

- Objective 2 (Synthetic Cost): Minimize ( f_2(\mathbf{x}) = \text{estimated synthetic complexity score} ).

- Objective 3 (Toxicity): Minimize ( f_3(\mathbf{x}) = \text{predicted hepatotoxicity score} ).

- Decision Variables: ( \mathbf{x} ) represents molecular descriptors (e.g., topological indices, functional group counts, 3D descriptors).

- Constraints: Include drug-likeness filters (Lipinski's Rule of Five), chemical feasibility constraints, and structural alerts for toxicity [1].

2. Algorithm Initialization:

- Population Size (( N )): Set to 100-500 individuals, depending on computational resources.

- Decomposition Method: Use the Tchebycheff approach with uniformly distributed weight vectors ( \lambda^1, \lambda^2, \dots, \lambda^N ).

- Neighborhood Size (( T )): Set to 10-20% of population size to balance exploration and exploitation.

- Genetic Operators:

- Crossover: Simulated Binary Crossover (SBX) with probability ( pc = 0.9 ) and distribution index ( \etac = 20 ).

- Mutation: Polynomial mutation with probability ( pm = 1/n ) (where ( n ) is number of variables) and distribution index ( \etam = 20 ).

- Stopping Criterion: Maximum of 50,000 function evaluations or convergence threshold (e.g., <0.001 change in hypervolume for 100 generations).

3. Execution Workflow:

- Step 1: Generate initial population of candidate compounds randomly or via space-filling design.

- Step 2: Compute objective function values for each candidate.

- Step 3: For each weight vector ( \lambda^i ), identify its ( T ) closest neighbors based on Euclidean distance.

- Step 4: For each subproblem ( i ) (corresponding to ( \lambda^i )):

- Randomly select two neighbors from its neighborhood.

- Apply crossover and mutation to create offspring.

- Evaluate offspring and update neighboring solutions if improvement occurs based on the Tchebycheff function.

- Step 5: Repeat Step 4 until stopping criterion is met.

- Step 6: Output non-dominated solutions from final population as the approximated Pareto front.

4. Performance Assessment:

- Hypervolume Indicator: Measure the volume of objective space dominated by the approximated front relative to a reference point.

- Inverted Generational Distance (IGD): Calculate average distance from points on true Pareto front to nearest point on approximated front.

- Spacing Metric: Assess distribution uniformity of solutions along the front.

Diagram 2: MOEA/D algorithm workflow

The Scientist's Toolkit: Essential Reagents for Multi-Objective Optimization Research

Table 3: Key Computational Tools and Conceptual "Reagents" for Multi-Objective Optimization Research

| Research "Reagent" | Function/Purpose | Implementation Notes |

|---|---|---|

| Scalarization Functions | Transform multi-objective problem into single-objective problems to apply traditional optimization methods [4] [1]. | Includes Weighted Sum, Tchebycheff, Achievement Scalarizing Functions; choice affects ability to find all Pareto optimal points. |

| Pareto Dominance Ranking | Classifies solutions into non-dominated fronts (Rank 1 = Pareto front) for selection in evolutionary algorithms [2]. | Critical for NSGA-II and similar algorithms; computational complexity is ( O(mN^2) ) for ( m ) objectives and ( N ) solutions. |

| Performance Indicators | Quantitatively assess quality of approximated Pareto fronts (convergence, diversity, uniformity) [1]. | Hypervolume, IGD, Spacing, Maximum Spread; hypervolume is strictly Pareto compliant but computationally expensive. |

| Data-Driven Uncertainty Sets | Handle uncertain parameters in robust multi-objective optimization without assuming known probability distributions [7]. | Constructed from historical data; used in distributionally robust optimization frameworks for problems like energy scheduling. |

| Constraint Handling Techniques | Manage feasible regions in problems with constraints that cannot be easily eliminated [1]. | Includes penalty methods, constraint domination, feasible rules; choice impacts algorithm performance on problems with complex feasible regions. |

Current Research Trends and Applications

The field of multi-objective optimization continues to evolve, with several prominent research directions emerging within the context of robust evolutionary optimization. Distributionally Robust Optimization (DRO) represents a significant advancement, combining robust optimization with statistical learning to make decisions that perform well under a set of probability distributions constructed from data [8]. Recent applications include newsvendor models under capital constraints [8], medical supplies distribution in humanitarian aid [8], and construction waste reverse logistics with joint chance constraints [8]. These approaches are particularly valuable for drug development professionals who must make decisions under profound uncertainty regarding compound efficacy, toxicity, and manufacturing costs.

The integration of multi-objective optimization with machine learning has created powerful synergies, particularly in hyperparameter tuning where multiple error rates (e.g., false positives and false negatives) must be balanced [1]. Similarly, contextual robust optimization frameworks are being developed to handle multi-period decision-making in environments where contextual information arrives sequentially, such as in online energy applications where scheduling decisions must be updated every few minutes based on new data [7].

In pharmaceutical applications, multi-objective optimization has been successfully applied to therapeutic drug design, where researchers simultaneously optimize for drug potency, minimal synthesis costs, and minimal side effects [1]. The Pareto front approach enables medicinal chemists to visualize the fundamental trade-offs between these competing objectives and select candidate compounds that represent the best possible compromises based on project priorities and constraints.

Pareto optimality and the Pareto front constitute the fundamental theoretical framework for understanding and solving multi-objective optimization problems across scientific disciplines. For researchers in robust multi-objective evolutionary optimization, these concepts provide both the mathematical foundation for algorithm development and the practical mechanism for decision support in complex, high-dimensional problems with conflicting objectives. The continuing evolution of computational methods—from sophisticated decomposition-based evolutionary algorithms to data-driven distributionally robust approaches—ensures that these foundational concepts remain highly relevant for addressing contemporary challenges in fields ranging from engineering design to pharmaceutical development. As optimization problems grow in complexity and scale, the principles of Pareto optimality will continue to guide the development of methods that effectively map trade-offs and support informed decision-making in the face of competing objectives.

In the realm of multi-objective evolutionary optimization, the presence of uncertainties represents a fundamental challenge that can significantly compromise the performance of solutions in real-world applications. Robust optimization addresses this critical issue by pursuing solutions that maintain their performance in the face of disturbances, striking an optimal balance between convergence and robustness [9]. This balance holds immense significance across numerous real-world applications faced with noisy inputs, from manufacturing processes with unavoidable production errors to aerodynamic design with variations in nominal geometry [9].

Uncertainty in optimization problems manifests in two primary forms: input perturbation uncertainty (also called parameter uncertainty) and structural uncertainty. Input perturbation occurs when the objective function has a structure consistent with the true objective function, but its input variables are subject to perturbations within a certain neighborhood due to disturbances. In contrast, structural uncertainty involves a model bias between the objective function being optimized and the true objective function within a certain neighborhood [9]. Both forms present distinct challenges that require specialized approaches for effective mitigation.

The concept of robustness in this context represents a degree of resistance to solution insensitivity when faced with variable disturbance. A solution is deemed robust when it exhibits insensitivity to disturbances in decision variables, meaning its performance remains stable despite fluctuations or noise in the operating environment [9]. This property is particularly crucial in critical applications such as drug discovery and development, where uncertainties can lead to costly failures or safety issues in later stages [10].

Foundational Concepts and Definitions

Problem Formulations

Multi-objective optimization problems without uncertainty can be formulated as minimizing a vector function F(x) = (f₁(x), f₂(x), ..., fₘ(x)) subject to x ∈ Ω, where x = (x₁, x₂, ..., xₙ) is an n-dimensional solution, M is the number of objectives, and Ω ⊆ Rⁿ represents the decision search space [9]. When considering input perturbation uncertainty, this formulation extends to:

min F(x') = (f₁(x'), f₂(x'), ..., fₘ(x')) with x' = (x₁ + δ₁, x₂ + δ₂, ..., xₙ + δₙ) subject to x ∈ Ω

where δᵢ represents noise added to the i-th dimension of x [9]. Given the maximum disturbance degree δᵐᵃˣ = (δ₁ᵐᵃˣ, ..., δₙᵐᵃˣ), there exists -δᵢᵐᵃˣ ≤ δᵢ ≤ δᵢᵐᵃˣ where i ∈ {1, ..., n} [9].

Robustness Measures and Evaluation

The evaluation of robustness typically employs three main strategies. The first uses expectation or variance measures, where extensive function evaluations estimate the expectation and variance values of a single solution by integrating fitness values from all solutions within its neighborhood [9]. The second approach utilizes explicit robustness measures, which may include statistical indicators beyond expectation and variance. The third strategy employs implicit methods that evaluate robustness through neighborhood sampling without explicit metrics [11].

Each approach has distinct advantages and limitations. Expectation-based methods are mathematically tractable but may overlook performance stability. Variance-focused approaches prioritize consistency but might compromise optimality. Composite metrics attempt to balance both concerns but introduce additional complexity in parameter tuning [11].

Table 1: Classification of Robustness Measures in Multi-Objective Optimization

| Measure Type | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| Expectation-based | Focuses on average performance under perturbations | Simple interpretation, mathematically tractable | May select solutions with high performance variance |

| Variance-focused | Emphasizes performance stability | Identifies consistent performers | May overlook solutions with superior average performance |

| Composite metrics | Combines multiple statistical indicators | Balanced perspective on performance and stability | Requires careful weighting of different components |

| Survival rate | Measures solution persistence under disturbances | Direct assessment of robustness | Computationally intensive to evaluate |

Methodological Frameworks for Robust Multi-Objective Optimization

Surviving Rate-Based Approaches

A novel approach in robust multi-objective evolutionary optimization introduces the concept of surviving rate as a new optimization objective [9]. This algorithm comprises two distinct stages: the evolutionary optimization stage and the construction stage of the robust optimal front. In the former stage, the survival rate acts as a robust measure for archive updates, equally considering robustness and convergence [9]. By employing non-dominated sorting methods, solutions at the first rank are filtered, ensuring only solutions with good robustness and convergence are preserved in the archive.

The methodology incorporates two key mechanisms: precise sampling and random grouping. The precise sampling mechanism applies multiple smaller perturbations around a solution after adding initial noise, calculating the average value in objective space in the vicinity to provide a more accurate evaluation of the solution's performance in actual operating processes [9]. The random grouping mechanism introduces an element of randomness in individual allocations to maintain population diversity [9].

Uncertainty-Related Pareto Front (UPF) Framework

The Uncertainty-related Pareto Front (UPF) framework represents a paradigm shift from traditional approaches by balancing robustness and convergence as equal priorities rather than treating robustness as secondary to convergence [11]. This framework explicitly accounts for decision variables with noise perturbation by quantifying their effects on both convergence guarantees and robustness preservation within a theoretically grounded and general framework [11].

Building upon UPF, researchers have developed RMOEA-UPF—a population-based search robust multi-objective optimization algorithm. This method enables efficient search optimization by calculating and optimizing the UPF during the evolutionary process [11]. It features an innovative archive-centric framework where the elite archive acts as the core population, generating parents directly from this elite archive to tightly integrate the selection of high-performing solutions with the creation of new candidates [11].

Performance Assessment in Robust Optimization

Evaluating the performance of robust optimization approaches requires specialized metrics that capture both conventional performance indicators and robustness-specific considerations. The table below summarizes key quantitative metrics employed in recent robust multi-objective optimization research:

Table 2: Performance Metrics for Robust Multi-Objective Optimization Algorithms

| Metric Name | Mathematical Formulation | Interpretation | Application Context |

|---|---|---|---|

| Survival Rate | SR(x) = Pr[‖F(x+δ) - F(x)‖ ≤ ε] | Probability of maintaining performance under perturbation | General robust optimization [9] |

| Expected Performance | E[F(x)] = ∫ F(x+δ)p(δ)dδ | Average performance across perturbations | Type I robustness [11] |

| Performance Variance | Var[F(x)] = E[(F(x+δ) - E[F(x)])²] | Stability of performance under uncertainty | Consistency-focused applications [11] |

| Robustness-Convergence Metric | RCM = Conv(X) × Robust(X) | Combined measure of optimality and stability | Comprehensive assessment [9] |

| Utopian Robust Indicator | URI = ‖F(x) - F*‖ × (1 + CV(F(x))) | Distance to ideal performance with variability penalty | Multi-scenario optimization [12] |

Experimental Protocols and Methodologies

Benchmark Problems and Evaluation Frameworks

Experimental validation of robust multi-objective optimization algorithms typically employs nine benchmark problems that incorporate various forms of uncertainty [9] [11]. These benchmarks are designed to represent different challenge characteristics including multi-modality, deception, and variable interaction under noisy conditions. The evaluation framework assesses algorithm performance across multiple dimensions including convergence speed, solution quality, diversity maintenance, and robustness stability.

The standard experimental protocol involves multiple independent runs of each algorithm on the benchmark problems with careful measurement of performance metrics. Statistical significance testing (typically using Wilcoxon rank-sum tests with α = 0.05) validates whether observed differences in performance metrics are statistically significant [9]. The performance assessment includes both quantitative metrics and qualitative analysis of the obtained Pareto fronts.

Detailed Methodology for Surviving Rate Calculation

The surviving rate computation follows a precise sampling methodology:

- Initialization: For each solution x in the population, define perturbation range based on δᵐᵃˣ

- Primary Perturbation: Apply initial noise δₚ to create x' = x + δₚ

- Secondary Sampling: Generate k secondary perturbations δₛ₁, δₛ₂, ..., δₛₖ around x'

- Evaluation: Compute objective values for all perturbed solutions F(x' + δₛᵢ) for i = 1 to k

- Survival Assessment: Count solutions maintaining performance within threshold ε

- Rate Calculation: SR(x) = (number of surviving solutions) / k

This methodology provides a more accurate evaluation of the solution's performance in actual operating processes compared to single-stage perturbation approaches [9].

Implementation of the UPF Framework

The UPF framework implementation involves these key computational steps:

- Uncertainty Modeling: Characterize the distribution and magnitude of input perturbations

- Solution Evaluation: Assess each solution under multiple perturbation scenarios

- UPF Construction: Identify solutions that are non-dominated considering both original objectives and robustness measures

- Archive Maintenance: Preserve diverse, high-performing solutions across different perturbation scenarios

- Termination Check: Evaluate convergence criteria based on UPF stability and iteration limits

The algorithm terminates when the UPF shows minimal improvement over successive generations or when a predetermined computational budget is exhausted [11].

Applications in Pharmaceutical Research and Drug Development

Robust Optimization in Drug Discovery Pipeline

The drug discovery and development process faces numerous uncertainties throughout its pipeline, from early target identification to post-market surveillance [10]. This structured process includes five main stages: discovery, preclinical research, clinical research, regulatory review, and post-market monitoring [10]. Each stage presents distinct optimization challenges with inherent uncertainties that robust multi-objective approaches can address.

Model-Informed Drug Development (MIDD) has emerged as an essential framework for advancing drug development and supporting regulatory decision-making in the face of these uncertainties [10]. MIDD plays a pivotal role by providing quantitative predictions and data-driven insights that accelerate hypothesis testing, assess potential drug candidates more efficiently, reduce costly late-stage failures, and accelerate market access for patients [10]. Evidence from drug development and regulatory approval has demonstrated that a well-implemented MIDD approach can significantly shorten development cycle timelines, reduce discovery and trial costs, and improve quantitative risk estimates [10].

Table 3: Multi-Objective Optimization Challenges in Drug Development Stages

| Development Stage | Key Uncertainties | Optimization Objectives | Robustness Considerations |

|---|---|---|---|

| Target Identification | Biological complexity, disease heterogeneity | Target druggability, novelty, therapeutic potential | Resilience to biological variability [13] |

| Lead Optimization | Chemical synthesis variability, ADME unpredictability | Potency, selectivity, safety, synthesizability | Performance stability across biological systems [14] |

| Preclinical Testing | Species translation limitations, toxicity prediction | Efficacy, safety margin, pharmacokinetics | Consistency across model systems [10] |

| Clinical Trials | Patient population diversity, adherence variability | Efficacy, safety, dosage convenience | Robustness across subpopulations [10] |

| Post-Market Surveillance | Real-world usage patterns, long-term effects | Benefit-risk balance, adherence, outcomes | Performance under diverse real-world conditions [10] |

Case Study: Automated Drug Design with Robust Optimization

A recent advancement in pharmaceutical informatics introduces the optSAE + HSAPSO framework, which integrates a stacked autoencoder for robust feature extraction with a hierarchically self-adaptive particle swarm optimization algorithm for adaptive parameter optimization [13]. This approach addresses critical limitations in existing drug classification and target identification methods, including inefficiencies, overfitting, and limited scalability [13].

The experimental implementation achieved a remarkable accuracy of 95.52% on datasets from DrugBank and Swiss-Prot, with significantly reduced computational complexity (0.010 seconds per sample) and exceptional stability (±0.003) [13]. The robust optimization framework demonstrated superior performance across various classification metrics while maintaining consistent performance across both validation and unseen datasets [13].

Research Reagent Solutions for Robust Optimization Experiments

Table 4: Essential Research Materials and Computational Tools for Robust Optimization Experiments

| Item Category | Specific Examples | Function in Research | Application Context |

|---|---|---|---|

| Benchmark Libraries | ZDT, DTLZ, WFG problem suites | Algorithm validation and performance comparison | General robust MOEA testing [9] [11] |

| Pharmaceutical Datasets | DrugBank, Swiss-Prot, ChEMBL | Real-world validation of optimization approaches | Drug discovery applications [13] |

| Optimization Frameworks | PlatEMO, pymoo, EvoTorch | Implementation and testing of algorithms | Experimental prototyping [15] |

| Uncertainty Modeling Tools | Monte Carlo simulation libraries, perturbation generators | Simulation of input disturbances and structural uncertainties | Robustness assessment [9] |

| Performance Metrics | Hypervolume, IGD, survival rate calculators | Quantitative assessment of algorithm performance | Comparative analysis [9] [11] |

The critical need for robustness in addressing input perturbations and structural uncertainties has established robust multi-objective evolutionary optimization as an essential methodology across scientific and engineering domains, particularly in pharmaceutical research and drug development. The emerging approaches discussed—including surviving rate-based algorithms, Uncertainty-related Pareto Front frameworks, and specialized applications in drug discovery—demonstrate significant advances in simultaneously optimizing for both performance and stability under uncertainty.

Future research directions should focus on enhancing computational efficiency for large-scale problems, developing more sophisticated robustness measures that better capture real-world uncertainty patterns, and creating specialized frameworks for emerging application domains. The integration of robust optimization principles with artificial intelligence and machine learning approaches presents particularly promising avenues for advancing pharmaceutical research and addressing complex challenges in drug discovery and development. As these methodologies continue to mature, they hold the potential to significantly reduce development timelines, lower costs, and improve success rates in critical applications ranging from healthcare to energy systems.

In the realm of multi-objective evolutionary optimization, the pursuit of optimal solutions is fundamentally challenged by the presence of uncertainties in real-world applications. Robustness measures provide the critical framework for evaluating solution quality under these uncertainties, ensuring that performance remains effective when applied to real systems with noisy inputs or perturbed parameters. This technical guide examines three foundational approaches to robustness assessment—surviving rate, expectation strategies, and quality metrics—providing researchers and drug development professionals with methodologies for designing optimization algorithms that deliver reliable, high-performing solutions in practical scenarios.

The significance of robustness extends across domains from complex network design to healthcare quality measurement. In industrial processes, design parameters are vulnerable to random input disturbances, often resulting in products that perform less effectively than anticipated [9]. Similarly, in healthcare, robust quality measures are essential for accurately evaluating the implementation of evidence-based practices and for assuring accountability across provider systems [16] [17]. This guide synthesizes recent advances in robustness quantification, offering structured protocols for their implementation within multi-objective optimization frameworks.

Core Concepts of Robustness Measures

Surviving Rate

The surviving rate represents a novel approach to robustness quantification in multi-objective evolutionary optimization algorithms (MOEAs). It functions as a robustness indicator that evaluates a solution's ability to maintain performance quality when subjected to input disturbances or variable perturbations [9]. Unlike traditional metrics that may prioritize convergence alone, surviving rate equally weights robustness and convergence, treating robustness as a distinct optimization objective rather than a secondary consideration.

Within robust multi-objective optimization problems (RMOOPs), a solution is considered robust when it exhibits insensitivity to disturbances in decision variables [9]. The surviving rate formally captures this insensitivity by measuring the proportion of evaluations in which a solution maintains acceptable performance across multiple samples within a neighborhood around the design point. This approach enables algorithms to directly optimize for stability in performance, creating solutions that deliver consistent outcomes despite operational variances.

Expectation Strategies

Expectation strategies constitute a classical approach to robustness measurement, employing statistical estimators to approximate performance under uncertainty. These methods typically use Monte Carlo integration or similar sampling techniques to estimate the expectation and variance values of a solution by aggregating fitness values from numerous points within its neighborhood [9].

In practice, expectation strategies replace the original objective function with a composite measure that encompasses both performance and expectation near the considered solution. By evaluating a solution across a distribution of perturbations, these methods generate probabilistic guarantees of performance, providing optimization algorithms with guidance for identifying regions of the search space that exhibit stable performance characteristics. While computationally intensive, expectation strategies offer mathematically rigorous foundations for robustness assessment, particularly when the distribution of uncertainties is well-characterized.

Quality Metrics

Quality metrics provide standardized, quantitative measures for evaluating specific attributes of system performance, particularly in applied domains such as healthcare. These metrics transform theoretical concepts of quality into operationalized indicators that enable consistent measurement, comparison, and benchmarking across different systems, providers, or time periods [16].

In healthcare contexts, quality metrics are defined as "quantitative measures that provide information about the effectiveness, safety, and/or people-centredness of care" [16]. Effective quality metrics incorporate three essential components: a quality goal (clear statement of the intended objective), a measurement concept (specified method for data collection and calculation), and an appraisal concept (description of how the measure is used to judge quality) [16]. This structured approach ensures that metrics produce consistent, interpretable results that can reliably inform decision-making processes across diverse implementation contexts.

Table 1: Classification of Robustness Measures

| Measure Type | Fundamental Principle | Primary Application Context | Key Advantages |

|---|---|---|---|

| Surviving Rate | Solution insensitivity to input disturbances | Multi-objective evolutionary optimization with noisy inputs | Equally considers robustness and convergence as objectives |

| Expectation Strategies | Statistical estimation of performance expectation | Problems with well-characterized uncertainty distributions | Mathematically rigorous probabilistic guarantees |

| Quality Metrics | Standardized quantitative indicators of performance | Healthcare quality measurement and implementation research | Enables benchmarking and accountability across systems |

Methodologies and Experimental Protocols

Surviving Rate Implementation in RMOEA-SuR

The RMOEA-SuR (Robust Multi-Objective Evolutionary Algorithm based on Surviving Rate) implements surviving rate through a structured two-stage process that combines evolutionary optimization with robust optimal front construction [9]:

Stage 1: Evolutionary Optimization

- Solution Representation: Define the chromosome structure appropriate to the problem domain, typically real-valued vectors for continuous optimization problems.

- Surviving Rate Calculation: For each solution in the population, apply multiple smaller perturbations after adding initial noise. Calculate the average objective value across these perturbations to estimate performance in actual operating conditions.

- Multi-Objective Optimization: Introduce surviving rate as an additional optimization objective alongside traditional fitness functions. Employ non-dominated sorting to identify solutions that balance convergence and robustness.

- Diversity Maintenance: Implement a random grouping mechanism to introduce randomness in individual allocations, preventing premature convergence and maintaining population diversity.

Stage 2: Robust Optimal Front Construction

- Performance Integration: Combine convergence and robustness using a performance measure that multiplies the L0 norm average value in objective space (convergence) by the surviving rate (robustness). This multiplicative approach mitigates scaling issues between different measures.

- Pareto Front Identification: Apply selection pressure toward solutions that demonstrate balanced performance across all objectives, including surviving rate.

- Validation: Evaluate the resulting solution set under realistic noisy conditions to verify robustness improvements.

Expectation Strategy Protocol

The implementation of expectation strategies for robustness measurement follows a structured sampling approach:

Neighborhood Definition: For each solution x in the population, define a neighborhood N(x) based on the known or estimated distribution of input disturbances. This neighborhood typically represents the range of possible perturbations during actual operation.

Monte Carlo Sampling: Within N(x), generate k sample points x₁, x₂, ..., xₖ using Monte Carlo or Latin Hypercube sampling techniques. The sample size should balance computational cost with estimation accuracy.

Function Evaluation: Evaluate the objective function f(xᵢ) for each sample point in the neighborhood.

Statistical Aggregation: Calculate the expected performance using the arithmetic mean: E[f(x)] = (1/k) × Σ f(xᵢ)

Simultaneously, compute performance variance: Var[f(x)] = (1/(k-1)) × Σ (f(xᵢ) - E[f(x)])²

Fitness Assignment: Replace the original objective function value with the expected value E[f(x)] or a composite measure incorporating both expectation and variance.

Optimization Guidance: Utilize these robustness-enhanced fitness values to guide the evolutionary search toward regions with superior expected performance and reduced sensitivity to perturbations.

Quality Metric Development and Validation

The development of robust quality metrics for implementation research follows a rigorous methodological framework:

Conceptual Definition: Clearly define the theoretical concept of quality being measured, specifying the target domain (e.g., effectiveness, safety, patient-centeredness) and the specific aspect of care being evaluated.

Operationalization: Translate the conceptual definition into a measurable quantity by specifying:

- Numerator: The count of events, actions, or outcomes representing the quality concept

- Denominator: The population at risk or eligible for the measurement

- Data Sources: Specific data elements required from existing systems (EMR, claims, administrative data)

Stakeholder Review: Engage clinical experts, operational leaders, and implementation stakeholders to review the metric for face validity, relevance, and actionability.

Pilot Testing: Calculate the metric using historical data to identify potential issues with data availability, computational feasibility, and result interpretability.

Appraisal Concept Definition: Establish thresholds or benchmarks for interpreting metric values, defining what constitutes "good" or "poor" performance.

Validation: Assess the metric's reliability, sensitivity to change, and correlation with relevant outcomes through statistical analysis.

Table 2: Experimental Protocols for Robustness Measurement

| Protocol Phase | Key Procedures | Data Requirements | Validation Approaches |

|---|---|---|---|

| Surviving Rate Calculation | Precise sampling with multiple perturbations; Random grouping for diversity | Noisy input distributions; Performance evaluation metrics | Comparison of solution performance under clean vs. noisy conditions |

| Expectation Strategy Implementation | Monte Carlo sampling; Statistical aggregation of neighborhood performance | Characterization of uncertainty distributions; Function evaluation capabilities | Analysis of variance in performance across sampled points |

| Quality Metric Development | Operationalization of quality concepts; Stakeholder review; Pilot testing | Administrative data (claims, EMR); Population definitions | Reliability testing; Correlation with relevant outcomes |

Table 3: Research Reagent Solutions for Robustness Measurement

| Reagent/Resource | Function | Application Context |

|---|---|---|

| Graph Isomorphism Network (GIN) | Surrogate model for approximating network robustness | Complex network robustness optimization [18] |

| Multi-Objective Particle Swarm Optimization (MOPSO) | Evolutionary algorithm for handling multiple objectives | Smart building energy management [19] |

| Non-dominated Sorting Genetic Algorithm II (NSGA-II) | Pareto-based multi-objective evolutionary algorithm | General robust multi-objective optimization [20] |

| Precise Sampling Mechanism | Multiple smaller perturbations around solutions | Surviving rate calculation in RMOEA-SuR [9] |

| Random Grouping Mechanism | Introduces randomness in individual allocations | Diversity maintenance in evolutionary algorithms [9] |

| ε-Constraint Method | Generates Pareto optimal solutions | Closed-loop supply chain optimization [21] |

| Three-Part Composite Crossover Operator | Enhances convergence in network optimization | Network robustness enhancement [22] |

Applications and Case Studies

Robust Optimization in Noisy Industrial Environments

Industrial design processes frequently encounter random input disturbances that degrade performance from anticipated levels. The application of surviving rate within multi-objective evolutionary optimization has demonstrated significant improvements in solution robustness for these environments [9]. In experimental evaluations across nine test problems, the RMOEA-SuR algorithm achieved superior convergence and robustness compared to existing approaches under noisy conditions.

The greenhouse-crop system exemplifies this application challenge, where conflicting objectives of increasing crop yield and reducing energy consumption create a multi-objective optimization problem [9]. Uncertain microclimate data and imperfect control of environmental parameters introduce input disturbances that must be addressed through robust optimization. By implementing surviving rate as an optimization objective, solutions maintain stable performance despite these operational variances, delivering more reliable real-world performance.

Healthcare Quality Measurement in Implementation Research

Healthcare represents a critical domain for quality metric application, where robust measurement directly impacts patient outcomes and system efficiency. The Advancing Pharmacological Treatments for Opioid Use Disorder (ADaPT-OUD) implementation study illustrates both the advantages and challenges of healthcare quality measurement [17]. This study utilized an operations-calculated quality metric representing the proportion of patients with an opioid use disorder diagnosis who receive medication treatment (MOUD/OUD ratio).

The experience revealed critical lessons in robust quality measurement:

- Operations-calculated measures facilitated stakeholder communication but introduced risks when operational definitions changed mid-study

- Researcher-calculated measures provided consistency across study phases but required additional validation for stakeholder acceptance

- A hybrid approach using operations-calculated measures for monitoring and researcher-calculated measures for outcomes evaluation balanced stakeholder engagement with methodological rigor

This case underscores the necessity of measurement consistency throughout implementation research, particularly when evaluating the effectiveness of strategies for promoting evidence-based practices.

Network Robustness Optimization

Complex networks require robustness to maintain functionality despite component failures or targeted attacks. The Eff-R-Net framework addresses this challenge through an efficient evolutionary algorithm that incorporates prior structural knowledge [22]. This approach employs a novel three-part composite crossover operator and specialized mutation operators that guide the evolution toward "onion-like" network structures demonstrated to exhibit superior robustness.

Similarly, the MOEA-GIN algorithm utilizes a graph isomorphism network as a surrogate model to approximate expensive robustness evaluations, reducing computational cost by approximately 65% while maintaining optimization performance [18]. This approach formulates network robustness as a multi-objective optimization problem balancing robustness against structural modification costs, enabling practical application to large-scale networks where direct simulation would be computationally prohibitive.

Comparative Analysis and Implementation Guidelines

Performance Trade-offs and Selection Criteria

Each robustness measure demonstrates distinctive strengths and limitations across application contexts:

Surviving Rate excels in problems with significant input disturbances where maintaining consistent performance is equally important as achieving optimal performance. Its integration directly into the optimization objective provides explicit pressure toward robust solutions, but requires careful implementation of sampling mechanisms to accurately estimate robustness without excessive computational overhead.

Expectation Strategies offer mathematical rigor for problems with well-characterized uncertainty distributions, providing probabilistic performance guarantees. These methods are particularly valuable in safety-critical applications where understanding worst-case scenarios is essential. However, they typically require substantial computational resources for comprehensive neighborhood sampling.

Quality Metrics provide standardized, interpretable measures for applied domains where stakeholder communication and benchmarking are priorities. Their structured development process supports consistent implementation across systems, but requires meticulous definition and maintenance to prevent conceptual drift or calculation inconsistencies over time.

Implementation Recommendations

Based on comparative analysis across domains, the following implementation guidelines support effective robustness measurement:

Problem Characterization: Begin with comprehensive analysis of uncertainty sources, distinguishing between input disturbances (affecting decision variables) and structural uncertainties (model bias) to select appropriate robustness measures [9].

Computational Budget Allocation: Balance resources between optimization iterations and robustness evaluation, considering surrogate models like GIN networks [18] for complex evaluations.

Stakeholder Alignment: In applied settings, engage domain experts early in metric development to ensure relevance and actionability while maintaining methodological rigor [17].

Multi-Faceted Validation: Employ complementary validation approaches, including historical data analysis, sensitivity testing, and prospective validation in implementation contexts.

Adaptive Framework Design: Implement self-adaptive hyper-parameters where possible, enabling dynamic adjustment of operator execution probabilities during optimization [22].

The strategic integration of robustness measures within multi-objective optimization frameworks provides essential capabilities for addressing real-world uncertainty across domains from engineering design to healthcare implementation. By selecting appropriate measures based on problem characteristics and implementation constraints, researchers can develop solutions that deliver consistent, high-quality performance despite operational variances and disturbances.

Drug discovery is inherently a multi-criteria optimization problem involving tremendously large chemical space, where each compound can be characterized by multiple molecular and biological properties [23]. The identification of novel therapeutics that balance requirements for potency, safety, metabolic stability, and pharmacodynamic profile presents a major challenge, which is further exacerbated by recent interest in designing compounds with properties that enable them to engage multiple targets [24]. This entails balancing different, sometimes competing chemical features, which can be particularly challenging without computational methodologies. Modern computational approaches strive to efficiently explore the chemical space in search of molecules with the desired combination of properties, often leveraging multi-objective optimization methods to help design novel small molecules optimized for conflicting pharmacological attributes with generative models [24] [23].

The transition from traditional trial-and-error approaches to AI-powered discovery engines represents a paradigm shift in pharmacology, replacing labor-intensive, human-driven workflows with systems capable of compressing timelines, expanding chemical and biological search spaces, and redefining the speed and scale of modern drug development [25]. This whitepaper examines the foundations of robust multi-objective evolutionary optimization research within this context, providing technical guidance on methodologies, implementations, and experimental protocols for addressing the core conflicting objectives in drug discovery.

Computational Frameworks for Multi-Objective Molecular Optimization

Problem Formulation and Mathematical Foundations

Constrained multi-property molecular optimization problems can be mathematically expressed as a constrained multi-objective optimization problem, where each property to be optimized is treated as an objective, and strict requirements are treated as constraints [26]:

Where x represents a molecule in molecular search space X, f(x) is the objective vector consisting of n optimization properties, gᵢ(x) represents m inequality constraints, and hⱼ(x) represents p equality constraints [26]. The constraint violation (CV) aggregation function measures the degree of constraint violation for a molecule:

If CV(x) = 0, the molecule is feasible; otherwise, it is infeasible [26]. This formulation differs from both single-objective optimization and unconstrained multi-objective optimization, as it must explore molecules that not only compromise different molecular properties but also satisfy predefined drug-like constraints, which may result in narrow, disconnected, and irregular feasible molecular space [26].

Algorithmic Approaches and Implementation Frameworks

Multiple algorithmic strategies have emerged to address these challenges, each with distinct advantages for handling conflicting objectives in drug discovery:

Table 1: Multi-Objective Optimization Algorithms in Drug Discovery

| Algorithm | Optimization Approach | Key Features | Application Examples |

|---|---|---|---|

| NSGA-II [27] | Multi-objective evolutionary algorithm | Non-dominated sorting, crowding distance | PCL microsphere formulation optimization |

| MOAHA [27] | Multi-objective metaheuristic | Inspired by flight patterns of hummingbirds | Pharmaceutical formulation design |

| CMOMO [26] | Constrained multi-objective framework | Two-stage dynamic constraint handling | Molecular multi-property optimization with constraints |

| VIKOR [23] | Multi-criteria decision analysis | Compromise ranking with utility and regret measures | Compound ranking in generative chemistry |

| IDOLpro [28] | Diffusion-based generative AI | Differentiable scoring functions | Structure-based drug design |

The CMOMO framework implements a two-stage dynamic constraint handling strategy that first solves unconstrained multi-objective molecular optimization to find molecules with good properties, then considers both properties and constraints to identify feasible molecules with promising properties [26]. This approach achieves balance between optimization of multiple properties and satisfaction of constrained molecules through cooperative optimization between discrete chemical space and continuous implicit space.

The VIKOR method (VIšekriterijumsko KOmpromisno Rangiranje) provides a structured approach for ranking compounds by calculating utility (S) and regret (R) measures [23]:

Where fᵢ* and fᵢ⁻ are ideal and anti-ideal values for criterion i, wᵢ is the weight assigned to criterion i, and v is a preference parameter (typically 0.5) reflecting decision maker's tendency toward group benefit or individual satisfaction [23].

Experimental Protocols and Methodologies

Workflow for Constrained Multi-Objective Molecular Optimization

The following diagram illustrates the complete CMOMO workflow for balancing molecular property optimization with constraint satisfaction:

Detailed Experimental Protocol: CMOMO Implementation

Phase 1: Population Initialization

- Bank Library Construction: Curate a library of high-property molecules similar to the lead molecule from public databases (e.g., ChEMBL, PubChem) [26]

- Molecular Encoding: Use a pre-trained encoder to embed the lead molecule and Bank library molecules into continuous latent space

- Linear Crossover: Perform linear crossover between the latent vector of the lead molecule and each molecule in the Bank library to generate high-quality initial population

Phase 2: Dynamic Cooperative Optimization

- Unconstrained Scenario Optimization:

- Apply Vector Fragmentation-based Evolutionary Reproduction (VFER) strategy on implicit molecular population

- Decode parent and offspring molecules from continuous implicit space to discrete chemical space using pre-trained decoder

- Filter invalid molecules using RDKit-based validity verification

- Evaluate molecular properties (potency, safety, pharmacokinetics)

- Select molecules with better property values using environmental selection strategy

- Constrained Scenario Optimization:

- Apply dynamic constraint handling to balance property optimization and constraint satisfaction

- Evaluate constraint violation degree using CV aggregation function

- Identify feasible molecules adhering to all drug-like constraints

- Select Pareto-optimal solutions satisfying both property and constraint requirements

Phase 3: Validation and Analysis

- Experimental Validation: Synthesize and test top-ranked molecules for experimental verification

- Deviation Analysis: Compare measured vs. predicted values (target: <5% deviation)

- Success Rate Calculation: Calculate percentage of molecules satisfying all target requirements [26] [27]

Multi-Objective Optimization in Practice: Case Studies and Applications

Formulation Optimization Using Intelligent Algorithms

In a study optimizing polycaprolactone microsphere (PCL-MS) formulations for tissue filling, researchers applied multi-objective optimization to balance particle size and distribution width [27]. The experimental protocol included:

- Experimental Design: Box-Behnken design investigated three factors: PCL concentration (X₁), polyvinyl alcohol concentration (X₂), and water-oil ratio (X₃)

- Mathematical Modeling: Developed models to predict particle size (Y₁) and particle size distribution width (Y₂)

- Multi-Objective Optimization: Applied NSGA-II and MOAHA to determine optimal preparation schemes

- Validation: Experimental confirmation showed no significant statistical difference (P>0.05) between measured and predicted values, with deviations under 5% [27]

This approach yielded three ideal PCL-MS formulations that facilitated production of microspheres with smaller particle sizes and narrower distributions, advancing formulation development while balancing competing objectives [27].

Structure-Based Drug Design with Generative AI

The IDOLpro platform demonstrates the application of multi-objective optimization in structure-based drug design through a diffusion-based generative AI approach [28]. The methodology includes:

- Differentiable Scoring Functions: Guide latent variables of diffusion model to explore uncharted chemical space

- Multi-Property Optimization: Simultaneously optimize binding affinity and synthetic accessibility

- Benchmark Validation: Tested on benchmark sets and experimental complexes

- Performance Comparison: Head-to-head comparison against exhaustive virtual screening

Results demonstrated that IDOLpro generated molecules with binding affinities 10-20% higher than state-of-the-art methods, producing more drug-like molecules with better synthetic accessibility scores [28]. The platform was over 100× faster and less expensive than virtual screening while generating superior molecules, including the first instances of molecules with better binding affinities than experimentally observed ligands on test sets of experimental complexes [28].

Clinical Pipeline Applications

AI-driven drug discovery platforms have demonstrated substantial improvements in development efficiency across multiple clinical programs:

Table 2: Clinical Pipeline Applications of Multi-Objective Optimization

| Company/Platform | Therapeutic Area | Optimization Approach | Results and Clinical Status |

|---|---|---|---|

| Insilico Medicine [25] | Idiopathic Pulmonary Fibrosis | Generative AI for target discovery and molecule design | Progressed from target discovery to Phase I in 18 months (typical: 5+ years) |

| Exscientia [25] | Oncology, Immuno-oncology | Centaur Chemist approach integrating AI with human expertise | AI-designed drug candidates reached clinical trials with ~70% faster design cycles |

| Schrödinger [25] | Immunology (TYK2 inhibitor) | Physics-plus-ML design strategy | Advanced zasocitinib (TAK-279) to Phase III clinical trials |

| BenevolentAI [25] [29] | Glioblastoma | Knowledge-graph driven target discovery | Identified novel targets in glioblastoma through multi-omics data integration |

Successful implementation of multi-objective optimization in drug discovery requires specialized computational tools and research reagents:

Table 3: Essential Research Reagents and Computational Tools

| Category | Specific Tools/Reagents | Function and Application |

|---|---|---|

| Computational Frameworks | ADMET Predictor with AIDD module [23] | Generative chemistry engine with MPO algorithms and MCDA integration |

| Optimization Algorithms | NSGA-II, MOAHA [27] | Multi-objective optimization for formulation and molecular design |

| Constraint Handling | CMOMO framework [26] | Dynamic constraint handling for molecular multi-property optimization |

| Generative AI Platforms | IDOLpro [28] | Diffusion-based generative AI with multi-objective optimization for structure-based design |

| Chemical Representation | SMILES strings, Molecular graphs [23] [28] | Chemical structure representation for generative models |

| Property Prediction | QSAR, PBPK, QSP models [10] | Predictive modeling of pharmacokinetics, toxicity, and efficacy |

| Decision Support | VIKOR, TOPSIS, AHP [23] | Multi-criteria decision analysis for compound ranking and selection |

| Validation Tools | RDKit [26] | Cheminformatics toolkit for molecular validity verification and manipulation |

The integration of multi-objective optimization methodologies represents a fundamental advancement in addressing the conflicting objectives of potency, safety, and pharmacokinetics in drug discovery. Frameworks such as CMOMO demonstrate that deliberate balancing of property optimization and constraint satisfaction through dynamic multi-stage approaches can successfully identify high-quality molecules exhibiting desired molecular properties while adhering rigorously to drug-like constraints [26]. The mathematical foundations of these approaches, particularly when integrated with multi-criteria decision analysis methods like VIKOR, provide structured frameworks for evaluating multiple molecular properties simultaneously and making informed trade-offs between often competing objectives [23].

The continuing evolution of these methodologies—including the integration of generative AI with multi-objective optimization [28], the development of more sophisticated constraint handling strategies [26], and the implementation of federated learning approaches to overcome data privacy barriers [29]—promises to further enhance our ability to navigate the complex landscape of drug discovery. These advances in robust multi-objective optimization research ultimately support the accelerated delivery of safer, more effective therapeutics to patients by systematically addressing the core conflicting objectives that have traditionally challenged drug development.

Multi-objective optimization problems (MOPs) are fundamental to numerous scientific and industrial domains, where decisions must balance multiple, often conflicting, objectives simultaneously. In real-world applications, from aerodynamic design to manufacturing processes, decision variables are often subject to input noise—unavoidable perturbations that cause the realized solution to differ from the intended one [9]. This discrepancy can lead to significant performance degradation, rendering a theoretically optimal solution practically useless. Consequently, robust multi-objective optimization has emerged as a critical research area, focusing on finding solutions that are not only optimal but also insensitive to input perturbations.

This technical guide establishes the mathematical foundations for formulating and solving Robust Multi-objective Optimization Problems (R-MOPs) under input noise. Framed within a broader thesis on robust evolutionary optimization, this work synthesizes current methodologies and theoretical models designed to handle uncertainty, providing researchers with the formal groundwork and practical tools necessary for advancing the field.

Problem Formulation and Core Concepts

Standard Multi-Objective Optimization

A deterministic multi-objective optimization problem (MOP) typically seeks to minimize multiple conflicting objectives simultaneously and can be formulated as:

min F(x) = (f₁(x), f₂(x), ..., fₘ(x)) subject to x ∈ Ω

where x = (x₁, x₂, ..., xₙ) is an n-dimensional decision vector from the feasible decision space Ω ⊆ Rⁿ, and M is the number of objectives [9]. The solution to an MOP is not a single point but a set of Pareto-optimal solutions, representing the best possible trade-offs among the objectives.

Robust Multi-Objective Optimization under Input Noise

When decision variables are subject to input noise, the realized solution becomes x' = (x₁ + δ₁, x₂ + δ₂, ..., xₙ + δₙ), where δᵢ represents the noise added to the i-th dimension within a maximum disturbance degree δᵢᵐᵃˣ [9]. The R-MOP is then formulated as optimizing the original objectives F evaluated at the perturbed point x'.

The core goal shifts from finding the Pareto-optimal set for F(x) to finding a robust Pareto-optimal set whose members exhibit acceptable performance under perturbations. A solution is considered robust if it exhibits insensitivity to disturbances in its decision variables [9].

Key Mathematical Robustness Measures

Three primary strategies are employed to quantify solution robustness:

- Expectation and Variance Measures: The robustness of a solution is estimated by evaluating the expectation (mean) and variance of its objective values within a neighborhood. The expected performance is often used as a new objective to be optimized [9].

- Threshold-Based Measures: These measures evaluate the probability that a solution's performance will remain acceptable (e.g., within a specified degradation threshold) under perturbations.

- Surviving Rate (SuR): A novel measure that redefines the R-MOP by adding robustness as an explicit objective. The Surviving Rate of a solution represents its ability to maintain performance within a desirable region of the objective space after perturbation, effectively acting as a robustness measure for archive updates [9].

Methodological Approaches and Algorithms

The following table summarizes and compares the core methodological approaches for solving R-MOPs with input noise.

Table 1: Core Methodological Approaches for Robust Multi-Objective Optimization with Noisy Inputs

| Methodological Approach | Core Idea | Key Mechanism | Primary Citation |

|---|---|---|---|

| Robust Multi-Objective Bayesian Optimization (Robust MBO) | Uses Bayesian surrogates to efficiently optimize expensive black-box functions under input noise. | Formalizes the goal as optimizing the multivariate value-at-risk (MVaR) and uses random scalarizations for a scalable solution. [30] | |

| Surviving Rate-based RMOEA (RMOEA-SuR) | Treats robustness and convergence as equally important objectives in an evolutionary algorithm. | Introduces Surviving Rate (SuR) as a new optimization objective; employs precise sampling and random grouping. [9] | |

| Stochastic Dominance-based MOEA | Extends non-dominated sorting for ranking solutions with stochastic objective evaluations. | Incorporates concepts of stochastic dominance and significant dominance to discriminate between solutions in noisy environments. [31] |

Robust Multi-Objective Bayesian Optimization

For expensive-to-evaluate black-box functions, Robust MBO provides a sample-efficient framework. Daulton et al. [30] formalize the goal as optimizing the multivariate value-at-risk (MVaR), which is a risk measure for uncertain objectives. Since directly optimizing MVaR is computationally challenging, they propose a theoretically-grounded approach using random scalarizations, which efficiently identifies optimal robust designs that satisfy specifications across multiple metrics with high probability [30].

Evolutionary Algorithms with Surviving Rate

The RMOEA-SuR algorithm introduces a two-stage process [9]:

- Evolutionary Optimization Stage: The Surviving Rate is incorporated as a new optimization objective. A non-dominated sorting approach is then applied to find a front that balances convergence and robustness.

- Construction Stage of the Robust Optimal Front: A performance measure integrating both convergence and robustness guides the final selection of solutions.

To enhance performance, RMOEA-SuR incorporates two key mechanisms:

- Precise Sampling: Applies multiple smaller perturbations after an initial noise injection, calculating the average objective value in the vicinity for a more accurate performance assessment.

- Random Grouping: Introduces randomness in individual allocations to maintain population diversity and avoid local optima [9].

Experimental Protocols and Evaluation

General Workflow for Robust MOP Experimentation

The following diagram illustrates a generalized experimental workflow for evaluating robust MOP algorithms, synthesizing elements from the cited methodologies.

Performance Metrics for Algorithm Evaluation

Evaluating algorithms for R-MOPs requires metrics that assess both the quality of the Pareto front and the robustness of the solutions.

- Inverted Generational Distance (IGD): Measures the average distance from each point in the true Pareto front to the nearest solution in the obtained front. A lower IGD indicates better convergence and diversity. The Prim-NSGAII algorithm was shown to improve the IGD index by 39.3% over traditional NSGA-II [32].

- Spread Metric (SM): Assesses the diversity and spread of solutions along the Pareto front. An improvement of 69.1% in the SM index was reported for the Prim-NSGAII algorithm [32].

- Solution Quality: The actual performance on the primary objectives (e.g., cost, carbon emissions). Enhancements of 0.59% and 0.86% in solution quality were noted for the robust Prim-NSGAII model [32].

- Converance-Robustness Integrated Measure: A composite measure, as used in RMOEA-SuR, that multiplies a convergence indicator (like the L0 norm average) with a robustness indicator (like Surviving Rate) to balance both properties [9].

The Researcher's Toolkit

This section details key computational reagents and resources essential for conducting research in robust MOPs with noisy inputs.

Table 2: Essential Research Reagents and Computational Tools for Robust MOPs

| Research Reagent / Tool | Function / Purpose | Application Context |

|---|---|---|

| Box Uncertainty Set | A mathematical set used to characterize and bound the fluctuations of uncertain parameters (e.g., demand, return volumes). [32] | Modeling parameter uncertainty in robust optimization frameworks. |

| Multivariate Value-at-Risk (MVaR) | A risk measure used to evaluate and optimize objectives under uncertainty, focusing on worst-case scenarios. [30] | Defining robustness in Robust Multi-Objective Bayesian Optimization. |

| Non-Dominated Sorting | A ranking procedure that classifies solutions into non-domination fronts based on Pareto dominance. [9] | Core selection mechanism in Multi-Objective Evolutionary Algorithms (MOEAs). |

| Stochastic Nondomination-Based Ranking | An extension of non-dominated sorting that incorporates concepts of stochastic dominance to handle noisy evaluations. [31] | Ranking solutions when objective functions are stochastic or noisy. |

| Precise Sampling Mechanism | A technique that applies multiple, smaller perturbations to a solution to accurately estimate its average performance in a noisy neighborhood. [9] | Accurately evaluating solution fitness and robustness in RMOEAs. |

| Random Grouping Mechanism | Introduces randomness in population management to maintain diversity and prevent premature convergence. [9] | Enhancing population diversity in evolutionary algorithms. |

| Double Deep Q-Network (DDQN) | A reinforcement learning algorithm that approximates state and decision spaces using artificial neural networks. [33] | Solving attacker-defender game frameworks in robust optimization. |

The mathematical formulation of robust multi-objective optimization problems under input noise represents a critical advancement for applying optimization techniques to real-world, uncertain environments. This guide has detailed the core formulations, from the basic problem structure incorporating perturbed decision variables to advanced robustness measures like MVaR and Surviving Rate.

The featured methodologies—spanning Bayesian optimization with random scalarizations and evolutionary algorithms with novel survival metrics—provide a robust theoretical and practical foundation for researchers. The experimental protocols and performance metrics outlined offer a standardized framework for validating new algorithms and contributions in this field. As industrial and scientific problems grow in complexity and uncertainty, these foundations will become increasingly vital for developing reliable, high-performing systems across domains such as drug development, supply chain logistics, and sustainable design. Future work will likely focus on scaling these approaches to higher dimensions and blending them with other uncertainty-handling techniques like fuzzy programming for even greater applicability.

Algorithmic Frameworks and Real-World Applications in Biomedical Research

Robust Multi-Objective Evolutionary Optimization (RMOEO) addresses a critical challenge in real-world engineering and scientific applications: finding solutions that remain effective despite uncertainties in decision variables or environmental conditions. In many manufacturing and design processes, parameters are vulnerable to random disturbances, causing final products to perform less effectively than anticipated during optimization [9]. Traditional Multi-Objective Evolutionary Algorithms (MOEAs) prioritize convergence to the Pareto optimal front while treating robustness as a secondary consideration, potentially yielding solutions highly sensitive to perturbations [11].

This technical guide examines two advanced approaches addressing these limitations: the Multi-Objective Evolutionary Algorithm based on Decomposition (MOEA/D) and the novel Survival Rate-based RMOEA (RMOEA-SuR). These frameworks represent paradigm shifts in how robustness is conceptualized and optimized alongside convergence. MOEA/D provides a decomposition-based foundation for handling multiple objectives, while RMOEA-SuR introduces innovative mechanisms to balance robustness and convergence as equally important criteria [9] [34]. Within the broader thesis of RMOEO foundations, these algorithms demonstrate how evolutionary computation can evolve to handle the inherent uncertainties present in practical optimization problems across fields ranging from drug development to agricultural planning and energy systems.

Theoretical Foundations of Robust Multi-Objective Optimization

Problem Formulation

A conventional Multi-Objective Optimization Problem (MOP) aims to minimize a vector of M conflicting objectives [9]:

where x = (x₁, x₂, ..., xₙ) is an n-dimensional decision vector, and Ω ⊆ Rⁿ represents the feasible decision space [9].

In Robust Multi-Objective Optimization Problems (RMOPs) with input perturbation uncertainty, this formulation extends to account for disturbances in decision variables [11]:

where δ = (δ₁, δ₂, ..., δₙ) represents a noise vector affecting each decision variable within specified bounds -δᵢᵐᵃˣ ≤ δᵢ ≤ δᵢᵐᵃˣ [11].

Robustness Measures in Evolutionary Computation

Three primary strategies exist for assessing solution robustness in RMOPs:

- Expectation and Variance Measures: Estimate expected performance and variability through multiple function evaluations within a solution's neighborhood [9].

- Statistical Aggregations: Combine expectation and variance through weighted sums or ratios to create composite robustness indicators [11].

- Surviving Rate: A novel approach evaluating a solution's ability to maintain performance across multiple perturbations, representing robustness as a distinct optimization objective [9].

Table 1: Classification of Robustness Measures in RMOEO

| Measure Type | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| Expectation-Based | Uses average objective values from neighborhood samples | Simple implementation, intuitive interpretation | May favor solutions with inconsistent performance |

| Variance-Based | Focuses on performance stability under perturbations | Directly measures consistency | Computationally expensive |

| Surviving Rate | Treats robustness as separate optimization objective | Equal consideration of robustness and convergence | Requires careful parameter tuning |

MOEA/D: A Decomposition-Based Approach

Core Algorithmic Framework