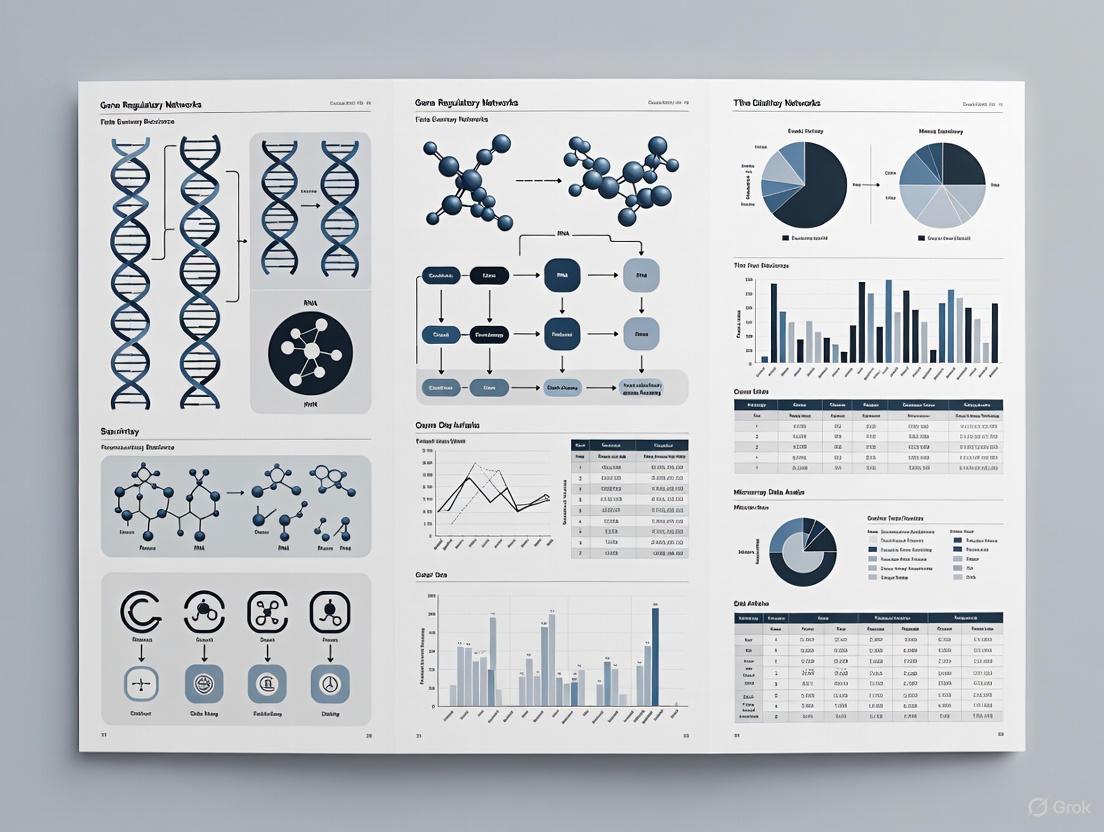

Reverse Engineering Gene Regulatory Networks from Microarray Data: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive overview of the methods and challenges in reverse engineering Gene Regulatory Networks (GRNs) from microarray data, tailored for researchers, scientists, and drug development professionals.

Reverse Engineering Gene Regulatory Networks from Microarray Data: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive overview of the methods and challenges in reverse engineering Gene Regulatory Networks (GRNs) from microarray data, tailored for researchers, scientists, and drug development professionals. It explores the foundational concepts of GRNs and their critical role in understanding cellular processes and complex diseases like cancer. The scope spans from core principles and a diverse array of inference methodologies—including linear time-variant models, Bayesian networks, and modern machine learning techniques—to essential steps for troubleshooting data quality and optimizing computational approaches. Finally, it details rigorous validation protocols and comparative analyses of methods, synthesizing key takeaways and future directions for translating network models into clinical and therapeutic applications.

Understanding Gene Regulatory Networks: From Core Concepts to Biomedical Significance

Gene Regulatory Networks (GRNs) represent the complex web of interactions where transcription factors (TFs) and other molecular regulators control the expression of target genes, ultimately governing cellular processes and fate decisions [1]. Reverse engineering—the process of inferring these networks from high-throughput expression data—remains a central challenge in computational biology. With the advent of microarray and RNA-sequencing technologies, statistical and machine learning approaches have become indispensable for transforming quantitative expression profiles into predictive network models [2] [1]. This application note provides a statistical perspective on GRN definition, detailing key methodologies, experimental protocols, and practical tools for researchers and drug development professionals working within the context of reverse engineering networks from expression data.

Statistical Foundations of GRN Inference

A variety of statistical and computational methodologies are employed to infer GRNs from gene expression data. The choice of method depends on the type of data available, the size of the network, and the desired balance between interpretability and predictive power.

Table 1: Core Statistical Methods for GRN Inference

| Method Category | Key Principle | Representative Algorithms | Strengths | Limitations |

|---|---|---|---|---|

| Correlation & Association | Measures co-expression or statistical dependence between genes. | Pearson/Spearman Correlation, Mutual Information, ARACNE, CLR [1] [3] | Computationally efficient; intuitive. | Cannot distinguish direct vs. indirect regulation or infer causality. |

| Regression Models | Models a gene's expression as a function of its potential regulators. | Linear Regression, LASSO, GENIE3 [1] [3] | Infers directionality; provides interpretable coefficients. | Struggles with highly correlated predictors (e.g., co-regulated TFs). |

| Probabilistic Models | Uses graphical models to represent dependencies between variables. | Bayesian Networks [1] | Naturally handles noise and uncertainty. | Can be computationally intensive for large networks. |

| Dynamical Systems | Models the system's behavior over time using differential equations. | Custom ODE models [4] | Captures temporal dynamics and causality. | Requires time-series data; depends on prior knowledge for parametrization. |

| Machine/Deep Learning | Learns complex, non-linear relationships from data. | Support Vector Machines (SVM), Convolutional Neural Networks (CNNs), Hybrid Models [4] [3] | High predictive accuracy; can integrate diverse data types. | Often requires large datasets; models can be "black boxes." |

A critical advancement in the field is the use of perturbation experiments to move beyond correlation and establish causality. By systematically perturbing specific genes and observing the steady-state changes in expression across the network, researchers can more accurately deduce the direction of regulatory influence [2] [5]. Furthermore, the integration of prior knowledge—such as experimentally-determined TF-target interactions from ChIP-seq or DAP-seq—into supervised machine learning models has been shown to significantly improve reconstruction accuracy [4] [3].

Detailed Experimental Protocol for Perturbation-Based GRN Inference

This protocol outlines the key steps for inferring a GRN using gene perturbation data, based on the TopNet framework [5]. The overall workflow is designed to establish causal relationships from transcriptomic data.

Cell Culture and Perturbation

Materials & Reagents:

- Cell Line: Young Adult Mouse Colon (YAMC) cells or other relevant model (e.g., DLD-1, PC3) [5].

- Culture Media: RPMI 1640 supplemented with ~9% FBS, 1× ITS-A, 2.5 μg/mL gentamycin, and 5 U/mL interferon γ for YAMC cells [5].

- Perturbagens: Retroviral vectors encoding cDNAs for overexpression or shRNAs for knockdown of specific target genes [5].

- Coating Reagent: Rat tail collagen, type I, diluted to 1 μg/cm² for coating tissue culture dishes [5].

Procedure:

- Cell Maintenance: Culture YAMC cells at the permissive temperature of 33°C on collagen-coated dishes. Passage cells every 3-5 days to maintain 40-90% confluence [5].

- Perturbagen Production:

- Culture ΦΝΧ-E (Phoenix-ECO) viral packaging cells in DMEM with 10% FBS.

- Transfect the packaging cells with the retroviral vector of interest using a calcium phosphate or lipid-based method.

- Collect the viral supernatant containing the pertubagen 48-72 hours post-transfection [5].

- Cell Perturbation:

- Infect the target YAMC cells with the viral supernatant in the presence of a transfection enhancer like polybrene.

- Select successfully transduced cells using appropriate antibiotics (e.g., puromycin for shRNA vectors) for several days [5].

Expression Measurement and Data Preprocessing

Materials & Reagents:

- RNA Extraction Kit: TRIzol or column-based kit.

- Microarray/Sequencing Platform: Appropriate chip (e.g., Affymetrix) or RNA-seq library prep kit.

Procedure:

- RNA Extraction: Harvest perturbed cells and extract total RNA following the manufacturer's protocol. Ensure RNA Integrity Number (RIN) > 9.0 for high-quality data [5].

- Expression Profiling: Perform gene expression profiling using microarray or, preferably, RNA-seq. Include biological replicates for each perturbation condition and controls.

- Data Preprocessing:

- For RNA-seq data: Use Trimmomatic to remove adapter sequences and low-quality bases. Assess quality with FastQC [3].

- Align reads to the reference genome using a splice-aware aligner like STAR [3].

- Generate raw gene-level read counts and normalize them using methods like TMM (weighted trimmed mean of M-values) in edgeR to account for compositional biases [3].

Network Inference using TopNet

Procedure:

- Input Data Preparation: Format the normalized expression matrix from all perturbation experiments and controls for TopNet input. Rows represent genes, and columns represent samples [5].

- Network Modeling: Run the TopNet algorithm. TopNet is specifically designed to model data where nodes are both perturbed and measured, using the perturbation status of genes to infer directed regulatory influences on others [5].

- Output and Summarization: The primary output is a network file (e.g., in .csv or .graphml format) listing inferred regulatory interactions (TF → Target). Use network analysis and visualization tools (e.g., Cytoscape) to summarize the global topology and identify hub genes [5].

- Validation: Perform genetic validation (e.g., additional knockdown experiments) of key dependencies revealed by the network model to confirm predictions [5].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful GRN inference relies on a combination of wet-lab reagents and computational tools.

Table 2: Key Research Reagent Solutions for GRN Inference

| Category | Item | Function/Application |

|---|---|---|

| Biological Models | YAMC cells, ΦΝΧ-E packaging cells | A genetically tractable cell model for perturbation studies and virus production [5]. |

| Perturbation Tools | shRNA vectors, cDNA overexpression vectors | To knock down or overexpress specific genes, creating a causal signal in the expression data for network inference [5]. |

| Culture Reagents | Collagen I, Interferon γ, Specialty Media | To provide the necessary extracellular matrix and growth signals for maintaining specific cell lines in culture [5]. |

| Profiling Technology | Microarray platforms, RNA-seq kits | For genome-wide measurement of gene expression changes resulting from perturbations [2] [3]. |

| Computational Tools | TopNet, FRANK, GENIE3, TIGRESS | Algorithms and software for inferring network structure from expression data [5] [4] [3]. |

| Validation Methods | ChIP-seq, DAP-seq, Y1H | Orthogonal experimental techniques to validate predicted TF-target interactions [4]. |

Advanced Concepts and Future Directions

The field of GRN inference is rapidly evolving. Two key advanced concepts are the incorporation of multi-omic data and the use of cross-species transfer learning.

The integration of single-cell multi-omic data (e.g., simultaneous scRNA-seq and scATAC-seq) allows for a more nuanced, cell-state-specific resolution of regulatory networks by linking transcription factor binding site accessibility to target gene expression [1]. Furthermore, for non-model organisms with limited data, transfer learning has emerged as a powerful strategy. This involves training a model on a well-annotated, data-rich species (like Arabidopsis thaliana) and applying it to infer GRNs in a less-characterized species (like poplar or maize), significantly improving prediction performance [3]. Hybrid models that combine the feature extraction power of deep learning (e.g., CNNs) with the interpretability of traditional machine learning have also been shown to consistently outperform traditional methods, achieving over 95% accuracy in some holdout tests [3].

The Biological Significance of GRNs in Cellular Processes and Disease

A Gene Regulatory Network (GRN) is an abstract mapping of gene regulations within living cells, representing the complex interplay of molecular components that control cellular functions [6] [7]. These networks form the fundamental circuitry that governs cellular identity, fate decisions, and responses to environmental stimuli by regulating transcriptional dynamics [1] [8]. The reverse engineering of GRNs from experimental data, such as microarray datasets, enables researchers to decipher the regulatory logic underlying cellular processes and disease states [6] [7] [9]. Understanding GRN architecture provides critical insights for developing novel therapeutic strategies, particularly for complex diseases like cancer, where disrupted regulatory networks contribute to pathogenesis and treatment resistance [8].

GRN inference has evolved significantly with technological advancements in molecular profiling. Early approaches relied primarily on microarray data, which measures the expression of thousands of genes in parallel [6] [7]. Contemporary methods now leverage single-cell multi-omics technologies, including simultaneous profiling of RNA expression and chromatin accessibility, enabling more precise reconstruction of regulatory relationships at cellular resolution [1]. This progression from bulk to single-cell analysis has revolutionized our ability to capture cellular heterogeneity and identify rare cell populations with distinct regulatory states, such as drug-resistant cancer cells [1] [8].

Methodological Foundations of GRN Reconstruction

Computational Frameworks for GRN Inference

Multiple computational approaches have been developed to infer GRNs from gene expression data, each with distinct strengths and limitations for specific applications and data types [1].

Table 1: Computational Methods for GRN Inference

| Method Category | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Correlation-based | Measures co-expression using association metrics (Pearson, Spearman, Mutual Information) | Simple implementation, captures linear/non-linear relationships | Cannot distinguish directionality; confounded by indirect relationships |

| Regression Models | Models gene expression as function of potential regulators | Interpretable coefficients indicate regulatory strength | Prone to overfitting with many predictors; unstable with correlated TFs |

| Dynamical Systems | Uses differential equations to model system behavior over time | Captures diverse factors affecting expression; highly interpretable parameters | Computationally intensive; less scalable to large networks |

| Probabilistic Models | Graphical models estimating most probable regulatory relationships | Enables filtering/prioritization of interactions | Often assumes specific gene expression distributions |

| Deep Learning | Neural networks learning complex regulatory patterns from data | Highly versatile architectures; can integrate multi-modal data | Requires large datasets; computationally intensive; less interpretable |

Linear Time-Variant Modeling for Microarray Data

The linear time-variant model represents a powerful approach for reverse engineering GRNs from time-series microarray data. This method models gene regulatory dynamics using the equation:

dXᵢ/dt = W(t)X(t)

Where X(t) represents the vector of gene expression levels at time t, and W(t) is the time-variant connectivity matrix encoding regulatory relationships [6] [7]. Unlike linear time-invariant systems, this approach can capture nonlinear behaviors through its time-dependent parameters, providing greater flexibility in modeling complex biological systems while maintaining computational efficiency compared to fully nonlinear models [7].

The parameters of the linear time-variant model can be effectively estimated using evolutionary algorithms, such as Self-Adaptive Differential Evolution (SADE). This optimization approach efficiently navigates the high-dimensional parameter space typical of GRN inference problems, demonstrating robust performance even with noisy expression data [6] [7]. Validation studies using synthetic networks and biological datasets, including the SOS DNA repair system in Escherichia coli, have confirmed the method's accuracy in identifying correct regulatory interactions with substantially reduced computational time compared to alternative approaches [6] [7].

Application Note: Analyzing Drug Resistance Mechanisms in Melanoma

Experimental Protocol: scMemorySeq for Cellular Memory Tracking

Purpose: To track heritable gene expression states associated with drug resistance in melanoma cells at single-cell resolution.

Principle: This protocol combines cellular barcoding with single-cell RNA sequencing (scRNA-seq) to map transcriptional states and their persistence across cell divisions, enabling quantification of cellular memory in drug-resistant populations [8].

Reagents and Equipment:

- WM989 BRAF V600E-mutated melanoma cell line

- High-complexity transcribed barcode library

- TGF-β1 cytokine (e.g., human recombinant TGF-β1)

- PI3K inhibitor (e.g., PI3Ki)

- Single-cell RNA sequencing platform (10x Genomics or similar)

- Cell culture reagents and equipment

- Bioinformatics tools for scRNA-seq analysis (Seurat, Scanpy, or similar)

Procedure:

- Library Preparation: Transduce WM989 cells with a high-complexity barcode library to enable lineage tracing.

- Treatment Conditions:

- Condition A: Treat with TGF-β1 (10 ng/mL for 72 hours) to induce transition to primed state

- Condition B: Treat with PI3Ki (concentration optimized for specific inhibitor) to promote drug-susceptible state

- Control: Maintain untreated cells in parallel

- Single-Cell Sequencing: Harvest cells and prepare scRNA-seq libraries according to platform-specific protocols

- Data Analysis:

- Perform quality control, normalization, and clustering of scRNA-seq data

- Identify transcriptional clusters using Louvain clustering

- Map barcode distributions across clusters to infer lineage relationships

- Calculate cellular memory metrics based on lineage-specific expression patterns

Troubleshooting Tips:

- Optimize barcode transduction efficiency to ensure adequate lineage resolution

- Include spike-in controls to account for technical variability in scRNA-seq

- Use batch correction methods when processing multiple sequencing runs

- Validate key findings using orthogonal methods (e.g., flow cytometry for marker genes)

Signaling Pathways in Melanoma Cell State Transitions

The application of scMemorySeq to melanoma cells has identified key signaling pathways regulating transitions between drug-susceptible and drug-resistant states [8]. The TGF-β signaling pathway promotes transition to a primed, drug-resistant state characterized by elevated expression of NGFR, FGFR1, FOSL1, and JUN. Conversely, PI3K inhibition drives cells toward a drug-susceptible state with high expression of SOX10 and MITF [8].

Table 2: Key Signaling Pathways and Inhibitors in Melanoma Drug Resistance

| Signaling Pathway | Role in Melanoma | Therapeutic Inhibitors | Effect on Cellular State |

|---|---|---|---|

| TGF-β | Promotes transition to drug-resistant state; upregulates EMT markers | Galunisertib, SB-431542 | Increases primed-state cells (NGFRᵈⁱⁿ, AXL⁺) |

| PI3K | Supports survival and proliferation in drug-resistant cells | PI3Ki (e.g., Pictilisib, Alpelisib) | Reduces primed-state cells by 93%; promotes drug susceptibility |

| MAPK | Driver pathway in BRAF-mutated melanoma | BRAFi (Vemurafenib) + MEKi (Trametinib) | Primary therapeutic target; efficacy enhanced by PI3Ki pretreatment |

Figure 1: Signaling Pathways Regulating Melanoma Cell State Transitions

Data Interpretation and Analysis

Analysis of scMemorySeq data from 12,531 melanoma cells (including 7,581 barcode-labeled cells) reveals two primary transcriptional populations: one exhibiting high expression of primed-state markers (EGFR, AXL) and another expressing drug-susceptible genes (SOX10, MITF) [8]. Cellular memory is quantified by tracking the persistence of these transcriptional states across cell divisions within barcoded lineages.

Treatment with TGFB1 increases the proportion of primed-state cells, confirming its role in promoting drug resistance. Conversely, PI3Ki treatment reduces primed-state cells by 93% across lineages, demonstrating its potency in reversing the resistant phenotype [8]. These state transitions occur without genetic alterations, highlighting the importance of non-genetic mechanisms in therapeutic resistance.

Research Reagent Solutions

Table 3: Essential Research Reagents for GRN Studies in Drug Resistance

| Reagent Category | Specific Examples | Function in GRN Research |

|---|---|---|

| Lineage Tracing Systems | High-complexity barcode libraries, Lentiviral barcodes | Enables tracking of cell fate decisions and cellular memory across divisions |

| Signaling Modulators | TGF-β1 cytokine, PI3K inhibitors (Pictilisib), MAPK pathway inhibitors | Perturbs specific pathways to test their role in regulatory network dynamics |

| Single-Cell Multi-omics Platforms | 10x Multiome, SHARE-seq | Simultaneously profiles gene expression and chromatin accessibility in single cells |

| CRISPR Screening Tools | CRISPRi, CRISPRa, Epigenetic editors | Enables targeted perturbation of regulatory elements and transcription factors |

| Bioinformatics Tools | Seurat, Scanpy, SCENIC, Dynamical systems modeling | Analyzes single-cell data and infers regulatory relationships from expression patterns |

Regulatory Network Dynamics in Cellular Memory

Theoretical Framework of Cellular Memory

Cellular memory represents the ability of cells to preserve information from past experiences and maintain specific gene expression patterns across multiple cell divisions [8]. This memory operates through bistable network configurations that alternate between active ("on") and inactive ("off") states, ensuring precise control of essential genes. In cancer, cellular memory contributes critically to drug resistance, where cells dynamically transition between drug-susceptible and drug-resistant states [8].

The double positive feedback loop represents a fundamental GRN motif that sustains cellular memory through mutual reinforcement of gene expression. In this configuration, two genes mutually enhance each other's expression, creating self-sustaining states that persist through cell divisions [8]. However, these memory states remain reversible and vulnerable to disruption by stochastic fluctuations in gene expression ("noise") that accumulate over time.

Figure 2: Double Positive Feedback Loop in Cellular Memory Maintenance

Protocol: Mathematical Modeling of Noise in GRNs

Purpose: To quantify how stochastic fluctuations affect information transmission in gene regulatory networks and cellular memory stability.

Principle: This protocol uses mutual information metrics from information theory to measure the dependency between gene expression states in the presence of intrinsic and extrinsic noise sources [8].

Mathematical Framework:

- Model Definition:

- Represent the GRN as a system of stochastic differential equations

- Incorporate both intrinsic (transcriptional bursting) and extrinsic (cellular component variation) noise sources

- Parameterize feedback loop strengths based on experimental measurements

Mutual Information Calculation:

- Compute mutual information I(X;Y) between transcription factors in the network

- Use kernel density estimation for probability distributions of expression states

- Calculate information transmission capacity under varying noise conditions

Simulation Procedure:

- Implement numerical simulations using Euler-Maruyama or Gillespie algorithms

- Quantify memory stability as persistence of expression states across simulated cell divisions

- Perform parameter sensitivity analysis to identify critical network components

Application Notes:

- Higher mutual information values indicate more robust communication between network components

- Noise can disrupt information transmission, leading to memory loss and phenotypic switching

- Therapeutic interventions can be modeled as targeted modifications to network parameters

The reverse engineering of gene regulatory networks from microarray and single-cell data provides powerful insights into the biological significance of GRNs in cellular processes and disease. The integration of computational modeling with experimental validation has revealed how network dynamics control cellular memory and contribute to therapeutic resistance in cancer. Future directions in GRN research will likely focus on multi-scale modeling approaches that integrate transcriptional, epigenetic, and spatial information to generate more comprehensive models of cellular regulation. Additionally, the development of sophisticated perturbation tools, including CRISPR-based epigenetic editors and synthetic biology circuits, will enable precise manipulation of GRNs for therapeutic purposes, potentially allowing reprogramming of pathological cellular states in cancer and other diseases.

Reverse engineering of Gene Regulatory Networks (GRNs) is a fundamental process in computational biology that aims to infer the complex web of causal interactions between genes and their products from experimental data [10] [11]. By analyzing patterns in gene expression outputs, researchers can reconstruct the regulatory circuitry that controls cellular processes without prior knowledge of the network topology. Microarray technology has served as a cornerstone for this reverse engineering process, enabling simultaneous measurement of mRNA expression levels for thousands of genes in parallel and providing the numeric seed for GRN inference [10] [12]. The primary goal is to represent the abstract mapping of regulatory rules underlying gene expression, which can provide breakthroughs in understanding complex diseases and designing novel therapeutic interventions [10].

The challenge of GRN reconstruction is substantial due to the high-dimensional nature of transcriptomic data, where the number of genes (p) vastly exceeds available samples (n), creating a "large-p-small-n" problem [13] [12]. Additional complexities include nonlinear gene interactions, time-delayed regulatory effects, noisy measurements, and the combinatorial nature of transcriptional control [11]. Despite these challenges, advances in computational methods have made remarkable progress in extracting meaningful biological insights from microarray data through sophisticated modeling approaches.

Computational Frameworks for GRN Inference

Key Algorithmic Approaches

Multiple computational frameworks have been developed for reconstructing GRNs from microarray data, each with distinct strengths and limitations for capturing different aspects of regulatory relationships.

Table 1: Comparison of Major GRN Inference Methods

| Method Category | Key Algorithms | Strengths | Limitations | Typical Data Requirements |

|---|---|---|---|---|

| Bayesian Networks | Hill Climbing (HC), Dynamic Bayesian Networks (DBN), TDBN [11] [13] | Captures complex dependency structures; Models direct and indirect relationships; Handles uncertainty well | High computational cost for large networks; May produce directed acyclic graphs (no feedback loops) | Time-series or multi-condition data |

| Information Theory | MICRAT, ARACNE, PCA_CMI [11] | Detects non-linear relationships; No distributional assumptions; Identifies functional associations | Large sample sizes needed for reliability; May miss linear relationships | Steady-state or time-series data |

| Regression Models | Linear Time-Variant, S-system [10] | Models quantitative regulation strengths; Faster computation; Clear interpretation | Limited to linear or pre-defined non-linearity; May oversimplify complex interactions | Time-series data preferred |

| Undirected Graphical Models | Graphical Lasso (Glasso), Scale-Free Glasso (SFGlasso) [13] | Handles high-dimensional data well; Efficient sparse network estimation | Undirected edges (no causality); Limited to linear dependencies | Multi-condition data |

Advanced Hybrid Methods

Recent approaches combine multiple strategies to overcome limitations of individual methods. The MICRAT algorithm employs maximal information coefficient (MIC) to construct an undirected graph representing gene associations, then directs edges using conditional relative average entropy combined with time-series mutual information to account for regulatory delays [11]. Non-Gaussian pair-copula Bayesian networks address distributional limitations by using copula functions to model dependencies when normality assumptions are violated, assigning different bivariate copula functions for each local term to capture complex dependency structures [14]. Linear time-variant models incorporated with Self-Adaptive Differential Evolution optimization can discover nonlinear relationships through linear frameworks while handling noisy expression data effectively [10].

Experimental Protocols for GRN Reconstruction

Microarray Data Generation Workflow

Figure 1: Microarray experimental workflow for gene expression profiling

Protocol: Microarray-Based Gene Expression Profiling

RNA Isolation and Quality Control

cDNA Synthesis and Labeling

- Generate single-stranded cDNA from total RNA using reverse transcriptase with T7-linked oligo(dT) primer

- Convert to double-stranded cDNA using DNA polymerase and RNase H

- Perform in vitro transcription (IVT) with biotinylated UTP/CTP using T7 RNA polymerase to produce labeled cRNA [16]

- Purify labeled cRNA and fragment to ~50-200 nucleotides using Mg²⁺ at 94°C

Hybridization and Scanning

- Hybridize fragmented cRNA to microarray chips (e.g., Affymetrix GeneChip platforms) at 45°C for 16 hours in hybridization oven [16]

- Wash and stain chips using fluidics station with specific protocols for array type

- Scan chips using high-resolution scanner (e.g., GeneChip Scanner 3000) to generate DAT image files

- Process images with software (e.g., Affymetrix GeneChip Command Console) to produce CEL intensity files

Data Preprocessing and Normalization

Protocol: Microarray Data Processing Pipeline

Background Correction and Normalization

- Import CEL files into analysis environment (R/Bioconductor recommended)

- Apply Robust Multi-array Average (RMA) algorithm for background adjustment, quantile normalization, and summarization [16]

- For two-color arrays, implement LOESS normalization to correct dye biases

Quality Assessment

- Check for spatial artifacts, RNA degradation trends, and outlier arrays

- Verify positive and negative control probes meet expected performance

- Assess between-array consistency using correlation metrics and clustering

Expression Matrix Generation

- Export normalized, log2-transformed expression values for downstream analysis

- Filter out low-intensity probes (e.g., lowest 30% quantile across samples) [15]

- Annotate probes with current gene symbols and genomic coordinates

GRN Inference Using Bayesian Approaches

Protocol: Gaussian Bayesian Network Reconstruction

Data Preparation

- Format expression matrix with genes as columns and samples as rows

- For time-series data, ensure proper temporal ordering and equivalent intervals

- Standardize expression values for each gene to mean=0, variance=1

Network Structure Learning

- Implement Hill Climbing (HC) algorithm to search through directed acyclic graph space [13]

- Use Bayesian Information Criterion (BIC) as score function to balance fit and complexity

- Execute multiple runs with different random seeds to assess stability

Network Validation

- Perform bootstrap resampling to estimate edge confidence

- Compare with known regulatory interactions from literature or databases

- Validate predictions using independent datasets or experimental follow-up

Protocol: MICRAT Algorithm Implementation

Undirected Network Construction

- Calculate Maximal Information Coefficient (MIC) between all gene pairs [11]

- Apply statistical threshold to identify significant associations

- Construct initial undirected graph representing potential regulatory relationships

Edge Directionality Inference

- Compute conditional relative average entropy for each connected gene pair

- Calculate time-delayed mutual information for time-series data

- Combine metrics to infer directionality of regulatory interactions

Network Refinement

- Remove edges with weak or inconsistent directional evidence

- Integrate with transcription factor binding information if available

- Export final directed network for biological interpretation

Research Reagent Solutions and Computational Tools

Essential Research Materials

Table 2: Key Reagents and Platforms for Microarray Studies

| Category | Specific Product/Platform | Application Notes | Quality Control Metrics |

|---|---|---|---|

| RNA Isolation | TRIzol Reagent, RNeasy Kits | Maintain RNA integrity; Prevent degradation | RIN > 8.0, 260/280 ratio 1.8-2.1 |

| Labeling Kits | GeneChip 3' IVT PLUS Kit | Optimized for 3' bias in mRNA amplification | Yield > 1.5μg cRNA, fragmentation efficiency |

| Microarray Platforms | Affymetrix GeneChip PrimeView, Illumina BeadChip | Platform-specific protocols required | Background levels, control probe performance |

| Hybridization | GeneChip Hybridization Oven | Temperature stability critical | Consistent hybridization across arrays |

| Scanning | GeneChip Scanner 3000 | Regular calibration essential | Intensity distribution, spatial artifacts |

Computational Tools and Software

Table 3: Bioinformatics Resources for GRN Reconstruction

| Tool Name | Application | Algorithmic Basis | Input Data Requirements |

|---|---|---|---|

| Mana | mRNA quality analysis | Sequencing data processing | Raw sequencing reads, reference sequence |

| Glasso | Sparse inverse covariance estimation | Graphical lasso regularization | Continuous expression matrix |

| Hill Climbing | Bayesian network learning | Score-based greedy search | Continuous or discrete expression data |

| MICRAT | Time-series network inference | Maximal information coefficient | Time-course expression data |

| ARACNE | Mutual information networks | Information theory | Multi-sample expression data |

| GeneNetWeaver | Benchmark generation | Biological network simulation | Network topology for in silico data |

Comparative Analysis of Method Performance

Benchmarking on Reference Networks

Figure 2: GRN method evaluation framework using benchmark data

Evaluation studies using DREAM challenge benchmarks and well-characterized biological networks provide performance insights:

- Hill Climbing Gaussian Bayesian Networks demonstrate superior accuracy in capturing complex dependency structures, balancing both strong local and significant global connections in expression data [13]

- MICRAT shows particular strength on 100-gene networks, outperforming other methods on time-series data by effectively accounting for regulatory delays [11]

- Linear time-variant models with evolutionary optimization achieve high reconstruction accuracy from both noise-free and noisy time-series data (5-10% noise) with considerably smaller computational time [10]

- Non-Gaussian pair-copula Bayesian networks effectively handle distributional violations (non-normality, multi-modality, heavy-tailed distributions) common in genomic data [14]

Application to Real Biological Networks

SOS DNA Repair Network in E. coli: Multiple algorithms have successfully reconstructed key aspects of this well-characterized network, with linear time-variant models identifying more correct and reasonable regulations compared to existing approaches [10].

Breast Cancer Subtypes: Non-Gaussian pair-copula Bayesian networks have been applied to reveal differences in GRNs across molecular subtypes, potentially informing targeted therapeutic strategies [14].

cAMP Oscillations in Dictyostelium discoideum: Reverse engineering approaches correctly identified regulatory parameters from simulated expression data, demonstrating utility for analyzing dynamic biological processes [10].

Integration with Emerging Technologies

Microarray and RNA-Seq Complementary

While RNA sequencing offers advantages including wider dynamic range and detection of novel transcripts, microarray platforms remain viable for traditional transcriptomic applications due to lower cost, smaller data size, and well-established analytical pipelines [16]. Recent comparisons show both technologies yield similar functional pathway identification and concentration-response modeling outcomes, supporting continued use of microarray data for GRN reconstruction, particularly when combined with appropriate computational methods [16].

Protocols for cross-platform data integration have been developed, including batch correction methods like ComBat, enabling researchers to leverage extensive existing microarray data while incorporating newer RNA-seq datasets [15]. This approach maximizes resource utilization and facilitates meta-analyses across experimental platforms.

Future Methodological Directions

The field continues to evolve with several promising avenues:

- Multi-omics integration: Combining microarray data with proteomic, epigenomic, and metabolomic data sources

- Single-cell resolution: Adapting network inference methods for single-cell transcriptomics

- Machine learning enhancements: Incorporating deep learning architectures for capturing higher-order interactions

- Dynamic network modeling: Developing approaches that capture time-varying network structures

Microarray data continues to provide a robust foundation for reverse engineering gene regulatory networks when analyzed with sophisticated computational methods. The protocols and applications outlined here provide researchers with practical frameworks for extracting biologically meaningful networks from high-throughput mRNA expression measurements.

The intricate mapping of gene regulatory networks (GRNs) is a fundamental objective in computational biology, providing critical insights into the molecular mechanisms that control cellular functions in development, health, and disease [17] [10]. Gene regulatory networks represent abstract mappings of gene regulations in living cells, forming the functional circuitry that guides cellular responses to environmental and developmental cues [10] [18]. The reverse engineering of GRNs from experimental data such as microarrays presents substantial computational challenges due to the high-dimensional nature of genomic data, nonlinear relationships between regulators, and the combinatorial complexity of molecular interactions [17] [10] [19].

Understanding regulatory interactions requires integrating multiple layers of biological organization. Transcription factors (TFs) regulate gene expression by binding to specific DNA sequences, often interacting with other TFs to form complex regulatory modules [17] [20]. These protein-protein interactions extend the regulatory lexicon far beyond what individual TFs can accomplish alone [20]. Meanwhile, gene-gene interactions (epistasis) represent another critical dimension of complexity, where the effect of one gene on a phenotype depends on the status of other genes [21] [19]. The integration of these interaction types enables researchers to move from static gene lists to dynamic network models that better reflect biological reality.

Transcription Factor Interactions and DNA-Mediated Complexes

Transcription factors rarely function in isolation. Instead, they commonly interact with other TFs through DNA-mediated complexes that significantly expand the regulatory code's complexity [20]. The human gene regulatory code involves more than 1,600 TFs that frequently interact with each other, creating an intricate network of regulatory possibilities far more complex than the genetic code itself [20].

CAP-SELEX for Mapping TF-TF Interactions

The CAP-SELEX (consecutive-affinity-purification systematic evolution of ligands by exponential enrichment) method has emerged as a powerful high-throughput approach for identifying cooperative binding motifs for pairs of TFs [20]. This technique enables simultaneous identification of individual TF binding preferences, TF-TF interactions, and the specific DNA sequences bound by these interacting complexes.

Table 1: Key Protein-Protein Interaction Methods and Applications

| Method | Interaction Types Detected | Key Applications | Strengths |

|---|---|---|---|

| Co-immunoprecipitation (Co-IP) | Stable or strong interactions | Protein complex identification; validation of suspected interactions | Considered gold standard; works with endogenous proteins |

| Pull-down assays | Stable or strong interactions | Initial screening for interacting proteins; when no antibody available | Amenable to screening; uses tagged bait proteins |

| Crosslinking | Transient or weak interactions | Stabilizing temporary interactions for analysis | Captures transient complexes; can be combined with other methods |

| CAP-SELEX | DNA-mediated TF-TF interactions | High-throughput mapping of cooperative TF binding | Identifies orientation/spacing preferences; discovers novel composite motifs |

| Surface Plasmon Resonance (SPR) | Label-free biomolecular interactions | Kinetic parameter determination (on/off rates, Kd) | Label-free; quantitative kinetic data |

| Bio-layer Interferometry (BLI) | Label-free biomolecular interactions | Protein-protein and protein-small molecule interactions | Real-time kinetics; works with crude samples |

Experimental Protocol: CAP-SELEX for TF-TF Interaction Screening

Purpose: To identify cooperative DNA binding between transcription factor pairs and determine their binding specificities.

Materials:

- Library of human TFs (enriched for conserved mammalian proteins)

- 384-well microplate format system

- CAP-SELEX reagents (binding buffer, washing solutions)

- High-throughput sequencing platform

- Positive control TF pairs (e.g., CEBPD–ETV5, FOXO1–ETV5)

Procedure:

- TF Pair Preparation: Combine TFs into pairs (58,754 pairs in recent study) in 384-well format [20].

- CAP-SELEX Cycles: Perform three consecutive CAP-SELEX cycles with the TF pairs.

- DNA Sequencing: Sequence selected DNA ligands using massively parallel sequencing.

- Data Analysis:

- Apply mutual information algorithm to identify TF pairs with preferred spacing and orientation.

- Use composite motif detection algorithm to identify novel binding motifs different from individual TF specificities.

- Validation: Compare identified composite motifs with ENCODE ChIP-seq data to confirm in vivo relevance.

Gene-Gene Interactions (Epistasis) in Complex Traits

Gene-gene interactions, or epistasis, represent a fundamental aspect of complex trait architecture where the effect of one gene on a phenotype depends on the status of other genes [21]. The detection and characterization of these interactions are complicated by the curse of dimensionality, where the number of possible multilocus genotype combinations increases exponentially with each additional gene considered [21].

Deep Learning Framework for Gene-Gene Interaction Detection

Recent advances in deep learning have enabled more powerful detection of gene-gene interactions by considering all SNPs within genes rather than just top SNPs [19]. The gene interaction neural network employs a structured sparse architecture that reflects biological reality: SNPs within a gene affect gene behavior, and the combined behavior of multiple genes affects the phenotype.

Table 2: Comparison of Gene-Gene Interaction Detection Methods

| Method | Gene Representation | Interaction Model | Key Features | Limitations |

|---|---|---|---|---|

| Top-SNP Approaches | Single most significant SNP | Multiplicative (linear) | Simple implementation; computationally efficient | Ignores information from other SNPs in gene |

| PCA-Based Methods | Unsupervised dimensionality reduction | Multiplicative or parametric | Accounts for multiple SNPs; reduces dimensionality | Learned representations neglect phenotype information |

| Boosting Trees | Original SNP data | Non-parametric | Captures complex patterns; handles nonlinearities | Limited integration of representation learning |

| Gene Interaction Neural Network | Learned hidden nodes combining all SNPs | Implicit in deep network | End-to-end learning; captures complex interactions | Computationally intensive; requires careful permutation testing |

Experimental Protocol: Neural Network-Based Gene-Gene Interaction Detection

Purpose: To detect statistically significant gene-gene interactions for complex phenotypes using deep learning.

Materials:

- Genotype data (SNPs) for candidate genes

- Phenotype measurements

- Computational resources (GPU recommended)

- Deep learning framework (e.g., PyTorch, TensorFlow)

Procedure:

- Data Preparation:

- Select genes associated with phenotype of interest

- Perform quality control on SNP data

- Split data into training and validation sets

Network Architecture:

- Implement structured sparse neural network with separate MLPs for each gene

- Create gene representation layer where each node represents a single gene

- Add fully connected layers after gene representation layer

Model Training:

- Train neural network to predict phenotype from SNP data

- Use appropriate regularization to prevent overfitting

Interaction Scoring:

- Calculate Shapley interaction scores between gene representation nodes

- Compute interaction effects for all gene pairs

Significance Testing:

- Implement permutation procedure tailored for neural networks

- Generate null distribution by permuting residuals from main effects model

- Calculate false discovery rates for multiple testing correction

Protein-Protein Interactions: Experimental Methods and Analysis

Protein-protein interactions (PPIs) form the physical basis of most cellular processes, with the vast majority of proteins interacting with others for proper biological activity [22]. These interactions can be stable (forming multi-subunit complexes) or transient (temporary associations requiring specific conditions) [22].

Research Reagent Solutions for Protein Interaction Studies

Table 3: Essential Research Reagents for Protein Interaction Analysis

| Reagent/Method | Function | Application Context |

|---|---|---|

| Co-IP Antibodies | Immunoprecipitation of target protein | Isolation of protein complexes; validation of interactions |

| Crosslinkers (BS3, DTSSP) | Covalent stabilization of interactions | Capturing transient interactions; complex isolation |

| Affinity Tags (GST, polyHis) | Purification of recombinant proteins | Pull-down assays; protein complex purification |

| Surface Plasmon Resonance Chips | Label-free interaction measurement | Kinetic parameter determination; affinity measurements |

| Fluorescence Labels | Detection and visualization | FRET, BiFC, and fluorescence polarization assays |

| CAP-SELEX Reagents | High-throughput TF interaction screening | Mapping DNA-mediated TF-TF interactions |

Experimental Protocol: Co-immunoprecipitation for Protein Complex Isolation

Purpose: To isolate and identify protein interaction partners under near-physiological conditions.

Materials:

- Specific antibody for target protein

- Protein A/G magnetic beads or agarose resin

- Cell lysis buffer (with appropriate protease inhibitors)

- Wash buffers (varying stringency if needed)

- Elution buffer (low pH or competitive analyte)

Procedure:

- Cell Lysis: Prepare cell lysate using mild non-denaturing lysis buffer to preserve protein interactions.

- Antibody Binding: Incubate target protein antibody with cell lysate to form immune complexes.

- Bead Immobilization: Add Protein A/G magnetic beads to capture antibody-protein complexes.

- Washing: Wash beads thoroughly with appropriate buffers to remove non-specifically bound proteins.

- Elution: Elute bound proteins using low pH buffer or competitive elution.

- Analysis: Identify co-precipitated proteins by Western blotting or mass spectrometry.

Key Considerations:

- Include appropriate controls (non-specific IgG, bead-only control)

- Optimize antibody concentration to avoid saturation

- Consider crosslinking for weak or transient interactions

- Note that co-IP detects both direct and indirect interactions

Integrative Network Inference Frameworks

The integration of multiple data types and prior knowledge has emerged as a powerful approach for enhancing the accuracy of GRN inference. Methods like KEGNI (Knowledge graph-Enhanced Gene regulatory Network Inference) demonstrate how combining single-cell RNA-seq data with structured biological knowledge can improve cell type-specific network reconstruction [23].

The KEGNI Framework Protocol

Purpose: To infer cell type-specific gene regulatory networks by integrating scRNA-seq data with prior biological knowledge.

Materials:

- Single-cell RNA-seq data with cell type annotations

- Prior knowledge graphs (KEGG, TRRUST, RegNetwork)

- Computational resources for graph autoencoders

- Cell type marker database (CellMarker 2.0)

Procedure:

- Base Graph Construction:

- Compute Euclidean distances from gene expression profiles

- Apply k-nearest neighbors algorithm to construct initial graph

Masked Graph Autoencoder:

- Implement self-supervised learning strategy

- Randomly mask subset of node features (gene expressions)

- Train model to reconstruct masked features

Knowledge Graph Integration:

- Construct cell type-specific knowledge graph from databases

- Apply contrastive learning with negative sampling

- Generate knowledge graph embeddings

Multi-task Learning:

- Jointly optimize objectives of both autoencoder and knowledge embedding models

- Share embeddings between models for common genes

- Infer final GRN from combined model

Performance Comparison and Validation

Rigorous benchmarking of GRN inference methods is essential for assessing their effectiveness. The BEELINE framework provides a standardized approach for evaluating accuracy, robustness, and efficiency across different algorithms and datasets [23].

Quantitative Performance Assessment

Table 4: Performance Comparison of GRN Inference Methods on BEELINE Benchmark

| Method | Early Precision Ratio (EPR) | Key Strengths | Consistency Across Benchmarks |

|---|---|---|---|

| KEGNI | Best performance (12/16 benchmarks) | Integration of prior knowledge; self-supervised learning | Consistently outperforms random predictors |

| MAE Model | Second best performance (4/16 benchmarks) | Effective capture of gene relationships from scRNA-seq | Consistently outperforms random predictors |

| GENIE3 | Top performance (4/16 benchmarks) | Tree-based ensemble method; handles nonlinearities | Variable performance across benchmarks |

| PIDC | Top performance (1/16 benchmarks) | Information theoretic approach | Variable performance across benchmarks |

| Other Methods | Below top performance | Varies by specific method | Inconsistent performance across benchmarks |

Validation approaches for inferred networks include comparison with ground truth datasets such as cell type-specific ChIP-seq networks, non-specific ChIP-seq networks, functional interaction networks from STRING database, and loss-of-function/gain-of-function networks [23]. The evaluation metrics such as Early Precision Ratio (EPR) - the fraction of true positives among top-k predicted edges compared to random predictor - provide standardized performance assessment [23].

The reverse engineering of gene regulatory networks from microarray and other omics data has evolved from simple correlation-based approaches to sophisticated integrative frameworks that capture the multi-layered nature of regulatory interactions. The key advances include the development of high-throughput methods for mapping TF-TF interactions, deep learning approaches for detecting nonlinear gene-gene interactions, and integrative frameworks that combine multiple data types with prior knowledge.

The field continues to face challenges including the integration of unpaired multi-omics data, reconstruction of cell type-specific networks, and validation of predicted interactions. However, the ongoing development of methods like CAP-SELEX for comprehensive TF interaction mapping, neural networks for epistasis detection, and knowledge-enhanced frameworks like KEGNI promises to advance our understanding of the complex regulatory codes underlying cellular function and dysfunction in disease states.

As these technologies mature, they will increasingly enable researchers to move from static gene lists to dynamic, context-specific network models that better reflect biological reality and provide stronger foundations for therapeutic development.

Methodologies in Practice: A Guide to GRN Inference Algorithms and Their Applications

Gene Regulatory Networks (GRNs) represent the complex circuitry of interactions where transcription factors and other molecules control the expression of target genes, playing key roles in development, phenotype plasticity, and evolution [24] [25]. In the context of reverse engineering from microarray data—which allows simultaneous measurement of thousands of gene expression levels—computational models serve as essential tools for deciphering these interactions [7] [26]. These models can be systematically categorized into distinct classes based on their complexity and the aspects of regulation they capture. We propose a classification into three fundamental model classes: topology models that describe connection patterns, control logic models that define combinatorial regulatory effects, and dynamic models that simulate real-time system behavior [27]. This framework provides researchers with a structured approach for selecting appropriate modeling strategies based on their specific experimental goals and data resources.

Topological Models

Conceptual Foundation and Applications

Topological models describe GRNs as graphs with nodes representing genes and edges representing regulatory interactions, effectively creating wiring diagrams of the regulatory system [27]. These models focus exclusively on the connectivity pattern between network components without specifying the detailed kinetics of interactions. In graph terminology, genes with outgoing edges are termed source genes, while genes with incoming edges from a particular source are its target genes [27]. The scale-free property is a particularly relevant feature of biological networks, including GRNs, providing network resilience against random node removal and fitting the data of genome evolution by gene duplication [24]. Topological analysis has revealed that life-essential subsystems are governed mainly by transcription factors with intermediary average nearest neighbor degree (Knn) and high page rank or degree, whereas specialized subsystems are primarily regulated by transcription factors with low Knn [24].

Key Topological Features and Their Biological Significance

Table 1: Key Topological Features in GRNs and Their Biological Interpretation

| Topological Feature | Mathematical Definition | Biological Significance | Experimental Validation |

|---|---|---|---|

| Degree | Number of connections to a node | Hubs (high-degree nodes) often correspond to key transcription factors | Essential genes show higher centralities [24] |

| Knn (Average Nearest Neighbor Degree) | Average degree of a node's neighbors | Distinguishes regulators from targets; indicates network modularity | Low Knn regulators control specialized subsystems [24] |

| Page Rank | Measure of node importance based on connection importance | Identifies critical nodes in life-essential subsystems | High page rank ensures robustness against perturbation [24] |

| Betweenness Centrality | Number of shortest paths passing through a node | Identifies bottleneck genes connecting network modules | Disease-related genes show specific betweenness ranges [24] |

Experimental Protocol for Topological Network Inference

Protocol: Reconstructing Topological Networks from Microarray Data

Data Preprocessing: Begin with normalized gene expression data from time-series or perturbation microarray experiments. Perform quality control, background correction, and normalization using established methods [28] [29].

Differential Expression Analysis: Identify significantly differentially expressed genes using appropriate statistical methods. The Significance Analysis of Microarrays (SAM) method is recommended, which performs gene-specific t-tests and computes a statistic for each gene that measures the strength of the relationship between gene expression and a response variable [28].

Network Inference: Calculate correlation coefficients (Pearson, Spearman) or mutual information scores between all gene pairs to establish potential regulatory relationships. Construct an adjacency matrix where elements represent the strength of regulatory interactions [27].

Threshold Determination: Apply significance thresholds to eliminate spurious connections. Use false discovery rate (FDR) correction for multiple testing [28].

Topological Analysis: Calculate key network metrics using computational tools. For small to medium networks, use specialized packages in R or Python; for large-scale networks, employ distributed computing resources [24].

Biological Validation: Compare inferred network topology with known pathways from databases such as KEGG. Perform experimental validation through chromatin immunoprecipitation (ChIP) or gene knockout studies [30].

Control Logic Models

Conceptual Foundation and Applications

Control logic models describe the combinatorial effects of regulatory signals that determine gene expression outcomes, answering questions about which transcription factor combinations activate or repress target genes [27]. These models move beyond simple connectivity to capture the integration of multiple inputs that genes receive from various transcription factors. A longstanding question in this field is how these tangled interactions synergistically contribute to decision-making procedures [25]. Regulatory logic can be represented through various formalisms including Boolean networks, where gene states are binary (ON/OFF) and regulations follow logical rules (AND, OR, NOT) [7] [25]. Researchers observed in the E. coli lac operon system that changes in one single base can shift the regulatory logic significantly, suggesting that logic functions of GRNs can be adapted on the demand of specific functions in organisms [25].

Common Logic Motifs and Their Functional Roles

Table 2: Common Regulatory Logic Motifs in GRNs

| Logic Motif | Formal Representation | Biological Function | Example System |

|---|---|---|---|

| AND Gate | Gene = TF1 AND TF2 | Combinatorial control requiring multiple factors | Enhanceosome assembly [25] |

| OR Gate | Gene = TF1 OR TF2 | Redundant regulation; multiple activation paths | Stress response genes [25] |

| NOT Gate | Gene = NOT TF1 | Repression; silencing of gene expression | Cell cycle inhibitors [25] |

| CIS (Cross-Inhibition with Self-activation) | X = X AND NOT Y; Y = Y AND NOT X | Mutual exclusion; binary fate decisions | Gata1-PU.1 in hematopoiesis [25] |

Experimental Protocol for Control Logic Modeling

Protocol: Inferring Logic Rules from Expression Data

Data Collection: Obtain time-series gene expression data under multiple perturbation conditions (knockdown, overexpression of transcription factors). Ensure sufficient replicates to account for biological variability.

Network Discretization: Convert continuous expression values to discrete states (0/1 for OFF/ON) using appropriate thresholding methods. Z-score based thresholds or mixture models are commonly employed.

Candidate Logic Rule Generation: For each target gene, identify potential regulator combinations using prior knowledge or correlation analysis. The number of possible regulators per gene should be limited to avoid combinatorial explosion.

Logic Rule Optimization: Evaluate candidate logic rules against experimental data using fitness measures. For small networks (3-5 genes), exhaustive search is feasible; for larger networks, use evolutionary algorithms or Bayesian approaches.

Model Validation: Test predicted logic rules against independent perturbation data not used in model training. Perform experimental validation through reporter assays with mutated cis-regulatory elements.

Biological Interpretation: Analyze the distribution of logic motifs in the context of biological functions. Identify key motifs such as the Cross-Inhibition with Self-activation (CIS) network commonly found in cell fate decisions [25].

Dynamic Models

Conceptual Foundation and Applications

Dynamic models simulate the real-time behavior of gene regulatory networks, enabling prediction of system responses to various environmental changes, external stimuli, or internal perturbations [27]. These models capture the temporal evolution of gene expression states, making them particularly valuable for understanding cellular processes that unfold over time, such as cell cycle regulation, circadian rhythms, and developmental processes [30]. Dynamic models can be implemented using various mathematical frameworks, including ordinary differential equations (ODEs), stochastic models, and rule-based systems [7]. A particularly promising nonlinear model is the S-system, which possesses a rich structure capable of capturing various dynamics of complex regulation [7]. However, the large number of parameters required for S-systems has limited their application to small-scale networks, leading to the development of alternative approaches such as linear time-variant models that can handle nonlinear relationships while maintaining computational efficiency [7].

Mathematical Frameworks for Dynamic Modeling

Table 3: Mathematical Frameworks for Dynamic GRN Modeling

| Model Type | Mathematical Formulation | Advantages | Limitations |

|---|---|---|---|

| Linear Time-Variant | dX/dt = A(t)X + B(t)U | Captures nonlinearity via time-variance; computationally efficient | Limited biological interpretability of time-varying parameters [7] |

| S-system (Power Law) | dXᵢ/dt = αᵢ ΠXⱼ^{gᵢⱼ} - βᵢ ΠXⱼ^{hᵢⱼ} | Rich structure for complex dynamics; canonical nonlinear form | 2n(n+1) parameters; curse of dimensionality [7] |

| Dynamic Bayesian Networks | P(X(t+1)|X(t), X(t-1)...) | Handles uncertainty; incorporates prior knowledge | Computational complexity with large networks [30] |

| Boolean Dynamical Models | Xᵢ(t+1) = Fᵢ(X₁(t),...,Xₙ(t)) | Simple implementation; minimal parameters | Oversimplification of continuous dynamics [7] |

Experimental Protocol for Dynamic Model Construction

Protocol: Developing Dynamic Models from Time-Series Data

Experimental Design: Collect high-resolution time-series microarray data with sufficient temporal points to capture dynamics of interest. For cell cycle studies, sample at least 8-12 time points per cycle [30].

Data Preprocessing: Apply normalization specific to time-series data. Use methods such as moving average smoothing to reduce noise while preserving dynamic features [30] [28].

Model Selection: Choose appropriate mathematical framework based on network size, data quality, and biological questions. For large networks (>100 genes), consider linear time-variant models; for small networks (<10 genes), S-systems or ODE models are feasible [7].

Parameter Estimation: Optimize model parameters to fit experimental data. For nonlinear models, use evolutionary algorithms such as Self-Adaptive Differential Evolution [7]. For Bayesian approaches, employ variational Bayesian structural expectation maximization (VBSEM) algorithms [30].

Model Validation: Assess model performance using cross-validation and independent data sets. Evaluate predictive accuracy for unseen time points or perturbation conditions [30] [7].

Dynamic Analysis: Simulate network behavior under various conditions. Perform stability analysis, identify attractors, and analyze transient dynamics in the context of biological function [25].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Computational Tools for GRN Modeling

| Reagent/Tool Category | Specific Examples | Function/Application | Implementation Notes |

|---|---|---|---|

| Microarray Platforms | Affymetrix GeneChip, Agilent DNA Microarrays | Genome-wide expression profiling | Follow MAQC protocols for standardization [28] |

| Normalization Software | RMA, MAS5, FARMS | Background correction and data normalization | FARMS outperforms others in sensitivity/specificity [28] |

| Differential Expression Tools | SAM (Significance Analysis of Microarrays) | Identifying statistically significant expression changes | Uses gene-specific t-tests with permutation analysis [28] |

| Clustering Algorithms | Hierarchical Clustering, K-means, SOM | Grouping genes with similar expression patterns | K-means generally outperforms hierarchical methods [26] |

| Network Inference Software | VBSEM, Self-Adaptive DE | Parameter estimation for dynamic models | VBSEM provides posterior distribution of parameters [30] [7] |

| Pathway Analysis Tools | Gene Set Enrichment Analysis (GSEA) | Linking expression changes to biological pathways | Identifies enriched gene sets between biological states [28] [26] |

| Validation Databases | KEGG, Biocarta, Gene Ontology | Comparing inferred networks with known pathways | KEGG used as gold standard for network validation [30] |

Linear Time-Variant Models and Evolutionary Algorithms for Network Learning

Reverse engineering gene regulatory networks (GRNs) from microarray data is a fundamental challenge in computational biology, crucial for understanding cellular processes, disease mechanisms, and drug development [10]. This process involves inferring the unknown network of causal interactions between genes from observed gene expression data. The complexity of biological systems, combined with typically limited sample sizes and noisy data, makes this a computationally intensive inverse problem [10] [31].

Among various modeling frameworks, Linear Time-Variant (LTV) models offer a powerful compromise: they retain the simplicity and computational tractability of linear models while being capable of capturing a richer range of dynamic responses, including some nonlinear behaviors present in real biological systems [10]. When combined with evolutionary algorithms (EAs) as robust optimization engines, this approach provides an effective method for elucidating GRN topology and dynamics from time-series expression data.

Key Concepts and Theoretical Framework

Linear Time-Variant Models for GRN Inference

Traditional linear time-invariant models identify a fixed set of parameters for a predetermined model structure. However, LTV models introduce time-dependent parameters, enabling them to approximate nonlinear system behavior through a linear formalism [10]. In the context of GRNs, this allows the model to adapt to different regulatory regimes that might occur under varying cellular conditions.

For a network of n genes, the system dynamics can be represented as:

Ẋ(t) = A(t)X(t)

Where:

- Ẋ(t) is the vector of time derivatives of gene expression levels at time t

- X(t) is the vector of gene expression concentrations at time t

- A(t) is the time-varying connectivity matrix encoding regulatory relationships

The primary advantage of this approach is its reduced parameter space compared to fully nonlinear models like S-systems, which require estimation of 2n(n+1) parameters [10]. This efficiency makes LTV models particularly suitable for medium to large-scale network inference.

Evolutionary Algorithms as Optimization Engines

Evolutionary Algorithms are population-based stochastic optimization techniques inspired by biological evolution. They are particularly well-suited for GRN reverse engineering due to their ability to:

- Handle high-dimensional, multimodal search spaces common in network inference

- Avoid local optima through mechanisms like mutation and recombination

- Work effectively with noisy data and incomplete information [31] [32]

In the EAs framework, candidate network structures are encoded as chromosomes, and a fitness function (typically Bayesian Information Criterion or similar scoring metric) evaluates how well each candidate explains the observed expression data while penalizing excessive complexity [31].

Table 1: Comparison of Modeling Approaches for GRN Inference

| Model Type | Key Characteristics | Parameter Count | Ability to Capture Nonlinearity | Computational Complexity |

|---|---|---|---|---|

| Boolean Networks | Discrete, logical interactions | Low | Limited | Low |

| Bayesian Networks | Probabilistic, acyclic graphs | Moderate | Limited | Moderate |

| S-system | Nonlinear differential equations | High (2n(n+1)) | Excellent | Very High |

| Linear Time-Invariant | Linear differential equations | Moderate | Poor | Low-Moderate |

| Linear Time-Variant | Time-varying linear parameters | Moderate | Good | Moderate |

Application Notes: LTV Models with Evolutionary Optimization

Experimental Validation and Performance

The combination of LTV models with evolutionary optimization has been validated across multiple experimental scenarios, demonstrating robust performance in both synthetic and real biological networks [10].

For synthetic network inference, the approach successfully reconstructed network topology and regulatory parameters with high accuracy from both noise-free and noisy time-series data (5% and 10% noise levels) [10]. This demonstrates the method's resilience to experimental noise commonly found in microarray data.

When applied to the simulated cAMP oscillation expression data in Dictyostelium discoideum, the method identified correct regulatory relationships with considerably smaller computational time compared to alternative approaches [10]. This efficiency makes it particularly suitable for larger network reconstruction.

For real biological systems, analysis of the SOS DNA repair network in Escherichia coli succeeded in finding more correct and reasonable regulations compared to various existing methods [10]. The method successfully identified key regulatory interactions known from experimental literature.

Implementation Considerations

Successful implementation requires careful consideration of several factors:

- Data Requirements: Time-series gene expression data with sufficient temporal resolution to capture network dynamics

- Parameter Tuning: EA parameters such as population size, mutation rates, and selection pressure must be optimized for specific applications

- Model Validation: Robust validation through cross-validation, bootstrap analysis, or comparison with known biological networks is essential

- Computational Infrastructure: For large networks, distributed computing approaches may be necessary [32]

Table 2: Performance Metrics for LTV-EA Approach in Network Reconstruction

| Test Case | Network Size | Data Conditions | Reconstruction Accuracy | Computational Time |

|---|---|---|---|---|

| Synthetic Network | 5-10 genes | Noise-free | High | Low |

| Synthetic Network | 5-10 genes | 5-10% noise | Moderate-High | Low |

| cAMP Oscillation (D. discoideum) | 6 genes | Simulated data | High | Low |

| SOS DNA Repair (E. coli) | 8 genes | Real microarray data | Moderate-High | Moderate |

Experimental Protocols

Protocol 1: Reverse Engineering GRNs Using LTV Models and Self-Adaptive Differential Evolution

This protocol details the complete workflow for inferring gene regulatory networks from time-series microarray data using Linear Time-Variant models with Self-Adaptive Differential Evolution as the optimization engine.

Research Reagent Solutions and Computational Tools

Table 3: Essential Research Reagents and Computational Tools

| Item | Function/Specification | Application Context |

|---|---|---|

| Time-Series Microarray Data | mRNA expression measurements across multiple time points | Primary input data for network inference |

| Self-Adaptive Differential Evolution Algorithm | Evolutionary optimization with adaptive parameter control | Parameter estimation for LTV model |

| Linear Time-Variant Model Framework | Mathematical representation of gene regulatory dynamics | Core modeling formalism |

| Bayesian Information Criterion (BIC) | Scoring metric balancing model fit and complexity | Fitness evaluation in evolutionary algorithm |

| Computational Grid Infrastructure | Distributed computing resources (e.g., Condor, JavaSpaces) | Handling computational demands of large networks [32] |

Step-by-Step Procedure

Data Preparation and Preprocessing

- Collect time-series gene expression data from microarray experiments

- Perform quality control, normalization, and missing value imputation

- For noisy data, apply appropriate smoothing filters while preserving underlying dynamics

Model Initialization

- Define gene set and potential regulatory relationships based on biological knowledge

- Initialize LTV model structure with random parameter values

- Set up Self-Adaptive Differential Evolution parameters:

- Population size: 50-100 candidate networks

- Mutation and recombination rates: initially set to default values (self-adaptation will adjust these)

- Stopping criterion: maximum generations or convergence threshold

Evolutionary Optimization Loop

- Evaluation: Calculate fitness for each candidate network using BIC score:

- BIC(G) = Σi Σk Σl -2Nikl log(θikl) + KGi log(s) [31]

- Where Nikl is the co-occurrence count in data, θikl is parameter estimate, K_Gi is parameter count

- Selection: Apply deterministic crowding or tournament selection to maintain population diversity [31]

- Recombination: Generate offspring networks through crossover operations

- Mutation: Introduce structural changes to networks through edge additions/deletions

- Parameter Self-Adaptation: Automatically adjust DE parameters based on performance

- Evaluation: Calculate fitness for each candidate network using BIC score:

Model Validation

- Perform cross-validation using held-out temporal data points

- Compare inferred network with known biological pathways if available

- Assess robustness through bootstrap resampling

Network Interpretation

- Extract significant regulatory interactions from optimized LTV model

- Annotate network edges with direction and strength of regulation

- Integrate with additional biological knowledge for functional interpretation

Protocol 2: Bayesian Network Inference Using Evolutionary Algorithms with Niching

For comparative analysis, this protocol describes an alternative approach using Bayesian Networks with enhanced evolutionary strategies for situations where static rather than time-series data are available.

Methodological Steps

Data Discretization

- Convert continuous gene expression values to discrete states (e.g., low, medium, high)

- Ensure sufficient samples per discrete state for reliable probability estimation

Evolutionary Algorithm with Niching

- Implement steady-state genetic algorithm with population of candidate BN structures

- Apply deterministic crowding niching strategy to maintain population diversity

- Use specialized recombination operators that preserve graph structural properties

Structure Learning and Evaluation

- Score candidate structures using Bayesian Information Criterion

- Enforce directed acyclic graph constraint inherent to BN formalism

- Estimate conditional probability tables for each parental configuration

Visual Representation of Workflows

LTV Model Reverse Engineering Workflow

Gene Regulatory Network Inference Methodology Comparison

Discussion and Future Perspectives

The integration of Linear Time-Variant models with Evolutionary Algorithms represents a promising approach for balancing biological realism with computational feasibility in GRN reverse engineering. The LTV framework provides sufficient flexibility to capture essential dynamics of gene regulation while avoiding the parameter explosion of fully nonlinear models [10].

Key advantages of this approach include:

- Computational Efficiency: Significantly faster computation compared to nonlinear models, enabling application to larger networks

- Noise Tolerance: Demonstrated robustness to experimental noise common in microarray data

- Biological Relevance: Successful identification of known regulatory relationships in real biological systems

Future developments in this area will likely focus on:

- Integration of multi-omics data sources beyond gene expression

- Incorporation of prior biological knowledge to constrain network search space

- Development of hybrid approaches combining evolutionary computation with machine learning techniques [33]

- Enhanced visualization methods for interpreting complex network structures [34] [35]

As microarray technologies continue to evolve, producing higher quality temporal data at reduced cost, the LTV-EA framework is well-positioned to contribute significantly to our understanding of gene regulatory networks and their implications for drug development and therapeutic interventions.

Reverse engineering of Gene Regulatory Networks (GRNs) is a fundamental challenge in computational biology, aiming to reconstruct the complex web of interactions that control cellular processes from high-throughput data [10]. Microarray technology, which allows for the parallel measurement of thousands of gene expression levels, has been a primary data source for this task [10] [36]. However, the statistical reconstruction of these networks is fraught with challenges, primarily due to the high-dimensional nature of the data, where the number of genes (variables) vastly exceeds the number of available samples (observations) [13] [37]. This "large-p-small-n" problem is often compounded by the non-Gaussian characteristics of genomic data, including multi-modality, heavy-tailed distributions, and complex, non-linear dependency structures between genes [38].