Rare Variant Association Studies: Unlocking Complex Trait Heritability from Methodology to Clinical Translation

This article provides a comprehensive overview of Rare Variant Association Studies (RVAS) for complex traits, addressing a critical need for researchers and drug development professionals.

Rare Variant Association Studies: Unlocking Complex Trait Heritability from Methodology to Clinical Translation

Abstract

This article provides a comprehensive overview of Rare Variant Association Studies (RVAS) for complex traits, addressing a critical need for researchers and drug development professionals. It explores the foundational role of rare variants in explaining missing heritability, contrasting them with common variants from Genome-Wide Association Studies (GWAS). The content delves into evolving methodological approaches, including burden and kernel tests, and addresses key analytical challenges like population stratification and power limitations. Finally, it examines validation strategies and the growing convergence of common and rare variant findings, highlighting how advanced sequencing, imputation, and AI are accelerating the translation of RVAS discoveries into therapeutic targets and precise clinical insights.

The Foundation of Rare Variants: From Missing Heritability to Biological Significance in Complex Traits

Rare genetic variants, characterized by their low frequency in a population, have emerged as essential components in the study of complex disease genetics, offering unique insights beyond what can be gleaned from common variants alone [1]. The biology of rare variants underscores their significance, as they can exert profound effects on phenotypic variation and disease susceptibility [1]. Next-generation sequencing (NGS) technologies now make it feasible to survey the full spectrum of genetic variation in coding regions or the entire genome, enabling researchers to systematically investigate the role of rare variants in complex traits [2]. Unlike common variants, which typically have modest effect sizes, rare variants can include high-penetrance alleles that provide hypothesis-free evidence for gene causality, serve as precise targets for functional analysis, offer new targets for drug development, and function as genetic markers with high disease risk for personalized medicine [3].

A fundamental challenge in rare variant association studies (RVAS) lies in defining what constitutes a "rare" variant. There is no universally accepted minor allele frequency (MAF) threshold, and the appropriate cutoff can vary depending on study design, sample size, and population genetics [3]. The establishment of a MAF threshold is a critical first step in any RVAS, as it directly influences which variants are included in association tests and ultimately affects the statistical power and interpretation of results. This protocol examines the spectrum of MAF thresholds used in the field, their statistical implications, and provides detailed methodologies for implementing RVAS in complex trait research.

Establishing MAF Thresholds: Definitions and Statistical Considerations

The Spectrum of MAF Thresholds in Current Research

The definition of rare variants varies across studies and contexts, with different thresholds applied based on specific research questions and methodological considerations. There is currently no consensus on a single standard MAF cutoff, leading to a range of thresholds in the literature as illustrated in Table 1 [3].

Table 1: MAF Thresholds in Rare Variant Association Studies

| MAF Threshold | Classification | Typical Applications | Key Considerations |

|---|---|---|---|

| < 0.1% - 0.5% | Very rare/Ultra-rare | Mendelian disorders, severe phenotypes | Extreme allelic heterogeneity; very large sample sizes required |

| < 1% | Rare | Complex diseases, large biobanks | Balance between variant inclusion and power |

| 1-5% | Low-frequency/Less common | Bridge between common and rare variants | Often analyzed separately or in combination with rare variants |

The selection of an appropriate MAF threshold involves balancing multiple competing factors. Lower thresholds (e.g., <0.1%) focus on very rare variants that may have larger effect sizes but require enormous sample sizes for statistical power. Higher thresholds (e.g., <1-5%) include more variants in the analysis, potentially increasing power but possibly diluting signal by including neutral variants [2] [3]. The optimal threshold often depends on the specific genetic architecture of the trait under investigation and the available sample size.

Statistical Implications of MAF Threshold Selection

The choice of MAF threshold has profound implications for statistical power and multiple testing correction in genetic association studies. Single-variant tests for rare variants are inherently underpowered due to the low frequency of the variants, which has led to the development of specialized aggregation methods that pool information across multiple rare variants within genes or other genomic regions [4] [2].

For genome-wide significance, the conventional threshold of (5 × 10^{-8}) was established under assumptions that may not hold across diverse populations and whole-genome sequencing analyses, particularly for rare variants [5]. Recent research demonstrates that MAF-specific, population-tailored significance thresholds provide a more accurate framework for association studies [5]. The Li-Ji method, which accounts for the complex linkage disequilibrium (LD) structure of the human genome, can be used to derive population-specific significance thresholds that more appropriately control type I error rates, especially for rare variants [5].

Table 2: Statistical Considerations for Different MAF Thresholds

| MAF Range | Effective Number of Independent Tests | Recommended Significance Threshold | Appropriate Statistical Tests |

|---|---|---|---|

| < 0.1% | Highest | Most stringent ((< 5 × 10^{-8})) | Burden tests, aggregation methods |

| 0.1-1% | Intermediate | Population-specific | SKAT, SKAT-O, combined tests |

| 1-5% | Lower than common variants | Less stringent than conventional | Single-variant, variance-component tests |

The statistical power of RVAS depends on multiple factors including sample size ((n)), region heritability ((h^2)), the number of causal variants ((c)), and the total number of variants aggregated ((v)) [4]. Analytic calculations and simulations have revealed that aggregation tests are more powerful than single-variant tests only when a substantial proportion of variants are causal, highlighting the importance of variant selection and functional annotation in study design [4].

Protocols for Rare Variant Association Studies

Quality Control and Variant Annotation

Quality control (QC) of sequencing data is a critical first step in RVAS to ensure the reliability of variant calls and minimize false positives. The following protocol outlines a standardized QC pipeline for whole-exome sequencing (WES) data:

Variant Calling Quality Assessment: Evaluate sequencing depth, mapping quality, and genotype quality metrics. Apply filters based on read depth (typically >10-20×), genotype quality (GQ > 20), and call rate (>95-99%) to ensure high-quality variant calls.

Sample-level QC: Remove samples with excessive missingness (>5%), gender discrepancies, contamination, or outliers in heterozygosity rates. Assess relatedness using kinship coefficients and retain unrelated individuals for association analysis.

Variant-level QC: Filter variants based on call rate (>95-99%), Hardy-Weinberg equilibrium (HWE p > (1 × 10^{-6})), and technical artifacts. For rare variants, HWE thresholds may be relaxed as rare variants are more likely to violate HWE by chance.

Variant Annotation and Functional Prioritization: Annotate variants using tools such as ANNOVAR, VEP, or similar packages to predict functional consequences. Prioritize likely functional variants including:

- Protein-truncating variants (PTVs): stop-gain, stop-loss, splice-site, and frameshift variants

- Deleterious missense variants: predicted damaging by multiple in silico tools (e.g., SIFT, PolyPhen-2, CADD)

- Variants in conserved or functional domains

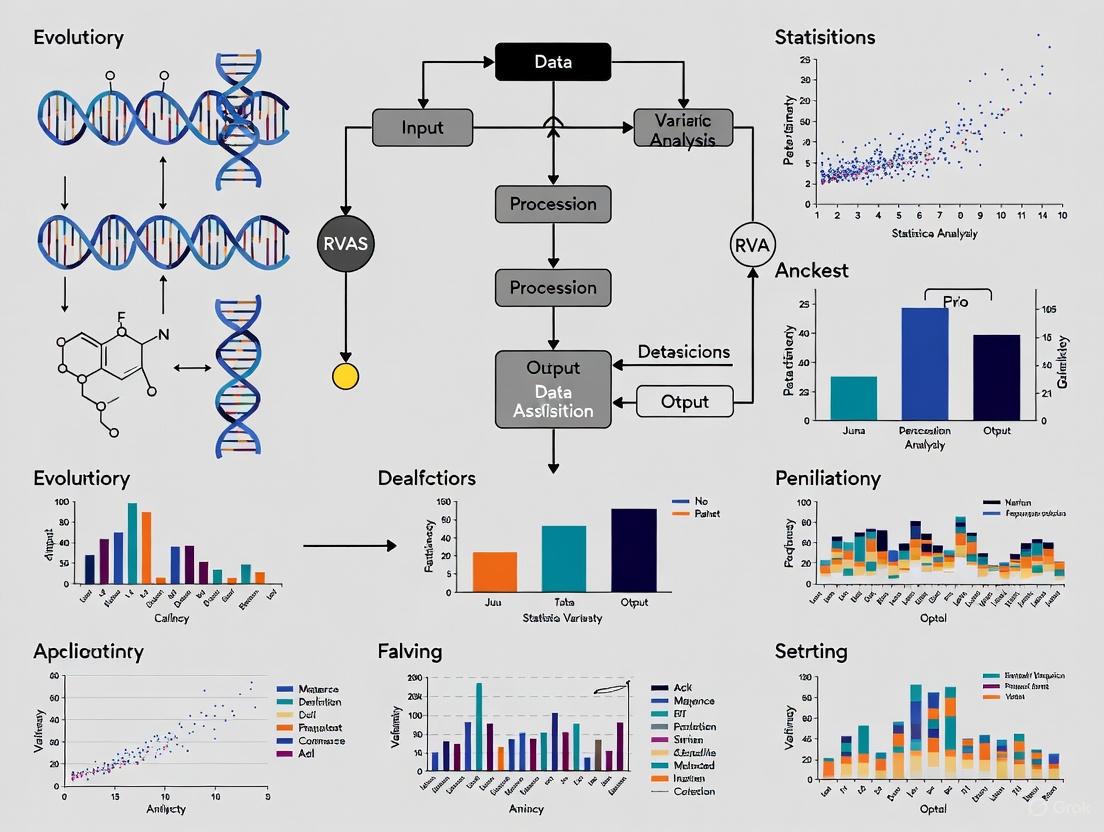

Diagram 1: Quality Control and Variant Annotation Workflow. This workflow outlines the sequential steps for processing raw sequencing data into analysis-ready variants for RVAS, highlighting key QC and annotation stages.

Rare Variant Association Analysis Methods

Association testing for rare variants requires specialized statistical approaches due to the challenges of low allele frequencies and potential allelic heterogeneity. The primary methods can be categorized into three main classes:

Single-Variant Tests: Test each variant individually for association with the trait of interest. While straightforward, these tests are generally underpowered for rare variants unless sample sizes are very large or effect sizes are substantial [4] [2].

Aggregation Tests (Gene-Based or Region-Based): These methods pool information across multiple rare variants to increase statistical power:

- Burden Tests: Calculate a weighted sum of minor allele counts across variants in a gene or region and test this aggregated burden for association with the trait [4] [1]. Burden tests are most powerful when most variants in the region are causal and effects are in the same direction.

- Variance-Component Tests: Evaluate the distribution of variant effect sizes across a region, allowing for both positive and negative effects [4] [1]. The Sequence Kernel Association Test (SKAT) is a widely used variance-component test that is robust to the presence of non-causal variants and variants with opposite effects.

- Combined Tests: Methods like SKAT-O combine burden and variance-component approaches to achieve robust power across different genetic architectures [1] [6].

Meta-Analysis Methods: For combining results across multiple studies, specialized rare variant meta-analysis methods such as Meta-SAIGE provide accurate type I error control and computational efficiency [6]. Meta-SAIGE extends SAIGE-GENE+ to meta-analysis, employing saddlepoint approximation to handle case-control imbalance and reusing linkage disequilibrium matrices across phenotypes to boost computational efficiency.

The following protocol outlines a standard RVAS analysis pipeline:

Define Analysis Units: Group variants into biologically meaningful units, most commonly genes, but also considering protein domains, regulatory regions, or pathways.

Select Variants for Inclusion: Apply MAF thresholds (typically <0.01 or <0.005) and functional filters (e.g., PTVs and deleterious missense variants) to focus on likely functional rare variants.

Choose Appropriate Statistical Tests: Select from burden, variance-component, or combined tests based on the expected genetic architecture. For exploratory analyses, consider multiple methods.

Account for Covariates and Confounders: Include relevant covariates such as age, sex, and principal components to account for population stratification.

Perform Association Testing: Conduct gene-based or region-based tests using specialized software such as SAIGE-GENE+, STAAR, or REGENIE.

Correct for Multiple Testing: Apply exome-wide or genome-wide significance thresholds, typically using Bonferroni correction based on the number of genes or regions tested.

Diagram 2: Rare Variant Aggregation Test Strategies. This diagram illustrates the three main approaches to rare variant association testing and their optimal use cases, highlighting the trade-offs between different methodological strategies.

Significance Calculation and Interpretation

Determining statistical significance in RVAS requires careful consideration of multiple testing burden and appropriate thresholds. The following approaches are recommended:

Gene-Based Significance Thresholds: For exome-wide analyses, a Bonferroni-corrected threshold of (2.5 × 10^{-6}) (0.05/20,000 genes) is commonly used.

Population-Specific Adjustments: For diverse populations or specialized variant sets, consider methods like the Li-Ji approach that account for linkage disequilibrium patterns and population genetics [5].

Replication and Validation: Whenever possible, replicate findings in independent cohorts or use functional validation to confirm associations.

Gene-Level Intolerance Metrics: Incorporate metrics such as pLI scores from the Exome Aggregation Consortium or missense Z-scores to prioritize genes that are intolerant to variation, as these are more likely to harbor pathogenic variants [7].

Tools like SORVA (Significance Of Rare VAriants) can calculate the statistical significance of observing rare variants in a given proportion of sequenced individuals, which is particularly useful for assessing the significance of findings in smaller studies or for novel gene-disease relationships [7].

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Rare Variant Association Studies

| Resource Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Variant Annotation | ANNOVAR, VEP, SnpEff | Functional consequence prediction, allele frequency annotation |

| Variant Prioritization | CADD, SIFT, PolyPhen-2 | In silico prediction of variant deleteriousness |

| Association Testing | SAIGE-GENE+, STAAR, REGENIE | Rare variant burden and SKAT tests for large datasets |

| Meta-Analysis | Meta-SAIGE, MetaSTAAR | Cross-study rare variant association meta-analysis |

| Cloud Platforms | NHGRI AnVIL, Terra | Cloud-based genomic data analysis and sharing |

| Reference Data | gnomAD, 1000 Genomes | Population allele frequency references |

| Gene Intolerance | pLI, RVIS, LOEUF | Gene-level constraint metrics for variant interpretation |

The NHGRI Genomic Data Science Analysis, Visualization, and Informatics Lab-space (AnVIL) is a particularly valuable resource that provides a cloud-based genomic data sharing and analysis platform, facilitating integration and computing on large datasets generated by NHGRI programs and other initiatives [8]. AnVIL offers a collaborative environment for researchers with varying levels of computational expertise and incorporates data access and security features essential for working with controlled-access genomic data.

Defining the rare variant spectrum through appropriate MAF thresholds is a fundamental step in RVAS that directly impacts study power and interpretation. There is no one-size-fits-all threshold, and researchers should select MAF cutoffs based on their specific research question, sample size, and expected genetic architecture. The protocols outlined in this document provide a comprehensive framework for conducting RVAS, from quality control and variant annotation to association testing and significance calculation. As sequencing technologies continue to advance and sample sizes grow, rare variant analyses will play an increasingly important role in unraveling the genetic architecture of complex traits and diseases, with particular relevance for drug development and personalized medicine applications.

The quest to fully understand the genetic architecture of complex human diseases has given rise to two complementary yet distinct methodological paradigms: genome-wide association studies (GWAS) focused on common variants and rare variant association studies (RVAS). Common variant GWAS, which tests hundreds of thousands of genetic variants across many genomes, has successfully identified thousands of robust associations between common single nucleotide polymorphisms (SNPs) and complex traits [9]. However, these discoveries typically explain only a fraction of the estimated heritability, prompting increased interest in the contribution of rare genetic variants [10] [11]. RVAS represents a specialized approach designed specifically to detect associations with low-frequency and rare variants, which are typically defined as having a minor allele frequency (MAF) below 0.5-1% [11] [12]. These two approaches differ fundamentally in their underlying genetic hypotheses, technical requirements, analytical frameworks, and translational applications, reflecting the complex spectrum of genetic influences on disease susceptibility.

The distinction between these approaches stems from competing hypotheses about the nature of "missing heritability." The infinitesimal model posits that common variants of very small effect collectively explain most heritability, while the rare allele model argues that numerous rare variants of larger effect account for a substantial portion of genetic risk [10]. Evolutionary theory provides a compelling argument for the rare variant model, suggesting that deleterious alleles likely to influence disease risk will be kept at low population frequencies through purifying selection [10] [11] [12]. In practice, most complex traits appear to be influenced by a combination of both common and rare variants, necessizing researchers to understand both approaches and their appropriate applications.

Fundamental Principles and Genetic Architecture

Underlying Genetic Models and Hypotheses

The theoretical foundations of common variant GWAS and RVAS reflect divergent views of complex trait architecture. The common disease-common variant (CD-CV) hypothesis initially dominated the field, proposing that common diseases are influenced by common genetic variants present in >5% of the population [10]. This model assumes that disease-associated alleles arose in ancestral human populations and have persisted at relatively high frequencies. In contrast, the rare variant model proposes that a significant portion of complex disease risk is attributable to numerous recently derived rare mutations, each with relatively large effects on disease risk [10]. Under this model, diseases such as schizophrenia or inflammatory bowel disease may actually represent collections of hundreds or even thousands of similar conditions attributable to rare variants at individual loci [10].

The practical implications of these differing models are substantial. The common variant model predicts that risk is distributed across many loci of small effect, with affected individuals carrying a slight excess of risk alleles compared to unaffected individuals [10]. The rare variant model generally refers to dominant effects with higher penetrance, where risk is elevated 2-fold or more over background levels, though penetrance need not be complete [10]. Importantly, these models are not mutually exclusive; emerging evidence suggests that both common and rare variants contribute to most complex traits, though their relative contributions vary across diseases and phenotypes.

Distinct Variant Characteristics and Functional Impacts

Common and rare variants exhibit systematically different characteristics that extend beyond their population frequencies. Common variants identified through GWAS are more likely to reside in non-coding regulatory regions and exert their effects through subtle influences on gene expression [9]. These variants typically have modest effect sizes, with odds ratios generally ranging from 1.1 to 1.5, and explain only small portions of phenotypic variance [9]. Due to extensive linkage disequilibrium (LD) across common variant sites, GWAS signals often implicate broad genomic regions containing multiple correlated variants, making precise identification of causal variants challenging without additional functional studies [9] [13].

In contrast, rare variants accessible through RVAS are enriched for coding changes with more direct impacts on protein structure and function [11] [3]. These include loss-of-function variants (nonsense, frameshift, splice-site), missense mutations with deleterious effects, and other protein-altering changes. Rare variants typically display lower linkage disequilibrium with flanking variants, which can facilitate more precise mapping of causal alleles once associations are detected [3]. While some rare variants have large effect sizes, recent evidence suggests that most have modest-to-small effects on phenotypic variation, contrary to initial expectations [12]. The lower LD around rare variants can be both advantageous, enabling finer mapping, and challenging, reducing the utility of tag-SNP approaches that are fundamental to common variant GWAS.

Table 1: Key Characteristics of Common and Rare Variants

| Characteristic | Common Variants | Rare Variants |

|---|---|---|

| Minor Allele Frequency (MAF) | >5% | <0.5-1% |

| Typical Effect Sizes | Modest (OR: 1.1-1.5) | Variable (moderate to large) |

| Molecular Consequences | Predominantly non-coding regulatory | Enriched for coding/functional |

| Linkage Disequilibrium | Extensive | Limited |

| Evolutionary History | Often ancient alleles | Often recent mutations |

| Penetrance | Low | Variable (often higher) |

| Population Specificity | Shared across populations | Often population-specific |

Methodological Approaches: Study Design and Genotyping

Study Designs and Sampling Strategies

Common variant GWAS typically employ large-scale case-control or cohort designs, often requiring sample sizes in the tens or hundreds of thousands to achieve sufficient statistical power for detecting variants of small effect [9] [13]. The emphasis is on broad population sampling to capture adequate representation of common variants, with meta-analyses of multiple cohorts now standard practice for maximizing discovery power [9]. For quantitative traits, random population sampling is generally preferred, though selective sampling approaches such as extremes of trait distribution can enhance power for detecting genetic associations, particularly for rare variants [12].

RVAS utilizes several specialized study designs to improve power for detecting rare variant associations. Extreme phenotype sampling selects individuals from the tails of trait distributions (e.g., the highest and lowest 5%) to enrich for rare variants of larger effect [11] [12]. This approach has successfully identified rare variants associated with LDL-cholesterol levels and type 2 diabetes risk [12]. Family-based designs leverage the enrichment of rare variants in relatives of affected individuals, particularly for early-onset or severe disease forms [12]. Population isolates offer advantages for RVAS due to reduced genetic diversity, founder effects that amplify rare variant frequencies, and greater environmental homogeneity [12]. Each design presents unique analytical challenges, particularly regarding the control of confounding and generalizability of findings.

Genotyping Technologies and Platform Selection

The technological requirements for common variant GWAS and RVAS differ significantly, reflecting their different targets in the allele frequency spectrum. Common variant GWAS primarily relies on high-density genotyping arrays that efficiently interrogate hundreds of thousands to millions of common SNPs across the genome [14] [13]. These arrays are cost-effective for large sample sizes and provide excellent coverage of common variation through linkage disequilibrium-based imputation using reference panels such as the 1000 Genomes Project [9] [13]. Standard quality control procedures include filtering for call rate, Hardy-Weinberg equilibrium, batch effects, and population stratification [14] [15].

RVAS requires more comprehensive variant discovery approaches, primarily using next-generation sequencing technologies [11] [12]. The choice of sequencing strategy involves trade-offs between cost, coverage, and comprehensiveness:

- Whole-genome sequencing (WGS) provides complete coverage of both coding and non-coding regions but remains expensive for large samples [11] [12]

- Whole-exome sequencing (WES) targets protein-coding regions, offering a cost-effective compromise for detecting rare coding variants [11] [1]

- Targeted sequencing focuses on specific genes or regions of interest, enabling deep coverage of prioritized areas [12]

- Low-depth WGS combined with imputation provides a cost-effective alternative for variant discovery in large cohorts [11]

Additionally, exome arrays provide a genotyping-based approach for interrogating previously identified coding variants, though they lack comprehensiveness for very rare variants [11] [12]. Quality control for sequencing-based studies involves additional considerations including sequence coverage, variant calling quality, and contamination checks [11].

Table 2: Comparison of Genotyping and Sequencing Platforms

| Technology | Variant Coverage | Best Applications | Key Limitations |

|---|---|---|---|

| GWAS Arrays | Common variants (MAF>5%) | Large-scale population studies | Limited rare variant coverage |

| Exome Arrays | Coding variants (MAF>0.1%) | Cost-effective rare coding variant screening | Population-specific biases, limited novelty |

| Whole Exome Sequencing | Coding variants across frequency spectrum | Discovery of rare coding variants | Limited to exonic regions |

| Whole Genome Sequencing | Genome-wide across frequency spectrum | Comprehensive variant discovery | Higher cost, computational burden |

| Low-pass WGS + Imputation | Common and low-frequency variants | Large-scale variant discovery | Lower accuracy for rare variants |

Analytical Frameworks and Statistical Approaches

Association Testing Methods

The statistical approaches for common variant GWAS and RVAS differ fundamentally in their unit of analysis and multiple testing burdens. Common variant GWAS typically employs single-variant association tests,

Figure 1: Statistical Testing Frameworks for GWAS versus RVAS

using linear regression for quantitative traits or logistic regression for case-control studies [9] [13]. The massive multiple testing burden inherent in testing millions of independent variants necessitates stringent significance thresholds, typically p < 5 × 10⁻⁸ for genome-wide significance [9] [13]. Additional analytical considerations include correction for population stratification using principal components or genetic relationship matrices, covariate adjustment, and accounting for relatedness [14] [9].

RVAS employs gene-based or region-based aggregation tests that combine information across multiple rare variants within functional units [11] [3]. These methods can be categorized into three main classes:

- Burden tests collapse multiple variants into a single aggregate score and test for association between this burden and the trait of interest [11] [1]. These tests assume that most rare variants in the region are causal and influence the trait in the same direction.

- Variance-component tests like the Sequence Kernel Association Test (SKAT) evaluate the distribution of variant effect sizes, allowing for both protective and risk-increasing effects within the same gene [11] [1].

- Combined tests such as SKAT-O aim to leverage the advantages of both burden and variance-component approaches by adapting to the underlying genetic architecture [11] [1].

The choice among these methods depends on the expected proportion of causal variants and their effect directionalities. Functional annotation tools (e.g., PolyPhen-2, CADD) are often incorporated to prioritize putatively functional variants within aggregation units [11] [3].

Post-Association Analyses and Interpretation

Following initial association signals, both GWAS and RVAS require specialized analytical pipelines for result interpretation and validation. For common variant GWAS, statistical fine-mapping is essential to distinguish causal variants from non-causal variants in linkage disequilibrium [9] [13]. Methods such as CAVIAR, FINEMAP, and PAINTOR leverage LD structure and association statistics to identify credible sets of putative causal variants [15] [16]. Replication in independent samples remains a gold standard for validating GWAS findings, with true replication requiring the same variant, phenotype, and genetic model [13].

For RVAS, replication is more challenging due to the low frequency and potential population specificity of rare variants [12]. Analytical follow-up often focuses on functional validation through experimental studies in model systems and detailed characterization of variant effects on protein function [1] [3]. Meta-analysis of RVAS requires careful harmonization of gene-based tests across studies, with methods available for combining burden and SKAT statistics [11].

Both approaches benefit from integration with functional genomics resources such as ENCODE, Roadmap Epigenomics, and GTEx to prioritize variants for follow-up and hypothesize biological mechanisms [14] [3]. Mendelian randomization analyses can leverage both common and rare variant associations to infer potential causal relationships between risk factors and health outcomes [16].

Practical Protocols and Workflows

Standard GWAS Protocol for Common Variants

A robust common variant GWAS follows a standardized workflow with particular attention to quality control and multiple testing:

Figure 2: Common Variant GWAS Workflow

- Sample Quality Control: Remove samples with call rates <98%, sex discrepancies, excessive heterozygosity, or non-European ancestry in population-homogeneous studies [14] [15].

- Variant Quality Control: Exclude variants with call rates <95%, significant deviation from Hardy-Weinberg equilibrium (p < 1×10⁻⁶), or minor allele frequency below the study-specific threshold (typically 1%) [14].

- Imputation: Perform genotype phasing and imputation using reference panels (1000 Genomes, HRC) to increase variant resolution and enable meta-analysis across platforms [14] [9].

- Association Testing: Conduct using linear or logistic regression with adjustment for principal components and relevant covariates [14] [13]. For binary traits, logistic regression is standard, while linear regression is used for quantitative traits.

- Significance Thresholding: Apply genome-wide significance threshold of p < 5×10⁻⁸, with conditional analysis and clumping to identify independent signals [9] [13].

- Fine-Mapping: Implement statistical fine-mapping at associated loci to prioritize causal variants [16] [13].

- Functional Annotation: Integrate with functional genomics resources to prioritize variants and generate biological hypotheses [14] [3].

RVAS Protocol for Rare Variants

RVAS requires specialized approaches from study design through interpretation:

- Variant Calling and Quality Control: Process sequencing data through alignment, variant calling, and rigorous quality filtering. Check for sample contamination (evidenced by excess heterozygosity), sequence depth, transition/transversion ratios, and variant quality metrics [11] [1].

- Variant Annotation: Annotate variants using bioinformatic tools (e.g., PolyPhen-2, SIFT, CADD) to predict functional impact [11] [3]. Focus on protein-altering variants for exome-based studies.

- Variant Filtering: Apply frequency filters (typically MAF < 0.5-1% in population databases) and functional filters to focus on putatively consequential rare variants [11] [12].

- Gene-Based Association Testing: Conduct using burden tests, SKAT, or SKAT-O depending on the expected genetic architecture [11] [1]. Include relevant covariates such as sex, age, and principal components.

- Replication and Validation: Attempt replication in independent samples where possible, though this may be challenging for very rare variants. Functional validation through experimental studies is often necessary [1] [12].

Applications and Translational Potential

Biological Insights and Drug Discovery

Common variant GWAS and RVAS provide complementary biological insights with distinct implications for therapeutic development. Common variant GWAS has successfully identified thousands of loci associated with complex diseases, revealing novel biological pathways and potential drug targets [9]. For example, GWAS discoveries implicated the autophagy pathway in Crohn's disease and the complement pathway in age-related macular degeneration, opening new avenues for therapeutic intervention [11]. However, the small effect sizes of common variants and their frequent location in non-coding regions can complicate direct translation to drug targets.

RVAS offers unique advantages for biological discovery and drug development. Because rare variants are enriched for coding changes with direct functional consequences, they can provide hypothesis-free evidence for gene causality [3]. For example, rare loss-of-function variants in PCSK9 were found to associate with low LDL-cholesterol and reduced cardiovascular risk, leading to the development of PCSK9 inhibitors as effective cholesterol-lowering therapies [12]. Similarly, rare variants in SLC30A8 were found to protect against type 2 diabetes, highlighting this gene as a therapeutic target [12]. The identification of rare variants with large effects on disease risk provides strong evidence for the biological and potential therapeutic relevance of their gene targets.

Clinical Applications and Risk Prediction

The clinical applications of common and rare variant findings differ substantially. Common variant GWAS has enabled the development of polygenic risk scores (PRS) that aggregate the effects of many common variants to identify individuals at high genetic risk for various diseases [9] [13]. For some conditions, such as coronary artery disease and breast cancer, PRS can identify individuals with risk equivalent to monogenic mutations [9]. However, the utility of PRS is currently limited by reduced portability across diverse ancestral backgrounds and incomplete biological interpretation.

RVAS contributes to clinical medicine through the identification of rare variants with large effect sizes that may have direct diagnostic utility [3]. In some cases, rare variants identified through RVAS approaches have been incorporated into diagnostic testing panels for conditions such as hereditary cancer syndromes and familial hypercholesterolemia [3]. The higher penetrance of some rare variants makes them particularly useful for family counseling and personalized risk assessment. However, the population specificity of many rare variants and their typically low individual frequencies limit their broad utility for population screening.

Table 3: Key Analytical Tools and Resources for Genetic Association Studies

| Tool Category | Specific Tools | Primary Application | Key Features |

|---|---|---|---|

| GWAS Analysis | PLINK [14] [15] | Basic association testing | Comprehensive toolkit for quality control and association |

| SNPTEST [14] | Single SNP associations | Bayesian and frequentist testing approaches | |

| GENESIS [14] | Association in structured populations | Mixed models for related samples | |

| RVAS Analysis | Burden Tests [11] [1] | Rare variant aggregation | Assumes unidirectional effects |

| SKAT/SKAT-O [11] [1] | Flexible rare variant testing | Accommodates bidirectional effects | |

| Quality Control | EIGENSTRAT [14] | Population stratification | Principal component-based correction |

| GCTA [14] | Heritability estimation | Genetic relationship matrix construction | |

| Meta-Analysis | METAL [14] [9] | GWAS meta-analysis | Efficient large-scale coordination |

| RareMETALS [11] | RVAS meta-analysis | Gene-based test coordination | |

| Functional Annotation | PolyPhen-2 [14] [3] | Coding variant impact | Protein structure-based predictions |

| ANNOVAR [11] | Comprehensive annotation | Integrates multiple functional databases | |

| VEP [15] | Variant consequence | Ensemble's annotation tool | |

| Fine-Mapping | FINEMAP [16] | Causal variant identification | Bayesian approach for fine-mapping |

| CAVIAR [15] | Credible set generation | LD-aware fine-mapping |

The contrasting genetic architectures targeted by RVAS and common variant GWAS represent complementary rather than competing approaches to unraveling the genetic basis of complex traits. Common variant GWAS provides a broad survey of the polygenic background contributing to disease risk, enabling population-level risk prediction and revealing key biological pathways. RVAS delivers high-resolution insights into specific genes and proteins with direct functional consequences, offering compelling targets for therapeutic intervention and opportunities for personalized medicine based on rare, high-impact variants.

The most powerful contemporary genetic studies integrate both approaches, leveraging the respective strengths of each method. As sequencing costs continue to decline and analytical methods improve, the distinction between these approaches is likely to blur, with whole-genome sequencing enabling comprehensive assessment of both common and rare variants within unified frameworks. Nevertheless, the fundamental differences in the genetic architecture of common and rare variants will continue to necessitate specialized analytical approaches and thoughtful study design. Researchers and drug development professionals would be well-served by maintaining expertise in both paradigms while recognizing their distinct contributions to understanding human disease biology.

The 'Missing Heritability' Problem and the CD-RV Hypothesis

The "missing heritability" problem represents a central paradox in human genetics: for most complex traits, the heritability estimated from family-based studies (pedigree-based heritability, or (h{PED}^{2})) substantially exceeds the heritability explained by common variants identified through genome-wide association studies (GWAS heritability, or (h{GWAS}^{2})) [17] [18]. This discrepancy has prompted several hypotheses, among which the Common Disease-Rare Variant (CD-RV) hypothesis has gained significant traction. This hypothesis proposes that a substantial portion of the unexplained heritability is attributable to rare genetic variants (minor allele frequency, MAF < 1%), which are poorly captured by standard GWAS arrays [19].

This Application Note frames the CD-RV hypothesis within the context of Rare Variant Association Studies (RVAS). We provide a quantitative summary of recent evidence, detail standardized protocols for conducting RVAS, and visualize key workflows and relationships to support researchers and drug development professionals in this evolving field.

Quantitative Evidence: Partitioning the Heritability of Complex Traits

Recent advances in whole-genome sequencing (WGS) of large biobanks have enabled high-precision estimates of the contribution of rare variants to complex traits. A landmark 2025 study analyzing WGS data from 347,630 UK Biobank participants of European ancestry quantified the heritability explained by 40 million variants across 34 phenotypes [17] [20].

Table 1: Heritability Partitioning from Whole-Genome Sequencing (WGS) Data

| Heritability Component | Average Contribution (across 34 phenotypes) | Key Findings |

|---|---|---|

| Total WGS-based heritability ((h_{WGS}^{2})) | ~88% of (h_{PED}^{2}) | On average, WGS data captures the vast majority of pedigree-based heritability [17]. |

| Rare Variants (MAF < 1%) | ~20% of (h_{WGS}^{2}) | Rare variants make a significant, but minority, contribution [17]. |

| Common Variants (MAF ≥ 1%) | ~68% of (h_{WGS}^{2}) | Common variants account for the largest fraction of explained heritability [17]. |

| Rare Coding Variants | 21% of rare-variant (h_{WGS}^{2}) | |

| Rare Non-Coding Variants | 79% of rare-variant (h_{WGS}^{2}) | Non-coding regions harbor the majority of rare-variant heritability, underscoring a key challenge for functional interpretation [17]. |

These findings are supported by independent methodologies like Relatedness Disequilibrium Regression (RDR) and Sibling Regression (SR), which converge on average narrow-sense heritability estimates of ~30% for a range of traits, significantly lower than the 50-60% often reported by classic twin studies [18]. The gap between twin studies and molecular estimates suggests that environmental confounders that correlate with genetic relatedness, rather than a massive tranche of undiscovered rare variants, explain a portion of the historical "missing heritability" [18].

Mechanistic Insights: The Role of Rare Non-Coding Variants

While the aggregate contribution of rare variants is quantified in Table 1, understanding their biological mechanisms is crucial. Rare non-coding variants can influence disease through diverse pathways, one of which is the disruption of post-transcriptional regulatory mechanisms.

A 2025 study constructed an atlas of alternative polyadenylation (APA) outliers (aOutliers) from 15,201 RNA-seq samples across 49 human tissues [21]. These aOutliers represent individuals with aberrant usage of polyadenylation sites in 3' UTRs or introns. The study found:

- Unique Molecular Signature: 74.2% of multi-tissue aOutlier genes were not identified by analyses of expression or splicing outliers (eOutliers or sOutliers), indicating APA represents a distinct molecular phenotype [21].

- RV Enrichment: Deleterious rare variants were significantly enriched in regions adjacent to these aOutliers [21].

- Functional Impact: aOutlier-associated RVs were shown to alter poly(A) signals and splicing sites, mechanistically linking them to APA events [21].

- Disease Relevance: A Bayesian prediction model pinpointed 1,799 RVs impacting 278 genes with large disease effect sizes, highlighting the utility of APA for identifying functional RVs [21].

Diagram: Mechanistic Link Between Rare Non-Coding Variants and Disease via APA

Experimental Protocols for Rare Variant Association Studies

The following section outlines standardized protocols for conducting a RVAS, from study design to data analysis.

A typical RVAS involves a multi-stage process, from initial study design and quality control to association testing and replication.

Diagram: RVAS Workflow Pipeline

Protocol: Study Design and Sequencing

Objective: To select a cost-effective sequencing strategy that provides adequate power for rare variant detection.

Platform Selection: Choose from the following options based on budget and research goals [11]:

- Whole-Genome Sequencing (WGS): Identifies nearly all variants in the genome with high confidence. Highest cost.

- Whole-Exome Sequencing (WES): Identifies variants in protein-coding regions. Less expensive than WGS but limited to the exome.

- Low-Depth WGS: A cost-effective approach for association mapping when coupled with imputation, but has lower accuracy for rare variant calling [11].

- Custom Genotyping Arrays (e.g., Exome Chip): Much cheaper than sequencing but provides limited coverage for very rare variants and non-European populations [11].

Sample Size Estimation: Well-powered RVAS for complex traits requires large sample sizes, often with at least 25,000 cases for a discovery set, plus a substantial replication cohort [22].

Study Design Considerations: For binary traits, extreme-phenotype sampling (selecting individuals at both ends of the phenotypic distribution) can be a cost-effective strategy to enrich for causal rare variants [11].

Protocol: Variant Annotation, Filtering, and Set Definition

Objective: To process raw genetic data and define meaningful units for association testing.

Quality Control (QC):

- Sample QC: Investigate and exclude samples with unusually high heterozygosity, indicating potential DNA contamination. Calculate broad quality indicators like read depth and transition/transversion ratio [11].

- Variant QC: Apply filters based on quality scores (e.g., QUAL), strand bias, and haplotype scores.

Variant Annotation: Use bioinformatics tools (e.g., ANNOVAR, SnpEff) to predict the functional impact of variants. Annotate variants as synonymous, missense, nonsense, or splicing site variants. For non-coding variants, functional annotation from tools like CADD or Eigen can be informative [11] [19].

Variant Set Definition: Group rare variants (typically MAF < 0.5% - 1%) into biologically relevant units for aggregative testing [19].

- The most common unit is the gene.

- Other units include specific functional domains, exons, or sliding genomic windows.

Protocol: Gene-Based Rare Variant Association Testing

Objective: To test for a statistically significant association between a set of rare variants and a phenotype of interest.

Model Selection: Choose a statistical test based on the assumed genetic architecture of the trait [11] [19].

- Burden Tests: Collapse variants into a single burden score (e.g., a count of rare alleles). Assumes all causal variants influence the trait in the same direction. Examples: CAST, Weighted-Sum Test.

- Variance-Component Tests: Model variant effects flexibly, allowing for causal variants with different effect sizes and directions. Robust to the presence of non-causal variants in the set. The prime example is the Sequence Kernel Association Test (SKAT).

- Omnibus Tests: Combine burden and variance-component tests to achieve robust power across different scenarios. SKAT-O is a widely used omnibus test.

Covariate Adjustment: Adjust for covariates such as age, sex, and genetic principal components (PCs) to control for population stratification and confounding.

Handling Relatedness and Imbalance: For binary traits with highly unbalanced case-control ratios, use methods like SAIGE-GENE+ or STAAR that employ saddlepoint approximation to control Type I error inflation [6].

Protocol: Meta-Analysis for Rare Variants (Meta-SAIGE)

Objective: To combine summary statistics from multiple cohorts to increase power for detecting rare variant associations.

With the growth of multiple biobanks, meta-analysis has become a primary approach. The Meta-SAIGE protocol is as follows [6]:

Summary Statistics Preparation: In each cohort, use SAIGE to derive per-variant score statistics (S) and a sparse linkage disequilibrium (LD) matrix for the genomic region. The LD matrix is not phenotype-specific and can be reused across different phenotypes.

Summary Statistics Combination: Combine score statistics from all cohorts into a single superset. For binary traits, apply a genotype-count-based saddlepoint approximation (SPA) to the combined statistics to ensure accurate Type I error control under case-control imbalance.

Gene-Based Testing: Perform Burden, SKAT, and SKAT-O tests on the combined summary statistics, mirroring the analysis done with individual-level data in SAIGE-GENE+.

Table 2: Key Research Reagent Solutions for RVAS

| Item / Resource | Function / Application |

|---|---|

| Whole-Genome Sequencing (WGS) | Gold standard for comprehensive variant discovery across coding and non-coding regions [17]. |

| Whole-Exome Sequencing (WES) | Cost-effective targeted sequencing for discovering coding variants [1] [19]. |

| Functional Annotation Tools | Software (e.g., ANNOVAR, SnpEff) to predict the functional consequences of variants (e.g., missense, LoF) for prioritization [11]. |

| RVAS Analysis Software | Tools like SAIGE-GENE+, STAAR, and SKAT for performing gene-based rare variant association tests while controlling for confounding [23] [6]. |

| Meta-Analysis Platforms | Tools like Meta-SAIGE for scaling RVAS by combining summary statistics from multiple cohorts with accurate error control [6]. |

| Biobank Datasets | Large-scale resources (e.g., UK Biobank, All of Us) providing WES/WGS and phenotype data for well-powered studies [17] [6]. |

The CD-RV hypothesis has driven significant methodological and empirical advances in human genetics. While current evidence indicates that rare variants explain a minority (~20%) of the heritability for most complex traits, their contribution is biologically important and disproportionately enriched in coding and regulatory regions. The remaining "heritability gap" between pedigree-based and WGS-based estimates appears largely attributable to environmental confounders inflating traditional family-based estimates, rather than a massive tranche of undiscovered rare variants.

Future research must focus on three fronts: First, the functional characterization of rare non-coding variants, building on insights into mechanisms like alternative polyadenylation. Second, the development of even more powerful statistical methods to detect ultra-rare variant effects and gene-gene interactions. Finally, the continued expansion of diverse, large-scale biobanks will be essential to fully elucidate the role of rare variation in human complex traits and translate these findings into therapeutic hypotheses.

The study of genetic associations has undergone a significant paradigm shift, moving beyond the common disease-common variant hypothesis to acknowledge the crucial role of rare genetic variants (typically defined as those with Minor Allele Frequency [MAF] < 0.5-1%) in complex disease architecture. Despite the success of genome-wide association studies (GWAS) in identifying thousands of common variant loci, a substantial portion of disease heritability remains unexplained [24] [11]. Rare variants have emerged as a key source of this "missing heritability," distinguished not merely by their frequency but by their characteristically larger effect sizes on phenotypic variation and disease risk compared to common variants [25] [26]. This inverse relationship between allele frequency and effect size represents a fundamental genetic principle with profound implications for understanding disease biology, identifying therapeutic targets, and developing personalized medicine approaches.

The investigation of rare variants demands specialized methodologies, collectively termed Rare Variant Association Studies (RVAS), which differ significantly from common-variant GWAS in their experimental designs, sequencing technologies, and statistical approaches [1] [11]. Framing research within the context of RVAS is essential for drug development professionals seeking to identify novel biological targets, as rare variants with large effects often point directly to causal genes and pathways with potentially transformative therapeutic implications [24]. This application note elucidates the biological and evolutionary basis for the disproportionate impact of rare variants, provides standardized protocols for their investigation, and visualizes the analytical frameworks essential for advancing complex trait research.

Biological and Evolutionary Mechanisms

The Role of Purifying Selection

The inverse correlation between variant frequency and effect size is primarily driven by natural selection, specifically purifying selection acting against deleterious alleles. Evolutionary theory predicts that variants with strong negative effects on fitness are prevented from rising to high frequencies in populations and are instead maintained at low frequencies or continuously eliminated [25] [26]. This selection-pressure gradient creates a systematic relationship where variants with larger detrimental effects on health-related traits tend to be progressively rarer in populations.

- Deleterious Spectrum: Rare variants are enriched for functionally consequential changes, including protein-truncating variants (nonsense, frameshift), splice-site disruptions, and deleterious missense mutations that substantially alter protein function [26]. These variants are more likely to be slightly deleterious polymorphisms (sdSNPs) – deleterious enough to elevate disease risk but not sufficiently harmful to be completely eliminated by selection [26].

- Fitness Consequences: The strength of selection varies across traits. Early-onset diseases show stronger negative selection against risk variants, while late-onset diseases may accumulate variants with larger effects that manifest after reproductive age [26].

- Empirical Evidence: Population genetic analyses reveal that approximately 30-42% of coding variants are moderately deleterious, with rare variants disproportionately affecting evolutionarily constrained genes and pathways [26].

Evolutionary Evidence and Population Genetics

The frequency distribution of genetic variants in human populations directly reflects their evolutionary history and functional impact. Several lines of evidence establish the relationship between rarity and effect size:

- Variant Frequency Spectrum: Over 50% of validated SNPs in the human genome are rare (MAF < 5%), with the proportion increasing sharply at very low frequencies (MAF ≤ 0.025) [26]. This abundance of rare variation provides a substantial reservoir of potentially high-impact alleles.

- Mutation-Selection Balance: The equilibrium between constant introduction of new deleterious mutations and their removal by purifying selection maintains rare variants in populations [25]. Recent variants that have arisen more recently in evolutionary history have not had time to reach higher frequencies, particularly when selection acts against them.

- Empirical Effect Size Distributions: Large-scale studies in model organisms provide direct evidence of this relationship. In yeast, rare variants (MAF < 0.01) contribute disproportionately to phenotypic variance, explaining 51.7% of trait variation despite constituting only 27.8% of all variants [25]. This enrichment for large effects is most pronounced for traits under strong selection, such as growth under stress conditions.

Table 1: Evolutionary Evidence Linking Rare Variants to Larger Effect Sizes

| Evolutionary Process | Impact on Rare Variants | Empirical Evidence |

|---|---|---|

| Purifying Selection | Removes deleterious variants from population; strongest against large-effect variants | Enrichment of protein-truncating variants in rare spectrum [26] |

| Mutation-Selection Balance | Maintains deleterious variants at low frequencies | Higher proportion of rare variants in functional genomic regions [26] |

| Recent Origin | Large-effect variants have less time to rise in frequency | Rare variants in yeast explain >50% of trait variance despite being <28% of variants [25] |

| Evolutionary Constraint | Genes under strong selection accumulate rare, large-effect variants | Disease genes show higher levels of evolutionary conservation [26] |

Quantitative Evidence and Heritability Contributions

Heritability Estimates from Sequencing Studies

Large-scale sequencing studies have quantified the substantial contribution of rare variants to complex trait heritability, providing empirical support for their outsized effects. The RARity framework (Rare variant heritability estimator), applied to whole exome sequencing data from 167,348 UK Biobank participants, demonstrates that rare coding variants account for significant portions of phenotypic variance across diverse traits [27].

- Height Heritability: Rare variants explain 21.9% (95% CI: 19.0-24.8%) of height heritability, the highest among 31 complex traits analyzed [27].

- Broad Impact: Among 31 complex traits, 27 showed significant rare variant heritability (h²RV > 5%), confirming the widespread contribution of rare variants across diverse biological domains [27].

- Trait-Specific Patterns: The relative contribution of rare variants varies by trait, with some phenotypes (e.g., growth in cadmium chloride, low pH, high temperature) showing particularly strong rare variant contributions (>75% of variance) in yeast models [25].

Methodological Considerations in Heritability Estimation

Accurate quantification of rare variant heritability requires specialized approaches that address their unique statistical properties:

- Aggregation Limitations: Gene-level burden tests, which aggregate rare variants within genes, suffer from a 79% (95% CI: 68-93%) loss of heritability information compared to variant-level approaches, highlighting the importance of individual variant effects [27].

- LD Pruning Challenges: Rare variants exhibit long-range linkage disequilibrium (LDLD) patterns distinct from common variants, requiring stringent pruning thresholds (r² > 0.1 over 50Mb windows) to prevent heritability inflation [27].

- Architectural Diversity: The genetic architecture of rare variant effects varies across traits, with different proportions of causal variants and effect size distributions requiring flexible estimation methods [27].

Table 2: Rare Variant Heritability (h²RV) Estimates for Selected Complex Traits

| Trait Category | Specific Trait | h²RV Estimate | Notes |

|---|---|---|---|

| Anthropometric | Height | 21.9% (19.0-24.8%) | Highest h²RV among measured traits [27] |

| Lipid Metrics | HDL | 10.2% (8.4-12.0%) | Significant contribution to lipid regulation [27] |

| Liver Enzymes | ALP | 13.8% (11.7-15.9%) | Substantial rare variant influence [27] |

| Yeast Growth Traits | Cadmium chloride resistance | >75% variance explained | Extreme example of rare variant dominance [25] |

| Yeast Growth Traits | Copper sulfate resistance | Minimal rare variant contribution | Common variants dominate at CUP locus [25] |

Analytical Frameworks and Experimental Protocols

Statistical Methods for Rare Variant Association

Standard single-variant association tests used in GWAS are underpowered for rare variants due to their low frequency. Specialized aggregation tests have been developed to overcome this limitation by combining signals from multiple rare variants within functional units [28] [29] [11].

- Burden Tests: Aggregate rare variants within a gene or region into a single genetic score, assuming all variants influence the trait in the same direction with similar effect sizes [29] [11]. These include methods like CMC and Weighted Sum Statistic (WSS).

- Variance-Component Tests: Model variant effects as random draws from a distribution, allowing for bidirectional effects and variable effect sizes. SKAT (Sequence Kernel Association Test) is the most prominent example [6].

- Omnibus Tests: Combine burden and variance-component approaches to achieve robustness across different genetic architectures. SKAT-O and ACAT-O optimize power across diverse scenarios [29] [6].

- Advanced Methods: Recent methods like LRT-q (Likelihood Ratio Test for quantitative traits) incorporate functional annotations and genotype data to prioritize causal variants, demonstrating improved power through nonlinear aggregation of variant statistics [29].

Meta-Analysis Protocols for Multi-Cohort Studies

Meta-analysis substantially enhances power for rare variant discovery by combining data across multiple studies. Meta-SAIGE provides a standardized protocol for scalable rare variant meta-analysis [6]:

Diagram 1: Meta-SAIGE Workflow for Rare Variant Meta-Analysis. This protocol combines summary statistics from multiple cohorts with accurate type I error control [6].

Protocol Steps for Meta-SAIGE Implementation:

Per-Cohort Processing:

- Apply SAIGE to compute per-variant score statistics (S) and their variances

- Generate sparse LD matrix (Ω) capturing variant correlations

- Implement saddlepoint approximation (SPA) for accurate P-values with case-control imbalance

Summary Statistic Combination:

- Combine score statistics across K cohorts: ( S{\text{meta}} = \sum{k=1}^K S_k )

- Recalculate variances by inverting SPA-adjusted P-values

- Apply genotype-count-based SPA for improved type I error control

Gene-Based Association Testing:

- Conduct Burden, SKAT, and SKAT-O tests using combined statistics

- Incorporate functional annotations and multiple MAF cutoffs

- Collapse ultrarare variants (MAC < 10) to enhance power

- Combine P-values using Cauchy combination method

This protocol effectively controls type I error rates for low-prevalence binary traits (e.g., 1% prevalence) where traditional methods show >100-fold inflation, while achieving power comparable to individual-level data analysis [6].

Study Design Considerations for RVAS

Appropriate study design is critical for successful rare variant association studies. Key considerations include [11]:

- Sequencing Depth: Balance between sample size and sequencing depth based on research goals. Low-depth (4-7x) whole genome sequencing enables larger sample sizes, while deep exome sequencing (>80x) provides more accurate variant calling in coding regions.

- Extreme Phenotype Sampling: Enriching for individuals with extreme phenotypes increases power to detect rare variant associations by increasing the prevalence of causal variants in the sample [11].

- Variant Functional Annotation: Prioritize variants based on predicted functional impact using tools like CADD (Combined Annotation Dependent Depletion) and gene-level constraint metrics (pLI scores) [29].

- Replication Strategy: Independent replication is essential, requiring large sample sizes and careful consideration of variant frequency across populations.

Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for RVAS

| Tool Category | Specific Tools | Function/Application | Key Features |

|---|---|---|---|

| Variant Callers | GATK, FreeBayes | Identify genetic variants from sequencing data | Handle rare variant specific challenges, low-frequency false positives |

| Functional Prediction | CADD, SIFT, PolyPhen-2 | Annotate variant functional impact | Prioritize deleterious variants for aggregation tests [29] |

| Association Testing | SAIGE-GENE+, SKAT-O, LRT-q | Gene-based rare variant association tests | Adjust for case-control imbalance, sample relatedness [29] [6] |

| Meta-Analysis | Meta-SAIGE, MetaSTAAR | Combine results across studies | Control type I error for binary traits, reusable LD matrices [6] |

| Heritability Estimation | RARity, GREML | Estimate variance explained by rare variants | Architecture-free estimation, gene-level partitioning [27] |

| Reference Panels | UK10K, Haplotype Reference Consortium | Improve variant imputation accuracy | Enhanced coverage of rare variants in specific populations [24] |

| Annotation Databases | gnomAD, dbSNP | Determine population allele frequencies | Filter technical artifacts, identify truly rare variants [11] |

The disproportionate impact of rare variants on complex traits represents both a challenge and opportunity for therapeutic development. The biological rationale—rooted in evolutionary principles of purifying selection—provides a coherent framework for interpreting rare variant associations. From a drug development perspective, rare variants with large effects offer high-value targets for several reasons:

- Causal Priority: Rare variants are more likely to directly disrupt coding sequences with clear functional consequences, facilitating target validation and mechanistic studies [24] [26].

- Pathway Identification: Genes harboring multiple rare variants associated with a disease pinpoint critical biological pathways with potential for therapeutic intervention.

- Genetic Validation: Targets with rare variant support have approximately twice the success rate in clinical development, highlighting the value of these findings for portfolio prioritization.

Future directions in rare variant research will require even larger sample sizes through international consortia, improved functional annotation of noncoding rare variation, and integration of multi-omics data to fully elucidate the biological mechanisms through which rare variants influence complex disease risk. The continued refinement of RVAS methodologies and their application to diverse populations will further accelerate the translation of genetic discoveries into transformative therapies.

Genome-wide association studies (GWAS) and rare variant association studies (RVAS) represent two fundamental approaches for identifying trait-relevant genes in complex trait research. While conceptually similar, these methods systematically prioritize different genes, raising critical questions about biological interpretation and therapeutic target selection. This application note examines how specificity, gene length, and distinct methodological frameworks drive these ranking differences. We provide detailed protocols for implementing both approaches, visualizations of their underlying mechanisms, and practical guidance for researchers and drug development professionals navigating these complementary tools for uncovering disease biology.

The identification of genes influencing complex traits and diseases represents a cornerstone of modern genetic research with profound implications for therapeutic development. Two primary methodological frameworks have emerged: genome-wide association studies (GWAS) that assess common genetic variants (typically with minor allele frequency > 5%), and rare variant association studies (RVAS) that focus on low-frequency variants (MAF < 1-5%) often using burden tests that aggregate multiple variants within genes [11]. Despite conceptual similarities, these approaches systematically prioritize different genes, creating interpretation challenges and opportunities for biological discovery [30] [31].

Recent systematic analysis of 209 quantitative traits in the UK Biobank reveals that GWAS and RVAS rank genes discordantly, with only approximately 26% of genes showing burden support falling within top GWAS loci [31]. This discrepancy persists even after controlling for technical artifacts and power differences, suggesting fundamental biological and methodological drivers. Understanding these systematic differences is essential for accurately interpreting genetic findings and prioritizing genes for functional validation and drug target development.

Quantitative Comparison: GWAS versus RVAS

Table 1: Fundamental methodological differences between GWAS and RVAS

| Characteristic | GWAS | RVAS (Burden Tests) |

|---|---|---|

| Variant Frequency Focus | Common variants (MAF > 5%) | Rare variants (MAF < 0.5-5%) |

| Primary Unit of Analysis | Single variants across genome | Aggregated variants within genes |

| Typical Platform | Genotyping arrays | Sequencing (whole exome/genome) |

| Key Statistical Approach | Single-variant association tests | Gene-based burden tests |

| Typical Effect Sizes | Modest effects | Potentially larger effects per variant |

| Power Considerations | Large sample sizes required | Extreme sampling can be efficient |

Table 2: Empirical differences in gene ranking based on UK Biobank analysis of 209 traits [31]

| Ranking Characteristic | GWAS | RVAS (LoF Burden Tests) |

|---|---|---|

| Prioritization Basis | Genes near trait-specific variants | Trait-specific genes |

| Pleiotropy Handling | Can identify highly pleiotropic genes | Favors genes with specific effects |

| Top Gene Overlap | Limited overlap with top RVAS genes | Limited overlap with top GWAS genes |

| Representative Example | HHIP locus (highly significant GWAS signal, minimal burden signal) | NPR2 (top burden signal, lower GWAS rank) |

| Influencing Factors | Non-coding variant context specificity | Gene length, selective constraint |

Biological and Methodological Drivers of Differential Ranking

Trait Importance versus Trait Specificity

The fundamental ranking discrepancy between GWAS and RVAS stems from their differential emphasis on two distinct gene properties: trait importance and trait specificity [31].

- Trait Importance: Refers to the magnitude of a gene's quantitative effect on a trait of interest, defined as the squared effect of loss-of-function (LoF) variants on the trait.

- Trait Specificity: Measures a gene's importance for the trait of interest relative to its importance across all fitness-relevant traits.

RVAS burden tests prioritize genes primarily by their trait specificity, whereas GWAS can identify both specific and highly pleiotropic genes [31]. This occurs because natural selection constrains LoF variants in genes with broad biological importance, keeping them rare. Consequently, RVAS effectively identifies genes whose disruption specifically affects the trait under study with minimal pleiotropic consequences.

Figure 1: Fundamental drivers of differential gene ranking between GWAS and RVAS. The diagram illustrates how distinct biological and methodological factors influence each approach's prioritization schema.

Distinct Technical and Biological Influences

Both methods are influenced by trait-irrelevant technical factors that complicate interpretation:

- Gene Length Effect: RVAS burden tests show increased power for longer genes because they contain more potential sites for LoF mutations, increasing the aggregate burden signal [31]. This can artificially inflate rankings of longer genes regardless of their biological relevance.

- Variant Context: GWAS identifies predominantly non-coding variants that can be highly context-specific (e.g., cell-type-specific regulatory elements), enabling discovery of pleiotropic genes with trait-specific regulation [31]. Conversely, RVAS directly tests coding variants with more consistent effects across contexts.

- Selection Pressure: Rare variants in RVAS are strongly influenced by purifying selection, which constrains functionally important genes [11] [31]. Common variants in GWAS are largely immune to this constraint.

Experimental Protocols and Methodologies

Protocol 1: Genome-Wide Association Study (GWAS) Implementation

Study Design and Quality Control

Sample Collection and Genotyping

- Collect DNA samples from well-phenotyped cohort (minimum N > 10,000 for common variants)

- Perform genome-wide genotyping using standardized arrays (e.g., Illumina Global Screening Array)

- Implement rigorous quality control: sample call rate > 98%, variant call rate > 95%, Hardy-Weinberg equilibrium p > 1×10⁻⁶, relatedness filtering (PI-HAT < 0.2)

Population Structure Control

- Calculate genetic principal components (PCs) using LD-pruned variants

- Include 5-10 PCs as covariates to control for population stratification

- Consider mixed models for additional stratification control in diverse populations

Association Testing and Interpretation

Phenotype Processing

- For quantitative traits, assess distribution normality

- Apply rank-based inverse normal transformation (INT) for non-normally distributed traits to maintain type I error control [32]

- Consider direct (D-INT) versus indirect (I-INT) transformation based on underlying data generating mechanism

Statistical Analysis

- Perform single-variant association testing using linear or logistic regression

- Include relevant covariates (age, sex, genotyping batch, genetic PCs)

- Apply genome-wide significance threshold (p < 5×10⁻⁸)

- Conduct conditional analysis to identify independent signals

Figure 2: Standard GWAS workflow from sample collection to locus annotation. Critical steps include quality control (QC), population structure control via principal components (PC), and appropriate phenotype transformation.

Protocol 2: Rare Variant Association Study (RVAS) Implementation

Sequencing and Variant Calling

Sequencing Design Considerations

- Select appropriate sequencing approach: whole exome sequencing (cost-effective), whole genome sequencing (comprehensive), or targeted sequencing (hypothesis-driven)

- For large cohorts, consider low-depth whole genome sequencing (4× coverage) with LD-based imputation as cost-effective alternative [11]

- Maintain minimum depth of 20× for confident variant calling at rare variants

Variant Quality Control and Annotation

- Implement stringent variant filtering: read depth, mapping quality, strand bias, genotype quality

- Annotate variants for functional impact using tools like ANNOVAR, SnpEff, or VEP

- Focus on protein-altering variants (missense, nonsense, splice-site) for burden tests

- Retain rare variants with MAF < 0.01 (or 0.001 for ultra-rare analyses)

Burden Testing and Interpretation

Gene-Based Aggregation

- Aggregate qualifying variants within gene boundaries (±5kb for regulatory regions)

- Consider functional subsets: loss-of-function only (predicted truncating), or all protein-altering

- Calculate burden score for each individual (e.g., number of alternative alleles)

Statistical Analysis

- Apply burden tests (e.g., SKAT-O, combined burden tests) that aggregate variants within genes [11] [1]

- Adjust for covariates including sequencing batch, genetic ancestry

- Account for multiple testing across genes (Bonferroni correction for ~20,000 genes)

- Consider replication in independent cohorts given effect size inflation in discovery

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key research reagents and computational tools for GWAS and RVAS

| Category | Specific Tools/Reagents | Application | Considerations |

|---|---|---|---|

| Genotyping Platforms | Illumina Global Screening Array, Affymetrix Axiom | GWAS genotyping | Cost-effective for large samples; limited rare variant coverage |

| Sequencing Platforms | Illumina NovaSeq, PacBio HiFi, Oxford Nanopore | RVAS sequencing | Depth requirements vary by application (30× WGS, 100× WES) |

| Variant Callers | GATK, FreeBayes, DeepVariant | Variant identification from sequencing | Parameter tuning critical for rare variants |

| Functional Annotation | ANNOVAR, VEP, SnpEff | Variant functional prediction | Consistent annotation pipeline enables meta-analysis |

| Association Software | PLINK, REGENIE, SAIGE | GWAS analysis | Efficient for large biobank data |

| Burden Testing Tools | SKAT, SKAT-O, Burden tests | RVAS analysis | Differential performance under various genetic architectures |

| Quality Control Tools | QChip, VCFtools, Hail | Data quality assessment | Sample and variant-level metrics essential |

Biological Interpretation and Therapeutic Implications

Case Studies in Discordant Ranking

NPR2 Locus (Height)

- RVAS Ranking: Second most significant gene in LoF burden tests for height

- GWAS Ranking: Contained within 243rd most significant GWAS locus

- Biological Context: NPR2 mutations cause short stature in humans and mice, representing a specific height gene with minimal pleiotropy [31]

- Interpretation: High trait specificity makes NPR2 prioritize highly in RVAS but not GWAS

HHIP Locus (Height)

- GWAS Ranking: Third most significant locus with P values as small as 10⁻¹⁸⁵

- RVAS Ranking: Essentially no burden signal

- Biological Context: HHIP interacts with Hedgehog proteins involved in body patterning and osteogenesis [31]

- Interpretation: High pleiotropy and potentially regulatory mechanisms make HHIP prioritize highly in GWAS but not RVAS

Implications for Drug Development

The differential ranking between GWAS and RVAS has profound implications for therapeutic target selection:

- RVAS-Prioritized Targets: Genes with high trait specificity (like NPR2) represent ideal candidates for therapeutic modulation with potentially fewer side effects due to limited pleiotropic consequences [31].

- GWAS-Prioritized Targets: Genes identified through GWAS may have broader biological roles but offer opportunities for context-specific modulation (e.g., tissue-specific regulatory elements).

- Complementary Approaches: Strategic integration of both methods provides a more comprehensive view of trait biology, identifying both specific effector genes and broader regulatory networks.

GWAS and RVAS represent complementary rather than redundant approaches for gene discovery in complex traits. GWAS excels at identifying regulatory networks and pleiotropic genes, while RVAS burden tests prioritize trait-specific genes with direct functional impacts. Understanding the methodological and biological drivers of their systematic ranking differences—particularly the roles of trait specificity, gene length, and selection pressure—enables more accurate biological interpretation and strategic therapeutic target selection. Researchers should implement both approaches where feasible and carefully consider their distinct biases when prioritizing genes for functional validation and drug development.

RVAS in Action: Study Designs, Statistical Methods, and Evolving Best Practices

Rare genetic variants play a crucial role in explaining the "missing heritability" of complex human traits and diseases that genome-wide association studies (GWAS) of common variants have largely failed to capture [33] [34]. The choice of sequencing technology—whole-genome sequencing (WGS), whole-exome sequencing (WES), or targeted approaches—significantly impacts the scope, resolution, and power of rare variant association studies (RVAS). WGS provides a comprehensive view of the entire genome, capturing both coding and non-coding variation, while WES focuses specifically on protein-coding regions at a lower cost. Targeted approaches offer the deepest sequencing for specific genomic regions of interest. This application note provides researchers, scientists, and drug development professionals with a structured comparison of these technologies, detailed experimental protocols, and analytical frameworks to guide the design and implementation of RVAS for complex traits.

Technology Comparison and Selection Guide

Technical Specifications and Performance Metrics

Table 1: Comparison of sequencing technologies for rare variant discovery

| Feature | Whole-Genome Sequencing (WGS) | Whole-Exome Sequencing (WES) | Targeted Sequencing |

|---|---|---|---|

| Genomic Coverage | Comprehensive (≥95% of genome) | 1-2% (protein-coding exons only) | Customizable (specific genes/regions) |

| Variant Types Detected | SNVs, indels, structural variants, non-coding variants | Primarily coding SNVs and indels | Pre-defined variants of interest |

| Rare Variant Detection Power | Highest for all variant classes | Moderate for coding variants | Highest for targeted regions |