Phylogenetic-Informed Monte Carlo Simulations: Advancing Biomedical Research and Drug Discovery

This article explores the integration of phylogenetic data with Monte Carlo simulation methods to address complex challenges in biomedical research and drug development.

Phylogenetic-Informed Monte Carlo Simulations: Advancing Biomedical Research and Drug Discovery

Abstract

This article explores the integration of phylogenetic data with Monte Carlo simulation methods to address complex challenges in biomedical research and drug development. It covers the foundational principles of Monte Carlo techniques in evolutionary biology, detailing their application for simulating sequence evolution, testing evolutionary hypotheses, and performing Bayesian phylogenetic inference. The content provides a methodological guide for implementing these simulations, including optimization strategies to overcome computational bottlenecks and realistic modeling of indel events and rate variation. Further, it examines the validation of these models against empirical data and compares the performance of different algorithms, such as Sequential Monte Carlo versus traditional Markov Chain Monte Carlo. Aimed at researchers, scientists, and drug development professionals, this review synthesizes key insights to empower robust, data-driven decision-making in preclinical research and therapeutic development.

The Convergence of Phylogenetics and Stochastic Simulation: Core Principles and Evolutionary Models

Phylogenetic-Informed Monte Carlo (Phy-IMC) represents a transformative computational framework that marries the statistical sampling power of Monte Carlo methods with the evolutionary models of phylogenetics. Originally developed for complex integration problems in physics, Monte Carlo techniques have been adapted to efficiently traverse the vast combinatorial space of phylogenetic trees, enabling robust Bayesian inference of evolutionary relationships [1]. This approach addresses a critical bottleneck in modern biological research, allowing scientists to integrate evolutionary history into analyses of sequence data, trait evolution, and phylogeographic patterns [2]. The core innovation lies in using phylogenetic models to inform proposal distributions and sampling strategies, creating more efficient algorithms for exploring high-dimensional parameter spaces that include tree topologies, branch lengths, and evolutionary model parameters [1] [2]. For drug development professionals, these methods provide crucial insights into pathogen evolution, drug resistance mechanisms, and host-pathogen co-evolution, ultimately supporting more targeted therapeutic strategies.

Theoretical Foundations

From Nuclear Physics to Biological Inference

The conceptual transition of Monte Carlo methods from nuclear physics to phylogenetic analysis represents a remarkable cross-disciplinary migration. In physics, Monte Carlo approaches solved complex integration problems through random sampling of parameter spaces [1]. Similarly, phylogenetic inference requires integration over tree spaces to estimate posterior distributions, making Monte Carlo methods naturally applicable. The key distinction emerges in the structure of the parameter space: while physical systems often inhabit continuous Euclidean spaces, phylogenetic analyses navigate discrete, combinatorial tree topologies embedded with continuous branch length parameters [1]. This complex landscape necessitates specialized Monte Carlo implementations that can efficiently handle both discrete and continuous parameters while maintaining computational tractability.

Phylogenetic-Informed Monte Carlo fundamentally extends this foundation by using evolutionary models to guide sampling processes. Where conventional Markov Chain Monte Carlo (MCMC) might propose tree alterations through generic topological moves, Phy-IMC leverages phylogenetic likelihoods and priors to make more intelligent proposals, dramatically accelerating convergence [2]. This informed approach proves particularly valuable in phylodynamic and phylogeographic analyses where evolutionary parameters directly influence spatial and temporal inferences crucial for understanding epidemic spread and pathogen adaptation [2].

Algorithmic Spectrum: From MCMC to SMC

The Phy-IMC framework encompasses a spectrum of algorithmic strategies, each with distinct advantages for phylogenetic inference:

Markov Chain Monte Carlo (MCMC): The established workhorse for Bayesian phylogenetics, MCMC constructs a Markov chain that explores the posterior distribution through iterative sampling [1]. Traditional implementations use local search strategies with tree perturbation operations like subtree pruning and regrafting (SPR) and nearest neighbor interchange (NNI) [3]. While highly accurate, MCMC faces challenges with convergence on large datasets and complex models, necessitating development of enhanced variants.

Sequential Monte Carlo (SMC): As an alternative to MCMC, SMC methods employ a sequential search strategy using partial tree states that are progressively extended toward complete phylogenies [1]. The PosetSMC algorithm maintains multiple candidate partial states (particles) that are periodically resampled based on their promise, effectively exploring multiple tree hypotheses simultaneously [1]. This approach offers theoretical advantages including natural parallelization, automatic marginal likelihood estimation, and improved initial convergence [1].

Hybrid MCMC-SMC Schemes: Combining the strengths of both approaches, hybrid methods use SMC for rapid exploration of tree space followed by MCMC for local refinement [1]. These schemes leverage the efficient particle system of SMC to identify promising regions of tree space, then apply MCMC's precise local sampling for detailed estimation within those regions.

Hamiltonian Monte Carlo (HMC): Recently introduced in BEAST X, HMC employs gradient information to enable more efficient exploration of high-dimensional parameter spaces [2]. By leveraging preorder tree traversal algorithms that compute linear-time gradients, HMC achieves substantial improvements in effective sample size per unit time compared to conventional Metropolis-Hastings samplers [2].

Table 1: Comparison of Monte Carlo Methods in Phylogenetics

| Method | Search Strategy | State Representation | Key Advantages | Implementation Examples |

|---|---|---|---|---|

| MCMC | Local search | Full phylogenetic tree | Proven reliability, extensive model support | MrBayes [4], BEAST X [2] |

| SMC/PosetSMC | Sequential search | Partial trees (forests) | Natural parallelization, marginal likelihood estimation | PosetSMC [1] |

| HMC | Gradient-informed local search | Full tree with continuous parameters | Efficient high-dimensional sampling, faster convergence | BEAST X for clock models, trait evolution [2] |

Experimental Protocols and Application Notes

Protocol: Bayesian Phylogenetic Inference with MCMC

This protocol provides a systematic workflow for conducting Bayesian phylogenetic analysis using MCMC methods, integrating automated tools to enhance accuracy and reproducibility [4].

A. Sequence Alignment with GUIDANCE2 and MAFFT

Perform robust sequence alignment using GUIDANCE2 with MAFFT to handle evolutionary complexities:

- Access and Upload: Navigate to the GUIDANCE2 server and upload your multi-sequence FASTA file. Ensure sequence names contain only numbers, letters, and underscores [4].

- Tool Selection: Choose MAFFT as the alignment method within the GUIDANCE2 interface [4].

- Parameter Configuration: Adjust MAFFT parameters based on dataset characteristics. For shorter sequences or rapid analyses, select the

6merpairwise method. For sequences with local similarities or conserved regions, uselocalpair. For longer sequences requiring global alignment, choosegenafpairorglobalpair[4]. - Execution and Evaluation: Run the alignment and download results in FASTA format. Perform initial quality assessment and remove unreliable alignment columns based on confidence scores [4].

B. Sequence Format Conversion

Convert aligned sequences to formats required for downstream analysis:

- Use MEGA X for initial conversion from FASTA to NEXUS format [4].

- Employ PAUP* for format refinement, ensuring compatibility with MrBayes requirements [4].

- Verify that NEXUS files begin with the

#NEXUSdeclaration and conform to non-interleaved specifications if using PAUP* [4].

C. Evolutionary Model Selection

Identify optimal substitution models using statistical criteria:

- For nucleotide sequences: Use MrModeltest2 in conjunction with PAUP. Copy the MrModelblock file to your working directory, execute it in PAUP via

File > Execute, and use the generatedmrmodel.scoresfile for model selection based on Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC) [4]. - For protein sequences: Use ProtTest 3.4.2, ensuring Java 8 or later is installed. Navigate to the ProtTest directory in a command-line terminal and execute the analysis. ProtTest automatically identifies optimal protein evolution models using statistical criteria [4].

D. Bayesian Inference with MrBayes

Execute Bayesian phylogenetic inference under the selected model:

- Software Setup: Download MrBayes 3.2.7 and place NEXUS files in the

bindirectory. Open a command-line terminal in this directory and launch MrBayes by typingmb[4]. - Model Specification: In the MrBayes command interface, define the evolutionary model based on MrModeltest or ProtTest results using the

lsetandprsetcommands [4]. - MCMC Configuration: Set two independent runs with four chains each (three heated, one cold) using the

mcmccommand. Use thesamplefreqparameter to specify sampling frequency andngento define the number of generations [4]. - Convergence Diagnostics: Monitor convergence using the

sumpcommand to examine potential scale reduction factors (PSRF) and effective sample sizes (ESS). Ensure ESS values exceed 200 and PSRF approaches 1.000 [4]. - Tree Summarization: Use the

sumtcommand to generate a consensus tree with posterior probabilities after confirming convergence [4].

Protocol: Sequential Monte Carlo with PosetSMC

This protocol outlines phylogenetic inference using the PosetSMC algorithm, which can provide faster convergence for certain dataset types [1].

A. Algorithm Configuration

- Software Installation: Download PosetSMC from http://www.stat.ubc.ca/bouchard/PosetSMC and install according to platform-specific instructions [1].

- Particle System Initialization: Set the number of particles based on available computational resources. Begin with the least partial state where each tree in the forest consists of exactly one taxon [1].

- Successor Proposal: Define the successor function for generating new partial states from existing ones. In PosetSMC, successors are obtained by merging two trees in a forest, forming a new forest with one less tree [1].

B. Iterative Tree Construction

- Picle Propagation: At each algorithm step, generate possible successors of current partial states and calculate their weights based on the phylogenetic likelihood [1].

- Resampling: Periodically resample particles based on their normalized weights, pruning unpromising candidates while maintaining diversity in the particle set [1].

- Termination Check: Continue iterations until all particles represent fully specified phylogenetic trees [1].

C. Posterior Estimation

- Marginal Likelihood Estimation: Calculate the marginal likelihood directly from the final particle weights, a key advantage of SMC methods [1].

- Consensus Tree Generation: Generate a consensus tree from the final particle set, optionally incorporating branch length information [1].

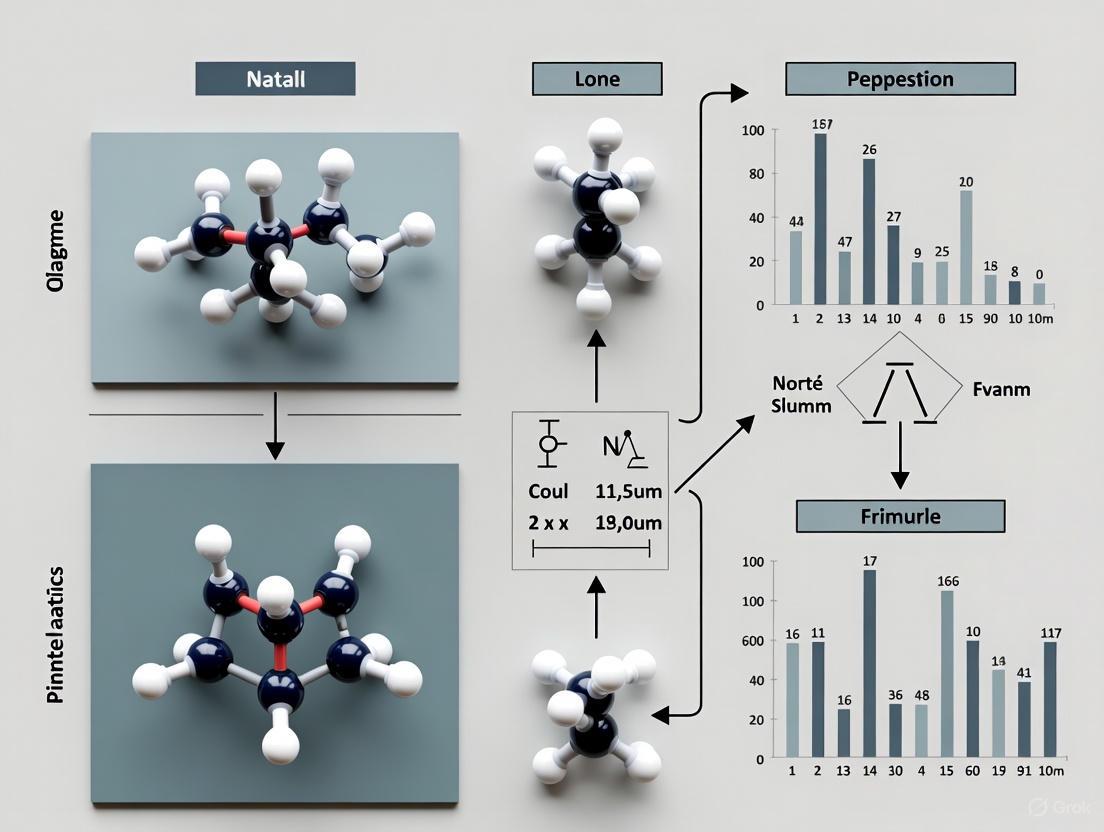

Workflow Visualization

The following diagram illustrates the integrated workflow for Phylogenetic-Informed Monte Carlo analysis:

Diagram 1: Integrated workflow for Phylogenetic-Informed Monte Carlo analysis, showing key stages from sequence data to tree interpretation.

Advanced Implementations and Computational Innovations

Next-Generation Algorithms in BEAST X

The BEAST X platform represents the cutting edge of Phy-IMC implementation, introducing several novel computational approaches that significantly advance the flexibility and scalability of evolutionary analyses [2]:

Hamiltonian Monte Carlo (HMC): BEAST X implements HMC transition kernels that leverage gradient information for more efficient sampling of high-dimensional parameters. By employing preorder tree traversal algorithms that compute linear-time gradients, HMC achieves substantially higher effective sample sizes per unit time compared to conventional Metropolis-Hastings samplers [2]. This approach proves particularly valuable for sampling under complex models including skygrid coalescent, mixed-effects clock models, and continuous-trait evolution processes [2].

Markov-Modulated Models (MMMs): These substitution models allow the evolutionary process to change across each branch and for each site independently within an alignment [2]. MMMs comprise multiple substitution models (e.g., nucleotide, amino acid, or codon models) that construct a high-dimensional instantaneous rate matrix, capturing site- and branch-specific heterogeneity that may reflect varying selective pressures [2].

Random-Effects Substitution Models: This extension of standard continuous-time Markov chain models incorporates additional rate variation by representing the base model as fixed-effect parameters while allowing random effects to capture deviations from the simpler process [2]. These models can identify non-reversible substitution processes, as demonstrated in SARS-CoV-2 evolution where they detected strongly increased C→T versus T→C substitution rates [2].

Deep Learning Integrations: NeuralNJ

Recent research has explored integrating deep learning with Monte Carlo approaches through the NeuralNJ algorithm, which employs a learnable neighbor-joining mechanism guided by priority scores [3]. This end-to-end framework directly constructs phylogenetic trees from input sequences, avoiding inaccuracies from disjoint inference stages [3]. Key innovations include:

Sequence Encoder: Uses MSA-transformer architecture to compute attention along both species and sequence dimensions, generating site-aware and species-aware representations [3].

Tree Decoder: Iteratively selects and joins subtrees based on learned priority scores, considering both local topology and global ancestry information through a topology-aware gated network [3].

Reinforcement Learning Integration: NeuralNJ-RL variant employs reinforcement learning with likelihood as reward rather than supervised learning, generating multiple complete trees from which the highest likelihood candidate is selected [3].

Table 2: Performance Comparison of Phylogenetic Inference Methods

| Method | Computational Efficiency | Theoretical Guarantees | Marginal Likelihood Estimation | Parallelization Potential | Best-Suited Applications |

|---|---|---|---|---|---|

| MCMC (MrBayes) | Moderate | Asymptotically exact | Requires additional computation | Limited | General-purpose Bayesian phylogenetics [4] |

| SMC (PosetSMC) | High (faster initial convergence) | Consistent estimator | Automatic | High | Medium-sized datasets, marginal likelihood estimation [1] |

| HMC (BEAST X) | High for gradient-compatible models | Asymptotically exact | Requires additional computation | Moderate | High-dimensional models, complex trait evolution [2] |

| NeuralNJ | Very high after training | Data-dependent | Not primary focus | High | Large datasets, rapid screening analyses [3] |

Successful implementation of Phylogenetic-Informed Monte Carlo methods requires both computational tools and biological data resources. The following table details essential components for establishing a Phy-IMC research pipeline:

Table 3: Essential Research Reagents and Computational Resources for Phy-IMC

| Resource Category | Specific Tools/Reagents | Function/Role in Phy-IMC | Implementation Notes |

|---|---|---|---|

| Sequence Alignment | GUIDANCE2 with MAFFT | Handles alignment uncertainty and complex evolutionary events | Default parameters recommended for most datasets; adjust Max-Iterate for high complexity [4] |

| Model Selection | ProtTest (proteins), MrModeltest (nucleotides) | Automates identification of optimal evolutionary models | Uses statistical criteria (AIC/BIC); requires PAUP* for MrModeltest [4] |

| MCMC Inference | MrBayes, BEAST X | Bayesian phylogenetic estimation with MCMC/SMC | MrBayes simpler for standard analyses; BEAST X offers advanced models for complex data [4] [2] |

| SMC Inference | PosetSMC | Alternative to MCMC with faster convergence on some datasets | Naturally parallelizable; provides automatic marginal likelihood estimation [1] |

| Format Conversion | MEGA X, PAUP* | Ensures compatibility between tools with different format requirements | Critical for seamless pipeline integration; prevents failures from format mismatches [4] |

| Programming Environment | Python 3.13.1, Java 8+ | Scripting automation, custom analysis extensions | Essential for parsing model selection outputs, pipeline automation [4] |

Applications in Drug Development and Molecular Epidemiology

Phylogenetic-Informed Monte Carlo methods have proven particularly valuable in pharmaceutical and public health contexts, where understanding pathogen evolution directly informs intervention strategies:

SARS-CoV-2 Variant Surveillance: BEAST X has enabled real-time phylodynamic inference of SARS-CoV-2 variant emergence and spread, characterizing the Omicron BA.1 invasion in England through discrete-trait phylogeographic analysis [2]. These approaches integrate environmental and epidemiological predictors within Bayesian inference, providing critical intelligence for public health decision-making.

Antiviral Resistance Modeling: Phy-IMC methods can track the evolution of resistance mutations in viruses like HIV and influenza, identifying selective pressures and compensatory mutations that maintain viral fitness despite drug exposure. The random-effects substitution models in BEAST X are particularly adept at detecting non-reversible substitution processes associated with antiviral resistance [2].

Vaccine Target Identification: Through phylogenetic analysis of pathogen diversity, researchers can identify conserved regions amenable to vaccine targeting. The Markov-modulated models in BEAST X help identify sites under varying selective pressures across different lineages, highlighting regions constrained by functional requirements [2].

Clinical Trial Stratification: Phy-IMC can inform clinical trial design by characterizing the evolutionary relationships among circulating strains, ensuring that vaccine candidates are tested against representative variants. The improved node height estimation in BEAST X's time-dependent evolutionary rate model provides more accurate dating of evolutionary events, supporting trial timing decisions [2].

The following diagram illustrates the application of Phy-IMC in molecular epidemiology and drug development:

Diagram 2: Applications of Phylogenetic-Informed Monte Carlo in drug development and molecular epidemiology.

Phylogenetic-Informed Monte Carlo represents a sophisticated fusion of statistical physics and evolutionary biology that continues to transform our ability to extract meaningful insights from biological sequence data. By guiding Monte Carlo sampling with phylogenetic models, these methods enable efficient exploration of incredibly complex parameter spaces that include tree topologies, evolutionary parameters, and associated continuous traits. The ongoing development of advanced implementations—including Hamiltonian Monte Carlo in BEAST X, Sequential Monte Carlo in PosetSMC, and deep learning approaches in NeuralNJ—promises to further expand the scope and scale of phylogenetic inference in biomedical research. For drug development professionals, these computational advances translate to more precise tracking of pathogen evolution, better identification of therapeutic targets, and more informed clinical trial design, ultimately supporting the development of more effective interventions against evolving infectious diseases.

The Role of Stochastic Simulation in Testing Evolutionary Hypotheses and Rate Constancy

Stochastic simulation has become an indispensable tool in modern evolutionary biology, enabling researchers to model the inherent randomness of biological processes such as mutation, genetic drift, and selection. These methods are particularly valuable for testing evolutionary hypotheses and evaluating rate constancy in molecular evolution, where they provide a framework for comparing alternative models and assessing the fit of empirical data. The power of stochastic simulation lies in its ability to account for heterogeneity in natural systems, moving beyond traditional deterministic models that often overlook critical sources of variation [5].

Within phylogenetic inference, stochastic simulation methods allow scientists to explore complex evolutionary scenarios that would be mathematically intractable using analytical approaches alone. By incorporating Monte Carlo techniques, researchers can generate null distributions of evolutionary parameters, test hypotheses about rate variation across lineages, and evaluate the robustness of phylogenetic conclusions to violations of model assumptions. The BEAST X platform represents the cutting edge in this field, combining molecular phylogenetic reconstruction with complex trait evolution, divergence-time dating, and coalescent demographics in an efficient statistical inference engine [2].

Theoretical Foundation: Stochastic Processes in Evolution

Mathematical Principles of Stochastic Simulation

Stochastic simulation in evolutionary biology typically employs Markov processes, where the future state of a system depends only on its current state, not on its historical path. This memoryless property makes these processes computationally tractable while still capturing the essential randomness of evolutionary change. The fundamental mathematical framework involves:

- Continuous-Time Markov Chains (CTMCs): These model state transitions (e.g., nucleotide substitutions) as Poisson processes with rate parameters defined by an instantaneous rate matrix Q

- Master Equations: These differential equations describe the time evolution of the probability for a system to occupy each of its possible states

- Monte Carlo Integration: This technique uses random sampling to approximate complex integrals that arise in Bayesian phylogenetic inference

The stochastic simulation algorithm (SSA), first developed for chemical reaction systems, has been adapted for evolutionary processes to generate exact sample paths of the underlying stochastic process without introducing temporal discretization error.

Testing Rate Constancy with Stochastic Methods

The molecular clock hypothesis posits that evolutionary rates remain constant across lineages, providing a foundation for estimating divergence times. Stochastic simulation enables rigorous testing of this hypothesis through:

- Posterior Predictive Simulation: Generating data under the clock model and comparing summary statistics to those observed in empirical data

- Model Comparison: Calculating Bayes factors or using information criteria to compare clock-like and relaxed clock models

- Local Clock Detection: Identifying lineages with significantly accelerated or decelerated evolutionary rates

BEAST X implements novel clock models including time-dependent evolutionary rate extensions, continuous random-effects clock models, and a more general mixed-effects relaxed clock model that provide enhanced capacity for testing rate constancy hypotheses [2].

Application Notes: Stochastic Methods in Practice

Implementing Stochastic Simulation for Hypothesis Testing

Application 1: Testing Molecular Clock Assumptions

To evaluate whether a dataset conforms to a molecular clock, researchers can implement a posterior predictive simulation approach:

- Estimate model parameters from empirical data under both strict clock and relaxed clock models

- Simulate multiple sequence alignments using parameter estimates from each model

- Calculate appropriate test statistics (e.g., rate variation among lineages) for both empirical and simulated datasets

- Compare the distribution of test statistics from simulated data to the empirical value

A significant discrepancy between empirical and simulated distributions indicates model inadequacy. BEAST X enhances this approach with newly developed, continuous random-effects clock models and a more general mixed-effects relaxed clock model [2].

Application 2: Assessing Phylogenetic Uncertainty

Stochastic simulation enables quantification of uncertainty in phylogenetic estimates through:

- Bayesian Bootstrap: Resampling sites with replacement from sequence alignments and re-estimating trees

- Parametric Bootstrap: Simulating new datasets under the estimated model and comparing tree topologies

- Markov Chain Monte Carlo (MCMC): Sampling from the posterior distribution of trees given the data

BEAST X introduces linear-in-N gradient algorithms that enable high-performance Hamiltonian Monte Carlo (HMC) transition kernels to sample from high-dimensional spaces of parameters that were previously computationally burdensome to learn [2].

Quantitative Comparison of Stochastic Methods

Table 1: Performance Comparison of Stochastic Sampling Methods in BEAST X

| Sampling Method | Effective Sample Size (ESS)/hour | Applicable Models | Computational Complexity |

|---|---|---|---|

| Metropolis-Hastings | Baseline (1.0x) | Standard substitution, clock, and tree models | O(N) to O(N²) |

| Hamiltonian Monte Carlo | 3.5x-8.2x faster [2] | Skygrid, mixed-effects clocks, trait evolution | O(N) with gradients |

| Random-effects substitution | 2.1x-4.7x faster [2] | Non-reversible processes, covarion-like models | O(N·S²) to O(N·S⁴) |

| Gradient-based tree sampling | 5.3x-12.6x faster [2] | Divergence time estimation, node dating | O(N) with preorder traversal |

Table 2: Stochastic Clock Models for Testing Rate Constancy

| Clock Model | Key Parameters | Hypothesis Testing Application | Implementation in BEAST X |

|---|---|---|---|

| Strict Clock | Single rate parameter | Testing global rate constancy | Standard |

| Uncorrelated Relaxed Clock | Rate multipliers per branch | Identifying lineage-specific rate variation | Enhanced with HMC sampling |

| Random Local Clock | Discrete rate categories | Detecting local rate shifts | Shrinkage-based implementation [2] |

| Time-Dependent Clock | Epoch-specific rates | Testing rate changes over time | Phylogenetic epoch modeling [2] |

| Mixed-Effects Clock | Fixed + random effects | Partitioning rate variation sources | Continuous random-effects extension [2] |

Experimental Protocols

Protocol 1: Testing Evolutionary Rate Constancy Using Posterior Prediction

Objective: To evaluate whether an empirical dataset exhibits significant deviation from a molecular clock using posterior predictive simulation.

Materials and Software:

- BEAST X software package (v2.0 or higher)

- Sequence alignment in NEXUS or PHYLIP format

- Computing cluster or high-performance workstation (≥16 GB RAM, multi-core processor)

Procedure:

- Model Selection:

- Load sequence alignment and partition data appropriately

- Run model selection to determine optimal substitution model using Bayesian Information Criterion (BIC) or stepping-stone sampling

- Note marginal likelihood estimates for competing models

Strict Clock Analysis:

- Configure strict clock model with appropriate substitution model

- Set up MCMC chain for 10-100 million generations, sampling every 1000 generations

- Use appropriate tree prior (e.g., coalescent, birth-death) based on data characteristics

- Run analysis and assess convergence (ESS > 200 for all parameters)

Relaxed Clock Analysis:

- Configure uncorrelated lognormal relaxed clock model with same substitution model and tree prior

- Maintain equivalent MCMC settings as strict clock analysis

- Run analysis and assess convergence

Posterior Predictive Simulation:

- Calculate test statistic (e.g., coefficient of rate variation among lineages) from empirical data under both models

- Simulate 1000 alignments using parameter values drawn from posterior distribution of each model

- Calculate test statistic for each simulated alignment

- Compute posterior predictive P-value as proportion of simulated test statistics more extreme than empirical value

Interpretation:

- P-value < 0.05 indicates significant inadequacy of the model

- Consistently better performance of relaxed clock suggests rate heterogeneity

- Compare marginal likelihoods to calculate Bayes factor for model selection

Troubleshooting:

- If MCMC fails to converge, increase chain length or adjust tuning parameters

- If posterior predictive distributions are too narrow, check for model overparameterization

- If computational time is excessive, consider approximate methods or subset analyses

Protocol 2: Stochastic Mapping of Trait Evolution

Objective: To reconstruct the evolutionary history of discrete traits using stochastic character mapping.

Materials and Software:

- BEAST X with discrete trait evolution package

- Time-calibrated phylogenetic tree

- Trait data for terminal taxa

Procedure:

- Model Configuration:

- Load fixed time-calibrated tree or set up tree inference simultaneously

- Configure symmetric or asymmetric transition rate matrix for trait evolution

- Set up appropriate clock model for sequence data

MCMC Analysis:

- Run analysis for sufficient generations to achieve convergence (ESS > 200)

- Monitor key parameters: transition rates, tree likelihood, trait evolution likelihood

Stochastic Mapping:

- Sample 1000 trees from posterior distribution

- For each tree, simulate stochastic trait histories conditional on trait states at tips

- Record number and timing of transitions between states

Summarization:

- Calculate posterior probabilities of trait states at internal nodes

- Compute expected number of transitions between states across tree

- Identify branches with high probability of transition events

Visualization:

- Map posterior expectations onto maximum clade credibility tree

- Use color coding to represent trait states and transition events

- Generate animations showing temporal spread of traits (see Visualization section)

Validation:

- Compare results to maximum parsimony reconstruction

- Perform posterior predictive simulation to assess model adequacy

- Test sensitivity to prior distributions on transition rates

Visualization and Workflow Diagrams

Stochastic Simulation Workflow for Hypothesis Testing

Stochastic Testing Workflow

Rate Constancy Evaluation Methodology

Rate Constancy Evaluation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Stochastic Evolutionary Simulation

| Tool/Resource | Function/Purpose | Implementation Notes |

|---|---|---|

| BEAST X Platform | Bayesian evolutionary analysis with stochastic simulation | Cross-platform, open-source; supports complex trait evolution, divergence-time dating, and coalescent demographics [2] |

| Hamiltonian Monte Carlo (HMC) | High-dimensional parameter sampling | Linear-in-N gradient algorithms enable efficient sampling; 3.5x-8.2x faster ESS/hour than conventional methods [2] |

| Markov-Modelled Models (MMMs) | Site- and branch-specific heterogeneity modeling | Covarion-like mixture models with K substitution models of dimension S creating KS × KS rate matrix [2] |

| Random-effects Substitution Models | Capturing additional rate variation beyond base CTMC models | Extends standard models with shrinkage priors to regularize random effects; useful for non-reversible processes [2] |

| Preorder/Postorder Tree Traversal | Calculating likelihoods and sufficient statistics | Enables linear-in-N evaluations of high-dimensional gradients for branch-specific parameters [2] |

| Discrete-trait Phylogeography | CTMC modeling of geographic spread | Enhanced with GLM extensions for transition rates and HMC for missing data [2] |

| Relaxed Random Walk (RRW) Models | Continuous-trait phylogeography | Scalable method with HMC sampling for branch-specific rate multipliers [2] |

Advanced Applications and Future Directions

Emerging Methods in Stochastic Phylogenetics

The field of stochastic simulation in evolutionary biology continues to advance rapidly, with several emerging methods showing particular promise:

Gaussian Trait Evolution Models BEAST X now implements scalable Ornstein-Uhlenbeck and other Gaussian trait-evolution models that can efficiently handle high-dimensional trait data with dozens or even thousands of observations per taxon. These models successfully capture dependencies between traits using novel computational inference techniques, particularly phylogenetic factor analysis and phylogenetic multivariate probit models [2].

Missing Data Integration A significant innovation in BEAST X is the ability to integrate out missing data within Bayesian inference procedures using HMC approaches. This is particularly valuable for phylogeographic analyses where parameterizing between-location transition rates as log-linear functions of environmental or epidemiological predictors may encounter missing predictor values for some location pairs [2].

High-Dimensional Gradient Methods The implementation of linear-time gradient algorithms represents a breakthrough in computational efficiency for phylogenetic inference. These methods calculate derivatives using preorder and postorder tree traversal to achieve linear time complexity in the number of taxa, enabling application of HMC transition kernels to previously intractable high-dimensional problems [2].

Protocol 3: Implementing Custom Stochastic Simulations

Objective: To create custom stochastic simulations for testing specific evolutionary hypotheses beyond standard models.

Materials and Software:

- Programming environment (R, Python, or MATLAB)

- Basic familiarity with stochastic processes and probability distributions

- Template code for Monte Carlo simulations

Procedure:

- Define State Variables:

- Identify key system components (e.g., population sizes, trait values, sequence states)

- Create data structures to track variables over time

- Example MATLAB structure for microtubule simulation: [6]

Specify Transition Rules:

- Define probabilities for state changes based on biological knowledge

- Convert rates to probabilities using appropriate transformations

- Example probability calculation: [6]

Implement Core Simulation Loop:

- Create time-stepping mechanism with appropriate step size

- Ensure numerical stability and convergence

- Example time loop structure: [6]

Add Boundary Conditions:

- Implement constraints to maintain biological realism

- Example zero-length boundary: [6]

Validation and Sensitivity Analysis:

- Compare simulation output to analytical solutions when available

- Test sensitivity to time step size and initial conditions

- Perform robustness checks across parameter ranges

Implementation Tips:

- Start with simple models before adding complexity

- Use multiple random seeds to assess variability

- Implement comprehensive logging for debugging

- Optimize code for performance when working with large numbers of replicates

Stochastic simulation provides a powerful framework for testing evolutionary hypotheses and evaluating rate constancy in molecular evolution. The methods and protocols outlined in this document offer researchers comprehensive tools for implementing these approaches in their own work, from basic model comparison to advanced custom simulations. As computational methods continue to advance, particularly through platforms like BEAST X with its Hamiltonian Monte Carlo samplers and high-dimensional gradient methods, the scope and accuracy of stochastic evolutionary inference will continue to expand, enabling ever more sophisticated investigations into the patterns and processes of evolution.

Bayesian phylogenetic inference provides a powerful probabilistic framework for reconstructing evolutionary relationships among species, a central problem in computational biology. This framework combines a phylogenetic prior with an evolutionary substitution likelihood model to formulate the posterior distribution over phylogenetic trees. Traditional methods often rely on Markov chain Monte Carlo (MCMC) approaches, which can suffer from slow convergence and local mode trapping in practice. With the integration of molecular phylogenetic reconstruction, complex trait evolution, divergence-time dating, and coalescent demographics, Bayesian methods have become indispensable in evolutionary biology, epidemiology, and conservation genetics. This protocol details advanced computational workflows that leverage recent innovations in variational inference, deep learning, and Hamiltonian Monte Carlo to achieve state-of-the-art inference accuracies for phylogenetic, phylogeographic, and phylodynamic analyses.

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Software and Resources for Bayesian Phylogenetic Analysis

| Tool Name | Primary Function | Key Application | Platform/Requirements |

|---|---|---|---|

| BEAST X | Bayesian evolutionary analysis | Integrates sequence evolution, phylodynamics, phylogeography | Cross-platform, Open-source [2] |

| MrBayes | Bayesian phylogenetic inference | Estimates phylogenetic trees through Bayesian inference | Windows, Command-line [4] |

| GUIDANCE2 with MAFFT | Sequence alignment | Handles alignment uncertainty and evolutionary events | Web server, FASTA/PHYLIP input [4] |

| ProtTest | Model selection | Identifies optimal protein evolution models | Windows, Java-dependent [4] |

| MrModeltest | Model selection | Determines nucleotide substitution models | Windows, PAUP*-dependent [4] |

| PAUP* | Phylogenetic analysis | Comprehensive analysis for nucleotide sequences | Windows, NEXUS format [4] |

| MEGA X | Sequence analysis | Format conversion and preliminary analyses | Windows [4] |

| ARTree | Autoregressive probabilistic model | Models tree topologies using deep learning | Python, Research implementation [7] |

Integrated Phylogenetic Analysis Workflow

The Bayesian phylogenetic analysis workflow comprises five critical stages that form a cohesive pipeline: (1) robust sequence alignment using GUIDANCE2 with MAFFT to handle evolutionary complexities; (2) sequence format conversion for downstream compatibility; (3) optimal evolutionary model selection guided by statistical criteria; (4) execution of Bayesian inference with appropriate diagnostics; and (5) validation and visualization of phylogenetic outputs [4]. This structured approach minimizes manual intervention while ensuring biological rigor, with detailed command-line instructions and custom Python scripts enhancing reproducibility.

Diagram 1: Phylogenetic analysis workflow showing the sequence of computational steps from raw sequence data to final tree output.

Performance Analysis of Computational Methods

Table 2: Comparative Performance of Bayesian Phylogenetic Methods

| Method | Computational Approach | Key Advantages | Typical Effective Sample Size (ESS) Gains | Best Application Context |

|---|---|---|---|---|

| MCMC (Traditional) | Markov chain Monte Carlo | Robust, well-established | Baseline | Standard datasets, Conservative inference [4] |

| HMC in BEAST X | Hamiltonian Monte Carlo | Efficiently traverses high-dimensional spaces | Substantial increase per unit time | Large datasets, Complex models [2] |

| Variational Inference with ARTree | Deep learning autoregressive model | Models tree topologies efficiently | State-of-the-art accuracy | Large trees, Acceleration needed [7] |

| Semi-implicit Construction | Hierarchical Bayesian modeling | Handles branch length uncertainty | Improved convergence | Branch-specific parameter estimation [7] |

Recent advances in BEAST X introduce linear-in-N gradient algorithms that enable high-performance HMC transition kernels to sample from high-dimensional parameter spaces that were previously computationally burdensome [2]. These innovations allow scalable inference under complex models including the nonparametric coalescent-based skygrid model, mixed-effects and shrinkage-based clock models, and various continuous-trait evolution models. Applications of these linear-time HMC samplers achieve substantial increases in effective sample size per unit time compared with conventional Metropolis-Hastings samplers, with the exact speedups being sensitive to dataset size and nature.

Advanced Protocol for Bayesian Phylogenetic Inference

Sequence Alignment with GUIDANCE2 and MAFFT

Purpose: To generate reliable multiple sequence alignments while accounting for alignment uncertainty and evolutionary events such as insertions and deletions.

Procedure:

- Access the GUIDANCE2 server and upload your multi-sequence FASTA file

- Select MAFFT as the alignment method in the tool options

- Configure alignment parameters according to dataset characteristics:

- For shorter sequences or rapid preliminary analyses, use the 6mer method

- For sequences with local similarities or conserved regions, apply the localpair approach

- For longer sequences requiring global alignment, implement genafpair or globalpair methods

- Click Submit and await process completion

- Download the resulting alignment file in FASTA format

- Perform initial review to ensure proper sequence alignment

- Remove unreliable alignment columns based on confidence scores [4]

Critical Notes: Sequence names must not contain special characters; use only numbers, letters, and underscores. Default MAFFT parameters are recommended for most datasets, though the Max-Iterate option (0, 1, 2, 5, 10, 20, 50, 80, 100, 1,000) can optimize alignment iterations for high-complexity datasets.

Sequence Format Conversion

Purpose: To ensure seamless data handoffs between tools by addressing format specification differences.

Procedure:

- Utilize MEGA X for initial format conversions

- Employ PAUP* for format refinement, particularly for non-interleaved NEXUS requirements

- Verify all NEXUS files begin with the declaration "#NEXUS" [4]

Technical Considerations: The protocol leverages MEGA for initial format conversions and PAUP* for format refinement, preventing pipeline failures from format mismatches. GUIDANCE2 accepts FASTA/PHYLIP inputs, MrBayes requires NEXUS format, and PAUP* demands non-interleaved NEXUS for its analyses, making proper format conversion essential.

Evolutionary Model Selection

Purpose: To identify optimal evolutionary models using statistical criteria for reliable downstream phylogenetic inferences.

Procedure: For nucleotide sequences:

- Execute MrModeltest within PAUP* environment

- Copy the MrModelblock file to your working directory

- Execute via File > Execute in PAUP*

- Use the generated mrmodel.scores file for subsequent analyses

For protein sequences:

- Navigate to ProtTest extraction directory in command line terminal

- Run ProtTest analysis using appropriate parameters

- Evaluate results based on Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC) values [4]

Implementation: Custom Python scripts can streamline the parsing of model selection outputs, enhancing data handling efficiency. Automated model selection tools like ProtTest and MrModeltest identify optimal evolutionary models using statistical criteria, thereby improving the reliability of downstream phylogenetic inferences.

Bayesian Inference Implementation

Purpose: To estimate phylogenetic trees and evolutionary parameters using probabilistic frameworks that incorporate uncertainty and prior knowledge.

Procedure using MrBayes:

- Download MrBayes and extract to directory with English characters only (no spaces)

- Rename executable file (mb.3.2.7-win64.exe for 64-bit CPUs) to mb.exe

- Place NEXUS files in the bin directory

- Open command line terminal in this directory

- Type "mb" and press Enter to launch MrBayes

- Execute analysis with parameters determined from model selection step [4]

Procedure using BEAST X:

- Implement novel substitution models including Markov-modulated models (MMMs) for site- and branch-specific heterogeneity

- Apply random-effects substitution models to capture additional rate variation

- Utilize newly developed molecular clock models:

- Time-dependent evolutionary rate model for rate variations through time

- Continuous random-effects clock model

- Shrinkage-based local clock model

- Employ preorder tree traversal algorithms for linear-in-N gradient evaluations [2]

Advanced Features: BEAST X introduces scalable Gaussian trait-evolution models, missing-trait models, phylogenetic factor analysis, and phylogenetic multivariate probit models. These successfully model dependencies between high-dimensional trait data with dozens or even thousands of observations per taxon through novel computational inference techniques.

Diagram 2: Bayesian inference engine integrating multiple input models and parameters to generate posterior tree distributions.

Deep Learning Approaches to Tree Modeling

Recent innovations integrate variational inference with deep learning to address limitations of traditional Monte Carlo approaches. The ARTree model, an autoregressive probabilistic model, and its accelerated version specifically model tree topologies, while a semi-implicit hierarchical construction handles branch lengths. These approaches include representation learning for phylogenetic trees to provide high-resolution representations ready for downstream tasks [7].

The mathematical formulation involves developing an autoregressive probabilistic model called ARTree and its accelerated version to model tree topologies, with a semi-implicit hierarchical construction for branch lengths. These deep learning approaches achieve state-of-the-art inference accuracies and inspire broader follow-up innovations in Bayesian phylogenetic inference.

Implementation Considerations:

- Representation learning components provide high-resolution tree representations

- Autoregressive structure enables efficient sampling of tree topologies

- Hierarchical construction effectively captures uncertainty in branch lengths

- Gradient-based optimization facilitates convergence in high-dimensional spaces

These methodological advances demonstrate how integrating deep learning with Bayesian phylogenetic inference can overcome computational bottlenecks while maintaining statistical rigor, particularly for large datasets where traditional MCMC methods face convergence challenges.

The Monte Carlo method is a powerful computational technique that relies on repeated random sampling to understand the impact of uncertainty and model complex systems that are analytically intractable. Originating from statistical physics during the Manhattan Project in the 1940s, where it was used to model neutron diffusion, the method has since revolutionized numerous scientific fields, including evolutionary biology and drug development [8] [9]. The core principle involves constructing a detailed picture of risk or variability through computer simulation of many random numbers possessing the key characteristics of the empirical system being studied [8].

In phylogenetic research and drug discovery, Monte Carlo simulation stands as an invaluable asset, enabling the evaluation of new systems without physical implementation, experimentation with existing systems without operational adjustments, and testing system limits without real-world repercussions [8]. This capability is particularly crucial when dealing with stochastic biological processes where multiple sources of uncertainty coexist, such as genetic sequence evolution, phylogenetic tree topology, and molecular clock rates. The method's ability to account for uncertainties and variability makes it particularly useful when dealing with intricate, dynamic systems inherent in healthcare and evolutionary biology [8].

Table 1: Key Advantages and Limitations of Monte Carlo Simulation

| Advantages | Limitations |

|---|---|

| Allows conclusions about new systems without building them [8] | Cannot optimize; only generates results from "WHAT-IF" queries [8] |

| Visualizes system operations under different conditions [8] | Cannot obtain correct results from inaccurate data [8] |

| Reveals how different components interact and affect overall system performance [8] | Computationally intensive, requiring numerous simulations [8] |

| Provides insight into the essence of processes [8] | Cannot describe system characteristics not included in the model [8] |

Monte Carlo Methods in Phylogenetic Analysis

Bayesian Inference of Phylogenetic Distances

In phylogenetic research, Monte Carlo methods form the computational backbone for Bayesian inference of evolutionary relationships. A recent advancement involves a hybrid approach that incorporates Γ-distributed rate variation and heterotachy (lineage-specific evolutionary rate variations over time) into a hierarchical Bayesian GTR-style framework [10]. This approach is differentiable and amenable to both stochastic gradient descent for optimisation and Hamiltonian Markov chain Monte Carlo for Bayesian inference, enabling researchers to study complex evolutionary hypotheses such as the origins of the eukaryotic cell within the context of a universal tree of life [10].

The mathematical foundation for phylogenetic distance estimation using Monte Carlo methods involves modeling sequence evolution through continuous-time Markov processes that describe state transitions along phylogenetic trees. For DNA sequences, the General Time Reversible (GTR) model provides a framework for estimating evolutionary distances, while more complex phenomena like heterotachy require extensions to this basic model [10]. The Monte Carlo approach allows for the integration of uncertainty in model parameters, leading to more robust estimates of phylogenetic distances and evolutionary relationships.

Protocol: Bayesian Phylogenetic Inference Using Markov Chain Monte Carlo

Application: Estimating phylogenetic trees and evolutionary parameters from molecular sequence data.

Materials and Reagents:

- Molecular sequence alignment (DNA, RNA, or amino acid sequences)

- Computational environment (BEAST, MrBayes, or custom Bayesian inference software)

- High-performance computing resources

- Substitution model (e.g., GTR, HKY)

- Prior distributions for model parameters

- Markov Chain Monte Carlo (MCMC) sampling algorithm

Procedure:

- Model Specification: Select an appropriate substitution model (e.g., GTR+Γ) that accounts for the pattern of sequence evolution and among-site rate variation [10].

- Prior Selection: Define prior probability distributions for all model parameters, including branch lengths, substitution rates, and tree topology.

- MCMC Initialization: Set initial values for parameters and define the proposal mechanisms for parameter updates.

- Chain Execution: Run the MCMC algorithm for a sufficient number of iterations (typically millions) to ensure adequate sampling of the posterior distribution.

- Convergence Assessment: Monitor chain convergence using diagnostic tools (effective sample sizes, trace plots) to ensure proper mixing and stationarity.

- Posterior Analysis: Summarize the posterior distribution of trees and parameters, computing consensus trees and Bayesian credible intervals.

Troubleshooting Tips:

- If MCMC chains fail to converge, adjust proposal mechanisms or run multiple independent chains.

- For computational efficiency, consider Hamiltonian Monte Carlo for high-dimensional parameter spaces [10].

- Validate model adequacy using posterior predictive simulations.

Monte Carlo Methods in Drug Development

Simulating the Drug Discovery Pipeline

Monte Carlo simulation has emerged as a transformative tool in pharmaceutical research and development, addressing the critical challenge of declining productivity in bringing new molecular entities to market [11]. The method enables researchers to model the entire drug discovery pipeline from conceptualization to candidate selection, incorporating the inherent uncertainties and variabilities at each stage. Simulations predict that there is an optimum number of scientists for a given drug discovery portfolio, beyond which output in the form of preclinical candidates per year will remain flat [11]. The model further predicts that the frequency of compounds successfully passing the candidate selection milestone will be irregular, with projects entering preclinical development in clusters marked by periods of low apparent productivity [11].

The drug discovery pipeline can be categorized into primary transition points including exploratory/screening, hit-to-lead, and lead optimization stages, with go/no-go decisions at each milestone [11]. Virtual projects progress through this pipeline by successfully passing these decision points based on random number assignments compared against user-specified probability of success thresholds. Staffing levels are dynamically adjusted based on project priority and stage, with the algorithm correcting cycle times according to resource availability [11].

Table 2: Key Parameters for Drug Discovery Monte Carlo Simulations

| Parameter Category | Specific Parameters | Impact on Simulation Output |

|---|---|---|

| Project Parameters | Project type (biology-driven, chemistry-driven, follow-on), cycle time, milestone transition probabilities [11] | Determines the fundamental structure and progression of virtual projects |

| Resource Parameters | Target number of chemists and biologists, FTE efficiency, DMPK support [11] | Affects project velocity and probability of success |

| Portfolio Parameters | Percentage of follow-on projects, chemistry vs. biology driven projects [11] | Influences overall portfolio diversity and resource allocation |

Pharmacokinetic-Pharmacodynamic Modeling

In antibacterial drug development, Monte Carlo simulation is extensively used for pharmacokinetic-pharmacodynamic (PK-PD) target attainment analyses to support dose selection [9]. The process involves generating simulated patient populations and evaluating whether non-clinical PK-PD targets for efficacy are achieved. These analyses are conducted iteratively throughout drug development, using population pharmacokinetic models that are refined as clinical data accumulate [9]. The approach provides the greatest opportunity to de-risk the development of antibacterial agents by optimizing dosing regimens before expensive late-stage clinical trials.

The PK-PD target represents the magnitude of the PK-PD index for an antibacterial agent associated with a given level of bacterial reduction from baseline, typically ranging from net bacterial stasis to a 2-log10 colony forming unit reduction [9]. Monte Carlo simulation allows researchers to account for variability in drug exposure, pathogen susceptibility, and PK-PD target requirements, providing a comprehensive assessment of the likelihood of achieving therapeutic targets across a patient population.

Protocol: PK-PD Target Attainment Analysis Using Monte Carlo Simulation

Application: Supporting antibacterial dose selection based on achievement of pharmacokinetic-pharmacodynamic targets.

Materials and Reagents:

- Population pharmacokinetic model

- Non-clinical PK-PD targets for efficacy

- Pathogen minimum inhibitory concentration (MIC) distributions

- Monte Carlo simulation software

- Statistical analysis tools

Procedure:

- Pharmacokinetic Model Development: Develop a population PK model using available clinical data, identifying covariates that explain interindividual variability [9].

- PK-PD Target Identification: Determine appropriate PK-PD targets (e.g., AUC/MIC, T>MIC) from non-clinical infection models [9].

- Patient Population Simulation: Generate a virtual patient population (typically 10,000 patients) using the population PK model and relevant demographic and physiologic characteristics.

- Drug Exposure Simulation: Simulate drug exposure (e.g., AUC, Cmax) for each virtual patient under proposed dosing regimens.

- Target Attainment Calculation: For each virtual patient, calculate the probability of achieving the PK-PD target across a range of MIC values.

- Cumulative Fraction of Response: Compute the overall probability of target attainment across the MIC distribution of target pathogens.

Troubleshooting Tips:

- Ensure population PK model adequately captures observed variability in drug exposure.

- Validate simulation outputs against observed clinical data when available.

- Consider conducting sensitivity analyses for uncertain parameters.

Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Monte Carlo Simulations

| Reagent/Resource | Function/Application | Implementation Considerations |

|---|---|---|

| Population PK Models | Describe drug disposition and variability in target patient populations [9] | Must be developed using appropriate clinical data and validated against external datasets |

| PK-PD Targets | Define exposure requirements for efficacy based on non-clinical infection models [9] | Should reflect appropriate bacterial reduction endpoints (e.g., stasis, 1-log, 2-log kill) |

| MIC Distributions | Characterize susceptibility of target pathogens to the antibacterial agent [9] | Should be representative of the target patient population and updated regularly |

| Substitution Models | Describe pattern of sequence evolution in phylogenetic analyses [10] | Model selection should be based on statistical criteria and biological plausibility |

| MCMC Algorithms | Sample from posterior distribution in Bayesian phylogenetic inference [10] | Requires careful convergence assessment and may need customization for specific models |

| Hamiltonian MCMC | More efficient sampling for high-dimensional parameter spaces [10] | Particularly useful for complex models with many parameters |

Workflow Visualization

Monte Carlo Analysis Workflow

Drug Discovery Pipeline Simulation

Monte Carlo simulation of sequence evolution is a cornerstone methodology for assessing the performance of phylogenetic inference methods, sequence alignment algorithms, and ancestral reconstruction techniques [12]. These computational approaches enable researchers to mimic the complex processes of molecular evolution under controlled conditions, providing a critical framework for hypothesis testing in evolutionary biology [13]. The growing complexity of evolutionary models, driven by advances in our understanding of molecular evolution, has created a pressing need for more realistic and flexible simulation tools that can incorporate heterogeneous substitution dynamics, varying selective pressures, and complex indel patterns [12]. Phylogenetic informed Monte Carlo simulations are particularly valuable because they explicitly account for the shared evolutionary history of sequences, thereby avoiding the pitfalls of assuming independent data points and enabling more accurate reconstruction of evolutionary processes [14].

The R statistical computing environment has emerged as a leading platform for phylogenetic simulation, benefiting from its extensive ecosystem of packages for statistical analysis and visualization [12]. Within this environment, specialized frameworks like PhyloSim provide researchers with object-oriented tools for simulating sequence evolution under a wide range of realistic scenarios, from basic substitution models to complex multi-process evolutionary dynamics [15]. These tools have become indispensable for benchmarking analytical methods, testing evolutionary hypotheses, and designing computational experiments across biological research, including applications in drug development where understanding molecular evolution can inform target selection and therapeutic design [16].

Comparative Analysis of Simulation Tools

Table 1: Phylogenetic Simulation Software Comparison

| Program | Class | Substitution Models | Indels | Rate Variation | Language/Platform |

|---|---|---|---|---|---|

| PhyloSim | Phylogenetic | Nucleotide, amino acid, codon | Yes | Gamma, invariant sites, user-defined | R [15] |

| INDELible | Phylogenetic | Nucleotide, codon, amino acid | Yes | Gamma, invariant sites | Cross-platform [13] |

| Seq-Gen | Phylogenetic | Nucleotide, codon, amino acid | No | Gamma, invariant sites | Cross-platform [13] |

| DAWG | Phylogenetic | Nucleotide | Yes | Gamma, invariant sites | Cross-platform [13] |

| ALF | Phylogenetic | Nucleotide, codon, amino acid | Yes | Gamma, invariant sites | Cross-platform [13] |

| Evolver | Phylogenetic | Nucleotide, codon, amino acid | No | Gamma, invariant sites | PAML package [13] |

Detailed Framework Specifications

PhyloSim represents an extensible object-oriented framework for Monte Carlo simulation of sequence evolution implemented entirely in R [15]. Its architecture builds upon the ape and R.oo packages and utilizes the Gillespie algorithm to simulate substitutions, insertions, and deletions within a unified computational framework [15] [12]. This approach allows PhyloSim to integrate the actions of multiple concurrent evolutionary processes, sampling the time of occurrence of the next event and then modifying the sequence object according to a randomly selected event, with the rate of occurrence determined by the sum of all possible event rates [12]. The framework uniquely supports arbitrarily complex patterns of among-sites rate variation through user-defined R expressions, enabling the simulation of heterotachy and other cases of non-homogeneous evolution through "node hook" functions that alter site properties at internal nodes [15] [12].

INDELible employs a different approach, using a flexible model of indel formation that allows for power-law distributed fragment lengths, providing an alternative methodology for simulating realistic indel events [13]. Unlike PhyloSim, INDELible is implemented in C++ and operates as a standalone application, potentially offering performance advantages for large-scale simulations while sacrificing the tight integration with R's analytical ecosystem [13]. Seq-Gen, one of the earliest and most widely used simulation tools, focuses primarily on substitution processes with support for rate variation across sites but lacks native indel simulation capabilities, making it suitable for more basic evolutionary scenarios [13].

PhyloSim Architecture and Core Capabilities

Computational Framework and Algorithmic Foundation

PhyloSim employs a discrete-event simulation approach based on the Gillespie algorithm, which provides a mathematically rigorous framework for integrating multiple concurrent evolutionary processes [12]. This algorithm operates by repeatedly sampling the exponential distribution for the time to the next event, then selecting which event occurs with probability proportional to its rate [12]. The key innovation in PhyloSim's implementation is its treatment of sequence evolution as a collection of competing processes, including substitutions, insertions, and deletions, each with their own rate parameters and dependencies. The Gillespie algorithm effectively handles this complexity by calculating a total rate parameter λ as the sum of all individual event rates, then sampling the time to the next event from an exponential distribution with parameter λ, and finally selecting a specific event with probability proportional to its contribution to λ [12].

This computational foundation enables PhyloSim to simulate evolution under remarkably complex scenarios, including multiple indel processes with length distributions sampled from arbitrary discrete distributions or R expressions, and site-specific selective constraints on indel acceptance [15] [12]. The framework implements explicit versions of the most popular substitution models for nucleotides, amino acids, and codons, and supports simulation under gamma-distributed rate variation (+G) and invariant sites plus gamma models (+I+G) [15]. Unlike earlier simulation tools that assumed uniform evolutionary dynamics across sequences, PhyloSim allows for different sets of processes and parameters at every site, effectively supporting an arbitrary number of partitions in simulated data without creating unrealistic "edge effects" at partition boundaries [12].

Advanced Features for Realistic Simulation

Table 2: PhyloSim Feature Set for Complex Evolutionary Scenarios

| Feature Category | Specific Capabilities | Research Applications |

|---|---|---|

| Substitution Processes | Arbitrary rate matrices; Combined processes per site; Popular nucleotide, amino acid, codon models | Model selection studies; Method benchmarking [15] |

| Rate Variation | Gamma (+G); Invariant sites (+I); Site-specific rates via R expressions | Among-site rate heterogeneity; Functional constraint modeling [15] [12] |

| Indel Processes | Multiple independent processes; Arbitrary length distributions; Field indel models with tolerance parameters | Alignment algorithm testing; Structural constraint simulation [12] |

| Evolutionary Constraints | Site-process specific parameters; "Node hook" functions at internal nodes | Heterotachy; Non-homogeneous evolution [15] [12] |

| Output and Analysis | Branch statistics export; Event counting; Newick tree format | Comparative method validation; Evolutionary hypothesis testing [15] |

A particularly innovative aspect of PhyloSim is its implementation of "field indel models" that allow for fine-grained control of selective constraints on insertion and deletion events [12]. In this framework, each site in a sequence has an associated tolerance parameter dᵢ ∈ [0,1] representing the probability that it will be deleted if proposed in a deletion event [12]. For multi-site deletions spanning a set of sites ℐ, the deletion is accepted only if every site accepts it, with total probability Πᵢ∈ℐdᵢ [12]. This approach naturally incorporates functionally important "undeletable" sites and regions, as well as deletion hotspots, without requiring arbitrary partition boundaries that create edge effects. For computational efficiency, PhyloSim implements a "fast field deletion model" that rescales the process when deletions are strongly selected against, preventing the waste of computational resources on rejected events [12].

Experimental Protocols and Implementation

Basic Installation and Setup Protocol

Protocol 1: PhyloSim Installation and Basic Configuration

Installation in R Environment

- Launch R or RStudio and install the devtools package:

install.packages("devtools") - Load the devtools library:

library(devtools) - Install PhyloSim directly from GitHub:

install_github("botond-sipos/phylosim", build_manual=TRUE, build_vignettes=FALSE)[15] - Load PhyloSim into the current R session:

library(phylosim)

- Launch R or RStudio and install the devtools package:

Verification and Documentation Access

- Verify successful installation by checking the package version and available functions

- Access the comprehensive tutorial available in the package vignette [15]

- Review manual pages for specific classes and methods, particularly the PhyloSim class reference

Dependency Management

- Ensure required packages (ape, R.oo, compoisson, ggplot2) are properly installed [12]

- Verify compatibility with your R version and operating system

- Test basic functionality with example simulations from the documentation

This installation protocol establishes the foundation for all subsequent phylogenetic simulations. The devtools-based installation ensures access to the most recent version of PhyloSim, including any bug fixes or feature enhancements not yet available through standard CRAN distribution channels [15]. The dependency on ape provides compatibility with standard phylogenetic tree formats and manipulation functions, while R.oo enables the object-oriented programming paradigm that underlies PhyloSim's extensible architecture [12].

Core Simulation Workflow

Figure 1: PhyloSim Core Simulation Workflow

Protocol 2: Basic Nucleotide Sequence Simulation

Tree and Sequence Initialization

- Load or generate a phylogenetic tree using ape package functions

- Create a nucleotide sequence object with specified length:

seq <- NucleotideSequence(length=1000) - Attach the phylogenetic tree to the sequence object

Process Configuration

- Create a substitution process:

subst.process <- GTR(rate.params=list("a"=1, "b"=2, "c"=3, "d"=1, "e"=2, "f"=1), base.freqs=c(0.25,0.25,0.25,0.25)) - Attach the process to the sequence:

attachProcess(seq, subst.process) - Set among-sites rate variation:

setRateMultipliers(seq, subst.process, value=rgamma(1000, shape=1))

- Create a substitution process:

Simulation Execution

- Create the PhyloSim object:

sim <- PhyloSim(root.seq=seq, phylo=tree) - Run the simulation:

Simulate(sim) - Extract the resulting alignment:

alignment <- getAlignment(sim)

- Create the PhyloSim object:

Output and Validation

- Export branch statistics if needed:

exportStatTree(sim) - Validate simulation parameters against expected outcomes

- Save results in appropriate format for downstream analysis

- Export branch statistics if needed:

This protocol illustrates a basic nucleotide simulation that can be extended with additional complexity as needed. The GTR substitution model with gamma-distributed rate variation represents a common scenario in evolutionary analysis, providing a balance between biological realism and computational tractability [15]. The site-specific rate multipliers enable heterogeneous substitution rates across the sequence, reflecting realistic variation in evolutionary constraints due to functional importance or structural features [12].

Advanced Protocol: Complex Multi-Process Simulation

Protocol 3: Protein-Coding Sequence with Indels and Selective Constraints

Codon Model Setup

- Create a codon substitution process using the GY94 model:

codon.process <- GY94(kappa=2, omega=0.3) - Set equilibrium codon frequencies based on empirical data or calculated values

- Attach the process to a protein-coding sequence object

- Create a codon substitution process using the GY94 model:

Indel Process Configuration

- Create an insertion process:

ins.process <- DiscreteInsertor(rate=0.01, sizes=c(1,2,3,4,5,6), probs=c(0.4,0.2,0.1,0.1,0.1,0.1)) - Create a deletion process:

del.process <- DiscreteDeletor(rate=0.01, sizes=c(1,2,3,4,5,6), probs=c(0.4,0.2,0.1,0.1,0.1,0.1)) - Set site-specific tolerance parameters for the deletion process based on functional constraints

- Create an insertion process:

Multi-Process Integration

- Attach all processes to the sequence with appropriate relative rates

- Configure process interactions and dependencies if needed

- Set site-specific parameters to model variable functional constraints

Simulation and Analysis

- Execute the simulation with multiple processes:

Simulate(sim) - Extract and analyze the resulting coding sequence alignment

- Calculate summary statistics (dN/dS, indel distribution, etc.)

- Execute the simulation with multiple processes:

This advanced protocol demonstrates PhyloSim's capacity for complex multi-process simulation, integrating codon-based substitution models with realistic indel processes under selective constraints [15] [12]. The GY94 codon model with specified kappa (transition-transversion ratio) and omega (dN/dS ratio) parameters allows for the simulation of protein-coding sequences under specific selective regimes, while the discrete insertion and deletion processes with empirically-informed length distributions generate realistic indel patterns [12]. The site-specific tolerance parameters enable the modeling of variable functional constraints across the sequence, creating a more biologically realistic simulation of molecular evolution.

Essential Research Reagent Solutions

Table 3: Computational Research Reagents for Phylogenetic Simulation

| Reagent Category | Specific Tools/Functions | Purpose and Application |

|---|---|---|

| Substitution Models | GTR, HKY, JC69, GY94, WAG, JTT | Model nucleotide, amino acid, or codon evolution under specified parameters [15] [13] |

| Rate Variation Models | GammaDistribution, InvariantSite | Incorporate among-sites rate heterogeneity; model invariable sites [15] |

| Indel Processes | DiscreteInsertor, DiscreteDeletor | Simulate insertion and deletion events with specified length distributions [12] |

| Tree Handling | ape package (read.tree, rtree) | Import, generate, and manipulate phylogenetic trees for simulation [12] |

| Sequence Objects | NucleotideSequence, AminoAcidSequence, CodonSequence | Container for sequence data and associated evolutionary processes [15] |

| Analysis & Visualization | getAlignment, exportStatTree, ggplot2 | Extract, analyze, and visualize simulation results [15] [12] |

These computational research reagents represent the fundamental building blocks for constructing phylogenetic simulations in PhyloSim. The substitution models encompass the most commonly used in evolutionary biology, from simple single-parameter models like JC69 to complex empirical models like WAG and JTT for amino acid sequences [15] [13]. The rate variation models enable researchers to incorporate biologically realistic heterogeneity in evolutionary rates across sites, while the indel processes provide flexible frameworks for simulating insertion and deletion events with empirically-informed length distributions [12]. The tree handling functions from the ape package ensure compatibility with standard phylogenetic file formats and enable the use of empirical trees or simulated trees as the foundation for sequence evolution [12].

Applications in Evolutionary Research and Drug Development

Methodological Validation and Benchmarking

Phylogenetic Monte Carlo simulations serve as the gold standard for validating new analytical methods in evolutionary biology and comparative genomics [12]. By generating synthetic datasets with known evolutionary parameters, researchers can rigorously assess the performance, accuracy, and limitations of phylogenetic inference methods, sequence alignment algorithms, and ancestral reconstruction techniques [12] [13]. This approach was prominently employed in the validation of PhyloSim itself, where the framework was used to simulate evolution of nucleotide, amino acid, and codon sequences of increasing length, followed by estimation of model parameters and branch lengths from the resulting alignments using the PAML package to verify accuracy [12]. Similarly, a 2025 study leveraged simulated data to demonstrate that phylogenetically informed predictions outperformed predictive equations from ordinary least squares and phylogenetic generalized least squares regression, showing two- to three-fold improvement in performance [14].

The benchmarking applications extend to protein structure and function studies, where simulations incorporating structural constraints can identify critical residues and permissible sequence spaces. As demonstrated in a 2020 study, embedding point-to-point control on the preservation of local structure during sequence evolution provides information about positions not to substitute and about substitutions not to perform at a given position to maintain structural integrity [16]. This approach intrinsically contains information about site-specific rate heterogeneity of substitution and can reproduce sequence diversity observed in natural sequences, making it valuable for protein engineering applications where maintaining structural integrity while enhancing specific properties is paramount [16].

Biomedical and Drug Development Applications

Figure 2: Drug Development Application Workflows

In pharmaceutical research and drug development, phylogenetic simulation approaches have enabled significant advances in vaccine design and therapeutic protein optimization. A prominent example includes the design of a pan-betacoronavirus vaccine candidate through a phylogenetically informed approach, where simulation methodologies helped identify conserved regions across viral lineages that could serve as broad-spectrum vaccine targets [17]. Similarly, PhyloSim has been applied to the problem of weighting genetic sequences in phylogenetic analyses, improving the accuracy of evolutionary reconstructions that inform target selection in antimicrobial and antiviral development [17].