Phylogenetic Generalized Least Squares (PGLS): A Comprehensive Guide for Biomedical and Evolutionary Research

This article provides a comprehensive introduction to Phylogenetic Generalized Least Squares (PGLS), a fundamental phylogenetic comparative method for analyzing trait correlations across species.

Phylogenetic Generalized Least Squares (PGLS): A Comprehensive Guide for Biomedical and Evolutionary Research

Abstract

This article provides a comprehensive introduction to Phylogenetic Generalized Least Squares (PGLS), a fundamental phylogenetic comparative method for analyzing trait correlations across species. Tailored for researchers, scientists, and drug development professionals, it covers the foundational theory behind PGLS, explaining why it is essential for correcting phylogenetic non-independence in cross-species data. The scope extends to practical methodological implementation, including code snippets, advanced troubleshooting for model misspecification, and a comparative validation of PGLS against other statistical approaches. By synthesizing conceptual knowledge with applied techniques, this guide empowers practitioners to robustly test evolutionary and biomedical hypotheses.

Why Phylogeny Matters: The Foundations of PGLS and Non-Independent Data

The Problem of Phylogenetic Non-Independence in Comparative Data

Phylogenetic non-independence refers to the statistical challenge arising from the shared evolutionary history of species. Due to common ancestry, trait data from closely related species tend to be more similar than those from distantly related species, violating the fundamental assumption of independence in standard statistical tests [1] [2]. This phenomenon, if unaccounted for, can lead to inflated Type I error rates (false positives) in hypothesis testing and reduced precision in parameter estimation [3]. For researchers in comparative biology, ecology, and evolutionary medicine, recognizing and controlling for phylogenetic non-independence is crucial for drawing valid biological inferences about trait correlations, adaptive evolution, and the evolutionary drivers of disease [1] [2].

The need for phylogenetic control exists across taxonomic scales. At the interspecific level, the problem is predominantly driven by shared common ancestry without subsequent gene flow [1]. However, analyses across populations within a single species face the additional complexity of gene flow between populations, which further contributes to the non-independence of data points [1]. The statistical framework of Phylogenetic Generalized Least Squares (PGLS) has emerged as a powerful and flexible solution for addressing these challenges, allowing researchers to test hypotheses about correlated trait evolution while explicitly accounting for the phylogenetic structure in the data [4].

The Statistical Consequences of Ignoring Phylogenetic Non-Independence

Failure to account for phylogenetic non-independence in comparative analyses has serious statistical consequences. When data points are non-independent, the effective sample size is smaller than the number of species measured, leading to underestimated standard errors and an increased likelihood of detecting spurious correlations [3].

- Inflated Type I Error Rates: When traits are uncorrelated, standard Ordinary Least Squares (OLS) regression applied to phylogenetically structured data can incorrectly reject the null hypothesis (that the traits are unrelated) at unacceptably high rates. Simulations have demonstrated that standard phylogenetic regression can have unacceptable type I error rates under heterogeneous models of evolution [3].

- Reduced Precision and Bias: When traits are truly correlated, OLS regression produces biased parameter estimates with reduced precision. The phylogenetic signal in the data effectively provides less unique information than an equivalent number of independent data points, compromising the accuracy of the estimated relationship between traits [3].

The following table summarizes the key differences between analyses that ignore versus account for phylogenetic non-independence.

Table 1: Consequences of Ignoring Phylogenetic Non-Independence in Comparative Analyses

| Aspect | Ordinary Least Squares (OLS) Regression | Phylogenetic Generalized Least Squares (PGLS) |

|---|---|---|

| Core Assumption | Data points are independent | Data points are correlated according to a specified evolutionary model |

| Type I Error Rate | Inflated | Appropriately controlled (e.g., ~5% at α=0.05) |

| Parameter Estimates | Potentially biased and less precise | Corrected for phylogenetic structure |

| Biological Interpretation | Reflects patterns in raw data, confounded by shared ancestry | Reflects evolutionary correlations, independent of shared ancestry |

Phylogenetic Generalized Least Squares (PGLS): A Conceptual Framework

Core Principles and Mathematical Foundation

PGLS is a model-based approach that incorporates the evolutionary relationships among species directly into the linear regression framework. It modifies the generalised least squares algorithm by using the phylogenetic covariance matrix to weight the analysis, thereby accounting for the expected non-independence of species data [4]. The phylogenetic regression is expressed as:

Y = a + βX + ε

Here, the residual error (ε) is not independently and identically distributed as in OLS, but follows a multivariate normal distribution with a variance-covariance structure proportional to the phylogenetic tree [3]. This structure is defined as σ²C, where σ² represents the residual variance and C is an n × n matrix (for n species) describing the evolutionary relationships [3]. The matrix C encapsulates the shared branch lengths between species, with diagonal elements being the total branch length from root to tip, and off-diagonal elements representing the shared evolutionary path for each species pair [3] [4].

PGLS can be conceptualized as a two-step process: first, it transforms the data and the regression model to remove the phylogenetic correlations; second, it performs a standard regression on these transformed, phylogenetically independent data [4]. This process ensures that the statistical inference is based on independent evolutionary events.

Relationship to Other Comparative Methods

PGLS is a highly general framework that encompasses several other phylogenetic comparative methods. Notably, Phylogenetically Independent Contrasts (PIC), one of the earliest and most widely used methods, is mathematically equivalent to a PGLS model under a Brownian motion model of evolution [1] [4]. The PIC method calculates differences in trait values (contrasts) at each node in a bifurcating phylogeny, effectively breaking down the data into a set of evolutionarily independent comparisons [1]. The PGLS framework, however, offers greater flexibility by allowing the incorporation of more complex and realistic models of trait evolution beyond the simple Brownian motion assumption [3] [4].

Evolutionary Models Underlying PGLS

The accuracy of a PGLS analysis hinges on selecting an appropriate model for how traits evolve along the branches of the phylogeny. The phylogenetic covariance matrix (C) is derived from a specified model of trait evolution.

Common Models of Trait Evolution

Table 2: Common Models of Trait Evolution Used in Phylogenetic Comparative Methods

| Model | Description | Biological Interpretation | Key Parameters |

|---|---|---|---|

| Brownian Motion (BM) | Traits evolve as a random walk through trait space. Variance between species is proportional to their time of independent evolution [5]. | Neutral drift; evolution toward randomly fluctuating selective optima [5]. | σ² (rate of evolution) |

| Ornstein-Uhlenbeck (OU) | A random walk with a centralizing pull. Models stabilizing selection around a trait optimum or adaptive peak [3] [5]. | Stabilizing selection; evolutionary constraints; niche conservatism [2] [5]. | σ² (rate); α (strength of selection); θ (optimum) |

| Pagel's Lambda (λ) | A branch length transformation model that scales the phylogenetic covariance between 0 (star phylogeny) and 1 (Brownian motion) [5]. | Measures the phylogenetic signal, the extent to which closely related species resemble each other [5] [4]. | λ (multiplier of internal branches) |

| Pagel's Delta (δ) | Transforms branch lengths to model accelerating (δ >1) or decelerating (δ <1) rates of evolution through time [5]. | Early burst of trait evolution during adaptive radiation; increasing rates of evolution over time. | δ (power transformation of branch lengths) |

| Pagel's Kappa (κ) | Raises all branch lengths to a power, approximating a punctuational model of evolution when κ=0 [5]. | Trait change associated with speciation events. | κ (power transformation of branch lengths) |

Model Selection and the Challenge of Heterogeneity

A critical advancement in the field is the recognition that evolutionary models are often heterogeneous across a phylogeny. The assumption of a single, constant tempo and mode of evolution (e.g., one global rate σ²) is unlikely to be realistic, particularly in large trees [3]. Ignoring this rate heterogeneity can lead to poorly fitting models and, as with ignoring phylogeny altogether, inflated Type I errors [3].

Modern implementations of PGLS can accommodate this complexity by allowing different parts of the tree to evolve under different models or parameters. For instance, heterogeneous Brownian Motion models allow σ² to vary across clades [3]. Similarly, multi-optima OU models can be fitted, allowing different selective regimes to operate in different lineages [3]. The choice of model can be informed by maximum likelihood or Bayesian information criterion (BIC), allowing researchers to select the model that best fits their data without overfitting [5].

A Practical Workflow for PGLS Analysis

Experimental and Analytical Protocol

Implementing a robust PGLS analysis involves a series of structured steps, from data collection to the interpretation of results.

- Phylogeny Acquisition and Preparation: Obtain a time-calibrated phylogeny for your study species. This may involve building a molecular phylogeny from sequence data (using software like BEAST, RAxML, or MrBayes) and converting it into a chronogram with branch lengths proportional to time [5].

- Trait Data Compilation: Assemble trait data for the species at the tips of the phylogeny. Mismatches between trait data and phylogeny must be resolved.

- Exploratory Data Analysis: Visually inspect the data and phylogeny. Plot traits onto the phylogeny to gain an initial impression of their distribution.

- Model Selection:

- Estimate the phylogenetic signal in your traits (e.g., using Blomberg's K or Pagel's λ) [5].

- Fit a set of candidate evolutionary models (e.g., BM, OU, EB) to each trait.

- Use model selection criteria (e.g., AICc, BIC) to identify the best-supported model for constructing the variance-covariance matrix.

- PGLS Implementation: Run the PGLS regression using the selected evolutionary model. This can be done in R using packages such as

nlme,caper, orphylolm. - Model Diagnostics: Check the assumptions of the PGLS model. This includes ensuring there is no heteroscedasticity or remaining phylogenetic structure in the residuals [2].

- Interpretation and Reporting: Interpret the slope (β) and its confidence interval from the PGLS output, not the OLS output. The R² value from a PGLS model has a different interpretation than in OLS and should be reported with caution.

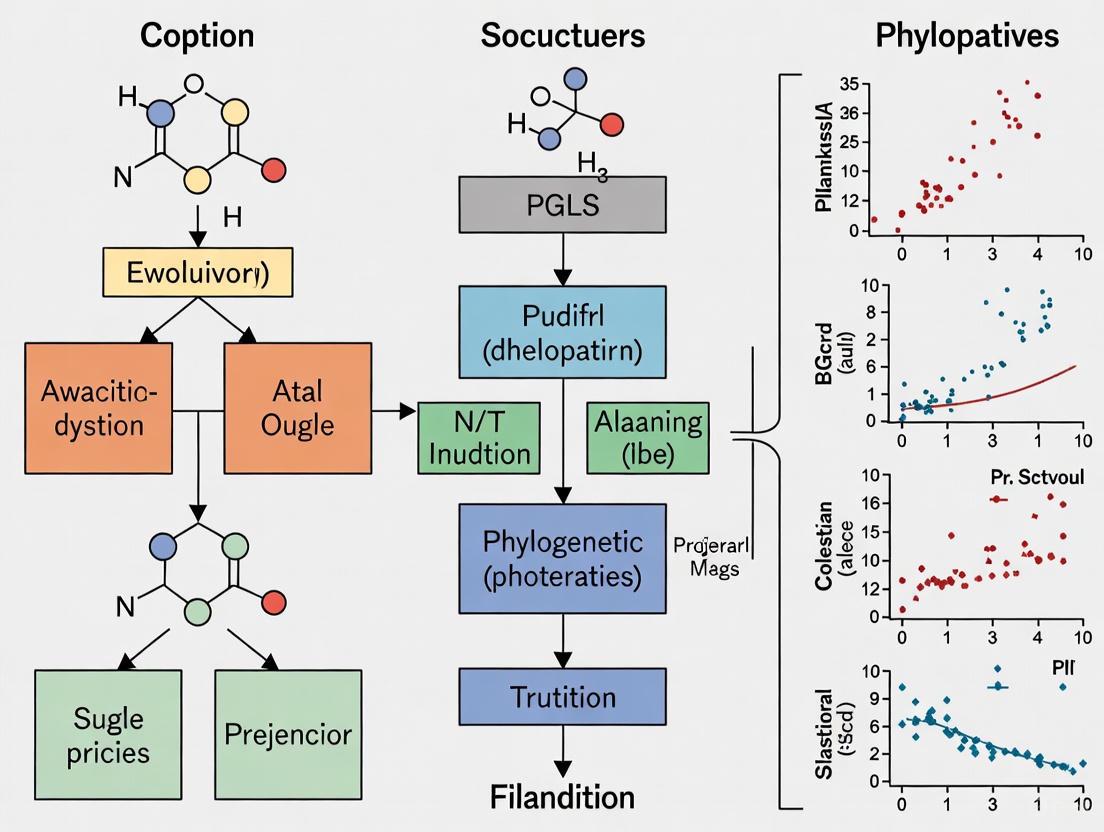

The following diagram illustrates the logical workflow for a PGLS analysis.

Table 3: Key Software and Analytical Resources for PGLS Research

| Resource Name | Type | Primary Function | Relevance to PGLS |

|---|---|---|---|

| R Statistical Environment | Software Platform | General statistical computing and graphics. | The primary environment for implementing phylogenetic comparative analyses. |

ape, geiger, phylolm |

R Packages | Phylogenetic analysis, simulation, and model fitting. | Core utilities for reading, manipulating, and visualizing phylogenies; fitting evolutionary models. |

caper, nlme |

R Packages | Comparative analyses and mixed models. | Direct implementation of PGLS regression. caper is designed for comparative methods, while nlme provides a more general GLS framework. |

| BEAST / RAxML | Standalone Software | Bayesian / Maximum Likelihood phylogenetic inference. | Used to generate the phylogenetic hypothesis (tree) required for the PGLS analysis. |

| Blomberg's K / Pagel's λ | Statistical Metric | Quantification of phylogenetic signal in trait data. | Diagnostic tools to assess the degree of phylogenetic non-independence before and after analysis. |

Current Challenges and Future Directions

Despite its power, the application of PGLS is not without challenges. A significant "dark side" of phylogenetic comparative methods lies in the communication gap between method developers and end-users, leading to misunderstandings and misapplications [2]. Common pitfalls include:

- Inadequate Model Diagnostics: Many studies fail to check whether the evolutionary model used is appropriate for their data, or whether the model residuals are free of phylogenetic structure [2].

- Over-interpretation of Models: For example, fit to an OU model is often automatically interpreted as evidence of stabilizing selection, while it can also be caused by other processes like data error [2].

- Ignoring Phylogenetic Uncertainty: Most analyses treat the input phylogeny as fixed and known, disregarding the uncertainty inherent in phylogenetic reconstruction [4].

Future developments are focused on increasing model complexity and robustness. Key directions include the wider adoption of heterogeneous models that allow different parts of the tree to evolve at different rates or under different selective regimes [3]. Furthermore, there is a growing emphasis on integrating comparative methods with other data types, such as genomic information, and on developing methods that better account for phylogenetic uncertainty in the estimation of trait correlations [3] [4].

Phylogenetic non-independence is a fundamental problem that complicates statistical inference in comparative biology. Phylogenetic Generalized Least Squares provides a powerful and flexible framework for addressing this problem, allowing researchers to disentangle the patterns of correlated evolution from the confounding effects of shared ancestry. Its success, however, depends on the careful application of biological and statistical judgment—from the acquisition of a robust phylogeny to the selection of an appropriate evolutionary model and rigorous diagnostic checking. As the field moves toward more complex and heterogeneous models, PGLS will continue to be an indispensable tool for uncovering the evolutionary processes that have shaped the diversity of life.

Ordinary Least Squares (OLS) is a foundational method for estimating unknown parameters in a linear regression model. The core objective of OLS is to find the line (or hyperplane) that minimizes the sum of the squared differences between the observed values and the values predicted by the model. For a model with dependent variable y and independent variables X, the OLS estimation is represented as y = Xβ + ε, where β represents the parameters to be estimated, and ε represents the error term. The OLS method computes parameter estimates by solving the optimization problem β̂ = argminb(y - Xb)T(y - Xb), which yields the well-known closed-form solution β̂ = (XTX)-1XTy [6]. This method assigns equal weight or importance to each observation when minimizing the residual sum of squares [7].

The OLS estimator is considered the Best Linear Unbiased Estimator (BLUE) under the Gauss-Markov theorem, but only when specific key assumptions are met. These critical assumptions include that the errors have a constant variance (homoscedasticity), are uncorrelated with each other, and have a mean of zero. When these conditions hold, OLS provides unbiased, consistent, and efficient parameter estimates. However, in practical research settings, particularly with real-world data in fields like biology, pharmacology, and phylogenetics, these assumptions are frequently violated. The presence of heteroscedasticity (non-constant variance) or autocorrelation (correlation between error terms) invalidates the optimality of OLS, leading to inefficient estimates and biased standard errors, which can result in incorrect statistical inferences [8] [7] [6].

The Need for Generalized Least Squares (GLS)

Generalized Least Squares (GLS) extends the OLS framework to handle situations where the basic assumptions of constant variance and uncorrelated errors are violated. In statistical terms, GLS is designed for scenarios where the covariance matrix of the error terms is not a scalar identity matrix (σ²I), but rather a general positive-definite matrix Ω. The model is thus expressed as y = Xβ + ε, with E[ε|X] = 0 and Cov[ε|X] = Ω [6]. The fundamental difference lies in how each observation is weighted during the estimation process. While OLS treats all observations equally, GLS explicitly weights them, typically giving less weight to observations with higher variance or those that are highly correlated with others [7].

The core principle of GLS involves transforming the original model to create a new system that satisfies the standard OLS assumptions. This is achieved by finding a transformation matrix C, where Ω = CCT. Applying the inverse of this transformation (C-1) to the original model yields y* = Xβ + ε, where y* = C-1y, X* = C-1X, and ε* = C-1ε. The variance of the transformed errors becomes Var[ε*|X] = C-1Ω(C-1)T = I, which satisfies the homoscedasticity and no-autocorrelation assumptions required for OLS [6]. The GLS estimator is then obtained by applying OLS to this transformed system, resulting in the formula β̂ = (XTΩ-1X)-1XTΩ-1y.

Key Properties and Special Cases of GLS

The GLS estimator retains several desirable statistical properties. It is unbiased, consistent, efficient, and asymptotically normal, with E[β̂|X] = β and Cov[β̂|X] = (XTΩ-1X)-1 [6]. When the error structure Ω is correctly specified, GLS provides more efficient estimates (smaller variance) than OLS, making it the BLUE in these circumstances. A particularly important special case of GLS is Weighted Least Squares (WLS), which applies when all off-diagonal entries of Ω are zero (no correlation between errors), but the variances are unequal (heteroscedasticity). In WLS, the weight for each unit is proportional to the reciprocal of the variance of its response value [6]. Another variant is Feasible Generalized Least Squares (FGLS), used when the covariance matrix Ω is unknown and must be estimated from the data, though this approach offers fewer guarantees of improvement [6].

Table 1: Key Differences Between OLS and GLS

| Feature | OLS Method | GLS Method |

|---|---|---|

| Assumptions | Assumes homoscedastic, uncorrelated errors | Can handle heteroscedasticity, autocorrelation |

| Method | Minimizes the unweighted sum of squared residuals | Minimizes the weighted sum of squared residuals |

| Efficiency | Can be inefficient when assumptions are violated | More efficient estimates (if assumptions are met) |

| Implementation | Simpler to implement | More complex, requires error covariance matrix estimation |

| Variance Formula | σ²(XTX)-1 | (XTΩ-1X)-1 |

Phylogenetic Generalized Least Squares (PGLS)

Phylogenetic Generalized Least Squares (PGLS) represents a specialized application of GLS methodology in evolutionary biology, ecology, and comparative phylogenetics. PGLS explicitly incorporates the phylogenetic relationships among species into regression analysis, acknowledging that closely related species may share similar traits due to common ancestry rather than independent evolution. This phylogenetic non-independence violates the standard independence assumption of OLS and, if unaccounted for, can lead to inflated Type I error rates and incorrect conclusions about evolutionary relationships [9]. The PGLS framework models the expected covariance among species under a specific evolutionary model, typically using the phylogenetic tree as the basis for constructing the covariance matrix Ω.

In PGLS, the covariance structure is often defined by an evolutionary model such as Brownian motion, where the covariance between two species is proportional to their shared evolutionary history[branch length on the phylogenetic tree [9]. The Brownian motion model implies that the variance between species increases linearly with time since divergence, and the covariance between two species is proportional to the length of their shared evolutionary path on the phylogenetic tree. More sophisticated models like Ornstein-Uhlenbeck (OU) can also be implemented in PGLS to model stabilizing selection or other evolutionary processes [9]. The PGLS model can be represented as y = Xβ + ε, where ε ~ N(0, σ²Σ), and Σ is a covariance matrix derived from the phylogenetic tree that captures the expected similarity between species due to shared ancestry.

PGLS Implementation and Workflow

The implementation of PGLS begins with reading in the trait data and phylogenetic tree, followed by checking that species names match between the datasets. Researchers can then compute phylogenetic independent contrasts (PIC) as a special case of PGLS or fit full PGLS models using generalized least squares functions with a phylogenetic correlation structure [9]. The core functionality is typically implemented in R using packages such as ape, geiger, nlme, and phytools, with the gls() function from the nlme package being particularly central to the analysis [9]. The basic syntax for a PGLS model in R is: pglsModel <- gls(y ~ x, correlation = corBrownian(phy = tree), data = data, method = "ML"), where the correlation structure specifies the evolutionary model (e.g., Brownian motion, Pagel's λ, or OU).

Table 2: Key Research Reagent Solutions for PGLS Analysis

| Research Tool | Function/Role in PGLS | Implementation Example |

|---|---|---|

| Phylogenetic Tree | Represents evolutionary relationships and defines covariance structure | anoleTree <- read.tree("anolis.phy") [9] |

| Trait Data | Contains measured characteristics for analysis | anoleData <- read.csv("anolisDataAppended.csv", row.names=1) [9] |

| Correlation Structure | Models evolutionary process (BM, OU, etc.) | correlation = corBrownian(phy=anoleTree) [9] |

| Model Fitting Function | Implements the GLS estimation with specified covariance | gls() function from nlme package [9] |

| Model Comparison | Evaluates fit of different evolutionary models | AIC, log-likelihood values [9] |

PGLS offers significant flexibility compared to simple PIC approaches, as it can accommodate multiple predictors, discrete predictor variables (e.g., ecological ecomorph categories), and interaction terms [9]. For example, researchers can fit models with both continuous and categorical predictors: pglsModel3 <- gls(hostility ~ ecomorph * awesomeness, correlation = corBrownian(phy = anoleTree), data = anoleData, method = "ML") [9]. The ability to compare different evolutionary models (e.g., Brownian motion vs. OU processes) through likelihood ratio tests or information criteria like AIC represents another significant advantage of the PGLS framework, allowing researchers to test specific hypotheses about evolutionary processes while accounting for phylogenetic structure.

Figure 1: PGLS Analysis Workflow

Comparative Experimental Framework: OLS vs. GLS/PGLS

Experimental Protocol for Method Comparison

To empirically demonstrate the differences between OLS and GLS approaches, researchers can implement a comparative analysis framework using real or simulated phylogenetic data. The following protocol outlines a standardized methodology for such comparison:

Data Collection and Preparation: Begin with a phylogenetic tree and corresponding trait dataset. The tree should be ultrametric or nearly so for time-calibrated analyses. Trait data should include continuous variables for both response and predictor variables [9].

Preliminary Diagnostics: Conduct initial OLS regression and visually inspect residuals for patterns suggesting heteroscedasticity or phylogenetic signal. Calculate phylogenetic signal metrics (e.g., Pagel's λ, Blomberg's K) to quantify phylogenetic dependence [9].

Model Implementation:

- OLS: Fit standard linear model using

lm()function:olsModel <- lm(y ~ x, data = data) - PGLS: Fit phylogenetic model using

gls()with appropriate correlation structure:pglsModel <- gls(y ~ x, correlation = corBrownian(phy = tree), data = data, method = "ML")[9] - Alternative Models: Fit additional PGLS models with different evolutionary assumptions (e.g.,

corPagel,corMartinsfor OU processes) [9].

- OLS: Fit standard linear model using

Model Comparison: Evaluate models using AIC, BIC, and log-likelihood values. Conduct likelihood ratio tests to compare nested models. Compare parameter estimates and their standard errors across approaches [9].

Validation and Sensitivity Analysis: Perform cross-validation where appropriate. Conduct simulation studies to assess Type I error rates and statistical power under different evolutionary scenarios.

Comparative Analysis of Results

The comparative analysis typically reveals important differences between OLS and PGLS approaches. In a case study analyzing the relationship between "awesomeness" and "hostility" in Anolis lizards, both OLS and PGLS with Brownian motion showed a significant negative relationship between the traits [9]. However, the PGLS approach provides several advantages: (1) it properly accounts for phylogenetic non-independence, yielding more appropriate standard errors and p-values; (2) it allows explicit modeling of different evolutionary processes; and (3) it enables comparison of alternative evolutionary models through formal statistical tests [9].

When comparing OLS and GLS generally, the GLS approach demonstrates superior performance when the specified covariance structure appropriately reflects the true underlying error structure. The key advantage emerges in the efficiency of the estimates - GLS produces estimates with smaller sampling variance when the covariance structure is correctly specified [8] [6]. However, this advantage comes with the crucial caveat that misspecification of the covariance structure in GLS can lead to worse performance than simple OLS, particularly in smaller samples. This highlights the importance of careful model selection and diagnostic checking in applied work.

Figure 2: OLS vs GLS/PGLS Comparative Outcomes

Advanced GLS Applications in Biological Research

Extensions and Specialized Applications

The GLS framework extends beyond standard phylogenetic comparative methods to several advanced applications in biological research. One significant extension involves models of trait evolution beyond Brownian motion, including Ornstein-Uhlenbeck processes for modeling stabilizing selection, early-burst models for adaptive radiations, and multivariate Brownian motion for correlated trait evolution [9]. Each of these evolutionary models corresponds to a specific covariance structure that can be implemented within the PGLS framework. For instance, the OU process can be specified using corMartins in the gls() function: pglsModelOU <- gls(y ~ x, correlation = corMartins(1, phy = tree), data = data, method = "ML") [9].

Another important extension involves the integration of GLS with mixed-effects models, creating phylogenetic mixed models that can partition variance components while accounting for phylogenetic non-independence. This approach is particularly valuable for quantitative genetic studies and analyses of heritability in an evolutionary context. Furthermore, GLS methods can be adapted for community phylogenetic analyses, spatial phylogenetic ecology, and comparative analyses of rates of phenotypic evolution. The flexibility of the GLS framework also allows for the incorporation of measurement error, missing data, and fossil taxa through appropriate specification of the covariance structure, making it an increasingly powerful tool for addressing complex biological questions.

Connections to Drug Discovery and Therapeutic Development

While the statistical foundation of GLS and PGLS is primarily applied to evolutionary biology, the conceptual framework of accounting for covariance structure has parallels in biomedical research, particularly in drug discovery. Just as PGLS accounts for phylogenetic covariance in comparative analyses, advanced statistical methods in pharmaceutical research must account for complex covariance structures in high-throughput screening data, genomic analyses, and clinical trial data. The fundamental principle remains consistent: proper statistical inference requires appropriate modeling of the underlying covariance structure, whether that structure arises from evolutionary history, experimental design, or biological replication.

In pharmacological contexts, particularly in cancer research targeting metabolic enzymes like glutaminase (GLS1), understanding evolutionary relationships can inform drug development strategies [10] [11] [12]. The GLS1 enzyme, which catalyzes the conversion of glutamine to glutamate, represents a promising therapeutic target in oncology due to its role in cancer cell metabolism [10] [11]. While the application differs from phylogenetic comparative methods, the underlying statistical challenge of modeling complex biological systems with non-independent observations connects these seemingly disparate fields. The rigorous approach to covariance modeling developed in phylogenetic comparative methods can thus provide valuable insights for statistical approaches in drug discovery and development.

The progression from Ordinary Least Squares to Generalized Least Squares represents a fundamental advancement in statistical methodology for handling data with complex covariance structures. Phylogenetic Generalized Least Squares embodies this progression in the context of evolutionary biology, providing a robust framework for testing evolutionary hypotheses while appropriately accounting for phylogenetic non-independence. The key advantage of GLS lies in its ability to incorporate specific covariance structures into regression analysis, yielding more accurate parameter estimates and appropriate inference compared to OLS when the covariance structure is correctly specified.

The experimental protocols and comparative analyses presented in this review demonstrate the practical implementation and tangible benefits of GLS/PGLS over traditional OLS approaches for phylogenetic data. As biological datasets continue to grow in size and complexity, the flexibility of the GLS framework to accommodate diverse evolutionary models, incorporate multiple data types, and address complex biological questions ensures its continued relevance and importance in evolutionary biology, ecology, and beyond. The conceptual connections to statistical challenges in biomedical research further highlight the broad utility of this methodological approach across biological disciplines.

Understanding the Phylogenetic Covariance Matrix

In phylogenetic comparative studies, the phylogenetic covariance matrix (denoted as C) is a fundamental algebraic construct that encodes the evolutionary relationships among species based on their shared history [13]. This matrix serves as the backbone for a suite of statistical methods, most notably Phylogenetic Generalized Least Squares (PGLS), which enables researchers to test evolutionary hypotheses while accounting for the non-independence of species data due to common ancestry [13] [9]. The stability and reliability of these analyses are intrinsically linked to the mathematical properties of the C matrix, particularly its condition number [13]. This guide provides an in-depth technical examination of the phylogenetic covariance matrix, its role in PGLS, and the critical methodological considerations for its application in evolutionary biology and drug discovery research.

Core Concepts and Mathematical Foundation

Algebraic Representation of a Phylogenetic Tree

A phylogenetic tree of n taxa can be algebraically transformed into an n by n squared symmetric phylogenetic covariance matrix C [13]. Each element ( c_{ij} ) in the matrix represents the evolutionary affinity, or shared branch length, between extant species i and extant species j [13]. The diagonal elements represent the total path length from the root to each tip species [13].

For a hypothetical 5-taxon tree, the phylogenetic covariance matrix C might appear as follows [13]:

( C = \begin{bmatrix} 1.00 & 0.76 & 0.00 & 0.00 & 0.00 \ 0.76 & 1.00 & 0.00 & 0.00 & 0.00 \ 0.00 & 0.00 & 1.00 & 0.36 & 0.36 \ 0.00 & 0.00 & 0.36 & 1.00 & 0.70 \ 0.00 & 0.00 & 0.36 & 0.70 & 1.00 \end{bmatrix} )

The Brownian Motion Model

A common statistical model assuming trait evolution along a phylogenetic tree under Brownian Motion (BM) uses this covariance structure [13]. In this model, phenotypic values ( Y = (y1, y2, ..., y_n)^T ) for n species follow a multivariate normal distribution [13]:

( Y \sim N(\theta\mathbf{1}, \sigma^2C) )

where:

- ( \theta ) is the ancestral state at the root of the phylogeny

- ( \mathbf{1} ) is a vector of 1s

- ( \sigma^2 ) is the evolutionary rate

- ( C ) is the phylogenetic covariance matrix [13]

The corresponding likelihood function is given by [13]:

( L(\theta, \sigma^2 | Y, T) = \frac{1}{(2\pi)^{n/2} |C|^{1/2}} \frac{1}{(\sigma^2)^{n/2}} \exp\left(-\frac{1}{2\sigma^2}(Y - \theta\mathbf{1})^T C^{-1} (Y - \theta\mathbf{1})\right) )

Maximum likelihood estimators for parameters ( \theta ) and ( \sigma^2 ) both require computing the inverse of C (( C^{-1} )) [13].

Condition Number and Matrix Stability

Defining the Condition Number

The condition number ( \kappa ) of a phylogenetic covariance matrix C is defined as the ratio of its maximum eigenvalue to its minimum eigenvalue [13]:

( \kappa(C) = \frac{\lambda{\text{max}}(C)}{\lambda{\text{min}}(C)} )

where ( \lambda{\text{max}}(C) = \max{\lambdai}{i=1}^n ) and ( \lambda{\text{min}}(C) = \min{\lambdai}{i=1}^n ), and ( \lambda_i ) are the positive eigenvalues of C [13].

Impact on Statistical Analyses

The condition number is a measure of how stable the matrix is for subsequent operations [13]. Matrices with small condition numbers are more stable, while larger condition numbers indicate less stability [13].

- Stable matrices have less error in downstream algebraic operations, such as matrix inversion, data multiplication, projection, linear model prediction, and data simulation [13].

- Ill-conditioned matrices (( \kappa ) much greater than 1, e.g., ( 10^5 ) for a ( 5 \times 5 ) Hilbert matrix) make these operations unstable and more prone to error propagation [13].

In phylogenetic comparative methods, an ill-conditioned C matrix can lead to unstable calculation of the likelihood and parameter estimates that depend on optimizing the likelihood [13]. The Moore-Penrose inverse, sometimes used to make algebra tractable, may fail to give the exact inverse when C is ill-conditioned [13].

Empirical Patterns in Condition Numbers

Analysis of empirical trees from the TreeBASE database reveals that the condition number ( \kappa ) generally increases with the number of taxa [13]. Simulation studies show that trees generated under different processes exhibit varying condition numbers:

- Coalescent trees tend to have the highest condition numbers [13].

- Birth-death process trees have intermediate condition numbers [13].

- Pure-birth process trees typically show the lowest condition numbers [13].

Table 1: Average Condition Numbers from Simulated Phylogenies (100 runs per category)

| Tree Simulation Model | Number of Taxa | Average ( \log_{10}\kappa ) |

|---|---|---|

| Coalescent | 100 | ~2.5 |

| Birth-Death Process | 100 | ~1.8 |

| Pure-Birth Process | 100 | ~1.5 |

Tree Transformations for Improving Matrix Condition

In 1999, Pagel introduced three statistical models that transform the elements of the phylogenetic variance-covariance matrix, which can also be conceptualized as transformations of the tree's branch lengths [14]. These transformations can help address issues with matrix conditioning and test whether data deviates from a constant-rate Brownian motion process [14].

Decision Workflow for Tree Transformations

Pagel's Lambda (λ)

The λ transformation multiplies all off-diagonal elements in the phylogenetic variance-covariance matrix by λ, which is restricted to values between 0 and 1 [14].

If the original matrix is:

( \mathbf{Co} = \begin{bmatrix} \sigma1^2 & \sigma{12} & \dots & \sigma{1r} \ \sigma{21} & \sigma2^2 & \dots & \sigma{2r} \ \vdots & \vdots & \ddots & \vdots \ \sigma{r1} & \sigma{r2} & \dots & \sigma{r}^2 \end{bmatrix} )

Then the λ-transformed matrix becomes:

( \mathbf{C\lambda} = \begin{bmatrix} \sigma1^2 & \lambda\cdot\sigma{12} & \dots & \lambda\cdot\sigma{1r} \ \lambda\cdot\sigma{21} & \sigma2^2 & \dots & \lambda\cdot\sigma{2r} \ \vdots & \vdots & \ddots & \vdots \ \lambda\cdot\sigma{r1} & \lambda\cdot\sigma{r2} & \dots & \sigma{r}^2 \end{bmatrix} )

In terms of branch length transformations, λ compresses internal branches while leaving the tip branches of the tree unaffected [14]. As λ ranges from 1 (no transformation) to 0, the tree becomes increasingly star-like, with all tip branches equal in length and all internal branches of length 0 [14].

Pagel's Delta (δ) and Kappa (κ)

While the λ transformation is the most commonly used, Pagel also introduced two additional transformations:

- Delta (δ): This transformation extends terminal branches relative to internal branches by raising all branch lengths to the power δ [14]. This can help identify periods of rapid evolution near the tips of the tree.

- Kappa (κ): This transformation raises all branch lengths to the power κ, which affects all branches equally and can be used to test for speciational models of evolution [14].

Table 2: Summary of Pagel's Tree Transformations

| Transformation | Matrix Operation | Branch Length Effect | Biological Interpretation |

|---|---|---|---|

| Lambda (λ) | Multiplies off-diagonals by λ (0 ≤ λ ≤ 1) | Compresses internal branches, leaves tips unchanged | Measures phylogenetic signal; λ=1 implies Brownian motion, λ=0 implies star phylogeny |

| Delta (δ) | Not explicitly defined in matrix terms | Extends terminal branches relative to internal branches | Identifies periods of rapid evolution near tips |

| Kappa (κ) | Not explicitly defined in matrix terms | Raises all branch lengths to power κ | Tests speciational models of evolution (κ=0) |

Implementation in Phylogenetic Generalized Least Squares (PGLS)

Basic PGLS Framework

Phylogenetic Generalized Least Squares (PGLS) incorporates the phylogenetic covariance matrix into linear models to account for non-independence of species data [9]. The fundamental PGLS model can be implemented in R using the gls function from the nlme package with a Brownian motion correlation structure [9]:

This model tests the relationship between two continuous traits while accounting for phylogenetic non-independence [9].

Advanced PGLS Applications

PGLS offers considerable flexibility beyond simple linear regression:

- Discrete Predictors: PGLS can include categorical variables as predictors [9].

- Multiple Predictors: The framework supports multiple regression with several continuous and/or discrete predictors [9].

- Interaction Terms: PGLS can test for interactions between predictors [9].

- Alternative Evolutionary Models: The correlation structure can be modified to represent different evolutionary processes beyond Brownian motion [9].

Correlation Structures Beyond Brownian Motion

While Brownian motion is the default model, PGLS can implement various evolutionary models through different correlation structures:

Covariance Matrix Scaling and Phylogenetic Signal

Covariance Matrix vs. Correlation Matrix

An important consideration in phylogenetic comparative methods is whether to use the covariance matrix or the correlation matrix derived from a phylogenetic tree [15]:

- Covariance Matrix:

ape::vcv(tree, corr = FALSE)- Contains actual shared branch lengths [15]. - Correlation Matrix:

ape::vcv(tree, corr = TRUE)- Standardized version of the covariance matrix [15].

The choice between these matrices can significantly impact estimates of phylogenetic signal [15]. Analyses using the correlation matrix often yield substantially higher phylogenetic signal estimates compared to those using the covariance matrix of an unscaled tree [15].

Tree Scaling Approaches

To address this discrepancy, researchers sometimes scale the tree before generating the covariance matrix [15]:

The effect of these different approaches on phylogenetic signal estimation can be substantial, as shown in the example below comparing phylogenetic signal estimates from the same dataset analyzed with three different matrix representations [15].

Matrix Scaling Impact on Signal

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function/Purpose | Implementation Example |

|---|---|---|

| R Statistical Environment | Primary platform for phylogenetic comparative analysis | Base computational environment |

| ape Package | Core phylogenetic operations; generates covariance matrices | tree_vcv <- ape::vcv(tree, corr = FALSE) |

| nlme Package | Fits PGLS models with various correlation structures | gls(trait1 ~ trait2, correlation = corBrownian(phy = tree), data = data) |

| geiger Package | Data-tree reconciliation and utility functions | name.check(anoleTree, anoleData) |

| phytools Package | Comprehensive toolkit for phylogenetic analysis | Phylogenetic tree plotting and manipulation |

| brms Package | Advanced Bayesian phylogenetic modeling | Bayesian mixed models with phylogenetic random effects |

| Pagel's lambda (λ) | Tests phylogenetic signal in comparative data | correlation = corPagel(1, phy = tree, fixed = FALSE) |

| Ornstein-Uhlenbeck Models | Models constrained evolution with stabilizing selection | correlation = corMartins(1, phy = tree) |

| TreeSim Package | Simulates phylogenetic trees under various models | Generating birth-death trees for simulation studies |

| Datelife Package | Accesses and processes phylogenetic trees from OpenTree | Obtaining empirical trees for analysis |

The phylogenetic covariance matrix C is a fundamental component of modern comparative methods, particularly Phylogenetic Generalized Least Squares. Its algebraic properties, especially the condition number, directly impact the stability and reliability of statistical inferences about evolutionary processes. Understanding how to construct, evaluate, and when necessary transform this matrix using Pagel's λ, δ, and κ transformations is essential for robust phylogenetic comparative analysis. Furthermore, researchers should be mindful of the important distinction between covariance and correlation matrices derived from phylogenetic trees, as this choice can significantly influence estimates of phylogenetic signal. As phylogenetic methods continue to evolve, particularly with the integration of more complex models and Bayesian approaches, proper handling of the phylogenetic covariance matrix remains crucial for valid inference in evolutionary biology and related fields.

Brownian motion serves as a fundamental model for understanding the evolution of continuous traits in phylogenetic comparative biology. This mathematical framework provides a null model for trait evolution that can be tested against empirical data, forming the essential foundation for more advanced analytical techniques, including Phylogenetic Generalized Least Squares (PGLS). The model originated from physics to describe the random motion of particles suspended in a fluid but was adapted to evolutionary biology to characterize how traits change randomly through time across lineages.

In biological terms, Brownian motion captures evolutionary change resulting from the accumulation of many small, random perturbations over time. Imagine a large ball being passed over a crowd in a stadium; the ball's movement is determined by the sum of many small forces from people pushing it in various directions. Similarly, trait evolution under Brownian motion results from numerous small evolutionary pressures whose combined effect appears random over macroevolutionary timescales [16]. This model is particularly valuable because it provides a mathematically tractable framework for making statistical inferences about evolutionary processes from comparative data.

Mathematical and Statistical Properties of Brownian Motion

Formal Definition and Core Properties

Brownian motion models the evolutionary trajectory of continuous trait values through time as a random walk process. This process is completely described by two fundamental parameters: the starting value of the trait at time zero, denoted as $\bar{z}(0)$, and the evolutionary rate parameter, σ², which determines how rapidly traits wander through phenotypic space [16].

The Brownian motion process exhibits three critical statistical properties that make it invaluable for comparative analysis:

- Constant Expected Value: The expected value of the trait at any time t equals its initial value: $E[\bar{z}(t)] = \bar{z}(0)$. This property indicates that Brownian motion has no directional trends and wanders equally in both positive and negative directions [16].

- Independent Increments: Changes over non-overlapping time intervals are statistically independent of one another. Although the trait values themselves are autocorrelated, the successive changes are independent [16].

- Normally Distributed Traits: The trait value at any time t follows a normal distribution with mean equal to the starting value and variance proportional to both the evolutionary rate and time: $\bar{z}(t) \sim N(\bar{z}(0),\sigma^2 t)$ [16].

These properties ensure that Brownian motion provides a mathematically convenient foundation for deriving statistical estimators and hypothesis tests in phylogenetic comparative methods.

Evolutionary Rate Parameter and Trait Variance

The variance of trait values under Brownian motion increases linearly with time, with the evolutionary rate parameter σ² determining the slope of this relationship. The following table summarizes how different evolutionary rates affect the spread of traits over time:

Table 1: Relationship Between Evolutionary Rate Parameters and Trait Variance

| Evolutionary Rate (σ²) | Time Interval (t) | Expected Variance (σ²t) | Biological Interpretation |

|---|---|---|---|

| Low (σ² = 1) | 100 units | 100 | Slow divergence, conserved traits |

| Medium (σ² = 5) | 100 units | 500 | Moderate evolutionary rate |

| High (σ² = 25) | 100 units | 2500 | Rapid evolutionary change, high divergence |

This linear relationship between time and variance has profound implications for phylogenetic comparative studies, as it allows researchers to estimate evolutionary rates from contemporary species data while accounting for shared evolutionary history.

Brownian Motion on Phylogenetic Trees

Extending Brownian Motion to Tree-Like Structures

When modeling trait evolution on phylogenetic trees, Brownian motion proceeds independently along each branch, with changes accumulating from the root to the tips. The covariance between species' traits is proportional to their shared evolutionary history, formally represented by the time since their last common ancestor [17].

For two species i and j sharing evolutionary history for time tᵢⱼ, the expected covariance between their traits is Cov[zᵢ, zⱼ] = σ²tᵢⱼ. This covariance structure forms the basis for the phylogenetic variance-covariance matrix required for PGLS analysis, which explicitly accounts for the non-independence of species data due to shared ancestry.

Phylogenetic Tree and Trait Evolution Visualization

The following Graphviz diagram illustrates how Brownian motion operates along the branches of a phylogenetic tree, showing the accumulation of trait variance from root to tips:

Diagram 1: Brownian Motion on Phylogeny

This visualization shows how trait values evolve from a common ancestral value at the root, with independent Brownian motion processes along each branch. The normal distribution notation (N(0,σ²t)) indicates that evolutionary changes along each branch are drawn from normal distributions with mean zero and variance proportional to branch length.

Experimental Protocols and Methodologies

Simulating Brownian Motion on Phylogenetic Trees

Protocol 1: Basic Brownian Motion Simulation

- Objective: Generate simulated trait data under a Brownian motion model on a known phylogenetic tree.

- Methodology:

- Begin with a rooted phylogenetic tree with branch lengths proportional to time.

- Assign the root node an initial trait value $\bar{z}(0)$.

- For each branch emanating from the root, simulate a trait change by drawing from a normal distribution with mean 0 and variance σ²t, where t is the branch length.

- Calculate the trait value at each descendant node by adding the simulated change to the ancestral value.

- Continue this process recursively from root to tips until all terminal taxa have trait values.

- The resulting tip values represent the trait data one would observe in extant species [17] [16].

Table 2: Brownian Motion Simulation Parameters

| Parameter | Symbol | Description | Typical Values |

|---|---|---|---|

| Root trait value | $\bar{z}(0)$ | Starting value at root node | Often set to 0 for simplicity |

| Evolutionary rate | σ² | Rate of trait evolution per unit time | Estimated from data or varied |

| Branch lengths | t | Time duration for each branch | From dated phylogenies |

| Number of simulations | N | Replicates for assessing variance | 100-10,000 |

Statistical Fitting and Model Comparison

Protocol 2: Fitting Brownian Motion to Empirical Data

- Objective: Estimate Brownian motion parameters from observed trait data and phylogenetic relationships.

- Methodology:

- Obtain a phylogeny with branch lengths for the taxa of interest.

- Collect continuous trait measurements for each tip species.

- Construct the phylogenetic variance-covariance matrix C, where each element Cᵢⱼ represents the shared path length between species i and j.

- Use maximum likelihood or restricted maximum likelihood to estimate the evolutionary rate parameter σ².

- Calculate the log-likelihood of the Brownian motion model given the data.

- Compare this likelihood to alternative models (e.g., Ornstein-Uhlenbeck, Early Burst) using information criteria (AIC, AICc, BIC) [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Brownian Motion Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| R Statistical Software | Platform for phylogenetic comparative analysis | All statistical analyses and simulations |

| ape package (R) | Phylogenetic tree manipulation and basic comparative methods | Reading, manipulating, and visualizing phylogenies |

| geiger package (R) | Model fitting and simulation of evolutionary models | Fitting Brownian motion to empirical data |

| phytools package (R) | Advanced phylogenetic comparative methods | Simulation and visualization of trait evolution |

| Graphviz/DOT language | Visualization of phylogenetic trees and evolutionary processes | Creating diagrams of evolutionary relationships |

Biological Interpretations and Limitations

Connecting Models to Biological Processes

While often described as a "neutral" model, Brownian motion can arise from multiple biological processes, not just genetic drift. The model is sufficiently flexible to approximate various evolutionary scenarios:

- Genetic Drift: For traits with high heritability and no selection, Brownian motion accurately describes evolution under neutral genetic drift [16].

- Randomly Fluctuating Selection: When the direction and strength of selection vary randomly through time, the resulting trait dynamics can closely approximate Brownian motion [17].

- Stabilizing Selection with Moving Optima: When a trait experiences stabilizing selection but the optimal value itself undergoes random changes, the trait evolution follows an Ornstein-Uhlenbeck process, which resembles Brownian motion over short timescales.

Critically, finding that a trait pattern is consistent with Brownian motion does not necessarily indicate an absence of selection. As Harmon (2019) notes, "testing for a Brownian motion model with your data tells you nothing about whether or not the trait is under selection" [17]. The model serves as a statistical null hypothesis rather than a complete biological explanation.

Multivariate Extensions

Brownian motion naturally extends to multiple correlated traits through multivariate Brownian motion. This extension allows researchers to model the co-evolution of trait suites and estimate evolutionary correlation matrices. The covariance between trait k and l evolving under multivariate Brownian motion is described by Cov[zₖ(t), zₗ(t)] = Σₖₗt, where Σ is an m × m evolutionary variance-covariance matrix for m traits [17].

Comparative Framework: Brownian Motion in PGLS Analysis

Role in Phylogenetic Generalized Least Squares

Brownian motion provides the evolutionary model that generates the expected covariance structure in PGLS. The phylogenetic variance-covariance matrix derived from Brownian motion is used to weight regression analyses appropriately, accounting for phylogenetic non-independence. This approach enables researchers to test hypotheses about evolutionary relationships between traits while accounting for shared evolutionary history.

The PGLS model takes the form:

y = Xβ + ε

where ε ~ N(0, σ²C), with C being the phylogenetic covariance matrix derived from Brownian motion expectations. This formulation provides statistically unbiased estimates of regression parameters β even when species data exhibit phylogenetic signal.

Comparison with Alternative Evolutionary Models

Table 4: Comparison of Brownian Motion with Alternative Evolutionary Models

| Model | Key Parameters | Biological Interpretation | When to Use |

|---|---|---|---|

| Brownian Motion | σ² (evolutionary rate) | Random walk; neutral evolution or random selection | Default null model; baseline for comparison |

| Ornstein-Uhlenbeck | σ², α (selection strength), θ (optimum) | Stabilizing selection toward an optimum | When traits are under constraint or adaptation |

| Early Burst | σ², r (rate decay) | Rapid early diversification slowing through time | Adaptive radiation scenarios |

| White Noise | σ² (variance) | No phylogenetic signal; tip independence | Testing for phylogenetic signal in traits |

This comparative framework allows researchers to select the most appropriate evolutionary model for their specific research question and biological system, with Brownian motion serving as the essential starting point for model comparison.

The Interplay between Traits, Phylogeny, and Residual Error

Phylogenetic Generalized Least Squares (PGLS) has emerged as a cornerstone method in evolutionary biology, enabling researchers to test hypotheses about trait correlations while accounting for shared phylogenetic history. This technical guide delves into the core interplay between species traits, phylogeny, and residual error that forms the foundation of PGLS. We demonstrate that standard PGLS implementations assuming homogeneous evolutionary models can produce unacceptable type I error rates when evolutionary processes are heterogeneous across clades [3]. This whitepaper provides a comprehensive framework for implementing PGLS, from core assumptions and mathematical foundations to advanced protocols for handling model uncertainty and heterogeneous evolution, equipping researchers with methodologies to produce more robust evolutionary inferences.

Phylogenetic Generalized Least Squares (PGLS) is a powerful statistical framework that modifies general least squares regression to incorporate phylogenetic relatedness among species [4]. The method addresses a fundamental challenge in comparative biology: the statistical non-independence of species due to their shared evolutionary history. Conventional regression approaches like Ordinary Least Squares (OLS) assume data points are independent, an assumption violated in cross-species data where closely related species often share similar traits through common descent rather than independent evolution [3]. PGLS resolves this by using the expected covariance structure derived from phylogenetic relationships to appropriately weight the regression analysis [4].

The flexibility of PGLS has made it one of the most widely adopted phylogenetic comparative methods [3]. It serves as a generalization of independent contrasts [4] and can incorporate various models of trait evolution, including Brownian Motion (BM), Ornstein-Uhlenbeck (OU), and lambda (λ) models [3]. This methodological flexibility is particularly valuable for contemporary research dealing with large phylogenetic trees and complex trait datasets, where heterogeneous evolutionary processes are increasingly recognized as the norm rather than the exception [3].

Core Concepts and Mathematical Foundations

The PGLS Regression Model

The fundamental PGLS model structure follows the standard regression equation:

Y = a + βX + ε [3]

Where Y is the dependent trait variable, a is the intercept, β is the slope coefficient, X is the independent trait variable, and ε represents the residual error. The critical distinction from ordinary regression lies in the distribution of these residuals. In PGLS, the residual error ε is distributed according to N(0, σ²C), where σ² represents the residual variance and C is an n × n phylogenetic variance-covariance matrix describing evolutionary relationships among species [3]. The diagonal elements of C represent the total branch length from each tip to the root, while off-diagonal elements represent the shared evolutionary path between species pairs [3].

Models of Trait Evolution

PGLS can incorporate different evolutionary models through the structure of the covariance matrix:

Table 1: Evolutionary Models Implementable in PGLS

| Model | Mathematical Formulation | Biological Interpretation | Parameters |

|---|---|---|---|

| Brownian Motion (BM) | dX(t) = σdB(t) [3] | Neutral evolution; traits diverge via random drift [4] | σ²: rate of evolution |

| Ornstein-Uhlenbeck (OU) | dX(t) = α[θ-X(t)]dt + σdB(t) [3] | Stabilizing selection toward an optimum [3] | α: strength of selection; θ: optimum trait value |

| Lambda (λ) | Rescales internal branches by λ [3] | Modifies phylogenetic signal strength [3] | λ: phylogenetic signal (0-1) |

The Interplay of Traits, Phylogeny, and Residual Error

The relationship between traits, phylogeny, and residual error forms the conceptual core of PGLS. Phylogeny enters the model through the expected covariance structure of the residuals, explicitly modeling how trait covariance decreases with increasing evolutionary distance [4]. When two traits evolve under complex models of evolution, standard PGLS assuming a single evolutionary rate may not be appropriate, leading to inflated type I errors or reduced statistical power [3].

The issue is particularly pronounced in large comparative datasets where evolutionary processes are likely heterogeneous [3]. Phylogenetic signal—the extent to which closely related species resemble each other—resides in the residuals of the regression rather than in the traits themselves [4]. This nuanced understanding is crucial for appropriate model specification and interpretation.

Methodological Framework and Workflow

A typical PGLS analysis follows a structured workflow from data preparation through model interpretation, with particular attention to model diagnostics and validation.

Workflow Components

Input Phase: Begin with properly formatted trait data and phylogenetic tree. Critical step: verify that species names match exactly between datasets using specialized functions like

name.check()in R [9].Computational Phase: Select appropriate evolutionary model and calculate phylogenetic variance-covariance matrix. The covariance matrix encodes expected trait similarities based on shared branch lengths.

Validation Phase: Perform model diagnostics including residual analysis and assessment of phylogenetic signal. Iteratively refine model specification if diagnostics indicate inadequacy.

Experimental Protocols and Analytical Procedures

Basic PGLS Implementation Protocol

Objective: Implement a standard PGLS regression to test for correlation between two continuous traits while accounting for phylogenetic non-independence.

Software Requirements: R packages ape, nlme, and geiger [9].

Procedure:

- Import trait data and phylogenetic tree into R environment

- Verify congruence between data and tree using

name.check()function [9] - Specify the PGLS model using

gls()function withcorrelation = corBrownian(phy = tree)argument [9] - Fit the model using maximum likelihood (

method = "ML") or restricted maximum likelihood - Extract and interpret coefficients, standard errors, and p-values from model summary

Code Example:

Advanced Protocol: Handling Heterogeneous Evolution

Objective: Address scenarios where evolutionary rates differ across clades, which can inflate Type I error rates [3].

Procedure:

- Simulate trait evolution under heterogeneous models to assess robustness

- Implement heterogeneous rate models using specialized packages (e.g.,

phylolm) - Transform variance-covariance matrix to account for model heterogeneity

- Compare model fit using information criteria (AIC, BIC)

Interpretation: Studies show that standard PGLS exhibits unacceptable type I error rates under heterogeneous evolution, but appropriate transformation of the covariance matrix can correct this bias even when the evolutionary model isn't known a priori [3].

Bayesian PGLS Extension Protocol

Objective: Incorporate uncertainty in phylogeny, evolutionary regimes, and model parameters using Bayesian methods.

Software Requirements: JAGS software with R packages rjags, ape, and phytools [18].

Procedure:

- Prepare posterior distribution of trees (from Bayesian phylogenetic analysis)

- Specify priors for regression parameters and evolutionary rates

- Run MCMC sampling to obtain posterior distributions

- Assess convergence and effective sample size of parameters

- Calculate posterior means and credible intervals for parameters of interest

Advantages: Bayesian PGLS relaxes the homogeneous rate assumption and naturally incorporates multiple sources of uncertainty, providing more robust interval estimates [18].

Quantitative Data and Comparative Analysis

Performance Metrics Under Different Evolutionary Models

Simulation studies provide crucial insights into PGLS performance under various evolutionary scenarios:

Table 2: Statistical Performance of PGLS Under Different Evolutionary Models

| Evolutionary Model | Type I Error Rate | Statistical Power | Recommended Approach |

|---|---|---|---|

| Homogeneous Brownian Motion | Appropriate (≈0.05) | Good | Standard PGLS |

| Ornstein-Uhlenbeck | Inflated (if unmodeled) | Reduced (if unmodeled) | Incorporate OU structure |

| Heterogeneous Rates | Unacceptably high [3] | Good but unreliable [3] | Transform VCV matrix [3] |

| Lambda (λ < 1) | Inflated (if unmodeled) | Variable | Estimate λ parameter |

Comparison of Phylogenetic Comparative Methods

Table 3: Comparison of Methodological Approaches to Phylogenetic Regression

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| PGLS | Flexible evolutionary models; Generalization of PICs [4] | Handles continuous and discrete predictors [9] | Assumes known phylogeny and model |

| Independent Contrasts (PIC) | Transforms traits to independent contrasts [9] | Computational simplicity | Less flexible than PGLS |

| Bayesian PGLS | Incorporates multiple uncertainty sources [18] | More robust interval estimates | Computational intensity |

| Methods for Heterogeneous Evolution | Allows different evolutionary rates across clades [3] | Addresses model violation | Complex implementation |

Advanced Applications and Extensions

Complex Model Formulations

Advanced PGLS applications extend beyond simple bivariate regression:

- Multiple Regression: Incorporate multiple predictors including interactions [9]

- Discrete Predictors: Include categorical variables like ecomorph categories [9]

- Model Selection: Compare evolutionary models using information criteria

- Predictive Performance: Validate models using cross-validation approaches

Relationship to Other Comparative Methods

PGLS has deep connections with other phylogenetic comparative methods:

The diagram illustrates how PGLS serves as a central framework that generalizes and connects various phylogenetic comparative approaches. PGLS is a generalization of independent contrasts [4], with current extensions incorporating Bayesian methodology to handle uncertainty [18] and heterogeneous models to address varying evolutionary rates across clades [3].

Essential Research Reagents and Computational Tools

Table 4: Essential Computational Tools for PGLS Research

| Tool/Package | Primary Function | Application Context |

|---|---|---|

| R statistical environment | Core computational platform | All PGLS analyses |

| ape package | Phylogenetic tree handling | Data input and manipulation |

| nlme package | Generalized least squares implementation | PGLS model fitting [9] |

| phytools package | Phylogenetic comparative methods | Simulation and visualization |

| geiger package | Data-tree congruence checking | Pre-analysis validation [9] |

| JAGS | Bayesian analysis | Bayesian PGLS implementation [18] |

| corBrownian() | Brownian motion correlation structure | Standard PGLS [9] |

| corPagel() | Pagel's lambda correlation structure | Estimating phylogenetic signal [9] |

The interplay between traits, phylogeny, and residual error represents a fundamental consideration in evolutionary comparative studies. PGLS provides a flexible framework for addressing this interplay, but its appropriate application requires careful attention to model assumptions, particularly regarding homogeneity of evolutionary processes. The methods and protocols outlined in this guide equip researchers with tools to implement PGLS across diverse research scenarios, from basic bivariate correlations to complex models incorporating heterogeneous evolution and multiple uncertainty sources. As comparative datasets continue to grow in size and complexity, the ongoing development and refinement of PGLS methodologies will remain essential for robust inference in evolutionary biology.

Implementing PGLS: A Step-by-Step Guide from Theory to R Code

Phylogenetic Generalized Least Squares (PGLS) represents a cornerstone of modern comparative biology, providing a statistical framework that explicitly accounts for the non-independence of species data due to shared evolutionary history. By incorporating phylogenetic relationships into regression analyses, PGLS enables researchers to test evolutionary hypotheses while controlling for the phylogenetic structure that can lead to spurious correlations [19]. This methodology has revolutionized our understanding of trait evolution across diverse fields, from ecology and paleontology to biomedical research and drug development.

The power of PGLS stems from its ability to model trait covariation under specific evolutionary models, with the Brownian motion model being frequently employed as a default starting point [20]. Under this model, the variance-covariance matrix Σ is proportional to the shared evolutionary path lengths in the phylogeny, effectively weighting observations by their degree of phylogenetic relatedness [20]. Contemporary implementations have expanded to include more complex evolutionary models and spatial effects, allowing researchers to partition variance between phylogenetic history, geographic proximity, and independent evolution [20].

For researchers in drug development and comparative medicine, PGLS offers particularly valuable applications. It enables the prediction of unknown trait values based on phylogenetic position and trait correlations—a capability with significant implications for understanding disease susceptibility, drug response variability, and physiological parameters across species [19]. When properly implemented with appropriate data preparation and robust statistical packages, PGLS provides a powerful tool for unlocking evolutionary patterns in complex biological data.

Essential R Packages for PGLS Research

The R statistical environment hosts a comprehensive suite of packages specifically designed for phylogenetic analysis. Three packages form the essential foundation for PGLS research, each providing distinct but complementary functionality.

Table 1: Core R Packages for Phylogenetic Analysis

| Package | Primary Function | Key Features | Dependencies |

|---|---|---|---|

| ape | Phylogenetics & Evolution | Phylogeny reading/manipulation, comparative methods, tree plotting | Base R |

| nlme | Linear & Nonlinear Mixed Effects | GLS model fitting, correlation structures, variance modeling | Base R |

| geiger | Analysis of Evolutionary Diversification | Taxon name matching, data treation, model fitting | ape, nlme |

Detailed Package Specifications

ape (Analysis of Phylogenetics and Evolution)

As one of the most foundational packages in phylogenetic analysis, ape provides the essential toolkit for reading, writing, plotting, and manipulating phylogenetic trees. Since 2003, it has been cited more than 15,000 times, and over 300 R packages on CRAN depend on it, establishing it as the community standard for phylogenetic computation [21]. The package supports the entire phylogenetic workflow from tree input/output (supporting Newick, Nexus, and other formats) to complex tree manipulation operations including rooting, pruning, and branching calculations. For PGLS specifically, ape generates the phylogenetic variance-covariance matrices that form the statistical backbone of the analysis [20].

nlme (Linear and Nonlinear Mixed Effects)

The nlme package provides the core engine for fitting generalized least-squares models with specific correlation structures, including the phylogenetic correlation structures central to PGLS [22]. Its gls() function enables the specification of custom correlation structures through the corStruct class system, which can be parameterized with the phylogenetic variance-covariance matrices derived from ape. The package offers comprehensive model diagnostics, likelihood comparison methods, and robust parameter estimation techniques. With approximately 111,000 monthly downloads, it represents one of R's most extensively validated and widely used statistical modeling packages [22].

geiger (Analysis of Evolutionary Diversification)

While not explicitly detailed in the search results, geiger complements ape and nlme by providing specialized functions for data treation—ensuring taxonomic name matching between phylogenetic trees and trait datasets—and for fitting evolutionary models to comparative data. It serves as a bridge between data management and analytical phases, automating the critical preprocessing steps that often consume significant research time.

Data Preparation Methodologies

Data Structure Requirements

PGLS analysis requires data to be structured in a "long" or "vertical" format where each row represents a single observation (typically a species) with columns specifying the trait measurements, taxonomic identifiers, and any grouping variables [23]. This structure contrasts with "wide" format data where related measurements are spread across multiple columns. Proper data structuring is critical because most phylogenetic comparative methods, including those implemented in ape, nlme, and related packages, assume this organizational framework.

The minimal data frame for a basic bivariate PGLS analysis should include:

- Taxon names that exactly match tip labels in the phylogeny

- Response variable measurements

- Predictor variable measurements

- Grouping factors for any hierarchical structure (e.g., population, subspecies)

Missing data should be explicitly coded as NA values, and categorical predictors must be converted to factors using R's as.factor() function [23]. Taxonomic names should be standardized before analysis to ensure exact matching with phylogeny tip labels.

Phylogeny and Trait Data Integration

The critical step in PGLS preparation involves reconciling trait datasets with phylogenetic trees, which often originates from separate sources. This process requires exact matching of taxon names between the data frame and the phylogeny tip labels. The following workflow outlines this essential integration process:

Diagram 1: Workflow for integrating trait data with phylogenetic trees.

Implementation of this workflow in R typically follows this sequence:

This workflow ensures that the taxonomic units between the tree and trait data are perfectly aligned, preventing analytical errors that can arise from mismatched datasets.

Addressing Phylogenetic Uncertainty

Modern best practices in PGLS acknowledge that phylogenetic trees are estimates with inherent uncertainty rather than fixed entities. The PGLS-spatial approach advanced by Atkinson et al. addresses this by incorporating multiple trees from Bayesian posterior distributions [20]. This method involves repeating the analysis across many trees (e.g., 100 replicates) and averaging parameter estimates across replicates, thereby incorporating phylogenetic uncertainty directly into the statistical inference process.

The mathematical implementation modifies the standard regression variance matrix V according to:

V = (1 - φ)λΣ + φW + (1 - φ)(1 - λ)hI

Where:

- λ represents the size of the shared ancestry effect

- φ represents the contribution of spatial effects

- Σ is an n × n matrix comprising the shared path lengths on the phylogeny

- W is the spatial matrix comprising pairwise great-circle distances between sites

- h is the diagonal of Σ representing the distance from the root to each sampled language [20]

This approach quantifies the proportional contributions of phylogeny (λ′) and spatial effects (φ) to variance while explicitly acknowledging uncertainty in phylogenetic estimation.

Experimental Protocols and Analytical Frameworks

Model Fitting and Selection Protocol

The process of fitting and selecting PGLS models follows a systematic protocol that balances model complexity with explanatory power. A robust approach involves:

Initial Data Exploration: Visualize phylogenetic signal using Moran's I or similar metrics and assess trait distributions for outliers or transformations needs.

Base Model Specification: Define the biological hypothesis in terms of fixed effects structure (e.g., y ~ x1 + x2) and select an appropriate evolutionary model (typically starting with Brownian motion).

Model Fitting: Use the

gls()function from thenlmepackage with the phylogenetic correlation structure:

Model Averaging: When multiple ecological or predictor variables are available, rather than using stepwise regression, evaluate all possible predictor combinations and use model averaging based on information criteria (e.g., Akaike's Information Criterion) [20]. Calculate relative variable importance (RVI) as the sum of the corrected Akaike weights of all models including each variable.

Model Diagnostics: Examine residuals for heteroscedasticity, phylogenetic structure, and normality using diagnostic plots and statistical tests.

Performance Comparison Framework

Recent research has demonstrated the superior performance of phylogenetically informed predictions compared to predictive equations derived from ordinary least squares (OLS) or PGLS regressions [19]. The experimental framework for quantifying these performance differences involves comprehensive simulations across multiple phylogenetic trees with varying degrees of balance and trait correlations.

Table 2: Performance Comparison of Prediction Methods Across Simulation Studies

| Method | Prediction Error Variance (r = 0.25) | Prediction Error Variance (r = 0.75) | Accuracy Advantage | Key Application Context |

|---|---|---|---|---|

| Phylogenetically Informed Prediction | 0.007 | 0.002 | Reference method | Missing data imputation, fossil trait reconstruction |

| PGLS Predictive Equations | 0.033 | 0.014 | 4-4.7× worse performance | Commonly used but suboptimal for prediction |

| OLS Predictive Equations | 0.030 | 0.015 | 4-4.7× worse performance | Phylogenetically naive approach |