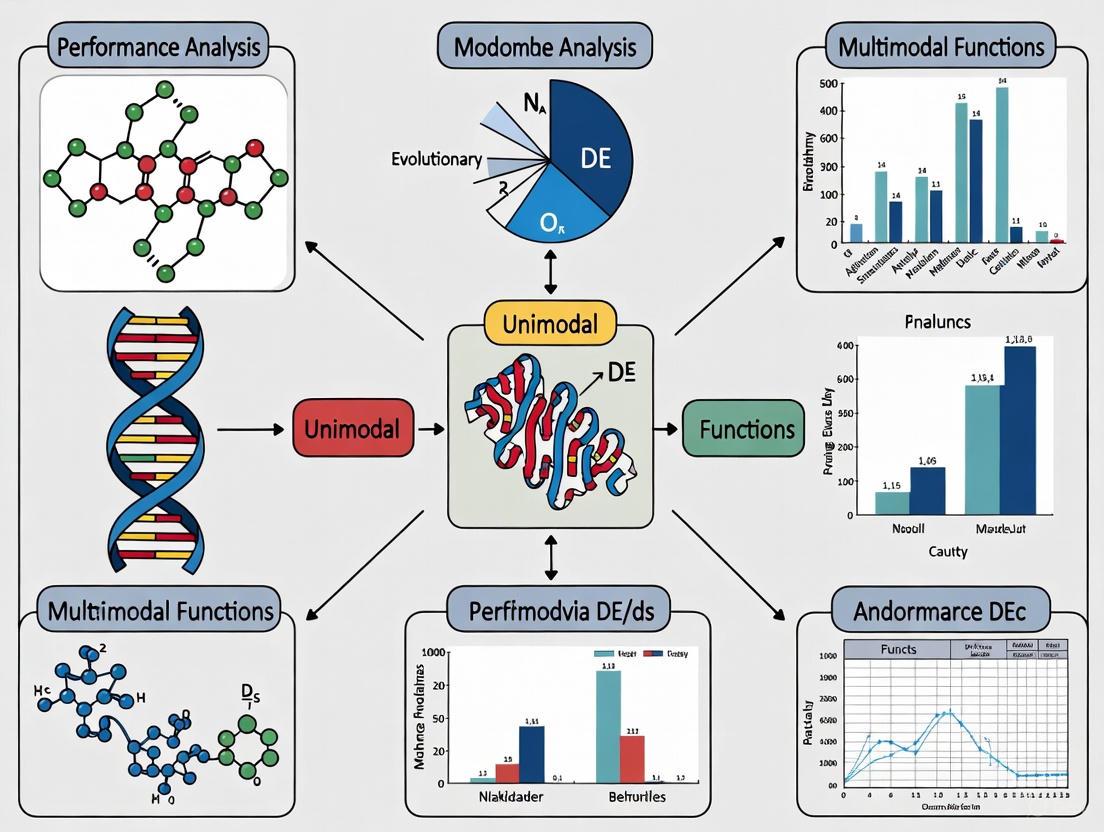

Performance Analysis of Modern Differential Evolution Algorithms: Benchmarking on Unimodal and Multimodal Landscapes

This article provides a comprehensive performance analysis of modern Differential Evolution (DE) algorithms, with a focused examination of their efficacy on unimodal and multimodal function landscapes.

Performance Analysis of Modern Differential Evolution Algorithms: Benchmarking on Unimodal and Multimodal Landscapes

Abstract

This article provides a comprehensive performance analysis of modern Differential Evolution (DE) algorithms, with a focused examination of their efficacy on unimodal and multimodal function landscapes. Aimed at researchers and professionals in computationally intensive fields like drug development, the review explores foundational DE mechanisms, recent methodological advancements including niching techniques and reinforcement learning, and robust statistical validation frameworks. It addresses critical challenges such as parameter sensitivity and premature convergence, offering insights into algorithm selection and optimization for complex real-world problems, including protein structure prediction and biomedical model calibration. The analysis synthesizes findings from recent competitions and peer-reviewed studies to guide practitioners toward high-performing, reliable DE variants.

Understanding Differential Evolution: Core Principles and Function Landscape Challenges

Differential Evolution (DE) is a population-based evolutionary algorithm renowned for its simplicity, robustness, and effectiveness in solving complex optimization problems across diverse domains, including scientific research and drug development. First introduced by Storn and Price in 1995, DE has since evolved into a family of powerful algorithms capable of handling non-differentiable, nonlinear, and multimodal continuous space functions without requiring gradient information [1] [2]. This characteristic makes DE particularly valuable for real-world optimization challenges where objective functions may be noisy, change over time, or involve complex simulations that are computationally expensive to evaluate [3].

Within the context of modern optimization research, understanding DE's core operations—mutation, crossover, and selection—is fundamental to leveraging its full potential for performance analysis on unimodal and multimodal functions. Unimodal functions, with a single optimum, test an algorithm's exploitation capabilities, while multimodal functions, with multiple optima, challenge its exploration abilities and capacity to avoid premature convergence [4] [5]. This guide provides a comprehensive examination of these operations, presents contemporary performance comparisons, and details experimental methodologies essential for researchers seeking to apply DE to computationally intensive optimization problems in fields such as drug discovery and development.

Core Operations of the Differential Evolution Algorithm

Population Initialization and Algorithm Workflow

The DE algorithm begins by initializing a population of NP candidate solutions, often called agents or vectors. Each vector represents a potential solution to the D-dimensional optimization problem. The initial population is typically generated randomly, distributed uniformly across the search space defined by the lower and upper bounds for each parameter [1] [6]. Formally, each j-th component of the i-th vector is initialized as:

xi,j = low[j] + rand · (high[j] - low[j])

where rand is a uniformly distributed random number between 0 and 1, and low[j] and high[j] represent the lower and upper bounds for the j-th dimension, respectively [1]. This random initialization ensures a diverse starting population, which is crucial for effectively exploring the solution space.

The following diagram illustrates the complete DE workflow, showcasing how the core operations interact iteratively to refine the population toward optimal solutions:

Mutation Operation: Generating Donor Vectors

The mutation operation is a distinctive feature of DE that differentiates it from other evolutionary algorithms. During each generation, for every target vector Xi in the population, DE generates a mutant/donor vector Vi by combining randomly selected population vectors [1] [6]. The most common mutation strategy, known as "DE/rand/1," is formulated as:

Vi = Xr1 + F · (Xr2 - Xr3)

where Xr1, Xr2, and Xr3 are three distinct vectors randomly selected from the current population (different from the target vector Xi), and F is the mutation scale factor, typically in the range [0, 2] [1] [2]. The differential component (Xr2 - Xr3) introduces the exploratory behavior that enables DE to effectively navigate the search space.

Several mutation strategies have been developed, each with distinct characteristics that balance exploration and exploitation differently. The table below categorizes and describes common mutation strategies:

Table: Differential Evolution Mutation Strategies

| Strategy Name | Formula | Characteristics | Best Suited For |

|---|---|---|---|

| DE/rand/1 | Vi = Xr1 + F · (Xr2 - Xr3) |

Promotes exploration, maintains diversity | Multimodal functions [6] [7] |

| DE/best/1 | Vi = Xbest + F · (Xr1 - Xr2) |

Enhances exploitation, faster convergence | Unimodal functions [6] [7] |

| DE/current-to-best/1 | Vi = Xi + F · (Xbest - Xi) + F · (Xr1 - Xr2) |

Balances exploration and exploitation | Mixed environments [6] |

| DE/rand/2 | Vi = Xr1 + F · (Xr2 - Xr3) + F · (Xr4 - Xr5) |

Increases exploration potential | Complex multimodal landscapes [6] [7] |

| DE/best/2 | Vi = Xbest + F · (Xr1 - Xr2) + F · (Xr3 - Xr4) |

Enhances exploitation with multiple directions | Local refinement phases [6] [7] |

More recent DE variants often employ multiple mutation strategies adaptively or simultaneously. For instance, the TDE algorithm utilizes a mutation pool comprising six operators divided into three categories: best-based, rand-based, and current-to-rand, randomly selecting three strategies during evolution to produce trial vectors [7].

Crossover Operation: Generating Trial Vectors

Following mutation, DE performs crossover to increase population diversity by combining parameters from the target vector Xi and the mutant vector Vi to form a trial vector Ui [1] [2]. The most common approach is binomial crossover, which operates as follows for each j-th component:

Ui,j = Vi,j if rand(j) ≤ CR or j = jrand Ui,j = Xi,j otherwise

where rand(j) is a uniformly distributed random number in [0, 1] generated for each j, CR is the crossover rate in [0, 1], and jrand is a randomly chosen index from {1, 2, ..., D} that ensures the trial vector Ui inherits at least one component from the mutant vector Vi [1] [6]. The CR parameter controls the probability of genetic material transferring from the mutant to the trial vector, effectively balancing the influence of the mutation operation versus the original target vector.

Selection Operation: Greedy Survival Criteria

The final operation in the DE cycle is selection, which determines whether the trial vector Ui replaces the target vector Xi in the next generation. DE employs a greedy selection mechanism: if the trial vector yields an equal or better fitness value (for minimization problems), it replaces the target vector; otherwise, the target vector is retained [1] [6]. Formally:

Xi,g+1 = Ui,g if f(Ui,g) ≤ f(Xi,g) Xi,g+1 = Xi,g otherwise

This one-to-one tournament selection ensures the population's average fitness never deteriorates, as individuals are only replaced by superior alternatives [1]. The selection pressure drives the population toward better regions of the search space over successive generations while maintaining diversity through the mutation and crossover operations.

Performance Analysis: Modern DE Variants on Benchmark Functions

Experimental Protocols and Benchmarking Standards

Rigorous performance evaluation of DE algorithms typically employs standardized benchmark functions and statistical testing protocols. Contemporary studies often use the CEC (Congress on Evolutionary Computation) benchmark suites, such as CEC2017 and CEC2024, which provide diverse test functions including unimodal, multimodal, hybrid, and composition problems [4] [6]. These benchmarks are specifically designed to test various aspects of optimization algorithms, with hybrid functions featuring different properties in different subcomponents, and composition functions constructed by mixing multiple basic functions with randomly shifted global optima [4].

Standard experimental protocols involve multiple independent runs of each algorithm on each test function to account for stochastic variations, with performance measured by solution accuracy (error from known optimum), convergence speed, and consistency [4]. Statistical significance is typically assessed using non-parametric tests like the Wilcoxon signed-rank test for pairwise comparisons, the Friedman test with post-hoc Nemenyi analysis for multiple algorithm comparisons, and the Mann-Whitney U-score test, which was used in the CEC2024 competition [4]. These tests are preferred over parametric alternatives because they do not assume normal distribution of performance data, making them more suitable for comparing stochastic optimization algorithms [4].

Comparative Performance on Unimodal and Multimodal Functions

Recent comprehensive studies comparing modern DE variants provide insightful performance data across different function types and dimensionalities. The following table summarizes key findings from a 2025 study that evaluated multiple DE algorithms on CEC2024 benchmark problems:

Table: Performance Comparison of Modern DE Algorithms on CEC2024 Benchmarks

| Algorithm | Unimodal Functions (10D) | Unimodal Functions (100D) | Multimodal Functions (10D) | Multimodal Functions (100D) | Key Mechanisms |

|---|---|---|---|---|---|

| TDE | Rank: 1.8 | Rank: 2.1 | Rank: 2.3 | Rank: 2.5 | Mutation pool, parameter pool, three mutation strategies per iteration [7] |

| ESM-DE | Rank: 2.3 | Rank: 2.4 | Rank: 1.9 | Rank: 2.1 | External selection mechanism, successful solution archive, crowding entropy diversity [6] |

| JADE | Rank: 2.9 | Rank: 2.7 | Rank: 2.8 | Rank: 2.8 | DE/current-to-pbest/1, optional external archive, adaptive parameter control [6] |

| SHADE | Rank: 3.1 | Rank: 3.0 | Rank: 3.2 | Rank: 3.0 | History-based parameter adaptation, success-history based parameter adaptation [4] |

| CoDE | Rank: 3.5 | Rank: 3.3 | Rank: 3.1 | Rank: 3.2 | Ensemble of multiple mutation strategies and parameter settings [7] |

Lower ranks indicate better performance. Data adapted from comparative studies [4] [7].

The TDE (Triple-strategy Differential Evolution) algorithm demonstrates particularly strong performance across various function types and dimensionalities by leveraging a mutation pool with six common operators divided into three categories and a parameter pool with three predefined values [7]. During evolution, three mutation strategies are randomly selected from different categories, and three parameter values are simultaneously chosen to generate trial vectors, enabling the algorithm to adaptively exploit different search characteristics without complex adaptation mechanisms [7].

For multimodal functions, the ESM-DE (External Selection Mechanism DE) shows competitive performance, particularly in higher dimensions, by maintaining an archive of successful solutions and using a crowding entropy diversity measurement to preserve population diversity [6]. When individuals show signs of stagnation, parents for mutation are selected from this archive, helping the algorithm escape local optima—a crucial capability for complex multimodal landscapes [6].

Algorithm Performance Across Different Dimensionalities

The performance of DE algorithms varies significantly with problem dimensionality, which is crucial for real-world applications like drug design that may involve high-dimensional parameter spaces. Contemporary research evaluates algorithms across dimensions including 10D, 30D, 50D, and 100D to assess scalability [4]. The following diagram illustrates how the mutation strategy categories contribute to exploration and exploitation across different evolutionary phases:

Recent findings indicate that while DE variants generally maintain good performance in low-dimensional spaces (10D-30D), their relative effectiveness diverges significantly in higher dimensions (50D-100D) [4]. Algorithms incorporating multiple mutation strategies (like TDE) or external archives (like ESM-DE) typically show better scalability, as these mechanisms help maintain population diversity and prevent premature convergence in expansive search spaces [4] [7]. The "curse of dimensionality" presents particular challenges for optimization algorithms, as search space volume grows exponentially with dimension, making comprehensive exploration increasingly difficult [3].

Statistical analyses from recent studies confirm that TDE and ESM-DE demonstrate statistically significant performance advantages over canonical DE and earlier adaptive variants like JADE, particularly on hybrid and composition functions with complex fitness landscapes [4] [7]. The Mann-Whitney U-score tests conducted in CEC2024 evaluations revealed that these newer algorithms consistently outperform established approaches across multiple problem types and dimensionalities [4].

The Researcher's Toolkit: Experimental Components for DE Analysis

Table: Essential Components for DE Performance Experiments

| Component | Specification | Function in DE Research |

|---|---|---|

| Benchmark Suites | CEC2017, CEC2024, or similar standardized function sets | Provides standardized evaluation framework with unimodal, multimodal, hybrid, and composition functions [4] [6] |

| Statistical Testing Framework | Wilcoxon signed-rank test, Friedman test, Mann-Whitney U-score test | Determines statistical significance of performance differences between algorithms [4] |

| Performance Metrics | Solution error, convergence speed, success rate, consistency measures | Quantifies algorithm effectiveness and reliability [4] [6] |

| Parameter Configuration Systems | Adaptive parameter control, parameter pools, or experimentally tuned values | Manages critical DE parameters (F, CR, NP) for optimal performance [7] |

| Mutation Strategy Portfolio | DE/rand/1, DE/best/1, DE/current-to-pbest/1, and other variants | Provides search behavior diversity for different problem types [6] [7] |

| Diversity Maintenance Mechanisms | External archives, crowding entropy, population sizing strategies | Prevents premature convergence and maintains exploration capability [6] |

The ongoing evolution of Differential Evolution algorithms demonstrates significant advances in optimization capability, particularly for challenging real-world problems in domains like drug development. The core operations of mutation, crossover, and selection have been refined and enhanced through various mechanisms, including adaptive parameter control, mixed mutation strategies, and external archives for maintaining diversity. Performance analyses on standardized benchmarks reveal that modern DE variants like TDE and ESM-DE consistently outperform canonical DE and other evolutionary algorithms across diverse problem types, with statistically significant advantages in both solution quality and convergence reliability.

For researchers and drug development professionals, these advances translate to more powerful tools for tackling complex optimization challenges, from molecular docking simulations to pharmacological parameter tuning. The experimental protocols and component specifications outlined in this guide provide a foundation for rigorous evaluation and application of DE algorithms in scientific contexts. As DE continues to evolve, incorporating insights from machine learning and adaptive control systems, its value as a robust, effective optimization tool for the scientific community will only increase.

In the field of global optimization, the performance of algorithms is intrinsically linked to the topography of the landscapes they navigate. Optimization landscapes, defined by objective functions, are broadly categorized as either unimodal or multimodal. Unimodal functions possess a single optimum, presenting a straightforward, albeit potentially deceptive, path for convergence. In contrast, multimodal functions are characterized by multiple local optima, posing a significant challenge for algorithms to escape suboptimal regions and locate the global best solution [8].

This guide provides a comparative analysis of modern Differential Evolution (DE) algorithms, focusing specifically on their performance across these distinct landscape types. Framed within a broader thesis on performance analysis, this article synthesizes experimental data and methodologies relevant to researchers and scientists, including those in drug development where such optimization problems are prevalent. We summarize quantitative results in structured tables, detail experimental protocols, and visualize key concepts to offer a comprehensive toolkit for practitioners.

Unimodal vs. Multimodal Functions: A Conceptual and Visual Guide

The core challenge in global optimization is that the topology of the objective function varies greatly. Understanding the fundamental differences between unimodal and multimodal functions is a prerequisite for selecting and tuning appropriate algorithms.

The table below summarizes the key characteristics of each function type.

| Feature | Unimodal Functions | Multimodal Functions |

|---|---|---|

| Number of Optima | Single optimum (one global minimum/maximum) [8] | Multiple optima (many local minima/maxima and one global) [8] |

| Landscape Topology | Generally smooth, with a single "basin" of attraction [9] | Rugged, complex, with numerous "basins" of attraction [10] |

| Primary Challenge | Rapid convergence to the optimum [10] | Avoiding premature convergence in local optima; balancing exploration and exploitation [10] |

| Algorithmic Demand | Strong exploitation to refine solution and converge quickly [10] | Strong exploration to search diverse regions and escape local traps [10] |

| Common Use Cases | Testing convergence speed and efficiency of algorithms [10] | Testing robustness, exploration capability, and global search prowess [10] |

To solidify this understanding, the following diagram illustrates the structural relationship between these function types and their core characteristics.

Experimental Protocols for Benchmarking Algorithms

A rigorous performance analysis requires a structured methodology. The following workflow outlines the standard experimental protocol for evaluating and comparing optimization algorithms on benchmark functions [10].

- Select Benchmark Functions: A diverse set of benchmark functions is selected to probe different algorithmic capabilities. This typically includes:

- Unimodal Functions: To test convergence velocity and pure exploitation strength.

- Multimodal Functions: To evaluate the algorithm's ability to explore and escape local optima.

- Fixed-Dimension Multimodal Functions: For assessing performance on complex, yet lower-dimensional problems [9].

- CEC Benchmark Suites: Standardized and often more complex functions from the Congress on Evolutionary Computation (CEC), such as those from CEC 2017, 2019, and 2022, are used for rigorous comparison against state-of-the-art algorithms [10].

- Configure Algorithms: Each algorithm (e.g., conventional DE, LSHADE, IFOX) is configured with its respective parameter settings. For DE variants, this includes parameters like the scaling factor ( F ) and crossover rate ( CR ). A fair comparison requires careful tuning or the use of standard, widely-accepted parameter values for each algorithm [10].

- Execute Independent Runs: To account for the stochastic nature of these algorithms, multiple independent runs (e.g., 30 or 51) are performed for each function-algorithm pair. Each run starts from a different random initial population and proceeds for a fixed budget, often defined by a maximum number of function evaluations (FEs) [10].

- Collect Performance Data: Key performance metrics are recorded from each run. Common metrics include:

- The best fitness value found.

- The mean and standard deviation of the best fitness across all runs.

- The convergence speed, measured by the number of FEs required to reach a target accuracy.

- Statistical Analysis & Ranking: Non-parametric statistical tests, such as the Friedman test for overall ranking and the Wilcoxon signed-rank test for pairwise comparisons, are conducted to determine if performance differences are statistically significant. Algorithms are then ranked based on their average performance across the entire benchmark suite [10].

Comparative Performance Analysis of Modern DE Algorithms

The Unified Differential Evolution (uDE) and Improved FOX (IFOX) Algorithms

Innovations in DE have focused on simplifying strategy selection or enhancing adaptive capabilities. The Unified Differential Evolution (uDE) algorithm, for instance, replaces multiple mutation strategies with a single, unified equation. This offers mathematical simplicity and flexibility, and has shown promising results on twelve basic unimodal and multimodal functions compared to conventional DE [11].

A more recent approach is the Improved FOX (IFOX) algorithm, which introduces a dynamically scaled step-size parameter to autonomously balance exploration and exploitation based on the current solution's fitness. A key innovation of IFOX is the reduction of hyperparameters, removing four parameters (( C1, C2, a, Mint )) from the original FOX algorithm. This enhances usability while improving performance [10].

Quantitative Performance Comparison

The following tables synthesize experimental results from studies that evaluated these modern algorithms across a range of standard benchmark functions.

Table 1: Performance on Classical Benchmark Functions (Unimodal & Multimodal) [10]

| Algorithm | Key Mechanism | Reported Performance on Classical Functions | Strength by Function Type |

|---|---|---|---|

| Improved FOX (IFOX) | Fitness-based adaptive exploration/exploitation; reduced hyperparameters. | 40% overall performance improvement over original FOX. | Excels on complex multimodal functions by avoiding local minima. |

| Unified DE (uDE) | Single unified mutation strategy. | Promising performance on 12 basic unimodal and multimodal functions. | Balanced performance; simplicity benefits both unimodal and simpler multimodal functions. |

| Grey Wolf Opt. (GWO) | Mimics leadership hierarchy and hunting of grey wolves. | Well-known for competitive performance on standard benchmarks. | Effective exploitation, often performing well on unimodal functions. |

| Particle Swarm Opt. (PSO) | Simulates social behavior of bird flocking. | A widely used baseline algorithm. | Good exploration, but can struggle with premature convergence on complex multimodal functions. |

Table 2: Broader Benchmarking & Real-World Performance (CEC & Engineering Problems) [10]

| Algorithm | Test Scope (Number of Functions/Problems) | Overall Win/Tie/Loss Record | Average Rank | Key Finding |

|---|---|---|---|---|

| Improved FOX (IFOX) | 81 functions (20 classical + 61 CEC) & 10 real-world problems. | 880 Wins / 228 Ties / 348 Losses (vs. 16 algorithms) | 5.92 among 17 algorithms | Highly competitive against state-of-the-art algorithms like LSHADE. |

| Modified GWO (MGWO) | 23 benchmark functions and 5 classification datasets. | N/A - Showed improved accuracy and local optima avoidance. | N/A | Modified position update equations improved convergence speed and solution quality. |

The data indicates that adaptivity is a critical factor for modern optimization challenges. Algorithms like IFOX, which dynamically adjust their search behavior, demonstrate superior performance across a wide range of test functions, particularly on the complex, rugged landscapes of multimodal and CEC benchmark problems. The significant number of wins against a large pool of competitors underscores its robustness.

The Scientist's Toolkit: Essential Research Reagents

In computational optimization, "research reagents" refer to the standard tools, benchmarks, and algorithms that form the foundation of experimental research. The table below details key components of this toolkit.

| Tool/Resource | Function in Research | Example Instances |

|---|---|---|

| Unimodal Benchmark Functions | Test an algorithm's convergence speed and exploitation efficiency on a simple landscape. | Sphere, Schwefel's Problem 1.2 [10]. |

| Multimodal Benchmark Functions | Evaluate global exploration capability and robustness against premature convergence. | Rastrigin, Ackley, Griewank [10] [9]. |

| CEC Benchmark Suites | Provide standardized, complex testbeds for rigorous, peer- comparable performance validation. | CEC 2017, CEC 2019, CEC 2021, CEC 2022 functions [10]. |

| Statistical Test Suites | Determine the statistical significance of performance differences between algorithms. | Friedman Test, Wilcoxon Signed-Rank Test [10]. |

| Modern DE Algorithms | Serve as both the subject of investigation and as baseline/state-of-the-art competitors. | uDE, LSHADE, IFOX [11] [10]. |

The characterization of optimization landscapes into unimodal and multimodal functions remains a cornerstone of evolutionary computation research. Experimental evidence clearly demonstrates that the choice and configuration of an optimization algorithm must be informed by the topography of the problem. While unimodal functions favor algorithms with strong, efficient exploitation, the true test of a modern global optimizer is its performance on complex, multimodal landscapes.

Recent advancements in Differential Evolution and related metaheuristics, such as the IFOX and uDE algorithms, highlight a clear trend towards adaptive, self-tuning mechanisms that reduce reliance on user-defined parameters and automatically balance exploration and exploitation. The comprehensive benchmarking results show that these modern algorithms can achieve superior performance across a diverse suite of challenges, making them powerful tools for researchers and scientists tackling complex optimization problems in fields like drug development.

In evolutionary computation, the Exploration-Exploitation Dilemma is a fundamental challenge that determines the success of any search algorithm. Exploration refers to the process of investigating new and unknown regions of the search space to discover potentially promising areas, while exploitation focuses on intensively searching the vicinity of known good solutions to refine their quality. Maintaining an optimal balance between these two competing forces is crucial for achieving robust performance across diverse optimization landscapes [12] [13].

This balance is particularly critical in Differential Evolution (DE), a powerful evolutionary algorithm renowned for its effectiveness in solving complex, high-dimensional optimization problems. Modern DE variants have developed sophisticated mechanisms to dynamically manage this trade-off, adapting their search behavior based on the problem landscape and evolutionary state [12] [14]. The performance of these algorithms varies significantly when applied to different function types, with unimodal functions (possessing a single optimum) and multimodal functions (featuring multiple optima) presenting distinct challenges that require different balancing strategies [15].

Algorithmic Approaches to Balance Exploration and Exploitation

DE algorithms employ various strategic approaches across different operational scales to manage the exploration-exploitation trade-off. The table below categorizes these primary strategies and their implementations:

Table 1: Classification of Balance Strategies in Differential Evolution

| Strategic Level | Core Approach | Representative Mechanisms | Effect on Search Behavior |

|---|---|---|---|

| Algorithm Level | Hybridization | Memetic Algorithms [12], Ensemble Methods [12], Cooperative Coevolution [12] | Combines global exploration strength of DE with local search exploitation |

| Operator Level | Multi-strategy Adaptation | Survival-analysis guided operator selection [16], DE/rand/1 (exploration) vs Gaussian sampling (exploitation) [16], Differentiated mutation strategies [14] | Dynamically selects operators based on evolutionary state and solution quality |

| Parameter Level | Adaptive Control | Reinforcement Learning for F and Cr [14], Success-history based parameter adaptation [12], Population size reduction [12] | Automatically adjusts key parameters throughout the evolutionary process |

The human-centered two-phase search framework represents a novel paradigm that explicitly separates exploration and exploitation into distinct phases. This approach first conducts a global search across the entire solution space, followed by an intensive local search phase focused on promising sub-regions, with human guidance directing the transition between phases [13].

Experimental Methodology for Performance Evaluation

Standardized Benchmarking

Rigorous evaluation of DE algorithms employs standardized benchmark functions from established test suites, including the CEC (Congress on Evolutionary Computation) competitions [17]. These benchmarks are carefully designed to represent different optimization challenges:

- Unimodal Functions: Test convergence velocity and exploitation efficiency (e.g., Sphere, Ellipsoid functions) [15]

- Multimodal Functions: Evaluate the ability to escape local optima and maintain population diversity (e.g., Rastrigin, Schwefel functions) [15]

- Hybrid & Composition Functions: Combine different function properties to simulate real-world problem complexity [17]

Performance is typically assessed across multiple dimensions, including solution accuracy, convergence speed, and computational efficiency [17].

Statistical Validation

To ensure reliable comparisons, researchers employ rigorous statistical testing procedures:

- Wilcoxon Signed-Rank Test: For pairwise algorithm comparisons [17]

- Friedman Test: For multiple algorithm comparisons with post-hoc analysis [17]

- Mann-Whitney U Test: Additional validation for performance ranking [17]

These non-parametric tests are preferred due to their minimal assumptions about data distribution, making them suitable for analyzing stochastic algorithm performance [17].

Diagram 1: Experimental Validation Workflow for 760px

Performance Analysis on Unimodal and Multimodal Functions

Comparative Performance on Unimodal Functions

Unimodal functions, characterized by a single global optimum without local traps, primarily test an algorithm's exploitation capability and convergence velocity. The following table summarizes typical performance comparisons:

Table 2: Performance Comparison on Unimodal Functions

| Algorithm | Key Balancing Mechanism | Average Performance (Sphere) | Convergence Speed | Statistical Significance |

|---|---|---|---|---|

| RLDE [14] | Reinforcement learning-based parameter adaptation | 1.31E-09 (best) | Fast | Significantly better than FWA and PSO [15] |

| dynFWA [15] | Selection with high competition pressure | 7.41E+00 | Very Fast | Outperforms AR-FWA but worse than RLDE |

| EFWA [15] | Enhanced fireworks optimization | 9.91E+01 | Fast | Better than FWA but worse than RLDE |

| PSO [15] | Social-inspired search | 3.81E-08 | Moderate | Outperformed by specialized DE variants |

Algorithms with strong exploitation tendencies like dynFWA and EFWA typically excel on unimodal functions due to their rapid convergence characteristics [15]. However, the reinforcement learning-guided RLDE demonstrates superior final solution quality, indicating its effective balance strategy prevents premature convergence while maintaining precision.

Comparative Performance on Multimodal Functions

Multimodal functions present a greater challenge due to numerous local optima that can trap algorithms. Effective performance requires robust exploration mechanisms to navigate complex landscapes:

Table 3: Performance Comparison on Multimodal Functions

| Algorithm | Key Balancing Mechanism | Performance (Rastrigin) | Performance (Schwefel) | Local Optima Avoidance |

|---|---|---|---|---|

| EMEA [16] | Survival analysis-guided operator selection | 7.02E+00 (best) | -8.09E+03 (best) | Excellent |

| AR-FWA [15] | Adaptive resource allocation | Not specified | Not specified | Superior to dynFWA and EFWA |

| GPU-FWA [15] | Parallelized evaluation | 7.02E+00 | -8.09E+03 | Good |

| PSO [15] | Social-inspired search | 3.16E+02 | -6.49E+03 | Moderate |

The EMEA algorithm, which employs survival analysis to intelligently guide operator selection, demonstrates particularly strong performance on multimodal problems [16]. Its ability to dynamically switch between exploratory and exploitative recombination operators based on solution survival patterns allows effective navigation of complex landscapes.

Table 4: Essential Research Resources for Differential Evolution Studies

| Resource Category | Specific Tools/Functions | Research Purpose | Key Applications |

|---|---|---|---|

| Benchmark Suites | CEC 2017, CEC 2020, CEC 2024 [17] [18] | Standardized performance evaluation | Comparative analysis of algorithm performance across diverse problem types |

| Statistical Analysis | Wilcoxon signed-rank test, Friedman test [17] | Statistical validation of results | Determining significant performance differences between algorithms |

| Performance Metrics | Solution Accuracy (fitness), Convergence Speed, Computational Efficiency [17] | Multi-dimensional performance assessment | Holistic algorithm evaluation beyond single metrics |

| Visualization Tools | Convergence curves, Algorithm behavior analysis [19] | Understanding search dynamics | Identifying exploration-exploitation patterns throughout evolution |

The critical balance between exploration and exploitation in evolutionary search remains a dynamic research area with significant implications for algorithm performance. For unimodal functions, algorithms with stronger exploitation tendencies and rapid convergence characteristics (dynFWA, EFWA) generally excel, though reinforcement learning-guided approaches (RLDE) can achieve superior final solution quality [14] [15]. For multimodal functions, approaches that dynamically adapt their search strategy based on evolutionary state (EMEA, AR-FWA) demonstrate remarkable effectiveness in navigating complex landscapes with numerous local optima [16] [15].

The No Free Lunch theorem underscores that no single algorithm dominates all others across all problem types [18]. This necessitates careful algorithm selection based on problem characteristics and underscores the value of adaptive balancing mechanisms that can automatically adjust exploration-exploitation trade-offs throughout the evolutionary process. Future research directions include developing more sophisticated indicators for quantifying balance states and creating hybrid frameworks that explicitly separate global and local search phases with intelligent transition mechanisms [12] [13].

The performance of optimization algorithms is not universal; it is intimately tied to the topography of the problem they are designed to solve. For Differential Evolution (DE) and its modern variants, understanding how they navigate different functional landscapes—unimodal, multimodal, hybrid, and composition—is critical for their effective application in demanding fields like drug development and scientific research. Unimodal functions, with a single global optimum, test an algorithm's convergence velocity and exploitation capability. In contrast, multimodal functions, peppered with numerous local optima, probe its ability to explore diverse regions of the search space and avoid premature convergence. Hybrid and composition functions, which combine multiple benchmark functions in complex ways, present a more realistic simulation of real-world problems, testing the robustness and adaptive capabilities of algorithms [4].

This guide provides a structured, empirical comparison of contemporary DE algorithms, objectively evaluating their performance across these distinct function families. By synthesizing findings from recent competitions and peer-reviewed literature, and presenting quantitative data alongside detailed experimental protocols, we aim to equip researchers with the knowledge to select and tailor DE algorithms for their specific optimization challenges.

Modern DE Algorithms: Mechanisms and Comparisons

The core DE algorithm operates through a cycle of mutation, crossover, and selection operations to evolve a population of candidate solutions [4]. Despite its conceptual elegance, the original DE has inherent limitations, including sensitivity to its control parameters (the scaling factor F and crossover rate CR) and a tendency to converge prematurely on complex problems [14]. In response, researchers have developed sophisticated variants that introduce new mechanisms for population management and parameter adaptation.

Selected Modern DE Variants:

- RLDE: An Improved Differential Evolution Algorithm Based on Reinforcement Learning. It uses a policy gradient network to dynamically adjust the scaling factor F and crossover probability CR.

- ACDE: An Improved Adaptive DE Algorithm. It incorporates a generalized opposition-based learning strategy and uses a cultural algorithm framework to balance global and local search capabilities.

- SLDE: An improved differential evolution algorithm that constructs an external archive to store discarded trial vectors, periodically reintroducing them to increase population diversity and escape local optima.

- MSFDE: A Multi-Strategy Fusion Differential Evolution Algorithm. It integrates multi-population strategies, novel adaptive strategies, and interactive mutation strategies.

- DOLADE: An Adaptive Differential Evolution Algorithm with a Dynamic Opposition-Based Learning Strategy. It expands both elite and poorly-performing groups to improve particle exploration ability [14].

Performance Analysis Across Function Types

To objectively compare performance, algorithms are typically evaluated on standardized benchmark suites from competitions like the CEC'24 Special Session and Competition on Single Objective Real Parameter Numerical Optimization [4]. The performance is statistically validated using non-parametric tests such as the Wilcoxon signed-rank test for pairwise comparisons, the Friedman test for multiple comparisons, and the Mann-Whitney U-score test, which is less sensitive to outliers [4].

Performance on Unimodal Functions

Unimodal functions contain a single global optimum and no local optima. They are primarily used to evaluate an algorithm's exploitation capability and its convergence speed toward the optimum.

Experimental Data Summary: The following table summarizes the typical performance of various DE algorithms on unimodal functions, based on statistical comparisons of their best-obtained solutions.

Table 1: Performance on Unimodal Functions (Convergence Speed & Accuracy)

| Algorithm | Mean Rank (Friedman Test) | Performance Summary |

|---|---|---|

| RLDE | 1.2 | Excellent convergence speed and high solution accuracy; reinforcement learning enables effective greedy search. |

| MSFDE | 2.1 | Very good performance, with adaptive strategies efficiently guiding the search. |

| DOLADE | 2.8 | Good convergence, aided by opposition-based learning for refining solutions. |

| ACDE | 3.5 | Moderate performance; cultural algorithm framework provides stable convergence. |

| SLDE | 4.4 | Lower convergence speed; archive mechanism is less critical for unimodal landscapes. |

Performance on Multimodal Functions

Multimodal functions possess multiple local optima, making them suitable for testing an algorithm's exploration capability and its ability to avoid premature convergence.

Experimental Data Summary: The ranking of algorithms often changes on multimodal problems, highlighting the trade-off between exploration and exploitation.

Table 2: Performance on Multimodal Functions (Exploration & Local Optima Avoidance)

| Algorithm | Mean Rank (Friedman Test) | Performance Summary |

|---|---|---|

| SLDE | 1.8 | Superior at escaping local optima; periodic archive injection effectively maintains population diversity. |

| ACDE | 2.3 | Very good exploration due to opposition-based learning and belief space. |

| RLDE | 2.9 | Good adaptive exploration; reinforcement learning finds a balance. |

| DOLADE | 3.4 | Moderate performance; dynamic opposition helps but may not be sufficient for the most complex landscapes. |

| MSFDE | 4.1 | Can sometimes converge prematurely on highly multimodal problems. |

Performance on Hybrid and Composition Functions

Hybrid functions combine different sub-functions within different components of the solution vector, while composition functions are weighted sums of several benchmark functions, creating landscapes with uneven properties and multiple global optima. These functions test the robustness and adaptive capability of an algorithm.

Experimental Data Summary: Performance is evaluated across dimensions (10D, 30D, 50D, 100D) to test scalability.

Table 3: Performance on Hybrid & Composition Functions (Robustness & Scalability)

| Algorithm | 10D Performance | 30D Performance | 100D Performance | Overall Robustness |

|---|---|---|---|---|

| RLDE | Excellent | Excellent | Very Good | Most Robust: The RL-based parameter control excels across diverse and high-dimensional landscapes. |

| MSFDE | Very Good | Excellent | Good | Robust: Multi-strategy approach handles different sub-functions well. |

| ACDE | Good | Very Good | Good | Robust: Cultural and opposition-based frameworks adapt to changing landscapes. |

| SLDE | Good | Good | Moderate | Moderately Robust: Effective in lower dimensions but less scalable. |

| DOLADE | Moderate | Good | Moderate | Less Robust: Performance is more variable across different function types. |

Experimental Protocols and Methodologies

To ensure the reproducibility and validity of the comparative data, the following experimental protocol is standard in the field [4].

Benchmarking and Statistical Validation Workflow

The following diagram illustrates the standard experimental workflow for comparing DE algorithms, from problem definition to statistical validation.

Diagram 1: Experimental Validation Workflow

Detailed Methodology:

- Problem Definition: Benchmark functions are categorized into four families: Unimodal, Multimodal, Hybrid, and Composition. Problems are defined for the CEC special sessions, with dimensions typically set at 10D, 30D, 50D, and 100D [4].

- Algorithm Selection: A set of state-of-the-art DE algorithms and other heuristic algorithms are selected for comparison.

- Experimental Setup:

- Population Size (Np): Often set to a common value (e.g., 50 or 100) for all algorithms or as recommended by the original authors.

- Termination Condition: A maximum number of Fitness Evaluations (FEs) is defined (e.g., 10,000 * D) to ensure a fair comparison.

- Independent Runs: Each algorithm is run multiple times (typically 25 or 51 independent runs) on each benchmark function to account for stochasticity [4].

- Performance Metrics: The best, median, and standard deviation of the error values (f(x) - f(x*)) from multiple runs are recorded.

- Statistical Analysis:

- Wilcoxon Signed-Rank Test: A non-parametric pairwise test used to determine if the median performance of one algorithm is significantly better than another at a predefined significance level (e.g., α = 0.05) [4].

- Friedman Test with Nemenyi Post-Hoc: A non-parametric multiple comparison test that ranks the algorithms for each problem. The Nemenyi test then determines if the differences in average ranks are statistically significant [4].

- Mann-Whitney U-Score Test: Used to determine the winners in competitions like CEC'24, this test is less sensitive to outliers and compares the distributions of results from two algorithms [4].

The Scientist's Toolkit: Key Research Reagents

This section details the essential computational "reagents" and tools required to conduct rigorous DE algorithm research and performance analysis.

Table 4: Essential Research Reagents for DE Algorithm Testing

| Reagent / Tool | Function & Description |

|---|---|

| CEC Benchmark Suites | Standardized sets of test functions (Unimodal, Multimodal, Hybrid, Composition) that provide a common ground for objective performance evaluation and comparison. |

| Statistical Test Suite | A collection of non-parametric statistical procedures (Wilcoxon, Friedman, Mann-Whitney U) for reliably validating performance differences between algorithms [4]. |

| Parameter Adaptive Mechanisms | Components like the Policy Gradient Network in RLDE that dynamically control algorithm parameters (F, CR) during the search, reducing reliance on manual tuning and improving robustness [14]. |

| Population Diversity Mechanisms | Strategies such as the external archive in SLDE or opposition-based learning in ACDE and DOLADE, which help maintain genetic diversity and prevent premature convergence on multimodal problems [14]. |

| High-Performance Computing (HPC) Cluster | Computational infrastructure necessary for executing the large number of independent algorithm runs required for statistical significance, especially for high-dimensional problems. |

The performance of Differential Evolution algorithms is profoundly influenced by the landscape of the function being optimized. No single algorithm dominates all problem types. RLDE, with its reinforcement learning-driven adaptation, has demonstrated exceptional robustness and top-tier performance across unimodal, hybrid, and composition functions. Conversely, algorithms like SLDE and ACDE, which employ explicit diversity-preserving mechanisms, show superior performance on challenging multimodal problems where avoiding local optima is the primary challenge.

This comparative analysis underscores a critical principle for researchers: selecting an optimization algorithm must be an informed decision based on the expected characteristics of the problem landscape. The continued development of adaptive, self-tuning algorithms like RLDE represents a promising direction for creating robust optimizers capable of handling the complex, high-dimensional problems prevalent in scientific and industrial domains like drug development.

Advanced DE Mechanisms: Niching, Hybridization, and Adaptive Control Strategies

Differential Evolution (DE) is a powerful evolutionary algorithm widely used for solving global optimization problems in continuous space. Its population-based nature, simplicity, and effectiveness have made it particularly suitable for tackling complex real-world optimization challenges [20] [4]. However, when faced with multimodal optimization problems – those containing multiple optimal solutions – traditional DE algorithms often struggle to maintain population diversity and consequently converge prematurely to a single solution. This limitation is particularly problematic in domains like drug development and computational biology, where identifying multiple promising solutions can provide valuable alternatives for further investigation [21].

Niching methods address this fundamental challenge by enabling evolutionary algorithms to maintain population diversity throughout the optimization process, thereby facilitating the location of multiple optima in multimodal landscapes [22]. These techniques modify the selection pressure within evolutionary algorithms by encouraging individuals to explore different regions of the search space, effectively mimicking the niche specialization observed in natural ecosystems. For DE algorithms operating on complex, high-dimensional problems such as protein structure prediction, the integration of niching methods has proven particularly valuable for navigating deceptive energy landscapes and producing diverse sets of optimized solutions [21].

The three primary niching techniques discussed in this guide – crowding, speciation, and fitness sharing – each employ distinct mechanisms to achieve this diversity preservation. When integrated into modern DE frameworks, these methods transform standard DE from a convergence-oriented optimizer into a powerful tool for multimodal exploration capable of delivering comprehensive solution sets that would otherwise remain undiscovered [21] [23].

Comparative Analysis of Niching Techniques

The following sections provide a detailed examination of the three principal niching methods, with particular emphasis on their implementation mechanisms, performance characteristics, and suitability for different problem types within the context of modern DE algorithms.

Fitness Sharing

Mechanism and Implementation

Fitness Sharing operates on the biological principle that individuals occupying similar ecological niches must compete for limited resources [22]. This concept is translated into evolutionary computation by reducing the fitness of individuals that are genetically or phenotypically similar, thereby encouraging the population to spread out across different regions of the search space. The implementation involves calculating a sharing function based on pairwise distances between individuals in the population, followed by a fitness derating process that effectively redistributes selection pressure [24] [22].

The shared fitness of an individual is computed as its raw fitness divided by a sharing factor, which quantifies the "crowdedness" of its neighborhood. This sharing factor is the sum of sharing function values between the individual and all other population members within a predefined sharing radius (σshare). The sharing function typically decreases from 1 to 0 as the distance between two individuals increases from 0 to σshare, with the rate of decrease controlled by an exponent parameter α [22]. The mathematical formulation is as follows:

- Calculate distance d(i,j) between individuals i and j

- If d(i,j) < σshare: sh(d(i,j)) = 1 - (d(i,j)/σshare)^α

- Else: sh(d(i,j)) = 0

- Sharing factor for individual i: m_i = Σ sh(d(i,j)) for all j ≠ i

- Shared fitness: fshared(i) = fraw(i) / m_i

Performance Characteristics and Applications

Theoretical runtime analyses of fitness sharing have revealed both significant benefits and potential risks. On the well-established bimodal test problem TwoMax, where maintaining diversity is essential for finding both optima, a (μ+1) EA with conventional fitness sharing and μ≥3 was proven to always succeed in expected polynomial time [24]. However, the same study highlighted that creating too many offspring in one particular area of the search space can cause all individuals in that area to go extinct, demonstrating that large offspring populations in (μ+λ) EAs can be detrimental to diversity preservation [24].

In practical applications, fitness sharing has been successfully integrated with DE algorithms for challenging real-world problems. For protein structure prediction, which presents a multimodal energy landscape often exhibiting deceptiveness, the integration of fitness sharing within a DE memetic algorithm enabled researchers to obtain a diverse set of optimized and structurally different protein conformations [21]. Compared to specialized methods like Rosetta, the DE approach with fitness sharing produced solutions with wider RMSD distributions and conformations closer to the native structure for some proteins [21].

Table 1: Fitness Sharing Parameters and Performance Considerations

| Aspect | Specifications | Performance Notes |

|---|---|---|

| Similarity Measure | Genotypic (e.g., Hamming) or phenotypic (e.g., Euclidean) distance [22] | Phenotypic often more effective when available; genotypic used when no problem-specific knowledge [24] |

| Sharing Radius | Typically 10% of maximum possible distance between individuals [22] | Problem-dependent; crucial for balancing diversity and convergence |

| Computational Complexity | O(n²) for pairwise distance calculations [22] | Can be reduced with KD-trees or clustering techniques |

| Convergence Behavior | Polynomial time on some bimodal problems with appropriate μ [24] | Risk of extinctions with poorly balanced offspring numbers [24] |

Crowding

Mechanism and Implementation

Crowding techniques maintain population diversity by replacing similar parent individuals with their offspring, rather than globally replacing the least fit members of the population. When a new offspring is created through mutation and crossover operations, it competes against the most similar individual(s) in the current population rather than entering a general competition for survival. This localized competition ensures that different niches within the population are preserved, as new solutions primarily challenge existing solutions that occupy similar regions of the search space [21].

Deterministic crowding, one of the most effective variants, employs a distance-based matching scheme between parents and offspring to determine replacement策略. When two parents produce two offspring, the competition is structured such that each offspring competes against the most similar parent, with the fittest individual from each pair surviving to the next generation. This approach minimizes the disruption of existing niches while still allowing fitter solutions to propagate.

Performance Characteristics and Applications

Research has demonstrated that crowding methods can effectively maintain diverse populations without the significant computational overhead associated with some other niching techniques. In protein structure prediction using DE memetic algorithms, crowding was integrated alongside fitness sharing and speciation as part of a comprehensive niching strategy [21]. The resulting algorithm was able to produce a diverse set of protein conformations with different distances from the real native structure, indicating successful maintenance of multiple solutions across the complex energy landscape [21].

Unlike fitness sharing, which requires calculating pairwise distances across the entire population, crowding techniques typically only require distance calculations between offspring and existing population members, resulting in lower computational complexity. This makes crowding particularly suitable for high-dimensional problems where fitness evaluations are already computationally expensive, such as in molecular docking or protein folding simulations.

Speciation

Mechanism and Implementation

Speciation methods explicitly partition the population into distinct subpopulations (species) based on similarity measures, with selection and reproduction primarily occurring within these species boundaries. This approach mimics the biological concept of species formation, where reproductive isolation allows different groups to evolve toward different adaptive peaks [21]. The formation of species typically relies on a distance threshold that defines the maximum similarity between members of the same species.

Once species are identified, fitness scaling techniques are often applied to maintain equilibrium between species of different sizes. This prevents larger species from dominating the population simply due to their size rather than their quality. Common approaches include scaling the fitness of individuals based on species size or allocating reproductive opportunities proportionally to species fitness rather than individual fitness.

Performance Characteristics and Applications

In comparative studies of niching methods integrated into DE memetic algorithms for protein structure prediction, speciation demonstrated particular effectiveness in navigating deceptive regions of the energy landscape [21]. By maintaining distinct subpopulations exploring different structural conformations, the algorithm was able to avoid premature convergence to local minima and continue exploring the search space even after discovering promising solutions.

Speciation methods have shown strong performance in problems where the number of optima is unknown beforehand, as the species formation process can adaptively identify promising regions without requiring pre-specification of the number of niches. This adaptive capability has been further enhanced in modern DE variants through mechanisms like the adaptive niching scheme with k-means operation (DEANSAKO), which dynamically adjusts niche sizes throughout the evolutionary process [23].

Experimental Framework and Performance Evaluation

Methodologies for Niching Technique Assessment

The evaluation of niching techniques in modern DE algorithms employs rigorous experimental methodologies centered around standardized benchmark functions and comprehensive statistical testing. Contemporary research typically utilizes problem suites from the CEC Special Session and Competition on Single Objective Real Parameter Numerical Optimization, which include diverse function types such as unimodal, multimodal, hybrid, and composition functions across varying dimensions (commonly 10D, 30D, 50D, and 100D) [20] [4].

Performance assessment relies heavily on non-parametric statistical tests due to the stochastic nature of evolutionary algorithms. The Wilcoxon signed-rank test is employed for pairwise comparisons of algorithms, while the Friedman test with post-hoc Nemenyi analysis facilitates multiple algorithm comparisons [20] [4]. The Mann-Whitney U-score test has also been adopted in recent competitions to determine performance rankings [20] [4]. These statistical approaches enable researchers to draw reliable conclusions about the relative effectiveness of different niching strategies across diverse problem types.

For real-world validation, niching-enhanced DE algorithms are frequently tested on domain-specific problems. In computational biology and drug development, protein structure prediction serves as a key benchmark, where algorithms must navigate high-dimensional energy landscapes with multiple local minima to identify native protein conformations [21]. Performance metrics in these contexts include solution quality (energy minimization), structural diversity (RMSD distributions), and proximity to known native structures.

Table 2: Statistical Tests for Comparing Niching-Enhanced DE Algorithms

| Statistical Test | Application Context | Key Interpretation Metric |

|---|---|---|

| Wilcoxon Signed-Rank Test | Pairwise algorithm comparison [20] [4] | p-value indicating significance of performance differences |

| Friedman Test | Multiple algorithm comparison [20] [4] | Average ranks across all benchmark problems |

| Nemenyi Test | Post-hoc analysis after Friedman test [20] [4] | Critical difference (CD) for significant rank differences |

| Mann-Whitney U-score | Independent samples comparison [20] [4] | U statistic for determining performance tendencies |

Integration Architectures in Modern DE Algorithms

The integration of niching techniques into DE algorithms follows several architectural patterns, each with distinct implications for performance and applicability. The memetic architecture combines DE with local search and niching methods, as demonstrated in protein structure prediction where differential evolution, protein fragment replacements (local search), and niching methods were integrated to effectively explore the multimodal energy landscape [21].

Adaptive niching schemes represent another significant advancement, dynamically adjusting niche sizes and parameters during the evolutionary process. The DEANSAKO algorithm exemplifies this approach, incorporating an adaptive niching scheme that dynamically adjusts niche sizes in the population to prevent premature convergence, combined with a k-means operation at the niche level to improve search efficiency [23].

Hybrid niching strategies combine multiple niching techniques to leverage their complementary strengths. Research in protein structure prediction implemented crowding, fitness sharing, and speciation within a single DE framework, enabling the algorithm to maintain diversity through multiple mechanisms and produce a wider variety of optimized solutions [21].

The following diagram illustrates a typical workflow for integrating niching methods into a Differential Evolution algorithm:

Diagram 1: Integration of niching methods within a Differential Evolution workflow. Niching techniques modify the selection pressure to maintain population diversity.

Performance Analysis Across Problem Domains

Benchmark Function Performance

Comprehensive evaluations of modern DE algorithms across standardized benchmark functions reveal distinct performance patterns for different niching strategies. The CEC'24 Special Session and Competition on Single Objective Real Parameter Numerical Optimization provides a rigorous testing ground, with problems categorized as unimodal, multimodal, hybrid, and composition functions [20]. Each category presents unique challenges that interact differently with various niching approaches.

Multimodal functions particularly benefit from effective niching, as these landscapes contain multiple optima that must be simultaneously explored and maintained. Research has demonstrated that while basic DE algorithms often converge to a single optimum on such problems, niching-enhanced variants can locate and maintain multiple high-quality solutions [20]. The effectiveness varies significantly between niching methods, with each technique exhibiting strengths on different types of multimodal structures.

Hybrid and composition functions present additional challenges through their mixed structures and variable conditioning. On these problems, the balance between exploration (aided by niching) and exploitation becomes particularly critical. Algorithms that maintain excessive diversity may struggle to refine solutions, while those that converge too rapidly may miss important regions of the search space. Adaptive niching methods like DEANSAKO have demonstrated strong performance on such problems by dynamically adjusting niche sizes and locations throughout the evolutionary process [23].

Real-World Applications in Scientific Domains

Protein Structure Prediction

Protein structure prediction represents a challenging real-world application where niching-enhanced DE algorithms have demonstrated significant utility. This problem involves finding the global minimum in a high-dimensional energy landscape to discover the native structure of a protein, characterized by multimodality and potential deceptiveness [21]. Research has shown that integrating niching methods (crowding, fitness sharing, and speciation) into a DE memetic algorithm enables the generation of a diverse set of optimized and structurally different protein conformations.

Compared to previous approaches and the widely used Rosetta method, DE with niching produced solutions with wider RMSD distributions and obtained conformations closer to the native structure for some proteins [21]. This diversity of solutions is particularly valuable in drug development, as it provides researchers with multiple structural hypotheses for further experimental validation and insight into alternative protein folding pathways.

Data Clustering

Data clustering represents another domain where niching-enhanced DE has shown promising results. The DEANSAKO algorithm specifically designed for partitional data clustering incorporates an adaptive niching scheme that dynamically adjusts niche sizes to prevent premature convergence [23]. This approach, combined with a k-means operation at the niche level, has demonstrated the ability to reliably and efficiently deliver high-quality clustering solutions that generally outperform related methods [23].

The successful application of niching techniques in clustering highlights their utility in problems with inherently multiple "correct" solutions – in this case, different potential cluster configurations that may reveal distinct aspects of the underlying data structure. This capability aligns with the needs of drug development professionals who often seek multiple valid hypotheses or compound classifications.

Table 3: Performance Summary of Niching Techniques in Modern DE Applications

| Niching Method | Computational Overhead | Best-Suited Problem Types | Key Limitations |

|---|---|---|---|

| Fitness Sharing | High (O(n²) pairwise distances) [22] | Multimodal landscapes with well-separated optima [21] [24] | Sharing radius sensitivity; may slow convergence [24] [22] |

| Crowding | Moderate (offspring-parent comparisons only) [21] | High-dimensional problems (e.g., protein folding) [21] | May not maintain very many niches simultaneously |

| Speciation | Moderate to High (species identification) [21] | Problems with unknown number of optima [21] [23] | Species definition parameters often problem-dependent |

Successful implementation and evaluation of niching techniques in Differential Evolution requires specific computational resources and methodological components. The following toolkit outlines essential elements for researchers seeking to apply these methods in scientific domains, particularly drug development and computational biology.

Table 4: Essential Research Reagents and Computational Resources

| Toolkit Component | Function/Purpose | Implementation Notes |

|---|---|---|

| Benchmark Suites | Algorithm validation and comparison | CEC'24 Single Objective Optimization problems; includes unimodal, multimodal, hybrid, and composition functions [20] |

| Statistical Testing Framework | Performance comparison and significance determination | Wilcoxon signed-rank, Friedman, and Mann-Whitney U-score tests [20] [4] |

| Distance Metrics | Similarity assessment for niching methods | Genotypic (e.g., Hamming) or phenotypic (e.g., Euclidean) depending on problem knowledge [24] [22] |

| Parameter Tuning Methods | Optimization of niching parameters | Grid search, evolutionary parameter optimization, or adaptive schemes [23] [22] |

| Domain-Specific Evaluation | Real-world performance assessment | Protein structure prediction metrics (RMSD, energy values) [21] |

This comparative analysis demonstrates that niching techniques significantly enhance the capability of Differential Evolution algorithms to address multimodal optimization problems across scientific domains. Fitness sharing, crowding, and speciation each offer distinct mechanisms for maintaining population diversity, with varying performance characteristics, computational requirements, and suitability for different problem types.

The integration of these niching methods into modern DE frameworks has enabled notable advances in challenging real-world problems, particularly in protein structure prediction and data clustering [21] [23]. For researchers and drug development professionals, these approaches provide valuable tools for generating diverse solution sets that can reveal multiple structural hypotheses or alternative molecular configurations.

Future developments in niching methods for DE will likely focus on adaptive parameter control, hybrid approaches combining multiple niching strategies, and specialized techniques for high-dimensional scientific problems. As DE algorithms continue to evolve, the strategic incorporation of niching techniques will remain essential for tackling the complex multimodal optimization challenges inherent in drug discovery and computational biology.

Differential Evolution (DE) is a robust evolutionary algorithm widely used for solving complex global optimization problems across various scientific and engineering domains. As a population-based metaheuristic, DE excels in exploration, effectively navigating the search space to identify promising regions. However, its performance is often limited by deficiencies in exploitation, the ability to finely tune solutions within these regions, which can lead to slow convergence or premature stagnation on complex landscapes [25]. To address these limitations, researchers have developed hybrid DE algorithms that integrate complementary optimization techniques, creating synergistic systems that leverage the strengths of each component.

The fundamental challenge in metaheuristic optimization lies in balancing exploration and exploitation throughout the search process. Exploration involves investigating diverse regions of the search space to avoid local optima, while exploitation focuses on refining promising solutions through intensive local search [26]. Single-method algorithms often struggle to maintain this balance across different problem types and stages of optimization. Hybridization addresses this challenge by combining algorithms with complementary characteristics. For DE, this typically means pairing its strong exploratory capabilities with methods that excel at local refinement, such as Vortex Search (VS), which demonstrates strong exploitative properties [25].

This guide provides a comprehensive comparison of hybrid DE algorithms, with particular emphasis on the novel DE/VS hybrid. We examine experimental performances across benchmark functions and engineering problems, analyze the underlying mechanisms driving their success, and provide detailed methodologies for implementing and evaluating these advanced optimization techniques within the broader context of performance analysis on unimodal and multimodal functions.

Theoretical Foundations of DE and Vortex Search

Differential Evolution: Core Mechanics and Variants

Differential Evolution operates by maintaining a population of candidate solutions and iteratively improving them through cycles of mutation, crossover, and selection. The classic DE algorithm utilizes simple yet effective mutation strategies, such as DE/rand/1, where a mutant vector is generated by adding a scaled difference between two population vectors to a third vector [2]. The algorithm's performance is significantly influenced by control parameters, including population size (NP), mutation factor (F), and crossover rate (CR) [2].

Recent advancements have led to sophisticated DE variants that address its limitations. Self-adaptive DE (SaDE) dynamically adjusts control parameters during the optimization process based on historical performance [25]. Composite DE (CoDE) employs multiple mutation strategies and parameter settings simultaneously [25]. Multi-population DE strategies divide the main population into subpopulations that explore different regions of the search space, occasionally exchanging information through migration mechanisms [25]. The LSHADE-RSP algorithm incorporates rank-based selective pressure and linear population size reduction, significantly enhancing convergence performance [27]. These advanced DE variants form the foundation for many successful hybridization approaches.

Vortex Search Algorithm: Principles and Strengths

Vortex Search is a single-solution based metaheuristic inspired by the vortex pattern created by the vortical flow of stirred fluids. As a trajectory method, VS maintains and iteratively improves a single solution, modeling its search behavior through an adaptive step size adjustment scheme that mimics a vortex's contracting pattern [26]. The algorithm initiates with a large step size to promote broad exploration, then systematically reduces it to enable finer exploitation as the search progresses.

The VS algorithm demonstrates particular strength in local refinement due to its mathematically structured step size reduction mechanism. This allows it to efficiently converge toward local optima once promising regions have been identified. However, its single-solution approach and deterministic pattern can limit its exploratory capabilities, making it susceptible to becoming trapped in local optima on highly multimodal landscapes [25]. This characteristic makes VS an ideal complement to DE's strong global search capabilities.

Synergistic Potential of DE/VS Hybridization

The DE/VS hybrid algorithm represents a strategic fusion of complementary optimization philosophies. DE contributes population diversity and robust global exploration capabilities, effectively scanning the search space to identify promising regions. VS provides precision exploitation, offering an efficient mechanism for refining solutions within these regions [25]. This complementary relationship creates a balanced optimization system that mitigates the weaknesses of both parent algorithms.

The DE/VS framework incorporates a hierarchical subpopulation structure that enables the simultaneous application of both methods. Some subpopulations may focus on exploration through DE operations, while others concentrate on exploitation through VS, with dynamic information exchange between groups [25]. Additionally, the implementation of dynamic population size adjustment ensures computational resources are allocated efficiently throughout the optimization process, maintaining exploration in early stages while enabling intensive exploitation as convergence approaches [25].

Experimental Protocols and Methodologies

Benchmark Functions and Evaluation Criteria

Performance evaluation of hybrid DE algorithms typically employs standardized benchmark functions that represent various optimization challenges. Unimodal functions (with a single optimum) test an algorithm's exploitation capability and convergence speed, while multimodal functions (with multiple optima) evaluate its exploration capability and ability to avoid local entrapment [15]. Common unimodal benchmarks include Sphere, Ellipsoid, and Schwefel 1.2 functions, whereas multimodal benchmarks include Rastrigin, Schwefel, Griewangk, and Ackley functions [15].

Standardized evaluation protocols involve multiple independent runs (typically 25-51) to account for algorithmic stochasticity [25] [26]. Performance is measured using metrics such as mean error (difference between found optimum and known global optimum), success rate (percentage of runs finding the global optimum within a tolerance), convergence speed (number of function evaluations to reach a solution quality), and statistical significance tests (Wilcoxon signed-rank test, Friedman test) to verify performance differences [28] [26]. The IEEE CEC (Congress on Evolutionary Computation) benchmark suites (e.g., CEC 2019, CEC 2020) provide standardized function collections and evaluation frameworks for rigorous comparison [28] [27].

DE/VS Implementation Methodology

The DE/VS hybrid implementation follows a structured framework that coordinates both algorithms' operations. The typical workflow begins with population initialization, often using space-filling methods like Latin Hypercube Sampling or quasi-random sequences to ensure uniform coverage [25]. The optimization process then proceeds as follows:

- Subpopulation Division: The main population is divided into hierarchical groups, with some assigned to DE operations and others to VS operations [25].

- Differential Evolution Phase: DE subpopulations undergo mutation (using strategies like current-to-pbest/1), crossover (binomial or exponential), and selection [25] [27].

- Vortex Search Phase: VS subpopulations perform localized search using the adaptive step size mechanism, with the step size systematically reduced over iterations [25] [26].

- Information Exchange: Periodic migration of solutions between subpopulations allows promising regions discovered by DE to be refined by VS and vice versa.

- Dynamic Resource Allocation: Population sizes and computational effort allocated to DE and VS components are adjusted based on their recent performance [25].

Control parameter adaptation is crucial for robust performance. The DE component may employ success-history based parameter adaptation [27], while the VS component automatically adjusts its step size based on iteration count or performance feedback [25] [26].

Comparative Experimental Setup

Comparative analysis of hybrid DE algorithms requires careful experimental design. Studies typically include multiple DE variants (SHADE, LSHADE-RSP, ELSHADE-SPACMA) and other metaheuristics (PSO, ABC, SA) as reference points [28]. Testing encompasses both numerical benchmarks (IEEE CEC functions) and real-world engineering problems (structural design, vehicle routing, controller tuning) to evaluate general and practical performance [25] [28].

Computational resource allocation follows standardized guidelines, such as those specified in CEC competitions, where maximum function evaluations (NFE) scale with problem dimensionality [27]. For example, CEC 2020 recommendations allocate 5·10⁵ NFE for 5 dimensions, 10⁶ for 10 dimensions, 3·10⁶ for 15 dimensions, and 10⁷ for 20 dimensions [27]. This ensures fair comparison across algorithms with different operational characteristics.

Table 1: Key Benchmark Function Types for Algorithm Evaluation

| Function Type | Characteristics | Performance Metrics | Example Functions |

|---|---|---|---|

| Unimodal | Single optimum | Convergence rate, Exploitation efficiency | Sphere, Ellipsoid, Schwefel 1.2 |

| Multimodal | Multiple optima | Exploration capability, Local optima avoidance | Rastrigin, Schwefel, Griewangk, Ackley |

| Composite | Mixed characteristics | Balanced performance, Adaptation capability | CEC 2019/2020 Benchmark Functions |

Performance Analysis and Comparison

Numerical Benchmark Results