Paddy Field Algorithm (PFA) Explained: A Versatile Optimizer for Biomedical and Chemical Research

This article provides a comprehensive exploration of the Paddy Field Algorithm (PFA), a nature-inspired evolutionary optimization technique.

Paddy Field Algorithm (PFA) Explained: A Versatile Optimizer for Biomedical and Chemical Research

Abstract

This article provides a comprehensive exploration of the Paddy Field Algorithm (PFA), a nature-inspired evolutionary optimization technique. Tailored for researchers, scientists, and drug development professionals, we detail PFA's core principles, inspired by plant reproduction, and its practical implementation for complex problem-solving. The content covers its application in hyperparameter tuning, molecular generation, and experimental planning, alongside a comparative performance analysis against Bayesian and other evolutionary methods. Practical guidance on parameter tuning and strategies to overcome common challenges is also included, highlighting PFA's potential to accelerate discovery in automated experimentation and clinical research.

Understanding the Paddy Field Algorithm: Biological Inspiration and Core Mechanics

The Evolutionary Algorithm Landscape and a Niche for PFA

Evolutionary Algorithms (EAs) represent a class of population-based metaheuristic optimization techniques inspired by biological evolution. These algorithms use mechanisms such as selection, mutation, crossover, and survival of the fittest to iteratively improve a population of candidate solutions toward an optimal solution for a given problem [1]. Within the broad family of EAs, several distinct approaches have emerged, including genetic algorithms (GAs), evolution strategies, differential evolution, and estimation of distribution algorithms [1].

The development of EAs has primarily centered on the creation of novel selection and mutation operators and, in the case of genetic algorithms, crossover operators that define their behavior and differentiate them from one another [1]. While these algorithms have demonstrated considerable success across numerous domains, certain limitations persist, particularly regarding:

- Premature convergence to local optima

- Difficulty balancing exploration and exploitation

- Sensitivity to parameter tuning

- Computational expense for complex, high-dimensional problems

The Paddy Field Algorithm (PFA) emerges as a novel evolutionary optimizer that addresses these challenges through a unique biologically-inspired approach. Unlike traditional EAs that often rely on direct fitness-based selection, PFA incorporates density-based reinforcement of solutions, creating a different paradigm for navigating complex search spaces [1] [2].

Biological Inspirations and Core Metaphors

PFA draws its inspiration from the agricultural processes of rice cultivation, specifically the reproductive behavior of paddy plants and their relationship with environmental factors [2]. The algorithm conceptually maps key biological elements to computational optimization components:

Table 1: Biological to Computational Mapping in PFA

| Biological Concept | Computational Equivalent | Role in Optimization |

|---|---|---|

| Rice seeds | Initial candidate solutions | Starting points for optimization |

| Soil quality | Objective function value | Quality measure of solutions |

| Plant fitness | Fitness score | Quantitative solution quality |

| Pollination | Solution propagation | Generating new candidate solutions |

| Seed dispersal | Parameter space exploration | Maintaining population diversity |

| Farmer collective intelligence | Memory mechanism | Preserving historical search information |

The fundamental reproductive principle in PFA is based on the relationship between soil quality, pollination, and plant propagation to maximize plant fitness. This biological foundation translates to an optimization process that considers both solution quality and population density when generating new candidate solutions [1] [2]. Unlike niching-based genetic algorithms, PFA allows a single parent solution to produce multiple offspring based on both its relative fitness and a pollination factor derived from solution density in its neighborhood [1].

PFA Working Principles and Mathematical Formulation

The Paddy Field Algorithm operates through a structured five-phase process that transforms initial random seeds into optimized solutions through iterative improvement [1] [2]:

The Five-Phase Process

Phase 1: Sowing The algorithm initializes with a randomly generated set of parameters (seeds) that serve as starting points for evaluation. The size of this initial population represents a trade-off between computational cost and the algorithm's exploratory capabilities [1].

Phase 2: Selection The objective function ( f(x) ) is evaluated for all candidate solutions, converting seeds into plants with associated fitness values ( y = f(x) ). A user-defined threshold parameter ( H ) selects the top-performing plants based on sorted fitness values [1]:

[ H[y] = H[f(x)] = f(xH) = yH = {yt, \dots, y{max}} \forall xH \in x, yH \in y ]

Phase 3: Seeding Selected plants ( y^* \in yH ) generate new seeds based on their normalized fitness values and a user-defined maximum seed count ( s{max} ) [1]:

[ s = s{max} \left( \frac{y^* - yt}{y{max} - yt} \right) \forall y^* \in y_H ]

Phase 4: Pollination The density of solutions in different regions influences the propagation behavior, with higher-density areas receiving more attention, mimicking the pollination process in dense paddy fields [2].

Phase 5: Dispersion New seeds disperse through the parameter space via Gaussian mutation, maintaining exploration capabilities while exploiting promising regions identified through previous iterations [2].

Key Algorithm Parameters

PFA's behavior can be tuned through several parameters that control its exploration-exploitation balance:

Table 2: PFA Parameters and Their Roles

| Parameter | Symbol | Role | Impact on Performance |

|---|---|---|---|

| Population size | ( N ) | Number of candidate solutions | Larger values enhance exploration but increase computational cost |

| Selection threshold | ( H ) | Proportion of plants selected | Affects selective pressure and convergence speed |

| Maximum seed count | ( s_{max} ) | Maximum offspring per plant | Controls propagation of high-quality solutions |

| Dispersion factor | ( \sigma ) | Gaussian mutation strength | Balances local refinement vs. global exploration |

| Number of iterations | ( T ) | Termination condition | Determines search exhaustiveness |

Comparative Performance Analysis

Benchmarking Against Alternative Optimizers

PFA has been systematically evaluated against several established optimization approaches across diverse problem domains, demonstrating its versatility and robustness [1]:

Table 3: Performance Comparison Across Optimization Algorithms

| Algorithm | Strengths | Weaknesses | Best-Suited Applications |

|---|---|---|---|

| Paddy Field Algorithm (PFA) | Robust versatility, avoids premature convergence, lower runtime, balanced exploration/exploitation [1] [2] [3] | Sensitive to initial conditions, limited theoretical foundation [2] | Chemical system optimization, hyperparameter tuning, complex multimodal problems [1] |

| Bayesian Optimization (Gaussian Process) | Sample efficiency, uncertainty quantification | Computational overhead for large datasets, limited scalability | Expensive black-box functions, low-dimensional parameter spaces |

| Tree-structured Parzen Estimator (TPE) | Handles complex search spaces, good for hyperparameter optimization | Can struggle with high-dimensional continuous spaces | Neural architecture search, categorical parameter optimization |

| Genetic Algorithm (GA) | Global search capability, handles diverse variable types | Premature convergence, parameter sensitivity | Broad applicability across discrete and continuous domains |

| Evolution Strategy (ES) | Strong local search, self-adaptation | May require problem-specific adaptations | Continuous optimization, reinforcement learning |

Chemical System Optimization Results

In chemical optimization tasks, PFA demonstrated particular effectiveness, outperforming or matching Bayesian optimization approaches while requiring significantly lower computational runtime [1] [3]. Specific applications included:

- Global optimization of bimodal distributions: PFA successfully identified global optima without becoming trapped in local solutions [1]

- Hyperparameter optimization for neural networks: Classification tasks involving solvent classification for reaction components showed improved efficiency [1]

- Targeted molecule generation: Optimization of input vectors for decoder networks demonstrated PFA's capability in generative chemical tasks [1]

- Experimental planning: Efficient sampling of discrete experimental spaces for optimal condition identification [1]

Implementation Protocols and Experimental Setups

Standard PFA Implementation Workflow

PFA Experimental Protocol for Chemical Optimization

The following protocol outlines a standardized approach for applying PFA to chemical optimization problems, based on methodologies successfully implemented in recent studies [1]:

Problem Formulation

- Define the objective function ( f(x) ) representing the chemical outcome to optimize

- Identify parameter constraints and bounds for all variables ( x = {x1, x2, ..., x_n} )

- Establish appropriate fitness metrics aligned with chemical objectives

Algorithm Initialization

- Set population size based on problem dimensionality (typically 50-100 for moderate dimensions)

- Define selection threshold ( H ) (commonly 0.2-0.4 of population size)

- Initialize maximum seed count ( s_{max} ) (typically 5-20)

- Configure Gaussian dispersion parameters based on parameter scales

Iteration and Monitoring

- Execute the five-phase PFA process according to the workflow above

- Track convergence metrics and population diversity

- Implement early stopping if fitness plateaus

- Maintain memory of historical evaluations for expensive objective functions

Validation and Analysis

- Verify optimal solutions through experimental validation or cross-validation

- Analyze parameter sensitivity and solution robustness

- Compare against baseline optimization approaches

Neural Architecture Search Application

In deep learning applications, PFA has demonstrated significant effectiveness in evolving Convolutional Neural Network (CNN) architectures. One study applied PFA to geographical landmark recognition using the Google Landmarks Dataset V2, resulting in a 40% improvement in accuracy (from 0.53 to 0.76) through optimized hyperparameters [4] [5]. The experimental protocol for this application included:

- Representation: Encoding CNN hyperparameters (filter sizes, layer depths, connectivity patterns) as PFA parameters

- Fitness Evaluation: Using validation accuracy as the objective function with cross-validation

- Constraints: Incorporating computational budget limits and architectural constraints

- Validation: Comparing evolved architectures against manually designed baselines and other NAS approaches

Table 4: Research Reagent Solutions for PFA Implementation

| Resource Category | Specific Tools/Libraries | Function | Application Context |

|---|---|---|---|

| Software Libraries | Paddy (Python package) [1] | Primary PFA implementation | Chemical system optimization, general optimization tasks |

| Benchmarking Frameworks | Hyperopt, Ax, EvoTorch [1] | Comparative performance analysis | Algorithm validation and selection |

| Visualization Tools | Matplotlib, Plotly, Graphviz | Results visualization and algorithm analysis | Performance monitoring and interpretation |

| Chemical Simulation | RDKit, Schrödinger Suite, OpenMM | Objective function evaluation | Cheminformatics and molecular optimization |

| Neural Network Framework | TensorFlow, PyTorch, Keras | Fitness function computation | Hyperparameter optimization and NAS |

Advantages and Research Directions

Key Strengths of PFA

The Paddy Field Algorithm offers several distinct advantages that make it particularly suitable for complex optimization scenarios:

- High Convergence Rate: PFA demonstrates rapid convergence to high-quality solutions compared to many alternative approaches [2]

- Balanced Exploration-Exploitation: The density-based pollination mechanism maintains an effective balance between exploring new regions and refining promising areas [2]

- Robustness: PFA maintains strong performance across diverse problem domains, from mathematical functions to real-world chemical and deep learning applications [1] [4]

- Early Convergence Avoidance: The algorithm's structure helps prevent premature convergence to local optima, a common limitation in many evolutionary approaches [1] [3]

- Scalability: PFA effectively handles optimization problems with moderate to high dimensionality [2]

Current Challenges and Future Research Directions

Despite its promising performance, PFA faces several challenges that represent opportunities for further investigation:

- Theoretical Foundation: Limited mathematical analysis of convergence properties and theoretical guarantees compared to established algorithms [2]

- Parameter Sensitivity: Performance can be sensitive to initial conditions and parameter settings, though to a lesser degree than some alternatives [2]

- Constraint Handling: Effective incorporation of complex constraints remains challenging, particularly for highly constrained real-world problems [2]

- High-Dimensional Optimization: Scaling to very high-dimensional spaces (hundreds or thousands of dimensions) requires further algorithmic enhancements

- Multi-objective Extension: Development of multi-objective PFA variants for Pareto-optimal solution identification

The algorithm's performance in chemical optimization and neural architecture search suggests promising applications in drug discovery, materials science, and automated machine learning, where efficient global optimization of expensive black-box functions is paramount [1] [4].

The Paddy Field Algorithm (PFA) represents a significant advancement in the domain of nature-inspired metaheuristic optimization. Framed within a broader thesis on evolutionary computation, this algorithm derives its core operational principles from the biological processes observed in rice cultivation. The transition from agricultural practice to computational optimization exemplifies how biological metaphors can solve complex, non-deterministic polynomial-time (NP-Hard) problems across scientific disciplines, including drug development and chemical system optimization [4] [6].

Inspired by the natural phenomena of seed sowing, plant growth, and pollination in paddy fields, PFA belongs to the class of population-based evolutionary algorithms. It distinguishes itself through a unique density-based reinforcement mechanism that effectively balances exploration and exploitation within the search space [2] [6]. This technical guide provides an in-depth examination of PFA's core principles, biological foundations, and practical implementations, with a specific emphasis on applications relevant to researchers and scientists in chemical and pharmaceutical development.

Biological Inspiration and Core Principles

The PFA's operational framework is metaphorically built upon the complete lifecycle of rice cultivation, translating agricultural practices into robust optimization strategies.

The Agricultural Foundation

Rice cultivation, a practice refined over millennia, involves a series of deliberate steps: seed selection, planting, growth influenced by soil quality and pollination, and harvesting. The PFA abstracts this process into a computational model where solution candidates are treated as "rice seeds" [2]. These seeds are evaluated for their quality (fitness), with higher-quality plants producing more offspring, analogous to natural selection pressure. The algorithm incorporates the concept of group intelligence, observed in how farmers collectively manage paddies, by grouping seeds into "paddy fields" evaluated on average quality, thus maintaining population diversity and preventing premature convergence [2].

A crucial biological inspiration is the memory mechanism observed in rice plants, which adapt to changing conditions by storing environmental information. The PFA mimics this through a memory structure that retains historical information about solution candidates, effectively guiding the search toward promising regions of the solution space [2].

From Agriculture to Algorithm

The translation of biological observations into mathematical operations follows a structured mapping:

Table: Biological to Computational Mapping in PFA

| Biological Process | Computational Operation | Optimization Function |

|---|---|---|

| Seed Sowing | Initialization of parameter vectors | Define numerical propagation space |

| Soil Quality | Evaluation of objective function | Assess solution fitness |

| Plant Pollination | Density-based propagation | Reinforce promising search regions |

| Seed Dispersal | Gaussian mutation | Explore adjacent parameter space |

| Harvesting | Selection of optimal solutions | Extract best parameter sets |

This biological metaphor enables PFA to perform directed sampling of parameter space without directly inferring the underlying objective function, making it particularly valuable for complex optimization landscapes where gradient information is unavailable or computationally expensive to obtain [6].

The Paddy Field Algorithm: Formal Specification

Algorithmic Formulation

The PFA operates through a five-phase process that transforms a population of solution candidates toward optimality [6]:

- Sowing: Initialization with a random set of parameter vectors (seeds)

- Selection: Evaluation and selection of top-performing plants based on fitness

- Seeding: Determination of offspring count per selected plant based on fitness and density

- Pollination: Density-based reinforcement through elimination of sparse solutions

- Dispersion: Gaussian mutation of parameters to explore adjacent spaces

Mathematically, the seeding and pollination steps incorporate both fitness proportional selection and density-dependent reinforcement. The number of seeds produced by a plant is determined by its relative fitness and pollination factor derived from solution density within its neighborhood [6]. This dual dependence distinguishes PFA from traditional evolutionary approaches, as it considers both solution quality and distribution within the parameter space.

The dispersion phase employs Gaussian mutation, where new parameter values are generated by sampling from a Gaussian distribution centered on parent values [6] [2]:

x_new = x_parent + N(0, σ)

where σ controls the exploration radius, often adaptively decreased during the optimization process to transition from global exploration to local exploitation.

Critical Parameterization

Successful implementation of PFA requires appropriate configuration of its key parameters:

Table: PFA Parameters and Their Optimization Impact

| Parameter | Function | Performance Impact |

|---|---|---|

| Population Size | Number of initial solution candidates | Larger sizes improve exploration but increase computational cost |

| Number of Paddy Fields | Grouping mechanism for seeds | Enhances diversity and prevents premature convergence |

| Growth Operators | Problem-specific solution modification | Directly determines solution improvement capability |

| Selection Mechanism | Method for choosing best paddy field | Affects convergence speed and solution quality |

| Memory Mechanism | Storage of historical search information | Guides search toward promising regions |

| Termination Criteria | Conditions for stopping the algorithm | Balances solution quality with computational resources |

Research indicates that PFA demonstrates high convergence rate and effective balance between exploration and exploitation, making it suitable for large-scale optimization problems with many variables [2].

Experimental Protocols and Implementation

Workflow Specification

The experimental implementation of PFA follows a structured workflow that can be visualized as follows:

Detailed Methodological Framework

Initialization and Sowing Phase

The algorithm begins by generating an initial population of solution vectors, termed "rice seeds." The population size is user-defined and critically impacts downstream propagation. While larger populations provide better exploratory capability, they come with increased computational costs [6] [2]. Each seed represents a point in the n-dimensional parameter space: x = {x₁, x₂, ..., xₙ}.

Fitness Evaluation and Selection

Each solution candidate is evaluated using the objective function: y = f(x). Parameters yielding high fitness values (y_H ∈ y) are selected for propagation (y* ∈ y_H). The selection operator can be configured to choose only from the current iteration or the entire population, providing flexibility for different optimization scenarios [6].

Seeding and Pollination Mechanism

The number of seeds generated by a selected plant depends on both its relative fitness and local population density. This density-based pollination mechanism reinforces areas with higher concentrations of quality solutions, mimicking how rice plants in dense, healthy areas produce more offspring [6] [2]. The pollination factor is calculated based on the number of neighboring plants within a defined Euclidean distance in the parameter space.

Dispersion and Termination

The dispersion phase applies Gaussian mutation to the pollinated seeds, scattering them within the parameter space. The degree of dispersion is controlled by the standard deviation of the Gaussian distribution, which can be adaptively tuned [2]. The algorithm terminates when convergence criteria are met or a maximum number of iterations is reached.

Application in Chemical and Drug Development

Chemical System Optimization

The Paddy software package, implementing PFA, has demonstrated robust performance in optimizing chemical systems and processes. In benchmark studies, Paddy outperformed or performed on par with Bayesian optimization methods and other evolutionary algorithms across various chemical optimization tasks [6]. Specific applications include:

- Molecular Generation: Optimizing input vectors for decoder networks in targeted molecule generation

- Experimental Planning: Sampling discrete experimental space for optimal experimental design

- Hyperparameter Optimization: Tuning artificial neural networks for chemical reaction classification

Paddy maintains strong performance while avoiding early convergence to local optima, a critical feature for exploring complex chemical spaces where global optima may be widely separated by energy barriers [6].

Convolutional Neural Network Evolution

In geographical landmark recognition for chemical compound imaging, PFA has been successfully applied to evolve Convolutional Neural Network (CNN) architectures. This neural architecture search (NAS) approach optimized CNN hyperparameters using the Google Landmarks Dataset V2, resulting in a performance improvement from an accuracy of 0.53 to 0.76 - an enhancement of over 40% [4].

The PFANET architecture demonstrates PFA's capability in addressing NP-Hard problems like neural architecture search, where the combinatorial explosion of possible architectures makes exhaustive search infeasible [4]. This approach has direct applications in drug discovery for optimizing neural networks used in quantitative structure-activity relationship (QSAR) modeling and molecular property prediction.

Research Reagents and Computational Tools

Implementation of PFA in research settings requires specific computational tools and frameworks:

Table: Essential Research Reagents for PFA Implementation

| Tool/Parameter | Function | Application Context |

|---|---|---|

| Paddy Python Library | Core PFA implementation | General-purpose optimization |

| Hyperopt Library | Benchmark comparison | Bayesian optimization comparison |

| Ax Platform with BoTorch | Bayesian optimization framework | Performance benchmarking |

| EvoTorch | Evolutionary algorithm implementation | Comparison with other evolutionary methods |

| TensorFlow/PyTorch | Neural network framework | CNN architecture evolution |

| Google Landmarks Dataset V2 | Benchmark dataset | Validation of evolved architectures |

Performance Analysis and Comparative Evaluation

Benchmarking Results

In comprehensive benchmarks against established optimization approaches, PFA has demonstrated competitive performance across multiple domains:

Table: Performance Benchmarking of PFA Against Alternative Algorithms

| Algorithm | Mathematical Optimization | Chemical System Optimization | Neural Architecture Search | Computational Efficiency |

|---|---|---|---|---|

| Paddy Field Algorithm (PFA) | Strong global optimization with local minima avoidance | Robust performance across tasks | >40% accuracy improvement in CNN evolution | Lower runtime vs. Bayesian methods |

| Bayesian Optimization (Ax) | Varies with acquisition function | Strong sample efficiency | Good performance | Higher computational overhead |

| Tree of Parzen Estimator (Hyperopt) | Moderate performance | Varies with problem structure | Limited reporting | Moderate efficiency |

| Evolutionary Algorithm (EvoTorch) | Good for continuous domains | Limited reporting | Established performance | Similar to PFA |

| Genetic Algorithm (EvoTorch) | Effective with crossover | Limited reporting | Established performance | Similar to PFA |

Advantages and Limitations

PFA offers several distinct advantages for research applications [2]:

- High Convergence Rate: Rapid progression toward optimal solutions

- Scalability: Effective performance on large-scale problems with many variables

- Balance of Exploration and Exploitation: Maintains diversity while intensifying search in promising regions

- Implementation Simplicity: Does not require specialized optimization knowledge

However, researchers should consider its limitations [2]:

- Theoretical Foundation: Lacks strong theoretical analysis compared to established algorithms

- Parameter Sensitivity: Performance can be sensitive to initial conditions and parameter settings

- Adoption Level: Relatively new algorithm with limited independent validation

The Paddy Field Algorithm represents a biologically-inspired approach to optimization that translates principles from rice cultivation into an effective computational strategy. Its unique density-based propagation mechanism, combined with fitness-proportional selection, enables robust performance across diverse optimization domains, particularly in chemical and pharmaceutical applications.

For researchers and drug development professionals, PFA offers a valuable tool for addressing complex optimization challenges, from experimental condition optimization to neural architecture search for molecular property prediction. The algorithm's ability to avoid premature convergence while maintaining rapid progression toward global optima makes it particularly suitable for high-dimensional, multimodal optimization landscapes common in chemical and biological domains.

As with any metaheuristic, successful application requires careful parameter tuning and problem-specific adaptation. However, PFA's biological foundation provides an intuitive framework for addressing complex optimization challenges in scientific research and drug development.

The Paddy Field Algorithm (PFA) is a nature-inspired metaheuristic optimization algorithm that emulates the reproductive behavior of rice plants to iteratively evolve optimal solutions for complex problems [1] [2]. Inspired by the biological processes of paddy cultivation, PFA operates on principles of group intelligence and density-based propagation, effectively balancing exploration and exploitation in high-dimensional search spaces [2]. This algorithm has demonstrated significant utility across diverse domains, from optimizing chemical systems and processes to evolving convolutional neural network architectures for geographical landmark recognition [1] [4]. Unlike traditional Bayesian optimization methods or genetic algorithms, PFA incorporates a unique density-based reinforcement mechanism that directs search efforts toward promising regions while maintaining innate resistance to premature convergence on local optima [1] [3]. The algorithm's robust performance, marked by excellent runtimes and versatility, makes it particularly valuable for researchers and drug development professionals dealing with complex optimization landscapes where objective functions may be computationally expensive to evaluate or poorly understood [1] [7].

Detailed Explanation of Core Principles

Sowing: Algorithm Initialization

The sowing phase represents the initialization stage of the Paddy Field Algorithm, where a population of potential solutions is generated to begin the optimization process [1]. In this phase, the algorithm creates a random set of user-defined parameters (denoted as x) that serve as starting seeds for evaluation [1]. These parameters define the numerical propagation space for the optimization problem, with each seed representing a potential solution vector in an n-dimensional space [1]. The exhaustiveness of this initial sowing step significantly influences downstream propagation processes; while larger seed sets provide a stronger foundation for exploration, they also incur higher computational costs [1]. The sowing phase establishes the initial diversity of the population, with the spatial distribution of seeds across the parameter space determining the algorithm's initial exploratory capabilities [2]. Formally, for an objective function y = f(x) with n-dimensional parameters x = {x1, x2, ..., xn}, the sowing phase generates the initial population P₀ = {x₁, x₂, ..., xₘ} where m represents the user-defined population size [1].

Selection: Fitness Evaluation and Plant Selection

The selection phase converts seeds into plants by evaluating their fitness through the objective function and identifies the most promising candidates for propagation [1]. After the sowing phase generates the initial population, the algorithm computes the fitness score y = f(x) for each parameter vector x, effectively assessing the "soil quality" for each plant [1]. The selection operator then applies a user-defined threshold parameter (H) to select the top-performing plants based on their sorted fitness values [1]. This process can be mathematically represented as H[y] = H[f(x)] = f(xH) = yH = {yt, ..., ymax} ∀ xH ∈ x, yH ∈ y, where yH represents the sorted list of function evaluations from all current and previous evaluations that satisfy the threshold H for the corresponding parameters xH [1]. The threshold parameter yt defines the number of plants selected for propagation, creating an elite subset of the population that exhibits superior fitness characteristics [1]. This selective pressure ensures that only the most promising solutions contribute to the next generation, guiding the search toward optimal regions of the solution space.

Seeding: Determining Reproductive Potential

The seeding phase calculates the reproductive potential of each selected plant based on its fitness and local population density [1]. For each selected plant y* ∈ yH, the algorithm determines the number of seeds (s) it will produce as a fraction of a user-defined maximum number of seeds (smax) [1]. This calculation incorporates both the relative fitness of the plant and its contextual performance within the population through min-max normalization [1]. The mathematical formulation for this process is s = smax([y* - yt]/[ymax - yt]) ∀ y* ∈ yH, where y* represents the fitness value of a selected plant, yt is the threshold fitness value, and ymax is the maximum fitness value in the current population [1]. This approach ensures that plants with higher fitness values produce more seeds, while simultaneously considering the density of high-quality solutions in their vicinity [2]. The seeding mechanism embodies the algorithm's density-based reinforcement strategy, directing computational resources toward regions of the search space that demonstrate both high-quality solutions and concentrated promising activity [1].

Pollination: Density-Based Reproduction

Pollination represents a distinctive phase in the Paddy Field Algorithm where reproduction is mediated by both solution quality and population density [1] [2]. Unlike traditional evolutionary algorithms that rely solely on fitness-proportional reproduction, PFA incorporates a pollination factor derived from local solution density [1]. In this phase, the number of neighboring plants and their collective fitness scores influence the reproductive success of individual solutions [1]. This density-dependent pollination mechanism allows the algorithm to leverage collective intelligence observed in natural paddy ecosystems, where plants in densely populated high-quality areas exhibit enhanced reproductive success [2]. The pollination process enables a single parent solution to produce multiple offspring through Gaussian mutations, with the quantity determined by both its relative fitness and the pollination factor derived from local solution density [1]. This approach effectively identifies and exploits promising regions in the search space while maintaining diversity through density-aware reproduction, striking a balance between intensification and diversification throughout the optimization process [2].

Dispersion: Offspring Generation via Gaussian Mutation

The dispersion phase implements the actual generation of new candidate solutions through controlled perturbation of selected parent solutions [1] [2]. During this phase, the parameter values (x* ∈ x) corresponding to the selected plants undergo modification by sampling from a Gaussian distribution [1]. This mutation operation introduces variability into the population, facilitating exploration of the search space surrounding promising solutions identified in previous phases. The dispersion process can be mathematically represented as x_new = x* + 𝒩(0,σ), where x* represents a parent solution selected for reproduction and 𝒩(0,σ) denotes a Gaussian random variable with mean zero and standard deviation σ [2]. The degree of dispersion (controlled by σ) determines whether the algorithm performs fine-grained local search around existing solutions or more exploratory movements through the parameter space [1]. This strategic application of Gaussian mutations ensures that the algorithm can effectively navigate complex fitness landscapes, escaping local optima while progressively refining solutions in promising regions [1] [3]. The offspring generated through dispersion then form the next generation of seeds, continuing the evolutionary optimization cycle [2].

Quantitative Performance Data

Table 1: Benchmark Performance of Paddy Algorithm Across Different Domains

| Application Domain | Performance Metric | Paddy Result | Comparative Algorithms | Improvement/Notes |

|---|---|---|---|---|

| Geographical Landmark Recognition | Classification Accuracy | 0.76 (evolved CNN) [4] | 0.53 (baseline CNN) [4] | >40% improvement after PFA optimization [4] |

| Chemical System Optimization | Runtime & Convergence | Excellent runtime [1] | Bayesian Optimization (Hyperopt, Ax), Evolutionary Algorithms (EvoTorch) [1] | Lower runtime with robust convergence [1] [3] |

| Global Optimization (2D bimodal) | Solution Quality | Strong performance [1] | Tree of Parzen Estimator, Gaussian Process, Population-based methods [1] | Avoids early convergence to local minima [1] |

| Neural Network Hyperparameter Tuning | Optimization Efficiency | Robust performance [1] | Bayesian methods, Genetic Algorithms [1] | Maintains strong performance across varied benchmarks [1] |

Table 2: PFA Parameter Settings and Their Impact on Performance

| Parameter | Mathematical Representation | Effect on Algorithm Behavior | Recommended Settings |

|---|---|---|---|

| Population Size | P = {x₁, x₂, ..., xₘ} [2] | Larger sizes enhance exploration but increase computational cost [1] [2] | Problem-dependent; balance between exhaustiveness and cost [1] |

| Threshold Parameter (H) | H[y] = {yt, ..., ymax} [1] | Controls selective pressure; higher values increase elitism [1] | User-defined based on desired selection intensity [1] |

| Maximum Seeds (smax) | s = smax([y* - yt]/[ymax - yt]) [1] | Influences reproductive potential of high-fitness solutions [1] | Typically set as fraction of population size [2] |

| Dispersion Parameter (σ) | x_new = x* + 𝒩(0,σ) [2] | Controls mutation strength; balances exploration/exploitation [2] | Adaptive strategies often beneficial [1] |

Experimental Protocols and Methodologies

Protocol 1: Chemical System Optimization

The application of PFA to chemical system optimization follows a structured experimental protocol designed to efficiently navigate complex parameter spaces while minimizing costly evaluations [1]. The process begins with defining the chemical objective function, which could represent reaction yield, purity, or other performance metrics [1]. Researchers must carefully parameterize the search space, including continuous variables (e.g., temperature, concentration) and discrete variables (e.g., catalyst type, solvent selection) [1]. The PFA initialization involves sowing an initial population of experimental conditions, with population size determined by computational budget and search space dimensionality [1]. Each iteration proceeds through the selection, seeding, pollination, and dispersion phases, with the objective function evaluated for each proposed experimental condition [1]. For chemical applications, researchers have implemented batch evaluation strategies to parallelize experimental work, significantly reducing optimization timeline [1]. The algorithm terminates when convergence criteria are met (e.g., minimal improvement over successive generations) or when the experimental budget is exhausted [1]. This protocol has demonstrated particular effectiveness in optimizing neural network hyperparameters for chemical classification tasks and targeted molecule generation through decoder network optimization [1] [3].

Protocol 2: Neural Architecture Search (NAS)

The PFA-based Neural Architecture Search protocol enables automated design of high-performance convolutional neural networks [4]. This methodology begins by defining the search space encompassing critical CNN hyperparameters including filter sizes, layer depths, activation functions, and connectivity patterns [4]. The initial population consists of diverse neural architectures randomly sampled from this search space [4]. Each CNN architecture is then trained on a subset of the target dataset (e.g., Google Landmarks Dataset V2) using accelerated computing resources, with validation accuracy serving as the fitness function [4]. The selection phase identifies top-performing architectures, which then produce offspring through the seeding and pollination mechanisms [4]. During dispersion, architectural mutations are applied through Gaussian perturbations of continuous parameters (e.g., learning rates) and discrete changes to structural elements [4]. This protocol demonstrated remarkable efficacy in geographical landmark recognition, evolving CNN architectures that achieved 40% improvement in accuracy compared to baseline models [4]. For drug development applications, this approach can be adapted to optimize neural networks for molecular property prediction, chemical reaction optimization, or drug-target interaction analysis.

Workflow Visualization

PFA Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for PFA Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| Paddy Python Package [1] | Primary implementation of PFA algorithm | Chemical system optimization, automated experimentation |

| Hyperopt Library [1] | Comparative Bayesian optimization (Tree of Parzen Estimator) | Benchmarking PFA performance against alternative approaches |

| Ax Framework [1] | Bayesian optimization with Gaussian processes | Performance comparison in chemical optimization tasks |

| EvoTorch [1] | Population-based optimization methods | Benchmarking against evolutionary algorithms and genetic algorithms |

| Google Landmarks Dataset V2 [4] | Benchmark dataset for neural architecture search | Validation of PFA for CNN architecture optimization |

The Paddy Field Algorithm represents a robust, nature-inspired optimization methodology with demonstrated efficacy across diverse scientific domains, including chemical system optimization and neural architecture search [1] [4]. Its core principles—sowing, selection, seeding, pollination, and dispersion—collectively enable efficient navigation of complex parameter spaces while maintaining resistance to premature convergence [1] [3]. The algorithm's unique density-based reproduction mechanism, implemented through the pollination phase, effectively balances exploratory and exploitative search behaviors [1] [2]. For researchers and drug development professionals, PFA offers a versatile optimization tool capable of addressing challenging problems where traditional gradient-based methods struggle and where objective function evaluations are computationally expensive [1] [7]. The quantitative benchmarks demonstrate PFA's competitive performance against established optimization approaches, with particular advantages in runtime efficiency and robustness across varied problem domains [1] [4] [3]. As automated experimentation and artificial intelligence continue transforming scientific discovery, evolutionary optimization approaches like PFA provide valuable foundation for accelerating research cycles and enhancing decision-making in complex scientific landscapes.

The Paddy Field Algorithm (PFA) is a biologically inspired evolutionary optimization algorithm that propagates parameters without direct inference of the underlying objective function [6]. Inspired by the reproductive behavior of rice plants, PFA treats optimization as a process akin to how plants grow and propagate based on soil quality and pollination density [6] [2]. This algorithm operates on a reproductive principle dependent on solution fitness and the distribution of population density among a set of selected solutions [6].

Unlike traditional optimization methods, PFA uses density-based reinforcement of solutions, allowing a single parent vector to produce multiple children via Gaussian mutations based on both its relative fitness and a pollination factor drawn from solution density [6]. This approach provides innate resistance to early convergence and enables effective bypassing of local optima in search of global solutions [6] [7]. The algorithm has demonstrated robust versatility across mathematical and chemical optimization tasks, maintaining strong performance compared to Bayesian optimization and other evolutionary algorithms [6] [8] [7].

Core Terminology and Conceptual Framework

Foundational Terminology

The Paddy Field Algorithm employs a specific biological analogy to frame the optimization process. Understanding these core terms is essential for implementing and applying PFA effectively.

Table 1: Core Terminology of the Paddy Field Algorithm

| Term | Definition | Role in Optimization |

|---|---|---|

| Seeds [6] | Initial random set of user-defined parameters | Starting points for evaluation; represent potential solutions |

| Plants [6] | Seeds that have been evaluated using the objective function | Represent tested solutions with known performance |

| Fitness [6] | Value obtained from evaluating the objective function at specific parameters | Measures solution quality; determines selection for propagation |

| Parameter Space [6] | The n-dimensional space defined by all possible parameter values | The domain where the algorithm searches for optimal solutions |

| Paddy Field [2] | Groupings of rice seeds evaluated based on average quality | Maintains diversity and avoids premature convergence |

The Five-Phase Process of PFA

The PFA operates through a structured five-phase process that transforms initial seeds into optimized solutions [6]:

Sowing: The algorithm begins by generating an initial population of random parameters, known as seeds, within the defined parameter space. The exhaustiveness of this initial step significantly influences downstream processes, with larger seed sets providing better starting points at the cost of computational resources [6].

Selection: After evaluating the objective function for all seeds, a user-defined number of top-performing plants are selected for further propagation. This selection operator can be configured to consider only the current iteration or the entire population [6].

Seeding: The algorithm calculates how many seeds each selected plant should generate, accounting for fitness across the parameter space. This mimics how soil fertility determines the number of flowers a plant can grow [6].

Pollination: This phase reinforces the density of selected plants by eliminating seeds proportionally for those with fewer than the maximum number of neighboring plants within the Euclidean space of the objective function variables [6].

Dispersion: New parameter values are assigned to pollinated seeds by randomly dispersing them using a Gaussian distribution, with the mean being the parameter values of the parent plant [6] [2].

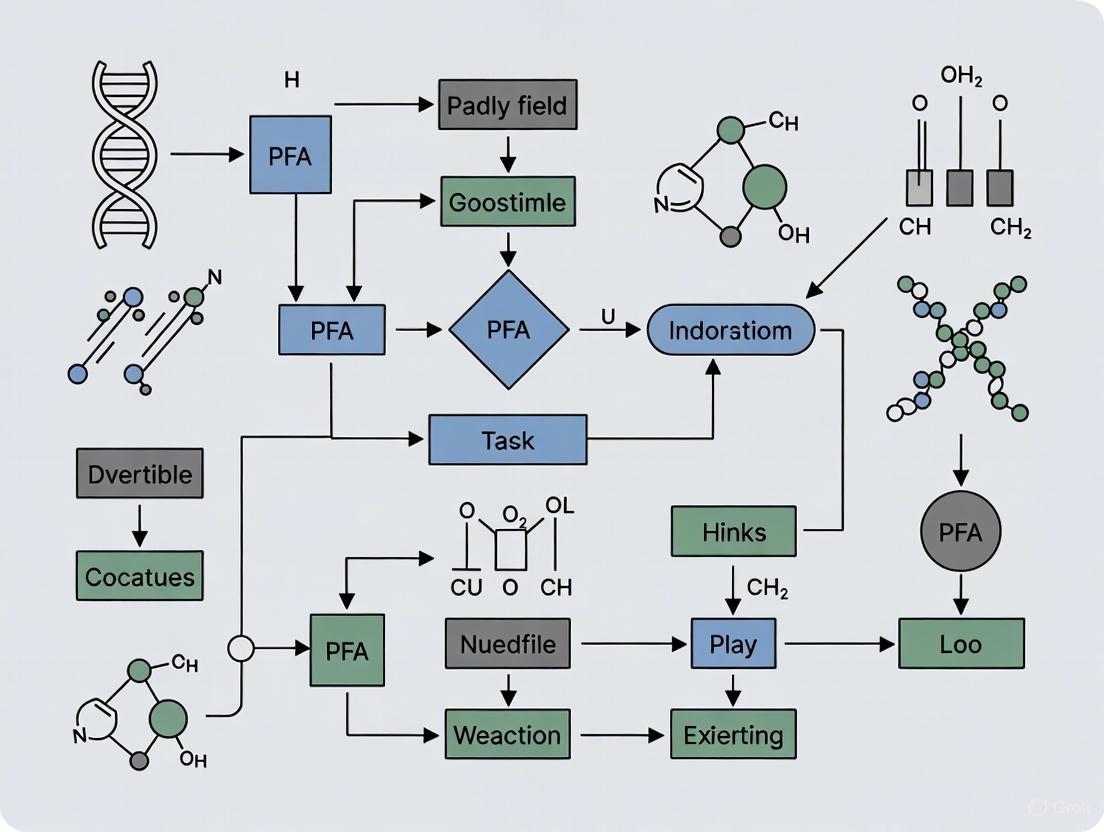

PFA Workflow Overview: The diagram illustrates the iterative five-phase process of the Paddy Field Algorithm, from initial sowing to termination upon convergence.

Quantitative Performance Benchmarks

Comparison with Alternative Optimization Methods

PFA has been systematically benchmarked against several established optimization approaches across diverse tasks. The following table summarizes key performance comparisons:

Table 2: Performance Benchmarking of PFA Against Other Optimization Algorithms

| Algorithm | Mathematical Optimization | Chemical System Optimization | Neural Network Hyperparameter Tuning | Runtime Efficiency |

|---|---|---|---|---|

| Paddy (PFA) [6] [7] | Strong performance in global optimization of bimodal distributions and interpolation of irregular functions | Robust versatility across chemical optimization tasks | Effective hyperparameter optimization for ANN classification | Markedly lower runtime compared to Bayesian methods |

| Bayesian Optimization [6] | Varying performance depending on problem structure | Effective but computationally expensive | Preferred when minimal evaluations are desired | Considerable computational costs for complex search spaces |

| Genetic Algorithms [6] | Moderate performance across mathematical tasks | Less consistent performance across chemical tasks | Moderate effectiveness for architecture search | Moderate computational requirements |

| Tree-structured Parzen Estimator [6] | Competitive but problem-dependent performance | Effective for certain chemical systems | Good performance for hyperparameter optimization | Higher computational demands than PFA |

Application-Specific Performance Metrics

In specific application domains, PFA has demonstrated quantifiable improvements:

- Geographical Landmark Recognition: When used to evolve Convolutional Neural Networks, PFA increased accuracy from 0.53 to 0.76 on the Google Landmarks Dataset V2, an improvement of more than 40% [4].

- Chemical System Optimization: Paddy maintains strong performance across all optimization benchmarks compared to other algorithms with varying performance, demonstrating particular strength in avoiding early convergence [6] [7].

- Computational Efficiency: Paddy demonstrates excellent runtime performance compared to Bayesian optimization methods, making it suitable for problems where computational resources are a constraint [7].

Experimental Protocols and Methodologies

Standard PFA Implementation Protocol

The following protocol provides a detailed methodology for implementing and evaluating the Paddy Field Algorithm:

Phase 1: Algorithm Initialization

- Define the parameter space dimensionality and bounds for each parameter

- Set the initial population size (typically 50-100 seeds)

- Configure algorithm parameters: number of iterations, selection rate, and pollination radius

- Initialize the random seed generation for reproducibility [6] [2]

Phase 2: Fitness Function Implementation

- Implement the objective function specific to the optimization problem

- Define fitness evaluation criteria and constraints

- Establish termination conditions (convergence threshold or maximum iterations) [6]

Phase 3: Iterative Optimization Loop

- Sowing Phase: Generate initial population of seeds randomly within parameter space

- Evaluation Phase: Calculate fitness scores for all seeds

- Selection Phase: Select top-performing plants based on fitness scores

- Seeding Phase: Calculate seed production for each plant based on fitness and local density

- Pollination Phase: Apply density-based reinforcement to seed counts

- Dispersion Phase: Generate new seeds via Gaussian mutation around parent plants [6]

Phase 4: Results Validation

- Execute multiple independent runs to account for stochastic variability

- Compare final fitness values across runs to assess convergence

- Validate optimal parameters against ground truth where available [6]

Chemical System Optimization Protocol

For chemical applications, the following specialized protocol has been validated:

Experimental Design

- Define chemical parameters to optimize (e.g., solvent conditions, temperature, concentration)

- Establish objective function based on desired chemical outcome (e.g., yield, purity)

- Set safety and feasibility constraints for parameters [6]

Optimization Procedure

- Initialize PFA with chemically feasible parameter ranges

- Implement batch evaluation for parallel experimental testing

- Incorporate domain knowledge through constrained parameter spaces

- Execute PFA with emphasis on exploratory sampling in early iterations [6]

Validation Methodology

- Compare optimized conditions against traditional approaches

- Assess reproducibility across multiple experimental batches

- Validate predictive performance on unseen chemical systems [6]

Advanced Implementation Diagrams

Pollination and Density Mechanism

The pollination phase represents a key innovation of PFA, where solution density directly influences reproduction rates.

Density-Based Pollination: This diagram illustrates how plant density and fitness interact to determine seed production in the pollination phase.

Parameter Propagation Logic

The dispersion mechanism controls how new seeds are generated from parent plants, balancing exploration and exploitation.

Parameter Dispersion Logic: The diagram shows how Gaussian dispersion around parent plants generates new seeds while maintaining exploration of the parameter space.

Research Reagent Solutions

Essential Computational Tools

Implementing and applying PFA requires specific computational tools and frameworks:

Table 3: Essential Research Reagents for PFA Implementation

| Research Reagent | Function | Application Context |

|---|---|---|

| Paddy Python Package [6] | Primary implementation of PFA with save/recovery features | Core optimization engine for chemical and mathematical problems |

| EvoTorch Library [6] | Provides comparison algorithms for benchmarking | Performance validation against evolutionary and genetic algorithms |

| Ax Framework [6] | Bayesian optimization implementation | Benchmarking against Bayesian optimization approaches |

| Hyperopt Library [6] | Tree of Parzen Estimators implementation | Comparison with sequential model-based optimization |

| Custom Fitness Functions [6] | Problem-specific objective function implementation | Domain-specific application of PFA |

For specialized applications, additional resources are required:

- Chemical System Optimization: Domain-specific parameter constraints, experimental validation frameworks, and chemical descriptor libraries [6]

- Neural Architecture Search: Network architecture templates, performance evaluation metrics, and hardware acceleration resources [4]

- Molecular Generation: Chemical decoder networks, molecular property predictors, and structural validity checkers [6]

The Five-Phase Process of the Paddy Field Algorithm (PFA)

The Paddy Field Algorithm (PFA) represents a significant advancement in the domain of evolutionary optimization, particularly for complex chemical systems and drug development research. As a biologically inspired evolutionary optimization algorithm, PFA propagates parameters without direct inference of the underlying objective function, making it particularly valuable for chemical optimization tasks where objective functions may be poorly defined or computationally expensive to evaluate [1]. The algorithm operates on a reproductive principle dependent on solution fitness and the distribution of population density among a set of selected solutions, distinguishing it from traditional evolutionary approaches through its density-based reinforcement mechanism [1]. This technical guide provides an in-depth examination of PFA's core five-phase process, experimental protocols, and implementation methodologies to equip researchers and scientists with the knowledge necessary to leverage this powerful optimization tool in pharmaceutical and chemical research applications.

Compared to other optimization approaches such as Bayesian optimization with Gaussian processes or traditional population-based methods, Paddy demonstrates robust versatility by maintaining strong performance across diverse optimization benchmarks while avoiding early convergence with its innate ability to bypass local optima in search of global solutions [1]. This characteristic is particularly valuable in drug development contexts where chemical space exploration must be both efficient and comprehensive to identify promising candidate compounds amidst complex, multi-modal optimization landscapes.

The Five-Phase Process of PFA

The Paddy Field Algorithm implements a meticulously structured five-phase process that mirrors the reproductive behavior of plants in agricultural settings, leveraging relationships between soil quality, pollination, and plant propagation to maximize fitness. This process transforms initial parameter seeds into optimally evolved solutions through iterative refinement, combining fitness-based selection with density-dependent propagation mechanisms [1]. The complete workflow can be visualized through the following diagram:

Figure 1: The five-phase workflow of the Paddy Field Algorithm showing the iterative optimization process.

Phase 1: Sowing

The Paddy algorithm initiation involves generating a random set of user-defined parameters (x) as starting seeds for evaluation [1]. The exhaustiveness of this initial phase critically influences downstream propagation processes and overall algorithm performance. While larger seed sets provide Paddy with a more comprehensive starting point for exploration, this approach incurs computational costs that must be balanced against available resources and optimization requirements [1]. Conversely, employing fewer initial seeds may constrain the algorithm's exploratory capabilities, though the iterative nature of the five-phase process enables continuous refinement of the solution space. In chemical optimization contexts, these initial seeds typically represent parameter combinations such as chemical concentrations, temperature conditions, reaction times, or molecular descriptors that define the experimental space to be explored.

Technical Implementation Protocol:

- Define parameter boundaries for each dimension of the optimization problem

- Generate uniform random samples within defined boundaries to create initial population

- Determine population size based on computational constraints and problem complexity

- Encode continuous and categorical parameters appropriately for mixed-variable optimization

Phase 2: Selection

During the selection phase, the fitness function y = f(x) undergoes evaluation for the complete set of seed parameters (x), effectively converting seeds to plants with associated fitness scores [1]. The algorithm applies a user-defined threshold parameter (H) that implements the selection operator, identifying promising candidates from the sorted list of evaluations (yH) for respective seeds (xH). Mathematically, this selection process can be represented as:

f(x) = y = {ymin, …, ymax}

H[y] = H[f(x)] = f(xH) = yH = {yt, …, ymax} ∀ xH ∈ x, yH ∈ y

where yH represents the sorted list of function evaluations (selected plants) from all current and previous evaluations satisfying threshold H for the set of seeds or parameters xH belonging to all parameters x [1]. In pharmaceutical applications, fitness functions may incorporate multiple objectives such as binding affinity, synthetic accessibility, toxicity metrics, and physicochemical properties, requiring sophisticated multi-objective optimization approaches.

Experimental Protocol for Fitness Evaluation:

- Establish robust fitness function quantifying optimization objectives

- Implement normalization procedures for multi-objective optimization

- Define threshold parameter H based on population characteristics

- Incorporate constraint handling mechanisms for invalid parameter combinations

Phase 3: Seeding

The seeding phase calculates potential seed production (s) for selected plants (y* ∈ yH) as a fraction of a user-defined maximum number of seeds (s_max) based on min-max normalized fitness values [1]. This calculation follows the mathematical relation:

s = smax([y* − yt]/[ymax − yt]) ∀ y* ∈ yH

where s represents the quantity of seeds generated by selected plants with function evaluation y* belonging to the sorted list (yt minimum to ymax maximum) of plants satisfying threshold yH [1]. This approach ensures that higher fitness solutions produce more offspring while maintaining diversity through proportional representation across the fitness spectrum. The Paddy software implementation utilizes the variable Qmax in place of the theoretical smax denoted in the formal algorithm description [1].

Phase 4: Pollination

Pollination represents the distinctive density-mediated phase of PFA that differentiates it from conventional evolutionary approaches. During pollination, the algorithm calculates a pollination factor derived from solution density within the parameter space [1]. Unlike niching-based genetic algorithms, Paddy enables a single parent vector to produce multiple children via Gaussian mutations based on both relative fitness and the pollination factor drawn from solution density [1]. This density-aware reproduction mechanism allows PFA to automatically identify and exploit promising regions of the solution space while maintaining exploration capabilities to avoid premature convergence. The pollination intensity correlates with local solution density, creating a positive feedback loop that efficiently focuses computational resources on high-potential regions of the chemical space.

Phase 5: Propagation

The final propagation phase modifies parameter values (x* ∈ x) for selected plants through sampling from a Gaussian distribution centered around parent solutions [1]. The extent of modification depends on both the fitness of parent solutions and local density characteristics, creating offspring that explore the vicinity of promising solutions identified in previous phases. Following propagation, the algorithm returns to the sowing phase with the newly generated population, continuing this iterative process until convergence criteria are satisfied. For chemical optimization tasks, convergence might be determined by improvement thresholds, maximum iteration counts, or computational budget limitations. The modified selection operator introduced with Paddy provides users the flexibility to select and propagate exclusively from the current iteration rather than the entire population history, which can be particularly beneficial for chemical optimization problems where parameter relationships may shift across iterations [1].

Key Algorithm Parameters and Configurations

Successful implementation of the Paddy Field Algorithm requires careful configuration of core parameters that control the optimization process. The table below summarizes these critical parameters, their mathematical representations, and their influence on algorithm behavior:

Table 1: Key parameters for configuring the Paddy Field Algorithm

| Parameter | Mathematical Symbol | Description | Impact on Optimization |

|---|---|---|---|

| Initial Population Size | Number of starting seeds in sowing phase | Larger sizes enhance exploration but increase computational cost [1] | |

| Selection Threshold | H | Parameter defining selection operator for choosing plants | Controls selective pressure and population diversity [1] |

| Maximum Seeds | smax (Qmax in implementation) | Maximum number of seeds producible by a plant | Influences reproduction rate and convergence speed [1] |

| Fitness Function | y = f(x) | Objective function mapping parameters to fitness scores | Directs search toward optimal regions of parameter space [1] |

| Mutation Distribution | Gaussian distribution for parameter modification | Balances exploration and exploitation during propagation [1] |

Experimental Implementation and Benchmarking

Research Reagent Solutions

Implementation of PFA for chemical optimization requires both computational resources and domain-specific components. The following table details essential "research reagents" for conducting PFA experiments in chemical and pharmaceutical contexts:

Table 2: Essential research reagents and computational components for PFA implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Parameter Encoder | Transforms chemical parameters to optimization variables | Molecular descriptors, reaction conditions, spectral features [1] |

| Fitness Evaluator | Quantifies solution quality | Binding affinity predictors, yield calculators, property estimators [1] |

| Constraint Handler | Manages boundary conditions and feasibility | Penalty functions, repair mechanisms, feasibility filters [1] |

| Termination Checker | Determines when to stop optimization | Convergence metrics, iteration limits, computational budgets [1] |

| Python Paddy Library | Primary implementation framework | Open-source package providing core PFA functionality [1] |

Benchmarking Protocols and Performance

Extensive benchmarking against established optimization approaches demonstrates PFA's capabilities across diverse problem domains. The algorithm has been evaluated against Tree-structured Parzen Estimators implemented in Hyperopt, Bayesian optimization with Gaussian processes via Meta's Ax framework, and population-based methods from EvoTorch [1]. Performance metrics consistently show that Paddy maintains competitive performance while offering significantly reduced runtime requirements compared to Bayesian methods [1].

In chemical optimization benchmarks, Paddy has been applied to mathematical optimization tasks, hyperparameter optimization of artificial neural networks for solvent classification, targeted molecule generation through decoder network optimization, and sampling discrete experimental spaces for optimal experimental planning [1]. Across these diverse applications, Paddy demonstrated robust versatility, maintaining strong performance where other algorithms showed variable results depending on problem characteristics [1].

Experimental Protocol for Algorithm Benchmarking:

- Define standardized test problems with known optima

- Implement identical fitness evaluation budgets for all algorithms

- Measure performance using convergence speed and solution quality metrics

- Conduct statistical significance testing across multiple runs

- Compare computational efficiency using runtime and resource consumption

Applications in Chemical Research and Drug Development

The Paddy Field Algorithm offers particular utility for optimization challenges in chemical sciences and pharmaceutical development. Its ability to efficiently navigate complex parameter spaces without requiring gradient information or explicit objective function modeling makes it suitable for diverse applications including synthetic methodology optimization, chromatography condition selection, transition state geometry calculations, and drug formulation design [1]. The algorithm's resistance to premature convergence proves especially valuable when exploring chemical spaces containing multiple local optima, such as molecular design optimization where subtle structural modifications can dramatically impact compound properties.

In automated experimentation contexts, PFA's capacity for proposing experiments that efficiently optimize underlying objectives while effectively sampling parameter space aligns with the requirements of closed-loop optimization systems [1]. This capability enables more efficient resource utilization in high-throughput experimentation settings, accelerating the optimization of chemical reactions and materials synthesis protocols. The open-source nature of the Paddy implementation further enhances its accessibility for research applications, providing a versatile toolkit for chemical problem-solving tasks with inherent resistance to early convergence for identifying optimal solutions [1].

Mathematical Formulation of the Fitness and Seeding Process

Within the broader study of the Paddy Field Algorithm (PFA), a nature-inspired metaheuristic, understanding the mathematical formulation of its fitness and seeding process is paramount for researchers aiming to apply it to complex optimization problems in fields like drug development and chemical system design [8] [1]. The PFA distinguishes itself from other evolutionary algorithms through its unique density-based reinforcement of solutions, which is central to its robust performance and ability to avoid premature convergence on local optima [6] [1]. This guide provides an in-depth technical examination of the core mathematical operators that govern this process, enabling scientists to effectively implement and adapt the algorithm for their experimental workflows.

Core Concepts of the Paddy Field Algorithm

The Paddy Field Algorithm (PFA) is an evolutionary optimization algorithm inspired by the reproductive behavior of rice plants [2]. It propagates a population of candidate solutions, conceptualized as "plants," without directly inferring the underlying objective function, making it particularly useful for black-box optimization problems common in chemical and pharmaceutical research [8] [3].

The algorithm operates through a five-phase process: Sowing, Selection, Seeding, Pollination, and Dispersion [6] [2]. The fitness of a plant is determined by evaluating the objective function, y = f(x), for its parameter set x [6]. Higher fitness values, yH, indicate superior "soil quality" and lead to the selection of those parameters, xH, for further propagation [6]. The subsequent seeding and pollination phases are critically dependent on both the fitness of a solution and the local density of other high-fitness solutions in the parameter space, allowing the algorithm to effectively balance exploration and exploitation [1].

Table 1: Key Terminology in the Paddy Field Algorithm

| Term | Mathematical Symbol | Description |

|---|---|---|

| Seed/Plant | x = {x1, x2, …, xn} |

A candidate solution vector of n parameters [6]. |

| Fitness | y = f(x) |

The evaluation of the objective function for a given seed [6]. |

| Selected Plants | yH, xH |

The set of high-fitness plants selected for propagation [6]. |

| Maximum Seeds | s_max |

A user-defined parameter for the maximum number of seeds a plant can produce [1]. |

| Threshold Parameter | H or y_t |

The user-defined threshold that determines how many top-performing plants are selected [6] [1]. |

Mathematical Formulation of Fitness and Selection

The selection phase is the first step in identifying the most promising solutions from the current population.

The Selection Operator

After the fitness function y = f(x) is evaluated for all seeds in an iteration, the algorithm applies a selection operator. This operator selects a subset of plants, yH, based on a user-defined threshold parameter, H (denoted as y_t in the context of the number of plants) [6] [1]. The selection can be mathematically represented as:

In this formulation, yH is the sorted list of function evaluations (from minimum y_t to maximum y_max) that satisfy the threshold H for the set of parameters xH [6]. This mechanism ensures that only the most fit plants are chosen to produce the next generation of seeds.

Mathematical Formulation of the Seeding Process

The seeding process determines how many new candidate solutions (seeds) each selected plant is allowed to generate. This number is not based on fitness alone but is a function of both relative fitness and the density of other high-performing solutions.

Seeding Calculation

The number of seeds s that a selected plant with fitness y* will generate is calculated as a fraction of the user-defined maximum number of seeds, s_max [1]. The formula uses min-max normalization to scale the fitness value relative to the other selected plants:

Here, y* is the fitness of an individual selected plant belonging to the sorted list yH, y_max is the highest fitness value in the population, and y_t is the lowest fitness value among the selected plants [1]. This ensures that a plant with higher fitness will produce more seeds than one with lower fitness within the same selected group.

The Role of Pollination and Density

Following the initial seeding calculation, a crucial pollination step adjusts the number of seeds based on population density [6] [2]. The algorithm reinforces areas with a higher density of selected plants by eliminating seeds proportionally from plants that have fewer than the maximum number of neighbors within a defined Euclidean distance in the parameter space [6]. This density-mediated pollination is a key feature that differentiates PFA from other evolutionary algorithms, as it allows a single parent to produce offspring based on both its fitness and its proximity to other successful solutions [1].

The diagram below illustrates the complete workflow of the Paddy Field Algorithm, highlighting the central role of the fitness evaluation and seeding process.

Experimental Protocols and Benchmarking

The performance of Paddy's fitness and seeding formulation has been validated against several state-of-the-art optimization algorithms across diverse tasks.

Benchmarking Algorithms and Tasks

In a comprehensive study, the Paddy algorithm was benchmarked against the following methods [8] [1]:

- Tree of Parzen Estimator (TPE): Implemented via the Hyperopt software library.

- Bayesian Optimization (BO): With a Gaussian process via Meta's Ax framework.

- Population-based Methods: From EvoTorch, including an evolutionary algorithm with Gaussian mutation and a genetic algorithm using Gaussian mutation and single-point crossover.

The algorithms were evaluated on several mathematical and chemical optimization tasks [8] [1]:

- Global optimization of a two-dimensional bimodal distribution.

- Interpolation of an irregular sinusoidal function.

- Hyperparameter optimization of an artificial neural network for solvent classification.

- Targeted molecule generation by optimizing input vectors for a decoder network.

- Sampling discrete experimental space for optimal experimental planning.

Key Findings and Performance

The benchmarking revealed that Paddy maintains strong performance across all tasks, often outperforming or matching Bayesian optimization while requiring markedly lower runtime [1] [3]. A critical finding was Paddy's innate resistance to early convergence, attributed to its density-based seeding and pollination process, which allows it to effectively bypass local optima in search of global solutions [8] [6].

Table 2: Key Parameters for Paddy Field Algorithm Implementation

| Parameter | Symbol | Description | Considerations |

|---|---|---|---|

| Population Size | - | Number of initial seeds [2]. | Larger sizes aid exploration but increase computational cost [6]. |

| Threshold Parameter | H (y_t) |

Number of top plants selected for propagation [6] [1]. | Directly controls selective pressure. |

| Maximum Seeds | s_max |

Maximum number of seeds a plant can produce [1]. | Influences the rate of exploitation in promising regions. |

| Pollination Radius | - | Euclidian distance to determine neighbors [6]. | Affects density calculation and diversity maintenance. |

| Dispersion Factor | σ |

Standard deviation for Gaussian mutation [6]. | Governs the degree of exploration during seed dispersal. |

The Scientist's Toolkit: Research Reagent Solutions

Implementing and experimenting with the Paddy Field Algorithm requires a set of essential computational tools and resources. The following table details key components for researchers in drug development and chemical sciences.

Table 3: Essential Research Reagents and Tools for PFA Research

| Tool/Resource | Type | Function in Research |

|---|---|---|

| Paddy Python Library | Software Library | The primary open-source implementation of the PFA, providing the core optimization toolkit for chemical problem-solving [8] [1]. |

| Hyperopt | Software Library | Provides the Tree of Parzen Estimator algorithm, used as a key benchmark for comparing Paddy's performance [1]. |

| Ax Framework | Software Platform | Provides Bayesian optimization with Gaussian processes, serving as another benchmark for high-performance optimization [6] [1]. |

| EvoTorch | Software Library | Provides population-based optimization methods (evolutionary and genetic algorithms) for comparative performance analysis [1]. |

| Objective Function | Experimental Setup | A user-defined function y = f(x) representing the chemical or experimental system to be optimized (e.g., reaction yield, drug potency) [6]. |

| Parameter Space | Experimental Setup | The defined bounds and dimensions of the input variables x for the optimization problem [6]. |

The relationships between these core components and the PFA workflow are visualized below, showing how benchmarks and the algorithm interact within an experimental setup.

The mathematical formulation of the fitness and seeding process is the cornerstone of the Paddy Field Algorithm's efficacy. By integrating a fitness-proportional seeding mechanism with a unique density-based pollination step, Paddy achieves a robust balance between exploration and exploitation. This allows it to efficiently navigate complex parameter spaces, such as those encountered in chemical system optimization and drug development, without requiring excessive computational resources or succumbing to local optima. The provided formulations, parameters, and experimental contexts offer researchers a solid foundation for implementing and adapting this powerful algorithm to their most challenging optimization problems.

Implementing PFA in Practice: From Code to Chemical and Biomedical Applications

Getting Started with the Paddy Python Package

The Paddy field algorithm (PFA) is an evolutionary optimization algorithm inspired by the biological processes of rice cultivation, including sowing, growth, pollination, and harvesting [2]. This metaheuristic mimics the collective intelligence observed in natural paddy fields, where the reproductive success of plants is influenced by both their individual fitness and the population density in their vicinity [1]. The Paddy Python package provides a robust implementation of this algorithm, offering researchers and developers a versatile tool for solving complex optimization problems across various domains, including drug development and chemical system optimization [1].

Unlike traditional gradient-based optimization methods or other evolutionary algorithms like Genetic Algorithms (GA), PFA introduces a unique density-based reinforcement mechanism that directs the search process [1]. This approach allows Paddy to maintain a effective balance between exploration (searching new areas of the solution space) and exploitation (refining known good solutions), resulting in robust performance with a marked resistance to premature convergence on local optima [2]. Benchmarks against other optimization approaches, including Bayesian methods (e.g., Gaussian process optimization, Tree-structured Parzen Estimator) and other population-based algorithms, have demonstrated Paddy's strong performance and lower computational runtime across diverse optimization tasks [1].

Biological Inspiration and Theoretical Foundations

Core Biological Concepts