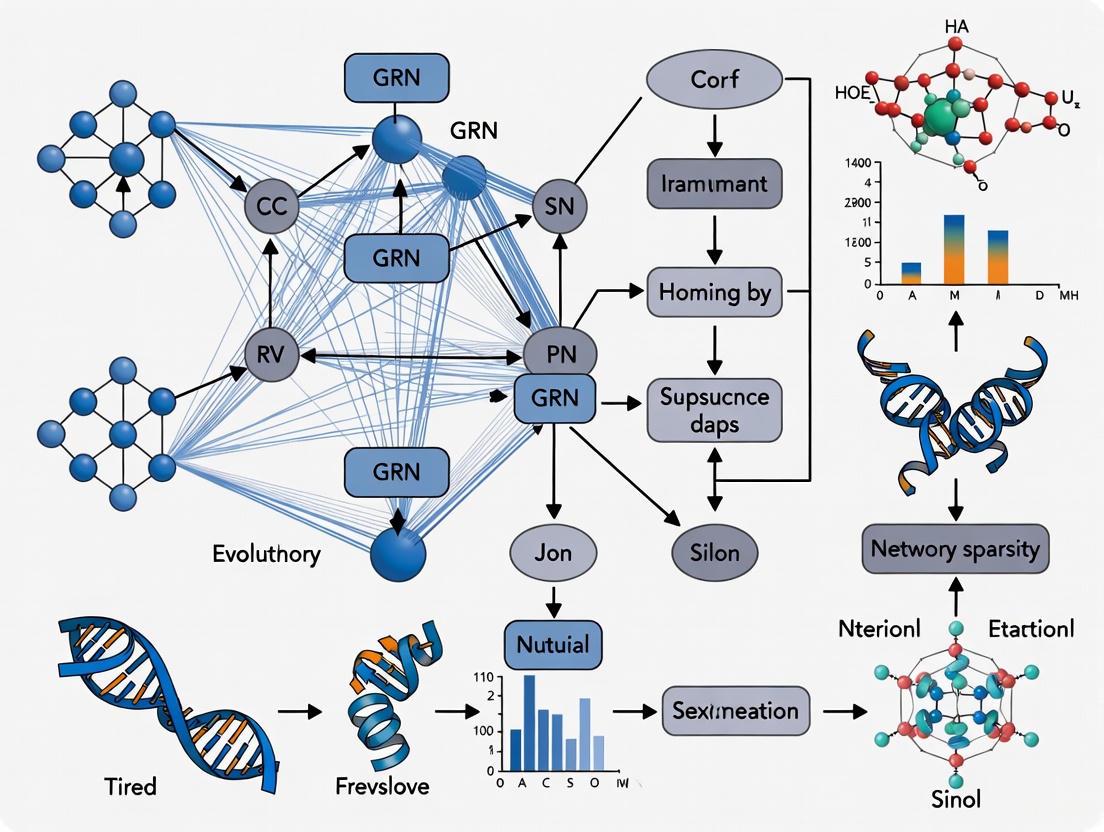

Overcoming the Sparsity Challenge: Advanced Strategies for Robust Gene Regulatory Network Reconstruction

Reconstructing Gene Regulatory Networks (GRNs) from single-cell RNA sequencing (scRNA-seq) data is fundamental for understanding cellular identity and disease mechanisms.

Overcoming the Sparsity Challenge: Advanced Strategies for Robust Gene Regulatory Network Reconstruction

Abstract

Reconstructing Gene Regulatory Networks (GRNs) from single-cell RNA sequencing (scRNA-seq) data is fundamental for understanding cellular identity and disease mechanisms. However, this process is severely challenged by network sparsity, a issue stemming from data dropout, cellular heterogeneity, and the inherent scale-free topology of biological networks. This article provides a comprehensive guide for researchers and drug development professionals on navigating these challenges. We explore the foundational causes of sparsity, detail cutting-edge computational methods from deep learning to graph theory designed to mitigate its effects, offer practical troubleshooting and optimization strategies for real-world data, and finally, establish a rigorous framework for the validation and comparative analysis of inferred networks to ensure biological relevance and accuracy.

The Sparsity Problem: Understanding the Roots of Network Sparsity in scRNA-seq Data

Defining Network Sparsity in the Context of GRN Topology

Troubleshooting Guides

Guide 1: Resolving Suboptimal Sparsity Selection in GRN Inference

Problem: The inferred Gene Regulatory Network (GRN) is too dense or too sparse, does not reflect biological reality, and contains many false positive or false negative interactions.

Explanation: A major shortcoming of most GRN inference methods is that they do not automatically find the optimal sparsity level—the single best network that balances completeness with accuracy. Instead, sparsity is often controlled by an arbitrarily set hyperparameter without guidance for its optimal value [1]. Biological networks are typically sparse, which is crucial for network stability and explorability [1].

Solution: Implement topology-based sparsity prediction methods that leverage the scale-free property of real GRNs.

Steps:

- Generate candidate networks: Use your chosen GRN inference method (e.g., LASSO, Zscore, LSCON, GENIE3) with a range of hyperparameter values (( \lambdag \in {\lambda1, \ldots, \lambdaG} )) to create multiple candidate networks ( \hat{A}g ) of varying sparsity [1].

- Calculate node out-degrees: For each candidate network, compute the out-degree ( c_i(g) ) for each gene ( i ) by counting its nonzero regulatory interactions [1].

- Fit a power law distribution: For each candidate network's out-degree distribution, approximate the Maximum Likelihood estimator ( \alpha_{ML}(g) ) of the power law parameter [1].

- Apply selection metric: Use one of the following metrics to select the optimal network:

- Goodness-of-Fit Metric: Calculate the ( Qg ) statistic (Equation 6) for each candidate network. The network with the smallest ( Qg ) value, indicating the best fit to a power law distribution, is selected [1].

- Logarithmic Linearity Metric: Calculate the Pearson's correlation coefficient ( r ) between the logarithm of out-degrees and the logarithm of their observed frequencies. The network with a correlation closest to -1 (strongest negative linear trend) is selected [1].

Verification: The selected network should have a hub structure with few highly connected genes and many genes with few connections, consistent with scale-free topology. Biologically, key hub genes often include well-known master transcription factors.

Guide 2: Addressing Inaccurate GRN Inference from High-Dimensional Data

Problem: With a large number of genes (nodes) and limited samples, the inferred GRN is unstable, overfit, and fails to generalize.

Explanation: Regression models become unstable and overfit when the number of predictors (e.g., TFs, CREs) is large and samples are scarce, which is common in genomics [2]. This can lead to a densely connected network that does not reflect true biological sparsity.

Solution: Employ penalized regression methods like LASSO (Least Absolute Shrinkage and Selection Operator) which introduce a constraint on the sum of the absolute values of the model coefficients [2].

Steps:

- Model Setup: For each target gene, model its expression as a response variable regulated by the expression levels of all potential transcription factors (TFs).

- Apply LASSO Regression: Use a LASSO penalty which shrinks the coefficients of irrelevant TFs to exactly zero, effectively performing variable selection and yielding a sparse list of regulators [2].

- Tune Regularization Parameter: Use cross-validation to find the optimal value of the LASSO penalty parameter ( \lambda ) that minimizes prediction error. This ( \lambda ) controls the sparsity level [2].

- Construct the Network: Aggregate the non-zero regulatory links identified for each target gene across all genes to build the final sparse GRN.

Verification: The resulting network should contain mostly zero-weight connections. Stability can be checked using bootstrap methods to see if core regulatory relationships are consistently inferred.

Guide 3: Handling Non-Linear and Complex Regulatory Relationships

Problem: Standard linear correlation methods fail to capture the complex, non-linear relationships between regulators and target genes.

Explanation: Not all gene regulatory interactions are linear. Some may follow activation thresholds or other complex patterns [2]. Relying solely on linear methods like Pearson correlation can miss these true interactions or produce misleading sparsity estimates.

Solution: Utilize non-parametric association measures that can capture non-linear dependencies.

Steps:

- Choose a Non-linear Method:

- Spearman's Rank Correlation: A non-parametric version of correlation that assesses monotonic relationships, whether linear or not [2].

- Mutual Information (MI): An information-theoretic measure that quantifies the dependence between two variables, capable of detecting any predictable relationship, including complex non-linear ones [2].

- Compute Associations: Calculate the chosen measure (e.g., MI) between all pairs of genes (or between TFs and potential targets).

- Perform Statistical Testing: Use permutation tests to establish a significance threshold for the associations. This threshold acts as a sparsity parameter, where only links above the threshold are retained [2].

- Construct the Network: Build the adjacency matrix of the GRN using the significant interactions.

Verification: Compare the network inferred with non-linear methods to one inferred with linear methods. Look for known non-linear regulatory relationships that are captured by the former but missed by the latter.

Frequently Asked Questions (FAQs)

Q1: What is network sparsity and why is it critical in GRN topology? Network sparsity refers to the proportion of possible regulatory interactions in a GRN that are effectively zero or non-existent [1]. It is critical because biological networks are inherently sparse, a property believed to be essential for stability and functional robustness [1]. Using an incorrect sparsity level during inference leads to networks flooded with false positives (if too dense) or missing crucial interactions (if too sparse), compromising biological validity and downstream analysis.

Q2: My data is from single-cell RNA-seq experiments. How does this impact sparsity determination? Single-cell data introduces two types of sparsity to consider:

- Technical Sparsity: An artifact where many genes have zero counts due to dropout effects.

- Biological Sparsity: The true underlying sparsity of the regulatory network. When inferring GRNs from scRNA-seq, it is vital to correct for technical zeros using imputation or modeling approaches before network inference. Furthermore, single-cell multi-omic data (e.g., paired scRNA-seq and scATAC-seq) can provide more direct evidence of potential regulatory interactions (via chromatin accessibility), helping to better constrain and define the true biological sparsity of the network [2].

Q3: Are there specific network motifs I should look for to validate my sparsity level? Yes, the presence of certain overrepresented motifs can be an indicator of a biologically plausible network. For instance, if your inferred network is constrained to perform specific functions like multistability, you might expect to see motifs like mutually inhibitory pairs of genes with self-activation. Conversely, networks constrained to exhibit periodicity (e.g., cell cycle) may be enriched for bifan motifs or diamond-like structures [3]. A correctly sparsified network should recapitulate known motif enrichments found in biological systems.

Q4: How can I quantitatively compare the sparsity of two different GRNs? A direct comparison can be made using the connection density or average node degree. The following table summarizes key quantitative metrics for comparison:

Table: Key Metrics for Quantitative Sparsity Comparison

| Metric | Formula | Interpretation |

|---|---|---|

| Connection Density | ( L / N(N-1) ) | Proportion of possible directed links that are present. Closer to 0 indicates higher sparsity [1]. |

| Average Node Degree | ( \frac{1}{N} \sum{i=1}^N ci ) | Mean number of connections per node. A lower value indicates a sparser network [1]. |

| Scale-free Fit (( Q_g )) | ( \sum{d=1}^n \frac{(xd(g) - n(g)pX(g)(d))^2}{n(g)pX(g)(d)} ) | Goodness-of-fit to a power law. A lower value suggests a more biologically plausible, scale-free topology [1]. |

Q5: What are the primary data types used for defining GRN sparsity? The choice of data type influences the inference method and how sparsity is constrained.

Table: Common Data Types for GRN Inference and Sparsity

| Data Type | Role in Defining Sparsity | Example Methods |

|---|---|---|

| Time-Series Expression | Allows inference of causal, temporal relationships, helping to eliminate spurious correlations and sparsify the network [4]. | Dynamic Bayesian Networks, ODE-based models [2]. |

| Perturbation Data (e.g., Knockouts) | Provides direct causal information; a gene whose expression changes after a perturbation is likely a direct target, directly informing sparsity [4]. | Zscore, LASSO on differential expression. |

| Single-cell Multi-omics | Combines expression with chromatin accessibility (scATAC-seq) to prioritize interactions where the regulator's binding site is accessible, leading to more confident and sparser networks [2]. | Regression-based methods (like LASSO) that integrate multi-omic features. |

Experimental Protocols

Protocol 1: Topology-Based Sparsity Selection Workflow

This protocol provides a detailed methodology for using network topology to find the optimal sparsity level for a GRN inferred from gene expression data [1].

Key Research Reagent Solutions:

- Gene Expression Dataset: A matrix of gene expression values (e.g., from RNA-seq or microarrays) with genes as rows and samples/conditions as columns.

- GRN Inference Tool: Software capable of generating networks with varying sparsity (e.g., using LASSO, GENIE3).

- Computational Environment: A programming environment (e.g., R, Python) with libraries for statistical analysis and network topology assessment.

Methodology:

- Candidate Network Generation: Run your chosen GRN inference algorithm across a wide, logarithmically-spaced range of its key sparsity-controlling hyperparameter (( \lambda )). This will yield a set of candidate networks ( { \hat{A}1, \hat{A}2, ..., \hat{A}_G } ) [1].

- Out-Degree Calculation: For each candidate network ( \hat{A}g ), compute the out-degree ( ci(g) ) for each gene ( i ) using ( ci(g) = \sum{j=1}^n |\text{sign}(a{i,j}(g))| ), where ( a{i,j}(g) ) is the inferred interaction from gene ( i ) to gene ( j ) in network ( g ) [1].

- Power Law Parameter Estimation: For the out-degree distribution of each network, calculate the maximum likelihood estimator for the power law exponent ( \alpha ) using the approximation: ( \alpha{ML}(g) \approx 1 + n \left[ \sum{i=1}^n \ln \frac{ci(g)}{k{min} - \frac{1}{2}} \right]^{-1} ), where ( k_{min} ) is typically set to 1 [1].

- Goodness-of-Fit Calculation: For each network, compute the goodness-of-fit statistic ( Qg ): ( Qg = \sum{d=1}^n \frac{(xd(g) - n(g) pX(g)(d))^2}{n(g) pX(g)(d)} ), where ( xd(g) ) is the frequency of out-degree ( d ), ( n(g) ) is the number of genes with positive out-degree, and ( pX(g)(d) ) is the probability from the fitted power law model [1].

- Optimal Network Selection: Identify the candidate network ( \hat{A} ) that satisfies ( \hat{A} = \arg \min{\hat{A}g} Q_g ). This network is predicted to have the sparsity level closest to the true biological network [1].

Protocol 2: Boolean Network Inference from Temporally Sparse Data

This protocol outlines the inference of a sparse Boolean GRN topology from limited time-series data, using Bayesian optimization for efficient search [5].

Key Research Reagent Solutions:

- Time-Series Expression Data: Single-cell or bulk gene expression data measured at multiple time points, often binarized into active/inactive states.

- Boolean Network Model: A computational model where gene states are binary (0/1) and update according to logical rules.

- Bayesian Optimization Library: A software tool (e.g., in Python) for efficient global optimization of expensive black-box functions.

Methodology:

- Model Definition: Define a Boolean Network with Perturbation (BNp) model. The gene state vector ( Xk ) at time ( k ) updates as ( Xk = f(X{k-1}) \oplus nk ), where ( \oplus ) is modulo-2 addition, ( n_k ) is Bernoulli noise, and ( f ) is the network function [5].

- Network Function Parameterization: Express the network function ( f ) using a connectivity matrix ( C ), where elements ( c_{ij} ) can be -1 (inhibition), 0 (no interaction), or +1 (activation). The bias vector ( b ) breaks ties [5].

- Likelihood Formulation: Define a likelihood function ( P(D | \theta) ) that measures the probability of observing the time-series data ( D ) given a network topology defined by parameter vector ( \theta ) (which encodes the unknown ( c_{ij} )s) [5].

- Gaussian Process Surrogate Model: Model the expensive-to-compute log-likelihood function using a Gaussian Process (GP) with a topology-inspired kernel. This GP predicts the likelihood for unevaluated topologies [5].

- Bayesian Optimization Loop: Iteratively: a. Use the GP's posterior to select the most promising topology ( \theta ) to evaluate next (balancing exploration and exploitation). b. Compute the actual log-likelihood for the selected ( \theta ). c. Update the GP model with this new data point. The loop continues until convergence, finding the topology with the highest likelihood without exhaustively searching all possibilities [5].

Frequently Asked Questions

What causes "dropout" in scRNA-seq data? Dropout refers to the phenomenon where a gene is actively expressed in a cell but is not detected during the sequencing process. This occurs due to technical limitations, including imperfect reverse transcription, inefficient amplification, and the low starting amount of mRNA in a single cell. These errors result in a dataset with an excess of zero values, a characteristic known as "zero-inflation" [6] [7] [8].

How does data sparsity negatively impact GRN inference? Sparse data can lead to several critical issues in GRN inference, including over-fitting, where a model learns the noise in the data rather than the true biological signals. It can also cause models to avoid or misrepresent important regulatory relationships hidden within the sparse data points. Furthermore, sparsity increases the computational time and space complexity required for analysis [9].

What is the difference between a biological zero and a technical zero (dropout)? A biological zero accurately represents a gene that is not expressed in a given cell. A technical zero (dropout) is an artifact where a truly expressed gene fails to be captured and measured. Distinguishing between these two types of zeros from the observed data is a major challenge in scRNA-seq analysis [7].

Beyond imputation, what are alternative strategies for handling sparse data? A promising alternative is to build models that are inherently robust to dropout noise. One such method is Dropout Augmentation (DA), which regularizes the model by intentionally adding synthetic dropout events during training. This teaches the model to be less sensitive to missing data, improving its performance on real, zero-inflated datasets [6] [8].

Can external data sources improve GRN inference from sparse single-cell data? Yes. Methods like LINGER use lifelong learning to incorporate large-scale external bulk data from sources like the ENCODE project. This provides a rich prior knowledge base, which helps the model learn complex regulatory mechanisms from limited single-cell data points, significantly boosting inference accuracy [10].

Troubleshooting Guides

Guide 1: Diagnosing and Quantifying Data Sparsity

Before reconstructing GRNs, it is crucial to assess the level of sparsity in your scRNA-seq dataset.

| Step | Action | Interpretation & Tips |

|---|---|---|

| 1. Calculate Basic Metrics | Compute the percentage of zero counts in your expression matrix. | In scRNA-seq, it is common for 57% to 92% of observed values to be zeros [6] [8]. A percentage within this range is typical. |

| 2. Generate Quality Control Reports | Use pipelines like Cell Ranger (10x Genomics) to generate a web_summary.html file and inspect key metrics [11]. |

Check the "Median Genes per Cell" and the "Barcode Rank Plot." A low gene count per cell or an unclear separation between cells and background in the plot can indicate high sparsity or other quality issues [11]. |

| 3. Visualize with Dropout Curves | Use tools like the dropR R package to create dropout curves that visualize the rate of non-response or data loss across an experimental process [12]. |

This is especially useful for diagnosing if specific steps in your protocol (e.g., certain questionnaire items in a web-based study) cause disproportionate data loss, which can be analogized to specific stages in single-cell library preparation [12]. |

Guide 2: Selecting a GRN Inference Method for Sparse Data

Different computational methods handle data sparsity in different ways. The table below summarizes the core strategies.

| Method Category | Representative Tool(s) | Core Strategy for Handling Sparsity | Key Characteristics |

|---|---|---|---|

| Model Regularization | DAZZLE [6] [8] | Dropout Augmentation (DA): Adds synthetic zeros during training to improve model robustness. | Does not alter the original data; focuses on making the inference algorithm more resilient. |

| Integration of External Data | LINGER [10] | Lifelong Learning: Leverages atlas-scale external bulk data as a regularizing prior. | Mitigates the challenge of learning from limited data points by incorporating knowledge from larger, related datasets. |

| Deep Learning with Specialized Distributions | scVI, DCA [7] | Generative Modeling: Uses probabilistic models (e.g., Zero-Inflated Negative Binomial) that inherently account for the sparsity. | Models the data generation process, including technical noise, to learn a denoised latent representation. |

| Graph Machine Learning | scSimGCL [13] | Graph Contrastive Learning: Uses data augmentation (gene/edge masking) and self-supervised learning on cell-cell graphs. | Creates robust cell representations by learning from augmented views of the data, improving downstream clustering. |

Guide 3: Implementing a Dropout-Augmented GRN Inference Workflow

This guide provides a high-level protocol for using a dropout augmentation-based method like DAZZLE.

Experimental Protocol: GRN Inference with DAZZLE

Primary Source: Zhu & Slonim, 2025 [6] [8]

1. Input Data Preparation:

- Input: A single-cell gene expression matrix (cells x genes) containing raw UMI counts.

- Transformation: Apply a log-transform to the count matrix using

log(x+1)to reduce variance and avoid taking the logarithm of zero [6] [8].

2. Model Training with Dropout Augmentation:

- The model architecture is an autoencoder-based Structural Equation Model (SEM) where an adjacency matrix (representing the GRN) is parameterized and learned [6] [8].

- During each training iteration, a Dropout Augmentation (DA) step is performed: a small, random proportion of the non-zero expression values is set to zero to simulate additional dropout events.

- A noise classifier is trained concurrently to identify which zeros are likely to be augmented, helping the decoder ignore them during reconstruction [6] [8].

3. Network Extraction and Sparsity Control:

- After training, the weights of the learned adjacency matrix are retrieved as the inferred GRN.

- DAZZLE introduces stability by delaying the application of a sparsity-inducing loss term, preventing premature and suboptimal pruning of network edges [6] [8].

Visualization of the DAZZLE Workflow:

The Scientist's Toolkit

Research Reagent Solutions

| Tool / Resource | Function in Experiment |

|---|---|

| 10x Genomics Chromium | A droplet-based single-cell sequencing platform that generates the raw scRNA-seq data. Later protocols have improved detection rates, but dropout remains an issue [6] [8]. |

| BEELINE Benchmark | A standardized framework and set of datasets used to evaluate and compare the performance of different GRN inference methods [6] [8]. |

| ENCODE Project Data | A comprehensive repository of functional genomic data from bulk experiments. Used as a source of external prior knowledge in methods like LINGER to guide inference from sparse single-cell data [10]. |

| dropR Software | An R package and web application designed to analyze and visualize dropout, providing metrics and curves to diagnose data loss patterns [12]. |

Core Computational Concepts

Visualizing the Sparse Data Problem in GRNs:

Troubleshooting Guide: Addressing Common GRN Reconstruction Challenges

FAQ: What are the primary data-related challenges in GRN reconstruction from single-cell data? The primary challenges stem from network sparsity and cellular heterogeneity. Single-cell RNA-seq data is inherently high-dimensional and noisy, which conventional GRN inference approaches often struggle with, leading to issues with robustness and interpretability [14]. Furthermore, the presence of multiple cell states and subtypes within the data can obscure genuine regulatory relationships.

FAQ: How can I improve the stability of my GRN inference under high-noise conditions? A hybrid framework that integrates Gaussian Graphical Models (GGM) for learning conditional independence relationships with Neural Ordinary Differential Equations (Neural ODE) for dynamic modeling has been shown to achieve superior accuracy and stability, particularly under high-noise conditions [14]. Using the undirected graph from GGM as a prior constraint for the Neural ODE reduces the parameter search space and data requirements [14].

FAQ: My reconstructed network seems to miss context-specific interactions. How can I address this? Relying solely on curated databases like KEGG may not capture context-specificity, for instance, in processes like Epithelial-Mesenchymal Transition (EMT) where marker genes show significant environmental specificity [14]. Integrating multiple perturbation datasets (e.g., gene knockouts, drug treatments) can help establish more accurate, context-aware causal relationships [4].

FAQ: What metrics should I use to validate the accuracy of predicted regulatory interactions? Commonly used metrics include the Receiver Operating Characteristic (ROC) curve, Area Under Curve (AUC), F1-score, and precision [14]. These should be applied to benchmark your method against existing algorithms like PCM, GENIE3, and GRNBoost2 [14].

FAQ: How can I analyze stochastic dynamics and cell fate decisions from my GRN model? The energy landscape theory provides a framework for understanding stochastic dynamics. A hybrid strategy combining a GRN inference method (like GGANO) with a dimension reduction approach of the landscape (DRL) can quantify the energy landscape, helping to identify intermediate cellular states and their plasticity, such as a partial-EMT state in cancer cells [14].

Table 1: Quantitative Validation Metrics for GRN Inference (Benchmarking Example)

| Method | AUC Score | F1-Score | Precision | Robustness to High Noise |

|---|---|---|---|---|

| GGANO [14] | Superior | Superior | Superior | High |

| Pure Neural ODE [14] | Lower | Lower | Lower | Moderate |

| GENIE3 [14] | Lower | Lower | Lower | Low |

| GRNBoost2 [14] | Lower | Lower | Lower | Low |

Table 2: Troubleshooting Common Experimental and Computational Issues

| Problem | Potential Cause | Solution | Key References |

|---|---|---|---|

| Unstable network structures | High technical noise & outliers in single-cell data | Apply temporal Gaussian graphical model with fused Lasso penalty for temporal homogeneity [14]. | [14] |

| Lack of directional inference | Use of correlation-based methods that infer undirected links | Use dynamic models like Neural ODEs to infer direction and type of regulation [14]. | [14] [4] |

| Inability to identify key regulators | Lack of perturbation data to establish causality | Integrate gene knockout or drug treatment datasets to infer causal links [14] [4]. | [14] [4] |

| Poor generalizability | Overfitting to a specific cell type or condition | Use ensemble concepts to improve model stability and generalizability [14]. | [14] |

Experimental Protocols & Workflows

Detailed Protocol: Applying the GGANO Framework

Objective: To reconstruct a robust, directed GRN from high-dimensional and noisy single-cell time-series data.

Workflow Overview: The following diagram illustrates the integrated GGANO workflow for inferring gene regulatory networks and their dynamics.

GGANO Workflow for GRN Inference

Step-by-Step Methodology:

Data Preprocessing:

- Input: Raw single-cell RNA-seq data from time-course experiments (e.g., across multiple cell lines and EMT-inducing factors) [14].

- Filtering: Apply gene filtering criteria to remove low-quality cells and genes.

- Normalization: Perform normalization steps as detailed in supplementary material of relevant studies [14].

Undirected Structure Inference with Temporal GGM:

- Model Assumption: Assume gene expression data at each time point

iand conditionjfollows a multivariate Gaussian distribution,X_k(i,j) ~ N(μ(i,j), Σ(i,j))[14]. - Goal: Estimate a set of precision matrices

{Θ_ij}, which encode the undirected partial correlation structure of the network over time and across conditions. - Sparsity Enforcement: Incorporate Lasso regularization to enhance the sparsity of the network structure [14].

- Temporal Homogeneity: Introduce a Fused Lasso penalty to constrain differences between consecutive networks, ensuring smooth temporal changes [14].

- Model Assumption: Assume gene expression data at each time point

Directed Dynamics Inference with Neural ODE:

- Input: The undirected network structure from Step 2 is used as a prior constraint.

- Process: The Neural ODE model learns the system's dynamics, inferring the direction and type (activation/inhibition) of regulatory interactions [14].

- Benefit: This prior constraint reduces the search space for neural network parameters and lowers the demands on dataset size during training.

Validation and Landscape Analysis:

- Validation: Compare the inferred GRN against known interactions or use benchmark metrics (AUC, F1-score) against other methods (e.g., GENIE3) [14].

- Stochastic Dynamics: Combine the inferred GRN model with a dimension reduction of the landscape (DRL) approach to calculate the potential energy landscape [14].

- Output: Identify stable attractor states (e.g., Epithelial, Mesenchymal, partial-EMT) and quantify the plasticity and transition probabilities between these states.

Protocol for Identifying Problematic Image Color Contrast

Objective: To ensure that scientific figures, such as GRN diagrams and pathway maps, are accessible to individuals with color vision deficiencies (CVD), aligning with the color contrast rules specified for this document.

Workflow Overview: This flowchart outlines the process for checking and remediating color contrast in scientific figures.

Color Accessibility Check for Figures

Step-by-Step Methodology:

Image Evaluation:

Problem Identification:

- Manually review figures or use automated tools to identify images that rely solely on color pairs with low contrast (e.g., certain shades of green and red) that would be problematic for deuteranopes [16].

- Note that studies have found a significant portion of images in biological literature to be potentially problematic [16].

Apply Mitigations:

- Avoid Rainbow Color Maps: Use CVD-friendly color schemes with sufficient contrast [16].

- Use Labels and Patterns: Complement color information with direct labels, symbols, or different patterns (e.g., hashing, stripes) [16].

- Increase Spatial Distance: Ensure that low-contrast colors are not placed immediately adjacent to one another [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for GRN Studies

| Item Name | Function / Application | Specific Example / Note |

|---|---|---|

| Single-cell RNA-seq Data | Reveals cell-type-specific gene expression patterns and heterogeneity, which is fundamental for building context-aware GRNs. | Data can be generated using technologies like MULTI-seq [14]. |

| Perturbation Datasets | Used to infer causal relationships. Includes data from gene knockouts (CRISPR) or drug treatments. | Essential for moving beyond correlation to causality in network inference [4]. |

| Time-Series Expression Data | Allows for the study of changes in gene expression over time to infer dynamic GRNs and identify regulatory relationships. | Available on platforms like the DREAM Challenges website [4]. |

| Curated Pathway Databases | Provide prior knowledge for network validation and integration. | Examples include KEGG and Ingenuity Pathway Analysis (IPA). Be aware of limitations with context-specificity [14]. |

| GGM with Regularization | A computational "reagent" to infer the undirected, sparse structure of a regulatory network from data. | Incorporates Lasso and Fused Lasso for sparsity and temporal homogeneity [14]. |

| Neural ODE Framework | A computational "reagent" for inferring the direction and nonlinear dynamics of regulatory interactions. | Used after GGM to refine the network into a directed, dynamic model [14]. |

| Color Contrast Checker | A tool to ensure scientific figures are accessible to all, including those with color vision deficiencies. | For example, the WebAIM Contrast Checker [15]. Use the provided color palette with sufficient contrast [17]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why does network sparsity make it difficult to distinguish direct from indirect regulatory links? In a sparse Gene Regulatory Network (GRN), where each gene connects to only a few others, indirect regulation often creates chains of interactions. For example, Transcription Factor (TF) A regulates TF B, which then regulates gene C. This creates a correlation between the expression of TF A and gene C, even though no direct link exists. In a sparse network, these indirect pathways can be statistically similar to direct, single-step regulations because the number of intermediate nodes is small, making them hard to distinguish using correlation-based methods alone [19]. Sparsity increases the risk of misinterpreting these indirect correlations as direct causal links, leading to false positives in the inferred network.

FAQ 2: What are the primary computational challenges caused by sparsity in GRN inference? Sparsity presents two major computational challenges. First, it creates a large-scale underdetermined problem: the number of potential regulatory links (e.g., between all TFs and all target genes) is vastly greater than the number of available experimental observations or data points. This makes it statistically difficult to uniquely identify the true, sparse set of connections [20]. Second, there is the challenge of efficiently enforcing sparsity during inference. While methods like LASSO use sparsity constraints, they often only provide a single-point estimate of the network. In contrast, advanced Bayesian methods like BiGSM (Bayesian inference of GRN via Sparse Modelling) are engineered to leverage this sparsity more effectively and provide a full posterior distribution, offering insights into the confidence of each predicted link [21].

FAQ 3: Which experimental designs are best suited for identifying direct links in a sparse network? Perturbation-based experiments, such as single-gene knockouts or knockdowns, are crucial for establishing causality and teasing apart direct regulations. When a TF is perturbed, the direct downstream targets will show the most immediate and significant expression changes. Measuring steady-state expression levels after such targeted perturbations provides data that, when analyzed with appropriate sparse reconstruction algorithms, can help pinpoint direct regulators by breaking apart correlation chains [21] [20]. Furthermore, techniques like Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) or microarray (ChIP-chip) can provide direct physical evidence of a TF binding to a gene's promoter region, thereby validating a direct regulatory link inferred from expression data [22] [19].

FAQ 4: How can I assess the confidence of a predicted direct regulatory link? The confidence in a predicted link can be assessed using methods that provide probabilistic outputs. For instance, the BiGSM method infers closed-form posterior distributions for GRN links. This allows researchers to examine the statistical confidence (e.g., credible intervals) of each possible link, rather than relying on a simple binary prediction [21]. Additionally, applying data processing inequality (DPI) principles, often used in mutual information-based methods, can help prune the network of weaker, likely indirect edges, thereby increasing the confidence that the remaining edges are direct [19].

Troubleshooting Guides

Issue: High False Positive Rate in Inferred Network

Problem: Your inferred GRN contains many regulatory links that are likely indirect or non-existent.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Reliance on correlation alone | Check if the inference method is based solely on gene co-expression (e.g., Pearson correlation). | Apply methods that distinguish direct/indirect links, such as those using partial correlation or information theory (e.g., DPI) [19]. |

| Insufficient perturbation data | Review your experimental design; a lack of diverse gene perturbations limits causal inference. | Incorporate data from single-gene knockout/knockdown experiments to establish causal directions [21]. |

| Poorly tuned sparsity constraint | Check the density of your inferred network; if it's much denser than known biological networks (~3 links/gene), the sparsity constraint is too weak [21]. | Use a method with an explicit, tunable sparsity parameter (e.g., LASSO, BiGSM) and validate the network density against known benchmarks [21] [20]. |

Issue: Inability to Replicate Network Topology Across Datasets

Problem: The structure of your inferred GRN changes dramatically when using different but related datasets (e.g., different replicates or perturbation sets).

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| High noise levels in data | Calculate the Signal-to-Noise Ratio (SNR) if possible. Performance drops sharply at low SNR (e.g., 0.01) [21]. | Use methods robust to noise, like BiGSM or Total Least Squares (TLS), and aim to increase data quality through technical replicates [21] [20]. |

| Underpowered experimental data | The number of experiments (samples) may be too low compared to the number of genes. | Increase the number of independent perturbation experiments. Use sparse reconstruction algorithms designed for underdetermined problems [20]. |

Experimental Protocol: Inferring Direct Links from Steady-State Perturbation Data

This protocol outlines a methodology for inferring GRN structure from steady-state gene expression data following targeted perturbations, using a sparse reconstruction framework [20].

1. Experimental Design and Data Collection

- Perturbations: Perform independent single-gene perturbation experiments (e.g., knockdowns) for a significant subset of genes, particularly transcription factors. Each experiment should target a different gene.

- Expression Measurement: For each perturbation, measure the steady-state expression levels of all genes in the network using RNA sequencing or microarrays.

- Data Matrix Construction:

- Let

nbe the number of genes. - Construct an

m x nexpression matrix, wheremis the number of perturbation experiments. Each element represents the log-fold-change of genejin perturbation experimentℓcompared to a wild-type control.

- Let

2. Data Preprocessing for Sparse Reconstruction

- For each gene

i(considered as a potential target), formulate a linear model based on the causal interaction model [20]:

b = Φα_i

- Vector b: The observation vector

b ∈ R^mis formed from the measured expression changes of the target geneiacross allmexperiments.- Matrix Φ: The measurement matrix

Φ ∈ R^(m x (n-1))is constructed from the expression changes of all other genes (excludingi). Each column corresponds to a potential regulator genej.

3. Network Inference via Sparse Reconstruction

- Algorithm Selection: Choose a sparse reconstruction algorithm suitable for underdetermined systems (where

m < n). Examples include Sparse Bayesian Learning (SBL) [21] or other ℓ₁-minimization techniques [20]. - Solving for Links: For each gene

i, solve the optimization problem to find the sparse vectorα_i. The non-zero entries inα_iindicate the identity and strength of the direct regulatory links from other genes to genei. - Network Assembly: Assemble the full

n x nGRN matrix by collating the vectorsα_ifor all genesi=1,...,n.

Workflow Diagram: From Perturbation to Network Inference

Table 1: Benchmark Performance of GRN Inference Methods

The following table summarizes the performance of various GRN inference methods as reported in benchmark studies using datasets like GeneSPIDER and DREAM challenges [21]. A key challenge is maintaining accuracy as noise increases.

| Method | Underlying Principle | Handles Sparsity Explicitly? | Provides Confidence Estimates? | Key Strength / Weakness |

|---|---|---|---|---|

| BiGSM | Bayesian Sparse Learning | Yes [21] | Yes (Posterior distributions) [21] | Overall best performance in benchmarks; robust to noise. |

| GENIE3 | Random Forest / Feature Importance | No | No (Provides importance scores) [21] | High accuracy but can predict overly dense networks. |

| LASSO | L1-penalized Regression | Yes [21] | No (Point estimates only) [21] | Explicit sparsity control, but lacks confidence metrics. |

| LSCON | Regression-based | No | No [21] | Performance varies with noise levels. |

| Zscore | Simple Correlation | No | No [21] | Prone to false positives from indirect links. |

Table 2: Impact of Noise and Data Type on Inference Accuracy

This table synthesizes information on how different factors affect the ability to correctly identify direct regulatory links [21] [20].

| Factor | Impact on Distinguishing Direct vs. Indirect Links | Mitigation Strategy |

|---|---|---|

| Low Signal-to-Noise Ratio (SNR) | Severely reduces accuracy; increases both false positives and false positives [21]. | Use methods designed for noise (e.g., BiGSM, TLS); increase replicates. |

| Lack of Perturbation Data | Makes inferring causal directionality nearly impossible; networks remain undirected [19]. | Design experiments with targeted gene perturbations (KO/KD). |

| Using Steady-State vs. Time-Series Data | Steady-state data can be effective with perturbations [20]. Time-series allows for analysis of temporal causality (e.g., Granger causality) [19]. | Choose method based on data: sparse reconstruction for steady-state, dynamical models for time-series. |

The Scientist's Toolkit: Research Reagent Solutions

| Resource / Reagent | Function in GRN Research | Key Application Notes |

|---|---|---|

| GeneSPIDER Toolbox | Generates synthetic GRN and simulated expression data for benchmarking [21]. | Allows testing of methods on networks with known, sparse ground-truth topology under controlled noise levels. |

| GRNbenchmark Webserver | Provides an integrated suite for fair and transparent evaluation of GRN inference methods [21]. | Use to objectively compare your method's performance against state-of-the-art algorithms on standardized datasets. |

| DREAM Challenge Datasets | Community-standard in silico and in vivo network datasets (e.g., DREAM3, DREAM4, DREAM5) [21] [20]. | The gold standard for comparative performance assessment of GRN inference methods. |

| ChIP-chip / ChIP-seq | Experimental techniques to map physical protein-DNA interactions genome-wide [22] [19]. | Provides direct evidence of TF binding to distinguish direct regulatory targets from indirect, correlated genes. |

| Perturbation Reagents (siRNA, CRISPR-Cas9) | Tools for performing targeted gene knockouts or knockdowns. | Essential for generating the perturbation data required to establish causal, direct links in the network. |

Logical Pathway Diagram: Direct vs. Indirect Regulation

This diagram illustrates the fundamental difference between a direct regulatory link and two common types of indirect regulation, which is the core challenge in sparse GRN inference.

Computational Arsenal: Methodologies for Robust GRN Inference from Sparse Data

Troubleshooting Guide & FAQs

FAQ: What are the most common causes of poor inference performance in GRLGRN? Poor performance often stems from data sparsity and high dimensionality in single-cell RNA-seq data. GRLGRN addresses this using a graph transformer network to extract implicit links from prior GRNs, moving beyond explicit connections that may be missing in sparse networks [23]. Ensure your prior GRN quality matches your cell type and the gene expression data is properly normalized.

FAQ: How does GRLGRN prevent over-fitting on sparse gene regulatory data? GRLGRN incorporates a graph contrastive learning regularization term during training. This technique reduces excessive feature smoothing by ensuring the model learns robust gene representations that are invariant to minor perturbations, significantly improving generalization on sparse datasets [23].

FAQ: My Graphviz node fill colors are not displaying. What is wrong?

For fillcolor to work in Graphviz, you must also set style=filled for the node. This is a common requirement that is often overlooked [24] [25].

FAQ: Which benchmark datasets should I use to validate my GRLGRN implementation? The BEELINE database is the standard benchmark, containing seven cell line types including human embryonic stem cells (hESC), human hepatocytes (hHEP), and multiple mouse cell types (mDC, mESC, mHSC-E, mHSC-GM, mHSC-L). Each includes three ground-truth networks (STRING, cell-type-specific ChIP-seq, non-specific ChIP-seq) for comprehensive evaluation [26] [23].

FAQ: How do I handle memory issues with large GRN graphs?

GRLGRN's architecture processes the prior GRN as five directed subgraphs (regulatory, reverse regulatory, TF-TF, reverse TF-TF, and self-connected). For computational efficiency, consider starting with the 500-gene sets before scaling to 1000-gene networks, and utilize the provided batching in utils.py [26] [23].

Experimental Protocols & Methodologies

GRLGRN Model Training Protocol

Data Preparation: Download your chosen cell line dataset from the BEELINE database. The input requires the gene expression matrix and a prior GRN adjacency matrix. The code automatically partitions gene pairs (positive and negative samples) into training, validation, and test sets [26].

Environment Setup: Configure the Python 3.8 environment with critical packages: PyTorch 2.1.0, scikit-learn 0.23.1, NumPy 1.24.3, pandas 1.3.4, and SciPy 1.4.1 [26].

Gene Embedding Module Execution: The core model uses a graph transformer network to extract implicit links by processing five derived graphs from your prior GRN. This generates enriched gene embeddings that capture latent regulatory dependencies [23].

Feature Enhancement: Process the gene embeddings through the Convolutional Block Attention Module (CBAM). This module emphasizes informative channels and spatial features, refining the representations for the prediction task [26] [23].

Model Training and Evaluation: Execute

train.pywith your data. The training incorporates an automatic weighted loss and a graph contrastive learning regularization term to combat over-fitting. Evaluate the model on the test set usingtest.pyand compute standard performance metrics withcompute_metrics.py[26] [23].

Benchmarking and Ablation Study Protocol

Performance Comparison: Compare GRLGRN against established methods (e.g., GENIE3, CNNC, GRNBoost2, GCNG) on your chosen benchmark dataset. Standard evaluation metrics are Area Under the Receiver Operating Characteristic curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) [23] [27].

Ablation Analysis: To validate the contribution of each GRLGRN component, run controlled experiments by:

- Removing the graph contrastive learning regularization term.

- Replacing the CBAM module with a standard fully connected layer.

- Using only the original explicit links instead of the graph transformer-extracted implicit links. Quantitative results will demonstrate the importance of each innovation to the model's overall performance [23].

Table 1: GRLGRN Performance on Benchmark Datasets

Table comparing the performance of GRLGRN against other models across different cell lines and ground-truth networks, measured in AUROC and AUPRC.

| Cell Line | Ground-Truth Network | GRLGRN (AUROC) | Best Benchmark (AUROC) | GRLGRN (AUPRC) | Best Benchmark (AUPRC) |

|---|---|---|---|---|---|

| mESC | STRING | 0.923 | 0.861 (GENIE3) | 0.891 | 0.682 (GENIE3) |

| mESC | ChIP-seq (cell-type-specific) | 0.895 | 0.843 (GCNG) | 0.845 | 0.594 (GCNG) |

| hHEP | STRING | 0.911 | 0.849 (GRNBoost2) | 0.872 | 0.665 (GRNBoost2) |

| mDC | Non-specific ChIP-seq | 0.882 | 0.827 (CNNC) | 0.823 | 0.601 (CNNC) |

| mHSC-E | STRING | 0.931 | 0.872 (GENIE3) | 0.902 | 0.712 (GENIE3) |

Table 2: Ablation Study Results (Average Across All Datasets)

Table showing the contribution of key GRLGRN components by systematically removing them and measuring the performance drop.

| Model Variant | AUROC | AUPRC | Key Change |

|---|---|---|---|

| GRLGRN (Full Model) | 0.901 | 0.847 | - |

| GRLGRN w/o Contrastive Learning | 0.872 | 0.801 | Removes graph contrastive learning regularization |

| GRLGRN w/o CBAM | 0.885 | 0.823 | Replaces CBAM with a standard layer |

| GRLGRN w/o Implicit Links | 0.841 | 0.752 | Uses only explicit links from prior GRN |

Workflow and Architecture Visualization

GRLGRN Architecture

Implicit Link Extraction

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

Key resources for implementing and experimenting with GRLGRN and similar graph-based GRN inference methods.

| Resource Name | Type | Function in Experiment | Source/Reference |

|---|---|---|---|

| BEELINE Database | Benchmark Data | Provides standardized scRNA-seq datasets & ground-truth GRNs for 7 cell lines to ensure fair model comparison [26] [23]. | Pratapa et al., 2020 |

| STRING Database | Ground-Truth Network | Serves as one of three benchmark networks of known regulatory interactions for validating GRN inferences [26] [23]. | Szklarczyk et al., 2019 |

| Cell-Type-Specific ChIP-seq | Ground-Truth Network | Provides a higher-confidence, context-aware benchmark network derived from experimental ChIP-seq data [26] [23]. | Xu et al., 2013 |

| Graph Transformer Network | Algorithmic Module | Core component of GRLGRN that extracts implicit links from a sparse prior GRN, enabling the discovery of latent regulatory dependencies [23]. | [23] |

| Convolutional Block Attention Module (CBAM) | Algorithmic Module | Enhances gene feature representation by adaptively emphasizing important channels and spatial context within the data [26] [23]. | [26] |

| Graph Contrastive Learning | Regularization Technique | Prevents over-fitting and over-smoothing of gene embeddings during model training, improving generalization on sparse data [23]. | [23] |

FAQs and Troubleshooting Guides

How do attention mechanisms specifically address the problem of network sparsity in GRN reconstruction?

Network sparsity, characterized by many unconfirmed gene-gene links, is a fundamental challenge in GRN reconstruction because conventional methods often miss these latent relationships [28]. Attention mechanisms combat sparsity by dynamically assigning importance weights, forcing the model to focus on the most informative features and connections, even when data is incomplete.

Graph Attention Networks (GATs) directly address sparsity by performing targeted, local aggregation. Instead of treating all neighboring genes in a prior network as equally important, GATs learn to assign a weight (αᵢⱼ) between a target gene

iand its neighborj[29] [30]. This allows the model to focus on the most critical regulatory signals and ignore noisy or irrelevant connections, effectively "filling in" missing information by emphasizing meaningful pathways [31].Convolutional Block Attention Module (CBAM) tackles sparsity in feature maps. GRN models often generate intermediate feature maps where not all features are equally useful. CBAM refines these maps by sequentially applying channel attention (to highlight the most informative feature channels) and spatial attention (to highlight the most relevant spatial locations within the feature map) [32] [33]. This dual attention amplifies important signals and suppresses noise, leading to more robust gene representations against the backdrop of sparse and heterogeneous single-cell data [31].

My model fails to converge when training a GAT on a large, sparse GRN. What could be the issue?

This is a common problem often related to the attention computation over a large node set. The issue likely stems from the softmax function in the attention mechanism.

- Problem Analysis: The softmax function in a GAT layer normalizes attention scores for a node across all its neighbors [29] [30]. In a very large and sparse graph, the initial, unnormalized attention scores (

eᵢⱼ) for non-existent or unimportant edges might dominate the softmax, leading to unstable gradients and preventing convergence. - Solution: Implement masked attention. Before applying the softmax, mask out attention scores for non-existent edges by setting them to a large negative value (e.g., -9e16). This ensures the softmax only considers actual edges in the graph, stabilizing the learning process [29].

How can I improve the inference accuracy of my GRN model for identifying key hub genes?

To enhance the identification of hub genes, which are crucial for understanding biological functions, you can integrate a gene importance scoring mechanism directly into your model architecture.

- Solution: Incorporate a modified PageRank* algorithm. Traditional PageRank assesses node importance based on in-degree (links pointing to the node). For GRNs, a gene's importance is often defined by its out-degree (how many genes it regulates). The PageRank* algorithm modifies the standard assumptions to focus on a gene's out-degree, effectively identifying genes that regulate many others or that regulate other important genes [34].

- Implementation: Calculate this importance score for each gene during preprocessing or within the model. Fuse this score with the gene's expression features. This directs the model's attention to high-impact genes during the encoding and decoding process, significantly improving the accuracy of hub gene identification and the overall GRN reconstruction [34].

What is the difference between global and masked attention in GATs, and which one should I use for GRN inference?

The choice is critical and depends on the structure of your prior GRN and the specific task.

Table: Comparison of Global vs. Masked Attention in GATs

| Feature | Global Attention | Masked Attention |

|---|---|---|

| Graph Structure | Ignores original graph topology; treats graph as fully connected. | Respects the original graph topology; only operates on existing edges [29]. |

| Computational Efficiency | Low; computes attention between all node pairs, expensive for large graphs [35]. | High; only computes attention between connected nodes [35]. |

| Primary Use Case | Graph-level tasks (e.g., graph classification) that require a global context. | Node-level and edge-level tasks (e.g., node classification, link prediction) [35]. |

| Suitability for GRN | Generally not recommended due to high computational cost and potential for noise. | Highly recommended as it is designed for link prediction and works with the inherent sparsity of prior GRNs [31]. |

For GRN inference, which is fundamentally a link prediction task (predicting regulatory edges between genes), masked attention is almost always the appropriate choice. It efficiently leverages the known graph structure to learn meaningful representations without being overwhelmed by the computational cost of a fully-connected graph [31] [35].

Experimental Protocols and Methodologies

Protocol 1: Implementing a Basic GAT Layer for GRN Node Representation

This protocol outlines the steps to create a GAT layer that generates refined gene embeddings from a prior GRN and gene expression data.

Theory: A GAT layer updates a node's representation by performing a weighted aggregation of features from its neighbors. The weights are learned dynamically through a self-attention mechanism [30].

Methodology: The forward pass of a single-head GAT layer involves four key equations [30]:

- Linear Transformation: Transform each node's feature vector

h_iusing a shared weight matrixW. [zi^{(l)} = W^{(l)}hi^{(l)}] - Attention Score Calculation: For each edge

(i, j), compute an unnormalized attention scoree_ijby applying a learnable weight vectorato the concatenation of the transformed node featuresz_iandz_j, followed by a LeakyReLU activation. [e{ij}^{(l)} = \text{LeakyReLU}\left(\vec a^{(l)^T}(zi^{(l)}||z_j^{(l)})\right)] - Attention Weight Normalization: Normalize the attention scores across all neighbors

jof nodeiusing a softmax function to obtain the final attention weightsα_ij. [\alpha{ij}^{(l)} = \frac{\exp(e{ij}^{(l)})}{\sum{k\in \mathcal{N}(i)}\exp(e{ik}^{(l)})}] - Feature Aggregation: The output embedding for node

iis the weighted sum of the transformed neighboring features, passed through a non-linear activation functionσ(e.g., ELU). [hi^{(l+1)} = \sigma\left(\sum{j\in \mathcal{N}(i)} {\alpha^{(l)}{ij} z^{(l)}j }\right)]

Visualization: GAT Layer Workflow

Protocol 2: Integrating CBAM for Feature Map Refinement in a GRN Model

This protocol describes how to integrate the CBAM module into a CNN-based GRN pipeline to enhance feature maps extracted from gene expression data.

Theory: CBAM sequentially infers attention maps along the channel and spatial dimensions of a feature map. The channel attention identifies "what" features are meaningful, while the spatial attention identifies "where" the most informative regions are. These maps are multiplied to the input feature map for adaptive refinement [32] [31].

Methodology:

- Input: A intermediate feature map

Fof dimensionsC x H x W(Channels x Height x Width). - Channel Attention:

- Squeeze: Apply global average pooling and global max pooling to

F, resulting in twoC x 1 x 1descriptors. - Excitation: Feed these descriptors into a shared multi-layer perceptron (MLP) with one hidden layer. The outputs are summed and passed through a sigmoid activation to generate the channel attention map

Mc. - Refinement: Multiply

Mcwith the input feature mapFto get the channel-refined feature mapF'.

- Squeeze: Apply global average pooling and global max pooling to

- Spatial Attention:

- Compute Statistics: Along the channel dimension, apply global average pooling and global max pooling to

F', resulting in two1 x H x Wmaps. Concatenate them. - Convolution: Apply a standard convolution layer to the concatenated map to produce a spatial attention map

Ms. - Refinement: Multiply

Mswith the channel-refined feature mapF'to obtain the final refined output feature mapF''.

- Compute Statistics: Along the channel dimension, apply global average pooling and global max pooling to

Visualization: CBAM Refinement Process

Protocol 3: Multi-Source Feature Fusion to Combat Data Sparsity

This protocol is for a more advanced setup that enriches gene representations by fusing multiple sources of information before applying attention mechanisms.

Theory: Relying on a single data source (like a prior network) can perpetuate sparsity issues. Fusing temporal expression patterns, baseline expression levels, and topological attributes provides a multi-faceted view of each gene, allowing the model to cross-reference information and make more confident predictions about regulatory relationships [28].

Methodology:

- Feature Extraction:

- Temporal Features: From time-series scRNA-seq data, extract per-gene statistics: mean, standard deviation, maximum, minimum, skewness, kurtosis, and time-series trend [28].

- Baseline Expression Features: From wild-type expression data, extract baseline expression level, stability, specificity, pattern, and correlation with other genes [28].

- Topological Features: From the prior GRN, compute node-level metrics: degree centrality, in-degree, out-degree, clustering coefficient, betweenness centrality, and PageRank score [28].

- Preprocessing: Normalize each feature type (e.g., Z-score normalization for temporal data) to ensure they are on comparable scales.

- Fusion: Concatenate the normalized feature vectors from all three sources into a single, comprehensive feature vector for each gene. This fused vector is then used as the input to your subsequent GAT or other graph model.

Visualization: Multi-Source Feature Fusion Framework

Performance Data and Benchmarks

Table: Quantitative Performance Comparison of GRN Inference Methods

| Model / Method | Core Attention Mechanism | Average AUROC | Average AUPRC | Key Strength |

|---|---|---|---|---|

| GRLGRN [31] | Graph Transformer + CBAM | Highest | ~30.7% improvement | Best overall performance on benchmark datasets. |

| GAEDGRN [34] | Gravity-Inspired Graph Autoencoder | High Accuracy | Strong | Effectively captures directed network topology. |

| GTAT-GRN [28] | Graph Topology-Aware Attention | High | High | Excellent at capturing high-order dependencies. |

| GENELink | Graph Attention Network (GAT) | Good | Good | Baseline GAT model for GRN inference. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Resources for GRN Research with Attention Mechanisms

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| BEELINE Database [31] | Provides standardized benchmark scRNA-seq datasets and ground-truth GRNs for 7 cell lines. | Essential for fair evaluation and comparison of new methods. |

| DGL (Deep Graph Library) | A Python library for implementing GNNs; includes a built-in GATConv layer [30]. |

Simplifies the implementation of GAT models and improves running efficiency. |

| PyTorch Geometric | Another popular library for deep learning on graphs, includes GAT and other attention layers. | Widely used in research communities for rapid prototyping. |

| Graph Transformer Network | An advanced architecture for extracting implicit links from a prior GRN [31]. | Used in GRLGRN to better handle network sparsity. |

| PageRank* Algorithm | A modified algorithm to calculate gene importance scores based on out-degree [34]. | Helps the model focus on high-impact hub genes during reconstruction. |

In the field of single-cell genomics, researchers increasingly rely on multi-modal data integration to gain a comprehensive understanding of cellular identity and function. The integration of single-cell RNA sequencing (scRNA-seq) with single-cell Assay for Transposase-Accessible Chromatin using sequencing (scATAC-seq) presents a powerful approach for reconstructing Gene Regulatory Networks (GRNs), but introduces significant computational challenges related to data sparsity and technical variability. This technical support center provides comprehensive troubleshooting guides and FAQs to help researchers navigate these challenges effectively.

Core Concepts and Workflows

Understanding the Integration Framework

The fundamental principle behind integrating scRNA-seq and scATAC-seq data involves leveraging complementary information to annotate cell types and states with greater confidence. While scRNA-seq provides direct measurement of gene expression, scATAC-seq reveals accessible chromatin regions that indicate regulatory potential. The integration process typically involves using an annotated scRNA-seq dataset to label cells from an scATAC-seq experiment, co-embedding cells from both modalities, and projecting scATAC-seq cells onto a UMAP derived from an scRNA-seq experiment [36].

Key Experimental Protocol

The standard workflow for integrating scRNA-seq and scATAC-seq data consists of several critical steps:

Data Preprocessing: Independently process each modality using standard analysis pipelines. For scRNA-seq, this includes normalization, variable feature identification, scaling, PCA, and UMAP visualization. For scATAC-seq, this involves adding gene annotation information, running TF-IDF normalization, identifying top features, performing LSI (Latent Semantic Indexing), and UMAP projection [36].

Gene Activity Quantification: Calculate gene activity scores from scATAC-seq data by quantifying counts in the 2 kb-upstream region and gene body using the

GeneActivity()function from the Signac package. This creates a bridge between chromatin accessibility and transcriptional output [36].Anchor Identification: Use canonical correlation analysis (CCA) to identify integration anchors between the scRNA-seq and scATAC-seq datasets with the

FindTransferAnchors()function, settingreduction = 'cca'to better capture shared feature correlation structure across modalities [36].Label Transfer: Transfer cell type annotations from the scRNA-seq reference to the scATAC-seq query using the

TransferData()function, utilizing the LSI reduction of the ATAC-seq data to compute neighborhood weights [36].

The following diagram illustrates the complete computational workflow:

Technical Support FAQs

Data Quality and Preprocessing

Q: What quality control thresholds should I apply to scATAC-seq data before integration?

A: Recommended QC thresholds for scATAC-seq data include:

- nCount_ATAC: 200-10,000 fragments per cell

- Blacklist fraction: <0.2

- Nucleosome signal: <0.4

- TSS enrichment: >2 [37]

These thresholds help filter out low-quality cells while retaining biologically meaningful data for downstream integration analysis.

Q: How can I handle the inherent sparsity of scATAC-seq data during integration?

A: scATAC-seq data exhibits near-digital measurements at individual regulatory elements, with approximately 9.4% of promoters represented in a typical scATAC-seq library [38]. To address this sparsity in GRN reconstruction:

- Aggregate information across sets of genomic features sharing common characteristics

- Calculate "deviation" scores comparing observed vs. expected fragments

- Use specialized algorithms designed for sparse data reconstruction [20]

- Consider sparse reconstruction frameworks that formulate GRN identification as solving an underdetermined system of linear equations (y = Φx) [20]

Integration Methodology

Q: Why does my second transfer learning step result in messy clustering compared to the first transfer?

A: This common issue arises from several potential causes:

- Data Distribution Shifts: Subsetting data (e.g., selecting only neuronal cells) changes the data distribution, affecting anchor identification [37].

- Inconsistent Feature Selection: Using different variable features between initial and secondary transfers introduces inconsistency.

- Reduction Misalignment: The LSI dimensions used for weight reduction may not optimally capture the structure of subset data.

Solution: Recompute variable features and LSI reduction on the subset data before performing the second transfer, rather than relying on parameters from the full dataset [37].

Q: What are the critical parameter choices for cross-modal integration and why?

A: Key parameters and their rationales include:

| Parameter | Recommended Setting | Rationale |

|---|---|---|

reduction in FindTransferAnchors() |

'cca' | Better captures shared feature correlation across modalities compared to PCA [36] |

weight.reduction in TransferData() |

LSI dimensions from scATAC-seq | Better captures internal structure of ATAC-seq data [36] |

dims for weight reduction |

2:30 | Excludes first LSI dimension (typically correlated with sequencing depth) [36] |

min.cutoff in FindTopFeatures() |

"q0" | Retains all features above minimum threshold for comprehensive analysis [37] |

Q: How can I evaluate the success of my integration?

A: Effective evaluation strategies include:

- Quantitative Metrics: Calculate the fraction of cells with correctly predicted annotations. In benchmark tests, correct prediction rates of ~90% are achievable [36].

- Prediction Scores: Monitor the

prediction.score.maxfield - correctly annotated cells typically show scores >90%, while incorrect annotations often have scores <50% [36]. - Confusion Analysis: Examine misassignments which typically occur between closely related cell types (e.g., Intermediate vs. Naive B cells) rather than distinct lineages [36].

Advanced Applications in GRN Reconstruction

Q: How can integrated scRNA-seq and scATAC-seq data improve GRN reconstruction despite data sparsity?

A: Multi-modal integration addresses GRN sparsity challenges through:

- Cross-Validation: Chromatin accessibility provides orthogonal evidence for regulatory relationships inferred from expression data.

- Context-Specific Regulation: Identifies transcription factors associated with cell-type specific accessibility variance [38].

- Synergistic Effects: Reveals combinations of trans-factors that induce or suppress cell-to-cell variability in regulatory elements [38].

The following diagram illustrates how sparse data from multiple modalities contributes to GRN inference:

Q: What computational approaches help manage sparsity in GRN reconstruction from multi-modal data?

A: Effective strategies include:

- Sparse Reconstruction Frameworks: Formulate GRN identification as a sparse vector reconstruction problem, determining the number, location and magnitude of nonzero entries by solving underdetermined systems of linear equations [20].

- Deviation Scoring: Quantify regulatory variation using changes in accessibility across feature sets, calculating observed minus expected fragments normalized by background signal [38].

- Representation Learning: Use graph autoencoders and deep representation learning to embed network structure into low-dimensional spaces, mitigating sparsity through learned representations [39].

Visualization and Interpretation

Q: What tools are available for visualizing integrated multi-modal data?

A: Vitessce is an interactive web-based visualization framework specifically designed for exploring multimodal and spatially resolved single-cell data, supporting:

- Simultaneous visualization of transcriptomics, proteomics, genome-mapped, and imaging modalities

- Coordination across multiple views (scatterplots, heatmaps, spatial coordinates)

- Scalable visualization of millions of cells using WebGL and deck.gl [40]

Q: How can I communicate integration results effectively to collaborators?

A: Recommended practices include:

- Multi-Panel Visualization: Show predicted annotations alongside ground-truth annotations when available [36].

- Confidence Scoring: Include prediction score distributions for different cell types.

- Modality Comparison: Use tools like Vitessce to create coordinated views that link gene expression, chromatin accessibility, and spatial localization [40].

The Scientist's Toolkit: Research Reagent Solutions

| Resource | Function | Application in Integration |

|---|---|---|

| Signac Package | Analysis of single-cell chromatin data | Provides GeneActivity() function for creating gene activity scores from scATAC-seq data [36] |

| Vitessce Framework | Interactive visualization of multimodal data | Enables coordinated exploration of scRNA-seq and scATAC-seq data across multiple views [40] |

| Seurat WNN | Weighted nearest neighbors multi-omic analysis | Enables integrated analysis of scRNA-seq and scATAC-seq data collected from the same cells [36] |

| 10x Genomics Multiome | Simultaneous scRNA-seq and scATAC-seq | Provides ground-truth data for evaluating integration accuracy [36] |

| Sparse Reconstruction Algorithms | Solving underdetermined linear systems | Addresses data sparsity in GRN identification from limited measurements [20] |

Successful integration of scRNA-seq and scATAC-seq data requires careful attention to data sparsity, appropriate parameter selection, and robust validation. By following the troubleshooting guidelines and methodologies outlined in this technical support document, researchers can effectively leverage multi-modal single-cell data to reconstruct more accurate Gene Regulatory Networks and gain deeper insights into cellular regulatory mechanisms. The field continues to evolve with advances in sparse reconstruction methods [20] and deep learning approaches [39] that promise to further enhance our ability to integrate heterogeneous genomic data types.

This technical support center provides troubleshooting guides and FAQs for researchers applying advanced deep learning architectures to Gene Regulatory Network (GRN) reconstruction, with a special focus on handling network sparsity.

Frequently Asked Questions (FAQs)

Q1: How can I improve the physical consistency of my deep learning model for GRN inference under noisy or sparse data conditions?

A1: To enhance physical consistency, consider using a Mechanism-Guided Residual Network (MGResNet). This architecture integrates known physical laws or mechanistic equations directly into the training process of a residual network via an improved loss function [41]. This approach constraints the model to produce outputs that are not only data-driven but also align with underlying biological or physical principles, significantly improving robustness and accuracy under noisy and data-scarce conditions [41].

Q2: Why does my graph autoencoder for GRN reconstruction have a high loss even when the output graph is functionally correct?

A2: This is a classic symptom of the graph reconstruction loss problem [42]. Standard autoencoders measure reconstruction loss by directly comparing the input and output adjacency matrices. However, graphs can be represented by many different, yet isomorphic, adjacency matrices. If your model outputs a graph that is structurally identical to the input but with a different node ordering, the loss function may be high despite a perfect reconstruction [42]. Solutions include using graph matching algorithms, heuristic node ordering, or replacing the reconstruction loss with a discriminator loss [42].

Q3: What is a major pitfall in designing spiking residual neural networks for processing sequential data like gene expression time-series?

A3: A key issue is the spike avalanche effect [43]. In standard spiking ResNet architectures, events from the direct and residual paths can sum up, creating sudden peaks of activity that drastically reduce the sparsity of the network [43]. This undermines one of the primary advantages of spiking models—energy efficiency. To counter this, consider using architectures specifically designed to manage spike propagation, such as the Sparse-ResNet [43].

Q4: Which deep learning architecture is most effective for capturing the directional nature of regulatory relationships in GRNs?

A4: For inferring directed networks, standard Graph Autoencoders (GAE) and Variational Graph Autoencoders (VGAE) are insufficient as they often predict undirected graphs [34]. Instead, use a Gravity-Inspired Graph Autoencoder (GIGAE), which is designed to effectively extract the complex directed network topology features essential for modeling causal regulatory relationships between genes [34].

Troubleshooting Guides

Issue 1: Handling Class Imbalance in Supervised GRN Inference

Problem: The model fails to predict true regulatory interactions because positive links (edges) are vastly outnumbered by non-existent ones, leading to poor accuracy.

Solution Guide:

- Algorithm Selection: Employ ensemble-based methods specifically designed for imbalanced data. The EnGRNT framework, which uses ensemble methods with topological feature extraction, is proposed to address this issue [44].

- Ensemble Feature Construction: As a robust alternative, construct an ensemble model that aggregates the predictions of multiple base classifiers. One effective method uses a Flexible Neural Tree (FNT) model, which takes predictions from 13 diverse base algorithms (e.g., Random Forest, XGBoost, SVM) as input. A hybrid evolutionary algorithm then searches for the optimal ensemble structure, demonstrated to outperform individual supervised and unsupervised methods [45].

- Evaluation Metrics: Move beyond standard accuracy. Prioritize metrics that are robust to class imbalance, such as the Area Under the Precision-Recall Curve (AUPRC) and the F1-score [45].

Issue 2: Managing Network Sparsity and Activity in Spiking Neural Networks (SNNs)

Problem: The SNN model generates excessive spike activity, leading to high energy consumption on neuromorphic hardware and reduced efficiency.

Solution Guide:

- Neuron-Level Sparsification: Adopt multi-level spiking neurons instead of traditional binary neurons. These neurons output multiple bits per timestep, reducing information loss and allowing for minimal inference latency (as low as 1 timestep) without performance degradation. This can reduce energy consumption by a factor of 2 to 3 compared to equivalent binary SNNs [43].

- Architecture-Level Sparsification: Replace standard spiking ResNet architectures with a Sparse-ResNet design [43]. This architecture is specifically engineered to mitigate the "spike avalanche effect" in residual connections. It can reduce overall network spiking activity by more than 20% while maintaining state-of-the-art accuracy [43].

- Energy Estimation: During model design, use energy estimation metrics that account for all memory accesses and synaptic operations in an event-driven execution scenario, not just spike counts. This provides a more realistic assessment of energy gains on neuromorphic hardware [43].

Issue 3: Incorporating Gene Importance into GRN Reconstruction

Problem: The model treats all genes equally, potentially missing the critical regulatory influence of hub genes.

Solution Guide:

- Score Calculation: Implement a gene importance scoring mechanism. An improved PageRank* algorithm can be used, which focuses on a gene's out-degree (how many other genes it regulates) rather than its in-degree, based on the biological hypothesis that genes regulating many others are more important [34].

- Feature Fusion: Integrate the calculated importance scores with the gene expression feature matrix. This weighted fusion directs the model's attention to genes with higher regulatory potential during the encoding and decoding processes [34].

- Latent Space Regularization: To further improve the learning of gene embeddings, apply random walk regularization. This technique helps standardize the latent vectors produced by the encoder by capturing the local topology of the network, leading to a more uniform and effective embedding distribution [34].

Table 1: Performance Comparison of GRN Inference Methods

| Method | Approach | Key Technology | AUROC | AUPRC | F1-Score | Best For |

|---|---|---|---|---|---|---|

| Ensemble w/ FNT [45] | Supervised | Flexible Neural Tree + 13 base models | High | High | High | General purpose, high accuracy |

| GAEDGRN [34] | Supervised | Gravity-Inspired GAE + PageRank* | High Accuracy | Strong Robustness | N/A | Directed GRNs, important genes |