Optimizing S-system Models for Gene Regulatory Networks: From Foundational Theory to Advanced Parameter Estimation

This article provides a comprehensive guide to parameter optimization for S-system models in Gene Regulatory Network (GRN) inference.

Optimizing S-system Models for Gene Regulatory Networks: From Foundational Theory to Advanced Parameter Estimation

Abstract

This article provides a comprehensive guide to parameter optimization for S-system models in Gene Regulatory Network (GRN) inference. It covers foundational principles of the S-system's power-law formalism and its advantages in modeling complex biological dynamics. The content explores a spectrum of optimization methodologies, from established evolutionary algorithms to cutting-edge deep learning and hierarchical techniques. It addresses critical challenges like computational complexity, noise handling, and structural robustness, offering practical troubleshooting strategies. Furthermore, it presents a rigorous framework for model validation and comparative analysis against other continuous modeling approaches, equipping researchers and drug development professionals with the knowledge to build more accurate and predictive models of gene regulation.

Understanding S-system Foundations: The Power-Law Framework for GRN Dynamics

The S-system formalism is a canonical modeling framework within Biochemical Systems Theory (BST) that provides a powerful mathematical representation of biochemical networks [1]. The key advantage of S-system models lies in their ability to represent steady states as systems of linear algebraic equations through logarithmic transformation of variables, significantly simplifying analysis of biological systems [1] [2].

At the core of the S-system approach is a specific structure for modeling the dynamics of each system component. For a dependent variable ( X_i ), the rate of change is governed by exactly two terms: a synthesis term and a degradation term [1].

Core Mathematical Representation

The general S-system formulation for the dynamics of a dependent variable ( X_i ) is given by:

[ \dot{Xi} = \alphai \prod{j=1}^{n+m} Xj^{g{ij}} - \betai \prod{j=1}^{n+m} Xj^{h_{ij}} ]

Where the first product represents the synthesis term and the second product represents the degradation term [1].

Parameter Definitions

| Parameter | Interpretation | Biological Meaning |

|---|---|---|

| ( \alpha_i ) | Rate constant for synthesis | Controls the maximum production rate of ( X_i ) |

| ( \beta_i ) | Rate constant for degradation | Controls the maximum elimination rate of ( X_i ) |

| ( g_{ij} ) | Kinetic order for synthesis | Quantifies the effect of ( Xj ) on the production of ( Xi ) |

| ( h_{ij} ) | Kinetic order for degradation | Quantifies the effect of ( Xj ) on the breakdown of ( Xi ) |

| ( n ) | Number of dependent variables | System components whose dynamics are modeled |

| ( m ) | Number of independent variables | External factors that influence but aren't affected by the system |

Table 1: Key parameters in the S-system formalism and their biological interpretations [1] [2].

Advantages for GRN Modeling

The S-system formalism provides several significant advantages for modeling Gene Regulatory Networks (GRNs) compared to conventional Michaelis-Menten approaches:

- Structural Homogeneity: All regulatory interactions use the same mathematical form, regardless of whether they represent activation or inhibition [2]

- Simplified Steady-State Analysis: At steady state (( \dot{Xi} = 0 )), the system can be transformed into a linear system in logarithmic coordinates: ( AD \cdot yD + AI \cdot y_I = b ) [1]

- Ease of Model Expansion: Adding new interactions or modifying existing ones is straightforward due to the consistent mathematical structure [2]

- Mitigated Parameter Identifiability: The formalism reduces issues with parameter estimation that plague more complex modeling approaches [2]

Experimental Protocols for Parameter Optimization

Protocol 1: Steady-State Based Parameter Estimation

This protocol leverages the linear algebraic structure of S-system steady states for parameter optimization [1]:

- Collect steady-state data under multiple experimental conditions

- Transform variables using logarithmic transformation: ( yi = \ln Xi )

- Formulate the linear system: ( AD \cdot yD + AI \cdot yI = b )

- Apply matrix inversion or regression methods to characterize the admissible solution space

- Validate parameters using hold-out data not used in estimation

- Perform sensitivity analysis to identify most influential parameters

Protocol 2: Time-Series Data Integration

For situations where time-series data is available from sources like scRNA-Seq [3]:

- Preprocess expression data to address technical artifacts (e.g., dropout events in scRNA-Seq)

- Estimate slopes from time-series data for ( \dot{X_i} ) values

- Apply linear regression in the logarithmic domain to estimate kinetic parameters

- Implement cross-validation to prevent overfitting

- Use optimization algorithms (e.g., least-squares) to refine parameter estimates

- Test model predictions against experimental data

Frequently Asked Questions (FAQs)

Q1: How do I determine whether a variable should be treated as dependent or independent in my S-system model?

Independent variables (( Xi ) for ( i = n+1, \ldots, n+m )) are external factors that influence system dynamics but are not affected by the system itself. Examples include environmental signals, experimental treatments, or fixed inputs. Dependent variables (( Xi ) for ( i = 1, \ldots, n )) are system components whose dynamics are governed by the network interactions. The distinction should be based on your experimental design and biological knowledge of the system [1].

Q2: What is the biological interpretation of kinetic orders (( g{ij} ) and ( h{ij} )) in GRN applications?

In GRN modeling, kinetic orders quantify the regulatory strength of transcription factors on target genes:

- Positive ( g_{ij} ) values indicate transcriptional activation

- Negative ( g_{ij} ) values indicate transcriptional repression

- The magnitude represents the sensitivity of the regulation

- A value of 0 indicates no direct regulatory effect For degradation kinetic orders (( h_{ij} )), they typically represent effects on protein stability or dilution rates [1] [2].

Q3: My S-system model has too many parameters for reliable estimation. What strategies can help?

This common problem in GRN modeling can be addressed through:

- Stepwise model expansion: Start with a core network and gradually add components

- Parameter space constraints: Use biological knowledge to define plausible ranges

- Sensitivity analysis: Focus estimation efforts on most influential parameters

- Data aggregation: Combine multiple data sources to increase estimation power

- Dimensionality reduction: Apply principal component analysis before model fitting [3] [2]

Troubleshooting Common Issues

Problem: Model fails to reach steady state or exhibits unrealistic oscillations

Solution: Check the consistency of your kinetic orders:

- Ensure degradation terms can dominate synthesis terms when concentrations become high

- Verify that independent variables are properly constrained

- Examine the eigenvales of the system matrix ( A_D ) for stability

- Consider whether time delays need to be explicitly modeled for gene expression [2]

Problem: Poor fit between model predictions and experimental data

Solution: Implement a systematic diagnostic approach:

- Residual analysis: Identify specific conditions or variables where discrepancies occur

- Parameter identifiability analysis: Determine if parameters can be uniquely estimated from your data

- Model reduction: Eliminate weakly supported interactions

- Alternative topologies: Test competing network structures

- Data quality assessment: Verify that experimental noise isn't obscuring true signals [3] [4]

Problem: Computational challenges in parameter optimization

Solution: Address numerical issues through:

- Logarithmic transformation of variables to improve numerical stability

- Implementation of global optimization algorithms to avoid local minima

- Utilization of parallel computing for large parameter spaces

- Application of regularization techniques to prevent overfitting [3]

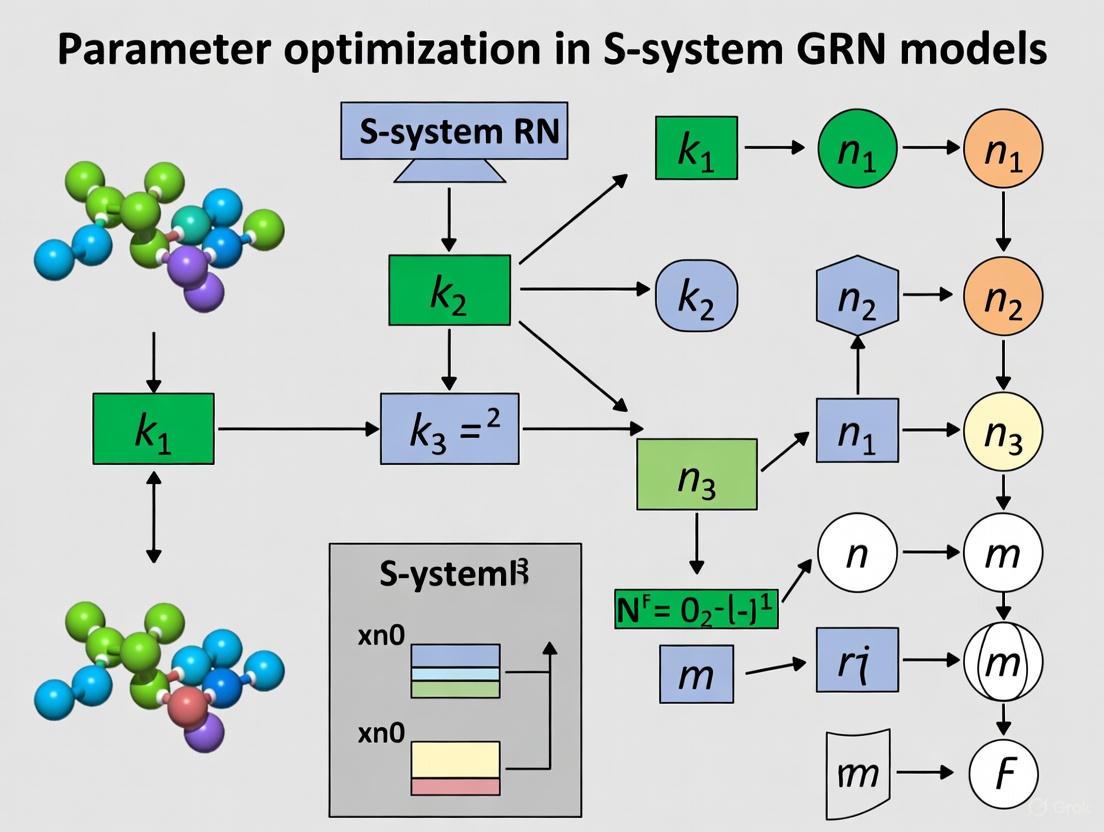

Visualization of S-system Structure

S-system Structure and Dynamics

Research Reagent Solutions

| Reagent/Tool | Function | Application in S-system Modeling |

|---|---|---|

| scRNA-Seq Data | Single-cell resolution gene expression | Provides time-series data for parameter estimation and model validation [4] |

| CRISPR Knockouts | Targeted gene disruption | Generates perturbations to test model predictions and identify network structure [4] |

| Metabolic Inhibitors | Chemical perturbation of specific pathways | Creates controlled interventions for model discrimination [1] |

| Fluorescent Reporters | Real-time monitoring of gene expression | Generates quantitative dynamic data for parameter estimation [2] |

| Bioinformatics Pipelines | Data preprocessing and normalization | Addresses technical artifacts in high-throughput data before model fitting [3] [4] |

Table 2: Essential research reagents and their applications in S-system GRN modeling.

Frequently Asked Questions

Q1: What is the most common reason for parameter estimation failure in S-system models, and how can it be resolved?

A common failure point is the quasi-redundancy of parameters, where errors in some kinetic orders (e.g., g_i,j) can be compensated by adjustments in others or in the rate constants (α_i, β_i), leading to non-identifiable parameters and convergence issues. This is frequently tackled by implementing the Alternating Regression (AR) method, which dissects the nonlinear inverse problem into iterative steps of linear regression, often providing a fast and deterministic solution [5] [6]. If AR does not converge, the problem can often be resolved by dedicating computational resources to identify better start values and search settings, a feasible task given the method's speed [6].

Q2: Why does my identified model fit the time-series data well but fail to represent the correct network topology? This occurs when a collection of different network topologies can produce essentially identical dynamic time series. The solution is to use time series from multiple different initial conditions (imitating different biological stimulus-response experiments) during the parameter estimation process. This provides the algorithm with a richer set of dynamical information, allowing it to identify the correct network topology out of the collection of models that all fit a single dataset [5].

Q3: What is a practical method for estimating the slopes (derivatives) required for S-system parameterization from noisy experimental data? The estimation of slopes from time-series data is a crucial step. If data are relatively noise-free, simple methods like linear interpolation, splines, or a three-point method are effective. For noisier data, it is recommended to use smoothing methods such as the Whittaker filter, collocation methods, or artificial neural networks before slope calculation to prevent noise from being magnified in the estimated derivatives [6].

Q4: How can I incorporate prior knowledge about the network structure into the parameter estimation process?

The S-system framework allows for easy integration of prior knowledge to constrain the search space and improve convergence. If it is known that a variable X_j does not affect the production or degradation of X_i, the corresponding kinetic order (g_i,j or h_i,j) can be set to zero and held constant throughout the regression. Similarly, if a variable is known to be an inhibitor or activator, its kinetic order can be constrained to negative or positive values, respectively [6].

Troubleshooting Guides

Problem: The Alternating Regression (AR) algorithm fails to converge.

- Potential Cause 1: Poor initial guesses for the parameter values, particularly for the degradation term parameters (

β_iandh_i,j) in the first phase of the algorithm [6].- Solution: Utilize experience-based typical S-system parameter values (e.g., kinetic orders between -1 and +2) to select better initial guesses. The speed of the AR method makes it feasible to run it multiple times with different initial values [6].

- Potential Cause 2: The system is highly fragmented due to decoupling, and constraints from the underlying biology are not being applied.

- Solution: Implement an extension of the algorithm that optimizes network topologies with constraints on metabolites and fluxes. These constraints effectively rejoin the system where it was fragmented by decoupling, guiding the algorithm toward a biologically feasible solution [5].

Problem: The estimated parameters are sensitive to small changes in the time-series data.

- Potential Cause: The model is over-parameterized, or the available data does not contain enough information to reliably estimate all parameters, a phenomenon related to quasi-redundancy [5].

- Solution: Perform a sensitivity analysis on the identified rate constants. This involves statistically predicting rate constants that slightly differ from the estimated values and observing their impact on the final output (e.g., product yield in a network). This helps identify which parameters are most critical and which can be adjusted within a range without significantly altering the system's behavior [7].

Problem: The decoupled system's algebraic equations do not accurately represent the original differential system.

- Potential Cause: Inaccurate estimation of the slopes

Ṡ_i(t_k)from the observed time-series data [6].- Solution: Revisit the slope estimation step. Ensure an appropriate smoothing or interpolation technique is used that matches the quality (noise level) and quantity of your experimental data. Validate the slopes by checking if the reconstructed time courses from the integrated model match the original data [6].

S-system Parameter Tables

Table 1: Interpretation of Core S-system Parameters [5] [6]

| Parameter | Mathematical Symbol | Biological/Functional Interpretation | Typical Range/Values |

|---|---|---|---|

| Rate Constant (Production) | α_i |

Represents the turnover rate or the basal level of production for metabolite X_i. |

Non-negative; specific value depends on the system and timescale. |

| Rate Constant (Degradation) | β_i |

Represents the rate of removal or degradation of metabolite X_i. |

Non-negative; specific value depends on the system and timescale. |

| Kinetic Order (Production) | g_i,j |

The influence of metabolite X_j on the production of X_i. |

Real numbers, typically between -1 and 2. Positive = activating effect; Negative = inhibiting effect; Zero = no effect. |

| Kinetic Order (Degradation) | h_i,j |

The influence of metabolite X_j on the degradation of X_i. |

Real numbers, typically between -1 and 2. Positive = enhancing degradation; Negative = suppressing degradation; Zero = no effect. |

Table 2: Troubleshooting Common Parameter Estimation Issues

| Problem | Possible Symptoms | Recommended Solution Pathway |

|---|---|---|

| Parameter Quasi-Redundancy | Multiple parameter sets yield similar goodness-of-fit; algorithm cannot find a unique solution [5]. | Use eigenvector optimization to identify nonlinear constraints [5] or incorporate time series from multiple initial conditions [5]. |

| Non-convergence of AR | Iterative process does not settle on a solution [6]. | Exploit the algorithm's speed to test multiple initial guesses and search settings [6]. |

| Incorrect Topology | Model fits dynamics but has wrong network connections [5]. | Use structure identification algorithms on S-system models with no a priori zero parameters to let the data drive topology discovery [6]. |

Experimental Protocol: Parameter Estimation via Alternating Regression

This protocol details the estimation of S-system parameters from time-series data using the Alternating Regression method, combined with system decoupling [6].

1. Experimental Design and Data Collection

- Stimulus-Response Experiments: Generate time-series data by perturbing your biological system (e.g., Gene Regulatory Network) from different initial concentrations of its variables. This provides a rich dataset that helps the algorithm identify the correct network topology [5].

- Time Points: Collect measurements of all metabolite (

X_1, X_2, ..., X_n) concentrations at a series of time pointst_1, t_2, ..., t_N.

2. Data Preprocessing and Slope Estimation

- Smoothing: If the experimental data is noisy, apply a smoothing filter (e.g., Whittaker filter, splines) to the time series for each metabolite [6].

- Slope Calculation: For each metabolite

iand at each time pointt_k, estimate the slopeS_i(t_k) = dX_i/dtfrom the smoothed data. This can be done using methods like linear interpolation, splines, or the three-point method [6].

3. System Decoupling

- At this stage, the original system of

ncoupled differential equations is reformulated inton × Nuncoupled algebraic equations of the form [6]:S_i(t_k) = α_i Π X_j(t_k)^g_i,j - β_i Π X_j(t_k)^h_i,j - This allows the parameters for each metabolite to be computed separately.

4. Alternating Regression (AR) Algorithm

The following workflow details the AR steps for a single metabolite i. The process must be repeated for all n metabolites.

5. Validation

- Integrated Validation: Use the identified parameter set (

α_i, g_i,j, β_i, h_i,j) to numerically integrate the original S-system differential equations. - Goodness-of-fit: Compare the integrated time courses with the original experimental time series data. A good fit indicates a successful parameter estimation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Analytical Tools for S-system Modeling

| Item/Reagent | Function in S-system Analysis | Example/Notes |

|---|---|---|

| Time-Series Data | The primary experimental input used for parameter estimation and structure identification. | Should ideally be collected from multiple different initial conditions to ensure topological correctness [5]. |

| Slope Estimation Algorithm | Calculates the derivatives of metabolite concentrations, which are needed to decouple the system. | Methods range from simple (three-point) to sophisticated (Whittaker filter, neural networks) depending on data noise [6]. |

| Alternating Regression (AR) Code | A deterministic algorithm that performs fast parameter estimation by iterating between two linear regression phases. | Can be implemented in environments like MATLAB or Python. Known for being several orders of magnitude faster than meta-heuristics [6]. |

| Sequential Quadratic Programming (SQP) | An optimization method used in conjunction with regression to handle constraints and improve convergence. | Used in methods that optimize one term (production or degradation) completely before estimating the complementary term [5]. |

| Sensitivity Analysis Framework | Assesses how variation in a model's output can be apportioned to different input parameters. | Helps identify the most critical rate constants. Can be performed by hypothetically varying rate constants and observing the impact on outputs like product yield [7]. |

Conceptual Diagram of an S-system Model

The diagram below illustrates the structure of a generic S-system model, showing how multiple inputs influence the production and degradation of a metabolite, X_i.

Troubleshooting Guide: S-system Model Experiments

Parameter Optimization Challenges

Issue: Failure of Model Convergence During Parameter Optimization

- Problem: The parameter optimization algorithm fails to converge on a stable solution, resulting in a model that does not accurately represent the gene regulatory network (GRN).

- Causes:

- Poorly chosen initial parameter values.

- An objective function with numerous local minima.

- Insufficient or noisy experimental data for the complexity of the model.

- Solution:

- Re-initialize Parameters: Use a multi-start strategy by running the optimization from multiple, randomly selected initial parameter sets to find a global, rather than local, optimum.

- Regularization: Introduce regularization terms (e.g., L1 or L2) to the objective function to penalize overly complex models and prevent overfitting.

- Data Augmentation: If possible, incorporate additional perturbation data (e.g., from single-cell CRISPR screens) to provide more constraints for the optimization process [8].

Issue: Model Predictions Do Not Match Validation Data

- Problem: After training, the S-system model performs well on training data but poorly on unseen validation data, indicating overfitting.

- Causes:

- The model has too many degrees of freedom (parameters) compared to the available data.

- The training data does not capture the full dynamic range of the system.

- Solution:

- Cross-Validation: Use k-fold cross-validation during the model training phase to assess generalizability.

- Simplify the Network: Impose sparsity constraints on the GRN structure to reduce the number of parameters, focusing on the most impactful regulatory interactions.

- Utilize Explainable AI Frameworks: Adopt a GRN-aligned parameter optimization approach, which explicitly models the GRN in the latent space to ensure learned parameters have biological meaning, similar to the GPO-VAE methodology [8].

Numerical and Computational Issues

Issue: Numerical Instability in Time-Series Simulations

- Problem: Simulations of the S-system differential equations produce unrealistic values (e.g.,

NaNor infinities). - Causes:

- The numerical integrator (e.g., ODE solver) uses a step size that is too large.

- The power-law terms in the model lead to extremely large values.

- Solution:

- Adjust Solver Settings: Switch to a stiff ODE solver and reduce the maximum step size or relative/absolute error tolerances.

- Parameter Bounding: Implement strict bounds on parameter values during optimization to prevent them from reaching numerically unstable regions.

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using S-systems over linear models for GRN inference? S-systems use a power-law formalism that inherently captures the non-linear and saturable dynamics of biological processes, such as Michaelis-Menten enzyme kinetics and cooperative binding. Linear models assume additive effects and cannot represent these fundamental biological phenomena, making S-systems a more structurally accurate representation.

Q2: How do S-systems naturally represent bidirectional regulation? Unlike many modeling frameworks that require separate mechanisms for activation and inhibition, the S-system structure intrinsically supports it. Each variable's rate of change is governed by a single, unified differential equation where the kinetic parameters can be positive (for activation) or negative (for inhibition), allowing the model to seamlessly represent the competing effects of multiple regulators on a single gene [8].

Q3: Our parameter optimization is slow. Are there strategies to improve computational efficiency? Yes. Consider the following:

- Dimensionality Reduction: Before modeling, use techniques like PCA to reduce the number of genes, focusing on highly variable or known key regulators.

- Metaheuristic Algorithms: For complex problems with many local minima, global optimization algorithms like Particle Swarm Optimization (PSO) or Genetic Algorithms (GAs) can be more efficient than traditional gradient-based methods.

- Model Decomposition: If the GRN can be broken into smaller, weakly interconnected modules, optimize these subsystems independently before integrating them.

Experimental Protocol: GRN Inference using S-systems

Title: Protocol for Constructing an Explainable GRN from Perturbation Data using S-system Parameter Optimization.

1. Objective: To reconstruct a gene regulatory network from high-throughput gene perturbation data by optimizing the parameters of an S-system model, ensuring the learned network is biologically explainable.

2. Materials and Reagents:

- Single-cell RNA Sequencing (scRNA-seq) Data: Post-genetic perturbation (e.g., via CRISPR-Cas9) [8].

- Benchmark Datasets: For validation and performance comparison (e.g., from publicly available repositories like the Gene Expression Omnibus).

- Computational Resources: High-performance computing cluster for intensive parameter optimization.

3. Methodology:

- Step 1: Data Preprocessing

- Normalize the scRNA-seq count data.

- Log-transform the data to stabilize variance and make it more compatible with the power-law formalism.

- Split the data into training and validation sets.

- Step 2: Model Initialization

- Define the S-system structure. The number of system variables (X) is equal to the number of genes (N) being modeled.

- If prior knowledge of the GRN is available (e.g., from literature or databases like KEGG), use it to initialize the network connectivity, setting unknown interactions to zero.

- Step 3: Parameter Optimization (GRN-Aligned)

- Objective Function: Define a cost function, typically the Sum of Squared Errors (SSE), between the model's predicted gene expression and the experimental data.

- Optimization Algorithm: Employ a global optimization algorithm (e.g., a Genetic Algorithm) to minimize the cost function by adjusting the S-system parameters (rate constants, kinetic orders).

- Explainability Alignment: Incorporate a regularization term that favors network structures (kinetic orders) that align with known biological pathways or GRN properties, as demonstrated in GPO-VAE [8].

- Step 4: Model Validation

- Simulate the optimized S-system model using initial conditions from the held-out validation dataset.

- Quantify prediction performance using metrics like Mean Absolute Error (MAE) or Pearson correlation.

- Perform additional validation on the inferred GRN topology by comparing it to experimentally validated regulatory pathways [8].

The Scientist's Toolkit: Research Reagent Solutions

The table below details key computational and data resources essential for S-system GRN modeling.

| Research Reagent / Resource | Function in S-system GRN Research |

|---|---|

| scRNA-seq Data (Post-CRISPR) | Provides high-resolution, single-cell gene expression measurements following genetic perturbations, serving as the primary data for model training and validation [8]. |

| Benchmark Datasets | Publicly available, gold-standard datasets used to objectively evaluate the predictive performance and GRN inference accuracy of a newly developed S-system model against other state-of-the-art methods [8]. |

| Global Optimization Algorithms (e.g., GA, PSO) | Computational engines that efficiently search the high-dimensional, non-convex parameter space of S-systems to find a parameter set that minimizes the difference between model predictions and experimental data. |

| Explainability Regularization | A mathematical constraint applied during optimization that penalizes biologically implausible parameter configurations, steering the model toward a GRN structure that aligns with established biological knowledge [8]. |

The following tables summarize key quantitative aspects of developing and evaluating S-system models.

Table 1: S-system Parameter Bounds and Interpretation

| Parameter Type | Symbol | Typical Bounds | Biological Interpretation |

|---|---|---|---|

| Rate Constant | αᵢ, βᵢ | [0, ∞) | Represents the apparent rate of synthesis or degradation. |

| Kinetic Order (Activation) | gᵢⱼ, hᵢⱼ | [0, 2] | Positive, represents the strength of an activating regulatory interaction. |

| Kinetic Order (Inhibition) | gᵢⱼ, hᵢⱼ | [-2, 0] | Negative, represents the strength of an inhibitory regulatory interaction. |

Table 2: Key Performance Metrics for Model Validation

| Metric | Formula | Ideal Value | What It Measures |

|---|---|---|---|

| Mean Absolute Error (MAE) | (1/n) Σ|yᵢ - ŷᵢ| | 0 | The average magnitude of prediction errors. |

| Pearson Correlation (r) | Σ[(yᵢ-ȳ)(ŷᵢ-ŷ)] / √[Σ(yᵢ-ȳ)²Σ(ŷᵢ-ŷ)²] | +1 or -1 | The linear correlation between predicted and actual values. |

| Area Under ROC Curve (AUC) | Area under ROC curve | 1 | The ability of the inferred GRN to correctly identify true regulatory links versus non-links. |

Visualizing S-system Concepts and Workflows

Power Law in S-systems

S-system Optimization Workflow

Bidirectional Regulation

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: Why is parameter estimation for S-system models computationally so expensive and complex?

Parameter estimation for S-system models is complex due to the high-dimensional, non-linear nature of the problem. The primary challenges include:

- Huge Parameter Space: The number of parameters that need to be estimated grows rapidly with the number of genes (nodes) in your network. Each node can be connected to many others, leading to a combinatorial explosion of possible regulatory interactions that must be quantified [9].

- Structural and Parametric Unidentifiability: Correlations between model parameters and states can make it difficult to find a unique solution. Different combinations of parameter values might fit your time-series expression data equally well, a problem known as equifinality [9] [10].

- Limited Informative Data: The number of biological perturbation experiments is often limited compared to the number of genes and parameters. This lack of data provides insufficient constraints for the optimization algorithm to reliably pinpoint the best parameters [9].

FAQ 2: What strategies can I use to improve the efficiency and accuracy of parameter estimation?

A highly effective strategy is to move away from estimating all parameters simultaneously and instead use a hierarchical estimation methodology based on network topology. This approach involves:

- Decomposing the Network: Analyze your Gene Regulatory Network (GRN) as a directed graph and break it down into its Strongly Connected Components (SCCs). An SCC is a subgraph where every node is reachable from every other node [9].

- Prioritizing Root SCCs: Identify the root SCCs, which are located in the upstream of the network's information flow. The genes in these components are given top priority for parameter estimation [9].

- Sequential Parameter Estimation: First, estimate the parameters for the genes in the root SCC. Then, use these estimated parameters as fixed, known values to infer the parameters for genes in the next priority level. This process continues sequentially through the decomposed levels of the network [9].

This method reduces computational burden by breaking a large problem into smaller, more manageable sub-problems and has been shown to achieve lower error indexes while consuming fewer resources [9].

FAQ 3: My model has many parameters but I only have a single steady-state gene expression profile. Can I still calibrate my model?

Yes, it is possible, but it requires specific approaches and simplifying assumptions. One methodology involves reformulating the problem for a Parameterized Stochastic Boolean Network (PSBN) model. The process can be outlined as follows:

- Model Formulation: Use a stochastic Boolean network model where gene states are ON (1) or OFF (0), and update rules include probabilistic parameters [11].

- Simplifying Assumptions: Apply assumptions that allow the problem to be reformulated from a complex non-linear estimation into a system of linear equations. This ensures ergodicity and the existence of a unique solution [11].

- Simulation-Based Solution: Instead of explicitly solving the large system of linear equations (which can be computationally challenging), use a simulation-based approach to estimate the parameters that make the model's steady-state behavior match your single expression profile [11].

This technique is particularly relevant for personalizing models for specific cell lines or patient samples [11].

FAQ 4: How can I incorporate explainability into my deep learning models for perturbation prediction?

To enhance the explainability of deep learning models like Variational Autoencoders (VAEs) in predicting gene perturbation responses, you can integrate prior biological knowledge. The GPO-VAE (GRN-aligned Parameter Optimization VAE) framework provides a solution:

- GRN-Aligned Latent Space: Design your VAE to explicitly model gene regulatory networks in its latent space. The key is to optimize the learnable parameters related to latent perturbation effects toward GRN-aligned explainability [8].

- Explainable Predictions: This approach forces the model to learn features that align with established biological understanding. It not only improves prediction performance but also allows the model to generate meaningful GRNs that can be validated against known regulatory pathways [8].

Troubleshooting Guides

Issue: Optimization Algorithm Fails to Converge or Converges to a Poor Solution

| Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| High error, non-biological parameter values | Unidentifiability due to correlated parameters or insufficient data [9]. | 1. Incorporate prior knowledge (e.g., known interactions) to fix some parameters [9].2. Perform a global sensitivity analysis to identify and prioritize the most influential parameters for estimation [10]. |

| Algorithm is slow, no improvement | Huge parameter space leading to slow exploration [9]. | 1. Apply hierarchical estimation based on network topology to reduce problem size [9].2. If using a large model, consider the "optimize all parameters" strategy with efficient sampling methods like iterative Importance Sampling, which can handle high-dimensional spaces [10]. |

Issue: Model Does Not Generalize Well to New Validation Data

| Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| Good fit on training data, poor on test data | Overfitting to noise in the limited training dataset [9]. | 1. Utilize multi-variable data assimilation. Constrain your model with a rich dataset of multiple, orthogonal biological metrics to provide stronger, more robust constraints [10].2. Ensure your dataset includes diverse perturbation states to better capture the system's dynamics [8]. |

Experimental Protocols & Methodologies

Protocol 1: Hierarchical Parameter Estimation Based on Network Topology

This protocol provides a step-by-step guide for implementing the hierarchical estimation method described in the FAQs [9].

- Network Structure Identification: Begin with an inferred or proposed network topology (directed graph) for your GRN. This structure can be obtained from databases or inferred using algorithms like ARACNE [9].

- SCC Decomposition: Perform a decomposition of the directed graph into its Strongly Connected Components (SCCs). This can be done using standard graph algorithms (e.g., Kosaraju's or Tarjan's algorithm).

- Priority Level Assignment: Identify the root SCCs (those with no incoming edges from other SCCs). Assign genes in the root SCC to the first (highest) priority level. Subsequent levels are determined by the topological order of the SCCs.

- Sequential Optimization:

- Level 1: Run the parameter optimization algorithm only for the genes and their regulatory connections within the first priority level.

- Level N: Fix the parameters estimated in all previous levels. Use these as constants in the model and run the optimization algorithm for the genes and connections in the current priority level.

- Validation: Validate the final, fully parameterized model against a held-out dataset not used during estimation.

Diagram: Workflow for Hierarchical Parameter Estimation

Protocol 2: Global Sensitivity Analysis for Parameter Prioritization

This protocol is adapted from methodologies used in large-scale biogeochemical model optimization, which is directly relevant to complex GRN models [10].

- Parameter Selection: Define the full set of model parameters to be analyzed. In a large model, this could be 95+ parameters [10].

- Sampling Strategy: Use an efficient sampling method (e.g., Latin Hypercube Sampling) to generate a large number of parameter sets from the pre-defined prior distributions for each parameter.

- Model Execution & Metric Calculation: For each sampled parameter set, run the model and calculate a cost function (e.g., Normalized Root Mean Square Error) that quantifies the difference between model output and experimental data.

- Sensitivity Index Calculation: Apply a variance-based sensitivity analysis method (e.g., Sobol' method) to the results. This calculates two key indices for each parameter:

- Main Effect Index (Si): Measures the direct contribution of a single parameter to the output variance.

- Total Effect Index (STi): Measures the total contribution of a parameter, including its interactions with all other parameters.

- Parameter Prioritization: Rank parameters based on their Total Effect Index. Parameters with high STi values are the most influential and should be prioritized in the optimization.

Table 1: Summary of Key Parameter Optimization Strategies

| Strategy | Description | Best Use Case | Computational Cost |

|---|---|---|---|

| Hierarchical Estimation [9] | Decomposes network into levels and estimates parameters sequentially. | Large networks with a modular, hierarchical topology. | Lower |

| Subset Optimization (Main Effects) [10] | Optimizes only parameters with strong direct influence on the output. | Limited computational resources; well-understood systems. | Medium |

| Subset Optimization (Total Effects) [10] | Optimizes parameters with strong direct and interaction-based influence. | Systems where parameter interactions are significant. | High |

| All-Parameters Optimization [10] | Simultaneously optimizes all model parameters. | Comprehensive uncertainty quantification; robust results. | Highest (but scalable with methods like iIS) |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Experimental Reagents for GRN Research

| Reagent / Resource | Function / Description | Relevance to S-system Inference |

|---|---|---|

| Time-Series Expression Data (Microarray, RNA-seq) [9] | Provides the dynamic transcriptional profiles used to fit and validate the model. | The primary source of information for calculating the cost function during parameter optimization. |

| Perturbation Datasets (e.g., CRISPR screens) [8] | Data from genetic perturbations (knockdowns, knockouts) that shift network states. | Crucial for providing informative constraints that help resolve unidentifiability and validate predicted network responses. |

| Prior Knowledge Databases (e.g., known TF-target interactions) [9] | Curated information from literature and databases about established regulatory links. | Allows researchers to fix certain parts of the network structure, drastically reducing the parameter space that needs to be estimated. |

| Global Sensitivity Analysis (GSA) [10] | A computational tool to identify which parameters most influence model output. | Guides parameter prioritization, allowing effort to be focused on the most important parameters, thus improving efficiency. |

| Iterative Importance Sampling (iIS) [10] | An advanced sampling algorithm for optimizing a large number of parameters. | Enables the "All-Parameters" optimization strategy, providing a robust and scalable solution for complex models. |

Diagram: Modular Decomposition of a Sample GRN

This diagram illustrates how a complex network is broken down into hierarchical levels for sequential parameter estimation [9].

Frequently Asked Questions (FAQs)

FAQ 1: What are the most important topological features for distinguishing regulators from target genes in a Gene Regulatory Network (GRN)?

Research on diverse GRNs from species including E. coli, S. cerevisiae, and H. sapiens has consistently identified three key topological features that effectively distinguish transcription factors (TFs or regulators) from their target genes [12]. The decision-making process for classifying a node based on these features can be visualized with the following logical workflow:

FAQ 2: Why does my S-system parameter estimation fail to converge, and how can I fix it?

Non-convergence in S-system parameter estimation is a common challenge, often stemming from the inherent nonconvexity and ill-conditioning of the inverse problem [13]. The Alternating Regression (AR) algorithm, while fast, can have complex convergence patterns [6]. The following troubleshooting guide outlines common issues and their solutions:

Table: Troubleshooting Guide for S-system Parameter Estimation

| Problem Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| Algorithm fails to converge or oscillates between solutions. | Poor initial parameter guesses; ill-conditioned problem; nonconvex objective function. | Use a multi-start strategy with different initial guesses; apply global optimization methods to escape local minima [6] [13]. |

| Model fits calibration data well but has poor predictive performance (overfitting). | Over-parametrization; model too flexible for the available data [13]. | Implement regularization techniques (e.g., Tikhonov regularization) to penalize overly complex solutions; use cross-validation [13]. |

| Parameter estimates are physically unrealistic or have large uncertainties. | Lack of practical identifiability due to insufficient or noisy data [14] [13]. | Incorporate prior knowledge as constraints on parameters; use robust optimization methods combined with uncertainty quantification (e.g., profile likelihood) [15]. |

FAQ 3: How can I incorporate prior biological knowledge into my GRN model?

Prior knowledge can be integrated systematically during the parameter estimation phase. In the S-system formalism, this is achieved by constraining the search space [6]. If it is known that a variable ( Xj ) does not affect the production of ( Xi ), the corresponding kinetic order parameter ( g{ij} ) can be fixed to zero. Similarly, if a variable is a known inhibitor, its kinetic order (( g{ij} ) or ( h_{ij} )) can be constrained to negative values [6]. This reduces the number of free parameters, mitigating ill-conditioning and guiding the optimization algorithm toward biologically plausible solutions [13].

Troubleshooting Guides

Guide 1: Resolving Overfitting in Dynamic Models

Overfitting occurs when a model learns the noise in the calibration data instead of the underlying biological signal, leading to poor generalizability [13]. Follow this protocol to combat overfitting:

Step 1: Diagnose the Problem. Split your experimental data into a calibration set (e.g., 70-80%) and a validation set (e.g., 20-30%). Calibrate the model on the first set and test its prediction on the unseen validation set. A significant performance drop on the validation set indicates overfitting [13].

Step 2: Apply Regularization. Reformulate the objective function for parameter estimation to include a regularization term that penalizes model complexity. A common approach is Tikhonov regularization, where the objective function becomes ( J(\theta) = SSE(\theta) + \lambda ||\theta - \theta0||^2 ). Here, ( SSE(\theta) ) is the sum of squared errors, ( \lambda ) is the regularization parameter, and ( \theta0 ) is a prior estimate of the parameters (often zero) [13].

Step 3: Tune the Regularization. Systematically vary the regularization parameter ( \lambda ) to find the best trade-off between bias and variance. Use techniques like L-curve analysis to select a ( \lambda ) that significantly reduces parameter variance with a minimal increase in bias [13].

Guide 2: Implementing the Alternating Regression (AR) Method for S-systems

The AR method is a fast and efficient technique for estimating S-system parameters (( \alphai, g{ij}, \betai, h{ij} )) from time-series data by decoupling the differential equations [6]. The workflow is illustrated below.

Experimental Protocol:

- Decouple the System: Given time-series data for metabolite concentrations ( Xj(tk) ) and estimated slopes ( Si(tk) ), the S-system differential equation for each metabolite ( i ) is treated as an independent algebraic equation at each time point ( tk ): ( Si(tk) = \alphai \prod{j=1}^{N} Xj(tk)^{g{ij}} - \betai \prod{j=1}^{N} Xj(tk)^{h_{ij}} ) [6].

- Initialize: Make initial guesses for all parameters in the degradation term (( \betai ) and ( h{ij} )). These can be random or based on prior knowledge [6].

- Phase 1 - Production Term Estimation:

- Use the current estimates of ( \betai ) and ( h{ij} ) to compute the transformed observation vector ( \mathbf{yd} = \log(Si(tk) + \betai \prod{j=1}^{N} Xj(tk)^{h{ij}}) ) for all time points.

- Solve for the production term parameters (( \alphai ) and ( g{ij} )) using multiple linear regression on the model ( \mathbf{yd} = Lp \mathbf{bp} + \mathbf{\epsilonp} ), where ( L_p ) is the matrix of log-concentrations [6].

- Phase 2 - Degradation Term Estimation:

- Use the new estimates of ( \alphai ) and ( g{ij} ) to compute the transformed observation vector ( \mathbf{yp} = \log(\alphai \prod{j=1}^{N} Xj(tk)^{g{ij}} - Si(tk)) ).

- Solve for the degradation term parameters (( \betai ) and ( h{ij} )) using multiple linear regression on the model ( \mathbf{yp} = Ld \mathbf{bd} + \mathbf{\epsilond} ) [6].

- Iterate: Repeat steps 3 and 4 until the parameter values converge (i.e., changes between iterations fall below a predefined tolerance) or a maximum number of iterations is reached [6].

The Scientist's Toolkit

Key Research Reagent Solutions

Table: Essential Computational Tools for GRN and S-system Modeling

| Tool / Reagent | Function / Description | Relevance to S-system Modeling |

|---|---|---|

| S-system Formalism | A canonical power-law representation within Biochemical Systems Theory (BST) where a differential equation consists of one production and one degradation term [16] [6]. | The core modeling framework that enables the application of Alternating Regression and other parameter estimation methods due to its mathematically convenient structure [6]. |

| Alternating Regression (AR) | An estimation algorithm that dissects the nonlinear inverse problem into iterative steps of linear regression [6]. | Provides a fast method for parameter value identification and structure identification (determining which interactions are present) from time-series data [6]. |

| Regularization Techniques | Methods to combat overfitting by adding a penalty term to the objective function to constrain parameter values [13]. | Improves the robustness and generalizability of calibrated models, especially when dealing with noisy or scarce data [13]. |

| Global Optimization Algorithms | Methods like evolutionary algorithms, differential evolution, or metaheuristics designed to find the global minimum of a cost function [13] [15]. | Addresses the nonconvexity of the parameter estimation problem, helping to avoid convergence to local, suboptimal solutions [13]. |

| PageRank & Centrality Measures | Graph theory metrics used to rank nodes in a network by their importance or influence [12] [17]. | Used to identify key regulators (TFs) from the inferred network topology. PageRank, in combination with Knn and degree, helps distinguish regulators from targets [12]. |

Advanced Optimization Techniques: From Evolutionary Algorithms to Deep Learning

Frequently Asked Questions (FAQs)

Q1: What are the fundamental differences between GA and PSO that I should consider for parameter optimization?

A1: GA and PSO are both population-based metaheuristics, but they are inspired by different natural phenomena and have distinct operational mechanics, making them suitable for different types of optimization problems [18].

- Genetic Algorithms (GAs) are inspired by biological evolution. They use crossover (combining parts of two solutions) and mutation (randomly altering a solution) to explore the search space. They are particularly effective for combinatorial and discrete optimization problems [18].

- Particle Swarm Optimization (PSO) is inspired by the social behavior of bird flocking or fish schooling. Particles (solutions) "fly" through the search space by adjusting their positions based on their own experience and the experience of their neighbors. It is known for its simplicity and efficiency in continuous, multi-dimensional spaces [18].

Q2: My optimization run is consistently getting stuck in local optima. How can I improve the global search capability?

A2: Premature convergence is a common challenge. The strategies differ between algorithms [18]:

- For PSO: The tendency to get stuck in local optima is often due to a lack of diversity in the swarm. To mitigate this, you can:

- Introduce mechanisms to maintain swarm diversity.

- Use a neighborhood search mechanism to enhance exploration in complex landscapes [19].

- Adjust the inertia weight to balance exploration and exploitation.

- For GA: GAs are generally more robust against local optima because they maintain diversity longer through genetic operators. Ensure your mutation rate is set appropriately to introduce enough randomness and prevent premature convergence [18].

- Hybrid Approach: Consider integrating an elite evolution strategy, where the best solutions (elites) are refined in each iteration to rapidly improve quality while using selection strategies to maintain diversity [19].

Q3: How do I balance exploration (diversification) and exploitation (intensification) in these algorithms?

A3: Balancing exploration (searching new regions) and exploitation (refining known good regions) is crucial for algorithm performance [19].

- In PSO, this balance is controlled by the inertia weight and acceleration coefficients. A higher inertia favors exploration, while a lower inertia promotes exploitation. Introducing a perturbation term or adaptive inertia can help maintain this balance throughout the run [19].

- In GA, exploration is driven by mutation and crossover, while exploitation is handled by selection. Using a roulette-wheel selection strategy can help maintain exploration diversity by giving poorer solutions a chance to be selected [19].

Q4: For a high-dimensional parameter optimization problem in an S-system model, which algorithm is typically faster?

A4: PSO generally converges faster than GA because particles learn from each other directly, leading to quicker information propagation through the swarm. However, this can sometimes lead to premature convergence. GAs, while often slower due to the high number of evaluations per generation, can handle complex, rugged search spaces more effectively [18]. The choice may depend on whether your parameter space is primarily continuous (favors PSO) or discrete (favors GA).

Troubleshooting Guides

Problem 1: Poor Convergence or Slow Optimization Speed

Possible Causes and Solutions:

- Cause 1: Poorly tuned algorithm parameters.

- Solution:

- GA: Systematically adjust the population size, mutation rate, and crossover probability. A typical starting point is a mutation rate of 0.01-0.1 and a crossover rate of 0.7-0.9.

- PSO: Optimize the inertia weight, cognitive, and social parameters. An adaptive inertia weight that decreases over the run can improve performance.

- Solution:

- Cause 2: The algorithm is unsuitable for the problem landscape.

- Solution: Refer to the following table to select the appropriate algorithm.

| Problem Characteristic | Recommended Algorithm | Rationale |

|---|---|---|

| Continuous, real-valued parameters | Particle Swarm Optimization (PSO) | Effective in continuous, multi-dimensional spaces; fast convergence [18]. |

| Discrete, combinatorial parameters | Genetic Algorithms (GA) | Excels in discrete problems using crossover and mutation operators [18]. |

| Rugged landscape with many local optima | Genetic Algorithms (GA) or Simulated Annealing (SA) | GA maintains diversity; SA accepts worse solutions to escape local traps [18]. |

| Dynamic or multi-objective optimization | Particle Swarm Optimization (PSO) | Highly adaptable to changing conditions and multiple objectives [18]. |

| High-dimensional combinatorial tasks | Elite-evolution-based discrete PSO (EEDPSO) | Specifically designed for complex discrete optimization; balances exploration/exploitation [19]. |

Problem 2: Algorithm Unable to Find a Feasible Solution

Possible Causes and Solutions:

- Cause 1: Constraints are not handled properly.

- Solution: Implement constraint-handling techniques such as penalty functions, which penalize the fitness of solutions that violate constraints, steering the population towards feasible regions.

- Cause 2: The initial population is poorly distributed.

- Solution: Ensure the initial population of particles (PSO) or individuals (GA) is randomly generated across the entire feasible search space to promote better exploration.

Experimental Protocols & Methodologies

Protocol 1: Benchmarking GA vs. PSO for S-system Parameter Optimization

This protocol provides a standardized method for comparing the performance of GA and PSO.

- Objective: To compare the convergence speed, accuracy, and robustness of GA and PSO in estimating parameters of a target S-system model.

- Data Simulation:

- Generate in silico data using a known S-system model with a predefined parameter set.

- Add Gaussian noise to simulate experimental error.

- Algorithm Configuration:

- GA Setup:

- Population Size: 100

- Crossover: Two-point crossover (Rate: 0.8)

- Mutation: Gaussian mutation (Rate: 0.05)

- Selection: Roulette-wheel selection

- PSO Setup:

- Swarm Size: 50

- Inertia Weight (w): 0.7

- Cognitive Coefficient (c1): 1.5

- Social Coefficient (c2): 1.5

- GA Setup:

- Fitness Function: Use the Sum of Squared Errors (SSE) between the simulated data from the candidate model and the target in silico data.

- Stopping Criterion: Maximum of 10,000 iterations or convergence (e.g., fitness improvement < 1e-6 for 100 consecutive iterations).

- Metrics for Comparison:

- Best-fit error (SSE)

- Computational time

- Number of iterations to convergence

The following workflow outlines this benchmarking protocol:

Protocol 2: Handling High-Dimensionality with a Discrete PSO Variant

For very complex S-system models with a large number of parameters, a standard PSO may struggle. This protocol uses an advanced variant [19].

- Objective: To optimize high-dimensional S-system parameters using a discrete PSO framework.

- Algorithm: Elite-evolution-based discrete PSO (EEDPSO).

- Key Modifications from standard PSO:

- Discretization: Particle positions represent discrete candidate solutions.

- Velocity Redefinition: Velocity is redefined as the number of dimension changes.

- Elite Evolution: The best solutions (p-best and g-best) undergo a neighborhood search for refinement.

- Selection: A roulette-wheel selection guides each dimension's update.

- Workflow:

- Initialize particle positions discretely.

- In each iteration, update velocities and positions using the discrete mechanism.

- Perform a neighborhood search on elite particles.

- Use roulette-wheel selection to maintain diversity.

The Scientist's Toolkit: Research Reagent Solutions

This table lists computational tools and their roles in optimizing S-system models.

| Item/Reagent | Function in Optimization |

|---|---|

| Genetic Algorithm (GA) | An evolution-inspired optimizer that uses selection, crossover, and mutation to evolve a population of candidate parameter sets towards an optimal solution [18]. |

| Particle Swarm Optimization (PSO) | A swarm intelligence-based optimizer where a population of candidate solutions (particles) moves through the parameter space, guided by their own and the swarm's best-found positions [18]. |

| Elite-evolution-based discrete PSO (EEDPSO) | An advanced PSO variant redesigned for high-dimensional discrete problems. It uses elite evolution and neighborhood search to improve efficiency and avoid premature convergence [19]. |

| Fitness Function | A function (e.g., Sum of Squared Errors) that quantifies how well a candidate S-system parameter set fits the experimental or target data. It drives the optimization process. |

| Roulette-wheel Selection | A genetic algorithm operator that selects individuals for reproduction with a probability proportional to their fitness, helping to maintain population diversity [19]. |

| Neighborhood Search | A local search procedure applied to good solutions (elites) to refine them further, intensifying the search in promising regions of the parameter space [19]. |

This technical support center is designed for researchers engaged in the parameter optimization of S-system models for Gene Regulatory Networks (GRNs). The hierarchical estimation methods discussed herein leverage the inherent topology of biological networks to enhance computational efficiency and accuracy. This resource provides targeted troubleshooting guides, frequently asked questions (FAQs), and detailed experimental protocols to address common challenges you may encounter during your computational experiments. The guidance is framed within the context of advanced GRN research, utilizing tools like single-cell RNA-Seq (scRNA-Seq) data and genome-scale metabolic models (GEMs) to refine parameter estimation in complex biological systems [4] [20].

Frequently Asked Questions (FAQs)

Q1: What is hierarchical estimation in the context of S-system GRN models, and why is it more efficient? A1: Hierarchical estimation is a computational strategy that breaks down the complex problem of estimating all S-system parameters simultaneously into smaller, more manageable sub-problems. This is achieved by leveraging the known or inferred topology of the gene regulatory network. Instead of a monolithic optimization, parameters for individual nodes or small network modules are estimated sequentially or in parallel, often using a "divide and conquer" approach. This method significantly improves efficiency by reducing the computational search space and can help avoid local optima, a common issue in high-dimensional nonlinear optimization [21] [22].

Q2: My parameter optimization fails to converge. What are the primary causes? A2: Non-convergence in parameter optimization for S-system models typically stems from a few key issues:

- Incorrect Network Topology: The inferred gene-gene interaction topology used to constrain the S-system model may be inaccurate. Noisy or incomplete scRNA-Seq data can lead to erroneous edges (false positives) or missing interactions (false negatives) in the network [4] [23].

- Local Optima: The optimization algorithm may be trapped in a local minimum of the objective function. Hierarchical methods that decompose the problem can make the optimization landscape smoother and less prone to this issue [21].

- Data Pre-processing: A lack of proper pre-processing of gene expression data, such as normalization, smoothing, or handling of excess zeros (dropouts), can introduce biases that prevent the model from fitting the biological reality [4].

Q3: How can I validate the structure of my GRN before using it for hierarchical estimation? A3: Validating a GRN's ground-truth structure is a major challenge. The recommended approach is to use a combination of:

- Benchmark Datasets: Utilize synthetic and curated real-world benchmark datasets with known reference networks, such as those provided by the DREAM Challenges [4] [23].

- Multi-Omics Integration: Corroborate your inferred network topology with independent data sources, such as ChIP-seq data for transcription factor binding or protein-protein interaction networks [23].

- Perturbation Data: Incorporate data from gene knockout or knockdown experiments. A robust network topology should correctly predict the outcomes of such perturbations [23].

Q4: What are the best practices for handling scRNA-Seq data dropouts in S-system modeling? A4: The high number of zero values (dropouts) in scRNA-Seq data poses a significant challenge. Best practices include:

- Imputation and Smoothing: Apply specialized computational methods to distinguish technical dropouts from true biological absence of expression. This can involve smoothing the data or imputing likely values for missing data points [4].

- Modeling Zero-Inflated Distributions: Consider using statistical models that explicitly account for zero-inflated data distributions during the parameter inference process, rather than relying on standard least-squares approaches [4].

Q5: Where can I find high-quality, standardized models and data for my research? A5: Several knowledge bases provide curated resources:

- BiGG Models: A centralized repository of high-quality, genome-scale metabolic models (GEMs). These can be used to constrain or validate aspects of your GRN model, especially in metabolic contexts. All models are manually curated and linked to genome annotations and external databases [24] [20].

- DREAM Challenges: A community resource that provides standardized benchmark problems and datasets for network inference and model evaluation, enabling fair comparison of different methods [4].

Troubleshooting Guides

Troubleshooting Parameter Optimization

Table 1: Common Parameter Optimization Issues and Solutions

| Problem Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| Optimization fails to converge | Noisy or poorly pre-processed scRNA-Seq data [4] | Implement rigorous data pre-processing: normalize, smooth, and handle dropouts. |

| Model predictions are biologically implausible | Inaccurate initial network topology [23] | Validate topology with multi-omics data or perturbation experiments before parameter estimation. |

| Algorithm trapped in local optima | High-dimensional, non-linear parameter space [21] | Employ hierarchical optimization; use global search algorithms (e.g., genetic algorithms) for sub-problems. |

| Parameter values exhibit high variance | Insufficient gene expression data points [4] | Increase sample size or leverage multi-strain/model data from resources like BiGG Models [20]. |

| Poor model generalizability | Overfitting to the training data [23] | Use cross-validation; simplify network topology by removing low-confidence edges. |

Troubleshooting Network Topology Inference

Table 2: Common Network Topology Inference Issues and Solutions

| Problem Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| High false positive rate in edges | Method not robust to data noise [4] | Use ensemble methods (e.g., GENIE3) [23] or algorithms designed for scRNA-Seq specifics. |

| Inability to detect key interactions | Low statistical power of inference method [4] | Integrate prior knowledge from databases; use methods that combine correlation and causality. |

| Poor performance on benchmark data | Lack of gene selection/pre-processing [4] | Pre-select a subset of genes of interest (e.g., transcription factors) to reduce problem complexity. |

| Inferred network is too dense/connected | Lack of sparsity constraint [23] | Apply regularization techniques (e.g., L1 regularization) to promote sparse network solutions. |

Experimental Protocols

Protocol: Hierarchical Parameter Estimation for a Defined S-system Model

This protocol details a hierarchical method for estimating S-system parameters given a fixed network topology.

1. Research Reagent Solutions & Materials

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Function in the Protocol | Specification / Notes |

|---|---|---|

| scRNA-Seq Dataset | Provides single-cell resolution gene expression data for inference and validation. | Should include time-series or perturbation data. Check for UMI counts to ensure quality [4]. |

| BiGG Models Knowledgebase | Source of standardized, curated metabolic networks. Used for cross-validation or as a source of prior knowledge on gene-metabolite interactions [24] [20]. | Access via http://bigg.ucsd.edu. The universal model is recommended for initial exploration. |

| COBRA Toolbox | A MATLAB/Python suite for constraint-based reconstruction and analysis. Useful for simulating metabolic constraints that can inform the GRN [20]. | -- |

| MEMOTE Suite | Model validation tool to test the biochemical consistency and quality of the resulting network model [20]. | Used to ensure final model quality and annotation. |

| DREAM Challenge Datasets | Gold-standard benchmark datasets with known ground-truth networks for method validation [4] [23]. | Essential for validating the inference pipeline before applying it to novel data. |

2. Workflow Diagram

Diagram 1: Hierarchical parameter estimation workflow.

3. Step-by-Step Methodology

- Step 1: Data Pre-processing. Begin with your scRNA-Seq dataset. Perform quality control, normalization, and address the dropout phenomenon using appropriate imputation or smoothing techniques. The goal is to produce a clean, reliable matrix of gene expression values [4].

- Step 2: Network Topology Inference. Use a GRN inference algorithm (e.g., based on information theory like PIDC, or regression trees like GENIE3) on the pre-processed data to generate an initial graph of gene-gene interactions. This graph defines the potential regulatory links for your S-system model [4] [23].

- Step 3: Topology Validation. Critically assess the inferred topology. Use benchmark datasets with known networks (like those from DREAM) to tune your inference method. If possible, integrate transcription factor binding data or plan perturbation experiments to validate key interactions [4] [23].

- Step 4: Hierarchical Decomposition. Decompose the validated global network into smaller, manageable modules or sub-networks. This can be based on network community detection algorithms, functional annotations (e.g., GO terms), or by focusing on individual nodes and their direct regulators [21] [22].

- Step 5: Module Parameter Estimation. For each network module, run the S-system parameter optimization. Since each sub-problem has far fewer parameters, you can use more robust optimization algorithms and avoid local minima more effectively. This step can be parallelized for computational efficiency [21].

- Step 6: Model Integration and Validation. Re-integrate the optimized parameters from all modules into the full S-system model. Validate the integrated model's predictive power on held-out data. Use tools like MEMOTE to check for biochemical consistency and ensure the model generates biologically plausible predictions [20].

Visualization and Diagram Specifications

All diagrams are generated using Graphviz DOT language, adhering to the following specifications to ensure accessibility and visual clarity.

S-system Model Estimation Logic

Diagram 2: Hierarchical parameter focus for a single node.

Key Color Palette for Visualizations

The following colors are approved for use in all diagrams to maintain consistency and accessibility. Always check contrast ratios between foreground (text, arrows) and background colors.

- Blue:

#4285F4 - Red:

#EA4335 - Yellow:

#FBBC05 - Green:

#34A853 - White:

#FFFFFF - Light Gray:

#F1F3F4 - Dark Gray:

#202124 - Mid Gray:

#5F6368

Contrast Rule Compliance: All text within colored nodes (e.g., fontcolor="#FFFFFF" on dark blue) is explicitly set to ensure a high contrast ratio, as mandated by WCAG guidelines. For example, light text on dark backgrounds and dark text on light backgrounds are used throughout [25] [26].

FAQs: Core Concepts and Common Problems

FAQ 1: What is the fundamental challenge of "coupled optimization" in inferring S-system GRN models? The core challenge is that inferring a Gene Regulatory Network (GRN) is an under-determined problem. Simply finding a set of parameters that minimizes the error between the model's output and experimental gene expression data is insufficient, as many different parameter combinations (and thus network structures) can fit the same data equally well. True coupled optimization requires simultaneously satisfying two objectives: minimizing the model's behavioral error and ensuring the inferred network structure reflects biologically plausible connectivity [27]. This involves not just parameter optimization but also structural inference to identify the correct regulatory interactions between genes.

FAQ 2: Why do my inferred S-system parameters lead to a dynamically unstable or biologically implausible network?

This is a common issue where the optimization converges on a solution with good numerical fit but poor biological fidelity. It often occurs when the optimization focuses solely on the Mean Squared Error (MSE) without considering structural constraints [27]. The solution is to use a multi-objective evaluation function that incorporates both network behavior and structure:

fobj(i) = α ⋅ MSE(i) + (1-α) ⋅ StructureErr [27]

Here, MSE(i) quantifies the fit to expression data for gene i, StructureErr is a penalty term for unwanted connectivity, and α is a weighting factor. Without this structural guidance, algorithms may infer overly complex, fragile networks not representative of robust biological systems.

FAQ 3: My parameter identification algorithm gets stuck in local minima. What advanced optimization strategies can help? Local minima are a significant challenge in the high-dimensional, non-linear parameter landscape of S-system models. Stochastic, population-based global optimization techniques are better suited for this task than traditional local methods [27]. A recommended approach is a hybrid algorithm, such as a Genetic Algorithm (GA) coupled with Particle Swarm Optimization (PSO) [27]. This hybrid method leverages GA's exploration strength and PSO's efficiency in local refinement. Furthermore, prioritizing the optimization of the most sensitive parameters first, based on sensitivity analysis, can improve convergence and lead to more robust networks [27].

FAQ 4: How can I validate my inferred network structure in the absence of a completely known ground-truth network? Direct validation is challenging, but several strategies exist:

- Leverage Robustness as a Proxy: Biologically correct network structures often exhibit intrinsic robustness—insensitivity to small parametric or genetic perturbations. Testing your inferred network's stability against such perturbations can build confidence in its structural validity [27].

- Use Benchmark Datasets: Utilize publicly available benchmark datasets and community challenges, such as the DREAM challenges, which provide curated networks and standardized frameworks for comparing inference performance against other methods [28] [4].

- Incorporate Prior Knowledge: When available, use structural knowledge from literature to define a

StructureErrpenalty term in your objective function, guiding the inference toward biologically consistent connections [27].

FAQ 5: What are the specific data pre-processing challenges with single-cell RNA-seq (scRNA-seq) data for S-system inference? scRNA-seq data introduces unique hurdles that must be addressed before inference [4]:

- Dropout Events: A high number of zero counts in the data, where a gene is undetected in some cells not due to true non-expression but technical limitations.

- Biological Noise: Significant stochastic variation in gene expression, environmental niche effects, and cell-cycle effects can obscure the underlying regulatory signal. Proper pre-processing steps, including gene selection, smoothing, and potentially discretization of gene expression, are critical to mitigate these issues and improve the performance of subsequent inference algorithms [4].

Troubleshooting Guides

Problem: Poor Model Fit Despite Parameter Tuning

Symptoms:

- High Mean Squared Error (MSE) between simulated and experimental time-course data.

- Model fails to capture the dynamic trends of key genes.

| Diagnostic Step | Action & Validation |

|---|---|

| Check Data Quality | Inspect the gene expression data for excessive noise or dropout events. Apply appropriate smoothing or imputation techniques for scRNA-seq data [4]. |

| Verify Algorithm Convergence | Run the optimization algorithm multiple times with different random seeds. If results vary widely, the algorithm may not be consistently converging, indicating a need for a more robust optimizer like a hybrid GA-PSO [27]. |

| Simplify the Network | The model might be over-parameterized. Impose sparsity constraints to reduce the number of allowable connections (gi,j and hi,j), encouraging a simpler, more identifiable network [27]. |

Problem: Structurally Unsound or Overly Complex Inferred Network

Symptoms:

- The inferred network is densely connected, contradicting the known sparsity of biological networks.

- The network is dynamically fragile, becoming unstable with minor parameter changes.

| Diagnostic Step | Action & Validation |

|---|---|

| Adjust the Objective Function | Increase the weight (1-α) of the StructureErr term in the evaluation function. This forces the optimizer to prioritize sparse connectivity [27]. |

| Perform Robustness Analysis | Perturb the inferred parameters slightly and simulate the network. A biologically plausible network should maintain stable dynamics. Use this as a validation step post-inference [27]. |

| Incorporate Prior Knowledge | If some gene interactions are known from literature, fix those parameters and only infer the unknown ones. This reduces the search space and guides the structure [27]. |

Problem: Optimization is Computationally Prohibitive

Symptoms:

- A single optimization run takes an impractically long time.

- Scaling the model to a larger number of genes is infeasible.

| Diagnostic Step | Action & Validation |

|---|---|

| Adopt a Decoupled S-system | Use the decoupled S-system strategy, which breaks the large-scale inference problem into N separate, smaller sub-problems (one for each gene), drastically reducing computational complexity [27]. |

| Leverage Machine Learning | Replace the inner ODE simulation with a trained surrogate model, such as an Artificial Neural Network (ANN), to rapidly predict system behavior given parameters, as demonstrated in other inverse problems [29]. |

| Implement Sensitivity Analysis | Identify and focus optimization on the parameters to which the system output is most sensitive. This reduces the effective number of parameters to be optimized [27]. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Data for GRN Inference

| Tool / Reagent | Function in Coupled Optimization |

|---|---|

| Time-Series Gene Expression Data (Bulk or Single-Cell) | The primary experimental input. Used to calculate the MSE and drive the parameter optimization process. High temporal resolution is critical [30] [4]. |

| Reporter Plasmids (e.g., GFP-based) | Allows for real-time, high-resolution monitoring of promoter activity in living cells, providing accurate dynamic data for parameter identification [30]. |

| S-system Model Formalism | Provides the mathematical framework (a set of non-linear ODEs) to represent the synthesis and degradation of gene products and their regulatory interactions [27]. |

| Global Optimization Algorithms (e.g., GA, PSO) | The computational engine for navigating the high-dimensional parameter space to find values that minimize the objective function [27]. |

| Benchmark Networks (e.g., from DREAM Challenges) | Provide standardized, ground-truth datasets to validate, compare, and benchmark the performance of different inference methodologies [28] [4]. |

Experimental Protocol: A Workflow for Coupled Optimization

This protocol outlines a combined approach for inferring an S-system model of a GRN from time-series expression data.

1. Experimental Data Acquisition:

- Method: Use GFP-reporter plasmids or scRNA-seq to obtain high-resolution time-series measurements of gene expression [30] [4].

- Key Consideration: Ensure adequate temporal resolution to capture dynamic transitions and multiple biological replicates.

2. Mathematical Modeling with the S-system:

- Formulate the network using the decoupled S-system formalism for each gene i [27]:

dXi/dt = αi ∏ Xj^gi,j - βi ∏ Xj^hi,j - Parameters to Infer: Rate constants

αiandβi, and kinetic ordersgi,jandhi,j.

3. Define the Coupled Optimization Problem:

- Objective Function: Formulate a multi-objective function:

fobj(i) = α ⋅ MSE(i) + (1-α) ⋅ StructureErr[27]. - MSE Calculation:

MSE(i) = Σ [ (Xia(t) - Xio(t)) / Xio(t) ]²across all time points T [27]. - Structure Error: Define

StructureErrbased on a sparsity penalty (L1-norm) or prior knowledge.

4. Execute Hybrid GA-PSO Optimization: