Optimizing Ornstein-Uhlenbeck Parameter Estimation: Advanced Methods for Biomedical and Clinical Research

This article provides a comprehensive guide for researchers and drug development professionals on optimizing parameter estimation for Ornstein-Uhlenbeck (OU) models, which are crucial for analyzing longitudinal biomedical data like disease...

Optimizing Ornstein-Uhlenbeck Parameter Estimation: Advanced Methods for Biomedical and Clinical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing parameter estimation for Ornstein-Uhlenbeck (OU) models, which are crucial for analyzing longitudinal biomedical data like disease biomarkers and physiological processes. It covers foundational OU theory, advanced estimation methodologies addressing common challenges like measurement noise and finite-sample bias, optimization techniques for improved accuracy, and rigorous model validation approaches. By synthesizing current research and practical considerations, this resource enables more reliable analysis of serially correlated biological data to enhance drug development and clinical research.

Understanding Ornstein-Uhlenbeck Processes: Foundations for Biomedical Data Analysis

Frequently Asked Questions

Q1: What does the Ornstein-Uhlenbeck process model in biological systems?

The Ornstein-Uhlenbeck (OU) process models systems where a variable tends to revert to a long-term mean over time, balancing random fluctuations with a restoring force. In biological contexts, this is used to model phenomena such as stabilizing selection on trait evolution across species and novel mechanisms for synaptic learning in the brain, where it helps balance exploration and exploitation during learning [1] [2].

Q2: My parameter estimates for the OU process are biased, especially with small datasets. What is wrong?

This is a known common issue. With small datasets, likelihood ratio tests often incorrectly favor OU models over simpler ones (like Brownian motion), and estimates for the strength of mean reversion (α or θ) can be significantly biased [1]. Even small amounts of measurement error in your data can profoundly affect the inferences. Always validate your model by simulating data with your fitted parameters and comparing the results to your empirical findings [1].

Q3: Can I interpret a fitted OU model as direct evidence of stabilizing selection?

Caution is required. While the OU model is frequently described as a model of 'stabilizing selection,' this can be misleading. The process modeled among species traits is qualitatively different from stabilizing selection within a population toward a fitness optimum. The OU parameter α measures the strength of pull towards a central trait value, but its interpretation requires careful consideration of the biological context [1].

Q4: What are the main methods for estimating OU parameters from my data?

Two primary classes of methods are used:

- Statistical Methods: Maximum Likelihood Estimation (MLE) is a standard and efficient approach [3] [4]. For a discrete-time series, the process can be modeled as an AR(1) process, and its parameters can be mapped back to the continuous-time OU parameters [5].

- Deep Learning Techniques: Recent research explores using Recurrent Neural Networks (RNNs) for parameter estimation, which may offer higher precision in some cases, though they can be computationally more expensive [3].

Troubleshooting Guides

Problem: Poor Performance and Divergent Transitions during Bayesian Estimation

Issue: When implementing an OU model in a Bayesian framework (e.g., using Stan), you encounter very long runtimes and a high percentage of divergent transitions, sometimes up to 80-90% [6].

Solution:

- Reparameterize the Model: A key strategy is to use non-centered parameterizations for unobserved states. This can dramatically improve sampling efficiency and increase the effective sample size (

n_eff) [6]. - Constrain Parameters with a Simplex: If your model includes a vector of switching times (

t_switch), declare it as asimplexin the parameters block. This ensures the values are ordered and positive, which helps the sampler [6].- In Stan, this can look like:

simplex[N] t_switch; - These values can then be transformed to the original time scale inside the model block.

- In Stan, this can look like:

Problem: Choosing the Wrong Model for Your Biological Data

Issue: You automatically fit an OU process to your data and interpret it as evidence for a specific biological process like stabilizing selection, which may be inaccurate [1].

Solution:

- Compare Multiple Models: Do not fit only the OU model. Always compare its fit against a set of other models, such as the simple Brownian motion model, using appropriate statistical criteria [1].

- Simulate and Validate: After fitting the model, simulate new datasets using the estimated parameters. If the properties of your simulated data do not match your original empirical data, it indicates the model is a poor description of the underlying process [1].

- Consider Biological Interpretation: Remember that a better fit for an OU model does not automatically equal stabilizing selection. The link between the statistical pattern and the biological process is complex and requires careful justification [1].

Problem: Implementing the OU Process for a Pairs Trading Strategy

Issue: You are trying to model the spread between two assets for a pairs trading strategy and need to fit the OU process to find the optimal entry and exit points [4].

Solution:

- Formulate the Log-Likelihood: Define the average log-likelihood function for the OU process based on its probability density function. For a portfolio value ( Xt ) with observations at discrete time steps, the log-likelihood is [4]: [ \ell (\theta,\mu,\sigma) = -\frac{1}{2} \ln(2\pi) - \ln(\tilde{\sigma}) - \frac{1}{2n\tilde{\sigma}^2}\sum{i=1}^{n} [xi - x{i-1} e^{-\mu \Delta t} - \theta (1 - e^{-\mu \Delta t})]^2 ] where ( \tilde{\sigma}^2 = \sigma^2 \frac{1 - e^{-2\mu\Delta t}}{2\mu} ).

- Apply Maximum Likelihood Estimation (MLE): Maximize this log-likelihood function to estimate the parameters ( \theta ) (long-term mean), ( \mu ) (speed of reversion), and ( \sigma ) (volatility) [4].

- Solve the Optimal Stopping Problem: Use the fitted parameters to solve for the optimal liquidation level ( b^* ) and entry level ( d^* ) based on the expected discounted value, factoring in transaction costs [4].

Experimental Protocols & Workflows

Protocol 1: Parameter Estimation via Maximum Likelihood Estimation (MLE)

This is a standard method for fitting an OU process to discrete time-series data [3] [4].

- Data Preparation: Collect your observed data ( (x0, x1, \cdots, x_n) ) at a fixed time interval ( \Delta t ).

- Define the Log-Likelihood Function: Use the formula provided in the Troubleshooting Guide above.

- Numerical Optimization: Use a numerical optimization algorithm (e.g., BFGS, Nelder-Mead) to find the parameters ( (\theta^, \mu^, \sigma^*) ) that maximize the log-likelihood function ( \ell ).

- Validation: Simulate the OU process with your estimated parameters and compare the statistical properties (mean, variance, autocorrelation) of the simulated data with your original data.

Protocol 2: Comparing OU and Brownian Motion Models

This protocol helps determine if the mean-reverting property of the OU model is truly necessary for your data [1].

- Model Fitting: Fit both the OU model and the simpler Brownian motion (BM) model to your dataset.

- Model Selection: Use a model selection criterion like AIC (Akaike Information Criterion) or a likelihood ratio test to compare the goodness-of-fit of the two models. Be aware that likelihood ratio tests may be biased toward preferring the OU model with small datasets [1].

- Simulation-Based Assessment: Simulate many datasets under the fitted BM model. Then, fit both BM and OU models to these simulated datasets. This shows how often you would incorrectly select the OU model even when the data was generated by BM. This process helps assess the statistical power of your test [1].

Research Reagent Solutions

The table below lists key "reagents" — in this context, computational tools and mathematical formulas — essential for working with OU processes.

| Research Reagent | Function & Application | Notes / Formulation |

|---|---|---|

| OU Stochastic Differential Equation (SDE) | Core model defining the continuous-time dynamics of a mean-reverting process [7] [8]. | ( dXt = \mu (\theta - Xt)dt + \sigma dWt )Where ( Wt ) is a Wiener process. |

| MLE Log-Likelihood Function | Objective function for finding the most probable parameters given observed data [4]. | ( \ell = -\frac{1}{2} \ln(2\pi) - \ln(\tilde{\sigma}) - \frac{1}{2n\tilde{\sigma}^2}\sum{i=1}^{n} [xi - x_{i-1} e^{-\mu \Delta t} - \theta (1 - e^{-\mu \Delta t})]^2 ) |

| Explicit Solution of OU SDE | Allows for direct simulation and analysis of the process state at any time ( t ) [7] [3]. | ( Xt = Xs e^{-\mu (t-s)} + \theta (1 - e^{-\mu (t-s)}) + \sigma \ints^{t} e^{-\mu (t-u)} dWu ) |

| Euler-Maruyama Discretization | Scheme for numerically simulating the OU process in discrete time [8] [5]. | ( X{k+1} = Xk + \mu(\theta - Xk)\Delta t + \sigma \sqrt{\Delta t} \, \epsilonk, \quad \epsilon_k \sim \mathcal{N}(0,1) ) |

| Optimal Stopping Formulae | Used in trading applications to determine mathematically optimal entry and exit points for a mean-reverting strategy [4]. | Liquidation level ( b^* ) solves:( F(b) - (b - c_s)F'(b) = 0 ) |

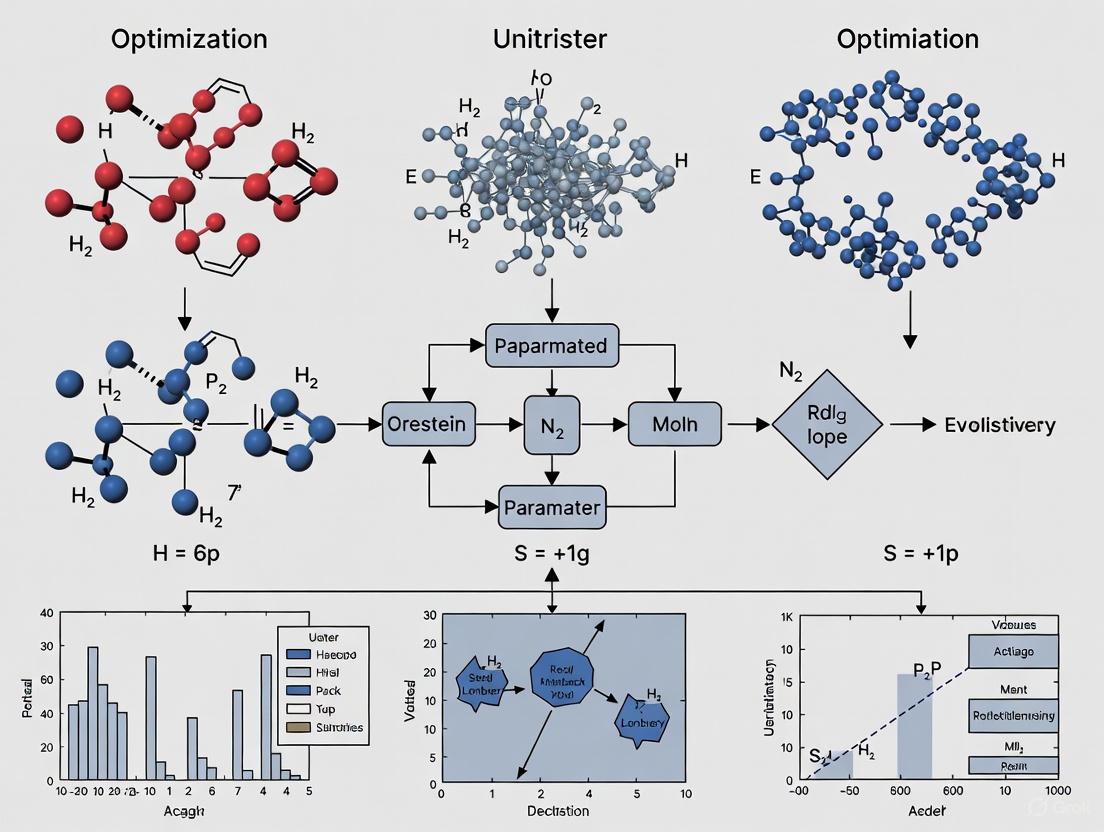

Conceptual Diagrams

OU Process Dynamics and Parameter Estimation Workflow

Balancing Exploration and Exploitation in Neural Learning

This diagram illustrates the application of the OU process for meta-learning in biological and artificial neural systems, where it helps balance the exploration of new parameters with the exploitation of known good ones [2].

Key OU Parameters and Their Biological Interpretations in Clinical Contexts

Frequently Asked Questions

What is the primary biological relevance of the Ornstein-Uhlenbeck process in clinical research? The OU process is fundamentally used to model biological systems that exhibit mean reversion, where a variable tends to drift towards a long-term equilibrium value over time. This is crucial in clinical contexts for modeling phenomena such as:

- Drug Concentration in Blood: Drug levels often revert to a steady state due to continuous metabolic clearance and periodic dosing schedules [9].

- Biological Homeostasis: Physiological variables like blood glucose, core body temperature, and hormone levels are regulated to stay within a narrow range [10] [11].

- Trait Evolution in Comparative Studies: In evolutionary biology, it helps test adaptive hypotheses by modeling how traits evolve toward optimal values under phylogenetic constraints [12].

In a Model-Informed Drug Development (MIDD) context, how can an OU model be "Fit-for-Purpose"? A model is "Fit-for-Purpose" when its complexity and assumptions are directly aligned with the key Question of Interest (QOI) and Context of Use (COU). An OU model is appropriate when your QOI involves understanding the rate and strength of a biological system's self-regulation.

- Do Use an OU Model When: You need to quantify how quickly a biomarker (e.g., blood pressure) returns to baseline after a perturbation (e.g., drug administration), or to model the fluctuating concentration of a drug around a mean value [13] [14].

- Do Not Use an OU Model When: The system shows no tendency to revert to a mean (e.g., a random walk), or the data-generating process is not continuous. Oversimplifying a complex system or using poor quality data can render the model not "Fit-for-Purpose" [13].

What are the practical implications of a long phylogenetic half-life (t₁/₂) versus a short one in trait evolution?

The phylogenetic half-life, defined as t₁/₂ = ln(2)/α, quantifies the time for a trait to evolve halfway from its ancestral state to a new optimal value [12].

- Long t₁/₂ (Slow Rate of Adaptation α): Suggests strong phylogenetic inertia, where a trait's evolution is heavily constrained by ancestry. The trait's past history has a prolonged influence, and it adapts slowly to new optimal values [12].

- Short t₁/₂ (Fast Rate of Adaptation α): Indicates weak phylogenetic inertia. The trait can rapidly evolve toward a new optimum, suggesting it is under strong and effective selective pressure [12].

My model fails to converge during parameter estimation. What are the key areas to troubleshoot? Parameter estimation for OU processes, especially with limited data, can be challenging. Key areas to investigate are:

- Prior Distributions: In a Bayesian framework (e.g., using a tool like

Blouch), poorly chosen priors can lead to identifiability issues. Incorporate any existing biological knowledge to set informative priors and restrict parameter space to meaningful regions [12]. - Data Quality and Quantity: The model may be over-parameterized for the available data. Ensure you have sufficient, high-quality longitudinal data points to capture the mean-reverting behavior [13].

- Likelihood Ridges: Parameters like the mean-reversion rate (α or θ) and the stationary variance (σ²/2α) can be correlated, creating "ridges" in the likelihood surface that make it difficult for the algorithm to find a unique maximum [12].

Key Parameter Tables

Table 1: Core OU Process Parameters and Interpretations

| Parameter | Standard Mathematical Symbol | Biological/Drug Development Interpretation | Impact of a Higher Parameter Value |

|---|---|---|---|

| Mean-Reversion Rate | θ, κ, α | Speed at which a system returns to its equilibrium after a perturbation [7] [15] [10]. | Faster return to the long-term mean (equilibrium). Shorter relaxation/decorrelation time [10] [11]. |

| Long-Term Mean | μ, θ | The homeostatic setpoint or target equilibrium value of the system (e.g., steady-state drug concentration, optimal trait value) [7] [10] [11]. | The process fluctuates around a higher average level. |

| Volatility / Noise Intensity | σ | Magnitude of random fluctuations caused by stochastic biological variability or measurement error [7] [15] [10]. | Larger random deviations from the path to the mean, leading to a higher stationary variance [10]. |

Table 2: Derived Statistical Properties

| Property | Formula | Biological/Drug Development Interpretation |

|---|---|---|

| Conditional Mean | E[X(t)] = X₀e^(-θt) + μ(1 - e^(-θt)) [7] [10] |

The expected value of the process at time t, given its starting value X₀. Shows the exponential path toward the long-term mean. |

| Stationary Variance | σ²/(2θ) [7] [10] [11] |

The equilibrium variance of the process around the long-term mean. Represents the expected variability of the system in a stable state. |

| Phylogenetic Half-Life | t₁/₂ = ln(2)/α [12] |

The time it takes for a trait to evolve halfway from its ancestral state to a new primary optimum in evolutionary studies. |

Experimental Protocols

Protocol 1: Fitting an OU Process to Model Microbial Population Dynamics in a Chemostat

This protocol is adapted from studies analyzing the dynamics of microorganisms under stochastic growth conditions [16].

1. Problem Formulation:

- Objective: To model the fluctuating concentration of microorganisms in a chemostat, where the maximum growth rate is subject to random environmental variations.

- Model: The growth rate

m(t)is modeled as an OU process:dm(t) = θ(m̄ - m(t))dt + σ dW(t), which then drives the differential equations for nutrient and microorganism concentrations [16].

2. Data Collection:

- Collect high-frequency, longitudinal measurements of microorganism concentration (

X(t)) and nutrient concentration (S(t)) from the chemostat over a sufficiently long time period to observe fluctuations and mean-reverting behavior.

3. Parameter Estimation via Markov Semigroup Theory:

- For systems with degenerate diffusion (like the chemostat model), the Markov semigroup theory is a key mathematical tool to prove the existence of a unique stationary distribution.

- The exact probability density function for the distribution of the system states can be derived using matrix similarity transformations after linearizing the system around its positive equilibrium [16].

4. Interpretation:

- Persistence: A unique stationary distribution indicates that the microorganism population can survive for a long time in the chemostat despite fluctuations.

- Extinction: If the modified growth rate parameter falls below a critical threshold (

R₀ˢ < 0), the microorganism is predicted to go extinct [16].

Protocol 2: Bayesian OU Modeling for Comparative Trait Evolution

This protocol outlines the workflow for using Bayesian OU models to test adaptive hypotheses in evolutionary biology, as implemented in the Blouch package [12].

1. Problem Formulation:

- Objective: Test if a trait (e.g., antler size in deer) is an adaptation to a predictor variable (e.g., body size or breeding group size), while accounting for phylogenetic relatedness.

- Model: The evolution of the response trait

yis modeled as an OU process toward an optimal stateθthat is a function of the predictor:dy = -α(y - θ(z))dt + σ_y dB_y[12].

2. Workflow Diagram: Bayesian OU Model Fitting with Blouch

The following diagram illustrates the iterative process of model fitting and validation in a Bayesian framework.

3. Key Steps:

- Specify Priors: Incorporate prior biological knowledge about parameters (e.g., a plausible range for the phylogenetic half-life

t₁/₂or the stationary variance) to constrain the model and test specific hypotheses [12]. - Model Fitting: Use Hamiltonian Monte Carlo (HMC) sampling in

Stanto efficiently sample from the posterior distribution of all model parameters. HMC is advantageous for complex models as it requires fewer samples and has reduced autocorrelation [12]. - Model Diagnostics: Check for MCMC convergence (e.g., using R-hat statistics) and perform model validation, such as simulation-based calibration, to ensure the model is well-specified [12].

- Interpretation: Analyze the posterior distributions of key parameters. For example, the compatibility interval for

αdirectly informs the strength of adaptation, and the location of the optimumθcan be interpreted in the context of different selective regimes [12].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational Tools and Frameworks

| Tool / Reagent | Function in OU Research | Relevant Context / Application |

|---|---|---|

| Stan | A probabilistic programming language for full Bayesian statistical inference using Hamiltonian Monte Carlo (HMC) sampling [12]. | Used as the engine in the Blouch package for fitting Bayesian OU models in phylogenetic comparative methods [12]. |

| Blouch R Package | Fits Bayesian Linear Ornstein-Uhlenbeck models for comparative hypotheses. Allows for fixed or continuous predictors and incorporates measurement error [12]. | Testing adaptive hypotheses in evolutionary biology with phylogenetic data; allows for varying effects and multilevel modeling [12]. |

| Markov Semigroup Theory | A mathematical framework used to prove the existence and uniqueness of a stationary distribution for stochastic systems with degenerate diffusion matrices [16]. | Essential for analyzing the long-term survival probability of microorganisms in stochastic chemostat models [16]. |

| Approximate Maximum Likelihood Estimation (AMLE) | An iterative algorithm for direct parameter estimation based on the likelihood function, without requiring prior assumptions [9]. | Estimating parameters in complex OU extensions, such as two-threshold, three-mode OU processes common in economics and pharmacology [9]. |

The Linear Mixed IOU Model for Longitudinal Biomarker Data

Troubleshooting Guides

FAQ 1: My model fitting fails to converge. What are the primary causes and solutions?

Answer: Convergence failures in Linear Mixed IOU models typically stem from data structure, noise, or algorithmic issues. The table below summarizes common causes and solutions.

| Cause Category | Specific Issue | Recommended Solution |

|---|---|---|

| Data Structure | Small sample size or excessive missing data | Ensure adequate samples; use REML for unbiased estimation with small samples [17]. |

| Insufficient longitudinal time points | Increase data sampling frequency; assess sampling rate impact on noise separation [18]. | |

| Noise & Measurement | High measurement noise obscuring true signal | Implement probabilistic methods (e.g., Expectation-Maximization) to separate signal from noise [18]. |

| Dominant multiplicative (signal-dependent) noise | Add known white noise to shift noise balance; use Hamilton Monte Carlo (HMC) methods [18]. | |

| Algorithm & Parameters | Poor optimization algorithm choice | Start with Fisher Scoring (FS) for robustness, then switch to Newton-Raphson (NR) for faster convergence [17]. |

| Incorrect parameterization of IOU process | Compare model fits using Akaike Information Criterion (AIC) for different parameterizations [17]. |

FAQ 2: How do I handle different types of noise in my longitudinal biomarker data during parameter estimation?

Answer: Accurately distinguishing between noise types is critical for precise parameter estimation in Ornstein-Uhlenbeck processes.

- Separating Thermal (White) Noise: For additive Gaussian white noise, use an Expectation-Maximization (EM) algorithm. This approach provides parameter estimates similar to Hamilton Monte Carlo (HMC) methods but with significantly improved computational speed [18].

- Handling Multiplicative Noise: Standard HMC methods often struggle to separate multiplicative noise from the underlying signal due to similar power spectra. To address this:

- Ensure the ratio of multiplicative to white noise does not exceed a threshold dependent on your data sampling rate.

- If multiplicative noise dominates, you can add controlled white noise to shift the noise balance, enabling successful parameter estimation [18].

- Probabilistic Modeling: Implement Bayesian approaches that model both the data and noise probabilistically and simultaneously, rather than relying on traditional filtering alone [18].

FAQ 3: What are the best practices for data preprocessing to ensure robust IOU model estimation?

Answer: Proper data preprocessing is foundational for reliable biomarker analysis and model performance.

- Data Normalization: Apply appropriate normalization methods to make variables comparable and manage heteroskedasticity. Common effective methods include:

- Log Transformation: Eliminates heteroscedasticity and large multiplicative differences, making data distribution more symmetrical. Use

log(1+x)if data contains zeros or negative values [19]. - Auto Scaling (Z-score): Transforms data to have a mean of 0 and variance of 1, ideal for many machine learning algorithms [19].

- Pareto Scaling: Preserves the original data structure better than Auto Scaling while still reducing the impact of large outliers [19].

- Log Transformation: Eliminates heteroscedasticity and large multiplicative differences, making data distribution more symmetrical. Use

- Quality Control: Perform rigorous quality control checks both before and after preprocessing using data type-specific quality metrics [20].

- Handling Missing Data: Remove features with excessive missing values (>30%). For features with limited missing values, use appropriate imputation methods or algorithms that tolerate missingness [20].

Experimental Protocols

Parameter Estimation Workflow for OU Processes with Measurement Noise

The following protocol details the steps for accurate parameter estimation of an Ornstein-Uhlenbeck process in the presence of measurement noise, a common scenario in longitudinal biomarker studies.

Figure 1. Workflow for OU process parameter estimation with noise.

Procedure:

Data Preprocessing

- Normalization: Apply Log Transformation or Auto Scaling to address heteroskedasticity and make variables comparable [19].

- Quality Control: Perform statistical outlier checks and compute data type-specific quality metrics to ensure data integrity [20].

- Missing Data Handling: Remove features with >30% missing values; use imputation for features with limited missingness [20].

Noise Assessment and Processing

- Characterize Noise: Analyze the power spectrum of the data to identify the dominant noise type (additive white noise vs. signal-dependent multiplicative noise) [18].

- White Noise Processing: If additive white noise dominates, proceed with an Expectation-Maximization (EM) algorithm for efficient parameter estimation [18].

- Multiplicative Noise Processing: If multiplicative noise dominates:

- Determine the ratio of multiplicative to white noise.

- If the ratio exceeds manageable thresholds, add controlled white noise to shift the noise balance.

- Apply Hamilton Monte Carlo (HMC) methods with known noise ratio for parameter estimation [18].

Parameter Estimation

- Optimization Algorithm Selection: For model fitting, begin with the Fisher Scoring (FS) algorithm for robustness to poor starting values, then switch to Newton-Raphson (NR) for faster convergence [17].

- Estimation Method: Use Restricted Maximum Likelihood (REML) estimation to avoid downward bias in variance component estimates, especially with small sample sizes [17].

Validation

- Goodness-of-Fit: Compare the Linear Mixed IOU model against alternative models (e.g., conditional independence model) using fit statistics like Akaike Information Criterion (AIC) [17].

- Robustness Check: Validate parameter estimates through sensitivity analyses, examining their stability under different preprocessing methods or noise handling approaches [20].

Integrated Experimental and Computational Pipeline for Biomarker Discovery

This protocol combines experimental and computational steps for discovering and validating biomarkers using longitudinal data and the Linear Mixed IOU model.

Figure 2. Biomarker discovery and validation pipeline.

Procedure:

Study Design and Sample Collection

- Precise Objectives: Clearly define primary and secondary biomedical outcomes, subject inclusion/exclusion criteria, and sample size requirements to ensure adequate statistical power [20].

- Sample Collection: Implement a biological sampling design that minimizes batch effects and controls for potential confounders through appropriate blocking [20].

Data Generation and Preprocessing

- Multi-modal Data: Generate data from relevant platforms (e.g., genomics, proteomics, metabolomics) depending on the biomarker type [21].

- Data Curation: Ensure values fall within acceptable ranges, resolve inconsistencies (e.g., unit conversions), and transform data to standard formats (e.g., CDISC, OMOP) [20].

- Preprocessing: Apply data type-specific preprocessing pipelines including normalization, transformation, and scaling to reduce technical noise [20].

Biomarker Screening and Feature Selection

- Machine Learning Algorithms: Apply ensemble methods like XGBoost or Random Forest to identify predictive features from high-dimensional data [22].

- Regularized Regression: Use Glmnet for generalized linear models with regularization to prevent overfitting in high-dimensional datasets [22].

- Multi-omics Integration: Leverage early, intermediate, or late data integration strategies to combine information from different data types (e.g., clinical and omics data) [20].

Longitudinal Modeling with Linear Mixed IOU Model

- Model Formulation: Implement the Linear Mixed IOU model to account for serial correlation, nonstationary variance, and derivative tracking in longitudinal biomarker trajectories [17].

- Parameter Interpretation: Interpret the α parameter as a measure of derivative tracking (strong tracking with small α) and τ as a scaling parameter [17].

- Special Cases Considerations: Recognize that as α→∞, the model approaches a Brownian Motion mixed-effects model, and as α→0, it reduces to a conditional independence model with random intercept and slope [17].

Validation and Clinical Translation

- Independent Validation: Test identified biomarkers in independent assays and datasets to ensure robustness and replicability [22].

- Analytical Validation: Establish assay performance characteristics including sensitivity, specificity, and reproducibility [20].

- Clinical Utility Assessment: Evaluate whether biomarkers provide added value over traditional clinical markers for decision-making [20].

The Scientist's Toolkit

Research Reagent Solutions for Biomarker Discovery

| Category | Item | Function |

|---|---|---|

| Computational Tools | Stata IOU Module |

Implements Linear Mixed IOU model for longitudinal biomarker data with REML estimation [17]. |

Omics Playground |

Integrated platform for biomarker analysis with multiple machine learning algorithms (XGBoost, Random Forest) [22]. | |

FastQC/FQC |

Quality control package for next-generation sequencing data to assess data quality before analysis [20]. | |

| Statistical Methods | Hamilton Monte Carlo (HMC) |

Bayesian method for parameter estimation in complex models with measurement noise [18]. |

Expectation-Maximization (EM) Algorithm |

Efficient method for parameter estimation of OU processes with white noise [18]. | |

sparse Partial Least Squares (sPLS) |

Simultaneously integrates data and performs variable selection for biomarker identification [22]. | |

| Data Standards | CDISC/OMOP |

Standardized formats for clinical data organization, ensuring consistency and interoperability [20]. |

MIAME/MINSEQE |

Reporting standards for microarray and sequencing experiments to ensure reproducibility [20]. |

Core Concepts and Definitions FAQ

Q1: What is the fundamental difference between Brownian Motion and the Ornstein-Uhlenbeck (OU) process?

Brownian Motion (Wiener process) is a continuous random walk where increments are independent, normally distributed, and have a variance proportional to the time step. Its key characteristic is that its variance grows unbounded over time [7] [23]. In contrast, the Ornstein-Uhlenbeck process is a mean-reverting modification of Brownian motion. It drifts towards a long-term mean, with a greater attraction force when the process is further away from the center. This makes it a stationary Gauss-Markov process with bounded variance, which is the continuous-time analogue of the discrete-time AR(1) process [7].

Q2: Why is the OU process often more suitable than the Wiener process for modeling physical degradation or financial mean reversion?

The OU process is often more physically realistic for two main reasons:

- Bounded Variance: The variance of the OU process converges to a finite value,

σ²/(2θ), preventing the prediction intervals from becoming unrealistically wide over long horizons [7] [24]. The Wiener process's variance grows linearly with time, leading to unbounded and often physically implausible confidence intervals [24]. - Mean-Reversion: The OU process incorporates a "damping" effect that pulls the process back towards a mean level. This effectively models state-dependent negative feedback mechanisms, such as crack-tip stress redistribution in materials or closed-loop control systems in engineering, which the memoryless Wiener process cannot capture [24].

Q3: How is the mean-reverting property represented mathematically?

The mean-reverting property is captured by the deterministic drift term in the OU process's stochastic differential equation. The most common form is:

dX_t = θ(μ - X_t)dt + σdW_t

where:

X_tis the value of the process at timet.θ > 0is the mean reversion speed or strength.μis the long-term mean level.σ > 0is the volatility.dW_tis the increment of a Wiener process [7] [25].

Parameter Estimation & Computational Troubleshooting

Q4: What are the primary methods for estimating OU process parameters, and how do they compare?

The two primary methods are statistical approaches like Maximum Likelihood Estimation (MLE) and deep learning techniques like Recurrent Neural Networks (RNNs). Their characteristics are summarized in the table below.

| Method | Key Principle | Computational Cost | Best-Suited Scenario |

|---|---|---|---|

| Maximum Likelihood Estimation (MLE) [25] [3] | Finds parameters that maximize the probability of observing the given data. Based on the analytical properties of the OU process. | Generally lower; provides closed-form solutions in some cases. | Smaller, cleaner datasets; situations requiring high model interpretability. |

| Recurrent Neural Network (RNN) [3] | A deep learning model trained on simulated OU paths to learn a mapping from the data to the parameters (θ, μ, σ). | Higher; requires significant data for training and substantial computational resources. | Large, complex, or noisy datasets where traditional methods may fail. |

Q5: During parameter estimation, my model fails to converge or produces unrealistic values (e.g., negative mean reversion speed). What could be wrong?

This is a common issue with several potential root causes:

- Insufficient or Noisy Data: The dataset may be too short to capture the mean-reverting dynamics reliably. Outliers or high-frequency noise can be mistaken for the process's random fluctuations, corrupting the parameter estimates [3].

- Regime Changes: The underlying process may have undergone a structural shift, meaning the parameters (especially the long-term mean

μ) are not constant throughout the entire data sample [25]. Using a single set of parameters for the entire period will lead to poor estimates. - Incorrect Model Specification: The data might be generated by a more complex process (e.g., with jumps, time-varying parameters, or a different stochastic structure) [25]. Forcing a standard OU model onto it will yield invalid results. Consider using extended models or testing for model fit.

Q6: How can I efficiently compute the Remaining Useful Life (RUL) distribution for a time-varying mean OU process used in Prognostics and Health Management (PHM)?

For a standard OU process, the RUL distribution can be derived analytically. However, for a novel OU process with a time-varying mean (e.g., exponential drift), an analytical solution may not exist. In this case, a highly efficient alternative to Monte Carlo simulations is a numerical inversion algorithm that constructs an exponential martingale. Research has demonstrated that this method can achieve over 80% faster computation than Monte Carlo simulations without sacrificing accuracy, making it suitable for online, real-time RUL prediction [24].

Application in Drug Discovery & Model Implementation

Q7: How are stochastic processes like Brownian Motion and the OU process relevant to drug discovery?

While not typically used to model molecular motion directly in this context, the concepts of random walks and stochastic optimization are fundamental. They underpin advanced AI-driven drug discovery platforms. For instance:

- Molecular Generation: AI tools like DerivaPredict use generative models to create novel natural product derivatives. These models often rely on stochastic sampling techniques to explore the vast "chemical space" [26].

- Optimization Algorithms: The Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO) algorithm, used for hyperparameter tuning in deep learning models for drug classification, is inspired by the stochastic, collective behavior of swarms [27]. This is conceptually linked to randomized search processes.

Q8: What are the best practices for simulating a Brownian Motion that will be used to drive an OU process?

Accurate simulation is the foundation of reliable modeling and parameter estimation experiments.

- Get the Units Right: Scale the random displacements correctly. The variance of the increments should be

D * dimensions * τ, whereDis the diffusion coefficient andτis the time interval [28]. - Leverage Cumulative Sum: Generate a vector of independent, normally distributed random increments with the correct variance and then use the cumulative sum to obtain the path [28] [23] [29].

- Control for Bulk Flow: In physical simulations, be aware that solvent flow (e.g., from evaporation) can introduce a systematic drift. This manifests as a quadratic component in the displacement-squared-versus-time plot, which should theoretically be a straight line. Using shorter sampling periods can minimize this error [28].

Diagram 1: Simulation workflow for Brownian Motion and OU Process.

Essential Research Reagent Solutions

The following table details key computational tools and datasets used in modern, AI-driven drug discovery research, which often forms the application context for stochastic modeling.

| Reagent / Resource | Type | Primary Function in Research |

|---|---|---|

| DerivaPredict [26] | Software Tool | Generates novel natural product derivatives using curated chemical and metabolic transformation rules, and predicts their binding affinities and ADMET profiles. |

| optSAE + HSAPSO Framework [27] | Deep Learning Model | A stacked autoencoder optimized with a swarm intelligence algorithm for highly accurate (~95.5%) drug classification and target identification. |

| DrugBank / Swiss-Prot [27] | Database | Curated repositories of drug, chemical, and protein data used as benchmark datasets for training and validating predictive models. |

| AlphaFold / AtomNet [30] | AI Model | Predicts protein 3D structures (AlphaFold) and aids in structure-based drug design (AtomNet), accelerating target identification and lead optimization. |

Diagram 2: Parameter estimation experimental protocol.

FAQs and Troubleshooting Guides

FAQ 1: What are the common causes of failure in Ornstein-Uhlenbeck parameter estimation with clinical data, and how can I resolve them?

Answer: Failures in parameter estimation often stem from data quality and model specification issues. The table below outlines common problems and solutions.

| Problem | Symptom | Solution |

|---|---|---|

| Data Heterogeneity [21] | Model fails to generalize across different patient populations. | Implement multi-modal data fusion techniques and ensure diverse training data. |

| Insufficient Standardization [21] | High variance in parameter estimates across datasets. | Apply standardized data pre-processing and governance protocols before estimation. |

| Domain Errors in MLE [31] | Code throws "math domain error" during optimization. | Add a small epsilon value (e.g., 1e-5) to calculated variances to prevent division by zero. Enforce strict parameter bounds. |

| Low Generalizability [21] | Model performs well on training data but poorly on new cohorts. | Incorporate data augmentation and validate models on external, real-world datasets. |

FAQ 2: How can I effectively incorporate cyclical data, like hormonal cycles, into a stochastic process model?

Answer: Integrating cyclical data requires capturing both the periodic pattern and its inherent variability.

- Data Collection: Leverage longitudinal data streams from wearable devices and digital biomarkers to capture dynamic, high-frequency measurements [32]. The Apple Women's Health Study, for example, uses such data to understand changes in physiology across menstrual cycles and pregnancies [32].

- Modeling Approach: Treat the cycle as a vital sign and source of biological state information [33] [34]. Irregularities in the cycle can be both a cause and a consequence of wider health issues, and excluding them can bias research [33]. Model the underlying cyclical trend separately before incorporating it as a time-varying parameter in your Ornstein-Uhlenbeck process to inform the mean-reversion level or volatility.

- Troubleshooting: If the model fails to capture the cycle's impact, ensure you are using a sufficiently long time series to cover multiple cycles. Consider using a Fourier transform to identify and validate the dominant cyclical components in your data.

FAQ 3: When modeling CD4 count trajectories, what is a key consideration for ensuring accurate parameter estimation?

Answer: A critical consideration is the strong negative correlation between CD4 cell count and viral load. Research on TB/HIV co-infected patients shows a "very strong negative relationship" between subject-specific changes in CD4 count and viral load over time [35]. Ignoring this relationship can lead to biased estimates.

- Solution: Use a joint modeling approach. A joint model that simultaneously estimates the parameters for both CD4 and viral load trajectories can account for their evolution and provide more accurate and interpretable results [35]. Clinical factors like adherence to antiretroviral therapy (ART), hemoglobin levels, and baseline biomarker values are often joint determinants for both outcomes and should be included as covariates [35].

FAQ 4: What is a major pitfall in disease biomarker discovery, and how can modern approaches overcome it?

Answer: The major pitfall is identifying biomarkers by comparing a single disease only to healthy controls. This method cannot distinguish between proteins specific to that disease and those common to many diseases [36].

- Solution:

- Pan-Disease Proteome Analysis: Cross-reference proteomes across many different diseases to identify profiles that are truly unique to a specific condition [36]. This approach has shown, for instance, that while liver-related diseases have distinct protein clusters, many potential cancer biomarkers are not disease-specific when viewed in a wider context [36].

- Multi-Omics Integration: Leverage data from genomics, proteomics, and metabolomics to build comprehensive biomarker signatures that reflect the complexity of diseases, leading to improved diagnostic accuracy [21] [37].

- AI-Driven Analytics: Use machine learning algorithms to automate the analysis of these complex, high-dimensional datasets and build predictive models of disease progression [37].

Quantitative Data for Experimental Design

Table 1: Joint Determinants of CD4 Cell Count and Viral Load in TB/HIV Co-infected Patients [35]

| Determinant | Category/Unit | Impact on Biomarkers |

|---|---|---|

| Treatment Adherence | Good & Fair | Positive determinant for both CD4 count increase and viral load suppression |

| Hemoglobin Level | ≥ 11 g/dl | Positive joint determinant |

| Baseline CD4 Cell Count | ≥ 200 cells/mm³ | Positive joint determinant |

| Baseline Viral Load | < 10,000 copies/mL | Positive joint determinant |

| White Blood Cell Count | Cells/mm³ | Joint determinant |

| Hematocrit | Percentage | Joint determinant |

| Monocyte Count | Cells/mm³ | Joint determinant |

Table 2: Key Biomarker Types and Clinical Validation Metrics [21]

| Biomarker Type | Key Detection Technologies | Critical Validation Metrics |

|---|---|---|

| Genetic Biomarkers | Whole Genome Sequencing, PCR, SNP arrays | Sensitivity, Specificity, Predictive Value |

| Proteomic Biomarkers | Mass Spectrometry, ELISA, Protein Arrays | Dynamic Range, Technical Reproducibility |

| Metabolomic Biomarkers | LC–MS/MS, GC–MS, NMR | Pathway Specificity, Temporal Stability |

| Digital Biomarkers | Wearable Devices, Mobile Applications | Signal-to-Noise Ratio, Clinical Correlation |

Experimental Protocols

Protocol 1: Estimating fOU Process Parameters using an LSTM Network

This protocol outlines a method for estimating parameters of the fractional Ornstein-Uhlenbeck (fOU) process, a task relevant for modeling mean-reverting biological phenomena with long-memory effects [38].

1. Data Generation and Pre-processing:

- Path Simulation: Use a library like

stochastic-rsin Rust to generate multiple synthetic paths of the fOU process. For each path, randomly sample the Hurst exponent (H) and the mean-reversion parameter (theta) from predefined uniform distributions (e.g., H between 0.01 and 0.99) [38]. - Standardization: For each generated path, standardize the data by subtracting its mean and dividing by its standard deviation [38].

- Data Structuring: Pair each standardized path tensor with its corresponding theta value (the target for estimation). Split the data into training and validation sets.

2. LSTM Model Building (using a framework like candle):

- Architecture: Construct a model with:

- Initial linear and PReLU activation layers.

- Multiple LSTM layers.

- Layer normalization.

- A final multi-layer perceptron (MLP) for outputting the estimated parameter [38].

- Training: Use an optimizer like AdamW and a loss function such as Mean Squared Error (MSE) to train the model to map the input paths to the true theta values.

3. Troubleshooting:

- Overfitting: If the model performs well on training data but poorly on validation data, increase dropout rates or use L2 regularization within the MLP layers [38].

- Non-convergence: Adjust the learning rate, inspect gradient norms, and ensure your data is properly standardized.

Protocol 2: Designing a Pan-Disease Biomarker Validation Study

This protocol is for validating the specificity of a candidate biomarker across multiple disease states [36].

1. Cohort Selection:

- Assemble a cohort that includes healthy controls and patients with a wide range of diseases (aim for 50+ different conditions).

- Collect blood samples from all participants for plasma proteome analysis.

2. Proteomic Profiling:

- Use a high-throughput protein quantification platform (e.g., proximity extension assay) to profile a large number of proteins from each sample.

- Create a centralized, open-access database of all proteome measurements.

3. Data Analysis:

- Traditional Case-Control Analysis: Compare the proteome of your disease of interest against the healthy controls to identify differentially expressed proteins.

- Pan-Disease Analysis: Cross-reference the list of candidate proteins against the full pan-disease database. Filter out proteins that are also significantly elevated or reduced in unrelated diseases.

- Machine Learning: Train a classifier (e.g., a random forest or support vector machine) on the pan-disease proteomic data to identify a minimal set of proteins that can accurately distinguish your target disease from all others.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions

| Reagent / Material | Function in Experiment |

|---|---|

| High-Throughput Protein Quantification Platform | Enables simultaneous measurement of thousands of proteins from a small blood sample for pan-disease biomarker discovery [36]. |

| Single-Cell Analysis Kit | Allows for deep characterization of tumor microenvironments and identification of rare cell populations that drive disease [37]. |

| Liquid Biopsy Assay | Provides a non-invasive method for real-time disease monitoring and detection via circulating tumor DNA (ctDNA) or exosomes [37]. |

| Multi-Omics Data Integration Software | Facilitates the fusion of genomic, transcriptomic, proteomic, and metabolomic data to build comprehensive biological models [21] [37]. |

| Longitudinal Cohort Data | Provides time-series data essential for modeling dynamic processes like CD4 count trajectories or hormonal fluctuations [35] [32]. |

Modeling Workflows and Pathways

Diagram Title: Parameter Estimation Workflow for Biomedical Data

Diagram Title: CD4 and Viral Load Dynamic Relationship

Estimation Methods and Implementation for OU Models in Practice

Frequently Asked Questions (FAQs)

1. Why is my estimated mean-reversion speed (θ) inaccurate even with large datasets?

The mean-reversion speed (θ) is notoriously difficult to estimate accurately. Even with over 10,000 observations, significant estimation errors can persist. This occurs because the estimator's standard deviation decreases very slowly with more data, at a rate proportional to 1/sqrt(N), where N is the number of observations [39]. For finite samples, most estimators have a positive bias, meaning they systematically overestimate the true mean-reversion speed, which is particularly problematic in pairs trading strategies where profitability depends on correct θ values [39].

2. My AR(1) regression produced a negative 'a' coefficient. Can I still calculate the OU parameters?

Yes, but you must use the absolute value of the coefficient a when applying the parameter conversion formulas. The formulas for θ (mean-reversion speed) and σ (volatility) both require ln(a), which is undefined for negative values. Using ln(|a|) provides the correct calculation for these parameters [40]. The half-life of mean reversion should also be calculated using -ln(2)/ln(|a|) [40].

3. When should I use AR(1) versus Maximum Likelihood estimation for my OU process? The choice depends on your specific requirements. The AR(1) approach with ordinary least squares (OLS) is simpler and faster to implement but may produce biased estimates, especially for small samples [39] [40]. Maximum Likelihood Estimation (MLE) generally produces more accurate results but is computationally more intensive [39] [41]. For most applications with sufficient data, MLE is preferred, though the difference may be minimal when the lag-1 autocorrelation is close to 1 [39].

4. What does a "singular fit" warning indicate in my estimation output? A singular fit warning typically indicates perfect multicollinearity in your parameter estimates, often visible as correlations of exactly -1 or 1 between random effects in your variance-covariance matrix [42]. This suggests your model may be overparameterized, or there may be an underlying issue with your data that makes certain parameters unidentifiable. The solution is often to simplify your model by removing unnecessary variables [42].

5. How can I assess the reliability of my parameter estimates? The Cramér-Rao Lower Bound (CRLB) provides the minimum theoretical variance attainable by an unbiased estimator, offering a benchmark for estimator performance [41]. For practical assessment, Monte Carlo analysis can be used by generating multiple simulated datasets and evaluating the bias and variance of your estimates across these simulations [39] [41]. This approach is particularly valuable for quantifying estimator performance when theoretical analysis is complex [41].

Troubleshooting Guides

Problem: High Estimation Error in Mean-Reversion Speed (θ)

Symptoms:

- Large confidence intervals for θ estimates

- Inconsistent θ values across different data periods

- Half-life calculations that don't match observed mean-reversion behavior

Diagnosis Steps:

- Check Sample Size: Verify you have sufficient data points. For θ estimation, even 10,000+ observations may be needed for reasonable accuracy [39].

- Analyze Estimator Bias: Use the bias approximation formula:

Bias ≈ -(1 + 3θΔt)/N, where Δt is the time increment and N is the sample size. This quantifies expected bias in your estimates [39]. - Compare Estimation Methods: Implement multiple approaches (AR(1), MLE, Moment Estimation) to identify consistent results across methods [39].

Solutions:

- Increase Data Quantity: Use longer time series where possible

- Apply Bias Correction: Use the adjusted moment estimator:

θ = (1 - â)/Δt - (1 + 3θΔt)/(NΔt)to reduce positive bias [39] - Try Indirect Inference: Consider simulation-based indirect inference estimation, which is unbiased for finite samples though computationally more intensive [39]

Problem: AR(1) Model Fitting Issues

Symptoms:

- Negative autoregressive coefficients preventing parameter calculation

- Poor model fit with low R-squared values

- Residuals that don't satisfy normality assumptions

Diagnosis Steps:

- Verify OU Process Assumptions: Confirm your data genuinely follows a mean-reverting process rather than other time series patterns

- Check Coefficient Significance: Ensure the autoregressive coefficient is statistically significant

- Test Alternative ARIMA Models: Your data might follow ARIMA(p,d,q) with p≠1; experiment with different orders [40]

Solutions:

- Use Absolute Values: For negative 'a' coefficients, use

|a|in OU parameter conversion formulas [40] - Incorporate Known Mean: If the long-term mean is known (e.g., zero in spread trading), use the enhanced AR(1) estimator:

â = (1/N) × Σ(x_i × x_(i-1)) / (1/N) × Σ(x_(i-1)²)[39] - Try Exact Maximum Likelihood: Implement the direct MLE approach using the normal conditional density:

f(x_i|x_(i-1)) = 1/√(2πσ²) × exp(-(x_i - x_(i-1)e^(-θΔt) - μ(1-e^(-θΔt)))² / (2σ²))[39]

Problem: Model Convergence Failures

Symptoms:

- Optimization algorithms failing to converge

- "Singular fit" or "non-convergence" warning messages

- Highly sensitive parameter estimates to initial values

Diagnosis Steps:

- Check Variance-Covariance Matrix: Look for near-zero variances or correlations at exactly ±1 [42]

- Examine Optimization Settings: Review optimizer tolerances, iteration limits, and algorithm choices [42]

- Test for Multicollinearity: Check if predictor variables are highly correlated [43]

Solutions:

- Simplify Model Structure: Reduce random effects or remove correlated parameters [42]

- Adjust Optimizer Settings: Increase iteration limits, change optimization algorithms, or adjust tolerance settings [42]

- Scale Input Variables: Rescale independent variables by 2 times their standard deviation to improve numerical stability [43]

- Try Alternative Start Values: Use method-of-moments estimates as starting points for MLE optimization

Estimation Methods Comparison

Table 1: Comparison of OU Parameter Estimation Methods

| Method | Key Formula/Approach | Advantages | Limitations | Best Use Cases |

|---|---|---|---|---|

| AR(1) with OLS | x_i = a×x_(i-1) + b + ε with θ = -ln(a)/Δt, μ = b/(1-a), σ = stdev(ε)×√(-2ln(a)/(Δt(1-a²))) [39] [40] |

Simple implementation; Fast computation [39] | Biased for small samples; Can produce negative coefficients [39] [40] | Initial analysis; Large datasets; Quick prototyping |

| Maximum Likelihood | Maximize L(θ,μ,σ) = Σ log(f(x_i|x_(i-1))) where f(x_i|x_(i-1)) is normal density with mean x_(i-1)e^(-θΔt) + μ(1-e^(-θΔt)) and variance σ²(1-e^(-2θΔt))/(2θ) [39] |

More accurate; Statistically efficient [39] [41] | Computationally intensive; Sensitive to initial values [39] | Production models; Final analysis; Smaller datasets |

| Moment Estimation | θ = (1 - â)/Δt - (1 + 3θΔt)/(NΔt) where â is from AR(1) [39] |

Reduces small-sample bias [39] | More complex implementation; Still some finite-sample bias [39] | Small to medium datasets; Bias-sensitive applications |

Table 2: Performance Metrics for OU Parameter Estimators

| Estimation Context | Bias Characteristics | Variance Convergence | Recommended Sample Size |

|---|---|---|---|

| Fixed time span (T), increasing frequency | Persistent bias even with infinite observations [39] | Limited improvement with higher frequency [39] | Focus on longer time spans rather than higher frequency |

| Fixed frequency (Δt), increasing time span | Bias decreases with longer time series [39] | Variance decreases at rate 1/sqrt(N) [39] |

250+ observations for μ and σ; 10,000+ for accurate θ estimation [39] |

| Known long-term mean (μ) | Reduced bias compared to unknown μ case [39] | Faster convergence for θ and σ [39] | 100+ observations for reasonable estimates |

Experimental Protocols

Protocol 1: AR(1) Estimation with Bias Assessment

Purpose: Estimate OU parameters using AR(1) approach and evaluate estimator bias.

Materials Needed:

- Time series data (minimum 250 observations for basic estimation)

- Statistical software with linear regression capabilities

- Numerical computation tool (e.g., Python, R, MATLAB)

Procedure:

- Prepare your time series data

{x_0, x_1, ..., x_N}with constant time interval Δt - Create lagged variables:

y = {x_1, x_2, ..., x_N}andx_lag = {x_0, x_1, ..., x_(N-1)} - Perform linear regression:

y = a × x_lag + bwithout intercept - Calculate OU parameters:

- Estimate bias using:

Bias ≈ -(1 + 3θΔt)/N[39] - Compute bias-adjusted θ:

θ_adj = θ - Bias

Validation:

- Compare estimates across different time periods

- Check half-life

(ln(2)/θ)against observed mean-reversion behavior - Verify residuals approximate white noise

Protocol 2: Exact Maximum Likelihood Estimation

Purpose: Implement exact MLE for OU parameters using the conditional density formulation.

Materials Needed:

- Time series data with constant time intervals

- Optimization software (e.g., optim in R, fmincon in MATLAB)

- Numerical libraries for normal distribution calculations

Procedure:

- Define the log-likelihood function based on the exact transition density:

L(θ,μ,σ) = Σ log[(2πσ²(1-e^(-2θΔt))/(2θ))^(-1/2) × exp(-(x_i - x_(i-1)e^(-θΔt) - μ(1-e^(-θΔt)))² / (2σ²(1-e^(-2θΔt))/(2θ)))][39] - Obtain initial parameter estimates using AR(1) method

- Use optimization algorithm to maximize

L(θ,μ,σ)with respect to parameters - Implement multiple restarts with different initial values to avoid local optima

- Compute Hessian matrix at optimum to estimate parameter standard errors

Validation:

- Compare log-likelihood values across different optimization runs

- Check gradient near optimum is close to zero

- Verify that residuals (standardized innovations) approximate i.i.d. standard normal

Research Reagent Solutions

Table 3: Essential Computational Tools for OU Parameter Estimation

| Tool Category | Specific Implementation | Function | Key Features |

|---|---|---|---|

| Optimization Algorithms | L-BFGS-B, Nelder-Mead, Gradient Descent [39] [44] | Numerical optimization for MLE | Handles parameter constraints; Efficient for medium-scale problems |

| Statistical Libraries | Python: statsmodels, R: arima(), MATLAB: econometric toolbox | AR(1) estimation and diagnostic testing | Built-in model diagnostics; Robust standard errors |

| Monte Carlo Simulation | Custom implementation using exact simulation method [39] | Performance assessment; Bias quantification | x_i = x_(i-1)e^(-θΔt) + μ(1-e^(-θΔt)) + σ√((1-e^(-2θΔt))/(2θ)) × N(0,1) [39] |

| Bias Correction | Adjusted moment estimator [39] | Reduce small-sample bias in θ estimates | θ_adj = (1 - â)/Δt - (1 + 3θΔt)/(NΔt) |

Methodological Workflows

Frequently Asked Questions (FAQs)

Q1: What constitutes an unbalanced factorial design, and why is it a problem for my analysis?

An unbalanced factorial design occurs when you have unequal numbers of observations or replicates across the different treatment combinations of your factors. There are two primary ways this happens [45]:

- Varying Replications: The number of replications for each treatment combination varies. This can result from missing observations that are "missing at random," such as a failed measurement.

- Missing Combinations: Some treatment combinations are entirely absent from the dataset.

The core problem with unbalanced designs is that they are non-orthogonal. This means the factors are correlated, and the standard (Type I) sums of squares for main effects will depend on the order in which you enter the factors into your statistical model. This can lead to misleading conclusions if not handled properly [45].

Q2: My experiment has missing data. When can I still proceed with a factorial ANOVA?

You can often proceed with a factorial ANOVA if the missing observations are Missing At Random (MAR) [45]. For example, if a data point is lost due to a equipment failure that is unrelated to the experimental treatment, it is likely MAR. However, if the treatment itself causes the missing data (e.g., a drug combination is toxic and leads to patient dropouts), the data is not missing at random, and a standard ANOVA would be biased [45].

Q3: What are Type II and Type III sums of squares, and which should I use for my unbalanced design?

With unbalanced designs, it is common to use adjusted sums of squares that are not affected by the order of factors in the model [45].

- Type II SS (Higher-level terms omitted): The sum of squares for a main effect is calculated after adjusting for other main effects, but not for the interaction terms. This is often the recommended approach when interactions are not significant or when testing main effects in the presence of an interaction [45].

- Type III SS (Higher-level terms included): The sum of squares for an effect is calculated after adjusting for all other effects in the model, including interactions.

There is ongoing debate among statisticians regarding the "right" type. A common recommendation is to use Type II sums of squares, as they better align with the principles of hypothesis testing for main effects when interactions are present [45]. However, an alternate approach is to present sequential analyses that best match the specific objectives of your research question [45].

Q4: How does handling unbalanced data relate to parameter estimation in Ornstein-Uhlenbeck models?

The Ornstein-Uhlenbeck (OU) process is a foundational stochastic differential equation used to model phenomena like stock prices and biological processes [46]. Accurate parameter estimation is crucial for these models. While traditional statistical methods like Maximum Likelihood Estimation (MLE) are commonly used, recent research explores deep learning techniques like Recurrent Neural Networks (RNNs) for potentially more precise estimators [46]. Properly handling unbalanced data in the experimental design phase ensures that the input data for these estimation techniques (whether MLE or RNN) is robust and minimizes bias, leading to more reliable and generalizable parameter estimates in your OU models.

Troubleshooting Guide

Problem: Inconsistent Main Effect Results in ANOVA

Symptoms: The significance of a main effect changes dramatically when the order of factors is changed in your statistical software using Type I (sequential) sums of squares.

Diagnosis: This is a classic symptom of a non-orthogonal, unbalanced design. The correlation between factors means their effects cannot be independently assessed.

Solution: Use adjusted sums of squares.

- Switch to Type II or Type III Sums of Squares in your statistical software's ANOVA function.

- For Type II SS: Ensure the model calculates each main effect after accounting for the other main effect(s).

- For Type III SS: Ensure the model calculates each effect after accounting for all others. Be aware that this approach is debated, particularly for designs with interactions [45].

- Report the type of sums of squares used in your methodology.

Problem: Entire Treatment Combination is Missing

Diagnosis: Some combinations of your factors have zero replicates.

Solution: This poses a greater challenge. The most straightforward solution is to:

- Ignore the factorial structure and analyze the data using a one-factor ANOVA.

- Treat each existing combination as a separate, distinct treatment group [45].

- Acknowledge the limitation in your research conclusions, as you cannot estimate the missing interaction or main effect components.

Experimental Protocols

Protocol 1: Handling Unbalanced Data with Adjusted Sums of Squares

Objective: To correctly perform a factorial ANOVA on an unbalanced dataset using adjusted sums of squares.

Materials: Dataset with unequal replication, statistical software (e.g., R).

Methodology:

- Data Preparation: Organize your data with columns for the response variable and each factorial variable.

- Exploratory Analysis: Tabulate your data to confirm the unbalanced nature and check for missing combinations.

- Model Fitting - Type II SS:

- Fit a full model including all main effects and interactions.

- To obtain the adjusted SS for factor A, fit a model where factor A is entered last (e.g.,

model <- aov(Response ~ B + A)). - To obtain the adjusted SS for factor B, fit a model where factor B is entered last (e.g.,

model <- aov(Response ~ A + B)). - The interaction sum of squares (SSAB) comes from the full model (A + B + A*B) [45].

- Composite Table: Construct a final ANOVA table using the sums of squares from the models above, along with the residual sums of squares.

Protocol 2: Parameter Estimation for Ornstein-Uhlenbeck Processes

Objective: To compare traditional statistical and modern deep learning techniques for estimating OU process parameters.

Materials: Simulated or real-time series data fitting an OU process, computing environment (e.g., Python with TensorFlow/PyTorch).

Methodology:

- Data Simulation: Simulate data from a known OU process for method validation. The OU process is defined by the stochastic differential equation:

dX(t) = θ(μ - X(t))dt + σdW(t), where θ is the mean reversion rate, μ is the long-term mean, and σ is the volatility. - Traditional Estimation (MLE):

- Implement Maximum Likelihood Estimation to derive parameter estimates (θ, μ, σ).

- Calculate the confidence intervals for the estimates.

- Deep Learning Estimation (RNN):

- Design a Recurrent Neural Network (e.g., LSTM) architecture.

- Train the network on the simulated data, using the known parameters as labels.

- Validate the trained model on a held-out test set.

- Comparison: Compare the accuracy and computational expense of the MLE and RNN estimators against the known true parameter values [46].

Data Presentation

Table 1: Comparison of ANOVA Sums of Squares Types for Unbalanced Designs

| Type | Calculation Method | Adjusts For | Recommended Use Case |

|---|---|---|---|

| Type I (Sequential) | Terms are added to the model in a specified order. | Only previous terms in the sequence. | Balanced designs; a priori ordered hypotheses. |

| Type II (HTO) | Each term is fitted last in a model containing other terms at its same level. | Other main effects (but not interactions). | Testing main effects in the presence of non-significant interactions [45]. |

| Type III (HTI) | Each term is fitted last in the model containing all other terms. | All other effects, including higher-level interactions. | When interactions are significant (subject to debate) [45]. |

Table 2: Comparison of Parameter Estimation Techniques for the Ornstein-Uhlenbeck Process

| Technique | Underlying Principle | Key Advantages | Key Limitations | Computational Expense |

|---|---|---|---|---|

| Maximum Likelihood Estimation (MLE) | Finds parameters that maximize the likelihood of observing the data. | Well-established theory, statistical consistency. | Can be biased for small samples; assumes model correctness. | Low to Moderate [46] |

| Recurrent Neural Network (RNN) | A deep learning model trained to learn the mapping from time-series data to parameters. | High potential accuracy; can capture complex non-linear patterns. | "Black box" nature; requires large amounts of data for training. | High (especially during training) [46] |

Workflow Visualization

Workflow for Data Analysis and OU Parameter Estimation

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Computational Experiments

| Item | Function | Example/Tool |

|---|---|---|

| Statistical Software | To perform complex statistical analyses, including ANOVA with adjusted sums of squares. | R, Python (with statsmodels, scipy) |

| Deep Learning Framework | To build and train models like RNNs for advanced parameter estimation. | TensorFlow, PyTorch |

| Data Simulation Package | To generate synthetic data from known models (e.g., OU process) for method validation. | R (simecol), Python (custom SDE solvers) |

| Color Contrast Checker | To ensure accessibility and readability of all visualizations, in line with WCAG guidelines [47] [48]. | Aditus Button Contrast Checker [49], Coolors Contrast Checker [50] |

Frequently Asked Questions (FAQs)

Q1: What are the primary strengths and weaknesses of Newton-Raphson, Expectation-Maximization (EM), and Bayesian Optimization for parameter estimation?

The table below summarizes the core characteristics of each algorithm to help you select the appropriate one for your experiment.

| Algorithm | Best For | Convergence | Key Requirements | Common Pitfalls |

|---|---|---|---|---|

| Newton-Raphson [51] [52] | Finding roots of real-valued functions (e.g., solving equilibrium points). | Quadratic convergence when near a root. | Function must be differentiable; requires the first derivative. | Divergence with poor initial guess; fails if derivative is zero at root [51]. |

| Expectation-Maximization (EM) [53] | Maximum likelihood estimation with unobserved latent variables or noisy data. | Linear convergence. | A model with latent variables that would simplify the likelihood if observed. | Convergence can be slow; may find local, not global, maxima [18] [53]. |

| Bayesian Optimization [54] [55] [56] | Optimizing expensive, black-box functions (e.g., hyperparameter tuning). | Global search, not dependent on local convergence. | A well-defined search space; a costly-to-evaluate objective function. | Performance degrades in high dimensions (>50 variables); has its own computational overhead [56]. |

Q2: The Newton-Raphson method is failing to converge in my Ornstein-Uhlenbeck parameter estimation. What could be wrong?

This is a common issue. The table below lists specific problems and evidence-based solutions.

| Problem | Diagnostic Check | Solution & Reference |

|---|---|---|

| Poor Initial Guess | The value of the objective function, ( f(x_0) ), is very far from zero. | Start with a guess close to the root. Perform a grid search to find where ( f(x) ) changes sign [52]. |

| Ill-Behaved Derivative | The derivative ( f'(x) ) is close to or equal to zero in the neighborhood of your guess. | Use a robust root-finding method or implement successive over-relaxation to stabilize the algorithm [51]. |

| Model/Noise Mismatch | The optimization is highly sensitive to tiny changes in data. | Reconsider the noise model. For O-U processes with measurement noise, an EM algorithm is often more robust than direct Newton-Raphson application [18]. |

Q3: How do I choose an acquisition function for Bayesian Optimization of my drug response model?

Your choice balances exploration (testing uncertain regions) and exploitation (refining known good regions). The table compares common functions.

| Acquisition Function | Mechanism | Best Use Case |

|---|---|---|

| Probability of Improvement (PI) [54] [55] | Selects the point with the highest probability of improving over the current best value. | Quick refinement when you have a good initial best guess and want to exploit it. Use a moderate ( \epsilon ) to encourage some exploration [54]. |

| Expected Improvement (EI) [54] [56] | Selects the point offering the highest expected improvement, considering both probability and magnitude. | General-purpose choice; better than PI when the potential magnitude of improvement is important [54]. |

| Upper Confidence Bound (UCB) [55] | Selects points by maximizing a weighted sum of the predicted mean and uncertainty. | When you want explicit control over the exploration/exploitation trade-off via a tunable weight parameter [55]. |

Q4: My EM algorithm for single-particle tracking is computationally expensive. How can I speed it up?

The computational burden often comes from the E-step. Consider the following method.

| Method | Description | Application Context |

|---|---|---|

| Unscented-EM (U-EM) [53] | Replaces particle-based filters and smoothers with an Unscented Kalman Filter (UKF) and Unscented RTS Smoother. This approximates the state distribution, drastically reducing cost. | Highly effective for SPT data from sCMOS cameras with an Ornstein-Uhlenbeck motion model. It achieves performance similar to SMC-EM with significantly improved speed [53]. |

Experimental Protocols

Protocol 1: Parameter Estimation for an Ornstein-Uhlenbeck Process with Measurement Noise using EM

This protocol is adapted from methods used in single-particle tracking to handle measurement noise [53].

1. Problem Formulation

- Motion Model: The O-U process for a particle's position ( xt ) is given by: ( x{t+1} = B xt + wt ), where ( B = \exp(- \Delta t / \tau) ), and ( w_t \sim \mathcal{N}(0, A(1-B^2)) ) [18].

- Observation Model: The measured position ( yt ) is corrupted by noise: ( yt \sim \mathcal{N}(xt, \sigmaN^2 + \sigmaM^2 xt^2) ), where ( \sigmaN^2 ) is white noise variance and ( \sigmaM^2 ) is multiplicative noise variance [18].

2. Expectation-Maximization Algorithm

- Initialization: Guess initial parameters ( \theta^{(0)} = { A, B, \sigmaN, \sigmaM } ).

- Expectation (E)-Step: Given the current parameters ( \theta^{(k)} ) and all observations ( y{1:T} ), compute the smoothed state distributions ( p(xt | y{1:T}, \theta^{(k)}) ) and cross-densities ( p(xt, x{t+1} | y{1:T}, \theta^{(k)}) ).

- Tool Choice: For high accuracy and general models, use a Particle Filter and Smoother (SMC-EM). For faster performance with near-Gaussian models, use an Unscented Kalman Smoother (U-EM) [53].

- Maximization (M)-Step: Update the parameters ( \theta^{(k+1)} ) by maximizing the expected complete-data log-likelihood derived from the smoothed distributions.

- Iteration: Repeat E- and M-steps until convergence (e.g., when the change in parameter values falls below a threshold).

Protocol 2: Tuning Model Hyperparameters using Bayesian Optimization

This workflow is standard for optimizing machine learning models and can be adapted for calibrating complex stochastic models [55] [56].

1. Define the Objective Function and Search Space

- Objective Function ( f(x) ): This is the performance metric to optimize (e.g., the negative cross-validation score of a predictive model or the log-likelihood of your O-U model). Treat it as a black box.

- Search Space: Define the hyperparameters and their ranges (e.g., learning rate: [0.01, 0.1], number of trees: {100, 200, 300}).

2. Initialize and Run the Bayesian Optimization Loop

- Initial Sampling: Randomly select a few points ( x1, x2, ..., x_n ) from the search space and evaluate ( f(x) ) at these points.

- Surrogate Model: Fit a Gaussian Process (GP) model to the data ( { (xi, f(xi)) } ). The GP models the objective function and provides a predictive mean and uncertainty at any point ( x ) [54] [55].

- Acquisition Function: Use an acquisition function ( \alpha(x) ) (like Expected Improvement) to determine the most promising point to evaluate next. This balances exploration and exploitation.

- Iterate: Evaluate the objective function at the point suggested by the acquisition function, update the GP model with the new data, and repeat until the budget is exhausted.

Research Reagent Solutions

The following table lists key computational tools and their functions for implementing the advanced algorithms discussed.

| Tool / Technique | Function in Research | Application Example |

|---|---|---|

| Gaussian Process (GP) [54] | A surrogate model that approximates an unknown objective function and provides uncertainty estimates. | Core component of Bayesian Optimization, used to model the cross-validation loss as a function of hyperparameters [54] [56]. |

| Particle Filter (SMC) [53] | A sequential Monte Carlo method used to approximate the state distribution in a dynamical system. | Used in the E-step of the SMC-EM algorithm to handle the nonlinear, non-Gaussian observation model in SPT [53]. |

| Unscented Kalman Filter (UKF) [53] | A filter that uses a deterministic sampling technique to approximate state distributions, often faster than particle methods. | Used in the U-EM algorithm as a computationally efficient alternative to the particle filter for SPT data [53]. |

| Generalized Anscombe Transform [53] | A variance-stabilizing transformation that converts a Poisson-Gaussian noise model into an approximately Gaussian one. | Prepares sCMOS camera data for the UKF in the U-EM algorithm by making the noise additive and Gaussian [53]. |

Incorporating Mixed Effects for Population Modeling (SDMEMs)

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of simulation failure in mixed-effects models, and how can I address them? Simulation failures often stem from configuration errors, numerical issues, or parameter discontinuities. Common configuration errors include missing solver configuration blocks, missing reference nodes (like electrical ground in analog circuits), or illegal connections of domain-specific sources in parallel or series [57]. Numerical issues frequently involve dependent dynamic states leading to higher-index differential algebraic equations (DAEs) or parameter discontinuities that cause transient initialization failures [57]. To address these, simplify your circuit or model, add small parasitic terms to avoid dependent states, and ensure parameters don't have discontinuities with respect to time or other variables [57].