Optimizing Gene Network Comparison: Advanced Methods for Biomedical Discovery and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on optimizing gene regulatory network (GRN) comparison.

Optimizing Gene Network Comparison: Advanced Methods for Biomedical Discovery and Drug Development

Abstract

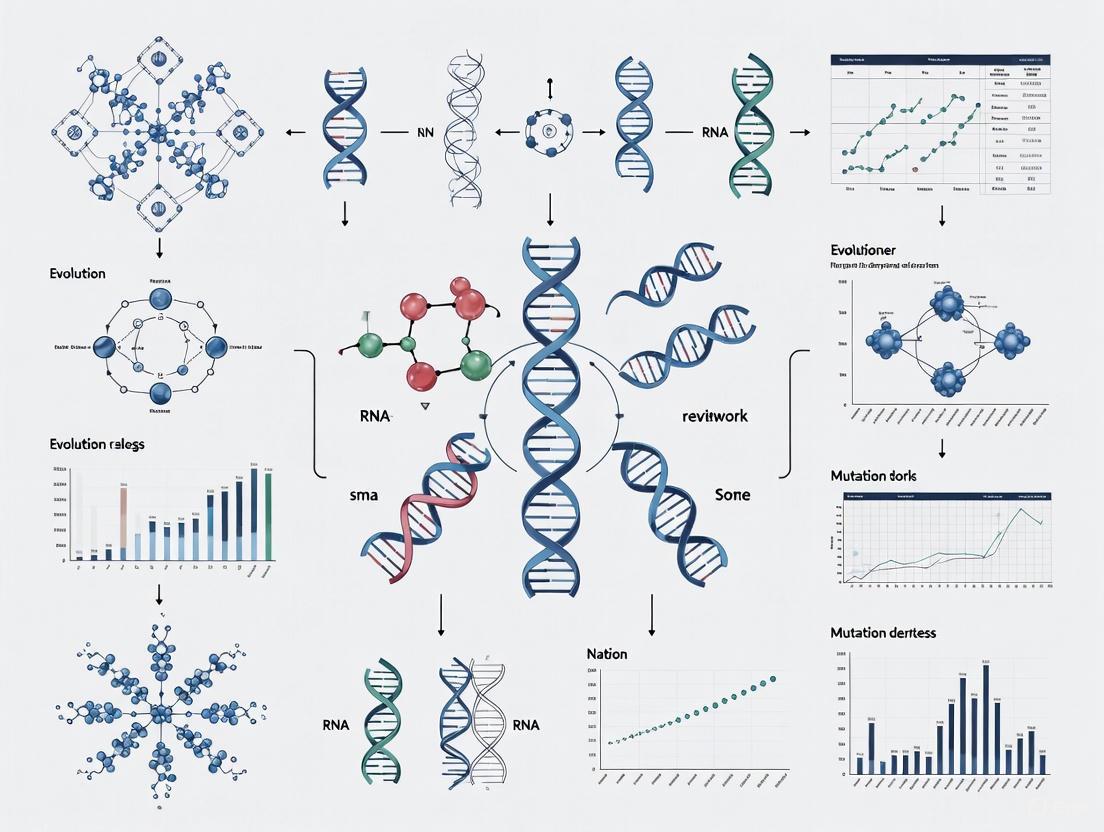

This article provides a comprehensive guide for researchers and drug development professionals on optimizing gene regulatory network (GRN) comparison. It covers foundational principles, from defining GRNs and their components to exploring their critical role in understanding disease mechanisms and cellular identity. The review details cutting-edge computational methodologies, including machine learning, single-cell analysis tools, and role-based embedding techniques, highlighting their applications in identifying key regulators and dynamic network changes. It further addresses common challenges like data sparsity and prediction accuracy, offering optimization strategies and systematic validation frameworks. By synthesizing key takeaways and future directions, this resource aims to equip scientists with the knowledge to leverage GRN comparisons for uncovering novel therapeutic targets and advancing personalized medicine.

The Blueprint of Life: Understanding Gene Regulatory Networks and Their Comparative Power

Core Concepts of Gene Regulatory Networks (GRNs)

A Gene Regulatory Network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins. This, in turn, determines the cell's function, fitness, and survival [1]. GRNs are central to understanding how cells make fate decisions, respond to environmental stimuli, and how body structures are created during morphogenesis [2] [1].

The structure of a GRN can be broken down into three fundamental components:

- Nodes: These represent the key functional molecules in the network. Typically, nodes are genes, their protein products (transcription factors), or messenger RNAs (mRNAs) [1] [3].

- Edges: These represent the physical and/or regulatory interactions between nodes. An edge can indicate that a transcription factor (TF) binds to a cis-regulatory element of a target gene to activate or inhibit its expression [4] [3].

- Regulatory Logic: This refers to the Boolean or logical function that governs how a node integrates multiple input signals to determine its output state. For example, a gene may be expressed only if TF A is present AND TF B is absent (A AND NOT B) [2].

The following diagram illustrates the basic components and a common network motif, the Cross-Inhibition with Self-Activation (CIS) topology, often found in cell fate decisions [2].

Basic GRN Components and CIS Topology

FAQs and Troubleshooting Guides

FAQ 1: What are the main experimental methods for mapping GRN edges?

Mapping the physical interactions between TFs and their target genes (the "edges" of the GRN) is a foundational step. The table below summarizes two primary high-throughput strategies [4] [3].

| Method | Core Principle | Key Challenge | Suitability |

|---|---|---|---|

| TF-Centered (e.g., ChIP-chip, ChIP-seq) | Starts with a specific TF; identifies all genomic regions it binds to (protein-to-DNA) [3]. | Binding does not prove functional regulation; may not distinguish between activation/repression [4]. | Ideal for studying a specific TF's role across the genome. |

| Gene-Centered (e.g., Yeast One-Hybrid, Y1H) | Starts with a specific gene's regulatory sequence; identifies all TFs that can bind to it (DNA-to-protein) [3]. | Typically performed in vitro (e.g., in yeast), which may not reflect native chromatin state in the cell of interest [3]. | Ideal for identifying all regulators of a key gene of interest. |

FAQ 2: How can I infer regulatory logic from experimental data?

Regulatory logic is not directly measured by binding assays alone. It requires integrating multiple data types to understand how a gene responds to combinations of inputs [2].

Detailed Methodology:

- Map Interactions: Use ChIP-chip or Y1H assays to identify which TFs bind to the cis-regulatory region of your target gene [4] [3].

- Perturb and Observe: Perform systematic perturbations (e.g., gene knockdown, knockout, or overexpression) of the identified TFs, both individually and in combination.

- Measure Output: Quantify the expression level of the target gene under each perturbation condition using techniques like RNA-seq or qPCR.

- Model the Logic: Construct a truth table of inputs (TF present/absent) and output (gene on/off). Use this table to infer the logical function (e.g., AND, OR, NOT) that best explains the expression pattern [2]. Computational models, such as logic-incorporated GRN models, can then be used to simulate network behavior and test hypotheses [2].

Troubleshooting Guide: Inconsistent GRN Model Predictions

| Problem Area | Potential Cause | Solution |

|---|---|---|

| Network Topology | The underlying map of interactions (edges) is incomplete or contains false positives/negatives [4]. | Validate key interactions with low-throughput assays (e.g., EMSA, reporter assays). Integrate complementary data (e.g., protein-protein interactions) to refine the network [3]. |

| Regulatory Logic | The model assumes incorrect logic functions for nodes, failing to capture combinatorial regulation [2]. | Incorporate perturbation data for multiple TFs in combination to empirically determine the logic, moving beyond simple activation/inhibition assumptions [2]. |

| Context Specificity | The GRN is not static; its structure and logic can change between cell types, developmental stages, or environmental conditions [1] [3]. | Ensure experimental data used to build the model is from a well-defined and consistent biological context. |

Quantitative Data and Network Properties

GRNs are not random; they exhibit distinct global and local topological features that influence their function and evolution [1] [3].

Table: Key Quantitative Properties of GRNs

| Property | Description | Functional Implication |

|---|---|---|

| Scale-Free Topology | The network contains a few highly connected nodes ("hubs") and many poorly connected nodes [1] [3]. | Robust to random failure but vulnerable to targeted attacks on hubs. Evolves via preferential attachment of duplicated genes [1]. |

| Network Motifs | Small, repetitive sub-networks that occur more frequently than in random networks (e.g., Feed-Forward Loop - FFL) [1]. | Considered "computational modules" that perform specific functions, such as accelerating responses or filtering noise [1]. |

| Node Degree | The number of connections a node has. In-degree: TFs regulating a gene. Out-degree: Genes a TF regulates [3]. | Nodes with high out-degree (TF hubs) control large genetic programs. Nodes with high in-degree (gene hubs) integrate multiple signals [3]. |

The following diagram visualizes a Feed-Forward Loop (FFL), a common network motif, and its potential function as a noise filter [1].

Feed-Forward Loop Motif

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in GRN Research |

|---|---|

| Chromatin Immunoprecipitation (ChIP) | A key technique for mapping TF binding sites (edges) in a TF-centered approach. The crosslinked and immunoprecipitated DNA is analyzed by microarray (ChIP-chip) or sequencing (ChIP-seq) [4]. |

| Yeast One-Hybrid (Y1H) System | A gene-centered method to identify all TFs that can bind a specific DNA regulatory element (e.g., a promoter), helping to map edges pointing to a gene of interest [3]. |

| DNA Microarrays & RNA-seq | Technologies for genome-wide expression profiling. Critical for observing the output of the network and inferring regulatory relationships and logic after perturbations [4] [3]. |

| Cytoscape | An open-source software platform for visualizing complex GRNs and integrating them with expression and other functional data [3]. |

| Logic-Incorporated Computational Models | Mathematical models (e.g., Boolean, ODEs) that incorporate regulatory logic to simulate network dynamics, test hypotheses, and predict cell fate decisions [2]. |

Visualizing a Differentiated Cell State Network

The following diagram represents a simplified, stable GRN configuration for a differentiated cell type, such as a megakaryocyte-erythroid progenitor (MEP), based on the Gata1-PU.1 circuit. It shows how mutual inhibition and self-activation maintain a specific fate [2].

Stable Differentiated State GRN

Frequently Asked Questions (FAQs)

FAQ 1: How can I avoid creating an uninterpretable "hairball" network visualization? A "hairball" occurs when a network is too dense with nodes and edges to be usefully visualized. Solutions include: reducing the number of nodes to only the most significant ones (e.g., those with edges over a certain weight), grouping nodes into specific categories during data pre-processing, selecting graphics better suited for many nodes (like circos plots), and adjusting graph properties such as image size [5]. For networks with 30 nodes or more, this becomes a significant risk [5].

FAQ 2: What is the first step I should take before creating a biological network figure? The most important first step is to determine the purpose of your figure and assess the network's characteristics. Before creating the illustration, write down the explanation or caption you wish to convey. Decide if the message relates to the whole network, a subset of nodes, the network's topology, or a functional aspect. This determines the data to include, the figure's focus, and the visual encoding sequence [6].

FAQ 3: When should I use an adjacency matrix instead of a standard node-link diagram? Adjacency matrices are advantageous for dense networks with many edges, as they can represent every possible edge without clutter. They excel at encoding edge attributes using color or color saturation, showing node neighborhoods and clusters (with optimized node order), and displaying readable node labels where a node-link diagram would be too cluttered [6].

FAQ 4: My Cytoscape layout is failing or running slowly for a large network. How can I fix this?

Layout failures for large networks can often be resolved by increasing the memory and stack size allocated to Cytoscape from the command line. For networks with 70,000-150,000 objects (nodes + edges), allocating 800MB-1GB of memory is suggested. If layout algorithms fail, try adding the -Xss10M flag to increase stack space [7].

java -Xmx1GB -Xss10M -jar cytoscape.jar -p plugins

FAQ 5: How can I infer a Gene Regulatory Network (GRN) from my gene expression data? Machine learning techniques can be applied to various datasets for GRN inference. Common data types include:

- Microarray data: Widely available for various organisms and tissues [8].

- RNA-seq data: Provides more accurate quantification of gene expression [8].

- Single-cell RNA-seq data: Reveals cell-type-specific gene expression patterns [8].

- Time-series expression data: Allows for the inference of dynamic GRNs based on temporal patterns (available via DREAM Challenges) [8].

- Perturbation data: Gene knockouts or drug treatments can help establish causal relationships [8].

Troubleshooting Guides

Issue 1: Network Visualization is Cluttered and Unreadable

Problem: The network figure is a dense "hairball" where relationships are obscured [5].

Solution: Apply a combination of layout optimization and data filtering.

Step-by-Step Guide:

- Filter Nodes: Reduce the number of nodes to the most significant ones. In a tool like Gephi, use a filter (e.g., "Degree Range") to show only nodes with a high number of connections [5].

- Choose a Suitable Layout:

- In Cytoscape or yEd, use a force-directed layout like "Force Atlas 2" which disperses groups and gives space around larger nodes. Enable the "prevent overlap" parameter [6] [9].

- For very dense networks, consider an Adjacency Matrix layout, which is inherently less cluttered [6].

- For text-based networks or to show connections in a circular shape, use a Circos Plot [5].

- Group Nodes: If your data contains categories, group nodes by these attributes. In a Hive Plot, nodes are assigned to different axes based on their attributes, making inter-group and intra-group connections clear [5].

- Adjust Visual Properties: Increase the image size and lower the opacity of edges to improve contrast with nodes [9].

Issue 2: Difficulty Interpreting the Spatial Layout of a Node-Link Diagram

Problem: The spatial arrangement of nodes may lead to unintended interpretations, such as perceiving conceptual relationships where none exist [6].

Solution: Select a layout algorithm that intentionally encodes the story you want to tell.

Step-by-Step Guide:

- Define the Intended Message: Determine what spatial principle should guide the layout [6]:

- Proximity: To show conceptual relatedness, use a layout that places similar nodes close together. Use force-directed algorithms that interpret connectivity strength or node attribute similarity as an attracting force [6].

- Centrality: To emphasize the importance of a node, use an algorithm that places the most "central" nodes (by a metric like betweenness centrality) in the center of the figure [6].

- Direction: To show a flow of information or a cascade, use a hierarchical or directed layout that places nodes from left to right or top to bottom [6].

- Select and Run the Algorithm: In Gephi, run a first-pass layout like "Fruchterman Reingold" to untangle the network, then apply "Force Atlas 2" with a high "Scaling" value and "Prevent Overlap" enabled to fine-tune the spatialization [9].

- Validate: Calculate centrality measures (e.g., degree, betweenness) in the Statistics panel and ensure the visual centrality matches the mathematical centrality [9].

Issue 3: Integrating and Analyzing Omics Data in a Network Context

Problem: Researchers have a list of interesting genes (e.g., from RNA-seq) and want to understand their functional relationships and identify key pathways and regulators.

Solution: Follow a structured pathway and network analysis workflow [10].

Step-by-Step Guide:

- Process Data: Obtain a ranked list of genes. For RNA-seq data, this is typically a list of differentially expressed genes with log2 fold change and p-values [10].

- Identify Pathways:

- Over-representation Analysis: Use tools like g:Profiler with your list of significant genes as the foreground and all expressed genes as the background. Use a statistical threshold (FDR) and filter gene set sizes (e.g., 3-300 genes) for interpretability [10].

- Gene Set Enrichment Analysis (GSEA): Use the GSEA desktop application with a ranked list of all genes to identify pathways where genes are coordinately up- or down-regulated, even with subtle changes [10].

- Visualize Pathways: Create an Enrichment Map in Cytoscape to display the landscape of enriched pathways and their relationships [10].

- Build and Analyze the Network:

- Discover Regulators: Use iRegulon for sequence-based discovery of transcription factors, their targets, and motifs from your set of genes [10].

Experimental Protocols & Data

Protocol 1: Creating a Biological Network Figure for Publication

This methodology follows the 10 simple rules for creating effective biological network figures [6].

1. Determine the Figure's Purpose:

- Write the intended figure caption first. Decide if the message is about network functionality (e.g., a signaling cascade) or structure (e.g., a protein-protein interaction network) [6].

- For functionality: Use a data flow encoding with directed edges (arrows). Node color can show attributes like expression variance [6].

- For structure: Use undirected edges. Node placement should reinforce the network structure. Node color can show attributes like fold change, and size can represent mutation count [6].

2. Choose a Layout and Assess the Network:

- Assess network scale, data type, and structure [6].

- For small to medium networks, a node-link diagram is standard. Use algorithms (force-directed, hierarchical) that match your intended spatial message (proximity, centrality, direction) [6].

- For dense networks, use an adjacency matrix [6].

- For trees, use implicit layouts like icicle or sunburst plots [6].

3. Apply Color and Channels:

- Use a sequential color scheme (e.g., yellow to green) for attributes like expression variance [6].

- Use a divergent color scheme (e.g., red to blue) to emphasize extreme values, like differential expression [6].

4. Provide Readable Labels and Captions:

- Labels must be legible, using a font size the same as or larger than the caption font [6].

- If labels cannot be made larger in the main figure, provide a high-resolution version online that can be zoomed [6].

Protocol 2: Performing Over-Representation Analysis with g:Profiler

A standard method for identifying pathways enriched in a gene list [10].

Methodology:

- Prepare Input Files:

- Foreground genes: A list of differentially expressed genes (e.g., Log2(FC)>1.0 & FDR<0.01). Using ENSEMBL IDs is recommended as they are unique [10].

- Background genes: Can be "Only annotated genes" or a list of all "expressed" genes from your experiment (e.g., genes with normalized counts >=10 in all samples) [10].

- Run Query on g:Profiler Web Tool:

- Select the correct organism.

- Paste your foreground gene list.

- In "Advanced Options," paste your background gene list or select "Only annotated genes."

- Set statistical threshold to FDR.

- Select data sources (e.g., GO: Biological Process with "no electronic GO annotations," and Reactome).

- Interpret Results:

- Save Results: Save the Generic Enrichment Map (GEM) file for direct use with the EnrichmentMap Cytoscape app [10].

The Scientist's Toolkit

Table 1: Essential Software for Network Analysis and Visualization

| Software/Tool | Primary Function | Key Features & Applications |

|---|---|---|

| Cytoscape [7] | Open-source platform for network visualization and analysis. | Visual integration of biomolecular interaction networks with expression data and phenotypes. Extensible via plugins. Ideal for pathway analysis. |

| Gephi [9] | Open-source network analysis and visualization software. | User-friendly interface for graph spatialization and calculation of centrality measures (degree, betweenness, closeness). |

| g:Profiler [10] | Web tool for functional enrichment analysis. | Performs over-representation analysis to find enriched pathways in a list of genes. Supports various ID types and organisms. |

| GSEA [10] | Desktop application for Gene Set Enrichment Analysis. | Analyzes a ranked gene list to identify coordinated expression changes in pre-defined gene sets/pathways. |

| EnrichmentMap [10] | A Cytoscape app. | Visualizes the results of enrichment analyses as a network of interconnected pathways, providing a landscape view. |

| ReactomeFI [10] | A Cytoscape app. | Used to build and visualize functional interaction networks among genes from enriched pathways. |

| GeneMANIA [10] | A Cytoscape app. | Predicts gene function by finding related genes based on a wide range of interaction networks. |

Table 2: Key Research Reagents and Data Sources for GRN Reconstruction

| Item | Function in Gene Network Analysis |

|---|---|

| Microarray Data [8] | Provides gene expression levels across various conditions for inferring co-expression networks and GRNs. |

| RNA-seq Data [8] | Offers more accurate gene expression quantification than microarrays; used as the primary data source for network inference. |

| Single-cell RNA-seq Data [8] | Reveals cell-type-specific gene expression patterns, enabling the construction of context-specific GRNs. |

| Time-Series Expression Data [8] | Allows for the inference of dynamic GRNs and causal relationships by capturing changes in gene expression over time. |

| Perturbation Data (e.g., Gene Knockouts) [8] | Helps establish causality in regulatory relationships by observing network changes after targeted interventions. |

Workflow and Network Diagrams

Gene Network Analysis Workflow

Network Visualization Layout Selection

Software Integration for Pathway Analysis

Key Biological Questions Addressed by Network Comparisons

Frequently Asked Questions

What is the primary goal of comparing biological networks across species? Comparative network analysis aims to identify evolutionarily conserved interactions and species-specific adaptations. By examining similarities and differences in network architecture, researchers can understand how cellular processes have evolved and which interactions are fundamental to biological function. This is crucial for inferring gene function and understanding phenotypic diversity [11].

My cross-species network alignment has low conservation scores. What could be wrong? Low conservation scores often stem from technical rather than biological differences. Consider these factors: the quality and completeness of the underlying interaction data for each species, the orthology mapping method used to connect nodes between networks, and potential biases in the original experimental data used to construct each network. Incomplete data can make networks appear more different than they actually are [12].

How do I choose between local and global network alignment methods? Your choice depends on the biological question. Use global alignment when you want to identify a comprehensive map of conserved interactions across entire networks, which is useful for studying broad evolutionary patterns. Use local alignment when searching for specific conserved functional modules or pathways, which helps identify key functional units preserved across species [12].

Why do my gene co-expression networks differ significantly between two tissues of the same species? Biological networks are context-dependent and can be "rewired" based on cellular conditions. Gene co-expression patterns naturally differ across tissues due to tissue-specific regulatory programs. These differences often reflect genuine biological variation in how genes interact in different functional contexts, which can provide insights into tissue-specific physiology and disease mechanisms [11].

What are the most common pitfalls in biological network visualization? Common issues include: selecting inappropriate layouts that misrepresent network topology, using colors with insufficient contrast that obscure information, creating label clutter that makes nodes unreadable, and choosing representations that don't align with the figure's intended message about network structure or function [6].

Troubleshooting Guides

Problem: Inconsistent Results in Cross-Species Network Comparisons

Symptoms

- Variable conservation scores across different network regions

- Poor overlap in hub gene identification between species

- Inconsistent functional module preservation

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incomplete underlying data | Compare network coverage metrics (nodes, edges) against known proteome size [12] | Use data completeness filters; focus on high-confidence interactions |

| Incorrect orthology mapping | Validate orthology pairs using multiple databases | Use consensus orthology assignments from several sources |

| Algorithm parameter sensitivity | Test alignment with varying stringency parameters | Perform parameter sweeps; use benchmarked settings |

| Biological rather than technical differences | Check if divergent regions correspond to known biological adaptations | Validate findings with functional assays; focus on biologically meaningful differences |

Resolution Protocol

- Data Quality Assessment: First, quantify completeness of each network using standard metrics [12].

- Orthology Validation: Cross-reference orthology mappings using bioDBnet or similar platforms that integrate multiple databases [13].

- Parameter Optimization: Systematically test alignment parameters using known conserved pathways as positive controls.

- Biological Validation: Use functional enrichment analysis to determine if divergent network regions correspond to meaningful biological adaptations.

Problem: Poor Detection of Conserved Functional Modules

Symptoms

- Known conserved pathways appear fragmented in alignment results

- Low statistical significance for module preservation

- High false negative rate for functionally related gene sets

Diagnostic Workflow

Solution Steps

- Input Data Optimization

- Filter networks to include only high-confidence interactions

- Use consensus network construction approaches

- Ensure adequate sample size for co-expression network construction [14]

Algorithm Selection

- Choose methods specifically designed for local network alignment

- Implement multi-scale approaches that detect both small and large modules

- Use ensemble methods that combine multiple alignment algorithms [12]

Statistical Validation

- Apply appropriate multiple testing corrections

- Use permutation-based approaches to establish significance thresholds

- Benchmark against known conserved pathways

Problem: Network Visualization Obscures Important Patterns

Symptoms

- Key biological features not visually apparent

- High edge density creates "hairball" effects

- Difficulty identifying hub nodes or modular structure

| Issue | Diagnostic Method | Resolution Approach |

|---|---|---|

| Layout selection | Test multiple layout algorithms | Choose layout based on network characteristics and message [6] |

| Visual clutter | Calculate node/edge density | Apply filtering or clustering; use adjacency matrices for dense networks [6] |

| Poor color contrast | Check color accessibility standards | Use colorblind-friendly palettes with sufficient luminance contrast [6] |

| Inadequate labeling | Assess label readability at publication size | Use selective labeling or interactive visualization tools [6] |

Visualization Optimization Protocol

Implementation Details

- Purpose-Driven Design

- Clearly define the main message before creating the visualization

- Select representations that highlight relevant biological features

- Tailor the visualization to either structure or function emphasis [6]

Appropriate Layout Selection

- Use force-directed layouts for general topological features

- Apply circular layouts for highlighting modular organization

- Consider adjacency matrices for dense networks [6]

Effective Attribute Mapping

- Map most important node attributes to size and color

- Use edge thickness or color for interaction strength or type

- Employ selective labeling to reduce clutter

Experimental Protocols

Protocol 1: Cross-Species Gene Co-expression Network Alignment

Purpose Identify evolutionarily conserved co-expression modules and rewired interactions between species.

Materials

- RNA-seq or microarray data from both species

- Orthology mapping database (Ensembl Compara, OrthoDB)

- Network construction tools (WGCNA, CEMiTool)

- Network alignment software (IsoRank, NetworkBLAST, AlignNemo)

Methodology

- Network Construction

- Calculate gene co-expression using appropriate similarity measures

- Apply soft thresholding to preserve continuous edge weights

- Construct weighted networks for each species [11]

Orthology Mapping

- Obtain high-confidence orthology pairs

- Resolve one-to-many and many-to-many orthology relationships

- Filter for orthologs with conserved functional annotations

Network Alignment

- Select alignment method based on research question

- For local alignment: Use methods that identify conserved functional modules

- For global alignment: Use methods that maximize overall network similarity [11]

Statistical Analysis

- Assess significance of aligned regions using permutation tests

- Calculate conservation scores for network edges and modules

- Perform functional enrichment analysis of conserved modules

Troubleshooting Notes

- If alignment identifies too few conserved regions, verify orthology mapping quality

- If alignment produces fragmented modules, adjust algorithm stringency parameters

- Validate biologically significant findings with independent data sources

Protocol 2: Differential Network Analysis for Condition-Specific Interactions

Purpose Identify changes in network topology and connectivity under different biological conditions.

Materials

- Gene expression data from multiple conditions

- Network analysis environment (R/Bioconductor, Cytoscape)

- Differential connectivity algorithms (DiffCorr, DINGO)

- Visualization tools (Cytoscape, Gephi)

Methodology

- Condition-Specific Network Construction

- Construct separate networks for each biological condition

- Maintain consistent network construction parameters

- Preserve edge weight information for comparative analysis [14]

Differential Connectivity Analysis

- Identify nodes with significant changes in connectivity

- Calculate differential correlation for edge-based comparisons

- Detect network regions with significant topological changes

Module-Based Comparison

- Identify conserved and condition-specific modules

- Calculate module preservation statistics

- Analyze functional enrichment of condition-specific modules

Validation and Interpretation

- Compare findings with known biological pathways

- Integrate additional omics data for multi-layer validation

- Perform experimental validation of key predictions

Technical Notes

- Sample size strongly affects network comparison reliability

- Batch effects can create artificial network differences

- Network construction method has less impact than analysis strategy on biological interpretation [14]

The Scientist's Toolkit

| Research Reagent | Primary Function | Application Notes |

|---|---|---|

| Cytoscape | Network visualization and analysis | Supports plugins for network alignment, functional enrichment, and module detection [6] |

| STRING database | Protein-protein interaction data | Provides confidence scores; integrates physical and functional interactions [15] |

| WGCNA | Weighted gene co-expression network analysis | Uses soft thresholding; identifies modules of highly correlated genes [11] |

| bioDBnet | Biological database integration | Converts between different identifier types; essential for cross-database integration [13] |

| KEGG PATHWAY | Curated pathway maps | Reference for conserved pathways; useful for validation of network alignment results [15] |

| OrthoDB | Orthology information | Provides evolutionary classifications of genes across species [11] |

| IsoRank | Network alignment algorithm | Global alignment approach; uses sequence and network similarity [12] |

FAQs: Data Processing and GRN Inference

Q1: What are the primary computational methods for inferring Gene Regulatory Networks (GRNs) from single-cell data, and how do they differ?

Several statistical and machine learning approaches are used for GRN inference, each with distinct foundations and assumptions [16]. The choice of method depends on the data type and the specific biological question.

- Correlation-based approaches operate on the "guilt by association" principle, inferring relationships between co-expressed genes or between transcription factor (TF) expression and target gene expression using measures like Pearson's correlation or mutual information. However, these methods struggle to distinguish direct from indirect regulation [16].

- Regression models treat the expression of a gene as the response variable, regressed against the expression or accessibility of multiple potential TFs and cis-regulatory elements. Penalized methods like LASSO are often used to prevent overfitting and improve network interpretability [16].

- Probabilistic models use graphical models to capture dependencies between variables. They estimate the most probable regulatory relationships that explain the observed data and can provide uncertainty estimates for predictions, which is a key advantage [16].

- Dynamical systems model gene expression as it evolves over time using differential equations. While highly interpretable, these methods require temporal data and can be less scalable for large networks [16].

- Deep learning models, such as multi-layer perceptrons or autoencoders, are versatile and can learn complex patterns from data. However, they often require large datasets, substantial computational resources, and can be less interpretable than other methods [16].

Q2: My single-cell RNA-seq data shows high mitochondrial read percentages. What does this indicate, and how should I filter it?

An elevated percentage of reads mapping to mitochondrial genes is often associated with stressed, apoptotic, or low-quality cells where cytoplasmic mRNA has leaked out [17]. However, the appropriate threshold for filtering varies by biological context.

- Diagnosis: In cell types like peripheral blood mononuclear cells (PBMCs), high mitochondrial gene expression is not expected. For such data, a threshold of 10% is sometimes used [17]. It is critical to check the distribution of mitochondrial reads across all cells to determine a suitable cutoff. Always visualize the data to distinguish a biologically relevant subpopulation from a technical artifact.

- Solution:

- Use tools like Loupe Browser or computational pipelines to plot the distribution of mitochondrial read percentages per cell barcode [17].

- Filter out cell barcodes that are extreme outliers, typically those with a much higher percentage than the majority of cells.

- Exercise caution with certain cell types, such as cardiomyocytes, where high mitochondrial gene expression may be biologically meaningful, and filtering could introduce bias [17].

Q3: What are common causes of low library yield in NGS preparations for RNA-seq, and how can they be addressed?

Low library yield can stem from issues at multiple steps in the preparation workflow. Systematic diagnosis is required to identify the root cause [18].

- Sample Input and Quality: Degraded RNA or contaminants like phenol, salts, or EDTA can inhibit enzymatic reactions. Quantification errors from using absorbance-based methods (e.g., NanoDrop) that overestimate usable material are also common.

- Fix: Re-purify input samples, use fluorometric quantification (e.g., Qubit), and check absorbance ratios (260/280 ~1.8, 260/230 >1.8) [18].

- Fragmentation and Ligation: Over- or under-fragmentation reduces ligation efficiency. An suboptimal adapter-to-insert molar ratio can lead to excessive adapter dimers or reduced yield.

- Fix: Optimize fragmentation parameters and titrate adapter concentrations [18].

- Amplification/PCR: Too many PCR cycles can introduce duplicates and bias, while enzyme inhibitors can cause mid-reaction failure.

- Fix: Use the minimal number of PCR cycles necessary and ensure reagents are free of inhibitors [18].

Q4: How can prior knowledge, such as motif information, be integrated into GRN inference?

Modern GRN inference methods, particularly probabilistic ones, provide a flexible framework for integrating diverse prior information. For example, the PMF-GRN method uses a probabilistic matrix factorization approach where prior hyperparameters can represent an initial guess of interactions between TFs and target genes [19]. This prior knowledge can be derived from:

- TF motif databases.

- Measurements of chromatin accessibility (e.g., from scATAC-seq).

- Direct measurements of TF-binding (e.g., from ChIP-seq) [19]. This integration guides the model to more biologically plausible networks and helps resolve inherent identifiability issues in matrix factorization [19].

Troubleshooting Guides

Microarray Analysis Troubleshooting

| Problem | Possible Cause | Solution |

|---|---|---|

| High background noise or poor signal-to-noise ratio | Non-specific binding or hybridization issues; sample contaminants | Ensure stringent washing protocols; re-check sample purity and quality (degradation, contaminants) [20]. |

| Unexpectedly high ribosomal RNA signal | rRNA competition with mRNA during amplification steps, common with total RNA input | Deplete ribosomal RNA from the sample before the amplification steps using commercially available kits [20]. |

Bulk and Single-Cell RNA-seq Troubleshooting

| Problem | Possible Cause | Solution |

|---|---|---|

| Low Library Yield [18] | Poor input RNA quality or contaminants; inaccurate quantification; inefficient fragmentation/ligation. | Re-purify input; use fluorometric quantification (Qubit); optimize fragmentation parameters; titrate adapter ratios. |

| High Ambient RNA Contamination (Single-Cell) [17] | RNA released from lysed cells during sample preparation. | Use computational tools like SoupX or CellBender to estimate and subtract background noise [17]. |

| Presence of Adapter Dimers [18] | Over-aggressive fragmentation; suboptimal ligation conditions; inefficient size selection. | Titrate adapter-to-insert ratio; optimize bead-based cleanup parameters (e.g., bead-to-sample ratio). |

| Inaccurate Gene Expression Quantification | Read assignment uncertainty, especially for genes with multiple isoforms. | Use quantification tools like Salmon or kallisto that statistically model the uncertainty of read assignments to transcripts [21]. |

GRN Inference Benchmarking Results

The following table summarizes the performance of the PMF-GRN method against other state-of-the-art tools on real and synthetic single-cell datasets, demonstrating its advanced capabilities [19].

| Method | Underlying Approach | Key Features | Benchmark Performance (vs. Gold Standards) |

|---|---|---|---|

| PMF-GRN [19] | Probabilistic Matrix Factorization with Variational Inference | Provides well-calibrated uncertainty estimates; principled hyperparameter search; integrates prior knowledge. | Overall improved performance in recovering true GRN; outperformed baselines on synthetic BEELINE datasets. |

| Inferelator [19] | Regularized Regression (e.g., LASSO) | - | Lower accuracy compared to PMF-GRN in benchmark tests. |

| SCENIC [19] | Tree-Based Regression | - | Lower accuracy compared to PMF-GRN in benchmark tests. |

| Cell Oracle [19] | Bayesian Ridge Regression | - | Lower accuracy compared to PMF-GRN in benchmark tests. |

Experimental Protocols

Best Practices for 10x Genomics Single-Cell RNA-seq Data Analysis

This protocol outlines the standard steps for initial processing and quality control of 10x Genomics single-cell gene expression data [17].

- Raw Data Processing: Process raw FASTQ files using the Cell Ranger

multipipeline on the 10x Genomics Cloud or via command line. This performs read alignment, UMI counting, cell calling, and initial clustering. - Initial Quality Control: Review the

web_summary.htmlfile generated by Cell Ranger. Key metrics to check include:- Number of cells recovered (should be close to target).

- Percentage of confidently mapped reads in cells (should be high, e.g., >90%).

- Median genes per cell (should be within expected range for the sample type).

- Barcode Rank Plot (should show a clear separation between cells and background).

- Barcode Filtering with Loupe Browser: Open the

.cloupefile in Loupe Browser to perform manual filtering of cell barcodes.- Filter by UMI Counts: Remove barcodes with extremely high UMI counts (potential multiplets) and very low UMI counts (potential ambient RNA).

- Filter by Genes Detected: Similarly, remove outliers with very high or low numbers of detected genes.

- Filter by Mitochondrial Reads: Set a threshold for the maximum percentage of mitochondrial UMIs allowed (e.g., 10% for PBMCs). Document all filtering thresholds for reproducibility.

- Downstream Analysis: The filtered feature-barcode matrix can then be exported for advanced analyses like differential expression, trajectory inference, or GRN inference in community-developed tools.

Protocol for Bulk RNA-seq Differential Expression Analysis with limma

This protocol describes a robust workflow for identifying differentially expressed genes from bulk RNA-seq data, a common starting point for GRN inference [21].

- Data Preparation and Quantification: Use the nf-core/RNA-seq Nextflow workflow for automated, reproducible processing.

- Input: Paired-end FASTQ files, a genome FASTA file, and a GTF annotation file.

- Process: The workflow runs STAR for splice-aware genome alignment and Salmon (in alignment-based mode) for transcript-level quantification, handling read assignment uncertainty.

- Output: A gene-level count matrix for all samples.

- Differential Expression in R: Use the

limmapackage in R for statistical testing.- Import Data: Read the count matrix and a sample sheet linking samples to experimental conditions.

- Normalization and Transformation: Transform the count data using the

voomfunction, which estimates the mean-variance relationship and prepares the data for linear modeling. - Linear Modeling: Define a design matrix based on the experimental conditions and fit a linear model to the transformed expression data for each gene.

- Hypothesis Testing: Compute moderated t-statistics, F-statistics, and p-values using empirical Bayes moderation to identify significantly differentially expressed genes.

Visualized Workflows

GRN Inference from Single-Cell Multi-omics

Single-Cell RNA-seq QC Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Chromium Single Cell 3' Reagent Kits (10x Genomics) | Enables high-throughput barcoding and library preparation of single-cell transcriptomes for platforms like the Chromium [17]. |

| rRNA Depletion Kits | Removes abundant ribosomal RNA from total RNA samples before library prep for microarrays or RNA-seq, preventing competition during amplification and improving mRNA detection [20]. |

| STAR Aligner | A splice-aware aligner that accurately maps RNA-seq reads to a reference genome, a critical first step in many quantification pipelines [21]. |

| Salmon | A fast and bias-aware quantification tool that uses a pseudoalignment approach to estimate transcript and gene abundance, effectively modeling uncertainty in read origin [21]. |

| PMF-GRN Software | A computational tool that uses probabilistic matrix factorization and variational inference to infer gene regulatory networks from single-cell data, providing confidence estimates for interactions [19]. |

A Methodologist's Toolkit: From Machine Learning to Single-Cell GRN Analysis

Leveraging Machine Learning and Hybrid Models for Enhanced GRN Prediction

Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when implementing machine learning (ML) and hybrid models for Gene Regulatory Network (GRN) prediction, providing targeted solutions to keep your projects on track.

Common ML/GRN Prediction Errors

| Problem Symptom | Possible Cause | Solution |

|---|---|---|

| Low prediction accuracy on real biological data [22] | High complexity of transcriptional regulation; model cannot capture true TF-gene interactions. | Use network-level topological analysis to extract insights despite imperfect predictions. Focus on community structure and centrality metrics. |

| Model performs well on training data but poorly on new species | Limited availability of high-quality training data for non-model organisms [23]. | Implement transfer learning, leveraging models trained on data-rich species (e.g., Arabidopsis) for data-scarce targets [23]. |

| Inability to capture non-linear regulatory relationships | Traditional ML methods (linear regression, SVM) struggling with complex data [23]. | Employ hybrid models combining CNNs for feature extraction and ML for classification [23]. |

| High fraction of false positive TF-gene predictions | Inherent limitations of inference algorithms with real expression data [22]. | Integrate prior knowledge (e.g., motif information, protein-DNA interactions) to constrain predictions [24]. |

| Overfitting on limited training datasets | DL models requiring large, high-quality labeled datasets [23]. | Use data augmentation strategies or opt for models with fewer parameters and efficient design [25]. |

Data Quality & Preprocessing Issues

| Problem Symptom | Possible Cause | Solution |

|---|---|---|

| Poor model generalization across sequence types | Model architecture or training strategy not capturing fundamental regulatory rules [25]. | Utilize innovative training (e.g., random sequence training, masked nucleotide prediction) to improve robustness [25]. |

| Low library yield or quality during NGS prep for expression data | Poor input DNA/RNA quality or contaminants; inaccurate quantification [18]. | Re-purify input sample; use fluorometric quantification (Qubit) over UV absorbance; optimize fragmentation [18]. |

| Adapter-dimer contamination in sequencing data | Suboptimal adapter ligation conditions; inefficient purification [18]. | Titrate adapter-to-insert molar ratios; optimize bead-based cleanup parameters [18]. |

| Inefficient ligation during library prep | Poor ligase performance; improper reaction buffer or temperature [18]. | Ensure fresh ligase and buffer; maintain optimal temperature (~20°C); verify fragmentation distribution [18]. |

Advanced Model Optimization FAQs

Q: What model architectures have shown top performance in recent GRN challenges? A: In recent benchmarks, fully Convolutional Neural Networks (CNNs) and transformer models have achieved state-of-the-art results. Specifically, architectures based on EfficientNetV2 and ResNet have topped performance rankings, with one winning solution using a bin-classification approach and innovative data encoding [25].

Q: How can I improve my model's prediction of transcription factor binding and regulatory impact? A: Integrate multiple data types. Use sequence-based features (e.g., from DeepBind, DeepSEA) and incorporate chromatin accessibility data (e.g., from ATAC-seq) alongside gene expression profiles. Tools like SCENIC can use co-expression and prior motif information to refine regulon predictions [23] [24].

Q: What strategies can help with cross-species GRN prediction? A: Transfer learning is a key strategy. Train your model on a well-annotated, data-rich species (like Arabidopsis thaliana), then apply it to a less-characterized target species. This leverages evolutionary conservation and enhances performance when target species data is limited [23].

Q: My model's predictions are biologically implausible. How can I add constraints? A: Incorporate prior biological knowledge. Use databases of known TF-regulons (e.g., DoRothEA, TTRUST, RegulonDB) to guide the model. Integrating metabolic network models can also provide biochemical constraints that improve prediction accuracy [24] [23].

Experimental Protocols & Workflows

Detailed Methodology: Hybrid GRN Inference from RNA-seq Data

This protocol outlines a hybrid ML/DL approach for constructing GRNs from transcriptomic data, as utilized in recent studies achieving over 95% accuracy [23].

Data Collection and Preprocessing

- Raw Data Retrieval: Download RNA-seq datasets in FASTQ format from public repositories (e.g., NCBI SRA) using the SRA Toolkit [23].

- Quality Control and Trimming: Assess read quality with FastQC. Remove adapters and low-quality bases using Trimmomatic [23].

- Read Alignment and Quantification: Align trimmed reads to the appropriate reference genome using STAR aligner. Generate gene-level raw read counts using featureCounts or similar tools [23].

- Expression Normalization: Normalize raw count data using the weighted trimmed mean of M-values (TMM) method in the edgeR package to account for composition bias between samples [23].

Construction of Training Data

- Positive/Negative Pairs: Create a set of known transcription factor (TF)-target gene pairs (positive examples) from curated databases. Generate negative examples by randomly pairing TFs and genes not known to interact, ensuring they are not co-expressed.

- Feature Engineering: For each TF-target pair, input features can include normalized expression levels across multiple conditions, sequence motif scores, chromatin accessibility data, and evolutionary conservation scores.

Model Training and Evaluation

- Hybrid Model Architecture: Implement a hybrid model where a Convolutional Neural Network (CNN) extracts high-level features from input data, which are then fed into a traditional machine learning classifier (e.g., Random Forest, SVM) for final prediction of regulatory interactions [23].

- Training Regimen: Train the model using the prepared positive and negative examples. Use a holdout test set for final evaluation.

- Performance Assessment: Evaluate model performance using standard metrics: Accuracy, Precision, Recall, and Area Under the Precision-Recall Curve (AUPR). Compare against traditional methods like GENIE3 or TIGRESS [23] [22].

Workflow for GRN Inference from Single-Cell RNA-seq Data using SCENIC

This protocol adapts the SCENIC tool for GRN inference from scRNA-seq data, enabling the identification of cell-type-specific regulons [24].

Data Preparation and Preprocessing

- Load Data: Load the pre-processed single-cell dataset (e.g., in Anndata format for Python). The dataset should be filtered for cells and genes.

- Subset Data (Optional): For computational efficiency or to focus on a specific batch/donor, subset the data. Highly variable gene selection is recommended [24].

- File Format Conversion: Convert the gene expression matrix to a Loom file format, which is required for the PySCENIC pipeline. Include necessary row (Gene) and column (CellID, nGene, nUMI) attributes [24].

GRN Inference with PySCENIC

- GRNBoost2 for Co-expression Modules: Run the

pyscenic grncommand. This step uses GRNBoost2 to infer potential TF-target relationships based on co-expression, generating an adjacency matrix of TF, target, and importance weight [24]. - Regulon Prediction (cisTarget): Run the

pyscenic ctxcommand. This step refines the co-expression modules using cis-regulatory motif analysis to identify direct binding targets, defining true regulons. - Cellular Activity Scoring (AUCell): Run the

pyscenic aucellcommand. This step calculates the activity of each regulon in each individual cell, resulting in a binary activity matrix.

Downstream Analysis and Visualization

- Integration with Clustering: Overlay the regulon activity scores onto your single-cell clustering (e.g., UMAP) to identify cell-type-specific or state-specific regulatory programs.

- Visualization: Use the binary activity matrix to visualize regulon activity across cells and conditions.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in GRN Research |

|---|---|

| STAR Aligner | Maps RNA-seq reads to a reference genome for transcript quantification, a critical first step for expression-based GRN inference [23]. |

| Trimmomatic | Performs initial quality control by removing adapter sequences and low-quality bases from raw sequencing reads [23]. |

| edgeR | A Bioconductor package used for normalizing RNA-seq count data, often using the TMM method, to enable accurate comparison between samples [23]. |

| PySCENIC | A Python-based pipeline for inferring GRNs from scRNA-seq data, combining co-expression (GRNBoost2), motif analysis (cisTarget), and activity scoring (AUCell) [24]. |

| Cytoscape | A powerful open-source platform for visualizing complex GRNs, allowing for custom layouts, analysis of network properties, and integration with expression data [26] [27]. |

| GENIE3 | A classic, high-performing machine learning algorithm (based on Random Forests) for inferring GRNs from transcriptomic data, often used as a benchmark [22]. |

| Transcription Factor Databases (DoRothEA, TTRUST) | Curated repositories of known TF-target interactions used as prior knowledge to train supervised models or validate predictions [24]. |

| 10x Genomics Xenium Platform | Provides in-situ spatial gene expression data, which can be used to infer context-specific GRNs within tissue architecture (requires troubleshooting as per [28]). |

Performance Benchmarking Table

| Model/Method Type | Key Features | Reported Performance (Accuracy/AUPR) | Best Use Cases |

|---|---|---|---|

| Hybrid CNN-ML Models [23] | Combines CNN for feature learning with ML classifiers (e.g., SVM, Random Forest). | >95% accuracy on holdout test sets for Arabidopsis, poplar, maize [23]. | Large-scale transcriptomic data integration; leveraging prior knowledge. |

| Transfer Learning [23] | Applies models trained on data-rich species (Arabidopsis) to data-poor targets. | Enhanced performance in non-model species (poplar, maize) vs. training from scratch [23]. | GRN prediction in non-model organisms with limited experimental data. |

| Traditional ML (GENIE3) [22] | Random Forest-based inference; a top performer in community challenges. | AUPR ~0.3 on synthetic benchmarks; AUPR 0.02-0.12 on real E. coli data [22]. | A strong baseline method; well-supported and widely used. |

| Sequence-Based DL (DREAM Challenge Models) [25] | CNNs, Transformers trained on random DNA sequences to predict expression. | Surpassed previous state-of-the-art; approached experimental reproducibility limits for some sequence types [25]. | Predicting regulatory activity and variant effects directly from DNA sequence. |

Gene regulatory networks (GRNs) are systems of interacting genes, transcription factors, and other molecular components that govern gene expression levels within individual cells. These networks consist of nodes (representing genes and proteins) and edges (representing regulatory interactions) that collectively orchestrate cellular processes essential for development, function, and response to environmental stimuli [29]. In eukaryotic systems, transcription factors—proteins crucial for cell identity and state management—carefully control gene expression by either activating or repressing specific target genes. This regulation depends on transcription factor abundance, their chromatin-binding capability, and various post-translational modifications they undergo [30].

Single-cell RNA sequencing (scRNA-seq) has emerged as a transformative technology that allows detailed characterization of transcriptomes at individual cell resolution within heterogeneous populations. Unlike traditional bulk RNA sequencing, which provides averaged gene expression profiles across cell mixtures, scRNA-seq offers high-resolution insights into cellular diversity. However, analyzing GRNs from scRNA-seq data presents significant challenges due to data sparsity (limited information about each gene in each cell) and cellular heterogeneity (the presence of cells at different stages or states) [31]. These limitations make modeling biological variability across single-cell samples particularly difficult.

SCORPION (Single-Cell Oriented Reconstruction of PANDA Individually Optimized Gene Regulatory Networks) represents a computational breakthrough that addresses these challenges. This R package tool generates GRNs from scRNA-seq data with remarkable precision and efficiency by incorporating multiple data sources beyond just gene expression [31]. SCORPION enables researchers to construct comparable, fully connected, weighted, and directed transcriptome-wide gene regulatory networks suitable for statistical analyses leveraging multiple samples per experimental group—capabilities essential for population-level studies [30].

Technical Framework and Algorithmic Approach

Core Methodology

SCORPION operates through five iterative steps to reconstruct comparable GRNs from single-cell transcriptomic data [30]:

Data Coarse-Graining: SCORPION first addresses the issue of sparsity in high-throughput single-cell/nuclei RNA-seq data by collapsing a k number of the most similar cells identified at the low-dimensional representation of the multidimensional RNA-seq data. This approach reduces sample size while decreasing data sparsity, enabling better capture of gene expression relationships [30] [29].

Initial Network Construction: Three distinct initial networks are constructed as described in the PANDA algorithm:

- Co-regulatory Network: Represents co-expression patterns between genes, constructed using correlation analyses over the coarse-grained transcriptomic data.

- Cooperativity Network: Accounts for known protein-protein interactions between transcription factors, with data sourced from the STRING database.

- Regulatory Network: Describes relationships between transcription factors and their target genes through transcription factor footprint motifs found in promoter regions of each gene [30] [29].

Information Flow Calculation: A modified version of Tanimoto similarity (designed for continuous values) generates:

- Availability Network (Aij): Represents information flow from a gene j to a transcription factor i, describing accumulated evidence for how strongly the transcription factor influences the gene's expression level.

- Responsibility Network (Rij): Represents information flowing from a transcription factor i to a gene j, capturing accumulated evidence for how strongly the gene is influenced by that specific transcription factor [30].

Network Refinement: The average of the availability and responsibility networks is computed, and the regulatory network is updated to include a user-defined proportion (α = 0.1 by default) of information from the other two original unrefined networks [30].

Iterative Convergence: Steps three to five are repeated until the Hamming distance between networks reaches a user-defined threshold (0.001 by default). When convergence is achieved, the refined regulatory network is returned as a matrix with transcription factors in rows and target genes in columns, with values encoding relationship strength between each transcription factor and gene [30].

Workflow Visualization

SCORPION Computational Workflow: From single-cell data to population-level comparisons

Performance Benchmarks and Validation

Comparative Performance Analysis

SCORPION was rigorously evaluated against 12 existing GRN reconstruction techniques using BEELINE, a standardized evaluation framework for systematically benchmarking algorithms that infer GRNs from single-cell transcriptional data [30] [31]. The results demonstrated SCORPION's superior performance across multiple metrics:

Table 1: SCORPION Performance Comparison Against Competing Methods

| Evaluation Metric | SCORPION Performance | Key Competitors | Performance Advantage |

|---|---|---|---|

| Overall Precision | Highest | PPCOR, PIDC | 18.75% more precise |

| Recall Rate | Highest | PPCOR, PIDC | 18.75% more sensitive |

| Multi-Metric Ranking | First place average | 12 other methods | Consistent top performer |

| Biological Relevance | Accurate identification of TF perturbations | Multiple methods | Superior biological accuracy |

| Transcriptome-Wide Capability | Full transcriptome analysis | Limited in competitors | Comprehensive network modeling |

SCORPION generates 18.75% more precise and sensitive GRNs than other methods [30]. While PPCOR and PIDC showed similar performance in some aspects, they demonstrated limitations in evaluating all regulatory mechanisms expected in comprehensive GRNs and performed poorly in transcriptome-wide scenarios [30].

Biological Validation Studies

SCORPION was further validated using supervised experiments with real biological datasets to assess its ability to detect meaningful biological differences:

Transcription Factor Perturbation Studies: Using curated real datasets generated with 10x Genomics' high-throughput single-cell/nuclei RNA-seq technologies, SCORPION accurately identified differences in regulatory networks between wild-type cells and cells carrying transcription factor perturbations [30]. Specifically, it analyzed data from studies involving transcription factors DUX4 and Hnf4αγ, successfully detecting biologically relevant regulatory changes [31].

Colorectal Cancer Atlas Application: To demonstrate scalability to population-level analyses, researchers applied SCORPION to a single-cell RNA-seq atlas containing 200,436 cells from 47 patients, representing three different regions of colorectal tumors and adjacent healthy tissues [30] [31]. The tool successfully:

- Developed gene regulatory networks specific to cell types

- Modeled tumor progression using GRNs

- Identified differences in left versus right-sided cancer GRNs

- Detected consistent differences between intra- and intertumoral regions aligned with the chromosomal instability pathway underlying most colon cancers [31]

Independent Cohort Validation: Findings from the colorectal cancer analysis were confirmed in an independent cohort of patient-derived xenografts from left- and right-sided tumors, providing insights into regulators associated with phenotypes and differences in survival rates [30].

Technical Specifications and Resource Requirements

Computational Implementation

SCORPION is implemented as an R package and leverages several computational strategies to enhance performance:

- Memory Optimization: Employs sparse matrices by default to conserve memory and accelerate matrix multiplication [31]

- Efficient Processing: Uses shorter primary components during the desparsification stage to boost computational performance [31]

- Accessibility: Available through the CRAN repository, ensuring easy installation and use across diverse operating systems [31]

- Integration Compatibility: Compatible with the popular single-cell analysis R package Seurat for data loading and clustering, and allows potential integration with other data types (bulk RNA-seq, ATAC-seq) to enhance GRN analysis [29]

Table 2: Key Research Reagent Solutions for SCORPION Implementation

| Resource Category | Specific Solution/Reagent | Function in SCORPION Workflow |

|---|---|---|

| Sequencing Technology | 10x Genomics single-cell/nuclei RNA-seq | Generates high-throughput single-cell transcriptomic input data |

| Prior Knowledge Databases | STRING database | Provides protein-protein interaction data for cooperativity network |

| Motif Information | Transcription factor footprint motifs | Informs regulatory network using promoter region binding sites |

| Computational Environment | R statistical programming environment | Core platform for SCORPION package execution |

| Supporting Packages | Seurat R package | Facilitates single-cell data loading and clustering preprocessing |

| Validation Frameworks | BEELINE evaluation toolkit | Enables systematic performance benchmarking against ground truth |

Troubleshooting Guide and Frequently Asked Questions

Common Technical Challenges and Solutions

Q1: How does SCORPION address the critical challenge of data sparsity in single-cell RNA-seq datasets? SCORPION employs a coarse-graining approach that collapses similar cells into "SuperCells" or "MetaCells" by identifying the most similar cells in low-dimensional representations of multidimensional RNA-seq data. This process reduces the sample size while significantly decreasing data sparsity, enabling more accurate detection of gene expression relationships that would be obscured in raw single-cell data [30] [29]. The coarse-graining step effectively creates mini pseudo-bulk profiles that retain biological variability while reducing technical noise and dropout effects common in scRNA-seq data.

Q2: What distinguishes SCORPION from correlation-based network construction methods? Unlike methods that rely solely on correlation metrics over sparse matrices (such as WGCNA), SCORPION integrates multiple data sources through a message-passing algorithm (PANDA) that incorporates:

- Protein-protein interaction data from STRING database

- Gene expression patterns from coarse-grained single-cell data

- Sequence motif data describing transcription factor binding potential This multi-source integration allows SCORPION to overcome limitations of correlation-only approaches, which perform poorly on sparse single-cell data and cannot distinguish direct from indirect regulatory relationships effectively [30].

Q3: What types of biological questions is SCORPION particularly suited to address? SCORPION is specifically designed for population-level comparative analyses, making it ideal for:

- Identifying regulatory differences between experimental conditions (e.g., wild-type vs. mutant, treated vs. untreated)

- Characterizing cell-type specific regulatory networks across multiple samples

- Discovering regulatory drivers of disease progression in patient cohorts

- Analyzing transcription factor activity changes across developmental stages or disease states

- Conducting population-level statistical analyses on GRN architectures [30] [31]

Q4: How does SCORPION enable comparative analysis across multiple samples? SCORPION generates comparable GRNs across multiple samples through two key features:

- Consistent Baseline Priors: All networks reconstructed for different samples leverage the same baseline priors (protein-protein interactions, motif information)

- Sample-Specific Networks: Creates individual GRNs for each sample using coarse-grained data from that sample, enabling statistical comparison across experimental groups rather than collapsed aggregate networks [30] This approach allows researchers to model biological variability in regulatory interactions across populations rather than assuming uniform regulatory mechanisms within experimental groups.

Q5: What computational resources are recommended for SCORPION analysis of large single-cell datasets? While SCORPION implements several optimization strategies (sparse matrices, reduced components during desparsification), users should consider:

- Memory Allocation: Large single-cell atlas datasets (e.g., 200,000+ cells) may require substantial RAM for efficient processing

- Processing Time: Iterative message-passing algorithm may require extended computation for large networks

- Parallelization: The tool is compatible with high-performance computing environments for accelerated processing For extremely large datasets, users may need to implement strategies such as analyzing cell subsets or leveraging cloud computing resources [30] [31].

Advanced Implementation Considerations

Q6: How can researchers validate SCORPION-predicted regulatory interactions experimentally? SCORPION predictions can be validated through:

- Transcription factor perturbation experiments (knockout/overexpression) followed by scRNA-seq

- Chromatin immunoprecipitation (ChIP-seq) for transcription factor binding

- Reporter assays for regulatory element activity

- Functional validation through target gene manipulation The tool has already been validated in supervised experiments accurately identifying differences between wild-type and transcription factor-perturbed cells [30].

Q7: Can SCORPION incorporate additional omics data types beyond transcriptomics? While SCORPION primarily leverages single-cell transcriptomic data, its framework allows potential integration with:

- ATAC-seq data for chromatin accessibility information

- DNA methylation data for epigenetic regulation

- Spatial transcriptomics for positional context The message-passing algorithm can theoretically accommodate additional data types as new network layers, though current implementation focuses on transcriptomics, protein interactions, and motif information [29].

Q8: What parameter adjustments most significantly impact SCORPION network reconstruction? Key user-defined parameters include:

- Coarse-graining level (k): Number of similar cells collapsed into SuperCells

- Convergence threshold: Hamming distance threshold for stopping iterations (default: 0.001)

- Information integration (α): Proportion of information from co-regulatory and cooperativity networks (default: 0.1) Users should optimize these parameters based on dataset size, sparsity, and biological questions, with documentation providing guidance for different scenarios [30].

Signaling Pathway and Regulatory Logic

SCORPION Regulatory Logic: From molecular data to clinical insights

SCORPION represents a significant advancement in computational biology by enabling population-level comparisons of gene regulatory networks using single-cell transcriptomics data. Its ability to generate precise, comparable GRNs across multiple samples addresses a critical gap in single-cell bioinformatics. The tool's validated performance superiority over existing methods, combined with its scalability to large datasets (demonstrated with 200,000+ cell atlas data), positions it as a valuable resource for advancing precision medicine initiatives [29] [31].

The methodological framework established by SCORPION has broad implications for optimizing gene network comparison approaches in biomedical research. By providing statistically robust GRNs suitable for population-level analysis, SCORPION enables researchers to move beyond descriptive characterizations of cellular states toward mechanistic understanding of regulatory programs driving phenotypes. This capability is particularly valuable for identifying key regulatory factors and interactions associated with disease progression, treatment response, and clinical outcomes—ultimately supporting development of targeted therapeutic strategies based on comprehensive regulatory network analysis [30] [29] [31].

As single-cell technologies continue evolving, producing increasingly complex and high-dimensional data, tools like SCORPION will be essential for extracting biologically meaningful insights from these rich datasets. The integration of multiple data types within a unified analytical framework represents a promising direction for computational biology, and SCORPION's success in leveraging this approach for GRN reconstruction provides a template for future methodological developments in the field.

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the Gene2role method compared to previous network analysis tools? Gene2role is the first method to apply role-based graph embedding to signed Gene Regulatory Networks (GRNs). Unlike traditional methods that focus on simple topological information like gene degree, Gene2role leverages multi-hop topological information (e.g., 1-hop and 2-hop neighbors) to capture deeper structural connections. This allows for a more nuanced comparison of GRNs across different cell states or types by projecting genes from separate networks into a unified embedding space for analysis [32] [33].

Q2: What file formats are required as input for the Gene2role pipeline? Gene2role primarily accepts two types of input files, depending on your starting data:

- Network File: A TSV (Tab-Separated Values) file with three columns:

geneID1,geneID2, andedge sign(1 or -1) [34]. - Expression Data: For constructing GRNs from scratch, you need:

Q3: How does Gene2role handle the scale-free nature of GRNs in its calculations? The method uses a custom distance function called Exponential Biased Euclidean Distance (EBED) to calculate topological similarity. EBED applies a logarithmic transformation to node degrees to mitigate the effects of the power-law distribution common in scale-free networks. It then computes the Euclidean distance and applies an exponential function to preserve the original proportionality of distances [32] [33].

Q4: What are the main output analyses I can perform with Gene2role embeddings? The embeddings generated by Gene2role enable two primary levels of downstream analysis:

- Gene-Level: Identify Differentially Topological Genes (DTGs) across cell types or states, offering a perspective beyond differential gene expression [32].

- Module-Level: Quantify the stability of gene modules between cellular states by measuring changes in the collective embeddings of genes within these modules [32].

Q5: My GRN was inferred from single-cell RNA-seq data. Which tool should I use in the pipeline? The Gene2role pipeline supports GRNs inferred by different methods. For single-cell RNA-seq data, you can use either:

- EEISP: Based on co-dependency and mutual exclusivity of gene expression [32] [34].

- Spearman Correlation: A co-expression-based method [32] [34].

You specify the choice via the

TaskModeargument in the pipeline command [34].

Troubleshooting Guides

Issue 1: Pipeline Command Failures or Incorrect Arguments

Problem

Errors when executing the pipeline.py script due to incorrect parameters or misconfigured input files.

Solution

- Step 1: Verify the command structure. The basic syntax is:

python pipeline.py TaskMode CellType EmbeddingMode input [][34] - Step 2: Ensure you are using the correct combination of arguments. Refer to the table below for standard configurations based on the original experiments [34].

| Experiment / Data Type | TaskMode | CellType | EmbeddingMode | Key Input |

|---|---|---|---|---|

| Simulated/Curated Networks | 1 | 1 | 1 | Edgelist file [34] |

| scRNA-seq (B cell, PBMC) via EEISP | 3 | 1 | 1 | Gene X Cell matrix [34] |

| scRNA-seq (B cell, PBMC) via Spearman | 2 | 1 | 1 | Gene X Cell matrix [34] |

| Multi-omics (Ery_0 state) | 1 | 1 | 1 | Edgelist file [34] |

| Multi-cell-type Analysis (Glioblastoma) | 3 | 2 | 2 | Gene X Cell matrix [34] |

- Step 3: Run

python pipeline.py --helpfor detailed information on other arguments [34].

Issue 2: Errors in Gene ID Mapping Across Multiple Networks

Problem When performing a comparative analysis across multiple GRNs (e.g., different cell types), inconsistencies in gene identifiers can cause failures.

Solution

- Step 1: After running the pipeline for multiple networks, locate the generated

index_tracker.tsvfile [34]. - Step 2: Use this file to clarify gene IDs across the GRNs. The file's columns represent cell types, and rows represent genes. The values show the replaced ID for a gene in the GRN of a specific cell type [34].

- Step 3: Cross-reference this tracker file during your downstream analysis to ensure genes are correctly matched.

Issue 3: Challenges with Interpreting the Multi-Layer Graph Construction

Problem Difficulty understanding the intermediate steps of the embedding generation, particularly the construction of the multi-layer graph.

Solution The multilayer graph encodes topological similarities at different depths ("hops") from each gene. The following diagram illustrates the logical workflow from a signed GRN to the final gene embeddings.

Issue 4: Low Contrast in Network Visualizations for Publications

Problem Visualizations created from network results or analysis diagrams have poor color contrast, making them difficult to interpret in reports or presentations.

Solution Adhere to accessibility guidelines for visual presentation. For critical informational text, ensure a contrast ratio of at least 4.5:1 for large text and 7:1 for other text against the background [35]. The color palette provided for the diagrams in this document is pre-validated for sufficient contrast. When using tools like Cytoscape [36] for further network visualization, manually check the colors of nodes, text, and edges against their backgrounds.

Experimental Protocols & Key Materials

Protocol 1: Generating Embeddings from a Pre-computed GRN

This protocol is used when you already have a signed GRN in the form of an edgelist [34].

- Input Preparation: Format your GRN as a TSV file with three columns:

geneID1,geneID2, andedge sign(1 for activation, -1 for inhibition). - Command Execution: Run the pipeline with the following command:

python pipeline.py 1 1 1 your_edgelist.tsvTaskMode=1: Run SignedS2V for an edgelist file.CellType=1: Single cell-type analysis.EmbeddingMode=1: Single network embedding [34].

- Output: The pipeline will generate the gene embeddings for downstream analysis.

Protocol 2: Generating Embeddings from Single-Cell RNA-seq Count Data

This protocol is used when you need to infer the GRN from a gene-by-cell count matrix before generating embeddings [34].

- Input Preparation:

- Prepare

your_count_matrix.csv(genes as rows, cells as columns). - Prepare

your_cell_metadata.csvwithorig.identandcelltypecolumns.

- Prepare

- Command Execution: To infer the GRN using EEISP and generate embeddings, use:

python pipeline.py 3 1 1 your_count_matrix.csvTaskMode=3: Run EEISP and SignedS2V from a count matrix [34].

- Output: The pipeline will infer the GRN and subsequently produce the gene embeddings.

Research Reagent Solutions

The following table lists essential materials, datasets, and software tools used in the development and application of Gene2role.

| Item Name | Type | Function / Description | Source / Reference |

|---|---|---|---|