Navigating the Genotype-Phenotype Map: From Complex Networks to Clinical Applications in Drug Discovery

This article provides a comprehensive overview of strategies for managing the profound complexity of genotype-phenotype mapping, a central challenge in modern biology and precision medicine.

Navigating the Genotype-Phenotype Map: From Complex Networks to Clinical Applications in Drug Discovery

Abstract

This article provides a comprehensive overview of strategies for managing the profound complexity of genotype-phenotype mapping, a central challenge in modern biology and precision medicine. Tailored for researchers and drug development professionals, we explore the foundational principles of genetic and epigenetic interaction networks that govern phenotypic expression. The scope extends to cutting-edge methodological advances, including single-cell resolved atlases, deep mutational scanning, and high-throughput CRISPR screens, which systematically link genetic variation to phenotypic outcomes. We further address troubleshooting and optimization strategies for interpreting complex data, and present validation frameworks that translate these insights into clinically actionable knowledge, ultimately enhancing target identification and improving the success rate of therapeutic development.

Deconstructing Complexity: The Theoretical Foundations of the Genotype-Phenotype Map

The relationship between genotype and phenotype is fundamental to genetics, yet this mapping is notoriously complex. Traditional linear models, which assume additive effects of individual genes, are insufficient for capturing the intricate biological reality where nonlinear interactions and complex networks dominate. This technical support center provides practical guidance for researchers grappling with these complexities, offering troubleshooting advice, detailed protocols, and visual frameworks to advance your investigations into genotype-phenotype mapping beyond conventional linear assumptions.

Frequently Asked Questions (FAQs)

Q1: Why does my genotype-phenotype map show high-order epistasis even after accounting for additive effects?

High-order epistasis (interactions between three or more mutations) can reflect genuine biological complexity but may also emerge as a statistical artifact if the scale of your model doesn't match the underlying biological system. A linear model applied to an inherently multiplicative process will generate spurious epistatic terms [1]. To diagnose this:

- Estimate nonlinear scaling: Use power transformations to linearize your map before epistasis analysis [1]

- Validate with back-transformation: Apply the inverse transformation to verify your scaling approach [1]

- Interpret cautiously: Even after accounting for nonlinearity, significant high-order epistasis may persist, contributing 2.2-31.0% of phenotypic variation in documented cases [1]

Q2: How do genotyping errors impact genetic map construction, and how can I mitigate these effects?

Genotyping errors seriously distort genetic maps by inflating distances and disrupting marker orders. Each 1% genotyping error rate can add approximately 2 cM of inflated distance to your map [2] [3]. The impact varies by error type and marker position:

Table 1: Impact of Genotyping Errors on Map Construction

| Error Type | Effect on Map | Recommended Correction |

|---|---|---|

| Terminal marker errors | Indistinguishable from recombinations | Assume all are recombinations [2] |

| Internal marker errors | Creates two apparent recombinations | Use error-compensating likelihood models [2] |

| Systematic platform errors | Consistent bias across markers | Implement repeated genotyping (30% samples) [3] |

| Random sampling errors | Inconsistent genotypes | Apply algorithms (QTL IciMapping, Genotype-Corrector) [3] |

Q3: What computational approaches can capture nonlinear gene-gene and gene-environment interactions?

Traditional methods struggle with higher-order interactions, but several advanced frameworks show promise:

- G–P Atlas: A neural network framework using a two-tiered denoising autoencoder that first learns phenotype representations, then maps genotypes to these representations [4]

- Genetic Programming: Treats genes as computer programs that evolve through mutation and recombination to discover nonlinear mappings [5]

- Causally Cohesive Genotype–Phenotype (cGP) Models: Embeds dynamic physiological models with explicit genetic parameters to bridge mechanisms across scales [6]

Q4: How should I account for population structure in genotype-phenotype association studies?

Population stratification causes spurious associations when subgroup ancestry correlates with both genotype and phenotype. Implement a three-step control process [7]:

- Quality Control: Filter poor-quality samples and markers

- Association Testing: Include ancestry principal components as covariates

- Post-GWAS Interrogation: Apply genomic control and validate across ancestries

Use global ancestry estimation tools (STRUCTURE, ADMIXTURE) to quantify ancestral proportions, particularly in admixed populations [7].

Troubleshooting Guides

Problem: Inflated Genetic Map Distances

Symptoms: Map lengths exceed expected values based on physical maps; excessive double recombinants appear.

Diagnosis and Solutions:

- Quantify error rates: Calculate inconsistency rates between technical replicates [3]

- Classify error types: Categorize as 01, 02, or 12 errors based on parental genotype confusions [3]

- Apply correction methods:

- Validate corrected maps: Compare correlation between linkage and physical maps pre- and post-correction [3]

Problem: Detecting Spurious Epistasis Due to Scale Mismatch

Symptoms: High-order interaction terms are statistically significant but biologically implausible; similar maps show inconsistent epistatic patterns.

Diagnosis and Solutions:

Test for nonlinear scaling: Fit a power transform to your genotype-phenotype map using nonlinear least-squares regression [1]:

Where Pobs is observed phenotype, Padd is predicted additive phenotype, A and B are translation constants, λ is the scaling parameter, and GM is the geometric mean [1]

Linearize your map: Apply the estimated parameters to transform phenotypes to a linear scale [1]:

Recompute epistasis: Perform high-order epistasis analysis on the linearized data using Walsh transforms [1]

Compare results: Assess whether high-order terms remain significant after scale correction

Problem: Modeling Multi-Gene Interactions in Cancer Systems

Symptoms: Single-gene models fail to recapitulate tumor heterogeneity; unable to resolve polygenic drivers of cancer phenotypes.

Diagnosis and Solutions:

Implement combinatorial organoid transformation [8]:

- Use barcoded lentiviral libraries encoding cancer-associated events

- Transduce primary epithelial cells at high multiplicity of infection (10-20 copies/cell)

- Engraft in immunocompromised mice for tumorigenic selection

Resolve clonal architecture:

- Perform single-cell DNA amplicon sequencing to enumerate lentiviral barcodes

- Use laser capture microdissection for spatial histology-genotype correlation [8]

Analyze cooperative oncogenecity: Identify co-occurring genetic events across tumor histologies using the BASE47 subtype predictor and Consensus Molecular Classifier [8]

Experimental Protocols

Protocol 1: Nonlinear Scale Estimation in Genotype-Phenotype Maps

Purpose: Estimate and account for nonlinear scaling in genotype-phenotype maps to avoid spurious epistasis [1].

Materials:

- Genotype-phenotype data for all binary combinations of L mutations (2^L genotypes)

- Software for nonlinear least-squares regression (R, Python, or MATLAB)

- Multiple phenotype measurements per genotype (minimum 3 replicates)

Procedure:

Calculate additive predictions: For each genotype i, compute the additive phenotype prediction:

where 〈ΔPj〉 is the average effect of mutation j across backgrounds, and xi,j indicates presence/absence of mutation j in genotype i [1]

Fit power transform: Use nonlinear regression to estimate parameters λ, A, and B:

Linearize phenotypes: Apply the back-transform to obtain scale-corrected phenotypes [1]

Proceed with epistasis analysis: Use Walsh transforms or similar approaches on linearized data

Troubleshooting:

- If regression fails to converge, try different initial values for λ (start with 0.5, 1, 2)

- If confidence intervals for λ include 1, the map is likely linear

- Validate by checking if epistasis patterns stabilize after transformation

Protocol 2: Combinatorial Genetic Strategy for Complex Cancer Phenotypes

Purpose: Generate diverse, clinically relevant cancer models to explore polygenic drivers of malignant transformation [8].

Materials:

- Primary mouse bladder urothelial (mBU) or prostate epithelial (mPE) cells

- Barcoded lentiviral libraries (ORFs and shRNAs) targeting cancer-associated genes

- Matrigel for organoid culture

- NSG mice for transplantation

- Single-cell DNA amplicon sequencing platform (Mission Bio Tapestri)

Procedure:

Isolate primary cells: FACS sort Lin⁻ (CD45⁻CD31⁻Ter119⁻), EpCAM⁺CD49fʰⁱᵍʰ populations [8]

Achieve high-efficiency transduction:

- Mix cells with concentrated lentivirus in cold Matrigel

- Seed as organoid droplets for polymerization

- Aim for 10-20 proviral copies per cell [8]

Recombine with inductive mesenchyme: For bladder tumors, use E16 bladder mesenchyme (EBLM); for prostate, use urogenital sinus mesenchyme (UGSM) [8]

Transplant and monitor: Graft subcutaneously in NSG mice; monitor tumor formation (2.3-16 months) [8]

Resolve clonal architecture: Perform single-cell or spatial barcode sequencing to associate genotypes with histological subtypes [8]

Validation:

- Confirm urothelial origin by GFP and GATA3/TP63/panCK staining

- Classify subtypes using BASE47 predictor and Consensus Molecular Classifier

- Project expression patterns onto TCGA cohorts to validate clinical relevance [8]

Research Reagent Solutions

Table 2: Essential Research Reagents for Nonlinear Genotype-Phenotype Mapping

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| Barcoded lentiviral libraries [8] | Deliver multiple genetic perturbations trackable via barcodes | Combinatorial cancer modeling; exploring polygenic drivers |

| Denoising autoencoder frameworks [4] | Capture nonlinear relationships with data efficiency | G–P Atlas for simultaneous multi-phenotype prediction |

| Power transform algorithms [1] | Estimate and correct nonlinear scaling in phenotype data | Differentiating true biological epistasis from scale artifacts |

| Error-correcting map software [2] [3] | Compensate for genotyping errors in linkage analysis | TMAP; QTL IciMapping; Genotype-Corrector |

| Causally cohesive model platforms [6] | Embed genetic variation in physiological dynamics | Virtual Physiological Rat project; multiscale physiology |

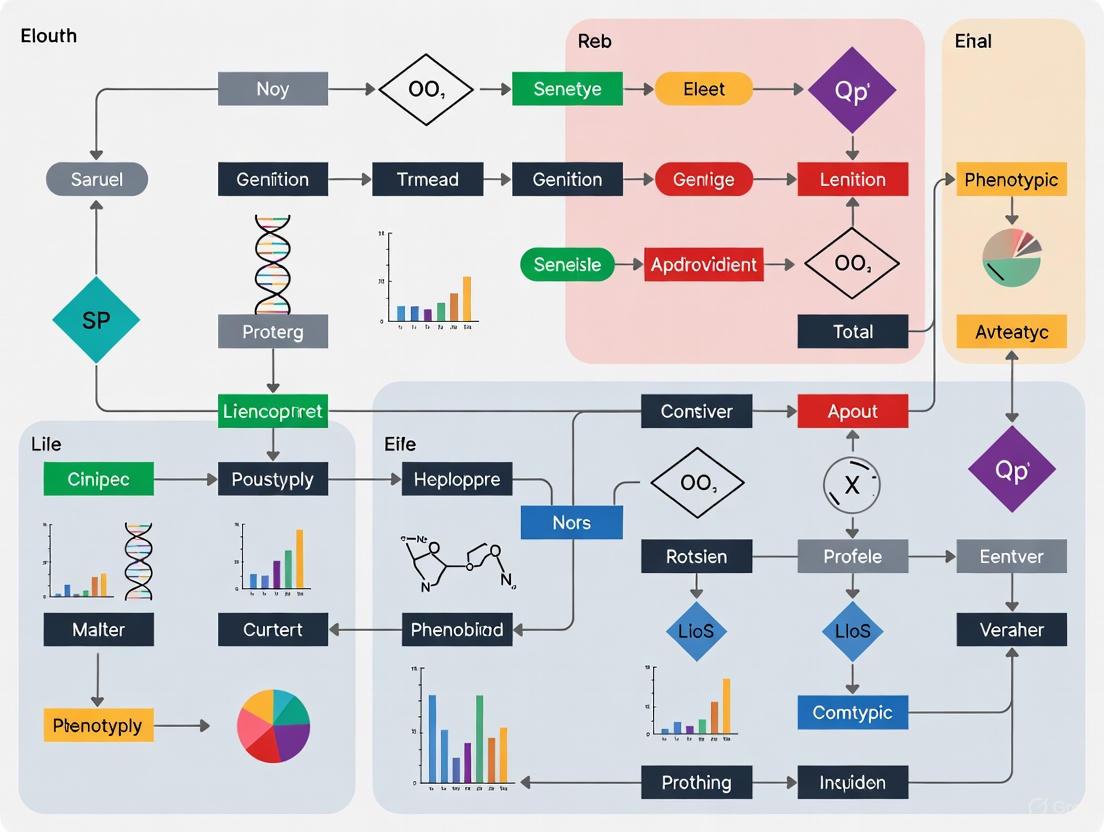

Visualizations

Diagram 1: Nonlinear Scale Correction Workflow

Diagram 2: Combinatorial Cancer Model Strategy

Diagram 3: Genotyping Error Impact and Correction

Technical Support Center: Troubleshooting Boolean Network Experiments

This support center provides solutions for common challenges encountered when constructing, simulating, and validating Boolean network models for genotype-phenotype mapping research.

Frequently Asked Questions (FAQs)

1. My model's dynamics do not match the experimental time-series data. How can I repair it? Answer: This is a common issue where the model's logical rules are inconsistent with new data. A method using Answer Set Programming (ASP) can automatically suggest minimal repairs [9].

- Cause: The manually defined logical functions may not accurately capture all regulatory dependencies observed in new datasets.

- Solution: Use an ASP-based repair tool. The process involves:

- Encode the Problem: Formulate your existing Boolean model and the time-series data it fails to match as a logic program.

- Define Repair Operations: Specify the allowed atomic changes, such as modifying a single logical operator (e.g., changing an AND to an OR) in a regulatory function.

- Find Minimal Repairs: The ASP solver will compute the smallest set of repair operations that make the model consistent with the data. This minimizes changes to the original, expert-curated model [9].

- Protocol:

- Convert your model and data into the required input format (e.g., using bioLQM toolkit).

- Run the ASP encoding to generate repair suggestions.

- Implement the suggested repairs and re-simulate to validate against the time-series.

2. How can I identify which nodes in my network have the highest impact on its dynamic behavior (e.g., attractors)? Answer: You can identify dynamically relevant nodes by calculating specific impact measures based on network perturbations [10].

- Cause: In large networks, exhaustive dynamic analysis is computationally infeasible. Static topological measures alone may miss key influencers.

- Solution: Perform in-silico knockout (KO) and overexpression (OE) experiments and measure changes in attractors.

- Protocol:

- For each node in your network, simulate two perturbations: KO (fix state to 0) and OE (fix state to 1).

- For each perturbation, calculate the following dynamic measures [10]:

- Gain of Attractors (Gg): Count of new attractors that emerge after perturbation.

- Loss of Attractors (Lg): Count of original attractors that disappear after perturbation.

- Minimal Hamming Distance (Dg): Measures the minimal shift in the state patterns of attractors.

- Aggregate these three measures into a single Dynamic Impact (Ig) score for each node to rank their overall importance [10].

3. What is the most effective way to infer a large-scale Boolean network model directly from transcriptomic data? Answer: A scalable methodology involves using software like BoNesis to automatically generate ensembles of models from qualitative data specifications [11].

- Cause: Manually designing logical rules for large networks (e.g., hundreds of genes) is slow and prone to bias. Many automated methods do not scale well.

- Solution: A pipeline that transforms transcriptome data (both scRNA-seq and bulk RNA-seq) into a logical specification of expected dynamic properties, which is then used to infer compatible models.

- Protocol:

- Data Binarization: Discretize gene expression data into Boolean ON/OFF states using a tool like PROFILE [11].

- Trajectory Reconstruction: For scRNA-seq data, use trajectory inference tools (e.g., STREAM) to identify cell states and differentiation paths [11].

- Define Properties: Translate the trajectories into expected model behaviors, such as which states must be steady states and the trajectories that must connect them.

- Model Inference: Use BoNesis to search for the sparsest Boolean networks (from a prior knowledge network) that satisfy all the specified dynamic properties [11].

Troubleshooting Guides

Issue: Inconsistent Model Behavior After Perturbation This occurs when a simulated intervention (e.g., node knockout) produces unexpected or biologically implausible results.

- Step 1: Verify the logical function of the perturbed node. Ensure the perturbation is correctly implemented by fixing the node's value and checking that it remains constant throughout the simulation.

- Step 2: Check for feedback loops. The perturbed node might be part of a critical feedback loop. Its forced state could create a conflict that propagates through the network. Analyze the network's structure to identify these loops.

- Step 3: Validate with the dynamic impact measure. Calculate the dynamic impact (Ig) of the perturbed node. A high Ig score confirms the node is a key driver, and the unexpected result may be a valid model prediction worth biological investigation [10].

- Step 4: Repair the model. If the behavior is confirmed to be incorrect based on new experimental data, employ the ASP-based model repair method to fix the inconsistent logical functions [9].

Issue: Model Fails to Reach Known Phenotypic Attractors The simulated network does not settle into the steady states corresponding to known biological phenotypes.

- Step 1: Confirm the attractor specification. Double-check that the expected phenotypic states are correctly defined as steady states in the model's specification during the inference or repair process [11].

- Step 2: Review the data binarization method. The classification of gene expression into Boolean ON/OFF states is critical. Try different binarization thresholds or methods, as this can significantly alter the inferred model dynamics [11].

- Step 3: Examine the underlying network structure. The prior knowledge network used for inference might be missing key regulatory interactions. Consider augmenting it with additional data from databases like DoRothEA [11].

- Step 4: Utilize ensemble modeling. Instead of a single model, generate an ensemble of models that are all compatible with the data. Analyze this ensemble to identify robust core nodes and functions essential for the phenotype [11].

Experimental Protocols & Data Presentation

Protocol 1: Quantifying Node-Specific Dynamic Impact

This protocol details how to rank nodes in a Boolean network based on their influence on system dynamics [10].

- Simulation Setup: Load your Boolean network model into a simulation environment like the R package

BoolNet. - Perturbation: For each node

gin the network: a. Create a knockout variantNgKO(fixxg := 0). b. Create an overexpression variantNgOE(fixxg := 1). - Attractor Analysis: Identify all attractors for the original network

A(N)and each perturbed networkA(NgP). - Calculation: Compute the three dynamic measures for each perturbation using these formulas [10]:

- Gain of Attractors:

Gg = maxP | Ag(NgP) \ Ag(N) | - Loss of Attractors:

Lg = maxP | Ag(N) \ Ag(NgP) | - Minimal Hamming Distance:

Dg = maxP [ 1/|A(NgP)| * Σ min H_g(a, a') ]where the sum is over a' in A(NgP) and the min is over a in A(N).H_gis the Hamming distance excluding component g.

- Gain of Attractors:

- Ranking: Rank the nodes for each measure (G, L, D) and compute the final Dynamic Impact score as:

Ig = 1/3 * ( rk(Gg) + rk(Lg) + rk(Dg) ).

Table 1: Dynamic Impact Measures for a Sample Network This table shows a sample output from the dynamic impact analysis for a Boolean model [10].

| Node | Gain of Attractors (Gg) | Loss of Attractors (Lg) | Minimal Hamming Distance (Dg) | Dynamic Impact (Ig) Rank |

|---|---|---|---|---|

| Gene_A | 2 | 1 | 4.2 | 1 |

| Gene_B | 1 | 2 | 3.5 | 2 |

| Gene_C | 0 | 0 | 1.1 | 5 |

| Gene_D | 1 | 1 | 2.8 | 3 |

Protocol 2: Data-Driven Inference of a Boolean Network from scRNA-seq Data

This protocol outlines the steps to automatically reconstruct Boolean models from single-cell RNA sequencing data [11].

- Data Preprocessing: Perform hyper-variable gene selection on the scRNA-seq count matrix.

- Trajectory Reconstruction: Use a tool like STREAM to reconstruct the differentiation trajectory, identifying branching points and cell states.

- State Binarization: Classify the gene activity (0/1) for each cell state cluster using a method like PROFILE, aggregating results from individual cells.

- Logical Specification: Define the expected dynamical properties of the Boolean model. This includes:

- Designating leaf nodes of the trajectory as steady states.

- Specifying that there must exist trajectories between states according to the reconstructed tree.

- Model Inference: Use the software

BoNesisto infer an ensemble of Boolean networks. The software will identify models that use the provided regulatory network (e.g., from DoRothEA) and satisfy all the dynamical properties from the previous step. The output is often the sparsest possible models that explain the data [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software Tools for Boolean Network Research

| Tool Name | Function | Application in Research |

|---|---|---|

| BoolNet [10] [12] | Attractor search and robustness analysis | Simulate network dynamics, identify stable states (attractors), and perform perturbation analyses. |

| BoNesis [11] | Inference of Boolean networks from specifications | Automatically generate models that are consistent with prior knowledge and observed dynamical properties. |

| bioLQM [9] | Model conversion and formatting | Translate Boolean models between different file formats (e.g., SBML-qual) for use in various software tools. |

| Answer Set Programming (ASP) Solver (e.g., clingo) [9] | Logical reasoning and combinatorial optimization | Solve complex model repair and inference problems by finding solutions that satisfy all defined constraints. |

| PROFILE [11] | Binarization of scRNA-seq data | Discretize continuous gene expression data into Boolean ON/OFF states for use in logical model inference. |

Pathway and Workflow Visualizations

Troubleshooting Guides and FAQs for Epigenetic Research

FAQ: Core Concepts and Experimental Challenges

What are the primary epigenetic mechanisms I need to consider for genotype-phenotype mapping? Beyond the DNA sequence, gene expression and the resulting phenotype are regulated by several key epigenetic layers. These include DNA methylation, various histone modifications, the action of non-coding RNAs, and chromatin remodeling [13] [14]. In complex genotype-phenotype research, these mechanisms can mediate the effects of environmental cues on genetic output and contribute to phenotypic heterogeneity that is not explainable by genetics alone [15] [16].

Why is my epigenetic data inconsistent between technical replicates? Inconsistent data often stems from technical artifacts. For bisulfite-based DNA methylation sequencing, a major culprit is severe DNA degradation caused by the bisulfite conversion process itself [17]. Consider switching to more modern techniques like EM-Seq or TAPS, which are less damaging to DNA and can provide more reliable results [17]. For histone modification studies, inconsistency can arise from poor antibody specificity in ChIP-Seq protocols [17]. Alternative methods like CUT&RUN or CUT&Tag can offer higher resolution and lower background noise by performing the cleavage reaction in situ [17].

How can I account for non-genetic heterogeneity in my phenotype data? Phenotypic heterogeneity can arise from two primary non-genetic sources: bet-hedging and phenotypic plasticity [16]. Bet-hedging describes stochastic phenotype switching within an isogenic population, while phenotypic plasticity is the deterministic change of phenotype in response to environmental signals [16]. Your experimental design should incorporate single-cell assays (e.g., single-cell CUT&Tag [17]) and controlled environmental fluctuations to distinguish between these drivers of heterogeneity.

My research aims to therapeutically reverse a pathogenic epigenetic mark. What are the main challenges? A key challenge is achieving specificity and avoiding off-target effects [18]. While epigenetic modifications are reversible, the machinery involved (e.g., DNMTs, HDACs) often regulates many genes genome-wide. Newer approaches like CRISPR-dCas9 systems fused to epigenetic modifiers aim for locus-specific editing, but delivery and long-term safety remain significant hurdles [18].

Troubleshooting Guide: Common Experimental Issues

Problem: Poor Resolution in Histone Modification Mapping

- Potential Cause 1: Crosslinking-induced false positives in ChIP-Seq. Formaldehyde crosslinking can create artifacts by linking DNA to non-specifically bound proteins [17].

- Solution: Transition to crosslinking-free methods such as CUT&RUN or CUT&Tag. These techniques use immobilized cells and micrococcal nuclease or Tn5 tagmentation to release specific protein-DNA complexes, resulting in higher resolution and lower background [17].

- Potential Cause 2: Low antibody specificity or affinity.

- Solution: Validate antibodies rigorously using appropriate positive and negative control cell lines. Consider using tagged histone variants and affinity-based purification instead of antibodies where possible.

Problem: Incomplete Bisulfite Conversion in DNA Methylation Sequencing

- Potential Cause: Suboptimal reaction conditions or DNA quality. Incomplete conversion leads to unmodified cytosines being misinterpreted as methylated cytosines, overestimating true methylation levels [17] [13].

- Solution: Standardize reaction time, temperature, and DNA input quantity. Include controls with known methylation status (e.g., unmethylated lambda DNA) to monitor conversion efficiency. As a long-term solution, adopt bisulfite-free methods like EM-Seq or TAPS to eliminate this problem entirely [17].

Problem: High Noise in Chromatin Accessibility Data (ATAC-Seq)

- Potential Cause: Mitochondrial DNA contamination or over-digestion/under-digestion by the transposase.

- Solution: Optimize transposase concentration and incubation time. Use bioinformatic tools to filter out mitochondrial reads. Include a nuclei purification step instead of using whole cells to improve signal-to-noise ratio.

The Scientist's Toolkit: Research Reagent Solutions

The table below details key reagents and their functions in modern epigenetic research.

Table 1: Essential Reagents for Epigenetic Research

| Research Reagent / Tool | Primary Function | Key Application Examples |

|---|---|---|

| HDAC Inhibitors (e.g., Vorinostat) [18] | Inhibits histone deacetylases, leading to increased histone acetylation and a more open chromatin state. | Used to reverse repressive epigenetic marks; studied in neurodegenerative disease models and cancer [18]. |

| DNMT Inhibitors (e.g., 5-azacytidine, Decitabine) [17] [18] | Incorporated into DNA during replication, leading to irreversible binding and inhibition of DNA methyltransferases (DNMTs), causing DNA hypomethylation. | Therapeutic use in Myelodysplastic Syndromes (MDS) and Acute Myeloid Leukemia (AML); research tool to probe function of DNA methylation [17]. |

| CRISPR-dCas9 Epigenetic Editors [18] | A "catalytically dead" Cas9 fused to epigenetic writer/eraser domains (e.g., DNMT3a, TET1, p300). Enables precise, locus-specific editing of epigenetic marks without altering the DNA sequence. | Investigated for targeted reactivation of tumor suppressor genes or silencing of pathogenic genes in neurodegenerative disorders [18]. |

| Specific Antibodies (for ChIP-Seq, CUT&RUN) [17] [13] | Immunoprecipitation of DNA fragments bound by specific histone modifications (e.g., H3K27ac, H3K4me3, H3K27me3) or chromatin-associated proteins. | Genome-wide mapping of histone modification landscapes; identification of active enhancers and promoters [17]. |

| Sodium Bisulfite [17] [13] | Chemical deamination of unmethylated cytosine to uracil, while leaving 5-methylcytosine (5mC) intact. The foundation for most gold-standard DNA methylation sequencing methods. | Required for Whole-Genome Bisulfite Sequencing (WGBS) and Reduced Representation Bisulfite Sequencing (RRBS) [13]. |

The following table provides a structured comparison of key methodologies for mapping epigenetic modifications.

Table 2: Comparison of Epigenetic Modification Sequencing Methods

| Method | Target Modification(s) | Resolution | Key Advantage | Key Limitation |

|---|---|---|---|---|

| WGBS [17] [13] | 5mC, 5hmC | Base-level | Quantitative; considered the gold standard for 5mC. | Bisulfite treatment severely damages DNA [17]. |

| EM-Seq / TAPS [17] | 5mC, 5hmC | Base-level | Bisulfite-free; preserves DNA integrity. | Emerging technology; may have higher cost. |

| ChIP-Seq [17] [13] | Histone modifications, transcription factors | 200-500 bp | Well-established; wide array of validated antibodies. | Requires high input DNA; crosslinking artifacts; antibody specificity issues [17]. |

| CUT&Tag / CUT&RUN [17] | Histone modifications, transcription factors | ~20 bp (CUT&RUN) | Low background noise; works well with low cell numbers; no crosslinking. | Still relies on antibody quality. |

| ATAC-Seq [14] | Chromatin accessibility | Single-nucleotide | Simple, fast protocol; reveals open chromatin regions. | Sensitive to sample quality and mitochondrial contamination. |

Detailed Experimental Protocols

Protocol 1: CUT&RUN for Histone Modification Mapping

Principle: This antibody-targeted chromatin profiling method uses Protein A-MNase fusion protein to cleave and tag DNA bound by a specific protein of interest in situ, avoiding crosslinking [17].

Workflow:

- Cell Permeabilization: Immobilize purified nuclei on concanavalin A-coated magnetic beads.

- Antibody Incubation: Incubate with a primary antibody specific to the histone mark (e.g., H3K4me3).

- Protein A-MNase Binding: Add the Protein A-MNase fusion protein, which binds to the antibody.

- Targeted Cleavage: Activate MNase by adding Ca²⁺ to cleave DNA surrounding the antibody-bound nucleosomes.

- DNA Extraction: Release the cleaved DNA fragments into the supernatant and purify.

- Library Prep and Sequencing: Prepare sequencing libraries from the purified DNA fragments for high-resolution mapping [17].

CUT&RUN Workflow for Histone Marks

Protocol 2: Whole-Genome Bisulfite Sequencing (WGBS) for DNA Methylation

Principle: Sodium bisulfite converts unmethylated cytosine to uracil, which is then read as thymine during sequencing. Methylated cytosines (5mC) are resistant to conversion and are still read as cytosine. Comparing the bisulfite-converted sequence to a reference genome reveals methylation sites [17] [13].

Workflow:

- DNA Shearing: Fragment genomic DNA to the desired size for sequencing.

- Bisulfite Conversion: Treat DNA with sodium bisulfite.

- Desalting and Cleanup: Purify the bisulfite-converted DNA to remove salts and reagents.

- Library Preparation: Prepare a sequencing library from the converted DNA. Special adapters that are compatible with bisulfite-converted sequences are often used.

- Next-Generation Sequencing: Sequence the library.

- Bioinformatic Analysis: Map sequencing reads to a reference genome and calculate the methylation percentage at each cytosine position [17] [13].

WGBS Workflow for DNA Methylation

Traditional drug discovery, often reliant on empirical approaches and incomplete biological hypotheses, faces a fundamental challenge: the high complexity of the human genome and the non-linear relationship between genotype (genetic makeup) and phenotype (observable traits/disease) [19] [20]. This complexity leads to a high rate of failure in clinical development, primarily due to an inability to demonstrate efficacy or sufficient safety [19]. The central issue is that efficacy in treating non-clinical disease models is not always an adequate proxy for efficacy in treating human disease [20].

Genomic complexity manifests through several key mechanisms:

- Epistasis: The phenotypic effect of a mutation often depends on the genetic background in which it occurs, a phenomenon known as epistasis [21].

- Polygenic Traits: For most prevalent diseases, heritable risk is driven by a large number of common variants with small individual effect sizes, rather than single genes [20].

- Context-Dependent Effects: The high dimensionality of sequence space and the context-dependent effects of mutations make predicting phenotypic outcomes from genotypic data exceptionally difficult [21].

The table below summarizes the quantitative impact of this challenge on drug development pipelines.

Table 1: The Impact of Drug Development Challenges

| Challenge Metric | Traditional Approach | Genomics-Enhanced Approach | Data Source |

|---|---|---|---|

| Clinical Trial Attrition | High failure rates; 51% of Phase II trials (2005-2015) failed due to lack of efficacy [20] | Targets with human genetic evidence are ~2.6x more likely to reach approval [22] | Nature (2024) |

| Likelihood of Approval (LOA) | Dropped to as low as 6% (2021-2022) [19] | Returning to 10-11%, with genomics as a key driver [19] | Industry Analysis |

| Target Validation | Based on empirical approaches and often incomplete biological hypotheses [19] | Systematic prioritization within a probabilistic framework [23] [22] | Nat Rev Genet (2025) |

Visualizing the Workflow: Traditional vs. Modern Genomics-Driven Discovery

The following diagram illustrates the fundamental differences between the traditional, linear drug discovery pipeline and the modern, integrative genomics-driven approach, which is designed to manage complexity.

The Scientist's Toolkit: Essential Research Reagent Solutions

Navigating genomic complexity requires a specific set of tools and reagents. The following table details key solutions for effective genotype-phenotype mapping research.

Table 2: Key Research Reagent Solutions for Genotype-Phenotype Mapping

| Tool/Reagent | Primary Function | Application in Troubleshooting |

|---|---|---|

| Multiplex Assays of Variant Effect (MAVEs) [21] | Enables high-throughput phenotyping of thousands to millions of genetic variants in a single experiment. | Empirically characterizes genotype-phenotype maps at scale, overcoming the inability to explore vast sequence space. |

| Long-Read Sequencing (HiFi) [24] | Provides highly accurate and comprehensive view of the genome, especially in complex "dark regions". | Diagnoses rare diseases linked to repeat expansions (e.g., ALS, Huntington's) and resolves complex structural variants. |

| gpmap-tools Python Library [21] | Infers and visualizes complex genotype-phenotype maps from MAVE data or natural sequences. | Models and accounts for high-order epistatic interactions that confound simple genetic models. |

| Open Targets Platform [22] | Integrates multiple lines of evidence (genetics, genomics, drugs) for target identification and prioritization. | Validates therapeutic targets with human genetic evidence to de-risk drug discovery projects. |

| 3D Cell Culture / Organoids (MO:BOT) [25] | Provides human-relevant, automated tissue models that standardize seeding and quality control. | Generates more predictive human safety and efficacy data, reducing reliance on non-predictive animal models. |

Troubleshooting Guides & FAQs

FAQ 1: Our target shows promise in vitro but consistently fails in human trials. How can genetics help?

The Problem: This is a classic manifestation of the genotype-phenotype gap, where model systems do not recapitulate human disease biology [20].

The Solution:

- Integrate Human Genetic Evidence: Retrospective studies show that drugs developed against targets with human genetic support are at least 2 times more likely to achieve approval [20]. A 2024 study confirms that drug mechanisms with human genetic evidence are 2.6 times more likely to reach approval [22].

- Implementation Protocol:

- Query Genetic Databases: Use the GWAS Catalog, Open Targets Platform, and biobank data (e.g., UK Biobank, Estonian Biobank) to assess genetic associations between your target and the human disease of interest [22] [24] [20].

- Perform Mendelian Randomization: Use genetic variants as instrumental variables to infer causal relationships between modulating the target and disease risk. This can mimic the effect of a therapeutic intervention in humans [22].

- Check for Safety Signals: Analyze human genetic data for links between loss-of-function variants in your target gene and adverse phenotypes. This can predict potential mechanism-based toxicity [22].

FAQ 2: We are struggling to account for complex genetic interactions (epistasis) in our disease model.

The Problem: The effect of a mutation often depends on the genetic background (epistasis), making phenotypic outcomes difficult to predict from single-locus analyses [21].

The Solution:

- Utilize MAVE Data and Advanced Modeling: Multiplex Assays of Variant Effect (MAVEs) combined with Gaussian process models can empirically map genetic interactions [21].

- Implementation Protocol:

- Access or Generate MAVE Data: For your gene or regulatory element of interest, use existing MAVE datasets or design a new experiment to measure the fitness of thousands of sequence variants [21].

- Infer the Genotype-Phenotype Map: Use the

gpmap-toolsPython library to infer a model from the MAVE data. The library can handle genetic interactions of every possible order [21]. - Visualize the Fitness Landscape: Employ the visualization methods in

gpmap-toolsto identify high-fitness "ridges" and "valleys," revealing the complex architecture of genetic interactions that define functional sequences [21].

FAQ 3: Our clinical trials are failing due to lack of efficacy and unexpected side effects.

The Problem: This high attrition rate is often due to poor target selection and insufficient understanding of the target's role in human biology beyond the disease context [23] [19].

The Solution:

- Systematic Target Prioritization and Safety Assessment: Integrate multiple lines of evidence centered on human genetics within a probabilistic framework [23] [22].

- Implementation Protocol:

- Calculate a Genetic Priority Score: Use frameworks that integrate multiple genetic features (e.g., GWAS signals, constraint scores, molecular QTLs) into a unified score to prioritize targets with a higher probability of clinical success [22].

- Predict Side Effects: Analyze the phenotypes associated with genes encoding drug targets, as they can be predictive of clinical trial side effects. Tissue-specific genetic features are particularly informative [22].

- Leverage Cross-Population Meta-Analyses: Use frameworks that integrate data across diverse populations to identify robust drug repositioning candidates and improve generalizability [22].

Visualizing the Genotype-Phenotype Mapping Challenge

The core challenge in modern genetics is accurately modeling the pathway from a DNA sequence to a measurable trait, a relationship filled with complexity and interaction.

High-Throughput Tools for Mapping: From Single Cells to Genome-Scale Screens

Deep Mutational Scanning (DMS) is a powerful experimental approach that enables researchers to systematically quantify the functional effects of tens of thousands of genetic variants in a single, highly multiplexed experiment [26] [27]. By combining saturation mutagenesis, functional selection, and high-throughput sequencing, DMS provides high-resolution insight into sequence-function relationships, transforming our ability to understand protein behavior, interpret human genetic variation, and guide therapeutic development [26] [28]. This technology has become indispensable for managing the complexity of genotype-phenotype mapping, allowing comprehensive characterization of variant effects at scales previously unimaginable with traditional methods.

Core Methodology and Workflow

The DMS workflow consists of three principal components: construction of mutant libraries, functional screening or selection, and high-throughput sequencing analysis [28] [27]. The central concept involves creating "site-variant-function" relationships through a high-throughput framework that links genetic changes to their phenotypic consequences.

The following diagram illustrates the core DMS workflow from library construction to functional analysis:

Key Research Reagents and Solutions

Successful DMS experiments depend on carefully selected reagents and methodologies. The table below outlines essential materials and their functions in DMS workflows:

| Reagent/Method | Function in DMS | Key Applications |

|---|---|---|

| Oligo Pools with Degenerate Codons (NNK/NNS) [28] [27] | Systematic amino acid substitutions | Saturation mutagenesis for all possible amino acid changes |

| Error-Prone PCR [27] | Random mutagenesis through low-fidelity amplification | Directed evolution; exploring random mutational space |

| CRISPR-Cas Genome Editing [29] [30] | In situ mutagenesis in native genomic context | Studying variants in natural chromosomal environment |

| Yeast/Mammalian Display Systems [28] | Protein expression and phenotypic screening | Antibody engineering; cell surface receptor studies |

| DiMSum Software Pipeline [31] | Data processing and error estimation | Variant fitness calculation and quality control |

| Barcoded Sequencing Libraries [31] | Tracking variant abundance | Quantifying enrichment/depletion across conditions |

Troubleshooting Common Experimental Challenges

Library Construction Issues

Problem: Incomplete library coverage or biased mutational representation

- Root Cause: Traditional error-prone PCR exhibits mutation biases, with Taq polymerase having higher mutation rates at A/T bases compared to C/G [27]. Oligo synthesis with NNK codons also creates uneven amino acid distribution and includes stop codons [28].

- Solution: Implement advanced mutagenesis techniques such as:

- Trinucleotide cassette (T7 Trinuc) design: Achieves equiprobable amino acid distribution while avoiding stop codons [28].

- PFunkel mutagenesis: Combines Kunkel mutagenesis with Pfu DNA polymerase for rapid site-directed mutagenesis on double-stranded plasmid templates [28].

- SUNi (Scalable and Uniform Nicking Mutagenesis): Implements double nicking sites on templates with optimized homology arms for higher uniformity and coverage [28].

- Quality Control: Monitor editing efficiency and diversity through targeted sequencing to assess substitution/indel distribution and wild-type residues [28].

Problem: Low efficiency in mammalian cell systems

- Root Cause: Heterogeneous CRISPR editing accessibility due to PAM/sequence context dependence and variations in HDR efficiency [28] [30].

- Solution:

Functional Screening Problems

Problem: High noise-to-signal ratio in phenotypic measurements

- Root Cause: Bottlenecks in the experimental workflow that restrict variant pool diversity, or system-induced biased signals that deviate from true physiological states [28] [31].

- Solution:

- Maintain large population sizes (typically >100 copies per mutant) throughout the experiment to prevent bottleneck effects [32] [31].

- Include multiple biological replicates to account for technical variability [31].

- For binding assays, consider using non-cellular display systems (e.g., PURE system) to minimize cellular confounders [28].

- Preventive Design: Calculate the 95%-confidence interval for measurement error estimates a priori to determine the maximum expected precision of the experimental setup [32].

Problem: Discrepancy between in vitro and in vivo functional effects

- Root Cause: Overexpression artifacts, lack of proper post-translational modifications, or absence of native interacting partners in simplified systems [28].

- Solution:

- Use CRISPR-mediated saturation mutagenesis in the native genomic context to preserve natural regulation and interaction networks [29].

- Employ mammalian cell systems when studying human disease variants to ensure proper cellular context [30].

- Validate key findings with orthogonal assays in physiologically relevant models [28].

Sequencing and Data Analysis Challenges

Problem: Inaccurate fitness scores due to experimental noise

- Root Cause: Multiple error sources including finite sequencing counts, batch effects in sample processing, and variability in selection experiments [31].

- Solution: Implement the DiMSum computational pipeline, which uses an interpretable error model that captures main sources of variability in DMS workflows [31].

- Implementation:

- Process raw sequencing files through DiMSum WRAP module for quality control and variant counting [31].

- Use DiMSum STEAM module for fitness score estimation and error modeling [31].

- Diagnostic Tools: Utilize the summary reports generated by DiMSum to identify common experimental pathologies and take remedial steps [31].

Problem: Inadequate experimental design for precise effect estimation

- Root Cause: Insufficient sequencing depth, too few time points, or inappropriate selection duration [32].

- Solution: Follow statistical guidelines for optimizing time-sampled deep-sequencing bulk competition experiments [32]:

- Sample more time points and extend experiment duration rather than excessively increasing sequencing depth [32].

- Even with fixed experiment duration, cluster time points at both beginning and end to increase power for detecting both strong and weak selection [32].

- For essential genes, perform competitive growth assays with multiple sampling time points to capture fitness differences [29].

Frequently Asked Questions (FAQs)

Q1: How can I determine if my DMS library has sufficient coverage for meaningful results?

A: Aim for >100x average coverage per variant, and ensure that >95% of designed synonymous edits are detectable [29]. High-quality libraries typically achieve 96-97% saturation for synonymous mutations, which serve as neutral controls [29]. Utilize the hierarchical variant abundance structure to identify potential bottlenecks where specific variant subsets may be underrepresented [31].

Q2: What are the key considerations when choosing between random mutagenesis and programmed allelic series?

A: Use programmed allelic series (e.g., NNK codons) when you need systematic coverage of all amino acid substitutions at specific positions, particularly for structured regions like antibody CDRs [28]. Choose random mutagenesis (error-prone PCR) when exploring a broader mutational landscape is prioritized over comprehensive site coverage, but be aware of inherent mutation biases [27]. For large-scale studies requiring uniform coverage, advanced methods like SUNi or Trinucleotide cassettes are recommended [28].

Q3: How can I optimize the statistical power of my DMS experiment during the design phase?

A: Focus on increasing the number of sampled time points and extending experiment duration, as these improvements disproportionately enhance precision compared to increasing sequencing depth alone [32]. Also, reduce the number of competing mutants if possible, as this decreases noise in fitness estimates [32]. Use interactive web tools available from statistical guides to calculate expected confidence intervals for your specific experimental parameters [32].

Q4: What strategies can help validate DMS findings and increase confidence in the results?

A: Always include biological replicates to assess reproducibility—high-quality DMS experiments typically show correlation coefficients (R²) of 0.85-0.96 between replicates [32]. Compare your results with known functional sites or previously characterized variants to ensure biological relevance [29]. For clinical applications, orthogonal validation using low-throughput functional assays for select variants is recommended [30].

Q5: How can DMS help in drug development and assessing resistance potential?

A: DMS can identify resistance-conferring mutations before clinical deployment of antimicrobials [29]. By quantifying how mutations affect both protein function and drug resistance, DMS can rank lead compounds based on their "resistance potential"—compounds with fewer resistance pathways are superior targets [29]. For example, MurA was identified as a superior antimicrobial target compared to FabZ due to its lower mutational flexibility that limits resistance development while preserving function [29].

Advanced Applications and Future Directions

The DiMSum pipeline represents a significant advancement in DMS data analysis, providing an end-to-end solution for obtaining variant fitness estimates and diagnosing experimental issues [31]. The software is organized into two modules: WRAP for processing raw sequencing files and STEAM for estimating variant fitness scores and their associated errors [31].

As DMS methodologies continue to evolve, several emerging applications are particularly promising for genotype-phenotype mapping research:

- Variant Interpretation: Systematic classification of variants of unknown significance (VUS) in disease genes, supporting clinical decision-making [30].

- Antibiotic Development: Guidance for antibiotic development by identifying targets with low mutational flexibility that limits resistance evolution [29].

- Viral Evolution Prediction: Forecasting viral evolution pathways, as demonstrated by SARS-CoV-2 DMS studies that identified mutations later dominant in populations [27].

- Protein Structure Prediction: Enabling accurate protein structure modeling using genetic interaction scores derived from DMS experiments [27].

Future methodological improvements will likely focus on increasing the accuracy and scope of DMS, particularly through enhanced library construction techniques, more physiologically relevant screening systems, and improved computational models for extrapolating DMS results to in vivo contexts [28] [30].

Experimental Protocols & Workflows

Genome-Scale Perturbation Screens with Single-Cell Readouts

The creation of a high-resolution genotype-to-transcriptome atlas requires a method for simultaneously introducing genetic perturbations and measuring their transcriptional consequences in individual cells. The following workflow outlines the core methodology, with Perturb-seq being a primary example. [33]

Detailed Perturb-seq Protocol

This protocol is adapted from genome-scale screens performed in human cell lines. [33]

- Perturbation Modality Selection: CRISPR interference (CRISPRi) is often preferred over CRISPR knockout for its:

- Higher proportion of loss-of-function phenotypes.

- Direct measurability of knockdown efficacy via scRNA-seq.

- More homogeneous perturbation, reducing selection for unperturbed cells.

- Avoidance of DNA damage response activation that can alter transcriptional signatures.

- Library Design: Use a multiplexed library where each genetic element contains two distinct sgRNAs targeting the same gene to maximize knockdown efficacy. During oligonucleotide synthesis, overrepresent constructs targeting essential genes to maintain their representation.

- Cell Line Engineering: Engineer cell lines (e.g., K562, RPE1) to stably express the CRISPRi effector dCas9-KRAB.

- Lentiviral Transduction: Transduce the pooled sgRNA library into cells at a low Multiplicity of Infection (MOI) to ensure most cells receive a single perturbation.

- Incubation Period: Allow a sufficient period post-transduction for phenotypic manifestation (e.g., 6-8 days).

- Single-Cell RNA Sequencing: Use droplet-based 3' scRNA-seq (e.g., 10x Genomics Chromium) with direct sgRNA capture to concurrently profile transcriptomes and perturbation identities in a pooled format.

- Computational Analysis:

- Assign cells to their genetic perturbation based on captured sgRNAs.

- Exclude cells with multiple conflicting sgRNA assignments.

- Normalize expression measurements using control cells bearing non-targeting sgRNAs.

- Detect transcriptional phenotypes using conservative, non-parametric statistical tests comparing cells with a given perturbation to control cells.

Yeast Knockout Collection Reengineering for scRNA-seq

In yeast, which is amenable to precise genetic engineering, a high-resolution atlas was built by reconfiguring the classic yeast knockout collection (YKOC) for single-cell profiling. [34]

- Library Redesign: The standard YKOC gene deletion cassette was reconfigured to make genotype identity traceable at the RNA level.

- Cassette Structure:

- The KanMX resistance marker was replaced with URA3.

- A shortened heterologous terminator was added.

- A unique clone barcode (5 random nucleotides) was inserted downstream of the URA3 STOP codon.

- Strain Generation: Mutants were grown and transformed individually (not pooled) to prevent competition, with positive clones selected in successive rounds in selective media.

- Perturb-seq Application: The final collection of RNA-traceable mutants was grown independently, pooled, and subjected to control or stress conditions before scRNA-seq profiling.

Troubleshooting Guides

Common Single-Cell RNA-seq Experimental Issues

Low Library Yield or Quality

Table: Troubleshooting Low Library Yield

| Cause of Failure | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality / Contaminants | Enzyme inhibition due to residual salts, phenol, or EDTA. [35] | Re-purify input sample; ensure wash buffers are fresh; target high purity (260/230 > 1.8). [35] |

| Inaccurate Quantification | Over- or under-estimating input concentration leads to suboptimal enzyme stoichiometry. [35] | Use fluorometric methods (Qubit) over UV absorbance; calibrate pipettes; use master mixes. [35] |

| Fragmentation / Ligation Inefficiency | Over- or under-fragmentation reduces adapter ligation efficiency. [35] | Optimize fragmentation parameters (time, energy); verify fragmentation profile; titrate adapter:insert molar ratio. [35] |

| Overly Aggressive Purification | Desired fragments are excluded during cleanup or size selection. [35] | Optimize bead-to-sample ratios; avoid over-drying beads. [35] |

Poor Single-Cell Sample Quality

Table: Ensuring High-Quality Single-Cell Preparations

| Quality Issue | Impact on Data | Solution & Best Practices |

|---|---|---|

| Low Cell Viability (<90%) | RNA leakage from dead cells increases background noise, obscuring true cell-specific signals. [36] | Use dead cell removal kits; enrich for live cells; handle cells gently with wide-bore tips. [36] |

| Cell Clumping & Debris | Can obstruct microfluidic chips, leading to low cell recovery; may be sequenced as doublets/multiplets. [36] | Filter samples before loading; wash samples through centrifugation to remove contaminants. [36] |

| Inaccurate Cell Counting | Missing target cell recovery goals; misrepresentation of cell populations. [36] | Use a consistent counting process with fluorescent dyes for live/dead discrimination. [36] |

Computational & Data Analysis Troubleshooting

Cell Ranger Pipeline Failures

- Preflight Failures: Occur before the pipeline runs due to invalid inputs. [37]

- Error:

bcl2fastq not found on PATH. - Solution: Ensure Illumina's

bcl2fastqsoftware is correctly installed and on your system's PATH. [37]

- Error:

- In-flight Failures: Occur during execution due to external factors. [37]

- Error: Runs out of system memory or disk space.

- Solution: Check that your system meets the memory and storage requirements. Monitor available space during runs. [37]

- Resuming Failed Pipestances: If a

cellrangerrun fails and the output directory exists, re-issuing the same command will typically resume the pipeline. If you get apipestance already exists and is lockederror, you can delete the_lockfile in the output directory if you are sure no other instance is running. [37]

Challenges in Quantifying Repetitive Elements

- Problem: Standard scRNA-seq analysis overlooks transcripts from repetitive genomic elements like transposable elements (TEs) due to mapping ambiguity. [38]

- Solution: Use specialized computational tools like Stellarscope, which employs a Bayesian mixture model to probabilistically reassign multimapping reads to specific TE loci, providing locus-specific TE expression counts. [38]

Frequently Asked Questions (FAQs)

Experimental Design

Q1: Should I use single cells or single nuclei for my experiment? [36]

A: The choice depends on your experimental goals and sample type.

- Use single cells when your goal is to profile:

- Cell surface proteins (e.g., BCR/TCR sequences for immunoprofiling).

- Standard whole transcriptomes from tissues that dissociate easily.

- Use single nuclei when:

- Your tissue is difficult to dissociate into intact single cells (e.g., brain, fat).

- Your cells are too large or an awkward shape (e.g., neurons, cardiomyocytes, yeast). [36]

- Your analyte is nuclear (e.g., for measuring chromatin accessibility).

Q2: How many cells should I plan for a single-cell experiment? [36]

A: There is no single answer, as it depends on:

- Sample Complexity: Heterogeneous samples (e.g., tumors, complex tissues) require more cells to adequately capture rare cell types.

- Experimental Question: If targeting rare cell populations, start with more cells.

- Capture Efficiency: Account for the technology's cell recovery rate (e.g., ~65% for 10x Genomics assays). Plan your input cell load accordingly. [36]

Q3: What defines a high-quality single-cell sample? [36]

A: A high-quality sample is:

- Clean: Free of debris, cell aggregates, and contaminants like background RNA.

- Healthy: Has high cell viability (≥90% is recommended).

- Intact: Has intact cellular or nuclear membranes, achieved through gentle handling. [36]

Data Analysis & Interpretation

Q4: What fraction of genetic perturbations typically cause a detectable transcriptional phenotype?

A: In a genome-scale Perturb-seq screen targeting ~9,900 genes in human cells, a robust computational framework detected significant global transcriptional changes in ~30% (2,987) of targeted genes. This indicates a substantial portion of genetic perturbations influence the transcriptome, underscoring the value of large-scale screening. [33]

Q5: Can single-cell data recapitulate findings from bulk RNA-seq studies?

A: Yes. Despite substantial methodological differences, large-scale scRNA-seq datasets of genetic perturbations have shown consistent correlation with previous bulk transcriptome profiling in the number of differentially expressed genes per genotype, validating the robustness of the single-cell approach. [34]

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Reagents and Resources

| Reagent / Resource | Function / Application | Key Features / Examples |

|---|---|---|

| CRISPRi sgRNA Library | Enables large-scale loss-of-function genetic screens. [33] | Multiplexed designs (2 sgRNAs/gene) improve knockdown efficacy; can be focused on expressed or essential genes. [33] |

| RNA-Traceable Yeast Knockout Collection (YKOC) | Allows pooled single-cell profiling of defined gene deletions in yeast. [34] | Contains URA3 marker with integrated clone and genotype barcodes in the 3'UTR, making the perturbation identity detectable in scRNA-seq data. [34] |

| 10x Genomics Chromium Platform | Partitions single cells into droplets for barcoding and reverse transcription. [39] [36] | Enables high-throughput scRNA-seq library preparation; accommodates cells up to 30μm in diameter. [36] |

| Cell Ranger Software Suite | Processes scRNA-seq data from raw sequencing reads to a gene-cell expression matrix. [37] | Performs sample demultiplexing, barcode processing, read alignment, and UMI counting. |

| Stellarscope | Quantifies locus-specific transposable element (TE) expression from scRNA-seq data. [38] | Uses a Bayesian model to resolve multimapping reads, revealing the "repeatome" layer of the transcriptome. |

| PolyGene Model | A computational framework that uses language models to learn integrated genotype-phenotype relationships from scRNA-seq data. [40] | Helps uncover how genes interact to contribute to complex traits and can identify new gene functions and biomarkers. |

FAQs: Addressing Common CRISPR Screening Challenges

This section answers frequent, specific questions researchers encounter during CRISPR screening experiments, from data interpretation to experimental design.

Q1: How much sequencing depth is required for a CRISPR screen?

For reliable results, each sample should achieve a minimum sequencing depth of 200x. The total data volume required can be calculated as follows [41]:

Required Data Volume = Sequencing Depth × Library Coverage × Number of sgRNAs / Mapping Rate

For a typical human whole-genome knockout library, this translates to approximately 10 Gb of sequencing data per sample [41].

Q2: Why do different sgRNAs targeting the same gene show variable performance?

Editing efficiency is highly influenced by the intrinsic properties of each sgRNA sequence. To ensure robust and reliable results, it is recommended to design at least 3–4 sgRNAs per gene. This strategy mitigates the impact of individual sgRNA performance variability and provides consistent identification of gene function [41].

Q3: What is the difference between a negative and a positive CRISPR screen?

The selection pressure applied and the goal of the screen define its type [41]:

- Negative Screening: Applies mild selection pressure, leading to the death of only a small subset of cells. The goal is to identify genes whose knockout causes cell death or reduced viability. This is observed through the depletion of corresponding sgRNAs in the surviving cell population.

- Positive Screening: Applies strong selection pressure, resulting in the death of most cells, with only a small number surviving due to resistance. The goal is to identify genes whose disruption confers a selective advantage. This is observed through the enrichment of corresponding sgRNAs in the surviving cells.

Q4: How can I determine if my CRISPR screen was successful?

The most reliable method is to include well-validated positive-control genes with known effects in your screen. If the sgRNAs for these controls show significant enrichment or depletion in the expected direction, it strongly indicates effective screening conditions. In the absence of known targets, you can evaluate performance by assessing the degree of cell killing under selection pressure and examining the distribution of sgRNA abundance across conditions [41].

Q5: What should I do if no significant gene enrichment is observed?

The absence of significant hits is often due to insufficient selection pressure during the screening process, which weakens the phenotypic signal. It is recommended to increase the selection pressure and/or extend the screening duration to allow for greater enrichment of positively selected cells [41].

Troubleshooting Guides: From Data to Validation

Troubleshooting Data Analysis

A successful screen relies on robust data analysis. The table below summarizes common data issues and their solutions.

Table 1: Troubleshooting CRISPR Screening Data Analysis

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Low sgRNA mapping rate | General sequencing quality issues. | A low mapping rate itself does not compromise reliability, as analysis uses only mapped reads. Ensure the absolute number of mapped reads is sufficient to maintain the recommended ≥200x sequencing depth [41]. |

| Unexpected positive LFC in a negative screen (or vice versa) | Statistical calculation using the median of sgRNA-level LFCs. | This can occur when using the Robust Rank Aggregation (RRA) algorithm. Extreme values from individual sgRNAs can skew the gene-level median LFC. This is often a computational artifact rather than a biological one [41]. |

| Large loss of sgRNAs from the library | Before screening: Insufficient initial library representation.After screening: Excessive selection pressure. | Re-establish the CRISPR library cell pool with adequate coverage. If post-screening, reduce the selection pressure [41]. |

| How to prioritize candidate genes? | Trade-off between comprehensive ranking and explicit cutoffs. | Prioritize RRA rank-based selection as it integrates multiple metrics. Combining LFC and p-value thresholds is common but may yield more false positives [41]. |

| Low correlation between replicates | High technical or biological variability. | If the Pearson correlation coefficient is below 0.8, avoid combined analysis. Perform pairwise comparisons and use Venn diagrams or meta-analysis to identify consistently overlapping hits [41]. |

Troubleshooting Experimental Execution

Experimental pitfalls can occur at various stages. The following guide addresses common workflow problems.

Table 2: Troubleshooting Common Experimental Problems

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Low editing efficiency | Inefficient gRNA design or delivery. | Verify gRNA targets a unique genomic sequence. Optimize delivery methods (electroporation, lipofection, viral vectors) for your specific cell type. Confirm Cas9/gRNA expression using a suitable promoter [42]. |

| Off-target effects | Cas9 cuts at unintended, partially complementary sites. | Design highly specific gRNAs using online prediction tools. Use high-fidelity Cas9 variants to reduce off-target cleavage [42]. |

| Cell toxicity/low survival | High concentrations of CRISPR components. | Titrate the concentration of delivered RNP or plasmid. Start with lower doses. Using Cas9 protein with a nuclear localization signal can enhance efficiency and reduce toxicity [42]. |

| Mosaicism (mixed edited/unedited cells) | Editing occurred after multiple cell divisions. | Optimize the timing of delivery for the cell cycle stage. Use inducible Cas9 systems. Isolate fully edited clonal cell lines via single-cell cloning [42]. |

| Inability to detect edits | Insensitive genotyping methods. | Use robust detection methods. The T7 Endonuclease I (T7EI) assay is a quick gel-based check, but Next-Generation Sequencing (NGS) is recommended for precise characterization of edits and off-targets [43]. |

Hit Validation Strategies

After a primary screen, candidate genes require rigorous validation to confirm their role in the observed phenotype [44].

- Deconvolution: Test individual sgRNAs that were part of a pool targeting the same gene. A true hit should be reproducible across multiple independent sgRNAs [44].

- Orthogonal Validation: Use a different technology to perturb the same gene (e.g., use RNAi to silence a gene initially identified by CRISPRko). Confirming the phenotype through an independent mechanism strongly validates the hit [44].

- Knockout Cell Lines: Create stable, clonal knockout cell lines for the candidate gene. This provides a clean background for more complex follow-up experiments, such as "rescue" experiments where the gene's function is reintroduced via a cDNA to confirm the phenotype is directly linked to the gene knockout [44].

Experimental Protocols for Key Applications

Protocol: Validating Edits with the T7EI Assay

The T7 Endonuclease I (T7EI) assay is a rapid, gel-based method to confirm that a genomic change has occurred near the target site [43].

- Principle: The T7EI enzyme cleaves heteroduplex DNA (hybrids of wild-type and edited strands) at mismatched base pairs.

- Workflow:

- PCR Amplification: Amplify the target genomic region from both control and CRISPR-edited cell populations.

- Heteroduplex Formation: Denature and reanneal the PCR products. Mixed sequences from edited and control DNA will form heteroduplexes with mismatches at the edit site.

- T7EI Digestion: Treat the reannealed DNA with the T7EI enzyme, which cleaves at the mismatch sites.

- Analysis: Run the digestion products on a gel. Successful editing is indicated by the presence of additional, smaller DNA fragments compared to the undigested control band.

- Note: The T7EI assay indicates a change but does not reveal the exact sequence alteration. For precise nucleotide-level information, follow up with Sanger sequencing or NGS [43].

Protocol: Confirming a Gene Knockout via NGS

Next-Generation Sequencing (NGS) is the gold standard for characterizing CRISPR edits, providing nucleotide-level resolution.

- Principle: Targeted amplicon sequencing of the genomic region of interest to read the DNA sequences of a population of cells.

- Workflow [43]:

- Design Amplicons: Design PCR primers to amplify ~200-300 bp regions surrounding the on-target site and nominated off-target sites.

- Library Preparation: Generate sequencing libraries from the amplicons. Systems like the rhAmpSeq CRISPR Analysis System use specialized PCR to create libraries for Illumina platforms.

- Sequencing & Analysis: Sequence the libraries and use a dedicated analysis pipeline (e.g., rhAmpSeq CRISPR Analysis Tool) to align sequences, quantify the different insertion/deletion mutations (indels) at the target site, and assess editing at off-target sites.

Diagram 1: CRISPR screening and validation workflow.

The Scientist's Toolkit: Essential Reagents & Tools

Table 3: Key Research Reagent Solutions for CRISPR Screening

| Category | Item | Function & Application |

|---|---|---|

| Core Screening Components | CRISPR Library (e.g., whole-genome, focused) | A pooled collection of sgRNA constructs used to systematically perturb genes on a large scale [45]. |

| Cas9 Nuclease (Wild-type or High-fidelity) | The enzyme that creates double-strand breaks in DNA at locations specified by the sgRNA. High-fidelity variants reduce off-target effects [42]. | |

| Delivery Vectors (Lentiviral, Lipofection reagents) | Methods to introduce CRISPR components into cells. Lentiviral transduction is common for pooled screens due to stable integration [45]. | |

| Controls | Positive Control sgRNA (e.g., targeting TRAC, RELA) | A validated sgRNA with known high editing efficiency. Used to confirm that workflow conditions are optimized for successful editing [46]. |

| Negative Control sgRNA (Non-targeting/scramble) | An sgRNA with no perfect match in the host genome. Used to establish a baseline phenotype and control for non-specific effects of the CRISPR machinery [46]. | |

| Transfection Control (e.g., GFP mRNA) | A fluorescent reporter used to visually quantify and optimize the delivery efficiency of CRISPR components into cells [46]. | |

| Detection & Analysis | NGS-based Detection Kit (e.g., rhAmpSeq) | A system for targeted amplicon sequencing to precisely quantify on- and off-target editing efficiencies [43]. |

| Analysis Software (e.g., MAGeCK) | A widely used computational tool for analyzing CRISPR screen data, incorporating algorithms like RRA for hit identification [41]. |

Diagram 2: The role of experimental controls in interpreting screening results.

G-P Atlas Technical Support Center

Troubleshooting Guides

Poor Phenotype Prediction Accuracy

Problem: The model produces inaccurate predictions for multiple phenotypes on your test dataset. Solution:

- Re-tune Hyperparameters: Systematically adjust the size of the latent space and hidden layers. The original study found optimal performance with a latent space between 50-100 dimensions [4].

- Check Data Corruption Levels: The model is trained with corrupted input data for robustness. Re-calibrate the amount of Gaussian noise and erroneous genotypes used during training if your data has different noise characteristics [4].

- Verify Phenotype Scaling: Ensure all phenotypic input data is properly normalized, as the mean squared error loss function is sensitive to variable scales [4].

Failure to Identify Known Causal Genes

Problem: The permutation-based feature importance analysis does not highlight known causal loci in your dataset. Solution:

- Confirm Test Set Integrity: Ensure the 20% test dataset used for variable importance calculation is properly segregated and not used in training [4].

- Validate Importance Calculation: Use the Captum library's permutation-based feature ablation as implemented in the original framework, measuring the mean squared shift in predicted phenotypes when omitting each feature [4].

- Check for Gene Interactions: The model excels at detecting non-additive interactions. If your causal genes operate through strong epistatic effects, ensure your dataset has sufficient power to detect these complex relationships [4].

Extended Training Time with Large Datasets

Problem: Model training takes significantly longer than expected with large genotype-phenotype datasets. Solution:

- Optimize Batch Size: The original implementation used a batch size of 16. Adjust based on your available GPU memory—smaller batches may slow training, while larger batches could reduce gradient estimation quality [4].

- Verify Hardware Acceleration: Ensure PyTorch (v2.2.2) is configured to use available GPU resources with CUDA support enabled [4].

- Monitor Convergence: Training typically requires 250 epochs. Use early stopping if the validation loss plateaus to save computation time [4].

Frequently Asked Questions (FAQs)

Q: What types of genetic interactions can G-P Atlas detect that traditional methods miss? A: G-P Atlas specifically captures non-additive gene-gene interactions (epistasis) and pleiotropic effects where single genes influence multiple phenotypes. Traditional methods often assume linear, additive relationships and examine single phenotypes in isolation, missing these complex biological realities [4].

Q: How does the two-tiered architecture improve data efficiency? A: The framework first learns a compressed representation of phenotype-phenotype relationships, then maps genotypes to this latent space. By fixing the decoder weights during the second training phase, it dramatically reduces the parameters needing optimization, making it suitable for biologically realistic dataset sizes [4].

Q: What are the software and dependency requirements for implementing G-P Atlas? A: The framework is implemented in PyTorch (v2.2.2) and uses Captum for interpretability features. All code is available on GitHub, and the researchers provide both simulated and empirical datasets for validation [4].

Q: How should researchers handle missing data in their genotype-phenotype datasets? A: The denoising autoencoder architecture is specifically designed for robustness to missing and corrupted data. During training, deliberate corruption of input data helps the model learn to handle real-world data imperfections effectively [4].

Q: Can G-P Atlas incorporate environmental factors in addition to genotypes and phenotypes? A: The framework is designed to potentially include environments alongside genotypes and phenotypes, though the current implementation focuses on genotype-phenotype mapping as a foundation for these more complex integrations [4].

Table 1: G-P Atlas Hyperparameter Optimization Settings

| Hyperparameter | Options Tested | Optimal Value | Tuning Method |

|---|---|---|---|

| Latent Space Size | 25, 50, 100, 200 dimensions | Dataset-dependent | Grid Search |

| Hidden Layer Size | 128, 256, 512, 1024 nodes | Dataset-dependent | Grid Search |

| Noise Corruption | 5%, 10%, 15%, 20% | Dataset-dependent | Systematic Testing |

| Batch Size | 16, 32, 64 | 16 | Fixed |

| Training Epochs | 100, 250, 500 | 250 | Fixed |

| Learning Rate | 0.1, 0.01, 0.001 | 0.001 | Fixed |

Table 2: G-P Atlas Performance on Benchmark Datasets

| Dataset | Sample Size | Phenotypes | Genomic Loci | Key Findings | Comparison to Traditional Methods |

|---|---|---|---|---|---|

| Simulated Population [4] | 600 individuals | 30 traits | 3,000 loci | Successfully identified causal genes with additive and epistatic effects | Outperformed linear models in detecting non-additive interactions |

| F1 Yeast Cross [4] | Real experimental data | Multiple traits | Genome-wide | Accurately predicted complex traits from genetic data | Provided more holistic organismal view than single-trait approaches |

Detailed Experimental Protocols

Protocol 1: Phenotype-Phenotype Autoencoder Training

Purpose: To learn efficient low-dimensional representations of phenotypic relationships. Methodology:

- Input: Corrupted phenotypic data with added Gaussian noise [4].

- Architecture: Three-layer denoising autoencoder with leaky ReLU activation (negative slope=0.01) and batch normalization (momentum=0.8) [4].

- Training: 250 epochs using Adam optimizer (β₁=0.5, β₂=0.999), learning rate=0.001, mean squared error loss function [4].

- Output: Compressed latent representation capturing essential phenotypic covariance structure.

Protocol 2: Genotype-to-Phenotype Mapping

Purpose: To map genetic data into the learned phenotypic latent space. Methodology:

- Input: Corrupted genotypic data with missing and erroneous genotypes [4].

- Architecture: Fixed pre-trained phenotype decoder with trainable genotype encoder mapping to latent space [4].

- Regularization: L1 norm (weight=0.8) and L2 norm (weight=0.01) on weights mapping genotypes to phenotype space [4].

- Output: Phenotype predictions from genetic data alone.

Protocol 3: Variable Importance Analysis

Purpose: To identify causal genotypes and phenotypes influencing biological variation. Methodology:

- Implementation: Permutation-based feature ablation using Captum library [4].

- Metric: Mean squared shift in predicted phenotype distribution when omitting each feature [4].

- Testing: Calculated exclusively on the 20% holdout test dataset [4].

- Locus Identification: For multi-allelic loci, uses the largest variable importance from the set of alleles [4].

G-P Atlas Architecture Visualization

G-P Atlas Two-Tiered Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for G-P Atlas Implementation

| Tool/Resource | Function | Implementation Details |