Navigating the Computational Maze: Solving Key Challenges in Phylogenetic Comparative Methods

Phylogenetic comparative methods (PCMs) are essential for testing evolutionary hypotheses across species, but their application is fraught with computational and statistical challenges.

Navigating the Computational Maze: Solving Key Challenges in Phylogenetic Comparative Methods

Abstract

Phylogenetic comparative methods (PCMs) are essential for testing evolutionary hypotheses across species, but their application is fraught with computational and statistical challenges. This article provides a comprehensive guide for researchers and biomedical professionals on overcoming these hurdles. We explore the foundational problem of statistical non-independence due to shared ancestry and the critical assumptions underlying common methods. The article then details advanced methodological applications, from ancestral state reconstruction to models of trait-dependent diversification, and offers practical solutions for troubleshooting prevalent issues like phylogenetic uncertainty and tree misspecification. Finally, we present a rigorous framework for model validation and comparative analysis to ensure robust, biologically meaningful inferences in evolutionary biology and drug discovery.

The Roots of the Problem: Understanding Core Challenges and Assumptions in PCMs

Frequently Asked Questions

What is phylogenetic autocorrelation? Phylogenetic autocorrelation (also known as Galton's Problem) is a statistical phenomenon where data points sampled from related taxa (like species or populations) are not statistically independent. Similarities between them can be due not only to independent evolution but also to shared common ancestry or cultural borrowing [1]. This non-independence violates a core assumption of standard statistical tests, which can lead to inflated false positive rates (Type I errors) and incorrect conclusions [1] [2].

Why is non-independence a problem for my analysis? Treating non-independent data as independent artificially increases your effective sample size. This, in turn, makes measures of variance appear smaller than they truly are, exaggerating the statistical significance of correlations and increasing the risk of identifying spurious relationships [1] [3]. One review found that over half of highly-cited cross-national studies failed to sufficiently control for this problem [2].

What are the main sources of non-independence in biological data? The primary sources are:

- Shared Common Ancestry (Phylogenetic Non-Independence): Closely related species are likely to be similar simply because they have inherited traits from a common ancestor [3].

- Gene Flow: Exchange of migrants between populations can make their traits more similar [3].

- Spatial Proximity: Populations or cultures that are geographically close may share similar traits due to similar environmental pressures or diffusion of ideas, independent of their ancestry [2].

My dataset includes populations, not species. Do I still need to worry? Yes, the problem is if anything more complex. Analyses across populations within a species must account for both shared ancestry and gene flow, whereas analyses across species often assume gene flow is negligible [3].

Troubleshooting Guide: Identifying and Solving Non-Independence

| Symptom | Potential Diagnostic Check | Recommended Solution |

|---|---|---|

| Spurious or overly strong correlations in trait data. | Test for spatial or phylogenetic signal in your model residuals using statistics like Moran's I [1]. | Use Generalized Least Squares (GLS) with a phylogenetic variance-covariance matrix to model the expected non-independence [3]. |

| Model residuals are not independently distributed. | Examine a correlogram or variogram of residuals to detect spatial or phylogenetic structure [1]. | Apply Phylogenetically Independent Contrasts (PICs), which transform data into independent evolutionary changes at each node of the phylogeny [3]. |

| Need to incorporate both shared ancestry and gene flow. | Estimate a population pedigree or a matrix of genetic/linguistic distances between populations [3]. | Implement a Mixed Model framework (e.g., the "animal model"), which can include multiple sources of non-independence as random effects [3]. |

| Your field traditionally treats taxa as independent (e.g., some cross-cultural or cross-national research). | Conduct a sensitivity analysis: run your model with and without controls for non-independence [2]. | Include controls like spatial autoregression or cultural phylogenetic models using geographic and linguistic proximity matrices [2]. |

Methodological Protocols for Key Experiments

Protocol 1: Testing for Phylogenetic Signal with Moran's I This method tests whether traits from closely related taxa are more similar than those from distantly related taxa [1].

- Inputs: A trait measurement for each taxon in your dataset and a matrix representing the phylogenetic or cultural distance between all pairs of taxa.

- Procedure:

- Calculate Moran's I statistic, which measures spatial autocorrelation. In this context, "space" is replaced by phylogenetic or cultural distance [1].

- Assess the statistical significance of the calculated value by comparing it to a distribution of values generated under the null hypothesis of no phylogenetic structure (e.g., via permutation tests).

- Interpretation: A significant Moran's I indicates the presence of phylogenetic autocorrelation in your trait data, confirming that standard statistical tests may be invalid [1].

Protocol 2: Implementing Phylogenetically Independent Contrasts (PICs) PICs are used to remove the effect of phylogenetic relationships before testing for a correlation between two traits [3].

- Inputs: A fully resolved, bifurcating phylogeny with branch lengths and trait data for the taxa at the tips.

- Procedure:

- For each internal node of the phylogeny, calculate a "contrast" for each trait. A contrast is the standardized difference in trait values between the two daughter lineages arising from that node [3].

- These contrasts are phylogenetically independent data points. The number of independent contrasts for n species is n-1 [3].

- To test for an evolutionary correlation between two traits, regress the set of contrasts for one trait against the contrasts for the other trait through the origin.

- Interpretation: A significant regression indicates a correlation between evolutionary changes in the two traits, independent of shared ancestry [3].

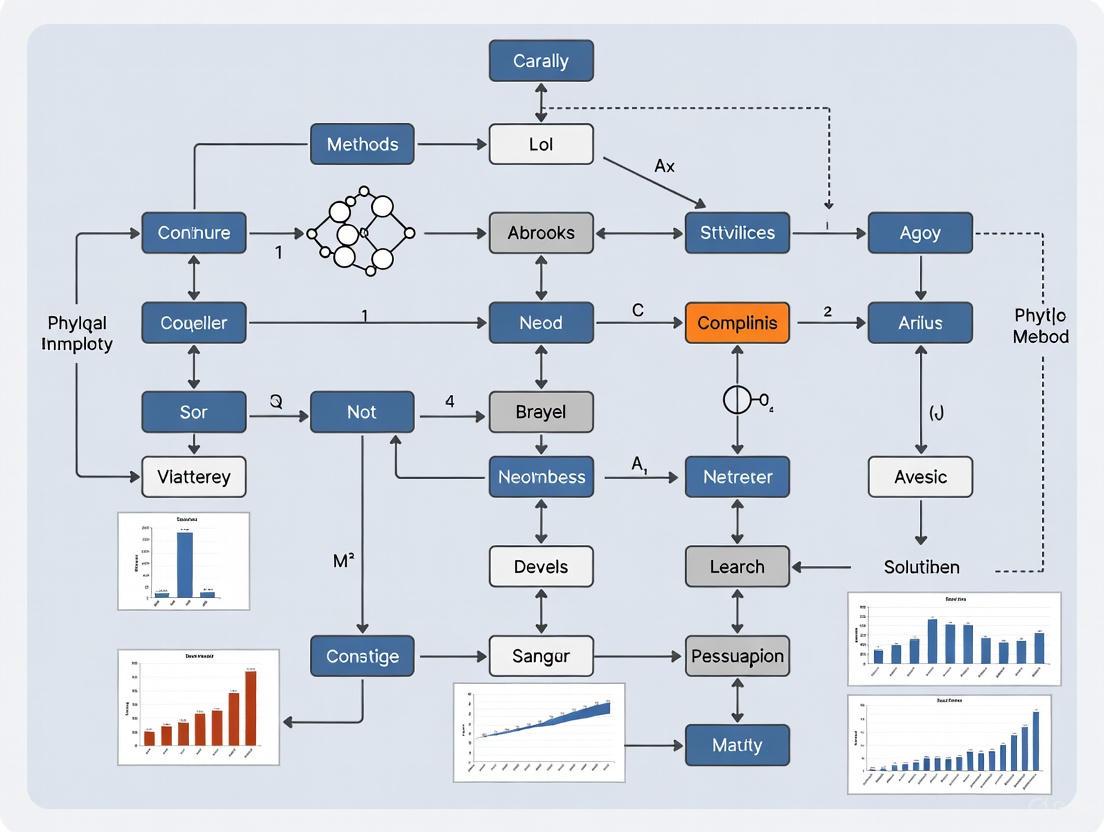

Conceptual Workflow and Logical Relationships

The following diagram outlines the logical process of diagnosing and addressing phylogenetic non-independence in a comparative analysis.

The Scientist's Toolkit: Essential Research Reagents

The table below lists key analytical "reagents" for solving the challenges of phylogenetic non-independence.

| Research Reagent | Function & Explanation |

|---|---|

| Phylogenetic Tree | The foundational scaffold that defines evolutionary relationships. It is used to calculate expected covariances between taxa [3]. |

| Variance-Covariance Matrix | A matrix derived from the phylogeny, showing the shared evolutionary history between species. It is used in GLS and mixed models to weight the data appropriately [3]. |

| Distance Matrix | A matrix of phylogenetic, genetic, or spatial distances between all pairs of populations or cultures. Used in autoregression and Moran's I calculations [1] [2]. |

| Phylogenetically Independent Contrasts (PICs) | A data transformation technique that converts tip data into independent evolutionary changes, creating statistically independent data points for regression analysis [3]. |

| Generalized Least Squares (GLS) | A regression method that incorporates the phylogenetic variance-covariance matrix, allowing for non-independent errors and providing unbiased parameter estimates [3]. |

| Phylogenetic Mixed Model | A powerful framework that partitions trait variance into a phylogenetic component (modeled as a random effect) and species-specific effects, and can incorporate other factors like gene flow [3]. |

| Moran's I | A statistical test used to diagnose the presence and strength of spatial or phylogenetic autocorrelation in model residuals or raw trait data [1]. |

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between Brownian Motion and the Ornstein-Uhlenbeck process in modeling trait evolution?

The core difference lies in mean reversion. Brownian Motion (BM) describes a random walk where trait changes are independent over time, leading to unbounded variance as time increases. In contrast, the Ornstein-Uhlenbeck (OU) process incorporates a deterministic pull that forces the trait value back towards a long-term mean (μ), making it a mean-reverting process. The OU process is the continuous-time analogue of an autoregressive model [4].

- Brownian Motion is best for scenarios where traits evolve neutrally without constraints, akin to genetic drift [5].

- Ornstein-Uhlenbeck is preferred for traits under stabilizing selection, where the trait is pulled towards an optimal value [6].

2. When should I choose an OU model over a Brownian Motion model for my phylogenetic comparative analysis?

You should consider an OU model when your biological question involves stabilizing selection or evolutionary constraints. Key indicators include:

- Theoretical Expectation: Your trait is expected to oscillate around a physiological or functional optimum (e.g., body size, optimal pH for an enzyme).

- Data Observation: The trait variance across species does not increase indefinitely with time but appears bounded. BM is more appropriate for neutral evolution or when the trait is expected to diverge freely without constraints [5].

3. My model estimation for the OU process fails to converge. What are the most likely causes and solutions?

Non-convergence often stems from issues with parameter identifiability or insufficient data.

- Cause 1: Poor initial parameter values. The optimization algorithm gets stuck.

- Solution: Use informed starting values. Estimate the initial mean (μ) from your data, and set the initial rate (θ) to a small positive value.

- Cause 2: A nearly flat likelihood surface, often due to a very small mean-reversion rate (θ), making the OU process hard to distinguish from BM.

- Solution: Constrain the parameter space or use a prior if employing Bayesian methods. Also, check if your data has sufficient phylogenetic signal.

- Cause 3: Inadequate computational power for evaluating the likelihood over a large tree.

- Solution: Utilize efficient algorithms designed for phylogenetic models, such as those using the pruning algorithm [7].

4. How do I interpret the key parameters (µ, θ, σ) of an OU process in a biological context?

The parameters of the OU stochastic differential equation, dX_t = θ(μ - X_t)dt + σdW_t, have direct biological interpretations [4] [7] [6]:

- μ (Long-term mean): The optimal trait value or "attraction point" towards which the trait evolves. In a phylogenetic context, different lineages can have different μ values, indicating adaptation to different niches.

- θ (Mean reversion rate): The strength of the evolutionary pull towards the optimum. A higher θ indicates stronger stabilizing selection, causing the trait to revert to the mean more quickly after a perturbation.

- σ (Volatility or diffusion parameter): The intensity of random fluctuations in the trait evolution per unit of time. It represents the unpredictable component of evolution, such as random genetic drift or environmental shocks.

5. Can I visually represent the structure and assumptions of these stochastic processes?

Yes, the logical relationships and workflows for these models can be effectively visualized. The following diagram illustrates the conceptual path from model selection to simulation for both Brownian Motion and the Ornstein-Uhlenbeck process.

Diagram Title: Workflow for Stochastic Process Model Selection and Simulation

Troubleshooting Guides

Problem: Poor Parameter Estimation in OU Process

Symptoms:

- Highly uncertain or biologically implausible estimates for θ (mean reversion rate) or σ (volatility).

- Large confidence intervals for parameter values.

Resolution Steps:

- Verify Data Quality: Ensure your trait data has enough variation and is measured accurately. Highly noisy data can obscure the mean-reverting signal.

- Check Phylogenetic Signal: Use metrics like Blomberg's K or Pagel's λ to confirm that your trait data exhibits a phylogenetic signal consistent with the model.

- Profile the Likelihood: Examine the likelihood surface around the estimated parameters. A flat surface suggests identifiability issues.

- Consider Model Simplification: If the data is sparse, a BM model might be more appropriate. Alternatively, reduce the number of selective regimes (μ) in the OU model.

- Cross-Validation: Perform phylogenetic cross-validation to assess the model's predictive power and guard against overfitting.

Prevention:

- Simulate data under your proposed OU model with known parameters to test your estimation pipeline before applying it to real data [7].

- Use a Bayesian framework with regularizing priors to stabilize estimates.

Problem: Deciding Between BM and OU Models

Symptoms:

- Similar log-likelihood values for BM and OU models fitted to the same data.

- The estimated θ parameter in the OU model is very close to zero.

Resolution Steps:

- Formal Model Comparison: Use information criteria like AICc (Akaike Information Criterion, corrected for small sample sizes) to compare the models. The model with the lower AICc score is preferred. A ΔAICc > 2 is typically considered substantive evidence.

- Likelihood Ratio Test (LRT): Since BM is nested within OU (BM is an OU process with θ = 0), you can perform an LRT. Note that the test statistic does not follow a standard Chi-square distribution under the null, so use a significance level of 0.1 (or simulated critical values) to account for the boundary condition [6].

- Visual Inspection of Traits: Plot the trait data against a representation of the phylogeny (e.g., a traitgram). Look for visual evidence of bounded evolution or distinct optima in different clades, which would favor the OU process.

Prevention:

- Clearly define your biological hypotheses a priori. A hypothesis of neutral evolution predicts BM, while a hypothesis of constrained evolution predicts OU.

Experimental Protocols & Data Presentation

Protocol: Simulating an Ornstein-Uhlenbeck Process

This protocol details the steps to simulate a path of an OU process using the Euler-Maruyama discretization method, a common numerical approach [7].

Principle: The continuous-time OU process, dX_t = θ(μ - X_t)dt + σdW_t, is approximated by discretizing time into small steps of size Δt.

Procedure:

- Parameter Initialization: Define the parameters:

θ(mean reversion rate)μ(long-term mean)σ(volatility)X_0(initial value)T(total time)N(number of time steps)- Calculate

Δt = T / N

- Initialize Arrays: Create an array

Xof lengthN+1to store the process values. SetX[0] = X_0. - Iterative Simulation: For each time step

ifrom 0 toN-1:- Draw a random value

ΔWfrom a normal distribution with mean0and varianceΔt. This simulates the Brownian motion increment,dW_t. - Update the process:

X[i+1] = X[i] + θ * (μ - X[i]) * Δt + σ * ΔW

- Draw a random value

- Output: The array

Xnow contains the simulated OU path at times0, Δt, 2Δt, ..., T.

The following table summarizes and compares the core properties of the Brownian Motion and Ornstein-Uhlenbeck processes.

Table 1: Key Properties of Brownian Motion vs. Ornstein-Uhlenbeck Process

| Property | Brownian Motion (BM) | Ornstein-Uhlenbeck (OU) Process |

|---|---|---|

| Defining SDE | dX_t = σ dW_t |

dX_t = θ(μ - X_t)dt + σ dW_t [4] [7] |

| Mean | E[X_t] = X_0 (constant) |

E[X_t] = X_0e^{-θt} + μ(1-e^{-θt}) (converges to μ) [4] [6] |

| Variance | Var[X_t] = σ²t (grows unbounded) |

Var[X_t] = (σ²/(2θ))(1 - e^{-2θt}) (converges to σ²/(2θ)) [4] [6] |

| Stationarity | Non-stationary | Stationary (admits a stable long-term distribution) [4] |

| Primary Application in Phylogenetics | Modeling neutral evolution / genetic drift [5] | Modeling evolution under stabilizing selection [6] |

The logical dependencies of the parameters in the OU process and their influence on the model's behavior can be visualized as follows.

Diagram Title: Parameter Relationships in the Ornstein-Uhlenbeck Process

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Computational Tools for Stochastic Process Modeling

| Item / Reagent Solution | Function / Purpose | Example / Note |

|---|---|---|

| Statistical Software (R/Python) | Provides the environment for statistical analysis, model fitting, and simulation. | R packages: geiger, ouch, PCMBase. Python: NumPy, SciPy [7]. |

| Phylogenetic Tree | The historical framework representing evolutionary relationships among taxa. | Typically an input; a rooted, ultrametric tree with branch lengths proportional to time. |

| Euler-Maruyama Method | A numerical scheme for approximating solutions to Stochastic Differential Equations (SDEs). | Essential for simulating trajectories of the OU process [7]. |

| Graphviz | A tool for visualizing graph structures, useful for depicting model workflows and dependencies. | Can be used to create diagrams for presentations and publications [8] [9]. |

| Optimization Algorithm | A computational method for finding parameter values that maximize the model likelihood. | Common choices: L-BFGS-B, Nelder-Mead, or simulated annealing. |

Technical Support Center

Troubleshooting Guides

TG01: Troubleshooting Sampling Bias in Phylogenetic Datasets

- Issue Description: Inferred evolutionary patterns are skewed due to non-random, incomplete, or unrepresentative species sampling in the phylogenetic tree.

- Potential Symptoms:

- Model parameters (e.g., evolutionary rates, trait correlations) shift significantly when adding or removing taxa.

- Poor model fit despite high statistical support for parameters.

- Inconsistent results when comparing studies using different taxonomic samples.

- Diagnostic Steps:

- Conduct a Power Analysis: Use simulations to determine if your sample size has sufficient power to detect the effect sizes of interest [10].

- Perform Taxon Subsampling Tests: Systematically remove specific clades or random subsets of taxa to test the robustness of your conclusions.

- Check for Phylogenetic Signal: Use metrics like Pagel's λ or Blomberg's K to assess if the data conforms to the phylogenetic structure.

- Resolution Protocol:

- Where data is missing, consider using multiple imputation techniques designed for phylogenetic data.

- Apply sample size correction methods or use models that explicitly account for sampling effort.

- Clearly report and justify taxonomic sampling criteria in your methodology.

TG02: Addressing Confirmation Bias in Model Selection

- Issue Description: A tendency to favor complex evolutionary models or interpretations that confirm pre-existing hypotheses, while neglecting simpler or contradictory explanations.

- Potential Symptoms:

- Consistently selecting the most complex model without adequate statistical justification.

- Interpreting marginal statistical significance (e.g., p-values between 0.01-0.05) as strong evidence for a preferred hypothesis.

- Overlooking model diagnostics that indicate poor fit or violation of assumptions.

- Diagnostic Steps:

- Blind Analysis: If possible, conduct initial analyses without access to the grouping variable or hypothesis label.

- Implement Robust Model Testing: Use cross-validation or posterior predictive simulations in a Bayesian framework to test model adequacy.

- Systematically Report All Models: Document the performance of all candidate models tested, not just the best-performing one.

- Resolution Protocol:

- Pre-register your analysis plan, including model selection criteria, before conducting the analysis.

- Use a strict model selection framework (e.g., AICc, BIC) and favor the simplest model that adequately explains the data.

- Actively seek evidence that contradicts your initial hypothesis [10].

TG03: Correcting for Brilliance Bias in Computational Workflows

- Issue Description: Over-reliance on "default" settings or widely cited software packages without critical evaluation of their appropriateness for a specific dataset, potentially overlooking more suitable but less-known tools.

- Potential Symptoms:

- Using software without a clear understanding of its underlying assumptions.

- Consistent use of default prior distributions in Bayesian analyses without sensitivity checks.

- Dismissing newer or alternative methodological approaches.

- Diagnostic Steps:

- Software Audit: Document the rationale for selecting every software package and its specific settings.

- Sensitivity Analysis: Test how results change with different software, algorithms, or prior specifications.

- Resolution Protocol:

- Engage with the methodology literature to understand the strengths and weaknesses of different tools.

- Consult with colleagues from different sub-fields to gain perspective on alternative methods.

- Customize software settings (e.g., priors, optimization routines) based on the properties of your specific data.

Frequently Asked Questions (FAQs)

FAQ 1: Our analysis produced a surprising, strong correlation between two traits. How can we verify this is a real biological signal and not an artifact of a biased model?

- Answer: A robust verification protocol is essential.

- Test Model Assumptions: Check for violations like heteroscedasticity or non-independence of residuals.

- Control for Phylogeny: Ensure you have used a phylogenetic comparative method appropriate for your data type. A strong correlation in raw data may disappear after accounting for shared evolutionary history.

- Data Resampling: Apply a non-parametric test like a phylogenetic bootstrap to assess the stability of the correlation.

- Exclude Influential Points: Perform a leave-one-out analysis to see if the effect is driven by a single, highly influential species or clade.

FAQ 2: What is the most common mistake you see in PCM studies that leads to misinterpretation?

- Answer: One of the most common issues is confusing correlation with causation and failing to adequately consider confounding variables. For example, a correlation between two traits might be driven by a third, unmeasured variable that influences both. Another frequent mistake is over-interpreting a single, best-fit model without considering the statistical support for alternative models or conducting proper model diagnostics to check for adequacy.

FAQ 3: How can we preemptively design our study to minimize the impact of these biases?

- Answer: Proactive study design is key to robust science.

- Pre-registration: Publicly archive your hypotheses, planned methods, and analysis plan before collecting or analyzing data.

- Power Analysis: Before data collection, conduct simulations to determine the sample size (number of species) required to reliably detect your effect of interest.

- Blind Data Collection & Coding: When possible, code traits or states without knowledge of the hypothesis being tested.

- Pilot Studies: Run preliminary analyses on a subset of data to refine your methods and identify potential pitfalls early.

The Scientist's Toolkit

Key Research Reagent Solutions for Robust PCM

| Reagent / Solution | Function in PCM Research |

|---|---|

| Akaike Information Criterion (AIC) | A model selection estimator that balances model fit and complexity, helping to avoid overfitting [10]. |

| Bayesian Posterior Predictive Checks | A method to assess the adequacy of a fitted model by comparing simulated data from the model to the observed data. |

| Phylogenetic Bootstrap | A resampling technique applied to branches or data to assess the confidence/robustness of phylogenetic trees or evolutionary inferences. |

| Sensitivity Analysis | The process of varying model assumptions, parameters, or data subsets to determine how they influence the study's conclusions. |

| Multiple Imputation Methods | Techniques for handling missing data by creating several plausible datasets, analyzing them separately, and combining the results. |

Experimental Protocols & Visualization

EP01: Protocol for a Robust PCM Analysis Workflow

This protocol outlines a systematic workflow to mitigate common biases in Phylogenetic Comparative Methods.

Pre-analysis Phase: Study Design & Power Analysis

- Define Hypotheses: Clearly state primary and alternative hypotheses.

- Pre-register Plan (Recommended): Document and timestamp your analysis plan.

- Conduct Power Analysis: Simulate data under your expected effect size and model to determine the necessary taxonomic sample size.

Data Curation & Assembly

- Assemble Phylogeny: Source a time-calibrated phylogenetic tree for your taxon set.

- Compile Trait Data: Collect trait data from literature, databases, or direct measurement. Document all sources and potential measurement errors.

- Audit for Sampling Bias: Check if your taxon sample is representative of the broader clade's diversity.

Exploratory Data Analysis (EDA)

- Visualize Raw Data: Plot traits against each other and map them onto the phylogeny.

- Check Phylogenetic Signal: Quantify signal using metrics like Pagel's λ.

- Identify Outliers: Statistically and visually identify species that are extreme outliers for further investigation.

Model Fitting & Selection

- Define Candidate Models: Select a set of models that represent your biological hypotheses and null models.

- Fit Models: Use appropriate software (e.g.,

geiger,phytools,bayouin R). - Compare Models: Use a strict criterion (AICc, BIC) to rank models. Do not dismiss models with ΔAIC < 2.

Model Diagnosis & Robustness Checks

- Check Model Diagnostics: Analyze residuals for patterns, heteroscedasticity, and influential data points.

- Perform Sensitivity Analyses:

- Taxon Sensitivity: Re-run analysis with key clades removed.

- Phylogenetic Uncertainty: Repeat analysis across a posterior sample of trees from a Bayesian analysis.

- Conduct Posterior Predictive Checks (If using Bayesian methods).

Interpretation & Reporting

- Report Comprehensively: Include all tested models, diagnostics, and results of sensitivity analyses.

- Acknowledge Limitations: Be transparent about sampling issues, model weaknesses, and alternative interpretations.

- Archive Code & Data: Make analysis code and data publicly available for reproducibility.

Workflow Visualization

Troubleshooting Guide: Resolving Common Computational Challenges in Phylogenetic Analysis

Issue 1: Poor Model Fit in Trait Evolution Analysis

- Problem: Your phylogenetic comparative model (e.g., an Ornstein-Uhlenbeck model) has a poor statistical fit, or the results are biologically implausible.

- Causes:

- Incorrect Model Selection: The chosen model of evolution may not reflect the actual process [11].

- Small Sample Size: Analyses with a small number of taxa (e.g., median of 58 for OU studies) are prone to incorrectly favoring complex models over simpler ones [11].

- Data Error: Even small amounts of error in datasets can cause an OU model to be incorrectly favored, not due to biological process but because it can accommodate more variance towards the tips of the tree [11].

- Solutions:

- Run Model Diagnostics: Always compare the fit of multiple evolutionary models (e.g., Brownian Motion vs. OU) using criteria like AICc [11].

- Increase Taxa Sampling: Where possible, increase the number of species in your analysis to improve statistical power.

- Check for Rate Heterogeneity: Investigate if variation in diversification rates across the tree is being misinterpreted as trait-dependent evolution [11].

Issue 2: Phylogenetic Independent Contrasts (PIC) Assumptions Violated

- Problem: The results from PIC analysis are unreliable.

- Causes: Violation of one of the three core assumptions [11]:

- The phylogeny's topology is inaccurate.

- The branch lengths of the phylogeny are incorrect.

- Traits have not evolved under a Brownian Motion model.

- Solutions:

- Test Assumptions: Use standard diagnostic plots (available in packages like

caperin R) to check for relationships between standardized contrasts and node heights, or for heteroscedasticity in model residuals [11]. - Consider Alternative Methods: If assumptions are violated, consider using Phylogenetic Generalized Least Squares (PGLS), which is mathematically equivalent but can be more flexible [11].

- Test Assumptions: Use standard diagnostic plots (available in packages like

Issue 3: Inaccurate Inference of Trait-Dependent Diversification

- Problem: A Binary State Speciation and Extinction (BiSSE) analysis indicates a trait influences diversification rates, but the result may be a false positive.

- Cause: The inference can be confounded by a single, trait-independent diversification rate shift elsewhere in the phylogeny [11].

- Solutions:

- Account for Rate Heterogeneity: Use methods that test for and incorporate background rate variation across the tree that is unrelated to the trait of interest [11].

- Simulate Data: Perform simulations on your tree to confirm that the BiSSE model can reliably detect trait-dependent diversification given your specific phylogenetic structure [11].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a rooted and an unrooted phylogenetic tree? A rooted tree has a designated root node representing the most recent common ancestor of all leaf nodes, indicating the direction of evolution. An unrooted tree only illustrates relationships between nodes without suggesting an evolutionary direction [12].

Q2: My analysis has limited computational power. Which tree-building method should I choose for a large dataset? For large datasets (many taxa), distance-based methods like Neighbor-Joining (NJ) are recommended. NJ uses a stepwise construction approach that is computationally faster than searching for the optimal tree across the vast space of all possible tree topologies, which grows exponentially with the number of sequences [12].

Q3: When should I use a Maximum Likelihood (ML) method instead of Maximum Parsimony (MP)? Maximum Likelihood is ideal when you have a small number of distantly related sequences and can apply a specific evolutionary model. Maximum Parsimony is well-suited for data with high sequence similarity or for data types where designing an appropriate evolutionary model is difficult, such as with morphological traits or genomic rearrangements [12].

Q4: What are the key assumptions of the Phylogenetic Independent Contrasts method? The major assumptions are: (1) the topology of the phylogeny is accurate; (2) the branch lengths are correct; and (3) the traits have evolved under a Brownian motion model of evolution [11].

Q5: What is the practical difference between node-based and stem-based tree interpretations? These are two mathematical models for interpreting the same phylogenetic information [13].

- In a node-based tree, vertices (nodes) represent taxa (either sampled or inferred ancestors), and edges represent ancestry relationships [13].

- In a stem-based tree, edges represent taxa and ancestral lineages, while vertices represent speciation events [13]. The choice impacts how evolutionary concepts like monophyly are represented graphically, but both contain the same information about relationships [13].

Comparison of Common Phylogenetic Tree Construction Methods

The table below summarizes the principles, assumptions, and applications of the most common methods for inferring phylogenetic trees to help you select the appropriate one for your data and research question [12].

| Algorithm | Principle | Hypothesis & Model | Criteria for Final Tree | Best Application Scope |

|---|---|---|---|---|

| Neighbor-Joining (NJ) | Minimal evolution: minimizes the total branch length of the tree [12]. | BME branch length estimation model [12]. | Produces a single tree. | Short sequences with small evolutionary distance and few informative sites [12]. |

| Maximum Parsimony (MP) | Maximum-parsimony criterion: minimizes the number of evolutionary steps needed to explain the data [12]. | No explicit model required [12]. | The tree with the smallest number of character state changes (e.g., base substitutions) [12]. | Sequences with high similarity; data where designing characteristic evolution models is difficult [12]. |

| Maximum Likelihood (ML) | Maximizes the likelihood of the data given the tree and an evolutionary model [12]. | Sites evolve independently; branches can have different rates [12]. | The tree with the maximum likelihood value [12]. | A small number of distantly related sequences [12]. |

| Bayesian Inference (BI) | Applies Bayes' theorem to compute the posterior probability of a tree [12]. | Uses a continuous-time Markov substitution model [12]. | The most frequently sampled tree in the Markov chain Monte Carlo (MCMC) output [12]. | A small number of sequences [12]. |

Experimental Protocol: Constructing a Phylogenetic Tree from Gene Sequences

This protocol outlines the general workflow for constructing a phylogenetic tree, starting from gene sequences, as practiced in modern research [12].

1. Sequence Collection

- Action: Collect homologous DNA or protein sequences from public databases (e.g., GenBank, EMBL, DDBJ) or through experimental methods.

- Note: Ensure sequences are appropriate for your taxonomic question.

2. Multiple Sequence Alignment

- Action: Align the collected sequences using software such as MAFFT, Clustal Omega, or MUSCLE. Accurate alignment is the foundation for inferring correct evolutionary relationships [12].

- Troubleshooting Tip: Use multiple alignment methods and compare results for consistency [12].

3. Alignment Trimming

- Action: Precisely trim the aligned sequences to remove unreliably aligned regions that could introduce noise into the phylogenetic analysis [12].

- Critical Consideration: Balance is key. Insufficient trimming leaves noise, while excessive trimming removes genuine phylogenetic signal [12].

4. Evolutionary Model Selection

- Action: Select an appropriate substitution model (e.g., JC69, K80, HKY85) for your data using model-testing programs (e.g., ModelTest, jModelTest). This is a critical step for model-based methods like ML and BI [12].

5. Phylogenetic Tree Inference

- Action: Apply your chosen algorithm (NJ, MP, ML, or BI) using specialized software. The choice depends on your data size, evolutionary distance, and computational resources (refer to the comparison table above).

6. Tree Evaluation

- Action: Assess the statistical support for the inferred tree branches. Common methods include:

- Bootstrapping (for ML and MP): Resampling the data to test tree stability.

- Posterior Probabilities (for BI): The probability that a clade is true, given the data and model.

The following workflow diagram illustrates this multi-step process:

This table details key computational tools, data types, and conceptual models that are essential for research in phylogenetic comparative methods.

| Item / Resource | Type | Primary Function | Relevant Context |

|---|---|---|---|

| Homologous Sequences | Data | The raw character data (DNA, RNA, protein) used to infer evolutionary relationships [12]. | Fundamental input for any phylogenetic analysis. |

| Evolutionary Model (e.g., HKY85, GTR) | Conceptual / Mathematical Model | Describes the probabilities of character state changes over time, correcting for multiple hits and variation in rates [12]. | Critical for model-based methods (ML, BI) to compute likelihoods accurately. |

| R Statistical Language | Software Environment | A platform with extensive packages (e.g., ape, phangorn, caper) for conducting phylogenetic analyses and comparative methods [12]. |

Widely used for its flexibility and the vast array of specialized PCMs. |

| Tree of Life Databases (e.g., Open Tree of Life) | Data Resource | Provide pre-computed, large-scale phylogenetic trees for use in comparative analyses [14]. | Allows researchers to focus on trait evolution without building a tree from scratch. |

| Brownian Motion (BM) Model | Conceptual / Mathematical Model | A null model of trait evolution where variance accrues linearly with time [11] [15]. | Used in PIC and as a baseline for comparing more complex models (e.g., OU). |

| Ornstein-Uhlenbeck (OU) Model | Conceptual / Mathematical Model | A model of trait evolution that includes a restraining force, often interpreted as stabilising selection towards an optimum [11]. | Used to test hypotheses about adaptive evolution and trait constraints. |

The following diagram maps the logical relationships between core concepts when interpreting a phylogenetic tree as a data structure, highlighting the differences between node-based and stem-based perspectives [13].

Effective communication between phylogenetic comparative method (PCM) developers and the researchers who use these tools is fundamental to advancing evolutionary biology. However, this communication often fails, leading to misunderstandings, implementation errors, and ultimately, barriers to scientific progress. This technical support center is designed within the broader thesis of solving PCM computational challenges, providing direct troubleshooting and methodological guidance to bridge this critical gap.

Frequently Asked Questions (FAQs)

General PCM Concepts

What are Phylogenetic Comparative Methods and when should I use them? Phylogenetic comparative methods (PCMs) use information on the historical relationships of lineages (phylogenies) to test evolutionary hypotheses [16]. They are particularly useful for assessing the generality of evolutionary phenomena by considering independent evolutionary events and for modeling evolutionary processes over very long time periods to provide macroevolutionary insights [16].

What is the difference between PGLS and Phylogenetic Independent Contrasts? Phylogenetic Independent Contrasts, introduced by Felsenstein (1985), was the first general statistical method for incorporating phylogenetic information [16]. It transforms original tip data into values that are statistically independent and identically distributed [16]. Phylogenetic Generalized Least Squares (PGLS) is a more general approach that incorporates the phylogenetic tree into the residual structure [16]. When a Brownian motion model is used, PGLS is identical to the independent contrasts estimator [16].

How do I interpret Pagel's λ in my model results? Pagel's λ is a model parameter that measures the phylogenetic signal in your data, indicating how much trait variation follows the expected pattern under Brownian motion evolution [16]. A value of 1 indicates strong phylogenetic signal, while a value of 0 suggests no phylogenetic signal.

Technical Implementation

My analysis is running extremely slowly with a large phylogeny. How can I improve performance? Performance issues with large phylogenies are common. Consider these optimization strategies:

- Reduce tree complexity by pruning non-essential taxa

- Utilize more efficient computational algorithms

- Increase system memory allocation

- Implement parallel processing where applicable

- Check for convergence issues that may cause infinite loops

What should I do when I get "Likelihood calculation error" or "Matrix inversion failed" errors? These errors typically indicate issues with your data or model structure:

- Check for collinearity among predictor variables

- Verify that your phylogenetic tree is properly formatted and ultrametric

- Ensure missing data are properly handled

- Examine the variance-covariance matrix for singularity issues

- Simplify your model structure and gradually add complexity

How do I handle missing data in my comparative analysis? Missing data in comparative analyses requires careful consideration:

- Use methods specifically designed for handling missing data

- Consider multiple imputation techniques

- Avoid complete-case analysis which can introduce bias

- Document all missing data and handling procedures

- Validate results with different missing data approaches

Interpretation of Results

What does "non-positive definite variance-covariance matrix" mean and how do I fix it? This error indicates that your variance-covariance matrix has mathematical properties that prevent certain calculations. To address this:

- Check for highly correlated variables

- Remove redundant variables

- Verify your phylogenetic tree structure

- Ensure branch lengths are appropriate

- Consider using a ridge regularization approach

How do I choose between Brownian Motion, Ornstein-Uhlenbeck, and other evolutionary models? Model selection should be based on both statistical criteria and biological reasoning:

- Use information criteria for statistical comparison

- Consider the biological plausibility of each model

- Evaluate model fit through diagnostic plots

- Use simulation approaches to assess power

What constitutes strong support for one model over another in model selection? Strong model support is typically indicated by:

- ΔAIC/AICc values greater than 2

- Consistent results across different model selection criteria

- Biological interpretability of the selected model

- Good predictive performance on validation data

Troubleshooting Guides

Common Computational Errors

Problem: Convergence issues in Bayesian PCM analyses

Symptoms:

- Poor mixing of MCMC chains

- Low effective sample sizes

- High Gelman-Rubin statistics

Troubleshooting Steps:

- Adjust MCMC parameters: Increase chain length and thinning intervals [17]

- Modify proposal mechanisms: Adjust tuning parameters to improve acceptance rates

- Reparameterize model: Transform parameters to improve sampling efficiency

- Run multiple chains: Verify consistency across independent runs

- Simplify model: Reduce model complexity until convergence is achieved

Visual Guide to Diagnosing Convergence Issues:

Problem: Inconsistent results across different PCM software implementations

Symptoms:

- Differing parameter estimates between software packages

- Contradictory model selection outcomes

- Discrepant statistical significance values

Troubleshooting Steps:

- Verify input data consistency: Ensure identical trees, trait data, and formatting across platforms [17]

- Check default settings: Document and align all software-specific default parameters

- Validate with simulated data: Test implementations with known simulated datasets

- Consult documentation: Review method-specific assumptions and requirements [18]

- Contact developers: Report inconsistencies through proper channels [19]

Data Preparation and Quality Control

Problem: Phylogenetic tree and trait data compatibility issues

Symptoms:

- Taxon name mismatches between tree and data

- Missing species in either dataset

- Incompatible tree formats across analysis steps

Troubleshooting Steps:

- Standardize taxonomy: Implement consistent naming conventions across all datasets [17]

- Verify tree ultrametricity: Ensure trees are properly calibrated for time-structured analyses

- Check data alignment: Use automated tools to match tree tips with trait data

- Document pruning decisions: Record all taxonomic adjustments for reproducibility

- Validate final dataset: Confirm tree and data alignment before analysis

Visual Workflow for Data Integration:

Methodological Protocols

Standard PCM Analysis Workflow

Protocol 1: Phylogenetic Signal Assessment

Purpose: Quantify the degree to which traits reflect phylogenetic relationships.

Methodology:

- Data Preparation: Format trait data and phylogenetic tree for analysis

- Model Specification: Implement Pagel's λ, Blomberg's K, or related metrics

- Parameter Estimation: Calculate phylogenetic signal statistics

- Hypothesis Testing: Compare observed signal to null expectations

- Interpretation: Relate statistical results to biological processes

Expected Outcomes: Quantitative measures of phylogenetic signal with statistical significance assessments.

Common Pitfalls:

- Inappropriate null models for hypothesis testing

- Misinterpretation of statistical versus biological significance

- Inadequate sample size for reliable signal detection

Protocol 2: Comparative Model Selection Framework

Purpose: Systematically identify the best-supported evolutionary model for trait data.

Methodology:

- Candidate Models: Define biologically plausible evolutionary models

- Model Fitting: Estimate parameters for each candidate model

- Model Comparison: Calculate information criteria and likelihood ratios

- Model Averaging: Incorporate model uncertainty where appropriate

- Model Validation: Assess predictive performance and assumptions

Expected Outcomes: Ranked model support with parameter estimates and uncertainty measures.

Common Pitfalls:

- Over-reliance on automated model selection

- Ignoring model assumptions and limitations

- Failure to account for model selection uncertainty

Research Reagent Solutions

Table: Essential Computational Tools for Phylogenetic Comparative Methods

| Tool Category | Specific Implementation | Primary Function | Considerations |

|---|---|---|---|

| Phylogenetic Analysis Platforms | R (ape, phytools, geiger packages) | Comprehensive PCM implementation | Steep learning curve but extensive community support [16] |

| Bayesian MCMC Frameworks | MrBayes, BEAST2 | Bayesian phylogenetic inference | Computationally intensive, requires convergence assessment [15] |

| Specialized PCM Software | BayesTraits, COMPARE | Implementation of specific PCMs | Method-specific assumptions and limitations [16] |

| Tree Visualization | FigTree, ggtree | Phylogenetic tree visualization and annotation | Critical for data quality assessment and result interpretation |

| Data Management Tools | Custom R/Python scripts | Data formatting and workflow automation | Essential for reproducible research practices |

Communication Framework

Visualizing Developer-User Communication Pathways:

This technical support framework addresses the critical communication gaps between PCM developers and research users by providing clear, actionable guidance for common computational challenges. Through comprehensive troubleshooting guides, methodological protocols, and structured communication pathways, this resource aims to enhance methodological rigor and reproducibility in phylogenetic comparative research.

From Theory to Practice: Implementing Advanced PCMs in Evolutionary Analysis

Frequently Asked Questions (FAQs)

Q1: What are the core evolutionary models for continuous trait evolution, and when should I use each one? The three foundational models are Brownian Motion (BM), the Ornstein-Uhlenbeck (OU) process, and the Early Burst (EB) model. They represent different evolutionary philosophies and are suitable for different biological scenarios. BM models random trait drift and is often used as a null model. The OU process introduces stabilizing selection around an optimal trait value. The EB model describes rapid trait diversification following an evolutionary radiation that slows down as ecological niches fill. Your choice should be guided by your biological hypothesis: use BM for neutral drift, OU for traits under stabilizing selection, and EB for adaptive radiations.

Q2: My model selection results are inconclusive, especially with messy, real-world trait data. What are my options? Inconclusive results between standard models like BM and OU are common, often due to factors like trait imprecision (measurement error). You have two powerful modern options:

- Supervised Learning for Model Selection: Framing model selection as a classification problem can outperform traditional criteria like AIC. Evolutionary Discriminant Analysis (EvoDA) is one such method that uses discriminant functions to predict the best-fitting evolutionary model from trait data. It has been shown to achieve higher classification accuracy than AIC, particularly when measurement error is present [20].

- Large-Scale Simulation: Using software like TraitTrainR to perform thousands of evolutionary simulations under different models allows you to test the statistical power of your model selection procedure. This helps you determine if your data is truly insufficient to distinguish between models or if one model is genuinely better [20] [21].

Q3: How can I account for measurement error in my trait data during analysis? Ignoring measurement error can bias model selection and parameter estimates. Modern software packages are increasingly incorporating features to handle this. For instance, TraitTrainR allows users to define flexible parameter spaces that include measurement error in its simulation pipeline. When fitting models, you should ensure that your method can incorporate standard error estimates for your trait measurements, as this has been shown to improve the robustness of your conclusions [20].

Q4: The 'Early Burst' model rarely fits my data. Is the theory of adaptive radiations wrong? Not necessarily. Traditional EB models assume a uniform rate slowdown across all lineages in a clade, which may be overly simplistic. Recent research using more flexible models suggests that evolutionary rate dynamics are more complex. The Diffused Brownian Motion (DBM) model allows evolutionary rates to vary independently across lineages and time. Applications of DBM to large fossil and extant datasets have found that evolutionary rates for traits like body size can be stable over time, with long-term trends driven by a combination of sustained evolution and selective extinction of lineages, rather than a simple, clade-wide slowdown [22]. This indicates the need for more nuanced models to test adaptive landscape theory.

Q5: What software and visualization tools are available for these analyses? The field has developed robust, user-friendly software for simulation, analysis, and visualization.

- For Simulation & Analysis: TraitTrainR is an R package designed for fast, large-scale simulations under complex evolutionary models, including multi-trait evolution and measurement error [20] [21].

- For Visualization: PhyloScape is a web-based, interactive platform for visualizing phylogenetic trees. It supports multiple tree formats, offers a flexible metadata annotation system, and includes plugins for heatmaps, geographic maps, and protein structures, making it ideal for creating publication-ready figures [23].

Troubleshooting Common Experimental Issues

Problem: Inability to Distinguish Between OU and BM Models Symptoms: Similar AICc values or inconsistent likelihood ratio test results when comparing OU and BM models. Diagnosis: This is a common problem with limited statistical power, often due to small sample sizes (number of taxa) or weak signal of selection in the data. Solution:

- Assess Power via Simulation: Use TraitTrainR to simulate datasets under an OU process with parameters similar to your empirical data. Then, attempt to recover the OU model from these simulated datasets. This quantifies your power to detect stabilizing selection [20].

- Adopt a Supervised Learning Approach: Implement the EvoDA framework. Train a classifier on simulated data from various models (BM, OU, EB) and use it to predict the model for your empirical data. This method can be more accurate than AIC-based selection for complex and noisy data [20].

- Incorporate Measurement Error: Re-fit your models while explicitly accounting for the standard error of your trait measurements. This can prevent the underestimation of the selection strength (alpha) parameter in OU models [20].

Problem: Handling Phylogenetic Trees with Extreme Branch Length Variation Symptoms: Poor visualization and difficulty in interpreting evolutionary relationships due to highly heterogeneous branch lengths. Diagnosis: Standard tree visualization tools can distort trees with very long and very short branches, misrepresenting evolutionary time and relationships. Solution:

- Use Advanced Visualization Platforms: Import your tree into PhyloScape.

- Apply Branch Length Reshaping: Utilize PhyloScape's built-in multi-classification-based branch length reshaping method. This function groups branches into multiple classes using adaptive length intervals and applies injective functions to normalize the scales, improving the interpretability without altering the underlying data [23].

Problem: Low Accuracy in Complex Trait Prediction from Genomic Data Symptoms: Models built from genotype or gene expression data fail to accurately predict phenotypic traits. Diagnosis: The choice of prediction method may not align with the genetic architecture of your trait (e.g., using a method that assumes all genes have an effect on a trait that is actually controlled by a few key genes). Solution:

- Benchmark Multiple Methods: Systematically compare a suite of statistical learning methods, as their performance varies significantly. The table below summarizes methods tested for transcriptomic prediction [24].

- Incorporate Functional Annotation: Use biological knowledge to inform your models. For example, using Gene Ontology (GO) terms to group genes can improve prediction accuracy for traits like starvation resistance in Drosophila [24].

Table 1: Comparison of Statistical Learning Methods for Transcriptomic Prediction

| Method Category | Specific Method | Key Assumption | Performance Note |

|---|---|---|---|

| Dimension Reduction | Principal Component Regression (PCR) | Reduces predictors to orthogonal components [24] | Performance varies with trait architecture [24] |

| Penalized Regression | Partial Least Squares Regression (PLSR) | Simultaneously decomposes predictors and response [24] | Performance varies with trait architecture [24] |

| Mixed Models | GBLUP | All genes have an effect drawn from a normal distribution [24] | A common baseline method [24] |

| Machine Learning | Random Forest | Can capture complex, non-linear interactions [24] | May outperform linear models for certain traits [24] |

| Variable Selection | LASSO, BayesB | Sparsity (only a small fraction of genes have an effect) [24] | Can achieve higher accuracy for some traits (e.g., starvation resistance) [24] |

Experimental Protocols & Workflows

Protocol 1: Power Analysis for Evolutionary Model Selection Using TraitTrainR This protocol describes how to assess the statistical power of your model selection procedure through large-scale simulation.

- Parameterize Simulation: Use maximum likelihood estimates from a preliminary model fit to your empirical data (e.g., sigma² and alpha for an OU model) as starting parameters for TraitTrainR.

- Define Simulation Space: Set up a range of parameter values around your initial estimates to explore a realistic biological space. Include parameters for measurement error if available.

- Run Simulations: Use TraitTrainR to generate a large number (e.g., 1000) of simulated trait datasets across your defined parameter space under your focal model (e.g., OU).

- Model Fitting on Simulated Data: For each simulated dataset, fit all candidate models (e.g., BM, OU, EB) and perform model selection (e.g., using AICc or a custom classifier).

- Calculate Power: The power is calculated as the proportion of simulations where the true generating model (e.g., OU) was correctly identified as the best-fitting model. A low power percentage indicates your data type may be insufficient to reliably distinguish between models [20] [21].

Protocol 2: Supervised Model Selection with Evolutionary Discriminant Analysis (EvoDA) This protocol outlines a machine learning approach to model selection.

- Generate Training Data: Simulate a large and diverse set of trait datasets using TraitTrainR. Each dataset is a "sample" with a known "label" (i.e., the model that generated it, such as BM, OU, or EB).

- Extract Summary Features: From each simulated dataset, calculate a set of summary statistics that capture the phylogenetic signal and distribution of traits (e.g., mean trait value, variance, metrics like Bloomberg's K).

- Train the Classifier: Use the labeled set of summary statistics to train a discriminant analysis classifier (EvoDA). This classifier learns the patterns in the data that are characteristic of each evolutionary model.

- Classify Empirical Data: Calculate the same set of summary statistics from your empirical trait data and apply the trained EvoDA classifier to predict the most likely generating model [20].

Workflow for Power Analysis and Supervised Model Selection

The Scientist's Toolkit: Research Reagent Solutions

This table details essential software and methodological "reagents" for computational experiments in trait evolution.

Table 2: Essential Research Reagents for Modeling Trait Evolution

| Research Reagent | Type | Function & Application |

|---|---|---|

| TraitTrainR [20] [21] | R Software Package | Enables fast, large-scale evolutionary simulations under complex models (BM, OU, EB, multi-trait, measurement error) for power analysis and model testing. |

| EvoDA [20] | Methodological Framework | A supervised learning (discriminant analysis) approach for evolutionary model selection, robust to noisy data. |

| Diffused BM (DBM) Model [22] | Phylogenetic Model | A flexible model that allows evolutionary rates to vary continuously across lineages and time, testing predictions beyond standard EB models. |

| PhyloScape [23] | Web Visualization Platform | An interactive toolkit for creating, annotating, and sharing phylogenetic tree visualizations, integrated with heatmaps, maps, and other metadata. |

| Gene Ontology (GO) Annotations [24] | Biological Database | Functional annotation that can be incorporated into prediction models to group genes by biological process, improving complex trait prediction from genomic data. |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between ancestral state reconstruction for continuous versus discrete traits, and how does this impact taxonomic delimitation?

Ancestral state reconstruction estimates phenotypic or genetic characteristics of ancestral nodes on a phylogenetic tree. The method differs significantly between data types, which directly influences how you define taxonomic boundaries.

- For Continuous Characters (e.g., body size, gene expression levels): Methods like

fastAncin the R packagephytoolsfind the state for an internal node that has the maximum probability under a specified model (e.g., Brownian motion), providing maximum likelihood estimates [25]. These are useful for delimiting taxa based on quantitative thresholds. - For Discrete Characters (e.g., presence/absence of a morphological feature, nucleotide states): Methods like those in

phytools::ancrorcorHMMestimate the probability of each discrete state at an ancestral node, often under an Mk model [26]. This is critical for determining when a key diagnostic trait evolved, thereby informing the classification of clades.

FAQ 2: My ancestral state reconstruction for a discrete character is highly equivocal at key nodes. What steps can I take to improve the inference?

High uncertainty often stems from an poorly fitting model or limited data. Follow this troubleshooting protocol:

- Test Alternative Models: Do not assume a simple equal-rates (ER) model. Construct and compare custom models that reflect biological hypotheses, such as an ordered model where transitions between states must happen sequentially [26].

- Ensure Robust Model Fitting: Run multiple optimization iterations with different starting points and methods (e.g.,

nlminb,optim) to ensure you have found the true maximum likelihood solution [26]. - Consider Model Extensions: If uncertainty remains, explore more complex models like the hidden-rates model, which can account for unobserved factors influencing the rate of trait evolution [26].

- Validate with Alternative Methods: Cross-check your results using Bayesian inference for ancestral states or by performing a stochastic character mapping analysis, which can account for uncertainty in the reconstruction process [27].

FAQ 3: How can I account for uncertainty in the underlying phylogeny when performing ancestral state reconstruction for taxonomic delimitation?

The Trace Character Over Trees facility in Mesquite is designed specifically for this purpose [27]. It allows you to summarize ancestral state reconstructions across a series of trees (e.g., from a Bayesian posterior distribution or a set of equally parsimonious trees). For each clade in a reference tree, it reports the frequency of different ancestral states across all trees in the set that contain that clade. This provides a clear visual and quantitative measure of how sensitive your taxonomic conclusions are to phylogenetic uncertainty.

FAQ 4: What are the main software options for performing ancestral state reconstruction, and how do I choose?

The table below summarizes key software and their primary strengths.

| Software/Package | Method(s) | Key Feature / Use Case |

|---|---|---|

phytools (R) [25] [26] |

ML (continuous & discrete), Stochastic Mapping | Highly integrated within the R comparative biology ecosystem; good for visualization and custom analyses. |

corHMM (R) [26] |

ML (discrete) | Powerful and accurate for complex discrete trait models, including hidden rates models. |

| Mesquite [27] | Parsimony, ML, Bayesian | User-friendly graphical interface; excellent for exploratory analysis and visualizing results on trees. |

| DECIPHER (R) [28] | Parsimony, ML (sequence data) | Integrated with sequence alignment and tree building functions for a streamlined molecular workflow. |

FAQ 5: I am trying to use fastAnc in R, but my results seem unreliable. What could be wrong?

- Problem 1: Incorrect Data Format. The trait data must be a vector with names that exactly match the tip labels in the tree. Use

geiger::name.check()to identify and resolve any mismatches [26]. - Problem 2: Poor Model Fit.

fastAncassumes a Brownian motion model. If this model is a poor fit for your data (e.g., there is strong trait covariation or a trend), the estimates will be biased. Always check the model assumptions. - Solution: Verify data integrity and consider using the

vars=TRUEandCI=TRUEoptions infastAncto obtain confidence intervals and assess the uncertainty of your estimates at each node [25].

Experimental Protocols

Protocol 1: Ancestral State Reconstruction for a Continuous Trait

This protocol uses the phytools package in R to estimate the ancestral states of a continuous character, such as body size.

1. Load Packages and Data

2. Verify Data-Tree Matching

3. Perform Ancestral State Reconstruction

4. Visualize the Results

Protocol 2: Ancestral State Reconstruction for a Discrete Trait

This protocol outlines the steps for reconstructing ancestral states for a discrete character using the phytools package.

1. Load and Prepare Data

2. Define and Fit a Trait Evolution Model

3. Reconstruct Ancestral States

4. Visualize the Reconstruction

Workflow Visualization

The following diagram illustrates the logical workflow and decision process for performing ancestral state reconstruction for taxonomic delimitation.

Research Reagent Solutions

The table below lists essential computational tools and their functions for ancestral state reconstruction.

| Item | Function in Analysis |

|---|---|

| R Statistical Environment | The primary platform for statistical computing and implementing most comparative phylogenetic methods [25] [26] [28]. |

phytools R Package |

A comprehensive package for phylogenetic comparative biology, offering functions for both continuous (fastAnc) and discrete (ancr) ancestral state reconstruction, as well as visualization [25] [26]. |

ape R Package |

A foundational package for reading, writing, and manipulating phylogenetic trees and comparative data [25]. |

corHMM R Package |

A powerful package for fitting complex hidden Markov models of discrete trait evolution and performing ancestral state reconstruction [26]. |

| Mesquite Software | A standalone application with a graphical user interface for phylogenetic analysis, offering parsimony, likelihood, and Bayesian methods for ancestral state reconstruction [27]. |

| DECIPHER R Package | Provides functions for sequence alignment, phylogenetic tree building, and ancestral state reconstruction (Treeline) in an integrated workflow for molecular data [28]. |

| Sequence Alignment Tool (e.g., MAFFT) | Used for aligning DNA or protein sequences before tree building, which is a critical preliminary step for accurate phylogeny estimation [29]. |

Frequently Asked Questions & Troubleshooting

Q1: My BiSSE analysis shows low statistical power. What could be the cause and how can I address this?

A: Low statistical power in BiSSE is often caused by inadequate sample size or high tip ratio bias [30]. Power is severely affected with fewer than 300 taxa and can be extremely low (>5%) with only 50 taxa, regardless of the degree of rate asymmetry [30]. Furthermore, if one character state dominates the dataset (e.g., fewer than 10% of species are in one state), power, accuracy, and precision are significantly reduced [30].

- Solutions:

- Increase Sample Size: If possible, include more taxa in your phylogeny. Analyses with 300-500 tips show markedly improved power [30].

- Use a Reduced Parameter Model: Instead of the full 6-parameter model (λ₀, λ₁, μ₀, μ₁, q₀₁, q₁₀), constrain some equal parameters (e.g., μ₀=μ₁ and q₀₁=q₁₀) to create a 4-parameter model. This can substantially increase power, especially in high tip-bias scenarios [30].

- Test for Robustness: Perform robustness tests, such as comparing the difference in AIC between your best BiSSE model and a null model with the difference estimated from simulated datasets [31].

Q2: How can I test if my trait-dependent diversification result is a false positive?

A: State-dependent speciation and extinction (SSE) models, including BiSSE, can have a high Type I error rate, meaning they might infer a trait-dependent effect where none exists [31].

- Solutions:

- Implement the Character-Independent (CID-2) Model: Use the two-state character-independent diversification model available in the R package

hisse[31]. This model assumes the evolution of your observed binary trait is independent of the diversification process, which is accounted for by an unobserved, hidden trait. Comparing the fit of your BiSSE model to a CID-2 model helps validate that the diversification signal is truly linked to your observed trait [31]. - Bayesian MCMC Analysis: For your best-fit model, perform a Markov Chain Monte Carlo (MCMC) analysis to compute 95% confidence intervals for the parameters. This helps assess the uncertainty and stability of your parameter estimates [31]. A typical protocol involves running 20,000 MCMC steps after a burn-in of 2,000 steps [31].

- Implement the Character-Independent (CID-2) Model: Use the two-state character-independent diversification model available in the R package

Q3: I am getting an error when trying to include my phylogeny in a model in R. What should I check?

A: This is a common computational challenge. The error message Error: The following variables can neither be found in 'data' nor in 'data2' or issues with the isSymmetric method indicate the phylogenetic covariance matrix was not passed to the function correctly [32].

- Solutions:

- Create a Variance-Covariance Matrix: You cannot pass the

phyloobject directly. First, create a variance-covariance matrix from your tree usingape::vcv.phylo(your_phylo_object)[32]. - Verify Object Class: Ensure that the object you are using for the covariance matrix in functions like

brmis the matrix itself, not the originalphyloobject. The errorno applicable method for 'isSymmetric' applied to an object of class "phylo"confirms the function received the wrong object type [32].

- Create a Variance-Covariance Matrix: You cannot pass the

BiSSE Model Parameters and Estimation Accuracy

The BiSSE model estimates six core parameters. The accuracy of these estimates is highly dependent on the number of tips in the phylogeny and the underlying asymmetry in rates, which can cause a bias in the tip ratio [30].

Table 1: BiSSE Model Parameters and Estimation Notes

| Parameter | Biological Meaning | Estimation Performance Notes |

|---|---|---|

| λ₀, λ₁ | Speciation rates for state 0 and state 1. | Generally estimated with good accuracy and precision given an appropriate tree size. Precision decreases as rate asymmetry and tip bias increase [30]. |

| μ₀, μ₁ | Extinction rates for state 0 and state 1. | Estimates are often poor and lack precision, with performance worsening as the difference in extinction rates increases [30]. |

| q₀₁, q₁₀ | Transition rates between state 0 and 1. | Not estimated as accurately or precisely as speciation rates. Precision decreases with high tip bias [30]. |

Table 2: Impact of Sample Size and Tip Ratio on BiSSE Power [30]

| Condition | Impact on Hypothesis Testing Power |

|---|---|

| < 300 Taxa | Severely low power. Be extremely cautious interpreting results from small trees. |

| < 100 Taxa | Power is marginal or extremely low for all types of rate asymmetry. |

| High Tip Ratio Bias (e.g., one state has <10% of species) | Reduces power, accuracy, and precision. Can confound which rate asymmetry is causing an excess of a character state. |

Detailed Experimental Protocol: BiSSE Analysis in R

This protocol provides a step-by-step guide for setting up and running a BiSSE analysis using the diversitree package in R, including robustness checks [33] [31].

Prerequisites and Package Installation

Simulating a Tree for Analysis (Optional)

For testing and learning, you can simulate a tree and trait data under a known BiSSE model.

Fitting the BiSSE Model

With your own phylogeny my_tree and a vector of binary tip states my_tip_states (where names match tip labels), you can build and fit the model.

Testing Robustness with MCMC

Perform a Bayesian MCMC analysis for your best-fitting model to get parameter confidence intervals [31].

Running a Character-Independent Model (CID-2)

Use the hisse package to fit a model where diversification is independent of your observed trait [31].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Statistical Tools for BiSSE Analysis

| Tool Name | Function / Utility | Implementation |

|---|---|---|

diversitree R Package |

A core R package for fitting a wide range of SSE models, including the BiSSE model. Used for maximum likelihood and MCMC inference [33]. | bisse_model <- make.bisse(tree, states) [33] |

hisse R Package |

Implements the HiSSE model and, crucially, the Character-Independent (CID-2) model, which is essential for testing false positives [31]. | cid2_model <- hisse(tree, states, hidden.states=TRUE, ...) [31] |

| RevBayes | A Bayesian platform for phylogenetic analysis. It can be used for more complex and customizable implementations of the BiSSE model (referred to as CDBDP) using MCMC [34]. | timetree ~ dnCDBDP( speciationRates = speciation, ... ) [34] |

| Character-Independent (CID-2) Model | A statistical model used as a robustness check to confirm that a detected diversification signal is not spurious [31]. | Implemented in the hisse package [31]. |

| MCMC Analysis | A computational algorithm used within both R and RevBayes to approximate the posterior distribution of parameters, providing credible intervals [34] [31]. | mcmc( model, parameters, nsteps=20000, ...) [31] |

BiSSE Analysis Workflow and Troubleshooting Logic

This diagram outlines the key steps in a robust BiSSE analysis and the primary troubleshooting pathways for common problems.

Phylogenetic Comparative Methods (PCMs) are a suite of statistical tools that use phylogenetic trees to understand the evolutionary processes that shape phenotypic trait data across species. By accounting for shared evolutionary history, these methods allow researchers to move beyond simple correlations to test sophisticated hypotheses about adaptation, convergence, and the mode and tempo of evolution. The core challenge they address is the non-independence of species data; because species are related in a hierarchical fashion, their traits cannot be treated as independent data points in statistical analyses. PCMs provide the framework to model this non-independence explicitly.

The fundamental component of most PCMs is the phylogenetic variance-covariance (VCV) matrix, which is derived from the phylogenetic tree. This matrix captures the expected covariance between species due to their shared evolutionary history, summing their shared branch lengths from the most recent common ancestor to the root. It is essential for statistical models, such as Phylogenetic Generalized Least Squares (PGLS), that require accounting for phylogenetic structure to produce accurate parameter estimates and avoid spurious results [35].

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: My model fitting yields a "singularity" or "non-positive definite" matrix error. What does this mean and how can I resolve it?

- Problem: This error typically indicates a problem with the phylogenetic variance-covariance matrix. It can be caused by:

- Polytomies: The presence of unresolved nodes (multifurcations) in the phylogenetic tree.

- Zero-Length Branches: Some branches in the tree have a length of zero, which can create dependencies in the matrix.

- Small Sample Size: The number of species (tips) is too small relative to the number of parameters in the complex model you are trying to fit.

- Troubleshooting Steps:

- Check Your Tree: Resolve polytomies if possible, either by obtaining a more resolved tree from the literature or by using software to randomly resolve them (with multiple repetitions). Ensure no branches have a length of zero.

- Simplify Your Model: Start with simpler evolutionary models (e.g., Brownian Motion) before progressing to more complex ones (e.g., multi-optima Ornstein-Uhlenbeck). Reduce the number of parameters you are estimating.

- Increase Sample Size: If possible, add more species to your dataset to increase statistical power and matrix stability.

Q2: How do I interpret the results of a model selection analysis? What do values like AICc and BIC tell me?

- Problem: Researchers often obtain a table of multiple fitted models with different scores and are unsure how to proceed.

- Solution:

- AICc (Akaike Information Criterion, corrected for small sample size) and BIC (Bayesian Information Criterion) are metrics used to compare the relative fit of different models to your data, penalizing for model complexity. A lower score indicates a better model fit.

- Model Selection: The model with the lowest AICc/BIC score is considered the best among the set tested. A common rule of thumb is that models with a ΔAICc (difference from the best model) of less than 2 have substantial support, while those with ΔAICc greater than 10 have essentially no support.

- Model Averaging: If multiple models have similar support (e.g., ΔAICc < 4), it is often prudent to perform model averaging rather than relying on a single "best" model. This provides a more robust estimate of parameters across model uncertainty.

Q3: The parameter estimates for my complex model (e.g., OU) are highly uncertain or the model fails to converge. What should I do?

- Problem: Complex models like the Ornstein-Uhlenbeck (OU) can be difficult to fit, especially with limited data.

- Troubleshooting Steps:

- Re-check Your Starting Values: Optimization algorithms are sensitive to the initial parameter guesses. Try a range of different starting values to ensure the model is converging to a true global optimum and not a local one.

- Constrain Parameters: Consider fixing certain parameters to biologically plausible values to simplify the model landscape. For example, you might fix the alpha (selection strength) parameter in an OU model to test a specific hypothesis.

- Use a Simpler Model as a Prior: Fit a simpler model (e.g., Brownian Motion) first and use its parameters as informed starting values for the more complex model.

- Validate with Simulations: Simulate data under the model you are trying to fit to ensure your analysis pipeline can accurately recover the known parameters.