Navigating Ornstein-Uhlenbeck Model Biases: A Practical Guide for Biomedical Researchers Working with Small Datasets

The Ornstein-Uhlenbeck (OU) model has become a cornerstone in evolutionary biology and biomedical research for analyzing trait evolution and adaptation.

Navigating Ornstein-Uhlenbeck Model Biases: A Practical Guide for Biomedical Researchers Working with Small Datasets

Abstract

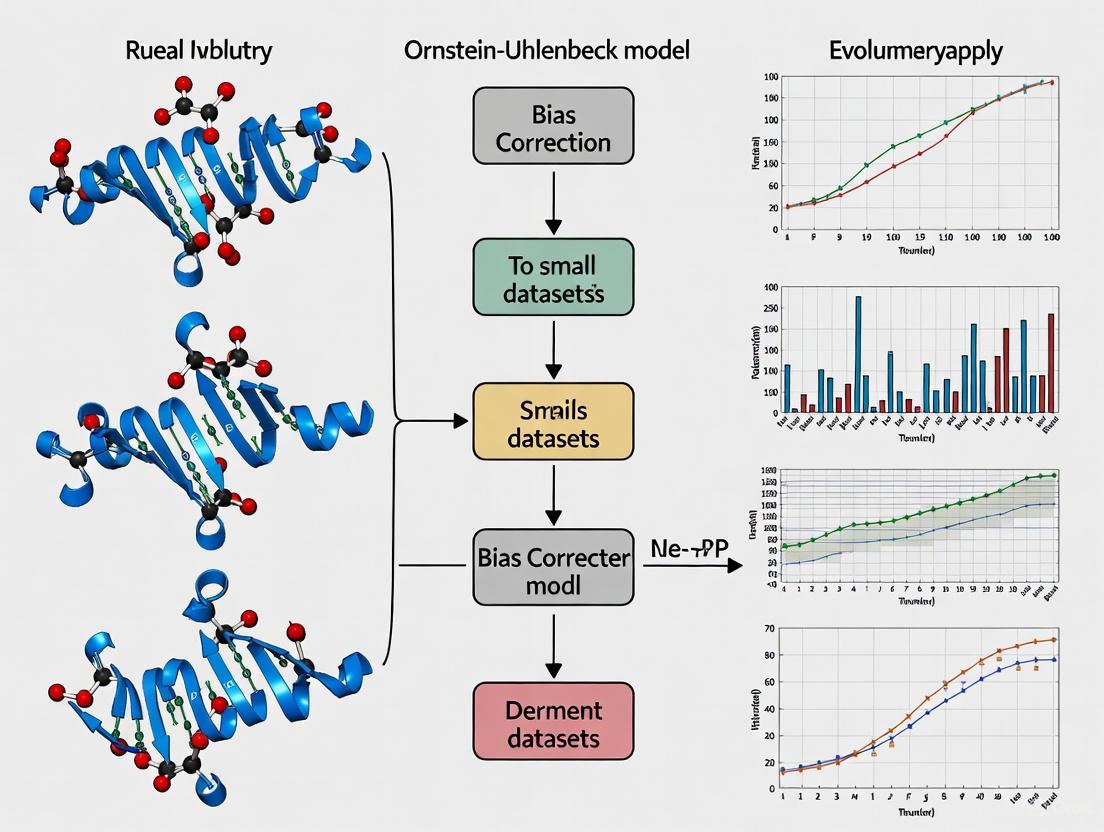

The Ornstein-Uhlenbeck (OU) model has become a cornerstone in evolutionary biology and biomedical research for analyzing trait evolution and adaptation. However, recent research reveals significant statistical biases when applying OU models to small datasets, including inflated Type I error rates, problematic parameter estimation, and sensitivity to measurement error. This article synthesizes current evidence on OU model limitations, provides practical methodological guidance for researchers and drug development professionals, and offers validation frameworks to ensure robust biological inferences. By addressing foundational concepts, methodological applications, troubleshooting strategies, and comparative validation approaches, we equip researchers with the knowledge to avoid common pitfalls and implement OU models appropriately within biological and clinical research contexts.

Understanding OU Model Fundamentals and the Small Dataset Bias Problem

Frequently Asked Questions (FAQs)

FAQ 1: What is the core mathematical principle behind the Ornstein-Uhlenbeck (OU) process?

The OU process is defined by a stochastic differential equation (SDE): dX_t = θ(μ - X_t)dt + σ dW_t [1] [2] [3]. The θ(μ - X_t)dt term is the drift that pulls the process toward its long-term mean (μ), a property known as mean-reversion [1] [3]. The σ dW_t term is the diffusion, which adds random fluctuations scaled by the volatility parameter σ via a Brownian motion (W_t) [1] [3].

FAQ 2: Why is the OU process often more suitable for biological data than a simple Brownian motion model?

Unlike Brownian motion, whose variance can grow without bound, the OU process possesses a stationary (equilibrium) distribution [1] [4] [3]. This means that over time, the process settles into a stable pattern of variation around the mean, which is often a more realistic assumption for biological traits under stabilizing selection or for modeling physiological equilibrium [4]. The stationary distribution is Gaussian with mean μ and variance σ²/(2θ) [1] [3].

FAQ 3: What are the most common methods for estimating OU parameters from my data?

Several methods are commonly used, each with its own strengths [5] [6]. The table below summarizes the core estimation methods. Note that for small datasets, all these methods can produce biased estimates, particularly for the mean-reversion speed θ [6].

Table 1: Common OU Process Parameter Estimation Methods

| Method Name | Brief Description | Key Consideration |

|---|---|---|

| AR(1) / OLS Approach [5] [6] | Treats discretely sampled data as an AR(1) process: X_{t+1} = α + β X_t + ε. Parameters are derived from the OLS regression results. |

Fast and simple, but estimates for θ can be significantly biased with small samples [6]. |

| Direct Maximum Likelihood [6] | Maximizes the likelihood function based on the conditional normal distribution of the process. | More computationally intensive than OLS; can produce results identical to the AR(1) approach for a pure OU process [6]. |

| Moment Estimation [6] | Matches theoretical moments of the process (e.g., variance, covariance) to their empirical counterparts. | Can help reduce the positive bias in the estimation of θ compared to the MLE/OLS estimators [6]. |

FAQ 4: I'm using small datasets. Which parameter is most notoriously difficult to estimate accurately?

The mean-reversion speed (θ) is notoriously difficult to estimate accurately from small datasets [6]. Even with a reasonably large number of observations (e.g., >10,000), estimating θ with precision can be challenging. The bias can be positive, meaning the strength of mean reversion is overestimated [6]. The half-life of mean reversion, a key derived metric, is calculated as ln(2)/θ and is therefore also strongly affected by this bias [6].

FAQ 5: How can I account for non-evolutionary variation within species in my phylogenetic model? Standard OU models assume all variation is evolutionary. You can use an extended OU model that includes a separate parameter for within-species (e.g., environmental, technical, or individual genetic) variation [4]. Failure to account for this can lead to misleading inferences; for example, high within-species variation might be mistaken for very strong stabilizing selection in a standard OU model [4].

Troubleshooting Guides

Problem: Inaccurate or Biased Estimation of Mean-Reversion Speed (θ)

Potential Causes and Solutions

Cause 1: Small Sample Size. This is the primary cause of bias in estimating

θ. The convergence of the estimator's distribution is slow, and a bias persists even as data frequency increases if the total time span is fixed [6].- Solution: If possible, increase the total time span of your data rather than just the frequency of sampling. Be aware of the bias and use estimation methods designed to mitigate it, such as the adjusted moment estimator [6]. For critical applications, consider simulation-based methods like indirect inference estimation, which is unbiased for finite samples but computationally slower [6].

Cause 2: Inefficient or Biased Estimation Method. The common OLS/AR(1) method, while simple, has a known positive finite-sample bias [6].

- Solution: Use the bias-adjusted moment estimator [6]. The formula for this adjustment (assuming

μis known) is:θ = -log(β)/h - (Var(ε) / (2 * (1-β²) * β * h))whereβis the AR(1) coefficient,his the time step, andVar(ε)is the variance of the residuals. This adjustment subtracts a positive term, reducing the bias.

- Solution: Use the bias-adjusted moment estimator [6]. The formula for this adjustment (assuming

Cause 3: Incorrect Assumption of Long-Term Mean (

μ). In pairs trading or spread modeling,μis often assumed to be zero. An incorrect assumption can affect other parameter estimates [6].- Solution: When possible, use estimation methods that allow

μto be unknown and estimated from data, unless there is a strong theoretical justification for fixing its value [6].

- Solution: When possible, use estimation methods that allow

Problem: Model Fitting Produces Poorly Identified Parameters or Fails to Converge

Potential Causes and Solutions

- Cause 1: Poorly Designed Experiment or Low Signal-to-Noise Ratio. If the data does not clearly exhibit mean-reverting behavior, the model parameters, especially

θ, will be uncertain.- Solution: Prior to data collection, conduct a power analysis via simulation to determine the sample size (time span) required to reliably detect a given strength of mean reversion. The workflow below outlines this proactive experimental design.

- Cause 2: Highly Correlated Parameters in Complex Models. When extending the basic OU model (e.g., with multiple regimes or shifts), parameters like the shift times (

t_switch) and the mean levels (x2) can become highly correlated, leading to computational problems and unreliable estimates [7].- Solution: Reparameterize the model to reduce correlation. For example, represent a vector of switching times using a

simplexto enforce ordering and boundaries, which can dramatically improve sampling efficiency [7].

- Solution: Reparameterize the model to reduce correlation. For example, represent a vector of switching times using a

Experimental Protocols & Data Presentation

Protocol: Calibrating an OU Process using OLS and AR(1) Regression

This protocol details a common two-step method for estimating OU parameters from discrete time series data [5] [8].

- Data Preparation: Begin with a stationary time series of observations,

Y(t). In biological contexts, this could be normalized gene expression levels across different species or individuals over time. - Regression of the Raw Series (if needed): If the data is believed to be the cumulative sum of an underlying OU process (e.g., a modeled spread), first compute the raw series:

X(k) = cumsum(Y(t))[8]. IfY(t)is already the mean-reverting variable, proceed to step 3. - Lagged Regression (AR(1)): Create two new time series:

x_k = X[0:-1](lagged values) andy_k = X[1:](current values). Perform a linear regression:y_k = α + β * x_k + ε[5] [8]. - Parameter Calculation: Derive the OU parameters from the regression results [5] [8] [6]. Let

hbe the time interval between observations.- Mean-Reversion Speed:

θ = -log(β) / h - Long-Term Mean:

μ = α / (1 - β) - Stationary Standard Deviation:

σ_eq = sqrt( Var(ε) / (1 - β²) )whereVar(ε)is the variance of the regression residuals. - (Optional) Volatility Parameter:

σ = σ_eq * sqrt(2 * θ)

- Mean-Reversion Speed:

Table 2: Expected Behavior of OU Process Parameters Under Different Scenarios

| Biological/Experimental Scenario | Effect on Long-Term Mean (μ) |

Effect on Mean-Reversion Speed (θ) |

Effect on Stationary Variance (σ_eq) |

|---|---|---|---|

| Strong Stabilizing Selection | May shift to a new optimum | Increases (faster reversion) | Decreases |

| Relaxed Constraint/Genetic Drift | Little change | Decreases (slower reversion) | Increases |

| Increased Environmental Noise | Little change | Little change | Increases |

| Successful Drug Intervention (restoring homeostasis) | Returns to wild-type (healthy) level | Increases (faster recovery) | Decreases |

Visualization: Simulating OU Process Behavior

The following diagram illustrates the core logic of the OU process and how its parameters determine the behavior of a trajectory, which is crucial for interpreting results.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for OU Process Analysis

| Tool / Resource | Function / Purpose | Notes on Application |

|---|---|---|

| Linear Regression (OLS) | Core engine for the AR(1) calibration method. | Found in any statistical software (R, Python, Julia). Fast and easy to implement for basic calibration [5]. |

| Optimization Algorithm (e.g., L-BFGS-B) | Used for maximizing the likelihood function in direct MLE. | Necessary when moving beyond simple OLS to more complex models or when imposing parameter constraints [6]. |

| Monte Carlo Simulation | Used for assessing estimator bias, conducting power analysis, and implementing advanced fitting methods like indirect inference. | Critical for quantifying uncertainty and validating your experimental design and findings, especially with small datasets [6]. |

| Doob's Exact Simulation Method | Algorithm for generating exact (error-free) sample paths of the OU process for a given set of parameters. | Superior to the Euler discretization method. Essential for creating accurate synthetic data for testing and validation [6]. |

Frequently Asked Questions

1. What is the primary statistical pitfall of using OU models with small datasets? The primary pitfall is the positive bias in the estimation of the mean-reverting strength (α). Even with more than 10,000 observations, the α parameter is notoriously difficult to estimate correctly. With small datasets, this estimation bias is pronounced, leading researchers to incorrectly favor the more complex OU model over a simpler Brownian motion model. This is often revealed through likelihood ratio tests, which can be misleading with limited data [6] [9].

2. How does measurement error affect OU model inferences? Even very small amounts of measurement error or intraspecific trait variation can profoundly distort inferences from OU models. This error inflates the apparent variance in the data, which can lead to an overestimation of the strength of selection (α) and a misinterpretation of the evolutionary process [9].

3. Is fitting an OU model evidence of stabilizing selection? Not necessarily. Although the OU model is frequently interpreted as a model of 'stabilizing selection,' this can be inaccurate and misleading. The process modeled in phylogenetic comparative studies is qualitatively different from stabilizing selection within a population in the population genetics sense. The OU model's α parameter describes the strength of pull towards a central trait value across species, which is more akin to a trait tracking a moving optimum rather than selection towards a static fitness peak [9].

4. What are the best practices for validating an OU model fit? It is critical to simulate datasets from your fitted OU model and compare the properties of these simulated data (e.g., distribution of α) with your empirical results. This helps diagnose estimation biases and confirms whether the model can adequately capture the patterns in your data. Furthermore, researchers should always investigate the impact of measurement error and consider its effect on their parameter estimates [9].

5. Besides small sample size, what other factors can lead to an OU model being mis-specified? An OU model may be incorrectly favored if the data is generated by a process that the model does not account for, such as the presence of true outliers/rare shifts, or trends in the evolutionary optimum. Mis-specification also occurs when researchers rely solely on statistical significance from model selection without considering the biological plausibility and the absolute performance of the model [10] [9].

Troubleshooting Guides

Guide 1: Diagnosing and Addressing OU Model Bias in Small Samples

Problem: Your analysis suggests a strong OU process, but you suspect the result is driven by a small dataset, leading to unreliable parameter estimates.

Background: The mean-reversion speed (α) and its derived half-life are key for interpreting the strength of the evolutionary pull. However, these are often overestimated with limited data [6] [9].

Investigation Protocol:

- Bias Assessment via Simulation: Quantify the estimation bias by simulating a known process.

- Step 1: Use your estimated OU parameters (α, θ, σ) to simulate a large number (e.g., 1000) of new datasets with the same number of tips as your original data.

- Step 2: Fit the OU model to each of these simulated datasets.

- Step 3: Compare the average of the estimated α values from the simulations to the "true" α you used to generate the data. A large difference indicates a significant bias [9].

- Compare Model Performance: Use a model selection framework to compare OU against a simpler Brownian motion (BM) model. Be cautious of likelihood ratio tests that may incorrectly favor OU; consider using sample-size corrected metrics like AICc [9].

Solution: If a significant bias is found, you should:

- Interpret the estimated α and the half-life with caution, emphasizing they are likely overestimates.

- Give more weight to the simpler BM model if the data does not provide strong, unbiased support for OU.

- Consider alternative methods like the "moment estimation approach" which can help reduce positive bias by effectively subtracting a positive term from the maximum likelihood estimator [6].

Guide 2: Managing the Impact of Measurement Error

Problem: Your trait data contains measurement error or intraspecific variation, and you are concerned it is skewing your OU model results.

Background: Measurement error increases the observed variance of traits, which can be misinterpreted by the model as requiring a stronger pull (higher α) to an optimum to explain the data [9].

Investigation Protocol:

- Sensitivity Analysis: Incorporate measurement error into your model explicitly.

- Step 1: Fit your OU model while including estimates of measurement error variance for each taxon, if available.

- Step 2: Compare the parameter estimates (especially α) from the model that includes measurement error with those from a model that ignores it.

- Step 3: A substantial change in α suggests your original inferences are sensitive to measurement error [9].

- Data Quality Review: Statistically identify and review outliers or taxa with unusually high reported standard errors, as these may have a disproportionate effect on the model.

Solution:

- Where possible, use the measurement error-corrected model for inference.

- If reliable estimates of measurement error are not available, explicitly state the potential for inflated α estimates as a limitation of the study.

- Follow best practices in the field to minimize and account for measurement error during data collection [9].

Table 1: Common OU Model Parameter Estimation Methods and Their Properties

| Method | Description | Advantages | Disadvantages/Caveats |

|---|---|---|---|

| AR(1) / Linear Regression | Treats discretely sampled OU data as a first-order autoregressive process [6]. | Simple and fast to implement [6] [5]. | Can produce estimates with significant positive bias, especially for small n or small true α [6] [9]. |

| Maximum Likelihood Estimation (MLE) | Directly maximizes the likelihood function of the OU process [6] [11]. | Statistically efficient; uses the exact discretization of the process [6]. | Can be computationally slower; for a pure OU process, can produce results identical to the biased AR(1) estimator [6]. |

| Moment Estimation | Uses analytical expressions for moments (e.g., variance, covariance) of the OU process to derive estimators [6]. | Can help reduce the positive bias inherent in MLE/AR(1) methods [6]. | May be less familiar to practitioners; performance can depend on accurate knowledge of the long-term mean [6]. |

Table 2: Impact of Dataset Properties on OU Model Inference

| Data Property | Impact on OU Model Inference | Recommendation |

|---|---|---|

Small Sample Size (n) |

Increases bias in α estimation; reduces power to correctly identify the generating model [9]. |

Simulate to quantify bias; use corrected model selection criteria (AICc); consider simpler models. |

| High Measurement Error | Inflates trait variance, leading to overestimation of α [9]. |

Perform sensitivity analysis by incorporating measurement error variance into the model. |

Fixed Time Period (T) |

Even with high-frequency data (large n), a short total evolutionary time (T) limits information, leading to persistent bias [6]. |

Recognize that n and T provide different information; a long T is crucial for accurate α estimation. |

Experimental Protocols

Protocol: Simulation-Based Validation for OU Models

Purpose: To assess the reliability of OU parameter estimates and the robustness of model selection given a specific dataset (sample size, phylogeny).

Workflow Diagram:

Materials:

- Software: R with packages such as

geiger,ouch,OUwie, orPMD[9]. - Computing Resource: Standard desktop or laptop is sufficient for small-to-medium datasets; high-performance computing may be needed for large-scale simulations.

Procedure:

- Model Fitting: Fit both an OU process and a Brownian Motion (BM) model to your original empirical dataset. Record the parameter estimates (e.g., α, σ²) and model selection scores (e.g., log-likelihood, AICc).

- Parameter Extraction: Use the parameter estimates from the OU model fit in Step 1 as the "true" parameters for simulation.

- Data Simulation: Using the same phylogenetic tree and "true" parameters from Step 2, simulate a large number (e.g., 1000) of new trait datasets.

- Model Re-fitting: For each simulated dataset from Step 3, re-fit both the OU and BM models.

- Output Analysis:

- Bias Assessment: Calculate the mean of the α estimates from all OU fits to the simulated data. Compare this mean to the "true" α from Step 2. The difference is the estimation bias.

- Model Selection Power: Determine the percentage of simulations where the OU model was correctly selected over BM (e.g., via AICc). A low percentage indicates low power to detect an OU process even when it is the true model [9].

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for OU Model Analysis

| Tool / Reagent | Function in Analysis |

|---|---|

| R Statistical Environment | The primary platform for phylogenetic comparative methods and fitting evolutionary models [9]. |

geiger / OUwie R Packages |

Specialized software packages for fitting a variety of OU models, including multi-optima models, on phylogenetic trees [9]. |

PMD R Package |

A tool used for model testing and simulation, helping to assess the statistical performance of models like OU [9]. |

| Custom Simulation Scripts | Code (e.g., in R) written by the researcher to perform power and bias analyses, as described in the experimental protocols above. |

| Akaike Information Criterion (AIC/AICc) | A model selection criterion used to compare the fit of OU models to alternative models (e.g., BM) while penalizing for model complexity [9]. |

Frequently Asked Questions (FAQs)

FAQ 1: Does a small sample size directly cause Type I error inflation? No, a small sample size does not inherently increase the Type I error rate if an appropriate statistical test is used. The significance level (α) is chosen by the researcher and defines the probability of a Type I error, which is the mistake of rejecting a true null hypothesis. Well-designed tests control this rate regardless of sample size [12] [13]. The primary risk with small samples is low statistical power, which increases the likelihood of Type II errors—failing to detect a true effect [14].

FAQ 2: What is a more significant problem than Type I error in small datasets? Low statistical power is a more prevalent and critical issue in small datasets. Power is the test's ability to correctly reject a false null hypothesis. With a small sample, even if a true effect exists, the study may not have the sensitivity to detect it, leading to a false negative conclusion (Type II error) [14].

FAQ 3: How do systematic errors differ from random errors in their impact? Systematic errors (bias) are generally more problematic than random errors because they consistently skew data in one direction, leading to inaccurate conclusions and potentially false positives or negatives (Type I or II errors). Random errors primarily affect measurement precision and tend to cancel each other out with a large enough sample, but they can reduce precision in small samples [15].

FAQ 4: When analyzing clustered data from small trials, how can Type I error be controlled? When dealing with few clusters (e.g., in cluster randomized trials), specific small sample corrections must be applied to the analysis to maintain the nominal Type I error rate. For continuous outcomes, methods like a cluster-level analysis using a t-distribution, a linear mixed model with a Satterthwaite correction, or GEE with the Fay and Graubard correction can preserve Type I error with as few as six clusters. For binary outcomes, an unweighted cluster-level analysis or a generalized linear mixed model with a between-within correction can be effective [16].

Troubleshooting Guides

Issue 1: Suspected Type I Error in a Small Dataset Analysis

Problem: A statistically significant result was found in a small dataset, but you suspect it might be a false positive.

Diagnosis and Solution Steps:

- Verify Test Assumptions: Small samples often violate assumptions (e.g., normality) that many common tests rely on. Use diagnostic plots or formal tests to check assumptions and switch to robust non-parametric tests if violations are found.

- Re-run Analysis with Corrections: Apply small sample corrections relevant to your model. For general linear models, consider the Satterthwaite or Kenward-Roger corrections for degrees of freedom [16].

- Report Effect Size with Confidence Interval: Always report the effect size and its confidence interval (CI). A statistically significant result with a tiny effect size and a CI that includes negligible values may not be practically significant, even if it is statistically significant [13].

- Replicate if Possible: The strongest evidence against a result being a Type I error is its replication in a new, independent sample [13].

Issue 2: Handling Misclassification Bias (Phenotyping Error) in EHR-Based Studies

Problem: Using an imperfect algorithm to define a binary disease outcome (phenotyping) from Electronic Health Records (EHR) introduces error, biasing association estimates.

Diagnosis and Solution Steps:

- Identify the Problem: The standard naïve method that ignores misclassification produces attenuated (biased towards zero) estimates of the true association [17].

- Choose a Correction Method: If a validation subset with gold-standard outcomes is available, use methods that incorporate this data. If not, consider the Prior Knowledge-Guided Integrated Likelihood Estimation (PIE) method [17].

- Implement the PIE Method: This method uses prior knowledge (e.g., from literature or expert opinion) about the algorithm's sensitivity and specificity. It integrates over a prior distribution for these parameters in the likelihood function to reduce estimation bias without needing a dedicated validation dataset [17].

- Evaluate Performance: Studies show PIE effectively reduces bias across various settings, particularly when prior distributions are accurate. Its benefit is most pronounced in bias reduction rather than improving hypothesis testing power [17].

Issue 3: Low Statistical Power in a Pilot Study

Problem: A small pilot study failed to find a significant effect, and you need to determine if this is a true negative or a false negative due to low power.

Diagnosis and Solution Steps:

- Conduct a Post-Hoc Power Analysis: Using the observed effect size, sample size, and alpha level, calculate the statistical power of the conducted test. Power below 80% is generally considered low, making the study susceptible to Type II errors [14].

- Interpret the Result Cautiously: A non-significant result from an underpowered study is inconclusive; it does not prove the null hypothesis. Report it as such, emphasizing the need for more extensive research [14].

- Plan a Future Study: Use the effect size estimated from the pilot study to perform an a priori sample size calculation. This determines the sample size required to achieve adequate power (e.g., 80% or 90%) for a future, definitive study [14].

Summarized Quantitative Data from Systematic Reviews

Table 1: Performance of Small Sample Corrections in Cluster Randomized Trials (CRTs) with Few Clusters [16]

| Outcome Type | Analytical Method | Small Sample Correction | Minimum Number of Clusters to Mostly Maintain Type I Error (~5%) | Notes |

|---|---|---|---|---|

| Continuous | Linear Mixed Model (LMM) | Satterthwaite | 6 | A reliable method for continuous outcomes. |

| Generalized Estimating Equations (GEE) | Fay and Graubard | 6 | Preserves nominal error in many settings. | |

| Cluster-level Analysis | t-distribution (between-within df) | 6 | Unweighted or inverse-variance weighted. | |

| LMM | Kenward-Roger | >30 | Often conservative (actual Type I error < 5%) even with 30 clusters. | |

| Binary | Cluster-level Analysis | t-distribution | ~10 | Can be anticonservative (Type I error > 5%) with small cluster sizes or low prevalence. |

| GLMM | Between-Within | ~10 | Can sometimes be conservative with up to 30 clusters. | |

| GEE | Mancl and DeRouen | ~10 | Mostly preserves error but can be anticonservative in some situations. |

Table 2: Simulation Parameters from Systematic Review of CRT Small Sample Corrections [16]

| Parameter | Median (Range) Across Simulated Scenarios |

|---|---|

| Number of Clusters | 4 to 200 |

| Smallest Intracluster Correlation (ICC) | 0.001 (0.000 – 0.200) |

| Largest Intracluster Correlation (ICC) | 0.10 (0.05 – 0.70) |

| Lowest Outcome Prevalence | 0.25 (0.05 – 0.50) |

| Coefficient of Variation of Cluster Sizes | 1.00 (0.80 – 1.50) |

Experimental Protocols for Key Cited Experiments

Protocol 1: Evaluating the PIE Method for Misclassification Bias Correction [17]

Objective: To assess the performance of the Prior Knowledge-Guided Integrated Likelihood Estimation (PIE) method in reducing estimation bias caused by phenotyping error in EHR-based association studies.

Methodology:

- Data Generation: Synthetic data is generated for a population of n=3000 patients.

- A binary predictor ( xi ) is generated from a Bernoulli distribution with a mean of 0.3.

- The true binary outcome ( Yi ) is generated from a logistic regression model: ( \text{Pr}(Yi=1) = \text{expit}(\beta0 + \beta1 xi) ), where prevalence for ( xi=0 ) varies from 5% to 50%, and the true association ( \beta1 ) varies from 0 (for Type I error) to log(3) (for power and bias).

- The observed error-prone outcome ( Si ) is generated based on the true outcome ( Yi ), using fixed sensitivity (( \alpha1 = 0.65 )) and specificity (( \alpha0 = 0.99 )).

Comparison of Methods: The following methods are compared on the generated data:

- Gold Standard: Regression using the true, unobserved outcomes ( Y_i ).

- Naïve Method: Logistic regression ignoring misclassification, using ( S_i ) as the outcome.

- PIE Method: Maximizing the integrated likelihood, which incorporates prior distributions for sensitivity and specificity (e.g., uniform distributions centered around the true values).

Performance Metrics: Each method is evaluated across 200 simulated datasets under each setting for:

- Bias: Difference between the estimated ( \hat{\beta1} ) and the true ( \beta1 ).

- Type I Error: The proportion of times the null hypothesis (( \beta_1 = 0 )) is incorrectly rejected.

- Power: The proportion of times the null hypothesis is correctly rejected when ( \beta_1 \neq 0 ).

Data Bias and Error Relationships

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Bias Mitigation and Error Control

| Tool / Method | Function | Context of Use |

|---|---|---|

| Satterthwaite Correction | Approximates degrees of freedom to control Type I error in mixed models with small samples. | Analyzing continuous outcomes from CRTs or hierarchical data. |

| Fay and Graubard Correction | A small sample correction for Generalized Estimating Equations (GEE). | Analyzing correlated data (e.g., CRTs, longitudinal) with few clusters. |

| Kenward-Roger Correction | Another degrees of freedom approximation for mixed models; can be conservative with few clusters. | An alternative to Satterthwaite in linear mixed models. |

| Between-Within Correction | A method for generalized linear mixed models (GLMM) to handle binary outcomes with few clusters. | Analyzing binary outcomes in CRTs with a small number of clusters. |

| Mancl and DeRouen Correction | A bias-correction method for the variance estimator in GEEs. | Used with GEEs for binary outcomes in small samples. |

| PIE Method | Reduces bias in association estimates by integrating prior knowledge of misclassification rates. | EHR-based studies where the outcome is defined by an error-prone algorithm. |

| A Priori Power Analysis | Determines the necessary sample size to achieve a desired level of statistical power before data collection. | Planning any study to ensure it is adequately powered to detect an effect of interest. |

Troubleshooting Guides

Troubleshooting Guide 1: Addressing Biased Parameter Estimates in Small Datasets

Problem: Parameter estimates (especially for the mean-reversion parameter θ) from an Ornstein-Uhlenbeck (OU) process are significantly biased when using small datasets or low-frequency observations, leading to unreliable models of adaptation or degradation.

Explanation: In small samples, the classical least squares estimators (LSEs) and quadratic variation estimators for OU processes are known to be asymptotically biased [18]. This is particularly problematic when studying evolutionary adaptation or equipment degradation, where the mean-reversion rate is a key parameter of interest.

Solution: Implement modified estimation techniques designed for small samples.

- Applicable Models: OU process defined by

dXₜ = θ(μ - Xₜ)dt + σdWₜ. - Solution Steps:

- Use Modified Least Squares Estimators (MLSEs): For low-frequency observations, employ MLSEs for drift parameters (θ, μ). These are derived heuristically using nonlinear least squares and provide asymptotically unbiased estimates [18].

- Apply Modified Quadratic Variation Estimator (MQVE): For the diffusion parameter (σ), use the MQVE based on the solution of the OU process SDE instead of the classical estimator [18].

- Consider Ergodic Estimators (EEs): Leverage the ergodic properties of the OU process. Ergodic estimators for all three parameters (θ, μ, σ) can be proposed, and their asymptotic behavior established using ergodic theory and the central limit theorem for the OU process [18].

- Validate with Simulation: Conduct Monte Carlo simulations to compare the performance of your proposed estimators (MLSE, MQVE, EE) against classical estimators, confirming the reduction in bias across sample sizes (e.g., n=100, 200, 500, 1000) [18].

Preventative Measures:

- When planning studies, use simulation-based power analysis to determine the sample size required to estimate parameters with sufficient precision.

- Clearly differentiate between single-optimum and multiple-optimum OU models in your research questions, as parameter estimation accuracy can differ substantially between them [19].

Troubleshooting Guide 2: Mitigating Overestimation of Outcome Rates and Proportions

Problem: The estimated proportion of cases in a specific category (e.g., "preventable" adverse events, patients with inadequate blood pressure control) is substantially higher than the true population proportion.

Explanation: This systematic overestimation occurs when classifying cases using a measurement of low-to-moderate reliability and the true outcome rate is low (<20%) [20]. Random measurement error in a continuous assessment, when dichotomized, leads to misclassification. Cases near the threshold can easily be pushed over the classification line due to error, inflating the estimated rate of the less common outcome.

Solution: Adjust prevalence estimates to account for measurement error.

- Applicable Models: Any study estimating a proportion or rate based on a fallible measurement tool.

- Solution Steps:

- Quantify Reliability: Determine the inter-rater or test-retest reliability of your measurement. Use metrics like the intra-class correlation coefficient (ICC) or kappa (κ) for a single rater/measurement [20].

- Formulate the Problem: Use a classical test theory framework. Assume each case has a true, unobserved rating (T) and an observed rating (X), where X = T + error, with both T and error being independent and normally distributed [20].

- Apply Statistical Correction: Use statistical methods that adjust for measurement error. This can be done without requiring an impractically large number of measurements per case [20].

- Report Adjusted Estimates: Always report the adjusted estimate alongside the naive (unadjusted) estimate and the reliability coefficient used for the adjustment.

Preventative Measures:

- Invest in developing and using highly reliable measurement protocols.

- Do not rely on majority rule with a small number of reviewers (e.g., 3) for dichotomous outcomes when reliability is low (~0.45), as this can lead to 50-100% overestimation [20].

Troubleshooting Guide 3: Interpreting Findings Beyond Simple Significance

Problem: Over-reliance on a p-value threshold (e.g., p < 0.05) to declare an effect "real" or "important," leading to misinterpretations of study results and poor decision-making.

Explanation: Statistical significance (a p-value) only indicates the improbability of the observed data under a specific null hypothesis (often "no effect"). It does not provide information on the magnitude of the effect, its clinical or practical importance, or the precision of the estimate [21] [22]. A result can be statistically significant but clinically irrelevant, and a non-significant result does not prove the null hypothesis [22] [23].

Solution: Adopt a multi-faceted approach to inference that moves beyond the "p < 0.05" dichotomy.

- Applicable Models: All inferential statistics, including comparisons of groups and model parameters.

- Solution Steps:

- Report Confidence Intervals (CIs): Always report point estimates (e.g., mean difference, risk ratio) with their confidence intervals (e.g., 95% CI). CIs provide a range of compatible values for the true effect size and indicate the precision of your estimate [22] [23].

- Incorporate Minimal Important Difference (MID): Pre-specify the smallest effect size that would be considered clinically or practically meaningful. Compare the entire confidence interval of your estimate to this MID [22] [23].

- Use the Framework of the Analysis of Credibility (AnCred): To challenge a significant finding, calculate the "Scepticism Limit" (SL). Only if prior evidence exists for effect sizes larger than the SL can the significant finding be deemed credible at the 95% level [24].

- Contextualize with Existing Evidence: Explicitly consider the certainty of the evidence, study design, data quality, and biological plausibility when drawing conclusions, rather than relying solely on a p-value [22].

Preventative Measures:

- Avoid using the terms "significant," "non-significant," or "trend towards" in the interpretation of results [22].

- Report exact p-values as continuous numbers (e.g., P = 0.07) rather than dichotomizing them [22].

Frequently Asked Questions (FAQs)

What is the fundamental problem with relying solely on statistical significance?

Statistical significance, typically indicated by a p-value below 0.05, only tells you that your observed data is unlikely under a specific null hypothesis (like "no difference"). It is a statement about the data, not the hypothesis [21]. The critical flaw is that it does not convey the size of the effect, its practical importance, or the precision of the estimate [21] [22]. A statistically significant result can be trivial in magnitude, and a non-significant result does not prove the absence of an effect, especially in small studies [21] [23].

How can measurement error lead to overestimation of a proportion or rate?

When you classify cases into categories (e.g., "preventable" vs. "not preventable") using an imperfect tool, measurement error causes misclassification. If the true rate of an outcome is low (e.g., <20%), the random error will push more cases from the large "non-event" group across the threshold into the small "event" group than it pushes in the opposite direction. This net influx artificially inflates the estimated proportion of the less common outcome. The lower the reliability of your measurement and the rarer the true outcome, the greater the overestimation will be [20].

What are the best alternatives to using p-values and statistical significance?

The consensus is moving towards estimation and meaningful interpretation over simple dichotomization.

- Confidence Intervals: Report and focus on the point estimate and its confidence interval. The interval shows the most plausible values for the true effect and directly visualizes the precision of your measurement [22] [23].

- Effect Size and MID: Always report the effect size (e.g., Cohen's d, risk difference) and interpret it in the context of a pre-defined Minimal Important Difference (MID)—the smallest change a patient or practitioner would care about [21] [22].

- Bayesian Methods: These allow you to estimate the probability that an effect is substantial, trivial, or harmful, formally integrating existing knowledge with your new data [23] [24].

In the context of Ornstein-Uhlenbeck models, why might my parameter estimates be unreliable with small datasets?

The Classical Least Squares Estimators (LSEs) for the drift parameters of an OU process are known to be asymptotically biased when estimated from low-frequency observations [18]. This means that with small sample sizes, your estimate of the critical mean-reversion parameter (θ) may be systematically too high or too low, leading to incorrect inferences about the rate of adaptation or degradation. The solution is to use Modified LSEs (MLSEs) and Ergodic Estimators, which are designed to have better statistical properties (like being asymptotically unbiased) with the kind of data commonly available in real-world applications [18].

How can I visually assess whether an effect might be clinically significant?

Plot your estimate with its confidence interval against a reference line marking the Minimal Important Difference (MID). Then, use this simple guide based on the interval's placement relative to the MID and the "no effect" line [23]:

| Confidence Interval Placement Relative to MID | Interpretation |

|---|---|

| Entirely above positive MID | Effect is clinically beneficial |

| Entirely below negative MID | Effect is clinically harmful |

| Includes "no effect" and crosses MID | Effect is inconclusive (compatible with both benefit and no benefit) |

| Includes "no effect" but within MIDs | Effect is trivial (too small to be important) |

| Spans both positive and negative MIDs | Effect is equivocal (compatible with both benefit and harm) |

Experimental Protocols & Methodologies

Protocol 1: Modified Estimation for Ornstein-Uhlenbeck Process Parameters

Objective: To accurately estimate the parameters (θ, μ, σ) of an OU process from low-frequency observational data while minimizing the bias inherent in classical estimators.

Materials:

- Low-frequency time series data

{X_tk}for k = 0 to n, wheret_k = k*handhis the fixed time interval. - Computational software (e.g., R, Python) for numerical optimization and simulation.

Methodology:

- Model Specification: Define the OU process by the stochastic differential equation:

dX_t = θ(μ - X_t)dt + σdW_t[18]. - Parameter Estimation:

- For Drift Parameters (θ, μ): Compute the Modified Least Squares Estimators (MLSEs). These are derived by applying the nonlinear least squares method to the explicit solution of the OU process, which is

X_t = e^{-θt}X_0 + (1 - e^{-θt})μ + σ∫_0^t e^{-θ(t-s)}dW_s[18]. - For Diffusion Parameter (σ): Calculate the Modified Quadratic Variation Estimator (MQVE) based on the same solution to the SDE [18].

- Ergodic Estimation (Alternative): Leverage the ergodic theorem for OU processes to propose ergodic estimators for all three parameters. Establish their asymptotic behavior using the central limit theorem for the OU process [18].

- For Drift Parameters (θ, μ): Compute the Modified Least Squares Estimators (MLSEs). These are derived by applying the nonlinear least squares method to the explicit solution of the OU process, which is

- Validation:

- Perform Monte Carlo simulations (e.g., N=1000 repetitions) with known parameter values (e.g., μ=θ=σ²=1).

- Compare the performance (bias, mean-squared error) of your proposed MLSEs, MQVE, and EEs against the classical LSEs and quadratic variation estimators across varying sample sizes (n=100, 200, 500, 1000) [18].

Protocol 2: Adjusting Proportion Estimates for Measurement Error

Objective: To obtain an accurate estimate of a population proportion (e.g., rate of preventable deaths) by adjusting for the reliability of the measurement tool.

Materials:

- Dataset with categorical classifications (e.g., "preventable"/"not preventable").

- Reliability estimate for the measurement (e.g., Inter-rater ICC or κ).

Methodology:

- Quantify Reliability: Using a subset of your data, have multiple raters assess the same cases. Calculate the inter-rater reliability (ICC for continuous underlying scores or κ for categorical classifications) for a single rater [20].

- Formulate Statistical Model: Use a classical test theory framework. Assume the observed score

Xis the sum of a true scoreT(normally distributed) and a random, independent error term [20]. - Apply Adjustment Method:

- Input the "naive" proportion estimate (from your data) and the reliability coefficient into a statistical method designed to correct for measurement error. This could involve modeling the relationship between the observed and true thresholds for classification [20].

- The output will be an adjusted, more accurate estimate of the true population proportion.

- Reporting: Report both the naive and adjusted estimates, along with the reliability coefficient, to provide a transparent view of the impact of measurement error.

Research Reagent Solutions

A toolkit of statistical concepts and methods essential for robust estimation and inference.

| Tool / Reagent | Function in Research |

|---|---|

| Modified Least Squares Estimators (MLSEs) | Provides asymptotically unbiased estimates of drift parameters in OU processes from low-frequency data, correcting for small-sample bias [18]. |

| Minimal Important Difference (MID) | Defines the smallest change in an outcome that patients or clinicians would identify as important, enabling the assessment of clinical/practical significance beyond statistical significance [21] [22]. |

| Confidence/Compatibility Interval | Provides a range of values that are highly compatible with the observed data, given a statistical model. It conveys the precision of an estimate and allows for more nuanced interpretation than a p-value [22] [23]. |

| Reliability Coefficient (ICC/κ) | Quantifies the consistency of a measurement tool (inter-rater or test-retest). Essential for diagnosing measurement error and adjusting prevalence estimates to avoid overestimation [20]. |

| Analysis of Credibility (AnCred) | A methodological framework that challenges significant findings by calculating the "Scepticism Limit," helping to determine if a result is credible in the context of existing knowledge [24]. |

Visual Workflows

Relationship Between Measurement Error and Estimation Problems

Framework for Interpreting Study Results Beyond Statistical Significance

Frequently Asked Questions (FAQs)

Q1: What is the core biological interpretation of the parameter alpha (α) in an OU model? Alpha (α) is the rate of adaptation or strength of stabilizing selection [9] [25]. It quantifies how strongly a trait is pulled toward an optimal value (θ) during evolution. A higher α value indicates a faster or stronger pull, meaning a trait recovers more quickly from perturbations away from its optimum [25]. It is crucial to note that in a phylogenetic comparative context, this "stabilizing selection" is not identical to within-population stabilizing selection as defined in population genetics; it instead models a macroevolutionary pattern of trait evolution around a theoretical optimum [9].

Q2: What is the "phylogenetic half-life" (t₁/₂), and why is it a useful metric?

The phylogenetic half-life is defined as t₁/₂ = ln(2)/α [25] [19] [26]. It represents the expected time for a lineage to evolve halfway from its ancestral state toward a new optimal trait value [19]. This transforms the unitless α into a measure with time units (e.g., millions of years), making its biological interpretation more intuitive [25] [26]. A short half-life relative to the phylogeny's height suggests rapid adaptation, while a long half-life suggests strong phylogenetic inertia [19].

Q3: How should I interpret the optimal trait value (θ)? The optimal trait value (θ) is the stationary mean toward which the trait evolves [25]. In a single-optimum model, all species are pulled toward one primary optimum [19]. In multi-optima models, different θ values can be assigned to different hypothesized selective regimes (e.g., different environments or niches) on the tree, allowing direct tests of adaptive hypotheses [9] [19]. The estimated θ represents the macroevolutionary "primary optimum," which is the average of local optima for species sharing a given niche [19].

Q4: My analysis on a small dataset strongly supports an OU model over a Brownian Motion (BM) model. Should I trust this result? You should be cautious. Simulation studies have shown that Likelihood Ratio Tests frequently and incorrectly favor the more complex OU model over simpler BM models when datasets are small [9]. It is a best practice to simulate data under your fitted models and compare the simulated patterns to your empirical results to assess model adequacy [9].

Q5: Could measurement error or within-species variation affect my parameter estimates? Yes, profoundly. Even very small amounts of measurement error or intraspecific variation can severely bias parameter estimates, particularly for the α parameter [9] [4]. Unaccounted-for within-species variation is often mistaken for strong stabilizing selection (high α) [4]. It is critical to use models that explicitly incorporate these variance components when your data contains such variation [4].

Troubleshooting Common Problems

Problem 1: Inflated Alpha (α) and Misinterpreted Stabilizing Selection

- Symptoms: Estimation of an unexpectedly high α value, leading to a strong inference of stabilizing selection.

- Potential Causes:

- Unmodeled Within-Species Variance: The most common cause is the failure to account for measurement error or individual variation within species [4]. This non-evolutionary noise is misinterpreted by the model as a strong pull toward an optimum.

- Small Dataset Size: With limited species, the OU model can be overfitted, and high α estimates may be statistically unjustified [9].

- Solutions:

- Use an Extended Model: Implement an OU model that includes a parameter for within-species (or measurement) variance [4].

- Validate with Simulations: Follow the recommendation of Cooper et al. (2016) to simulate trait data under your fitted OU model. If the simulated data does not resemble your empirical data, the parameter estimates are likely unreliable [9].

Problem 2: Inability to Distinguish Parameter Estimates (Parameter Correlation)

- Symptoms: High correlation between α and σ² in the posterior distribution (in Bayesian analyses) or difficulty converging on stable parameter estimates.

- Explanation: In the OU process, the long-term stationary variance is

σ²/2α[25] [19]. This relationship means that different combinations of α and σ² can produce similar trait patterns, especially when branches on the phylogeny are long, making it difficult to estimate these parameters separately [25]. - Solutions:

- Focus on Derived Parameters: Interpret the phylogenetic half-life (t₁/₂) and the stationary variance (σ²/2α), which can be more reliably estimated [25] [19].

- Use Informed Priors (Bayesian Methods): In a Bayesian framework, use expert knowledge to set informed priors on parameters [25].

- Check for Multiple Optima: If your biological question involves different selective regimes, fitting a multi-optima model can provide more information and help break the correlation between parameters [19].

Problem 3: Over-reliance on Single-Optimum OU Models

- Symptoms: A single-optimum OU model fits better than BM, but the biological conclusion (that everything evolves toward one optimum) is uninteresting or unrealistic.

- Explanation: The main utility of OU models in comparative analysis is to test hypotheses about different selective regimes, not to fit a single global optimum [19]. A single-optimum model is often not the biologically relevant hypothesis.

- Solution:

- Formulate Multi-Optima Hypotheses: Define selective regimes based on ecology, morphology, or environment. Use OU model implementations (e.g., in

OUwie,bayou,PhylogeneticEM) to test whether models with multiple, regime-specific θ values fit your data better than a single-optimum model [9] [19] [27].

- Formulate Multi-Optima Hypotheses: Define selective regimes based on ecology, morphology, or environment. Use OU model implementations (e.g., in

Key Parameter Relationships and Diagnostics

Table 1: Key Parameters of the Ornstein-Uhlenbeck Model and Their Meaning

| Parameter | Biological Interpretation | Relationship to Other Parameters |

|---|---|---|

| Alpha (α) | Rate of adaptation; strength of pull toward the optimum [25]. | - |

| Half-Life (t₁/₂) | Time to evolve halfway to a new optimum; t₁/₂ = ln(2)/α [25] [19]. |

Inversely proportional to α. |

| Optimum (θ) | The primary optimal trait value for a given selective regime [25] [19]. | - |

| Sigma² (σ²) | The instantaneous diffusion variance; rate of stochastic evolution [25]. | - |

| Stationary Variance | Long-term trait variance among species; σ²/2α [25] [19]. |

Determined by both σ² and α. |

Table 2: Troubleshooting Guide for OU Model Parameter Interpretation

| Problem | Diagnostic Check | Recommended Action |

|---|---|---|

| Overfitting on small datasets | Perform a likelihood ratio test between OU and BM. | Simulate data under the fitted OU model; if the empirical likelihood falls within the simulated distribution, the result may be valid [9]. |

| Confusing noise for selection | Check if data includes individual measurements or technical replicates. | Use an OU model that includes a within-species variance parameter [4]. |

| Unidentifiable parameters | Check for high correlation between α and σ² in MCMC output [25]. | Interpret the phylogenetic half-life and stationary variance instead of the raw parameters [25]. |

Experimental Protocol: Validating OU Model Fit and Parameters via Simulation

This protocol is a critical step to avoid misinterpretation of parameters, especially with small datasets [9].

- Model Fitting: Fit your Ornstein-Uhlenbeck model(s) of interest to the empirical trait data and phylogeny. Note the maximum likelihood parameter estimates (or posterior means).

- Data Simulation: Use the estimated parameters (α, σ², θ) and the original phylogeny to simulate a large number (e.g., 1000) of new trait datasets under the OU process.

- Model Refitting: Refit the same OU model to each of the simulated datasets. For each simulation, record the parameter estimates and the maximum log-likelihood.

- Distribution Comparison:

- Create a distribution of the parameter estimates from the simulated datasets.

- Check where your original empirical parameter estimates fall within this simulated distribution. If they are extreme (e.g., in the tails), the model may be overfitted.

- Create a distribution of the likelihood scores from the simulated datasets. Check if the likelihood of your empirical data is exceptionally high compared to this distribution.

- Interpretation: If the empirical data and its parameter estimates are consistent with the data simulated under the fitted model, you can have greater confidence in your inferences. If not, the model may be an inadequate description of the evolutionary process, and your conclusions should be tempered.

Research Reagent Solutions: Software for OU Model Analysis

Table 3: Key Software Packages for Fitting and Interpreting OU Models

| Software/Package | Primary Function | Key Feature / Use-Case |

|---|---|---|

| RevBayes [25] | Bayesian Phylogenetic Analysis | Implements OU models with MCMC, allows estimation of phylogenetic half-life and assessment of parameter correlations. |

| OUwie [9] [27] | Hypothesis Testing | Fits OU models with multiple, user-defined selective regimes (optima). |

| phylolm [19] | Phylogenetic Regression | Fast fitting of OU models for phylogenetic generalized least squares (PGLS). |

| ShiVa [27] | Shift Detection | A newer method to detect shifts in both optimal trait value (θ) and diffusion variance (σ²). |

| PCMFit [27] | Shift Detection | Automatically detects shifts in model parameters, including diffusion variance. |

Workflow for Robust OU Model Analysis

The following diagram outlines a logical workflow for conducting a robust OU model analysis, incorporating troubleshooting steps to avoid common pitfalls.

Practical Implementation: Methodological Considerations for Biomedical Applications

Frequently Asked Questions

- FAQ: Why is the mean-reversion speed, θ, so difficult to estimate accurately? The primary challenge is that the amount of information about θ depends on the total time span of the observed data, not simply the number of data points. Even with high-frequency data (a large number of observations), if the time span is short relative to the process's half-life, you will have very little information about the speed of mean reversion, leading to high estimation variance and significant bias [6].

- FAQ: My model fit seems good, but my trading strategy performs poorly. Could parameter estimation be the cause? Yes. In pairs trading, the profitability is highly sensitive to the mean-reversion speed. Standard estimation methods, like the AR(1) approach, are known to have a positive bias in finite samples, meaning they systematically overestimate θ. This makes the process appear to mean-revert faster than it actually does, leading to overly optimistic strategy expectations and potential losses [6] [11].

- FAQ: For a small dataset, should I use a traditional method or a deep learning model? Traditional methods are generally more suitable for smaller datasets. Research shows that while a Multi-Layer Perceptron (MLP) can accurately estimate OU parameters, it requires a large dataset of observed trajectories to do so. For smaller datasets, traditional methods like maximum likelihood estimation may be more appropriate [28] [29].

- FAQ: What is the impact of "dataset bias" on my parameter estimates? Dataset bias occurs when the data used for estimation has different properties than the real-world process the model is meant to represent. For example, data collected in a noisy online setting (like Amazon Mechanical Turk) can exhibit higher decision noise compared to controlled laboratory data. If not accounted for, this can lead to a model that fits your dataset perfectly but fails to generalize or make accurate predictions on new data [30].

Troubleshooting Guides

Problem: Inaccurate or Highly Variable Estimates of Mean-Reversion Speed

This is the most common challenge when working with the Ornstein-Uhlenbeck process. The symptoms include large confidence intervals for θ, estimates that change drastically with minor data updates, or strategy performance that does not match model predictions.

Investigation & Diagnosis:

- Check Your Data's Time Span: Calculate the total time period (T) covered by your observations. The precision of the θ estimate is more dependent on T than the number of data points within that period [6].

- Quantify the Expected Bias: Be aware that the most common estimators have a known positive bias. For the AR(1) estimator with known mean, the bias can be approximated as

Bias(θ̂) ≈ -(1 + 3θ)/Nfor large N, where N is the sample size [6]. - Profile the Likelihood: Check the practical identifiability of your parameters. If the profile likelihood for θ is flat and does not exceed a confidence threshold, it indicates that the data cannot reliably identify a unique value for θ, a clear sign of practical non-identifiability [31].

Solutions:

- Prioritize Data Span over Frequency: When collecting data, a longer time series is far more valuable than a high-frequency one. A dataset spanning several years with daily data is typically better for estimating θ than a dataset spanning one month with minute-by-minute data [6] [11].

- Use a Bias-Adjusted Estimator: Consider using the moment estimation approach, which incorporates a bias-adjustment term. The formula for this adjusted estimator is:

θ̂_adjusted = θ̂_MLE - (1 + 3θ̂_MLE)/Nwhereθ̂_MLEis the maximum likelihood estimate [6]. - Employ Robust Estimation Algorithms: Do not rely on a single algorithm. Perform multiple rounds of parameter estimation using different algorithms (e.g., quasi-Newton, Nelder-Mead, genetic algorithm) and under different initial conditions. This helps verify that you have found a true global optimum and not a local one [32].

- Validate with a Hybrid Model (for decision-making data): If modeling human decisions, account for dataset-specific noise. A proven method is to use a hybrid model that adds structured decision noise to a base neural network trained on a cleaner dataset, which can significantly improve transferability between datasets [30].

Problem: Model Fails to Generalize from Small Datasets

This problem occurs when a model trained on a small dataset performs well during testing but fails when applied to new, unseen data. This is often due to overfitting or dataset bias.

Investigation & Diagnosis:

- Evaluate with Repeated Nested Cross-Validation (rnCV): For small datasets, standard train/test splits have high variance. Use rnCV, which uses an inner CV loop for hyperparameter tuning and an outer CV loop for performance estimation, repeated multiple times to stabilize results [33].

- Perform a Permutation Test: To check if your model has learned true patterns or is just fitting noise, compare your model's performance against the distribution of performances from models trained on the same data with the target labels randomly permuted. This provides a p-value-like metric for the significance of your results [33].

Solutions:

- Adopt a Rigorous Evaluation Protocol: For small datasets, implement the refined evaluation approach combining rnCV and a non-parametric permutation test. This combination is almost free of biases and provides a reliable measure of whether results will generalize [33].

- Choose the Right Evaluation Metric: Avoid using accuracy for imbalanced datasets. Instead, use metrics that are more robust, such as the Matthews Correlation Coefficient (MCC), which has been shown to exhibit the lowest bias when both classes are equally important [33].

Experimental Protocols & Data

Table 1: Comparison of Common OU Parameter Estimation Methods

| Method | Core Principle | Key Advantages | Key Limitations / Biases |

|---|---|---|---|

| AR(1) with OLS | Treats discretized OU process as a linear regression. | Simple, fast to compute. | Positively biased for small samples [6]. Assumes constant time increments. |

| Maximum Likelihood Estimation (MLE) | Finds parameters that maximize the likelihood of observed data. | Statistically efficient (low variance) under correct model. | Can be computationally slow. Positive bias persists in finite samples [6] [11]. |

| Moment Estimation | Matches theoretical moments of the process (mean, variance) to sample moments. | Includes a bias-adjustment term, making it more accurate for finite samples than MLE or OLS [6]. | Slightly more complex calculation than OLS. |

| Kalman Filter | Recursive filter optimal for systems with unobserved states or noisy measurements. | Handles unobserved states and measurement noise very well [28]. | More complex to implement; may be overkill for a clean, fully observed OU process. |

| Neural Network (MLP) | A deep learning model trained to map data trajectories to parameters. | Can model complex, non-linear patterns; high accuracy with large datasets [28] [29]. | Requires very large datasets; acts as a "black box"; not suitable for small data [28]. |

Table 2: Impact of Data Scarcity on Model Evaluation

| Challenge | Effect on Parameter Estimation & Model Generalization | Recommended Mitigation Strategy |

|---|---|---|

| Small Sample Size | Increases estimator variance and bias. Leads to overfitting where model fits noise in the training data. | Use repeated nested cross-validation (rnCV) [33]. Apply bias-adjusted estimators [6]. |

| Dataset Bias | Model learns spurious correlations specific to the training set, failing to generalize. | Use transfer testing between datasets [30]. Employ hybrid/generative models to account for structured noise [30]. |

| Low Practical Identifiability | The data contains insufficient information to pin down a unique parameter value, resulting in high uncertainty. | Perform profile likelihood analysis [31]. Ensure the time span of data is long enough [6]. |

Protocol: Maximum Likelihood Estimation for the OU Process

This protocol outlines the steps for estimating the parameters of a zero-mean OU process using Exact MLE [6] [11].

Objective: To accurately estimate the mean-reversion speed (μ), and volatility (σ) of an OU process from a discrete time series dataset.

Materials: A time series of observations ( {X0, X1, ..., X_n} ) with constant time increments ( \Delta t ). Software capable of numerical optimization (e.g., R, Python with SciPy).

Workflow:

Discretization: Define the exact discretization of the OU process based on Doob's lemma. Given ( Xt ), the value at the next time step is normally distributed: ( X{t+\Delta t} \sim N\left( X_t e^{-\mu \Delta t}, \frac{\sigma^2}{2\mu}(1 - e^{-2\mu \Delta t}) \right) ) [6] [11]

Likelihood Function Construction: Write the conditional probability density function (PDF) for an observation ( xi ) given ( x{i-1} ): ( f^{OU}(xi | x{i-1}; \mu, \sigma) = \frac{1}{\sqrt{2\pi\tilde{\sigma}^2}} \exp\left(-\frac{(xi - x{i-1} e^{-\mu \Delta t})^2}{2 \tilde{\sigma}^2}\right) ) where ( \tilde{\sigma}^2 = \sigma^2 \frac{1 - e^{-2\mu \Delta t}}{2\mu} ) [11]

Log-Likelihood Maximization: Sum the log-likelihood over the entire time series and use a numerical optimization algorithm (e.g., L-BFGS-B) to find the parameters ( \mu ) and ( \sigma ) that maximize: ( \ell (\mu,\sigma | x0, x1, ..., xn) = -\frac{n}{2} \ln(2\pi) - \frac{n}{2} \ln(\tilde{\sigma}^2) - \frac{1}{2\tilde{\sigma}^2}\sum{i=1}^n [xi - x{i-1} e^{-\mu \Delta t}]^2 ) [6] [11]

The following diagram illustrates the logical workflow and key decision points in this protocol:

Diagram 1: Workflow for OU Process MLE.

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in OU Parameter Estimation |

|---|---|

| Yuima R Package | A specialized R package for simulating and estimating parameters of stochastic differential equations, including the (fractional) Ornstein-Uhlenbeck process [34]. |

| Exact Simulation (Doob's Method) | A simulation method that avoids discretization error by leveraging the exact conditional distribution of the OU process, leading to more accurate benchmark datasets [6]. |

| Repeated Nested Cross-Validation (rnCV) | An evaluation method that provides a nearly unbiased estimate of model performance on small datasets, reducing the risk of over-optimistic results [33]. |

| Profile Likelihood Analysis | A technique to assess practical identifiability by examining how the likelihood function changes as a parameter is varied, revealing estimation uncertainty [31]. |

| Bias-Adjusted Moment Estimator | A specific calculation that adjusts the maximum likelihood estimate to reduce its inherent positive bias in finite samples, providing a more accurate θ [6]. |

| Non-Parametric Permutation Test | A statistical test used to calculate the probability that a model's performance is achieved by chance, guarding against false discoveries in small datasets [33]. |

When analyzing the evolution of continuous traits, such as morphological characteristics or gene expression levels, researchers rely on phylogenetic comparative methods (PCMs) to identify patterns and infer underlying evolutionary processes. The Ornstein-Uhlenbeck (OU) model has become a cornerstone in this analytical toolkit, moving beyond the simple neutral evolution assumed by Brownian motion models by incorporating stabilizing selection toward an optimal trait value.

The core of the OU process is defined by the stochastic differential equation: dX(t) = -α(X(t) - θ)dt + σdW(t)

where:

- X(t) is the trait value at time t

- θ (theta) represents the primary optimum toward which the trait is pulled

- α (alpha) is the strength of selection, determining how strongly the trait reverts to the optimum

- σ (sigma) governs the rate of stochastic evolution

- dW(t) represents random perturbations following a Wiener process [9] [35]

This framework can be extended to include multiple selective regimes, allowing different branches of the phylogeny or different groups of species to evolve toward distinct optimal values [9]. Understanding the differences between single-optimum and multiple-optima implementations, along with their appropriate applications and limitations, is crucial for robust evolutionary inference.

Key Concepts: Definitions and Terminology

Core Model Parameters

Table 1: Key Parameters of the Ornstein-Uhlenbeck Model

| Parameter | Symbol | Interpretation | Biological Meaning |

|---|---|---|---|

| Optimal Trait Value | θ (theta) | The trait value that selection pulls toward | Selective optimum under stabilizing selection |

| Strength of Selection | α (alpha) | Rate of adaptation toward the optimum | Determines how quickly a trait returns to θ after perturbation |

| Stochastic Rate | σ (sigma) | Rate of random diffusion | Intensity of random perturbations (e.g., genetic drift) |

| Phylogenetic Half-Life | t₁/₂ = ln(2)/α | Time to cover half the distance to optimum | Measures the pace of adaptation; higher α = shorter half-life |

| Stationary Variance | σ²/(2α) | Long-term equilibrium variance | Balance between random perturbations and stabilizing selection |

Single-Optimum vs. Multiple-Optima Models

Single-Optimum OU Model: Assumes all species in the phylogeny are evolving toward the same primary optimum (θ). This model is typically used when testing for the presence of any stabilizing selection versus purely random evolution [25].

Multiple-Optima OU Model: Allows different parts of the phylogeny to evolve toward distinct optimal values (θ₁, θ₂, ..., θₙ). This approach is biologically realistic when different selective regimes are expected across habitats, ecological niches, or phylogenetic clades [9].

Frequently Asked Questions (FAQs)

Q1: How do I decide whether my dataset requires a single-optimum or multiple-optima OU model?

The choice depends on your biological question and phylogenetic context. Use a single-optimum model when testing whether a trait evolves under general stabilizing selection toward an overall optimum. Choose multiple-optima models when you have a priori hypotheses about different selective regimes operating on different clades or lineages. For example, if studying leaf size evolution across a plant phylogeny encompassing both arid and tropical environments, a multiple-optima model could test whether each environment has a distinct optimal leaf size [9]. Model selection criteria such as AICc or likelihood ratio tests can objectively compare statistical support for each model, but biological plausibility should also guide your decision.

Q2: My OU model analysis strongly supports an α value > 0. Can I interpret this as evidence of "stabilizing selection"?

This is a common point of confusion. While a significant α > 0 indicates the trait is evolving as if under stabilizing selection, caution is needed in biological interpretation. The OU process describes a pattern of constrained evolution, but this pattern can arise from multiple processes, not just stabilizing selection in the population genetics sense. The phylogenetic OU model estimates pull toward a "primary optimum" representing the mean of species optima, which is qualitatively different from selection toward a fitness optimum within a population. Alternative processes like genetic constraints, migration between populations, or even measurement error can generate similar patterns [9] [35].

Q3: Why do I get inconsistent OU parameter estimates when analyzing small datasets (< 30 species)?

Small datasets pose significant challenges for OU modeling. The α parameter is particularly prone to overestimation with limited data, and likelihood ratio tests frequently incorrectly favor OU over simpler Brownian motion models. This occurs because small datasets lack the statistical power to reliably distinguish genuine stabilizing selection from random fluctuations. Simulation studies demonstrate that datasets with fewer than 30-40 tips have high Type I error rates, incorrectly rejecting Brownian motion in favor of OU models. When working with small datasets, always supplement your analysis with parametric bootstrapping or posterior predictive simulations to assess reliability [9].

Q4: How does measurement error affect OU model parameter estimation?

Even small amounts of measurement error or intraspecific variation can profoundly distort OU parameter estimates. When trait measurements contain error, this can be misinterpreted by the model as rapid fluctuations around an optimum, leading to inflated estimates of the α parameter. This occurs because measurement error increases the apparent rate of evolution close to the optimum. To address this, either incorporate measurement error variance directly into your model or use methods that account for intraspecific variation. Always test the sensitivity of your results to potential measurement error, especially when using literature-derived trait data [9].

Q5: In a multiple-optima model, how are the different selective regimes specified?

Selective regimes are typically defined a priori based on biological hypotheses about where shifts in adaptive landscape might occur. Regimes can be specified using:

- Phylogenetic partitioning: Different clades assigned to different optima

- Ecological criteria: Species grouped by habitat type, diet, or other ecological factors

- Morphological characteristics: Groups based on distinct body plans or structures The phylogenetic relationships are incorporated through the variance-covariance matrix, which accounts for the shared evolutionary history among species. The model then simultaneously estimates each θ while accounting for non-independence due to phylogeny [9].

Troubleshooting Common Experimental Issues

Model Convergence and Identification Problems

Problem: Poor convergence of MCMC chains or unreasonably large confidence intervals for α and θ parameters.

Diagnosis: This often indicates parameter non-identifiability, frequently occurring when the phylogenetic half-life is similar to or exceeds the total tree height. When the half-life is long relative to the phylogeny, the OU process becomes statistically indistinguishable from Brownian motion.

Solutions:

- Include the phylogenetic half-life (t₁/₂ = ln(2)/α) directly in your model output to assess its relationship to tree height

- Implement joint proposals for correlated parameters (α, θ, σ²) in Bayesian MCMC sampling

- Use a fixed-effects model with fewer selective regimes if using a multiple-optima approach

- Consider model reparameterization to reduce parameter correlations [25]

Distinguishing Convergence from Migration/Interaction Effects

Problem: Similarity between closely related species might be interpreted as convergent evolution under an OU model when it actually results from migration or ecological interactions.

Diagnosis: Strong apparent "pull toward an optimum" among sympatric species or populations with known migration patterns.

Solutions:

- Incorporate migration matrices into your OU model when analyzing populations within species

- Use interaction-based OU models that explicitly model ecological dependencies

- Compare traditional OU models with models that include migration or interaction terms

- Validate results with independent evidence from population genetics or ecological studies [35]

Table 2: Troubleshooting Guide for Common OU Model Issues

| Problem | Potential Causes | Diagnostic Checks | Solution Approaches |

|---|---|---|---|

| Overestimated α | Small sample size; Measurement error | Parametric bootstrapping; Error-in-variable models | Increase sample size; Incorporate measurement error |

| Poor MCMC Convergence | Parameter correlations; Non-identifiability | Monitor trace plots; Check posterior correlations | Use multivariate moves; Reparameterize model |

| OU favored over BM | Small dataset bias; Tree structure | Simulation studies; Power analysis | Apply bias correction; Use informed priors |

| Unbiological θ estimates | Model misspecification; Extreme values | Check prior influence; Validate biologically | Adjust priors; Check for outliers |

Experimental Protocols and Methodologies

Standard Protocol for OU Model Fitting

Objective: Implement a phylogenetic OU model to test for stabilizing selection in a continuous trait.

Materials:

- Time-calibrated phylogeny (ultrametric tree)

- Continuous trait measurements for all tip species

- Computational environment (R + appropriate packages)

Procedure:

- Data Preparation

- Check that trait data and phylogeny tip labels match

- Log-transform traits if necessary to meet normality assumptions

- Center traits if using vague priors for optimum values

Model Specification (Bayesian Implementation)

- Define priors: θ ~ Uniform(-10, 10), α ~ Exponential(mean = root_age/2ln(2)), σ² ~ Loguniform(1e-3, 1)

- Include derived parameters: thalf = ln(2)/α, pth = 1 - (1 - exp(-2α×rootage))/(2α×rootage)

- Implement the PhyloOrnsteinUhlenbeckREML likelihood [25]

MCMC Configuration

- Use mvScale moves for α and σ² parameters

- Use mvSlide moves for θ parameter

- Include mvAVMVN multivariate move for correlated parameters

- Run 2+ independent chains for ≥50,000 generations

Convergence Assessment

- Check effective sample sizes (ESS > 200)