Navigating Dissimilar Tasks in Multi-Task Optimization: Strategies for Drug Discovery

Multi-task learning (MTL) promises improved efficiency and generalization by enabling a single model to learn multiple related tasks simultaneously.

Navigating Dissimilar Tasks in Multi-Task Optimization: Strategies for Drug Discovery

Abstract

Multi-task learning (MTL) promises improved efficiency and generalization by enabling a single model to learn multiple related tasks simultaneously. However, its application in complex fields like drug discovery is often hampered by the challenge of handling dissimilar tasks, which can lead to performance degradation due to issues like negative transfer and conflicting gradients. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational principles of MTL challenges, reviewing advanced methodological solutions like gradient modulation and evolutionary relatedness metrics, and presenting practical troubleshooting and optimization strategies. Finally, it offers a rigorous framework for the validation and comparative analysis of MTO methods, synthesizing key takeaways and future directions to guide the effective implementation of MTL in accelerating biomedical research.

The Promise and Peril of Multi-Task Learning: Understanding the Core Challenges

Defining Multi-Task Learning and Its Value Proposition in Drug Discovery

MTL Basics & Value in Drug Discovery

Q1: What is Multi-Task Learning (MTL) and why is it used in drug discovery?

Multi-Task Learning (MTL) is a machine learning paradigm where a single model is trained to perform multiple related tasks simultaneously. By sharing representations between tasks, the model can leverage common information, often leading to improved data efficiency, faster convergence, and better generalization compared to training separate models for each task (a method known as Single-Task Learning or STL) [1].

In drug discovery, MTL is particularly valuable for predicting compound bioactivity. The primary challenge in building accurate predictive models is the frequent lack of sufficient high-quality biological activity data for any single target protein. MTL addresses this by allowing information from multiple, similar biological targets to be shared and jointly modeled. This enables knowledge transfer, where data from one task can help improve the predictions for another, thereby boosting the overall prediction accuracy and model robustness [2] [3].

Q2: What is "negative transfer" and how can it be identified in an experiment?

Negative transfer is a key challenge in MTL where sharing information between tasks actually worsens the model's performance on one or more tasks, rather than improving it. This typically occurs when the tasks are not sufficiently related or are in conflict [1].

You can identify negative transfer in your experiment by comparing the performance of your MTL model against a Single-Task Learning (STL) baseline. A clear sign of negative transfer is when the MTL model shows significantly lower performance on a task than a model trained solely on that task's data [4]. For instance, one study reported a robustness of only 37.7% when training all 268 targets together, meaning that for over 60% of the targets, MTL performance was worse than STL [4].

Q3: What are the primary optimization challenges in MTL?

MTL optimization faces several hurdles that can lead to negative transfer or imbalanced performance:

- Gradient Conflicts: The gradients of the loss functions from different tasks can point in opposing directions. Following the average gradient might then lead to poor performance for all tasks involved [1] [5].

- Imbalanced Data and Loss Scales: Tasks often have datasets of different sizes and different natural loss scales. Without intervention, tasks with larger datasets or larger loss values can dominate the optimization process, causing the model to neglect other tasks [5] [6].

- Task Dissimilarity: The benefits of MTL are most pronounced when tasks are related. Grouping unrelated or weakly related tasks is a common cause of optimization difficulties and negative transfer [4] [1].

Experimental Protocols & Methodologies

Q4: What is a proven methodological framework for applying MTL to drug-target interaction prediction?

A robust methodology for MTL in drug discovery involves task grouping, model training with knowledge distillation, and rigorous evaluation.

Table: Key Stages in an MTL Experimental Protocol for Drug-Target Interaction

| Stage | Core Action | Example from Literature |

|---|---|---|

| 1. Task Similarity Analysis | Quantify the relatedness between prediction tasks (targets). | Using the Similarity Ensemble Approach (SEA) to compute ligand-based similarity between targets. Hierarchical clustering is then applied to group targets with high similarity [4]. |

| 2. Model Training with Knowledge Distillation | Train a "student" MTL model guided by pre-trained "teacher" models. | First, train STL models for each task. Then, train an MTL model on a group of similar tasks, using the predictions of the STL models as guidance via "teacher annealing" [4]. |

| 3. Evaluation | Compare MTL performance against a strong STL baseline. | Use metrics like Area Under the ROC Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) for each task. Calculate the average performance and the robustness (percentage of tasks where MTL outperforms STL) [4]. |

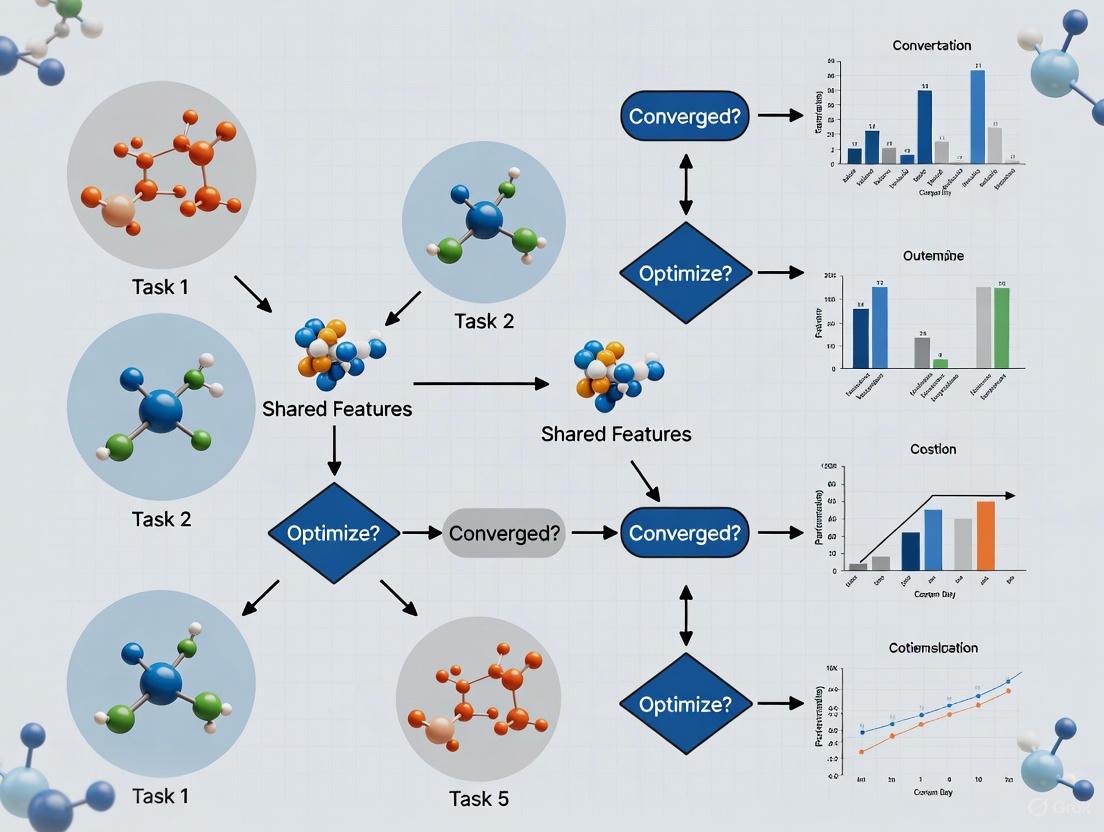

The following workflow diagram illustrates this multi-stage experimental protocol:

Q5: How can I implement a gradient conflict mitigation strategy like FetterGrad?

The FetterGrad algorithm is designed to resolve gradient conflicts in MTL by aligning the gradients of different tasks during training. The core idea is to minimize the Euclidean distance between the task gradients to keep them aligned and prevent one task from dominating the optimization [7].

Table: Steps to Implement the FetterGrad Algorithm

| Step | Action | Explanation |

|---|---|---|

| 1. | Compute task-specific gradients. | For a shared parameter, calculate the gradients ( \nabla{W} Li ) for each task ( i ). |

| 2. | Calculate pairwise Euclidean distances. | Compute the Euclidean distance (ED) between the gradient vectors of all task pairs. |

| 3. | Apply the FetterGrad update rule. | Modify the gradients by adding a term that minimizes the Euclidean distance between them, effectively pulling the task gradients closer together in the optimization space. |

| 4. | Update model parameters. | Use the modified, "aligned" gradients to perform a standard optimization step (e.g., SGD, Adam). |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for MTL in Drug Discovery

| Research Reagent | Function & Utility | Application Context |

|---|---|---|

| SparseChem | An open-source Python package for training large-scale bioactivity and toxicity models with high computational efficiency, supporting both classification and regression [3]. | Ideal for industry-scale projects involving millions of compounds and high-dimensional features (e.g., ECFP fingerprints). |

| Pre-trained Biomedical Language Models (e.g., BioBERT, ClinicalBERT) | Transformer-based models pre-trained on vast biomedical text corpora (e.g., PubMed). They provide powerful, context-aware feature representations for biomedical NLP tasks [8]. | Fine-tuning these models within an MTL framework for joint Named Entity Recognition (NER) and Relation Extraction from scientific literature [8]. |

| Similarity Ensemble Approach (SEA) | A computational method that estimates the similarity between protein targets based on the chemical structural similarity of their known active ligands [4]. | Used for the critical step of task grouping. Targets with high ligand-set similarity are clustered together for MTL. |

| FetterGrad Algorithm | A custom optimization algorithm that mitigates gradient conflicts between tasks by minimizing the Euclidean distance between their gradients [7]. | Employed in complex MTL frameworks (e.g., predicting affinity and generating drugs) to ensure stable and balanced learning across tasks. |

Troubleshooting Common MTL Experimental Issues

Q6: My MTL model's performance is worse than single-task models. What should I do?

This is a classic symptom of negative transfer. Your action plan should be:

- Diagnose Task Relatedness: The most likely cause is that the tasks in your group are not sufficiently similar. Re-evaluate your task grouping strategy.

- Check for Optimization Imbalance: One or two tasks may be dominating the training.

- Action: Analyze the norms of the task-specific gradients. A significant imbalance in gradient norms is a strong indicator of this issue. A straightforward mitigation strategy is to scale the task losses to balance their gradient norms [5].

- Refine Your Architecture:

- Action: Consider using more sophisticated MTL architectures like Cross-modal Adaptive Mixture-of-Experts (CAMoE), which uses modality-specific heads to prevent interference [6], or explore soft parameter sharing instead of hard parameter sharing.

Q7: How do I handle drastically different data volumes across tasks?

Data imbalance can cause a model to overfit on tasks with large datasets and underperform on tasks with small datasets. Solutions include:

- Dynamic Loss Weighting: Use methods like Uncertainty Weighting [5] or Dynamic Weight Average (DWA) [5] [1] to automatically adjust the contribution of each task's loss to the total objective.

- Adaptive Loss Masking (ALM): As used in the CAMoE framework, this technique ensures that during backpropagation, the parameters of a task-specific component are only updated by examples from its corresponding task. This prevents the gradients from a high-volume task from overwhelming a low-volume task [6].

The following diagram illustrates the architecture of a system like CAMoE that uses expert networks and adaptive loss masking to handle multi-modal or multi-domain data:

Q8: One task is learning very fast while others stagnate. How can I balance them?

This is a specific manifestation of optimization imbalance.

- Immediate Action: Apply loss balancing techniques. The GradNorm algorithm dynamically adjusts task weights by considering the learning rate of each task and the norm of the gradients of the shared layers [5] [1].

- Alternative Approach: A recent finding suggests a strong correlation between optimization imbalance and the norm of task-specific gradients. You can implement a simple yet effective strategy: scale the losses of the stagnating tasks to increase their gradient norms, bringing them closer to the norm of the fast-learning task. This has been shown to achieve performance comparable to an exhaustive grid search for loss weights [5].

Troubleshooting Guide: FAQs on Negative Transfer & Gradients

FAQ 1: What are the root causes of performance degradation in my Multi-Task Learning model? Performance degradation in MTL, often termed negative transfer, occurs when tasks conflict during joint optimization [9] [10]. The primary technical cause is conflicting gradients, where the gradient vectors of different tasks point in opposing directions during backpropagation, leading to inefficient and unstable updates of the model's shared parameters [11] [12]. In fairness-aware MTL, this can also manifest as bias transfer, where fairness considerations for one task adversely affect the fairness of others [9].

FAQ 2: How can I detect and measure gradient conflicts in my experiments? You can detect gradient conflicts by analyzing the cosine similarity between task-specific gradients with respect to the shared parameters [12]. A cosine similarity close to -1 indicates a strong conflict. Furthermore, monitor per-task performance metrics (e.g., loss, accuracy, fairness) throughout training; a significant and persistent drop in one task's performance compared to its single-task baseline is a strong indicator of negative transfer [9].

FAQ 3: What are the most effective strategies to mitigate conflicting gradients? Strategies can be broadly categorized. Gradient manipulation methods, like PCGrad, project conflicting gradients onto each other to reduce interference [12]. Architectural adaptations dynamically branch the shared network, grouping related tasks to isolate conflicting ones [9] [11]. Optimization-focused approaches use specialized algorithms, like FetterGrad, to align gradients by minimizing the Euclidean distance between them [7].

FAQ 4: Does the choice of optimizer influence negative transfer? Yes. Empirical studies show that the Adam optimizer often outperforms SGD with momentum in MTL scenarios [12]. Theoretical analyses suggest that Adam exhibits a degree of partial loss-scale invariance, making it more robust to the varying loss scales of different tasks, which is a common source of imbalance and conflict [12].

FAQ 5: How should I structure an MTL experiment to benchmark against negative transfer? Always include a Single-Task Learning (STL) baseline for each task. For a new MTL method, compare its performance against this baseline and simple MTL baselines (e.g., uniformly weighted sum of losses) [12]. Key evaluation metrics should encompass not only accuracy but also task-specific fairness criteria to account for bias transfer [9].

Comparative Analysis of Mitigation Methods

The table below summarizes quantitative findings from recent research on methods designed to mitigate negative transfer and conflicting gradients.

| Method | Core Principle | Reported Performance | Key Advantage |

|---|---|---|---|

| FairBranch [9] | Task-group branching & fairness gradient correction | Outperforms state-of-the-art MTLs on both fairness and accuracy on ACS-PUMS dataset. | Mitigates both negative transfer and bias transfer. |

| Recon [11] | Converts high-conflict shared layers to task-specific layers | Achieves better performance with slight parameter increase; improves various SOTA methods. | Reduces conflicts from the root; architecture-agnostic. |

| FetterGrad [7] | Aligns gradients by minimizing Euclidean distance | Improved DTA prediction (e.g., CI=0.897 on KIBA) and successful novel drug generation. | Explicitly aligns gradients in a shared feature space. |

| Adam Optimizer [12] | Adaptive learning rates & partial loss-scale invariance | Shows favorable performance over SGD+momentum in various MTL experiments. | Readily available, requires no modification to loss or architecture. |

Experimental Protocols for Mitigating Gradient Conflicts

Protocol 1: Implementing Gradient Conflict Correction with PCGrad

PCGrad is a gradient manipulation technique that projects one task's gradient onto the normal plane of another's if they conflict.

- Gradient Computation: For a batch of data, compute the loss and then the gradients for the shared parameters for each task, resulting in gradient vectors ( g1, g2, ..., g_T ).

- Conflict Check & Projection: For each task ( i ), iterate through all other tasks ( j ). Calculate the cosine similarity between ( gi ) and ( gj ). If ( gi \cdot gj < 0 ) (conflict), project ( gi ) onto the normal plane of ( gj ): ( gi = gi - \frac{gi \cdot gj}{||gj||^2} gj ).

- Gradient Update: After processing all tasks, aggregate the potentially modified gradients (e.g., by averaging) and use this aggregated gradient to update the shared model parameters.

Protocol 2: Dynamic Network Branching with FairBranch

This protocol uses parameter similarity to group related tasks and isolate conflicting ones.

- Warm-up Training: First, train a fully shared MTL model for a few epochs to obtain initial task-specific parameters.

- Similarity Assessment: Compute a similarity metric (e.g., based on the task-specific parameters) between all pairs of tasks.

- Task Grouping: Use a clustering algorithm to group tasks based on their similarity scores.

- Model Branching: Modify the MTL architecture so that each task group has its own branch of shared parameters stemming from an early layer. The initial shared layers remain common.

- Conflict-Aware Training: Continue training the branched network. Optionally, apply fairness or accuracy gradient correction within each branch to further reduce conflicts among closely related tasks [9].

Protocol 3: Gradient Alignment with FetterGrad Algorithm

FetterGrad explicitly optimizes for gradient alignment in a shared feature space, as used in DeepDTAGen for drug discovery [7].

- Shared Feature Extraction: A shared encoder processes input data to create a common feature representation used by all tasks.

- Task-Specific Head Processing: Each task-specific head processes the shared features to produce its output.

- Gradient Computation & Alignment: Compute the gradients of the total loss with respect to the shared parameters. The FetterGrad algorithm modifies these gradients by adding a term that minimizes the Euclidean Distance (ED) between the gradients arising from the different tasks, pulling them into closer alignment.

- Parameter Update: Update all model parameters using the aligned gradients.

Method Selection Workflow

The Scientist's Toolkit: Research Reagents & Solutions

The table below lists key computational "reagents" essential for experimenting with and mitigating negative transfer.

| Research Reagent | Function in MTL Experimentation |

|---|---|

| Gradient Conflict Score | A metric (e.g., cosine similarity) to quantify the degree of conflict between task gradients, used for diagnosis and triggering mitigation strategies [11] [12]. |

| Task Specific Layers | Small, separate neural network modules attached to a shared backbone. They isolate task-specific processing, reducing interference in the final prediction stages [9] [13]. |

| Parameter-Efficient Fine-Tuning (PEFT) | Techniques like LoRA (Low-Rank Adaptation) that reduce computational load during fine-tuning of large models on multiple tasks, mitigating resource intensity [13]. |

| Unified Evaluation Framework | A composite score that combines task-specific metrics (e.g., accuracy, fairness, MSE) into a single benchmark for holistic model assessment [13]. |

| Dynamic Task Weighting | An algorithm that adjusts the contribution (weight) of each task's loss to the total loss during training, preventing dominant tasks from overwhelming others [12]. |

Frequently Asked Questions (FAQs)

Q1: What is negative transfer, and why does it happen in multi-task learning (MTL)? A1: Negative transfer occurs when jointly training multiple tasks results in worse performance than training them independently. This happens because the learning process for one task interferes with and degrades the performance of another, often due to conflicting gradient directions during optimization or a fundamental dissimilarity between the tasks that prevents beneficial knowledge sharing [1] [4].

Q2: How can I measure the similarity or relatedness between two tasks before grouping them? A2: Researchers have developed several principled metrics to estimate task relatedness:

- Task Affinity Groupings (TAG): This method updates model parameters for one task and measures the impact on the loss of other tasks to quantify their interaction [1].

- Pointwise-Usable Information (PVI): This approach estimates the difficulty of a dataset for a given model. The hypothesis is that tasks with similar PVI estimates (similar difficulty levels) are more likely to benefit from joint training [14].

- Theoretical Metrics: Methods based on Wasserstein distance or H-divergence provide a theoretical upper bound on generalization error, offering a formal way to understand task relatedness [15].

- Domain-Specific Similarity: In bioinformatics, the Similarity Ensemble Approach (SEA) can compute similarity between target proteins based on the chemical structure of their active ligands, providing a domain-relevant relatedness metric [4].

Q3: My MTL model performs well on some tasks but poorly on others. How can I balance this? A3: This is a common issue often caused by differences in task difficulty, data set size, or the rate at which tasks learn. You can address it through several optimization techniques:

- Gradient Modulation: Techniques like Gradient Adversarial Training (GREAT) modify the gradients during training to reduce conflict between tasks [1].

- Loss Balancing: Adjust the weight of each task's loss function in the overall objective. This can be done manually or automatically, for instance, by weighting losses inversely proportional to their dataset sizes to prevent tasks with more data from dominating [1].

- Knowledge Distillation: Train a "teacher" single-task model for each task, then train a multi-task "student" model to mimic the teachers' predictions. Methods like teacher annealing can help the student model avoid performance degradation while still benefiting from shared representations [4].

Q4: Are there specific types of tasks that should never be trained together? A4: There is no absolute rule, but the risk of negative transfer is high when tasks have no underlying relationship or have competing objectives. Naively grouping all available tasks into a single model often leads to worse overall performance than single-task models or smarter groupings [4] [14]. The key is to use the metrics mentioned above to identify and avoid grouping tasks with low affinity.

Troubleshooting Guides

Problem: Performance Drop in Multi-Task Learning (Negative Transfer)

Symptoms

- The multi-task model's performance on one or more tasks is significantly worse than that of a single-task model trained exclusively on that task.

- The model fails to converge on a specific task while performing well on others.

Diagnosis and Solutions

| Step | Diagnosis | Solution | Relevant Context / Metric |

|---|---|---|---|

| 1 | Confirm that negative transfer is occurring. | Compare the performance (e.g., AUROC, accuracy) of your MTL model against single-task learning (STL) baselines for each task. | A robustness score (percentage of tasks where MTL outperforms STL) below 50% indicates a problem [4]. |

| 2 | Check for task dissimilarity. | Use a task-relatedness metric (e.g., TAG, PVI, SEA) to assess the affinity between your tasks. Regroup tasks with high mutual affinity. | In drug-target interaction prediction, grouping targets by ligand-based similarity (SEA) improved mean AUROC from 0.690 to 0.719 [4]. |

| 3 | Analyze gradient conflicts. | Implement a gradient modulation strategy, such as adversarial training, to align the gradients of different tasks during the optimization process. | The GREAT method encourages gradients from different tasks to have statistically indistinguishable distributions [1]. |

| 4 | Address task imbalance. | Rebalance the loss function or adjust the data sampling strategy to ensure no single task dominates the training. | Use a dynamic temperature-based sampling strategy or weight losses inversely to dataset size [1]. |

Problem: Selecting the Right Task Groupings

Symptoms

- You have a large pool of potential tasks but are unsure which subsets will work well together.

- An exhaustive search over all possible task combinations is computationally infeasible.

Diagnosis and Solutions

| Step | Diagnosis | Solution | Relevant Context / Metric |

|---|---|---|---|

| 1 | Quantify task difficulty. | Calculate the Pointwise-Usable Information (PVI) for each task. This estimates how much usable information a dataset contains for a given model. | Tasks with statistically similar PVI distributions are considered to be of comparable difficulty and are good candidates for grouping [14]. |

| 2 | Measure inter-task affinity. | Apply the Task Affinity Groupings (TAG) method. For each task, measure how a gradient update for that task affects the loss of all other tasks. | TAG identifies pairs of tasks that have a beneficial (or harmful) relationship when trained together, avoiding random or naive grouping [1]. |

| 3 | Leverage domain knowledge. | In scientific fields, use domain-specific similarity metrics. For example, in drug discovery, use the Similarity Ensemble Approach (SEA) to group protein targets based on ligand similarity. | Clustering targets with SEA before MTL led to higher performance and reduced negative transfer [4]. |

Experimental Protocols

Protocol 1: Quantifying Task Relatedness with Task Affinity Groupings (TAG)

Objective: To systematically measure the affinity between a set of tasks to determine the optimal groupings for Multi-Task Learning.

Methodology:

- Model Setup: Use a single neural network with a shared backbone and task-specific heads.

- Affinity Measurement: a. For a given task ( A ), perform a single gradient descent step on a mini-batch from ( A ). b. Immediately evaluate the model's loss on all other tasks ( B, C, D... ) using their respective validation sets. c. Undo the gradient update for task ( A ) (return to the previous parameters). d. Repeat steps a-c for every other task.

- Analysis: The change in loss for task ( B ) when the model is updated with data from task ( A ) indicates their affinity. A significant reduction in ( B )'s loss suggests high, positive affinity [1].

Protocol 2: Multi-Task Learning with Knowledge Distillation via Teacher Annealing

Objective: To gain the benefits of MTL (e.g., data efficiency) while avoiding the performance degradation of negative transfer.

Methodology:

- Teacher Training: First, train high-performing single-task models (the "teachers") for each task individually.

- Student Training: Train a multi-task model (the "student") on all tasks simultaneously. The student's total loss is a combination of: a. The standard cross-entropy loss with the true labels. b. A distillation loss that minimizes the Kullback–Leibler (KL) divergence between the student's predictions and the teachers' predictions.

- Teacher Annealing: During training, gradually reduce the weight of the distillation loss from the teachers and increase the weight of the true label loss. This allows the student to first learn from the teachers' knowledge before relying more on the true data [4]. This method has been shown to achieve higher average performance than both single-task learning and classic MTL.

Signaling Pathways & Workflows

Task Grouping Methodology

Negative Transfer and Gradient Conflict

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational and methodological "reagents" for designing robust multi-task learning experiments.

| Item | Function & Explanation | Relevant Context |

|---|---|---|

| Task Affinity Grouping (TAG) | A method to measure how training on one task affects the performance of others. It helps identify which task pairs will benefit from joint training before full-scale MTL. | Identifies beneficial task groupings and avoids negative transfer [1]. |

| Pointwise-Usable Information (PVI) | A metric to estimate the difficulty of a dataset/task for a given model. Grouping tasks with similar PVI values (similar difficulty) can promote successful joint learning. | Provides a proxy for task relatedness based on task difficulty [14]. |

| Gradient Adversarial Training (GREAT) | An optimization technique that adds an adversarial loss term to encourage gradients from different tasks to have similar distributions, thereby reducing gradient conflict. | Mitigates negative transfer caused by conflicting gradients [1]. |

| Knowledge Distillation with Teacher Annealing | A training strategy where a multi-task "student" model is guided by predictions from single-task "teacher" models. The guidance is gradually reduced (annealed) over time. | Improves average MTL performance and prevents degradation on individual tasks [4]. |

| Similarity Ensemble Approach (SEA) | A domain-specific method (cheminformatics) to compute the similarity between protein targets based on the chemical structure of their known active ligands. | Used to cluster biologically similar targets for effective MTL in drug discovery [4]. |

In the quest to accelerate drug discovery, multi-task learning (MTL) models that simultaneously predict drug-target affinity (DTA) and generate novel drug molecules represent a paradigm shift. However, the joint optimization of these interrelated but distinct tasks is fraught with a fundamental challenge: task conflicts. From an optimization perspective, these conflicts manifest as gradient conflict, where the direction and magnitude of gradients from different tasks differ significantly. This results in the average update benefiting one task at the expense of another, a phenomenon known as negative transfer [16] [17]. In real-world applications, this means a multi-task model might become proficient at predicting binding affinity but fail to generate chemically viable, target-aware molecules, or vice-versa, ultimately limiting its utility in a drug development pipeline. This technical guide diagnoses the specific issues arising from these conflicts and provides actionable troubleshooting protocols for researchers.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ: What are the primary symptoms of task conflict in my DTA and molecule generation model?

Answer: You can identify task conflicts through several clear, measurable symptoms in your model's output and training behavior:

- Performance Disparity: One task (e.g., DTA prediction) shows strong performance, while the other (e.g., molecule generation) performs poorly, worse than if it were trained independently. This is a classic sign of one task dominating the optimization [1].

- Unstable Training Loss: The loss for one or more tasks fluctuates wildly or fails to converge smoothly, indicating that gradient updates are interfering with each other [17].

- Poor Quality of Generated Molecules: Generated molecules may lack chemical validity, novelty, or fail to exhibit desired binding properties to the target, even when the DTA prediction head is accurate. This suggests the shared feature representation is biased towards the predictive task [7].

- Low Gradient Cosine Similarity: If you analyze the gradients of the two tasks with respect to the shared parameters, you will find a low or negative cosine similarity, directly quantifying the conflict [16] [17].

FAQ: My model suffers from performance disparity. How can I balance the learning of both tasks?

Problem: The DTA prediction task is highly accurate, but the generated molecules are invalid or non-novel.

Solution: This is often due to unbalanced losses or datasets. Implement a dynamic loss weighting or task scheduling strategy.

Troubleshooting Steps:

- Audit Your Data: Check the relative sizes and data distributions of your training sets for both tasks. A significant imbalance can cause the model to focus on the task with more data [1].

- Implement Dynamic Loss Weighting: Instead of using a simple average of losses, use methods that adaptively weight each task's loss based on its learning progress. For example, weight the loss inversely proportional to the training set size or based on the rate of loss improvement [1].

- Apply Task Scheduling: Rather than training on all tasks at every step, intelligently sample which task to train on. One effective strategy is to schedule tasks with a probability based on their relative performance to a target level, giving more attention to the lagging task [1].

Experimental Protocol:

- Baseline: Train your model with a simple average of the DTA prediction loss (e.g., Mean Squared Error) and the molecule generation loss (e.g., a reconstruction loss like cross-entropy for SMILES strings).

- Intervention: Implement a GradNorm or similar algorithm to dynamically adjust the weights of the two losses during training.

- Evaluation: Monitor the Validity, Novelty, and Uniqueness of generated molecules [7] alongside DTA metrics like CI (Concordance Index) and MSE (Mean Squared Error) [7]. Successful mitigation should see an improvement in the generative metrics without a significant drop in predictive performance.

FAQ: How can I directly address conflicting gradients during training?

Problem: Analysis shows the gradients of my two tasks have a negative cosine similarity, leading to unstable and sub-optimal training.

Solution: Employ gradient modulation algorithms that directly manipulate the task gradients to make them more compatible.

Troubleshooting Steps:

- Gradient Analysis: First, instrument your training loop to compute and log the gradients for the shared parameters from both tasks at regular intervals. Calculate the cosine similarity between them to confirm the conflict [17].

- Apply a Gradient Manipulation Method: Integrate one of the following algorithms into your optimizer step:

- PCGrad: Projects the gradient of one task onto the normal plane of the gradient of another task if a conflict is detected, effectively removing the conflicting component [17].

- CAGrad: Optimizes the worst-case performance improvement across tasks, leading to a more balanced update [17].

- FetterGrad: A recently developed algorithm that explicitly minimizes the Euclidean distance between task gradients to keep them aligned [7].

- Consider Sparse Training (ST): A novel approach that updates only a sparse subset of the model's parameters during training. This reduces the dimensionality of the optimization problem and naturally limits the potential for interference between tasks. ST can be combined with the gradient methods above for enhanced performance [17].

Experimental Protocol:

- Baseline: Standard training with SGD or Adam optimizer.

- Intervention: Implement PCGrad or FetterGrad on top of your base optimizer. For FetterGrad, the loss function includes a term to minimize the Euclidean distance between the task gradients [7].

- Evaluation: Track the average gradient cosine similarity throughout training. A successful method will increase this value towards 1. Also, monitor the final MSE on the DTA task and Uniqueness of generated molecules to ensure overall improvement.

The following diagram illustrates the logical workflow for diagnosing and mitigating gradient conflicts.

Quantitative Impact of Task Conflicts and Mitigation Strategies

The table below summarizes the performance degradation caused by task conflicts and the improvements achievable with effective mitigation strategies, as demonstrated on benchmark datasets.

Table 1: Performance Impact of Task Conflicts and Mitigation on Benchmark Datasets

| Dataset | Model / Scenario | DTA Prediction (MSE ↓) | DTA Prediction (CI ↑) | Molecule Generation (Uniqueness ↑) | Key Metric for Conflict |

|---|---|---|---|---|---|

| KIBA | Single-Task Baselines [7] | ~0.150 | ~0.890 | N/A | Baseline Performance |

| Multi-Task with Conflict [7] | 0.146 | 0.897 | Low (e.g., < 50%) | Low Gradient Similarity | |

| Multi-Task with Mitigation (e.g., FetterGrad) [7] | 0.146 | 0.897 | > 70% | Improved Gradient Alignment | |

| Davis | Single-Task Baselines [7] | ~0.220 | ~0.890 | N/A | Baseline Performance |

| Multi-Task with Conflict [7] | 0.214 | 0.890 | Low | Unstable Training Loss | |

| Multi-Task with Mitigation (e.g., FetterGrad) [7] | 0.214 | 0.890 | High | Stable Joint Loss Convergence | |

| BindingDB | Model with Gradient Conflict [17] | High | Low | Low | Negative Cosine Similarity |

| Model with Sparse Training [17] | Lower | Higher | Higher | Reduced Conflict Incidence |

Advanced Protocol: Mitigating Gradient Conflict via Sparse Training

For researchers dealing with severe conflicts, especially in larger models, Sparse Training (ST) offers a proactive solution.

- Objective: To reduce the occurrence of gradient conflicts by updating only a subset of the model's parameters, thereby limiting the dimensions in which tasks can interfere.

Methodology:

- Parameter Selection: At the start of training, select a subset of the model's parameters (e.g., 50-80%) to be updated. This can be done based on magnitude (selecting the largest weights) or gradient-based criteria [17].

- Frozen Parameters: The remaining parameters are frozen (masked) and do not receive gradient updates.

- Joint Training: Train the model on both DTA prediction and molecule generation tasks simultaneously. The gradients from both tasks will only flow through and update the selected sparse subset of parameters.

- (Optional) Integration: Combine ST with a gradient manipulation method like PCGrad for a dual-pronged approach [17].

Expected Outcome: A significant reduction in the frequency and severity of gradient conflicts, leading to more stable training and improved performance on both tasks, particularly in later training stages [17].

Table 2: Key Resources for Multi-Task Drug Discovery Experiments

| Resource Name | Type | Function in Experiment | Example/Reference |

|---|---|---|---|

| Benchmark Datasets | Data | Provides standardized data for training and fair comparison of models. | KIBA, Davis, BindingDB [7] [18] |

| ESM-2 Encoder | Software/Model | Encodes protein sequences into rich, contextualized feature representations for the DTA prediction task. | Used in DrugForm-DTA [18] |

| Chemformer | Software/Model | Encodes small molecule ligands (e.g., from SMILES strings) into feature representations for DTA input or generation tasks. | Used in DrugForm-DTA [18] |

| FetterGrad Algorithm | Software/Optimizer | An optimization algorithm designed to mitigate gradient conflicts in MTL by aligning task gradients. | Introduced in DeepDTAGen [7] |

| Sparse Training (ST) Framework | Software/Method | A training paradigm that updates only a subset of model parameters to proactively reduce gradient conflict. | [17] |

| Gradient Conflict Metrics | Analysis Tool | Quantifies the degree of conflict between tasks by calculating the cosine similarity of their gradients. | Essential for diagnosis [17] |

| Chemical Property Analyzers | Software | Validates generated molecules by calculating properties like Solubility, Drug-likeness, and Synthesizability. | Used for generative task evaluation [7] |

The following diagram maps the experimental workflow of a robust multi-task model, integrating the components and mitigation strategies discussed.

Advanced Architectures and Algorithms for Harmonizing Dissimilar Tasks

Frequently Asked Questions (FAQs)

1. What is a gradient conflict in multi-task deep learning? In multi-task deep learning (MTDL), a gradient conflict occurs when the gradients of different task-specific loss functions point in opposing directions or have significantly different magnitudes. This interference hinders the model's ability to converge effectively for all tasks simultaneously. Conflicts primarily arise from two sources [19]:

- Angle-Based Conflict: When the cosine similarity between two task gradients is negative (i.e., the angle between them is greater than 90°), their directions oppose each other. The vector sum of these gradients can result in a small net update, drastically slowing down convergence.

- Magnitude-Based Conflict: When one task's gradient has a much larger magnitude than others, it can dominate the aggregated update. This causes the model to prioritize learning one task at the expense of others, leading to biased and sub-optimal performance.

2. What are the common symptoms of gradient conflict in my experiments? Your model may be experiencing gradient conflict if you observe the following issues during training [19]:

- Unstable or Oscillating Loss: The loss values for one or more tasks fluctuate wildly without settling into a minimum.

- Biased or Degraded Performance: The model performs well on one or a subset of tasks but shows significantly worse performance on other tasks compared to training them individually.

- Slow Convergence: The overall training process takes much longer to converge than expected, or fails to converge altogether.

3. How can I detect and measure gradient conflicts? You can use the following methods to diagnose gradient conflicts [19]:

- Cosine Similarity: Compute the cosine similarity between the flattened gradient vectors of different tasks. A value close to -1 indicates a strong directional conflict.

cos(φ) = (g_i · g_j) / (||g_i|| * ||g_j||) - Gradient Magnitude Ratio: Track the ratio of the L2 norms of the gradients for different tasks. A very high or low ratio suggests a magnitude-based conflict where one gradient is dominating.

- Visual Inspection: Plotting the loss curves for individual tasks can visually reveal if one task's loss is decreasing at the direct expense of another's.

4. What is FetterGrad and how does it resolve gradient conflicts? FetterGrad is an optimization algorithm specifically designed to mitigate gradient conflicts in multitask learning frameworks like DeepDTAGen. Its core mechanism involves aligning the gradients of different tasks during the backward pass [20] [21]. The algorithm explicitly works to minimize the Euclidean Distance (ED) between the task gradients, fostering a more cooperative and stable learning process in a shared feature space. This prevents one task from dominating and ensures that the shared model parameters are updated in a direction that is beneficial for all tasks.

5. Are there other effective techniques to manage gradient conflicts? Yes, alongside FetterGrad, several other strategies have been developed, which can be broadly categorized as follows [19]:

- Gradient Surgery Methods: Techniques like PCGrad and the newer SAM-GS (Similarity-Aware Momentum Gradient Surgery) explicitly modify conflicting gradients. PCGrad projects one task's gradient onto the normal plane of another if they conflict, while SAM-GS uses gradient similarity to adaptively modulate the optimization momentum [19].

- Loss Balancing Methods: Algorithms like Uncertainty Weighting or GradNorm dynamically adjust the weights of the individual task losses during training to balance their influence.

- Adaptive Intervention: Frameworks like AIM learn a dynamic policy during training to mediate gradient conflicts, prioritizing progress on the most challenging tasks [22].

Troubleshooting Guide: Diagnosing and Resolving Gradient Conflicts

This guide provides a step-by-step protocol to identify and address gradient conflict issues in your multi-task learning experiments.

Problem: Model performance is unstable, and one task is learning at the expense of others.

Required Monitoring: Access to task-specific loss functions and their gradients during the training process.

Step 1: Confirm the Symptoms

Monitor your training logs for the following signs [19]:

- The aggregate multi-task loss is decreasing, but the loss for one or more specific tasks is stagnating or increasing.

- High variance or oscillations in the loss curves of individual tasks.

- Final performance on a task is significantly worse than when the same model is trained on that task alone.

Step 2: Quantify the Conflict

During a training iteration, calculate the following metrics for each pair of tasks [19]:

- Extract Gradients: For a shared set of model parameters, collect the gradient vectors

g_iandg_jfor tasksiandj. - Compute Cosine Similarity: Use the formula

cos(φ_ij) = (g_i · g_j) / (||g_i|| * ||g_j||). - Calculate Magnitude Ratio: Compute

ratio = ||g_i|| / ||g_j||.

A consistently negative cosine similarity and/or an extreme magnitude ratio (e.g., >10:1 or <1:10) confirms a gradient conflict.

Step 3: Implement a Mitigation Strategy

Based on your diagnosis from Step 2, choose and implement one of the following solutions:

- If conflicts are frequent and severe: Integrate a gradient surgery method. Below is a comparative table of different techniques.

| Technique | Core Mechanism | Best For | Key Hyperparameters |

|---|---|---|---|

| FetterGrad [20] [21] | Minimizes Euclidean distance between task gradients to align them. | Multitask frameworks with a shared feature space (e.g., drug affinity prediction & generation). | Gradient alignment loss weight. |

| SAM-GS [19] | Uses gradient similarity to adaptively modulate momentum; applies conservative learning for dissimilar gradients. | General MTDL benchmarks; scenarios requiring stable and efficient learning dynamics. | Momentum decay factors, similarity thresholds. |

| AIM [22] | Learns a dynamic policy to mediate conflicts, guided by dense, differentiable regularizers. | Data-scarce regimes (e.g., multi-property molecular design); scenarios requiring interpretability. | Policy network architecture, regularizer weights. |

The following diagram illustrates the high-level logical workflow for applying these gradient surgery techniques.

Diagram 1: A generic workflow for integrating gradient surgery into multi-task learning.

- If one task consistently dominates: Employ a loss balancing method. Techniques like Uncertainty Weighting can automatically tune the loss weights based on the task's inherent uncertainty.

- If the model architecture allows: Consider task-specific modules or adversarial training to help disentangle feature representations for different tasks.

Step 4: Evaluate the Solution

After implementing a mitigation strategy, re-run your experiment and monitor the same metrics from Step 1 and Step 2.

- Success Criteria:

- Loss curves for all tasks show a stable, converging trend.

- The final performance on all tasks meets or exceeds the performance achieved with single-task training or a naive multi-task baseline.

- Measured gradient conflicts (cosine similarity, magnitude ratio) are reduced.

Experimental Protocol: Implementing FetterGrad

This protocol provides a detailed methodology for integrating the FetterGrad algorithm into a multi-task learning setup, based on its use in the DeepDTAGen framework [20] [21].

Objective: To align task gradients and mitigate conflicts during training, thereby improving convergence and performance across all tasks.

Materials/Reagents (Software):

- Framework: PyTorch or TensorFlow.

- Model: A multi-task model with a shared encoder and task-specific heads (e.g., DeepDTAGen).

- Dataset: A suitable multi-task dataset (e.g., KIBA, Davis, or BindingDB for drug discovery).

- Optimizer: A base optimizer (e.g., Adam, SGD).

Procedure:

- Model Forward Pass: Perform a standard forward pass through the network using a mini-batch of data, computing the loss for each task (

ℒ_i). - Gradient Computation: Calculate the gradients of the total loss with respect to the model's shared parameters. Alternatively, calculate and store the gradients for each task loss individually.

- Apply FetterGrad: Before the optimizer step, modify the gradients using the FetterGrad procedure. The core step involves computing a regularization term that minimizes the Euclidean distance between the task gradients. The exact implementation involves [20] [21]:

- Accessing the gradients for the affinity prediction task (

g_DTA) and the drug generation task (g_Gen). - Computing a gradient alignment loss, such as the Euclidean Distance:

ℒ_align = ||g_DTA - g_Gen||². - Using this alignment loss to adjust the raw gradients to be more congruent.

- Accessing the gradients for the affinity prediction task (

- Parameter Update: Update the model's shared parameters using the base optimizer (e.g., Adam) and the "fettered" (aligned) gradients.

The workflow of the DeepDTAGen framework, which incorporates FetterGrad, is visualized below.

Diagram 2: The DeepDTAGen framework integrating FetterGrad for gradient alignment.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational "reagents" and their functions for building and optimizing multi-task learning models in drug discovery.

| Research Reagent | Function in the Experiment |

|---|---|

| DeepDTAGen Framework [20] [21] | A multitask deep learning model that serves as the core architecture for simultaneously predicting Drug-Target Affinity (DTA) and generating novel, target-aware drug molecules. |

| FetterGrad Algorithm [20] [21] | An optimization algorithm that acts as a gradient "conflict mediator" by aligning the gradients from different tasks, enabling stable training of the shared model. |

| Benchmark Datasets (KIBA, Davis) [21] | Standardized datasets containing drug molecules, target proteins, and their binding affinity values. They are used for training and evaluating predictive performance. |

| Gradient Surgery Libraries (e.g., PCGrad, SAM-GS) [19] | Code implementations of various gradient manipulation techniques that can be integrated into existing training loops to resolve gradient conflicts. |

| Differentiable Optimizers (e.g., AIM) [22] | Optimization frameworks that learn to dynamically adjust the training process (e.g., via a policy network) to handle inter-task relationships and conflicts, especially in data-scarce regimes. |

FAQ: Core Concepts and Troubleshooting

Q1: What are the fundamental differences between hard and soft parameter sharing?

A: Hard and soft parameter sharing represent two primary architectural approaches for sharing knowledge between tasks in Multi-Task Learning (MTL).

Hard Parameter Sharing: This is the most common MTL approach [23] [24]. It involves sharing the exact same parameters (weights) in the early (hidden) layers between all tasks. Each task then has its own specific output layers (heads) for final predictions [25] [26]. The shared layers learn a common representation that is useful for all tasks, acting as a strong regularizer that reduces the risk of overfitting [23] [24].

Soft Parameter Sharing: In this approach, each task has its own model with its own separate parameters. Instead of sharing identical weights, soft sharing encourages the parameters of the different models to be similar through regularization constraints added to the loss function [23] [24]. For example, the L2 norm can be used to penalize the distance between the parameters of different models [23]. This allows for more flexibility, as tasks are not forced to use the exact same representation.

Q2: My model performance degrades when I add a new task with hard parameter sharing. What could be the cause?

A: This is a classic sign of negative transfer, which occurs when tasks are too dissimilar or even in conflict [27] [25]. Forcing incompatible tasks to share the same parameters in the early layers can be detrimental, as the optimal feature representation for one task may hurt the performance of another.

- Troubleshooting Steps:

- Analyze Task Relatedness: Re-evaluate the similarity of your tasks. Tasks that benefit from shared low-level features (e.g., edge detection in images for both object detection and semantic segmentation) are good candidates for hard sharing. Tasks with competing requirements are not [25].

- Switch to Soft Sharing: Consider implementing a soft parameter sharing scheme. This gives each task its own model but encourages parameter similarity, offering a balance between task-specific learning and knowledge transfer [23] [25].

- Implement a Hybrid Architecture: You can explore architectures that share some layers but keep others task-specific, providing more granular control over what is shared [26].

- Use Task Grouping: For a large number of tasks, employ a principled task grouping method to cluster similar tasks together before applying MTL, maximizing beneficial transfer while minimizing harmful interactions [27].

Q3: How do I balance the losses of different tasks during training?

A: Balancing losses is critical because tasks may have different scales, noise levels, or learning dynamics. An unbalanced loss can cause the model to focus on one task at the expense of others.

- Simple Approach: Use a weighted sum of the individual task losses,

Total Loss = ∑ (w_i * Loss_i), wherew_iis a manually set weight based on task importance or loss scale [26]. - Advanced Automatic Method: Implement uncertainty weighting [28] [26]. This method learns the optimal weights simultaneously with the model parameters by treating the uncertainty of each task as a learnable parameter. This automatically down-weights noisy tasks and balances the contribution of all tasks to the total loss. The loss function takes the form:

Total Loss = ∑ (1/(2*σ_i²) * Loss_i + log(σ_i)), whereσ_iis the learnable uncertainty parameter for taski.

Q4: Soft parameter sharing is computationally expensive and slow to train. How can I improve this?

A: The overhead comes from maintaining and regularizing multiple sets of parameters.

- Optimization Strategies:

- Efficient Regularization: Instead of applying regularization to all parameters, focus on regularizing only the most critical layers (e.g., earlier layers that capture general features).

- Adopt a TAPS-like Method: Use methods like Task Adaptive Parameter Sharing (TAPS), which tunes a base model to a new task by adaptively modifying a small, task-specific subset of layers. This minimizes both resource usage and competition between tasks [29].

- Leverage Routing Networks: Explore novel routing methods optimized for MTL, which learn to control the amount of weight sharing between pairs of tasks, flexibly adapting to their relatedness [30].

Implementation of Hard and Soft Sharing

The following table summarizes the key experimental setups for both hard and soft parameter sharing, based on common implementations in the literature [23] [26].

Table 1: Experimental Setup for Hard and Soft Parameter Sharing

| Component | Hard Parameter Sharing Protocol | Soft Parameter Sharing Protocol |

|---|---|---|

| Architecture | Single shared backbone network (e.g., CNN or ResNet) with multiple task-specific output heads (fully connected layers). | Separate, independent models for each task. |

| Parameter Sharing | Shared weights in early/backbone layers are identical for all tasks. | No explicitly shared weights; each model has its own parameters. |

| Loss Function | L_total = L_task1 + L_task2 + ... + L_taskN |

L_total = L_task1 + L_task2 + λ * R(W_task1, W_task2)where R is a regularization term (e.g., L2 distance). |

| Key Hyperparameter | Depth/number of shared layers; architecture of task-specific heads. | Regularization strength (λ) and type of regularizer. |

| Primary Advantage | Strong implicit regularization, lower risk of overfitting, computationally efficient [23] [24]. | High flexibility; can handle less related tasks and varying data distributions [25]. |

Sample Code Snippet (Loss Function for Soft Sharing with L2 Regularization):

Quantitative Performance Comparison

The effectiveness of a parameter sharing strategy is highly context-dependent. The following table illustrates potential outcomes in different scenarios.

Table 2: Comparative Performance in Different Scenarios

| Scenario Description | Expected Outcome (Hard vs. Soft) | Key Takeaway |

|---|---|---|

| Highly related tasks (e.g., Human Parsing & Pose Estimation [28]) | Hard Sharing often outperforms or matches Soft Sharing, with higher computational efficiency. | Prefer Hard Sharing for closely related tasks to benefit from its regularization effect and efficiency. |

| Tasks with conflicting demands (e.g., Translation vs. Summarization [25]) | Soft Sharing significantly outperforms Hard Sharing, which can cause negative transfer. | Prefer Soft Sharing when tasks are dissimilar or have competing objectives. |

| Scarce data for one task (e.g., modeling taxi demand for a new vendor [23]) | MTL (Hard Sharing) dramatically outperforms Single-Task Learning (STL) on the data-poor task. | Use Hard Sharing as a powerful tool for data augmentation across tasks, overcoming data scarcity. |

Architectures and Workflows

Hard Parameter Sharing Workflow

The following diagram illustrates the data flow and architecture of a standard hard parameter sharing model.

Soft Parameter Sharing Workflow

The following diagram illustrates the interaction between separate task-specific models in a soft parameter sharing setup, coordinated via a regularization term.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Multi-Task Learning Experiments

| Research Reagent | Function & Explanation | Example Use Case |

|---|---|---|

| Base Model Architectures (ResNet, ViT, BERT) | A pre-trained, powerful feature extractor that serves as the foundation for building MTL models. | Used as the shared backbone in hard sharing or as the starting point for each task-specific model in soft sharing/TAPS [29]. |

| Uncertainty Weighting Module | A learnable parameter (σ) that automatically balances the contribution of different task losses to the total gradient. | Crucial for stabilizing training when tasks have different loss scales or noise levels, preventing one task from dominating [28] [26]. |

| L2 / Frobenius Norm Regularizer | A penalty term added to the loss function to minimize the squared distance between the parameters of different models. | The core component for enforcing parameter similarity in soft parameter sharing implementations [23] [24]. |

| Task Adaptive Parameter Sharing (TAPS) | A method that adapts a base model to a new task by modifying only a small, sparse set of layers, solving a joint optimization problem. | Efficiently learns multiple downstream tasks with minimal parameter overhead and reduced inter-task competition [29]. |

| Cross-Task Representation Consistency (CRC) Module | A knowledge distillation technique that transfers knowledge from single-task models to corresponding tasks in an MTL network. | Enhances communication between tasks (e.g., human parsing and pose estimation) during training without adding inference-time costs [28]. |

Frequently Asked Questions

Q1: What is Instance-Based Multi-Task Learning (IBMTL) and how does it differ from other MTL approaches? Instance-Based MTL is a variant of feature-based MTL that incorporates evolutionary relatedness metrics between proteins to guide the learning process. Unlike Single-Task Learning (STL), which trains a separate model for each task, or standard Feature-Based MTL (FBMTL) which applies learning across all proteins within a group without discrimination, IBMTL specifically leverages the evolutionary relationships between proteins. This allows the model to more effectively share information between closely related tasks, which is particularly beneficial when bioactivity data for natural products is limited [31] [32].

Q2: Under what conditions does IBMTL provide the most significant performance improvements? IBMTL demonstrates the most significant improvements when applied to protein groups with well-defined evolutionary hierarchies and when tasks share meaningful biological relationships. Research has shown particularly strong performance gains in the kinase and cytochrome P450 protein groups, as these proteins are classified at more specific levels of ChEMBL's 6-level hierarchical protein classification system. The method effectively balances the trade-off between evolutionary relatedness and dataset size [31].

Q3: How do I select the appropriate evolutionary relatedness metric and classification level for my protein targets? The optimal classification level depends on your specific dataset and protein families. For kinase targets, research indicates that IBMTL performs best at the target parent level, which provides an optimal balance between biological relevance and sufficient data aggregation. We recommend experimenting across different levels of ChEMBL's protein classification hierarchy while monitoring validation performance to identify the optimal granularity for your specific application [31].

Q4: What should I do when my multi-task model shows performance degradation on certain tasks? Performance degradation typically indicates significant task conflicts. We recommend implementing the following troubleshooting steps:

- Analyze the evolutionary distances between your target proteins - tasks with excessive dissimilarity may require separate modeling approaches.

- Verify that you're using the appropriate protein classification level in ChEMBL's hierarchy.

- Consider implementing Pareto multi-task optimization approaches that explicitly handle task conflicts by finding optimal trade-off solutions [16].

- Evaluate whether certain task combinations should be trained in separate model groups.

Q5: How can I quantify and visualize the performance advantages of IBMTL compared to other methods? The performance advantage of IBMTL can be quantified using standard classification metrics (AUC, accuracy, F1-score) compared against STL and FBMTL baselines. Create comparative tables showing performance differences across protein groups, with special attention to data-scarce scenarios where IBMTL typically shows the greatest advantage. The evolutionary relationships can be visualized using phylogenetic trees or protein classification hierarchies [31].

Troubleshooting Guides

Issue: Poor Cross-Task Knowledge Transfer

Symptoms

- Model shows improved performance on some tasks but degraded performance on others

- Validation metrics fluctuate unpredictably during training

- Model fails to outperform single-task baselines

Diagnosis and Resolution

- Analyze Task Relatedness: Calculate evolutionary distances between your target proteins using sequence alignment scores or phylogenetic analysis. Tasks with distances beyond threshold levels may require separate treatment.

Adjust MTL Architecture:

Optimize Protein Grouping: Restrict IBMTL to proteins sharing significant evolutionary relationships (e.g., same protein family or subfamily). Overly broad groupings can introduce negative transfer.

Hyperparameter Tuning: Adjust the balance between task-specific and shared parameters in your network architecture. Increase task-specific components for more dissimilar tasks.

Issue: Handling Limited and Imbalanced Bioactivity Data

Symptoms

- Poor generalization despite good training performance

- High variance in cross-validation results

- Model bias toward well-represented protein classes

Resolution Strategies

- Data Augmentation: Leverine evolutionary relationships to create synthetic training examples for data-scarce tasks through similarity-based sampling.

Transfer Learning: Pre-train on evolutionarily related proteins with abundant data before fine-tuning on specific targets.

Weighted Loss Functions: Implement class-balanced loss functions that account for both within-task and across-task imbalances.

Curriculum Learning: Schedule training to begin with evolutionarily close protein pairs before introducing more distant relationships.

Issue: Model Instability During Multi-Task Optimization

Symptoms

- Training loss shows high volatility

- Different random seeds yield significantly different results

- Difficulty in reproducing published performance

Stabilization Techniques

- Gradient Normalization: Apply gradient clipping or normalization to prevent any single task from dominating updates.

Consistent Evaluation Protocol: Implement rigorous k-fold cross-validation with fixed splits for reliable performance assessment.

Multi-Seed Validation: Report performance as mean ± standard deviation across multiple random seeds.

Early Stopping Criteria: Use validation performance on all tasks, not just aggregate metrics, to determine stopping points.

Experimental Protocols & Methodologies

IBMTL Implementation Workflow

The following diagram illustrates the complete IBMTL experimental workflow:

Dataset Curation Protocol

Data Sources and Preprocessing

- Primary Source: ChEMBL database for bioactivity data

- Filtering: Binary classification filtering to create balanced datasets

- Validation: Temporal or structural splitting to prevent data leakage

- Curation: Manual verification of protein target annotations and natural product structures

Evolutionary Classification Steps

- Extract protein sequences for all targets

- Perform multiple sequence alignment using ClustalOmega or MAFFT

- Construct phylogenetic trees or distance matrices

- Map to ChEMBL's 6-level hierarchical protein classification system

- Calculate pairwise evolutionary distances

Model Architecture and Training Specifications

Network Configuration

- Base Architecture: Shared bottom layers with task-specific heads

- Feature Dimension: 256-512 units in shared hidden layers

- Activation: ReLU or SELU non-linearities

- Regularization: Dropout (0.2-0.5) and L2 regularization (1e-4 to 1e-5)

- Instance Weighting: Evolutionary distance-based weighting in loss function

Training Parameters

- Optimizer: Adam or AdamW with learning rate 1e-4 to 1e-3

- Batch Size: 32-128 depending on dataset size

- Early Stopping: Patience of 20-50 epochs based on validation performance

- Loss Weighting: Automatic or manual balancing of task losses

Performance Benchmarking Data

Comparative Performance Across MTL Approaches

Table 1: Performance comparison (AUC scores) across protein groups using different learning approaches

| Protein Group | Single-Task Learning | Feature-Based MTL | Instance-Based MTL | Performance Delta |

|---|---|---|---|---|

| Kinase | 0.782 ± 0.024 | 0.801 ± 0.019 | 0.832 ± 0.015 | +0.050 |

| Cytochrome P450 | 0.815 ± 0.021 | 0.829 ± 0.017 | 0.856 ± 0.012 | +0.041 |

| Protease | 0.763 ± 0.028 | 0.779 ± 0.022 | 0.794 ± 0.018 | +0.031 |

| Ion Channel | 0.791 ± 0.026 | 0.802 ± 0.021 | 0.818 ± 0.016 | +0.027 |

Evolutionary Classification Level Impact

Table 2: IBMTL performance across different levels of ChEMBL protein classification hierarchy

| Classification Level | Kinase Group AUC | Data Utilization | Training Efficiency |

|---|---|---|---|

| Target Parent Level | 0.832 ± 0.015 | High | Optimal |

| Protein Family Level | 0.819 ± 0.017 | Medium-High | Good |

| Protein Superfamily Level | 0.804 ± 0.020 | Medium | Moderate |

| Broad Group Level | 0.791 ± 0.023 | Low-Medium | Less Efficient |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential resources for implementing IBMTL in protein bioactivity prediction

| Resource | Source | Application in IBMTL | Key Features |

|---|---|---|---|

| ChEMBL Database | EMBL-EBI | Bioactivity data source | Annotated bioactive molecules, drug-like properties, protein targets |

| ChEMBL Protein Classification | ChEMBL Web Resource | Evolutionary grouping | 6-level hierarchical classification system for evolutionary relatedness |

| UniProtKB | UniProt Consortium | Protein sequence data | Comprehensive protein sequence and functional information |

| Phylogenetic Analysis Tools | ClustalOmega, MAFFT | Evolutionary distance calculation | Multiple sequence alignment and evolutionary relationship inference |

| Deep Learning Frameworks | PyTorch, TensorFlow | MTL implementation | Flexible architectures for shared and task-specific components |

| Model Evaluation Suites | scikit-learn, custom scripts | Performance assessment | Comprehensive metrics for multi-task learning scenarios |

Advanced Methodological Considerations

Evolutionary Relatedness Conceptual Framework

The diagram below illustrates how evolutionary relatedness informs the IBMTL learning process:

Handling Task Conflicts in Dissimilar Protein Groups

For protein groups with significant evolutionary distances, standard MTL approaches may suffer from negative transfer. In these scenarios, we recommend:

Pareto Multi-Task Optimization

- Frame the problem as multi-objective optimization to find optimal trade-offs

- Generate diverse solutions representing different task prioritizations

- Allow researchers to select appropriate balance based on application needs

Adaptive Weighting Strategies

- Dynamically adjust task weights during training based on evolutionary distances

- Implement gradient surgery to minimize conflicting parameter updates

- Use uncertainty-based weighting to account for task difficulties

This approach aligns with recent advances in multi-task optimization research that explicitly addresses conflicts between dissimilar tasks [16].

Multi-Objective and Pareto Optimization Frameworks for Balanced Trade-Offs

Frequently Asked Questions

1. What is the fundamental difference between single-objective and multi-objective optimization?

In single-objective optimization, the goal is to find a single solution that minimizes or maximizes one objective function. In multi-objective optimization (MOO), several objective functions must be optimized simultaneously. These objectives are often conflicting, meaning improving one leads to the deterioration of another. Consequently, there is no single optimal solution, but rather a set of optimal trade-off solutions known as the Pareto optimal set [33] [34].

2. What is a Pareto Optimal Solution?

A solution is called Pareto optimal (or non-dominated) if none of the objective functions can be improved in value without degrading some of the other objective values [34]. In other words, you cannot find another solution that is better in at least one objective without being worse in another. The set of all these Pareto optimal solutions forms the Pareto front when visualized in the objective function space [35].

3. How do I handle conflicting gradients in Multi-Task Learning (MTL), a common issue with dissimilar tasks?

In MTL, where a single model is trained to perform multiple tasks, gradient conflict is a major optimization challenge. This occurs when the gradients of different loss functions point in opposing directions, hindering convergence.

- Solution: Advanced optimization algorithms can be developed to mitigate this. For example, the FetterGrad algorithm addresses this by keeping the gradients of both tasks aligned. It works by minimizing the Euclidean distance between task gradients to reduce conflict and prevent biased learning towards any single task [7].

4. Which algorithm should I use for my multi-objective optimization problem?

The choice of algorithm depends on your problem's nature. A popular and effective choice for many problems is the Non-dominated Sorting Genetic Algorithm II (NSGA-II) [36]. It is a multi-objective evolutionary algorithm that finds a diverse set of solutions along the Pareto front. The following table summarizes some key algorithms and their applications:

| Algorithm Name | Type | Key Characteristics | Typical Application Context |

|---|---|---|---|

| NSGA-II [36] | Evolutionary Algorithm | Uses non-dominated sorting and crowding distance to find a diverse Pareto front. | General-purpose multi-objective optimization; well-suited for complex, non-linear problems. |

| Weighted Sum Method [33] | Mathematical Programming | Converts MOO into SOO by summing weighted objectives. Simpler but cannot find Pareto front in non-convex regions. | Problems where a clear preference between objectives is known a priori. |

| ε-Constraint Method [33] | Mathematical Programming | Keeps one objective and transforms others into constraints. | Problems where thresholds for certain objectives are known. |

| MOGA (Multi-objective Genetic Algorithm) [33] | Evolutionary Algorithm | A broad category of genetic algorithms adapted for multiple objectives. | Engine design, thermal system optimization [33] [35]. |

5. What are common reasons for a poorly distributed Pareto front, and how can I fix it?

A poorly distributed Pareto front, where solutions are clustered in some regions and absent in others, can result from:

- Insufficient population size: The algorithm does not have enough solutions to explore the trade-off space adequately.

- Ineffective diversity maintenance: The algorithm's mechanism for promoting diversity (e.g., crowding distance in NSGA-II) is not working properly.

- Premature convergence: The algorithm gets stuck in a local Pareto front.

Troubleshooting Steps:

- Increase Population Size: Use a larger population to improve exploration [36].

- Adjust Genetic Operators: Tune crossover and mutation probabilities and distributions to enhance exploration versus exploitation [36].

- Enable Duplicate Elimination: Ensure the algorithm has a mechanism to eliminate duplicate solutions, which helps maintain diversity [36].

Troubleshooting Guide

| Problem | Symptom | Possible Cause | Solution |

|---|---|---|---|

| Algorithm Convergence Failure | The Pareto front shows little to no improvement over generations. | Incorrect algorithm parameters; problem is highly multi-modal with many local Pareto fronts. | Increase the number of generations or function evaluations; try a different algorithm or adjust mutation rates to escape local optima [36]. |

| Biased Pareto Front | Solutions are clustered near one objective, missing middle trade-offs. | Gradient conflict in MTL; unbalanced loss functions. | Use gradient conflict resolution techniques like FetterGrad; apply loss balancing strategies (e.g., weighting) [7]. |

| Violated Constraints | The final solutions do not satisfy all problem constraints. | Constraints are not properly handled by the optimizer; initial population is infeasible. | Implement robust constraint-handling techniques (e.g., penalty functions, feasibility rules); ensure the sampling method can generate feasible initial solutions [36]. |

| High Computational Cost | A single evaluation takes too long, making optimization infeasible. | Objective functions are computationally expensive (e.g., complex simulations). | Use surrogate models (e.g., neural networks, Gaussian processes) to approximate the expensive functions during the optimization loop. |

Experimental Protocols & Methodologies

1. Protocol for Solving a Constrained Bi-Objective Problem with Pymoo

This protocol outlines the steps to implement and solve a standard MOO problem using the Pymoo library in Python [36].

Step 1: Problem Formulation Ensure your problem is defined with objectives to be minimized and constraints in the form ≤ 0. For example, to maximize an objective ( f2(x) ), minimize ( -f2(x) ). Normalize constraints to similar scales.

Step 2: Problem Implementation Implement the problem by defining a class that inherits from

ElementwiseProblem. Specify the number of variables, objectives, and constraints, along with variable bounds.

- Step 3: Algorithm Selection and Initialization Choose an algorithm like NSGA-II and configure its operators.

- Step 4: Define Termination Criterion and Run Optimization Set a termination criterion (e.g., number of generations) and run the optimization.

- Step 5: Post-Processing and Analysis

Analyze the result object (

res.Xfor design variables,res.Ffor objective values) to visualize and interpret the Pareto front.

2. Protocol for Mitigating Gradient Conflict in Deep Multi-Task Learning

This protocol is based on the DeepDTAGen framework for drug discovery, which faces the challenge of jointly predicting drug-target affinity and generating new molecules [7].

- Objective: Jointly train a model on two tasks (e.g., regression and generation) with a shared feature space, while minimizing interference from conflicting gradients.

- Key Technique: Implementation of the FetterGrad algorithm or similar gradient manipulation techniques.

- Methodology:

- Shared Encoder: Design a model with a shared encoder that learns common features from the input data (e.g., drug molecules and target proteins).

- Task-Specific Heads: Attach separate network heads for each task (e.g., a predictor for affinity and a decoder for molecule generation).

- Gradient Alignment: During backpropagation, calculate the gradients for each task's loss with respect to the shared parameters.

- Gradient Modification: Apply the FetterGrad algorithm, which minimizes the Euclidean Distance (ED) between the task gradients. This adjustment aligns the gradient directions, reducing conflict and promoting cooperative learning.

- Parameter Update: Update the model's shared and task-specific parameters using the modified gradients.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Multi-Task/Optimization Research |

|---|---|

| Pymoo Library [36] | A comprehensive multi-objective optimization framework in Python used for implementing, solving, and analyzing optimization problems. |

| NSGA-II Algorithm [36] | A widely used genetic algorithm for finding a well-distributed set of non-dominated solutions (Pareto front). |

| FetterGrad Algorithm [7] | A custom optimization algorithm designed to mitigate gradient conflicts between different tasks in a multi-task learning setup. |

| Shared Feature Encoder [7] | A neural network component that learns a common representation from input data, which is then used by multiple task-specific heads. |

Workflow Visualization

Multi-Objective Optimization with Gradient Handling Workflow

Multi-Task Learning with Gradient Resolution

Technical Support Center

Troubleshooting Guides

This section addresses common technical challenges you might encounter when setting up and running DeepDTAGen experiments. The solutions are framed within the context of multi-task learning, where optimizing for two dissimilar tasks (affinity prediction and molecule generation) is a primary research focus.