Mutation Strategies in Evolution Strategies: A Comparative Analysis for Biomedical Optimization

This article provides a comprehensive analysis of mutation operators within Evolution Strategies (ES), a class of powerful optimization algorithms increasingly applied in complex biomedical research and drug development.

Mutation Strategies in Evolution Strategies: A Comparative Analysis for Biomedical Optimization

Abstract

This article provides a comprehensive analysis of mutation operators within Evolution Strategies (ES), a class of powerful optimization algorithms increasingly applied in complex biomedical research and drug development. We explore foundational concepts, from classical uncorrelated mutations to advanced self-adapting mechanisms, detailing their operational principles. The review systematically compares methodological implementations and their specific applications in addressing optimization challenges in domains such as pharmacokinetics and protein engineering. Furthermore, we examine common pitfalls, performance issues, and modern tuning techniques—including fuzzy logic controllers—to enhance robustness and convergence. Finally, we present a framework for the rigorous validation and comparative benchmarking of these strategies, offering researchers and scientists actionable insights for selecting and optimizing mutation operators to accelerate discovery in computational biology and medicine.

The Building Blocks of Evolution Strategies: Understanding Mutation Operators

Core Principles of Evolution Strategies versus Genetic Algorithms

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between Evolution Strategies and Genetic Algorithms?

The most fundamental difference lies in how they manage the strategy parameters, such as mutation strength. Evolution Strategies (ES) often use self-adaptation, where the strategy parameters (e.g., step sizes for mutation) are encoded within each individual and evolve alongside the solution parameters [1] [2]. This allows the algorithm to dynamically adjust its search behavior. In contrast, classic Genetic Algorithms (GAs) typically rely on fixed strategy parameters set by the user at the beginning of the run and remain constant [1] [3].

2. Which algorithm should I use for optimizing real-valued parameters?

Evolution Strategies are typically the preferred choice for continuous optimization problems in real-valued search spaces [2] [4]. Their design, including the use of real-number representation and Gaussian mutation, is naturally suited for this domain. While Genetic Algorithms can be adapted for real-valued problems (using specific representations and operators), their classic form uses a discrete (often binary) representation [1] [5].

3. How does selection differ between ES and GAs?

ES traditionally use a deterministic selection scheme. After creating and evaluating λ offspring, the best μ individuals are selected to form the next generation, either from the offspring alone (μ, λ)-selection or from the combined pool of parents and offspring (μ + λ)-selection [2]. GAs, however, often use probabilistic selection methods, like roulette wheel or tournament selection, where individuals are chosen to be parents with a probability proportional to their fitness [1] [5].

4. My algorithm is converging to a sub-optimal solution. How can I prevent this?

Premature convergence is often caused by a loss of diversity in the population.

- Increase Mutation Rate/Strength: Temporarily increase the mutation operator's effect to help the population escape the local optimum [6] [7].

- Review Selection Pressure: If using a GA, ensure your selection operator is not too greedy, allowing some less-fit individuals a chance to reproduce and maintain diversity [5].

- Utilize ES Self-Adaptation: If using an ES, the self-adaptation mechanism should, in theory, automatically increase the mutation strength to explore new regions if progress stalls [2].

5. What does the notation (μ/ρ, λ)-ES mean?

This is the standard notation for describing Evolution Strategies [2]:

- μ: The number of parents in the population.

- λ: The number of offspring generated from the parents.

- ρ: The mixing number, or how many parents are used to create a single offspring through recombination.

- Comma vs. Plus:

(μ, λ)-ES means selection occurs only from the λ offspring.(μ + λ)-ES means selection occurs from the union of the μ parents and λ offspring.

Troubleshooting Guides

Issue 1: Poor Convergence Performance

Problem: Your EA is not finding satisfactory solutions, or the fitness is improving too slowly.

Diagnosis and Resolution:

Check Parameter Tuning:

- ES: For self-adaptive ES, the learning rate

τfor strategy parameters is critical. It is often recommended to set it proportional to1/√n, wherenis the problem dimension [2]. - GA: The mutation and crossover rates are key. A high mutation rate can prevent convergence, while a rate that is too low can lead to premature convergence. Adaptive mutation rates that decrease over time can help, favoring exploration first and exploitation later [7] [8].

- ES: For self-adaptive ES, the learning rate

Verify Fitness Function: Ensure your fitness function accurately reflects the problem objectives. A poorly designed fitness function can lead the search in the wrong direction.

Adjust Population Size: A population that is too small may not hold enough diversity, while one that is too large can be computationally expensive. A common heuristic in ES is to set the offspring size

λto about 7 times the parent sizeμ[4].

Issue 2: Handling Constraints in Real-Valued Optimization

Problem: Your algorithm is generating candidate solutions that violate problem constraints.

Diagnosis and Resolution:

- Penalty Functions: Incorporate a penalty term into the fitness function that reduces the fitness of infeasible solutions based on their constraint violation [2].

- Specialized Operators: Use mutation and recombination operators that are aware of and respect the variable boundaries. For example, when a mutated real value falls outside its allowed range

[x_min, x_max], it can be reflected back or set to the boundary [6]. - Repair Algorithms: Implement a procedure that takes an infeasible solution and modifies it to become feasible before fitness evaluation.

Comparative Analysis: Key Experimental Data

Table 1: Core Algorithmic Differences

| Feature | Evolution Strategies (ES) | Genetic Algorithms (GA) |

|---|---|---|

| Primary Representation | Real-valued vectors [1] [2] | Binary strings (classic) or other discrete encodings [1] [5] |

| Core Variation Operators | Mutation as the primary operator; recombination is common [2] [9] | Crossover as the primary operator; mutation is a background operator [5] [8] |

| Mutation Type | Gaussian mutation (often with self-adapting step size) [2] [6] | Bit-flip, swap, inversion, etc. [6] [7] [5] |

| Selection Method | Deterministic ((μ, λ) or (μ + λ)) [2] |

Probabilistic (e.g., roulette wheel, tournament) [1] [5] |

| Strategy Parameters | Often self-adapted [1] [2] | Typically user-defined and static [1] [3] |

Table 2: Common Mutation Operators

| Operator Name | Typical Encoding | Description | Purpose |

|---|---|---|---|

| Gaussian Mutation | Real-valued [2] [6] | Adds a random value from a Gaussian distribution to a gene. | Fine-grained local search and exploitation. |

| Bit-Flip Mutation | Binary [6] [5] | Randomly selects bits in a string and flips them (0→1, 1→0). | Introduces diversity in binary-coded populations. |

| Swap Mutation | Permutation [7] | Randomly selects two genes and swaps their positions. | Maintains diversity in combinatorial problems like scheduling. |

| Inversion Mutation | Permutation [6] [7] | Selects a substring and reverses the order of genes within it. | Creates a larger disruption to escape local optima in permutations. |

Experimental Protocol: Comparing Mutation Strategies

Objective: To empirically evaluate the performance of a self-adaptive Evolution Strategy against a canonical Genetic Algorithm on a set of continuous benchmark functions.

1. Methodology

- Benchmark Functions: Select standard functions (e.g., Sphere, Rastrigin, Ackley) with known optima to test convergence, accuracy, and robustness against local optima [2].

- Algorithm Configurations:

- ES: Implement a

(μ/μ_I, λ)-σSA-ES (where μ_I denotes intermediate recombination) [2]. - GA: Implement a real-coded GA using blend crossover (BLX-α) and Gaussian mutation.

- ES: Implement a

- Performance Metrics: Record the best fitness found over generations, the number of function evaluations to reach a target fitness, and the final solution accuracy.

2. Workflow Diagram

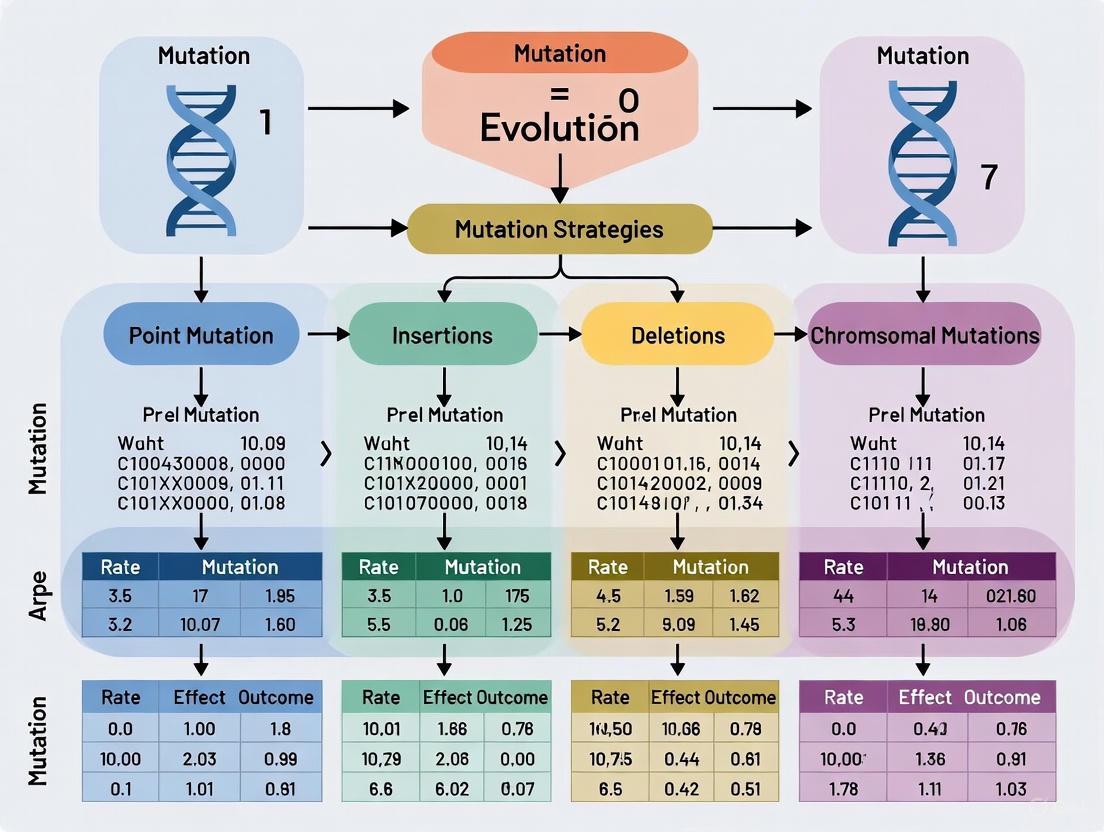

The following diagram illustrates the high-level workflow of a typical Evolution Strategy, highlighting the self-adaptation process.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Algorithmic Components

| Item | Function | Example in ES | Example in GA |

|---|---|---|---|

| Representation Schema | Defines how a candidate solution is encoded in the algorithm. | Real-valued vector (y, σ) [2]. |

Binary string "1011001" [5]. |

| Variation Operator | Creates new candidate solutions from existing ones. | Gaussian mutation with self-adaptive step size [2] [6]. | Single-point or uniform crossover [5] [8]. |

| Selection Mechanism | Determines which solutions are allowed to reproduce. | Deterministic (μ, λ)-selection [2]. |

Fitness-proportionate (roulette wheel) selection [5]. |

| Strategy Parameter Controller | Adjusts the algorithm's internal parameters during the run. | Log-normal self-adaptation of mutation strength σ [2]. |

Predefined, static mutation probability (e.g., 0.01) [5]. |

In Evolution Strategies (ES), a subclass of evolutionary algorithms, mutation operators are a fundamental genetic operator that introduces random variations into a population of candidate solutions, enabling the exploration of the search space [10]. The self-adaptation of mutation step sizes is a defining feature of ES, allowing the algorithm to dynamically adjust the magnitude of perturbations during the search process [10]. Mutation operators are broadly categorized based on how these step sizes are controlled. In its simplest form, a single step size control parameter may be used for all dimensions of the search space. More advanced strategies employ uncorrelated mutations with multiple step sizes, one for each coordinate, or correlated mutations, where a full covariance matrix adapts the mutation distribution, allowing it to align with the topology of the objective function [10]. Understanding this taxonomy is crucial for researchers and practitioners applying ES to complex optimization problems in fields like drug design and protein engineering, where navigating high-dimensional, rugged search spaces efficiently is paramount.

Core Taxonomy and Definitions

The following table outlines the core characteristics, mechanisms, and typical use cases for the different classes of mutation operators in Evolution Strategies.

| Feature | Uncorrelated Mutation (Single Step Size) | Uncorrelated Mutation (n Step Sizes) | Correlated Mutation |

|---|---|---|---|

| Core Concept | A single step size parameter controls mutation for all coordinates. | Each coordinate (dimension) has its own independently adaptable step size. | Step sizes and rotations are adapted using a covariance matrix, modeling dependencies between dimensions. |

| Number of Strategy Parameters | 1 | n | n(n+1)/2 |

| Mutation Distribution | Isotropic (spherical) | Axis-parallel ellipsoidal | General ellipsoidal (can be rotated) |

| Adaptation Mechanism | Self-adaptation or derandomized methods like CMA-ES. | Self-adaptation, where each step size is mutated independently. | Covariance Matrix Adaptation (CMA), which learns the underlying correlation structure of the search space. |

| Advantages | Simple, low computational cost. | Can scale mutations differently for each axis, good for separable functions. | Can handle non-separable and ill-conditioned problems effectively by learning the search direction. |

| Disadvantages | Inefficient on functions that are not axis-aligned or are ill-conditioned. | Cannot handle correlations between parameters; performance degrades on non-separable problems. | Higher computational and memory complexity due to the covariance matrix update and decomposition. |

| Typical Application | Simple, low-dimensional optimization problems. | Medium-dimensional problems where parameters are roughly independent. | Complex, high-dimensional, non-separable optimization problems. |

Frequently Asked Questions (FAQs)

Q1: My Evolution Strategy is converging prematurely to a suboptimal solution. What could be the cause and how can I troubleshoot this?

A: Premature convergence is often a result of a poor balance between exploration and exploitation, frequently linked to the mutation operator [11].

- Check Mutation Step-Sizes: If the step-sizes have become too small too quickly, the population loses diversity and gets trapped. Inspect the log of your strategy parameters.

- Troubleshooting Action: Consider switching from a

(μ + λ)-selection strategy to a(μ, λ)-strategy. The(μ, λ)-strategy, which selects parents only from the offspring, is more effective at avoiding premature convergence because it allows for the continual renewal of the population and forgets the parent information [10]. - Troubleshooting Action: Implement a mutation operator that better maintains population diversity. Operators like Non-uniform Mutation (NUM) or Power Mutation (PM) can help by providing a more dynamic search behavior, preventing the algorithm from stagnating [11].

Q2: How do I choose between uncorrelated and correlated mutations for my specific optimization problem in drug design?

A: The choice hinges on the problem's dimensionality, complexity, and your computational budget.

- For Low-Dimensional Problems or Initial Screening: Start with an uncorrelated mutation with n step-sizes. This is computationally cheaper and can be sufficient for problems where the parameters (e.g., molecular descriptors) have minimal complex interactions [10].

- For High-Dimensional, Complex Landscapes: If you are optimizing a molecular structure where parameters are highly interdependent (a non-separable problem), correlated mutations are strongly recommended. Techniques like the Covariance Matrix Adaptation Evolution Strategy (CMA-ES) are designed to learn these interactions, significantly improving search efficiency [10]. This is analogous to structure-guided drug design, where understanding the relationship between a protein's structure and function is key to designing effective inhibitors [12].

Q3: In a real-coded Genetic Algorithm (GA), my crossover operator seems to be causing unstable solutions with high variance. What's wrong?

A: This is a common challenge where conventional crossover operators fail to maintain a proper balance between exploration and exploitation, especially in multimodal and high-dimensional problems [11].

- Troubleshooting Action: Evaluate your current crossover operator. Standard operators like Simulated Binary Crossover (SBX) or Laplace Crossover (LX) may struggle with population diversity and adaptation.

- Troubleshooting Action: Consider implementing more robust, parent-centric real-coded crossover operators. Recent research has proposed operators like Mixture-based Gumbel Crossover (MGGX) and Mixture-based Rayleigh Crossover (MRRX), which are designed to dynamically adapt and generate diverse yet high-quality offspring. Studies show that MGGX, in particular, can achieve lower mean and standard deviation values, indicating more stable and reliable performance across various benchmark functions [11].

Experimental Protocols for Mutation Analysis

Protocol: Benchmarking Mutation Operator Performance

This protocol provides a standardized methodology for comparing the efficacy of different mutation operators, such as uncorrelated versus correlated, within an Evolution Strategy.

1. Objective: To quantitatively assess and compare the performance of different mutation operators on a set of standardized optimization problems. 2. Materials (Software & Computational Environment):

- Programming Language: Python (with libraries like NumPy and SciPy) or C++.

- ES Framework: A custom implementation or a library like

cma-es(for CMA-ES) in Python. - Computing Platform: A standard workstation or high-performance computing cluster for more demanding tests.

3. Methodology:

- Step 1: Selection of Benchmark Functions. Choose a diverse set of functions with known properties:

- Unimodal Function: e.g., Sphere (to measure pure convergence speed).

- Multimodal Function: e.g., Rastrigin (to test ability to escape local optima).

- Ill-Conditioned/Non-separable Function: e.g., Ellipsoid or Cigar (to test the need for correlated mutations).

- Step 2: Algorithm Configuration.

- Implement the ES with the mutation operators under test (e.g., uncorrelated with one step-size, uncorrelated with n step-sizes, and correlated via CMA).

- Keep all other parameters constant: population size (μ and λ), recombination method, and initial solution. A common setting is

(μ, λ)-selection with μ = λ/2 [10]. - For self-adaptation, use the standard log-normal update rule for step-sizes:

σ_j' = σ_j * exp(τ * N(0,1))[10].

- Step 3: Data Collection and Metrics.

- Run each (mutation operator, benchmark function) combination multiple times (e.g., 50 independent runs) to account for stochasticity.

- Record for each run:

- Best Fitness vs. Generation: To plot learning curves.

- Final Solution Quality: The best objective value found.

- Number of Function Evaluations to reach a target fitness.

- Step 4: Statistical Analysis.

- For each function, calculate the mean and standard deviation of the final solution quality across all runs for each operator [11].

- Perform statistical significance tests (e.g., Wilcoxon signed-rank test) to confirm that performance differences are not due to chance.

- Use multi-criteria decision-making methods like TOPSIS or the Quade test to rank the overall performance of the operators across all benchmark functions [11].

- Step 1: Selection of Benchmark Functions. Choose a diverse set of functions with known properties:

Protocol: Self-Adaptation of Mutation Step-Sizes

This protocol details the core mechanism of how strategy parameters (step-sizes) are mutated in a self-adaptive Evolution Strategy.

1. Objective: To implement and observe the self-adaptation process of mutation step-sizes in an uncorrelated mutation operator with n step-sizes.

2. Methodology:

* Step 1: Representation. Each individual in the population is a tuple a = (x, σ), where x is the object variable vector (the solution) and σ is the vector of n step-sizes, one for each dimension in x.

* Step 2: Mutation of Strategy Parameters. The step-size vector σ is mutated before the solution vector x. This is a critical aspect of self-adaptation.

* For each dimension j in σ, update the step-size:

σ_j' = σ_j * exp(τ * N(0,1)) ...(Global factor)

* A more common and effective method is to also include an independent perturbation for each dimension:

σ_j' = σ_j * exp(τ * N(0,1) + τ' * N_j(0,1)) ...(Global and individual factors)

* Here, N(0,1) is a standard normal random number drawn once for the entire individual, and N_j(0,1) is a new number drawn for each dimension j. The learning rates τ and τ' are pre-defined constants.

* Step 3: Mutation of Object Variables. After the new step-sizes σ' are computed, the solution vector x is mutated.

* For each dimension j in x:

x_j' = x_j + σ_j' * N_j(0,1)

* The mutation of x uses the newly mutated step-sizes σ_j', ensuring that offspring which inherit "good" step-sizes are more likely to survive [10].

Visualizing Mutation Strategies and Workflows

Mutation Strategy Decision Workflow

The diagram below outlines a logical workflow for selecting an appropriate mutation strategy based on problem characteristics.

Self-Adaptation Mechanism

This diagram illustrates the process flow for the self-adaptation of mutation step-sizes, a core concept in Evolution Strategies.

The Scientist's Toolkit: Essential Research Reagents & Algorithms

The following table details key algorithms, components, and computational "reagents" essential for experimentation with mutation operators in Evolution Strategies.

| Item Name | Type | Function / Application |

|---|---|---|

| CMA-ES Algorithm | Algorithm | A state-of-the-art Evolution Strategy that uses correlated mutations by adapting a full covariance matrix. Ideal for complex, non-separable optimization problems [10]. |

| Benchmark Function Suite | Software Tool | A collection of standard optimization problems (e.g., Sphere, Rastrigin, Cigar) used to rigorously test and compare the performance of different algorithms and operators [11]. |

| Real-Coded Genetic Algorithm (GA) | Algorithm Framework | A population-based optimization algorithm that works directly with real-valued parameters. Serves as a testbed for integrating and evaluating new crossover and mutation operators [11]. |

| MGGX / MRRX Crossover | Crossover Operator | Novel, parent-centric real-coded crossover operators designed to dynamically balance exploration and exploitation, often outperforming conventional operators like SBX in complex scenarios [11]. |

| Non-Uniform Mutation (NUM) | Mutation Operator | A mutation operator commonly used in GAs where the magnitude of mutation decreases over time, helping to shift from global exploration to local exploitation as the run progresses [11]. |

| Power Mutation (PM) | Mutation Operator | A mutation operator based on the power distribution, used to increase population diversity and help the algorithm escape local optima [11]. |

| Covariance Matrix | Data Structure | An n×n matrix at the heart of correlated mutations. It is adapted over generations to model the pairwise dependencies between decision variables, shaping the mutation distribution [10]. |

| Selection Strategies ((μ,λ) vs (μ+λ)) | Algorithmic Rule | Determines how the parent population for the next generation is formed. The (μ,λ) strategy often helps prevent premature convergence [10]. |

This technical support center provides troubleshooting guides and FAQs for researchers working with Evolution Strategies (ES). The content is framed within a broader thesis comparing mutation strategies, assisting scientists in diagnosing and resolving common issues with strategy parameters.

Frequently Asked Questions (FAQs)

1. My (μ/μ,λ)-ES is converging prematurely. How can I adjust the strategy parameters to improve exploration? Premature convergence often indicates a loss of population diversity and insufficient exploration. The following adjustments are recommended:

- Increase the Offspring Population Size (λ): Using a larger λ relative to the parent population size (μ) promotes exploration. A common heuristic is to set λ = 7μ [4].

- Re-evaluate the Step-Size (σ) Adaptation Rule: If you are using a simple rule like the 1/5th success rule, ensure it is functioning correctly. This rule states that you should decrease the mutation step size if the success rate (the fraction of mutations that lead to an improvement) is below 1/5, and increase it if it is above [13] [4]. A success rate consistently below 1/5 may require a larger base step size.

- Consider a Different Mutation Strategy: Switch from a strategy that heavily exploits the best solution (e.g., "best" based) to one that emphasizes exploration, such as "rand" based strategies which use randomly selected individuals [14].

2. My CMA-ES algorithm is running slowly on a high-dimensional problem. What steps can I take to improve its efficiency? Performance issues in high dimensions are often related to the complexity of updating the covariance matrix.

- Algorithm Selection: For very high-dimensional problems (e.g., >1000 parameters), consider using a variant like the Limited-Memory CMA-ES (L-CMA-ES), which reduces time and memory complexity by using a compressed representation of the covariance matrix [10].

- Parallelization: ES are highly amenable to parallelization. You can significantly speed up the fitness evaluation step by distributing the evaluation of offspring across multiple CPU cores [15]. The forward-pass-only nature of ES makes this more efficient than gradient-based methods that require backpropagation.

- Check Hyperparameters: Review the learning rates for the evolution paths and covariance matrix update (e.g., αcλ, αc1). The default settings are usually robust, but for specific problem landscapes, tuning may be necessary [16].

3. How do I choose between the (μ,λ) and (μ+λ) selection strategies? The choice fundamentally trades off exploration for convergence speed and robustness.

- Use (μ,λ)-ES: This strategy selects the next generation parents only from the newly created λ offspring. It does not include the previous parents. This is better for exploration and is more robust in dynamic environments or when dealing with noisy fitness functions, as it can "forget" bad parents [10] [4].

- Use (μ+λ)-ES: This strategy selects the next generation parents from the combined pool of the μ parents and λ offspring. This is an elitist strategy that guarantees the best solution found so far is never lost. It typically leads to faster convergence but may be more prone to getting stuck in local optima [10] [4].

Heuristic: A common and robust setting is to use the (μ,λ) strategy with λ = 7μ [4].

Troubleshooting Guides

Problem: Ineffective Step-Size Adaptation

Symptoms:

- The algorithm stagnates, showing no improvement over many generations.

- The step-size (σ) collapses to zero, halting all exploration.

- Oscillating performance where the step-size repeatedly grows too large and then shrinks too small.

Diagnosis and Solutions: This problem arises when the step-size adaptation mechanism is not correctly aligned with the local fitness landscape.

Verify the 1/5th Success Rule Implementation:

- Protocol: Calculate the success rate over a sliding window of the last n iterations, where n is the problem dimensionality [13].

- Action: Adjust the step-size multiplicatively based on the measured success rate (ps):

- If ps < 1/5: Set σ = σ * c (e.g., c = 0.85)

- If ps > 1/5: Set σ = σ / c

- Rationale: This rule ensures that the step-size is reduced to fine-tune solutions when progress is hard to find, and increased to take larger steps when progress is easy [13] [4].

Inspect Evolution Paths in CMA-ES:

- Concept: In CMA-ES, the step-size is adapted using an evolution path—a weighted history of the movement of the population mean. A short path indicates cancelling steps (step-size too large), while a long, straight path indicates consistent progress (step-size could be increased) [16].

- Visualization: The following diagram illustrates the step-size adaptation logic in CMA-ES based on the evolution path length.

- Action: If the step-size is not adapting well, check the update rule for the evolution path pσ. The damping parameter dσ controls the step-size change magnitude [16].

Problem: Poor Convergence Rate on Ill-Conditioned Problems

Symptoms:

- Slow progress along specific dimensions of the search space.

- Performance is significantly worse on non-separable, ill-conditioned functions compared to spherical functions.

Diagnosis and Solutions: The algorithm is failing to learn and exploit the structure of the fitness landscape, specifically the scaling of and correlations between variables.

- Switch to CMA-ES:

- Rationale: The Covariance Matrix Adaptation Evolution Strategy (CMA-ES) is specifically designed to address this issue. It automatically adapts a full covariance matrix of the mutation distribution, which effectively models the dependencies between variables [10] [16].

- Protocol:

- Initialization: Initialize the mean vector (μ), step-size (σ), and covariance matrix (C = I).

- Mutation: Generate λ offspring: xi = μ + σ * yi, where y_i ~ N(0, C).

- Selection and Recombination: Update the mean μ by taking a weighted average of the best-performing offspring.

- Covariance Matrix Adaptation: Update the covariance matrix C using information from both the current generation's best offspring and the long-term evolution path [16].

- Visualization: The workflow of the CMA-ES algorithm is outlined below.

- Check for Parameter Misconfiguration:

- Population Size: A larger population size (λ) can help in building a more reliable estimate of the covariance matrix, especially in higher dimensions. Consider using an increased population size [17].

- Learning Rates: The default learning rates for the covariance matrix update (e.g., αc1 for the rank-one update) are typically well-chosen. Modifying them without deep understanding of the algorithm can be detrimental [16].

Comparative Data Tables

Table 1: Comparison of Common Mutation Strategy Parameterizations

| Strategy | Parameters Controlled | Adaptation Mechanism | Best For |

|---|---|---|---|

| Isotropic Gaussian (1+1)-ES | Single step-size (σ) | 1/5th Success Rule [13] [4] | Simple, convex problems; quick prototyping. |

| Derandomized Self-Adaptation | n step-sizes (σ₁,...,σₙ) | Log-normal self-adaptation [10] | Problems with separable variables and different sensitivities per dimension. |

| CMA-ES | Full covariance matrix (C) & step-size (σ) | Evolution paths and rank-μ/rank-one updates [10] [16] | Non-separable, ill-conditioned, and rugged problems. |

Table 2: Performance Comparison of Selection Schemes

| Selection Scheme | Convergence Speed | Robustness to Noise | Risk of Premature Convergence |

|---|---|---|---|

| (1+1) | Fast (on simple problems) | Low | High |

| (μ+λ) | Fast | Medium | Medium-High |

| (μ,λ) | Slower | High | Low |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Algorithmic Components and Their Functions

| Component | Function | Example in ES Context |

|---|---|---|

| Mutation Strength (σ) | Controls the global scale of exploration in parameter space. A larger σ enables larger jumps [16] [4]. | The step-size in Simple Gaussian ES. |

| Covariance Matrix (C) | Encodes the shape and orientation of the mutation distribution, modeling variable dependencies and scaling [16]. | The adaptive matrix in CMA-ES that replaces the identity matrix. |

| Evolution Path | Tracks the direction of successful mutations over multiple generations, allowing for cumulative step-size adaptation [16]. | The path pσ used in CMA-ES to decide whether to increase or decrease σ. |

| Recombination Weights | Assigns different importance to selected parents when creating a new mean, typically favoring better individuals [16]. | The weights used in (μ/μ,λ)-ES to update the population mean. |

| Success Rule | A heuristic to adapt strategy parameters based on the observed frequency of successful mutations [13]. | The 1/5th rule for step-size control. |

This guide supports a broader thesis comparing mutation strategies in Evolution Strategies (ES) research. For researchers in fields like drug development, where model parameters must be finely tuned amidst noisy data, understanding and troubleshooting self-adaptation mechanisms—how an algorithm automatically controls its own mutation strength—is crucial for achieving robust performance. This resource provides targeted FAQs and experimental protocols to address specific issues encountered during implementation.

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: Why does my self-adaptive ES converge prematurely to a suboptimal solution?

- Problem: This is often due to mutation strength collapse, where the step-size parameter (σ) decreases too rapidly, halting exploration before finding the global optimum [18].

- Solution:

- Check Learning Parameters: The primary learning parameter

τ(tau) may be too large, causing excessive selection pressure on smaller step-sizes. Try reducingτ; a rule of thumb is τ ∝ 1/√n, where n is the number of parameters [18]. - Verify Sampling Method: Log-normal sampling (

σ_SAL) inherently introduces a bias towards larger step-sizes under random selection. If your population size is small, this bias can be detrimental. Consider switching to unbiased normal sampling (σ_SAN), whereσ_SAN = σ * (1 + τ * N(0,1))[18]. - Increase Population Size: A larger population (

μ,λ) helps maintain genetic diversity, providing more information for the self-adaptation mechanism to correctly adjust σ [18].

- Check Learning Parameters: The primary learning parameter

FAQ 2: How do I choose between Cumulative Step-size Adaptation (CSA) and Mutative Self-Adaptation (σSA)?

- Problem: Uncertainty about which σ-control mechanism is better suited for a specific problem type.

- Solution: The choice depends on the problem landscape and computational resources. The table below summarizes the key characteristics based on recent research [18]:

Table: Comparison of σ-Control Mechanisms

| Feature | Cumulative Step-size Adaptation (CSA) | Mutative Self-Adaptation (σSA) |

|---|---|---|

| Core Principle | Uses an evolution path to adapt σ based on the consistency of successful directions [18]. | Selects σ values based on the fitness of the offspring they produce [18]. |

| Adaptation Speed | Can be slower, especially with large populations [18]. | Can achieve faster adaptation and larger progress rates [18]. |

| Typical Use Case | Well-suited for noisy optimization problems [18]. | Effective on complex, multimodal fitness landscapes. |

| Parameter Sensitivity | Requires tuning of cumulation and damping parameters [18]. | Sensitive to the learning parameter τ [18]. |

FAQ 3: My algorithm's performance is highly variable between runs. What is wrong?

- Problem: High performance variance often stems from poor parameter settings or an incorrectly configured self-adaptation workflow.

- Solution:

- Re-examine the Workflow: Ensure your implementation correctly follows the self-adaptation logic. The diagram below outlines the core process for a (μ/μ_I, λ)-σSA-ES.

- Conduct Parameter Sensitivity Analysis: Systematically test different values for

τ,μ, andλon a simple benchmark function like the sphere model to understand their impact [18]. - Inspect the σ Path: Log the value of σ over generations. A healthy run should show σ adapting dynamically rather than monotonically decreasing or increasing.

Self-Adaptation Workflow in a (μ/μ_I, λ)-σSA-ES

Experimental Protocols and Methodologies

To validate and compare the performance of different self-adaptation mechanisms, the following experimental protocols are recommended.

Protocol 1: Benchmarking on the Sphere Model

The sphere model is a standard test function to analyze the core properties of ES, defined as f(x) = Σx_i² [18].

- Algorithm Setup: Implement a (μ/μ_I, λ)-ES with both σSA and CSA.

- Parameter Normalization: Use normalized progress rate (φ) and normalized mutation strength (σ) for scale-invariant analysis: φ* = φN/R and σ* = σN/R [18].

- Measurement: Run the ES for a fixed number of generations and record:

- The progress rate φ* over time.

- The steady-state level of σ*.

- The number of generations to reach a predefined fitness threshold.

- Analysis: Compare the adaptation speed and stability of σ* between σSA and CSA. The theoretical progress rate for the sphere can be used as a baseline for validation [18].

Protocol 2: Analyzing Adaptation on a Multimodal Function

Use a function like the Rastrigin function, which has many local optima, to test the global search capabilities and avoidance of premature convergence [19].

- Setup: Initialize the population far from the global optimum.

- Observation: Monitor how the mutation strength σ changes when the population encounters a local optimum. A well-adapted ES should temporarily increase σ to escape the basin of attraction.

- Metric: Record the success rate of finding the global optimum over multiple independent runs.

Research Reagent Solutions

The table below details the key algorithmic components required for implementing and experimenting with self-adaptive Evolution Strategies.

Table: Essential Components for Self-Adaptive ES Research

| Research Reagent | Function & Description |

|---|---|

| Mutation Sampling Scheme | Defines how offspring mutation strengths are generated. The two primary types are Log-Normal (biased) and Normal (unbiased), crucially impacting adaptation [18]. |

| Recombination Operator | The method for creating the new parental σ from selected offspring σ' values. Intermediate recombination is common in (μ/μ_I, λ)-ES [18]. |

| Learning Parameter (τ) | Controls the magnitude of changes in the mutation strength during sampling. It is a critical parameter that often requires problem-specific tuning [18]. |

| Population Size (μ, λ) | The number of parent (μ) and offspring (λ) individuals. Larger populations provide more information for reliable self-adaptation but increase computational cost [18]. |

| Fitness Benchmark Suite | A set of test functions (e.g., Sphere, Rastrigin, Schaffer) used to evaluate and compare the performance and robustness of different algorithm configurations [19] [18]. |

Implementing Mutation Strategies: From Theory to Biomedical Applications

Frequently Asked Questions

Q1: Why is my ES population converging prematurely to a suboptimal solution?

Premature convergence often occurs due to a loss of genetic diversity, frequently caused by an incorrectly calibrated mutation strength. If the mutation step size is too small, the algorithm cannot escape local optima [4]. To remedy this, ensure you are using a sufficiently large population size and adapt your mutation strength dynamically using rules like the 1/5th success rule or self-adaptation strategies where strategy parameters evolve alongside the solution parameters [4] [20].

Q2: How do I choose between the (μ, λ)-ES and (μ + λ)-ES selection strategies?

The choice impacts the algorithm's explorative character. Use the comma-selection (μ, λ)-ES for dynamic problems or when you need to maintain strong exploration pressure, as it discards parents entirely. Use the plus-selection (μ + λ)-ES for refining solutions and converging more reliably on static problems, as it allows parents to compete for survival [20]. A common heuristic is to set λ = 7μ [4].

Q3: What is the purpose of mutating strategy parameters?

Mutating strategy parameters—like the step size in Gaussian mutation—allows the algorithm to self-adapt to the local topology of the search landscape. This co-evolution of solution and strategy parameters enables the algorithm to automatically adjust the magnitude of its mutations, balancing exploration and exploitation without manual intervention [20].

Q4: My mutation operator is generating solutions that violate constraints. How can I fix this?

For simple box constraints, a direct approach is to clamp the values to the feasible range after mutation [20]. For more complex constraints, you may need to incorporate constraint-handling techniques such as penalty functions or repair mechanisms into your fitness evaluation. The mutation operators themselves can also be designed to be aware of the value range of decision variables [6].

Troubleshooting Guides

Problem: Slow or Stagnant Convergence Possible Causes and Solutions:

- Cause: Inadequate mutation strength.

- Cause: Insufficient population diversity.

- Solution: Increase the ratio of offspring to parents (

λ / μ). A larger population size (μ) can also improve exploration [4].

- Solution: Increase the ratio of offspring to parents (

- Cause: The recombination operator is causing premature homogenization.

- Solution: Experiment with different recombination operators. For instance, discrete recombination (randomly selecting parameters from parents) can promote more diversity than intermediate recombination (averaging parent parameters) [4].

Problem: Algorithm is Too Noisy and Fails to Refine Solutions Possible Causes and Solutions:

- Cause: Mutation strength is too high, causing the algorithm to behave like a random search.

- Solution: Decrease the initial mutation step size and use a plus-selection strategy

(μ + λ)-ESto better preserve good solutions [20].

- Solution: Decrease the initial mutation step size and use a plus-selection strategy

- Cause: The population size is too large for the available computational budget.

- Solution: While a larger population aids exploration, it requires more function evaluations. You may need to reduce the population size or offspring count and run the algorithm for more generations [4].

Experimental Protocols and Data Presentation

Table 1: Common Mutation Operators for Different Encodings

| Genome Type | Suitable Mutation Operators | Key Characteristics | Applicability in Drug Development |

|---|---|---|---|

| Real-Valued | Gaussian Mutation [6] [20] | Adds noise from a normal distribution; small steps are more likely. | Optimizing continuous parameters like molecular docking coordinates or chemical concentration ratios. |

| Uniform Mutation [6] | Replaces a value with a random one from a uniform distribution. | Exploring a wide range of possible values, such as in initial screening phases. | |

| Binary String | Bit Flip Mutation [6] | Flips individual bits (0 becomes 1, and vice versa) at random positions. | Optimizing feature selection masks in QSAR (Quantitative Structure-Activity Relationship) models. |

| Permutations | Inversion [6] | Reverses the order of a randomly selected subsequence. | Scheduling the order of laboratory experiments or synthetic steps. |

| Insertion/Deletion/Swap [6] | Moves, deletes, or swaps elements within the sequence. | Designing peptide sequences or optimizing molecular structures represented as sequences. |

Table 2: Key Strategy Parameters and Performance Heuristics

| Parameter | Description | Heuristic & Impact |

|---|---|---|

| Population Size (μ) | Number of parent solutions in each generation. | A larger μ improves exploration but increases computational cost [4]. |

| Offspring Count (λ) | Number of new solutions created each generation. | Typically λ > μ; a common setting is λ = 7μ to promote diversity [4]. |

| Mutation Strength (σ) | Standard deviation for Gaussian mutation. | Should be adapted; can be initialized as (x_max - x_min)/6 [6]. The 1/5th success rule is a classic adaptation heuristic [20]. |

| Recombination Size (ρ) | Number of parents used to create one offspring. | Often set to ρ = 2 for intermediate recombination; can be higher for discrete recombination [4]. |

Detailed Methodology: Gaussian Mutation with Self-Adaptation

This is a common and powerful protocol for continuous optimization problems [20].

- Representation: Each individual in the population is represented as a tuple

(x, σ), wherexis the vector of decision variables (e.g., molecular descriptors) andσis a vector of strategy parameters (step sizes) for each dimension. - Mutation of Strategy Parameters: First, mutate the step sizes for each offspring:

σ_i' = σ_i * exp(τ' * N(0,1) + τ * N_i(0,1))- Here,

N(0,1)is a standard normal random variable, sampled once for alli, andN_i(0,1)is sampled anew for eachi. The learning ratesτandτ'are set asτ ∝ 1/√(2n)andτ' ∝ 1/√(2√n), wherenis the problem dimension [20].

- Mutation of Object Variables: Then, mutate the solution itself using the new step sizes:

x_i' = x_i + σ_i' * N_i(0,1)

- Selection and Iteration: Evaluate the fitness of the new offspring

(x', σ')and proceed with selection (e.g.,(μ, λ)-selection) to form the next generation.

Workflow and Strategy Visualization

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for ES Experiments

| Item | Function in ES Research | Example & Notes |

|---|---|---|

| Optimization Framework | Provides the foundational algorithms and operators. | Examples: DEAP (Python), JMetal, HeuristicLab. These libraries offer implemented ES variants, mutation operators, and benchmarking tools. |

| Benchmark Problem Set | Standardized functions to validate and compare algorithm performance. | Examples: BBOB (Black-Box Optimization Benchmarking), Noisy Test Problems. Used to test convergence, robustness, and scalability of a new mutation strategy [20]. |

| Step-Size Adaptation Rule | A heuristic or mechanism to control mutation strength. | Examples: The 1/5th Success Rule, Log-Normal Self-Adaptation, CMA-ES. Critical for automating the tuning of the mutation operator [4] [20]. |

| Statistical Analysis Tool | For rigorous comparison of experimental results. | Examples: R, Python (with SciPy/statsmodels). Used to perform significance tests (e.g., Wilcoxon signed-rank test) on results from multiple independent runs. |

Model-Informed Drug Development (MIDD) is a quantitative framework that uses modeling and simulation to inform drug development decisions and regulatory evaluations. It encompasses a range of methodologies, including Quantitative Systems Pharmacology (QSP), which uses mechanistic models to simulate drug effects within biological systems [21] [22]. Within a research thesis comparing mutation strategies, these models act as sophisticated "fitness functions," where in silico simulations help identify optimal drug candidates and parameters before costly real-world experiments, mirroring how evolutionary algorithms search for optimal solutions [23].

This guide provides technical support for applying these approaches, with troubleshooting focused on common challenges in model development and validation.

Troubleshooting Guides

Problem 1: Poor Model Performance or Lack of Predictive Power

Issue: Your QSP or MIDD model fails to accurately predict experimental or clinical outcomes.

Diagnosis and Solutions:

| Diagnostic Step | Potential Root Cause | Recommended Action |

|---|---|---|

| Parameter Evaluation | Poorly constrained or inaccurate parameters [24]. | Perform global sensitivity analysis to identify most influential parameters. Focus calibration efforts on these [25]. |

| Model Structure Check | Oversimplified biology or missing key pathways [21]. | Review latest literature and omics data to ensure critical mechanisms are included. Consider a more granular semi-mechanistic approach [21]. |

| Data Integration Review | Inadequate or poor-quality data for model training/validation [24]. | Use AI-driven data imputation or synthetic data generation to fill gaps. Prioritize collecting high-quality, targeted data [24]. |

| Validation Method | Reliance on single, non-representative validation dataset [25]. | Employ a Virtual Population (VP) approach. Generate many in silico patients and confirm predictions hold across this population [25]. |

Experimental Protocol: Virtual Population (VP) Analysis for Model Validation

- Objective: To quantify the robustness of a qualitative model prediction (e.g., "Drug A is superior to Drug B").

- Methodology:

- Generate Virtual Subjects: Create a large cohort (e.g., 1,000-10,000) of in silico patients by sampling model parameters from predefined physiological distributions [25].

- Run Simulations: Execute your model for each virtual subject in the cohort for each scenario (e.g., Drug A vs. Drug B).

- Calculate Prediction Distribution: For each subject, calculate the outcome metric (e.g., % tumor shrinkage). Determine the percentage of the virtual population for which the qualitative prediction holds true (e.g., % of patients where Drug A outperforms Drug B).

- Statistical Testing: Compare this distribution against a null hypothesis (e.g., from random parameter sets or random "drugging" of proteins) using appropriate statistical tests (e.g., Chi-squared) to assess significance [25].

Problem 2: Model is Too Slow for Practical Use

Issue: Simulations, especially large-scale virtual population or parameter exploration runs, take too long, hindering iterative development.

Diagnosis and Solutions:

| Diagnostic Step | Potential Root Cause | Recommended Action |

|---|---|---|

| Code Profiling | Inefficient algorithms or coding practices. | Use profiling tools to identify computational bottlenecks. Refactor critical sections of code. |

| Platform Assessment | Hardware limitations (e.g., local machine). | Migrate to cloud-based computational platforms designed for large-scale QSP simulations [26]. |

| Model Complexity | Unnecessarily high model resolution for the COU. | Implement a "Fit-for-Purpose" strategy. Simplify the model where possible without compromising key outputs [21]. Use declarative programming environments that optimize execution [26]. |

Issue: The model cannot effectively incorporate or reconcile data from different sources (e.g., in vitro, omics, clinical trials).

Diagnosis and Solutions:

| Diagnostic Step | Potential Root Cause | Recommended Action |

|---|---|---|

| Data Audit | Incompatible formats, scales, or missing metadata. | Implement a unified data infrastructure or use AI-powered platforms to harmonize and integrate diverse datasets [24]. |

| Workflow Review | Manual, error-prone data processing pipelines. | Adopt end-to-end QSP platforms with built-in data handling and curation tools to streamline workflows [26]. |

Frequently Asked Questions (FAQs)

Q1: What is the difference between a "top-down" (e.g., PopPK) and "bottom-up" (e.g., QSP) MIDD approach, and when should I use each?

A1: The choice is dictated by the "Question of Interest" (QOI) and available data [21] [22].

- Top-Down (e.g., PopPK, PK/PD): These are primarily data-driven. They analyze observed clinical data to describe what happens (e.g., quantifying dose-exposure-response and identifying sources of variability). Use them when you have rich clinical data and need to describe relationships and variability in a population [22].

- Bottom-Up (e.g., QSP, PBPK): These are primarily mechanism-driven. They build on prior knowledge of physiology and biology to simulate how it happens. Use them for prospective prediction in data-sparse environments (e.g., first-in-human dose), understanding biological mechanisms, or optimizing combination therapies [22].

Q2: How do I know if my QSP model is "validated," especially since it has many unidentifiable parameters?

A2: Validation for QSP differs from traditional PK/PD models. Shift focus from "parameter identifiability" to "prediction credibility" [25].

- Qualitative Prediction: Can the model reproduce known, non-fitted biological behaviors or clinical outcomes? [25]

- Virtual Population (VP) Analysis: As described in the troubleshooting guide, use VPs to generate a distribution of predictions and test the robustness of qualitative findings [25].

- Biological Plausibility: The model's structure and dynamics should be reviewed and accepted by domain experts for biological realism [25].

Q3: Our QSP models are built by a single expert, creating a bottleneck. How can we make QSP more scalable and accessible?

A3: This is a common challenge driven by model complexity and reliance on specialized coding skills [26]. Solutions include:

- Utilize Pre-validated Model Libraries: Start from existing, documented models for common therapeutic areas to reduce development time [26].

- Adopt Democratizing Platforms: Use platforms with intuitive, visual interfaces and declarative programming that reduce the coding burden for non-specialists [26].

- Implement Collaboration Tools: Use software with features that allow teams to share, edit, and compare models and scenarios, breaking down knowledge silos [26].

Q4: How is AI being used to supercharge traditional QSP modeling?

A4: AI and machine learning are being integrated to address key limitations [24]:

- Data Gap Filling: AI can generate robust synthetic data to impute missing biological parameters (e.g., target expression levels) [24].

- Automated Parameterization: ML algorithms (e.g., Bayesian inference) can automate and accelerate model parameter estimation and calibration [24].

- Enhanced Predictive Capability: AI can help build models that better capture inter-individual variability, enabling more reliable virtual trials and patient stratification [24].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in MIDD/QSP | Key Consideration |

|---|---|---|

| PBPK Platform (e.g., GastroPlus, Simcyp) | Mechanistically simulates ADME and predicts human PK, DDI, and dosing in special populations [22]. | Quality of system-specific (physiological) and drug-specific (input) parameters is critical for reliable predictions. |

| QSP Software (e.g., Certara IQ, BIOiSIM) | Provides environment to build, simulate, and analyze complex QSP models; some offer AI-enhanced features and model libraries [26] [24]. | Choose based on usability, computational speed, and availability of models relevant to your therapeutic area. |

| PopPK/PD Software (e.g., NONMEM, Monolix) | Performs nonlinear mixed-effects modeling to quantify population mean parameters and inter-individual variability [21]. | Requires expertise in model coding, diagnostics, and statistical interpretation. |

| Model-Based Meta-Analysis (MBMA) | Uses curated historical clinical trial data for indirect comparison to competitors and optimization of trial design [22]. | The quality and breadth of the underlying database are paramount. |

| Virtual Population Generator | Creates in silico cohorts of virtual patients for simulating variability and quantifying uncertainty in predictions [25]. | The method for generating the population (e.g., stochastic search, sampling) can influence results. |

Detailed Experimental Protocol: QSP for Combination Therapy Scheduling

This protocol outlines how a QSP model can be used to identify an optimal dosing schedule for a combination therapy, a common and challenging application.

- Background: Drug combinations can show schedule-dependent effects where the sequence of administration impacts overall efficacy or safety [25].

- Objective: To use a calibrated QSP model of cancer cell signaling and proliferation to simulate and compare the efficacy of different dosing sequences for Gemcitabine and Birinapant [25].

Step-by-Step Methodology:

Model Construction and Calibration:

- Develop a system of ordinary differential equations (ODEs) representing key signaling pathways (e.g., apoptosis, survival) relevant to the drugs.

- Parameterize the model using dynamic, quantitative protein-level data (e.g., from Western blots or mass spectrometry) from a representative cell line (e.g., PANC-1) treated with each drug individually [25].

Define Simulation Scenarios:

- Scenario A (Simultaneous): Administer both drugs at the same time.

- Scenario B (Sequential 1): Administer Gemcitabine, followed after a defined interval (e.g., 24h) by Birinapant.

- Scenario C (Sequential 2): Administer Birinapant, followed by Gemcitabine.

Execute Simulations and Collect Output:

- For each scenario, run the model simulation over a defined time course (e.g., 96 hours).

- The primary output is the simulated tumor cell growth curve or the final number of viable cells.

Virtual Population Analysis:

- Repeat Step 3 for a large virtual population (see VP protocol above) to account for biological variability and parameter uncertainty.

- For each virtual subject, rank the scenarios by efficacy (e.g., lowest final cell count = best).

Analysis and Conclusion:

- Calculate the percentage of the virtual population for which each sequential schedule is superior to simultaneous administration.

- Perform statistical tests to confirm the significance of the optimal scheduling effect.

Core Concepts: Mutation Strategies in Evolutionary Algorithms

Evolutionary algorithms, particularly Differential Evolution (DE), are powerful population-based metaheuristics for solving complex optimization problems in high-dimensional parameter spaces. Their performance critically depends on the mutation strategy, which governs how new candidate solutions are generated by combining existing ones [27] [28].

The mutation phase creates a donor vector for each target vector in the population. Different strategies offer trade-offs between exploration (searching new areas) and exploitation (refining known good areas) [14] [29]. The most common mutation strategies are mathematically defined as follows. For a given target vector ( X{i,G} ) at generation ( G ), the mutant vector ( V{i,G} ) is generated using one of the strategies in Table 1 [30] [14]. The indices ( r1, r2, r3, r4, r5 ) are distinct random integers different from index ( i ). ( X_{\text{best},G} ) is the best-performing vector in the current generation, and ( F ) is a scaling factor controlling the magnitude of the differential variation [27].

Table 1: Common Differential Evolution Mutation Strategies

| Strategy Name | Mathematical Formulation | Characteristics |

|---|---|---|

| DE/rand/1 | ( V{i,G} = X{r1,G} + F \cdot (X{r2,G} - X{r3,G}) ) | High exploration, good for diverse search [30] [14]. |

| DE/best/1 | ( V{i,G} = X{\text{best},G} + F \cdot (X{r1,G} - X{r2,G}) ) | High exploitation, fast convergence [30]. |

| DE/current-to-best/1 | ( V{i,G} = X{i,G} + F \cdot (X{\text{best},G} - X{i,G}) + F \cdot (X{r1,G} - X{r2,G}) ) | Balances local and global search [30]. |

| DE/rand/2 | ( V{i,G} = X{r1,G} + F \cdot (X{r2,G} - X{r3,G}) + F \cdot (X{r4,G} - X{r5,G}) ) | Enhanced exploration with two difference vectors [30]. |

| DE/best/2 | ( V{i,G} = X{\text{best},G} + F \cdot (X{r1,G} - X{r2,G}) + F \cdot (X{r3,G} - X{r4,G}) ) | Enhanced exploitation with two difference vectors [30]. |

Enhanced Modern Strategies

Recent research focuses on strategies that dynamically adapt to the problem landscape. The DE/current-to-best/2 strategy incorporates the best solution, the current solution, and a random solution, potentially accelerating convergence [30]. Strategies like DE/Neighbor/1 and DE/Neighbor/2 use information from a randomly selected set of neighbors within the population to better balance exploration and exploitation, helping to prevent premature convergence to local optima [14].

Technical Support Center: FAQs & Troubleshooting

Q1: My optimization run is consistently converging to local optima rather than the global solution. How can I improve the population's diversity?

- Problem Diagnosis: This indicates an exploitation-exploration imbalance, likely caused by a mutation strategy that is too greedy (e.g., over-reliance on DE/best/1) or a population that has lost diversity [14] [29].

- Recommended Actions:

- Switch Mutation Strategy: Change from DE/best/1 to DE/rand/1 or a more balanced strategy like DE/current-to-best/1 [30].

- Use Enhanced Strategies: Implement a modern strategy like DE/Neighbor/2:

V_i = X_nbest + F * (X_r1 - X_r2) + F * (X_r3 - X_r4), whereX_nbestis the best vector in a random neighbor subset. The additional difference vector enhances exploration [14]. - Adjust Parameters: Temporarily increase the scaling factor ( F ) to encourage larger, more exploratory moves [28].

- Consider Algorithm Modification: Implement a multi-population approach where different sub-populations use different mutation strategies, allowing for parallel exploration of the search space [28].

Q2: The convergence of my algorithm has become unacceptably slow in a high-dimensional parameter space (e.g., >100 dimensions). What steps can I take?

- Problem Diagnosis: High-dimensional spaces suffer from the "curse of dimensionality," where the volume of the search space grows exponentially, making it difficult to locate the optimum. Distance metrics also become less meaningful [31] [32].

- Recommended Actions:

- Employ Dimensionality Reduction: As a preprocessing step, use techniques like Principal Component Analysis to reduce the feature space while preserving most of the variance [31].

- Incorporate Local Search: Hybridize your DE algorithm with a local search method. The global search of DE finds promising regions, and the local search efficiently refines the solution within that region, improving convergence speed [30] [29].

- Adaptive Crossover: Implement a self-adaptive crossover procedure. For example, use high crossover probability in early generations for diversity and lower probability in later generations for fine-tuning, or vary it based on generation count [30].

Q3: How do I select the most appropriate mutation strategy for my specific drug discovery problem?

- Problem Diagnosis: The "best" strategy is problem-dependent, governed by the No Free Lunch theorem [29]. Selection should be based on the problem's landscape and research goals.

- Decision Framework:

- For Initial Exploratory Studies (e.g., screening a vast chemical space for hits): Prioritize exploration. Use DE/rand/1 or DE/rand/2 [14].

- For Optimizing a Lead Compound (e.g., fine-tuning a small set of molecular properties): Prioritize exploitation. Use DE/best/1 or DE/current-to-best/1 [30].

- For Problems with Unknown Landscapes: Start with a balanced strategy like DE/current-to-best/1 or an adaptive algorithm that automatically selects strategies [28] [30].

- Standard Practice: Test multiple strategies on a simplified or representative version of your problem and compare convergence speed and solution quality.

Experimental Protocols & Methodologies

Protocol 1: Benchmarking Mutation Strategies

This protocol outlines a standard method for comparing the performance of different DE mutation strategies on a set of benchmark functions [28] [30].

- Select Benchmark Functions: Choose a diverse set of standard global optimization benchmark functions (e.g., from the CEC test suites). The set should include unimodal, multimodal, and hybrid composition functions [28].

- Define Algorithm Parameters:

- Population Size: 100

- Scaling Factor: 0.5

- Crossover Probability: 0.9

- Maximum Function Evaluations: 10,000 × D (where D is the dimension) [14]

- Implement Algorithms: Code each mutation strategy (DE/rand/1, DE/best/1, DE/current-to-best/1, etc.) within the same DE framework.

- Execute Independent Runs: Conduct 30-50 independent runs for each algorithm-strategy combination on each benchmark function to gather statistically significant results.

- Data Collection & Analysis: Record the best, worst, median, and standard deviation of the final objective function values. Perform non-parametric statistical tests (e.g., Wilcoxon signed-rank test) to determine if performance differences are significant [28].

Protocol 2: Tuning a DE Algorithm for a Specific Problem

This protocol describes how to optimize the parameters of a DE algorithm for a given problem, such as a quantitative structure-activity relationship model in drug discovery.

- Problem Formulation: Clearly define the objective function, decision variables, and constraints.

- Design of Experiments: To optimize the DE's own parameters (e.g., ( F ), ( Cr ), population size), use a Design of Experiments approach. This systematically explores the parameter space to find the most robust settings [30].

- Parameter Tuning: Execute the DE algorithm with different parameter combinations from the experimental design. The performance metric (e.g., mean best fitness) is the response variable.

- Validation: Select the best parameter set and perform multiple validation runs to confirm performance.

Workflow Visualization

DE Algorithm Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Evolutionary Optimization

| Tool/Reagent | Function / Purpose | Application Context |

|---|---|---|

| CEC Benchmark Suites | Standardized sets of test functions for fair and reproducible comparison of optimization algorithms. | Validating and benchmarking new mutation strategies against state-of-the-art methods [28]. |

| Parameter Tuning Software | Tools for Design of Experiments to systematically find the best algorithm parameters. | Optimizing the scaling factor and crossover rate for a specific drug discovery problem [30]. |

| Dimensionality Reduction Libraries | Software implementations of PCA, t-SNE, and autoencoders for feature reduction. | Preprocessing high-dimensional genomic or chemical data before optimization [31] [32]. |

| High-Per Computing Cluster | Parallel computing resources to run multiple algorithm iterations or population members simultaneously. | Handling computationally expensive fitness evaluations, common in molecular docking or clinical trial simulations. |

| DE Algorithm Frameworks | Flexible software libraries that allow easy implementation and testing of custom mutation strategies. | Prototyping and deploying new DE variants like DE/current-to-best/2 or DE/Neighbor/2 [30] [14]. |

This technical support center provides a framework for applying evolution strategies (ES), specifically mutation strategies from Differential Evolution (DE), to optimize dose-finding in clinical trials. The primary challenge in modern oncology and radiopharmaceutical development is identifying the Optimal Biological Dose (OBD) that maximizes efficacy while minimizing toxicity, rather than just the Maximum Tolerated Dose (MTD) [33] [34]. Nature-inspired metaheuristics like DE offer powerful solutions for these complex optimization problems with multi-dimensional parameter spaces and constrained objectives [33] [35].

Framed within a broader thesis comparing mutation strategies, this guide demonstrates how different DE variants can navigate the "quality landscape" of dose-response relationships [36]. The following sections provide troubleshooting guides, FAQs, and detailed protocols to help researchers implement these methods effectively.

Key Concepts and Terminology

Essential Clinical Trial Concepts for Optimization Scientists

- Maximum Tolerated Dose (MTD): The highest dose of a drug that does not cause unacceptable side effects. Traditional dose-finding trials focus on identifying the MTD [37].

- Optimal Biological Dose (OBD): The dose that provides the best balance of efficacy and tolerability. For newer targeted therapies, the OBD is often below the MTD [33].

- Patient-Reported Outcomes (PROs): Data collected directly from patients about their symptoms, treatment side effects, and health-related quality of life. PROs provide a patient-centered evidence layer for dose optimization [38].

- Project Optimus: An FDA initiative advocating for improved dose optimization in oncology, emphasizing the need to identify the OBD over the MTD [37].

Differential Evolution Mutation Strategies

DE creates new candidate solutions by combining a parent vector with a scaled difference vector of other population members [23]. The table below summarizes common mutation strategies used in DE.

Table 1: Common Differential Evolution Mutation Strategies

| Strategy Name | Formula | Search Characteristics | Clinical Trial Analogy |

|---|---|---|---|

| DE/rand/1 | ( vi = x{r1} + F \cdot (x{r2} - x{r3}) ) | Exploratory, good for diverse populations [35]. | Exploring a wide range of doses in early trial phases. |

| DE/best/1 | ( vi = x{best} + F \cdot (x{r1} - x{r2}) ) | Exploitative, converges quickly [35]. | Fine-tuning doses around a currently promising candidate. |

| DE/current-to-best/1 | ( vi = xi + F \cdot (x{best} - xi) + F \cdot (x{r1} - x{r2}) ) | Balanced between exploration and exploitation [35]. | Adjusting a current dose based on both the best-known dose and population diversity. |

| DE/rand/2 | ( vi = x{r1} + F \cdot (x{r2} - x{r3}) + F \cdot (x{r4} - x{r5}) ) | Highly exploratory, uses more information [35]. | A more robust search in complex, multi-modal toxicity/efficacy landscapes. |

The generalized scaling factor, ( g(F) ), is a key theoretical concept that allows for the comparison of different mutation operators by describing their relative mutation ranges [23].

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Why should I use Differential Evolution instead of traditional optimization methods for dose-finding? Traditional methods like the 3+3 design have a low probability (around 33%) of identifying the true optimal dose [37]. DE is a population-based metaheuristic that makes few assumptions about the underlying problem, handles high-dimensional spaces effectively, and is robust to multi-modal response surfaces commonly found in dose-toxicity-efficacy relationships [35]. It can efficiently explore large design spaces where standard methods fail.

Q2: My DE algorithm gets stuck in a local optimum, leading to a suboptimal dose recommendation. How can I improve exploration? This is a common problem known as premature convergence.

- Solution A: Switch your mutation strategy. Use DE/rand/1 or DE/rand/2 instead of DE/best/1 to promote exploration over exploitation [35].

- Solution B: Use an adaptive DE variant like JADE or SADE. These algorithms automatically adjust the scaling factor ( F ) and crossover rate ( Cr ) during the optimization process, which helps escape local optima [35].

- Solution C: Increase the population size. A larger, more diverse population provides a broader basis for mutation and helps explore the search space more thoroughly [35].

Q3: How do I incorporate real-world clinical constraints, like toxicity limits, into the DE optimization process? Constraints are typically handled using a penalty function.

- Method: Transform the constrained problem into an unconstrained one by adding a penalty term to the objective function. The penalty increases as violations of the constraints (e.g., exceeding a toxicity threshold) become more severe [35].

- Example Formula:

( F(x) = f(x) + \mu \sum{k=1}^{N} Hk(x) gk^2(x) )

Where:

- ( F(x) ) is the penalized objective function to be minimized.

- ( f(x) ) is the original objective (e.g., negative efficacy).

- ( \mu ) is a large penalty factor (e.g., ( 10^6 )).

- ( gk(x) ) is the k-th constraint function (( gk(x) \leq 0 )).

- ( Hk(x) ) is 1 if the constraint is violated and 0 otherwise [35].

Q4: Clinical trials are expensive to simulate. How can I make the optimization process more efficient?

- Use Potential Outcomes Simulation: Instead of generating new random data for every simulation run, pre-simulate all potential outcomes for each patient at every possible dose level. You can then reuse this dataset to compare multiple DE-driven trial designs efficiently, significantly reducing Monte Carlo error and computational load [39]. One study showed this method can require 30 times fewer simulations than conventional approaches [39].

- Leverage PROs: Integrate Patient-Reported Outcomes (PROs) like the EORTC QLQ-C30 questionnaire to better predict patient fitness and outcomes. High baseline PRO scores can identify patients more likely to remain fit for trial inclusion, making the optimization process more predictive and efficient [38].

Advanced Troubleshooting: Tuning DE Parameters

Table 2: Tuning Guide for DE Parameters in Dose-Finding Contexts

| Symptom | Potential Cause | Recommended Action |

|---|---|---|

| Slow convergence, taking too long to find a candidate dose. | Scaling factor ( F ) too low; over-reliance on exploitative strategies like DE/best/1. | Increase ( F ) to a value between 0.5 and 0.9; incorporate DE/rand/1 or DE/current-to-best/1 strategies [35]. |

| Algorithm unstable, skipping over promising dose regions. | Scaling factor ( F ) too high; population size ( NP ) too small. | Reduce ( F ) to a value between 0.2 and 0.5; increase population size ( NP ) [35]. |

| Poor performance on a specific trial design, but good on others. | Fixed parameters are not suited for all problem landscapes. | Switch to a self-adaptive DE variant like JDE or SADE, which tune their own parameters during the run [35]. |

| Algorithm consistently violates toxicity constraints. | Inadequate penalty function strength. | Increase the penalty factor ( \mu ) in the constraint handling function to heavily discourage infeasible solutions [35]. |

Experimental Protocols & Workflows

Core Protocol: Optimizing a Dose-Finding Trial Design using DE

This protocol outlines the steps for using DE to identify the OBD in a Phase I/II clinical trial considering both efficacy and toxicity [33].

1. Problem Formulation:

- Objective Function: Define ( f(x) ) to be minimized. This is often a composite of efficacy and toxicity, for example: ( f(x) = -[w \cdot \text{Efficacy}(x) - (1-w) \cdot \text{ToxicityScore}(x)] ), where ( w ) is a weight reflecting the trade-off.

- Design Variables (x): Typically the dose level(s) to be tested.

- Constraints (g(x)): Define limits based on safety, e.g., ( \text{Probability}( \text{Dose-Limiting Toxicity} ) \leq 0.33 ).

2. Algorithm Selection and Setup:

- Select a DE variant (e.g., Standard DE/rand/1 for exploration, SADE for adaptive performance).

- Set initial parameters: Population Size (( NP )), Scaling Factor (( F )), Crossover Rate (( Cr )).

- Define the termination criterion (e.g., max number of generations, convergence threshold).

3. Potential Outcome Simulation (Pre-Trial):

- Simulate a population of virtual patients.

- For each patient and each possible dose level, pre-calculate their potential outcomes (efficacy response and toxicity event) based on assumed statistical models (e.g., logistic or continuation-ratio models) [33] [39]. This creates a fixed, reusable dataset.

4. Optimization Execution:

- Initialization: Randomly generate an initial population of candidate dose regimens.

- Loop until termination:

- Mutation: For each candidate in the population, generate a mutant vector using the chosen strategy (e.g., DE/rand/1).

- Crossover: Create a trial vector by mixing parameters from the target and mutant vectors.

- Evaluation (Simulation): Using the pre-simulated potential outcomes, evaluate the performance (objective function ( f(x) )) of each trial vector. This simulates a clinical trial using that dose regimen.

- Selection: Compare the trial vector to its parent target vector. The one with the better objective value survives to the next generation.

5. Recommendation:

- After termination, the highest-performing candidate in the population is selected as the recommended OBD for subsequent real-world trials.

The workflow for this protocol is as follows:

Protocol Validation: Comparing DE to Traditional Designs

To validate the performance of your DE-driven design, conduct a head-to-head comparison against established methods.

- Objective: Compare the accuracy of OBD identification between your DE design and the Continual Reassessment Method (CRM) or a modified Toxicity Probability Interval (mTPI-2) design [39].

- Method: Use the same pre-simulated potential outcomes dataset for both designs. This eliminates random variation as a confounding factor [39].

- Metrics: Run 10,000 simulated trials for each design. Record the percentage of trials where each design correctly identifies the true OBD. A robust DE design should match or exceed the performance of established methods [39].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Clinical Tools for Optimization

| Tool / Reagent | Type | Function / Application | Source / Example |

|---|---|---|---|

| Potential Outcomes Dataset | Data | A pre-simulated set of patient responses for all doses; enables efficient and fair design comparisons [39]. | Generated in-house using statistical software (R, Python). |

| EORTC QLQ-C30 | Clinical Questionnaire | A validated PRO instrument to measure global health status and role functioning; helps predict patient fitness for trials [38]. | European Organisation for Research and Treatment of Cancer. |

escalation R Package |

Software | An R package that facilitates the implementation of adaptive dose-finding designs, including the potential outcomes approach [39]. | Comprehensive R Archive Network (CRAN). |

| Continuation-Ratio Model | Statistical Model | A model for ordinal outcomes (e.g., no efficacy/partial response/complete response); used to define the OBD in Phase I/II trials [33]. | Statistical literature on dose-finding. |

| Penalty Function | Algorithmic Component | Transforms a constrained optimization problem (dose with toxicity limits) into an unconstrained one for the DE algorithm [35]. | Custom-coded within the DE evaluation function. |

Visualizing Algorithmic and Clinical Pathways

Integration of DE Optimization in the Clinical Trial Pipeline

This diagram illustrates how a Differential Evolution optimizer is integrated into the broader clinical trial development process, from pre-clinical research to regulatory submission.

Optimizing Mutation Performance: Overcoming Pitfalls and Enhancing Robustness

Frequently Asked Questions (FAQs)