Multi-Task Optimization vs Multi-Objective Optimization: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive comparison of Multi-Task Optimization (MTO) and Multi-Objective Optimization (MOO) for researchers and professionals in drug development and biomedical sciences.

Multi-Task Optimization vs Multi-Objective Optimization: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive comparison of Multi-Task Optimization (MTO) and Multi-Objective Optimization (MOO) for researchers and professionals in drug development and biomedical sciences. We clarify the foundational definitions, distinct goals, and problem formulations of both paradigms. The content explores key algorithmic methodologies, including evolutionary computation and gradient-based approaches, and their practical applications in areas like quantitative structure-activity relationship (QSAR) modeling and anti-breast cancer drug candidate selection. We address critical challenges such as negative transfer and objective conflict, offering troubleshooting and optimization strategies. Finally, we present a framework for the validation and comparative analysis of these methods, synthesizing key takeaways and future directions for optimizing biomedical research pipelines.

Demystifying the Concepts: Core Principles of MOO and MTO

In both scientific research and industrial application, optimization challenges rarely involve a single, solitary goal. Multi-objective optimization is a mathematical framework for making decisions when multiple, conflicting objectives must be satisfied simultaneously [1] [2]. Unlike single-objective optimization, which seeks a single best solution, MOO identifies a set of optimal trade-offs, acknowledging that improving one aspect of a system often comes at the expense of another [3]. This approach is also known as Pareto optimization, multicriteria optimization, or vector optimization [1] [4].

This framework is indispensable in fields like drug development, where a candidate molecule must be optimized for potency, metabolic stability, and safety simultaneously [5]. Similarly, in engineering design, one might need to minimize weight while maximizing strength and minimizing cost [6]. The core challenge MOO addresses is the absence of a single solution that optimizes all objectives at once; instead, it provides a suite of solutions representing the best possible compromises [1] [3].

Core Principles: Conflict and Pareto Optimality

The Nature of Conflicting Objectives

The very foundation of MOO is the existence of conflicting objectives. In a sustainable design problem, for example, minimizing cost and minimizing environmental impact are goals that typically pull in opposite directions [4]. In finance, maximizing a portfolio's expected return directly conflicts with the objective of minimizing its risk [1]. Without conflict, the problem could be trivially reduced to a single-objective one, as all goals could be achieved perfectly by a single solution.

The Pareto Principle and Pareto Front

The concept of Pareto optimality, named after economist Vilfredo Pareto, provides the formal mechanism for defining "optimality" in a multi-objective context [4]. A solution is considered Pareto optimal or non-dominated if it is impossible to improve one objective without degrading at least one other objective [1] [3] [4].

The collection of all Pareto optimal solutions in the objective space is known as the Pareto front [1] [6]. This front visualizes the trade-off relationship between the objectives. For a two-objective problem, it typically appears as a curve, showing how much of one objective must be sacrificed to gain an improvement in the other [6]. Solutions inside this frontier are considered inferior or "dominated," as one can find a solution on the frontier that is at least as good in all objectives and strictly better in at least one [4].

Table 1: Key Terminology in Multi-Objective Optimization

| Term | Mathematical/Symbolic Definition | Practical Interpretation |

|---|---|---|

| Objective Functions | ( \vec{f}(\vec{x}) = [f1(\vec{x}), f2(\vec{x}), \ldots, f_k(\vec{x})] ) [2] | The multiple criteria (e.g., cost, efficacy, safety) to be optimized. |

| Decision Variables | ( \vec{x} = (x1, x2, ..., x_n) ) [1] | The adjustable parameters of the system (e.g., drug formulation components). |

| Constraints | ( gj(\vec{x}) \leq 0, \quad hl(\vec{x}) = 0 ) [3] | Limitations that define feasible solutions (e.g., budget, regulatory limits). |

| Pareto Dominance | ( fm(\vec{x}^*) \leq fm(\vec{x}) \quad \forall m ), and ( f{m'}(\vec{x}^*) < f{m'}(\vec{x}) ) for at least one ( m' ) [2] | Solution ( \vec{x}^* ) is as good as ( \vec{x} ) in all objectives and strictly better in at least one. |

| Pareto Optimal Set | ( { \vec{x}^* \in X \mid \nexists \vec{x} \in X : \vec{x} \text{ dominates } \vec{x}^* } ) [1] | The complete set of non-dominated, best-compromise solutions. |

| Pareto Front | ( { \vec{f}(\vec{x}^) \mid \vec{x}^ \text{ is Pareto optimal} } ) [1] | The visualization of trade-offs between objectives. |

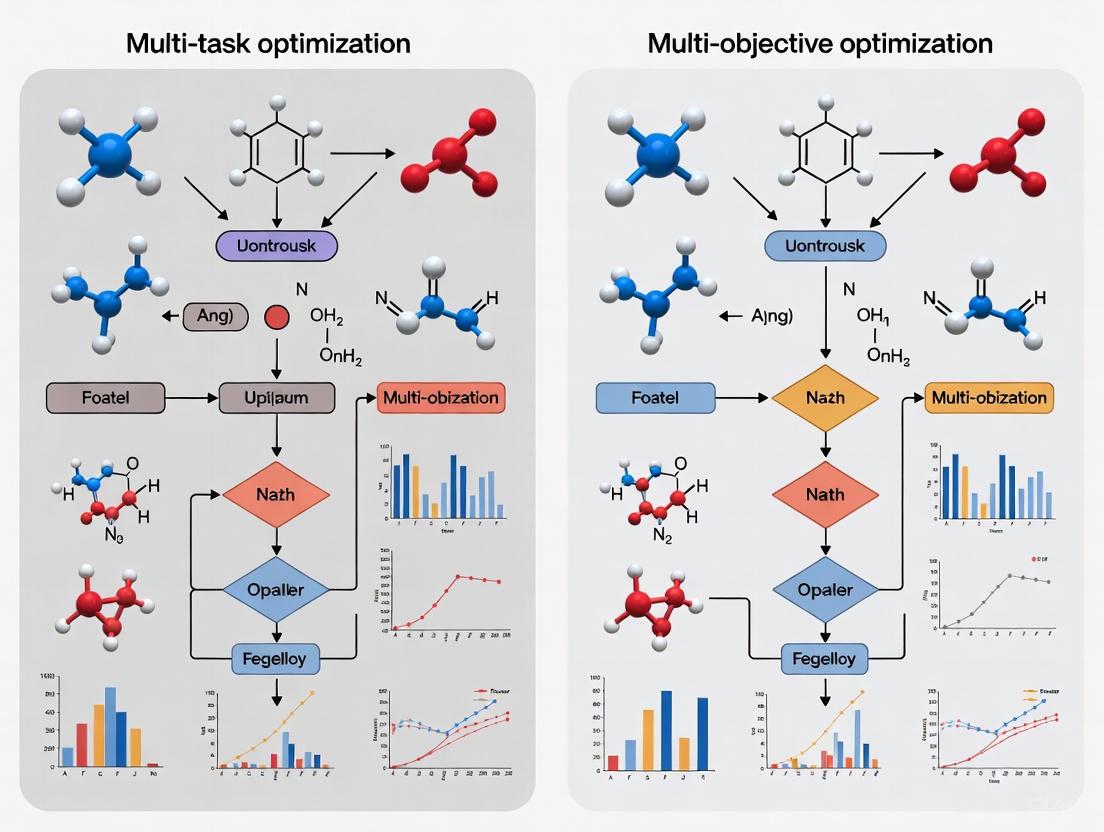

Figure 1: The Logical Workflow of a Multi-Objective Optimization Process

Multi-Objective vs. Multi-Task Optimization: A Critical Distinction

While the terms sound similar, multi-objective optimization (MOO) and multi-task optimization (MTO) represent distinct research paradigms, a distinction crucial to the broader thesis of this guide.

- Multi-Objective Optimization (MOO) focuses on solving a single problem characterized by multiple, conflicting objectives [1]. The goal is to find the Pareto front of trade-off solutions for that one problem. For example, optimizing a single drug formulation for both efficacy and safety is an MOO problem.

- Multi-Task Optimization (MTO), particularly Evolutionary Multi-Task Optimization (EMTO), is an emerging field that focuses on solving multiple optimization problems (tasks) concurrently [7]. The core principle is that there is some form of correlation between tasks, and the useful knowledge (e.g., patterns, parameter configurations) gained from solving one task can be transferred to help solve other, related tasks more efficiently than solving them in isolation [7]. The key risk in MTO is "negative transfer," which occurs when knowledge from unrelated tasks is transferred, thereby harming optimization performance [7].

These concepts can intersect in a framework called Multi-Objective Multi-Task Optimization (MOMTO), where multiple tasks, each with multiple objectives, are solved simultaneously. A recent algorithm in this area, the multi-objective multi-task evolutionary algorithm based on source task transfer (MOMFEA-STT), establishes online parameter sharing models between a historical "source task" and a current "target task" to enable adaptive knowledge transfer and improve overall performance [7].

Methodologies and Algorithmic Solutions

A variety of algorithms have been developed to approximate the Pareto front for complex problems.

Classical Scalarization Methods

Classical approaches convert the MOO problem into a single-objective problem.

- Weighted Sum Method: This method aggregates all objectives into a single function using a weight vector: ( F = w1 f1 + w2 f2 + \dots + wk fk ) [3] [4]. While simple, its major limitation is the inability to find solutions on non-convex portions of the Pareto front [4].

- ε-Constraint Method: This method optimizes one primary objective while treating all others as constraints bound by user-defined ε values [2] [4]. By systematically varying ε, a representative Pareto front can be generated, and this method can handle non-convex fronts.

Evolutionary and Metaheuristic Algorithms

For complex, non-linear, or non-convex problems, population-based evolutionary algorithms (EAs) are highly effective, as they can generate multiple Pareto optimal solutions in a single run.

- NSGA-II (Non-dominated Sorting Genetic Algorithm II): A highly popular algorithm that uses a non-dominated sorting approach to rank solutions and a crowding distance metric to ensure diversity along the Pareto front [2] [6] [4]. It is widely used in engineering and scientific domains.

- MOEA/D (Multi-Objective Evolutionary Algorithm based on Decomposition): This algorithm decomposes a MOO problem into several single-objective subproblems and optimizes them simultaneously [3].

- MOAHA (Multi-Objective Artificial Hummingbird Algorithm): A more recent metaheuristic algorithm inspired by the flight patterns and foraging behaviors of hummingbirds [8].

Table 2: Comparison of Primary Multi-Objective Optimization Algorithms

| Algorithm | Type | Core Mechanism | Key Advantages | Common Application Contexts |

|---|---|---|---|---|

| Weighted Sum [3] [4] | Scalarization | Linear aggregation of objectives with weights. | Conceptual simplicity, computational efficiency. | Problems with a known, convex Pareto front. |

| ε-Constraint [2] [4] | Scalarization | Optimizes one objective, constrains others. | Can find solutions on non-convex Pareto fronts. | When a clear primary objective exists. |

| NSGA-II [2] [6] | Evolutionary | Non-dominated sorting & crowding distance. | Good convergence and diversity; widely validated. | Chemical engineering [8], shape optimization [6]. |

| MOEA/D [3] | Evolutionary | Decomposition into single-objective subproblems. | High efficiency for many-objective problems. | Complex engineering and scheduling problems. |

| MOAHA [8] | Metaheuristic | Models hummingbird foraging strategies. | Potentially superior exploration/exploitation balance. | Emerging applications in formulation science [8]. |

Figure 2: A Taxonomy of Primary MOO Solution Methodologies

Experimental Protocols and Performance Benchmarking

Evaluating the performance of different MOO algorithms requires standardized metrics and benchmark problems. Common metrics include Generational Distance (GD), which measures convergence by the distance from the obtained front to the true Pareto front, and Inverted Generational Distance (IGD), which assesses both convergence and diversity [2]. Spacing (SP) measures how evenly the solutions are distributed along the front [2].

Case Study: Formulation of Polycaprolactone Microspheres (PCL-MS)

A recent study provides a robust experimental protocol for applying MOO in a pharmaceutical context, optimizing a drug delivery system [8].

- Objective: To produce PCL-MS fillers with optimal particle size (

Y1) and narrow particle size distribution (Y2) for tissue filling [8]. - Design of Experiments (DOE): A Box-Behnken design was employed to investigate the effects of three factors: PCL concentration (

X1), polyvinyl alcohol concentration (X2), and water-oil ratio (X3) [8]. - Modeling & Optimization: Mathematical models were developed to predict

Y1andY2based on the DOE. Multi-objective optimization was then performed using both NSGA-II and MOAHA to find the Pareto-optimal set of preparation schemes [8]. - Validation: The optimal schemes predicted by the algorithms were prepared experimentally. Results showed no significant statistical difference (P>0.05) between predicted and measured values, with deviations under 5%, confirming the validity and reliability of the MOO approach [8].

Table 3: Experimental Results for PCL-MS Optimization using NSGA-II and MOAHA [8]

| Optimization Algorithm | Selected Scheme | Predicted Particle Size (Y1) | Measured Particle Size (Y1) | Deviation | Predicted Distribution (Y2) | Measured Distribution (Y2) | Deviation |

|---|---|---|---|---|---|---|---|

| NSGA-II | Scheme 12 | Value A1 | Value A2 | < 5% | Value A3 | Value A4 | < 5% |

| NSGA-II | Scheme 21 | Value B1 | Value B2 | < 5% | Value B3 | Value B4 | < 5% |

| MOAHA | Scheme 3 | Value C1 | Value C2 | < 5% | Value C3 | Value C4 | < 5% |

Note: The original paper [8] confirms that multiple schemes from each algorithm were experimentally validated, and all met target requirements with deviations under 5%, demonstrating the practical efficacy of both NSGA-II and MOAHA.

For researchers embarking on MOO, particularly in applied fields like drug development, a specific toolkit is required.

Table 4: Essential Research Reagent Solutions for MOO in Formulation Science

| Item / Solution | Function in MOO Workflow | Exemplification from PCL-MS Study [8] |

|---|---|---|

| Polymer Material | The primary material constituting the product being optimized. | Polycaprolactone (PCL): The biodegradable polymer forming the microsphere matrix. |

| Stabilizing Agent | Aids in the formation and stability of the formulation. | Polyvinyl Alcohol (PVA): Acts as a stabilizer in the emulsion process to control particle size and distribution. |

| Solvent System | The liquid medium in which the formulation is prepared. | A specific water-oil ratio (WOR): The solvent environment critical to the emulsion and particle formation. |

| Box-Behnken Design (BBD) | A Response Surface Methodology (RSM) design to efficiently explore factor effects with fewer experimental runs. | Used to systematically vary PCL concentration (X1), PVA concentration (X2), and WOR (X3) to build predictive models. |

| NSGA-II Code/Software | The computational intelligence algorithm for finding the Pareto front. | Used to multi-objectively optimize the models for particle size (Y1) and distribution width (Y2). |

| MOAHA Code/Software | An alternative metaheuristic algorithm for Pareto front approximation. | Used alongside NSGA-II to provide a comparative optimization approach and validate results. |

Multi-objective optimization, grounded in the Pareto Principle, is an essential framework for tackling the complex, conflicting objectives inherent in modern scientific research and industrial design. The distinction between multi-objective and multi-task optimization is critical, with the former handling multiple goals for a single problem and the latter tackling multiple related problems at once. As evidenced by its successful application in drug delivery system design [8], MOO provides a rigorous, data-driven pathway to optimal compromise. The continued development of sophisticated algorithms like NSGA-II, MOEA/D, and MOAHA, and the emergence of hybrid fields like multi-objective multi-task optimization [7], promise to further enhance our ability to make balanced decisions in the face of complex trade-offs.

Multi-Task Optimization (MTO) represents an emerging paradigm in computational optimization that fundamentally challenges traditional single-task approaches. At its core, MTO investigates how to simultaneously solve multiple optimization problems (tasks) by exploiting their inherent correlations and dependencies [7]. The foundational principle is that useful knowledge—including patterns, features, parameter configurations, and optimization strategies—obtained while solving one task can be beneficially transferred to accelerate and improve the solution of other related tasks [7]. This approach stands in contrast to conventional optimization methods that treat each problem in isolation, instead creating pathways for synergistic skill transfer between tasks that enables more efficient exploration of complex search spaces [9].

The significance of MTO extends across numerous domains where interrelated optimization challenges exist simultaneously. In drug discovery, MTO frameworks can predict drug-target interactions while simultaneously generating novel drug candidates [10]. In engineering design, MTO efficiently handles multifaceted problems like car side-impact design that involve multiple competing requirements [9] [11]. Power systems benefit from MTO through optimized configuration and layout of transmission grids that improve stability, reliability, and transmission efficiency [7]. The breadth of these applications demonstrates MTO's transformative potential in tackling complex, interconnected optimization challenges prevalent in real-world scenarios.

Core Principles: Knowledge Transfer in MTO

Fundamental Concepts and Terminology

Multi-Task Optimization operates on several key concepts that define its operational framework:

- Tasks: Individual optimization problems to be solved concurrently. In an MTO problem with K tasks, each task Ti (i = 1, 2, ..., K) has its own objective function fi(x) and search space Ω_i [9].

- Knowledge Transfer: The mechanism by which information gained from solving one task is applied to another related task. This may include transferring solutions, strategy parameters, or landscape characteristics [7] [12].

- Negative Transfer: A critical challenge in MTO where blind knowledge transfer between unrelated or dissimilar tasks degrades optimization performance rather than improving it [7].

- Source and Target Tasks: In knowledge transfer, the source task provides useful knowledge, while the target task receives and utilizes this knowledge to enhance its own optimization process [7].

The mathematical formulation of an MTO problem seeks to find the set of optimal solutions {x₁, x₂, ..., x_K*} where each solution is given by:

xj* = argmin fj(x), for j = 1, 2, ..., K, with x ∈ R_j [12]

This formulation highlights the dual challenge of MTO: handling heterogeneous landscape properties of objective functions {fj} across sub-tasks while navigating potentially misaligned feasible decision variable regions {Rj} [12].

Key Differences Between Multi-Task and Multi-Objective Optimization

While both MTO and Multi-Objective Optimization (MOO) handle multiple functions, they address fundamentally different problem structures, as summarized in the table below.

Table 1: Comparison between Multi-Task and Multi-Objective Optimization

| Aspect | Multi-Task Optimization (MTO) | Multi-Objective Optimization (MOO) |

|---|---|---|

| Core Problem | Solving multiple distinct optimization problems simultaneously | Optimizing a single problem with multiple conflicting objectives |

| Solution Approach | Knowledge transfer between tasks | Finding trade-off solutions (Pareto front) |

| Nature of Functions | Potentially unrelated tasks with different domains | Conflicting objectives of a single problem |

| Primary Challenge | Avoiding negative transfer between unrelated tasks | Balancing competing objectives without a single optimal solution |

| Typical Output | Multiple distinct solutions (one per task) | Set of non-dominated solutions representing trade-offs |

A critical distinction lies in their fundamental nature: MOO typically deals with conflicting objectives within a single problem, where improving one objective often degrades another, necessitating trade-off analysis through Pareto optimality [11]. In contrast, MTO addresses multiple distinct problems that may share common structures or solution characteristics, focusing on knowledge transfer rather than trade-off management [7] [9]. Recent research has also identified scenarios with "aligned" objectives where gradient-based methods can simultaneously improve multiple objectives without conflicts, further blurring traditional boundaries between these domains [13].

Experimental Methodologies in MTO Research

Algorithmic Frameworks and Implementations

MTO research has produced diverse algorithmic frameworks, broadly categorized into implicit and explicit knowledge transfer mechanisms:

Implicit Transfer Approaches: Early MTO algorithms like the Multifactorial Evolutionary Algorithm (MFEA) maintained a single unified population for all tasks, where each individual was indexed by its most specialized task [12]. Knowledge transfer occurred organically during reproduction and selection operations without explicit control mechanisms.

Explicit Transfer Approaches: More recent MTO frameworks deploy separate optimization processes for each task with explicitly controlled knowledge transfer. These methods directly address the three fundamental questions of "where to transfer" (identifying source-target task pairs), "what to transfer" (determining the specific knowledge to share), and "how to transfer" (designing the exchange mechanism) [12]. The MOMFEA-STT algorithm exemplifies this approach by establishing online parameter sharing models between historical and target tasks, dynamically identifying task relationships to adjust cross-task knowledge transfer intensity [7].

The advancement of explicit transfer mechanisms has led to more sophisticated MTO implementations across different computational paradigms:

Table 2: Representative MTO Algorithms and Their Characteristics

| Algorithm | Type | Key Mechanism | Application Domains |

|---|---|---|---|

| MOMFEA-STT [7] | Evolutionary | Source task transfer with spiral search | General benchmark problems |

| MTSO [9] | Swarm Intelligence | Knowledge transfer probability & elite selection | High-dimensional functions, engineering design |

| DeepDTAGen [10] | Deep Learning | Multitask learning with FetterGrad algorithm | Drug-target affinity prediction, drug generation |

| MetaMTO [12] | Reinforcement Learning | Multi-role RL system for transfer decisions | Generalizable across problem distributions |

Benchmark Problems and Evaluation Metrics

Rigorous evaluation of MTO algorithms employs specialized benchmark problems and quantitative metrics. Common benchmark suites include multi-task versions of standard optimization functions that create controlled environments with known task relationships and optimal solutions [7] [12]. These benchmarks systematically vary key characteristics such as task similarity, landscape modality, and dimensional mismatch to comprehensively assess algorithm performance.

For quantitative evaluation, researchers employ multiple metrics:

- Convergence Performance: Measures solution quality against known optima, often using metrics like Mean Squared Error (MSE) for regression-based tasks [10].

- Transfer Effectiveness: Evaluates how successfully knowledge transfer improves optimization, potentially measured through transfer success rate [12].

- Computational Efficiency: Assesses resource requirements in terms of function evaluations or computation time to reach target solution quality [9].

Experimental protocols typically involve comparative studies against established baseline algorithms, with statistical significance testing to validate performance improvements. For example, MOMFEA-STT was evaluated against NSGA-II, MOMFEA, and MOMFEA-II on multi-task optimization benchmarks [7], while DeepDTAGen was compared to KronRLS, SimBoost, DeepDTA, and GraphDTA on drug-target affinity prediction benchmarks [10].

Performance Comparison: MTO Algorithms and Alternatives

Quantitative Performance Analysis

Comprehensive experimental studies provide quantitative evidence of MTO performance advantages across diverse application domains. The following table summarizes key results from recent algorithm evaluations:

Table 3: Performance Comparison of MTO Algorithms on Benchmark Problems

| Algorithm | Benchmark | Performance Metrics | Comparison to Alternatives |

|---|---|---|---|

| MOMFEA-STT [7] | Multi-objective multi-task benchmarks | Outperformed NSGA-II, MOMFEA, and MOMFEA-II | Superior convergence and solution quality |

| MTSO [9] | Five-task and 10-task planar kinematic arm control | Most accurate solutions | Better performance than advanced MTO algorithms |

| DeepDTAGen [10] | KIBA dataset (DTA prediction) | MSE: 0.146, CI: 0.897, r²m: 0.765 | Outperformed GraphDTA by 11.35% in r²m |

| DeepDTAGen [10] | Davis dataset (DTA prediction) | MSE: 0.214, CI: 0.890, r²m: 0.705 | Surpassed SSM-DTA by 2.4% in r²m |

In drug discovery applications, DeepDTAGen demonstrates particularly impressive performance, achieving state-of-the-art results in drug-target affinity prediction while simultaneously generating novel target-aware drug candidates [10]. The framework's FetterGrad algorithm effectively addresses gradient conflicts in multitask learning, enabling robust knowledge sharing between predictive and generative tasks. For generative performance, DeepDTAGen produced molecules with high validity (proportion of chemically valid molecules), novelty (proportion not present in training data), and uniqueness (proportion of unique molecules) [10].

Application-Specific Performance Advantages

The performance benefits of MTO approaches manifest differently across application domains:

In engineering design, the Multi-Task Snake Optimization (MTSO) algorithm achieved superior performance on real-world problems including the multitask robot gripper problem and car side-impact design problem [9]. The algorithm's knowledge transfer mechanism, controlled by transfer probability and elite individual selection, enabled more efficient exploration of complex design spaces compared to single-task alternatives.

In drug discovery, DeepDTAGen provides a unified framework that simultaneously predicts drug-target binding affinities and generates novel drug candidates [10]. This dual capability demonstrates how MTO can address interconnected aspects of a complex pipeline within a single framework, potentially accelerating early-stage drug discovery by generating target-aware compounds with higher potential for clinical success.

Beyond these domains, MTO has shown significant promise in power system optimization where it can optimize transmission grid configuration and layout to improve stability, reliability, and efficiency [7], and in water resources engineering where it can simultaneously address interconnected tasks like reservoir scheduling and irrigation planning to develop more comprehensive management strategies [7].

Computational Frameworks and Algorithms

Implementing effective MTO requires specialized computational frameworks and algorithms:

- Evolutionary Computation: Multifactorial Evolutionary Algorithm (MFEA) and its variants form the foundation of evolutionary approaches to MTO, using unified representation and assortative mating to enable implicit knowledge transfer [7] [12].

- Swarm Intelligence: Snake Optimization, Particle Swarm Optimization, and other swarm intelligence methods have been adapted for MTO, leveraging population-based search with explicit knowledge transfer mechanisms [9].

- Deep Learning: Multitask neural architectures like DeepDTAGen handle complex MTO problems in domains such as drug discovery, using shared representations and specialized gradient handling [10].

- Reinforcement Learning: Frameworks like MetaMTO employ RL to automatically learn transfer policies, addressing the "where, what, and how" of knowledge transfer through trained agents [12].

Robust MTO research requires carefully designed experimental resources:

- Benchmark Problems: Standardized multitask benchmark suites enable fair algorithm comparison, with tasks of varying similarity, landscape characteristics, and dimensionality [7] [12].

- Evaluation Metrics: Domain-specific and general-purpose metrics assess algorithm performance, including solution quality, convergence speed, transfer effectiveness, and computational efficiency [7] [10].

- Specialized Software: Domain-specific simulators and evaluation tools, such as chemical validity checkers for drug design or engineering simulators for design optimization, enable realistic performance assessment [10] [9].

The following diagram illustrates the core knowledge transfer process in explicit MTO frameworks:

Future Directions and Research Challenges

Despite significant advances, MTO research faces several important challenges that guide future directions:

- Negative Transfer Mitigation: Preventing performance degradation from transferring knowledge between unrelated tasks remains a fundamental challenge. Approaches include improved similarity measures [7], transferability assessment [12], and learned transfer policies [12].

- Scalability to Many Tasks: Most current MTO algorithms handle relatively few simultaneous tasks (typically 2-10), but real-world scenarios may involve dozens or hundreds of related problems. Scaling MTO to many tasks requires efficient similarity assessment and selective transfer mechanisms [9] [12].

- Theoretical Foundations: While empirical results demonstrate MTO's effectiveness, stronger theoretical foundations are needed regarding convergence guarantees, knowledge transfer boundaries, and optimal resource allocation across tasks [7] [12].

- Asymmetric Transfer: Current approaches often assume symmetric knowledge benefits between tasks, but real-world transfer is frequently asymmetric. Developing frameworks that recognize and exploit these asymmetries could significantly improve performance [7].

The integration of MTO with emerging artificial intelligence techniques represents a particularly promising direction. Learning-based approaches like MetaMTO demonstrate how reinforcement learning can automate knowledge transfer decisions [12], while advances in multi-task deep learning continue to expand MTO's applicability to complex domains like drug discovery [10]. As these trends continue, MTO is poised to become an increasingly essential tool for tackling complex, interconnected optimization challenges across science and engineering.

In the field of optimization, Multi-Objective Optimization (MOO) and Multi-Task Optimization (MTO) represent two distinct paradigms designed to address complex problems with multiple, often competing, goals. While both approaches manage multiple criteria simultaneously, their fundamental objectives and solution structures differ significantly. MOO focuses on finding a set of solutions that represent optimal trade-offs between conflicting objectives within a single task. In contrast, MTO aims to simultaneously solve multiple distinct optimization tasks, leveraging potential synergies and shared information between them to find the best solution for each individual task [14] [1].

This distinction is crucial for researchers and practitioners, particularly in fields like drug development where decisions often involve balancing multiple competing factors such as efficacy, toxicity, and cost. Understanding the philosophical and methodological differences between these approaches enables professionals to select the appropriate framework for their specific problem domain, ensuring more efficient and effective optimization outcomes.

Core Philosophical Differences: Trade-offs vs. Collective Learning

The Nature of Solutions

The most fundamental distinction between MOO and MTO lies in their conceptualization of what constitutes a "solution." In Multi-Objective Optimization, a single globally optimal solution that simultaneously minimizes or maximizes all objectives rarely exists. Instead, MOO identifies a set of Pareto-optimal solutions where improvement in one objective necessitates deterioration in at least one other [3] [1]. This collection of compromise solutions forms what is known as the Pareto front, which represents the best possible trade-offs among competing objectives. When solving a multi-objective optimization problem, the result is not a single answer but a family of alternatives that reveal the inherent conflicts between objectives [1].

In Multi-Task Optimization, the goal is to find multiple global optima - specifically, the best possible solution for each individual task. While these tasks are solved simultaneously, each maintains its own distinct solution. MTO operates on the premise that related tasks may contain complementary information that can accelerate the optimization process for all tasks when properly leveraged [14]. The paradigm effectively transforms multiple optimization problems into a single multi-task scenario where knowledge transfer between tasks enhances overall optimization performance.

Knowledge Transfer Mechanisms

The handling of information and knowledge between objectives or tasks differs substantially between the two approaches. In MOO, knowledge is inherently shared through the solution representation itself, as each point on the Pareto front embodies a specific trade-off among all objectives. However, there is typically no explicit knowledge transfer mechanism between different regions of the Pareto front.

MTO explicitly designs knowledge transfer mechanisms between tasks through techniques such as shared representations, implicit genetic transfer in multifactorial evolutionary algorithms, and adaptive resource allocation [14] [15]. This cross-task knowledge sharing allows MTO to accelerate convergence and improve solution quality by leveraging commonalities between tasks. The effectiveness of these transfer mechanisms significantly influences MTO performance, with poor transfer potentially leading to negative interference between tasks [14].

Methodological Approaches and Algorithms

Algorithmic Frameworks for MOO

Multi-Objective Optimization employs several distinct algorithmic approaches, each with particular strengths and limitations:

Mathematical Programming Methods: These include techniques like the weighted sum method and epsilon-constraint method, which transform the MOO problem into a single-objective problem or a series of such problems [3]. While straightforward, these methods may struggle with non-convex Pareto fronts and often require multiple runs to approximate the full Pareto set.

Pareto-Based Evolutionary Algorithms: Methods such as NSGA-II (Non-dominated Sorting Genetic Algorithm II) and MOEA/D (Multi-Objective Evolutionary Algorithm Based on Decomposition) evolve a population of solutions toward the Pareto front in a single run [3]. These algorithms explicitly maintain diversity along the Pareto front while pushing the population toward optimality.

Metaheuristics and AI-Based Approaches: More recently, reinforcement learning and other AI techniques have been applied to MOO problems, particularly for adapting search strategies in response to evolving optimization landscapes [3].

Algorithmic Frameworks for MTO

Multi-Task Optimization has developed specialized algorithms to facilitate knowledge transfer:

Multifactorial Evolutionary Algorithm (MFEA): This pioneering MTO approach enables implicit knowledge transfer through a unified representation and assortative mating, allowing genetic material to be exchanged between solutions from different tasks [14].

Cross-Domain and Asynchronous MTO: Advanced MTO variants handle tasks with different characteristics (cross-domain) or inconsistent arrival times (asynchronous), requiring more sophisticated transfer mechanisms [14].

Reinforcement Learning-Enhanced MTO: Recent approaches like QLMTMMEA use Q-learning to adaptively select optimal auxiliary tasks during evolution, dynamically balancing convergence and diversity across tasks [15].

The following diagram illustrates the structural differences between MOO and MTO frameworks:

Experimental Comparison and Performance Metrics

Evaluation Metrics and Methodologies

Evaluating MOO and MTO algorithms requires distinct metrics aligned with their different objectives. For MOO, quality assessment typically involves metrics that measure:

- Convergence: How close the obtained solutions are to the true Pareto front

- Diversity: How well the solutions spread across the Pareto front

- Coverage: The extent to which the solution set represents the entire Pareto front

For MTO, evaluation focuses on different aspects:

- Convergence Speed: How quickly the algorithm finds optimal solutions for each task

- Transfer Effectiveness: The degree to which knowledge sharing improves performance across tasks

- Negative Transfer Avoidance: The algorithm's ability to prevent harmful interference between unrelated tasks

Quantitative Performance Comparison

The table below summarizes typical experimental results comparing MOO and MTO approaches on benchmark problems:

Table 1: Performance Comparison of MOO vs. MTO Approaches

| Metric | MOO Algorithms | MTO Algorithms | Comparison Context |

|---|---|---|---|

| Solution Approach | Pareto-optimal trade-offs | Multiple global optima | Fundamental difference in output |

| Knowledge Transfer | Implicit through solution representation | Explicit cross-task transfer | MTO explicitly designs transfer mechanisms [14] |

| Diversity Focus | Objective space diversity | Decision space diversity | MTO maintains diversity for multiple optima [15] |

| Computational Efficiency | Moderate to high computational cost | Potentially reduced cost through transfer | MTO can accelerate convergence via knowledge sharing [14] |

| Typical Applications | Engineering design, portfolio optimization | Feature selection, vehicle routing, NAS | Different domains based on problem structure [14] |

Recent experimental studies on complex multimodal multi-objective problems demonstrate that MTO-inspired approaches like QLMTMMEA can outperform traditional MOO algorithms in maintaining decision space diversity while achieving competitive convergence [15]. In one study, QLMTMMEA was compared against seven state-of-the-art multimodal multi-objective evolutionary algorithms on 34 complex benchmark problems, showing competitive performance in balancing convergence and diversity [15].

The Researcher's Toolkit: Essential Methods and Reagents

Table 2: Essential Computational Tools for MOO and MTO Research

| Tool Category | Specific Methods/Algorithms | Function in Optimization | Applicable Paradigm |

|---|---|---|---|

| Evolutionary Algorithms | NSGA-II, MOEA/D, SPEA2 | Population-based Pareto front approximation | Primarily MOO |

| Multitask Frameworks | MFEA, MO-MFEA, MOMFEA | Implicit knowledge transfer between tasks | Primarily MTO |

| Reinforcement Learning | Q-learning, Policy Gradients | Adaptive task selection and resource allocation | Both (MTO focus) |

| Niching Techniques | Crowding, Fitness Sharing | Maintaining diversity in decision/objective space | Both (MMO focus) |

| Benchmark Problems | ZDT, DTLZ, CEC competitions | Standardized performance evaluation | Both |

| Performance Metrics | Hypervolume, IGD, Spacing | Quantifying solution quality and diversity | Both (with different emphasis) |

Application Contexts: When to Use Each Approach

Typical MOO Application Domains

Multi-Objective Optimization finds natural application in domains characterized by inherent trade-offs between competing objectives:

- Engineering Design: Balancing performance, cost, and reliability in product design [1]

- Financial Portfolio Optimization: Maximizing return while minimizing risk [3] [1]

- Drug Development: Optimizing efficacy while minimizing toxicity and side effects [3]

- Healthcare Planning: Balancing treatment effectiveness, cost, and accessibility

- Environmental Policy: Negotiating economic growth against environmental impact

In these domains, decision-makers benefit from understanding the trade-off landscape provided by the Pareto front, enabling informed choices based on contextual priorities and constraints.

Typical MTO Application Domains

Multi-Task Optimization proves particularly valuable in scenarios involving multiple related optimization problems:

- Feature Selection: Simultaneously optimizing feature sets for multiple related datasets [14]

- Neural Architecture Search (NAS): Finding optimal architectures for multiple related learning tasks [14]

- Capacitated Vehicle Routing: Solving multiple routing scenarios with shared constraints [14]

- Computational Offloading: Optimizing resource allocation across multiple devices and tasks [14]

- Cross-Domain Optimization: Solving problems with different characteristics but underlying similarities

The following diagram illustrates the knowledge transfer process in MTO:

Multi-Objective Optimization and Multi-Task Optimization offer distinct yet complementary approaches to managing complexity in optimization problems. MOO excels at revealing fundamental trade-offs between conflicting objectives within a single problem, providing decision-makers with a comprehensive view of their options. In contrast, MTO leverages relationships between multiple distinct problems to accelerate optimization and improve solution quality through knowledge transfer.

For researchers and drug development professionals, understanding these contrasting paradigms enables more informed selection of appropriate methodologies for specific problem contexts. MOO proves most valuable when exploring trade-offs is essential to the decision-making process, while MTO offers advantages when multiple related optimization problems must be solved simultaneously. As both fields evolve, hybrid approaches that combine elements of both paradigms may offer promising directions for addressing increasingly complex optimization challenges in scientific research and industrial applications.

In the evolving landscape of computational optimization, two sophisticated paradigms have emerged as powerful frameworks for addressing complex problems: Multi-Objective Optimization (MOO) and Multi-Task Optimization (MTO). While both approaches manage multiple competing elements, their underlying mathematical formulations, operational mechanisms, and application domains differ significantly. MOO focuses on finding optimal trade-offs between conflicting objectives within a single problem through vector optimization, while MTO facilitates knowledge transfer across distinct but related problems via cross-task search. Within the context of drug discovery and development, where researchers must balance molecular properties while satisfying multiple constraints, understanding these distinctions becomes critically important. This guide provides a comprehensive technical comparison of these approaches, examining their mathematical foundations, experimental performance, and practical implementation in scientific research.

Mathematical Foundations: Formulations and Mechanisms

Vector Optimization in Multi-Objective Optimization

Multi-Objective Optimization addresses problems with multiple conflicting objectives that must be simultaneously optimized. The mathematical formulation centers on finding a set of solutions that represent optimal trade-offs between these competing goals. Formally, an MOO problem can be defined as minimizing (or maximizing) an objective vector function:

[ \min{\mathbf{x} \in D} \mathbf{f}(\mathbf{x}) = [f1(\mathbf{x}), f2(\mathbf{x}), \ldots, fm(\mathbf{x})]^T ]

where ( D \subseteq \mathbb{R}^n ) represents the design space and ( \mathbf{x} ) is the decision vector. The image of the feasible set under the objective function mapping is ( T \subseteq \mathbb{R}^m ). A solution ( \mathbf{x}^* ) is considered Pareto optimal if no other solution exists that improves one objective without worsening at least one other. The collection of all Pareto optimal solutions forms the Pareto front, which represents the set of optimal trade-offs [16].

The Pareto dominance relation is fundamental to this approach: for two solutions ( \mathbf{s} ) and ( \mathbf{t} ) in the target space, ( \mathbf{t} \preccurlyeq \mathbf{s} ) if ( ti \leq si ) for all objectives i, with at least one strict inequality. The Pareto front (( PF )) is then defined as:

[ PF(f) := {f(\mathbf{x}) | \mathbf{x} \in D \text{ and } \nexists \mathbf{x}' \in D \text{ such that } f(\mathbf{x}') \prec f(\mathbf{x})} ]

This vector optimization approach enables decision-makers to understand the fundamental trade-offs between objectives before selecting a final solution [16].

Cross-Task Search in Multi-Task Optimization

Multi-Task Optimization employs a fundamentally different approach by simultaneously solving multiple optimization tasks (often with different objective functions) through knowledge transfer. The mathematical formulation for an MTO problem containing K optimization tasks can be represented as:

[ {\mathbf{x}1^*, \ldots, \mathbf{x}K^*} = \arg\min{\mathbf{x}k \in \Omega} {f1(\mathbf{x}1), \ldots, fK(\mathbf{x}K)}, \quad k=1,\ldots,K ]

where ( \mathbf{x}k^* ) is the optimal solution for task ( fk(\mathbf{x}_k) ) and ( \Omega ) is the D-dimensional search space [14]. The core mechanism enabling MTO is knowledge transfer between tasks, where useful patterns, features, or optimization strategies discovered while solving one task are applied to accelerate the optimization of other related tasks.

This cross-task search operates on the principle that related tasks often share common structures or underlying patterns that can be leveraged to improve optimization efficiency. The Multifactorial Evolutionary Algorithm (MFEA) was among the first to formalize this approach by maintaining a unified population of solutions that can be evaluated across different tasks, with implicit genetic transfer occurring through specialized crossover operations [14] [7].

Comparative Formulations

Figure 1: Fundamental differences between MOO and MTO in mathematical formulations and optimization mechanisms.

Algorithmic Approaches and Experimental Performance

Representative Algorithms and Their Characteristics

The methodological diversity in both MOO and MTO has led to the development of numerous specialized algorithms, each with distinct operational characteristics and performance profiles.

Table 1: Key Algorithm Characteristics in MOO and MTO

| Algorithm | Type | Core Mechanism | Key Features | Limitations |

|---|---|---|---|---|

| Weighted Sum | MOO | Scalarization | Converts MOO to SOO via linear combination | Cannot find solutions in non-convex regions [16] |

| ε-Constraint | MOO | Constraint-based | Optimizes one objective, treats others as constraints | Sensitivity to ε values [16] |

| NSGA-II | MOO | Evolutionary | Non-dominated sorting, crowding distance | Convergence issues in many-objective problems [7] |

| CMOMO | MOO (Constrained) | Two-stage evolutionary | Dynamic constraint handling, latent space optimization | Complex implementation [17] |

| MFEA | MTO | Evolutionary | Implicit genetic transfer, unified representation | Assumes task relatedness [14] [7] |

| MOMFEA-STT | MTO (Multi-objective) | Source task transfer | Online similarity recognition, spiral search mutation | Computationally intensive [7] |

| Rep-MTL | MTO | Representation-level | Task saliency, entropy-based penalization | Limited to specific architecture types [18] |

Experimental Performance Comparison

Recent experimental studies provide quantitative insights into the performance of various MOO and MTO approaches across different problem domains and benchmark tasks.

Table 2: Experimental Performance Metrics Across Domains

| Algorithm | Domain | Performance Metrics | Comparison Baseline | Key Findings |

|---|---|---|---|---|

| MOMFEA-STT [7] | Multi-task optimization benchmarks | Hypervolume Indicator, Generational Distance | NSGA-II, MOMFEA, MOMFEA-II | Outperformed comparison algorithms in comprehensive solving efficiency; superior convergence characteristics |

| CMOMO [17] | Molecular optimization | Success rate, Property optimization scores | QMO, Molfinder, MOMO | Two-fold improvement in success rate for GSK3 optimization task; generated molecules with favorable bioactivity and drug-likeness |

| Rep-MTL [18] | Multi-task learning benchmarks | Task-specific accuracy, Efficiency metrics | Loss scaling, Gradient manipulation methods | Achieved competitive performance gains with favorable efficiency without optimizer/architecture modifications |

| AutoScale [19] | Autonomous driving | Gradient magnitude similarity, Condition number | Prior MTOs, Linear scalarization | Performance close to searched weight performance across different datasets |

| Uncertainty Weighting [20] | Computer vision | Task balancing, Overall accuracy | Single-task models, Equal weighting | Mitigated imbalance but required careful parameter tuning |

Experimental Protocols and Methodologies

To ensure reproducibility and proper implementation of these optimization approaches, researchers should adhere to standardized experimental protocols:

MOO Experimental Protocol:

- Problem Formulation: Clearly define all objective functions, decision variables, and constraints

- Algorithm Selection: Choose appropriate MOO method based on problem characteristics (convexity, number of objectives, etc.)

- Parameter Tuning: Calibrate algorithm-specific parameters (population size, mutation rates, etc.)

- Performance Assessment: Evaluate using established metrics (hypervolume, spacing, generational distance)

- Pareto Front Analysis: Examine solution diversity and convergence properties

MTO Experimental Protocol:

- Task Relationship Analysis: Assess potential for knowledge transfer between tasks

- Transfer Mechanism Design: Implement appropriate knowledge representation and transfer strategy

- Similarity Quantification: Deploy task similarity measures to guide transfer intensity

- Negative Transfer Prevention: Incorporate safeguards against detrimental knowledge transfer

- Cross-Task Performance Validation: Evaluate performance improvements across all tasks

Domain Applications: Drug Discovery Case Studies

Constrained Molecular Optimization with CMOMO

In pharmaceutical development, the CMOMO framework addresses the critical challenge of constrained molecular multi-property optimization through a sophisticated two-stage approach. The mathematical formulation treats this as a constrained multi-objective optimization problem:

[ \begin{aligned} \min{\mathbf{m} \in M} & \quad \mathbf{f}(\mathbf{m}) = [f1(\mathbf{m}), f2(\mathbf{m}), \ldots, fp(\mathbf{m})]^T \ \text{subject to} & \quad gj(\mathbf{m}) \leq 0, \quad j = 1, \ldots, q \ & \quad hk(\mathbf{m}) = 0, \quad k = 1, \ldots, r \end{aligned} ]

where ( \mathbf{m} ) represents a molecule in chemical space ( M ), ( fi ) are the optimization properties (e.g., bioactivity, drug-likeness), and ( gj ), ( h_k ) represent inequality and equality constraints respectively (e.g., ring size restrictions, structural alerts) [17].

The constraint violation (CV) for a molecule is quantified as:

[ CV(\mathbf{m}) = \sum{j=1}^q \max(0, gj(\mathbf{m})) + \sum{k=1}^r |hk(\mathbf{m})| ]

CMOMO's dynamic constraint handling strategy initially explores the unconstrained objective space before progressively incorporating constraints, effectively balancing property optimization with constraint satisfaction [17].

Multi-Task Optimization in Pharmaceutical Research

MTO approaches have demonstrated significant potential in drug discovery by enabling simultaneous optimization across multiple related tasks, such as predicting activity against different protein targets or optimizing for both potency and metabolic stability. The MOMFEA-STT algorithm exemplifies this approach through its source task transfer strategy, which establishes parameter sharing models between historical tasks (source tasks) and current target tasks [7].

The algorithm dynamically identifies task correlations using a similarity calculation method that compares static characteristics of source problems with the dynamic evolution trend of target tasks. This enables adaptive knowledge transfer intensity, maximizing the benefits of cross-task optimization while minimizing negative transfer. The spiral search mutation operator further enhances global search capability, preventing premature convergence in complex molecular search spaces [7].

Figure 2: CMOMO's two-stage workflow for constrained molecular optimization, demonstrating the transition from unconstrained property optimization to constrained satisfaction.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Tools for Optimization Studies

| Tool/Reagent | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| Latent Vector Fragmentation (VFER) [17] | Evolutionary reproduction in continuous latent space | Molecular optimization using deep generative models | Enhances exploration efficiency in chemical space |

| Bank Library [17] | Repository of high-property molecules similar to lead compound | Initial population generation in molecular optimization | Requires careful similarity metrics and diversity preservation |

| Pre-trained Encoder-Decoder [17] | Maps molecules between discrete chemical and continuous latent spaces | Deep molecular optimization frameworks | Quality depends on pre-training data comprehensiveness |

| Task Saliency Maps [18] | Quantifies representation-level task interactions | Multi-task learning architectures | Requires specialized visualization and interpretation tools |

| Pareto Reflective Functions [16] | Preserves Pareto optimality during function composition | Problem-tailored MOO algorithm construction | Must satisfy specific mathematical properties for correctness |

| Spiral Search Mutation [7] | Enhances global exploration in evolutionary algorithms | Multi-task optimization with complex search spaces | Balances exploration-exploitation tradeoffs |

| Constraint Violation Aggregation [17] | Quantifies degree of constraint satisfaction | Constrained multi-objective optimization | Enables graduated approach to constraint handling |

Future Directions and Research Trends

The evolving landscape of multi-objective and multi-task optimization reveals several promising research directions. For MOO, emerging trends include the development of more sophisticated constraint-handling techniques for high-dimensional problems, and the integration of surrogate modeling to reduce computational expense in expensive function evaluations [21] [17]. For MTO, current research focuses on cross-domain asynchronous optimization, where tasks with different types and arrival times are efficiently handled, and more robust similarity measures to prevent negative transfer [14].

A significant convergence point lies in multi-objective multi-task optimization (MOMTO), which combines the Pareto optimality concepts from MOO with the knowledge transfer mechanisms of MTO. This hybrid approach is particularly relevant for complex scientific domains like drug discovery, where researchers must simultaneously optimize multiple molecular properties across related but distinct biological targets or optimization scenarios [14] [7].

The integration of these optimization paradigms with foundation models represents another frontier, where pre-trained models provide powerful initialization but still require specialized optimization strategies to handle multi-objective and multi-task scenarios effectively [20] [22]. As noted in recent analyses, even powerful Vision Foundation Models do not inherently resolve optimization imbalance in multi-task learning, highlighting the continued importance of specialized optimization research [20].

The comparison between Multi-Objective Optimization and Multi-Task Optimization reveals distinct strengths and application domains that researchers must consider when selecting an appropriate framework. MOO excels at revealing fundamental trade-offs between competing objectives within a single problem, making it invaluable for decision-making in complex design spaces. MTO leverages relationships between distinct tasks to accelerate optimization through knowledge transfer, particularly beneficial when facing multiple related optimization problems.

In pharmaceutical research and drug development, where researchers must balance multiple molecular properties while satisfying stringent constraints, both approaches offer complementary benefits. Constrained MOO methods like CMOMO provide robust frameworks for molecular optimization with explicit constraint handling, while MTO approaches enable knowledge transfer across related molecular optimization tasks. The emerging class of multi-objective multi-task optimization algorithms represents a promising synthesis of these paradigms, offering the potential for simultaneous trade-off analysis and cross-task knowledge transfer in complex drug discovery pipelines.

As optimization challenges in scientific research continue to grow in complexity and scale, the continued development and refinement of both MOO and MTO methodologies will remain essential for addressing the multifaceted optimization problems that characterize modern computational science and engineering.

In scientific and industrial research, efficiently managing multiple competing goals is a fundamental challenge. This has given rise to two distinct yet sometimes confused computational paradigms: Multi-Objective Optimization (MOO) and Multi-Task Optimization (MTO). While both frameworks handle multiple criteria, their core philosophies, applications, and methodological tools differ significantly.

Multi-Objective Optimization seeks to find the best possible trade-offs between conflicting objectives for a single primary process or product. The solution is not a single point but a set of optimal compromises, known as the Pareto front [23]. In contrast, Multi-Task Optimization aims to improve the learning efficiency and performance of a model by simultaneously solving multiple distinct but related problems, leveraging shared representations and knowledge across tasks.

This guide explores the interconnection and divergence between MTO and MOO, framing them within a broader research context. It provides experimental data and protocols from key applications, notably the Methanol-to-Olefins (MTO) process as a domain for MOO, and offers a toolkit for researchers, particularly in drug development and chemical engineering.

Core Concepts: MTO and MOO Defined

Multi-Objective Optimization (MOO) in Action

MOO is prevalent in engineering and design, where a single system must balance competing performance metrics. A classic example is catalyst design for the Methanol-to-Olefins (MTO) process, which aims to convert methanol into high-value light olefins like ethylene and propylene.

- Conflicting Objectives: The goal is to maximize both light olefin selectivity (product quality) and catalyst lifetime (operational efficiency) [24] [25]. These objectives often conflict; for instance, strategies that boost short-term yield might accelerate catalyst deactivation via coking.

- The MOO Solution: MOO algorithms do not yield a single "best" catalyst formulation but a Pareto-optimal set of candidates. Each candidate represents a unique trade-off, such as high selectivity with moderate lifetime versus longer lifetime with good enough selectivity, allowing engineers to choose based on broader economic or operational contexts [23].

Multi-Task Optimization (MTO) in Principle

MTO is a cornerstone of advanced machine learning, where the focus is on developing a single model that competently performs several tasks.

- Leveraging Commonalities: In drug discovery, a model might simultaneously learn to predict compound efficacy against a target and assess its potential toxicity [26] [27]. By sharing knowledge between these tasks, MTO can lead to more robust and generalizable models than those trained on each task in isolation.

- The Outcome: The result is a unified model that performs well across multiple, predefined tasks, optimizing a shared internal representation.

The diagram below illustrates the core structural differences between these two paradigms.

Experimental Data and Performance Comparison

MOO in Catalyst Design: Quantitative Trade-offs

The application of MOO in developing SAPO-34 catalysts for the MTO process reveals clear performance trade-offs. The following table summarizes experimental data for catalysts optimized for different points on the Pareto front, showing how enhanced lifetime often requires a compromise on initial selectivity [24] [25].

Table 1: MOO Performance Trade-offs in MTO Catalyst Design

| Catalyst Type | Modification Strategy | Light Olefin Selectivity (%) | Catalyst Lifetime (min) | Key Trade-off Characterization |

|---|---|---|---|---|

| SP34-P (Reference) | None (Parent catalyst) | ~84 | 360 | Baseline performance [25]. |

| SP-Ce | CeO₂ Doping | 83.9 | 600 | Significant lifetime extension with minimal selectivity loss [24]. |

| SP34-A | Acid Etching | High (increased adsorption) | < 360 (reduced) | High selectivity potential but rapid deactivation due to coking [25]. |

| SP34-B | Base Etching | Moderate | > 360 | Improved longevity and reduced coking, but lower peak selectivity [25]. |

| SP34-AB | Sequential Acid-Base Etching | 88.8 | 586 | Excellent balance: high selectivity and greatly extended lifetime [25]. |

MOO Algorithm Performance: TAMOPSO vs. Alternatives

Evaluating MOO requires assessing the performance of the algorithms themselves. The TAMOPSO algorithm, which incorporates a task allocation and archive-guided mutation strategy, demonstrates how advanced MOO methods can efficiently navigate complex trade-offs. The table below compares its performance against other algorithms on standard test problems [23].

Table 2: Multi-Objective Optimization Algorithm Performance Comparison

| Algorithm Name | Key Mechanism | Reported Performance on Standard Test Problems | Strengths |

|---|---|---|---|

| TAMOPSO | Task allocation, Adaptive Lévy flight mutation, Archive-guided search | Outperformed 10 existing algorithms on several standard tests [23]. | Balanced convergence and diversity; efficient search in complex spaces [23]. |

| MOAGDE | Adaptive guided differential evolution | Effective performance, but may be outperformed by TAMOPSO on specific problems [23]. | Good convergence properties [23]. |

| MOCPSO | Shift density estimation (SDE) for population division | Good performance, but partitioning standard can be less dynamic [23]. | Maintains population diversity [23]. |

| DTDP-EAMO | Two-stage multi-population adaptive mutation | High-quality solutions, promotes information exchange [23]. | Effective at avoiding local optima [23]. |

Detailed Experimental Protocols

Protocol 1: MOO of a CeO₂-Doped SAPO-34 Catalyst

This protocol details the synthesis and testing of a catalyst where MOO is applied to balance olefin selectivity and catalyst longevity through metal oxide doping [24].

1. Catalyst Synthesis:

- Prepare a synthesis gel with molar composition 1Al₂O₃:1P₂O₅:0.6SiO₂:1.25TEAOH:1.25Mor:70H₂O.

- Dissolve aluminum iso-propoxide in deionized water. Add tetraethylammonium hydroxide (TEAOH) and morpholine (Mor) dropwise.

- Introduce tetraethyl orthosilicate (TEOS) and stir for 3 hours at 60°C.

- Add phosphoric acid dropwise and stir for 2 hours. Age the mixture at room temperature for 24 hours.

- For Ce-doping, add cerium nitrate hexahydrate (0.05 ratio to Al₂O₃) and aqueous ammonium carbonate simultaneously to the gel.

- Subject the gel to hydrothermal treatment in an autoclave at 180°C for 24 hours.

- Recover the solid product via centrifugation, wash with water, dry at 100°C for 12 hours, and calcine at 550°C for 5 hours.

2. Catalytic Testing & MOO Evaluation:

- Conduct the MTO reaction in a fixed-bed reactor under standardized conditions (e.g., 425°C, methanol weight hourly space velocity of 1.5 h⁻¹).

- Use online gas chromatography (GC) to analyze product stream composition at regular intervals.

- Measure Objective 1 (Selectivity): Calculate the total percentage of ethylene and propylene in the hydrocarbon products at a defined time-on-stream (e.g., 30 minutes).

- Measure Objective 2 (Lifetime): Determine the total reaction time until methanol conversion drops below a specific threshold (e.g., 99%).

- Repeat the synthesis and testing process for catalysts with varying doping levels and types (e.g., different metal oxides) to build a dataset of performance trade-offs.

- Input the selectivity-lifetime data pairs into an MOO algorithm (e.g., TAMOPSO) to identify the Pareto-optimal set of catalyst synthesis parameters.

The workflow for this catalytic MOO process is summarized below.

Protocol 2: MTO for a Multi-Omics Drug Discovery Pipeline

This protocol outlines an MTO approach for building a predictive model in drug discovery that simultaneously learns from multiple data types and tasks [26] [27].

1. Data Collection and Task Definition:

- Genomics: Collect whole-genome sequencing data from patient cohorts to identify genetic variations.

- Transcriptomics: Obtain RNA-seq data from tissue samples to measure gene expression levels.

- Proteomics: Acquire mass spectrometry data to quantify protein abundance.

- Define Task 1: Predict drug efficacy (e.g., IC50 values) based on multi-omics profiles.

- Define Task 2: Predict drug toxicity (e.g., binary classification of hepatotoxicity).

2. Model Training via MTO:

- Input Layer: Design a model architecture with separate input branches for each omics data type (genomic, transcriptomic, proteomic).

- Shared Encoder: The input branches feed into a shared deep learning encoder (e.g., a multi-layer perceptron). This shared layer is critical for MTO as it learns a unified representation of the underlying biology common to both efficacy and toxicity prediction.

- Task-Specific Heads: The output of the shared encoder connects to two separate task-specific output layers (heads)—one for regression (efficacy prediction) and one for classification (toxicity prediction).

- Joint Loss Function: The model is trained by minimizing a joint loss function, ( L{total} = \alpha L{efficacy} + \beta L_{toxicity} ), where ( \alpha ) and ( \beta ) are hyperparameters that balance the focus between the two tasks.

3. Model Validation:

- Evaluate the final unified model on a held-out test set for both tasks, reporting performance metrics (e.g., Mean Squared Error for efficacy, AUC-ROC for toxicity) for each task simultaneously.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagent Solutions for Featured Experiments

| Item | Function / Application | Field |

|---|---|---|

| SAPO-34 Catalyst | Microporous catalyst providing shape-selective properties for high light olefin yield in the MTO process [24] [25]. | Chemical Engineering, MOO |

| Cerium Nitrate Hexahydrate | Precursor for CeO₂ doping of SAPO-34, used to modify acidity and suppress coke formation, thereby extending catalyst lifetime [24]. | Chemical Engineering, MOO |

| Tetraethylammonium Hydroxide (TEAOH) | Organic template agent used in the hydrothermal synthesis of the SAPO-34 zeolite framework [24] [25]. | Chemical Engineering, MOO |

| Multi-Omics Datasets (Genomics, Transcriptomics, Proteomics) | Integrated biological data used to build predictive models that learn from multiple layers of molecular information simultaneously [26] [27]. | Drug Discovery, MTO |

| Laser-Capture Microdissection | Technique for isolating specific cell populations (e.g., parvalbumin interneurons) prior to RNA-seq for precise target identification [26]. | Drug Discovery, MTO |

| Adeno-Associated Virus (AAV) Vector | Gene delivery tool; its safety profile (e.g., genotoxicity via integration) is assessed using multi-omics methods in late-stage drug development [26]. | Drug Discovery, MTO |

Interconnection and Divergence: A Synthesis

The relationship between MTO and MOO is not one of opposition but of complementary application to different types of problems. Their interconnection lies in their shared goal of handling multiple, simultaneous criteria. However, their fundamental divergence is clear:

- MOO is best suited for designing and refining a single product or process where inherent conflicts exist. The outcome is a set of optimal compromises, and the "best" choice is often determined by external business or operational constraints.

- MTO is a powerful machine learning strategy for building systems that can perform several related tasks competently. The outcome is a more robust and generalizable model that benefits from knowledge sharing.

In practice, these paradigms can even be nested. For instance, an MTO model for drug discovery (predicting efficacy and toxicity) could itself be tuned using MOO principles to find the best hyperparameters that balance its performance across both tasks. Understanding this fundamental relationship allows researchers and developers to select the right computational framework for their specific challenge, ultimately driving more efficient and effective optimization in science and industry.

Algorithms in Action: Methodologies and Biomedical Applications

Multi-objective optimization (MOO) is essential in numerous engineering and scientific applications that require the concurrent handling of two or more conflicting objectives [28]. When optimization problems involve more than three objectives, they are often classified as "many-objective" problems, presenting unique challenges for optimization algorithms [29]. The evolutionary algorithm family of Non-dominated Sorting Genetic Algorithms (NSGA), particularly NSGA-II and NSGA-III, has emerged as the most widely used method for solving industrial multi-objective optimization problems due to its simplicity and efficiency [29].

This comparison guide objectively analyzes the performance of NSGA-II and NSGA-III, with particular emphasis on reference-point based methods for many-objective optimization problems. Within the broader research context comparing multi-task optimization and multi-objective optimization, we examine how these algorithms balance convergence and diversity across various problem domains, from chemical engineering to drug discovery.

Algorithmic Fundamentals and Key Differences

NSGA-II: Crowding Distance Approach

NSGA-II employs a well-established procedure to achieve convergence through non-dominated sorting, where solutions are ranked into various fronts based on domination criteria [29]. For maintaining diversity, NSGA-II uses the crowding distance (CD) metric, which measures the density of solutions surrounding a particular point in the objective space. For a two-objective optimization problem, the perimeter of the cuboid formed by a solution's nearest neighbors represents its crowding distance [29]. This approach gives priority to solutions at the extreme ends of each objective (IⱼMin and IⱼMax), promoting spread across the Pareto front.

NSGA-III: Reference-Point Based Diversity Management

NSGA-III follows the same procedure as NSGA-II for achieving convergence through non-dominated sorting but employs a fundamentally different approach to maintain diversity [29]. Instead of crowding distance, NSGA-III uses structured reference points on a normalized hyper-plane to ensure diversity in many-objective spaces [29]. In this procedure, solutions of a given front are projected on a normalized plane, which is divided into equi-spaced reference points. Each projected solution is then associated with the closest reference point, and a selection procedure ensures that a maximum number of reference points are represented in the final solution set [29].

Theoretical Framework for Multi-Task vs. Multi-Objective Optimization

It is crucial to distinguish between multi-task optimization (MTO) and multi-objective optimization (MOO) within algorithmic research. While MOO deals with finding trade-offs between conflicting objectives for a single problem, multi-task optimization uses knowledge transfer between related tasks to solve multiple problems simultaneously [30]. As articulated in recent research, "Multitask optimization uses the knowledge transfer between tasks to deal with multiple related tasks simultaneously, which obtains better optimization performance" [30]. This distinction becomes particularly important in complex domains like drug discovery, where researchers might need to optimize multiple molecular properties (MOO) while simultaneously addressing related but distinct design problems (MTO).

Performance Comparison: Experimental Data

Direct Algorithm Comparison in Chemical Engineering

A comprehensive study comparing NSGA-II and NSGA-III for optimizing an adiabatic styrene reactor provides insightful performance metrics across three objectives: productivity, yield, and selectivity [29]. The results demonstrated that NSGA-III provides a more diverse range of optimal operating conditions than NSGA-II while maintaining comparable convergence quality [29].

Table 1: Performance Comparison in Styrene Reactor Optimization

| Algorithm | Diversity Metrics | Convergence Metrics | Computational Efficiency |

|---|---|---|---|

| NSGA-II | Limited spread across all objectives | Good convergence to Pareto front | Standard |

| NSGA-III | Superior diversity and distribution | Comparable convergence | Similar to NSGA-II |

Medical Device Design Applications

In the optimization of a novel scissor-type thrombolytic micro-actuator for medical applications, researchers employed NSGA-III for multi-objective optimization of tip amplitude and stirring force [31]. After optimization, the maximum tip amplitude and maximum stirring force of the micro-actuator improved by 61.33% and 80.19%, respectively, demonstrating the practical efficacy of reference-point based methods in complex engineering design problems [31].

Many-Objective Problem Performance

While both algorithms follow the same procedure to achieve the first goal of convergence, their approaches to maintaining diversity differ significantly, making NSGA-III particularly advantageous for many-objective problems [29]. Research indicates that "NSGA-III is reported to be more efficient for many-objective (more than two) optimization problems" [29]. The reference-point based approach in NSGA-III provides more diverse alternatives than NSGA-II, especially as the number of objectives increases beyond three [29].

Experimental Protocols and Methodologies

Standard Implementation Framework

The experimental protocols for comparing NSGA-II and NSGA-III typically follow a structured methodology:

- Problem Formulation: Clearly define objective functions, decision variables, and constraints [29]

- Algorithm Initialization: Set population size, termination criteria, and algorithm-specific parameters

- Reference Point Generation (for NSGA-III): Create structured reference points on normalized hyper-planes [29]

- Evolutionary Operations: Apply selection, crossover, and mutation operators

- Non-dominated Sorting: Rank solutions into Pareto fronts based on domination relationships [29]

- Diversity Preservation: Apply crowding distance (NSGA-II) or reference-point association (NSGA-III)

- Population Update: Select solutions for the next generation based on rank and diversity metrics

Performance Evaluation Metrics

Researchers typically employ multiple metrics to evaluate algorithm performance:

- Convergence Metrics: Measure proximity to true Pareto-optimal solutions

- Diversity Metrics: Assess spread and distribution across objective spaces [29]

- Hypervolume Indicators: Calculate the volume of objective space covered relative to a reference point

- Statistical Testing: Perform multiple independent runs with statistical significance testing

Visualization of Algorithm Structures and Workflows

NSGA-II vs. NSGA-III Algorithmic Flow

Multi-Task vs. Multi-Objective Optimization Relationships

Table 2: Multi-Objective Optimization Research Toolkit

| Tool/Resource | Function | Application Context |

|---|---|---|

| Reference Point Generation | Creates structured points on normalized hyper-planes | NSGA-III initialization for many-objective problems |

| Non-dominated Sorting | Ranks solutions into Pareto fronts based on domination | Common to both NSGA-II and NSGA-III |

| Crowding Distance Calculator | Measures solution density in objective space | NSGA-II diversity preservation |

| Normalization Procedures | Scales objectives to comparable ranges | Critical for reference-point approaches |

| Evolutionary Operators | Selection, crossover, and mutation mechanisms | Population evolution in both algorithms |

| Performance Metrics | Hypervolume, spread, spacing indicators | Algorithm evaluation and comparison |

The comparative analysis of NSGA-II and NSGA-III reveals a nuanced performance landscape where each algorithm excels in different contexts. NSGA-II remains a robust, efficient choice for problems with two or three objectives, where its crowding distance approach provides adequate diversity maintenance with straightforward implementation. In contrast, NSGA-III demonstrates superior performance in many-objective problems (typically more than three objectives), where its reference-point based method maintains better diversity across expanding objective spaces [29].

Within the broader context of multi-task versus multi-objective optimization research, NSGA algorithms represent sophisticated approaches to handling multiple objectives within single problems, while emerging multi-task optimization frameworks leverage knowledge transfer between related tasks [30]. For researchers and drug development professionals, the selection between NSGA-II and NSGA-III should be guided by problem dimensionality, diversity requirements, and computational constraints, with NSGA-III offering particular advantages for complex many-objective molecular design challenges prevalent in modern drug discovery pipelines.

In evolutionary computation, Multi-Task Optimization (MTO) and Multi-Objective Optimization (MOO) represent distinct paradigms for solving complex problems. While MOO focuses on optimizing multiple competing objectives within a single problem, MTO aims to solve multiple optimization tasks simultaneously by leveraging potential synergies and shared knowledge between them [7]. This guide focuses on two fundamental MTO approaches: Evolutionary Multi-tasking (EMT) and the Multi-Factorial Evolutionary Algorithm (MFEA).