Multifactorial Evolutionary Algorithm: A Complete Guide to Theory, Applications, and Optimization in Drug Discovery

This comprehensive guide explores multifactorial evolutionary algorithms (MFEAs), an emerging paradigm in evolutionary computation that simultaneously solves multiple optimization tasks through implicit knowledge transfer.

Multifactorial Evolutionary Algorithm: A Complete Guide to Theory, Applications, and Optimization in Drug Discovery

Abstract

This comprehensive guide explores multifactorial evolutionary algorithms (MFEAs), an emerging paradigm in evolutionary computation that simultaneously solves multiple optimization tasks through implicit knowledge transfer. Targeting researchers, scientists, and drug development professionals, the article examines MFEA foundations in multifactorial optimization, detailed methodological implementations, advanced troubleshooting strategies for negative transfer avoidance, and rigorous validation approaches. With special emphasis on biomedical applications, particularly de novo drug design and multi-objective molecular optimization, this resource provides both theoretical understanding and practical insights for leveraging MFEAs in complex research optimization scenarios.

Understanding Multifactorial Evolutionary Algorithms: Core Principles and Evolutionary Foundations

Defining Multifactorial Optimization and Evolutionary Multitasking

Multifactorial Optimization (MFO) represents a paradigm shift in evolutionary computation, enabling the simultaneous solving of multiple distinct optimization tasks within a single algorithmic run. Unlike traditional single-objective or multi-objective optimization that focuses on a single problem, MFO tackles a set of K different optimization problems, termed tasks, concurrently [1]. Each task has its own search space, objective function(s), and constraints. The fundamental goal of MFO is to find a set of optimal solutions, where each solution is the optimum for one of the K component tasks, by leveraging potential synergies and commonalities between them [2] [1].

Evolutionary Multitasking is the computational embodiment of the MFO concept, implemented through specialized evolutionary algorithms (EAs). It refers to the process of conducting evolutionary search across multiple optimization problems simultaneously. The key innovation in evolutionary multitasking is the transfer of knowledge or genetic material between tasks, a mechanism that allows an algorithm to use information discovered while solving one task to accelerate progress on another related task [1]. This paradigm is inspired by the biological concept of cultural evolution, where knowledge is transferred across generations and domains through assortative mating and vertical cultural transmission [1].

The Multifactorial Evolutionary Algorithm (MFEA) was the pioneering algorithm to realize this evolutionary multitasking paradigm [1]. MFEA creates a unified search space where individuals encoded in a common representation can be decoded and evaluated for different tasks. The algorithm utilizes two primary mechanisms to enable knowledge transfer: (1) assortative mating, which allows individuals with similar skill factors (the task they perform best on) to preferentially mate, while still permitting cross-task reproduction with a controlled probability, and (2) vertical cultural transmission, which ensures that offspring inherit cultural traits (skill factors) from their parents [2] [1].

Fundamental Principles and Mechanisms

Core Definitions in MFO

In a formal MFO environment with K tasks, the i-th task (T_i) is an optimization problem with search space Ω_i and objective function f_i: Ω_i → R. For a population of individuals P = {p_1, p_2, ..., p_N}, the following properties are defined for each individual [1]:

- Factorial Cost (

Ψ_i^j): The objective valuef_j( p_i )of individualp_ion taskT_j. - Factorial Rank (

r_i^j): The index of individualp_iwhen the population is sorted in ascending order ofΨ_j(for minimization problems). - Scalar Fitness (

φ_i): Defined as1 / min_{j∈{1,...,K} { r_i^j }, representing the overall performance of an individual across all tasks. - Skill Factor (

τ_i): The index of the task on which the individual performs best, formallyτ_i = argmin_{j∈{1,...,K}} { r_i^j }.

These definitions enable meaningful comparison and selection of individuals in a multitasking environment, where each individual may excel at different tasks.

The Knowledge Transfer Mechanism

The transfer of knowledge between tasks is controlled primarily through a parameter called random mating probability (rmp) [1]. The rmp determines the probability that two individuals with different skill factors will mate and produce offspring. When rmp is high, cross-task reproduction occurs frequently, promoting knowledge transfer. When rmp is low, individuals primarily mate with others having the same skill factor, limiting knowledge transfer.

A significant challenge in evolutionary multitasking is managing negative transfer—when the transfer of genetic material between unrelated tasks deteriorates optimization performance [1]. To address this, advanced MFEAs incorporate adaptive transfer strategies that dynamically adjust the rmp based on online estimation of inter-task relatedness, or use prediction models to identify promising individuals for knowledge transfer [1].

Table 1: Key Characteristics of Multifactorial Optimization

| Characteristic | Description | Significance |

|---|---|---|

| Unified Search Space | A common encoding allows individuals to be evaluated across different tasks [1]. | Enables direct comparison and knowledge transfer between tasks. |

| Skill Factor | Identifies the task an individual performs best on [1]. | Guides assortative mating and cultural transmission. |

| Implicit Transfer | Knowledge is transferred through crossover of encoded solutions [1]. | No explicit mapping required; transfer occurs naturally. |

| Cultural Transmission | Offspring inherit the skill factor of a parent [1]. | Maintains population diversity across tasks. |

Experimental Protocols and Methodologies

Standardized Benchmark Problems

Research in MFO relies on standardized benchmark problems to evaluate algorithm performance. Commonly used benchmarks include [1]:

- CEC2017 MFO Benchmark Problems: A set of problems specifically designed for multifactorial optimization, featuring tasks with varying degrees of relatedness.

- WCCI20-MTSO and WCCI20-MaTSO Benchmark Problems: Benchmark suites from the 2020 IEEE World Congress on Computational Intelligence, covering both single-objective and many-objective task optimization scenarios.

These benchmarks typically include problems where tasks share global optima, have overlapping basins of attraction, or are completely unrelated, allowing researchers to test both the convergence speed and the robustness of transfer mechanisms.

Performance Evaluation Metrics

The performance of MFEAs is typically evaluated using the following metrics [1]:

- Convergence Speed: The number of generations or function evaluations required to reach a satisfactory solution for each task.

- Solution Accuracy: The precision of the obtained solutions compared to known global optima.

- Transfer Effectiveness: The success rate of knowledge transfer, often measured by comparing performance with and without transfer mechanisms.

Table 2: Advanced Multifactorial Evolutionary Algorithms and Their Core Methodologies

| Algorithm | Core Methodology | Key Innovation | Reported Advantage |

|---|---|---|---|

| MFEA [1] | Cultural transmission with fixed rmp parameter. | Pioneering framework for evolutionary multitasking. | Foundation for all subsequent MFEAs. |

| MFEA-II [1] | Online transfer parameter estimation. | Replaces scalar rmp with an adaptive RMP matrix. | Captures non-uniform inter-task synergies; reduces negative transfer. |

| EMT-ADT [1] | Adaptive transfer strategy based on decision tree. | Uses supervised learning to predict an individual's transfer ability. | Improves probability of positive transfer; enhances solution precision. |

| EMTO-HKT [1] | Hybrid knowledge transfer strategy. | Combines individual-level and population-level learning. | Adapts to different degrees of task relatedness. |

| MPUSMs-IMFEOA [2] | Multidimensional preference user surrogate models. | Applies MFEA to interactive evolutionary algorithms for recommendation. | Improves recommendation diversity and novelty by 54.02% and 2.69% [2]. |

Protocol for the EMT-ADT Algorithm

The Evolutionary Multitasking Optimization with Adaptive Transfer Strategy Based on Decision Tree (EMT-ADT) exemplifies a modern MFEA methodology [1]:

- Initialization: Generate a unified population of individuals and initialize the adaptive parameters.

- Skill Factor Assignment: Evaluate each individual on all tasks and assign skill factors based on factorial ranks.

- Decision Tree Construction:

- Define an evaluation indicator to quantify the transfer ability of each individual.

- Use the Gini coefficient to construct a decision tree that predicts the transfer ability of candidate individuals.

- Assortative Mating and Cultural Transmission:

- Select parents based on their scalar fitness.

- Apply crossover between individuals with the same skill factor or with different skill factors based on the rmp.

- Use the decision tree to select promising positive-transfer individuals for knowledge transfer.

- Offspring Evaluation: Decode and evaluate offspring on their inherited skill factor's task (vertical cultural transmission).

- Population Update: Combine parent and offspring populations and select survivors based on scalar fitness.

- Parameter Adaptation: Dynamically update the rmp or transfer strategy based on the success history of cross-task transfers.

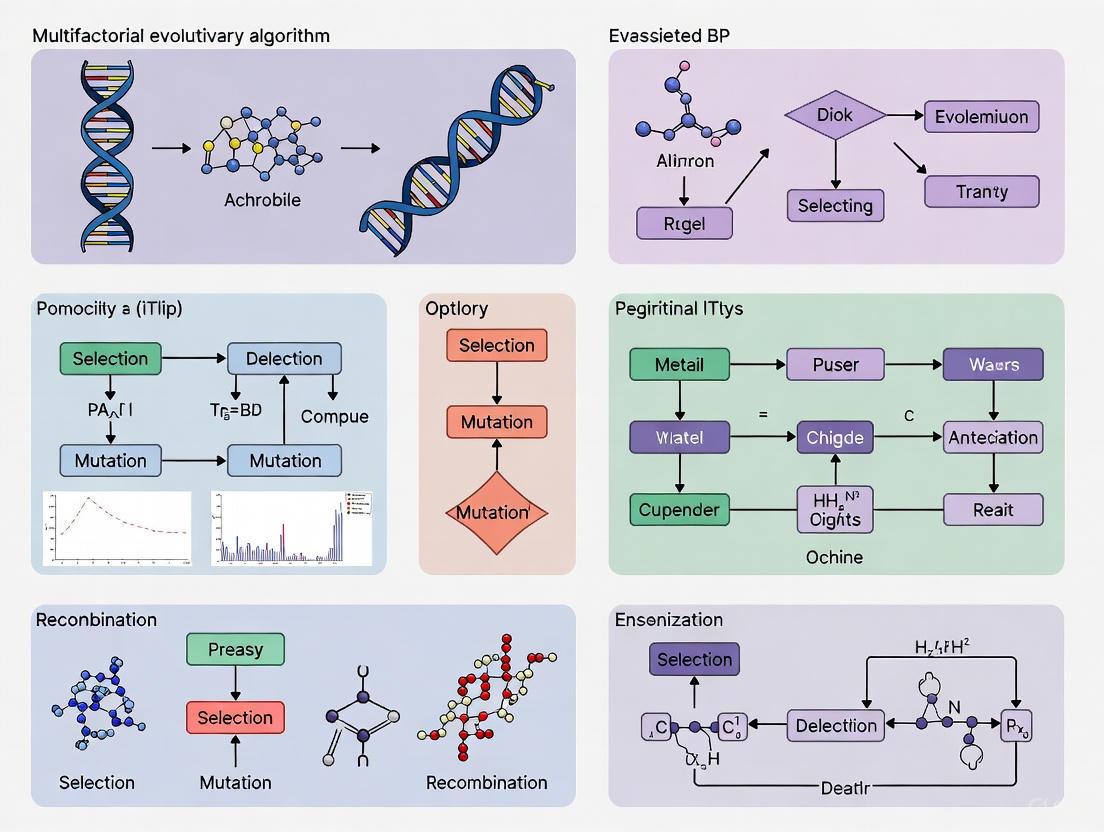

Visualization of Core Workflows

High-Level MFEA Workflow

The following diagram illustrates the generalized workflow of a Multifactorial Evolutionary Algorithm, showing how multiple tasks are optimized concurrently through a unified population and shared genetic material.

Knowledge Transfer and Negative Transfer Mitigation

This diagram details the critical process of knowledge transfer and the modern strategies used to mitigate negative transfer, which is a central challenge in evolutionary multitasking.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for MFO Research

| Research Reagent | Function | Example Implementation/Usage |

|---|---|---|

| Benchmark Problems | Standardized test functions to evaluate and compare algorithm performance. | CEC2017 MFO, WCCI20-MTSO, WCCI20-MaTSO [1]. |

| Unified Encoding | A representation scheme that allows a solution to be decoded for multiple tasks. | Random keys, direct representation, or problem-specific unified encodings [1]. |

| Skill Factor Calculator | Computational module that identifies the best-performing task for each individual. | Algorithm implementing factorial cost and rank calculations [1]. |

| Transfer Ability Predictor | Model to quantify and predict the usefulness of an individual for cross-task knowledge transfer. | Decision tree (EMT-ADT) [1] or other supervised learning models. |

| RMP Adaptation Mechanism | Component to dynamically adjust the random mating probability based on inter-task relatedness. | RMP matrix (MFEA-II) [1] or success-history based adaptation. |

| Domain Adaptation Technique | Methods to transform search spaces to improve inter-task correlation. | Linearized Domain Adaptation (LDA) [1] or autoencoders. |

The Multifactorial Evolutionary Algorithm (MFEA) is a pioneering algorithm in the field of Evolutionary Multitasking (EM) and Multifactorial Optimization (MFO) [3]. Unlike traditional evolutionary paradigms that solve a single optimization problem in isolation, MFEA is designed to solve multiple, self-contained optimization tasks simultaneously within a single, unified search process [4]. This approach is inspired by the biological concept of multifactorial inheritance, where an individual's traits are influenced by multiple hereditary factors [5]. The core innovation of MFEA lies in its ability to exploit potential synergies and complementarities between different tasks through the transfer of genetic material, often leading to accelerated convergence and improved solution quality for the tasks involved [6] [3]. The algorithm's efficacy hinges on three fundamental concepts: Factorial Cost, Factorial Rank, and Skill Factor, which together enable the implicit transfer of knowledge and effective management of multiple search spaces [4].

Foundational Concepts and Definitions

Within the MFEA framework, every individual in the population is encoded in a unified search space and can be decoded into a task-specific solution for any of the K optimization tasks being addressed [3]. To manage this multitasking environment, each individual is assigned several key properties.

Table 1: Core Properties of an Individual in MFEA

| Property | Symbol | Description |

|---|---|---|

| Factorial Cost | Ψₖᵖ | The fitness value of individual p when evaluated on a specific task Tₖ [4] [3]. |

| Factorial Rank | rₖᵖ | The rank of individual p within the population sorted by performance on task Tₖ [4] [3]. |

| Scalar Fitness | φᵖ | A unified fitness measure derived from the individual's best factorial rank across all tasks [4]. |

| Skill Factor | τᵖ | The single task on which an individual performs the best, defining its specialization [4]. |

Factorial Cost

The Factorial Cost is the most direct measure of an individual's performance on a given task. For an individual p and a task Tₖ, its factorial cost, denoted as Ψₖᵖ, is simply the value returned by the objective function fₖ of that task [3]. Each individual in the population possesses a vector of K factorial costs, {Ψ₁ᵖ, Ψ₂ᵖ, ..., Ψₖᵖ}, representing its performance across all concurrent tasks. In a minimization scenario, a lower factorial cost indicates better performance for that particular task.

Factorial Rank

The Factorial Rank provides a relative, task-specific performance measure. For each task Tₖ, the entire population is sorted in ascending order of their factorial cost (for minimization problems). The position index of an individual p in this sorted list is its factorial rank, rₖᵖ [4] [3]. An individual ranked 1 is the best-performing individual for that task. The factorial rank is crucial because it allows for a standardized comparison of individuals across different tasks, which may have objective functions on vastly different scales.

Skill Factor

The Skill Factor is the task identifier on which an individual exhibits its best performance, effectively defining its specialization [4]. It is determined by identifying the task for which the individual has the highest scalar fitness, φᵖ. The scalar fitness is itself calculated from the best (lowest) factorial rank an individual achieves across all tasks: φᵖ = 1 / minₖ { rₖᵖ } [4]. Consequently, an individual's skill factor, τᵖ, is set to the task k corresponding to this minₖ { rₖᵖ }. The skill factor governs the evolutionary process, as individuals are only evaluated on their skill factor task during reproduction, significantly reducing computational cost [4].

The MFEA Workflow and Algorithmic Structure

The MFEA process integrates these core concepts into a cohesive evolutionary workflow, as outlined in Algorithm 1 [4]. The algorithm begins by initializing a population and evaluating each individual on all tasks to determine their initial skill factors. The main loop then consists of generating offspring through genetic operators, selectively evaluating them, and creating the next generation.

Diagram 1: MFEA High-Level Workflow

Knowledge Transfer via Assortative Mating

A critical mechanism in MFEA is assortative mating, which controls knowledge transfer between tasks. During crossover, two randomly selected parents p1 and p2 have a fixed probability rmp (random mating probability) to undergo inter-task crossover, regardless of their skill factors. If their skill factors differ (τᵖ¹ ≠ τᵖ²) and a random number exceeds rmp, inter-task crossover is bypassed to prevent negative transfer [4]. This encourages beneficial knowledge exchange between similar tasks while reducing the risk of detrimental interference from unrelated tasks.

Vertical Cultural Transmission

The selection process in MFEA implements a form of vertical cultural transmission. The current population and the offspring population are combined into an intermediate pool. The scalar fitness φ of every individual in this pool is recalculated, and the best individuals are selected to form the next generation [4]. This ensures that high-performing individuals, and the beneficial genetic material they carry, are propagated, thereby driving the population towards improved solutions for all tasks.

Experimental Protocols and Methodologies

To empirically validate the performance of MFEA and its core concepts, researchers typically follow a structured experimental protocol involving benchmark problems and performance metrics.

Table 2: Typical Experimental Setup for MFEA Validation

| Component | Description | Example from Literature |

|---|---|---|

| Benchmark Problems | A set of known optimization problems (e.g., TSP, TRP) used to create multitasking environments [6]. | Traveling Salesman Problem (TSP) and Traveling Repairman Problem (TRP) with Time Windows [6]. |

| Performance Metrics | Quantitative measures to evaluate algorithm performance and efficiency. | Average Best Cost: The mean of the best objective values found over multiple runs. Performance Ranking: Ranking algorithms based on solution quality and computation time [7]. |

| Comparison Baselines | Standard algorithms used for performance comparison. | Independent runs of single-task optimizers like Genetic Algorithm (GA) and Particle Swarm Optimization (PSO) [7]. |

| Statistical Analysis | Methods to ensure the statistical significance of the results. | Multi-criteria decision-making methods like TOPSIS for overall ranking [7]. |

Sample Protocol: Evaluating MFEA on TSPTW and TRPTW

- Problem Instantiation: Select specific benchmark instances for the Traveling Salesman Problem with Time Windows (TSPTW) and the Traveling Repairman Problem with Time Windows (TRPTW) [6].

- Algorithm Configuration: Initialize the MFEA parameters, including population size, number of generations, crossover and mutation rates, and the random mating probability (

rmp). - Execution: Run the MFEA simultaneously on both tasks. In parallel, run traditional single-task optimizers (e.g., GA) independently on each problem.

- Data Collection: Record the best factorial cost found for each task at every generation. For single-task optimizers, record the best cost over evaluations.

- Analysis: Compare the convergence speed and final solution quality of MFEA against the single-task solvers. Analyze the genetic transferability by examining how individuals with different skill factors influence each other's search process [6].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key "Research Reagents" in Multifactorial Evolutionary Algorithm Research

| Item / Concept | Function / Role in the MFEA "Experiment" |

|---|---|

| Unified Search Space (Y) | A normalized representation (e.g., [0,1]^D) into which all task solutions are encoded. Allows for a common search domain and application of standard genetic operators [4]. |

| Random Mating Probability (rmp) | A key control parameter that regulates the rate of inter-task crossover, thereby balancing exploration and the risk of negative knowledge transfer [4]. |

| Scalar Fitness (φ) | Acts as a universal selector, enabling the comparison and selection of individuals from different tasks based on their relative performance, thus guiding the overall evolution [4]. |

| Benchmark Suites | Standardized sets of optimization problems (e.g., CEC competitions) used to rigorously test and compare the performance of different MFEA variants under controlled conditions [8]. |

| Skill Factor (τ) | A labeling mechanism that reduces computational cost by limiting expensive fitness evaluations and also identifies the specialist role of each individual in the population [4]. |

Advanced MFEA Variants and Future Directions

The basic MFEA framework has spawned numerous advanced variants designed to enhance its performance and robustness. Recent research has introduced methods for online transfer parameter estimation (MFEA-II) to automatically adapt the degree of knowledge transfer between tasks, moving beyond the fixed rmp and mitigating negative transfer [7]. Other variants, like the Mutagenic MFEA (M-MFEA), incorporate biological principles such as trait segregation to guide genetic exchanges without manually predefined parameters [9]. Furthermore, the integration of MFEA with local search techniques, such as the Randomized Variable Neighborhood Search (RVNS), has been shown to better balance exploration and exploitation, leading to superior results on complex combinatorial problems like the TSPTW and TRPTW [6].

Diagram 2: Evolution of MFEA Variants

These advancements frame the core concepts of factorial cost, rank, and skill factor not as static definitions, but as the foundation of a dynamic and rapidly evolving research paradigm aimed at solving complex, real-world optimization problems in areas such as drug development, industrial planning, and system reliability more efficiently [9] [7] [10]. The ongoing research focuses on making knowledge transfer in evolutionary multitasking more adaptive, explainable, and effective.

Multifactorial Evolutionary Algorithm (MFEA) represents a pioneering computational paradigm within the broader field of evolutionary multitasking optimization (EMTO). This innovative framework addresses multiple optimization tasks simultaneously within a single unified search process, mimicking human cognitive ability to leverage knowledge across related problems. Unlike traditional evolutionary algorithms that solve tasks in isolation, MFEA capitalizes on implicit genetic transfer and cross-task synergies to accelerate convergence and improve solution quality across all tasks. The fundamental premise is that related optimization tasks often contain complementary information, and transferring this knowledge can enhance overall search efficiency [11] [12]. This capability makes MFEA particularly valuable for complex real-world domains like pharmaceutical research, where related drug development problems can benefit from shared insights.

The MFEA framework introduces two foundational mechanisms: a unified search space that enables cross-task operations, and cultural transmission principles that facilitate knowledge transfer. These mechanisms work synergistically to overcome the limitations of traditional evolutionary approaches, which treat each optimization task independently, often resulting in computational inefficiency and missed opportunities for leveraging inter-task correlations [1]. By explicitly designing for multitasking environments, MFEA achieves what conventional methods cannot – the simultaneous improvement on multiple fronts through intelligent genetic exchange, establishing itself as a transformative approach in computational optimization with significant implications for data-driven scientific fields.

Core Architecture of MFEA

Foundational Principles and Definitions

The MFEA architecture builds upon several formally defined concepts that enable its multitasking capability. In a multitasking environment with K distinct optimization tasks, each task Tj possesses its own search space Xj and objective function fj. The algorithm maintains a single population of individuals, each with specific properties defined relative to all tasks [1]:

- Factorial Cost (Ψji): The objective value of individual pi on task Tj, representing its raw performance on that specific task.

- Factorial Rank (rji): The performance ranking of individual pi within the population when sorted ascendingly by factorial cost for task Tj.

- Scalar Fitness (φi): A unified fitness measure defined as φi = 1/min{rji} across all tasks j ∈ {1,…,K}, enabling cross-task comparison.

- Skill Factor (τi): The task index where individual pi achieves its best factorial rank, identifying its specialized domain expertise.

These properties collectively enable MFEA to maintain a unified population while preserving task-specific specialization. The scalar fitness provides a common ground for selection pressure, while skill factors ensure that individuals contribute most effectively to tasks where they demonstrate superior performance [1].

The Unified Search Space

The unified search space represents a fundamental innovation in MFEA, serving as a common representation framework that encodes solutions for all tasks. This unified representation enables genetic operations across individuals specialized for different tasks, facilitating the implicit knowledge transfer that drives MFEA's efficiency gains. Through careful design of encoding and decoding mechanisms, disparate search spaces for different tasks are mapped to a shared representation space where crossover and mutation operations can occur without losing task-specific semantics [13].

This unified approach differs significantly from traditional multi-population methods, as it maintains a single population where each individual carries a skill factor denoting its specialized task. The unified space enables assortative mating – the controlled exchange of genetic material between individuals from different tasks – which serves as the primary mechanism for knowledge transfer. The efficiency of this approach stems from its ability to leverage genetic complementarity across tasks while maintaining population diversity, often resulting in convergence rates 3-4 orders of magnitude faster than traditional iterative methods on related tasks [14].

Cultural Transmission Mechanisms

Theoretical Foundation

Cultural transmission in MFEA implements knowledge transfer through biologically-inspired mechanisms operating on the unified population. Drawing inspiration from modern cultural evolution theory, these mechanisms enable the flow of valuable genetic information between tasks, mimicking how humans acquire and transfer knowledge across related domains [12]. The cultural transmission framework incorporates two primary evolutionary engines:

Vertical Cultural Transmission: This mechanism involves the inheritance of genetic material from parent to offspring during reproduction, preserving specialized knowledge within task lineages. It ensures that offspring initially specialize in the same tasks as their parents, maintaining expertise continuity while allowing for potential skill factor changes through subsequent operations.

Horizontal Cultural Transmission: This complementary mechanism enables knowledge acquisition across different task specialties through cross-task mating and information exchange. It introduces genetic diversity and allows promising solution features discovered in one task to propagate to other tasks, potentially accelerating convergence across the entire multitasking environment [12].

These cultural transmission principles address a fundamental challenge in evolutionary computation: balancing exploitation of known good solutions with exploration of new regions in the search space. By strategically controlling the flow of genetic information, MFEA navigates this trade-off more effectively than single-task approaches.

Assortative Mating and Knowledge Transfer

Assortative mating implements cultural transmission through controlled reproduction within the unified population. The process is governed by a key parameter – the random mating probability (rmp) – which determines the likelihood of cross-task reproduction versus within-task mating [1]. The assortative mating process follows these steps:

- Parent Selection: Two parents are selected from the unified population, each with their respective skill factors.

- Mating Decision: If both parents share the same skill factor, mating proceeds unconditionally. If they have different skill factors, a random number is generated; if it exceeds the rmp threshold, mating is prohibited.

- Offspring Generation: Successful mating produces offspring through genetic operators, with offspring potentially inheriting genetic material from parents specialized in different tasks.

- Skill Factor Assignment: Offspring are assigned skill factors, initially often inheriting from parents but potentially developing new specializations through evaluation.

This controlled mating strategy enables MFEA to dynamically regulate knowledge transfer intensity between tasks. High rmp values encourage extensive cross-task genetic exchange, beneficial for highly similar tasks, while lower rmp values restrict transfer, reducing negative interference between dissimilar tasks [1]. This parameter can be fixed based on domain knowledge or adaptively tuned during evolution based on measured task relatedness.

Advanced Knowledge Transfer Strategies

Addressing Negative Transfer

A significant challenge in MFEA is negative knowledge transfer, which occurs when genetic exchange between dissimilar tasks degrades performance rather than enhancing it. This phenomenon is particularly problematic when tasks have low inter-task similarity, as transferred solutions may disrupt rather than accelerate convergence [11] [15]. Recent research has developed sophisticated strategies to mitigate this issue:

Adaptive Gaussian-Mixture-Model-Based Knowledge Transfer (MFDE-AMKT): This approach uses Gaussian distributions to model subpopulation distributions for each task, creating a Gaussian Mixture Model (GMM) for comprehensive knowledge transfer. The mixture weights and mean vectors are adaptively adjusted based on evolutionary trends, with similarity measured through probability density overlap on each dimension for fine-grained assessment [11].

Evolutionary Trend Alignment in Subdomains (SETA-MFEA): This method decomposes tasks into subdomains with simpler fitness landscapes, then establishes precise inter-subdomain mappings by determining and aligning evolutionary trends of corresponding subpopulations. This enables more accurate knowledge transfer than treating tasks as indivisible domains [15].

Decision Tree-Based Adaptive Transfer (EMT-ADT): This innovative approach defines evaluation indicators to quantify individual transfer ability, then constructs decision trees to predict positive-transfer individuals, selectively enabling knowledge transfer from promising candidates only [1].

Residual Learning for Enhanced Crossover

Recent advances have introduced deep learning techniques to enhance MFEA's crossover operations. The MFEA-RL (Residual Learning) method employs a Very Deep Super-Resolution (VDSR) model to transform low-dimensional individuals into high-dimensional residual representations, enabling better modeling of complex variable interactions [16]. This approach addresses limitations of traditional crossover operators in handling high-dimensional, nonlinear task relationships.

The MFEA-RL framework incorporates three key innovations:

- High-Dimensional Representation: A VDSR model generates D×D high-dimensional representations from 1×D individuals, explicitly modeling complex inter-variable relationships.

- ResNet-Based Skill Factor Assignment: Dynamic skill factor assignment using residual networks that adapt to task relationships.

- Random Mapping Crossover: Extraction and mapping of single rows from high-dimensional data back to original space, replacing traditional simulated binary crossover [16].

This neural-enhanced crossover operator demonstrates MFEA's continuing evolution, incorporating modern deep learning to overcome limitations in traditional evolutionary operators, particularly for high-dimensional optimization problems common in pharmaceutical applications.

Quantitative Performance Analysis

Benchmarking Results

The performance of MFEA and its variants has been extensively evaluated on standardized benchmark problems. The following table summarizes key quantitative results from comparative studies:

Table 1: Performance Comparison of MFEA Variants on Standard Benchmark Problems

| Algorithm | Key Innovation | Convergence Speed | Solution Quality | Negative Transfer Resistance |

|---|---|---|---|---|

| MFEA [1] | Basic cultural transmission | Baseline | Baseline | Low |

| MFEA-II [15] | Online similarity learning | 1.5-2× faster than MFEA | Moderate improvement (5-15%) | Moderate |

| MFDE-AMKT [11] | Adaptive Gaussian mixture model | 2-3× faster than MFEA | Significant improvement (15-30%) | High |

| SETA-MFEA [15] | Subdomain evolutionary trend alignment | 2.5-3.5× faster than MFEA | Significant improvement (20-35%) | High |

| CT-EMT-MOES [12] | Cultural transmission theory | 2-2.8× faster than MFEA | Moderate improvement (10-20%) | Moderate |

| MFEA-RL [16] | Residual learning crossover | 3-4× faster than MFEA | Best improvement (25-40%) | High |

These results demonstrate consistent improvement across MFEA variants, with newer algorithms achieving significantly better performance through enhanced knowledge transfer mechanisms. The table shows a clear trend toward both faster convergence and better solution quality, with modern variants achieving speedups of 3-4 orders of magnitude compared to traditional iterative methods on appropriate problem classes [14].

Pharmaceutical Application Performance

In pharmaceutical applications, MFEA has demonstrated particular value for complex optimization problems. The following table illustrates performance metrics for specific drug development applications:

Table 2: MFEA Performance in Pharmaceutical Applications

| Application Domain | Algorithm | Key Metric Improvement | Computational Efficiency |

|---|---|---|---|

| Inter-domain path computation [13] | NDE-MFEA | 25-40% better solution quality | 30-50% faster convergence |

| Drug design optimization [17] | Not specified | 15-25% improved binding affinity | 2-3× reduction in screening time |

| Protein stability prediction [17] | Not specified | 20-30% accuracy improvement | Enabled high-throughput in silico screening |

These results highlight MFEA's practical value in computationally intensive pharmaceutical domains, where its ability to simultaneously optimize multiple related objectives can significantly accelerate research timelines and improve outcomes.

Experimental Protocols and Methodologies

Standardized Evaluation Framework

Rigorous evaluation of MFEA implementations follows established experimental protocols using benchmark problems specifically designed for multitasking optimization. The Community Employment Center 2017 Multitasking Single Objective (CEC2017-MTSO) and IEEE World Congress on Computational Intelligence 2020 Multitasking Single Objective (WCCI2020-MTSO) benchmark suites provide standardized testing environments [16]. These benchmarks incorporate tasks with varying degrees of inter-task similarity, landscape modality, and variable interactions to comprehensively assess algorithm performance.

Standard experimental procedure includes:

- Population Initialization: A unified population is initialized with random individuals encoded in the unified search space.

- Skill Factor Assignment: Each individual is evaluated on all tasks and assigned a skill factor based on best performance.

- Evolutionary Cycles: The population undergoes repeated generations of selection, assortative mating, cultural transmission, and evaluation.

- Performance Tracking: Algorithm performance is measured using metrics like convergence speed, solution quality, and computational efficiency across all tasks.

Experiments typically run for a fixed number of generations or until convergence criteria are met, with multiple independent runs to ensure statistical significance. Performance is compared against baseline algorithms including single-task evolutionary algorithms, basic MFEA, and state-of-the-art multitasking approaches [11] [15].

Workflow Visualization

The following diagram illustrates the standard MFEA experimental workflow:

Essential Research Components

Successful implementation of MFEA in pharmaceutical research requires specific computational tools and methodological components. The following table details key "research reagents" – essential algorithms, models, and frameworks – that constitute the MFEA toolkit:

Table 3: Essential Research Components for MFEA Implementation

| Component | Function | Example Implementations |

|---|---|---|

| Unified Encoding Scheme | Represents solutions for all tasks in a common space | Node-depth encoding [13], Random keys, Direct representation |

| Similarity Measurement | Quantifies inter-task relatedness to guide transfer | Wasserstein distance [11], Probability density overlap [11], Evolutionary trend consistency [15] |

| Adaptive Transfer Controller | Dynamically regulates knowledge transfer intensity | Decision trees [1], Gaussian mixture models [11], Online similarity learning [15] |

| Domain Adaptation | Enhances transfer between dissimilar tasks | Linearized domain adaptation [15], Affine transformation [16], Subspace alignment [15] |

| Crossover Operators | Facilitates genetic exchange between tasks | Residual learning crossover [16], Simulated binary crossover, Partially mapped crossover |

Implementation Framework

The following diagram illustrates the architectural relationships between these components in a modern MFEA implementation:

Pharmaceutical Applications and Case Studies

MFEA has demonstrated significant potential in pharmaceutical research and development, where multiple related optimization problems frequently occur. In drug discovery, MFEA can simultaneously optimize multiple molecular properties such as binding affinity, solubility, and synthetic accessibility, overcoming the limitations of sequential optimization approaches [17]. For protein therapeutic development, researchers have used stability prediction models enhanced by deep learning to guide optimization, with MFEA enabling simultaneous consideration of multiple stability metrics and functional constraints [17].

In pharmacokinetic modeling, MFEA has been applied to optimize multiple model parameters simultaneously using experimental data, significantly reducing model calibration time while improving predictive accuracy [17]. The algorithm's ability to transfer knowledge between related compound classes allows it to leverage structural similarities, making particularly efficient use of limited experimental data – a common challenge in early-stage drug development.

Another promising application involves drug delivery system optimization, where MFEA can concurrently address multiple design objectives including release profile, stability, and manufacturing efficiency [17]. For complex delivery systems like nanoparticles or antibody-drug conjugates, this simultaneous optimization approach can identify design solutions that would likely be missed by traditional sequential methods, potentially accelerating development timelines while improving therapeutic outcomes.

The MFEA framework represents a significant advancement in evolutionary computation, with particular relevance for data-intensive fields like pharmaceutical research. Future development directions include increased integration with deep learning architectures, automated hyperparameter optimization, and enhanced negative transfer prevention through more sophisticated similarity metrics. As pharmaceutical problems grow in complexity, MFEA's ability to leverage relatedness between tasks will become increasingly valuable for accelerating discovery and optimization processes.

The continued evolution of MFEA will likely focus on explainable knowledge transfer – developing methods to interpret and justify cross-task genetic exchanges – which is particularly important in regulated pharmaceutical applications. Additionally, federated multitasking approaches that enable knowledge transfer across distributed datasets without sharing proprietary information could address significant industry concerns while preserving MFEA's efficiency benefits.

In conclusion, MFEA's unified search space and cultural transmission mechanisms provide a powerful framework for addressing complex multitasking optimization problems. Its demonstrated success across diverse domains, combined with ongoing methodological innovations, positions MFEA as a transformative computational approach with substantial potential to accelerate pharmaceutical research and development through more efficient knowledge leveraging across related optimization challenges.

The field of optimization is witnessing a significant paradigm shift, moving from algorithms designed for single, isolated problems towards those capable of addressing multiple tasks simultaneously. This transition mirrors a broader trend in Artificial Intelligence (AI), where the ability to handle several coexisting data flows and modeling tasks has become paramount [18]. Evolutionary Multitask Optimization (EMTO) has emerged as a prominent research area within this landscape, focusing on the development of solvers that can leverage knowledge acquired from one problem to enhance the solution of other, related or unrelated, problems [18]. This paradigm is part of the broader Transfer Optimization field, which also includes sequential transfer and multiform multitasking, but multitasking is currently the most prominent due to the central role played by Evolutionary Computation in its development [18]. The conceptual foundation of this field is the exploitation of synergies between concurrent tasks, aiming to achieve benefits such as accelerated convergence, more robust search, and a reduced need for computational resources.

Theoretical Foundations of Evolutionary Multitasking

Fundamental Concepts and Definitions

At its core, a multitasking environment involves optimizing K distinct tasks {T_k}_{k=1}^K, each defined over its own search space Ω_k [18]. The goal is not merely to find a good solution for each task in isolation, but to find a set of solutions {x_k}_{k=1}^K that jointly optimize all tasks, potentially by exploiting the commonalities between them. In the Evolutionary Multitasking paradigm, this is typically achieved through a unified population of individuals that evolves to address all tasks simultaneously. Knowledge transfer is the central mechanism that enables this synergistic search. It involves the exchange of genetic information or learned patterns between individuals solving different tasks. The effectiveness of a multitasking algorithm hinges on its ability to promote positive transfer—where knowledge from one task aids another—while minimizing negative transfer (or inter-task confusion), where the exchange of information hampers convergence [18].

The Multifactorial Evolutionary Algorithm (MFEA), often considered a canonical algorithm in this domain, embodies these principles by maintaining a single population where each individual is associated with a specific task but can potentially mate with individuals from other tasks based on a random mating probability [9]. This creates opportunities for genetic material from well-adapted individuals in one task to influence the search process in another.

The Biological Inspiration: From Single- to Multi-Task Phenotypes

The intellectual roots of EMTO are deeply embedded in biological principles. Traditional Evolutionary Algorithms (EAs) draw inspiration from Darwinian evolution, mimicking processes such as selection, crossover, and mutation to evolve a population of candidate solutions towards an optimum for a single task. EMTO extends this biological metaphor to encompass more complex phenomena observed in nature.

One key inspiration is the concept of multifactorial inheritance, where an individual's overall traits (phenotype) are determined by multiple genetic and environmental factors [9]. In EMTO, this translates to a single individual's genetic material (chromosome) possessing the latent potential to express solutions to multiple tasks. The algorithm's role is to unravel this potential effectively.

A more recent biological inspiration is trait segregation, a well-recognized phenomenon in biological evolution where genetic information is naturally segregated and expressed as dominant or recessive traits [9]. This principle has been leveraged to guide evolutionary exchanges in populations without relying on manually predefined parameters. For instance, the Mutagenic Multifactorial Evolutionary Algorithm based on Trait Segregation (M-MFEA) defines whether an individual's traits are dominant or recessive within a unified search space [9]. This allows for a more natural and spontaneous guidance of evolution, as individuals interact and transfer genetic information based on their expressed traits, leading to enhanced information transfer within and across tasks.

Table 1: Core Concepts in Evolutionary Multitask Optimization

| Concept | Description | Biological Analogy |

|---|---|---|

| Multitask Environment | A scenario comprising multiple optimization tasks to be solved concurrently. | Multiple selective pressures in a single ecosystem. |

| Unified Search Space | A generalized space that encapsulates the search spaces of all individual tasks. | A common gene pool for a population facing multiple environmental challenges. |

| Knowledge Transfer | The exchange of information (e.g., genetic material, learned models) between tasks. | Horizontal gene transfer or cultural learning between species. |

| Factorial Cost | A vector representing an individual's performance across all tasks. | An organism's fitness across different environmental niches. |

| Skill Factor | The task on which an individual performs best. | An organism's primary specialization or adaptation. |

| Random Mating Probability | A parameter controlling the likelihood of cross-task reproduction. | Biological mechanisms that influence reproductive isolation. |

| Trait Segregation | The natural emergence of dominant and recessive traits guiding genetic exchange. | Mendelian inheritance of dominant and recessive alleles. |

Key Algorithmic Frameworks and Methodologies

The Multifactorial Evolutionary Algorithm (MFEA)

The MFEA establishes a foundational framework for Evolutionary Multitasking. Its operational workflow can be visualized as a continuous cycle of evaluation, selection, and reproduction that facilitates cross-task knowledge exchange, as shown in the diagram below.

The key steps of the MFEA are:

- Initialization: A single, unified population of individuals is initialized. Each individual possesses a chromosome representation that can be decoded into a solution for any of the K tasks.

- Factorial Cost Evaluation: Each individual is evaluated for every task in the environment. The result is a factorial cost vector, representing the individual's performance across all tasks. The best performing task for an individual is designated as its skill factor.

- Selection and Mating: A mating pool is created by selecting parents based on their performance. A critical parameter, the Random Mating Probability (RMP), determines whether two selected parents can produce offspring. If a random number exceeds the RMP, mating occurs only if the parents share the same skill factor (within-task crossover). Otherwise, cross-task crossover is permitted, facilitating direct knowledge transfer.

- Genetic Operations: Crossover and mutation are applied to generate offspring. Vertical cultural transmission ensures that offspring inherit the skill factor of a parent if both parents share the same skill factor; otherwise, it is assigned randomly.

- Iteration: Steps 2-4 are repeated until a termination condition is met, yielding a set of highly adapted solutions for each task.

Advanced Paradigms: M-MFEA and Trait Segregation

Recent research has focused on overcoming the limitations of manually set parameters like RMP. The Mutagenic Multifactorial Evolutionary Algorithm based on Trait Segregation (M-MFEA) is a notable advancement inspired directly by biological trait segregation [9]. This algorithm introduces several key innovations:

- Trait Expression Mechanism: Individuals are characterized by having either dominant or recessive traits within the unified multitasking search space. This trait expression naturally guides the evolutionary process without requiring a predefined RMP.

- Mutagenic Genetic Information Interaction: A specialized strategy is designed to enhance information transfer both within and across tasks. Individuals spontaneously guide evolution according to their trait expressions, promoting more effective and organic knowledge sharing.

- Adaptive Mutagenic Gene Inheritance: This mechanism drives continuous task convergence by dynamically controlling how genetic information is passed on to offspring, based on the expressed traits of the parents.

Table 2: Comparison of Key Multitasking Evolutionary Algorithms

| Algorithm | Core Mechanism | Key Parameters | Advantages | Limitations/Challenges |

|---|---|---|---|---|

| MFEA [18] | Unified population, implicit genetic transfer | Random Mating Probability (RMP) | Foundational framework, relatively simple to implement. | Performance sensitive to RMP setting; risk of negative transfer. |

| MFEA-II [9] | Online transfer parameter estimation | Online learned parameters | Reduces reliance on pre-set parameters; more adaptive. | Increased computational overhead for parameter estimation. |

| M-MFEA [9] | Trait segregation and mutagenic inheritance | Trait expression (dominant/recessive) | Eliminates need for manual RMP; natural, biologically-inspired guidance. | Complexity in defining and managing trait expressions. |

Experimental Protocols and Validation

Benchmarking and Performance Evaluation

Validating the performance of multitasking algorithms requires rigorous experimentation on standardized benchmarks and real-world problems. Common benchmark suites often comprise multiple optimization functions (e.g., sphere, Rastrigin, Rosenbrock) grouped into different multitasking scenarios. These scenarios are carefully designed to have known inter-task correlations, allowing researchers to assess an algorithm's ability to exploit synergies [18].

The evaluation methodology must fairly compare the performance of multitasking algorithms against two baselines: (1) solving each task in isolation using a competitive single-task optimization algorithm, and (2) other state-of-the-art multitasking algorithms. Key performance metrics include:

- Convergence Speed: The number of generations or function evaluations required to reach a satisfactory solution for all tasks.

- Solution Quality: The average and best fitness values achieved for each task upon convergence.

- Statistical Significance: Results are typically reported over multiple independent runs, and statistical tests (e.g., Wilcoxon signed-rank test) are used to confirm the significance of performance differences.

A critical, yet often overlooked, aspect is the computational effort required for knowledge transfer. A comprehensive evaluation should account not only for fitness improvements but also for the overhead introduced by the transfer mechanisms [18].

Detailed Protocol: Validating M-MFEA on Benchmarks and an Industrial Problem

The validation protocol for the M-MFEA algorithm provides a concrete example of a modern experimental methodology [9]. The following diagram illustrates the structured workflow from problem definition to result analysis.

Protocol Steps:

Problem Definition:

- Benchmark Suites: Select a diverse set of benchmark problems from established suites. These should include tasks with varying degrees of relatedness to test the algorithm's robustness in different transfer scenarios.

- Industrial Problem: Employ a real-world problem to demonstrate practical applicability. In the case of M-MFEA, this was an industrial planar kinematic arm control problem, which presents a complex, high-dimensional optimization challenge relevant to intelligent manufacturing [9].

Algorithm Configuration:

- Configure the M-MFEA by defining its core components: the trait segregation mechanism, the mutagenic genetic information interaction strategy, and the adaptive gene inheritance mechanism.

- Set population size, maximum number of generations, and other standard evolutionary algorithm parameters. Note that M-MFEA does not require manual setting of RMP.

Execution and Data Collection:

- Execute the M-MFEA and all competitor algorithms (e.g., MFEA, MFEA-II) for a fixed number of independent runs (e.g., 30 runs) to account for stochasticity.

- In each run, record the best fitness for each task at every generation to create convergence profiles.

Performance Analysis:

- Convergence Accuracy: Analyze the final solution quality achieved for all tasks.

- Convergence Speed: Compare the number of function evaluations required to reach a pre-defined accuracy threshold.

- Statistical Testing: Perform non-parametric statistical tests (e.g., Wilcoxon signed-rank test) on the results to confirm that any performance differences are statistically significant.

- Knowledge Transfer Quality: Investigate the algorithm's behavior to quantify the occurrence of positive and negative transfer, validating the effectiveness of the trait segregation mechanism.

The Scientist's Toolkit: Research Reagent Solutions

For researchers embarking on experimental work in Evolutionary Multitasking, a suite of "research reagents" is essential. The following table details key components and their functions in a typical experimental setup.

Table 3: Essential Research Reagents for Evolutionary Multitasking Experiments

| Research Reagent / Tool | Function / Purpose | Exemplars / Notes |

|---|---|---|

| Benchmark Suites | Standardized set of problems for fair algorithm comparison and validation. | Compositions of classic functions (e.g., Sphere, Rastrigin, Ackley) with known inter-task relationships. |

| Real-World Test Problems | Validate algorithmic performance and practicality in complex, applied scenarios. | Industrial planar kinematic arm control [9], aluminum electrolysis process optimization [9], wind turbine blade design [9]. |

| Performance Metrics | Quantify algorithm effectiveness, efficiency, and robustness. | Average convergence curve, best objective value, hypervolume indicator, performance gain over single-task solvers. |

| Statistical Testing Framework | Provide rigorous, statistically sound validation of experimental results. | Wilcoxon signed-rank test, Friedman test with post-hoc analysis for multiple algorithm comparison. |

| Software Libraries & Platforms | Provide implementations of core algorithms and utilities for rapid prototyping and testing. | Platforms like MATLAB, Python (with libraries like DEAP, PyGMO), and custom software. |

Applications in Drug Development and Complex Domains

The principles of evolutionary multitasking show significant promise for addressing complex challenges in drug development and related fields. While direct applications in pharmaceutical research are still emerging, the underlying methodologies are perfectly suited to problems involving multiple, interrelated optimization tasks. Potential application areas include:

- Multi-Objective Molecular Design: Simultaneously optimizing a drug candidate for multiple properties, such as binding affinity, solubility, and synthetic accessibility, can be naturally framed as a multitask optimization problem. Knowledge about chemical structures that favor one property could inform the search for structures that satisfy another.

- Polypharmacology and Target Interaction Prediction: Optimizing a single compound to interact with multiple biological targets (polypharmacology) while minimizing off-target effects is a quintessential multitask challenge. EMTO could help explore the complex chemical space to find molecules with a desired multi-target profile.

- Genotype-Environment Interaction Analysis: Understanding how genetic factors interact with environmental variables to influence disease risk or drug response involves analyzing multiple, correlated tasks [9]. Multitasking algorithms could help unravel these complex interactions more efficiently than single-task approaches.

- Process Optimization in Pharmaceutical Manufacturing: As seen in other industrial sectors like aluminum electrolysis [9], EMTO can optimize multiple, interdependent operational parameters in a manufacturing process to simultaneously maximize yield, purity, and energy efficiency.

The ability of algorithms like M-MFEA to perform adaptive knowledge transfer is particularly valuable in these domains, where the relationships between tasks (e.g., between different ADMET properties) may not be known a priori and must be learned during the optimization process [9].

Future Directions and Open Challenges

Despite its promising advances, Evolutionary Multitask Optimization faces several fundamental questions and open challenges that require community effort to resolve [18]. Key future research directions include:

- Plausibility and Practical Applicability: There is an urgent need to demonstrate that the simultaneous optimization of several related problems occurs naturally in real-world applications and that EMTO provides a tangible profit over solving problems in isolation with powerful single-task algorithms [18].

- Algorithmic Novelty and Terminology: The field must strive for genuine innovation, ensuring that new algorithms are not merely minor variations or renamings of existing methods. Establishing clear and consistent terminology is crucial [18].

- Fair and Comprehensive Evaluation: Research studies must adopt more rigorous evaluation methodologies that go beyond simple fitness comparisons. This includes reporting computational effort, clearly demonstrating the benefit over single-task baselines, and using benchmarks that reflect realistic scenarios [18].

- Scalability and Many-Task Optimization: Extending these paradigms to handle a large number of tasks (so-called "many-task" optimization) presents significant scalability challenges. Efficient knowledge extraction and transfer in such high-task environments is an active area of research [9].

- Hybrid and Surrogate-Assisted Models: Integrating multitasking algorithms with surrogate models (e.g., for expensive finite-element simulations in photonics [19] or molecular dynamics) and other AI paradigms like deep learning can greatly enhance their applicability to computationally demanding real-world problems.

Multifactorial Evolutionary Algorithms (MFEAs) represent a paradigm shift in evolutionary computation, moving from single-task optimization to a concurrent multitasking environment. This framework, known as Evolutionary Multitasking Optimization (EMTO), allows multiple optimization tasks to be solved simultaneously by leveraging potential synergies and complementarities between them [20] [21]. The core principle underpinning this approach is that useful knowledge gained while solving one task may contain valuable information that can accelerate the search process or improve the solution quality of other, related tasks [22].

The success of EMTO hinges critically on the effective management of knowledge transfer between tasks. Within this context, three fundamental concepts emerge as cornerstones of the field: positive transfer, negative transfer, and random mating probability (RMP). These interconnected mechanisms govern how information flows between tasks and ultimately determine whether multitasking provides a net benefit over traditional single-task optimization approaches. This technical guide provides an in-depth examination of these critical terminologies, their interrelationships, and their practical implications for researchers implementing multifactorial evolutionary algorithms.

Foundational Concepts in Multifactorial Evolution

Before delving into the core terminology, it is essential to understand the basic framework of multifactorial optimization. In a typical MTO scenario, K distinct optimization tasks are solved simultaneously [20] [23]. Each task, Tᵢ, possesses its own search space Xᵢ and objective function fᵢ: Xᵢ → ℝ. The goal of MTO is to find a set of optimal solutions {x₁, x₂, ..., x𝐾} such that each xᵢ minimizes its corresponding fᵢ [20].

The Multifactorial Evolutionary Algorithm (MFEA), introduced by Gupta et al., was the pioneering algorithm to implement this paradigm through implicit genetic transfer [20]. In MFEA, individuals in a unified population are assigned a skill factor (τᵢ), which indicates the task on which the individual performs best [1] [21]. Knowledge transfer occurs primarily through crossover operations between parents with different skill factors, governed by a key control parameter called random mating probability [20].

Table 1: Key Definitions in Multifactorial Evolutionary Algorithms

| Term | Definition | Significance |

|---|---|---|

| Skill Factor | The task on which an individual performs best [1] [21] | Determines an individual's specialized task and influences mating selection |

| Factorial Rank | The performance index of an individual on a specific task when the population is sorted by factorial cost [1] [21] | Used to compute scalar fitness and skill factors |

| Scalar Fitness | A unified measure of an individual's overall performance across all tasks, calculated as φᵢ = 1/min{rᵢⱼ} [1] [21] | Enables cross-task comparison and selection |

| Assortative Mating | A mating strategy where individuals with similar skill factors are more likely to mate, unless the random mating probability condition is met [20] | Balances knowledge transfer with task specialization |

Critical Terminology Deep Dive

Positive Transfer

Positive transfer occurs when knowledge exchange between optimization tasks leads to improved performance in one or more tasks—either through accelerated convergence, better solution quality, or enhanced population diversity [20] [22]. This beneficial effect emerges when tasks share complementary features or similar fitness landscapes, allowing progress in one task to inform and guide the search in another.

The mechanism can be visualized as a scenario where the global optimum of one task (G1) shares decision space characteristics with the global optimum of another task (G2) [20]. When genetic material from individuals near G1 is transferred to the population of task T2, it pulls the search toward regions containing G2, thereby accelerating discovery of the optimal solution.

Table 2: Methodologies for Enhancing Positive Transfer

| Methodology | Underlying Principle | Implementation Example |

|---|---|---|

| Domain Adaptation | Aligns search spaces of different tasks to facilitate more effective knowledge transfer [20] [1] | MDS-based Linear Domain Adaptation (LDA) creates low-dimensional subspaces for each task and learns mapping relationships between them [20] |

| Elite Knowledge Transfer | Leverages high-quality solutions to guide the evolution of other tasks [24] | Gaussian distribution models constructed from current populations and elite individuals generate offspring for knowledge transfer [24] |

| Similarity-Based Transfer | Dynamically identifies task relatedness to adjust transfer intensity [22] | Source Task Transfer (STT) strategy matches static features of historical tasks with dynamic evolution trends of target tasks [22] |

| Multi-Knowledge Fusion | Combines multiple knowledge types and transfer mechanisms [1] | Hybrid knowledge transfer strategies employ both individual-level and population-level learning based on task relatedness [1] |

Negative Transfer

Negative transfer represents the detrimental counterpart to positive transfer—it occurs when knowledge exchange between tasks impedes optimization performance, typically by misleading the search process or promoting convergence to suboptimal solutions [20] [22] [1]. This phenomenon poses a significant challenge in EMTO, as indiscriminate knowledge sharing can degrade performance below what would be achieved through independent task optimization.

The risk of negative transfer is particularly pronounced under two conditions: (1) when attempting knowledge transfer between high-dimensional tasks with differing dimensionalities, where learning robust mappings from limited population data becomes challenging [20]; and (2) when transferring knowledge between dissimilar or unrelated tasks, which can easily lead to premature convergence [20]. A classic example of this mechanism occurs when the global optimum of Task 1 (G1) is located in a decision space region corresponding to a local optimum for Task 2 (L2), and vice versa [20]. Transferring genetic material from high-performing individuals of Task 1 (near G1) to Task 2 then pulls the search for Task 2 away from its true global optimum (G2) and traps it in the basin of L2 [20].

Figure 1: Negative Transfer: Causes, Mechanisms, and Consequences

Random Mating Probability (RMP)

Random mating probability is a crucial control parameter in MFEA that directly governs the frequency of cross-task mating and knowledge transfer [20] [1]. The RMP determines whether two randomly selected parent individuals from the population with different skill factors will undergo crossover, thereby facilitating knowledge exchange between their respective tasks.

In the basic MFEA, RMP is typically implemented as a single scalar value, often set between 0.1 and 0.5 based on empirical studies [20]. When a random number generated during mating selection is less than the RMP value, crossover occurs regardless of the parents' skill factors; otherwise, assortative mating is favored (preferring parents with the same skill factor) [20]. This simple yet effective mechanism serves as the primary gateway for knowledge transfer in multifactorial evolution.

Advanced RMP Strategies and Interrelationships

Evolution Beyond Fixed RMP

While the basic MFEA employs a fixed RMP value, recent research has demonstrated that adaptive RMP strategies can significantly enhance algorithmic performance by dynamically adjusting transfer intensity based on online assessments of task relatedness and transfer effectiveness [23] [1].

One prominent approach replaces the scalar RMP with an RMP matrix that captures non-uniform inter-task synergies across different task pairs [1]. In MFEA-II, this matrix is continuously learned and adapted during the search process, allowing the algorithm to automatically identify which task pairs benefit from knowledge sharing and which should be isolated to prevent negative transfer [1].

Alternative adaptive strategies adjust RMP based on the success rate of cross-task transfers. For instance, some algorithms compare the success rate of individuals generated through knowledge transfer versus those generated within the same task, using this information to adaptively adjust the RMP value to promote positive transfer [23]. Other approaches employ more sophisticated mechanisms, such as ResNet-based dynamic skill factor assignment, which integrates high-dimensional residual information and task relationship learning to optimize individual adaptability across tasks [16].

Table 3: Comparative Analysis of RMP Strategies

| RMP Strategy | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Fixed RMP | Uses a single, predetermined value for all task pairs [20] | Simple implementation, computationally efficient | Cannot adapt to varying task relatedness, high risk of negative transfer |

| Matrix RMP | Employs a matrix to capture different transfer intensities between each task pair [1] | Captures non-uniform inter-task synergies, reduces negative transfer | Increased complexity, requires sufficient population data for estimation |

| Success-Based Adaptive RMP | Adjusts RMP based on online measurement of transfer success rates [23] | Responsive to actual transfer effectiveness, promotes positive transfer | Success rate metrics may be noisy, delayed response to landscape changes |

| Prediction-Based RMP | Uses machine learning models (e.g., decision trees) to predict beneficial transfers [1] | Potentially more precise transfer control, can anticipate beneficial exchanges | High computational overhead, requires careful feature engineering |

The Interplay of Positive Transfer, Negative Transfer, and RMP

The relationship between positive transfer, negative transfer, and RMP forms the fundamental dynamic that governs knowledge exchange in EMTO. These three elements exist in a delicate balance where the RMP parameter serves as the primary regulator between the beneficial and detrimental effects of knowledge transfer.

When RMP is set too low, the algorithm restricts knowledge exchange between tasks, potentially missing opportunities for positive transfer and effectively reducing the optimization to parallel single-task evolution [20]. Conversely, when RMP is set too high, excessive cross-task mating increases the risk of negative transfer, particularly between unrelated or competing tasks [20] [1]. The optimal RMP setting therefore depends critically on the degree of relatedness between tasks and the complementarity of their fitness landscapes.

Figure 2: RMP Configuration Workflow for Balancing Knowledge Transfer

Advanced EMTO algorithms address this challenge through several sophisticated approaches:

Online similarity detection: Algorithms like MOMFEA-STT establish parameter sharing models between historical and target tasks, automatically identifying association degrees between different tasks to adjust cross-task knowledge transfer intensity [22].

Transfer ability prediction: The EMT-ADT algorithm defines an evaluation indicator to quantify the transfer ability of each individual and constructs a decision tree to predict this ability, selecting only promising positive-transferred individuals for knowledge exchange [1].

Domain adaptation: Techniques like MDS-based linear domain adaptation create low-dimensional subspaces for each task and learn mapping relationships between these subspaces, enhancing the potential for positive transfer even between tasks with differing dimensionalities [20].

Experimental Protocols and Assessment Methodologies

Standardized Benchmarking Approaches

Rigorous experimental evaluation is essential for assessing the effectiveness of knowledge transfer strategies in EMTO. Researchers have developed standardized benchmarking protocols to enable fair comparisons between algorithms.

For single-objective multitask optimization, the CEC2017 MFO benchmark problems provide a comprehensive test suite featuring tasks with varying degrees of relatedness, different dimensionalities, and diverse fitness landscape characteristics [1]. For multi-objective multitask optimization, the WCCI20-MTSO and WCCI20-MaTSO benchmark problems offer specialized testing environments [22] [1].

Performance assessment typically employs two complementary metrics: (1) convergence speed, measured by the number of function evaluations required to reach a target solution quality, and (2) solution accuracy, evaluated by the best objective value achieved within a fixed computational budget [20] [1]. For comprehensive assessment, these metrics are computed separately for each component task and aggregated to provide an overall performance measure.

Quantifying Knowledge Transfer Effects

Measuring the actual occurrence and impact of knowledge transfer requires specialized experimental designs:

Ablation studies: Researchers implement algorithm variants with specific transfer mechanisms disabled (e.g., setting RMP=0) to isolate the contribution of knowledge transfer to overall performance [20].

Success rate monitoring: Tracking the proportion of cross-task generated offspring that outperform their parents provides a direct measure of positive transfer effectiveness [23].

Population diversity metrics: Measuring genotypic and phenotypic diversity within task-specific subpopulations helps assess whether knowledge transfer is enhancing exploration or causing premature convergence [20].

Table 4: Research Reagent Solutions for EMTO Experiments

| Research Reagent | Function | Example Implementation |

|---|---|---|

| CEC2017-MTSO Benchmark | Standardized test problems for single-objective MTO [1] | Provides controlled environment with known task relatedness for algorithm comparison |

| WCCI20-MTSO/MaTSO Benchmark | Specialized test suites for multi-objective MTO [22] [1] | Enables evaluation of algorithms on complex multi-objective multitasking scenarios |

| Skill Factor Assignment | Mechanism for identifying an individual's specialized task [1] [21] | τᵢ = argmin{rij} where rij is the factorial rank of individual i on task j |

| Factorial Cost Calculation | Unified evaluation metric across tasks [21] | Ψⱼⁱ = γδⱼⁱ + Fⱼⁱ where Fⱼⁱ is objective value and δⱼⁱ is constraint violation |

| Scalar Fitness Computation | Cross-task performance measure for selection [1] [21] | φᵢ = 1/min{rij} enabling comparison of individuals across different tasks |

Positive transfer, negative transfer, and random mating probability represent three interconnected pillars that support the theoretical and practical framework of evolutionary multitasking optimization. The effective management of knowledge transfer through appropriate RMP strategies separates successful EMTO implementations from those that fail to realize the promised benefits of multitasking.

Future research directions in this domain include the development of more sophisticated transferability assessment mechanisms, potentially leveraging deep learning architectures like the VDSR model used in MFEA-RL for generating high-dimensional residual representations of individuals [16]. Additionally, the application of EMTO to complex real-world problems in drug development and personalized medicine presents promising opportunities for demonstrating the practical value of controlled knowledge transfer [2] [25].

As the field progresses, the balanced orchestration of positive and negative transfer through adaptive RMP mechanisms will continue to be essential for unlocking the full potential of evolutionary multitasking optimization across scientific and engineering domains.

MFEA Implementation Strategies and Real-World Applications in Biomedical Research

Multifactorial Evolutionary Algorithms (MFEAs) represent an advanced paradigm within evolutionary computation that enables the simultaneous solution of multiple optimization tasks in a single run. This innovative approach falls under the broader field of Evolutionary Multitasking (EMT), which leverages the implicit parallelism of population-based search to exploit potential synergies between different optimization tasks and problems [26]. Unlike traditional evolutionary algorithms that focus on solving a single problem, MFEAs are designed to handle multiple tasks concurrently, allowing for knowledge transfer and genetic exchange between populations evolving for different objectives [9]. The fundamental insight behind MFEAs is that the process of searching for optimal solutions to one task may contain valuable information that can assist in solving other related tasks, thereby accelerating convergence and improving solution quality across all optimization problems.

MFEAs have demonstrated remarkable success across diverse domains, particularly in complex industrial scenarios where multiple interrelated optimization problems must be addressed simultaneously [9]. The architectural foundation of MFEAs enables them to maintain distinct populations for different tasks while permitting controlled genetic exchange through carefully designed mechanisms. This multifactorial approach has proven especially valuable in data-rich environments where optimization tasks share common characteristics or underlying structures, allowing the algorithm to discover solutions that would be challenging to find when tasks are optimized in isolation [25].

Core Components of MFEA Architecture

Population Initialization Strategies

Population initialization in Multifactorial Evolutionary Algorithms establishes the foundation for effective evolutionary search across multiple tasks. Unlike single-task evolutionary algorithms, MFEA requires specialized initialization that considers the diverse characteristics of all tasks involved. The process typically begins with the creation of a unified search space that encompasses the solution domains of all tasks, allowing for seamless knowledge transfer during evolution [9].

Unified Genomic Representation: MFEAs employ a normalized encoding scheme that maps candidate solutions from different tasks into a common representational space. This unified approach enables direct comparison and genetic exchange between individuals from different tasks, facilitated by a multifactorial encoding that incorporates task-specific and shared genetic information [25].

Diversity-Aware Sampling: Effective initialization strategies prioritize population diversity to prevent premature convergence. This involves sophisticated sampling techniques that ensure adequate coverage of each task's search space while maintaining a balanced distribution of individuals across all tasks. Research has demonstrated that populations initialized with 30-50 individuals per task provide sufficient diversity without excessive computational overhead [25].