Modern Differential Evolution Algorithms: A Comparative Analysis of Mechanisms and Applications in Drug Discovery

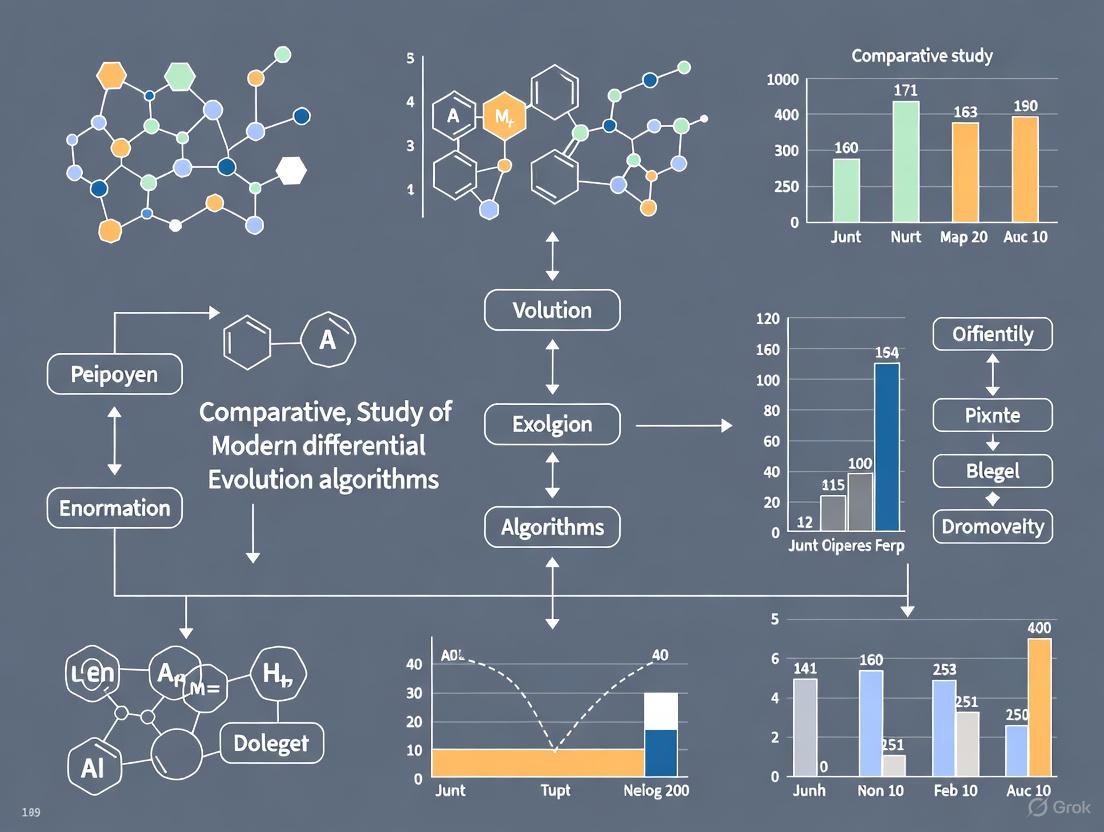

This article provides a comprehensive comparative analysis of modern Differential Evolution (DE) algorithms, focusing on their enhanced mechanisms and transformative applications in drug discovery and development.

Modern Differential Evolution Algorithms: A Comparative Analysis of Mechanisms and Applications in Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of modern Differential Evolution (DE) algorithms, focusing on their enhanced mechanisms and transformative applications in drug discovery and development. We explore foundational concepts and the evolution of DE from its inception to current state-of-the-art variants. The methodological section examines sophisticated adaptation strategies including reinforcement learning, successful-history-based parameter control, and hybridization techniques. For practitioners, we analyze troubleshooting approaches for common challenges like parameter sensitivity and premature convergence. The validation framework presents rigorous comparative assessments on standard benchmarks and real-world drug discovery applications, particularly in drug-target binding affinity prediction and molecular optimization. This synthesis enables researchers and drug development professionals to select appropriate DE variants to accelerate and optimize their computational workflows.

The Evolutionary Journey of Differential Evolution: From Basic Principles to Modern Frameworks

Differential Evolution (DE) stands as a cornerstone of modern evolutionary computation, representing a significant leap in global optimization techniques for complex, real-valued functions. Since its introduction in the mid-1990s, DE has distinguished itself through straightforward vector operations and exceptional performance across diverse problem domains including engineering design, machine learning, and chemometrics [1] [2]. This guide examines DE's historical development and core evolutionary mechanics, providing researchers with a foundational understanding of its operational principles and comparative performance characteristics. The algorithm's robustness stems from its minimal assumptions about the optimized problem, enabling effective application to non-differentiable, noisy, and multimodal objective functions where traditional gradient-based methods falter [3] [2]. By maintaining its relevance through continuous innovation, DE serves as both a powerful standalone tool and a benchmark for emerging metaheuristics.

Historical Foundations and Development

Differential Evolution emerged in 1995 through the work of Rainer Storn and Kenneth Price, who sought a practical heuristic for optimizing non-linear and non-differentiable continuous space functions [3] [2]. Their seminal 1997 publication, "Differential Evolution – A Simple and Efficient Heuristic for Global Optimization over Continuous Spaces," formally established DE as a competitive evolutionary algorithm characterized by simple structure, strong robustness, and high convergence efficiency [4]. This foundation triggered decades of research and refinement, positioning DE as a preferred alternative to both traditional methods and earlier evolutionary approaches like Genetic Algorithms (GAs).

A key historical differentiator was DE's inherent suitability for continuous spaces compared to GA's primary focus on discrete domains [1]. Current optimization literature acknowledges that DE often produces more stable and superior solutions than GA, leveraging vector operations and random number generators to achieve competitive speeds while overcoming the limitations of simpler methods [1]. The algorithm's development trajectory has been marked by significant community engagement, including annual competitions at the Congress on Evolutionary Computation (CEC) where DE-based algorithms consistently demonstrate prominent performance [5] [6].

Table: Historical Milestones in Differential Evolution Development

| Year | Development Milestone | Key Contributors | Significance |

|---|---|---|---|

| 1995 | Initial Introduction | Storn and Price | First description of DE as a heuristic approach [3] |

| 1997 | Formal Publication | Storn and Price | Established DE in scientific literature [3] [4] |

| Early 2000s | Multi-objective Extensions | Various researchers | Application of DE to multi-objective problems (MODE) [7] |

| 2005 | Comprehensive Book | Price, Storn, Lampinen | "Differential Evolution: A Practical Approach to Global Optimization" [3] |

| 2010s-Present | Parameter Adaptation & Hybrid Variants | Research Community | Emergence of self-adaptive, reinforcement learning-enhanced, and multi-population DE [4] [8] |

Core Evolutionary Principles

The algorithmic framework of Differential Evolution operates on population-based evolutionary principles, iteratively improving candidate solutions through specific genetic operations. DE maintains a population of candidate solutions, referred to as agents, which are moved through the search-space using mathematical formulae derived from existing population vectors [3]. The core procedure cycles through three principal operations—mutation, crossover, and selection—over successive generations until a termination criterion is satisfied [2] [4]. This process treats the optimization problem as a black box, requiring only measure of quality without needing gradient information [3].

Population Initialization

The algorithm begins by initializing a population of NP candidate solutions, often called agents or target vectors. Each individual in this population is represented as a D-dimensional vector ( x{i,g} = (x{1,i,g}, x{2,i,g}, ..., x{D,i,g}) ), where ( i ) represents the individual index, and ( g ) denotes the generation number [5] [6]. Initial parameter values are typically generated randomly within user-specified bounds according to: [ x{i,j}(0) = rand{ij}[0,1] \cdot (x{j}^{U} - x{j}^{L}) + x{j}^{L} ] where ( x{j}^{U} ) and ( x{j}^{L} ) represent the upper and lower bounds of the j-th parameter, and ( rand{ij}[0,1] ) is a uniformly distributed random variable [4]. For enhanced performance, modern variants may employ quasi-random sequences like the Halton sequence to improve the ergodicity and uniform coverage of the initial solution set [4].

Mutation (Difference Vector Generation)

The mutation operation generates a mutant vector ( v{i,g+1} ) for each target vector in the current population by adding the scaled difference of two or more randomly selected population vectors to a third base vector [3] [2]. The most common strategy, DE/rand/1, follows: [ v{i,g+1} = x{r1,g} + F \cdot (x{r2,g} - x_{r3,g}) ] where indices ( r1, r2, r3 ) are mutually exclusive integers randomly selected from the population and distinct from the target index ( i ) [5] [6]. The scaling factor ( F ), typically chosen from [0, 2], controls the amplification of differential variations [3] [4]. This disturbance through vector differences enables exploration of the search space while maintaining solution structure.

Crossover

Following mutation, the crossover operation creates a trial vector ( u{i,g+1} ) by mixing parameters of the mutant vector ( v{i,g+1} ) with those of the target vector ( x{i,g} ) [3]. The predominant binomial crossover is defined as: [ u{ji,g+1} = \begin{cases} v{ji,g+1} & \text{if } rand(j) \leq CR \text{ or } j = j{rand} \ x{ji,g} & \text{otherwise} \end{cases} ] where ( rand(j) ) is a uniform random number generator output, ( CR ) is the crossover probability in [0,1], and ( j{rand} ) is a randomly chosen dimension ensuring the trial vector inherits at least one component from the mutant vector [5] [6]. This recombination mechanism introduces new genetic material while preserving beneficial traits from the parent.

Selection

The final operation employs greedy selection to determine whether the target or trial vector survives to the next generation. The fitness of the trial vector ( u{i,g+1} ) is compared against its corresponding target vector ( x{i,g} ): [ x{i,g+1} = \begin{cases} u{i,g+1} & \text{if } f(u{i,g+1}) \leq f(x{i,g}) \ x_{i,g} & \text{otherwise} \end{cases} ] For minimization problems, if the trial vector yields an equal or lower objective value, it replaces the target vector; otherwise, the target vector is retained [5] [6] [2]. This deterministic selection pressure steadily drives the population toward improved regions of the search space over successive generations.

Diagram: Differential Evolution Algorithm Workflow

Experimental Protocols and Performance Assessment

Robust experimental protocols are essential for evaluating DE variants and comparing their performance against alternative optimization algorithms. Standardized methodology involves testing on benchmark suites with defined dimensions, multiple independent runs to account for stochasticity, and statistical analysis to validate performance differences [5] [6].

Benchmark Functions and Testing Environments

Comparative studies typically employ established benchmark suites such as those from the CEC Special Session and Competition on Single Objective Real-Parameter Numerical Optimization [5] [6]. These suites encompass diverse function types: unimodal functions (testing convergence speed), multimodal functions (assessing ability to escape local optima), hybrid functions (combining different characteristics), and composition functions (creating complex landscapes) [5]. Comprehensive evaluation tests algorithms across increasing dimensions (e.g., 10D, 30D, 50D, 100D) to assess scalability [5] [4]. Each algorithm is typically run 25-51 times per function with different random seeds, and performance is measured using error from known optimum or best-found solution quality after fixed function evaluations [5].

Statistical Validation Methods

Non-parametric statistical tests are preferred for comparing DE performance due to their fewer assumptions about data distribution [5] [6]. Standard practice includes:

- Wilcoxon Signed-Rank Test: Used for pairwise algorithm comparisons based on mean performance across multiple runs and functions [5] [6]. It ranks absolute differences in performance, making it more powerful than simple sign tests.

- Friedman Test with Nemenyi Post-hoc Analysis: Employed for multiple algorithm comparisons, this method ranks algorithms for each problem then compares average ranks [5] [6]. The critical difference (CD) from Nemenyi test determines whether rank differences are statistically significant.

- Mann-Whitney U Test: Also called Wilcoxon rank-sum test, used to determine if one algorithm tends to produce better results than another [5].

These tests typically employ significance level α=0.05, and researchers report p-values indicating the strength of evidence against null hypothesis of equal performance [5].

Table: Experimental Comparison of Modern DE Variants (CEC'24 Benchmark)

| Algorithm | Key Mechanism | Unimodal Performance | Multimodal Performance | Hybrid Performance | Composite Performance |

|---|---|---|---|---|---|

| RLDE [4] | Reinforcement learning-based parameter adaptation | Fast convergence | Good local escape | Strong | Strong |

| EBJADE [8] | Multi-population with elites regeneration | Moderate | Excellent diversity | Strong | Moderate |

| MODE-FDGM [7] | Directional generation for multi-objective | N/A (Multi-objective) | N/A (Multi-objective) | N/A (Multi-objective) | N/A (Multi-objective) |

| Classic DE [3] | Rand/1/bin strategy | Slow convergence | Premature convergence | Weak | Weak |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Components for Differential Evolution Research

| Research Component | Function/Purpose | Example Implementations |

|---|---|---|

| Benchmark Suites | Standardized test functions for algorithm validation | CEC2014, CEC2017, CEC2024 test suites [5] [8] |

| Statistical Test Packages | Mathematical libraries for performance comparison | Wilcoxon signed-rank, Friedman test, Mann-Whitney U test [5] [6] |

| Mutation Strategies | Mechanisms for generating new search directions | DE/rand/1, DE/best/1, DE/current-to-ord/1 [3] [8] |

| Parameter Control Methods | Adaptive adjustment of F and CR parameters | Reinforcement learning adaptation [4], JADE's parameter adaptation [8] |

| Population Management | Techniques for maintaining diversity and quality | Multi-population approaches [8], elite regeneration [8] |

Differential Evolution has maintained remarkable relevance since its inception nearly three decades ago, evolving from Storn and Price's fundamental formulation to sophisticated modern variants incorporating adaptive parameter control, multi-population approaches, and hybrid mechanisms [4] [8]. The algorithm's enduring value stems from its conceptual simplicity, minimal parameter requirements, and proven effectiveness across diverse optimization landscapes. Contemporary research directions continue to enhance DE's capabilities, particularly in addressing parameter sensitivity through reinforcement learning [4], improving exploitation via directional mutation strategies [7] [8], and maintaining diversity through multi-population architectures [8]. For researchers and practitioners, understanding DE's historical foundations and core evolutionary principles provides essential context for selecting, implementing, and advancing optimization methodologies suited to increasingly complex scientific and engineering challenges.

Differential Evolution (DE) is a population-based stochastic optimizer for continuous spaces that has demonstrated significant robustness and effectiveness in solving complex real-world problems. Since its introduction, the core mechanics of DE have remained a subject of intense research and refinement. The canonical algorithm operates through a simple yet powerful cycle of population initialization, mutation, crossover, and selection operations. Despite its conceptual simplicity, the specific implementation of these components dramatically influences optimization performance, leading to numerous algorithmic variants. This guide provides a systematic comparison of these fundamental mechanics as implemented in modern DE algorithms, examining their impact on performance through experimental data and highlighting key advancements that address DE's inherent challenges with parameter sensitivity and premature convergence.

The Core Operational Framework of Differential Evolution

The DE algorithm follows an evolutionary cycle where a population of candidate solutions is iteratively improved. The workflow begins with initializing a population of vectors within the specified parameter bounds, then cyclically applies mutation to create donor vectors, crossover to produce trial vectors, and selection to determine which vectors survive to the next generation. This process continues until a termination criterion is met. The diagram below illustrates this fundamental operational workflow.

Detailed Breakdown of Fundamental Operations

Population Initialization

The initial population in DE serves as the starting point for the search process. Traditional DE uses simple random initialization, where each parameter of every vector is randomly assigned a value within its predefined lower and upper bounds [4]. Formally, for a population of NP individuals in a D-dimensional space, the j-th dimension of the i-th individual is initialized as:

$$x{ij} (0) = rand{ij} (0,1)\times(x{ij}^{U} - x{ij}^{L}) + x_{ij}^{L}$$

where $rand{ij}(0,1)$ is a uniformly distributed random number in [0,1], and $x{ij}^{U}$ and $x_{ij}^{L}$ represent the upper and lower bounds of the j-th dimension, respectively [4].

Modern variants have introduced more sophisticated initialization schemes to improve initial population diversity. The Halton sequence method has been employed in algorithms like RLDE to achieve more uniform coverage of the search space, enhancing the ergodicity of the initial solution set and potentially improving convergence performance [4].

Mutation Strategies

Mutation is the distinctive operation in DE that generates donor vectors by combining differences between population individuals. The choice of mutation strategy significantly influences the search behavior, balancing exploration and exploitation. The table below compares common and advanced mutation strategies used in modern DE variants.

Table 1: Comparison of DE Mutation Strategies

| Strategy Name | Mathematical Formulation | Search Characteristics | Modern Implementations |

|---|---|---|---|

| DE/rand/1 | $vi = x{r1} + F\cdot(x{r2} - x{r3})$ | High diversity, good exploration | Foundational strategy |

| DE/best/1 | $vi = x{best} + F\cdot(x{r1} - x{r2})$ | Fast convergence, exploitation | Often modified in modern variants |

| DE/current-to-pbest/1 | $vi = xi + F\cdot(x{pbest} - xi) + F\cdot(x{r1} - x{r2})$ | Balanced exploration-exploitation | JADE, L-SHADE |

| DE/current-to-pbest with enforced spacing | $vi = xi + F\cdot(x{pbest} - xi) + F\cdot(x{r1} - x{r2})$with minimal distance enforcement | Enhanced exploration of different basins | Recent 2024 improvement [9] |

Recent research has focused on enhancing mutation strategies to overcome local optima. The CM-DE algorithm incorporates a perturbation strategy into the crossover operation based on the t-distribution probability density function, using information from outstanding individuals to guide the search direction [10]. Another 2024 advancement introduces a pbest selection mechanism that enforces minimal distance between the selected pbest individual and other better individuals, increasing the likelihood of exploring different attraction basins in the search space [9].

Crossover Operations

Crossover combines coordinates from the target vector and donor vector to create a trial vector. The binomial crossover is most common, defined as:

$$u{ij} = \begin{cases} v{ij} & \text{if } rand(j) \leq CR \text{ or } j = j{rand} \ x{ij} & \text{otherwise} \end{cases}$$

where $rand(j)$ is a uniform random number in [0,1], CR is the crossover rate controlling the probability of parameter inheritance from the donor vector, and $j_{rand}$ ensures at least one parameter comes from the donor vector [4].

Advanced crossover mechanisms include the CM-DE approach that constructs a new crossover operation between the mutant vector and target vector based on the t-distribution probability density function, introducing beneficial perturbations to enhance population diversity [10].

Selection Methods

DE employs greedy selection where the trial vector competes directly against its target vector predecessor:

$$xi(t+1) = \begin{cases} ui(t+1) & \text{if } f(ui(t+1)) < f(xi(t)) \ x_i(t) & \text{otherwise} \end{cases}$$

This deterministic selection pressure drives the population toward better regions of the search space [4].

Modern enhancements include archive-based selection mechanisms that store successful solutions and reuse them when the population shows signs of stagnation. The External Selection Mechanism (ESM) employs a crowding entropy diversity measurement in the fitness landscape combined with fitness rank to maintain archive diversity and quality [11]. Similarly, RLDE implements a ranking mechanism where all solutions are sorted by fitness after evolution, applying different strategies to different groups to retain better solutions and improve poorer ones [4].

Advanced Mechanisms in Modern DE Variants

Parameter Adaptation Techniques

Control parameters F (scale factor) and CR (crossover rate) significantly impact DE performance. Modern variants have moved from fixed parameters to sophisticated adaptation mechanisms:

- CM-DE uses a dual-phase approach for F: improved wavelet basis function in early evolution and Cauchy distribution in later stages, while CR follows a normal distribution [10]

- RLDE establishes a dynamic parameter adjustment mechanism using a policy gradient network within a reinforcement learning framework for online adaptive optimization of F and CR [4]

- ISDE implements a jump-out mechanism based on deep reinforcement learning to control mutation intensity, with neural networks trained by a double deep Q-network algorithm using continuous evolutionary data [12]

Diversity Maintenance

Maintaining population diversity is crucial for preventing premature convergence:

- CM-DE calculates the covariance matrix of the population, using variance as an indicator of individual similarity. When diversity drops below a threshold, a competition mechanism perturbs stagnant individuals using t-distribution or Cauchy distribution [10]

- ISDE introduces a Population Range Indicator (PRI) to describe individual differences, with a diversity maintenance mechanism that schedules individuals based on PRI values [12]

- ESM employs information entropy rather than distance metrics to maintain archive diversity, storing successful solutions and using them when stagnation is detected [11]

Hybridization with Machine Learning

Reinforcement learning has been successfully integrated into DE for enhanced decision-making:

- RLDE uses a policy gradient network to adjust control parameters, where the DE population evolution process serves as the interaction environment for RL [4]

- ISDE incorporates a deep reinforcement learning-based jump-out mechanism where neural networks control mutation intensity based on historical evolutionary information [12]

Experimental Comparison of Modern DE Variants

Benchmark Protocols

Modern DE variants are typically evaluated using standardized benchmark suites from the Congress on Evolutionary Computation (CEC). The CEC2017 and CEC2024 test suites are commonly employed, containing unimodal, multimodal, hybrid, and composition functions that test various algorithm capabilities [10] [5]. Statistical comparison methods include the Wilcoxon signed-rank test for pairwise comparisons, the Friedman test for multiple comparisons, and the Mann-Whitney U-score test, with performance evaluation across dimensions of 10D, 30D, 50D, and 100D [5].

Performance Comparison

The table below summarizes experimental results from recent studies comparing modern DE variants:

Table 2: Performance Comparison of Modern DE Algorithms on CEC2017 Benchmark (50D)

| Algorithm | Key Mechanisms | Performance vs. Baseline Algorithms | Strengths |

|---|---|---|---|

| CM-DE | Covariance matrix diversity measure, parameter adaptation with wavelet and Cauchy distribution | 22/30 better than LSHADE, 20/30 better than jSO, 24/30 better than LPalmDE, 23/30 better than PaDE, 28/30 better than LSHADE-cnEpSin [10] | Excellent solution accuracy, effective diversity maintenance |

| RLDE | Reinforcement learning parameter control, Halton sequence initialization, differentiated mutation | Significant enhancement in global optimization performance across 26 test functions in 10D, 30D, 50D [4] | Strong convergence performance, effective parameter adaptation |

| ISDE | Population Range Indicator, deep RL jump-out mechanism, adaptive optimization operator | Superior comprehensive performance on CEC2017 with reasonable computational complexity [12] | Self-learning capability, effective local optima escape |

| ESM-enhanced DE | External selection mechanism, crowding entropy diversity, successful solution archive | Improved accuracy of DE and its variants simultaneously without increased computational complexity [11] | Universal applicability, effective stagnation recovery |

The Researcher's Toolkit: Essential Components for Modern DE

Table 3: Key Research Reagents and Computational Tools for DE Experimentation

| Component | Function | Example Implementations |

|---|---|---|

| CEC Benchmark Suites | Standardized performance evaluation | CEC2013, CEC2014, CEC2017, CEC2024 test functions [10] [5] |

| Statistical Testing Frameworks | Rigorous algorithm comparison | Wilcoxon signed-rank test, Friedman test, Mann-Whitney U-score test [5] |

| Diversity Metrics | Population diversity quantification | Population Range Indicator (PRI), covariance matrix variance, crowding entropy [10] [12] [11] |

| Parameter Adaptation Mechanisms | Dynamic parameter control | Wavelet-Cauchy adaptation, reinforcement learning policy networks, histogram-based adaptation [10] [4] |

| Archive Systems | Preservation and reuse of promising solutions | External Selection Mechanism (ESM), successful solution archives [11] |

| Hybridization Frameworks | Integration of machine learning with evolutionary algorithms | Deep Q-networks for jump-out control, policy gradient for parameter adaptation [4] [12] |

The fundamental mechanics of Differential Evolution have evolved significantly from the canonical algorithm through systematic enhancements to initialization, mutation, crossover, and selection operations. Modern DE variants demonstrate that adaptive parameter control, diversity preservation mechanisms, and strategic hybridization with machine learning techniques substantially improve performance on complex optimization problems. The experimental results consistently show that algorithms incorporating covariance matrix diversity measures (CM-DE), reinforcement learning-based parameter adaptation (RLDE, ISDE), and external selection mechanisms (ESM) outperform established DE variants across diverse benchmark functions. Future development will likely focus on more sophisticated learning mechanisms, improved balance between exploration and exploitation, and specialized operators for high-dimensional and constrained optimization problems. For researchers working with DE algorithms, the current evidence suggests prioritizing variants with adaptive mechanisms and diversity preservation features for the most challenging optimization scenarios.

Differential Evolution (DE), introduced by Storn and Price in 1997, has emerged as one of the most versatile and robust population-based metaheuristics for solving complex optimization problems across scientific and engineering domains. Inspired by Darwin's theory of evolution, DE employs straightforward vector operations to efficiently navigate continuous search spaces, making it particularly valuable for real-valued function optimization. Unlike many evolutionary algorithms, DE bears no natural paradigm and is not biologically inspired, instead relying on mathematical operations to drive the evolutionary process. Its simplicity, effectiveness, and minimal parameter requirements have contributed to its widespread adoption and continued development over nearly three decades.

The methodological evolution of DE represents a fascinating case study in algorithmic refinement, characterized by successive enhancements to its core components: initialization, mutation, crossover, and selection operations. From the classical "DE/rand/1" strategy to contemporary hybrids integrating machine learning, DE has demonstrated remarkable adaptability to increasingly complex optimization landscapes. This evolution has been particularly crucial for addressing modern challenges in fields such as drug discovery, structural engineering, and aerial robotics, where optimization problems often exhibit high dimensionality, multimodality, and complex constraints. The algorithm's journey reflects a broader trend in computational intelligence toward more adaptive, self-configuring, and problem-aware optimization techniques.

This article provides a comprehensive analysis of DE's algorithmic progression, focusing on the mechanisms that underpin performance improvements in contemporary variants. By examining experimental results across benchmark functions and real-world applications, we aim to equip researchers and practitioners with the insights needed to select appropriate DE variants for their specific optimization challenges.

Historical Trajectory: From Foundational Principles to Modern Frameworks

The Original DE Algorithm: Foundational Concepts

The classical DE algorithm operates through a simple yet powerful sequence of operations: initialization, mutation, crossover, and selection. Initially developed for continuous optimization problems, DE maintains a population of candidate solutions that evolve over generations through differential mutation and crossover operations. The basic "DE/rand/1" mutation strategy creates a donor vector for each target vector by adding the scaled difference between two randomly selected population vectors to a third vector, embodying the algorithm's fundamental principle of leveraging vector differences for exploration.

The original DE formulation requires only three control parameters: population size (NP), scaling factor (F), and crossover rate (CR), contributing to its reputation as an accessible and easily implementable optimization technique. Despite its parametric simplicity, DE has demonstrated remarkable performance across diverse optimization landscapes, establishing itself as a competitive alternative to more established evolutionary algorithms. Its greedy selection mechanism, where offspring replace parents only if they exhibit superior fitness, facilitates rapid convergence toward promising regions of the search space while maintaining exploratory potential through stochastic differential operations.

Key Transitions in DE Research

DE research has evolved through several distinct phases, each marked by conceptual advances addressing specific algorithmic limitations:

Parameter Adaptation Era: Early enhancements focused on overcoming the sensitivity of DE performance to parameter settings, leading to self-adaptive and adaptive mechanisms that dynamically adjust F and CR during the optimization process. Variants like jDE and SaDE represented significant milestones in this direction, reducing the need for manual parameter tuning while improving robustness across problem domains.

Strategy Ensemble Approaches: Recognizing that no single mutation strategy performs optimally across all problems, researchers developed ensemble methods that combine multiple mutation and parameter selection strategies within a unified framework. EPSDE and CoDE exemplify this approach, leveraging the complementary strengths of different strategies to enhance overall performance.

Hybridization Trends: More recently, DE has been successfully hybridized with other optimization techniques, including vortex search, reinforcement learning, and local search methods, creating algorithms that balance DE's exploratory strength with enhanced exploitation capabilities. These hybrids often employ sophisticated population structures and adaptive mechanisms to dynamically balance exploration and exploitation throughout the search process.

Contemporary DE Variants: A Landscape of Specialized Approaches

The DE research landscape has diversified significantly, with contemporary variants targeting specific optimization challenges through specialized mechanisms. The table below summarizes prominent DE variants and their distinctive features:

Table 1: Classification of Contemporary Differential Evolution Variants

| Variant Category | Representative Algorithms | Core Innovation | Target Problem Type |

|---|---|---|---|

| Parameter-Adaptive DE | jDE, SaDE, JADE | Self-adaptive control parameters (F, CR) | General-purpose optimization |

| Ensemble DE | EPSDE, CoDE, EDEV | Multiple mutation strategies and parameter sets | Complex multimodal problems |

| Hybrid DE | DE/VS, DE-BP, DEGSA | Integration with complementary algorithms | Engineering design problems |

| Multi-objective DE | MODE-FDGM, ε-MyDE, DEMO | Pareto dominance and diversity preservation | Multi-objective optimization |

| Multimodal DE | Niching DE, Crowding DE | Niche formation and maintenance | Multimodal optimization |

| Reinforcement Learning-enhanced DE | RLDE, RL-DE | Policy gradient networks for parameter control | High-dimensional problems |

Hybrid DE Variants: Synergistic Optimization

Hybrid DE algorithms represent one of the most extensively studied areas in evolutionary computation, combining DE with complementary optimization techniques to harness their synergistic strengths. The recently proposed DE/VS hybrid algorithm integrates DE with Vortex Search (VS) to address the critical challenge of balancing exploration and exploitation in complex search spaces. This framework introduces a hierarchical subpopulation structure and dynamic population size adjustment to maintain an effective trade-off between these competing objectives. While DE provides robust exploration capabilities, it often struggles with precise exploitation, whereas VS excels at local refinement but lacks global exploration, often leading to premature convergence. By combining these approaches, DE/VS achieves superior performance across diverse benchmark functions and engineering problems [13].

Another significant hybrid approach, the COASaDE Optimizer, merges the Crayfish Optimization Algorithm (COA) with Self-adaptive Differential Evolution (SaDE) to solve complex optimization and engineering design problems. Experimental results using benchmark functions and engineering challenges confirm the hybrid optimizer's superior performance, robustness, and efficiency compared to other state-of-the-art algorithms. Similarly, the MBDE algorithm combines DE with Particle Swarm Optimization (PSO), demonstrating remarkable solution quality, convergence rate, efficiency, and efficacy when tackling complex continuous optimization problems [13].

Multi-objective DE Variants: Expanding to Pareto Optimization

Multi-objective DE variants extend the algorithm's capabilities to problems with multiple conflicting objectives, which are prevalent in science and engineering applications. The recently proposed MODE-FDGM algorithm incorporates a directional generation mechanism that leverages current and past information to rapidly build feasible solutions, boosting both speed and quality in exploring Pareto non-dominated space. This approach includes an update mechanism that combines crowding distance evaluation with historical information to enhance diversity and improve the ability to escape local optima [7].

Another innovative multi-objective approach, Archive-based Parameter-free Multi-objective Rao-Differential Evolution (APMORD), embeds the parameter-free Rao-1 mutation into a differential evolution framework and pairs it with an elite archive plus dynamic population-size control. This method eliminates manual hyper-parameter tuning while achieving faster convergence and a well-spread Pareto front. These advances address the perennial challenge in multi-objective optimization of balancing convergence with diversity maintenance throughout the search process [7].

Reinforcement Learning-Enhanced DE: Toward Intelligent Adaptation

The integration of reinforcement learning (RL) with DE represents a cutting-edge approach to addressing the algorithm's parameter sensitivity and premature convergence issues. The RLDE algorithm establishes a dynamic parameter adjustment mechanism based on the policy gradient network, realizing online adaptive optimization of the scaling factor and crossover probability through an RL framework. This approach classifies the population according to individual fitness values and implements a differentiated mutation strategy, significantly enhancing global optimization performance while maintaining computational efficiency [4].

Another RL-enhanced approach, RL-DE, adaptively adjusts the mutation scalar based on the evolution environment, demonstrating improved performance across standard test functions and engineering applications such as UAV task assignment. By framing the population evolution process as the interaction environment for RL, these integrated approaches compensate for DE's inherent limitations through real-time adaptation to the evolving search landscape [4] [14].

Experimental Comparison: Performance Across Variants and Dimensions

Methodological Framework for Comparative Analysis

Robust evaluation of DE variants requires carefully designed experimental methodologies employing appropriate statistical tests to ensure reliable conclusions. Recent comparative studies have utilized non-parametric statistical tests including the Wilcoxon signed-rank test for pairwise comparisons, the Friedman test for multiple comparisons, and the Mann-Whitney U-score test to determine performance rankings. These tests are preferred over parametric alternatives due to their fewer restrictions and suitability for comparing stochastic optimization algorithms whose performance data often violate normality assumptions [5].

Comprehensive evaluation typically involves testing algorithms across standard benchmark functions from the CEC Special Session and Competition on Single Objective Real Parameter Numerical Optimization, with problems categorized as unimodal, multimodal, hybrid, and composition functions. Performance is assessed across multiple dimensions (commonly 10D, 30D, 50D, and 100D) to evaluate scalability, with multiple independent runs conducted for each algorithm-function-dimension combination to account for random variation. Solution quality is measured primarily through error values (difference from known optimum), while convergence speed may be evaluated through generations or function evaluations to specified thresholds [5].

Table 2: Performance Comparison of DE Variants Across Problem Dimensions (Mean Rank)

| Algorithm | 10D | 30D | 50D | 100D | Overall Rank |

|---|---|---|---|---|---|

| RLDE | 2.1 | 1.8 | 1.5 | 1.3 | 1.7 |

| DE/VS | 2.3 | 2.1 | 2.3 | 2.5 | 2.3 |

| MODE-FDGM | 2.8 | 2.5 | 2.1 | 1.9 | 2.3 |

| TDE | 2.5 | 2.8 | 3.1 | 3.4 | 2.9 |

| JADE | 3.2 | 3.5 | 3.3 | 3.1 | 3.3 |

| CODE | 3.8 | 4.2 | 4.5 | 4.8 | 4.3 |

Note: Lower ranks indicate better performance. Data derived from comparative studies in [5] [4].

Performance Analysis Across Problem Types

Experimental results demonstrate that contemporary DE variants exhibit specialized performance profiles across different problem types. The RLDE algorithm consistently achieves top performance across dimensions, particularly excelling in higher-dimensional problems (50D and 100D) where its reinforcement learning-based parameter adaptation provides significant advantages. The DE/VS hybrid shows strong performance across all dimensions, with particularly good results in 10D and 30D problems, while MODE-FDGM demonstrates improving relative performance as dimensionality increases, suggesting its directional generation mechanism effectively navigates complex high-dimensional search spaces [5] [13] [4].

The TDE algorithm, which employs three mutation operators categorized by their characteristics, demonstrates competitive performance, particularly on moderate-dimensional problems (10D-50D). Its approach of randomly selecting mutation strategies from three categories during evolution allows it to adapt to different problem landscapes without requiring explicit mechanism selection. Among established algorithms, JADE maintains strong performance across dimensions, benefiting from its adaptive parameter control and optional external archive, while CODE shows relatively weaker performance in higher dimensions, possibly due to its fixed strategy pool [14].

Application-Specific Performance: Case Studies in Engineering and Drug Discovery

Structural Engineering Optimization

In constrained structural optimization problems, particularly weight minimization of truss structures subject to stress and displacement constraints, DE variants have demonstrated exceptional performance. A comparative study evaluating standard DE, composite DE (CODE), adaptive DE with optional external archive (JADE), and self-adaptive DE (JDE and SADE) on 2D and 3D benchmark truss structures revealed that DE is among the most reliable algorithms, showing robustness, excellent performance, and scalability for such problems. The self-adaptive variants (JDE and SADE) particularly excelled in handling the high nonlinearity and complex constraint landscapes characteristic of structural optimization problems, effectively balancing exploration and exploitation throughout the search process [15].

Constraint handling in these engineering applications is typically managed through penalty functions, with the constrained problem transformed to an unconstrained one through the addition of penalty terms that increase objective function values when constraints are violated. The effectiveness of different DE variants in navigating these transformed fitness landscapes contributes significantly to their relative performance, with adaptive and self-adaptive approaches demonstrating advantages in dynamically balancing constraint satisfaction and objective optimization [15].

Drug-Target Binding Affinity Prediction

In pharmaceutical applications, DE has proven valuable for optimizing complex deep learning models used in drug discovery. A recent study employed DE for hyperparameter optimization of a Convolution Self-Attention Network with Attention-based Bidirectional Long Short-Term Memory Network (CSAN-BiLSTM-Att) to predict drug-target binding affinities. The DE-optimized model achieved a concordance index of 0.898 and a mean square error of 0.228 on the DAVIS dataset, and 0.971 concordance with 0.014 mean square error on the KIBA dataset, outperforming previous approaches and demonstrating DE's effectiveness in tuning complex machine learning architectures [16].

This application highlights DE's utility beyond direct engineering optimization, showcasing its capability to enhance predictive model performance in computational drug discovery. By optimizing hyperparameters of deep learning models, DE contributes to more accurate prediction of drug-target interactions, potentially reducing the time and financial resources required for experimental drug discovery while maintaining high prediction accuracy [16].

Table 3: Research Reagent Solutions for Differential Evolution Applications

| Tool/Resource | Function | Application Context |

|---|---|---|

| CEC Benchmark Functions | Standardized performance evaluation | Algorithm comparison and validation |

| Wilcoxon Signed-Rank Test | Pairwise statistical comparison | Experimental results analysis |

| Friedman Test | Multiple algorithm comparison | Ranking-based performance assessment |

| Halton Sequence | Quasi-random population initialization | Enhancing initial population diversity |

| Policy Gradient Networks | Reinforcement learning-based parameter control | Adaptive parameter adjustment in RLDE |

| Niching Techniques | Multi-modal optimization | Maintaining multiple solution subpopulations |

| Crowding Distance Metrics | Diversity preservation | Multi-objective optimization |

| Archive Mechanisms | Elite solution preservation | External storage of non-dominated solutions |

The algorithmic evolution of Differential Evolution has transformed it from a simple, elegant optimizer to a sophisticated, adaptive optimization framework capable of tackling increasingly complex challenges across diverse domains. Contemporary research trends indicate several promising directions for future development, including tighter integration with machine learning techniques for enhanced adaptability, improved theoretical foundations explaining DE's empirical success, and specialized variants targeting emerging application domains such as large-scale machine learning and complex systems design.

The continued progression toward parameter-free or self-adaptive approaches reduces the need for manual algorithm configuration, making powerful optimization accessible to non-specialists while maintaining competitive performance. Additionally, the growing emphasis on multimodal optimization capabilities addresses the practical need for diverse solution sets in many real-world applications where multiple alternatives offer valuable flexibility for decision-makers. As DE continues to evolve, its core principles of differential mutation and selection maintain their relevance, providing a stable foundation upon which increasingly sophisticated enhancements continue to be built.

DE Algorithm Workflow with Adaptive Strategy Selection

Differential Evolution (DE), introduced by Storn and Price in the mid-1990s, represents a simple yet powerful evolutionary algorithm for solving complex optimization problems across continuous spaces [17]. Its operational framework, built upon population initialization, mutation, crossover, and selection, has demonstrated remarkable versatility in handling non-differentiable, nonlinear, and multimodal objective functions common in scientific and engineering domains [4] [5]. However, the performance of the classical DE algorithm exhibits significant sensitivity to the setting of its control parameters—specifically the scaling factor (F) and crossover rate (CR)—and the selection of mutation strategies [18]. This limitation prompted extensive research into adaptive mechanisms, leading to the development of sophisticated DE variants that dynamically adjust their parameters and strategies during the optimization process.

Among the plethora of DE enhancements, the algorithms originating from the JADE framework have demonstrated particularly impressive performance, with many securing top positions in prestigious IEEE Congress on Evolutionary Computation (CEC) competitions [19] [20]. This review provides a comprehensive taxonomic analysis of these modern DE variants—JADE, SHADE, and L-SHADE—elucidating their architectural relationships, mechanistic improvements, and comparative performance across benchmark functions and real-world applications. By examining their evolutionary pathways and experimental validations, we aim to establish a clear understanding of how these algorithms have advanced the state-of-the-art in evolutionary computation.

Algorithmic Architectures and Evolutionary Pathways

JADE: Establishing the Adaptive Foundation

JADE (Adaptive Differential Evolution with Optional External Archive), introduced by Zhang and Sanderson in 2009, represented a paradigm shift in parameter adaptation within DE algorithms [19]. Its architecture introduced two fundamental innovations that distinguished it from prior DE variants:

Current-to-pbest/1 Mutation Strategy: This strategy incorporates information from high-quality solutions to guide the search process more efficiently:

V_i^G = X_i^G + F_i · (X_pbest^G - X_i^G) + F_i · (X_r1^G - X_r2^G)where

X_pbest^Gis randomly chosen from the top 100p% individuals in the current population (with p ∈ (0,1]), andX_r2^Gis selected from a union of the current population and an external archive [19] [18]. This approach balances exploration and exploitation more effectively than classical strategies like DE/rand/1 or DE/best/1.Adaptive Parameter Control: JADE implements a self-adaptive mechanism for updating the crossover rate (CR) and scaling factor (F) based on previously successful values. The control parameters are sampled from probability distributions whose locations are updated according to the learning memory of successful configurations in previous generations [19].

The external archive in JADE maintains recently discarded inferior solutions, providing additional diversity in the mutation process and helping prevent premature convergence [19]. These architectural innovations established JADE as one of the "important variants of DE" and created a foundation for subsequent developments.

SHADE: Enhancing Memory for Parameter Adaptation

SHADE (Success-History Based Adaptive Differential Evolution) extends JADE's adaptive framework by incorporating a historical memory of successful control parameters [19]. This enhancement addresses limitations in JADE's adaptation mechanism by providing more robust and stable parameter control:

Historical Memory Architecture: SHADE maintains a memory pool

M_CRandM_Fcontaining the mean values of successful CR and F parameters from previous generations. This historical perspective enables more informed parameter adaptation compared to JADE's immediate feedback approach [19].Dynamic Memory Utilization: During each generation, individuals draw their control parameters from the historical memory rather than relying solely on recent successful values. The memory components are updated at the end of each generation based on the current successful parameter values, with a progressive weighting scheme that prioritizes more significant improvements [19].

The retention of successful parameter history allows SHADE to better accommodate the varying optimization landscape requirements throughout the evolutionary process, demonstrating superior performance consistency across diverse problem types [19].

L-SHADE: Integrating Population Size Adaptation

L-SHADE (Linear Population Size Reduction SHADE) further extends the SHADE framework by incorporating dynamic population size reduction, representing the third major evolutionary step in this DE lineage [19]:

Linear Population Reduction: L-SHADE systematically decreases the population size according to a linear schedule throughout the optimization process. This approach allocates more computational resources to exploration in early generations while focusing on refinement during later stages [19] [18].

Integrated Architecture: L-SHADE combines the historical memory mechanism of SHADE with population size reduction, creating a more comprehensive adaptive system. The algorithm initialization begins with a larger population that gradually shrinks according to:

NP_{next} = round([(NP_min - NP_init) / max_nfes] · nfe + NP_init)where

NP_initandNP_minrepresent the initial and minimum population sizes,max_nfesis the maximum number of function evaluations, andnfeis the current number of function evaluations [19].

The linear reduction strategy aligns with the intuitive principle that diverse exploration benefits from larger populations, while intensive exploitation can proceed effectively with smaller populations [18].

Table 1: Core Architectural Components Across DE Variants

| Algorithm | Mutation Strategy | Parameter Adaptation | Population Size | External Archive |

|---|---|---|---|---|

| JADE | Current-to-pbest/1 | Adaptive based on successful values | Fixed | Yes (optional) |

| SHADE | Current-to-pbest/1 | Success-history based parameter adaptation | Fixed | Yes |

| L-SHADE | Current-to-pbest/1 | Success-history based parameter adaptation | Linear reduction | Yes |

Architectural Relationships and Evolutionary Trajectory

The relationship between JADE, SHADE, and L-SHADE represents a logical progression in addressing DE's adaptive challenges. This evolutionary pathway can be visualized as a cumulative enhancement process, where each variant retains the successful features of its predecessor while introducing new adaptive mechanisms:

Diagram 1: Evolutionary pathway of JADE-based algorithms showing cumulative enhancements.

This architectural genealogy highlights how each successive variant addresses limitations in its predecessor while maintaining the core adaptive philosophy. The historical memory in SHADE provides more stable parameter control compared to JADE's immediate adaptation, while L-SHADE's population size reduction further optimizes resource allocation throughout the search process [19].

Experimental Analysis and Performance Comparison

Benchmark Evaluation Protocols

The comparative performance assessment of modern DE variants typically employs standardized benchmark suites and rigorous experimental methodologies. The most widely adopted evaluation frameworks include:

IEEE CEC Benchmark Functions: The specialized sessions and competitions on single objective real-parameter numerical optimization provide comprehensive testbeds comprising unimodal, multimodal, hybrid, and composition functions [5]. These benchmarks are specifically designed to evaluate algorithm performance across diverse optimization landscapes with varying characteristics and difficulties.

Real-World Engineering Problems: Practical applications from mechanical engineering, aerospace design, and energy systems offer validation in realistic scenarios with constraints and complex objective functions [20]. These problems often feature non-convex, high-dimensional search spaces with multiple constraints.

Statistical Validation Methods: Non-parametric statistical tests, including the Wilcoxon signed-rank test for pairwise comparisons and the Friedman test for multiple algorithm comparisons, provide rigorous performance validation [20] [5]. These methods account for the stochastic nature of evolutionary algorithms and ensure statistically significant conclusions.

The experimental protocol typically involves multiple independent runs of each algorithm on each test problem, with performance measured according to solution accuracy (error from known optimum), convergence speed, and reliability [19] [5].

Comparative Performance Analysis

Comprehensive studies evaluating multiple DE variants across benchmark problems and real-world applications reveal distinct performance patterns across the JADE/SHADE lineage:

Table 2: Competition Performance of DE Variants in IEEE CEC Events

| Algorithm | CEC 2013 | CEC 2014 | CEC 2015 | CEC 2016 | CEC 2017 |

|---|---|---|---|---|---|

| SHADE | 4th place | - | - | - | - |

| L-SHADE | - | 1st place | - | - | - |

| SPS-L-SHADE-EIG | - | - | 1st place | - | - |

| L-SHADE-EpSin | - | - | - | Joint-winner | - |

| jSO | - | - | - | - | Top 4 |

| L-SHADE-cnEpSin | - | - | - | - | Top 4 |

| L-SHADE-SPACMA | - | - | - | - | Top 4 |

Performance analysis across these competitions demonstrates the consistent dominance of algorithms from the SHADE lineage, particularly those incorporating linear population size reduction (L-SHADE) and specialized enhancements [19]. The success of these variants in competitive environments underscores the effectiveness of their adaptive architectures.

Table 3: Performance Comparison on CEC2014 Benchmark Functions (50-D)

| Algorithm | Ranking (Avg) | Performance Profile | Remarks |

|---|---|---|---|

| L-SHADE | 1 | Best overall performance | Excellent balance |

| SHADE | 4 | Competitive | Robust across problems |

| JADE | 7 | Moderate | Outperformed by newer variants |

| SaDE | 10 | Lower ranking | Less adaptive |

Recent large-scale comparative studies examining 22 JADE/SHADE-based variants revealed that while L-SHADE generally performs well, no single variant dominates across all problem types and computational budgets [19]. Algorithm performance shows significant dependence on the available number of function evaluations (NFE), with different variants excelling under different NFE conditions [19].

Real-World Engineering Applications

The practical efficacy of modern DE variants extends beyond artificial benchmarks to challenging real-world engineering problems:

Mechanical Design Optimization: Studies comparing DE variants on constrained mechanical engineering design problems from IEEE CEC 2020 non-convex constrained optimization suite show that SHADE and ELSHADE-SPACMA deliver considerable performance for solving such complex design problems [20].

Unmanned Aerial Vehicle (UAV) Task Assignment: Recent improved DE variants incorporating reinforcement learning mechanisms have demonstrated significant engineering value in UAV mission planning scenarios, outperforming other heuristic optimization algorithms across multiple performance indicators [4].

Multimodal Optimization: Advanced DE variants incorporating niching techniques, ecological niche radius concepts, and dual-mutation strategies have shown promising results in identifying multiple optimal solutions for complex multimodal problems, providing decision-makers with diverse alternatives in applications such as pedestrian detection, electromagnetic machine design, and protein structure prediction [21].

The specialization of DE variants for specific problem characteristics highlights the importance of selecting algorithms aligned with application domain requirements rather than relying on a single universally superior approach.

Benchmark Problem Suites

IEEE CEC Test Suites: Annual competition problems providing diverse, challenging benchmark functions with known optimal values for controlled algorithm evaluation [5].

CEC 2011 Real-World Problems: A collection of 22 various-dimensional practical optimization problems derived from real-world applications, offering validation in realistic scenarios [19].

Statistical Analysis Frameworks

Wilcoxon Signed-Rank Test: Non-parametric statistical method for pairwise algorithm comparison, appropriate for non-normally distributed performance data [20] [5].

Friedman Test with Nemenyi Post-Hoc Analysis: Multiple comparison procedure for ranking algorithms across multiple problems, identifying statistically significant performance differences [5].

Mann-Whitney U-Score Test: Additional non-parametric test used in recent CEC competitions to determine performance winners based on aggregated rankings [5].

MATLAB Codes: Reference implementations of advanced DE variants often available from original authors (e.g., MDE-pBX, HCLPSO, AMALGAM) [19].

Parameter Adaptation Modules: Reusable components for implementing historical memory mechanisms, population size reduction schedules, and mutation strategy selection [18].

The taxonomic analysis of JADE, SHADE, and L-SHADE reveals a clear evolutionary trajectory in differential evolution, characterized by increasingly sophisticated adaptive mechanisms. The architectural relationships between these variants demonstrate a cumulative enhancement approach, where each generation retains successful features of its predecessors while addressing specific limitations through new adaptive components.

The consistent performance of these algorithms in competitive evaluations and real-world applications confirms the effectiveness of their underlying adaptive philosophies. However, the "no free lunch" theorem remains evident in the performance variability across different problem types and experimental conditions [19] [20]. Future research directions likely include deeper integration of machine learning techniques for adaptive control [4], specialized mechanisms for high-dimensional and multi-objective problems [7], and improved theoretical understanding of convergence properties in adaptive DE variants.

For researchers and practitioners selecting among these algorithms, the choice should be guided by problem characteristics, computational budget, and specific performance requirements rather than seeking a universally superior variant. The rich architectural ecosystem of modern DE variants provides specialized tools for diverse optimization scenarios, with the JADE/SHADE/L-SHADE lineage representing particularly robust and effective solutions for many challenging optimization problems.

Differential Evolution (DE), a population-based metaheuristic optimization algorithm, has established itself as a powerful tool for solving complex optimization problems in continuous spaces. Since its introduction by Storn and Price in the mid-1990s, DE has gained widespread adoption across numerous scientific and engineering disciplines due to its simplicity, robust performance, and minimal requirement for problem-specific customization [3]. Unlike traditional gradient-based methods, DE does not require the optimization problem to be differentiable, making it particularly suitable for real-world problems where the objective function may be noisy, non-differentiable, or poorly understood [3].

In the field of computational biology, researchers increasingly face optimization challenges characterized by high-dimensional parameter spaces, non-linear relationships, and complex, multi-modal landscapes. Conventional optimization techniques often struggle with these complexities, creating an opportunity for evolutionary algorithms like DE to provide effective solutions. This review assesses the historical trajectory and quantifiable impact of DE in computational biology, specifically focusing on its applications in critical areas such as molecular modeling, drug design, and biological system optimization. Through comparative performance analysis and detailed examination of experimental methodologies, we aim to provide researchers with a comprehensive understanding of DE's capabilities and implementation requirements in biological computing contexts.

Fundamental Principles of Differential Evolution

The DE algorithm operates through a straightforward yet powerful cycle of genetic operations applied to a population of candidate solutions. The core process involves four primary stages: initialization, mutation, crossover, and selection [3] [4]. The algorithm begins by generating an initial population of candidate solutions uniformly distributed across the search space. For each generation, the algorithm creates new candidate solutions by combining existing ones according to a mutation strategy, then applies crossover to increase diversity, and finally selects the fittest solutions for the next generation [3].

The classic DE/rand/1 mutation strategy can be formalized as:

[ v{i}(g+1) = x{r1}(g) + F \cdot (x{r2}(g) - x{r3}(g)) ]

where (v{i}(g+1)) is the newly generated mutant vector, (x{r1}, x{r2}, x{r3}) are three distinct randomly selected parent vectors from the population, and (F) is the scaling factor that controls the amplification of differential variations [5] [4]. The crossover operation then generates trial vectors by mixing parameters of mutant and target vectors, controlled by the crossover rate ((CR)) parameter [3]. The selection process finally determines whether the trial vector replaces the target vector in the next generation based on their relative fitness [4].

DE's performance is highly dependent on the appropriate selection of its control parameters ((NP), (F), and (CR)) and mutation strategies [3]. This sensitivity has motivated the development of numerous adaptive and self-adaptive DE variants that dynamically adjust these parameters during the optimization process [22] [23] [4].

Evolution of Differential Evolution Algorithms

Historical Development

Since its introduction, DE has undergone substantial refinements to enhance its performance across diverse problem domains. The original DE algorithm proposed by Storn and Price employed a simple yet effective framework that quickly attracted attention from the optimization community [24]. Early research efforts focused primarily on parameter tuning, as researchers recognized that the performance of DE is highly sensitive to the settings of its control parameters ((NP), (F), and (CR)) [22].

As optimization problems became increasingly complex and diverse, researchers identified limitations in fixed parameter configurations and began developing adaptive parameter methods [22]. This led to the creation of DE variants with self-adapting mechanisms that dynamically adjust parameters based on feedback from the optimization process. Notable examples include SaDE (Self-adaptive Differential Evolution), JADE (Adaptive Differential Evolution with Optional External Archive), and SHADE (Success-History Based Adaptive Differential Evolution) [25]. These adaptive approaches demonstrated significant performance improvements across various benchmark problems and real-world applications.

Key Algorithmic Variants

Table 1: Notable Differential Evolution Variants and Their Core Innovations

| Variant | Year | Key Innovations | Primary Applications |

|---|---|---|---|

| ESADE [22] | 2024 | Evolutionary scale adaptation using successful search feedback | General global optimization, engineering design |

| HCDE [23] | 2025 | Hierarchical parameter control, entropy-based diversity measurement | Complex multimodal problems, structural optimization |

| MHDE [25] | 2024 | Multiple hybridization, Weibull distribution for CR, population reduction | Frame structure design, engineering problems |

| RLDE [4] | 2025 | Reinforcement learning for parameter adaptation, Halton sequence initialization | UAV task assignment, high-dimensional problems |

| Multi-modal DE [21] | 2025 | Niching techniques, archive-based methods, specialized mutation | Multi-peak optimization, protein structure prediction |

Recent advancements in DE have focused on several strategic directions. The ESADE algorithm introduces an evolutionary scale adaptation mechanism that measures successful evolutionary scales between target and trial vectors, using this information to guide subsequent search processes [22]. The HCDE algorithm employs a hierarchical control strategy that dynamically adjusts scaling factor (F) and crossover rate (CR) using logistic and Cauchy distributions, enabling adaptive trade-offs between exploration and exploitation [23]. The MHDE variant incorporates multiple hybridizations with other algorithms and uses a Weibull distribution for the crossover rate, allowing extensive exploration in initial stages and focused exploitation in later stages [25].

For multi-modal optimization problems common in biological systems, specialized DE variants have incorporated niching techniques to identify and maintain multiple optimal solutions simultaneously [21]. These approaches enable the population to divide into subpopulations that independently evolve toward different optima, making them particularly valuable for exploring alternative solutions in complex biological landscapes.

Applications in Computational Biology

Molecular Structure Prediction and Optimization

DE has demonstrated significant utility in addressing the complex optimization challenges associated with molecular structure prediction. The three-dimensional configuration of biological molecules directly determines their function and interactions, making accurate structure prediction a critical objective in computational biology. Traditional optimization techniques often struggle with the high-dimensional, non-convex energy landscapes characteristic of molecular systems.

In protein structure prediction, DE-based approaches have been employed to navigate the complex conformational space efficiently. The algorithm explores possible backbone and side-chain arrangements to identify low-energy configurations that correspond to stable native structures. Multi-modal DE variants are particularly valuable in this context, as they can identify alternative folding patterns that might represent metastable states with functional significance [21]. The effectiveness of DE in handling non-differentiable energy functions and discrete conformational changes has established it as a competitive approach in this domain.

Drug Design and Molecular Docking

The drug discovery process presents numerous optimization challenges that align well with DE's capabilities. In molecular docking studies, DE algorithms optimize the position, orientation, and conformation of a small molecule (ligand) within a target protein's binding site to predict binding affinity and mode. The multi-modal nature of DE allows simultaneous exploration of multiple binding poses, providing researchers with a diverse set of plausible interaction models for further investigation.

Recent applications have extended to de novo drug design, where DE optimizes molecular structures toward desired pharmacological properties. In these implementations, DE operates on structural representations or molecular descriptors, efficiently navigating the vast chemical space to identify promising candidate structures. The algorithm's ability to handle mixed variable types (continuous, ordinal, and categorical) makes it particularly suitable for molecular optimization problems that incorporate both structural and physicochemical parameters.

Biological Network Modeling and Parameter Estimation

Reconstructing biological networks from experimental data represents another area where DE has made substantial contributions. Systems biology models typically involve numerous parameters that must be estimated from often noisy and incomplete experimental measurements. DE efficiently explores high-dimensional parameter spaces to identify values that minimize the discrepancy between model predictions and observed data.

The robustness of DE against local optima is particularly valuable in this context, as biological network models frequently exhibit multi-modal parameter distributions where different combinations yield similar system behaviors. By identifying multiple plausible parameter sets, DE enables researchers to assess system robustness and identify critical parameters that strongly influence network behavior. This capability has proven valuable in metabolic engineering, where DE assists in optimizing pathway manipulation strategies for enhanced production of target compounds.

Comparative Performance Analysis

Benchmarking Methodology

Objective assessment of DE performance in biological contexts requires standardized evaluation protocols. The IEEE CEC (Congress on Evolutionary Computation) benchmark suites have emerged as the standard for comparative analysis of optimization algorithms [5] [25]. These test suites include diverse function types (unimodal, multimodal, hybrid, and composition) that mimic various challenges present in real-world optimization landscapes.

Statistical validation typically employs non-parametric tests, including the Wilcoxon signed-rank test for pairwise comparisons and the Friedman test for multiple algorithm comparisons [5] [6]. These methods are preferred over parametric alternatives because they do not assume normal distribution of performance data, which is rarely observed in stochastic optimization algorithms [5]. Recent competitions have also incorporated the Mann-Whitney U-score test to provide additional statistical validation [5] [6].

Performance is typically evaluated using solution error value (SE), defined as (f(x) - f(x^)), where (x) represents the best solution found and (x^) is the known global optimum [22]. Convergence speed, success rate, and algorithm robustness across multiple runs provide complementary performance metrics.

Quantitative Performance Comparison

Table 2: Performance Comparison of DE Variants on Benchmark Problems

| Algorithm | Unimodal Functions (Rank) | Multimodal Functions (Rank) | Hybrid Functions (Rank) | Composite Functions (Rank) | Overall Rank |

|---|---|---|---|---|---|

| ESADE [22] | 2.1 | 1.8 | 1.9 | 2.3 | 2.0 |

| HCDE [23] | 1.9 | 2.1 | 1.7 | 2.0 | 1.9 |

| MHDE [25] | 2.3 | 2.4 | 2.2 | 2.5 | 2.4 |

| RLDE [4] | 2.0 | 1.9 | 2.1 | 1.8 | 2.0 |

| Classic DE | 3.5 | 3.8 | 3.9 | 4.2 | 3.9 |

Modern DE variants consistently outperform the classic DE algorithm across all function categories, with the most significant improvements observed on composite and hybrid functions that most closely resemble real-world optimization landscapes [22] [23] [25]. The HCDE algorithm demonstrates particularly strong performance on hybrid functions, benefiting from its hierarchical parameter control and entropy-based diversity maintenance [23]. The ESADE and RLDE variants show excellent performance on multimodal problems, suggesting strong exploration capabilities valuable for biological applications where identifying multiple solutions is often critical [22] [4].

When applied to real-world problems, these performance advantages translate to practical benefits. In the CEC2020 real-world optimization competition, the HCDE algorithm demonstrated "outstanding performance" on 57 real-world problems, outperforming strategies focused solely on diversity maintenance [23]. Similarly, the MHDE algorithm showed excellent performance in structural optimization problems, minimizing weight while satisfying complex engineering constraints [25].

Experimental Protocols and Methodologies

Standard Implementation Framework

Implementing DE for computational biology applications requires careful attention to experimental design. A standardized workflow ensures reproducible and comparable results across studies. The following protocol outlines the key steps for implementing DE in biological optimization contexts:

Problem Formulation: Clearly define the objective function, decision variables, and constraints specific to the biological problem. In molecular docking, this includes specifying the search space encompassing the binding site and the scoring function to evaluate binding affinity.

Algorithm Selection: Choose an appropriate DE variant based on problem characteristics. For multi-modal biological landscapes, ESADE or multi-modal DE variants are often suitable [22] [21]. For high-dimensional parameter estimation, HCDE or RLDE may provide better performance [23] [4].

Parameter Configuration: Set population size ((NP)), mutation factor ((F)), and crossover rate ((CR)). Adaptive variants automate this process, but initial values should be set according to established guidelines ((NP = 10n), (CR = 0.9), (F = 0.8), where (n) is problem dimensionality) [3].

Termination Criteria: Define appropriate stopping conditions, which may include maximum function evaluations, convergence thresholds, or computation time limits based on the specific biological application.

Validation Procedure: Implement statistical validation methods, typically including multiple independent runs and non-parametric statistical tests to ensure result significance [5] [6].

Figure 1: Differential Evolution Experimental Workflow

Specialized Methodologies for Biological Applications

Biological optimization problems often require specialized methodological adaptations. For protein structure prediction, the experimental protocol typically includes:

Representation Scheme: Implement a suitable coordinate system (Cartesian, internal coordinates, or hybrid representation) that efficiently encodes molecular configurations.

Energy Function: Define a biologically plausible scoring function that incorporates force field parameters, statistical potentials, or knowledge-based terms.

Constraint Handling: Incorporate structural constraints (bond lengths, angles, chirality) using penalty functions or specialized operators that maintain feasible solutions [3].

Multi-objective Formulation: For complex biological optimization, implement multi-objective DE variants to simultaneously optimize conflicting objectives such as stability, specificity, and synthesizability.

For biological network parameter estimation, the protocol includes additional specialized steps:

Experimental Data Integration: Incorporate time-series or steady-state experimental measurements as optimization targets.

Uncertainty Quantification: Implement methods to assess parameter identifiability and estimate confidence intervals for optimized parameters.

Model Selection: Employ multi-modal DE capabilities to identify alternative model structures that fit experimental data.

These specialized methodologies leverage DE's flexibility while addressing the unique challenges of biological optimization problems.

Research Reagent Solutions

Table 3: Essential Computational Tools for DE Implementation in Biology

| Tool Category | Specific Solutions | Function | Implementation Considerations |

|---|---|---|---|

| Optimization Frameworks | DEAP, Platypus, jMetal | Provide reusable components for DE implementation | Support for parallel evaluation, statistical analysis |

| Benchmark Suites | IEEE CEC test suites, BBOB | Algorithm performance assessment | Standardized comparison across methods |

| Biological Modeling | Rosetta, AutoDock, COPASI | Domain-specific modeling environments | Integration of DE with biological simulators |

| Statistical Analysis | R, Python SciPy | Non-parametric statistical testing | Wilcoxon, Friedman, Mann-Whitney tests |

| Visualization Tools | Matplotlib, Gnuplot, PyMOL | Results representation and analysis | Convergence plots, molecular visualization |

Successful implementation of DE in computational biology requires appropriate computational tools and frameworks. DEAP (Distributed Evolutionary Algorithms in Python) provides a comprehensive framework for implementing DE variants with support for parallel evaluation and statistical analysis [1]. Specialized biological modeling environments such as Rosetta for protein structure prediction and AutoDock for molecular docking often incorporate evolutionary algorithms including DE as optimization engines [21].

For performance assessment, the IEEE CEC benchmark suites provide standardized test problems that help researchers evaluate algorithm capabilities before application to biological problems [5] [25]. Statistical analysis packages in R or Python enable rigorous performance comparison using non-parametric tests, which are essential for validating results from stochastic optimization algorithms [5] [6].

Differential Evolution has established itself as a valuable optimization methodology in computational biology, demonstrating consistent performance across diverse applications including molecular structure prediction, drug design, and biological network optimization. The algorithm's simplicity, robustness, and ability to handle non-differentiable, multi-modal problems align well with the challenges characteristic of biological systems.

The continuous evolution of DE variants has addressed initial limitations related to parameter sensitivity and premature convergence. Modern implementations featuring adaptive parameter control, hierarchical structures, and hybrid strategies have significantly enhanced performance on complex biological optimization landscapes. The empirical evidence from comprehensive benchmarking indicates that contemporary DE variants consistently outperform the classic algorithm, particularly on hybrid and composite functions that most closely resemble real-world biological problems.