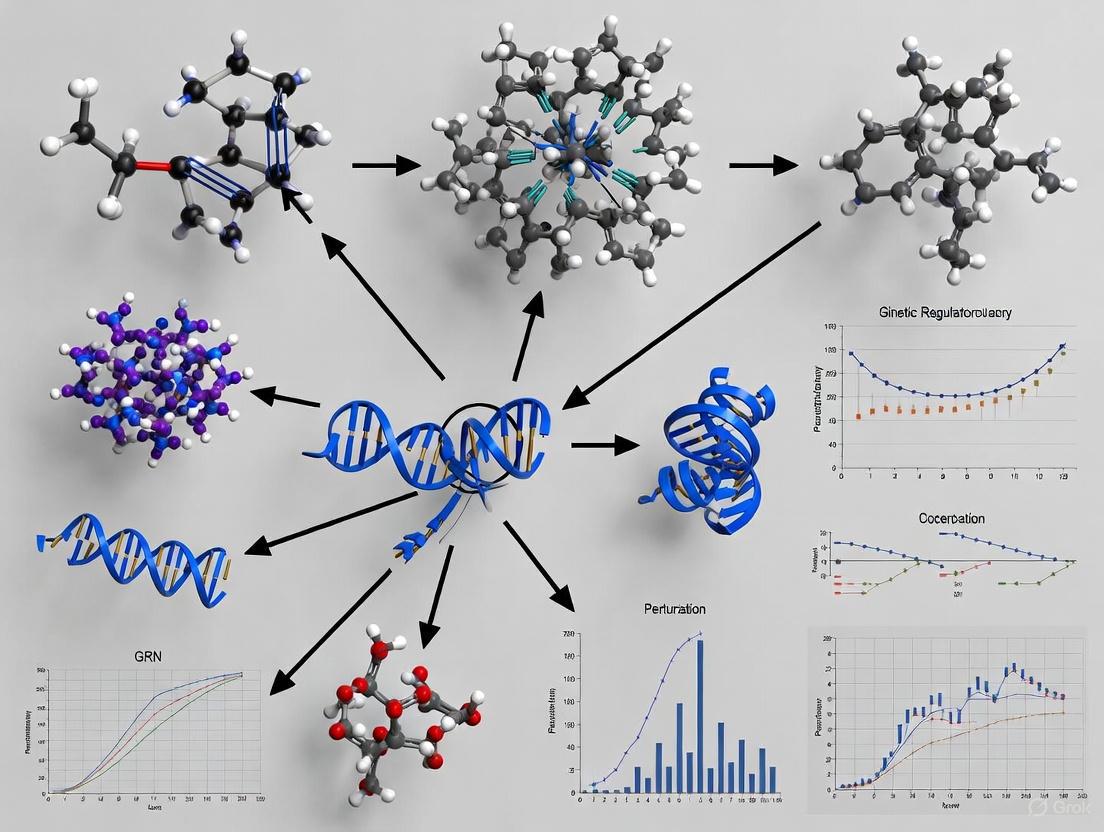

Modeling Gene Regulation: Computational Strategies for Simulating GRN Structure and Perturbation Effects

This article provides a comprehensive overview of cutting-edge computational models for simulating Gene Regulatory Network (GRN) structure and predicting the effects of genetic perturbations.

Modeling Gene Regulation: Computational Strategies for Simulating GRN Structure and Perturbation Effects

Abstract

This article provides a comprehensive overview of cutting-edge computational models for simulating Gene Regulatory Network (GRN) structure and predicting the effects of genetic perturbations. It explores the foundational principles of realistic GRN generation, from small-world topologies to power-law degree distributions. The review systematically compares machine learning and deep learning methodologies for GRN inference and expression forecasting, examining their applications in drug discovery and disease modeling. It further addresses critical challenges in model benchmarking, scalability, and optimization, synthesizing insights from recent large-scale validation studies. Designed for researchers, scientists, and drug development professionals, this synthesis of current computational approaches aims to bridge the gap between theoretical innovation and practical application in functional genomics.

Decoding GRN Architecture: From Biological Principles to Computational Representation

Essential Structural Properties of Biological Gene Regulatory Networks

Gene Regulatory Networks (GRNs) represent the complex, recursive sets of regulatory interactions between genes and their products that control cellular decision-making, development, and homeostasis [1]. The architectural features of these networks—their topological properties, modular organization, and dynamic control mechanisms—determine their functional behavior and response to perturbations. Within the broader context of computational models for simulating GRN structure and perturbation effects, understanding these essential structural properties is paramount for predicting cellular behavior in development, disease, and therapeutic intervention. This Application Note details the fundamental structural properties of GRNs, quantitative metrics for their characterization, and experimental protocols for their investigation, providing researchers with a framework for analyzing these complex systems.

Essential Topological Properties of GRNs

Computational analyses across multiple species and biological contexts have consistently identified three topological features as most relevant for defining GRN structure and function: the average nearest neighbor degree (Knn), PageRank, and node degree [2]. These properties are evolutionarily conserved and represent primary cell features that distinguish regulators from targets while determining subsystem essentiality.

Table 1: Essential Topological Properties of Gene Regulatory Networks

| Topological Feature | Mathematical Definition | Biological Interpretation | Role in Subsystem Control |

|---|---|---|---|

| Knn (Average Nearest Neighbor Degree) | Average degree of a node's neighbors [2] | Measures connectivity association; low Knn indicates hubs connected to low-degree nodes [2] | Low Knn: Specialized subsystemsIntermediate Knn: Life-essential subsystems [2] |

| PageRank | Probability a random signal traversing the network will visit a node [2] | Measures influence and importance in signal propagation [2] | High PageRank: Life-essential subsystems (increases robustness) [2] |

| Degree | Number of connections a node has [2] | Identifies hubs; regulators typically have high degree [2] | High Degree: Life-essential subsystems [2] |

| Sparsity | Typical gene regulated by small number of transcription factors [3] [4] | Enables manageable analysis; most perturbations have limited effects [3] | Network-wide property enabling functional organization |

| Modularity | Organization into densely connected groups [3] [5] | Groups genes by function; corresponds to hierarchical organization [3] | Facilitates coordinated response to perturbations |

Life-essential subsystems are primarily governed by transcription factors with intermediate Knn and high PageRank or degree, whereas specialized subsystems are typically regulated by TFs with low Knn [2]. The high PageRank of essential subsystem components ensures robustness against random perturbations by increasing the probability that regulatory signals successfully propagate to their target genes [2].

Quantitative Structural Analysis of GRNs

Machine Learning Classification of Network Components

Decision tree models based on Knn, PageRank, and degree can distinguish regulators from target genes with an average accuracy of 84.91% (ROC average: 86.86%) [2]. The classification rules follow these patterns:

- Nodes with very low ("A","B") or high ("D"-"F") Knn values are classified as regulators and targets, respectively

- For intermediate Knn values ("C"), PageRank becomes the deciding factor

- When both Knn and PageRank yield intermediate values, degree provides the final classification [2]

This demonstrates that these three features alone contain sufficient information to reliably distinguish network components, highlighting their fundamental nature in GRN architecture.

Distribution of Perturbation Effects

Large-scale perturbation studies reveal that GRN sparsity limits the effects of most interventions. In a genome-scale Perturb-seq study in K562 cells examining 5,247 perturbations that targeted genes with measured expression:

- Only 41% of perturbations targeting a primary transcript significantly affected the expression of any other gene [3] [4]

- Only 3.1% of ordered gene pairs showed at least a one-directional perturbation effect [3]

- Just 2.4% of these pairs exhibited bidirectional effects [3]

Table 2: Perturbation Effect Distributions in GRNs

| Perturbation Metric | Value | Biological Significance |

|---|---|---|

| Perturbations with significant trans-effects | 41% | Most genes do not act as regulatory hubs |

| Gene pairs with one-directional effects | 3.1% | Sparse connectivity between specific gene pairs |

| Gene pairs with bidirectional effects | 2.4% of affected pairs | Feedback loops are present but not universal |

| Power-law fit (R²) | ≈1.0 | Strong scale-free topology across species [2] |

Experimental Protocols for GRN Structure Analysis

Protocol 1: Mapping TF-TF Interactions via CAP-SELEX

Purpose: To identify cooperative binding between transcription factors and the DNA sequences mediating these interactions.

Background: Transcription factor cooperativity significantly expands the regulatory lexicon beyond what individual TFs can achieve. The CAP-SELEX method enables high-throughput mapping of these interactions [6].

Reagents and Equipment:

- Human TF library (conserved across mammals)

- 384-well microplates

- Escherichia coli expression system

- High-throughput sequencer

- CAP-SELEX buffer components

Procedure:

- Clone and Express TFs: Express 242 human TFs enriched for mammalian conservation in E. coli [6]

- Form TF Pairs: Combine TFs into 58,754 pairwise combinations in 384-well format [6]

- CAP-SELEX Cycling:

- Incubate TF pairs with random DNA library

- Perform three consecutive affinity purification cycles

- Extract and purify bound DNA ligands [6]

- Sequencing and Analysis:

- Sequence selected DNA ligands via high-throughput sequencing

- Apply mutual information algorithm to identify preferred spacing/orientation

- Use k-mer enrichment analysis to detect novel composite motifs [6]

Expected Results: The screen of 58,754 TF pairs identified 2,198 specific interactions (1,329 with spacing/orientation preferences; 1,131 with novel composite motifs), representing between 18-47% of all human TF-TF motifs [6].

Protocol 2: Network Comparison Using ALPACA

Purpose: To identify differentially structured modules between phenotypic states without predefined gene sets.

Background: ALPACA (ALtered Partitions Across Community Architectures) detects condition-specific network modules by comparing community structures between two phenotypic states, overcoming limitations of simple edge-based differential networks [5].

Reagents and Equipment:

- Gene expression datasets from two conditions

- Computational environment (R/Python)

- ALPACA software package

Procedure:

- Network Inference:

- ALPACA Analysis:

- Input baseline and perturbed networks

- Optimize differential modularity function: [ \Delta Q = \frac{1}{{2m}}\sum\limits{i,j} \left( B{ij} - \frac{{di^B dj^B}}{{2m^B}} \right)\delta(Ci,Cj) ] where (B{ij} = A{ij}^P - A_{ij}^B) represents the edge weight difference between perturbed and baseline networks [5]

- Identify modules that maximize (\Delta Q)

- Validation:

- Perform functional enrichment analysis on differential modules

- Compare with differential expression results

- Validate key findings with orthogonal methods (e.g., CRISPR perturbations)

Expected Results: In ovarian cancer subtypes, ALPACA identified angiogenic-specific modules enriched for blood vessel development genes; in breast tissue, it detected female-specific modules enriched for estrogen receptor and ERK signaling pathways [5].

Visualization of GRN Structural Properties

CAP-SELEX Workflow for TF-TF Interaction Mapping

Network Perturbation Analysis Framework

Research Reagent Solutions

Table 3: Essential Research Reagents for GRN Structural Analysis

| Reagent/Resource | Specifications | Experimental Function | Example Applications |

|---|---|---|---|

| CAP-SELEX Platform | 384-well format; 58,754 TF pairs screened [6] | High-throughput mapping of cooperative TF-DNA binding | Identify novel composite motifs; resolve binding specificity paradox [6] |

| Morpholino Antisense Oligonucleotides (MASOs) | Stable base-pairing; low embryonic toxicity [8] | Block mRNA translation or splicing in perturbation studies | Sea urchin GRN mapping; functional linkage validation [8] |

| NanoString nCounter | 50-500 gene multiplexing; mRNA barcoding [8] | Direct mRNA quantification without amplification | Regulatory gene expression profiling; perturbation response measurement [8] |

| GRLGRN Software | Graph transformer network; implicit link extraction [7] | Infer regulatory relationships from scRNA-seq data | GRN reconstruction from single-cell data; hub gene identification [7] |

| ALPACA Algorithm | Differential modularity optimization [5] | Detect condition-specific network modules | Identify disease-specific network structure; compare phenotypic states [5] |

| Perturb-seq Data | 5,530 genes; 1.99M cells; 11,258 perturbations [3] | Genome-scale knockout effects measurement | GRN topology-function relationships; perturbation effect distribution [3] |

The essential structural properties of Gene Regulatory Networks—particularly Knn, PageRank, and degree—form the architectural basis for cellular control systems. These evolutionarily conserved properties determine subsystem essentiality, with high PageRank and intermediate Knn characterizing life-essential subsystems, while low Knn associates with specialized functions. The experimental and computational frameworks presented here enable researchers to quantitatively analyze these properties and their functional consequences. As GRN research advances, integrating these structural principles with dynamical models will be essential for predicting cellular behavior across development, homeostasis, and disease, ultimately informing therapeutic strategies that target regulatory network architecture.

Sparsity, Hierarchy, and Modularity in Network Organization

The architecture of biological networks is not random but is governed by fundamental organizational principles that enable robust and evolvable system functions. In the context of Gene Regulatory Networks (GRNs), which control core developmental and biological processes underlying complex traits, three structural properties are particularly critical: sparsity, hierarchy, and modularity [3]. These principles provide a framework for understanding how complex regulatory interactions can be efficiently encoded within the genome and how perturbations, such as gene knockouts, propagate through the system. The study of these properties has been revolutionized by single-cell sequencing assays and CRISPR-based molecular perturbation approaches like Perturb-seq, which provide high-resolution data on regulatory interactions and their functional consequences [3] [9]. Computational models that accurately incorporate these architectural features are essential for interpreting experimental data, predicting perturbation outcomes, and ultimately understanding the genetic basis of human disease and development.

The integration of these principles is particularly relevant for research on computational models simulating GRN structure and perturbation effects. As noted in recent research, "key properties of GRNs, like hierarchical structure, modular organization, and sparsity, provide both challenges and opportunities" for inferring the architecture of gene regulation [3]. This application note provides detailed protocols for analyzing these organizational principles, with specific emphasis on their relevance to perturbation effect distributions in GRN research.

Quantitative Characterization of Network Properties

Defining and Measuring Core Properties

Sparsity describes the property wherein each gene is directly regulated by only a small subset of all possible regulators, resulting in networks with limited connectivity. Empirical evidence from a genome-scale Perturb-seq study in K562 cells demonstrates this principle: only 41% of perturbations targeting a primary transcript had significant effects on the expression of any other gene, indicating that most genes do not function as influential regulators [3]. Quantitatively, sparsity can be measured as the fraction of possible connections that are actually present in the network, typically resulting in values significantly less than 1.

Hierarchy refers to the organization of regulatory relationships into ordered levels, with genes at higher levels controlling the activity of those below. This layered control structure enables coordinated regulation of complex biological processes. In directed graphs representing GRNs, hierarchy manifests as an ordering of nodes where edges tend to flow from higher to lower levels, with feedback loops creating exceptions to strict hierarchical arrangements [3].

Modularity quantifies the extent to which a network is organized into functionally specialized communities or modules, with dense connections within modules and sparser connections between them [10]. Formally, modularity (Q) is defined as the fraction of edges that fall within given groups minus the expected fraction if edges were distributed at random. For a network partitioned into c communities, modularity is calculated as:

[ Q = \sum{i=1}^{c} (e{ii} - a_i^2) ]

where ( e{ii} ) is the fraction of edges within community i, and ( ai ) is the fraction of edges attached to vertices in community i [10]. Biological networks, including GRNs and brain functional networks, consistently exhibit high modularity, reflecting their functional specialization [11] [10].

Table 1: Key Metrics for Quantifying Network Architectural Principles

| Property | Quantitative Metric | Typical Range in Biological Networks | Measurement Approach |

|---|---|---|---|

| Sparsity | Connection density | ~0.01-0.05 | Fraction of possible edges that actually exist |

| Hierarchy | Hierarchy score | Varies by system | Directed acyclic graph analysis; feedback loop identification |

| Modularity | Modularity index (Q) | 0.3-0.7 [10] | Community detection algorithms [10] |

| Feedback | Reciprocal connection percentage | ~2.4% of regulatory pairs [3] | Identification of bidirectional edges |

Empirical Evidence from Biological Systems

Quantitative studies of large-scale networks provide compelling evidence for these organizational principles across biological systems. Analysis of human brain functional networks using fMRI has revealed a hierarchical modular organization with a mutual information similarity of I = 0.63 between subjects, indicating a consistent architectural pattern across individuals [11]. The largest modules identified at the highest hierarchical level included medial occipital, lateral occipital, central, parieto-frontal, and fronto-temporal systems, with association cortical areas containing critical connector nodes and hubs that facilitate inter-modular connectivity [11].

In GRNs, analysis of perturbation effects from a genome-scale Perturb-seq study demonstrated that only 3.1% of ordered gene pairs showed at least a one-directional perturbation effect, with 2.4% of these pairs exhibiting bidirectional regulation [3]. This sparsity in functional connections highlights the selective nature of regulatory interactions and underscores why assumptions of dense connectivity are biologically unrealistic when modeling GRNs.

Table 2: Empirical Measurements from Experimental Studies

| Study System | Sparsity Indicator | Modularity Findings | Hierarchical Organization |

|---|---|---|---|

| GRN (K562 cells) | 41% of gene perturbations affect other genes [3] | Hierarchical structure observed [3] | |

| Human Brain Network | Five major modular systems [11] | Multiple hierarchical levels with distinct subsystems [11] | |

| Chromosome Organization | Topologically Associating Domains (TADs) [12] | Hierarchical folding from territories to point interactions [12] |

Experimental Protocols for Network Analysis

Protocol 1: Inferring Hierarchical Modular Structure from Functional Connectivity Data

Purpose: To identify hierarchical modular organization in biological networks using functional connectivity data, with application to brain networks or GRNs.

Materials and Reagents:

- Functional connectivity data (e.g., fMRI BOLD signals, gene expression correlations)

- High-resolution parcellation template (region-based or gene-based)

- Computing environment with necessary algorithms (e.g., Blondel et al. algorithm)

- Preprocessing tools for motion correction and registration (for neuroimaging)

- Wavelet filtering package (e.g., Brainwaver R package for fMRI)

Procedure:

- Data Acquisition: Acquire whole-brain functional MRI data using a 3T scanner with the following parameters: repetition time = 2000 ms; echo time = 30 ms; flip angle = 78°; slice thickness = 3 mm plus 0.75 mm interslice gap; 32 slices; image matrix size = 64 × 64 [11]. For gene expression data, perform single-cell RNA sequencing with sufficient coverage (recommended: >50,000 reads per cell).

- Data Preprocessing: Correct images for motion and register to standard space using an affine transform. Extract time series using a high-resolution regional parcellation (1,808 regional nodes for brain networks) [11]. Apply inclusion criteria that each region must be at least 50% overlapping with the target mask (grey matter for brain, expressed genes for GRNs).

- Wavelet Filtering: Filter mean time series of each region using wavelet transformation to isolate frequency-dependent functional connectivity (scale-dependent for brain networks: 0.25-0.015 Hz) [11].

- Connectivity Matrix Construction: Calculate wavelet correlation coefficients between each pair of nodes to generate an association matrix representing functional connectivity.

- Hierarchical Modular Decomposition: Apply a computationally efficient community detection algorithm (e.g., Blondel et al.) to identify nested modular structure at several hierarchical levels [11].

- Similarity Quantification: Use mutual information (0 < I < 1) to estimate similarity of community structure between different subjects or conditions [11].

- Hub Identification: Identify connector nodes and hubs based on their participation in inter-modular connectivity, with particular attention to association areas in neural networks or key transcription factors in GRNs.

Troubleshooting Tips:

- If computational time is prohibitive for large networks, consider using a fast, greedy modularity optimization algorithm.

- For single-cell RNA-seq data with low coverage, cluster related cells into pseudobulk or meta-cells to improve signal-to-noise ratio [9].

Protocol 2: Mapping Perturbation Effects in Gene Regulatory Networks

Purpose: To systematically characterize the distribution of perturbation effects in GRNs and relate these effects to network architectural principles.

Materials and Reagents:

- CRISPR-based perturbation system (e.g., Perturb-seq)

- Single-cell RNA sequencing platform

- 5,530 gene transcript panel (adapt as needed for system)

- Cell culture system (e.g., K562 erythroid progenitor cell line)

- Computational resources for network simulation and analysis

Procedure:

- Perturbation Library Design: Design a CRISPR-based perturbation library targeting 9,866 unique genes with 11,258 total perturbations [3].

- Experimental Processing: Transduce cells with perturbation constructs and prepare single-cell suspensions. Perform single-cell RNA sequencing on 1,989,578 cells to capture expression profiles of 5,530 gene transcripts [3].

- Data Filtering: Subset data to 5,247 perturbations that target genes whose expression is also measured in the data [3].

- Effect Significance Testing: For each perturbation, test for significant effects on the expression of other genes using Anderson-Darling FDR-corrected p < 0.05 [3].

- Network Property Calculation:

- Sparsity: Calculate the percentage of perturbations that significantly affect other genes (expected ~41%) [3].

- Reciprocal Regulation: Identify pairs of genes with bidirectional regulation (expected ~2.4% of regulating pairs) [3].

- Hierarchy: Construct directed graphs from perturbation effects and test for hierarchical organization using directed acyclic graph algorithms.

- Modularity: Apply community detection algorithms to identify functional modules enriched for specific biological processes.

- Network Simulation: Generate realistic network structures using algorithms based on small-world network theory that incorporate sparsity, hierarchy, and modularity [3].

- Model Fitting: Model gene expression regulation using stochastic differential equations formulated to accommodate molecular perturbations [3].

- Effect Distribution Analysis: Characterize the distribution of perturbation effects within and across the simulated GRNs, comparing to experimental observations.

Validation Steps:

- Confirm that a subset of simulated networks recapitulates features observed in the experimental Perturb-seq data.

- Validate key predicted regulatory relationships using orthogonal experimental approaches (e.g., chromatin immunoprecipitation).

Computational Modeling and Visualization

Graphviz Diagrams for Network Architecture and Workflows

Network Architecture Principles and Perturbation Effects

Hierarchical Modular Analysis Workflow

Table 3: Essential Research Reagents and Computational Tools

| Item | Function/Application | Specifications/Alternatives |

|---|---|---|

| CRISPR Perturbation Library | Targeted gene knockout for perturbation studies | 11,258 perturbations targeting 9,866 genes [3] |

| Single-cell RNA Sequencing Platform | Measuring transcriptome-wide gene expression | 5,530 gene transcripts in 1,989,578 cells [3] |

| ATAC-seq Reagents | Mapping accessible chromatin regions | Identifies active cis-regulatory elements [9] |

| ChIP-seq Antibodies | Profiling transcription factor binding and histone modifications | e.g., H3K27ac for active enhancers [9] |

| Community Detection Algorithm | Identifying modular structure in networks | Blondel et al. algorithm for hierarchical decomposition [11] |

| Modularity Maximization Software | Quantifying community structure | Algorithms optimizing Q metric [10] |

| Graph Visualization Tools | Creating accessible network diagrams | ColorBrewer for color-blind friendly palettes [13] [14] |

| Stochastic Differential Equation Solvers | Modeling gene expression dynamics | Accommodates molecular perturbations [3] |

The architectural principles of sparsity, hierarchy, and modularity provide a powerful framework for understanding the organization and function of gene regulatory networks. These properties are not merely structural features but have profound implications for how networks respond to perturbations, evolve new functions, and can be effectively modeled computationally. The protocols and analyses detailed in this application note provide researchers with practical methodologies for quantifying these properties and relating them to perturbation effects.

Understanding these organizational principles has significant implications for drug development and therapeutic targeting. The hierarchical organization of GRNs suggests that targeting master regulators high in the hierarchy may produce more dramatic phenotypic effects, while the modular structure indicates that perturbations might be contained within functional units, potentially reducing off-target effects. Furthermore, the sparsity of biological networks explains why most genetic perturbations have limited effects, helping to identify critical leverage points for therapeutic intervention. As computational models continue to incorporate these principles with increasing sophistication, they will enhance our ability to predict the system-level consequences of genetic and pharmacological interventions, ultimately accelerating the development of targeted therapies for complex diseases.

The Role of Directed Edges and Feedback Loops in Dynamic Systems

Gene Regulatory Networks (GRNs) are collections of molecular regulators that interact to govern gene expression levels, determining cellular function [15]. The structure of these networks—characterized by directed edges and feedback loops—is a critical determinant of their dynamic behavior. Directed edges represent the causal influence of one molecular species (e.g., a transcription factor) on another (e.g., its target gene), while feedback loops form when these influences form cyclic pathways [16] [17]. Understanding this relationship between network topology and dynamics is a central concern of systems biology, particularly in the context of computational models for simulating GRN structure and perturbation effects [16] [3].

Empirical GRNs exhibit specific structural properties that shape their function: they are sparse, with most genes having few direct regulators; they possess hierarchical organization with asymmetric degree distributions; they contain significant modularity; and they feature an inherent global directionality that drastically reduces the fraction of feedback loops compared to randomized networks [3] [18] [17]. This structural framework provides both constraints and opportunities for understanding how perturbations propagate through biological systems and offers insights for therapeutic intervention in disease states.

Theoretical Foundations of Feedback Loops

Classification and Functional Impact

Feedback loops in GRNs are categorized based on their structural and functional characteristics. The table below summarizes the primary types of feedback loops and their functional implications in dynamic systems.

Table 1: Classification and Functions of Feedback Loops in Gene Regulatory Networks

| Loop Type | Structural Definition | Key Dynamical Role | Biological Examples |

|---|---|---|---|

| Negative Feedback | Closed path with odd number of inhibitory interactions | Enables periodic oscillations, maintains homeostasis [16] | Circadian rhythms, stress response systems |

| Positive Feedback | Closed path with even number of inhibitory interactions | Enables multistationarity, differentiation, bistable switches [16] | Cell fate decisions, epigenetic memory |

| Feed-forward Loop | Three-node motif where X regulates Y and Z, and Y regulates Z | Creates temporal programs, filters noise [15] | Galactose utilization in E. coli [15] |

Quantifying Feedback Loops: The Distance-to-Positive-Feedback Metric

The "distance-to-positive-feedback" metric quantifies how many independent negative feedback loops exist in a network—a property inherent in the network topology [16]. In essence, this measure captures the minimum number of sign-flips (changing activations to inhibitions or vice versa) required to eliminate all negative feedback loops, rendering the network sign-consistent [16]. Through computational studies using Boolean networks, this distance measure correlates strongly with network dynamics: as the number of independent negative feedback loops increases, the number of limit cycles tends to decrease while their length increases [16]. These mathematical insights provide a framework for predicting network behavior from topological features.

Computational Modeling Approaches

Boolean Network Models for GRN Analysis

Boolean network models represent a simplification where each molecular species is either active (1) or inactive (0), with time proceeding in discrete steps [16]. This approach is particularly valuable when detailed kinetic information is lacking, focusing attention on the essential logical structure of regulatory relationships.

Key Protocol: Implementing Boolean Network Simulations

- Network Definition: Represent the GRN as a directed graph G(V,E) where vertices V represent genes/proteins and directed edges E represent regulatory interactions.

- State Assignment: For each node vi ∈ V, assign a binary state value si(t) ∈ {0,1} at time t.

- Update Rules: Define Boolean functions fi for each node specifying how its state depends on its regulators.

- Dynamics Simulation: Update states synchronously or asynchronously across discrete time steps.

- Attractor Identification: Identify fixed points (steady states) and limit cycles (oscillatory states) through state-space enumeration.

For monotone Boolean networks (those without negative feedback loops), all trajectories must settle into equilibria or periodic orbits, with theoretical bounds on cycle lengths established by Sperner's Theorem [16].

Generating Realistic GRN Structures

A novel network generation algorithm creates synthetic GRNs with properties matching empirical observations [3] [18]. This algorithm produces directed scale-free networks with specified modularity through a growth process with preferential attachment:

Key Protocol: Network Generation Algorithm

- Initialization: Begin with a small initial graph of connected nodes.

- Growth Process: Iteratively add nodes or directed edges until reaching the target network size.

- Preferential Attachment: When adding edges between existing nodes, select:

- Targets with probability proportional to (existing out-degree + δin)

- Sources with probability proportional to (existing in-degree + δout)

- Modularity Control: Assign nodes to groups and bias edge creation using a within-group affinity parameter w.

- Parameter Effects: The sparsity parameter p controls mean regulators per gene (~1/p), while δin and δout control the coefficient of variation of degree distributions [18].

Table 2: Parameters for Realistic GRN Generation

| Parameter | Symbol | Effect on Network Structure | Biological Interpretation |

|---|---|---|---|

| Sparsity | p | Controls mean number of regulators per gene (~1/p) [18] | Determines network connectivity density |

| Modularity | w | Adjusts fraction of within-group edges: ~w/(w+k-1) for k groups [18] | Controls functional specialization |

| In-degree Bias | δin | Controls CV of in-degree distribution (heavy-tailedness) [18] | Creates variation in regulatory influence |

| Out-degree Bias | δout | Controls CV of out-degree distribution (heavy-tailedness) [18] | Creates variation in susceptibility to regulation |

| Group Number | k | Determines number of functional modules [18] | Corresponds to distinct biological programs |

Experimental Analysis of Feedback Loops

Quantifying Inherent Directionality and Feedback Suppression

Empirical evidence demonstrates that biological networks contain significantly fewer feedback loops than randomized networks with identical connectivity [17]. This suppression stems from an inherent global directionality present in empirical networks, where nodes can be ordered along a hierarchy such that links preferentially point from lower to higher levels [17].

A probabilistic model quantifies this directionality using a single parameter γ, representing the probability that any given link points along the inherent direction [17]. The model predicts the fraction F(k) of structural loops of length k that are also feedback loops follows:

F(k) ≈ (1/2)^(k-1) × [1 + (2γ - 1)^k]

This equation demonstrates that as the inherent directionality strengthens (γ > 0.5), the fraction of feedback loops decreases exponentially with loop length k [17]. Empirical studies of biological networks consistently reveal γ values significantly greater than 0.5, explaining the observed suppression of feedback loops [17].

Loop Enumeration Methodology

Key Protocol: Counting Feedback Loops in Empirical Networks

- Network Preparation: Compile directed network representation from experimental data.

- Loop Identification: Employ breadth-first search algorithms to enumerate structural loops of increasing length k.

- Feedback Classification: For each structural loop, determine if it constitutes a feedback loop (allowing return to start following edge directions).

- Randomization Controls: Compare against:

- Directionality Randomization (DR): Preserves links, randomizes directions

- Configuration Randomization (CR): Randomizes links and directions while preserving node degrees

- Statistical Analysis: Compute fraction F(k) of feedback loops for each loop length k.

Computational constraints limit exhaustive enumeration to loop lengths k ≤ 12 for most empirical networks, though analytical estimations exist for larger networks [17].

Visualization of Network Properties

Feedback Loop Structures and Motifs

Network Motifs and Feedback Structures: This diagram illustrates three fundamental network motifs that govern dynamics in gene regulatory networks: negative feedback (enabling oscillations), positive feedback (enabling bistability), and feed-forward loops (creating temporal programs).

GRN Perturbation Analysis Workflow

GRN Perturbation Analysis Pipeline: This workflow diagram outlines the systematic approach for analyzing perturbation effects in gene regulatory networks, from initial network modeling through to therapeutic target identification.

Research Reagent Solutions

Table 3: Essential Research Tools for GRN Perturbation Studies

| Reagent/Resource | Primary Function | Application in GRN Research |

|---|---|---|

| Perturb-seq [3] [19] | CRISPR-based screening with single-cell RNA sequencing | Enables large-scale mapping of perturbation effects at single-cell resolution |

| Boolean Network Simulation Tools [16] | Discrete dynamic modeling of GRNs | Investigates logical structure of regulatory networks without detailed kinetic parameters |

| Loop Enumeration Algorithms [17] | Exhaustive counting of structural and feedback loops | Quantifies feedback loop statistics in empirical networks |

| Network Generation Algorithms [3] [18] | Creates synthetic GRNs with realistic properties | Produces benchmark networks for testing inference methods and hypotheses |

| Directionality Quantification Metrics [17] | Measures inherent hierarchy in directed networks | Quantifies the parameter γ representing network feedforwardness |

Discussion and Research Implications

The structural properties of GRNs—particularly their inherent directionality and controlled feedback loop abundance—have significant implications for both basic research and therapeutic development. The relative scarcity of feedback loops in biological networks compared to random networks suggests evolutionary constraints that favor dynamical stability [17]. This insight guides the prioritization of network motifs for therapeutic targeting, as perturbations to naturally rare feedback structures may produce more dramatic and potentially pathological effects.

For drug development professionals, understanding these principles enables more strategic intervention in disease-associated networks. The distance-to-positive-feedback metric [16] offers a quantitative measure of network stability that could predict which regulatory sub-networks are most susceptible to therapeutic perturbation. Similarly, the recognition that most genes have limited pleiotropy and operate within regulatory modules [15] suggests targeted therapies may achieve efficacy with reduced off-target effects by focusing on specific network modules rather than individual genes.

Future research directions should focus on integrating high-resolution perturbation data [3] [19] with dynamical models to map the complex relationship between network topology, feedback regulation, and therapeutic response. The tools and protocols outlined here provide a foundation for systematically exploring these relationships across different disease contexts and therapeutic modalities.

Power-Law Distributions and Master Regulators in Network Topology

The architecture of Gene Regulatory Networks (GRNs) is not random; it is characterized by specific topological features that are crucial for their stability, function, and dynamic response to perturbations. Among the most significant of these features are scale-free (power-law) degree distributions and the presence of master regulators. A scale-free topology is defined by a connectivity distribution where a few nodes (hubs) possess a vastly greater number of connections than the average node, following a power law, ( P(k) \sim k^{-\gamma} ) [20]. Master regulators are a specific class of hubs, often transcription factors, that exert disproportionate control over downstream gene expression programs [20] [21]. These architectural principles are foundational for understanding how complex cellular phenotypes are controlled and for designing targeted therapeutic interventions. Computational models that accurately incorporate these features are essential for simulating GRN structure and predicting the effects of genetic perturbations in research and drug development.

Key Architectural Principles and Biological Significance

Scale-Free Topology and the Hubs

In a scale-free network, the probability that a node has connections to (k) other nodes (its degree) follows a power-law distribution. This results in a system that is highly heterogeneous, with most nodes having few connections, and a critical few—the hubs—having a very high number of links [20]. This topology has profound implications for network dynamics. Research shows that networks with outgoing scale-free degree distributions (SFO), where a handful of nodes are outgoing hubs, have a markedly higher probability of converging to stable fixed-point attractors compared to their transposed counterparts with incoming hubs (SFI) [20]. This suggests that outgoing hubs have an organizing role, potentially suppressing chaotic dynamics and driving the network toward stability, much like an external control signal in a feedback system [20].

The Bow-Tie Architecture

Another non-random feature observed across GRNs of diverse species, from prokaryotes to multicellular organisms, is the bow-tie architecture [21]. This structure consists of a central, densely interconnected core—the Largest Strong Component (LSC)—flanked by "in" and "out" layers. The in layer contains nodes that regulate the core but are not regulated by it, while the out layer contains nodes regulated by the core but do not feed back into it. The size of the core relative to the entire network has been observed to increase with biological complexity and is linked to fundamental dynamical properties like robustness, controllability, and evolvability [21]. This architecture creates a hierarchical flow of information, with the core processing signals from the in layer and distributing control to the out layer.

The Functional Role of Master Regulators

Master regulators typically reside in the "in" layer or the core of the bow-tie architecture. They are characterized by their high out-degree, allowing them to coordinate the expression of vast sets of target genes. A key insight from computational studies is that these outgoing hubs are instrumental in stabilizing network dynamics. By lumping the most connected outgoing hubs into a single effective node, one can approximate a complex heterogeneous network as a simpler, more tractable system where this "lumped hub" provides a feedback signal to the rest of the network (the bulk) [20]. This approximation has been shown to accurately preserve the convergence statistics of the original network, highlighting the outsized influence of a small number of nodes.

Table 1: Key Architectural Features of Biological GRNs

| Architectural Feature | Description | Biological/Functional Implication |

|---|---|---|

| Scale-Free (Power-Law) Topology | A network where the node connectivity distribution follows a power law, leading to few highly connected hubs. | Heterogeneous structure that is robust to random failures but fragile to targeted hub attacks [20]. |

| Bow-Tie Architecture | A structure with a central core (LSC) where every node can reach every other, and input/output layers. | Facilitates robustness, controllability, and evolvability; core size correlates with species complexity [21]. |

| Master Regulators (Outgoing Hubs) | Nodes with a disproportionately high number of outgoing connections to target genes. | Drives the network towards stable states and coordinates large-scale gene expression programs [20]. |

| Sparsity | The average node is connected to a small fraction of the total nodes in the network. | Limits the propagation of perturbations and makes the network more tractable for inference [3]. |

Computational Analysis of Network Topology and Dynamics

Modeling Framework for GRN Dynamics

To investigate the relationship between topology and dynamics, researchers often employ a Boolean dynamic model. In this framework, the activity of each node (e.g., a gene) is represented as a binary variable: expressed (+1) or repressed (-1). The system's state evolves according to the equation: [ si(t+1) = \text{sign}\left(\sum{j=1}^{N} W{ij} sj(t)\right) ] where (W) is the weighted connectivity matrix, often decomposed into a topology matrix (T) and an interaction strength matrix (J) ((W = T \circ J)) [20]. The topology matrix (T) is a directed adjacency graph whose edges can be sampled from specific distributions, such as a scale-free distribution for outgoing connections (P_{out}(k)) [20]. This model is particularly useful for studying the probability of a network converging to a fixed-point attractor, a fundamental dynamical property linked to network stability.

Effect of Hub Direction on Network Convergence

A critical finding from simulation-based studies is the marked difference between networks with outgoing hubs (SFO) and those with incoming hubs (SFI). When simulating Boolean dynamics, networks with a scale-free out-degree distribution (SFO) maintain a considerable probability of convergence to a fixed point even in large networks (e.g., N=5000). In contrast, the convergence probability for networks with a scale-free in-degree distribution (SFI) decreases rapidly and becomes negligible for networks larger than N=1000 [20]. This demonstrates that the directionality of hub connections is a primary determinant of network dynamics, with outgoing hubs exerting a stabilizing, organizing influence.

Table 2: Quantitative Comparison of SFO vs. SFI Network Dynamics

| Network Ensemble Type | Description | Convergence Probability to Fixed Point (as a function of network size N) |

|---|---|---|

| SFO (Scale-Free Out) | Power-law distribution of outgoing connections, narrow distribution of incoming connections. | High convergence probability that remains considerable for large N (tested up to N=5000) [20]. |

| SFI (Scale-Free In) | Power-law distribution of incoming connections, narrow distribution of outgoing connections (transpose of SFO). | Convergence probability decreases rapidly with N and is negligible for N > 1000 [20]. |

Network Topology and System Stability

The stability of GRNs—their ability to return to a steady state after perturbation—is also heavily influenced by global topology. Studies coupling mRNA and protein dynamics have shown that networks with a lower fraction of interactions targeting transcription factors (TFs) themselves (i.e., fewer TF-TF interactions) are significantly more stable [22]. Furthermore, the biological GRN of E. coli was found to be significantly more stable than its randomized counterparts, suggesting that evolutionary pressures have selected for topologies that inherently promote stability [22]. This can be understood through the lens of the bow-tie architecture, where a core rich in TF-TF interactions might be more prone to instability if not properly structured.

Experimental and Computational Protocols

Protocol 1: Simulating Boolean Dynamics on a Scale-Free Network

This protocol outlines the steps to generate a scale-free network and simulate its Boolean dynamics to assess convergence, a key metric for stability [20].

Research Reagent Solutions:

- Computational Environment: A scientific computing platform (e.g., Python with NumPy/SciPy, MATLAB, R).

- Network Generation Tool: A software library capable of generating random graphs from a specified degree distribution (e.g.,

networkxin Python).

Methodology:

- Network Generation: Construct a directed scale-free network with (N) nodes.

- Define the outgoing degree distribution (P{out}(k) \sim k^{-\gamma}) (e.g., using the configuration model).

- Define a narrow incoming degree distribution (P{in}(k)) (e.g., Poisson distribution).

- This creates an SFO network ensemble. To create an SFI ensemble, simply transpose the adjacency matrix.

Define Interaction Strengths: Create a matrix (J) of interaction strengths, where each element (J_{ij}) is typically drawn from an i.i.d. Gaussian distribution with a mean of 0 and a standard deviation of 1.

Form the Connectivity Matrix: Calculate the final weight matrix as the Hadamard (element-wise) product of the topology matrix (T) and the strength matrix (J): (W = T \circ J).

Initialize and Iterate:

- Initialize the state vector (s(t=0)) with random values of +1 or -1.

- Update the state of all nodes synchronously using the rule: (s(t+1) = \text{sign}(W \cdot s(t))).

- Repeat the update for a predefined number of time steps or until a fixed point is detected (i.e., (s(t+1) = s(t))).

Analysis: Over many random network instantiations and initial conditions, calculate the fraction of simulations that converge to a fixed point. Compare the convergence probabilities between SFO and SFI ensembles.

Figure 1: Workflow for Boolean dynamics simulation on a scale-free network.

Protocol 2: Analyzing Perturbation Effects on an EMT GRN

This protocol describes a combined Boolean and ODE-based approach to systematically identify nodes whose perturbation can induce a major state transition, such as the Epithelial-Mesenchymal Transition (EMT), in a GRN [23].

Research Reagent Solutions:

- GRN Model: A well-studied network model, such as the 26-node, 100-edge EMT GRN [23].

- Boolean Modeling Software: A custom or published software package for simulating Boolean networks with noise (e.g., in MATLAB or Python).

- ODE Modeling Tool: A tool like RACIPE (Random Circuit Perturbation) that generates an ensemble of ODE models from a network topology.

Methodology:

- Network Preparation and Baseline State Identification:

- Obtain the EMT GRN topology.

- For the Boolean model, run simulations from random initial conditions until a low-frustration stable state is reached. Classify these states as Epithelial (E) or Mesenchymal (M) based on known marker genes.

Define Perturbation:

- Select a node (or a pair of nodes) for clamping. For a pro-EMT perturbation, typically clamp a mesenchymal node to its "ON" (1) state or an epithelial node to its "OFF" (0) state.

Apply Transient Perturbation with Noise:

- Start from a stable E state.

- Clamp the selected node(s) to their target values for a duration (t_c).

- During this signaling period, introduce transcriptional noise, which can be modeled as a pseudo-temperature (T) in Boolean models or stochasticity in ODEs.

Monitor Transition:

- After the perturbation period (t_c), release the clamps and any applied noise.

- Continue the simulation until a new stable state is reached.

- Score the transition as successful if the final state is an M-type state.

Analysis: Repeat the process for all nodes and various noise levels and signal durations. Rank nodes by their efficacy in inducing the state transition (e.g., the percentage of successful runs). Effective master regulators will show high transition probabilities even at lower noise levels.

Figure 2: Workflow for analyzing perturbation-induced state transitions.

Essential Research Reagents and Tools

Table 3: The Scientist's Toolkit for GRN Topology and Perturbation Research

| Tool/Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Network Generation & Analysis | Configuration Model, Preferential Attachment Algorithms, networkx library (Python) |

Generate random graphs with specified degree distributions (e.g., scale-free) for in silico studies [20] [3]. |

| Dynamical Modeling Software | Custom Boolean/ODE simulators, RACIPE, BoolNet (R) | Simulate the temporal evolution of network states, test for attractors, and model the effects of perturbations [20] [23]. |

| Perturbation Data | Genome-scale CRISPR screens (e.g., Perturb-seq), knockout studies | Provide empirical data on the effects of systematically disrupting individual nodes, used for model validation [3]. |

| Validated Network Models | Context-specific GRNs (e.g., 26-node EMT network [23]), databases like RegulonDB | Serve as biologically grounded testbeds for developing and benchmarking computational methods. |

| Bow-Tie Decomposition Algorithms | Strongly Connected Component algorithms (e.g., Tarjan's) | Decompose a directed network into its bow-tie components (IN, CORE, OUT) for architectural analysis [21]. |

# Application Notes

Gene regulatory networks (GRNs) are fundamental to understanding the complex biological processes underlying human traits and diseases. The core challenge in computational biology is inferring the architecture of gene regulation with precision. Realistic simulation of GRN structure is therefore critical for interpreting experimental data, designing perturbation studies, and ultimately advancing drug discovery efforts. This document provides detailed application notes and protocols for generating biologically realistic GRN structures using a novel algorithm grounded in small-world network theory, followed by methodologies for simulating their dynamics and perturbation effects [3] [19].

Key properties of biological GRNs that must be captured by any realistic generating algorithm include sparsity, hierarchical organization, modularity, and the presence of feedback loops. Furthermore, biological networks exhibit a power-law degree distribution (scale-free property) and the small-world property, where most nodes are connected by short paths [3]. The algorithm described herein is specifically designed to incorporate these properties, enabling the generation of synthetic networks that recapitulate features observed in large-scale experimental studies, such as genome-scale Perturb-seq screens [3].

#2. Key Structural Properties of Biological GRNs

The proposed generating algorithm aims to recapitulate several key structural properties consistently observed in empirical biological network data. The quantitative characteristics of these properties, derived from a foundational Perturb-seq study in an erythroid progenitor cell line (K562), are summarized in the table below [3].

Table 1: Key Quantitative Properties of Biological GRNs from Experimental Data

| Network Property | Experimental Observation | Biological Significance |

|---|---|---|

| Sparsity | Only 41% of gene perturbations significantly affect other genes' expression [3]. | Indicates that the typical gene is directly regulated by a limited number of regulators, simplifying network inference. |

| Directed Edges & Feedback | 3.1% of tested gene pairs show a one-directional perturbation effect; 2.4% of these show evidence of bidirectional regulation [3]. | Confirms that regulatory relationships are causal and directional, with feedback loops being a common functional motif. |

| Scale-Free Topology | The in- and out-degree distribution of nodes follows an approximate power-law [3]. | Implies the presence of a few highly connected "hub" genes and many genes with few connections, impacting network robustness. |

| Small-World Property | Most nodes in the network are connected by short paths [3]. | Ensures efficient information flow and can amplify the effects of perturbations through the network. |

| Modular Organization | Networks exhibit group-like structure and enrichment for structural motifs [3]. | Reflects functional specialization, allowing for the coordinated regulation of biological processes. |

The novel algorithm for generating realistic GRN structures is inspired by established models from network theory, particularly those that produce scale-free and small-world topologies [3]. The algorithm focuses on creating directed graphs that embody the properties detailed in Table 1.

The core workflow of the algorithm involves several key stages: network initialization, preferential attachment to achieve a scale-free structure, and rewiring to introduce the small-world property and modular organization. The subsequent simulation of network dynamics allows for the analysis of perturbation effects. This workflow is depicted in the following diagram.

GRN Generation and Simulation Workflow

The algorithm's parameters allow researchers to control the final network's properties, such as its sparsity level and the strength of its modular groups. Networks generated with this method have been shown to produce distributions of knockout effects that mirror those found in real-world, genome-scale perturbation studies [3].

# Protocols

# Protocol 1: Generating the GRN Structure

This protocol details the steps for creating a directed graph structure that embodies the key properties of biological GRNs.

# Materials and Reagents

Table 2: Research Reagent Solutions for GRN Simulation

| Item Name | Function / Description | Example / Source |

|---|---|---|

| GRiNS Python Library | A parameter-agnostic library for simulating GRN dynamics, integrating RACIPE and Boolean Ising models with GPU acceleration [24]. | GRiNS Documentation |

| Custom Network Generation Scripts | Implements the novel small-world, scale-free generating algorithm [3]. | Provided GitHub Repository |

| RACIPE Framework | A parameter-agnostic ODE-based modeling framework that samples kinetic parameters over predefined ranges to identify possible network steady states [24]. | Integrated within GRiNS |

| Boolean Ising Formalism | A coarse-grained, binary simulation model for large networks where ODEs are computationally prohibitive [24]. | Integrated within GRiNS |

# Procedure

- Network Initialization: Begin with a small, connected directed graph of

N_initnodes. - Growth and Preferential Attachment: Iteratively add new nodes to the network. For each new node, create a fixed number of directed edges (

m) to existing nodes. The probability of connecting to an existing nodeishould be proportional to its current out-degree (to create scale-free out-degree distribution) and/or in-degree (to create scale-free in-degree distribution). This step is crucial for generating a scale-free topology [3]. - Rewiring for Small-World Property and Modularity: With a low probability

p, rewire the target of each directed edge to a different node. To enforce modularity, bias this rewiring process to prefer nodes within the same pre-defined or emerging functional module [3]. This step introduces shortcuts and enhances the small-world property. - Assign Edge Signs: For each directed edge, assign a sign:

+1for activation/induction and-1for inhibition/repression. The distribution of signs can be based on biological prior knowledge. - Validation: Calculate key network metrics (e.g., degree distribution, path length, clustering coefficient) to verify the generated network exhibits the desired scale-free and small-world properties.

# Protocol 2: Simulating Network Dynamics and Perturbations

This protocol describes how to simulate gene expression dynamics on the generated network structure and to model the effects of gene knockouts.

# Materials and Reagents

- The GRN structure generated in Protocol 1.

- GRiNS Python library [24].

- Stochastic differential equation (SDE) solver.

# Procedure

Formulate the Dynamical Model: Convert the generated GRN structure into a system of coupled Ordinary Differential Equations (ODEs) or Stochastic Differential Equations (SDEs) to model gene expression dynamics. A common approach is the RACIPE framework, which uses a modified Hill function for regulation [3] [24].

- For a target gene

Tregulated by activatorsP_iand inhibitorsN_j, the ODE is:dT/dt = G_T * [∏_i H^S(P_i, P_i^0_T, n_PiT, λ_PiT)] * [∏_j H^S(N_j, N_j^0_T, n_NjT, λ_NjT)] - k_T * TwhereH^Sis the shifted Hill function,G_Tis the maximum production rate,k_Tis the degradation rate,B_A^0is the threshold parameter,n_BAis the Hill coefficient, andλ_BAis the fold change [24].

- For a target gene

Parameter Sampling (RACIPE): For a network with

Nnodes andEedges, sample the kinetic parameters (2N + 3Eparameters total). Sample production and degradation rates (G_T,k_T), as well as for each edge, the threshold (B_A^0), Hill coefficient (n_BA), and fold-change (λ_BA) from broad, biologically plausible ranges [24]. The Boolean Ising model can be used as a less computationally intensive alternative for very large networks [24].Simulate Steady States: For each sampled parameter set, simulate the ODE/SDE system from multiple random initial conditions until it reaches a steady state. This ensemble of simulations captures the possible behaviors of the network.

Model Gene Knockouts: To simulate a knockout of gene

X, set its production rateG_Xto zero and re-simulate the network dynamics. Compare the steady-state expression levels of all genes in the knockout condition to their levels in the wild-type (unperturbed) simulations [3].Analyze Perturbation Effects: Quantify the effect of a knockout as the log2-fold change in expression of each gene. Aggregate these effects across multiple knockouts and parameter sets to generate a distribution of perturbation effects for analysis.

The logical flow of the simulation and analysis process, including the critical step of converting a network topology into a dynamical system, is illustrated below.

GRN Dynamics and Perturbation Simulation

# Protocol 3: Analysis of Perturbation Effects and Network Inference

This protocol covers the analysis of simulated perturbation data to gain biological insights and evaluate network inference strategies.

# Procedure

- Characterize Effect Distributions: Analyze the global distribution of knockout effects. Note that in realistic networks, due to sparsity and structure, most knockouts will have minimal effects, while a few will have large, cascading consequences [3].

- Identify Key Regulators: Genes whose knockout causes widespread and significant expression changes are likely hub genes in the network topology.

- Evaluate Inference Avenues: Use the simulated perturbation data and the known ground-truth network to benchmark different network inference algorithms. A key finding is that while perturbation data are critical for discovering specific regulatory interactions, data from unperturbed cells alone may be sufficient to reveal broad regulatory programs and modules [3].

Gene Regulatory Networks (GRNs) represent the complex causal relationships and control circuitry that govern cellular responses to internal and external cues [25]. Understanding their structure and function is a central goal in systems biology and essential for interpreting the effects of molecular perturbations in disease and therapeutic development [3]. Computational models form the backbone of this research endeavor, enabling scientists to simulate, analyze, and predict GRN behavior across different conditions and perturbation regimes.

The landscape of GRN modeling is characterized by a fundamental trade-off between biological detail and computational scalability. On one end, Boolean networks offer intuitive, parameter-free models ideal for qualitative analysis and large-scale networks [26] [25]. On the opposite extreme, stochastic differential equations provide mechanistically realistic, continuous representations of system dynamics, albeit with significant parameterization requirements [27]. Intermediate approaches like probabilistic Boolean networks and ordinary differential equation (ODE) models bridge these extremes, incorporating stochasticity or continuous values while maintaining varying degrees of scalability [28] [23].

This article provides a comprehensive overview of these foundational mathematical frameworks, with particular emphasis on their application to simulating GRN structure and perturbation effects. We present standardized protocols for model implementation, quantitative comparisons of computational characteristics, and visualization of key signaling pathways to equip researchers with practical tools for selecting and applying appropriate modeling paradigms to their specific research questions in drug development and systems biology.

Mathematical Frameworks for GRN Modeling

Boolean Networks (BNs)

Boolean networks represent one of the simplest yet most powerful approaches for qualitative GRN modeling. In this discrete formalism, genes are represented as binary-valued nodes (ON/OFF or 1/0), and regulatory relationships are described through logical transfer functions using Boolean operators (AND, OR, NOT) [29]. The system evolves through discrete time steps, with all nodes updated either synchronously or asynchronously [26].

A Boolean network is formally defined as a directed graph G(V,F) where:

- V = {X₁, X₂, ..., Xₙ} represents the set of binary-valued nodes (genes)

- F = (f₁, f₂, ..., fₙ) represents the list of Boolean functions for each node

- Each fᵢ: {0,1}ⁿ → {0,1} determines the next state of node Xᵢ based on the current states of its regulators [28]

The dynamics of Boolean networks lead to attractor states (steady states or cycles), which correspond to functional cellular states such as proliferation, apoptosis, or differentiation [29]. For example, in a Boolean model of the Epithelial-Mesenchymal Transition (EMT) network, the epithelial (E) and mesenchymal (M) states emerge as low-frustration attractors with specific gene expression configurations [23].

Table 1: Boolean Network Implementation Considerations

| Aspect | Options | Biological Interpretation |

|---|---|---|

| Update Scheme | Synchronous | All genes update simultaneously; artificial but deterministic |

| Asynchronous | Genes update in random order; captures stochastic timing | |

| Rank-ordered | Updates follow biological hierarchy; incorporates known sequences | |

| Node States | Binary (0,1) | Gene expressed/not expressed; simplest representation |

| Multi-valued | Captures intermediate expression levels; increased complexity | |

| Attractor Analysis | Steady states | Correspond to stable cell types or states |

| Cycles | Correspond to oscillatory behaviors (e.g., circadian rhythms) |

Probabilistic Boolean Networks (PBNs)

Probabilistic Boolean networks extend the Boolean framework to account for the inherent stochasticity of genetic and molecular interactions [28]. In a PBN, each gene can have multiple possible Boolean functions with associated probabilities, reflecting uncertainty in regulatory mechanisms or environmental influences [27].

Formally, a PBN consists of:

- The same node set V = {X₁, X₂, ..., Xₙ} as Boolean networks

- For each node Xᵢ, a set of possible Boolean functions: Fᵢ = {f₁⁽ⁱ⁾, f₂⁽ⁱ⁾, ..., fₗ₍ᵢ₎⁽ⁱ⁾}

- Each function fⱼ⁽ⁱ⁾ has selection probability cⱼ⁽ⁱ⁾ with Σⱼ cⱼ⁽ⁱ⁾ = 1

- The network has N = Πᵢ₌₁ⁿ l(i) possible Boolean network realizations [28]

PBNs are particularly valuable for modeling cell-to-cell variability and probabilistic fate decisions in development and disease. The stochasticity in PBNs can be implemented at different levels, including random selection of update functions or probabilistic application of deterministic rules [27].

Ordinary Differential Equations (ODEs)

ODE models provide a continuous, quantitative framework for modeling GRN dynamics by describing the rate of change of molecular concentrations as functions of current concentrations [23] [26]. Unlike logical models, ODEs capture graded, dose-dependent responses and can incorporate detailed kinetic parameters.

A typical ODE model for GRNs takes the form: dXᵢ/dt = synthesisterm - degradationterm

Where the synthesis term is typically a function of the concentrations of regulatory molecules, often modeled using Hill kinetics to capture cooperative binding effects [23]. For example, in EMT modeling, ODE-based approaches have been implemented using tools like RACIPE (Random Circuit Perturbation) to generate ensembles of models with randomized parameters that nonetheless converge to biologically observed E and M states [23].

Table 2: Comparison of GRN Modeling Frameworks

| Framework | State Representation | Stochasticity | Computational Complexity | Best-Suited Applications |

|---|---|---|---|---|

| Boolean Networks | Discrete (binary) | Deterministic or asynchronous update | O(2ⁿ) for state space | Qualitative analysis, large networks, attractor identification |

| Probabilistic BNs | Discrete (binary) | Intrinsic (function selection) | O(nN2ⁿ) for transition matrix | Modeling uncertainty, heterogeneous cell populations |

| Stochastic BNs | Discrete (binary) | Intrinsic (propensity-based) | Similar to BNs but with Monte Carlo sampling | Cell population simulations, noise-driven transitions |

| ODE Models | Continuous | Deterministic | Depends on solver and stiffness | Quantitative prediction, kinetic analysis, dose-response |

| Stochastic DEs | Continuous | Intrinsic (chemical noise) | High (exact); moderate (approximate) | Small systems, molecular noise effects, low copy numbers |

Stochastic Differential Equations (SDEs) and the Chemical Master Equation

At the most detailed level of description, GRN dynamics can be modeled using the chemical master equation or its approximations, which explicitly account for the discrete, random nature of biochemical reactions [26]. The Gillespie algorithm provides an exact stochastic simulation method that generates trajectories consistent with the master equation by calculating the time until the next reaction and which reaction occurs [27].

Stochastic differential equations bridge the gap between deterministic ODEs and discrete stochastic simulation by adding noise terms to differential equations: dX(t) = μ(X(t))dt + σ(X(t))dW(t)

Where μ(X(t)) represents the deterministic drift (typically matching ODE models), and σ(X(t))dW(t) represents stochastic fluctuations, with W(t) being a Wiener process [27]. SDEs are particularly useful for modeling the effects of intrinsic noise in gene expression, which arises from the random timing of biochemical events and can significantly impact cellular decision-making in processes like EMT [23].

Experimental Protocols and Implementation

Protocol 1: Implementing a Stochastic Boolean Network for Perturbation Analysis

This protocol outlines the steps for implementing a Stochastic Boolean Network (SBN) to simulate GRN dynamics and perturbation responses, based on the approach described in [28].

Materials and Reagents

- Computational environment: MATLAB or Python with BooleanNet library [29]

- Network structure: Prior knowledge of regulatory interactions (e.g., from databases like DoRothEA) [30]

- Initial states: Experimentally determined or hypothesized gene expression patterns

Procedure

Network Definition:

- Define the set of genes/nodes V = {X₁, X₂, ..., Xₙ}

- For each node, define its possible update functions Fᵢ = {f₁⁽ⁱ⁾, f₂⁽ⁱ⁾, ..., fₗ₍ᵢ₎⁽ⁱ⁾} based on regulatory inputs

- Assign selection probabilities cⱼ⁽ⁱ⁾ to each function based on experimental data or theoretical considerations

Stochastic Implementation:

Perturbation Simulation:

- To simulate gene knockout, clamp the target gene state to 0 (OFF)

- To simulate overexpression, clamp the target gene state to 1 (ON)

- Run multiple stochastic simulations to account for random variations

Analysis:

- Identify attractor states reached from different initial conditions

- Calculate transition probabilities between states

- Compute steady-state distributions to identify stable phenotypes

Troubleshooting

- If the network exhibits excessive stochasticity, review function probabilities and consider incorporating more specific biological constraints

- If simulations fail to reach biologically relevant attractors, verify network topology and update rules against experimental data

Protocol 2: ODE Modeling of GRN Perturbation Responses

This protocol describes the implementation of an ODE-based model for simulating continuous GRN dynamics and perturbation responses, based on approaches used in EMT studies [23].

Materials and Reagents

- Software tools: RACIPE for ensemble modeling [23], or custom implementations in MATLAB, Python, or R

- Kinetic parameters: Experimentally measured or estimated from literature

- Perturbation data: Gene expression changes in response to perturbations

Procedure

Model Formulation:

- For each gene, formulate an ODE describing its expression dynamics: dXᵢ/dt = αᵢ · F(regulators) - γᵢ · Xᵢ where αᵢ is the production rate, γᵢ is the degradation rate, and F(regulators) is a function capturing regulatory inputs

- Use Hill functions to model regulatory interactions: F(activators) = Πⱼ (Xⱼⁿⱼ)/(Kⱼⁿⱼ + Xⱼⁿⱼ) for activators F(repressors) = Πⱼ Kⱼⁿⱼ/(Kⱼⁿⱼ + Xⱼⁿⱼ) for repressors

Parameter Estimation:

- If using RACIPE, generate an ensemble of models with randomized parameters within physiological ranges [23]

- For specific models, estimate parameters from time-series expression data using optimization algorithms

Perturbation Implementation:

- Simulate gene knockout by setting production rate to near zero

- Simulate overexpression by increasing production rate or decreasing degradation rate

- For pharmacological inhibition, modify the relevant kinetic parameters

Analysis:

- Identify steady states by solving dX/dt = 0

- Perform stability analysis through Jacobian matrix evaluation

- Simulate temporal dynamics using numerical integrators (e.g., Runge-Kutta methods)

Troubleshooting

- If the model fails to exhibit multistability (multiple steady states), review regulatory logic and consider adding cooperative interactions

- If parameter estimation fails to converge, consider simplifying the model structure or increasing the quality of experimental data for fitting

Figure 1: GRN Modeling Workflow. The process begins with experimental data and prior knowledge, proceeds through network inference and model formulation, and iterates through simulation, validation, and refinement to generate predictions.

Table 3: Essential Research Reagents and Computational Tools for GRN Modeling

| Resource | Type | Function | Example Applications |

|---|---|---|---|

| BooleanNet [29] | Software library | Simulates Boolean network models with synchronous, asynchronous, and hybrid updates | Implementation and analysis of discrete GRN models |

| BoNesis [30] | Software framework | Infers Boolean networks from transcriptomic data and prior knowledge | Data-driven Boolean model construction from scRNA-seq or bulk RNA-seq |

| RACIPE [23] | Computational method | Generates ensemble ODE models with randomized parameters | Exploring parameter-independent GRN behaviors |

| GPO-VAE [31] | Deep learning model | Predicts perturbation responses with GRN-based explainability | Predicting cellular responses to genetic perturbations |

| DoRothEA [30] | Database | Provides prior knowledge on TF-target regulatory interactions | Network structure initialization for model inference |

| Perturb-seq Data [3] | Experimental resource | Single-cell RNA sequencing following genetic perturbations | Model validation and parameter estimation |

Signaling Pathways and Network Visualization

Figure 2: GRN Regulatory Logic. This diagram illustrates common regulatory motifs in GRNs, including activation, repression, feedback loops, and combinatorial logic (AND, OR, NOT gates) that determine gene expression dynamics.

The diverse mathematical frameworks for GRN simulation—from Boolean networks to stochastic differential equations—offer complementary strengths for investigating gene regulatory structure and perturbation effects. Boolean approaches provide unparalleled scalability and intuitive representation of regulatory logic, making them ideal for initial network characterization and qualitative hypothesis testing. ODE models enable quantitative predictions of dose-response relationships and temporal dynamics, while stochastic frameworks capture the inherent noise and variability of biological systems that often drive cell fate decisions.

The choice of modeling framework should be guided by specific research questions, data availability, and the desired level of biological detail. For perturbation studies in drug development, a multi-faceted approach that combines Boolean models for rapid screening of intervention points with more detailed ODE or stochastic models for promising targets may offer the most efficient path to therapeutic insights. As computational methods continue to advance, particularly through the integration of machine learning with mechanistic modeling [31] [25], the field moves closer to predictive digital twins of cellular regulation that can transform both basic research and therapeutic development.

AI-Driven GRN Inference: From Machine Learning to Transformers in Network Modeling

Application Notes

Gene Regulatory Networks (GRNs) represent the complex web of interactions where transcription factors (TFs) regulate the expression of their target genes. Supervised learning approaches leverage known TF-target interactions to train models that can accurately predict novel regulatory relationships on a genome-wide scale. Unlike unsupervised methods, these techniques require labeled training data, typically derived from experimental sources such as chromatin immunoprecipitation sequencing (ChIP-seq) or DNA affinity purification sequencing (DAP-seq) [32]. The primary challenge in this domain involves handling high-dimensional genomic data where the number of genes (features) vastly exceeds the number of available biological samples, necessitating robust algorithms resistant to overfitting [33].

Recent advances demonstrate that hybrid models combining deep learning with traditional machine learning consistently outperform individual approaches, achieving over 95% accuracy on holdout test datasets for plant species including Arabidopsis thaliana, poplar, and maize [32]. Furthermore, transfer learning strategies enable knowledge transfer from data-rich model organisms to less-characterized species, significantly enhancing prediction performance despite limited training data [32].

Performance Comparison of Algorithmic Approaches

The table below summarizes the key strengths, limitations, and optimal use cases for major supervised learning algorithms in GRN target prediction.

Table 1: Comparison of Supervised Learning Approaches for GRN Target Prediction

| Algorithm | Key Strengths | Major Limitations | Representative Tools/Methods |

|---|---|---|---|

| Random Forest | Handles high-dimensional data well; robust to noise; provides feature importance scores [33]. | Limited ability to capture complex non-linear relationships compared to deep learning [32]. | GENIE3, iRafNet [33] |

| Support Vector Machine (SVM) | Effective in high-dimensional spaces; memory efficient due to support vectors [32]. | Performance can degrade with very large datasets; less intuitive for feature importance [32]. | TGPred [32] |

| Neural Networks/Deep Learning | Excels at capturing hierarchical and non-linear regulatory relationships [32]. | Requires very large training datasets; prone to overfitting with limited data [32]. | DeepBind, DeeperBind, DeepSEA [32] |

| Hybrid Models (CNN + ML) | Highest reported accuracy (>95%); combines feature learning of DL with classification power of ML [32]. | Complex architecture; computationally intensive to train [32]. | CNN + Machine Learning hybrids [32] |