Model Selection in Phylogenetic Comparative Methods: A Guide for Robust Evolutionary Analysis in Biomedical Research

This article provides a comprehensive guide to model selection in phylogenetic comparative methods (PCMs), tailored for researchers and drug development professionals.

Model Selection in Phylogenetic Comparative Methods: A Guide for Robust Evolutionary Analysis in Biomedical Research

Abstract

This article provides a comprehensive guide to model selection in phylogenetic comparative methods (PCMs), tailored for researchers and drug development professionals. It covers the foundational principles of PCMs, emphasizing why proper model selection is critical for valid evolutionary inferences in biological and biomedical datasets. The content explores key methodological approaches and their specific applications, including drug target identification and understanding pathogen evolution. A significant focus is given to troubleshooting common pitfalls, such as tree misspecification, and optimizing analyses with advanced techniques like robust regression. Finally, the guide offers a framework for validating model fit and compares the predictive performance of different approaches, synthesizing key takeaways to enhance the rigor and reliability of evolutionary analyses in biomedical research.

Why Model Selection Matters: The Foundations of Phylogenetic Comparative Analysis

Defining Phylogeny Analysis and Core Evolutionary Concepts

Frequently Asked Questions (FAQs)

Q: What is the fundamental difference between phylogenetic analysis and evolutionary biology? A: Evolutionary biology is the broader subfield that studies the mechanisms of evolution—natural selection, mutation, genetic drift, and gene flow—and how they generate diversity over time [1]. Phylogenetic analysis is a specific methodology within this field that focuses on inferring evolutionary relationships among species or genes, typically visualized through phylogenetic trees [2] [3]. While evolutionary biology seeks to understand the processes of change, phylogenetics aims to reconstruct the historical patterns of descent from common ancestors [4].

Q: My model selection analysis suggests different best-fit models depending on whether I use AIC or BIC. Which criterion should I trust? A: Research indicates that while different criteria (AIC, AICc, BIC, DT) may select different models, they generally lead to very similar phylogenetic inferences regarding tree topology and ancestral sequence reconstruction [5]. AIC tends to favor more complex models, while BIC prefers simpler ones [5]. For many applications, particularly topology reconstruction, the choice between these criteria is not crucial. Some studies suggest that skipping model selection entirely and using the complex GTR+I+G model directly produces similar results to those obtained through formal model selection procedures [5].

Q: What are the practical implications of using rooted versus unrooted phylogenetic trees? A: Rooted trees provide directionality to evolutionary relationships by specifying a common ancestor, allowing researchers to understand the sequence of evolutionary events and the direction of character state transformations [2] [6]. Unrooted trees only show relationships among taxa without indicating ancestry or evolutionary direction [2] [3]. Rooted trees are essential for understanding evolutionary history, while unrooted trees are useful when the position of the common ancestor is unknown or uncertain.

Q: How does poor taxon sampling affect phylogenetic accuracy? A: Inadequate taxon sampling can lead to incorrect phylogenetic inferences, particularly issues like long-branch attraction where unrelated branches are incorrectly grouped due to shared homoplastic sites [3]. Research comparing sampling strategies suggests that, for a given total number of nucleotide sites, sampling fewer taxa with more sites (genes) per taxon often yields higher accuracy and better bootstrap replicability than sampling more taxa with fewer sites per taxon [3].

Q: What are the key differences between distance-based and character-based phylogenetic methods? A: The table below summarizes the core differences:

| Feature | Distance-Based Methods | Character-Based Methods |

|---|---|---|

| Basis | Total evolutionary changes between sequence pairs [6] | Individual character state changes (nucleotides/amino acids) across all sequences [6] |

| Computational Demand | Lower; suitable for large datasets [6] | Higher; computationally intensive [6] |

| Evolutionary Models | Treats genetic changes equally [6] | Incorporates complex evolutionary models with different rates [6] |

| Common Methods | Neighbor-joining, UPGMA [6] | Maximum likelihood, Bayesian inference, maximum parsimony [3] [6] |

| Output Trees | Single tree proposed [6] | Multiple trees evaluated and ranked [6] |

Troubleshooting Common Experimental Issues

Problem: Inconsistent Tree Topologies Across Different Analysis Methods

Solution: This discrepancy often arises from methodological differences rather than biological reality. Follow this systematic troubleshooting protocol:

Assess Dataset Quality: Check alignment quality and remove ambiguous regions. Verify that missing data does not exceed 20% of the matrix.

Evaluate Branch Support: Calculate bootstrap values (≥70% generally considered reliable) or posterior probabilities (≥0.95 considered significant) for all nodes [6]. Poorly supported nodes indicate areas of uncertainty.

Test Model Adequacy: If using model-based methods, ensure the evolutionary model adequately fits your data. Compare results under different models to identify sensitive relationships.

Check for Systematic Errors: Assess whether compositional heterogeneity, heterotachy, or among-site rate variation might be affecting your results.

Utilize Multiple Methods: Consistent results across different methods (e.g., maximum likelihood and Bayesian inference) provide stronger evidence for phylogenetic hypotheses.

Experimental Protocol: Model Selection Using Stepping-Stone Sampling

Based on current best practices [7], follow this protocol for accurate model selection in Bayesian phylogenetics:

Prepare Power Posteriors: Set up path sampling/stepping-stone sampling in BEAST with 50-100 path steps, each with a chain length of at least 250,000 iterations.

Configure XML Specification:

Calculate Marginal Likelihoods: Use the collected samples to compute marginal likelihoods using both path sampling and stepping-stone sampling.

Compare Models: Calculate Bayes factors to compare model fit. A Bayes factor >10 provides strong evidence for one model over another [7].

Problem: Low Bootstrap Support in Critical Nodes

Solution: Low support values indicate uncertainty in phylogenetic relationships. Address this through:

Increase Gene/Locus Sampling: Add more independent genetic markers, particularly those with appropriate evolutionary rates for your phylogenetic depth.

Improve Taxon Sampling: Strategically add taxa to break up long branches, especially in poorly supported regions of the tree.

Check for Model Misspecification: Test whether more parameter-rich models improve likelihood scores and support values.

Explore Dataset Conflicts: Use partition analyses to identify conflicting phylogenetic signals that might be causing uncertainty.

Experimental Protocols

Protocol 1: Phylogenetic Tree Construction Workflow

Quantitative Performance Metrics of Model Selection Criteria

| Criterion | Model Selection Tendency | Computational Demand | Topology Accuracy | Recommended Use Cases |

|---|---|---|---|---|

| AIC | More complex models [5] | Moderate | ~50% [5] | Exploratory analysis, dataset exploration |

| AICc | Complex models (small samples) | Moderate | Similar to AIC | Small datasets (n/K < 40) |

| BIC | Simpler models [5] | Moderate | ~50% [5] | Conservative model selection |

| Bayes Factors | Model with highest marginal likelihood | High | High with adequate sampling [7] | Bayesian frameworks, model comparison |

| hLRT/dLRT | Nested model comparison | Low-Moderate | ~50% [5] | Hierarchical model testing |

Protocol 2: Assessing Morphological Correlates of Migration in Evolutionary Studies

Adapted from the Catharus thrush study [8], this protocol enables quantitative analysis of functional morphology in an evolutionary context:

Sample Selection: Obtain comprehensive taxonomic and geographic sampling. The Catharus study used 2,578 adult study skins of known sex [8].

Character Measurement:

- Record wing length, tarsometatarsus length, tail length, and body mass

- Calculate "volancy" (θ) as the mass-equated ratio of wing to tarsometatarsus length [8]

Phylogenetic ANOVA: Use simulation-based approaches to test whether mean morphological values differ among evolutionary strategies (e.g., migratory vs. sedentary) while accounting for phylogenetic non-independence [8].

Ancestral State Reconstruction: Model evolutionary transitions using maximum likelihood or Bayesian methods to infer historical character states at critical nodes.

Correlation Analysis: Test for negative relationships between investment in different morphological modules (e.g., wing vs. leg length) using phylogenetic generalized least squares.

Research Reagent Solutions

| Reagent/Material | Function in Phylogenetic Analysis | Application Notes |

|---|---|---|

| Ultra-Conserved Elements (UCEs) | Genomic markers for phylogenomic studies [8] | Provide hundreds to thousands of loci; Catharus study used 1,238 UCEs with 2.1 million characters [8] |

| Museum Specimens | Source of morphological and historical DNA data [8] | Enable comprehensive taxonomic sampling; critical for measuring functional morphology |

| BEAST Software Package | Bayesian evolutionary analysis sampling trees [7] | Implements path sampling, stepping-stone sampling for model selection [7] |

| Geneious Prime | Integrated bioinformatics platform [6] | Provides built-in neighbor-joining, UPGMA; plugin support for character-based methods |

| jModelTest | Statistical selection of nucleotide substitution models | Used in 41% of phylogenetic studies for AIC-based model selection [5] |

The Critical Role of PCMs in Evolutionary Biology and Drug Discovery

FAQs and Troubleshooting Guides

Model Selection and Data Analysis

Q1: My phylogenetic comparative analysis detected correlated evolution between two traits, but I suspect it might be a false positive. What could be wrong?

A: Your suspicion may be justified, especially if your analysis involves traits with limited evolutionary changes. A common cause is a small evolutionary sample size (the effective number of independent character state changes on your phylogeny), not just the number of species [9]. Models like Pagel's Discrete can erroneously support correlated evolution in these scenarios [9].

- Troubleshooting Steps:

- Check Evolutionary Sample Size: Calculate the number of independent transitions for each trait on your phylogeny. If a trait has evolved only once, it is invalid to statistically test for correlated evolution with another trait [9].

- Assess Phylogenetic Imbalance: Use metrics like the phylogenetic imbalance ratio to evaluate if your tree and trait data are suitable for the model you've chosen [9].

- Try Alternative Models: Test your hypothesis with multiple models (e.g., Threshold, GLMM). Underlying continuous data distributions can be less prone to this error [9].

- Seek Consilience: Corroborate your statistical findings with evidence from other fields like biogeography or developmental biology [9].

Q2: How do I choose between different Phylogenetic Comparative Models (PCMs) for my dataset?

A: Model selection should be guided by your biological question, data type, and the evolutionary processes you wish to test.

- Decision Workflow:

- Define Your Question: Are you testing for trait correlations, estimating ancestral states, or modeling diversification rates? [10]

- Identify Your Data Type:

- Check for Phylogenetic Signal: Determine if your trait evolves according to phylogenetic history (e.g., using Pagel's λ) [10].

- Compare Model Fit: Use information criteria (e.g., AIC) to compare the fit of different models to your data. Be wary of overfitting, especially with complex models on small datasets [11].

The table below summarizes key models and their applications.

| Model Name | Data Type | Primary Application | Key Considerations |

|---|---|---|---|

| Independent Contrasts (PIC) [10] | Continuous | Trait correlations, allometry | Equivalent to PGLS under a Brownian motion model. |

| PGLS [10] | Continuous | Trait correlations, accounting for phylogeny | Flexible; allows testing of different evolutionary models (BM, OU, Pagel's λ). |

| Pagel's Discrete [9] | Discrete | Correlated evolution of binary traits | Can produce false positives when evolutionary sample size is small [9]. |

| Threshold Model [9] | Discrete | Evolution of binary traits | Assumes an underlying continuous liability; can be more robust than Pagel's Discrete in some cases [9]. |

Q3: What are the common pitfalls when applying PCMs to genomic data in drug discovery?

A: Applying PCMs to genomics for target discovery introduces specific challenges.

- Primary Pitfalls:

- Non-Independence of Lineages: Genomes, genes, and species are products of shared evolutionary history. Treating them as independent data points is one of the most common and critical mistakes [12].

- Small Evolutionary Sample Size: If a gene of interest has a conserved function and has changed in only one lineage, it is statistically challenging to link it to a phenotype that also evolved once [9].

- Over-reliance on Genomics: Genomic data alone may not predict drug efficacy due to complex biological layers (e.g., pharmacokinetics, pharmacogenomics, microbiome interactions) [13]. True "personalized medicine" requires integrating multiple biomarker layers [13].

- Insufficient Evidence in Agnostic Studies: Tumor-agnostic drug approvals based on genomic biomarkers alone sometimes rely on trial endpoints that are surrogates for true clinical benefit. Conclusions can be difficult without proper control groups [13].

Experimental Design and Data Quality

Q4: My phylogenetic independent contrasts analysis failed. What are the potential reasons?

A: The analysis may not have "failed" in a technical sense, but the results might be uninterpretable or erroneous due to data issues.

- Troubleshooting Checklist:

- Are branch lengths present and correct? Independent contrasts require a fully resolved, ultrametric tree with meaningful branch lengths [10].

- Does the trait data contain minimal variation? If there is little to no variation across species, the contrasts will be near zero, and correlations cannot be computed meaningfully.

- Have you checked for outliers? A single species with an extreme trait value can disproportionately influence the contrasts and the resulting correlation.

- Is the assumption of Brownian motion evolution reasonable? Use diagnostic plots (e.g., of absolute contrasts versus their standard deviations) to check the model's fit [10].

Essential Experimental Protocols

Protocol 1: Conducting a PGLS Analysis to Test for a Trait Correlation

This protocol tests the relationship between two continuous traits while accounting for phylogenetic non-independence.

1. Prerequisites:

- Data: A phylogeny of the study species and a dataset of trait values for each species.

- Software: R with packages

ape,nlme, andgeiger.

2. Workflow:

3. Step-by-Step Instructions:

- Step 1: Input and Validate Data. Load your tree and trait data. Ensure trait data is named correctly to match tree tip labels. Check for missing data.

- Step 2: Model Evolutionary Process. Choose a model for the residual structure

V. Start with a Brownian motion (BM) model or a more flexible model like Pagel's λ [10] [12]. - Step 3: Fit the PGLS Model. Using the

gls()function in R, specify the regression formula (e.g.,trait_y ~ trait_x) and the correlation structure defined by the phylogeny and your chosen evolutionary model. - Step 4: Check Model Diagnostics. Examine a plot of residuals versus fitted values to check for homoscedasticity. Check a Q-Q plot of residuals to assess normality.

- Step 5: Interpret Results. Examine the p-value and slope of the regression. A significant p-value indicates a relationship between the traits after accounting for phylogeny.

Protocol 2: Designing a Robust Study for Evolutionary Hypothesis Testing

This protocol outlines the planning stages to ensure your PCM study is sound.

1. Prerequisites:

- A clear evolutionary hypothesis.

- Knowledge of the phylogenetic relationships of the taxa in question.

2. Workflow:

3. Step-by-Step Instructions:

- Step 1: Define an A Priori Hypothesis. Your hypothesis should be developed before data collection and analysis to avoid post-hoc storytelling [9].

- Step 2: Maximize Evolutionary Sample Size. Design your study to include lineages with independent evolutionary transitions in your traits of interest. This is more critical than simply maximizing the number of species [9].

- Step 3: Select and Assess Model Suitability. Choose a PCM that fits your data type and question. Evaluate the suitability of your tree and data for the model using diagnostic tools [9].

- Step 4: Analyze Data. Run your chosen analyses, comparing multiple models if appropriate.

- Step 5: Seek Consilience. Do not rely solely on statistical output. Actively look for evidence from development, paleontology, or ecology that supports or refutes your hypothesis [9].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential resources for conducting phylogenetic comparative research.

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| Phylogenetic Tree | The historical hypothesis of relationships among lineages. The foundational scaffold for all PCMs. | Sourced from published studies or constructed from molecular data (e.g., GenBank sequences). |

| Trait Database | Curated dataset of phenotypic or ecological traits for the species in the phylogeny. | Testing for correlations between life-history traits (e.g., brain & body size) [10]. |

| Comparative Genomics Database | Databases of genomic sequences and annotations across multiple species. | Identifying genetic changes associated with convergent evolution of traits [12]. |

| R Statistical Environment | Open-source software for statistical computing and graphics. | The primary platform for implementing most PCMs. |

R packages: ape, phytools, caper |

Specialized R libraries for phylogenetic analysis and PCMs. | Reading tree files, calculating independent contrasts, running PGLS, and modeling trait evolution. |

| Consilience Evidence | Data from disparate fields like developmental biology, biogeography, or the fossil record [9]. | Providing independent support for hypotheses generated by statistical PCMs. |

FAQs: Understanding Core Evolutionary Models

What is the fundamental difference between Brownian Motion (BM) and Ornstein-Uhlenbeck (OU) models?

BM models trait evolution as a random walk, where variance increases linearly with time, and closely related species are expected to have more similar trait values. In contrast, the OU model adds a stabilizing parameter (α) that pulls the trait value toward a theoretical optimum (θ), making it useful for modeling processes like stabilizing selection or adaptive tracking [14].

When should I choose an OU model over a BM model for my analysis?

An OU model may be appropriate when you have an a priori hypothesis that a trait is under stabilizing selection or is tracking a fluctuating optimum. However, use caution: the OU model is frequently and incorrectly favored over simpler models in likelihood ratio tests, especially with small datasets. It is critical to simulate fitted models and compare empirical results to avoid misinterpretation [14].

How do I interpret the α parameter in the OU model?

The parameter α measures the strength of selection pulling a trait toward the optimum θ. A larger α indicates a stronger pull. It is sometimes called a "rubber band" parameter [15]. However, note that α in a phylogenetic context estimates the pull toward a primary optimum across species and is not a direct measure of stabilizing selection within a population [14]. The phylogenetic half-life, calculated as ln(2)/α, is often a more intuitive measure, representing the time expected for a trait to evolve halfway to the optimum from its ancestral state [15].

My model parameters (e.g., α and σ²) are highly correlated in the MCMC output. Is this a problem?

Yes, this is a known and common challenge. Parameters of the OU model can be correlated because traits evolving under an OU process tend toward a stationary distribution where the long-term variance is a function of both σ² and α (variance = σ² / 2α) [15]. This can make it difficult to estimate parameters separately. Using moves that propose parameters from a multivariate normal distribution with a learned covariance structure during MCMC can help improve estimation [15].

Troubleshooting Guides

Problem: OU Model is Over-Fitted or Incorrectly Favored in Model Selection

Symptoms

- An OU model is selected over a simpler BM model using likelihood ratio tests, even with a small dataset (e.g., fewer than 20-30 species).

- High uncertainty in parameter estimates, particularly for α.

Solutions

- Prioritize Simulation: Always simulate data under your fitted OU model and compare the properties of the simulated data to your empirical data. This helps validate whether the model adequately captures the evolutionary pattern [14].

- Account for Measurement Error: Even small amounts of intraspecific trait variation or measurement error can profoundly bias parameter estimates in OU models. Incorporate measurement error into your models where possible [14] [16].

- Consider Alternative Methods: For challenging tasks, newer methods like Evolutionary Discriminant Analysis (EvoDA) can offer improved performance over conventional AIC-based approaches, especially when traits are subject to measurement error [16].

Problem: Poor MCMC Convergence for OU Model Parameters

Symptoms

- Low effective sample sizes (ESS) for parameters like α, θ, and σ² in Bayesian analyses.

- Visible correlation between parameters in trace plots.

Solutions

- Use Efficient Moves: In addition to standard moves (e.g.,

mvScale), implement a multivariate move like the Adaptive Multivariate Normal Metropolis-Hungarian move (mvAVMVN). This move learns the covariance structure of parameters during the MCMC and can propose more efficient joint updates [15]. - Reparameterize: Instead of interpreting α directly, monitor derived parameters like the phylogenetic half-life (

t_half = ln(2)/α) or the percent decrease in trait variance due to selection (p_th). These can be more stable and interpretable [15]. - Use Informed Priors: Use biologically informed priors where possible. For example, one can set the prior for α with an expectation that the phylogenetic half-life is about half the age of the root [15].

Experimental Protocols & Data Analysis

Protocol: Fitting a Simple OU Model in a Bayesian Framework

This protocol outlines the steps for implementing a Bayesian OU model with a single optimum, as exemplified in RevBayes [15].

1. Read and Prepare the Data

- Read in the time-calibrated phylogeny.

- Read in the continuous character data.

- Exclude all traits not being analyzed and include only the focal trait.

2. Specify the Model Parameters

- Rate parameter (σ²): Draw from a loguniform prior (e.g.,

dnLoguniform(1e-3, 1)). This prior is uniform on the log scale, representing ignorance about the order of magnitude. - Adaptation parameter (α): Draw from an exponential prior. A biologically meaningful approach is to set the mean of this prior to

root_age / 2.0 / ln(2.0), which encodes an expectation that the phylogenetic half-life is half the tree's age. - Optimum (θ): Draw from a vague uniform prior (e.g.,

dnUniform(-10, 10)).

3. Define the OU Process and Run MCMC

- Draw the character data from a phylogenetic OU distribution (e.g.,

dnPhyloOrnsteinUhlenbeckREML), specifying the tree, α, θ, and σ². Assume the root state began at θ. - Clamp the observed data to this stochastic node.

- Set up monitors to record the states of the chain (e.g.,

mnModel,mnScreen). - Configure the MCMC with the model, monitors, and move specifications (e.g.,

mvScale,mvSlide,mvAVMVN). - Run the MCMC for a sufficient number of generations (e.g., 50,000).

Parameter Table for Core Evolutionary Models

Table 1: Key parameters for the Brownian Motion and Ornstein-Uhlenbeck models.

| Model | Parameters | Biological Interpretation |

|---|---|---|

| Brownian Motion (BM) | σ² (sigma squared) | The instantaneous rate of drift; defines the increase in variance per unit time [14]. |

| Ornstein-Uhlenbeck (OU) | σ² (sigma squared) | The stochastic rate of evolution (drift) [15]. |

| α (alpha) | The strength of the pull toward the optimum [14] [15]. | |

| θ (theta) | The optimal trait value [15]. | |

| t₁/₂ (phylogenetic half-life) | The expected time for a trait to cover half the distance from the root state to θ (derived: ln(2)/α) [15]. |

Model Selection and Advanced Workflow

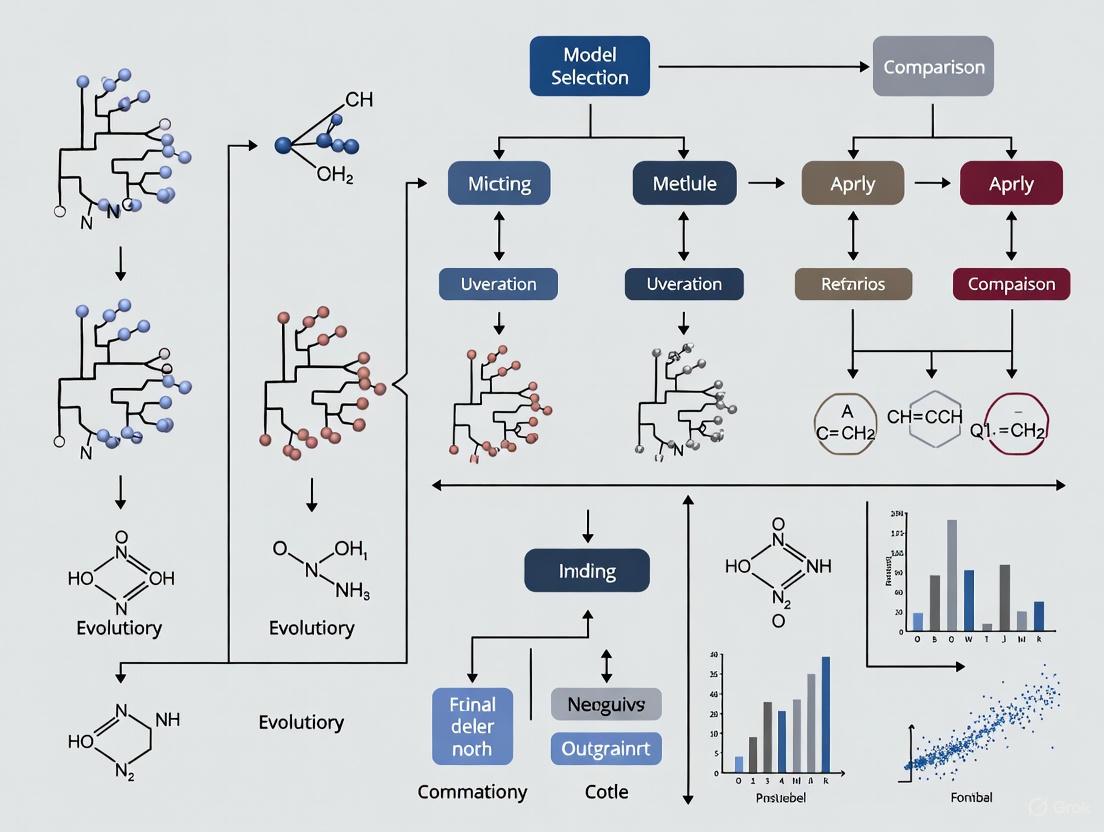

Selecting the right model is a critical step. The workflow below outlines the process, emphasizing the caution required when selecting the OU model.

Diagram 1: Model selection workflow for trait evolution models, highlighting the critical steps for validating an OU model.

The Scientist's Toolkit

Table 2: Essential software and statistical reagents for analyzing trait evolution.

| Research Reagent | Function / Use Case | Key Features |

|---|---|---|

| R Package: GEIGER | Fitting and comparing diverse models of trait evolution [14]. | Implements BM, OU, Early-Burst, and other models. |

| R Package: OUwie | Fitting OU models with multiple selective regimes (optima) [14]. | Allows different clades to have distinct θ values. |

| R Package: ouch | Fitting OU models to phylogenetic data [14]. | Implements the original Hansen (1997) method. |

| RevBayes Software | Bayesian inference of phylogenetic models, including OU [15]. | Flexible model specification, MCMC analysis, and graphical model representation. |

| EvoDA Methods | Supervised learning approach to predict evolutionary models [16]. | Can improve model selection accuracy, especially with measurement error. |

| AIC / AICc / BIC | Information criteria for model selection, balancing fit and complexity [16]. | Standard for conventional model comparison. |

FAQs: Understanding Phylogenetic Non-Independence

Q1: What is phylogenetic pseudo-replication, and why is it a problem? Phylogenetic pseudo-replication occurs when species are treated as independent data points in statistical analyses despite sharing evolutionary history. This violates the fundamental assumption of independence in most standard statistical tests, potentially leading to spurious correlations and inflated Type I error rates. For example, a trait might appear correlated across species not due to a functional relationship but simply because the species share a recent common ancestor.

Q2: How can I determine if my comparative data requires phylogenetic correction? Your data likely requires phylogenetic correction if the traits you are studying have a phylogenetic signal—meaning that closely related species resemble each other more than they resemble species drawn at random from your tree. You can test for phylogenetic signal using metrics such as Pagel's λ or Blomberg's K. A significant phylogenetic signal indicates that standard statistical tests may be inappropriate.

Q3: What are the most common methods for accounting for phylogeny in comparative analyses? Common methods include:

- Phylogenetic Generalized Least Squares (PGLS): A standard linear model that incorporates the phylogenetic covariance matrix to correct for non-independence.

- Phylogenetic Independent Contrasts (PIC): Calculates contrasts between nodes/species under a Brownian motion model of evolution.

- Phylogenetic Mixed Models: A framework that can partition variance into phylogenetic and species-specific components.

- Stochastic Character Mapping: Used to reconstruct the history of discrete character evolution on a phylogeny [17].

Q4: My analysis yielded different results when I included a phylogeny. Which result should I trust? In general, the analysis that accounts for phylogeny is more statistically robust because it does not violate the assumption of data independence. The difference in results highlights that the initial, non-phylogenetic finding was likely driven by shared evolutionary history rather than a true functional relationship. You should report the phylogenetic analysis and discuss the implications of the difference.

Q5: Is model selection always necessary for phylogenetic comparative methods? Recent research suggests that for some common inference tasks, such as topology and ancestral state reconstruction, the choice of model selection criterion (AIC, BIC, etc.) has minimal impact, and using a complex general model like GTR+I+G can yield very similar results, potentially saving time [5]. However, for parameters sensitive to model assumptions, proper model selection remains crucial.

Troubleshooting Common Experimental Issues

Problem: Inconsistent results when using different phylogenetic trees.

- Potential Cause: Uncertainty in the underlying tree topology or branch lengths is being propagated into your comparative analysis.

- Solution: Do not rely on a single point-estimate tree. Instead, repeat your analysis across a posterior distribution of trees (e.g., from a Bayesian analysis) and summarize the results (e.g., the mean and 95% credible interval of your parameter of interest) to account for phylogenetic uncertainty.

Problem: Software error when running a PGLS model.

- Potential Cause 1: Mismatch between species names in your trait data and the tip labels on the phylogeny.

- Solution: Use functions in R packages like

apeorgeigerto check that all species in your dataset are present in the tree and that the names match exactly in spelling and case. - Potential Cause 2: The phylogenetic covariance matrix is singular (non-invertible), often due to polytomies or zero-length branches.

- Solution: Resolve polytomies if possible, or add a very small amount of branch length to zero-length branches to make the matrix invertible.

Problem: Poor visualization of a large phylogeny where extreme trait values make branches hard to see.

- Potential Cause: Using a default color palette where the highest or lowest values are too close to white, causing branches to "vanish" [18].

- Solution: Use a custom color palette that excludes the extreme, near-white ends of the spectrum. For example, in R's

phytools::plotBranchbyTrait, you can define a custom function to truncate the color range [18].

Experimental Protocols & Data Presentation

Protocol 1: Testing for Phylogenetic Signal

Objective: To quantify the degree to which a trait's evolution follows a Brownian motion model along a given phylogeny.

Materials:

- Trait Data: A vector of continuous trait values for each species.

- Phylogeny: A time-calibrated tree of the studied species in Newick format [19].

- Software: R with packages

phytools[17] andape.

Methodology:

- Data Preparation: Ensure your trait data and phylogeny are correctly matched using

geiger::name.check. - Compute Blomberg's K: Use the

phytools::phylosigfunction. - Compute Pagel's λ: Use the

phytools::phylosigfunction with a different method. - Interpretation: A K-value of 1 suggests evolution under Brownian motion. A K < 1 indicates closely related species are less similar than expected under Brownian motion, and K > 1 indicates strong phylogenetic signal. For λ, a value of 0 indicates no phylogenetic signal, and 1 indicates a strong signal consistent with Brownian motion. The significance test (P-value) should be consulted.

Protocol 2: Performing a Phylogenetic Generalized Least Squares (PGLS) Analysis

Objective: To test for a correlation between two continuous traits while accounting for phylogenetic non-independence.

Materials:

- Data: Two continuous traits measured across the same set of species.

- Phylogeny: A time-calibrated tree of the studied species.

- Software: R with packages

nlmeandape.

Methodology:

- Model Formulation: Define the linear model (e.g.,

Trait1 ~ Trait2). - Build Correlation Structure: Create a phylogenetic correlation matrix from your tree, assuming a Brownian motion model.

- Run PGLS: Use the

glsfunction, specifying the correlation structure. - Output Examination: Summarize the model to obtain the intercept, slope, R-squared, and P-values for the coefficients.

Quantitative Data on Model Selection Criteria

Table 1: Comparison of Model Selection Criteria Performance in Phylogenetic Inference [5]. The table shows that while different criteria select different models, their impact on final topological inference is minimal.

| Criterion | Full Name | Model Selection Tendency | Topology Recovery Accuracy |

|---|---|---|---|

| AIC | Akaike Information Criterion | More complex models | ~50-51% |

| AICc | Corrected AIC | More complex models | ~50-51% |

| BIC | Bayesian Information Criterion | Simpler models | ~50-51% |

| DT | Decision-theory Criterion | Simpler models | ~50-51% |

| dLRT | Dynamic Likelihood Ratio Test | Varies by dataset | ~50-51% |

| BF | Bayes Factor | Best-fitting model | ~50-51% |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Software Tools for Phylogenetic Comparative Methods

| Tool Name | Function/Brief Explanation | Application Context |

|---|---|---|

| R Statistical Environment | An open-source programming language and environment for statistical computing and graphics. | The primary platform for implementing most phylogenetic comparative methods [17]. |

| ape Package | A foundational R package for reading, writing, and manipulating phylogenetic trees. | Basic tree handling, plotting, and foundational comparative analyses [17]. |

| phytools Package | A comprehensive R package with hundreds of functions for phylogenetic analysis. | Fitting models of trait evolution, ancestral state reconstruction, and tree visualization [17]. |

| ggtree Package | An R package for visualizing and annotating phylogenetic trees using the ggplot2 syntax. |

Creating highly customizable and publication-quality tree figures with complex data integration [20]. |

| BEAST 2 | A software package for Bayesian evolutionary analysis sampling trees. | Used for phylogenetic tree inference, divergence dating, and model selection via path sampling/stepping-stone sampling [7]. |

| Newick Format | A standard format for representing phylogenetic trees using parentheses and commas [19]. | The universal format for storing and exchanging tree data between different software applications. |

Visualization of Workflows and Relationships

Phylogenetic Comparative Method Workflow

Consequences of Ignoring Phylogeny

Establishing a Robust Hypothesis-Testing Framework with PCMs

This technical support center provides troubleshooting guides and FAQs for researchers using Phylogenetic Comparative Methods (PCMs) in evolutionary biology and medicine.

Troubleshooting Guides

Why is my phylogenetic model failing to converge?

Problem: The Markov Chain Monte Carlo (MCMC) sampler does not converge, leading to unreliable parameter estimates.

Diagnosis: This is often caused by poorly chosen starting values, an overly complex model for the data, or insufficient MCMC iterations [21].

Solution:

- Simplify your model: Begin with a simple Brownian motion (BM) model before progressing to more complex models like the Ornstein-Uhlenbeck (OU) [21].

- Adjust starting values: Manually set biologically plausible starting values for parameters instead of relying on random generation [21].

- Increase iterations: Substantially increase the number of MCMC generations and ensure the effective sample size (ESS) for all parameters is greater than 200 [21].

- Check priors: Use weakly informative priors to constrain parameters to plausible ranges without overly influencing the posterior [21].

How do I choose the best evolutionary model for my trait data?

Problem: It is unclear which model of trait evolution (e.g., BM, OU, Trend) best fits the dataset.

Diagnosis: Model selection is a core part of PCMs. Using an incorrect model can lead to false conclusions about evolutionary processes [21].

Solution:

- Fit multiple models: Simultaneously fit a set of candidate models to your data [21].

- Compare using AICc: Use the Akaike Information Criterion corrected for small sample sizes (AICc) to rank the models. The model with the lowest AICc score is the best fit [21].

- Calculate Akaike weights: Convert AICc scores to Akaike weights to quantify the probability that each model is the best among the set considered [21].

Table 1: Common Models of Continuous Trait Evolution

| Model Name | Key Parameter(s) | Biological Interpretation | Best For |

|---|---|---|---|

| Brownian Motion (BM) | Rate (σ²) | Neutral evolution / genetic drift; trait variance increases randomly over time [21]. | Null hypothesis; traits under random walk [21]. |

| Ornstein-Uhlenbeck (OU) | α (strength of selection), θ (optimum) | Stabilizing selection towards a specific optimum trait value [21]. | Traits under constraints or adaptation to a niche [21]. |

| Trend | Drift (μ) | Directional change in trait mean over time [21]. | Traits under consistent directional selection [21]. |

| White Noise | None | No phylogenetic signal; trait values are independent of evolutionary history [21]. | Testing for the presence of any phylogenetic signal [21]. |

My analysis shows a weak phylogenetic signal. What does this mean?

Problem: Pagel's lambda (λ) is estimated to be close to 0, indicating little influence of phylogeny on trait variation.

Diagnosis: A low lambda suggests that closely related species are not more similar in their trait values than distantly related species. This could be due to measurement error, high levels of convergent evolution, or a trait evolving very rapidly [21].

Solution:

- Verify data quality: Check for errors in trait measurement or data entry.

- Confirm phylogeny: Ensure the phylogenetic tree is well-supported and appropriate for your taxonomic group.

- Interpret biologically: A low signal is a valid result. It suggests that other factors (e.g., environmental pressures) may be more important than shared ancestry in shaping the trait [21].

Frequently Asked Questions (FAQs)

What is the difference between an ECM and a PCM?

The Engine Control Module (ECM) is an automotive part that manages engine functions. In our scientific context, these acronyms are not relevant. Phylogenetic Comparative Methods (PCMs) are statistical tools used to test evolutionary hypotheses across a phylogeny. The core component discussed in methodological papers is the Phylogenetic Variance-Covariance (VCV) matrix, which encodes the expected trait covariances among species based on their shared evolutionary history [21].

How can I test if my PCM analysis is statistically valid?

Answer: Validity is ensured through several diagnostic checks [21]:

- Model Convergence: For Bayesian methods, ensure MCMC chains have converged (trace plots, ESS > 200).

- Model Fit: Use metrics like AICc to confirm your chosen model fits the data better than a null model.

- Residual Diagnostics: Check the residuals of your model (e.g., in a PGLS) for homoscedasticity and normality.

- Phylogenetic Signal: Test if your residual variation is independent of phylogeny.

What should I do if my model parameters are inconsistent with biological reality?

Answer: This often points to model misspecification or data issues [21].

- Re-examine your tree: Check for inaccurate branch lengths or topology.

- Check for outliers: Identify if a single species or clade is driving the unusual parameter estimates.

- Consider alternative models: The model you are using may be too simple or complex. Explore other models in the candidate set.

- Consult literature: Compare your estimates with previously published values for similar traits and taxa.

How do I handle missing data in my trait dataset?

Answer: Most modern PCM software (e.g., phytools in R, BayesTraits) can handle missing data. The data is typically treated as a parameter to be estimated by the model. It is crucial to ensure that the data is "Missing At Random" (MAR) and that the amount of missing data is not excessive, as this can increase uncertainty in parameter estimates [21].

Experimental Protocols

Protocol 1: Fitting and Comparing Models of Trait Evolution

Purpose: To infer the mode of evolution for a continuous trait using a set of competitive models [21].

Materials: Phylogenetic tree in Newick format; trait data file (e.g., CSV).

Methodology:

- Data Preparation: Import the tree and trait data into R. Prune the tree and data to ensure matching taxa.

- Model Fitting:

- Fit a Brownian Motion (BM) model.

- Fit an Ornstein-Uhlenbeck (OU) model with a single optimum.

- Fit a Trend model.

- (Optional) Fit more complex OU models with multiple optima.

- Model Comparison: Extract the AICc score for each fitted model. Calculate Akaike weights to determine the best-supported model.

- Parameter Estimation: Report the parameter estimates (e.g., σ², α, λ) for the best-fitting model.

Protocol 2: Testing for Phylogenetic Signal

Purpose: To quantify the degree to which shared evolutionary history explains trait similarity among species [21].

Materials: Phylogenetic tree; continuous trait data.

Methodology:

- Calculate Pagel's Lambda: Use the

phylosigfunction in thephytoolsR package to estimate Pagel's λ. - Hypothesis Testing: Perform a likelihood ratio test to compare the model where λ is estimated to a model where λ is fixed at 0 (no phylogenetic signal).

- Interpretation: A λ not significantly different from 0 suggests a lack of phylogenetic signal. A λ of 1 indicates trait evolution consistent with a Brownian motion model.

Visualizations

PCM Analysis Workflow

Evolutionary Model Relationships

Research Reagent Solutions

Table 2: Essential Computational Tools for PCM Research

| Tool / Reagent | Function | Application in PCMs |

|---|---|---|

| R Statistical Environment | Software platform for statistical computing and graphics [21]. | The primary environment for implementing most PCMs. |

phytools R Package |

An R package for phylogenetic comparative biology [21]. | Fitting evolutionary models, visualizing trait evolution, and conducting phylogenetic analyses. |

ape R Package |

Core R package for manipulating and analyzing phylogenetic trees [21]. | Reading, writing, and manipulating phylogenetic trees; building phylogenetic variance-covariance matrices. |

| Phylogenetic Variance-Covariance (VCV) Matrix | A matrix describing expected trait covariances based on shared evolutionary history [21]. | The foundational mathematical structure used in PGLS and other PCMs to account for non-independence of species. |

| Bayesian Software (e.g., RevBayes, BEAST) | Software for Bayesian evolutionary analysis [21]. | Fitting complex evolutionary models, dating phylogenies, and performing hypothesis testing in a Bayesian framework. |

A Practical Toolkit: Key Methodologies and Their Biomedical Applications

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My Phylogenetic Independent Contrasts (PIC) analysis yields significant results, but the model diagnostics look strange. What are the most common assumptions I might have violated?

Phylogenetic Independent Contrasts rely on several key assumptions. Violations can lead to misleading results. The three major assumptions are:

- Accurate Phylogenetic Topology: The tree's branching order is correct.

- Correct Branch Lengths: The branch lengths in the phylogeny are accurate and proportional to time or evolutionary change.

- Brownian Motion Trait Evolution: Traits evolve according to a Brownian motion model, where variance accrues linearly with time [22].

Troubleshooting Steps: Use diagnostic plots available in standard packages like

caperin R. Look for relationships between standardized contrasts and their standard deviations or node heights. A significant relationship suggests model assumption violations [22].

Q2: I've found that an Ornstein-Uhlenbeck (OU) model fits my trait data better than a Brownian Motion model. Can I confidently conclude this is evidence of stabilising selection or niche conservatism?

While an OU model is often interpreted as evidence for stabilising selection, you must exercise caution. Several well-known caveats exist:

- Small Sample Sizes: OU models are frequently and incorrectly favoured over simpler models in small datasets (the median number of taxa in OU studies is 58) [22].

- Measurement Error: Even tiny amounts of error in your data can cause an OU model to be favoured because it can accommodate more variance towards the tips of the phylogeny, not due to a meaningful biological process [22].

- Biological Interpretation: The literature clearly states that a simple explanation of clade-wide stabilising selection is unlikely to be the sole reason for an OU model fit [22]. Other factors should be investigated before making a strong biological inference.

Q3: My trait-dependent diversification analysis (e.g., using BiSSE) suggests a trait influences speciation rates. What major pitfall should I check for in my analysis and results?

A significant result can be misleading. It is crucial to rule out the possibility that the detected pattern is not caused by a single diversification rate shift in the tree that is unrelated to your trait of interest. Simulations have shown that such rate heterogeneity can create a strong correlation between a trait and diversification rate, making the finding biologically meaningless [22]. Always check for underlying rate shifts in your phylogeny that are not associated with the trait.

Experimental Protocols for Core PCMs

Protocol 1: Conducting a Phylogenetic Generalized Least Squares (PGLS) Analysis

PGLS is a standard method for testing relationships between traits while accounting for phylogenetic non-independence.

- Data Preparation: Compile a dataset of trait values for each species and a phylogenetic tree with branch lengths.

- Model Selection: Choose an evolutionary model for the residual structure (covariance matrix

V). Common choices include:- Brownian Motion (BM): Assumes trait variance increases linearly with time.

- Ornstein-Uhlenbeck (OU): Adds a parameter for pull towards a trait optimum.

- Pagel's λ: A multilevel scaling parameter for the phylogenetic correlation [10].

- Model Fitting: Use a PGLS implementation (e.g., the

glsfunction in the R packagenlmewith a defined correlation structure) to fit the regression modelY ~ X, incorporating the phylogenetic covariance matrixVderived from your chosen evolutionary model [10]. - Parameter Estimation: The PGLS algorithm co-estimates the parameters of the regression (slope, intercept) and the parameters of the evolutionary model (e.g., λ, α) [10].

- Diagnostic Checking: Examine the model residuals to check for homoscedasticity and normality, and to ensure the chosen evolutionary model is appropriate.

Protocol 2: Implementing Phylogenetic Independent Contrasts

This method transforms species data into statistically independent values.

- Calculate Contrasts: Start at the tips of the phylogeny. For each node, calculate the difference (contrast) between the two descendant node values. The calculation is weighted by the branch lengths and the variances [10].

- Standardize Contrasts: Divide each raw contrast by its standard deviation (which is a function of the branch lengths) [10].

- Check Assumptions: Ensure there is no relationship between the standardized contrasts and their standard deviations or node heights. The basal node value can be interpreted as a phylogenetically weighted estimate of the ancestral state or the grand mean [10] [22].

- Statistical Analysis: The standardized contrasts are now independent and can be used in standard statistical analyses, such as regression through the origin [10].

Model Selection Workflow & Logical Relationships

The following diagram outlines a logical workflow for selecting and applying core Phylogenetic Comparative Methods.

PCM Model Selection Workflow

Research Reagent Solutions

The following table details key computational tools and conceptual models essential for conducting research in Phylogenetic Comparative Methods.

| Research Reagent | Type | Primary Function |

|---|---|---|

| Phylogenetic Tree | Data Structure | The historical hypothesis of relationships used to account for non-independence among species [10]. |

| R Statistical Environment | Software Platform | The primary software environment for implementing a wide array of PCMs [22]. |

caper R package |

Software Tool | Implements Phylogenetic Independent Contrasts and includes standard diagnostic checks for model assumptions [22]. |

| Brownian Motion (BM) Model | Evolutionary Model | A null model of trait evolution where variance accrues linearly with time [10] [22]. |

| Ornstein-Uhlenbeck (OU) Model | Evolutionary Model | A model that adds a parameter for pull towards a trait optimum, often used to model stabilizing selection [22]. |

| Phylogenetic Generalized Least Squares (PGLS) | Statistical Framework | A general regression framework that incorporates phylogenetic information into the error structure [10]. |

Software Installation and Configuration

This section addresses common setup issues for the primary phylogenetic software platforms.

MEGA

Q: MEGA does not render correctly on my Linux system with a dark theme. How can I fix this? A: This is a known issue with MEGA on Linux related to the GTK2 widget toolkit [23] [24]. You can resolve it by:

- Switching your entire desktop to a light theme.

- Launching MEGA with a light theme only. Try executing these commands in a terminal: This will launch MEGA using the Adwaita (light) theme without affecting other applications [23].

Q: Is my macOS system compatible with MEGA? A: Compatibility depends on your macOS version and hardware [23]:

- macOS 10.15 (Catalina) and later: You must use MEGAX 10.1.4 or later, as Apple dropped support for 32-bit applications. MEGA7 will not run.

- macOS with ARM-based M-series chips: You must use MEGA12 or later for native support. Earlier versions are not optimized for this architecture.

- macOS 10.13-10.14: It is recommended to use MEGAX 10.0.0 or later.

Q: I see a floating blue box in MEGA's Tree Explorer that I cannot remove. What should I do? A: This display issue can be resolved by restoring MEGA's default settings. Close MEGA and delete its settings folder [24]:

- Windows: Navigate to

%localappdata%, then go toMEGA\MEGA_buildnumber\Privateand delete theInifolder. - Linux: Navigate to

~/.config/MEGA/MEGA_buildnumber/Privateand delete theInidirectory. - macOS (MEGA12+): Right-click MEGA in your Applications folder, select "Show Package Contents", then navigate to

Contents/Resources/Privateand delete theInifolder.

IQ-TREE

Q: What is the best way to get help with IQ-TREE? A: The developers recommend this structured approach [25]:

- Read the IQ-TREE documentation and this FAQ.

- Search the IQ-TREE Google group and GitHub discussions for existing answers.

- If the problem persists, post a question to the IQ-TREE group with a minimally reproducible example, including your command, input files, and output logs [26].

Q: How many CPU cores should I use for my IQ-TREE analysis?

A: For the best performance, use the -nt AUTO option, which automatically determines the optimal number of threads for your data and computer [25]. Note that parallel efficiency is higher for longer alignments. You can set an upper limit with -ntmax.

R Packages (ape,phytools)

Q: How do I read a phylogenetic tree into R?

A: The ape package provides core functions for reading trees [27] [28]. The function you use depends on the file format:

- Newick format: Use

read.tree("path/to/myfile.tre"). - NEXUS format: Use

read.nexus("path/to/myfile.nex"). This creates aphyloobject, which is the standard for storing phylogenies in R.

Q: My trait data and tree tip labels do not match. How do I align them?

A: The species data in your data frame must be in the same order as the tip labels in the tree object. Assuming your data frame mydata has species names as row names, use this command to reorder the rows [28]:

Data Handling and Analysis

This section covers common questions related to preparing data and executing analyses.

MEGA

Q: When I open a FASTA file, only the first part of the sequence name is displayed. Why?

A: By default, the Alignment Explorer shows sequence names only up to the first whitespace. To view full names, click Display -> Show Full Sequence Names [23].

Q: Why do my Maximum Likelihood analyses on different computers yield slightly different results with the same data and settings? A: This is expected. Likelihood calculations use floating-point arithmetic, which is highly sensitive to tiny precision differences arising from variations in CPU architectures, operating systems, or compilers [23].

IQ-TREE

Q: How does IQ-TREE handle gaps, missing data, and ambiguous characters?

A: IQ-TREE treats gaps (-) and missing characters (?, N) as unknown, meaning they contain no information [25]. Ambiguous characters (e.g., R for A/G in DNA) are supported according to IUPAC nomenclature; the likelihood is equally distributed among the possible character states.

Q: Can I mix different data types (e.g., DNA and protein) in one analysis? A: Yes, using a partitioned analysis with a NEXUS partition file. Each data type can be specified from separate alignment files [25].

Q: How should I interpret ultrafast bootstrap (UFBoot) support values?

A: UFBoot support values are less biased than standard bootstrap. A clade with 95% UFBoot support has approximately a 95% probability of being true [25]. For single genes, it is recommended to also perform the SH-aLRT test (-alrt 1000). A clade with SH-aLRT ≥ 80% and UFBoot ≥ 95% is considered highly supported.

R Packages (ape,phytools)

Q: How can I test for phylogenetic signal in a continuous trait?

A: Use Pagel's λ (lambda) with the phylosig function from phytools [28]. Lambda ranges from 0 (no signal) to 1 (strong signal, consistent with Brownian motion evolution).

Q: How do I perform a phylogenetic regression using Independent Contrasts?

A: Use the pic() function from ape to compute phylogenetically independent contrasts (PICs) for your traits, then fit a linear model through the origin [28].

Results Interpretation and Visualization

This section helps with understanding output and creating publication-quality figures.

IQ-TREE

Q: What is the purpose of the composition test run at the start of an analysis? A: The composition chi-square test checks for significant deviations in character composition (e.g., nucleotide, amino acid) of each sequence from the alignment-wide average [25]. A "failed" sequence may indicate potential issues, but it is an explorative tool. If your tree shows an unexpected topology, this test might help identify problematic sequences.

R Packages (ape,phytools)

Q: How can I visualize the evolution of a continuous trait on a tree?

A: The contMap function in phytools maps a continuous trait onto the tree branches using a color gradient [29].

Q: How can I plot a tree with trait data at the tips?

A: phytools offers several functions [29] [28]:

dotTree: Plots dots of varying size next to tips.plotTree.barplot: Plots bars next to tips.phylo.heatmap: Creates a heatmap of multiple traits next to the tree.

Comparative Methods in R

This section focuses on implementing phylogenetic comparative methods.

Q: How do I fit a phylogenetic generalized least squares (PGLS) model?

A: Use the gls function from the nlme package, specifying the phylogenetic correlation matrix [28]. This matrix, which defines the expected species correlations under a Brownian motion model, is created with ape::vcv().

Q: How can I plot a phylogenetic tree in a "fan" style?

A: Use the type argument in the plot.phylo function from ape or in plotting functions from phytools [29].

Essential Research Reagent Solutions

The table below lists key software "reagents" essential for phylogenetic comparative analysis.

| Tool/Platform | Primary Function | Key Use-Case in Comparative Methods |

|---|---|---|

| MEGA | User-friendly GUI for sequence alignment, model testing, and tree building [23] | Building initial phylogenetic trees from molecular data for downstream comparative analyses. |

| IQ-TREE | Efficient maximum likelihood phylogeny inference with model finding [25] | Robust, model-based tree inference for large datasets; uses ModelFinder for best-fit model selection. |

R ape package |

Core infrastructure for reading, writing, and manipulating phylogenetic trees [27] [28] | Foundational operations: reading trees, calculating independent contrasts, phylogenetic correlations. |

R phytools package |

Visualization and methods for phylogenetic comparative biology [29] [28] | Advanced plotting (trait evolution, morphospaces), phylogenetic signal, stochastic character mapping. |

R nlme package |

Fitting linear mixed-effects models [28] | Implementing Phylogenetic Generalized Least Squares (PGLS) regression to account for phylogeny. |

Workflow and Logical Diagrams

Phylogenetic Analysis and Model Selection Workflow

The following diagram outlines a standard workflow for molecular phylogenetics and subsequent comparative analysis.

Phylogenetic Comparative Methods Logic

This diagram illustrates the logical structure of a phylogenetic comparative analysis, showing how different R packages contribute to the process.

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using phylogenetic comparative methods (PCMs) for drug target identification over genetics-only approaches? PCMs allow researchers to model trait evolution and identify evolutionarily conserved biological pathways critical for host survival. This helps prioritize targets that are less likely to mutate, thereby reducing the risk of drug resistance—a common problem when targeting rapidly evolving viral or bacterial proteins. Furthermore, methods based on the Ornstein-Uhlenbeck process can model adaptation on a phenotypic adaptive landscape that itself evolves, capturing long-term trait evolution more realistically than other approaches [30].

Q2: My multi-omics data shows a promising target, but phylogenetic analysis indicates it is not evolutionarily conserved. Should I still pursue it? Proceed with caution. While a lack of conservation does not automatically rule out a target, it raises a significant risk flag regarding potential functional redundancy, high mutation rate, or undesirable off-target effects in homologous human proteins. It is recommended to use a multi-modal AI approach to integrate this phylogenetic signal with other data layers (e.g., structural biology, single-cell omics) to assess the target's role in disease mechanisms more comprehensively [31].

Q3: How can I integrate 3D genomic data to improve the identification of conserved regulatory elements? Non-coding variants found in genome-wide association studies (GWAS) often influence gene regulation over long genomic distances. By using 3D multi-omics data, which layers genome folding data with other molecular readouts, you can map physical interactions between regulatory regions and their target genes. This moves beyond simple linear association and helps identify conserved regulatory networks, pinpointing which genes matter, in which cell types, and in which contexts [32].

Q4: What is the role of AI in analyzing phylogenetic and comparative data for target discovery? Artificial intelligence, particularly large language models (LLMs) and multimodal AI systems, can revolutionize this field. Specialized LLMs can be trained on biological sequences (like SMILES or FASTA) to predict protein-ligand binding or identify conserved domains. Multimodal AI can combine diverse data sources—including phylogenetic trees, molecular structures, multi-omics profiles, and biomedical literature—using knowledge graphs to enable cross-modal reasoning and prioritize high-confidence, evolutionarily informed drug targets [33] [31].

Troubleshooting Guides

Issue 1: Poor Correlation Between Evolutionarily Conserved Genes and Disease Association

Problem: Your analysis identifies evolutionarily conserved genes, but they do not appear to have a strong association with the disease pathology in human multi-omics datasets.

Solution:

- Action 1: Refine Your Conservation Metric. Instead of using simple sequence conservation, employ a Phylogenetic Comparative Method (PCM) like the Adaptation-Inertia Framework. This method models a changing adaptive landscape and is more powerful for testing evolutionary hypotheses by capturing how traits evolve in response to a shifting fitness landscape [30].

- Action 2: Integrate Cellular Context. Use AI-powered single-cell omics analysis. Bulk omics data can mask cell-type-specific effects. Single-cell RNA sequencing can resolve cellular heterogeneity and identify if a conserved target is dysregulated in a specific, disease-relevant cell subpopulation, which might be missed in bulk data [31].

- Action 3: Validate Functional Relevance. Implement an AI-enhanced perturbation omics framework. Use CRISPR-based screens to systematically knock down conserved genes in relevant cell models and measure molecular responses. This provides causal evidence for the target's role in disease-related pathways [31].

Issue 2: High Computational Complexity in Analyzing Multi-Omics Data with Phylogenetic Models

Problem: Integrating large, complex phylogenetic and multi-omics datasets is computationally prohibitive, leading to long processing times and model instability.

Solution:

- Action 1: Leverage Optimized AI Frameworks. Adopt a deep learning framework like optSAE + HSAPSO, which integrates a stacked autoencoder for robust feature extraction with a hierarchically self-adaptive particle swarm optimization algorithm. This combination has been shown to achieve high accuracy (95.52%) while significantly reducing computational complexity and improving stability [34].

- Action 2: Utilize Available Databases and Tools. Conduct your analysis using established platforms. Rely on curated omics databases (e.g., Cancer Cell Line Encyclopedia), structure databases (e.g., Protein Data Bank), and knowledge bases (e.g., DrugBank, Guide to Pharmacology) to access pre-processed, high-quality data, which reduces computational overhead during the initial data integration and modeling phases [31].

- Action 3: Employ Hybrid AI Models. For specific tasks, use a hybrid LM/LLM method. These architectures leverage the strengths of large language models alongside dedicated computational modules like graph neural networks, which can be more efficient for specific geometric reasoning tasks involved in analyzing evolutionary relationships [33].

Data Presentation

Table 1: Comparison of Key Methodologies for Identifying Conserved Drug Targets

| Method Category | Key Technique | Data Inputs | Primary Output | Key Advantage |

|---|---|---|---|---|

| Phylogenetic Comparative Methods | Adaptation-Inertia Framework (OU process) [30] | Trait data across species, phylogeny | Models of trait evolution, identification of stable targets | Models a changing adaptive landscape for more realistic long-term evolution |

| 3D Multi-omics Integration | Genome folding profiling (e.g., Hi-C) [32] | GWAS variants, 3D genome structure, gene expression | Causal gene-regulatory networks for diseases | Links non-coding variants to their target genes via 3D structure, revealing context |

| AI & Deep Learning | Optimized Stacked Autoencoder (optSAE + HSAPSO) [34] | Drug and protein features from DrugBank, Swiss-Prot | Druggable target classification | High accuracy (95.5%), low computational complexity, and high stability |

| Multimodal AI Systems | Knowledge graphs + LLMs [33] [31] | Molecular structures, omics profiles, literature | Prioritized list of high-confidence drug targets | Cross-modal reasoning integrating diverse data for robust target discovery |

Table 2: Essential Research Reagent Solutions

| Research Reagent | Function & Application in Target Identification |

|---|---|

| CETSA (Cellular Thermal Shift Assay) | Validates direct drug-target engagement in intact cells and tissues, confirming binding and mechanistic activity in a physiologically relevant context [35]. |

| Single-Cell Multi-omics Kits | Enables resolution of genomic, transcriptomic, or proteomic profiles at the single-cell level for deciphering cellular heterogeneity and identifying cell-type-specific targets [31]. |

| Perturbation Omics Tools (e.g., CRISPR libraries) | Provides a causal reasoning foundation by introducing systematic gene perturbations and measuring global molecular responses to reveal functional targets [31]. |

| AI-Curated Knowledge Bases | Databases (e.g., DrugBank, Guide to Pharmacology) provide structured biological and chemical data for training AI models and validating potential targets [31]. |

Experimental Protocols

Protocol 1: Workflow for Identifying Evolutionarily Conserved Drug Targets via PCMs and AI

Objective: To systematically identify and prioritize evolutionarily conserved drug targets for a specific disease by integrating phylogenetic comparative methods with multimodal AI.

Step-by-Step Methodology:

- Data Curation and Phylogenetic Tree Construction

- Gather genomic and phenotypic data for a broad panel of species relevant to the disease (e.g., mammalian species for a human disease).

- Construct a robust phylogenetic tree using sequence data from conserved genes.

Trait Evolution Modeling

- Apply the Adaptation-Inertia Framework, an Ornstein-Uhlenbeck (OU) based PCM, to model the evolution of disease-relevant traits [30].

- Use multivariate extensions of these methods to test hypotheses about correlated evolution between traits and environmental factors.

Identification of Conserved Genomic Elements

- Cross-reference the results of the PCM analysis with human GWAS data to identify conserved genomic regions associated with the disease.

- For non-coding variants, utilize 3D multi-omics data (e.g., from platforms like Enhanced Genomics) to map long-range physical interactions between regulatory regions and the genes they control, thereby pinpointing causal genes [32].

Multimodal AI-Based Prioritization

- Input the candidate genes into a multimodal AI system. This system should integrate:

- Omics Data: Bulk and single-cell transcriptomics to confirm expression in relevant cell types [31].

- Structural Data: AI-predicted protein structures (from AlphaFold) to assess druggability of potential binding sites [31].

- Literature & Knowledge: Use LLMs to mine existing biomedical literature and knowledge graphs for known associations [33].

- Employ a framework like optSAE + HSAPSO for efficient and accurate classification and prioritization of the final candidate targets [34].

- Input the candidate genes into a multimodal AI system. This system should integrate:

Experimental Validation

Protocol 2: Validating Target Engagement and Mechanism of Action

Objective: To confirm direct binding of a drug candidate to its identified evolutionarily conserved target within a complex cellular environment and understand the downstream effects.

Step-by-Step Methodology:

- Cellular Model Preparation

- Culture disease-relevant cell lines. Treatment groups: vehicle (DMSO), drug candidate, and an inactive analog as a negative control.

CETSA (Cellular Thermal Shift Assay) Execution

- Drug Treatment: Treat intact cells with the compound of interest across a range of doses.

- Heat Denaturation: Heat the cells to a gradient of temperatures to denature proteins.

- Cell Lysis and Protein Solubilization: Lyse cells and separate soluble (folded) protein from insoluble (aggregated) protein.

- Target Protein Quantification: Use high-resolution mass spectrometry (as in Mazur et al., 2024) to quantify the amount of the soluble target protein remaining at each temperature [35].

- Data Analysis: A rightward shift in the protein's melting curve (increased thermal stability) in the drug-treated sample compared to the control indicates direct target engagement.

Mechanistic Profiling via Perturbation Omics

- Following target engagement confirmation, use the same cell model with and without drug treatment.

- Perform single-cell RNA sequencing to profile the full transcriptomic response.

- Use AI tools to analyze the data, infer gene regulatory networks, and identify downstream pathways that are significantly altered, thereby confirming the expected mechanism of action [31].

Frequently Asked Questions (FAQs)

Q1: My phylogenetic analysis shows conflicting signals between different genes in the same pathogen. What could be the cause and how can I resolve it? Conflicting signals, or incongruence, between gene trees is common in pathogen evolution due to processes like horizontal gene transfer (HGT) or recombination [36]. To resolve this:

- Confirm Incongruence: Use statistical tests like the Shimodaira–Hasegawa test to determine if the differences in tree likelihoods are significant.

- Model Selection: Employ models that can account for different evolutionary histories across the genome. Consider using concatenated alignments with partitioning or multi-species coalescent models.

- Identify Recombination: Use tools like Gubbins or RDP4 to detect and mask recombinant regions in your alignment before re-inferring the phylogeny.

Q2: How do I choose the right evolutionary model for my dataset of antimicrobial resistance (AMR) genes? Selecting the correct model is critical for accurate phylogenetic inference [37] [10].

- Start with Model Selection: Use software like

ModelTest-NGorjModelTest2for nucleotide data, orProtTestfor amino acid data. These tools calculate the likelihood of different models given your sequence alignment. - Use a Selection Criterion: Base your choice on the Bayesian Information Criterion (BIC) or Akaike Information Criterion (AICc), which balance model fit with complexity.

- Consider Your Biological Question: For dating analyses, a relaxed molecular clock model is often appropriate. For tracing phenotype evolution, a Brownian motion or Ornstein-Uhlenbeck model may be used in subsequent comparative analyses [10].

Q3: What is the best way to visualize and annotate a large phylogenetic tree with AMR and metadata information? For large trees (e.g., >50 strains), effective annotation is key to analysis [38].

- Use Interactive Tools: Web tools like Context-Aware Phylogenetic Trees (CAPT) allow you to link the phylogenetic tree view with an icicle plot of taxonomic data, enabling interactive exploration [36].

- Custom Annotation Files: For software like FigTree, you can create or modify NEXUS format tree files to include color annotations for traits like serotype, isolation source, or AMR profile using custom scripts [38].

- Define Color Schemes: Create a tab-delimited file specifying trait values and their corresponding hex color codes to ensure consistency and preserve logical ordering (e.g., for age groups or resistance levels) [39].

Q4: How can I test for a correlation between a specific genetic mutation and a phenotype like antimicrobial resistance? Phylogenetic comparative methods (PCMs) are designed for this, as they control for shared evolutionary history [10].

- Phylogenetic Generalized Least Squares (PGLS): This is the most common PCM for testing relationships between continuous traits while accounting for phylogenetic non-independence. It incorporates the phylogenetic relationship into the error structure of a linear model [10].

- For Discrete Traits: Use methods like Phylogenetic ANOVA or implementations of Pagel's λ to test for the correlated evolution of two binary traits (e.g., presence of a mutation and resistance to an antibiotic) [10].

Troubleshooting Guides

Problem: Poor Resolution in Phylogenetic Tree (Low Bootstrap Values) Low support values indicate uncertainty in inferred relationships.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient Phylogenetic Signal | Check for low sequence divergence or a high number of parsimony-uninformative sites in the alignment. | Increase the number of informative sites by including more genes (e.g., whole genome sequencing) or longer gene sequences. |

| Model Misspecification | Run a model selection test to see if a more complex model (e.g., with gamma-distributed rate variation) is warranted. | Re-run the analysis with the best-fit evolutionary model as identified by software like ModelTest-NG. |

| Recombination | Use recombination detection software (e.g., Gubbins). | Mask recombinant regions in the alignment before phylogenetic inference. |

| Alignment Errors | Visually inspect the alignment for poorly aligned regions. | Re-align sequences and trim unreliable regions using tools like Gblocks or TrimAl. |

Problem: Inconsistent Taxonomic Classification from Phylogenomic Data Traditional taxonomy and phylogeny-based taxonomy can conflict [36].

| Issue | Explanation | Resolution |

|---|---|---|

| Misplaced Species | A species appears in a clade inconsistent with its established taxonomic rank. | Use interactive visualization tools like CAPT [36] to explore the congruence between the phylogenetic tree and taxonomic hierarchy. This helps validate updated, phylogeny-based taxonomies. |

| Polyphyletic Groups | Organisms from the same genus or species appear in multiple distant clades on the tree. | This often indicates that the current taxonomy does not reflect evolutionary history. It may be necessary to consider reclassification based on the genomic evidence. |

| Weak Support for Key Nodes | Low bootstrap values at nodes that define major taxonomic groups. | This may be due to the limitations of single-gene methods like 16S rRNA sequencing. Employ whole-genome methods like Average Nucleotide Identity (ANI) for higher resolution at the species level [36]. |

Experimental Protocols & Workflows

Protocol 1: Building a Phylogenomic Tree for AMR Surveillance This protocol outlines a standard workflow for tracing the evolution of resistant pathogens.

1. Data Collection and Preparation

- Input: Whole Genome Sequencing (WGS) data from bacterial isolates.

- Quality Control: Use FastQC to assess read quality. Trim adapters and low-quality bases with Trimmomatic.

- Assembly: Assemble genomes using a tool like SPAdes. Check assembly quality with QUAST.

2. Gene Calling and Annotation

- Identify AMR Genes: Annotate assemblies using Prokka and specifically screen for known AMR genes with ABRicate against databases like CARD or ResFinder.

- Identify Core Genes: Use a tool like Roary to identify the core genome (genes present in all or most isolates).

3. Multiple Sequence Alignment

- Concatenate Core Genes: Extract and concatenate the core gene sequences.

- Align: Perform a multiple sequence alignment of the core genome using MAFFT or Clustal Omega.

4. Phylogenetic Inference

- Model Selection: Use

ModelTest-NGon the alignment to determine the best-fit nucleotide substitution model. - Tree Building: Infer the tree using Maximum Likelihood (e.g., RAxML-NG or IQ-TREE) or Bayesian methods (e.g., MrBayes or BEAST2). For dating, BEAST2 with a relaxed molecular clock is recommended.

- Support Assessment: Calculate branch support using 1000 bootstrap replicates for ML or posterior probabilities for Bayesian methods.

5. Visualization and Analysis

- Annotate the Tree: Use tools like FigTree or the CAPT web tool to color branches by metadata such as resistance profile, isolation location, or date [36] [38].

- Comparative Analysis: Use the resulting tree in PCMs to test hypotheses, for example, on the association between certain lineages and the acquisition of resistance genes.

Workflow for Phylogenomic Analysis of AMR