Machine Learning for GRN Reconstruction: From Foundational Concepts to Advanced Applications in Biomedicine

This article provides a comprehensive overview of machine learning (ML) approaches for reconstructing Gene Regulatory Networks (GRNs) from gene expression data.

Machine Learning for GRN Reconstruction: From Foundational Concepts to Advanced Applications in Biomedicine

Abstract

This article provides a comprehensive overview of machine learning (ML) approaches for reconstructing Gene Regulatory Networks (GRNs) from gene expression data. It explores the foundational principles of GRN inference, detailing the evolution from classical statistical methods to modern deep learning and hybrid models. The review systematically compares supervised, unsupervised, and contrastive learning paradigms, highlighting their application to both bulk and single-cell RNA-seq data. It further addresses critical challenges in model optimization, data integration, and computational efficiency, offering practical troubleshooting guidance. Finally, the article establishes a framework for the validation and comparative analysis of GRN inference methods, discussing their profound implications for drug discovery and personalized medicine.

Understanding Gene Regulatory Networks and the Rise of Machine Learning

A Gene Regulatory Network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins, which in turn determine cellular function [1]. GRNs play a central role in morphogenesis (the creation of body structures) and are fundamental to evolutionary developmental biology (evo-devo) [1]. Conceptually, GRNs can be visualized as intricate maps where nodes represent biological entities (e.g., genes, proteins), and edges represent the regulatory interactions between them. The regulatory logic is encoded in the nature of these edges, determining the dynamic behavior and output of the network. The reconstruction of these networks is a primary challenge in modern biology, essential for understanding cellular decision-making, development, and disease [2] [3].

Network Components: Nodes, Edges, and Regulatory Logic

Nodes

In a GRN, a node can represent various molecular entities [1]:

- Genes: Specifically, their expression states (active/silent) or expression levels.

- Transcription Factors (TFs): Proteins that bind to specific DNA sequences to activate or repress gene transcription.

- Messenger RNAs (mRNAs): The intermediate molecules carrying genetic information from DNA to the protein-making machinery.

- Protein/Protein Complexes: Such as complexes of transcription factors that work together.

- Cellular Processes: Broader functional outcomes.

Edges

Edges represent the functional interactions between nodes. These can be [1]:

- Inductive (Activatory): Represented by arrows (

→) or a plus sign (+). An increase in the concentration or activity of the source node leads to an increase in the target node. - Inhibitory: Represented by blunt arrows (

⊣), filled circles (•), or a minus sign (-). An increase in the source node leads to a decrease in the target node. These interactions can be direct, such as a TF binding to a gene's promoter, or indirect, through intermediate molecules or processes [1].

Regulatory Logic

The regulatory logic defines how a node integrates its inputs to determine its output state. In computational models, this is often represented by Boolean functions (AND, OR, NOT) or more complex differential equations [1]. A critical feature arising from this logic is the feedback loop, which creates cyclic chains of dependencies and is responsible for key network behaviors like stability, oscillation, and cellular memory [1] [4].

Table 1: Core Components of a Gene Regulatory Network

| Component | Description | Biological Example |

|---|---|---|

| Node (Regulator) | A molecular entity that influences another. | A transcription factor (e.g., MYB46). |

| Node (Target) | A molecular entity being influenced. | A structural gene in a biosynthesis pathway. |

| Activatory Edge | An interaction that promotes activation. | TF binding to a promoter and recruiting RNA polymerase. |

| Inhibitory Edge | An interaction that promotes repression. | TF binding to a promoter and blocking RNA polymerase. |

| AND Logic | Multiple regulators are required to activate a target. | TF A AND TF B must be present to turn on Gene C. |

| OR Logic | Any one of multiple regulators can activate a target. | TF X OR TF Y can turn on Gene Z. |

| Feedback Loop | A output feeds back to influence its own regulation. | A protein represses the transcription factor that activates its own gene. |

Machine Learning Approaches for GRN Reconstruction

The inference of GRNs from high-throughput expression data is a central problem in systems biology. Machine learning (ML) methods have emerged as powerful tools for this task, offering scalability and the ability to capture complex, non-linear relationships that traditional statistical methods might miss [5].

Methodological Foundations

ML-based GRN inference methods can be broadly categorized based on their underlying algorithmic principles [3]:

- Correlation-based approaches (e.g., Pearson's correlation, Spearman's correlation) measure association but cannot easily distinguish direct from indirect regulation.

- Regression models (e.g., LASSO) model a target gene's expression as a function of potential TFs, promoting sparsity to identify key regulators.

- Tree-based methods (e.g., Random Forests, as in GENIE3) use ensemble learning to rank the importance of TFs for each target gene.

- Deep learning models (e.g., Convolutional Neural Networks, Autoencoders) learn hierarchical representations from data, integrating multi-omic inputs for more accurate predictions [5] [3].

Recent advances include hybrid models that combine deep learning with traditional ML. For example, using a Convolutional Neural Network (CNN) to extract features from expression data followed by a machine learning classifier has been shown to consistently outperform traditional methods, achieving over 95% accuracy in benchmark tests on plant data [5]. Transfer learning is another powerful strategy, where a model trained on a data-rich species (like Arabidopsis thaliana) is adapted to infer GRNs in a less-characterized species (like poplar or maize), effectively addressing the challenge of limited training data in non-model organisms [5].

Table 2: Machine Learning Approaches for GRN Inference from Expression Data

| Method Category | Key Principle | Representative Algorithm(s) | Advantages | Limitations |

|---|---|---|---|---|

| Correlation-based | Measures co-expression or co-accessibility. | Pearson/Spearman Correlation, ARACNE, CLR | Simple, intuitive, fast to compute. | Cannot infer causality; prone to false positives from indirect regulation. |

| Regression-based | Models gene expression as a function of TFs. | LASSO, TIGRESS | More robust to correlated inputs; provides directional insights. | Assumes linear relationships; performance depends on penalty parameter selection. |

| Tree-based | Uses ensemble learning to rank regulator importance. | GENIE3, Random Forests | Captures non-linearities; no prior assumptions on data distribution. | Computationally intensive for large networks; less interpretable than linear models. |

| Deep Learning | Uses neural networks to learn complex hierarchical patterns. | CNNs, Autoencoders, DeepBind | High accuracy; can integrate multi-omic data seamlessly. | Requires large datasets; computationally expensive; "black box" nature. |

| Hybrid Models | Combines deep feature extraction with ML classifiers. | CNN + Machine Learning Classifier | High performance and accuracy; leverages strengths of both approaches. | Complex model architecture and training pipeline. |

Experimental Protocols for GRN Validation

Computational predictions require experimental validation. The following are key protocols for confirming TF-target interactions.

Chromatin Immunoprecipitation followed by Sequencing (ChIP-seq/ChIP-chip)

ChIP-seq is a gold-standard method for identifying genome-wide binding sites of a protein of interest, such as a transcription factor [2] [3].

Detailed Protocol:

- Cross-linking: Formaldehyde is added to cells to cross-link proteins to DNA.

- Cell Lysis and Chromatin Shearing: Cells are lysed, and chromatin is fragmented into ~200-500 bp pieces by sonication.

- Immunoprecipitation: An antibody specific to the TF of interest is used to pull down the TF and its bound DNA fragments.

- Reversal of Cross-linking and Purification: The protein-DNA cross-links are reversed, and the enriched DNA is purified.

- Library Preparation and Sequencing: A sequencing library is constructed from the purified DNA and sequenced on a high-throughput platform.

- Data Analysis: Sequence reads are aligned to a reference genome, and peaks of enriched signal, representing potential TF binding sites, are called.

The related ChIP-chip technique uses a DNA microarray instead of sequencing to identify bound fragments and was one of the first high-throughput methods applied to map TF binding sites in yeast [2].

Yeast One-Hybrid (Y1H) Assay

Y1H is a genetic system used to detect interactions between a "prey" protein (a TF) and a "bait" DNA sequence [5].

Detailed Protocol:

- Bait Strain Construction: A DNA fragment of interest (e.g., a putative promoter) is cloned upstream of a reporter gene (e.g., HIS3 or LacZ) in a yeast strain.

- Prey Library Transformation: A library of TFs, fused to a transcriptional activation domain (AD), is introduced into the bait strain.

- Selection: Yeast are grown on selective media (e.g., lacking histidine). Growth indicates that the TF-AD fusion has bound the bait DNA and activated the reporter gene.

- Confirmation: Positive interactions are typically confirmed using a secondary reporter, such as β-galactosidase (LacZ) assay.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Resources for GRN Research

| Reagent / Resource | Function in GRN Research | Example / Specification |

|---|---|---|

| scRNA-seq Kit | Profiling gene expression at single-cell resolution to identify cell types/states. | 10x Genomics Single Cell Gene Expression Solution |

| scATAC-seq Kit | Mapping open chromatin regions at single-cell resolution to identify accessible CREs. | 10x Genomics Single Cell ATAC Solution |

| ChIP-grade Antibody | Specific immunoprecipitation of a transcription factor for ChIP-seq. | Validated antibodies with high specificity (e.g., from Abcam, Cell Signaling). |

| Yeast One-Hybrid System | Testing physical interaction between a TF and a specific DNA sequence. | Clontech Matchmaker Gold Y1H System |

| DAP-seq Service | In vitro method for identifying TF binding sites using purified TF and genomic DNA. | Commercial service providers or custom protocols. |

| Reference Genome | Essential baseline for mapping and interpreting all sequencing-based data. | Species-specific assembly (e.g., TAIR for Arabidopsis, GRCm39 for mouse). |

| TF Binding Motif Database | In silico prediction of potential TF binding sites for hypothesis generation. | JASPAR, CIS-BP |

| GRN Inference Software | Computational tool for reconstructing networks from omics data. | GENIE3, DeepGRN, SCENIC |

The field of genomics has undergone a profound transformation, moving from population-averaged transcriptomic measurements to high-resolution, multi-layered molecular profiling at single-cell resolution. This data revolution is fundamentally reshaping our ability to decipher gene regulatory networks (GRNs)—the complex blueprints of interactions between transcription factors (TFs), cis-regulatory elements (CREs), and their target genes that govern cellular identity and function [3] [6]. GRNs represent the cornerstone of cellular processes, orchestrating everything from development to disease progression, and their accurate reconstruction is paramount for advancing biological understanding and therapeutic development [3] [7].

The evolution from bulk to single-cell multi-omics technologies has addressed a critical limitation of traditional approaches: the inability to capture cellular heterogeneity. Bulk sequencing methods, while valuable, provided only averaged signals across cell populations, masking the distinct regulatory programs of individual cells [3]. The advent of single-cell RNA sequencing (scRNA-seq) revealed this previously hidden heterogeneity, and subsequent technologies like single-cell ATAC-seq (scATAC-seq) further enabled the profiling of chromatin accessibility at a single-cell level [3] [8]. The latest innovation—single-cell multi-omics—allows for the simultaneous measurement of multiple molecular layers, such as RNA expression and chromatin accessibility, from the same cell [3] [7]. This progression, summarized in Table 1, has generated data of unprecedented richness and complexity, creating both an opportunity and an imperative for advanced computational methods.

Machine learning (ML) has emerged as the essential tool kit for interpreting this data deluge. The scale, dimensionality, and sparsity of single-cell multi-omic data surpass the capabilities of traditional statistical methods [8] [9]. ML approaches, ranging from random forests to deep learning architectures, provide the computational power needed to uncover subtle, nonlinear patterns and reconstruct accurate, context-specific GRNs that illuminate the regulatory logic underpinning cell types and states [5] [10]. This application note details the experimental and computational protocols leveraging this data revolution to reconstruct GRNs, framed within the broader thesis that machine learning is indispensable for translating multi-omic data into biological insight.

Experimental and Computational Foundations

Key Technological Advances and Data Generation

The reconstruction of GRNs from single-cell multi-omics data relies on a foundation of sophisticated sequencing technologies and carefully curated research reagents. The following section outlines the core platforms and materials that enable this research.

Table 1: Evolution of Transcriptomic and Multi-omic Data Types for GRN Inference

| Data Type | Key Characteristics | Advantages for GRN Inference | Limitations |

|---|---|---|---|

| Bulk RNA-seq | Population-averaged gene expression measurements [3]. | Established analysis pipelines; lower cost per sample [3]. | Obscures cellular heterogeneity; cannot resolve cell-type-specific regulation [3]. |

| Single-cell RNA-seq (scRNA-seq) | Gene expression profiling of individual cells [3] [8]. | Reveals cellular heterogeneity; enables identification of rare cell populations [3] [8]. | High technical noise and "dropout" events (false zeros) [10]. |

| Single-cell ATAC-seq (scATAC-seq) | Profiling of chromatin accessibility in individual cells [3]. | Identifies accessible cis-regulatory elements (CREs); infers potential TF binding sites [3]. | Data is inherently sparse and noisy; indirect measure of TF binding. |

| Single-cell Multi-omics | Simultaneous measurement of multiple modalities (e.g., RNA + ATAC) from the same cell [3] [7]. | Directly links regulatory element activity to gene expression in a single cell; provides a more causal view of regulation [3] [7]. | Technically complex; higher cost; data integration challenges. |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful GRN inference projects depend on a suite of wet-lab and computational reagents.

Table 2: Key Research Reagent Solutions for Single-Cell Multi-omics

| Reagent / Platform | Function | Application in GRN Studies |

|---|---|---|

| 10x Genomics Multiome | A commercial platform for simultaneous scRNA-seq and scATAC-seq from the same nucleus [3]. | Generating paired gene expression and chromatin accessibility data for methods like cRegulon [7]. |

| SHARE-Seq | An alternative high-throughput method for jointly profiling chromatin accessibility and gene expression [3]. | Mapping gene regulatory landscapes across complex tissues. |

| Illumina NovaSeq X | High-throughput sequencing platform [11]. | Generating the massive sequencing depth required for large-scale single-cell projects. |

| Oxford Nanopore Technologies | Sequencing technology known for long read lengths and portability [11]. | Resolving complex genomic regions and enabling real-time sequencing. |

| Lifebit AI Platform | A commercial cloud-based platform for genomic data analysis [9]. | Providing scalable computing and AI tools for analyzing large multi-omic datasets. |

Core Methodologies for GRN Inference

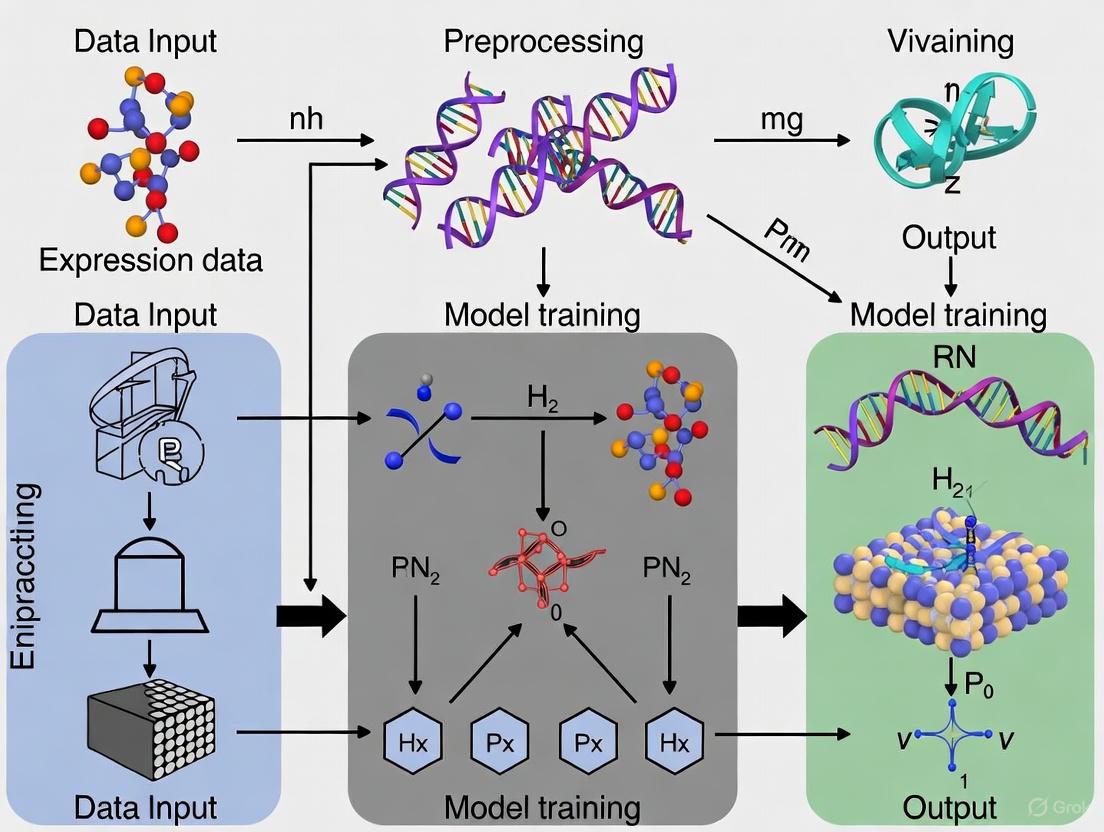

The reconstruction of GRNs from single-cell multi-omics data employs a diverse set of machine learning methodologies, each with distinct mathematical foundations and strengths. The following workflow diagram illustrates the logical relationships and progression from raw data to a validated GRN.

Foundational Machine Learning Approaches

GRN inference methods can be categorized based on their underlying statistical and algorithmic principles [3] [6].

- Correlation-based Approaches: These methods, including Pearson's correlation, Spearman's correlation, and mutual information, operate on the principle of "guilt by association." They identify genes that are co-expressed or show coordinated activity, suggesting they may be co-regulated [3]. While simple and intuitive, a key limitation is that correlation does not imply causation; these methods struggle to distinguish direct regulatory interactions from indirect ones and cannot infer directionality [3].

- Regression Models: These models treat the expression of a target gene as the response variable, which is regressed against the expression or accessibility of potential regulators (TFs, CREs). Penalized regression methods like LASSO are particularly valuable as they introduce sparsity, shrinking the coefficients of irrelevant predictors to zero and thus simplifying the inferred network [3]. The sign and magnitude of the coefficients can be interpreted as the direction and strength of the regulatory interaction.

- Dynamical Systems: This class of methods, which includes tools like SCODE [10], uses differential equations to model how gene expression changes over time. They are powerful for capturing the dynamic nature of regulatory processes, such as those occurring during development or disease progression. However, they often require precise temporal data and can be computationally intensive for large networks [3].

- Deep Learning Models: Neural network architectures have become increasingly prominent. Autoencoders, for example, can learn compressed representations of gene expression data, and the model's structure can be designed to reflect regulatory relationships [3] [10]. Convolutional Neural Networks (CNNs) are applied to genomic sequences to identify regulatory motifs, while Recurrent Neural Networks (RNNs) can model sequential dependencies in time-series data [5] [9]. As shown in benchmark studies, hybrid models that combine CNNs with traditional ML classifiers have demonstrated top performance, achieving over 95% accuracy in predicting TF-target relationships in plant species [5].

Advanced Protocol: GRN Inference with DAZZLE

Purpose: To infer a robust and stable Gene Regulatory Network from scRNA-seq data that is resilient to technical noise, particularly dropout events. Background: DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) is an autoencoder-based model that introduces a novel regularization strategy called Dropout Augmentation (DA) to mitigate the confounding effects of zero-inflation in single-cell data [10].

Materials:

- Input Data: A preprocessed scRNA-seq count matrix (cells x genes).

- Software: DAZZLE source code (available at https://github.com/TuftsBCB/dazzle).

- Computational Environment: Python with dependencies including PyTorch.

Procedure:

- Data Preprocessing: Transform the raw count matrix ( x ) using the relation ( x' = \log(x + 1) ) to stabilize variance and avoid undefined log(0) operations [10].

- Dropout Augmentation (DA): This is the core innovative step. During model training, artificially set a small, random subset of non-zero expression values in the input matrix to zero. This simulates additional dropout events, teaching the model to be robust to this specific type of noise [10].

- Model Training:

- The DA-augmented data is fed into a variational autoencoder (VAE) where the gene-gene interaction network is represented by a learnable adjacency matrix ( A ) [10].

- The model is trained to reconstruct the original (non-augmented) input from the augmented input. The reconstruction loss is used to optimize both the VAE's parameters and the adjacency matrix ( A ) [10].

- Network Inference: After training, the adjacency matrix ( A ) is extracted. The non-zero values in ( A ) represent the predicted regulatory interactions, with their magnitudes indicating the interaction strengths [10].

Validation: The performance and stability of DAZZLE can be benchmarked against other methods (e.g., GENIE3, DeepSEM) using curated gold-standard networks from resources like the DREAM Challenges or BEELINE [10].

Advanced Protocol: Inferring Combinatorial Modules with cRegulon

Purpose: To identify combinatorial regulatory modules (cRegulons)—sets of transcription factors that work together to co-regulate common target genes—from paired scRNA-seq and scATAC-seq data. Background: Many key cellular processes are controlled not by single TFs, but by combinations of TFs acting in concert. The cRegulon method moves beyond single-TF analysis to model this combinatorial regulation, providing a more accurate representation of the underlying regulatory units defining cell identity [7].

Materials:

- Input Data: Paired single-cell multi-omics data (e.g., from 10x Multiome or SHARE-seq), where both RNA expression and chromatin accessibility are measured from the same cell [7].

- Software: cRegulon algorithm.

Procedure:

- Data Preprocessing and GRN Construction:

- Perform standard quality control, normalization, and clustering on the multi-omics data.

- For each cell cluster, construct an initial GRN linking TFs, their potential cis-regulatory elements (from scATAC-seq), and target genes (from scRNA-seq) [7].

- Quantifying Combinatorial Effects:

- For every pair of TFs within a cluster-specific GRN, calculate a "combinatorial effect" score. This score integrates the co-regulation effect of the TF pair on shared targets and their activity specificity within the cluster [7].

- Matrix Decomposition for Module Identification:

- Assemble a matrix ( C ) containing all pairwise combinatorial effects.

- Assume ( C ) can be approximated by a mixture of rank-1 matrices, where each rank-1 component corresponds to a module of co-regulating TFs (a cRegulon) [7].

- Solve the optimization model to deconvolve ( C ) and output the final set of cRegulons. Each cRegulon is defined by its TF module, associated regulatory elements, and co-regulated target genes [7].

Validation: cRegulon's performance can be tested on in-silico simulated data with known ground truth and on mixed cell line data, where it should successfully recover known TF partnerships, such as the Sox2, Nanog, and Pou5f1 module in pluripotent stem cells [7].

Analysis and Validation of Inferred GRNs

Once a GRN is inferred, rigorous computational and experimental validation is essential to confirm its biological relevance.

Computational Validation:

- Benchmarking: Compare the inferred network against curated gold-standard networks from databases like DREAM Challenges. Standard metrics include Precision, Recall, and the Area Under the Precision-Recall Curve (AUPRC) [10].

- Functional Enrichment Analysis: Perform Gene Ontology (GO) or pathway enrichment analysis on the sets of genes co-regulated by key TFs (regulons). A biologically meaningful regulon should show enrichment for processes relevant to the cell type or condition studied [7].

- Stability Analysis: Assess the robustness of the inference method by applying it to different subsets of the data or by adding small amounts of noise. Stable methods like DAZZLE should produce highly similar networks across these perturbations [10].

Experimental Validation:

- CRISPR-based Perturbations: Knock out or overexpress a predicted key TF and use scRNA-seq to measure the expression changes in its predicted target genes. Successful validation is achieved if the observed changes align with the predictions of the GRN [11] [9].

- Chromatin Immunoprecipitation (ChIP-seq): Validate physical binding of a predicted TF to the promoter or enhancer regions of its target genes, providing direct evidence for the regulatory interaction [5].

The revolution from bulk transcriptomics to single-cell multi-omics has provided the resolution necessary to dissect the intricate regulatory networks that define cellular identity and function. This application note has detailed the experimental and computational protocols that leverage this data, with a specific focus on advanced machine learning methods like DAZZLE and cRegulon. These tools are at the forefront of addressing the unique challenges of single-cell data, such as noise and sparsity, while unlocking the potential to model complex biological phenomena like combinatorial regulation.

The integration of sophisticated ML with multi-layered genomic data is no longer a niche pursuit but a central paradigm in biology. As the field progresses, the continued development and application of these protocols will be crucial for translating the vast and complex data generated by modern genomics into actionable insights for basic research and therapeutic development. The future of GRN inference lies in further refining these models, improving their interpretability and generalizability, and seamlessly integrating them with experimental workflows to accelerate discovery.

Gene Regulatory Network (GRN) inference is a cornerstone of systems biology, aiming to reconstruct the complex web of causal interactions between genes that controls cellular mechanisms, development, and disease progression [12] [13]. The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized this field by providing transcriptomic profiles at individual cell resolution, enabling the dissection of regulatory dynamics across heterogeneous cell populations [12]. However, this opportunity comes with significant computational challenges. This application note details the core obstacles—data noise, sparsity selection, and causal ambiguity—within the context of machine learning approaches for GRN reconstruction, and provides detailed protocols for implementing cutting-edge solutions.

Core Challenges and Modern Solutions

The inference of accurate GRNs from scRNA-seq data is hampered by several intrinsic issues. Table 1 summarizes the primary challenges and corresponding innovative solutions developed in the field.

Table 1: Core Challenges in GRN Inference and Modern Computational Solutions

| Challenge | Impact on GRN Inference | Modern Solution | Key Reference |

|---|---|---|---|

| Data Noise & Dropout | High levels of zero-inflation (57-92% zeros) obscure true gene relationships and cause overfitting. | Dropout Augmentation (DA); Diffusion Models (RegDiffusion) | [10] [14] |

| Sparsity Selection | Arbitrary cutoffs produce biologically implausible networks, leading to false positives/negatives. | Topology-based metrics; GRN Information Criterion (GRNIC) | [15] [16] |

| Causal Ambiguity | Correlation does not imply causation; confounders and reverse causation obscure true regulatory direction. | Instrumental Variables (2SPLS); Structure Equation Models (SEM) | [13] [10] |

Overcoming Data Noise and Dropout with Augmentation and Diffusion

The prevalence of "dropout" events in scRNA-seq data—where transcripts are erroneously not captured—creates a zero-inflated count profile that can mislead traditional inference algorithms [10]. Rather than merely imputing these missing values, a more robust approach is to build model resilience against this noise.

Protocol 2.1.1: Implementing Dropout Augmentation with DAZZLE

This protocol stabilizes the training of autoencoder-based GRN models, such as DeepSEM, by making them robust to dropout noise [10].

Input Data Preparation:

- Input: Raw scRNA-seq count matrix.

- Transformation: Apply the transformation ( x' = \log(x + 1) ) to all raw counts ( x ) to reduce variance and avoid undefined log(0) values.

- Output: A transformed gene expression matrix ( X ), where rows are cells and columns are genes.

Dropout Augmentation:

- During each training epoch, synthetically zero out a random, small subset of non-zero values in ( X ). This simulates additional dropout events.

- This process regularizes the model, preventing it from overfitting to the specific dropout pattern present in the original data and forcing it to learn more generalizable regulatory relationships.

Model Training with DAZZLE Framework:

- DAZZLE uses a Structure Equation Model (SEM) framework within a variational autoencoder.

- The model's key feature is a parameterized adjacency matrix ( A ), which represents the GRN and is used in both the encoder and decoder.

- The model is trained to reconstruct the augmented input data ( X ) while simultaneously learning the sparse matrix ( A ). A closed-form prior and a modified sparsity control strategy enhance stability compared to its predecessor, DeepSEM.

Output:

- The trained, sparse adjacency matrix ( A ) is the inferred GRN, where entries indicate the strength and direction of regulatory interactions.

An alternative to the autoencoder-based DAZZLE is the diffusion-based model, RegDiffusion. The workflow, illustrated below, uses a forward process of iterative noising and a reverse process to recover the underlying GRN structure, demonstrating high speed and stability [14].

Resolving Sparsity Selection with Topology-Based Metrics

A major shortcoming of many GRN methods is the lack of guidance for selecting the optimal network sparsity, often relying on arbitrarily set hyperparameters [15]. Since biological GRNs are known to be sparse and exhibit scale-free topology, this property can be leveraged to automate sparsity selection.

Protocol 2.2.1: Optimal Sparsity Selection Using Scale-Free Topology

This protocol uses the "goodness of fit" metric to find the GRN from a candidate set that best approximates a scale-free structure [15].

Generate Candidate GRNs:

- Use any GRN inference method (e.g., LASSO, GENIE3) to generate a series of networks ( {\hat{A}1, \hat{A}2, ..., \hat{A}G} ) across a range of hyperparameters ( {\lambda1, ..., \lambda_G} ) that control sparsity.

Calculate Out-Degree Distribution:

- For each candidate network ( \hat{A}g ), compute the out-degree ( ci^{(g)} ) for each gene ( i ) (the number of non-zero regulatory outputs).

- Count the frequency ( x_d^{(g)} ) of each out-degree ( d ).

Compute Goodness of Fit Metric (( Q_g )):

- For each network, calculate the Maximum Likelihood estimator ( \alpha_{ML}^{(g)} ) for the power law distribution.

- Calculate the goodness of fit statistic: [ Qg = \sum{d=1}^{n} \frac{(xd^{(g)} - n^{(g)} pX^{(g)}(d))^2}{n^{(g)} pX^{(g)}(d)} ] where ( pX^{(g)}(d) ) is the probability under the fitted power law.

Select Optimal GRN:

- Identify the candidate network that minimizes the goodness of fit statistic: [ \hat{A}{optimal} = \arg \min{\hat{A}g} Qg ]

- This network has an out-degree distribution closest to a scale-free topology and is selected as the final, optimally sparse GRN.

Disentangling Causal Ambiguity with Instrumental Variables

Methods based solely on co-expression can identify association but fail to establish causation due to unmeasured confounders and reverse causality [13]. The SIGNET software package overcomes this by leveraging genotypic data as natural instrumental variables in a Mendelian randomization framework.

Protocol 2.3.1: Causal GRN Inference with SIGNET

This protocol constructs a transcriptome-wide, causal GRN from paired transcriptomic and genotypic data [13].

Data Preprocessing:

- Transcriptomic Data: Filter low-read genes, normalize (e.g., using VST or TMM), and correct for confounders (e.g., race, gender, population stratification via principal components).

- Genotypic Data: Perform quality control (e.g., with PLINK), remove variants/samples with high missing rates, and impute missing SNPs (e.g., with IMPUTE2).

Identify Instrumental Variables (IVs):

- For each gene, identify its cis-acting genotypic variants (SNPs within its genetic region).

- Test these variants for significant association with the gene's expression at a prescribed significance level (default 0.05). These significant variants serve as instrumental variables for their host gene.

Causal Inference with 2-Stage Penalized Least Squares (2SPLS):

- SIGNET uses the 2SPLS algorithm, which employs the IVs identified in the previous step to infer causal relationships between all genes.

- The method constructs directed cyclic graphs (DCGs), allowing it to capture reciprocal regulations and feedback loops, which are biologically critical.

- The computation is distributed across two stages of parallel computing, making transcriptome-wide inference feasible.

Bootstrap Aggregation and Visualization:

- Bootstrap the original dataset and run SIGNET on each bootstrap sample.

- Aggregate results across all bootstraps to build a consensus GRN with confidence scores for each regulatory edge.

- Use SIGNET's interactive Shiny-based interface to visualize the network, identify hub genes, and explore subnetworks.

The following diagram summarizes the integrated SIGNET workflow for causal GRN inference from raw data to a validated network.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software Tools for GRN Inference

| Tool Name | Type | Primary Function | Key Application |

|---|---|---|---|

| DAZZLE [10] | Software Package (R/Python) | Stable GRN inference using Dropout Augmentation and autoencoders. | Handling high dropout noise in scRNA-seq data. |

| RegDiffusion [14] | Software Package (Python) | Fast GRN inference using diffusion probabilistic models. | Rapid inference on large datasets (>15,000 genes in minutes). |

| SIGNET [13] | Software Platform (R) | Causal GRN inference using instrumental variables (2SPLS). | Establishing causality in transcriptome-wide networks. |

| SPA [16] | Algorithm | Selects optimal GRN sparsity using a GRN Information Criterion (GRNIC). | Determining the single best network sparsity post-inference. |

The path to accurate Gene Regulatory Network inference is paved with the challenges of noisy, sparse data and causal ambiguity. This application note has detailed how modern machine learning approaches—including dropout augmentation, diffusion models, topology-based sparsity selection, and causal inference with instrumental variables—provide robust, experimentally applicable solutions. By implementing these protocols, researchers can move closer to reconstructing faithful models of gene regulation, thereby accelerating discoveries in fundamental biology and therapeutic development.

The reconstruction of Gene Regulatory Networks (GRNs) is a fundamental challenge in systems biology, crucial for understanding cellular control, disease mechanisms, and therapeutic target discovery [17]. GRNs model the complex regulatory interactions between transcription factors (TFs) and their target genes [18]. Over the past decades, the computational methods for inferring these networks from gene expression data have evolved significantly. This evolution has progressed from early methods based on simple correlation metrics to sophisticated modern paradigms leveraging artificial intelligence (AI) and machine learning (ML), each generation offering increased scale, accuracy, and biological relevance [17] [18] [19].

This application note details the key methodologies in this evolutionary trajectory, providing structured comparisons, experimental protocols, and visual workflows to guide researchers in selecting and implementing these approaches for GRN reconstruction.

From Correlation to Regression: Foundational Methods

The earliest computational approaches for GRN inference relied on measuring the co-expression of genes across multiple samples to infer associations.

Weighted Gene Co-expression Network Analysis (WGCNA)

WGCNA is a systems biology method designed to analyze complex data patterns in large sample sets. It constructs a weighted network where genes (nodes) are connected by edges whose thickness represents the strength of their co-expression correlation, raised to a user-defined power (a "soft threshold") to emphasize strong connections [20]. The process involves four main steps [20]:

- Network Construction: A matrix of all pairwise correlations between genes is transformed into an adjacency matrix.

- Module Detection: Using hierarchical clustering, genes with highly correlated expression patterns are grouped into modules, each representing a functionally related gene set.

- Trait Correlation: Module eigengenes (the first principal component of a module) are calculated and correlated with external sample traits (e.g., disease status) to identify biologically relevant modules.

- Hub Gene Identification: Within significant modules, the most highly connected genes (hub genes) are identified as potential key regulators or drivers of phenotypes [20].

Table 1: Key Characteristics of Foundational GRN Inference Methods

| Method | Underlying Principle | Key Output | Key Advantages | Key Limitations |

|---|---|---|---|---|

| WGCNA [20] | Weighted correlation and hierarchical clustering | Clusters (modules) of co-expressed genes; association with traits | Identifies functionally related gene groups; integrates trait data | Infers undirected networks; limited power to identify specific regulators |

| GENIE3 [19] | Tree-based ensemble (Random Forests/Extra-Trees) | Ranked list of potential regulatory links (TF → target) | Infers directed networks; handles non-linear relationships; won DREAM4 challenge | Computationally intensive for very large datasets |

Regression-Based and Tree-Based Methods

Moving beyond simple correlation, regression-based methods formulated GRN inference as a problem of predicting a target gene's expression based on the expression of potential TFs.

GENIE3 (GEne Network Inference with Ensemble of trees) is a leading algorithm from this class. It decomposes the network inference problem into p different regression problems, one for each gene [19]. For each target gene, the expression pattern is predicted from the expression patterns of all other genes using a tree-based ensemble method, such as Random Forests or Extra-Trees. The importance of each potential regulator in predicting the target gene's expression is computed, and these importance scores are aggregated across all genes to produce a ranked list of putative regulatory interactions [19].

The following workflow diagram illustrates the core steps of the GENIE3 algorithm:

The Rise of Machine and Deep Learning

The advent of more complex ML and DL models addressed several limitations of earlier methods, particularly their ability to model non-linear and hierarchical regulatory relationships.

Kernel Methods and Boosting

KBoost is an example of an advanced ML method that uses Kernel PCA regression (KPCR) and gradient boosting. KPCR is a non-parametric technique that maps TF expression data into a high-dimensional feature space using a kernel function, allowing it to capture complex, non-linear relationships without requiring a predefined model form [18]. KBoost employs a boosting framework to iteratively combine weak KPCR models, each built from the expression profile of a single TF, to create a strong predictor for each target gene. The frequency with which a TF is selected in the models is used to infer its regulatory role, and this process can be enhanced by incorporating prior knowledge from other sources, such as ChIP-seq data [18].

Hybrid and Transfer Learning Approaches

More recently, hybrid models that combine the strengths of DL and traditional ML have shown superior performance. For instance, one study integrated Convolutional Neural Networks (CNNs) with machine learning classifiers, achieving over 95% accuracy in predicting TF-target relationships in plant species [5]. These hybrid approaches typically use CNNs to automatically learn informative feature representations from raw input data (e.g., expression profiles), which are then fed into a standard ML classifier (e.g., SVM, Random Forest) for final prediction.

A critical challenge in supervised GRN inference is the scarcity of labeled training data (known TF-target pairs), especially for non-model organisms. Transfer learning has emerged as a powerful strategy to overcome this. It involves pre-training a model on a data-rich source species (e.g., Arabidopsis thaliana) and then fine-tuning it on a target species with limited data (e.g., poplar or maize) [5]. This allows the model to leverage conserved regulatory principles across species, significantly enhancing performance in data-scarce scenarios [5].

Table 2: Advanced AI-Driven Approaches for GRN Inference

| Method Category | Example | Core Mechanism | Application Context |

|---|---|---|---|

| Kernel Methods & Boosting | KBoost [18] | Kernel PCA Regression + Bayesian Model Averaging | Fast, accurate reconstruction on standard hardware; handles large cohorts (>2000 samples) |

| Hybrid Models (ML/DL) | CNN-ML Hybrids [5] | Feature extraction with CNN + classification with ML | High-accuracy prediction of TF-target pairs; outperforms traditional ML/DL alone |

| Transfer Learning | Cross-Species Inference [5] | Model pre-training on data-rich species + fine-tuning on target species | GRN inference for non-model or data-scarce species |

| Foundation Models | GeneCompass [21] | Transformer model pre-trained on >120M single-cell transcriptomes | Cross-species understanding; multiple downstream tasks (e.g., perturbation simulation) |

| Few-Shot Meta-Learning | Meta-TGLink [22] | Graph Neural Networks + Model-Agnostic Meta-Learning (MAML) | Inferring GRNs with very few known regulatory interactions (few-shot learning) |

Cutting-Edge AI: Foundation Models and Few-Shot Learning

The current frontier of GRN inference involves large-scale foundation models and techniques that can learn from minimal data.

Cross-Species Foundation Models

GeneCompass is a knowledge-informed, cross-species foundation model pre-trained on a massive corpus of over 120 million human and mouse single-cell transcriptomes [21]. It integrates four types of prior biological knowledge—GRN information, promoter sequences, gene family annotation, and gene co-expression relationships—into its learning process. Using a Transformer architecture, it is trained via masked language modeling to recover the identities and expression values of randomly masked genes in a cell [21]. This self-supervised pre-training allows GeneCompass to develop a deep, contextual understanding of gene regulation, which can then be fine-tuned for specific downstream tasks with high accuracy, including predicting key factors in cell fate transitions [21].

Few-Shot Learning with Graph Meta-Learning

Meta-TGLink addresses the critical problem of inferring GRNs when known regulatory interactions are extremely scarce. It formulates GRN inference as a few-shot link prediction task on a graph [22]. The model employs a structure-enhanced Graph Neural Network (GNN) that alternates between Transformer layers and GNN layers to capture both relational and positional information of genes in the network. It is trained using a meta-learning framework (specifically, Model-Agnostic Meta-Learning or MAML), where the model learns from a variety of tasks, each with a small support set (a few known links). This training enables Meta-TGLink to quickly adapt and make accurate predictions for new target cell lines or TFs with only a handful of known examples, dramatically reducing the reliance on large labeled datasets [22].

The architecture and workflow of a modern few-shot learning model like Meta-TGLink can be visualized as follows:

Experimental Protocols

Protocol 1: Implementing a Standard WGCNA Analysis

Application: Identifying co-expression modules and their association with sample traits from RNA-seq data. Reagents & Tools:

- Input Data: Normalized gene expression matrix (e.g., TMM, FPKM, or TPM).

- Software: R statistical environment with the

WGCNApackage installed.

Procedure:

- Data Preprocessing and Input: Prepare a matrix where rows are genes and columns are samples. Remove genes with low expression or low variance. The

WGCNApackage can be used for this step. - Network Construction:

- Choose a "soft-thresholding power" (β) using the

pickSoftThresholdfunction to ensure the network approximates a scale-free topology. - Calculate the pairwise correlations between all genes and transform them into an adjacency matrix.

- Convert the adjacency matrix into a Topological Overlap Matrix (TOM) to measure network interconnectedness.

- Choose a "soft-thresholding power" (β) using the

- Module Detection:

- Perform hierarchical clustering on the TOM-based dissimilarity matrix (1-TOM).

- Use the

cutreeDynamicfunction to identify modules (branches of the dendrogram), each assigned a unique color.

- Module-Trait Association:

- Calculate the module eigengene (ME) for each module.

- Correlate MEs with external sample traits (clinical data, treatment groups). High correlations indicate modules relevant to the trait of interest.

- Hub Gene Identification:

- Calculate module membership (kME) as the correlation between a gene's expression and its module's eigengene.

- Identify genes with high kME and high intramodular connectivity as hub genes for further validation.

Protocol 2: Inferring a GRN using a Hybrid ML/DL and Transfer Learning Approach

Application: Predicting TF-target interactions in a non-model species with limited data. Reagents & Tools:

- Source Species Data: Large, well-annotated transcriptomic compendium (e.g., Arabidopsis thaliana RNA-seq data from SRA).

- Target Species Data: Smaller transcriptomic dataset from the species of interest (e.g., poplar).

- Validation Data: Curated list of known TF-target pairs (e.g., from public databases or literature).

- Software: Python with deep learning (e.g., TensorFlow, PyTorch) and machine learning libraries (e.g., scikit-learn).

Procedure:

- Data Collection and Preprocessing:

- Download raw sequencing data (FASTQ files) from the Sequence Read Archive (SRA) for both source and target species.

- Perform quality control (FastQC), adapter trimming (Trimmomatic), and alignment to the respective reference genomes (STAR).

- Generate raw read counts and normalize them using a method like TMM from

edgeRto create compendium datasets.

- Model Pre-training on Source Species:

- Construct a hybrid model (e.g., a CNN for feature extraction followed by an ML classifier like SVM or Random Forest).

- Train the model on the source species compendium, using known TF-target pairs as positive examples and randomly selected non-pairs as negative examples.

- Transfer Learning to Target Species:

- Remove the final classification layer of the pre-trained model.

- Replace the target species-specific input layer if necessary.

- Fine-tune the model on the (much smaller) target species training dataset, using a low learning rate to adapt the pre-trained weights without overwriting them.

- Model Evaluation and Inference:

- Evaluate the fine-tuned model's performance on a held-out test set from the target species using metrics like AUROC and AUPRC.

- Use the trained model to predict novel TF-target interactions across the entire target species transcriptome.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Computational GRN Inference

| Item Name | Function/Application | Key Features & Considerations |

|---|---|---|

| Normalized Transcriptomic Compendium | Primary input data for all inference methods. | Large sample size (N >100) increases power. Normalization (e.g., TMM, TPM) is critical for cross-dataset comparison. Sourced from SRA, GEO. |

| Curated Gold Standard Interactions | Training data for supervised methods; validation for all methods. | Quality and context-relevance are crucial. Sourced from literature or databases (KEGG, ChIP-Atlas, I2D) [17]. |

| Prior Biological Knowledge | Enhances model accuracy and biological plausibility. | Includes promoter sequences, gene families, known GRNs, co-expression data [21]. Integrated as model priors or input features. |

| WGCNA R Package | Implement WGCNA for co-expression network analysis. | User-friendly functions for entire workflow; requires careful parameter selection (e.g., soft-thresholding power) [20]. |

| Tree-Based Ensemble Algorithms (GENIE3) | Infer directed GRNs from expression data. | Handles non-linearities; provides ranked list of interactions. Implemented in R (GENIE3 package) [19]. |

| Deep Learning Frameworks (PyTorch/TensorFlow) | Build and train custom hybrid, foundation, or meta-learning models. | Flexibility for model architecture design; requires significant computational resources (GPUs) and coding expertise [5] [22] [21]. |

| Pre-trained Foundation Models (GeneCompass) | Leverage large-scale models for downstream GRN tasks. | State-of-the-art performance via fine-tuning; requires understanding of transfer learning techniques [21]. |

A Taxonomy of Machine Learning Methods for GRN Inference

In the broader context of machine learning approaches for Gene Regulatory Network (GRN) reconstruction, supervised methods leverage known molecular interactions to infer new regulatory relationships from gene expression data [23] [24]. Unlike unsupervised methods that identify patterns without labeled examples, supervised learning frames GRN inference as a classification or regression problem, where algorithms learn from experimentally validated gene regulations [24]. This approach often yields higher accuracy by incorporating prior biological knowledge [25].

Within this paradigm, three significant methods are GENIE3, SIRENE, and DeepSEM. GENIE3, despite often being categorized alongside unsupervised techniques in benchmarks, uses a supervised regression strategy to predict gene targets [23] [26]. SIRENE is a classic supervised classification model that explicitly trains on known interactions [27] [24]. DeepSEM represents a more recent advancement, employing neural networks within a semi-supervised or unsupervised structural equation model framework to infer GRNs [23] [28] [29]. This article details the application and protocols for utilizing these methods to predict known interactions.

The following table summarizes the core characteristics of GENIE3, SIRENE, and DeepSEM, highlighting their key methodologies and typical applications.

Table 1: Overview of Supervised GRN Inference Methods

| Method | Learning Paradigm | Core Technology | Input Data Type | Key Principle |

|---|---|---|---|---|

| GENIE3 [23] [26] | Supervised Regression | Random Forest / Tree-based Ensemble | Bulk & Single-cell RNA-seq | Decomposes GRN inference into predicting each gene's expression as a function of all potential regulators. |

| SIRENE [23] [27] [24] | Supervised Classification | Support Vector Machine (SVM) | Bulk RNA-seq | Decomposes GRN inference into local binary classification problems to separate target from non-target genes for each TF. |

| DeepSEM [23] [28] [29] | (Semi-/Unsupervised) | Variational Autoencoder (VAE) & Structural Equation Model (SEM) | Single-cell RNA-seq | Uses a neural network to parameterize the adjacency matrix and learns the GRN structure by reconstructing gene expression data. |

The workflow for applying these methods typically involves data preparation, model training, and network inference, as illustrated below.

Diagram 1: General Workflow for GRN Inference

Detailed Methodologies and Experimental Protocols

GENIE3 (Random Forest-Based Regression)

Principle: GENIE3 formulates GRN inference as a supervised regression problem. It decomposes the task into predicting the expression level of each gene in turn, based on the expression levels of all other potential regulator genes (or a pre-defined set of Transcription Factors). The method uses a tree-based ensemble, such as Random Forest, to learn these non-linear relationships [23] [26].

Experimental Protocol:

Input Data Preparation:

- Obtain a gene expression matrix (bulk or single-cell RNA-seq) with dimensions

(n_cells, n_genes). - Preprocess the data: Apply log-transformation

log(x+1)to stabilize variance [30]. Filter for highly variable genes if working with large datasets. - Provide an optional list of known Transcription Factors (TFs) to limit the set of potential regulators.

- Obtain a gene expression matrix (bulk or single-cell RNA-seq) with dimensions

Model Training and GRN Inference:

- For each target gene

g_iin the set of all genesG:- Set the expression profile of

g_ias the target response variableY. - Set the expression profiles of all potential regulators (or all other genes) as the feature matrix

X. - Train a Random Forest regression model to predict

YfromX. - Extract the variable importance score (e.g., mean decrease in impurity) for every feature (regulator) in the model. This score represents the strength of the potential regulatory link.

- Set the expression profile of

- For each target gene

Output and Interpretation:

SIRENE (Supervised Classification with SVM)

Principle: SIRENE is a purely supervised method that frames GRN inference as a set of binary classification problems. For each Transcription Factor (TF), it builds a classifier to distinguish its known target genes from non-target genes based on global expression profiles [27] [24].

Experimental Protocol:

Input Data Preparation:

- Obtain a compendium of gene expression data.

- Acquire a set of known, experimentally validated regulatory interactions. For a given TF, these serve as the positive training examples.

- Generate negative training examples. Due to the lack of confirmed non-interactions, SIRENE uses a cross-validation scheme on the set of genes not known to be targets of the TF, treating a subset as non-targets [27] [24].

Model Training:

- For each TF in the network:

- Construct a feature vector for every gene from the global expression profile.

- Train a Support Vector Machine (SVM) classifier using the positive (targets) and negative (non-targets) examples.

- The trained model learns a decision boundary that separates targets from non-targets in the expression feature space.

- For each TF in the network:

Prediction and Output:

- Apply the trained TF-specific classifier to all genes not in the training set (or to all genes for a full network reconstruction).

- The classifier's output (e.g., decision function score or probability) for each

(TF, gene)pair indicates the likelihood of a regulatory interaction [27].

DeepSEM (Neural Network-Based Structural Modeling)

Principle: DeepSEM uses a Variational Autoencoder (VAE) integrated with a Structural Equation Model (SEM). It is often categorized as unsupervised or semi-supervised as it does not require a ground truth network for training. Instead, it learns the GRN adjacency matrix W as a set of parameters within a neural network by trying to reconstruct the input gene expression data X [23] [28] [29]. The relationship is modeled as X = XW^T + Z, where Z is a latent variable.

Experimental Protocol:

Input Data Preparation:

- Use a single-cell RNA-seq expression matrix, preprocessed with

log(x+1)transformation. - The matrix is formatted with cells as rows and genes as columns.

- Use a single-cell RNA-seq expression matrix, preprocessed with

Model Architecture and Training:

- Encoder: The encoder network

q(Z|X)takes the gene expression dataXand maps it to a distribution over the latent variablesZ. - Structural Layer: The learnable parameter matrix

W(the adjacency matrix) is used in the structural equation. A sparsity constraint (L1 regularization) is applied toWto promote a sparse network. - Decoder: The decoder network

p(X|Z)reconstructs the expression data from the latent variablesZand the structural model. - Training: The model is trained to minimize a loss function that combines the reconstruction error and the Kullback–Leibler divergence between the latent distribution and a prior, often with an L1 sparsity term on

W:L = −E_Z [log p(X|Z)] + β KL(q(Z|X)||p(Z)) + α ||W||_1[28].

- Encoder: The encoder network

GRN Inference:

- After training, the weights of the

Wmatrix are extracted. The absolute value of each entryW_ijrepresents the inferred regulatory strength of genej(regulator) on genei(target) [28].

- After training, the weights of the

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Implementing GRN Inference Methods

| Resource / Reagent | Function / Description | Example Use Case |

|---|---|---|

| scRNA-seq Data (e.g., from 10X Genomics, Smart-seq2) | Provides the input gene expression matrix at single-cell resolution, capturing cellular heterogeneity. | Essential for all methods, particularly DeepSEM which is designed for single-cell data [30] [31]. |

| Bulk RNA-seq / Microarray Data | Provides the input gene expression matrix from pooled cell populations. | Standard input for GENIE3 and SIRENE on bulk tissue samples [23] [24]. |

| Ground Truth Networks (e.g., from ChIP-seq, eCLIP, STRING) | Provides experimentally validated interactions for training supervised models (SIRENE) and benchmarking inferred networks. | Used as positive examples in SIRENE [27]; used for performance evaluation in benchmarks [29]. |

| Transcription Factor List | A curated list of genes known to function as TFs to constrain the search space for regulators. | Provided as input to GENIE3 to limit potential regulators [23]. |

| Computational Framework (e.g., R, Python, GPU acceleration) | The software and hardware environment required to run computationally intensive model training and inference. | DeepSEM requires PyTorch and GPU resources for efficient training [28]. |

Performance Comparison and Practical Considerations

Benchmarking studies on real single-cell RNA-seq datasets provide practical insights into the performance of these methods. The table below summarizes typical comparative findings.

Table 3: Performance Comparison on scRNA-seq Data

| Method | Reported Performance | Advantages | Limitations & Challenges |

|---|---|---|---|

| GENIE3 | Competitive performance in benchmarks; winner of DREAM challenges [26] [32]. | High scalability and explainability; handles non-linear relationships well [32]. | Cannot distinguish between activation and inhibition; may introduce discontinuities in modeling [32]. |

| SIRENE | Retrieved ~6x more known regulations than other state-of-the-art methods in an E. coli benchmark [27]. | Conceptual simplicity and computational efficiency for each local model [27]. | Requires high-quality known interactions for training; performance depends on negative sample selection [24]. |

| DeepSEM | Shows better performance than most methods on BEELINE benchmarks; runs significantly faster than many [30]. | Models complex, non-linear relationships; end-to-end deep learning framework [23] [30]. | Can be unstable and overfit to dropout noise in single-cell data; quality may degrade after convergence [30] [29]. |

A critical consideration for single-cell data is the "dropout" problem, where an excess of zero values in the expression matrix can hamper inference. Methods like DAZZLE, an extension of the DeepSEM concept, have been developed to address this by using Dropout Augmentation (DA) as a model regularization technique, which improves robustness and stability [30].

The logical relationships and data flow within the DeepSEM architecture are captured in the following diagram.

Diagram 2: DeepSEM Model Architecture

Gene Regulatory Networks (GRNs) are complex computational representations of the interactions between genes and their regulators, such as transcription factors (TFs), which collectively control cellular processes, development, and responses to environmental cues [5] [33] [3]. Reverse engineering, or "deconvoluting," these networks from high-throughput gene expression data is a fundamental challenge in computational biology, crucial for understanding normal cell physiology and complex pathologic phenotypes [34] [35]. Unlike supervised methods that require known regulatory interactions for training, unsupervised learning approaches infer networks directly from the statistical patterns within expression data alone, making them widely applicable, especially in less-characterized biological contexts.

This application note details three influential unsupervised methodologies for GRN inference: ARACNE (Algorithm for the Reconstruction of Accurate Cellular Networks), CLR (Context Likelihood of Relatedness), and GRN-VAE (Gene Regulatory Network-Variational Autoencoder). ARACNE and CLR represent classical information-theoretic methods, while GRN-VAE exemplifies modern deep learning applications. We provide a comparative analysis, detailed experimental protocols, and practical visualization tools to guide researchers and drug development professionals in implementing these methods for their GRN reconstruction projects.

Comparative Analysis of Methods

The following table summarizes the key characteristics, strengths, and weaknesses of ARACNE, CLR, and GRN-VAE, providing a high-level overview to guide method selection.

Table 1: Comparative Overview of ARACNE, CLR, and GRN-VAE

| Feature | ARACNE | CLR | GRN-VAE |

|---|---|---|---|

| Underlying Principle | Information Theory & Mutual Information | Information Theory with Z-score contextualization | Deep Learning & Neural Networks |

| Core Function | Estimates MI, then removes indirect edges using DPI | Calculates MI, then infers network by comparing to background distribution | Uses a graph-aware autoencoder to learn a parameterized adjacency matrix |

| Key Strength | Effectively eliminates a majority of spurious indirect interactions [34] | More robust than pure correlation against false positives from highly expressed genes [5] | Can capture complex, non-linear hierarchical relationships in data [30] [3] |

| Primary Limitation | Asymptotically exact only if network loops are negligible [34] | May still infer some indirect relationships | Can be computationally intensive and requires large datasets for effective training [5] [3] |

| Typical Data Input | Static bulk or single-cell expression profiles [34] [5] | Static bulk or single-cell expression profiles [5] | Single-cell RNA-seq data [30] |

| Scalability | Scalable to mammalian-scale networks [34] | Scalable to mammalian-scale networks | High, but performance is hardware-dependent (benefits from GPUs) [30] |

Detailed Methodologies and Protocols

ARACNE (Algorithm for the Reconstruction of Accurate Cellular Networks)

ARACNE is an information-theoretic algorithm designed to identify direct regulatory interactions by eliminating the majority of indirect connections inferred by co-expression methods [34] [35]. Its theoretical foundation rests on modeling the joint probability distribution (JPD) of gene expressions using a Markov Random Field framework, where a statistical interaction is considered direct if and only if the corresponding potential in the JPD expansion is non-zero [34].

Experimental Protocol

Table 2: Key Research Reagents and Computational Tools for ARACNE

| Item Name | Function/Description | Example/Format |

|---|---|---|

| Gene Expression Matrix | Primary input data. Rows represent samples/cells, columns represent genes. | Normalized count matrix (e.g., TMM, TPM) |

| Transcription Factor List | A list of gene identifiers annotated as TFs. Used to constrain the DPI application. | Text file with one gene ID per line |

| MI Threshold | Used to filter out statistically non-significant MI values. | Can be a pre-defined value or derived from a p-value via bootstrapping |

| DPI Tolerance (ε) | A small value to account for MI estimation errors when applying the DPI. | Typical value: 0.05-0.15 |

Workflow Steps:

- Input Data Preprocessing: Provide a normalized gene expression matrix. Optionally, provide a list of known transcription factors (TFs).

- Mutual Information Estimation: For all gene pairs (i, j), compute the Mutual Information, MI(i, j), which measures the degree of dependency between their expression profiles. ARACNE typically uses adaptive partitioning to estimate MI [36].

- Statistical Thresholding: Remove all edges for which MI(i, j) < I0, where the threshold *I0 * can be defined by the user or determined empirically from a desired p-value using a null distribution of MI generated via bootstrapping [34] [36].

- Data Processing Inequality (DPI): For every candidate triplet of genes (i, j, k), the DPI is applied. The least significant edge in the triplet is removed if MI(i, j) ≤ min[MI(i, k), MI(j, k)] - ε, where ε is a small tolerance value. This step eliminates the weakest edge in triangles, which likely represents an indirect interaction [34]. If a TF list is provided, DPI is applied such that a connection between a TF and its target is not removed by an intermediate gene that is not a TF [36].

- Network Output: The algorithm produces an adjacency matrix file, which can be used for network visualization and analysis in tools like Cytoscape [36].

Figure 1: ARACNE algorithm workflow.

CLR (Context Likelihood of Relatedness)

The CLR algorithm is an extension of basic mutual information methods. It aims to reduce false positives by accounting for the background distribution of MI for each gene. While detailed protocol steps for CLR were not fully available in the search results, it is a established method included in benchmarks and its core principle is well-documented [5] [30].

Core Principle: CLR calculates a Z-score for the MI between each gene pair (i, j) relative to the empirical distribution of MI values for gene i and gene j individually. This step contextualizes the MI score, making the method more robust to inherent variations in the connectivity and expression levels of different genes.

Generalized Workflow:

- Mutual Information Matrix: Compute the full pairwise MI matrix from the gene expression data, similar to ARACNE's first step.

- Background Distribution Estimation: For each gene i, define a background distribution of its MI values with all other genes in the network.

- Z-score Calculation: For each gene pair (i, j), calculate the Z-score: zij = sqrt( zi² + zj² ), where zi is the Z-score of MI(i, j) within the distribution of gene i's MIs, and zj is the Z-score within the distribution of gene j's MIs.

- Network Inference: The final network is derived by thresholding this matrix of Z-scores, which represents the context-adjusted strength of the regulatory relationship.

GRN-VAE and the DAZZLE Framework

GRN-VAE refers to a class of methods that use Variational Autoencoders to infer GRNs. These are deep generative models that learn a low-dimensional representation of the expression data while simultaneously inferring the underlying network structure. DAZZLE is a robust and stabilized variant of a VAE-based GRN inference method, specifically designed to handle the zero-inflated nature of single-cell RNA-seq (scRNA-seq) data [30].

Experimental Protocol

Table 3: Key Research Reagents and Computational Tools for GRN-VAE/DAZZLE

| Item Name | Function/Description | Example/Format |

|---|---|---|

| scRNA-seq Count Matrix | Primary input data. Rows represent cells, columns represent genes. | Raw or log-normalized (log(x+1)) count matrix |

| Graphical Processing Unit (GPU) | Accelerates the training of the deep learning model. | NVIDIA CUDA-enabled GPU |

| Dropout Augmentation (DA) | A regularization technique that adds synthetic dropout noise during training to improve model robustness [30]. | A defined probability of setting random expression values to zero |

| Sparsity Constraint | A loss term that encourages the inferred adjacency matrix to be sparse, reflecting biological reality. | L1-penalty on the adjacency matrix weights |

Workflow Steps (DAZZLE Implementation):

- Input Data Preprocessing: Transform the raw scRNA-seq count matrix X using log(X + 1) to reduce variance and avoid taking the log of zero. The matrix is formatted with rows as cells and columns as genes [30].

- Model Initialization: Initialize the VAE model, which includes an encoder network, a decoder network, and a randomly initialized, parameterized adjacency matrix A that represents the GRN to be learned.

- Dropout Augmentation (DA): During each training iteration, augment the input data by setting a small, random subset of the non-zero expression values to zero. This simulates additional dropout noise and regularizes the model, preventing overfitting to the specific dropout pattern in the original data [30].

- Model Training (Autoencoding): The model is trained to reconstruct its input. The encoder maps the input expression data to a latent representation Z. The decoder uses this representation Z and the adjacency matrix A to reconstruct the expression data. The training objective is to minimize the reconstruction error while applying a sparsity constraint on A.

- Adjacency Matrix Extraction: After training convergence, the weights of the optimized adjacency matrix A are retrieved. The absolute values of these weights represent the confidence or strength of the directed regulatory interactions between genes [30].

- Network Output: The finalized adjacency matrix is thresholded to obtain a binary or weighted GRN for downstream analysis.

Figure 2: GRN-VAE/DAZZLE algorithm workflow.

Performance Benchmarks and Applications

Performance benchmarking of GRN inference methods is often conducted on synthetic networks where the true interactions are known, using metrics like the Area Under the Precision-Recall Curve (AUPRC) [30] [37].

Table 4: Example Performance Benchmarks on Synthetic Data

| Method Category | Example Method | Reported Performance (AUPRC) | Notes / Context |

|---|---|---|---|

| Information-Theoretic | ARACNE | Low error rates on synthetic benchmarks [34] | Outperformed Relevance Networks and Bayesian Networks on its original synthetic dataset [34]. |

| Deep Learning (VAE-based) | DeepSEM | Performance degrades after overfitting [30] | Served as a baseline for DAZZLE development. |

| Deep Learning (VAE-based) | DAZZLE | Superior and more stable than DeepSEM [30] | Improved robustness and stability due to Dropout Augmentation. |

| Deep Learning (Diffusion-based) | DigNet | Superior AUPRC vs. 13 other methods [37] | Example of a state-of-the-art method outperforming established tools. |

In practical applications, these methods have proven valuable in biological discovery. For instance, ARACNE was successfully used to infer validated transcriptional targets of the c-MYC proto-oncogene in human B cells, demonstrating its utility in identifying potential therapeutic targets in cancer [34] [35] [36]. Similarly, advanced models like DAZZLE have been applied to elucidate expression dynamics in complex systems, such as microglial cells across the mouse lifespan [30].

Unsupervised learning methods for GRN reconstruction are powerful tools for the de novo discovery of regulatory interactions. ARACNE remains a robust, information-theoretic choice for identifying direct interactions, particularly when a list of potential transcription factors is available. CLR offers a solid alternative that improves upon simple correlation or MI by accounting for network context. For researchers working with large-scale single-cell data and seeking to capture complex, non-linear relationships, modern deep learning approaches like GRN-VAE and its advanced derivatives such as DAZZLE represent the cutting edge, albeit with higher computational resource requirements.

Method selection should be guided by the specific biological question, data type (bulk vs. single-cell), and available computational resources. As the field progresses, the integration of these methods with multi-omic data and the development of more robust, scalable algorithms will further enhance our ability to unravel the complex wiring of the cell.

Gene Regulatory Network (GRN) reconstruction is a fundamental challenge in computational biology, essential for understanding the complex interactions that control cellular functions, development, and disease mechanisms [38]. The advent of high-throughput sequencing technologies has generated vast amounts of gene expression data, creating an urgent need for sophisticated computational methods capable of deciphering the intricate regulatory relationships between transcription factors (TFs) and their target genes [39]. Traditional statistical and machine learning approaches often struggle to capture the nonlinear, high-dimensional, and hierarchical nature of these relationships.

Deep learning architectures have emerged as powerful tools for GRN inference, offering significant advantages in processing complex biological data [5]. This application note provides a comprehensive overview of four key deep learning architectures—Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), Graph Neural Networks (GNNs), and Transformers—in the context of GRN modeling. We present detailed protocols, performance comparisons, and practical implementation guidelines to assist researchers in selecting and applying these methods effectively.

Core Architectural Applications

Convolutional Neural Networks (CNNs): Applied to extract spatial features from gene expression data. Some methods, such as CNNC, transform expression profiles into image-like histograms for processing, while others use 1D-CNNs to capture patterns directly from expression vectors [39] [40]. CNNs excel at identifying local regulatory patterns and motifs in the data.

Recurrent Neural Networks (RNNs): Primarily utilized for analyzing time-series gene expression data. RNNs, including Long Short-Term Memory (LSTM) networks, model temporal dependencies and dynamic regulatory processes, capturing how gene expression changes over time and responds to perturbations [5].

Graph Neural Networks (GNNs): Directly model GRNs as graph structures, where nodes represent genes and edges represent regulatory interactions. GNNs use message-passing mechanisms to aggregate information from neighboring nodes, learning gene embeddings that incorporate network topology [39] [41] [38]. Variants like Graph Convolutional Networks (GCNs) and Graph Attention Networks (GATs) are particularly prevalent.

Transformers: increasingly applied to GRN inference through Graph Transformer models. These models use self-attention mechanisms to capture global dependencies between all genes in a network, overcoming limitations of local message-passing in GNNs and effectively modeling long-range regulatory interactions [42] [40] [38].

Performance Comparison

Table 1: Comparative performance of deep learning architectures in GRN inference

| Architecture | Representative Methods | Key Strengths | Common Datasets | Reported Performance |

|---|---|---|---|---|

| CNN | CNNC, DeepDRIM, CNNGRN | Captures local spatial features; Handles image-like data representations [40] [38] | DREAM5, BEELINE | Effective for histogram-based representations but may introduce noise [40] |

| RNN | LSTM-based models | Models temporal dynamics; Captures time-delayed regulations [5] | Time-series expression data | Suitable for developmental processes and time-course experiments |

| GNN | GCNG, GNNLink, scSGL, AutoGRN | Incorporates network topology; Learns from graph-structured data [41] [40] [38] | DREAM5, BEELINE benchmarks | GNNLink: AUROC improvement (~7.3%) and AUPRC improvement (~30.7%) reported over baselines [38] |

| Transformer | GT-GRN, AttentionGRN, GRLGRN | Captures global dependencies; Mitigates over-smoothing [42] [40] [38] | BEELINE (hESC, hHEP, mDC, mESC) | AttentionGRN: State-of-the-art performance across 88 datasets [40] |

Experimental Protocols and Methodologies

Data Preprocessing and Feature Extraction

Protocol 1: Standardized scRNA-seq Data Processing

- Data Collection: Retrieve raw sequencing data in FASTQ format from public repositories such as the Sequence Read Archive (SRA) [5].

- Quality Control: Process raw reads using Trimmomatic (v0.38) to remove adapter sequences and low-quality bases. Assess quality with FastQC [5].

- Alignment and Quantification: Align trimmed reads to the appropriate reference genome using STAR (v2.7.3a). Generate gene-level raw read counts with CoverageBed [5].