Machine Learning for Gene Regulatory Network Reconstruction: A Comparative Analysis of Methods, Challenges, and Future Directions

The reconstruction of Gene Regulatory Networks (GRNs) is fundamental for understanding cellular identity, disease mechanisms, and therapeutic target discovery.

Machine Learning for Gene Regulatory Network Reconstruction: A Comparative Analysis of Methods, Challenges, and Future Directions

Abstract

The reconstruction of Gene Regulatory Networks (GRNs) is fundamental for understanding cellular identity, disease mechanisms, and therapeutic target discovery. This article provides a comprehensive comparative analysis of machine learning (ML) approaches for GRN inference, tailored for researchers, scientists, and drug development professionals. We explore the foundational principles of GRNs and the evolution of data from bulk to single-cell multi-omics technologies. The review systematically contrasts a wide array of methodologies, from traditional statistical models to advanced deep learning and hybrid frameworks, addressing key computational challenges and optimization strategies. Furthermore, we critically examine validation techniques and performance benchmarks, synthesizing insights into the relative strengths and practical applications of different ML approaches. This analysis aims to serve as a guide for selecting appropriate methods and to illuminate promising future research avenues at the intersection of computational biology and biomedicine.

GRN Foundations and the Data Revolution: From Bulk to Single-Cell Multi-Omics

Gene Regulatory Networks (GRNs) are foundational to understanding cellular identity and function. They are interpretable graph models that represent the complex web of causal interactions between transcription factors (TFs) and their target genes, a process fundamentally directed by cis-regulatory elements (CREs) and reflected in cellular dynamics [1] [2]. The reconstruction of these networks is a central challenge in systems biology, vital for elucidating the mechanisms of cell fate decisions, development, and disease etiology [1]. Recent advances in single-cell multi-omics technologies have revolutionized this field, enabling the inference of GRNs at unprecedented resolution and facilitating a new era of comparative analysis for machine learning approaches in GRN reconstruction [1] [3].

Methodological Foundations of GRN Inference

The computational inference of GRNs relies on diverse mathematical and statistical principles to move from correlative observations to causal predictions. These methodologies can be broadly categorized as follows:

- Correlation and Information Theory-Based Approaches: These early methods operate on the "guilt-by-association" principle, inferring potential regulatory relationships through measures like Pearson's correlation or mutual information. While computationally efficient, they struggle to distinguish direct from indirect interactions [1] [4].

- Regression Models: These methods model gene expression as a response variable predicted by the expression or accessibility of TFs and CREs. Penalized regression techniques, such as LASSO, are often employed to handle the high dimensionality of omics data and prevent overfitting by shrinking less important coefficients to zero [1] [5].

- Machine Learning and Deep Learning Approaches: This category includes a wide range of algorithms from tree-based ensembles like Random Forest (GENIE3) to sophisticated deep learning models. More recently, hybrid models that combine, for example, convolutional neural networks (CNNs) with traditional machine learning, have shown superior performance by leveraging the feature extraction power of deep learning alongside the interpretability of classical algorithms [6] [4].

- Probabilistic and Dynamical Systems Models: Probabilistic models, such as Dynamic Bayesian Networks, aim to model the dependence between variables, while dynamical systems approaches use differential equations to model the evolution of gene expression over time. These methods are particularly powerful for capturing the temporal dynamics inherent in biological processes [1] [4].

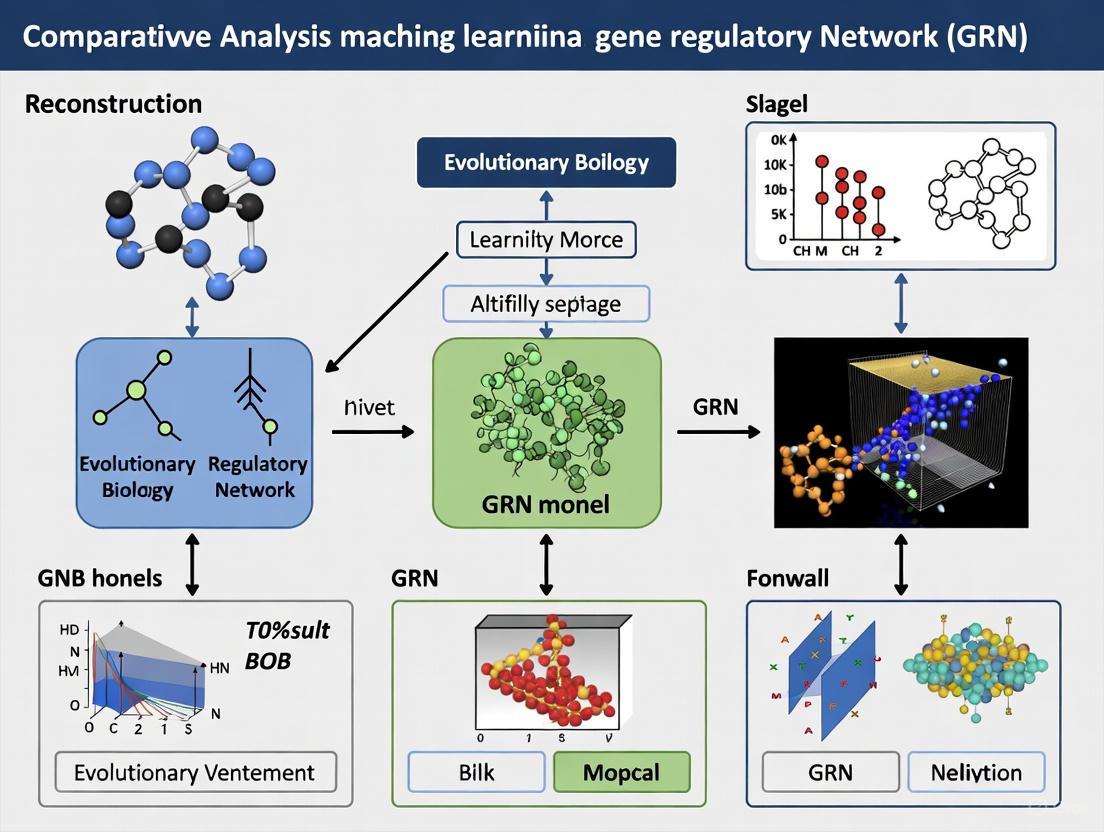

The following diagram illustrates the logical workflow and relationship between these key methodological families in GRN inference.

Diagram: A workflow of GRN inference methodologies, showing how different computational approaches are applied to omics data to reconstruct gene regulatory networks.

Comparative Analysis of Machine Learning Approaches

The performance of GRN inference methods is rigorously evaluated based on their accuracy, scalability, and ability to identify true TF-target relationships. The table below summarizes a comparative analysis of selected methods, highlighting their core algorithms and applications.

Table 1: Comparison of Selected GRN Inference Tools and Methods

| Tool/Method | Core Algorithm | Data Input | Key Features | Applications |

|---|---|---|---|---|

| SCENIC/pySCENIC [3] [2] | GENIE3 (Random Forest) + Rcistarget | scRNA-seq | Infers co-expression modules and refines them with TF motif analysis to identify regulons. | Cell identity regulation; widely used for single-cell GRN mapping. |

| TGPred [6] | Hybrid CNN + Machine Learning | Bulk RNA-seq | Hybrid model integrating deep feature extraction with ML classification; suitable for static data. | Identifying regulators in plant lignin biosynthesis pathways. |

| Inferelator [1] [4] | Sparse Regression | Time-series RNA-seq, ATAC-seq | Infers environmental gene regulatory influence networks (EGRINs) from dynamic data. | Modeling plant responses to environmental stresses like heat and drought. |

| DIRECT-NET [3] | Non-linear Modeling | scATAC-seq (paired or integrated) | Infers GRNs from scATAC-seq data alone, capturing non-linear relationships. | Cell type-specific network inference from epigenomic data. |

| GENIST [4] | Dynamic Bayesian Network | Time-series scRNA-seq | Models temporal dynamics and causal relationships in time-series data. | Inferring GRNs in Arabidopsis root stem cells. |

Recent experimental data underscores the performance gains of advanced methodologies. In a 2025 study, a hybrid model combining Convolutional Neural Networks (CNNs) with traditional machine learning was benchmarked against other methods for predicting TF-target relationships in Arabidopsis thaliana, poplar, and maize [6]. The results demonstrate a significant advantage for the hybrid approach.

Table 2: Performance Comparison of GRN Inference Methods on Plant Transcriptomic Data [6]

| Method Category | Example Method | Reported Accuracy | Key Strengths | Limitations |

|---|---|---|---|---|

| Traditional ML | GENIE3 (Random Forest) | ~70-85% (varies by dataset) | Good interpretability, robust to noise. | Struggles with high-dimensional, non-linear data. |

| Statistical | LASSO Regression | ~65-80% (varies by dataset) | Computational efficiency, provides sparse solutions. | Assumes linear relationships; can be unstable with correlated features. |

| Deep Learning | CNN-based Model | ~85-92% | Captures complex, non-linear hierarchical relationships. | High computational demand; requires very large datasets. |

| Hybrid (ML+DL) | CNN + ML Ensemble | >95% [6] | Combines high accuracy of DL with interpretability of ML; effective on imbalanced data. | Model complexity; can be challenging to implement and optimize. |

Experimental Protocols and Validation

Validating computationally inferred GRNs is a critical step that relies on integrating multiple lines of experimental evidence. A standard workflow for a comprehensive GRN study, as employed in platforms like scGRN, involves several key stages [2]:

- Data Acquisition and Preprocessing: Single-cell RNA-seq (scRNA-seq) and single-cell ATAC-seq (scATAC-seq) data are collected from public repositories like NCBI GEO. Quality control is performed using tools like Seurat and Signac to remove low-quality cells. Gene expression matrices are normalized, and chromatin accessibility matrices are transformed using the TF-IDF method [2].

- Cell Clustering and Annotation: Cells are clustered based on their expression or accessibility profiles. Cell types are then annotated using automated tools like SingleR, which compares clusters to reference datasets [2].

- GRN Inference: The pySCENIC pipeline is a commonly used protocol. It involves two major steps:

- Co-expression Module Identification: Using GRNBOOST2 or GENIE3, potential TF-target relationships are identified based on co-expression patterns within the scRNA-seq data.

- Regulon Pruning with Motif Analysis: The initial co-expression modules are refined using Rcistarget, which scans the DNA sequence around a gene's transcription start site (e.g., within a 10kb window) for enriched TF binding motifs. Direct targets (regulons) are retained if they have a Normalized Enrichment Score (NES) > 3.0 [2].

- Experimental Validation:

- Yeast One-Hybrid (Y1H) Assay: Used to physically confirm the binding of a predicted TF to the promoter region of its target gene [6] [4].

- Chromatin Immunoprecipitation Sequencing (ChIP-seq): Provides genome-wide, high-resolution mapping of TF binding sites, offering strong evidence for direct regulatory interactions [6] [4].

- Functional Assays in Mutants: Analyzing gene expression changes in TF knockout or knock-down mutants (e.g., via RNA-seq) can confirm the regulatory influence of the TF on its predicted target genes [4].

The following diagram illustrates this integrated computational and experimental workflow.

Diagram: The standard GRN inference and validation workflow, from raw data processing to computational inference and final experimental verification.

The Scientist's Toolkit: Research Reagent Solutions

The reconstruction and validation of GRNs rely on a suite of key reagents and computational resources. The following table details essential tools for researchers in this field.

Table 3: Key Research Reagents and Resources for GRN Studies

| Resource / Reagent | Type | Function in GRN Research | Example |

|---|---|---|---|

| Cis-Regulatory Element Databases | Data Resource | Provide annotations of promoter, enhancer, and other regulatory regions for motif enrichment analysis. | Rcistarget databases (e.g., for 500bp upstream or 10kb around TSS) [2]. |

| TF Motif Annotations | Data Resource | Collections of known DNA binding specificities for TFs, used to link open chromatin regions to potential regulators. | Motif collections from JASPAR or TRANSFAC used by tools like Rcistarget [2]. |

| Validated TF-Target Interactions | Data Resource | Curated databases of known regulatory interactions used for training supervised models and benchmarking. | TRRUST (literature-curated), hTFtarget (integrates ChIP-seq) [2]. |

| GRN Platform | Software/Web Resource | Integrative platforms that catalog pre-computed GRNs and provide online analysis tools. | scGRN (hosts cell type-specific networks), GRAND (sample-specific networks) [2]. |

| Yeast One-Hybrid System | Experimental Reagent | A high-throughput method to experimentally validate physical binding of a TF to a specific DNA sequence in vivo. | Used to confirm TF-promoter interactions predicted by tools like TGPred [6]. |

| ChIP-seq Antibodies | Experimental Reagent | Antibodies specific to TFs or histone modifications for immunoprecipitation in ChIP-seq assays. | Critical for generating genome-wide maps of TF binding sites for validation [6]. |

Future Directions and Challenges

The field of GRN inference is rapidly evolving, with several promising and necessary future directions emerging:

- Cross-Species Transfer Learning: A significant challenge in non-model species is the lack of large, curated datasets for training. Transfer learning, where a model trained on a data-rich species (e.g., Arabidopsis) is applied to a data-scarce species (e.g., poplar or maize), has shown great promise in improving prediction accuracy and feasibility [6].

- Integration of Multi-Omic Data: While current methods leverage transcriptome and chromatin accessibility, future approaches will more deeply integrate additional data layers, such as chromatin conformation (Hi-C) and protein-protein interactions, to build more comprehensive and accurate models of regulation [1] [7].

- Improving Interpretability and Scalability: As deep learning models become more complex, ensuring they remain interpretable to biologists is crucial. Furthermore, methods must continue to scale efficiently to handle the growing size of single-cell and multi-omics datasets [5] [6].

- From Static to Dynamic Networks: There is a growing emphasis on inferring GRNs that capture dynamic changes across time, such as during disease progression or developmental processes, moving beyond static snapshots of regulation [4] [8].

In conclusion, the reconstruction of Gene Regulatory Networks has been profoundly advanced by machine learning and single-cell multi-omics technologies. The comparative analysis reveals that no single method is universally superior; the choice depends on the biological question, data type, and required scalability. Hybrid and transfer learning approaches represent the cutting edge, offering robust performance and cross-species applicability. As these tools continue to mature, they will undoubtedly unlock deeper insights into the regulatory logic of life, accelerating discovery in basic biology and drug development.

The reconstruction of Gene Regulatory Networks (GRNs) is a cornerstone of modern systems biology, essential for elucidating the molecular mechanisms that control cellular functions, responses, and diseases. The accuracy of these models is profoundly influenced by the quality and nature of the transcriptomic data from which they are inferred. Over the past two decades, the technologies for generating gene expression data have evolved dramatically, progressing from hybridization-based microarrays to sequencing-based RNA-seq, and more recently, to the single-cell revolution. This evolution has expanded the scope and resolution of biological questions we can address, while simultaneously introducing new computational challenges and opportunities for machine learning. This guide provides a comparative analysis of these key technologies—Microarray, RNA-seq, and single-cell RNA-seq (scRNA-seq)—focusing on their experimental protocols, data characteristics, and their implications for GRN reconstruction, particularly within the framework of machine learning approaches.

Technology Comparison: Microarray vs. RNA-seq

The transition from microarrays to RNA-seq represents a significant leap in transcriptomic profiling capabilities. The table below summarizes a direct comparative study of these platforms.

Table 1: Quantitative comparison of Microarray and RNA-seq performance in a concentration-response study [9].

| Feature | Microarray (PrimeView) | RNA-seq (Illumina) | Impact on GRN Studies |

|---|---|---|---|

| Detection Principle | Hybridization to predefined probes | Sequencing and counting of aligned reads | RNA-seq can identify novel TFs and isoforms not present on arrays |

| Dynamic Range | Limited (~10³), signal saturation at high end | Wide (>10⁵), digital read counts [10] | RNA-seq provides more accurate expression levels for highly expressed TFs |

| Sensitivity / Specificity | Lower sensitivity for low-abundance transcripts | Higher sensitivity and specificity [10] | Better detection of weakly expressed regulatory genes |

| Differentially Expressed Genes (DEGs) | Fewer DEGs identified | Larger numbers of DEGs with wider dynamic ranges [9] | Potentially more candidate genes for GRN inference |

| Transcript Coverage | Limited to known, predefined transcripts | Can detect novel transcripts, splice variants, non-coding RNAs [9] [10] | Enables construction of more comprehensive networks including non-coding regulators |

| Final Output (tPoD) | Equivalent pathway identification and tPoD values | Equivalent pathway identification and tPoD values [9] | For some traditional outputs, the platforms can yield similar conclusions |

Despite RNA-seq's technical advantages in dynamic range and novel transcript detection, a 2025 comparative study on cannabinoids found that both platforms revealed similar overall gene expression patterns and, crucially, identified equivalent functional pathways and transcriptomic points of departure (tPoD) through gene set enrichment analysis (GSEA) [9]. This suggests that for traditional applications like mechanistic pathway identification, microarray data, with its lower cost, smaller data size, and well-established analysis pipelines, remains a viable choice [9].

Detailed Experimental Protocols

Understanding the foundational experimental protocols is critical for evaluating data quality and its suitability for GRN inference.

Microarray Protocol (GeneChip PrimeView) [9]

- Sample Input: 100 ng of total RNA.

- cDNA Synthesis: Single-stranded cDNA is generated using reverse transcriptase and a T7-linked oligo(dT) primer, followed by conversion to double-stranded cDNA.

- IVT and Labeling: Complementary RNA (cRNA) is synthesized via in vitro transcription (IVT) with biotinylated UTP and CTP.

- Fragmentation and Hybridization: 12 µg of biotin-labeled cRNA is fragmented and hybridized onto the microarray chip for 16 hours.

- Staining and Scanning: The chip is stained and washed on a fluidics station before being scanned to produce image files.

- Data Processing: Image files are processed into cell intensity files (CEL), and the Robust Multi-chip Average (RMA) algorithm is used for background adjustment, quantile normalization, and summarization of probe-level data.

RNA-seq Protocol (Illumina Stranded mRNA Prep) [9]

- Sample Input: 100 ng of total RNA.

- Poly-A Selection: Messenger RNA (mRNA) with polyA tails is purified from total RNA using oligo(dT) magnetic beads.

- Library Preparation: The purified mRNA is fragmented. Sequencing adapters are ligated to the fragments in a process that includes a strand-marking step.

- Sequencing: The library is sequenced on an Illumina platform, generating millions of short sequencing reads.

- Data Processing: Reads are aligned to a reference genome, and gene-level expression is quantified by counting the number of reads aligned to each gene.

The following diagram illustrates the key procedural differences between these two foundational workflows.

The Single-Cell Revolution

The development of single-cell RNA sequencing (scRNA-seq) marked a paradigm shift, moving from bulk tissue analysis, which measures average gene expression across thousands of cells, to profiling the transcriptomes of individual cells. This technology was conceptually pioneered in 2009 [11] and has since matured, allowing researchers to unravel the heterogeneity and complexity of tissues and organs at unprecedented resolution.

scRNA-seq Experimental Workflow and Key Challenges

The core workflow for high-throughput scRNA-seq involves several critical steps [11]:

- Single-Cell Isolation: Cells are dissociated from tissue and captured individually using methods like fluorescence-activated cell sorting (FACS), microfluidics, or droplet-based systems (e.g., 10x Genomics).

- Cell Lysis and Reverse Transcription: Each cell is lysed, and its mRNA is reverse-transcribed into cDNA. A critical step is the use of Unique Molecular Identifiers (UMIs), which are short random barcodes added to each mRNA molecule to correct for amplification bias and enable absolute mRNA counting [11].

- cDNA Amplification and Library Preparation: The cDNA is amplified, typically via PCR, and sequencing libraries are constructed.

- Sequencing and Data Generation: The libraries are sequenced, producing a digital gene expression matrix for thousands of individual cells.

A major challenge in scRNA-seq is the "dropout" phenomenon, where a gene is observed at a low or moderate expression level in one cell but is not detected in another cell of the same type. These technical zeros complicate the distinction between true lack of expression and technical failure, posing a significant hurdle for accurate GRN inference [12]. Furthermore, tissue dissociation can induce artificial transcriptional stress responses, potentially altering the biological state being measured [11]. An alternative method, single-nucleus RNA-seq (snRNA-seq), sequences nuclear RNA and can be advantageous for tissues that are difficult to dissociate, such as brain tissue [11].

Impact on Gene Regulatory Network Inference

The type of transcriptomic data available directly shapes the choice and performance of computational methods for GRN inference. The characteristics of bulk versus single-cell data introduce distinct challenges and opportunities.

Table 2: Comparison of GRN inference challenges across sequencing technologies.

| Aspect | Bulk RNA-seq / Microarray | Single-Cell RNA-seq (scRNA-seq) |

|---|---|---|

| Primary Data | Population-average gene expression | Gene expression matrix for thousands of individual cells |

| Key Inferential Challenge | Disentangling correlated expression in a mixed signal | Distinguishing true regulatory relationships from technical noise (dropouts) and biological variation [12] |

| Common Inference Methods | GENIE3 (Random Forest), TIGRESS, mutual information (ARACNE, CLR) [6] [12] | PIDC (Information theory), LEAP (Correlation), PPCOR [12] |

| Role of Machine Learning | Traditional ML (SVM, Decision Trees) and ensemble methods | Deep learning (CNNs, RNNs) and hybrid models to capture non-linear, hierarchical relationships [6] |

| Data Preprocessing | Standard normalization (e.g., TMM, RMA) | Critical and complex: normalization, dropout imputation, and feature selection are highly influential [12] [13] |

Machine Learning Approaches for GRN Reconstruction

Machine learning (ML), deep learning (DL), and hybrid approaches have emerged as powerful tools for large-scale GRN prediction, overcoming the low-throughput limitations of experimental methods like ChIP-seq and yeast one-hybrid assays [6].

- Traditional ML and Statistical Methods: Methods like GENIE3 (using random forests) and those based on mutual information (e.g., ARACNE, CLR) are well-established for static bulk data [6] [12]. However, they can struggle with the high-dimensionality and noise of scRNA-seq data and may fail to capture complex non-linear relationships.

- Deep Learning (DL) Models: Architectures like Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) excel at learning hierarchical and temporal dependencies from complex data. Tools such as DeepBind and DeepSEA use CNNs to predict regulatory relationships from DNA sequence data [6].

- Hybrid and Transfer Learning: A promising approach is the use of hybrid models that combine the feature-learning power of DL with the classification strength of traditional ML. For example, combining CNNs with ML models has consistently outperformed traditional methods in GRN prediction for plants, achieving over 95% accuracy in holdout tests [6]. Furthermore, transfer learning allows knowledge gained from a data-rich species (like Arabidopsis thaliana) to be applied to infer GRNs in less-characterized species (like poplar or maize), effectively addressing the challenge of limited training data in non-model organisms [6].

A critical step in scRNA-seq analysis for GRN inference is feature selection. Benchmarking studies have shown that the method used to select a subset of informative genes (features) before integration significantly impacts the performance of downstream tasks, including the ability to map new query cells and detect rare populations [13]. Highly variable feature selection is a common and effective practice for producing high-quality integrations and robust reference atlases [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key reagents, technologies, and computational tools for sequencing-based research.

| Item / Technology | Function / Application | Relevance to GRN Studies |

|---|---|---|

| iPSC-derived Hepatocytes (iCell 2.0) | A consistent and human-relevant in vitro cell model for toxicogenomic and transcriptomic studies [9]. | Provides a standardized cellular system for studying chemical-induced perturbations in gene regulatory pathways. |

| Unique Molecular Identifiers (UMIs) | Short random barcodes added to each mRNA molecule during reverse transcription in scRNA-seq [11]. | Enables accurate quantification of transcript counts by correcting for PCR amplification bias, crucial for reliable expression input for GRNs. |

| 10x Genomics Platform | A widely used droplet-based system for high-throughput single-cell RNA sequencing [11]. | Allows for the profiling of gene expression in thousands of individual cells, providing the raw data for cell-type-specific GRN inference. |

| STAR Aligner | A popular software for accurate and fast alignment of RNA-seq reads to a reference genome [6]. | A critical preprocessing step to generate the count data used for all downstream GRN inference analyses. |

| GENIE3 | A random forest-based algorithm for inferring GRNs from bulk gene expression data [12]. | A benchmark method in the field for predicting target genes of transcription factors. |

| Convolutional Neural Networks (CNNs) | A class of deep learning models effective for processing structured data, such as sequence motifs in DNA [6]. | Used in tools like DeepBind to predict TF binding sites, providing prior knowledge for network construction. |

| Compass Framework | A resource and software (CompassR) for comparative analysis of gene regulation across tissues using single-cell multi-omics data [14]. | Enables the identification of tissue-specific and conserved CRE-gene linkages, validating and refining inferred GRNs. |

Single-cell multi-omics technologies represent a revolutionary advancement in biological research, enabling the simultaneous measurement of multiple molecular layers within individual cells. These platforms, particularly SHARE-seq (Simultaneous High-throughput ATAC and RNA Expression sequencing) and 10x Multiome, allow researchers to capture both gene expression and chromatin accessibility from the same cell, providing unprecedented insights into cellular identity and regulatory mechanisms [15] [16]. The ability to co-profile the transcriptome and epigenome within individual cells has transformed our understanding of gene regulatory networks (GRNs), cellular heterogeneity, and developmental trajectories in complex biological systems.

These technologies address a fundamental challenge in single-cell biology: understanding the precise relationship between chromatin accessibility and gene expression patterns across diverse cell types and states. While single-modality approaches (scRNA-seq or scATAC-seq alone) can identify cell populations, they often produce discordant results regarding cell type/state assignment [17]. Multi-omic technologies resolve these inconsistencies by directly linking regulatory elements with their transcriptional outputs in the same cell, enabling more accurate cell type annotation and revealing novel cell states that show modality-specific features [17] [18].

For researchers investigating gene regulatory networks, single-cell multi-omic data provides the essential foundation for computational methods that connect transcription factors, cis-regulatory elements, and target genes. This technological capability is particularly valuable for studying dynamic biological processes such as development, differentiation, and disease progression, where understanding the temporal relationship between chromatin remodeling and gene expression changes is crucial for deciphering underlying regulatory principles [15] [18].

Technical Platform Comparison: SHARE-seq vs. 10x Multiome

SHARE-seq is a highly scalable approach for measuring both chromatin accessibility and gene expression in the same single cell, applicable to diverse tissues [15]. The method utilizes a two-step combinatorial indexing strategy that begins with fixing and permeabilizing cells or nuclei. In the first indexing step, transposase complexes tag accessible chromatin regions with adaptor sequences while also reverse-priming cDNA synthesis from mRNA transcripts. The second indexing occurs during PCR amplification, creating uniquely barcoded libraries for both ATAC and RNA from the same cell [15]. This platform can profile tens of thousands of cells in a single experiment, making it suitable for comprehensive tissue atlases and developmental studies.

The 10x Multiome platform from 10x Genomics employs a different technical approach based on microfluidic partitioning of nuclei into Gel Bead-In Emulsions (GEMs) [16] [18]. Each GEM contains a single nucleus along with two types of gel beads: one for ATAC sequencing and another for RNA sequencing. The ATAC bead contains Tn5 transposase pre-loaded with adapters, while the RNA bead carries oligonucleotides with poly(dT) sequences for mRNA capture along with cell barcodes and unique molecular identifiers (UMIs) [18]. This simultaneous capture of both modalities within the same partition ensures that both libraries originate from the same nucleus, enabling direct correlation between chromatin accessibility and gene expression patterns.

Performance Characteristics and Data Output

When comparing these platforms, several key performance metrics emerge from published benchmarks and technical documentation:

Table 1: Performance Comparison Between SHARE-seq and 10x Multiome

| Performance Metric | SHARE-seq | 10x Multiome |

|---|---|---|

| Cell Throughput | Tens of thousands of cells per experiment [15] | Thousands to tens of thousands of cells per run [18] |

| RNA Sequencing Sensitivity | High sensitivity for transcript detection | Slightly lower than standalone snRNA-seq but comparable for cell typing [18] |

| ATAC Sequencing Sensitivity | Comprehensive chromatin accessibility profiling | Lower unique fragment peaks compared to standalone scATAC-seq [18] |

| Multiplexing Capacity | High (combinatorial indexing) | Moderate (single sample per run typically) |

| Technical Complexity | Higher (two-step indexing) | Lower (integrated commercial workflow) |

| Data Integration | Requires computational alignment of dual indices | Built-in cellular barcode matching |

A systematic benchmark study on peripheral blood mononuclear cells revealed that 10x Multiome produced approximately half the unique fragment peaks compared to the most advanced 10x Single Cell ATAC protocol, indicating reduced sensitivity for chromatin accessibility profiling [18]. However, the gene expression profile quality in 10x Multiome is ostensibly comparable to standalone single-nucleus RNA sequencing, with only slightly lower sensitivity as measured by median genes and UMIs per nucleus [18].

For SHARE-seq, the original publication demonstrated the technology's capability to profile 34,774 joint profiles from mouse skin, successfully identifying cis-regulatory interactions and defining domains of regulatory chromatin (DORCs) that significantly overlap with super-enhancers [15]. The high scalability of SHARE-seq makes it particularly suitable for comprehensive atlas-building projects requiring massive cell numbers.

Analytical Frameworks for Multi-Omic Data Integration

Computational Methods for Multi-Omic Data Alignment

The distinct feature spaces of different omics modalities (e.g., accessible chromatin regions in scATAC-seq versus genes in scRNA-seq) present a major computational challenge for integration [19]. Several computational approaches have been developed to address this challenge:

Anchor-based alignment methods: Tools like Seurat v3 employ canonical correlation analysis (CCA) combined with mutual nearest neighbors (MNN) to identify cross-modal anchors for data integration [17] [20]. MOJITOO effectively infers shared representations across multiple modalities using CCA [20].

Matrix factorization-based methods: Techniques like iNMF (integrative Non-negative Matrix Factorization) extend NMF to multi-omics data, enabling more precise identification of cell clusters [20]. Mowgli integrates iNMF with optimal transport to capture inter-omics relationships and improve fusion quality [20].

Deep learning models: Frameworks like GLUE (Graph-Linked Unified Embedding) use variational autoencoders to map heterogeneous omics data into a unified latent space [19]. GLUE employs a knowledge-based guidance graph that explicitly models cross-layer regulatory interactions, bridging different omics-specific feature spaces in a biologically intuitive manner [19]. MultiVI assumes a negative binomial distribution for RNA-seq data and a Bernoulli distribution for ATAC-seq data, aligning embeddings through a symmetric Kullback-Leibler divergence loss [17].

Enhanced contrastive learning: Recently developed methods like scECDA employ independently designed autoencoders that autonomously learn feature distributions of each omics dataset while incorporating enhanced contrastive learning and differential attention mechanisms to reduce noise interference during data integration [20].

A comprehensive benchmarking study evaluating nine integration methods found that Seurat v4 was the best currently available platform for integrating scRNA-seq, snATAC-seq, and multiome data, even in the presence of complex batch effects [17]. The study emphasized that an adequate number of nuclei in the multiome dataset is crucial for achieving accurate cell type annotation, with the number of cells being more important than sequencing depth for this purpose [17].

Gene Regulatory Network Inference from Multi-Omic Data

The integration of transcriptomics with epigenomics data at single-cell resolution has become the new standard for mechanistic network inference [16]. Several methodological approaches have been developed for GRN reconstruction from multi-omic data:

Regression models: SCARlink uses regularized Poisson regression on tile-level accessibility data to predict single-cell gene expression and link enhancers to target genes [21]. Unlike pairwise correlation approaches, SCARlink models all regulatory effects at a gene locus jointly, avoiding limitations of peak calling and pairwise gene-peak correlations [21].

Spatial association approaches: scSAGRN incorporates spatial association to compute correlations between gene expression and chromatin openness data, connecting distal cis-regulatory elements to genes and inferring GRNs [22]. This method combines neighborhood information obtained by weighted nearest neighbor (WNN) with spatial association to measure relationships between modalities.

Multi-omic regression: Methods like those implemented in SCENIC+ use multiple regression approaches to predict gene expression levels based on transcription factor expression and regulatory region accessibility to identify enhancer-driven GRNs [22].

Probabilistic models: Approaches based on probabilistic matrix decomposition and variational inference can infer GRNs with uncertainty estimation through systematic model selection and parameter optimization [22].

Table 2: Computational Methods for GRN Inference from Multi-Omic Data

| Method | Core Approach | Key Features | Performance Highlights |

|---|---|---|---|

| SCARlink | Regularized Poisson regression | Joint modeling of regulatory effects; non-negative coefficients for enhancer identification | Outperformed ArchR gene score; 11×-15× enrichment in fine-mapped eQTLs [21] |

| GLUE | Graph-linked variational autoencoder | Knowledge-based guidance graph; adversarial alignment | Superior performance in benchmarks; enables triple-omics integration [19] |

| scSAGRN | Spatial association with WNN | Identifies activating/repressive TFs; links distal CREs to genes | Superior TF recovery and peak-gene linkage prediction [22] |

| Seurat v4 | Weighted nearest neighbors (WNN) | Supervised projection of single-modality data | Best overall in benchmarking; robust to batch effects [17] |

| scECDA | Enhanced contrastive learning | Differential attention mechanism; automatic feature fusion | Higher accuracy in cell clustering across diverse datasets [20] |

Benchmarking evaluations demonstrate that SCARlink significantly outperformed existing gene scoring methods for imputing gene expression from chromatin accessibility across high-coverage multi-ome datasets, while providing comparable to improved performance on low-coverage datasets [21]. The method identified cell-type-specific enhancers validated by promoter capture Hi-C and showed 11× to 15× and 5× to 12× enrichment in fine-mapped eQTLs and fine-mapped GWAS variants, respectively [21].

Experimental Design and Protocol Considerations

Sample Preparation and Library Construction

The successful application of single-cell multi-omics technologies requires careful experimental design and protocol optimization. For both SHARE-seq and 10x Multiome, nuclei isolation is a critical step, as it is mandatory for the tagmentation process in scATAC-seq [18]. This requirement contrasts with scRNA-seq, which can be performed on both whole cells and nuclei. Researchers must consider this constraint when designing experiments where the whole-cell transcriptome might be essential for capturing certain RNA species.

For SHARE-seq experiments, the protocol involves:

- Cell fixation and permeabilization to maintain cellular integrity while allowing reagent access

- Simultaneous tagmentation of accessible chromatin and reverse transcription of mRNA

- Cell indexing through two rounds of combinatorial barcoding

- Library preparation and sequencing [15]

The 10x Multiome workflow follows these key steps:

- Nuclei isolation from fresh or frozen tissue

- Optimization of nuclei concentration for optimal GEM recovery

- Simultaneous partitioning of nuclei into GEMs for both ATAC and RNA capture

- Post-GEM cleanup and library construction

- Sequencing on Illumina platforms [18]

A critical consideration for 10x Multiome is that nuclei isolation is mandatory, which may influence transcriptome representation compared to whole-cell approaches. A workaround for researchers requiring whole-cell transcriptome information is to combine a standalone whole-cell scRNA-seq experiment with a standalone ATAC-seq experiment using divided samples [18].

Quality Control and Data Preprocessing

Robust quality control is essential for generating reliable multi-omic data. Key quality metrics include:

- Cell calling: Distinguishing true cells from background using barcode ranking plots that show characteristic "cliff-and-knee" shapes [23]

- Sequencing depth: Ensuring sufficient read coverage for both modalities

- Mitochondrial read percentage: Filtering cells with high mitochondrial content (typically >10% for PBMCs) which may indicate poor cell quality [23]

- Doublet detection: Identifying multiplets using computational tools

- Feature counts: Assessing genes per cell and fragments per cell for RNA and ATAC respectively

For data preprocessing, standard pipelines include:

- Read alignment: Using optimized aligners like Cell Ranger for 10x data

- Peak calling: Identifying accessible chromatin regions from scATAC-seq data

- Count matrix generation: Creating cell-by-feature matrices for both modalities

- Normalization: Applying appropriate normalization methods to address technical variability

- Feature selection: Identifying highly variable genes and differential accessible regions [16] [23]

The high dimensionality and sparsity of single-cell multi-omics data necessitate careful dimensionality reduction. Methods include linear approaches like principal component analysis and non-linear methods such as autoencoders, which aim to consolidate information from high-dimensional space into fewer dimensions while preserving biological information [16].

Research Reagent Solutions and Essential Materials

Successful single-cell multi-omics experiments require specific reagents and computational tools. The following table outlines essential components for establishing a multi-omics workflow:

Table 3: Essential Research Reagents and Computational Tools for Single-Cell Multi-Omics

| Category | Item | Function | Implementation Examples |

|---|---|---|---|

| Wet Lab Reagents | Nuclei Isolation Kits | Release intact nuclei from tissue/cells | 10x Nuclei Isolation Kits |

| Transposase Enzymes | Tagment accessible chromatin | Tn5 transposase loaded with adapters | |

| Reverse Transcriptase | Synthesize cDNA from mRNA | Moloney murine leukemia virus (MMLV) RT | |

| Barcoded Beads | Cell/index labeling | 10x Barcoded Gel Beads | |

| Computational Tools | Alignment Pipelines | Process raw sequencing data | Cell Ranger (10x), SHARE-seq pipeline |

| Integration Methods | Combine multi-omic datasets | Seurat v4, GLUE, SCARlink, scSAGRN | |

| GRN Inference Tools | Reconstruct regulatory networks | SCENIC+, FigR, TRIPOD | |

| Visualization Software | Explore and present results | Loupe Browser, UCSC Genome Browser |

Visualization of Multi-Omic Data Integration and Analysis

The following diagram illustrates the conceptual workflow for integrating single-cell multi-omic data to infer gene regulatory networks, synthesizing the computational approaches discussed throughout this guide:

This workflow illustrates how raw multi-omic data undergoes preprocessing before being integrated using various computational approaches. The integrated data then serves as input for GRN inference methods that ultimately generate biological insights about regulatory mechanisms. The color coding distinguishes between data (yellow), processes (green), method categories (blue), specific tools (red), and analytical approaches (green with red elements).

Single-cell multi-omics technologies have fundamentally transformed our ability to decipher gene regulatory networks with unprecedented resolution. Both SHARE-seq and 10x Multiome offer powerful approaches for simultaneous profiling of chromatin accessibility and gene expression, each with distinct advantages depending on research goals. SHARE-seq provides higher scalability and flexibility through combinatorial indexing, while 10x Multiome offers a more standardized commercial workflow with slightly lower sensitivity in ATAC profiling compared to standalone assays [15] [18].

The computational landscape for analyzing multi-omic data has evolved rapidly, with methods like GLUE, SCARlink, and scSAGRN demonstrating superior performance in benchmarks for data integration and GRN inference [21] [19] [22]. These tools enable researchers to move beyond correlation and identify putative causal relationships between regulatory elements and gene expression.

Future developments in single-cell multi-omics will likely focus on integrating additional omics layers, improving scalability for massive datasets, enhancing spatial context through spatial transcriptomics and ATAC-seq, and developing more sophisticated computational models that incorporate temporal dynamics and causal inference [16]. As these technologies and analytical methods continue to mature, they will undoubtedly yield deeper insights into the regulatory principles governing cellular identity, function, and dysfunction in disease.

Gene Regulatory Network (GRN) reconstruction is a fundamental challenge in computational biology, essential for understanding cellular mechanisms and advancing drug discovery [24] [25]. The accuracy of inferred networks is profoundly influenced by the type of data used. Time-series, perturbation, and multi-omics datasets provide complementary views of the regulatory machinery, capturing dynamic, causal, and cross-layer interactions, respectively [26] [27]. This guide provides a comparative analysis of these key data sources, detailing their experimental protocols, performance characteristics, and appropriate computational methods to guide researchers in selecting optimal datasets for their GRN inference projects.

Data Source Comparison at a Glance

The table below summarizes the core characteristics, strengths, and challenges of the three primary data types used for GRN inference.

Table 1: Comparative Overview of Key Data Sources for GRN Inference

| Data Source | Core Principle | Key Strengths | Primary Challenges | Example Experimental Platforms |

|---|---|---|---|---|

| Time-Series Data | Measuring molecular levels at multiple time points after a perturbation [28] [24]. | Captures temporal order of events, enabling inference of causality and dynamics [28] [24]. | Requires careful time-point selection; computationally intensive for large systems [24]. | Bulk RNA-seq, single-cell RNA-seq (scRNA-seq) |

| Perturbation Data | Measuring system response after targeted experimental disruption of specific genes [29] [27]. | Provides direct evidence for causal relationships; gold standard for validation [27]. | High cost and experimental complexity; scalability can be limited [27] [30]. | CRISPR-KO/CRISPRi, siRNA/shRNA knockdown |

| Multi-Omics Data | Integrating simultaneous measurements from multiple molecular layers (e.g., transcriptome, metabolome) [26] [31]. | Reveals system-wide, cross-layer regulatory mechanisms; holistic view [26]. | High sample heterogeneity; data integration complexity; timescale separation between layers [26]. | scRNA-seq + Bulk Metabolomics, ATAC-seq |

Time-Series Transcriptomics Data

Experimental Protocols and Data Generation

Time-series transcriptomics experiments involve profiling gene expression (via bulk or single-cell RNA-seq) across multiple time points following an environmental stimulus, drug application, or genetic perturbation [24]. Key steps include:

- System Perturbation: A synchronized cell population is subjected to a stimulus.

- Sample Collection: Aliquots of cells or tissues are collected at pre-defined, closely spaced time points to capture expression dynamics.

- RNA Sequencing: RNA is extracted from each sample and prepared for bulk or single-cell RNA-seq.

- Data Processing: Sequence reads are aligned, quantified, and normalized to create a gene expression matrix across time.

Performance and Inference Applications

Time-series data is powerful for establishing the temporal order of regulatory events, a prerequisite for causal inference [28]. It allows researchers to move beyond correlations and model the dynamics of the system.

Specialized computational methods have been developed to leverage this temporal information, which can be broadly categorized as model-free (e.g., using mutual information, random forests) or model-based (e.g., using Ordinary Differential Equations (ODEs) or Bayesian frameworks) [24]. The DREAM project benchmarks have shown that a high-confidence consensus network, inferred by integrating results from multiple methods, often provides the most accurate and robust reconstruction [24].

The following diagram illustrates a general workflow for inferring GRNs from time-series transcriptomic data.

Perturbation Data

Experimental Protocols and Data Generation

Perturbation-based studies directly intervene on genes to observe the downstream effects on the network. CRISPR-based technologies are now the standard for this due to their high precision and scalability [27] [30]. A typical workflow is:

- Perturbation Design: Design single-guide RNAs (sgRNAs) to knock out (CRISPR-KO) or knock down (CRISPRi) a set of target genes, often focusing on transcription factors (TFs) [29] [27].

- Cell Transduction: Deliver sgRNAs and Cas9 machinery into cells (e.g., via lentiviral transduction or as ribonucleoproteins in primary cells) [29].

- Perturbation & Sequencing: After a period allowing for gene expression changes, perform single-cell or bulk RNA-seq on both perturbed and control cells.

- Quality Control: Validate editing efficiency and check for expected expression changes in the targeted genes [30].

Large-scale benchmarks like CausalBench use such datasets, containing hundreds of thousands of single-cell profiles from thousands of perturbations in cell lines like K562 and RPE1 [27].

Performance and Inference Applications

Perturbation data provides the strongest evidence for causal relationships between genes, moving beyond prediction to establish directionality [27]. The performance of inference methods on this data is typically evaluated using metrics that measure the trade-off between precision and recall, such as the F1 score, as well as causal-effect specific metrics like the mean Wasserstein distance and False Omission Rate (FOR) [27].

A key finding from recent benchmarks is that methods leveraging interventional data (e.g., GIES, DCDI, LLCB) do not always outperform those using only observational data, highlighting the challenge of fully utilizing perturbation information [27]. Methods like Linear Latent Causal Bayes (LLCB) are specifically designed for perturbation data, using a Bayesian framework to deconvolve direct effects from total perturbation effects and estimate potentially cyclic regulatory graphs [29].

Table 2: Selected Methods for Inference from Perturbation Data and Their Performance

| Method Name | Type | Key Feature | Reported Performance (CausalBench) |

|---|---|---|---|

| LLCB (Linear Latent Causal Bayes) [29] | Interventional, Bayesian | Estimates direct effects and allows for cyclic graphs. | High accuracy in identifying direct, causal edges from CRISPR-KO data. |

| GIES (Greedy Interventional Equivalence Search) [27] | Interventional, Score-based | Extension of GES for interventional data. | Does not consistently outperform its observational counterpart (GES). |

| DCDI (Differentiable Causal Discovery from Interventional Data) [27] | Interventional, Optimization-based | Uses continuous optimization with acyclicity constraints. | Performance varies; challenges in scalability and utilization of interventional data. |

| Mean Difference [27] | Interventional | Top-performing method from the CausalBench challenge. | High performance on statistical evaluation (mean Wasserstein distance). |

| Guanlab [27] | Interventional | Top-performing method from the CausalBench challenge. | High performance on biological evaluation (F1 score). |

The logical flow of a perturbation-based GRN inference experiment, from design to network analysis, is shown below.

Multi-Omics Data

Experimental Protocols and Data Generation

Multi-omics studies collect data from two or more molecular layers from the same biological sample. A common and powerful combination in GRN inference integrates single-cell transcriptomics with bulk metabolomics [26]. The protocol involves:

- Sample Preparation: Treat and collect samples at multiple time points.

- Parallel Assaying: For each sample, perform:

- Single-cell RNA-seq: To profile gene expression at cellular resolution.

- Bulk Metabolomics: Using mass spectrometry to quantify metabolite concentrations.

- Data Integration: Align the datasets from different modalities for joint analysis, which presents a significant computational challenge [26] [31].

Performance and Inference Applications

Integrating multi-omics data allows for the inference of a more comprehensive network that includes cross-layer interactions (e.g., a metabolite regulating a gene) in addition to intra-layer interactions (e.g., TF-target gene) [26]. A major challenge is the separation of timescales between molecular layers; for instance, metabolic reactions occur on the order of seconds, while transcriptional changes take hours [26].

Methods like MINIE (Multi-omIc Network Inference from timE-series data) are specifically designed to address this. MINIE uses a Differential-Algebraic Equation (DAE) model, where slow transcriptomic dynamics are modeled with differential equations and fast metabolic dynamics are modeled with algebraic constraints, providing a more biologically realistic and computationally stable framework than standard ODEs [26]. Benchmarking shows that purpose-built multi-omic methods like MINIE can outperform single-omic methods, successfully identifying high-confidence interactions in complex diseases like Parkinson's [26].

The MINIE pipeline integrates these concepts into a two-step inference process, as visualized below.

The Scientist's Toolkit: Research Reagent Solutions

This table details key experimental and computational resources essential for working with the featured data sources.

Table 3: Essential Research Reagents and Tools for GRN Inference Studies

| Category | Item | Function & Application |

|---|---|---|

| Perturbation Tools | CRISPR-Cas9 RNP [29] | Enables efficient, arrayed gene knockout in primary cells (e.g., CD4+ T cells) for perturbation studies. |

| CRISPRi [27] | CRISPR interference for targeted gene knockdown, used in large-scale single-cell perturbation screens. | |

| Omics Technologies | Single-cell RNA-seq (scRNA-seq) [26] [27] | Profiles genome-wide gene expression at single-cell resolution, capturing cellular heterogeneity. |

| Bulk Metabolomics [26] | Quantifies metabolite concentrations, often integrated with transcriptomics for multi-omic networks. | |

| ChIP-seq / DAP-seq [6] | Identifies in vivo or in vitro DNA binding sites of TFs, providing prior knowledge for network inference. | |

| Computational Tools | CausalBench Suite [27] | A benchmark suite for evaluating network inference methods on real-world, large-scale single-cell perturbation data. |

| PEREGGRN Engine [30] | A benchmarking platform for evaluating expression forecasting methods on diverse perturbation transcriptomics datasets. | |

| Prior Knowledge Databases [26] | Curated databases of human metabolic reactions and regulatory interactions used to constrain inference models. |

Gene Regulatory Network (GRN) reconstruction is a fundamental challenge in computational biology, essential for understanding cellular processes, disease mechanisms, and developmental biology. The core challenge in accurate GRN inference lies in distinguishing direct regulatory interactions from indirect correlations that arise from shared regulators or downstream effects. Indirect correlations can create numerous false positives in inferred networks, as standard correlation measures cannot differentiate whether gene A regulates gene B directly, or if both are co-regulated by a hidden factor C [32] [1].

Advances in machine learning have produced diverse methodological approaches to tackle this challenge, each with distinct theoretical foundations, data requirements, and performance characteristics. This guide provides a comparative analysis of these methodologies, evaluating their effectiveness in discriminating true causal regulatory relationships from spurious correlations through controlled benchmarks and experimental validation.

Methodological Approaches for Direct Network Inference

GRN inference methods employ different mathematical frameworks to address the problem of indirect effects. The table below summarizes major algorithmic categories and their mechanisms for identifying direct regulation:

Table 1: Methodological Approaches for Direct Network Inference

| Method Category | Core Mechanism | Key Strengths | Inherent Limitations |

|---|---|---|---|

| Regression-Based | Models gene expression as multivariate function of potential regulators | Captures multivariate effects; Provides directional inference | Struggles with highly correlated predictors |

| Information Theory | Uses mutual information to detect statistical dependencies | Detects non-linear relationships; Minimal assumptions | Cannot infer directionality without modifications |

| Time-Series Analysis | Leverages temporal precedence to infer causality | Naturally handles dynamics; Stronger causal inference | Requires dense time-course data |

| Network Deconvolution | Mathematically separates direct from indirect paths | Explicitly models indirect effects as network paths | Assumes linear propagation of effects |

| Deep Learning | Uses neural networks to learn complex regulatory patterns | Captures hierarchical and non-linear relationships | High computational cost; Limited interpretability |

Regression-Based Methods

Regression approaches address the multivariate nature of gene regulation by modeling each gene's expression as a function of all potential regulators simultaneously. Methods like Random LASSO (used in DiffGRN) and GENIE3 employ regularization techniques to produce sparse networks where only the most likely direct regulators maintain non-zero coefficients [33] [34]. The LASSO (Least Absolute Shrinkage and Selection Operator) penalty shrinks coefficients toward zero, effectively filtering out weak associations that may represent indirect effects.

Information-Theoretic Methods

Information-theoretic approaches like ARACNE and CLR use mutual information to detect statistical dependencies between genes. ARACNE implements the Data Processing Inequality principle to prune edges that likely represent indirect interactions, under the assumption that information weakens as it propagates through intermediary nodes [34]. These methods excel at detecting non-linear relationships but typically infer undirected networks without inherent directionality.

Time-Series Approaches

Time-lagged methods leverage the fundamental causal principle that causes must precede effects. The Time-lagged Ordered Lasso incorporates monotonicity constraints, assuming that regulatory influence decreases with increasing temporal distance [35]. This approach naturally handles the dynamics of gene regulation while reducing false positives from coincidental correlations.

Network Deconvolution

Network Deconvolution (ND) frames the challenge as a mathematical decomposition problem where the observed correlation network is represented as the sum of direct interactions and indirect effects [36]. By modeling indirect effects as products of direct interactions along network paths, ND can "deconvolve" the observed network to recover the underlying direct network. Time-delayed ND extends this approach by incorporating cross-correlation to identify probable time lags before applying deconvolution [36].

Deep Learning Architectures

Modern deep learning methods like GRN-VAE (Variational Autoencoder) and graph neural networks learn complex, non-linear regulatory relationships from large-scale omics data [34] [6]. These approaches can integrate multiple data modalities and capture hierarchical dependencies but require substantial computational resources and training data.

Performance Comparison Across Methodologies

Quantitative evaluation of GRN inference methods presents challenges due to the limited availability of completely known ground-truth networks. Performance assessments typically use benchmark networks from model organisms or simulation studies.

Table 2: Performance Comparison on Benchmark Datasets

| Method | Category | Sensitivity | Specificity | F-Score | Data Requirements |

|---|---|---|---|---|---|

| Time-delayed ND | Network Deconvolution | 0.79 | 0.85 | 0.82 | Time-series data |

| DiffGRN | Regression-Based | N/A | Outperformed DINGO | N/A | Bulk RNA-seq |

| Ordered Lasso | Time-Series | Accurate on DREAM challenges | N/A | N/A | Time-course data |

| GENIE3 | Ensemble Regression | Moderate accuracy | Moderate accuracy | Moderate accuracy | Bulk/single-cell |

| DeepSEM | Deep Learning | High with sufficient data | High with sufficient data | High with sufficient data | Large datasets |

In simulation studies, the DiffGRN framework demonstrated superior performance compared to correlation-based methods like DINGO, particularly in capturing multivariate effects and causal relationships [33]. Similarly, Time-delayed ND showed significantly higher sensitivity without sacrificing specificity compared to methods that ignore temporal dynamics [36].

Hybrid approaches that combine multiple methodologies have shown promising results. For example, models integrating convolutional neural networks with traditional machine learning achieved over 95% accuracy in holdout tests for Arabidopsis thaliana, poplar, and maize datasets [6].

Experimental Protocols for Method Validation

Differential Network Analysis with DiffGRN

The DiffGRN protocol implements a statistically rigorous framework for identifying differential regulatory interactions between conditions (e.g., disease vs. healthy) [33]:

Network Inference: For each condition, infer group-specific GRNs using Random LASSO, which performs two bootstrap aggregations to select stable regulatory relationships while handling high-dimensional data.

Significance Testing: Compute differential scores for each regulatory interaction using a specialized statistical test that accounts for the distribution of LASSO coefficients.

Multiple Testing Correction: Apply false discovery rate control to identify significantly differential interactions while maintaining family-wise error control.

This approach successfully identified clinically relevant differential regulations in asthma, including ADAM12 and RELB, which were corroborated by biological literature [33].

Time-Delayed Network Inference Protocol

Time-delayed GRN inference incorporates the natural dynamics of gene regulation through a two-stage process [36]:

Lag Identification: For each potential regulator-target pair, compute cross-correlation across multiple time lags to identify the lag that maximizes dependence.

Direct Interaction Testing: Apply Network Deconvolution to the time-aligned data to distinguish direct regulatory relationships from indirect correlations.

This protocol has been validated on experimentally determined yeast cell cycle networks, successfully reconstructing known interactions in the nine-gene cell cycle network and the five-gene IRMA network [36].

Semi-Supervised Network Refinement

For contexts with partial prior knowledge, semi-supervised approaches enhance de novo inference:

Prior Knowledge Integration: Embed known regulatory interactions from databases like KEGG or REACTOME as constraints in the inference algorithm.

Novel Interaction Discovery: Use regularized regression to identify additional interactions that explain expression patterns not captured by prior knowledge.

This approach has been successfully implemented with the Time-lagged Ordered Lasso, improving accuracy on benchmark datasets like the HeLa cell cycle data [35].

Research Reagent Solutions for GRN Inference

Successful implementation of GRN inference methods requires appropriate computational tools and data resources. The table below outlines essential research reagents for experimental studies:

Table 3: Essential Research Reagents for GRN Inference Studies

| Reagent / Resource | Type | Function in GRN Inference | Example Implementations |

|---|---|---|---|

| Bulk RNA-seq Data | Data Input | Provides transcriptome-wide expression measurements for correlation-based methods | GENIE3, ARACNE, CLR |

| Single-cell Multi-omics | Data Input | Enables cell-type specific network inference; Combines expression and chromatin accessibility | GRN-VAE, DeepMAPS |

| DREAM Challenge Networks | Benchmark | Provides gold-standard networks for method validation | Yeast cell cycle, IRMA network |

| MSigDB | Prior Knowledge | Curated gene sets for incorporating biological knowledge | GSEA, pathway-informed methods |

| GENIE3 | Algorithm | Random forest-based ensemble method for GRN inference | Python/R implementations |

| Time-lagged Ordered Lasso | Algorithm | Regularized regression with temporal constraints | R package (github.com/pn51/laggedOrderedLassoNetwork) |

| GRN-VAE | Algorithm | Variational autoencoder for single-cell GRN inference | https://github.com/HantaoShu/DeepSEM |

Integration of Prior Knowledge and Multi-Omic Data

Incorporating biological prior knowledge significantly enhances the accuracy of GRN inference. Methods that integrate pathway information from databases like KEGG and REACTOME demonstrate improved performance even when pathway knowledge is partially incomplete or inaccurate [32]. Similarly, combining multiple data modalities—such as paired scRNA-seq and scATAC-seq data—provides complementary evidence that helps distinguish direct regulatory relationships.

Transfer learning approaches leverage well-annotated model organisms to improve inference in less-characterized species. For example, models trained on Arabidopsis thaliana have successfully predicted regulatory relationships in poplar and maize, addressing the challenge of limited training data in non-model species [6].

Distinguishing direct regulation from indirect correlation remains the central challenge in GRN reconstruction, with no single method universally superior across all experimental contexts. Regression-based approaches like DiffGRN offer strong performance in capturing multivariate effects, while time-aware methods like Time-lagged Ordered Lasso provide more natural handling of regulatory dynamics. Network deconvolution approaches mathematically address the core challenge of indirect effects, and emerging deep learning methods show promise in capturing complex regulatory patterns.

The choice of methodology should be guided by data availability, biological context, and specific research objectives. For bulk transcriptomic data, regression-based methods often provide the best balance of performance and interpretability. When temporal data is available, time-lagged methods leverage crucial causal information. In single-cell multi-omic contexts, specialized deep learning architectures can exploit the full richness of modern sequencing data. Future methodological development will likely focus on hybrid approaches that combine the strengths of multiple paradigms while improving scalability and accessibility for diverse research applications.

A Landscape of ML Methods: From Correlation to Deep Architectures

Table of Contents

- Introduction

- Performance Comparison

- Experimental Protocols

- Methodology & Workflow Diagrams

- Research Reagent Solutions

Gene regulatory network (GRN) reconstruction is fundamental for understanding cellular mechanisms, disease pathogenesis, and drug development [37] [38]. Classical computational approaches for inferring regulatory relationships from gene expression data often rely on correlation, mutual information (MI), and regression models [39] [40]. These methods aim to elucidate the complex causal interactions between transcription factors (TFs) and their target genes. While newer methods leverage graph neural networks and large foundation models [37] [41], the classical approaches remain widely used due to their interpretability and well-understood statistical properties. This guide provides a comparative analysis of these foundational methods, focusing on their performance, optimal applications, and implementation protocols within GRN research.

Performance Comparison

The table below summarizes the key characteristics and comparative performance of correlation, mutual information, and regression-based models as established in empirical studies and benchmarks.

Table 1: Performance Comparison of Classical GRN Reconstruction Approaches

| Approach | Key Strengths | Key Limitations | Reported Accuracy/Performance | Optimal Use Case |

|---|---|---|---|---|

| Correlation (e.g., Biweight Midcorrelation) | Fast calculation; straightforward statistical testing; can distinguish positive/negative relationships; outperforms MI in gene ontology enrichment when coupled with topological overlap matrix (TOM) transformation [39]. | Primarily captures linear or monotonic relationships [39] [42]. | Superior to MI in elucidating gene pairwise relationships and leading to more significantly enriched co-expression modules [39]. | Standard co-expression analysis in stationary data; preferred over MI for linear/monotonic relationships [39]. |

| Mutual Information (MI) | Measures non-linear and non-monotonic statistical associations; information-theoretic interpretation [39] [42]. | Non-trivial to estimate for quantitative variables; computationally intensive permutation tests; can be inferior to correlation in practice [39]. | Often exhibits a close relationship with correlation, suggesting limited added value in many datasets [39]. Performance can be poor on specific non-linear relationships (e.g., perfect for quadratic, but worse on others) [43]. | Detecting complex, non-linear relationships where correlation fails; requires careful validation [39] [43]. |

| Regression Models (e.g., Linear Regression, Dynamic Bayesian Networks) | Explicit model of relationship; ability to include covariates; statistical inference on parameters; can model causality in time-series data [39] [40]. | Model misspecification risk; may require significant data for robust parameter estimation [40]. | Linear Gaussian dynamic Bayesian networks and variable selection based on F-statistics identified as suitable methods from time-series data [40]. | Time-series expression data to identify causal relations; incorporating prior knowledge [40]. |

| Polynomial/Spline Regression | Attractive alternative to MI for capturing non-linear relationships between quantitative variables [39]. | Can be computationally intensive. | Proposed as a powerful alternative that can safely replace MI networks [39]. | Capturing predefined non-linear relationships more effectively than linear models or MI [39]. |

Table 2: Data Requirements and Experimental Design Impact

| Factor | Impact on Reconstruction | Recommendation |

|---|---|---|

| Data Type (Time-Series vs. Static) | Time-series data enables identification of causal relations without active perturbation [40]. | Use time-series data for causal inference [40]. |

| Perturbation Type (Knock-Outs) | Gene knock-out experiments are optimal for revealing underlying network structure [40]. | Prioritize TF knock-out time series experiments [40]. |

| Data Size & Noise | High dimensionality, few replicates, and observational noise (20-30% in microarrays) limit reconstruction accuracy [40]. | Ensure sufficient data size relative to noise levels [40]. |

| Prior Knowledge | Incorporation of prior knowledge (e.g., from ChIP experiments) can improve predictions, especially with small expression data sets [40]. | Integrate prior knowledge in a Bayesian learning framework when data is limited [40]. |

| Hidden Variables (e.g., TF activity) | Unobserved processes (e.g., protein-protein interactions) induce dependencies indistinguishable from direct transcriptional regulation based on gene expression alone [40]. | Be cautious in interpretation; use additional data modalities to constrain models [40]. |

Experimental Protocols

1. Protocol for Correlation-Based Network Reconstruction (e.g., WGCNA) This protocol is adapted from methods used in large-scale comparative studies [39].

- Input Data: A gene expression matrix (genes as rows, samples as columns). Data should be pre-processed and normalized.

- Association Measure Calculation: Compute a pairwise correlation matrix for all genes. The biweight midcorrelation (bicor) is recommended as a robust measure [39].

- Network Construction (Adjacency): Transform the correlation matrix into an adjacency matrix. For a signed network, use: ( A{ij} = \left( \frac{1 + cor(xi, x_j)}{2} \right)^\beta ) where ( \beta ) is a soft-thresholding power that emphasizes stronger correlations [39].

- Topological Overlap Matrix (TOM) Transformation: Calculate the TOM to transform the adjacency matrix. This step considers not only direct connections between two genes but also their shared neighbors, leading to more robust modules [39].

- Module Detection: Use hierarchical clustering on the TOM-based dissimilarity matrix to identify modules (clusters) of co-expressed genes.

- Validation: Assess the biological significance of modules via enrichment analysis of gene ontology (GO) terms [39].

2. Protocol for Mutual Information-Based Network Reconstruction (e.g., ARACNE) This protocol outlines the core steps for MI-based inference [39].

- Input Data: A gene expression matrix. For discrete MI, data may need to be discretized (a non-trivial step that can impact results).

- MI Estimation: For each pair of genes, estimate the mutual information. For discrete data, use: ( MI(dx, dy) = \sum{r=1}^{Rx} \sum{c=1}^{Ry} p(l{dx}^r, l{dy}^c) \log \frac{p(l{dx}^r, l{dy}^c)}{p(l{dx}^r)p(l{dy}^c)} ) where ( p ) represents the frequency of discrete levels [39]. For continuous data, use methods like kernel density estimation.

- Network Construction (Adjacency): The MI matrix can be used directly as an adjacency matrix or transformed into [0,1] range. A common subsequent step is the Data Processing Inequality (DPI) to prune indirect interactions.

- Validation: Compare the resulting network topology and inferred relationships to known regulatory interactions or functional enrichment.

3. Protocol for Regression-Based Network Reconstruction (e.g., Inferelator) This protocol is based on regression with regularization used for GRN reconstruction from diverse data types [38] [40].

- Input Data: Gene expression data (time-series or static) and prior information on potential regulators (e.g., list of TFs).

- Regulator Selection: For each target gene, select a set of potential transcriptional regulators from the prior information.

- Model Fitting: Solve a regression problem for each target gene ( i ): ( \frac{d yi}{d t} = \beta0 + \sumj \betaj yj ) where ( yj ) are the expression levels of potential regulators. Use regularization (e.g., LASSO, ridge regression) to avoid overfitting and promote sparse solutions, which is crucial given the high dimensionality [38] [40].

- Network Output: The non-zero ( \beta_j ) coefficients define the regulatory interactions in the network, with the sign indicating activation or repression.

- Validation: Benchmark the accuracy of the inferred network against a gold standard, using metrics like area under the ROC curve [40].

Methodology & Workflow Diagrams

Research Reagent Solutions

The table below details key computational tools and data resources essential for implementing the classical GRN reconstruction approaches discussed.

Table 3: Key Research Reagents and Computational Tools

| Reagent / Tool | Type | Primary Function in GRN Research | Relevant Classical Approach |

|---|---|---|---|

| WGCNA (Weighted Gene Co-expression Network Analysis) | R Software Package | Provides a comprehensive framework for constructing correlation-based co-expression networks, including TOM transformation and module detection [39]. | Correlation |

| ARACNE (Algorithm for the Reconstruction of Accurate Cellular Networks) | Software Tool | Uses mutual information and the Data Processing Inequality (DPI) to reconstruct gene regulatory networks [39]. | Mutual Information |

| Inferelator | Computational Framework | Uses regression with regularization to infer regulatory relationships from gene expression data (time-series and static) and prior information [38]. | Regression Models |

| scRNA-seq Data | Experimental Data | Single-cell RNA sequencing data providing gene expression measurements at the resolution of individual cells. The high resolution enables the discovery of cell-type-specific networks [37] [38]. | All Approaches |

| Prior Knowledge Networks (e.g., from ChIP-seq) | Data Resource | Experimentally derived information on transcription factor binding sites or known interactions. Used to constrain and improve computational predictions [40]. | All Approaches (especially Regression) |

| Gene Knock-Out (KO) Perturbation Data | Experimental Data | Gene expression data from experiments where specific genes (especially TFs) have been knocked out. Considered an optimal experiment for revealing network structure [40]. | All Approaches |

Gene Regulatory Network (GRN) reconstruction is a fundamental challenge in computational biology, aiming to unravel the complex interactions where genes and their products regulate the expression of other genes [44] [34]. These networks are crucial for understanding cellular functions, organism development, and the molecular basis of diseases [8]. Among the diverse computational approaches developed, probabilistic models (specifically Bayesian Networks) and dynamical systems models (often based on Differential Equations) represent two powerful but philosophically distinct paradigms [1]. Bayesian Networks model GRNs as directed graphs where edges represent probabilistic dependencies, inferring the most likely network structure that explains observed gene expression data [44] [45]. In contrast, Differential Equation models formulate GRNs as systems of equations that describe the continuous dynamics of gene expression changes over time, capturing the kinetic parameters of regulatory interactions [1]. This guide provides a comparative analysis of these approaches, examining their theoretical foundations, performance characteristics, and practical implementation requirements to assist researchers in selecting appropriate methodologies for specific research contexts.

Theoretical Foundations and Methodologies

Bayesian Network Models