Learning Classifier Systems: An Interpretable AI Revolution for Drug Discovery and Biomedical Research

This article provides a comprehensive overview of Learning Classifier Systems (LCS), a powerful class of evolutionary rule-based machine learning algorithms.

Learning Classifier Systems: An Interpretable AI Revolution for Drug Discovery and Biomedical Research

Abstract

This article provides a comprehensive overview of Learning Classifier Systems (LCS), a powerful class of evolutionary rule-based machine learning algorithms. Tailored for researchers, scientists, and drug development professionals, it explores the core principles of LCS, contrasting Michigan and Pittsburgh architectures, and their unique synergy of rule-based systems, reinforcement learning, and evolutionary computation. The scope extends to practical methodologies, real-world applications in bioinformatics and clinical data mining, strategies for troubleshooting and optimization, and a comparative analysis with traditional machine learning models. By demystifying how LCS generates human-interpretable rules for complex problems like detecting epistasis and genetic heterogeneity, this article positions LCS as a cornerstone for explainable AI in the future of biomedicine.

What Are Learning Classifier Systems? Core Principles and Architectural Styles

Learning Classifier Systems (LCS) represent a paradigm of rule-based machine learning methods that integrate a discovery component, typically a genetic algorithm from evolutionary computation, with a learning component capable of performing supervised, reinforcement, or unsupervised learning [1]. This hybrid architecture allows LCS to identify sets of context-dependent rules that collectively store and apply knowledge in a piecewise manner to solve complex problems across diverse domains including behavior modeling, classification, data mining, regression, function approximation, and game strategy [1]. The founding principles behind LCS originated from early attempts to model complex adaptive systems using rule-based agents to form artificial cognitive systems, establishing LCS as a significant branch of artificial intelligence research with particular relevance for applications requiring interpretable models, such as biomedical research and drug development [2] [3].

The LCS framework distinguishes itself through its unique combination of three powerful computational techniques: the expressiveness of rule-based systems, the adaptive capability of machine learning, and the global optimization power of evolutionary computation [4]. This integration enables LCS algorithms to distribute learned patterns over a collaborative population of individually interpretable IF-THEN rules, allowing them to flexibly describe complex and diverse problem spaces while maintaining human-understandable solutions [3]. For researchers and professionals in drug development, this transparency is particularly valuable in high-stakes decision-making processes where understanding the rationale behind predictions is as crucial as the predictions themselves.

Core Architectural Components

Fundamental Elements of LCS Architecture

The architecture of a Learning Classifier System comprises several interacting components that can be modified or exchanged to suit specific problem domains [1]. At its core, every LCS operates through the coordinated functioning of these essential elements:

Rule Population: The foundation of any LCS is a population of classifiers, where each classifier consists of a condition (IF part) and an action (THEN part) [1]. These rules typically employ a ternary representation (using 0, 1, or #'don't care' symbols) for binary data, allowing the system to generalize relationships between features and target endpoints [1]. The 'don't care' symbol serves as a wild card, enabling rules to match multiple environmental states and facilitating efficient generalization.

Performance Component: This element is responsible for processing incoming environmental information, matching relevant classifiers from the population based on their conditions, and forming a match set [M] containing all classifiers whose conditions are satisfied by the current input [1]. The performance component then selects an action based on the predictions of the matching classifiers, executing it in the environment.

Credit Assignment Component: A critical challenge in any rule-based system is determining which rules deserve credit for successful outcomes. LCS addresses this through reinforcement learning techniques that distribute rewards to classifiers based on their contributions to system performance [4]. In supervised learning implementations, parameter updates reflect the accuracy of each classifier's prediction relative to the known outcome [1].

Rule Discovery Component: To innovate new rules and explore the search space of possible solutions, LCS employs evolutionary computation methods, typically genetic algorithms [1]. This component selects parent classifiers based on fitness, applies genetic operators (crossover and mutation) to create offspring rules, and introduces these new candidate solutions into the population [4].

Michigan vs. Pittsburgh Approaches

LCS implementations primarily follow one of two architectural styles, each with distinct characteristics and advantages:

Table: Comparison of Michigan and Pittsburgh LCS Approaches

| Feature | Michigan-Style | Pittsburgh-Style |

|---|---|---|

| Learning Approach | Incremental learning | Batch learning |

| Population Entity | Individual rules | Sets of rules |

| Evaluation Unit | Single rules | Complete rule sets |

| Genetic Algorithm | Operates on single rules | Operates on rule sets |

| Fitness Assignment | To individual rules | To complete rule sets |

| Primary Application | Online learning | Offline learning |

Michigan-style systems, such as the well-known XCS algorithm, employ an incremental learning approach where each rule has its own fitness parameters, and the genetic algorithm operates on individual rules within the population [1]. These systems start with an empty population and use a covering mechanism to introduce new rules as needed when no existing rules match current environmental inputs [1]. This approach is particularly effective for online learning scenarios where data arrives sequentially.

In contrast, Pittsburgh-style systems maintain a population of complete rule sets rather than individual rules, applying batch learning where each rule set is evaluated over much or all of the training data in each iteration [1]. These systems evolve complete solutions through genetic operations on rule sets, making them particularly suitable for offline learning problems where comprehensive model evaluation is feasible.

The LCS Algorithm: A Step-by-Step Workflow

Detailed Operational Cycle

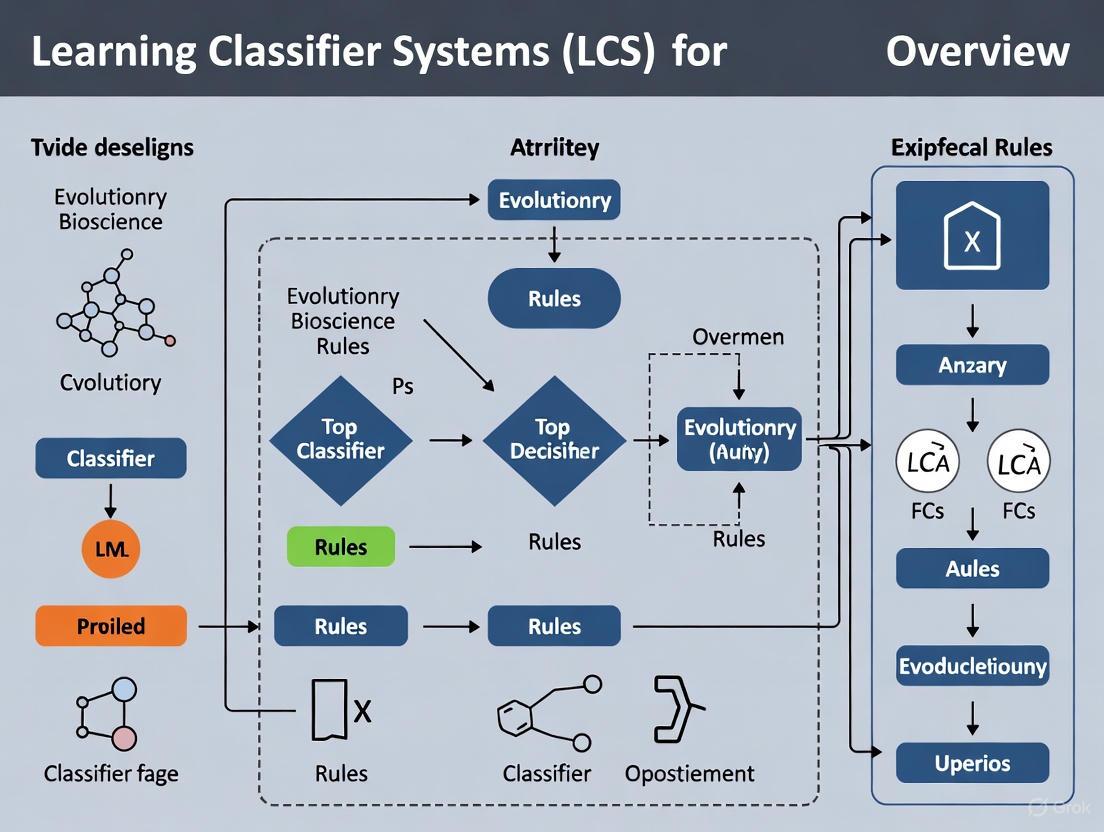

The learning process in a Michigan-style LCS follows a systematic, iterative cycle that integrates machine learning with evolutionary computation. The following diagram illustrates the complete workflow of a generic Learning Classifier System:

The LCS algorithm operates through the following detailed sequence:

- Environment Interaction: The cycle begins when the system receives a training instance from the environment, consisting of feature values (state) and a target endpoint (e.g., class or dependent variable) [1].

- Match Set Formation: The system compares the current state against all rules in the population [P], selecting those whose conditions match the current input into match set [M]. A rule matches when all specified feature values in its condition equal corresponding values in the training instance [1].

- Covering Check: If [M] is empty, the covering mechanism creates a new rule that matches the current instance and proposes the correct action (in supervised learning). This ensures at least one relevant rule exists for every encountered situation [1].

- Correct Set Formation: For supervised learning, [M] is divided into correct set [C] (rules proposing the right action) and incorrect set [I] (rules with wrong predictions) [1].

- Parameter Updates: The system updates rule parameters, primarily accuracy and fitness, based on performance. Rule accuracy is calculated as the proportion of correct predictions out of all matches, representing a "local accuracy" measure [1].

- Subsumption: An explicit generalization mechanism merges classifiers covering redundant problem spaces. A more general classifier can subsume a more specific one if it's equally accurate and covers all situations of the subsumed rule [1].

- Rule Discovery: A genetic algorithm selects parent classifiers from [C] based on fitness, applies crossover and mutation to create offspring, and inserts them into the population [1].

- Population Management: The system deletes classifiers to maintain population size limits, with selection probability inversely proportional to fitness [1].

Key Mechanisms in Detail

Credit Assignment through Reinforcement Learning: In reinforcement learning scenarios, LCS employs algorithms like the bucket brigade or Q-learning to distribute credit across sequences of rules that lead to rewards, solving the temporal credit assignment problem [4]. The bucket brigade algorithm creates a market economy where rules bid for the right to act and pay each other for privileges, while successful rules eventually receive external rewards.

Genetic Algorithm for Rule Discovery: The genetic algorithm in LCS typically uses tournament selection for parent choice, followed by crossover and mutation operators tailored to the rule representation [1]. This approach enables the system to explore new rule combinations while exploiting previously successful building blocks.

Generalization Mechanisms: Beyond subsumption, LCS promotes generalization through the 'don't care' symbol (#) in rule conditions and fitness sharing mechanisms that prevent over-specialized rules from dominating the population [1]. These techniques allow the system to develop compact, general rules that cover broad problem areas.

Experimental Evaluation and Performance Metrics

Quantitative Performance Analysis

Rigorous evaluation of LCS algorithms involves multiple performance dimensions, including predictive accuracy, rule set comprehensibility, and risk estimation capability. The following table summarizes quantitative findings from comparative studies between LCS and other machine learning approaches:

Table: Experimental Performance Comparison of LCS vs. Other Algorithms

| Algorithm | Classification Accuracy | Risk Estimation Accuracy | Rule Parsimony | Hypothesis Generation Utility |

|---|---|---|---|---|

| LCS (EpiCS) | Significantly lower than C4.5 (P<0.05) [5] | Significantly more accurate than Logistic Regression (P<0.05) [5] | Less parsimonious than C4.5 [5] | Potentially more useful for hypothesis generation [5] |

| C4.5 | Superior to EpiCS (P<0.05) [5] | Not evaluated in study | More parsimonious than EpiCS [5] | Less useful for hypothesis generation [5] |

| Logistic Regression | Not primary focus in study | Less accurate than EpiCS (P<0.05) [5] | Not applicable | Limited utility for hypothesis generation |

The experimental data reveals a crucial insight: while LCS may not always achieve the highest classification accuracy compared to specialized algorithms like C4.5, it excels in risk estimation accuracy and provides unique advantages for knowledge discovery and hypothesis generation [5]. This performance profile makes LCS particularly valuable for domains like biomedical research and drug development, where understanding complex relationships and estimating risks precisely is often more important than simple classification accuracy.

Experimental Methodology and Protocols

To ensure reproducible evaluation of LCS algorithms, researchers follow standardized experimental protocols:

Data Preparation and Partitioning: Studies typically employ k-fold cross-validation (commonly 10-fold) with stratified sampling to maintain class distribution across folds. For the EpiCS evaluation in epidemiologic surveillance, data from a large national child automobile passenger protection program was utilized [5].

Parameter Configuration: LCS algorithms require careful parameter tuning, including population size (typically ranging from hundreds to thousands of classifiers), learning rates (usually small values for gradual updates), and genetic algorithm parameters (crossover rate, mutation rate, tournament size) [4].

Performance Assessment: Comprehensive evaluation includes multiple metrics: classification accuracy (proportion of correct predictions), area under ROC curve for risk estimation, rule set complexity (number of rules and conditions), and computational efficiency (training time and memory usage) [5].

Statistical Validation: Studies employ appropriate statistical tests (e.g., t-tests for accuracy comparisons) to determine significance of performance differences, with P<0.05 typically considered statistically significant [5].

Advanced Research Directions and Hybrid Approaches

Integration with Modern AI Paradigms

Current LCS research explores innovative integrations with contemporary artificial intelligence approaches:

Explainable AI (XAI): Evolutionary Rule-based Machine Learning (ERL), including LCS, inherently provides interpretable decisions through human-readable rules, making it naturally aligned with XAI objectives [2]. This characteristic has garnered significant attention as the machine learning community increasingly prioritizes model transparency.

Large Language Models (LLMs): Emerging research investigates hybridization between LLMs and evolutionary computation, exploring how LLMs can generate rules for EC, provide natural language explanations, and enhance interpretability [2] [6]. These approaches potentially combine the pattern recognition power of LLMs with the transparent reasoning of LCS.

Fuzzy Rule-Based Systems: Recent extensions incorporate fuzzy logic into LCS, creating Learning Fuzzy-Classifier Systems (LFCS) that handle uncertainty and vague data more effectively while maintaining interpretability [2].

The Researcher's Toolkit: Essential Components for LCS Implementation

Successful implementation of Learning Classifier Systems requires specific computational components and methodological approaches:

Table: Essential Research Reagents for LCS Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Rule Representation | Encodes condition-action relationships | Ternary representation (0,1,#), real-valued intervals, fuzzy predicates [1] |

| Matching Algorithm | Identifies rules relevant to current input | Ternary matching, efficient set formation [1] |

| Credit Assignment | Distributes reinforcement to rules | Q-learning, bucket brigade, accuracy-based updates [1] [4] |

| Rule Discovery | Generates new candidate rules | Genetic algorithm with tournament selection, crossover, mutation [1] |

| Generalization Mechanism | Promotes broad, applicable rules | Subsumption, specificity-based fitness, don't care propagation [1] |

| Population Management | Maintains diverse, compact rule sets | Roulette wheel deletion, niche-based preservation [1] |

Applications in Biomedical and Healthcare Domains

The unique characteristics of Learning Classifier Systems make them particularly suitable for healthcare and biomedical applications where interpretability and risk estimation are crucial:

Epidemiologic Surveillance: As demonstrated by EpiCS, LCS can effectively analyze large-scale public health data to identify risk patterns and generate hypotheses about disease factors [5]. The accurate risk estimation capability of LCS supports evidence-based public health decision-making.

Integrated Disease Risk Assessment: Research initiatives like Project CLEAR (Cardiovascular disease risk and Lung cancer screening for Early Assessment of Risk) highlight the growing recognition that patients undergoing screening for one condition (e.g., lung cancer) often face elevated risks for other conditions (e.g., atherosclerotic cardiovascular disease) [7]. LCS can potentially model these complex risk interactions through interpretable rules.

Clinical Decision Support: The transparent rule structures generated by LCS facilitate implementation in clinical settings where understanding the rationale behind recommendations is essential for physician adoption [2]. This contrasts with black-box models that may offer higher accuracy but limited explainability.

Biomedical Data Mining: In bioinformatics and personalized medicine applications, LCS can identify complex biomarker interactions and genotype-phenotype relationships while providing human-interpretable patterns that support scientific discovery [2] [3].

The future development of LCS algorithms continues to enhance their applicability to biomedical challenges, with ongoing research focusing on handling high-dimensional data, incorporating domain knowledge, and improving scalability while maintaining the interpretability advantages that distinguish this unique machine learning paradigm.

Learning Classifier Systems (LCSs) represent a paradigm of rule-based machine learning methods that combine a discovery component, typically a genetic algorithm, with a learning component performing supervised, reinforcement, or unsupervised learning [1]. Since their inception, research has diverged into two distinct architectural philosophies: Michigan-style and Pittsburgh-style LCSs [8] [9]. This divergence represents a fundamental split in how these systems represent, evolve, and evaluate potential solutions to machine learning problems. Understanding the characteristics, advantages, and limitations of each architecture is crucial for researchers and practitioners, particularly in complex domains like drug development where interpretability, accuracy, and the ability to model heterogeneous biological relationships are paramount.

This technical guide provides an in-depth examination of both architectures, detailing their operational methodologies, performance characteristics, and implementation considerations. By framing this comparison within the context of LCS overview research, we aim to provide scientists with the necessary foundation to select and implement the appropriate architecture for their specific research challenges.

Architectural Fundamentals

Core Conceptual Differences

The Michigan-style LCS is characterized by a population of individual rules, where the genetic algorithm operates within or between these rules, and the evolved solution is represented by the entire rule population [8] [9]. In this approach, often described as a "collaborative" system, each rule is a potential part of the overall solution, and the system learns by gradually improving these individual components through competitive selection and genetic operations. The population is typically initialized empty, with rules introduced incrementally via a covering mechanism that generates rules matching current training instances [1].

In contrast, the Pittsburgh-style LCS maintains a population of rule-sets, where each individual in the population represents a complete candidate solution to the learning problem [10] [9]. The genetic algorithm operates between these complete rule-sets, evaluating and evolving entire solutions rather than their constituent parts. This architecture aligns more closely with traditional genetic algorithms, where each individual is a self-contained solution competing based on its global performance.

Table: Fundamental Architectural Differences

| Characteristic | Michigan-Style | Pittsburgh-Style |

|---|---|---|

| Solution Representation | Population of individual rules | Population of complete rule-sets |

| Genetic Algorithm Operation | Within/between individual rules | Between complete rule-sets |

| Primary Learning Focus | Cooperating rule discovery | Competitive solution optimization |

| Population Initialization | Typically empty, uses covering | Pre-initialized with complete solutions |

| Solution Interpretation | Entire population forms the model | Individual rule-sets form potential models |

| Typical Learning Mode | Incremental (online) | Batch (offline) |

System Workflows

The following diagrams illustrate the fundamental operational workflows for both Michigan-style and Pittsburgh-style LCS architectures, highlighting their distinct approaches to rule management and evolution.

Michigan-Style LCS Workflow

Pittsburgh-Style LCS Workflow

Michigan-Style LCS: Deep Dive

Algorithmic Components and Processes

Michigan-style systems employ sophisticated mechanisms for rule management and evaluation. The matching process is particularly critical, where every rule in the population [P] is compared to the current training instance to identify contextually relevant rules, which are moved to a match set [M] [1]. In supervised learning, [M] is subsequently divided into correct set [C] (rules proposing the correct action) and incorrect set [I] (rules proposing incorrect actions) [1]. The system employs a covering mechanism that randomly generates rules matching the current training instance when no existing rules match, ensuring the system can adapt to new patterns in the data [1].

Credit assignment occurs through parameter updates to rules in [M], where rule accuracy is calculated as the number of times the rule was correct divided by the number of times it matched any instance [1]. Rule fitness is typically calculated as a function of this accuracy. Subsumption serves as an explicit generalization mechanism that merges classifiers covering redundant problem spaces when one classifier is more general, equally accurate, and covers all the problem space of another [1]. The rule discovery mechanism employs a highly elitist genetic algorithm that selects parent classifiers based on fitness (typically from [C]), applies crossover and mutation to generate offspring, and maintains population size through a deletion mechanism that selects classifiers for removal inversely proportional to fitness [1].

Knowledge Discovery and Rule Interpretation

A significant challenge in Michigan-style systems is knowledge discovery from the potentially large population of rules. Traditional approaches involve sorting rules by metrics like numerosity (number of rule copies in the population) and manually inspecting those with highest values to identify key solution components [9]. However, in complex, noisy domains, this approach has limitations. As noted in research, "Without prior knowledge of the problem complexity or structure, achieving the ideal balance between accuracy and generalization may be impractical or even impossible" [9].

Advanced strategies include rule compaction or condensation to reduce population size, and clustering-based approaches where rules are grouped by similarity and aggregate rules are generated representing common cluster characteristics [9]. More recent approaches shift focus from individual rule inspection to a global, population-wide perspective, combining visualizations with statistical evaluation to identify predictive attributes and reliable rule generalizations, particularly in noisy domains like genetic association studies [9].

Pittsburgh-Style LCS: Deep Dive

Algorithmic Framework and Search Methodologies

Pittsburgh-style LCSs employ fundamentally different search dynamics, treating each rule-set as an individual in an evolutionary algorithm. These systems face the challenge that "standard crossover operators in GAs do not guarantee an effective evolutionary search in many sophisticated problems that contain strong interactions between features" [10]. This limitation has driven research into advanced recombination strategies.

Recent innovations include integrating Estimation of Distribution Algorithms (EDAs) like the Bayesian Optimization Algorithm (BOA) to improve rule structure exploration effectiveness and efficiency [10]. In this approach, classifiers are generated and recombined at two levels: at the lower level, single rules are produced by sampling Bayesian networks characterizing global statistical information from promising rules; at the higher level, classifiers are recombined by rule-wise uniform crossover operators that preserve rule semantics within each classifier [10].

This hybrid approach enables more effective identification of building blocks (BBs) - low order highly-fit schemata contained in the global optimum. Traditional GA crossover operators can frequently disrupt important feature combinations in problems with strong interactions between BBs, giving poor performance [10]. The EDA approach explicitly models and preserves these important structures.

Performance and Scalability Considerations

Pittsburgh-style systems typically evolve more compact solutions compared to Michigan-style approaches, which facilitates knowledge discovery and interpretability [9]. However, studies have indicated that Pittsburgh-style systems may struggle to reliably learn precisely generalized rules, potentially indicating over-fitting tendencies [9].

Computational performance remains a challenge for Pittsburgh-style systems. As noted in recent research, "Similar gains have been more difficult to achieve in the Pittsburgh-style approach, where each individual represents a complete rule set and is evaluated holistically. The structural mismatch between symbolic rules and GPU-optimized numerical formats is more severe, making it difficult to parallelize the evaluations" [11]. This has limited the scalability of Pittsburgh-style systems compared to their Michigan-style counterparts, though recent tensor-based representation approaches show promise for addressing these limitations [11].

Comparative Analysis and Performance

Quantitative Performance Comparison

Table: Performance Characteristics Across Problem Domains

| Performance Metric | Michigan-Style | Pittsburgh-Style | Contextual Notes |

|---|---|---|---|

| Solution Compactness | Larger rule populations | Smaller, more compact rule-sets | Pittsburgh-style explicitly optimizes rule-set size |

| Optimal Generalization | Can over-fit at rule level | Struggles with precise rule generalization | Balancing accuracy/generality challenging in noise |

| Knowledge Discovery | Requires population-wide analysis | Direct rule-set inspection possible | Pittsburgh-style more immediately interpretable |

| Computational Efficiency | More amenable to parallelization | Holistic evaluation limits parallelization | GPU acceleration more challenging for Pittsburgh |

| Heterogeneity Handling | Excellent through distributed solution | Requires explicit representation | Michigan-style naturally models heterogeneous spaces |

| Convergence Speed | Faster initial learning | May require more generations | Michigan-style incremental learning advantage |

Implementation Considerations for Scientific Research

When implementing LCS architectures for research applications, particularly in domains like drug development, several practical considerations emerge. The choice between Michigan and Pittsburgh approaches should be guided by research goals: Michigan-style systems are preferable for exploring complex, heterogeneous problem spaces where the complete underlying model is unknown, while Pittsburgh-style systems may be better when seeking compact, interpretable models for well-defined subproblems [9].

For bioinformatics applications such as genetic association studies, both architectures have demonstrated capabilities in detecting epistasis (interaction between attributes) and heterogeneity (independent predictors of the same phenotype) [9]. However, their differing approaches lead to distinct analytical strategies: Michigan-style systems require population-wide analysis to identify reliable patterns, while Pittsburgh-style systems enable direct inspection of evolved rule-sets [9].

Recent advancements in computational approaches are addressing scalability limitations for both architectures. For Michigan-style systems, GPU acceleration has shown promising results by parallelizing rule evaluation and evolution processes [11]. For Pittsburgh-style systems, tensor-based rule representations in frameworks like PyTorch enable more efficient evaluation and even gradient-based optimization of rule coefficients while maintaining logical structures [11].

Experimental Protocols and Methodologies

Standardized Evaluation Frameworks

Experimental evaluation of LCS algorithms typically employs both artificial and real-world binary classification problems. Artificial problems enable controlled assessment of specific capabilities, while real-world datasets validate practical utility [10].

Common artificial problems include:

- Multiplexer Problems: A standard benchmark in LCS research with a Boolean function of length k + 2^k [10]

- Count Ones Problems: Tests the ability to count specific attributes or patterns [10]

- Parity Problems: Challenges the system to identify odd/even relationships [10]

- Ladder Problems: Examines performance on structured, hierarchical dependencies [10]

Real-world validation typically employs benchmark datasets from repositories like the UCI Machine Learning Repository, with specific adaptations for domain-specific applications [10] [11]. In bioinformatics, specialized datasets simulating genetic associations with embedded epistasis and heterogeneity provide targeted evaluation of capabilities relevant to complex disease modeling [9].

Research Reagent Solutions

Table: Essential Components for LCS Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Rule Representation | Encodes condition-action relationships | Ternary representation (0,1,#) for binary data; real-valued representations for continuous data |

| Fitness Metric | Guides evolutionary search | Accuracy-based; strength-based; multi-objective combinations |

| Genetic Operators | Generates new rule candidates | Crossover (single/multi-point); mutation (bit-flip, condition modification) |

| Selection Mechanism | Chooses parents for reproduction | Tournament selection; fitness-proportionate selection; elitist selection |

| Covering Mechanism | Introduces new rules matching current context | Random rule generation constrained to match current instance |

| Subsumption Mechanism | Reduces redundancy through rule merging | Generalization-based absorption of more specific rules |

| Population Management | Maintains computational efficiency | Roulette wheel deletion; crowding mechanisms; niche formation |

The Michigan and Pittsburgh architectures represent complementary approaches to rule-based evolutionary machine learning, each with distinct strengths and limitations. Michigan-style systems excel in modeling complex, heterogeneous problem spaces through distributed knowledge representation and incremental learning, making them suitable for exploratory analysis in domains like complex disease genetics. Pittsburgh-style systems offer more immediately interpretable solutions through compact rule-sets and may demonstrate advantages in solution optimization for well-structured problems.

Future research directions include hybrid approaches that leverage the strengths of both architectures, improved scalability through advanced computational techniques like GPU acceleration and tensor-based representations, and enhanced knowledge discovery methodologies that enable more reliable pattern identification in noisy, high-dimensional data. As these advancements mature, LCS architectures will continue to provide valuable tools for researchers and drug development professionals tackling increasingly complex biological systems.

Learning Classifier Systems (LCS) represent a unique paradigm of rule-based machine learning that combines a discovery component, typically a genetic algorithm, with a learning component capable of supervised, reinforcement, or unsupervised learning [1]. This whitepaper provides an in-depth technical examination of the four core components that constitute an LCS: the rule system, population mechanism, matching process, and the genetic algorithm for rule discovery. These components work in concert to identify sets of context-dependent rules that collectively store and apply knowledge in a piecewise manner, enabling LCS to solve complex prediction problems including classification, regression, and behavior modeling [1]. Understanding these core elements is essential for researchers aiming to apply LCS to challenging domains such as bioinformatics and drug development, where their ability to model complex, nonlinear relationships offers significant advantages.

The Core Components

Rules/Classifiers

In an LCS, a rule (often referred to as a classifier when associated with its parameters) forms the fundamental building block of knowledge representation. Rules typically take the form of an {IF condition THEN action} expression, representing a context-dependent relationship between observed state values and a prediction [1]. Unlike complete models in other machine learning approaches, an individual LCS rule functions as a "local-model" that is only applicable when its specific condition is satisfied.

The condition portion of a rule is commonly represented using a ternary system (0, 1, #) for binary data, where the 'don't care' symbol (#) serves as a wild card, allowing rules to generalize across feature spaces [1]. For example, the rule (#1###0 ~ 1) would match any instance where the second feature equals 1 AND the sixth feature equals 0, regardless of other feature values, and would then predict class 1.

Each classifier maintains a set of parameters that track its experience and effectiveness, creating a comprehensive profile that guides the system's evolutionary process. The table below summarizes these key parameters.

Table: Key Parameters Associated with a Classifier

| Parameter | Description |

|---|---|

| Condition | The context in which the rule is applicable (e.g., ternary string) [1]. |

| Action | The prediction or behavior the rule recommends [1]. |

| Fitness | A measure of the rule's usefulness, often based on accuracy [1]. |

| Numerosity | The number of copies of this rule that exist in the population [1]. |

| Accuracy/Error | The rule's local accuracy, calculated only over instances it matches [1]. |

| Age | Tracks how long the rule has existed in the population [1]. |

Population

The population [P] is the container that holds the complete set of classifiers (rules with their parameters) throughout the learning process [1]. In the common Michigan-style LCS architecture, the population has a user-defined maximum size and starts empty—unlike many evolutionary algorithms that require random initialization [1].

The population is dynamic, with new classifiers introduced via a covering mechanism and a genetic algorithm, while poorly performing classifiers are systematically removed to maintain the population size limit [1]. The entire trained population collectively forms the final prediction model, representing a diverse set of coordinated local patterns rather than a single global solution [1].

Matching

The matching process is a critical, often computationally intensive component of the LCS learning cycle where the system identifies which rules in the population are contextually relevant to a given training instance [1]. The process follows these steps:

- Input: A single training instance is drawn from the environment [1].

- Comparison: Every classifier in the population

[P]is compared to the instance [1]. - Match Set Formation: Rules whose conditions are satisfied by the instance are moved to the match set

[M]. A rule matches if all specified feature values (0 or 1, not #) in its condition equal the corresponding feature values in the training instance [1]. - Correct/Action Set Formation: For supervised learning,

[M]is divided into a correct set[C](rules proposing the correct action) and an incorrect set[I](rules proposing incorrect actions). In reinforcement learning, an action set[A]is formed instead [1].

If the match set is empty, a covering mechanism generates a new rule that matches the current instance, ensuring the system can explore all relevant parts of the problem space [1].

The Genetic Algorithm

The Genetic Algorithm (GA) serves as the primary rule discovery mechanism in LCS, introducing innovation and maintaining diversity within the population [1] [12]. Operating as a highly elitist component, the GA typically selects parent classifiers from the correct set [C] based on fitness, favoring rules that have demonstrated high accuracy.

The genetic algorithm employs selection, crossover, and mutation operators to generate new offspring rules from parent classifiers [1]. This GA is applied in a niche-specific manner, meaning it operates on rules that match similar environmental contexts, which helps to preserve useful specialized rules [1]. Following rule discovery, a deletion mechanism maintains the population size by removing classifiers, with probability inversely proportional to fitness, ensuring the system retains its most effective rules [1].

LCS Learning Cycle: An Integrated Workflow

The core components interact through a structured learning cycle that processes one training instance at a time. The following diagram illustrates this integrated workflow and the sequential interaction between the rules/population, matching, and genetic algorithm components.

Experimental Protocol & Implementation

Key Experiments and Validation

LCS algorithms have been validated through various experimental studies. The following table summarizes key quantitative findings from selected implementations.

Table: Experimental Performance of LCS in Selected Studies

| Study / System | Application Domain | Key Comparative Finding | Performance Metric |

|---|---|---|---|

| EpiCS [5] | Epidemiologic Surveillance, Knowledge Discovery | Induced rules were less parsimonious than C4.5 but more useful for hypothesis generation. | Rule Utility |

| EpiCS [5] | Epidemiologic Surveillance, Risk Estimation | Risk estimates were significantly more accurate than those from logistic regression. | Risk Estimate Accuracy (P<0.05) |

| XCS for RBN Control [13] | Boolean Network Control | Successfully evolved control rules to drive networks to a target attractor from any state. | Control Success Rate |

Research Reagent Solutions

The following table details essential computational "reagents" and materials required for implementing and experimenting with LCS.

Table: Essential Research Reagents for LCS Implementation

| Reagent / Tool | Function / Purpose | Implementation Example |

|---|---|---|

| Ternary Rule Representation [1] | Encodes conditions using {0, 1, #} for generalization. | Rule: (#1###0 ~ 1) |

| Covering Mechanism [1] | Initializes new rules matching current input when match set is empty. | Creates rule (#0#0## ~ 0) for instance (001001 ~ 0) |

| Accuracy-Based Fitness [1] [13] | Determines classifier selection probability for GA. | Fitness = (Correct Matches) / (Total Matches) |

| Genetic Algorithm (Niche) [1] | Discovers new rules via selection, crossover, mutation in correct set. | Tournament selection from [C] |

| Subsumption Mechanism [1] | Generalizes population by merging specific rules into more general, accurate ones. | Rule (1#0#1 ~ 1) subsumes (11001 ~ 1) |

| Parameter Update Rules [1] | Adjusts fitness, accuracy, and experience of classifiers after each match. | Update rule accuracy based on performance in [C] vs. [M] |

Implementation Considerations

Successfully deploying an LCS requires careful attention to several implementation factors. Parameter tuning is critical, as the system's performance is highly dependent on the appropriate configuration of the genetic algorithm, learning rates, and population size [13]. Furthermore, the selection of a fitness metric—whether strength-based or, more commonly in modern systems, accuracy-based—profoundly influences the pressure toward optimal rule sets [1]. Finally, the choice between Michigan-style and Pittsburgh-style architecture represents a fundamental design decision, with the former evolving individual rules within a single population and the latter evolving entire rule sets as individuals [1].

Learning Classifier Systems (LCSs) represent a paradigm of rule-based machine learning methods that strategically combine a discovery component, typically a genetic algorithm, with a learning component that can perform supervised, reinforcement, or unsupervised learning [1]. This unique architecture allows LCSs to identify sets of context-dependent rules that collectively store and apply knowledge in a piecewise manner to solve complex prediction problems, including classification, regression, behavior modeling, and data mining [1]. The founding principles behind LCSs originated from attempts to model complex adaptive systems, using rule-based agents to form an artificial cognitive system [1]. Unlike many conventional machine learning approaches that seek a single optimal model, LCSs evolve a cooperative set of rules that work in concert to solve tasks, creating an adaptive system that learns through interaction with data and environment [8]. This distributed approach to knowledge representation enables LCSs to decompose complex solution spaces into smaller, more manageable parts, making them particularly valuable for heterogeneous problems common in scientific and industrial domains such as drug development [1] [8].

The LCS landscape is primarily divided into two major architectural styles: Michigan-style and Pittsburgh-style systems [1] [8]. Michigan-style systems, the more traditional approach, maintain a population of individual rules that compete and cooperate, with learning occurring iteratively, one training instance at a time [8]. In contrast, Pittsburgh-style systems evolve entire rule sets as individuals in the population, typically employing batch learning where rule sets are evaluated over much or all of the training data in each iteration [1]. This fundamental distinction in architecture leads to significant differences in how these systems scale, adapt, and ultimately function, making each suitable for different problem domains and application requirements within drug development and biomedical research.

Core Algorithmic Framework and Mechanisms

Architectural Components and Workflow

The LCS algorithm consists of several interconnected components that function together as an adaptive machine. A generic Michigan-style LCS with supervised learning operates through a sophisticated workflow where each component plays a critical role in the system's overall learning capability [1]. The process begins when the environment provides a training instance containing features and a known endpoint or class. This instance is passed to the population of classifiers [P], where the matching process identifies all rules whose conditions align with the current input state [1]. These matching rules form the match set [M], which is subsequently divided based on whether each rule proposes the correct or incorrect action, forming the correct set [C] and incorrect set [I] respectively [1]. If no rules match the current instance, a covering mechanism generates a new rule that matches the input and specifies the correct action, ensuring the system can continuously expand its knowledge base [1].

Following these initial steps, the system performs parameter updates through credit assignment, adjusting rule accuracy, error, and fitness based on their performance against the training instance [1]. The subsumption mechanism then generalizes the knowledge representation by merging classifiers that cover redundant areas of the problem space, with more general and accurate classifiers subsuming more specific ones [1]. The genetic algorithm introduces innovation by selecting parent classifiers from [C] based on fitness, applying crossover and mutation to create new offspring rules that are added back to the population [1]. Finally, a deletion mechanism maintains the population size by removing classifiers with poor performance, completing one learning cycle [1]. This intricate process enables the LCS to continuously adapt its rule population to better model the underlying patterns in the data.

The Rule Representation and Matching Process

At the heart of every LCS is its rule representation, which typically follows an {IF:THEN} structure, where the condition specifies a context and the action represents a prediction, classification, or behavior [1]. Rules can be represented using various schemas to handle different data types, with ternary representations (0, 1, #) being traditional for binary data, where the 'don't care' symbol (#) serves as a wild card, enabling strategic generalization [1]. For example, a rule represented as (#1###0 ~ 1) would match any instance where the second feature equals 1 AND the sixth feature equals 0, while generalizing over all other features [1]. This representation balances specificity with generality, allowing rules to cover broader areas of the problem space without sacrificing predictive accuracy.

The matching process is computationally critical and involves comparing each rule in the population against the current training instance to determine contextual relevance [1]. A rule matches an instance only if all specified feature values in its condition exactly correspond to the respective feature values in the instance [1]. The resulting match set [M] contains all rules whose conditions are satisfied by the current input, regardless of whether their proposed actions are correct [1]. This matching mechanism enables the LCS to activate only the subset of knowledge relevant to the current context, creating a highly efficient and scalable approach to problem-solving that can focus computational resources where they are most needed, a particular advantage when handling the high-dimensional data common in drug discovery pipelines.

Credit Assignment and Rule Discovery Mechanisms

Credit assignment in LCSs operates by updating rule parameters based on experiential feedback, with rule accuracy typically calculated as the proportion of times a rule was correct when matched [1]. This "local accuracy" represents the rule's performance within its specific domain of applicability rather than across the entire problem space [1]. Rule fitness, commonly derived as a function of accuracy, determines reproductive opportunity within the genetic algorithm, creating evolutionary pressure toward more accurate and general rules [1]. Modern accuracy-based fitness systems represent a significant advancement over earlier strength-based approaches, driving the evolution of maximally general yet accurate rules rather than simply those that trigger frequently [14].

Rule discovery primarily occurs through a highly elitist genetic algorithm that selects parent classifiers based on fitness, typically from the correct set [C], and applies crossover and mutation to generate new offspring rules [1]. This evolutionary component enables the system to explore the rule space beyond what is possible through covering alone, discovering novel patterns and relationships that might be missed by other machine learning approaches [1]. The deletion mechanism complements this discovery process by removing poorly performing classifiers from the population, with selection probability typically inversely proportional to fitness [1]. Together, these mechanisms create a continuous cycle of innovation and refinement that allows the LCS to adapt its knowledge representation to complex, evolving problem domains, including those with heterogeneous patterns and non-uniform data distributions frequently encountered in biomedical research.

Comparative Advantages in Research Applications

Interpretability and Explainable AI

The interpretability of LCSs represents one of their most significant advantages for scientific domains like drug development, where understanding the reasoning behind predictions is often as important as the predictions themselves. Unlike black-box models such as deep neural networks, LCSs generate human-readable IF-THEN rules that directly illustrate the relationships between input features and outcomes [8] [15]. This innate comprehensibility aligns with the growing emphasis on Explainable AI (XAI) in healthcare and pharmaceutical research, where regulatory requirements and safety concerns demand transparent decision-making processes [15]. The evolved rule sets not only make predictions but also provide immediately interpretable insights into the underlying patterns in the data, enabling researchers to validate models against existing scientific knowledge and potentially discover novel biological relationships [15].

This explicitness allows LCSs to function as both predictive and descriptive models, offering researchers actionable insights beyond mere classification [5]. For instance, in epidemiologic surveillance, rules induced by LCSs were found to be potentially more useful for hypothesis generation compared to more parsimonious but less informative rules from other algorithms [5]. The transparency of individual rules facilitates domain expert validation, a critical requirement in drug development where understanding mechanism of action is essential. Furthermore, the ability to trace specific predictions back to explicit rules builds trust in the model's outputs and supports regulatory compliance by providing auditable decision trails [15].

Model-Free Learning and Adaptivity

LCSs are model-free, meaning they do not make strong a priori assumptions about data distributions, functional relationships, or problem structure [8]. This flexibility enables them to effectively capture diverse pattern types including linear, epistatic, and heterogeneous associations without requiring researchers to specify the underlying model form [8]. The model-free nature is particularly advantageous in drug discovery applications where the true relationship between chemical structures, biological targets, and therapeutic effects is often complex and poorly understood, making parametric assumptions potentially misleading.

The adaptive capabilities of LCSs allow them to continuously learn from new data without requiring complete retraining [8]. This incremental learning support is invaluable in research environments where data evolves over time, such as when new experimental results become available or when patient data streams in continuously [8]. Unlike batch learning algorithms that must process entire datasets when new information arrives, LCSs can seamlessly incorporate new instances while preserving existing knowledge, making them exceptionally suited for dynamic research environments [1] [8]. This adaptivity extends to changing dataset environments, where parts of the solution can evolve without starting from scratch, significantly reducing computational costs during longitudinal studies and iterative experimental designs common in pharmaceutical research [8].

Performance Characteristics and Scalability

LCSs demonstrate particular strength in heterogeneous problem domains where different patterns exist in different subsets of the data, a common scenario in biomedical datasets with diverse patient subgroups or compound classes [8]. By decomposing complex problems into smaller, locally accurate rules, LCSs can effectively handle such heterogeneity without requiring explicit segmentation of the dataset [8]. Additionally, their inherent resistance to noise and natural handling of missing data makes them robust to the imperfect data quality often encountered in real-world research settings [8].

Despite these advantages, LCS algorithms face certain scalability challenges, particularly with very high-dimensional data [8]. Most implementations to date have demonstrated limited scalability compared to some other machine learning approaches, and they can be computationally demanding, sometimes requiring longer convergence times [8]. However, ongoing research in optimizations and parallel implementations, including GPU acceleration and improved matching algorithms, is actively addressing these limitations [15]. For many research applications in drug development, particularly those with moderate-dimensional data where interpretability is paramount, the benefits of LCSs often outweigh their computational demands [8] [14].

Table 1: Comparative Analysis of LCS Against Other Machine Learning Approaches

| Characteristic | LCS | Deep Learning | Decision Trees | Logistic Regression |

|---|---|---|---|---|

| Interpretability | High (Human-readable rules) | Low (Black-box) | Medium (Tree structure) | Medium (Coefficients) |

| Model Assumptions | Model-free | Architecture-dependent | Non-parametric | Linear relationship |

| Handling Heterogeneity | Excellent | Moderate | Good | Poor |

| Incremental Learning | Supported | Limited | Limited | Supported |

| Feature Selection | Automatic (via rule conditions) | Automatic (implicit) | Automatic | Manual/Regularization |

| Noise Tolerance | High | Medium | Low | Low |

Experimental Validation and Performance Metrics

Methodology for Validating LCS Performance

Rigorous experimental validation is essential when implementing LCS algorithms for research applications. The foundational methodology involves comparing LCS performance against established algorithms using appropriate validation frameworks and statistical testing [5]. In a notable epidemiologic surveillance study, EpiCS (an LCS implementation) was systematically evaluated against C4.5 decision trees and logistic regression to assess classification accuracy and risk estimation capability [5]. The experimental protocol should employ stratified k-fold cross-validation or hold-out validation sets to ensure unbiased performance estimation, with particular attention to class imbalance through techniques like stratified sampling or balanced accuracy metrics [5].

For drug development applications, the validation framework must include both predictive performance metrics and interpretability assessments. Predictive performance can be evaluated using standard metrics including accuracy, precision, recall, F1-score, and area under the ROC curve (AUC-ROC) [5]. Additionally, since LCSs often provide probability estimates or risk scores, calibration metrics such as Brier score and reliability diagrams should be incorporated [5]. The interpretability and utility of induced rules require different evaluation approaches, potentially involving domain expert ratings, rule simplicity measures (such as condition length and number of rules), and novel metrics that assess the clinical or biological plausibility of discovered patterns [5].

Quantitative Performance in Research Domains

Empirical studies demonstrate that LCS algorithms achieve competitive performance across diverse domains, with particular strengths in certain types of learning tasks. In the epidemiologic surveillance domain, while C4.5 demonstrated superior classification performance (P<0.05), EpiCS derived risk estimates that were significantly more accurate than those from logistic regression (P<0.05) [5]. This ability to produce accurate probability estimates makes LCSs valuable for applications like drug safety assessment and patient risk stratification where quantifying uncertainty is as important as classification itself [5].

The performance characteristics of LCS algorithms continue to evolve with recent advancements. Modern LCS implementations have demonstrated improved capacity to handle larger and more complex datasets through techniques including accuracy-based fitness evaluation, enhanced generalization mechanisms, and optimized rule representations [14]. While comprehensive benchmarks against contemporary machine learning methods in drug discovery domains remain somewhat limited in the literature, existing studies suggest that LCSs achieve particularly strong performance in problems exhibiting heterogeneous patterns, epistatic interactions, and modular substructures—characteristics common to many biological and chemical datasets [8] [5].

Table 2: Quantitative Performance of LCS in Research Applications

| Application Domain | Comparison Algorithms | Key Performance Findings | Statistical Significance |

|---|---|---|---|

| Epidemiologic Surveillance | C4.5, Logistic Regression | Rules less parsimonious but more useful for hypothesis generation; superior risk estimation | P<0.05 for risk estimation superiority |

| Knowledge Discovery | Various rule-based systems | Improved hypothesis generation capability; effective pattern discovery in heterogeneous data | Not explicitly reported |

| General Classification | Decision Trees, SVMs, Neural Networks | Competitive accuracy with enhanced interpretability; strong performance on heterogeneous problems | Varies by study and dataset |

Implementation Guide for Research Applications

The LCS Research Toolkit

Implementing LCS algorithms effectively requires both conceptual understanding and practical tools. The research toolkit for LCS applications includes several key components, from algorithmic implementations to specialized resources for specific research domains. While comprehensive production-ready LCS libraries are less abundant than for some other machine learning approaches, accessible implementations are emerging, including Python-based algorithms paired with introductory educational materials [8]. For drug development professionals, these computational resources should be complemented by domain-specific knowledge bases and validation frameworks to ensure biological relevance and experimental rigor.

Table 3: Essential Components of the LCS Research Toolkit

| Toolkit Component | Representative Examples | Function in Research Application |

|---|---|---|

| Algorithm Implementations | Python-coded LCS algorithms [8] | Core machine learning functionality for pattern discovery and prediction |

| Data Preprocessing Tools | Standard scientific computing libraries (e.g., NumPy, Pandas) | Handle missing data, normalization, and feature encoding for biological/chemical data |

| Visualization Packages | Rule visualization tools, graph libraries | Interpret and communicate discovered patterns to multidisciplinary teams |

| Validation Frameworks | Cross-validation implementations, statistical testing packages | Rigorously assess predictive performance and rule quality |

| Domain Knowledge Bases | Chemical databases, biological pathway resources | Validate and contextualize discovered rules within existing scientific knowledge |

Experimental Protocol for Drug Development Applications

Implementing LCS algorithms in drug development requires a structured experimental protocol to ensure scientifically valid results. The following methodology provides a framework for applying LCS to typical drug discovery problems such as compound activity prediction, toxicity assessment, or patient stratification:

Data Preparation Phase: Begin by curating and preprocessing the research dataset, which may include chemical structures, biological assay results, genomic profiles, or clinical records. Represent chemical compounds using appropriate descriptors such as molecular fingerprints, physicochemical properties, or structural fragments. Encode biological data using relevant features including gene expression levels, protein interactions, or pathway activities. Handle missing values using appropriate imputation techniques or exploit the LCS's native ability to manage incomplete data through generalization. Normalize continuous features and encode categorical variables as needed for the chosen rule representation [1] [8].

Model Training and Validation Phase: Split the dataset into training, validation, and test sets using stratified sampling to maintain class distribution, particularly important for imbalanced problems like rare adverse event prediction. Initialize LCS parameters including population size, learning rate, mutation and crossover rates, covering probability, and subsumption thresholds based on domain requirements. Execute the LCS learning cycle iteratively over training instances, monitoring performance on the validation set to guide parameter tuning and prevent overfitting. Employ techniques such as early stopping if performance plateaus. Finally, evaluate the trained model on the held-out test set using comprehensive metrics including accuracy, sensitivity, specificity, and AUC-ROC, complemented by rule quality assessments [1] [5].

Knowledge Extraction and Interpretation Phase: Extract the final rule population and analyze rule conditions to identify key molecular features, structural patterns, or biological markers associated with the target property. Calculate rule-specific metrics including accuracy, coverage, and generality to prioritize the most reliable and broadly applicable patterns. Validate discovered rules against existing domain knowledge and literature to assess biological plausibility. Conduct experimental design based on rule insights to plan subsequent compound synthesis, biological testing, or clinical validation studies [8] [5].

Future Directions and Research Opportunities

The LCS field continues to evolve with several promising research directions that enhance their applicability to drug development and scientific discovery. Recent advances focus on optimizing rule selection, improving scalability, and integrating novel search methods to extract meaningful, human-readable knowledge from large and dynamic datasets [14]. The integration of novelty search mechanisms with rule-based learning has demonstrated promising improvements in balancing prediction error and model complexity, ultimately yielding more robust and generalized classifier sets [14]. This approach prioritizes behavioral diversity over direct optimization, facilitating the discovery of innovative solutions in complex search spaces—a particularly valuable capability for novel drug design where chemical space exploration is essential [14].

Another significant frontier involves hybrid approaches that combine LCS with other machine learning paradigms to leverage complementary strengths. Integration with deep learning architectures, representation learning techniques, and transfer learning frameworks presents opportunities to enhance LCS capabilities while maintaining interpretability [15]. Similarly, incorporating partial parametric model knowledge within reinforcement learning frameworks has shown that blending model-based insights with data-driven updates can dramatically improve performance in continuous control settings, particularly in environments characterized by uncertainty and noise [14]. For pharmaceutical applications, this could translate to more effective optimization of compound properties or clinical trial designs using hybrid knowledge-driven and data-driven approaches.

The emerging emphasis on explainable AI (XAI) in healthcare and regulatory science positions LCS as a foundational technology for developing interpretable yet powerful machine learning systems [15]. Future research will likely focus on enhancing the comprehensibility of evolved rule sets through advanced visualization, interactive knowledge exploration, and integration with formal knowledge representation systems [15]. As the field progresses, standardized benchmarking protocols, shared repositories for rule set evaluation, and methodological guidelines specific to drug development applications will be crucial for advancing LCS from research tools to validated components of the pharmaceutical development pipeline [15] [14].

The increasing complexity of artificial intelligence (AI) models has created a significant transparency problem, often referred to as the "black box" issue, where AI systems produce outputs without revealing the reasoning behind their decisions [16]. This opacity becomes critically problematic when AI systems influence high-stakes domains such as healthcare, finance, and autonomous systems, where understanding AI decision-making processes is a fundamental requirement for trust, accountability, and ethical deployment [16]. Explainable AI (XAI) has therefore emerged as a crucial field of study, with the XAI market projected to grow from $9.77 billion in 2025 to $20.74 billion by 2029, demonstrating its rapidly increasing importance [17].

Within this landscape, Learning Classifier Systems (LCS) represent a family of rule-based machine learning algorithms that offer inherent interpretability [2]. By combining reinforcement learning with evolutionary computation, LCS algorithms evolve a set of human-readable condition-action rules to solve complex problems [14]. This paper explores the foundational relationship between LCS, evolutionary rule-based learning, and XAI, providing a comprehensive technical guide for researchers and scientists interested in developing transparent, adaptive AI systems.

Foundations of Learning Classifier Systems (LCS)

Core Architecture and Algorithmic Components

Learning Classifier Systems are rule-based, multifaceted machine learning algorithms that originated and have evolved through inspiration from evolutionary biology and artificial intelligence [18]. The LCS architecture integrates several key components:

- Rule-Based Foundation: LCS utilizes a population of conditional rules, typically expressed in IF:THEN format, which collectively form the system's knowledge base [19].

- Evolutionary Computation: A genetic algorithm acts on the rule population, evolving new rules through mechanisms of selection, crossover, and mutation [5] [18].

- Reinforcement Learning: The system employs a credit assignment mechanism (such as a bucket brigade algorithm or Q-learning) to distribute rewards to rules based on their contribution to solving the problem [14].

The fundamental goal of LCS is not to identify a single best model, but to create a cooperative set of rules that together solve the task at hand [19]. This distributed approach to problem-solving allows LCS to effectively describe complex and diverse problem spaces found in behavior modeling, function approximation, classification, and data mining [19].

Michigan-Style vs. Pittsburgh-Style LCS

Two major genres of LCS algorithms exist, differing primarily in how they employ evolutionary computation:

Table: Comparison of Michigan-style and Pittsburgh-style LCS

| Feature | Michigan-Style LCS | Pittsburgh-Style LCS |

|---|---|---|

| Evolutionary Unit | Individual rules within a population | Multiple complete rule-sets as competing individuals |

| Population | Single, collaborative rule population | Multiple rule-sets that compete |

| Learning Approach | Iterative, one instance at a time | Batch-wise evaluation on full dataset |

| Primary Strength | Adaptive to changing environments | Direct optimization of entire rule-sets |

| Interpretability | Individual rules are interpretable | Rule-sets are interpretable as a whole |

Michigan-style systems, the more traditional architecture, distribute learned patterns over a competing yet collaborative population of individually interpretable IF:THEN rules [19]. These systems apply iterative learning, meaning rules are evaluated and evolved one training instance at a time rather than being immediately evaluated on the training dataset as a whole [19]. This makes them efficient and naturally well-suited to learning different problem niches found in multi-class, latent-class, or heterogeneous problem domains [19].

LCS as a Form of Evolutionary Rule-Based Machine Learning

Evolutionary Rule-based Machine Learning (ERL) represents a collection of machine learning techniques that leverage the strengths of various metaheuristics to find an optimal set of rules to solve a problem [2]. LCS is a prominent example of ERL, with deep connections to other methodologies including Ant-Miner, artificial immune systems, and fuzzy rule-based systems [2]. These methods have been developed using diverse learning paradigms, including supervised learning, unsupervised learning, and reinforcement learning [2].

The hallmark characteristic of ERL models is their innate comprehensibility, which encompasses traits like explainability, transparency, and interpretability [2]. This property has garnered significant attention within the machine learning community, aligning with the broader interest of Explainable AI [2]. The 28th International Workshop on Evolutionary Rule-based Machine Learning (IWERL) in 2025 continues to serve as a cornerstone for this research community, highlighting modern implementations of ERL systems for real-world applications and demonstrating the effectiveness of ERL in creating flexible and explainable AI systems [2].

Recent advancements in ERL have focused on optimizing rule selection, enhancing scalability, and integrating novel search methods to extract meaningful, human-readable knowledge from large and dynamic datasets [14]. For instance, work on integrating novelty search mechanisms with rule-based learning has demonstrated promising improvements in balancing prediction error and model complexity, ultimately yielding more robust and generalized classifier sets [14].

The Explainable AI (XAI) Revolution and LCS

The Black Box Problem and XAI Fundamentals

The "black box" problem refers to the lack of transparency and interpretability in AI decision-making processes, particularly in complex deep learning models [16] [17]. This opacity makes it difficult to understand how models arrive at their predictions or recommendations, creating significant challenges in critical applications [17]. Explainable AI aims to address this problem by developing methods and techniques that make AI systems more transparent and interpretable [16].

Two fundamental concepts in XAI are transparency and interpretability [17]. Transparency refers to the ability to understand how a model works, including its architecture, algorithms, and training data—akin to looking at a car's engine to see all the parts and understand how they work together [17]. Interpretability, however, is about understanding why a model makes specific decisions—similar to understanding why a car's navigation system took a specific route [17].

LCS as an Inherently Explainable AI Approach

LCS algorithms align perfectly with XAI objectives through their native interpretability features [2]. Unlike post-hoc explanation methods (such as SHAP or LIME) that attempt to explain black-box models after training, LCS generates explanations as an integral part of its operation [2] [19]. The key characteristics that make LCS an explainable AI approach include:

- Human-Readable Rules: The rules evolved by LCS are typically expressed in IF:THEN format that is directly interpretable by humans [2] [19].

- Transparent Decision Process: The entire process of matching conditions, selecting rules, and executing actions can be traced and understood [19].

- Model Comprehensibility: The evolved rule sets provide insight into the underlying structure of the problem domain [14].

The interpretability provided by LCS and other evolutionary rule-based systems represents an important step toward eXplainable AI (XAI), particularly valuable in real-world applications such as defense, biomedical research, and legal systems where understanding the decision process is critical [2].

Experimental Framework and Methodologies

General LCS Experimental Protocol

Implementing and evaluating Learning Classifier Systems requires a structured experimental approach. The following workflow outlines a standard methodology for LCS experimentation:

Diagram Title: LCS Experimental Methodology Workflow

This methodology emphasizes the iterative nature of LCS, where rules are continuously evaluated and evolved to adapt to the problem space. The process involves distinct phases of problem definition, system configuration, evolutionary learning, validation, and interpretation [19].

Key Research Reagents and Computational Tools

Implementing LCS requires specific computational tools and frameworks. The following table details essential "research reagents" for LCS experimentation:

Table: Essential Research Reagents for LCS Experimentation

| Tool Category | Specific Examples | Function and Application |

|---|---|---|

| XAI Explanation Libraries | SHAP, LIME, IBM AI Explainability 360 | Provide post-hoc explanations for model validation and comparison with innate LCS interpretability [16] [17]. |

| Evolutionary Computation Frameworks | DEAP, ECJ, OpenAI ES | Offer foundational evolutionary algorithms that can be adapted for LCS implementations [2]. |

| Specialized LCS Implementations | EpiCS, XCS, ExSTraCS | Domain-specific LCS implementations with optimized parameters for particular problem domains [5] [14]. |

| Rule Visualization Tools | RuleViz, TreeMap, custom visualization suites | Enable visualization of evolved rule sets, population dynamics, and knowledge structures [2] [19]. |

| Benchmark Datasets | UCI Repository, synthetic datasets with known properties | Provide standardized testing environments for evaluating LCS performance and interpretability [5] [19]. |

These computational "reagents" form the essential toolkit for researchers developing and evaluating LCS algorithms. The growing emphasis on explainability has driven increased integration between traditional LCS implementations and broader XAI toolkits [17].

Case Study: LCS for Epidemiological Surveillance

A seminal application of LCS in a high-stakes domain is the EpiCS system, designed for knowledge discovery in epidemiologic surveillance [5]. This case study illustrates the practical implementation and evaluation of LCS for a real-world problem with significant societal implications.

Experimental Protocol: EpiCS Implementation

The EpiCS implementation followed a rigorous experimental design:

- Data Source: Utilized data from a large, national child automobile passenger protection program [5].

- System Architecture: Employed a Michigan-style LCS architecture integrating a rule-based system with reinforcement learning and genetic algorithm-based rule discovery [5].

- Comparative Framework: Compared performance against C4.5 (a decision tree algorithm) and logistic regression to evaluate classification accuracy and risk estimation capability [5].

- Evaluation Metrics: Assessed both rule parsimony and classification performance for comprehensive evaluation [5].

The experimental results demonstrated key characteristics of LCS approaches:

- Classification Performance: C4.5 achieved superior classification performance compared to EpiCS (P<0.05) [5].

- Risk Estimation: EpiCS derived significantly more accurate risk estimates than logistic regression (P<0.05) [5].

- Rule Utility: While rules induced by EpiCS were less parsimonious than those induced by C4.5, they were potentially more useful to investigators in hypothesis generation [5].

Interpretation of EpiCS Findings

The EpiCS case study highlights several important aspects of LCS in practice:

- Transparency vs. Accuracy Trade-off: The slightly lower classification accuracy of EpiCS compared to C4.5 may be offset by its superior interpretability and hypothesis generation capability in domains like epidemiology where understanding underlying relationships is crucial [5].

- Domain-Specific Strengths: The superior risk estimation performance demonstrates that LCS may be particularly valuable in applications where estimating likelihoods and uncertainties is more important than simple classification [5].

- Knowledge Discovery: The rules generated by EpiCS, while less parsimonious, provided researchers with actionable insights and hypotheses for further investigation, illustrating the knowledge discovery potential of LCS [5].

Quantitative Analysis of Recent XAI Applications with Evolutionary Components

Recent research has demonstrated the powerful synergy between XAI methodologies and geostatistical approaches, with evolutionary components enhancing interpretability. The following table summarizes quantitative findings from a 2025 study on air pollution control that integrated XAI with geostatistical analysis:

Table: XAI-Geostatistics Integration for Air Pollution Analysis (2025)

| Analysis Dimension | Key Finding | XAI Methodology | Interpretation Value |

|---|---|---|---|

| Temporal Variability | July showed least spatial variability in PM2.5; December showed highest | Predictor importance heatmaps with spatio-temporal dependencies | Revealed seasonal shifts in key pollution drivers [20]. |

| Predictor Importance | Hour of day was key predictor in July (13.04%); Atmospheric pressure dominant in December (13.84%) | Multiple ML model interpretation with feature importance analysis | Identified temporal dynamics in factor significance [20]. |

| Cluster Analysis | 4-9 distinct clusters with significant spatial variability in predictor importance | Transition matrix analysis of spatio-temporal clusters | Highlighted stable and dynamic pollution patterns [20]. |

| Policy Impact | Framework enables spatially targeted pollution control strategies | Transparent XAI framework for urban management | Supports sustainable city planning and mitigation efforts [20]. |

This integrated approach demonstrates how evolutionary rule-based systems combined with XAI can uncover complex spatio-temporal patterns that would be difficult to detect with traditional analytical methods. The study specifically highlighted how XAI reveals significant seasonal shifts in PM2.5 clusters and key pollution drivers, enabling more targeted and effective environmental interventions [20].

Future Research Directions and Challenges

Current Limitations and Research Gaps

Despite their strengths, LCS algorithms face several significant challenges that represent opportunities for future research: