Key Challenges and Advanced Solutions in Predicting Bidirectional Regulation and Feedback Loops

This article explores the central challenges in modeling and predicting bidirectional regulation and feedback loops, dynamic systems fundamental to biology, from cellular decision-making to organism-level physiology.

Key Challenges and Advanced Solutions in Predicting Bidirectional Regulation and Feedback Loops

Abstract

This article explores the central challenges in modeling and predicting bidirectional regulation and feedback loops, dynamic systems fundamental to biology, from cellular decision-making to organism-level physiology. Tailored for researchers, scientists, and drug development professionals, it synthesizes foundational concepts, cutting-edge computational methodologies, common troubleshooting strategies, and validation frameworks. By integrating insights from circadian biology, gene regulatory networks, and neuroendocrine interactions, this review provides a comprehensive guide for navigating the complexities of these systems to advance predictive biology and therapeutic intervention.

Deconstructing Complexity: The Core Principles of Bidirectional Systems

Defining Bidirectional Regulation and Feedback Loops in Biological Systems

FAQ: Core Concepts and Research Challenges

What is a Bidirectional Feedback Loop in biological systems? A Bidirectional Feedback Loop describes a cyclical relationship where two components in a system influence each other mutually. The output from one system becomes the input for the other, and vice versa. This dual-direction exchange is essential for maintaining dynamic equilibrium and facilitating adaptive change in complex biological systems [1].

Why is predicting the behavior of these loops a major research challenge? Predicting the behavior of these loops is difficult because they often involve non-linear dynamics and are embedded within larger, interconnected networks. A change in one component can propagate through the loop in unpredictable ways, leading to outcomes that are not apparent when studying the components in isolation. Furthermore, these loops can be either reinforcing (positive feedback, accelerating change) or balancing (negative feedback, stabilizing the system), and the net effect depends on their interaction [2]. For instance, in Parkinson's disease research, mitochondrial dysfunction and neuroinflammation engage in a "damaging interlinked bidirectional and self-perpetuating cycle," where it is challenging to isolate a primary cause [3].

What are some key experimental challenges in validating these loops? Key challenges include:

- Distinguishing Causality from Correlation: Observing that two components change together is not enough to prove they regulate each other.

- System Identification: Accurately determining all the components and connection strengths within a feedback loop. As one methodological study notes, for a model to be identified, it is necessary to instrument both variables in the loop [4].

- Context-Dependent Behavior: The loop's function can change under different physiological conditions or disease states.

FAQ: Experimental Troubleshooting and Methodologies

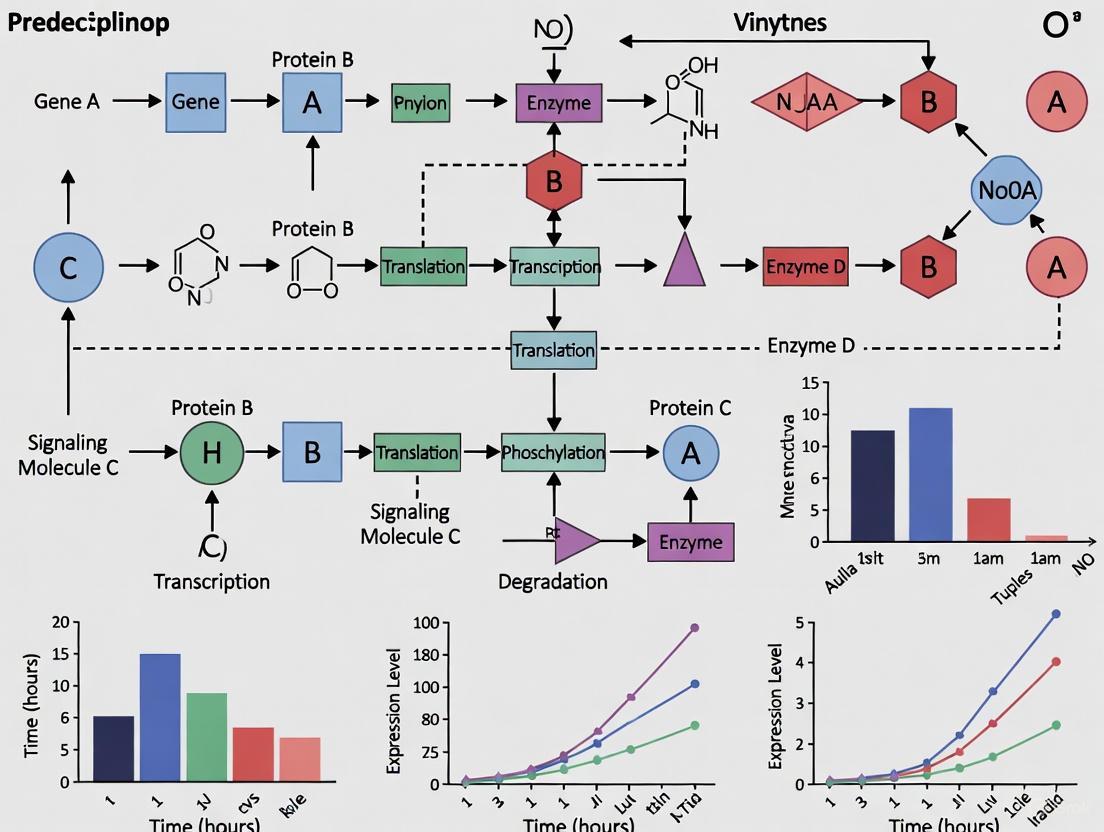

How can I experimentally dissect a bidirectional regulatory mechanism? A robust approach involves a combination of genetic, biochemical, and computational methods to perturb each component and observe the effects on the other. The diagram below outlines a generalized experimental workflow for this validation.

We observed a correlation between two components (A and B), but subsequent perturbation of A did not affect B as expected. What could be wrong? This is a common issue. Consider these possibilities:

- Presence of Compensatory Mechanisms: The system may have redundant pathways that compensate for the loss of A, masking its effect on B.

- Insufficient Perturbation: The intervention (e.g., knockdown efficiency) may not have been strong enough to exceed a critical threshold needed to affect B.

- Temporal Dynamics: The effect may be time-sensitive. You might have measured the outcome too early or too late.

- Context Specificity: The regulation might only occur under specific conditions (e.g., stress, specific cell type, or cell cycle phase) not met in your experiment.

Our data suggests a feedback loop, but we cannot distinguish between direct and indirect regulation. How can we resolve this? To establish a direct molecular interaction, you need to move from cellular phenotyping to biochemical and biophysical assays.

- For Protein-Protein Interactions: Use co-immunoprecipitation (Co-IP) or proximity ligation assays (PLA) to confirm physical binding.

- For Kinase-Substrate Relationships: Conduct in vitro kinase assays with purified proteins to prove direct phosphorylation.

- For Transcriptional Regulation: Use Chromatin Immunoprecipitation (ChIP) assays to test if a transcription factor directly binds to the promoter of its target gene.

Case Study: The DYRK2-USP28 Feedback Loop

A 2025 study uncovered a novel bidirectional feedback loop between the kinase DYRK2 and the deubiquitinase USP28, which controls cancer homeostasis and the DNA damage response [5]. This loop is an excellent example of the challenges and methodologies discussed.

Detailed Experimental Workflow from the DYRK2/USP28 Study:

- Initial Correlation and Loss-of-Function: The researchers first manipulated DYRK2 levels and observed a corresponding change in USP28 protein levels, but not its mRNA, suggesting post-translational regulation. Conversely, genetic deletion of DYRK2 increased USP28 protein levels [5].

- Establishing Direct Regulation: They demonstrated that DYRK2 phosphorylates USP28, which promotes its ubiquitination and degradation by the proteasome. Critically, they showed this was independent of DYRK2's kinase activity and its known E3 ligase partner, FBXW7 [5].

- Testing Bidirectionality: The researchers then reversed the experiment, showing that USP28, in its role as a deubiquitinase, stabilizes DYRK2 by removing its ubiquitin chains. This action also enhanced DYRK2's kinase activity [5].

- Mapping the Interaction Domain: To pinpoint the mechanism, they identified a specific region on DYRK2 (residues 521–541, particularly T525) that was crucial for USP28-mediated stabilization [5].

- Functional Consequences: Finally, they connected this reciprocal regulation to a cellular outcome: the USP28-DYRK2-p53 axis influenced apoptotic responses to DNA damage, underscoring the loop's biological significance [5].

The following diagram illustrates the core mechanism of this bidirectional loop.

Quantitative Data from the DYRK2/USP28 Study: Table: Key quantitative observations from the DYRK2-USP28 feedback loop study [5].

| Experimental Manipulation | Effect on DYRK2 | Effect on USP28 | Key Method Used |

|---|---|---|---|

| DYRK2 Overexpression | --- | Dose-dependent decrease in protein | Western Blot |

| DYRK2 Depletion (siRNA) | --- | Increase in protein | Western Blot |

| DYRK2 Genetic Deletion (CRISPR) | --- | Increase in protein | Western Blot |

| USP28 Depletion | Decrease in protein and kinase activity | --- | Western Blot / Kinase Assay |

| Co-expression of DYRK2 & USP28 | Protein stabilized, activity enhanced | Targeted for degradation | Co-Immunoprecipitation |

Research Reagent Solutions for Studying Feedback Loops: Table: Essential reagents and their applications for investigating bidirectional regulation, as exemplified by the DYRK2/USP28 study [5].

| Research Reagent | Function in the Experiment | Specific Example from Case Study |

|---|---|---|

| siRNA / shRNA | Gene knockdown to assess component necessity. | DYRK2-specific siRNA used to confirm its role in regulating USP28 stability. |

| CRISPR/Cas9 | Complete gene knockout for phenotypic analysis. | DYRK2–/– cell lines (MDA-MB-468) used to validate USP28 upregulation. |

| Site-Directed Mutagenesis Kits | Generate point mutants to dissect functional domains. | Used to create catalytic mutant USP28C171A and DYRK2 domain mutants (e.g., T525). |

| Plasmids for Ectopic Expression | Overexpress wild-type or mutant proteins. | Plasmids for DYRK2, USP28, and USP25 used for dose-response and specificity tests. |

| Specific Antibodies | Detect proteins, modifications, and interactions. | Antibodies for WB and Co-IP to monitor protein levels, phosphorylation, and binding. |

| Proteasome Inhibitors | Block protein degradation, test for stability regulation. | MG132 used to confirm USP28 degradation occurs via the proteasome. |

The Scientist's Toolkit: Key Experimental Approaches

Beyond specific reagents, several core methodologies are fundamental for probing bidirectional loops.

- Computational Modeling: Using tools like bifurcation analysis and spectrum analysis is crucial for understanding how feedback loops generate and regulate dynamic behaviors, such as the gamma oscillations in the Wilson-Cowan neural model [6]. Structural Equation Modeling (SEM) can also be used to model bidirectional relationships statistically [4].

- Genetic Perturbation: CRISPR-Cas9 and RNAi are indispensable for testing necessity and sufficiency within a proposed loop.

- Biochemical Assays: Co-immunoprecipitation, in vitro kinase assays, and ubiquitination assays are required to move from correlation to direct mechanistic evidence.

- High-Content Live-Cell Imaging: This allows for real-time observation of the dynamic interplay between components, such as the translocation of proteins in response to signals within the loop.

In conclusion, researching bidirectional regulation requires a multidisciplinary strategy that integrates precise genetic and biochemical perturbations with computational modeling. The inherent complexity of these systems means that predictions are challenging, but a rigorous, stepwise experimental approach can successfully map these critical regulatory networks and uncover their profound impact on health and disease.

FAQs: Navigating Complex Bidirectional Systems

FAQ 1: What are the core challenges in experimentally distinguishing bidirectional feedback from unidirectional causation?

A primary challenge is the difficulty in isolating and independently manipulating each half of the feedback loop. In a bidirectional system, an intervention on one component (A) inevitably affects the other (B), which then feeds back to influence A, creating a confounding cycle. Standard causal inference methods can be misled by this reciprocal relationship. Advanced methods, such as Mendelian Randomization with bidirectional instruments or Structural Equation Modeling (SEM) that explicitly include feedback loops, are required to model these relationships accurately. Furthermore, these systems often exhibit non-linear dynamics and time-lagged effects, making real-time measurement and interpretation complex [7].

FAQ 2: Within the circadian-microbiota axis, what are specific examples of bidirectional feedback, and what technical issues arise when studying them?

A canonical example is the bidirectional relationship between host clock genes and the gut microbiome. The host's central circadian clock (e.g., via CLOCK/BMAL1 complexes) regulates gut physiology and, consequently, the microbial environment. In return, microbial metabolites, such as short-chain fatty acids, can signal to the host and influence the expression and amplitude of circadian clock genes [8]. Technically, this creates several issues:

- Confounding Rhythms: It is challenging to determine whether an observed change in the microbiome is a cause or a consequence of the host's circadian rhythm. Disentangling this requires carefully timed sample collection and the use of animal models with genetic disruptions of specific clock genes (e.g., Bmal1 knockout) [9].

- Synchronization: Maintaining consistent circadian conditions for animals in a facility while performing experiments is difficult. Factors like light pollution, feeding times, and researcher activity can inadvertently disrupt rhythms and introduce variability [8].

FAQ 3: When an experiment involving a suspected feedback loop yields a null or unexpected result, what is the first set of controls to verify?

The first step is to run a comprehensive set of controls to rule out technical failure:

- Positive Controls: Use a known activator/stimulus of each individual pathway to confirm that the experimental system is responsive.

- Negative Controls: Include treatments with inhibitors or neutral agents to establish a baseline.

- Experimental Controls: Verify that all equipment is calibrated and reagents are fresh and viable. For example, in cell-based assays, check for mycoplasma contamination or incorrect cell culture conditions, which can globally disrupt cellular signaling [10] [11]. Documenting all aspects of the protocol is crucial for identifying where the process may have failed [12].

Troubleshooting Guides

Guide: Unexpected Results in Feedback Loop Experiments

This guide provides a systematic approach for when experimental results do not align with your hypothesis regarding a bidirectional regulation.

| Troubleshooting Step | Key Actions | Specific Checks for Bidirectional Systems |

|---|---|---|

| 1. Verify the Result | Repeat the experiment. Check for simple human error (e.g., miscalculations, mislabeled samples) [12] [10]. | Repeat the experiment, but with more frequent time-point measurements to capture potential oscillatory dynamics. |

| 2. Interrogate Assumptions | Re-examine your initial hypothesis and experimental design [11]. | Question whether the timing of your intervention or measurement was optimal to detect the feedback. Could the feedback be context-dependent (e.g., only active under stress)? |

| 3. Scrutinize Methods & Reagents | Check equipment calibration, reagent integrity, storage conditions, and sample quality [11] [10]. | Pay special attention to the stability of key metabolites or signaling molecules. For circadian studies, ensure strict control of light and other timing cues. |

| 4. Validate Critical Controls | Ensure all controls (positive, negative, experimental) performed as expected [10]. | Your positive controls should independently activate each arm of the suspected feedback loop to prove each pathway is functional in your setup. |

| 5. Isolate Variables Systematically | Change only one variable at a time to identify the root cause [10]. | Design experiments that chemically or genetically inhibit one arm of the loop to observe the effect on the other arm in isolation. |

The following workflow diagram outlines the logical sequence for applying these troubleshooting steps:

Guide: Troubleshooting a Mouse Model of Circadian-Microbiota Interaction

This guide addresses specific issues when studying the interplay between circadian rhythms and gut microbiota in vivo.

| Problem | Potential Cause | Solution Experiment |

|---|---|---|

| No rhythmic variation in microbial metabolites detected in fecal samples. | Mouse facility is not on a strict light-dark cycle; ad libitum feeding masks rhythmicity. | Implement a controlled light-dark cycle (e.g., 12h:12h) and restrict feeding to the active (dark) phase. Collect fecal samples at multiple time points over 24-48 hours [8]. |

| High variability in microbiota composition between genetically identical mice in the same cohort. | Lack of synchronization in circadian rhythms; contamination; low n-number. | Ensure all mice are synchronized to the same light-dark cycle for at least two weeks prior to experiment. Use single-housed mice or control for coprophagia. Increase sample size [8]. |

| Clock gene knockout mouse does not show expected microbial dysbiosis. | Compensation by other clock genes; the effect is tissue-specific; diet is not permissive. | Verify the knockout phenotype in the relevant tissue (e.g., intestine). Test the effect under different dietary challenges (e.g., high-fat diet) [9]. |

| Failure to recapitulate a host phenotype via fecal microbiota transplant (FMT). | Recipient's endogenous circadian rhythm is resisting colonization or influencing the outcome. | Use antibiotic-treated or germ-free recipients. Consider using recipient mice with a disrupted circadian clock (e.g., SCN-lesioned or Bmal1-KO) to reduce host-driven confounding [8]. |

Key Signaling Pathways and Experimental Workflows

The Core Circadian-Microbiota Bidirectional Feedback Loop

This diagram illustrates the fundamental two-way communication between the host's circadian clock and the gut microbiome, a canonical example of a bidirectional system.

Workflow for Isolating Bidirectional Causality

This experimental workflow outlines a methodological approach to distinguish causal direction in a suspected feedback loop, using genetic tools for validation.

Research Reagent Solutions

This table details essential materials and their functions for studying complex biological systems like the circadian-microbiota axis.

| Reagent / Material | Function in Experiment | Example Application |

|---|---|---|

| Antibody for BMAL1 | Immunodetection of core clock protein; used in Western Blot (WB) or Immunohistochemistry (IHC). | Verify knockout efficiency or oscillation of clock protein in tissue samples [9]. |

| Fecal DNA Isolation Kit | Isolate high-quality microbial DNA from fecal samples for 16S rRNA sequencing. | Analyze circadian-driven changes in gut microbiota composition and diversity [8]. |

| Enzyme-Linked Immunosorbent Assay (ELISA) for Cytokines | Quantify specific inflammatory proteins in serum or tissue homogenates. | Measure immune response outputs linked to microbiota or circadian disruption [3] [9]. |

| Short-Chain Fatty Acid (SCFA) Standard Mix | Chromatography standard for quantifying microbial metabolites (e.g., butyrate, acetate). | Link changes in microbiota to functional metabolic outputs in the host [8]. |

| PER2::LUCIFERASE Reporter Cell Line | Real-time, bioluminescent monitoring of circadian clock gene expression dynamics. | Study the direct effect of microbial metabolites on cellular circadian rhythms in vitro [9]. |

Troubleshooting Guide: Common Experimental Challenges in Feedback Loop Research

FAQ: My model predicts multistability, but my experimental system consistently converges to a single state. What could be wrong? This common issue often stems from insufficient network characterization. Your model might be missing critical regulatory interactions. Follow this diagnostic protocol:

- Step 1: Verify that all autoregulations (self-activations) are included in your network model. Networks with autoregulated nodes are more likely to exhibit multiple steady states [13].

- Step 2: Check the network topology. Hub-style networks (where multiple toggle switches connect to a central node) naturally have a more restricted state space and are often only mono- or bistable, whereas serial-chain topologies more readily achieve higher-order multistability [13].

- Step 3: Experimentally, ensure that the cells are given sufficient time to settle into a state and that your measurement technique is not inadvertently selecting for a single, more robust phenotype.

FAQ: What is the most effective way to reprogram a cell to a specific, non-extremal fate? Reprogramming to intermediate stable states is more complex than driving a system to its maximum or minimum state.

- Theoretical Guideline: For a desired stable steady state, the input space is guaranteed to contain a reprogramming input, but it is not located at the extremes. A finite-time search procedure is required [14].

- Pruning the Search Space: Leverage the structure of the monotone system to eliminate input choices that are guaranteed not to work. Inputs that intuitively up-regulate factors higher in the target state and down-regulate lower ones can be ineffective [14].

- Practical Protocol: Use the table of "Research Reagent Solutions" below to design a combinatorial perturbation screen, focusing on the input space recommended by theoretical pruning.

FAQ: How does network topology influence the emergent cell fates? The structure of the interconnected feedback loops is a primary determinant of the possible stable states.

- Key Finding: Topologically distinct networks with identical numbers of nodes or feedback loops can have dramatically different steady-state distributions. This highlights that network structure, not just component count, governs dynamics [13].

- Operational Principle: A "tug of war" exists between different network families. Serial and cyclic interconnected feedback loops tend to exhibit multiple alternative states, while hub networks have a state space restricted to mono- and bistability [13].

Experimental Protocols & Data

Protocol 1: Identifying Stable Steady States in a Multistable System using RACIPE This protocol utilizes the RAndom CIrcuit Perturbation (RACIPE) method to analyze a network's steady states without relying on a single parameter set [13].

- Network Input: Define the network topology (e.g., "Gene A activates Gene B, Gene B represses Gene A").

- Parameter Sampling: The algorithm generates a large number of parameter sets (e.g., production/degradation rates, Hill coefficients) from a physiologically relevant range.

- ODE Simulation: For each parameter set, numerically solve the corresponding Ordinary Differential Equations (ODEs) to find all possible stable steady states.

- State Analysis: Cluster the resulting steady states to determine the number and nature of distinct phenotypes (e.g., (High, Low) or (Low, High) for a two-gene system).

Protocol 2: Reprogramming a Toggle Switch via Transient Input Stimulation This protocol details how to force a transition from one stable state to another [14].

- Culture Preparation: Maintain cells in a known baseline stable state (e.g., State S1: (X^ON, Y^OFF)).

- Input Application: Apply a constant, saturating external input

w. To drive the system to the (X^OFF, Y^ON) state, apply a positive input to node Y and/or a negative input (enhanced degradation) to node X. The input can be modeled asq(x_i, w_i) = u_i - v_i * x_iin the ODEs [14]. - Transient Exposure: Maintain the input for a sufficient duration for the system's state to be shifted beyond the basin of attraction of the initial state.

- Input Withdrawal & Validation: Remove the input and allow the system to settle into its new natural stable state. Verify the final state (e.g., via fluorescence if X and Y are fluorescent proteins).

Table 1: Key Parameters for a Mutual Antagonism Network Motif This table summarizes the parameters and their functions for the ODE model described in Eq. (1) and (2) [14].

| Parameter | Description | Role in Model |

|---|---|---|

β₁, β₂ |

Leaky expression rate constants | Set the baseline production rate of the proteins. |

α₁, α₂ |

Activation rate constants | Determine the maximum expression level when fully activated. |

γ₁, γ₂ |

Decay rate constants | Set the rate of protein degradation/dilution. |

k₁, k₂, k₃, k₄ |

Apparent dissociation constants | Represent the concentrations at which activation/repression is half-maximal. |

n₁, n₂, n₃, n₄ |

Hill coefficients | Control the steepness (non-linearity) of the regulatory response. |

u_i |

Positive stimulation input | Represents over-expression of protein x_i [14]. |

v_i |

Negative stimulation input | Represents enhanced degradation of protein x_i [14]. |

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Feedback Loop Studies

| Reagent / Material | Function in Experiment |

|---|---|

| Inducible Gene Expression Systems | Used to implement the positive stimulation input u_i for controlled over-expression of specific transcription factors [14]. |

| degron Tagging Systems | Used to implement the negative stimulation input v_i for targeted and enhanced degradation of specific proteins [14]. |

| Live-Cell Fluorescence Microscopy | Essential for tracking the dynamics of multiple network nodes (e.g., X and Y in a toggle switch) in real-time in individual cells. |

| Ordinary Differential Equation (ODE) Solvers | Software tools (e.g., in MATLAB, Python) used to simulate the mathematical models (like Eq. (1)) and predict system dynamics and steady states [14] [13]. |

| RACIPE Algorithm | A robust computational tool to characterize the possible stable states of a regulatory network across thousands of parameter sets, independent of precise kinetic data [13]. |

Network Topology and Reprogramming Visualizations

FAQs: Understanding Core Concepts and Challenges

FAQ 1: What makes a system 'non-linear,' and why does this complicate the prediction of bidirectional regulation? In a non-linear system, the output is not directly proportional to the input. Small changes in one variable can lead to disproportionately large or unexpected changes in another. In the context of bidirectional regulation, this means that the effect of one element regulating another can change dramatically depending on the system's current state. For instance, in neural networks, the non-linear activation functions of neurons are essential for complex computations but can degrade the system's memory capacity, creating a fundamental trade-off between non-linear processing and the ability to retain information over time [15]. This makes it difficult to predict the net outcome of two components regulating each other.

FAQ 2: How do time delays inherent in biological systems impact the study of feedback loops? Time delays, such as those in axonal signal propagation or biochemical reactions, introduce a disconnect between an action and its effect. In computational models, introducing distance-based inter-neuron delays has been shown to increase memory capacity, but also creates a trade-off with non-linear processing power [15]. From a methodological perspective, these delays mean that the measured effect of one variable on another (a cross-lagged effect) is not instantaneous. Failing to account for the correct time interval in longitudinal studies can lead to misinterpretation of the strength and even the direction of these bidirectional relationships [16].

FAQ 3: Why is context-dependency a major challenge in drug development? A system's response to a stimulus or drug is often highly dependent on its initial state or context. For example, in the Wilson-Cowan model of neural oscillations, the background input to the network has a substantial impact on its response and can determine whether theta oscillation modulates gamma oscillation [6]. This means that a therapeutic intervention could have a beneficial effect in one physiological context (e.g., a healthy state) and a negligible or adverse effect in another (e.g., a disease state), making drug efficacy and safety difficult to predict across diverse patient populations.

FAQ 4: What is the difference between a cross-lagged effect and a feedback effect? In longitudinal studies, a cross-lagged effect typically refers to the predictive influence of one variable (Variable A) on another (Variable B) at a subsequent time point, and vice-versa. A feedback effect, however, represents the overall dynamic interplay between the two variables as a whole. It quantifies the combined, reciprocal influence they have on each other over time. Focusing only on individual cross-lagged effects may miss the bigger picture of the system's dynamic behavior [16].

Troubleshooting Guides

Guide 1: Troubleshooting Unpredictable Outcomes in Computational Models of Bidirectional Regulation

Problem: Your computational model (e.g., a Wilson-Cowan model or Echo State Network) produces unstable, chaotic, or unpredictable outcomes, making it difficult to study the feedback loops of interest.

| Possible Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Overly Strong Non-Linearity | Analyze the model's information processing capacity for different degrees of non-linearity [15]. | For Echo State Networks (ESNs), consider using a mixture of linear and non-linear neurons, or implement Distance-Based Delay Networks (DDNs) to improve the memory-non-linearity trade-off [15]. |

| Incorrect Time-Scale Parameters | Perform a bifurcation analysis to see how model dynamics change with parameters like time constants (τ) or self-feedback strength [6]. | Adjust the decay rate (a) in ESNs or the time constants (τE, τI) in the Wilson-Cowan model to align network timescales with task requirements [6] [15]. |

| Unbalanced Feedback Strength | Systematically vary the excitatory (WEE) and inhibitory (WII) self-feedback strengths and observe the system's output using spectral analysis [6]. | Tune the self-feedback strengths. Increasing excitatory self-feedback can promote oscillation generation, while increasing inhibitory self-feedback can raise oscillation frequency [6]. |

Experimental Protocol: Bifurcation Analysis for Parameter Tuning

- Select a Key Parameter: Choose a parameter suspected of causing instability (e.g., self-feedback strength

WEEorWIIin the Wilson-Cowan model, or the spectral radius of the weight matrix in an ESN). - Define a Range: Set a realistic and sufficiently wide range of values for this parameter.

- Simulate and Record: For each parameter value, simulate the model from multiple initial conditions and record the steady-state outputs (e.g., firing rates

rEandrI). - Plot the Bifurcation Diagram: Plot the steady states of a key variable (e.g.,

rE) against the parameter values. This visualization will reveal regions of stability, instability, and bifurcation points where the system dynamics change qualitatively [6].

Guide 2: Troubleshooting Experimental Data Analysis in Longitudinal Feedback Studies

Problem: Analysis of intensive longitudinal data (e.g., from daily diaries or ecological momentary assessment) fails to reveal clear bidirectional relationships, or the results are inconsistent with theory.

| Possible Cause | Diagnostic Checks | Corrective Actions |

|---|---|---|

| Incorrect Time Interval | Test the sensitivity of your results by analyzing the data using different time intervals (e.g., one-day lag vs. two-day lag) [16]. | Use the parameter transformation method to translate cross-lagged effects to a theoretically meaningful time interval, or use models that explicitly account for continuous time [16]. |

| Focusing Only on Cross-Lagged Effects | Check if your statistical model (e.g., a Dynamic Structural Equation Model) allows for the calculation of the overall feedback effect, which represents the dynamic interplay between two variables [16]. | Shift focus from individual cross-lagged paths to the estimated feedback effect. This provides a single metric for the overall bidirectional relation, which can be more powerful for testing theories [16]. |

| Unmodeled Individual Differences | Test for heterogeneity in your cross-lagged models. | Use techniques that allow for person-specific feedback effects, which can reveal how bidirectional relations vary across individuals and correlate with other traits [16]. |

Experimental Protocol: Estimating Feedback Effects with DSEM

- Model Specification: Build a bivariate Dynamic Structural Equation Model (DSEM) that includes the auto-regressive and cross-lagged paths for your two variables of interest.

- Model Estimation: Fit the model to your intensive longitudinal data.

- Calculate Feedback Effects: Compute the feedback effect using the estimated cross-lagged coefficients. This provides a quantitative measure of the overall bidirectional relation.

- Establish Benchmarks: Compare the magnitude of your obtained feedback effects to empirically established benchmarks to aid interpretation [16].

Research Reagent Solutions

Table: Key Components for Modeling Neural Feedback Loops

| Item | Function in Research |

|---|---|

| Wilson-Cowan Model | A mesoscopic firing rate model used to emulate the interaction between excitatory (E) and inhibitory (I) neural populations and to study the generation of oscillations like gamma rhythms [6]. |

| Excitatory Self-Feedback Strength (WEE) | A parameter in the Wilson-Cowan model that controls the strength of the feedback from the excitatory population onto itself. Increasing WEE promotes the generation of gamma oscillations but decreases their frequency [6]. |

| Inhibitory Self-Feedback Strength (WII) | A parameter in the Wilson-Cowan model that controls the strength of the feedback from the inhibitory population onto itself. Increasing WII is not conducive to generating gamma oscillations but facilitates an increase in oscillation frequency [6]. |

| Echo State Network (ESN) | A type of recurrent neural network with fixed, randomly initialized weights used as a reservoir for temporal pattern learning tasks. It exemplifies the trade-off between linear memory capacity and non-linear processing [15]. |

| Distance-Based Delay Network (DDN) | A class of ESN that incorporates brain-inspired, variable inter-neuron delays proportional to distance. DDNs achieve a better trade-off between linear memory and non-linear processing over larger time spans than conventional ESNs [15]. |

System Visualization Diagrams

Wilson-Cowan Model Feedback

Cross-Lagged Effects Model

Reservoir Computing Trade-Off

Technical Support Center: Experimental Research Guidance

This resource provides technical support for researchers investigating bidirectional feedback loops and their dysregulation in chronic diseases. The following guides address common experimental challenges.

Frequently Asked Questions & Troubleshooting Guides

Q1: How can I resolve inconsistent causal estimates in my Mendelian Randomization (MR) study of bidirectional relationships?

- Problem: When modeling exposure and outcome variables that reciprocally influence each other, traditional instrumental variable (IV) estimators yield unstable or conflicting causal estimates.

- Solution: Implement a Structural Equation Modeling (SEM) framework.

- Diagnostic Check: Run MR analyses "both ways" (exposure on outcome and outcome on exposure). Inconsistent results from Wald ratio or Two-Stage Least Squares (2SLS) estimators indicate potential bidirectional relationships [7].

- Recommended Action: Model the relationship using a SEM with an explicit bidirectional linear feedback loop. This approach uses genetic variants as instruments for both the exposure (y₁) and outcome (y₂) variables within a single model, defined by: y = By + Γx + ζ [7].

- Note: While both traditional IV and SEM estimators are statistically consistent, in finite samples, SEM power is less sensitive to residual correlation between variables and improves with instruments that explain more residual variance in the outcome [7].

Q2: What steps should I take when my experimental model shows escalating proinflammatory cycles, such as in Parkinson's disease research?

- Problem: An experimental system exhibits a self-perpetuating cycle of microglial activation, mitochondrial impairment, and elevated reactive oxygen species (ROS), leading to irreversible neuronal damage.

- Solution: Target the core bidirectional feedback loop.

- Step 1 - System Assessment: Confirm the presence of key cycle markers: elevated oxidative stress, impaired cellular energy (ATP) production, and chronic microglial activation releasing proinflammatory cytokines [3].

- Step 2 - Intervention Point: Design interventions that simultaneously target multiple points in the cycle. For example, consider compounds that improve mitochondrial quality control while also suppressing microglial-mediated proinflammatory immune responses [3].

- Step 3 - Protocol Adjustment: Shift experimental focus from studying linear events to investigating the damage along the "continuum" of this reinforcing cycle. Measure how an intervention changes the cycle's trajectory rather than just a single endpoint [3].

Q3: How can I quantify and model "emotional dysregulation" as a feedback loop in psychosomatic chronic disease studies?

- Problem: Emotion dysregulation (ED) is a multifaceted construct that is difficult to quantify as a destabilizing factor in disease systems.

- Solution: Apply a method inspired by Chaos Theory to compute an "instability coefficient" (Δ).

- Procedure: Use the Emotion Dysregulation Scale (DERS). The coefficient Δ is the Euclidean distance between vectors composed of similar or reversed items from the test. This measures the instability in a subject's evaluation of their own emotional state, acting as a proxy for system vulnerability [17].

- Interpretation: High Δ values indicate high emotional vulnerability and are significantly associated with chronic disease conditions (e.g., breast cancer, blood cancer). This metric can predict ED and Negative Affect (NA), framing the emotional and somatic systems as two complex dynamical systems in interaction [17].

Experimental Protocols & Methodologies

Protocol 1: Modeling Bidirectional Feedback Loops using Structural Equation Modeling (SEM)

This protocol is for estimating reciprocal causal effects between two variables [7].

- Variable Instrumentation: Select two strong genetic instruments (x₁, x₂) for your two endogenous variables (y₁, y₂), respectively.

- Model Specification: Define the SEM using LISREL matrix notation:

- B =

[ 0, β₁₂; β₂₁, 0 ](coefficient matrix for reciprocal effects) - Γ =

[ γ₁₁, 0; 0, γ₂₂ ](coefficient matrix for SNP effects) - Ψ =

[ ψ₁₁, ψ₂₁; ψ₂₁, ψ₂₂ ](covariance matrix of residual errors)

- B =

- Model Fitting: Fit the model using maximum likelihood estimation.

- Validation: Compare causal parameter estimates (β₁₂, β₂₁) with those from traditional bidirectional Wald estimator analyses to check for consistency [7].

Protocol 2: Assessing System Instability in Emotion Dysregulation

This protocol details the calculation of the instability coefficient (Δ) for psychosomatic research [17].

- Administration: Administer the Emotion Dysregulation Scale (DERS) to participants.

- Data Preparation: For each participant, create two vectors from the item responses. Vector A uses the scores from a set of items, and Vector B uses the scores from their corresponding similar or reversed items.

- Calculation: Compute the Euclidean distance between the two vectors for each participant. This value is the instability coefficient, Δ.

- Analysis: Use statistical tests (e.g., t-tests) to compare mean Δ values between clinical and healthy control groups. Regression analysis can test Δ's power to predict ED and NA.

Research Reagent Solutions

Table 1: Essential Materials for Feedback Loop Research

| Item | Function in Research |

|---|---|

| Genetic Variants (e.g., SNPs) | Serve as instrumental variables (x) in Mendelian Randomization studies to model causal pathways and bidirectionality for exposure and outcome variables [7]. |

| Emotion Dysregulation Scale (DERS) | A standardized questionnaire to assess difficulties in emotion regulation; its items are used to compute the instability coefficient (Δ) reflecting system vulnerability [17]. |

| Proinflammatory Cytokine Assays | Quantify levels of specific cytokines (e.g., IL-1β, TNF-α) to experimentally measure the state of microglial activation and neuroinflammation in feedback loops [3]. |

| Mitochondrial Respiration Assays | Measure oxygen consumption rates to assess mitochondrial function, OXPHOS activity, and ATP production, key parameters in the mitochondrial-neuroinflammatory feedback cycle [3]. |

| Reactive Oxygen Species (ROS) Detection Kits | Used to quantify levels of neurotoxic ROS, a critical component in the damaging feedback loop involving mitochondrial impairment and neuronal loss [3]. |

Table 2: Key Quantitative Findings from Literature

| Parameter / Relationship | Quantitative Value / Finding | Context / Condition |

|---|---|---|

| Dopaminergic Neuron Loss at PD Diagnosis | 60-80% loss [3] | Substantia Nigra pars compacta (SNpC) in Parkinson's disease patients at clinical diagnosis. |

| Global Prevalence of PD | ~3% of population >65 years [3] | Rises to 5% in people over 85 years of age. |

| Contrast Ratio for Large Text | Minimum 4.5:1 [18] | WCAG Enhanced Contrast requirement (Level AAA). |

| Contrast Ratio for Standard Text | Minimum 7.0:1 [18] | WCAG Enhanced Contrast requirement (Level AAA). |

| Wald Estimator vs. SEM | Both yield consistent causal estimates [7] | In bidirectional feedback models with a single exposure and outcome variable. |

Signaling Pathways and Experimental Workflows

Neuroinflammatory-Mitochondrial Feedback Cycle in PD [3]

SEM Workflow for Bidirectional Analysis [7]

Computational Arsenal: From ODEs to Deep Learning for Loop Prediction

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ: What is the primary challenge in modeling biological systems with traditional ODEs? A key challenge is accurately representing bidirectional feedback loops, where two system components, like a cellular process and its regulator, influence each other mutually. This creates a cyclical relationship that is difficult to model with simple, linear approaches and can lead to unstable or inaccurate predictions if not properly accounted for in the model structure [1].

FAQ: Why is my ODE model for a biological network failing to converge or producing unrealistic results? This is a common issue when modeling reciprocal causality. A model might be misspecified if it treats a relationship as one-way (A affects B) when it is, in fact, a two-way, bidirectional loop (A affects B and B affects A). For instance, in neuroscience, microglial activation and neuronal mitochondrial impairment form a damaging, self-perpetuating cycle that escalates neurodegeneration. Modeling them as separate, linear events fails to capture the core pathology [3]. Ensure your model's causal pathways are justified by empirical evidence and that both directions of influence are tested.

FAQ: How can I differentiate between a unidirectional and a bidirectional relationship using experimental data? Statistical methods like Mendelian Randomization (MR) with instrumental variables can be used. To identify a bidirectional loop, you must run the analysis in both directions [7]. For example:

- Use genetic instrument

x1to estimate the causal effect of variabley1ony2. - Use a different genetic instrument

x2to estimate the causal effect ofy2ony1. A statistically significant effect in both directions provides evidence for a bidirectional feedback loop. Consistency of these estimators relies on having strong instruments for both variables [7].

FAQ: My computational model of a feedback loop is sensitive to initial conditions. Is this normal? Yes, systems with strong bidirectional feedback are often highly sensitive to initial conditions and parameter values. This is a inherent property of nonlinear, interconnected systems. To troubleshoot, perform a sensitivity analysis to identify which parameters have the greatest effect on your model's output. This will help you focus experimental efforts on measuring the most critical parameters more precisely.

Experimental Protocols for Analyzing Bidirectional Regulation

Protocol 1: Structural Equation Modeling (SEM) for Bidirectional Feedback

Application: This protocol is used for quantifying the strength of bidirectional causal effects between two observed variables (e.g., a specific protein and a disease biomarker) using instrumental variables.

Methodology:

- Instrument Selection: Identify at least one strong instrumental variable for each of the two endogenous variables (e.g., genetic variants for a protein and a biomarker). The instruments must be independent of confounding factors [7].

- Model Specification: Construct a structural equation model representing the bidirectional relationship. The core equation is:

y = By + Γx + ζWhere:yis the vector of your two observed variables.Bis the matrix containing the bidirectional path coefficients (β12,β21) you want to estimate.xis the vector of your instrumental variables.Γis the matrix of effects from the instruments to the variables.ζis the vector of residual errors [7].

- Model Fitting: Fit the specified model to your observational data using maximum likelihood estimation.

- Validation: Test the model's goodness-of-fit. Compare the power of the SEM approach against traditional Two-Stage Least Squares (2SLS) methods, as their relative performance can depend on instrument strength and residual correlation [7].

Protocol 2: Physics-Informed Neural Networks (PINNs) for ODE Solutions

Application: Use this data-driven method to find solutions to Odes that define a physical or biological system, especially when a closed-form analytical solution is unknown [19].

Methodology:

- Problem Definition: Define the ODE and its initial condition. For example: ODE: ( \dot{y} = -2xy ), Initial Condition: ( y(0) = 1 ) [19].

- Network Architecture: Define a fully-connected neural network (e.g., input layer, hidden layers with tanh or sigmoid activation, output layer) that takes the independent variable

xas input and outputs the approximate solutiony_θ(x)[19]. - Custom Loss Function: Create a loss function that incorporates the physical law (the ODE itself) and the initial condition. The loss

Lis a weighted sum of:- ODE Loss:

||y_θ' + 2xy_θ||²(penalizes deviation from the ODE) - Initial Condition Loss:

k * ||y_θ(0) - 1||²(penalizes deviation from the initial condition) [19].

- ODE Loss:

- Training: Train the network by minimizing the custom loss function using gradient-based optimizers (e.g., SGD with momentum). Gradients of the network output with respect to its input (

y_θ') are computed via automatic differentiation [19].

Research Reagent Solutions

The table below lists key computational tools and their functions for researching ODEs and network analysis.

| Research Reagent | Function & Application |

|---|---|

| Structural Equation Modeling (SEM) Software | Used to specify and fit models with bidirectional feedback loops and latent variables, providing estimates for reciprocal path coefficients [7]. |

| Automatic Differentiation Libraries | Enable the computation of exact derivatives, which is essential for training PINNs and solving ODEs with gradient-based optimization [19] [20]. |

| Neural Network Frameworks | Provide the building blocks for creating and training Physics-Informed Neural Networks (PINNs) and Neural ODEs to learn dynamics from data [19] [21]. |

| Adaptive ODE Solvers | Numerical algorithms used as a layer within Neural ODEs to integrate the system's dynamics forward in time [21]. |

Workflow and Pathway Visualizations

Diagram: Bidirectional Feedback Loop in a Neurodegenerative Context

Diagram: Workflow for a Physics-Informed Neural Network (PINN)

Frequently Asked Questions (FAQs)

Q1: My model fails to learn long-term dependencies in time-series biological data. What is the cause and how can I address it? This is typically the vanishing gradient problem, a fundamental limitation of basic Recurrent Neural Networks (RNNs) [22] [23]. As the sequence length increases, the gradients used to update network weights during backpropagation can become infinitesimally small, preventing the model from learning from earlier time steps [23].

- Solution: Transition to more advanced architectures designed to handle long-term dependencies.

- Use LSTM Networks: LSTMs introduce a gating mechanism (input, forget, and output gates) and a cell state that acts as a "conveyor belt," allowing information to flow unchanged over many time steps. This design mitigates the vanishing gradient problem [22] [23].

- Consider GRUs: Gated Recurrent Units (GRUs) offer a simplified alternative to LSTMs by combining the input and forget gates into a single update gate. They are computationally efficient while still effectively capturing long-range dependencies [22] [24].

- Implement Transformers: Transformer models use a self-attention mechanism to weigh the importance of all previous time steps simultaneously, effectively capturing long-range dependencies without the issue of vanishing gradients [22] [23].

Q2: How can I effectively model bidirectional feedback loops, such as those in neurodegenerative disease progression? Standard RNNs process sequences in one direction (forward). To model bidirectional relationships, you need architectures that can integrate information from both past and future states.

- Solution:

- Bidirectional LSTM (BiLSTM): A BiLSTM consists of two LSTMs processing the sequence in opposite directions (one forward, one backward). The outputs from both directions are combined, providing the network with full context for every time point [24]. This is ideal for tasks where understanding the interaction between two reciprocally influencing variables is key.

- Structural Equation Modeling (SEM) for Causal Inference: For analyzing causal relationships in bidirectional loops (e.g., between genetic variants and disease phenotypes), SEMs with feedback loops can be employed within a Mendelian Randomization framework. These models can explicitly represent and estimate reciprocal causal effects [7].

Q3: My training process is extremely slow. How can I speed up model training on large temporal datasets? The sequential nature of RNNs, LSTMs, and GRUs prevents parallel processing, creating a major bottleneck [22].

- Solution: Leverage the parallel processing capabilities of the Transformer architecture. Unlike RNNs, Transformers process all time steps in a sequence simultaneously using self-attention, leading to significantly faster training times on hardware like GPUs [22] [23]. For very long sequences, consider efficient Transformer variants designed to reduce the computational load of the self-attention mechanism.

Q4: What are the best practices for preparing temporal data for these models? Proper feature engineering is critical for performance [23].

- Lag Features: Include values from previous time steps as explicit input features to help the model recognize short-term patterns.

- Rolling Averages & Volatility Measures: Provide moving averages to help the model capture trend-based signals and stability over windows of time.

- Cyclical Encoding: Transform time-based features (e.g., hour of the day, seasonal cycles) into sine and cosine pairs. This helps the model interpret cyclical patterns correctly [23].

- Differencing: Compute differences between consecutive observations to help stabilize the mean of a time series, making it easier to model.

- Normalization: Standardize the data to a common scale to ensure stable and efficient gradient updates during training [23].

Architecture Comparison and Selection Guide

The table below summarizes the key characteristics of different deep learning models for temporal data to guide your selection [22].

| Parameter | RNN (Recurrent Neural Network) | LSTM (Long Short-Term Memory) | GRU (Gated Recurrent Unit) | Transformers |

|---|---|---|---|---|

| Core Architecture | Simple loops for recurrence | Memory cells with input, forget, and output gates | Combines gates into update and reset gates; fewer parameters | Attention-based mechanism without recurrence |

| Handling Long Sequences | Struggles with long-term dependencies | Excels at capturing long-term dependencies | Better than RNNs, slightly less effective than LSTMs | Excellent, uses global context |

| Training Time | Fast but less accurate | Slower due to complex gates | Faster than LSTMs, slower than RNNs | Fast training via parallelism, but high computational cost |

| Parallelization | Limited; sequential processing | Limited; sequential processing | Limited; sequential processing | High; processes entire sequence at once |

| Primary Use Cases | Simple sequence modeling | Time-series forecasting, text generation, tasks needing long-term memory | Similar to LSTM, preferred for computational efficiency | NLP (translation, summarization), LLMs, complex temporal tasks |

Experimental Protocol: Modeling a Biological Feedback Loop

This protocol outlines the steps to model a bidirectional feedback loop, such as the escalating cycle between neuroinflammation and mitochondrial dysfunction in Parkinson's disease [3].

1. Hypothesis Definition

- Define the Loop: Formally state the bidirectional relationship to be modeled. Example: "Microglial activation (Variable A) and neuronal mitochondrial impairment (Variable B) engage in a positive feedback loop, where each exacerbates the other over time [3]."

2. Data Preparation and Feature Engineering

- Data Collection: Gather longitudinal time-series data for Variables A and B.

- Feature Engineering:

- Create Lagged Features: For each variable, create time-lagged versions (e.g., value at t-1, t-2) to serve as input features.

- Encode Cyclical Time: If applicable, encode time of day or experimental phase using sine/cosine transformations [23].

- Normalize Data: Apply standardization (e.g., Z-score normalization) to all features.

3. Model Selection and Implementation

- Architecture Choice: Select a Bidirectional LSTM (BiLSTM) to capture the influence of both past and future states on the current interaction [24].

- Model Design:

- Input Layer: For predicting Variable A at time

t, the inputs would include lagged values of both A and B. - BiLSTM Layer(s): One or more layers to process the sequence from both directions.

- Output Layer: A dense layer to produce the prediction.

- Input Layer: For predicting Variable A at time

4. Model Training and Evaluation

- Training: Use backpropagation through time (BPTT) with a suitable optimizer (e.g., Adam) and loss function (e.g., Mean Squared Error).

- Validation: Hold out a portion of the temporal data for validation to monitor for overfitting.

- Causal Inference Analysis (Optional): To statistically test the hypothesized bidirectional causality, use a Structural Equation Model (SEM) with a feedback loop in a Mendelian Randomization framework, instrumenting both variables with genetic variants [7].

Research Reagent Solutions

The table below lists essential computational "reagents" for experiments in this field.

| Research Reagent | Function / Explanation |

|---|---|

| Lagged Variables | Created from historical data, these are the primary input features that allow the model to learn temporal dependencies and feedback dynamics. |

| Positional Encodings | Essential for Transformer models, these inject information about the relative or absolute position of time steps in a sequence since Transformers lack inherent recurrence [23]. |

| Genetic Instruments | In Mendelian Randomization, these are genetic variants (e.g., SNPs) used as instrumental variables to infer causal relationships in the presence of bidirectional feedback, helping to control for confounding [7]. |

| Sine/Cosine Encoders | Software functions that transform cyclical time features (e.g., time of day) into a continuous, meaningful representation for the model, preventing it from misinterpreting cyclic patterns [23]. |

Workflow and Architecture Diagrams

Modeling Bidirectional Feedback Loop

RNN LSTM GRU Internal Gates

Troubleshooting Guide: Common Hybrid Modeling Challenges

Problem 1: Model Failure in Simulating Bidirectional Feedback

- Symptoms: Model predictions diverge to infinity or settle to unrealistic, unchanging values when simulating feedback loops between variables (e.g., between hormones and neural signals) [25].

- Diagnosis: This often indicates a miscalibration in the strength of reciprocal causal paths (β12 and β21), leading to an unstable system [7].

- Solution: Re-estimate the bidirectional path strengths using an instrumental variables approach. Ensure both variables in the loop are instrumented by strong, uncorrelated exogenous variables (e.g., genetic variants) for model identification [7].

Problem 2: "Black Box" AI Predictions Lacking Mechanistic Insight

- Symptoms: The AI component of the hybrid model makes accurate predictions, but researchers cannot understand the biological rationale behind them, limiting trust and clinical applicability [26] [27].

- Diagnosis: This is a fundamental limitation of purely data-driven deep learning models. The model lacks interpretability by design [26].

- Solution: Implement a hybrid framework where the AI's predictions are used to constrain or inform parameters within an interpretable, mechanistic model based on differential equations. This combines predictive power with physiological insight [25] [26].

Problem 3: Poor Generalization to New Patient Data or Conditions

- Symptoms: A model trained on one dataset performs poorly when applied to data from a different cohort or under different experimental conditions [28].

- Diagnosis: The model may be overfitting to noise or specific patterns in the original training data, and lacks the underlying physiological principles that generalize across contexts [25].

- Solution: Integrate domain knowledge directly into the model architecture. Use the mechanistic component to encode known biological relationships (e.g., hormone secretion patterns, feedback loops), making the model more robust to distribution shifts in the data [25].

Problem 4: High Computational Cost of Mechanistic Model Simulations

- Symptoms: Running simulations with complex mechanistic models (e.g., QSP models with many "virtual patients") is prohibitively slow, hindering iterative development and validation [28] [26].

- Diagnosis: Mechanistic models are often computationally expensive due to their complexity and the need to simulate many interacting components [26].

- Solution: Train an AI-based surrogate model (e.g., a deep neural network) to emulate the input-output behavior of the mechanistic model. The surrogate runs much faster and can be used for rapid prototyping and sensitivity analysis [26].

Frequently Asked Questions (FAQs)

What is the key advantage of hybrid modeling over purely AI-driven or mechanistic approaches?

Hybrid modeling uniquely combines the predictive power of AI with the interpretability of mechanistic models. AI excels at finding complex patterns in large datasets, while mechanistic models provide a causal, biologically-grounded framework. Hybrid approaches leverage the strengths of both, leading to more robust, generalizable, and trustworthy models for complex biological systems like those involving bidirectional regulation [26] [25].

How can I ensure my hybrid model of a feedback loop is correctly identified?

For a model of bidirectional feedback between two variables (e.g., Y1 and Y2) to be identifiable, you must instrument both variables. Each variable needs its own set of exogenous instrumental variables (e.g., genetic variants for Y1 and Y2) that directly affect one variable but not the other. Without this, the reciprocal causal paths cannot be uniquely estimated, and the model parameters will be unreliable [7].

Can generative AI be used in hybrid modeling beyond analyzing scientific literature?

Yes. Generative AI can be trained directly on raw biological data (e.g., from single-cell experiments or perturbation screens) to learn the "language" of biological systems. These models can then generate hypotheses about new cell states or predict the outcomes of future experiments in silico, which can be rigorously tested within a mechanistic framework. This helps overcome the biases present in language models trained only on existing literature [27].

My model struggles with parameter estimation from sparse clinical data. What can I do?

This is a common challenge. A hybrid approach can help by using AI to integrate multiple, disparate datasets (e.g., multi-omics, clinical biomarkers, in vitro data) to inform parameter estimation. Furthermore, AI and machine learning frameworks can assist in screening and prioritizing which covariates to include in population models, making the estimation process more efficient and less reliant on single, sparse data sources [28] [25].

Experimental Protocol: Analyzing a Bidirectional Feedback Loop

This protocol outlines the steps for using a Structural Equation Modeling (SEM) framework to estimate parameters in a bidirectional feedback loop, as applied in Mendelian randomization studies [7].

Objective

To consistently estimate the reciprocal causal effects (β21 and β12) between two endogenous variables, Y1 and Y2, in the presence of latent confounding.

Materials & Prerequisites

- Dataset: Observational data containing measurements for Y1, Y2, and their respective candidate instrumental variables (X1, X2).

- Software: A statistical software package capable of fitting SEMs (e.g., LISREL, Mplus, R with

lavaanpackage). - Instruments: Two or more genetically instrumental variables for Y1 and Y2.

Step-by-Step Methodology

Model Specification:

- Formally specify the SEM using matrix notation:

y = By + Γx + ζ - Where:

yis the vector of endogenous variables [Y1, Y2].Bis the matrix of reciprocal effects [[0, β12], [β21, 0]].xis the vector of instruments [X1, X2].Γis the matrix of instrument effects (diagonal matrix with γ11 and γ22).ζis the vector of disturbances [ζ1, ζ2], with a covariance matrix Ψ that accounts for latent confounding [7].

- Formally specify the SEM using matrix notation:

Model Identification Check:

- Verify that the model satisfies the order condition for identification. A key requirement is that each variable in the feedback loop is instrumented by at least one exogenous variable [7].

Parameter Estimation:

- Input the specified model matrices and observed data (Y1, Y2, X1, X2) into the SEM software.

- Use Maximum Likelihood (ML) estimation to fit the model and obtain estimates for β21, β12, γ11, γ22, and ψ12.

Validation with Instrumental Variables Estimators:

- Perform two separate Wald estimator/Two-Stage Least Squares (2SLS) analyses as a consistency check:

- Estimate β21 by using X1 as an instrument for Y1 on Y2:

β21* = cov(X1, Y2) / cov(X1, Y1). - Estimate β12 by using X2 as an instrument for Y2 on Y1:

β12* = cov(X2, Y1) / cov(X2, Y2)[7].

- Estimate β21 by using X1 as an instrument for Y1 on Y2:

- Compare the SEM estimates (β21, β12) with the Wald/2SLS estimates (β21, β12). They should be asymptotically consistent, providing a validation of the SEM results [7].

- Perform two separate Wald estimator/Two-Stage Least Squares (2SLS) analyses as a consistency check:

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Hybrid Modeling / Experimentation |

|---|---|

| Instrumental Variables (e.g., Genetic Variants) | Used to establish causal direction and identify parameters in bidirectional feedback loops within SEMs, helping to control for unmeasured confounding [7]. |

| D1 Receptor Agonists (e.g., SKF38393) | Pharmacological tools used to activate the dopamine D1 receptor pathway (Gαs-coupled), which increases cAMP and facilitates LTP, useful for probing bidirectional metaplasticity [29]. |

| Group II mGluR Antagonists (e.g., LY341495) | Pharmacological blockers of mGluR2/3 receptors (Gαi/o-coupled), used to unmask LTP at intermediate stimulation frequencies by removing inhibitory presynaptic signaling [29]. |

| Adenylate Cyclase (AC) Activators/Forskolin | Directly stimulates the production of cAMP, a key second messenger, used to test the role of the AC–cAMP–PKA signaling cascade in synaptic plasticity [29]. |

| DREADDs (Designer Receptors Exclusively Activated by Designer Drugs) | Chemogenetic tools that allow for cell type-specific and temporally precise control of neuronal signaling, enabling the dissection of presynaptic vs. postsynaptic contributions to plasticity [29]. |

| Hormone Interaction Dynamics Network (HIDN) | A graph-based neural architecture used in computational modeling to encapsulate the spatiotemporal interdependencies among endocrine glands, hormones, and EEG signal fluctuations [25]. |

| Adaptive Hormonal Regulation Strategy (AHRS) | A computational strategy that dynamically optimizes therapeutic interventions in a model using real-time feedback and patient-specific parameters [25]. |

Model Performance Data

Table 1. Comparison of Modeling Approaches for Simulating Neuroendocrine Feedback [25]

| Modeling Approach | Key Strength | Key Limitation | Relative Predictive Accuracy for Hormone Dynamics |

|---|---|---|---|

| Symbolic AI / Differential Equations | High interpretability, mechanistic insight | Oversimplification, poor handling of biological variability | ~65% |

| Data-Driven Machine Learning | Good pattern recognition from large datasets | "Black box," poor temporal dependency capture | ~78% |

| Proposed Hybrid Framework (HIDN + AHRS) | Balances interpretability & accuracy, robust | Complex implementation, high computational demand | ~92% |

Table 2. Relative Power of SEM vs. Wald/2SLS in Finite Samples for Bidirectional Effects [7]

| Experimental Condition | Recommended Method | Rationale |

|---|---|---|

| Strong instruments for the "outcome" variable (explain more residual variance) | SEM | Power of SEM improves relative to Wald/2SLS as instruments explain more residual variance in the "outcome" variable. |

| High residual correlation between exposure and outcome variables | Wald/2SLS | Power of Wald/2SLS improves relative to SEM as the magnitude of the residual correlation increases. |

| Low residual correlation between variables | SEM | Power of Wald/2SLS deteriorates relative to SEM as the residual correlation decreases. |

Workflow and Pathway Visualizations

Diagram 1: Hybrid Model Integration Wrkflw

Diagram 2: Bidirectional Feedback SEM

Diagram 3: mGluR2/3 & D1 Receptor Interaction

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: How can I overcome the "scale overlap" problem when connecting cellular and network models?

The Challenge: A common issue arises from the structural overlap between biological scales. For instance, a pyramidal cell's apical dendrite (a subcellular structure) can span hundreds of microns, physically crossing multiple laminae of a cortical network. This makes it difficult to create clean, encapsulated models for each scale [30].

Troubleshooting Guide:

- Problem: Model encapsulation fails because a single biological structure operates across multiple spatial scales.

- Solution: Consider using a practical approximation, such as treating the neuron in the network model as a point neuron. Acknowledge that this is a trade-off that sacrifices some biophysical detail for greater conceptual clarity and computational tractability [30].

- Advanced Solution: Explore multi-algorithmic approaches. For the subcellular component, use a computation-intensive method like a multi-compartment model. For the network-level integration, switch to a simplified model that captures the essential input-output function of the neuron [30].

FAQ 2: Why do my multi-scale models lack interpretability, and how can I improve this?

The Challenge: Many deep learning models for biological data are "black boxes." They may perform well at tasks like cell-type identification but provide little insight into the biological mechanisms behind their decisions, such as the key pathways or interactions distinguishing different cell states [31].

Troubleshooting Guide:

- Problem: My model makes accurate predictions but offers no biological insight.

- Solution: Integrate structured biological knowledge directly into the model architecture. For example, the Cell Decoder framework uses protein-protein interaction networks, gene-pathway maps, and pathway-hierarchy relationships to build an interpretable graph neural network [31].

- Solution: Employ post-hoc interpretability modules. Techniques like hierarchical Gradient-weighted Class Activation Mapping (Grad-CAM) can be applied to a fitted model to identify which pathways and biological processes were most crucial for its predictions, providing a multi-view biological characterization [31].

FAQ 3: What strategies can I use to integrate data from vastly different temporal and spatial scales?

The Challenge: Simulation methods are often limited to specific spatiotemporal scales. For example, Molecular Dynamics (MD) simulations access atomic-level details but over nanoseconds, while network models require seconds to minutes of system-level behavior [32].

Troubleshooting Guide:

- Problem: It's unclear how to link high-detail, short-scale simulations with lower-detail, long-scale models.

- Solution: Implement a Markov State Model (MSM) pipeline. Atomic-scale MSMs can be built from MD simulations to identify key conformational states and their dynamics. The output conformations can then feed into Brownian Dynamics (BD) simulations to calculate association rate constants, which in turn can parameterize protein-scale MSMs for integration into whole-cell models [32].

- Solution: Utilize milestoning. This technique seamlessly integrates MD and BD scales to provide reaction probabilities and forward-rate constants for molecular association events, which are critical parameters often inaccessible by experiment alone [32].

FAQ 4: How can I validate my multi-scale model when experimental data for all scales is incomplete?

The Challenge: Comprehensive experimental data for every level of a multi-scale model is often unavailable. Furthermore, similar pathophysiological drivers (e.g., neuroinflammation) can lead to diverse clinical phenotypes, making direct validation difficult [33] [30].

Troubleshooting Guide:

- Problem: My model cannot be fully validated against a complete experimental dataset.

- Solution: Focus on cross-validation with available empirical data. Use the data you have at specific scales (e.g., protein structures, cellular electrophysiology, network imaging) to validate corresponding model components [34].

- Solution: Leverage AI and machine learning. Train algorithms on rich, multi-modal datasets (genetic, imaging, electrophysiological) to identify predictive patterns. The model's output, such as a predicted disease trajectory, can then be tested against future clinical observations for validation [33].

- Solution: Pursue model-driven discovery. Use your model to generate a novel, testable prediction about system behavior or a missing mechanistic link. Designing an experiment to confirm this prediction serves as powerful validation [34].

Essential Research Reagent Solutions

The table below details key computational tools and resources used in advanced multi-scale modeling research.

Table 1: Key Research Reagents and Computational Tools for Multi-Scale Modeling

| Item Name | Function in Multi-Scale Modeling | Key Application Notes |

|---|---|---|

| Cell Decoder [31] | An interpretable deep learning model for cell-type identification that integrates multi-scale biological prior knowledge. | Embeds protein-protein interactions and pathway hierarchies into a graph neural network; provides multi-view interpretability via Grad-CAM. |

| The Virtual Brain [33] | A computational framework for simulating large-scale brain network dynamics. | Enables personalized digital brain twins by linking empirical data to mechanistic models of brain dynamics. |

| Finite Element (FE) Models [30] | Used for simulating physical phenomena like mechanical stress in traumatic brain injury or electrical signal spread in neurostimulation. | The same numerical technique is applied with vastly different physical parameters (mechanical vs. electrical) depending on the clinical scenario. |

| Markov State Models (MSMs) [32] | Provide a robust representation of the free energy landscape and kinetics of molecular and protein-scale systems. | Used at both atomic and protein scales to bridge MD/BD simulations with cellular network models. |

| Molecular Dynamics (MD) [32] | Simulates atomistic movements and forces to explore protein conformational ensembles and dynamics. | Relies on empirical force fields (e.g., CHARMM, AMBER); computational limits time and spatial scales. |

| Brownian Dynamics (BD) [32] | Calculates diffusion-limited association rate constants (kon) for protein-protein and protein-ligand interactions. | Complements MD by simulating microscopic events over larger systems and timescales using simplifying assumptions. |

Experimental Protocols for Key Methodologies

Protocol 1: Building a Multi-Scale Model for Protein Kinase A (PKA) Activation

This protocol outlines a strategy for bridging from atomic-scale simulations to cell-scale signaling networks, using PKA as a case study [32].

Atomic-Scale Conformational Sampling:

- Objective: Elucidate the key conformational states of the PKA regulatory and catalytic subunits.

- Method: Perform Molecular Dynamics (MD) simulations. Use high-resolution crystal structures as a starting point and run simulations using a force field like CHARMM or AMBER to explore the conformational ensemble.

- Output: A set of protein conformations representing different states.

State Discretization and Kinetics:

- Objective: Identify metastable states and their transition kinetics from the MD simulation data.

- Method: Construct an atomic-scale Markov State Model (MSM). Cluster the MD trajectories into discrete states and calculate the transition probabilities between these states.

- Output: An MSM that provides kinetic and thermodynamic parameters for the conformational changes.

Determining Association Rates:

- Objective: Calculate the rate constant (kon) for cAMP binding to the PKA regulatory subunit.

- Method: Run Brownian Dynamics (BD) simulations. Use conformations from the MSM as input for BD to model the diffusion and association of cAMP.

- Output: Diffusion-limited association rate constants.

Integrating Parameters into a Protein-Scale Model:

- Objective: Create a mechanistic model of PKA holoenzyme activation.

- Method: Build a protein-scale MSM. Incorporate the rate constants and conformational states derived from the atomic-scale models into a model that describes the activation cycle of PKA in response to cAMP.

- Output: A predictive model of PKA activation that can be incorporated into larger whole-cell models.

Protocol 2: Interpretable Cell-Type Identification with Cell Decoder

This protocol details the use of the Cell Decoder framework for robust and interpretable cell-type identification from single-cell transcriptomic data [31].

Input Data Preparation:

- Biological Data: Collect single-cell transcriptomics data (gene expression matrix).

- Prior Knowledge: Gather curated biological domain knowledge, including Protein-Protein Interaction (PPI) networks, gene-pathway mapping relationships, and pathway-hierarchy relationships.

Multi-Scale Graph Construction:

- Construct a hierarchical graph structure with the following layers:

- Gene-gene graph based on PPI networks.

- Gene-pathway graph based on mapping relationships.

- Pathway-Biological Process (BP) graph based on hierarchy information.

- Use gene expressions as initial features for the gene nodes.

- Construct a hierarchical graph structure with the following layers:

Model Training and Optimization:

- Train the Cell Decoder graph neural network end-to-end. The model performs both intra-scale and inter-scale message passing to integrate information.

- Utilize the AutoML module to automatically search for the best model design, including hyperparameters and architecture modifications, tailored to your specific dataset.

Interpretation and Analysis:

- Apply post-hoc interpretability modules. Use hierarchical Grad-CAM analysis on the trained model to identify the pathways and biological processes most critical for predicting different cell types.

- Output: Cell-type predictions accompanied by a multi-view biological characterization explaining the model's decisions.

Workflow and Relationship Diagrams

Multi-Scale Model Integration Workflow

Diagram Title: Information Flow Across Biological Scales in Multi-Scale Modeling

Multi-Scale Model Validation Framework

Diagram Title: Iterative Cycle for Multi-Scale Model Development and Validation

Frequently Asked Questions & Troubleshooting Guides

This technical support resource addresses common challenges researchers face when implementing the hybrid framework for modeling hormone-EEG signal interactions, with a particular focus on the complexities of bidirectional regulation and feedback loops.

FAQ 1: Our model is failing to capture the non-linear dynamics between hormonal cycles and EEG rhythms. What could be the cause?

This is often due to a mismatch between the temporal scales of your data or an oversimplified model architecture.

- Potential Cause A: Inadequate Data Alignment. Hormonal data (e.g., cortisol levels) typically changes over hours or days, whereas EEG signals fluctuate in milliseconds. Models struggle when these multi-scale time-series data are not properly aligned or preprocessed [25].

- Potential Cause B: Model Architecture Limitations. Standard Recurrent Neural Networks (RNNs) may fail to capture long-term dependencies. The Hormone Interaction Dynamics Network (HIDN) is specifically designed to handle these spatial-temporal interdependencies [25].