Integrating Prior Knowledge in GRN Inference: Advanced Strategies for Enhanced Accuracy and Biological Relevance

Accurately inferring Gene Regulatory Networks (GRNs) from single-cell RNA-sequencing data remains a significant challenge due to data sparsity and noise.

Integrating Prior Knowledge in GRN Inference: Advanced Strategies for Enhanced Accuracy and Biological Relevance

Abstract

Accurately inferring Gene Regulatory Networks (GRNs) from single-cell RNA-sequencing data remains a significant challenge due to data sparsity and noise. This article provides a comprehensive guide for researchers and drug development professionals on the strategic integration of prior biological knowledge to overcome these limitations. We explore the foundational rationale for using priors, categorize cutting-edge computational methodologies from graph neural networks to transformer models, and address key troubleshooting and optimization challenges. The content further delivers a critical analysis of validation frameworks and comparative performance of leading tools, offering a practical resource for selecting and applying these methods to uncover robust regulatory mechanisms for therapeutic discovery.

Why Prior Knowledge is a Game-Changer for GRN Inference

FAQs: Understanding Data Sparsity and Its Impacts

What causes the high sparsity and noise in my scRNA-seq data?

Sparsity in scRNA-seq data arises from a combination of biological and technical factors. Biologically, a gene may be truly inactive in a cell, resulting in a biological zero. Technically, a transcript may be present but not detected due to limitations in sequencing depth or efficiency, resulting in a technical zero or "dropout" [1]. Modern datasets are becoming progressively sparser as studies sequence more cells with shallower coverage, making this a fundamental characteristic of contemporary scRNA-seq data [1].

How do dropouts impact my downstream analysis?

Dropouts directly challenge the core assumption of clustering—that similar cells are close in expression space. Research shows that while cluster homogeneity (cells in a cluster being the same type) often remains stable, cluster stability (consistent co-clustering of cell pairs) decreases significantly with higher dropout rates [2]. This means that identifying subtle sub-populations within known cell types becomes increasingly unreliable.

Can I trust the cell types identified from my sparse data?

Analysis confirms that cell type identification based on binarized data (where only gene detection is considered) performs comparably to methods using full count data [1]. This suggests that for classification tasks, the simple presence or absence of gene expression often provides sufficient signal, and the precise count values may not add critical information for distinguishing major cell types.

Is my data too sparse for Gene Regulatory Network (GRN) inference?

Sparsity poses significant challenges for GRN inference, but strategies exist to overcome them. The key is integrating prior knowledge to constrain the solution space. This can include known regulatory interactions from databases, transcription factor binding data, or chromatin accessibility information from multi-omics experiments [3]. Algorithms that incorporate such priors demonstrate enhanced reliability in recovering true regulatory relationships from sparse data.

Troubleshooting Guides

Problem: Unstable Clustering Results

Symptoms: Cell assignments change dramatically with slight parameter adjustments; difficulty reproducing sub-populations.

Solutions:

- Preprocessing: Implement rigorous quality control to remove low-quality cells that exacerbate sparsity issues [4].

- Algorithm Selection: Consider methods designed for sparse data. Newer deep learning approaches like sc-INDC learn noise-invariant representations specifically to overcome these challenges [5].

- Validation: Use multiple clustering methods and compare results. Be cautious when interpreting fine-grained clusters from very sparse data [2].

Experimental Protocol: Evaluating Cluster Stability

- Apply your clustering pipeline to the full dataset

- Randomly subsample 90% of cells and re-cluster

- Repeat subsampling 10 times

- Calculate concordance of cluster assignments using Adjusted Rand Index

- Low concordance scores (<0.7) indicate instability likely due to sparsity

Problem: Poor Integration of Multiple Datasets

Symptoms: Batch effects dominate biological variation; cells cluster by sample rather than cell type.

Solutions:

- Binary Representation: For very sparse datasets, try data integration using binarized expression (0 for undetected, 1 for detected). Studies show this can improve dataset mixing compared to count-based methods [1].

- Structure-Preserving Methods: Use visualization tools like Deep Visualization (DV) that explicitly preserve data geometry while correcting batch effects [6].

- Multi-level Correction: For complex batch effects, consider hierarchical correction methods that handle multiple technical factors simultaneously.

Problem: Weak Signal in GRN Inference

Symptoms: Inferred networks lack known biological pathways; poor reproducibility across similar datasets.

Solutions:

- Incorporate Prior Knowledge: Use curated databases of known interactions to constrain possible networks [3].

- Leverage Multi-omics: Integrate scATAC-seq data to identify accessible regulatory regions that likely contain functional TF binding sites [3].

- Pseudo-bulk Analysis: Aggregate cells by type or condition to reduce sparsity before network inference [1].

Experimental Protocol: GRN Inference with Prior Knowledge

- Data Preparation: Quality-controlled scRNA-seq matrix (cells × genes)

- Prior Knowledge Curation: Collect known TF-target interactions from dedicated databases

- Algorithm Selection: Choose methods capable of incorporating graph-based priors

- Network Inference: Run inference with priors as constraints

- Validation: Compare inferred networks to held-out experimental data or perform functional enrichment

Quantitative Analysis of Sparsity Trends

Dataset Sparsity Over Time

Table 1: Increasing sparsity in modern scRNA-seq datasets (2015-2021)

| Year | Average Number of Cells | Average Detection Rate | Correlation (Cells vs. Detection) |

|---|---|---|---|

| 2015 | 704 | Higher | Strong negative correlation |

| 2020 | 58,654 | Lower | (r = -0.47) |

Data aggregated from 56 published datasets shows a clear trend: as the number of cells per dataset has increased exponentially, detection rates have significantly decreased [1]. This creates progressively sparser datasets where zeros dominate the expression matrix.

Performance of Binary vs. Count-Based Analysis

Table 2: Comparative analysis performance on sparse data

| Analysis Task | Binary Representation | Count-Based | Notes |

|---|---|---|---|

| Cell Type ID | Median F1: 0.93 | Comparable | Based on 22 annotated datasets [1] |

| Data Integration | LISI: 1.18 | LISI: 1.12 | Higher LISI = better mixing [1] |

| Computational Load | ~50x reduction | Baseline | Same hardware resources [1] |

| Pseudobulk DE | Spearman r ≥0.99 | Baseline | Correlation of profiles [1] |

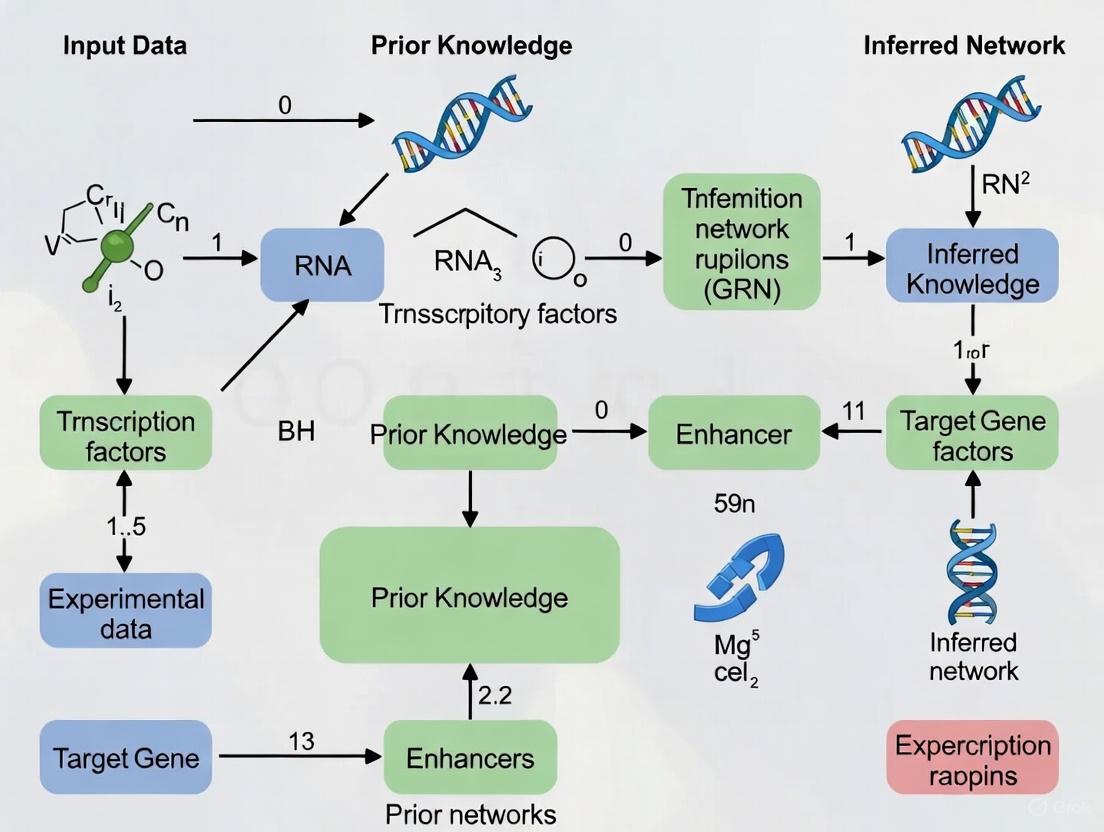

Visualizing Analytical Workflows

Sparsity-Robust scRNA-seq Analysis Pipeline

GRN Inference with Prior Knowledge Integration

Research Reagent Solutions

Table 3: Essential reagents and computational tools for sparse data analysis

| Resource | Type | Function/Purpose | Sparsity Consideration |

|---|---|---|---|

| 10X Chromium | Hardware | Single-cell partitioning | Adjust cell loading to optimize doublet rates and data quality [7] |

| UMI Barcodes | Reagent | Molecular counting | Distinguish biological zeros from technical dropouts [8] |

| TotalSeq Antibodies | Reagent | CITE-seq protein detection | Multi-modal data provides additional validation for cell identity [7] |

| scBFA | Algorithm | Binary dimensionality reduction | Specifically designed for sparse, binary data [1] |

| Harmony | Algorithm | Data integration | Effective batch correction for combining sparse datasets [1] |

| DoubletFinder | Algorithm | Doublet detection | Critical for sparse data where doublets create artifactual populations [4] |

Performance Benchmarking of GRN Inference Methods

The table below summarizes the performance of various Gene Regulatory Network (GRN) inference methods that integrate prior knowledge, based on benchmark evaluations from the BEELINE framework and other studies [9] [10].

| Method Name | Core Approach | Type of Prior Knowledge Used | Reported Performance (EPR/AUPR) | Key Strengths |

|---|---|---|---|---|

| KEGNI [9] | Graph Autoencoder + Knowledge Graph Embedding | Cell type-specific knowledge graphs from KEGG & CellMarker | Superior performance in 12/21 benchmarks; consistently outperforms random predictors | Modular design; effectively captures nonlinear dependencies from scRNA-seq data |

| GRNPT [11] | Transformer + LLM Embeddings + Temporal Convolutional Network | Gene embeddings from biological text (NCBI); ChIP-seq data for training | Outperforms supervised/unsupervised methods, even with only 10% training data | Exceptional generalizability to unseen cell types and regulators |

| KINDLE [12] | Knowledge Distillation (Teacher-Student model) | Prior knowledge used only in teacher model training | State-of-the-art on four benchmark datasets | Infers GRNs from expression data alone after distillation; enables novel discovery |

| SCENIC+ [9] | Co-expression (GENIE3) + Regulatory Potential | RcisTarget for motif analysis; scATAC-seq data | Improved precision over base co-expression methods | Prunes false positives using cis-regulatory information |

| LINGER [9] | Not Specified | scATAC-seq data; putative TF targets from ChIP-seq | Evaluated on PBMC data from 10x Genomics | Leverages multi-omics data for inference |

Experimental Protocols for Key Methods

- Input Data Preparation: Provide a cell type-annotated scRNA-seq dataset.

- Base Graph Construction: Construct an initial k-nearest neighbors (k-NN) graph using Euclidean distances computed from gene expression profiles. Genes are nodes, and expression levels are node features.

- Knowledge Graph Construction:

- Model Training (Multi-task Learning):

- MAE Component: A Masked Graph Autoencoder is trained to reconstruct randomly masked gene expression features in the base graph.

- KGE Component: A Knowledge Graph Embedding model uses contrastive learning on the cell type-specific knowledge graph.

- Embeddings for genes common to both the expression data and knowledge graph are shared and jointly optimized.

- GRN Inference: The trained KEGNI model predicts regulatory interactions, resulting in a cell type-specific GRN.

- Input Data Preparation: Process a scRNA-seq dataset and reconstruct a cell trajectory (pseudotime).

- Feature Extraction - Temporal Dynamics:

- Order gene expression data according to the cell trajectory.

- Train a Temporal Convolutional Network (TCN) autoencoder on this ordered data to capture temporal co-regulation patterns.

- Feature Extraction - Biological Knowledge:

- Obtain gene embedding vectors (1536-dimensional) using GenePT, which processes text from the NCBI database through a GPT-3.5 model [11].

- Feature Integration & Model Training:

- Integrate TCN features and GenePT embeddings using an attention layer.

- Train a Transformer model using known regulatory pairs (e.g., from ChIP-seq data) and randomly generated negative pairs.

- GRN Prediction: Use the trained Transformer decoder to reconstruct the GRN.

- Teacher Model Training: Train a model that integrates prior knowledge with temporal gene expression dynamics.

- Knowledge Distillation: Transfer the knowledge encoded in the teacher model to a student model.

- Student Model Deployment: The student model can perform accurate GRN inference using only gene expression data, without requiring direct access to the original prior knowledge.

Frequently Asked Questions (FAQs)

Q1: What are the main sources of prior knowledge for constructing a GRN? Prior knowledge can be sourced from both experimental data and curated databases. Key sources include:

- Curated Databases: TRRUST, RegNetwork, KEGG PATHWAY, and STRING provide known gene-gene and protein-protein interactions [9].

- Genomic Datasets: Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) data provides direct evidence of Transcription Factor (TF)-DNA binding and is often used as a ground truth for training supervised models [9] [11].

- Cell Type Markers: Databases like CellMarker 2.0 help refine knowledge graphs for specific cellular contexts [9].

- Biological Text: Large Language Models (LLMs) can process text from resources like the NCBI database to generate informative gene embeddings [11].

Q2: How can I validate the accuracy of my inferred GRN? Standard practice involves benchmarking against known ground truth networks and using established evaluation frameworks.

- Ground Truths: Use cell type-specific ChIP-seq networks, literature-curated networks, or functional interaction networks from databases like STRING [9] [10].

- Evaluation Framework: The BEELINE framework provides a standardized way to assess GRN inference methods [9] [10].

- Key Metrics: Evaluate using metrics like Early Precision (EPR), which measures the fraction of true positives among the top-k predictions, and the Area Under the Precision-Recall Curve (AUPR) [9].

Q3: My GRN has many false positives. How can I improve precision? Strategies to reduce false positives include:

- Integration of Epigenetic Data: Use scATAC-seq data or motif information (e.g., with RcisTarget) to prune edges that lack supporting cis-regulatory evidence [9].

- Leverage Prior Knowledge: Incorporate high-quality, context-specific knowledge graphs to guide the inference and restrict the solution space to biologically plausible interactions [9] [12].

- Utilize Advanced Architectures: Methods like KEGNI and GRNPT use deep learning to capture complex, nonlinear relationships, moving beyond simple correlation which can be prone to false positives [9] [11].

Q4: Can a model trained on one cell type be applied to another? This depends on the method's generalizability. Traditional methods often struggle with this, but newer approaches like GRNPT are specifically designed to generalize effectively to unseen cell types and even predict regulatory relationships for unseen regulators [11].

Q5: Is prior knowledge always beneficial for GRN inference? While prior knowledge generally enhances accuracy, its effectiveness is contingent on precision. Imprecise or low-quality prior information can mislead the model. Furthermore, heavy reliance on prior knowledge may limit the potential for novel biological discovery. Frameworks like KINDLE aim to decouple inference from prior dependencies, using knowledge only during training to create a model that can make novel predictions from data alone [12].

Research Reagent Solutions

| Reagent / Resource | Function in GRN Inference | Example Use Case |

|---|---|---|

| scRNA-seq Data | Provides single-cell resolution gene expression profiles, the foundational data for inferring co-expression and regulatory relationships. | Input for all benchmarked methods (KEGNI, GRNPT, etc.) to learn gene-gene relationships [9] [11]. |

| scATAC-seq Data | Identifies regions of open chromatin, giving clues about active regulatory elements and potential TF binding sites. | Used by methods like FigR and SCENIC+ to validate and prune predicted regulatory links [9]. |

| ChIP-seq Data | Serves as a source of high-confidence, direct TF-DNA binding information, often used as ground truth for training and validation. | Forms the positive regulatory pairs for training supervised models like GRNPT [11]. |

| KEGG Database | A curated repository of pathway maps that provides known molecular interaction and reaction networks. | Used by KEGNI to construct its initial, general biological knowledge graph [9]. |

| CellMarker Database | A resource of cell type-specific marker genes, useful for contextualizing analysis. | Employed by KEGNI to refine its KEGG-derived knowledge graph for a specific cell type [9]. |

Workflow Visualization

KEGNI Inference Architecture

GRNPT Knowledge Integration

Frequently Asked Questions (FAQs)

Q1: What are the primary differences between TRRUST, KEGG, and RegNetwork, and when should I use each one?

A1: These databases serve complementary roles. TRRUST is ideal for obtaining a high-confidence, literature-curated set of transcription factor (TF)-target interactions, complete with mode-of-regulation (activation/repression) annotations [13] [14]. KEGG provides manually drawn pathway maps that place genes within the context of broader molecular interaction and reaction networks, which is essential for interpreting the functional consequences of regulatory events [15] [16]. RegNetwork offers a more comprehensive, integrated network by combining both transcriptional (TF-target) and post-transcriptional (miRNA-target) regulatory interactions sourced from numerous other databases [17]. Your choice depends on the research question, as summarized in the table below.

Q2: I have constructed a regulon using TRRUST, but my downstream analysis does not seem biologically coherent. What could be wrong?

A2: A common issue is the lack of cellular context. TRRUST and other general knowledge bases contain interactions aggregated from many different cell types and experimental conditions [18]. A regulon active in one cell line may be entirely inactive in another. To troubleshoot:

- Filter by Evidence: Check if the interactions in your regulon are supported by ChIP-Seq or other binding data in your cell type of interest. Databases like ChIP-Atlas or GTRD can be used for this [18].

- Integrate Expression Data: Ensure the TF and its putative target genes are expressed in your specific cellular context. You can use RNA-Seq data from sources like ENCODE to filter the regulon [18].

- Validate Experimentally: If possible, use TF knockout experiments to benchmark your regulon's accuracy, as described in benchmarking studies [18].

Q3: When performing KEGG pathway analysis on my differentially expressed genes, some pathway boxes are multicolored (e.g., red and green). How should I interpret this?

A3: Multicolored boxes typically represent a gene family or an enzyme complex composed of multiple subunits [16]. The different colors indicate that not all the genes belonging to that functional unit are regulated in the same direction. For example, one subunit of a complex might be encoded by an up-regulated gene (red), while another subunit is encoded by a down-regulated gene (green). This suggests a complex regulatory mechanism affecting the same pathway or protein complex [16].

Q4: How can I incorporate cell type-specific markers to improve my Gene Regulatory Network (GRN) inference?

A4: Cell type-specific markers are crucial for contextualizing prior knowledge.

- Define Cellular Context: Use established markers to confirm the cell type identity of your samples before applying broad knowledge bases like TRRUST or RegNetwork. This ensures the regulatory rules you are applying are biologically relevant [18].

- Guide Data Integration: In single-cell RNA-seq studies, markers help in annotating cell clusters. Once clusters are defined, you can infer cell-type-specific GRNs by integrating prior knowledge filtered for expression within that specific cluster [19] [18].

- Avoid Over-Correction: When integrating single-cell datasets from multiple batches to build GRNs, use advanced batch correction tools like scCobra that minimize the risk of "over-correction," which can erase subtle, biologically meaningful differences between cell types [19].

Database Comparison and Selection Guide

The table below summarizes the key quantitative and functional attributes of TRRUST, KEGG, and RegNetwork to guide your selection.

Table 1: Comparison of Key Knowledge Databases for GRN Inference

| Feature | TRRUST | KEGG | RegNetwork |

|---|---|---|---|

| Primary Focus | TF-target regulatory interactions [13] | Biological pathways & molecular networks [15] [16] | Integrated transcriptional & post-transcriptional network [17] |

| Core Content | Literature-curated TF-target pairs | Manually drawn pathway maps | TF-target & miRNA-target interactions |

| # of Human TF-Target Interactions | ~8,444 (v2) [14] | Not primarily TF-focused | Comprehensive (compiled from 25+ sources) [17] |

| Mode-of-Regulation | Yes (Activation/Repression) [13] | Implied by pathway logic | Varies by source |

| Unique Strength | High-confidence, small-scale experimental data [13] | Visual integration of genes/metabolites in pathways [16] | Combines TF and miRNA regulation [17] |

| Best Used For | Benchmarking GRN algorithms; studying specific TFs | Functional interpretation of gene lists; pathway analysis | Building comprehensive, multi-layer regulatory networks |

Experimental Protocols for GRN Research

Protocol 1: Constructing a Cell Type-Specific Regulon

This protocol outlines a method for defining regulons that capture cell-specific aspects of both TF binding and gene expression [18].

- Data Acquisition:

- Mapping TF-Target Interactions:

- Select a Mapping Strategy: Choose a methodology to link TF binding sites to target genes. Common strategies include:

- S2Mb: Links a peak to the TSS of the highest expressed transcript within a ±1 Mb window. (Captures distal enhancers but may have more false positives).

- S100Kb: Links a peak to the TSS of the highest expressed transcript within a ±50 kb window [18].

- Annotate TSS: Use a tool like

bedtools closestto annotate peaks with TSS coordinates, followed by distance filtering per your chosen strategy [18].

- Select a Mapping Strategy: Choose a methodology to link TF binding sites to target genes. Common strategies include:

- Filtering for Active Regulation:

- Filter the mapped interactions to include only target genes where the corresponding transcript is expressed. A common threshold is to retain only the top 50% of expressed transcripts to eliminate noise [18].

- Functional Characterization (Optional):

- Annotate the resulting regulon using ATAC-Seq or DNase-Seq data to confirm open chromatin at the promoter/enhancer.

- Perform motif analysis on the ChIP-Seq peaks to validate direct binding potential [18].

Protocol 2: Benchmarking Inferred GRNs Against Prior Knowledge

This protocol uses TRRUST as a gold-standard to evaluate computationally inferred networks [13].

- GRN Inference: Run your chosen GRN inference algorithm (e.g., GENIE3, SCENIC, GRNPT) on your gene expression dataset to generate a ranked list of potential TF-target links [20].

- Retrieve Gold-Standard Network: Download the set of known human TF-target interactions from TRRUST [13] [14].

- Performance Evaluation:

- Calculate the enrichment of TRRUST interactions within the top-ranked predictions of your inferred network. A significant enrichment indicates that your model is recovering biologically valid relationships [13].

- Generate a Receiver Operating Characteristic (ROC) or Precision-Recall (PR) curve, treating TRRUST as the positive set.

Workflow and Pathway Visualizations

The following diagrams, generated with Graphviz, illustrate core concepts and methodologies.

Diagram 1: GRN Inference Knowledge Integration

Diagram 2: Cell Type-Specific Regulon Construction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for GRN Knowledge Integration

| Item / Resource | Function / Description | Key Example / Source |

|---|---|---|

| Literature-Curated Database | Provides high-confidence, experimentally validated TF-target interactions for benchmarking. | TRRUST [13] [14] |

| Integrated Regulatory Network | Offers a comprehensive prior network combining TFs and miRNAs. | RegNetwork [17] |

| Pathway Database | Enables functional interpretation and visualization of gene lists in a biological context. | KEGG PATHWAY [15] [16] |

| ChIP-Seq Data Repository | Source of genome-wide TF binding data for specific cell types. | ReMap, ChIP-Atlas [18] |

| Gene Expression Repository | Provides transcriptome data to filter interactions for active genes in a cell type. | ENCODE [18] |

| Batch Correction Tool | Integrates single-cell datasets from different studies while preserving biological variation. | scCobra [19] |

| Advanced GRN Inference Tool | Infers regulatory networks by integrating prior knowledge with expression data. | GRNPT (Transformer-based) [20] |

Frequently Asked Questions (FAQs)

Q1: What are the main types of prior knowledge I can use to improve my GRN inference? You can leverage several types of prior knowledge to make the GRN inference problem more tractable. These are often categorized as follows [3]:

- Multi-omics Data: This includes experimental data like chromatin accessibility (from ATAC-seq), maps of physical DNA contacts (from Hi-C), and transcription factor binding sites (from ChIP-seq). Integrating this data provides direct evidence of potential regulatory interactions.

- Curated Databases: Existing knowledge from literature-curated databases of known regulatory interactions between specific gene pairs can be used to constrain or guide the inference.

- Topological Priors: General graph structures or network properties known from previous studies can serve as a prior, encouraging the inferred network to have biologically plausible architectures.

Q2: My GRN inference results have too many false positives. What strategies can I use to control the False Discovery Rate (FDR)? Controlling the FDR in GRN inference is challenging due to indirect effects, nonlinear relationships, and unmeasured confounding variables. One advanced statistical framework to address this is the model-X knockoffs method [21]. This framework can control the FDR while accounting for:

- Indirect regulation: Helping to distinguish direct from indirect regulatory effects.

- Nonlinear dose-response: Capturing complex, non-linear relationships between variables.

- User-provided covariates: Allowing you to include known covariates to account for some confounding factors. However, a major remaining driver of FDR is unmeasured confounding, which must be considered when interpreting results [21].

Q3: How do I represent prior knowledge in a standardized way for different algorithms? A highly flexible and recommended approach is to represent your prior knowledge as a graph structure [3]. In this representation:

- Nodes represent biological entities (e.g., transcription factors, target genes, regulatory elements).

- Edges represent the prior knowledge about interactions or potential interactions between them. Using a graph-based prior allows you to utilize diverse sources of knowledge in a unified format that can be incorporated by many modern GRN inference algorithms.

Troubleshooting Guides

Issue: High False Discovery Rate in Inferred Network

A high rate of false positives undermines the reliability of your inferred GRN for downstream analysis and experimental validation.

Diagnosis:

- Potential Cause 1: The inference is based solely on transcriptomic data, which is insufficient to distinguish direct causation from indirect correlation and is susceptible to unmeasured confounding variables [21].

- Potential Cause 2: The algorithm used does not incorporate strong enough constraints or prior knowledge to limit spurious connections.

- Potential Cause 3: The chosen method does not implement robust statistical control for FDR under realistic biological conditions (e.g., nonlinear effects).

Resolution:

- Integrate Prior Knowledge: Incorporate additional biological evidence to constrain the solution space. Use a graph-based prior representing known interactions from databases or multi-omics experiments [3].

- Use FDR-Controlling Methods: Employ inference algorithms that implement rigorous statistical frameworks for FDR control, such as the model-X knockoffs, which are designed to handle the complexities of biological data [21].

- Benchmark Your Workflow: Use standardized benchmarking frameworks to evaluate the performance of your chosen algorithm, especially its ability to control FDR, on datasets similar to yours [3].

Issue: Poor Algorithm Performance on Noisy Single-Cell Data

The inherent technical noise and high sparsity (dropouts) in single-cell RNA sequencing (scRNA-seq) data lead to unreliable and poorly reproducible GRNs.

Diagnosis:

- Potential Cause: The GRN inference algorithm is treating the noisy scRNA-seq data as a primary signal without sufficient regularization or integration of supporting evidence.

Resolution:

- Select a Robust Algorithm: Choose an algorithm specifically designed to handle the noise and sparsity of scRNA-seq data and, crucially, one that has the capability to incorporate flexible prior knowledge [3].

- Leverage Multi-omics Priors: If available, use prior knowledge from single-cell multi-omics datasets. For example, pairing scRNA-seq with scATAC-seq data can provide direct evidence on which transcription factors have accessible binding sites in which cells, greatly enhancing inference reliability [3].

- Validate Reproducibility: Assess the reproducibility of your inferred GRNs on independent datasets collected under the same biological conditions to ensure your results are not an artifact of the specific dataset's noise profile [3].

Experimental Protocols & Data

Detailed Methodology: Incorporating a Graph Prior in GRN Inference

This protocol outlines the steps for integrating prior knowledge represented as a graph to infer a more accurate Gene Regulatory Network from scRNA-seq data [3].

1. Prior Knowledge Acquisition and Curation:

- Objective: Collect known regulatory interactions relevant to your biological context.

- Procedure:

- Extract known Transcription Factor-Target Gene (TF-TG) interactions from curated databases (e.g., ENCODE, ChIP-Atlas).

- From multi-omics experiments (e.g., ATAC-seq), identify regions of open chromatin and predict potential TF binding sites to form TF-Regulatory Element (TF-RE) prior knowledge.

- Output: A list of putative regulatory interactions.

2. Graph Prior Construction:

- Objective: Represent the prior knowledge in a standardized graph format.

- Procedure:

- Create a graph where nodes are genes (and optionally, regulatory elements).

- For each known or putative interaction from Step 1, add a directed edge from the TF to the TG (or from the TF to the RE, and from the RE to the TG for eGRNs).

- Weights can be assigned to edges to represent confidence or strength of the prior evidence.

- Output: A prior knowledge graph (e.g., in a simple TSV or GraphML format).

3. Integration into GRN Inference Algorithm:

- Objective: Use the graph prior to guide the network inference from your scRNA-seq data.

- Procedure:

- Select a GRN inference algorithm that can accept graph-based priors (e.g., methods classified as "prior-informed").

- Input your scRNA-seq expression matrix and the graph prior constructed in Step 2.

- The algorithm will use the prior to initialize, constrain, or regularize the inference process, penalizing solutions that deviate strongly from the known biology while still learning from the data.

4. Validation and Benchmarking:

- Objective: Assess the improvement gained by integrating the prior.

- Procedure:

- Compare the prior-informed network against a network inferred without the prior.

- Use a benchmarking framework with held-out gold standard interactions (if available) to calculate performance metrics like precision and recall.

- Perform functional enrichment analysis on the target genes of key TFs in the inferred network to check for biological relevance.

Quantitative Data on GRN Inference Challenges

The table below summarizes key findings from benchmarking studies that highlight the core challenges in GRN inference, which strategic prior knowledge integration aims to solve [3].

| Challenge | Key Finding | Implication for Research |

|---|---|---|

| Overall Performance | Highly variable and overall poor performance across algorithms and datasets. | No single algorithm performs best in all contexts; careful selection and validation are required. |

| Reproducibility | Poor reproducibility of inferred GRNs from independent datasets under the same biological condition. | Inferred networks may be unstable and specific to a single dataset's noise profile. |

| Comparison to Baseline | Advanced methods cannot consistently outperform simple linear correlation. | Highlights the fundamental difficulty of the problem and the limitations of transcriptome-only data. |

| Topological Bias | Available algorithms introduce inherent topological biases into their inferred GRNs. | The inferred network structure may be influenced as much by the algorithm's bias as by the underlying biology. |

Research Reagent Solutions

The table below lists essential computational "reagents" and resources for conducting prior-informed GRN inference studies.

| Item / Resource | Function / Description |

|---|---|

| scRNA-seq Data | The primary data measuring gene expression heterogeneity at single-cell resolution, used as the main input for inference [3]. |

| Prior Knowledge Databases | Curated repositories of known TF-TG interactions (e.g., from ChIP-seq experiments) used to build constraint graphs [3]. |

| Multi-omics Data (e.g., scATAC-seq) | Provides complementary evidence on chromatin accessibility, helping to identify potential regulatory regions and constrain possible TF-target relationships [3]. |

| Model-X Knockoffs Framework | A statistical framework used to control the False Discovery Rate (FDR) in the inferred network, accounting for confounding factors [21]. |

| Graph Representation | A flexible data structure (nodes and edges) used to standardize the incorporation of diverse prior knowledge sources into the inference process [3]. |

| Benchmarking Framework | A standardized set of metrics and gold-standard data to fairly evaluate and compare the performance of different GRN inference algorithms [3]. |

Strategic Workflow Visualization

The following diagram illustrates the logical workflow and strategic advantage of integrating prior knowledge to constrain the solution space in GRN inference.

GRN Inference with Prior Knowledge

The next diagram provides a more detailed view of the "Constrained GRN Inference" process, showing how different types of prior knowledge are integrated.

How Priors Guide Inference

Frequently Asked Questions (FAQs)

FAQ 1: What are the main advantages of using a graph structure as prior knowledge for GRN inference? Using a graph structure as a prior helps overcome the high false positive rates common in methods that rely solely on gene co-expression from scRNA-seq data. It incorporates established biological knowledge, which guides the inference model towards more biologically plausible regulatory relationships, enhances the accuracy of the predicted network, and helps in identifying key driver genes within specific cellular contexts [9].

FAQ 2: My scRNA-seq data is unpaired with epigenetic data (like scATAC-seq). Can I still use these graph-based methods? Yes. Frameworks like KEGNI and GRLGRN are specifically designed to work with scRNA-seq data and integrate prior knowledge from existing databases, reducing the dependency on paired multi-omics data. This avoids the potential introduction of noise that can occur when integrating unpaired datasets from different sources [9] [22].

FAQ 3: How is "prior knowledge" transformed into a graph format for these models? Prior knowledge is typically compiled from established biological databases such as KEGG PATHWAY, TRRUST, or RegNetwork. In these graphs, genes are represented as nodes, and known regulatory interactions (e.g., TF-target relationships) are represented as edges. This graph can be further refined to be cell type-specific by filtering for relevant markers from databases like CellMarker [9].

FAQ 4: What is an "implicit link," and how does extracting them improve GRN inference? Explicit links are the direct connections found in a prior knowledge graph. Implicit links are latent, higher-order dependencies between genes that are not directly connected in the prior graph but can be inferred through the network's topology. Methods like GRLGRN use graph transformer networks to extract these implicit links, allowing the model to uncover potential regulatory relationships that are not immediately obvious from the explicit prior knowledge alone [22].

FAQ 5: How can I assess the performance of a GRN inference method on my own data? Performance is typically evaluated by comparing the inferred network against a ground-truth network using metrics like Early Precision Ratio (EPR), Area Under the Precision-Recall Curve (AUPR), and Area Under the Receiver Operating Characteristic Curve (AUROC). The BEELINE framework provides standardized benchmark datasets and procedures for this purpose, which allows for a fair comparison of different algorithms [9] [22].

Troubleshooting Guides

Issue 1: Low Accuracy and High False Positives in Inferred GRN

Problem: The inferred gene regulatory network contains many regulatory edges that are not biologically valid.

Solution:

- Action 1: Integrate High-Quality Prior Knowledge. Construct a cell type-specific knowledge graph to guide the inference.

- Protocol: Use the following steps to build a knowledge graph for the KEGNI framework [9]:

- Source Prior Data: Download known gene interactions from the KEGG PATHWAY database [9].

- Refine with Cell Markers: Obtain cell type-specific marker genes from the CellMarker 2.0 database [9].

- Filter the Graph: Select only those nodes and edges from the KEGG graph that are associated with the identified cell type markers.

- Formalize the Graph: Represent genes as nodes and their known regulatory interactions as edges to form the final knowledge graph.

- Protocol: Use the following steps to build a knowledge graph for the KEGNI framework [9]:

- Action 2: Employ a Self-Supervised Learning Strategy. Use a framework that learns gene representations directly from expression data.

- Protocol: Implement the Masked Graph Autoencoder (MAE) component of KEGNI [9]:

- Construct Base Graph: Create a k-nearest neighbors (k-NN) graph from the gene expression profiles.

- Mask and Reconstruct: Randomly mask the expression features of a subset of genes (nodes) in the graph.

- Train the Model: Use a graph autoencoder to learn gene embeddings by trying to reconstruct the masked features. This forces the model to learn robust relationships between genes.

- Protocol: Implement the Masked Graph Autoencoder (MAE) component of KEGNI [9]:

Issue 2: Model Fails to Learn Beyond Explicit Prior Knowledge

Problem: The inference model is overly reliant on the input prior graph and fails to discover novel regulatory relationships.

Solution:

- Action: Use a Model that Discovers Implicit Links. Implement a framework like GRLGRN that uses advanced graph learning to find hidden connections [22].

- Protocol: Apply the graph representation learning approach from GRLGRN:

- Extract Implicit Links: Use a graph transformer network to analyze the prior GRN. This layer processes multiple derived graphs (e.g., TF-to-target, target-to-TF, TF-to-TF) to capture complex topological patterns [22].

- Generate Implicit Adjacency Matrix: Combine the outputs to form a new adjacency matrix that includes both explicit and inferred implicit links.

- Refine Features with Attention: Process the gene features through a Convolutional Block Attention Module (CBAM) to highlight the most informative features for predicting regulatory dependencies [22].

- Protocol: Apply the graph representation learning approach from GRLGRN:

Issue 3: Inconsistent Performance Across Different Cell Types or Datasets

Problem: The GRN inference method works well on one dataset but performs poorly on another.

Solution:

- Action: Perform a Rigorous Benchmarking. Systematically evaluate the method using standardized benchmarks.

- Protocol: Use the BEELINE framework to assess performance [9] [22]:

- Obtain Benchmark Data: Download one of the seven standard scRNA-seq datasets (e.g., from human ESCs or mouse dendritic cells) provided by BEELINE.

- Select Ground-Truth Networks: Choose one or more of the provided ground-truth networks for evaluation, such as cell type-specific ChIP-seq networks or the STRING functional interaction network.

- Run Inference and Evaluate: Execute your GRN inference method and use the BEELINE evaluation scripts to calculate performance metrics like EPR and AUPRC. Compare the results against other established methods.

- Protocol: Use the BEELINE framework to assess performance [9] [22]:

Performance Benchmarking Data

The following tables summarize the quantitative performance of modern graph-based methods against established algorithms on benchmark datasets.

Table 1: Performance Comparison on BEELINE Benchmark (scRNA-seq data) [9]

| Method | Key Principle | Best Performance (Number of Benchmarks) | Key Metric |

|---|---|---|---|

| KEGNI | Knowledge graph + Graph Autoencoder | 12 | Early Precision Ratio (EPR) |

| MAE (KEGNI component) | Self-supervised feature reconstruction | 4 | Early Precision Ratio (EPR) |

| GENIE3 | Tree-based ensemble | 4 | Early Precision Ratio (EPR) |

| PIDC | Information theory | 1 | Early Precision Ratio (EPR) |

| GRNBoost2 | Gradient boosting on regulators | Not top performer | Early Precision Ratio (EPR) |

Table 2: Performance of GRLGRN on Seven Cell Line Datasets [22]

| Evaluation Metric | Performance Result | Comparison to Other Models |

|---|---|---|

| AUROC (Area Under the ROC Curve) | Best performance on 78.6% of datasets | Average improvement of 7.3% |

| AUPRC (Area Under the Precision-Recall Curve) | Best performance on 80.9% of datasets | Average improvement of 30.7% |

Experimental Protocols

Protocol 1: GRN Inference with the KEGNI Framework

Purpose: To infer a cell type-specific Gene Regulatory Network from scRNA-seq data by integrating prior knowledge with a graph autoencoder [9].

Workflow:

Procedure:

- Input Data Preparation: Provide a cell type-annotated scRNA-seq count matrix as input.

- Base Graph Construction: Construct an initial k-Nearest Neighbors (k-NN) graph where nodes are genes and edges are based on Euclidean distances between their expression profiles.

- Knowledge Graph Construction: Build a separate knowledge graph by extracting gene-gene interactions from the KEGG PATHWAY database and refining them with cell-type markers from CellMarker 2.0.

- Model Training:

- The MAE model takes the k-NN graph, randomly masks a portion of gene expression features, and uses a graph autoencoder to reconstruct them.

- The KGE model takes the cell type-specific knowledge graph and learns node embeddings via contrastive learning.

- A multi-task learning approach jointly optimizes the objectives of both models, sharing embeddings for genes present in both inputs.

- Output: The framework outputs a ranked list of predicted regulatory interactions for the cell type.

Protocol 2: GRN Inference with the GRLGRN Framework

Purpose: To infer GRNs by extracting implicit links from a prior GRN using a graph transformer network, thereby capturing latent regulatory dependencies [22].

Workflow:

Procedure:

- Input: A prior GRN (adjacency matrix) and a matrix of single-cell gene expression profiles.

- Implicit Link Extraction: The prior GRN is processed by a graph transformer layer. This layer creates multiple derived graphs (e.g., TF→target, target→TF, TF→TF) and uses a attention mechanism to learn a new composite adjacency matrix that includes implicit links.

- Gene Embedding: The implicit link adjacency matrix and the gene expression profile matrix are fed into a Graph Convolutional Network (GCN) to generate low-dimensional embeddings for each gene.

- Feature Enhancement: The gene embeddings are further refined using a Convolutional Block Attention Module (CBAM) to emphasize important features.

- Output and Training: The refined embeddings are used by an output module to predict regulatory relationships. The model is trained with a loss function that includes a graph contrastive learning regularization term to prevent over-smoothing.

Table 3: Key Resources for GRN Inference with Graph Priors

| Resource Name | Type | Function in GRN Inference |

|---|---|---|

| KEGG PATHWAY [9] | Database | Provides a comprehensive collection of known molecular interaction networks and pathways used to build prior knowledge graphs. |

| TRRUST [9] | Database | A curated database of transcriptional regulatory networks, useful for sourcing TF-target relationships for the prior graph. |

| CellMarker 2.0 [9] | Database | Provides cell type-specific marker genes, enabling the refinement of a general knowledge graph into a cell type-specific one. |

| BEELINE [9] [22] | Software Framework | A standardized benchmarking framework for evaluating GRN inference algorithms on common scRNA-seq datasets with ground-truth networks. |

| STRINTG [22] | Database | A database of known and predicted protein-protein interactions, often used as a ground-truth network for functional evaluation. |

| ChIP-seq Data [22] | Ground-Truth Data | Experimentally derived transcription factor binding sites used as a high-confidence ground-truth network for performance evaluation. |

A Practical Guide to Modern GRN Inference Methods and Tools

Frequently Asked Questions (FAQs)

Q1: What is the primary innovation of the KEGNI framework compared to previous GRN inference methods? KEGNI (Knowledge graph-Enhanced Gene regulatory Network Inference) introduces an integrated approach that combines a Masked Graph Autoencoder (MAE) for learning gene relationships from single-cell RNA sequencing (scRNA-seq) data with a Knowledge Graph Embedding (KGE) model that incorporates structured prior biological knowledge. This combination allows KEGNI to effectively capture complex, non-linear gene regulatory relationships while reducing false positives that commonly occur in co-expression-based methods [9] [23].

Q2: What types of input data does KEGNI require? KEGNI primarily requires scRNA-seq data as its primary input. Additionally, it can incorporate a cell type-specific knowledge graph constructed from biological pathway databases like KEGG PATHWAY and cell type markers from databases such as CellMarker 2.0. The framework is also compatible with paired scRNA-seq and scATAC-seq data, though it performs well with scRNA-seq data alone [9].

Q3: How does KEGNI handle cell type-specificity in GRN inference? KEGNI constructs cell type-specific knowledge graphs by integrating KEGG pathway information with relevant cell type markers identified from the CellMarker 2.0 database. This ensures the inferred networks are context-specific to the biological conditions being studied [9].

Q4: What is the role of the masked autoencoder in KEGNI's architecture? The Masked Graph Autoencoder (MAE) in KEGNI employs a self-supervised learning strategy where it randomly masks a subset of node features (gene expressions) and learns to reconstruct them. This process enables the model to capture meaningful gene regulatory relationships from scRNA-seq data without relying solely on direct correlation patterns [9].

Q5: How does KEGNI's performance compare to other GRN inference methods? According to benchmarks using the BEELINE framework, KEGNI demonstrates superior performance compared to multiple established methods including PIDC, GENIE3, GRNBoost2, scGeneRAI, AttentionGRN, SCODE, PPCOR, and SINCERITIES. It consistently outperformed random predictors across all benchmarks and achieved the best performance in 12 out of 21 benchmarks [9].

Troubleshooting Guides

Issue 1: Poor GRN Inference Performance

Symptoms:

- Low early precision ratio (EPR) scores compared to benchmarks

- High false positive rates in predicted regulatory edges

- Inability to identify known driver genes

Potential Causes and Solutions:

| Cause | Solution |

|---|---|

| Insufficient data quality | Ensure scRNA-seq data is properly normalized and preprocessed. Remove low-quality cells and genes with minimal expression. |

| Suboptimal hyperparameters | Adjust the number of neighbors (k) in the k-NN algorithm used for base graph construction. Typical values range from 5-20 [9]. |

| Inadequate knowledge graph | Verify the cell type-specific knowledge graph includes relevant pathways and markers. Expand knowledge sources if necessary. |

| Improper masking ratio | Adjust the feature masking ratio in the graph autoencoder. KEGNI's default parameters typically provide stable performance [9]. |

Issue 2: Computational Performance and Scalability

Symptoms:

- Long training times

- Memory constraints with large datasets

- Difficulty handling datasets with many genes

Optimization Strategies:

| Strategy | Implementation |

|---|---|

| Feature selection | Use the 500-1000 most variable genes as input rather than all detected genes [9]. |

| Graph sparsification | Adjust k-NN parameters to create sparser base graphs while maintaining biological relevance. |

| Modular execution | Run the MAE component independently first, then integrate with KGE if computational resources are limited [9]. |

| Batch processing | For very large datasets, process genes in batches or by chromosomal regions. |

Symptoms:

- Knowledge graph edges have minimal overlap with inferred regulations

- Poor integration of scRNA-seq data with prior knowledge

- Contradictory predictions between data-driven and knowledge-driven components

Resolution Approaches:

| Approach | Description |

|---|---|

| Knowledge graph validation | Ensure the knowledge graph is cell type-specific by incorporating appropriate markers from CellMarker 2.0 [9]. |

| Balance coefficient adjustment | Tune the balancing coefficient (λ) between MAE loss and KGE loss during multi-task learning [9]. |

| Edge filtering | Apply post-processing with tools like RcisTarget (KEGNI*) to prune potentially false positive edges while maintaining coverage [9]. |

Experimental Protocols and Methodologies

KEGNI Workflow Implementation

Diagram Title: KEGNI Framework Workflow

Graph Autoencoder Architecture

Diagram Title: KEGNI Graph Autoencoder Architecture

Performance Benchmarking Protocol

Objective: Evaluate KEGNI's performance against established GRN inference methods using the BEELINE framework [9].

Methodology:

- Dataset Preparation: Utilize 7 scRNA-seq datasets (5 mouse and 2 human cell lines) from BEELINE

- Ground Truth Definition: Collect three distinct ground-truth networks:

- Cell type-specific ChIP-seq networks

- Non-specific ChIP-seq networks

- Functional interaction networks from STRING database

- Evaluation Metric: Calculate Early Precision Ratio (EPR) - fraction of true positives among top-k predicted edges compared to random predictor

- Comparison Methods: Include PIDC, GENIE3, GRNBoost2, scGeneRAI, AttentionGRN, SCODE, PPCOR, and SINCERITIES

- Statistical Analysis: Perform 10 independent runs and report median performance values

Implementation Details:

- Construct cell type-specific knowledge graphs for each dataset

- Ensure minimal overlap (<3%) between knowledge graph edges and ground truths

- Use default KEGNI parameters unless specified otherwise

Performance Data and Comparison

Benchmark Results Across Methods

Table 1: Early Precision Ratio (EPR) Performance Comparison Across GRN Inference Methods [9]

| Method | Average EPR | Performance Range | Consistency Score | Key Strengths |

|---|---|---|---|---|

| KEGNI | 2.85 | 1.92-3.75 | High | Best overall performance, robust across cell types |

| MAE (KEGNI component) | 2.42 | 1.65-3.20 | High | Effective without external knowledge |

| GENIE3 | 1.95 | 0.85-2.95 | Medium | Top performer in 4 benchmarks |

| PIDC | 1.78 | 0.72-2.65 | Medium | Best in 1 benchmark |

| GRNBoost2 | 1.82 | 0.80-2.70 | Medium | Good with large datasets |

| scGeneRAI | 1.88 | 0.78-2.82 | Medium | Interpretable predictions |

| AttentionGRN | 1.91 | 0.82-2.88 | Medium | Captures complex dependencies |

Hyperparameter Sensitivity Analysis

Table 2: KEGNI Hyperparameter Optimization Guidelines [9]

| Parameter | Default Value | Recommended Range | Effect on Performance | Stability Assessment |

|---|---|---|---|---|

| k-NN neighbors | 10 | 5-20 | Moderate impact | Stable within range |

| Masking ratio | 0.3 | 0.2-0.5 | Low to moderate impact | Very stable |

| λ (MAE-KGE balance) | 0.7 | 0.5-0.9 | High impact | Optimal at 0.6-0.8 |

| Embedding dimension | 128 | 64-256 | Low impact | Very stable |

| Training epochs | 300 | 200-500 | Moderate impact | Stable after 250 |

Multi-Modal Data Integration Performance

Table 3: KEGNI Performance with Different Data Modalities [9]

| Data Input | AUPR Score | EPR Score | Recall | Best Use Cases |

|---|---|---|---|---|

| scRNA-seq only | 0.285 | 2.85 | 0.324 | Standard GRN inference |

| scRNA-seq + KEGG | 0.312 | 3.15 | 0.358 | Pathway-informed analysis |

| scRNA-seq + scATAC-seq | 0.295 | 2.95 | 0.341 | Chromatin accessibility contexts |

| All integrated data | 0.328 | 3.28 | 0.372 | Comprehensive regulatory mapping |

Research Reagent Solutions

Table 4: Essential Research Resources for KEGNI Implementation [9]

| Resource | Type | Function in KEGNI | Availability |

|---|---|---|---|

| KEGG PATHWAY | Database | Provides prior knowledge for knowledge graph construction | https://www.genome.jp/kegg/ |

| CellMarker 2.0 | Database | Supplies cell type-specific markers for context refinement | http://bio-bigdata.hrbmu.edu.cn/CellMarker/ |

| STRING DB | Database | Functional protein associations for validation | https://string-db.org/ |

| BEELINE | Benchmark | Framework for performance evaluation and comparison | https://github.com/Murali-group/Beeline |

| Graph Autoencoder | Algorithm | Learns gene representations from expression data | KEGNI implementation |

| RcisTarget | Tool | Post-hoc pruning of predicted edges to reduce false positives | https://bioconductor.org/packages/RcisTarget |

Gene Regulatory Network (GRN) inference is a fundamental process in computational biology that aims to reconstruct the regulatory rules governing gene expression from experimental data [20]. The advent of single-cell RNA sequencing (scRNA-seq) has provided unprecedented resolution for observing cell-to-cell variability, but the inherent noise, sparsity, and technical confounding factors in this data present significant challenges for accurate GRN inference [3]. Traditional methods often struggle with generalization across diverse cell types and accounting for unseen regulators [20].

A promising strategy to overcome these limitations is the integration of prior knowledge into the inference process [3]. This can include known regulatory interactions from curated databases, experimental multi-omics data (such as chromatin accessibility), or other biological constraints that help narrow the solution space. GRNPT (Gene Regulatory Network inference using Transformer) represents a novel framework that leverages this strategy by integrating large language model (LLM) embeddings from publicly accessible biological data with a temporal convolutional network (TCN) autoencoder to capture regulatory patterns from scRNA-seq trajectories [20] [24]. By combining the ability of LLMs to distill biological knowledge with deep learning methodologies that capture complex patterns in gene expression data, GRNPT overcomes limitations of traditional methods and enables more accurate understanding of gene regulatory dynamics [20].

Frequently Asked Questions (FAQs)

General GRNPT Questions

What is GRNPT and how does it differ from traditional GRN inference methods? GRNPT is a Transformer-based framework that integrates LLM embeddings from biological data and a TCN autoencoder to capture regulatory patterns from scRNA-seq data [20] [24]. Unlike traditional methods that rely solely on expression data, GRNPT incorporates prior biological knowledge through LLM embeddings, which significantly improves its performance and generalizability, especially when training data is limited [20].

What types of prior knowledge does GRNPT incorporate? GRNPT primarily incorporates prior knowledge through LLM embeddings trained on publicly accessible biological data [20]. This can include known regulatory interactions from curated databases, transcription factor binding information, and other functional genomic data that provides context for regulatory relationships.

In what scenarios does GRNPT demonstrate the most significant improvements? GRNPT shows particularly strong performance when training data is limited and in its ability to generalize to previously unseen cell types and regulators [20] [24]. This makes it valuable for studying rare cell types or conditions where comprehensive training data may not be available.

Technical Implementation

What are the key computational components of GRNPT? The GRNPT framework consists of two main components: (1) LLM embeddings that distill biological knowledge from text and sequence data, and (2) a TCN autoencoder that captures regulatory patterns from scRNA-seq trajectories [20]. The Transformer architecture enables the model to effectively integrate these different types of information.

How does GRNPT handle the high dimensionality and sparsity of scRNA-seq data? GRNPT uses a TCN autoencoder specifically designed to capture temporal patterns in scRNA-seq trajectories, which helps address data sparsity by learning meaningful representations of the gene expression dynamics [20]. The integration of prior knowledge through LLM embeddings further regularizes the solution space.

Can GRNPT predict regulatory relationships for novel transcription factors? Yes, one of GRNPT's notable capabilities is its ability to accurately predict regulatory relationships involving previously unseen regulators [20], demonstrating exceptional generalizability beyond the specific examples present in its training data.

Practical Application

What input data formats does GRNPT require? GRNPT requires scRNA-seq trajectory data as primary input, along with access to biological databases or pre-trained embeddings for prior knowledge integration [20]. The specific data preprocessing requirements would depend on the implementation details.

How can researchers validate GRNPT predictions experimentally? Predictions from GRNPT can be validated using standard experimental techniques for verifying gene regulatory interactions, including CRISPR perturbations, chromatin immunoprecipitation (ChIP), and reporter assays. The high accuracy demonstrated by GRNPT across diverse cell types provides confidence in its predictions [20].

Troubleshooting Guides

Data Preparation Issues

Problem: Inconsistent results when using different scRNA-seq datasets

| Possible Cause | Solution | Verification Method |

|---|---|---|

| High technical variability between datasets | Apply robust normalization and batch correction techniques | Check for consistent performance after normalization |

| Differences in gene coverage | Ensure consistent gene sets across comparisons | Verify gene overlap between datasets |

| Variable data sparsity patterns | Implement imputation methods designed for scRNA-seq | Compare results before and after imputation |

Problem: Poor integration of prior knowledge sources

| Symptom | Diagnostic Check | Resolution |

|---|---|---|

| Model fails to leverage known regulatory interactions | Verify format and completeness of prior knowledge database | Curate specific, high-confidence interactions from multiple sources |

| Conflicting information between knowledge sources | Assess consistency across different databases | Implement confidence-weighted integration of different sources |

| Mismatch between prior knowledge and expression data | Check for tissue/cell type specificity of prior knowledge | Use context-specific prior knowledge where available |

Model Performance Issues

Problem: Limited generalizability to unseen cell types

- Check training data diversity: Ensure training encompasses multiple cell types

- Validate prior knowledge relevance: Confirm biological priors are applicable to target cell type

- Adjust regularization parameters: Increase regularization to prevent overfitting to training cell types

- Progressive evaluation: Test on increasingly distant cell types from training set

Problem: High computational resource requirements

| Component | Resource-Intensive Aspect | Optimization Strategy |

|---|---|---|

| LLM Embeddings | Loading large pre-trained models | Use distilled versions of models; cache embeddings |

| TCN Autoencoder | Processing long scRNA-seq trajectories | Implement strategic downsampling; use efficient convolution |

| Transformer Integration | Attention mechanism computation | Employ efficient attention variants; reduce sequence length |

Interpretation Challenges

Problem: Difficulties in interpreting model predictions

- Visualize attention patterns: Examine which parts of input sequence most influence predictions

- Ablation studies: Systematically remove prior knowledge components to assess contribution

- Compare with known biology: Check if predictions align with established regulatory relationships

- Generate confidence scores: Implement uncertainty quantification for predictions

Experimental Protocols

GRNPT Implementation Workflow

Step 1: Data Preparation and Preprocessing

- Collect scRNA-seq trajectory data representing the biological system of interest

- Perform quality control, normalization, and imputation for missing values

- Format expression matrices for temporal analysis, preserving cell state transitions

Step 2: Prior Knowledge Acquisition

- Extract biological knowledge from publicly available databases (e.g., transcription factor databases, regulatory interaction databases)

- Generate LLM embeddings using pre-trained biological language models

- Align prior knowledge with genes present in expression data

Step 3: Model Configuration

- Initialize TCN autoencoder with architecture appropriate for sequence length in scRNA-seq trajectories

- Configure Transformer components for integrating expression patterns and biological embeddings

- Set hyperparameters based on dataset size and complexity

Step 4: Model Training and Validation

- Implement cross-validation strategy appropriate for temporal data

- Monitor training to ensure proper integration of prior knowledge without overfitting

- Validate intermediate predictions against held-out data

Step 5: Network Inference and Interpretation

- Extract regulatory relationships from trained model

- Apply statistical thresholds for edge inclusion in final network

- Interpret results in biological context of studied system

Validation Experiment Design

Protocol for Experimental Validation of GRNPT Predictions

Objective: Confirm accuracy of novel regulatory relationships predicted by GRNPT using orthogonal experimental methods.

Materials:

- Cell line or primary cells relevant to biological context

- Reagents for CRISPR-based perturbation (see Research Reagent Solutions table)

- qPCR or RNA-seq supplies for measuring expression changes

- Antibodies for chromatin immunoprecipitation if applicable

Procedure:

- Select high-confidence novel predictions from GRNPT output

- Design guide RNAs targeting predicted transcription factors

- Implement CRISPR-based knockout or inhibition of selected regulators

- Measure expression changes in predicted target genes using qPCR or RNA-seq

- Compare observed regulatory effects with GRNPT predictions

- For direct binding predictions, perform ChIP-seq for transcription factors where antibodies are available

Expected Results: Successful validation should show concordance between GRNPT predictions and experimental observations, with statistically significant effects on target gene expression following perturbation of predicted regulators.

Research Reagent Solutions

| Reagent/Category | Function in GRNPT Workflow | Example Applications |

|---|---|---|

| scRNA-seq Platforms | Generate primary input data for GRN inference | 10x Genomics, Smart-seq2 for trajectory data |

| Biological Databases | Source of prior knowledge for LLM embeddings | ENCODE, JASPAR, TRRUST, RegNetwork |

| Pre-trained LLMs | Provide biological context embeddings | ProtTrans, DNABERT, other biologically-trained transformers |

| Perturbation Tools | Experimental validation of predictions | CRISPR-Cas9, siRNA, small molecule inhibitors |

| Validation Assays | Confirm regulatory relationships | qPCR, RNA-seq, ChIP-seq, reporter assays |

| Computational Frameworks | Implementation of GRNPT architecture | PyTorch, TensorFlow with transformer extensions |

Performance Metrics and Benchmarks

Quantitative Comparison of GRN Inference Methods

Table: Performance Comparison of GRNPT Against Other Methods [20]

| Method | Accuracy (AUPRC) | Generalization to Unseen Cell Types | Performance with Limited Data |

|---|---|---|---|

| GRNPT | 0.89 | Excellent | High |

| Supervised Methods | 0.72-0.81 | Variable | Poor to Moderate |

| Unsupervised Methods | 0.65-0.78 | Limited | Moderate |

| Correlation-based | 0.58-0.70 | Poor | Poor |

Implementation Requirements

Table: Technical Specifications for GRNPT Deployment

| Component | Minimum Requirements | Recommended Specifications |

|---|---|---|

| Memory | 16 GB RAM | 32+ GB RAM |

| Storage | 100 GB free space | 500 GB+ free space |

| GPU | Not required | NVIDIA GPU with 8+ GB VRAM |

| Biological Data | scRNA-seq dataset | Multiple scRNA-seq datasets with trajectories |

| Prior Knowledge | Basic TF databases | Comprehensive multi-omics databases |

Troubleshooting Guide: Common Issues in Hybrid GRN Inference

Q1: My hybrid model is overfitting on limited training data for a non-model plant species. How can I improve its generalization?

A: Employ a transfer learning strategy. Leverage knowledge from a data-rich source species to improve performance in a target species with limited data [25].

- Diagnosis: Overfitting typically occurs when a model has too many parameters relative to the amount of training data. In GRN inference for non-model species, the number of experimentally validated regulatory pairs is often small [25].

- Solution Protocol:

- Select a Source Model: Choose a pre-trained hybrid model (e.g., CNN-ML) that was trained on a well-characterized, data-rich species like Arabidopsis thaliana [25].

- Prepare Target Data: Preprocess your target species transcriptomic data (e.g., poplar or maize). This involves raw read alignment, gene-level count quantification, and normalization using a method like TMM [25].

- Model Transfer: Apply the source model to the preprocessed target species data. The model will use the features learned from Arabidopsis to infer regulatory relationships in the new species.

- Fine-Tuning (Optional): If sufficient validation data exists for the target species, you can optionally fine-tune the transferred model on this data to slightly adjust the parameters.

Q2: The predictions from my hybrid model lack interpretability. How can I identify the most important transcription factors?

A: Utilize the ranking capability inherent in well-designed hybrid models. These models can prioritize key regulators in their candidate lists [25].

- Diagnosis: Deep learning components can sometimes act as "black boxes." However, hybrid models that integrate ML can be designed for higher interpretability.

- Solution Protocol:

- Examine Model Output: Analyze the ordered list of candidate regulator-target pairs generated by your model.

- Identify Top Candidates: The hybrid models discussed in the literature demonstrated high precision in ranking key master regulators (e.g., MYB46, MYB83) and upstream regulators (e.g., VND, NST, SND families) at the top of these lists [25].

- Biological Validation: Focus experimental validation efforts (e.g., ChIP-seq, Y1H) on these top-ranked transcription factors to confirm their regulatory roles efficiently.

Experimental Protocol: Implementing a Hybrid CNN-ML Model for GRN Inference

This protocol details the methodology for constructing a Gene Regulatory Network (GRN) using a hybrid approach that combines Convolutional Neural Networks (CNN) with traditional Machine Learning (ML), as validated in recent plant studies [25].

1. Data Collection & Preprocessing

- Objective: To acquire and normalize large-scale transcriptomic data for model training and testing.

- Steps:

- Retrieve Data: Download raw RNA-seq datasets in FASTQ format from public repositories like the NCBI Sequence Read Archive (SRA) [25].

- Quality Control: Use tools like FastQC to assess the quality of raw sequencing reads [25].

- Trim Adaptors: Remove adaptor sequences and low-quality bases using Trimmomatic [25].

- Alignment & Quantification: Map the trimmed reads to a reference genome using STAR and obtain gene-level raw read counts with CoverageBed [25].

- Normalization: Normalize the raw count data using the weighted trimmed mean of M-values (TMM) method in the edgeR package [25].

- Key Materials:

- Computational Resources: High-performance computing cluster.

- Software: SRA-Toolkit, FastQC, Trimmomatic, STAR, CoverageBed, edgeR [25].

2. Model Architecture & Training

- Objective: To design and train a hybrid model that outperforms traditional ML or DL methods alone.

- Steps:

- Feature Extraction: Use a Convolutional Neural Network (CNN) to learn high-level, non-linear features from the preprocessed gene expression data. The CNN acts as a powerful feature extractor from complex omics data [25].

- Regulatory Prediction: Feed the features extracted by the CNN into a traditional machine learning classifier (e.g., Support Vector Machine, Random Forest) for the final prediction of regulatory relationships (TF-target pairs) [25].

- Model Validation: Evaluate the model on a holdout test dataset. The hybrid CNN-ML model has been shown to achieve over 95% accuracy on such datasets [25].

3. Cross-Species Inference via Transfer Learning

- Objective: To apply a model trained on a data-rich species to a species with limited data.

- Steps:

- Source Model: Start with a hybrid CNN-ML model that has been fully trained and validated on a species like Arabidopsis thaliana [25].

- Target Application: Directly apply this model to the normalized expression data of the target species (e.g., poplar, maize) to infer its GRN [25].

- Performance: This strategy enhances model performance in data-scarce species and demonstrates the feasibility of knowledge transfer [25].

Performance Data of GRN Inference Methods

The following table summarizes the quantitative performance of different computational approaches for GRN inference, highlighting the effectiveness of hybrid and transfer learning models.

Table 1: Comparative Performance of GRN Inference Methods

| Method Type | Key Examples | Reported Accuracy | Key Advantages | Key Challenges |

|---|---|---|---|---|

| Hybrid CNN-ML | CNN combined with ML classifiers [25] | >95% (holdout test) [25] | High accuracy; identifies more known TFs; better ranking of master regulators [25] | Requires large, high-quality labeled datasets [25] |

| Deep Learning (DL) | DeepBind, DeeperBind, DeepSEA [25] | Information Missing | Captures non-linear, hierarchical relationships [25] | Can be a "black box"; high computational demand [25] |

| Traditional Machine Learning | GENIE3, TIGRESS, SVM [25] | Information Missing | More interpretable than some DL models [25] | May struggle with high-dimensional, noisy data [25] |

| Graph Representation Learning | GRLGRN [26] | 7.3% avg. improvement in AUROC; 30.7% avg. improvement in AUPRC vs. benchmarks [26] | Leverages prior GRN topology; uses attention mechanisms [26] | Designed for single-cell data; complexity can be high [26] |

Workflow Diagram: Hybrid GRN Inference with Transfer Learning

Workflow for Cross-Species GRN Inference

Research Reagent Solutions

Table 2: Essential Materials and Tools for Hybrid GRN Research

| Item Name | Function/Brief Explanation | Example/Note |

|---|---|---|

| Transcriptomic Data | Provides the gene expression profiles used to infer regulatory relationships. | SRA public database (e.g., Arabidopsis, poplar, maize datasets) [25]. |

| Reference Genomes | Essential for aligning RNA-seq reads and assigning them to specific genes. | Species-specific genomes (e.g., TAIR for Arabidopsis, Phytozome for poplar) [25]. |

| Preprocessing Tools | Software for quality control, read trimming, alignment, and expression quantification. | Trimmomatic, FastQC, STAR, CoverageBed [25]. |

| Normalization Algorithm | Corrects for technical variation in sequencing depth and composition across samples. | Weighted Trimmed Mean of M-values (TMM) in edgeR [25]. |

| Hybrid Model Framework | The core computational architecture that combines CNN for feature learning and ML for classification. | Custom implementations in Python (e.g., using TensorFlow/PyTorch and scikit-learn) [25]. |

| Validation Databases | Sources of experimentally validated regulatory interactions for model training and testing. | STRING, cell type-specific ChIP-seq, non-specific ChIP-seq databases [26]. |

Troubleshooting Guides

Common scATAC-seq Data Issues and Solutions

| Problem | Possible Causes | Diagnostic Checks | Recommended Solutions |

|---|---|---|---|

| Low TSS Enrichment Score [27] | Poor signal-to-noise ratio; Uneven fragmentation; Cell type-specific effects. | Check TSS enrichment score (below 6 is a warning) [27]. | Optimize cell viability; Review library preparation protocol to avoid over-tagmentation [27]. |

| Unstable Peak Calling [27] | Improper tool assumptions; High noise levels; Inefficient mitochondrial read removal. | Verify fragment size distribution for nucleosome pattern (~50bp, ~200bp, ~400bp) [27]. | Use Genrich with proper mitochondrial filtering; Consider HMMRATAC for cleaner nucleosome patterns [27]. |

| High Data Sparsity [27] [28] | Low sequencing depth per cell; Inefficient Tn5 tagmentation. | Confirm over 90% zeros in the count matrix [28]. | Apply TF-IDF normalization [27]; Use cluster-wise peak calling to retain rare cell type signals [27]. |

| Poor Replicate Agreement [27] | Variable antibody efficiency (for CUT&Tag); Sample preparation differences; PCR bias. | Check correlation metrics between replicates. | Standardize sample prep protocols; Merge replicates before peak calling to strengthen signal [27]. |

| Inaccurate Differential Analysis [27] [29] | Strong batch effects; Inappropriate peak definition; Low replicate number. | Compare results with bulk ATAC-seq or scRNA-seq if available [29]. | Use methods that support multi-factor testing (e.g., PACS [30]); Increase number of biological replicates. |

Integration with scRNA-seq Data

| Issue | Challenge | Solution |

|---|---|---|

| False Correlation [27] | Gene activity scores (from scATAC-seq) may not directly predict expression. | Avoid blind trust in activity scores; Validate with multi-omic datasets where possible. |

| Modality Misalignment [31] | Fundamental differences between chromatin accessibility and transcriptional data. | Use integration frameworks like scAttG, which leverage sequence features via deep learning [31]. |

| Joint Embedding Noise [27] | Gene activity matrix or motif scores can be noisy. | Employ specialized integration tools within established packages (e.g., Signac [32]). |

Frequently Asked Questions (FAQs)

General Concepts

Q1: Why is integrating prior knowledge particularly important for analyzing scATAC-seq data? scATAC-seq data is inherently very sparse and high-dimensional, with over 90% of values in the count matrix being zeros [28]. This sparsity, combined with technical variations like differing sequencing depths, makes it difficult for models to learn robust patterns from data alone. Incorporating prior biological knowledge—such as known transcription factor binding motifs or gene annotations—helps guide the analysis, improves model generalizability, and enhances the interpretability of the results [33] [34].