Inferring Gene Regulatory Networks with S-system Differential Equations: From Stochastic Modeling to Clinical Applications

This article provides a comprehensive overview of S-system differential equation models for gene regulatory network (GRN) inference, tailored for researchers and drug development professionals.

Inferring Gene Regulatory Networks with S-system Differential Equations: From Stochastic Modeling to Clinical Applications

Abstract

This article provides a comprehensive overview of S-system differential equation models for gene regulatory network (GRN) inference, tailored for researchers and drug development professionals. It explores the foundational principles of S-systems, highlighting their ability to model both production and degradation phases of gene regulation. The content delves into advanced methodological extensions, including stochastic and time-delayed models for handling noisy, real-world biological data. It further examines computational optimization strategies and troubleshooting techniques for large-scale network inference. Finally, the article presents a comparative analysis of S-system performance against other state-of-the-art methods and discusses its validation through applications in cancer research and understanding disease mechanisms, underscoring its potential in biomedical and clinical research.

S-system Fundamentals: Mastering the Mathematical Framework for GRN Dynamics

The S-system formalism is a canonical, non-linear ordinary differential equation (ODE) model within Biochemical Systems Theory (BST) that provides a powerful framework for modeling and analyzing the dynamics of biological networks, including Gene Regulatory Networks (GRNs) [1] [2]. Its name derives from its ability to represent synergistic and saturable system behavior, which is prevalent in biochemical systems. The core structure of an S-system is characterized by a set of coupled ODEs, where the rate of change of each system component is represented as the difference between two aggregated processes: a production term and a degradation term [1]. This formalism is particularly valued in systems biology and GRN inference for its high representational detail, as it can explicitly capture the direction, nature (activation/inhibition), and intensity of regulatory interactions between genes and other biochemical species [3].

The primary application of S-system models lies in the reverse engineering of GRNs from temporal gene expression data [3]. By leveraging its structured form, researchers can translate the challenge of network inference into a parameter estimation problem for the S-system's numerous kinetic parameters. The flexibility of S-system models allows them to simulate and analyze complex system behaviors, including the shift from one steady state to another—a common scenario in biological stress responses or phenotypic changes [1]. Furthermore, the model's mathematical structure offers a significant analytical advantage: the ability to analytically compute steady-states by transforming the non-linear ODEs into a system of linear equations upon a logarithmic transformation of variables [1]. This property is central to many analyses of biological design and operating principles.

Mathematical Deconstruction of the S-system Equation

The General Equation Form

The time-dependent change of a system variable ( Xi ) (where ( i = 1, \ldots, n ) for ( n ) dependent variables) is governed by the canonical S-system equation [1]: [ \dot{X}i = \alphai \prod{j=1}^{n+m} Xj^{g{ij}} - \betai \prod{j=1}^{n+m} Xj^{h{ij}} ] Here, ( \dot{X}i = \frac{dXi}{dt} ) represents the rate of change of the concentration of ( X_i ).

The following table defines the core parameters of the equation:

| Parameter | Symbol | Definition & Biological Interpretation |

|---|---|---|

| Rate Constant | ( \alpha_i ) | A non-negative constant scaling the production rate of ( X_i ). |

| Rate Constant | ( \beta_i ) | A non-negative constant scaling the degradation/consumption rate of ( X_i ). |

| Kinetic Order | ( g_{ij} ) | A real-valued exponent quantifying the effect of ( Xj ) on the production of ( Xi ). A positive ( g_{ij} ) indicates activation, a negative value indicates inhibition, and zero indicates no effect. |

| Kinetic Order | ( h_{ij} ) | A real-valued exponent quantifying the effect of ( Xj ) on the degradation of ( Xi ). Its sign interpretation is the same as for ( g_{ij} ). |

| System Variable | ( X_j ) | The concentration of a system component. The index ( j = 1, \ldots, n ) corresponds to dependent variables, and ( j = n+1, \ldots, n+m ) corresponds to independent variables (external inputs or fixed conditions). |

Steady-State Analysis

A fundamental strength of the S-system formalism is the tractability of its steady-state analysis. The steady state of the system is defined by the condition where all net fluxes vanish, i.e., ( \dot{X}i = 0 ) for all dependent variables. This leads to [1]: [ \alphai \prod{j=1}^{n+m} Xj^{g{ij}} = \betai \prod{j=1}^{n+m} Xj^{h{ij}} ] By taking logarithms of both sides, this non-linear equation can be transformed into a system of linear equations in the logarithms of the variables [1]. Defining ( yj = \ln Xj ), the steady-state equation becomes: [ \mathbf{AD \cdot yD + AI \cdot yI = b} ] where ( \mathbf{AD} ) and ( \mathbf{AI} ) are matrices of kinetic order differences ( (a{ij} = g{ij} - h{ij}) ) for dependent and independent variables, respectively, ( \mathbf{yD} ) is the vector of log-dependent variables, ( \mathbf{yI} ) is the vector of log-independent variables, and ( \mathbf{b} ) is a vector with components ( \ln(\betai / \alphai) ) [1]. This linearized form enables the use of powerful matrix algebra and linear programming techniques to explore the admissible steady-state operating space of a biological system.

Protocols for S-system-Based GRN Inference

This section provides a detailed experimental and computational workflow for applying S-system models to infer gene regulatory networks from gene expression data.

Protocol 1: Network Inference via Parameter Optimization

Objective: To reconstruct a GRN topology and dynamics by estimating the kinetic parameters of an S-system model from time-course gene expression data.

Materials & Reagents:

- Hardware: A high-performance computing workstation or cluster.

- Software: A numerical computing environment (e.g., MATLAB, Python with SciPy) or specialized optimization software.

- Data: Quantitative, time-course gene expression data (e.g., from RNA-seq or microarrays). The data should ideally have sufficient temporal resolution to capture dynamics.

Procedure:

- Problem Formulation:

- Let ( N ) be the number of genes in the network. The goal is to estimate all parameters ( \alphai, \betai, {g{i1}, ..., g{iN}}, {h{i1}, ..., h{iN}} ) for ( i = 1 ) to ( N ).

- This results in a total of ( 2N + 2N^2 ) parameters to estimate, highlighting the curse of dimensionality that occurs as ( N ) increases [3].

Define the Objective Function:

- The core of the optimization is to minimize the difference between the model's prediction and the experimental data. This is typically done by minimizing the Sum of Squared Errors (SSE) or Mean Squared Error (MSE) [3].

- For a gene ( i ), the error is: ( Ei = \sum{t=1}^{T} [X{i,exp}(t) - X{i,model}(t)]^2 ), where ( T ) is the number of time points, and ( X{i,exp}(t) ) and ( X{i,model}(t) ) are the experimental and model-predicted concentrations of gene ( i ) at time ( t ), respectively.

Implement Optimization with Regularization:

- Due to the large number of parameters, standard optimization algorithms can converge slowly or to poor local minima. Incorporate a sparsity-promoting penalty term (e.g., L1 regularization) into the objective function to favor biologically plausible, sparse network structures [3].

- Utilize evolutionary algorithms (e.g., Genetic Algorithms, Differential Evolution) which are effective for high-dimensional, non-convex optimization landscapes [2] [3].

- To address the issue of contradictory kinetic orders (( g{ij} ) and ( h{ij} ) both being significantly non-zero), implement a dynamic penalty term that explicitly penalizes candidate solutions with such invalid interactions, guiding the optimization toward valid regulatory structures [3].

Model Validation:

- Perform cross-validation by holding out a subset of the time-series data during parameter estimation and testing the model's predictive accuracy on the held-out data.

- Compare the inferred network topology against known, validated interactions from databases to calculate accuracy metrics like Precision, Recall, and F-score [3].

Protocol 2: Steady-State Perturbation Analysis

Objective: To identify all admissible strategies (combinations of independent variable changes) that enable a biological system to transition from a normal steady state to a target, perturbed steady state [1].

Materials & Reagents:

- Software: A linear algebra package (e.g., in MATLAB, R, or Python with NumPy/SciPy) capable of matrix inversion and linear programming.

- Data: A validated S-system model for the biological network of interest, including all kinetic orders and rate constants.

Procedure:

- Linearize at Steady State:

- Starting from the dynamic model ( \dot{X}i ), derive the steady-state equation in its log-linear form: ( \mathbf{AD \cdot yD + AI \cdot y_I = b} ) [1].

Define the Perturbation:

- Specify the target steady state for a subset of dependent variables ( \mathbf{y_D^*} ). This represents the new biological state (e.g., a stress response phenotype).

Characterize the Solution Space:

- The equation from Step 1 defines a linear system. The independent variables ( \mathbf{y_I} ) (e.g., external stimuli, gene knock-downs) are the control knobs.

- Method A (Matrix Inversion): If the system is square and non-singular, solve directly for the dependent variables.

- Method B (Regression/Pseudo-inverse): If the system is over-determined, use regression to find a best-fit solution. If under-determined, use the Moore-Penrose pseudo-inverse to find the solution with the minimum norm [1].

- Method C (Linear Programming): Use Linear Programming (LP) or Mixed-Integer Linear Programming (MILP) to find a solution that minimizes a cost function (e.g., the total absolute change in independent variables), subject to the linear steady-state constraint and bounds on variable values [1].

Interpretation:

- The solution(s) reveal the necessary adjustments in independent variables to achieve the target state. Comparing the observed biological response (e.g., from transcriptomics) to the computed solution space can yield insights into the cell's evolved operating principles [1].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and computational tools essential for conducting S-system-based GRN research.

| Category / Item | Function & Application in S-system Research |

|---|---|

| Computational Tools | |

| Optimization Suites | Software platforms (e.g., MATLAB Optimization Toolbox, Python's SciPy, R) are used to implement evolutionary algorithms and other global optimization methods for estimating S-system parameters [3]. |

| High-Performance Computing (HPC) Cluster | Essential for handling the computational burden of parameter estimation in large networks (e.g., >20 genes) due to the exponential growth in the number of parameters [3]. |

| Specialized GRN Software | Tools like the dyngen simulator can generate realistic synthetic single-cell RNA-seq data for benchmarking S-system inference methods against known ground-truth networks [4]. |

| Data Sources | |

| Temporal Expression Data | Time-course gene expression data (from microarrays or RNA-seq) serves as the primary input for fitting the dynamic S-system model. |

| Prior Knowledge Databases | Structured databases of known gene interactions (e.g., from KEGG, Reactome) and text-mined information from literature (e.g., using BioBERT-based tools like PRESS) can be used to constrain the model, drastically reducing the solution space and improving inference accuracy [5]. |

| Conceptual Frameworks | |

| Biochemical Systems Theory (BST) | The overarching theoretical framework that provides the foundation for the S-system formalism and its application to biological network analysis [1]. |

| Method of Controlled Mathematical Comparisons (MCMC) | A rigorous methodology used to compare the performance of different network structures or operating strategies against objective criteria of functional effectiveness (e.g., robustness, response time) [1]. |

Advanced Applications and Considerations

Integration of Prior Knowledge

A significant challenge in S-system modeling is the high computational cost of parameter estimation. The PRESS (Prior Knowledge Enhanced S-system) methodology addresses this by automating the integration of biologically relevant prior knowledge extracted from published literature using a BioBERT-based natural language processing (NLP) framework [5]. This approach identifies regulatory genes and their potential interactions based on co-occurrence in literature, which is then incorporated into the optimization process as constraints or priors. This reduces the number of parameters to be estimated, leading to substantial reductions in computational cost and simultaneous improvements in prediction accuracy [5].

Handling Invalid Kinetic Orders

A common issue in S-system optimization is the convergence of independent kinetic orders ( g{ij} ) and ( h{ij} ) to values that imply a biologically contradictory regulation (e.g., both strong activation and inhibition between the same gene pair). Advanced strategies to mitigate this include [3]:

- Combined Parameter (( w{ij} )): Using a single derived parameter ( w{ij} ) that combines ( g{ij} ) and ( h{ij} ) to represent the net regulatory effect.

- Dynamic Penalty Term: Incorporating a novel, magnitude-aware penalty term into the fitness function that explicitly penalizes candidate solutions based on the number and severity of such invalid interactions, systematically guiding the optimization toward valid networks.

Performance and Evaluation

When applying S-system models for GRN inference, it is critical to evaluate performance using standardized metrics. Benchmarking studies on both simulated and real-world data (e.g., ovarian cancer transcriptomics) have shown that prediction accuracy is highly dependent on data features, network size, and topology [6]. Standard evaluation metrics include the Area Under the Receiver Operating Characteristic Curve (AUC), which allows for a threshold-independent comparison of methods, as well as the F-score, which provides a single measure of accuracy balancing precision and recall [6].

Gene Regulatory Networks (GRNs) represent the complex interplay of genes, proteins, and other molecules that control cellular functions and responses. The reverse engineering of these networks from experimental data, particularly time-series gene expression data, remains a central challenge in computational systems biology [7] [8]. Among the various mathematical frameworks developed for this purpose, S-system models offer a particularly powerful approach due to their ability to capture the rich, nonlinear dynamics inherent in biological systems [9].

S-system models belong to the class of continuous, ordinary differential equation (ODE) models that provide a quantitative and accurate means to predict system behavior [10]. Their canonical power-law representation strikes an effective balance between biological relevance and mathematical flexibility, making them suitable for representing a wide range of biochemical processes [11] [9]. Unlike simpler linear models or qualitative Boolean networks, S-systems can model the precise kinetic relationships and feedback loops that characterize real biological networks, enabling researchers to move beyond mere topological inference toward truly predictive computational models [10] [8].

Mathematical Foundation of S-system Models

Formal S-system Structure

The S-system formalism models the dynamics of a biochemical network through a set of coupled, nonlinear ordinary differential equations. For a network comprising n molecular species (typically genes or proteins) and m external inputs, the rate of change of each component Xᵢ is described by:

[ \frac{dXi}{dt} = \alphai \prod{j=1}^{n+m} Xj^{g{ij}} - \betai \prod{j=1}^{n+m} Xj^{h_{ij}}, \quad i = 1, 2, \ldots, n ]

Where:

- (X_i) represents the concentration or expression level of the i-th component

- (\alpha_i) is the rate constant for the production term

- (\beta_i) is the rate constant for the degradation term

- (g_{ij}) are the kinetic orders for production

- (h_{ij}) are the kinetic orders for degradation

- (X(0) = X_0) specifies the initial conditions [9] [8]

The power-law terms capture the aggregated effect of all processes influencing the synthesis and degradation of each component. The kinetic orders ((g{ij}) and (h{ij})) quantify the strength and direction of influence—positive values indicate activating effects, negative values represent inhibitory effects, and values of zero indicate no direct influence [9].

Advantages of the S-system Formulation

The S-system formalism provides several critical advantages for modeling biological networks:

Universality: The power-law representation can approximate any nonlinear function, providing considerable flexibility in capturing diverse biological phenomena [11]

Biological Interpretability: Unlike black-box models, S-system parameters have direct biological interpretations—kinetic orders quantify regulatory strengths, while rate constants determine reaction velocities [8]

Structured Representation: The explicit separation of production and degradation terms aligns with fundamental biochemical principles [9]

Steady-State Analytics: The structure enables direct analytical solution at steady-state conditions, facilitating system analysis [11]

Comparative Analysis of GRN Modeling Approaches

Table 1: Comparison of GRN Modeling Frameworks

| Model Type | Mathematical Basis | Strengths | Limitations | Representative Methods |

|---|---|---|---|---|

| S-system | Nonlinear ODEs with power-law terms | High biological accuracy; Parameter interpretability; Handles nonlinearity | Computationally intensive; Parameter estimation challenge | MONET [8]; Unified Approach [9] |

| Boolean Networks | Logical rules; Binary states | Conceptual simplicity; Scalability to large networks | Limited quantitative precision; Oversimplification of dynamics | Boolean Network Models [7] |

| Bayesian Networks | Probabilistic graphs; Conditional dependencies | Handles uncertainty; Robust to noise | Primarily static relationships; Limited temporal dynamics | Bayesian Networks [7] |

| Linear ODEs | Linear differential equations | Computational efficiency; Simple parameter estimation | Poor capture of nonlinear biological phenomena | SCODE [10]; GRISLI [10] |

| Neural Network Models | Artificial neural networks; Universal approximators | Handles complex patterns; No predefined structure required | Black-box nature; Limited interpretability | MLP-based ODEs [10]; UDEs [12] |

| Universal Differential Equations (UDEs) | Hybrid mechanistic-ANN models | Combines prior knowledge with data-driven flexibility | Training challenges; Balance interpretability | UDEs [12] [13] |

Computational Methodologies for S-system Inference

Unified Two-Step Inference Protocol

Wang et al. (2010) developed a unified approach for S-system inference that addresses both large-scale network discovery and detailed parameter estimation [9]:

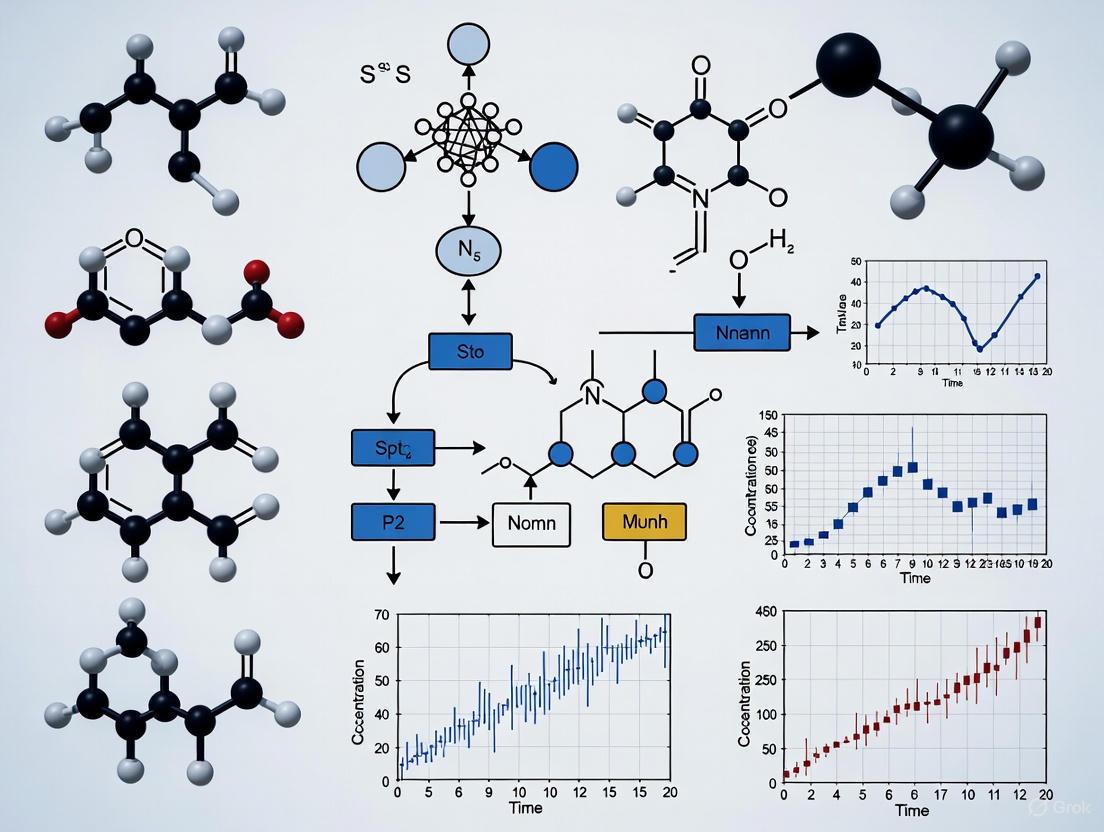

Figure 1: Unified S-system Inference Workflow

Protocol: Simplified S-system for Large-scale Network Inference

Data Preparation

Structure Inference

- Employ simplified S-system model with reduced parameter set

- Use rapid parameter estimation techniques to identify major regulatory interactions

- Focus on determining network topology rather than precise kinetic parameters [9]

Gene Subset Selection

- Based on initial large-scale analysis, select biologically relevant gene subsets for detailed modeling

- Typical subset size: 3-10 genes for detailed S-system analysis [9]

Protocol: Two-Step Detailed Parameter Estimation

Parameter Range Estimation

- Apply genetic programming combined with recursive least square estimation

- Determine plausible ranges for kinetic parameters (α, β, g, h)

- Objective: Constrain the search space for precise estimation [9]

Precise Parameter Optimization

- Apply multi-dimensional optimization algorithms to kinetic parameters

- Recommended algorithms: Downhill simplex or modified Powell algorithm

- Optimization criterion: Minimize mean squared error between simulated and experimental data [9]

Multi-Objective Optimization with MONET

The MONET framework represents a state-of-the-art approach for S-system inference using multi-objective optimization [8]:

Figure 2: MONET Multi-Objective Optimization

Protocol: MONET Implementation for S-system Inference

Problem Formulation

- Define the multi-objective optimization problem with two competing objectives:

- Mean Squared Error (MSE): Quantifies difference between simulated and experimental data

- Topology Regularization (TR): Promotes sparse network structures typical of biological systems [8]

- Define the multi-objective optimization problem with two competing objectives:

Algorithm Configuration

- Algorithm: Multi-Objective Cellular Genetic Algorithm (MOCell)

- Population size: 100 individuals

- Termination: 25,000 function evaluations

- Parameter representation: Direct encoding of S-system parameters (α, β, g, h) [8]

Solution Selection

- Output: Pareto front of non-dominated solutions

- Expert evaluation: Biologists select final network based on biological plausibility and accuracy trade-offs [8]

Bayesian Estimation Methods

Bayesian approaches provide robust parameter estimation for S-system models, particularly handling measurement noise and uncertainty [11] [14]:

Protocol: Variational Bayesian Filter (VBF) for S-system Estimation

State-Space Formulation

- Represent the S-system in state-space form with process and measurement equations

- Include both state variables (biological concentrations) and model parameters in the estimation [11]

Filter Implementation

- Apply Variational Bayesian Filter (VBF) for joint state and parameter estimation

- VBF advantages: Optimal sampling distribution utilizing observed data; improved accuracy over particle filters [11]

Performance Comparison

Experimental Validation and Applications

Benchmark Performance Evaluation

Table 2: S-system Performance on Benchmark Networks

| Network/Dataset | Network Size | Inference Method | Performance Metrics | Key Findings |

|---|---|---|---|---|

| DREAM3 Challenge | 10-100 genes | MONET (Multi-objective) | Competitive accuracy vs. state-of-art | Robust to noise; Provides trade-off solutions [8] |

| IRMA In Vivo | 5 genes | MONET (Multi-objective) | Accurate topology identification | Successfully captures biological behavior [8] |

| Synthetic 50-Gene | 50 genes | Unified Two-Step Approach | Identifies major interactions | Applicable to larger networks [9] |

| Yeast Protein Synthesis | Small subnetworks | Unified Two-Step Approach | Effective parameter estimation | Validated on real biological data [9] |

| Cad System in E. coli | 4 state variables | Variational Bayesian Filter | Low RMSE for state estimation | Effective for noisy measurements [11] |

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Type | Function/Purpose | Example Sources/Platforms |

|---|---|---|---|

| scRNA-seq Data | Experimental Data | Single-cell resolution gene expression measurements; Input for GRN inference [7] | 10X Genomics; Smart-seq2 |

| Time-Series Expression Data | Experimental Data | Temporal dynamics for inferring causal relationships [9] [8] | Microarrays; RNA-seq |

| DREAM3 Datasets | Benchmark Data | Standardized evaluation of GRN inference methods [8] | DREAM Challenges |

| IRMA Network | Benchmark Data | In vivo validated network for yeast [8] | Cantone et al. 2009 |

| jMetal Framework | Software Library | Multi-objective optimization algorithms [8] | jMetal (http://jmetal.sourceforge.net/) |

| SciML Ecosystem | Software Tools | Differential equation solutions and UDE implementation [12] | Julia SciML Framework |

| TF-TF Interaction Databases | Prior Knowledge | Biological constraints for inference algorithms [15] | Public PPI Databases |

Integration with Emerging Methodologies

Universal Differential Equations (UDEs)

Universal Differential Equations represent a promising hybrid approach that combines mechanistic S-system models with data-driven neural networks [12] [13]:

Protocol: UDE Implementation for Enhanced S-system Modeling

Model Formulation

- Define known mechanistic components using S-system formalism

- Represent unknown or overly complex components with neural networks

- Example: Replace ATP usage and degradation terms with ANN in glycolysis model [12]

Training Pipeline

- Apply multi-start optimization strategy for parameter estimation

- Use specialized solvers (Tsit5, KenCarp4) for stiff biological systems

- Implement regularization (weight decay) to prevent overfitting and maintain interpretability [12]

Performance Optimization

- Address challenges: Noise, sparse data, and stiff dynamics

- Apply log-transformation for parameters spanning multiple orders of magnitude

- Use maximum likelihood estimation for parameter identification [12]

Symbolic Regression with Biological Priors

LogicSR represents an advanced framework integrating Boolean logic with symbolic regression for GRN inference [15]:

Key Integration Points with S-system Modeling:

- Incorporates prior biological knowledge to guide network inference

- Uses Monte Carlo Tree Search (MCTS) for efficient exploration of regulatory rules

- Balances model accuracy with biological interpretability [15]

S-system models continue to provide a powerful framework for capturing the nonlinear dynamics of gene regulatory networks, bridging the gap between quantitative accuracy and biological interpretability. The ongoing development of computational methods—from multi-objective optimization to hybrid mechanistic-machine learning approaches—addresses the historical challenges of parameter estimation and scalability.

Future research directions should focus on enhanced integration with single-cell multi-omics data, improved handling of stochastic cellular processes, and development of more efficient optimization algorithms capable of handling genome-scale networks. Furthermore, the incorporation of additional biological constraints and prior knowledge will be essential for improving inference accuracy while maintaining biological plausibility.

As systems biology continues to generate increasingly complex and high-dimensional datasets, S-system models, particularly when integrated with complementary approaches like UDEs and symbolic regression, will remain indispensable tools for deciphering the complex regulatory logic underlying cellular function and dysfunction.

Within the broader thesis on S-system differential equation models for Gene Regulatory Network (GRN) inference, the biological interpretation of model parameters is paramount. S-system models, a key class of continuous models in GRN research, are characterized by their power-law formalism, where rate constants and kinetic orders are the fundamental parameters requiring precise biological explanation [7]. Understanding the dynamics of biochemical reactions, which are central to GRN behavior, depends entirely on knowledge of their rate constants [16]. These parameters are not merely abstract numbers; they encode the regulatory logic and kinetic properties of the network. The kinetic orders in an S-system model quantitatively represent the strength and direction (activation or inhibition) of the regulatory influence that one gene or protein exerts on another. Meanwhile, the rate constants encapsulate the inherent turnover rate of each component. The challenge of accurately inferring these parameters from often-noisy experimental data, such as that from scRNA-Seq technology, is a significant focus in modern Systems Biology [7]. Properly interpreting these values allows researchers to move beyond network topology to a functional, predictive understanding of the system, which is crucial for applications like targeted drug development.

Theoretical Foundation: From Biochemical Reactions to S-system Parameters

First-Order and Second-Order Rate Constants

The parameters in complex S-system models are rooted in the rate constants of elementary biochemical reactions. Virtually all biochemical processes can be described by two basic reaction types [16]:

- Second-Order Association Reactions: For a reversible, bimolecular binding reaction where molecule A binds molecule B to form complex AB (A + B → AB), the rate of the forward reaction is a second-order process. The rate is given by

rate = k+ [A][B], wherek+is the second-order association rate constant with units of M⁻¹s⁻¹. This constant is characteristic of the reaction under specific conditions and scales with the probability of a successful molecular collision and binding event [16]. - First-Order Dissociation and Isomerization Reactions: The reverse reaction, the dissociation of complex AB (AB → A + B), is a first-order reaction. Its rate is

rate = k- [AB], wherek-is the first-order dissociation rate constant with units of s⁻¹. This constant represents the probability that the complex will spontaneously dissociate in a unit of time. Similarly, conformational changes (isomerization), such as a molecule A transitioning to an active form A*, are also first-order reactions with rate constants describing the probability of that transition per unit time [16].

Table 1: Fundamental Rate Constants and Their Biological Meanings

| Parameter Type | Reaction Type | Units | Biological Interpretation |

|---|---|---|---|

| Association Rate Constant (k+) | Second-Order | M⁻¹s⁻¹ | Efficiency of molecular collision, binding, and complex formation. |

| Dissociation Rate Constant (k-) | First-Order | s⁻¹ | Stability of a molecular complex; probability of spontaneous dissociation. |

| Isomerization Rate Constant | First-Order | s⁻¹ | Rate of conformational change between functional states of a molecule. |

Relating Rate Constants to S-system Formalism

In the S-system formalism, these elementary reactions are synthesized into a aggregated model for each network component. The rate constant in an S-system equation (often denoted as α_i or β_i) is a composite parameter that is functionally related to the underlying association and dissociation rate constants, reflecting the aggregated turnover rate of the species. The kinetic orders (often denoted as g_ij or h_ij), which are exponents in the power-law expression, are similarly aggregated and relate to the sensitivity of a synthesis or degradation rate to a change in concentration of an influencing variable. The power-law approximation allows the complex web of elementary reactions to be represented in a mathematically tractable form that is highly suited for inference from time-course data [16] [7].

Experimental Protocols for Parameter Inference and Validation

Protocol 1: Transient-State Kinetics for Measuring Fundamental Rate Constants

The following protocol, adapted from Pollard (2010), describes how to measure the basic rate constants that underpin the aggregated parameters in an S-system model [16].

1. Objective: To determine the association (k+) and dissociation (k-) rate constants for a bimolecular binding reaction (e.g., a transcription factor binding to DNA).

2. Key Reagent Solutions:

- Purified Reactants: Highly purified preparations of the interacting molecules (e.g., transcription factor and DNA promoter sequence).

- Stopped-Flow Apparatus: A instrument that rapidly mixes small volumes of reactants and monitors the time course of the reaction.

- Signal Detection System: A method to track complex formation in real-time, such as fluorescence anisotropy, fluorescence resonance energy transfer (FRET), or a change in intrinsic protein fluorescence.

3. Methodology:

a. Experiment Setup: Prepare solutions of molecules A and B at concentrations typically 5-10 times higher than the expected equilibrium dissociation constant (Kd).

b. Rapid Mixing: Use the stopped-flow apparatus to rapidly mix the two solutions and initiate the reaction.

c. Data Collection: Record the signal corresponding to the formation of the complex AB over time, from a few milliseconds to several minutes, depending on the reaction speed.

d. Data Analysis: The time course of the signal (Signal(t)) will typically follow a single exponential approach to equilibrium: Signal(t) = Signal∞ + (Signal0 - Signal∞) * e^(-k_obs * t), where k_obs is the observed rate constant.

e. Determining Constants: Perform this experiment at several different concentrations of A and B. The observed rate constant k_obs is related to the underlying constants by k_obs = k+ [B] + k- for a fixed concentration of [A]. A plot of k_obs versus [B] will yield a straight line with a slope equal to the association rate constant k+ and a y-intercept equal to the dissociation rate constant k-.

4. Relating to S-system: The measured k+ and k- for key regulatory interactions provide prior knowledge that can constrain and validate the aggregated rate constants and kinetic orders inferred for the larger S-system model.

Protocol 2: Bayesian Inference of S-system Parameters from Gene Expression Data

This protocol outlines a modern approach to inferring the parameters of an S-system model from noisy high-throughput data, such as scRNA-Seq, using a Bayesian framework to quantify uncertainty [7] [17].

1. Objective: To infer the posterior distributions of rate constants and kinetic orders in an S-system model from gene expression data under perturbation.

2. Key Reagent Solutions:

- Gene Expression Data Matrix: A matrix of gene expression levels (M genes x N experiments or time points) from scRNA-Seq or a similar technology.

- Perturbation Matrix: A design matrix encoding known genetic perturbations (e.g., knockouts, knockdowns) applied during the experiments.

- Computational Toolbox: Software such as GRNbenchmark or a custom implementation of a Bayesian inference algorithm like BiGSM (Bayesian inference of GRN via Sparse Modelling) [17].

3. Methodology: a. Data Pre-processing: Perform quality control, normalization, and potential smoothing or imputation to address the high dropout rate characteristic of scRNA-Seq data [7]. b. Model Specification: Formulate the S-system differential equations for the network. Place sparse prior distributions (e.g., Laplace or Spike-and-Slab priors) on the kinetic orders to reflect the biological knowledge that GRNs are typically sparse, with each gene regulated by only a few others [17]. c. Posterior Inference: Use numerical methods (e.g., Markov Chain Monte Carlo - MCMC, or Variational Inference) to compute the joint posterior distribution of all model parameters (rate constants and kinetic orders). This step integrates the observed expression data and the prior distributions. d. Posterior Analysis: Analyze the resulting posterior distributions. The mean or median of a parameter's posterior provides a point estimate, while the variance quantifies the confidence in that estimate. A kinetic order whose posterior distribution is tightly centered away from zero provides high confidence for a specific regulatory link.

4. Outcome: A fully probabilistic S-system model where every parameter is associated with a measure of statistical confidence, allowing for more robust biological interpretations and predictions.

Figure 1: A Bayesian workflow for inferring and validating S-system parameters from gene expression data.

Biological Interpretation of Inferred Parameters

A Guide to Interpreting Rate Constants and Kinetic Orders

Once inferred, the model parameters must be translated into biological understanding. The following table provides a guide for this critical interpretation phase.

Table 2: Biological Interpretation of S-system Parameters

| S-system Parameter | Mathematical Role | Biological Interpretation | Example Inference |

|---|---|---|---|

| Rate Constant (α_i) | Aggregated rate of synthesis of species X_i. | Represents the maximum potential production rate of a gene's product, influenced by the promoter's strength and the abundance of RNA polymerase. | A high value suggests strong basal transcription. |

| Kinetic Order (g_ij) | Exponent for independent variable Xj in the synthesis term of Xi. | Strength and type of regulatory influence of species j on the production of species i. A positive value indicates activation; a negative value indicates inhibition. | A g_ij = +0.8 suggests a strong transcriptional activator. A g_ij = -0.5 suggests a weak repressor. |

| Rate Constant (β_i) | Aggregated rate of degradation/consumption of species X_i. | Represents the baseline turnover rate of the species, influenced by protease activity, dilution from cell growth, and spontaneous degradation. | A high value indicates an unstable protein or mRNA with a short half-life. |

| Kinetic Order (h_ij) | Exponent for independent variable Xj in the degradation term of Xi. | Sensitivity of the degradation rate of species i to the concentration of species j. Often positive, representing enhanced degradation. | A positive h_ij could indicate that a protease (j) targets the protein (i) for breakdown. |

The Scientist's Toolkit: Key Reagents for GRN Inference

Table 3: Essential Research Reagent Solutions for GRN Inference Experiments

| Reagent / Resource | Function in Experimentation | Application Context |

|---|---|---|

| scRNA-Seq Kit | Provides high-resolution gene expression data at the single-cell level, capturing cellular heterogeneity. | The primary data source for inferring GRN dynamics and parameter estimation [7]. |

| CRISPR-Cas9 Knockout Library | Enables systematic perturbation of genes across the network to observe downstream effects, crucial for establishing causality. | Used to generate the perturbation matrix required for robust inference by methods like BiGSM [17]. |

| Unique Molecular Identifiers (UMIs) | Molecular tags that correct for amplification bias in sequencing, providing more accurate quantitative expression counts. | Essential for quality control and pre-processing of scRNA-Seq data to improve parameter inference accuracy [7]. |

| Bayesian Inference Software (e.g., BiGSM) | A computational tool that infers model parameters and provides confidence estimates (posterior distributions) for each prediction. | Used to translate gene expression and perturbation data into a probabilistic S-system model [17]. |

Visualization of a Canonical Regulatory Motif

The following diagram illustrates a simple two-gene regulatory network, a fundamental building block of larger GRNs, and how its dynamics are described by an S-system model. The parameters are annotated with their biological meanings.

Figure 2: A two-gene network where Protein A represses Gene B, modeled with S-system parameters.

Gene Regulatory Network (GRN) inference research, particularly within the S-system differential equation framework, has provided powerful methodologies for reconstructing cellular networks. However, traditional deterministic models often overlook two fundamental biological realities: the inherent stochasticity of molecular interactions and the significant time delays inherent in transcriptional and translational processes. These omissions limit the predictive power and biological relevance of inferred networks. The intrinsic noise within biochemical systems arises from random fluctuations in gene expression, leading to significant cell-to-cell variability even in clonal populations [18]. Simultaneously, time delays occur naturally in processes such as transcription, translation, and protein transport, creating non-instantaneous relationships between regulatory signals and their functional outcomes [19] [20]. This application note establishes why incorporating these elements is no longer optional but essential for advancing GRN research, particularly for drug development professionals seeking to translate network models into therapeutic insights.

The transition from ordinary differential equations (ODEs) to more biologically realistic frameworks represents a paradigm shift in systems biology. While ODE-based S-system models provide valuable insights through their power-law formalism, they fundamentally assume instantaneous reactions and deterministic behaviors—conditions rarely observed in vivo. Time-delayed differential equations (DDEs) and stochastic simulation algorithms (SSAs) address these limitations by explicitly incorporating temporal discontinuities and random fluctuations [19] [21]. For research focused on therapeutic interventions, such as targeting drug-resistant cancer networks, these advanced modeling frameworks can more accurately predict system responses to perturbations, potentially identifying novel treatment strategies that would be overlooked by traditional approaches [21].

The Biological Imperative: Stochasticity and Time Delays in Cellular Systems

The Ubiquity of Stochastic Effects in Gene Regulation

Biological systems are inherently noisy, with stochasticity playing a constitutive role in cellular processes rather than representing mere measurement error. This randomness manifests profoundly in gene expression, where low copy numbers of DNA, mRNA, and proteins lead to significant fluctuations that can drive phenotypic switching and probabilistic cell fate decisions [18]. These stochastic effects create cell-to-cell variability within genetically identical populations, enabling bet-hedging strategies in microbial communities and contributing to fractional killing in cancer therapy [18].

Mathematical frameworks such as the Chemical Master Equation (CME) and stochastic simulation algorithms provide principled approaches to capture these fluctuations. The "bursty" nature of transcription and translation—where proteins are produced in intermittent bursts rather than continuously—generates distributions of molecular counts that deterministic models cannot represent accurately [21]. For drug development professionals, this stochasticity has direct implications for understanding therapeutic resistance and heterogeneous treatment responses observed in clinical settings.

Time Delays as Fundamental Components of Regulatory Logic

Time delays are not incidental artifacts but fundamental features of biological regulation with functional consequences. Transcriptional delays arise from the sequential processes of transcription factor binding, chromatin remodeling, transcription initiation, elongation, and mRNA processing. Translational delays occur during protein synthesis, folding, and post-translational modifications. These delays collectively create significant temporal displacements between regulatory signals and their functional outcomes [19].

From a modeling perspective, these delays fundamentally alter system dynamics. While ODEs assume instantaneous interactions, Delay Differential Equations (DDEs) explicitly incorporate historical dependence, where the future state of the system depends on its past state. This temporal structure enables phenomena impossible in ODE frameworks, including stable oscillations emerging from negative feedback loops with sufficient delays [19] [20]. For core biological motifs like negative feedback, feedforward loops, and cascades, delayed interactions are not merely complications but essential design features that govern dynamics and functional capabilities [20].

Table 1: Comparative Analysis of Modeling Frameworks for GRN Inference

| Model Feature | Traditional ODE/S-system | Time-Delayed (DDE) Framework | Stochastic Framework |

|---|---|---|---|

| Temporal Dynamics | Instantaneous reactions | Explicit time delays incorporated | Markovian processes with random timing |

| Mathematical Representation | dx/dt = f(x(t)) | dx/dt = f(x(t-τ)) | Probability distributions over states |

| Noise Handling | Deterministic | Deterministic with history dependence | Intrinsic stochasticity |

| System Outcomes | Fixed points, ODE-driven oscillations | Delay-induced oscillations, history-dependent states | Multimodal distributions, switching behaviors |

| Computational Complexity | Moderate | High (requires history storage) | Very high (multiple simulations needed) |

| Biological Accuracy | Limited for delayed systems | High for transcriptional/translational processes | High for low-copy number situations |

Methodological Approaches and Experimental Protocols

Protocol 1: Implementing Stochastic Simulation for GRN Inference

Principle: Stochastic Simulation Algorithms (SSA) generate exact trajectories of the stochastic time evolution of biochemical systems, capturing inherent noise in gene regulatory processes [21].

Materials:

- High-performance computing environment (R, Python, or MATLAB)

- DelaySSA software package (available for R, Python, and MATLAB)

- Single-cell RNA-seq time series data

- Known reaction network topology

Procedure:

- System Specification: Define the set of molecular species and their initial quantities. For S-system models, convert power-law equations to reaction-like formalism.

- Reaction Definition: Enumerate all biochemical reactions with their propensity functions. For delayed reactions, specify both the initiation and completion events.

- Delay Parameterization: Assign delay times (τ) for transcription, translation, and other delayed processes based on experimental measurements or literature values.

- Simulation Execution: Run the stochastic simulator using the Next Reaction Method or Delay Direct Method to generate temporal trajectories.

- Ensemble Analysis: Perform multiple independent simulations (typically 1,000-10,000 runs) to characterize the probability distribution of system states.

- Model Validation: Compare the stationary distribution and temporal correlations with experimental single-cell data.

Technical Notes: The DelaySSA package supports two types of delayed reactions: (1) those with immediate reactant consumption and delayed product generation, and (2) those with delayed changes to both reactants and products [21]. For GRN inference, type (1) better represents transcription where transcription factors bind immediately but mRNA production occurs after a delay.

Protocol 2: Inferring Time-Delayed Regulatory Networks from Time-Series Data

Principle: Dynamic Bayesian Networks (DBNs) with explicit time-delay modeling can reconstruct causal relationships in GRNs while accounting for non-instantaneous regulation [22].

Materials:

- Time-series gene expression data (microarray or RNA-seq)

- Computational resources for structure learning

- DBNCS algorithm implementation or similar tools

Procedure:

- Data Preprocessing: Normalize expression data and address missing values using appropriate imputation methods.

- Search Space Construction: Apply the CMI2NI (conditional mutual inclusive information-based network inference) algorithm to identify potential regulatory relationships, reducing the search space for network reconstruction.

- Redundancy Reduction: Implement recursive optimization to remove spurious connections and minimize false positives.

- Network Decomposition: Partition the potential network into cliques (highly interconnected subgraphs) to reduce computational complexity.

- Directionality Inference: Apply DBN structure learning to establish causal directions within cliques, identifying regulator-target pairs.

- Delay Estimation: Optimize time-delay parameters for each regulatory interaction using maximum likelihood estimation or Bayesian methods.

- Network Validation: Evaluate reconstructed networks against benchmark datasets (e.g., DREAM challenges) or known biological networks (e.g., SOS DNA repair in E. coli) [22].

Technical Notes: The DBNCS hybrid learning method combines constraint-based and score-based approaches, achieving higher accuracy than either method alone [22]. For S-system models, the inferred time-delayed network can provide essential structural constraints for parameter estimation.

Protocol 3: Neural Network-Based Differential Equations for GRN Reconstruction

Principle: Multilayer perceptrons can approximate complex, nonlinear regulatory functions in differential equations, enabling inference of regulatory relationships from expression data [10].

Materials:

- Time-series gene expression data

- Machine learning framework (PyTorch or TensorFlow)

- Custom implementation of NN-based differential equation solver

Procedure:

- Network Architecture Design: Construct a fully connected neural network with a four-layer structure to represent the regulatory function for each gene.

- Model Training: Train the neural network to predict gene expression at time t+1 from expression at time t, using the loss between predictions and actual measurements.

- Regulatory Relationship Identification: Apply link-knockout techniques by systematically setting gene expressions to zero and observing the effect on synthesis rates of other genes.

- Network Pruning: Eliminate redundant regulatory connections through iterative knockout experiments and sensitivity analysis.

- Functionality Validation: Test whether the reconstructed network maintains expected biological functions (e.g., adaptation, oscillation) under perturbation.

- Model Transfer: Convert the neural network representation to a Hill function-based model for biological interpretation [10].

Technical Notes: This approach effectively handles nonlinear and non-monotonic regulatory relationships that challenge traditional linear models. The method has been successfully applied to reconstruct the core topology of the Xenopus Brachyury expression network [10].

Visualization of Integrated Stochastic and Time-Delayed GRN Framework

Diagram 1: Integrated framework for next-generation GRN modeling

Research Reagent Solutions: Essential Tools for Advanced GRN Modeling

Table 2: Key Computational Tools for Stochastic and Time-Delayed GRN Inference

| Tool/Platform | Function | Application Context | Key Features |

|---|---|---|---|

| DelaySSA [21] | Stochastic simulation with delays | Modeling transcriptional/translational delays | R/Python/MATLAB implementation; Handles multiple delay types |

| DBNCS [22] | Dynamic Bayesian network learning | Inferring time-delayed regulatory relationships | Hybrid learning; Reduced false positive rate |

| NN-DE [10] | Neural network-based differential equations | Reconstructing nonlinear regulatory networks | Handles non-monotonic regulation; Link knockout analysis |

| GENIE3 [23] | Random forest-based network inference | Large-scale GRN reconstruction from expression data | Supervised learning; High accuracy in benchmarks |

| GRN-VAE [23] | Variational autoencoder for GRNs | Single-cell GRN inference from sparse data | Deep learning architecture; Handles cellular heterogeneity |

| BiRGRN [23] | Bidirectional recurrent neural network | Modeling feedback in regulatory networks | Captures temporal dependencies; Handles cyclic regulation |

The integration of stochasticity and time delays represents a critical evolution in GRN inference that moves S-system frameworks closer to biological reality. For researchers and drug development professionals, these advanced modeling approaches offer more accurate predictions of cellular behaviors under perturbation, potentially revealing novel therapeutic vulnerabilities, especially in complex diseases like cancer. The protocols and tools outlined here provide practical pathways for implementing these sophisticated modeling frameworks, bridging the gap between theoretical systems biology and translational medicine. As single-cell technologies continue to reveal the breathtaking complexity of cellular heterogeneity, embracing these biologically realistic modeling paradigms will be essential for unlocking the therapeutic potential of gene regulatory networks.

In the field of systems biology, computational models for reconstructing Gene Regulatory Networks (GRNs) from experimental data exist on a spectrum ranging from purely empirical to fully mechanistic. At one extreme lie black-box models, such as artificial neural networks or random forests, which establish input-output relationships through parameters devoid of physical meaning [24]. While these models offer flexibility and can achieve high predictive accuracy, their internal workings remain uninterpretable, providing limited insight into the biological mechanisms they represent [24] [25]. At the other extreme reside fully mechanistic models, often based on detailed chemical kinetics like Hill functions; these models are constructed from first principles and their parameters directly correspond to physical biological quantities, such as binding affinities or reaction rates [10]. Although highly interpretable, these models are often computationally expensive and require substantial prior knowledge, making them difficult to scale and infer from data [26] [27].

S-system models, a formalism within Biochemical Systems Theory (BST), occupy a crucial middle ground in this modeling spectrum [27]. S-systems represent the dynamics of biological networks using power-law approximations derived from Taylor series expansion in logarithmic space. This structure provides a canonical nonlinear representation of network interactions. Unlike black-box models, the parameters of an S-system—rate constants (α, β) and kinetic orders (g, h)—carry distinct biological interpretations related to reaction rates and interaction strengths, respectively [27]. Simultaneously, S-systems avoid the complexity of fully detailed mechanistic models by approximating the aggregate effect of multiple processes, offering a favorable balance between biological realism, mathematical tractability, and capacity for inference from data [28] [27].

S-system Fundamentals and Formal Definition

An S-system models the dynamics of a biological network with N components (e.g., genes, proteins) using a set of coupled ordinary differential equations (ODEs). For a system with M metabolites, the rate of change in each component ( X_i ) is given by:

[ \dot{X}i = \alphai \prod{j=1}^{M} Xj^{g{ij}} - \betai \prod{j=1}^{M} Xj^{h_{ij}}, \quad i = 1, 2, \cdots, M. ]

Here, ( \dot{X}i ) denotes the time derivative of the concentration of component ( Xi ). The rate constants ( \alphai ) and ( \betai ) are non-negative values representing the production and degradation rate constants, respectively. The kinetic orders ( g{ij} ) and ( h{ij} ) are real-valued exponents that quantify the strength and direction of the influence of component ( Xj ) on the production or degradation of ( Xi ) [27]. A positive kinetic order (e.g., ( g{ij} > 0 )) indicates an activating influence, a negative value (e.g., ( g{ij} < 0 )) signifies a repressing influence, and a value of zero implies no direct interaction. The network structure is thus uniquely mapped onto the pattern of non-zero kinetic orders, while the dynamics are determined by their magnitudes and the rate constants [27].

Table 1: Interpretation of S-system Parameters

| Parameter | Biological Interpretation | Typical Range | Inference Challenge |

|---|---|---|---|

| Rate Constant (( \alpha_i )) | Aggregate rate of synthesis for component ( X_i ) | ( \alpha_i \geq 0 ) | High correlation with kinetic orders |

| Rate Constant (( \beta_i )) | Aggregate rate of degradation for component ( X_i ) | ( \beta_i \geq 0 ) | High correlation with kinetic orders |

| Kinetic Order (( g_{ij} )) | Strength of activation of ( Xi ) by ( Xj ) | ( g_{ij} \in \mathbb{R} ) (often ( -3 \text{ to } 3 )) | Determines network topology |

| Kinetic Order (( h_{ij} )) | Strength of inhibition of ( Xi ) by ( Xj ) | ( h_{ij} \in \mathbb{R} ) (often ( -3 \text{ to } 3 )) | Determines network topology |

The following diagram illustrates the canonical structure of an S-system model and its mapping to a biological network topology.

Diagram 1: The S-system modeling framework. The power-law differential equations are parameterized to map onto the topology and dynamics of a biological network.

Application Note 1: GRN Inference from Time-Series Data

Protocol: Reverse Engineering with Alternating Regression (AR)

A significant advantage of the S-system formalism is the ability to decouple its differential equations for parameter estimation. This protocol outlines the AR method for inferring S-system parameters from time-series gene expression data [27].

Primary Objective: To estimate the parameter set ( \Thetai = (\alphai, \betai, g{i1}, ..., g{iM}, h{i1}, ..., h_{iM}) ) for each gene ( i ) from transcriptomic data.

Materials and Reagents:

- Time-Series Expression Data: Typically from RNA-sequencing (bulk or single-cell) or microarrays. Single-cell RNA-Seq (scRNA-Seq) data requires careful pre-processing to handle dropout events and biological noise [7].

- Computing Environment: Software with robust regression and optimization libraries (e.g., Python with Scikit-learn and SciPy, or MATLAB).

Procedure:

- Data Pre-processing and Derivative Estimation:

- Standardize the data by normalizing gene expression counts.

- Estimate the derivative ( \dot{X}i ) at each time point ( tk ) using finite differences or more sophisticated smoothing techniques [27]: ( \dot{X}i(tk) \approx \frac{Xi(t{k+1}) - Xi(tk)}{t{k+1} - tk} ).

Logarithmic Transformation:

- Transform the S-system equation for a single gene into a linear-in-parameters form by taking logarithms of the production and degradation terms separately. This is the core step that enables the use of linear regression.

Iterative Alternating Regression:

- Initialize all parameters, for example, by setting kinetic orders to small random values.

- Step A - Production Term: Assume the degradation term is known. The equation can be rearranged as: ( \dot{X}i + \betai \prod Xj^{h{ij}} = \alphai \prod Xj^{g{ij}} ). Taking logarithms yields a linear model where ( \log(\alphai) ) and ( g_{ij} ) can be estimated via multiple linear regression.

- Step B - Degradation Term: Now, assume the production term (just estimated) is known. Rearrange as ( \alphai \prod Xj^{g{ij}} - \dot{X}i = \betai \prod Xj^{h{ij}} ). Again, take logarithms to estimate ( \log(\betai) ) and ( h_{ij} ) via linear regression.

- Iterate Steps A and B until the parameter values converge or a maximum number of iterations is reached.

Troubleshooting and Validation:

- Non-Convergence: The AR method may not converge for some systems. If this occurs, consider switching to a more robust hybrid method that combines global and local optimization, such as sequential quadratic programming (SQP) or eigenvector optimization [27].

- Validation: Use a portion of the data (a test set) withheld from training to validate the predictive capability of the inferred model. Compare the simulated dynamics from the inferred S-system against the actual, held-out data.

Performance and Quantitative Benchmarks

S-system inference algorithms have been quantitatively evaluated using benchmark datasets from competitions like the DREAM Challenges, where the underlying "gold standard" network is known [28] [26]. Performance is typically measured using precision-recall (PR) curves and receiver operating characteristic (ROC) curves, with a general consensus that PR curves are more informative for the typically sparse networks in biology [28].

Table 2: Quantitative Performance of S-system Models on DREAM5 Benchmarks

| Network Size | Best AUPR | Best AUROC | Inference Method | Comparative Performance |

|---|---|---|---|---|

| In silico (50 genes) | 0.39 | 0.78 | Hybrid Eigenvector + SQP [27] | Outperformed several correlation and regression methods |

| E. coli (~100 TFs) | 0.16 | 0.71 | Alternating Regression [27] | Performance comparable to top DREAM5 submissions (e.g., GENIE3) |

| S. cerevisiae (~100 TFs) | 0.12 | 0.65 | Alternating Regression [27] | Performance comparable to top DREAM5 submissions |

Key Insight: While S-systems are nonlinear models, their performance on some inference tasks can be comparable to sophisticated machine learning methods like GENIE3 (a random forest-based algorithm) [28] [27]. This demonstrates their utility as a structurally transparent and interpretable alternative to complex black-box models.

Application Note 2: Hybrid Optimization for Large-Scale Parameter Estimation

Directly estimating all parameters of a large S-system model is a high-dimensional, non-convex optimization problem that is computationally challenging. This protocol describes a hybrid optimization strategy that combines metaheuristics with gradient-based methods for efficient parameter estimation [29] [27].

Primary Objective: To efficiently and robustly identify the global optimum for S-system parameters in models with more than 10 components.

Materials and Reagents:

- Time-Series Data: High-quality, densely sampled time-series data is critical.

- High-Performance Computing (HPC) Cluster: The computational cost can be significant for large networks.

Procedure:

- Decoupling and Problem Decomposition:

- Leverage the structure of the S-system to decouple the full model, allowing parameters for each gene or metabolite to be estimated separately. This reduces a single ( O(M^2) ) problem into ( M ) problems of ( O(M) ) complexity [27].

Global Exploration Phase:

- Use a metaheuristic algorithm such as a Genetic Algorithm (GA) or Differential Evolution (DE) to explore the global parameter space for each decoupled equation.

- The objective function is the sum of squared errors between the measured and estimated derivatives (or gene expression levels at the next time point).

- Run the global optimizer for a fixed number of generations or until convergence stalls.

Local Refinement Phase:

- Take the best solution found by the global optimizer and use it to initialize a local, gradient-based optimizer such as Sequential Quadratic Programming (SQP).

- The local optimizer fine-tunes the parameters to achieve a high-precision solution.

Constraint Integration:

- Incorporate known biological constraints (e.g., certain kinetic orders must be zero, or a specific interaction is known to be activating) during the optimization process. This rejoins the decoupled system with prior knowledge and drastically reduces the feasible search space, improving convergence and biological plausibility [27].

Workflow Diagram: The following chart outlines the stages of this hybrid optimization protocol.

Diagram 2: Hybrid optimization workflow for S-system parameter estimation, combining global and local search strategies.

The Scientist's Toolkit: Research Reagents and Computational Solutions

Table 3: Essential Resources for S-system-based GRN Research

| Resource Category | Specific Tool / Reagent | Function and Application Notes |

|---|---|---|

| Experimental Data Generation | scRNA-Seq Platform (e.g., 10x Genomics) | Provides single-cell resolution expression data for network inference. Requires UMI (Unique Molecular Identifier) counting to address technical noise [7]. |

| Experimental Data Generation | Metabolic or Transcriptional Perturbations (e.g., inhibitors, gene knockouts) | Generates necessary system perturbations to expose network interactions. Critical for causal inference [28] [26]. |

| Data Pre-processing | Weighted Gene Co-expression Network Analysis (WGCNA) [28] | An R package for identifying co-expressed gene modules. Useful for pre-filtering genes and forming hypotheses about network structure prior to S-system modeling. |

| Benchmarking & Evaluation | DREAM Challenge Gold Standard Datasets [28] [26] | Publicly available datasets with known network structures. Essential for objectively validating and benchmarking new inference algorithms. |

| Computational Modeling | GENIE3 [28] [7] | A state-of-the-art, random-based (black-box) inference algorithm. Serves as a performance benchmark for S-system models. |

| Computational Modeling | Alternating Regression (AR) Code [27] | A fast, deterministic algorithm for S-system parameter estimation. A good first choice for systems where it converges. |

| Computational Modeling | Hybrid Optimizer (GA/SQP) [27] | A more robust but computationally expensive alternative to AR for challenging parameter estimation problems. |

S-system models offer a powerful and mathematically grounded framework that effectively bridges the gap between uninterpretable black-box models and intractable fully mechanistic models. Their power-law formalism provides a canonical representation of biological nonlinearity, while their parameters retain a direct, biochemically relevant interpretation. As demonstrated in the protocols and benchmarks, S-systems are amenable to inference from modern high-throughput data, particularly when combined with sophisticated hybrid optimization strategies. For researchers and drug development professionals, S-systems represent a versatile tool for generating testable, mechanistic hypotheses about the structure and dynamics of complex biological networks, from small-scale functional modules to genome-scale regulatory systems.

Advanced S-system Methodologies: From Time Delays to Parallel Computing

S-system models, a class of power-law formalism within biochemical systems theory, provide a highly effective framework for modeling the dynamics of gene regulatory networks (GRNs). They are characterized by a set of coupled ordinary differential equations where the synthesis and degradation terms are represented as products of power-law functions. However, traditional deterministic S-system models fail to capture the inherent stochasticity—or biological noise—that is ubiquitous in cellular processes. Stochastic S-system (SSS) models integrate the power-law formalism with stochastic simulation algorithms to bridge this gap, enabling researchers to model the random fluctuations in molecular species (e.g., mRNAs, proteins) that arise from the discrete and probabilistic nature of biochemical reactions.

Within the broader thesis on GRN inference, incorporating biological noise via SSS models is not merely a technical refinement; it is a conceptual necessity. Single-cell technologies have revolutionized systems biology by revealing substantial cell-to-cell heterogeneity in gene expression, even in clonal populations. Rigorously probing the biology of living cells requires a unified mathematical framework that accounts for single-molecule biological stochasticity alongside the technical variation of genomics assays [30]. The SSS framework moves beyond deterministic averages, allowing in silico experiments to explore how noise influences network stability, emergence of bistability, and cellular decision-making processes critical to drug development, such as mechanisms of drug resistance in cancer.

Theoretical Foundations of Noise in Biological Systems

Classifying Biological Noise

In stochastic modeling, biological noise is categorized into two primary types, each with distinct origins and implications for GRN dynamics. The table below summarizes their core characteristics.

Table 1: Classification of Biological Noise in Gene Regulatory Networks

| Noise Type | Source / Origin | Modeling Impact on GRNs | Example in Gene Expression |

|---|---|---|---|

| Intrinsic Noise | Discreteness of molecular species and randomness of reaction events [31]. | Inherent to the system; leads to heterogeneity even in genetically identical cells under identical conditions. | Stochastic binding/unbinding of transcription factors to DNA, leading to bursty transcription [30]. |

| Extrinsic Noise | Temporal or state-dependent fluctuations in external cellular factors [31]. | Arises from cell-to-cell variations in global factors; affects multiple network components simultaneously. | Cell-to-cell differences in ribosome concentration, causing correlated fluctuations in the translation rates of all mRNAs. |

The Stochastic Simulation Algorithm (SSA) Framework

The core engine for simulating discrete stochastic dynamics is the Stochastic Simulation Algorithm (SSA), also known as the Gillespie algorithm. The SSA directly simulates the time evolution of a molecular network by calculating the precise timing and sequence of individual reaction events. The algorithm is characterized by its Markovian property, meaning the future state depends only on the present state, not on the past [21]. This makes it excellent for capturing intrinsic noise. The propensity of each reaction is calculated based on the current state of the system, and a series of random numbers determines the next reaction to fire and the time at which it occurs.

For SSS models, the power-law rate laws of traditional S-systems are translated into the propensity functions that drive the SSA. This translation moves the system from a continuous, deterministic trajectory to a discrete, stochastic trajectory that more accurately reflects the noisy reality of intracellular processes. Recent research has focused on extending the SSA to handle non-Markovian processes with delays, which are critical for modeling transcription and translation where the initiation of a reaction and its completion are separated by a significant time lag [21].

Protocols for Stochastic S-system Modeling

Protocol 1: Implementing a Basic SSS Model Simulation

This protocol details the steps to implement a basic stochastic S-system simulation using the SSA, converting a deterministic S-system model into a stochastic framework.

Workflow Overview: Basic SSS Model Simulation

Materials and Reagents

- Computing Environment: A computer with R, Python, or MATLAB installed.

- Software Package: The

DelaySSAsoftware suite (available for R, Python, and MATLAB) [21]. - Model Definition: A well-defined deterministic S-system model for your GRN of interest.

Step-by-Step Procedure

- Define the S-system Model: Begin with your deterministic S-system model, which for a species ( Xi ) is typically written as: ( \frac{dXi}{dt} = \alphai \prod{j=1}^{N} Xj^{g{ij}} - \betai \prod{j=1}^{N} Xj^{h{ij}} ) where ( \alphai ) and ( \betai ) are rate constants, and ( g{ij} ) and ( h{ij} ) are kinetic orders.

- Formulate Reaction Channels: Decompose the S-system equation for each species into a set of synthesis and degradation reactions. For example, the term ( \alphai \prod Xj^{g{ij}} ) becomes a synthesis reaction, and ( \betai \prod Xj^{h{ij}} ) becomes a degradation reaction.

- Initialize the System: Set the initial molecular counts for all species ( X1, X2, ..., X_N ) and the initial simulation time ( t = 0 ).

- Implement the SSA Loop: Use the

DelaySSApackage or a custom SSA implementation to run the following iterative process until the desired simulation end time is reached: a. Calculate the propensity ( a\mu ) for each reaction ( \mu ). b. Generate two random numbers ( r1 ) and ( r2 ) from a uniform distribution between 0 and 1. c. Calculate the time until the next reaction: ( \tau = \frac{1}{a0} \ln(\frac{1}{r1}) ), where ( a0 ) is the sum of all propensities. d. Determine which reaction ( \mu ) occurs next by finding the smallest integer satisfying ( \sum{\nu=1}^{\mu} a\nu > r2 a0 ). e. Update the molecular counts according to the stoichiometry of reaction ( \mu ). f. Advance the time ( t = t + \tau ). g. Record the state of the system. - Analysis: Perform multiple independent simulations to analyze the distribution of possible system trajectories and calculate statistics like means, variances, and Fano factors.

Protocol 2: Incorporating Extrinsic Noise and Delays

This protocol extends the basic SSS model to include extrinsic noise and explicit time delays, which are essential for modeling complex biological processes like gene expression feedback.

Workflow Overview: Advanced SSS with Delays and Extrinsic Noise

Materials and Reagents

- Computing Environment: As in Protocol 1.

- Software Package: The

DelaySSApackage, which implements algorithms for SSA with delays [21]. - Knowledge of System: Prior knowledge or hypotheses about which reactions involve significant time delays (e.g., transcription, translation) and which parameters are subject to extrinsic fluctuations.

Step-by-Step Procedure

- Identify Delayed Reactions: Pinpoint reactions in your network where a significant time lag exists between the initiation and completion of the reaction. Transcription and translation are classic examples.

- Configure Delay Type: In the

DelaySSAframework, specify the type of delayed reaction [21]:- Type I (Consuming): Reactants are consumed immediately upon reaction initiation, and products are added after the delay ( \tau ).

- Type II (Non-Consuming): Both reactants and products are updated only after the delay ( \tau ). This is often used to model processes where the initiating molecule is not consumed.

- Model Extrinsic Noise: Introduce extrinsic noise by allowing key rate constants (e.g., ( \alphai ), ( \betai )) to fluctuate over time or vary from cell to cell. This can be achieved by modeling these parameters as stochastic processes themselves (e.g., an Ornstein-Uhlenbeck process) that run concurrently with the main SSA.

- Simulate with Delayed SSA (DSSA): Use the

DelaySSApackage to simulate the system. The DSSA manages a future event queue where delayed completions are scheduled, allowing the algorithm to handle non-Markovian processes. - Validate with Single-Cell Data: Compare the simulation outputs, such as the distribution of mRNA and protein counts, to experimental data from single-cell RNA sequencing (scRNA-seq) or flow cytometry. The framework for integrating these models with scRNA-seq data is a key area of current research [30].

Table 2: Essential Computational Tools for Stochastic S-system Modeling

| Tool / Resource | Function / Purpose | Key Features / Application Notes |

|---|---|---|

| DelaySSA Software Suite [21] | An easy-to-use implementation of SSA and Delayed SSA. | Available in R, Python, and MATLAB; facilitates accurate modeling of biological systems with delays. |

| R Statistical Programming [32] | A primary environment for statistical computing, data analysis, and network visualization. | Heavily used in bioinformatics; integrates with tools for network goal analysis and stochastic simulation. |

| GRIT (Gene Regulation Inference by Transport) [33] | Infers dynamic GRNs from single-cell data using optimal transport theory. | Used to fit differential equation models to data; can provide a target GRN structure for SSS modeling. |

| Generating Functions Framework [30] | A unifying mathematical framework for modeling stochasticity in single-cell omics data. | Provides a method to integrate models of transcription, splicing, and technical noise for rigorous simulation. |

| Virtual Masking Augmentation (VMA) [34] | A graph representation method for structure-sensitive graphs (e.g., protein interaction networks). | Useful for analyzing and representing the inferred GRN structure without altering its original topology. |

Application Note: Modeling a Bistable Gene Regulatory Network in Cancer

Background: A key challenge in cancer drug development is the phenomenon of lineage plasticity, where cancer cells transition between states to evade therapy. A prime example is lung cancer adeno-to-squamous transition (AST), which confers resistance to targeted therapies. The underlying GRN is hypothesized to exhibit bistability, maintaining both adenocarcinoma and squamous states.

SSS Modeling Approach:

- Network Definition: An S-system model is constructed based on known key transcription factors and regulators (e.g., SOX2, NKX2-1) driving AST.

- Stochastic Simulation: The model is simulated using the SSS framework with Delayed SSA to account for the slow, multi-step process of gene expression and phenotypic transition.

- Therapeutic Intervention: A potential therapeutic intervention, such as a SOX2 degrader, is modeled as a delayed degradation reaction targeting the SOX2 protein.

Results and Impact:

Simulations with DelaySSA successfully reproduced the bistable behavior of the AST network. By simulating the administration of a SOX2 degrader, researchers observed that the intervention could effectively block the AST transition and reprogram cells back to the adenocarcinoma state [21]. Furthermore, by approximating the Waddington's epigenetic landscape from the stochastic simulations, the stability of each cellular state and the depth of the valley separating them can be qualitatively analyzed. This application demonstrates how SSS models can move beyond mere description to become predictive tools for evaluating novel therapeutic strategies against adaptive drug resistance.