Hypergraph Variational Autoencoders: A Next-Generation Framework for Gene Regulatory Network Inference from Single-Cell Data

This article explores the transformative potential of Hypergraph Variational Autoencoders (HyperG-VAE) in inferring Gene Regulatory Networks (GRNs) from single-cell RNA sequencing (scRNA-seq) data.

Hypergraph Variational Autoencoders: A Next-Generation Framework for Gene Regulatory Network Inference from Single-Cell Data

Abstract

This article explores the transformative potential of Hypergraph Variational Autoencoders (HyperG-VAE) in inferring Gene Regulatory Networks (GRNs) from single-cell RNA sequencing (scRNA-seq) data. Aimed at researchers and drug development professionals, we first establish the foundational challenges of scRNA-seq analysis and GRN inference. We then detail the innovative architecture of HyperG-VAE, which synergistically models cellular heterogeneity and gene modules via dual encoders. The article provides crucial insights for troubleshooting data sparsity and optimizing model performance. Finally, we present comprehensive validation against state-of-the-art methods and discuss its profound implications for identifying disease biomarkers and accelerating therapeutic discovery.

The GRN Inference Challenge: Why Single-Cell Data Demands a New Approach

The Critical Role of Gene Regulatory Networks in Cellular Function and Disease

Gene Regulatory Networks (GRNs) are intricate biological systems that record the complex interactions between transcription factors (TFs) and the genes whose expression they control [1]. These networks represent collections of molecular regulators that interact with each other to determine gene activation and silencing in specific cellular contexts, forming the fundamental basis for understanding how cells perform diverse functions, respond to environmental changes, and how noncoding genetic variants cause disease [2]. The regulation of a gene is not carried out directly; rather, regulator genes encode proteins that carry out the regulation. Specific proteins called transcription factors bind to specific DNA sequences and increase or decrease the transcription of a gene, thereby controlling the level or intensity of that gene's expression [1].

GRNs provide crucial insights into complex biological phenomena by enabling researchers to describe and predict dependencies between molecules [1]. These networks can provide valuable understanding of complex biological systems, allowing for the identification of potential drug targets for treating diseases such as cancer [1]. The dynamic nature of gene regulation means that GRN relations often change over time rather than remaining constant, yet many available networks in databases and literature are static, representing either snapshots of gene regulatory relations at a single time point or unions of successive gene regulations over time [3]. This static representation limits our ability to understand temporal aspects of gene regulation such as the order of interactions and their pace [3].

Technological Advances in GRN Analysis

Single-Cell RNA Sequencing Revolution

The advent of single-cell RNA sequencing (scRNA-seq) technology has provided unprecedented resolution for analyzing gene regulatory networks at the single-cell level [1]. First conceptualized and technically demonstrated in 2009 by Tang et al., who sequenced the transcriptome of single blastomeres and oocytes, scRNA-seq has evolved into a powerful tool that now enables researchers to analyze transcriptomic profiles of hundreds of thousands of individual cells in a single study [4] [5]. This technology provides a more detailed and accurate view of cellular diversity than traditional bulk RNA sequencing methods, which only reflect average gene expression across a sample [5]. The ability to profile gene expression activity at single-cell resolution has become one of the most authentic approaches to probe cell identity, state, function, and response, allowing researchers to classify, characterize, and distinguish each cell at the transcriptome level, including rare but functionally important cell populations [4].

The standard scRNA-seq protocol includes several critical steps: sample acquisition, single-cell isolation, lysis, reverse transcription (conversion of RNA into complementary DNA or cDNA), cDNA amplification, library construction, sequencing, and data analysis [5]. Among these, single-cell isolation and capture presents particular challenges, with common techniques including limiting dilution, fluorescence-activated cell sorting (FACS), magnetic-activated cell sorting, microfluidic systems, and laser microdissection [4]. Microfluidics has emerged as a particularly popular approach due to its low sample consumption, precise fluid control, and reduced operating costs [5]. Droplet-based microfluidics (microdroplets) currently represents the most popular high-throughput platform, where single cells are isolated in nanoliter droplets containing lysis buffer and barcoded beads using microfluidic and reverse emulsion devices [5].

scRNA-seq Methodologies

scRNA-seq technologies have diversified into two primary categories: full-length transcript sequencing approaches and 3'/5'-end transcript sequencing approaches (tag-based methods) [5]. Full-length protocols such as Smart-seq2, Quartz-seq, and MATQ-seq provide comprehensive transcript coverage, offering advantages for isoform usage analysis, allelic expression detection, and identification of RNA editing markers [4] [5]. Tag-based methods including CEL-seq2, MARS-seq2, Drop-seq, inDrop, and 10x Genomics focus on either the 3' or 5' end of transcripts, with the main advantage of compatibility with unique molecular identifiers (UMIs) that reduce overall costs and improve gene-level quantification [4] [5].

Table 1: Comparison of Major scRNA-seq Platforms

| Platform/Method | Amplification Method | Read Coverage | Throughput | Key Applications |

|---|---|---|---|---|

| Smart-seq2 | PCR-based | Full-length | Low-medium | Isoform analysis, mutation detection |

| CEL-seq2 | IVT-based | 3'-end | Medium-high | Gene expression quantification |

| 10x Genomics | PCR-based | 3'-end | High (up to 10,000 cells) | Large-scale cell atlas projects |

| Drop-seq | PCR-based | 3'-end | High | Transcriptomic screening |

| MARS-seq2 | IVT-based | 3'-end | High (8,000-10,000 cells/run) | High-throughput profiling |

A key innovation in scRNA-seq has been the introduction of unique molecular identifiers (UMIs), which barcode each individual mRNA molecule within a cell during the reverse transcription step [4]. This approach significantly improves the quantitative nature of scRNA-seq by effectively eliminating PCR amplification bias and enhancing reading accuracy [4]. The development of these technologies has dramatically reduced costs while increasing automation and throughput, making single-cell analysis increasingly accessible to research communities worldwide [4].

Challenges in GRN Inference from scRNA-seq Data

Technical and Biological Limitations

Despite the revolutionary potential of scRNA-seq for GRN inference, several significant challenges persist. A primary issue is the prevalence of "dropout" events, where transcripts with low or moderate expression levels in a cell are erroneously not captured by the sequencing technology, resulting in zero-inflated count data [1] [6]. In various datasets examined, 57 to 92 percent of observed counts are zeros, creating substantial obstacles for computational analysis [6]. Dropouts make it difficult to distinguish and properly model the sources of zeros, complicating the inference of accurate regulatory relationships [1].

Additional technical challenges include cellular diversity, inter-cell variation in sequencing depth, and cell-cycle effects that introduce biological variation [6]. The dissociation process itself can induce artificial transcriptional stress responses, where stress gene expression triggered by tissue dissociation at 37°C leads to technical errors and inaccurate cell type identification [4]. This has led to recommendations to perform tissue dissociation at 4°C to minimize isolation procedure-induced gene expression changes [4]. Single-nucleus RNA sequencing (snRNA-seq) has emerged as an alternative approach that solves problems related to tissue preservation and cell isolation, particularly for tissues that don't easily separate into single-cell suspensions, such as brain tissue [4]. However, snRNA-seq only captures transcripts in the nucleus, potentially missing important biological processes related to mRNA processing, RNA stability, and metabolism [4].

Computational and Methodological Hurdles

From a computational perspective, GRN inference methods face significant obstacles. Recent studies have shown that many current methods for GRN inference specifically using scRNA-seq technology perform similarly to random predictors [1]. The lack of adequate pre-processing of gene expression data, including selection steps for subsets of genes of interest, smoothing, and discretization of gene expression, significantly affects the performance of inference approaches [1]. Furthermore, the absence of knowledge about ground-truth networks and the non-standardization of appropriate metrics to measure the quality of inferred networks make comparing algorithm performance particularly challenging [1].

The fundamental challenge remains that learning complex regulatory mechanisms from limited independent data points presents a daunting task [2]. Although single-cell data offers a large number of cells, most are not independent, limiting the statistical power for inference. Additionally, incorporating prior knowledge such as TF-motif matching into non-linear models presents technical difficulties that have not been fully resolved [2].

Hypergraph Variational Autoencoder for GRN Inference

Theoretical Foundation and Architecture

The hypergraph variational autoencoder (HyperG-VAE) represents a Bayesian deep generative model that leverages hypergraph representation to address the challenges of modeling single-cell RNA sequencing data [7] [8]. This innovative approach was developed specifically to overcome the limitations of existing GRN inference methods that struggle to simultaneously address both cellular heterogeneity and gene modules [7]. HyperG-VAE enhances scRNA-seq representation by reducing sparsity through its hypergraph modeling framework, enabling more accurate capture of the complex relationships in GRNs [7].

The model architecture features two key components: a cell encoder incorporating a structural equation model to account for cellular heterogeneity and construct GRNs, and a gene encoder utilizing hypergraph self-attention to identify gene modules [7] [8]. The synergistic optimization of these encoders through a decoder improves multiple aspects of scRNA-seq analysis, including GRN inference, single-cell clustering, and data visualization [7]. This architecture allows HyperG-VAE to capture latent correlations among genes and cells while enhancing the imputation of contact maps, addressing the critical dropout problem that plagues scRNA-seq data analysis [7].

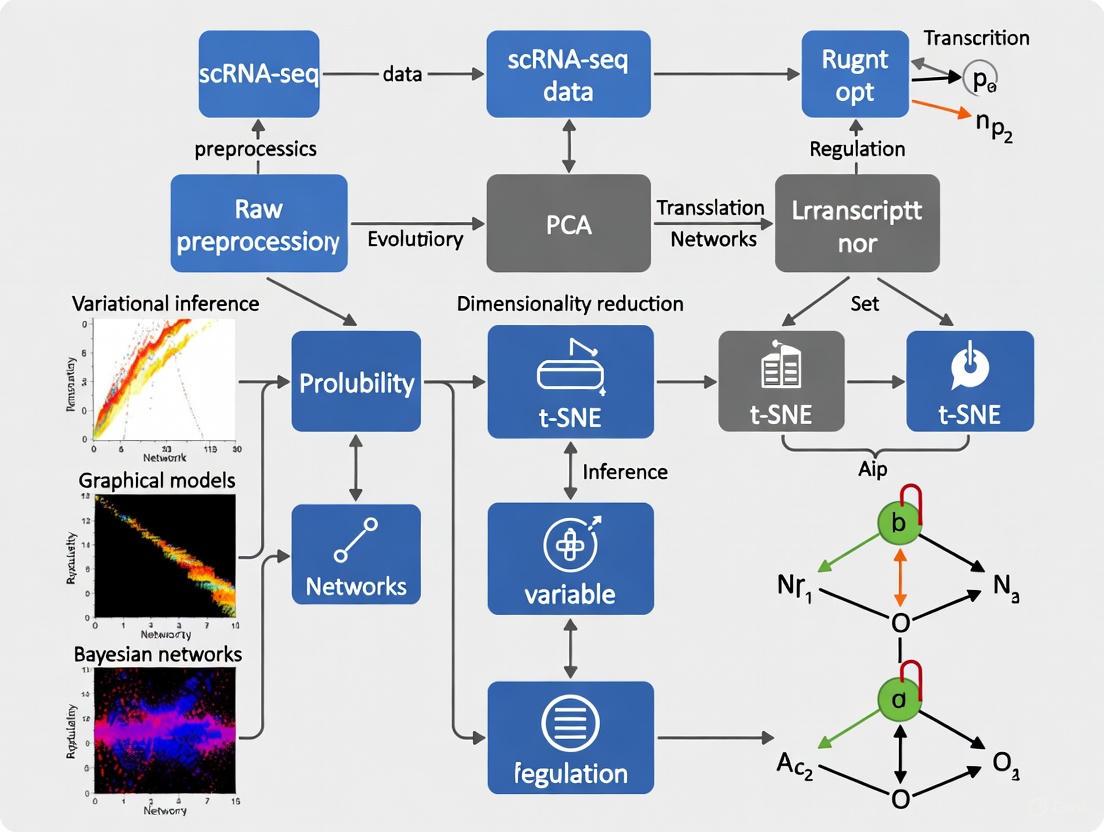

Diagram 1: HyperG-VAE Architecture for GRN Inference

Experimental Protocol for HyperG-VAE Implementation

Data Preprocessing and Quality Control

The implementation of HyperG-VAE begins with comprehensive data preprocessing. Start with the raw gene expression matrix from scRNA-seq data, where rows represent cells and columns represent genes. Transform raw counts using the relation log(x+1) to reduce variance and avoid taking the logarithm of zero [6]. Perform quality control checks to remove low-quality cells and genes, including filtering based on mitochondrial gene percentage, number of genes detected per cell, and total counts per cell. Normalize the data using standard scRNA-seq preprocessing pipelines to account for sequencing depth variation between cells.

Model Training and Optimization

Configure the HyperG-VAE architecture with the cell encoder and gene encoder components. The cell encoder should implement a structural equation model to account for cellular heterogeneity, while the gene encoder employs hypergraph self-attention mechanisms to identify gene modules. Initialize model parameters following Bayesian deep learning principles. Train the model using synergistic optimization of both encoders through the decoder component. Utilize benchmark validation datasets to optimize hyperparameters and monitor training progress. Implement early stopping based on reconstruction loss and validation performance to prevent overfitting.

GRN Inference and Validation

After training, extract the GRN from the learned parameters of the structural equation model in the cell encoder. Apply sparsity constraints to eliminate weak connections and focus on high-confidence regulatory interactions. Validate the inferred GRN using gene set enrichment analysis of overlapping genes in predicted GRNs [8]. Compare the results with existing gold-standard networks or experimental validation data where available. Perform downstream analyses including single-cell clustering, data visualization, and lineage tracing to assess biological relevance.

Performance Benchmarks and Applications

HyperG-VAE has demonstrated superior performance in benchmark evaluations compared to existing methods. The model surpasses benchmarks in predicting GRNs and identifying key regulators, with particular excellence demonstrated in analyzing B cell development data from bone marrow [7] [8]. The method effectively uncovers gene regulation patterns and demonstrates robustness in downstream analyses, validated through comprehensive benchmarks [7].

Table 2: Performance Comparison of GRN Inference Methods

| Method | Theoretical Approach | Key Strengths | Limitations |

|---|---|---|---|

| HyperG-VAE | Hypergraph variational autoencoder | Captures cellular heterogeneity and gene modules; reduces data sparsity | Computational complexity |

| LINGER | Lifelong learning with external data | 4-7x relative accuracy increase; uses atlas-scale external data | Requires substantial external data resources |

| DAZZLE | Dropout augmentation | Improved robustness to zero-inflation; enhanced stability | Limited to specific data types |

| GENIE3 | Random forest | Established performance; works well on diverse data | Originally designed for bulk data |

| PIDC | Partial information decomposition | Models cellular heterogeneity effectively | Performance varies across cell types |

| SCENIC | Co-expression + TF motif analysis | Identifies key transcription factors and regulons | Multi-step process potentially accumulating errors |

In practical applications, HyperG-VAE has proven particularly valuable for understanding cellular development and disease mechanisms. The model's ability to refine GRN inference through gene set enrichment analysis of overlapping genes confirms the gene encoder's role in improving regulatory network prediction [8]. This capability enables more accurate identification of disease-associated regulatory changes and potential therapeutic targets.

Advanced GRN Inference Methodologies

Integration of Multi-Omics Data

Recent advances in GRN inference have emphasized the integration of multiple data types to improve accuracy. LINGER (Lifelong neural network for gene regulation) represents a cutting-edge approach that infers GRNs from single-cell multiome data, incorporating both gene expression and chromatin accessibility information [2]. This method leverages atlas-scale external bulk data across diverse cellular contexts and prior knowledge of transcription factor motifs as manifold regularization [2]. The integration of these diverse data sources enables a fourfold to sevenfold relative increase in accuracy over existing methods, addressing the critical challenge that current GRN inference approaches perform only marginally better than random predictions [2].

The LINGER framework implements lifelong learning, incorporating knowledge from previous tasks to learn new tasks more efficiently with limited data [2]. The methodology involves three key steps: training on external bulk data, refining on single-cell data using elastic weight consolidation (EWC) loss with bulk data parameters as prior, and extracting regulatory information using interpretable AI techniques [2]. This approach generates comprehensive GRNs containing three types of interactions: trans-regulation (TF-TG), cis-regulation (RE-TG), and TF-binding (TF-RE) [2].

Addressing Technical Noise with DAZZLE

The DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) model introduces a novel perspective on addressing the dropout problem in scRNA-seq data through dropout augmentation (DA) rather than imputation [6]. This approach regularizes models by augmenting data with synthetic dropout events, counter-intuitively improving robustness against actual dropout noise in the data [6]. Based on the same VAE-based GRN learning framework as DeepSEM, DAZZLE incorporates dropout augmentation alongside optimized adjacency matrix sparsity control strategies, simplified model structures, and closed-form priors [6].

The theoretical foundation for dropout augmentation rests on established machine learning principles, where adding noise to input data during training improves model robustness and performance [6]. This approach aligns with Bishop's demonstration that adding noise equates to Tikhonov regularization and Hinton's introduction of random "dropout" on input or model parameters to enhance training performance [6]. Empirical validation demonstrates that DAZZLE exhibits superior model stability and robustness compared to existing approaches in benchmark experiments [6].

Diagram 2: Advanced GRN Inference Workflow

Research Reagent Solutions for GRN Studies

Table 3: Essential Research Reagents and Platforms for scRNA-seq Studies

| Reagent/Platform | Function | Application Context |

|---|---|---|

| 10x Genomics Chromium | Droplet-based single cell partitioning | High-throughput scRNA-seq library preparation |

| Smart-seq2 | Full-length transcript amplification | Full-length scRNA-seq with high sensitivity |

| Unique Molecular Identifiers (UMIs) | Barcoding individual mRNA molecules | Correcting PCR amplification bias |

| CEL-seq2 | Linear amplification via IVT | 3'-end counting with improved RT efficiency |

| MARS-seq2 | Automated high-throughput processing | Large-scale scRNA-seq studies |

| Fluorescence-Activated Cell Sorting (FACS) | Single-cell isolation | Precise selection of specific cell populations |

| Microfluidic Devices | Single-cell capture and processing | Low-volume, high-efficiency processing |

Future Directions and Clinical Applications

The future of GRN research points toward increasingly integrative and dynamic approaches. HyperG-VAE demonstrates potential for extending GRN modeling to temporal and multimodal single-cell omics, enabling more comprehensive understanding of regulatory dynamics [7] [8]. Similarly, methods like LINGER highlight the value of incorporating external data resources through lifelong learning paradigms to overcome the limitations of small sample sizes in single-cell studies [2]. These approaches will be essential for translating GRN inferences into clinically actionable insights.

In cancer research, scRNA-seq technologies have been increasingly employed to explore tumor heterogeneity and the tumor microenvironment, enhancing our understanding of tumorigenesis and evolution [5]. The ability to characterize subtle changes in tumor biology by identifying distinct cell subpopulations, dissecting the tumor microenvironment, and characterizing cellular genomic mutations positions GRN analysis as a crucial tool for advancing precision oncology [5]. As these methodologies continue to mature, they offer promising avenues for identifying novel therapeutic targets and developing more effective treatment strategies for complex diseases.

The critical role of Gene Regulatory Networks in understanding cellular function and disease mechanisms continues to drive methodological innovations. From hypergraph generative models to multi-omics integration approaches, the field is rapidly advancing toward more accurate, robust, and biologically meaningful inference of regulatory relationships. These developments promise to unlock deeper insights into the fundamental principles governing gene expression and their disruption in disease states, ultimately enabling new approaches to therapeutic intervention and personalized medicine.

Limitations of Bulk Sequencing and Traditional GRN Inference Methods

Gene regulatory networks (GRNs) are fundamental to understanding cellular identity, response to stimuli, and the mechanistic underpinnings of disease. They represent the complex interactions between transcription factors (TFs), cis-regulatory elements (CREs), and their target genes. The accurate inference of these networks is a central challenge in computational biology. Historically, this task relied on data from bulk sequencing technologies and a suite of traditional inference methods. However, these approaches possess inherent limitations that obscure the true dynamic and heterogeneous nature of gene regulation within complex tissues. This application note details these limitations, providing a structured comparison and experimental context, framed within the advancement towards methods like hypergraph variational autoencoders for analyzing single-cell RNA-sequencing (scRNA-seq) data.

Core Limitations of Bulk Sequencing and Traditional Methods

The primary shortcoming of bulk sequencing is its fundamental nature: it measures the average gene expression across thousands to millions of cells in a sample. This averaging process masks critical biological variability and confounds network inference in several key ways.

- Conflation of Cellular Heterogeneity: Bulk data represents a composite signal from potentially diverse cell types and states present in a tissue. GRNs inferred from such data are, at best, an average network that does not accurately represent the regulatory architecture of any specific cell type. At worst, they are biologically misleading, containing false positive and false negative edges that would not exist in a cell-type-specific network [9].

- Inability to Model Dynamic Processes: Many biological processes of great interest, such as cell differentiation, development, and disease progression, are dynamic. Bulk sequencing provides static snapshots, making it impossible to resolve the temporal sequence of regulatory events that drive these transitions [10].

- Loss of Correlations from Single-Cell Data: Regulatory relationships are not always linear and can be obscured when data is averaged. Single-cell data can reveal gene-gene correlations that are invisible in bulk data due to the conflation of distinct cell populations [11].

Table 1: Key Limitations of Bulk Sequencing for GRN Inference

| Limitation | Impact on GRN Inference | Experimental Consequence |

|---|---|---|

| Cellular Averaging | Produces confounded, non-cell-type-specific networks that may not reflect biology of any individual cell type [9]. | High rates of false positives and false negatives; inability to identify cell-type-specific driver TFs. |

| Static Snapshot | Cannot infer the directionality or causality of regulatory interactions over time [10]. | Fails to model dynamic processes like differentiation and cell fate decisions. |

| Masked Heterogeneity | Obscures unique GRNs of rare cell subpopulations that may have critical biological functions [11]. | Key regulatory networks in rare cell types (e.g., stem cells, rare immune cells) are missed. |

Limitations of Traditional GRN Inference Methodologies

Traditional computational methods designed for bulk data struggle to overcome these inherent data limitations and introduce their own set of challenges.

- Inability to Handle Single-Cell Data Characteristics: Methods developed for bulk data are not equipped to handle the high sparsity (dropout events) and noise characteristic of scRNA-seq data. Applying them directly to single-cell data leads to poor performance and inaccurate networks [12].

- Limited Incorporation of Prior Knowledge: Many traditional methods operate on gene expression data alone. While newer approaches are beginning to integrate multi-omic data, the seamless incorporation of diverse prior knowledge (e.g., TF motifs, chromatin accessibility, protein-protein interactions) remains a challenge. This limits their ability to distinguish direct from indirect regulation [13].

- Network Resolution and Directionality: Co-expression methods based on correlation cannot easily distinguish the regulator from the target or resolve causal relationships, often inferring undirected networks. Furthermore, they typically infer a single, population-level network [2] [11].

Table 2: Performance Comparison of Selected GRN Inference Methods

| Method Category | Example Methods | Key Limitations | Reported Accuracy (Example) |

|---|---|---|---|

| Co-expression/ Correlation | WGCNA [12], PIDC [10] | Infers undirected edges; cannot distinguish causality; highly sensitive to data sparsity [11]. | AUC only marginally better than random prediction on benchmark data [2]. |

| Regression-Based | GENIE3 [9] [12], Elastic Net | Performance degrades with high-dimensional predictors; struggles with correlated TFs; not designed for single-cell dropouts [2] [6]. | GENIE3 performs well on simulated data without dropouts, but poorly on data with dropouts [12]. |

| Bulk-Data Integrative | PECA [2] | Limited by the cellular heterogeneity present in the input bulk data, which reduces inference accuracy [2]. | Outperformed by single-cell multiome methods (e.g., LINGER showed 4-7x relative increase in accuracy) [2]. |

Experimental Protocols for Benchmarking GRN Inference

To quantitatively evaluate the limitations of traditional methods and the performance of novel algorithms, standardized benchmarking protocols are essential. The following outlines a core experimental workflow.

Protocol 1: In Silico Benchmarking with Synthetic Data

Objective: To assess GRN inference accuracy against a known ground truth network under controlled conditions, including simulated technical noise like dropouts.

- Data Generation: Use a software tool like GeneNetWeaver (GNW) to generate gold-standard network structures and corresponding synthetic gene expression data [12].

- Simulate Single-Cell Characteristics: Mimic the dropout phenomenon by randomly setting values in the synthetic expression matrix to zero based on a Bernoulli distribution, parameterized by a dropout probability

p[12]. - Network Inference: Run the traditional and novel GRN inference methods on the synthetic dataset.

- Performance Evaluation:

Protocol 2: Validation with Experimental Ground Truths

Objective: To validate inferred GRNs against experimentally derived regulatory interactions.

- Ground Truth Collection: Collect high-confidence TF-target interactions from independent experimental data, such as:

- ChIP-seq Data: Systematically curated ChIP-seq datasets for specific TFs in relevant cell types provide direct evidence of TF binding [2].

- eQTL Data: Expression Quantitative Trait Loci from studies like GTEx or eQTLGen link genetic variants to gene expression, providing evidence for cis-regulatory relationships [2].

- GRN Inference: Apply GRN methods to a real single-cell or bulk dataset (e.g., a public PBMC multiome dataset [2]).

- Validation Analysis:

Diagram 1: Traditional GRN inference workflow from bulk data, highlighting core limitations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for GRN Inference Research

| Resource / Reagent | Function in GRN Research | Example & Notes |

|---|---|---|

| 10x Genomics Multiome | Simultaneously profiles gene expression (RNA) and chromatin accessibility (ATAC) in the same single cell. | Provides paired data for methods like LINGER [2]. Enables linking TFs to REs and TGs. |

| ChIP-seq Antibodies | Protein-specific antibodies for Chromatin Immunoprecipitation to map TF binding sites. | Critical for generating experimental ground truth data for validation [2]. Quality is antibody-dependent. |

| Cis-Target Databases | Databases of conserved TF binding motifs (e.g., JASPAR, CIS-BP). | Provides prior knowledge on TF-RE binding potential for methods like SCENIC+ and LINGER [2] [13]. |

| Benchmarking Software | Tools to generate synthetic data and evaluate performance. | GeneNetWeaver (GNW) for simulation; BEELINE framework for standardized benchmarking [12] [14]. |

| Curated Interaction Databases | Databases of known TF-target interactions from literature and experiments. | Used as prior knowledge or for validation (e.g., from sources like ENCODE [2] [13]). |

The limitations of bulk sequencing and traditional GRN inference methods are fundamental and multi-faceted, stemming from the data's inherent lack of resolution and the methods' inability to model cellular heterogeneity and dynamic regulation. The transition to single-cell technologies has exposed these shortcomings, driving the development of a new generation of computational approaches. These novel methods, including the hypergraph variational autoencoders central to this thesis, are designed to leverage the resolution of scRNA-seq data, integrate multi-omic priors, and explicitly model the complex, cell-type-specific nature of gene regulation, thereby promising more accurate and biologically insightful GRNs.

Single-cell RNA sequencing (scRNA-seq) has fundamentally transformed biological research by enabling the examination of gene expression at the resolution of individual cells. This technological revolution provides an unprecedented window into cellular heterogeneity, allowing researchers to decipher the complex composition of tissues, uncover novel cell subtypes, and trace developmental trajectories that were previously obscured in bulk sequencing approaches [15] [16]. The ability to profile thousands of cells simultaneously has catalyzed major initiatives such as the Human Cell Atlas, which aims to map every cell type in the human body [16].

Despite these remarkable opportunities, the analysis of scRNA-seq data presents substantial computational challenges that must be addressed to fully realize its potential. The limited starting material per cell leads to technical artifacts including amplification bias, dropout events, and high levels of technical noise [15] [16]. Furthermore, the high-dimensional nature of single-cell data, often encompassing hundreds of thousands of cells measured across thousands of genes, demands specialized statistical and computational methods [15] [16]. This article explores these opportunities and hurdles, with a specific focus on the application of hypergraph variational autoencoders for gene regulatory network inference, and provides detailed protocols for researchers navigating this complex landscape.

The Computational Landscape of Single-Cell Analysis

Key Challenges in scRNA-seq Data Analysis

The journey from raw sequencing data to biological insights in scRNA-seq experiments is paved with numerous technical and analytical obstacles that can significantly impact result interpretation.

Technical and Biological Variability: scRNA-seq data suffers from multiple sources of noise and bias. The low RNA input from individual cells can result in incomplete reverse transcription and amplification, leading to inadequate coverage [15]. Dropout events, where transcripts fail to be captured or amplified in a single cell, create false-negative signals that are particularly problematic for detecting lowly expressed genes and rare cell populations [15]. Additionally, batch effects arising from technical variations between sequencing runs can confound biological interpretations if not properly addressed [15] [17].

Data Sparsity and Dimensionality: Single-cell datasets are characterized by their high dimensionality and sparsity, with excess zeros resulting from both biological and technical factors [18]. This sparsity poses significant challenges for downstream analyses, including cell type identification and gene regulatory network inference [19]. The curse of dimensionality further complicates these analyses, necess specialized dimensionality reduction techniques before meaningful patterns can be extracted [17].

Cell Type Identification and Annotation: Accurately identifying and annotating cell types remains a formidable challenge in scRNA-seq analysis. While unsupervised clustering is commonly used, methods struggle with rare cell types and continuous biological processes such as differentiation [17]. The process is further complicated when chemical exposures or disease states alter the expression of canonical marker genes, potentially leading to misannotation [17].

Table 1: Key Computational Challenges in scRNA-seq Analysis

| Challenge Category | Specific Challenges | Potential Impacts |

|---|---|---|

| Technical Variability | Amplification bias, dropout events, batch effects, ambient RNA contamination | False negatives/positives, reduced statistical power, confounded results |

| Data Characteristics | High dimensionality, sparsity, noise, missing data | Reduced accuracy in clustering and trajectory inference |

| Biological Complexity | Cellular heterogeneity, rare cell populations, continuous biological processes | Difficulty identifying cell types and states, missing biologically relevant populations |

| Integration Challenges | Modality-specific technical effects, weak feature correlations across modalities | Inability to leverage complementary multi-omics information |

Analytical Best Practices

Establishing robust analytical workflows is crucial for generating reliable insights from scRNA-seq data. Key considerations include:

Quality Control and Normalization: Rigorous quality control measures are essential for filtering out low-quality cells and genes. Standard practices include filtering cells expressing fewer than 200 or more than 2500 genes, and removing cells with high mitochondrial gene content (typically >5-20%), which may indicate compromised cell viability [17]. Normalization methods such as the pooling approach implemented in scran effectively account for differences in sequencing depth and library size between cells [17].

Batch Effect Correction: When integrating datasets across multiple samples or experimental conditions, batch correction is critical. Methods such as Harmony, Scanorama, and scVI have demonstrated excellent performance in removing technical variation while preserving biological signals [17] [20]. The choice of method depends on dataset size and complexity, with scVI particularly suited for large, complex datasets [17].

Dimensionality Reduction and Visualization: Following quality control and normalization, dimensionality reduction techniques such as principal component analysis (PCA) are applied to reduce computational complexity [15]. Non-linear methods like UMAP (Uniform Manifold Approximation and Projection) then enable effective visualization of cell clusters in two or three dimensions [17].

Hypergraph Variational Autoencoders for GRN Inference

Theoretical Framework

Gene regulatory networks (GRNs) represent the complex interplay between transcription factors and their target genes, defining cellular identity and function [19]. Inferring accurate GRNs from scRNA-seq data has been challenging due to data sparsity, noise, and cellular heterogeneity. The hypergraph variational autoencoder (HyperG-VAE) represents a significant advancement in addressing these challenges by modeling scRNA-seq data as a hypergraph, where cells are represented as hyperedges connecting the genes they express [19].

This innovative framework simultaneously captures cellular heterogeneity and gene modules through dual encoders—a cell encoder that models cell-specific regulatory mechanisms using a structural equation model, and a gene encoder that identifies gene modules through hypergraph self-attention mechanisms [19]. The joint optimization of these encoders enables the model to elucidate gene regulatory mechanisms within gene modules across various cell clusters, significantly enhancing its ability to delineate complex gene regulatory interactions [19].

Performance Benchmarks

HyperG-VAE has demonstrated superior performance in GRN inference compared to existing state-of-the-art methods. Comprehensive benchmarks conducted using the BEELINE framework across seven scRNA-seq datasets (including two human cell lines and five mouse cell lines) showed that HyperG-VAE outperforms methods such as DeepSEM, GENIE3, and PIDC across multiple evaluation metrics, including enrichment of true positives among top predictions (EPR) and area under the precision-recall curve (AUPRC) [19].

The model's effectiveness stems from its ability to overcome data sparsity by capturing latent correlations among genes and cells, thereby enhancing the imputation of contact maps and providing more robust GRN predictions [19]. Additionally, HyperG-VAE has shown excellent performance in downstream analyses including cell clustering, gene clustering, and lineage tracing, demonstrating its utility as a comprehensive framework for single-cell transcriptomic analysis [19].

Table 2: Comparison of GRN Inference Methods

| Method | Theoretical Approach | Key Advantages | Limitations |

|---|---|---|---|

| HyperG-VAE | Hypergraph-based variational autoencoder | Captures both cellular heterogeneity and gene modules; handles data sparsity effectively | Computational complexity; steep learning curve for implementation |

| DeepSEM | Structural equation modeling with deep learning | Models nonlinear relationships between TFs and target genes | Limited ability to capture gene module information |

| GENIE3 | Tree-based ensemble method | High accuracy in benchmark studies; handles large datasets | Computationally intensive for very large networks |

| PIDC | Information-theoretic approach | Effective at detecting conditional dependencies | Sensitivity to data sparsity and noise |

Experimental Protocols and Workflows

Protocol 1: Standard scRNA-seq Analysis Workflow

Objective: To process raw scRNA-seq data from FASTQ files to cell type identification and differential expression analysis.

Materials and Reagents:

- Raw sequencing data in FASTQ format

- Reference genome appropriate for the sample species

- High-performance computing cluster with sufficient memory and storage

Procedure:

- Data Preprocessing: Use Cell Ranger (10x Genomics) to align sequencing reads to the reference genome and generate gene expression matrices [20]. This tool employs the STAR aligner and accounts for cell barcodes and unique molecular identifiers (UMIs) to accurately quantify gene expression.

Quality Control: Filter cells based on the following criteria using Scanpy or Seurat [17] [20]:

- Remove cells with fewer than 200 detected genes

- Remove cells with more than 2500 detected genes (potential doublets)

- Exclude cells with >5-20% mitochondrial reads Apply additional doublet detection using DoubletFinder if sequencing depth is high [17].

Normalization: Normalize counts using the scran pooling-based method [17]. Log-transform the normalized counts using log(x+1) to stabilize variance [17].

Feature Selection: Identify highly variable genes using the Seurat

FindVariableFeaturesfunction or Scanpypp.highly_variable_genes[20].Dimensionality Reduction:

Clustering: Use the Leiden algorithm to identify cell clusters in the nearest-neighbor graph [17] [20].

Cell Type Annotation:

- Identify marker genes for each cluster using differential expression tests

- Reference cell type markers from curated databases (PanglaoDB)

- Manually annotate clusters based on canonical markers [17]

Differential Expression: Perform differential expression analysis between conditions using appropriate methods (e.g., MAST, Wilcoxon test) that account for the characteristics of single-cell data [17].

Troubleshooting Tips:

- If clusters appear driven by batch effects rather than biology, apply batch correction methods such as Harmony or Scanorama before clustering [17] [20]

- If rare cell populations are missed, consider density-based clustering methods like GiniClust [17]

- If suspected ambient RNA contamination, apply SoupX to correct counts [17]

Protocol 2: HyperG-VAE for GRN Inference

Objective: To infer gene regulatory networks from scRNA-seq data using HyperG-VAE

Materials and Reagents:

- Processed scRNA-seq count matrix

- List of transcription factors and target genes of interest

- High-performance computing environment with GPU acceleration recommended

Procedure:

- Data Preparation:

- Format the scRNA-seq data as a hypergraph incidence matrix where cells are hyperedges and genes are nodes [19]

- Preprocess the count matrix using standard normalization and log transformation

- Select highly variable genes and transcription factors for inclusion in the model

Model Configuration:

- Initialize the HyperG-VAE architecture with cell and gene encoders

- Configure the structural equation model in the cell encoder to learn GRN interactions

- Set up the hypergraph self-attention mechanism in the gene encoder to identify gene modules [19]

Model Training:

- Train the model using variational inference to optimize the evidence lower bound (ELBO)

- Employ a joint optimization strategy for both cell and gene encoders

- Implement early stopping based on reconstruction loss to prevent overfitting [19]

GRN Inference:

- Extract the learned causal interaction matrix from the structural equation layer

- Apply thresholding to identify significant regulatory interactions

- Validate network edges using established biological databases (e.g., STRING, ChIP-seq data) [19]

Downstream Analysis:

- Perform gene set enrichment analysis on identified gene modules

- Visualize the GRN using network visualization tools (e.g., Cytoscape)

- Correlate regulatory interactions with cell type-specific functions [19]

Validation and Interpretation:

- Compare inferred GRNs with ground truth networks from databases such as STRING or cell-type-specific ChIP-seq data [19]

- Assess biological relevance through enrichment analysis of target genes for transcription factor binding motifs

- Validate key predictions using orthogonal data or experimental approaches

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Single-Cell Analysis

| Tool/Reagent | Type | Primary Function | Application Notes |

|---|---|---|---|

| 10x Genomics Chromium | Wet-bench platform | Single-cell partitioning and barcoding | Supports RNA-seq, ATAC-seq, and multiome assays; industry standard for droplet-based scRNA-seq [21] |

| Cell Ranger | Software pipeline | Processing raw sequencing data to count matrices | Optimized for 10x Genomics data; uses STAR aligner; generates standardized output compatible with downstream tools [20] |

| Seurat | R toolkit | Comprehensive scRNA-seq analysis | Excellent for data integration and multimodal analysis; strong visualization capabilities [17] [20] |

| Scanpy | Python toolkit | Scalable scRNA-seq analysis | Handles millions of cells efficiently; integrates with scVI-tools and machine learning ecosystems [20] |

| scVI-tools | Python package | Deep generative modeling for scRNA-seq | Superior batch correction and imputation; based on variational autoencoders [20] |

| Harmony | Algorithm | Batch effect correction | Efficient integration of datasets across batches, conditions, and technologies [20] |

| CellBender | Computational tool | Ambient RNA removal | Uses deep learning to distinguish real cell signals from background noise [20] |

| HyperG-VAE | Deep learning framework | GRN inference from scRNA-seq data | Models data as hypergraph; simultaneously captures cellular heterogeneity and gene modules [19] |

Workflow Visualization

Single-Cell RNA-seq Analysis Workflow

HyperG-VAE Architecture for GRN Inference

The single-cell revolution has provided unprecedented opportunities to explore cellular heterogeneity and gene regulatory mechanisms at unprecedented resolution. However, realizing the full potential of these technologies requires addressing significant computational hurdles, including data sparsity, technical noise, and the complexity of biological systems. The development of advanced computational methods such as HyperG-VAE represents a promising approach to overcoming these challenges, particularly for inferring gene regulatory networks from sparse single-cell data.

As single-cell technologies continue to evolve, generating increasingly large and complex multimodal datasets, the development of robust, scalable, and interpretable computational methods will be crucial. Future directions in the field include the integration of self-supervised learning strategies, transformer-based architectures, and federated learning frameworks to enhance the robustness and reproducibility of single-cell analyses [22]. By combining cutting-edge experimental technologies with advanced computational approaches, researchers will continue to unlock the secrets of cellular function and dysfunction, with profound implications for basic biology and therapeutic development.

Addressing Data Sparsity and Cellular Heterogeneity in scRNA-seq

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling transcriptomic profiling at the resolution of individual cells, revealing unprecedented insights into cellular heterogeneity, developmental trajectories, and disease mechanisms [23] [24]. This technological advancement has displaced the long-standing paradigm that cells of the same tissue origin are homogeneous, instead demonstrating that even genetically identical cells cultured in the same conditions exhibit significant variations in gene expression [24]. However, the high-dimensional nature of scRNA-seq data presents two fundamental analytical challenges: data sparsity and cellular heterogeneity.

Data sparsity in scRNA-seq arises primarily from technical limitations, including so-called "dropout events" where lowly expressed genes fail to be detected, resulting in an excess of zero counts in the expression matrix [25] [26]. This sparsity obstructs reliable detection of expressed genes and introduces substantial noise into downstream analyses. Simultaneously, cellular heterogeneity—the natural biological variation between individual cells—manifests as diverse gene expression patterns across cell types, states, and transient developmental stages [24] [27]. While uncovering this heterogeneity is a primary goal of scRNA-seq studies, it complicates analysis by creating complex, multi-modal distributions in the data.

Within the context of gene regulatory network (GRN) inference, these challenges are particularly pronounced. Accurate GRN reconstruction requires detecting subtle, coordinated expression changes between transcription factors and their target genes—signals that are often obscured by technical noise and biological variability [25] [26]. This application note establishes experimental protocols and analytical frameworks designed to address these intertwined challenges, with special emphasis on hypergraph variational autoencoder (HyperG-VAE) approaches that synergistically model cellular heterogeneity while constructing reliable GRNs from sparse single-cell data [8].

Methodological Approaches and Experimental Protocols

Hypergraph Variational Autoencoders for Integrated Analysis

The HyperG-VAE framework represents a Bayesian deep generative model that leverages hypergraph representations to simultaneously address data sparsity and cellular heterogeneity in scRNA-seq data [8]. The model architecture consists of two complementary encoders: a cell encoder that incorporates a structural equation model to account for cellular heterogeneity and construct GRNs, and a gene encoder that utilizes hypergraph self-attention to identify coherent gene modules [8]. These components are synergistically optimized via a shared decoder, enabling simultaneous improvement in GRN inference, single-cell clustering, and data visualization.

The protocol for implementing HyperG-VAE begins with standard scRNA-seq preprocessing: removal of low-quality cells and genes, normalization, and selection of highly variable genes [23]. Following this, the hypergraph structure is constructed by modeling genes as nodes and incorporating biological prior knowledge about gene interactions where available. The model is then trained using a combined loss function that includes reconstruction loss, Kullback-Leibler divergence for the variational approximation, and regulatory constraints that promote biologically plausible network structures [8] [26].

Table 1: Key Components of the HyperG-VAE Framework

| Component | Architecture | Function | Biological Interpretation |

|---|---|---|---|

| Cell Encoder | Structural Equation Model | Accounts for cellular heterogeneity | Captures cell-to-cell variation in GRN structure |

| Gene Encoder | Hypergraph Self-Attention | Identifies gene modules | Discovers functionally coordinated gene groups |

| Shared Decoder | Neural Network | Reconstructs expression data | Ensures biological fidelity of representations |

| Optimization | Combined Loss Function | Joint training of encoders and decoder | Balances reconstruction accuracy with regulatory constraints |

Comparative Analysis of GRN Inference Methods

Multiple deep learning approaches have been developed to address the intertwined challenges of sparsity and heterogeneity in GRN inference. The SIGRN (Soft Introspective Variational Autoencoder) method introduces an adversarial mechanism within a VAE framework to improve the quality of generated data, which subsequently enhances GRN inference accuracy [26]. Unlike standard VAEs that often reconstruct low-quality data, SIGRN employs a "soft" introspective adversarial approach that avoids training additional neural networks or adding excessive parameters [26].

The f-DyGRN (f-divergence-based dynamic gene regulatory network) method addresses a different aspect—temporal dynamics—by inferring time-varying regulatory networks from time-series scRNA-seq data [25]. This approach integrates a first-order Granger causality model with regularization techniques and partial correlation analysis to reconstruct dynamic GRNs, employing a moving window strategy to capture changes in gene interactions over time [25].

Table 2: Performance Comparison of GRN Inference Methods on Benchmark Datasets

| Method | Architecture | AUC Score | Early Precision Ratio | Scalability | Key Advantage |

|---|---|---|---|---|---|

| HyperG-VAE | Hypergraph VAE | 0.81-0.89 | 7.2-11.5 | High | Integrates gene modules and cell heterogeneity |

| SIGRN | Introspective VAE | 0.79-0.87 | 6.8-10.9 | Medium | Improved data generation without extra parameters |

| f-DyGRN | Dynamic Network | 0.76-0.84 | N/A | Medium | Captures time-varying regulatory relationships |

| scGraphformer | Transformer GNN | 0.83-0.91 | N/A | High | Learns cell-cell relationships without predefined graphs |

| DeepSEM | SEM + Neural Networks | 0.72-0.81 | 5.3-8.7 | High | Stable performance across datasets |

Experimental Workflow for GRN Inference

Diagram 1: Integrated Computational Workflow for GRN Inference. This workflow illustrates the parallel processing of data sparsity and cellular heterogeneity challenges before integrated model application.

Research Reagent Solutions and Experimental Materials

Essential Computational Tools and Frameworks

Successful implementation of the protocols described in this application note requires specific computational tools and frameworks. The HyperG-VAE model is implemented in Python using PyTorch, with specific dependencies including Scanpy for single-cell data preprocessing, and specialized libraries for hypergraph operations [8] [28]. The SIGRN method similarly relies on PyTorch and incorporates the "soft" introspective adversarial training approach, which necessitates GPU acceleration for efficient training [26].

For benchmarking GRN inference performance, the BEELINE framework provides standardized evaluation metrics and benchmark datasets, enabling fair comparison across different methods [26]. Essential evaluation metrics include Area Under the Receiver Operating Characteristic Curve (AUC) and Early Precision Ratio (EPR), which measures the proportion of true positives among the top-k edges (where k counts the edges in the "ground truth" network) [26].

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Specifications | Application | Protocol Reference |

|---|---|---|---|

| PyTorch Framework | Version 1.9.0+ with CUDA support | Deep learning model implementation | HyperG-VAE, SIGRN protocols |

| Scanpy | Version 1.9.1+ | Single-cell data preprocessing | Data normalization and HVG selection |

| - BEELINE Benchmarks | Standardized evaluation framework | Performance assessment | AUC and EPR calculation |

| 10X Genomics Chromium | Droplet-based single-cell isolation | scRNA-seq library preparation | Cell encapsulation and barcoding |

| Fluidigm C1 System | Microfluidic cell capture | Single-cell isolation | Integrated library preparation |

Technical Protocols and Implementation Guidelines

Comprehensive scRNA-seq Data Preprocessing Protocol

Effective addressing of data sparsity begins with meticulous data preprocessing. The following protocol outlines the critical steps for preparing scRNA-seq data for GRN inference:

Quality Control and Filtering: Remove low-quality cells using thresholds tailored to your experimental system (typically <500-1,000 genes detected per cell or >10-20% mitochondrial content). Filter out genes expressed in fewer than 1% of cells to reduce noise [26] [27].

Normalization: Normalize gene expression counts using the 'normalizepercell' function to set total counts per cell to 10,000-20,000, followed by log2 transformation. Apply Z-score normalization across genes to standardize expression values [26].

Highly Variable Gene (HVG) Selection: Select 500-1,000 highly variable genes using the Seurat or Scanpy package. Include all transcription factors in the HVG list regardless of variability to ensure regulatory elements are represented [23] [26].

Data Augmentation: For particularly sparse datasets, consider applying data augmentation techniques such as scGFT (Generative Fourier Transformer), which synthesizes single cells that exhibit natural gene expression profiles present within authentic datasets without requiring pre-training [29].

HyperG-VAE Implementation Protocol

Implementing HyperG-VAE for GRN inference involves both standard deep learning practices and specialized configurations for biological data:

Data Configuration: Format preprocessed scRNA-seq data into a cell-by-gene matrix with dimensions (ncells × ngenes). Split data into training (80%) and validation (20%) sets, ensuring all cell types are represented in both sets.

Hypergraph Construction: Construct hypergraph structure where genes represent nodes. Incorporate prior biological knowledge by connecting genes that share known protein-protein interactions, pathway affiliations, or regulatory relationships.

Model Training: Train the model using a combined loss function:

- Reconstruction loss (mean squared error between input and reconstructed expression)

- KL divergence between latent distribution and standard normal

- Regulatory loss encouraging sparsity in inferred networks Utilize the Adam optimizer with initial learning rate of 0.001 and batch size of 128.

GRN Extraction: After training, extract the regulatory network from the cell encoder's structural equation model component. Apply a threshold to the interaction weights to obtain a binary adjacency matrix representing the final GRN.

Model Validation and Benchmarking Protocol

Rigorous validation is essential for assessing GRN inference performance:

Evaluation Metrics: Calculate AUC and EPR using the BEELINE framework [26]. Compare against known ground truth networks from databases like STRING or ChIP-Seq datasets [26].

Biological Validation: Perform gene set enrichment analysis on highly connected genes in the inferred network to assess functional coherence [8]. Validate key regulatory relationships using external datasets or through experimental collaboration where possible.

Stability Assessment: Conduct multiple training runs with different random seeds to evaluate consistency in inferred networks. For HyperG-VAE, examine the reproducibility of identified gene modules across runs.

This application note has detailed protocols for addressing the dual challenges of data sparsity and cellular heterogeneity in scRNA-seq data, with particular emphasis on GRN inference using hypergraph variational autoencoder approaches. The integrated workflow enables researchers to transform sparse, heterogeneous single-cell data into biologically interpretable gene regulatory networks, facilitating discoveries in developmental biology, disease mechanisms, and therapeutic development.

The comparative analysis demonstrates that methods like HyperG-VAE, SIGRN, and f-DyGRN each offer distinct advantages depending on the specific research context and data characteristics. As single-cell technologies continue to evolve, producing increasingly complex multimodal datasets, the integration of these approaches with emerging experimental techniques will further enhance our ability to decipher the regulatory logic underlying cellular function and dysfunction.

Deconstructing HyperG-VAE: A Dual-Encoder Architecture for Enhanced GRN Inference

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the characterization of gene expression at the ultimate resolution of individual cells. However, the analysis of scRNA-seq data presents significant challenges due to its high-dimensionality, sparsity, and complex cellular heterogeneity. Traditional network-based approaches, such as co-expression networks, have been widely adopted but possess inherent limitations: they lose higher-order information, create inefficient data representation by converting sparse datasets into fully connected networks, and overestimate coexpression due to zero-inflation [30].

Hypergraph representations offer a powerful alternative framework that naturally captures the multi-way relationships inherent in scRNA-seq data. In this paradigm, nodes represent cells and hyperedges represent genes, with each hyperedge connecting all cells where its corresponding gene is actively expressed [30]. This conceptualization preserves the complete information contained within the original expression matrix while providing a mathematical structure capable of modeling complex, overlapping biological relationships that traditional pairwise networks cannot capture.

Hypergraph Formalism for scRNA-seq Data

Mathematical Representation

In formal terms, a hypergraph is defined as a pair H = (V, E), where V is a set of vertices (cells) and E is a set of hyperedges (genes). For scRNA-seq data with m cells and n genes, the hypergraph structure is encoded through an incidence matrix M ∈ {0,1}^m×n, where Mij = 1 if gene j is expressed in cell i (i.e., Hij^V^ > 0), and 0 otherwise [19]. This representation directly captures the relationship between cells and their expressed genes without requiring the data reduction inherent in graph projections.

Comparative Advantages Over Traditional Methods

Table 1: Comparison of scRNA-seq Data Representation Methods

| Representation Type | Mathematical Structure | Preserves Higher-Order Information | Handles Data Sparsity | Computational Efficiency |

|---|---|---|---|---|

| Hypergraph | Incidence matrix M ∈ {0,1}^m×n | Yes | Excellent | Moderate |

| Co-expression Network | Adjacency matrix A ∈ R^n×n | No | Poor | High |

| Dimensionality Reduction | Projection P ∈ R^m×k | Partial | Moderate | High |

The hypergraph framework offers distinct advantages for scRNA-seq analysis. Unlike co-expression networks that force data into pairwise interactions, hyperedges can connect multiple cells through shared gene expression patterns, naturally capturing the complex modular organization of transcriptional programs [30] [19]. This approach also better handles the characteristic sparsity of scRNA-seq data by maintaining the original expression relationships without creating artificially dense network structures.

Implementation in GRN Inference: The HyperG-VAE Framework

The hypergraph variational autoencoder (HyperG-VAE) represents a cutting-edge implementation of hypergraph representations for Gene Regulatory Network (GRN) inference from scRNA-seq data [19]. This Bayesian deep generative model specifically addresses the dual challenges of cellular heterogeneity and gene module identification through a synergistic architecture featuring two specialized encoders:

- Cell Encoder: Incorporates a structural equation model (SEM) to account for cellular heterogeneity and construct GRNs from gene co-expression space

- Gene Encoder: Utilizes hypergraph self-attention to identify gene modules with consistent expression profiles across cells

These encoders undergo joint optimization through a hypergraph decoder that reconstructs the original topology of the hypergraph using the learned latent embeddings of genes and cells [19]. The resulting framework enables simultaneous inference of GRNs, cell clustering, gene clustering, and characterization of interactions between gene modules and cellular heterogeneity.

Experimental Protocol for HyperG-VAE Implementation

Protocol 1: Hypergraph Construction from scRNA-seq Data

Data Preprocessing

- Begin with raw count matrix from scRNA-seq experiment

- Perform quality control to remove low-quality cells and genes

- Normalize using standard scRNA-seq methods (e.g., SCTransform)

Incidence Matrix Formation

- Let X ∈ R^m×n be the normalized expression matrix with m cells and n genes

- Construct incidence matrix M ∈ {0,1}^m×n where:

- Mij = 1 if Xij > τ (expression threshold)

- Mij = 0 otherwise

- Optimal threshold τ can be determined by sensitivity analysis

Hypergraph Initialization

- Initialize hypergraph H with cells as vertices and genes as hyperedges

- Implement using hypergraph libraries (e.g., XGI in Python) [31]

Protocol 2: HyperG-VAE Training and GRN Inference

Model Configuration

- Initialize cell encoder with structural equation model (SEM) layers

- Initialize gene encoder with hypergraph self-attention mechanisms

- Configure decoder to reconstruct hypergraph topology

Training Procedure

- Train model using variational evidence lower bound (ELBO) objective

- Employ mini-batch optimization for large-scale datasets

- Monitor reconstruction loss and regularization terms

GRN Extraction

- Extract learned causal interaction matrix from SEM layer in cell encoder

- Apply thresholding to obtain binary regulatory interactions

- Validate against ground truth networks (e.g., STRING, ChIP-seq)

Performance Benchmarking

Table 2: Performance Comparison of GRN Inference Methods on Benchmark Datasets

| Method | AUPRC (STRING) | AUPRC (ChIP-seq) | EPR (LOF/GOF) | Computational Time |

|---|---|---|---|---|

| HyperG-VAE | 0.317 | 0.285 | 0.462 | Medium |

| DeepSEM | 0.289 | 0.251 | 0.381 | Low |

| GENIE3 | 0.274 | 0.238 | 0.395 | High |

| PIDC | 0.263 | 0.229 | 0.342 | Medium |

| GRNBOOST2 | 0.281 | 0.247 | 0.401 | High |

Performance metrics demonstrate that HyperG-VAE surpasses established methods in GRN inference across multiple benchmark datasets and evaluation metrics, including Area Under the Precision-Recall Curve (AUPRC) and Enrichment of True Positives among top predictions (EPR) [19]. The improvement is particularly significant when analyzing datasets with weak data modularity, where traditional methods struggle to capture complex regulatory relationships.

Advanced Clustering Methodologies Using Hypergraph Random Walks

Dual-Importance Preference Algorithms

Building upon hypergraph representations, novel clustering methodologies have been developed specifically for scRNA-seq data analysis. The Dual-Importance Preference Hypergraph Walk (DIPHW) algorithm leverages random walks on hypergraphs to identify cell clusters with superior performance compared to graph-based approaches [30]. This method accounts for both:

- Gene Importance: The relative expression strength across cells

- Cell Importance: The significance of specific cells within hyperedges

A more advanced implementation, CoMem-DIPHW, further integrates the gene coexpression network, cell coexpression network, and the cell-gene expression hypergraph from single-cell abundance counts data for embedding computation [30]. This approach simultaneously captures local-level information from single-cell gene expression and global-level information from pairwise similarity in coexpression networks.

Experimental Protocol for Hypergraph-Based Clustering

Protocol 3: Hypergraph Random Walk Clustering

Hypergraph Construction

- Follow Protocol 1 to create hypergraph from scRNA-seq data

- Weight hyperedges by gene expression variance

- Weight nodes by cell quality metrics

Random Walk Implementation

- Configure random walk parameters (restart probability, walk length)

- Implement dual-importance preference for transition probabilities

- Simulate multiple random walks from each cell node

Embedding Generation

- Construct feature vectors from walk visit frequencies

- Apply dimensionality reduction (PCA, UMAP) to embeddings

- Perform clustering (Louvain, Leiden) on reduced space

Validation

- Compare with ground truth annotations if available

- Assess cluster stability through bootstrapping

- Evaluate biological coherence via pathway enrichment

Visualization Techniques for scRNA-seq Hypergraphs

Multi-Modal Visualization Approaches

Effective visualization of hypergraphs is essential for interpretation and analysis. Multiple complementary techniques have been developed to address the unique challenges of visualizing high-order relationships:

- Barycenter Layout: Positions nodes using Fruchterman-Reingold force-directed algorithm on an augmented graph projection with phantom nodes (barycenters) for each hyperedge [31]

- Multilayer Visualization: Displays hyperedges of different orders in separate layers, particularly effective for 3D visualization [31]

- Convex Hull Representation: Draws hyperedges as convex hulls encompassing their constituent nodes, with options for filled or outline-only rendering [31]

- Bipartite Projection: Visualizes the hypergraph as a bipartite graph with two node classes (cells and genes) [31]

Experimental Protocol for Hypergraph Visualization

Protocol 4: Visualizing scRNA-seq Hypergraphs with XGI

Environment Setup

- Install Python hypergraph library (XGI)

- Import necessary dependencies: matplotlib, numpy, xgi

Basic Visualization

- Load or create hypergraph object

- Compute layout using barycenterspringlayout()

- Generate plot with xgi.draw() function

- Customize node size, colors, and labels

Advanced Visualization Options

- Implement convex hull visualization with hull=True parameter

- Create multilayer visualizations for hyperedges of different orders

- Generate bipartite representations using draw_bipartite()

Customization for Publication

- Map node colors to cell properties (e.g., cluster identity)

- Map hyperedge colors to gene properties (e.g., expression level)

- Adjust font sizes and figure dimensions for publication standards

- Export in appropriate formats (PDF, SVG, PNG)

Table 3: Essential Research Reagents and Computational Tools for Hypergraph Analysis of scRNA-seq Data

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| XGI Library | Python library | Hypergraph construction, analysis, and visualization | General hypergraph manipulation and basic visualization [31] |

| HyperG-VAE | Deep learning model | GRN inference from scRNA-seq data | Bayesian deep generative modeling for regulatory network construction [19] |

| DIPHW/CoMem-DIPHW | Clustering algorithm | Cell clustering using hypergraph random walks | Identification of cell types and states in complex scRNA-seq datasets [30] |

| Seurat | R toolkit | Single-cell data analysis and integration | Data preprocessing, basic analysis, and conversion to hypergraph formats [32] |

| scViewer | R/Shiny application | Interactive exploration of scRNA-seq data | Visualization of gene expression, co-expression, and differential expression [33] |

Integration with Downstream Analytical Frameworks

Compatibility with Established scRNA-seq Workflows

Hypergraph representations demonstrate strong compatibility with established scRNA-seq analysis workflows, enabling seamless integration into existing research pipelines. The processed Seurat object format serves as an effective bridge between conventional single-cell analysis and hypergraph approaches [33]. Conversion functions allow transformation between popular formats (e.g., Scanpy's AnnData) and hypergraph-compatible structures, ensuring interoperability across computational environments [32].

Applications in Disease Modeling and Drug Development

The enhanced analytical capabilities of hypergraph representations have significant implications for disease modeling and drug development. In Alzheimer's disease research, hypergraph-based analysis has revealed cell-type-specific regulatory patterns in prefrontal cortical samples, identifying potential therapeutic targets [33]. Similarly, in B cell development studies, HyperG-VAE has successfully uncovered key gene regulation patterns and demonstrated robustness in downstream analyses, including lineage tracing and identification of regulatory mechanisms [19].

Hypergraph representations provide a powerful mathematical framework for analyzing the complex, high-dimensional data generated by scRNA-seq technologies. By faithfully capturing the multi-way relationships between genes and cells, these approaches address fundamental limitations of traditional network-based methods while enabling new insights into cellular heterogeneity and gene regulatory mechanisms. The integration of hypergraph representations with deep learning architectures, as exemplified by HyperG-VAE, represents a significant advancement in computational biology with broad applications across basic research, disease modeling, and therapeutic development.

Future development directions include extension of hypergraph methods to temporal and multimodal single-cell omics data, incorporation of spatial transcriptomic information, and development of more scalable algorithms for increasingly large-scale single-cell datasets [19]. As these methodologies continue to mature, hypergraph-based approaches are poised to become increasingly central to single-cell data analysis, offering unprecedented capabilities for unraveling the complexity of cellular systems.

Application Note

This application note details the implementation and use of the Cell Encoder, a core component of the hypergraph variational autoencoder (HyperG-VAE) framework designed for Gene Regulatory Network (GRN) inference from single-cell RNA sequencing (scRNA-seq) data. The Cell Encoder specifically addresses the challenge of capturing cellular heterogeneity by employing a Structural Equation Model (SEM) to infer cell-specific gene regulatory mechanisms within a hypergraph representation of scRNA-seq data [19] [8].

Scientific Background and Principle

Inferring GRNs from scRNA-seq data is crucial for understanding the complex interactions between transcription factors (TFs) and target genes that define cellular functions and responses. A significant challenge in this field is simultaneously accounting for cellular heterogeneity and gene module information. Traditional methods often focus on one aspect while overlooking the other, or struggle with the noise and sparsity inherent in scRNA-seq data [19].

The HyperG-VAE model tackles this by representing scRNA-seq data as a hypergraph, where individual cells are modeled as hyperedges and the genes expressed within them as nodes [19]. Within this architecture, the Cell Encoder leverages a Structural Equation Model to generate cell representations (H^E) in the form of hypergraph duality. This approach facilitates the embedding of high-order relations and enables GRN construction through a learnable causal interaction matrix within the structural equation layer. This design allows the Cell Encoder to adeptly capture the gene regulation process in a cell-specific manner, thereby elucidating a clearer landscape of cellular heterogeneity [19].

Table 1: Core Components of the HyperG-VAE Framework and Their Functions

| Component Name | Type | Primary Function in GRN Inference |

|---|---|---|

| Cell Encoder | Structural Equation Model (SEM) | Generates cell representations (H^E); infers cell-specific GRNs by capturing cellular heterogeneity [19]. |

| Gene Encoder | Hypergraph Self-Attention | Processes observed gene representations (H^V); identifies gene modules with consistent expression profiles [19]. |

| Hypergraph Decoder | Generative Model | Reconstructs the original hypergraph topology using learned latent embeddings of genes and cells [19]. |

| Structural Equation Layer | Learnable Causal Matrix | Realizes GRN construction within the cell encoder by modeling causal interactions between genes [19]. |

Performance and Validation

The HyperG-VAE framework, and by extension its Cell Encoder, has been rigorously benchmarked against state-of-the-art methods like DeepSEM, GENIE3, and PIDC [19]. Evaluations were conducted on seven scRNA-seq datasets, including human cell lines and mouse cell lines, using ground-truth data from sources such as STRING, ChIP-seq, and loss-/gain-of-function networks [19].

Performance was assessed using the Enrichment of Precision-Recall (EPR) metric, which evaluates the enrichment of true positives among the top K predicted edges relative to random predictions, and the Area Under the Precision-Recall Curve (AUPRC), which accounts for class imbalance [19]. In these benchmarks, HyperG-VAE demonstrated superior performance in predicting GRNs, effectively uncovering key gene regulation patterns [19].

Table 2: Key Benchmarking Results of HyperG-VAE Against Baselines

| Evaluation Metric | Description | HyperG-VAE Performance |

|---|---|---|

| EPR (Enrichment of Precision-Recall) | Assesses true positive enrichment in top predictions [19]. | Surpassed all seven state-of-the-art baseline algorithms in benchmarks [19]. |

| AUPRC (Area Under Precision-Recall Curve) | Measures performance under class imbalance [19]. | Achieved higher accuracy than benchmarks including DeepSEM and PIDC [19]. |

| Downstream Analysis | Cell clustering, data visualization, lineage tracing [19]. | Excelled in uncovering regulatory patterns in B cell development data [19]. |

Protocol

This protocol provides a step-by-step procedure for implementing the HyperG-VAE framework, with a focus on the Cell Encoder module, to infer GRNs from a given scRNA-seq expression matrix.

Experimental Workflow

The following diagram illustrates the complete workflow of the HyperG-VAE, from data input to GRN inference.

Step-by-Step Procedures

Step 1: Hypergraph Construction from scRNA-seq Data

Objective: To transform the raw scRNA-seq expression matrix into a hypergraph structure that serves as the input for HyperG-VAE.

Procedure:

- Input Data: Begin with a scRNA-seq expression matrix

H^V ∈ R^(m×n), wheremis the number of cells andnis the number of genes. - Define Hypergraph:

- Consider each cell as a hyperedge.

- Consider each gene as a node.

- Construct Incidence Matrix (

M): Create an incidence matrixM ∈ {0,1}^(m×n)that defines the hypergraph structure.- For each cell (hyperedge)

jand gene (node)i:M_ij = 1if the geneiis expressed in cellj(i.e.,H_ij^V > 0).M_ij = 0otherwise [19].

- For each cell (hyperedge)

Output: A hypergraph defined by the incidence matrix M, ready for processing by the dual encoders.

Step 2: Configure and Execute the Cell Encoder with SEM

Objective: To leverage the Cell Encoder for generating latent cell representations and inferring the initial GRN via the Structural Equation Model.

Procedure:

- Model Input: Feed the hypergraph structure (incidence matrix

M) and gene expression data (H^V) into the Cell Encoder. - Structural Equation Layer:

- The encoder utilizes a structural equation layer to model gene-gene interactions. This layer contains a learnable causal interaction matrix that infers regulatory relationships between transcription factors and target genes [19].

- The SEM accounts for cellular heterogeneity by learning cell-specific parameters, allowing the model to capture variations in gene regulation across different cell states or types [19].

- Generate Representations: The encoder outputs stochastic latent representations for cells, denoted as

H^E.

Output: Latent cell embeddings (H^E) and an initial GRN inferred from the structural equation layer.