Genetic Algorithm vs Differential Evolution: A Beginner's Guide for Research and Drug Development

This guide provides a clear, comparative exploration of Genetic Algorithms (GA) and Differential Evolution (DE) for researchers and professionals in drug development and the chemical sciences.

Genetic Algorithm vs Differential Evolution: A Beginner's Guide for Research and Drug Development

Abstract

This guide provides a clear, comparative exploration of Genetic Algorithms (GA) and Differential Evolution (DE) for researchers and professionals in drug development and the chemical sciences. It covers the foundational principles of both evolutionary algorithms, detailing their unique methodologies and operators. The content extends to practical implementation strategies, troubleshooting common issues like premature convergence, and a validation of their performance based on recent scientific benchmarks. By synthesizing theoretical knowledge with application-focused insights, this article serves as a primer for selecting and optimizing these powerful algorithms for complex optimization tasks in biomedical research.

Understanding the Core: Biological Metaphors and Algorithmic Principles

Evolutionary Algorithms (EAs) are a class of population-based, metaheuristic optimization algorithms inspired by the principles of biological evolution, such as natural selection, reproduction, and mutation [1]. They belong to the broader field of computational intelligence and are designed to solve complex optimization problems for which traditional, exact solution methods are ineffective or unknown [1]. By simulating a process of "survival of the fittest," EAs iteratively generate improved solutions to a problem, making them particularly powerful for navigating high-dimensional, non-differentiable, and multimodal search spaces commonly encountered in scientific and engineering disciplines, including drug development [2] [3].

The core principle of an EA is to maintain a population of candidate solutions. Each candidate is evaluated for its "fitness"—a measure of how well it solves the problem. High-fitness candidates are more likely to be selected as parents to produce offspring for the next generation. This reproduction process involves genetic operators like crossover (recombining parts of parent solutions) and mutation (introducing random small changes). Over successive generations, the population evolves toward better regions of the search space [4] [5]. This tutorial provides an in-depth introduction to EAs, with a specific focus on comparing two prominent types: Genetic Algorithms (GAs) and Differential Evolution (DE), framed for beginners and practitioners in research-intensive fields.

The Core Mechanisms of Evolutionary Algorithms

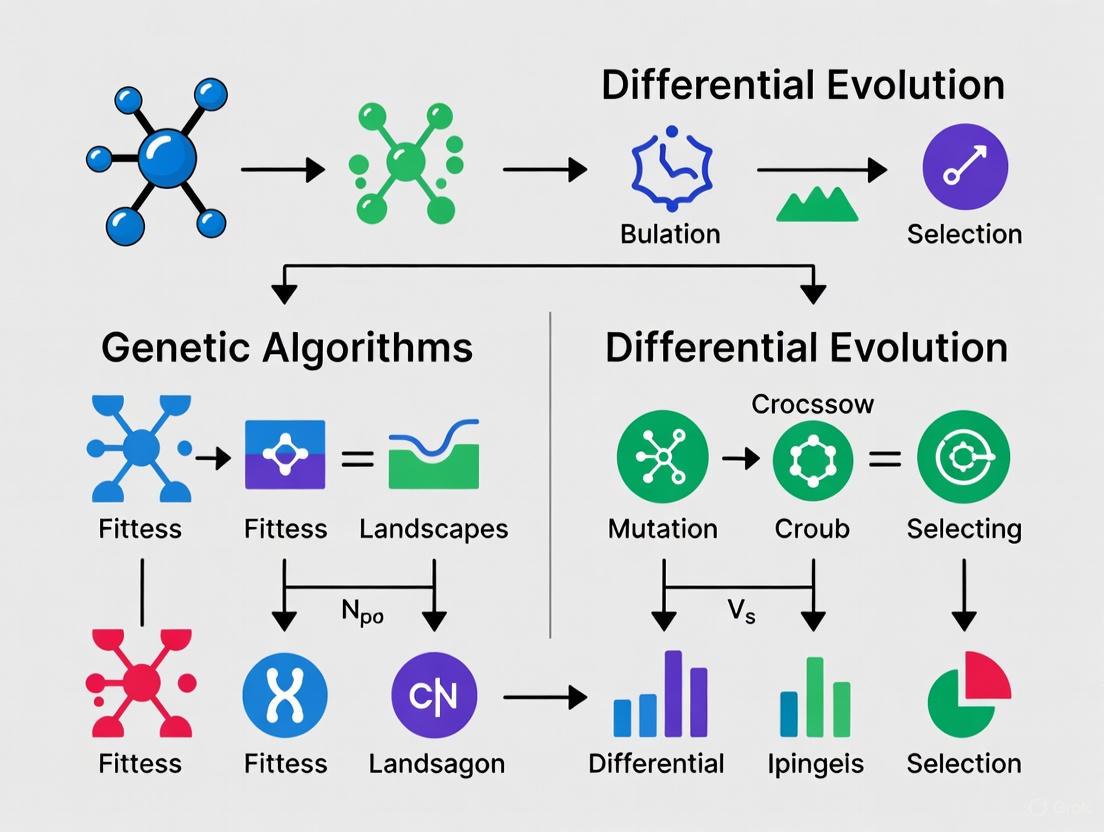

All Evolutionary Algorithms share a common generic structure, though they differ in their specific implementation details [1]. The following diagram illustrates the typical workflow of an EA.

Key Components and Operators

The operation of an EA relies on several key components:

- Population: A set of multiple candidate solutions (individuals). Unlike traditional methods that improve a single solution, the population-based approach allows EAs to explore multiple areas of the search space simultaneously, reducing the risk of becoming trapped in local optima [4].

- Fitness Function: A function that assigns a quality score to each candidate solution based on its performance on the optimization problem. The fitness function guides the search process by determining which solutions are "better" and thus more likely to reproduce [4] [5].

- Selection: The process of choosing parent solutions from the current population based on their fitness. Fit individuals have a higher probability of being selected, mimicking natural selection. Common strategies include tournament selection (selecting the best from a random subset) and roulette wheel selection (probability proportional to fitness) [5].

- Crossover (Recombination): An operator that combines the genetic information of two or more parents to create one or more offspring. This allows the exchange of promising solution features between individuals. A simple example is the one-point crossover, where two binary strings are split at a random point and segments are swapped [4] [5].

- Mutation: An operator that introduces small, random changes to an offspring's genetic representation. Mutation is crucial for maintaining population diversity and exploring new regions of the search space, thereby preventing premature convergence to suboptimal solutions. It typically occurs with a low probability [4] [5].

Table 1: Core Components of an Evolutionary Algorithm

| Component | Description | Role in the Algorithm |

|---|---|---|

| Population | A collection of candidate solutions (individuals). | Enables parallel exploration of the search space. |

| Fitness Function | A problem-specific function measuring solution quality. | Guides the search by selecting the fittest individuals. |

| Selection | Process for choosing parents based on fitness. | Promotes the propagation of good genetic material. |

| Crossover | Operator combining parts of two or more parents. | Exploits and recombines existing good solutions. |

| Mutation | Operator introducing random changes to an offspring. | Explores new areas and maintains population diversity. |

A Deep Dive into Genetic Algorithms (GAs)

Genetic Algorithms (GAs), developed by John Holland and his colleagues in the 1970s, are one of the most popular and historically significant types of Evolutionary Algorithms [5]. They closely adhere to the metaphor of genetic reproduction, where solutions are encoded as chromosomes composed of genes [6].

Representation and Operators in GAs

In the canonical GA, solutions are represented using a fixed-length encoding, traditionally binary strings, though integer and real-valued representations are also common depending on the problem [6] [4]. For example, in a knapsack problem, a binary chromosome might represent whether an item is included (1) or not (0) [4].

The power of a GA comes from its genetic operators:

- Crossover: This is the primary search operator in GA. It creates new offspring by recombining genetic material from two parents. Methods include one-point, two-point, and uniform crossover [4].

- Mutation: This is a secondary operator that acts as a background operator. It randomly alters one or more gene values in a chromosome (e.g., flipping a bit in a binary string) to introduce novelty [5].

Experimental Protocol: Implementing a Simple GA

The following Python pseudocode outlines the basic structure of a Genetic Algorithm, which can be applied to problems like feature selection or parameter tuning [4] [5].

Table 2: Key "Reagent Solutions" for a Genetic Algorithm Experiment

| Reagent (Component) | Function | Example / Notes |

|---|---|---|

| Population | The set of candidate solutions to be evolved. | Initialized randomly or using a heuristic. Size is critical for diversity. |

| Fitness Function | Defines the objective and measures solution quality. | Must be carefully designed to accurately reflect the problem's goals. |

| Selection Operator | Guides the search by selecting fitter parents. | Tournament or Roulette Wheel selection are common choices. |

| Crossover Operator | Recombines parents to create new offspring. | One-point or uniform crossover for binary representations. |

| Mutation Operator | Introduces diversity and prevents premature convergence. | Bit-flip for binary, Gaussian noise for real-valued representations. |

A Deep Dive into Differential Evolution (DE)

Differential Evolution (DE), introduced by Storn and Price in 1997, is a powerful EA specifically designed for optimizing real-valued functions in continuous search spaces [2] [3]. While it shares the common EA framework of population, mutation, crossover, and selection, its mutation strategy is unique and gives the algorithm its name.

The Distinctive Mutation Strategy of DE

The key differentiator of DE is how it performs mutation. Instead of relying on a probability distribution, DE generates a mutant vector for each individual in the population (called the target vector) by adding a scaled difference between two or more randomly selected population vectors to a third base vector [2] [3]. The most common mutation strategy, "DE/rand/1/bin", is mathematically expressed as:

$$ \text{mutationvector} = x{r1} + F \cdot (x{r2} - x{r3}) $$

Here, $x{r1}$, $x{r2}$, and $x{r3}$ are three distinct randomly chosen population vectors, and $F$ is a scaling factor that controls the amplification of the differential variation $(x{r2} - x_{r3})$ [2] [3]. This use of vector differences allows DE to automatically adapt the step size and orientation of the search based on the current distribution of the population.

Crossover and Selection in DE

After mutation, a crossover operation is performed between the mutant vector and the original target vector to create a trial vector. This is typically binomial crossover, where each parameter of the trial vector is inherited either from the mutant vector or the target vector with a certain probability, controlled by the crossover rate ($Cr$) [2]. Finally, a greedy selection occurs: the trial vector replaces the target vector in the next generation only if it has a better or equal fitness [2] [3]. The following diagram visualizes the core process of creating a new candidate in DE.

Experimental Protocol: Implementing a Simple DE

The following Python pseudocode implements the "DE/rand/1/bin" strategy, which is the classic and one of the most widely used variants of Differential Evolution [2] [3].

Table 3: Key "Reagent Solutions" for a Differential Evolution Experiment

| Reagent (Component) | Function | Example / Notes |

|---|---|---|

| Population (Vectors) | Set of real-valued candidate solutions. | Each individual is a vector in a continuous space. |

| Scaling Factor (F) | Controls the amplification of differential variation. | Typically in [0, 2]. A smaller F promotes convergence. |

| Crossover Rate (Cr) | Controls the mixing of mutant and target vectors. | Typically in [0, 1]. A higher Cr exploits the mutant more. |

| Mutation Strategy | Defines how the mutant vector is created. | "DE/rand/1" uses random base vector and one difference. |

Comparative Analysis: Genetic Algorithms vs. Differential Evolution

Understanding the differences between GAs and DE is crucial for selecting the right tool for a given optimization problem. The table below summarizes their key distinctions.

Table 4: A Comparative Summary of Genetic Algorithms (GAs) and Differential Evolution (DE)

| Feature | Genetic Algorithm (GA) | Differential Evolution (DE) |

|---|---|---|

| Primary Metaphor | Genetic reproduction (chromosomes, genes) [6]. | Directional information and vector differences [3]. |

| Representation | Traditionally binary strings, but other encodings are possible [4]. | Primarily real-valued vectors [6] [2]. |

| Core Search Driver | Crossover is the primary, disruptive search operator [4]. | Mutation based on vector differences is the primary driver [3]. |

| Key Parameters | Population size, crossover probability, mutation probability [5]. | Population size, scaling factor (F), crossover rate (Cr) [2]. |

| Operator Sequence | Selection → Crossover → Mutation [5]. | Mutation → Crossover → Selection [7]. |

| Typical Application Scope | Well-suited for discrete and combinatorial problems (e.g., scheduling) [4]. | Excels in continuous, numerical optimization (e.g., function fitting) [6] [2]. |

| Strengths | Easily parallelizable, good for escaping local optima in combinatorial spaces [4]. | Fewer control parameters, often more effective/efficient for continuous problems [6] [2]. |

Guidance for Algorithm Selection

The choice between GA and DE depends heavily on the nature of the problem:

- Use Genetic Algorithms when dealing with discrete or combinatorial optimization problems, such as the traveling salesman problem, feature selection, or scheduling [4]. Their representation and crossover operators are naturally suited for these domains.

- Use Differential Evolution when the problem involves optimization in a continuous, real-valued parameter space, especially if the function is non-differentiable, nonlinear, or multimodal [2] [3]. DE's ability to use directional information from the population often leads to faster and more robust convergence on such problems [6].

- Consider DE's simplicity: DE requires very few control parameters to steer the minimization (typically just F and Cr), which makes it easier and more practical to use for many real-world applications [2].

Advanced Concepts and Current Trends

Theoretical Foundations

Two fundamental theoretical concepts provide context for understanding EAs:

- The No Free Lunch Theorem: This theorem states that all optimization algorithms are equally effective when averaged over all possible problems [1]. This means there is no single EA that is universally best. The strong performance of GAs and DE on specific problem classes is precisely because they exploit the structure of those problems. Therefore, problem-specific knowledge should be incorporated into the EA design (e.g., in the representation or fitness function) for best results [1].

- Convergence: For EAs that employ elitism (always keeping the best solution found so far), it is possible to prove that the algorithm will converge to the global optimum, given infinite time [1]. However, this proof does not specify the speed of convergence, which is a key practical concern.

Variants and Recent Developments

The fields of GA and DE are actively evolving. Numerous advanced variants have been developed to enhance performance:

- DE Strategy Variants: There are multiple strategies for creating the mutant vector in DE, following a naming convention of DE/x/y/z [2] [7]. For example, DE/best/1/bin uses the best solution in the population as the base vector, promoting faster convergence (though with a higher risk of getting stuck in a local optimum), while DE/rand/1/bin uses a random base vector, favoring exploration [2].

- Self-Adaptation: Advanced versions of DE and ES can self-adapt their parameters (like F and Cr) during the run, freeing the user from the burden of finding robust static parameter values [7].

- Hybrid Algorithms (Memetic Algorithms): EAs are often hybridized with local search techniques to refine solutions. These hybrids are known as Memetic Algorithms and often demonstrate superior performance by combining the global exploration of EA with the local exploitation of a dedicated local search method [1].

Evolutionary Algorithms, particularly Genetic Algorithms and Differential Evolution, provide powerful and flexible frameworks for solving complex optimization problems that are intractable for traditional methods. GAs, with their strong biological metaphor and suitability for combinatorial problems, and DE, with its unique differential mutation strategy for continuous spaces, are two cornerstone techniques in this domain.

The key takeaway is that algorithm selection is problem-dependent. For researchers and scientists in fields like drug development, where problems can range from discrete molecular design (potentially suited for GAs) to continuous parameter tuning in pharmacokinetic models (potentially suited for DE), understanding the core mechanisms, strengths, and weaknesses of each algorithm is the first step toward harnessing their power. By following the structured methodologies and experimental protocols outlined in this guide, practitioners can effectively apply these population-based optimizers to push the boundaries of their research.

The Genetic Algorithm (GA) is a powerful metaheuristic optimization algorithm inspired by the principles of Darwin's theory of natural selection [8]. As a problem-independent optimization method, GA belongs to a broader class of evolutionary computing algorithms designed to find solutions to NP-hard problems where traditional methods struggle with the exponential growth of possible solutions [8]. By modeling the evolutionary processes of selection, inheritance, and genetic variation, GAs can efficiently explore complex solution spaces to find near-optimal solutions for a wide range of optimization challenges. The fundamental analogy draws parallels between biological evolution and computational optimization: cells operate similarly to Turing machines, with genetic programs providing the operational blueprint [8]. This biological foundation enables GAs to tackle complex optimization problems across diverse fields, from computational biology to drug development.

Core Components and Operational Mechanics

Foundational Elements and Workflow

The genetic algorithm operates through an iterative process that mimics natural evolution, requiring only two essential components: a population of candidate solutions and a fitness function to evaluate them [8]. The algorithm creates conditions of natural selection where individuals with advantageous traits are more likely to survive and reproduce, progressively improving the population's overall fitness across generations [8]. This process incorporates the key biological principles of fitness, inheritance, and natural variation through recombination and mutation [8].

Table 1: Core Components of a Genetic Algorithm

| Component | Description | Biological Analogy |

|---|---|---|

| Population | Set of candidate solutions (genes, chromosomes) | Population of organisms in an ecosystem |

| Fitness Function | Function that quantifies solution quality | Environmental selection pressures |

| Selection | Process choosing individuals for reproduction | Natural selection based on fitness |

| Crossover | Combining genetic material from two parents | Sexual reproduction and recombination |

| Mutation | Random alterations to genetic material | Genetic mutations introducing variation |

The GA workflow follows a generational approach that begins with population initialization and proceeds through repeated cycles of fitness evaluation, selection, and genetic operations until termination conditions are met. The initial population, or first generation (F1), consists of a set of genes, chromosomes, or other units of interest, commonly represented as bit arrays where different permutations of 0s and 1s represent different genes [8]. Through successive generations, the algorithm preserves and combines beneficial traits while introducing controlled variations, creating an evolutionary trajectory toward improved solutions.

Detailed Operational Workflow

The genetic algorithm implements a structured workflow that transforms an initial population of candidate solutions through successive generations:

Population Initialization: The algorithm begins by creating an initial population of candidate solutions, typically represented as binary strings, real-valued vectors, or other appropriate data structures. For a binary representation, different permutations of 0s and 1s represent different genes or chromosomes [8]. The population size is a critical parameter that affects both diversity and computational efficiency, with larger populations providing more genetic diversity but requiring more computational resources per generation.

Fitness Evaluation: Each candidate solution in the population is evaluated using a problem-specific fitness function that quantifies how well it solves the target problem. The fitness function serves as the environmental pressure in the evolutionary process, assigning higher values to better solutions. In a common approach, beneficial traits might be represented by 1s and neutral traits by 0s, with the fitness score equivalent to the sum of these binary values [8]. The design of an effective fitness function is crucial, as it directly guides the evolutionary process toward desired solutions.

Selection Operation: The selection process mimics natural selection by choosing which individuals from the current population will contribute genetic material to the next generation. Individuals with higher fitness scores have a greater probability of being selected, similar to how organisms with advantageous traits are more likely to survive and reproduce in nature [8]. However, most implementations also preserve a small proportion of less-fit individuals to maintain genetic diversity and prevent premature convergence.

Genetic Operators: The crossover (recombination) operator combines genetic information from two parent solutions to create offspring. In a simple single-point crossover, a random point is selected along the parent chromosomes, and segments are exchanged to create new offspring [8]. The mutation operator introduces random changes by altering small portions of the genetic material, such as flipping bits in a binary representation with a low probability [8]. These operators balance the exploitation of good solutions with the exploration of new possibilities in the search space.

Termination Check: The algorithm iteratively repeats the evaluation-selection-variation cycle until a termination condition is met. Common termination criteria include reaching a predetermined number of generations, achieving a satisfactory fitness level, or detecting population convergence where successive generations no longer produce significant improvements [9].

Advanced Modifications and Specialized Variants

Enhanced GA Variants for Complex Problems

Basic genetic algorithms can be enhanced with specialized techniques to address specific challenges and improve performance across diverse problem domains:

Elitism: This commonly used approach ensures that the best solutions are preserved unchanged across generations by automatically carrying the highest-fitness individuals from one generation to the next [8]. Elitism guarantees that solution quality never decreases between generations and prevents the loss of valuable genetic information [8]. By maintaining the best performers without modification, elitism accelerates convergence while ensuring monotonic improvement in solution quality.

Speciation: Speciation heuristics discourage crossover between highly similar individuals, promoting genetic diversity within the population [8]. Without speciation, populations often suffer from premature convergence where suboptimal solutions dominate early in the evolutionary process [8]. By encouraging mating between diverse individuals, speciation maintains a broader exploration of the solution space and helps escape local optima.

Adaptive Genetic Algorithms (AGAs): These self-tuning variants dynamically adjust mutation and crossover rates throughout the evolutionary process to maintain population diversity while preserving convergence properties [8]. Though computationally more expensive, AGAs often produce more realistic and robust results, particularly for problems with complex fitness landscapes [8]. Research has demonstrated that AGAs offer "the highest accuracy and the best performance on most unimodal and multimodal test functions" compared to other optimization approaches [8].

Interactive Genetic Algorithms (IGAs): When fitness cannot be quantitatively defined, IGAs incorporate human judgment into the selection process by having users evaluate candidate solutions subjectively [8]. While valuable for domains like fashion design or creative arts, IGAs face scalability challenges due to human fatigue and introduce subjective biases [8]. The interactive approach shifts the fitness computation burden to users but enables optimization in domains with qualitative evaluation criteria.

Table 2: Advanced GA Variants and Their Applications

| Variant | Key Mechanism | Advantages | Typical Applications |

|---|---|---|---|

| Elitist GA | Preserves best solutions unchanged | Prevents loss of good solutions; Monotonic improvement | General optimization; Engineering design |

| Speciated GA | Restricts mating between similar individuals | Maintains diversity; Prevents premature convergence | Multimodal optimization; Neural network training |

| Adaptive GA | Dynamically adjusts genetic parameters | Self-tuning; Robust performance | Complex landscapes; Real-world problems |

| Interactive GA | Incorporates human evaluation | Handles qualitative fitness criteria | Creative design; Subjective optimization |

Genetic Algorithm vs. Differential Evolution: A Comparative Analysis

Fundamental Differences and Performance Comparisons

While both belonging to the broader class of evolutionary algorithms, Genetic Algorithms and Differential Evolution (DE) exhibit distinct characteristics that make them suitable for different problem types. DE has gained recognition as a powerful alternative to GAs, particularly for continuous optimization problems [10].

Representation and Operation: The primary difference between GAs and DE lies in their representation and search mechanisms. GAs traditionally operate on discrete representations, often using binary encoding, while DE is designed specifically for continuous parameter spaces [10]. DE employs straightforward vector operations rather than the crossover and mutation mechanisms typical of GAs, creating new candidate solutions by adding the weighted difference between two population vectors to a third vector [2].

Performance Characteristics: Experimental comparisons between GAs and DE have demonstrated DE's superiority in many numerical optimization scenarios. One comprehensive study comparing state-of-the-art multiobjective algorithms found that "on the majority of problems, the algorithms based on differential evolution perform significantly better than the corresponding genetic algorithms with regard to applied quality indicators" [11]. This suggests that DE explores the decision space more efficiently than GAs for numerical multiobjective optimization [11]. However, GAs maintain advantages for problems with discrete or mixed variable types and in domains where their representation more naturally matches the problem structure.

Parameter Sensitivity and Control: DE requires fewer control parameters than most GA variants, typically needing only three parameters: population size, mutation scale factor, and crossover rate [2]. This makes DE easier to implement and tune for practitioners [2]. The canonical DE has been shown to work well for complex problems, including solving multi-objective problems, finding clustering weights, and training neural networks [10].

Table 3: Genetic Algorithm vs. Differential Evolution Comparison

| Characteristic | Genetic Algorithm (GA) | Differential Evolution (DE) |

|---|---|---|

| Search Space | Primarily discrete | Continuous |

| Core Operations | Selection, crossover, mutation | Vector addition, subtraction |

| Parameter Count | More parameters required | Fewer parameters (typically 3) |

| Convergence | May be slower on numerical problems | Faster convergence for continuous spaces |

| Exploration Mechanism | Probability-based mutation | Directional information via difference vectors |

| Implementation Complexity | Moderate | Simple vector operations |

Mutation and Crossover Strategies

The mutation and crossover mechanisms represent a fundamental distinction between GAs and DE. In GAs, mutation typically occurs through random alterations based on probability distributions, such as bit flips in binary representations [8]. In contrast, DE mutation utilizes directional information from the population by calculating weighted differences between solution vectors [7]. This difference-based approach allows DE to efficiently adapt step sizes and directions according to the distribution of solutions in the search space.

Crossover also differs significantly between the two approaches. In GAs, crossover typically occurs at defined points in the chromosome, exchanging segments between parent solutions [8]. DE employs a more granular approach where each variable undergoes independent crossover decisions, increasing diversity in the offspring generation [7]. Additionally, DE performs mutation before crossover, reversing the traditional order used in most GA implementations [7].

Experimental Protocols and Research Applications

Implementation Framework and Research Reagents

Implementing genetic algorithms for research requires both computational frameworks and problem-specific components that serve as the "research reagents" for experimental optimization:

Table 4: Essential Research Reagents for Genetic Algorithm Experiments

| Reagent | Function | Implementation Example |

|---|---|---|

| Population Initialization | Generates initial candidate solutions | Random sampling from defined search space |

| Fitness Function | Evaluates solution quality | Problem-specific objective function |

| Selection Operator | Chooses parents for reproduction | Tournament selection, roulette wheel |

| Crossover Operator | Combines parent solutions | Single-point, multi-point, uniform crossover |

| Mutation Operator | Introduces genetic diversity | Bit flip, random reset, swap mutation |

| Termination Criterion | Determines algorithm completion | Generation count, fitness threshold, convergence |

Experimental Protocol for Phylogenetic Tree Construction: One significant biological application of GAs involves constructing phylogenetic trees from evolutionary data. The Hill et al. team applied GAs to this challenging combinatorial problem where the number of possible trees grows exponentially with taxa [8]. Their implementation followed this methodology:

Representation: Encode potential phylogenetic trees as chromosomes, with each gene representing a topological feature or branch arrangement.

Fitness Evaluation: Calculate tree fitness based on maximum parsimony or maximum likelihood criteria, measuring how well the tree explains the observed biological sequences.

Genetic Operations: Apply specialized crossover operators that combine subtree elements from parent trees and mutation operators that rearrange branches or alter topological features.

Performance: Their Phanto program demonstrated that GAs could "infer the phylogeny of 92 taxa with 533 amino acids, including gaps in a little over half an hour using a regular desktop PC" [8].

Experimental Protocol for Protein Folding Prediction: The Wong et al. team applied GAs to the protein folding prediction problem using the HP Lattice model (Hydrophobic-Polar Lattice) to simplify protein structures [8]. Their approach:

Representation: Encode protein conformations as chromosomes representing the spatial arrangement of amino acids.

Fitness Function: Define fitness as the negative of the energy required to fold the protein into that shape, implementing the HP model to capture hydrophobicity interactions between amino acid residues.

Optimization: Use the iterative nature of GAs to find configurations that minimize the folding energy, exploring the vast conformational space efficiently.

Chemical and Pharmaceutical Applications

Genetic algorithms have demonstrated significant utility in chemical optimization and drug development, where they help navigate complex experimental parameter spaces. The recently developed Paddy algorithm, an evolutionary approach inspired by plant reproduction behavior, has shown robust performance across various chemical optimization tasks [9]. In benchmark studies comparing multiple optimization approaches, evolutionary methods like Paddy demonstrated "robust versatility by maintaining strong performance across all optimization benchmarks" [9].

Chemical optimization applications typically follow this experimental protocol:

Parameter Encoding: Represent experimental conditions (temperature, concentration, pH, etc.) as chromosomes with each gene corresponding to a specific parameter.

Fitness Evaluation: Measure experimental outcomes (reaction yield, purity, biological activity) quantitatively as fitness scores.

Iterative Optimization: Evolve experimental conditions across generations, with high-performing parameter sets receiving greater representation in subsequent experimental rounds.

Validation: Confirm optimized conditions through replication and out-of-sample testing to prevent overfitting to specific experimental conditions.

This approach has been successfully applied to diverse chemical challenges including synthetic methodology development, chromatography condition optimization, catalyst design, and drug formulation [9]. The ability of GAs to efficiently explore high-dimensional parameter spaces makes them particularly valuable for complex chemical systems where traditional one-variable-at-a-time approaches are impractical.

Genetic Algorithms provide a powerful and biologically-inspired methodology for solving complex optimization problems across diverse domains. Their representation of candidate solutions as evolving populations subject to selection pressures enables efficient exploration of challenging search spaces. For researchers and drug development professionals, GAs offer particular value for problems with discrete variables, complex constraints, or where the problem structure naturally aligns with chromosomal representations.

The choice between Genetic Algorithms and Differential Evolution should be guided by problem characteristics and research objectives. DE generally outperforms GA for continuous numerical optimization, particularly when leveraging its efficient vector operations and directional search capabilities [11]. However, GAs maintain advantages for discrete optimization, problems requiring specialized representations, and domains where their operators can be customized to incorporate domain knowledge. Emerging evolutionary approaches like the Paddy algorithm demonstrate continued innovation in this field, combining elements from both paradigms to address complex chemical optimization challenges [9].

As optimization needs evolve with increasing problem complexity and data availability, both Genetic Algorithms and Differential Evolution will continue to serve as valuable tools in the researcher's toolkit. Understanding their complementary strengths enables practitioners to select the most appropriate approach for their specific optimization challenges, whether in computational biology, drug development, or other scientific domains requiring robust optimization methodologies.

Differential Evolution (DE) is a powerful evolutionary algorithm (EA) first introduced by Rainer Storn and Kenneth Price in the mid-1990s for optimizing problems defined over continuous domains [12]. As a population-based metaheuristic, DE belongs to the broader class of evolutionary computation but distinguishes itself through a unique differential mutation strategy that efficiently explores complex search spaces [13] [14]. Unlike traditional genetic algorithms (GAs) that were originally designed for discrete spaces and rely heavily on crossover operations, DE operates directly on real-valued vectors using vector differences to drive the mutation process, making it particularly effective for numerical optimization problems [10] [11].

The fundamental distinction between DE and GA lies in their approach to candidate improvement. While GAs typically use genotype representations (often binary or integer strings) and apply crossover as the primary search operator, DE utilizes a differential mutation strategy that calculates weighted differences between population vectors to create new trial vectors [6] [12]. This approach enables DE to automatically adapt the step size and orientation of its search based on the current population distribution, allowing for more effective navigation of complex fitness landscapes, especially in continuous domains [11] [15]. For researchers and drug development professionals, this translates to superior performance in optimizing complex, multimodal objective functions commonly encountered in chemometrics, molecular modeling, and binding affinity prediction [16] [10].

Core Algorithmic Mechanics

Fundamental Operations and Workflow

DE maintains a population of candidate solutions, referred to as agents or vectors, which evolve over generations through the sequential application of mutation, crossover, and selection operations [13] [12]. The algorithm treats the optimization problem as a black box, requiring only the ability to evaluate candidate solutions against a fitness function, without needing gradient information [13]. This property makes DE particularly valuable for optimizing non-differentiable, noisy, or otherwise irregular functions common in scientific applications [10] [15].

The complete DE workflow integrates these core operations into an iterative process that progressively refines the population toward optimal solutions. This systematic procedure enables DE to efficiently balance exploration of the search space with exploitation of promising regions.

Figure 1: Differential Evolution Algorithm Workflow. The process iterates through mutation, crossover, and selection operations until termination criteria are met.

Mutation: The Differential Advantage

The mutation operation constitutes DE's distinctive feature, employing vector differences to explore the search space [13]. For each target vector in the population, a donor vector is created by perturbing a base vector with the scaled difference of two other randomly selected population vectors [10]. The most common mutation strategy, known as DE/rand/1, follows this formula:

Donor Vector = Xᵣ₀ + F × (Xᵣ₁ - Xᵣ₂)

where Xᵣ₀, Xᵣ₁, and Xᵣ₂ are three distinct randomly selected vectors from the population, and F is the mutation scale factor typically chosen from the range [0, 2] [13] [10]. The differential component (Xᵣ₁ - Xᵣ₂) enables the algorithm to automatically adapt step sizes based on the distribution of solutions in the current population [15]. This self-adjusting mechanism allows DE to perform large jumps in early generations when the population is dispersed, and finer adjustments as the population converges toward promising regions [6].

Crossover: Incorporating Genetic Diversity

Following mutation, DE employs a crossover operation to increase population diversity by combining components of the donor vector with the target vector [12]. The most common approach is binomial crossover, which constructs a trial vector by selecting elements from either the donor or target vector based on a crossover probability (CR) parameter [13] [10]. The crossover operation ensures that at least one dimension is inherited from the donor vector while preserving some genetic material from the target vector, maintaining a balance between exploration and exploitation [15].

Selection: Greedy Survival Competition

DE implements a straightforward yet effective selection mechanism where each trial vector competes directly against its corresponding target vector [10]. If the trial vector demonstrates superior or equal fitness compared to the target vector, it replaces the target in the next generation; otherwise, the target vector survives [12]. This greedy selection strategy ensures monotonic improvement in population fitness while preserving diversity through the simultaneous evaluation of all candidate solutions [13].

DE vs. Genetic Algorithms: A Comparative Analysis

While both DE and Genetic Algorithms belong to the broader evolutionary computation family, they differ significantly in their representation, operations, and performance characteristics [6] [11]. Understanding these distinctions is crucial for selecting the appropriate algorithm for specific optimization challenges.

Table 1: Differential Evolution vs. Genetic Algorithms Comparison

| Characteristic | Differential Evolution | Genetic Algorithms |

|---|---|---|

| Representation | Real-valued vectors [12] | Typically binary or integer strings [12] |

| Primary Search Operator | Differential mutation [6] | Crossover/recombination [6] |

| Mutation Strategy | Uses vector differences [13] | Typically bit-flips or random perturbations [6] |

| Parameter Adaptation | Self-adapting step sizes through vector differences [15] | Fixed mutation rates [6] |

| Convergence Speed | Generally faster for continuous problems [11] | Slower for continuous, high-dimensional problems [11] |

| Typical Applications | Continuous parameter optimization, chemometrics, engineering design [10] | Discrete/combinatorial optimization, scheduling [10] |

Experimental studies have demonstrated DE's superiority over GAs for many numerical optimization problems. Research comparing multiobjective variants showed that "on the majority of problems, the algorithms based on differential evolution perform significantly better than the corresponding genetic algorithms with regard to applied quality indicators" [11]. This performance advantage stems from DE's ability to automatically adapt its search step size based on population distribution, whereas GAs typically rely on fixed mutation rates that require careful tuning [6].

Implementation Guidelines

Parameter Configuration

DE performance depends critically on appropriate parameter selection. The three primary control parameters—population size (NP), mutation factor (F), and crossover rate (CR)—should be chosen based on problem characteristics [13] [10].

Table 2: DE Parameter Settings and Guidelines

| Parameter | Symbol | Typical Range | Recommended Starting Value | Impact |

|---|---|---|---|---|

| Population Size | NP | [5×D, 10×D] where D is dimension [10] | 10×D [13] | Larger values increase diversity but slow convergence |

| Mutation Factor | F | [0.4, 1.0] [15] | 0.8 [13] | Lower values emphasize exploitation, higher values exploration |

| Crossover Rate | CR | [0.7, 0.95] for non-separable problems [15] | 0.9 [13] | Higher values preserve more donor vector information |

For problems with strong multimodality or periodic terms, lower CR values (0.2-0.5) may produce better results by promoting search along coordinate axes [15]. The mutation factor F can also be dynamically adjusted using dithering, which randomizes F within a specified range (e.g., [0.3, 1.0]) for each mutant vector to enhance exploration [15].

Constraint Handling

DE originally designed for unconstrained optimization can be extended to constrained problems through penalty methods, where the objective function is modified to include a penalty term for constraint violations: f̃(x) = f(x) + ρ×CV(x), where CV(x) measures the constraint violation and ρ is a penalty coefficient [13]. Alternative approaches include projecting infeasible solutions onto the feasible set or using specialized repair operations [10].

Advanced DE Variants and Recent Advances

The success of basic DE has spurred development of numerous enhanced variants targeting specific problem classes and performance limitations [14] [17]. For multimodal optimization problems (MMOPs) where identifying multiple optimal solutions is valuable, DE has been integrated with niching techniques that maintain subpopulations around different optima [17]. These approaches enable DE to locate and maintain multiple global and local optima in a single run, providing decision-makers with alternative solutions for scenarios where practical constraints may preclude implementing the theoretical global optimum [17].

Recent advancements include:

- Adaptive DE variants that dynamically adjust control parameters F and CR based on performance feedback [14]

- Hybrid DE algorithms that combine DE with local search techniques (memetic algorithms) or machine learning models for improved exploitation [17]

- Multi-objective DE for problems with competing objectives using Pareto-based selection [11]

- DE with surrogate models that reduce computational cost for expensive objective functions [14]

Application in Drug Discovery and Development

DE has demonstrated significant utility in drug discovery, particularly in predicting drug-target interactions (DTIs) and binding affinities—critical tasks for drug repositioning and discovery [16]. Traditional experimental approaches for investigating DTIs are time-consuming and require substantial financial resources, making computational approaches highly valuable [16].

In a recent implementation, researchers proposed a deep learning approach for predicting binding affinities between drugs and targets using a Convolution Self-Attention Network with Attention-based Bidirectional Long Short-Term Memory Network (CSAN-BiLSTM-Att) [16]. Due to the model's complexity, proper hyperparameter tuning was essential, for which DE was employed to select optimal hyperparameters [16]. The DE-optimized model achieved a concordance index of 0.898 and a mean square error of 0.228 on the DAVIS dataset, and 0.971 concordance with 0.014 mean square error on the KIBA dataset, outperforming previous approaches [16].

Table 3: Experimental Results of DE-Optimized Model for Drug-Target Binding Affinity Prediction

| Dataset | Concordance Index (CI) | Mean Square Error (MSE) | Performance Context |

|---|---|---|---|

| DAVIS | 0.898 | 0.228 | Outperformed previous approaches [16] |

| KIBA | 0.971 | 0.014 | Superior to existing methods [16] |

The experimental workflow for this application illustrates DE's role in optimizing complex computational models:

Figure 2: Drug-Target Binding Affinity Prediction Using DE Optimization. DE efficiently tunes hyperparameters in complex deep learning models for drug discovery applications.

Experimental Protocols and Research Reagents

Computational Research Toolkit

Implementing DE for optimization problems requires specific computational tools and parameters. The following table details key components of the DE research toolkit:

Table 4: Differential Evolution Research Reagent Solutions

| Component | Type/Value | Function/Purpose |

|---|---|---|

| Population Size | 5×D to 10×D (D = dimensions) | Maintains genetic diversity while balancing computational cost [10] |

| Mutation Scheme | DE/rand/1 or DE/best/1 | Determines how donor vectors are created [13] |

| Mutation Factor (F) | 0.4-1.0 | Controls amplification of differential variation [15] |

| Crossover Rate (CR) | 0.7-0.9 for non-separable problems | Controls inheritance from donor versus target vector [15] |

| Termination Criteria | Maximum generations or fitness threshold | Determines when optimization process stops [12] |

| Constraint Handling | Penalty functions or projection methods | Manages feasible solution search spaces [13] |

Benchmarking Protocol

To validate DE performance against alternative optimizers like Genetic Algorithms, follow this experimental protocol:

Problem Selection: Choose benchmark problems with varying characteristics (unimodal, multimodal, separable, non-separable) and real-world applications like drug-target binding affinity prediction [16] [11]

Algorithm Configuration:

- Implement DE with population size NP = 10×D, F = 0.8, CR = 0.9

- Implement GA with comparable population size, binary tournament selection, simulated binary crossover, and polynomial mutation [11]

Performance Metrics:

- Record number of function evaluations to reach target fitness

- Measure final solution quality

- Compute statistical significance of results across multiple independent runs [11]

Statistical Analysis:

- Perform Wilcoxon signed-rank tests to determine significant differences

- Calculate mean and standard deviation of performance metrics [11]

Differential Evolution represents a significant advancement in evolutionary computation, particularly for continuous optimization problems encountered in scientific research and drug development. Its distinctive approach of using vector differences to guide the search process enables efficient exploration of complex fitness landscapes without requiring gradient information or differentiable objective functions. For researchers investigating drug-target interactions, DE provides a powerful tool for optimizing complex computational models, as demonstrated by its successful application in predicting binding affinities with superior accuracy.

When compared to Genetic Algorithms, DE typically demonstrates faster convergence and better performance on continuous numerical optimization problems, while GAs may remain preferable for discrete or combinatorial problems [11]. The choice between these evolutionary approaches should be guided by problem characteristics—with DE being particularly well-suited for high-dimensional, continuous, multimodal problems common in drug discovery and chemometrics.

Future DE research directions include further development of adaptive parameter control mechanisms, hybridization with machine learning techniques for surrogate modeling, and enhanced constraint handling approaches for complex real-world optimization challenges [14] [17]. As computational power grows and optimization problems increase in complexity, DE's balance of simplicity, efficiency, and effectiveness positions it as an increasingly valuable tool in the researcher's toolkit.

In both natural evolution and computational optimization, four fundamental concepts form the cornerstone of understanding: genotype, phenotype, population, and fitness. These terms originate from evolutionary biology but have been adapted into powerful computational paradigms that drive modern optimization techniques. For researchers in drug development and related fields, understanding these concepts is crucial for leveraging evolutionary algorithms to solve complex problems, from molecular design to experimental parameter optimization. This guide provides an in-depth technical breakdown of these core concepts, framing them within the context of comparing two prominent evolutionary algorithms: Genetic Algorithms (GAs) and Differential Evolution (DE). The precise mathematical formulation of these concepts enables their transformation from biological principles into robust computational tools that can navigate high-dimensional search spaces common in pharmaceutical research and development.

Terminology Breakdown

Genotype

- Biological Definition: In genetics, a genotype constitutes the complete set of genes inherited from parents [18]. For a specific trait, it refers to the pair of alleles an organism possesses (e.g.,

RR,RW, orWWfor seed shape in Mendel's pea plants) [18]. - Computational Representation: In evolutionary computation, a genotype (or chromosome) is the encoded representation of a candidate solution within the algorithm's search space [19]. Unlike the discrete alleles in biology, computational genotypes often use real-valued or integer representations, especially in DE and related techniques applied to continuous parameter optimization [3].

- Algorithm Context: The representation of the genotype is a primary differentiator between GAs and DE. GAs traditionally use bitstrings (sequences of 1s and 0s) to represent solutions, requiring problem-specific encoding and decoding steps [19]. In contrast, DE typically operates directly on real-valued vectors, making it particularly suitable for numerical optimization problems in chemical systems and drug development where parameters are continuous [9] [3].

Table: Genotype Representations in Evolutionary Algorithms

| Algorithm | Typical Genotype Representation | Application Context |

|---|---|---|

| Genetic Algorithm (GA) | Bitstring (Binary or Gray-code) [19] | Discrete or encoded parameter spaces; subset selection [19] |

| Differential Evolution (DE) | Real-valued vector [3] | Continuous parameter optimization; chemical system tuning [9] |

| Paddy Field Algorithm (PFA) | Real-valued parameters (x = {x1, x2, ..., xn}) [9] |

High-dimensional chemical optimization tasks [9] |

Phenotype

- Biological Definition: The phenotype is the observable characteristic or trait of an organism (e.g., round or wrinkled seeds), which results from the expression of the genotype in conjunction with environmental influences [18].

- Computational Representation: In optimization, the phenotype is the actual candidate solution decoded from the genotype and evaluated by the objective function (or fitness function) [19]. For a real-valued genotype, the phenotype might be the vector itself, interpreted as a set of system parameters. For a binary-coded genotype, it must be decoded into meaningful parameters before evaluation [19].

- Algorithm Context: The mapping from genotype to phenotype is straightforward in DE due to its real-valued representation, reducing computational overhead [3]. In GAs, this mapping can be complex, involving scaling and transformation from a bitstring to real values or other data structures, which adds to the computational cost but provides flexibility for non-numeric problems [19].

Population

- Biological Definition: A biological population is a group of interbreeding individuals of the same species living in a shared geographical area [18]. Population genetics studies the genetic composition of such groups and how it changes over time [18].

- Computational Representation: In evolutionary algorithms, the population is the set of all candidate solutions (genotypes) being maintained and evolved during the optimization process [3] [19]. The population size is a critical algorithmic parameter balancing exploration of the search space and computational expense.

- Algorithm Context: Both GAs and DE are population-based optimizers. DE's population comprises real-valued vectors (agents), and its mutation strategy specifically uses vector differences within this population to explore the search space [3] [20]. GAs maintain a population of bitstrings, and diversity is managed through selection, crossover, and mutation operators [19]. The Paddy algorithm introduces a density-based reinforcement concept, where the spatial distribution of the population influences the propagation of new solutions [9].

Fitness

- Biological Definition: Fitness is a quantitative measure of an organism's ability to survive, reproduce, and propagate its genes to subsequent generations [21] [22]. It is a propensity rather than a guarantee—a genotype with high fitness has a high expected number of offspring [21] [22].

- Computational Representation: The fitness is the output value of the objective function being optimized. It numerically evaluates the quality of a candidate solution (phenotype) [19]. The algorithm's goal is to find the solution that minimizes or maximizes this function.

- Mathematical Formulation: In population genetics, the change in genotype frequency due to natural selection is driven by relative fitness (denoted

w), which is normalized against the fitness of other genotypes in the population [21] [22]. This is distinct from absolute fitness (W), which is the expected number of offspring or the actual growth rate of a genotype [21]. The change in allele frequency per generation isΔp = p(w - w̄)/w̄, wherepis the frequency,wis the relative fitness of the allele, andw̄is the mean relative fitness of the population [21] [22]. - Algorithm Context: In both GAs and DE, it is the relative fitness of solutions within the population that guides selection. DE uses a direct comparison between parent and trial vectors, inherently implementing a relative fitness competition [3]. GAs often use selection mechanisms explicitly based on relative fitness, such as fitness-proportional selection or ranking [19].

Table: Key Fitness Metrics and Their Computational Analogues

| Fitness Metric | Biological Definition | Computational Role in Optimization |

|---|---|---|

Absolute Fitness (W) |

The expected number of offspring of a genotype [21] [22]. | The raw output value of the objective function for a candidate solution. |

Relative Fitness (w) |

Absolute fitness normalized by the fitness of a reference genotype (often the fittest) [21] [22]. | A normalized quality measure used for selection probabilities (e.g., in GA selection) [19]. |

Selection Coefficient (s) |

A measure of the reduction in fitness of a genotype relative to the fittest (w₂ = 1 - s) [22]. |

Implicitly determines the replacement probability of a parent solution by a new candidate. |

Genetic Algorithms vs Differential Evolution: A Comparative Analysis

Understanding the core terminology enables a clear comparison of how Genetic Algorithms and Differential Evolution operationalize these concepts differently. The table below summarizes the key distinctions.

Table: Comparative Analysis of Genetic Algorithms and Differential Evolution

| Feature | Genetic Algorithm (GA) | Differential Evolution (DE) |

|---|---|---|

| Genotype Representation | Discrete (Bitstrings) [19] | Continuous (Real-valued vectors) [3] |

| Primary Exploration Mechanism | Crossover (Recombination) & Mutation [19] | Differential Mutation & Crossover [3] |

| Mutation Operator | Bit-flip with low probability [19] | Weighted vector difference: v_i = x_r1 + F*(x_r2 - x_r3) [3] [20] |

| Crossover Operator | Multi-point (e.g., 1-point, uniform) [19] | Binomial or Exponential [3] |

| Selection Strategy | Fitness-proportional, tournament [19] | Greedy one-to-one spawning [3] |

| Typical Application Domains | Combinatorial problems, subset selection, sequencing [19] | Continuous parameter optimization, chemical system tuning [9] [3] |

The workflow diagrams for each algorithm illustrate how these components interact.

Genetic Algorithm Workflow

Differential Evolution Workflow

Experimental Protocols and Applications

Benchmarking Evolutionary Algorithms

Robust benchmarking is essential for evaluating algorithm performance. The following methodology, derived from the Paddy algorithm study [9], provides a framework for comparing GA, DE, and other optimizers:

- Define Benchmark Problems: Select a diverse set of optimization tasks:

- Mathematical Functions: Bimodal distributions, irregular sinusoidal functions to test convergence and ability to avoid local optima [9].

- Chemical Hyperparameter Optimization: Tuning an Artificial Neural Network for tasks like solvent classification for reaction components [9].

- Targeted Molecule Generation: Optimizing input vectors for a decoder network (e.g., Junction-Tree Variational Autoencoder) to design molecules with desired properties [9].

- Configure Algorithms: Set population size, iteration limits, and algorithm-specific parameters (e.g., DE's mutation factor

Fand crossover rateCR, GA's crossover and mutation rates) [9] [3]. Use identical computational resources. - Execute and Measure: For each benchmark and algorithm, run multiple independent trials to account for stochasticity. Track:

- Convergence Speed: Number of function evaluations or iterations to reach a solution threshold.

- Solution Quality: Best fitness value found.

- Robustness: Consistency of performance across different trials and problem types.

- Computational Runtime [9].

- Analyze Results: Compare performance metrics across algorithms. Advanced analysis may involve assessing performance on high-dimensional problems to evaluate scalability and the "curse of dimensionality" [3].

Application in Drug Discovery

Evolutionary algorithms are increasingly applied in pharmaceutical research. The "fitness function" in this context is a computational proxy for a desired drug profile. Key applications include:

- Molecular Generation and Optimization: AI-driven generative models can create novel molecular structures. Evolutionary algorithms like DE can optimize the input vectors of these models to steer generation toward regions of chemical space with high fitness, such as strong target binding affinity and low toxicity [9] [23].

- Chemical System Optimization: Algorithms can efficiently navigate complex experimental parameter spaces (e.g., reaction conditions, chromatographic settings) to maximize yield or purity, directly integrating with automated laboratory systems [9].

- Neural Network Training: DE can be used as a global optimizer to find the weights of a neural network, potentially avoiding local minima that trap traditional gradient-based methods, though at the cost of increased computational time [24].

The Scientist's Toolkit

Table: Essential Reagents and Resources for Evolutionary Algorithm Experiments

| Tool/Reagent | Function/Purpose | Example/Notes |

|---|---|---|

| Optimization Software Libraries | Provide implemented algorithms, saving development time. | Hyperopt (Tree of Parzen Estimators) [9], Ax/Botorch (Bayesian Optimization) [9], EvoTorch (Evolutionary Algorithms) [9]. |

| Paddy Package | Implements the Paddy Field Algorithm for chemical optimization [9]. | Python library for robust optimization of chemical systems; emphasizes avoiding local minima [9]. |

| Fitness (Objective) Function | Encodes the problem to be solved; evaluates solution quality. | Defined by the researcher. Examples: molecular binding affinity score, chemical reaction yield, neural network training error [9] [24]. |

| Computational Environment | Hardware and software for running resource-intensive optimizations. | Systems supporting parallel processing can significantly speed up population evaluation [20] [24]. |

| Validation Framework | Prevents overfitting and ensures solutions generalize. | Crucial in drug discovery. Uses out-of-sample data and robust statistical tests to validate optimized solutions [20] [23]. |

The precise definitions of genotype, phenotype, population, and fitness create a universal framework for understanding and applying evolutionary computation. For drug development professionals, the choice between Genetic Algorithms and Differential Evolution hinges on the problem structure: GAs offer flexibility for discrete and combinatorial problems, while DE excels at optimizing continuous parameters common in chemical and pharmacological models. Mastery of these core concepts enables the effective deployment of these powerful optimization strategies, accelerating research in molecular design, experimental planning, and the development of robust AI models for the pharmaceutical industry.

Evolutionary Algorithms (EAs) represent a sophisticated class of metaheuristic optimization methods that emulate the processes of biological evolution to solve complex computational problems. By utilizing mechanisms inspired by Darwinian natural selection—such as selection, mutation, and crossover—these algorithms effectively tackle a wide variety of optimization challenges that are difficult for traditional methodologies. The fundamental principle underlying evolutionary computation is the simulation of natural selection's "survival of the fittest" paradigm, where a population of candidate solutions evolves over generations toward increasingly optimal configurations [25] [10].

Within the broader family of evolutionary computing, Genetic Algorithms (GA) and Differential Evolution (DE) have emerged as two powerful approaches with distinct characteristics and applications. While both algorithms belong to the same evolutionary computation family and share conceptual foundations, they employ different strategies for manipulating candidate solutions and exploring search spaces. Genetic Algorithms, developed by John Henry Holland in the 1970s, operate on binary or discrete representations of solutions, applying genetic operators inspired by biological genetics [26] [5]. In contrast, Differential Evolution, introduced by Rainer Storn and Kenneth Price in 1997, specializes in continuous optimization problems and employs unique vector-based mutation strategies that differentiate it from traditional GAs [10] [27].

The biological inspiration for these algorithms extends beyond mere metaphor—it provides a robust framework for solving complex, multi-modal, and non-differentiable optimization problems that challenge conventional mathematical approaches. By mimicking nature's billion-year-old optimization process, researchers have developed computational tools capable of navigating high-dimensional search spaces, avoiding local optima, and discovering innovative solutions across diverse domains including drug discovery, engineering design, and artificial intelligence [10] [28].

Biological Foundations and Computational Analogies

The design of evolutionary algorithms draws upon well-established biological principles, creating direct analogies between natural processes and computational mechanisms. Understanding these biological foundations is essential for appreciating the operational principles of both Genetic Algorithms and Differential Evolution.

Natural Selection and Fitness Landscapes

In biological evolution, natural selection acts as the primary mechanism driving species adaptation to their environment. Organisms with traits better suited to their environment tend to survive and reproduce more successfully, passing these advantageous traits to subsequent generations. Similarly, in both GA and DE, a fitness function evaluates candidate solutions, preferentially selecting better-performing individuals to contribute to future generations [26]. The fitness function in evolutionary computation corresponds to the environmental pressures in nature, creating a computational "fitness landscape" where algorithms seek peaks of optimal performance [5].

Genetic Inheritance and Representation

Biological genetics provides the inspiration for how solutions are represented and modified in evolutionary algorithms. In nature, genetic information is encoded in DNA, which undergoes recombination during sexual reproduction and occasional mutations. Similarly, evolutionary algorithms maintain a population of candidate solutions, each represented as chromosomes containing genes that encode specific solution parameters [26]. The representation varies between algorithms—traditional GAs often use binary strings, while DE typically works with real-valued vectors, but both maintain the fundamental genetic metaphor [10] [27].

Evolutionary Operators

Biological evolution operates through several key mechanisms that have direct computational counterparts:

Mutation: In nature, random changes in DNA sequences introduce novel traits. In evolutionary computation, mutation operators randomly alter portions of candidate solutions, maintaining population diversity and exploring new regions of the search space [26] [5].

Crossover (Recombination): Sexual reproduction combines genetic material from parents to produce offspring. Similarly, algorithmic crossover operations combine elements from multiple parent solutions to create new candidate solutions [26].

Selection: Nature's survival-of-the-fittest principle is implemented through selection operators that determine which solutions get to reproduce based on their fitness [5].

The following table summarizes the biological analogies in GA and DE:

Table 1: Biological Analogies in Evolutionary Algorithms

| Biological Concept | Computational Implementation | Role in Optimization |

|---|---|---|

| DNA/Genome | Chromosome (Binary, Real-valued) | Solution representation |

| Gene | Parameter/variable | Decision variable |

| Population | Set of candidate solutions | Diversity maintenance |

| Fitness | Objective function value | Solution quality measure |

| Mutation | Random perturbation | Diversity introduction |

| Crossover | Solution recombination | Exploitation of good traits |

| Natural Selection | Selection operators | Fitness-based propagation |

Genetic Algorithms: Mechanisms and Methodologies

Algorithmic Framework and Core Components

Genetic Algorithms operate through an iterative process that mirrors biological evolution, starting with an initial population of candidate solutions and progressively improving them through the application of genetic operators. The algorithm maintains a population of individuals, each representing a potential solution to the optimization problem at hand. These individuals undergo evaluation, selection, and reproduction cycles that emulate natural evolutionary processes [26] [5].

The standard Genetic Algorithm workflow consists of several well-defined phases. Initialization creates the first generation of candidate solutions, typically through random generation within the problem's search space. The fitness evaluation phase assesses each solution's quality using a problem-specific fitness function. Selection identifies promising solutions based on their fitness, giving them higher probability of contributing offspring to the next generation. Crossover combines genetic material from selected parents to produce new solutions, while mutation introduces random changes to maintain diversity. Finally, replacement forms the new population for the next generation [26].

Diagram 1: Genetic Algorithm Workflow

Genetic Representation and Operators

A fundamental aspect of Genetic Algorithms is the chromosomal representation of solutions. While traditional GAs often employ binary strings, modern implementations may use various representations including real-valued vectors, permutations, or tree structures depending on the problem domain [26]. The representation choice significantly impacts algorithm performance and should align with the problem structure.

Selection operators determine which solutions proceed to the reproduction phase. Common selection strategies include:

- Fitness Proportionate Selection: Solutions are selected with probability proportional to their fitness scores [5].

- Tournament Selection: Small random subsets of the population compete, with the fittest advancing [27].

- Rank-Based Selection: Solutions are selected based on their fitness rank rather than absolute values [5].

Crossover operators combine genetic information from parent solutions. Standard approaches include:

- Single-Point Crossover: A single crossover point is selected, and tails are swapped [26].

- Multi-Point Crossover: Multiple crossover points divide the chromosome into alternating segments [27].

- Uniform Crossover: Each gene is randomly selected from either parent with equal probability [26].

Mutation operators introduce random perturbations to maintain population diversity. The mutation rate is typically kept low to prevent the algorithm from degenerating into random search [26] [5].

Implementation Considerations

Successful implementation of Genetic Algorithms requires careful attention to several parameters that control the evolutionary process. The population size balances diversity and computational cost—larger populations explore more space but require more evaluations per generation [26]. Crossover and mutation rates determine the balance between exploiting existing good solutions and exploring new regions of the search space. Elitism strategies preserve the best solutions from each generation, ensuring that high-quality genetic material is not lost [27].

Termination criteria for GAs may include reaching a maximum number of generations, achieving a satisfactory fitness level, or detecting convergence when successive generations no longer produce significant improvements [26]. Proper parameter tuning is essential for obtaining good performance, though optimal settings are often problem-dependent [26] [27].

Differential Evolution: Advanced Evolutionary Approach

Algorithmic Foundations and Vector Operations

Differential Evolution represents a significant advancement in evolutionary computation, specifically designed for continuous optimization problems. While sharing the general evolutionary framework with Genetic Algorithms, DE employs distinct vector-based operations that make it particularly effective for real-valued search spaces. The algorithm begins by initializing a population of candidate solutions, represented as real-valued vectors, within the specified bounds of the optimization problem [10] [2].

The hallmark of Differential Evolution is its unique mutation strategy, which generates new parameter vectors by adding the scaled difference between two population vectors to a third vector. This approach enables DE to automatically adapt the step size and orientation of the search based on the current population distribution. The standard mutation strategy, denoted as DE/rand/1, follows the formula:

[ \text{mutant} = x{r1} + F \cdot (x{r2} - x_{r3}) ]

where (x{r1}), (x{r2}), and (x_{r3}) are three distinct randomly selected population vectors, and (F) is the scaling factor controlling the amplification of the differential variation [10] [2].

Following mutation, DE employs a crossover operation that combines parameters from the mutant vector with those of a target vector to produce a trial vector. Unlike the typical crossover in GAs, DE commonly uses binomial crossover where each parameter is independently selected from either the mutant or target vector based on a crossover probability [10]. The final selection step is deterministic—the trial vector replaces the target vector in the next generation only if it yields an equal or better fitness value [2].

Diagram 2: Differential Evolution Workflow

Mutation Strategies and Parameter Control

Differential Evolution features several mutation strategies that influence its exploration-exploitation balance. Common strategies include:

- DE/rand/1: Uses three randomly selected vectors, promoting exploration.

- DE/best/1: Incorporates the best solution in the population to guide toward promising regions.

- DE/current-to-best/1: Combines the current vector with the best solution to balance exploration and exploitation.

The naming convention DE/x/y/z specifies the vector to be mutated (x), the number of difference vectors used (y), and the crossover type (z) [2].

DE requires only three control parameters, making it simpler to implement than many other evolutionary algorithms:

- Population Size (NP): Typically set between 5-10 times the problem dimension [10].

- Scaling Factor (F): Controls mutation strength, usually in [0, 1] or [0, 2] [2].

- Crossover Rate (CR): Determines parameter inheritance probability, typically in [0.3, 1] [10].

These parameters significantly impact DE performance, and extensive research has focused on adaptive parameter control mechanisms to enhance its robustness across diverse problem types [10].

Comparative Analysis: Genetic Algorithm vs Differential Evolution

Structural and Operational Differences

While both Genetic Algorithms and Differential Evolution belong to the evolutionary computation family and share common biological inspiration, they exhibit fundamental differences in their operational mechanisms and philosophical approaches to optimization. Understanding these distinctions is crucial for selecting the appropriate algorithm for specific problem domains and applications.

The primary distinction lies in their solution representation and operational domains. Traditional Genetic Algorithms often employ binary or discrete representations, making them suitable for combinatorial optimization problems. In contrast, Differential Evolution operates directly on real-valued vectors, positioning it as a preferred choice for continuous optimization problems [10] [27]. This fundamental difference in representation leads to variations in their genetic operators and search mechanisms.

Another significant difference concerns the application order of genetic operators. In Genetic Algorithms, selection typically occurs before recombination and mutation, emphasizing the propagation of high-fitness individuals. Differential Evolution reverses this sequence—mutation is the primary operator, followed by crossover and selection. This operational reversal contributes to DE's explorative capabilities in continuous spaces [27].

The mutation strategies employed by both algorithms further highlight their philosophical differences. GA uses typically small, random perturbations to maintain population diversity. DE's mutation is more directional, utilizing vector differences to guide the search process. This difference makes DE particularly effective for correlated parameter optimization where the relationship between variables can be exploited through vector operations [10] [2].

Table 2: Algorithmic Comparison Between GA and DE

| Characteristic | Genetic Algorithm (GA) | Differential Evolution (DE) |

|---|---|---|

| Solution Representation | Binary, Discrete, Real-valued | Primarily Real-valued Vectors |

| Primary Operators | Selection, Crossover, Mutation | Mutation, Crossover, Selection |

| Mutation Strategy | Random perturbations | Scaled vector differences |

| Crossover Type | Single/Multi-point, Uniform | Binomial, Exponential |

| Selection Method | Probabilistic (Fitness-based) | Deterministic (Greedy) |

| Parameter Control | Multiple parameters required | Fewer parameters (F, CR, NP) |

| Problem Domain | Discrete, Combinatorial | Continuous, Real-valued |

| Convergence Behavior | May premature converge | Generally better global convergence |

Performance Analysis and Application Suitability

Empirical studies comparing GA and DE reveal distinct performance characteristics across various problem domains. Research indicates that DE often demonstrates superior performance on continuous optimization problems, particularly those with real-valued parameters and nonlinear objective functions [10] [27]. The vector-based operations of DE enable efficient navigation of continuous search spaces, often resulting in faster convergence to near-optimal solutions.