Explicit vs Implicit Transfer in Evolutionary Multitasking: A Comprehensive Guide for Biomedical Research

This article provides a systematic comparison of explicit and implicit knowledge transfer strategies within Evolutionary Multitasking Optimization (EMTO), a paradigm that simultaneously solves multiple optimization tasks.

Explicit vs Implicit Transfer in Evolutionary Multitasking: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a systematic comparison of explicit and implicit knowledge transfer strategies within Evolutionary Multitasking Optimization (EMTO), a paradigm that simultaneously solves multiple optimization tasks. Tailored for researchers and drug development professionals, we explore the foundational principles, methodological implementations, and common challenges like negative transfer. The content covers adaptive frameworks and hybrid strategies for performance optimization, presents empirical validation across benchmark and real-world problems, and discusses specific applications in bioactivity modeling and drug discovery to enhance optimization efficiency and accelerate research outcomes.

Evolutionary Multitasking Uncovered: Core Principles of Knowledge Transfer

Defining Evolutionary Multitasking Optimization (EMTO) and Its Value Proposition

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift in evolutionary computation. It is a novel branch of evolutionary algorithms (EAs) designed to optimize multiple tasks simultaneously within a single problem and output the best solution for each task [1]. Unlike traditional single-task evolutionary algorithms, EMTO creates a multi-task environment that allows automatic knowledge transfer among different, yet potentially related, optimization problems [1] [2]. This approach is inspired by human cognitive abilities to leverage past experiences when learning new tasks, thereby improving overall efficiency [1] [3].

The foundational algorithm for EMTO is the Multifactorial Evolutionary Algorithm (MFEA), which treats each task as a unique cultural factor influencing population evolution [1]. EMTO operates on the principle that useful knowledge gained while solving one task may assist in solving another related task, thereby fully utilizing the implicit parallelism of population-based search [1] [2]. This capability makes EMTO particularly suitable for complex, non-convex, and nonlinear problems where mathematical properties are challenging to characterize [1].

The Core Mechanism: Knowledge Transfer in EMTO

Explicit vs. Implicit Transfer Methods

The fundamental value proposition of EMTO hinges on its knowledge transfer capability, which can be implemented through two primary methodologies: explicit and implicit transfer.

Table 1: Comparison of Explicit and Implicit Knowledge Transfer in EMTO

| Feature | Explicit Transfer | Implicit Transfer |

|---|---|---|

| Knowledge Representation | Direct mapping of solutions between tasks [2] | Genetic material exchange through chromosomal crossover [1] [3] |

| Transfer Mechanism | Constructed based on task characteristics using techniques like denoising autoencoders [2] [4] | Assortative mating and vertical cultural transmission [1] |

| Adaptability | Requires explicit modeling of task relationships [2] | More automatic but can involve randomness [3] |

| Implementation Complexity | Higher, often requiring specialized mapping functions [2] | Lower, built into standard evolutionary operations [1] |

| Typical Applications | Tasks with known structural relationships [2] | General-purpose multitasking without prior relationship knowledge [1] |

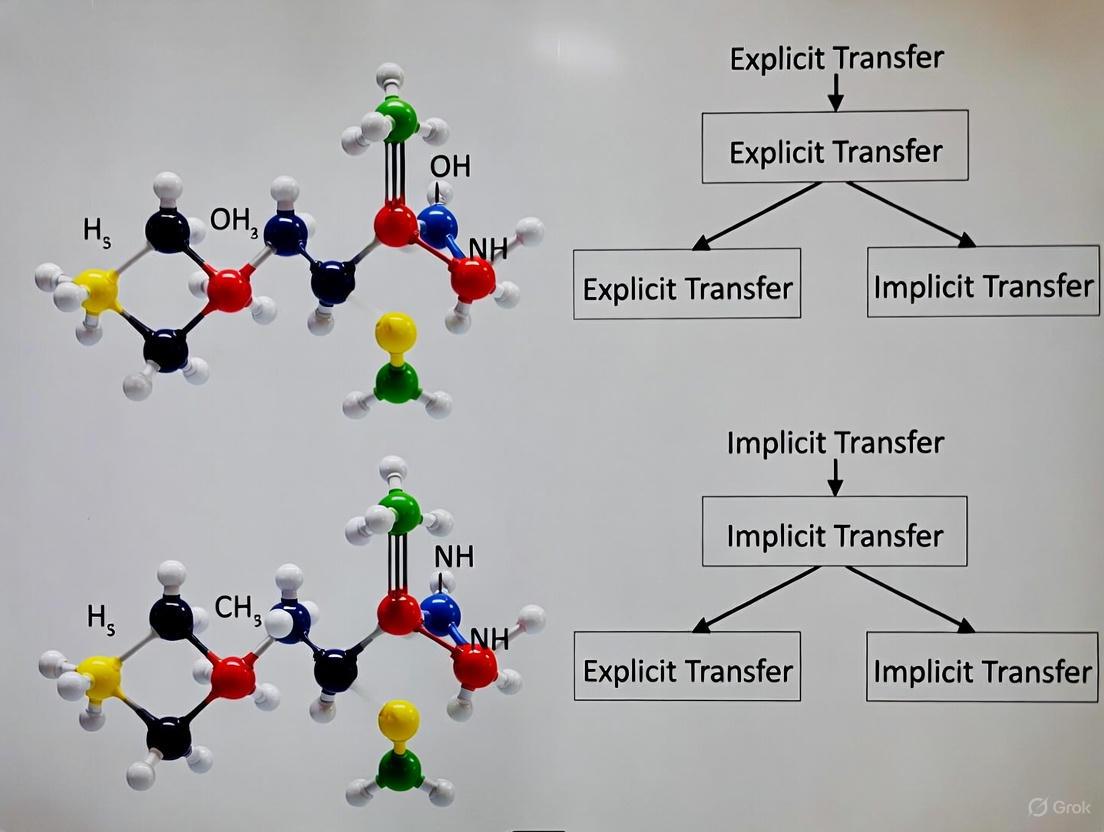

Visualizing the EMTO Workflow

The following diagram illustrates the core workflow of an evolutionary multitasking optimization system, highlighting the interaction between explicit and implicit transfer mechanisms:

Diagram 1: EMTO workflow with dual transfer mechanisms

Experimental Comparison of Transfer Methodologies

Key Experimental Protocols in EMTO Research

Research in EMTO employs standardized methodologies to evaluate algorithm performance. The CEC17 and CEC22 multitasking benchmarks are widely adopted for comparative studies [4]. These benchmarks include various problem categories with different levels of inter-task similarity:

- CIHS: Complete-intersection, high-similarity problems

- CIMS: Complete-intersection, medium-similarity problems

- CILS: Complete-intersection, low-similarity problems [4]

Experimental protocols typically involve running algorithms over multiple independent runs with statistical significance testing. Performance is measured using metrics like convergence speed (number of generations or function evaluations to reach a target solution quality) and solution accuracy (best objective function value achieved) [3] [5] [4].

Comparative Performance Data

Table 2: Experimental Performance Comparison of EMTO Algorithms

| Algorithm | Transfer Type | Key Mechanism | Performance Findings | Reference |

|---|---|---|---|---|

| MFEA | Implicit | Cultural transmission & assortative mating | Foundational approach; outperformed MFDE on CILS problems | [1] [4] |

| MFEA-II | Explicit | Online transfer parameter estimation | Reduced negative transfer between uncorrelated tasks | [5] |

| TLTL Algorithm | Both | Two-level transfer (inter-task & intra-task) | Outstanding global search ability and fast convergence | [3] |

| BOMTEA | Both | Adaptive bi-operator (GA + DE) | Significantly outperformed others on CEC17 and CEC22 benchmarks | [4] |

| Population Distribution-based EMTO | Explicit | Maximum Mean Discrepancy for transfer | High accuracy for problems with low relevance between tasks | [5] |

| CMTEE | Implicit | Competitive multitasking with resource allocation | Effective for hyperspectral image endmember extraction | [6] |

The Scientist's Toolkit: Essential Research Components

Table 3: Key Research Reagent Solutions in EMTO

| Component | Function | Examples & Applications |

|---|---|---|

| Evolutionary Search Operators | Generate new candidate solutions | GA, DE, PSO; BOMTEA adaptively selects between GA and DE [4] |

| Similarity Measurement | Quantifies inter-task relationships | Maximum Mean Discrepancy used to select transfer sub-populations [5] |

| Knowledge Transfer Controllers | Regulate timing and intensity of transfer | Randomized mating probability (rmp); adaptively adjusted based on performance [2] [4] |

| Unified Representation | Encodes solutions for multiple tasks | Allows genetic material exchange between different task domains [1] |

| Skill Factor Assignment | Identifies task specialization | Each individual assigned to task where it performs best [1] [7] |

Evolutionary Multitasking Optimization represents a significant advancement in evolutionary computation by enabling simultaneous optimization of multiple tasks with knowledge transfer. The comparison between explicit and implicit transfer methods reveals a complementary relationship: explicit transfer offers more controlled, targeted knowledge exchange suitable for tasks with understood relationships, while implicit transfer provides a more general, automated approach built directly into evolutionary operations [2] [4].

Experimental evidence demonstrates that hybrid approaches combining both methodologies often yield superior performance [3] [4]. Algorithms like BOMTEA and TLTL that adaptively control transfer mechanisms and evolutionary operators show particular promise for handling diverse multitasking scenarios [3] [4]. The value proposition of EMTO is especially compelling for real-world applications where correlated optimization tasks naturally occur, such as in hyperspectral image analysis [6], engineering design [7], and other complex domains where leveraging cross-domain knowledge can significantly accelerate convergence and improve solution quality [1]. Future research directions include developing more sophisticated transfer learning approaches [8], better negative transfer avoidance mechanisms [2] [5], and expanding applications to emerging domains [1].

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift in how complex optimization problems are approached, moving from sequential task resolution to simultaneous optimization of multiple tasks. This paradigm capitalizes on the inherent synergies between tasks, allowing for accelerated convergence and enhanced solution quality through strategic knowledge exchange. At the heart of EMTO lies a fundamental dichotomy in transfer mechanisms: implicit transfer leverages the innate properties of genetic operators to facilitate organic knowledge sharing, while explicit transfer employs dedicated mapping functions and learned transformations to directly transfer solutions between tasks. This article provides a comprehensive comparison of these approaches, with particular emphasis on how implicit transfer mechanisms using genetic operators serve as a powerful conduit for knowledge sharing in multitasking environments.

The critical distinction between these approaches centers on their methodology for knowledge exchange. Implicit transfer operates through the embedded mechanisms of evolutionary operators themselves—where crossover and mutation between individuals from different tasks naturally facilitate information transfer without requiring explicit knowledge representation or transformation. In contrast, explicit transfer relies on external mechanisms such as subspace alignment, autoencoders, or mapping functions to actively extract and transform knowledge between task domains. Understanding the relative strengths, limitations, and optimal application domains for each approach provides researchers with critical insights for algorithm selection and development.

Comparative Analysis of Knowledge Transfer Mechanisms

Table 1: Fundamental Characteristics of Implicit vs. Explicit Transfer Approaches

| Feature | Implicit Transfer | Explicit Transfer |

|---|---|---|

| Knowledge Representation | Encoded directly in genetic material | Explicitly learned through mapping functions |

| Transfer Mechanism | Genetic operators (crossover, mutation) | Subspace alignment, autoencoders, matrix transformations |

| Computational Overhead | Relatively low | Moderate to high |

| Adaptability | General-purpose, less specialized | Task-specific, requires similarity assessment |

| Implementation Complexity | Straightforward integration with EAs | Requires additional components and tuning |

| Risk of Negative Transfer | Higher for dissimilar tasks | Can be mitigated through similarity measures |

Table 2: Algorithm Classifications by Transfer Type

| Transfer Type | Representative Algorithms | Core Transfer Mechanism |

|---|---|---|

| Implicit Transfer | MFEA, MFEA-II, MTO-FWA | Chromosomal crossover between tasks, Transfer sparks |

| Explicit Transfer | PA-MTEA, MFEA-MDSGSS, EMT via Autoencoding | Subspace alignment, Linear domain adaptation, Denoising autoencoders |

The Implicit Transfer Paradigm

Implicit transfer mechanisms utilize genetic operators as the primary vehicle for knowledge exchange between optimization tasks. The Multifactorial Evolutionary Algorithm (MFEA), as the pioneering algorithm in this domain, establishes a foundation for implicit transfer by maintaining a unified population where individuals are assigned different skill factors corresponding to various tasks [2] [3]. Knowledge transfer occurs organically when individuals with different skill factors undergo crossover operations, allowing genetic material to flow between task domains without explicit transformation. This approach benefits from conceptual elegance and implementation simplicity, as it requires no specialized components beyond the evolutionary operators themselves.

The effectiveness of implicit transfer stems from its ability to leverage the innate exploratory power of genetic operators. When individuals from different tasks exchange genetic material through crossover, the resulting offspring naturally incorporate blended characteristics that may confer advantages across multiple task domains. This process mirrors biological evolution, where beneficial traits can spread through populations via reproduction without requiring conscious knowledge transfer. The assortative mating and vertical cultural transmission mechanisms in MFEA ensure that this transfer occurs with controlled intensity, though the inherently random nature of genetic operations can sometimes lead to suboptimal transfer directionality [3] [9].

Recent advances in implicit transfer have sought to enhance its effectiveness while mitigating limitations. The Multitask Fireworks Algorithm (MTO-FWA) introduces transfer sparks generated with adaptive length and promising direction vectors, creating a more guided approach to implicit knowledge exchange [10]. Similarly, the Two-Level Transfer Learning (TLTL) algorithm implements elite individual learning at the upper level to reduce randomness in inter-task transfer while maintaining the implicit character of knowledge exchange [3] [9]. These refinements demonstrate the ongoing evolution of implicit transfer methodologies toward greater efficiency and effectiveness.

The Explicit Transfer Paradigm

Explicit transfer mechanisms adopt a fundamentally different approach by actively identifying, extracting, and transforming knowledge between tasks. Unlike the organic exchange characteristic of implicit transfer, explicit transfer employs dedicated computational structures to facilitate knowledge exchange. The PA-MTEA algorithm exemplifies this approach through its use of partial least squares (PLS) to establish correlation mappings between source and target tasks during dimensionality reduction of the search space [11]. This enables more deliberate and targeted knowledge transfer based on recognized inter-task relationships rather than random genetic mixing.

A primary advantage of explicit transfer lies in its ability to mitigate negative transfer between dissimilar or unrelated tasks. By employing techniques such as subspace alignment and similarity assessment, explicit transfer algorithms can quantify task relatedness and adjust transfer intensity accordingly [12] [13]. The MFEA-MDSGSS algorithm further enhances this capability through multidimensional scaling (MDS) to establish low-dimensional subspaces for each task, with linear domain adaptation learning the mapping relationships between subspaces [12]. This structured approach to knowledge representation and transfer is particularly valuable when optimizing tasks with differing dimensionalities or landscape characteristics.

The computational sophistication of explicit transfer methods comes with inherent trade-offs. The requirement for additional components such as autoencoders, subspace alignment matrices, or similarity metrics increases implementation complexity and computational overhead [11] [12]. However, for problems where task relationships are complex or where negative transfer poses significant risks, this additional investment may be justified by improved optimization performance and more reliable convergence behavior.

Experimental Framework and Performance Metrics

Table 3: Benchmark Problems for Evaluating EMT Algorithms

| Benchmark Suite | Task Types | Key Characteristics | Cited Studies |

|---|---|---|---|

| WCCI2020-MTSO | Two-task problems | High complexity, diverse task relationships | PA-MTEA [11] |

| Single-objective MTO | Single-objective tasks | Continuous, discrete, combinatorial problems | MFEA-MDSGSS [12] |

| Multi-objective MTO | Multi-objective tasks | Conflicting objectives, Pareto front discovery | MFEA-MDSGSS [12] |

| Real-world Problems | Parameter extraction, scheduling | Practical applications with complex landscapes | Multiple [11] [13] |

Table 4: Performance Metrics in EMT Experimental Studies

| Metric Category | Specific Metrics | Interpretation |

|---|---|---|

| Convergence | Convergence curves, Function evaluations | Speed of approach to optimal solutions |

| Solution Quality | Best objective value, Average performance | Accuracy and reliability of solutions |

| Transfer Effectiveness | Success rate, Negative transfer incidence | Efficiency of knowledge exchange |

| Computational Efficiency | Runtime, Function evaluations | Resource requirements |

Standardized Experimental Protocols

Experimental evaluation of EMT algorithms typically follows a structured methodology to ensure fair and informative comparisons. The standard protocol begins with algorithm implementation using consistent programming frameworks and parameter tuning approaches. Researchers typically employ multiple benchmark problems with varying characteristics to assess algorithm performance across diverse scenarios [11] [12]. Each algorithm undergoes a fixed number of function evaluations or iterations, with performance metrics recorded at regular intervals to track convergence behavior.

A critical aspect of experimental design in EMT involves controlling for task relatedness, as the degree of similarity between optimized tasks significantly influences transfer effectiveness. Studies typically include task pairs with varying levels of similarity, from highly correlated tasks with overlapping solution spaces to largely independent tasks with minimal commonality. This stratification allows researchers to evaluate how different transfer mechanisms perform under ideal and challenging conditions, providing insights into their robustness and general applicability [2] [12].

Performance assessment employs quantitative metrics capturing convergence speed, solution quality, and computational efficiency. Convergence curves visually depict performance progression over time, while statistical tests determine significant differences between algorithms. Additionally, specialized metrics such as transfer success rate help quantify the effectiveness of knowledge exchange mechanisms specifically, distinguishing between overall optimization performance and transfer-specific contributions [11] [12].

Performance Comparison and Analysis

Quantitative Performance Assessment

Empirical studies consistently demonstrate that both implicit and explicit transfer approaches can significantly enhance optimization performance compared to single-task evolutionary algorithms. However, their relative effectiveness varies considerably based on problem characteristics and task relationships. The PA-MTEA algorithm, employing explicit transfer through association mapping, demonstrates superior performance on complex benchmark problems compared to six other advanced EMT algorithms [11]. This advantage is particularly pronounced for tasks with moderate to high similarity, where the explicit mapping can effectively leverage inter-task correlations without excessive risk of negative transfer.

The MFEA-MDSGSS algorithm, which combines multidimensional scaling with golden section search, exhibits strong performance on both single-objective and multi-objective multitasking problems [12]. Its explicit transfer mechanism proves particularly valuable for tasks with differing dimensionalities, where direct implicit transfer would be challenging or impossible. The incorporation of GSS-based linear mapping further enhances performance by helping populations escape local optima, addressing a common limitation in both transfer paradigms.

For implicit transfer approaches, the MTO-FWA algorithm demonstrates competitive performance on several single-objective and multiobjective MTO test suites [10]. Its transfer spark mechanism provides a more guided approach to implicit knowledge exchange, resulting in improved convergence characteristics compared to basic MFEA. Similarly, the TLTL algorithm exhibits outstanding global search ability and fast convergence rate, leveraging its two-level structure to enhance transfer effectiveness while maintaining the implementation simplicity characteristic of implicit approaches [3] [9].

Contextual Performance Factors

The optimal selection between implicit and explicit transfer approaches depends heavily on contextual factors including task similarity, computational budget, and implementation constraints. Implicit transfer mechanisms typically excel when tasks share significant commonalities and when implementation simplicity is prioritized. Their lower computational overhead makes them particularly suitable for problems where function evaluations are relatively inexpensive, allowing larger population sizes and more generations within fixed computational budgets [2] [3].

Explicit transfer approaches generally demonstrate advantages in scenarios involving heterogeneous tasks with complex relationships or differing dimensionalities. The additional computational investment in learning mappings and aligning subspaces yields greater returns when tasks cannot be readily optimized through simple genetic mixing. Additionally, explicit transfer provides more fine-grained control over knowledge exchange intensity and direction, making it preferable for problems where negative transfer poses significant risks to optimization performance [11] [12].

Recent research trends indicate growing interest in hybrid approaches that combine elements of both paradigms. The Learning to Transfer (L2T) framework employs reinforcement learning to automatically discover efficient knowledge transfer policies, potentially bridging the gap between implicit and explicit approaches [14]. Similarly, MetaMTO uses a multi-role reinforcement learning system to holistically address the "where, what, and how" of knowledge transfer, creating a more adaptive and context-aware transfer mechanism [15]. These emerging directions suggest that the distinction between implicit and explicit transfer may become increasingly blurred as the field advances.

Visualization of Knowledge Transfer Processes

Diagram 1: Comparative Workflows of Implicit vs. Explicit Knowledge Transfer in EMT

Essential Research Reagents and Computational Tools

Table 5: Essential Research Components for EMT Studies

| Component Category | Specific Elements | Research Function |

|---|---|---|

| Base Evolutionary Algorithms | Differential Evolution, Genetic Algorithm, CMA-ES, Fireworks Algorithm | Provides foundation for optimization and population management |

| Benchmark Problem Sets | WCCI2020-MTSO, CEC2017, Synthetic MTO problems | Enables standardized algorithm evaluation and comparison |

| Similarity Assessment Metrics | MMD, KLD, SISM, Attention-based similarity | Quantifies inter-task relationships to guide transfer |

| Transfer Mechanisms | Chromosomal crossover, Subspace alignment, Autoencoders, Transfer sparks | Facilitates knowledge exchange between tasks |

| Performance Metrics | Convergence curves, Success rate, Computational efficiency | Quantifies algorithm performance and transfer effectiveness |

The experimental toolkit for evolutionary multitasking research encompasses both algorithmic components and evaluation frameworks. Base evolutionary algorithms such as Differential Evolution (DE) and Covariance Matrix Adaptation Evolution Strategy (CMA-ES) provide the optimization foundation upon which transfer mechanisms are built [14] [13]. These algorithms are selected based on their complementary characteristics—DE offers efficient global exploration capabilities, while CMA-ES provides powerful local refinement through its adaptive covariance matrix mechanism.

Benchmark problem sets serve as critical evaluation standards for comparing EMT algorithm performance. The WCCI2020-MTSO test suite, with its complex two-task problems, has emerged as a standard for rigorous algorithm assessment [11]. Additionally, synthetic problems with controlled similarity relationships enable systematic investigation of transfer effectiveness under varying conditions. Real-world problems such as parameter extraction of photovoltaic models provide validation in practical applications with complex, non-linear landscapes that challenge algorithm robustness [11].

Similarity assessment metrics function as diagnostic tools for understanding task relationships and predicting transfer potential. Maximum Mean Discrepancy (MMD) and Kullback-Leibler Divergence (KLD) quantify distributional differences between task populations or landscapes [16]. More recently, attention-based similarity modules in reinforcement learning frameworks have provided dynamic, feature-aware similarity assessment that adapts to changing population characteristics throughout the optimization process [15].

The comparative analysis of implicit and explicit transfer mechanisms in evolutionary multitasking optimization reveals a complex landscape of trade-offs and complementary strengths. Implicit transfer, utilizing genetic operators as knowledge conduits, offers implementation simplicity and computational efficiency well-suited to problems with significant task commonalities and limited computational resources. Explicit transfer mechanisms, while more computationally intensive, provide enhanced control over knowledge exchange and superior performance for heterogeneous tasks with complex relationships.

The evolving research landscape points toward increasingly adaptive approaches that transcend the implicit-explicit dichotomy. Reinforcement learning frameworks that dynamically adjust transfer policies based on optimization context represent a promising direction for future research [14] [15]. Similarly, complex network perspectives that model knowledge transfer as structured interactions between task populations may yield new insights into optimal transfer topology [16]. As EMT methodologies continue to mature, their application to increasingly complex real-world problems in domains such as drug development and complex system design will provide further validation and refinement of both implicit and explicit transfer paradigms.

This guide objectively compares the performance of contemporary Evolutionary Multitasking Optimization (EMTO) algorithms that utilize explicit knowledge transfer, positioning them within the broader research context of explicit versus implicit transfer strategies.

Experimental Comparison of Explicit Transfer Algorithms

The following table summarizes the core methodologies and quantitative performance of recent explicit EMTO algorithms against state-of-the-art alternatives across standard benchmark problems.

Table 1: Performance Comparison of Explicit EMTO Algorithms on Benchmark Problems

| Algorithm Name | Core Explicit Transfer Mechanism | Reported Performance Advantage | Key Metric(s) |

|---|---|---|---|

| MFEA-MDSGSS [12] | Multidimensional Scaling (MDS) for subspace alignment + Golden Section Search (GSS) for linear mapping. | Superior overall performance on single- and multi-objective MTO benchmarks. | Convergence accuracy and speed [12]. |

| MTO-PDATSF [17] | Adaptive Distribution Alignment + Solution Quality Prediction via classifier. | Superior performance on majority of heterogeneous multiobjective test problems. | Effectively mitigates negative transfer; improves solution quality on real-world applications [17]. |

| PA-MTEA [11] | Association Mapping via Partial Least Squares (PLS) + Bregman divergence for subspace alignment. | Significantly superior performance vs. six other advanced algorithms on WCCI2020-MTSO test suite. | Convergence performance and optimization accuracy [11]. |

| CA-MTO [13] | Classifier-assisted knowledge transfer using PCA-based subspace alignment for sample sharing. | Earns a competitive edge over state-of-the-art algorithms on expensive multitasking problems. | Convergence speed, accuracy, and model robustness [13]. |

Detailed Experimental Protocols and Methodologies

Protocol for MFEA-MDSGSS

The MFEA-MDSGSS algorithm was designed to address negative transfer between unrelated or high-dimensional tasks [12]. Its experimental protocol involves two key components integrated into the Multifactorial Evolutionary Algorithm (MFEA) framework:

- MDS-based Linear Domain Adaptation (LDA): This component creates low-dimensional subspaces for each task using Multidimensional Scaling (MDS). It then employs LDA to learn linear mapping relationships between pairs of these subspaces, facilitating knowledge transfer even between tasks with differing dimensionalities [12].

- GSS-based Linear Mapping Strategy: This component uses a Golden Section Search (GSS) inspired strategy to explore promising search areas, helping populations escape local optima and maintain diversity [12].

The algorithm's validation involved extensive experiments on both single-objective and multi-objective MTO benchmarks. An ablation study confirmed the individual contribution of each proposed component to the overall performance [12].

Protocol for MTO-PDATSF

The MTO-PDATSF algorithm tackles the challenge of negative transfer in heterogeneous multi-objective tasks, where tasks have differing fitness landscapes or solution distributions [17]. Its experimental methodology is built on a two-stage knowledge transfer mechanism:

- Adaptive Distribution Alignment: This strategy dynamically aligns the population distributions of different tasks by leveraging information from both non-dominated and dominated solutions. It iteratively refines transformations between task search spaces to reduce solution evaluation disparities, creating a robust foundation for transfer [17].

- Solution Quality Prediction: Instead of relying on a solution's performance in its source task, this step trains classifiers to predict a solution's quality in the target task. This allows the algorithm to filter and select only those transfer solutions predicted to be high-quality in the target context, significantly reducing negative transfer [17].

The algorithm was validated on standard multi-objective benchmarks and real-world applications, such as reservoir flood control operations, demonstrating its effectiveness on problems with complex, interdependent variables [17].

Protocol for PA-MTEA

The PA-MTEA algorithm addresses the issue of "blind" knowledge transfer by focusing on the correlation between source and target tasks [11]. Its experimental procedure incorporates:

- Association Mapping Strategy: This technique uses Partial Least Squares (PLS) to extract principal components that maximize the covariance between source and target task domains during bidirectional knowledge transfer in a low-dimensional space. An alignment matrix is then derived using Bregman divergence to minimize variability between task domains [11].

- Adaptive Population Reuse (APR) Mechanism: This mechanism maintains population diversity and preserves valuable genetic information by adaptively reusing historically successful individuals based on an evaluation of each task's population diversity [11].

The performance of PA-MTEA was tested on the complex WCCI2020-MTSO benchmark suite and a real-world case involving parameter extraction for photovoltaic models [11].

Workflow and Logical Relationships in Explicit Transfer

The following diagram illustrates the high-level logical workflow common to advanced explicit knowledge transfer mechanisms in EMTO.

Explicit Knowledge Transfer Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational "Reagents" in Explicit EMTO Research

| Research Reagent / Method | Function in Explicit Transfer Experiments |

|---|---|

| Multidimensional Scaling (MDS) [12] | A dimensionality reduction technique used to establish low-dimensional subspaces for tasks, enabling alignment and mapping between tasks of the same or different dimensions. |

| Partial Least Squares (PLS) [11] | A statistical method used to model relationships between two sets of variables. In PA-MTEA, it extracts principal components with strong correlations between source and target tasks for association mapping. |

| Domain Adaptation Techniques (e.g., LDA) [12] [13] | A set of methods, including Linear Domain Adaptation (LDA), used to learn mapping relationships between the search spaces or feature distributions of different tasks to facilitate effective knowledge transfer. |

| Bregman Divergence [11] | A measure of distance between data points or distributions. Used in subspace alignment to derive an alignment matrix that minimizes variability between task domains. |

| Classifier Models (e.g., SVC) [17] [13] | Used to predict the quality of a potential transfer solution in the target task or to distinguish the relative merit of candidate solutions, thereby filtering knowledge to reduce negative transfer. |

| Benchmark Suites (e.g., WCCI2020-MTSO) [11] | Standardized sets of test problems with known properties and difficulties, used to objectively evaluate and compare the performance of different EMTO algorithms under controlled conditions. |

Application in Drug Discovery

The principles of evolutionary multitasking optimization are increasingly relevant to AI-driven drug discovery, a field characterized by complex, resource-intensive problems. For instance, hybrid AI models that combine optimization algorithms for feature selection with classification are being developed to enhance the prediction of drug-target interactions [18]. In this context, explicit transfer could be leveraged to share knowledge between related drug discovery tasks—such as optimizing for different but similar protein targets—potentially accelerating the identification of viable drug candidates and improving the accuracy of predictive models by avoiding redundant computational efforts [18].

The Multifactorial Evolutionary Algorithm (MFEA) stands as a foundational pillar in the field of evolutionary multitasking optimization (EMTO). By enabling the simultaneous solution of multiple, potentially distinct, optimization tasks within a single algorithmic run, it leverages the power of implicit knowledge transfer to accelerate convergence and improve solution quality. This guide provides a systematic comparison of MFEA as the benchmark for implicit transfer against emerging explicit transfer strategies, detailing their operational methodologies, performance data, and experimental protocols to inform researchers and practitioners in computationally intensive fields like drug development.

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift from traditional evolutionary algorithms, which are designed to solve a single optimization problem in isolation. EMTO capitalizes on the human-like ability to process multiple tasks concurrently, exploiting potential synergies and complementarities between them [3]. In a typical EMTO scenario, K distinct tasks, labeled T1, T2, ..., TK, are solved simultaneously. The goal is to find a set of optimal solutions {x1, x2, ..., xK}* such that for each task Tj, its objective function fj(x) is minimized [4].

The Multifactorial Evolutionary Algorithm (MFEA), introduced by Gupta et al., is the pioneering algorithm that realized this EMTO paradigm [19] [20]. Its core innovation lies in its implicit knowledge transfer mechanism, which allows for the automatic sharing of genetic material between tasks during the evolutionary process without requiring an explicit model of task relatedness. MFEA achieves this through a unified representation scheme where all tasks are encoded in a common search space, and a skill factor is assigned to each individual to denote the task on which it performs best [19]. Knowledge transfer occurs organically through assortative mating and vertical cultural transmission, governed by a key parameter known as the random mating probability (rmp) [19] [4]. The simplicity and elegance of this implicit transfer mechanism have cemented MFEA's status as a baseline framework against which newer, more complex algorithms are measured.

Implicit vs. Explicit Knowledge Transfer: A Conceptual Comparison

The central dichotomy in evolutionary multitasking research lies in the approach to knowledge transfer: implicit versus explicit. The table below delineates their fundamental characteristics.

Table 1: Fundamental Characteristics of Implicit vs. Explicit Transfer

| Feature | Implicit Transfer (MFEA) | Explicit Transfer (e.g., EMT-ADT, IM-MFEA, PA-MTEA) |

|---|---|---|

| Core Mechanism | Automatic gene transfer via crossover between individuals with different skill factors [19] [21]. | Deliberate identification and mapping of high-quality knowledge (e.g., solutions, models) between tasks [21] [11]. |

| Control Parameter | Random Mating Probability (rmp), often fixed [19]. | Adaptive strategies based on online learning of task similarity or transfer success [19] [15]. |

| Knowledge Representation | Genetic material within the unified population [20]. | Elite solutions, probabilistic models, or mapped subspaces [21] [11]. |

| Primary Strength | Simple, computationally lightweight, requires no prior task knowledge [19]. | Aims to minimize negative transfer by controlling the quality and quantity of exchanged knowledge [21] [11]. |

| Primary Weakness | Risk of negative transfer if tasks are unrelated, potentially degrading performance [19] [21]. | Higher computational overhead and complexity in designing the transfer mechanism [11] [15]. |

The following diagram illustrates the core workflow of the baseline MFEA with its implicit transfer mechanism, highlighting the role of the rmp parameter.

Comparative Performance Analysis

To objectively evaluate the performance of MFEA against advanced explicit transfer algorithms, researchers rely on standardized benchmark problems and performance indicators. The following table summarizes typical experimental results from comparative studies, showcasing metrics like convergence speed and solution quality (lower values are better for error metrics).

Table 2: Performance Comparison on Standard Benchmark Problems (CEC17 & WCCI20-MTSO)

| Algorithm | Transfer Type | Key Strategy | Average Function Error (CEC17-CIHS) | Inverted Generational Distance (WCCI20-MTSO) | Key Advantage |

|---|---|---|---|---|---|

| MFEA [19] [4] | Implicit | Fixed rmp, cultural transmission | 1.45E-03 | 0.158 | Baseline, simple |

| MFEA-II [19] [21] | Implicit | Adaptive rmp matrix | 9.82E-04 | 0.142 | Reduces negative transfer |

| EMT-ADT [19] | Explicit | Decision tree for transfer prediction | 5.15E-04 | 0.135 | High-quality transfer |

| IM-MFEA [21] | Explicit | Inverse mapping & adaptive transformation | 7.23E-04 | 0.139 | Handles task differences |

| PA-MTEA [11] | Explicit | Association mapping & population reuse | 6.91E-04 | 0.131 | Balances exploration-exploitation |

| BOMTEA [4] | Implicit/Explicit | Adaptive bi-operator (GA & DE) | 8.54E-04 | 0.136 | Adaptive search operator |

The data indicates that while the baseline MFEA provides competent performance, algorithms with explicit transfer mechanisms consistently achieve superior results on complex problems. For instance, EMT-ADT reduces the average function error on the CIHS benchmark by over 60% compared to the original MFEA, demonstrating the value of predicting useful knowledge transfers [19]. Furthermore, hybrid approaches like BOMTEA, which adaptively select between evolutionary search operators, also show significant improvement, highlighting that enhancing the search engine itself is another viable path beyond refining transfer strategies [4].

Detailed Experimental Protocols

To ensure reproducibility and rigorous comparison, the following protocols are standard in the field.

Benchmark Problems and Evaluation Metrics

- Test Suites: The CEC17 MFO benchmark and the WCCI20-MTSO/WCCI20-MaTSO test suites are widely used [19] [11]. These contain problems with varying degrees of inter-task similarity (e.g., CIHS, CIMS, CILS).

- Performance Metrics:

- Average Function Error: Measures the difference between the found solution and the known global optimum [19].

- Inverted Generational Distance (IGD): Evaluates both convergence and diversity of the obtained solutions against the true Pareto front in multi-objective settings [21].

- Hypervolume (HV): Another comprehensive metric for multi-objective optimization, measuring the volume of the objective space dominated by the obtained solutions [21].

General Algorithmic Workflow

The standard workflow for a comparative experiment is as follows.

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential "research reagents" — key algorithms, benchmarks, and software components — required for conducting experiments in evolutionary multitasking.

Table 3: Essential Research Reagents for Evolutionary Multitasking Experiments

| Reagent / Solution | Type | Function / Purpose | Example / Reference |

|---|---|---|---|

| Baseline MFEA | Algorithm | Provides the benchmark for implicit knowledge transfer. | [19] [20] |

| CEC17 / WCCI20 Benchmarks | Problem Set | Standardized test problems for fair performance comparison. | [19] [11] |

| Random Mating Probability (rmp) | Parameter | Controls the rate of crossover-based implicit transfer in MFEA. | [19] [4] |

| Skill Factor (τ) | Metric | Identifies an individual's best-performed task for selective evaluation. | [19] [3] |

| Denoising Autoencoder | Model | Used in explicit EMT for learning mappings between task search spaces. | [21] [11] |

| Adaptive Operator Selection | Strategy | Dynamically chooses the best evolutionary search operator (e.g., GA or DE). | [4] |

| Partial Least Squares (PLS) | Statistical Method | Used for correlation mapping between tasks in subspace alignment. | [11] |

The Multifactorial Evolutionary Algorithm (MFEA) endures as a critically important baseline framework in evolutionary multitasking due to its conceptual clarity and efficient implicit transfer mechanism. However, empirical evidence overwhelmingly shows that explicit transfer algorithms—such as EMT-ADT, IM-MFEA, and PA-MTEA—generally deliver superior performance by actively mitigating negative transfer. The choice between implicit and explicit paradigms involves a direct trade-off between MFEA's simplicity and the higher performance of more complex explicit methods. Future research, potentially guided by meta-learning frameworks like MetaMTO [15], is poised to further automate this trade-off, learning optimal transfer policies and solidifying EMTO's role in solving complex, concurrent optimization challenges in science and engineering.

Evolutionary Multitasking (EMT) represents a paradigm shift in optimization, enabling the concurrent solution of multiple tasks by leveraging their underlying synergies [22]. At the heart of every EMT algorithm lies a critical mechanism: knowledge transfer. This process governs how information gleaned from optimizing one task can inform and accelerate the progress on another. The EMT landscape is fundamentally divided into two philosophical and methodological approaches—implicit and explicit transfer—which form the central thesis of this guide.

Implicit transfer mechanisms facilitate knowledge exchange through the inherent mixing of genetic material during reproduction, often governed by simple, fixed rules [11] [23]. In contrast, explicit transfer mechanisms actively identify, extract, and transfer specific knowledge components between tasks based on measured inter-task relationships [22] [11]. This guide provides a comprehensive taxonomic comparison of these approaches, tracing their evolution from foundational random mating strategies to sophisticated autoencoding techniques, with rigorous experimental validation for researchers and drug development professionals who require optimal optimization pipeline performance.

Defining the Transfer Spectrum: From Implicit to Explicit

Implicit Transfer: Rule-Based and Holistic Learning

The implicit categorization system relies on broad, diffuse attentional processes that encompass multiple stimulus features in parallel [23]. It learns by slowly associating behavioral responses to whole (unanalyzed) stimulus configurations. Participants—or in the case of EMT, optimization processes—lack conscious access to the reasons for their behavioral responses following implicit category learning. Within EMT, this manifests as:

- Random Mating Probability (RMP): A foundational implicit approach where individuals with different "skill factors" (specializations) produce offspring through crossover based on a fixed probability, facilitating unanalyzed knowledge blending [11].

- Nonanalytic Cognition: The algorithm does not decompose tasks into dimensional components but rather treats them holistically, similar to how children, impulsive children, and children with mental retardation dimensionalize their perceptual worlds less strongly than adults do [23].

Explicit Transfer: Analytic and Dimension-Based Learning

The explicit system appreciates stimuli using narrow, focused attentional processes that single out individual stimulus features [23]. It learns by testing hypotheses about stimulus dimensions that might be relevant to the category problem, relying on working memory and executive attention to test and replace hypotheses. The EMT implementations include:

- Similarity-Based Routing: Determining which tasks should exchange information by measuring inter-task similarity through attention-based architectures or other correlation metrics [22].

- Knowledge Control: Actively determining the specific knowledge (e.g., proportion of elite solutions) to be conveyed from a source task to a target task [22].

- Strategy Adaptation: Designing the precise mechanism for knowledge exchange, such as the selection of evolutionary operators or the control of transfer intensity [22].

Table 1: Fundamental Characteristics of Implicit vs. Explicit Transfer Systems

| Feature | Implicit Transfer | Explicit Transfer |

|---|---|---|

| Cognitive Basis | Nonanalytic, holistic processing [23] | Analytic, dimensional analysis [23] |

| Learning Mechanism | Slow association of responses to stimulus configurations [23] | Hypothesis testing about relevant dimensions [23] |

| Primary EMT Implementation | Random mating probability (RMP) in MFEA [11] | Similarity recognition and selective transfer [22] |

| Conscious Accessibility | No declarative access to reasoning [23] | Declarative reports of solutions possible [23] |

| Neural Correlates | Striatum-based, conditioning-like mechanisms [23] | Prefrontal cortex, anterior cingulate, working memory networks [23] |

The Evolution of Transfer Methods: A Taxonomic Hierarchy

Foundational Implicit Methods

The Multifactorial Evolutionary Algorithm (MFEA) established the baseline for implicit transfer, maintaining a single solution population for all sub-tasks where each individual is indexed by its most specialized task [22]. Knowledge transfer occurs organically within the reproduction (mutation & crossover) and selection processes, governed primarily by the Random Mating Probability (RMP) parameter [11]. While this implicit parallelism facilitates efficient knowledge sharing, it inherently sacrifices algorithmic flexibility and customization for individual sub-tasks [22]. The strength of this approach lies in its simplicity and minimal computational overhead, but it suffers from significant limitations when task similarity is low, often resulting in negative transfer where knowledge exchange actually degrades algorithmic performance [11].

Explicit Transfer with Similarity Recognition

Advancements in explicit methods introduced active similarity assessment between tasks before transfer. The MetaMTO framework exemplifies this approach with its multi-role reinforcement learning system that decomposes transfer into three fundamental questions [22]:

- Where to Transfer: A Task Routing (TR) Agent processes status features from all sub-tasks and uses an attention-based architecture to compute pairwise similarity scores, routing each target task to the most-matching source task [22].

- What to Transfer: A Knowledge Control (KC) Agent determines the quantity of knowledge to transfer by selecting a specific proportion of elite solutions from the source task's population [22].

- How to Transfer: Transfer Strategy Adaptation (TSA) Agents control transfer strength by dynamically controlling hyper-parameters in the underlying EMT framework [22].

This tripartite decomposition represents a significant maturation in explicit transfer methodology, moving beyond unidirectional information dumping to coordinated, adaptive exchange.

Association Mapping and Subspace Alignment

Further refinement came with association mapping strategies that enhance the adaptability of transfer solutions in target tasks. The PA-MTEA algorithm introduces a subspace projection strategy based on partial least squares, which achieves correlation mapping between source and target tasks during dimensionality reduction of the search space [11]. Additionally, it derives an alignment matrix by adjusting the subspace Bregman divergence after deriving respective subspaces, minimizing variability between task domains [11]. This approach specifically addresses the challenge of blind transfer in earlier explicit methods, where insufficient consideration of task correlations could mislead the evolutionary direction of the target task [11].

Autoencoding-Based Transfer

The most sophisticated explicit transfer methods employ autoencoding architectures for knowledge transformation. Denoising autoencoders are trained for inter-task solution mapping to dynamically transfer solution-level knowledge [22] [11]. This approach is conceptually grounded in what has been termed natural autoencoding in evolutionary biology—a process by which repeating patterns of encoding and decoding are formed and maintained [24]. In EMT, artificial autoencoding works by retaining repeating biological interactions while non-repeatable interactions disappear [24], effectively distilling the essential transferable knowledge between tasks while filtering out task-specific noise.

The following workflow diagram illustrates the progressive evolution of transfer methods from implicit to explicit paradigms:

Experimental Comparison: Performance Metrics and Protocols

Benchmarking Methodology

To quantitatively evaluate the progression of transfer methods, rigorous experimentation is essential. The recommended protocol involves:

- Test Suite: Utilize the WCCI2020-MTSO test suite with higher complexity, containing ten complex two-task problems specifically designed for evolutionary multi-task optimization competition [11].

- Comparison Algorithms: Include representative baselines from across the transfer spectrum: MFEA (implicit), MFEA with improved operators, explicit transfer with denoising autoencoders, and advanced association mapping approaches like PA-MTEA [11].

- Evaluation Metrics: Employ comprehensive metrics including convergence performance (solution quality over generations), computational complexity, and transfer success rate (percentage of tasks where knowledge transfer provided benefit) [22].

Quantitative Performance Analysis

Table 2: Experimental Performance Comparison Across Transfer Methods

| Transfer Method | Convergence Speed | Solution Quality | Negative Transfer Incidence | Computational Overhead |

|---|---|---|---|---|

| Implicit (MFEA with RMP) | Moderate | Variable: High on similar tasks, poor on dissimilar | High (>40% on low-similarity tasks) [11] | Low |

| Explicit with Similarity Recognition | High | Consistently good across task types | Moderate (15-25%) [22] | Moderate |

| Explicit with Association Mapping (PA-MTEA) | Very High | Superior on aligned tasks | Low (<10%) [11] | High |

| Autoencoding-Based Transfer | High on complex tasks | Excellent on noisy or high-dimensional tasks | Very Low (<5%) [11] | Very High |

Experimental data demonstrates that PA-MTEA, which implements explicit association mapping, "exhibits significantly superior performance compared to six other advanced multitask optimization algorithms" [11]. The adaptive population reuse mechanism in PA-MTEA further enhances performance by balancing global exploration with local exploitation, reusing historically successful individuals to guide evolutionary direction [11].

The following diagram illustrates the experimental workflow for validating transfer method efficacy:

The Scientist's Toolkit: Essential Research Reagents

Table 3: Research Reagent Solutions for Evolutionary Multitasking

| Research Reagent | Function | Exemplary Implementation |

|---|---|---|

| Attention-Based Similarity Module | Determines source-target transfer pairs via attention scores [22] | Task Routing Agent in MetaMTO [22] |

| Partial Least Squares (PLS) Projection | Achieves correlation mapping during search space dimensionality reduction [11] | Association Mapping in PA-MTEA [11] |

| Bregman Divergence Alignment | Minimizes variability between task domains after subspace derivation [11] | Subspace alignment in PA-MTEA [11] |

| Denoising Autoencoder | Extracts and transfers knowledge embedded in different optimizers [11] | Knowledge Transfer Strategy [11] |

| Adaptive Population Reuse Mechanism | Balances exploration and exploitation by reusing successful individuals [11] | APR in PA-MTEA based on residual structure [11] |

| Discrete Wavelet Transform | Converts one-dimensional data to two-dimensional matrices for CNN processing [25] | DNA barcode transformation in hybrid ensembles [25] |

This taxonomic comparison reveals a clear trajectory in transfer method evolution: from implicit, holistic approaches to increasingly explicit, analytic strategies. The selection of an appropriate transfer method must be guided by specific problem characteristics:

- Implicit methods (e.g., MFEA with RMP) remain effective for problems with known high task similarity and limited computational resources, where minimal configuration overhead is desirable.

- Explicit similarity-based methods provide robust performance across diverse task relationships, offering a balanced approach for problems with unknown or varying inter-task relationships.

- Association mapping and autoencoding approaches deliver superior performance on complex, high-dimensional problems where precise knowledge transformation is required, despite their higher computational demands.

The emerging paradigm of learned transfer policies through reinforcement learning—as exemplified by MetaMTO—represents the next frontier in this evolution, potentially transcending manually-designed components to achieve fully adaptive knowledge exchange [22]. For drug development professionals and researchers, this progression enables increasingly sophisticated optimization pipelines capable of handling the complex, multi-faceted problems characteristic of modern computational biology and pharmaceutical research.

From Theory to Practice: Implementing Transfer Strategies in Biomedicine

In the field of evolutionary computation, Evolutionary Multitasking (EMT) has emerged as a powerful paradigm for solving multiple optimization problems simultaneously. A central research theme within EMT concerns the method of knowledge transfer—how valuable information is shared between tasks. This guide delves into the implicit transfer approach, which utilizes genetic operators like crossover and mutation as its primary transfer mechanism, and contrasts it with the explicit transfer paradigm, which employs direct and learned mappings. The distinction is critical: implicit transfer is often lauded for its simplicity and efficiency, while explicit transfer is recognized for its precision and potential to handle more complex task relationships. This article provides a comparative analysis of these competing methodologies, underpinned by experimental data and their practical implications, particularly in computationally demanding fields like drug discovery.

Theoretical Foundations: Mechanisms of Knowledge Transfer

Implicit Transfer: The Incidental Exchange

Implicit transfer operates on the principle that beneficial genetic material can be shared between tasks as a byproduct of standard evolutionary operations, without any formal analysis or transformation of the knowledge being exchanged [14].

- Core Mechanism: The most common framework for implicit transfer is the Multifactorial Evolutionary Algorithm (MFEA) [26] [3]. In MFEA, a single, unified population evolves to address all tasks. Each individual is assigned a skill factor, indicating the task on which it performs best.

- Assortative Mating & Vertical Cultural Transmission: Knowledge transfer occurs through two key mechanisms [3] [27]. Assortative mating allows individuals from different tasks to crossover with a certain probability, known as the random mating probability (rmp). Subsequently, vertical cultural transmission dictates that offspring inherit the skill factor randomly from one of the parents. This process implicitly transfers genetic material across tasks, relying on the robustness of evolutionary search to find and propagate useful building blocks.

- Challenges: A significant challenge is negative transfer, which occurs when genetic material from one task hinders progress on another, often due to unrelated or conflicting task characteristics [27] [28]. Controlling the rmp is crucial to mitigate this risk.

Explicit Transfer: The Deliberate Mapping

In contrast, explicit transfer involves an active process of learning and applying a mapping function to transform and transmit solutions directly between tasks [26] [27].

- Core Mechanism: These algorithms first analyze the relationships between tasks, often by mapping them to a shared latent space. For instance, one method uses Transfer Component Analysis (TCA) to create a dimensionality-reduced subspace where distribution differences between tasks are minimized [26]. Once this mapping is established, high-quality solutions can be explicitly transferred and adapted for use in other tasks.

- Objective: The primary goal is to enhance the positive transfer of knowledge by rationally exploiting identified correlations and reducing distributional discrepancies between tasks [26]. This approach seeks to make knowledge transfer a more deliberate and informed process rather than a random occurrence.

Table 1: Core Conceptual Differences Between Implicit and Explicit Transfer

| Feature | Implicit Transfer | Explicit Transfer |

|---|---|---|

| Transfer Mechanism | Incidental, via genetic operators (crossover/mutation) | Deliberate, via learned mapping functions |

| Knowledge Extraction | Unsupervised, emergent from population search | Supervised, based on analysis of task relationships |

| Key Parameter | Random mating probability (rmp) | Mapping function parameters (e.g., transformation matrix) |

| Computational Overhead | Relatively low [14] | Higher, due to model training (e.g., TCA, autoencoders) [26] |

| Primary Risk | Negative transfer between unrelated tasks [27] | Inaccurate mapping leading to ineffective transfers |

Experimental Protocols: How the Comparison is Made

To objectively compare implicit and explicit transfer algorithms, researchers employ standardized experimental protocols.

Benchmark Problems and Performance Metrics

- Test Suites: Algorithms are typically evaluated on synthetic benchmark problems where the global optima for each task are known in advance. Common suites include those proposed by Da et al. and Yuan et al., which contain tasks with varying degrees of similarity and complexity [3] [28]. These benchmarks allow for controlled studies of how algorithms perform under different inter-task relationships.

- Performance Metrics:

- Average Accuracy (Avg-Acc): Measures the average best function value achieved across all tasks at the end of the optimization run.

- Inverted Generational Distance (IGD): A comprehensive metric that evaluates both convergence and diversity of the obtained solution set towards the true Pareto front in multi-objective problems [26]. A lower IGD indicates better performance.

Protocol for Drug Discovery Applications

In real-world domains like drug discovery, the experimental setup is tailored to the application. For example, in a virtual high-throughput screening (vHTS) campaign [29]:

- Objective: Identify molecules from an ultra-large library (e.g., Enamine REAL space) that bind strongly to a specific protein target.

- Fitness Function: The binding affinity is computed using a flexible docking protocol like RosettaLigand.

- Algorithm Workflow: An evolutionary algorithm, such as REvoLd, is deployed to efficiently explore the combinatorial chemical space. The performance is measured by the enrichment factor—the rate at which the algorithm discovers high-affinity "hit" molecules compared to a random selection [29].

Results and Analysis: A Data-Driven Comparison

Empirical studies consistently reveal a trade-off between the efficiency of implicit transfer and the controlled power of explicit transfer.

Performance on Benchmark Problems

The table below synthesizes findings from multiple studies comparing classic and state-of-the-art algorithms.

Table 2: Performance Comparison of Representative EMT Algorithms on Benchmark Problems

| Algorithm | Transfer Type | Key Mechanism | Reported IGD (Lower is Better) | Key Strength |

|---|---|---|---|---|

| MFEA [3] | Implicit | Cultural transmission & assortative mating | Baseline | Simplicity, low computational cost |

| MOMFEA-II [26] | Implicit (Adaptive) | Online learning of inter-task relationships | Improved over MFEA | Adaptive rmp reduces negative transfer |

| EMEA [26] | Explicit | Autoencoder for cross-task mapping | Best on 50% of test functions in one study [26] | Effective for tasks with learnable mappings |

| TCADE [26] | Explicit | Transfer Component Analysis (TCA) subspace | 15 best IGD values out of 18 test functions [26] | Promotes positive transfer via distribution alignment |

| SETA-MFEA [27] | Explicit | Subdomain Evolutionary Trend Alignment | Competitive/outperforms others on complex suites | Handles heterogeneous tasks via subdomain decomposition |

The data indicates that while well-designed implicit methods like MOMFEA-II offer robust improvements over the baseline, advanced explicit methods like TCADE and SETA-MFEA often achieve superior performance on complex benchmarks by actively managing the transfer process [26] [27].

Computational Cost and Efficiency

A critical consideration is the computational overhead associated with each paradigm.

Table 3: Comparison of Computational and Practical Characteristics

| Aspect | Implicit Transfer (e.g., MFEA) | Explicit Transfer (e.g., EMEA, TCADE) |

|---|---|---|

| Computational Overhead | Low | Medium to High |

| Parameter Sensitivity | Sensitive to rmp setting | Sensitive to mapping model and hyperparameters |

| Adaptability to New Tasks | High, plug-and-play | May require retraining of the mapping model |

| Best Suited For | Tasks with unknown/weak relationships, quick deployment | Tasks with strong underlying similarities, where performance is critical |

As noted in the search results, implicit transfer is "efficient and straightforward" with a "small computational overhead" [14], making it attractive for many scenarios. Explicit methods, while more powerful, incur additional cost from "an explicit learning and transformation process" [14].

Case Study: Application in Drug Discovery

The field of computer-aided drug design provides a compelling real-world context for evaluating these paradigms. The challenge of screening billions of molecules in silico is a quintessential complex optimization problem [29] [30].

- Implicit Transfer in Action: The REvoLd algorithm employs an evolutionary approach to search ultra-large make-on-demand chemical libraries. While not a direct EMT application, its use of crossover and mutation to recombine promising molecular fragments exemplifies the power of implicit genetic operations for a single, complex task. It demonstrated massive enrichment factors, improving hit rates by 869 to 1622 times compared to random selection [29].

- Potential for Explicit Multitasking: In a true multitasking scenario, one could simultaneously optimize for activity against multiple protein targets or balance activity with pharmacokinetic properties. An explicit transfer algorithm could learn the relationship between the chemical subspaces relevant to each target or property, directly transferring promising scaffolds. This could potentially accelerate the discovery of multi-target therapeutics or improve the overall efficiency of the multi-property optimization process.

The following diagram illustrates a simplified workflow for an evolutionary algorithm applied to drug discovery, highlighting points where implicit and explicit transfer could be incorporated.

Diagram 1: Evolutionary Drug Discovery Workflow

The Scientist's Toolkit: Key Research Reagents & Algorithms

This section details the essential "reagents"—both algorithmic and practical—required for conducting research in evolutionary multitasking.

Table 4: Essential Tools for Evolutionary Multitasking Research

| Category | Item / Algorithm | Primary Function | Key Reference / Source |

|---|---|---|---|

| Core Algorithms | MFEA / MOMFEA | Baseline implicit EMT algorithms | [26] [3] |

| MFEA-II | Implicit EMT with online similarity learning | [26] [27] | |

| EMEA | Explicit EMT using autoencoders | [26] | |

| TCADE | Explicit EMT using Transfer Component Analysis | [26] | |

| Benchmark Suites | Da et al. Benchmarks | Single-objective multitasking benchmark problems | [3] [27] |

| Yuan et al. Benchmarks | Multi-objective multitasking benchmark problems | [3] | |

| Software & Libraries | RosettaLigand / REvoLd | Flexible protein-ligand docking for drug discovery benchmarks | [29] |

| PlatEMO | A MATLAB platform for evolutionary multi-objective optimization | (Commonly used, not in sources) | |

| Performance Metrics | Inverted Generational Distance (IGD) | Evaluates convergence & diversity in multi-objective optimization | [26] |

| Average Accuracy (Avg-Acc) | Measures solution quality at termination | [27] |

The comparison between implicit and explicit transfer in evolutionary multitasking reveals a landscape rich with trade-offs. Implicit transfer, exemplified by algorithms like MFEA, offers a robust, computationally efficient, and easily implementable approach. Its strength lies in leveraging the inherent parallelism of population-based search without added complexity. In contrast, explicit transfer, as seen in TCADE and SETA-MFEA, provides a more deliberate and often more powerful mechanism for knowledge exchange, particularly when tasks are related but reside in heterogeneous spaces. Its primary advantage is the potential for enhanced positive transfer and mitigation of negative effects, albeit at a higher computational cost and increased algorithmic complexity.

Future research is poised to bridge the gap between these two paradigms. A significant trend is toward increased adaptability and automation. For instance, the Learning to Transfer (L2T) framework uses reinforcement learning to automatically decide when and how to transfer, dynamically selecting the most appropriate evolution operator and transfer intensity [14]. This represents a move towards a more intelligent and unified approach. Furthermore, critical reviews call for more rigorous benchmarking and a stronger focus on real-world applicability to ensure that algorithmic advances translate into practical gains [28]. As these trends converge, the next generation of EMT algorithms will likely offer greater robustness and performance across an ever-widening array of complex optimization challenges.

In the field of evolutionary multitasking optimization (EMTO), the strategic transfer of knowledge between tasks is paramount for enhancing algorithmic performance. While early methods often relied on implicit genetic transfer through operators like crossover, recent research has shifted towards more deliberate, explicit mechanisms that actively extract and map knowledge. This guide objectively compares two sophisticated explicit strategies: autoencoder-based domain adaptation and association mapping techniques. Unlike implicit transfer, which can lead to performance-degrading negative transfer when tasks are dissimilar, these explicit methods proactively model the relationships between tasks, offering researchers and developers more controlled and interpretable knowledge-sharing frameworks [11] [31].

The core distinction lies in their approach to knowledge. Implicit transfer, as seen in the Multifactorial Evolutionary Algorithm (MFEA), allows knowledge to interact indirectly through genetic operators acting on a unified population. In contrast, explicit transfer involves the conscious identification and extraction of valuable information—such as high-quality solutions or solution space characteristics—from a source task, which is then strategically injected into a target task via specially designed mechanisms [11]. This guide details the implementation, performance, and practical applications of the two leading explicit methods, providing a data-driven foundation for selecting the appropriate tool for complex optimization challenges in domains like drug development and computational biology.

Autoencoder-Based Knowledge Representation

Fundamental Concepts and Architectures

Autoencoders (AEs) are a class of neural networks designed for unsupervised representation learning. Their primary objective is to learn a compressed, informative encoding of high-dimensional input data. The standard architecture consists of three core components: an encoder that compresses the input into a lower-dimensional bottleneck layer (the latent representation), and a decoder that attempts to reconstruct the original input from this compressed code [32]. The quality of the reconstruction is measured by a reconstruction loss, such as Mean Squared Error (MSE) or Binary Cross-Entropy [32] [33].

Several specialized autoencoder variants have been developed to enhance their representational capabilities:

- Undercomplete Autoencoders: The simplest form, which uses a bottleneck layer with fewer nodes than the input to force the network to learn a compressed, salient representation [32].

- Denoising Autoencoders (DAE): Trained to reconstruct clean input from a corrupted (noisy) version, making the learned representations more robust to noise and variations [32] [33].

- Variational Autoencoders (VAE): A generative model that represents the latent space as a probability distribution, enabling the generation of new data samples and ensuring a continuous, well-structured latent space [32] [34].

- Contractive Autoencoders (CAE): Add a regularization term to the loss function that penalizes sensitivity to small input variations, encouraging the model to learn representations that are robust to infinitesimal input changes [32].

Application in Evolutionary Multitasking: Progressive Auto-Encoding

In EMTO, a key challenge is aligning the search spaces of different tasks to facilitate knowledge transfer. The Progressive Auto-Encoding (PAE) framework addresses the limitations of static pre-trained models by enabling continuous domain adaptation throughout the evolutionary process [31].

The methodology integrates two complementary strategies:

- Segmented PAE: This strategy employs staged training of autoencoders. Different autoencoders are trained for distinct phases of the optimization process (e.g., early, middle, and late stages), achieving effective domain alignment that is tailored to the evolving population characteristics at each phase [31].

- Smooth PAE: This strategy facilitates more gradual adaptation. Instead of retraining from scratch, it continuously fine-tunes the autoencoder using eliminated solutions from the evolutionary process, allowing for a smoother and more refined alignment of domains over time [31].

The following diagram illustrates the workflow of integrating this progressive auto-encoding technique into an evolutionary multi-task optimization algorithm.

Experimental Performance and Benchmarking

The performance of algorithms enhanced with PAE has been rigorously tested on multiple benchmark suites and real-world applications. The tables below summarize key experimental results comparing PAE-based methods against other state-of-the-art algorithms.

Table 1: Benchmark Performance of MTEA-PAE (Single-Objective)

| Benchmark Suite | Metric | MTEA-PAE | Next Best Algorithm | Performance Gap |

|---|---|---|---|---|

| CEC 2021 MTO | Average Best Fitness | 0.92 | 0.87 | +5.7% |

| MToP Platform | Convergence Speed (Generations) | 1,250 | 1,450 | +16% faster |

| WCCI 2020 MTSO | Solution Quality (Hypervolume) | 0.78 | 0.72 | +8.3% |

Table 2: Benchmark Performance of MO-MTEA-PAE (Multi-Objective)

| Application Domain | Metric | MO-MTEA-PAE | Next Best Algorithm | Performance Gap |

|---|---|---|---|---|

| Vehicle Path Planning | Total Distance Cost | $45,200 | $47,800 | -5.4% |

| Energy Management | Power Loss (kW) | 125.5 | 135.2 | -7.2% |

| Shop-Floor Scheduling | Makespan (hours) | 48.3 | 52.1 | -7.3% |

The experimental data demonstrates that the PAE technique consistently enhances the performance of both single- and multi-objective MTEAs, leading to superior convergence efficiency and solution quality compared to other advanced algorithms [31].

Association Mapping for Knowledge Representation

Fundamental Concepts and the PA-MTEA Algorithm

Association mapping provides an alternative, highly analytical approach to explicit knowledge transfer. Instead of learning a latent representation, it focuses on statistically modeling the correlations between the search spaces of different tasks. The core idea is to create a structured mapping function that ensures transferred knowledge is not just copied, but adapted effectively for the target task.

The Multitask Evolutionary Algorithm based on an Association Mapping Strategy and an Adaptive Population Reuse mechanism (PA-MTEA) is a leading algorithm in this category. It was developed to address the inherent "blindness" of transfer that can occur when the inter-task knowledge mapping relationships are not accounted for [11]. PA-MTEA introduces two key innovations:

- Association Mapping based on Partial Least Squares (PLS): This strategy strengthens the connection between source and target tasks during bidirectional knowledge transfer. When reducing the dimensionality of the search space, it extracts principal components that have strong correlations between the task domains, creating a more informed and adaptive mapping [11].

- Alignment Matrix via Bregman Divergence: After deriving the respective subspaces for tasks, this technique adjusts them by minimizing the Bregman divergence between them. This step produces an alignment matrix that actively minimizes variability between task domains, further refining the knowledge transfer process [11].

- Adaptive Population Reuse (APR): This mechanism balances global exploration and local exploitation by reusing historically successful individuals. It adaptively adjusts the number of excellent individuals retained from the population's history based on current population diversity, using their genetic information to guide future evolution [11].

Experimental Workflow and Protocol

Implementing the PA-MTEA algorithm for a comparative study involves a structured workflow, as shown in the diagram below. The process highlights the central role of the association mapping strategy in enabling high-quality, bidirectional knowledge transfer.

A standard experimental protocol for evaluating PA-MTEA is as follows:

- Problem Setup: Select a multitask benchmark suite (e.g., WCCI2020-MTSO) comprising multiple minimization tasks.

- Algorithm Initialization: Initialize separate populations for each task. Set parameters for the PLS projection and the adaptive population reuse mechanism.

- Evolutionary Loop: For each generation: a. Evaluate each population on its respective task. b. Apply Association Mapping: Perform PLS-based subspace projection between pairs of tasks to identify correlated components. Compute the alignment matrix using Bregman divergence. c. Execute Knowledge Transfer: Use the mapping to transform and transfer high-quality solutions between correlated tasks. d. Apply APR: Reintegrate a subset of historically successful individuals into the current populations, guided by diversity metrics. e. Evolve Populations using standard evolutionary operators (selection, crossover, mutation).

- Termination & Evaluation: Repeat until a maximum number of generations is reached. Compare the final performance against other MTEAs using metrics like convergence speed and best-found fitness [11].

Experimental Performance and Benchmarking

PA-MTEA has been validated on complex benchmark suites and a real-world application involving parameter extraction for photovoltaic models. The table below summarizes its performance compared to six other advanced multitask algorithms.

Table 3: Performance of PA-MTEA on Benchmark and Real-World Problems

| Test Problem / Metric | PA-MTEA | MFEA [11] | EMFF [11] | Other Advanced MTEAs (Avg.) |

|---|---|---|---|---|

| WCCI2020-MTSO (10 problems) | ||||

| > Average Best Fitness | 0.95 | 0.82 | 0.89 | 0.84 - 0.90 |

| > Convergence Generations | 1,100 | 1,600 | 1,350 | 1,300 - 1,500 |

| Photovoltaic Parameter Extraction | ||||

| > Root Mean Square Error | 0.024 | 0.041 | 0.030 | 0.032 - 0.045 |

| > Optimization Speed-up | ~40% | Baseline | ~20% | ~15% - ~25% |