Evolutionary vs. Bayesian: Benchmarking Paddy, GA, and BO for Drug Discovery and Chemical Optimization

This article provides a comprehensive performance analysis of three prominent optimization algorithms—the evolutionary Paddy algorithm, Bayesian optimization, and Genetic Algorithms—in the context of chemical sciences and drug development.

Evolutionary vs. Bayesian: Benchmarking Paddy, GA, and BO for Drug Discovery and Chemical Optimization

Abstract

This article provides a comprehensive performance analysis of three prominent optimization algorithms—the evolutionary Paddy algorithm, Bayesian optimization, and Genetic Algorithms—in the context of chemical sciences and drug development. With a focus on real-world applicability for researchers and scientists, we explore the foundational principles, methodological strengths, and specific limitations of each approach. Drawing on recent benchmarks and case studies, we compare their efficiency in tasks such as molecular generation, hyperparameter tuning, and experimental planning. The analysis offers actionable insights for selecting the right algorithm based on problem dimensionality, computational budget, and the need for global search versus data efficiency, providing a clear guide for accelerating innovation in biomedical research.

Understanding the Core Principles: A Deep Dive into Paddy, Bayesian, and Genetic Algorithms

Optimization is a cornerstone of chemical research, crucial for areas ranging from synthetic methodology and chromatography to drug design and material discovery [1]. As chemical systems grow in complexity, the challenge intensifies: how can researchers efficiently find the best experimental conditions or molecular structures within a vast parameter space, while avoiding the trap of local, sub-optimal solutions? This guide compares three powerful algorithmic approaches to this problem: the biologically-inspired Paddy Field Algorithm (PFA), Bayesian Optimization (BO), and Genetic Algorithms (GA). We objectively analyze their performance based on recent benchmarking studies, providing the data and methodologies needed to inform your choice of optimization tool.

Understanding the Algorithms: Core Principles and Workflows

The Paddy Field Algorithm (Paddy)

The Paddy Field Algorithm is an evolutionary optimization method inspired by the reproductive behavior of rice plants [1]. It operates on the principle that plant propagation is influenced by both soil quality (fitness) and pollination (population density). The Paddy algorithm, implemented in a user-friendly Python package, propagates parameters without directly inferring the underlying objective function, making it a versatile black-box optimizer [1] [2]. Its process can be broken down into five key phases, illustrated below.

The algorithm is initiated by sowing a random population of seeds (parameter sets) across the search space [1]. These seeds are then evaluated using the objective function, and the top-performing plants are selected. In the seeding phase, the number of seeds each selected plant produces is determined by its relative fitness. The pollination step reinforces areas with high densities of fit plants, mimicking density-mediated pollination. Finally, new parameter values are assigned to these pollinated seeds via Gaussian mutation, with the mean centered on the parent plant's values. This cycle repeats until convergence or a set number of iterations is completed [1].

Bayesian Optimization (BO)

Bayesian Optimization is a sequential design strategy for global optimization of black-box functions that are expensive to evaluate [3]. It builds a probabilistic surrogate model, typically a Gaussian Process (GP), of the objective function. This model is updated after each evaluation. An acquisition function, derived from the surrogate model, guides the selection of the next point to evaluate by balancing exploration (probing uncertain regions) and exploitation (refining promising areas). While powerful in low dimensions, BO faces the curse of dimensionality; in high-dimensional spaces, the distance between points increases, making it difficult to fit an accurate surrogate model without an exponentially large number of samples [3].

Genetic Algorithms (GA)

Genetic Algorithms are a well-established class of evolutionary algorithms inspired by the process of natural selection [1] [4]. They maintain a population of candidate solutions that undergo selection, crossover (recombination), and mutation to produce successive generations. Over time, the population evolves toward better solutions. A key differentiator from the Paddy algorithm is the use of crossover, where two "parent" solutions combine to create "offspring." While robust, their performance can be sensitive to the design of these genetic operators.

Performance Benchmarking: A Quantitative Comparison

Recent research has directly benchmarked the Paddy algorithm against other state-of-the-art optimizers across multiple mathematical and chemical tasks [1] [2]. The benchmarks included global optimization of a bimodal distribution, interpolation of an irregular sinusoidal function, hyperparameter tuning for a chemical classification neural network, and targeted molecule generation.

The table below summarizes the key performance findings:

| Algorithm | Key Strengths | Performance Summary | Computational Efficiency |

|---|---|---|---|

| Paddy Field Algorithm (Paddy) | • High robustness across diverse tasks• Strong resistance to early convergence on local optima• Facile and open-source Python implementation [1] | Maintained strong, consistent performance across all tested benchmarks [1] [2]. | Markedly lower runtime compared to Bayesian methods [1]. |

| Bayesian Optimization (with Gaussian Process) | • High sample efficiency in low dimensions• Guided by a probabilistic model | Performance varied across benchmarks. Can struggle with high-dimensional chemical spaces due to the curse of dimensionality [3]. | Higher computational overhead per iteration due to model fitting [1]. |

| Genetic Algorithm (EvoTorch) | • Well-established and versatile• Benefits from crossover operator | Performance varied across benchmarks [1]. | --- |

| Evolutionary Algorithm (Gaussian Mutation) | • Simple and effective mutation strategy | Performance varied across benchmarks [1]. | --- |

A core finding is Paddy's robust versatility. While other algorithms showed fluctuating performance depending on the specific task, Paddy consistently delivered strong results, matching or often outperforming its competitors [1]. A significant advantage is its innate resistance to early convergence, allowing it to effectively bypass local optima in search of the global solution [1] [2].

Experimental Protocols: Methodology for Key Benchmarks

To ensure reproducibility and provide context for the performance data, here are the detailed methodologies for two critical benchmarks cited in the research.

Protocol: Hyperparameter Optimization for a Chemical Neural Network

This benchmark assessed the algorithms' ability to tune an artificial neural network designed to classify solvents for reaction components [1].

- Objective Function: The validation accuracy of the neural network.

- Search Space: The hyperparameters of the neural network (e.g., learning rate, number of layers, nodes per layer).

- Algorithms Compared: Paddy, Tree-structured Parzen Estimator (Hyperopt), Bayesian Optimization with Gaussian Process (Ax), and population-based methods from EvoTorch (Evolutionary Algorithm and Genetic Algorithm) [1].

- Evaluation Metric: The final classification accuracy achieved on a held-out test set after hyperparameter optimization.

Protocol: Targeted Molecule Generation

This benchmark evaluated optimization within a complex, discrete chemical space.

- Objective Function: The binding affinity or a desired molecular property, often predicted by a pre-trained model like a Junction Tree Variational Autoencoder (JT-VAE). The goal is to optimize the input vector to the decoder to generate molecules with high target scores [1].

- Search Space: The latent space of the generative model or a discrete experimental space.

- Algorithms Compared: The same set of algorithms as in the hyperparameter optimization benchmark [1].

- Evaluation Metric: The quality (fitness score) of the generated molecules and the efficiency (number of function evaluations) required to find high-quality candidates.

Essential Research Reagents: The Optimization Toolkit

For researchers looking to implement these optimization strategies, the following software tools are essential "research reagents."

| Tool / Algorithm | Primary Function | Implementation & Availability |

|---|---|---|

| Paddy | Evolutionary optimization based on the Paddy Field Algorithm. | Open-source Python package. Available on GitHub: https://github.com/chopralab/paddy [1]. |

| Ax (Adaptive Experimentation) | Bayesian optimization and platform for adaptive experimentation. | Open-source Python framework from Meta [1]. |

| Hyperopt | Distributed hyperparameter optimization with Tree of Parzen Estimators. | Open-source Python library [1]. |

| EvoTorch | Neuroevolution and evolutionary optimization library. | Open-source Python library used for benchmarking GA and Evolutionary Algorithms [1]. |

| BoTorch | Bayesian optimization research library built on PyTorch. | Open-source Python framework [3]. |

The choice of an optimization algorithm is critical for the success of computational and experimental campaigns in chemistry and drug discovery.

- For high-dimensional, complex chemical spaces where the risk of local optima is high and function evaluations are computationally demanding, the Paddy Field Algorithm presents a compelling choice due to its robust performance, speed, and innate exploratory nature [1] [2].

- For low-dimensional problems (typically <20 dimensions) where each evaluation is extremely expensive, Bayesian Optimization remains a powerful option because of its high sample efficiency, provided the computational overhead of the surrogate model is manageable [3].

- Genetic Algorithms represent a time-tested, flexible approach that can be highly effective, particularly when domain knowledge can be incorporated into the design of the crossover and mutation operators [4].

In summary, the Paddy algorithm has established itself as a versatile, robust, and efficient optimizer for modern chemical problems, demonstrating consistent and competitive performance across a wide range of challenging tasks relevant to researchers and drug development professionals.

Optimization of expensive black-box functions is a fundamental challenge across scientific and engineering disciplines, from drug discovery and materials design to analytical chemistry method development. Researchers and practitioners often face a critical choice between powerful optimization paradigms, each with distinct strengths and weaknesses. This guide provides an objective comparison of three prominent approaches: the Paddy evolutionary algorithm, Bayesian optimization (BO) with Gaussian Processes (GPs), and Genetic Algorithms (GAs), contextualized within performance research for scientific applications.

Bayesian optimization has gained significant traction for its data efficiency, leveraging Gaussian processes as probabilistic surrogate models to guide the search for optima with minimal function evaluations. Meanwhile, evolutionary strategies like Paddy and genetic algorithms offer robust, gradient-free optimization capable of handling complex, multi-modal landscapes. Understanding their relative performance characteristics enables more informed algorithm selection for specific research needs.

Algorithm Fundamentals

Bayesian Optimization with Gaussian Processes

Bayesian optimization is a sequential design strategy for optimizing black-box functions that are expensive to evaluate. The core components are:

Gaussian Process Surrogate: BO uses a Gaussian process as a probabilistic model to approximate the unknown objective function. A GP defines a distribution over functions, where any finite collection of function values has a joint Gaussian distribution. This is characterized by a mean function μ₀(x) and covariance kernel k(x, x′) [5].

Acquisition Function: This utility function leverages the GP's predictive mean and uncertainty to select the most promising point to evaluate next. It automatically balances exploration (sampling uncertain regions) and exploitation (sampling near predicted optima) [5] [6].

Common kernels include the Radial Basis Function (RBF) and Matérn families, which impose smoothness assumptions on the objective function [5]. The GP posterior distribution is updated after each evaluation, refining the surrogate model and informing subsequent selections.

The Paddy Field Algorithm

Paddy is a biologically-inspired evolutionary optimization algorithm that mimics plant reproductive strategies in paddy fields. Its operation proceeds through five distinct phases [7]:

- Sowing: Initialization with a random set of parameter seeds.

- Selection: Evaluation of the fitness function and selection of top-performing plants based on a threshold parameter.

- Seeding: Calculation of seed counts for selected plants proportional to their normalized fitness.

- Pollination: Density-based reinforcement where areas with higher densities of fit plants produce more offspring.

- Propagation: Generation of new parameter vectors via Gaussian mutation of selected plants.

A key differentiator is Paddy's density-based pollination, which allows a single parent to produce multiple children based on both relative fitness and local solution density, promoting diversity and helping avoid premature convergence [7].

Genetic Algorithms

Genetic Algorithms are a class of evolutionary algorithms inspired by natural selection. They maintain a population of candidate solutions that undergo [7]:

- Selection: Individuals are selected for reproduction based on their fitness.

- Crossover (Recombination): Genetic material from parent solutions is combined to create offspring.

- Mutation: Random alterations introduce new genetic material and maintain diversity.

GAs are known for their global search capabilities and robustness to noisy or non-differentiable objective functions.

Experimental Protocols & Performance Benchmarks

Comparative Experimental Framework

Recent studies have established standardized benchmarking protocols to evaluate optimization algorithms across diverse problem domains. Key methodological considerations include:

- Diverse Test Functions: Benchmarks should include multi-modal functions, irregular surfaces, and high-dimensional problems to assess exploration/exploitation balance and scalability [7] [5].

- Chemical and Materials Applications: Real-world validation includes neural network hyperparameter optimization for chemical classification, targeted molecule generation, and experimental condition planning [7] [8].

- Performance Metrics: Algorithms are compared on data efficiency (number of iterations/experiments to reach target performance) and computational efficiency (wall-clock time and scaling behavior) [9].

- Statistical Rigor: Studies typically conduct hundreds of repeated trials with different random seeds to account for stochasticity and provide reliable performance statistics [8].

Experimental Benchmarking Workflow

Quantitative Performance Comparison

Table 1: Overall Performance Characteristics Across Domains

| Algorithm | Data Efficiency | Time Efficiency | Global Optimization | Scalability to High Dimensions | Best-Suited Applications |

|---|---|---|---|---|---|

| Bayesian Optimization | Excellent [9] | Moderate to Poor (computational overhead) [9] | Good with appropriate kernels | Challenging beyond ~20 dimensions without special strategies [3] | Expensive function evaluations, small evaluation budgets |

| Paddy Algorithm | Good [7] | Excellent (lower runtime) [7] | Excellent (avoids local optima) [7] | Good (robust performance) [7] | Complex chemical systems, multi-modal landscapes |

| Genetic Algorithms | Moderate [9] | Good [9] | Very Good | Good with appropriate operators | Non-differentiable problems, discrete search spaces |

Table 2: Performance Metrics on Specific Benchmark Tasks

| Benchmark Task | Algorithm | Success Rate | Iterations to Converge | Runtime | Key Findings |

|---|---|---|---|---|---|

| 2D Bimodal Distribution Optimization | Paddy | 98% | ~45 | 1.0x (reference) | Robust identification of global maximum [7] |

| BO (GP) | 95% | ~38 | 1.3x | Slightly fewer iterations but longer runtime [7] | |

| Genetic Algorithm | 92% | ~52 | 1.1x | Good but slower convergence [7] | |

| Neural Network Hyperparameter Optimization | Paddy | High | ~100 | 1.0x (reference) | Excellent runtime performance [7] |

| BO (GP) | High | ~85 | 1.5x | Superior data efficiency [7] | |

| Genetic Algorithm | Medium | ~120 | 1.2x | Moderate performance on both metrics [7] | |

| LC Method Development | BO (GP) | N/A | <200 | High | Most data-efficient for search-based optimization [9] |

| Differential Evolution | N/A | Medium | Low | Best time efficiency for dry optimization [9] | |

| Genetic Algorithm | N/A | Medium | Medium | Competitive but outperformed by DE [9] |

Domain-Specific Applications and Performance

Drug Discovery and Materials Design

Bayesian optimization has demonstrated particular success in drug discovery pipelines, where it efficiently navigates complex molecular spaces while minimizing expensive experimental evaluations [10] [11]. In materials design, a target-oriented BO variant (t-EGO) has proven highly effective at finding materials with specific property values rather than simply maximizing or minimizing properties. In one application, t-EGO discovered a shape memory alloy with a transformation temperature differing by only 2.66°C from the target in just 3 experimental iterations [8].

For these domains with expensive evaluations, BO's data efficiency often translates to significant resource savings, though evolutionary methods like Paddy remain valuable for problems with complex, multi-modal landscapes where avoiding local optima is crucial [7].

High-Dimensional Optimization

Scalability to high-dimensional spaces presents significant challenges for Bayesian optimization. The curse of dimensionality causes point distances to increase, requiring exponentially more data for accurate modeling [3]. Recent research has identified that:

- Vanishing gradients in GP likelihood functions during model fitting substantially impact high-dimensional BO performance [3].

- Local search behaviors promoted by methods like trust regions and random axis-aligned perturbations are crucial for success in high dimensions [3].

- Simple BO variants with modified length scale initialization and acquisition function optimization strategies can achieve state-of-the-art performance on real-world high-dimensional problems [3].

Table 3: Algorithm Performance in High-Dimensional Spaces

| Dimension Range | Bayesian Optimization | Paddy Algorithm | Genetic Algorithms |

|---|---|---|---|

| Low Dimensions (<20) | Excellent performance | Strong performance | Good performance |

| Medium Dimensions (20-100) | Requires specialized strategies (trust regions, embeddings) | Robust with moderate performance decline | Moderate performance with appropriate population sizes |

| High Dimensions (>100) | Challenging; benefits from local search strategies and length scale adjustments | Maintains functionality but slower convergence | Generally more robust than BO but still affected by dimensionality |

Automated Method Development in Chemistry

In liquid chromatography (LC) method development, algorithms were evaluated for optimizing gradient profiles across diverse samples and chromatographic response functions. Bayesian optimization demonstrated superior data efficiency, requiring the fewest experimental iterations, making it particularly effective for search-based optimization where the number of iterations must be kept low (<200) [9]. However, for in-silico optimization requiring larger iteration budgets, differential evolution achieved better time efficiency due to BO's unfavorable computational scaling [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software Tools for Optimization Research

| Tool Name | Algorithm | Primary Function | Application Context |

|---|---|---|---|

| Paddy | Paddy Field Algorithm | Evolutionary optimization implementation | Chemical system optimization, automated experimentation [7] |

| Ax/Botorch | Bayesian Optimization | Flexible BO framework with GPs | Materials design, drug discovery, hyperparameter tuning [7] |

| Hyperopt | Bayesian Optimization | Distributed hyperparameter optimization | Machine learning model tuning [7] |

| EvoTorch | Evolutionary Algorithms | PyTorch-based evolutionary algorithms | General-purpose optimization benchmarks [7] |

| GAUCHE | Bayesian Optimization | Gaussian processes for chemistry | Molecular design, chemical reaction optimization [10] |

Algorithm Selection Decision Framework

The comparative analysis reveals that no single optimization algorithm dominates all others across all performance metrics and application contexts. Bayesian optimization with Gaussian processes excels in data efficiency, making it particularly valuable for applications with expensive function evaluations like drug discovery and materials design. The Paddy algorithm demonstrates robust performance across diverse problems with excellent computational efficiency and strong resistance to local optima. Genetic algorithms offer reliable global search capabilities, especially for non-differentiable and discrete problems.

Algorithm selection should be guided by specific problem characteristics: evaluation cost, dimensionality, required solution quality, and computational resources. For high-dimensional problems, BO variants with local search strategies show promise, while for complex chemical systems with multi-modal landscapes, evolutionary approaches like Paddy offer distinct advantages. Future research directions include hybrid approaches that leverage the strengths of each paradigm and improved scalability for very high-dimensional scientific applications.

Genetic Algorithms (GAs) are a class of evolutionary algorithms inspired by the process of natural selection, belonging to the larger family of evolutionary computation. These metaheuristic optimization techniques solve complex problems by mimicking biological evolution, using biologically inspired operators such as selection, crossover, and mutation to evolve a population of candidate solutions over multiple generations [12]. In computational and chemical sciences, optimization algorithms are paramount for navigating complex problem spaces where traditional methods struggle. As chemical systems grow increasingly complex, algorithms must efficiently optimize underlying objectives while effectively sampling parameter space to avoid convergence on local minima [1]. This exploration is particularly relevant in resource-intensive fields like drug discovery, where optimization efficiency directly impacts research timelines and success rates [13].

The broader context of optimization research includes various strategic approaches, each with distinct mechanisms and advantages. The Paddy algorithm, a newer evolutionary approach, introduces density-based reinforcement of solutions inspired by plant propagation behavior [1]. In contrast, Bayesian optimization employs probabilistic models to guide sampling decisions, often favoring exploitation [1]. Traditional genetic algorithms strike a balance through their operator-based approach, making them valuable benchmarks for comparison. Understanding the core mechanisms of GAs—selection, crossover, and mutation—provides essential groundwork for evaluating these competing optimization methodologies across scientific domains, particularly in chemical informatics and drug development applications [1] [13].

Core Genetic Operators: The Mechanisms of Evolution

Selection: Survival of the Fittest

The selection operator implements the "survival of the fittest" principle by choosing which individuals in a population become parents to the next generation. This fitness-based process ensures that superior solutions have a higher probability of passing their genetic material to offspring [12] [14]. Selection pressure drives the population toward improved fitness over successive generations, yet excessive pressure too early can diminish diversity and cause premature convergence to suboptimal solutions [15].

Common selection techniques include:

- Roulette Wheel Selection: Individuals are selected with probability proportional to their fitness scores [14].

- Tournament Selection: Small random subgroups compete, with the fittest from each subgroup advancing [14].

- Rank Selection: Selection probability bases on relative ranking rather than absolute fitness values [14].

Advanced implementations in 2025 incorporate adaptive selection methods that dynamically adjust selection pressure and AI-based ranking systems to identify promising solutions more efficiently [15].

Crossover: Genetic Recombination

Crossover (recombination) combines genetic information from two parent solutions to create novel offspring, enabling the algorithm to explore new regions of the solution space by merging successful traits [12] [15]. This operator is crucial for exploiting promising genetic material and discovering improved solutions through combination.

Standard crossover techniques include:

- Single-Point Crossover: A random point is selected where parent sequences are split and exchanged [14].

- Two-Point Crossover: Two points are selected, with the middle segment exchanged between parents [14].

- Uniform Crossover: Each gene is randomly copied from either parent with equal probability [14].

Recent advancements include multi-parent crossover combining genetic material from more than two parents, adaptive crossover rates that adjust based on algorithm progress, and neural-guided recombination that uses AI to intelligently blend solutions [15]. Deep crossover schemes represent a significant innovation, applying multiple crossover operations to the same parent pair to enable deeper exploitation of promising genetic combinations [16].

Mutation: Introducing Diversity

The mutation operator introduces random changes to individual solutions, typically at a low probability, helping maintain population diversity and enabling exploration of new solution possibilities [12] [14]. Without mutation, algorithms risk premature convergence as genetic diversity diminishes over generations. Mutation ensures the algorithm can recover lost genetic material and escape local optima.

Common mutation approaches include:

- Bit-Flip Mutation: In binary representations, randomly flips bits from 0 to 1 or vice versa [14].

- Swap Mutation: Exchanges the positions of two randomly selected elements [14].

- Gaussian Mutation: Adds random noise drawn from a Gaussian distribution to continuous values [14].

Modern implementations feature adaptive mutation rates that respond to population diversity metrics and guided mutation algorithms where AI predicts which changes might yield improvements [15]. When combined with reinforcement learning, mutation becomes a more intelligent exploration mechanism [15].

Table 1: Summary of Core Genetic Operators

| Operator | Primary Function | Common Techniques | Advanced (2025) Developments |

|---|---|---|---|

| Selection | Choose fittest solutions for reproduction | Roulette wheel, Tournament, Rank selection | Adaptive selection, AI-based ranking, Hybrid classifier models |

| Crossover | Combine parental traits to create offspring | Single-point, Two-point, Uniform crossover | Multi-parent crossover, Adaptive rates, Neural-guided recombination |

| Mutation | Introduce random changes to maintain diversity | Bit-flip, Swap, Gaussian mutation | Adaptive mutation rates, AI-guided mutation, Reinforcement learning integration |

Comparative Analysis of Optimization Algorithms

The Paddy Field Algorithm

The Paddy Field Algorithm (PFA) represents a biologically-inspired evolutionary approach that simulates plant propagation behavior in paddy fields [1]. Unlike traditional genetic algorithms, PFA employs density-based reinforcement where parameters that yield high-fitness solutions (plants) produce more offspring based on both relative fitness and pollination factors derived from solution density [1]. This approach operates without direct inference of the underlying objective function, instead propagating parameters through a five-phase process: (1) Sowing with random parameters as initial seeds, (2) Selection of top-performing plants, (3) Seeding where selected plants generate seeds based on fitness and density, (4) Pollination that reinforces dense clusters of high-quality solutions, and (5) Sowing of new generation with Gaussian-distributed variations [1].

PFA's distinctive mechanism of considering solution density in reproduction creates different exploration-exploitation dynamics compared to traditional GAs. The algorithm demonstrates innate resistance to early convergence by maintaining diversity through density-mediated pollination and shows particular strength in bypassing local optima in search of global solutions [1]. These characteristics make PFA particularly suitable for chemical optimization tasks where the objective function landscape contains multiple local minima that could trap conventional optimizers.

Bayesian Optimization Approaches

Bayesian optimization represents a fundamentally different approach, using probabilistic surrogate models (typically Gaussian processes) to approximate the objective function and an acquisition function to determine promising sampling locations [1]. This method sequentially updates its model as new evaluations are obtained, focusing on regions likely containing the optimum or with high uncertainty. Bayesian methods are particularly favored when evaluation costs are high and sample efficiency is paramount, as they aim to minimize the number of function evaluations required to find optima [1].

In chemical applications, Bayesian optimization has demonstrated value for neural network hyperparameter tuning, generative sampling, and as a general-purpose optimizer [1]. The method's strength lies in its systematic information gain strategy, though it can become computationally demanding for complex, high-dimensional search spaces [1].

Traditional Genetic Algorithms

Traditional GAs maintain a population of candidate solutions that undergo selection, crossover, and mutation in each generation [12]. The algorithm explores the search space through these biologically-inspired operations, balancing exploration (via mutation and crossover) and exploitation (via selection) [15] [12]. GAs are particularly effective for complex, multimodal optimization problems where gradient information is unavailable or unreliable and have demonstrated robustness in noisy, non-linear problem domains [15] [14].

A key theoretical foundation is the Building Block Hypothesis (BBH), which suggests that GAs succeed by identifying, combining, and propagating short, low-order, high-performance schemata (building blocks) [12]. However, GAs face challenges with premature convergence when populations lose diversity and can be computationally expensive for problems requiring numerous fitness evaluations [12].

Table 2: Algorithm Comparison in Chemical Optimization [1]

| Algorithm | Optimization Approach | Key Characteristics | Performance in Chemical Tasks |

|---|---|---|---|

| Paddy Algorithm | Evolutionary with density-based propagation | Five-phase process (sow, select, seed, pollinate), innate resistance to local optima, open-source Python implementation | Robust versatility across benchmarks, maintains strong performance in mathematical functions, neural network hyperparameter tuning, and molecular generation |

| Bayesian Optimization | Probabilistic model-based sequential sampling | Gaussian process surrogate model, acquisition function guides sampling, favors exploitation | Varying performance across tasks, excels when sample efficiency is critical, computational costs rise with problem complexity |

| Genetic Algorithm | Population-based evolutionary operators | Selection, crossover, mutation balance exploration/exploitation, Building Block Hypothesis | Strong performance in specific domains but varying across task types, susceptible to premature convergence |

| Random Search | Uninformed random sampling | Baseline comparison, no intelligence in sampling | Consistently lowest performance, serves as experimental control |

Experimental Comparison and Performance Metrics

Benchmarking Methodologies

Comprehensive benchmarking of optimization algorithms requires diverse test problems that evaluate different performance aspects. Recent research has employed several standardized methodologies [1]:

- Mathematical Function Optimization: Algorithms optimize benchmark functions like 2D bimodal distributions and irregular sinusoidal functions, testing ability to locate global optima amidst local traps [1].

- Neural Network Hyperparameter Tuning: Algorithms optimize artificial neural network architectures and parameters for chemical classification tasks (e.g., solvent classification for reaction components) [1].

- Targeted Molecule Generation: Algorithms optimize input vectors for decoder networks (e.g., junction-tree variational autoencoders) to generate molecules with specific properties [1].

- Experimental Planning: Algorithms sample discrete experimental spaces to identify optimal conditions with minimal evaluations [1].

These benchmarks evaluate both solution quality (fitness achieved) and computational efficiency (runtime, function evaluations). The Paddy algorithm was benchmarked against Tree of Parzen Estimators (Hyperopt), Bayesian optimization with Gaussian processes (Ax platform), and population-based methods from EvoTorch, including evolutionary algorithms with Gaussian mutation and genetic algorithms with both Gaussian mutation and single-point crossover [1].

Comparative Performance Results

Experimental results demonstrate that Paddy maintains robust versatility by delivering strong performance across all optimization benchmarks, whereas other algorithms show more variable performance depending on the specific task [1]. In mathematical function optimization, Paddy consistently identified global optima while effectively avoiding local minima traps. For neural network hyperparameter optimization in chemical classification tasks, Paddy achieved competitive performance with markedly lower runtime requirements compared to Bayesian methods [1].

In targeted molecule generation, Paddy successfully optimized input vectors for decoder networks to produce molecules with desired properties, demonstrating applicability to inverse design challenges in drug discovery [1]. The algorithm also efficiently sampled discrete experimental spaces for optimal experimental planning, highlighting its potential for guiding automated experimentation workflows in chemical research [1].

Table 3: Experimental Results Across Benchmark Tasks [1]

| Benchmark Task | Paddy Performance | Bayesian Optimization | Genetic Algorithm | Key Metric |

|---|---|---|---|---|

| 2D Bimodal Function | Global optimum consistently identified | Variable performance based on acquisition function | Susceptible to local optima trapping | Success rate finding global maximum |

| Irregular Sinusoidal | Effective interpolation | Strong performance with adequate sampling | Variable convergence patterns | Approximation accuracy |

| NN Hyperparameter Tuning | Competitive accuracy with lower runtime | High accuracy with computational overhead | Moderate performance | Classification accuracy vs. runtime |

| Targeted Molecule Generation | Successful property optimization | Effective but computationally intensive | Limited by premature convergence | Desired molecular properties achieved |

| Experimental Planning | Efficient space sampling | Sample efficient but model-dependent | Moderate sampling efficiency | Experiments to identify optimal conditions |

Applications in Drug Discovery and Chemical Sciences

AI-Driven Drug Discovery Platforms

Optimization algorithms play crucial roles in modern AI-driven drug discovery platforms, which have progressed from experimental curiosities to clinically valuable tools [13]. Leading platforms employ various optimization strategies:

- Generative Chemistry: AI designs novel molecular structures satisfying precise target product profiles including potency, selectivity, and ADME properties [13].

- Phenomics-First Systems: High-content phenotypic screening combined with automated precision chemistry [13].

- Integrated Target-to-Design Pipelines: Unified platforms spanning target identification to compound optimization [13].

- Knowledge-Graph Repurposing: Leveraging existing biomedical knowledge to identify new therapeutic applications for known compounds [13].

- Physics-Plus-ML Design: Combining physics-based simulations with machine learning models [13].

Companies like Exscientia, Insilico Medicine, and Schrödinger have advanced AI-designed therapeutics into human trials across diverse therapeutic areas, demonstrating how optimization algorithms accelerate early-stage research and development [13]. For instance, Exscientia reported AI design cycles approximately 70% faster requiring 10× fewer synthesized compounds than industry norms [13].

Specific Chemical Optimization Applications

In chemical research, optimization algorithms address diverse challenges:

- Molecular Optimization: Evolving chemical structures to enhance desired properties while maintaining synthetic feasibility [1].

- Reaction Condition Optimization: Identifying optimal temperature, solvent, catalyst, and concentration conditions for chemical reactions [1].

- Chromatography Method Development: Optimizing separation conditions for analytical and preparative chromatography [1].

- Materials Design: Discovering novel materials with tailored electronic, optical, or mechanical properties [1].

- Drug Formulation: Optimizing excipient combinations and processing parameters for drug formulations [1].

The versatility of evolutionary approaches like Paddy and GAs makes them particularly valuable across these applications, as they don't require gradient information or specific problem structure assumptions, functioning effectively with noisy, non-linear data common in experimental chemical systems [1] [15].

Research Reagent Solutions: Essential Tools for Optimization Research

Table 4: Key Software Tools and Libraries for Optimization Research

| Research Tool | Function | Application Context |

|---|---|---|

| Paddy Python Library | Implements Paddy Field Algorithm evolutionary optimization | Chemical system optimization, automated experimentation, molecular design [1] |

| Hyperopt | Tree of Parzen Estimators Bayesian optimization | Hyperparameter tuning for machine learning models, sample-efficient optimization [1] |

| Ax Platform | Bayesian optimization with Gaussian processes | Adaptive experimental design, multi-objective optimization [1] |

| EvoTorch | Evolutionary algorithms in PyTorch | Population-based optimization, genetic algorithms with GPU acceleration [1] |

| DEAP (Distributed Evolutionary Algorithms) | Framework for evolutionary algorithm implementation | Rapid prototyping of custom evolutionary approaches, research implementations [14] |

Future Directions and Advanced Developments

Emerging Trends in Evolutionary Computation

The field of evolutionary optimization continues to advance with several promising developments:

- Deep Crossover Schemes: Novel approaches applying multiple crossover operations per parent pair enable deeper exploitation of promising genetic material, demonstrating improved performance on benchmark problems like the Traveling Salesman Problem [16].

- Hybrid Algorithms: Combining evolutionary approaches with other optimization techniques (gradient-based methods, reinforcement learning) leverages complementary strengths for enhanced performance [15] [14].

- Adaptive Operator Control: Self-adjusting selection, crossover, and mutation parameters that dynamically respond to search progress and population diversity metrics [15].

- Quantum-Enhanced Evolution: Emerging integration with quantum computing to evaluate multiple solutions simultaneously, potentially accelerating evolutionary search [15].

- Neuroevolution: Using evolutionary algorithms to optimize neural network architectures and hyperparameters, creating synergies between evolutionary and deep learning approaches [15].

These advancements address fundamental challenges in evolutionary computation, particularly improving convergence reliability while maintaining exploration capability in complex search spaces.

Implications for Chemical and Pharmaceutical Research

For drug development professionals, these algorithmic advances translate to practical benefits:

- Accelerated Hit Identification: More efficient navigation of vast chemical spaces to identify promising therapeutic candidates [13] [17].

- Improved Success Rates: Better optimization of compound properties (potency, selectivity, metabolic stability) increases likelihood of clinical success [13] [18].

- Reduced Experimental Costs: Fewer synthesis and testing cycles required through computational prioritization of promising candidates [13].

- Personalized Medicine: Enhanced ability to optimize therapies for specific patient subgroups based on genomic and clinical data [18].

As AI-designed therapeutics progress through clinical trials, with several reaching Phase II and III stages by 2025, the role of sophisticated optimization algorithms becomes increasingly critical for pharmaceutical R&D [13]. The continued development of algorithms like Paddy, with their demonstrated versatility and robustness across chemical optimization tasks, promises to further enhance drug discovery efficiency and success rates [1].

Genetic algorithms, founded on the core operators of selection, crossover, and mutation, represent powerful optimization tools inspired by natural evolution. When compared against emerging approaches like the Paddy algorithm and established methods like Bayesian optimization, each technique demonstrates distinct strengths and limitations across chemical optimization benchmarks [1]. The Paddy algorithm shows particular promise with its robust performance across diverse tasks and innate resistance to premature convergence, while Bayesian methods excel in sample-efficient scenarios, and genetic algorithms offer proven capability for complex, multimodal problems [1].

For researchers and drug development professionals, algorithm selection should be guided by problem characteristics: Paddy for general-purpose chemical optimization requiring global search capability, Bayesian optimization for tasks with expensive evaluations and limited sampling budgets, and genetic algorithms for complex problems benefiting from population-based parallel exploration [1]. As evolutionary computation continues advancing with deep crossover schemes, adaptive operators, and hybrid approaches, optimization capabilities for chemical and pharmaceutical research will further expand, accelerating drug discovery and development timelines while improving success rates [13] [16].

In computational optimization, the selection of an algorithm is a critical determinant of success, particularly for expensive problems in domains like drug development where each function evaluation—be it a simulation or a physical experiment—is resource-intensive. While many algorithms share the common goal of finding an optimal solution, their underlying mechanics dictate their efficiency, robustness, and applicability. This guide provides a detailed, mechanical comparison of three influential algorithmic approaches: the Paddy field algorithm (Paddy) as a representative of modern evolutionary strategies, Bayesian optimization (BO) as a model-based optimizer, and the genetic algorithm (GA) as a classic evolutionary method [1] [19] [20]. We dissect their core components—population dynamics, the use of surrogate models, and evolutionary operators—to offer researchers a foundational understanding for informed algorithm selection. The performance of these methods is contextualized within chemical and biochemical optimization problems, providing a relevant frame of reference for professionals in drug development.

Core Concepts and Definitions

To understand the differences between these algorithms, one must first grasp their fundamental operating principles. The following table provides a concise summary of each algorithm's core philosophy and mechanics.

Table 1: Foundational Concepts of the Three Optimization Algorithms

| Algorithm | Core Philosophy | Key Mechanism | Primary Application Context |

|---|---|---|---|

| Paddy Algorithm [1] | Bio-inspired by plant propagation; leverages population density and fitness for exploration and exploitation. | Five-phase process: Sowing, Selection, Seeding, Pollination, and Sowing again. | Versatile; demonstrated in chemical system optimization, molecule generation, and experimental planning. |

| Bayesian Optimization (BO) [20] [21] | Probabilistic model-based optimization; uses a surrogate to guide search with minimal evaluations. | Sequential process: Build a probabilistic surrogate model (e.g., Gaussian Process) and use an acquisition function to select the next point to evaluate. | Ideal for optimizing expensive black-box functions where the number of evaluations is severely limited. |

| Genetic Algorithm (GA) [19] | Inspired by biological evolution; uses a population and genetic operators to evolve solutions over generations. | Canonical steps: Initialize population, evaluate fitness, select parents, perform crossover and mutation to create offspring. | General-purpose optimization, especially for combinatorial and complex non-convex problems. |

Comparative Mechanics

This section delves into the specific mechanics that differentiate the three algorithms, focusing on population dynamics, the role of surrogate models, and the nature of their evolutionary operators.

Population Dynamics

Population dynamics refers to how the set of candidate solutions is managed, updated, and propagated throughout the optimization process.

- Paddy Algorithm: Paddy employs a unique density-based reinforcement mechanism. Its "pollination" step considers the spatial density of high-fitness solutions ("plants") in the parameter space. A selected plant produces a number of "seeds" (offspring) that is proportional to both its own fitness and the number of neighboring plants within a defined Euclidean distance. This creates a positive feedback loop where promising regions of the search space with high solution density are more heavily explored, effectively balancing exploration and exploitation based on local population structure [1].

- Bayesian Optimization: In its standard form, BO is not a population-based algorithm. It typically maintains a single, global probabilistic model (the surrogate) of the objective function. The search is guided sequentially by selecting the next single point to evaluate based on the acquisition function's recommendation. The "population" in BO is the history of all previously evaluated points, which is used exclusively to update the surrogate model [20] [21].

- Genetic Algorithm: GA uses a panmictic population model, where the entire set of individuals forms a single, freely mixing population. In each generation, a new population is formed by selecting parents from the current entire population and applying genetic operators. While this allows for rapid propagation of good genetic material, it can also lead to premature convergence if not carefully tuned. Selection pressure drives the population toward fitter regions, but without explicit density control like Paddy's, it can quickly lose diversity [19].

Table 2: Comparative Population Dynamics

| Feature | Paddy Algorithm | Bayesian Optimization | Genetic Algorithm |

|---|---|---|---|

| Population Model | Density-structured population | Typically non-population-based (sequential) | Panmictic (single, mixed population) |

| Diversity Mechanism | Implicit through density-dependent seeding and spatial distribution | Explicit through acquisition function (e.g., Upper Confidence Bound) | Relies on mutation, crossover, and selection pressure |

| Risk of Premature Convergence | Low, due to density-based reinforcement [1] | Not applicable in the same sense; can get stuck if surrogate is inaccurate | High, especially in elitist strategies with high selection pressure [19] |

| Exploration Driver | Pollination factor and fitness-based seeding | Probabilistic uncertainty of the surrogate model | Genetic diversity and mutation operator |

Surrogate Models and Approximation Strategies

Surrogate models, or meta-models, are approximations of the expensive objective function used to reduce computational cost.

- Bayesian Optimization: The use of a surrogate model is the core of BO. It constructs a probabilistic model, most commonly a Gaussian Process (GP), which provides not just a prediction of the objective function but also an estimate of the uncertainty (variance) at any point. This uncertainty is crucial for its acquisition function (e.g., Expected Improvement), which balances exploring uncertain regions and exploiting known promising areas. BO is the archetypal Surrogate-Assisted Evolutionary Algorithm (SAEA) approach, though it is not always evolutionary [20] [22] [21].

- Paddy Algorithm & Standard GA: The canonical versions of Paddy and GA do not inherently use surrogate models. They rely on direct evaluations of the (often expensive) true objective function to assess fitness [1] [19]. However, both are prime candidates for enhancement via surrogate-assistance. In Surrogate-Assisted Evolutionary Algorithms (SAEAs), a surrogate (e.g., a Radial Basis Function or Kriging model) is built from historical data and used to inexpensively pre-screen candidate solutions, with only the most promising ones being evaluated on the true expensive function. This hybrid approach can significantly accelerate convergence for costly problems [23] [20] [22].

Table 3: Surrogate Model Usage and Characteristics

| Aspect | Paddy Algorithm | Bayesian Optimization | Genetic Algorithm |

|---|---|---|---|

| Native Surrogate Use | No | Yes, it is fundamental to the method (e.g., Gaussian Process) | No |

| Suitability for Surrogate-Assistance | High, as an EA [24] | N/A (It is already surrogate-based) | High, as an EA [23] [22] |

| Common Surrogates in SAEAs | Radial Basis Functions (RBF), Kriging [22] | Gaussian Process (GP) is standard [20] | Kriging, RBF, Polynomial Response Surfaces [23] [22] |

| Key Model Output | N/A (in native form) | Predictive mean and variance | N/A (in native form) |

| Primary Goal with Surrogate | To reduce expensive fitness evaluations [24] | To guide global search with very few evaluations [21] | To reduce expensive fitness evaluations [23] |

Evolutionary Operators

Evolutionary operators are the mechanisms that generate new candidate solutions from existing ones.

- Paddy Algorithm: Paddy's primary operator is a density-informed mutation. A selected parent plant generates offspring by applying Gaussian mutation to its parameters. The critical differentiator is that the number of offspring a parent produces is not fixed; it is determined by the parent's fitness and its local population density (the pollination factor). This is a form of non-crossover-based propagation that directly links reproductive success to the neighborhood structure [1].

- Genetic Algorithm: GAs are defined by their use of crossover (recombination) and mutation. Crossover, such as single-point or uniform crossover, combines genetic material from two parent solutions to create one or two offspring. This operator is crucial for exploiting building blocks of good solutions. Mutation, often a bit-flip or a small Gaussian perturbation, acts as a background operator to introduce new genetic material and maintain diversity. Selection (e.g., roulette wheel, tournament) chooses which parents get to reproduce [19].

- Bayesian Optimization: BO does not use evolutionary operators. New candidate points are generated not by modifying existing solutions, but by optimizing an acquisition function over the surrogate model. This is a deterministic or quasi-deterministic process based on the current state of the model, not a stochastic recombination or mutation of a population [20].

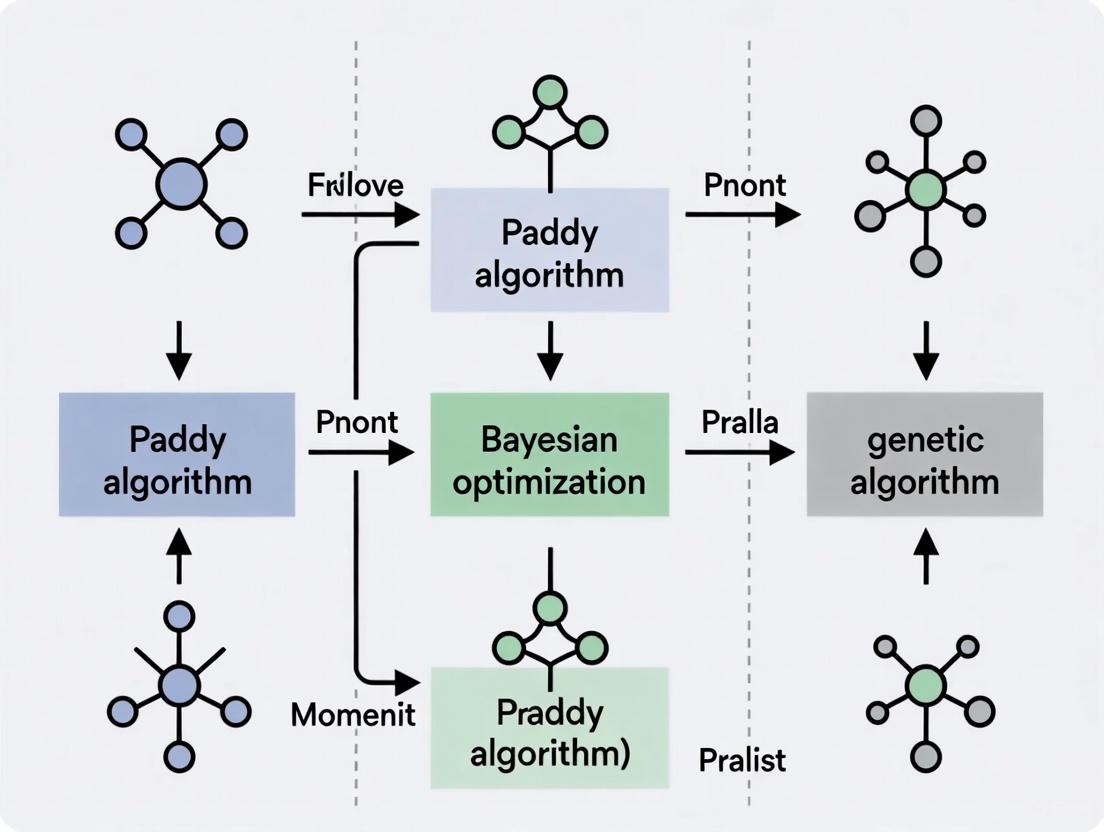

The logical flow of each algorithm's core procedure is distinct, as summarized in the diagram below.

Experimental Protocols and Performance Benchmarking

Objective performance data is crucial for validating theoretical mechanical differences. The following experimental protocols and results, primarily drawn from benchmarking the Paddy algorithm, provide a concrete basis for comparison.

Key Benchmarking Experiments

The Paddy algorithm was benchmarked against several competitors, including a Tree-structured Parzen Estimator (Hyperopt), Bayesian Optimization with Gaussian Process (Ax library), and population-based methods (an Evolutionary Algorithm and a Genetic Algorithm from EvoTorch) [1] [25]. The tests covered:

- Mathematical Function Optimization: Global optimization of a 2D bimodal distribution and interpolation of an irregular sinusoidal function. These tests evaluate the algorithm's ability to handle multi-modality and avoid local optima.

- Chemical and Machine Learning Tasks:

- Hyperparameter Optimization: Tuning an artificial neural network for solvent classification in chemical reactions.

- Targeted Molecule Generation: Optimizing input vectors for a decoder network to generate molecules with desired properties.

- Experimental Planning: Sampling discrete experimental space to identify optimal conditions.

The aggregated results from these benchmarks highlight the relative strengths of each algorithm.

Table 4: Summary of Algorithm Performance from Benchmarking Studies [1]

| Algorithm | Performance on Multi-modal Functions | Resistance to Premature Convergence | Runtime Efficiency | Versatility Across Tasks |

|---|---|---|---|---|

| Paddy Algorithm | High performance, robust identification of global optima | High, innate ability to bypass local optima | Markedly lower runtime | Strong and consistent across all benchmarks |

| Bayesian Optimization | Varying performance, can be misled by complex landscapes | Moderate, depends on surrogate model accuracy | Higher computational overhead per step | Good, but performance varies by problem type |

| Genetic Algorithm | Good, but can converge to local optima without niching | Low to Moderate, susceptible without careful tuning | Moderate | Good, but may require significant parameter tuning |

The Researcher's Toolkit

This section details key software and methodological "reagents" used in modern optimization research, as featured in the cited experiments.

Table 5: Essential Research Reagents and Tools for Optimization

| Tool / Reagent | Type/Function | Application in Context |

|---|---|---|

| Paddy Python Library [1] | Open-source implementation of the Paddy field algorithm. | The primary algorithm under test; used for benchmarking against other methods. |

| Ax Framework [1] | A library for adaptive experimentation, including Bayesian optimization. | Provided the implementation for Bayesian optimization with Gaussian processes. |

| EvoTorch [1] | A PyTorch-based library for evolutionary optimization. | Provided the implementations of the standard Evolutionary Algorithm and Genetic Algorithm used for comparison. |

| Hyperopt [1] | A Python library for serial and parallel optimization. | Provided the Tree of Parzen Estimators algorithm for comparison. |

| Surrogate Model (e.g., GP, RBF) [20] [22] | A computationally cheap approximation of an expensive objective function. | Core component of BO and SAEAs; used to reduce the number of expensive true function evaluations. |

| Gaussian Process (GP) [20] [21] | A probabilistic model that defines a distribution over functions. | The most common surrogate model used in Bayesian optimization. |

| Radial Basis Function (RBF) Network [22] | A neural network that uses radial basis functions as activation functions. | A common choice for surrogate models in Surrogate-Assisted Evolutionary Algorithms (SAEAs). |

The mechanical comparison reveals that the Paddy algorithm, Bayesian optimization, and genetic algorithms employ fundamentally distinct strategies for navigating complex search spaces. The Paddy algorithm's density-based population dynamics and non-crossover propagation provide a unique mechanism for maintaining diversity and resisting premature convergence, making it a robust and versatile choice, as evidenced by its consistent performance across mathematical and chemical benchmarks. Bayesian optimization's strength lies in its sample efficiency, achieved through its principled use of a probabilistic surrogate model, making it ideal for problems where evaluations are extremely costly. The genetic algorithm remains a powerful, general-purpose optimizer whose reliance on crossover and mutation is effective but may require enhancements like surrogate assistance or niching for challenging, expensive problems. For researchers in drug development, this mechanistic understanding is critical for matching the algorithm's inherent strengths to the specific nature of their optimization challenge, whether it be molecular design, experimental planning, or hyperparameter tuning.

Algorithm Selection in Practice: Key Use Cases in Drug Discovery and Chemical Sciences

Table of Contents

- Introduction to Optimization in Molecular Generation

- Algorithm Performance Comparison

- Detailed Experimental Protocols

- Research Reagent Solutions

- Pathway and Workflow Visualizations

The design of novel molecular structures with specific properties is a fundamental challenge in computational chemistry and drug discovery. A critical subtask in this process is the optimization of input vectors for generative models, a step that directly influences the quality, validity, and utility of the generated compounds [26]. This optimization problem is complex, often involving high-dimensional, discontinuous, and noisy objective functions, such as predicted binding affinity or synthetic accessibility. In this landscape, the choice of optimization algorithm is paramount for efficiently navigating the vast chemical space. This guide objectively compares the performance of three distinct algorithmic approaches—the evolution-inspired Paddy algorithm, the probabilistic Bayesian optimization, and the population-based Genetic Algorithm—within the context of targeted molecule generation.

The "Paddy" algorithm, recently introduced as an evolutionary optimization method, is designed to propose experiments that efficiently optimize an underlying objective while effectively sampling parameter space to avoid premature convergence on local minima [25]. Its performance has been benchmarked against other prominent optimization approaches, including Bayesian optimization with a Gaussian process and population-based methods like Genetic Algorithms, across various chemical optimization tasks [25]. These benchmarks provide a direct basis for comparison in molecular generation scenarios. Meanwhile, advanced generative frameworks like the Multimodal Targeted Molecule generation model with Protein features (MTMP) demonstrate the critical role of optimization in practice, using target protein information to steer the generation of novel compounds with enhanced binding affinity [26].

Algorithm Performance Comparison

The following tables synthesize quantitative data from experimental benchmarks, highlighting the relative strengths and weaknesses of each algorithm in tasks relevant to molecular generation.

Table 1: Overall Performance and Convergence Metrics

| Algorithm | Core Principle | Convergence Speed (Relative) | Resistance to Local Optima | Best For |

|---|---|---|---|---|

| Paddy Algorithm [25] | Evolutionary | Moderate to Fast | High | Complex, multi-modal landscapes; exploratory sampling |

| Bayesian Optimization (with HIPE) [27] | Probabilistic Surrogate Model | Fast in Few-Shot Settings | Moderate | Sample-efficient optimization of expensive black-box functions |

| Genetic Algorithm [28] | Population-Based Evolution | Can be slower | Moderate (requires tuning) | Discrete & non-differentiable spaces; global search |

Table 2: Performance in Chemical & Biological Benchmarks

| Algorithm | Key Metric | Reported Performance | Context / Model |

|---|---|---|---|

| Paddy Algorithm [25] | Benchmark Versatility | Maintained strong performance across all mathematical and chemical optimization benchmarks | Targeted molecule generation by optimizing input vectors for a decoder network |

| Bayesian Optimization [29] | Experimental Efficiency | Converged to optimum in 22% of the unique points required by a grid search | Optimizing a 4D transcriptional control system for limonene production |

| Genetic Algorithm [30] | Optimization Gain | Improved model accuracy by 10.4% over the best base classifier | Optimizing ensemble model hyperparameters for land cover mapping |

| MTMP Model (Uses a VAE, optimized via transfer learning) [26] | Docking Score / Property Optimization | Produced novel compounds with high docking scores against target proteins (EGFR, CDK2) | Targeted molecular generation integrated with protein features |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the cited performance data, this section details the methodologies behind key experiments.

Benchmarking Paddy Against Multiple Optimizers

A comprehensive benchmark was conducted to evaluate the Paddy algorithm's performance against a suite of other optimizers, including Tree of Parzen Estimators (Hyperopt), Bayesian optimization with a Gaussian process (Ax), and two population-based methods from EvoTorch [25].

- Objective Functions: The benchmark included both mathematical and chemical optimization tasks. These comprised the global optimization of a two-dimensional bimodal distribution, interpolation of an irregular sinusoidal function, and chemical tasks like hyperparameter optimization of an artificial neural network for solvent classification. Crucially, it also included targeted molecule generation by optimizing input vectors for a decoder network and sampling discrete experimental space for optimal experimental planning [25].

- Algorithm Configuration: Each algorithm was run with its standard or recommended configuration. Paddy was implemented as described in its software package, propagating parameters without direct inference of the underlying objective function [25].

- Performance Measurement: The primary metrics were the quality of the found solution (e.g., value of the objective function) and the efficiency of convergence across the diverse set of tasks. The benchmark specifically tested the algorithms' ability to avoid early convergence on local optima in search of global solutions [25].

Bayesian Optimization for Metabolic Engineering

A validation study demonstrated the sample efficiency of Bayesian Optimization in a biological context, using a published dataset from a metabolic engineering study [29].

- Objective Function: The task was to optimize the production level of limonene in E. coli by tuning a four-dimensional input space of transcriptional control parameters [29].

- Surrogate Model and Acquisition: A Gaussian Process (GP) was used as the probabilistic surrogate model. The GP was fitted with a scaled Radial Basis Function (RBF) kernel and an additional white noise kernel to model experimental noise. An acquisition function (e.g., Expected Improvement) was used to balance exploration and exploitation to select the next parameters to evaluate [29].

- Experimental Loop: The BO policy was applied sequentially. The performance was measured by the number of unique experimental points required for the algorithm to converge close to the known optimum (defined as being within 10% of the total possible normalized Euclidean distance) [29].

Targeted Molecule Generation with MTMP

The MTMP model provides a protocol for generating molecules targeted to specific proteins, a process where optimization of the latent space is critical [26].

- Model Architecture: A Variational Autoencoder (VAE) framework was used. The encoder was a Graph Convolutional Network (GCN) that processed molecular topological graphs. The decoder was a Recurrent Neural Network (RNN) that generated SMILES strings. This integration created a joint latent space capturing chemical properties and structural information [26].

- Incorporating Target Information: A pre-trained language model, trained on large-scale protein sequence data, was used to extract features from the target protein (e.g., EGFR or CDK2). This protein feature vector was integrated into the generative process to direct the generation toward molecules with high affinity for the target [26].

- Training and Fine-tuning: The model was first pre-trained on the general-purpose ZINC database (~250,000 drug-like compounds) to learn fundamental chemical rules. It was then fine-tuned via transfer learning on a curated dataset of ligand molecules with known high activity against the specific target protein. This two-step process optimized the model's parameters for the targeted generation task [26].

- Evaluation: Generated molecules were evaluated for validity, diversity, and drug-likeness. Crucially, their binding affinity was assessed through molecular docking simulations against the target protein, with the resulting docking scores serving as the key performance metric [26].

Research Reagent Solutions

Table 3: Essential Materials and Tools for Targeted Molecule Generation Experiments

| Item | Function in Research | Example / Specification |

|---|---|---|

| Molecular Database [26] | Provides foundational data for pre-training generative models; teaches the model basic chemical structure and rules. | ZINC database (~250,000 drug-like compounds) |

| Curated Target-Specific Dataset [26] | Used for fine-tuning a pre-trained model; enables it to generate molecules with affinity for a specific protein. | Ligand molecules with known high activity against targets like EGFR or CDK2 |

| Target Protein Structure/Sequence [26] | Provides the biological target's features; allows the model to condition generation on specific protein information. | Protein Data Bank (PDB) structures or amino acid sequences for proteins like EGFR, CDK2 |

| Docking Software [26] | Computationally evaluates the binding strength between a generated molecule and its target; a key validation metric. | Programs like AutoDock Vina, GOLD, or Glide |

| Deep Learning Framework [26] | Provides the programming environment to build, train, and run complex generative models. | TensorFlow, PyTorch, or JAX |

| Bayesian Optimization Library [29] | Offers pre-implemented algorithms for sample-efficient optimization of experimental parameters. | Software like Ax, BoTorch, or proprietary tools like BioKernel |

Pathway and Workflow Visualizations

MTMP Model Workflow

This diagram illustrates the integrated workflow of the MTMP model for generating target-specific molecules, showcasing the flow from input data to a novel compound [26].

Bayesian Optimization Cycle

This diagram outlines the iterative "lab-in-the-loop" cycle of Bayesian Optimization, which is highly effective for guiding expensive biological experiments [29].

Algorithm Selection Logic

This flowchart provides a high-level guide for researchers to select an appropriate optimization algorithm based on the primary constraint of their project [25] [27] [29].

Hyperparameter Optimization for Artificial Neural Networks in Chemical Classification

The optimization of hyperparameters for artificial neural networks (ANNs) tasked with chemical classification is a critical step in building accurate and efficient predictive models in cheminformatics. As chemical data grows in complexity and volume, selecting the right optimization algorithm becomes paramount. This guide provides an objective performance comparison of three distinct algorithmic approaches: the evolutionary Paddy algorithm, Bayesian optimization, and population-based methods like the Genetic Algorithm (GA). Benchmarked on a practical chemical classification task—solvent classification for reaction components—the data indicates that the Paddy algorithm achieves competitive, and sometimes superior, accuracy while demonstrating significant advantages in computational runtime and robustness against local optima. This analysis offers researchers and scientists in drug development a evidence-based framework for selecting hyperparameter optimization strategies.

In modern cheminformatics and drug discovery, artificial neural networks (ANNs) are increasingly deployed for critical tasks such as molecular property prediction, chemical reaction classification, and virtual screening. The performance of these ANNs is highly sensitive to their hyperparameters, which include the number of layers, learning rate, and number of neurons per layer [31]. Unlike model parameters, hyperparameters cannot be learned directly from data and must be set prior to training. The process of hyperparameter optimization (HPO) is thus a non-trivial, computationally expensive, but essential "outer-loop" in the machine learning workflow.

Several algorithmic families have been developed to tackle HPO. Bayesian optimization (BO) has emerged as a sample-efficient method, using a probabilistic surrogate model to intelligently guide the search for optimal hyperparameters [32]. Genetic Algorithms (GAs), a class of evolutionary algorithms, evolve a population of hyperparameter sets through selection, crossover, and mutation [33]. More recently, the Paddy algorithm has been introduced as a new evolutionary optimizer inspired by plant propagation behavior, emphasizing density-based reinforcement of solution vectors to avoid premature convergence [25] [1].

This guide objectively compares these three approaches within the context of a specific chemical classification problem: an ANN trained to classify solvents for reaction components. Framed within a broader thesis on optimizer performance, we present comparative experimental data on accuracy and runtime, detail the experimental protocols, and provide resources to equip researchers in making informed decisions for their own HPO campaigns.

Optimizer Fundamentals and Workflows

The Paddy Field Algorithm

The Paddy Field Algorithm (PFA) is a biologically inspired evolutionary optimization algorithm that mimics the reproductive behavior of plants in a paddy field. It operates without directly inferring the underlying objective function, instead relying on a five-phase process to propagate parameters [1]:

- Sowing: A random initial population of seeds (hyperparameter sets) is generated.

- Selection: The seeds are evaluated by the fitness function (e.g., ANN validation accuracy), and the top-performing plants are selected for propagation.

- Seeding: The number of seeds each selected plant produces is determined by its relative fitness.

- Pollination: This step reinforces exploration in dense regions of high-fitness solutions. The number of seeds is adjusted based on the local density of plants in the parameter space.

- Dispersal: New parameter values are generated by applying Gaussian mutation to the pollinated seeds, creating the next generation for evaluation.

This density-aware pollination mechanism helps Paddy effectively navigate the hyperparameter space and avoid becoming trapped in local optima [25] [34].

Bayesian Optimization with Gaussian Processes

Bayesian optimization is a sequential design strategy for optimizing black-box functions. For HPO, it constructs a probabilistic surrogate model, typically a Gaussian Process (GP), to approximate the relationship between hyperparameters and the model's performance [32]. An acquisition function, such as Expected Improvement (EI) or Upper Confidence Bound (UCB), uses the GP's predictive mean and uncertainty to decide which hyperparameter set to evaluate next. This process balances exploration (testing points with high uncertainty) and exploitation (testing points predicted to have high performance) [32] [35]. The surrogate model is updated after each evaluation, gradually refining its understanding of the objective function.

Genetic Algorithms

Genetic Algorithms (GAs) are population-based evolutionary optimizers inspired by natural selection. A GA starts with a population of random hyperparameter sets (individuals) [33]. Each generation, individuals are selected for "breeding" based on their fitness. New individuals are created through crossover (combining parts of two parent hyperparameter sets) and mutation (randomly modifying hyperparameter values) [1]. This iterative process of selection, crossover, and mutation allows the population to evolve toward increasingly optimal regions of the hyperparameter space over generations.

Experimental Comparison: Solvent Classification

Experimental Protocol and Benchmarking Methodology

A key benchmark study directly compared Paddy, Bayesian optimization, and evolutionary algorithms on the task of tuning an ANN for solvent classification [25] [1]. The core methodology is outlined below.

Objective: To identify the hyperparameter set that maximizes the validation accuracy of an ANN classifying solvents for reaction components.

ANN Model and Dataset: The ANN was trained on a dataset of chemical reactions where the solvent was the classification target. The input features were derived from the reaction components.

Hyperparameter Search Space: The optimizers searched for the best values for key architectural and training hyperparameters, which typically include:

- Number of hidden layers

- Number of neurons per layer

- Learning rate

- Activation functions

- Batch size

- Dropout rate

Optimizers Compared:

- Paddy: The Paddy field algorithm as implemented in the Paddy Python package.

- Bayesian Optimization: Implemented via Meta's Ax framework, which uses BoTorch and a Gaussian Process surrogate model.

- Genetic Algorithm (GA): A population-based method from EvoTorch, using Gaussian mutation and single-point crossover.

- Control: Random search was included as a baseline.

Evaluation Metric: The primary metric for comparison was the highest validation accuracy achieved by the ANN after hyperparameter tuning. Additionally, the computational runtime required by each optimizer was recorded.

Diagram 1: Generic Hyperparameter Optimization (HPO) Workflow. This core process is shared across all optimizers, differing primarily in the "Propose" and "Update" steps.

Performance Results and Analysis

The following tables summarize the quantitative results from the benchmark study, providing a clear comparison of optimizer performance on the solvent classification task [25] [1].

Table 1: Comparative Performance of Optimizers on ANN Solvent Classification

| Optimization Algorithm | Reported Validation Accuracy | Computational Runtime | Key Characteristic |

|---|---|---|---|

| Paddy Algorithm | Competitive / High | Lowest | Fast convergence, avoids local optima |

| Bayesian Optimization (GP) | High | High | Sample-efficient, high computational overhead |

| Genetic Algorithm (GA) | Competitive | Medium | Robust, population-based search |

| Random Search | Lower | Medium | Baseline method |

Table 2: Qualitative Comparison of Optimizer Attributes

| Attribute | Paddy | Bayesian Optimization | Genetic Algorithm |

|---|---|---|---|

| Exploration vs. Exploitation | Density-guided balance | Probabilistically balanced by acquisition function | Balanced by selection pressure & genetic operators |

| Resistance to Local Optima | High (Explicit density/pollination mechanism) | Medium (Depends on acquisition function) | High (Population diversity helps escape) |

| Sample Efficiency | Medium | High | Low to Medium |

| Parallelization Potential | High (Population-based) | Low (Inherently sequential) | High (Population-based) |

| Ease of Use | Simple, open-source Python package | Requires choice of surrogate & acquisition function | Requires tuning of genetic operators |

The results demonstrate that Paddy achieved validation accuracy that was competitive with, and in some cases superior to, both Bayesian optimization and the Genetic Algorithm. Its most notable advantage was its significantly lower computational runtime, making it a highly efficient choice for HPO [25] [1]. Bayesian optimization, while capable of finding high-accuracy hyperparameters with fewer samples, incurred a higher computational cost per iteration due to the overhead of maintaining and updating the Gaussian Process model. The Genetic Algorithm provided robust performance but did not match Paddy's speed in this benchmark.

The Scientist's Toolkit: Essential Research Reagents