Evolutionary Rates Modeling (EvoRates): From Genomic Clocks to Predictive Drug Development in 2025

This article provides a comprehensive overview of the latest methodologies, challenges, and applications in evolutionary rates modeling (EvoRates) for a scientific audience.

Evolutionary Rates Modeling (EvoRates): From Genomic Clocks to Predictive Drug Development in 2025

Abstract

This article provides a comprehensive overview of the latest methodologies, challenges, and applications in evolutionary rates modeling (EvoRates) for a scientific audience. It explores the foundational principles of rate estimation across genomic, phenotypic, and protein evolution, highlighting persistent challenges like rate-time scaling. The piece details cutting-edge tools, including Bayesian software and AI-powered simulations, for applications in phylogenetic inference and forecasting viral evolution. It further discusses strategies for overcoming model misspecification and optimization, and covers rigorous validation through empirical benchmarks and comparative analyses. Finally, it synthesizes key takeaways on the transformative potential of integrating evolutionary models with biomedical research to accelerate therapeutic discovery.

The Foundations of Evolutionary Rate Variation: From Genomes to Phenotypes

Evolutionary rates provide a crucial window into the tempo and mode of biological evolution across different scales of biological organization. This application note details the core methodologies for estimating three fundamental types of evolutionary rates: genomic (dN/dS), phenotypic, and protein stability metrics. We provide standardized protocols for the key experimental and computational workflows, visual summaries of the analytical pipelines, and a curated list of essential research reagents. Designed for researchers and drug development scientists, this guide facilitates the selection and implementation of appropriate rate metrics for evolutionary studies, with particular emphasis on integration within modern evorates research frameworks.

Quantifying evolutionary rates is fundamental to understanding how natural selection shapes biological diversity across genomic, structural, and phenotypic levels [1] [2]. The ratio of non-synonymous to synonymous substitutions (dN/dS) serves as a canonical measure of selective pressure at the molecular sequence level [3] [4]. At the protein structure level, biophysical models quantify evolutionary rates in terms of thermodynamic constraints, such as changes in folding stability (ΔΔG) and stress energy (ΔΔ*G) [1]. For macroscopic traits, phenotypic evolutionary rates capture the pace of morphological change, with methods like evorates modeling how these rates themselves evolve across phylogenies [5] [2]. Understanding the interrelationships and appropriate applications of these metrics is essential for a cohesive research program in evolutionary systems biology.

Metric Comparison and Data Presentation

The table below summarizes the core characteristics, applications, and key methodological considerations for the three primary evolutionary rate metrics.

Table 1: Comparative Overview of Key Evolutionary Rate Metrics

| Metric | Basis of Calculation | Primary Application | Key Interpretation | Common Software/Tools |

|---|---|---|---|---|

| dN/dS (ω) | Ratio of substitution rates at non-synonymous (dN) and synonymous (dS) codon sites [3] [4]. | Identifying genes and specific sites under positive or purifying selection [3]. | ω > 1: Positive selectionω ≈ 1: Neutral evolutionω < 1: Purifying selection | PAML (CODEML) [3] [4] |

| Protein Stability (ΔΔG) | Estimated change in free energy (ΔΔG) of protein folding caused by a mutation, derived from biophysical models [1]. | Understanding structural constraints on protein evolution; predicting site-specific evolutionary rates [1]. | More negative ΔΔG: Greater destabilizationHigher evolutionary rate at less constrained sites | Custom stability models [1] |

| Phenotypic Rate (σ²) | Rate of trait change per unit time under models like Brownian Motion (BM); can be heterogeneous across a tree [5]. | Quantifying the pace of morphological evolution; testing for adaptive radiation and stasis [5] [2]. | Higher σ²: Faster phenotypic divergenceVarying σ²: Support for complex evolutionary scenarios | evorates [5], RRmorph [2] |

Table 2: Data Requirements and Output for Evolutionary Rate Analyses

| Metric | Required Input Data | Typical Output | Critical Assumptions & Caveats |

|---|---|---|---|

| dN/dS | Codon-aligned sequences from at least two species; a phylogenetic tree [3]. | dN/dS value per gene, branch, or site; tests for positive selection. | Assumes synonymous mutations are neutral; sensitive to recombination and codon usage bias; interpretation differs between diverged lineages and population samples [4]. |

| Protein Stability | Protein 3D structure or high-quality model; multiple sequence alignment [1]. | Site-specific evolutionary rate predictions; correlation with empirical rates. | Assumes additivity of mutational effects on stability; model performance varies among proteins [1]. |

| Phenotypic Rate | Phenotypic trait measurements (e.g., morphology); a dated phylogeny [5] [2]. | Branch-wise or clade-specific rate estimates; visual rate maps on 3D shapes. | Quality of rate estimates depends on accurate phylogeny and trait data; can be biased by phylo-temporal clustering in ancient DNA [6]. |

Experimental Protocols

Protocol 1: Estimating Genome-Wide dN/dS Using CODEML

I. Objective: To calculate the non-synonymous to synonymous substitution rate ratio (dN/dS) for all protein-coding genes in a genome using orthologous sequences from at least two species, identifying genes under positive or purifying selection [3].

II. Materials and Reagents

- Genomic Data: Assembled genomes or transcriptomes for the target species.

- Computing Infrastructure: Unix/Linux server or high-performance computing cluster.

- Software:

- Ortholog Assignment: OrthoFinder, OMA, or BLASTP for identifying orthologous gene sets.

- Sequence Alignment: MAFFT, PRANK, or MUSCLE. PRANK is recommended for its codon-awareness.

- Phylogenetic Analysis: PAML package (specifically the CODEML program) [3].

III. Procedure

- Ortholog Assignment: Identify one-to-one orthologs between your target species using a tool like OrthoFinder.

- Codon Alignment: Align the amino acid sequences of each ortholog set. Then, back-translate the alignment to the corresponding codon-aligned nucleotide sequences using

pal2nalor a similar tool. This preserves the codon structure. - Phylogeny Construction: Generate a species tree. This can be derived from a concatenation of highly conserved genes or sourced from the literature. The tree must be in Newick format.

- CODEML Configuration File Setup: Create a control file (e.g.,

codeml.ctl). Key parameters to set include:seqfile = [your_codon_alignment.phy]treefile = [your_species_tree.tre]outfile = results.outmodel = 0(0for site-specific models,2for branch-specific models; consult the PAML manual for your hypothesis)NSsites = 0 1 2(0for one ω ratio for all sites,1for neutral model,2for positive selection; allows testing different models)fix_omega = 0(0to estimate ω,1to fix it at a pre-specified value)

- Execution: Run CODEML from the command line:

codeml codeml.ctl - Results Interpretation: The output file contains dN, dS, and ω (dN/dS) values. To test for positive selection, compare models that allow for sites with ω > 1 (e.g.,

NSsites = 2) to a null model that does not (e.g.,NSsites = 1) using a Likelihood Ratio Test (LRT).

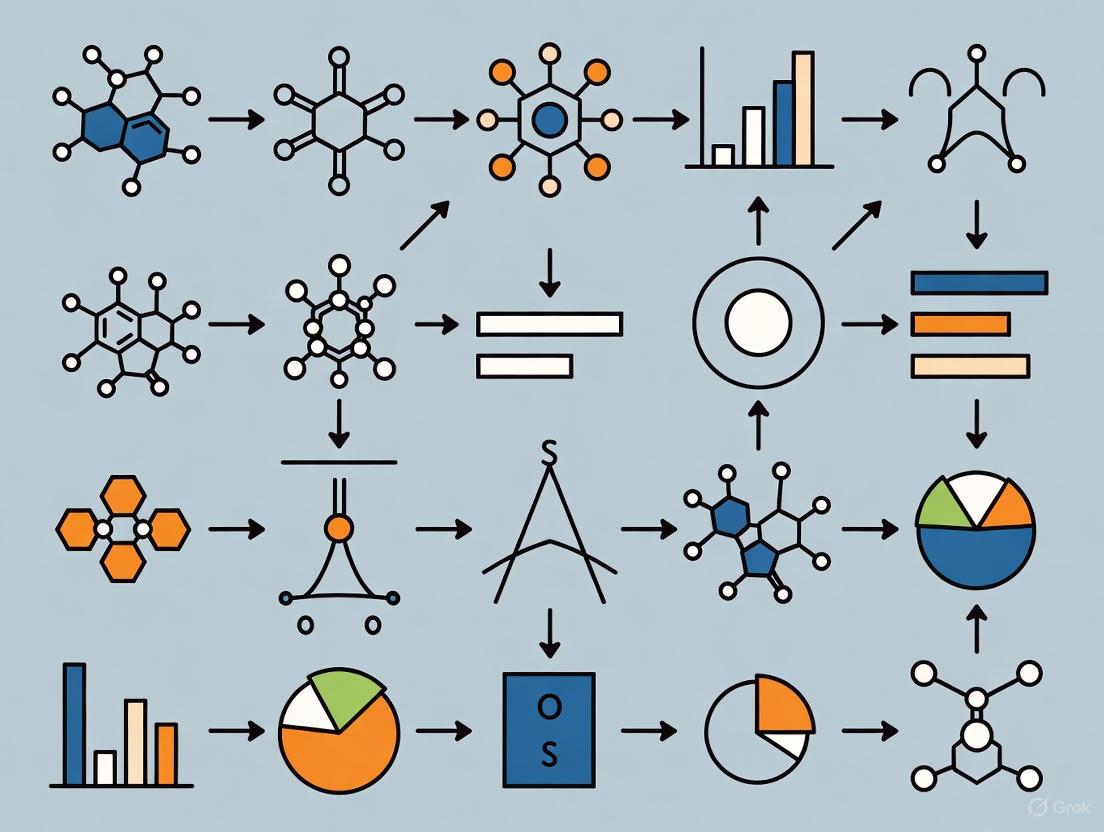

IV. Diagram: dN/dS Estimation Workflow

Protocol 2: Modeling Protein Evolutionary Rates from Stability Constraints

I. Objective: To predict site-specific evolutionary rates in proteins based on the thermodynamic stability changes (ΔΔG) caused by mutations [1].

II. Materials and Reagents

- Protein Structure: Experimentally determined (e.g., from PDB) or high-quality predicted 3D structure.

- Stability Prediction Tools: FoldX, Rosetta, or other ΔΔG prediction algorithms.

- Sequence Data: A deep multiple sequence alignment of the protein homologs.

- Computing Environment: Python or R for implementing the stability model and calculating empirical rates.

III. Procedure

- Empirical Rate Calculation: From the multiple sequence alignment, infer site-specific evolutionary rates using a method like Rate4Site or from a phylogenetic model.

- Stability Change (ΔΔG) Calculation:

- Use the wild-type protein structure as a reference.

- For each site in the protein, perform in silico mutagenesis to all other 19 amino acids.

- Use a tool like FoldX to calculate the ΔΔG for each mutation, representing the change in folding free energy.

- Modeling Evolutionary Rates:

- Implement a stability model where the fitness of a protein is 1 if its stability (ΔG) is below a threshold and 0 otherwise [1].

- The fixation probability of a mutation is determined by whether it keeps the protein stable, given a background distribution of protein stabilities [1].

- The predicted substitution rate for a site is a function of the ΔΔG values for all possible mutations at that site and their corresponding fixation probabilities.

- Model Validation: Correlate the predicted site-specific rates from the stability model with the empirically derived rates from Step 1. A high correlation (e.g., up to ~0.75) indicates that stability constraints explain a large portion of the observed rate variation [1].

IV. Diagram: Protein Stability Rate Model

Protocol 3: Mapping Phenotypic Evolutionary Rates on 3D Structures with RRmorph

I. Objective: To estimate and visualize heterogeneous evolutionary rates directly on 3D phenotypic structures (e.g., skulls, endocasts) to identify morphological regions that have evolved at an accelerated pace [2].

II. Materials and Reagents

- 3D Morphological Data: Digital meshes (e.g., .ply, .stl files) of the anatomical structure of interest for all taxa.

- Landmark & Semilandmark Data: Cartesian coordinates of biologically homologous points collected on all 3D specimens.

- Phylogenetic Tree: A time-calibrated phylogeny of the studied taxa.

- Software:

III. Procedure

- Data Preparation: Place all 3D mesh files in a single directory. Prepare a table of landmark coordinates and a matching phylogenetic tree.

- Shape Alignment & Analysis:

- In R, use Generalized Procrustes Analysis (GPA) to align the landmark data, removing the effects of translation, rotation, and scale.

- Perform a Principal Components Analysis (PCA) on the aligned coordinates to reduce dimensionality. The PC scores represent the major axes of shape variation.

- Evolutionary Rate Estimation with RRphylo:

- Use the

RRphylofunction on the PC scores and the phylogeny to compute phylogenetic ridge regression slopes. These slopes represent the evolutionary rates for each branch in the tree for each PC axis [2].

- Use the

- Rate Mapping with RRmorph:

- Use the

rate.mapfunction from theRRmorphpackage. This function translates the multivariate evolutionary rates from the PC space back into the morphology of a reference 3D mesh. - The output is a color-coded 3D model where the color intensity on the mesh surface reflects the magnitude of the local evolutionary rate.

- Use the

- Visualization & Interpretation: Visualize the output mesh in

RRmorphor export it for viewing in external 3D software. Regions with hot colors (e.g., red) indicate areas that have experienced high rates of shape evolution.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Evolutionary Rate Analyses

| Item Name | Supplier / Source | Function in Research |

|---|---|---|

| PAML (Phylogenetic Analysis by Maximum Likelihood) | http://abacus.gene.ucl.ac.uk/software/paml.html | A software package for phylogenetic analysis of nucleotide or amino acid sequences using maximum likelihood. Its CODEML program is the standard for dN/dS calculation [3]. |

| RRmorph / RRphylo R Packages | CRAN (https://cran.r-project.org/) | RRphylo performs phylogenetic ridge regression to estimate phenotypic evolutionary rates on a tree. RRmorph maps these rates directly onto 3D morphological meshes [2]. |

| FoldX | http://foldx.org/ | A widely used software for the quantitative estimation of protein stability changes (ΔΔG) upon mutation, a key input for stability-based evolutionary models [1]. |

| evorates | Available as an R package or Bayesian implementation | A Bayesian method for modeling phenotypic trait evolution rates as gradually and stochastically changing (evolving) across a phylogeny, rather than shifting infrequently [5]. |

| Time-Structured Sequence Data | Public repositories (e.g., SRA, ENA) or ancient DNA labs | DNA sequences from modern and ancient samples of known age are required for calibrating substitution rates in Bayesian, least-squares, and root-to-tip regression methods [6]. |

| Curated Protein Structure (PDB) | Protein Data Bank (https://www.rcsb.org/) | An experimentally determined (e.g., X-ray crystallography, Cryo-EM) 3D protein structure is essential for calculating biophysical parameters like ΔΔG and ΔΔG* for stability and stress models [1]. |

Concluding Remarks

The parallel and integrated analysis of genomic, structural, and phenotypic evolutionary rates offers a powerful, multi-faceted perspective on the mechanisms of adaptation. While dN/dS reveals selection on the gene sequence, stability metrics explain the biophysical constraints that shape this signal, and phenotypic rates capture the macroevolutionary outcomes. Each metric operates on different assumptions and data types, making the choice of method dependent on the biological question and available data. The ongoing development of sophisticated models, such as evorates for phenotypic traits and increasingly accurate biophysical models for proteins, continues to enhance our ability to infer evolutionary processes from molecular to morphological levels.

Application Notes

Understanding the drivers of molecular evolutionary rate variation is critical for models in evolutionary biology (evorates). Recent research on birds, the most species-rich tetrapod lineage, demonstrates that life-history traits, particularly generation length and clutch size, are dominant factors explaining genome-wide mutation rates across lineages [7]. This relationship provides a framework for predicting molecular evolution and has significant implications for translating preclinical findings in drug development.

Key Quantitative Relationships

Table 1: Summary of Trait Associations with Genomic Evolutionary Rates [7]

| Trait | Molecular Rate Metric | Relationship | Effect Size/Credible Interval |

|---|---|---|---|

| Clutch Size | dN (non-synonymous) | Positive | CI: 1.19 - 8.57 |

| Clutch Size | dS (synonymous) | Positive | CI: 4.20 - 10.50 |

| Clutch Size | Intergenic Regions | Positive | CI: 3.57 - 10.03 |

| Generation Length | dN, dS, ω, Intergenic | Negative (most important variable) | Maximal importance score in random forest analysis |

| Tarsus Length | dN, Intergenic | Negative | dN CI: -9.88 to -1.13; Intergenic CI: -8.06 to -0.13 |

Implications for Drug Discovery

The principles of evolutionary rate variation directly impact preclinical drug discovery. Assumptions of functional equivalence between animal models and humans require robust evolutionary analysis [8]. Key considerations include:

- Orthology Assessment: Confirming a functional equivalent of the human drug target exists in the model species is paramount [8].

- Selection Analysis: Analyzing molecular selective pressure (dN/dS ratios) helps identify potential functional shifts in drug targets between species [8].

- Lineage-Specific Changes: Genes can be lost or undergo positive selection in specific lineages. For example, the serotonin receptor subunits HTR3C, D, and E are absent in rat and mouse but present in humans, dogs, and rabbits [8]. This discrepancy is critical for research on drugs targeting this receptor for conditions like irritable bowel syndrome.

Experimental Protocols

Protocol 1: Assessing Drivers of Genome-Wide Evolutionary Rates

Objective: To identify life-history and ecological traits that correlate with and predict genomic evolutionary rates across species [7].

Materials:

- Whole-genome sequence data for all taxa in the phylogeny.

- A robust, time-calibrated phylogeny of the study species.

- Curated species-level data for relevant traits (e.g., clutch size, generation length, body mass, tarsus length).

Procedure:

- Data Collection: Compile trait data from published datasets (e.g., BIRDBASE for avian studies [9]) and the primary literature.

- Rate Calculation: Calculate molecular evolutionary rates (dN, dS, ω, intergenic region rates) for each branch in the phylogeny using codon substitution models.

- Modeling & Analysis:

- Interpretation: A positive association between clutch size and dS or intergenic rates, after accounting for covariates, is interpreted as evidence for a role in driving mutation rates [7].

Protocol 2: Detecting Lineage-Specific Evolutionary Rate Shifts

Objective: To identify specific genes and lineages that have experienced significant shifts in evolutionary rates, indicative of functional diversification [10].

Materials:

- Multiple sequence alignments for genes of interest.

- High-performance computing resources for phylogenetic analysis.

Procedure:

- Gene Tree Construction: Generate a codon-aware phylogenetic tree from the multiple sequence alignment.

- Rate Decomposition: Apply a method like Evolutionary Rate Decomposition (ERD) or RAte Shift EstimatoR (RASER) to decompose site-specific evolutionary rates across the phylogeny [7] [10].

- Identify Axes of Variation: Use principal component analysis (PCA) on the rate estimates to identify major axes of evolutionary rate variation [7].

- Shift Detection: The RASER algorithm, for instance, uses a likelihood framework and empirical Bayesian inference to detect sites with a high posterior probability of a rate shift and to pinpoint the lineage where the shift occurred, without requiring prior specification of the lineages [10].

- Functional Correlation: Correlate identified rate-shifting sites and lineages with known biological traits (e.g., drug resistance, ecological diversification) [10].

Visualizations

Diagram 1: Evolutionary Rate Analysis Workflow

Diagram 2: Reproduction-Survival Trade-off Analysis

The Scientist's Toolkit

Table 2: Essential Research Reagents & Resources

| Item | Function/Description | Example/Application |

|---|---|---|

| BIRDBASE Dataset | A global avian trait dataset compiling 78 traits for 11,589 bird species; enables large-scale comparative analyses of life-history, ecology, and morphology [9]. | Sourcing species-level data for clutch size, body mass, and other traits for regression analysis [7]. |

| Whole-Genome Sequences | High-quality, de novo assembled genomes for multiple species across a phylogeny; the foundational data for calculating molecular evolutionary rates [7]. | Used by the B10K consortium to analyze 218 bird genomes from nearly all extant families [7]. |

| RAte Shift EstimatoR (RASER) | A Bayesian method for detecting site-specific evolutionary rate shifts across a phylogeny without pre-specifying lineages of interest [10]. | Identifying genes and lineages in HIV-1 subtypes where functional diversification has occurred [10]. |

| Animal Model (Statistical) | A hierarchical mixed model used in quantitative genetics to estimate additive genetic variance (G-matrix) and evolvability in wild populations [11]. | Estimating multivariate evolutionary potential of life-history traits in great tit populations across Europe [11]. |

| dN/dS (ω) Models | Codon-substitution models that estimate the ratio of non-synonymous to synonymous substitution rates; used to infer selection pressures [7] [8]. | A ω > 1 indicates positive selection; used to identify potential functional shifts in drug targets between species [8]. |

The Persistent Rate-Time Scaling Problem in Phenotypic Evolution

The analysis of phenotypic evolutionary rates is fundamentally complicated by a persistent and negative correlation between estimated rates and the time scale over which they are measured. This rate-time scaling problem presents a significant challenge for comparative evolutionary biology, as it impedes meaningful comparisons of evolutionary rates across lineages that have diversified over different time intervals. The causes of this correlation are deeply debated; while some attribute it to an inherent biological reality, others suspect model misspecification or statistical artifacts [12]. This Application Note addresses this persistent problem by framing it within the context of modern evolutionary rates modeling (evorates) research, providing researchers with both the theoretical background and practical protocols to identify, quantify, and mitigate scaling issues in their analyses.

The implications of the rate-time scaling problem extend across evolutionary biology, from comparative phylogenetic studies to analyses of phenotypic time series. For researchers in drug development, understanding the true pace of phenotypic evolution is crucial for predicting pathogen resistance, modeling host-pathogen coevolution, and interpreting experimental evolution results. The evorates framework, which models trait evolution rates as gradually changing across a phylogeny, offers a powerful approach to unify and expand on previous research by simultaneously estimating general trends and lineage-specific rate variation [5]. This Note provides detailed methodologies for implementing these approaches, with structured data presentation and visualization tools to enhance analytical rigor.

Theoretical Foundation: From Brownian Motion to Evolving Rates

Conventional Models and Their Limitations

Traditional models of phenotypic trait evolution have predominantly relied on Brownian Motion (BM) as a null model, which assumes a constant rate of evolution throughout a clade's history. While extensions to this model allow for rate shifts between predefined macroevolutionary regimes, they typically assume that rates change infrequently and abruptly [5]. Similarly, Early Burst/Late Burst (EB/LB) models incorporate simple exponential decreases or increases in rates over time but assume a perfect correspondence between time and rates across all lineages [5]. These assumptions become problematic given the vast number of intrinsic and extrinsic factors hypothesized to affect trait evolution rates, many of which vary continuously rather than discretely [5].

The fundamental limitation of these conventional approaches is their potential for severe underfitting of observed data. As noted in recent research, "if rates are instead affected by many factors, mostly with subtle effects, we would expect trait evolution rates to constantly shift in small increments over time within a given lineage, resulting in gradually changing rates over time and phylogenies" [5]. This oversimplification collapses heterogeneous evolutionary processes into homogeneous regimes, potentially generating spurious links between trait evolution rates and explanatory variables.

The evorates Framework: A Process-Based Solution

The evorates framework addresses these limitations by modeling trait evolution rates as gradually and stochastically changing across a phylogeny, essentially allowing rates to "evolve" via a geometric Brownian motion (GBM) process [5]. This approach incorporates two key parameters:

- Rate variance: Controls how quickly rates diverge among independently evolving lineages

- Trend: Determines whether rates tend to decrease or increase over time

When rate variance is zero, the model simplifies to conventional EB/LB models, with negative trends corresponding to EBs, no trend to BM, and positive trends to LBs. However, by incorporating stochastic rate variation, evorates can more accurately detect general trends while accounting for lineages exhibiting anomalously high or low rates [5]. This flexibility enables researchers to infer both how and where rates vary across a phylogeny, providing a more nuanced understanding of evolutionary dynamics.

Table 1: Core Parameters in the evorates Framework

| Parameter | Mathematical Interpretation | Biological Interpretation | Relationship to Rate-Time Scaling |

|---|---|---|---|

| Rate Variance | Controls the magnitude of stochastic rate changes across branches | Reflects the degree of lineage-specific adaptation in response to numerous subtle factors | High variance exacerbates scaling issues in conventional models |

| Trend Parameter | Determines the deterministic component of rate change over time | Captures overarching patterns like adaptive radiations (EB) or character displacement (LB) | Directly addresses the time-dependent component of rate variation |

| Branchwise Rates | Estimated average trait evolution rates along each branch | Identifies lineages with anomalously high or low evolutionary rates | Enables detection of outliers that distort rate-time relationships |

Quantitative Manifestations of the Rate-Time Scaling Problem

Empirical Evidence from Evolutionary Time Series

Recent analyses of 643 empirical time series confirm that the negative correlation between evolutionary rates and time intervals persists even after accounting for model misspecification, sampling error, and model identifiability issues [12]. This finding suggests that the rate-time correlation "requires an explanation grounded in evolutionary explanations, and that common models used in phylogenetic comparative studies and phenotypic time series analyses often fail to accurately describe trait evolution in empirical data" [12]. The persistent nature of this correlation across diverse datasets underscores its fundamental importance for the field.

Simulation studies demonstrate that rates of evolution estimated as a parameter in the unbiased random walk (Brownian motion) model theoretically lack rate-time scaling when data has been generated using this model, even when time series are made incomplete and biased [12]. This indicates that it should be possible to estimate rates that are not time-correlated from empirical data, yet in practice, "making meaningful comparisons of estimated rates between clades and lineages covering different time intervals remains a challenge" [12].

Comparative Performance of Evolutionary Rate Models

Table 2: Model Performance in Addressing Rate-Time Scaling

| Model Type | Theoretical Basis | Handling of Rate Heterogeneity | Susceptibility to Rate-Time Artifacts | Best Use Cases |

|---|---|---|---|---|

| Brownian Motion | Constant rate throughout phylogeny | None | High | Null model development; traits under stabilizing selection |

| Early/Late Burst | Exponential decrease/increase in rates over time | Deterministic trend only | Moderate to High | Adaptive radiations; character displacement studies |

| Regime-Shift Models | Discrete rate shifts at specific nodes | Infrequent, large-effect changes | Moderate | Traits influenced by major ecological shifts |

| evorates Framework | Geometic Brownian Motion of rates | Continuous, stochastic variation | Low | Complex evolutionary scenarios with multiple influencing factors |

Experimental Protocols for Rate Estimation

Protocol 1: Baseline Assessment of Rate-Time Scaling

Purpose: To quantify the presence and magnitude of rate-time scaling in empirical datasets.

Materials and Reagents:

- Phylogenetic tree with branch lengths proportional to time

- Phenotypic trait measurements for tip taxa

- Computational environment with R and appropriate packages

Methodology:

- Data Preparation: Format comparative data as a univariate continuous trait associated with tips of a rooted, ultrametric phylogeny. The current implementation allows for missing data and multiple trait values per tip [5].

- Model Fitting: Fit a series of nested models beginning with simple Brownian Motion and progressing to more complex EB/LB models.

- Rate Estimation: Calculate branch-specific rates across multiple time scales using a sliding window approach.

- Scaling Assessment: Perform correlation analysis between estimated rates and measurement intervals.

Expected Outcomes: This protocol will generate a quantitative assessment of rate-time scaling in the dataset, providing a baseline for evaluating the effectiveness of more sophisticated modeling approaches.

Protocol 2: Implementing the evorates Framework

Purpose: To estimate phenotypic evolutionary rates while accounting for both general trends and stochastic rate variation.

Materials and Reagents:

- Phylogenetic tree with branch lengths proportional to time

- Phenotypic trait measurements

- Bayesian inference software with evorates implementation

Methodology:

- Prior Specification: Set priors for the rate variance and trend parameters based on biological expectations.

- Bayesian Inference: Run Markov Chain Monte Carlo (MCMC) sampling to approximate the posterior distribution of parameters.

- Convergence Assessment: Monitor MCMC chains for convergence using standard diagnostics.

- Posterior Analysis: Extract branchwise rate estimates and identify lineages with anomalously high or low rates.

Expected Outcomes: The protocol generates posterior distributions for trend parameters and branch-specific rates, enabling identification of both general patterns and lineage-specific deviations.

Protocol 3: Validation via Simulation

Purpose: To validate inference accuracy under known evolutionary scenarios.

Materials and Reagents:

- Simulation framework capable of generating trait data under specified evolutionary models

- Parameter sets covering biologically realistic values

Methodology:

- Data Simulation: Generate trait data across phylogenies of varying sizes under different evolutionary scenarios.

- Model Inference: Apply the evorates framework to simulated datasets.

- Accuracy Assessment: Compare inferred parameters to known true values.

- Performance Quantification: Calculate statistical power to detect trends and rate variation.

Expected Outcomes: This protocol quantifies the accuracy and reliability of evorates inference across different evolutionary scenarios and phylogenetic tree sizes.

Research Reagent Solutions

Table 3: Essential Computational Tools for Evolutionary Rate Analysis

| Research Reagent | Type/Category | Function in Analysis | Implementation Considerations |

|---|---|---|---|

| evorates R Package | Bayesian inference tool | Estimates gradually changing trait evolution rates across phylogenies | Requires basic familiarity with Bayesian statistics and MCMC diagnostics |

| Phylogenetic Data | Biological data structure | Provides evolutionary relationships and temporal framework | Branch lengths must be proportional to time; supports missing data |

| Phenotypic Measurements | Continuous trait data | Raw material for evolutionary rate estimation | Both univariate and multivariate approaches possible |

| CMA-ES Algorithm | Evolutionary optimization algorithm | Efficiently navigates parameter space for complex models | Particularly effective for GMA and linear-logarithmic kinetics [13] |

| High-Performance Computing | Computational infrastructure | Enables Bayesian inference for large datasets | Parallel processing significantly reduces computation time |

Visualizing Methodological Approaches

Workflow for Addressing Rate-Time Scaling

Comparative Model Structures

Application to Cetacean Body Size Evolution

A compelling empirical application of the evorates framework comes from the analysis of body size evolution in cetaceans (whales and dolphins). Previous research had suggested a general slowdown in body size evolution over time, but the evorates analysis provided a more nuanced understanding by "recovering substantial support for an overall slowdown in body size evolution over time with recent bursts among some oceanic dolphins and relative stasis among beaked whales of the genus Mesoplodon" [5]. This application demonstrates how the framework can simultaneously detect overarching trends while identifying lineage-specific exceptions that might otherwise obscure general patterns.

The cetacean case study illustrates the practical utility of these methods for unifying and expanding on previous research. By applying the protocols outlined in this Note, researchers can similarly decompose complex evolutionary patterns into their constituent components, distinguishing general trends from lineage-specific adaptations and thereby generating more accurate interpretations of evolutionary processes.

A central challenge in evolutionary biology is discerning the relative roles of natural selection and neutral evolution in shaping genomic and phenotypic diversity. The neutral theory, introduced by Motoo Kimura, posits that the majority of evolutionary changes at the molecular level are due to the random fixation of selectively neutral mutations through genetic drift [14]. In contrast, selectionist frameworks argue that natural selection is the dominant force driving adaptations [15]. The nearly neutral theory offers a bridge, emphasizing the role of slightly deleterious mutations whose fate is determined by the interplay between selection and drift, a balance heavily influenced by effective population size (Nₑ) [16] [17]. For researchers modeling evolutionary rates (evorates), accurately quantifying these forces is paramount. This application note provides a structured overview of the theoretical frameworks, quantitative predictions, and essential protocols for dissecting their contributions, with direct applications in comparative genomics and drug development.

Theoretical Frameworks and Their Quantitative Predictions

The following table summarizes the core tenets and predictions of the major evolutionary theories.

Table 1: Key Evolutionary Theories and Their Predictions

| Theory | Core Principle | Predicted Relationship with Population Size | Primary Molecular Signature |

|---|---|---|---|

| Strict Neutral Theory [14] | The vast majority of fixed molecular changes are selectively neutral. | Substitution rate = mutation rate; independent of population size. | Higher evolutionary rates in non-coding regions and synonymous sites compared to non-synonymous sites. |

| Nearly Neutral Theory [16] [17] | A substantial fraction of mutations are slightly deleterious; their fate is determined by selection-drift balance. | Negative correlation between evolutionary rate and population size; weaker selection in smaller populations. | Proportion of nearly neutral mutations inversely correlates with Nₑ; measures like dN/dS are sensitive to Nₑ. |

| Selectionist Theory [15] | Natural selection is the main driver of evolutionary change, both for adaptations and constraints. | Evolutionary rate depends on environmental shifts and selective pressures, not solely on population size. | Signature of positive selection (e.g., dN/dS > 1) or pervasive purifying selection (e.g., dN/dS < 1). |

| Balanced Mutation Theory (Static Regime) [16] | Evolution is a nearly neutral process with a balance between slightly deleterious and compensatory advantageous substitutions. | Negative relationship between molecular evolutionary rate and population size. | Population phenotype remains at a suboptimum fitness equilibrium. |

The Fisher Geometrical Model (FGM) provides a biologically interpretable framework for many of these theories. It models phenotypes in a multi-dimensional space, where mutations are random vectors and fitness is determined by the distance to an optimum phenotype [16]. This framework naturally generates distributions of selection coefficients, linking them to parameters like mutation size and organismal complexity, rather than assuming them a priori.

Quantitative Data and Empirical Correlates

Empirical studies have identified specific life-history and genomic traits that correlate with evolutionary rates, informing predictions. A 2025 study on avian genomics provides a clear example of how these factors are quantified.

Table 2: Correlates of Molecular Evolutionary Rates in Birds (Family-Level Analysis) [7]

| Trait | Correlation with Intergenic Rate (proxy for mutation rate) | Correlation with dN/dS (ω) | Proposed Evolutionary Driver |

|---|---|---|---|

| Clutch Size | Significant Positive Association | No Significant Association | Larger clutch size increases viable genomic replications per generation, elevating mutation opportunity. |

| Generation Length | Significant Negative Association (from random forest analysis) | No Significant Association | Longer generations are associated with more DNA repair cycles and reduced mutation rates. |

| Body Mass | Not Significant (in multivariate models) | No Significant Association | Effects are indirect, mediated through correlations with life-history traits like clutch size. |

| Tarsus Length | Significant Negative Association (in species-level analysis) | No Significant Association | Shorter tarsi associated with aerial lifestyles; potential link to oxidative stress during flight. |

A critical prediction of the nearly neutral theory is that the effectiveness of selection depends on the product Nₑs (effective population size × selection coefficient). This leads to the expectation that the proportion of effectively neutral mutations is higher in species with small Nₑ [14]. For instance, in hominids (small Nₑ), about 30% of nonsynonymous mutations are effectively neutral, whereas in Drosophila (large Nₑ), this proportion is less than 16% [14]. Furthermore, natural selection constrains neutral diversity more strongly in large populations, explaining the long-standing paradox that observed levels of genetic diversity across species are much more uniform than expected under a strict neutral model given the vast range of census population sizes [18].

Experimental Protocols and Analytical Workflows

Protocol: Phylogenetic Analysis of Evolutionary Rates Using the Ornstein-Uhlenbeck (OU) Model

The OU process is a powerful tool for modeling the evolution of continuous traits, such as gene expression levels, under stabilizing selection [19].

1. Research Reagent Solutions:

- Input Data: A compiled RNA-seq or expression array dataset across multiple species and tissues. For example, a dataset of 10,899 one-to-one orthologous genes from 17 mammalian species and seven tissues [19].

- Software Tools: Phylogenetic analysis software capable of fitting OU models (e.g.,

geigerorOUwiein R). - Phylogenetic Tree: A time-calibrated species tree representing the evolutionary relationships among the studied species.

2. Procedure: a. Data Preparation and Orthology Mapping: Map sequencing reads to reference genomes and quantify expression levels for each orthologous gene in each species and tissue. Normalize expression data to account for technical variation [19]. b. Model Fitting: For each gene and tissue, fit the OU model to the expression data using the phylogenetic tree. The OU model is described by the stochastic differential equation: dXₜ = α(θ - Xₜ)dt + σdWₜ, where: * Xₜ is the trait value at time t. * θ is the optimal trait value. * α is the strength of selection pulling the trait toward θ. * σ is the rate of the random diffusion process (Brownian motion, dWₜ). c. Parameter Estimation & Interpretation: Estimate the parameters α, σ, and θ for each gene. A significant α > 0 indicates evolution under stabilizing selection. The stationary variance of the process, σ²/2α, quantifies how constrained a gene's expression is in a given tissue [19]. d. Hypothesis Testing: Compare the fit of the OU model to a pure drift model (Brownian motion) using likelihood ratio tests or Akaike Information Criterion (AIC) to determine if stabilizing selection is a significant force.

Protocol: Evolutionary Rate Decomposition for Genome-Wide Analysis

This protocol identifies lineage- and gene-specific drivers of evolutionary rate variation from whole-genome data [7].

1. Research Reagent Solutions:

- Input Data: Whole-genome sequences for a phylogenetically broad set of species (e.g., 218 bird families from the B10K consortium) [7].

- Software Tools: Genome alignment tools (e.g., CACTUS, LASTZ), phylogenetic inference software (e.g., IQ-TREE, RAxML), and custom scripts for evolutionary rate decomposition.

- Species Traits: A comprehensive table of life-history, morphological, and ecological traits for the studied species.

2. Procedure: a. Sequence Alignment and Phylogeny Estimation: Generate a whole-genome alignment for all orthologous genes and infer a robust, time-calibrated phylogenetic tree. b. Calculate Substitution Rates: For each branch in the tree and each gene, estimate the nonsynonymous (dN) and synonymous (dS) substitution rates, and their ratio (ω or dN/dS). c. Perform Evolutionary Rate Decomposition: Conduct a principal component analysis (PCA) on the matrix of evolutionary rates (e.g., dN, dS, or ω) across all branches and genes. This identifies the major independent axes of rate variation [7]. d. Identify Influential Taxa and Genes: For each principal component (axis of variation), identify the specific phylogenetic branches (lineages) and genomic loci (genes) that contribute most strongly to the rate variation. e. Correlate with Species Traits: Regress the identified axes of rate variation, or the rates of key lineages, against the table of species traits to uncover the ecological and life-history drivers of molecular evolution (e.g., clutch size, generation length) [7].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Resources for Evolutionary Rate Studies

| Item | Function/Description | Example/Application |

|---|---|---|

| One-to-One Ortholog Sets | Curated sets of genes that are orthologous across all species in the study, minimizing errors from gene duplication and loss. | Ensembl-annotated mammalian one-to-one orthologs (e.g., 10,899 genes) [19]. |

| Time-Calibrated Phylogenies | Species trees with branch lengths proportional to time, essential for modeling evolutionary processes over time. | Family-level bird phylogeny from the B10K consortium [7]. |

| Poisson Random Field (PRF) Models | A population genetics framework for modeling the allele frequency spectrum (AFS), used to infer parameters of selection and demography. | Modeling the non-stationary AFS after a population size change to derive time-dependent measures of selection [17]. |

| Structurally Constrained Substitution (SCS) Models | Protein evolution models that incorporate structural and functional constraints, providing greater realism and accuracy than empirical matrices. | Phylogenetic inference incorporating protein stability constraints to detect adaptation [20]. |

| Autoregressive-Moving-Average (ARMA) Models | A time-series approach for modeling phylogenetic rates of continuous trait evolution when rates are correlated along a tree. | Modeling the evolution of primate body mass or plant genome size where evolutionary rates are serially correlated [21]. |

Advanced Methodologies: Bayesian Inference, AI, and Forecasting Models

BEAST X (Bayesian Evolutionary Analysis Sampling Trees) represents a significant milestone in molecular sequence analysis, serving as a powerful cross-platform program dedicated to Bayesian evolutionary analysis using Markov Chain Monte Carlo (MCMC) methods. This sophisticated software specializes in inferring rooted, time-measured phylogenies using either strict or relaxed molecular clock models, providing researchers with an unparalleled framework for testing evolutionary hypotheses without being constrained to a single tree topology. Positioned within the broader context of evolutionary rates modeling (evorates) research, BEAST X enables scientists to reconstruct phylogenetic relationships while simultaneously estimating rate heterogeneity across lineages and through time—a critical capability for understanding the tempo and mode of evolutionary processes.

The software achieves this through its sophisticated implementation of MCMC algorithms that average over tree space, weighting each tree proportionally to its posterior probability. This approach provides a robust statistical foundation for evolutionary inference, allowing researchers to quantify uncertainty in their estimates of evolutionary rates and divergence times. Within the evorates research framework, this capability is particularly valuable for investigating how evolutionary processes vary across different lineages, environments, and temporal scales, offering insights into the fundamental drivers of biological diversification.

BEAST X stands as the direct successor to the BEAST v1 series, with its version 10.5.0 superseding the previous v1.10.4 release. The rename to BEAST X accompanies a shift to semantic versioning, making v10.5.0 the 10th major and 5th minor release of the original BEAST project [22]. This transition represents not merely a version update but a substantial re-engineering of the platform, offering enhanced performance, improved stability, and a more modular architecture that facilitates future extensions and specialized analytical packages.

Computational Framework and Performance Optimization

System Architecture and Dependencies

The computational backbone of BEAST X relies on a sophisticated integration of Java-based architecture with native acceleration libraries. As a cross-platform application developed in Java, BEAST X maintains compatibility across diverse operating systems while leveraging the BEAGLE library (Broad-platform Evolutionary Analysis General Likelihood Evaluator) for high-performance computation of phylogenetic likelihoods [23]. This combination enables the software to deliver cutting-edge Bayesian phylogenetic inference while maintaining accessibility across different computing environments.

The performance-critical components of BEAST X benefit significantly from BEAGLE integration, which provides:

- Hardware acceleration: Through explicit support for multi-core processors (CPU), vector instructions (SSE/AVX), and graphics processing units (GPU)

- Computational efficiency: By optimizing the calculation of phylogenetic likelihoods across trees and evolutionary models

- Parallel processing: Enabling simultaneous calculation of multiple independent Markov chains or partitioned analyses

For evolutionary rates modeling, this computational efficiency translates directly into the ability to handle more complex models and larger datasets within feasible timeframes, thereby expanding the scope of questions that can be addressed in evorates research.

Hardware Configuration Guidelines

Selecting appropriate hardware configurations is crucial for efficient evolutionary rates modeling with BEAST X. The table below summarizes recommended hardware configurations based on dataset scale and analytical complexity:

Table 1: Hardware Recommendations for BEAST X Analyses

| Analysis Scale | CPU Cores | RAM | Storage | Acceleration | Typical Runtime |

|---|---|---|---|---|---|

| Standard Dataset (≤100 taxa) | 8+ | 16 GB+ | SSD | BEAGLE-CPU/SSE | 4-8 hours |

| Genome-scale Data | 32+ | 64 GB+ | NVMe | BEAGLE-GPU | 2-5 days |

| Phylodynamic Analyses | 16+ | 32 GB+ | SSD | BEAGLE-CPU/SSE | 1-3 days |

| Complex Evolutionary Models | 24+ | 48 GB+ | NVMe | BEAGLE-GPU | 3-7 days |

The relationship between computational resources and analytical performance demonstrates non-linear scaling characteristics. Empirical benchmarks reveal that doubling CPU core count typically yields a 1.5-1.8x speed improvement rather than 2x, due to parallelization overhead and memory bandwidth limitations [23]. Similarly, GPU acceleration provides the greatest benefits for analyses involving large taxon sets and complex substitution models, where computational demands outweigh data transfer overhead.

Performance Optimization Strategies

Maximizing computational efficiency in BEAST X requires careful configuration of both hardware resources and software parameters. The following strategies have demonstrated significant improvements in runtime for evolutionary rates modeling:

Memory Allocation Optimization: BEAST X's Java foundation necessitates careful memory management, particularly for large datasets. The software typically benefits from allocating approximately 50-75% of available system RAM to the Java Virtual Machine (JVM), with specific parameters adjustable through command-line options [24]. For a system with 64GB RAM, optimal settings often include -Xmx48g (maximum memory) and -Xms24g (initial memory), with the G1 garbage collector (-XX:+UseG1GC) providing superior performance for large heaps.

Thread Configuration: BEAST X automatically detects available processor cores and typically configures itself to use all available threads. However, explicit thread configuration through the --threads parameter can sometimes improve performance, particularly when running multiple simultaneous analyses or on systems with simultaneous multithreading (SMT) [23]. For evolutionary rates modeling involving partitioned data, allocating one thread per partition often represents the optimal configuration.

BEAGLE Resource Utilization: The BEAGLE library supports sophisticated resource management through its -beagle_order and -beagle_instances flags. These parameters control how computational resources are allocated across different analysis components, with particular importance for partitioned analyses and complex evolutionary models [23]. For multi-gene datasets in evorates research, assigning specific CPU/GPU resources to distinct partitions can reduce runtime by 20-40% compared to automatic resource allocation.

Table 2: Performance Scaling with Thread Count in BEAST X

| Thread Count | Runtime for 1M Generations | Speedup Factor | CPU Utilization | Recommended Use Cases |

|---|---|---|---|---|

| 1 | 180 minutes | 1.0x | ~100% | Testing, very small datasets |

| 4 | 52 minutes | 3.5x | ~380% | Standard single-gene analyses |

| 8 | 31 minutes | 5.8x | ~720% | Multi-gene analyses, simple models |

| 16 | 19 minutes | 9.5x | ~1500% | Genome-scale data, complex models |

Experimental Protocols for Evolutionary Rates Modeling

Installation and Configuration Protocol

Software Acquisition and Base Installation

- Download the latest BEAST X release (v10.5.0-beta5 as of July 2024) from the official GitHub repository [22]

- Verify Java compatibility (JRE 1.8.0_202 or later required) using

java -version - Extract the archive to the target installation directory (e.g.,

/opt/beast_xfor system-wide installation or~/beast_xfor user-specific installation) - Add the BEAST X binary directory to the system PATH environment variable:

- Validate installation by executing

beast -versionto confirm successful deployment

BEAGLE Library Installation and Configuration The BEAGLE library substantially accelerates likelihood calculations and is essential for practical evolutionary rates modeling:

- Install build dependencies (GCC 7.3+, CMake 3.6+)

- Clone the BEAGLE source repository:

- Configure compilation with CPU and GPU support:

- Compile and install:

- Update library path and verify installation:

This command should display available computational resources, including CPU and GPU devices accessible for acceleration [23].

Environment Configuration for High-Performance Computing For production environments, particularly in HPC contexts, several additional configuration steps optimize stability and performance:

- Create a dedicated environment configuration file (

/etc/profile.d/beast_x.sh): - Adjust system limits for extended analyses by editing

/etc/security/limits.conf: - For cluster deployments, create a submission script (e.g.,

beast_job.slurm) that loads the environment before execution [25]

Input Data Preparation and XML Configuration

Sequence Data Preparation and Formatting BEAST X accepts sequence data in multiple formats (FASTA, NEXUS, Phylip), with specific preparation requirements for evolutionary rates modeling:

- Sequence Alignment: Use MAFFT, MUSCLE, or ClustalΩ for multiple sequence alignment, ensuring proper handling of coding sequences when analyzing protein-coding genes

- Data Partitioning: For partitioned analyses, define subsets corresponding to different genes, codon positions, or evolutionary regimes

- Temporal Calibration: Incorporate sample dates (for tip-dating analyses) or fossil calibrations (for node-dating) using appropriate formatting

- Metadata Verification: Ensure sequence names and dates follow consistent formatting to prevent parsing errors

XML Configuration Generation While BEAST X configuration files can be authored manually, the BEAUti graphical interface provides a more accessible approach:

- Launch BEAUti:

beauti - Import sequence data through the "File > Import Data" menu

- Define appropriate site models for each partition, selecting substitution models (e.g., GTR, HKY) and rate heterogeneity models (e.g., Gamma, Invariant sites)

- Configure the clock model based on evolutionary rates research questions:

- Strict Clock: Assumes constant evolutionary rates across lineages

- Relaxed Clock Log Normal: Allows rate variation across lineages with autocorrelation

- Relaxed Clock Exponential: Permits uncorrelated rate variation among lineages

- Set up the tree prior according to the biological context:

- Coalescent models: For population-level data

- Birth-Death models: For species-level trees

- Yule process: Simple speciation model

- Configure MCMC parameters:

- Chain length (typically 10^7-10^9 generations)

- Sampling frequency (every 1000-10000 generations)

- Log parameters to monitor convergence (posterior, likelihood, prior)

- Save the configuration as an XML file for execution

Advanced Configuration for Evolutionary Rates Models For specialized evolutionary rates research, additional XML configuration is often necessary:

- Implement epoch models for detecting rate shifts through time

- Configure random local clocks to identify lineage-specific rate variation

- Set up Bayesian model averaging to marginalize over competing models

- Define hyperpriors for hierarchical models when appropriate

Execution and Monitoring Protocol

Analysis Execution Execute the configured analysis using the appropriate command-line invocation:

For extended analyses on HPC systems, implement through job schedulers:

Run Monitoring and Convergence Assessment Effective monitoring is essential for ensuring analytical reliability:

- Run Progress Monitoring: Use tail to monitor log files in real-time:

- Convergence Diagnostics: Assess MCMC convergence using:

- Effective Sample Size (ESS): All parameters should have ESS > 200

- Potential Scale Reduction Factor (PSRF): Should approach 1.0 for all parameters

- Trace Inspection: Visual examination of parameter traces for stationarity

- Run Completion Assessment: Verify successful completion by checking for:

- Final "Thank you for using BEAST" message in log file

- Complete tree files with expected number of samples

- Properly formatted log files without parsing errors

Troubleshooting Common Runtime Issues

- Memory Allocation Errors: Increase JVM heap size with

-Xmxparameter - BEAGLE Library Failures: Verify library path and hardware compatibility

- Numerical Instabilities: Adjust model parameterization or use simpler models

- Failure to Converge: Extend chain length, adjust operators, or modify priors

Visualization of the BEAST X Analytical Workflow

The following diagram illustrates the complete analytical workflow for evolutionary rates modeling using BEAST X, from data preparation through to interpretation:

Diagram 1: BEAST X Analytical Workflow for Evolutionary Rates Modeling

The workflow demonstrates the iterative nature of Bayesian evolutionary analysis, where convergence assessment may necessitate additional MCMC iterations or model adjustments. This cyclical process continues until all diagnostic criteria are satisfied, ensuring robust inference of evolutionary parameters.

Essential Research Reagent Solutions

The following table catalogues the essential computational "reagents" required for effective evolutionary rates modeling with BEAST X, including software components, libraries, and configuration specifications:

Table 3: Essential Research Reagent Solutions for BEAST X Evolutionary Rates Modeling

| Reagent Category | Specific Solution | Version/Configuration | Primary Function | Usage Notes |

|---|---|---|---|---|

| Core Software | BEAST X | v10.5.0-beta5+ | Bayesian phylogenetic inference | Latest stable release recommended [22] |

| Acceleration Library | BEAGLE | 4.0.0+ with CUDA support | Likelihood computation acceleration | Requires compatible NVIDIA GPU for GPU acceleration [23] |

| Java Environment | Java Runtime Environment | 1.8.0_202+ | Execution environment | Later versions may offer performance improvements [24] |

| Sequence Alignment | MAFFT/MUSCLE | Latest | Multiple sequence alignment | Critical for input data quality |

| Model Selection | ModelFinder (IQ-TREE) | 2.0+ | Substitution model selection | Identifies optimal substitution models [26] |

| Convergence Assessment | Tracer | 1.7+ | MCMC diagnostics | Verifies ESS values and run convergence [24] |

| Tree Visualization | FigTree/IcyTree | Latest | Tree visualization and annotation | Enables visualization of time-scaled trees |

| Batch Processing | Custom SLURM scripts | N/A | HPC job management | Facilitates large-scale analyses [25] |

These research reagents collectively form the essential toolkit for conducting state-of-the-art evolutionary rates modeling with BEAST X. Proper integration and configuration of these components establishes the foundation for efficient, reproducible, and biologically meaningful inference of evolutionary dynamics from molecular sequence data.

For researchers focusing specifically on evolutionary rates modeling, additional specialized packages may enhance analytical capabilities, including the evorates package for explicit evolutionary rate inference and BEASTlabs for implementing novel clock models [27]. The expanding ecosystem of BEAST X packages continues to broaden the software's applicability to diverse research questions in evolutionary biology.

Structurally Constrained Substitution (SCS) Models for Protein Evolution Forecasting

Structurally Constrained Substitution (SCS) models represent a significant advancement in protein evolution modeling by incorporating biophysical constraints on protein folding stability into evolutionary predictions. Unlike traditional empirical substitution models that rely primarily on sequence patterns, SCS models define fitness based on the structural stability of proteins, providing a more mechanistic framework for forecasting evolutionary trajectories [28] [20]. These models operate on the fundamental principle that natural selection acts to maintain protein structural integrity, with the folding free energy (ΔG) of a protein serving as a key determinant of evolutionary fitness [29]. The integration of structural information allows SCS models to capture site-dependent evolutionary constraints that reflect the three-dimensional organization of proteins, where amino acids distant in sequence but proximate in structure may co-evolve [28] [30].

Within the broader context of evolutionary rates modeling, SCS models provide a biophysically grounded framework for understanding variation in substitution rates across protein sites. Research has demonstrated that local structural environments, quantified through metrics such as local packing density, correlate strongly with site-specific evolutionary rates, outperforming traditional measures like relative solvent accessibility [31]. This structural perspective enables more accurate forecasting of protein evolution, particularly in scenarios where selection pressures on folding stability dominate, such as in viral proteins subjected to immune pressure [28]. The development of SCS models represents a convergence of molecular evolution, structural biology, and population genetics, offering researchers a powerful tool for predicting evolutionary outcomes with applications ranging from drug design to understanding fundamental evolutionary processes.

Key Principles and Mechanisms

Theoretical Foundations

SCS models are grounded in population genetics theory and protein biophysics, connecting molecular evolution to structural constraints. The core innovation lies in modeling fitness as a function of protein folding stability, typically calculated from the folding free energy (ΔG) using the Boltzmann distribution:

f(A) = 1 / (1 + e^(ΔG/kT)) [29]

where f(A) represents the fitness of protein variant A, ΔG is the folding free energy, k is Boltzmann's constant, and T is temperature. This formulation captures the probability that a protein remains correctly folded, with lower (more negative) ΔG values corresponding to higher fitness [29]. SCS models account for both stability against unfolding and against misfolding, addressing the important evolutionary constraint that overly hydrophobic sequences may aggregate rather than fold properly [30].

The most recent advances in SCS modeling include Stability Constrained (Stab-CPE) and Structure Constrained (Str-CPE) models, collectively termed Structure and Stability Constrained Protein Evolution (SSCPE) models [32]. While Stab-CPE models define fitness based on the probability of finding a protein in its native folded state, Str-CPE models incorporate the structural deformation produced by mutations, predicted using linearly forced elastic network models [32]. These novel Str-CPE models demonstrate increased stringency, predicting lower sequence entropy and substitution rates while providing better fit to empirical sequence data and improved site-specific conservation patterns [32].

Model Integration with Evolutionary Processes

SCS models have been integrated into comprehensive evolutionary frameworks that unite several key processes:

Site-dependent constraints: SCS models incorporate the structural context of individual amino acid positions, recognizing that buried residues with many contacts experience different selective pressures than solvent-exposed residues [31].

Fitness-dependent birth-death processes: In forecasting applications, the fitness calculated from structural stability determines birth and death rates in population models, where high-fitness variants have increased birth rates and reduced death rates [28] [29].

Phylogenetic history integration: Unlike earlier structure-aware models that operated outside phylogenetic contexts, modern SCS implementations evolve sequences along phylogenetic trees or ancestral recombination graphs, accounting for shared evolutionary histories [30].

Table 1: Key Parameters in SCS Model Formulations

| Parameter | Description | Biological Significance |

|---|---|---|

| ΔG | Folding free energy | Determines protein stability and fitness; more negative values indicate higher stability [29] |

| Contact Matrix (Cij) | Binary matrix indicating residue proximity (<4.5Å) | Defines protein topology and residue interactions [30] |

| Interaction Energy (U(a,b)) | Free energy gained when amino acids a and b contact | Determines energetic contributions of specific amino acid pairs [30] |

| Sequence Entropy | Measure of variability at a site | Indicates evolutionary constraint; lower entropy suggests stronger selection [32] |

| Branch Length | Expected substitutions per site | Represents evolutionary time in phylogenetic frameworks [30] |

Forecasting Methodology and Workflow

Integrated Birth-Death-Substitution Framework

The forecasting of protein evolution using SCS models employs an integrated approach that simultaneously models population dynamics and molecular evolution, overcoming limitations of traditional methods that simulated these processes separately [28]. The framework combines:

- Forward-time birth-death processes that simulate evolutionary trajectories based on the fitness of protein variants

- Structurally constrained substitution models that govern sequence evolution along phylogenetic branches

- Fitness-dependent rates where birth and death probabilities at each node depend on the folding stability of the protein variant [28] [29]

This integrated approach generates more biologically realistic simulations by allowing molecular evolution to influence evolutionary history and vice versa, creating a feedback loop between sequence change and population dynamics [28].

Computational Implementation

The forecasting workflow has been implemented in ProteinEvolver, a computational framework specifically designed for simulating protein evolution under structural constraints. The key components of this implementation include:

- Input requirements: Initial protein sequence and structure, population parameters, and evolutionary model specifications

- Simulation engine: Efficient algorithms for forward-time simulation of birth-death processes with structural constraints

- Output generation: Forecasted protein variants with associated stability measures and phylogenetic relationships [28] [30]

For deep phylogenetic applications, the SSCPE models have been implemented in SSCPE.pl, a PERL-based program that uses RAxML-NG to infer phylogenies under SCS models given a multiple sequence alignment and matching protein structures [32].

Experimental Protocols

Protocol 1: Forecasting Protein Evolution Using Integrated Birth-Death-SCS Models

Purpose: To forecast future protein variants under selection for folding stability using integrated birth-death population genetics and structurally constrained substitution models.

Materials and Reagents:

- Initial protein sequence and three-dimensional structure

- ProteinEvolver software framework (available from https://github.com/MiguelArenas/proteinevolver)

- Computational resources for structural energy calculations

Procedure:

- Initialization: Assign the starting protein sequence and structure to the root node of the simulation.

- Fitness Calculation: Compute the fitness of the protein variant using the Boltzmann distribution applied to folding free energy: f(A) = 1 / (1 + e^(ΔG/kT)) [29].

- Birth-Death Process: For each node, determine birth and death rates based on the calculated fitness. High-fitness variants receive higher birth rates and lower death rates.

- Phylogenetic Branching: Generate descendant nodes according to the birth-death process, creating a forward-time phylogenetic history.

- Sequence Evolution: Along each branch, simulate protein evolution using SCS models: a. Determine the number of substitutions based on branch length and protein length. b. Introduce mutations according to the instantaneous rate matrix. c. Compute the folding free energy of the mutated protein structure using contact-based energy functions: E(A,C) = Σ Cij U(Ai,Aj) where Cij is the contact matrix and U(a,b) is the amino acid interaction matrix [30]. d. Accept or reject the mutation based on fixation probability derived from fitness changes. e. Repeat until the target number of substitutions is completed.

- Iteration: Continue the process for the desired evolutionary timeframe.

- Output Analysis: Collect forecasted protein variants, their sequences, structures, and stability measures.

Troubleshooting Tips:

- If simulations show excessive stability loss, adjust selection strength parameters.

- For computational efficiency with large proteins, consider using mean-field approximations of SCS models.

- Validate forecasts against known evolutionary trajectories where possible.

Protocol 2: Phylogenetic Inference Using SSCPE Models

Purpose: To infer phylogenetic relationships using Structure and Stability Constrained Protein Evolution (SSCPE) models for improved accuracy, particularly with distantly related sequences.

Materials and Reagents:

- Multiple sequence alignment (MSA) of protein families

- Known protein structures matching sequences in the MSA

- SSCPE.pl software (PERL-based implementation using RAxML-NG)

Procedure:

- Data Preparation: Compile a multiple sequence alignment and identify corresponding protein structures. If experimental structures are unavailable, generate homology models.

- Model Selection: Choose appropriate SSCPE model based on data characteristics:

- Str-CPE models for scenarios where structural deformation is the primary constraint

- Combined Str-CPE/Stab-CPE models for increased stringency

- Parameter Configuration: Set up SSCPE parameters including:

- Structural deformation coefficients

- Stability thresholds

- Population genetic parameters

- Phylogenetic Inference: Execute SSCPE.pl to infer phylogenetic trees under the selected structural constraints.

- Model Comparison: Compare results with traditional empirical models using likelihood values and conservation pattern analysis.

- Validation: Assess phylogenetic accuracy using structural distance metrics as reference [32].

Interpretation Guidelines:

- Higher likelihood values indicate better model fit to empirical data.

- Similar phylogenies inferred under SSCPE models across distantly related proteins suggest improved deep phylogeny reconstruction.

- Compare site-specific conservation patterns between models and empirical observations.

Performance and Validation

Quantitative Assessment of Forecasting Accuracy

The forecasting performance of SCS models has been evaluated through applications to monitored viral proteins, providing quantitative measures of prediction accuracy:

Table 2: Forecasting Performance of SCS Models on Viral Proteins

| Prediction Target | Accuracy Level | Notes on Error Sources |

|---|---|---|

| Folding Stability (ΔG) | Acceptable errors | More reliable than sequence prediction [28] |

| Protein Sequences | Larger errors | Expected due to stochastic evolutionary processes [28] |

| Site-specific Conservation | Improved with Str-CPE | Novel Str-CPE models better predict observed conservation [32] |

| Sequence Entropy | Higher stringency with SSCPE | Combined models predict lower, more realistic entropy [32] |

Evaluation studies indicate that forecasting accuracy depends strongly on the evolutionary scenario, with greater predictability in systems under strong selection pressures on protein stability [28]. The errors in sequence prediction reflect the inherent stochasticity of evolutionary processes, while stability predictions benefit from the physical constraints built into SCS models.

Comparative Performance with Alternative Models

SCS models demonstrate several advantages over traditional empirical substitution models:

- Improved site-specific amino acid distributions: Sequences simulated under SCS models produce amino acid distributions closer to those observed in real protein families compared to empirical models [30].

- Enhanced folding stability: Proteins evolved in silico under SCS models are predicted to have significantly higher folding stability than those generated with traditional models [30].

- Better fit to empirical data: Novel Str-CPE models provide higher likelihood to multiple sequence alignments that include known structures [32].

- Superior deep phylogeny inference: More similar phylogenies are inferred under SSCPE models compared to traditional empirical models when using distantly related proteins with structural distance-based reference trees [32].

The performance advantages are particularly pronounced in scenarios involving distant evolutionary relationships and when protein stability represents a major selective constraint.

Research Reagent Solutions

Table 3: Essential Research Tools and Resources for SCS Modeling

| Resource | Type | Function and Application | Availability |

|---|---|---|---|

| ProteinEvolver | Software Framework | Simulates protein evolution under structural constraints along phylogenetic histories | Freely available from https://github.com/MiguelArenas/proteinevolver [28] |

| SSCPE.pl | PERL Program | Implements Structure and Stability Constrained models for phylogenetic inference | Includes RAxML-NG for phylogeny reconstruction [32] |

| Contact Matrices | Structural Descriptor | Defines residue proximity in protein structures; enables energy calculations | Derived from PDB structures using distance cutoffs (<4.5Å) [30] |

| Amino Acid Interaction Matrix | Energy Parameters | Provides pairwise interaction energies between amino acids; used in stability calculations | Parameterized from empirical folding data [30] |

| Empirical Substitution Models | Baseline Comparison | Traditional sequence-based models for benchmarking SCS performance | Available in standard phylogenetic packages [20] |

Applications in Evolutionary Rate Modeling

SCS models provide unique insights into evolutionary rate variation through their mechanistic representation of structural constraints. Key applications in evolutionary rates modeling include:

Interpretation of rate heterogeneity: SCS models establish a direct connection between local structural environments and site-specific evolutionary rates, explaining why buried residues with intermediate numbers of contacts may experience different selective constraints than either highly exposed or deeply buried residues [31].

Prediction of substitution patterns: By modeling fitness through folding stability, SCS models can predict site-specific substitution rates without relying on empirical fitting to sequence data, providing a mechanistic null model for identifying additional selective pressures [31].

Ancestral sequence reconstruction: SCS models improve the accuracy of ancestral protein reconstructions by incorporating structural constraints, yielding ancestors with more realistic stability profiles than those obtained with empirical models [33].

Identification of functional constraints: When combined with models of enzymatic activity, SCS models can disentangle structural versus functional constraints on protein evolution, identifying sites where functional requirements override folding stability as the dominant selective pressure [20].

The integration of SCS models into evolutionary rates research provides a biophysical foundation for interpreting patterns of molecular evolution, moving beyond descriptive analyses toward mechanistic predictions of evolutionary constraints.

Integrating Birth-Death Population Models with Evolutionary Predictions

Forecasting evolutionary trajectories is a growing field with critical applications in understanding pathogen evolution and developing therapeutic strategies. Traditional population genetics methods often simulate evolutionary history and molecular evolution as separate processes, which can introduce biological incoherences. This application note details a methodology that integrates birth-death population models with structurally constrained substitution (SCS) models to directly couple evolutionary dynamics with molecular changes, enabling more realistic predictions of protein evolution. This approach is particularly valuable within evolutionary rates modeling (evorates) research for projecting future evolutionary paths under selective constraints.

Theoretical Framework and Key Concepts

Birth-Death Population Models

Birth-death models are continuous-time Markov processes used to study how the number of individuals (or lineages) in a population changes through time. In macroevolution, these models describe diversification through speciation (birth) and extinction (death) events [34].

Core Mathematical Formulation:

- Each lineage has a constant probability of speciating (birth rate, λ) or going extinct (death rate, μ).

- The waiting time between events follows an exponential distribution.

- With N(t) lineages alive at time t, the waiting time to the next event is exponential with parameter N(t)(λ + μ).

- The probability that an event is speciation is λ/(λ + μ), and extinction is μ/(λ + μ) [34].

- The net diversification rate is defined as r = λ - μ [34].

Structurally Constrained Substitution (SCS) Models

SCS models of protein evolution incorporate protein structural stability as a selective constraint, often providing more accurate evolutionary inferences than traditional empirical substitution models. These models account for the fact that amino acids far apart in the sequence may be close in the three-dimensional structure and interact, affecting their co-evolution [28] [29] [35].

Integrated Forecasting Approach

The integrated method simulates forward-in-time evolutionary history using a birth-death process where the fitness of a protein variant at a node directly influences its subsequent birth or death rates. This process is coupled with SCS models that govern protein evolution along the resulting phylogenetic branches, creating a cohesive framework where evolutionary history and molecular evolution mutually influence each other [28] [29].

Quantitative Parameters and Data