Evolutionary Optimization of Well Placement Using Convolutional Neural Networks: A Hybrid Framework for Reservoir Management

This article explores the integration of Convolutional Neural Networks (CNNs) with evolutionary optimization algorithms to solve the complex, high-dimensional challenge of well placement optimization in reservoir management.

Evolutionary Optimization of Well Placement Using Convolutional Neural Networks: A Hybrid Framework for Reservoir Management

Abstract

This article explores the integration of Convolutional Neural Networks (CNNs) with evolutionary optimization algorithms to solve the complex, high-dimensional challenge of well placement optimization in reservoir management. The hybrid framework addresses foundational concepts, detailing how CNNs preserve spatial features of reservoir properties to predict productivity and how evolutionary algorithms like Particle Swarm Optimization (PSO) and Genetic Algorithms (GA) efficiently explore the solution space. Methodological implementations are discussed, including the novel use of multi-modal CNN (M-CNN) architectures and theory-guided CNNs (TgCNNs) that incorporate physical laws. The content further covers troubleshooting common optimization challenges like overfitting and computational cost, and presents validation case studies demonstrating significant improvements in cumulative oil production and drastic reductions in computational expenses compared to traditional simulation-based approaches.

The Well Placement Challenge: Foundations of CNN and Evolutionary Algorithm Integration

Defining the Well Placement Optimization Problem in Geoenergy

Well placement optimization is a critical process in geoenergy applications, including hydrocarbon recovery, geothermal energy, and geologic carbon sequestration. The core objective is to determine the optimal number, type, location, and trajectory of energy wells to maximize a specific economic or environmental objective function while satisfying complex geological and engineering constraints [1] [2]. This problem represents a highly nonlinear, computationally expensive, and often multimodal challenge, where decision variables can include both integer parameters (well locations and types) and continuous parameters (well controls) [3] [2].

The fundamental mathematical formulation aims to find the configuration of wells (x) that maximizes an objective function, typically Net Present Value (NPV) or cumulative production, subject to nonlinear constraints:

$$ \max\, f(\mathbf{x}) $$ $$ \text{subject to: } g_i(\mathbf{x}) \leq 0,\quad i = 1, \dots, m $$ $$ \mathbf{x} \in X $$

where (f(\mathbf{x})) is the objective function evaluated through reservoir simulation, (g_i(\mathbf{x})) represent nonlinear constraints (e.g., bottomhole pressure limits, inter-well distances), and (X) defines the feasible space for decision variables [2]. The computational expense arises because each function evaluation requires a full numerical reservoir simulation, which can take hours or even days for complex geological models [3] [4].

Current Methodologies and Limitations

Traditional Optimization Approaches

Traditional approaches to well placement optimization have primarily relied on expert judgment and numerical simulation. While valuable, these methods are inherently limited by subjectivity, time-intensive processes, and difficulty in achieving globally optimal solutions [4].

Table 1: Comparison of Traditional Well Placement Optimization Methods

| Method | Key Features | Limitations | Typical Applications |

|---|---|---|---|

| Expert Judgment | Qualitative assessment of reservoir characteristics; Rule-based systems | Subjective; Difficult to generalize; Experience-dependent | Sidetrack well planning; Mature field redevelopment |

| Numerical Simulation | Physics-based modeling; Scenario analysis | Computationally prohibitive for full optimization; Manual intervention required | Greenfield development; Constrained well placement |

Evolutionary Optimization Algorithms

Evolutionary algorithms have emerged as powerful derivative-free methods for handling the complex, non-convex nature of well placement problems. These population-based stochastic optimizers are particularly effective for avoiding local optima [3] [1].

Table 2: Evolutionary Algorithms in Well Placement Optimization

| Algorithm | Key Mechanism | Advantages | Reported Performance |

|---|---|---|---|

| Genetic Algorithm (GA) | Selection, crossover, mutation operators; Chromosome representation of solutions | Robust global search; Handles discrete variables | 8.09% improvement in cumulative oil production compared to original schemes [1] |

| Differential Evolution (DE) | Vector differences for mutation; Binomial crossover | Effective balance of exploration/exploitation; Fewer control parameters | Superior performance in sidetrack well optimization; Effective constraint handling [4] |

| Covariance Matrix Adaptation Evolution Strategy (CMA-ES) | Adaptive covariance matrix; Step size control | Powerful derivative-free continuous optimization; Reduced simulation calls | Higher NPV with significant reduction in reservoir simulations compared to GA [5] |

Surrogate-Assisted Evolutionary Approaches

The Role of Machine Learning Surrogates

To address the computational bottleneck of numerical simulations, machine learning-based surrogate modeling has become integral to modern well placement optimization. These surrogates create computationally inexpensive approximations of the objective function landscape, dramatically reducing the number of required simulation runs [3] [4].

The generalized data-driven evolutionary algorithm (GDDE) demonstrates this approach by combining classification and regression surrogates. This methodology reduces simulation runs to approximately 20% of those required by conventional differential evolution algorithms [3]. Key surrogate modeling techniques include:

- Radial Basis Functions (RBF): Used for local approximation of the objective function landscape

- Probabilistic Neural Networks (PNN): Employed as classifiers to identify promising candidate solutions

- Random Forest Models: Provide robust predictive accuracy for sidetrack well performance (RMSE of 0.0283, R² of 0.8059) [4]

- Polynomial Response Surfaces: Enhance local surrogate accuracy through hyperparameter optimization [3]

Experimental Protocol: Surrogate-Assisted Evolutionary Optimization

Objective: To optimize well placement configurations using machine learning surrogates to reduce computational expense while maintaining solution quality.

Materials and Computational Requirements:

- Reservoir simulation software (e.g., Eclipse, CMG)

- Machine learning library (scikit-learn, TensorFlow, or PyTorch)

- High-performance computing cluster with multiple nodes

- Geological model and historical production data

Procedure:

Initial Sampling Phase:

Surrogate Model Training:

- Train multiple surrogate model types: Random Forest, RBF networks, and neural networks

- Optimize hyperparameters via cross-validation:

- Random Forest: Number of trees (100-500), maximum depth (10-30)

- RBF: Shape factor optimization using polynomial response surfaces [3]

- Neural Networks: Architecture tuning, learning rate (0.001-0.1)

- Select best-performing model based on validation set RMSE and R² metrics

Evolutionary Optimization Loop:

- Initialize population of 50-100 candidate solutions

- For each generation (100-300 iterations):

- Pre-screen candidates using surrogate predictions

- Select most promising individuals using tournament selection

- Apply evolutionary operators (crossover rate: 0.8-0.9, mutation rate: 0.1-0.2)

- Evaluate top 10-20% promising candidates using numerical simulator

- Update surrogate model with new simulation results

- Apply constraint handling techniques (adaptive penalization or Augmented Lagrangian Method) [2]

Termination and Validation:

- Stop when improvement falls below threshold (1-5%) for 20 consecutive generations

- Validate final optimal configuration with full numerical simulation

- Compare performance against baseline approaches

Advanced Constraint Handling Techniques

Real-world well placement problems involve numerous nonlinear constraints, including:

- Minimum inter-well distance requirements (e.g., 500-1000 feet)

- Well-to-boundary distance limitations

- Injection bottomhole pressure constraints

- Field gas production rate limits [2]

The Augmented Lagrangian Method (ALM) combined with Iterative Latin Hypercube Sampling (ILHS) has demonstrated superior performance for handling these complex constraints. This approach incorporates constraint violations directly into the objective function through penalty terms, effectively transforming constrained problems into unconstrained ones [2]. ALM-ILHS tends to minimize constraint violations more effectively than filter methods, while maintaining competitive objective function values.

Integration with Convolutional Neural Network Research

Within the broader thesis context of convolutional neural network (CNN) research, significant opportunities exist for enhancing well placement optimization. While current applications of deep learning in geoenergy are emerging, several promising directions align with developments in drug discovery and computer vision:

Spatial Feature Extraction

CNNs can process 2D and 3D reservoir models as spatial inputs, automatically extracting features related to geological structures, fluid flow pathways, and heterogeneity patterns. This approach mirrors successful applications in drug discovery where CNNs process molecular structures and protein-ligand complexes [6].

Transfer Learning and Pre-training

Similar to the Gnina framework in drug discovery, which uses pre-trained CNNs for molecular scoring [6], geoenergy applications can develop CNN architectures pre-trained on diverse reservoir models. These models can then be fine-tuned for specific optimization problems, reducing data requirements and improving convergence.

Research Reagent Solutions

Table 3: Essential Computational Tools for CNN-Enhanced Well Placement Optimization

| Tool/Category | Function | Geoenergy Application Example |

|---|---|---|

| Deep Learning Frameworks (TensorFlow, PyTorch) | CNN model implementation and training | Spatial feature extraction from reservoir models |

| Reservoir Simulation Software (Eclipse, CMG) | Physics-based objective function evaluation | Ground truth data generation for surrogate training |

| Evolutionary Algorithm Libraries (DEAP, PyGAD) | Population-based optimization | Global search for optimal well configurations |

| Geological Modeling Platforms (Petrel, RMS) | Reservoir characterization and visualization | Input data preparation and constraint definition |

| High-Performance Computing Clusters | Parallel simulation execution | Accelerated objective function evaluation |

The well placement optimization problem in geoenergy represents a challenging computational problem that benefits significantly from surrogate-assisted evolutionary approaches. Current methodologies successfully combine machine learning surrogates with evolutionary algorithms to reduce computational expense while maintaining solution quality.

Future research directions should focus on integrating convolutional neural networks for spatial reservoir analysis, developing transfer learning frameworks across different geological settings, and creating end-to-end optimization systems that seamlessly integrate geological modeling, simulation, and optimization. These advances, inspired by parallel developments in drug discovery and artificial intelligence, will enable more efficient and effective geoenergy resource development.

Limitations of Traditional Simulation-Based and Standalone Evolutionary Methods

In the domain of geoenergy science and engineering, well placement optimization is a critical multi-million-dollar process for determining optimal well locations and configurations to maximize economic value while considering geological, engineering, economic, and environmental constraints [7]. This complex procedure has traditionally relied on two fundamental methodological pillars: simulation-based training and evolutionary optimization algorithms. Simulation-based training provides a controlled environment for mimicking real-world scenarios, offering benefits such as enhanced skill development, increased knowledge retention, and improved decision-making [8]. Concurrently, evolutionary algorithms (EAs)—including Genetic Algorithms (GA), Particle Swarm Optimization (PSO), and Differential Evolution (DE)—have been widely adopted as powerful population-based search methods for generating high-quality solutions with stable convergence characteristics [9] [7].

However, despite their individual strengths, both approaches present significant limitations when deployed in isolation. Traditional simulation-based methods often face constraints in scalability, realism, and computational efficiency, while standalone evolutionary algorithms struggle with convergence speed, parameter sensitivity, and computational demands, particularly when integrated with computationally intensive full-physics reservoir simulations [7]. This document examines these limitations through a detailed analytical framework and presents hybrid methodologies that integrate convolutional neural networks (CNNs) with evolutionary optimization to overcome these challenges, with specific application to well placement optimization in subsurface reservoir management.

Analytical Framework: Core Limitations

The table below systematically outlines the principal limitations associated with traditional simulation-based and standalone evolutionary methods, providing a structured comparison of their constraints and impacts.

Table 1: Core Limitations of Traditional Methodologies

| Methodology Category | Specific Limitation | Impact on Well Placement Optimization | Quantitative Evidence |

|---|---|---|---|

| Simulation-Based Training | High Computational Cost | Implementation hindered by expenses related to travel, accommodation, and time away from work [8] | Limited application scope and scalability [8] |

| Limited Real-World Transfer | Classroom lectures and textbook readings alone cannot adequately prepare individuals for field complexities [8] | Reduced practical applicability in diverse geological formations [8] | |

| Assessment Challenges | Inconsistent assessment impacts and limited evaluation frameworks [10] | Difficulty validating model performance against real-world benchmarks [10] | |

| Standalone Evolutionary Algorithms | Computational Intensity | High computational cost from exhaustive reservoir simulation runs for objective function evaluations [7] | Implementation hindered despite powerful search capabilities [7] |

| Search Stagnation | Stagnation of search performance in later generations during evolutionary process [7] | Premature convergence and suboptimal well placement solutions [7] | |

| Parameter Sensitivity | Heavy reliance on domain knowledge for algorithm configuration, selection, and customized design [9] | Critical barrier to transferring optimization technology from theory to practice [9] | |

| Integrated Simulation-Evolutionary Approaches | Extrapolation Limitations | Predictability deteriorates for extrapolation in nonlinear, complex problems beyond learning data range [7] | Limited capability in identifying highly productive reservoir regions beyond training data [7] |

| Data Acquisition Burden | High computational cost of reservoir simulations associated with acquisition of learning data [7] | Generalization difficulty with small ratio of available data volume to domain volume [7] |

Experimental Protocols: Hybrid CNN-Evolutionary Framework

Protocol 1: Multi-Modal CNN (M-CNN) Integrated with Particle Swarm Optimization

Objective: To overcome computational limitations of standalone evolutionary methods by creating an efficient proxy model for well placement optimization that maintains high predictive accuracy while dramatically reducing computational costs [7].

Workflow:

- PSO-Driven Data Generation: Execute full-physics reservoir simulations for diverse well placement scenarios using PSO to generate representative learning data correlating well locations with cumulative oil production [7].

- Spatial Feature Processing: Construct input datasets comprising near-wellbore spatial properties (porosity, permeability, pressure, and saturation) preserving spatial relationships through convolutional operations [7].

- Multi-Modal Architecture Configuration:

- Implement convolutional layers with ReLU activation for spatial feature extraction

- Incorporate auxiliary data (well distances, reservoir boundaries) as 1D array in fully-connected layers

- Design output layer to predict oil productivity at candidate well locations [7]

- Iterative Learning Scheme: Enhance proxy model suitability by adding qualified scenarios to learning data and re-training M-CNN to mitigate extrapolation problems [7].

- Validation Framework: Compare M-CNN predictions with full-physics reservoir simulation results for qualified well placement scenarios to validate model consistency [7].

Validation Metrics:

- Prediction accuracy within 3% relative error margin compared to full-physics simulations

- Computational cost reduction to approximately 11.18% of traditional reservoir simulation requirements

- Field cumulative oil production improvement of 47.40% compared to original configurations [7]

Protocol 2: Evolutionary Optimization of CNN Architecture

Objective: To address limitations in traditional CNN design through automated architecture optimization using evolutionary algorithms, enhancing feature extraction capabilities for complex geological data patterns [11] [12].

Workflow:

- Evolutionary Algorithm Initialization: Deploy genetic algorithms with selection, crossover, and mutation operators to explore CNN architecture search space [12].

- Hyperparameter Optimization: Utilize evolutionary operators (natural selection, version combination, random weight mutations) to optimize CNN hyperparameters including filter sizes, layer depth, and connectivity patterns [13] [11].

- Fault Diagnosis Integration: Apply evolutionary ensemble CNN with diverse feature extractors (Fourier, Wavelet transforms) for comprehensive geological fault diagnosis [11].

- Performance Evaluation: Assess optimized CNN architectures using accuracy, convergence speed, and generalization capabilities on benchmark geological datasets [13] [11].

- Cross-Validation: Implement k-fold cross-validation to ensure robust performance across diverse reservoir conditions and geological formations [12].

Implementation Considerations:

- Balance exploration and exploitation in evolutionary search to prevent premature convergence

- Incorporate domain knowledge constraints to ensure geologically plausible architectures

- Optimize computational efficiency through parallel evaluation of candidate architectures [11] [12]

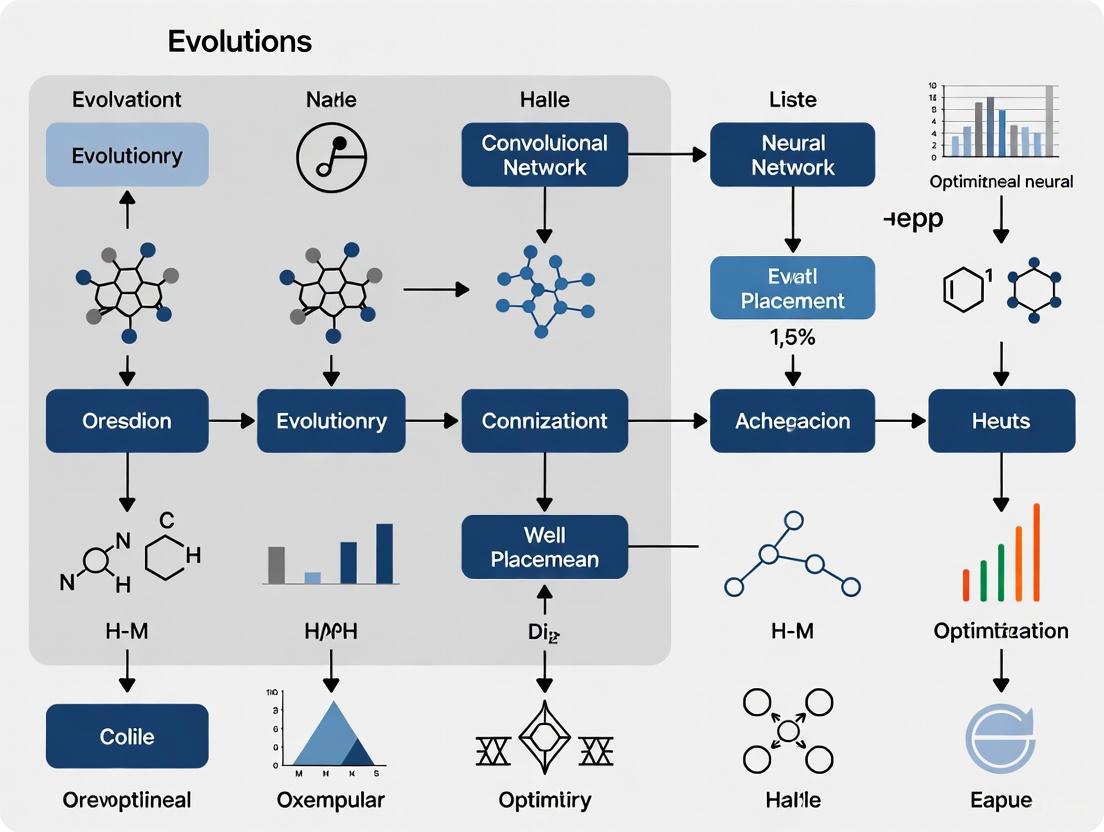

Visualization: Workflow Integration Diagrams

Hybrid Optimization Framework

(Hybrid CNN-Evolutionary Workflow for Well Placement)

Evolutionary CNN Architecture Optimization

(Evolutionary CNN Architecture Search Process)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Components for Hybrid Optimization Framework

| Research Component | Function | Implementation Example |

|---|---|---|

| Multi-Modal CNN (M-CNN) | Learns correlation between near-wellbore spatial properties and cumulative oil production | Input: porosity, permeability, pressure, saturation; Output: oil productivity prediction [7] |

| Particle Swarm Optimization (PSO) | Provides learning data through full-physics reservoir simulation of well placement scenarios | Generates high-quality solutions with stable convergence for dataset creation [7] |

| Evolutionary Algorithms | Optimizes CNN architecture and hyperparameters through selection, crossover, and mutation | Discovers effective network configurations beyond human design intuition [11] [12] |

| Iterative Learning Framework | Mitigates extrapolation problems by continuously enhancing training data with qualified scenarios | Improves proxy model predictability for complex, nonlinear reservoir behavior [7] |

| Full-Physics Reservoir Simulator | Provides ground truth data for training and validation of proxy models | Benchmark for evaluating M-CNN prediction accuracy (target: <3% relative error) [7] |

| Spatially-Extended Labeling | Ensures label information accessibility across all spatial positions in convolutional layers | Enables effective CNN training on datasets with complex, fine geological features [14] |

The integration of convolutional neural networks with evolutionary optimization represents a paradigm shift in overcoming the limitations of traditional simulation-based and standalone evolutionary methods for well placement optimization. By leveraging M-CNNs as efficient proxy models and employing evolutionary algorithms for architecture optimization and search guidance, researchers can achieve substantial improvements in both computational efficiency (reducing costs to approximately 11.18% of traditional methods) and solution quality (47.40% improvement in field cumulative oil production). The experimental protocols and visualization frameworks presented provide actionable methodologies for implementing this hybrid approach, while the research reagent solutions offer essential components for constructing effective optimization systems. This integrated framework demonstrates significant potential for advancing well placement optimization by balancing predictive accuracy with computational practicality, ultimately enabling more effective reservoir management decisions in complex geological environments.

Convolutional Neural Networks (CNNs) are a class of deep learning models specifically designed to process grid-structured data, making them exceptionally well-suited for extracting spatial features from reservoir models. Unlike traditional Artificial Neural Networks (ANNs) that flatten input data into one dimension, CNNs preserve and leverage the spatial relationships within multi-dimensional data through convolutional operations [15] [7]. This capability is crucial for reservoir characterization, as it allows the network to identify critical spatial patterns in petrophysical properties—such as permeability and porosity—that correlate with hydrocarbon productivity [15] [16].

The fundamental advantage of CNNs in reservoir feature extraction lies in their hierarchical architecture. Through successive convolutional layers, CNNs automatically learn to detect features from simple edges and textures in initial layers to complex geological patterns like channel bodies and facies distributions in deeper layers [16]. This automated feature extraction eliminates the need for manual feature engineering and enables the network to capture nonlinear relationships between spatial reservoir properties and production outcomes that might be missed by traditional methods [15] [17].

Key Applications in Reservoir Characterization

Well Placement Optimization

CNNs have demonstrated significant value in well placement optimization by acting as efficient surrogate models that correlate near-wellbore spatial properties with production outcomes. Research shows that CNNs can input spatial data including both static properties (permeability, porosity) and dynamic properties (pressure, saturation) around candidate well locations and output accurate predictions of cumulative oil production [15] [7]. This approach has achieved remarkable consistency with full-physics reservoir simulation results, with prediction accuracy within 3% relative error margin while reducing computational costs to just 11.18% of traditional simulation requirements [7]. When coupled with robust optimization frameworks, CNNs identify well locations that maximize the expectation of cumulative oil production across equiprobable geological realizations, effectively handling geological uncertainty [15].

Reservoir Channel Characterization

CNNs provide powerful capabilities for quantitatively identifying geological features, particularly in fluvial reservoirs. In one application, CNNs were used to determine the width of single channels within underwater distributary channels at the delta front edge—a key parameter in designing well programs [16]. The method established candidate models with channel widths of 100, 130, 160, 190, 220, and 250 meters based on target simulation and human-computer interactions. The CNN accurately identified that a width of 160 meters had the highest matching rate with conditional data and corresponded to the actual situation in the study area [16]. This application demonstrates CNN's capability to solve traditional challenges in characterizing continuous bending and oscillating morphology of channel systems.

Table 1: Quantitative Performance of CNN Applications in Reservoir Characterization

| Application Area | Key Metric | Performance | Reference |

|---|---|---|---|

| Well Placement Optimization | Prediction accuracy | Within 3% relative error | [7] |

| Well Placement Optimization | Computational cost reduction | Reduced to 11.18% of full simulation | [7] |

| Well Placement Optimization | Production improvement | 47.40% improvement in field cumulative oil production | [7] |

| Reservoir Channel Characterization | Channel width identification accuracy | Highest matching rate with conditional data | [16] |

Experimental Protocols and Methodologies

Protocol 1: CNN Development for Well Placement Optimization

Purpose: To develop a CNN surrogate model for predicting cumulative oil production based on near-wellbore spatial properties to optimize well placements [15] [7].

Workflow:

- Data Preparation: Collect spatial reservoir data including static properties (permeability, porosity) and dynamic properties (pressure, oil saturation) from reservoir simulation models. Format data as multi-dimensional grids preserving spatial relationships [7].

- Training Data Generation: Use an evolutionary optimization algorithm like Particle Swarm Optimization (PSO) to generate well-placing scenarios. Run full-physics reservoir simulations for these scenarios to obtain cumulative oil production values as training labels [7].

- Network Architecture Design: Construct a Multi-Modal CNN (M-CNN) with:

- Convolutional layers for spatial feature extraction

- Pooling layers for dimensionality reduction

- Fully connected layers for regression

- Include auxiliary inputs (e.g., distances to boundaries) at full-connection stage [7]

- Network Training: Train the M-CNN using generated data with iterative learning. Add qualified scenarios to learning data and re-train to improve prediction for hydrocarbon-prolific regions [7].

- Validation: Compare CNN predictions with full-physics reservoir simulation results for qualified well-placing scenarios to validate model consistency [15].

Protocol 2: Theory-Guided CNN (TgCNN) for Subsurface Flow

Purpose: To develop a physics-constrained CNN surrogate for subsurface flows with position-varying well locations, enhancing accuracy and generalizability [17].

Workflow:

- Problem Formulation: Define the governing equations for subsurface flow (e.g., pressure equation) and boundary conditions.

- Network Architecture: Design a standard CNN architecture with convolutional, pooling, and fully-connected layers.

- Theory-Guided Loss Function: Incorporate physical constraints by adding the residual of governing equations and boundary/initial conditions to the standard data-driven loss function [17].

- Training Process: Train the TgCNN using limited simulation data while minimizing the combined data mismatch and physical constraint violation.

- Extrapolation Testing: Validate the model's performance for scenarios with different well numbers than those used in training [17].

- Optimization Integration: Combine the trained TgCNN surrogate with genetic algorithm for well placement optimization.

Protocol 3: Reservoir Channel Width Quantification

Purpose: To apply CNNs for quantitatively identifying channel width in fluvial reservoirs [16].

Workflow:

- Candidate Model Generation: Generate multiple candidate models with different channel widths (e.g., 100, 130, 160, 190, 220, 250 meters) using multi-point geostatistical methods [16].

- Training Data Preparation: Create labeled datasets of channel models with known widths.

- Transfer Learning Implementation: Utilize pre-trained CNN models (e.g., Inception-Resnet-v2) and apply transfer learning by fine-tuning on the reservoir channel dataset [16].

- Model Selection: Use the trained CNN to select the model that best matches conditioned data from multiple candidate models.

- Validation: Compare identified channel width with sedimentological knowledge and actual field conditions.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Tools for CNN Reservoir Feature Extraction

| Tool/Category | Specific Examples | Function/Purpose | Application Context |

|---|---|---|---|

| CNN Architectures | Multi-Modal CNN (M-CNN), Theory-Guided CNN (TgCNN), Inception-Resnet-v2 | Extracts spatial features from reservoir models, preserves spatial relationships | Well placement optimization, channel characterization [7] [17] [16] |

| Optimization Algorithms | Particle Swarm Optimization (PSO), Genetic Algorithm (GA), Modified Dung Beetle Algorithm | Optimizes well placement parameters, generates training scenarios | Evolutionary well placement, parameter optimization [18] [7] [19] |

| Reservoir Simulation Tools | Commercial reservoir simulators (e.g., Eclipse, CMG) | Generates training data, validates CNN predictions | Full-physics simulation for ground truth data [15] [7] |

| Physical Constraints | Governing equations, Boundary conditions, Initial conditions | Incorporates physics knowledge into CNN training | Theory-guided neural networks [17] |

| Data Processing Frameworks | Python, PyTorch, TensorFlow | Implements CNN architectures, manages training workflows | General model development and experimentation [16] |

Integration with Evolutionary Optimization Frameworks

The combination of CNNs with evolutionary optimization algorithms creates a powerful framework for solving complex well placement problems. In this integrated approach, CNNs serve as efficient surrogate models that dramatically reduce the computational cost of evaluating candidate solutions, while evolutionary algorithms like Particle Swarm Optimization (PSO) and Genetic Algorithms (GA) provide robust global search capabilities [7] [19].

Research demonstrates that this hybrid approach can improve optimization efficiency significantly. One study reported that using a Theory-Guided CNN surrogate with Genetic Algorithm improved optimization efficiency "significantly compared with running the simulators repeatedly" [17]. Another implementation showed that the integrated framework reduced computational costs to just 11.18% of those associated with full-physics reservoir simulations while achieving a 47.40% improvement in field cumulative oil production compared to the original configuration [7].

The integration typically follows an iterative process: the evolutionary algorithm generates candidate well placements, the CNN surrogate rapidly evaluates their performance, and the results are used to guide the search toward promising regions of the solution space. This approach is particularly valuable for handling geological uncertainty, as the CNN can be trained on multiple geological realizations and the optimization can identify robust solutions that perform well across uncertainty scenarios [15] [17].

Table 3: Performance Comparison of Optimization Algorithms in Well Placement

| Optimization Method | Key Advantages | Limitations | Reported Performance |

|---|---|---|---|

| Genetic Algorithm (GA) | Extensive search capabilities, handles discrete variables | High computational cost, may stagnate in later generations | Widely adopted in commercial software [7] [19] |

| Particle Swarm Optimization (PSO) | Memory retention, collaborative search | Single main operator may limit flexibility | Effective in joint optimization of well placement and control [19] |

| Integrated Algorithm (GA-PSO) | Combines strengths of GA and PSO, avoids local optima | Complex implementation | Outperforms both GA and PSO individually [19] |

| Modified Dung Beetle Optimizer (IDDBO) | Rapid convergence, handles discrete nonlinear problems | Recently developed, limited track record | Excellent performance in solving discrete WPO problems [18] |

Advanced Architectures and Future Directions

Multi-Modal and Theory-Guided Networks

Recent advances in CNN architectures for reservoir characterization include Multi-Modal CNNs (M-CNNs) that integrate data from multiple sources and Theory-Guided CNNs (TgCNNs) that incorporate physical principles directly into the learning process [7] [17]. M-CNNs enhance feature extraction by fusing different types of reservoir data (e.g., static and dynamic properties) and incorporating auxiliary information like well distances at the fully-connected stage [7]. This approach has demonstrated remarkable consistency with full-physics simulation results, achieving prediction accuracy within 3% relative error margin [7].

TgCNNs address a fundamental limitation of purely data-driven models by incorporating physical constraints directly into the training process through the loss function [17]. This theory-guided approach achieves better accuracy and generalizability, even when trained with limited data, and demonstrates satisfactory extrapolation performance for scenarios with different well numbers than those encountered during training [17].

Emerging Trends and Research Needs

Future research directions in CNN applications for reservoir feature extraction include:

- Improved Handling of Geological Uncertainty: Developing methods that more effectively account for uncertainty in geological models during the optimization process [17].

- Integration with Graph Neural Networks: Exploring hybrid architectures that combine CNNs with Graph Neural Networks (GNNs) for better handling of complex spatial relationships in reservoir systems [20].

- Enhanced Multi-Scale Feature Extraction: Developing architectures that can simultaneously capture features at different scales, from small-scale heterogeneities to field-wide trends.

- Transfer Learning Applications: Expanding the use of transfer learning to enable effective model application across different reservoirs and geological settings with limited training data [16].

Evolutionary algorithms (EAs) are powerful optimization tools inspired by natural selection and population genetics. In complex fields like reservoir management and drug discovery, these algorithms excel at navigating high-dimensional search spaces where traditional methods struggle. This article provides a detailed overview of three prominent evolutionary algorithms—Particle Swarm Optimization (PSO), Genetic Algorithm (GA), and Differential Evolution (DE)—focusing on their application to well placement optimization and their integration with modern deep learning techniques.

The challenge of determining optimal well locations in oil and gas fields is computationally intensive due to the large number of reservoir simulations required. Similarly, in drug discovery, searching ultra-large chemical libraries demands efficient global optimization strategies. Evolutionary algorithms address these challenges by using population-based stochastic search procedures that iteratively evolve solutions toward global optima [21] [22] [23].

Algorithmic Foundations and Performance Comparison

Core Algorithm Mechanisms

Particle Swarm Optimization (PSO) is a stochastic optimization procedure that uses a population of solutions, called particles, which move through the search space. Particle positions are updated iteratively according to particle fitness (objective function value) and position relative to other particles. Each particle adjusts its trajectory based on its own experience and the experience of neighboring particles [22].

Genetic Algorithm (GA) is a computational model that simulates the biological evolution process of natural selection and genetic mechanism of Darwin's biological evolution. GA starts from a randomly generated population representing potential solutions. The strategy of "survival of the fittest" is used to select relatively superior individuals as parents, followed by genetic operations including selection, crossover, and mutation to produce new generations of solutions [1].

Differential Evolution (DE) is a stochastic optimization algorithm that uses a population of solutions which evolve through generations to reach the global optimum. DE creates new candidate solutions by combining existing solutions according to a specific formula, then keeps whichever candidate solution has the best score or fitness on the optimization problem [24] [23].

Performance Comparison in Well Placement Applications

Table 1: Comparative Performance of Evolutionary Algorithms in Well Placement Optimization

| Algorithm | Performance Advantages | Computational Efficiency | Key Applications |

|---|---|---|---|

| PSO | Outperforms GA in determining well type and location, yields higher NPV values [22] | Requires significant computational time to reach optimal solutions [21] | Vertical, deviated, and dual-lateral wells; optimization over multiple reservoir realizations [22] |

| GA | Effective when combined with helper methods like Productivity Potential Maps (PPMs) [1] | Sensitive to initial values; performance improves with quality initialization [1] | Well placement optimization using reservoir simulators; combined with neural networks [1] [17] |

| DE | Outperforms GA in well placement applications; effective for global optimization [23] | Finds high-quality solutions with acceptable function evaluations [24] [23] | Determination of optimal well locations in complex reservoir models [23] |

| Sparrow Search Algorithm (SSA) | Consistently outperforms PSO, yielding significantly higher NPV values with faster convergence [21] | Computational cost higher than PSO; runtime management strategies required [21] | Simultaneous optimization of well location and flow rate in heterogeneous reservoirs [21] |

Table 2: Advanced Hybrid Approaches and Recent Enhancements

| Algorithm | Enhancement | Performance Improvement |

|---|---|---|

| Modified PSO (MPSO) | Introduction of "inertia decrement" variable in particle motion equation [21] | Better performance in determining drilling locations; improved exploration scenarios [21] |

| GA with PPMs | Productivity Potential Maps guide initial population generation [1] | COP increased by 8.09% compared to standard GA; 20.95% improvement over original well schemes [1] |

| GA with Theory-Guided CNN | Physical constraints incorporated through residual of governing equations in loss function [17] | Better accuracy and generalizability even with limited data; efficient optimization [17] |

| Hybrid Self-Adaptive Direct Search | Combines PSO with Mesh Adaptive Direct Search (MADS) [21] | Superior results for handling nonlinear constraints through penalty methods [21] |

Experimental Protocols and Methodologies

Standard Protocol for Well Placement Optimization

Objective Function Configuration:

- The primary objective function is typically Net Present Value (NPV) or Cumulative Oil Production (COP) [21] [1]

- For hydrocarbon reservoirs, NPV incorporates oil production rates, water injection costs, and drilling expenses [21]

- Constraints are handled using penalty methods or map cleaning techniques to ensure feasible well placements [21]

Decision Variables:

- Key variables include well locations, types (production/injection), flow rates, and well trajectories [21]

- The dimension of the search space is reduced to a reasonable order to manage computational complexity [21]

- Well location variables are typically represented as grid blocks or continuous coordinates in the reservoir model [21] [22]

Reservoir Simulation Integration:

- Each function evaluation requires a full reservoir simulation run [22]

- Commercial simulators or research tools like MATLAB Reservoir Simulation Toolbox (MRST) are employed [24]

- Simulation results are used to calculate the objective function value for each candidate solution [22]

PSO Implementation Protocol

Parameter Configuration:

- Population size: Typically 25-50 particles [21]

- Iterations: 50-100 generations, with runtime as a practical termination condition [21]

- Velocity parameters: Cognitive and social components balanced for exploration vs. exploitation [22]

Algorithm Steps:

- Initialize particle positions randomly within the search space

- Evaluate fitness of each particle using reservoir simulator

- Update personal best and global best positions

- Update particle velocities and positions

- Repeat steps 2-4 until termination criteria met

- Return global best solution as optimal well placement [22]

Enhancement Strategies:

- Quality maps guide particles toward promising reservoir regions [21]

- Adaptive parameter tuning maintains balance between exploration and exploitation [21]

- Runtime management strategies control computational expenses [21]

GA Implementation Protocol

Initialization:

- Standard approach: Random population generation [1]

- Enhanced approach: Productivity Potential Maps (PPMs) guide initial well locations [1]

- Population size: Problem-dependent, typically 50-100 individuals [1]

Genetic Operations:

- Selection: Roulette Wheel Selection (RWS) proportional to fitness [1]

- Crossover: Single-point crossover with demarcation point for variable transformation [1]

- Mutation: Small step size or probability perturbation of offspring [1]

Termination Criteria:

- Fixed number of generations (typically 100-200) [21]

- Runtime limitations (e.g., 10-15 hours for specific problems) [21]

- Convergence thresholds based on fitness improvement [1]

DE Implementation Protocol

Parameter Settings:

- Population size: Varies with problem dimension and complexity [23]

- Mutation factor: Typically between 0.5-1.0 [23]

- Crossover probability: Usually 0.7-0.9 [23]

Algorithm Specifics:

- Mutation strategy: Difference vectors created from population members [23]

- Crossover: Binomial or exponential recombination [23]

- Selection: Greedy approach where offspring replace parents if better [23]

Performance Considerations:

- Multiple runs recommended due to stochastic nature [23]

- Parameter tuning essential for optimal performance [24]

- Benchmarking against PSO and GA provides performance validation [23]

Integration with Convolutional Neural Networks

CNN for Surrogate Modeling

Architecture and Training:

- CNNs input near-wellbore permeability and output cumulative oil production [15]

- Training uses multiple geological realizations to capture uncertainty [15]

- Feature extraction capabilities superior to standard ANNs for spatial data [15]

Theory-Guided CNN (TgCNN):

- Physical laws (e.g., governing equations) incorporated in training process [17]

- Residual of governing equations added to loss function [17]

- Achieves better accuracy and generalizability with limited data [17]

Performance Advantages:

- CNN outperforms ANN in well placement prediction accuracy [15] [25]

- Significant computational cost reduction compared to full simulation [15]

- Effective handling of geological uncertainty through robust optimization [15]

Hybrid Optimization Framework

Surrogate-Assisted Evolutionary Algorithms:

- Trained CNN surrogate replaces expensive reservoir simulations [17]

- Evolutionary algorithms use surrogate predictions for fitness evaluation [17]

- Enables more generations and larger populations within feasible computational time [17]

Workflow Integration:

- Train CNN on limited set of full reservoir simulations

- Integrate trained CNN as surrogate model with EA optimizer

- Perform extensive optimization using surrogate-evaluated fitness

- Validate promising candidates with full reservoir simulation

- Update surrogate model if necessary [17]

Experimental Results:

- TgCNN surrogate combined with GA achieves satisfactory extrapolation performance [17]

- Joint optimization of well number and placement feasible with surrogate models [17]

- Optimization efficiency significantly improved compared to simulator-only approaches [17]

Visualization of Algorithm Workflows

Evolutionary Algorithm Workflows for Well Placement Optimization

The Researcher's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Function | Application Context |

|---|---|---|

| Reservoir Simulators | Full-physics simulation of fluid flow in porous media | Objective function evaluation for well placement candidates [15] [22] |

| Productivity Potential Maps (PPMs) | Guide initial well locations based on reservoir quality | GA initialization; reduces optimization time [1] |

| Theory-Guided CNN | Surrogate model incorporating physical constraints | Efficient optimization with limited simulation data [17] |

| Quality Maps | Identify high-potential areas of the reservoir | Enhance algorithm performance; guide exploration [21] |

| MATLAB Reservoir Simulation Toolbox (MRST) | Open-source reservoir modeling and simulation | Benchmark testing and algorithm development [24] |

| Differential Evolution Framework | Global optimization algorithm implementation | Well placement optimization benchmarked against PSO and GA [23] |

| Robust Optimization Framework | Handles geological uncertainty through multiple realizations | CNN integration for reliable well placement [15] |

Evolutionary algorithms represent powerful optimization tools for complex problems like well placement in hydrocarbon reservoirs. PSO, GA, and DE each offer distinct advantages, with PSO generally outperforming GA in well placement applications, while DE shows promising results in comparative studies. The integration of these algorithms with deep learning approaches, particularly convolutional neural networks as surrogate models, creates a robust framework for addressing computational challenges in reservoir optimization.

Future research directions include enhanced hybrid algorithms that combine the strengths of multiple optimization techniques, improved surrogate modeling with physical constraints, and more efficient handling of geological uncertainties. These advancements will further solidify the role of evolutionary algorithms as essential tools in reservoir management and optimization.

The Synergistic Potential of a Hybrid CNN-Evolutionary Framework

Application Notes

The integration of Convolutional Neural Networks (CNNs) with evolutionary optimization algorithms creates a powerful hybrid framework for solving complex, high-dimensional optimization problems. This synergy is particularly effective in domains characterized by vast search spaces, computationally expensive simulations, and complex, spatially-distributed data.

The core principle of this framework involves using a CNN as a surrogate model (or proxy) to approximate the objective function, which is then evaluated by an evolutionary algorithm to efficiently navigate the solution space. This addresses a critical bottleneck: traditional evolutionary algorithms require thousands of evaluations to converge, which becomes prohibitive when each evaluation involves a slow, full-physics simulation [7] [26]. By replacing the simulator with a fast, data-driven CNN proxy, the optimization process is accelerated by several orders of magnitude.

Quantitative Performance of Hybrid Frameworks

The table below summarizes the demonstrated performance of various hybrid CNN-Evolutionary frameworks across different applications, primarily in geoenergy.

Table 1: Quantitative Performance of Hybrid CNN-Evolutionary Frameworks

| Application / Study Focus | Key Hybrid Components | Reported Performance Metrics |

|---|---|---|

| Sequential Oil Well Placement [7] | Multi-Modal CNN (M-CNN) + Particle Swarm Optimization (PSO) | • Prediction accuracy within 3% relative error vs. simulator• Computational cost reduced to 11.18% of full-physics simulations• 47.40% improvement in field cumulative oil production |

| Horizontal Well Placement [26] | Adaptive Constraint-Guided EA + Dual Surrogate Models | • Effective management of complex constraints and discrete variables• Superior performance in identifying optimal placements that maximize economic returns |

| Sidetrack Well Placement [4] | Random Forest Proxy + Differential Evolution (DE) | • Model MSE of 0.0008 (R²: 0.8059)• Successful field validation: reduced water cut, 82.7 tons incremental oil |

| Well Placement under Geological Uncertainty [27] | Multi-input Deep Learning Proxy + PSO | • R² of 0.89 and 0.73 for sequential production periods• Achieved 96% of the optimal solution with 70-85% time reduction |

Key Advantages and Synergistic Effects

The hybrid framework delivers superior results through several synergistic mechanisms:

- Computational Efficiency: The CNN proxy model dramatically reduces the time required for each objective function evaluation. This allows the evolutionary algorithm to perform thousands of evaluations that would be infeasible with a full simulator [7] [27].

- Handling Spatial Complexity: CNNs excel at processing and extracting features from spatially-distributed data, such as reservoir permeability or porosity maps. This allows the proxy to learn the complex underlying physical relationships between reservoir characteristics and well performance [7] [27].

- Balanced Global Search: Evolutionary algorithms like PSO, DE, and GA provide a robust global search capability, preventing the optimization from becoming trapped in local optima. The surrogate model guides this search intelligently, focusing computational effort on promising regions of the solution space [26].

- Addressing Data Scarcity with Physics-Informed Learning: Incorporating physical laws into the CNN's loss function, as in Physics-Informed Neural Networks (PINNs), can enhance data efficiency and model generalizability. Evolutionary algorithms are particularly suited for optimizing the complex loss landscapes of PINNs, a promising area known as physics-informed neuroevolution [28].

Experimental Protocols

This section provides a detailed, step-by-step methodology for implementing a hybrid CNN-Evolutionary framework, using the optimization of sequential well placements as a canonical example [7] [27].

The following diagram illustrates the integrated workflow of the hybrid framework, showing the interaction between data generation, CNN proxy training, and evolutionary optimization.

Protocol 1: Synthetic Dataset Generation for Proxy Training

Objective: To create a high-quality, representative dataset for training a robust CNN proxy model.

Materials:

- Reservoir Simulation Software: A full-physics simulator (e.g., Eclipse, CMG, AD-GPRS).

- Geological Model: A representative reservoir model (e.g., UNISIM-I-D, Egg, or PUNQ-S3) [7] [27].

- Sampling Script: Code for parameter space exploration (e.g., in Python or MATLAB).

Procedure:

- Parameter Space Definition:

- Identify all optimization variables (e.g., well locations in x,y coordinates, well type, control parameters) and static reservoir properties (e.g., multiple permeability/porosity realizations for geological uncertainty) [27].

- Design of Experiments (DoE):

- Use Latin Hypercube Sampling (LHS) or Orthogonal Arrays to efficiently sample the multi-dimensional parameter space. This ensures maximum coverage with a minimum number of simulation runs [4].

- Generate

N(typically thousands) of unique well placement scenarios.

- Numerical Simulation:

- For each scenario from the DoE, run a full-physics reservoir simulation to calculate the objective function (e.g., cumulative oil production, Net Present Value) over the desired time horizon.

- For sequential well placement: This may involve simulating the drilling and production of wells at different time intervals [27].

- Dataset Curation:

- Compile the inputs and outputs into a structured dataset.

- Inputs (Features): For each well placement scenario, this includes spatial maps (e.g., permeability, porosity, pressure, saturation) cropped or centered around the wellbore, and auxiliary data (e.g., well-to-well distances) [7].

- Output (Label): The corresponding objective function value from the simulation (e.g., cumulative oil production).

Protocol 2: Development and Training of the CNN Proxy Model

Objective: To train a CNN model that accurately maps reservoir characteristics and well locations to production performance.

Materials:

- Machine Learning Framework: TensorFlow, PyTorch, or Keras.

- Computing Hardware: GPU-accelerated workstation or cluster.

Procedure:

- Architecture Selection:

- Employ a Multi-Modal CNN (M-CNN) capable of processing multiple input channels (e.g., separate porosity, permeability, pressure maps) [7].

- The architecture should include:

- Convolutional Layers: For feature extraction from spatial maps.

- Pooling Layers: For dimensionality reduction.

- Flatten Layer: To transition from spatial features to a vector.

- Fully Connected (Dense) Layers: To integrate flattened spatial features with auxiliary 1D inputs (e.g., well distances) and perform final regression [7].

- Data Preprocessing:

- Normalize all input data (e.g., scale spatial maps and auxiliary data to a [0,1] range).

- Split the dataset into training (e.g., 70%), validation (e.g., 15%), and test (e.g., 15%) sets.

- Model Training:

- Use the Mean Squared Error (MSE) or Mean Absolute Error (MAE) as the loss function.

- Employ the Adam optimizer for efficient gradient descent.

- Implement early stopping based on validation loss to prevent overfitting.

- Model Validation:

Protocol 3: Evolutionary Optimization using the CNN Proxy

Objective: To find the global optimum well placement by leveraging the trained CNN proxy within an evolutionary algorithm.

Materials:

- Trained CNN Proxy Model (from Protocol 2).

- Evolutionary Algorithm Library: DEAP, PyGMO, or custom-coded PSO/GA.

Procedure:

- Algorithm Selection:

- Initialization:

- Randomly generate an initial population of candidate solutions (i.e., vectors representing well placement coordinates).

- Fitness Evaluation:

- For each candidate in the population, generate the required input data (spatial maps based on well locations) and use the trained CNN proxy to predict its fitness (e.g., cumulative production). This replaces the reservoir simulator.

- Evolutionary Loop:

- Selection: Preferentially select high-fitness candidates to be parents for the next generation.

- Crossover/Mutation: Create new offspring by combining traits of parents (crossover) and introducing random variations (mutation).

- Replacement: Form a new population from the best parents and offspring.

- Repeat steps 3-4 for a predefined number of generations or until convergence is achieved (i.e., no significant improvement in the best fitness).

- Constraint Handling:

- Integrate an Adaptive Constraint-Guided mechanism to handle operational constraints (e.g., minimum well spacing, reservoir boundaries). This can involve penalty functions or feasibility rules that guide the search toward feasible regions [26].

Protocol 4: Validation and Iterative Learning

Objective: To verify the optimization results and enhance the proxy model's accuracy.

Procedure:

- Final Validation:

- Select the top

K(e.g., 5-10) best-performing well placement scenarios identified by the evolutionary optimizer. - Run full-physics simulations for these top scenarios to obtain ground-truth performance values [7].

- Select the top

- Iterative Proxy Refinement:

- Compare the CNN proxy's predictions with the full-physics simulation results for the top candidates.

- If discrepancies are high, add these new, high-quality data points to the original training dataset.

- Re-train the CNN proxy with this augmented dataset. This "iterative learning" scheme specifically improves the proxy's accuracy in the most promising regions of the solution space, mitigating extrapolation errors [7].

The Scientist's Toolkit: Research Reagent Solutions

The successful implementation of the hybrid CNN-Evolutionary framework relies on a suite of computational "reagents." The table below details these essential components and their functions.

Table 2: Essential Research Reagents for the Hybrid Framework

| Category | Reagent / Tool | Function & Description |

|---|---|---|

| Data Generation | Full-Physics Reservoir Simulator (e.g., Eclipse, CMG) | Generates high-fidelity training data by solving complex physical equations for fluid flow in porous media. |

| Latin Hypercube Sampling (LHS) | An advanced statistical method for generating a near-random sample of parameter values, ensuring comprehensive exploration of the input space for simulation. | |

| Proxy Model Development | Multi-Modal CNN (M-CNN) | A deep learning architecture designed to process and fuse multiple types of input data (e.g., various spatial property maps) to learn complex, non-linear relationships. |

| Physics-Informed Neural Network (PINN) | A type of neural network that incorporates physical laws (e.g., PDEs) directly into its loss function, improving data efficiency and physical consistency [28]. | |

| Evolutionary Optimization | Differential Evolution (DE) | A population-based metaheuristic known for its robustness and effectiveness in continuous optimization problems, often used for well placement [4]. |

| Particle Swarm Optimization (PSO) | An evolutionary algorithm inspired by social behavior, effective for navigating high-dimensional search spaces and commonly integrated with CNN proxies [7] [27]. | |

| Adaptive Constraint Mechanism (e.g., ACIM, ECTCR) | Algorithms that dynamically manage complex constraints during optimization, ensuring solutions are feasible and practical for field deployment [26]. |

Building the Hybrid Model: Architectures and Workflow Implementation

Multi-Modal CNN (M-CNN) Design for Integrating Static and Dynamic Reservoir Data

The optimization of well placement is a critical, high-value challenge in geoenergy science and engineering, requiring the determination of optimal well locations to maximize economic value while considering geological, engineering, and economic constraints [7]. This complex process has traditionally relied on computationally intensive reservoir simulations, often employing evolutionary optimization algorithms like Particle Swarm Optimization (PSO) or Genetic Algorithms (GA). While powerful, these population-based methods suffer from prohibitive computational costs due to the need for exhaustive simulation runs [7]. Recent advances in deep learning have introduced Convolutional Neural Networks (CNNs) as partial or full substitutes for expensive reservoir simulators. Unlike traditional Artificial Neural Networks (ANNs), CNNs preserve spatial features of large-scale reservoir data, making them particularly suited for processing the multi-dimensional nature of reservoir property distributions [7].

Multi-Modal CNN (M-CNN) architectures represent a significant evolution beyond standard CNNs by enabling the fusion of data from multiple sources or modalities. In reservoir modeling, this capability is crucial for integrating both static geological data (e.g., porosity, permeability) and dynamic reservoir data (e.g., pressure, saturation) that characterize different aspects of reservoir behavior [7] [29]. Drawing inspiration from this concept, researchers have developed workflows that integrate M-CNNs with evolutionary optimization to enhance solution quality for well placement problems while mitigating computational costs and extrapolation effects [7]. This integration addresses fundamental challenges in applying machine learning to well placement, particularly the difficulty in maximizing oil productivity when searching for productive regions beyond the range of the initial learning data [7].

Theoretical Foundation of M-CNN for Reservoir Data

Convolutional Neural Network Fundamentals

Convolutional Neural Networks are specifically designed to process data with a grid-like topology, such as images, making them exceptionally suitable for reservoir property maps and seismic data. The core operation in CNNs is the convolution operation, where a filter (or kernel) is passed over the input data to produce feature maps that preserve spatial relationships [30] [31]. For reservoir applications, key CNN components include:

Convolutional Layers: These layers apply filters to extract spatial features from input data. The operation involves element-wise multiplication of the filter with overlapping regions of the input, followed by summation to create feature maps. The operation can be represented as:

[ (f * h)[m,n] = \sum{j}\sum{k} h[j,k] \cdot f[m-j,n-k] ]

where (f) represents the input image and (h) represents the filter kernel [30].

Padding: To prevent spatial dimensionality reduction and information loss at image borders, padding adds extra pixels (typically zeros) around the input. "Same" padding ensures the output maintains the same spatial dimensions as the input, while "Valid" padding uses no padding [30] [31].

- Strided Convolution: The stride parameter controls how much the filter shifts between computations. Increasing stride reduces spatial dimensions of feature maps, providing a mechanism to control computational complexity [30] [31].

- Multi-Channel Convolution: Reservoir data typically includes multiple channels representing different properties. CNNs handle this through 3D convolutions where filters have the same depth as the input data, enabling simultaneous processing of multiple reservoir properties [30] [31].

Multi-Modal Learning Architecture

Multi-modal CNNs enhance standard architectures by incorporating and fusing information from different data types. For reservoir applications, this typically involves:

- Visual Data Pathway: A CNN branch processes spatial reservoir property maps (e.g., porosity, permeability, pressure, saturation distributions) using convolutional layers to extract hierarchical spatial features [29].

- Tabular Data Pathway: An Artificial Neural Network (ANN) branch processes traditional well data, completion parameters, and engineering measurements [29].

- Fusion Module: A dedicated network component combines and interacts features from both modalities, learning cross-modal relationships that enhance predictive performance [29].

This architecture specifically addresses the limitation of conventional methods that rely solely on discrete well data, which cannot capture the spatial geological context along well laterals or across the reservoir field [29].

M-CNN Architecture for Reservoir Integration

Input Data Modalities

The M-CNN architecture for reservoir data integration processes two distinct categories of input data:

Static Reservoir Data (Constant over time):

- Porosity distribution maps

- Permeability distribution maps

- Geological facies models

- Seismic attributes

- Reservoir structural framework

Dynamic Reservoir Data (Time-varying):

- Pressure distribution over time

- Fluid saturation maps (oil, gas, water)

- Production history data

- Temperature distributions

- Tracer concentration data [7] [32] [33]

Network Architecture Components

The complete M-CNN architecture consists of the following interconnected components:

- Spatial Feature Extraction Branch: Composed of multiple convolutional layers with 3×3 kernels, followed by batch normalization and ReLU activation functions. This branch processes 2D or 3D reservoir property maps, extracting hierarchical spatial features [7] [34].

- Tabular Data Processing Branch: A fully connected network that processes well-based parameters, completion designs, and operational constraints [29].

- Auxiliary Data Integration: Well distance constraints and boundary conditions are incorporated as a 1D array at the fully connected stage of the CNN to account for inter-well relationships and operational constraints [7].

- Feature Fusion Module: Implements cross-modal attention mechanisms or concatenation operations to combine features from spatial and tabular pathways, enabling the model to learn interactions between different data types [29].

- Output Head: A final fully connected layer that maps the fused features to prediction targets, typically cumulative oil production or other key performance indicators [7].

Evolutionary Optimization Integration

The M-CNN is integrated with Particle Swarm Optimization (PSO) in a hybrid workflow where:

- PSO generates initial well placement scenarios and provides full-physics reservoir simulation results as training data

- The M-CNN learns correlations between near-wellbore spatial properties and cumulative oil production

- Iterative learning enhances the proxy model by adding qualified scenarios to training data and retraining [7]

Table 1: M-CNN Input Data Specifications

| Data Type | Spatial Dimensions | Channels/Features | Preprocessing Requirements |

|---|---|---|---|

| Static Properties (Porosity, Permeability) | 64×64 to 256×256 | 2-3 (multiple properties) | Normalization to [0,1] range |

| Dynamic Properties (Pressure, Saturation) | Same as static | 2-4 (multiple time steps) | Time-windowing, normalization |

| Well Tabular Data | N/A | 10-20 features (completion, operational) | Standardization, feature selection |

| Auxiliary Constraints | N/A | 4-8 (distances, boundaries) | Distance normalization |

Application Notes: Well Placement Optimization

Implementation Workflow

The sequential well placement optimization using M-CNN follows a structured workflow:

- Initial Data Generation: PSO generates diverse well placement scenarios evaluated through full-physics reservoir simulations to create initial training data [7].

- M-CNN Training: The network learns correlations between spatial reservoir properties around well locations and corresponding production outcomes [7].

- Productivity Prediction: The trained M-CNN predicts oil productivity at every candidate well location in the reservoir [7].

- Scenario Qualification: The model selects highly productive well placement scenarios based on prediction thresholds [7].

- Iterative Refinement: Qualified scenarios are added to the training dataset, and the M-CNN is retrained to improve prediction accuracy, particularly for high-productivity regions [7].

- Validation: Final well placements are validated against full-physics reservoir simulations to ensure consistency [7].

Quantitative Performance

Recent implementations demonstrate significant performance improvements:

Table 2: M-CNN Performance Metrics for Well Placement Optimization

| Performance Metric | Traditional Methods | M-CNN Approach | Improvement |

|---|---|---|---|

| Prediction Accuracy | N/A (Baseline) | Within 3% relative error | High consistency with simulations |

| Computational Cost | 100% (Full simulations) | 11.18% of full-physics simulations | 88.82% reduction |

| Field Production | Baseline configuration | 47.40% improvement in cumulative production | Significant enhancement |

| Model Reliability | Extrapolation issues | Improved extrapolation via iterative learning | Better generalization |

Additional studies show that incorporating geological maps via multimodal architecture increases prediction accuracy from R² = 0.74 to R² = 0.83 compared to using tabular data alone [29]. Notably, significant improvement (to R² = 0.816) can be achieved by solely incorporating porosity maps, highlighting the value of spatial geological context [29].

Experimental Protocols

M-CNN Training Protocol

Objective: Train M-CNN to predict cumulative oil production based on static and dynamic reservoir properties around well locations.

Materials and Data Requirements:

- Reservoir simulation model (e.g., UNISIM-I-D benchmark)

- Historical production data (where available)

- Static property models (porosity, permeability distributions)

- Dynamic simulation results (pressure, saturation over time)

Procedure:

- Data Preparation Phase:

- Extract spatial patches (e.g., 64×64 grid blocks) centered at candidate well locations

- Normalize static and dynamic properties to zero mean and unit variance

- Partition data into training (70%), validation (15%), and test (15%) sets

Model Configuration:

- Initialize CNN branch with 3 convolutional layers (32, 64, 128 filters)

- Configure ANN branch with 2 hidden layers (64, 32 neurons)

- Implement fusion module with concatenation followed by 2 fully connected layers

- Set optimization hyperparameters: learning rate (0.001), batch size (32), epochs (200)

Training Execution:

- Implement early stopping with patience of 20 epochs based on validation loss

- Apply learning rate reduction on plateau (factor=0.5, patience=10)

- Monitor for overfitting using training/validation loss curves

Model Validation:

Iterative Learning Protocol

Objective: Improve M-CNN prediction accuracy for high-productivity regions through targeted data augmentation.

Procedure:

- Initial Model Training: Train initial M-CNN using PSO-generated well placement scenarios

- Candidate Location Screening: Use trained M-CNN to predict productivity at all candidate well locations

- Scenario Qualification: Select top 10-15% of predictions as highly productive scenarios

- Simulation Validation: Run full-physics simulations on qualified scenarios

- Data Augmentation: Add validated high-productivity scenarios to training dataset

- Model Retraining: Retrain M-CNN with augmented dataset

- Convergence Check: Repeat steps 2-6 until prediction accuracy stabilizes (typically 3-5 cycles) [7]

Visualization and Workflow Diagrams

M-CNN for Well Placement Optimization Workflow

Multi-Modal CNN Architecture

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Category | Specific Examples | Function in M-CNN Research |

|---|---|---|

| Reservoir Simulation Software | SLB's MEPO, CMG's CMOST-AI, CMG-GEM, PETREL | Generate training data, validate predictions, provide full-physics reference solutions |

| Deep Learning Frameworks | TensorFlow, PyTorch, Keras | Implement M-CNN architectures, manage training workflows |

| Optimization Algorithms | Particle Swarm Optimization (PSO), Genetic Algorithms (GA), NSGA-II | Generate initial scenarios, multi-objective optimization |

| Data Visualization & Analysis | CoViz 4D, MATLAB, Python (Matplotlib, Seaborn) | Preprocess reservoir data, visualize spatial properties, analyze results |

| Geological Modeling Tools | PETREL, RMS | Construct static reservoir models, generate property distributions |

| High-Performance Computing | GPU clusters (NVIDIA), cloud computing platforms | Accelerate CNN training and reservoir simulations |

The integration of Multi-Modal CNNs with evolutionary optimization represents a paradigm shift in well placement methodology, effectively balancing computational efficiency with prediction accuracy. By leveraging both static and dynamic reservoir data through dedicated network pathways, M-CNNs capture complex spatial relationships that traditional methods often overlook. The hybrid framework combining M-CNN with PSO demonstrates remarkable performance, reducing computational costs to approximately 11% of full-physics simulations while improving field production by over 47% and maintaining prediction errors within 3% [7]. The iterative learning component further enhances model robustness by continuously refining predictions for high-productivity regions. As reservoir development becomes increasingly challenging with more complex geological settings and economic constraints, M-CNN approaches offer a scientifically rigorous, computationally feasible path forward for optimizing hydrocarbon recovery while managing uncertainty. Future research directions should focus on extending these architectures to incorporate more diverse data modalities, improving uncertainty quantification, and adapting to increasingly complex reservoir systems.

Theory-Guided Convolutional Neural Networks (TgCNNs) represent a paradigm shift in scientific deep learning, moving beyond purely data-driven models by incorporating physical laws and domain knowledge directly into the learning process. Within the context of evolutionary optimization for well placement, TgCNNs have emerged as powerful surrogate models that combine the spatial feature extraction capabilities of CNNs with the reliability of physics-based simulation [35] [17].

The fundamental principle of TgCNNs involves augmenting the traditional data-driven loss function with additional theory-guided constraints derived from governing equations, physical boundaries, and engineering principles. This hybrid approach ensures that model predictions remain physically consistent while maintaining the computational efficiency of neural networks [35]. For well placement optimization—a computationally expensive process requiring numerous reservoir simulations—TgCNNs offer a compelling solution by providing rapid and physically plausible evaluations of candidate well configurations [26] [17].

Fundamental Mechanisms of TgCNNs

Core Architecture and Physical Constraints

TgCNNs build upon standard convolutional neural networks but introduce critical modifications to embed physical knowledge. The architecture typically processes spatial inputs such as permeability and porosity fields through convolutional layers to preserve spatial relationships [7] [35]. The theory-guidance is implemented through a composite loss function that penalizes violations of physical laws:

Loss = Loss_data + λ_physics * Loss_physics + λ_BC * Loss_BC + λ_IC * Loss_IC

where Loss_data represents the traditional data mismatch, while the additional terms enforce physical constraints, boundary conditions (BC), and initial conditions (IC), weighted by their respective coefficients (λ) [35].

For subsurface flow problems, the physics loss typically derives from discretized governing equations. For two-phase oil-water flow, the mass balance equation provides the foundational physical constraint:

Theory-Guided Formulations for Subsurface Flow

In reservoir engineering applications, TgCNNs commonly incorporate the governing equations for multiphase flow in porous media. The semi-discretized form of the mass balance equation for oil-water flow provides the physical foundation for the loss function [36]:

where ϕ represents porosity, Sα phase saturation, Pα pressure, K permeability tensor, kr,α relative permeability, and qα the source/sink term representing wells [36]. The TgCNN learns to satisfy this governing equation while simultaneously fitting available simulation or observational data.

Quantitative Performance Assessment

TgCNN Performance Metrics in Well Placement Optimization

Table 1: Performance metrics of Theory-Guided CNNs in well placement optimization

| Model Type | Application Context | Prediction Accuracy | Computational Efficiency | Key Advantages |

|---|---|---|---|---|

| TgCNN [17] | Well placement optimization | High accuracy with limited data | Significant improvement over numerical simulators | Better generalizability, physical consistency |

| M-CNN with PSO [7] [37] | Sequential well placement | Within 3% relative error | 11.18% of full-physics simulation cost | 47.4% improvement in cumulative production |

| Physics-Informed CNN (PICNN) [36] | Porous media flow with time-varying controls | Comparable to numerical methods | Highly efficient as surrogate model | Handles heterogeneous properties naturally |

| Adaptive Constraint-Guided EBS (ACG-EBS) [26] | Horizontal well placement | Maximizes NPV considering economic factors | Balances exploration and exploitation | Handles complex constraints and discreteness |

Comparison with Alternative Approaches

Table 2: Comparison of TgCNN with other neural network architectures in subsurface applications

| Architecture | Physical Knowledge Incorporation | Data Requirements | Interpretability | Limitations |

|---|---|---|---|---|

| TgCNN [35] [17] | Governing equations, boundary conditions | Low to moderate | Moderate through physical consistency | Complex implementation |

| Standard CNN [7] | None, purely data-driven | High | Low | Physically implausible predictions possible |