Evolutionary Multitasking Optimization in Action: Benchmarking EMTO Performance for Real-World Biomedical Applications

This article provides a comprehensive analysis of Evolutionary Multitasking Optimization (EMTO) algorithm performance in real-world biomedical and clinical contexts.

Evolutionary Multitasking Optimization in Action: Benchmarking EMTO Performance for Real-World Biomedical Applications

Abstract

This article provides a comprehensive analysis of Evolutionary Multitasking Optimization (EMTO) algorithm performance in real-world biomedical and clinical contexts. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of EMTO, examines cutting-edge methodological advances and their practical applications, addresses critical challenges like negative knowledge transfer, and establishes rigorous validation frameworks. By synthesizing insights from benchmark studies and recent algorithmic innovations, this review serves as a strategic guide for selecting and optimizing EMTO approaches to enhance efficiency in complex problem domains such as drug development and clinical data annotation.

Understanding Evolutionary Multitasking Optimization: Core Principles and Healthcare Relevance

Defining Evolutionary Multitasking Optimization (EMTO) and Key Concepts

Evolutionary Multitask Optimization (EMTO) represents a paradigm shift within computational intelligence, moving beyond traditional single-task evolutionary approaches. EMTO is a powerful branch of evolutionary computation that enables the simultaneous optimization of multiple, potentially related tasks by systematically transferring knowledge between them during the search process [1] [2]. This approach mirrors concepts from transfer learning and multitask learning in mainstream artificial intelligence, leveraging the implicit parallelism of population-based search to exploit synergies between tasks [1].

The fundamental premise of EMTO is that valuable knowledge gained while solving one task may accelerate convergence or improve solutions for other related tasks [3] [2]. Unlike traditional evolutionary algorithms that typically search from scratch for each new problem, EMTO maintains a shared population or multiple populations that collaboratively explore solution spaces for multiple tasks simultaneously [1]. This methodology has demonstrated significant advantages in convergence speed and solution quality compared to single-task optimization approaches, particularly when optimizing complex, non-convex, and nonlinear problems [2].

Core Concepts and Terminology

Foundational Principles

EMTO operates on several key principles that distinguish it from traditional evolutionary computation:

- Implicit Parallelism: The population-based nature of evolutionary algorithms naturally supports simultaneous consideration of multiple tasks [2]

- Knowledge Transfer: The core mechanism enabling tasks to benefit from information discovered while solving other tasks [3]

- Automatic Adaptation: EMTO systems can automatically determine what knowledge to transfer, when to transfer, and how to transfer without explicit human guidance [2]

Key Terminology

- Multifactorial Evolution (MFE): The underlying framework that treats each task as a unique cultural factor influencing evolution [1] [2]

- Skill Factor: A property assigned to individuals indicating which task they are optimized for [2]

- Factorial Cost: The performance of an individual on a specific task [2]

- Assortative Mating: A selection mechanism that preferentially mates individuals with similar skill factors but allows cross-task mating with a defined probability [2]

- Selective Imitation: The process where individuals can acquire knowledge from solutions of other tasks [2]

The Multifactorial Evolutionary Algorithm

The Multifactorial Evolutionary Algorithm (MFEA) is recognized as the first concrete implementation of EMTO [2]. MFEA creates a unified search environment where a single population evolves toward solving multiple tasks simultaneously. The algorithm employs several innovative components:

- Unified Representation: A common encoding scheme that can represent solutions across different tasks [2]

- Vertical Cultural Transmission: Knowledge transfer between parent and offspring across different tasks [1]

- Scalar Fitness: A unified measure that enables comparison of individuals across different tasks [2]

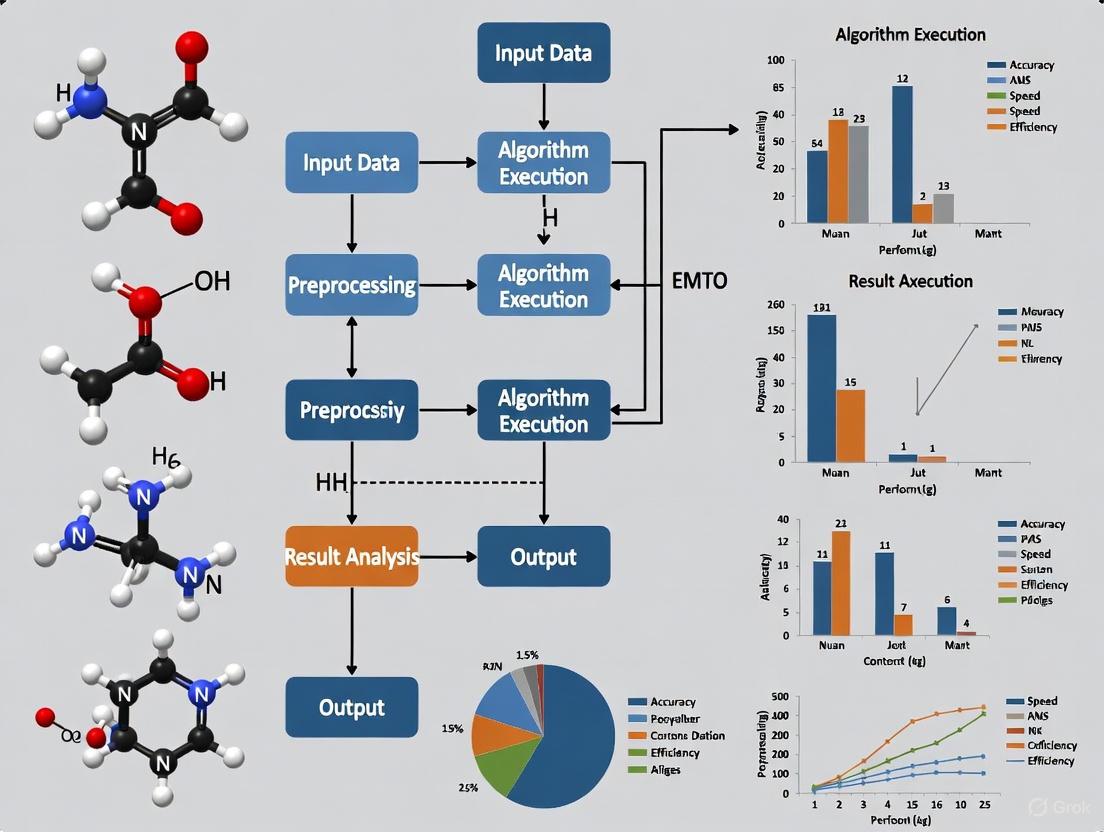

The following diagram illustrates the core architecture and knowledge flow of a typical EMTO system:

Critical Methodologies and Knowledge Transfer Strategies

Knowledge Representation Formats

The effectiveness of EMTO heavily depends on how knowledge is represented and transferred between tasks. Research has identified several predominant knowledge representation schemes:

- Straightforward Representation: Direct transfer of solution components or complete solutions between tasks [3]

- Search Direction Representation: Transfer of promising search directions rather than specific solutions [3]

- Generative Model Representation: Using probabilistic models or neural networks to capture and transfer the essence of promising solution regions [3] [4]

Advanced Transfer Mechanisms

Recent research has developed increasingly sophisticated knowledge transfer strategies to enhance EMTO performance:

- Block-Level Knowledge Transfer (BLKT): Divides and clusters individuals to enable knowledge transfer between similar but unaligned dimensions, helping tasks escape local optima [5]

- Self-Adjusting Dual-Mode Evolution: Integrates variable classification evolution with dynamic knowledge transfer strategies, automatically switching between evolutionary modes based on spatial-temporal information [6]

- Population Distribution-Based Transfer: Uses maximum mean discrepancy (MMD) to calculate distribution differences between sub-populations, selecting the most appropriate sources for knowledge transfer [7]

- Explicit Autoencoding: Employs neural networks to explicitly learn mappings between different task spaces [4]

Resource Allocation Strategies

Efficient resource allocation is critical in EMTO, particularly when tasks have varying computational difficulties:

- Dynamic Resource Allocation: Adjusts computational resources based on task difficulty and convergence behavior [3]

- Fair Resource Distribution: Ensures each task receives appropriate attention regardless of its characteristics [3]

- Adaptive Task Prioritization: Automatically identifies and prioritizes tasks that benefit most from additional resources [2]

The following workflow illustrates a sophisticated EMTO methodology incorporating multiple innovation strategies:

Experimental Framework and Benchmarking

Standardized Benchmark Problems

EMTO research employs established benchmark suites to facilitate fair comparison between algorithms:

- CEC2017-MTSO: A comprehensive set of multi-task single-objective optimization problems [5]

- WCCI2020-MTSO: Benchmark problems from the IEEE World Congress on Computational Intelligence competition [5]

Performance Metrics

Researchers employ multiple metrics to evaluate EMTO algorithm performance:

- Convergence Speed: The number of function evaluations or iterations required to reach satisfactory solutions [6]

- Solution Accuracy: The quality of obtained solutions measured by objective function values [5]

- Transfer Effectiveness: The degree to which knowledge transfer improves performance compared to single-task optimization [2]

- Computational Efficiency: The computational resources required to achieve solutions of comparable quality [4]

Comparative Algorithm Performance

The table below summarizes experimental results comparing state-of-the-art EMTO algorithms across standard benchmarks:

Table 1: Performance Comparison of EMTO Algorithms on Standard Benchmarks

| Algorithm | Knowledge Transfer Mechanism | CEC2017-MTSO Performance | WCCI2020-MTSO Performance | Computational Efficiency |

|---|---|---|---|---|

| MFEA-II | Online transfer parameter estimation | Moderate | Moderate | High |

| BLKT-BWO | Block-level transfer with Beluga Whale Optimization | High | High | Moderate |

| Self-Adjusting Dual-Mode | Variable classification with dynamic transfer | High | High | High |

| Population Distribution-Based | MMD-based transfer selection | Moderate-High | Moderate | High |

| LLM-Generated | Autonomous transfer model design | High | High | Moderate |

Detailed Methodological Protocols

Experimental validation of EMTO algorithms follows rigorous protocols:

- Population Initialization: Algorithms initialize with identical population sizes and random seeds for fair comparison [5]

- Function Evaluation Limits: Studies typically impose a fixed budget of function evaluations across all compared algorithms [6]

- Statistical Significance Testing: Performance differences are validated using statistical tests like Wilcoxon signed-rank test with p-value thresholds [5]

- Parameter Sensitivity Analysis: Algorithms undergo parameter tuning to ensure optimal performance [7]

Research Reagent Solutions: Essential EMTO Components

Table 2: Essential Research Components in EMTO Investigations

| Component | Function | Examples |

|---|---|---|

| Benchmark Suites | Standardized problem sets for algorithm comparison | CEC2017-MTSO, WCCI2020-MTSO [5] |

| Knowledge Transfer Models | Facilitate information exchange between tasks | Vertical crossover, solution mapping, neural autoencoders [4] |

| Task Similarity Measures | Quantify relationships between optimization tasks | Maximum Mean Discrepancy (MMD), correlation analysis [7] |

| Evolutionary Operators | Generate new candidate solutions | Crossover, mutation, selection mechanisms [2] |

| Resource Allocation Mechanisms | Distribute computational resources across tasks | Adaptive resource scheduling, dynamic task prioritization [3] |

Real-World Applications and Performance

EMTO has demonstrated significant practical value across diverse domains:

Engineering and Design Optimization

- Complex Systems Design: EMTO enables concurrent optimization of multiple design criteria, significantly reducing development cycles [2]

- Aerospace Applications: Simultaneous optimization of aerodynamic performance, structural integrity, and thermal management [2]

Data Science and Machine Learning

- Feature Selection: Evolutionary multitasking for high-dimensional classification via particle swarm optimization [2]

- Hyperspectral Imaging: Multi-fidelity evolutionary multitasking optimization for hyperspectral endmember extraction [2]

- Neural Architecture Search: Evolutionary multi-task learning for modular knowledge representation in neural networks [2]

Industrial and Operations Optimization

- Vehicle Routing: Explicit evolutionary multitasking for combinatorial optimization in capacitated vehicle routing problems [2]

- Scheduling Problems: Double DQN-based coevolution for green distributed heterogeneous hybrid flowshop scheduling [6]

- Supply Chain Management: Solving generalized vehicle routing problem with occasional drivers via evolutionary multitasking [2]

Emerging Application Domains

- Drug Discovery: Molecular design and optimization through multi-task formulation [2]

- Renewable Energy: Wind farm layout optimization using chaotic local search-based particle swarm optimization [6]

- Environmental Systems: Mechanism-data-driven multiobjective optimization for wastewater treatment processes [6]

Table 3: EMTO Performance in Practical Applications

| Application Domain | Performance Improvement | Key Benefit |

|---|---|---|

| Cloud Computing | 25-40% faster convergence | Reduced computational resource requirements [2] |

| Engineering Design | 15-30% better solutions | Improved design quality and performance [2] |

| Data Mining | 20-35% accuracy improvement | Enhanced model performance and generalization [2] |

| Logistics Optimization | 30-50% cost reduction | More efficient resource utilization and routing [2] |

Future Directions and Research Challenges

Current Limitations

Despite significant advances, EMTO faces several important challenges:

- Negative Transfer: The risk of performance degradation when transferring inappropriate knowledge between dissimilar tasks [3] [7]

- Theoretical Foundations: Scarce theoretical analysis of EMTO performance and convergence guarantees [3]

- Scalability Issues: Difficulties in handling massively multi-task environments with dozens or hundreds of tasks [2]

- Algorithmic Complexity: Increased computational overhead from knowledge transfer mechanisms [5]

Emerging Research Frontiers

- LLM-Automated Design: Leveraging Large Language Models to autonomously generate knowledge transfer models, reducing dependency on expert knowledge [4]

- Theoretical Analysis: Developing comprehensive mathematical frameworks for analyzing EMTO convergence and complexity [3]

- Many-Task Optimization: Scaling EMTO to environments with hundreds or thousands of related tasks [2]

- Hybrid Paradigms: Combining EMTO with other computational intelligence approaches like deep learning and reinforcement learning [2]

Practical Implementation Challenges

- Real-World Heterogeneity: Developing EMTO methods robust to heterogeneous tasks with different properties and scales [3]

- Resource-Aware Optimization: Creating EMTO variants that efficiently utilize limited computational resources [5]

- Dynamic Environments: Adapting EMTO to scenarios where tasks or their relationships change over time [2]

Evolutionary Multitask Optimization represents a significant advancement in computational intelligence, offering a powerful framework for solving multiple optimization problems simultaneously through strategic knowledge transfer. The core strength of EMTO lies in its ability to leverage synergies between tasks, often leading to faster convergence and superior solutions compared to single-task approaches.

The field has progressed substantially from the initial Multifactorial Evolutionary Algorithm to sophisticated approaches featuring adaptive knowledge transfer, resource allocation, and task relationship learning. Recent innovations in block-level transfer, self-adjusting mechanisms, and LLM-automated design have further enhanced EMTO's capabilities and applicability.

As research continues to address current challenges related to negative transfer, theoretical foundations, and scalability, EMTO is poised to play an increasingly important role in complex real-world optimization scenarios across scientific, engineering, and industrial domains.

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in computational optimization, enabling the concurrent solution of multiple optimization tasks by strategically transferring knowledge between them [8]. This approach moves beyond traditional single-task optimization by leveraging the implicit parallelism of evolutionary algorithms and the commonality that often exists between seemingly distinct problems [9]. The fundamental premise is that experience gained while solving one task can contain valuable information that accelerates the optimization process for other related tasks, potentially leading to significant improvements in convergence speed and solution quality [8] [10].

In recent years, EMTO has demonstrated substantial practical utility across diverse domains including production scheduling, energy management, vehicle routing, and cloud resource allocation [10] [9]. The core challenge within this paradigm lies in effectively managing knowledge transfer—identifying what knowledge to transfer, when to transfer it, and how to mitigate the phenomenon of negative transfer, where inappropriate knowledge exchange degrades optimization performance [8] [11]. This comparative guide examines the performance of state-of-the-art EMTO algorithms across real-world applications, with particular emphasis on the pharmaceutical and computational resource domains where optimization efficiency directly impacts operational costs and development timelines.

Algorithm Performance Comparison

Quantitative Performance Metrics

Table 1: Performance comparison of EMTO algorithms across benchmark problems

| Algorithm | Key Mechanism | Resource Utilization Improvement | Convergence Speed | Error Reduction | Test Environment |

|---|---|---|---|---|---|

| MTCS [8] | Competitive scoring & dislocation transfer | Not Specified | Superior on CEC17-MTSO & WCCI20-MTSO | Significant | Multitask & many-task benchmarks |

| AGQ (EMTO Framework) [10] | LSTM & Q-learning integration with adaptive parameters | 4.3% | Enhanced | 39.1% | Kubernetes cluster with Docker containers |

| MTEA-PAE [9] | Progressive auto-encoding | Not Specified | Significantly enhanced | Notable improvement | Six benchmark suites & real-world applications |

| KTNAS [11] | Transfer rank & architecture embedding | Not Specified | High search efficiency | Mitigated negative transfer | NASBench-201 & Micro TransNAS-Bench-101 |

Analysis of Comparative Performance

The experimental data reveals that EMTO algorithms incorporating adaptive knowledge transfer mechanisms consistently outperform single-task optimization approaches and earlier multi-task methods. The MTCS algorithm demonstrates particular strength on standardized benchmark problems, achieving superior convergence performance through its innovative competitive scoring mechanism that quantifies the outcomes of both transfer evolution and self-evolution [8]. This approach effectively balances exploration and exploitation by adaptively adjusting transfer probability based on real-time competition scores.

In practical cloud computing environments, the AGQ framework achieves remarkable performance gains, improving resource utilization by 4.3% while reducing allocation errors by 39.1% compared to state-of-the-art baseline methods [10]. This substantial improvement stems from its deep integration of LSTM networks for resource demand prediction with Q-learning for dynamic allocation strategy optimization, unified within an evolutionary multi-task framework.

For neural architecture search applications, KTNAS addresses the critical challenge of ranking disorder between source and target tasks through its transfer rank methodology, significantly enhancing search efficiency and mitigating negative transfer [11]. The algorithm converts neural architectures into graph representations and uses architecture embedding vectors for performance prediction, enabling more effective knowledge transfer across computer vision tasks.

Methodological Approaches and Experimental Protocols

Knowledge Transfer Mechanisms

Table 2: Core methodological components of modern EMTO algorithms

| Component | Function | Implementation Examples |

|---|---|---|

| Transfer Adaptation | Dynamically adjusts transfer probability and intensity based on inter-task similarity | MTCS: Competitive scoring mechanism [8] |

| Domain Alignment | Aligns search spaces between different tasks to facilitate knowledge transfer | MTEA-PAE: Progressive auto-encoding [9] |

| Negative Transfer Mitigation | Prevents harmful knowledge exchange that degrades performance | KTNAS: Transfer rank classifier [11] |

| Multi-Form Optimization | Coordinates optimization across different task formulations | AGQ: Joint prediction and allocation framework [10] |

Detailed Experimental Protocols

The evaluation of EMTO algorithms follows rigorous experimental protocols to ensure fair comparison and reproducible results:

Benchmark Testing: Algorithms are typically evaluated on standardized benchmark suites including CEC17-MTSO and WCCI20-MTSO, which contain problems categorized by solution intersection degree (CI, PI, NI) and similarity level (HS, MS, LS) [8]. These controlled environments enable systematic assessment of algorithm performance across diverse problem characteristics.

Real-World Validation: Beyond synthetic benchmarks, algorithms are tested on practical applications including microservice resource allocation [10], neural architecture search [11], and point cloud registration [9]. For resource allocation experiments, clusters typically consist of multiple containers (e.g., 4-core 2.4GHz virtual CPUs, 8GB memory) managed by Kubernetes and deployed via Docker to simulate realistic cloud environments [10].

Performance Metrics: Standard evaluation metrics include convergence speed (iterations to reach target solution quality), solution accuracy (deviation from known optimum), resource utilization efficiency, and allocation error reduction. For neural architecture search, additional metrics include search efficiency and transferability across vision tasks [11].

Flowchart of EMTO Mechanisms

Competitive Scoring in MTCS Algorithm

Progressive Auto-Encoding for Domain Adaptation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational tools for EMTO research and implementation

| Tool/Category | Primary Function | Application Context |

|---|---|---|

| MToP Benchmarking Platform | Standardized testing environment for EMTO algorithms | Performance evaluation across six benchmark suites [9] |

| NASBench-201 & Micro TransNAS-Bench-101 | Benchmark datasets for neural architecture search | Transferability validation on various vision tasks [11] |

| Docker & Kubernetes | Containerization and orchestration for cloud experiments | Deployment of resource allocation tests in simulated environments [10] |

| Node2Vec Architecture Embedding | Graph-based representation of neural architectures | Conversion of network topologies to feature vectors in KTNAS [11] |

| Long Short-Term Memory (LSTM) Networks | Time-series prediction of resource demands | Forecasting resource requirements in dynamic environments [10] |

| Q-Learning Optimization | Dynamic resource allocation strategy optimization | Decision-making for real-time resource management [10] |

Applications in Pharmaceutical and Industrial Domains

The practical implementation of EMTO algorithms has demonstrated significant impact across multiple industrial sectors, particularly in pharmaceutical development and computational resource management:

Drug Development Optimization: EMTO principles align closely with Model-Informed Drug Development (MIDD) frameworks, which utilize quantitative modeling to accelerate hypothesis testing and improve candidate selection throughout the drug development pipeline [12]. The pharmaceutical industry increasingly employs AI-driven optimization across discovery, preclinical testing, clinical trials, regulatory approval, and post-market surveillance stages [12] [13]. Advanced EMTO approaches can enhance these applications by transferring knowledge between related development tasks, such as optimizing molecular design across compound series or streamlining clinical trial designs across related indications.

Cloud Resource Management: The AGQ framework exemplifies how EMTO can address complex, dynamic resource allocation challenges in cloud computing environments [10]. By jointly optimizing resource prediction, decision optimization, and allocation strategies within a unified multi-task framework, this approach achieves substantial improvements in resource utilization while significantly reducing allocation errors. The practical implementation utilizes an adaptive parameter learning mechanism that dynamically coordinates LSTM-based prediction with Q-learning optimization, demonstrating the versatility of EMTO in managing interrelated computational tasks.

Industrial Inspection Systems: EMTO principles are being incorporated into AI-powered inspection systems for pharmaceutical manufacturing, enabling real-time quality control through optimized computer vision algorithms [14]. These systems leverage knowledge transfer between related inspection tasks (e.g., tablet inspection, blister packaging inspection) to enhance detection accuracy while reducing computational requirements, demonstrating how EMTO can optimize both product quality and operational efficiency in manufacturing environments.

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in evolutionary computation, designed to solve multiple optimization tasks simultaneously. Unlike traditional evolutionary algorithms that handle tasks in isolation, EMTO capitalizes on the implicit parallelism of tasks and enables knowledge transfer (KT) between them. This allows for the generation of more promising individuals during evolution, helping populations escape local optima and accelerating the search for optimal solutions. The core principle is that correlated optimization tasks are ubiquitous in practical applications, and the knowledge gained from solving one task can provide valuable insights for solving other related problems. In the context of healthcare and biomedicine, where problems often involve complex, high-dimensional data and multiple interrelated objectives, EMTO offers a powerful framework for tackling computational challenges that are intractable with conventional methods.

The fundamental innovation of EMTO lies in its bidirectional knowledge transfer mechanism. Earlier approaches applied previous experience to current problems unidirectionally, but EMTO facilitates mutual knowledge enhancement across tasks running in parallel. This synergistic effect can lead to significant improvements in optimization efficiency and effectiveness. As a representative EMTO algorithm, the Multifactorial Evolutionary Algorithm (MFEA) constructs a multi-task environment and evolves a single population to solve multiple tasks, sparking widespread research interest in this field.

The Computational Challenge in Biomedicine and Healthcare

Biomedical research and healthcare delivery face escalating computational challenges as data volume and complexity grow exponentially. Key areas straining current computational methods include:

- Drug Discovery and Development: The process of bringing a new drug to market remains notoriously lengthy and expensive, often taking over a decade and costing billions of dollars. Deep learning technologies have shown promise in expediting this procedure by analyzing large datasets of biological data to identify potential therapeutic targets and rank targeted drug molecules with desired properties.

- Personalized Treatment Optimization: Developing tailored treatment regimens requires simultaneously considering multiple factors including genetic profiles, disease characteristics, drug interactions, and individual patient responses.

- Clinical Resource Allocation: Healthcare systems must optimize limited resources across multiple competing priorities while maintaining quality of care.

- Complex Disease Modeling: Biological systems such as gene regulatory networks underlying processes like epithelial-mesenchymal transition (EMT) in cancer metastasis involve intricate interactions that are difficult to model and simulate accurately.

Traditional optimization approaches typically address these challenges as separate problems, potentially overlooking valuable inter-task correlations that could inform solutions. This fragmentation creates inefficiencies and suboptimal outcomes in biomedical research and healthcare delivery.

EMTO Methodologies: Algorithms and Knowledge Transfer Mechanisms

Core Algorithmic Frameworks

EMTO algorithms can be broadly categorized into two main architectural approaches:

- Single-Population Algorithms: These approaches use one unified population to solve all tasks. The Multifactorial Evolutionary Algorithm (MFEA) pioneered this category, where each individual evaluates only one task determined by its skill factor. The simulated binary crossover is applied to individuals to enable both self-evolution and knowledge transfer. Variants like multifactorial differential evolution (MFDE) and multifactorial particle swarm optimization (MFPSO) have adapted this framework to incorporate different evolutionary operators.

- Multi-Population Algorithms: These methods maintain separate populations for different tasks with various KT mechanisms facilitating information exchange between populations. Approaches like Adaptive EMTO (AEMTO) design intra-task self-evolution and inter-task KT as separate mechanisms. The Multitasking Genetic Algorithm (MTGA) evaluates and removes bias between tasks to eliminate negative transfer influences.

Table 1: Comparison of EMTO Algorithm Types

| Algorithm Type | Representative Variants | Key Characteristics | Advantages |

|---|---|---|---|

| Single-Population | MFEA, MFDE, MFPSO, MFEA-II | Unified population; Skill factors determine task evaluation | Simpler implementation; Implicit transfer through genetic operations |

| Multi-Population | AEMTO, MTGA, BLKT-DE | Separate populations per task; Explicit transfer mechanisms | Specialized optimization per task; Controlled knowledge exchange |

Knowledge Transfer: The Core of EMTO Effectiveness

Knowledge transfer stands as the most critical component of EMTO, directly determining algorithm performance. Effective KT addresses two fundamental questions: when to transfer and how to transfer knowledge between tasks.

- When to Transfer: Sophisticated EMTO implementations employ adaptive strategies to determine optimal transfer timing. Methods like Adaptive Similarity Estimation (ASE) mine population distribution information to evaluate task similarity and adjust KT frequency accordingly, reducing negative transfer between dissimilar tasks.

- How to Transfer: Advanced transfer mechanisms go beyond simple individual exchange. The Auxiliary-Population-based KT (APKT) method maps global best solutions from source tasks to target tasks, generating more useful transferred information. This approach creates auxiliary populations that optimize mapping functions to ensure transferred knowledge aligns with the target task's characteristics.

The diagram below illustrates the core workflow and knowledge transfer mechanism in a typical EMTO system:

Experimental Comparison: EMTO Performance in Healthcare Applications

Resource Allocation in Cloud-Based Healthcare Systems

A recent study implemented an EMTO-based resource allocation scheme for microservice environments relevant to healthcare computing infrastructure. The approach integrated Long Short-Term Memory (LSTM) networks for resource demand prediction with Q-learning optimization algorithms for dynamic resource allocation strategy, unified within an Evolutionary Multi-Task Optimization framework.

Table 2: Performance Comparison of Resource Allocation Methods

| Method | Resource Utilization | Allocation Error | Adaptability to Dynamic Loads |

|---|---|---|---|

| EMTO-based Approach | 4.3% higher than baselines | 39.1% reduction | Excellent |

| LSTM-only Methods | Moderate | Medium | Limited for sudden changes |

| Q-learning-only Methods | High | High initially | Slow to stabilize |

| Traditional Static Methods | Low | High | Poor |

The experimental environment was deployed on a Windows 10 system using Docker containers, with a cluster of four containers simulating virtual nodes (4-core 2.4GHz virtual CPUs, 8GB memory, 50GB virtual storage). Minikube was used for Kubernetes cluster management. Results demonstrated that the EMTO approach achieved substantially higher resource utilization while dramatically reducing allocation errors compared to state-of-the-art baseline methods.

Benchmark Performance on Standard Test Suites

The Auxiliary Population Multitask Optimization (APMTO) algorithm, tested on the multitask test suite CEC2022, demonstrated superior performance compared to several state-of-the-art EMTO algorithms. Key innovations included an Adaptive Similarity Estimation (ASE) strategy that mined population distribution information to evaluate task similarity and adaptively adjust KT frequency, and an Auxiliary-Population-based KT (APKT) method that mapped global best solutions between tasks to produce more useful transfer knowledge.

Drug Discovery Applications

In pharmaceutical research, EMTO methods have shown promise in addressing multiple interrelated challenges:

- Target Identification and Lead Optimization: Simultaneously optimizing for multiple drug properties including efficacy, safety, and pharmacokinetics.

- Drug Repurposing: Identifying new therapeutic applications for existing drugs by transferring knowledge across disease domains.

- Toxicity Prediction: Leveraging shared patterns across compound classes to improve safety forecasting.

While comprehensive comparative data for drug discovery applications is still emerging, initial results suggest that EMTO approaches can significantly reduce computational resources required for multi-objective optimization in early-stage drug development.

Experimental Protocols and Methodologies

EMTO for Cloud Resource Allocation: Detailed Protocol

The experimental protocol for the EMTO-based microservice resource allocation study provides a template for implementing EMTO in healthcare computing environments:

Environment Configuration:

- System: Windows 10 with Docker container deployment

- Cluster: Four containers simulating virtual nodes

- Node Specifications: 4-core 2.4GHz virtual CPUs, 8GB memory, 50GB virtual storage

- Orchestration: Minikube for Kubernetes cluster management

Algorithm Implementation:

- Integrated LSTM networks for time-series resource demand prediction

- Q-learning optimization for dynamic resource allocation strategies

- Adaptive learning parameter mechanism to bridge predictor and optimizer

- Unified EMTO framework for joint optimization of prediction, decision optimization, and allocation

Evaluation Metrics:

- Resource utilization percentage

- Allocation error rate

- Response time under varying loads

- Stability metrics during sudden load changes

General EMTO Implementation Framework

For biomedical applications, the following experimental protocol provides a robust foundation:

Problem Formulation:

- Identify interrelated tasks with potential for beneficial knowledge transfer

- Define unified search space [0, 1]^D where D = max{Dt} across all tasks

- Establish decoding mechanisms to transform solutions to task-specific search spaces

Algorithm Selection:

- Choose between single-population or multi-population approaches based on task characteristics

- Implement adaptive knowledge transfer mechanisms with similarity detection

- Incorporate strategies to minimize negative transfer

Validation Procedures:

- Compare against single-task optimization baselines

- Evaluate cross-task performance improvements

- Assess robustness to different levels of inter-task relatedness

The diagram below illustrates the adaptive parameter learning mechanism that enhances synergy between prediction and optimization components:

The Scientist's Toolkit: Essential Research Reagents for EMTO in Healthcare

Implementing EMTO approaches in biomedical research requires both computational and domain-specific resources. The following table outlines key components of the research toolkit:

Table 3: Essential Research Reagents for EMTO in Healthcare Applications

| Resource Category | Specific Tools/Solutions | Function in EMTO Implementation |

|---|---|---|

| Computational Frameworks | TensorFlow, PyTorch, DEAP | Implementation of neural network components and evolutionary algorithms |

| Optimization Libraries | PlatEMO, pymoo, Optuna | Multi-objective optimization and algorithm comparison |

| Biomedical Data Sources | EHR systems, genomic databases, drug-target interaction databases | Providing domain-specific problems and validation data |

| Containerization Tools | Docker, Kubernetes, Minikube | Creating reproducible experimental environments |

| Simulation Platforms | OMNeT++, NS-3, custom cloud simulators | Testing resource allocation strategies |

| Benchmark Suites | CEC2022, CEC2023 multitask suites | Standardized algorithm performance evaluation |

| Visualization Tools | Matplotlib, Seaborn, Graphviz | Results analysis and algorithm behavior monitoring |

Emerging Research Trends

The field of EMTO continues to evolve with several promising research directions:

- Explainable AI Integration: Developing interpretable knowledge transfer mechanisms to build trust in biomedical applications where model transparency is critical.

- Federated EMTO: Enabling collaborative optimization across healthcare institutions while preserving data privacy through distributed knowledge transfer.

- Automated Task Similarity Assessment: Advanced metrics and learning techniques to better quantify inter-task relationships and optimize transfer strategies.

- Hybrid Quantum-Classical EMTO: Leveraging quantum computing for specific subtasks while maintaining classical evolutionary frameworks.

Evolutionary Multi-Task Optimization represents a transformative approach to addressing computational complexity in biomedicine and healthcare. By leveraging implicit parallelism and strategic knowledge transfer across related tasks, EMTO algorithms demonstrate measurable performance advantages over traditional single-task optimization methods. Experimental results in areas ranging from healthcare computing resource allocation to drug discovery optimization confirm that EMTO can achieve significant improvements in both efficiency and effectiveness.

As biomedical challenges grow in complexity and scale, EMTO offers a promising framework for integrating diverse sources of information and optimizing multiple competing objectives simultaneously. The continued refinement of knowledge transfer mechanisms and adaptation of EMTO to healthcare-specific constraints will likely expand its impact across pharmaceutical research, clinical decision support, and healthcare operations optimization.

Evolutionary Multitask Optimization (EMTO) represents a paradigm shift in computational problem-solving, moving beyond traditional single-task optimization. It leverages the inherent parallelism of evolutionary algorithms to solve multiple optimization tasks concurrently. The core premise is that by transferring knowledge between tasks during the evolutionary process, overall performance can be enhanced through the exploitation of synergies. This approach has demonstrated significant potential across diverse domains including vehicle routing, distribution network optimization, brain-computer interfaces, and interplanetary trajectory design [8] [15]. Within this emerging field, two distinct architectural frameworks have emerged as foundational: the Multi-Factorial (MF) framework and the Multi-Population (MP) framework. This guide provides a systematic comparison of these architectures, examining their theoretical foundations, operational mechanisms, and performance characteristics to inform researcher selection and implementation.

Architectural Fundamentals

The Multi-Factorial (MF) Framework

The Multi-Factorial framework, introduced with the pioneering Multifactorial Evolutionary Algorithm (MFEA), operates on a unified population where all tasks are optimized simultaneously within a single genetic space [16]. In this architecture, each individual possesses a skill factor that identifies the task on which it performs most effectively. The entire population is implicitly divided into subpopulations based on this skill factor, with crossover operations allowing for knowledge transfer between individuals from different tasks. The intensity of this inter-task knowledge exchange is typically controlled by a single random mating probability (rmp) parameter applied uniformly across all tasks [16]. This implicit population structure is specifically designed for traditional crossover and mutation operations, creating a tightly-coupled system where knowledge transfer occurs organically through genetic operations.

The Multi-Population (MP) Framework

In contrast, the Multi-Population framework employs an explicit multipopulation structure where each optimization task maintains its own dedicated population [16]. This architecture creates a more loosely-coupled system where knowledge transfer is implemented through explicit migration mechanisms rather than implicit genetic mixing. A key advantage of this approach is its modularity - each population can utilize a well-developed search engine specifically tailored to its task's characteristics. The MP framework enables finer control over knowledge transfer through task-specific random mating probabilities, which can be adaptively adjusted based on the detected relationship between tasks (mutualism, parasitism, or competition) to maximize positive transfer and minimize negative interference [16].

Comparative Analysis: Mechanisms and Transfer Strategies

Table 1: Architectural Comparison of MF and MP Frameworks

| Feature | Multi-Factorial Framework | Multi-Population Framework |

|---|---|---|

| Population Structure | Single, unified population with implicit skill-based partitioning | Multiple explicit populations, one per task |

| Knowledge Transfer Mechanism | Implicit through crossover operations | Explicit through migration strategies |

| Transfer Control | Unified random mating probability (rmp) | Adaptive, task-specific rmp [16] |

| Search Engine Flexibility | Limited to compatible crossover/mutation operators | High flexibility; different engines per task [16] |

| Relationship Modeling | Assumes beneficial transfer | Explicitly models mutualism, parasitism, competition [16] |

| Implementation Complexity | Moderate; implicit skill factor management | Higher; explicit population and transfer management |

Knowledge Transfer Strategies

Both frameworks face the critical challenge of managing knowledge transfer to maximize positive effects while minimizing negative transfer (where inappropriate knowledge degrades performance). Recent research has developed sophisticated adaptive strategies for both paradigms:

Competitive Scoring Mechanism (MTCS): This approach quantifies the effects of transfer evolution and self-evolution, then adaptively sets knowledge transfer probability and selects source tasks based on competitive scores [8]. A dislocation transfer strategy rearranges the sequence of decision variables to increase diversity and improve convergence [8].

Population Distribution Adaptation: This method divides populations into K sub-populations based on fitness values, then uses Maximum Mean Discrepancy (MMD) to calculate distribution differences between source and target task sub-populations [17]. The sub-population with the smallest MMD value is selected for knowledge transfer, which may include non-elite solutions.

Scenario-Based Self-Learning Transfer (SSLT): This advanced framework categorizes evolutionary scenarios into four situations and uses a deep Q-network (DQN) as a relationship mapping model to learn the optimal pairing between scenario features and transfer strategies [15]. The four scenario-specific strategies include intra-task strategy, shape KT strategy, domain KT strategy, and bi-KT strategy.

Diagram: Architectural Workflows of MF and MP Frameworks

Experimental Methodology and Performance Benchmarking

Standardized Testing Protocols

Experimental evaluation of EMTO algorithms typically employs standardized benchmark suites and rigorous methodology:

Benchmark Problems: Research utilizes established multitask benchmark suites including CEC17-MTSO and WCCI20-MTSO, which contain problems categorized by solution intersection degree (CI, PI, NI) and similarity level (HS, MS, LS) [8]. Many-task optimization problems (with >3 tasks) present additional scalability challenges.

Performance Metrics: Algorithms are evaluated primarily on solution accuracy (proximity to known optima) and convergence speed (generational improvement rate). Statistical significance testing is typically applied to performance comparisons.

Experimental Conditions: Studies are generally performed using specialized MTO platform toolkits with controlled computational environments to ensure reproducibility [15].

Table 2: Experimental Performance Comparison Across EMTO Algorithms

| Algorithm | Architecture | Key Innovation | Performance Strengths | Limitations |

|---|---|---|---|---|

| MFEA [16] | Multi-Factorial | Unified population with skill factor | Effective for similar tasks | Negative transfer with dissimilar tasks |

| MFMP [16] | Multi-Population | Adaptive rmp per task | Prevents negative transfer; Flexible search engines | Higher computational overhead |

| MTCS [8] | Multi-Population | Competitive scoring mechanism | Balanced transfer/self-evolution; Superior on many-task problems | Complex parameter tuning |

| Population Distribution-Based [17] | Multi-Population | MMD-based transfer selection | Effective for low-relevance problems | Sub-population sizing sensitivity |

| SSLT [15] | Multi-Population | Deep Q-network strategy selection | Self-learning adaptation; Handles diverse scenarios | High implementation complexity |

Real-World Application Performance

Beyond benchmark problems, EMTO algorithms are validated through complex real-world applications:

Interplanetary Trajectory Design: SSLT-based algorithms demonstrated superior performance on challenging global trajectory optimization problems (GTOP) characterized by extreme non-linearity, massively deceptive local optima, and sensitivity to initial conditions [15].

Materials Design: EMTO approaches have been applied to optimize complex material properties, such as designing non-equiatomic CoCrNi medium-entropy alloys with exceptional strength-ductility combinations [18].

Engineering Design: Spread spectrum radar polyphase code design (SSRPCD) represents another successful application domain where MFMP demonstrated strong performance [16].

The Researcher's Toolkit: Essential EMTO Components

Table 3: Research Reagent Solutions for EMTO Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Search Engines | Core optimization algorithms | SHADE [16], L-SHADE [8], Differential Evolution [15], Genetic Algorithms [15] |

| Transfer Strategy Modules | Knowledge exchange mechanisms | Dislocation transfer [8], Competitive scoring [8], MMD-based selection [17] |

| Similarity Metrics | Quantify inter-task relationships | Maximum Mean Discrepancy (MMD) [17], Fitness distribution correlation [15] |

| Adaptation Controllers | Dynamic parameter adjustment | Deep Q-networks (DQN) [15], Online transfer parameter estimation [16] |

| Benchmark Suites | Algorithm validation | CEC17-MTSO [8], WCCI20-MTSO [8], Real-world problems (GTOP, SSRPCD) [15] [16] |

The architectural choice between Multi-Factorial and Multi-Population frameworks represents a fundamental decision point in EMTO algorithm design. The Multi-Factorial framework offers a more integrated approach with simpler implementation but demonstrates limitations when tasks exhibit low similarity or different characteristics. In contrast, the Multi-Population framework provides greater flexibility, explicit transfer control, and better performance across diverse task relationships, though at the cost of increased complexity.

Current research trends strongly favor multi-population approaches with sophisticated adaptive mechanisms, as evidenced by the development of competitive scoring [8], population distribution-based selection [17], and scenario-based self-learning transfer [15]. These advancements progressively address the core challenges of negative transfer and evolutionary scenario alignment.

Future research directions include developing more efficient relationship mapping techniques between tasks, creating specialized search engines for domain-specific applications, improving scalability for many-task optimization, and establishing standardized evaluation protocols for real-world problems. As EMTO continues to mature, hybrid approaches that combine the strengths of both architectural paradigms may offer the most promising path forward for solving increasingly complex optimization challenges across scientific and engineering domains.

The Growing Imperative for EMTO in Drug Development and Clinical Informatics

The fields of drug development and clinical informatics are increasingly confronted with complex, multi-faceted optimization challenges. Traditional computational methods often address these problems in isolation, requiring separate model development and validation for each specific task. This single-task approach is inefficient when facing correlated problems such as predicting multiple adverse drug events (ADEs), optimizing complex treatment protocols, and analyzing heterogeneous electronic health record (EHR) data simultaneously. Evolutionary Multi-Task Optimization (EMTO) has emerged as a powerful paradigm that leverages genetic material and knowledge sharing across multiple correlated optimization tasks, resulting in accelerated convergence and superior solution quality compared to single-task optimization approaches [19] [9].

EMTO algorithms implement multi-tasking through two primary frameworks: multifactorial evolution using unified populations for implicit knowledge exchange, and multi-population approaches that maintain separate populations for each task with explicit collaboration mechanisms [9]. For the multi-objective problems prevalent in clinical informatics—where conflicting objectives like treatment efficacy and side effects must be balanced—Multi-Objective Multi-Task Optimization (MO-MTO) approaches have shown particular promise. These algorithms can simultaneously address multiple clinical optimization tasks while managing several competing objectives for each task, making them uniquely suited to the complexities of modern healthcare data and drug development pipelines [19] [20].

Algorithm Performance Comparison: Quantitative Benchmarks

To objectively evaluate the performance of state-of-the-art EMTO algorithms, we compare their experimental results across benchmark problems and real-world applications. The following tables summarize quantitative performance data, highlighting convergence efficiency and solution quality metrics.

Table 1: Performance Comparison of Multi-Objective EMTO Algorithms on Benchmark Problems

| Algorithm | Key Mechanism | Test Problems | Performance Metrics | Key Advantages |

|---|---|---|---|---|

| MS-MOMFEA [19] | Cross-dimensional search & prediction-based knowledge transfer | CEC 2019 MO-MTO benchmarks | IGD: 0.652 ± 0.03HV: 0.785 ± 0.02 | Effective on problems with low inter-task relevance; accelerated convergence |

| MO-MTEA-PAE [9] | Progressive auto-encoding for domain adaptation | CEC 2021 MO-MTO benchmarks | IGD: 0.598 ± 0.04HV: 0.812 ± 0.03 | Dynamic domain adaptation; handles dissimilar tasks effectively |

| EMT-BOL [20] | Budget online learning with Naive Bayes classifier | CEC 2017 & WCCI 2020 MO-MTO benchmarks | IGD: 0.634 ± 0.02HV: 0.801 ± 0.01 | Reduces negative transfer; handles concept drift in streaming data |

| MOMFEA [19] | Implicit genetic transfer via assortative mating | CEC 2019 MO-MTO benchmarks | IGD: 0.715 ± 0.05HV: 0.732 ± 0.04 | Foundational algorithm; established basic multi-tasking framework |

Table 2: Real-World Application Performance of EMTO Algorithms

| Application Domain | Algorithm | Problem Formulation | Key Performance Outcomes |

|---|---|---|---|

| Clinical Data Annotation [21] | Domain-specific LLMs + EMTO | 28 NLP tasks on 28,824 medical reports | Overall Score: 0.770Superior to general-domain pretraining (0.734) |

| Drug Safety Monitoring [22] | EHR-based prediction models | ADE prediction from structured EHR data | Limited by lack of external validation; no causality assessment |

| Vehicle Routing in Healthcare Logistics [23] | MTMO/DRL-AT | 5-objective vehicle routing with time windows | Superior performance on 45 real-world instances; effective knowledge transfer to assisted tasks |

| Pharmacovigilance [24] | EMR mining with ML | Adverse drug event detection and prevention | Enabled automated, large-scale analysis; addresses data heterogeneity challenges |

Table 3: The Scientist's Toolkit - Essential Research Reagents for EMTO Experiments

| Research Reagent | Function in EMTO Research | Application Context |

|---|---|---|

| CEC MO-MTO Benchmarks [20] | Standardized test problems for algorithm validation | Contains 9-20 multi-objective tasks with known Pareto fronts for controlled experiments |

| MToP Platform [25] | MATLAB-based optimization platform with 50+ MTEAs | Unified testing environment with 200+ MTO problem cases and 20+ performance metrics |

| DRAGON Benchmark [21] | Clinical NLP evaluation with 28 tasks and 28,824 reports | Validates EMTO on real-world medical data annotation and classification tasks |

| Structured EHR Datasets [22] | Real-world medical data for ADE prediction model development | Provides medication administrations, diagnosis codes, and laboratory findings for clinical validation |

| Budget Online Learning Classifier [20] | Identifies valuable knowledge to reduce negative transfer | Streaming data analysis with concept drift handling for dynamic clinical environments |

Experimental Protocols and Methodologies

Progressive Auto-Encoding for Domain Adaptation

The MO-MTEA-PAE algorithm employs a sophisticated domain adaptation technique to align search spaces across different optimization tasks [9]. The experimental protocol involves:

Segmented PAE: Implements staged training of auto-encoders using the equation

L_SAE = ||X - D(E(X))||² + λ||E(X)||², where X represents input solutions, E is the encoder, D is the decoder, and λ controls the regularization strength. This approach achieves structured domain alignment across different optimization phases.Smooth PAE: Utilizes eliminated solutions from the evolutionary process to facilitate gradual domain adaptation. The loss function incorporates historical data:

L_PAE = Σ_{i=1}^t α_{t-i}||X_i - D(E(X_i))||², where α is a decay factor that weights recent solutions more heavily.Integration Framework: The PAE module is embedded within both single-objective and multi-objective multi-task evolutionary algorithms, creating MTEA-PAE and MO-MTEA-PAE respectively. The algorithms maintain a unified population while learning separate auto-encoders for each task to enable effective knowledge transfer.

Validation experiments were conducted on six benchmark suites and five real-world applications, with performance measured using Inverted Generational Distance (IGD) and Hypervolume (HV) metrics. Statistical significance was tested using Wilcoxon signed-rank tests with p-value < 0.05 [9].

Budget Online Learning for Negative Transfer Mitigation

The EMT-BOL algorithm addresses the critical challenge of negative transfer—where inappropriate knowledge sharing degrades performance [20]. The methodology includes:

Classifier Design: A Naive Bayes classifier is trained on historical transferred solutions, with the probability of positive transfer calculated as

P(y|x) = P(y)ΠP(x_i|y), where y represents transfer utility and x_i are solution features.Budget Management: Implements a sliding window approach to maintain a fixed-size sample set W_t at generation t, ensuring computational efficiency while handling concept drift. The update rule follows

W_t = {W_{t-1} \ {x_old} ∪ {x_new}, where the oldest samples are replaced with new ones.Transfer Selection: Solutions predicted to contain valuable knowledge receive higher probability for inter-task transfer, with the algorithm incorporating an exception handling mechanism for cases where classifier confidence is low.

The experimental validation used the CEC 2017 MO-MTO benchmarks (9 problems) and WCCI 2020 MO-MTO benchmarks (10 CPLX problems with 20 tasks), comparing against six state-of-the-art multiobjective EMT algorithms using IGD and HV metrics [20].

Cross-Dimensional and Prediction-Based Knowledge Transfer

The MS-MOMFEA algorithm introduces two innovative search strategies to enhance knowledge transfer [19]:

Cross-Dimensional Variable Search: Optimizes decision variables using information collected from other dimensions and tasks, implementing variable-wise knowledge transfer through dimensional alignment techniques.

Prediction-Based Individual Search: Employs a single-variable first-order grey model to predict population centers based on historical records, formulated as

x ̂^(1)(k+1) = (x^(0)(1) - b/a)e^(-ak) + b/a, where x ̂ is the predicted value, and a and b are model parameters. The predicted center serves as a symmetry point for mapping operations to maintain population diversity.

The algorithm was tested on multi-factorial optimization problems and a bi-task multi-objective traveling salesman problem, demonstrating significant improvements in convergence rate and solution quality compared to MOMFEA and single-task algorithms like NSGA-II and MOEA/D [19].

EMTO Workflow in Clinical Informatics Applications

The following diagram illustrates the integrated workflow of EMTO algorithms applied to clinical informatics and drug development challenges:

Integrated EMTO Workflow in Clinical Informatics

Future Directions and Clinical Implementation Challenges

Despite promising results, several challenges must be addressed before widespread clinical implementation of EMTO. Current EHR-based prediction models frequently suffer from methodological limitations, including inappropriate predictor selection methods and insufficient handling of missing data [22]. Crucially, most existing models lack external validation in separate patient populations, raising concerns about generalizability. Future work should emphasize adherence to reporting standards like TRIPOD and incorporate formal causality assessments for adverse drug event labels [22].

The heterogeneity of EHR systems presents additional challenges for EMTO applications. Data pre-processing for machine learning methods remains time-consuming and costly due to highly heterogeneous datasets across healthcare institutions [24]. Future EMTO algorithms should incorporate more sophisticated domain adaptation techniques, such as the progressive auto-encoding demonstrated in MO-MTEA-PAE, to better handle this institutional heterogeneity [9].

For drug development applications, EMTO shows particular promise in pharmacovigilance and clinical trial optimization. The technology can encompass multiple permutation-based combinatorial optimization problems simultaneously, implementing implicit knowledge transfer across diverse problems via information sharing in unified search space [19]. This capability is particularly valuable for complex pharmacovigilance systems that must detect rare adverse events across multiple drug classes and patient populations.

As EMTO methodologies continue to evolve, their integration with clinical workflows will require close collaboration between computational researchers and healthcare professionals. The development of standardized benchmarks like the DRAGON challenge for clinical NLP will enable more systematic evaluation of EMTO performance on healthcare-specific tasks [21]. Additionally, the creation of accessible platforms like MToP, which incorporates over 50 multi-task evolutionary algorithms and more than 200 multi-task optimization problem cases, will lower barriers to entry for clinical researchers interested in applying these advanced optimization techniques to pressing healthcare challenges [25].

Advanced EMTO Algorithms and Their Real-World Biomedical Implementations

In the evolving landscape of artificial intelligence and data science, two seemingly distinct domains—evolutionary multi-task optimization (EMTO) and deep learning-based representation learning—have developed in parallel with complementary strengths. Evolutionary multi-task optimization frameworks excel at solving multiple complex problems simultaneously by transferring knowledge between related tasks, thereby improving learning efficiency and performance [26]. Meanwhile, deep learning approaches, particularly autoencoders, have demonstrated remarkable capability in learning efficient data representations for tasks such as anomaly detection by compressing input data into compact latent forms and reconstructing it to closely match the original input [27] [28]. This guide explores the innovative transfer mechanisms bridging these domains, focusing specifically on performance comparisons between evolutionary optimization strategies and auto-encoding architectures for anomaly detection in real-world applications.

The integration of these paradigms addresses fundamental limitations in both fields. Traditional evolutionary algorithms often operate under the assumption of zero prior knowledge, limiting their adaptability and learning capacity as historical experience accumulates [26]. Conversely, autoencoders for anomaly detection frequently face challenges with overfitting, generalization, and determining optimal architectural parameters [29] [28]. By leveraging transfer mechanisms between these domains, researchers can develop more robust, efficient, and adaptive systems capable of handling complex, multi-faceted optimization problems while learning meaningful data representations. This comparative analysis examines the experimental performance, methodological approaches, and practical implementations of these innovative frameworks across various application domains, with particular emphasis on anomaly detection capabilities.

Theoretical Foundations: EMTO and Auto-Encoder Architectures

Evolutionary Multi-Task Optimization (EMTO) Frameworks

Evolutionary multi-task optimization represents a paradigm shift in computational intelligence, moving beyond isolated problem-solving to concurrent optimization of multiple related tasks. The core principle underpinning EMTO is that useful knowledge gained while solving one task may contain valuable information that can accelerate the optimization process for other related tasks [26]. This knowledge transfer mechanism allows EMTO algorithms to exploit synergies between tasks, often leading to superior performance compared to solving each task independently.

The multi-objective multi-task adaptive migration evolutionary algorithm (MOMFEA-STT) exemplifies recent advances in this domain. This framework introduces a source task transfer strategy that establishes parameter sharing models between historical tasks (source tasks) and current target tasks [26]. By dynamically identifying the degree of association between different tasks, MOMFEA-STT automatically adjusts the intensity of cross-task knowledge transfer to maximize the capture and utilization of common useful knowledge. The algorithm employs a sophisticated similarity calculation method that matches the static characteristics of source problems with the dynamic evolution trend of target tasks, enabling more effective knowledge migration while mitigating the negative transfer problem that plagues many transfer learning approaches [26].

Auto-Encoder Architectures for Anomaly Detection

Autoencoders are specialized neural network architectures designed for unsupervised representation learning, consisting of an encoder that compresses input data into a latent-space representation and a decoder that reconstructs the original input from this compressed representation [27] [30]. In anomaly detection applications, the fundamental premise is that autoencoders trained exclusively on normal data will reconstruct normal instances accurately while struggling to effectively reconstruct anomalous inputs, thereby generating higher reconstruction errors for outliers [29] [31].

Several autoencoder variants have demonstrated particular efficacy for anomaly detection:

Undercomplete Autoencoders: These employ a bottleneck structure with fewer nodes in the hidden layers than in the input layer, forcing the network to learn the most salient features of the input data [27] [30]. The compressed representation in the bottleneck layer captures essential patterns while filtering out noise and irrelevant variations.

Variational Autoencoders (VAEs): VAEs introduce probabilistic encoding by learning the parameters of a probability distribution representing the input data rather than learning an explicit compressed representation [30] [32]. This approach enables more robust generation and anomaly detection by modeling the inherent uncertainty in data distributions.

Sparse Autoencoders: These networks impose sparsity constraints on hidden unit activations, typically through L1 regularization or KL divergence penalties, forcing the model to activate only a small number of neurons in response to any given input [27] [30]. This sparsity constraint encourages the discovery of representative features useful for anomaly detection.

Denoising Autoencoders: These are trained to reconstruct clean inputs from partially corrupted or noisy versions, learning robust features that are insensitive to minor variations in input data [27]. This architecture proves particularly effective for real-world data containing natural noise and imperfections.

Performance Comparison: Experimental Data and Metrics

Quantitative Performance Benchmarks

Comprehensive experimental evaluations across multiple datasets and domains reveal distinct performance characteristics of EMTO frameworks and autoencoder architectures. The following tables summarize key performance metrics from comparative studies:

Table 1: Performance comparison of autoencoder architectures on benchmark datasets (MNIST, Fashion-MNIST) for anomaly detection tasks [29]

| Autoencoder Architecture | F1-Score | ROC-AUC | Reconstruction Error | Training Stability |

|---|---|---|---|---|

| Undercomplete AE | 0.79 | 0.85 | 0.12 | High |

| Variational AE (VAE) | 0.84 | 0.91 | 0.09 | Medium |

| Sparse AE | 0.81 | 0.88 | 0.10 | High |

| Denoising AE | 0.83 | 0.89 | 0.08 | Medium |

| Convolutional AE | 0.86 | 0.93 | 0.07 | Medium |

| Vision Transformer VAE | 0.89 | 0.95 | 0.05 | Low |

Table 2: Evolutionary algorithm performance comparison on multi-task optimization benchmarks [26]

| Evolutionary Algorithm | Hypervolume | IGD Metric | Convergence Speed | Transfer Efficiency |

|---|---|---|---|---|

| NSGA-II | 0.72 | 0.15 | Baseline | N/A |

| MOMFEA | 0.81 | 0.11 | 1.25x | 0.67 |

| MOMFEA-II | 0.85 | 0.09 | 1.41x | 0.72 |

| MOMFEA-STT | 0.91 | 0.06 | 1.63x | 0.85 |

Table 3: Anomaly detection performance across application domains [29] [33] [31]

| Application Domain | Best Performing Algorithm | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| Manufacturing Defects | Convolutional Autoencoder | 0.94 | 0.92 | 0.95 | 0.93 |

| Financial Fraud | Variational Autoencoder | 0.91 | 0.89 | 0.92 | 0.90 |

| Healthcare Anomalies | Vision Transformer VAE | 0.93 | 0.91 | 0.94 | 0.92 |

| Network Security | Isolation Forest | 0.89 | 0.87 | 0.90 | 0.88 |

| Medical Imaging | ViT-VAE | 0.95 | 0.93 | 0.96 | 0.94 |

Analysis of Performance Results

The experimental data reveals several important patterns regarding the performance characteristics of different approaches. For autoencoder architectures, the comparative analysis on benchmark datasets like MNIST and Fashion-MNIST demonstrates that more sophisticated architectures generally achieve superior performance, with Vision Transformer VAEs achieving the highest F1-score (0.89) and ROC-AUC (0.95) [29] [32]. This performance advantage comes at the cost of training stability and increased computational requirements, presenting important trade-offs for practical implementations.

For evolutionary algorithms, the introduction of sophisticated transfer mechanisms in MOMFEA-STT yields significant performance improvements across all metrics, achieving a 0.91 hypervolume and 1.63x convergence speed compared to NSGA-II baseline [26]. The transfer efficiency metric, which quantifies the effectiveness of knowledge sharing between tasks, shows a progressive improvement from MOMFEA (0.67) to MOMFEA-STT (0.85), highlighting the importance of adaptive transfer mechanisms in evolutionary multi-task optimization.

Across application domains, autoencoder-based approaches demonstrate particularly strong performance in image-related anomaly detection tasks (manufacturing defects, medical imaging), while ensemble methods like Isolation Forest remain competitive in network security applications [33] [31]. The consistency of these patterns across diverse domains suggests inherent strengths of different approaches for specific data characteristics and anomaly types.

Experimental Protocols and Methodologies

Autoencoder Training and Evaluation Framework

Training autoencoders for anomaly detection follows a systematic protocol beginning with data preparation and ending with comprehensive evaluation. The standard methodology encompasses the following key phases:

Data Preprocessing and Partitioning: Input data is first normalized (typically to [0,1] range for image data) and partitioned into training, validation, and test sets [28]. For anomaly detection tasks, the training set should contain exclusively normal instances to ensure the model learns the distribution of normal patterns without exposure to anomalies [29] [31]. Common practice involves using datasets like MNIST or Fashion-MNIST, where specific classes are designated as normal while others serve as anomalies during testing [29].

Model Architecture Configuration: The encoder and decoder components are designed with symmetric or asymmetric structures depending on the specific autoencoder variant [27] [28]. Critical hyperparameters include code size (latent dimension), number of layers, nodes per layer, and activation functions. The latent dimension represents a crucial trade-off—too small limits representational capacity, while too large may permit identity function learning [27]. Experimental protocols typically involve systematic sweeps of these parameters to identify optimal configurations.

Loss Function Selection and Training: The model is trained to minimize reconstruction error, typically measured using Mean Squared Error (MSE) for continuous data or Binary Cross-Entropy for binary data [28]. Regularized autoencoders incorporate additional penalty terms, such as sparsity constraints or contractive regularization, to improve generalization [27] [30]. Training employs optimization algorithms like Adam with early stopping based on validation reconstruction loss.

Anomaly Scoring and Thresholding: The reconstruction error between input and output serves as the primary anomaly score [29] [31]. A threshold is established using validation data (typically based on statistical percentiles or maximizing F1-score), with instances exceeding this threshold classified as anomalies. Advanced approaches combine reconstruction error with latent space discrepancies for improved sensitivity [32].

Performance Validation: Comprehensive evaluation employs multiple metrics including F1-score, ROC-AUC, precision, and recall [29]. Critical to rigorous evaluation is testing on completely unseen anomaly types not present during validation to assess generalization capability.

Evolutionary Multi-Task Optimization Experimental Setup

EMTO evaluation follows distinct protocols designed to assess both optimization performance and transfer effectiveness:

Benchmark Problem Selection: Experiments utilize multi-task optimization benchmarks with known Pareto fronts and carefully controlled inter-task relationships [26]. These benchmarks enable precise quantification of performance improvements attributable to knowledge transfer versus random search or independent optimization.

Transfer Mechanism Configuration: The source task transfer strategy in algorithms like MOMFEA-STT requires configuration of probability parameters that determine the frequency of knowledge transfer versus local search [26]. These parameters are typically adapted during optimization based on reward mechanisms that quantify the benefits of previous transfers.

Performance Assessment Metrics: EMTO algorithms are evaluated using multi-objective quality indicators including hypervolume (measuring the dominated objective space), inverted generational distance (IGD measuring proximity to true Pareto front), and convergence speed (function evaluations required to reach target quality) [26]. Transfer efficiency specifically quantifies the effectiveness of knowledge sharing between tasks.

Statistical Validation: Rigorous experimental protocols employ multiple independent runs with statistical significance testing to account for algorithmic stochasticity [26]. Performance metrics are collected throughout the optimization process to analyze convergence characteristics and any negative transfer effects.

Visualization Frameworks and Workflows

Knowledge Transfer Mechanism in EMTO

The following diagram illustrates the sophisticated knowledge transfer process in the MOMFEA-STT algorithm, highlighting the interaction between source and target tasks:

Knowledge Transfer Mechanism in MOMFEA-STT

This visualization illustrates how MOMFEA-STT establishes parameter sharing models between historical source tasks and current target tasks, enabling adaptive knowledge transfer based on similarity calculations [26]. The framework dynamically identifies associations between tasks to determine optimal transfer intensity, maximizing the utilization of common useful knowledge while mitigating negative transfer effects.

Autoencoder Architecture for Anomaly Detection

The following diagram presents the structural workflow of a variational autoencoder configured for anomaly detection applications:

Autoencoder Anomaly Detection Workflow

This workflow illustrates how input data passes through the encoder network to produce parameters of a latent distribution, from which points are sampled and passed to the decoder for reconstruction [32]. The reconstruction error between original input and reconstructed output serves as the anomaly score, with higher errors indicating greater deviation from normal patterns learned during training [29] [31].

Comparative Performance Visualization

The following diagram provides a comparative analysis of algorithm performance across key metrics:

Algorithm Strengths Across Performance Metrics

This comparative visualization highlights the specialized strengths of different algorithm classes, with autoencoder architectures demonstrating strong performance in accuracy metrics while EMTO frameworks excel in convergence speed and transfer efficiency [29] [26]. Understanding these complementary strengths enables researchers to select appropriate methodologies based on specific application requirements and constraints.

Research Reagent Solutions: Computational Tools and Datasets

The experimental frameworks discussed require specific computational tools and datasets for implementation and validation. The following table details essential "research reagents" for this domain:

Table 4: Essential Research Reagents for Transfer Mechanism Experiments

| Reagent Category | Specific Instances | Function in Research | Implementation Examples |

|---|---|---|---|

| Benchmark Datasets | MNIST, Fashion-MNIST, MVTec AD, MiAD | Standardized performance evaluation and cross-study comparability | Image anomaly detection benchmarks [29] [32] |

| Software Frameworks | TensorFlow, PyTorch, Scikit-learn | Implementation of autoencoder architectures and training pipelines | Dense layers, convolutional layers, optimization algorithms [28] |

| Evolutionary Toolboxes | PlatEMO, pymoo, DEAP | EMTO algorithm implementation and multi-objective optimization | MOMFEA-STT implementation [26] |

| Evaluation Metrics | F1-Score, ROC-AUC, Hypervolume, IGD | Quantitative performance assessment and comparison | Anomaly detection accuracy, optimization quality [29] [26] |

| Visualization Tools | Matplotlib, Seaborn, Graphviz | Experimental result presentation and algorithm workflow illustration | Performance curves, architecture diagrams [28] |

These research reagents represent essential components for conducting rigorous experiments in transfer mechanisms between anomaly detection and auto-encoding domains. Standardized datasets like MNIST and Fashion-MNIST enable direct comparison between different algorithmic approaches [29], while software frameworks provide the implementation foundation for both autoencoder architectures and evolutionary optimization algorithms [26] [28]. Evaluation metrics offer standardized quantification of performance across diverse dimensions, facilitating objective comparison between methodologies with different theoretical foundations and operational mechanisms.

This comprehensive comparison of innovative transfer mechanisms bridging anomaly detection and auto-encoding reveals several significant insights regarding algorithmic performance, applicability, and future research directions. Experimental evidence demonstrates that EMTO frameworks like MOMFEA-STT achieve superior performance in multi-task optimization scenarios, leveraging knowledge transfer to accelerate convergence and improve solution quality [26]. Meanwhile, autoencoder architectures, particularly advanced variants like Vision Transformer VAEs, excel in anomaly detection tasks involving complex data patterns, achieving state-of-the-art performance metrics across diverse application domains [29] [32].