Evolutionary Multitasking Optimization: A Complete Framework Guide from Foundations to Biomedical Applications

This comprehensive guide explores the fundamental framework of evolutionary multitasking optimization (EMTO), an emerging paradigm that simultaneously solves multiple optimization tasks through intelligent knowledge transfer.

Evolutionary Multitasking Optimization: A Complete Framework Guide from Foundations to Biomedical Applications

Abstract

This comprehensive guide explores the fundamental framework of evolutionary multitasking optimization (EMTO), an emerging paradigm that simultaneously solves multiple optimization tasks through intelligent knowledge transfer. Covering foundational principles, methodological implementations, troubleshooting strategies, and validation techniques, this article provides researchers, scientists, and drug development professionals with essential knowledge for applying EMTO to complex biomedical challenges. The content synthesizes the latest research advances in implicit and explicit knowledge transfer, negative transfer mitigation, and domain adaptation methods, offering practical insights for accelerating optimization in clinical research and pharmaceutical development.

Understanding Evolutionary Multitasking: Core Principles and Mathematical Foundations

Defining Evolutionary Multitasking Optimization (EMTO) and Its Significance

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift in evolutionary computation that enables the simultaneous optimization of multiple tasks while strategically leveraging knowledge transfer between them. This innovative approach draws inspiration from multitask learning and transfer learning, utilizing the implicit parallelism of population-based search to solve several correlated optimization problems concurrently rather than in isolation [1] [2]. The fundamental premise underpinning EMTO is that useful knowledge exists across different optimization tasks, and the experience gained while solving one task may provide valuable insights that accelerate and improve the optimization process for other related tasks [2].

Unlike traditional evolutionary algorithms (EAs) that typically address single optimization problems without leveraging cross-domain knowledge, EMTO creates an environment where multiple tasks co-evolve within a shared search framework. This paradigm has demonstrated particular strength in handling complex, non-convex, and nonlinear problems where mathematical properties may be poorly understood or difficult to exploit [1]. The ability to automatically transfer knowledge among different but related problems allows EMTO to potentially achieve faster convergence and discover superior solutions compared to single-task optimization approaches [1] [3].

Core Principles and Methodological Framework

Fundamental Concepts and Terminology

At its core, EMTO operates on the principle that if common useful knowledge exists across tasks, then the information gained while solving one task may assist in solving another related task [2]. This bidirectional knowledge transfer differentiates EMTO from sequential transfer learning approaches, where experience is applied unidirectionally from previous problems to current ones [2].

The multifactorial evolutionary algorithm (MFEA) stands as the pioneering implementation of EMTO, establishing several foundational concepts [1] [3]. MFEA creates a multi-task environment where a single population evolves to address multiple tasks simultaneously, with each task treated as a unique "cultural factor" influencing the population's development [1]. Key mechanisms in MFEA include:

- Skill factors: Assignments that divide the population into non-overlapping task groups, with each group focusing on a specific optimization task [1]

- Assortative mating: Allows individuals from different tasks to generate offspring with a specified random mating probability, facilitating knowledge transfer [1] [3]

- Vertical cultural transmission: Randomly assigns each offspring to tasks associated with its parents, maintaining task-specific search trajectories [3]

Algorithmic Workflow and Knowledge Transfer Mechanisms

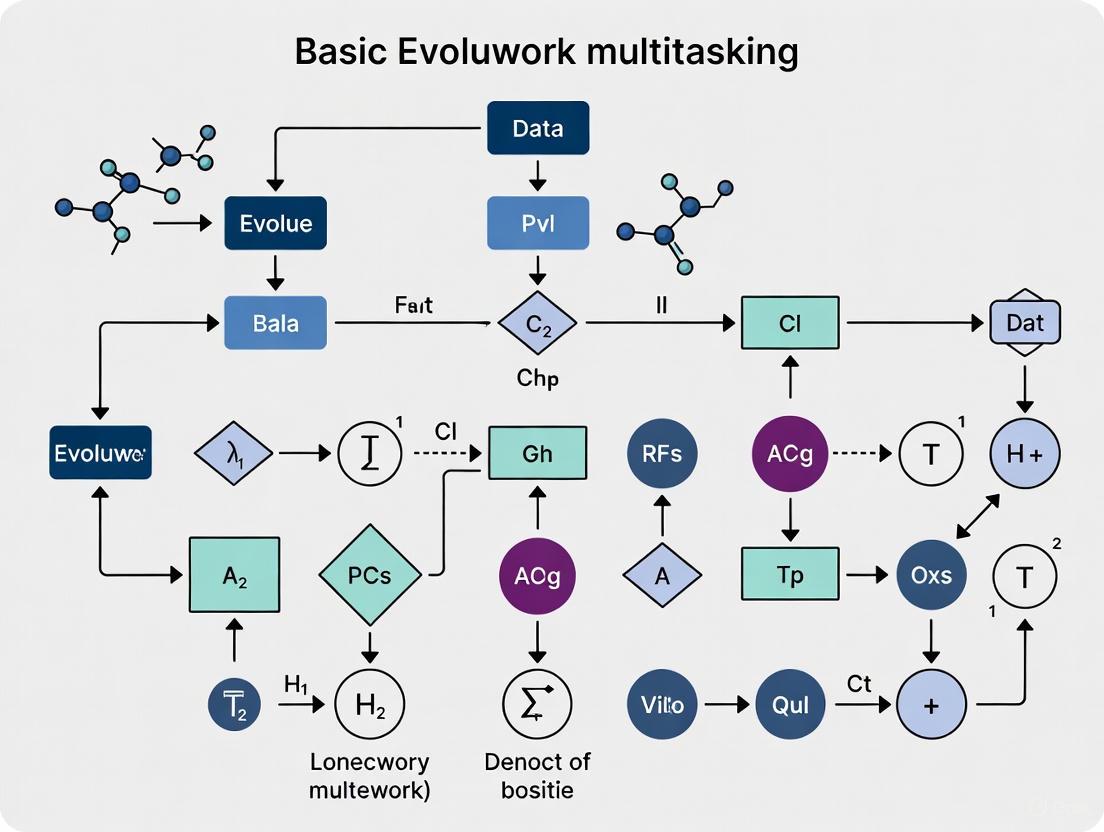

The EMTO process integrates knowledge transfer as a critical component within the evolutionary optimization cycle. The diagram below illustrates the core workflow and knowledge transfer mechanisms in a typical EMTO implementation:

Figure 1: Evolutionary Multitasking Optimization (EMTO) Core Workflow. This diagram illustrates the parallel optimization of multiple tasks with bidirectional knowledge transfer.

Effective knowledge transfer represents the most crucial element in EMTO, with research focusing primarily on two fundamental questions: when to transfer knowledge and how to transfer it effectively [2]. The "when" aspect involves determining the optimal timing and task pairings for knowledge exchange to maximize positive transfer while minimizing negative interference. The "how" aspect focuses on the mechanisms and representations used for transferring knowledge between tasks [2].

Advanced knowledge transfer methodologies have evolved beyond the basic implicit transfer in early EMTO implementations. Current approaches include:

- Explicit mapping techniques: Construct direct inter-task mappings based on task characteristics to transfer useful knowledge [2]

- Domain adaptation methods: Treat each task as a separate domain and enhance inter-task similarity through transformation mappings [3]

- Subdomain evolutionary trend alignment (SETA): Decomposes tasks into subdomains and aligns evolutionary trends between corresponding subpopulations [3]

- Large Language Model (LLM)-based transfer: Automatically designs knowledge transfer models using LLM capabilities [4]

Significance and Performance Advantages

Theoretical and Practical Benefits

EMTO offers several significant advantages over traditional single-task evolutionary algorithms. The most prominent benefit is enhanced convergence speed achieved through the exploitation of synergies between related tasks [1]. Theoretical analyses have demonstrated that EMTO can outperform traditional single-task optimization in terms of convergence velocity when solving optimization problems with interrelated characteristics [1].

The implicit parallelism of population-based search in EMTO allows for more efficient utilization of computational resources when addressing multiple related problems simultaneously [1] [2]. Rather than allocating separate resources to each optimization task, EMTO creates a unified search environment where evaluation efforts contribute to solving all tasks through knowledge transfer.

EMTO demonstrates particular strength in handling complex real-world problems with poorly understood mathematical properties, as it relies on global search capabilities rather than problem-specific mathematical characteristics [1]. This makes it particularly suitable for complex, non-convex, and nonlinear problems that challenge traditional optimization approaches.

Quantitative Performance Comparison

The table below summarizes key performance advantages of EMTO established through empirical studies:

Table 1: EMTO Performance Advantages Based on Empirical Studies

| Performance Metric | Superiority Range | Application Context | Key Contributing Factors |

|---|---|---|---|

| Convergence Speed | Significant improvement over single-task EA [1] | General optimization problems | Exploitation of inter-task synergies |

| Solution Quality (IGD) | Up to 15.7% improvement [5] | Cascade reservoir scheduling | Dual-task structure with dynamic knowledge transfer |

| Hypervolume (HV) | Up to 12.6% increase [5] | Cascade reservoir scheduling | Effective balancing of multiple competing objectives |

| Adaptability | Strong performance across varying conditions [5] | Complex hydrological constraints | Knowledge transfer between constrained and unconstrained task formulations |

Experimental Protocols and Evaluation Methodologies

Standardized Benchmarking Approaches

Rigorous evaluation of EMTO algorithms typically employs multiple benchmark suites designed to test performance across various problem characteristics. Commonly used benchmarks include:

- Single objective multitasking/many-tasking benchmark suites: Well-established test problems that evaluate fundamental EMTO capabilities [3]

- Constrained many-objective optimization problems (CMaOPs): Complex problems with multiple constraints, representative of real-world challenges like reservoir system scheduling [5]

Experimental protocols generally compare proposed EMTO algorithms against multiple baseline approaches, including:

- Traditional single-task evolutionary algorithms optimized independently for each task [3]

- Classic EMTO algorithms such as MFEA [3]

- State-of-the-art EMTO algorithms representing recent advancements [3]

Performance Metrics and Assessment Criteria

Comprehensive evaluation of EMTO performance employs multiple quantitative metrics:

- Inverted Generational Distance (IGD): Measures convergence and diversity by calculating the distance between solutions in the obtained Pareto front and true Pareto front [5]

- Hypervolume (HV): Assesses the volume of objective space dominated by obtained solutions, providing a combined measure of convergence and diversity [5]

- Statistical significance tests: Determine whether performance differences between algorithms are statistically significant [3]

The evaluation typically examines both search efficiency (convergence speed) and effectiveness (solution quality) across multiple independent runs to account for algorithmic stochasticity [3] [4].

The Researcher's Toolkit: Essential Components for EMTO Implementation

Algorithmic Components and Research Reagents

Successful implementation of EMTO requires several key components, which function as "research reagents" in experimental setups:

Table 2: Essential Research Components for EMTO Implementation

| Component | Function | Examples/Alternatives |

|---|---|---|

| Base Optimizer | Provides fundamental search mechanism | Genetic Algorithm (GA), Particle Swarm Optimization (PSO), Differential Evolution (DE) [3] |

| Knowledge Transfer Mechanism | Facilitates exchange of information between tasks | Vertical crossover, solution mapping, domain adaptation, SETA, LLM-generated transfer models [3] [4] [2] |

| Similarity Measurement | Quantifies inter-task relationships for transfer control | Fitness ranking correlation, evolutionary direction consistency, distribution overlap [3] [2] |

| Benchmark Problems | Enable standardized algorithm evaluation | Multitasking benchmark suites, constrained many-objective optimization problems [3] [5] |

| Performance Metrics | Quantify algorithm effectiveness | IGD, Hypervolume, convergence rate analysis [5] |

Advanced Knowledge Transfer Strategies

Recent advancements in knowledge transfer strategies have significantly enhanced EMTO capabilities. The following diagram illustrates the taxonomy of knowledge transfer methods in EMTO:

Figure 2: Knowledge Transfer Methods Taxonomy in EMTO. This diagram categorizes approaches based on transfer timing and mechanism.

The SETA-MFEA algorithm represents a significant advancement in handling dissimilar tasks through adaptive domain decomposition [3]. This approach:

- Decomposes each task into multiple subdomains using density-based clustering (Affinity Propagation Clustering)

- Establishes precise inter-subdomain mappings by determining and aligning evolutionary trends

- Enables various subpopulations to share consistent evolutionary trends through SETA-based inter-subdomain crossovers [3]

Another emerging approach leverages Large Language Models to autonomously design knowledge transfer models [4]. This LLM-based framework:

- Searches for high-performing knowledge transfer models by optimizing both transfer effectiveness and efficiency

- Employs few-shot chain-of-thought prompting to enhance model quality

- Generates transfer models that outperform existing hand-crafted methods in certain scenarios [4]

Applications in Drug Development and Regulatory Science

While the search results do not provide specific details on direct applications of EMTO in drug development, the methodology offers significant potential for addressing complex challenges in pharmaceutical research and regulatory science. The principles of multi-task optimization align well with several drug development challenges:

- Biomarker development: EMTO could optimize multiple biomarker qualification tasks simultaneously, potentially accelerating the establishment of evidentiary standards and qualification pathways [6]

- Clinical trial design and analysis: The capability to balance multiple endpoints and integrate data longitudinally over time aligns with specialized statistical techniques needed in clinical trials [6]

- Postmarket safety evaluation: EMTO's ability to handle multiple objectives could enhance safety signal detection and evaluation in complex real-world data environments [6]

- Pediatric drug development: Formulation optimization balancing multiple patient-centric parameters could benefit from EMTO approaches [7]

The application of EMTO to reservoir scheduling optimization demonstrates its capability to handle complex many-objective problems with multiple constraints [5], suggesting similar potential for pharmaceutical manufacturing process optimization and quality by design (QbD) implementations in drug development [7].

Evolutionary Multitasking Optimization represents a significant advancement in evolutionary computation that leverages knowledge transfer across multiple simultaneous optimization tasks. Its ability to exploit synergies between related problems enables improved convergence speed and solution quality compared to traditional single-task approaches. As research in EMTO continues to evolve, key future directions include:

- Developing more sophisticated similarity measures and transfer mechanisms to further reduce negative transfer [1] [2]

- Enhancing scalability for many-tasking environments with numerous simultaneous optimization tasks [1]

- Expanding application domains, particularly in complex pharmaceutical and healthcare optimization challenges [6] [7]

- Integrating emerging artificial intelligence approaches, including LLMs, for autonomous algorithm design [4]

For drug development professionals and researchers, EMTO offers a powerful framework for addressing multifaceted optimization challenges that involve balancing multiple competing objectives—a common scenario in pharmaceutical research, development, and regulatory decision-making.

Mathematical Formulation of Multitasking Optimization Problems (MTOPs)

Multitasking Optimization (MTO) represents a paradigm shift in computational problem-solving that aims to simultaneously optimize multiple tasks, leveraging their potential inter-relationships to achieve better performance than optimizing each task in isolation [8]. The fundamental premise of MTO is that in real-world scenarios, many optimization problems possess intrinsic connections, and harnessing the information between these interrelated tasks can lead to more efficient and effective solutions [8]. This approach stands in contrast to traditional single-task optimization by exploiting the inherent parallelism of population-based algorithms to solve a collection of tasks concurrently, thereby creating pathways for knowledge transfer between them [8].

Within the broader framework of evolutionary multitasking research, MTO addresses the challenge of optimizing multiple tasks simultaneously under the assumption that similarities exist between problems, whether in shared optimal domains, landscape trends, or other characteristics [8]. This approach has found applications across diverse domains including high-dimensional function optimization, large-scale multi-objective optimization, constraint optimization, engineering design, vehicle routing, power system scheduling, and drug development [8]. For researchers in drug development, MTO offers promising methodologies for addressing complex, multi-faceted problems where multiple biological targets, compound properties, or efficacy parameters must be optimized simultaneously.

Mathematical Formulation

The mathematical foundation of Multitask Optimization Problems establishes a formal structure for concurrent problem-solving. In a scenario requiring simultaneous optimization of K tasks, where each task represents a minimization problem, MTO can be formally defined as follows [8]:

Let ( T_i ) (where ( i = 1, 2, \ldots, K )) denote the i-th task. A Multitask Optimization Problem is then defined by:

[ {x^_1, x^2, \ldots, x^*K} = \arg\min {f1(x), f2(x), \ldots, f_K(x)} ]

[ xi \in \Omegai, \quad i = 1, 2, \ldots, K ]

Where:

- ( fi ) represents the fitness function of task ( Ti )

- ( xi ) denotes the solution of task ( Ti )

- ( \Omegai ) represents the search space for task ( Ti )

- ( x^*i ) is the optimal solution for task ( Ti )

This formulation emphasizes that MTO seeks to find optimal solutions for all K tasks simultaneously, rather than sequentially. The key challenge lies in effectively exploring the product space ( \Omega1 \times \Omega2 \times \ldots \times \Omega_K ) while leveraging potential synergies between tasks.

Relationship to Multi-Objective Optimization

It is crucial to distinguish Multitasking Optimization from Multi-Objective Optimization, which addresses problems with multiple conflicting objectives within a single task [9]. While Multi-Objective Optimization deals with vector-valued objective functions and seeks Pareto-optimal solutions for one problem with multiple criteria [9], Multitasking Optimization simultaneously handles multiple distinct tasks, each potentially with their own single or multiple objectives [8] [10]. The table below clarifies these distinctions:

Table 1: Comparison of Optimization Paradigms

| Feature | Single-Task Optimization | Multi-Objective Optimization | Multitask Optimization |

|---|---|---|---|

| Number of Tasks | One | One | Multiple (K ≥ 2) |

| Number of Objectives | Single | Multiple | Each task can have single or multiple objectives |

| Solution Approach | Find global optimum | Find Pareto-optimal front | Find optima for all tasks simultaneously |

| Knowledge Transfer | Not applicable | Not applicable | Explicit or implicit transfer between tasks |

| Typical Applications | Standard engineering problems | Design with conflicting criteria | Complex systems with related subproblems |

Algorithmic Frameworks and Experimental Protocols

Multitask Snake Optimization (MTSO) Algorithm

The Multitask Snake Optimization algorithm represents a recent advancement in MTO, building upon the bio-inspired Snake Optimization algorithm [8]. The MTSO operates through two distinct phases:

Phase 1: Independent Optimization

- Assign separate sub-populations to each task

- Independently optimize each task using the standard SO algorithm

- Select top 20% of elite individuals based on fitness

- Store elite individuals in a dedicated repository

Phase 2: Knowledge Transfer

- Generate random numbers ( r1 ) and ( r2 )

- Determine transfer mechanism based on comparison with thresholds:

- If ( r1 < \text{RMP} ) and ( r2 < R1 ): Inter-task knowledge transfer

- If ( r1 < \text{RMP} ) and ( r2 \geq R1 ): Self-knowledge transfer via random perturbation

- If ( r_1 \geq \text{RMP} ): Reverse learning through lens imaging strategy

The algorithm employs normalization before knowledge transfer to standardize individuals across different search spaces [8]:

[ X{ij}^* = \frac{X{ij} - Lbj}{Ubj - Lb_j} ]

Where ( X{ij}^* ) and ( X{ij} ) are the normalized and original j-th dimension of the i-th individual, and ( Lbj ) and ( Ubj ) are the lower and upper bounds of the j-th dimension, respectively [8].

Table 2: MTSO Parameter Configuration

| Parameter | Symbol | Value | Description |

|---|---|---|---|

| Knowledge Transfer Probability | RMP | 0.5 | Controls likelihood of cross-task transfer |

| Elite Selection Probability | R1 | 0.95 | Probability of selecting elite individuals for transfer |

| Elite Fraction | - | 0.2 | Top 20% of individuals considered elite |

| Normalization | - | Required | Pre-processing step before knowledge transfer |

Knowledge Transfer Mechanisms

Effective knowledge transfer constitutes the core of successful multitask optimization. The MTSO algorithm implements several transfer mechanisms:

Inter-Task Knowledge Transfer

- Selects a random source task different from the target task

- Transfers knowledge from elite individuals of source to target task

- Facilitates cross-task learning and convergence acceleration

Self-Knowledge Transfer

- Applies random perturbation to worst-performing individuals

- Maintains diversity within task sub-populations

- Prevents premature convergence

Reverse Learning through Lens Imaging

- Generates reverse individuals of target task population

- Evaluates and selects best-performing individuals

- Enhances exploration of search space

Learning to Transfer (L2T) Framework

Recent research has introduced the Learning to Transfer framework to address adaptability challenges in implicit Evolutionary Multitasking [11]. This novel approach formulates knowledge transfer as a sequence of strategic decisions made by a learning agent within the EMT process [11]. Key components include:

- Action Formulation: Deciding when and how to transfer knowledge

- State Representation: Informative features capturing evolutionary states

- Reward Formulation: Balancing convergence and transfer efficiency gains

- Actor-Critic Network: Learned via proximal policy optimization

The L2T framework demonstrates marked improvement in adaptability and performance across a wide spectrum of unseen MTOPs, particularly for problems with diverse inter-task relationships, function classes, and task distributions [11].

Visualization of Multitask Optimization Framework

The following diagram illustrates the core architecture and workflow of a typical multitask optimization algorithm:

MTOP Architecture and Knowledge Transfer Workflow

The following flowchart details the knowledge transfer decision process within the MTSO algorithm:

Knowledge Transfer Decision Process in MTSO

Successful implementation of multitasking optimization requires specific computational tools and resources. The following table outlines essential components for experimental work in this field:

Table 3: Essential Research Reagents and Computational Resources

| Resource Category | Specific Tool/Platform | Purpose/Function | Implementation Notes |

|---|---|---|---|

| Algorithm Frameworks | Multifactorial Evolutionary Algorithm (MFEA) | Foundational MTO framework | Base implementation for comparative studies [10] |

| Multi-Task Snake Optimization (MTSO) | Bio-inspired MTO algorithm | Recent approach with promising performance [8] | |

| Learning to Transfer (L2T) | Adaptive knowledge transfer | RL-based framework for transfer policy learning [11] | |

| Benchmark Problems | Synthetic test functions | Algorithm validation | Controlled environments with known optima [8] |

| Planar Kinematic Arm Control | Real-world validation | Five-task and 10-task versions available [8] | |

| Robot Gripper Design | Engineering application | Multitask design optimization [8] | |

| Car Side-Impact Design | Safety engineering problem | Constrained multitask optimization [8] | |

| Software Libraries | MATLAB/Python optimization tools | Algorithm implementation | Flexible environments for MTO development [10] |

| GPU acceleration frameworks | Large-scale MTO | Essential for high-dimensional problems [10] | |

| Evaluation Metrics | Convergence speed | Performance measurement | Iterations to reach target solution quality [8] |

| Solution accuracy | Quality assessment | Deviation from known optima [8] | |

| Transfer efficiency | Knowledge utility | Improvement attributable to cross-task transfer [11] |

Application Protocols for Drug Development

For researchers in pharmaceutical sciences, implementing multitasking optimization requires specific methodological considerations:

Protocol for Multi-Objective Drug Design Optimization

Objective: Simultaneously optimize multiple drug properties including efficacy, selectivity, and pharmacokinetic parameters.

Experimental Setup:

- Task Definition: Define each optimization target as a separate task (e.g., Task 1: binding affinity; Task 2: selectivity ratio; Task 3: metabolic stability)

- Representation: Encode molecular structures as numerical representations suitable for evolutionary algorithms

- Fitness Functions: Define objective functions for each task incorporating experimental data and predictive models

- Algorithm Configuration: Implement MTSO with domain-specific knowledge transfer mechanisms

Execution Parameters:

- Population size: 100-200 individuals per task

- Knowledge transfer probability (RMP): 0.3-0.7 depending on task relatedness

- Termination criterion: Convergence stability or maximum iterations (1000-5000)

- Evaluation: Cross-validation with holdout molecular sets

Validation:

- Compare against single-task optimization baselines

- Statistical significance testing of performance differences

- Analysis of transferred solutions for chemical feasibility

Protocol for Experimental Parameter Optimization

Objective: Optimize multiple experimental parameters simultaneously in high-throughput screening or process optimization.

Implementation Guidelines:

- Task Formulation: Define each experimental outcome as a separate optimization task

- Parameter Encoding: Represent experimental conditions in search space compatible with optimization algorithm

- Constraint Handling: Incorporate laboratory feasibility constraints directly in search space definition

- Knowledge Transfer: Enable sharing of promising parameter combinations between related experimental setups

Data Collection:

- Standardized recording of all experimental parameters and outcomes

- Explicit documentation of reagent identifiers and equipment specifications [12]

- Comprehensive metadata following FAIR principles [12]

Quality Control:

- Implementation of negative and positive controls within experimental design

- Replication of promising parameter sets identified through knowledge transfer

- Blind validation of optimized parameters by independent researchers

The mathematical formulation of Multitasking Optimization Problems provides a powerful framework for addressing complex, interconnected optimization challenges in drug development and scientific research. By enabling simultaneous optimization of multiple tasks with controlled knowledge transfer, MTO approaches like the Multitask Snake Optimization algorithm and Learning to Transfer framework offer significant advantages over traditional sequential optimization methods. The experimental protocols and visualization methodologies presented in this work provide researchers with practical tools for implementing these advanced optimization techniques in their scientific workflows, potentially accelerating discovery and development processes across pharmaceutical and biotechnology domains.

The Evolutionary Multitasking Paradigm vs. Traditional Evolutionary Algorithms

Evolutionary Algorithms (EAs) are population-based metaheuristic optimization methods inspired by natural processes of species reproduction and evolution [3] [13]. They perform global optimization without relying on problem mathematical properties, making them suitable for complex, non-convex, and nonlinear problems [1]. Traditional EAs are typically limited to handling a single optimization task at a time [3].

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift that enables simultaneous optimization of multiple tasks while leveraging potential complementarities through inter-task knowledge transfer [1] [14]. This approach mimics human ability to apply knowledge from previous experiences to new related problems, potentially accelerating convergence and improving solution quality across all optimized tasks [1].

This technical guide provides a comprehensive analysis of both paradigms, focusing on their fundamental mechanisms, methodological implementations, and practical applications in research and industrial contexts.

Fundamental Principles and Comparative Analysis

Core Architecture and Operational Mechanisms

Traditional Evolutionary Algorithms follow a well-established iterative process [13]:

- Randomly generate initial population

- Evaluate fitness of each individual

- Select parent individuals based on fitness

- Create offspring through recombination and mutation

- Select individuals for next generation

- Repeat until termination criteria met

This single-task approach evolves populations within isolated fitness landscapes, potentially overlooking valuable synergies when solving multiple related problems [1].

Evolutionary Multitasking introduces cross-task optimization through several implementations. The Multifactorial Evolutionary Algorithm (MFEA), a pioneering EMTO approach, creates a multi-task environment where a single population evolves to solve multiple tasks simultaneously [3] [1]. Each task influences evolution as a unique cultural factor, with knowledge transfer occurring through assortative mating and selective imitation mechanisms [1].

Multi-population based EMT establishes separate populations for each task, facilitating knowledge transfer through elite individual migration [3]. Both architectures aim to exploit implicit parallelism in population-based search to enable complementary knowledge exchange [1].

Comparative Framework: Single-Task vs. Multitasking Approaches

Table 1: Comparative analysis of traditional EA vs. evolutionary multitasking paradigms

| Aspect | Traditional Evolutionary Algorithms | Evolutionary Multitasking |

|---|---|---|

| Optimization Scope | Single task per run | Multiple tasks simultaneously |

| Knowledge Transfer | None between independent runs | Explicit transfer through cross-task operators |

| Population Structure | Single population | Single unified or multiple interacting populations |

| Algorithmic Foundation | Biological evolution principles | Evolution + transfer learning/multitask learning |

| Key Mechanisms | Selection, recombination, mutation | Skill factor, assortative mating, selective imitation, domain adaptation |

| Solution Output | Best solution for one problem | Best solutions for all optimized tasks |

| Theoretical Basis | Population genetics, optimization theory | Evolutionary computation, transfer learning |

| Implementation Examples | Genetic Algorithms, Evolution Strategy, Genetic Programming | MFEA, MFPSO, EMFF, SETA-MFEA |

Table 2: Knowledge transfer mechanisms in evolutionary multitasking

| Transfer Type | Method | Implementation | Benefits |

|---|---|---|---|

| Implicit | Assortative mating | Cross-task reproduction based on random mating probability | Simple implementation, automatic transfer |

| Explicit | Domain adaptation | Learning transformation mappings between task search spaces | Enhanced performance on dissimilar tasks |

| Individual-based | Linearized Domain Adaptation (LDA) | Fitness-ranked sample pairing with linear transformation | Precise point-to-point transfer |

| Distribution-based | Subspace alignment | Principal Component Analysis with Bregman divergence minimization | Captures population-level characteristics |

| Trend-based | Subdomain Evolutionary Trend Alignment (SETA) | Aligns evolutionary directions of corresponding subpopulations | Consistent evolutionary trajectory across tasks |

Advanced Methodologies in Evolutionary Multitasking

Domain Adaptation Techniques for Heterogeneous Tasks

A significant challenge in EMTO emerges when optimized tasks share little similarity, potentially leading to negative knowledge transfer that degrades performance [3]. Domain adaptation methods address this by actively enhancing inter-task similarity through transformation mappings.

The Subdomain Evolutionary Trend Alignment (SETA) method introduces innovative approaches [3]:

- Adaptive task decomposition using density-based clustering (Affinity Propagation Clustering)

- Subdomain formation with relatively simple fitness landscapes

- Evolutionary trend alignment through inter-subdomain mapping

- SETA-based knowledge transfer enabling intra- and inter-task crossovers

This methodology enables precise knowledge transfer even for dissimilar tasks by operating at subdomain level rather than treating tasks as indivisible domains [3].

Explicit Evolutionary Frameworks with Feature Fusion

The Explicit Evolutionary Framework with Multitasking Feature Fusion (EMFF) addresses limitations in existing explicit EMT algorithms that often overlook inter-task correlation of feature information [15]. EMFF incorporates:

- Multitasking feature fusion mechanism that explores potential connections among feature information from different tasks

- Transfer Individual Derivation (TID) strategy to ensure rapid evolution of critical knowledge

- Comprehensive components designed for industrial applications like aluminum electrolysis process optimization [15]

Task Relevance Evaluation in Feature Selection

For high-dimensional feature selection problems, task relevance evaluation represents a crucial advancement beyond traditional feature relevance approaches [16]. This methodology includes:

- Multi-task generation strategy using Relief-F algorithm for feature weight evaluation and A-Res algorithm for sampling subtasks

- Task relevance metric based on average crossover ratio, formulated as the heaviest k-subgraph problem

- Guiding vector-based knowledge transfer with adaptive convergence factor to balance exploration and exploitation

- Optimal task crossover ratio determination (experimentally established at approximately 0.25) [16]

Experimental Protocols and Benchmarking

Standardized Evaluation Methodologies

Comprehensive performance validation of EMT algorithms employs established experimental protocols [3] [16]:

Benchmark Suites:

- Single objective multitasking/many-tasking benchmark suites (e.g., from Da et al., 2016 and Feng et al., 2020)

- High-dimensional feature selection datasets (21 benchmark datasets for classification)

- Real-world applications (aluminum electrolysis, hyperspectral image unmixing)

Performance Metrics:

- Convergence speed and solution quality across all tasks

- Computational efficiency and scalability

- Effectiveness of knowledge transfer (positive vs. negative transfer)

- Robustness to task heterogeneity and dimensionality

Comparative Baselines:

- Single-task evolutionary algorithms optimized independently

- Classic EMT algorithms (MFEA, MFPSO)

- State-of-the-art EMT algorithms (various recent proposals)

Workflow for Evolutionary Multitasking Experiments

EMT Algorithm Component Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents for evolutionary multitasking experiments

| Research Reagent | Function/Purpose | Implementation Examples |

|---|---|---|

| Benchmark Suites | Algorithm performance validation and comparison | Single objective multitasking benchmarks, many-tasking problems [3] |

| Fitness Functions | Quality assessment of candidate solutions | Problem-specific objective functions, multi-task fitness formulations [13] |

| Knowledge Transfer Operators | Enable cross-task genetic material exchange | Inter-task crossover, mapping-based transfer, trend alignment [3] |

| Domain Adaptation Techniques | Enhance similarity between heterogeneous tasks | Linearized Domain Adaptation (LDA), subspace alignment, SETA [3] |

| Task Similarity Metrics | Quantify inter-task relationships for transfer control | Fitness-based ranking correlation, evolutionary direction consistency [16] |

| Resource Allocation Mechanisms | Distribute computational resources among tasks | Adaptive selection pressure, population sizing per task [1] |

Applications and Performance Analysis

Real-World Implementation Domains

Evolutionary multitasking has demonstrated significant success across diverse application domains:

Industrial Process Optimization:

- Aluminum electrolysis cell parameter optimization using EMFF framework [15]

- Complex scheduling and engineering design problems [1]

Data Science and Machine Learning:

- High-dimensional feature selection for classification [16]

- Sparse reconstruction and hyperspectral image unmixing [17]

Complex Systems:

Performance Analysis and Comparative Results

Experimental studies demonstrate EMTO's capabilities:

Convergence Acceleration: EMTO algorithms typically achieve convergence speed improvements compared to single-task EAs, particularly when tasks share beneficial complementarities [1]. The SETA-MFEA algorithm shows competitive performance against single-task EAs and six classic/state-of-the-art EMT algorithms across multiple benchmarks [3].

Solution Quality Enhancement: High-dimensional feature selection with task relevance evaluation outperforms various state-of-the-art FS methods across 21 high-dimensional datasets [16]. Knowledge transfer through appropriate mechanisms improves solution quality beyond single-task optimization.

Heterogeneous Task Optimization: Advanced domain adaptation techniques like SETA enable effective knowledge transfer even for dissimilar tasks through subdomain alignment and evolutionary trend consistency [3].

The evolutionary multitasking paradigm represents a significant advancement beyond traditional evolutionary algorithms by enabling simultaneous optimization of multiple tasks with complementary knowledge transfer. While traditional EAs remain effective for isolated problems, EMTO provides powerful mechanisms for exploiting synergies across related tasks, particularly in complex, high-dimensional optimization scenarios.

Methodological innovations in domain adaptation, task relevance evaluation, and explicit feature fusion continue to expand EMTO's applicability to increasingly heterogeneous tasks. The experimental protocols, benchmarking methodologies, and research reagents outlined in this guide provide foundational resources for researchers and practitioners implementing these advanced optimization techniques.

As computational capabilities advance and theoretical understanding deepens, evolutionary multitasking is positioned to address increasingly complex real-world optimization challenges across scientific and industrial domains.

Evolutionary Multitasking (EMT) represents a paradigm shift in evolutionary computation, enabling the simultaneous solution of multiple optimization tasks by harnessing their underlying synergies [19]. Inspired by the human brain's ability to process concurrent tasks, EMT moves beyond traditional single-task optimization to exploit potential complementarities between problems, often leading to accelerated convergence and improved solution quality [20] [21]. Within this framework, three fundamental mechanisms form the basic architecture of multitasking evolutionary algorithms: Skill Factors enable individuals to specialize in specific tasks, Random Mating Probability (RMP) governs the frequency of cross-task genetic exchange, and Cultural Transmission provides a structured approach to knowledge transfer across tasks [19] [22] [23]. This technical guide examines these core concepts within the broader thesis that effective evolutionary multitasking research requires sophisticated mechanisms for managing task specialization, genetic exchange, and knowledge transfer to balance exploration and exploitation while mitigating negative transfer between unrelated tasks.

Conceptual Foundations

Skill Factors: Mechanism for Task Specialization

In evolutionary multitasking environments, Skill Factors provide a crucial mechanism for assigning and tracking task specialization within a unified population. Formally, the Skill Factor (τ) of an individual is defined as the specific optimization task on which the individual demonstrates best performance, determined through factorial rank comparisons [19] [21]. This concept enables the implementation of implicit genetic transfer while maintaining population diversity across tasks.

The computational determination of Skill Factors follows a rigorous four-step process:

- Factorial Cost Calculation: For each individual pi and task Tj, compute αij = γδij + Fij, where Fij is the objective value, δij is the constraint violation, and γ is a large penalizing multiplier [19] [21].

- Factorial Ranking: Individuals are sorted in ascending order based on factorial cost for each task, with the index in this sorted list representing the factorial rank rij [19] [21].

- Skill Factor Assignment: Each individual is assigned τi = argmin{rij}, identifying the task where it performs most effectively [19] [21].

- Scalar Fitness Assignment: Compute a unified fitness measure βi = max{1/ri1,…,1/riK} to enable cross-task performance comparisons [19] [21].

This mechanism allows a single population to maintain specialized subgroups for different tasks while operating within a unified search space, creating opportunities for beneficial genetic exchange between individuals specializing in different tasks [23].

Random Mating Probability (RMP): Controlling Genetic Exchange

Random Mating Probability represents a crucial control parameter in multifactorial evolutionary algorithms, directly governing the frequency of cross-task genetic interactions [23]. The RMP determines whether individuals with different skill factors should undergo crossover, thereby facilitating knowledge transfer between optimization tasks [23].

In implementation, when two parent candidates with different skill factors are selected for reproduction, a random number is generated and compared against the RMP threshold. If the random number is less than RMP, crossover occurs between the parents, allowing genetic material to be exchanged between tasks [23]. Otherwise, each parent undergoes mutation separately to produce offspring that maintain the same skill factor [23].

Recent research has evolved from fixed RMP values toward adaptive mechanisms that dynamically adjust transfer probabilities based on online performance feedback [22] [23]. For instance, the MFEA-II algorithm incorporates online transfer parameter estimation to mitigate negative transfer between unrelated tasks [23], while the Cultural Transmission-based EMT algorithm employs an adaptive information transfer strategy that adjusts probability based on dominant relationships between offspring and parents [22].

Table 1: RMP Configuration Strategies in Evolutionary Multitasking Algorithms

| Algorithm | RMP Type | Control Mechanism | Advantages |

|---|---|---|---|

| MFEA [23] | Fixed | Constant value (e.g., 0.3) | Implementation simplicity |

| MFEA-II [23] | Adaptive | Online transfer parameter estimation | Reduces negative transfer |

| MTSO [8] | Fixed | Constant value (0.5) with elite selection probability | Balanced exploration-exploitation |

| CT-EMT-MOES [22] | Adaptive | Adjusts based on parent-offspring dominance | Promotes positive transfer |

Cultural Transmission: Knowledge Transfer Mechanism

Cultural Transmission in evolutionary multitasking refers to the vertical transfer of genetic and cultural traits from parents to offspring during the evolutionary process [19] [22]. This mechanism enables the propagation of valuable problem-solving knowledge across tasks and generations, serving as the primary vehicle for implementing transfer learning in multitasking environments [21].

The Cultural Transmission framework operates through two primary mechanisms:

- Assortative Mating: Governs the conditions under which individuals with different skill factors can reproduce, typically controlled by the RMP parameter [23].

- Vertical Cultural Transmission: Determines how offspring inherit cultural traits (skill factors) from parents, with implementations ranging from random inheritance to elite-guided strategies [19] [22].

Advanced implementations have expanded this concept through dual-transfer mechanisms. For example, the Two-Level Transfer Learning algorithm implements both inter-task knowledge transfer (through chromosome crossover and elite individual learning) and intra-task knowledge transfer (through information transfer of decision variables) [19] [21]. Similarly, the Cultural Transmission-based Multi-Objective Evolution Strategy employs elite-guided variation to transfer current Pareto front information to all individuals and horizontal cultural transmission to efficiently share information between source and target tasks [22].

Experimental Framework & Methodologies

Baseline Algorithm: Multifactorial Evolutionary Algorithm

The Multifactorial Evolutionary Algorithm provides the foundational framework for implementing Skill Factors, RMP, and Cultural Transmission in evolutionary multitasking [19] [23]. The complete experimental workflow can be visualized as follows:

MFEA Experimental Workflow

The core MFEA procedure implements Skill Factors, RMP, and Cultural Transmission through the following algorithmic structure:

MFEA Algorithmic Structure

Advanced Implementation: Two-Level Transfer Learning

The Two-Level Transfer Learning algorithm enhances the basic MFEA framework by implementing a sophisticated dual-transfer mechanism [19] [21]. This approach addresses the limitation of random inter-task transfer by incorporating structured knowledge exchange:

Table 2: Two-Level Transfer Learning Architecture

| Level | Transfer Type | Mechanism | Function |

|---|---|---|---|

| Upper-Level | Inter-task Transfer | Chromosome crossover with elite individual learning | Reduces randomness in knowledge exchange |

| Lower-Level | Intra-task Transfer | Information transfer of decision variables across dimensions | Accelerates convergence within tasks |

The algorithm operates through a probabilistic decision process controlled by inter-task transfer learning probability (tp). If a generated random value exceeds tp, the algorithm executes four steps for inter-task transfer: (1) parent selection, (2) offspring generation through crossover operators, (3) elite individual knowledge transfer to reduce randomness, and (4) selection of high-fitness individuals for the next generation [21]. When the random value is less than tp, the algorithm performs local search based on intra-task knowledge transfer, executing one-dimensional search using information from other dimensions according to individual fitness and elite selection operators [21].

Knowledge Transfer Control Strategies

Effective management of knowledge transfer is critical for preventing negative transfer between unrelated tasks. The Cultural Transmission-based Multi-Objective Evolution Strategy addresses this through an adaptive information transfer strategy that dynamically adjusts transfer probability based on dominant relationships between offspring and parents [22]. This approach enables the algorithm to reasonably allocate evolutionary resources between intra-task and inter-task optimization, significantly enhancing positive transfer while minimizing negative interference [22].

The Bi-Operator Evolutionary Multitasking Algorithm further advances transfer control by adaptively selecting between different evolutionary search operators (genetic algorithms and differential evolution) based on their performance on various problem types [23]. This bi-operator strategy demonstrates that no single search operator is optimal for all multitasking environments, and adaptive selection can significantly improve overall performance [23].

Research Reagent Solutions

Table 3: Essential Computational Tools for Evolutionary Multitasking Research

| Research Tool | Function | Implementation Example |

|---|---|---|

| Unified Encoding Scheme | Represents solutions for different tasks in a common search space | Random-key representation [19], Permutation-based representation [19] |

| Skill Factor Tracking | Assigns and maintains task specialization for each individual | Factorial rank calculations [19] [21], Scalar fitness assignment [19] [21] |

| RMP Controller | Manages frequency of cross-task genetic exchanges | Fixed probability (0.3-0.5) [8] [23], Adaptive mechanisms [22] [23] |

| Cultural Transmission Operator | Facilitates knowledge transfer between parents and offspring | Assortative mating [23], Vertical cultural transmission [19] [22] |

| Benchmark Problems | Standardized tests for algorithm performance validation | CEC17 Multitasking Benchmark [23], CEC22 Multitasking Benchmark [23] |

Skill Factors, Random Mating Probability, and Cultural Transmission form the fundamental trinity of mechanisms enabling effective evolutionary multitasking. Through sophisticated implementations such as the Multifactorial Evolutionary Algorithm, Two-Level Transfer Learning, and Cultural Transmission-based Evolution Strategies, these concepts provide a robust framework for simultaneous optimization of multiple tasks. The continued refinement of adaptive RMP controllers, bidirectional transfer mechanisms, and specialized cultural transmission operators represents the cutting edge of evolutionary multitasking research, offering promising pathways for enhanced optimization performance across diverse applications from neural engineering to drug development.

Evolutionary Algorithms (EAs) have long been established as powerful meta-heuristics for solving complex optimization problems across scientific and engineering domains. Traditional EAs, however, typically address problems in isolation, without leveraging potential complementarities between related tasks. This limitation prompted researchers to explore a novel paradigm inspired by human cognitive processes—specifically, our innate ability to conduct multiple tasks simultaneously while transferring knowledge between them. This conceptual shift led to the development of Evolutionary Multitasking Optimization (EMTO), an emerging branch of evolutionary computation that aims to solve multiple optimization problems concurrently through a single search process [14]. The fundamental rationale behind EMTO is that by dynamically exploiting existing complementarities among problems, tasks can assist one another through the exchange of valuable knowledge, potentially leading to accelerated convergence and superior solutions [14] [1].

Within this paradigm, the Multifactorial Evolutionary Algorithm (MFEA) introduced by Gupta et al. stands as a landmark contribution, establishing the first formal framework for evolutionary multitasking [1] [24]. MFEA created a multi-task environment where a single population evolves to solve multiple tasks simultaneously, treating each task as a unique cultural factor influencing evolution [1]. This groundbreaking work ignited significant research interest, leading to numerous enhancements, extensions, and applications across diverse fields. The historical development from the basic MFEA to modern frameworks represents a journey of addressing fundamental challenges in knowledge transfer while expanding the algorithmic capabilities to tackle increasingly complex real-world problems. This technical guide examines this evolutionary trajectory within the broader context of establishing a comprehensive framework for evolutionary multitasking research.

The Foundation: Multifactorial Evolutionary Algorithm (MFEA)

Core Principles and Algorithmic Framework

The MFEA represents a pioneering approach in evolutionary multitasking, introducing a biologically-inspired framework based on concepts of multifactorial inheritance [1] [25]. At its core, MFEA maintains a single unified population of individuals that evolves to address multiple optimization tasks concurrently. Each individual in the population is assigned a skill factor that indicates the task on which it performs best, effectively creating implicit task-specific groupings within the overall population [1] [21].

The algorithm's knowledge transfer mechanism operates through two key algorithmic modules: assortative mating and selective imitation [1]. Assortative mating allows individuals with different skill factors to reproduce with a specified probability, facilitating the transfer of genetic material across tasks. Through vertical cultural transmission, offspring inherit genetic traits from both parents while randomly acquiring a skill factor from one parent, which then determines the task on which they are evaluated [1] [21]. This implicit transfer mechanism enables MFEA to exploit potential synergies between tasks without requiring explicit similarity measures or complex mapping functions.

Table 1: Key Definitions in MFEA Framework

| Term | Mathematical Definition | Interpretation |

|---|---|---|

| Factorial Cost | αij = γδij + Fij | Performance of individual i on task j (incorporating constraint violation δij) |

| Factorial Rank | rij | Ranking of individual i relative to others on task j |

| Skill Factor | τi = argminj{rij} | Task where individual i performs best |

| Scalar Fitness | βi = 1/riτ | Overall fitness accounting for all tasks |

The multifactorial environment established by MFEA enables the algorithm to efficiently allocate computational resources toward promising individuals capable of performing well across multiple tasks [21]. The scalar fitness measure provides a unified metric for comparing individuals specialized in different tasks, thereby driving selection pressure toward individuals demonstrating superior performance in their respective domains [21].

Algorithmic Workflow and Knowledge Transfer

The MFEA operationalizes evolutionary multitasking through a carefully designed workflow that balances task specialization with cross-task knowledge exchange. The following diagram illustrates the core architecture and process flow of the basic MFEA:

Diagram 1: MFEA Core Architecture - The fundamental workflow of the Multifactorial Evolutionary Algorithm showing key components and knowledge transfer mechanisms.

The initial MFEA implementation relies on a fixed, probabilistic knowledge transfer mechanism governed by the random mating probability (rmp) parameter [26]. This parameter controls the likelihood of cross-task reproduction, with higher values promoting more extensive knowledge sharing but potentially increasing the risk of negative transfer—whereby inappropriate information exchange between dissimilar tasks degrades optimization performance [26]. Despite this limitation, MFEA demonstrated remarkable efficiency gains over traditional single-task evolutionary algorithms across various benchmark problems and real-world applications, establishing evolutionary multitasking as a viable and promising research direction [1] [14].

Limitations of the Basic MFEA and Initial Enhancements

Identified Challenges and Research Gaps

While the basic MFEA introduced a groundbreaking framework for evolutionary multitasking, several significant limitations emerged through theoretical analysis and empirical studies. The algorithm's simplistic transfer mechanism, which utilized a single random mating probability (rmp) value for all task pairs, proved particularly problematic [26]. This one-size-fits-all approach failed to account for varying degrees of similarity between different task pairs, often resulting in negative transfer when dissimilar tasks exchanged genetic material [26]. Theoretical analyses further revealed that the basic MFEA lacked formal convergence guarantees, with the population dynamics and long-term behavior remaining poorly understood [27].

Additionally, the original framework exhibited strong randomness in its knowledge transfer process, leading to slow convergence speeds in many application scenarios [21]. The implicit nature of knowledge transfer also made it difficult to control or direct the exchange of useful information, limiting the algorithm's ability to adapt to problem-specific characteristics. As research in evolutionary multitasking expanded, these limitations stimulated numerous enhancement efforts aimed at developing more sophisticated and effective transfer mechanisms.

Early Algorithmic Enhancements

Initial improvements to the basic MFEA focused on introducing more structured approaches to knowledge transfer while maintaining the algorithm's core framework. The Two-Level Transfer Learning (TLTL) algorithm represented one such enhancement, incorporating both inter-task and intra-task knowledge transfer mechanisms [21]. At the upper level, TLTL implemented inter-task transfer through chromosome crossover and elite individual learning, reducing randomness by exploiting inter-task commonalities and similarities [21]. At the lower level, the algorithm introduced intra-task knowledge transfer based on information transfer of decision variables for across-dimension optimization [21].

Table 2: Early MFEA Enhancements and Their Contributions

| Algorithm | Key Innovation | Addressed Limitation |

|---|---|---|

| TLTL [21] | Two-level transfer learning with inter-task and intra-task knowledge exchange | Reduced randomness in basic MFEA |

| P-MFEA [21] | Permutation-based unified representation for combinatorial problems | Limited applicability to specific problem types |

| MFEA with PSO/DE [21] | Hybridization with other evolutionary paradigms | Improved search efficiency for continuous optimization |

| Linearized Domain Adaptation [21] | Explicit similarity measures to prevent negative transfer | Uncontrolled knowledge transfer between dissimilar tasks |

Another significant direction involved domain adaptation strategies that explicitly measured inter-task similarity to guide transfer decisions. Bali et al. proposed a linearized domain adaptation strategy specifically designed to address the issue of negative transfer between uncorrelated tasks [21]. These early enhancements demonstrated that incorporating problem-specific knowledge and more controlled transfer mechanisms could substantially improve upon the basic MFEA's performance, paving the way for more sophisticated frameworks.

Modern Frameworks and Advanced Approaches

MFEA-II: Online Transfer Parameter Estimation

A significant advancement in evolutionary multitasking emerged with the development of MFEA-II, which introduced online transfer parameter estimation to address the critical limitation of using a single rmp value [26]. Unlike the basic MFEA that applies a uniform transfer probability across all task pairs, MFEA-II employs a dynamically estimated similarity matrix that captures pairwise task relationships [26]. This innovation enables the algorithm to customize transfer intensity based on measured complementarities between specific task pairs, thereby promoting beneficial knowledge exchange while minimizing negative transfer.

The online estimation process continuously evaluates transfer effectiveness throughout the evolutionary process, allowing the algorithm to adapt its knowledge sharing strategy as search progresses. This adaptive capability proves particularly valuable in many-tasking environments (with more than three tasks), where task relationships may exhibit complex patterns that are difficult to pre-specify [26]. Empirical studies demonstrated that MFEA-II achieves significantly improved solution quality and faster convergence compared to the basic MFEA, especially as the number of simultaneous tasks increases [26].

Reinforcement Learning for Transfer Control

Recent research has explored the integration of Reinforcement Learning (RL) to automate knowledge transfer decisions in evolutionary multitasking. The MetaMTO framework represents a cutting-edge approach in this direction, formulating a multi-role RL system to comprehensively address the fundamental questions of knowledge transfer [24]:

- Where to Transfer: A Task Routing (TR) agent with an attention-based similarity recognition module determines source-target transfer pairs via attention scores [24]

- What to Transfer: A Knowledge Control (KC) agent determines the proportion of elite solutions to transfer between tasks [24]

- How to Transfer: A group of Transfer Strategy Adaptation (TSA) agents dynamically control hyper-parameters in the underlying EMT framework [24]

This holistic approach enables fully automated control over knowledge transfer, significantly reducing the reliance on human expertise while adapting to problem-specific characteristics [24]. The framework is trained end-to-end over an augmented multitask problem distribution, resulting in a generalizable meta-policy that can effectively address novel problem instances [24].

Theoretical Foundations: MFEA with Diffusion Gradient Descent

The development of MFEA based on Diffusion Gradient Descent (MFEA-DGD) represents a crucial advancement in establishing theoretical foundations for evolutionary multitasking [27]. This approach provides formal convergence guarantees for the first time, addressing a significant gap in the theoretical understanding of MFEA [27]. The mathematical framework demonstrates that the local convexity of some tasks can help other tasks escape from local optima through knowledge transfer, offering theoretical explanations for the empirical performance benefits observed in multitasking environments [27].

The MFEA-DGD endows the evolution population with a dynamic equation similar to DGD, ensuring convergence while maintaining the benefits of knowledge transfer [27]. This theoretical grounding enables more rigorous algorithm design and provides insights into the conditions under which evolutionary multitasking delivers significant advantages over single-task approaches.

Experimental Protocols and Performance Analysis

Methodological Framework for EMT Evaluation

Rigorous experimental evaluation has been essential to advancing evolutionary multitasking research. Standard evaluation methodologies typically involve comparing EMT algorithms against both single-task evolutionary algorithms and other multitasking approaches across diverse problem sets. The experimental protocol generally follows these key steps:

Problem Selection: Constructing multitask problem instances that include both synthetic benchmark functions and real-world applications with varying degrees of inter-task relatedness [26]

Algorithm Configuration: Implementing compared algorithms with careful parameter tuning, typically using population sizes between 30-100 individuals and generations ranging from 150 to 500 depending on problem complexity [28] [26]

Performance Metrics: Evaluating algorithms based on multiple criteria including:

Statistical Validation: Conducting multiple independent runs with statistical significance tests (e.g., ANOVA) to ensure result reliability [26]

Quantitative Performance Comparison

Comprehensive experimental studies have demonstrated the performance advantages of modern EMT frameworks over both single-task optimizers and the basic MFEA. The following table summarizes key quantitative results from empirical evaluations:

Table 3: Performance Comparison of EMT Algorithms

| Algorithm | Solution Quality | Computational Efficiency | Remarks |

|---|---|---|---|

| Basic MFEA | Moderate improvement over single-task | 40-50% faster than single-task | Suffers from negative transfer |

| MFEA-II | 5-15% improvement over basic MFEA | 40-60% faster than single-task | Effective in many-tasking |

| MFEA-DGD | Competitive with state-of-the-art | Faster convergence guaranteed | Theoretical convergence proofs |

| MetaMTO (RL) | State-of-the-art performance | Adaptive transfer reduces wasted evaluations | Generalizable to novel problems |

Specific application results further illustrate these advantages. In Reliability Redundancy Allocation Problems (RRAPs), MFEA-II demonstrated 53.43% faster computation times compared to Genetic Algorithms and 62.70% faster than Particle Swarm Optimization when solving multiple tasks simultaneously [26]. In personalized recommendation systems, multifactorial evolutionary approaches improved individual diversity by 54.02% with only about 5% reduction in Hit Ratio and Average Precision [29].

The Researcher's Toolkit: Essential Components for EMT

Algorithmic Components and Their Functions

Implementing effective evolutionary multitasking algorithms requires careful integration of several key components. The following toolkit outlines essential elements and their functions:

Table 4: Research Reagent Solutions for Evolutionary Multitasking

| Component | Function | Implementation Considerations |

|---|---|---|

| Unified Encoding | Represents solutions for multiple tasks in a common search space | Balance between expressiveness and complexity |

| Skill Factor Assignment | Identifies specialized task for each individual | Based on factorial rank calculations |

| Assortative Mating | Enables cross-task reproduction | Controlled by rmp or similarity matrix |

| Selective Imitation | Facilitates cultural transmission | Vertical inheritance from parents to offspring |

| Online Similarity Estimation | Measures inter-task relationships dynamically | Critical for preventing negative transfer |

| Multi-Role Policy Networks | Automates transfer decisions using RL | Requires pre-training on problem distribution |

Advanced Architectural Framework

Modern evolutionary multitasking frameworks have evolved considerably from the basic MFEA architecture. The following diagram illustrates the sophisticated components and information flows in advanced systems such as MetaMTO:

Diagram 2: Modern EMT Architecture - Advanced evolutionary multitasking framework with automated transfer control components, showing coordinated information flow between specialized agents.

The historical development from the basic Multifactorial Evolutionary Algorithm to modern frameworks represents a remarkable evolution from a simple yet powerful concept to sophisticated algorithmic systems with theoretical foundations and practical effectiveness. This journey has transformed evolutionary multitasking from a niche idea into a robust optimization paradigm capable of addressing complex real-world problems across diverse domains including engineering design, brain-computer interfaces, recommendation systems, and logistics [28] [30] [25].

The trajectory of development has consistently focused on addressing three fundamental challenges in knowledge transfer: determining where to transfer (task pairing), what to transfer (knowledge content), and how to transfer (mechanism design) [24]. Modern approaches have made significant strides in each of these areas through online similarity estimation, reinforcement learning, and theoretical convergence analysis [26] [24] [27]. The resulting frameworks demonstrate substantially improved performance over both single-task optimization and early multitasking approaches, particularly as the number of simultaneous tasks increases [26].

Future research directions in evolutionary multitasking include developing more scalable architectures for many-tasking environments with dozens or hundreds of tasks, enhancing theoretical understanding of multitask landscapes and transfer dynamics, exploring cross-paradigm integration with other computational intelligence approaches, and expanding application domains to emerging challenges in scientific discovery and engineering innovation [1] [14]. As these research directions mature, evolutionary multitasking is poised to become an increasingly essential tool for addressing the complex, interconnected optimization problems that characterize contemporary scientific and engineering challenges.

Evolutionary Multitasking (EMT) represents a paradigm shift in computational optimization by leveraging the implicit parallelism of population-based search algorithms. It enables the simultaneous solving of multiple optimization tasks, exploiting potential synergies and complementarities between them. This synergistic approach allows for the transfer of knowledge across tasks, often leading to accelerated convergence and superior solution quality compared to solving tasks in isolation. The core principle hinges on the concept of parallel task optimization, where the performance gain is not merely additive but multiplicative, creating a synergistic effect that enhances the overall computational efficacy. This is particularly critical in data-intensive fields like drug development, where evaluating complex objective functions—such as molecular docking simulations or predicting pharmacokinetic properties—is computationally prohibitive. By facilitating efficient resource utilization and intelligent inter-task knowledge transfer, parallel task optimization provides a robust basic framework for tackling the multifaceted optimization problems endemic to modern scientific research.

Fundamental Mechanisms of Parallel Performance Enhancement

The performance enhancements observed in parallel computing environments are driven by several core mechanisms that ensure computational workloads are distributed and executed efficiently. These foundational strategies are critical for realizing the synergistic effects in evolutionary multitasking systems.

Intelligent Task Scheduling: Traditional schedulers rely on static rules, which can lead to significant processor idle time under variable workloads. AI-driven schedulers dynamically assign work by learning from historical execution data, such as task runtimes and resource usage patterns. This allows the system to predict optimal task-to-core mappings, balancing the load and reducing bottlenecks in heterogeneous computing environments. Studies demonstrate that such data-driven schedulers can achieve a 14.3% reduction in energy consumption while maintaining performance levels, and significantly reduce average job waiting times [31].

Adaptive Load Balancing: In large-scale parallel systems, workloads can shift unpredictably, causing some processors to become overloaded while others remain idle. Adaptive load balancing, often powered by reinforcement learning agents, continuously monitors system performance and redistributes tasks—sometimes migrating jobs or threads between nodes—in real-time based on current load metrics. This dynamic reallocation has been shown to outperform static methods like round-robin, achieving 20–30% higher throughput when traffic patterns change rapidly [31].

Predictive Modeling of Performance Hotspots: Performance bottlenecks, such as memory contention or I/O latency, can severely throttle parallel applications. Machine learning models can forecast these hotspots by analyzing code patterns or real-time system counters. This proactive insight allows the system to take preventive actions, such as adjusting data layout or prefetch strategies before a bottleneck occurs. For instance, the NeuSight model can predict GPU kernel execution times on new hardware with an error of only 2.3%, enabling pre-emptive tuning to avoid stalls [31].

AI-Driven Techniques for Parallel Optimization

The integration of Artificial Intelligence (AI) has revolutionized parallel optimization, moving beyond static heuristics to create dynamic, self-optimizing systems. These techniques automate complex decisions, leading to substantial gains in performance and efficiency.

Table 1: AI-Driven Techniques for Parallel Optimization

| Optimization Technique | Key Function | Demonstrated Benefit | Research Context |

|---|---|---|---|

| Automated Code Parallelization | Automatically refactors sequential code to generate parallel versions (e.g., multi-threaded or GPU-kernel code) [31]. | Produced parallel code that ran ~3% faster than standard LLM-based generators on benchmarks [31]. | AUTOPARLLM system using GNNs and LLMs [31]. |

| Data Partitioning Optimization | Uses ML to learn optimal data block sizes and distribution strategies to balance workload and minimize communication overhead [31]. | ML model efficiently determined suitable data splits, improving throughput of data-parallel tasks [31]. | BLEST-ML system for distributed computing environments [31]. |

| Hardware-Aware Kernel Tuning | Employs RL or evolutionary search to find optimal kernel parameters (e.g., tile sizes) for specific CPU/GPU architectures [31]. | Achieved an average 1.8× speedup over untuned baseline kernels [31]. | METR system automated GPU kernel search [31]. |

| Energy Efficiency Optimization | Optimizes job scheduling, DVFS, and processor allocation to minimize power consumption without sacrificing performance [31]. | Early results show "potential" to match traditional policies while focusing on energy goals [31]. | RL-based scheduler (InEPS) for heterogeneous clusters [31]. |

Quantitative Analysis of Performance Gains

The theoretical advantages of parallel task optimization are substantiated by empirical data from recent studies. The quantitative benefits can be categorized into performance acceleration and resource efficiency metrics, which are crucial for evaluating the synergistic effect in practical research settings.

Table 2: Quantitative Performance Gains from Parallel Optimization Techniques

| Metric Category | Specific Metric | Improvement | Source Technique |

|---|---|---|---|

| Speed & Throughput | Kernel Execution Speed | 1.8× average speedup [31] | Hardware-Aware Kernel Tuning |

| System Throughput | 20-30% higher throughput under variable loads [31] | Adaptive Load Balancing | |

| Efficiency & Resource Use | Energy Consumption | 14.3% lower energy use under same performance [31] | Intelligent Task Scheduling |

| Job Waiting Time | Significant reduction in average waiting time [31] | Intelligent Task Scheduling | |

| Computational Accuracy | Performance Prediction Error | Reduced to 2.3% error vs. >100% for baseline [31] | Predictive Hotspot Modeling |

These quantitative gains demonstrate that the synergistic effect of parallel optimization is not merely theoretical. The performance enhancements are measurable and significant, directly impacting the time-to-solution and operational costs for large-scale computational research.

Experimental Protocols for Evolutionary Multitasking

To empirically validate the performance enhancements from parallel task optimization, researchers can implement the following detailed experimental protocol. This methodology is designed to quantify the synergistic effects within an evolutionary multitasking framework.

Experimental Setup and Workflow

The experimental workflow involves a comparative analysis between traditional single-task optimization and multi-task optimization within a parallel computing environment. The key is to control for variables to isolate the effect of parallel task optimization.

Key Performance Indicators (KPIs) and Measurement

The following diagram illustrates the logical relationships between the core components of a parallel evolutionary multitasking system and the key performance indicators used to evaluate its efficacy. This structure enables the measurement of synergistic effects.

Research Reagent Solutions: Essential Computational Materials

Implementing parallel task optimization experiments requires both hardware and software "research reagents." The following table details these essential materials and their functions in the experimental framework.

Table 3: Essential Research Reagents for Parallel Optimization Experiments

| Category | Item | Function in Research | Exemplars / Specifications |

|---|---|---|---|

| Hardware Infrastructure | High-Performance Computing (HPC) Cluster | Provides the physical parallel computing environment for executing multiple tasks simultaneously [31]. | Heterogeneous clusters with CPUs and GPUs. |

| Software Frameworks | Evolutionary Algorithm Toolkit | Provides the foundational algorithms for implementing single-task and multi-task optimization [31]. | Custom implementations or platforms like PlatEMO. |

| Parallel Computing Platform | Manages low-level parallel execution, task distribution, and inter-process communication [31]. | MPI, OpenMP, CUDA, or Apache Spark. | |

| AI & Optimization Libraries | Machine Learning Library | Enables the implementation of intelligent schedulers, predictive models, and adaptive balancers [31]. | TensorFlow, PyTorch, or Scikit-learn. |

| Auto-Tuning Framework | Automates the process of hardware-aware kernel optimization [31]. | METR system or OpenTuner. | |

| Benchmarking Tools | Performance Profiling Suite | Measures key metrics like execution time, CPU/GPU utilization, power consumption, and memory bandwidth [31]. | NVIDIA Nsight, Intel VTune, or perf Linux. |

| Optimization Benchmark Problems | Standardized test functions and real-world problems to evaluate and compare algorithm performance [31]. | NAS Parallel Benchmarks, Rodinia, or CEC competition problems. |

Application in Drug Development and Research