Evolutionary Multitasking on GPU: A Parallel Computing Framework for Accelerated Biomedical Discovery and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on implementing evolutionary multitasking (EMT) algorithms on GPU architectures.

Evolutionary Multitasking on GPU: A Parallel Computing Framework for Accelerated Biomedical Discovery and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing evolutionary multitasking (EMT) algorithms on GPU architectures. It explores the foundational principles of GPU parallel computing and EMT, details methodological strategies for designing GPU-accelerated frameworks like GEAMT, and offers practical troubleshooting for performance bottlenecks and non-determinism. Through validation case studies in genetic analysis and comparative performance analysis, we demonstrate how GPU-based EMT significantly enhances search accuracy, accelerates complex optimization in high-dimensional spaces, and provides a scalable path for tackling large-scale problems in genomics and personalized medicine.

The Foundations of Evolutionary Multitasking and GPU Parallelism

Evolutionary Multitasking (EMT) represents a paradigm shift in evolutionary computation. It enables the simultaneous solution of multiple optimization tasks by leveraging their implicit parallelism to facilitate cross-task knowledge transfer. The core principle involves using a single population to solve multiple tasks concurrently, where the evolutionary process automatically extracts and transfers beneficial genetic material between tasks. This approach aims to enhance population diversity and accelerate convergence speed by preventing premature stagnation on individual tasks.

The integration of EMT with GPU-based computing frameworks has recently emerged as a transformative development, addressing the substantial computational demands of evolving populations across multiple tasks. These parallel implementations demonstrate remarkable efficiency gains, particularly for high-dimensional problems and scenarios involving thousands of asynchronous tasks. This technological synergy creates powerful opportunities for complex real-world applications, including drug development and genomic analysis, where multiple related optimization problems must be solved within constrained computational resources [1] [2].

Theoretical Foundations of Evolutionary Multitasking

Core Principles and Definitions

EMT operates on the fundamental premise that valuable information discovered while solving one task may provide useful insights for solving other related tasks. Formally, a multitask optimization problem comprises K distinct tasks, where the goal is to find optimal solutions ( {x1^*, x2^, \ldots, x_K^} ) such that for each task ( T_j ), the solution satisfies:

[ xj^* = \underset{x \in \Omegaj}{\mathrm{argmin}} f_j(x), \quad j=1,2,\ldots,K ]

Here, ( fj ) and ( \Omegaj ) denote the objective function and feasible region of task ( T_j ), respectively. The key innovation of EMT lies in its ability to exploit potential synergies between these tasks, even when they exhibit heterogeneous landscape properties or have misaligned feasible decision variable regions [3].

Knowledge Transfer Mechanisms

The efficacy of EMT hinges on sophisticated knowledge transfer mechanisms that determine where, what, and how to transfer information between tasks:

- Where to Transfer: Identifying which tasks should exchange information, typically achieved by measuring inter-task similarity through attention-based architectures that compute pairwise similarity scores between task populations [3].

- What to Transfer: Determining the specific knowledge to convey, such as selecting the proportion of elite solutions to transfer from a source task to a target task [3].

- How to Transfer: Designing the precise exchange mechanism, including evolutionary operator selection and transfer intensity control through adaptive strategy agents [3].

Table: Knowledge Transfer Decision Points in EMT

| Decision Point | Challenge | Advanced Solution |

|---|---|---|

| Task Selection | Identifying similar tasks for beneficial transfer | Attention-based similarity recognition modules [3] |

| Content Selection | Determining what knowledge to transfer | Adaptive selection of elite solution proportions [3] |

| Transfer Mechanism | Controlling how knowledge is incorporated | Dynamic hyper-parameter control and strategy adaptation [3] |

| Negative Transfer Prevention | Avoiding detrimental knowledge exchange | Population distribution analysis using Maximum Mean Discrepancy [4] |

GPU-Accelerated EMT Frameworks

Architectural Foundations

The parallel nature of evolutionary algorithms makes them exceptionally suitable for GPU implementation. GPU-based EMT frameworks exploit this inherent parallelism through:

- Massive Parallelization: Evaluating thousands of individuals across multiple tasks simultaneously by leveraging thousands of GPU cores [1].

- Concurrent Multi-Island Mechanism: Enabling parallel EMT algorithms to efficiently solve high-dimensional problems through distributed sub-populations [1].

- Multi-Stream Multi-Thread (MSMT) Mechanism: Handling thousands of asynchronously arriving tasks with minimal overhead through dedicated processing streams [1] [5].

Implementation Protocols

Protocol: Implementing a GPU-Based EMT Framework

Environment Setup

- Configure CUDA environment (version 11.0 or higher)

- Ensure GPU with compute capability 7.0 or higher (e.g., NVIDIA V100, A100)

- Allocate device memory for population matrices and fitness evaluation buffers

Population Initialization

- Initialize unified population representation across all tasks

- Map diverse task search spaces to normalized unified space using affine transformations: [ x' = \frac{x - Lk}{Uk - Lk} ] where ( Lk ) and ( U_k ) represent lower and upper bounds for task ( k ) [6]

Parallel Fitness Evaluation

- Implement kernel functions for simultaneous fitness evaluation across tasks

- Utilize shared memory for frequently accessed data patterns

- Employ asynchronous memory transfers to overlap computation and data movement

Knowledge Transfer Operations

Experimental results demonstrate that such GPU implementations can significantly reduce search time while maintaining solution quality, particularly for high-dimensional problems where traditional EMT algorithms face computational bottlenecks [1] [5].

Application Notes for Biomedical Research

SNP Interaction Detection

EMT has shown remarkable success in genome-wide association studies (GWAS) for detecting epistatic interactions between single nucleotide polymorphisms (SNPs). The following protocol outlines the implementation of GPU-Powered Evolutionary Auxiliary Multitasking for this application:

Protocol: GPU-Powered SNP Interaction Detection

Task Formulation

- Main Task: Explore the entire SNP search space to identify significant interactions

- Auxiliary Tasks: 3-5 low-dimensional tasks focusing on distinct SNP subspaces to enhance local optimization capabilities [2]

Implementation Framework

- Algorithm: GEAMT (GPU-powered Evolutionary Auxiliary Multitasking)

- Platform: Multi-GPU implementation (minimum 2 GPUs with 16GB memory each)

- Data Structure: Sparse matrix representation for efficient SNP pattern storage

Iteration Process

- Step 1: All auxiliary tasks transfer high-quality SNP patterns to the main task via an information transfer mechanism

- Step 2: Apply auxiliary task update strategy based on feature regrouping to switch search subspaces

- Step 3: Evaluate Pareto-optimal solutions from the main task for significant SNP interactions

- Step 4: Update task relationships based on transfer success metrics [2]

Validation

- Compare detected interactions against synthetic datasets with known ground truth

- Apply statistical significance testing with multiple test correction

- Validate on real-world datasets with established biological pathways

This approach demonstrates notable scalability and efficiency on both synthetic and real-world datasets, significantly enhancing search accuracy while accelerating the discovery process [2].

High-Dimensional Feature Selection

High-dimensional feature selection presents a combinatorial challenge well-suited to EMT approaches. The following workflow illustrates the process for high-dimensional biomedical data:

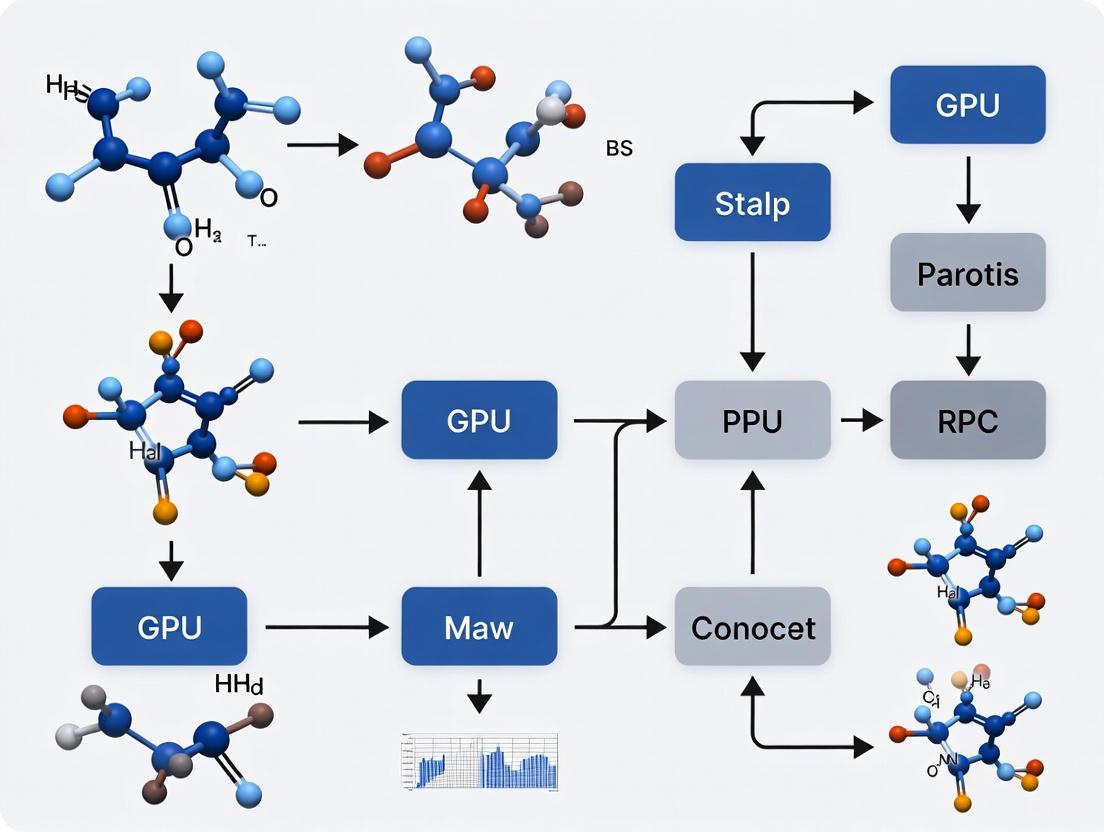

Diagram Title: EMT Feature Selection Workflow

Protocol: Task Relevance Evaluation for Feature Selection

Multi-Task Generation

- Apply Relief-F algorithm to evaluate feature weights and importance scores

- Utilize Algorithm with Reservoir (A-Res) sampling to generate diverse feature selection subtasks

- Define subtasks focusing on different feature subspaces based on weight distributions [7]

Task Relevance Evaluation

- Calculate average crossover ratio between all subtask pairs

- Formulate optimal subtask selection as heaviest k-subgraph problem

- Apply branch-and-bound method to identify most relevant task groupings [7]

Knowledge Transfer Implementation

- Implement guiding vector-based transfer strategy

- Adapt convergence factor dynamically during optimization: [ CF = CF{max} - (CF{max} - CF{min}) \times \frac{iter}{iter{max}} ]

- Balance exploration and exploitation based on transfer success history [7]

Experimental Configuration

- Datasets: 21 high-dimensional biomedical datasets

- Optimal Parameters: Task crossover ratio ≈ 0.25

- Validation: 10-fold cross-validation with multiple classification models

Extensive simulations confirm that this EMT-based feature selection framework consistently outperforms various state-of-the-art methods in high-dimensional classification scenarios prevalent in biomedical research [7].

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Resources for EMT Implementation

| Resource Category | Specific Tools | Function/Purpose |

|---|---|---|

| Software Platforms | MToP (MATLAB) [6] | Comprehensive EMT benchmarking with 50+ algorithms, 200+ problem cases |

| PlatEMO [6] | Multi-objective optimization and performance comparison | |

| EvoX [6] | Distributed GPU-accelerated evolutionary computation | |

| GPU Frameworks | CUDA Toolkit [1] [2] | Parallel implementation of population evaluation and knowledge transfer |

| Multi-Stream Multi-Thread [1] | Handling asynchronous task arrival in real-time systems | |

| Algorithmic Components | Attention-based Similarity [3] | Identifying related tasks for knowledge transfer |

| Maximum Mean Discrepancy [4] | Measuring distribution differences between task populations | |

| Guiding Vector Transfer [7] | Adaptive knowledge incorporation based on convergence factors | |

| Biomedical Applications | GEAMT for SNP Detection [2] | Identifying epistatic interactions in GWAS datasets |

| EMTRE for Feature Selection [7] | High-dimensional biomarker selection with task relevance evaluation |

Advanced Protocols and Experimental Designs

Reinforcement Learning for Transfer Policy Optimization

The integration of Reinforcement Learning with EMT represents a cutting-edge approach for automating knowledge transfer decisions:

Protocol: Multi-Role Reinforcement Learning for EMT

Agent Architecture Design

- Task Routing Agent: Incorporates attention-based similarity recognition to determine source-target transfer pairs via attention scores

- Knowledge Control Agent: Determines optimal proportion of elite solutions to transfer between tasks

- Strategy Adaptation Agents: Dynamically control hyper-parameters in the underlying EMT framework [3]

Training Methodology

Implementation Framework

- Pre-train all network modules end-to-end over augmented multitask problem distribution

- Utilize actor-critic network structure for policy approximation

- Deploy learned policy across various evolutionary algorithms for enhanced generalization [3]

This approach demonstrates state-of-the-art performance against both human-crafted and learning-assisted baselines, providing insightful interpretations of learned transfer policies [3] [8].

Cross-Domain Knowledge Transfer

The following diagram illustrates the information flow in cross-domain evolutionary multitasking:

Diagram Title: Cross-Domain EMT Architecture

Evolutionary Multitasking represents a significant advancement in evolutionary computation, particularly through its integration with GPU-based parallelization frameworks. The protocols and application notes presented herein provide researchers with practical methodologies for implementing EMT in biomedical contexts, with specific emphasis on enhancing population diversity and convergence characteristics. The future of EMT research points toward increasingly autonomous systems capable of learning transfer policies adaptively, with promising applications in drug development, personalized medicine, and complex genomic analysis. As GPU technologies continue to evolve and reinforcement learning methodologies mature, EMT is poised to become an indispensable tool for tackling the multidimensional optimization challenges inherent in modern biomedical research.

The architecture of Graphics Processing Units (GPUs) is fundamentally designed for massive parallelism, making them indispensable for accelerating large-scale scientific computing and evolutionary multitasking research. Unlike traditional Central Processing Units (CPUs) optimized for sequential tasks, GPUs comprise thousands of smaller cores that execute many operations concurrently [9]. This paradigm is particularly effective in research domains like drug development, where tasks such as molecular docking simulations, genomic analysis, and protein folding can be decomposed into thousands of independent subtasks. Framing this within evolutionary multitasking research allows for the simultaneous optimization of multiple drug candidates or the exploration of vast chemical spaces by leveraging the GPU's ability to manage numerous parallel threads efficiently [10].

At its core, NVIDIA's CUDA platform enables this by exposing a hierarchical parallel architecture. Understanding the interaction between its fundamental components—CUDA Cores for computation, a multi-tiered Memory Hierarchy for data access, and Thread Blocks for organizing parallel threads—is critical for researchers to effectively harness GPU capabilities and achieve significant speedups over CPU-based implementations [11] [12].

Core Architectural Components

CUDA Cores: The Fundamental Compute Units

CUDA Cores are the basic arithmetic processing units within an NVIDIA GPU. Each core is capable of performing scalar floating-point and integer operations [12]. These cores are not autonomous like CPU cores; instead, they are organized into much larger groupings to achieve high throughput. A useful analogy is to think of a GPU as a factory: each CUDA core is an individual worker handling basic math, while a Streaming Multiprocessor (SM) is a shop floor containing many such workers, and the entire GPU is the complete factory with many SMs operating in parallel [12].

The real computational power comes from the sheer number of these cores. For example, a modern GPU like the RTX 5090 contains 21,760 CUDA cores [12]. These cores operate on the principle of Single Instruction, Multiple Threads (SIMT), where a group of 32 threads (called a warp) executes the same instruction simultaneously on different data elements. This model is exceptionally efficient for the data-parallel workloads common in scientific simulation and machine learning [12].

Specialized Cores: Tensor Cores and RT Cores

Modern GPUs also incorporate specialized cores to accelerate specific workloads. Tensor Cores are dedicated to performing matrix multiply-and-accumulate operations at high speed, often using mixed precision (e.g., FP16 input with FP32 accumulation) [11]. They are pivotal for deep learning training and inference, which are increasingly common in drug discovery for tasks like predictive toxicology and generative chemistry. RT Cores accelerate ray-tracing operations, which, while common in graphics, can also be repurposed for certain scientific simulations involving wave propagation or geometric analysis [12].

Table 1: Core Types in a Modern GPU and Their Primary Functions

| Core Type | Primary Function | Key Architectural Trait | Benefit to Research Workloads |

|---|---|---|---|

| CUDA Core | Scalar FP32/INT arithmetic | Thousands of cores for massive parallelism | General-purpose computation (e.g., fitness evaluation in evolutionary algorithms) |

| Tensor Core | Matrix math & accumulation | Operates on small matrix blocks (e.g., 4x4) | Dramatically accelerates deep learning and linear algebra operations |

| RT Core | Ray tracing & bounding volume hierarchy (BVH) traversal | Hardware-accelerated intersection testing | Speeds up rendering and specific geometric calculations |

The Memory Hierarchy

Feeding data to thousands of concurrent threads requires a sophisticated memory hierarchy designed to balance bandwidth, latency, and capacity. The hierarchy is structured to keep the CUDA cores busy by minimizing the time spent waiting for data [11].

- Registers and Local Memory: The fastest memory is assigned to registers, which are dedicated to each thread. When registers are exhausted, data spills into local memory, which is a reserved region of much slower off-chip DRAM [10].

- Shared Memory and L1 Cache: Each SM contains a small, ultra-fast, software-managed shared memory and a hardware-managed L1 cache. Shared memory is a powerful tool for performance optimization, allowing threads within the same block to communicate and collaboratively reuse loaded data, thereby reducing redundant accesses to slower memory [11] [10].

- L2 Cache and Global Memory: All SMs share a large L2 cache, which acts as a buffer for the global memory (or VRAM). Global memory is the GPU's main, high-bandwidth DRAM, with capacities ranging from 16 GB to 80 GB in data-center GPUs like the A100 [11] [13]. While it offers high bandwidth, its access latency is also high. Technologies like High Bandwidth Memory (HBM2) are used in high-end GPUs to provide exceptional bandwidth, exceeding 1.5 TB/s in the A100 [13].

- Constant and Texture Memory: These are specialized, read-only memory types that are cached for efficient access patterns, beneficial for data that is read repeatedly without modification [14].

Table 2: GPU Memory Hierarchy and Characteristics (Examples from NVIDIA A100 and RTX A4000)

| Memory Type | Location | Bandwidth & Speed | Key Characteristics & Purpose |

|---|---|---|---|

| Registers | SM | Fastest (single cycle) | Dedicated per-thread storage. |

| Shared Memory / L1 Cache | SM | Very High | Low-latency, shared by threads in a block for collaboration [11]. |

| L2 Cache | GPU (shared) | High | Unified cache for all SMs; buffers global memory accesses [11]. |

| Global Memory (HBM2) | GPU (e.g., A100) | ~1555 GB/s [13] | High-bandwidth, high-capacity, high-latency main memory. |

| Global Memory (GDDR6) | GPU (e.g., A4000) | ~448 GB/s [13] | High-bandwidth memory for consumer/professional cards. |

Diagram 1: The layered memory hierarchy in a GPU, from fast per-thread registers to high-capacity global memory.

Threads, Warps, and Blocks: The Execution Model

The Thread Block Hierarchy

The CUDA parallel execution model is built on a two-level hierarchy of threads [11]:

- Threads are grouped into thread blocks (or blocks). Threads within the same block reside on the same SM and can communicate efficiently via shared memory and synchronize their execution.

- A grid of these thread blocks is launched to execute a kernel function (the parallel task).

To fully utilize a GPU with multiple SMs, the application must launch many more thread blocks than there are SMs. This ensures that when some SMs complete their assigned blocks, they can immediately start processing new ones, keeping the entire GPU occupied [11]. A set of thread blocks running concurrently is called a wave. It is most efficient to have several full waves; if the last wave has only a few blocks, the GPU will be underutilized during that "tail" period, known as the tail effect [11].

Warps and Latency Hiding

Within an SM, threads from one or more resident blocks are grouped into warps of 32 threads each [12]. The SM executes instructions for entire warps at a time in a SIMT fashion. If all 32 threads in a warp follow the same execution path, the hardware operates at peak efficiency. However, if threads within a warp diverge (e.g., via a conditional branch where some threads take the if path and others the else), the warp must serially execute each divergent path, disabling the threads not on the current path. This is called thread divergence and severely impacts performance [15].

GPUs hide the high latency of memory operations through massive parallelism. When a warp stalls because it is waiting for data from memory, the SM's hardware scheduler immediately switches to another warp that is ready to execute. This technique, known as latency hiding, ensures that the SM's computational units are kept busy. Effective latency hiding requires a high number of active warps per SM, a metric referred to as occupancy [11] [14]. If there are insufficient active warps, the SM has no other work to switch to, and the execution units sit idle, a state often revealed by profiling tools showing "long scoreboard" stalls [14] [15].

Diagram 2: The organization of threads into blocks and warps, which are scheduled across SMs.

Performance Analysis and Optimization

The Roofline Model: Arithmetic Intensity and Bandwidth

A powerful conceptual model for understanding GPU performance is the Roofline Model. It posits that the performance of any kernel is limited by one of two factors: memory bandwidth or compute bandwidth [11].

The key metric is Arithmetic Intensity (AI), defined as the number of operations performed per byte of data accessed from memory (FLOPs/Byte) [11]. This algorithmic characteristic is then compared to the GPU's ops:byte ratio, which is its peak compute throughput divided by its peak memory bandwidth.

- Memory-Bound Kernels: If a kernel's AI is lower than the GPU's ops:byte ratio, its performance is limited by how quickly data can be moved from memory. Optimizations focus on improving memory access patterns or reducing data movement [11].

- Compute-Bound Kernels: If a kernel's AI is higher than the ops:byte ratio, performance is limited by the raw math speed of the CUDA or Tensor Cores. Optimizations focus on improving computational efficiency [11].

Table 3: Performance Limits of Common Deep Learning Operations (Example: NVIDIA V100 GPU)

| Operation | Arithmetic Intensity (FLOPS/B) | Performance Limitation |

|---|---|---|

| ReLU Activation | 0.25 | Heavily Memory-Bound |

| Layer Normalization | < 10 | Memory-Bound |

| Max Pooling (3x3 window) | 2.25 | Memory-Bound |

| Linear Layer (Batch=1) | 1 | Memory-Bound |

| Linear Layer (Batch=512) | 315 | Compute-Bound |

Experimental Protocol: GPU Kernel Performance Profiling

Objective: To analyze and optimize a custom GPU kernel, identifying whether it is memory-bound or compute-bound and applying targeted optimizations.

Materials:

- Hardware: An NVIDIA GPU (e.g., A100, V100, or consumer-grade RTX).

- Software: NVIDIA CUDA Toolkit, NVIDIA Nsight Compute and Nsight Systems profilers.

Methodology:

- Baseline Profiling:

- Implement the kernel in CUDA C++.

- Compile with

nvccusing flags-arch=sm_xx(specifying the target GPU compute capability) and-O3. - Run the kernel under NVIDIA Nsight Compute to collect initial metrics.

- Key Metrics to Record:

sm__throughput.avg.pct_of_peak_sustained_elapsed: SM throughput utilization (% of peak).dram__throughput.avg.pct_of_peak_sustained_elapsed: Memory bandwidth utilization.smsp__thread_inst_executed_per_inst_executed.ratio: Average number of threads executed per instruction (measure of thread divergence).- Top "warp stall reason" from the

warp_stall_breakdownsection (e.g., "Long Scoreboard" indicates memory latency waits) [14] [15].

Performance Limitation Analysis:

- Memory-Bound Identification: High memory bandwidth utilization coupled with low SM utilization and "Long Scoreboard" as the top stall reason [14] [15].

- Compute-Bound Identification: High SM utilization with lower memory bandwidth utilization.

- Latency-Bound Identification: Low utilization of both compute and memory bandwidth, often with high "Long Scoreboard" stalls, indicating insufficient parallelism to hide latency [14].

Targeted Optimization:

- For Memory-Bound Kernels:

- Optimize Memory Access Patterns: Ensure coalesced global memory accesses (consecutive threads accessing consecutive memory addresses).

- Utilize Shared Memory: Load frequently accessed data from global memory into shared memory to promote data reuse across threads in a block [10].

- For Latency-Bound Kernels:

- Increase Occupancy: Adjust the number of threads per block and reduce register/shared memory usage to allow more concurrent warps per SM [10].

- For All Kernels:

- Minimize Thread Divergence: Restructure code and data to ensure threads within a warp follow the same execution path. Using

__syncwarp()can help reconverge threads after a conditional block [15]. - Use Tensor Cores: Where possible, formulate operations as matrix multiplications to leverage Tensor Core acceleration [11] [10].

- Minimize Thread Divergence: Restructure code and data to ensure threads within a warp follow the same execution path. Using

- For Memory-Bound Kernels:

Validation:

- Re-profile the optimized kernel and compare metrics against the baseline.

- Verify numerical correctness of the output.

The Scientist's Toolkit: Essential GPU Programming Reagents

Table 4: Key Tools and Libraries for GPU-Accelerated Research

| Tool / Library | Category | Primary Function in Research |

|---|---|---|

| NVIDIA CUDA Toolkit | Development Environment | Core compiler (nvcc), debugger (cuda-gdb), and fundamental libraries (cuBLAS, cuFFT, Thrust) for CUDA C++ development [12]. |

| NVIDIA Nsight Compute | Profiling Tool | Detailed instruction-level performance profiling to identify bottlenecks in GPU kernels [15]. |

| cuBLAS / cuDNN | Accelerated Library | Highly optimized implementations of BLAS linear algebra routines and deep neural network primitives for machine learning workloads. |

| OpenCL | Programming Framework | An open, cross-platform standard for parallel programming across GPUs, CPUs, and other accelerators [10]. |

| NVIDIA Occupancy Calculator | Utility | Spreadsheet-based tool to calculate theoretical occupancy for a kernel given its resource usage (threads, registers, shared memory) [10]. |

The massively parallel architecture of GPUs, built upon a foundation of thousands of CUDA Cores, a sophisticated Memory Hierarchy, and an efficient Thread Block execution model, provides unprecedented computational power for evolutionary multitasking and drug development research. Successfully leveraging this architecture requires more than just porting code to the GPU; it demands a deep understanding of the performance implications of algorithm design and implementation. By applying structured experimental protocols for profiling and optimization, and by utilizing the Roofline Model to classify performance limitations, researchers can systematically overcome bottlenecks and fully exploit the potential of GPU-accelerated computing to solve complex, data-intensive problems in biomedical science.

Evolutionary Multitasking (EMT) represents a paradigm shift in evolutionary computation. It enables the simultaneous optimization of multiple tasks by leveraging implicit parallelism and knowledge transfer between related problems, mimicking the human brain's ability to process interconnected tasks [16]. A Multitask Optimization (MTO) problem involves finding solutions for K tasks concurrently, formally defined as finding optimal solutions that minimize a set of objective functions across all tasks [17]. The core principle of EMT is to exploit synergies between tasks, where knowledge gained while solving one problem can accelerate convergence and improve solution quality for other related tasks [16] [18].

The recent explosion of computational demands in fields like drug discovery and AI has exposed the limitations of traditional CPU-based computing architectures. While CPUs excel at sequential processing, they struggle with the massive parallelism required for modern evolutionary computation. This has led to an inflection point where GPU multitasking has become essential to improve hardware utilization and reduce computational costs [19]. GPUs, with their thousands of simpler cores running concurrent threads, offer significantly greater performance per watt than CPUs—a critical advantage as energy consumption becomes the key design criterion for large computing facilities [20].

The Computational Synergy Between EMT and GPU Architecture

Fundamental Alignment of Computational Paradigms

The synergy between EMT and GPU computing stems from their shared foundation in data-parallel processing. Evolutionary algorithms are inherently parallel, as they evaluate and evolve entire populations of candidate solutions simultaneously. Similarly, GPU architectures are designed specifically for Single Instruction, Multiple Data (SIMD) operations, where the same instruction executes across thousands of data points concurrently [20]. This perfect architectural alignment enables GPUs to process entire generations of evolutionary populations in parallel, dramatically accelerating the optimization process.

The computational characteristics of population-based optimization map exceptionally well to GPU architecture. Fitness evaluation, often the most computationally intensive component, can be distributed across GPU streaming multiprocessors, while the memory hierarchy of GPUs efficiently handles the large-scale data access patterns required for maintaining and processing populations [19]. This marriage of technologies is particularly effective for MTO problems, where multiple optimization tasks must evolve concurrently while exchanging knowledge through transfer mechanisms.

Quantitative Performance Advantages

Table 1: Key Performance Advantages of GPU-Accelerated EMT over CPU Implementation

| Performance Metric | CPU-Based EMT | GPU-Accelerated EMT | Improvement Factor |

|---|---|---|---|

| Population Processing | Sequential batch evaluation | Massive parallel evaluation | 10-100x speedup [21] |

| Memory Bandwidth | Limited by CPU memory subsystem | High-bandwidth dedicated memory | >20x increase [19] |

| Energy Efficiency | High power per operation | Superior performance per watt | Significant improvement [20] |

| Task Scaling | Linear cost increase with tasks | Minimal overhead for additional tasks | Near-constant time for many tasks [21] |

| Hardware Utilization | Often low (<10% for inference) | High utilization via multitasking | >80% utilization achievable [19] |

GPU-Accelerated EMT: Implementation Frameworks and Platforms

Emerging GPU Multitasking Frameworks

The transition from GPU singletasking to multitasking represents a fundamental shift in computational paradigms. Traditional GPU usage allocated entire devices to single tasks, leading to significant underutilization, especially with diverse AI workloads and dynamic request patterns [19]. Modern approaches now embrace a resource management layer that functions as an operating system for GPU multitasking, enabling fast resource partitioning, efficient memory virtualization, and cooperative scheduling across applications.

Industry and academic efforts have produced several frameworks for GPU multitasking. NVIDIA MIG (Multi-Instance GPU) technology allows physical partitioning of GPUs into isolated instances, while time-sharing approaches like NVIDIA MPS enable concurrent execution of multiple tasks [19]. However, current solutions face limitations in achieving both high utilization and performance guarantees, prompting research into more advanced scheduling and memory management techniques. The emerging openvgpu project represents a promising open-source initiative building a comprehensive GPU resource management layer to address these challenges [19].

Specialized EMT Software Platforms

Table 2: Key Platforms for Implementing GPU-Accelerated Evolutionary Multitasking

| Platform Name | Primary Features | GPU Support | Target Applications |

|---|---|---|---|

| MTO-Platform (MToP) [17] | >40 MTEAs, 150+ MTO problems, 10+ metrics | Comprehensive | Single/multi-objective, constrained, many-task optimization |

| openvgpu [19] | GPU resource management layer, memory virtualization | Native | Large-scale LLM inference, diverse AI workloads |

| PlatEMO | Multi-objective evolutionary algorithms | Limited | Traditional multi-objective optimization |

| EvoX | Distributed GPU acceleration | Native | Reinforcement learning, complex optimization |

The MTO-Platform (MToP) represents a significant advancement for the EMT community, providing the first open-source MATLAB platform specifically designed for evolutionary multitasking research [17]. MToP incorporates over 40 multitask evolutionary algorithms (MTEAs) and more than 150 MTO problem cases with real-world applications, along with over 10 performance metrics. The platform features a user-friendly graphical interface for results analysis, data export, and visualization, while its modular design allows researchers to extend functionality for emerging problem domains [17].

Experimental Protocols for GPU-Accelerated EMT

Protocol 1: Implementing Large-Scale EMT Using GPU Paradigm

Purpose: To implement and evaluate a large-scale evolutionary multitasking system capable of handling hundreds of optimization tasks simultaneously using GPU acceleration.

Materials and Reagents:

- Computing Hardware: NVIDIA data center GPU (e.g., A100, B300) with minimum 40GB memory

- Software Dependencies: CUDA Toolkit 11.0+, MATLAB R2020a+ with Parallel Computing Toolbox

- Framework: MTO-Platform (MToP) with custom GPU extensions

- Benchmark Problems: WCCI2020 test suites for many-task optimization [16]

Procedure:

- Environment Setup: Install and configure MTO-Platform with GPU support enabled. Verify CUDA installation and MATLAB GPU computing compatibility.

Population Initialization: Implement unified search space initialization for all tasks using GPU-accelerated random number generation:

GPU-Accelerated Evaluation: Implement fitness evaluation kernel that processes multiple tasks concurrently:

Knowledge Transfer Mechanism: Implement implicit knowledge transfer through random mating between tasks using GPU-accelerated crossover operations [21].

Performance Monitoring: Track GPU utilization, memory usage, and speedup factors compared to CPU implementation.

Troubleshooting Tips:

- For memory bottlenecks, reduce population size or implement memory-efficient representations

- For low GPU utilization, increase task batch sizes or optimize kernel launch parameters

- Use NVIDIA Nsight Systems for performance profiling and bottleneck identification

Protocol 2: DDQN-Guided Evolutionary Multitasking for Constrained Problems

Purpose: To implement a dual-population constrained multi-objective evolutionary algorithm guided by Double Deep Q-Networks (DDQN) for complex optimization problems such as autonomous ship berthing, with extensions to drug discovery applications.

Materials and Reagents:

- Deep Learning Framework: PyTorch 1.9+ or TensorFlow 2.5+ with GPU support

- Reinforcement Learning: DDQN implementation with experience replay

- Evolutionary Algorithm Base: Custom implementation of EMCMO (Evolutionary Multitasking Constrained Multi-objective Optimization) [22]

- Constraint Handling: Adaptive constraint tolerance parameters

Procedure:

- Dual-Population Initialization: Create two distinct populations on GPU—one for exploration and one for exploitation—with different initialization strategies.

DDQN Operator Selection Network:

- State Representation: Encode current population diversity, convergence metrics, and constraint violation statistics

- Action Space: Define available evolutionary operators (SBX, DE, PM, knowledge transfer)

- Reward Function: Design based on Pareto front improvement and constraint satisfaction

GPU-Accelerated Training Loop:

Knowledge Transfer Mechanism: Implement adaptive knowledge transfer between populations based on similarity measures using maximum mean discrepancy (MMD) [16].

Constraint Handling: Apply adaptive penalty functions and feasibility rules to maintain feasible solutions across tasks.

Validation Metrics:

- Hypervolume indicator for multi-objective performance

- Constraint violation rate across generations

- Knowledge transfer effectiveness ratio

- Speedup factor compared to single-task optimization

Table 3: Key Research Reagent Solutions for GPU-Accelerated Evolutionary Multitasking

| Resource Category | Specific Tools/Platforms | Function in EMT Research |

|---|---|---|

| GPU Computing Platforms | NVIDIA CUDA, AMD ROCm, openvgpu | Provide low-level acceleration and resource management for parallel task execution [19] |

| EMT Software Frameworks | MTO-Platform (MToP), PlatEMO | Offer implemented algorithms, benchmark problems, and performance metrics for experimental comparison [17] |

| Benchmark Problem Sets | WCCI2020 Test Suites, CEC Competition Problems | Enable standardized performance evaluation and comparison between different MTEAs [16] |

| Performance Analysis Tools | NVIDIA Nsight Systems, Hypervolume Calculator | Facilitate profiling of GPU utilization and quantitative assessment of optimization results [21] |

| Knowledge Transfer Mechanisms | Affine Transformation, Autoencoding, Subspace Alignment | Enable effective information exchange between optimization tasks to accelerate convergence [18] [17] |

Workflow Visualization and Decision Pathways

GPU-Accelerated EMT Implementation Workflow

GPU Architecture for Parallel Task Processing

The synergy between Evolutionary Multitasking and GPU computing represents a transformative advancement in optimization capabilities. By aligning the inherent parallelism of population-based evolutionary algorithms with the massive parallel architecture of GPUs, researchers can achieve order-of-magnitude improvements in computational efficiency and solution quality. The protocols and frameworks presented in this work provide a foundation for implementing GPU-accelerated EMT across diverse domains, from drug discovery to complex engineering design.

Future research directions should focus on several key areas: (1) developing more sophisticated knowledge transfer mechanisms that automatically learn task relationships during optimization; (2) creating dynamic resource allocation strategies that adapt computational effort based on task complexity and inter-task synergies; and (3) advancing multi-objective many-task optimization algorithms capable of handling numerous conflicting objectives across multiple tasks simultaneously [16] [18]. As GPU architectures continue to evolve toward increasingly parallel designs, and as EMT methodologies mature, this powerful combination will unlock new frontiers in our ability to solve previously intractable optimization problems across scientific and engineering domains.

Within evolutionary multitasking research, GPU-based parallel implementation is pivotal for accelerating scientific discovery, particularly in data-intensive fields like drug development. These frameworks allow researchers to exploit the massive parallel architecture of GPUs, transforming computationally prohibitive tasks into tractable problems [23]. This document provides application notes and experimental protocols for the three dominant GPU programming models—CUDA, OpenCL, and Vulkan Compute Shaders—framed within the context of a broader thesis on evolutionary multitasking. It is tailored for an audience of researchers, scientists, and drug development professionals who require practical guidance on selecting and implementing these technologies to accelerate molecular dynamics, virtual screening, and multiscale modeling simulations [24].

Comparative Analysis of GPU Programming Models

The table below summarizes the core characteristics of the three key GPU programming models, providing a high-level overview for researchers to make an informed initial selection.

Table 1: Comparative Overview of CUDA, OpenCL, and Vulkan Compute Shaders

| Feature | CUDA | OpenCL | Vulkan Compute Shaders |

|---|---|---|---|

| Primary Purpose | General-purpose computing on NVIDIA GPUs [25] | Cross-platform parallel computing [26] [27] | Cross-platform graphics & compute [26] |

| Provider & Type | NVIDIA, Proprietary [28] | Khronos Group, Open Standard [26] | Khronos Group, Open Standard [26] |

| Key Strength | Mature ecosystem, high performance on NVIDIA hardware, extensive AI/library support [25] [29] | Hardware vendor independence, runs on CPUs/GPUs/other accelerators [25] | Low-overhead, fine-grained control, ideal for graphics-integrated workloads [26] |

| Programming Language | C/C++, Fortran, Python (via CuPy, etc.) [28] | C-based language [26] | GLSL (for compute shaders) [30] |

| Memory Model | Unified Memory, Shared Memory, Constant Memory [28] | Global, Local, Private, Constant Memory [26] | Fine-grained control over memory allocation and barriers [26] |

| Performance | Typically highest on NVIDIA GPUs due to deep hardware optimization [25] | High, but can be less optimized than CUDA on NVIDIA hardware [26] | High, low-driver overhead; comparable to others for well-tuned code [30] |

| Portability | Limited to NVIDIA GPUs [25] | High (across NVIDIA, AMD, Intel, ARM, etc.) [26] [25] | High (Windows, Linux, Android) [26] |

| Maturity & Ecosystem | Very mature, vast library ecosystem (cuDNN, cuBLAS, cuFFT), excellent tools [25] [28] | Mature standard, but library ecosystem less extensive than CUDA [26] | Growing adoption, younger ecosystem focused on graphics and mobile [26] |

| Ease of Use | Straightforward API, comprehensive documentation, large community [25] | More complex to code due to need for explicit hardware management [25] | Complex API, requires explicit management of synchronization and memory [26] |

| Ideal For | AI/ML, HPC, scientific simulations in NVIDIA-dominated environments [25] [24] | Platform-independent projects, edge devices, heterogeneous hardware clusters [26] [25] | Cross-platform applications, real-time processing, mobile, graphics-compute hybrid tasks [26] [30] |

Model-Specific Application Notes

CUDA (Compute Unified Device Architecture)

CUDA is a proprietary parallel computing platform and API that enables developers to use NVIDIA GPUs for general-purpose processing. Its key advantage for scientific workloads lies in its tight integration with NVIDIA hardware, allowing for top performance in complex simulations and the training of large language models [25]. The model is based on a hierarchy of threads, blocks, and grids, which maps efficiently to the GPU's physical architecture, enabling the management of thousands of concurrent threads [31].

For evolutionary multitasking research, CUDA provides a mature ecosystem of optimized libraries. Leveraging libraries like cuBLAS for linear algebra, cuFFT for Fast Fourier Transforms, and cuRAND for random number generation can drastically reduce development time and maximize performance [28]. In drug development, this translates to faster molecular dynamics simulations using packages like GROMACS and AMBER, which have mature CUDA-accelerated paths [24].

OpenCL (Open Computing Language)

OpenCL is an open, royalty-free standard for cross-platform parallel programming across diverse processors, including GPUs, CPUs, and FPGAs [26] [27]. Its primary strength is hardware vendor independence, making it suitable for projects that require long-term platform flexibility or must run in heterogeneous data centers with mixed GPU types [25]. The programming model involves defining a context containing devices and organizing work-items into work-groups [26].

For scientific workloads, OpenCL is a robust choice when targeting non-NVIDIA hardware, such as AMD GPUs or edge devices based on ARM processors where CUDA is unavailable [25]. Its cross-platform nature is valuable in collaborative environments where standardized code is necessary. However, achieving peak performance comparable to CUDA on NVIDIA hardware often requires more effort, as the open standard may not leverage architecture-specific optimizations [26] [25].

Vulkan Compute Shaders

Vulkan is a low-overhead, cross-platform API for graphics and compute, maintained by the Khronos Group [26]. Unlike CUDA and OpenCL, which are purely for compute, Vulkan's compute shader capability is part of a broader graphics and compute framework. Its design emphasizes explicit control over GPU resources and synchronization, minimizing driver overhead and allowing developers to achieve highly predictable performance [26] [30].

In scientific computing, Vulkan Compute is particularly well-suited for hybrid workloads that intertwine computation and visualization. For instance, a real-time simulation rendering a dynamic molecular model could use the same Vulkan context for simulation and display, avoiding costly data transfers between separate compute and graphics APIs. While the API is more complex and its general-purpose computing ecosystem is less mature than CUDA's, it offers powerful, low-level control for specialized applications on Windows, Linux, and Android platforms [26].

Experimental Protocols for Scientific Workloads

Protocol: Molecular Dynamics Simulation with Mixed Precision

Objective: To accelerate a molecular dynamics (MD) simulation, such as protein-ligand binding, by leveraging mixed-precision arithmetic on consumer or workstation GPUs [24].

Background: MD simulations are central to drug development, but their computational cost is high. Modern GPUs offer significant speedups for mixed-precision calculations, where most of the computation is done in single-precision (FP32) while critical accumulations use double-precision (FP64) to maintain accuracy [24].

Table 2: Research Reagent Solutions for MD Simulation

| Item | Function/Description | Example Solutions |

|---|---|---|

| GPU Hardware | Provides parallel processing cores for acceleration. | NVIDIA GeForce RTX 4090/5090, Data Center GPUs (A100/H100) for full FP64 [24]. |

| MD Software | Software package with GPU acceleration support. | GROMACS, AMBER, NAMD, LAMMPS [24]. |

| Containerized Environment | Ensures reproducibility by packaging software and dependencies. | Docker or Singularity image with a pinned version of CUDA and the MD software [24]. |

| Precision Configuration | Flags to control numerical precision in the simulation. | Use explicit flags in the MD software (e.g., in GROMACS: -nb gpu -pme gpu -update gpu) [24]. |

Methodology:

- Environment Setup: Pull a pre-configured container (e.g., from NVIDIA NGC) containing your chosen MD software and a specific CUDA version. Pin all versions, including the driver, for reproducibility [24].

- System Preparation: Prepare your molecular system (e.g., protein, ligand, solvation box) and parameter files (.mdp, .prm).

- Precision Configuration: Enable mixed-precision GPU acceleration using software-specific flags. For GROMACS, this typically involves flags like

-nb gpu -pme gpu -update gputo offload short-range non-bonded forces, Particle Mesh Ewald (PME), and coordinate updates to the GPU [24]. - Execution & Monitoring: Launch the simulation. Monitor performance metrics, notably nanoseconds simulated per day (ns/day), and track GPU utilization using tools like

nvidia-smi[24]. - Validation: Validate the results against a known, short benchmark run performed in full double-precision to ensure accuracy has not been compromised.

Workflow Diagram:

Protocol: Virtual Screening via High-Throughput Docking

Objective: To screen large libraries of chemical compounds (ligands) against a target protein to identify potential drug candidates using GPU-accelerated docking software.

Background: Docking simulations predict how a small molecule binds to a protein target. This is an embarrassingly parallel task, as each ligand can be docked independently, making it ideal for GPU acceleration that scales with the number of available cores [24].

Methodology:

- Target Preparation: Prepare the protein structure file (e.g., PDB), ensuring correct protonation states and removing water molecules if necessary.

- Ligand Library Preparation: Curate a library of 3D ligand structures in an appropriate format. This can be sourced from public databases like ZINC.

- GPU Software Selection: Choose a GPU-accelerated docking tool such as AutoDock-GPU or Vina-GPU [24].

- Batch Configuration: Configure the docking software for high-throughput batch processing. Define the search space (grid box) on the protein and set docking parameters.

- Execution & Scoring: Launch the job. The GPU will process thousands of ligands concurrently. The output is a ranked list of ligands based on predicted binding affinity (scoring function).

- Post-Processing: Analyze top-ranking hits for binding pose and interaction quality, often leading to further synthesis or experimental testing.

Workflow Diagram:

Protocol: Performance Benchmarking Across Models

Objective: To quantitatively compare the performance of CUDA, OpenCL, and Vulkan Compute Shaders for a specific, well-defined scientific kernel (e.g., a custom n-body simulation or matrix multiplication) within an evolutionary multitasking framework.

Background: Selecting the right model requires empirical evidence. This protocol outlines a standardized benchmarking process to guide researchers in evaluating the performance of different GPU programming models for their specific workload [24].

Methodology:

- Kernel Selection: Choose a computationally intensive kernel representative of your larger application.

- Implementation: Implement the same kernel algorithm in CUDA, OpenCL, and Vulkan Compute. Use the best practices for each model (e.g., optimal memory access patterns, shared memory usage in CUDA).

- Hardware Setup: Use a controlled test environment with a fixed GPU, driver version, and operating system.

- Metric Collection: Execute each implementation and collect key metrics: execution time (ms), throughput (GFLOPS), and GPU utilization. Use profiling tools like NVIDIA Nsight Compute for CUDA to identify bottlenecks [31] [25].

- Data Analysis: Compare the results, normalizing for any differences in code optimization effort. The goal is to identify the model that delivers the highest performance and efficiency for the given kernel and hardware.

Workflow Diagram:

Choosing the correct GPU programming model is a critical strategic decision for a research team. The following decision tree synthesizes the protocols and analysis above into a actionable guide.

Decision Framework Diagram:

In conclusion, the integration of GPU programming models into evolutionary multitasking research represents a paradigm shift in computational science. CUDA stands out for pure performance in NVIDIA-dominated environments, OpenCL provides essential flexibility for heterogeneous and edge computing, and Vulkan Compute offers specialized power for hybrid visualization-compute tasks. By applying the structured protocols and decision framework outlined in this document, researchers and drug development professionals can systematically harness these technologies, thereby accelerating the pace of scientific discovery and innovation.

In evolutionary computation, parallelism is not merely an implementation detail but a fundamental strategy for managing the immense computational costs associated with population-based optimization. As evolutionary algorithms (EAs) typically evaluate thousands of candidate solutions across numerous generations, efficient distribution of this workload across computing resources becomes critical, particularly for expensive optimization problems (EOPs) where single fitness evaluations may require substantial execution time [32]. The emergence of graphics processing units (GPUs) as computational workhorses has further accelerated this trend, offering thousands of execution cores that can significantly reduce processing time for parallelizable workloads [20].

Within this context, two complementary paradigms dominate: data parallelism, which distributes data elements across computing cores that perform identical operations, and task parallelism, which executes different computational functions concurrently across multiple cores [33] [34]. Understanding the distinction, implementation requirements, and appropriate application domains for each strategy is essential for researchers designing efficient evolutionary computation systems, particularly in scientific domains like drug development where optimization problems frequently involve computationally expensive simulations [2] [32].

The following sections provide a comprehensive examination of these parallelization strategies, their implementation in evolutionary computation frameworks, experimental protocols for benchmarking, and practical guidance for researchers developing GPU-accelerated evolutionary algorithms.

Conceptual Foundations: Data and Task Parallelism

Core Definitions and Distinctions

Data parallelism occurs when the same operation is applied concurrently to different elements of a dataset. In evolutionary computation, this manifests most clearly in parallel fitness evaluation, where the same fitness function is applied simultaneously to multiple individuals in a population [34] [35]. This approach is inherently synchronous, as all computational units typically complete their operations before the algorithm proceeds to the next evolutionary step such as selection or variation [33].

Task parallelism involves the concurrent execution of different operations, which may be applied to the same or different datasets [33]. In evolutionary computation, this might involve simultaneously running different evolutionary algorithms on subpopulations, applying different variation operators to different individuals, or conducting multiple components of a complex fitness evaluation in parallel [34]. This approach is typically asynchronous, with different tasks completing at different times according to their specific computational requirements [33].

Table 1: Fundamental Characteristics of Data and Task Parallelism

| Characteristic | Data Parallelism | Task Parallelism |

|---|---|---|

| Computational Pattern | Same operation on different data subsets | Different operations on same or different data |

| Execution Model | Synchronous | Asynchronous |

| Speedup Potential | Proportional to input size/data volume | Proportional to number of independent tasks |

| Load Balancing | Automatic with uniform operations | Requires careful scheduling |

| Implementation Complexity | Lower | Higher |

Hardware Execution Models

GPUs implement data parallelism through a Single Instruction, Multiple Data (SIMD) or Single Instruction, Multiple Threads (SIMT) architecture, where thousands of threads execute the same instruction sequence on different data elements [34]. This architecture provides extremely high computational density for parallelizable operations but suffers performance penalties when threads within a warp (a group of 32 threads in CUDA architectures) diverge in their execution paths [34].

Task parallelism on GPUs presents greater implementation challenges, as different kernels (GPU functions) must be scheduled to execute concurrently, or a single kernel must handle divergent execution paths across thread warps [34]. Modern GPU programming models like CUDA and OpenCL provide increasing support for task-parallel execution through features like dynamic parallelism and streams, but efficient implementation requires careful attention to resource contention and load balancing [34] [20].

Parallelism in Evolutionary Computation: Implementation Frameworks

GPU-Accelerated Evolutionary Frameworks

The EvoRL framework represents a cutting-edge approach to integrating evolutionary computation with reinforcement learning through comprehensive GPU acceleration [36]. This end-to-end framework executes the entire training pipeline on GPUs, including environment simulations and evolutionary operations, employing hierarchical parallelism that operates across three dimensions: parallel environments, parallel agents, and parallel training [36]. This architecture specifically addresses the computational bottlenecks that have traditionally limited evolutionary algorithm research by enabling efficient training of large populations on a single machine.

EvoRL implements both major EvoRL paradigms: Evolution-guided RL (e.g., ERL, CEM-RL) and Population-Based AutoRL (e.g., PBT) [36]. The framework's modular design allows researchers to replace and customize components while maintaining high computational efficiency through vectorization and compilation techniques that optimize performance across the training pipeline [36]. This approach demonstrates how modern evolutionary computation frameworks can leverage both data and task parallelism in an integrated hierarchy.

Specialized Evolutionary Multitasking Implementations

Evolutionary multitasking (EMT) represents a sophisticated application of task parallelism where multiple optimization tasks are solved simultaneously through knowledge transfer [2]. The GPU-powered Evolutionary Auxiliary Multitasking (GEAMT) algorithm exemplifies this approach for SNP interaction detection in genomic studies [2]. GEAMT constructs a main task alongside several low-dimensional auxiliary tasks that collaboratively explore the search space, with the main task exploring the entire space while auxiliary tasks focus on distinct subspaces to enhance local optimization capabilities [2].

In each iteration, GEAMT's auxiliary tasks transfer high-quality information to the main task via a specialized information transfer mechanism, followed by an auxiliary task update strategy based on feature regrouping that switches the search subspaces of the auxiliary tasks [2]. This implementation, distributed across multiple GPUs, demonstrates how task parallelism can enhance both optimization performance and computational efficiency in evolutionary computation [2].

Table 2: Parallelism in Evolutionary Algorithm Frameworks

| Framework/Algorithm | Primary Parallelism Type | Application Domain | Key Features |

|---|---|---|---|

| EvoRL [36] | Hierarchical (Data + Task) | Evolutionary Reinforcement Learning | End-to-end GPU execution, Vectorized environments, Modular architecture |

| GEAMT [2] | Task Parallelism | SNP Interaction Detection | Evolutionary multitasking, Cross-task knowledge transfer, Multiple GPU implementation |

| SADEs [32] | Data Parallelism | Expensive Optimization Problems | Surrogate-assisted evolution, Parallel fitness evaluation, Population distribution |

Surrogate-Assisted Evolution and Parallelism

For expensive optimization problems where fitness evaluations require substantial computational resources, surrogate-assisted differential evolution (SADE) algorithms leverage parallelism to maintain search efficiency despite limited function evaluations [32]. These approaches typically employ data parallelism for concurrent surrogate model evaluations or task parallelism for managing multiple surrogate models with different fidelities or domains [32].

The parallel and distributed implementation of differential evolution is particularly natural since each individual can be evaluated independently, with the only stage requiring interaction being offspring generation [32]. This inherent parallelizability makes DE-based algorithms well-suited to modern high-performance computing environments, including multi-GPU systems [32].

Experimental Protocols for Parallel Evolutionary Algorithms

Benchmarking Methodology for Parallel Performance

Objective: Quantitatively evaluate the performance of data-parallel versus task-parallel implementations of evolutionary algorithms on GPU architectures, measuring speedup, scalability, and solution quality.

Experimental Setup:

- Hardware Configuration: NVIDIA A100 GPU (or comparable accelerator), Multi-core CPU host system, High-speed interconnects (PCIe 4.0+)

- Software Environment: CUDA 11.0+ or OpenCL 2.0+, Python 3.8+ with numerical libraries (NumPy, CuPy), Evolutionary computation framework (EvoRL [36] or custom implementation)

- Benchmark Problems:

Implementation Protocol for Data-Parallel Evolutionary Algorithm:

- Population Initialization: Generate initial population of N individuals, storing genotypes in GPU memory as 2D array (N × D) where D is problem dimensionality.

- Parallel Fitness Evaluation:

- Implement fitness function as GPU kernel using vectorization techniques [36]

- Launch one thread per individual or one thread block per individual depending on fitness function complexity

- Utilize shared memory for intermediate calculations when evaluating complex functions

- Data-Parallel Selection: Implement tournament selection on GPU by randomly selecting candidates from population and performing parallel comparisons.

- Vectorized Variation Operators: Apply mutation and crossover operations simultaneously across all individuals in population using GPU broadcasting capabilities.

- Performance Metrics: Measure execution time, speedup relative to sequential implementation, population scalability, and solution quality convergence.

Implementation Protocol for Task-Parallel Evolutionary Algorithm:

- Population Division: Partition population into K subpopulations based on task parallelism strategy.

- Heterogeneous Task Definition:

- Assign different evolutionary strategies to different subpopulations (e.g., differential evolution, particle swarm optimization, covariance matrix adaptation) [2]

- Implement each strategy as separate GPU kernel with optimized parameters for specific task

- Asynchronous Execution:

- Utilize CUDA streams or similar mechanism for concurrent kernel execution [34]

- Implement task scheduler to manage GPU resource allocation across different evolutionary strategies

- Knowledge Transfer Mechanism:

- Performance Metrics: Measure task utilization efficiency, load balancing, inter-task communication overhead, and diversity maintenance.

Evaluation Metrics and Analysis Methods

Computational Efficiency Metrics:

- Speedup Ratio: ( S = T{serial} / T{parallel} ) where T is execution time

- Parallel Efficiency: ( E = S / P ) where P is number of parallel processing units

- Scalability Profile: Performance measurement while increasing population size and problem dimensionality

- Memory Bandwidth Utilization: Percentage of theoretical peak memory bandwidth achieved

Algorithmic Performance Metrics:

- Convergence Rate: Generations or function evaluations required to reach target solution quality

- Solution Quality: Best fitness achieved and consistency across multiple runs

- Population Diversity: Genotypic and phenotypic diversity measures throughout evolution

Statistical Analysis:

- Perform minimum of 30 independent runs per configuration

- Apply Wilcoxon signed-rank test for statistical significance (α = 0.05)

- Calculate effect sizes using Cohen's d for performance differences

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for GPU-Accelerated Evolutionary Computation

| Tool/Category | Function | Representative Examples |

|---|---|---|

| GPU Programming Frameworks | Provides abstraction for GPU kernel development and execution | CUDA, OpenCL, HIP, SYCL |

| Evolutionary Computation Frameworks | Implements core evolutionary algorithms with GPU support | EvoRL [36], DEAP, Distributed Evolutionary Algorithms in Python |

| Performance Profiling Tools | Analyzes computational efficiency and identifies bottlenecks | NVIDIA Nsight Systems, AMD ROCProfiler, Intel VTune |

| Benchmark Problem Suites | Standardized evaluation of algorithm performance | CEC Benchmark Problems [32], OpenAI Gym (for RL) [36] |

| Surrogate Modeling Libraries | Approximates expensive fitness functions | Scikit-learn, TensorFlow, PyTorch |

| Visualization Tools | Analyzes algorithm behavior and population dynamics | Matplotlib, Plotly, Custom DOT visualization scripts |

Comparative Analysis and Implementation Guidelines

Strategic Selection Framework

Choosing between data parallelism and task parallelism requires careful analysis of the specific evolutionary algorithm characteristics and computational resources. The following guidelines support informed decision-making:

Select Data Parallelism When:

- The algorithm employs a homogeneous population with uniform genetic operators

- Fitness evaluation is computationally expensive but uniformly structured across individuals

- The problem exhibits regular data access patterns amenable to vectorization

- Primary goal is scaling to very large population sizes

- Implementation simplicity and development time are concerns

Select Task Parallelism When:

- The algorithm inherently involves multiple distinct strategies or operators

- Fitness evaluation has irregular structure or varying computational costs across individuals

- Implementing heterogeneous models or multi-fidelity optimization

- Exploring multiple areas of search space with different strategies simultaneously

- Algorithm would benefit from knowledge transfer between different optimization approaches

Hybrid Approaches often yield optimal performance by applying data parallelism within subpopulations and task parallelism across different algorithmic strategies [36]. The EvoRL framework's hierarchical parallelism demonstrates this integrated approach, achieving superior scalability while maintaining algorithmic flexibility [36].

Performance Optimization Considerations

Memory Access Patterns: Data-parallel implementations must prioritize coalesced memory access where threads within the same warp access contiguous memory locations to maximize memory bandwidth utilization [34]. This often requires restructuring population data from Array of Structures (AoS) to Structure of Arrays (SoA) layout.

Load Balancing: Task-parallel implementations require careful attention to load balancing, particularly when tasks have heterogeneous computational requirements [33]. Dynamic scheduling approaches may be necessary to ensure all processing units remain utilized.

Resource Contention: Concurrent execution in task-parallel systems can lead to contention for shared resources like memory bandwidth and cache space [34]. Profiling tools are essential for identifying and resolving these bottlenecks.

Data parallelism and task parallelism represent complementary strategies for distributing evolutionary computation workloads across modern GPU architectures. Data parallelism excels in scenarios requiring uniform operations across large populations, while task parallelism provides flexibility for heterogeneous algorithms and multifaceted optimization problems. The emerging trend toward hierarchical parallelism – as exemplified by frameworks like EvoRL [36] – demonstrates how integrating both approaches can yield superior performance and scalability.

For researchers in drug development and scientific computing, the strategic application of these parallelization strategies can dramatically reduce computation time for evolutionary algorithms applied to expensive optimization problems [2] [32]. As GPU architectures continue to evolve, emphasizing increased parallelism and specialized processing capabilities, the effective utilization of both data and task parallelism will become increasingly critical for advancing evolutionary computation research and applications.

Evolutionary Algorithms (EAs) face significant computational barriers when applied to complex, high-dimensional problems in domains such as drug discovery and genomics. The transition from single-objective optimization to Evolutionary Multi-Tasking (EMT) exacerbates these computational demands, requiring innovative approaches to parallelization. Modern Graphics Processing Units (GPUs) offer a transformative solution through their massive parallel architecture, featuring thousands of cores capable of simultaneously evaluating thousands of potential solutions [37]. This parallel capability aligns perfectly with the population-based nature of EAs, allowing for the cooperative execution of multiple optimization tasks that leverage cross-task knowledge transfer [2]. The emergence of GPU-accelerated evolutionary toolkits such as EvoJAX and PyGAD now compresses weeks of computation into hours, dramatically reducing experimentation costs and accelerating time-to-insight for research scientists [38].

Within the specific context of biomedical research, these advancements are particularly impactful for tackling problems such as SNP interaction detection in Genome-Wide Association Studies (GWAS) [2]. The computational intensity of exploring complex genetic interactions across millions of SNPs presents an ideal use case for GPU-accelerated EMT. This document provides detailed application notes and experimental protocols to guide researchers in implementing EMT on GPU architectures, with specific emphasis on overcoming traditional bottlenecks in computational biology and drug development.

The GPU Advantage for Evolutionary Computation

Hardware Architecture and Parallel Processing

GPU architecture is fundamentally designed for parallel processing, featuring thousands of computational cores that excel at executing identical operations on multiple data streams simultaneously [37]. This Single Instruction, Multiple Data (SIMD) paradigm is exceptionally well-suited to evolutionary computation, where fitness evaluation, mutation, and crossover operations can be performed in parallel across entire populations. Unlike traditional Central Processing Units (CPUs) optimized for sequential processing, GPUs provide the high-throughput computing necessary to make EMT feasible for real-world scientific problems [37].

The hardware structure of modern GPUs includes hundreds of Streaming Multiprocessors (SMs), each capable of executing thousands of threads concurrently [19]. This multi-threaded architecture, combined with a multi-tiered memory hierarchy including L1/L2 caches and global memory, enables efficient management of the substantial memory requirements inherent to evolutionary multitasking, where multiple populations and task parameters must be maintained simultaneously [19].

Overcoming the Single-Tasking Paradigm

Traditional GPU usage has followed a singletasking paradigm, where one task exclusively utilizes the entire device [19]. This approach proves increasingly inefficient for evolutionary computation, where individual model evaluations may not fully saturate modern GPU resources, particularly for smaller problems or during specific algorithm phases. The growing GPU-to-model size ratio means that small to medium-sized fitness evaluations cannot fully utilize a GPU's capacity, leading to wasted resources [19].

GPU multitasking addresses this inefficiency by enabling concurrent execution of multiple evolutionary tasks on a single device. Research indicates that data centers often experience GPU utilization as low as 10% for inference workloads [19], suggesting similar inefficiencies may affect evolutionary computation. Emerging GPU resource management frameworks, such as the open-source openvgpu project, aim to provide the necessary resource management layer for efficient multitasking, enabling fast resource partitioning and efficient memory virtualization [19].

Table 1: Comparative Analysis of GPU Multitasking Technologies for Evolutionary Computation

| Technology | Target Resources | Performance Guarantee | Fault Isolation | Large-scale Deployment |

|---|---|---|---|---|

| MIG [19] | Compute (Spatial), Memory | Yes | Yes | No |

| MPS [19] | Compute (Spatial) | Yes | No | No |

| Orion [19] | Compute (Temporal, Spatial) | No | No | No |

| REEF [19] | Compute (Temporal, Spatial) | No | No | No |

| LithOS [19] | Compute (Temporal, Spatial) | Yes | No | No |

| Ideal System | Compute (Temporal, Spatial), Memory | Yes | Yes | Yes |

Framework Ecosystem for GPU-Accelerated Evolutionary Computation

Core Frameworks and Their Specializations

The growing demand for GPU-accelerated AI has spurred development of specialized frameworks that facilitate efficient computation. While no single framework dominates evolutionary computation exclusively, several general-purpose GPU frameworks provide essential infrastructure:

PyTorch: Serves as a versatile "workhorse" framework with strong GPU acceleration through libraries like cuDNN and cuBLAS [39] [37]. Its dynamic computation graph is particularly valuable for experimental EMT algorithms requiring flexible architectures.

JAX: Gains adoption among advanced practitioners for its functional programming style and automatic differentiation capabilities [39] [37]. Its NumPy-like syntax makes it ideal for scientific computing applications, including evolutionary algorithm research.

TensorFlow: Remains relevant for production deployments, offering mature tooling and robust multi-GPU support [39] [37]. Its static computation graph can benefit large-scale evolutionary optimization with fixed evaluation pipelines.

Specialized evolutionary computation frameworks building on these platforms are emerging, with EvoJAX representing a prominent example of GPU-accelerated evolutionary toolkits that deliver significant speedups [38].

Scaling Frameworks for Large-Scale Evolution

Training increasingly complex evolutionary models requires specialized frameworks for efficient resource utilization:

DeepSpeed: Provides optimizations like ZeRO (Zero Redundancy Optimizer) to enable massive model training on limited GPU memory [39]. This is particularly relevant for evolutionary algorithms employing large neural networks as solution representations.

Megatron-LM: Offers tensor and pipeline parallelism tailored for trillion-parameter models [39], enabling evolutionary approaches to optimize extremely large parameter spaces.

Ray: Functions as a de facto framework for distributed training and serving, offering abstractions for task scheduling and parallelization [39]. This capability is essential for distributed EMT implementations across multiple GPU nodes.

Table 2: Performance Metrics of GPU-Accelerated Frameworks for Evolutionary Computation

| Framework | Primary Strengths | GPU Support | Optimal Use Cases in EMT |

|---|---|---|---|

| PyTorch [39] [37] | Dynamic computation graphs, rich ecosystem | cuDNN, CUDA, Multi-GPU | Research prototyping, flexible algorithm design |

| JAX [39] [37] | Functional programming, automatic differentiation | XLA compiler | Scientific computing, gradient-enhanced evolution |

| TensorFlow [39] [37] | Production-ready, mature tooling | NVIDIA CUDA, Multi-GPU | Large-scale deployment, fixed pipeline evolution |

| Ray [39] | Distributed computing abstractions | Multi-node, multi-GPU | Distributed EMT, scalable population evaluation |

Application Protocol: GPU-Powered Evolutionary Auxiliary Multitasking for SNP Detection

The following protocol details the implementation of a GPU-Powered Evolutionary Auxiliary Multitasking (GEAMT) algorithm, specifically designed for detecting Single Nucleotide Polymorphism (SNP) interactions in Genome-Wide Association Studies (GWAS) [2]. This approach addresses key limitations of traditional EA methods in high-dimensional GWAS datasets, including premature convergence and prohibitive computational demands [2].

SNP interaction detection represents a challenging combinatorial problem where evaluating all possible combinations is computationally infeasible. The EMT paradigm enhances population diversity and convergence speed through collaborative, cross-task knowledge sharing [2]. By implementing this algorithm across multiple GPUs, researchers achieve notable scalability and efficiency improvements, significantly enhancing search accuracy while accelerating the discovery process [2].

Required Materials and Reagents

Table 3: Research Reagent Solutions for GPU-Accelerated Evolutionary Multitasking

| Item | Function | Implementation Example |

|---|---|---|

| High-Performance GPU Cluster | Provides parallel processing capability for population evaluation | NVIDIA B300 (288GB memory) or equivalent [19] |

| GPU Multitasking Framework | Enables concurrent execution of multiple evolutionary tasks | Openvgpu resource manager [19] |

| Evolutionary Computation Backend | Core evolutionary algorithm operations | EvoJAX or PyGAD [38] |

| Deep Learning Framework | Neural network support for solution representation | PyTorch or JAX [39] [37] |