Evolutionary Multitasking for Large-Scale Combinatorial Optimization: Methods and Applications in Biomedical Research

This article explores the emerging paradigm of Evolutionary Multitasking Optimization (EMTO) for solving complex, large-scale combinatorial problems, with a special focus on applications in biomedicine and drug discovery.

Evolutionary Multitasking for Large-Scale Combinatorial Optimization: Methods and Applications in Biomedical Research

Abstract

This article explores the emerging paradigm of Evolutionary Multitasking Optimization (EMTO) for solving complex, large-scale combinatorial problems, with a special focus on applications in biomedicine and drug discovery. We first establish the foundational principles of EMTO, contrasting it with traditional single-task evolutionary algorithms. The discussion then progresses to advanced methodological frameworks, including explicit and implicit knowledge transfer mechanisms, and their implementation for problems like personalized drug target recognition. Subsequently, we address key challenges such as negative transfer and scalability, presenting state-of-the-art optimization strategies. Finally, the article provides a comparative analysis of EMTO performance against conventional methods, validating its efficacy through real-world case studies in cancer genomics. This comprehensive overview is tailored for researchers, scientists, and drug development professionals seeking to leverage concurrent optimization for accelerated biomedical discovery.

The Foundations of Evolutionary Multitasking: From Single-Task to Concurrent Optimization

Defining Evolutionary Multitasking Optimization (EMTO) and Its Core Principles

Definition and Foundational Concepts

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift in evolutionary computation. It is a branch of evolutionary algorithms (EAs) designed to optimize multiple tasks simultaneously within a single problem, outputting the best solution for each task [1]. EMTO leverages the implicit parallelism of population-based search to create a multi-task environment where a single population evolves towards solving multiple distinct, yet potentially related, optimization problems concurrently [1].

The core inspiration for EMTO stems from the principles of multitask learning and transfer learning in machine learning [1]. It operates on the fundamental premise that useful knowledge gained while solving one task may contain valuable information that can accelerate the optimization process or lead to better solutions for another related task. By automatically transferring this knowledge among different optimization problems, EMTO can often achieve superior performance compared to traditional single-task evolutionary algorithms, particularly in convergence speed [1]. This approach is especially powerful for tackling complex, non-convex, and nonlinear problems where traditional mathematical optimization techniques may struggle [1].

The first concrete implementation of this concept was the Multifactorial Evolutionary Algorithm (MFEA), which treats each task as a unique "cultural factor" influencing the population's evolution [1]. In MFEA, knowledge transfer is facilitated through algorithmic modules like assortative mating and selective imitation, allowing individuals from different task groups to exchange genetic information [1].

Table 1: Key Characteristics of EMTO versus Traditional Evolutionary Algorithms

| Feature | Evolutionary Multitasking Optimization (EMTO) | Traditional Single-Task EA |

|---|---|---|

| Problem Scope | Optimizes multiple tasks concurrently within a single run | Optimizes a single task per run |

| Knowledge Utilization | Automatically transfers knowledge between related tasks | No explicit knowledge transfer between independent runs |

| Search Mechanism | Implicit parallelism through shared population evolution | Population evolves towards a single objective |

| Primary Advantage | Improved convergence speed; leverages inter-task synergies | Simplicity; focused search on one problem |

| Typical Applications | Complex systems with interrelated components; multi-domain problems | Isolated optimization problems |

Core Principles and Methodologies

The efficacy of EMTO hinges on several core principles and the methodologies designed to implement them effectively.

Knowledge Transfer

Knowledge transfer is the cornerstone of EMTO, enabling the exchange of useful genetic material between tasks. The fundamental idea is that if common useful knowledge exists in solving a task, the information gained from processing that task may help solve another related task [1]. EMTO makes full use of the implicit parallelism of population-based search to achieve this. However, a key challenge is negative transfer, which occurs when knowledge from one task hinders progress on another, often due to low inter-task relevance [2]. Advanced EMTO algorithms employ strategies to identify valuable knowledge for transfer, such as using population distribution information to select sub-populations with the smallest distribution difference to the target task's elite solutions, thereby mitigating negative transfer [2].

Factorial Inheritance and Assortative Mating

In MFEA and related algorithms, factorial inheritance allows offspring to inherit genetic material from parents working on different tasks. This is governed by assortative mating rules, which determine the conditions under which individuals from different task groups can crossover. Typically, crossover between parents from different tasks is permitted with a defined probability (random mating probability), encouraging the exchange of diverse genetic material [1]. This mechanism is crucial for creating a multi-task environment where a single population can evolve towards solving multiple tasks simultaneously.

Skill Factor and Selective Imitation

The skill factor is a scalar value assigned to each individual, denoting the specific task on which that individual performs best [1]. The population is dynamically divided into non-overlapping task groups based on these skill factors. Selective imitation is a learning process where an individual may adopt the skill factor of a superior parent from a different task if that parent's genetic material proves beneficial, allowing for the cross-pollination of successful traits across task boundaries [1].

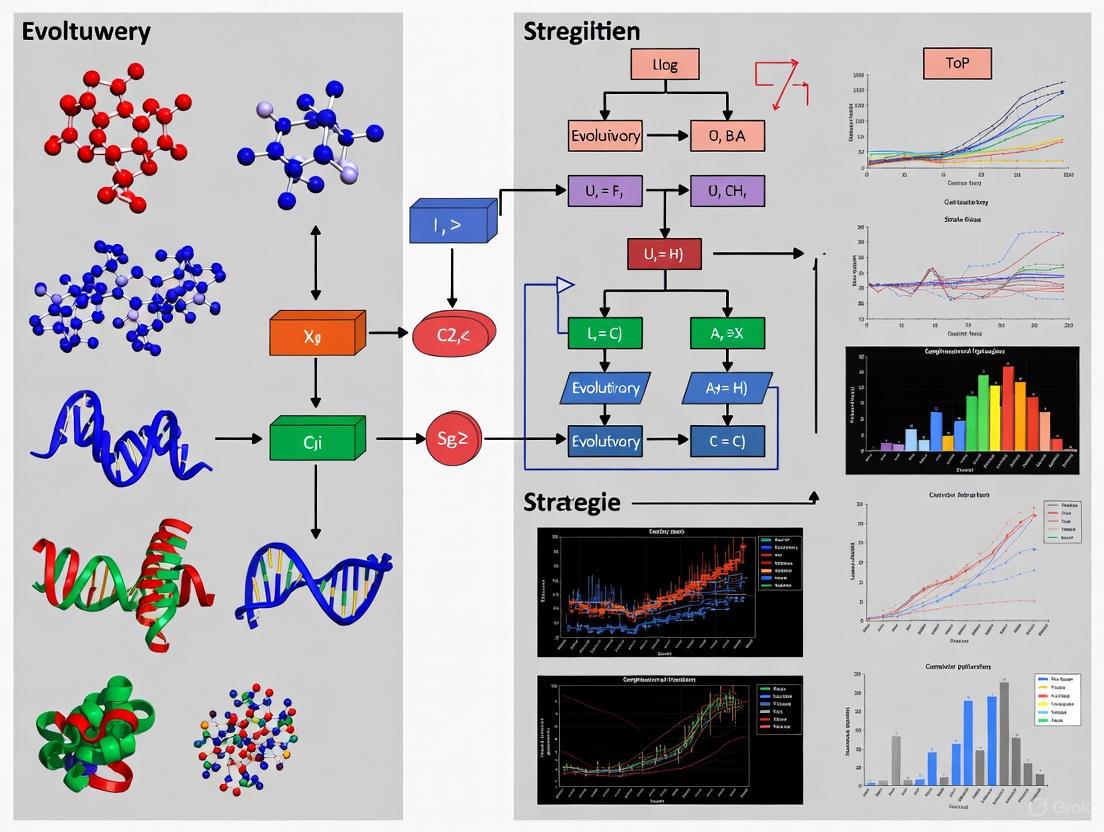

Diagram 1: High-level workflow of a typical Evolutionary Multitasking Optimization algorithm, illustrating the interplay between its core principles.

Quantitative Data and Optimization Strategies

The performance of EMTO is heavily influenced by the choice and implementation of various optimization strategies. Research has systematically categorized these strategies to enhance algorithm efficiency, particularly focusing on improving the knowledge transfer process.

Table 2: Classification of Key Optimization Strategies in EMTO [1]

| Strategy Category | Sub-category | Core Objective | Impact on EMTO Performance |

|---|---|---|---|

| Knowledge Transfer | Transfer Solution | Directly transfer elite solutions between tasks | High potential, but risk of negative transfer if tasks are unrelated |

| Transfer Model | Build a probabilistic model of a source task and use it to generate solutions in a target task | More robust transfer; can capture underlying problem structure | |

| Transfer Parameter | Share algorithmic parameters or search distribution information | Efficient for tasks with similar landscape characteristics | |

| Resource Allocation | Dynamic Resource Allocation | Assign more computational resources to harder or more promising tasks | Improves overall efficiency and convergence |

| Algorithmic Integration | Hybrid EMTO | Combine EMTO with other optimization paradigms (e.g., surrogate models) | Reduces computational cost; enhances solution quality in complex problems |

| Multi-objective EMTO | Solve multiple tasks, each having multiple objectives | Expands applicability to real-world problems with several conflicting goals |

A significant advancement is the development of adaptive algorithms that use population distribution information. For instance, one method divides each task's population into K sub-populations and uses the Maximum Mean Discrepancy (MMD) metric to calculate distribution differences [2]. The sub-population from a source task with the smallest MMD value to the target task's elite sub-population is selected for transfer. This allows transferred individuals to be potentially useful solutions, not necessarily just the elite ones, which is particularly effective for problems with low inter-task relevance [2]. Furthermore, incorporating an improved randomized interaction probability helps to adaptively adjust the intensity of inter-task interactions, fine-tuning the balance between exploration and exploitation [2].

Application Notes and Protocols for Drug Discovery

EMTO has demonstrated significant applicability in various real-world domains, with computer-aided drug design (CADD) being a prominent area. The process of de novo drug design is inherently a multi-objective and multi-task challenge, making it a suitable candidate for EMTO methodologies [3].

Protocol: Multi-Task Optimization forDe NovoDrug Design

This protocol outlines the application of an EMTO framework to design novel drug candidates with multiple desired properties.

Objective: To simultaneously generate and optimize novel molecular structures against multiple objectives, such as maximizing drug-likeness (QED), minimizing synthetic accessibility (SA) score, and optimizing binding affinity scores for one or more target proteins.

Research Reagent Solutions:

Table 3: Essential Research Reagents and Computational Tools for EMTO in Drug Design

| Item/Tool Name | Function/Description | Application in Protocol |

|---|---|---|

| SELFIES String Representation | A molecular string representation guaranteeing 100% syntactic validity [4]. | Genotypic encoding of molecules within the evolutionary algorithm. Prevents invalid offspring. |

| GuacaMol Benchmark Suite | A benchmark platform for de novo drug design providing multi-objective tasks [4]. | Source of standardized objective functions (e.g., similarity, isomerism) for evaluation. |

| Quantitative Estimate of Drug-likeness (QED) | A metric quantifying the overall drug-likeness of a compound [4]. | An objective function to be maximized. |

| Synthetic Accessibility (SA) Score | A score estimating the ease of synthesizing a molecule [4]. | An objective function to be minimized. |

| NSGA-II / NSGA-III / MOEA/D | Multi-objective evolutionary algorithms (MOEAs) for handling multiple conflicting objectives [4] [3]. | Core optimization engines within the EMTO framework to manage intra-task objectives. |

Methodology:

Problem Formulation:

- Define Tasks (T1, T2,... Tn): Each task represents a unique drug design scenario. For example, T1 could be "Design inhibitors for Protein A," and T2 could be "Design inhibitors for Protein B," where A and B are related targets.

- Define Multi-objective Functions for Each Task: For each task, specify 2-4 objective functions. For a typical task, this might include:

- Objective 1: Maximize QED.

- Objective 2: Minimize SA Score.

- Objective 3: Maximize predicted binding affinity (e.g., via a docking score or ML-based predictor).

Algorithm Initialization:

- Representation: Initialize a unified population of individuals, where each individual's genome is a SELFIES string representing a molecule [4].

- Parameters: Set EMTO parameters, including population size, random mating probability (rmp), number of generations, and crossover/mutation rates.

Evolutionary Loop (for N generations):

- Evaluation: Evaluate each individual on all tasks defined in Step 1. For a molecule in T1, this means calculating its QED, SA Score, and binding affinity for Protein A.

- Skill Factor Assignment: For each individual, assign a skill factor corresponding to the task on which it achieves the best scalarized fitness (e.g., based on non-dominated ranking if using NSGA-II as the underlying MOEA) [1].

- Grouping: Group the population by their assigned skill factors.

- Assortative Mating & Crossover:

- Selective Imitation & Mutation: Offspring are evaluated on both parents' tasks. An offspring may inherit the skill factor of a parent from a different task if it performs better on that task. Apply mutation operators valid for SELFIES strings.

- Environmental Selection: Form the new population for the next generation by selecting the best individuals from the combined pool of parents and offspring, maintaining diversity.

Output:

- Upon convergence, the algorithm outputs the non-dominated Pareto set of optimal molecular solutions for each defined task [3]. These solutions represent the best trade-offs between the conflicting objectives for each drug design task.

Diagram 2: Experimental workflow for applying EMTO to de novo drug design.

Analysis and Validation

The success of the EMTO run should be evaluated using several metrics:

- Hypervolume Indicator: Measures the volume of objective space covered by the obtained Pareto front, indicating overall performance.

- Inter-task Similarity Analysis: Post-hoc analysis of the transferred solutions can reveal hidden relationships between the drug design tasks (e.g., between Protein A and B).

- Novelty and Diversity: The structural novelty of the generated molecules compared to known databases should be assessed. Studies have shown that MOEAs can discover novel and promising candidates not present in conventional databases [4].

- Expert Validation: Top-ranking molecules from the Pareto sets should undergo further in silico validation (e.g., molecular dynamics simulations) and, ultimately, in vitro testing to confirm bioactivity.

Contrasting EMTO with Traditional Single-Task Evolutionary Algorithms

Evolutionary Algorithms (EAs) are population-based optimization techniques inspired by natural evolution that have successfully solved complex optimization problems across various domains. Traditional EAs typically follow a single-task optimization (STO) paradigm, focusing on solving one problem at a time in isolation. However, this approach fails to leverage potential synergies when multiple related problems need to be solved simultaneously. Evolutionary Multi-Task Optimization (EMTO) has emerged as a novel paradigm that enables the simultaneous optimization of multiple tasks while facilitating knowledge transfer between them [1].

EMTO represents a shift from traditional isolated optimization approaches toward a more integrated framework. By exploiting the implicit parallelism of population-based search, EMTO creates a multi-task environment where valuable knowledge gained while solving one task can be transferred to assist in solving other related tasks [1] [5]. This paradigm is particularly valuable for large-scale combinatorial optimization problems where related tasks often share common structures or characteristics that can be exploited to enhance search efficiency and solution quality.

The foundational algorithm in EMTO is the Multifactorial Evolutionary Algorithm (MFEA), which treats each task as a unique cultural factor influencing population evolution [1]. MFEA uses skill factors to divide the population into non-overlapping task groups and achieves knowledge transfer through assortative mating and selective imitation mechanisms [1]. Since its introduction, numerous EMTO variants have been developed with enhanced knowledge transfer capabilities across diverse application domains.

Fundamental Differences Between STO and EMTO

Core Architectural Differences

The fundamental distinction between single-task and multi-task evolutionary approaches lies in their architectural design and operational methodology. STO employs dedicated algorithms and populations for each optimization problem, while EMTO utilizes a shared infrastructure across multiple tasks.

Table 1: Architectural Comparison Between STO and EMTO

| Feature | Single-Task Optimization (STO) | Evolutionary Multi-Task Optimization (EMTO) |

|---|---|---|

| Population Structure | Separate populations for each task | Single unified population or explicitly defined sub-populations |

| Search Process | Independent searches for each task | Concurrent searches with inter-task interactions |

| Knowledge Utilization | No knowledge transfer between tasks | Systematic knowledge transfer through specialized operators |

| Algorithmic Focus | Task-specific optimization | Cross-task synergy exploitation |

| Resource Allocation | Fixed resources per task | Dynamic resource allocation based on task relatedness |

Knowledge Transfer Mechanisms

The core innovation of EMTO lies in its ability to facilitate knowledge transfer between tasks, which is absent in traditional STO. This transfer occurs through specifically designed mechanisms that allow genetic or cultural material to move between task domains [5]. Effective knowledge transfer can significantly accelerate convergence and improve solution quality for complex problems with complementary fitness landscapes.

However, knowledge transfer introduces the challenge of negative transfer—where inappropriate knowledge exchange deteriorates optimization performance [5] [2]. Advanced EMTO approaches address this through transfer control mechanisms that dynamically adjust transfer intensity based on task relatedness measures [2]. For instance, some algorithms use Maximum Mean Discrepancy (MMD) to calculate distribution differences between sub-populations and selectively transfer individuals from the most similar distributions [2].

EMTO Application Notes for Large-Scale Combinatorial Optimization

Problem Formulation and Suitability

EMTO is particularly advantageous for large-scale combinatorial optimization problems exhibiting one or more of the following characteristics:

- Multiple related variants of a core problem need simultaneous solution

- Complementary search spaces where progress in one area can inform others

- Shared substructures or building blocks across different problem instances

- Computational budget constraints that favor efficient parallel optimization

A prominent example is the Multi-Objective Vehicle Routing Problem with Time Windows (MOVRPTW), which involves optimizing five conflicting objectives: minimizing the number of vehicles, total travel distance, longest route travel time, total waiting time, and total delay time [6]. EMTO approaches can construct assisted tasks (e.g., two-objective VRPTW) that share knowledge with the main MOVRPTW task, significantly enhancing optimization efficiency [6].

Performance Advantages in Combinatorial Domains

Research has demonstrated that EMTO can outperform STO in various combinatorial optimization domains:

- Enhanced convergence speed through cross-task genetic transfers [1] [7]

- Improved solution quality by leveraging complementary search progress [8] [6]

- Superior global exploration by escaping local optima through inter-task jumps [8]

- Efficient resource utilization through implicit parallelization of search efforts [1]

In the MOVRPTW domain, the MTMO/DRL-AT algorithm combining EMTO with Deep Reinforcement Learning has shown superior performance compared to traditional approaches, effectively leveraging knowledge transfer between main and assisted tasks [6].

Experimental Protocols and Benchmarking

Standardized Test Suites

The EMTO community has developed specialized test suites for rigorous algorithmic comparison. For the upcoming CEC 2025 Competition on Evolutionary Multi-task Optimization, two primary test suites have been established [7]:

Table 2: EMTO Benchmark Test Suites

| Test Suite | Problem Type | Number of Tasks | Number of Problems | Evaluation Metric |

|---|---|---|---|---|

| MTSOO | Single-Objective | 2 and 50 tasks | 19 total | Best Function Error Value (BFEV) |

| MTMOO | Multi-Objective | 2 and 50 tasks | 19 total | Inverted Generational Distance (IGD) |

Standard Experimental Protocol

For comprehensive evaluation, researchers should adhere to the following standardized protocol [7]:

Execution Parameters:

- 30 independent runs per algorithm with different random seeds

- Maximum 200,000 function evaluations for 2-task problems

- Maximum 5,000,000 function evaluations for 50-task problems

- Identical parameter settings across all benchmark problems

Data Collection:

- Record Best Function Error Values (BFEV) for single-objective problems at predefined evaluation checkpoints

- Record Inverted Generational Distance (IGD) values for multi-objective problems

- For 2-task problems: 100 checkpoints at k*maxFEs/100 (k=1,...,100)

- For 50-task problems: 1000 checkpoints at k*maxFEs/1000 (k=1,...,1000)

Performance Assessment:

- Calculate median performance over 30 runs at each checkpoint

- Evaluate performance across varying computational budgets

- Assess optimization trajectory smoothness and convergence speed

Advanced EMTO Methodologies

Data-Driven Multi-Task Optimization

The Data-Driven Multi-Task Optimization (DDMTO) framework represents a significant advancement in EMTO methodology. DDMTO utilizes machine learning models to smooth rugged fitness landscapes, creating an easier auxiliary task that assists in optimizing the original complex problem [8]. The framework operates through the following mechanism:

- Fitness Landscape Smoothing: A machine learning model (e.g., neural network) is trained to approximate and smooth the original rugged fitness landscape

- Multi-Task Formulation: The original problem (difficult task) and smoothed landscape (easy task) are optimized simultaneously

- Controlled Knowledge Transfer: A specialized transfer operator facilitates knowledge flow from the easy to the difficult task while preventing negative transfer

This approach has demonstrated significant performance improvements in complex solution spaces without increasing computational costs [8].

Adaptive Knowledge Transfer Based on Population Distribution

Advanced EMTO algorithms address the negative transfer problem through adaptive mechanisms based on population distribution analysis [2]:

- Sub-population Division: Each task population is divided into K sub-populations based on fitness values

- Distribution Similarity Measurement: Maximum Mean Discrepancy (MMD) calculates distribution differences between sub-populations

- Selective Transfer: The sub-population with smallest MMD to the target task's best solution region is selected for knowledge transfer

- Dynamic Interaction Control: Improved randomized interaction probability adjusts inter-task interaction intensity

This methodology has proven particularly effective for problems with low inter-task relevance, where traditional elite-solution transfer approaches often fail [2].

Integration with Other Computational Paradigms

EMTO demonstrates strong compatibility with other advanced computational intelligence techniques:

- Deep Reinforcement Learning Integration: Combining EMTO with DRL-based attention models for combinatorial optimization problems like MOVRPTW [6]

- Large Language Model Assistance: Using LLMs to autonomously generate knowledge transfer models tailored to specific optimization tasks [9]

- Surrogate Modeling: Employing surrogate models to reduce computational cost in expensive optimization problems [8]

Research Reagent Solutions

Table 3: Essential Research Components for EMTO Implementation

| Component | Function | Examples/Alternatives |

|---|---|---|

| Base Evolutionary Algorithm | Provides core optimization mechanics | Genetic Algorithm, Differential Evolution, Particle Swarm Optimization |

| Knowledge Transfer Mechanism | Facilitates cross-task information exchange | Assortative mating, Selective imitation, Explicit mapping-based transfer |

| Task Relatedness Measure | Quantifies similarity between tasks for transfer control | Maximum Mean Discrepancy (MMD), Transfer Rank, Similarity metric learning |

| Benchmark Test Suites | Standardized performance evaluation | MTSOO, MTMOO, CEC competition problems |

| Performance Metrics | Quantifies algorithmic effectiveness | BFEV, IGD, Hypervolume, Convergence curves |

| Resource Allocation Strategy | Dynamically distributes computational resources | Adaptive resource sharing, Online transfer parameter estimation |

Evolutionary Multi-Task Optimization represents a paradigm shift from traditional single-task evolutionary approaches, offering significant advantages for large-scale combinatorial optimization problems. By enabling synergistic knowledge transfer between related tasks, EMTO achieves superior convergence speed, solution quality, and computational efficiency compared to isolated optimization approaches. The ongoing development of adaptive knowledge transfer mechanisms, integration with machine learning techniques, and establishment of standardized benchmarking protocols continues to advance EMTO capabilities. For researchers tackling complex combinatorial optimization challenges with inherent task relatedness, EMTO provides a powerful framework that transcends the limitations of conventional single-task evolutionary computation.

Evolutionary Multitasking (EMT) is an advanced paradigm in evolutionary computation that enables the simultaneous optimization of multiple tasks by strategically exploiting their underlying synergies [5]. Unlike traditional Evolutionary Algorithms (EAs) that solve problems in isolation, EMT creates a multi-task environment where knowledge transfer accelerates the search process for all component tasks [5]. This approach mirrors human cognitive abilities to process multiple related tasks simultaneously, leveraging implicit parallelism and knowledge transfer to achieve superior performance compared to single-task optimization [10]. The fundamental rationale for multitasking stems from the observation that real-world problems rarely exist in isolation, with many optimization tasks sharing commonalities that can be exploited through carefully designed transfer mechanisms [11].

The mathematical foundation of EMT addresses a scenario with K distinct minimization tasks, where the j-th task Tj aims to find optimal solution xj* that minimizes objective function Fj(x) within feasible space Xj [5]. Through implicit parallelism and knowledge synergy, EMT searches all task spaces concurrently, often achieving performance improvements that would be impossible through independent optimization efforts [12]. This paradigm has demonstrated particular value in complex real-world applications including high-dimensional feature selection, vehicle path planning, shop-floor scheduling optimization, and parameter extraction of photovoltaic models [13].

Theoretical Foundations and Mechanisms

Key Concepts and Terminology

EMT operates through several specialized mechanisms that distinguish it from traditional evolutionary approaches:

Implicit Parallelism: EMT harnesses the inherent parallelism of population-based search, where a single population evolves to address multiple tasks simultaneously [5]. This contrasts with explicit parallelization techniques, instead leveraging the multi-task environment's natural capacity to explore multiple search spaces concurrently [12].

Knowledge Transfer: The core mechanism enabling synergy between tasks, knowledge transfer involves extracting valuable information from one task's search process and applying it to accelerate convergence in other related tasks [5]. This transfer can occur either implicitly through genetic operators or explicitly through designed mapping strategies [13].

Skill Factor: Each individual in the population is assigned a skill factor (τi) representing the specific task on which it demonstrates best performance, enabling specialized selection and reproduction strategies [10].

Scalar Fitness: In multitasking environments, individuals receive a unified fitness measure (βi) that enables direct comparison across different tasks, facilitating selection operations in the unified search space [10].

Algorithmic Frameworks and Transfer Modalities

EMT algorithms primarily fall into two categories based on their knowledge transfer mechanisms:

Implicit Knowledge Transfer approaches, exemplified by the Multifactorial Evolutionary Algorithm (MFEA), facilitate knowledge exchange primarily through genetic operators within a unified population [13]. In MFEA, individuals with different skill factors may produce offspring through crossover, enabling automatic knowledge transfer when random mating probability conditions are met [13]. While this approach provides seamless integration, its effectiveness heavily depends on task relatedness, with potential performance degradation when task similarity is low [13].

Explicit Knowledge Transfer algorithms actively identify and extract transferable knowledge from source tasks, such as high-quality solutions or solution space characteristics [13]. These methods employ specifically designed mechanisms—including denoising autoencoders, subspace alignment techniques, and similarity measures—to govern inter-task information exchange [13] [11]. This paradigm offers greater control over transfer quality but requires careful design to minimize negative transfer [5].

Table 1: Comparative Analysis of EMT Algorithm Classes

| Feature | Implicit Transfer Algorithms | Explicit Transfer Algorithms |

|---|---|---|

| Knowledge Representation | Genetic material within unified population | Extracted patterns, mappings, or elite solutions |

| Transfer Mechanism | Crossover between individuals with different skill factors | Designed mapping functions and similarity measures |

| Key Parameters | Random mating probability (RMP) | Similarity thresholds, transfer proportions |

| Advantages | Simple implementation, seamless integration | Controlled transfer, adaptability to task relationships |

| Limitations | Potential negative transfer, limited control | Computational overhead, design complexity |

| Representative Algorithms | MFEA [10] | MFEA-II, PA-MTEA, LDA-MFEA [13] [11] |

Quantitative Performance Analysis

Experimental evaluations across diverse benchmark problems demonstrate EMT's consistent performance advantages over traditional single-task optimization approaches. The following table synthesizes key quantitative findings from empirical studies:

Table 2: Quantitative Performance Metrics of EMT Algorithms

| Algorithm | Benchmark Problems | Key Performance Metrics | Comparative Advantage |

|---|---|---|---|

| PA-MTEA [13] | WCCI2020-MTSO test suite, Photovoltaic parameter extraction | Significant superiority over 6 advanced MTO algorithms | Cross-task association mapping enhances convergence |

| TLTL Algorithm [10] | Various MTO problems | Outstanding global search ability, fast convergence rate | 2-level transfer improves efficiency and effectiveness |

| CA-MTO [11] | Expensive multitasking problems | Strong robustness and scalability, competitive edge over state-of-the-art | Classifier assistance reduces fitness evaluations |

| MetaMTO [14] | Augmented multitask problem distribution | State-of-the-art performance against human-crafted and learning-assisted baselines | Holistic control of where, what, and how to transfer |

Experimental Protocols and Methodologies

General EMT Experimental Framework

Establishing a robust experimental protocol is essential for valid EMT research. The following protocol outlines standardized procedures for conducting and evaluating EMT experiments:

Phase 1: Problem Formulation and Benchmark Selection

- Select appropriate benchmark suites that represent target problem characteristics (e.g., WCCI2020-MTSO for complex two-task problems) [13]

- Clearly define each task's search space, objective function, and constraints

- For real-world applications, ensure tasks possess potential synergies that justify multitasking approach [11]

Phase 2: Algorithm Configuration and Parameter Setting

- Implement baseline algorithms (MFEA, MFEA-II, etc.) for comparative analysis [13] [11]

- Set population size based on problem complexity and task count

- Configure knowledge transfer parameters (RMP for implicit methods, similarity thresholds for explicit methods)

- Define termination criteria (maximum generations, fitness evaluations, or convergence thresholds)

Phase 3: Experimental Execution and Data Collection

- Execute multiple independent runs with different random seeds to ensure statistical significance

- Record convergence trajectories for each task separately

- Monitor knowledge transfer events and their impact on population quality

- Track computational resource consumption

Phase 4: Performance Assessment and Analysis

- Calculate performance metrics (best fitness, convergence speed, success rate)

- Apply statistical tests (Wilcoxon signed-rank, t-tests) to validate significance of differences

- Analyze transfer effectiveness and potential negative transfer occurrences

- Generate comparative visualizations (convergence plots, task similarity matrices)

Specialized Protocol for Expensive Optimization Problems

For computationally expensive problems where fitness evaluations constitute the primary resource bottleneck, the following modified protocol is recommended:

Surrogate Integration Protocol

- Implement classifier-assisted approaches (e.g., SVC-CMA-ES) to reduce fitness evaluations [11]

- Establish knowledge transfer strategy using domain adaptation techniques

- Create PCA-based subspace alignment to enrich training samples across tasks [11]

- Validate surrogate predictions periodically with actual fitness evaluations

Resource Allocation Framework

- Balance computational budget across tasks based on complexity and convergence behavior

- Implement adaptive resource sharing mechanisms that direct evaluations to most promising regions

- Use ensemble techniques to improve surrogate model reliability

Protocol for Knowledge Transfer Effectiveness Analysis

To specifically evaluate knowledge transfer quality and mitigate negative transfer:

Transfer Mapping Protocol

- For explicit transfer methods: Implement cross-task association mapping using partial least squares (PLS) or PCA-based subspace alignment [13] [11]

- Calculate alignment matrices using Bregman divergence minimization to reduce inter-task variability [13]

- Deploy adaptive population reuse mechanisms with residual structures to preserve valuable genetic material [13]

Similarity Assessment Framework

- Quantify inter-task relationships using attention-based similarity recognition modules [14]

- Dynamically adjust transfer proportions based on similarity measures

- Implement negative transfer detection mechanisms with fallback strategies

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Resources for EMT Research

| Tool Category | Specific Tools/Platforms | Function in EMT Research |

|---|---|---|

| Programming Languages | Python with NumPy, Pandas, scikit-learn [15] | Algorithm implementation, statistical analysis, and machine learning integration |

| EMT Frameworks | MFEA, MFEA-II, PA-MTEA [13] [11] | Baseline implementations and experimental comparisons |

| Benchmark Suites | WCCI2020-MTSO [13], CEC2017 [14] | Standardized problem sets for algorithm validation |

| Cloud Platforms | Amazon Redshift, Google BigQuery [15] | Scalable data processing and experimental analysis |

| Visualization Tools | Matplotlib, Seaborn [15] | Convergence plotting and results presentation |

| Containerization | Docker, Kubernetes [15] | Reproducible experimental environments |

Knowledge Transfer Implementation Framework

The implementation of effective knowledge transfer requires addressing three fundamental questions, as illustrated in Figure 2. Modern approaches, including the MetaMTO framework, employ specialized agents for each decision point [14]:

Task Routing (Where): Determines optimal source-target transfer pairs using attention-based similarity recognition modules that process status features from all sub-tasks [14].

Knowledge Control (What): Governs the quantity and quality of transferred knowledge by selecting specific proportions of elite solutions from source task populations [14].

Strategy Adaptation (How): Controls transfer mechanisms and hyper-parameters within the underlying EMT framework, including operator selection and transfer intensity [14].

For explicit transfer implementation, the following specialized protocols are recommended:

Subspace Alignment Protocol

- Apply Partial Least Squares (PLS) to extract principal components with strong inter-task correlations during bidirectional knowledge transfer [13]

- Construct low-dimensional subspaces for each task using PCA on current populations [11]

- Derive alignment matrices using Bregman divergence minimization to reduce inter-task variability [13]

- Validate subspace consistency through reconstruction error analysis

Adaptive Population Reuse Mechanism

- Implement diversity-based evaluation for each task's population

- Adaptively adjust the number of elite individuals retained in reused population history

- Incorporate genetic information from historical successes into current task populations

- Balance exploration-exploitation tradeoffs through residual structures [13]

Evolutionary Multitasking represents a paradigm shift in optimization methodology, moving from isolated problem-solving to synergistic multi-task environments. The theoretical foundations and experimental protocols presented in this document provide researchers with comprehensive guidelines for implementing and validating EMT approaches. The quantitative evidence demonstrates that through implicit parallelism and knowledge synergy, EMT consistently achieves performance advantages across diverse problem domains, particularly for complex, large-scale combinatorial optimization challenges.

Future research directions include deeper integration of transfer learning methodologies from machine learning, development of more sophisticated negative transfer detection and mitigation strategies, and expansion of EMT applications to emerging domains including drug development, personalized medicine, and complex systems design. The continued refinement of knowledge transfer mechanisms, particularly through learning-based approaches like the multi-role reinforcement learning system in MetaMTO [14], promises to further enhance EMT's capabilities and applicability to increasingly complex real-world optimization scenarios.

Conceptual Foundations & Quantitative Definitions

This section details the core concepts of Skill Factor, Factorial Cost, and Cultural Transmission within Evolutionary Multitasking (EMT). These concepts are fundamental to the operation of Multifactorial Evolutionary Algorithms (MFEAs), enabling them to solve multiple optimization tasks concurrently by leveraging potential synergies [16] [17].

Table 1: Core Conceptual Definitions in Evolutionary Multitasking

| Concept | Formal Definition | Role in Evolutionary Multitasking |

|---|---|---|

| Skill Factor [16] [17] | The skill factor (\taui) of an individual (pi) is the specific task on which the individual achieves its best performance (i.e., its lowest factorial rank): (\taui = \arg\minj {r_{ij}}). | Determines an individual's specialized task, guiding selective evaluation and facilitating implicit knowledge transfer. |

| Factorial Cost [17] | The factorial cost (\alpha{ij}) of an individual (pi) on task (Tj) is defined as (\alpha{ij} = \gamma \delta{ij} + F{ij}), where (F{ij}) is the raw objective value, (\delta{ij}) is the constraint violation, and (\gamma) is a large penalizing multiplier. | Provides a unified measure to evaluate and compare individuals across different optimization tasks, handling both objective and constraints. |

| Factorial Rank [16] [17] | The factorial rank (r{ij}) of an individual (pi) on task (T_j) is its index position when the entire population is sorted in ascending order of factorial cost for that task. | Used to compute scalar fitness and determine the skill factor of an individual, enabling cross-task comparison. |

| Scalar Fitness [16] | The scalar fitness (\betai) of an individual (pi) is derived from its factorial ranks across all tasks: (\betai = 1 / \minj {r_{ij}}). This represents the inverse of the individual's best rank. | A single, unified fitness value that allows for the selection of individuals from different tasks within a single, unified population. |

The concept of Cultural Transmission in EMT is inspired by theories from social evolution [18]. It refers to the process of transferring knowledge or genetic material between tasks or across generations within a population. This can be realized through various mechanisms:

- Vertical Cultural Transmission: Offspring inherit knowledge directly from their parents, a mechanism foundational to the original MFEA [17].

- Horizontal Cultural Transmission: An individual (or population) learns from peers working on different but simultaneous tasks. This is a form of inter-task knowledge transfer [18].

- Elite-Guided Variation: A specific operator where information from the current Pareto front is transferred to all individuals, guiding the population toward rapid convergence [18].

Benchmarking & Performance Evaluation Data

Standardized benchmarks and evaluation protocols are crucial for advancing EMT research. The CEC 2025 competition provides well-established test suites for this purpose [7].

Table 2: Standard Benchmark Test Suites for Evolutionary Multitasking (CEC 2025)

| Test Suite | Problem Types | Number of Tasks per Problem | Maximum Function Evaluations (maxFEs) | Performance Metric |

|---|---|---|---|---|

| Multi-Task Single-Objective Optimization (MTSOO) [7] | Nine complex problems; Ten 50-task problems | 2 (complex problems); 50 (many-task problems) | 200,000 (2-task); 5,000,000 (50-task) | Best Function Error Value (BFEV) |

| Multi-Task Multi-Objective Optimization (MTMOO) [7] | Nine complex problems; Ten 50-task problems | 2 (complex problems); 50 (many-task problems) | 200,000 (2-task); 5,000,000 (50-task) | Inverted Generational Distance (IGD) |

Experimental Protocol for Performance Evaluation [7]:

- Runs: Execute 30 independent runs per benchmark problem using different random seeds.

- Data Recording: For each run, record the algorithm's performance (BFEV for MTSOO, IGD for MTMOO) at predefined intervals (e.g., 100 checkpoints for 2-task problems, 1000 for 50-task problems).

- Parameter Settings: Algorithm parameters must remain identical for all problems within a test suite. Calibrating parameters specifically for individual problems is prohibited to ensure fair comparison.

- Overall Ranking: The final ranking of algorithms is based on their median performance across all 30 runs for each task and across all computational budgets.

Experimental Protocols for EMT Algorithms

Core MFEA Workflow Protocol

The following protocol outlines the standard procedure for a Multifactorial Evolutionary Algorithm (MFEA), which implicitly handles knowledge transfer through chromosomal crossover and skill factor inheritance [16] [17].

Protocol Steps:

- Initialization: Generate an initial population of individuals randomly within a unified search space [16]. Each individual is encoded in a normalized space that accommodates all tasks [16].

- Evaluation and Skill Factor Assignment: Evaluate each individual on every optimization task. Then, calculate the factorial cost, factorial rank, and subsequently assign a skill factor and scalar fitness to each individual based on Table 1 [16] [17].

- Assortative Mating and Crossover: Create offspring by applying crossover and mutation. A key feature is assortative mating: if two selected parents have the same skill factor, crossover occurs normally. If they have different skill factors, crossover still occurs with a probability defined by the random mating probability (rmp) parameter, facilitating implicit knowledge transfer between tasks [16] [17].

- Selective Evaluation: To conserve computational resources, each offspring is evaluated only on the task corresponding to its inherited skill factor (typically that of a randomly chosen parent) [16].

- Selection: The current parent population and offspring population are combined. The best individuals, based on their scalar fitness, are selected to form the population for the next generation [16].

- Termination and Output: Steps 2-5 repeat until a termination criterion (e.g., maximum function evaluations) is met. The best solution for each task is then outputted [16].

Advanced Protocol: Cultural Transmission-based Evolution Strategy

This protocol describes a more advanced algorithm, CT-EMT-MOES, which explicitly manages knowledge transfer to mitigate negative transfer (where interaction between tasks harms performance) [18].

Protocol Steps:

- Multi-Population Initialization: Instead of a single unified population, initialize separate sub-populations for each task [18] [19].

- Independent Evolution: Each sub-population evolves independently using a chosen evolutionary strategy.

- Adaptive Information Transfer Strategy: An adaptive mechanism determines the probability of information transfer ((tp)), adjusting it based on the dominance relationship between offspring and their parents to rationally allocate evolutionary resources and avoid negative transfer [18].

- Dual Transfer Mechanism:

- Elite-Guided Variation (Within-Task): If the transfer probability is below a threshold, this operator is activated. It transfers information from the current Pareto-optimal solutions (elites) to all individuals within the same task, promoting rapid convergence [18].

- Horizontal Cultural Transmission (Between-Task): If the transfer probability is above the threshold, this operator is activated. It efficiently transfers information from a source task to a target task, bringing in richer diversity [18].

- Continuation: The process repeats from Step 2 until a termination criterion is met.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for Evolutionary Multitasking Research

| Item Name | Type / Category | Function and Application Note |

|---|---|---|

| CEC 2025 Test Suites [7] | Benchmark Problems | Provides standardized single- and multi-objective problems for rigorous and comparable performance evaluation of EMT algorithms. |

| Random Mating Probability (rmp) [16] [17] | Algorithm Parameter | Controls the probability of crossover between individuals from different tasks. A key parameter for managing implicit knowledge transfer. |

| Multi-Factorial Evolutionary Algorithm (MFEA) [16] [17] | Base Algorithm | The foundational algorithm framework for EMT, implementing skill factor, factorial cost, and implicit transfer via assortative mating. |

| Complex Network Analysis [19] | Analysis Framework | A framework for modeling and analyzing knowledge transfer behaviors in many-task optimization, where nodes are tasks and edges are transfer relationships. |

| Two-Level Transfer Learning (TLTL) [17] | Advanced Algorithm | An MFEA variant that implements inter-task (upper-level) and intra-task, across-dimension (lower-level) transfer learning to accelerate convergence. |

| Adaptive Information Transfer Strategy [18] | Algorithm Component | A mechanism to dynamically adjust the probability of information transfer between tasks based on search progress, helping to mitigate negative transfer. |

The Multifactorial Evolutionary Algorithm (MFEA) is a pioneering computational framework in the field of evolutionary multitasking optimization (EMT). It was designed to solve multiple optimization tasks simultaneously within a single run of an evolutionary algorithm by leveraging implicit knowledge transfer between tasks [20]. This paradigm marks a significant departure from traditional evolutionary algorithms, which typically handle one problem at a time. The MFEA is particularly suited for complex, large-scale optimization scenarios commonly encountered in scientific and engineering domains, including drug development, where it can exploit potential synergies between related tasks to accelerate the discovery process and improve solution quality.

Core Principles of MFEA

The MFEA creates a multitasking environment where a unified population of individuals evolves to address multiple distinct optimization tasks concurrently. The algorithm's foundation rests on two key biological-inspired mechanisms: assortative mating and vertical cultural transmission [20]. These mechanisms facilitate the transfer of genetic material and knowledge across different tasks.

In MFEA, each individual in the population possesses a skill factor, which denotes the specific task on which that individual performs best [20]. The algorithm uses a prescribed parameter called the random mating probability (rmp) to control the rate of cross-task reproduction, thereby managing the degree of knowledge transfer [20]. A scalar fitness value, defined as the reciprocal of the best factorial rank of an individual across all tasks, allows for the effective comparison and selection of individuals operating in different task spaces [20].

MFEA in Combinatorial Optimization

The foundational MFEA, initially developed for continuous optimization, has been successfully adapted for combinatorial problems. The dMFEA-II is an adaptive multifactorial evolutionary algorithm specifically designed for permutation-based discrete optimization problems, such as the Traveling Salesman Problem (TSP) and the Capacitated Vehicle Routing Problem (CVRP) [21]. This adaptation required the reformulation of concepts like parent-centric interactions to make them suited for discrete search spaces without losing the benefits of the original MFEA-II [21].

More recently, the MFEA paradigm has been extended to operate across different domains in what is termed Multi-Domain Evolutionary Optimization (MDEO). This framework is particularly powerful for combinatorial problems in complex networks (e.g., social, biological, transportation networks) that share common structural properties like power-law distribution and community structure [22]. MDEO uses measures of graph similarity and network alignment to manage the transfer of solutions between different domains, demonstrating efficacy on challenging combinatorial problems like adversarial link perturbation [22].

Table 1: Key MFEA Variants for Combinatorial Optimization

| Algorithm/Variant | Problem Type | Key Features | Sample Applications |

|---|---|---|---|

| dMFEA-II [21] | Permutation-based Discrete | Adaptive strategy for discrete spaces; Reformulated parent-centric interactions. | Traveling Salesman Problem (TSP); Capacitated Vehicle Routing Problem (CVRP). |

| MDEO [22] | Network-structured Combinatorial | Cross-domain knowledge transfer; Community-level graph similarity measurement. | Adversarial link perturbation; Critical node mining. |

| A-CMFEA [23] | Constrained Optimization | Archiving strategy for infeasible solutions; Adaptive rmp; Mutation for constraint violation. |

Constrained optimization problems with multiple tasks. |

Application Notes and Experimental Protocols

Workflow for a Standard MFEA

The following diagram illustrates the standard workflow of a Multifactorial Evolutionary Algorithm.

Protocol: Constrained Multitasking Optimization with A-CMFEA

Aim: To solve constrained multitasking optimization problems using the Adaptive Archive-based MFEA (A-CMFEA) [23].

Materials/Reagents:

Table 2: Research Reagent Solutions for MFEA Experiments

| Component | Type/Function | Example/Description |

|---|---|---|

| Unified Population | Data Structure | A single matrix representing all candidate solutions for all tasks. |

| Skill Factor (τ) | Algorithmic Parameter | An index identifying the task an individual performs best on [20]. |

| Random Mating Probability (rmp) | Control Parameter | A scalar or matrix controlling cross-task reproduction rate [20] [23]. |

| Archive | Data Structure | Stores promising infeasible solutions to exploit useful information for convergence [23]. |

| Feasibility Priority Rule | Constraint Handler | Compares two solutions based on constraint violation and objective value [23]. |

Procedure:

- Initialization: Generate a random initial population

P. Set the initial random mating probabilityrmp. Initialize an empty archive. - Evaluation and Assignment: For each individual in

P, evaluate its factorial cost on every task. Assign a skill factor to each individual based on its best performance. - Offspring Generation: For each generation, create offspring through:

- Assortative Mating: Select two parents. If their skill factors are the same or a random number is less than

rmp, perform within-task crossover. Otherwise, perform cross-task crossover. - Mutation: Apply a mutation operator to introduce variability.

- Assortative Mating: Select two parents. If their skill factors are the same or a random number is less than

- Archiving Strategy: Evaluate generated offspring. If an offspring is infeasible but has a better objective function value than its parent, store it in the archive.

- Adaptive

rmpAdjustment: Calculate the success rates of individuals generated through within-task and cross-task knowledge transfer. Adapt the value ofrmpbased on a comparison of these success rates to promote positive transfer [23]. - Mutation for Constraint Handling: Select a random individual from the population and mutate it to create a mutant. Identify the individual in the population with the largest constraint violation. If the mutant has a better objective value, replace the worst individual.

- Selection: Combine the current population, offspring, and individuals from the archive. Select the best individuals to form the population for the next generation, using the scalar fitness and constraint handling techniques.

- Termination: Repeat steps 3-7 until a termination criterion (e.g., maximum number of generations) is met.

Protocol: Knowledge Transfer Prediction with Decision Tree (EMT-ADT)

Aim: To enhance positive knowledge transfer in MFEA by using a decision tree to predict the transferability of individuals [20].

Procedure:

- Define Transfer Ability: For each individual, define a quantitative metric (transfer ability) that measures the amount of useful knowledge it contains for other tasks.

- Construct Decision Tree: Use individuals with high transfer ability to train a decision tree model. The model uses characteristics of the individuals and their performance across tasks as features.

- Integration into MFEA: During the evolution process, for each candidate individual proposed for transfer, use the trained decision tree to predict its transfer ability.

- Selective Transfer: Only allow individuals predicted to have high transfer ability to participate in cross-task reproduction, thereby reducing negative transfer and improving overall algorithm performance [20].

Advanced Adaptations and Future Directions

The MFEA framework continues to evolve. Recent research explores many-task optimization (MaTO), where the number of tasks exceeds three, and many-objective many-task optimization, which deals with multiple tasks each having three or more objectives [24]. Algorithms like MOMaTO-RP use reference-point-based non-dominated sorting to maintain population diversity in high-dimensional objective spaces [24].

A cutting-edge direction involves using Reinforcement Learning (RL) to fully automate knowledge transfer decisions. The MetaMTO framework uses a multi-role RL system to dynamically learn policies for determining where to transfer (task routing), what to transfer (knowledge control), and how to transfer (strategy adaptation) [25]. This meta-learning approach aims to create more generalizable and robust multitasking optimizers, reducing the reliance on human expertise for algorithm design.

Algorithmic Frameworks and Biomedical Applications: Implementing EMTO for Complex Problems

Evolutionary Multitasking (EMT) represents a paradigm shift in evolutionary computation, enabling the simultaneous solution of multiple optimization problems by leveraging their underlying synergies. Within this framework, two distinct learning mechanisms have emerged: explicit and implicit knowledge transfer. Explicit EMT involves the deliberate, conscious transfer of solutions or strategies between tasks, often through structured operator designs and centralized knowledge repositories. In contrast, implicit EMT facilitates automatic, unconscious knowledge exchange through population structures and evolutionary dynamics without direct intervention [26].

The growing complexity of large-scale combinatorial optimization problems, particularly in domains like drug development where multiple molecular properties must be simultaneously optimized, has intensified the need for sophisticated EMT approaches. This article establishes a comprehensive framework for understanding and implementing explicit and implicit EMT strategies, with particular emphasis on their application to computational challenges in pharmaceutical research and development.

Theoretical Foundation

Explicit EMT: Centralized Learning Mechanisms

Explicit EMT operates on principles analogous to explicit learning in human sensorimotor systems, where conscious strategies are deployed to address novel challenges [27] [28]. In computational terms, this translates to:

- Deliberate knowledge extraction: Systematic identification of high-quality solutions or solution fragments from source tasks

- Structured knowledge representation: Encoding of transferred knowledge in interpretable formats (e.g., rules, models, or patterns)

- Targeted knowledge injection: Purposeful integration of transferred knowledge into the search process of target tasks

Centralized learning systems in explicit EMT typically maintain a global repository or model that accumulates knowledge across tasks, enabling the transfer of complex solution structures and heuristic rules. This approach mirrors the fast learning process observed in psychological studies, where explicit strategies enable rapid initial performance improvements [28].

Implicit EMT: Emergent Adaptation Mechanisms

Implicit EMT functions through decentralized, emergent phenomena similar to implicit learning in neural systems, where adaptation occurs gradually through repeated exposure to task regularities [27]. Key characteristics include:

- Automatic knowledge exchange: Unconscious transfer mediated through shared population structures

- Subsymbolic representation: Knowledge encoded in population distributions rather than explicit rules

- Procedural knowledge accumulation: Gradual improvement through repeated task exposure without conscious awareness

This approach corresponds to the slow learning process in psychological models, where implicit adaptation gradually reshapes the underlying solution landscape [28]. The implicit system operates through continuous, automatic adjustments to the search strategy based on experiential regularities across tasks.

Table 1: Comparative Characteristics of Explicit and Implicit EMT

| Feature | Explicit EMT | Implicit EMT |

|---|---|---|

| Knowledge Awareness | Deliberate, conscious transfer | Automatic, unconscious transfer |

| Learning Speed | Fast initial improvement | Slow, gradual adaptation |

| Knowledge Representation | Structured rules, models, patterns | Population distributions, solution features |

| Transfer Mechanism | Centralized repository, targeted injection | Shared population, assortative mating |

| Implementation Overhead | High (requires knowledge extraction) | Low (emerges from evolutionary operators) |

| Optimal Application Domain | Highly similar tasks, structured knowledge | Moderately similar tasks, procedural knowledge |

Centralized Learning Systems for Explicit EMT

Architectural Framework

Centralized learning systems provide the structural foundation for explicit knowledge transfer in EMT. The core components include:

- Knowledge Repository: A global database storing elite solutions, building blocks, and problem characteristics across all tasks

- Similarity Assessment Module: Algorithms for quantifying inter-task relationships to guide transfer decisions

- Transfer Controller: Mechanism for determining when, what, and how much knowledge to transfer between tasks

- Adaptation Engine: Components for modifying transferred knowledge to enhance compatibility with target tasks

This architecture enables the systematic accumulation and deployment of knowledge across multiple optimization tasks, creating a form of "evolutionary memory" that preserves useful solution characteristics.

Knowledge Representation Strategies

Effective explicit EMT requires sophisticated knowledge representation schemes:

- Solution Fragments: Partial solutions or building blocks that capture useful substructures

- Model-Based Representations: Probabilistic models (e.g., Bayesian networks) that encode solution distributions

- Rule-Based Systems: If-then rules capturing heuristics and patterns derived from successful solutions

- Feature-Based Encodings: Representations that emphasize salient problem characteristics rather than complete solutions

The choice of representation significantly impacts transfer effectiveness and computational efficiency, with different schemes suited to particular problem domains and similarity relationships.

Adaptive Operator Strategies for Implicit EMT

Subdomain Evolutionary Trend Alignment

The SETA-MFEA (Subdomain Evolutionary Trend Alignment in Multifactorial Evolutionary Algorithm) represents a significant advancement in implicit EMT [26]. This approach addresses the challenge of negative transfer between dissimilar tasks through several key innovations:

- Adaptive Task Decomposition: Using density-based clustering methods like Affinity Propagation Clustering (APC) to decompose complex tasks into simpler subdomains

- Evolutionary Trend Alignment: Establishing mappings between subdomains by aligning their evolutionary trajectories

- Inter-Subdomain Knowledge Transfer: Facilitating knowledge exchange through SETA-based crossover operations

This methodology enables precise knowledge transfer at the subdomain level, overcoming limitations of whole-task transfer approaches that often prove ineffective for heterogeneous tasks with dissimilar fitness landscapes [26].

Multifactorial Evolutionary Framework

The Multifactorial Evolutionary Algorithm (MFEA) provides the foundational framework for implicit EMT implementation [7] [26]. Key components include:

- Unified Representation: Encoding solutions for all tasks within a common search space

- Skill Factor Assignment: Associating each individual with a specific optimization task

- Assortative Mating: Preferential mating between individuals working on the same task, with controlled inter-task crossover

- Vertical Cultural Transmission: Inheriting task assignment from parents during reproduction

This framework naturally facilitates implicit knowledge transfer through shared population structures and genetic operators, without requiring explicit knowledge representation or transfer decisions.

Table 2: Performance Comparison of EMT Algorithms on Benchmark Problems

| Algorithm | Multi-task Single-objective Problems (Avg. BFEV) | Multi-task Multi-objective Problems (Avg. IGD) | Negative Transfer Susceptibility | Computational Overhead |

|---|---|---|---|---|

| MFEA | 0.154 | 0.082 | High | Low |

| MFEA-II | 0.121 | 0.065 | Medium | Medium |

| SETA-MFEA | 0.089 | 0.043 | Low | High |

| Single-task EA | 0.195 | 0.101 | N/A | N/A |

BFEV: Best Function Error Value; IGD: Inverted Generational Distance

Experimental Protocols and Validation

Benchmarking Methodology

Comprehensive evaluation of EMT algorithms requires standardized testing protocols:

- Test Suites: Utilization of established benchmark problems including multi-task single-objective optimization (MTSOO) and multi-task multi-objective optimization (MTMOO) problems [7]

- Experimental Settings: 30 independent runs per algorithm with different random seeds, with maximum function evaluations (maxFEs) set to 200,000 for 2-task problems and 5,000,000 for 50-task problems [7]

- Performance Metrics: Best function error value (BFEV) for single-objective problems and inverted generational distance (IGD) for multi-objective problems

- Statistical Validation: Appropriate statistical tests (e.g., Wilcoxon signed-rank test) to confirm significance of performance differences

These protocols ensure fair comparison and robust evaluation of EMT algorithm performance across diverse problem characteristics.

Implementation Protocol for SETA-MFEA

The following protocol details the implementation of the advanced SETA-MFEA algorithm:

Initialization Phase

- Create a unified population of individuals encoded in a unified search space

- Initialize skill factors for each individual randomly across all tasks

- Set algorithm parameters: random mating probability (rmp = 0.3), clustering threshold (δ = 0.15)

Subdomain Decomposition (Each Generation)

- For each task, identify corresponding subpopulation using skill factors

- Apply Affinity Propagation Clustering to decompose each subpopulation into k subdomains

- Characterize each subdomain by its centroid and fitness distribution

Evolutionary Trend Alignment

- Calculate evolutionary direction for each subdomain using population movements over previous 5-10 generations

- Compute similarity matrix between all subdomain pairs using trend consistency measures

- Establish mappings between subdomains with consistent evolutionary trends

Knowledge Transfer Phase

- For each individual, select mating partner using modified assortative mating:

- With probability rmp, select partner from different task but aligned subdomain

- With probability 1-rmp, select partner from same task

- Perform crossover using SETA-based alignment for inter-subdomain mating

- Apply mutation operators with task-specific parameter settings

- For each individual, select mating partner using modified assortative mating:

Selection and Update

- Evaluate offspring on assigned tasks

- Update subpopulation through elitist selection

- Update evolutionary trend records for each subdomain

This protocol enables precise knowledge transfer while minimizing negative transfer between dissimilar task subdomains [26].

Applications in Drug Development and Combinatorial Optimization

Pharmaceutical Optimization Scenarios

EMT approaches offer significant potential for addressing complex optimization challenges in pharmaceutical research:

- Multi-property Molecular Optimization: Simultaneous optimization of potency, selectivity, and ADMET properties

- Cross-target Drug Design: Leveraging similarities between related protein targets to accelerate inhibitor discovery

- Multi-scale Formulation Optimization: Concurrent optimization of molecular structure and formulation parameters

These applications typically involve heterogeneous tasks with varying degrees of similarity, requiring careful selection of explicit or implicit EMT strategies based on task relationships.

Large-scale Combinatorial Optimization

In large-scale combinatorial problems relevant to drug discovery, EMT provides several advantages:

- Synergistic Search: Leveraging common substructures or patterns across related problems

- Accelerated Convergence: Transferring high-quality building blocks between tasks to reduce evaluation burden

- Robust Solution Quality: Avoiding local optima through diverse knowledge sources

Experimental results demonstrate that EMT algorithms can reduce computational effort by 30-50% compared to single-task approaches while maintaining or improving solution quality [26].

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Computational Tools for EMT Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| MTO Benchmark Suites | Standardized test problems for algorithm validation | Performance comparison and capability assessment [7] |

| Domain Adaptation Libraries | Implementation of LDA, SETA, and other transfer mappings | Enhancing cross-task similarity for heterogeneous tasks [26] |

| Multi-factorial Evolutionary Framework | Foundational implementation of MFEA, MFPSO, and MFDE | Core EMT algorithm development and extension [26] |

| Fitness Landscape Analysis Tools | Characterization of problem difficulty and task similarity | Informed transfer strategy selection and parameter tuning |

| Parallel Computing Infrastructure | Distributed evaluation of multiple tasks and populations | Scalable EMT for computationally expensive problems |

Visualizations

Explicit vs. Implicit EMT Architecture

Explicit and Implicit EMT Architectures

SETA-MFEA Workflow

SETA-MFEA Algorithm Workflow

The integration of explicit and implicit EMT strategies represents a promising direction for addressing complex large-scale combinatorial optimization problems in drug development and related fields. Explicit EMT with centralized learning mechanisms provides structured, interpretable knowledge transfer suitable for tasks with clear similarities and structured knowledge. Implicit EMT with adaptive operator strategies offers robust, emergent transfer capabilities for heterogeneous tasks with complex, poorly understood relationships.

Future research directions include hybrid explicit-implicit frameworks that dynamically select transfer strategies based on task characteristics, more sophisticated knowledge representation schemes for complex solution structures, and enhanced scalability for many-task optimization scenarios. As EMT methodologies continue to mature, they hold significant potential for accelerating discovery processes in pharmaceutical research and other domains requiring concurrent optimization of multiple, interrelated objectives.

The application of evolutionary computation to combinatorial optimization represents a cornerstone of modern computational intelligence research, particularly within complex domains such as drug discovery. The paradigm of evolutionary multitasking has emerged as a powerful framework for addressing multiple optimization problems simultaneously, harnessing the synergies between tasks to accelerate convergence and improve solution quality [7]. Within pharmaceutical research, the combinatorial explosion of possible molecular configurations presents a characteristically high-dimensional challenge that traditional optimization methods struggle to address efficiently. This application note delineates structured methodologies and protocols for applying evolutionary multitasking approaches to large-scale combinatorial problems, with specific emphasis on drug discovery applications where discrete variables and complex constraint landscapes dominate the optimization terrain. By integrating advanced constraint-handling techniques with visualization approaches for combinatorial spaces, researchers can navigate these complex search domains more effectively, ultimately reducing drug development timelines and improving success rates in identifying viable therapeutic candidates [29] [30].

Core Computational Challenges in Combinatorial Drug Optimization

High-Dimensionality in Chemical Space

Drug discovery involves navigating ultra-large chemical spaces that can contain billions of potential compounds. Recent advances in "make-on-demand" virtual libraries have expanded accessible chemical space dramatically, with suppliers like Enamine offering approximately 65 billion novel compounds and OTAVA providing around 55 billion [30]. This combinatorial explosion creates significant challenges for traditional screening methods, as empirical evaluation of all possible compounds is computationally infeasible. The high-dimensional nature of molecular descriptor data further compounds this challenge, requiring sophisticated dimensionality reduction techniques to enable effective analysis and optimization.

Discrete Variable Representation

Combinatorial optimization in drug discovery inherently involves discrete variables representing molecular structures, scaffold configurations, and substitution patterns. Unlike continuous optimization problems, combinatorial spaces lack natural ordering and continuity, making neighborhood definitions and variation operators more complex [31]. The fundamental challenge lies in defining effective neighborhood structures and variation operators that can efficiently explore these discrete spaces while maintaining chemical feasibility and meaningful molecular transformations.

Constraint Handling in Biochemical Optimization

Drug optimization problems typically incorporate numerous complex constraints, including physicochemical properties, absorption, distribution, metabolism, excretion, and toxicity (ADMET) requirements, and synthetic accessibility considerations. Effectively handling these constraints is critical for generating practically viable solutions. Constraint-handling techniques must balance feasibility maintenance with optimality search, particularly when the global optimum often lies near the boundary of the feasible region [32] [33].

Table 1: Classification of Constraint-Handling Techniques for Combinatorial Optimization

| Technique Category | Key Characteristics | Advantages | Limitations |

|---|---|---|---|

| Penalty Function Methods | Uses penalty factors to incorporate constraints into fitness function | Simple implementation, wide applicability | Requires careful parameter tuning, may converge prematurely |

| Feasibility Preference Methods | Prioritizes feasible solutions over infeasible ones | Effective boundary search, good convergence | May overlook useful infeasible solutions |

| Multi-objective Optimization Methods | Treats constraints as additional objectives | Automatic balance of constraints/objectives | Increased computational complexity |

| Hybrid Methods | Combines multiple constraint-handling approaches | Adaptable to different problem phases | Complex implementation, parameter sensitivity |

Evolutionary Multitasking Protocols for Combinatorial Spaces

Framework Configuration

Evolutionary multitasking provides a powerful mechanism for leveraging inter-task synergies when solving multiple combinatorial optimization problems simultaneously. The protocol exploits the fact that genetic material evolved for one task may prove effective for another, facilitating knowledge transfer across optimization landscapes [7]. The multi-factorial evolutionary algorithm (MFEA) serves as the foundational framework, maintaining a unified population that searches across multiple tasks concurrently while enabling implicit genetic transfer through specialized crossover operations.

Implementation Protocol:

- Initialize unified population with random feasible solutions

- Assign skill factors to individuals based on task performance

- Implement assortative mating that preferentially crosses individuals with same skill factor

- Apply vertical cultural transmission to offspring to inherit skill factor

- Execute selection based on scalar fitness encompassing all tasks

- Enable implicit genetic transfer through cross-task crossover events

Chemical Space Decomposition Protocol

Managing ultra-large chemical spaces requires strategic problem decomposition to make optimization tractable. This protocol employs a classification-collaboration approach where the original problem with multiple constraints is decomposed into smaller subproblems [32].

Experimental Workflow:

- Constraint Classification: Randomly classify constraints into K distinct classes

- Problem Decomposition: Decompose original problem into K corresponding subproblems

- Subpopulation Initialization: Generate K subpopulations, each assigned to a subproblem

- Collaborative Evolution: Implement random and directed learning stages for inter-subpopulation interaction

- Solution Reconstruction: Aggregate solutions from subproblems to reconstruct complete solutions

Table 2: Evolutionary Multitasking Benchmark Problems for Combinatorial Optimization

| Problem Type | Component Tasks | Variable Types | Key Constraints | Evaluation Metric |

|---|---|---|---|---|

| Multi-Task Single-Objective (MTSOO) | 2-50 single-objective tasks | Discrete, combinatorial | Linear, nonlinear, equality, inequality | Best Function Error Value (BFEV) |

| Multi-Task Multi-Objective (MTMOO) | 2-50 multi-objective tasks | Discrete, combinatorial | Linear, nonlinear, equality, inequality | Inverted Generational Distance (IGD) |

| Drug Scaffold Optimization | Multiple molecular scaffolds | Discrete structural variables | ADMET, synthetic accessibility | Binding affinity, similarity metrics |