Evolutionary Multi-Task Optimization in Biomedicine: A Comprehensive Survey of Algorithms, Applications, and Future Directions

This comprehensive literature review surveys the rapidly evolving field of Evolutionary Multi-Task Optimization (EMTO) with specific focus on applications in biomedical and clinical research contexts.

Evolutionary Multi-Task Optimization in Biomedicine: A Comprehensive Survey of Algorithms, Applications, and Future Directions

Abstract

This comprehensive literature review surveys the rapidly evolving field of Evolutionary Multi-Task Optimization (EMTO) with specific focus on applications in biomedical and clinical research contexts. The review systematically examines foundational EMTO principles, including multi-factorial and multi-population frameworks, then progresses to methodological innovations such as progressive auto-encoding and adaptive knowledge transfer mechanisms. It addresses critical implementation challenges including negative transfer prevention and computational efficiency, while validating EMTO performance through benchmark studies and real-world applications in drug development, clinical trial optimization, and healthcare resource allocation. This survey synthesizes current research trends and identifies promising future directions for EMTO methodologies in addressing complex optimization problems in biomedical research and pharmaceutical development.

Foundations of Evolutionary Multi-Task Optimization: Principles, Frameworks, and Theoretical Underpinnings

Evolutionary Algorithms (EAs) have demonstrated remarkable success as powerful global optimization techniques by simulating the process of natural evolution [1]. Traditional EAs are designed for single-task optimization, focusing on solving one optimization problem at a time, whether single-objective, multi-objective, multi-modal, or dynamic in nature [1]. Despite their widespread application, single-task EAs possess inherent limitations, primarily their tendency to rely on greedy search approaches without leveraging prior knowledge gained from solving similar problems [1]. This approach mirrors solving each problem in isolation without utilizing potential synergies or commonalities that might exist between related tasks, resulting in computational inefficiencies when similar problems need to be solved repeatedly [2].

The fundamental shift toward multi-task optimization emerges from the recognition that "everything is interconnected, and humans can enhance their current task efficiency by leveraging past experiences" [1]. This conceptual breakthrough led researchers to explore how historical processing experience could be advantageously incorporated when solving present tasks, mirroring human problem-solving capabilities [1].

The Emergence of Evolutionary Multi-Task Optimization

Evolutionary Multi-task Optimization (EMTO) represents a paradigm shift in evolutionary computation, introducing a novel approach where multiple tasks are optimized simultaneously within the same problem framework, outputting the best solution for each task [1]. Drawing inspiration from multitask learning and transfer learning, EMTO creates algorithms specifically designed by EAs to solve multiple related tasks concurrently [1].

The core principle underpinning EMTO is that "if some common useful knowledge exists in solving a task, then the useful knowledge gained in the process of solving this task may help to solve another task that is related to it" [1]. This knowledge transfer mechanism allows EMTO to fully utilize the implicit parallelism of population-based search, enabling mutual enhancement across tasks during the optimization process [2]. Unlike traditional sequential transfer approaches where knowledge transfer is unidirectional, EMTO facilitates bidirectional knowledge transfer, allowing knowledge to flow among different tasks simultaneously to promote mutual enhancement [2].

The first groundbreaking implementation of EMTO was the Multifactorial Evolutionary Algorithm (MFEA), which created a multi-task environment where a single population evolves toward solving multiple tasks simultaneously [1]. In MFEA, each task is treated as a unique "cultural factor" influencing the population's evolution, with skill factors used to divide the population into non-overlapping task groups [1]. Knowledge transfer in MFEA is achieved through two algorithmic modules—assortative mating and selective imitation—which work in combination to enable knowledge transfer across different task groups [1].

Table 1: Comparison Between Single-Task EA and Evolutionary Multi-Task Optimization

| Feature | Single-Task EA | Evolutionary Multi-Task Optimization |

|---|---|---|

| Scope | Optimizes one problem at a time | Optimizes multiple tasks simultaneously |

| Knowledge Utilization | No knowledge transfer between tasks | Implicit knowledge transfer across tasks |

| Search Approach | Greedy search without prior knowledge | Leverages common useful knowledge across tasks |

| Parallelism | Limited to single task | Utilizes implicit parallelism of population-based search |

| Algorithmic Structure | Separate optimization for each task | Single population evolving for multiple tasks |

| Transfer Mechanism | No transfer or unidirectional sequential transfer | Bidirectional knowledge transfer |

The Core EMTO Framework and Knowledge Transfer Mechanisms

Fundamental Architecture

The EMTO framework introduces a multi-task environment where a single population evolves to address multiple optimization tasks concurrently [1]. Unlike traditional EAs that require separate optimization runs for each task, EMTO maintains a unified population that collectively searches across all task domains. This architecture enables the algorithm to "transfer knowledge across different tasks to improve performance in solving each task independently" [2]. The critical contribution of EMTO lies in its introduction of this multi-task environment and the implementation of systematic knowledge transfer across tasks during evolution [2].

The population in EMTO is typically divided using skill factors that assign individuals to specific tasks, creating non-overlapping task groups within the broader population [1]. This organizational structure allows each subgroup to focus on its respective task while remaining part of a larger evolutionary process that facilitates cross-task knowledge exchange. The effectiveness of this approach has been proven theoretically, with EMTO demonstrating superiority over traditional single-task optimization in convergence speed when solving optimization problems [1].

Knowledge Transfer: The Core Challenge

The performance of EMTO heavily depends on effective knowledge transfer between tasks [2]. The transfer process aims to leverage commonalities and relationships between tasks to accelerate convergence and improve solution quality. However, this process introduces the significant challenge of negative transfer, which occurs when "performing KT between tasks with low correlation can even deteriorate the optimization performance as compared to optimizing each task separately" [2].

Research to mitigate negative transfer primarily focuses on two aspects: "the first is to determine suitable tasks for performing knowledge transfer, and the second is to improve the way of eliciting more useful knowledge in the knowledge transfer process" [2]. Existing approaches address these challenges through various methods, including measuring similarity between tasks, dynamically adjusting inter-task knowledge transfer probabilities based on correlation, and monitoring the amount of positively transferred knowledge during evolution [2].

Table 2: Knowledge Transfer Methods in Evolutionary Multi-Task Optimization

| Method Category | Key Mechanism | Representative Techniques |

|---|---|---|

| Implicit Transfer | Improves selection or crossover methods of transfer individuals | Assortative mating, selective imitation [1] |

| Explicit Transfer | Directly constructs inter-task mappings based on task characteristics | Explicit knowledge representations, solution translation [2] |

| Similarity-Based | Measures correlation between tasks to determine transfer suitability | Task similarity metrics, correlation analysis [2] |

| Adaptive Probability | Dynamically adjusts transfer probability based on evolutionary progress | Success history monitoring, positive transfer measurement [2] |

Key Algorithms and Methodological Approaches

The Multifactorial Evolutionary Algorithm (MFEA)

As the pioneering EMTO algorithm, MFEA establishes the foundational framework for subsequent developments in the field [1]. MFEA introduces several innovative concepts that enable effective multi-task optimization:

- Multifactorial Environment: MFEA creates an environment where multiple optimization tasks (termed "cultural factors") simultaneously influence population evolution [1].

- Skill Factor: Each individual in the population is assigned a skill factor representing its assigned task, dividing the population into non-overlapping task groups [1].

- Assortative Mating: This mechanism allows individuals from different tasks to mate with a specified probability, facilitating cross-task knowledge exchange [1].

- Selective Imitation: This process enables individuals to learn from superior solutions across different tasks, further promoting knowledge transfer [1].

The algorithmic foundation of MFEA has demonstrated significant performance improvements, particularly in convergence speed, when solving related optimization problems compared to traditional single-task approaches [1].

Advanced Knowledge Transfer Strategies

Subsequent research has developed more sophisticated knowledge transfer strategies to enhance EMTO performance and mitigate negative transfer:

- Adaptive Transfer Methods: These approaches dynamically adjust transfer probabilities based on ongoing assessment of transfer success, increasing transfer between highly correlated tasks while reducing potentially detrimental transfers [2].

- Explicit Mapping Techniques: Unlike implicit transfer methods that rely on genetic operations, explicit methods construct direct mappings between task solution spaces based on task characteristics [2].

- Transfer Learning Integration: Recent works explore integrating transfer learning methodologies from machine learning into EMTO frameworks to improve knowledge extraction and application across tasks [2].

These advanced strategies address the fundamental questions of knowledge transfer: "when KT should be performed and how KT can be performed" [2], which represent the core challenges in EMTO design.

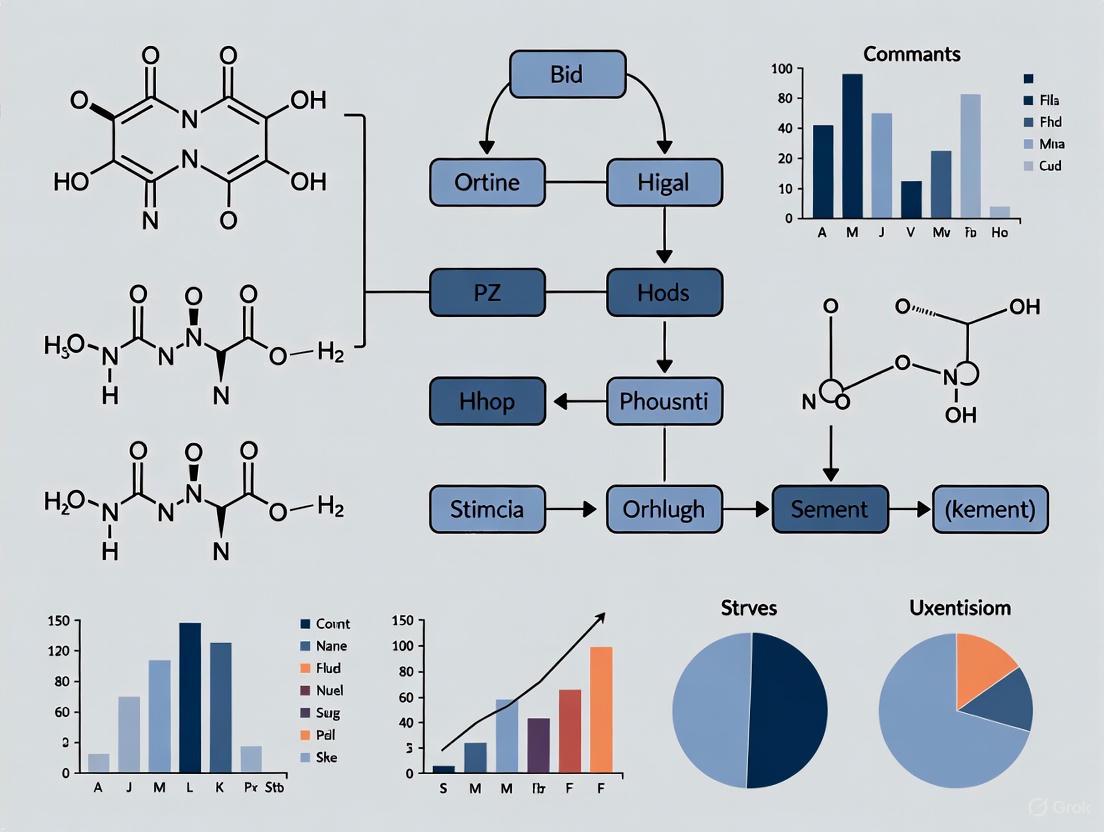

Diagram 1: EMTO Framework with Knowledge Transfer Mechanism. This diagram illustrates the unified population structure divided into task groups with bidirectional knowledge transfer enabling simultaneous optimization of multiple tasks.

Research Reagents and Experimental Tools

Table 3: Essential Methodological Components for EMTO Research and Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Population Structure | Maintains diversity across tasks while enabling knowledge transfer | Unified population with skill factors, explicit multipopulation frameworks [1] |

| Knowledge Transfer Mechanism | Facilitates exchange of useful information between tasks | Assortative mating, selective imitation, explicit solution mapping [1] [2] |

| Similarity Measurement | Quantifies inter-task relationships to guide transfer | Correlation analysis, transfer success history, topological mapping [2] |

| Adaptive Control | Dynamically adjusts transfer parameters based on performance | Probability adjustment, success-based transfer regulation [2] |

| Evaluation Framework | Assesses algorithm performance and transfer effectiveness | Convergence speed analysis, solution quality metrics, negative transfer quantification [1] [2] |

Applications and Future Research Directions

EMTO has demonstrated significant applicability across diverse domains, particularly benefiting problems where related optimization tasks share common underlying structures. Notable application areas include cloud computing resource optimization, complex engineering design problems, and machine learning parameter optimization [1]. The ability to simultaneously address multiple related tasks while leveraging cross-domain knowledge makes EMTO particularly valuable for real-world problems characterized by interconnected optimization challenges.

Future research directions for EMTO focus on addressing current limitations and expanding methodological capabilities. Promising areas include developing more sophisticated similarity measures for automatic task relationship detection, creating advanced transfer mechanisms to minimize negative transfer, and exploring synergies with other optimization paradigms such as multi-objective and constrained optimization [1]. Additionally, research continues into expanding the application domains of EMTO and developing theoretical foundations to better understand knowledge transfer dynamics in evolutionary computation [2].

As EMTO continues to evolve, it represents a paradigm shift in how evolutionary algorithms approach complex, interconnected optimization problems, moving beyond isolated problem-solving toward integrated multi-task frameworks that mirror the interconnected nature of real-world challenges.

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in computational intelligence, moving beyond traditional evolutionary algorithms that typically solve problems in isolation. Inspired by human capability to simultaneously manage multiple related activities and drawing concepts from multitask learning and transfer learning in artificial intelligence, EMTO aims to optimize multiple self-contained tasks concurrently within a single algorithmic run [1] [3]. The fundamental premise is that if common useful knowledge exists across tasks, then the transfer of this knowledge during the optimization process may accelerate convergence and improve solution quality for all tasks involved [1]. This approach leverages the implicit parallelism of population-based search, making it particularly suitable for complex, non-convex, and nonlinear problems that characterize many real-world applications in fields such as drug development, cloud computing, and engineering design [1].

The EMTO field has seen substantial growth since the introduction of the Multi-Factorial Evolutionary Algorithm (MFEA) in 2016, with a steady increase in publications and applications between 2017 and 2022 [1]. As an emerging branch of evolutionary computation, EMTO provides a novel framework for dealing with the growing complexity of modern optimization problems where tasks seldom exist in isolation. This technical guide examines the two predominant architectural frameworks—multi-factorial and multi-population approaches—that have emerged as foundational implementations within the EMTO paradigm, providing researchers with in-depth analysis, comparative evaluation, and practical implementation guidelines.

Multi-Factorial Evolutionary Architecture

Core Principles and Mechanism

The Multi-Factorial Evolutionary Algorithm (MFEA) represents the pioneering architecture in EMTO, introducing a unified representation scheme where all solutions reside within a normalized search space regardless of their associated tasks [4]. This innovative framework treats multiple optimization tasks as different "cultural factors" influencing the evolution of a single population, thereby creating what researchers term a multi-task environment [1]. The algorithm's cornerstone is its ability to encode solutions from different tasks into a unified representation space Y, typically normalized to [0, 1]^D, where D corresponds to the maximum dimensionality among all tasks [4]. This normalization enables direct genetic exchange between solutions belonging to different tasks, facilitating implicit knowledge transfer.

MFEA introduces several key properties for comparing individuals across different optimization tasks. The factorial cost refers to an individual's fitness value for a specific task, while the factorial rank represents its relative performance position within the population for that task [4]. These metrics enable the calculation of scalar fitness, a unified measure that allows direct comparison of individuals across different tasks, and the skill factor, which identifies the specific task at which an individual excels most [4]. Through these mechanisms, MFEA naturally allocates more resources to individuals demonstrating strong performance across tasks while maintaining population diversity.

Algorithmic Workflow and Knowledge Transfer

The MFEA workflow begins with population initialization, where individuals are randomly generated and encoded into the unified search space. Unlike traditional evolutionary approaches, MFEA employs a distinctive selective evaluation strategy where offspring are evaluated only for a single task—typically the same task as one parent or determined probabilistically [4]. This approach significantly reduces computational overhead, a crucial advantage when dealing with computationally expensive functions common in real-world applications like drug discovery and protein folding.

Knowledge transfer in MFEA occurs primarily through assortative mating and selective imitation [1]. The random mating probability (rmp) parameter serves as a crucial control mechanism, determining the likelihood that mating will occur between parents associated with different tasks versus the same task [4]. This balanced approach promotes beneficial genetic exchange while minimizing negative transfer—where inappropriate knowledge sharing degrades performance. The following diagram illustrates the core MFEA workflow:

Strengths and Limitations

The multi-factorial architecture offers several distinct advantages. Its unified representation enables seamless knowledge transfer across tasks with different dimensionalities and search space characteristics [4]. The implicit genetic transfer through assortative mating allows the algorithm to automatically discover and exploit synergies between tasks without requiring explicit similarity measures [1]. Furthermore, the skill factor mechanism naturally specializes individuals toward specific tasks while maintaining a shared genetic pool, creating an effective balance between specialization and knowledge sharing.

However, MFEA faces challenges when tasks exhibit significant dissimilarity, potentially leading to negative transfer where inappropriate genetic material degrades performance [5]. The algorithm also relies heavily on proper parameter tuning, particularly the random mating probability (rmp), which significantly impacts knowledge transfer effectiveness [4]. Additionally, as task heterogeneity increases, the unified representation may struggle to simultaneously accommodate diverse task requirements, potentially limiting performance for complex, dissimilar task combinations.

Multi-Population Evolutionary Architecture

Fundamental Framework and Design

The multi-population architecture presents an alternative EMTO approach that maintains separate, explicit populations for each optimization task while enabling controlled knowledge transfer between them [5] [4]. This framework aligns more closely with traditional island models in evolutionary computation but incorporates specialized mechanisms for cross-task knowledge exchange. Unlike MFEA's implicit transfer through unified representation, multi-population methods employ explicit collaboration strategies where transfer is deliberately managed between distinct populations [5].

In this architecture, each subpopulation evolves semi-independently, focusing on its specific task while periodically engaging in knowledge exchange with other subpopulations [4]. This approach naturally accommodates task heterogeneity, as each population can employ task-specific representations, operators, and parameters. The multi-population model is particularly advantageous when dealing with large numbers of tasks or when tasks demonstrate limited similarity, as it minimizes destructive interference while preserving beneficial transfer opportunities [5].

Inter-Population Knowledge Exchange

Knowledge transfer in multi-population EMTO occurs through explicit across-population reproduction operators that facilitate information exchange between subpopulations [4]. A core feature of this model is that parents and their offspring belong to the same subpopulation, maintaining population stability while incorporating genetic material from other tasks. This stands in contrast to MFEA's unified population where individuals may change task affiliations across generations.

The diagram below illustrates the multi-population architecture with explicit knowledge transfer mechanisms:

Advanced multi-population implementations incorporate adaptive transfer strategies that dynamically adjust the frequency and intensity of knowledge exchange based on detected task relatedness [5]. Some frameworks employ domain adaptation techniques such as progressive auto-encoding (PAE) to align search spaces and facilitate more effective knowledge transfer between populations [5]. These methods continuously adapt domain representations throughout the evolutionary process, addressing the limitation of static alignment in dynamic optimization landscapes.

Advantages and Implementation Challenges

The multi-population architecture offers significant advantages for specific problem classes. Its explicit population separation naturally minimizes negative transfer between highly dissimilar tasks, making it more robust for heterogeneous task groups [5]. The framework provides greater flexibility in employing task-specific representations and operators, which is particularly valuable when tasks have substantially different characteristics or search space dimensions. Furthermore, the architecture readily supports asynchronous evolution, allowing different populations to evolve at varying paces according to their complexity.

Implementation challenges include determining the optimal transfer policy—including what knowledge to transfer, when to transfer, and between which populations [1]. The architecture also introduces additional computational overhead from maintaining multiple populations and managing transfer mechanisms. Designing effective similarity measures to guide transfer decisions remains non-trivial, particularly when task relationships are complex or non-linear [5]. Without careful design, multi-population approaches may miss synergistic opportunities that MFEA's unified representation naturally exploits.

Comparative Analysis: Architectural Trade-offs

Structural and Operational Differences

The multi-factorial and multi-population architectures present fundamentally different approaches to organizing evolutionary search in multi-task environments. The table below summarizes their core structural and operational characteristics:

Table 1: Structural Comparison of Multi-Factorial vs. Multi-Population Architectures

| Characteristic | Multi-Factorial Architecture | Multi-Population Architecture |

|---|---|---|

| Population Structure | Single unified population | Multiple explicit subpopulations |

| Knowledge Representation | Unified encoding space | Task-specific representations |

| Knowledge Transfer Mechanism | Implicit through assortative mating | Explicit cross-population operators |

| Task Specialization | Skill factor assignment | Dedicated subpopulations |

| Evaluation Strategy | Selective evaluation | Typically full evaluation |

| Transfer Control | Random mating probability (rmp) | Explicit transfer policy |

| Theoretical Foundation | Multi-factorial inheritance | Island models with migration |

The multi-factorial approach emphasizes implicit parallelism through its unified representation, where a single population simultaneously addresses all tasks with individuals naturally specializing through the skill factor mechanism [1] [4]. In contrast, the multi-population framework employs explicit parallelism with separate populations focusing on specific tasks, coordinating through deliberate exchange protocols [5]. This fundamental distinction leads to different strengths, limitations, and suitability for various problem classes.

Performance Considerations

Table 2: Performance and Application Considerations

| Factor | Multi-Factorial Architecture | Multi-Population Architecture |

|---|---|---|

| Computational Overhead | Lower (selective evaluation) | Higher (multiple populations) |

| Scalability to Many Tasks | Limited by population size | More scalable with proper grouping |

| Handling Task Heterogeneity | Prone to negative transfer | More robust through separation |

| Implementation Complexity | Moderate | Higher (transfer policy design) |

| Knowledge Discovery | Automatic synergy discovery | Requires explicit similarity measures |

| Parameter Sensitivity | Highly sensitive to rmp | Sensitive to transfer policy |

Performance differences between the architectures significantly depend on task relatedness. For highly similar tasks with complementary search landscapes, MFEA's implicit transfer often produces superior convergence through automatic knowledge exchange [1]. As task dissimilarity increases, multi-population approaches typically demonstrate greater robustness by minimizing negative transfer [5]. The computational efficiency balance depends on evaluation cost—MFEA reduces evaluations through selective assessment, while multi-population methods may require more evaluations but with potentially faster per-task convergence.

Recent hybrid approaches attempt to combine strengths from both architectures. For instance, multi-population MFEA implementations reframe the original algorithm as an explicit multi-population model while preserving its core transfer mechanisms [4]. Similarly, progressive auto-encoding techniques enhance both architectures by enabling continuous domain adaptation throughout evolution, improving cross-task knowledge transfer effectiveness [5].

Experimental Framework and Assessment Methodologies

Benchmarking Standards and Metrics

Rigorous evaluation of EMTO architectures requires comprehensive benchmarking across diverse problem suites. Researchers have developed specialized multi-task optimization benchmarks that systematically vary task relatedness, landscape morphology, and dimensionality [5] [1]. The CEC 2021 competition on evolutionary multi-task optimization established standardized benchmark problems that enable direct comparison between different algorithmic approaches [5]. These benchmarks typically include tasks with known global optima, allowing precise measurement of solution quality and convergence speed.

Key performance metrics include:

- Convergence Speed: Generations or function evaluations required to reach satisfactory solutions

- Solution Accuracy: Deviation from known global optima across all tasks

- Task Similarity Sensitivity: Performance consistency across varying degrees of task relatedness

- Negative Transfer Resistance: Ability to maintain performance when tasks contain conflicting information

- Scalability: Performance preservation with increasing task numbers and dimensionality

Experimental protocols typically compare EMTO approaches against single-task evolutionary algorithms and between different multi-task architectures to isolate the benefits of knowledge transfer [5]. Statistical significance testing ensures observed differences reflect algorithmic capabilities rather than random variation.

Advanced Assessment Techniques

Beyond conventional metrics, researchers employ advanced assessment methodologies to understand architectural behaviors. Theoretical analysis establishes convergence properties and computational complexity bounds for different architectures [4]. Process-oriented analysis examines how knowledge transfer unfolds during evolution, measuring transfer quantity and quality throughout the search process [1]. These methodologies help explain why certain architectures perform better on specific problem classes.

For real-world applications where ground truth is often unavailable, researchers employ internal metrics that assess population diversity, fitness distribution, and transfer acceptance rates. These indicators provide insight into algorithmic behavior without requiring known optima. Additionally, sensitivity analysis examines how architectural performance varies with key parameters such as population size, transfer rates, and selection pressure [4].

Implementation Toolkit for Researchers

Implementing and experimenting with EMTO architectures requires specific computational resources and algorithmic components. The following table outlines essential elements for constructing EMTO research frameworks:

Table 3: Research Reagent Solutions for EMTO Implementation

| Component | Function | Implementation Notes |

|---|---|---|

| Unified Encoding Scheme | Representing diverse tasks in common space | Random-key representation [0,1]^D [4] |

| Skill Factor Calculator | Identifying individual task specialization | Factorial rank computation [4] |

| Assortative Mating Operator | Controlling cross-task reproduction | Rmp-based mating selection [1] |

| Across-Population Crossover | Explicit knowledge transfer | Prevents population drift [4] |

| Domain Adaptation Module | Aligning disparate search spaces | Progressive auto-encoding [5] |

| Task Similarity Analyzer | Quantifying inter-task relationships | Inform transfer policy design [1] |

| Multi-Task Benchmark Suite | Algorithm validation | CEC 2021 competition problems [5] |

These components serve as building blocks for constructing both multi-factorial and multi-population architectures. Researchers can mix and match elements to create hybrid approaches that address specific application requirements.

Practical Implementation Guidelines

Successful implementation requires careful attention to several practical considerations. Population sizing must balance sufficient genetic diversity with computational efficiency—typically larger populations benefit complex, multimodal problems but increase computational costs [4]. Transfer policy design should incorporate adaptive mechanisms that respond to detected task relatedness rather than relying on fixed parameters [5].

For multi-factorial implementations, the random mating probability requires careful tuning—values typically between 0.3-0.5 work well for moderately similar tasks, while lower values may be preferable for highly dissimilar tasks [4]. Multi-population implementations must establish appropriate migration policies determining transfer frequency, selection criteria for emigrants, and replacement strategies for immigrants.

Recent advances in progressive domain adaptation suggest that static alignment approaches should be replaced with continuous adaptation mechanisms that evolve with the population [5]. Segmented auto-encoding provides staged alignment across optimization phases, while smooth auto-encoding enables gradual refinement using eliminated solutions [5]. These techniques enhance both architectural frameworks by improving cross-task knowledge transfer effectiveness.

The multi-factorial and multi-population architectures represent complementary approaches to evolutionary multi-task optimization, each with distinct strengths, limitations, and application domains. The multi-factorial framework excels when tasks demonstrate significant similarity and knowledge can be seamlessly transferred through unified representation. The multi-population approach offers greater robustness for heterogeneous task collections by explicitly managing knowledge exchange between specialized populations.

Future research directions include developing more sophisticated task similarity assessment techniques that dynamically quantify relationships during optimization rather than relying on static measures [1]. Theoretical foundations require further development to better understand convergence properties and knowledge transfer dynamics across different architectural frameworks [4]. Hybrid architectures that adaptively switch between or combine elements of both approaches show significant promise for addressing diverse problem characteristics within single frameworks [5].

For drug development professionals and research scientists, architectural selection should be guided by problem characteristics—particularly task relatedness, computational budget, and solution quality requirements. As EMTO methodologies continue evolving, they offer increasingly powerful approaches for addressing complex optimization challenges where tasks contain valuable complementary information that can be exploited through intelligent transfer mechanisms.

Knowledge Transfer Mechanisms in Evolutionary Computation

Evolutionary multi-task optimization (EMTO) represents an emerging paradigm in evolutionary computation that leverages the implicit parallelism of population-based search to solve multiple optimization tasks simultaneously [2]. Unlike traditional evolutionary algorithms (EAs) that handle tasks in isolation, EMTO creates a multi-task environment where knowledge discovered while solving one task can transfer to other related tasks, potentially accelerating convergence and improving solution quality for all tasks involved [2]. The fundamental premise is that correlated optimization tasks frequently share common knowledge or skills, and harnessing these complementarities can lead to performance gains that surpass independent optimization approaches [2] [6].

The critical component enabling these benefits is the knowledge transfer (KT) mechanism – the systematic process by which useful information exchanges between tasks during evolutionary search [2]. Effective KT design addresses three fundamental questions: "where to transfer" (identifying suitable source-target task pairs), "what to transfer" (determining the knowledge content), and "how to transfer" (implementing the exchange mechanism) [7]. When properly implemented, KT facilitates mutual enhancement across tasks, but imperfect transfer can lead to negative transfer – where cross-task interference deteriorates performance compared to independent optimization [2]. This technical guide comprehensively examines KT mechanisms within EMTO, providing researchers with foundational principles, practical methodologies, and emerging trends to inform algorithm design and application, particularly in complex domains like drug development.

Theoretical Foundations of Knowledge Transfer

Fundamental Principles and Challenges

Knowledge transfer in evolutionary computation operates on the principle that optimization tasks, even those with heterogeneous landscapes, often possess latent synergies that can be exploited through structured information exchange [7]. The mathematical formulation of a multitask optimization problem involves finding optimal solutions {x₁, x₂, ..., xK} for K tasks, where each solution xj minimizes its respective objective function fj(x) within feasible region Rj [7]. The heterogeneous landscape properties and potentially misaligned feasible regions across tasks present significant algorithmic challenges that KT mechanisms must carefully address [7].

The most significant challenge in KT design is mitigating negative transfer, which occurs when knowledge exchange between dissimilar tasks interferes with the convergence process [2]. Research has demonstrated that performing KT between tasks with low correlation can deteriorate optimization performance compared to optimizing each task separately [2]. Contemporary approaches address this challenge through two primary avenues: determining suitable tasks for knowledge exchange based on similarity measures, and improving how useful knowledge is elicited during the transfer process [2].

Taxonomy of Knowledge Transfer Methods

The design space for KT mechanisms can be systematically categorized according to several key dimensions:

Table: Taxonomy of Knowledge Transfer Methods in EMTO

| Design Dimension | Approach Categories | Key Characteristics |

|---|---|---|

| Transfer Timing | Online Adaptive | Transfer parameters adjusted dynamically during evolution based on performance feedback or similarity measures [2] |

| Fixed Schedule | Transfer occurs at predetermined intervals or generations regardless of search state [2] | |

| Knowledge Content | Solution-Level | Direct transfer of complete solutions or genetic materials between task populations [2] [7] |

| Model-Level | Transfer of surrogate models, distribution models, or landscape characteristics [6] | |

| Transfer Mechanism | Implicit | KT occurs seamlessly through shared genetic operations like crossover and selection [2] [7] |

| Explicit | Dedicated transfer operators with explicit mapping functions between task search spaces [7] |

Key Algorithmic Frameworks and Experimental Protocols

Multifactorial Evolutionary Algorithm (MFEA) Framework

As a pioneering EMTO algorithm, MFEA establishes the foundational framework for implicit knowledge transfer [2] [7]. MFEA maintains a unified population of individuals, each encoded in a unified search space and tagged with a skill factor indicating its most specialized task [2] [7]. Knowledge transfer occurs inherently during assortative mating, where individuals from different tasks may undergo crossover, and vertical cultural transmission, where offspring evaluate their fitness on a subset of tasks [2]. The implicit nature of this transfer mechanism reduces computational overhead but offers limited control over transfer direction and content [7].

Experimental Protocol for MFEA Baseline:

- Population Initialization: Generate a unified population of size N with random initialization in unified search space

- Skill Factor Assignment: Evaluate each individual on all tasks and assign skill factor based on best performance

- Evolutionary Cycle: While termination criteria not met:

- Assortative Mating: Select parents considering inter-task mating probability (rmp)

- Crossover & Mutation: Apply genetic operators to produce offspring

- Vertical Cultural Transmission: Evaluate offspring on selected tasks (typically one or both parents' tasks)

- Selection: Implement environmental selection to maintain population size

- Solution Decoding: Decode unified representation to task-specific solutions for each optimization task

The critical parameter controlling knowledge transfer in MFEA is the random mating probability (rmp), which determines the likelihood of cross-task reproduction [2]. Traditional MFEA uses a fixed rmp value, while advanced variants adapt this parameter based on online measurements of inter-task similarity or transfer success [2].

Explicit Knowledge Transfer with Domain Adaptation

For tasks with heterogeneous search spaces or substantially different landscape characteristics, explicit transfer mechanisms often outperform implicit approaches [6] [7]. These methods employ dedicated transformation techniques to bridge disparate task representations before knowledge exchange. Notable implementations include:

Linear Domain Adaptation (LDA-MFEA): This algorithm introduces a linear transformation strategy to map tasks into a higher-order representation space where knowledge can transfer efficiently [6]. The transformation matrix is learned through correlation analysis between task populations.

Subspace Alignment Methods: These approaches establish low-dimensional subspaces for each task using principal component analysis (PCA) on current populations, then learn an alignment matrix to minimize subspace inconsistency [6]. This enables effective knowledge transfer even for tasks with different dimensionalities.

Experimental Protocol for Explicit Transfer with PCA Subspace Alignment:

- Task-Specific Subspace Construction: For each task Tᵢ, apply PCA on its current population Pᵢ to derive principal components PCᵢ

- Subspace Alignment: For each source-target task pair (Tᵢ, Tⱼ), compute alignment matrix Aᵢⱼ to minimize discrepancy between PCᵢ and PCⱼ

- Knowledge Extraction: Select elite solutions from source task Tᵢ and transform them using alignment matrix: x' = Aᵢⱼ × x

- Knowledge Injection: Incorporate transformed solutions into target task Tⱼ's population, replacing inferior individuals

- Transfer Evaluation: Monitor improvement in target task performance to assess transfer effectiveness and potentially adjust future alignment matrices

Advanced Knowledge Transfer Methodologies

Classifier-Assisted Evolutionary Multitasking

For computationally expensive optimization problems where fitness evaluations constitute the primary computational bottleneck, classifier-assisted EMTO provides an effective alternative to traditional regression-based surrogate models [6]. This approach replaces expensive fitness evaluations with a classification mechanism that distinguishes the relative quality of candidate solutions, significantly reducing computational requirements while maintaining evolutionary direction.

The classifier-assisted multitasking optimization (CA-MTO) algorithm integrates a support vector classifier (SVC) with covariance matrix adaptation evolution strategy (CMA-ES) and enhances classifier accuracy through cross-task knowledge transfer [6]. The SVC prescreens parent solutions from the current population with low computational cost, while the knowledge transfer strategy enriches training samples for each task-oriented classifier by sharing high-quality solutions among different tasks using PCA-based subspace alignment [6].

Experimental Protocol for Classifier-Assisted EMTO:

- Initial Sampling: For each task, generate initial population and evaluate a small set of solutions using expensive fitness function

- Classifier Training: Train task-specific SVCs using initial evaluated solutions with binary labels indicating solution quality

- Evolutionary Cycle with Knowledge Transfer: While computational budget not exhausted:

- Candidate Generation: Generate new candidate solutions using CMA-ES variation operators

- Classifier Prescreening: Use SVC to predict solution quality and select promising candidates

- Selective Evaluation: Evaluate only top-ranked candidates using expensive fitness function

- Cross-Task Knowledge Transfer: Periodically share high-quality solutions across tasks using subspace alignment

- Classifier Retraining: Update SVCs with newly evaluated solutions and transferred knowledge

- Solution Refinement: Conduct local search around best-found solutions using actual fitness evaluations

Reinforcement Learning for Adaptive Transfer Control

Recent advances automate KT policy design through reinforcement learning (RL), addressing the "no-free-lunch" limitations of fixed transfer mechanisms [7]. The MetaMTO framework implements a multi-role RL system where specialized agents collaboratively determine optimal transfer decisions throughout the evolutionary process [7].

Table: Multi-Role RL Agents for Knowledge Transfer Control

| Agent Type | Decision Role | Technical Implementation | Output |

|---|---|---|---|

| Task Routing (TR) Agent | "Where to transfer" | Attention-based similarity recognition module processing task status features | Source-target transfer pairs based on attention scores [7] |

| Knowledge Control (KC) Agent | "What to transfer" | Neural network processing source-target pair characteristics | Proportion of elite solutions to transfer from source task [7] |

| Transfer Strategy Adaptation (TSA) Agent Group | "How to transfer" | Multiple networks controlling algorithm hyperparameters | Transfer strength and specific operator parameters [7] |

Experimental Protocol for RL-Based Transfer Control:

- State Representation: Extract features from all sub-tasks including population distribution statistics, fitness trends, and diversity measures

- Policy Network Architecture: Implement specialized network modules for each transfer decision type (routing, control, adaptation)

- Reward Design: Define balanced reward function considering both global convergence performance and transfer success rate

- Meta-Training: Pre-train network modules end-to-end over augmented multitask problem distribution

- Online Execution: Deploy trained policy to control knowledge transfer throughout EMTO process

- Policy Refinement: Fine-tune policy based on performance feedback on specific problem domains

Research Reagents and Experimental Tools

Implementing and experimenting with knowledge transfer mechanisms requires specific computational tools and algorithmic components. The following table details essential research reagents for EMTO experimentation:

Table: Research Reagent Solutions for EMTO Experimentation

| Resource Category | Specific Tools/Components | Function in EMTO Research |

|---|---|---|

| Optimization Frameworks | PlatEMO, DEAP, PyGMO | Provide foundational evolutionary algorithm components and benchmark problem suites [2] |

| Multitask Benchmark Problems | CEC2017 Multitask Benchmark Suite, Custom Composite Problems | Enable controlled experimentation with known task relationships and difficulty levels [7] |

| Surrogate Modeling Libraries | scikit-learn (SVC, GP), TensorFlow/PyTorch (Neural Networks) | Implement classifier-assisted and surrogate-based transfer mechanisms [6] |

| Reinforcement Learning Platforms | OpenAI Gym, Stable Baselines3, Custom MTO Environments | Facilitate development and training of RL-based transfer control policies [7] |

| Domain Adaptation Tools | PCA implementations, Transfer Learning Toolkits | Enable explicit knowledge transfer across heterogeneous tasks [6] |

| Performance Metrics | Multitask Performance Gain, Transfer Success Rate, Convergence Plots | Quantify effectiveness of knowledge transfer mechanisms [2] [7] |

Implementation Considerations for Drug Development Applications

When applying EMTO with knowledge transfer to drug development problems such as molecular optimization or binding affinity prediction, researchers should consider several domain-specific adaptations:

- Representation Alignment: Molecular representations (SMILES, graphs, descriptors) often have different dimensionalities and semantics across related tasks, requiring careful design of mapping functions for effective knowledge transfer [6]

- Transfer Validation: In critical pharmaceutical applications, implement rigorous validation protocols to ensure transferred knowledge improves rather than degrades optimization performance, potentially using domain-specific validation metrics [2]

- Cost-Aware Evaluation: Balance computational budget allocation between actual fitness evaluations (e.g., molecular dynamics simulations) and surrogate-assisted optimization, considering the significant cost disparity [6]

Knowledge transfer mechanisms represent the cornerstone of evolutionary multi-task optimization, enabling performance gains through synergistic problem-solving. This technical guide has comprehensively examined the theoretical foundations, algorithmic frameworks, and experimental methodologies for implementing effective knowledge transfer in evolutionary computation. From implicit genetic transfer in MFEA to explicit domain adaptation and learning-driven control strategies, the EMTO landscape offers diverse approaches suitable for different problem characteristics and domain requirements.

For researchers in drug development and related computationally intensive fields, classifier-assisted approaches with structured knowledge transfer provide particularly promising avenues for tackling expensive optimization problems with limited fitness evaluations. Meanwhile, the emerging paradigm of reinforcement learning-based transfer control offers automated policy design that adapts to problem characteristics, potentially overcoming limitations of fixed transfer mechanisms. As EMTO research continues evolving, the refinement of knowledge transfer methodologies will undoubtedly expand the applicability and effectiveness of evolutionary approaches for complex multi-task optimization scenarios.

Taxonomy of Multi-Task Optimization Problems (MTOPs) in Scientific Domains

Multi-Task Optimization Problems (MTOPs) represent a paradigm shift in computational problem-solving, moving beyond traditional single-task approaches to harness the synergies between multiple, related optimization tasks. Within evolutionary computation, this is realized through Evolutionary Multi-Task Optimization (EMTO), a branch of evolutionary algorithms (EAs) designed to optimize multiple tasks simultaneously within the same problem and output the best solution for each task [1]. EMTO leverages the implicit parallelism of population-based search and is particularly suited for complex, non-convex, and nonlinear problems [1]. The fundamental premise is that knowledge gained while solving one task may contain valuable information that can help solve another related task, thereby improving learning efficiency, accelerating convergence, and enhancing overall optimization performance [1] [8]. This in-depth technical guide synthesizes current research to present a structured taxonomy of MTOPs, their solution methodologies, and their transformative applications, particularly within scientific domains such as drug discovery.

A Hierarchical Taxonomy of Multi-Task Optimization Problems

The landscape of MTOPs can be categorized based on several key characteristics, including the relationship between tasks, the optimization goal, and the nature of the tasks themselves. The following taxonomy provides a framework for understanding the different classes of MTOPs.

Classification by Task Relationship and Objective

Table 1: Taxonomy of MTOPs Based on Task Relationship and Primary Objective

| Category | Definition | Key Characteristics | Typical Algorithms |

|---|---|---|---|

| Collaborative MTOP | All tasks are optimized simultaneously, with knowledge transfer intended to mutually improve performance on every task [1] [8]. | The goal is to find the best solution for each task. Tasks are often related or similar. | Multifactorial Evolutionary Algorithm (MFEA) [1], MFEA-II [9] |

| Competitive MTOP | A primary task is not pre-defined; it is the task with the best optimal value. Tasks "compete" for computational resources to be identified and optimized as the primary task [10]. | The primary task is unknown a priori. The algorithm must identify and optimize the best task. | Competitive Multi-Task Bayesian Optimization (CMTBO) [10] |

| Source-Assisted MTOP | A single primary task is the focus of optimization, while auxiliary tasks provide cheaper or more abundant information to accelerate the search on the primary task [10]. | Clear distinction between a single primary task and supporting auxiliary tasks. | Multi-Task Bayesian Optimization (MTBO) [10] |

Classification by Task Scale and Domain

- Evolutionary Multi-Task Optimization (EMTO): The foundational paradigm for using evolutionary algorithms to solve multiple tasks concurrently. It often deals with a modest number of tasks and leverages genetic operators for knowledge transfer [1].

- Evolutionary Many-Task Optimization (EMaTO): A specialization of EMTO that focuses on scenarios involving a larger number of tasks (typically three or more) [11]. The increase in task count introduces heightened challenges in managing knowledge transfer and avoiding negative transfer [11] [9].

- Multi-Objective Multi-Task Optimization: Combines the challenges of MTOPs with those of Multi-Objective Optimization Problems (MOOPs), where each task itself has multiple, often conflicting, objectives to be optimized [12] [13]. This is highly relevant in drug design, where a molecule must be optimized for potency, safety, and synthesizability simultaneously [12].

- Multimodal Multiobjective Optimization: A further complex class where, for a given multi-objective problem, multiple distinct solutions in the decision space (modes) can correspond to the same objective space value [13]. In drug discovery, this could mean identifying different sets of drug targets (different gene configurations) that offer equivalent therapeutic efficacy and safety profiles [13].

Core Methodologies and Algorithmic Frameworks for MTOPs

Solving MTOPs requires sophisticated mechanisms to handle knowledge transfer. The following diagram illustrates the core workflow of a general EMTO system.

Foundational Algorithm: The Multifactorial Evolutionary Algorithm (MFEA)

The MFEA is a pioneering algorithm in EMTO, often considered a benchmark [1]. Its core components are:

- Unified Search Space: All tasks are mapped to a single, unified search space, allowing a single population of individuals to evolve.

- Skill Factor: Each individual in the population is assigned a skill factor (τ), which identifies the single task on which that individual performs best.

- Assortative Mating and Vertical Cultural Transmission: Mating between individuals is biased. If two randomly selected parents have the same skill factor, crossover always occurs. If they are different, crossover occurs with a predefined random mating probability (rmp). This controls inter-task knowledge transfer.

- Selective Imitation: Offspring inherit the skill factor of a parent, typically the one they are more culturally similar to, and are evaluated only on that task to conserve computational resources [1].

Key Optimization Strategies in EMTO

The performance of EMTO hinges on effectively addressing the "what," "who," and "when" of knowledge transfer [1] [9].

- Knowledge Transfer Probability: This determines the frequency of cross-task interactions. Early algorithms like MFEA used a fixed probability (rmp), but modern approaches like MFEA-II and MGAD use adaptive strategies that dynamically adjust transfer probability based on feedback from the evolutionary process, balancing task self-evolution and knowledge transfer [9].

- Transfer Source Selection: This involves identifying which tasks are sufficiently similar to benefit from knowledge sharing. Methods include:

- Population Distribution Similarity: Using metrics like Maximum Mean Discrepancy (MMD) or Kullback-Leibler Divergence (KLD) to measure similarity between the populations of different tasks [9].

- Evolutionary Trend Similarity: Newer methods, such as in the MGAD algorithm, also incorporate Grey Relational Analysis (GRA) to assess the similarity of evolutionary trends, not just static population states [9].

- Knowledge Transfer Mechanisms: This defines the content and method of transfer.

- Explicit Transfer: Direct transfer of elite individuals or solution blocks from one task's population to another [11].

- Implicit Transfer: Using genetic crossover between individuals from different tasks to exchange information [11].

- Mapped Transfer: Employing models like denoising autoencoders to map and transfer knowledge between the search spaces of different tasks [11]. Advanced methods like anomaly detection are also used to filter out potentially harmful individuals before transfer [9].

Experimental Protocols and Evaluation in Scientific Domains

Evaluating EMTO algorithms requires specific protocols and benchmarks to measure convergence speed, solution quality, and robustness against negative transfer.

Protocol for Benchmarking EMTO Algorithms

A standard experimental protocol involves:

- Benchmark Selection: Use standardized multi-task benchmark suites that contain groups of related test functions (e.g., CEC17 benchmarks for EMTO).

- Algorithm Comparison: Compare the proposed EMTO algorithm against single-task evolutionary algorithms and other state-of-the-art EMTO algorithms.

- Performance Metrics:

- Convergence Speed: Measure the number of function evaluations or generations required to reach a predefined solution quality.

- Solution Accuracy: Record the best objective value found for each task upon termination.

- Success Rate: The percentage of independent runs where the algorithm finds a satisfactory solution.

- Negative Transfer Analysis: Deliberately include weakly related or unrelated tasks in the problem set to evaluate the algorithm's ability to mitigate negative transfer.

Detailed Methodology: Drug-Target Affinity Prediction and Generation

The DeepDTAGen framework is a prime example of a multitask deep learning application in drug discovery, which shares conceptual parallels with EMTO [14]. Its experimental methodology is detailed below.

- Objective: To simultaneously predict drug-target binding affinities (a regression task) and generate novel, target-aware drug molecules (a generation task) within a unified model [14].

- Datasets: Use of real-world biochemical datasets such as KIBA, Davis, and BindingDB, which contain quantitative measurements of drug-target interactions.

- Model Architecture:

- A shared feature encoder learns a common latent representation from input drug molecules (represented as SMILES strings or graphs) and target proteins (represented as amino acid sequences).

- Two task-specific "heads" branch from the shared encoder: a regression head for affinity prediction and a transformer-based decoder for generating new drug SMILES strings conditioned on the target protein.

- Optimization Challenge: The gradients from the two tasks may conflict, leading to suboptimal performance. This is a common issue in multi-task learning.

- Novel Optimizer: The FetterGrad algorithm was developed to mitigate gradient conflict. It works by minimizing the Euclidean distance between the gradients of the two tasks, keeping them aligned during training and preventing one task from dominating the learning process [14].

- Evaluation:

- For DTA Prediction: Mean Squared Error (MSE), Concordance Index (CI), and R-squared (r²m).

- For Drug Generation: Validity (chemical correctness of generated molecules), Novelty (unseen in training data), Uniqueness (diversity of generated set), and binding ability to the target.

Table 2: Key Research Reagents and Computational Tools in MTOP and Drug Discovery

| Item / Resource | Type | Function in Research | Example Use Case |

|---|---|---|---|

| Benchmark Suites (e.g., CEC17) | Software/Dataset | Provides standardized sets of test functions for fair and reproducible comparison of EMTO algorithms. | Algorithm development and performance validation [1]. |

| Multi-factorial Evolutionary Algorithm (MFEA) | Algorithm | A foundational EMTO algorithm that creates a multi-task environment for a single population to solve multiple tasks. | Baseline for comparing new EMTO methods [1]. |

| FetterGrad Optimizer | Algorithm | A gradient optimization algorithm designed for multitask learning that mitigates conflicts between gradients of different tasks. | Training deep multi-task models like DeepDTAGen [14]. |

| KIBA, Davis, BindingDB | Biochemical Dataset | Publicly available datasets containing quantitative drug-target interaction data. | Training and evaluating predictive models in computational drug discovery [14]. |

| Baishenglai (BSL) Platform | Software Platform | An open-access, deep learning-powered platform that integrates seven core drug discovery tasks in a unified framework. | End-to-end virtual drug screening and design [15]. |

| Structural Network Control Theory | Mathematical Framework | Provides principles for controlling the state transitions of complex networks (e.g., molecular interaction networks). | Identifying personalized drug targets (PDTs) in precision medicine [13]. |

Application in Scientific Domains: A Focus on Drug Discovery

The principles of multi-task optimization are driving innovation in several scientific fields, with particularly impactful applications in drug discovery.

- De Novo Drug Design as a Many-Objective Problem: Designing a new drug molecule from scratch is inherently a many-objective optimization problem (involving more than three objectives). Key objectives often include maximizing potency against a target, structural novelty, and a favorable pharmacokinetic profile, while minimizing synthesis cost, toxicity, and side effects [12]. Evolutionary many-objective optimization algorithms are directly applicable to this challenge.

- Predictive and Generative Modeling: As exemplified by DeepDTAGen, multitask frameworks can combine predictive tasks (e.g., forecasting binding affinity) with generative tasks (e.g., creating new molecular structures). This allows for a closed-loop design process where predictions guide the generation of better candidates [14].

- Personalized Drug Target Identification: In precision medicine, multiobjective optimization is used to identify Personalized Drug Targets (PDTs). For example, the MMONCP framework formulates this as a multimodal multiobjective problem, aiming to find a set of driver genes that minimizes the number of nodes needed to control a patient-specific molecular network while maximizing the information from prior-known drug targets. Crucially, it can find multiple, functionally different sets of genes (modes) that are equivalent in their objective values, providing diverse treatment options [13].

- Platform Integration: Comprehensive platforms like Baishenglai (BSL) demonstrate the industrial application of these concepts. BSL integrates seven core tasks—molecular generation, optimization, property prediction, drug-target affinity prediction, drug-drug interaction prediction, drug-cell response prediction, and retrosynthesis analysis—into a single, modular framework powered by multi-task learning and other advanced AI technologies [15].

The taxonomy of Multi-Task Optimization Problems reveals a rich and complex landscape, spanning from collaborative to competitive paradigms and from few-task to many-task scales. The core algorithmic challenge lies in designing intelligent knowledge transfer mechanisms that dynamically determine what knowledge to share, between which tasks, and when. The experimental protocols and toolkits are maturing, enabling rigorous evaluation and advancement of the field. As evidenced by the transformative applications in drug discovery, from de novo molecular design to personalized medicine, MTOPs provide a powerful and efficient computational framework for tackling the multifaceted optimization challenges that are ubiquitous in modern science and engineering. Integrating EMTO with other paradigms like multi-objective optimization and machine learning continues to be a fertile ground for future research that will further expand its capabilities and applications.

Historical Development and Key Milestones in EMTO Research

Evolutionary Multitask Optimization (EMTO) represents a paradigm shift in evolutionary computation. It is a population-based approach that introduces a novel framework for solving multiple optimization tasks simultaneously, rather than in isolation [1]. The core principle of EMTO is that real-world optimization problems often possess underlying relationships or commonalities. By leveraging the implicit parallelism of population-based search, EMTO aims to exploit these relationships, allowing for the transfer of knowledge between tasks during the evolutionary process. This knowledge transfer can significantly accelerate convergence and improve the quality of solutions for one or all of the constituent tasks, a phenomenon known as positive transfer [9] [1].

The foundational concept draws inspiration from fields like multitask learning and transfer learning in machine learning [1]. EMTO operates on the key insight that the evolutionary process for solving one task may discover valuable genetic material or search directions that are beneficial for a different, but related, task. This is a departure from traditional Evolutionary Algorithms (EAs), which treat each problem as independent, potentially leading to inefficiency when dealing with sets of interconnected problems. The ability to handle multiple tasks concurrently makes EMTO particularly suitable for complex, real-world problems where designers must navigate numerous competing objectives or scenarios at once.

Historical Development and Key Milestones

The journey of EMTO from a conceptual framework to a mature research field is marked by several key innovations and milestones. Its development reflects a growing understanding of how to manage and facilitate knowledge transfer within an evolutionary context.

The Foundational Era: Establishing the Core Framework

The seminal work that formally established EMTO as a distinct research area was the introduction of the Multifactorial Evolutionary Algorithm (MFEA) by Gupta et al. in 2016 [1]. Often referred to as MFEA-I, this algorithm provided the foundational architecture for subsequent EMTO research. MFEA introduced the concept of a unified search space, where a single population of individuals is evolved to address multiple tasks concurrently. Each task is treated as a unique "cultural factor" influencing the population's evolution.

Key innovations of the original MFEA include:

- Skill Factor: A tag assigned to each individual, indicating the task on which it performs best.

- Assortative Mating: A mating strategy that encourages crossover between parents working on the same task, but allows for cross-task crossover with a defined probability, facilitating knowledge transfer.

- Selective Imitation: An mechanism that allows offspring to inherit genetic material from parents of different tasks, enabling the absorption of transferred knowledge [1].

MFEA demonstrated that EMTO could not only solve multiple tasks at once but could also outperform single-task EAs in terms of convergence speed due to the beneficial effects of knowledge transfer [1].

The Adaptive Era: Refining Knowledge Transfer

While MFEA proved the concept's viability, it relied on a fixed probability for cross-task mating, which was a significant limitation. This led to a second wave of research focused on adaptive mechanisms to control knowledge transfer more intelligently and prevent negative transfer—where the transfer of unhelpful knowledge hinders performance.

Key advancements in this era include:

- MFEA-II (2020): This algorithm replaced the fixed knowledge transfer probability with an adaptive, online learning mechanism. It expands the parameter into a random mating probability (RMP) matrix, which is continuously updated based on feedback from the success of previous transfers, allowing the algorithm to learn which task pairings benefit from interaction [9] [1].

- Explicit EMT Algorithms (EEMTA): These algorithms incorporated feedback-based credit assignment methods to select promising sources for knowledge transfer, moving beyond random selection [9].

- Focus on Transfer Source Selection: Researchers began using more sophisticated similarity measures, such as Kullback-Leibler (KL) Divergence and Maximum Mean Discrepancy (MMD), to quantitatively assess the similarity between task populations and select the most appropriate partners for knowledge transfer [9].

The Modern Era: Scalability and Complex Integration

The current era of EMTO research is characterized by tackling the challenges of scalability and integration. As the number of tasks increases, the complexity of managing knowledge transfer grows exponentially, giving rise to the subfield of Evolutionary Many-Task Optimization (EMaTO) [9].

Contemporary research directions include:

- Anomaly Detection for Transfer: Novel algorithms like MGAD use anomaly detection techniques to identify and transfer only the most valuable individuals from source tasks, thereby reducing the risk of negative transfer in many-task settings [9].

- Dynamic Weighting and Multi-Source Transfer: Modern frameworks employ dynamic weighting strategies to efficiently combine knowledge from multiple sources, rather than relying on single-source transfers [16].

- Hybrid and Specialized Frameworks: There is a growing trend of combining EMTO principles with other optimization paradigms, such as surrogate modeling for expensive problems, and designing domain-specific EMTO algorithms for areas like cloud computing and engineering design [1]. The integration of EMTO with multi-objective optimization has also been a significant focus, leading to powerful algorithms for handling complex, real-world problems with multiple conflicting objectives [16] [1].

Table 1: Key Milestones in EMTO Development

| Time Period | Core Paradigm | Key Algorithm(s) | Primary Contribution |

|---|---|---|---|

| ~2016 | Foundational | MFEA (MFEA-I) | Established the core EMTO framework with unified search space, skill factor, and assortative mating. |

| ~2017-2020 | Adaptive EMTO | MFEA-II, EEMTA | Introduced adaptive control of knowledge transfer probability and feedback-based transfer source selection. |

| ~2020-Present | Scalable & Hybrid EMTO | MGAD, BLKT-DE, Various Hybrids | Addresses many-task optimization (EMaTO), uses anomaly detection, block-level transfer, and integrates with other paradigms like multi-objective optimization. |

Core Methodologies and Experimental Protocols

A deep understanding of EMTO requires familiarity with its core methodologies and how they are evaluated. The following workflow and experimental components are standard in the field.

Standard Experimental Workflow and Algorithmic Components

The diagram below illustrates a generalized workflow for a modern EMTO algorithm, incorporating adaptive knowledge transfer.

Diagram: Generalized EMTO Workflow with Adaptive Knowledge Transfer.

Detailed Methodologies for Key EMTO Components

Knowledge Transfer Probability Control

A critical design element in EMTO is controlling the frequency of knowledge transfer between tasks. Early algorithms like MFEA used a fixed probability, but modern approaches employ dynamic strategies [9].

Enhanced Adaptive Knowledge Transfer Probability Strategy:

- Objective: To dynamically balance task self-evolution and knowledge transfer based on the tasks' current states and historical performance.

- Methodology: The algorithm maintains a record of success from previous knowledge transfer events. The transfer probability for a task is adjusted online, increasing if recent transfers led to improved offspring (positive transfer) and decreasing if they led to inferior offspring (negative transfer). This feedback loop allows the algorithm to learn optimal interaction patterns [9].

Transfer Source Selection Mechanism

Selecting the right task from which to draw knowledge is paramount. Modern algorithms use sophisticated similarity measures.

Predicated Source Task Selection with MMD and GRA:

- Objective: To accurately select the most promising source tasks for knowledge transfer by considering both current state and evolutionary trends.

- Methodology:

- Population Distribution Similarity (MMD): The Maximum Mean Difference (MMD) metric is used to measure the similarity between the probability distributions of the candidate source population and the target task population. A lower MMD indicates higher distributional similarity [9].

- Evolutionary Trend Similarity (GRA): Grey Relational Analysis (GRA) is employed to assess the similarity in the evolutionary trajectories of tasks. This considers the direction and pace of convergence, ensuring that knowledge is imported from a task that is not only similar but also evolving in a beneficial and compatible direction [9].

- Tasks are ranked based on a composite score from MMD and GRA, and the top-ranked tasks are selected as transfer sources.

Knowledge Transfer Execution

Once a source is selected, the mechanism of transfer must be designed to maximize positive effects.

Anomaly Detection-Based Knowledge Transfer Strategy:

- Objective: To mitigate negative transfer by identifying and transferring only the most valuable individuals from the source task.

- Methodology: Instead of transferring random or elite individuals, this strategy treats individuals that are exceptionally high-performing for their own task as potential "anomalies" in the context of the target task. These high-quality, anomalous individuals are then selectively transferred to the target task's population. This is often coupled with local distribution estimation or probabilistic model building to ensure the transferred knowledge is effectively integrated without disrupting the target population's diversity [9].

Benchmarking and Performance Evaluation

The performance of EMTO algorithms is rigorously tested against established benchmarks and peer algorithms.

Standard Experimental Protocol:

- Benchmark Selection: Researchers use standardized multitask benchmark problem sets. These often consist of well-known single-objective and multi-objective functions (e.g., ZDT, DTLZ, CEC competitions) grouped into related task pairs or suites [16] [1].

- Performance Metrics: The primary metrics for comparison include:

- Convergence Speed: The number of function evaluations or generations required to reach a predefined solution quality.

- Optimization Accuracy: The final value of the objective function(s) achieved.

- Statistical Significance: Results are typically averaged over multiple independent runs, and performance differences are validated using statistical tests like the Wilcoxon rank-sum test [16].

- Comparative Analysis: The proposed algorithm is compared against several state-of-the-art EMTO algorithms (e.g., MFEA, MFEA-II, EMaO) and traditional single-task EAs to demonstrate its competitive advantage [9] [16].

Table 2: Key Research Reagents and Tools in EMTO

| Tool / Component | Type | Primary Function in EMTO Research |

|---|---|---|

| Multitask Benchmark Suites | Software/Data | Provides standardized test problems for fair and reproducible comparison of EMTO algorithms. |

| Multi-factorial Evolutionary Algorithm (MFEA) | Algorithm | The foundational reference algorithm against which new EMTO methods are benchmarked. |

| Random Mating Probability (RMP) Matrix | Algorithmic Component | An adaptive mechanism for controlling the probability of knowledge transfer between specific task pairs. |

| Maximum Mean Discrepancy (MMD) | Statistical Metric | Quantifies the similarity between the distributions of two task populations to guide transfer source selection. |

| Anomaly Detection Mechanisms | Algorithmic Component | Identifies high-performing, transferable individuals from a source task population to reduce negative transfer. |

Current Research Focus and Future Directions

EMTO is a rapidly evolving field. Current research is focused on addressing its inherent challenges and expanding its applicability.

The main challenges identified by researchers include:

- Negative Transfer: The risk that unhelpful or disruptive knowledge will be transferred between tasks remains a central concern, especially as the number of tasks grows [9] [1].

- Scalability in EMaTO: Effectively managing knowledge transfer and computational resource allocation when dealing with a large number of tasks (e.g., tens or hundreds) is a key research frontier [9].

- Theoretical Foundations: While empirical success is well-documented, a stronger theoretical understanding of EMTO, including convergence guarantees and the dynamics of knowledge transfer, is still under development [1].

Promising future research directions, as outlined in recent surveys, are:

- Automated and Knowledge-Aware Transfer: Developing more intelligent systems that can automatically infer the type and amount of knowledge to transfer, perhaps drawing from a "knowledge repository" accumulated from solving previous tasks [1].

- Resource Allocation in EMaTO: Designing strategies to dynamically allocate more computational resources to the most complex or promising tasks within a many-task problem [1].

- Real-World Applications: Expanding the application of EMTO to new, complex domains such as large-scale personalized medicine, drug discovery, robotics, and complex system-on-chip design [9] [1]. The recent application to a planar robotic arm control problem is an example of this trend [9].

- Algorithmic Robustness: Enhancing the ability of EMTO algorithms to handle disparate task domains, different landscape modalities, and uncertainties in the problem definition [1].

Evolutionary Transfer Optimization represents a paradigm shift in evolutionary computation. It moves beyond the traditional approach of solving single, isolated optimization problems towards a framework that leverages knowledge gained from one problem to enhance the performance on other related, or sometimes seemingly unrelated, problems [2]. This approach is inspired by human cognitive processes, where experience accumulated from previous tasks accelerates learning and problem-solving in new situations. Within the broader context of a thesis on Evolutionary Multitask Optimization (EMTO) survey literature, understanding these transfer principles is fundamental, as they form the very engine that drives the performance gains in multitask environments [3]. The ability to effectively extract and transfer knowledge is what enables EMTO algorithms to outperform their single-task counterparts, making the study of these principles a cornerstone of modern evolutionary computation research [17].

Core Principles of Knowledge Transfer

The efficacy of Evolutionary Transfer Optimization hinges on several core principles that govern how knowledge is conceptualized, extracted, and shared across different optimization tasks.

Information, Insights, and Synthesis Knowledge