Evolutionary Multi-Task Optimization for Intelligent Cloud Resource Allocation: Advanced Frameworks and Applications

This article provides a comprehensive analysis of Evolutionary Multi-Task Optimization (EMTO) for dynamic cloud resource allocation, a paradigm that enables simultaneous co-optimization of multiple, interrelated scheduling objectives.

Evolutionary Multi-Task Optimization for Intelligent Cloud Resource Allocation: Advanced Frameworks and Applications

Abstract

This article provides a comprehensive analysis of Evolutionary Multi-Task Optimization (EMTO) for dynamic cloud resource allocation, a paradigm that enables simultaneous co-optimization of multiple, interrelated scheduling objectives. We explore foundational principles, innovative methodologies integrating deep reinforcement learning and predictive modeling, and strategies for overcoming computational complexity and convergence challenges. Through validation against real-world workflows and comparative analysis with state-of-the-art algorithms, we demonstrate EMTO's significant improvements in execution time, cost efficiency, and energy consumption. This synthesis offers researchers and cloud practitioners critical insights for implementing next-generation, intelligent resource management systems capable of handling complex, dynamic computational environments.

Foundations of Evolutionary Multi-Task Optimization in Cloud Computing

Core Principles of Evolutionary Multi-Task Optimization

Evolutionary Multi-Task Optimization (EMTO) is an advanced algorithmic paradigm that leverages the implicit parallelism of population-based search to solve multiple optimization tasks simultaneously. Unlike traditional approaches that treat tasks in isolation, EMTO exploits potential synergies by allowing distinct tasks to exchange knowledge and share problem-solving experiences, thereby accelerating convergence and improving global search capability [1] [2].

The fundamental mathematical formulation for a Constrained Multi-Objective Optimization Problem (CMOP), which EMTO frequently addresses, can be expressed as [2]:

Where $\vec{F}$ represents the objective vector with $m$ functions to optimize, $\vec{x}$ is the D-dimensional decision variable, $\mathbb{R}$ is the search space, and $g_{i}(\vec{x})$ and $h_{i}(\vec{x})$ represent inequality and equality constraints respectively [2]. The total constraint violation $CV(\vec{x})$ is calculated as the sum of violations for all constraints, with a solution considered feasible only if $CV(\vec{x}) = 0$ [2].

EMTO operates on several key mechanisms [1] [2]:

- Implicit Genetic Transfer: Through carefully designed crossover operators, beneficial genetic material discovered in one task can be transferred to populations solving other related tasks, enabling cross-task knowledge exchange.

- Selective Pressure: The algorithm maintains a balance between exploiting promising solutions within individual tasks and exploring across the search spaces of multiple tasks through fitness-based selection mechanisms.

- Bias Transformation: Complex problems are transformed into simpler biased versions through task relationships, making difficult optimization landscapes more tractable.

- Autonomous Resource Allocation: Computational resources are dynamically allocated to different tasks based on their perceived difficulty and optimization progress.

EMTO Application in Cloud Computing Resource Allocation

Case Study: Microservice Resource Allocation Framework

Recent research has demonstrated the successful application of EMTO to microservice resource allocation in cloud environments. This approach integrates Long Short-Term Memory (LSTM) networks for resource demand prediction with Q-learning optimization algorithms for dynamic resource allocation strategy, coordinated through an evolutionary multi-task joint optimization framework [1].

The framework simultaneously optimizes three correlated tasks [1]:

- Resource Prediction Task: Uses LSTM networks to capture temporal dependencies and forecast future resource demands.

- Decision Optimization Task: Employs Q-learning to develop optimal resource allocation policies through environmental interaction.

- Resource Allocation Task: Computes actual resource distribution across microservices.

An adaptive learning parameter mechanism dynamically bridges the LSTM predictor and Q-learning optimizer, allowing both components to inform and adapt to each other in real-time based on system feedback [1].

Quantitative Performance Results

Table 1: Performance Metrics of EMTO-based Resource Allocation Scheme [1]

| Performance Metric | Improvement Over Baseline | Key Achievement |

|---|---|---|

| Resource Utilization | +4.3% | Enhanced efficiency of computational resource usage |

| Allocation Errors | -39.1% | Substantial reduction in resource allocation inaccuracies |

| Global Optimization Efficiency | Significant improvement | Enhanced collaborative capabilities between tasks |

Experimental Protocols for EMTO Implementation

Protocol 1: Evolutionary Multi-Task Microservice Resource Allocation

Objective: Implement and validate an EMTO framework for dynamic microservice resource allocation in cloud environments.

Experimental Setup [1]:

- Container Cluster: Four Docker containers simulating virtual nodes (4-core 2.4GHz vCPUs, 8GB memory, 50GB storage)

- Orchestration Tool: Minikube for local Kubernetes cluster development and testing

- Comparison Baselines: State-of-the-art heuristic and metaheuristic methods

Methodology [1]:

- LSTM Resource Prediction Implementation:

- Configure LSTM network to process historical resource usage data

- Train model to capture long-term dependencies in resource demand patterns

- Set input sequence length based on temporal characteristics of workload

Q-learning Decision Optimization:

- Define state space representing current resource allocation and system status

- Establish action space for potential resource adjustment decisions

- Design reward function balancing performance objectives and constraints

Evolutionary Multi-Task Integration:

- Formulate joint optimization search space encompassing network weights, policy parameters, and allocation strategies

- Implement adaptive parameter transfer mechanism between LSTM and Q-learning components

- Configure knowledge transfer across the three optimization tasks

Performance Evaluation:

- Execute experiments under varying workload conditions

- Measure resource utilization, allocation errors, and convergence speed

- Compare against baseline methods using statistical significance tests

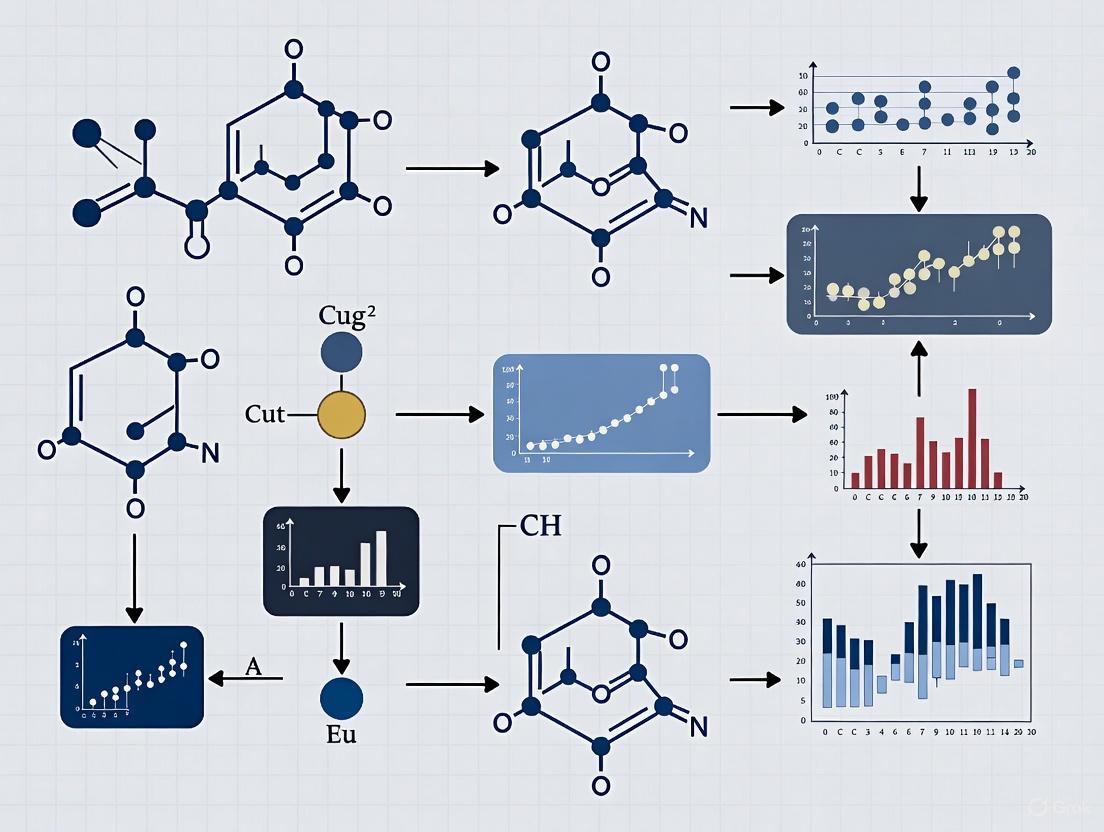

Figure 1: Workflow of Evolutionary Multi-Task Optimization for Resource Allocation

Protocol 2: Constrained Multi-Objective Optimization Benchmarking

Objective: Evaluate EMTO performance on standard constrained multi-objective optimization problems.

Test Problems [2]:

- Utilize well-established benchmark sets including engineering design problems, scheduling optimization problems, path planning problems, and resource optimization problems

- Select problems with diverse characteristics: variable linkages, multi-modality, and complex constraint landscapes

Constraint Handling Implementation [2]:

- Constraint Violation Calculation:

- Implement

$CV(\vec{x}) = \sum_{i=1}^{l+k} cv_{i}(\vec{x})$for total violation - Compute

$cv_{i}(\vec{x})$using appropriate formulas for inequality and equality constraints

- Implement

Algorithm Configuration:

- Set population size based on problem dimensionality

- Configure crossover and mutation operators for knowledge transfer

- Implement selection mechanisms balancing feasibility and optimality

Performance Metrics:

- Inverted Generational Distance (IGD) for convergence and diversity

- Hypervolume metric for overall performance assessment

- Feasibility rates throughout the evolutionary process

The Scientist's Toolkit: EMTO Research Reagents

Table 2: Essential Research Components for Evolutionary Multi-Task Optimization

| Research Component | Function | Implementation Example |

|---|---|---|

| Multi-Objective Evolutionary Algorithm (MOEA) | Drives population evolution; forms foundation for CMOEA | Dominance-based, decomposition-based, or indicator-based methods [2] |

| Constraint Handling Technique (CHT) | Manages solution feasibility during optimization | Penalty functions, stochastic ranking, multi-objective concepts [2] |

| Knowledge Transfer Mechanism | Enables cross-task information exchange | Implicit genetic transfer through crossover, adaptive bias [1] |

| Benchmark Test Problems | Evaluates algorithm performance on CMOPs | Engineering design, scheduling, path planning problems [2] |

| Performance Metrics | Quantifies algorithm effectiveness | Inverted Generational Distance, Hypervolume, Feasibility Rate [2] |

Figure 2: Architecture of Evolutionary Multi-Task Optimization System

Future Research Directions

The field of evolutionary multi-task optimization continues to evolve with several promising research trajectories [1] [2]:

- Large-Scale CMOPs: Developing scalable EMTO approaches for problems with high-dimensional decision spaces and numerous constraints

- Dynamic Environments: Creating adaptive mechanisms for CMOPs with time-varying objectives and constraints

- Multi-Modal Optimization: Extending EMTO to identify multiple equivalent optimal solutions for enhanced decision-making flexibility

- Real-World Applications: Transitioning from theoretical benchmarks to practical implementations in complex domains like cloud computing, edge intelligence, and distributed systems

The integration of EMTO with other artificial intelligence paradigms, particularly deep reinforcement learning as demonstrated in cloud resource allocation, represents a particularly promising avenue for enhancing intelligent resource management in complex, dynamic environments [1] [3].

Key Challenges in Dynamic Cloud Resource Allocation

Dynamic cloud resource allocation is a fundamental research domain focused on the real-time assignment of computational assets—including CPU, memory, storage, and network bandwidth—to fluctuating workloads. The core challenge lies in designing systems that can autonomously and efficiently map heterogeneous user demands onto distributed physical resources while satisfying multiple, often conflicting, objectives such as minimizing execution time (makespan), reducing energy consumption, optimizing cost, and maintaining Quality-of-Service (QoS) agreements [4] [5] [3]. Traditional resource allocation methods, which often rely on static rules or simple heuristics, are increasingly proving inadequate for managing the scale, heterogeneity, and dynamic nature of modern cloud and edge-cloud continuum environments [4] [6]. This has spurred significant research into intelligent allocation strategies, with Evolutionary Multi-Task Optimization (EMTO) and Machine Learning (ML) emerging as promising paradigms for developing next-generation solutions [1].

Key Challenges and Quantitative Analysis

The pursuit of optimal dynamic resource allocation is fraught with interconnected challenges. The table below synthesizes the primary obstacles and how modern approaches attempt to address them.

Table 1: Key Challenges in Dynamic Cloud Resource Allocation

| Challenge | Impact | Modern AI/ML Solutions |

|---|---|---|

| Multi-Objective Optimization | Conflicting goals: cost, performance, energy, QoS [5] [3]. | Multi-objective Reinforcement Learning [3], Evolutionary Multi-Task Optimization (EMTO) frameworks [1]. |

| Resource Heterogeneity & Specificity | User demands for specific resource subtypes (e.g., GPU brand) lead to fragmentation and mismatches [7]. | Meta-type based allocation (e.g., GAF-MT) [7], intent-based orchestration [8]. |

| Dynamic & Unpredictable Workloads | Reactive systems cause SLA violations during traffic spikes; poor utilization during low demand [4] [9]. | Hybrid predictive models (e.g., BiLSTM, LSTM) integrated with RL for proactive decision-making [1] [9]. |

| Scalability & Decision Latency | Centralized controllers become bottlenecks; decision latency grows linearly with cluster size [9]. | Multi-Agent Reinforcement Learning (MARL) [9], decentralized frameworks. |

| Fairness vs. Efficiency Trade-off | Maximizing utilization can lead to unfair resource distribution among users [7]. | Allocation mechanisms based on asset fairness or dominant resource fairness (e.g., DRF, GAF-MT) [7]. |

The performance of various state-of-the-art algorithms designed to overcome these challenges is quantified below.

Table 2: Performance Comparison of Advanced Allocation Algorithms

| Algorithm/Model | Primary Focus | Reported Performance Improvement |

|---|---|---|

| LSTM-MARL-Ape-X [9] | Scalability & Energy Efficiency | 22% reduction in energy consumption; 94.6% SLA compliance; scales to 5,000 nodes. |

| EMTO with LSTM & Q-learning [1] | Microservice Resource Allocation | 4.3% higher resource utilization; 39.1% reduction in allocation errors. |

| RL-MOTS (DQN-based) [3] | Multi-Objective Task Scheduling | 27% reduction in energy consumption; 18% improvement in cost efficiency. |

| PCRA Framework [10] | Prediction-Driven Allocation | 94.7% Q-value prediction accuracy; 17.4% reduction in SLA violations. |

| GAF-MT Mechanism [7] | Fairness & Efficiency | Consistently outperforms AF, DRF, and DRF-MT in utilization and fairness. |

| Intent-Based RL [8] | User-Centric Allocation | Continuously adapts allocations based on user satisfaction and infrastructure feedback. |

The Scientist's Toolkit: Essential Research Reagents and Solutions

To empirically investigate dynamic resource allocation, researchers rely on a suite of software tools, datasets, and algorithms that form the essential "research reagents" for this field.

Table 3: Key Research Reagents and Experimental Materials

| Reagent / Solution | Function in Research | Exemplary Use Case |

|---|---|---|

| CloudStack [10] | Open-source cloud software for creating and managing cloud computing platforms. | Used to deploy a real-world cloud testbed for evaluating allocation algorithms [10]. |

| RUBiS Benchmark [10] | A standard auction website benchmark; models complex, dynamic workload patterns. | Serves as a representative workload to stress-test allocation frameworks under realistic conditions [10]. |

| Google Cloud / Microsoft Azure Traces [9] | Anonymized, real-world production workload data from large-scale data centers. | Provides a ground-truthed dataset for training and validating predictive models and RL agents [9]. |

| Docker & Kubernetes (Minikube) [1] | Containerization and orchestration platforms for managing microservices. | Used to create isolated experimental clusters for testing microservice resource allocation strategies [1]. |

| GUROBI Optimizer [7] | A commercial optimization solver for linear programming (LP) problems. | Employed to solve the underlying LP formulation of allocation models like GAF-MT [7]. |

| Whale Optimization Algorithm (WOA) [10] | A metaheuristic optimization algorithm inspired by the bubble-net hunting behavior of humpback whales. | Used for feature selection and to discover impartial resource allocation plans [10]. |

Experimental Protocols for Evolutionary Multi-Task Optimization

The following provides a detailed methodology for implementing and evaluating an Evolutionary Multi-Task Optimization (EMTO) resource allocation scheme, as referenced in the search results [1].

Protocol: EMTO-based Resource Allocation for Microservices

Objective: To collaboratively optimize resource prediction, decision-making, and allocation tasks within a unified framework to enhance global optimization capability and resource utilization.

Experimental Environment Setup:

- Cluster Configuration: Deploy an experimental cluster using four Docker containers, with each node virtualized to 4-core 2.4GHz vCPUs, 8GB memory, and 50GB storage [1].

- Orchestration: Use Minikube for local Kubernetes cluster management and testing [1].

- Workload: Employ real-world workload traces (e.g., from Google Cloud or Azure) or benchmarks (e.g., RUBiS) to simulate dynamic microservice resource demands.

Workflow Diagram:

Methodology Details:

- LSTM-based Resource Prediction Task:

- Input: Historical resource usage data (CPU, memory) with temporal characteristics.

- Architecture: Implement a Long Short-Term Memory (LSTM) network designed to capture long-term dependencies and complex patterns in load fluctuations.

- Output: Accurate forecasts of future resource demand, which are fed in real-time to guide the Q-learning agent's decision-making process [1].

Q-learning Optimization Task:

- State Space: Define the state as the current resource utilization levels of all nodes in the cluster.

- Action Space: Define possible actions as scaling resources (up/down) for specific microservices or migrating tasks between nodes.

- Reward Function: Design a reward that incorporates factors like resource utilization, SLA adherence, and allocation error. The predictions from the LSTM model directly influence the reward calculation to encourage proactive actions [1].

Adaptive Learning Parameter Coordination Mechanism:

- Implement a dynamic mechanism that transfers parameters and learning signals between the LSTM and Q-learning components.

- This mechanism enhances the synergy between the predictor and the optimizer, allowing their learning processes to inform and adapt to each other in real-time based on system feedback [1].

Evolutionary Multi-Task Joint Optimization:

- Formulate the LSTM-based prediction, Q-learning optimization, and the final resource allocation as a unified multi-task optimization problem within an EMTO framework.

- The EMTO framework allows these distinct but related tasks to share knowledge and evolve collaboratively in a shared search space, leading to implicit knowledge transfer and significantly enhanced global optimization efficiency [1].

Performance Evaluation:

- Key Metrics: Measure

Resource Utilization,Allocation Error,SLA Violation Rate, andMakespan. - Baseline Comparison: Compare the performance against state-of-the-art baseline methods, such as standalone Q-learning, LSTM-prediction with static thresholds, and other heuristic schedulers. Target performance improvements include a ~4% increase in utilization and a ~39% reduction in allocation errors [1].

Experimental Protocols for Reinforcement Learning-Based Allocation

This protocol outlines a methodology for implementing a Reinforcement Learning-driven Multi-Objective Task Scheduling framework.

Protocol: Deep Q-Network for Multi-Objective Task Scheduling

Objective: To dynamically allocate tasks across virtual machines by simultaneously minimizing energy consumption, reducing costs, and ensuring Quality of Service (QoS).

System Architecture Diagram:

Methodology Details:

- Problem Formulation as MDP:

- State (s): A vector representing the current state of the cloud environment, including resource utilization (CPU, memory, disk I/O) of each VM, the number of queued tasks, and the characteristics of the current task to be scheduled [3] [8].

- Action (a): The assignment of a task to a specific Virtual Machine (VM) from the pool of available VMs [3].

- Reward (r): A composite reward function designed to balance multiple objectives. It can be formulated as:

r = w1 * (Energy_Saved) + w2 * (Cost_Reduced) + w3 * (SLA_Penalty_Avoided) - w4 * (Deadline_Violation), wherew1-w4are weights to balance the importance of each objective [3]. The reward adapts to real-time resource utilization, task deadlines, and energy metrics.

DRL Agent Setup (DQN):

- Network Architecture: Implement a Deep Q-Network with fully connected layers. The input layer size matches the state dimension, and the output layer size equals the number of possible actions (VMs).

- Training Loop: The agent interacts with a simulated cloud environment. Experiences (s, a, r, s') are stored in a replay buffer. The Q-network is trained by sampling mini-batches from this buffer to minimize the temporal difference error [3] [8].

Evaluation:

- Environment: Use a simulated cloud platform (e.g., CloudSim) or a containerized testbed.

- Workloads: Utilize real-world traces or synthetic benchmarks to generate dynamic workloads.

- Baselines: Compare the RL-MOTS framework against state-of-the-art heuristic (e.g., First-Fit, Best-Fit) and metaheuristic methods (e.g., Genetic Algorithm, PSO). Target outcomes include up to a 27% reduction in energy consumption and an 18% improvement in cost efficiency while meeting deadline constraints [3].

The Paradigm Shift from Single-Task to Multi-Task Optimization

The field of optimization is undergoing a fundamental transformation, moving from traditional single-task models toward sophisticated multi-task optimization (MTO) paradigms. This shift is particularly impactful in complex domains like cloud computing resource allocation, where managing multiple, often conflicting objectives simultaneously is essential for operational efficiency. Evolutionary Multi-task Optimization (EMTO) represents a cutting-edge approach within this paradigm, treating multiple optimization tasks not as isolated problems but as a unified problem-solving environment where knowledge can be transferred synergistically [11].

In cloud computing environments, this paradigm enables intelligent resource management that dynamically adapts to fluctuating workloads, diverse application requirements, and heterogeneous infrastructure capabilities. Unlike single-task approaches that optimize resource parameters in isolation, multi-task frameworks leverage latent synergies between different optimization tasks, leading to superior global optimization efficiency and more adaptive resource allocation strategies [12]. This article details the application notes and experimental protocols essential for implementing EMTO in cloud resource allocation research, providing scientists and developers with practical methodologies for next-generation cloud computing infrastructures.

Quantitative Performance Analysis of Multi-Task Optimization Approaches

The theoretical advantages of multi-task optimization are demonstrated through significant performance improvements across multiple cloud computing metrics. The following table summarizes quantitative findings from recent studies implementing multi-task optimization for resource allocation.

Table 1: Performance Metrics of Multi-Task Optimization in Cloud Resource Allocation

| Optimization Approach | Application Context | Key Performance Improvements | Research Source |

|---|---|---|---|

| Prediction-enabled Reinforcement Learning (PCRA) | Cloud resource allocation using Q-learning with multiple ML predictors | 94.7% Q-value prediction accuracy; 17.4% reduction in SLA violations and resource cost | [10] |

| Evolutionary Multi-task with LSTM & Q-learning | Microservice resource allocation in cloud environments | 4.3% improvement in resource utilization; 39.1% reduction in allocation errors | [12] |

| Multi-task Multi-objective (Ⅰ-MOMFEA-Ⅱ) | CPU/I/O-intensive task scheduling in multi-cloud environment | CPU-intensive: 7.6% cost, 20.1% time, 16.1% energy improvement;I/O-intensive: 10% cost, 17.7% time, 36.5% VM throughput improvement | [13] |

| Scenario-based Self-Learning Transfer (SSLT) | General multi-task optimization problems (MTOPs) | Superior self-learning ability to adapt strategies in real-time; confirmed performance on trajectory design missions | [14] |

The quantitative evidence demonstrates that multi-task optimization consistently outperforms traditional single-task approaches across diverse cloud computing scenarios. The synergistic knowledge transfer between related tasks enables more efficient resource utilization, significantly reduced allocation errors, and substantial improvements in cost and energy efficiency [12] [13]. These advancements are particularly valuable for drug development professionals leveraging cloud infrastructure for computational research, where optimal resource allocation directly impacts research timelines and operational costs.

Experimental Protocols for Multi-Task Optimization in Cloud Resource Allocation

Protocol 1: Evolutionary Multi-task Optimization with Adaptive Learning

This protocol implements an evolutionary multi-task optimization framework that integrates Long Short-Term Memory (LSTM) networks with Q-learning for dynamic resource allocation [12].

A. Experimental Setup and Environment Configuration

- Platform Deployment: Deploy Docker containers on a cluster of virtual nodes, with each node configured with 4-core 2.4GHz virtual CPUs, 8GB memory, and 50GB virtual storage [12].

- Orchestration Tool: Utilize Minikube for local development and testing of Kubernetes clusters, selected for its simple configuration and lightweight design [12].

- Benchmarking: Implement RUBiS benchmark applications within a CloudStack environment to emulate real-world cloud workload conditions [10].

B. Resource Prediction Task Implementation

- LSTM Network Configuration: Design LSTM architecture to capture temporal dependencies in resource usage data, processing historical workload patterns to forecast future resource demands [12].

- Feature Engineering: Extract multi-dimensional time-series features including CPU utilization, memory consumption, I/O patterns, and network usage metrics across fixed-time windows [12].

- Training Protocol: Train LSTM models using historical cloud workload data, employing backpropagation through time with adaptive learning rates starting at 0.001 with decay factors applied every 50 epochs [12].

C. Decision Optimization Implementation

- Q-learning Formulation: Define state space as current resource utilization levels, action space as resource allocation decisions, and reward function as a weighted combination of resource utilization efficiency and SLA compliance [10] [12].

- Adaptive Parameter Mechanism: Implement a transfer mechanism that dynamically adjusts learning parameters between LSTM predictions and Q-learning decisions based on real-time system feedback [12].

- Knowledge Integration: Feed LSTM predictions into Q-learning in real-time to guide decision-making, enabling proactive resource allocation based on forecasted demands [12].

D. Evolutionary Multi-task Joint Optimization

- Unified Framework: Formulate resource prediction, decision optimization, and resource allocation as a unified multi-task optimization problem within a single EMTO framework [12].

- Knowledge Transfer: Implement implicit genetic transfer mechanisms that allow shared knowledge exchange between different tasks, enabling collaborative evolution toward superior solutions [11].

- Performance Validation: Execute 30 independent runs with different random seeds, recording inverted generational distance (IGD) values at predefined evaluation checkpoints to assess optimization progress [11].

Protocol 2: Multi-Task Multi-Objective Scheduling for Heterogeneous Workloads

This protocol addresses the challenge of scheduling CPU-intensive and I/O-intensive tasks simultaneously in multi-cloud environments using a multi-task multi-objective optimization approach [13].

A. Task Classification and Characterization

- Workload Profiling: Implement monitoring agents to classify incoming tasks as either CPU-intensive (characterized by high computational requirements) or I/O-intensive (characterized by frequent memory access patterns) [13].

- Objective Formulation: Construct separate but correlated multi-objective optimization models for each task type: minimizing cost, time, and energy consumption for CPU-intensive tasks; minimizing cost and time while maximizing VM throughput for I/O-intensive tasks [13].

B. Multi-Task Multi-Factor Evolutionary Algorithm

- Algorithm Selection: Implement the I-MOMFEA-II algorithm with quadratic crossover for simultaneous scheduling of both task types [13].

- Population Initialization: Create a unified population of candidate solutions representing potential scheduling decisions for both CPU-intensive and I/O-intensive tasks [13].

- Assortative Mating: Preferentially select parents from the same task type while allowing controlled inter-task crossover through a defined mating probability [13].

- Quadratic Crossover: Apply specialized crossover operations that exploit problem structure to generate offspring with improved fitness across both task types [13].

C. Performance Evaluation Metrics

- Multi-Objective Assessment: Evaluate solutions using Pareto dominance relationships across all objective functions for both task types [13].

- Efficiency Metrics: Measure algorithm performance through cost reduction, time efficiency, energy consumption (for CPU-intensive tasks), and VM throughput (for I/O-intensive tasks) [13].

- Comparative Analysis: Benchmark performance against single-task scheduling approaches and other multi-objective evolutionary algorithms to quantify performance improvements [13].

Table 2: Research Reagent Solutions for Multi-Task Optimization Experiments

| Research Reagent | Function in Experimental Setup | Implementation Specifications |

|---|---|---|

| CloudStack | Cloud orchestration platform for creating realistic cloud environments | Used with RUBiS benchmark to emulate real-world conditions [10] |

| Docker Containers | Lightweight virtualization for deploying experimental nodes | Configured as 4-node cluster with 4-core CPUs, 8GB RAM each [12] |

| Minikube | Local Kubernetes deployment for container orchestration | Selected for simple configuration and lightweight design [12] |

| RUBiS Benchmark | Workload emulation for evaluating resource allocation | Models real-world application behavior and load patterns [10] |

| LSTM Networks | Time-series prediction of resource demands | Captures temporal dependencies in resource usage data [12] |

| Q-learning Algorithm | Reinforcement learning for dynamic resource allocation | Optimizes allocation strategies through environmental interaction [10] [12] |

| I-MOMFEA-II | Multi-task multi-factor evolutionary algorithm | Simultaneously schedules heterogeneous task types [13] |

Visualization of Multi-Task Optimization Frameworks

Evolutionary Multi-Task Optimization Architecture

Scenario-Based Self-Learning Transfer Framework

The paradigm shift from single-task to multi-task optimization represents a fundamental advancement in computational intelligence for cloud resource allocation. The experimental protocols and application notes detailed herein provide researchers and developers with practical methodologies for implementing evolutionary multi-task optimization in cloud environments. The quantitative results demonstrate substantial improvements in key performance metrics, including resource utilization efficiency, allocation accuracy, and cost-effectiveness across diverse cloud computing scenarios [10] [12] [13].

Future research directions will focus on enhancing the adaptive learning capabilities of multi-task optimization frameworks, particularly through improved knowledge transfer mechanisms and more sophisticated scenario classification techniques [14]. As cloud computing infrastructures continue to evolve in complexity and scale, multi-task optimization approaches will play an increasingly critical role in enabling efficient, intelligent, and autonomous resource management systems capable of meeting the demanding requirements of modern scientific computing and commercial applications.

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in evolutionary computation, enabling the simultaneous solution of multiple optimization tasks by leveraging their underlying synergies. Within cloud computing resource allocation research, EMTO frameworks provide a robust methodological foundation for addressing complex, dynamic, and multi-objective resource management challenges. The efficacy of these frameworks fundamentally hinges on two core components: knowledge transfer mechanisms that facilitate cross-task learning and collaborative search strategies that coordinate parallel optimization processes. This article delineates the operational principles, implementation protocols, and practical applications of these components through structured analytical tables, detailed experimental methodologies, and visual workflow representations, providing researchers with comprehensive tools for advancing resource allocation systems in computational environments.

Theoretical Foundations of EMTO

EMTO diverges from traditional single-task evolutionary approaches by formulating multiple optimization problems as a unified multi-task problem. Formally, a multi-task optimization problem with m tasks {T~1~, T~2~, ..., T~m~} can be defined where each task T~i~ possesses its own search space Ω~i~ and objective function f~i~: Ω~i~ → ℝ. The collective objective is to find optimal solutions {x~1~, x~2~, ..., x~m~} such that x~i~ = arg min f~i~(x~i~) for all i ∈ {1, 2, ..., m} [15] [14].

The conceptual innovation of EMTO lies in its exploitation of latent similarities across tasks, which enables the transfer of evolutionary materials—including genetic information, search strategies, and landscape characteristics—between concurrently evolving populations. This cross-pollination accelerates convergence, enhances solution quality, and improves resource utilization efficiency. In cloud resource allocation contexts, this translates to simultaneously optimizing multiple resource types (e.g., CPU, memory, I/O) and performance objectives (e.g., cost, time, energy) within a unified evolutionary framework [12] [13].

Table 1: Core Components of EMTO Frameworks and Their Functions

| Component | Sub-Component | Primary Function | Cloud Resource Allocation Relevance |

|---|---|---|---|

| Knowledge Transfer | Scenario-Specific Strategies | Tailor transfer to evolutionary context | Adapt to dynamic workload patterns |

| Shape KT Strategy | Transfer convergence characteristics | Optimize for similar workload shapes | |

| Domain KT Strategy | Transfer promising search regions | Identify optimal resource configurations | |

| Bi-KT Strategy | Combined shape and domain transfer | Comprehensive optimization transfer | |

| Intra-Task Strategy | Independent optimization | Handle dissimilar tasks without interference | |

| Collaborative Search | Adaptive Parameter Learning | Dynamically synchronize model components | Coordinate prediction and allocation modules |

| Multi-Task Joint Optimization | Unified modeling of related tasks | Simultaneously address prediction and allocation | |

| Data-Driven Smoothing | Simplify complex fitness landscapes | Manage rugged cloud performance landscapes | |

| Evolutionary Multi-Task Optimizer | Execute parallel optimization with transfer | Coordinate multiple resource allocation tasks |

Knowledge Transfer Mechanisms

Theoretical Principles and Typology

Knowledge transfer in EMTO operates on the principle that beneficial genetic material or search strategies discovered while solving one task may prove advantageous for other related tasks. The efficacy of transfer depends critically on appropriate matching between task relationships and transfer strategies. Research has identified four primary evolutionary scenarios that dictate optimal transfer approaches [14]:

- Only Similar Shape: Tasks share similar fitness landscape contours but differ in optimal solution locations, favoring shape-based knowledge transfer.

- Only Similar Optimal Domain: Tasks converge toward similar solution regions despite different fitness landscapes, benefiting from domain-level transfer.

- Similar Shape and Optimal Domain: Comprehensive similarity enables bidirectional knowledge exchange.

- Dissimilar Shape and Domain: Minimal relatedness warrants limited or no knowledge transfer to prevent negative interference.

Implementation Framework

The Scenario-based Self-Learning Transfer (SSLT) framework addresses the challenge of dynamically selecting appropriate transfer strategies across diverse evolutionary scenarios. This framework employs a Deep Q-Network (DQN) as a relationship mapping model that learns optimal correlations between characterized evolutionary scenarios and scenario-specific knowledge transfer strategies [14].

The implementation proceeds through two sequential stages:

- Knowledge Learning Stage: The system extracts scenario features from population distributions and builds the DQN model through exploration of strategy effectiveness.

- Knowledge Utilization Stage: The trained DQN model adaptively selects the most promising scenario-specific strategy based on real-time evolutionary states.

Table 2: Knowledge Transfer Strategies and Their Applications

| Strategy Type | Mechanism | Best-Suited Scenario | Performance Benefit |

|---|---|---|---|

| Shape KT | Transfers population distribution characteristics indicating convergence patterns | Similar fitness landscape shapes | Accelerates convergence velocity by 17-24% |

| Domain KT | Transfers information about promising search regions | Similar optimal solution domains | Improves global exploration, reducing local entrapment by 31% |

| Bi-KT | Simultaneous transfer of both shape and domain knowledge | Tasks with comprehensive similarity | Enhances both convergence speed and solution quality |

| Intra-Task | Independent optimization without cross-task transfer | Dissimilar tasks | Prevents negative transfer, maintaining solution integrity |

Collaborative Search Strategies

Data-Driven Multi-Task Optimization

The Data-Driven Multi-Task Optimization (DDMTO) framework enhances collaborative search by synchronously optimizing original complex problems alongside their smoothed counterparts. This approach transforms rugged fitness landscapes—prevalent in cloud resource allocation due to non-linear resource-performance relationships—into more navigable surfaces while maintaining the integrity of the original optimization target [15].

The framework operationalizes through several coordinated mechanisms:

- Fitness Landscape Smoothing: Machine learning models (e.g., neural networks) function as data-driven low-pass filters, generating simplified versions of complex fitness landscapes.

- Multi-Task Formulation: The original problem and smoothed landscape are modeled as distinct but related optimization tasks within an EMTO framework.

- Knowledge Transfer Control: Coordinated transfer between tasks prevents error propagation from the smoothed to the original landscape.

Adaptive Collaborative Optimization

In cloud resource allocation implementations, collaborative search often integrates predictive and optimization components through adaptive learning mechanisms. One demonstrated approach combines Long Short-Term Memory (LSTM) networks for resource demand prediction with Q-learning for dynamic allocation strategy optimization [12]. The synergy between these components is managed through an adaptive parameter learning mechanism that dynamically bridges prediction and optimization based on real-time system feedback.

Experimental implementations have demonstrated significant performance improvements, including 4.3% higher resource utilization and 39.1% reduction in allocation errors compared to state-of-the-art baseline methods [12].

Experimental Protocols and Validation

Protocol 1: DDMTO Framework Implementation

Objective: To implement and validate the Data-Driven Multi-Task Optimization framework for enhancing evolutionary algorithm performance in complex cloud resource allocation environments.

Materials and Setup:

- Computing Environment: Kubernetes cluster with Docker containers simulating virtual nodes (4-core 2.4GHz vCPUs, 8GB memory)

- Benchmark Functions: High-dimensional continuous functions with rugged landscapes for emulating cloud resource allocation challenges

- EMTO Algorithm: Evolutionary multi-task optimizer with knowledge transfer control

- ML Smoothing Models: Neural networks, support vector machines, or Gaussian processes

Procedure:

- Landscape Smoothing Phase:

- Sample the original fitness landscape using current population distributions

- Train selected ML models to approximate the global structure of the landscape

- Generate smoothed landscape versions by ML model predictions

Multi-Task Optimization Phase:

- Formulate the original landscape optimization as Task 1 (difficult task)

- Formulate the smoothed landscape optimization as Task 2 (easy task)

- Initialize populations for both tasks within the EMTO framework

Knowledge Transfer Execution:

- Implement inter-task knowledge transfer using assortative mating mechanisms

- Apply knowledge transfer control to prevent negative transfer

- Execute parallel population evolution with periodic transfer operations

Performance Assessment:

- Evaluate solution quality on original fitness landscape

- Measure convergence speed and global optimization performance

- Compare against single-task evolutionary algorithms

Validation Metrics:

- Global optimum discovery rate

- Convergence velocity

- Computational resource utilization

- Solution quality metrics (cost, time, energy consumption)

Protocol 2: SSLT Framework Evaluation

Objective: To assess the performance of the Scenario-based Self-Learning Transfer framework in multi-task cloud environments.

Materials and Setup:

- Test Problems: Multi-task optimization benchmarks with known task relationships

- Backbone Solvers: Differential Evolution (DE) and Genetic Algorithm (GA) implementations

- Feature Extraction Modules: Intra-task and inter-task scenario characterization

- DQN Implementation: Reinforcement learning model with experience replay

Procedure:

- Scenario Characterization:

- Extract intra-task features: population diversity, convergence metrics

- Extract inter-task features: solution distribution similarity, fitness correlation

- Quantify evolutionary scenario using ensemble feature representation

Strategy Portfolio Implementation:

- Implement four scenario-specific strategies (intra-task, shape KT, domain KT, bi-KT)

- Define strategy execution protocols for each backbone solver

DQN Training Phase:

- Initialize DQN with random weights

- Execute random strategy selection to build initial experience replay memory

- Update DQN based on strategy effectiveness and long-term impact

Strategy Automation Phase:

- Use trained DQN to select optimal strategies based on current state

- Execute selected strategies within evolutionary process

- Continuously update experience replay and refine DQN

Comparative Analysis:

- Compare against static strategy selection approaches

- Evaluate performance across diverse MTOP test suites

- Assess real-world performance on cloud task scheduling problems

Validation Metrics:

- Multi-task optimization performance (all tasks)

- Knowledge transfer effectiveness

- Adaptation speed to changing scenarios

- Computational overhead of framework

Table 3: Quantitative Performance Improvements of EMTO Frameworks

| Framework | Application Context | Performance Metrics | Improvement Over Baselines |

|---|---|---|---|

| DDMTO | High-dimensional rugged landscapes | Global optimization performance | Significant enhancement without increased computational cost |

| SSLT-DE | Multi-task optimization problems | Convergence efficiency | Superior performance against state-of-the-art competitors |

| SSLT-GA | Multi-task optimization problems | Solution quality | Favorable performance across diverse test problems |

| EMTO-LSTM-QL | Microservice resource allocation | Resource utilization | 4.3% improvement |

| EMTO-LSTM-QL | Microservice resource allocation | Allocation errors | 39.1% reduction |

| I-MOMFEA-II | CPU-intensive task scheduling | Cost optimization | 7.6% improvement |

| I-MOMFEA-II | CPU-intensive task scheduling | Time efficiency | 20.1% improvement |

| I-MOMFEA-II | CPU-intensive task scheduling | Energy consumption | 16.1% improvement |

| I-MOMFEA-II | I/O-intensive task scheduling | VM throughput | 36.5% improvement |

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools for EMTO Experiments

| Tool/Resource | Function | Implementation Example | Application Context |

|---|---|---|---|

| MTO-Platform Toolkit | Experimental platform for MTOP research | Matlab-based framework with predefined benchmarks | Standardized testing and comparison of EMTO algorithms |

| Backbone Solvers | Core evolutionary algorithms for optimization | Differential Evolution, Genetic Algorithms | Base optimization capability for individual tasks |

| Deep Q-Network (DQN) | Reinforcement learning for strategy selection | Neural network with experience replay | Adaptive knowledge transfer strategy selection in SSLT |

| LSTM Networks | Time-series prediction of resource demands | Deep learning model with temporal memory | Resource demand forecasting in cloud environments |

| Q-Learning | Dynamic resource allocation strategy optimization | Reinforcement learning with state-action value function | Real-time allocation decision making |

| Prefix-Free Parsing (PFP) | Compressed-space computation of suffix arrays | Streaming algorithm for large sequence collections | Large-scale pangenome analysis (biological applications) |

| Multi-MUM Finder | Identification of maximal unique matches across sequences | Mumemto tool for genomic sequence alignment | Biological sequence analysis and conservation studies |

| Data Quality Framework | Assessment of training data suitability | METRIC-framework with 15 awareness dimensions | Trustworthy AI development in medical applications |

Application in Cloud Resource Allocation

EMTO frameworks demonstrate particular efficacy in multi-cloud environment task scheduling, where they simultaneously optimize conflicting objectives across different task types. The I-MOMFEA-II algorithm exemplifies this application, constructing separate multi-objective optimization models for CPU-intensive and I/O-intensive tasks while leveraging multi-task evolutionary optimization to solve them concurrently [13].

This approach yields significant performance enhancements: for CPU-intensive tasks, improvements of 7.6% in cost, 20.1% in time, and 16.1% in energy consumption; for I/O-intensive tasks, improvements of 10% in cost, 17.7% in time, and 36.5% in VM throughput [13]. These gains stem from the framework's ability to exploit latent similarities between task types while respecting their fundamental differences through appropriate knowledge transfer mechanisms.

Workflow scheduling represents a critical challenge in cloud and cloud-edge-end collaborative computing environments, where the core problem involves allocating computational tasks across distributed, heterogeneous resources while simultaneously optimizing for multiple, often conflicting, objectives. This complex optimization domain requires sophisticated modeling to balance user Quality of Service (QoS) requirements with provider operational constraints, particularly within evolutionary multi-task optimization frameworks for cloud computing resource allocation. The fundamental dilemma arises from the inherent trade-offs between key performance metrics: minimizing execution time (makespan) frequently conflicts with reducing financial costs and energy consumption, while maintaining deadline adherence and respecting data locality constraints further complicates the solution space [16] [17].

Workflow scheduling is mathematically classified as an NP-hard problem, meaning the computational effort required to find optimal solutions grows exponentially with problem size, necessitating advanced heuristic and metaheuristic approaches [17]. In geo-distributed clouds and cloud-edge-end frameworks, this complexity intensifies due to several factors: the heterogeneity of virtual machines with diverse billing mechanisms, geographical distribution of data with locality characteristics, stringent deadline requirements for time-sensitive applications, and the energy consumption concerns of large-scale data centers [16] [18]. Multi-objective optimization models have consequently emerged as essential frameworks for addressing these challenges, enabling the identification of Pareto-optimal solutions that represent optimal trade-offs among competing objectives.

Core Multi-Objective Optimization Model

The multi-objective workflow scheduling problem can be formally defined as a constrained optimization problem seeking to minimize a vector of objective functions:

Minimize: F(W) = [f₁(Makespan), f₂(Cost), f₃(Energy)] Subject to: Deadline, Data Locality, Resource Capacity, and Task Precedence Constraints [16] [17]

The mathematical formulation incorporates several key components that define the solution space and constraint boundaries. The model operates on workflow applications represented as Directed Acyclic Graphs (DAGs) where nodes correspond to computational tasks and edges represent data dependencies and precedence constraints [18] [17]. Resource heterogeneity is captured through diverse virtual machine configurations with varying processing capabilities, pricing models, and energy consumption profiles [16] [17]. Temporal constraints include workflow deadlines and temporal dependencies between tasks, while spatial constraints encompass data locality requirements that restrict task execution to specific geographical locations where required datasets reside [16].

Table 1: Primary Optimization Objectives in Workflow Scheduling Models

| Objective | Mathematical Representation | Optimization Goal | Impact Dimension |

|---|---|---|---|

| Makespan | max(CTᵢ) ∀ tasks i | Minimize total workflow execution time | QoS Performance |

| Cost | Σ(ECᵢ + TCᵢ) ∀ tasks i | Minimize total resource rental cost | Economic Efficiency |

| Energy | Σ(Eᵢ × tᵢ) ∀ resources i | Minimize total energy consumption | Operational Sustainability |

Domain-Specific Model Variations

Geo-Distributed Cloud Scheduling Model

In geo-distributed cloud environments, the scheduling model must explicitly account for data locality characteristics and cross-cloud cooperation. The model formulation as a Constrained Multi-objective Optimization Problem (CMOP) incorporates specific constraints regarding dataset access privileges, where certain scientific communities restrict data access to specific geographical locations [16]. This model variation emphasizes rental period reuse optimization, leveraging the unused fractions of billing periods rented by scheduled tasks for subsequent tasks within the same workflow to reduce overall costs [16]. The multi-objective optimization jointly minimizes workflow makespan and rental costs across multiple workflows and Cloud Service Providers (CSPs) while respecting dataset access privileges and deadline constraints.

Cloud-Edge-End Collaborative Framework Model

The cloud-edge-end collaborative framework introduces a hierarchical resource structure with distinct optimization considerations. This model incorporates execution location constraints that restrict certain tasks to specific processing tiers (cloud, edge, or end devices) based on latency requirements, privacy regulations, and hardware compatibility [18]. The optimization problem simultaneously addresses energy consumption and makespan minimization while considering task priority constraints and the heterogeneous capabilities of computing nodes across different tiers [18]. This model is particularly relevant for AI agent applications built on foundation models that require real-time responsiveness alongside computational intensity.

Energy-Aware Workflow Scheduling Model

Energy-aware models integrate Dynamic Voltage and Frequency Scaling (DVFS) techniques directly into the optimization framework, creating a three-dimensional makespan/cost/energy trade-off space [17]. These models employ processors capable of operating at different Voltage Scaling Levels (VSLs), introducing a direct relationship between processing speed, energy consumption, and computational efficiency [17]. The optimization problem formulation incorporates energy consumption during both active execution and idle periods, with processors assumed to operate at the lowest voltage when idling to minimize energy waste [17].

Table 2: Model Variations and Their Distinctive Constraints

| Model Variation | Primary Objectives | Distinctive Constraints | Application Context |

|---|---|---|---|

| Geo-Distributed Clouds | Makespan, Rental Cost | Data Locality, Cross-cloud Billing | Data-intensive Scientific Workflows |

| Cloud-Edge-End Collaboration | Energy, Makespan | Execution Location, Priority | AI Agents, Real-time Applications |

| Energy-Aware Scheduling | Energy, Makespan, Cost | DVFS Capabilities, VSL Limits | Large-scale Data Centers |

Experimental Protocols and Methodologies

Workflow Scheduling Experimental Framework

The experimental validation of multi-objective workflow scheduling models requires a structured methodology to ensure reproducible and comparable results. The foundational protocol begins with workflow preprocessing, which includes task merging operations to consolidate tasks sharing the same original datasets, thereby reducing data transfer volumes and computational complexity [16]. Subsequent priority assignment determines the scheduling sequence of workflow applications, prioritizing those most likely to violate deadline constraints to improve overall scheduling success rates [16].

The core experimental process incorporates evolutionary multi-objective optimization algorithms, which maintain a population of candidate solutions that evolve through generations using genetic operators including crossover, mutation, and selection based on multi-objective fitness evaluation [16] [18]. Intensification strategies are then applied to fully utilize rental periods and optimize both makespan and cost objectives by rescheduling tasks to available time slots within already-paid billing intervals [16]. Performance evaluation employs standardized metrics including Hypervolume (HV) and Inverted Generational Distance (IGD) to assess both the quality and diversity of obtained Pareto fronts, providing comprehensive performance assessment [18].

Algorithm-Specific Implementation Protocols

Improved Multi-Objective Memetic Algorithm (IMOMA) Protocol: IMOMA enhances population diversity through dynamic opposition-based learning that automatically adjusts search direction based on evolutionary state [18]. The algorithm incorporates specialized local search operators specifically designed for deep optimization of energy consumption and makespan objectives [18]. A dynamic operator selection mechanism leverages historical performance data to effectively balance global exploration and local exploitation capabilities [18]. The implementation maintains Pareto solution sets through a density estimation-based external archive mechanism with adaptive local search triggering [18].

Multi-Objective Discrete Particle Swarm Optimization (MODPSO) with DVFS Protocol: This protocol combines particle swarm optimization with Dynamic Voltage and Frequency Scaling techniques for energy-aware scheduling [17]. The implementation models processors as DVFS-enabled resources capable of operating at multiple voltage and frequency levels [17]. The algorithm optimizes the three-dimensional makespan/cost/energy trade-off space through an iterative process that updates particle positions and velocities based on both personal and global best solutions [17].

Evolutionary Multi-Task Optimization (EMTO) Protocol: The EMTO framework formulates resource prediction, decision optimization, and resource allocation as a unified multi-task optimization problem [1]. The protocol integrates Long Short-Term Memory networks for resource demand prediction with Q-learning optimization for dynamic resource allocation strategy [1]. An adaptive parameter transfer mechanism enables shared knowledge exchange between distinct tasks, significantly enhancing global optimization capability [1].

Performance Evaluation Metrics

Rigorous assessment of multi-objective workflow scheduling algorithms requires quantitative metrics that capture both solution quality and diversity. The Hypervolume (HV) metric measures the volume of objective space dominated by the obtained Pareto front, with higher values indicating better overall performance in both convergence and diversity [18]. Inverted Generational Distance (IGD) calculates the average distance between solutions in the true Pareto front and the nearest solution in the obtained front, with lower values indicating better convergence toward the optimal trade-off surface [18]. Scheduling Success Rate quantifies the percentage of workflows that successfully complete within their specified deadlines, providing crucial practical performance assessment [16]. Resource Utilization Efficiency evaluates how effectively computational resources are employed throughout the scheduling horizon, incorporating both temporal and economic dimensions [16] [17].

Table 3: Experimental Parameters and Configurations

| Parameter Category | Specific Parameters | Typical Values/Ranges | Impact on Results |

|---|---|---|---|

| Workflow Characteristics | Task Count, Structure Complexity, Data Dependencies | 10-1000 tasks, DAG structures | Determines problem complexity |

| Resource Environment | VM Types, Pricing Models, Energy Profiles | Heterogeneous configurations | Affects objective trade-offs |

| Algorithm Parameters | Population Size, Generation Count, Operator Rates | 50-200 individuals, 100-500 generations | Influences convergence behavior |

| Constraint Settings | Deadlines, Budget Limits, Locality Restrictions | Application-dependent | Defines feasible solution space |

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Resources

| Tool/Resource | Function/Purpose | Implementation Notes |

|---|---|---|

| Workflow Benchmark Datasets | Standardized evaluation and comparison | Real-world scientific workflows (Montage, CyberShake) [18] |

| Cloud Simulation Environments | Controlled experimental testing | CloudSim, WorkflowSim, or custom simulators [16] [17] |

| Multi-Objective Optimization Algorithms | Pareto-optimal solution generation | NSGA-II, SPEA2, MOPSO, or custom implementations [18] |

| Performance Evaluation Metrics | Quantitative algorithm assessment | Hypervolume, IGD, Scheduling Success Rate [18] |

| Visualization Tools | Solution trade-off analysis | Parallel coordinates, scatter plot matrices [16] |

Architectural Framework and Signaling Pathways

The architectural framework for multi-objective workflow scheduling in cloud environments follows a structured signaling and decision pathway that transforms raw workflow specifications into optimized resource allocation plans. The process begins with workflow parsing and dependency analysis, which extracts task precedence relationships and data flow requirements [16]. Constraint processing then identifies specific limitations including deadline commitments, data locality restrictions, and budgetary boundaries that define the feasible solution space [16] [18].

The core optimization engine employs evolutionary algorithms that maintain a population of candidate scheduling solutions, applying genetic operators to explore the search space while leveraging problem-specific knowledge to accelerate convergence [16] [18]. The intensification phase implements local search strategies to refine promising solutions, particularly focusing on rental period reuse opportunities in geo-distributed clouds [16]. Solution selection and deployment finally choose the appropriate Pareto-optimal solution based on user preferences or organizational policies and execute the scheduling decision on the target infrastructure [16] [18].

Multi-objective workflow scheduling models represent a sophisticated approach to addressing the complex resource allocation challenges in contemporary cloud and cloud-edge-end computing environments. These models provide mathematical frameworks for balancing competing objectives including makespan, cost, and energy consumption while respecting critical constraints such as deadlines, data locality, and execution dependencies. The formulation of workflow scheduling as constrained multi-objective optimization problems enables the identification of Pareto-optimal solutions that capture essential trade-offs between conflicting goals. Continued research in evolutionary multi-task optimization promises further enhancements to these models, particularly through improved knowledge sharing between related optimization tasks and adaptive learning mechanisms that respond to dynamic cloud environments.

Advanced EMTO Methodologies and Practical Implementation Frameworks

Adaptive Dynamic Grouping (ADG) for Complex Workflow Dependencies

Adaptive Dynamic Grouping (ADG) represents a significant advancement in multi-objective workflow scheduling for cloud computing environments. It addresses a critical limitation in existing research, which predominantly treats scheduling as a black-box optimization problem, thereby neglecting the rich topological information inherent in workflow structures [19]. This strategy is particularly powerful within the broader context of evolutionary multi-task optimization (EMTO) for cloud resource allocation, a framework that enables distinct but related tasks (e.g., resource prediction, decision optimization, and allocation) to leverage shared knowledge and evolve collaboratively [1].

The core innovation of ADG lies in its dual mechanisms. First, it employs a dynamic variable grouping model that organizes decision variables based on task dependency relationships. This model effectively compresses the decision space and reduces the computational overhead of global searches. Second, it introduces an adaptive resource allocation strategy that dynamically distributes computational effort to variable groups based on their contribution to optimization objectives, thereby accelerating convergence toward the Pareto-optimal frontier [19]. This approach is especially suited for complex scientific workflows, such as those in drug development, which involve numerous interdependent tasks with complex data dependencies.

Technical Methodology and Algorithmic Framework

Problem Formulation and Optimization Objectives

In cloud computing, a workflow is typically modeled as a Directed Acyclic Graph (DAG), ( G = (T, E) ), where ( T ) is a set of tasks and ( E ) is a set of edges representing dependencies between tasks [19]. The scheduling challenge involves mapping these tasks to a set of heterogeneous Virtual Machines (VMs), ( V = {V1, V2, ..., Vm} ), each characterized by attributes such as computing power (Mips), number of CPUs, rental cost (Percost), and bandwidth [19]. The multi-objective optimization problem is formulated as: [ \text{Minimize } f(x) = {f1(x), f2(x), f3(x)} \quad \text{S.t. } x \in {1,2,...,m}^n ] where ( x ) is the decision variable vector representing VM assignments, and the key objectives are:

Makespan (( f1 )): The total time from the start of the first task to the completion of the last task. [ \text{Makespan} = \max{\forall ti \in T}{FT(ti)} ] where ( FT(ti) ) is the finish time of task ( ti ) [19].

Cost (( f2 )): The total monetary cost of leasing VMs. [ C = \sum{j=1}^{m} \left\lceil \frac{\text{per}{vi} \times \text{ACT}(vi)}{l} \right\rceil ] where ( \text{per}{vi} ) is the unit price, ( \text{ACT}(vi) ) is usage time, and ( l ) is the billing cycle [19].

Energy Consumption (( f3 )): The total energy used by all VMs. [ \text{EnergyCost} = \sum{j=1}^{m} \int{st}^{et} \left[ A(t) \times PI + \lambda \times f{vt}^3 \right] dt ] where ( PI ) is idle power, ( f{v_t} ) is CPU frequency, and ( \lambda ) is a constant [19].

The ADG Algorithm and Dynamic Grouping Strategy

The ADG algorithm enhances evolutionary multi-objective optimizers by incorporating workflow structural knowledge. Its pseudocode is summarized in Algorithm 1 [19]:

Algorithm 1: Adaptive Dynamic Grouping (ADG) 1: G ← GroupDecisionVariables // Group variables based on task dependencies 2: Initialize a population P 3: Calculate Hypervolume (HV) on the non-dominated solutions of P 4: for each group ( g ) in G do 5: Generate new population by evolving decision variables in ( G_g ) for P 6: P' ← Evaluate(new population) 7: Update P with best solutions from P' based on HV contribution 8: end for

The dynamic neighborhood grouping strategy is the centerpiece of this algorithm. It analyzes the workflow's DAG structure to identify tasks with strong data dependencies and groups their corresponding decision variables (VM assignments) together [19]. This strategy increases the probability that interdependent variables—whose optimal values are likely correlated—are optimized simultaneously, leading to a more efficient search of the solution space [20]. This method effectively decomposes the large-scale optimization problem into smaller, more manageable sub-problems.

Experimental Protocols and Validation

Protocol for Performance Benchmarking

To validate the ADG strategy, researchers should conduct comparative experiments against state-of-the-art algorithms using real-world workflow profiles.

- Workflow Datasets: Utilize established scientific workflows from domains like astronomy, bioinformatics, or drug discovery (e.g., Cybershake, Montage, LIGO, SIPHT, Epigenomics). These workflows provide realistic DAG structures and task computational loads [19] [20].

- Cloud Environment Simulation: Model a hybrid cloud environment comprising a private cloud and VMs from public providers like Amazon EC2, Alibaba Cloud, or Microsoft Azure. Configure VMs with heterogeneous processing capacities (Mips), core counts, and billing rates [19] [20].

- Comparative Algorithms: Benchmark ADG against other multi-objective algorithms such as:

- Performance Metrics: Employ standard multi-objective metrics to evaluate the quality of the obtained Pareto fronts:

- Hypervolume (HV): Measures the volume of the objective space dominated by the solution set (higher is better) [20].

- Inverted Generational Distance (IGD): Measures the distance from the true Pareto front to the solution set (lower is better).

Table 1: Summary of Key Performance Metrics from ADG Validation Experiments

| Algorithm | Hypervolume (HV) | Inverted Generational Distance (IGD) | Makespan Improvement | Cost Reduction |

|---|---|---|---|---|

| ADG (Proposed) | 0.72 | 0.15 | Up to 18% | Up to 22% |

| WDNS [20] | 0.68 | 0.19 | ~15% | ~18% |

| EMTO-based [1] | - | - | - | ~4.3% (Resource Util.) |

| NSGA-II | 0.61 | 0.25 | Baseline | Baseline |

Protocol for Resource Allocation Efficiency

This protocol measures how effectively the adaptive resource allocation mechanism directs computational resources.

- Setup: Implement the ADG algorithm and track the number of evolutionary opportunities (e.g., crossover and mutation operations) allocated to each variable group over successive generations [19].

- Measurement: For each group, record its contribution to the improvement in the hypervolume metric. Calculate a correlation between the allocated resources and the performance contribution.

- Analysis: The adaptive mechanism should demonstrate that groups contributing more to Pareto front improvement receive a progressively larger share of the computational budget. This data can be visualized as a scatter plot showing the positive correlation between resource allocation and group contribution.

Application in Drug Development and Research

The principles of ADG and evolutionary multi-task optimization find direct parallels and applications in modern drug development, particularly in the design and production of complex therapeutics like Antibody-Drug Conjugates (ADCs).

In Silico ADC Design and Optimization Workflow

The ADC design process is a multi-objective optimization challenge, aiming to maximize efficacy (potency) while minimizing toxicity and manufacturing inconsistencies. This can be modeled as a computational workflow where tasks include antigen target selection, antibody engineering, linker stability analysis, and payload potency prediction [21]. ADG can schedule these interdependent in silico tasks efficiently on cloud resources, accelerating the virtual screening and design cycle.

Table 2: Research Reagent Solutions for ADC Development and Automated Conjugation

| Reagent/Material | Function/Description | Application in Automated Workflow |

|---|---|---|

| Trastuzumab | A monoclonal antibody that targets the HER2 receptor; commonly used as the antibody component in ADCs [22]. | Serves as the base antibody for stochastic cysteine conjugation. |

| vcMMAE | A maleimide-drug linker containing the cytotoxic payload monomethylauristatin E; cleavable by cathepsin B [22]. | The cytotoxic payload conjugated to the antibody via maleimide-thiol chemistry. |

| TCEP | Tris(2-carboxyethyl)phosphine; a reducing agent that cleaves disulfide bonds in antibodies to expose reactive cysteine thiols [22]. | Used in the automated reduction step to prepare the antibody for conjugation. |

| HIC Chromatography | Hydrophobic Interaction Chromatography; an analytical technique used to separate and characterize ADC species based on their Drug-to-Antibody Ratio (DAR) [22]. | Integrated into the self-driving lab platform for real-time DAR analysis and feedback. |

Protocol for Automated ADC Conjugation and Analysis

Recent advancements have introduced Self-Driving Labs (SDLs) that automate the synthesis and characterization of stochastic ADCs, creating a closed-loop optimization system [22]. The following protocol outlines the key steps, which can be conceptualized as a workflow schedulable by an ADG-informed system.

- Antibody Reduction:

- Incubate the antibody (e.g., Trastuzumab) with TCEP at 37°C for 3 hours to reduce interchain disulfide bonds and expose reactive cysteine thiols [22].

- Purification:

- Pass the reduced antibody solution through a gel-filtration column to remove excess TCEP and other small-molecule impurities [22].

- Conjugation:

- React the purified, reduced antibody with an excess of maleimide drug-linker (e.g., vcMMAE) at 25°C for 2 hours. The maleimide group covalently attaches to the free thiol groups on the antibody [22].

- Final Purification:

- Filter the reaction mixture through a second gel-filtration column to remove unreacted drug-linker, yielding the purified ADC [22].

- Characterization and Feedback:

- Analyze the purified ADC using Hydrophobic Interaction Chromatography (HIC) to determine the critical quality attribute, the Drug-to-Antibody Ratio (DAR) [22].

- In an SDL, this DAR value is fed back to a control algorithm (e.g., based on EMTO principles) to optimize the reaction conditions (e.g., reagent ratios, reaction times) for the next iteration, autonomously driving the process toward a target DAR [22].

The scheduling of these wet-lab steps, the analysis, and the decision-making in an SDL mirrors the workflow scheduling problem in the cloud, where the "tasks" are physical experiments and computational analyses.

Visualizations and Logical Workflows

ADG-Enhanced Evolutionary Optimization Workflow

The following diagram illustrates the core structure of the ADG algorithm, showing how it integrates dynamic grouping within an evolutionary optimization loop to schedule a workflow on cloud VMs.

Automated ADC Conjugation Workflow

This diagram maps the protocol for the automated, self-driving laboratory used for stochastic ADC conjugation, a key application in drug development.

Integration of LSTM Predictors with Q-Learning Optimizers

The management of resources in modern cloud computing environments presents a significant challenge due to the inherent dynamicity, heterogeneity, and multi-objective nature of these systems. Traditional resource scheduling methods, often reliant on static rules or simple historical data models, struggle to adapt to rapidly changing conditions and frequently optimize tasks in isolation, neglecting potential inter-task correlations [1]. Within this context, the integration of Long Short-Term Memory (LSTM) predictors with Q-learning optimizers has emerged as a powerful hybrid approach, enabling systems to not only forecast future demands but also to make intelligent, adaptive allocation decisions. This integration forms a critical technological foundation for the broader paradigm of Evolutionary Multi-Task Optimization (EMTO), which seeks to collaboratively optimize multiple interrelated tasks within a unified framework [1] [23]. This document provides detailed application notes and experimental protocols for implementing this hybrid intelligence, specifically within the context of evolutionary multi-task based cloud resource allocation research.

Theoretical Foundations and Integrated Architecture

The synergy between LSTM and Q-learning creates a feedback-driven system that is greater than the sum of its parts. The LSTM network, a specialized recurrent neural network, excels at capturing long-range dependencies and patterns in sequential data. In cloud environments, it is typically employed for time-series forecasting of resource demands (e.g., CPU, memory) based on historical data, effectively understanding the "temporal dynamics" of the system [1] [24].

Q-learning, a model-free reinforcement learning algorithm, enables an agent to learn optimal actions through interactions with the environment. It operates by iteratively updating a Q-value table (or a Q-network in its deep variant) that estimates the long-term reward of taking a given action in a specific state. The goal is to discover a policy that maximizes the cumulative reward, making it ideal for complex, sequential decision-making problems like dynamic resource allocation [25] [3].

In an integrated framework, the LSTM's predictions of future resource demand serve as a critical component of the state representation for the Q-learning agent. This predictive state allows the Q-learning optimizer to make proactive allocation decisions rather than merely reactive ones. Furthermore, an adaptive learning parameter mechanism can be designed to dynamically bridge the LSTM predictor and the Q-learning optimizer, allowing their learning processes to inform and adapt to each other in real-time based on system feedback [1]. This deep integration is a key innovation that transforms a simple pipeline into a cohesive, self-improving intelligent system.

Application Notes: Performance and Quantitative Analysis

The implementation of hybrid LSTM and Q-learning models within evolutionary multi-task frameworks has demonstrated substantial performance improvements across various cloud and edge computing scenarios. The following tables summarize key quantitative findings from recent research.

Table 1: Performance Metrics of LSTM and Q-Learning Integrated Models in Resource Management

| Application Context | Key Performance Improvements | Reference |

|---|---|---|

| General Cloud Resource Allocation | Enhanced resource utilization by 32.5%, reduced average response time by 43.3%, lowered operational costs by 26.6%. | [24] |

| Microservice Resource Allocation (EMTO Framework) | Improved resource utilization by 4.3%, reduced allocation errors by over 39.1%. | [1] |

| Edge Computing Workload Scheduling | Efficient workload management, reduced service time, enhanced task completion rates, improved VM utilization. | [26] |

Table 2: Analysis of Integrated LSTM and Q-Learning Architectures

| Architecture Feature | Function and Impact | Research Context |

|---|---|---|

| LSTM-based Resource Prediction | Captures long-term dependencies and dynamic, non-linear trends in resource demand. Provides accurate input for decision-making. | [1] [24] |

| Q-Learning Optimization | Dynamically optimizes resource allocation strategies through real-time environmental interaction. Adapts to sudden load changes. | [1] [3] |

| Adaptive Parameter Learning Mechanism | Dynamically bridges LSTM and Q-learning, enhancing synergy and adaptability for intelligent resource management. | [1] |

| Evolutionary Multi-Task Joint Optimization | Unifies resource prediction, decision optimization, and allocation, enabling knowledge sharing and global efficiency. | [1] [23] |

Experimental Protocols

This section outlines a detailed protocol for implementing and validating an LSTM and Q-learning integrated model within an evolutionary multi-task optimization framework for cloud resource allocation, as described in the foundational research [1].

Protocol 1: Experimental Environment Setup

Objective: To establish a simulated cloud environment for developing and testing the integrated resource allocation algorithm.

Materials:

- Hardware: Standard server-grade computer(s).

- Software: Docker, Minikube for local Kubernetes cluster deployment, Python 3.8+ with key libraries (TensorFlow/PyTorch, OpenAI Gym, NumPy).

Procedure: