Evolutionary Multi-Task Optimization (EMTO): A Transformative Approach for Accelerating Drug Development and Engineering Design

This article explores the paradigm of Evolutionary Multi-Task Optimization (EMTO) and its significant potential to enhance efficiency in engineering design and drug development.

Evolutionary Multi-Task Optimization (EMTO): A Transformative Approach for Accelerating Drug Development and Engineering Design

Abstract

This article explores the paradigm of Evolutionary Multi-Task Optimization (EMTO) and its significant potential to enhance efficiency in engineering design and drug development. EMTO is a population-based search methodology that enables the simultaneous solving of multiple, related optimization tasks by facilitating knowledge transfer between them, often leading to accelerated convergence and superior solutions. We provide a comprehensive foundation of EMTO principles, detail cutting-edge algorithmic methodologies and their specific applications, address critical troubleshooting and optimization challenges such as negative transfer, and present a rigorous validation framework comparing state-of-the-art EMTO solvers. Tailored for researchers, scientists, and professionals in pharmaceutical development, this review synthesizes theoretical advances with practical applications, highlighting EMTO's role in optimizing complex, multi-faceted problems from preclinical research to manufacturing process design.

The Foundations of Evolutionary Multi-Task Optimization: Principles and Relevance to Drug Development

Evolutionary Algorithms (EAs) have traditionally been designed to solve a single optimization problem at a time. When confronted with multiple tasks, these conventional EAs must optimize each problem separately, often requiring substantial computational resources and time without leveraging potential correlations between tasks [1] [2]. This limitation prompted researchers to explore a novel paradigm inspired by human problem-solving capabilities—where knowledge gained from addressing one challenge often facilitates solving related problems more efficiently. This inspiration led to the emergence of Evolutionary Multi-Task Optimization (EMTO), a groundbreaking branch of evolutionary computation that enables the simultaneous optimization of multiple tasks by automatically transferring valuable knowledge across them [3].

EMTO represents a significant shift from traditional single-task optimization approaches by creating a multi-task environment where implicit parallelism of population-based search is fully exploited [1] [3]. By recognizing that correlated optimization tasks frequently share common useful knowledge, EMTO frameworks strategically transfer insights obtained during one task's optimization process to enhance performance on other related tasks [1]. This bidirectional knowledge transfer enables mutual reinforcement between tasks, potentially accelerating convergence and improving solution quality across all optimized problems [3]. The foundational algorithm that established this research domain was the Multifactorial Evolutionary Algorithm (MFEA), introduced by Gupta et al., which treats each task as a unique cultural factor influencing a unified population's evolution [2] [3].

Fundamental Concepts and Mechanisms

From Single-Task to Multi-Task Optimization

Traditional single-task Evolutionary Algorithms (EAs) operate on the principle of solving one optimization problem in isolation. When applied to multiple problems, each task is optimized independently without any knowledge exchange, potentially missing opportunities for performance improvement through shared insights [2]. In contrast, Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift by simultaneously addressing multiple optimization tasks while strategically facilitating knowledge transfer between them [1] [3].

The mathematical formulation of an MTO problem comprising K single-objective tasks (all minimization problems) can be formally defined as follows [4]:

where Tᵢ represents the i-th task, xᵢ denotes the decision variable for that task, and Xᵢ represents its dᵢ-dimensional search space. Each task has an objective function Fᵢ: Xᵢ → R. The goal of EMTO is to discover the optimal solutions {x₁, x₂, ..., x*_K} for all K tasks simultaneously [4].

For multi-objective multitasking optimization, the problem extends to handling multiple tasks with multiple objectives each [5]:

Here, Fk(·) represents the k-th task with mk objective functions, and each task may have different objective functions and decision variable dimensions [5].

Key Mechanisms for Knowledge Transfer

The efficacy of EMTO fundamentally depends on its knowledge transfer mechanisms, which determine how information is exchanged between tasks. These mechanisms address three critical questions: what knowledge to transfer, when to transfer it, and how to execute the transfer effectively [1] [3].

Table 1: Knowledge Transfer Mechanisms in EMTO

| Mechanism Category | Description | Representative Approaches |

|---|---|---|

| Implicit Transfer | Knowledge is shared through unified representation and genetic operations | MFEA uses assortative mating and cultural transmission [2] |

| Explicit Transfer | Direct mapping between task solutions using transformation techniques | DAMTO uses Transfer Component Analysis for domain adaptation [4] |

| Adaptive Transfer | Dynamically adjusts transfer probability based on success history | SaMTPSO uses success/failure memory to update transfer probabilities [6] |

| Selective Transfer | Identifies and transfers only valuable solutions between tasks | EMT-PKTM uses surrogate models to evaluate solution quality before transfer [5] |

A critical challenge in knowledge transfer is negative transfer, which occurs when knowledge exchange between poorly-related tasks deteriorates optimization performance compared to single-task approaches [1]. To mitigate this, advanced EMTO algorithms incorporate similarity measures between tasks or dynamically adjust inter-task knowledge transfer probabilities based on historical success rates [1] [6].

Major Algorithmic Frameworks and Approaches

Foundational and Specialized EMTO Algorithms

The EMTO landscape has evolved from a single foundational algorithm to diverse specialized frameworks, each with distinct knowledge transfer mechanisms and optimization strategies.

Table 2: Comparison of Major EMTO Algorithms

| Algorithm | Base Optimizer | Key Features | Knowledge Transfer Approach |

|---|---|---|---|

| MFEA [2] [3] | Genetic Algorithm | Multifactorial inheritance; skill factors | Implicit through assortative mating with fixed rmp |

| MFEA-II [4] | Genetic Algorithm | Online transfer parameter estimation | Adaptive rmp based on transfer effectiveness |

| MFDE [4] [6] | Differential Evolution | DE/rand/1 mutation strategy | Implicit transfer with fixed probability |

| BOMTEA [4] | GA + DE | Adaptive bi-operator strategy | Dynamically selects between GA and DE operators |

| MTLLSO [2] | Particle Swarm Optimization | Level-based learning | High-level individuals guide evolution of low-level ones |

| SaMTPSO [6] | Particle Swarm Optimization | Self-adaptive knowledge transfer | Probability-based selection from knowledge source pool |

| EMT-PKTM [5] | Multi-Objective EA | Positive knowledge transfer mechanism | Selective transfer using surrogate-assisted evaluation |

The Multifactorial Evolutionary Algorithm (MFEA) represents the pioneering approach in EMTO, inspired by biocultural models of multifactorial inheritance [2] [3]. In MFEA, each individual in a unified population is associated with a skill factor indicating its specialized task. Knowledge transfer occurs implicitly through assortative mating, where individuals with different skill factors may crossover with a specified random mating probability (rmp), facilitating the exchange of genetic material across tasks [2].

The self-adaptive multi-task particle swarm optimization (SaMTPSO) algorithm introduces a sophisticated knowledge transfer adaptation strategy where each task maintains a knowledge source pool containing all component tasks [6]. For each particle, a candidate knowledge source is selected based on probabilities learned from previous successful transfers, recorded in success and failure memories. The selection probability is updated using the formula:

where SR{t,k} represents the success rate of knowledge transfers from task Tk to task T_t over recent generations [6].

Evolutionary Search Operators in EMTO

The effectiveness of EMTO algorithms heavily depends on their evolutionary search operators (ESOs). While early approaches typically employed a single ESO throughout optimization, recent research demonstrates that adaptive operator selection can significantly enhance performance across diverse tasks [4].

The adaptive bi-operator evolutionary multitasking algorithm (BOMTEA) strategically combines the strengths of Genetic Algorithm (GA) operators and Differential Evolution (DE) operators [4]. In each generation, BOMTEA adaptively controls the selection probability of each ESO type based on its recent performance, effectively determining the most suitable search operator for different optimization tasks. This approach addresses the limitation of single-operator algorithms that may perform well on some tasks but poorly on others due to operator-task mismatch [4].

Differential Evolution operators in EMTO typically employ the DE/rand/1 mutation strategy:

where F represents the scaling factor, and x{r1}, x{r2}, x_{r3} are distinct individuals randomly selected from the population [4]. The trial vector is then generated through crossover between the mutated individual and the original individual.

Genetic Algorithm operators in EMTO often utilize Simulated Binary Crossover (SBX), which produces offspring based on an exponential probability distribution [4]:

where p₁ and p₂ represent parent individuals, c₁ and c₂ represent offspring, and β is a distribution parameter [4].

Experimental Protocols and Benchmarking

Standardized Test Suites for EMTO

Robust evaluation of EMTO algorithms requires standardized benchmark problems that enable systematic comparison of performance across different approaches. The most widely adopted benchmarks in the field include:

CEC17 Multitasking Benchmark Suite [4] [2]: This benchmark collection includes problems with varying degrees of inter-task similarity, categorized as:

- Complete-Intersection, High-Similarity (CIHS)

- Complete-Intersection, Medium-Similarity (CIMS)

- Complete-Intersection, Low-Similarity (CILS)

These classifications enable researchers to evaluate algorithm performance across different task-relatedness scenarios, which is crucial for assessing knowledge transfer effectiveness [4].

CEC22 Multitasking Benchmark Suite [4]: An updated collection featuring more complex problem formulations that challenge algorithms with higher-dimensional search spaces and more diverse task relationships.

Multi-Objective MTO Test Suites [5]: Specialized benchmarks for evaluating multi-objective multitasking algorithms, including the CPLX test suite developed for the WCCI 2020 Competition on Evolutionary Multitasking Optimization, which comprises ten complex MTO problems each involving two tasks with potentially different objective function dimensions.

Performance Evaluation Methodology

Comprehensive assessment of EMTO algorithms involves both quantitative metrics and comparative analyses against established baselines. Standard evaluation protocols include:

Performance Metrics:

- Convergence Speed: Measures the number of generations or function evaluations required to reach satisfactory solutions

- Solution Quality: Evaluates the objective function values achieved for each task

- Knowledge Transfer Efficiency: Assesses the ratio of positive to negative transfers, typically measured by comparing performance with and without transfer mechanisms [1] [3]

Comparative Framework:

- Single-Task EAs: Comparison against traditional EAs optimizing each task independently

- Established EMTO Algorithms: Evaluation relative to foundational approaches like MFEA and MFEA-II

- Statistical Significance Testing: Application of statistical tests (e.g., Wilcoxon signed-rank test) to validate performance differences

Experimental Protocol:

- Initialize algorithm parameters based on recommended settings from literature

- Execute multiple independent runs to account for stochastic variations

- Record performance metrics at regular intervals throughout evolution

- Compare final results using established statistical measures

- Conduct sensitivity analysis on critical parameters (e.g., rmp in MFEA)

Table 3: Key Research Reagents and Computational Resources for EMTO

| Resource Type | Specific Tool/Platform | Function in EMTO Research |

|---|---|---|

| Benchmark Suites | CEC17, CEC22, CPLX | Standardized performance evaluation and comparison [4] [5] |

| Simulation Tools | EMTO-CPA, DFT Calculations | Generate synthetic data for HEA design applications [7] |

| Algorithmic Frameworks | MFEA, MFDE, SaMTPSO | Foundational implementations for extension and comparison [4] [6] |

| Performance Metrics | Convergence Speed, Solution Quality | Quantitative assessment of algorithm effectiveness [3] |

Practical Applications and Case Studies

Engineering Design Optimization

EMTO has demonstrated significant potential in engineering design optimization, where multiple related design problems often share common underlying principles. A prominent case study involves crash safety design of vehicles, where designers must optimize multiple crash scenarios simultaneously [5]. In this application, different types of vehicle collisions (e.g., front impact, side impact) represent distinct but related optimization tasks. EMTO approaches can transfer knowledge between these tasks, leveraging common design principles to accelerate the optimization process while reducing computational costs associated with expensive crash simulations [5].

Another engineering application involves complex engineering design problems where multiple components or subsystems must be optimized concurrently. Cheng et al. demonstrated that coevolutionary multitasking approaches can effectively handle concurrent global optimization in complex engineering systems, outperforming traditional single-task optimization methods in both solution quality and computational efficiency [3].

Materials Science and HEA Design

The composition design of high-entropy alloys (HEAs) represents a compelling application domain for EMTO techniques. HEAs are multi-principal element materials with diverse structure-property relationships, but exploring their astronomically large composition space presents significant challenges for traditional experimental and computational approaches [7].

In this context, EMTO has been integrated with machine learning approaches to efficiently navigate the complex composition space. Researchers have employed high-throughput first-principles calculations using the EMTO-CPA method to generate extensive HEA datasets, which are then used to train machine learning models like Deep Sets for property prediction [7]. This synergistic approach enables simultaneous optimization of multiple material properties across a broad composition space, significantly accelerating the discovery of novel HEAs with tailored characteristics.

Cloud Computing and Resource Allocation

EMTO has found substantial applications in cloud computing environments, where multiple resource allocation and scheduling problems must be solved simultaneously [3]. In these scenarios, different resource management tasks (e.g., virtual machine placement, load balancing, energy management) often share common constraints and objectives. EMTO frameworks can leverage these commonalities to transfer knowledge between tasks, leading to more efficient overall resource utilization and improved quality of service compared to optimizing each resource management problem in isolation [3].

Implementation Workflow and Visualization

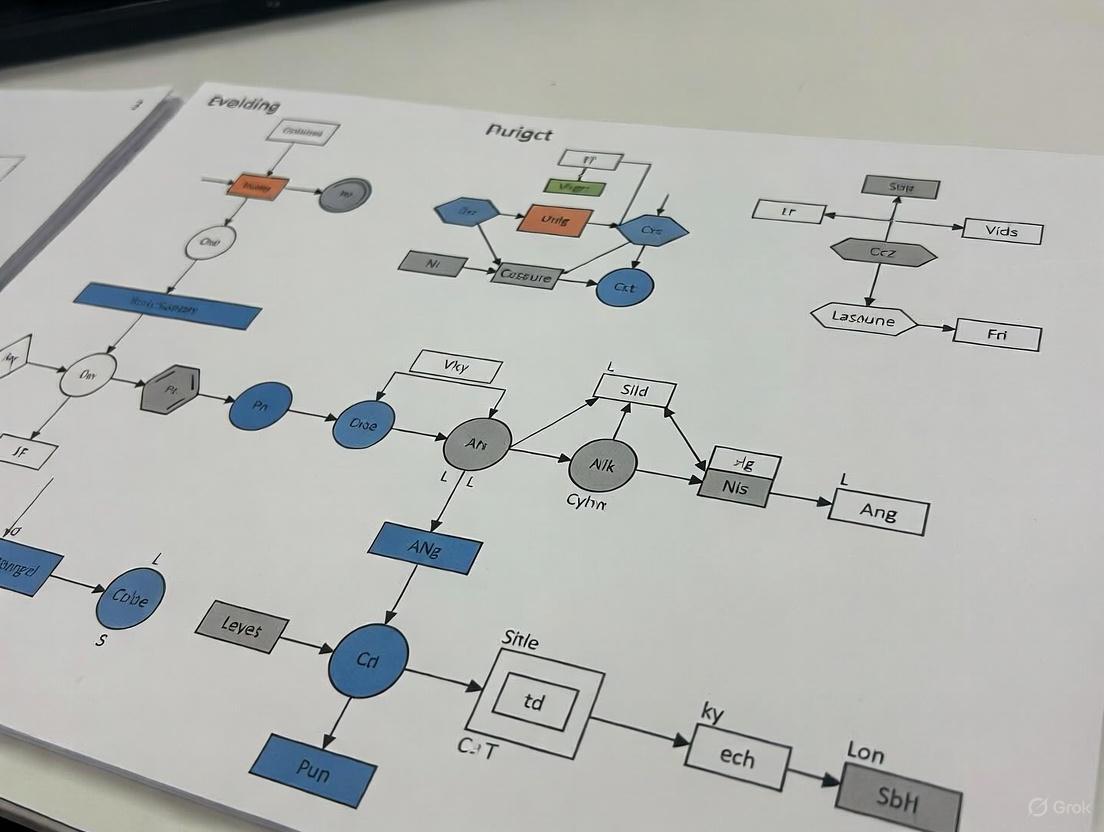

The standard implementation workflow for EMTO algorithms follows a structured process that integrates both single-task optimization and cross-task knowledge transfer mechanisms. The following diagram illustrates this generalized framework:

The knowledge transfer mechanism represents the core innovation in EMTO frameworks. The following diagram details the key decision points and transfer strategies:

Future Research Directions

As EMTO continues to evolve, several promising research directions merit further investigation:

Advanced Knowledge Transfer Mechanisms: Future work should focus on developing more sophisticated transfer approaches that can automatically identify the most valuable knowledge components to share between tasks while minimizing negative transfer [1] [3]. This includes exploring transfer learning techniques from machine learning, such as feature-based transfer and instance-based transfer, adapted to the evolutionary computation context [1].

Theoretical Foundations: While empirical success of EMTO has been widely demonstrated, theoretical analysis of convergence properties and knowledge transfer dynamics remains underdeveloped. Establishing comprehensive theoretical foundations would provide valuable insights into algorithm behavior and guide more effective algorithm design [3].

Large-Scale and Many-Task Optimization: Scaling EMTO approaches to handle larger numbers of tasks (many-task optimization) presents significant challenges in managing complex inter-task relationships and computational complexity. Developing scalable frameworks that can efficiently handle dozens or hundreds of related tasks would substantially expand the applicability of EMTO [3].

Hybrid Paradigms: Integrating EMTO with other optimization paradigms, such as surrogate-assisted evolution, multi-objective optimization, and constrained optimization, offers promising avenues for enhancing performance on complex real-world problems [3] [5]. These hybrid approaches could leverage the strengths of multiple methodologies to address limitations of standalone EMTO algorithms.

Domain-Specific Applications: Applying EMTO to novel application domains beyond engineering and materials science, such as drug discovery, financial modeling, and renewable energy systems, would demonstrate the broader utility of the paradigm while inspiring domain-driven algorithmic innovations [3].

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift in evolutionary computation, enabling the concurrent solution of multiple optimization tasks. Within this paradigm, the Multifactorial Evolutionary Algorithm (MFEA) has emerged as a cornerstone technique, inspired by the biological concept of multifactorial inheritance [8]. Unlike traditional evolutionary algorithms that handle a single task in isolation, MFEA leverages implicit knowledge transfer between tasks, often leading to accelerated convergence and superior solutions by exploiting synergies [9] [10]. The effectiveness of MFEA hinges on its core mechanisms: knowledge transfer, which facilitates the exchange of information between tasks, and skill factors, which manage task specialization within a unified population. For engineering design optimization—a field replete with complex, competing objectives—EMTO offers a powerful framework for addressing challenges such as parameter tuning, component sizing, and system integration simultaneously [11]. This article details the core protocols of MFEA, providing a structured guide for its application in engineering research.

Foundational Concepts and Definitions

The MFEA framework introduces a specialized set of concepts to operate in a multitasking environment. A multitasking optimization problem involves concurrently solving ( K ) distinct tasks, where the ( j )-th task, ( Tj ), is defined by an objective function ( fj(x): X_j \rightarrow \mathbb{R} ) [8]. To enable comparative assessment across these tasks, individuals in the unified population are characterized by several key properties [8] [12]:

- Factorial Cost (( \Psij^i )): Represents the raw objective value ( fj^i ) of an individual ( pi ) when evaluated on a task ( Tj ).

- Factorial Rank (( rj^i )): The rank of individual ( pi ) on task ( T_j ), obtained by sorting the entire population in ascending order of factorial cost for that task.

- Skill Factor (( \taui )): The task on which an individual ( pi ) performs best, formally defined as ( \taui = \mathrm{argmin}{j \in {1, \dots, n}} { r_j^i } ). The skill factor dictates the only task for which an individual is evaluated, conserving computational resources.

- Scalar Fitness (( \varphii )): A unified measure of an individual's overall performance in the multitasking environment, calculated as ( \varphii = 1 / \min{j \in {1, \dots, n}} { rj^i } ).

These definitions collectively allow MFEA to manage a single population of individuals, each with a latent aptitude for multiple tasks, but a specialized skill in one.

Core Mechanism I: Knowledge Transfer

Knowledge transfer is the process by which valuable genetic information is shared between different optimization tasks during the evolutionary process. The primary goal is to achieve positive transfer, where the exchange of information boosts performance on one or both tasks, while avoiding negative transfer, where inappropriate exchange degrades performance [8] [13].

Modes of Knowledge Transfer

Knowledge transfer in EMTO can be broadly classified into two categories:

- Implicit Knowledge Transfer: This is the original method employed by MFEA, where transfer occurs indirectly through genetic operators. The key mechanism is assortative mating, controlled by a random mating probability (rmp) parameter. When two parent individuals with different skill factors are selected for crossover, their genetic material is combined, leading to an implicit transfer of knowledge from one task's domain to another [8] [12]. This process is simple but can be blind to task relatedness.

- Explicit Knowledge Transfer: More advanced algorithms move beyond implicit transfer by actively identifying, extracting, and mapping knowledge. This often involves measuring inter-task similarity and using techniques like domain adaptation or subspace alignment to transform solutions from a source task before injecting them into the population of a target task [14] [9] [15]. This approach offers greater control but increases computational and design complexity.

Advanced Transfer Strategies

Recent research has focused on developing sophisticated strategies to enhance the quality of knowledge transfer. These strategies can be framed around three fundamental questions [14]:

- Where to Transfer? This involves identifying the most beneficial source-target task pairs for knowledge exchange. The Task Routing (TR) Agent in MetaMTO uses an attention-based module to compute pairwise task similarity scores for this purpose [14].

- What to Transfer? This decision concerns the selection of which specific knowledge (e.g., which individuals or what proportion of the population) should be transferred. The Knowledge Control (KC) Agent determines the proportion of elite solutions to transfer from a source task [14].

- How to Transfer? This pertains to the mechanism of the transfer itself. Strategies here include adaptive control of the

rmpparameter, using affine transformations for domain alignment, or employing novel crossover operators inspired by residual learning [9] [15] [16]. The Transfer Strategy Adaptation (TSA) Agent group dynamically controls hyper-parameters to govern this process [14].

Table 1: Classification of Knowledge Transfer Strategies in MFEA

| Strategy Category | Core Principle | Key Technique Examples | Advantages |

|---|---|---|---|

| Implicit Transfer | Blind exchange via genetic operators [8] | Assortative mating, rmp |

Simple implementation, low overhead |

| Explicit Transfer | Active measurement and mapping of knowledge [15] | Domain Adaptation, Subspace Alignment | Targeted transfer, reduces negative transfer |

Adaptive rmp |

Dynamically adjust transfer probability [13] | Online success rate estimation (MFEA-II) | Responds to changing task relatedness |

| Multi-Knowledge | Combine multiple transfer modes [9] | Dual knowledge transfer (DA + USS) | Robustness across diverse task types |

Core Mechanism II: Skill Factors

The skill factor is a pivotal component in the original MFEA framework that enables efficient multitasking within a single, unified population. It acts as a mechanism for resource allocation and implicit niche formation.

Protocol for Assigning Skill Factors

The assignment and utilization of skill factors follow a well-defined protocol within an MFEA generation [8] [12]:

- Initialization: In the initial population, each individual is randomly assigned a skill factor, or this assignment can be based on preliminary evaluation.

- Evaluation: Each individual is evaluated only on the task corresponding to its skill factor. This is a critical feature that prevents a combinatorial explosion of computational cost; an individual's performance on other tasks remains latent.

- Factorial Rank Calculation: For each task, all individuals in the population are ranked based on their factorial cost for that task, regardless of their skill factor.

- Scalar Fitness Assignment: An individual's overall (scalar) fitness is determined by its best factorial rank across all tasks (( \varphii = 1 / \minj { r_j^i } )).

- Skill Factor Update: After ranking, an individual's skill factor is updated to be the task on which it achieved its best rank (( \taui = \mathrm{argmin}j { r_j^i } )).

This process ensures that individuals gradually specialize in the task where they show the most promise, while the scalar fitness allows for a fair comparison between specialists of different tasks during selection.

Advanced Skill Factor Strategies

While the basic protocol is effective, recent advances have introduced more dynamic approaches:

- Dynamic Assignment: Instead of a fixed assignment, algorithms like MFEA-RL use a ResNet-based mechanism to dynamically assign skill factors by integrating high-dimensional residual information and learning inter-task relationships [16]. This enhances the algorithm's adaptability to complex task landscapes.

- Role in Transfer: The skill factor directly controls assortative mating. When two parents have the same skill factor, crossover proceeds normally. When they differ, knowledge transfer is triggered with a probability defined by the

rmpparameter [8].

Diagram 1: Skill Factor Protocol Workflow

Experimental Protocols and Benchmarking

Rigorous experimental validation is essential for evaluating the performance of any MFEA variant. This section outlines standard protocols for benchmarking.

Standard Benchmark Problems

Researchers typically use established benchmark suites to ensure fair and comparable results. Common suites include:

- CEC2017-MTSO: A standard set of multifactorial test problems for single-objective optimization [8] [16].

- WCCI2020-MTSO / WCCI20-MaTSO: More recent and complex benchmark sets from competition events, featuring both two-task and many-task optimization problems [8] [13] [9].

Table 2: Common MFEA Benchmark Problems (Examples)

| Benchmark Suite | Problem Type | Number of Tasks | Key Characteristics |

|---|---|---|---|

| CEC2017-MTSO [8] | Single-objective | 2 | Well-established, standard landscapes |

| WCCI2020-MTSO [9] [15] | Single-objective | 2 | Higher complexity, modern test set |

| WCCI20-MaTSO [8] [13] | Single-objective | >2 | Many-task optimization (MaTO) |

| CEC2021 MOMTO [10] | Multi-objective | 2 | Multi-objective multi-task problems |

Performance Evaluation Metrics

The performance of EMT algorithms is typically gauged using the following metrics:

- Average Accuracy (Convergence): The average objective value of the best-found solution for each task over multiple runs [9].

- Speed of Convergence: The number of generations or function evaluations required to reach a predefined solution quality [10].

- Success Rate of Knowledge Transfer: The proportion of cross-task transfers that result in improved offspring, indicating positive transfer [14].

Protocol for a Comparative Experiment:

- Algorithm Selection: Select state-of-the-art algorithms for comparison (e.g., MFEA-II, MTEA-ADT, MTDE-ADKT).

- Parameter Setup: Define common parameters like population size, maximum generations, and

rmp(or its adaptive equivalent). Use consistent settings across all algorithms. - Execution: Run each algorithm on the selected benchmark suites for a sufficient number of independent trials (e.g., 30 runs) to ensure statistical significance.

- Data Collection & Analysis: Record the best objective values for each task at the end of runs. Perform statistical tests (e.g., Wilcoxon rank-sum test) to validate performance differences.

The Scientist's Toolkit: Research Reagent Solutions

This section catalogues essential computational "reagents" and resources required for conducting MFEA research.

Table 3: Key Research Reagents and Resources for MFEA

| Reagent / Resource | Function / Description | Example Use Case |

|---|---|---|

| Benchmark Suites (CEC2017, WCCI2020) [8] | Standardized problem sets for algorithm performance evaluation and comparison. | Validating the performance of a new adaptive RMP strategy. |

| Domain Adaptation (DA) Module [9] | A computational component that maps solutions from a source task to the domain of a target task. | Enabling knowledge transfer between tasks with different search space characteristics. |

| Random Mating Probability (rmp) [8] | A scalar or matrix parameter controlling the probability of cross-task crossover. | Governing the intensity of implicit knowledge transfer; can be fixed or adaptive. |

| SHADE Optimizer [8] [9] | A powerful differential evolution variant often used as the search engine within MFEA. | Improving the underlying search capability of the MFEA framework. |

| Decision Tree Predictor [8] | A machine learning model used to predict an individual's transferability before crossover. | Filtering individuals to promote positive transfer and mitigate negative transfer. |

| Attention-based Similarity Module [14] | A neural network component that calculates pairwise similarity scores between tasks. | Answering the "where to transfer" question in an explicit transfer system. |

Application in Engineering Design Optimization

EMTO and MFEA have demonstrated significant potential in solving complex engineering design problems, where multiple, interrelated optimization tasks are common.

- Concurrent Global Optimization: MFEA can be applied to coevolutionary design scenarios, such as optimizing different components of a complex system (e.g., airfoil design and structural support) simultaneously, exploiting shared principles [9].

- Multi-Objective Multi-Task Problems (MOMTO): Engineering design often involves balancing multiple conflicting objectives for several tasks. Algorithms like MOMTPSO extend the MFEA concept to multi-objective problems, using strategies like objective space division and adaptive guiding particles to manage knowledge transfer [10].

- Expensive Optimization Problems: For tasks where function evaluations are computationally prohibitive (e.g., CFD simulations), knowledge transfer can help navigate the search space more efficiently, reducing the total number of evaluations required [13].

- Combinatorial Problems: MFEA has been successfully tailored to solve combinatorial challenges like the Vehicle Routing Problem (VRP) and the Shortest-Path Tree problem, demonstrating the flexibility of the paradigm beyond continuous optimization [8] [13].

Diagram 2: MFEA for Concurrent Engineering Design

The Multifactorial Evolutionary Algorithm establishes a robust and efficient framework for evolutionary multitasking by ingeniously integrating the core mechanisms of knowledge transfer and skill factors. The ongoing evolution of MFEA, driven by more sophisticated, adaptive, and learning-driven strategies for controlling transfer and assignment, continues to enhance its performance and applicability. For the field of engineering design optimization, EMTO offers a principled approach to tackling the inherent complexity of multi-component, multi-objective systems. Future research is likely to focus on scaling these methods to many-task optimization (MaTO) scenarios, further reducing the risk of negative transfer through explainable AI techniques, and deepening the integration of generative models for more intelligent solution space exploration. The protocols and mechanisms detailed in this article provide a foundational toolkit for researchers embarking on this promising path.

The drug development pipeline is a complex, costly, and high-attrition process. Current industry reports indicate that the landscape, while growing, demands more efficient strategies. The 2025 Alzheimer's disease drug development pipeline alone hosts 182 clinical trials assessing 138 novel drugs, a notable increase from the previous year [17]. This expanding complexity, mirrored across therapeutic areas, necessitates innovative approaches to optimize resource allocation and accelerate the identification of successful candidates. Here, we explore the compelling rationale for adopting Evolutionary Multitasking Optimization (EMTO) in drug development, drawing a powerful parallel to human cognitive multitasking.

Human cognition expertly handles multiple related tasks concurrently, extracting and transferring useful knowledge between them to improve overall efficiency and performance. EMTO, an emerging search paradigm in computational optimization, mimics this capability. It operates on the principle that when solving multiple optimization problems simultaneously, valuable, latent knowledge about one task can be leveraged to accelerate the search for solutions in other, related tasks [18]. For the pharmaceutical industry, this translates to a potential paradigm shift: instead of developing drugs in isolated, single-target silos, EMTO provides a framework to concurrently optimize multiple drug development programs, capturing the synergistic learning across related biological targets, disease models, or patient populations to enhance the efficiency and effectiveness of the entire R&D portfolio.

The EMTO Framework: From Biological Inspiration to Computational Reality

Conceptual Foundations and Mechanism of Knowledge Transfer

Evolutionary Multitasking Optimization is a knowledge-aware search paradigm designed to tackle multiple optimization problems concurrently. It dynamically exploits valuable problem-solving knowledge during the search process, fundamentally relying on the relatedness between tasks [18]. The core mechanism is based on the concept of implicit genetic transfer, where the evolutionary progress in solving one task informs and guides the population search in another.

The conceptual framework of EMTO involves maintaining a population of candidate solutions that are evaluated against multiple tasks. Through specialized genetic operators, the algorithm enables the transfer of building blocks—representing beneficial traits or partial solutions—from one task's search space to another. This process is analogous to a research team working on several related drug targets simultaneously, where a breakthrough in one program provides a novel hypothesis or methodological insight that benefits all parallel programs. The single-population model, exemplified by the Multi-factorial EA (MFEA), uses a unified representation and skill factors to manage this transfer, while multi-population models maintain separate populations for each task with explicit migration protocols [18].

Quantitative Landscape of Modern Drug Development

Table 1: Profile of the 2025 Alzheimer's Disease Drug Development Pipeline

| Pipeline Characteristic | Metric | Proportion/Number |

|---|---|---|

| Total Drugs in Development | 138 drugs in 182 trials | - |

| Therapeutic Modalities | Biological Disease-Targeted Therapies (DTTs) | 30% |

| Small Molecule DTTs | 43% | |

| Cognitive Enhancement Therapies | 14% | |

| Neuropsychiatric Symptom Therapies | 11% | |

| Innovation Strategy | Repurposed Agents | 33% of pipeline |

| Biomarker Utilization | Biomarkers as Primary Outcomes | 27% of active trials |

Source: Adapted from Alzheimer's disease drug development pipeline: 2025 [17]

The data in Table 1 illustrates the complexity and diversity of a modern drug development pipeline. With numerous mechanisms of action—addressing at least 15 distinct disease processes in the case of Alzheimer's—and a significant proportion of repurposed agents, the potential for synergistic learning across programs is substantial [17]. This landscape presents an ideal use case for EMTO, which can exploit the implicit relatedness between, for instance, different biological targets or shared patient stratification biomarkers.

Application Notes: Implementing EMTO in Drug Development Workflows

Protocol 1: Multi-Task Optimization for Lead Compound Identification

Objective: To concurrently identify lead compounds for multiple related therapeutic targets using EMTO, reducing screening time and exploiting cross-target pharmacophore similarities.

Background: Traditional high-throughput screening evaluates compounds against single targets in sequential fashion, potentially missing opportunities presented by polypharmacology and failing to leverage information from related screening campaigns.

Table 2: Research Reagent Solutions for EMTO in Lead Identification

| Reagent / Material | Function in EMTO Context |

|---|---|

| Virtual Compound Libraries (>10^6 compounds) | Provides the diverse solution space (search space) for the evolutionary algorithm to explore. |

| QSAR/QSP Prediction Models | Serve as surrogate fitness functions to evaluate compound properties (e.g., bioavailability, toxicity). |

| Target Binding Site Homology Models | Enables the alignment of genetic representations across related protein targets (task relatedness). |

| High-Performance Computing (HPC) Cluster | Facilitates the parallel evaluation of candidate solutions across multiple target tasks. |

Experimental Workflow:

- Problem Formulation: Define K related drug targets (T1, T2, ..., Tk) as distinct optimization tasks. Each task involves finding a compound that maximizes a multi-objective fitness function combining binding affinity, selectivity, and drug-likeness parameters.

- Unified Representation: Encode compounds into a unified genetic representation (chromosome) that can be interpreted across all K tasks. This may involve a descriptor-based approach or a simplified molecular input line entry system (SMILES)-based representation.

- Initialization: Generate a random population of N compounds (c1, c2, ..., cN). Each compound is evaluated for its skill factor—the task on which it performs best.

- Assortative Mating & Selective Imitation: Implement mating selection that favors individuals with similar skill factors (intra-task crossover) but allows with a defined probability for individuals of different skill factors to cross over (inter-task knowledge transfer).

- Offspring Evaluation: Created offspring are evaluated on all K tasks to determine their new skill factors.

- Environmental Selection: Select the next generation population based on multifactorial fitness, considering both the performance on each task and the diversity of the population.

- Termination & Output: Upon convergence or after a fixed number of generations, output the Pareto-optimal set of lead compounds for each of the K targets.

Diagram 1: EMTO Lead Identification Workflow (79 characters)

Protocol 2: QSP Model Calibration and Validation via EMTO

Objective: To simultaneously calibrate and validate a Quantitative Systems Pharmacology (QSP) model against multiple, disparate clinical datasets, ensuring robustness and predictive power across diverse patient populations.

Background: QSP models are sophisticated mathematical constructs that simulate drug effects within a biological system. Their calibration is often a high-dimensional optimization problem where parameters must be tuned to fit observed clinical data. EMTO enables calibration against multiple studies or patient strata concurrently, preventing overfitting to a single dataset.

Experimental Workflow:

- Task Definition: Let each of the K tasks represent the calibration of the QSP model against a distinct clinical trial dataset (e.g., different patient subgroups, dosing regimens, or combination therapies).

- Parameter Representation: Encode the uncertain parameters of the QSP model into a chromosome. The same parameter set is evaluated across all K tasks.

- Fitness Evaluation: For each task (dataset), the fitness of a parameter set is calculated as the goodness-of-fit (e.g., negative sum of squared errors) between the QSP model simulation outputs and the corresponding clinical data for that task.

- Multitasking Evolution: Employ an EMTO algorithm (e.g., MFEA) to evolve the population of parameter sets. The implicit genetic transfer allows promising parameter combinations discovered while fitting one dataset to inform the search for parameters fitting another, related dataset.

- Model Validation: The final, evolved parameter set is validated against a hold-out clinical dataset not used during the calibration phase to confirm its predictive capability.

This protocol leverages the fact that QSP is increasingly integral to drug development, helping to predict clinical outcomes, optimize dosing, and evaluate combination therapies by integrating knowledge across multiple scales [19] [20] [21]. The EMTO approach aligns with the "learn and confirm" paradigm central to physiological modeling in drug development [19].

Analysis and Future Perspectives

The integration of EMTO into drug development workflows represents a significant advancement in portfolio optimization. Drawing from its successful applications in manufacturing services collaboration, where it enhances efficiency by sharing optimization experiences across tasks [18], EMTO offers a systematic methodology for leveraging the intrinsic relatedness within a drug pipeline. This is particularly relevant given the rise of complex, multi-targeted therapies, such as bispecific antibodies, which are designed to address disease complexity by engaging multiple pathways simultaneously [22].

Furthermore, the growing role of AI and automation in drug discovery, as highlighted at recent industry events, underscores the need for sophisticated, data-driven optimization frameworks [23]. EMTO fits seamlessly into this evolving technological landscape, acting as a force multiplier when combined with AI-driven biomarker discovery and automated screening platforms. The application of EMTO can accelerate the identification of novel drug targets and enhance patient stratification, trends that are poised to expand significantly in neuroscience and beyond [24].

The future of EMTO in drug development will likely involve tighter integration with other Model-Informed Drug Development (MIDD) tools and a stronger regulatory acceptance framework. As the industry moves towards more integrated data platforms, the ability of EMTO to perform horizontal integration (across multiple biological pathways) and vertical integration (across multiple time and space scales) will be crucial for translating its theoretical promise into tangible reductions in development timelines and costs, ultimately delivering better therapies to patients faster.

Application Note: Core Concepts in Evolutionary Multitasking Optimization

Evolutionary Multitasking Optimization (EMTO) is a novel paradigm that enables the simultaneous solution of multiple, self-contained optimization tasks in a single run [25]. By leveraging the implicit parallelism of population-based evolutionary search, EMTO facilitates knowledge transfer across tasks, thereby potentially accelerating convergence and improving the quality of solutions for complex problems [26] [25]. This approach stands in contrast to traditional evolutionary algorithms, which typically solve one problem at a time, assuming zero prior knowledge [25]. For engineering design optimization, which often involves navigating complex, high-dimensional search spaces with multiple competing objectives, EMTO offers a powerful framework for discovering robust and high-performing solutions.

Conceptual Foundations

The efficacy of EMTO hinges on three interconnected operational concepts:

- Unified Search Space: This involves mapping the search spaces of several distinct optimization tasks into a common, unified representation [26] [27]. This mapping allows a single population of individuals to evolve solutions for all tasks concurrently. The primary challenge is designing an encoding and decoding scheme that effectively harmonizes disparate dimensionalities and representations across tasks [25].

- Assortative Mating: Inspired by biological principles, this is a mating pattern where individuals with similar phenotypes or genotypes mate more frequently than would be expected by chance [28] [29]. In EMTO, this concept is implemented to encourage the recombination of genetic material from individuals working on the same task, promoting a focused and efficient search within the domain of that specific task [26] [28].

- Selective Imitation: This refers to the strategic transfer of knowledge between different tasks [26] [30]. Rather than blindly copying all information, EMTO algorithms aim to identify and transfer individuals or building blocks that contain valuable knowledge which can assist a target task, thereby mitigating the negative effects of negative transfer—where unhelpful or harmful knowledge impedes performance [26] [31].

The synergistic relationship between these concepts is foundational to EMTO. The unified search space enables interaction, assortative mating refines solutions within tasks, and selective imitation leverages discoveries across tasks.

Application Note: Implementation and Experimental Protocols

Protocol: Establishing a Unified Search Space for Multi-Objective Problems

Objective: To create a unified search space for K multi-objective optimization tasks, enabling a single evolutionary algorithm to operate across them.

Background: A multi-objective multitasking (MO-MTO) problem consists of K tasks, where the k-th task is defined as: Minimize: ( Fk(xk) = {f{k1}(xk), \dots, f{kmk}(xk)} ), subject to: ( xk \in \prod{s=1}^{dk} [a{ks}, b{ks}] ) [26]. Here, ( d_k ) is the dimensionality of the k-th task's decision space.

Materials:

- Computational resources for running evolutionary algorithms.

- Software libraries for multi-objective optimization (e.g., PlatEMO, pymoo).

- Benchmark problems or engineering design problem definitions.

Procedure:

- Task Analysis: For each of the K tasks, identify the dimensionality ( dk ) of its decision variable vector ( xk ) and the bounds ( [a{ks}, b{ks}] ) for each variable.

- Dimensionality Alignment: Determine the maximum dimensionality ( D{max} = \max(d1, d2, ..., dK) ) among all tasks. For tasks with ( dk < D{max} ), extend their search space by adding ( (D{max} - dk) ) dummy variables. These variables are not used in the evaluation of that particular task but allow for a unified chromosome length [25].

- Domain Normalization: Normalize the decision variables of all tasks to a common range (e.g., [0, 1]) to ensure no single task dominates the search process due to scale differences.

- Chromosome Encoding: Implement a chromosome representation of length ( D_{max} ). Each individual in the population carries this unified chromosome.

- Skill Factor Assignment: Upon evaluation, each individual is assigned a skill factor (( \taui )), which is the index of the task on which it performs best. The scalar fitness (( \varphii )) is calculated as the inverse of its factorial rank on that task [25].

Table 1: Definitions for Individual Evaluation in a Unified Search Space

| Property | Mathematical Representation | Description |

|---|---|---|

| Factorial Cost | ( \psi_j^i ) | The objective value of individual ( pi ) on task ( Tj ) [25]. |

| Factorial Rank | ( r_j^i ) | The rank of ( pi ) in a sorted list of all individuals based on performance on task ( Tj ) [25]. |

| Skill Factor | ( \taui = \arg\minj r_j^i ) | The task assigned to individual ( p_i ), determined by its best factorial rank [25]. |

| Scalar Fitness | ( \varphii = 1 / \minj r_j^i ) | The unified fitness of ( p_i ), used for selection across all tasks [25]. |

Protocol: Implementing Assortative Mating

Objective: To promote effective within-task search by biasing mating towards individuals working on the same optimization task.

Background: Assortative mating, or homogamy, is a form of sexual selection where individuals with similar characteristics mate more frequently [28] [32]. In EMTO, this principle is used to maintain and exploit promising genetic lineages within a task.

Materials:

- A population of individuals with assigned skill factors.

- Crossover and mutation operators.

Procedure:

- Parent Selection: Select two parent individuals from the population using a selection method (e.g., tournament selection).

- Mating Probability Calculation: With a predefined probability ( r{mp} ) (e.g., 0.8), apply assortative mating. If a random number ( > r{mp} ), proceed with inter-task crossover.

- Assortative Mating Check: If the two parents have the same skill factor (( \tau{p1} = \tau{p2} )), they are considered to be working on the same task. Proceed with a standard crossover operation to produce offspring.

- Offspring Task Assignment: The offspring inherit the skill factor from their parents, as it is considered a cultural trait in the multi-factorial environment [25].

This protocol helps in preserving and combining beneficial genetic material that is specifically adapted to a given task's landscape.

Protocol: Enabling Selective Imitation via Semi-Supervised Learning

Objective: To identify and transfer high-quality knowledge (individuals) from one task to another to accelerate convergence and avoid negative transfer.

Background: Selective imitation is not a blind process; it involves evaluating which pieces of knowledge will be beneficial [30] [31]. The EMT-SSC algorithm addresses this by using a semi-supervised learning model to classify individuals as positive or negative for transfer [26].

Materials:

- Labeled data (e.g., non-dominated solutions from previous generations).

- Unlabeled data (the current population).

- A semi-supervised classification algorithm (e.g., based on SVM and modified Z-score).

Procedure:

- Data Generation: During the evolutionary optimization process, generate both labeled and unlabeled samples. Labeled samples can be individuals identified as non-dominated in earlier generations [26].

- Model Training: Train a semi-supervised classification model (e.g., combining Support Vector Machines with a modified Z-score) based on the cluster assumption. This model uses both the labeled and unlabeled data to learn the underlying data distribution [26].

- Knowledge Identification: Apply the trained model to the current population to classify individuals into two categories: those containing valuable knowledge (positive) and those that may lead to negative transfer (negative).

- Knowledge Transfer: Allow only the individuals classified as "positive" to participate in inter-task crossover (selective imitation), thereby transferring useful genetic material to assist other tasks [26].

This protocol provides a robust, data-driven method for managing knowledge transfer, which is critical for the success of EMTO in complex engineering domains.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Materials for EMTO Research

| Item / Solution | Function in EMTO Protocols |

|---|---|

| Multi-Task Benchmark Suites (e.g., CEC 2017 MO-MTO) | Provides standardized test problems to validate and compare the performance of EMTO algorithms against state-of-the-art methods [26]. |

| Semi-Supervised Learning Library (e.g., scikit-learn) | Supplies algorithms like SVM for building the classification model central to the selective imitation protocol, identifying valuable individuals for transfer [26]. |

| Evolutionary Algorithm Framework (e.g., PlatEMO, DEAP) | Offers a flexible and reusable codebase for implementing population management, crossover, mutation, and selection operators required for all protocols [25]. |

| Unified Encoding/Decoding Schema | A custom software module that maps task-specific parameters to and from the unified chromosome representation, a prerequisite for the unified search space protocol [25]. |

| Performance Metrics Software (e.g., for IGD, Hypervolume) | Quantifies the performance and convergence of the multi-objective optimization outcomes, enabling empirical validation of the EMTO algorithm's efficacy [26]. |

Workflow and Relationship Diagrams

EMTO Core Workflow

Knowledge Transfer Logic

Electromagnetic Topology Optimization (EMTO) represents a cutting-edge computational approach that is transforming engineering design paradigms. By applying advanced optimization algorithms to the design of electromagnetic components, EMTO enables the creation of high-performance, lightweight, and material-efficient structures that would be impossible to achieve through conventional design methods. This methodology aligns with the broader industrial automation market, which is projected to grow from USD 169.82 billion in 2025 to USD 443.54 billion by 2035, reflecting a compound annual growth rate (CAGR) of 9.12% [33]. The integration of EMTO within this expanding automation landscape demonstrates its critical role in advancing next-generation industrial technologies, particularly in sectors requiring precision engineering such as medical devices, aerospace, and telecommunications.

The recognition of EMTO's value is evidenced by recent industry awards, including the 2025 IoT Breakthrough Award for "Industrial IoT Innovation of the Year" granted to Emerson for its DeltaV Workflow Management software [34]. This award highlights the industrial community's acknowledgment of advanced optimization technologies that enhance workflow efficiency and accelerate innovation cycles. For researchers and drug development professionals, EMTO offers particular promise in the design of medical instrumentation, laboratory equipment, and therapeutic devices where electromagnetic performance directly impacts functionality, safety, and efficacy.

Quantitative Analysis of the EMTO Research Landscape

The growth of EMTO research can be quantitatively analyzed through market segmentation, regional adoption patterns, and technological implementation trends. The tables below summarize key quantitative data points that define the current landscape and projected growth of EMTO and related advanced optimization technologies.

Table 1: Global Industrial Automation Market Overview (Inclusive of EMTO Applications)

| Metric | Value | Time Period | Notes |

|---|---|---|---|

| Market Size | USD 169.82 billion | 2025 (Projected) | Base year for projection [33] |

| Projected Market Size | USD 443.54 billion | 2035 (Projected) | [33] |

| CAGR | 9.12% | 2025-2035 | [33] |

| Dominant Component Segment | Hardware | 2025 | Growing demand for physical automation components [33] |

| Fastest Growing Component Segment | Software | 2025-2035 | Higher CAGR anticipated during forecast period [33] |

Table 2: Market Segmentation Analysis Relevant to EMTO Applications

| Segment Category | Dominant Segment | Market Share Notes | Growth Drivers |

|---|---|---|---|

| Mode of Automation | Programmable Automation | Majority share currently [33] | Swift adjustment to product designs; demand from electronics, automotive, and consumer goods sectors [33] |

| Industry Type | Oil and Gas | Majority share currently [33] | Substantial investments driven by operational complexity and resource management needs [33] |

| Type of Offering | Plant-level Controls | Majority share [33] | Essential role in real-time control and monitoring (PLCs, DCS, HMI) [33] |

| Deployment Model | Cloud-based | Majority share [33] | Remote accessibility, adaptability, lower maintenance needs [33] |

| Geographical Region | North America | Majority share currently [33] | Increased awareness and demand in commercial sectors; government investments [33] |

| Fastest Growing Region | Asia | Highest anticipated CAGR [33] | Not specified in available data |

The quantitative data demonstrates substantial market momentum for technologies encompassing EMTO principles. The projected near-tripling of market size over the coming decade indicates significant investment and adoption across industrial sectors. Particularly relevant to EMTO research is the anticipated higher growth rate of software components compared to hardware, highlighting the increasing value of advanced computational methods like topology optimization in the industrial automation ecosystem.

Experimental Protocols for EMTO Implementation

Protocol 1: Multi-Objective EMTO Design Optimization

This protocol details a standardized methodology for implementing electromagnetic topology optimization for engineering design, with particular applicability to medical device components.

1. Problem Definition and Preprocessing

- Define design space and non-design regions using CAD software

- Specify electromagnetic performance objectives (e.g., field uniformity, quality factor, specific absorption rate)

- Identify constraints (e.g., material volume fraction, frequency response, thermal limits)

- Apply boundary conditions and excitation sources

2. Material Property Assignment

- Assign discrete material properties to elements within the design domain

- Define interpolation scheme for intermediate densities (SIMP/RAMP)

- Implement penalty factors to drive solution toward discrete (0-1) values

3. Finite Element Analysis

- Discretize domain using appropriate element type (tetrahedral/hexahedral)

- Solve governing electromagnetic equations (Maxwell's equations)

- Compute field distributions and performance metrics

4. Sensitivity Analysis

- Calculate derivatives of objective function and constraints with respect to design variables

- Employ adjoint variable method for computational efficiency

- Filter sensitivities to ensure mesh-independent solutions

5. Design Update

- Apply optimization algorithm (e.g., Method of Moving Asymptotes, Optimality Criteria)

- Update design variables based on sensitivities and constraints

- Implement regularization techniques to control numerical artifacts

6. Convergence Check

- Evaluate change in objective function and design variables

- Verify constraint satisfaction

- If not converged, return to Step 3; otherwise, proceed to post-processing

7. Post-processing and Interpretation

- Convert continuous density distribution to manufacturable geometry

- Perform validation analysis on final design

- Prepare design documentation including manufacturing specifications

Protocol 2: Experimental Validation of EMTO Designs

1. Prototype Fabrication

- Employ additive manufacturing (3D printing) for complex EMTO-optimized geometries

- Utilize appropriate materials (conductive polymers, metal composites)

- Implement surface treatments to enhance conductivity where required

2. Experimental Setup

- Calibrate measurement equipment (vector network analyzer, impedance analyzer)

- Establish reference measurements for validation

- Implement fixture de-embedding procedures

3. Performance Characterization

- Measure S-parameters across frequency band of interest

- Quantify field distributions using near-field scanning

- Evaluate efficiency metrics (e.g., radiation efficiency, quality factor)

4. Data Analysis

- Compare simulated and measured performance

- Compute correlation metrics (e.g., mean squared error, correlation coefficient)

- Identify and analyze discrepancies between simulation and measurement

5. Design Refinement

- Implement iterative corrections based on experimental findings

- Update simulation models to improve predictive accuracy

- Validate refined design through additional testing

The following workflow diagram illustrates the integrated computational and experimental methodology for EMTO implementation:

EMTO Design Workflow

The Scientist's Toolkit: Research Reagent Solutions for EMTO

Successful implementation of EMTO requires specialized software tools and computational resources. The table below details essential research "reagents" - the software and platforms that enable advanced electromagnetic topology optimization research.

Table 3: Essential Research Reagent Solutions for EMTO

| Tool Name | Type | Primary Function in EMTO | Key Features |

|---|---|---|---|

| MATLAB [35] | Numerical Computing Environment | Implementation of custom EMTO algorithms | Advanced matrix operations, comprehensive toolbox ecosystem, strong visualization capabilities [35] |

| COMSOL Multiphysics | Physics Simulation Platform | Finite element analysis for electromagnetic systems | Multiphysics capabilities, application-specific modules, live connection to MATLAB [36] |

| ANSYS HFSS | 3D Electromagnetic Simulation | High-frequency electromagnetic field simulation | Finite element method, adaptive meshing, advanced solver technologies [36] |

| STATA [35] | Statistical Software | Analysis of experimental EMTO validation data | Powerful scripting for automation, advanced statistical procedures, excellent data management [35] |

| R/RStudio [35] | Statistical Programming | Statistical analysis of EMTO performance metrics | Extensive CRAN library, advanced statistical capabilities, excellent visualization with ggplot2 [35] |

| Additive Manufacturing Systems | Fabrication Technology | Prototyping of complex EMTO-optimized geometries | 3D printing of conductive materials, support for complex geometries, rapid prototyping capabilities [33] |

| Vector Network Analyzer | Measurement Instrument | Experimental validation of EMTO device performance | S-parameter measurements, frequency domain analysis, calibrated measurements |

These research reagents form the essential toolkit for advancing EMTO methodologies from theoretical concepts to experimentally validated designs. The integration of specialized electromagnetic simulation tools with general-purpose numerical computing environments provides the flexibility required to implement custom optimization algorithms while leveraging validated physics simulation capabilities.

Industrial Recognition and Implementation Case Studies

The growing industrial recognition of EMTO's value is evidenced by several high-profile implementations and awards. Emerson's DeltaV Workflow Management software, which received the 2025 IoT Breakthrough Award for "Industrial IoT Innovation of the Year," demonstrates principles aligned with EMTO methodology by transitioning workflow data from paper-based records to digital records and generating searchable, exportable digital records for analysis [34]. This recognition by the IoT Breakthrough Awards program, which received more than 3,850 nominations, highlights the industrial community's endorsement of advanced optimization and workflow technologies [34].

The following diagram illustrates the interconnected factors driving industrial recognition and implementation of EMTO technologies:

EMTO Recognition Drivers

The implementation of EMTO principles in industrial settings follows several recognizable patterns. In the life sciences sector, companies are adopting these technologies to "accelerate therapy commercialization" and "provide a simple and scalable solution with no coding experience required" for researchers [34]. The shift from paper-based records to digital workflows mirrors the transition from traditional design methods to optimization-driven approaches in engineering design.

For drug development professionals, EMTO offers specific advantages in the design of medical devices, laboratory equipment, and therapeutic technologies. The methodology enables "predictive maintenance, analytics, and informed decision-making" through the integration of "advanced tools and technologies, like Industrial Internet of Things (IIoT) technology and integrated Artificial Intelligence (AI) algorithms" [33]. These capabilities align with the needs of researchers and scientists working to "scale and deliver drugs to market safely, efficiently and quickly" [34].

The current landscape of EMTO research demonstrates robust growth and increasing industrial recognition. The projected expansion of the industrial automation market to USD 443.54 billion by 2035 provides a favorable environment for the adoption of advanced optimization methodologies like EMTO [33]. The recognition of EMTO-related technologies through industry awards confirms the value proposition of these approaches for solving complex engineering design challenges.

Future developments in EMTO will likely focus on increased integration with artificial intelligence algorithms, expanded multi-physics capabilities, and enhanced workflow management solutions that make the technology accessible to broader user communities. As these trends continue, EMTO is positioned to become an increasingly essential methodology for researchers, scientists, and drug development professionals seeking to optimize electromagnetic devices and systems for advanced applications across healthcare, communications, and industrial automation sectors.

EMTO Algorithms in Action: Advanced Methodologies and Pharmaceutical Applications

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in evolutionary computation, enabling the simultaneous optimization of multiple tasks by leveraging implicit parallelism and knowledge transfer. A critical design choice within EMTO is the population structure, which governs how genetic material is organized and shared. Single-population models maintain a unified genetic repository, while multi-population models employ distinct, task-specific sub-populations. The selection between these frameworks significantly influences algorithmic behavior, particularly in balancing convergence speed against the risk of negative knowledge transfer. Within engineering design optimization, this choice dictates an algorithm's ability to manage complex, interrelated design tasks efficiently. This document provides a detailed comparison of these frameworks, supported by quantitative data, experimental protocols, and practical implementation tools for researchers.

Theoretical Framework and Comparative Analysis

Foundational Principles of EMTO

Evolutionary Multi-Task Optimization is grounded in the principle that valuable knowledge discovered while solving one task can be transferred to accelerate the optimization of other, related tasks [3]. This process, known as inter-task knowledge transfer, mimics human problem-solving by applying past experiences to new challenges. The first major EMTO algorithm, the Multifactorial Evolutionary Algorithm (MFEA), established the single-population model by creating a unified population where each individual is associated with a specific task through a "skill factor" [3]. This model facilitates knowledge transfer at the genetic level through mechanisms like assortative mating and selective imitation, allowing for the implicit exchange of beneficial traits across different optimization tasks without requiring explicit similarity measures between problem domains.

Single-Population EMTO Frameworks

The single-population framework operates through a unified genetic pool where all tasks co-evolve within a shared population. In this model, each individual is assigned a skill factor that determines its primary optimization task, and knowledge transfer occurs when individuals from different tasks produce offspring through crossover operations [3]. The primary advantage of this approach is its efficient resource utilization, as the entire population contributes to solving all tasks simultaneously. This framework is particularly effective when optimization tasks share strong underlying similarities or common optimal regions in the search space. However, its main limitation is the potential for negative transfer, where genetic material beneficial for one task proves detrimental for another, potentially leading to performance degradation or premature convergence.

Multi-Population EMTO Frameworks

Multi-population EMTO frameworks address the limitations of unified models by maintaining distinct sub-populations for each optimization task. These specialized populations evolve semi-independently, with knowledge transfer occurring through structured migration or information exchange protocols [37]. This architecture enables task-specific specialization while still benefiting from potential synergies between related tasks. A key advantage is the reduced risk of negative transfer, as knowledge exchange can be more carefully controlled and monitored. Recent advanced implementations, such as the adaptive evolutionary multitasking optimization based on population distribution, further enhance this framework by using distribution similarity metrics to guide transfer between sub-populations, effectively identifying valuable knowledge even when task optima are geographically distant in the search space [37].

Table 1: Core Architectural Comparison of EMTO Frameworks

| Feature | Single-Population Framework | Multi-Population Framework |

|---|---|---|

| Population Structure | Unified population with skill factors | Multiple dedicated sub-populations |

| Knowledge Transfer Mechanism | Implicit through crossover (assortative mating) | Explicit migration or information sharing |

| Resource Allocation | Dynamic based on task performance | Configurable per sub-population |

| Implementation Complexity | Lower | Higher due to coordination requirements |

| Risk of Negative Transfer | Higher | Lower through controlled exchange |

| Optimal Application Scenario | Highly related tasks with similar optima | Loosely related or disparate tasks |

Quantitative Performance Analysis

Convergence Behavior and Solution Quality

Empirical evaluations reveal distinct performance characteristics for each framework. Single-population models typically demonstrate faster initial convergence for strongly related tasks due to immediate knowledge sharing [3]. However, this advantage may diminish in later stages if negative transfer occurs. Multi-population models often achieve higher final solution quality for complex or weakly related task combinations, as they maintain population diversity and prevent premature convergence [37]. The performance gap widens as the degree of similarity between tasks decreases, with multi-population approaches maintaining robust performance even for tasks with distant global optima.

Computational Efficiency Metrics

Computational efficiency varies significantly between frameworks. Single-population models generally require less memory overhead and simpler implementation, making them suitable for resource-constrained environments. Multi-population models incur additional computational costs for managing multiple populations and transfer mechanisms but often achieve better overall efficiency through specialized search and reduced wasted evaluations [37]. The adaptive population distribution-based approach further enhances efficiency by strategically triggering knowledge transfer only when distribution similarity suggests a high probability of beneficial exchange.

Table 2: Performance Metrics Comparison Across Problem Types

| Performance Metric | Single-Population EMTO | Multi-Population EMTO |

|---|---|---|

| Convergence Speed (Highly Related Tasks) | Fast | Moderate |

| Convergence Speed (Weakly Related Tasks) | Slow, may stagnate | Consistently robust |

| Final Solution Accuracy | Variable, task-dependent | High, more consistent |

| Population Diversity Maintenance | Lower, risk of dominance | Higher, preserves niche specialties |

| Memory Footprint | Lower | Higher due to multiple populations |

| Negative Transfer Susceptibility | Higher | Significantly lower |

Experimental Protocols for EMTO Evaluation

Protocol 1: Benchmark Task Suite Configuration

Objective: Establish standardized benchmark procedures for comparing single-population and multi-population EMTO performance.

Materials: Multi-task test suites with varying inter-task relatedness; computing environment with appropriate computational resources; EMTO algorithm implementations with configurable population structures.

Procedure:

- Task Selection: Select optimization tasks with controlled degrees of similarity, including:

- High-relatedness suite: Tasks with overlapping optimal regions or similar fitness landscapes

- Medium-relatedness suite: Tasks with partially shared characteristics but different optima

- Low-relatedness suite: Tasks with minimal shared characteristics or conflicting optima

Parameter Configuration:

- Set population size to 100 individuals per task for both frameworks

- For single-population: Configure unified population of size 100×number of tasks

- For multi-population: Initialize separate populations of size 100 for each task

- Set knowledge transfer rate to 0.2 for both frameworks

Evaluation Metrics:

- Record convergence trajectories for each task

- Calculate final solution accuracy relative to known optima

- Measure computational effort (function evaluations)

- Quantify negative transfer incidence through cross-task performance correlation

Execution:

- Conduct 30 independent runs per configuration to ensure statistical significance

- Employ appropriate statistical tests (e.g., Wilcoxon signed-rank) to compare performance

Protocol 2: Knowledge Transfer Effectiveness Analysis

Objective: Quantify and compare knowledge transfer efficiency between frameworks.

Materials: Implemented EMTO variants with transfer tracking capability; benchmark problems with known transfer potential.

Procedure:

- Transfer Mechanism Implementation:

- For single-population: Implement skill-factor-based assortative mating with uniform crossover

- For multi-population: Implement adaptive transfer based on Maximum Mean Discrepancy (MMD) between sub-populations [37]

Transfer Tracking:

- Tag all transferred individuals/solutions with generational metadata

- Track fitness impact of transferred material on recipient tasks

- Classify transfers as positive, neutral, or negative based on fitness delta

Analysis:

- Calculate transfer effectiveness ratio (positive:negative transfers)

- Correlate transfer success with task relatedness metrics

- Compare convergence acceleration attributable to knowledge transfer

Implementation Guidelines and Visualization

Workflow Diagrams for EMTO Frameworks

The following diagrams illustrate the structural and procedural differences between single-population and multi-population EMTO frameworks using the specified color palette.

Single-Population EMTO Workflow: This diagram illustrates the unified population approach where knowledge transfer occurs implicitly through assortative mating between individuals from different tasks.

Multi-Population EMTO Workflow: This diagram shows the parallel evolution of task-specific sub-populations with explicit, adaptive knowledge transfer controlled by distribution similarity analysis.

The Researcher's Toolkit: Essential EMTO Components

Table 3: Key Research Reagents and Computational Tools for EMTO

| Tool/Component | Function | Implementation Example |

|---|---|---|

| Maximum Mean Discrepancy (MMD) | Measures distribution similarity between populations to guide knowledge transfer | Kernel-based statistical test comparing sub-population distributions [37] |

| Skill Factor Encoding | Assigns individuals to specific tasks in single-population EMTO | Scalar value representing an individual's primary optimization task [3] |

| Assortative Mating Operator | Controls crossover between individuals from different tasks | Probability-based mating that prefers individuals with similar skill factors but allows cross-task reproduction [3] |

| Factorial Ranking | Enables fair comparison of individuals across different tasks | Normalizes fitness values relative to each task's specific range [3] |

| Adaptive Transfer Controller | Dynamically regulates knowledge exchange intensity | Randomized interaction probability adjusted based on transfer success history [37] |

| Sub-Population Partitioning | Divides populations based on fitness characteristics | K-means clustering of individuals according to fitness values for targeted transfer [37] |

Application Notes for Engineering Design Optimization

Framework Selection Guidelines