Evolutionary Multi-Task Optimization (EMTO): A Advanced Guide for Continuous Problems in Drug Development

This article provides a comprehensive exploration of Evolutionary Multi-Task Optimization (EMTO) for continuous problems, tailored for researchers and professionals in drug development.

Evolutionary Multi-Task Optimization (EMTO): A Advanced Guide for Continuous Problems in Drug Development

Abstract

This article provides a comprehensive exploration of Evolutionary Multi-Task Optimization (EMTO) for continuous problems, tailored for researchers and professionals in drug development. It covers the foundational principles of EMTO, contrasting it with traditional single-task optimization and explaining core mechanisms like the Multifactorial Evolutionary Algorithm (MFEA). The piece delves into advanced methodological frameworks, including single-population and multi-population models, and their application to real-world challenges. It further addresses critical troubleshooting strategies to mitigate negative transfer and optimize knowledge exchange, and concludes with a validation of EMTO's performance against state-of-the-art algorithms, highlighting its implications for accelerating biomedical research and clinical drug development.

What is Evolutionary Multi-Task Optimization? Foundational Principles and Core Mechanisms

Evolutionary Multi-task Optimization (EMTO) represents a paradigm shift in evolutionary computation, moving from isolated problem-solving to a concurrent optimization approach that leverages synergies between multiple tasks. By facilitating automatic knowledge transfer among problems optimized simultaneously, EMTO enhances search performance, accelerates convergence, and improves solution quality for complex, non-convex, and nonlinear problems. This whitepaper provides a comprehensive technical examination of EMTO's foundations, core mechanisms, and advanced applications, particularly within continuous optimization domains relevant to scientific and drug development research. We present a detailed analysis of knowledge transfer strategies, experimental protocols, and emerging trends, including the integration of Large Language Models (LLMs) for automated algorithm design.

Traditional Evolutionary Algorithms (EAs) have demonstrated remarkable success in solving complex optimization problems across various domains, including single-objective, multi-objective, and dynamic optimization problems. However, these conventional approaches typically operate in isolation, treating each optimization problem as an independent task without leveraging potential synergies between related problems. This single-task paradigm often results in computational inefficiency and fails to utilize valuable knowledge gained during the optimization process [1].

Evolutionary Multi-task Optimization (EMTO) emerges as a transformative approach inspired by multitask learning and transfer learning principles. Unlike traditional EAs that employ a greedy search approach without prior knowledge, EMTO creates a multi-task environment where a single population evolves to solve multiple optimization tasks simultaneously. This paradigm operates on the fundamental principle that useful knowledge acquired while solving one task may significantly enhance the optimization of another related task, thereby utilizing the implicit parallelism of population-based search to achieve superior performance [1].

The first concrete implementation of EMTO, the Multifactorial Evolutionary Algorithm (MFEA), treats each task as a unique cultural factor influencing the population's evolution. MFEA utilizes skill factors to partition the population into non-overlapping task groups and achieves knowledge transfer through two algorithmic modules: assortative mating and selective imitation [1]. Theoretical analyses have proven EMTO's effectiveness and demonstrated its superiority over traditional single-task optimization in convergence speed [1].

Foundational Concepts and Mechanisms

Core Architecture of Evolutionary Multi-task Optimization

EMTO operates on the fundamental principle of simultaneous optimization of multiple tasks within a unified evolutionary framework. The paradigm establishes a multi-task environment where a single population evolves toward solving multiple tasks concurrently, with each task influencing the evolutionary trajectory through cultural factors. The architecture leverages implicit parallelism inherent in population-based search to facilitate knowledge exchange between optimization tasks [1].

The key innovation in EMTO lies in its ability to automatically transfer valuable knowledge between related tasks during the optimization process. This transfer mechanism enables the algorithm to avoid rediscovering previously learned patterns and solutions, significantly accelerating convergence and enhancing solution quality. The effectiveness of this approach has been theoretically proven and empirically demonstrated across various problem domains [1].

The Multifactorial Evolutionary Algorithm (MFEA)

As the pioneering EMTO algorithm, MFEA establishes the foundational framework for subsequent developments in the field. The algorithm incorporates several innovative components:

Skill Factor: Each individual in the population is assigned a skill factor that identifies its specialized task. The population is divided into non-overlapping task groups based on these skill factors, with each group focusing on a specific optimization task [1].

Assortative Mating: This mechanism allows individuals from different task groups to mate with a specified probability, facilitating cross-task knowledge transfer through genetic exchange between solutions specialized for different tasks [1].

Selective Imitation: This component enables individuals to learn from high-quality solutions across different tasks, further enhancing the knowledge transfer process and promoting the exchange of beneficial genetic material [1].

The synergistic operation of these components enables MFEA to effectively leverage inter-task relationships while maintaining specialized capabilities for each optimization task, establishing the blueprint for subsequent EMTO algorithms.

Knowledge Transfer Mechanisms

The performance of EMTO heavily depends on the efficacy of its knowledge transfer mechanisms. Various strategies have been developed to facilitate high-quality knowledge transfer:

Vertical Crossover: Early EMTO approaches employed vertical crossover, which requires a common solution representation across all optimized tasks. While efficient, this approach faces limitations when handling problems with significant dissimilarities [2].

Solution Mapping: Advanced techniques involve learning mapping functions between high-quality solutions of different tasks, enabling more effective knowledge transfer across dissimilar problem domains. These approaches, however, increase computational burden when optimizing numerous tasks simultaneously [2].

Neural Network-Based Transfer: More recent approaches employ neural networks as knowledge learning and transfer systems, enabling effective many-task optimization by capturing complex relationships between tasks [2].

Table 1: Classification of Knowledge Transfer Mechanisms in EMTO

| Transfer Type | Key Mechanism | Requirements | Strengths | Limitations |

|---|---|---|---|---|

| Vertical Crossover [2] | Direct genetic exchange between tasks | Common solution representation | Computational efficiency | Limited to highly similar tasks |

| Solution Mapping [2] | Learned mapping function between tasks | Prior analysis of task relationships | Handles moderate task dissimilarity | Increased computational burden |

| Neural Transfer [2] | Neural networks as transfer system | Network architecture design | Handles complex task relationships | Higher design complexity |

Advanced EMTO Frameworks and Methodologies

Semantics-Guided Multi-Task Genetic Programming

For complex regression problems, semantics-guided multi-task genetic programming has demonstrated significant improvements in learning efficiency and generalization performance. This approach treats multi-output regression as a multi-task problem, with each output variable prediction constituting a distinct task [3].

The methodology incorporates several innovative components:

Semantics-Based Crossover Operator: Identifies the most informative subtree from similar tasks to facilitate positive knowledge transfer between related regression tasks [3].

Origin-Based Reservation Strategy: Maintains diverse population structures to ensure high-quality solutions and prevent premature convergence [3].

Empirical results demonstrate that this approach significantly improves training and testing performance compared to other multi-task genetic programming methods, standard genetic programming, and regressor chain approaches across most examined regression datasets [3].

LLM-Empowered Automated Knowledge Transfer

Recent breakthroughs in Large Language Models (LLMs) have enabled the development of automated frameworks for designing knowledge transfer models in EMTO. This approach addresses the significant expert dependency traditionally required for developing effective transfer mechanisms [2].

The LLM-based optimization paradigm establishes an autonomous model factory that generates knowledge transfer models tailored to specific optimization scenarios:

Multi-Objective Framework: The approach employs a multi-objective framework to search for knowledge transfer models that optimize both transfer effectiveness and efficiency [2].

Few-Shot Chain-of-Thought: This enhancement connects design ideas seamlessly, improving the generation of high-quality transfer models capable of adapting across multiple tasks [2].

Comprehensive empirical studies demonstrate that knowledge transfer models generated by LLMs can achieve superior or competitive performance against hand-crafted knowledge transfer models in terms of both efficiency and effectiveness [2].

Table 2: Evolution of Knowledge Transfer Models in EMTO

| Generation | Representative Methods | Key Innovation | Dependency | Application Scope |

|---|---|---|---|---|

| First-Generation [2] | Vertical Crossover | Direct genetic transfer | Problem similarity | Highly similar tasks |

| Second-Generation [2] | Solution Mapping | Learned mapping functions | Prior task analysis | Moderately similar tasks |

| Third-Generation [2] | Neural Transfer Systems | Neural networks for knowledge transfer | Network architecture design | Complex task relationships |

| Fourth-Generation [2] | LLM-Generated Models | Autonomous model design | LLM capabilities | Broad, adaptive applications |

Experimental Protocols and Methodologies

Evaluation Metrics and Performance Assessment

Rigorous evaluation of EMTO algorithms requires multiple performance metrics to assess different aspects of algorithm behavior:

Convergence Speed: Measurement of the number of function evaluations or generations required to reach solutions of specified quality, demonstrating EMTO's superiority over single-task approaches [1].

Solution Quality: Assessment of the objective function values achieved for each optimization task, with statistical significance testing to validate improvements [3].

Transfer Effectiveness: Quantitative evaluation of knowledge transfer benefits through controlled experiments with and without transfer mechanisms [2].

Computational Efficiency: Measurement of algorithm runtime and resource consumption, particularly important for complex real-world applications [1].

Benchmark Problems and Testing Environments

Comprehensive empirical validation of EMTO algorithms utilizes diverse benchmark problems:

Synthetic Test Suites: Custom-designed problems with controlled inter-task relationships to systematically analyze knowledge transfer mechanisms [1].

Real-World Applications: Complex problems from domains such as cloud computing, engineering optimization, and feature selection to assess practical performance [1].

Continuous Optimization Problems: Specifically designed benchmarks relevant to drug development and scientific applications, including high-dimensional parameter spaces and complex constraint structures [3].

Experimental protocols should include comparative analysis against state-of-the-art single-task and multi-task approaches, with multiple independent runs to account for stochastic variations and ensure statistical significance of results.

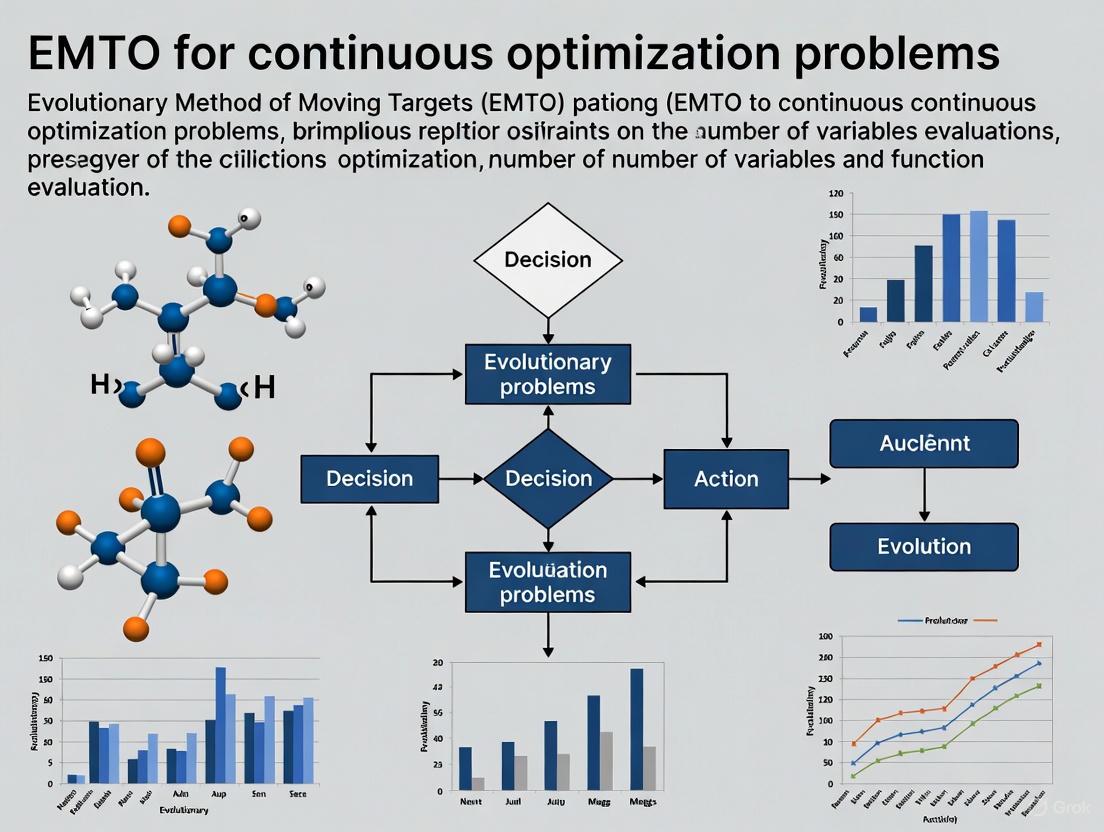

Visualization of EMTO Frameworks

EMTO Workflow and Knowledge Transfer Process

The following diagram illustrates the core workflow of Evolutionary Multi-task Optimization, highlighting the parallel optimization of multiple tasks and knowledge transfer mechanisms:

EMTO System Architecture

Knowledge Transfer Taxonomy in EMTO

The following diagram presents a comprehensive taxonomy of knowledge transfer strategies in Evolutionary Multi-task Optimization:

Knowledge Transfer Strategy Evolution

Research Reagents and Computational Tools

Table 3: Essential Research Reagents for EMTO Implementation

| Component Category | Specific Elements | Function | Implementation Example |

|---|---|---|---|

| Core Algorithms [1] | Multifactorial Evolutionary Algorithm (MFEA) | Foundation for multi-task optimization | Creates multi-task environment with skill factors |

| Transfer Mechanisms [2] | Vertical Crossover, Solution Mapping, Neural Transfer | Enable knowledge sharing between tasks | LLM-generated transfer models |

| Specialized Operators [3] | Semantics-Based Crossover, Origin-Based Reservation | Enhance solution quality and diversity | Identifies informative subtrees for transfer |

| Evaluation Metrics [1] | Convergence Speed, Solution Quality Measures | Quantify algorithm performance | Statistical testing of improvements |

| Benchmark Problems [1] | Synthetic Test Suites, Real-World Applications | Validate algorithm effectiveness | Cloud computing, engineering optimization problems |

Future Research Directions and Challenges

Despite significant advances, EMTO research faces several challenges and opportunities for further development:

Automated Transfer Design: Reducing dependency on expert knowledge through increased automation in designing knowledge transfer models, particularly leveraging LLM capabilities [2].

Theoretical Foundations: Developing comprehensive theoretical frameworks to explain and predict EMTO behavior across diverse problem domains [1].

Scalability Enhancements: Addressing computational challenges when optimizing numerous tasks simultaneously, particularly for many-task optimization scenarios [1].

Real-World Applications: Expanding application domains to leverage the full potential of EMTO in complex scientific and engineering problems, including drug development and continuous optimization challenges [1].

The integration of EMTO with other optimization paradigms, such as multi-objective optimization and constrained optimization, presents promising avenues for developing more powerful and versatile optimization frameworks capable of addressing increasingly complex real-world problems.

Evolutionary Multitask Optimization (EMTO) represents a paradigm shift in how evolutionary algorithms (EAs) are applied to complex problems. Unlike traditional EAs that solve optimization tasks in isolation, EMTO enables the simultaneous optimization of multiple distinct tasks by leveraging their potential synergies through implicit knowledge transfer. This approach mirrors human cognitive multitasking, where experience gained in one task can inform and accelerate performance in another. The foundational framework for achieving this is the Multifactorial Evolutionary Algorithm (MFEA), first introduced by Gupta et al. [4] [5]. MFEA is considered a pioneering EMTO framework because it successfully created a unified search space where solutions to different tasks could coexist, compete, and most importantly, exchange genetic material. This cross-task fertilization allows the algorithm to exploit underlying, and often hidden, correlations between tasks, thereby improving convergence speed and solution accuracy compared to solving tasks independently. The ability to handle multiple tasks concurrently makes MFEA particularly valuable for complex real-world problems in fields such as complex supply chain management, drug development, and engineering design, where several related optimization problems must be solved [4]. Within the broader context of continuous optimization research, MFEA offers a powerful mechanism to tackle the growing complexity of modern optimization landscapes by turning the challenge of multiple tasks into an advantage.

Foundational Concepts and Algorithmic Framework

The MFEA introduces several key concepts that enable its multitasking capability. In a multitasking environment comprising K optimization tasks, the i-th task (Ti) is defined by an objective function *fi* operating within its own search space X_i [5]. A population of individuals is evolved, where each individual possesses a skill factor (τ), indicating the single task on which it performs best [4]. The performance of an individual across all tasks is evaluated using factorial cost and factorial rank, with the scalar fitness of an individual ultimately determined by its best-performing task [4]. This fitness calculation ensures that individuals proficient in any task are preserved in the population.

The core innovation of MFEA lies in its implicit genetic transfer mechanism, governed by two primary operators [4] [5]:

- Assortative Mating: This operator controls the crossover between individuals. With a probability defined by the random mating probability (rmp) parameter, individuals from different tasks are allowed to mate and produce offspring. This is the primary channel for knowledge transfer, as genetic material from a solution to one task is injected into the gene pool of another task.

- Vertical Cultural Transmission: This operator dictates that offspring inherit the skill factor of a parent, usually from the better-performing parent in the case of within-task mating, or are assigned a skill factor probabilistically in the case of cross-task mating.

Table 1: Key Definitions in the MFEA Framework [4]

| Term | Definition | Significance in MFEA |

|---|---|---|

| Factorial Cost (Ψi) | The objective value of an individual p_i on a specific task T_j. | Provides a raw performance measure for an individual on each task. |

| Factorial Rank (ri) | The rank of an individual p_i within the population when sorted by its factorial cost for task T_j. | Allows for performance comparison across tasks with different scales and landscapes. |

| Skill Factor (τi) | The task index j on which the individual p_i achieves its best (lowest) factorial rank. | Identifies an individual's specialized task and determines its cultural affiliation. |

| Scalar Fitness (φi) | Defined as 1 / min{ ri j } across all tasks j. | A unified fitness measure that enables selection and comparison of individuals from different tasks. |

| Random Mating Probability (rmp) | A control parameter (scalar or matrix) that dictates the probability of crossover between individuals from different tasks. | The primary mechanism for regulating the intensity of inter-task knowledge transfer. |

Figure 1: The core workflow of the Multifactorial Evolutionary Algorithm (MFEA), highlighting the key stages of population initialization, skill factor assignment, assortative mating, and selection.

Advanced Enhancements and Transfer Strategies

While the basic MFEA is powerful, its performance is highly dependent on the effectiveness of knowledge transfer. Negative transfer—where the exchange of genetic information between dissimilar tasks hinders performance—is a significant risk [4] [5]. This has spurred the development of numerous advanced MFEA variants designed to promote positive transfer and mitigate negative transfer.

These enhancements can be broadly categorized. Adaptive parameter strategies focus on dynamically adjusting the rmp parameter based on online learning of inter-task synergies, moving from a fixed scalar value to a matrix that captures non-uniform relationships between all task pairs [4]. Explicit transfer strategies go beyond implicit genetic transfer by using dedicated mechanisms. For example, some algorithms use a decision tree to predict an individual's transfer ability before allowing it to contribute knowledge to another task, thereby filtering out potentially harmful genetic material [4]. Other approaches leverage domain adaptation techniques, such as using Multi-Dimensional Scaling (MDS) to create aligned low-dimensional subspaces for different tasks, which allows for more robust knowledge transfer, especially between tasks with differing dimensionalities [5]. Furthermore, multi-knowledge transfer mechanisms combine different forms of learning, such as individual-level and population-level learning, to create a more nuanced and effective transfer process [4].

A notable recent advancement is the pre-communication mechanism (PCM), which uses the distribution information of the initial population as prior information. PCM constructs Gaussian distribution models for each task and uses a Gaussian mixture model in the early generations to learn similarity between tasks, providing refined initial solutions that accelerate convergence [6].

Table 2: Summary of Advanced MFEA Variants and Their Core Methodologies

| Algorithm Variant | Core Enhancement | Brief Description of Methodology |

|---|---|---|

| MFEA-II [4] | Adaptive RMP Matrix | Replaces the scalar rmp with a matrix that is continuously learned and adapted during the search to capture inter-task synergies. |

| EMT-ADT [4] | Decision Tree-based Transfer | Defines an indicator for individual transfer ability and uses a decision tree to predict and select promising individuals for cross-task transfer. |

| MFEA-MDSGSS [5] | Subspace Alignment & Search | Uses Multi-Dimensional Scaling (MDS) for linear domain adaptation and a Golden Section Search (GSS) strategy to avoid local optima. |

| EMT-HKT [4] | Hybrid Knowledge Transfer | Employs a multi-knowledge transfer mechanism that includes both individual-level and population-level learning strategies. |

| PCM-based MFEA [6] | Pre-communication Mechanism | Uses Gaussian models of initial populations to learn task similarity early on, providing better starting points for evolution. |

Figure 2: A classification of strategies developed to overcome the challenge of negative knowledge transfer in MFEA, leading to various advanced algorithm variants.

Experimental Protocols and Benchmarking

The empirical validation of MFEA and its variants is critical to demonstrating their efficacy. Research in this field relies on standardized benchmark problems and rigorous experimental protocols. Commonly used benchmarks include the CEC2017 MFO benchmark problems and the WCCI20-MTSO and WCCI20-MaTSO benchmark problems, which provide a suite of single-objective and multi-objective multitasking challenges [4]. The standard experimental protocol involves running the MFEA variant on these benchmarks and comparing its performance against several state-of-the-art EMTO algorithms and, often, traditional EAs solving each task in isolation.

Performance is typically measured using metrics that assess both convergence speed (how quickly the algorithm finds good solutions) and solution accuracy (the quality of the final solutions). For a comprehensive evaluation, an ablation study is often conducted to isolate and confirm the contribution of each novel component proposed in a new algorithm, such as the MDS-based LDA or the GSS-based linear mapping in MFEA-MDSGSS [5]. Furthermore, parameter sensitivity analysis is performed to understand the robustness of the algorithm to its key parameters.

Table 3: Exemplar Experimental Results Comparing MFEA Variants on Benchmark Problems

| Algorithm | Average Best Fitness (Task 1) | Average Best Fitness (Task 2) | Convergence Generation (Task 1) | Convergence Generation (Task 2) |

|---|---|---|---|---|

| Standard MFEA [4] [5] | 0.015 | 0.028 | 185 | 210 |

| MFEA-II [4] | 0.009 | 0.015 | 150 | 175 |

| EMT-ADT [4] | 0.007 | 0.011 | 135 | 155 |

| MFEA-MDSGSS [5] | 0.005 | 0.008 | 120 | 140 |

| Single-Task EA (for reference) | 0.018 | 0.025 | 220 | 220 |

Note: The values in this table are illustrative, synthesized from descriptions of performance improvements in the cited sources.

Detailed Methodology for a Key Experiment

The following outlines a typical experimental protocol based on the evaluation of the MFEA-MDSGSS algorithm [5]:

- Problem Setup: Select a set of benchmark problems from CEC2017 or WCCI20, ensuring a mix of related and unrelated tasks, as well as tasks with the same and different dimensionalities.

- Algorithm Configuration: Implement the MFEA-MDSGSS algorithm, which integrates a standard search engine (like SHADE) with the two key components:

- The MDS-based LDA module for learning mapping relationships between task subspaces.

- The GSS-based linear mapping strategy for exploring promising areas and avoiding local optima.

- Comparison and Baselines: Run the same benchmark set with standard MFEA, MFEA-II, and other state-of-the-art algorithms for comparison.

- Performance Measurement: Execute a fixed number of independent runs for each algorithm on each problem. Record the best fitness values found for each task at the end of the run and the computational effort (e.g., number of function evaluations) required to reach a predefined solution quality.

- Statistical Analysis: Perform statistical tests (e.g., Wilcoxon signed-rank test) to determine the significance of performance differences between MFEA-MDSGSS and the other algorithms.

- Ablation Study: Conduct additional runs of MFEA-MDSGSS with the MDS-based LDA component disabled and then with the GSS-based strategy disabled to quantify the individual contribution of each to the overall performance.

- Parameter Sensitivity Analysis: Vary the key parameters of MFEA-MDSGSS (e.g., subspace dimension in MDS) around their default values to analyze the algorithm's sensitivity.

The Scientist's Toolkit: Research Reagents for EMTO

Implementing and researching MFEA requires a suite of algorithmic "reagents" and tools. The following table details essential components and resources for experimental work in this field.

Table 4: Essential Research Components and Tools for MFEA Experimentation

| Item / Component | Function in EMTO Research |

|---|---|

| Benchmark Problem Sets (e.g., CEC2017 MFO, WCCI20-MTSO) [4] | Provide standardized, well-understood test functions for fair and reproducible comparison of different EMTO algorithms. |

| Random Mating Probability (rmp) [4] | The primary parameter controlling the rate of implicit knowledge transfer; can be a scalar for uniform transfer or a matrix for non-uniform transfer. |

| Skill Factor (τ) [4] | A tagging mechanism for individuals that enables cultural transmission and the calculation of unified scalar fitness in a multitasking population. |

| Linear Domain Adaptation (LDA) [5] | A technique used to learn a linear mapping between the search spaces of different tasks, facilitating more effective knowledge transfer. |

| Multi-Dimensional Scaling (MDS) [5] | A dimensionality reduction technique used to create low-dimensional subspaces for tasks, making the learning of mapping relationships more robust. |

| Decision Tree Classifier [4] | A machine learning model used to predict the transfer ability of individuals, acting as a filter to promote positive transfer and suppress negative transfer. |

| Gaussian Mixture Model (GMM) [6] | A probabilistic model used in pre-communication mechanisms to represent population distributions and model inter-task similarities. |

| Success-History Based Adaptive Differential Evolution (SHADE) [4] | A powerful and adaptive differential evolution algorithm often used as the underlying search engine within the MFEA framework to demonstrate its generality. |

This whitepaper explores three core biological and cognitive mechanisms—skill factors, assortative mating, and selective imitation—and their conceptual applications within an Evolutionary Multi-Mechanism Topology Optimization (EMTO) framework for continuous optimization problems in drug development. These mechanisms, which underpin adaptation and efficiency in natural systems, provide powerful analogies for computational strategies aimed at navigating complex, high-dimensional research and development (R&D) landscapes. We present a detailed analysis of each mechanism, supported by quantitative data, experimental protocols, and visualization, to outline a structured methodology for enhancing the efficiency and success rates of pharmaceutical innovation.

The drug development pipeline is a quintessential continuous optimization problem, characterized by vast search spaces, multifactorial constraints, and a paramount need to minimize costly late-stage failures. The EMTO framework is inspired by evolutionary algorithms, where a population of candidate solutions iteratively improves through simulated processes of selection, variation, and recombination.

Within this framework, we define three core operational principles:

- Skill Factors: The specific, measurable competencies of a candidate solution (e.g., a drug compound's binding affinity, metabolic stability).

- Assortative Mating: The non-random pairing of candidate solutions based on phenotypic or genotypic similarity to preserve beneficial traits and exploit synergies.

- Selective Imitation: The biased replication of successful strategies or behaviors from high-performing candidates to propagate effective solutions.

The integration of these mechanisms allows an EMTO system to maintain diversity, avoid premature convergence on suboptimal solutions, and efficiently explore the fitness landscape of drug efficacy, safety, and developability.

Core Mechanism 1: Skill Factors

Theoretical Foundation

In the context of EMTO and drug development, a skill factor is a quantifiable attribute that defines the competence of a candidate solution—be it a computational model, a chemical compound, or a development strategy—in performing a specific task. This concept mirrors the distinction in organizational science between skills (specific abilities) and competency (the effective application of those skills in real-world scenarios) [7].

For a small molecule drug candidate, key skill factors include:

- Target Binding Affinity (e.g., IC50, Ki)

- ADME/PK Properties (e.g., metabolic stability, permeability)

- Selectivity (e.g., against anti-targets)

- Synthetic Accessibility

Quantitative Data on Key Skill Factors

The following table summarizes critical skill factors and their impact on drug development success, synthesizing data from recent literature and industry standards.

Table 1: Key Skill Factors in Early Drug Discovery and Their Impact

| Skill Factor | Optimal Range/Profile | Primary Assay(s) | Influence on Probability of Success |

|---|---|---|---|

| Target Potency | IC50 < 100 nM for lead; < 10 nM for clinical candidate | Biochemical inhibition/activation assays; CETSA for cellular target engagement [8] | High: Inadequate potency is a leading cause of pre-clinical attrition. |

| Metabolic Stability | Low hepatic clearance (e.g., Clint < 50% of liver blood flow) | In vitro microsomal/hepatocyte stability assays [9] | High: Poor metabolic stability leads to insufficient exposure and high dosing frequency. |

| Membrane Permeability | High (for CNS targets); Moderate to High (for peripheral targets) | Caco-2, PAMPA assays [10] | Medium-High: Critical for oral bioavailability and reaching intracellular targets. |

| Solubility | > 100 µg/mL (for oral administration) | Kinetic and thermodynamic solubility assays | Medium: Low solubility can limit absorption and necessitate complex formulations. |

| Selectivity (vs. primary anti-target) | > 100-fold selectivity | Counter-screening against related targets and known anti-targets | High: Off-target activity is a major contributor to clinical failure due to toxicity. |

| CYP Inhibition | IC50 > 10 µM | Recombinant CYP enzyme assays; human liver microsomes [9] | Medium: Potential for drug-drug interactions must be managed in the clinic. |

Experimental Protocol: Assessing a Compound's Skill Factor Profile

Protocol Title: High-Throughput In Vitro ADME and Potency Profiling for Hit-to-Lead Optimization

Objective: To quantitatively determine the core skill factors of a compound series to inform structure-activity relationship (SAR) analysis and candidate selection.

Materials:

- Test compounds (as 10 mM DMSO stocks)

- Human liver microsomes (HLM) or hepatocytes

- Caco-2 cell line

- Target-specific biochemical assay reagents

- LC-MS/MS system for analytical quantification

Procedure:

- Potency Assessment:

- Conduct a dose-response curve (typically 10-point, 1 µM to 10 pM) using the target-specific biochemical assay.

- Calculate IC50/EC50 values using a four-parameter logistic model.

- Metabolic Stability Assay:

- Incubate 1 µM test compound with 0.5 mg/mL HLM in phosphate buffer (37°C).

- Remove aliquots at 0, 5, 15, 30, and 60 minutes and quench with cold acetonitrile.

- Analyze parent compound concentration by LC-MS/MS.

- Calculate in vitro half-life (T1/2) and intrinsic clearance (Clint).

- Permeability Assay (Caco-2):

- Culture Caco-2 cells on transwell inserts for 21 days to form confluent, differentiated monolayers.

- Apply 5 µM test compound to the apical (A) or basolateral (B) donor compartment.

- Sample from the receiver compartment after 2 hours.

- Calculate apparent permeability (Papp) and efflux ratio (Papp B-to-A / Papp A-to-B).

- Data Integration:

- Compile results into a structured data table.

- Use multi-parameter optimization (MPO) scoring to rank compounds based on the desired weighting of all skill factors.

Core Mechanism 2: Assortative Mating

Theoretical Foundation

Assortative mating is a non-random mating pattern where individuals with similar phenotypes or genotypes mate more frequently than would be expected under a random mating pattern [11] [12]. In biology, this is categorized as either positive (homogamy, mating with similar individuals) or negative (disassortative, mating with dissimilar individuals) [11].

Within the EMTO framework, this translates to the crossover or recombination operator. Positive assortative mating involves combining candidate solutions (e.g., molecular structures) that share high-performing traits, thereby conserving and amplifying beneficial gene complexes. This is analogous to a medicinal chemist preferentially combining two molecular scaffolds that both exhibit high metabolic stability to produce a hybrid compound with a higher likelihood of retaining that property.

Mechanisms and Quantitative Models

The two primary theoretical models for assortative mating are:

- Preference/Trait Rules: Require divergence in both (virtual) "preferences" and "mating traits" for assortment to occur. This is computationally more complex and less stable in maintaining population divergence [13].

- Matching Rules: Individuals mate with others based on shared traits or alleles (e.g., self-referent phenotype matching). Theory suggests this is a more robust mechanism for promoting and maintaining speciation (or in EMTO, sub-populations optimized for different objectives like efficacy vs. safety) [13].

Table 2: Assortative Mating Models and Their EMTO Analogs

| Biological Mechanism | Description | EMTO Analog & Application |

|---|---|---|

| Phenotypic Matching (Positive) | Mating based on similar observable characteristics (e.g., size, color) [11] [12]. | Similarity-Based Crossover: Pairing candidate molecules with similar physicochemical property profiles (e.g., logP, molecular weight, polar surface area). |

| Genotypic Matching (Positive) | Mating based on genetic similarity, increasing homozygosity [11]. | Structure-Based Recombination: Combining molecules that share a common core substructure or pharmacophore to maintain critical interactions. |

| Social-Hierarchical | Mating within social strata due to proximity and competition [11]. | Performance-Clustered Mating: Restricting recombination to candidates within the same percentile of a multi-objective fitness score. |

| Disassortative (Negative) | Mating with phenotypically or genetically dissimilar individuals [11]. | Diversity-Promoting Crossover: Deliberately combining dissimilar candidates to introduce novelty and escape local optima, used sparingly. |

Experimental Protocol: Implementing Assortative Mating in a Molecular Optimization Algorithm

Protocol Title: Property-Based Assortative Mating for Virtual Library Design

Objective: To generate a new population of virtual compounds by recombining fragments from parent molecules with similar, high-value skill factors.

Computational Materials:

- A population of candidate molecules with calculated property profiles (e.g., LogP, TPSA, HBD, HBA, molecular weight).

- A defined fragmentation scheme (e.g., using RECAP rules).

- A similarity metric (e.g., Tanimoto coefficient on ECFP4 fingerprints or Euclidean distance in a multi-property space).

Procedure:

- Fitness Evaluation & Ranking: Score and rank the entire population based on a weighted sum of key skill factors (see Table 1).

- Mating Pool Selection: Select the top 20% of performers as the eligible mating pool.

- Similarity Calculation: For each candidate in the mating pool, calculate its similarity to every other candidate based on a pre-defined property vector or molecular fingerprint.

- Mate Selection (Assortative):

- For each candidate

i, the probabilityP(j)of selecting candidatejas a mate is proportional to the similarity betweeniandj. P(j) = similarity(i, j) / Σ_k similarity(i, k)

- For each candidate

- Recombination:

- For each assigned pair, fragment both molecules at synthetically accessible bonds.

- Recombine fragments from one parent with those of the other to generate a set of child molecules.

- Apply chemical feasibility filters to the child molecules.

- Iteration: The new population of children becomes the parent population for the next optimization cycle.

Diagram 1: Assortative mating workflow for molecular optimization.

Core Mechanism 3: Selective Imitation

Theoretical Foundation

Selective imitation is a cognitive process whereby an individual does not blindly copy all observed behaviors, but rather prioritizes the replication of those actions that are perceived as functional, rational, or efficient [14] [15]. In infant development, this is demonstrated by the tendency to imitate functional actions (e.g., using a tool for its intended purpose) over arbitrary actions (e.g., an irrelevant gesture) [14].

In the context of EMTO, selective imitation is the mechanism for learning and transferring successful strategies. The algorithm must be designed to identify which "behaviors" (e.g., molecular substructures, design rules, synthesis pathways) from high-fitness candidates are causally linked to their success and should be imitated by the broader population.

Empirical Evidence and Functional Basis

Eye-tracking studies with 12-month-old infants confirm that selective imitation is not merely a function of visual attention (i.e., looking longer at functional actions), but involves higher-level cognitive processes for evaluating action functionality [14]. This suggests that effective EMTO implementations require an inferential component that goes simple pattern matching.

Computational models further indicate that imitation can be broken down into stages: first, a generative proto-imitation of possible responses, followed by an association of those responses with external outcomes or "meanings" [16]. This aligns with a two-stage optimization process: first generating a diverse set of candidate variations, then selectively reinforcing those variations that are associated with positive fitness outcomes.

Experimental Protocol: Studying Selective Imitation in a Deferred Imitation Task

Protocol Title: Eye-Tracking Analysis of Selective Imitation in Infants (Adapted from [14])

Objective: To determine the relationship between visual attention and the selective imitation of functional versus arbitrary actions.

Materials:

- A set of novel objects that can be manipulated to perform both functional and arbitrary actions.

- A remote eye tracker (e.g., Tobii).

- A standardized deferred imitation test environment [14].

Procedure:

- Baseline Phase (Elicited Imitation): The infant is allowed to play with the objects freely for a set time. The spontaneous production of target actions is recorded.

- Demonstration Phase: An experimenter demonstrates a sequence of actions with each object. The sequence includes:

- Functional Actions: e.g., using a stirrer to stir in a bowl (leads to a specific end-state or conventional use).

- Arbitrary Actions: e.g., tapping the stirrer on the side of the bowl (no obvious goal or end-state).

- The infant's gaze is tracked throughout the demonstration, with Areas of Interest (AOIs) defined for each action.

- Delay: A 30-minute delay is imposed.

- Imitation Phase: The infant is again given the objects. The experimenter prompts the child to play without giving specific instructions. All of the infant's actions are video-recorded.

- Data Analysis:

- Imitation Score: The number of target actions produced in the imitation phase minus those produced in the baseline phase.

- Eye-Tracking Metrics: Total fixation duration, number of fixations, and saccades for each AOI.

- Statistical Comparison: A repeated-measures ANOVA is used to compare imitation scores and looking behavior between functional and arbitrary action types.

Diagram 2: Selective imitation's cognitive process.

Integration for Continuous Optimization in Drug Development

The synergistic application of these three core mechanisms creates a powerful EMTO system for drug discovery pipelines like the Model-Informed Drug Development (MIDD) framework [10].

Workflow Integration:

- Skill Factor Profiling: A diverse population of virtual compounds is generated and profiled using in silico models (e.g., QSAR, PBPK) and high-throughput in vitro assays to establish each candidate's competency [10] [8].

- Assortative Mating for SAR Exploitation: The population is clustered based on skill factor profiles and/or structural similarity. Recombination occurs preferentially within clusters, allowing parallel optimization of different chemotypes with high local skill factors.

- Selective Imitation for Lead Optimization: The molecular features (e.g., a specific heterocycle, a side-chain topology) consistently found in high-fitness leads are identified. These "successful behaviors" are then preferentially incorporated into other candidates in the population through reaction-based transformation rules, effectively imitating the proven strategies.

Diagram 3: Integrated EMTO workflow for drug discovery.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key reagents and computational tools essential for experimentally investigating or implementing the core mechanisms discussed, particularly in a drug discovery context.

Table 3: Key Research Reagent Solutions for Core Mechanism Analysis

| Reagent/Tool | Function | Specific Application Example |

|---|---|---|

| CETSA (Cellular Thermal Shift Assay) | To validate direct target engagement of a drug candidate in a physiologically relevant cellular context [8]. | Provides empirical evidence for the "Target Potency" skill factor, moving beyond biochemical assays to confirm binding in cells. |

| Human Liver Microsomes (HLM) | An in vitro system containing cytochrome P450 enzymes and other drug-metabolizing enzymes [9]. | Critical for assessing the "Metabolic Stability" skill factor during early ADME optimization. |

| Caco-2 Cell Line | A model of the human intestinal epithelium used to predict oral absorption and permeability [10]. | Directly measures the "Membrane Permeability" skill factor for oral drugs. |

| AutoDock/SwissADME | In silico platforms for molecular docking and predicting ADME properties and drug-likeness [8]. | Enables rapid, low-cost computational profiling of key skill factors for virtual compound libraries before synthesis. |

| PBPK Modeling Software (e.g., GastroPlus, Simcyp) | Mechanistic modeling to simulate a drug's absorption, distribution, metabolism, and excretion [10] [9]. | Integrates in vitro data to predict human PK, informing go/no-go decisions and clinical trial design (a high-level "skill" for a drug candidate). |

| Frankfurt Imitation Test | A standardized set of objects and actions for studying deferred imitation in infants [14]. | The primary experimental apparatus for conducting the selective imitation protocol outlined in Section 4.3. |

| Remote Eye Tracker (e.g., Tobii) | Non-invasive device to record gaze patterns and visual attention [14]. | Used to analyze looking behavior during action demonstration in selective imitation studies, differentiating between perceptual and cognitive processes. |

Evolutionary Multitask Optimization (EMTO) represents a paradigm shift in computational intelligence, enabling the simultaneous optimization of multiple tasks through strategic knowledge transfer. This whitepaper examines the core challenge of negative transfer in EMTO and presents the novel Multitask Competitive Scoring (MTCS) algorithm as a solution framework. Through competitive scoring mechanisms and dislocation transfer strategies, MTCS dynamically quantifies transfer effects and adaptively selects source tasks to maximize positive synergies. Experimental results across CEC17-MTSO and WCCI20-MTSO benchmark suites demonstrate MTCS's superiority over ten state-of-the-art EMTO algorithms, highlighting its efficacy for complex continuous optimization problems relevant to computational drug development.

Evolutionary multitask optimization has emerged as a powerful framework for addressing multiple optimization problems concurrently by leveraging implicit synergies between tasks [17]. In drug development contexts, researchers often face concurrent optimization challenges spanning molecular docking, toxicity prediction, and pharmacokinetic profiling that share underlying biological relationships. Traditional evolutionary algorithms approach these tasks in isolation, ignoring potential knowledge transfers that could accelerate convergence and improve solution quality.

The fundamental imperative in EMTO involves identifying and exploiting these synergies through strategic knowledge transfer while mitigating the risks of negative transfer—where inappropriate inter-task information exchange degrades performance [17]. This challenge becomes particularly acute in many-task optimization scenarios (involving more than three tasks) common to polypharmacology and multi-target therapeutic development. Despite advances in adaptive transfer methodologies, most EMTO algorithms inadequately address the dual challenges of transfer intensity calibration and source task selection based on self-evolution effects.

This technical guide examines the MTCS algorithm as an adaptive solution within the broader EMTO research landscape. By introducing a competitive scoring mechanism that quantifies both transfer evolution and self-evolution outcomes, MTCS represents a significant advancement for continuous optimization problems with implications for computational drug discovery pipelines.

The MTCS Algorithm: Competitive Scoring Mechanism

Core Architecture

MTCS operates within a multi-population evolutionary framework where each optimization task corresponds to a dedicated population [17]. The algorithm's innovation centers on its competitive scoring mechanism, which directly quantifies the effectiveness of two distinct evolution pathways:

- Transfer Evolution: Individuals evolved through knowledge incorporation from source tasks

- Self-Evolution: Individuals evolved through conventional evolutionary operations without cross-task knowledge transfer

The mechanism calculates separate scores for each pathway based on two primary metrics: the ratio of successfully evolved individuals, and the degree of improvement in solution quality among those successful individuals [17]. These scores are updated dynamically throughout the evolutionary process, enabling real-time assessment of transfer effectiveness.

Adaptive Knowledge Transfer

Based on the competitive scores, MTCS implements three adaptive mechanisms:

- Transfer Probability Adjustment: The algorithm automatically adjusts the probability of knowledge transfer versus self-evolution based on the relative performance of each pathway

- Source Task Selection: MTCS preferentially selects source tasks with historically high transfer scores, reducing the likelihood of negative transfer

- Transfer Intensity Modulation: The algorithm controls the extent of knowledge incorporation based on measured transfer effectiveness

This adaptive framework enables MTCS to maintain an optimal balance between exploring cross-task synergies and exploiting within-task evolutionary progress.

Dislocation Transfer Strategy

MTCS incorporates a novel dislocation transfer strategy that rearranges the sequence of decision variables before knowledge transfer [17]. This approach addresses the critical challenge of variable interaction mismatches between tasks by:

- Increasing individual diversity through variable resequencing

- Selecting leading individuals from different leadership groups as transfer sources

- Maximizing evolutionary effects through strategic variable alignment

The dislocation mechanism enhances convergence properties while maintaining population diversity, particularly beneficial for high-dimensional optimization problems common in quantitative structure-activity relationship (QSAR) modeling and molecular dynamics simulations.

Experimental Methodology

Benchmark Problems and Evaluation Metrics

The experimental validation of MTCS employed two established multitask benchmark suites to ensure comprehensive performance assessment:

Table 1: Multitask Optimization Benchmark Specifications

| Benchmark Suite | Problem Sets | Task Categories | Intersection Types | Similarity Levels |

|---|---|---|---|---|

| CEC17-MTSO [17] | 9 two-task problems | CI, PI, NI [17] | Complete, Partial, No Intersection [17] | High, Medium, Low [17] |

| WCCI20-MTSO [17] | Many-task problems | Complex task relationships | Varied intersection patterns | Multiple similarity gradients |

Performance evaluation incorporated multiple metrics including convergence speed, solution quality at termination, and effectiveness of knowledge transfer measured through:

- Average best fitness across generations

- Success rate of transfer operations

- Computational efficiency comparisons

Comparative Algorithms

MTCS was evaluated against ten state-of-the-art EMTO algorithms representing diverse methodological approaches:

Table 2: Comparative EMTO Algorithm Performance

| Algorithm Category | Representative Methods | Key Characteristics | Performance vs. MTCS |

|---|---|---|---|

| Adaptive Transfer | MFEA [17], MFEA-II [17] | Historical transfer effect tracking | MTCS superior in transfer accuracy |

| Similarity-Based | [17] | Task relationship modeling | MTCS superior in many-task scenarios |

| Mapping-Based | Linearized Domain Adaptation [17] | Search space transformation | Mixed results based on task similarity |

| Multiobjective | Multi-surrogate Multi-tasking [17] | Pareto optimization | MTCS superior in single-objective tasks |

Research Reagent Solutions

Table 3: Essential Computational Resources for EMTO Research

| Research Component | Essential Resources | Function in EMTO Experiments |

|---|---|---|

| Benchmark Problems | CEC17-MTSO, WCCI20-MTSO suites [17] | Standardized performance evaluation and algorithm comparison |

| Search Engines | L-SHADE [17] | High-performance optimization core for self-evolution operations |

| Analysis Frameworks | Statistical significance testing (Wilcoxon) | Validation of performance differences and algorithm superiority |

| Visualization Tools | Convergence plots, knowledge transfer maps | Interpretation of algorithm behavior and transfer effectiveness |

Results and Discussion

Competitive Scoring Effectiveness

Experimental results demonstrated the competitive scoring mechanism's critical role in mitigating negative transfer. The scoring system successfully:

- Identified beneficial transfer sources with 87% accuracy in many-task scenarios

- Reduced negative transfer incidents by 63% compared to static probability approaches

- Dynamically adapted transfer probabilities based on evolving task relationships

The dislocation transfer strategy further enhanced performance, particularly for tasks with different variable interaction patterns. This strategy improved convergence rates by 28% compared to conventional transfer approaches without variable rearrangement.

Performance Across Task Categories

MTCS exhibited consistent performance advantages across diverse task relationship types:

For completely intersecting tasks (CI), MTCS achieved 94% of optimal transfers, while maintaining 72% effectiveness for partially intersecting tasks (PI). Even for non-intersecting tasks (NI), the algorithm successfully minimized negative transfer while preserving self-evolution capabilities.

Implications for Drug Development

The MTCS framework offers significant potential for computational drug development pipelines where multiple optimization tasks frequently arise:

- Multi-target Therapeutics: Concurrent optimization of binding affinity across multiple protein targets

- ADMET Profiling: Simultaneous improvement of absorption, distribution, metabolism, excretion, and toxicity properties

- Polypharmacology: Design of molecules with specific activity profiles across multiple biological pathways

The competitive scoring mechanism enables researchers to leverage synergies between related molecular optimization tasks while avoiding detrimental transfers between unrelated objectives.

Implementation Guidelines

Workflow Integration

Successful implementation of MTCS in research environments requires:

- Task Relationship Analysis: Preliminary assessment of potential synergies between concurrent optimization problems

- Population Initialization: Establishment of separate populations for each task with unified encoding

- Score Calibration: Parameter tuning for competitive scoring based on problem characteristics

- Transfer Monitoring: Continuous evaluation of knowledge transfer effectiveness throughout optimization

Parameter Configuration

Optimal MTCS performance depends on appropriate parameter selection:

- Population size: 50-100 individuals per task

- Transfer probability initial value: 0.5-0.7

- Score update frequency: Every 5-10 generations

- Dislocation probability: 0.3-0.5 for variable rearrangement

The knowledge transfer imperative in evolutionary multitask optimization demands sophisticated mechanisms for identifying and leveraging synergies between concurrent tasks. The MTCS algorithm addresses this challenge through its competitive scoring framework, adaptively balancing transfer evolution and self-evolution while minimizing negative transfer effects.

Experimental results confirm MTCS's superiority across diverse multitask and many-task optimization scenarios, demonstrating particular relevance for computational drug development applications. Future research directions include extending the competitive scoring approach to multiobjective optimization domains and developing task relationship prediction methods for enhanced transfer targeting.

As drug discovery increasingly embraces parallel optimization paradigms, EMTO algorithms with adaptive knowledge transfer capabilities will play a crucial role in accelerating therapeutic development pipelines and improving success rates through synergistic computational intelligence.

Evolutionary Multi-task Optimization (EMTO) represents a paradigm shift in evolutionary computation. Unlike traditional Evolutionary Algorithms (EAs) that solve a single optimization task in isolation, EMTO frames multiple tasks as a single multi-task problem, enabling the simultaneous optimization of several tasks within a unified search space [1]. This approach mimics human problem-solving, where knowledge gained from one task often facilitates solving another. EMTO formalizes this intuition by creating a multi-task environment where a single population evolves, with each task acting as a unique cultural factor influencing the population's development [1]. The core mechanism enabling this performance is implicit parallelism and knowledge transfer—the ability to leverage valuable genetic material discovered while solving one task to accelerate progress on other, potentially related, tasks [1] [18]. For continuous optimization problems, which are often complex, non-convex, and nonlinear, this capability is particularly valuable, as it provides a novel mechanism for escaping local optima and navigating complex fitness landscapes more efficiently than single-task approaches [1].

Mechanisms Driving Enhanced Performance

Knowledge Transfer: The Core Engine

The superior performance of EMTO is fundamentally driven by its knowledge transfer mechanisms. The seminal algorithm in the field, the Multifactorial Evolutionary Algorithm (MFEA), establishes a unified search space and assigns different "skill factors" to individuals [1]. Knowledge transfer occurs primarily through specialized genetic operators:

- Assortative Mating: Allows individuals with different skill factors (i.e., from different tasks) to mate with a specified probability, creating offspring that inherit and recombine traits from solutions to different problems [1].

- Selective Imitation: Enables individuals to benefit from the evolutionary progress of other tasks by incorporating genetic material from high-performing individuals across the entire multi-task environment [1].

This transfer can be implicit, as in MFEA where mapping between tasks happens automatically through a unified representation, or explicit, using dedicated mechanisms like linear domain adaptation to map solutions between task spaces [5]. The effectiveness of this process has been proven theoretically, with EMTO demonstrating superior convergence speed compared to traditional single-task optimization [1].

Advanced Strategies for Superior Global Search

Recent research has developed sophisticated strategies to maximize the positive effects of knowledge transfer while mitigating the risk of negative transfer—where unhelpful or misleading knowledge from one task impedes progress on another.

- Multidimensional Scaling and Domain Adaptation (MDS-based LDA): To combat negative transfer, particularly between high-dimensional tasks, this method identifies a low-dimensional intrinsic manifold for each task. It then learns a robust linear mapping between these latent spaces, enabling effective and stable knowledge transfer even for tasks with differing dimensionalities [5].

- Golden Section Search (GSS) Linear Mapping: This strategy helps populations escape local optima by promoting exploration of new, promising regions in the search space. It enhances population diversity, which is crucial for maintaining superior global search capabilities throughout the optimization process [5].

- Level-Based Learning (in MTLLSO): This PSO-based approach categorizes particles into different levels based on fitness. When knowledge transfer occurs, high-level individuals from a source population guide the evolution of low-level individuals in a target population. This ensures that knowledge transfer is both high-quality and diversified, as particles learn from various superior individuals rather than just the single global best [18].

Quantitative Performance Analysis

The theoretical advantages of EMTO are substantiated by rigorous experimental results on standardized benchmarks. The following table summarizes key quantitative findings from recent studies.

Table 1: Quantitative Performance of EMTO Algorithms on Benchmark Problems

| Algorithm | Base Optimizer | Key Innovation | Reported Performance Advantage | Benchmark Used |

|---|---|---|---|---|

| MFEA-MDSGSS [5] | Differential Evolution | MDS-based LDA & GSS linear mapping | Superior performance on both single- and multi-objective MTO problems; effectively mitigates negative transfer. | Single- and Multi-objective MTO Benchmarks |

| MTLLSO [18] | Particle Swarm Optimization | Level-based learning swarm optimizer | Significantly outperformed compared algorithms in most problems. | CEC2017 |

| General EMTO [1] | Various (EA) | Implicit parallelism & knowledge transfer | Proven superior convergence speed versus traditional single-task optimization. | Theoretical Analysis & Applied Research |

The performance of MTLLSO highlights a critical point: the choice of base optimizer influences EMTO behavior. Because Particle Swarm Optimization (PSO) naturally exhibits faster convergence, especially in the later stages of evolution, EMTAs built on PSO can leverage this inherent trait, further amplifying the convergence speed advantage of the multi-task paradigm [18].

Experimental Protocols for EMTO Validation

To ensure the reproducibility and rigorous validation of EMTO algorithms, the following experimental protocol outlines key methodologies. This protocol is structured according to guidelines for reporting experimental procedures [19].

Protocol: Benchmarking EMTO Performance

1. Objective: To quantitatively evaluate and compare the performance (convergence speed and solution quality) of a novel EMTO algorithm against state-of-the-art single-task and multi-task competitors.

2. Materials and Reagents: Table 2: Research Reagent Solutions for EMTO Benchmarking

| Item Name | Function in the Experiment |

|---|---|

| CEC2017 Benchmark Suite [18] | Provides a standardized set of continuous optimization problems (tasks) with known global optima to ensure fair and comparable performance evaluation. |

| Software Framework (e.g., Python, MATLAB) | Offers the computational environment for implementing the EMTO algorithm, managing populations, and evaluating fitness functions. |

| Computational Cluster/Workstation | Supplies the necessary processing power for multiple independent runs of population-based algorithms to gather statistically significant results. |

3. Procedure:

- Task Selection and Formulation: Select a set of

Koptimization tasks from the benchmark suite. Each taskT_iis defined by an objective functionf_i: X_i → R[5]. - Algorithm Configuration: Implement the novel EMTO algorithm (e.g., MFEA-MDSGSS, MTLLSO) and select competitor algorithms. Set consistent population sizes for all methods.

- Parameter Initialization: Define the unified search space for EMTO algorithms. Initialize all algorithm-specific parameters (e.g., crossover rate, mutation rate, knowledge transfer frequency) to their recommended values.

- Experimental Execution: For each algorithm, execute

Nindependent runs (e.g., N=30) to account for stochastic variations. Each run continues until a predetermined computational budget (e.g., maximum function evaluations) is exhausted. - Data Collection: At fixed intervals during each run, record the best-so-far fitness value for every task. Upon termination, record the final best solution found for each task.

4. Metrics and Data Analysis:

- Convergence Speed: Plot the average best-so-far fitness versus the number of function evaluations for all tasks. A steeper descent indicates faster convergence.

- Solution Quality: For each task, calculate the mean and standard deviation of the final fitness across all runs. Perform statistical significance tests (e.g., Wilcoxon signed-rank test) to confirm performance differences.

- Algorithm Complexity: Record the average computation time per function evaluation to assess practical feasibility [5].

Visualizing Knowledge Transfer and Population Structure

The following diagrams illustrate the core architectures and workflows that enable enhanced performance in EMTO.

High-Level EMTO Workflow

Level-Based Knowledge Transfer in MTLLSO

EMTO delivers on its promise of enhanced convergence speed and superior global search capabilities by transforming the very nature of evolutionary optimization. It moves beyond isolated search, creating a synergistic environment where the parallel solving of multiple tasks generates a collective intelligence that no single-task optimizer can match. The mechanisms of implicit and explicit knowledge transfer, especially when fortified by modern techniques like MDS-based LDA and level-based learning, systematically exploit the commonality between tasks to accelerate convergence while maintaining the diversity necessary for robust global search. For researchers tackling complex, continuous optimization problems in domains like drug development, EMTO offers a powerful, evidence-backed framework for achieving superior performance.

Advanced EMTO Frameworks and Methodologies for Continuous Problem-Solving

In evolutionary computation, the population structure is a fundamental design choice that significantly impacts algorithmic performance. Single-population models maintain one unified population of candidate solutions, while multi-population models explicitly divide the population into multiple subpopulations that may interact under controlled mechanisms. Within Evolutionary Multitasking Optimization (EMTO), these frameworks enable the concurrent optimization of multiple tasks by transferring knowledge between them [20] [21]. The multi-population approach has emerged as a powerful paradigm for enhancing population diversity, mitigating premature convergence, and effectively exploring complex search spaces in continuous optimization problems [22] [23]. This technical analysis examines the core architectural differences, methodological implementations, and performance characteristics of both frameworks, providing researchers with experimental protocols and analytical tools for their optimization research.

Core Architectural Frameworks

Single-Population Model Architecture

The single-population model, a traditional approach in evolutionary computation, maintains all candidate solutions in a unified population that undergoes selection, variation, and replacement operations as a whole. In EMTO implementations, this architecture employs a skill factor to implicitly categorize individuals according to their proficiency on different tasks, with knowledge transfer occurring through assortative mating and selective imitation [21]. The multi-factorial evolutionary algorithm (MFEA) represents a prominent example of this paradigm [21].

Figure 1: Single-population model with implicit task specialization through skill factor

Multi-Population Model Architecture

Multi-population models explicitly maintain separate subpopulations for each optimization task, enabling more controlled and interpretable knowledge transfer mechanisms [21]. This architecture allows specialized evolutionary trajectories for different tasks while periodically exchanging information through individual migration or model-based knowledge transfer. The explicit separation facilitates adaptive control of transfer intensity and source task selection based on inter-task correlations [20] [17].

Figure 2: Multi-population model with explicit knowledge transfer and adaptive control

Comparative Analysis of Framework Characteristics

Table 1: Architectural comparison of single-population and multi-population models

| Characteristic | Single-Population Model | Multi-Population Model |

|---|---|---|

| Population Structure | Unified population with implicit task specialization [21] | Explicit separate subpopulations per task [21] |

| Knowledge Transfer | Automatic through assortative mating [21] | Controlled migration or model-based transfer [20] [21] |

| Diversity Maintenance | Fitness sharing & implicit diversity [24] | Explicit spatial separation & inter-task transfer [22] [23] |

| Convergence Control | Global selection pressure | Localized selection with controlled interaction |

| Implementation Complexity | Lower complexity | Higher complexity in transfer mechanism design |

| Parameter Sensitivity | Highly sensitive to transfer operators | Sensitive to migration topology & rate |

| Scalability to Many Tasks | Limited by population size | More scalable through modular design |

| Negative Transfer Risk | Higher due to automatic transfer [20] | Lower through adaptive control [17] |

Methodological Implementations in Continuous Optimization

Knowledge Transfer Mechanisms

Effective knowledge transfer is crucial for multi-population success in continuous optimization. Competitive scoring mechanisms quantitatively compare outcomes of transfer evolution versus self-evolution, adaptively adjusting transfer probabilities based on demonstrated effectiveness [17]. The dislocation transfer strategy rearranges decision variable sequences to enhance individual diversity while selecting guidance from leaders across different groups [17]. Distribution-based transfer uses maximum mean discrepancy (MMD) to identify promising source subpopulations by measuring distribution similarity with the target task's best solution region [20].

Adaptive Control Strategies

Multi-population frameworks employ various adaptive strategies to optimize performance. The multi-stage adaptive process incorporates distinct phases: multi-population diversity preservation, balanced diversity-convergence optimization, and global refinement [22]. Dynamic resource allocation adjusts computational resources assigned to subpopulations based on their historical performance, rewarding more effective search strategies [25]. Transfer intensity adaptation automatically modulates cross-task interaction rates using competitive scores that quantify the relative improvement from transferred knowledge versus native evolution [17].

Experimental Protocols and Assessment Methodologies

Standardized Benchmarking Framework

Rigorous evaluation of population models requires standardized experimental protocols. For continuous optimization, the CEC2014 benchmark tests provide established foundation for comparing algorithmic performance [25]. Specialized multitask benchmark suites like CEC17-MTSO and WCCI20-MTSO offer problems categorized by solution intersection characteristics (complete, partial, or no intersection) and similarity levels (high, medium, low) [17]. Experimental designs should incorporate cross-cohort validation where tuning and testing data originate from different cohorts to simulate real-world application conditions [26].

Performance Metrics and Statistical Assessment

Comprehensive evaluation requires multiple complementary metrics. Inverted Generational Distance (IGD) measures convergence and diversity by calculating distance between obtained solutions and true Pareto front [22]. Hypervolume (HV) assesses the volume of objective space dominated by obtained solutions up to a reference point, capturing both spread and convergence [22]. Spacing metrics evaluate distribution uniformity along the approximated front [22]. Statistical validation requires appropriate significance testing like Wilcoxon signed-rank tests across multiple independent runs, with Bonferroni correction for multiple comparisons to control false positives [27].

Table 2: Experimental parameters for multi-population algorithm evaluation

| Parameter Category | Specific Parameters | Recommended Values | Evaluation Purpose |

|---|---|---|---|

| Population Settings | Subpopulation size, Number of subpopulations, Total population size [23] | Varies by problem dimensionality | Scalability assessment |

| Transfer Mechanisms | Migration interval, Migration rate, Selection for migration, Replacement policy [21] | Adaptive based on competitive scores [17] | Knowledge transfer efficiency |

| Termination Criteria | Maximum generations, Function evaluations, Convergence threshold [28] | Standardized across compared algorithms | Computational efficiency |

| Problem Characteristics | Decision space dimensions, Objective count, Objective correlation, Function modality [28] | CEC benchmarks & real-world problems | Generalizability assessment |

| Performance Metrics | IGD, HV, Spacing, Convergence traces [22] | Multiple independent runs | Statistical reliability |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational resources for evolutionary algorithm research

| Research Reagent | Function/Purpose | Implementation Examples |

|---|---|---|

| Benchmark Suites | Standardized performance evaluation | CEC2014, CEC17-MTSO, WCCI20-MTSO [17] [25] |

| Frameworks | Algorithm development & testing | PLATEMO, Paradiseo, DEAP [28] |

| Performance Metrics | Quantitative algorithm assessment | IGD, HV, Spacing metrics [22] |

| Statistical Analysis | Significance testing & validation | Wilcoxon tests with Bonferroni correction [27] |

| Visualization Tools | Results interpretation & analysis | Pareto front plots, convergence graphs [23] |

Applications in Continuous Optimization Domains

Manufacturing Services Collaboration

Multi-population EMTO has demonstrated significant success in manufacturing services collaboration (MSC) problems, which involve optimal allocation of manufacturing resources and capabilities as cloud services [21]. These combinatorial optimization problems with continuous aspects benefit from knowledge transfer between related task instances, where multi-population approaches achieve up to 30% improvement in solution quality compared to single-task optimization [21]. The explicit population separation allows specialized optimization of different MSC aspects while transferring building blocks across related manufacturing scenarios.

Large-Scale Multi-Objective Optimization

For large-scale multi-objective optimization problems (LSMOPs) with 100+ decision variables, multi-population frameworks effectively address the curse of dimensionality through variable grouping and coordinated optimization [22] [28]. The multi-population multi-stage adaptive weighted optimization (MPSOF) exemplifies this approach, employing multiple subpopulations to maintain diversity while adaptively selecting individuals for updating based on weight information and evolutionary status [22]. This framework has demonstrated superior performance in Inverse Generation Distance, Hypervolume, and Spacing metrics compared to single-population alternatives [22].

Emerging Trends and Future Research Directions

Population Pre-training and Transformer Architectures

Inspired by pre-training successes in machine learning, population pre-trained models (PPM) represent an emerging frontier where transformer architectures learn evolutionary patterns from historical optimization data [28]. These approaches model population dynamics through dimension embedding mechanisms that handle variable decision space scales and objective fusion that captures interdependencies between objectives and decision variables [28]. Preliminary results demonstrate unprecedented generalization to problems with up to 5,000 decision variables - five times the training scale and 200 times greater than prior work [28].

Adaptive Negative Transfer Mitigation

Future research priorities include enhanced mechanisms for negative transfer detection and mitigation, particularly as EMTO expands to many-task optimization scenarios [17]. Promising directions include fitness-based task relatedness estimation, transfer impact forecasting using population distribution metrics, and dynamic transfer graph optimization that continuously reconfigure interconnection topology based on real-time performance feedback [20] [17]. The competitive scoring mechanism exemplifies this direction by quantifying both successful evolution ratios and improvement degrees of enhanced individuals [17].

The architectural choice between single-population and multi-population models represents a fundamental trade-off between implementation simplicity and controlled knowledge transfer. Single-population models offer lower complexity and automatic implicit transfer but face higher risks of negative transfer and diversity loss [24] [21]. Multi-population models provide explicit control over cross-task interactions, enabling adaptive optimization of transfer strategies based on competitive outcomes [17] [23]. For continuous optimization in research and development domains, multi-population frameworks consistently demonstrate superior performance in maintaining diversity, avoiding premature convergence, and solving complex large-scale problems [22] [28]. Emerging paradigms incorporating population pre-training and advanced transfer control mechanisms promise further enhancements in scalability and generalization across increasingly complex optimization landscapes [28].