Evolutionary Multi-Objective Optimization (EMO): A Complete Guide for Biomedical Research and Drug Development

This article provides a comprehensive overview of Evolutionary Multi-Objective Optimization (EMO), a powerful computational intelligence approach for solving problems with multiple conflicting objectives.

Evolutionary Multi-Objective Optimization (EMO): A Complete Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive overview of Evolutionary Multi-Objective Optimization (EMO), a powerful computational intelligence approach for solving problems with multiple conflicting objectives. Tailored for researchers, scientists, and drug development professionals, it explores EMO's core principles, key algorithms like NSGA-II and MOEA/D, and its transformative applications in complex domains such as peptide vaccine design and small molecule drug discovery. The content delves into advanced methodological challenges in many-objective optimization, performance validation techniques, and provides a forward-looking perspective on integrating EMO with cutting-edge AI technologies to accelerate biomedical innovation.

Understanding Evolutionary Multi-Objective Optimization: Core Principles and Pareto Efficiency

Defining Multi-Objective Optimization Problems and Key Concepts

Multi-objective optimization (MOO), also known as Pareto optimization, multi-criteria optimization, or vector optimization, is an area of multiple-criteria decision-making concerned with mathematical optimization problems involving more than one objective function to be optimized simultaneously [1]. In practical, real-world problems, it is rare that a system's performance can be adequately captured by a single metric. Engineers, economists, and scientists frequently encounter situations where multiple conflicting objectives must be balanced, such as minimizing cost while maximizing performance, or reducing weight while increasing strength [1].

For a multi-objective optimization problem, it is typically impossible to find a single solution that simultaneously optimizes each objective because the objective functions are inherently conflicting. A solution is called nondominated, Pareto optimal, or Pareto efficient if none of the objective functions can be improved in value without degrading some of the other objective values [1]. Without additional subjective preference information, there may exist a (possibly infinite) number of Pareto optimal solutions, all of which are considered equally good.

Mathematical Formulation

A multi-objective optimization problem can be formulated in mathematical terms as follows [1]:

[ \min{x \in X} (f1(x), f2(x), \ldots, fk(x)) ]

where the integer ( k \geq 2 ) is the number of objectives and ( X ) is the feasible set of decision vectors, typically ( X \subseteq \mathbb{R}^n ) where ( n ) is the number of decision variables. The functions ( f1, f2, \ldots, f_k ) are the objective functions that map decision vectors to objective vectors: ( f: X \to \mathbb{R}^k ).

If some objective function is to be maximized, it is equivalent to minimize its negative or its inverse. The image of ( X ) under ( f ) is denoted ( Y \subseteq \mathbb{R}^k ) and represents the feasible set in objective space. A solution ( x^* \in X ) is Pareto optimal if there does not exist another solution ( x \in X ) such that ( fi(x) \leq fi(x^) ) for all ( i = 1, \ldots, k ) and ( f_j(x) < f_j(x^) ) for at least one index ( j ) [1].

Table: Key Elements in Multi-Objective Optimization Formulation

| Element | Notation | Description | Example |

|---|---|---|---|

| Decision Vector | ( x ) | A point in the design space | Wing shape parameters |

| Objective Functions | ( f1(x), f2(x), \ldots, f_k(x) ) | Functions to be optimized | Drag coefficient, structural weight |

| Feasible Region | ( X ) | Set of all possible decision vectors satisfying constraints | All manufacturable wing designs |

| Objective Space | ( Y ) | Set of all possible objective vectors | All possible (drag, weight) pairs |

Core Concepts in Multi-Objective Optimization

Pareto Optimality

The fundamental concept in multi-objective optimization is Pareto optimality. A solution ( x1 \in X ) is said to dominate another solution ( x2 \in X ) if both of the following conditions are true [1]:

- ( fi(x1) \leq fi(x2) ) for all indices ( i \in {1, \dots, k} )

- ( fj(x1) < fj(x2) ) for at least one index ( j \in {1, \dots, k} )

A solution ( x^* \in X ) is called Pareto optimal if there does not exist another solution that dominates it. The set of all Pareto optimal solutions is called the Pareto set, and the image of the Pareto set in the objective space is called the Pareto front [1].

Ideal and Nadir Vectors

The ideal objective vector ( z^{ideal} ) and the nadir objective vector ( z^{nadir} ) define the bounds of the Pareto front [1]:

- The ideal vector represents the best possible values for each objective individually: [ z^{ideal} = \begin{pmatrix} \inf{x^* \in X^*} f1(x^) \ \vdots \ \inf_{x^ \in X^} f_k(x^) \end{pmatrix} ]

- The nadir vector represents the worst possible values among Pareto optimal solutions for each objective: [ z^{nadir} = \begin{pmatrix} \sup{x^* \in X^*} f1(x^) \ \vdots \ \sup_{x^ \in X^} f_k(x^) \end{pmatrix} ]

In practice, the nadir objective vector can only be approximated as the whole Pareto optimal set is typically unknown [1].

Solution Methods

Weighted Sum Method

The weighted sum method is the simplest approach to multi-objective optimization, which combines multiple objective functions by adding them together with assigned weights for each function [2]. The general formulation is:

[ f{\text{obj}}(x) = \alpha \frac{g(x)}{g0} + \beta \frac{h(x)}{h_0} ]

where ( g(x) ) and ( h(x) ) are two objectives, ( \alpha ) and ( \beta ) are weighting coefficients, and ( g0 ), ( h0 ) are normalization factors to account for different magnitudes of the objective functions [2]. This scalarization approach converts the multi-objective problem into a single-objective problem that can be solved with traditional optimization algorithms.

ε-Constraint Method

The ε-constraint method is another scalarization technique where one objective function is minimized while the other objective functions are constrained to specific values [2]. This method is particularly useful for generating well-distributed Pareto fronts and handles non-convex regions of the Pareto front better than the weighted sum method. The formulation constrains all but one objective function:

[ \begin{align} \min & \quad f_j(x) \ \text{subject to} & \quad f_i(x) \leq \epsilon_i, \quad i = 1, \ldots, k, i \neq j \ & \quad x \in X \end{align} ]

By systematically varying the ε values, different Pareto optimal solutions can be obtained.

Evolutionary Multi-Objective Optimization (EMO)

Evolutionary Multi-Criterion Optimization (EMO) represents a class of population-based algorithms that generate multiple Pareto optimal solutions in a single run [3] [4]. Unlike scalarization methods that require multiple independent runs to approximate the Pareto front, EMO algorithms maintain a diverse population of solutions that collectively converge to the Pareto optimal set. The 13th International Conference on Evolutionary Multi-Criterion Optimization (EMO 2025) highlights current research in this field, including algorithm design, benchmarking, and applications across various domains [3] [4].

Table: Comparison of Multi-Objective Optimization Methods

| Method | Key Mechanism | Advantages | Limitations |

|---|---|---|---|

| Weighted Sum | Linear combination of objectives with weights | Simple implementation; Works with standard optimizers | Cannot find solutions in non-convex regions; Sensitive to weight selection |

| ε-Constraint | Optimizes one objective while constraining others | Can find solutions in non-convex regions; Direct control over distribution | Requires appropriate setting of ε values; Constraint handling needed |

| Evolutionary | Population-based stochastic search | Finds multiple solutions in one run; Handles complex problems | Computationally intensive; Parameter tuning required |

Experimental Protocols and Benchmarking

Performance Assessment

Benchmarking multi-objective optimization algorithms requires specialized performance indicators that measure various aspects of solution quality [4]. Key indicators include:

- Convergence: How close the obtained solutions are to the true Pareto front

- Diversity: How well the solutions are distributed along the Pareto front

- Coverage: The extent to which the solutions represent the entire Pareto front

Tools like MO-IOHinspector enable anytime benchmarking of multi-objective algorithms, allowing researchers to track algorithm performance throughout the optimization process [4].

Workflow for Multi-Objective Optimization Studies

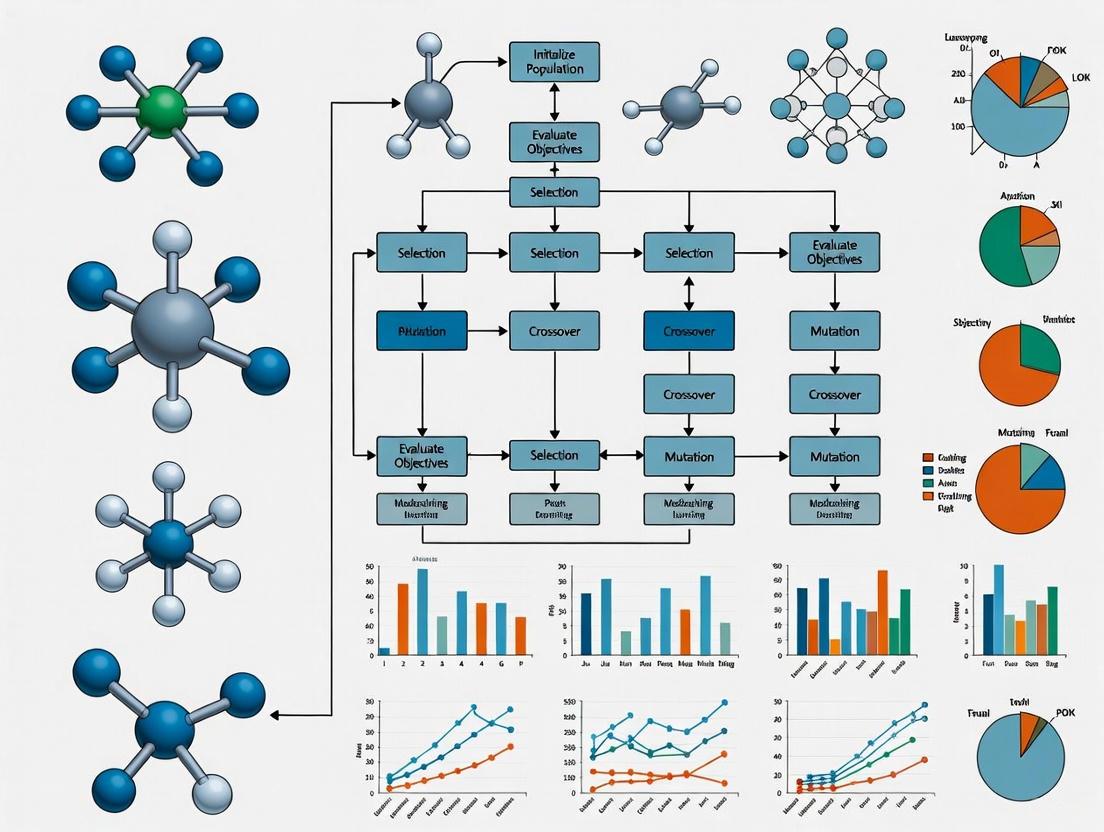

The following diagram illustrates a generalized workflow for conducting multi-objective optimization studies, particularly relevant for EMO research:

Multi-Objective Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Multi-Objective Optimization Research

| Tool Category | Representative Examples | Function in MOO Research |

|---|---|---|

| Optimization Frameworks | OpenMDAO [2], PlatEMO, jMetal | Provide infrastructure for implementing and testing MOO algorithms |

| Benchmark Problems | ZDT, DTLZ, WFG test suites | Standardized problems for comparing algorithm performance |

| Performance Indicators | Hypervolume, Generational Distance, Inverted Generational Distance | Quantify convergence and diversity of obtained solutions |

| Visualization Tools | Pareto front plots, parallel coordinate plots, heat maps | Enable interpretation of high-dimensional optimization results |

| Multi-Criteria Decision Making | AHP (Analytic Hierarchy Process), TOPSIS | Support selection of final solution from Pareto optimal set |

Applications in Research and Industry

Multi-objective optimization has been applied across numerous fields, demonstrating its versatility in addressing complex real-world problems [1]:

- Engineering Design: Balancing performance, cost, and reliability in aerospace, automotive, and structural design [2]

- Economics and Finance: Portfolio optimization to maximize returns while minimizing risk [1]

- Process Optimization: Chemical engineering and manufacturing processes with conflicting objectives like yield, purity, and energy consumption [1]

- Drug Development: Optimizing therapeutic efficacy while minimizing side effects and production costs

The continuing development of EMO algorithms addresses increasingly complex problems across these domains, with current research focusing on scaling to higher-dimensional problems, incorporating machine learning techniques, and improving computational efficiency [3] [4].

Evolutionary Multi-objective Optimization (EMO) research addresses problems where multiple, often conflicting, objectives must be simultaneously optimized. In such problems, improving one objective typically leads to deterioration in another, creating a complex landscape of trade-offs. The Pareto principle provides the foundational theory for understanding and solving these multi-objective problems. It defines Pareto optimality as a state where no objective can be improved without worsening at least one other objective [5]. The set of all Pareto optimal solutions forms the Pareto front (or Pareto frontier), which represents the complete spectrum of optimal trade-offs between competing objectives [5].

Within EMO research, the primary goal is to develop algorithms that can efficiently approximate the true Pareto front of a problem, providing decision-makers with a comprehensive set of alternative solutions. This field has grown significantly from its origins, now encompassing sophisticated evolutionary and population-based algorithms, surrogate models, and hybrid machine learning methods [3]. The ensuing sections explore the theoretical foundations of Pareto optimality, computational methods for identifying Pareto fronts, and their critical applications in scientific domains such as drug development and chemical engineering.

Theoretical Foundations of Pareto Optimality and Frontiers

Formal Definition and Key Concepts

In multi-objective optimization, a system is defined by a function ( f: X \rightarrow \mathbb{R}^m ) that maps a decision variable ( x ) in the space ( X \subseteq \mathbb{R}^n ) to a vector of ( m ) objective values in ( \mathbb{R}^m ) [5]. The Pareto frontier, denoted as ( P(Y) ), where ( Y = {y \in \mathbb{R}^m: y = f(x), x \in X} ), is formally described as follows [5]:

[ P(Y) = { y' \in Y : { y'' \in Y : y'' \succ y', y' \neq y'' } = \emptyset }. ]

This means a point ( y' ) belongs to the Pareto front if no other point ( y'' ) dominates it. Domination (( y'' \succ y' )) occurs when ( y'' ) is at least as good as ( y' ) in all objectives and strictly better in at least one [5]. The Pareto frontier distinguishes acceptable compromises from suboptimal solutions that can be objectively improved [6].

Marginal Rate of Substitution in Pareto Efficiency

A significant aspect of the Pareto frontier in economics and beyond is that at any Pareto-efficient allocation, the marginal rate of substitution (MRS) is identical across all consumers or objectives [5]. For a system with ( m ) consumers and ( n ) goods, this implies:

[ \frac{f{xj^i}^{i}}{f{xs^i}^{i}} = \frac{\muj}{\mus} = \frac{f{xj^k}^{k}}{f{xs^k}^{k}}. ]

Here, ( f{xj^i} ) represents the partial derivative of the utility function ( f ) with respect to good ( j ) for consumer ( i ), and ( \muj ) and ( \mus ) are Lagrange multipliers associated with the constraints on goods ( j ) and ( s ) [5]. This equality shows that in a Pareto-optimal state, the rate at which any consumer is willing to trade one good for another is uniform, indicating an efficient allocation of resources where no reallocation can improve one individual's situation without harming another's.

Computational Methods for Pareto Frontier Identification

Computing the Pareto frontier is a central challenge in EMO. Algorithms must find a set of solutions that are both diverse and close to the true Pareto front. The following table summarizes core computational approaches used in EMO research.

Table 1: Algorithms for Computing and Approximating the Pareto Front

| Algorithm Type | Core Principle | Key Advantages | Common Applications |

|---|---|---|---|

| Scalarization (Weighted Sum) [5] | Transforms multi-objective problem into a single objective using a weighted sum of objectives. | Simple to implement; intuitive. | Convex Pareto fronts; preliminary analysis. |

| ε-Constraint Method [5] | Optimizes one primary objective while treating others as constraints with ε thresholds. | Can find solutions on non-convex parts of the Pareto front. | Problems with non-convex Pareto fronts. |

| Multi-objective Evolutionary Algorithms (MOEAs) [5] [3] | Uses population-based search (e.g., selection, crossover, mutation) to approximate the front in a single run. | Finds diverse sets of solutions in parallel; handles complex, non-linear landscapes. | Complex black-box problems; engineering design. |

| Machine Learning / Bayesian Optimization [7] | Builds surrogate models (e.g., Gaussian Processes) to guide the search efficiently. | Highly data-efficient; suitable for expensive objective functions (e.g., chemical experiments). | Automated chemistry; reaction optimization. |

| Skyline Query / Maxima of a Point Set [5] | Directly compares vectors to filter out dominated solutions from a finite set. | Effective for pre-computed datasets or low-dimensional spaces. | Database queries; decision support systems. |

For complex or computationally expensive problems, generating the entire Pareto front can be intractable. Therefore, approximation algorithms are employed. An ε-approximation of a Pareto front ( P ) is a set ( S ) where the directed Hausdorff distance between ( S ) and ( P ) is at most ( ε ), requiring roughly ( (1/ε)^d ) queries for a ( d )-dimensional problem [5]. The performance of these approximations is evaluated based on criteria like invariance to scaling, monotonicity, and computational complexity [5].

The following diagram illustrates a high-level workflow for a machine learning-driven multi-objective optimization, as used in fields like automated chemistry.

Experimental Protocols and Applications

Detailed Protocol: Multi-Objective Optimization in Continuous Flow Chemistry

A seminal application of EMO principles in experimental science is the automated optimization of chemical reactors using machine learning [7]. The following protocol details the methodology.

- Objective: To simultaneously optimize conflicting performance criteria—specifically, reactor productivity (an economic objective) and environmental impact (an environmental objective)—for chemical reactions in a continuous flow reactor [7].

- Algorithm: A multi-objective machine learning algorithm (e.g., based on Gaussian Process models) was implemented for self-optimization [7].

- Procedure:

- Initialization: The algorithm is initiated with a small set of initial experiments that sample the parameter space (e.g., reactant concentration, flow rate, temperature).

- Modeling: A Gaussian Process (GP) surrogate model is constructed to approximate the relationship between the input parameters and the multiple objective functions. This model provides predictions of performance and associated uncertainty for untested parameters [7].

- Iteration: a. Selection: An acquisition function, guided by the GP model, selects the next set of reactor parameters to evaluate. This function balances exploring uncertain regions and exploiting known high-performance areas. b. Experiment: The selected parameters are automatically executed in the continuous flow reactor system. c. Evaluation: The productivity and environmental impact (e.g., E-factor, energy consumption) of the reaction are measured. d. Update: The GP model is updated with the new experimental results.

- Termination: The loop continues until a predefined budget of experiments is exhausted or the Pareto front is sufficiently well-defined.

- Outcome: The algorithm successfully identifies the Pareto front, visually represented as a trade-off curve between the environmental and economic objectives. This reveals all optimal compromises, showing that increasing productivity requires a concession in environmental performance, and vice versa [7]. The machine learning approach proved highly data-efficient, achieving optimization in fewer experiments than single-objective approaches [7].

The Scientist's Toolkit: Key Reagents and Materials

The following table details essential components for setting up an automated optimization system for chemical synthesis, as described in the protocol.

Table 2: Research Reagent Solutions for Automated Reaction Optimization

| Item Name | Function / Explanation |

|---|---|

| Continuous Flow Reactor | A reactor where reactants are continuously pumped through a reaction channel. Essential for precise parameter control and automation, enabling rapid experimentation [7]. |

| Gaussian Process Model | A probabilistic machine learning model. Serves as a surrogate for expensive experiments, predicting reaction outcomes and their uncertainty based on input parameters [7]. |

| Multi-Objective Acquisition Function | The decision-making engine (e.g., based on Expected Hypervolume Improvement). Uses the surrogate model to select the most informative parameters to test next, balancing multiple goals [7]. |

| Precursor Chemicals | The specific reactants for the synthesis being optimized. Their concentrations and ratios are key variables in the optimization process. |

| In-line Analytical Sensors | Sensors (e.g., IR, UV-Vis) integrated into the flow system. Provide real-time data on reaction conversion and selectivity, enabling immediate evaluation of objectives [7]. |

Application in Pharmaceutical Development

The Pareto principle finds critical application in pharmacoepidemiology for enhancing drug therapy safety. A study investigating adverse drug events (ADE) and medication errors (ME) in an emergency department (ED) demonstrated its utility [8].

- Methodology: Researchers analyzed 752 consecutive cases admitted to a non-traumatic ED. ADEs, MEs, contributing drugs, preventability, and detection rates were recorded. Symptoms, errors, and drugs were sorted by frequency to apply the Pareto principle [8].

- Findings: The study identified 242 ADEs, 61.2% of which were preventable. A limited subset of factors was responsible for the majority of outcomes:

- The ten most frequent symptoms accounted for 80% of ADE-related hospitalizations [8].

- A set of only 33 drugs was responsible for 76% of all ADEs [8].

- This frequency-based listing allowed researchers to identify the most locally relevant problems and develop targeted safety interventions, such as wall and pocket charts for ED staff [8].

This application highlights how Pareto analysis can efficiently direct limited resources toward the most impactful factors in complex healthcare systems.

Visualization and Analytical Tools

The Pareto Chart for the 80-20 Rule

A Pareto chart is a specialized bar chart that visualizes the Pareto principle (or the 80-20 rule), which states that a small percentage of factors often contribute to a large percentage of the effects [9]. It is used extensively in quality control and process improvement.

- Construction:

- Data Preparation: Categories (e.g., types of defects, complaint issues) are sorted in descending order by their frequency or cost.

- Cumulative Percentage: A line is added to the chart showing the cumulative percentage of the total as each category is added.

- Interpretation: The chart helps identify the "vital few" categories that, if addressed, would resolve the bulk of the problems. For example, an analysis of consumer complaints showed that the top 20% of issue types generated over 80% of all complaints [9].

The diagram below illustrates the logical structure of a system designed to help users navigate the Pareto frontier in an exploratory search context, such as e-commerce or research databases.

The Pareto principle and the concept of the Pareto frontier provide an essential framework for tackling complex, multi-objective problems across science and engineering. Within Evolutionary Multi-Objective Optimization research, the focus is on developing sophisticated algorithms—from evolutionary methods to machine learning hybrids—to efficiently approximate this frontier. As demonstrated by applications in automated chemistry and drug safety, moving beyond single-objective optimization to a holistic trade-off analysis is crucial for informed decision-making. The ongoing integration of EMO with artificial intelligence and adaptive interactive systems promises to further empower researchers and professionals in navigating the intricate landscape of competing objectives.

Evolutionary Algorithms as a Solution Strategy for Complex Problems

Evolutionary Multi-Objective Optimization (EMO) represents a specialized class of evolutionary algorithms that tackle problems with multiple, often conflicting, objectives. Unlike single-objective optimization, which converges toward a single solution, EMO algorithms identify a set of optimal solutions, known as the Pareto-optimal front. This front illustrates the trade-offs between objectives, enabling decision-makers to select the most appropriate solution based on their specific requirements. The 13th International Conference on Evolutionary Multi-Criterion Optimization (EMO 2025) highlights the continued advancement of this field, with proceedings covering algorithm design, benchmarking, applications, and multi-criteria decision support [4].

EMO research is particularly valuable for complex, real-world problems where no single perfect solution exists. By leveraging population-based search inspired by natural evolution, EMO algorithms can explore diverse regions of the search space simultaneously and approximate the entire Pareto-optimal front in a single run. This capability makes them exceptionally suited for challenging domains such as drug development, where researchers must balance efficacy, toxicity, cost, and other critical factors simultaneously.

Core Algorithmic Frameworks in EMO

Fundamental EMO Algorithm Design

EMO algorithms combine evolutionary computation principles with specialized mechanisms for handling multiple objectives. Most modern EMO approaches incorporate three key components: fitness assignment that reflects multiple objectives, diversity preservation mechanisms to maintain a spread of solutions along the Pareto front, and elitism to retain high-quality solutions across generations. The field has evolved from early non-elitist methods like VEGA and MOGA to sophisticated elitist algorithms such as NSGA-II, SPEA2, and more recent metric-based and decomposition-based approaches.

Key hybrid approaches are also emerging, as evidenced by research presented at EMO 2025. One paper describes "An MaOEA/Local Search Hybrid Based on a Fast, Stochastic BFGS Using Achievement Scalarizing Search Directions," which combines multi-objective evolutionary algorithms with local search refinement for improved performance [4]. Another contribution, "MOAISDX: A New Multi-objective Artificial Immune System Based on Decomposition," demonstrates how biological inspiration beyond natural selection can inform algorithm design [4].

EMO Algorithm Workflow

The following diagram illustrates the generalized workflow of an evolutionary multi-objective optimization algorithm:

Benchmarking and Performance Assessment

Robust benchmarking is essential for advancing EMO research. The "MO-IOHinspector: Anytime Benchmarking of Multi-objective Algorithms Using IOHprofiler" presented at EMO 2025 addresses this need by providing comprehensive tools for performance tracking across different stages of the optimization process [4]. Benchmarking in EMO requires specialized performance indicators that evaluate both convergence to the true Pareto-optimal front and diversity of solutions along the front. Common metrics include:

Table 1: Key Performance Metrics for EMO Algorithms

| Metric | Purpose | Interpretation |

|---|---|---|

| Hypervolume (HV) | Measures the volume of objective space dominated by solutions | Higher values indicate better convergence and diversity |

| Inverted Generational Distance (IGD) | Computes average distance from reference Pareto front to obtained solutions | Lower values indicate better convergence to the true front |

| Spread (Δ) | Quantifies the diversity and distribution of solutions | Lower values indicate more uniform distribution along the front |

| Epsilon (ε) Indicator | Measures the smallest factor needed to transform obtained set to dominate reference set | Lower values indicate better quality approximation sets |

EMO Applications in Scientific and Industrial Domains

Drug Development Applications

EMO algorithms offer significant advantages in pharmaceutical research, where multiple competing objectives must be balanced simultaneously. In drug discovery, these algorithms can optimize molecular structures to maximize therapeutic efficacy while minimizing toxicity, side effects, and production costs. The multi-objective approach allows medicinal chemists to explore the complex trade-off landscape and identify promising candidate molecules worthy of further experimental investigation.

Specific applications in drug development include:

- Molecular Design: Simultaneously optimizing binding affinity, solubility, metabolic stability, and synthetic accessibility

- Clinical Trial Design: Balancing statistical power, patient recruitment feasibility, cost constraints, and ethical considerations

- Formulation Optimization: Maximizing drug stability, bioavailability, and manufacturability while minimizing excipient costs

- Treatment Scheduling: Optimizing dosage regimens for efficacy, reduced side effects, and minimized development of drug resistance

Free-Form Coverage Path Planning

The EMO 2025 proceedings include research on "Encodings for Multi-objective Free-Form Coverage Path Planning," demonstrating how EMO approaches solve complex spatial optimization problems with applications in areas ranging from robotic surgery to agricultural automation [4]. This work highlights the importance of specialized solution representations tailored to specific problem domains, a recurring theme in successful EMO applications.

Experimental Protocols and Methodologies

Standard Experimental Framework for EMO Research

Conducting rigorous EMO experiments requires careful methodology to ensure valid, reproducible results. The following protocol outlines a comprehensive approach for evaluating EMO algorithm performance:

Phase 1: Problem Formulation

- Objective Definition: Clearly specify all objective functions to be optimized, noting any conflicts between objectives.

- Constraint Identification: Define all problem constraints and determine appropriate constraint-handling mechanisms (penalty functions, feasibility rules, etc.).

- Decision Variable Encoding: Establish suitable representation for decision variables (binary, real-valued, permutation, etc.) based on problem structure.

Phase 2: Experimental Setup

- Algorithm Configuration: Set population size, termination criteria (maximum generations, function evaluations, or convergence thresholds), and operator parameters (crossover and mutation rates).

- Benchmark Selection: Choose appropriate test problems with known Pareto-optimal fronts, spanning different characteristics (concave, convex, disconnected, multimodal).

- Performance Metrics: Select relevant quality indicators (see Table 1) aligned with research objectives.

Phase 3: Execution and Analysis

- Multiple Independent Runs: Execute algorithm multiple times (typically 20-30 runs) with different random seeds to account for stochastic variations.

- Statistical Testing: Apply appropriate statistical tests (Wilcoxon signed-rank, Kruskal-Wallis) to determine significance of performance differences.

- Visualization: Generate Pareto front approximations, performance trajectories, and comparative plots to facilitate interpretation.

Research Reagent Solutions for EMO Experimentation

Table 2: Essential Computational Tools for EMO Research

| Tool/Category | Function | Examples/Alternatives |

|---|---|---|

| Optimization Frameworks | Provides implementations of EMO algorithms | Platypus, jMetal, pymoo, DEAP |

| Benchmark Suites | Standardized test problems for algorithm comparison | ZDT, DTLZ, WFG test problems |

| Performance Assessment Tools | Calculate quality indicators and generate comparisons | METCO Library, IOHprofiler [4] |

| Visualization Libraries | Generate 2D/3D plots of Pareto fronts and performance metrics | Matplotlib, Plotly, ParaView |

| Statistical Analysis Packages | Perform significance testing and result analysis | SciPy, R, Statistica |

| High-Performance Computing | Enable computationally intensive EMO applications | MPI, OpenMP, GPU computing frameworks |

Advanced Methodologies and Hybrid Approaches

Integration with Machine Learning and Surrogates

A significant trend in EMO research involves combining evolutionary algorithms with machine learning techniques, particularly surrogate modeling. As indicated in the EMO 2025 proceedings, surrogates and machine learning approaches help address one of the fundamental challenges in EMO: the computational expense of evaluating objective functions, especially for real-world problems where each evaluation might involve complex simulations or physical experiments [4].

Surrogate-assisted EMO approaches typically follow this workflow:

- Initial Sampling: Design an initial experimental design to populate the surrogate training set

- Model Building: Construct approximate models of the objective functions using techniques like Kriging, radial basis functions, or neural networks

- Infill Criteria: Balance exploration and exploitation when selecting new points for expensive evaluation

- Model Update: Iteratively refine surrogates as new data becomes available

Multi-Criteria Decision Support Systems

EMO 2025 highlights the importance of "Multi-criteria decision support" as a key research topic, recognizing that identifying the Pareto-optimal front is only part of the solution process [4]. Effective decision support systems help stakeholders navigate the trade-off surface and select the most appropriate solution based on their preferences. These systems may incorporate:

- Preference Elicitation Methods: Techniques to capture and model decision-maker preferences

- Interactive EMO Approaches: Algorithms that incorporate decision-maker feedback during the optimization process

- Visual Analytics Tools: Advanced visualization techniques for exploring high-dimensional Pareto fronts

- Robust Decision Making: Methods for selecting solutions that perform well across different scenarios or in the presence of uncertainty

The following diagram illustrates the structure of a surrogate-assisted EMO framework with decision support:

Future Directions in EMO Research

The EMO 2025 conference proceedings indicate several emerging research directions that will shape the future of evolutionary multi-objective optimization. These include:

- Many-Objective Optimization: Developing scalable algorithms for problems with four or more objectives, where traditional Pareto-based approaches face challenges

- Expensive Optimization Problems: Advancing surrogate modeling and model management techniques for problems with computationally intensive evaluations

- Dynamic and Uncertain Environments: Creating algorithms that can adapt to changing environments and handle various forms of uncertainty

- Parallel and Distributed EMO: Leveraging high-performance computing architectures to solve increasingly complex problems

- Real-World Applications: Transferring EMO methodologies to challenging domains including personalized medicine, renewable energy systems, and sustainable engineering design

As EMO research continues to evolve, the integration of evolutionary computation with machine learning, decision science, and domain-specific knowledge will further establish evolutionary algorithms as a powerful solution strategy for complex, multi-objective problems across scientific and industrial domains.

Evolutionary Multiobjective Optimization (EMO) represents a subfield of computational intelligence that uses evolutionary algorithms to solve problems with multiple, often conflicting, objectives [10]. These multi-objective optimization problems (MOPs) yield a set of optimal solutions known as the Pareto set, with their images in the objective space forming the Pareto front [10]. Traditionally, EMO research has focused on problems with two or three objectives, where algorithms such as NSGA-II, SPEA2, and MOEA/D have demonstrated remarkable success [11].

However, many real-world problems involve concurrent optimization of numerous objectives. When the number of objectives exceeds three, these are classified as many-objective optimization problems [11]. This scaling introduces fundamental challenges that have necessitated new algorithmic paradigms and theoretical frameworks within EMO research. The transition from EMO to many-objective optimization represents a significant evolution in the field, driven by practical applications and addressing core computational challenges including selection pressure, diversity maintenance, and computational complexity [11].

Fundamental Challenges in Many-Objective Optimization

Core Algorithmic Difficulties

Scaling evolutionary algorithms to many objectives presents several interconnected challenges that distinguish this domain from traditional EMO research.

Pareto Dominance Degradation: As the number of objectives increases, the proportion of non-dominated solutions in a randomly generated population grows exponentially [11]. This erosion of selection pressure severely impedes convergence toward the true Pareto front, as the search process lacks sufficient guidance to distinguish superior solutions [11].

Visualization and Decision-Making Complexity: While EMO researchers have developed effective visualization techniques for two and three-dimensional objective spaces, visualizing high-dimensional Pareto fronts for many-objective problems remains challenging [11]. This complicates both algorithm development and decision-making processes.

Computational Resource Demands: The assessment of solution quality in many-objective spaces often requires computationally intensive indicators such as hypervolume [11]. Additionally, maintaining diverse solution sets across high-dimensional fronts necessitates larger population sizes and archive mechanisms, further increasing computational costs [11].

Performance Assessment in High-Dimensional Spaces

Traditional performance indicators used in EMO require adaptation or replacement for many-objective optimization. The table below summarizes key indicators and their applicability to many-objective problems.

Table 1: Performance Indicators for Many-Objective Optimization

| Indicator | Primary Function | Scalability to Many Objectives | Computational Cost |

|---|---|---|---|

| Hypervolume | Measures dominated volume | Good, but becomes expensive | High, grows with objectives and population |

| IGD (Inverted Generational Distance) | Measures convergence and diversity | Good with true Pareto front reference | Moderate, depends on reference set size |

| R2 Indicator | Uses utility functions | Good, more scalable than hypervolume | Low to moderate |

| Hausdorff Distance | Measures distance between sets | Moderate, can be sensitive to outliers | Moderate |

| Solow-Polasky Diversity | Measures solution diversity | Good for diversity-focused optimization | Moderate, involves matrix calculations |

Algorithmic Approaches for Many-Objective Optimization

Dominance-Based Modifications

Researchers have developed several strategies to address dominance degradation in many-objective optimization:

Relaxed Dominance Criteria: Approaches such as ε-dominance, cone dominance, and fuzzy dominance expand the dominance area to facilitate better solution discrimination [11]. These methods help restore selection pressure by making dominance relationships more likely.

Reference Point-Based Methods: Algorithms like NSGA-III use reference points supplied by decision makers to maintain population diversity across high-dimensional objective spaces [11]. This approach shifts focus from Pareto dominance to reference-driven diversity maintenance.

Indicator-Based Selection: Hypervolume-based algorithms and other indicator-based approaches replace Pareto dominance with quality indicators directly in the selection process [11]. While computationally demanding, these methods provide explicit optimization goals.

Decomposition and Dimension Reduction

Objective Reduction Techniques: These approaches identify redundant objectives that can be eliminated without substantially altering the problem structure, effectively reducing a many-objective problem to one with fewer objectives [11].

Aggregation Methods: Weighted sum approaches and other aggregation methods combine multiple objectives into a single fitness function, though this may obscure trade-off information [11].

Evolutionary Multitasking: A novel paradigm exemplified by the EMCMOA algorithm, which decomposes complex constrained many-objective problems into interdependent tasks [12]. The framework employs a dual-task structure where a helper task addresses unconstrained objective optimization and transfers knowledge to the main task focused on constraint satisfaction [12].

Table 2: Comparison of Many-Objective Optimization Algorithms

| Algorithm | Core Mechanism | Scalability | Constraint Handling | Key Advantages |

|---|---|---|---|---|

| NSGA-III | Reference point-based | Good for 3-15 objectives | Requires extension | Maintains diversity in high-dimensional spaces |

| MOEA/D | Decomposition | Good for many objectives | Requires extension | Leverages single-objective optimizers |

| SPEA2 | Improved fitness assignment | Moderate (2-5 objectives) | Requires extension | Effective archive strategy |

| EMCMOA | Evolutionary multitasking | Good for constrained problems | Native constraint handling | Knowledge transfer between tasks [12] |

| HypE | Hypervolume approximation | Good for many objectives | Requires extension | Scalable hypervolume estimation |

Experimental Protocols and Benchmarking

Standard Test Problems

Researchers typically evaluate many-objective optimization algorithms using scalable test suites that allow controlled assessment of algorithmic performance:

DTLZ Test Suite: The DTLZ (Deb-Thiele-Laumanns-Zitzler) problem family provides scalable test problems with known Pareto fronts, enabling systematic evaluation of convergence and diversity properties [11].

WFG Toolkit: The WFG (Walking Fish Group) test problems feature more complex Pareto set shapes and include position-related parameters that complicate optimization [11].

Performance Evaluation Methodology

Comprehensive algorithm assessment follows standardized experimental protocols:

Algorithm Configuration: Multiple population sizes (typically 36-210 individuals) are tested under fixed evaluation budgets [13]. Smaller populations may be sufficient when using external archives [13].

Performance Metrics Calculation: Algorithms are evaluated using quality indicators including:

- Inverted Generational Distance (IGD) measuring convergence and diversity

- Hypervolume (HV) measuring dominated space

- Δp indicator for completeness of Pareto front coverage [13]

Statistical Validation: Results are statistically compared using non-parametric tests like Wilcoxon signed-rank test with Bonferroni correction to ensure significance [12].

Constraint Handling Assessment: For constrained problems, algorithms are evaluated on both artificial and real-world problems to identify performance differences across problem types [13].

Research Toolkit for Many-Objective Optimization

Table 3: Essential Research Tools and Resources

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| JMetal | Software Framework | Algorithm implementation | Rapid prototyping of EMO algorithms |

| PlatEMO | Software Platform | Integrated EMO environment | Algorithm benchmarking and comparison |

| DTLZ/WFG | Test Problems | Algorithm performance assessment | Controlled experimental studies |

| Hypervolume | Performance Indicator | Solution quality measurement | Algorithm validation and comparison |

| EMCMOA | Algorithm | Constrained many-objective optimization | Real-world problems with constraints [12] |

Visualization of Algorithm Frameworks

The following diagram illustrates the core structure of an evolutionary multitasking framework for many-objective optimization, as implemented in the EMCMOA algorithm:

Figure 1: EMCMOA Evolutionary Multitasking Framework

The EMCMOA algorithm implements a dual-task structure where knowledge transfer between constrained and unconstrained optimization tasks enhances search efficiency [12]. This framework demonstrates how decomposition and knowledge sharing can address complex constrained many-objective optimization problems.

Application Case Study: Reservoir Scheduling

A practical application of many-objective optimization is found in cascade reservoir scheduling for small watersheds, which must balance multiple competing objectives [12]. The Lushui River Basin case study demonstrates a real-world implementation with the EMCMOA algorithm achieving up to 15.7% improvement in IGD and 12.6% increase in HV compared to state-of-the-art algorithms [12].

The many-objective scheduling model incorporates five critical objectives:

- Flood Control: Minimize peak water levels during precipitation events

- Ecological Water Requirements: Maintain minimum flow rates for ecosystem health

- Hydropower Generation: Maximize energy production from reservoir systems

- Agricultural Irrigation: Meet seasonal water demands for crop cultivation

- Industrial Water Supply: Ensure reliable water access for industrial operations

This application highlights the practical necessity of many-objective optimization approaches for complex real-world systems with numerous competing demands [12].

Future Research Directions

The field of many-objective optimization continues to evolve with several promising research trajectories:

Integration with Machine Learning: Surrogate modeling and Bayesian optimization techniques show potential for reducing computational costs in many-objective optimization [3] [13].

Theoretical Foundations: Enhanced understanding of search space characteristics and problem dimensionality effects will inform more robust algorithm design [10].

Interactive Optimization: Incorporating decision-maker preferences during the optimization process rather than as a post-processing step [10].

Scalable Visualization Techniques: Developing effective methods for visualizing and interpreting high-dimensional Pareto fronts to support decision-making [11].

The progression from EMO to many-objective optimization represents a natural maturation of the field, addressing increasingly complex real-world problems while developing sophisticated computational approaches to manage the challenges of high-dimensional objective spaces.

EMO Algorithms and Breakthrough Applications in Biomedicine and Drug Design

Many real-world problems in science and engineering, including those in drug development and complex system design, require the simultaneous optimization of several conflicting objectives. These are known as Multi-objective Optimization Problems (MOPs). Unlike single-objective problems, MOPs do not have a single optimal solution but instead a set of optimal trade-off solutions, collectively known as the Pareto-optimal front. Evolutionary Multi-objective Optimization (EMO) algorithms are a class of population-based stochastic search methods that are particularly well-suited for solving MOPs, as they can approximate the entire Pareto front in a single run. Within EMO research, dominance-based algorithms form a foundational pillar. These algorithms use the concept of Pareto dominance to compare and select solutions without requiring prior weighting of objectives. Among them, the Non-dominated Sorting Genetic Algorithm II (NSGA-II) and the Strength Pareto Evolutionary Algorithm 2 (SPEA2) are two of the most widely used and cited algorithms. This whitepaper provides an in-depth technical guide to their core mechanisms, performance, and applications, situating them within the broader context of modern EMO research.

Foundational Concepts and Definitions

Pareto Dominance and Optimality

- Pareto Dominance: A solution x is said to dominate a solution y (denoted x ≺ y) if two conditions are met:

- The solution x is no worse than y in all objectives.

- The solution x is strictly better than y in at least one objective.

- Pareto-Optimal Set: The set of all solutions in the decision variable space that are non-dominated by any other solution in the feasible space.

- Pareto Front (PF): The image of the Pareto-optimal set in the objective space, representing the set of optimal trade-offs.

Core Goals of EMO Algorithms

The primary goals of an EMO algorithm are:

- Convergence: The population of solutions should converge towards the true Pareto-optimal front.

- Diversity: The solutions should be well-spread and diverse across the Pareto front to provide the decision-maker with a rich set of alternatives.

The NSGA-II Algorithm

Core Mechanism

NSGA-II employs a three-step mechanism to achieve convergence and diversity [14].

- Non-dominated Sorting: The population is hierarchically classified into a sequence of non-dominated fronts (Front 1, Front 2, ..., Front k). Front 1 contains all non-dominated solutions in the current population; after removing these, Front 2 contains the next set of non-dominated solutions, and so on. This sorting provides the primary convergence pressure towards the Pareto front.

- Crowding Distance Assignment: Within each front, a density estimation metric is calculated for each solution. The crowding distance is the average side-length of the cuboid formed by a solution's immediate neighbors in the objective space. A larger crowding distance indicates a less crowded region and is preferred. This metric promotes diversity.

- Elitist Selection: The algorithm combines the parent and offspring populations. It then selects the next generation by first choosing all solutions from the best non-dominated front (Front 1), then Front 2, and so on. When the last front to be included cannot be accommodated fully, the solutions within that front with the largest crowding distance are selected. This ensures elitism and diversity preservation.

Workflow and Structure

The following diagram illustrates the step-by-step workflow of the NSGA-II algorithm.

The SPEA2 Algorithm

Core Mechanism

SPEA2 addresses some perceived weaknesses of earlier algorithms by using a fine-grained fitness assignment strategy and a density-based archive [15].

- Strength and Raw Fitness: Each solution i in both the main population and an external archive is assigned a strength value S(i), representing the number of solutions it dominates. The raw fitness R(i) of a solution is then determined by the sum of the strength values of all solutions that dominate it. Lower raw fitness is better. This provides a strong convergence pressure.

- Density Estimation: To further distinguish between solutions with identical raw fitness, SPEA2 incorporates a density estimate. The density D(i) is calculated using the inverse of the distance to the k-th nearest neighbor (typically k = √(population size + archive size)). A closer neighbor implies a more crowded region, and a higher density value.

- Archive Truncation: The total fitness F(i) is the sum of raw fitness and density (F(i) = R(i) + D(i)). The algorithm maintains an archive of fixed size containing the non-dominated solutions from the combined population and archive. If the number of non-dominated solutions exceeds the archive size, a truncation operator is used. It iteratively removes the solution with the smallest distance to its k-th neighbor, recalculating distances after each removal to ensure diversity is maintained.

Workflow and Structure

The following diagram illustrates the step-by-step workflow of the SPEA2 algorithm.

Comparative Performance Analysis

Theoretical and Practical Performance

Theoretical and empirical studies have highlighted key differences in the performance and behavior of NSGA-II and SPEA2.

Theoretical Runtime and Population Dynamics: A key theoretical insight is that the selection mechanisms of NSGA-II and SPEA2 lead to different population dynamics. The crowding distance in NSGA-II can cause the population to concentrate in the middle of the Pareto front. In contrast, the selection of SPEA2, which considers distances to all other individuals, tends to distribute solutions more evenly across the Pareto front. Recent runtime analysis shows that SPEA2's performance is less sensitive to increases in population size. For complex problems like OneJumpZeroJump, SPEA2 can achieve a runtime of O(n^(k+1)) for a wider range of population sizes (μ, λ = O(n^k)), whereas NSGA-II's best guarantee is achieved only for a specific population size (μ = Θ(n)), making parameter tuning easier for SPEA2 [16].

Empirical Performance on Benchmarks and Applications:

Table 1: Comparative Algorithm Performance on Various Problems

| Problem Domain | Key Performance Metrics | Reported NSGA-II Performance | Reported SPEA2 Performance | Source |

|---|---|---|---|---|

| Next Release Problem (NRP) [Software Engineering] | Hypervolume (HV), Spread, Runtime | Best CPU run time across all test scales. | -- | [17] |

| Job-Shop Scheduling [Manufacturing] | Hypervolume, R2 Indicator | Less efficient compared to SPEA2 and IBEA. | Most efficient for the task; yields good distribution. | [15] |

| General Multi-objective Benchmarks (e.g., OneJumpZeroJump) | Expected Runtime | Θ(μn log n) for OneMinMax (highly dependent on population size). | O(n^2 log n) for OneMinMax (less dependent on population size). | [16] |

Detailed Experimental Protocol

To ensure reproducibility, the following outlines a standard experimental protocol for comparing EMO algorithms, as inferred from the cited literature [17] [15].

Algorithm Configuration:

- Initialization: Set population size (N), archive size (for SPEA2), crossover probability (P_c), mutation probability (P_m), and distribution indices for simulated binary crossover (SBX) and polynomial mutation.

- Stopping Criterion: Define a maximum number of generations or function evaluations.

Problem Instantiation:

- Test Problems: Select standard benchmark problems (e.g., ZDT, DTLZ, WFG) or a real-world problem like the Next Release Problem (NRP) or Job-Shop Scheduling.

- Data Sets: For applied studies, use real-world datasets (e.g., Eclipse, Gnome, Mozilla for NRP).

- Objective Functions: Clearly define the objective functions to be optimized (e.g., maximize customer satisfaction and minimize cost for NRP; minimize makespan, mean flow time, and variance for Job-Shop Scheduling).

Performance Measurement:

- Independent Runs: Execute each algorithm configuration for a statistically significant number of independent runs (e.g., 30 runs) to account for stochasticity.

- Quality Indicators: Calculate performance indicators for the final population/archive of each run.

- Hypervolume (HV): Measures the volume of objective space dominated by the solution set relative to a reference point. Higher HV indicates better convergence and diversity.

- Spread (Δ): Measures the extent of spread achieved among the obtained solutions. A lower value indicates a more uniform distribution.

- R2 Indicator: Measures the average distance of solutions to a utility function, reflecting convergence.

Data Analysis:

- Descriptive Statistics: Report the mean and standard deviation of the performance indicators across all runs.

- Statistical Testing: Perform non-parametric statistical tests (e.g., Wilcoxon rank-sum test) to determine if performance differences between algorithms are statistically significant.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for EMO Algorithm Implementation and Evaluation

| Component / Tool | Function / Purpose | Example Use-Case |

|---|---|---|

| Standard Benchmark Suites (e.g., ZDT, DTLZ, WFG) | Provides a standardized set of test problems with known Pareto fronts to validate and compare algorithm performance. | Initial validation of a new NSGA-II variant's convergence on DTLZ2. |

| Performance Indicators (Hypervolume, Spread, R2) | Quantifiable metrics to assess the quality of an obtained solution set in terms of convergence and diversity. | Comparing the final archive of SPEA2 and NSGA-II on a scheduling problem using Hypervolume. |

| Statistical Testing Packages (e.g., in R or Python) | To perform rigorous statistical analysis of experimental results and confirm the significance of performance differences. | Using a Wilcoxon signed-rank test on Hypervolume values from 31 independent runs. |

| Reference Point (RP) | A vector in the objective space expressing a decision maker's preferences, used to guide the search towards a Region of Interest (ROI). | Integrating an RP into R-NSGA-II to find solutions near a specific cost-satisfaction trade-off in NRP. |

A significant trend in modern EMO research is the incorporation of decision-maker (DM) preferences to focus the search on a specific Region of Interest (ROI), thus improving scalability to many-objective problems. Both NSGA-II and SPEA2 have been extended in this context.

- Reference Point-Based NSGA-II (R-NSGA-II): This variant uses a supplied reference point to bias the selection process. Solutions closer to the reference point (measured by Euclidean distance) are preferred, leading the population to converge towards the ROI [14]. Recent modifications replace Euclidean distance with the Simplified Karush–Kuhn–Tucker Proximity Measure (S-KKTPM), which can predict convergence behavior without prior knowledge of the true Pareto front, yielding improved results [14].

- Preference-Based SPEA2: Concepts like g-dominance and r-dominance modify the Pareto dominance relation to incorporate preference information, allowing SPEA2 and similar algorithms to focus on the ROI [14].

NSGA-II and SPEA2 remain cornerstone algorithms in the field of evolutionary multi-objective optimization. While NSGA-II is often praised for its speed and effective use of elitism and crowding distance, SPEA2 offers a robust alternative with its fine-grained fitness assignment and archive truncation method, which can lead to more stable performance and better distribution, particularly on complex problems. The choice between them depends on the specific problem characteristics and the need for parameter robustness. The ongoing integration of preference information and theoretical runtime analysis continues to refine these classic algorithms, ensuring their relevance and application in solving complex, real-world optimization challenges in domains ranging from software engineering to drug development.

Evolutionary Multi-objective Optimization (EMO) research addresses problems with multiple conflicting objectives, known as Multi-objective Optimization Problems (MOPs). The core challenge lies in finding a set of optimal trade-off solutions rather than a single optimum [18]. Within this field, decomposition-based and indicator-based approaches represent two fundamental algorithmic philosophies for balancing convergence toward optimality with diversity across the Pareto front [18].

Multi-objective Evolutionary Algorithm based on Decomposition (MOEA/D), introduced by Zhang and Li in 2007, decomposes an MOP into multiple single-objective subproblems using scalarization functions and a set of weight vectors. These subproblems are then optimized simultaneously in a collaborative manner [19] [20]. In contrast, Indicator-based Multi-Objective Evolutionary Algorithms (IB-MOEAs) employ a performance indicator, such as Hypervolume, to guide the selection process, using this single metric to measure the quality of a solution set and drive the population toward both convergence and diversity [18].

This guide provides an in-depth technical examination of these two approaches, framing them within the broader context of EMO research. It details their core mechanisms, advanced variants, and practical applications, particularly in computationally expensive domains like drug design.

Foundational Principles of MOEA/D

Core Decomposition Framework

MOEA/D reformulates a multi-objective optimization problem into a collection of single-objective subproblems. Given an MOP to minimize ( F(x) = (f1(x), f2(x), \dots, fm(x)) ), MOEA/D employs a scalarization method to define ( N ) subproblems, each associated with a weight vector ( \lambda^i = (\lambda1^i, \dots, \lambdam^i) ) such that ( \sum{j=1}^m \lambdaj^i = 1 ) and ( \lambdaj^i \geq 0 ) [20]. The most common scalarization approaches are:

- Weighted Sum (WS): ( g^{ws}(x|\lambda) = \sum{j=1}^{m} \lambdaj f_j(x) ) [21]

- Weighted Tchebycheff (TCH): ( g^{te}(x|\lambda, z^) = \max_{1 \leq j \leq m} { \lambda_j | f_j(x) - z_j^ | } ) [19]

- Penalty-based Boundary Intersection (PBI): ( g^{pbi}(x|\lambda, z^) = d_1 + \theta d_2 ), where ( d_1 ) is the distance from ( F(x) ) to the ideal point ( z^ ) along the weight vector, and ( d_2 ) is the perpendicular distance to the weight vector; ( \theta ) is a preset penalty parameter [19]

The algorithm maintains a population of solutions, each corresponding to a subproblem. A key feature is the neighborhood relationship among subproblems, defined based on the proximity of their weight vectors. The reproduction of new solutions for each subproblem typically relies on information from its neighboring subproblems, which promotes convergence and maintains diversity [20].

Key Design Components and Challenges

The performance of MOEA/D hinges on several design components [20]:

- Weight Vector Settings: The initial distribution and potential adaptation of weight vectors significantly impact the diversity of solutions. Uniform sampling (e.g., via the Simplex Lattice Design) is common but may be ineffective for problems with irregular Pareto Fronts (PFs) [19].

- Sub-problem Formulations: The choice of scalarization function (WS, TCH, PBI) affects the algorithm's ability to handle different PF geometries, such as non-convex or disconnected fronts [19].

- Selection Mechanisms: Strategies for selecting solutions for reproduction and for survival into the next generation are crucial for balancing exploration and exploitation.

A major limitation of the original MOEA/D is its performance deterioration on MOPs with irregular POFs (e.g., disconnected, degenerate, or inverted) [19]. This is partly due to its geometric pattern of using a "single point and multiple directions," where the "single point" is the ideal or nadir point, and "multiple directions" are the preset weight vectors. This can cause solutions to concentrate in specific regions of the PF [19].

Advanced MOEA/D Variants and Methodologies

Adaptations for Irregular Pareto Fronts

Recent research has introduced significant improvements to overcome MOEA/D's limitations.

- Multiple Reference Points Strategy: One advanced variant replaces the "single point and multiple directions" approach with a framework of "multiple reference points and a single direction" [19]. This helps prevent the population from converging to a limited region of the PF. The algorithm includes methods for generating and adjusting these reference points during the evolutionary process to better match the true PF shape.

- Hybrid Decomposition Approaches: To tackle many-objective problems (those with 4+ objectives), MOEA/D-oDE combines the benefits of the Weighted Sum and Tchebycheff approaches. It uses an alterable decomposition method and incorporates optimal Differential Evolution (DE) schemes to enhance search capability [21].

- Adaptive Weight Vector Adjustment: MOEA/D-AWA periodically repairs the distribution of weight vectors by deleting vectors in crowded areas of the PF and generating new ones in sparse areas [19] [21]. This adaptive process allows the algorithm to dynamically respond to the evolving shape of the solution front.

Table 1: Advanced MOEA/D Variants and Their Core Improvements

| Variant Name | Core Improvement | Target Problem Type | Key Mechanism |

|---|---|---|---|

| MOEA/D with Multiple Reference Points [19] | Reference Point Geometry | Irregular POFs | Shifts from single ideal point to multiple reference points |

| MOEA/D-oDE [21] | Decomposition & Operator | Many-objective, Complex PFs | Hybrid WS/TCH decomposition; optimal DE schemes |

| MOEA/D-AWA [21] | Weight Vector Adaptation | General MOPs | Periodically deletes crowded/ adds sparse weight vectors |

| LSMOEA/D [22] | Decision Variable Analysis | Large-scale MaOPs | Integrates reference vectors for control variable analysis |

MOEA/D for Large-Scale and Many-Objective Problems

Large-scale high-dimensional many-objective optimization problems (LaMaOPs), characterized by numerous decision variables and objectives, present a substantial challenge. MOEA/D variants address these through:

- Decision Variable Analysis: LSMOEA/D incorporates reference vectors into the analysis of control variables, combining adaptive strategies to optimize high-dimensional decision spaces effectively [22].

- Cooperative Co-evolution: Some approaches break down the high-dimensional decision space into smaller components, optimizing them separately but cooperatively [22].

Foundational Principles of IB-MOEAs

Core Indicator-Based Framework

IB-MOEAs replace the traditional Pareto dominance-based selection with a performance indicator that evaluates the quality of a solution set. The core idea is to use this indicator directly as the selection criterion to drive the population toward the true Pareto front [18].

The most common environmental selection in IB-MOEAs is as follows [18]:

- A combined population ( Rt = Pt \cup Q_t ) (parents and offspring) is generated.

- The indicator value ( I({x}, A) ) is calculated for each solution ( x ) in ( R_t ), where ( A ) is a reference set (often the current population).

- Solutions are selected iteratively to form the next generation ( P_{t+1} ). In each iteration, the solution with the smallest indicator value is removed (minimizing the quality loss), until the population size reaches the desired size.

Key Indicators and Their Properties

The choice of indicator is critical, as different indicators balance convergence and diversity differently [18].

- Hypervolume (HV): Measures the volume of the objective space dominated by a solution set and bounded by a reference point. It is Pareto compliant, meaning that if a set A dominates set B, then A will have a higher hypervolume. It is considered a very comprehensive metric but is computationally expensive, especially as the number of objectives increases [18].

- Indicator ( I_{\epsilon+} ): A computationally efficient indicator that measures the minimum distance by which a solution set needs to be improved to dominate another set. It is less computationally intensive than hypervolume but can be biased toward convergence at the expense of diversity [18].

- ISDE+: An inverted version of the Shift-based Density Estimation indicator. It is computationally efficient and designed to consider both convergence and diversity simultaneously [18].

Table 2: Comparison of Common Indicators in IB-MOEAs

| Indicator | Pareto Compliant? | Computational Cost | Primary Bias | Key Advantage |

|---|---|---|---|---|

| Hypervolume (HV) | Yes | Very High (exponential in M) | Convergence & Diversity | Comprehensive quality measure |

| ( I_{\epsilon+} ) | No | Low | Convergence | Computational efficiency |

| ISDE+ | No | Low | Convergence & Diversity | Balanced performance; efficient |

Advanced IB-MOEAs and Hybrid Methodologies

Hybrid Selection MOEAs

Recognizing that different selection strategies have complementary strengths, recent research has focused on hybridization. The Hybrid Selection based MOEA (HS-MOEA) is one such algorithm that combines Pareto dominance, reference vectors, and an indicator [18].

HS-MOEA's environmental selection works as follows [18]:

- Pareto Dominance Filtering: The combined population is first sorted using non-dominated sorting. Solutions from the best non-dominated fronts are passed to the next stage.

- Reference Vector Assignment: The selected solutions are associated with predefined reference vectors to ensure diversity. Solutions are assigned to the reference vector with the smallest angle.

- Indicator-Based Final Selection: If a reference vector has more than one solution associated with it, the ISDE+ indicator is used to select the most suitable solution, ensuring both convergence and diversity within the niche.

This hybrid approach leverages the guaranteed convergence pressure of Pareto dominance, the diversity-preserving structure of reference vectors, and the fine-grained, balanced selection capability of an indicator.

Experimental Protocols and Performance Benchmarking

Standardized Testing and Evaluation Metrics

To validate and compare the performance of MOEA/D variants and IB-MOEAs, researchers employ standardized test suites and quality metrics.

- Test Suites: Common benchmark problems include the DTLZ and WFG test suites, which allow the configuration of various challenging PF characteristics such as convex, concave, disconnected, and degenerate shapes [18]. For large-scale problems, the LSMOP test suite is used [22].

- Performance Metrics:

- Inverted Generational Distance (IGD): Measures the average distance from the points in the true PF to the nearest solution in the approximated set. A lower IGD indicates better convergence and diversity [22].

- Hypervolume (HV): Measures the volume of the objective space dominated by the approximated set and bounded by a reference point. A higher HV indicates a better overall approximation of the PF [18].

Sample Experimental Protocol for Algorithm Comparison

A typical experimental protocol for benchmarking MOEAs involves the following steps [18]:

- Parameter Setup: Define the population size (e.g., 100 for 3-objective problems), termination condition (e.g., number of function evaluations), and other algorithm-specific parameters (e.g., neighborhood size in MOEA/D, penalty factor ( \theta ) in PBI).

- Independent Runs: Execute each algorithm a significant number of times (e.g., 20-30 independent runs) on each test instance to account for stochasticity.

- Data Collection: Record the final population from each run and calculate the performance metrics (IGD, HV) relative to the known true Pareto front.

- Statistical Analysis: Perform non-parametric statistical tests (e.g., the Wilcoxon rank-sum test) on the metric results to determine if performance differences between algorithms are statistically significant.

Application in Computer-Aided Drug Design

A prominent application of MOEAs is in Computer-Aided Drug Design (CADD), which aims to reduce the tremendous time and resource costs of developing new drugs by traversing the vast chemical space of potential compounds [23].

- Problem Formulation: The task is formulated as a multi-objective optimization problem where potential drug molecules (candidates) need to simultaneously optimize multiple properties, such as drug-likeness (QED), synthesizability (SA Score), and specific biological activities from benchmarks like GuacaMol [23].

- Algorithm Implementation: Studies have successfully deployed MOEAs like NSGA-II, NSGA-III, and MOEA/D for this purpose. A critical implementation detail is the molecular representation. While SMILES was traditionally used, the SELFIES representation is now preferred because it guarantees that every string corresponds to a valid molecular structure, leading to more efficient exploration [23].

- Outcome: Research results indicate that MOEAs show converging behavior and successfully optimize the defined criteria, discovering novel and promising candidate compounds for synthesis that are found in the obtained Pareto-sets [23].

Diagram 1: MOEA Workflow in Computer-Aided Drug Design

Table 3: Key Research Reagents and Computational Tools for EMO Research

| Item / Resource | Type | Primary Function in EMO Research |

|---|---|---|

| SELFIES [23] | Molecular Representation | String-based molecular representation guaranteeing 100% valid chemical structures for evolutionary operators. |

| GuacaMol Benchmark [23] | Benchmark Suite | Provides multi-objective tasks and metrics for benchmarking de novo molecular design algorithms. |

| DTLZ/WFG Test Suites [18] | Benchmark Problems | Standardized test problems for evaluating algorithm performance on various Pareto front geometries. |

| PlatEMO | Software Platform | An integrated MATLAB-based platform for experimental EMO research, featuring numerous algorithms. |

| Reference Vectors [18] | Algorithmic Component | A set of uniformly distributed vectors on a unit simplex used to guide diversity in RV-MOEAs and MOEA/D. |

| Hypervolume (HV) Calculator | Performance Metric | Software tool (e.g., in PyMOO) to compute the hypervolume indicator, measuring overall solution set quality. |

Diagram 2: Taxonomy of Multi-Objective Evolutionary Algorithm Frameworks

Decomposition-based (MOEA/D) and Indicator-based (IB-MOEAs) approaches represent two powerful and evolving paradigms within Evolutionary Multi-objective Optimization research. While MOEA/D offers a clear, decomposable structure and computational efficiency, IB-MOEAs provide a direct, quality-driven search guided by comprehensive performance metrics. The ongoing hybridization of these and other concepts, as seen in algorithms like HS-MOEA, demonstrates the field's dynamic nature and its continuous pursuit of more robust and effective optimization tools. The successful application of these algorithms in complex, real-world domains like computer-aided drug design underscores their significant practical value and potential for future impact across science and engineering.

Evolutionary Multi-objective Optimization (EMO) represents a class of population-based heuristic approaches for solving problems involving multiple conflicting objectives [24]. Traditional EMO algorithms aim to approximate the entire Pareto-optimal set, but as the number of objectives increases, this becomes computationally challenging and often impractical. Reference point methods address this limitation by incorporating decision maker (DM) preferences to focus the search on specific regions of interest (ROI) [14]. These methods enable a highly efficient search by allowing the DM to express preferences through desired objective values, guiding the optimization process toward solutions that better align with their expectations and requirements [25] [26].

The fundamental principle behind reference point methods is their ability to transform the multi-objective optimization problem from finding the entire Pareto front to identifying a relevant subset that satisfies the DM's preferences. This approach significantly reduces computational effort while providing more targeted solutions. Within the broader context of EMO research, reference point methods represent a crucial intersection between evolutionary computation and multiple-criteria decision-making (MCDM), fostering cross-fertilization between these two communities [24]. This integration has become increasingly important as EMO researchers recognize the need to develop and integrate practical decision-making capabilities into optimization algorithms.

Theoretical Foundations and Key Concepts

Basic Formulation of Multi-Objective Optimization Problems

A multi-objective optimization problem (MOP) with conflicting objectives can be formally defined as [1]:

$$\begin{aligned} \text{minimize} \quad & \mathbf{f}(\mathbf{x}) = (f1(\mathbf{x}), f2(\mathbf{x}), \ldots, f_k(\mathbf{x})) \ \text{subject to} \quad & \mathbf{x} \in X \end{aligned}$$

where $k \geq 2$ represents the number of objectives, $\mathbf{x}$ is the decision vector, and $X$ denotes the feasible decision space. The image of all feasible solutions in the objective space $\mathbb{R}^k$ is denoted as $Z$.

Pareto Optimality and Efficiency

In multi-objective optimization, Pareto optimality defines the fundamental concept of efficiency [1]. A solution $\mathbf{x}^* \in X$ is Pareto optimal if there does not exist another solution $\mathbf{x} \in X$ such that $fi(\mathbf{x}) \leq fi(\mathbf{x}^)$ for all $i = 1, \ldots, k$ and $f_j(\mathbf{x}) < f_j(\mathbf{x}^)$ for at least one index $j$. The set of all Pareto optimal solutions constitutes the Pareto set, whose images in the objective space form the Pareto front.

Reference Points and Regions of Interest

A reference point is a vector $\mathbf{q} = (q1, q2, \ldots, q_k)$ in the objective space representing the DM's desired values for each objective function [25]. These values may be achievable or unachievable, but they serve as a navigation tool for the optimization process. The region of interest (ROI) refers to the portion of the Pareto front that aligns with the DM's preferences, typically a neighborhood of solutions closest to the reference point according to a specific distance metric or achievement scalarizing function [14].

Table 1: Key Reference Vectors in Multi-Objective Optimization

| Vector Type | Mathematical Definition | Description | Practical Challenge |

|---|---|---|---|

| Ideal | $zi^{\text{ideal}} = \inf{\mathbf{x} \in X} f_i(\mathbf{x})$ | Best achievable value for each objective individually | Computationally accessible through individual optimization |

| Nadir | $zi^{\text{nadir}} = \sup{\mathbf{x} \in X^*} f_i(\mathbf{x})$ | Worst value of each objective across Pareto set | Difficult to compute exactly; typically approximated |

| Utopian | $zi^{\text{utopia}} = zi^{\text{ideal}} - \epsilon_i$ | Slightly better than ideal point ($\epsilon_i > 0$) | Used to ensure strict Pareto optimality |

| Reference | $\mathbf{q} = (q1, q2, \ldots, q_k)$ | DM's desired values for each objective | May be achievable or unachievable |

Methodological Approaches and Algorithms

Classification of Preference-Based EMO Methods

Reference point methods belong to the broader category of preference-based EMO approaches, which can be classified according to when preferences are incorporated [14]:

- A priori methods: Preferences are expressed before the optimization process begins

- Interactive methods: Preferences are provided during the optimization process through iterative feedback